# A Proposal to Extend the Common Model of Cognition with Metacognition

**Authors**: John Laird, Christian Lebiere, Paul Rosenbloom, Andrea Stocco

institutetext: Contact: John.Laird@cic.iqmri.org

## Abstract

The Common Model of Cognition (CMC) provides an abstract characterization of the structure and processing required by a cognitive architecture for human-like minds. We propose a unified approach to integrating metacognition within the CMC. We propose that metacognition involves reasoning over explicit representations of an agent’s cognitive capabilities and processes in working memory. Our proposal exploits the existing cognitive capabilities of the CMC, making minimal extensions in the structure and information available within working memory. We provide examples of metacognition within our proposal.

Keywords: Common Model of Cognition, Cognitive Architecture, Metacognition

## 1 Introduction

The Common Model of Cognition (CMC) [9] was developed as an abstract consensus model of human-like minds, derived from the computational structures and representations of cognitive architectures [6], and informed by our knowledge of the human mind and brain. The CMC specifies the fixed architectural structures for encoding, maintaining, using, and acquiring knowledge to produce behavior, emphasizing routine cognition. Here, we propose extending the CMC to include metacognition [3, 4, 5, 7, 12, 17].

Broadly speaking, metacognition encompasses reasoning about any aspect of cognition, including reasoning, memory, perception [13], motor skills, and learning. It can include partial or even incorrect theories of cognition, such as reasoning about perceived ESP capabilities. We focus on metacognition related to an agent’s own cognition. We also discuss metareasoning: reasoning about reasoning as a restricted form of metacognition. Examples of metacognition include: introspective monitoring, deliberate decision making, deliberate learning [10], predictive and hypothetical reasoning, retrospective reasoning, strategy selection, and self-explanation.

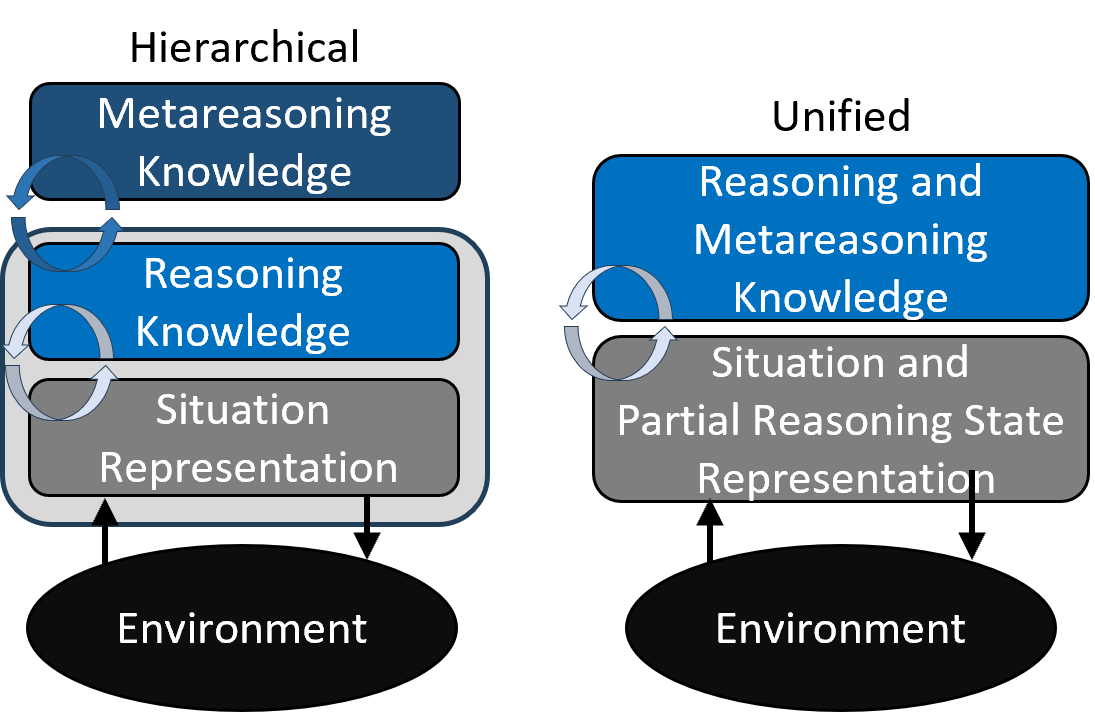

Figure 1 shows two approaches to metacognition in cognitive architectures. In the hierarchical approach, also referred to as the Nelson and Narens model [11], specialized modules are added “on top” of cognition to implement metacognition, as exemplified by MIDCA [2] and Clarion [16]. Those modules monitor cognition, reason about it, and modify its processing. They access the current state of cognitive processing, the histories of processing, and representations of the agent’s procedural knowledge that drives cognition, with reasoning and metareasoning operating in parallel without intermixing.

<details>

<summary>extracted/6529175/Figures/MCArch1.png Details</summary>

### Visual Description

\n

## Diagram: Comparison of Hierarchical vs. Unified Cognitive Architectures

### Overview

The image presents a side-by-side comparison of two conceptual models for cognitive or reasoning systems: a "Hierarchical" model on the left and a "Unified" model on the right. Both models illustrate the relationship between different types of knowledge, internal representations, and an external environment. The diagram uses colored boxes, arrows, and text to depict components and information flow.

### Components/Axes

The diagram is divided into two distinct sections:

**Left Section: Hierarchical Model**

* **Title:** "Hierarchical" (text at the top).

* **Components (from top to bottom):**

1. A dark blue, rounded rectangle labeled **"Metareasoning Knowledge"**.

2. A medium blue, rounded rectangle labeled **"Reasoning Knowledge"**.

3. A gray, rounded rectangle labeled **"Situation Representation"**.

4. A black oval at the bottom labeled **"Environment"**.

* **Arrows & Relationships:**

* A pair of curved, dark blue arrows form a circular feedback loop between the "Metareasoning Knowledge" and "Reasoning Knowledge" boxes.

* A pair of curved, light gray arrows form a circular feedback loop between the "Reasoning Knowledge" and "Situation Representation" boxes.

* A straight, black arrow points upward from the "Environment" oval to the "Situation Representation" box.

* A straight, black arrow points downward from the "Situation Representation" box to the "Environment" oval.

**Right Section: Unified Model**

* **Title:** "Unified" (text at the top).

* **Components (from top to bottom):**

1. A medium blue, rounded rectangle labeled **"Reasoning and Metareasoning Knowledge"**.

2. A gray, rounded rectangle labeled **"Situation and Partial Reasoning State Representation"**.

3. A black oval at the bottom labeled **"Environment"**.

* **Arrows & Relationships:**

* A pair of curved, light gray arrows form a circular feedback loop between the "Reasoning and Metareasoning Knowledge" box and the "Situation and Partial Reasoning State Representation" box.

* A straight, black arrow points upward from the "Environment" oval to the "Situation and Partial Reasoning State Representation" box.

* A straight, black arrow points downward from the "Situation and Partial Reasoning State Representation" box to the "Environment" oval.

### Detailed Analysis

* **Spatial Grounding:** The "Hierarchical" model is positioned on the left half of the image, and the "Unified" model is on the right. In both models, the "Environment" is the foundational element at the bottom. The knowledge/representation boxes are stacked vertically above it.

* **Component Isolation & Flow:**

* **Hierarchical Model Flow:** The environment provides input to the "Situation Representation." This representation interacts via a feedback loop with "Reasoning Knowledge," which in turn interacts via another feedback loop with the higher-level "Metareasoning Knowledge." The system outputs actions back to the environment. The architecture is strictly layered.

* **Unified Model Flow:** The environment provides input to a combined "Situation and Partial Reasoning State Representation." This integrated representation interacts via a single feedback loop with a combined "Reasoning and Metareasoning Knowledge" module. The system outputs actions back to the environment. The architecture merges previously distinct layers.

* **Text Transcription:** All text in the image is in English. The precise labels are as listed in the Components section above.

### Key Observations

1. **Structural Difference:** The core distinction is the separation versus integration of knowledge types. The Hierarchical model has three distinct layers (Metareasoning, Reasoning, Situation), while the Unified model collapses these into two integrated layers.

2. **Representational Change:** In the Unified model, the lower-level representation is not just of the external "Situation" but also includes the "Partial Reasoning State," suggesting internal cognitive state is part of the represented world.

3. **Feedback Loop Simplification:** The Hierarchical model has two separate, sequential feedback loops. The Unified model has a single, direct feedback loop between its two main components.

4. **Consistent Environment Interface:** Both models maintain identical, bidirectional interaction with the external "Environment."

### Interpretation

This diagram contrasts two fundamental approaches to designing intelligent systems.

* The **Hierarchical model** suggests a clear division of labor: low-level perception and situation assessment, middle-level reasoning for problem-solving, and high-level metareasoning for monitoring and controlling the reasoning process itself. This is analogous to a traditional management hierarchy or a computer system with distinct application, operating system, and kernel layers. It offers clarity and modularity but may introduce communication overhead and rigidity between layers.

* The **Unified model** proposes a more integrated architecture where reasoning and the meta-cognitive oversight of that reasoning are fused. Furthermore, the system's representation of the world explicitly includes its own partial reasoning state. This implies a system with greater self-awareness and potentially more fluid, adaptive cognition, as the distinction between "thinking" and "thinking about thinking" is blurred. It may be more efficient but also more complex to analyze and design.

The diagram argues that the choice between these architectures involves a trade-off between the **explicit structure and specialization** of the hierarchical approach versus the **integrated self-awareness and potential fluidity** of the unified approach. The persistence of the "Environment" as a separate, interacted-with entity in both models indicates that regardless of internal architecture, grounding and interaction with the external world remain a fundamental requirement for the system.

</details>

Figure 1: Alternative Metacognitive Architectures.

In the CMC and cognitive architectures more generally, a capability is realized through architectural structures and knowledge. Therefore, we propose a unified approach, on the right side of Figure 1, where cognition and metacognition differ only in what is the subject of reasoning. We propose minimal architectural extensions to make information about an agent’s cognition available in working memory. Versions of these extensions are found in existing CMC architectures, including ACT-R [1], Sigma [14], and Soar [8]. We also describe the sources of other non-architectural sources of information about cognition needed to support metacognition as a component of overall cognition. As with the original goals for the CMC, our goal is for our proposal of metacognition to apply to both humans and A(G)I systems with similar general capabilities.

Our proposal does not permit information in long-term memories to be examined or reasoned over by other modules. Instead, it restricts reasoning and metareasoning to information available in working memory. It avoids new modules by incorporating the long-term knowledge used in metareasoning within its existing long-term memories. Restricting access to long-term memories sacrifices omniscient metareasoning but enables efficient processing and memory functionalities consistent with neural memory models. Furthermore, all existing cognitive reasoning and learning capabilities are available for metacognition, including interaction with the external environment.

Below, we review the Common Model of Cognition and present our proposal for extending it to include human-like metacognition. Our proposal focuses on adding new representational distinctions and sources of information about cognition that become available to an agent to initiate, reason, and terminate metacognition. We attempt to identify the minimum architectural information required to support general metacognition, as well as non-architectural sources of information about cognition that are available to an agent. We include three examples of metacognition within this framework.

## 2 Common Model of Cognition

<details>

<summary>extracted/6529175/Figures/CMC1.png Details</summary>

### Visual Description

## Diagram: Cognitive Architecture Model

### Overview

The image displays a block diagram illustrating a cognitive architecture model, likely representing a theoretical framework for human or artificial cognition. It shows the structural components of a memory system and their interactions with perceptual and motor systems, all situated within an environment. The diagram uses color-coded blocks and directional arrows to indicate information flow and relationships.

### Components/Axes

The diagram consists of six primary components, each represented by a colored shape with a text label. Their spatial arrangement and connections are as follows:

1. **Declarative Long-term Memory** (Red rectangle, top-center)

2. **Procedural Long-term Memory** (Blue rectangle, left-center)

3. **Working Memory** (Brown rectangle, center)

4. **Perception** (Yellow polygon, lower-center-left)

5. **Motor** (Green polygon, lower-center-right)

6. **Environment** (Purple oval, bottom-center)

**Connections and Labels:**

* A **red, double-headed arrow** connects "Declarative Long-term Memory" and "Working Memory." A small red box labeled **"DL"** is positioned on this arrow, near the "Working Memory" block.

* A **blue, double-headed arrow** connects "Procedural Long-term Memory" and "Working Memory." A small blue box labeled **"RL/PC"** is positioned on this arrow, near the "Working Memory" block.

* A **yellow, double-headed arrow** connects "Perception" and "Working Memory."

* A **green, single-headed arrow** points from "Working Memory" to "Motor."

* A **green, double-headed arrow** connects "Perception" and "Motor."

* A **purple, single-headed arrow** points from the "Environment" to "Perception."

* A **green, single-headed arrow** points from "Motor" to the "Environment."

### Detailed Analysis

**Component Details and Flow:**

* **Working Memory** acts as the central hub. It has direct, bidirectional connections with both long-term memory systems ("Declarative" and "Procedural") and the "Perception" module. It has a direct, output-only connection to the "Motor" module.

* **Declarative Long-term Memory** (knowledge of facts and events) exchanges information with Working Memory via a pathway labeled **"DL"** (likely standing for Declarative Learning or a similar process).

* **Procedural Long-term Memory** (knowledge of skills and procedures) exchanges information with Working Memory via a pathway labeled **"RL/PC"** (likely standing for Reinforcement Learning / Procedural Consolidation or similar).

* **Perception** receives input from the **Environment** and exchanges information bidirectionally with both **Working Memory** and the **Motor** system.

* **Motor** receives commands from **Working Memory**, interacts with **Perception**, and sends output actions to the **Environment**.

* The **Environment** is the external context, providing sensory input to Perception and receiving motor output.

### Key Observations

* **Central Role of Working Memory:** The diagram positions Working Memory as the critical integration point, connecting all other subsystems. It is the only component with direct links to both long-term memory stores, perception, and motor control.

* **Bidirectional vs. Unidirectional Flow:** Most connections are bidirectional (double-headed arrows), indicating a two-way exchange of information. The exceptions are the output from Working Memory to Motor and the output from Motor to the Environment, which are unidirectional.

* **Process Labels:** The small boxes "DL" and "RL/PC" on the connecting arrows are significant. They don't just show a connection but label the specific cognitive or computational *process* that facilitates the exchange between those specific modules.

* **Hierarchical Layout:** There is a loose top-down hierarchy: Long-term memory systems are at the top, the central processor (Working Memory) is in the middle, and the systems interfacing with the external world (Perception, Motor, Environment) are at the bottom.

### Interpretation

This diagram represents a **closed-loop cognitive system** that learns and acts. It synthesizes classic cognitive psychology models (like the distinction between declarative and procedural memory) with computational frameworks (hinted at by terms like RL for Reinforcement Learning).

The data flow suggests a continuous cycle:

1. The **Environment** provides stimuli.

2. **Perception** processes this input and shares it with **Working Memory** and the **Motor** system.

3. **Working Memory** integrates this perceptual data with relevant knowledge retrieved from **Declarative** (facts) and **Procedural** (skills) **Long-term Memory**.

4. Based on this integration, **Working Memory** sends commands to the **Motor** system.

5. The **Motor** system executes actions, which affect the **Environment**, thus closing the loop and starting the cycle anew.

The inclusion of process labels ("DL," "RL/PC") is crucial. It moves the diagram from a simple structural map to a functional one, specifying *how* learning and memory consolidation occur between the specialized stores and the central workspace. This architecture is foundational for understanding both human cognition and designing advanced artificial intelligence systems that require integrated memory, perception, and action.

</details>

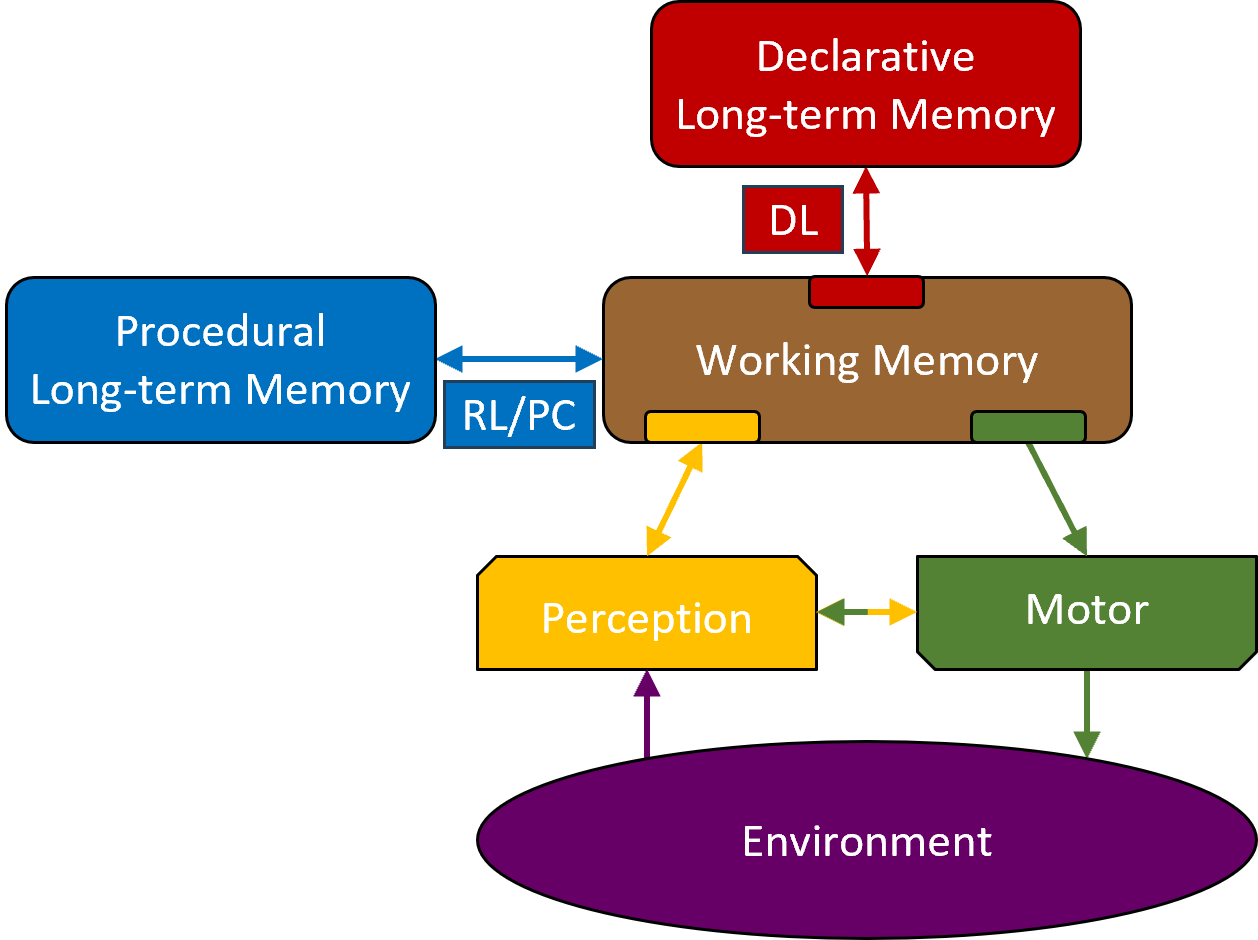

Figure 2: The Common Model of Cognition.

The CMC unifies many similar cognitive architectures by identifying common components, processing, connectivity, and constraints. It does not specify mechanisms or implementations, but emphasizes functionality, focusing on routine cognition and learning. Cognition is the collective processing of the component modules (working memory, procedural memory, declarative memory) and their associated processes (action selection, retrieval, learning).

Figure 2 shows the structure and data flow among the modules, which include short-term working memory, long-term procedural memory, long-term declarative memory, learning, perception, and motor control. Long-term memories have associated automatic learning mechanisms that incrementally modify and extend their contents. The CMC posits procedural compilation, reinforcement learning, and declarative learning. Memories contain relations over symbols annotated with quantitative metadata. Examples include the recency of creation or access, probability, and derived utility. Metadata influences processing within a module, such as retrieval and learning.

Data flow begins with perception and proceeds to working memory, representing an agent’s understanding of its current situation and goals. Procedural memory contains knowledge about selecting and executing actions that modify working memory. On each cognitive cycle, procedural memory, testing the contents of working memory, selects a single action, which makes one or more changes to working memory, resulting in a step in the cognitive cycle. Each of the other modules has a buffer in working memory through which procedural memory can initiate memory retrievals, motor actions, or top-down control of perception. Results from those module processes are added to their respective buffers. Thus, behavior unfolds as a sequence of steps, driven by procedural memory, making changes to working memory. We call such step-by-step behavior reasoning where the contents of working memory are what the reasoning is about and the knowledge in procedural and declarative memory determines the course of the reasoning. The original CMC encompasses routine and skilled performance, where working memory includes only information about the agent’s current goals and task state.

## 3 Phases of Metacognition Processing

In our proposal, metacognition employs the same cognitive cycle process, utilizing the same modules, and often the same knowledge. Metacognition is distinguished by the fact that the information about the agent’s own processing is included in its reasoning: information about prior problem solving (“I see where I made a mistake on that problem and need to do something different in the future.”), specific skills (“I’m good at jigsaw puzzles.”), general competences (“I struggle with math.”), and the operation of individual modules (“I have trouble remembering the difference between affect and effect.”). Our proposal does not introduce new modules but relies on existing architectural structures and knowledge, which are extended to make new forms and representations of information available in working memory. The process of metacognition is not purely metacognition, but a combination of new and existing capabilities.

In this section, we describe the three phases of metacognition: initiation, reasoning, and termination. We identify the types of knowledge needed in these phases. Then we describe our proposed extensions to meet those requirements, as well as sources of knowledge available for metareasoning. The following section steps through those phases using three examples of metacognition.

### 3.1 Initiation

The standard cognitive cycle for reasoning involves procedural memory responding to changes in working memory, typically concerning the performance of the current task - what we call base-level reasoning. However, a working memory element can be added that indicates a deviation of base-level reasoning, such as a failure in the retrieval from memory. The general requirement is that a knowledge source creates a structure in working memory that is about the agent’s cognition. Such an item can be created deliberately through an action of procedural memory, but also as a side-effect of other long-term memory retrievals or perception. Our proposal extends these by including feedback about the state of individual modules.

### 3.2 Reasoning

Once information is available about the state of the agent’s cognition, the agent can use its cognitive capabilities to respond to it (or ignore it), essentially treating it as the signal that a new (meta)problem may need to be solved. Knowledge for reasoning can combine existing task performance knowledge with knowledge that is specific to metacognition. We have identified three extensions to the existing sources of information that can be crucial for metacognition.

1. Information about the current state of agent processing in its modules.

1. A memory of the contents of working memory over time, where a sequence of past situations can be retrieved into working memory and reasoned over. This allows the agent to detect choices it should not make in the future or ones it should reinforce. It also allows the agent to learn a model of the effects of its actions on its internal state, its environment (changes that come from perception), and its processing state.

1. Some means of creating past or future hypothetical states in working memory with two seemingly contradictory properties. One is that these states are represented such that the agent’s knowledge for reasoning in the current state can apply to them, so that the agent can imagine what it would do in those states. The second is that they are also distinguished in some way, so that the agent does not confuse them with reality. Thus, an agent can plan for the future or retrospectively reconsider past actions, without disrupting or interfering with its reasoning about the present.

### 3.3 Termination

Metacognition terminates when reasoning is no longer sensitive to representations of the agent’s processing, such as when metacognition resolves the reason it was initiated. Depending on the initiating signal, this can be from a deliberate change to working memory, or indirectly because an impasse in reasoning is resolved. Whatever the reason for termination, any result can also change long-term memory (via the existing learning mechanisms).

## 4 Proposal for Metacognition in the CMC

The crux of our proposal is to expand the sources and representations of an agent’s available information to meet the requirements described above. We present our proposal in two stages. First, we describe structural modifications to the CMC that make new representations and direct sources of information available. In the second, we describe how the existing sources can also provide some of that information indirectly.

<details>

<summary>extracted/6529175/Figures/CMC2.png Details</summary>

### Visual Description

## Cognitive Architecture Diagram: Memory System Interactions

### Overview

The image is a technical block diagram illustrating a cognitive architecture model. It depicts the structural components of a memory system and their functional interconnections, showing how information flows between different types of memory, perceptual and motor systems, and the external environment. The diagram uses color-coded blocks and directional arrows to represent components and data pathways.

### Components/Axes

The diagram consists of seven primary components, each represented by a colored block with a text label. Their spatial arrangement and connections are as follows:

1. **Semantic Long-term Memory** (Maroon block, top-left)

* Contains the text: "Semantic Long-term Memory"

* Has a smaller attached maroon block labeled "SL".

* **Connections**: Bidirectional arrows (one up, one down) connect it to the central "Working Memory" block.

2. **Episodic Long-term Memory** (Red block, top-right)

* Contains the text: "Episodic Long-term Memory"

* Has a smaller attached red block labeled "EL".

* **Connections**: Bidirectional arrows (one up, one down) connect it to the central "Working Memory" block.

3. **Procedural Long-term Memory** (Blue block, middle-left)

* Contains the text: "Procedural Long-term Memory"

* Has a smaller attached blue block labeled "RL/PC".

* **Connections**: Bidirectional arrows (one right, one left) connect it to the central "Working Memory" block.

4. **Working Memory** (Brown block, center)

* Contains the text: "Working Memory".

* This is the central hub of the diagram. It has colored tabs on its top and bottom edges that correspond to the colors of the connected modules (maroon, red, yellow, green), visually indicating integration points.

* **Connections**: It has a self-referential loop arrow on its right side. It receives inputs from and sends outputs to all other major components except the Environment.

5. **Perception** (Yellow block, bottom-left)

* Contains the text: "Perception".

* **Connections**: A bidirectional arrow connects it to the "Motor" block. Two arrows (one yellow, one brown) connect it to "Working Memory" (one up, one down). A black arrow points upward from the "Environment" to this block.

6. **Motor** (Green block, bottom-right)

* Contains the text: "Motor".

* **Connections**: A bidirectional arrow connects it to the "Perception" block. Two arrows (one green, one brown) connect it to "Working Memory" (one up, one down). A green arrow points downward from this block to the "Environment".

7. **Environment** (Black oval, bottom-center)

* Contains the text: "Environment".

* **Connections**: It is the source of input (black arrow to "Perception") and the target of output (green arrow from "Motor").

### Detailed Analysis

**Information Flow and Relationships:**

* **Central Integration**: "Working Memory" is the central processing unit. It has direct, bidirectional communication channels with all three long-term memory stores (Semantic, Episodic, Procedural) and the Perception/Motor systems.

* **Long-Term Memory Specialization**: The three long-term memory types are distinct but interconnected via Working Memory.

* **Semantic** (facts/knowledge) and **Episodic** (events/experiences) memories are positioned symmetrically at the top, suggesting they are higher-level, declarative memory systems.

* **Procedural** (skills/how-to) memory is positioned to the side, connected via a pathway labeled "RL/PC" (likely Reinforcement Learning / Procedural Conditioning).

* **Sensorimotor Loop**: A clear loop exists between the external "Environment" and the internal cognitive system:

1. Information flows from the **Environment** to **Perception** (black arrow).

2. **Perception** processes this input and communicates with **Working Memory**.

3. **Working Memory** integrates this with information from long-term memories and sends commands to the **Motor** system.

4. The **Motor** system executes actions, affecting the **Environment** (green arrow).

5. There is also direct cross-talk between **Perception** and **Motor** systems (bidirectional arrow).

* **Internal Processing**: The self-loop on "Working Memory" indicates internal rehearsal, maintenance, or manipulation of information independent of external input or long-term memory retrieval.

### Key Observations

* **Color-Coding Consistency**: The diagram uses color consistently to link components and their connection points. For example, the maroon tab on Working Memory aligns with the Semantic Memory block and its connecting arrows.

* **Asymmetry in Connections**: While Semantic and Episodic memories have identical connection patterns, Procedural memory's connection is labeled with "RL/PC," hinting at a different underlying mechanism (learning-based vs. storage/retrieval).

* **Bidirectional Dominance**: Almost all connections are bidirectional, emphasizing interactive, two-way communication rather than a simple linear pipeline. The primary exceptions are the unidirectional flows from the Environment to Perception and from Motor to the Environment, defining the system's input and output boundaries.

### Interpretation

This diagram presents a functional model of an intelligent agent's cognitive architecture. It suggests that:

1. **Working Memory is the Nexus**: All conscious processing, integration of new sensory data with past knowledge (from long-term memory), and planning of actions occur within Working Memory. Its central position and numerous connections highlight its critical role as a bottleneck and integrator.

2. **Memory is Modular but Integrated**: Different types of knowledge (what, when, how) are stored in specialized long-term memory systems but are dynamically accessed and combined in Working Memory to guide behavior.

3. **Cognition is Embodied and Situated**: The system is not closed; it is fundamentally coupled with an external Environment through Perception and Action (Motor). This reflects an embodied cognition perspective, where thinking is inseparable from interacting with the world.

4. **The Model Implies Learning**: The "RL/PC" label on the Procedural memory pathway and the closed-loop interaction with the environment suggest the system can learn from experience, updating its procedural skills based on outcomes.

The architecture balances specialized processing (different memory types) with a centralized integrator (Working Memory), all grounded in environmental interaction. It provides a blueprint for understanding or building a system that perceives, remembers, thinks, and acts.

</details>

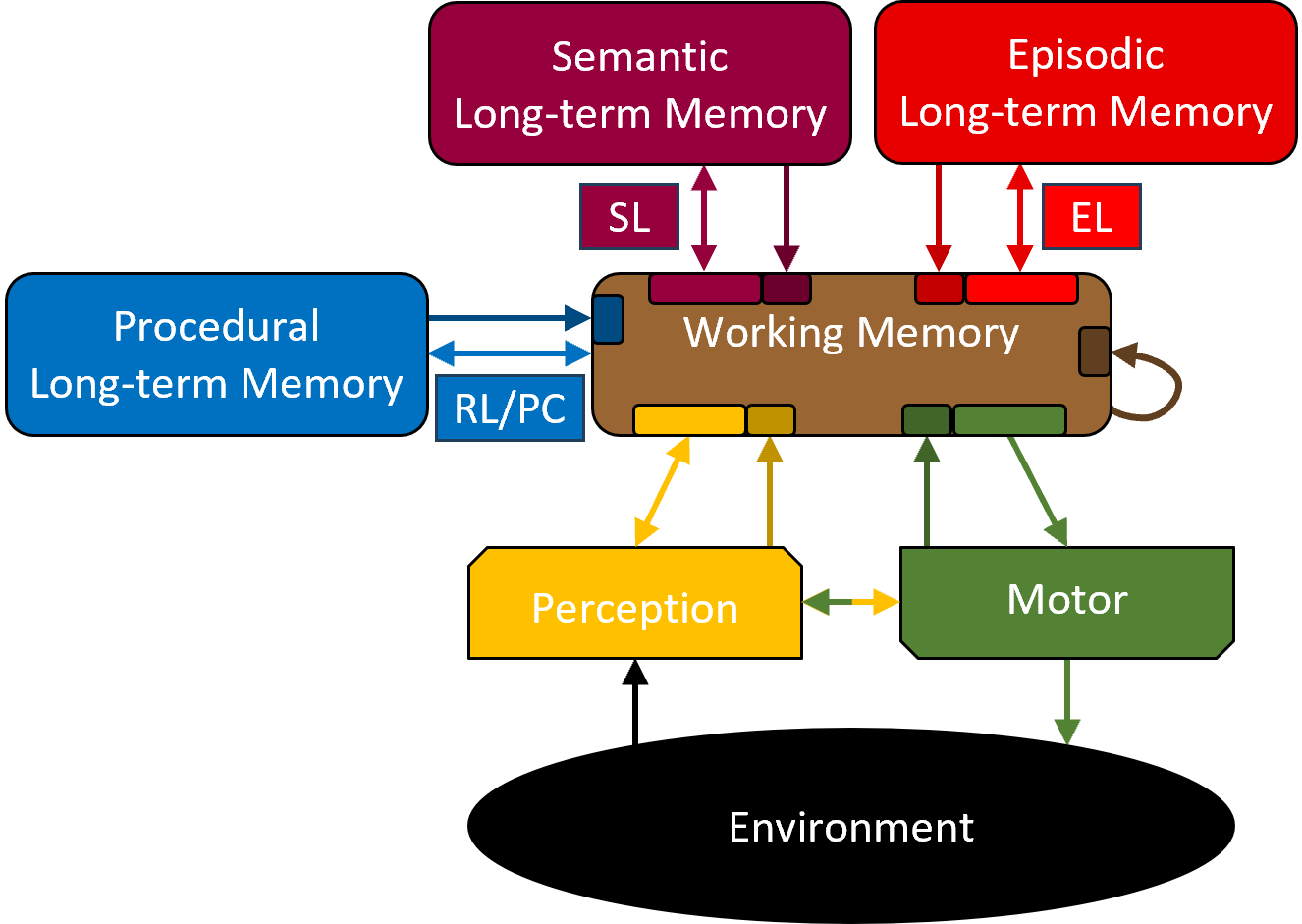

Figure 3: Structural extensions to the CMC to support Metacognition.

### 4.1 CMC Extensions

Figure 3 illustrates our proposed extensions. One is that information about the current processing state of each module, including procedural memory and working memory, is available in working memory buffers. The second is the inclusion of the functionality of episodic memory, which is shown as a separation from semantic memory. The third (not shown) is that working memory supports representations of information about past or future states, distinguished from the current state. We also describe how each extension is, or is not, implemented in ACT-R, Sigma, and Soar.

#### 4.1.1 Module Process-state Buffers

In our proposal, each module has a process-state buffer added to working memory. It summarizes information about the module’s state that can be a signal to initiate metareasoning, as well as the information for understanding the current state of processing during metareasoning. Types of process-state information include the success or failure of requested actions for a given buffer. Additional information can include certainty/confidence in a result, partial results (the answer starts with an ’A’), or indications that an answer is available but not retrieved (feeling of knowing). Perceptual process-state information can include surprise or the inability to recognize parts of the perceptual scene. Process-state information for working memory can include assessments, such as desirability or intrinsic pleasantness. A proposed CMC extension for emotion [15] considers adding a metacognitive assessment module with an associated buffer in working memory, which would be compatible with this proposal.

The existing module buffers in ACT-R, Sigma, and Soar include dedicated areas for process-state information. Sigma and Soar include process-state information associated with procedural memory, whereas ACT-R does not. In Sigma and Soar, if procedural memory cannot select an action, a structure called the substate is added to working memory. The substate describes the reason for the impasse and initiates metareasoning and provides a context for metareasoning (see below). Sigma, but not ACT-R or Soar, has something akin to the working memory process-state buffer for representing desirability appraisals.

#### 4.1.2 Hypothetical State Representations

Our proposal requires that an agent can currently represent hypothetical past or future states with the current state in working memory. The substate mechanisms in Sigma and Soar provide concurrent representations of the current situation for base-level reasoning and hypothetical states and are the locus for metareasoning. The structure of the substates can recreate the current state so that the agent’s long-term knowledge can be used for both metareasoning and base-level reasoning. However, the substates are distinguished by certain features so that hallucination is avoided. ACT-R has no similar architectural mechanisms. Instead, in agents that employ planning, knowledge-based conventions reserve specific slots in their working memory structures (chunks) to distinguish hypothetical states.

#### 4.1.3 Episodic Memory

Process-state buffers provide information about the instantaneous state of the agent, but do not provide any representation of the history of reasoning that can be used for metareasoning. The ability to reconstruct prior reasoning trajectories is precisely what episodic memory can provide. The original CMC had a single long-term declarative memory. Here, we propose that the functionality associated with episodic memory differs from that of semantic memory, as it provides a direct source of knowledge about an agent’s past reasoning. Episodic learning incrementally and automatically acquires episodes and their temporal relations, allowing an agent to reconstruct the extended sequence of reasoning in working memory, possibly through multiple retrievals. Once in working memory, that data, which is about the agent’s reasoning, enables retrospective analysis and other forms of metareasoning.

Soar has distinct semantic and episodic memories as shown in Figure 3; however, ACT-R and Sigma do not. Instead, they have a single long-term declarative memory, where some of the functionalities of episodic memory are supported but not all.

### 4.2 Indirect Sources of Processing Information

In addition to the direct sources provided by process-state buffers and episodic memory, an agent can access information about its processing from its interactions with its environment, by metareasoning, from its memories of its reasoning, behavior, and metareasoning.

#### 4.2.1 Perception of its Environment:

An agent’s environment includes many sources of information that an agent can use to reason about itself. Below are a few general categories.

- Self Observation: By observing the results of its interactions with its world, an agent can learn about the impact of its cognitive processes on its environment. When unexpected results occur, the perception of those results can lead to metacognition. When self-observation is combined with episodic memory, an agent can later review its behavior, determining what approaches work and which don’t work, and build up a model for future tasks and associated metareasoning.

- Other Agents: Other agents can provide observations about an agent’s cognitive processing, suggest possible reasoning steps or strategies, notify an agent of mistakes (or successes) in its reasoning, or even provide an agent with cognitive strategies (such as through Cognitive Behavior Therapy) to detect its reasoning approaches, evaluate them, and possibly modify them.

- Recorded Information: An agent can read books, watch movies, and even study psychology to acquire a general understanding of reasoning capabilities that it applies to itself.

Many of these sources provide temporal distance between an agent’s original reasoning and using the information, allowing the agent to create internal representations of behavior and capabilities in working memory after the behavior is generated. This can be impossible during routine reasoning, when only task information is available and task urgency prevents metacognitive processing.

#### 4.2.2 Metareasoning:

Metareasoning itself composes these other sources of knowledge about an agent’s cognitive capabilities to draw conclusions and generate new insights that fuel future metareasoning. This requires the other originating sources of information, but allows an agent to extend and expand its knowledge by combining information from multiple sources, identifying trends and commonalities, and so on. The knowledge created by metareasoning can then be learned and transferred to long-term memory for future use as described below.

#### 4.2.3 Semantic and Procedural Memories:

The automatic learning of procedural and semantic knowledge enables the retention of knowledge about an agent’s cognition produced by other sources. They are indirect sources, as they require information to be present in working memory, which, through learning, becomes available for retrieval into working memory and future metacognition.

## 5 Examples of Metacognitive Processing

We return to the three phases of metacognitive processing with three examples.

### 5.1 Wordle Retrieval

The agent attempts to retrieve a word from semantic memory using a partial specification of a five-letter word.

- Initiation: The semantic memory process-state buffer includes information that the retrieved word (‘Tripe’) is uncommon.

- Reasoning: The agent decides it wants to know more “about what it knows about the word,” and attempts to retrieve the word from episodic memory, embedding it in the context of it being a Wordle answer. Episodic memory does not retrieve a specific episode of it being a previous answer (possibly because of interference from the hundreds of previous times the agent has played Wordle). Still, the process-state buffer indicates that the word is very familiar. The agent reasons that the combination of being uncommon and familiar indicates it is probably a previous Wordle answer, as it wouldn’t come up in any other situation.

- Termination: The agent discards ‘Tripe’ from consideration, which terminates the short bout of metareasoning. The agent returns to generating a potential answer.

### 5.2 Making a Move in Chess

The agent is playing a chess game and is far enough into the game that its memorized opening moves are exhausted. Here we describe the mechanisms in Sigma and Soar that support metareasoning in such a situation.

- Initiation: Procedural memory does not return a single definite action to take. In Sigma and Soar, this leads to the creation of a substate.

- Reasoning: In the substate, the agent decides to explicitly try out the different available moves on internal copies of the current state, generating states that it then compares.

- Termination: It ultimately decides on a specific move, which terminates the metareasoning.

### 5.3 Repeated Robot Action

A one-armed robot is instructed to store all the leftovers on the counter in the refrigerator. Each time the robot stores an item in the refrigerator, it opens the refrigerator door, fetches the item, places it in the refrigerator, and then closes the refrigerator door.

- Initiation: After completing the task, the robot’s instructor tells it that it needs to improve its performance. This external information triggers procedural knowledge that the robot should do a retrospective analysis of its original performance.

- Reasoning: Using existing procedural knowledge, the robot recalls a trace of its behavior from episodic memory into working memory. It then uses existing procedural knowledge to analyze the trace. It detects that it is repeatedly in exactly the same world state because it closes the refrigerator door as part of one store command, but then immediately opens it for the next. It “imagines” being at the end of a store command when there are other items to store, and inhibits the action to close the refrigerator door. Through its procedural learning mechanism, it learns to inhibit that action in similar situations in the future.

- Termination: On completing its internal inhibition, it has additional procedural knowledge that removes from working memory the comment from the instructor, and continues with its normal activities.

## 6 Conclusion

We propose that three specific extensions be added to the CMC to support a unified approach to metacognition: module process-state buffers that provide information in working memory on the current state of each of the buffers; episodic memory that provides a means for the agent to recreate its behavior so that it becomes available to reason over; and some means of creating hypothetical situations in working memory that support using base-level reasoning during metareasoning, avoid interference and confusion between them. Our proposal is a framework, but it does not specify in detail the diverse and extensive indirect learned or pre-encoded long-term knowledge needed for an agent to engage in the forms of metacognition found in humans. An essential point of our proposals is to provide the architectural structure that is necessary above and beyond what is encoded as knowledge in the long-term memories.

Although we introduce some new architectural structures, there are no structural boundaries between base-level (non-meta) reasoning and metareasoning. Within existing architectures consistent with this proposal (ACT-R, Sigma, and Soar), an agent can rapidly switch between base-level reasoning and metareasoning, as the only difference is whether working memory structures are about the agent’s cognitive and reasoning capabilities. In addition, an agent’s reasoning transitions from base-level task reasoning to metareasoning as it responds to internal impasses, failures, and successes in base-level reasoning, and then back to base-level reasoning as learning leads to routine, impasse-free reasoning.

## References

- [1] Anderson, J.R., Bothell, D., Byrne, M.D., Douglass, S.A., Lebiere, C., Qin, Y.: An integrated theory of the mind. Psychological Review 111 (4), 1036–1060 (2004)

- [2] Cox, M., Alavi, Z., Dannenhauer, D., Eyorokon, V., Munoz-Avila, H., Perlis, D.: MIDCA: A Metacognitive, Integrated Dual-Cycle Architecture for Self-Regulated Autonomy. Proceedings of the AAAI Conference on Artificial Intelligence 30 (1) (Mar 2016)

- [3] Cox, M., Raja, A.: Metareasoning: An Introduction, pp. 3–14. MIT Press (2011)

- [4] Flavell, J.H.: Metacognition and cognitive monitoring: A new area of cognitive–developmental inquiry. American Psychologist 34 (10), 906–911 (1979)

- [5] Johnson, S.G.B., Karimi, A.H., Bengio, Y., Chater, N., Gerstenberg, T., Larson, K., Levine, S., Mitchell, M., Rahwan, I., Schölkopf, B., Grossmann, I.: Imagining and building wise machines: The centrality of AI metacognition (2025), https://arxiv.org/abs/2411.02478

- [6] Kotseruba, I., Tsotsos, J.K.: 40 years of cognitive architectures: Core cognitive abilities and practical applications. Artificial Intelligence Review 53 (1), 17–94 (Jan 2020)

- [7] Kralik, J.D., Lee, J.H., Rosenbloom, P.S., Jackson, P.C., Epstein, S.L., Romero, O.J., Sanz, R., Larue, O., Schmidtke, H.R., Lee, S.W., McGreggor, K.: Metacognition for a Common Model of Cognition. Procedia Computer Science 145, 730–739 (Jan 2018)

- [8] Laird, J.E.: The Soar Cognitive Architecture. MIT Press, Cambridge, MA (2012)

- [9] Laird, J.E., Lebiere, C., Rosenbloom, P.S.: A Standard Model of the Mind: Toward a Common Computational Framework across Artificial Intelligence, Cognitive Science, Neuroscience, and Robotics. AI Magazine 38 (4), 13–26 (2017)

- [10] Laird, J.E., Mohan, S.: Learning Fast and Slow. In: Proc. of the 32nd AAAI Conference on Artificial Intelligence. p. 5. AAAI Press, New Orleans (2018)

- [11] Nelson, T.O., Narens, L.: Metamemory: A theoretical framework and new findings. In: Bower, G. (ed.) The Psychology of Learning and Motivation: Advances in Research and Theory, pp. 125–173. Academic Press, New York (1990)

- [12] Nolte, R., Pomarlan, M., Janssen, A., Beßler, D., Javanmardi, K., Jongebloed, S., Porzel, R., Bateman, J., Beetz, M., Malaka, R.: How metacognitive architectures remember their own thoughts: A systematic review (2025), https://arxiv.org/abs/2503.13467

- [13] Rahnev, D., Balsdon, T., Charles, L., de Gardelle, V., Denison, R., Desender, K., Faivre, N., Filevich, E., Fleming, S.M., Jehee, J., Lau, H., Lee, A.L.F., Locke, S.M., Mamassian, P., Odegaard, B., Peters, M., Reyes, G., Rouault, M., Sackur, J., Samaha, J., Sergent, C., Sherman, M.T., Siedlecka, M., Soto, D., Vlassova, A., Zylberberg, A.: Consensus goals for the field of visual metacognition. Perspectives on Psychological Science 17 (6), 1746–1765 (2022)

- [14] Rosenbloom, P.S., Demski, A., Ustun, V.: The Sigma Cognitive Architecture and System: Towards Functionally Elegant Grand Unification. Journal of Artificial General Intelligence 7 (1), 1–103 (Dec 2016)

- [15] Rosenbloom, P.S., Laird, J.E., Lebiere, C., Stocco, A., Granger, R., Huyck, C.: A proposal for extending the Common Model of Cognition to emotion. In: 22nd International Conference on Cognitive Modeling, ICCM 2024. Tilburg University, the Netherlands (Apr 2024)

- [16] Sun, R.: The importance of cognitive architectures: an analysis based on CLARION. Journal of Experimental & Theoretical Artificial Intelligence 19 (2), 159–193 (2007)

- [17] Walker, P., Haase, J., Mehalick, M., Steele, C., Russell, D., Davidson, I.: Harnessing metacognition for safe and responsible ai. Technologies 13 (2025)