# SwS: Self-aware Weakness-driven Problem Synthesis in Reinforcement Learning for LLM Reasoning

**Authors**: Xiao Liang1∗1 1 ∗, Zhong-Zhi Li2∗2 2 ∗, Yeyun Gong, Yang Wang, Hengyuan Zhang, Los AngelesSchool of Artificial Intelligence

Corresponding author:

∗ Equal contribution. Work done during Xiao’s and Zhongzhi’s internships at Microsoft. † Corresponding authors: Yeyun Gong and Weizhu Chen. 🖂: yegong@microsoft.com; wzchen@microsoft.com

Abstract: Reinforcement Learning with Verifiable Rewards (RLVR) has proven effective for training large language models (LLMs) on complex reasoning tasks, such as mathematical problem solving. A prerequisite for the scalability of RLVR is a high-quality problem set with precise and verifiable answers. However, the scarcity of well-crafted human-labeled math problems and limited-verification answers in existing distillation-oriented synthetic datasets limit their effectiveness in RL. Additionally, most problem synthesis strategies indiscriminately expand the problem set without considering the model’s capabilities, leading to low efficiency in generating useful questions. To mitigate this issue, we introduce a S elf-aware W eakness-driven problem S ynthesis framework (SwS) that systematically identifies model deficiencies and leverages them for problem augmentation. Specifically, we define weaknesses as questions that the model consistently fails to learn through its iterative sampling during RL training. We then extract the core concepts from these failure cases and synthesize new problems to strengthen the model’s weak areas in subsequent augmented training, enabling it to focus on and gradually overcome its weaknesses. Without relying on external knowledge distillation, our framework enables robust generalization by empowering the model to self-identify and address its weaknesses in RL, yielding average performance gains of 10.0% and 7.7% on 7B and 32B models across eight mainstream reasoning benchmarks.

| \faGithub | Code | https://github.com/MasterVito/SwS |

| --- | --- | --- |

| \faGlobe | Project | https://MasterVito.SwS.github.io |

<details>

<summary>x1.png Details</summary>

### Visual Description

## Radar Charts: Performance Comparison of Language Models

### Overview

The image presents two radar charts comparing the performance of several language models across different benchmarks and domains. Chart (a) shows performance across benchmarks (GSM8K, AIME@32, AMC23, GaoKao 2023, Olympiad Bench, MATH 500, Minerva Math, Precalculus), while chart (b) displays performance across mathematical domains (Prealgebra, Number Theory, Counting & Probability, Geometry, Algebra, Intermediate Algebra). The models being compared are Qwen2.5-32B, Qwen2.5-32B-IT, ORZ-32B, SimpleRL-32B, Baseline-32B, and SwS-32B.

### Components/Axes

Both charts share the following components:

* **Radial Axes:** Representing different benchmarks/domains. The axes are labeled with the benchmark/domain names.

* **Concentric Circles:** Representing performance levels, ranging from 0% to 100%. The circles are marked at 20% intervals (20%, 40%, 60%, 80%, 100%).

* **Lines:** Each line represents the performance of a specific language model.

* **Legend:** Located in the top-left corner of each chart, identifying each line with a specific color and model name.

**Chart (a) - Performance across Benchmarks:**

* Benchmarks: GSM8K, AIME@32, AMC23, GaoKao 2023, Olympiad Bench, MATH 500, Minerva Math, Precalculus.

**Chart (b) - Performance across Domains:**

* Domains: Prealgebra, Number Theory, Counting & Probability, Geometry, Algebra, Intermediate Algebra.

### Detailed Analysis or Content Details

**Chart (a) - Performance across Benchmarks:**

* **Qwen2.5-32B (Purple):** Shows generally high performance, peaking at approximately 96.3% on GSM8K, and maintaining relatively high scores across all benchmarks. The line is mostly above 80% except for Minerva Math (around 47.1%) and Precalculus (around 72.3%).

* **Qwen2.5-32B-IT (Orange):** Similar to Qwen2.5-32B, with a peak of around 96.3% on GSM8K. It shows slightly lower performance on AIME@32 (around 31.2%) and AMC23 (around 90.0%).

* **ORZ-32B (Green):** Demonstrates strong performance, peaking at approximately 96.3% on GSM8K. It shows a dip on Minerva Math (around 47.1%).

* **SimpleRL-32B (Blue):** Generally lower performance than the other models, with a peak of around 80.3% on GaoKao 2023. It shows the lowest performance on Minerva Math (around 31.2%).

* **Baseline-32B (Red):** Moderate performance, peaking at around 89.4% on MATH 500. It shows lower performance on AIME@32 (around 31.2%).

* **SwS-32B (Teal):** Performance is variable, peaking at around 80.3% on GaoKao 2023. It shows a low score on Minerva Math (around 47.1%).

**Chart (b) - Performance across Domains:**

* **Qwen2.5-32B (Purple):** Highest performance, peaking at 96.3% on Prealgebra. Maintains high scores across most domains, with a slight dip to around 76.6% on Algebra.

* **Qwen2.5-32B-IT (Orange):** Similar to Qwen2.5-32B, peaking at 96.3% on Prealgebra.

* **ORZ-32B (Green):** Strong performance, peaking at 96.3% on Prealgebra.

* **SimpleRL-32B (Blue):** Lower performance, peaking at 84.1% on Intermediate Algebra.

* **Baseline-32B (Red):** Moderate performance, peaking at 84.1% on Intermediate Algebra.

* **SwS-32B (Teal):** Variable performance, peaking at 84.1% on Intermediate Algebra.

### Key Observations

* **Qwen2.5-32B and Qwen2.5-32B-IT consistently outperform other models** across most benchmarks and domains.

* **SimpleRL-32B generally exhibits the lowest performance.**

* **Minerva Math consistently presents a challenge** for most models, resulting in lower scores in Chart (a).

* **Intermediate Algebra is a strong suit** for several models in Chart (b).

* The shapes of the radar charts are similar for Qwen2.5-32B, Qwen2.5-32B-IT, and ORZ-32B, indicating similar performance profiles.

### Interpretation

The data suggests that Qwen2.5-32B and its IT variant are the most capable language models among those tested, demonstrating superior performance across a wide range of benchmarks and mathematical domains. The consistent underperformance of SimpleRL-32B indicates potential limitations in its architecture or training data. The lower scores on Minerva Math suggest that this benchmark presents a unique challenge, potentially requiring specialized knowledge or reasoning abilities. The high scores on Intermediate Algebra for several models suggest a strong foundation in fundamental algebraic concepts.

The radar chart format effectively visualizes the relative strengths and weaknesses of each model across different tasks. The area enclosed by each line represents the overall performance of the model, with larger areas indicating better overall performance. The deviations from the circular shape highlight specific areas where a model excels or struggles. The charts allow for a quick and intuitive comparison of model capabilities, facilitating informed decision-making in selecting the most appropriate model for a given task.

</details>

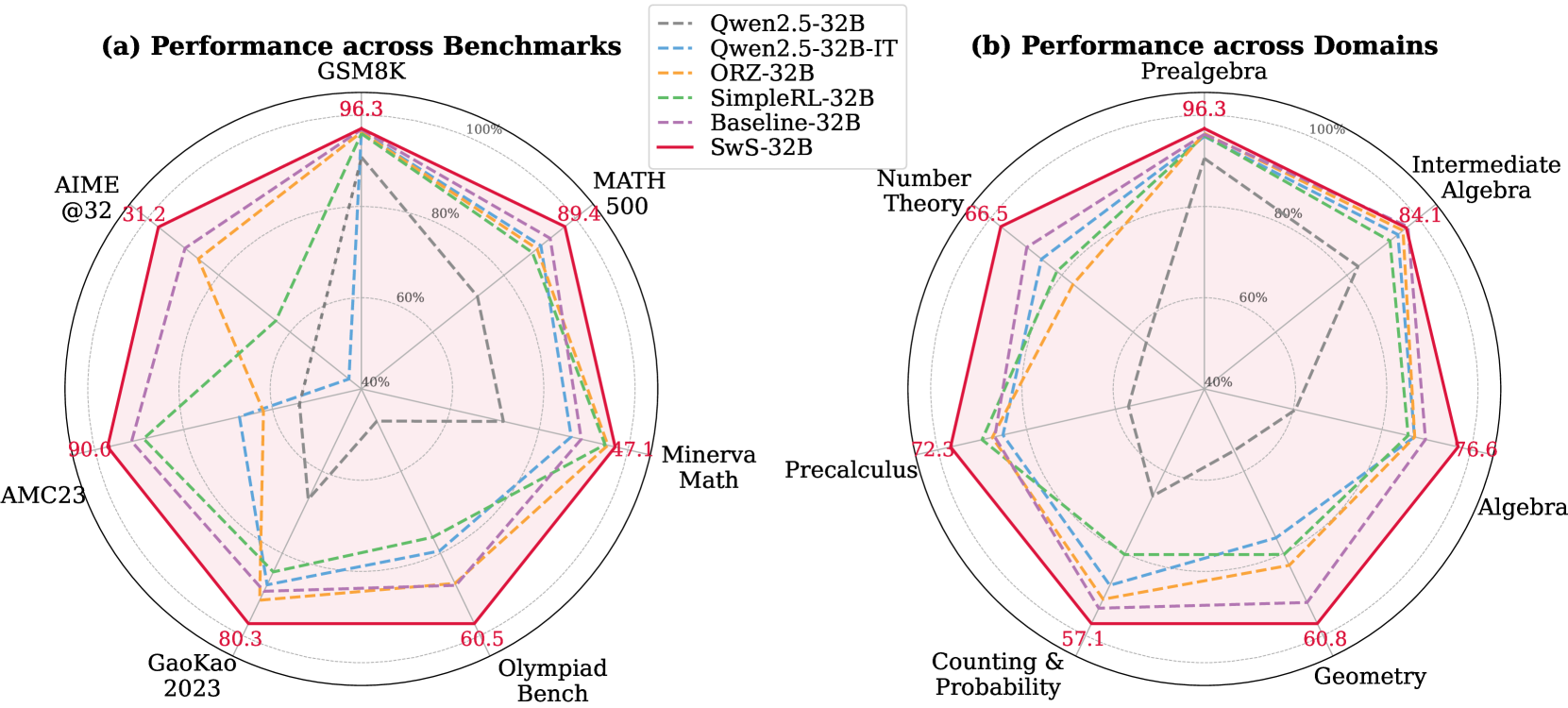

Figure 1: 32B model performance across mainstream reasoning benchmarks and different domains.

1 Introduction

"Give me six hours to chop down a tree and I will spend the first four sharpening the axe."

—Abraham Lincoln

Large-scale Reinforcement Learning with Verifiable Rewards (RLVR) has substantially advanced the reasoning capabilities of large language models (LLMs) [16, 10, 46], where simple rule-based rewards can effectively induce complex reasoning skills. The success of RLVR for eliciting models’ reasoning capabilities heavily depends on a well-curated problem set with proper difficulty levels [63, 28, 55], where each problem is paired with an precise and verifiable reference answer [14, 31, 63, 10]. However, existing reasoning-focused datasets for RLVR suffer from three main issues: (1) High-quality, human-labeled mathematical problems are scarce, and collecting large-scale, well-annotated datasets with precise reference answers is cost-intensive. (2) Most reasoning-focused synthetic datasets are created for SFT distillation, where reference answers are rarely rigorously verified, making them suboptimal for RLVR, which relies heavily on the correctness of the final answer as the training signal. (3) Existing problem augmentation strategies typically involve rephrasing or generating variants of human-written questions [62, 30, 38, 27], or sampling concepts from existing datasets [15, 45, 20, 73], without explicitly considering the model’s reasoning capabilities. Consequently, the synthetic problems may be either too trivial or overly challenging, limiting their utility for model improvement in RL.

More specifically, in RL, it is essential to align the difficulty of training tasks with the model’s current capabilities. When using group-level RL algorithms such as GPRO [40], the advantage of each response is calculated based on its comparison with other responses in the same group. If all responses are either entirely correct or entirely incorrect, the token-level advantages within each rollout collapse to 0, leading to gradient vanishing and degraded training efficiency [28, 63], and potentially harming model performance [55]. Therefore, training on problems that the model has fully mastered or consistently fails to solve does not provide useful learning signals for improvement. However, a key advantage of the failure cases is that, unlike the overly simple questions with little opportunity for improvement, persistently failed problems reveal specific areas of weakness in the model and indicate directions for further enhancement. This raises the following research question: How can we effectively utilize these consistently failed cases to address the model’s reasoning deficiencies? Could they be systematically leveraged for data synthesis that targets the enhancement of the model’s weakest capabilities?

To answer these questions, we propose a S elf-aware W eakness-driven Problem S ynthesis (SwS) framework, which leverages the model’s self-identified weaknesses in RL to generate synthetic problems for training augmentation. Specifically, we record problems that the model consistently struggles to solve or learns inefficiently through iterative sampling during a preliminary RL training phase. These failed problems, which reflect the model’s weakest areas, are grouped by categories, leveraged to extract common concepts, and to synthesize new problems with difficulty levels tailored to the model’s capabilities. To further improve weakness mitigation efficiency during training, the augmentation budget for each category is allocated based on the model’s relative performance across them. Compared with existing problem synthesis strategies for LLM reasoning [73, 45], our framework explicitly targets the model’s capabilities and self-identified weaknesses, enabling more focused and efficient improvement in RL training.

To validate the effectiveness of SwS, we conducted experiments across model sizes ranging from 3B to 32B and comprehensively evaluated performance on eight popular mathematical reasoning benchmarks, showing that its weakness-driven augmentation strategy benefits models across all levels of reasoning capability. Notably, our models trained on the augmented problem set consistently surpass both the base models and those trained on the original dataset across all benchmarks, achieving a substantial average absolute improvement of 10.0% for the 7B model and 7.7% for the 32B model, even surpassing their counterparts trained on carefully curated human-labeled problem sets [14, 6]. We also analyze the model’s performance on previously failed problems and find that, after training on the augmented problem set, it is able to solve up to 20.0% more problems it had consistently failed in its weak domain when trained only on the original dataset. To further demonstrate the robustness and adaptability of the proposed SwS pipeline, we extend it to explore the potential of Weak-to-Strong Generalization, Self-evolving, and Weakness-driven Selection settings, with detailed experimental results and analysis presented in Section 4.

Contributions. (i) We propose a Self-aware Weakness-driven Problem Synthesis (SwS) framework that utilizes the model’s self-identified weaknesses to generate synthetic problems for enhanced RLVR training, paving the way for utilizing high-quality and targeted synthetic data for RL training. (ii) We comprehensively evaluate the SwS framework across diverse model sizes on eight mainstream reasoning benchmarks, demonstrating its effectiveness and generalizability. (iii) We explore the potential of extending our SwS framework to Weak-to-Strong Generalization, Self-evolving, and Weakness-driven Selection settings, highlighting its adaptability through detailed analysis.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Reinforcement Learning Training and Verification Process

### Overview

This diagram illustrates a reinforcement learning (RL) training process with a verification step. It depicts the flow of information from a Policy to a set of Answers, then to a Verifier, and finally to a categorization of success (Failed set) or continuation. The diagram also includes two bar charts representing the accuracy (Acc) of the verifier over time (Epochs).

### Components/Axes

The diagram consists of the following components:

* **Policy θ:** A green box representing the RL policy.

* **Answer 1,1 ... Answer k,1:** A gray box containing multiple answers generated by the policy.

* **Verifier:** A light blue box representing the verification process.

* **Failed set:** A red oval representing the set of failed answers.

* **Bar Charts (2):** Two bar charts showing accuracy (Acc) over time (τ1, τ2, τ3, τr, Epoch).

* **Text:** "RL Training for T1 Epochs" below the Answer box.

* **Question-1:** A text block at the top left describing a geometry problem.

The bar charts have a vertical axis labeled "Acc" (Accuracy) with a scale ranging from approximately 0.0 to 1.0. The horizontal axis represents time steps: τ1, τ2, τ3, τr, and Epoch.

### Detailed Analysis or Content Details

The diagram shows a flow of information:

1. The **Policy θ** generates multiple **Answers** (Answer 1,1 to Answer k,1). The arrows indicate a many-to-many relationship.

2. These **Answers** are fed into the **Verifier**.

3. The **Verifier** evaluates the answers and categorizes them.

4. If the answers are deemed incorrect, they are added to the **Failed set**.

5. The **Verifier's** accuracy is visualized using two bar charts.

**Bar Chart 1 (Top):**

* τ1: Accuracy ≈ 0.3

* τ2: Accuracy ≈ 0.3

* τ3: Accuracy ≈ 0.3

* τr: Accuracy ≈ 0.2

* Epoch: Accuracy ≈ 0.5

The trend in this chart is relatively flat, with accuracy fluctuating around 0.3, and a slight increase to 0.5 at the Epoch.

**Bar Chart 2 (Bottom):**

* τ1: Accuracy ≈ 0.3

* τ2: Accuracy ≈ 0.9

* τ3: Accuracy ≈ 1.0

* τr: Accuracy ≈ 0.8

* Epoch: Accuracy ≈ 1.0

This chart shows a clear upward trend in accuracy, starting at 0.3, rising to 0.9, 1.0, 0.8, and peaking at 1.0 at the Epoch.

The text "RL Training for T1 Epochs" indicates that the process is repeated for T1 epochs.

The question at the top left is: "Tiffany is constructing a fence around a rectangular tennis court. She must use exactly 300 feet of fencing. The fence must enclose all four sides of the court. Regulation states that the length of the fence enclosure must be at least 80 feet and the width must be at least 40 feet. Tiffany wants the area enclosed by the fence to be as large as possible in order to accommodate benches and storage space. What is the optimal area, in square feet?"

### Key Observations

* The two bar charts demonstrate different accuracy trends. The first chart shows a relatively stable, lower accuracy, while the second chart shows a significant improvement in accuracy over time.

* The "Failed set" indicates that the RL policy is not always generating correct answers.

* The diagram highlights the importance of a verification step in RL training.

### Interpretation

The diagram illustrates a typical reinforcement learning workflow where a policy generates actions (answers), and a verifier assesses their quality. The two bar charts likely represent the accuracy of different verification methods or different stages of the verification process. The first chart might represent an initial, less refined verification method, while the second chart represents a more accurate and improved method. The increasing accuracy in the second chart suggests that the verification process is learning and improving over time. The failed set indicates that the policy is still making errors, and further training is needed. The inclusion of a geometry problem suggests that the RL agent is being trained to solve mathematical problems. The diagram emphasizes the iterative nature of RL training, where the policy is continuously refined based on feedback from the verifier.

</details>

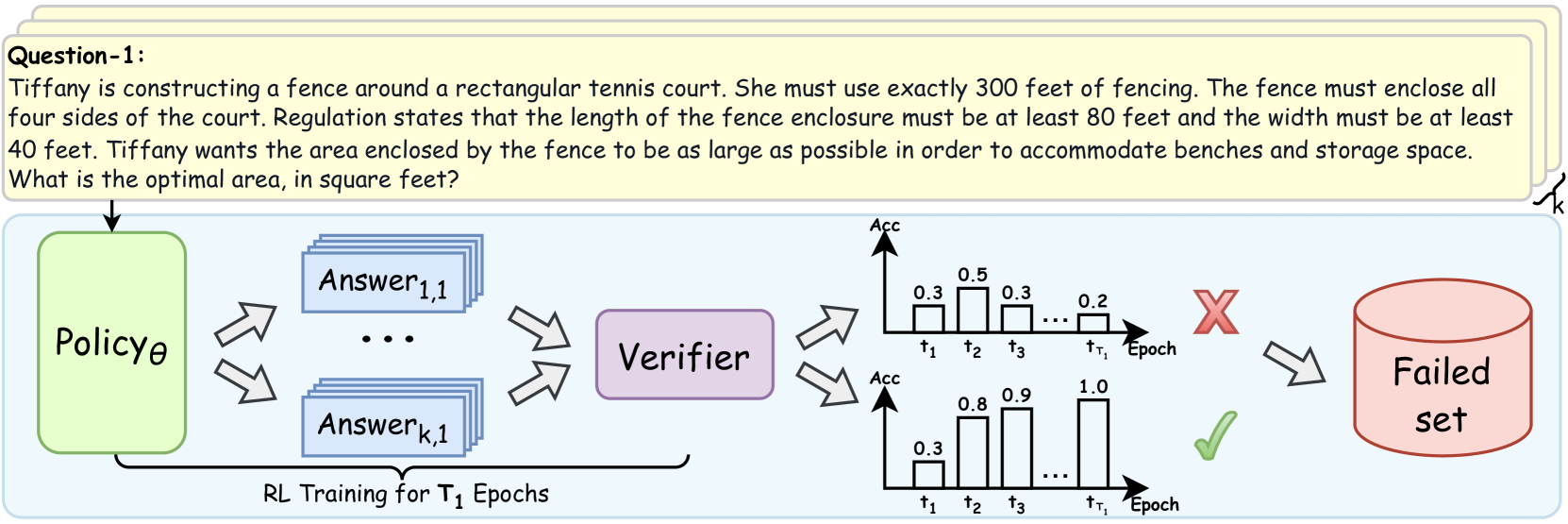

Figure 2: Illustration of the self-aware weakness identification during a preliminary RL training.

2 Method

2.1 Preliminary

Group Relative Policy Optimization (GRPO). GRPO [40] is an efficient optimization algorithm tailored for RL in LLMs, where the advantages for each token are computed in a group-relative manner without requiring an additional critic model to estimate token values. Specifically, given an input prompt $x$ , the policy model $\pi_{\theta_{\text{old}}}$ generates a group of $G$ responses $\mathbf{Y}=\{y_{i}\}_{i=1}^{G}$ , with acquired rewards $\mathbf{R}=\{r_{i}\}_{i=1}^{G}$ . The advantage $A_{i,t}$ for each token in response $y_{i}$ is computed as the normalized rewards:

$$

A_{i,t}=\frac{r_{i}-\text{mean}(\{r_{i}\}_{i=1}^{G})}{\text{std}(\{r_{i}\}_{i=%

1}^{G})}. \tag{1}

$$

To improve the stability of policy optimization, GRPO clips the probability ratio $k_{i,t}(\theta)=\frac{\pi_{\theta}(y_{i,t}\mid x,y_{i,<t})}{\pi_{\theta_{\text%

{old}}}(y_{i,t}\mid x,y_{i,<t})}$ within a trust region [39], and constrains the policy distribution from deviating too much from the reference model using a KL term. The optimization objective is defined as follows:

$$

\displaystyle\mathcal{J}_{\text{GRPO}}(\theta) \displaystyle=\mathbb{E}_{x\sim\mathcal{D},\mathbf{Y}\sim\pi_{\theta_{\text{%

old}}}(\cdot\mid x)} \displaystyle\Bigg{[}\frac{1}{G}\sum_{i=1}^{G}\frac{1}{|y_{i}|}\sum_{t=1}^{|y_%

{i}|}\Bigg{(}\min\Big{(}k_{i,t}(\theta)A_{i,t},\ \text{clip}\Big{(}k_{i,t}(%

\theta),1-\varepsilon,1+\varepsilon\Big{)}A_{i,t}\Big{)}-\beta D_{\text{KL}}(%

\pi_{\theta}||\pi_{\text{ref}})\Bigg{)}\Bigg{]}. \tag{2}

$$

Inspired by DAPO [63], in all experiments of this work, we omit the KL term during optimization, while incorporating the clip-higher, token-level loss and dynamic sampling strategies to enhance the training efficiency of RLVR. Our RLVR training objective is defined as follows:

$$

\displaystyle\mathcal{J}(\theta)=\mathbb{E}_{x\sim\mathcal{D},\,\mathbf{Y}\sim%

\pi_{\theta_{\text{old}}}(\cdot\mid x)}\ \displaystyle\Bigg{[}\frac{1}{\sum_{i=1}^{G}|y_{i}|}\sum_{i=1}^{G}\sum_{t=1}^{%

|y_{i}|}\Big{(}\min\big{(}k_{i,t}(\theta)A_{i,t},\ \text{clip}(k_{i,t}(\theta)%

,1-\varepsilon,1+\varepsilon^{h})A_{i,t}\big{)}\Big{)}\Bigg{]} \displaystyle\text{s.t.}\leavevmode\nobreak\ \leavevmode\nobreak\ \text{acc}_{%

\text{lower}}<\left|\left\{y_{i}\in\mathbf{Y}\;\middle|\;\texttt{is\_accurate}%

(x,y_{i})\right\}\right|<\text{acc}_{\text{upper}}. \tag{3}

$$

where $\varepsilon^{h}$ denotes the upper clipping threshold for importance sampling ratio $k_{i,t}(\theta)$ , and $\text{acc}_{\text{lower}}$ and $\text{acc}_{\text{upper}}$ are thresholds used to filter target prompts for subsequent policy optimization.

2.2 Overview

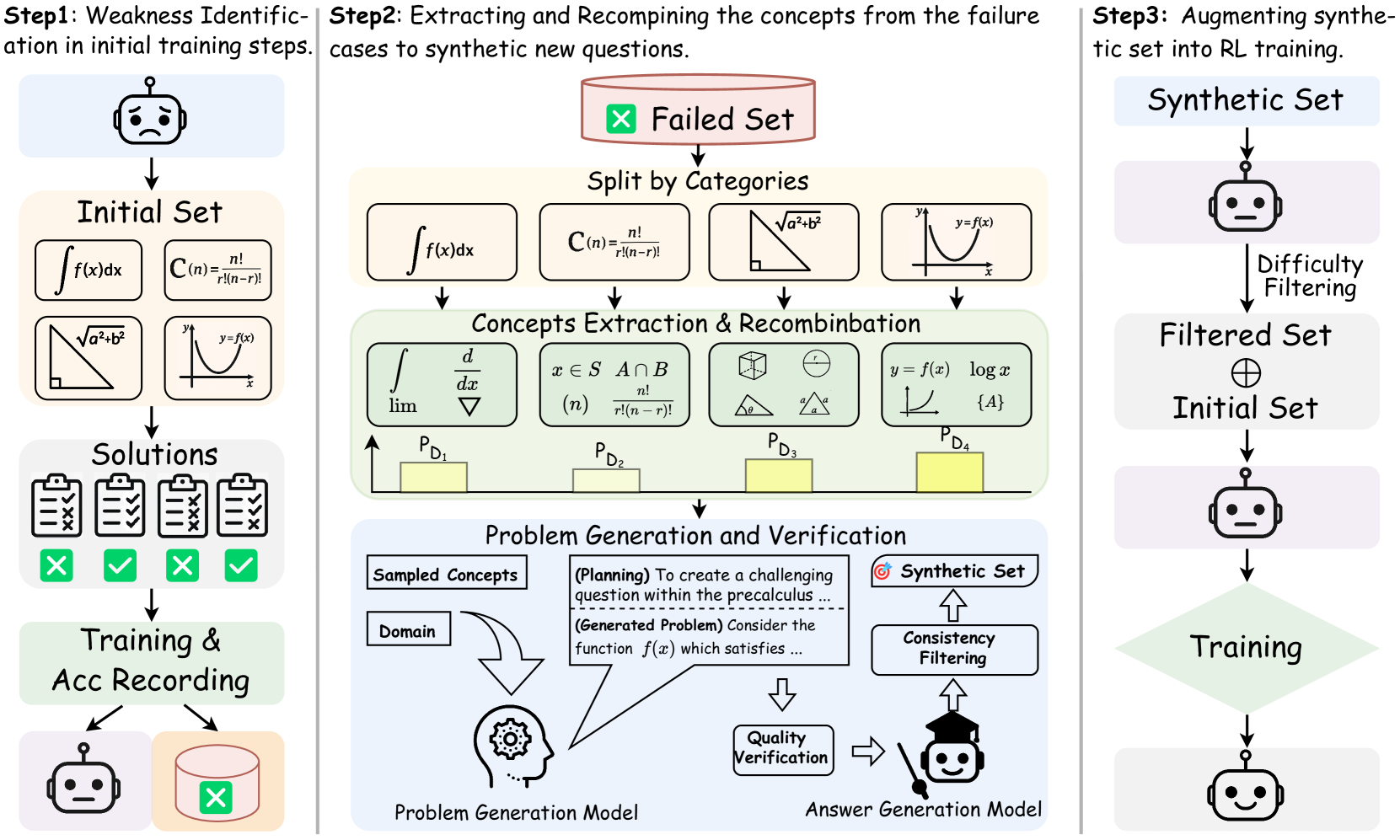

Figure 3 presents an overview of our SwS framework, which generates targeted training samples to enhance the model’s reasoning capabilities in RLVR. The framework initiates with a Self-aware Weakness Identification stage, where the model undergoes preliminary RL training on an initial problem set covering diverse categories. During this stage, the model’s weaknesses are identified as problems it consistently fails to solve or learns ineffectively. Based on failure cases that reflect the model’s weakest capabilities, in the subsequent Targeted Problem Synthesis stage, we group them by category, extract their underlying concepts, and recombine these concepts to synthesize new problems that target the model’s learning and mitigation of its weaknesses. In the final Augmented Training with Synthetic Problems stage, the model receives continuous training with the augmented high-quality synthetic problems, thereby enhancing its general reasoning abilities through more targeted training.

2.3 Self-aware Weakness Identification

Utilizing the policy model itself to identify its weakest capabilities, we begin by training it in a preliminary RL phase using an initial problem set $\mathbf{X}_{S}$ , which consists of mathematical problems from $n$ diverse categories ${\mathbf{\{D\}}}_{i=0}^{n}$ , each paired with a ground-truth answer $a$ . As illustrated in Figure 2, we record the average accuracy $a_{i,t}$ of the model’s responses to each prompt $x_{i}$ at each epoch $t∈\{0,1,...,T_{1}\}$ , where $T_{1}$ is the number of training epochs in this phase. We track the Failure Rate $F$ for each problem in the training set to identify those that the model consistently struggles to learn, which are considered its weaknesses. Specifically, such problems are defined as those the model consistently struggles to solve during RL training, which meet two criteria: (1) The model never reaches a response accuracy of 50% at any training epoch, and (2) The accuracy trend decreases over time, indicated by a negative slope:

$$

F(x_{i})=\mathbb{I}\left[\max_{t\in[1,T]}a_{i,t}<0.5\;\land\;\text{slope}\left%

(\{a_{i,t}\}_{t=1}^{T}\right)<0\right] \tag{4}

$$

This metric captures both problems the model consistently fails to solve and those showing no improvement during sampling-based RL training, making them appropriate targets for training augmentation. After the weakness identification phase via the preliminary training on the initial training set $\mathbf{X}_{S}$ , we employ the collected problems $\mathbf{X}_{F}=\left\{x_{i}∈\mathbf{X}_{S}\;\middle|\;F_{r}(x_{i})=1\right\}$ as seed problems for subsequent weakness-driven problem synthesis.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Reinforcement Learning Training Pipeline with Failure Analysis

### Overview

This diagram illustrates a reinforcement learning (RL) training pipeline that incorporates failure analysis and recombination of concepts to improve the synthetic set used for training. The process is divided into three main steps: Weakness Identification, Extracting and Recombining concepts from failures, and Augmenting synthetic set into RL training. It shows how failed cases are analyzed, broken down into concepts, recombined, and used to generate new training data.

### Components/Axes

The diagram is structured into three main columns representing the three steps. Key components include:

* **Initial Set:** Represents the starting point of the training data.

* **Failed Set:** Represents the subset of training cases that resulted in failure.

* **Synthetic Set:** Represents the artificially generated training data.

* **Filtered Set:** Represents the synthetic set after difficulty filtering.

* **Solutions:** Represents the correct answers or solutions to the training problems.

* **Concepts Extraction & Recombination:** A central block representing the process of analyzing failed cases and recombining concepts.

* **Problem Generation & Verification:** A block representing the generation of new training problems and their verification.

* **Training & Acc Recording:** A block representing the training process and the recording of accuracy.

* **Difficulty Filtering:** A process to filter the synthetic set based on difficulty.

* **Consistency Filtering:** A process to filter the synthetic set based on consistency.

* **Quality Verification:** A process to verify the quality of generated problems.

* **Domain:** Represents the knowledge domain for problem generation.

* **Problem Generation Model:** A model used to generate new problems.

* **Answer Generation Model:** A model used to generate answers to the problems.

### Detailed Analysis or Content Details

**Step 1: Weakness Identification in initial training steps.**

* An image of a sad robot face represents the initial state.

* The "Initial Set" contains mathematical expressions:

* ∫f(x)dx

* C(n) = n! / (n-r)!

* √a² + b²

* y = f(x)

* "Solutions" are represented by a stack of papers with checkmarks and crosses, indicating correct and incorrect solutions.

**Step 2: Extracting and Recombining the concepts from the failure cases to synthetic new questions.**

* "Failed Set" is indicated by a red 'X' symbol.

* "Split by Categories" leads to four distinct concept groups, each represented by a mathematical expression:

* ∫f(x)dx, C(n) = n! / (n-r)!

* d/dx, x ∈ S ∧ B

* lim, ∇

* y = f(x), log a

* "Concepts Extraction & Recombination" is represented by a block containing mathematical symbols (∫, d/dx, lim, ∇, x ∈ S ∧ B, n!, y = f(x), log a, {A}).

* The recombination process is represented by four probability distributions: P<sub>D1</sub>, P<sub>D2</sub>, P<sub>D3</sub>, P<sub>D4</sub>.

* "Problem Generation & Verification" includes:

* "Sampled Concepts" linked to "Domain" (represented by a gear icon).

* "(Planning) To create a challenging question within the precalculus..."

* "(Generated Problem) Consider the function f(x) which satisfies..."

* "Quality Verification" and "Answer Generation Model" (robot face).

**Step 3: Augmenting synthetic set into RL training.**

* A robot face represents the synthetic set generation.

* "Difficulty Filtering" filters the "Synthetic Set".

* "Filtered Set" is combined with the "Initial Set".

* A robot face represents the training process.

* "Training & Acc Recording" is represented by a robot face with a checkmark.

### Key Observations

* The diagram emphasizes a cyclical process of training, failure analysis, and improvement.

* Mathematical expressions are central to the training data and failure analysis.

* The recombination of concepts is a key step in generating new training data.

* Filtering mechanisms (difficulty and consistency) are used to refine the synthetic set.

* The use of robot faces throughout the diagram suggests an automated training process.

### Interpretation

The diagram depicts a sophisticated reinforcement learning training methodology that actively addresses weaknesses in the initial training data. By analyzing failed cases, extracting underlying concepts, and recombining them to generate new training examples, the system aims to improve its performance and robustness. The inclusion of filtering steps suggests a focus on creating high-quality synthetic data that is both challenging and consistent. The cyclical nature of the process indicates a continuous learning loop, where failures are used as opportunities for improvement. The diagram highlights the importance of not only generating synthetic data but also of intelligently analyzing and refining it based on the system's performance. The use of mathematical expressions suggests that the system is being trained on a task involving mathematical reasoning or problem-solving. The overall design suggests a system that is designed to learn from its mistakes and adapt to new challenges.

</details>

Figure 3: An overview of our proposed weakness-driven problem synthesis framework that targets at mitigating the model’s reasoning limitations within the RLVR paradigm.

2.4 Targeted Problem Synthesis

Concept Extraction and Recombination. We synthesize new problems by extracting the underlying concepts $\mathbf{C}_{F}$ from the collected seed questions $\mathbf{X}_{F}$ and strategically recombining them to generate questions that target similar capabilities. Specifically, the extracted concepts are first categorized into their respective categories $\mathbf{D}_{i}$ (e.g., mathematical topics such as Algebra or Geometry) based on the corresponding seed problem $x_{i}$ , and are subsequently sampled and recombined to generate problems within the same category. Inspired by [15, 73], we enhance the coherence and semantic fluency of synthetic problems by computing co-occurrence probabilities and embedding similarities among concepts within each category, enabling more appropriate sampling and recombination of relevant concepts. This targeted sampling approach ensures that the synthesized problems remain semantically coherent and avoids combining concepts from unrelated sub-topics or irrelevant knowledge points, which could otherwise result in invalid or confusing questions. Further details on the co-occurrence calculation and sampling algorithm are provided in Appendix E.

Intuitively, categories exhibiting more pronounced weaknesses demand additional learning support. To optimize the efficiency of targeted problem synthesis and weakness mitigation in subsequent RL training, we allocate the augmentation budget, i.e., the concept combinations used as inputs for problem synthesis, across categories based on the model’s category-specific failure rates $F_{\mathbf{D}}$ from the preliminary training phase. Specifically, we normalize these failure rates $F_{\mathbf{D}}$ across categories to determine the allocation weights for problem synthesis. Given a total augmentation budget $|\mathbf{X}_{T}|$ , the number of concept combinations allocated to domain $\mathbf{D}_{i}$ is computed as:

$$

|\mathbf{X}_{T,\mathbf{D}_{i}}|=|\mathbf{X}_{T}|\cdot P_{\mathbf{D}_{i}}=|%

\mathbf{X}_{T}|\cdot\frac{F_{\mathbf{D}_{i}}}{\sum_{j}^{n}F_{\mathbf{D}_{j}}}, \tag{5}

$$

where $F_{\mathbf{D}_{i}}$ is the failure rate of problems in category $\mathbf{D}_{i}$ within the initial training set. The sampled and recombined concepts then serve as inputs for subsequent problem generation.

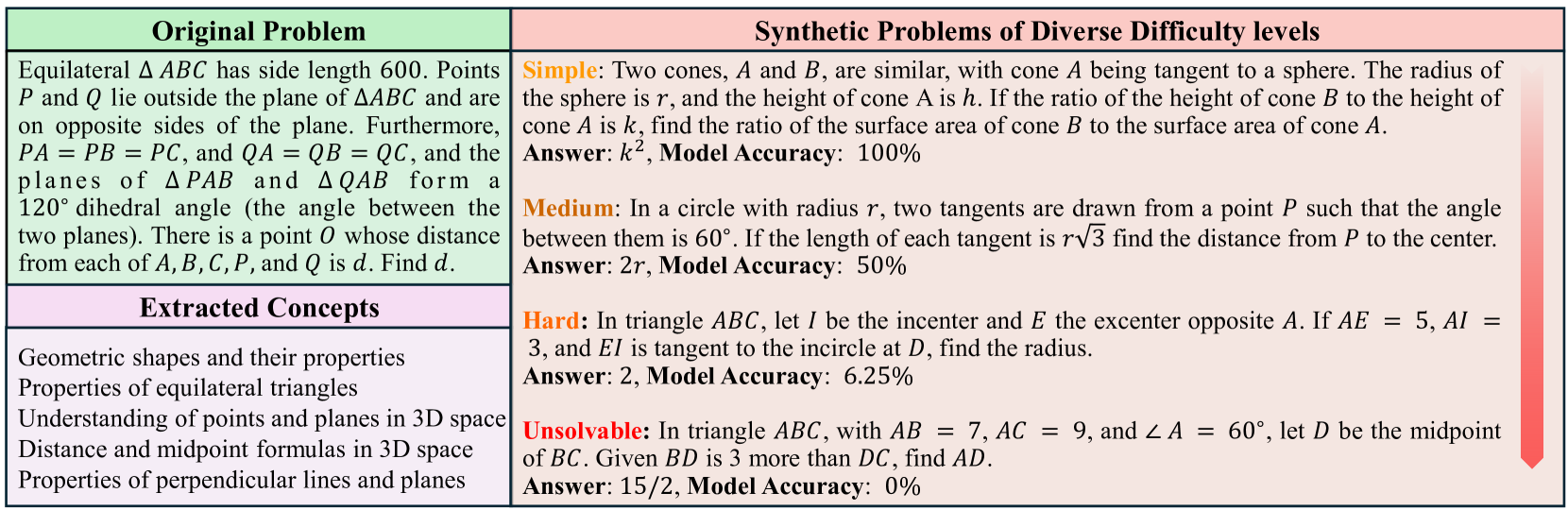

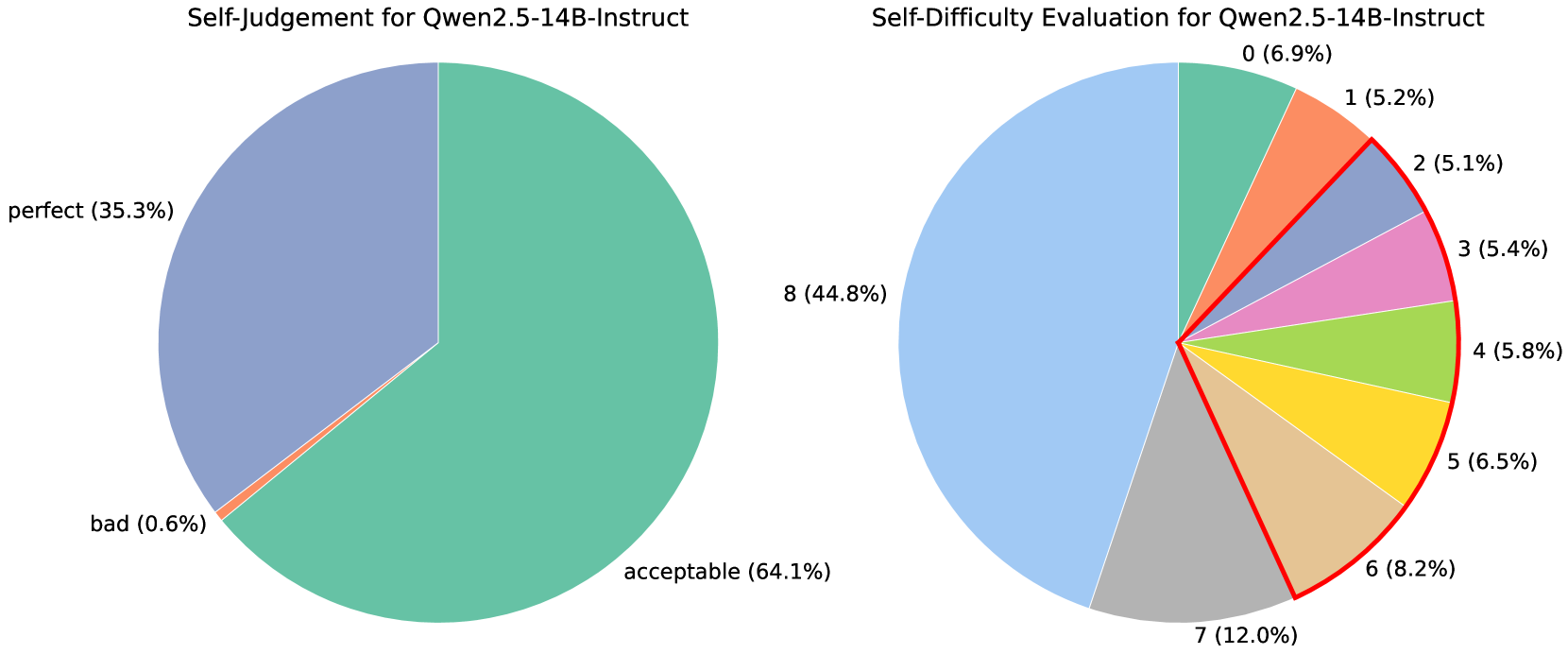

Problem Generation and Quality Verification. After extracting and recombining the concepts associated with the model’s weakest capabilities, we employ a strong instruction model, which does not perform deep reasoning, to generate new problems based on the category label and the recombined concepts. We instruct the model to first generate rationales that explore how the concept combinations can be integrated to produce a well-formed problem. To ensure the synthetic problems align with the RLVR setting, the model is also instructed to avoid generating multiple-choice, multi-part, or proof-based questions [1]. Detailed prompt used for the concept-based problem generation please refer to the Appendix J. For quality verification of the synthetic problems, we prompt general instruction LLMs multiple times to evaluate each problem and its rationale across multiple dimensions, including concept coverage, factual accuracy, and solvability, assigning an overall rating of bad, acceptable, or perfect. Only problems receiving ‘perfect’ ratings above a predefined threshold and no ‘bad’ ratings are retained for subsequent utilization.

Reference Answer Generation. Since alignment between the model’s final answer and the reference answer is the primary training signal in RLVR, a rigorous verification of the reference answers for synthetic problems is essential to ensure training stability and effectiveness. To this end, we employ a strong reasoning model (e.g., QwQ-32B [47]) to label reference answers for synthetic problems through a self-consistency paradigm. Specifically, we prompt it to generate multiple responses for each problem and use Math-Verify to assess answer equivalence, which ensures that consistent answers of different forms (e.g., fractions and decimals) are correctly recognized as equal. Only problems with at least 50% consistent answers are retained, as highly inconsistent answers are unreliable as ground truth and may indicate that the problems are excessively complex or unsolvable.

Difficulty Filtering. The most prevalently used RLVR algorithms, such as GRPO, compute the advantage of each token in a response by comparing its reward to those of other responses for the same prompt. When all responses yield identical accuracy—either all correct or all incorrect—the advantages uniformly degrade to zero, leading to gradient vanishing for policy updates and resulting in training inefficiency [40, 63]. Recent study [53] further shows that RLVR training can be more efficient with problems of appropriate difficulty. Considering this, we select synthetic problems of appropriate difficulty based on the initially trained model’s accuracy on them. Specifically, we sample multiple responses per synthetic problem using the initially trained model and retain only those whose accuracy falls within a target range $[\text{acc}_{\text{low}},\text{acc}_{\text{high}}]$ (e.g., $[25\%,75\%]$ ). This strategy ensures that the model engages with learnable problems, enhancing both the stability and efficiency of RLVR training.

2.5 Augmented Training with Synthetic Problems

After the rigorous problem generation, answer generation, and verification, the allocation budget of synthetic problems in each category is further adjusted using the weights in Eq. 5 to ensure their comprehensive and efficient utilization, resulting in $\mathbf{X}^{\prime}_{T}$ . We incorporate the retained synthetic problems $\mathbf{X}^{\prime}_{T}$ into the initial training set $\mathbf{X}_{S}$ , forming the augmented training set $\mathbf{X}_{A}=[\mathbf{X}_{S};\mathbf{X}^{\prime}_{T}]$ . We then continue training the initially trained model on $\mathbf{X}_{A}$ in a second stage of augmented RLVR, targeting to mitigate the model’s weaknesses through exploration of the synthetic problems.

3 Experiments

| Model | GSM8K | MATH 500 | Minerva Math | Olympiad Bench | GaoKao 2023 | AMC23 | AIME24 (Avg@ 1 / 32) | AIME25 (Avg@ 1 / 32) | Avg. |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Qwen 2.5 3B Base | | | | | | | | | |

| Qwen2.5-3B | 69.9 | 46.0 | 18.8 | 19.9 | 34.8 | 27.5 | 0.0 / 2.2 | 0.0 / 1.5 | 27.1 |

| Qwen2.5-3B-IT | 84.2 | 62.2 | 26.5 | 27.9 | 53.5 | 32.5 | 6.7 / 5.0 | 0.0 / 2.3 | 36.7 |

| BaseRL-3B | 86.3 | 66.0 | 25.4 | 31.3 | 57.9 | 40.0 | 10.0 / 9.9 | 6.7 / 3.5 | 40.4 |

| SwS-3B | 87.0 | 69.6 | 27.9 | 34.8 | 59.7 | 47.5 | 10.0 / 8.4 | 6.7 / 7.1 | 42.9 |

| $\Delta$ | +0.7 | +3.6 | +2.5 | +3.5 | +1.8 | +7.5 | +0.0 / -1.5 | +0.0 / +3.6 | +2.5 |

| Qwen 2.5 7B Base | | | | | | | | | |

| Qwen2.5-7B | 88.1 | 63.0 | 27.6 | 30.5 | 55.8 | 35.0 | 6.7 / 5.4 | 0.0 / 1.2 | 38.3 |

| Qwen2.5-7B-IT | 91.7 | 75.6 | 38.2 | 40.6 | 63.9 | 50.0 | 16.7 / 10.5 | 13.3 / 6.7 | 48.8 |

| Open-Reasoner-7B | 93.6 | 80.4 | 39.0 | 45.6 | 72.0 | 72.5 | 10.0 / 16.8 | 13.3 / 17.9 | 53.3 |

| SimpleRL-Base-7B | 90.8 | 77.2 | 35.7 | 41.0 | 66.2 | 62.5 | 13.3 / 14.8 | 6.7 / 6.7 | 49.2 |

| BaseRL-7B | 92.0 | 78.4 | 36.4 | 41.6 | 63.4 | 45.0 | 10.0 / 14.5 | 6.7 / 6.5 | 46.7 |

| SwS-7B | 93.9 | 82.6 | 41.9 | 49.6 | 71.7 | 67.5 | 26.7 / 18.3 | 20.0 / 18.5 | 56.7 |

| $\Delta$ | +1.9 | +4.2 | +5.5 | +8.0 | +8.3 | +22.5 | +16.7 / +3.8 | +13.3 / +12.0 | +10.0 |

| Qwen 2.5 7B Math | | | | | | | | | |

| Qwen2.5-Math-7B | 43.2 | 72.0 | 35.7 | 17.6 | 31.4 | 47.5 | 10.0 / 9.4 | 0.0 / 2.9 | 32.2 |

| Qwen2.5-Math-7B-IT | 93.3 | 80.6 | 36.8 | 36.6 | 64.9 | 45.0 | 6.7 / 7.2 | 13.3 / 6.2 | 47.2 |

| PRIME-RL-7B | 93.2 | 82.0 | 41.2 | 46.1 | 67.0 | 60.0 | 23.3 / 16.1 | 13.3 / 16.2 | 53.3 |

| SimpleRL-Math-7B | 89.8 | 78.0 | 27.9 | 43.4 | 64.2 | 62.5 | 23.3 / 24.5 | 20.0 / 15.6 | 51.1 |

| Oat-Zero-7B | 90.1 | 79.4 | 38.2 | 42.4 | 67.8 | 70.0 | 43.3 / 29.3 | 23.3 / 11.8 | 56.8 |

| BaseRL-Math-7B | 90.2 | 78.8 | 37.9 | 43.6 | 64.4 | 57.5 | 26.7 / 23.0 | 20.0 / 14.0 | 51.9 |

| SwS-Math-7B | 91.9 | 83.8 | 41.5 | 47.7 | 71.4 | 70.0 | 33.3 / 25.9 | 26.7 / 18.2 | 58.3 |

| $\Delta$ | +1.7 | +5.0 | +3.6 | +4.1 | +7.0 | +12.5 | +6.7 / +2.9 | +6.7 / +4.2 | +6.4 |

| Qwen 2.5 32B base | | | | | | | | | |

| Qwen2.5-32B | 90.1 | 66.8 | 34.9 | 29.8 | 55.3 | 50.0 | 10.0 / 4.2 | 6.7 / 2.5 | 42.9 |

| Qwen2.5-32B-IT | 95.6 | 83.2 | 42.3 | 49.5 | 72.5 | 62.5 | 23.3 / 15.0 | 20.0 / 13.1 | 56.1 |

| Open-Reasoner-32B | 95.5 | 82.2 | 46.3 | 54.4 | 75.6 | 57.5 | 23.3 / 23.5 | 33.3 / 31.7 | 58.5 |

| SimpleRL-Base-32B | 95.2 | 81.0 | 46.0 | 47.4 | 69.9 | 82.5 | 33.3 / 26.2 | 20.0 / 15.0 | 59.4 |

| BaseRL-32B | 96.1 | 85.6 | 43.4 | 54.7 | 73.8 | 85.0 | 40.0 / 30.7 | 6.7 / 24.6 | 60.7 |

| SwS-32B | 96.3 | 89.4 | 47.1 | 60.5 | 80.3 | 90.0 | 43.3 / 33.0 | 40.0 / 31.8 | 68.4 |

| $\Delta$ | +0.2 | +3.8 | +3.7 | +5.8 | +6.5 | +5.0 | +3.3 / +2.3 | +33.3 / +7.2 | +7.7 |

Table 1: We report the detailed performance of our SwS implementation across various base models and multiple benchmarks. AIME is evaluated using two metrics: Avg@1 (single-run performance) and Avg@32 (average over 32 runs).

3.1 Experimental Setup

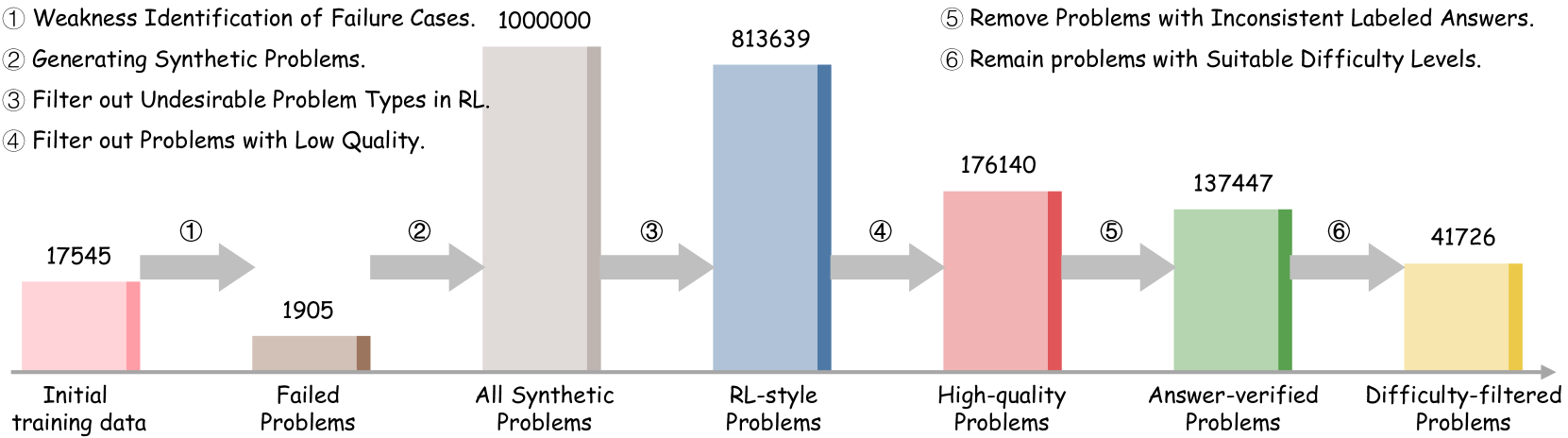

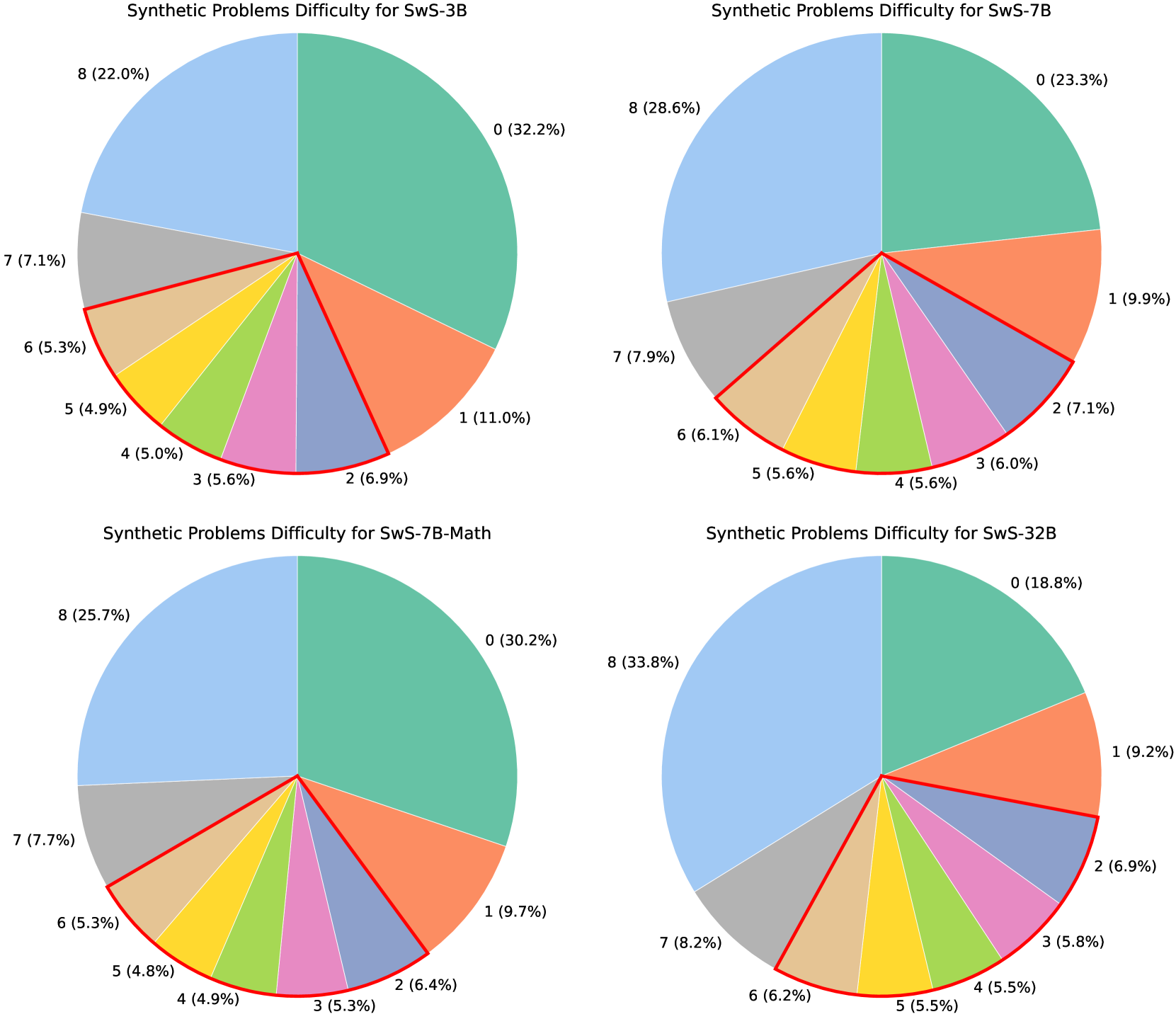

Models and Datasets. We employ the Qwen2.5-base series [57, 58] with model sizes from 3B to 32B in our experiments. For concept extraction and problem generation, we employ the LLaMA-3.3-70B-Instruct model [8], and for concept embedding, we use the LLaMA-3.1-8B-base model. To verify the quality of the synthetic questions, we use both the LLaMA-3.3-70B-Instruct and additionally Qwen-2.5-72B-Instruct [57] to evaluate them and filter out the low-quality samples. For answer generation, we use Skywork-OR1-Math-7B [12] for training models with sizes up to 7B, and QwQ-32B [47] for the 32B model experiments. We employ the SwS pipeline to generate 40k synthetic problems for each base model. All the prompts for each procedure in SwS can be found in Appendix J. We adopt GRPO [40] as the RL algorithm, and full implementation details are in Appendix B.

For the initial training set used in the preliminary RL training for weaknesses identification, we employ the MATH-12k [13] for models with sizes up to 7B. As the 14B and 32B models show early saturation on MATH-12k, we instead use a combined dataset of 17.5k samples from the DAPO [63] English set and the LightR1 [53] Stage-2 set.

Evaluation. We evaluated the models on a wide range of mathematical reasoning benchmarks, including GSM8K [4], MATH-500 [26], Minerva Math [19], Olympiad-Bench [11], Gaokao-2023 [71], AMC [33], and AIME [34]. We report Pass@1 (Avg@1) accuracy across all benchmarks and additionally include the Avg@32 metric for the competition-level AIME benchmark to enhance evaluation robustness. For detailed descriptions of the evaluation benchmarks, see Appendix I.

Baseline Setting. Our baselines include the base model, its post-trained Instruct version (e.g., Qwen2.5-7B-Instruct), and the initial trained model further trained on the initial dataset for the same number of steps as our augmented RL training as the baselines. To further highlight the effectiveness of the SwS framework, we compare the model trained on the augmented problem set against recent advanced RL-based models, including SimpleRL [67], Open Reasoner [14], PRIME [6], and Oat-Zero [28].

3.2 Main Results

The overall experimental results are presented in Table 1. Our SwS framework enables consistent performance improvements across benchmarks of varying difficulty and model scales, with the most significant gains observed in models greater than 7B parameters. Specifically, SwS-enhanced versions of the 7B and 32B models show absolute improvements of +10.0% and +7.7%, respectively, underscoring the effectiveness and scalability of the framework. When initialized with MATH-12k, SwS yields strong gains on competition-level benchmarks, achieving +16.7% and +13.3% on AIME24 and AIME25 with Qwen2.5-7B. These results highlight the quality and difficulty of the synthesized samples compared to well-crafted human-written ones, demonstrating the effectiveness of generating synthetic data based on model capabilities to enhance training.

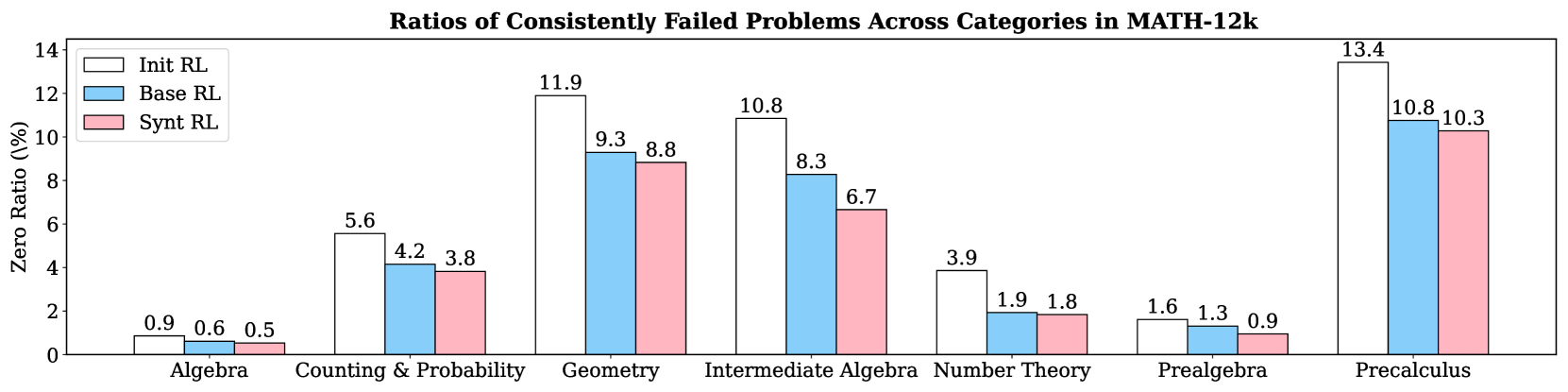

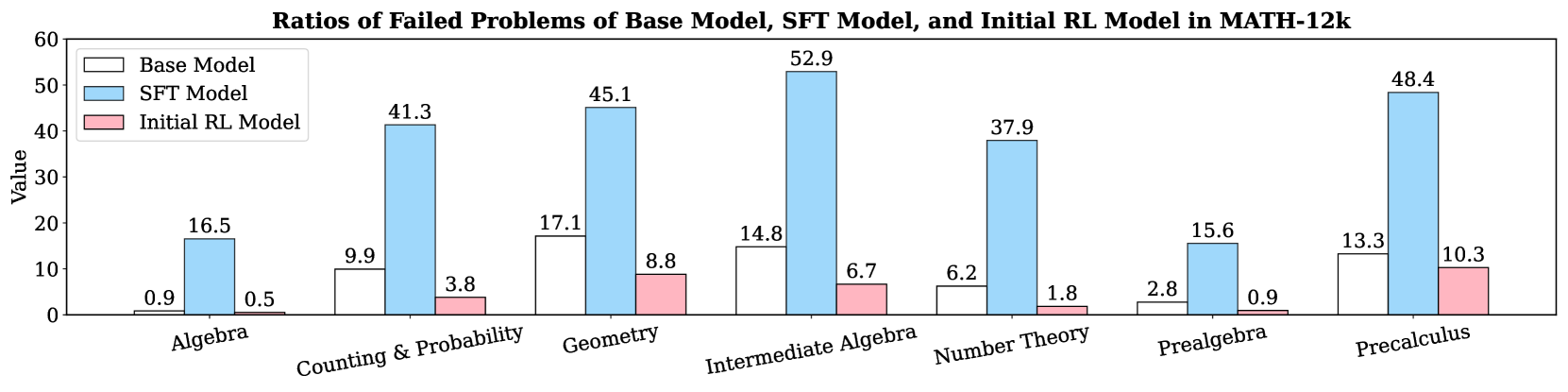

3.3 Weakness Mitigation from Augmented Training

The motivation behind SwS is to mitigate model weaknesses by explicitly targeting failure cases during training. To demonstrate its effectiveness, we use Qwen2.5-7B to analyze the ratios of consistently failed problems in the initial training set (MATH-12k) across three models: the initially trained model, the model continued trained on the initial training set, and the model trained on the augmented set with synthetic problems from the SwS pipeline. As shown in Figure 4, continued training on the augmented set enables the model to solve a greater proportion of previously failed problems across most domains compared to training on the initial set alone, with the greatest gains observed in Intermediate Algebra (20%), Geometry (5%), and Precalculus (5%) as its weakest areas. Notably, these improvements are achieved even though each original problem is sampled four times less frequently in the augmented set than in training on the original dataset alone, highlighting the efficiency of SwS-generated synthetic problems in RL training.

4 Extensions and Analysis

| Model | GSM8K | AIME24 (Pass@32) | Prealgebra | Intermediate Algebra | Algebra | Precalculus | Number Theory | Counting & Probability | Geometry |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Strong Student | 92.0 | 13.8 | 87.7 | 58.7 | 93.8 | 63.2 | 86.4 | 71.2 | 66.8 |

| Weak Teacher | 93.3 | 7.2 | 88.2 | 64.3 | 95.5 | 71.2 | 93.0 | 81.4 | 63.0 |

| Trained Student | 93.6 | 17.5 | 90.5 | 64.4 | 97.7 | 74.6 | 95.1 | 80.4 | 67.5 |

Table 2: Performance on two representative benchmarks and category-specific results on MATH-500 of the weak teacher model and the strong student model.

| Model | GSM8K | MATH 500 | Minerva Math | Olympiad Bench | GaoKao 2023 | AMC23 | AIME24 (Avg@ 1 / 32) | AIME25 (Avg@ 1 / 32) | Avg. |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Qwen2.5-14B-IT | 94.7 | 79.6 | 41.9 | 45.6 | 68.6 | 57.5 | 16.7 / 11.6 | 6.7 / 10.9 | 51.4 |

| + BaseRL | 94.5 | 85.4 | 44.1 | 52.1 | 71.7 | 65.0 | 20.0 / 21.6 | 20.0 / 22.3 | 56.6 |

| + SwS-SE | 95.6 | 85.0 | 46.0 | 53.5 | 74.8 | 67.5 | 20.0 / 19.8 | 20.0 / 17.8 | 57.8 |

| $\Delta$ | +1.1 | -0.4 | +1.9 | +1.4 | +3.1 | +2.5 | +0.0 / -1.8 | +0.0 / -4.5 | +1.2 |

Table 3: Experimental results of extending the SwS framework to the Self-evolving paradigm on the Qwen2.5-14B-Instruct model.

4.1 Weak-to-Strong Generalization for SwS

Employing a powerful frontier model like QwQ [47] helps ensure answer quality. However, when training the top-performing reasoning model, no stronger model exists to produce reference answers for problems identified as its weaknesses. To explore the potential of applying our SwS pipeline to enhancing state-of-the-art models, we extend it to the Weak-to-Strong Generalization [2] setting by using a generally weaker teacher that may outperform the stronger model in specific domains to label reference answers for the synthetic problems.

Intuitively, using a weaker teacher may result in mislabeled answers, which could significantly impair subsequent RL training. However, during the difficulty filtering stage, this risk is mitigated by using the initially trained policy to assess the difficulty of synthetic problems, as it rarely reproduces the same incorrect answers provided by the weaker teacher. As a byproduct, mislabeled cases are naturally filtered out alongside overly complex samples through accuracy-based screening. The experimental analysis on the validity of difficulty-level filtering in ensuring label correctness is presented in Table 5.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Ratios of Consistently Failed Problems Across Categories in MATH-12k

### Overview

This bar chart visualizes the ratios of consistently failed problems across different mathematical categories within the MATH-12k dataset. The chart compares three Reinforcement Learning (RL) models: "Init RL", "Base RL", and "Synt RL". The y-axis represents the "Zero Ratio" (in percentage), indicating the proportion of problems consistently failed. The x-axis displays the mathematical categories: Algebra, Counting & Probability, Geometry, Intermediate Algebra, Number Theory, Prealgebra, and Precalculus.

### Components/Axes

* **Title:** "Ratios of Consistently Failed Problems Across Categories in MATH-12k" (centered at the top)

* **X-axis Label:** Mathematical Categories (Algebra, Counting & Probability, Geometry, Intermediate Algebra, Number Theory, Prealgebra, Precalculus)

* **Y-axis Label:** "Zero Ratio (%)" (ranging from 0 to 14, with increments of 2)

* **Legend:** Located in the top-left corner, identifying the three RL models:

* Init RL (Red)

* Base RL (Light Blue)

* Synt RL (Turquoise)

### Detailed Analysis

The chart consists of grouped bar plots for each category, representing the Zero Ratio for each RL model.

* **Algebra:**

* Init RL: Approximately 0.9%

* Base RL: Approximately 0.6%

* Synt RL: Approximately 0.5%

* **Counting & Probability:**

* Init RL: Approximately 5.6%

* Base RL: Approximately 4.2%

* Synt RL: Approximately 3.8%

* **Geometry:**

* Init RL: Approximately 11.9%

* Base RL: Approximately 9.3%

* Synt RL: Approximately 8.8%

* **Intermediate Algebra:**

* Init RL: Approximately 10.8%

* Base RL: Approximately 8.3%

* Synt RL: Approximately 6.7%

* **Number Theory:**

* Init RL: Approximately 3.9%

* Base RL: Approximately 1.9%

* Synt RL: Approximately 1.8%

* **Prealgebra:**

* Init RL: Approximately 1.6%

* Base RL: Approximately 1.3%

* Synt RL: Approximately 0.9%

* **Precalculus:**

* Init RL: Approximately 13.4%

* Base RL: Approximately 10.8%

* Synt RL: Approximately 10.3%

**Trends:**

* For all categories, "Init RL" generally exhibits the highest Zero Ratio, followed by "Base RL", and then "Synt RL".

* The Zero Ratio is particularly high in "Precalculus" and "Geometry" for all models.

* The Zero Ratio is relatively low in "Algebra", "Number Theory", and "Prealgebra" for all models.

### Key Observations

* "Init RL" consistently performs worse (higher Zero Ratio) than the other two models across all categories.

* "Synt RL" generally shows the lowest Zero Ratio, suggesting it is the most effective model in addressing consistently failed problems.

* The largest difference in Zero Ratio between the models is observed in "Intermediate Algebra", where "Init RL" has a significantly higher ratio than "Synt RL".

* The categories "Precalculus" and "Geometry" present the most significant challenges for all models, as indicated by their high Zero Ratios.

### Interpretation

The data suggests that the "Init RL" model struggles more with consistently failed problems across all mathematical categories compared to "Base RL" and "Synt RL". This could be due to the initial training or configuration of the "Init RL" model. The "Synt RL" model appears to be the most robust, consistently achieving the lowest Zero Ratio, indicating its superior ability to address these challenging problems.

The high Zero Ratios in "Precalculus" and "Geometry" highlight these areas as particularly difficult for the models to solve. This could be due to the complexity of the concepts involved or the lack of sufficient training data for these categories. The relatively low Zero Ratios in "Algebra", "Number Theory", and "Prealgebra" suggest that the models are more proficient in these areas.

The differences in performance between the models across different categories could be attributed to the specific training data and algorithms used for each model. Further investigation is needed to understand the underlying reasons for these differences and to improve the performance of the models on the more challenging categories. The data also suggests that the "Synt RL" model is a promising approach for addressing consistently failed problems in mathematical reasoning.

</details>

Figure 4: The ratios of consistently failed problems from different categories in the MATH-12k training set under different training configurations. (Base model: Qwen2.5-7B).

We use the initially trained Qwen2.5-7B-Base as the student and Qwen2.5-Math-7B-Instruct as the teacher. Table 2 presents their performance on popular benchmarks and MATH-12k categories, where the student model generally outperforms the teacher. However, as shown in Table 2, the student policy further improves after training on weak teacher-labeled problems. This improvement stems from the difficulty filtering process, which removes problems with consistent student-teacher disagreement and retains those where the teacher is reliable but the student struggles, enabling targeted training on weaknesses. Detailed analysis can be found in Appendix 11.

4.2 Self-evolving Targeted Problem Synthesis

In this section, we explore the potential of utilizing the Self-evolving paradigm to address model weaknesses by executing the full SwS pipeline using the policy itself. This self-evolving paradigm for identifying and mitigating weaknesses leverages self-consistency to guide itself to generate effective trajectories toward accurate answers [75], while also integrating general instruction-following capabilities from question generation and quality filtering to enhance reasoning.

We use Qwen2.5-14B-Instruct as the base policy due to its balance between computational efficiency and instruction-following performance. The results are shown in Table 3, where the self-evolving SwS pipeline improves the baseline performance by 1.2% across all benchmarks, especially on the middle-level benchmarks like Gaokao and AMC. Although performance declines on AIME, we attribute this to the initial training data from DAPO and LightR1 already being specifically tailored to that benchmark. For further discussion of the Self-evolve SwS framework, refer to Appendix G.

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Line Chart: Overall Accuracy (%)

### Overview

This image presents a line chart illustrating the relationship between Training Steps and Average Accuracy (%). Two data series are plotted: "Target All Pass@1" and "Random All Pass@1". The chart aims to demonstrate how accuracy changes as the training progresses for both approaches.

### Components/Axes

* **Title:** (a) Overall Accuracy (%) - positioned at the top-center.

* **X-axis:** Training Steps - ranging from approximately 0 to 150, with markers at 0, 20, 40, 60, 80, 100, 120, and 140.

* **Y-axis:** Average Accuracy (%) - ranging from approximately 25.0 to 55.0, with markers at 25.0, 30.0, 35.0, 40.0, 45.0, 50.0, and 55.0.

* **Legend:** Located in the bottom-right corner.

* "Target All Pass@1" - represented by a reddish-pink color.

* "Random All Pass@1" - represented by a teal color.

* **Data Series:** Two lines with corresponding data points.

### Detailed Analysis

**Target All Pass@1 (Reddish-Pink):**

The line representing "Target All Pass@1" shows an upward trend, starting at approximately 38% accuracy at 0 training steps. It increases steadily, reaching around 45% at 20 steps, 47% at 40 steps, 49% at 60 steps, 50% at 80 steps, 51% at 100 steps, 52% at 120 steps, and finally reaching approximately 53% at 140 training steps. The data points are scattered around the line, indicating some variance.

* (0, 38%)

* (20, 45%)

* (40, 47%)

* (60, 49%)

* (80, 50%)

* (100, 51%)

* (120, 52%)

* (140, 53%)

**Random All Pass@1 (Teal):**

The line representing "Random All Pass@1" also exhibits an upward trend, but with a steeper initial increase. It starts at approximately 31% accuracy at 0 training steps, rapidly increasing to around 43% at 20 steps. The growth slows down, reaching approximately 46% at 40 steps, 48% at 60 steps, 49% at 80 steps, 48% at 100 steps, 47% at 120 steps, and finally reaching approximately 49% at 140 training steps. The data points are also scattered around the line.

* (0, 31%)

* (20, 43%)

* (40, 46%)

* (60, 48%)

* (80, 49%)

* (100, 48%)

* (120, 47%)

* (140, 49%)

### Key Observations

* Both "Target All Pass@1" and "Random All Pass@1" demonstrate increasing accuracy with more training steps.

* "Random All Pass@1" shows a more rapid initial increase in accuracy compared to "Target All Pass@1".

* The accuracy growth for both series appears to plateau after approximately 80 training steps.

* "Target All Pass@1" consistently achieves higher accuracy than "Random All Pass@1" throughout the training process.

### Interpretation

The chart suggests that both training approaches ("Target All Pass@1" and "Random All Pass@1") are effective in improving accuracy. However, the "Target All Pass@1" approach consistently outperforms the "Random All Pass@1" approach, indicating that targeting specific training examples leads to better results. The plateauing of accuracy after 80 training steps suggests that further training may yield diminishing returns. The initial steep increase in "Random All Pass@1" could indicate faster learning in the early stages, but the "Target All Pass@1" approach ultimately achieves higher overall accuracy. The scatter of data points around the lines indicates some variability in the results, which could be due to factors such as the specific training data or the randomness inherent in the training process.

</details>

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Competition Level Accuracy (%)

### Overview

This chart displays the average accuracy (%) of two different competition setups ("Target Comp Avg@32" and "Random Comp Avg@32") as a function of training steps. The chart aims to compare the performance of a targeted competition approach versus a random competition approach during a training process.

### Components/Axes

* **Title:** (b) Competition Level Accuracy (%) - positioned at the top-center.

* **X-axis:** Training Steps - ranging from approximately 0 to 140.

* **Y-axis:** Average Accuracy (%) - ranging from approximately 1.0 to 16.0.

* **Legend:** Located in the bottom-right corner.

* Target Comp Avg@32 - represented by red circles.

* Random Comp Avg@32 - represented by teal circles.

* **Data Series:** Two lines representing the accuracy trends for each competition setup, with data points marked by circles.

* **Gridlines:** A light gray grid is present to aid in reading values.

### Detailed Analysis

**Target Comp Avg@32 (Red Line):**

The red line shows an upward trend, starting at approximately 3.8% accuracy at 0 training steps. The line initially increases rapidly, then the rate of increase slows down.

* (0, 3.8)

* (10, 8.8)

* (20, 9.6)

* (30, 11.2)

* (40, 12.2)

* (50, 12.8)

* (60, 13.2)

* (70, 13.6)

* (80, 14.0)

* (90, 14.2)

* (100, 14.5)

* (110, 14.8)

* (120, 15.1)

* (130, 15.3)

* (140, 15.5)

**Random Comp Avg@32 (Teal Line):**

The teal line also shows an upward trend, but it plateaus earlier than the red line. It starts at approximately 4.0% accuracy at 0 training steps. The line increases rapidly initially, then levels off around 11-12% accuracy.

* (0, 4.0)

* (10, 8.5)

* (20, 9.5)

* (30, 10.4)

* (40, 10.8)

* (50, 11.1)

* (60, 11.3)

* (70, 11.4)

* (80, 11.5)

* (90, 11.6)

* (100, 11.7)

* (110, 11.6)

* (120, 11.4)

* (130, 11.2)

* (140, 11.0)

### Key Observations

* The "Target Comp Avg@32" consistently outperforms the "Random Comp Avg@32" throughout the training process.

* The "Random Comp Avg@32" reaches a plateau in accuracy around 11-12% after approximately 60 training steps, while the "Target Comp Avg@32" continues to improve, albeit at a decreasing rate.

* Both lines show a steep initial increase in accuracy, suggesting rapid learning in the early stages of training.

### Interpretation

The data suggests that a targeted competition approach ("Target Comp Avg@32") is more effective than a random competition approach ("Random Comp Avg@32") in improving average accuracy during training. The targeted approach demonstrates sustained improvement over a longer period, achieving a higher final accuracy. The plateau observed in the random competition approach indicates that it may reach a limit in its learning capacity or that further training does not yield significant gains. This could be due to the random approach exploring a less optimal search space or getting stuck in local optima. The initial rapid increase in both lines suggests that both approaches benefit from early training, but the targeted approach is better at capitalizing on continued training. The difference in performance highlights the importance of strategic competition design in optimizing training outcomes.

</details>

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Line Chart: Training Batch Accuracy (%)

### Overview

This image presents a line chart illustrating the average accuracy (%) of two training methods – "Target Training Acc" and "Random Training Acc" – over a series of training steps. The chart aims to compare the performance of these two methods during the training process.

### Components/Axes

* **Title:** "(c) Training Batch Accuracy (%)" – positioned at the top-center of the chart.

* **X-axis:** "Training Steps" – ranging from approximately 0 to 140, with tick marks at intervals of 20.

* **Y-axis:** "Average Accuracy (%)" – ranging from 0.0 to 80.0, with tick marks at intervals of 16.

* **Legend:** Located in the bottom-right corner of the chart.

* "Target Training Acc" – represented by red circles.

* "Random Training Acc" – represented by teal circles.

* **Data Series:** Two lines representing the accuracy of each training method.

### Detailed Analysis

**Target Training Acc (Red Line):**

The red line representing "Target Training Acc" starts at approximately 8% accuracy at 0 training steps. It exhibits a steep upward slope initially, reaching around 40% accuracy at 20 training steps. The slope gradually decreases, and the line plateaus around 68% accuracy between 80 and 140 training steps.

* (0, 8%)

* (20, 40%)

* (40, 56%)

* (60, 62%)

* (80, 66%)

* (100, 68%)

* (120, 69%)

* (140, 70%)

**Random Training Acc (Teal Line):**

The teal line representing "Random Training Acc" begins at approximately 1% accuracy at 0 training steps. It also shows a steep initial increase, reaching around 48% accuracy at 20 training steps. The slope then decreases, and the line approaches a plateau around 76% accuracy between 80 and 140 training steps.

* (0, 1%)

* (20, 48%)

* (40, 60%)

* (60, 68%)

* (80, 74%)

* (100, 76%)

* (120, 77%)

* (140, 78%)

### Key Observations

* Both training methods demonstrate increasing accuracy with more training steps.

* "Random Training Acc" consistently outperforms "Target Training Acc" throughout the training process.

* The rate of accuracy improvement diminishes for both methods as training progresses, indicating a potential convergence towards a maximum accuracy level.

* The "Target Training Acc" line appears to flatten out more significantly than the "Random Training Acc" line, suggesting it may be reaching its performance limit sooner.

### Interpretation

The chart suggests that the "Random Training Acc" method is more effective at achieving higher accuracy during the training process compared to the "Target Training Acc" method. The initial steep increase in accuracy for both methods indicates rapid learning in the early stages of training. The subsequent flattening of the curves suggests that the models are approaching their maximum achievable accuracy, and further training may yield diminishing returns. The consistent superiority of "Random Training Acc" could be due to a more effective exploration of the solution space or a better adaptation to the training data. The difference in performance between the two methods highlights the importance of selecting an appropriate training strategy to optimize model accuracy. The data suggests that the "Target Training Acc" method may benefit from adjustments to its parameters or architecture to improve its performance.

</details>

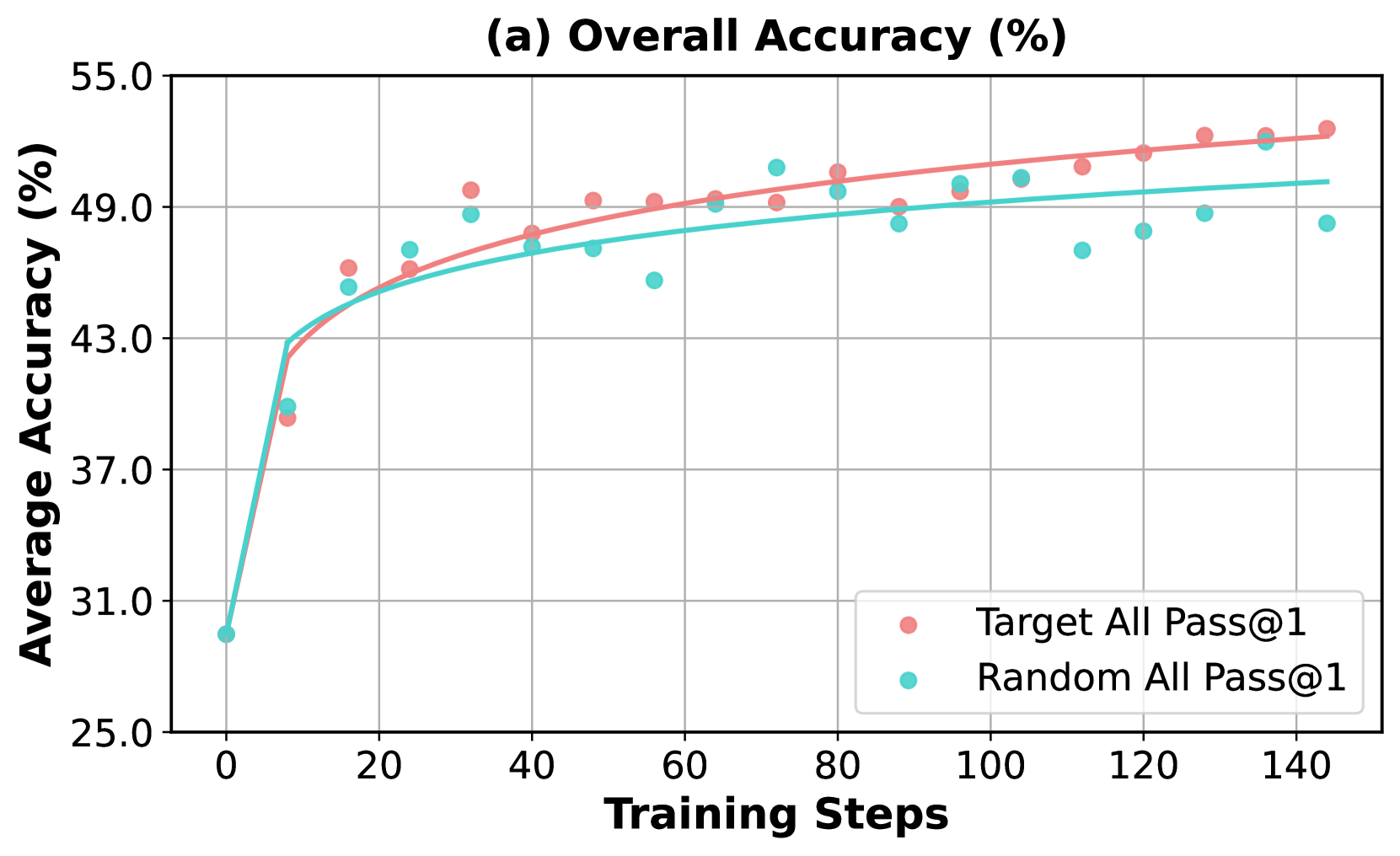

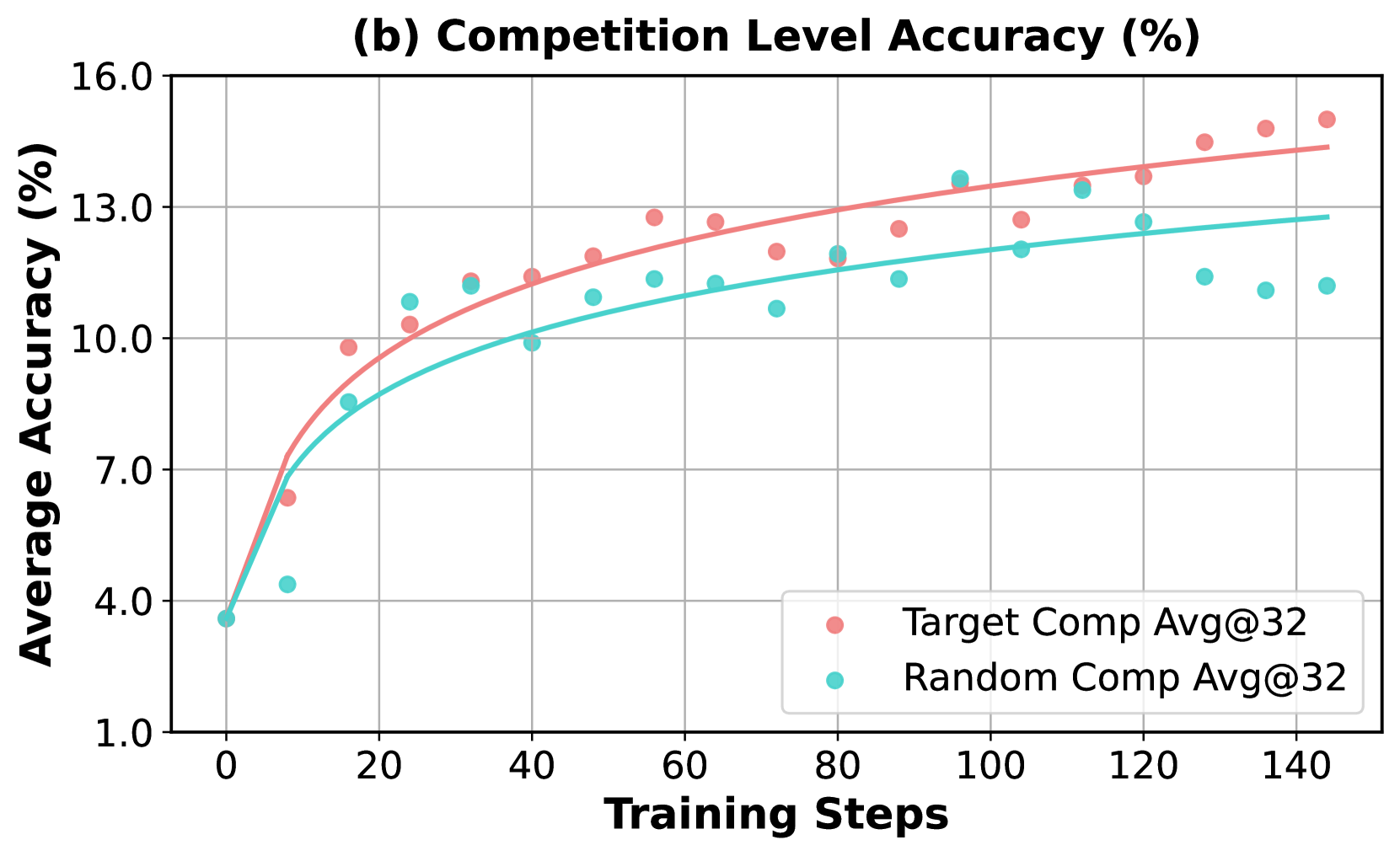

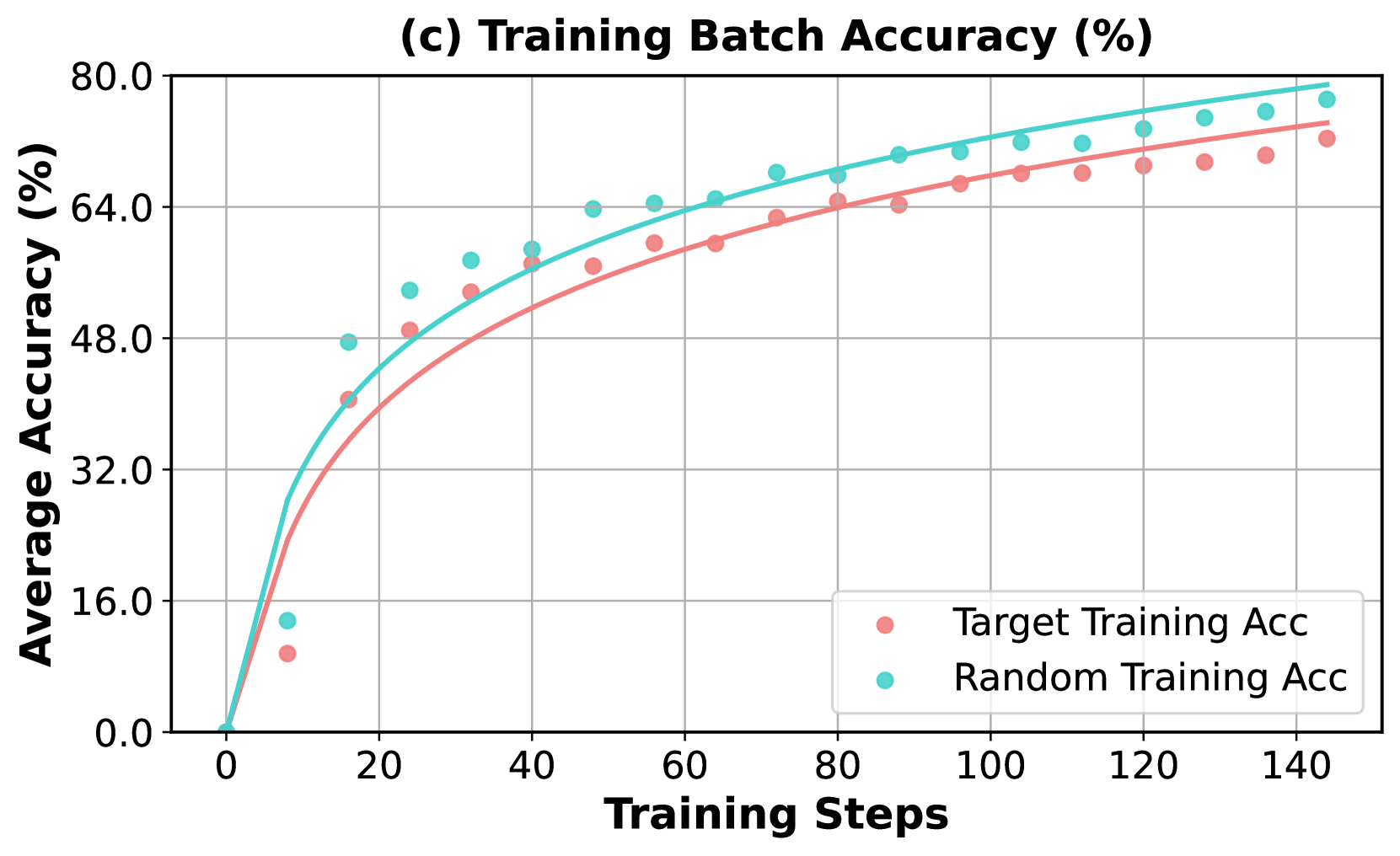

Figure 5: Comparison of accuracy improvements using (a) Pass@1 on full benchmarks evaluated in Table 1 and (b) Avg@32 on the competition-level benchmarks. (c) illustrates the proportion of prompts within a batch that achieved 100% correctness across multiple rollouts during training.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Overall Accuracy (%)

### Overview

This chart displays the average accuracy (%) over training steps for different difficulty levels. It uses line plots to represent the trend for each difficulty level, overlaid with scatter plots showing individual data points.

### Components/Axes

* **Title:** (a) Overall Accuracy (%) - positioned at the top-center.

* **X-axis:** Training Steps - ranging from approximately 0 to 200.

* **Y-axis:** Average Accuracy (%) - ranging from approximately 45.2 to 53.2.

* **Legend:** Located in the bottom-right corner.

* Difficulty (represented by light red circles)

* Simple (represented by teal circles)

* Medium (represented by blue circles)

* **Gridlines:** Present throughout the chart for easier readability.

### Detailed Analysis

The chart contains three data series, each represented by a line and corresponding scatter points.

**1. Difficulty (Light Red):**

* **Trend:** The line slopes upward, indicating increasing accuracy with more training steps. The initial slope is steep, then gradually flattens.

* **Data Points (approximate):**

* (0, 46.4)

* (25, 47.8)

* (50, 49.2)

* (75, 50.2)

* (100, 50.8)

* (125, 51.2)

* (150, 51.4)

* (175, 51.6)

* (200, 51.8)

**2. Simple (Teal):**

* **Trend:** The line also slopes upward, but is more erratic than the "Difficulty" line. It starts lower than the "Difficulty" line but eventually surpasses it.

* **Data Points (approximate):**

* (0, 47.2)

* (25, 48.6)

* (50, 49.8)

* (75, 50.6)

* (100, 51.0)

* (125, 51.2)

* (150, 51.4)

* (175, 51.8)

* (200, 52.2)

**3. Medium (Blue):**

* **Trend:** The line slopes upward, and is the most stable of the three lines. It starts higher than the "Difficulty" line and remains consistently above it.

* **Data Points (approximate):**

* (0, 48.0)

* (25, 49.0)

* (50, 50.0)

* (75, 50.8)

* (100, 51.2)

* (125, 51.4)

* (150, 51.6)

* (175, 52.0)

* (200, 52.4)

### Key Observations

* All three difficulty levels show an increase in average accuracy with increasing training steps.

* The "Medium" difficulty consistently achieves the highest accuracy.

* The "Simple" difficulty shows the most variability in its data points.

* The "Difficulty" line starts with the lowest accuracy but shows a consistent upward trend.

* The lines converge as the training steps increase, suggesting diminishing returns in accuracy improvement.

### Interpretation

The chart demonstrates that increasing training steps generally leads to improved accuracy for all difficulty levels. The "Medium" difficulty consistently outperforms the others, suggesting it provides an optimal level of challenge for the model. The variability in the "Simple" difficulty might indicate that the model quickly learns the simple task, leading to fluctuations in accuracy as it overfits or encounters minor variations. The convergence of the lines at higher training steps suggests that further training may not yield significant improvements in accuracy. This data could be used to determine the optimal training duration and difficulty level for maximizing model performance. The chart suggests a positive correlation between training steps and accuracy, but also highlights the importance of selecting an appropriate difficulty level.

</details>

<details>

<summary>x9.png Details</summary>

### Visual Description

## Chart: Competition Level Accuracy

### Overview

The image presents a line chart illustrating the relationship between Training Steps and Average Accuracy (%) for different competition difficulty levels. Three difficulty levels – Difficulty, Simple, and Medium – are represented by different colored data points and trend lines. The chart aims to demonstrate how accuracy changes with increasing training steps for each difficulty level.

### Components/Axes

* **Title:** (b) Competition Level Accuracy (%) - positioned at the top-center of the chart.

* **X-axis:** Training Steps - ranging from 0 to 200, with tick marks at intervals of 25.

* **Y-axis:** Average Accuracy (%) - ranging from 8.4 to 15.7, with tick marks at intervals of 0.4.

* **Legend:** Located in the bottom-right corner of the chart.

* Difficulty (represented by red circles)

* Simple (represented by green circles)

* Medium (represented by blue circles)

* **Gridlines:** Horizontal and vertical gridlines are present to aid in reading values.

### Detailed Analysis

The chart displays three lines representing the trend of accuracy for each difficulty level.

* **Difficulty (Red):** The red line shows an upward trend, starting at approximately 9.1% accuracy at 0 training steps and reaching approximately 14.7% accuracy at 200 training steps. The line exhibits a steeper slope in the initial stages (0-100 training steps) and then plateaus.

* (0, 9.1)

* (25, 9.8)

* (50, 10.8)

* (75, 11.8)

* (100, 12.5)

* (125, 13.2)

* (150, 13.8)

* (175, 14.4)

* (200, 14.7)

* **Simple (Green):** The green line also shows an upward trend, but it is less pronounced than the red line. It starts at approximately 9.7% accuracy at 0 training steps and reaches approximately 12.7% accuracy at 200 training steps. The slope is relatively consistent throughout the range.

* (0, 9.7)

* (25, 10.4)

* (50, 11.1)

* (75, 11.6)

* (100, 12.0)

* (125, 12.3)

* (150, 12.5)

* (175, 12.6)

* (200, 12.7)

* **Medium (Blue):** The blue line exhibits a similar upward trend to the green line, starting at approximately 10.2% accuracy at 0 training steps and reaching approximately 13.5% accuracy at 200 training steps. The slope is also relatively consistent.

* (0, 10.2)

* (25, 10.9)

* (50, 11.7)

* (75, 12.3)

* (100, 12.8)

* (125, 13.1)

* (150, 13.3)

* (175, 13.4)

* (200, 13.5)

### Key Observations

* The "Difficulty" level consistently demonstrates the highest accuracy across all training steps.

* The "Simple" and "Medium" levels show similar accuracy trends, with "Medium" generally performing slightly better.

* All three difficulty levels exhibit diminishing returns in accuracy as training steps increase, suggesting a point of saturation.

* The data points are scattered around the trend lines, indicating some variability in accuracy for each difficulty level at each training step.

### Interpretation

The chart suggests that increasing training steps generally improves accuracy for all competition difficulty levels. However, the "Difficulty" level benefits the most from additional training, achieving significantly higher accuracy compared to "Simple" and "Medium" levels. This implies that more complex tasks require more training to reach optimal performance. The diminishing returns observed at higher training steps suggest that there is a limit to the improvement achievable through further training. The spread of data points around the trend lines indicates that individual performance may vary, even within the same difficulty level. This could be due to factors such as individual learning rates or variations in the training data. The chart provides valuable insights into the relationship between training effort and performance in different competition settings, highlighting the importance of tailoring training strategies to the specific difficulty level.

</details>

<details>

<summary>x10.png Details</summary>

### Visual Description

\n

## Chart: Training Batch Accuracy (%)

### Overview

The image presents a line chart illustrating the average accuracy (%) of training batches over training steps for three different difficulty levels: Difficulty, Simple, and Medium. The chart aims to demonstrate how accuracy changes with the number of training steps for each difficulty setting.

### Components/Axes

* **Title:** (c) Training Batch Accuracy (%) - positioned at the top-center.

* **X-axis:** Training Steps - ranging from 0 to 200, with markers at intervals of 25.

* **Y-axis:** Average Accuracy (%) - ranging from 0 to 60, with markers at intervals of 12.

* **Legend:** Located in the bottom-right corner, identifying the data series:

* Difficulty (represented by a red circle)

* Simple (represented by a teal circle)

* Medium (represented by a blue circle)

* **Gridlines:** Present to aid in reading values.

### Detailed Analysis

The chart displays three distinct lines representing the accuracy trends for each difficulty level.

**1. Difficulty (Red Line):**

* The line starts at approximately 2% accuracy at 0 training steps.

* It exhibits a slow, almost linear increase, reaching approximately 22% accuracy at 200 training steps.

* Data points (approximate):

* 0 Steps: 2%

* 25 Steps: 6%

* 50 Steps: 10%

* 75 Steps: 14%

* 100 Steps: 17%

* 125 Steps: 20%

* 150 Steps: 21%

* 175 Steps: 22%

* 200 Steps: 22%

**2. Simple (Teal Line):**

* The line begins at approximately 4% accuracy at 0 training steps.

* It shows a moderate, upward sloping trend, reaching approximately 36% accuracy at 200 training steps.

* Data points (approximate):

* 0 Steps: 4%

* 25 Steps: 12%

* 50 Steps: 20%

* 75 Steps: 27%

* 100 Steps: 31%

* 125 Steps: 33%

* 150 Steps: 34%

* 175 Steps: 35%

* 200 Steps: 36%

**3. Medium (Blue Line):**

* The line starts at approximately 1% accuracy at 0 training steps.

* It demonstrates the steepest upward slope, reaching approximately 49% accuracy at 200 training steps.

* Data points (approximate):

* 0 Steps: 1%

* 25 Steps: 10%

* 50 Steps: 24%

* 75 Steps: 33%

* 100 Steps: 38%

* 125 Steps: 41%

* 150 Steps: 44%

* 175 Steps: 47%

* 200 Steps: 49%

### Key Observations

* The "Medium" difficulty level consistently exhibits the highest accuracy throughout the training process.

* The "Difficulty" level shows the lowest accuracy and the slowest rate of improvement.

* The "Simple" level falls between the other two, demonstrating a moderate improvement in accuracy.

* All three lines show diminishing returns in accuracy as the number of training steps increases, suggesting a potential plateau.

### Interpretation

The chart suggests that the difficulty of the training task significantly impacts the rate at which accuracy improves. More difficult tasks ("Difficulty") require more training steps to achieve comparable accuracy levels to simpler tasks ("Medium"). The steep slope of the "Medium" line indicates that the model learns more effectively on this difficulty level, potentially due to a better balance between challenge and solvability. The plateauing of all lines suggests that further training may not yield substantial improvements in accuracy, and that other factors (e.g., model architecture, hyperparameters) may need to be adjusted to achieve higher performance. The data implies that the model is learning, but the learning rate is decreasing over time. This is a common phenomenon in machine learning and can be addressed with techniques like learning rate scheduling.

</details>

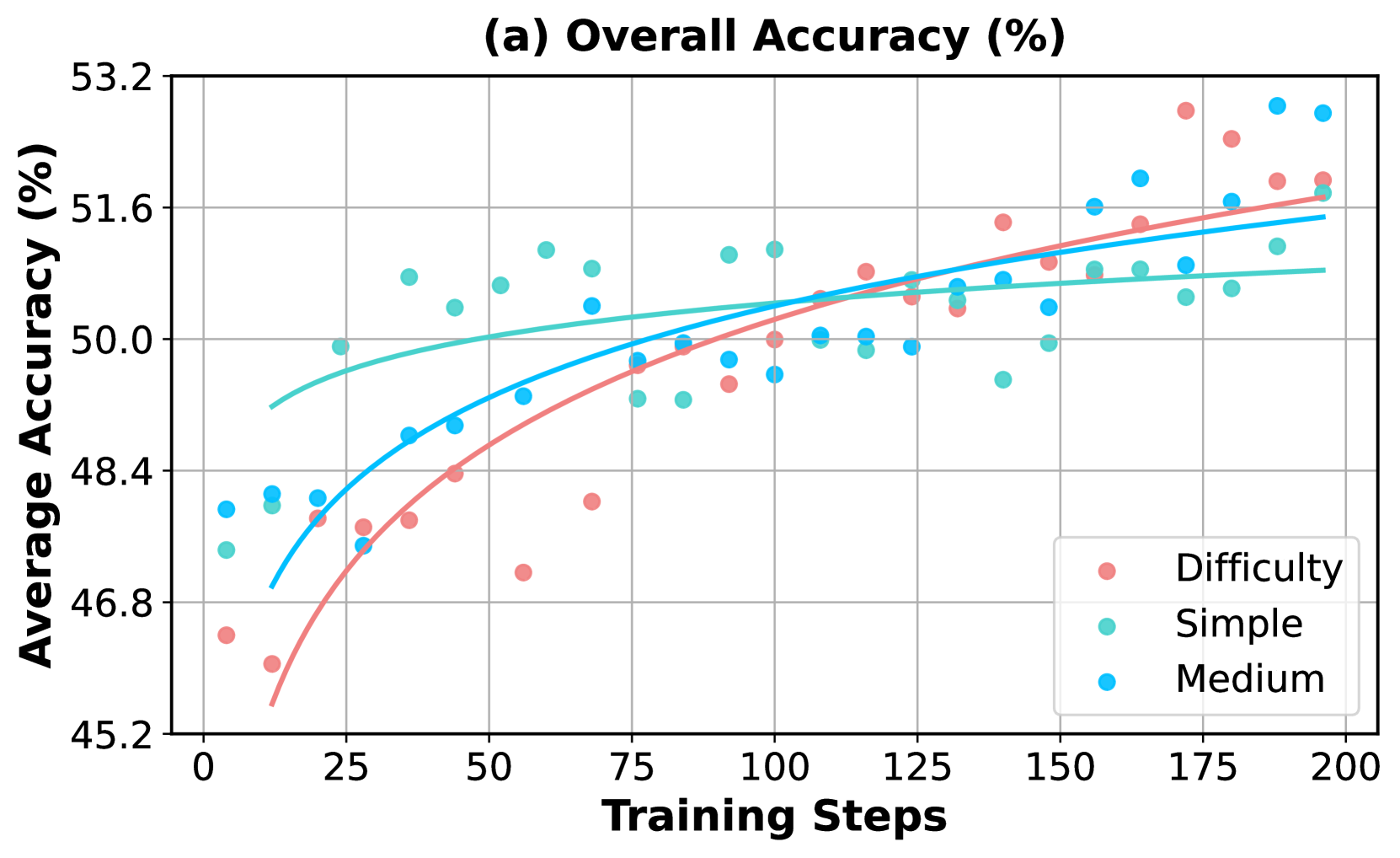

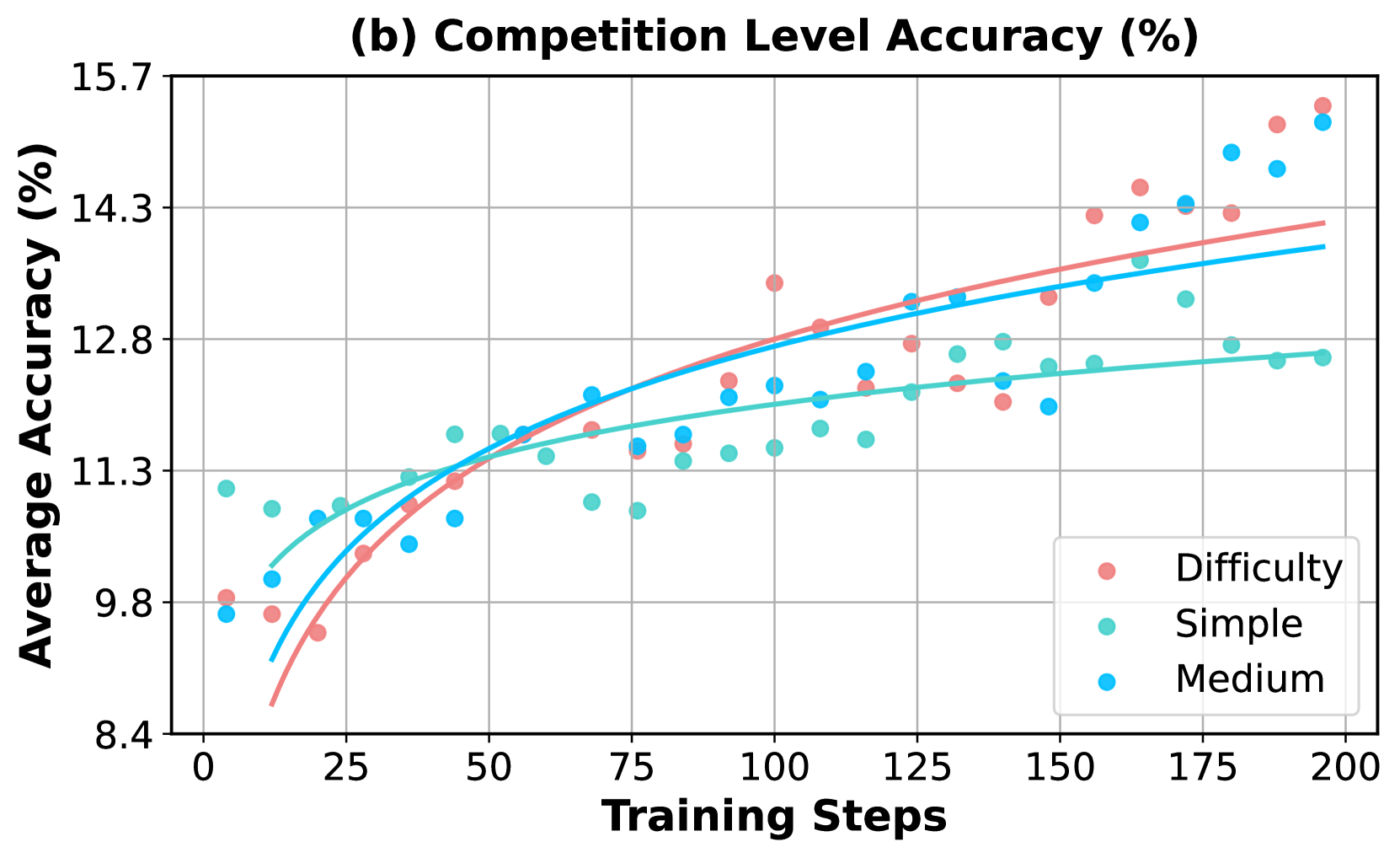

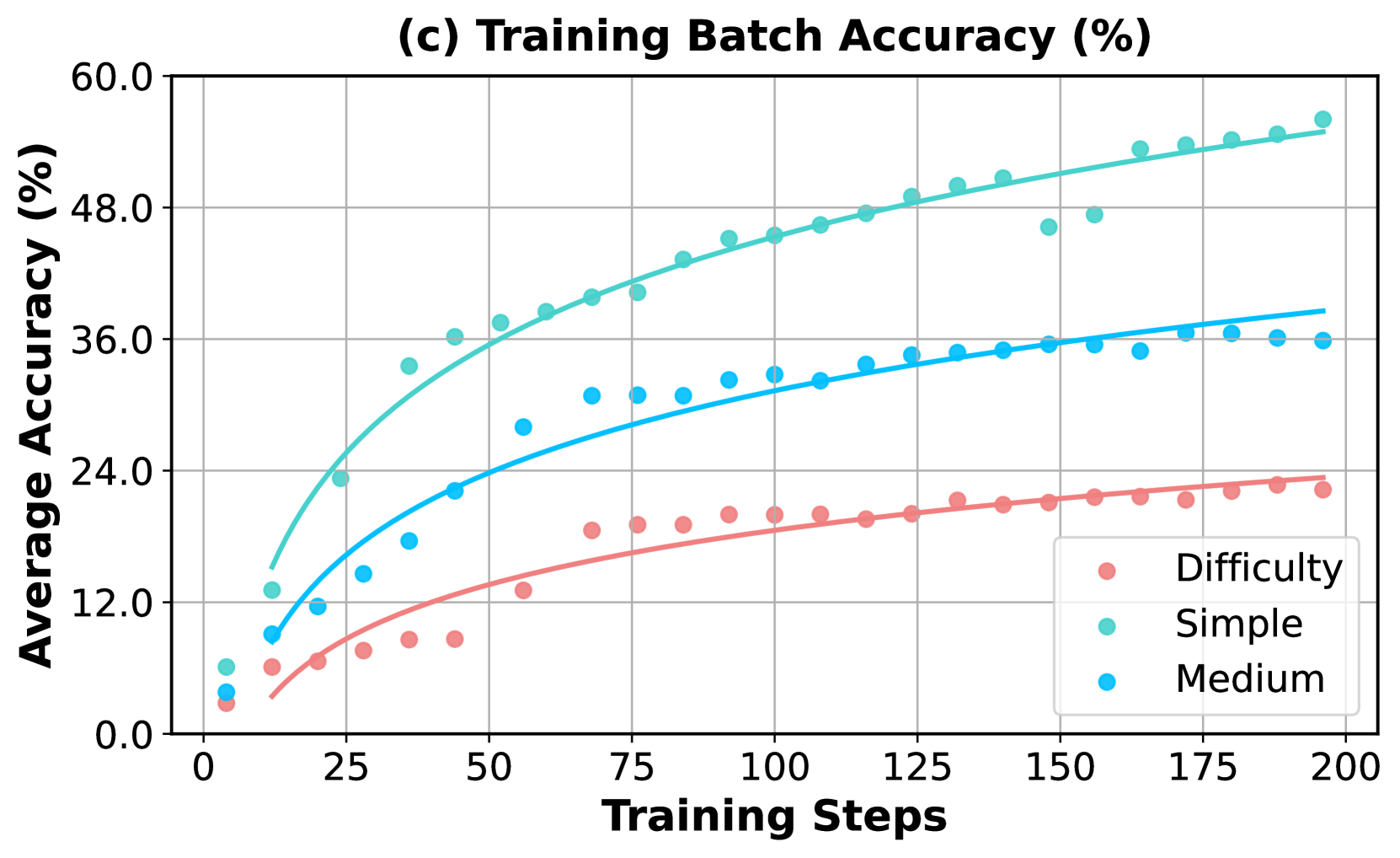

Figure 6: Comparison of incorporating synthetic problems of varying difficulty levels during the augmented RL training. For a detailed description of accuracy trends on evaluation benchmarks and the training set, refer to the caption in Figure 5.

4.3 Weakness-driven Selection

In this section, we explore an alternative extension that augments the initial training set using identified weaknesses and a larger mathematical reasoning dataset. Specifically, we use the Qwen2.5-7B model, identify its weaknesses on the MATH-12k training set, and retrieve augmented problems from Big-Math [1] that align with its failure cases, incorporating them into the initial training set for augmentation. We employ a category-specific selection strategy similar to the budget allocation in Eq. 5, using KNN [5] to identify the most relevant problems within each category. The total augmentation budget is also set to 40k. We compare this approach to a baseline where the model is trained on an augmented set incorporated with randomly selected problems from Big-Math. Details of the selection procedure are provided in Appendix H.

As shown in Figure 5, the model trained with weakness-driven augmentation outperforms the random augmentation strategy in terms of accuracy on both the whole evaluated benchmarks (Figure 5.a) and the competition-level subset (Figure 5.b), demonstrating the effectiveness of the weakness-driven selection strategy. In Figure 5.c, it is worth noting that the model quickly fits the randomly selected problems in training, which then cease to provide meaningful training signals in the GRPO algorithm. In contrast, since the failure cases highlight specific weaknesses of the model’s capabilities, the problems selected based on them remain more challenging and more aligned with its deficiencies, providing richer learning signals and promoting continued development of reasoning skills.

4.4 Impact of Question Difficulty

We ablate the impact of the difficulty levels of synthetic problems used in the augmented RL training. In this section, we define the difficulty of a synthetic problem based on the accuracy of multiple rollouts generated by the initially trained model, base from Qwen2.5-7B. We incorporate synthetic problems of three predefined difficulty levels—simple, medium, and hard—into the augmented RL training. These levels correspond to accuracy ranges of $[5,7]$ , $[3,5]$ , and $[1,4]$ out of 8 sampled responses, respectively. For each level, we sample 40k examples and combine them with the initial training set for a second training stage lasting 200 steps.

The experimental results are shown in Figure 6. Similar to the findings in Section 4.3, the model fits more quickly on the simple augmented set and initially achieves the best performance across all evaluation benchmarks, including competition-level tasks, but then saturates with no further improvement. In contrast, the medium and hard augmented sets lead to slower convergence on the training set but result in more sustained performance gains on the evaluation set, with the hardest problems providing the longest-lasting training benefits.

<details>

<summary>x11.png Details</summary>

### Visual Description

\n

## Document: Geometry Problem Set

### Overview

The image presents a document outlining an original geometry problem alongside a series of synthetic problems of varying difficulty levels. It also lists extracted concepts relevant to the original problem. The document appears to be designed for assessing or training problem-solving skills in geometry.

### Components/Axes

The document is divided into three main sections:

1. **Original Problem:** A detailed description of a complex geometric setup.

2. **Synthetic Problems:** Four problems categorized by difficulty (Simple, Medium, Hard, Unsolvable) with their answers and model accuracy.

3. **Extracted Concepts:** A bulleted list of geometric concepts related to the original problem.

### Detailed Analysis or Content Details

**Original Problem:**

* Equilateral triangle *ABC* has side length 600.

* Points *P* and *Q* lie outside the plane of *ΔABC* and are on opposite sides of the plane.

* *PA* = *PB* = *PC*, and *QA* = *QB* = *QC*.

* The planes of *ΔPAB* and *ΔQAB* form a 120° dihedral angle (the angle between the two planes).

* There is a point *O* whose distance from each of *A, B, C, P,* and *Q* is *d*. Find *d*.

**Synthetic Problems:**

* **Simple:** Two cones, A and B, are similar, with cone A being tangent to a sphere. The radius of the sphere is *r*, and the height of cone A is *h*. If the ratio of the height of cone B to the height of cone A is *k*, find the ratio of the surface area of cone B to the surface area of cone A.

* Answer: *k²*, Model Accuracy: 100%

* **Medium:** In a circle with radius *r*, two tangents are drawn from a point *P* such that the angle between them is 60°. If the length of each tangent is *√3* find the distance from *P* to the center.