# AgentOrchestra: Orchestrating Multi-Agent Intelligence with the Tool-Environment-Agent(TEA) Protocol

**Authors**:

- Wentao Zhang

- Liang Zeng

- Yuzhen Xiao

- Yongcong Li

- Ce Cui

- Yilei Zhao

- Rui Hu

- Yang Liu

- Yahui Zhou

- Bo An (Skywork AI Nanyang Technological University)

Abstract

Recent advances in LLM-based agent systems have shown promise in tackling complex, long-horizon tasks. However, existing LLM-based agentprotocols (e.g., A2A and MCP) under-specify cross-entity lifecycle and context management, version tracking, and ad-hoc environment integration, which in turn encourages fixed, monolithic agent compositions and brittle glue code. To address these limitations, we introduce the Tool–Environment–Agent (TEA) protocol, a unified abstraction that models environments, agents, and tools as first-class resources with explicit lifecycles and versioned interfaces. TEA provides a principled foundation for end-to-end lifecycle and version management, and for associating each run with its context and outputs across components, improving traceability and reproducibility. Moreover, TEA enables continual self-evolution of agent-associated components Unless otherwise specified, agent-associated components include prompts, memory/tool/agent/environment code, and agent outputs (solutions). through a closed feedback loop, producing improved versions while supporting version selection and rollback. Building on TEA, we present AgentOrchestra, a hierarchical multi-agent framework in which a central planner orchestrates specialized sub-agents for web navigation, data analysis, and file operations, and supports continual adaptation by dynamically instantiating, retrieving, and refining tools online during execution. We evaluate AgentOrchestra on three challenging benchmarks, where it consistently outperforms strong baselines and achieves 89.04% on GAIA, establishing state-of-the-art performance to the best of our knowledge. Overall, our results provide evidence that TEA and hierarchical orchestration improve scalability and generality in multi-agent systems.

<details>

<summary>x2.png Details</summary>

### Visual Description

Icon/Small Image (32x33)

</details>

AgentOrchestra: Orchestrating Multi-Agent Intelligence with the Tool-Environment-Agent(TEA) Protocol

1 Introduction

Recent advances in LLM-based agent systems have enabled strong performance on both general-purpose and complex, long-horizon tasks across diverse domains, including web navigation (OpenAI, 2025b; Müller and Žunič, 2024), computer use (Anthropic, 2024a; Qin et al., 2025), code execution (Wang et al., 2024a), game playing (Wang et al., 2023; Tan et al., 2024), and research assistance (OpenAI, 2024; DeepMind, 2024; xAI, 2025). Despite this progress, cross-environment generalization remains limited because context is scattered across prompts and logs, environment integration relies on brittle glue code, and agent-associated components are typically fixed rather than feedback-driven self-evolution.

Additionally, current agent protocols fall short of serving as a general substrate for scalable, general-purpose agents. As summarized in Table 1, representative protocols such as Google’s A2A (Google, 2025) and Anthropic’s MCP (Anthropic, 2024b) provide important building blocks, including task-level collaboration and messaging in A2A, as well as tool and resource schemas, discovery, and invocation in MCP. However, three protocol-level gaps remain: i) Lifecycle and context management are fragmented, as neither standardizes unified primitives to manage lifecycles and maintain consistent, versioned execution context across agent-associated components; ii) Self-evolution is not supported at the protocol level, as both protocols largely treat prompts and resources as externally maintained assets, and do not define a closed loop to refine prompts or tools from execution feedback with traceable versioning; iii) Environments are not first-class, environments are delegated to application-specific runtimes instead of being managed components with clear boundaries and constraints. This makes it difficult to switch agents across environments, reuse environments, and isolate parallel runs, often reducing systems to glue-code orchestration.

Table 1: Comparison of TEA Protocol with A2A and MCP. Symbols: $\checkmark$ = Yes, $\triangle$ = Partial, $×$ = No.

| Dimension | TEA | A2A | MCP |

| --- | --- | --- | --- |

| Core Entities | Tool, Env, Agent | Agent, Tool | Model |

| Lifecycle & Version | $\checkmark$ | $×$ | $×$ |

| Entity Transformations | $\checkmark$ | $×$ | $×$ |

| Self-Evolution Support | $\checkmark$ | $×$ | $×$ |

| Open Ecosystem | ✓ | $\triangle$ | $\triangle$ |

To address these limitations, we propose the Tool–Environment–Agent (TEA) protocol, which treats environments, agents, and tools as explicitly managed components under a unified protocol layer. Concretely, TEA standardizes component identifiers and version semantics, and binds each run to its context and execution state, so that artifacts remain traceable across iterations. Importantly, TEA goes beyond MCP by standardizing cross entity lifecycle semantics, explicit version semantics with stable entity identifiers, run-indexed context capture, explicit environment boundaries with constraints, and closed loop evolution hooks driven by execution feedback. As a result, execution state, artifacts, and context can be consistently persisted, reused, and traced across runs and iterations. TEA further enables self-evolution by defining a closed loop in which execution feedback can trigger agent-associated components during runtime, with updates recorded as new versions. Finally, TEA models environments as first-class components with explicit boundaries and constraints, for example web sandboxes, file systems, and code execution runtimes, improving reuse and isolation across heterogeneous domains and reducing context leakage in parallel executions. This also encourages consolidating functionally related tools into coherent environments; for example, discrete file operations can be organized as a managed file system, reducing context fragmentation and management overhead. Overall, TEA aims to make agent construction more composable and reproducible in practice. Detailed motivations for the TEA protocol and in-depth comparisons with existing protocols are provided in Appendix A, B.

Based on the TEA protocol, we develop AgentOrchestra, a hierarchical multi-agent framework for general-purpose task solving that integrates high-level planning with modular collaboration. AgentOrchestra uses a central planner to decompose a user objective and delegate sub-tasks to specialized agents for research, web navigation, analysis, tool synthesis, and reporting. Compared to flat coordination, where an orchestrator selects from a growing global pool of agents and tools and tends to accumulate irrelevant context, AgentOrchestra adopts hierarchical delegation with localized tool ownership. The planner routes each sub-task to a domain-specific sub-agent (or environment), which maintains and exposes only a curated toolset and context for its domain. This structure converts global coordination into a sequence of localized routing decisions, enabling tree-structured expansion as new capabilities are added while keeping the orchestrator’s decision scope and context footprint bounded. For example, the planner first selects a domain-level agent, which then supplies only the tools and context required for that domain. Furthermore, AgentOrchestra incorporates a self-evolution module that leverages TEA’s lifecycle and versioning mechanisms to refine agent- associated components based on execution feedback. Our contributions are threefold:

- We introduce the TEA protocol, which unifies environments, agents, and tools as first-class, versioned components with lifecycles to support context management and execution.

- We develop AgentOrchestra, a hierarchical multi-agent system built on TEA, demonstrating scalable orchestration through tree-structured routing and feedback-driven self-evolution.

- We conduct extensive evaluations on three challenging benchmarks, including ablations to isolate the effects of key components. AgentOrchestra consistently outperforms strong baselines and achieves 89.04% on GAIA, establishing state-of-the-art performance to the best of our knowledge.

2 Related Work

2.1 Tool and Agent Protocols

Recent protocols standardize tool interfaces and agent communication. For instance, MCP (Anthropic, 2024b) unifies tool integration for LLMs, while A2A (Google, 2025) enables agent-to-agent messaging and coordination. Other efforts, such as the Agent Network Protocol (ANP) (Ehtesham et al., 2025) and frameworks like SAFEFLOW (Li et al., 2025), enhance interoperability and safety in multi-agent systems. While these protocols provide essential building blocks, they primarily treat agents and tools as isolated service endpoints, often overlooking environments as dynamic, first-class components. TEA extends these existing standards rather than replacing them. By integrating tools, environments, and agents into a unified context-aware framework, TEA resolves protocol fragmentation with integrated lifecycle and version management missing in MCP or A2A.

2.2 General-Purpose Agents

Integrating tools with LLMs represents a paradigm shift, enabling agents to exhibit enhanced flexibility, cross-domain reasoning, and natural language interaction (Liang and Tong, 2025). Such systems have demonstrated efficacy across diverse domains, including web browsing (OpenAI, 2025b; Müller and Žunič, 2024), computer operation (Anthropic, 2024a; Qin et al., 2025), code execution (Wang et al., 2024a), and game playing (Wang et al., 2023; Tan et al., 2024). Standardized interfaces like OpenAI’s Function Calling and Anthropic’s MCP (OpenAI, 2023; Anthropic, 2024b), alongside frameworks such as ToolMaker (Wölflein et al., 2025), have further streamlined the synthesis of LLM-compatible tools. Building upon these foundations, multi-agent architectures like MetaGPT (Hong et al., 2023) demonstrate the potential of specialized agent coordination for complex problem-solving. However, many current approaches still struggle with efficient communication, dynamic role allocation, and scalable teamwork. The emergence of generalist frameworks, including Manus (Shen and Yang, 2025), OpenHands (Wang et al., 2024b), and smolagents (Roucher et al., 2025), has advanced unified perception and tool-augmented action. While recent efforts like Alita (Qiu et al., 2025) explore minimal predefinition and maximal self-evolution, these systems often lack unified protocols for cross-layer resource management. This gap motivates our proposal of the TEA Protocol and AgentOrchestra.

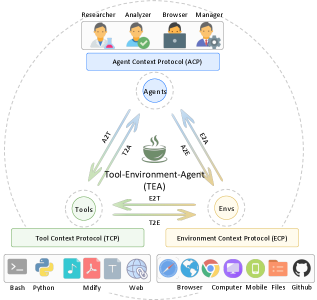

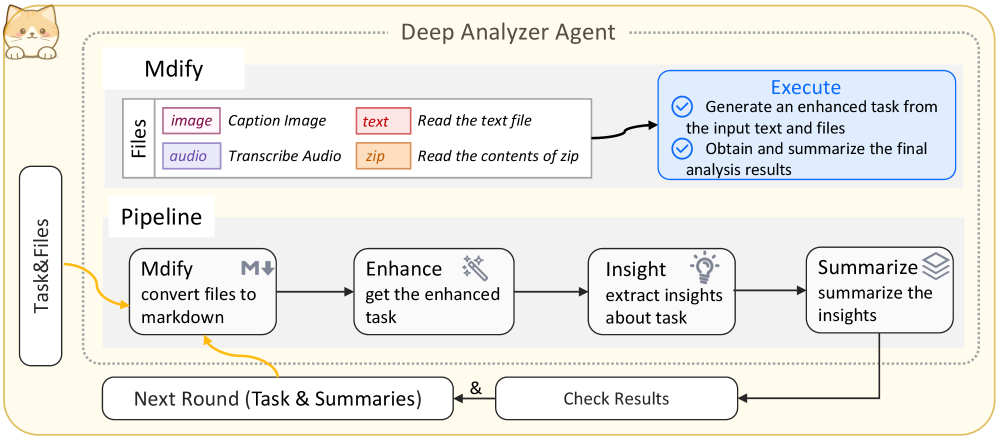

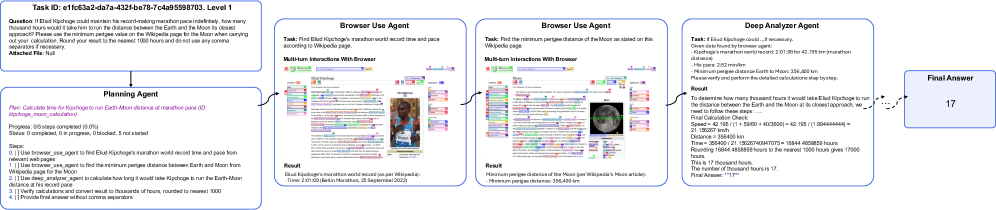

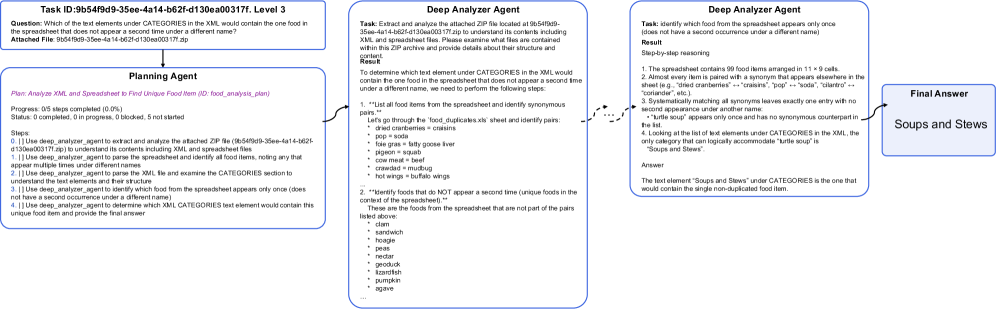

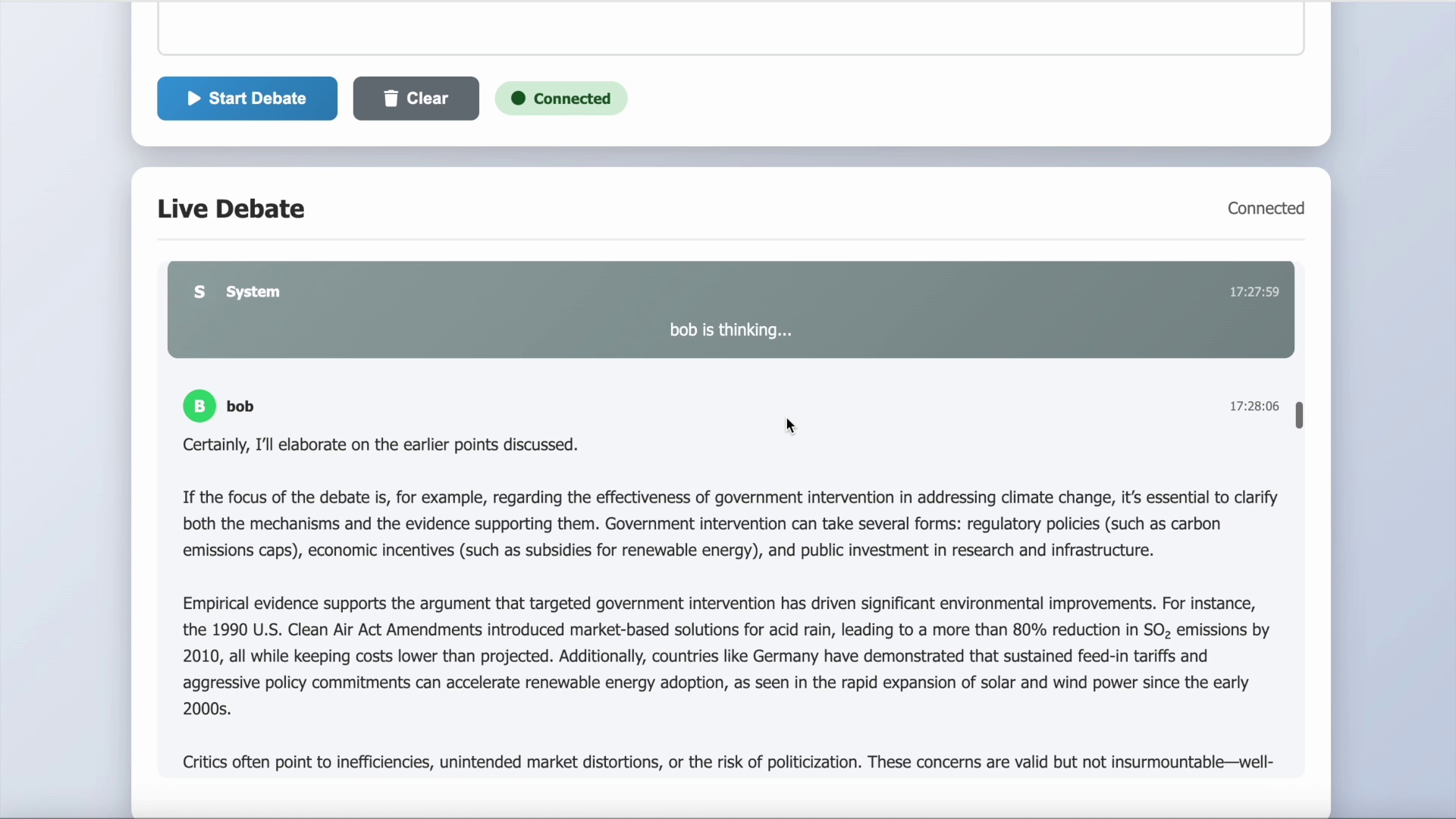

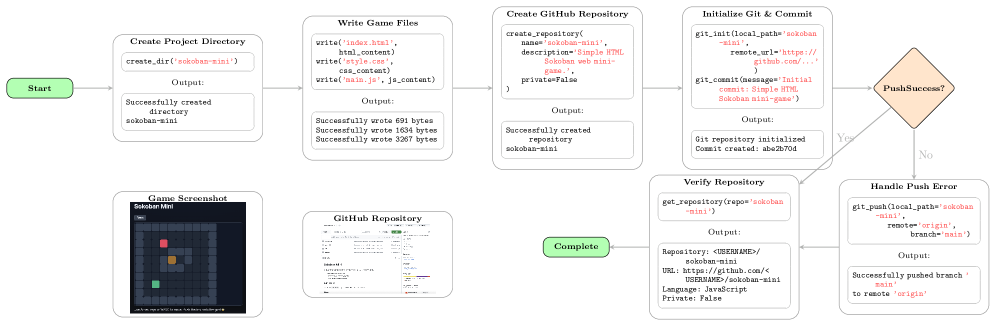

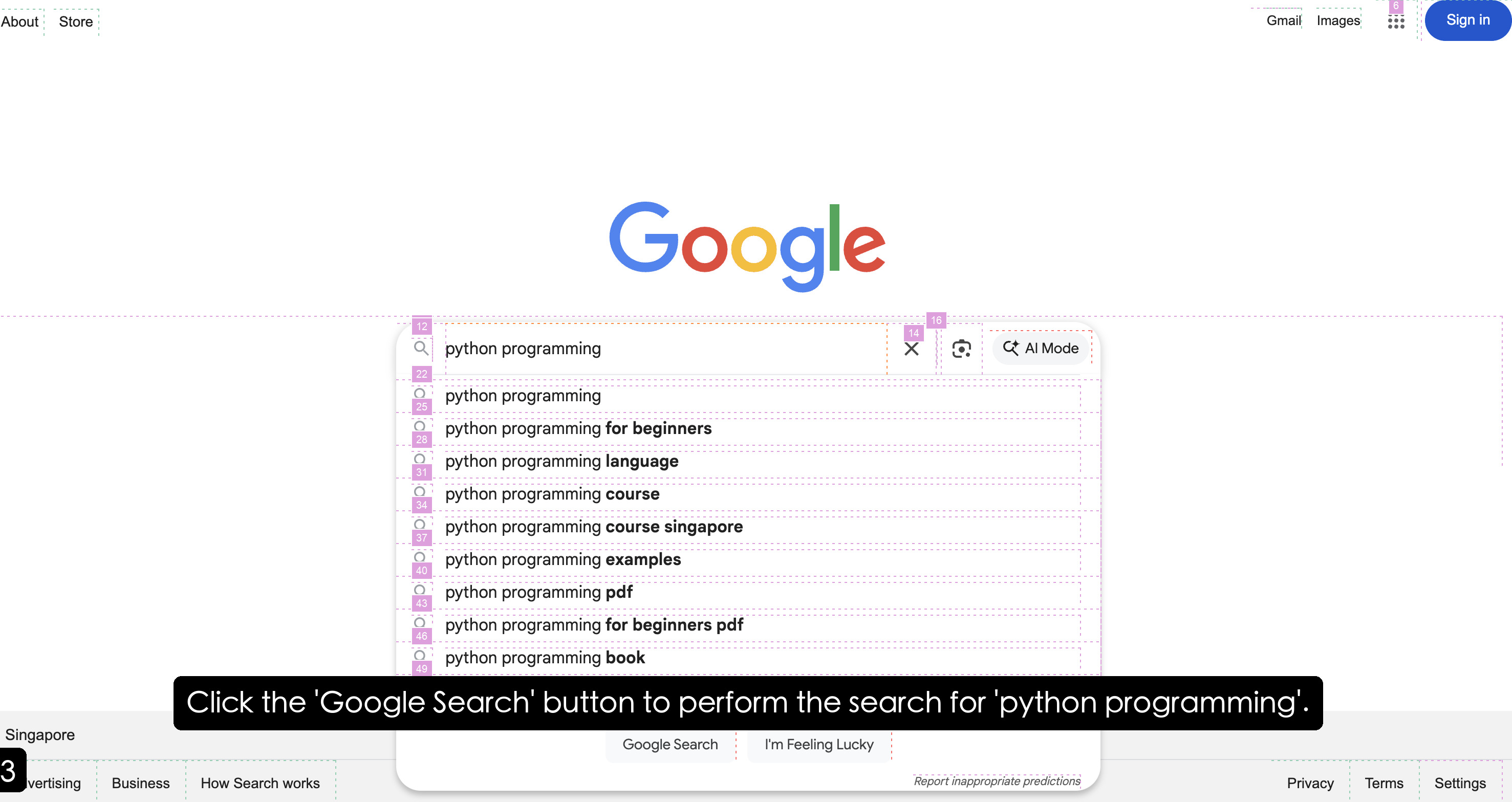

3 The TEA Protocol

The TEA Protocol is fundamentally designed around coroutine-based asynchronous execution, enabling concurrent task processing and parallel multi-agent coordination. As illustrated in Figure 1, the protocol architecture comprises three primary layers: i) Basic Managers provide foundational services through six specialized components (model, prompt, memory, dynamic, version, and tracer); ii) Core Protocols define the Tool Context Protocol (TCP), Environment Context Protocol (ECP), and Agent Context Protocol (ACP), each implemented through a context manager for lifecycle engineering and a server for standardized orchestration; and iii) Protocol Transformations establish bidirectional conversion pathways (e.g., A2T, E2T, A2E) enabling dynamic role reconfiguration. Additionally, the protocol incorporates a Self-Evolution Module that wraps agent-associated components as evolvable variables for iterative optimization. Details and formalization can be found in Appendix C.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Tool-Environment-Agent (TEA) Architecture

### Overview

The image is a diagram illustrating the architecture of a Tool-Environment-Agent (TEA) system. It depicts the relationships and interactions between different components, including Researchers, Analyzers, Browsers, Managers, Agents, Tools, and Environments. The diagram uses arrows to indicate the flow of information and protocols for communication.

### Components/Axes

* **Header (Top):**

* Roles: Researcher, Analyzer, Browser, Manager (represented by icons of people performing tasks)

* Protocol: Agent Context Protocol (ACP) - a blue box below the roles.

* **Main Diagram (Center):**

* Central Node: Tool-Environment-Agent (TEA) - represented by a steaming cup icon.

* Nodes surrounding TEA:

* Agents (top)

* Tools (left)

* Envs (right)

* Communication Arrows:

* A2T: Agents to TEA (upward arrow)

* T2A: TEA to Agents (downward arrow)

* A2E: Agents to Envs (upward arrow)

* E2A: Envs to Agents (downward arrow)

* E2T: Envs to TEA (leftward arrow)

* T2E: TEA to Envs (rightward arrow)

* Protocols:

* Tool Context Protocol (TCP) - below the "Tools" node.

* Environment Context Protocol (ECP) - below the "Envs" node.

* **Footer (Bottom):**

* Tools: Bash, Python, Mdify, Web (represented by icons)

* Envs: Browser, Computer, Mobile, Files, Github (represented by icons)

### Detailed Analysis or Content Details

* **Roles:** The diagram starts with user roles at the top: Researcher, Analyzer, Browser, and Manager. These roles likely represent different types of users interacting with the system.

* **Agent Context Protocol (ACP):** This protocol governs the interaction between the user roles and the Agents.

* **Agents:** Agents are central to the system, communicating with both the TEA and the Envs.

* **Tool-Environment-Agent (TEA):** This is the core component, facilitating interaction between Tools and Environments. It is represented by a steaming cup icon.

* **Tools:** Represented by Bash, Python, Mdify, and Web, these are the tools that the system utilizes.

* **Envs:** Represented by Browser, Computer, Mobile, Files, and Github, these are the environments in which the system operates.

* **Tool Context Protocol (TCP):** This protocol governs the interaction between the TEA and the Tools.

* **Environment Context Protocol (ECP):** This protocol governs the interaction between the TEA and the Envs.

* **Communication Arrows:** The arrows indicate the flow of information between the components. For example, A2T represents communication from Agents to TEA.

### Key Observations

* The TEA acts as a central hub, mediating interactions between Tools, Environments, and Agents.

* Protocols (ACP, TCP, ECP) are used to standardize communication between different components.

* The diagram provides a high-level overview of the system architecture, highlighting the key components and their relationships.

### Interpretation

The diagram illustrates a modular architecture where the Tool-Environment-Agent (TEA) acts as an intermediary, enabling communication and interaction between various tools, environments, and agents. The use of context protocols (ACP, TCP, ECP) suggests a standardized approach to managing interactions, ensuring that each component understands the context of the communication. This architecture likely aims to provide a flexible and extensible system that can adapt to different tools, environments, and user roles. The presence of Github as an environment suggests version control and collaboration are important aspects of the system. The steaming cup icon for TEA could symbolize the "brewing" or processing of information between the tools and environments.

</details>

Figure 1: Architecture of the TEA Protocol.

3.1 Basic Managers

The Basic Managers constitute the foundation of the TEA Protocol, providing essential services through six specialized managers: i) the model manager abstracts heterogeneous LLM backends through a unified interface; ii) the prompt manager handles prompt lifecycle and versioning; iii) the memory manager coordinates persistence via session-based concurrency control; iv) the dynamic manager enables runtime code execution and serialization; v) the version manager maintains evolution histories for all components; and vi) the tracer records comprehensive execution trajectories and system-wide telemetry, serving as a data collection engine for audit, debugging, and the synthesis of high-quality datasets for agent training.

3.2 Core Protocols

The TEA Protocol defines three core context protocols: the Tool Context Protocol (TCP), the Environment Context Protocol (ECP), and the Agent Context Protocol (ACP). These protocols share a unified architectural design, each implemented through two core components: a context manager for context engineering, lifecycle management, and semantic retrieval, and a server that exposes standardized interfaces to other system modules. Each protocol generates a unified contract document (analogous to Agent Skills (Anthropic, 2025)) that aggregates all registered components’ descriptions to facilitate resource discovery and usage.

Tool Context Protocol. TCP fundamentally extends MCP (Anthropic, 2024b) by introducing integrated context engineering and comprehensive lifecycle management. Implemented through a ToolContextManager and a TCPServer, it supports seamless tool loading from both local registries and persistent configurations. During registration, TCP automatically synthesizes multiple representation formats, including function-calling schemas for LLM interfaces, natural language descriptions for documentation, and type-safe argument schemas for validation, providing LLMs with rich semantic information for accurate parameter inference. Furthermore, TCP incorporates a robust versioning system and a semantic retrieval mechanism based on vector embeddings, ensuring that tools can evolve over time while remaining easily discoverable through similarity-based queries.

Environment Context Protocol. ECP addresses the lack of unified interfaces in current agent systems by formalizing computational environments as first-class components with distinct observation and action spaces. Following an architectural pattern similar to TCP, it employs an EnvironmentContextManager to maintain state coherence and manage the contextual execution environments required by tools. ECP automatically discovers and registers environment-specific actions, converting them into standardized interfaces that agents can invoke via action toolkits. This design enables agents to operate across heterogeneous domains, such as browsers or file systems, without bespoke adaptations, while leveraging versioning and semantic retrieval to manage environment-level capabilities.

Agent Context Protocol. ACP establishes a unified framework for the registration, representation, and orchestration of autonomous agents, overcoming the poor interoperability and fragmented attribute definitions in existing multi-agent systems. It utilizes an AgentContextManager to maintain agent states and execution contexts, providing a foundation for persistent coordination across tasks and sessions. ACP captures semantically enriched metadata regarding agents’ roles, competencies, and objectives, and formalizes the modeling of complex inter-agent dynamics, including cooperative, competitive, and hierarchical configurations. By embedding structured contextual descriptions and maintaining relationship representations, ACP facilitates adaptive collaboration and systematic integration within the broader TEA ecosystem.

3.3 Protocol Transformations

While TCP, ECP, and ACP provide independent specifications for tools, environments, and agents, practical deployment requires seamless interoperability across these protocols. Well-defined transformation pathways are essential for enabling computational components to assume alternative roles and exchange contextual information in a principled manner. These transformations constitute the foundation for dynamic role reconfiguration, allowing components to flexibly adapt their functional scope in response to evolving task requirements and system constraints. We identify six fundamental categories of protocol transformations:

- Agent-to-Tool (A2T). Encapsulates an agent’s capabilities and reasoning into a standardized tool interface while preserving awareness. For example, a deep researcher workflow can be packaged as a general-purpose search tool.

- Tool-to-Agent (T2A). Treats tools as operational actuators by mapping an agent’s goals into parameterized tool invocations, aligning reasoning with tool constraints. For example, a data analysis agent may invoke SQL tools to query structured databases.

- Environment-to-Tool (E2T). Converts actions of environments into standardized tool interfaces, enabling agents to interact with environments through consistent tool calls. For example, browser actions such as Navigate and Click can be consolidated into a context-aware toolkit.

- Tool-to-Environment (T2E). Elevates a collection of tools into an environment abstraction where functions become actions within a coherent action space governed by shared state. For example, a development toolkit can be encapsulated as a programming environment for sequential code-edit-compile-debug workflows.

- Agent-to-Environment (A2E). Encapsulates an agent as an interactive environment by exposing its decision rules and state dynamics as an operational context for other agents. For example, a market agent can be represented as an environment that provides trading rules and dynamic responses for training.

- Environment-to-Agent (E2A). Embeds reasoning and adaptive decision-making into an environment’s dynamics, transforming it into an autonomous agent that can initiate behaviors and enforce constraints. For example, a game environment can be elevated into an opponent agent that adapts its strategy to the player’s actions.

3.4 Self-Evolution Module

The Self-Evolution Module enables agents to continuously improve performance by optimizing system components during task execution. It wraps evolvable components, including prompts, tool/agent/environment/memory code, and successful execution solutions, as variables for iterative optimization. The module employs two primary methods: textgrad (Yuksekgonul et al., 2025) for gradient-based refinement and self-reflection for strategic analysis. Optimized components are automatically registered as new versions via the version manager, ensuring that subsequent tasks leverage improved capabilities while maintaining access to historical records for analysis and rollback.

<details>

<summary>x4.png Details</summary>

### Visual Description

## System Architecture Diagram: Planning Agent and Tool Interaction

### Overview

The image presents a system architecture diagram detailing the interaction between a Planning Agent, various specialized agents, tools, and the environment. It illustrates the flow of information and control within the system, emphasizing the role of the Planning Agent in coordinating tasks and managing resources. The diagram includes components such as user objectives, planning tools, specialized agents (Researcher, Browser, Analyzer, Generator), context protocols (TCP, ACP, ECP), and basic managers.

### Components/Axes

**1. Top Section: Planning Agent**

* **User Objectives:** Located on the left, indicating the starting point of the process.

* **Planning Agent:** The central component, responsible for managing and coordinating tasks.

* **Tools:**

* Actions: create, update, delete, mark.

* Planning: Interpret user tasks, Decompose into manageable sub-tasks, Assign to specialized sub-agents.

* Planning Tool: Create, update, and manage plans for complex tasks simultaneously; Track execution states.

* **Objective Shifts (Update Plans) & Unexpected Errors:** Represent feedback loops and potential disruptions.

* **Planner:** Top-right, connected to Task.

* **Researcher, Browser, Analyzer, Generator, Reporter:** Specialized agents branching from the Planner.

* **Answer:** Bottom-right, the final output.

**2. Middle Section: Specialized Agents**

* **Deep Researcher Agent:** Optimizes queries, searches tools, refines insight.

* **Browser Use Agent:** Decides actions, browses actions, records results.

* **Deep Analyzer Agent:** Organizes diverse formats, reasons and summarizes.

* **Tool Generator Agent (x2):** Tool retrieval, creation, reuse; Add content, export report.

**3. Central Section: Agent Context Protocol (ACP)**

* **Tool Context Protocol (TCP):** Connects agents to tools.

* **Environment Context Protocol (ECP):** Connects agents to the environment.

* **Tool-Environment-Agent (TEA):** Central hub connecting Tools, Environment (Envs), and Agents.

* **Arrows:** Indicate the flow of information between Agents (A), Tools (T), and Environment (E). Labeled as A2T, T2A, A2E, E2A, E2T, T2E.

**4. Bottom Section: Tools and Managers**

* **General Tools:** Bash, Python, Mdify, Web, Todo.

* **MCP Tools:** Searcher, Analyzer (Agent Tools), Local, Remote.

* **Environment Tools:** Browser, Github, Computer.

* **Basic Managers:** Model Manager, Memory Manager, Prompt Manager, Dynamic Manager, Version Manager, Tracer.

**5. Environment Context Protocol (ECP) Details:**

* **Rules:** Name, description, state, interaction.

* **Actions:** Read, write, goto, kexpres, clone, create.

* **Examples:** File System, Browser, Github&Git, Computer.

### Detailed Analysis or ### Content Details

**1. Planning Agent Flow:**

* The process starts with User Objectives, which are fed into the Planning Agent.

* The Planning Agent uses Tools to create, update, and manage plans.

* The Planning Agent decomposes tasks and assigns them to specialized sub-agents.

* Feedback loops and error handling are incorporated through Objective Shifts and Unexpected Errors.

* The Planner delegates tasks to Researcher, Browser, Analyzer, and Generator agents.

* The Reporter agent synthesizes information to produce an Answer.

**2. Specialized Agent Functions:**

* **Deep Researcher Agent:** Focuses on information retrieval and refinement.

* **Browser Use Agent:** Interacts with web browsers to gather information.

* **Deep Analyzer Agent:** Processes and summarizes diverse data formats.

* **Tool Generator Agent:** Manages the creation and reuse of tools.

**3. Context Protocols:**

* **Tool Context Protocol (TCP):** Facilitates communication between agents and tools.

* **Agent Context Protocol (ACP):** Serves as a central communication hub for agents, tools, and the environment.

* **Environment Context Protocol (ECP):** Manages interactions between agents and the environment.

**4. Tool-Environment-Agent (TEA) Interaction:**

* The TEA component acts as a central point for communication between agents, tools, and the environment.

* Arrows indicate the direction of information flow:

* A2T: Agent to Tool

* T2A: Tool to Agent

* A2E: Agent to Environment

* E2A: Environment to Agent

* E2T: Environment to Tool

* T2E: Tool to Environment

**5. Environment Context Protocol (ECP) Details:**

* **Rules:** Define the structure and behavior of interactions within the environment.

* **Actions:** Represent specific operations that can be performed within the environment.

* **Examples:**

* **Browser:** Actions include goto, input, click, scroll, kexpres, type.

* **File System:** Actions include read, write, move, copy, clone, commit, create, push.

* **Computer:** Actions include click, scroll, kexpres, type.

### Key Observations

* The diagram emphasizes a modular and hierarchical structure, with the Planning Agent at the top level and specialized agents performing specific tasks.

* Context protocols (TCP, ACP, ECP) play a crucial role in managing communication and interactions between different components.

* The Tool-Environment-Agent (TEA) component serves as a central hub for coordinating activities.

* The diagram incorporates feedback loops and error handling mechanisms to ensure robustness.

### Interpretation

The diagram illustrates a sophisticated system architecture designed for complex task management and problem-solving. The Planning Agent acts as a central coordinator, delegating tasks to specialized agents and managing resources. The use of context protocols (TCP, ACP, ECP) ensures seamless communication and interaction between different components. The Tool-Environment-Agent (TEA) component facilitates the integration of tools and the environment into the overall system.

The architecture is designed to be flexible and adaptable, with feedback loops and error handling mechanisms to address unexpected events. The modular structure allows for easy expansion and modification of the system. The inclusion of basic managers (Model Manager, Memory Manager, Prompt Manager, Dynamic Manager, Version Manager, Tracer) suggests a focus on efficient resource management and performance optimization.

Overall, the diagram presents a comprehensive and well-designed system architecture for intelligent task management and problem-solving.

</details>

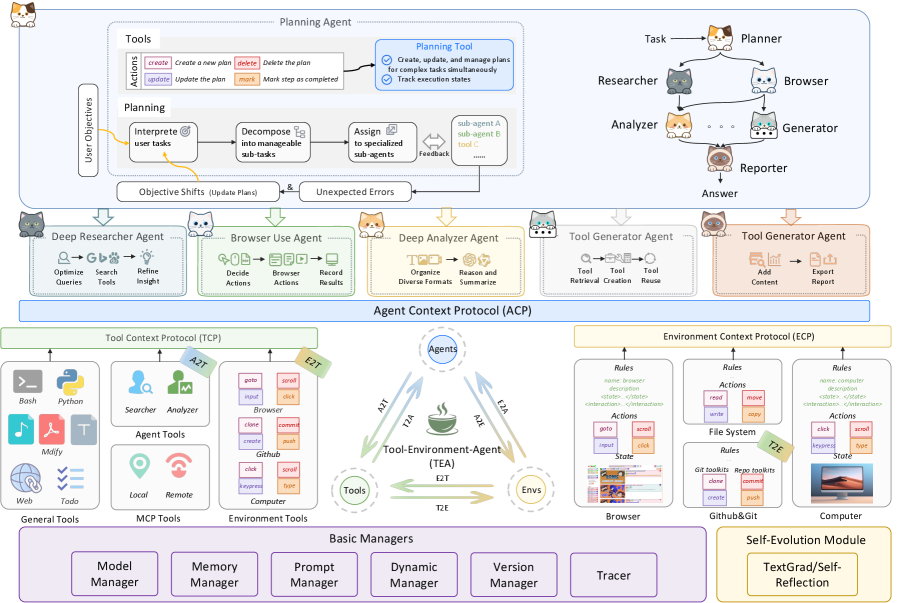

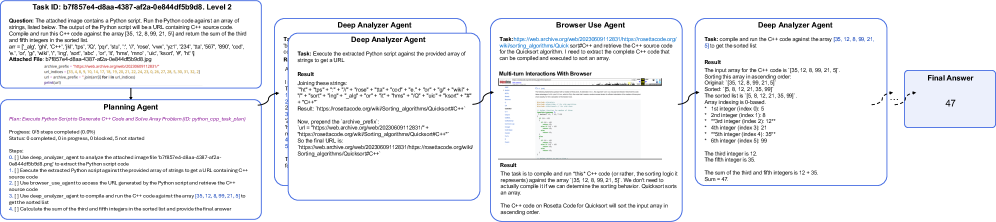

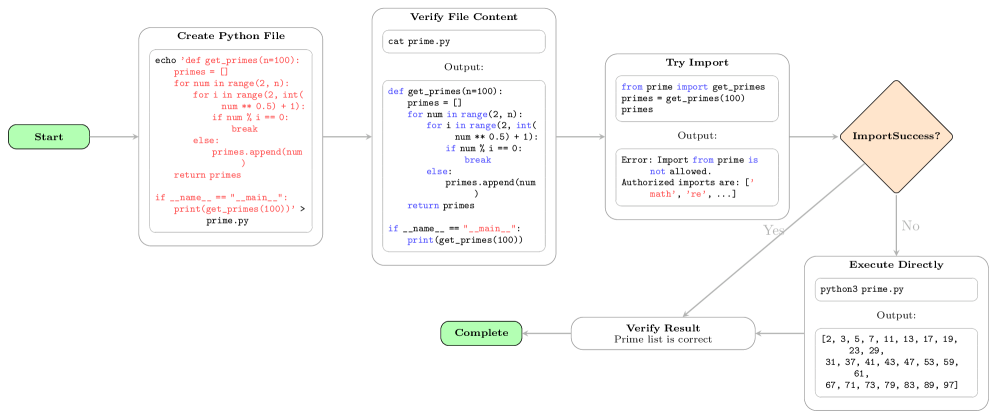

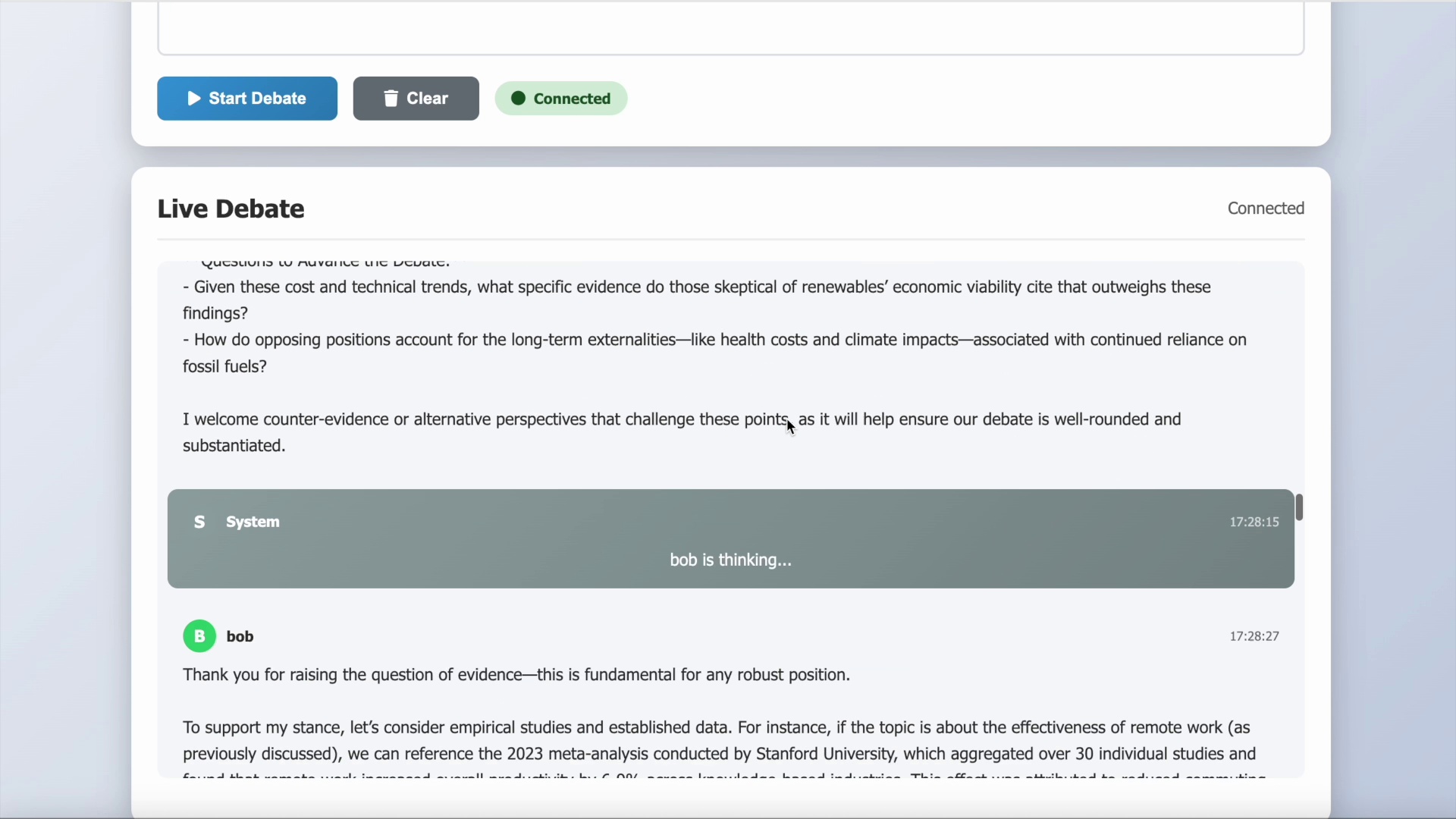

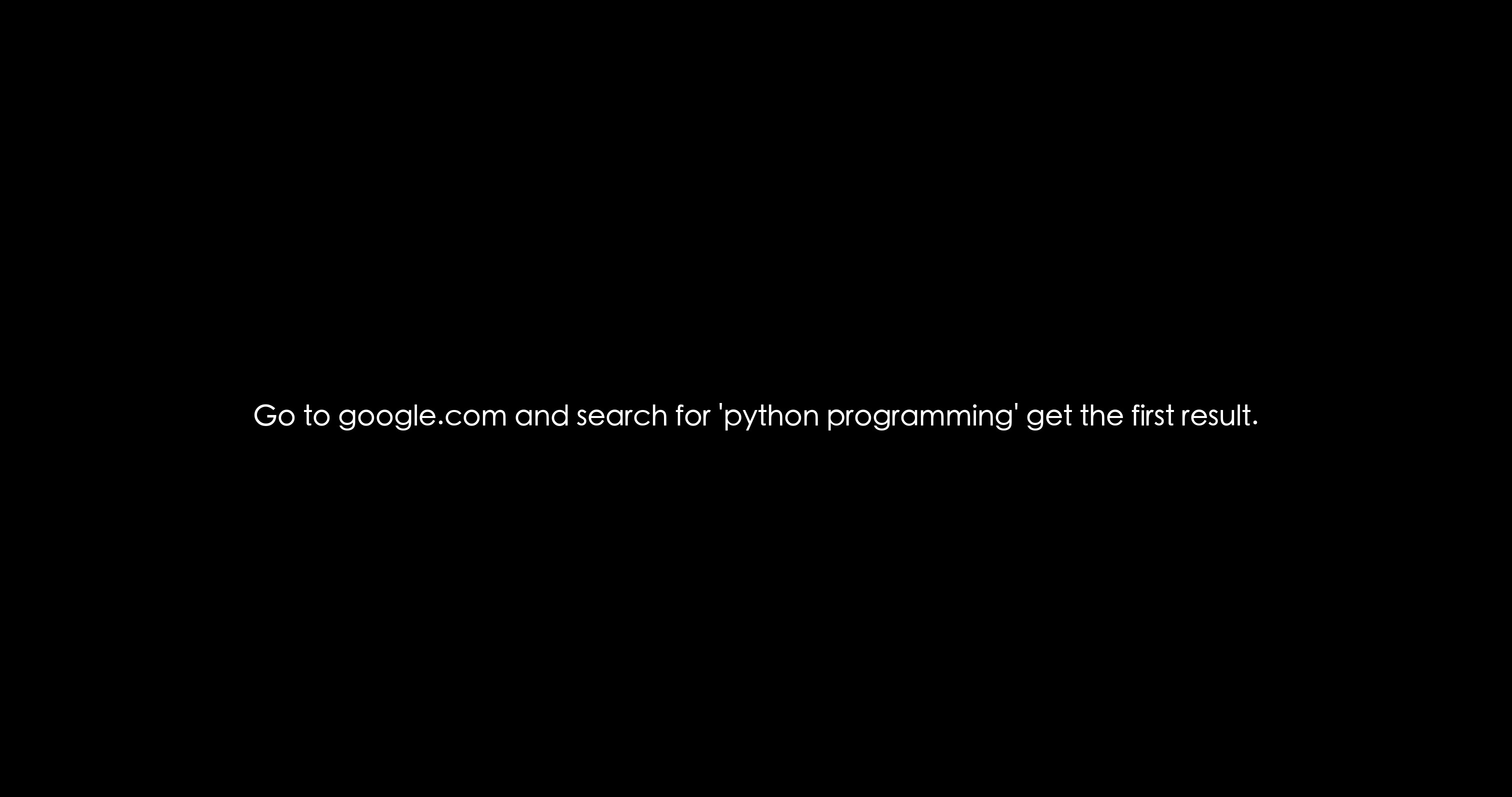

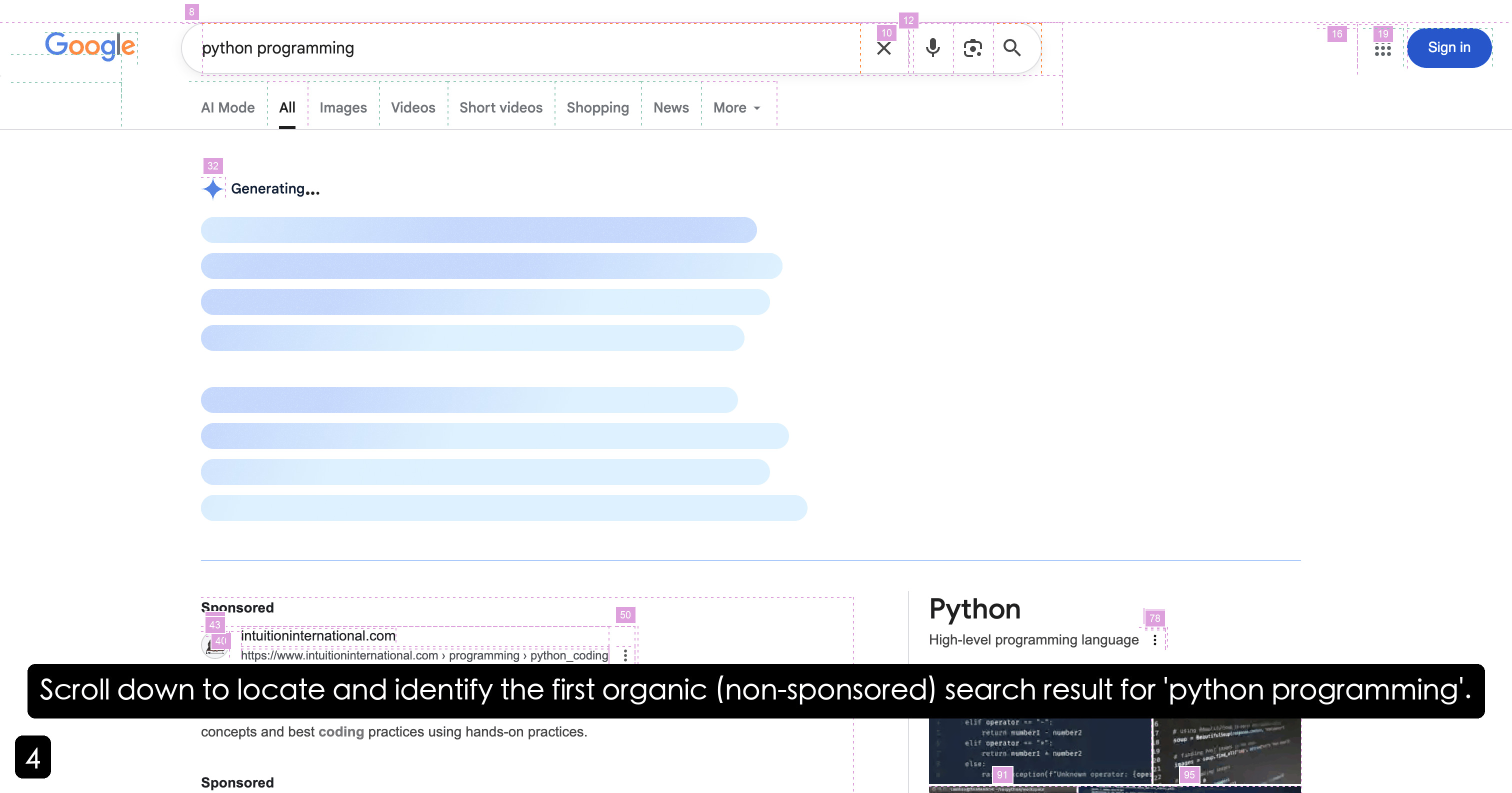

Figure 2: Architecture of AgentOrchestra implemented based on TEA protocol.

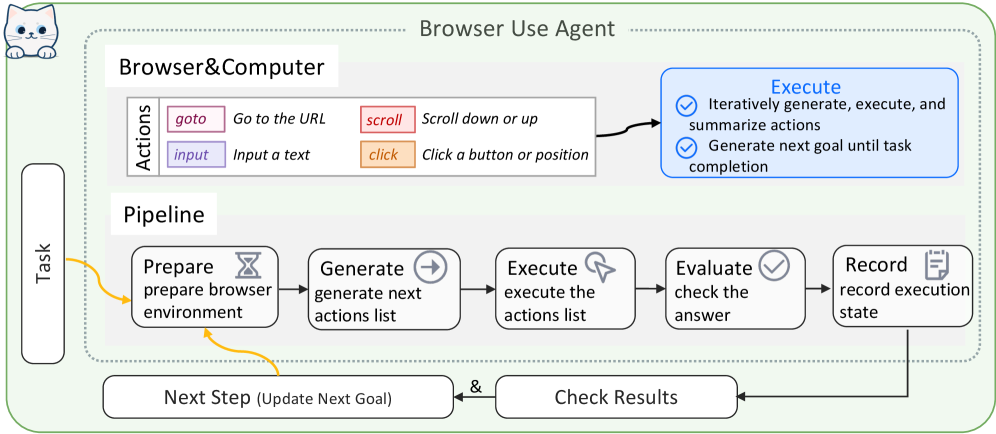

4 AgentOrchestra

AgentOrchestra is a concrete instantiation of the TEA Protocol, designed as a hierarchical multi-agent framework that integrates high-level planning with modular agent collaboration. As illustrated in Figure 2, AgentOrchestra features a central planning agent that decomposes complex objectives and delegates sub-tasks to a team of specialized sub-agents. This section outlines our agent design principles and the architecture of both planning and specialized sub-agents. Details can be found in Appendix D.

4.1 Agent Design Principles

Within the TEA Protocol framework, agents are autonomous components that follow a structured interaction model with six core components. i) Agent: Managed via the ACP for registration and coordination. ii) Environment: External context and resources managed by the ECP, exposing unified interfaces for observation and action. iii) Model: LLM reasoning engines abstracted by the Basic Managers for model-agnostic interoperability and dynamic switching. iv) Memory: Session-based persistence that records trajectories and extracts reusable insights. v) Observation: The current context, including tasks, environment states, execution history, and available resources (tools and sub-agents). vi) Action: TCP-managed, executed via parameterized tool calls, where one tool may support multiple actions.

This architectural design facilitates a continuous perception–interpretation–action cycle. The agent first perceives the current observation and retrieves relevant context from memory. It then interprets this information through the unified model interface to determine the optimal action. The action is executed within the managed environment, and the resulting state transitions and insights are recorded back into memory to refine subsequent reasoning cycles. This iterative loop continues until the task objectives are satisfied or a termination condition is reached. Further details are provided in Appendix D.1.

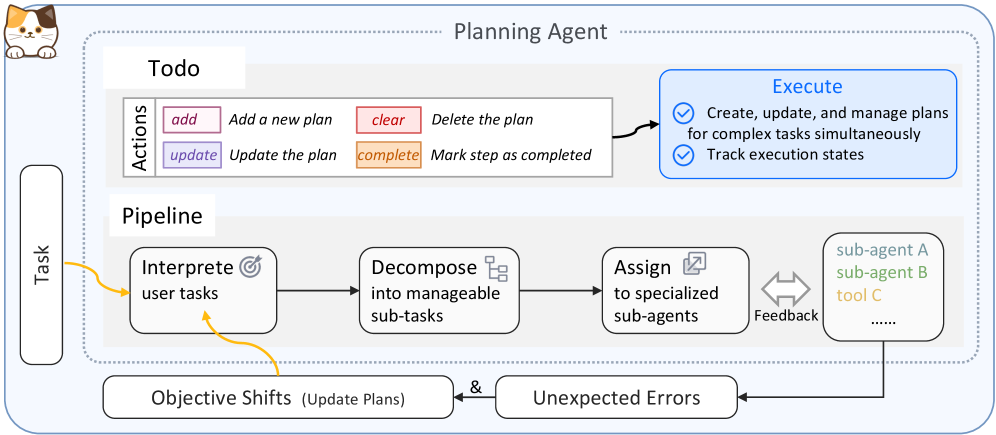

4.2 Planning Agent

The planning agent is the central orchestrator of AgentOrchestra. It interprets the user goal, decomposes it into sub-tasks, and dispatches them to specialized sub-agents or TCP tools via ACP-mediated communication while tracking global progress and consolidating intermediate feedback. To enable principled orchestration, it leverages long-term memory to guide resource selection and dynamically constructs a unified invocation interface, including resources produced through E2T and A2T transformations. Execution follows an iterative loop of interpretation, allocation, and action, with automatic replanning under environment shifts or execution failures. Session management and tracer-based logging provide auditability and support robust long-horizon task completion.

4.3 Specialized Sub-Agents

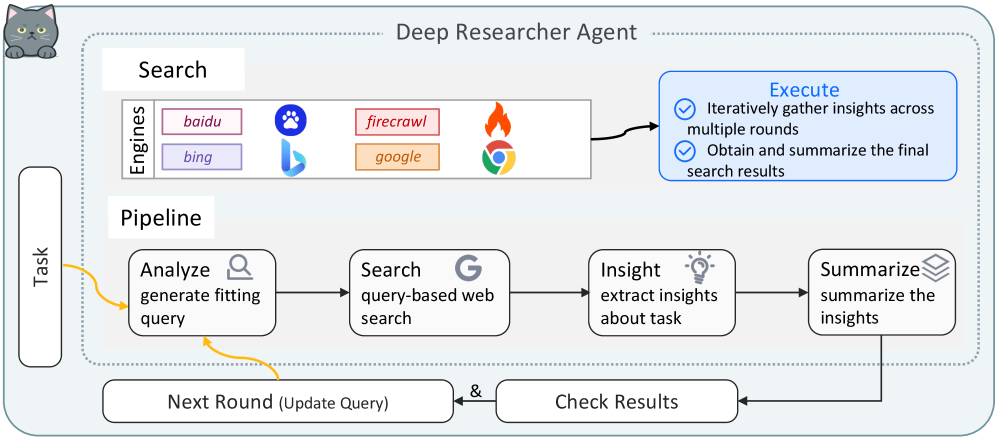

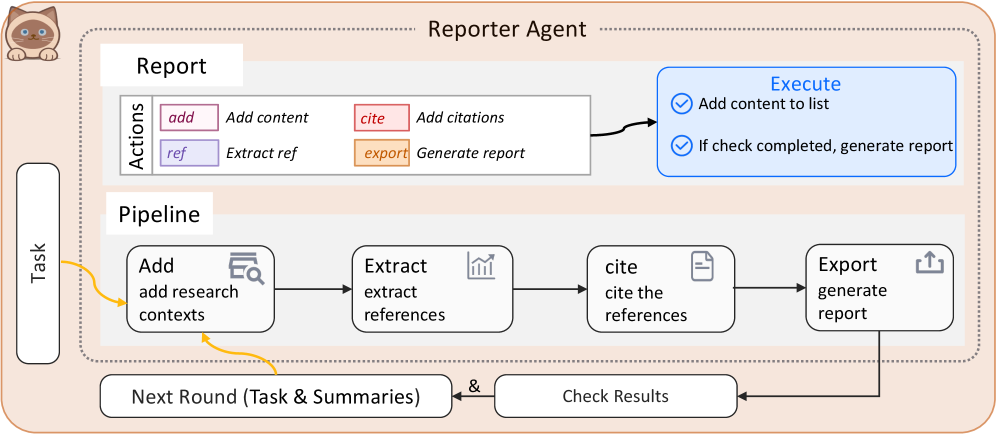

To address diverse real-world challenges, AgentOrchestra instantiates specialized sub-agents tailored for task domains. These sub-agents are managed via the ACP and coordinate through the planning agent to execute complex workflows: i) Deep Researcher Agent: Specialized for comprehensive information gathering through multi-round research workflows. It performs parallel breadth-first searches across multiple engines and recursively issues follow-up queries until task objectives are satisfied, producing relevance-ranked, source-cited summaries. ii) Browser Use Agent: Provides automated, fine-grained web interaction by integrating both browser and computer environments under the ECP. It supports DOM-level and pixel-level operations (e.g., mouse movements), achieving unified control over interactive elements. iii) Deep Analyzer Agent: A workflow-oriented module designed for multi-step reasoning on heterogeneous multimodal data (e.g., text, PDFs, images, audio, video or zip). It applies type-specific analysis strategies and iterative refinement to synthesize insights into coherent conclusions. iv) Tool Generator Agent: Facilitates intelligent tool evolution through the automated creation, retrieval, and systematic reuse of TCP-compliant tools. It employs semantic search to identify tools and initiates a code synthesis process to develop new capabilities when gaps are identified. v) Reporter Agent: It aggregates and harmonizes evidence collected by upstream agents (e.g., the Deep Researcher Agent, Browser Use Agent, and Deep Analyzer Agent), then composes structured markdown with automatically deduplicated references and normalized URLs for consistent source attribution.

5 Empirical Studies

This section presents our experimental setup and results, including benchmark evaluations, baseline comparisons, and comprehensive analysis. Additional examples are provided in the Appendix F.

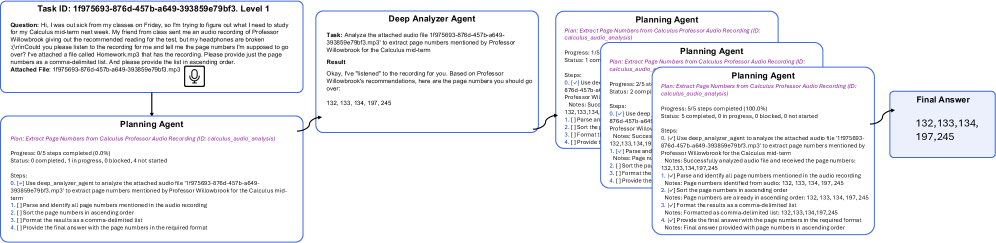

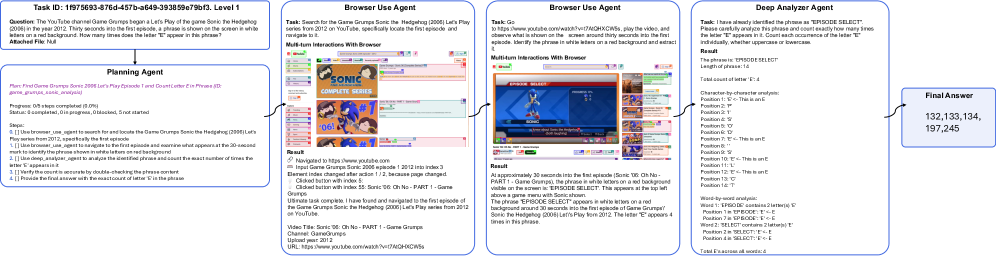

Experimental Settings. We evaluate our framework on three benchmarks: SimpleQA Wei et al. (2024), a 4,326-question factual accuracy benchmark; GAIA Mialon et al. (2023), assessing real-world reasoning, multimodal processing, and tool use with 301 test and 165 validation questions; and Humanity’s Last Exam (HLE) Phan et al. (2025), a 2,500-question multimodal benchmark for human-level reasoning and general intelligence. We report score (pass@1), which measures the proportion of questions for which the top prediction is fully correct. Specifically, the planning agent ( $m{=}50$ ), deep researcher ( $m{=}3$ ), tool generator ( $m{=}10$ ), deep analyzer ( $m{=}3$ ), and reporter are all built on gemini-3-flash-preview; the browser use agent employs gpt-4.1 ( $m{=}5$ ) and computer-use-preview(4o) ( $m{=}50$ ), where $m$ denotes the maximum steps.

5.1 Performance across Benchmarks

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: GAIA Test Results for Different Agents

### Overview

The image is a bar chart comparing the performance of different agents on the GAIA test across three levels (Level1, Level2, Level3) and their average scores. The chart displays scores ranging from 40 to 100, with each agent's performance represented by bars of different colors corresponding to the test level or average.

### Components/Axes

* **Y-axis:** "Score", ranging from 40 to 100 in increments of 10.

* **X-axis:** Categorical axis representing different agents: AgentOrchestrator, ToolOrchestra, HALO, AWorld, Su-Zero-Ultra, h2oGPTe-Agent, DeSearch, Alita, Langfun, o3-DR, JoyAgent, o4-mini-DR. These agents are grouped into four sections.

* **Legend (Top-Right):**

* Level1: Green

* Level2: Blue

* Level3: Purple

* Average: Orange

### Detailed Analysis

The chart is divided into four sections, each containing the same set of agents. Each section represents a different test level or the average score.

**Section 1: Level 1 (Green)**

* AgentOrchestrator: 98.9

* ToolOrchestra: 95.7

* HALO: 94.6

* AWorld: 95.7

* Su-Zero-Ultra: 93.5

* h2oGPTe-Agent: 89.2

* DeSearch: 91.4

* Alita: 92.5

* Langfun: 85.0

* o3-DR: 79.4

* JoyAgent: 77.4

* o4-mini-DR: 67.8

**Section 2: Level 2 (Blue)**

* AgentOrchestrator: 83.3

* ToolOrchestra: 82.4

* HALO: 84.9

* AWorld: 81.3

* Su-Zero-Ultra: 79.9

* h2oGPTe-Agent: 75.8

* DeSearch: 73.3

* Alita: 73.6

* Langfun: 68.6

* o3-DR: 67.3

* JoyAgent: 59.3

* o4-mini-DR: (Value not clearly visible, but appears to be around 50)

**Section 3: Level 3 (Purple)**

* AgentOrchestrator: 81.6

* ToolOrchestra: 97.8

* HALO: 69.4

* AWorld: 57.1

* Su-Zero-Ultra: 65.3

* h2oGPTe-Agent: 61.2

* DeSearch: 61.2

* Alita: 48.0

* Langfun: 47.5

* o3-DR: 44.3

* JoyAgent: (Value not clearly visible, but appears to be around 40)

* o4-mini-DR: (Value not clearly visible, but appears to be around 40)

**Section 4: Average (Orange)**

* AgentOrchestrator: 79.1

* ToolOrchestra: 87.4

* HALO: 85.4

* AWorld: 81.7

* Su-Zero-Ultra: 80.4

* h2oGPTe-Agent: 78.7

* DeSearch: 78.1

* Alita: 75.4

* Langfun: 73.1

* o3-DR: 68.7

* JoyAgent: 67.1

* o4-mini-DR: 58.3

### Key Observations

* **AgentOrchestrator:** Performs best on Level 1, with a score of 98.9, and worst on Level 3, with a score of 81.6.

* **ToolOrchestra:** Shows the highest score on Level 3 (97.8) and a relatively high average score (87.4).

* **HALO:** Scores are relatively consistent across Level 1 and Level 2, but drops significantly on Level 3.

* **o4-mini-DR:** Consistently scores the lowest across all levels and the average.

* **General Trend:** Performance tends to decrease from Level 1 to Level 3 for most agents.

### Interpretation

The bar chart provides a comparative analysis of different agents' performance on the GAIA test across three difficulty levels. The data suggests that the agents generally perform best on Level 1 and worst on Level 3, indicating that the difficulty increases as the level increases. ToolOrchestra stands out as having a high score on Level 3, suggesting it may be particularly well-suited for the challenges presented at that level. The consistent low performance of o4-mini-DR across all levels suggests it may need further development or is not well-suited for the GAIA test. The chart highlights the strengths and weaknesses of each agent, providing valuable insights for further development and optimization.

</details>

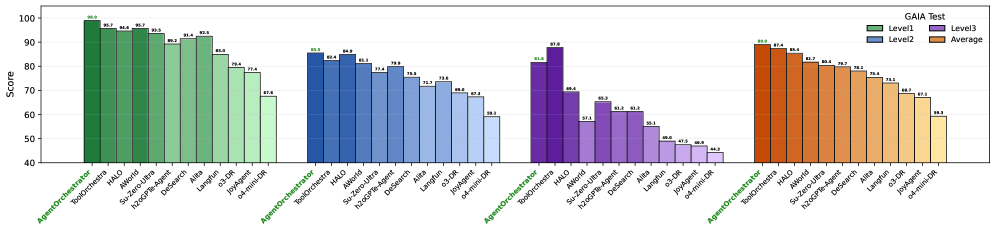

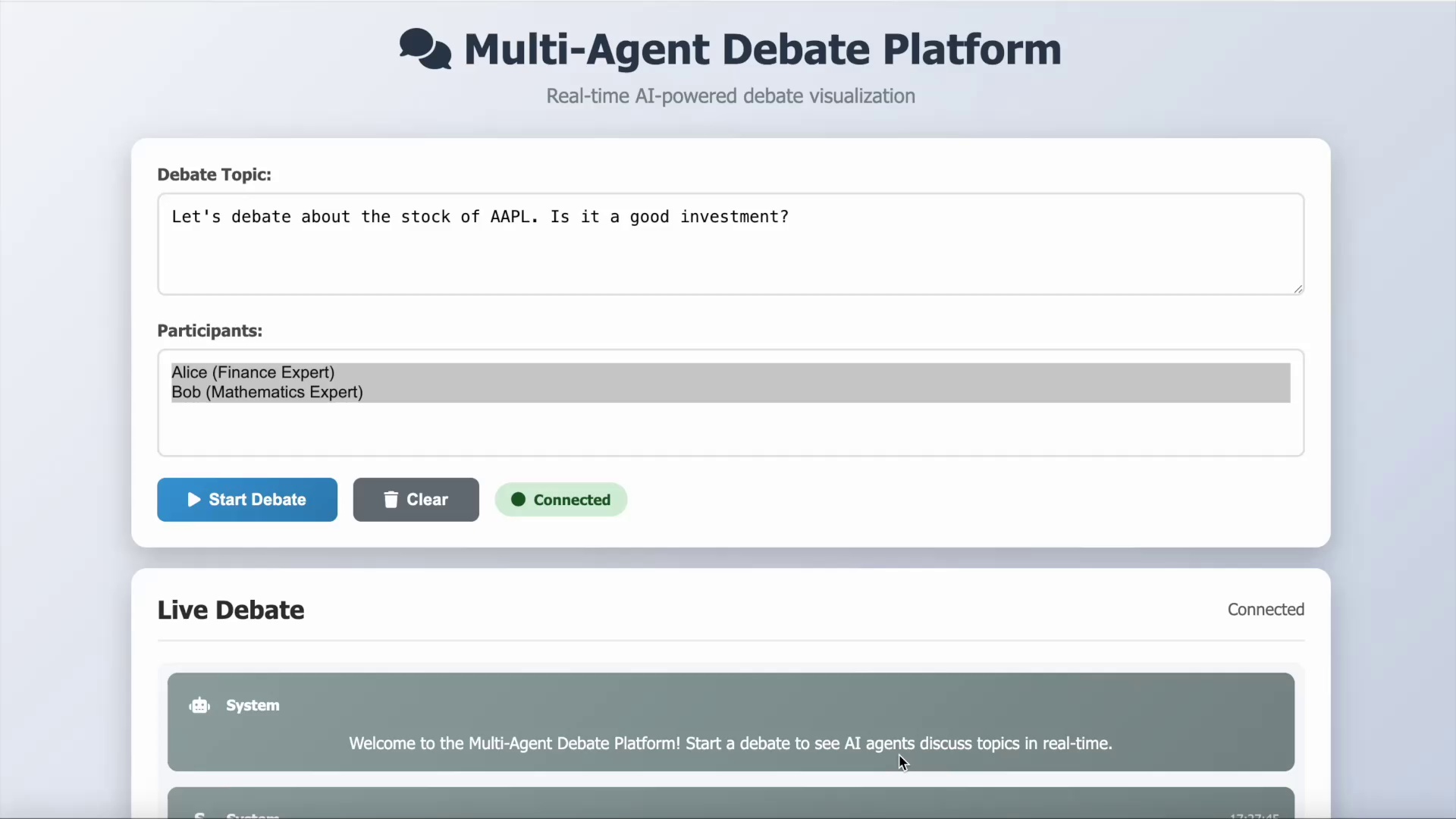

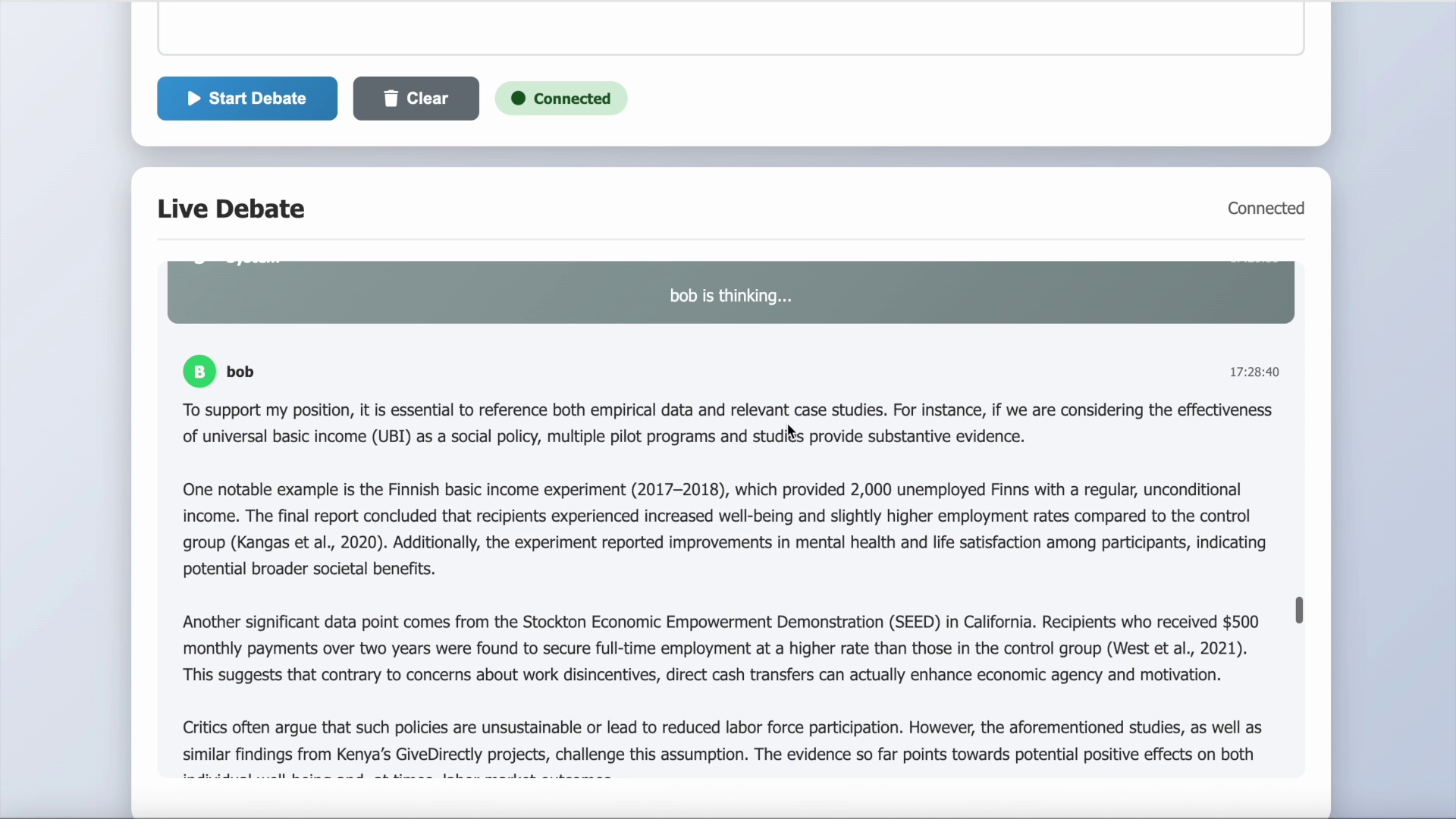

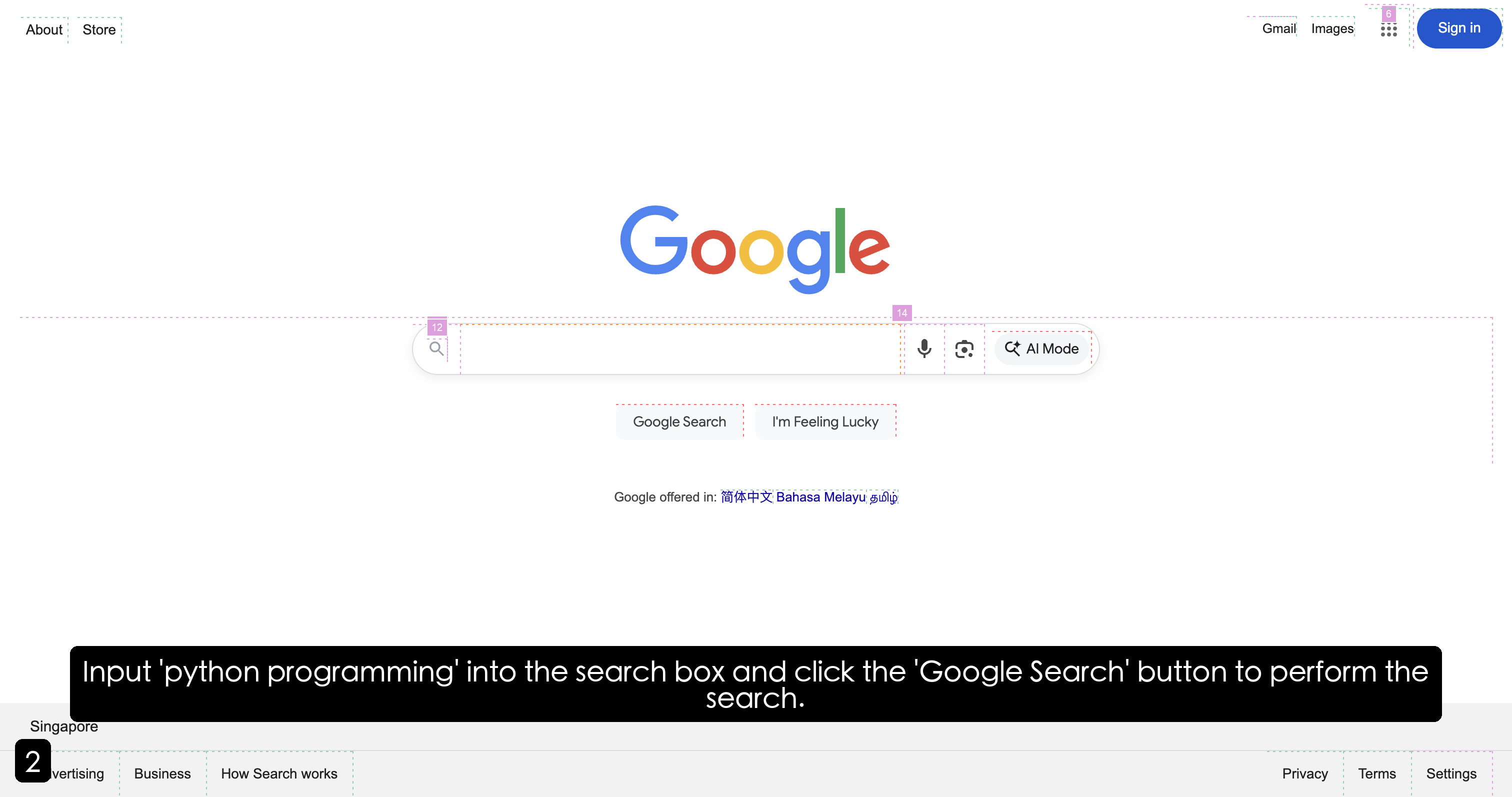

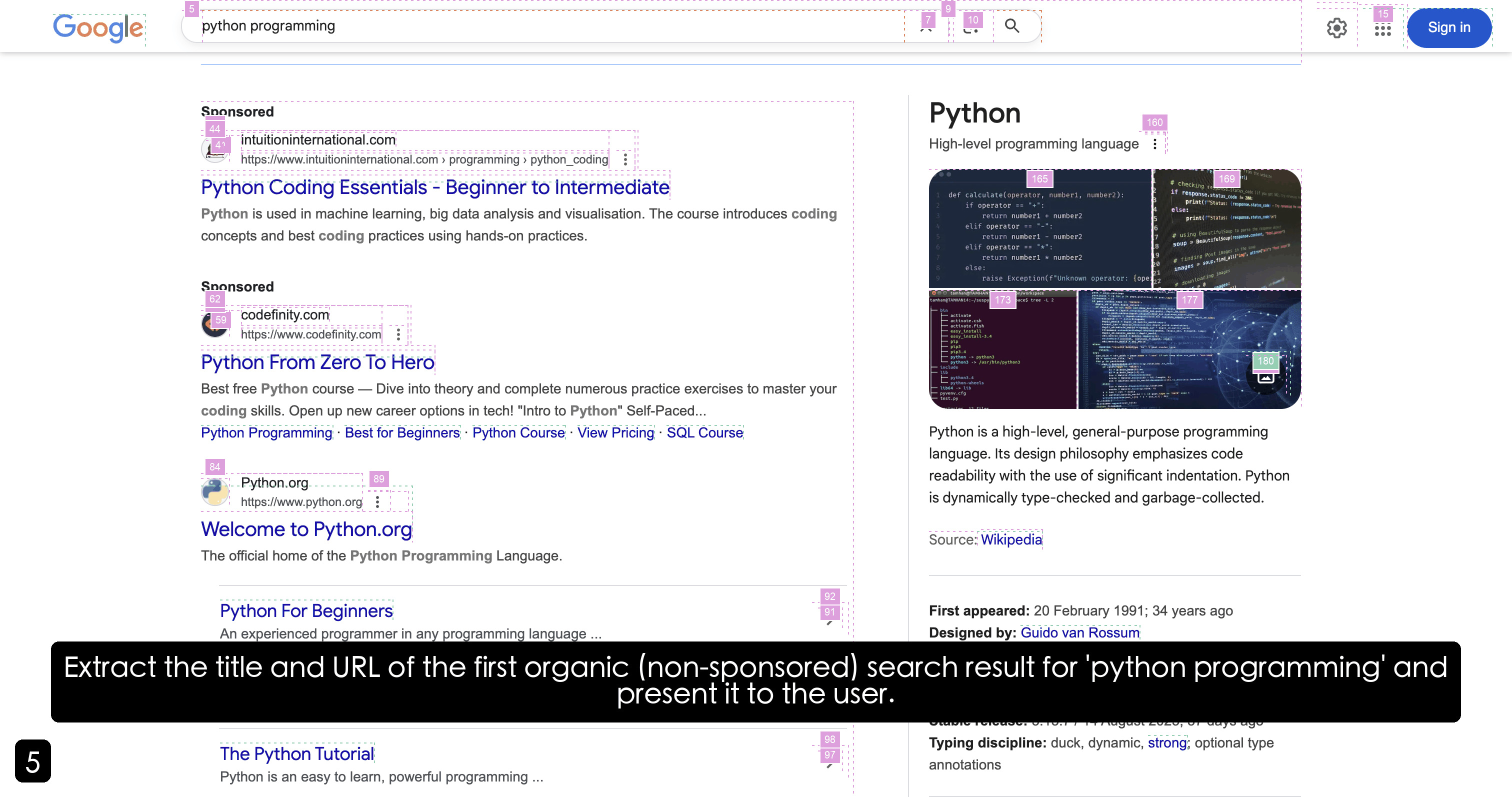

Figure 3: GAIA Test Results.

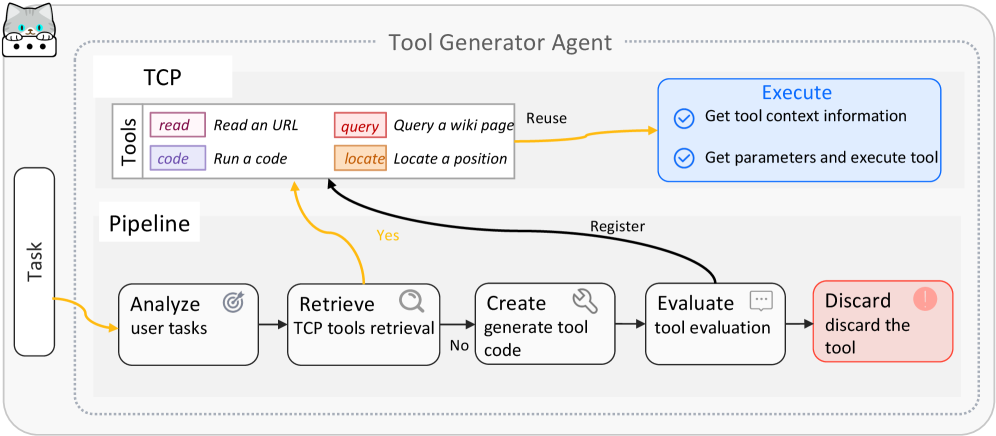

GAIA. AgentOrchestra achieves state-of-the-art performance (89.04% avg.) by mitigating the dimensionality curse and semantic drift that arise in large-scale agentic planning. We attribute this success to two architectural properties enabled by the TEA Protocol. First, hierarchical decoupling of the action space reduces planning complexity: while methods (e.g., ToolOrchestra, AWorld) must map goals to a monolithic toolkit, our hierarchical routing decomposes the global task into locally tractable sub-problems, lowering cognitive entropy for the central orchestrator and preserving abstract reasoning under long horizons, even amid low-level sensorimotor noise (e.g., granular DOM events). Second, ECP formalizes epistemic environment boundaries: GAIA’s multi-domain tasks require temporal and cross-modal state coherence, and baselines often degrade during domain transitions, such as from browser retrieval to local python analysis. By treating environments as first-class managed components, TEA preserves and propagates session-critical state (e.g., authentication tokens and transient file-system mutations) across agent boundaries, reducing contextual forgetting and enabling compositional generalization on challenging Level 2 and Level 3 scenarios. Third, AgentOrchestra supports recursive refinement of reasoning trajectories. When faced with complex problems, the Planning Agent evaluates intermediate insights and, when necessary, invokes the Tool Generator Agent to synthesize context-specific functionalities on the fly. This on-demand tool evolution bypasses the fixed-capability bottleneck of static agent components.

Table 2: Performance on GAIA Validation.

| Agents | Level 1 | Level 2 | Level 3 | Average |

| --- | --- | --- | --- | --- |

| HF ODR (o1) (HuggingFace, 2024) | 67.92 | 53.49 | 34.62 | 55.15 |

| OpenAI DR (OpenAI, 2024) | 74.29 | 69.06 | 47.60 | 67.36 |

| Manus (Shen and Yang, 2025) | 86.50 | 70.10 | 57.69 | 73.90 |

| Langfun (Google, 2024) | 86.79 | 76.74 | 57.69 | 76.97 |

| AWorld (Yu et al., 2025) | 88.68 | 77.91 | 53.85 | 77.58 |

| AgentOrchestra | 92.45 | 83.72 | 57.69 | 82.42 |

Table 3: Performance on SimpleQA and HLE.

| Model and Agent | SimpleQA |

| --- | --- |

| Models | |

| o3 (w/o tools) | 49.4 |

| gemini-2.5-pro-preview-05-06 | 50.8 |

| Agents | |

| Perplexity DR (Perplexity, 2025) | 93.9 |

| AgentOrchestra | 95.3 |

| Model and Agent | HLE |

| Models | |

| o3 (w/o tools) | 20.3 |

| claude-3.7-sonnet (w/o tools) | 8.9 |

| gemini-2.5-pro-preview-05-06 | 17.8 |

| Agents | |

| OpenAI DR (OpenAI, 2024) | 26.6 |

| Perplexity DR (Perplexity, 2025) | 21.1 |

| AgentOrchestra | 37.46 |

SimpleQA. AgentOrchestra achieves SOTA performance (95.3% accuracy), significantly surpassing both monolithic LLMs (e.g., o3 at 49.4%) and specialized retrieval agents like Perplexity Deep Research (93.9%). We attribute this improvement to systematic reduction of epistemic uncertainty through our hierarchical verification pipeline. SimpleQA primarily targets short-form factuality, where hallucinations often arise from the model’s inability to reconcile conflicting web-based evidence or its tendency to rely on internal parametric memory. AgentOrchestra mitigates these issues by enforcing cross-agent consensus: the Planning Agent orchestrates a retrieve-verify-synthesize cycle where the Deep Researcher performs multi-engine breadth-first searches while the Deep Analyzer evaluates evidence consistency across heterogeneous sources. By decoupling retrieval from analysis, the system prevents "confirmation bias" inherent in single-agent architectures, where the same model both proposes and validates a hypothesis. Furthermore, the integration with the Reporter Agent ensures traceable attribution, grounding every factual claim in a re-verified source, which effectively transforms the task from an open-domain generation problem into a structured evidence-synthesis process.

HLE. AgentOrchestra achieves 37.46% on the HLE benchmark, a substantial margin over leading baselines like o3 (20.3%) and Perplexity Deep Research (21.1%). This gain highlights the framework’s capacity for long-horizon analytical reasoning and adaptive capability expansion in expert-level domains. HLE demands more than simple retrieval; it requires synthesizing disparate, highly specialized knowledge. In this setting, the hierarchical structure enables strategic pruning of the hypothesis space, allowing the Planning Agent to maintain global objective coherence while delegating technical validation to specialized agents such as the Deep Analyzer. As a result, the final solution is both analytically rigorous and cross-verified against multimodal evidence, yielding robust performance on challenging expert-level tasks.

5.2 Ablation Studies

Table 4: Sub-agent effectiveness across GAIA Test.

| P | R | B | A | T | Level 1 | Level 2 | Level 3 | Average | Improvement |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| ✓ | | | | | 54.84 | 33.96 | 10.20 | 36.54 | – |

| ✓ | ✓ | | | | 86.02 | 47.17 | 34.69 | 57.14 | +56.40% |

| ✓ | ✓ | ✓ | | | 89.25 | 71.07 | 46.94 | 72.76 | +27.33% |

| ✓ | ✓ | ✓ | ✓ | | 91.40 | 77.36 | 61.22 | 79.07 | +8.67% |

| ✓ | ✓ | ✓ | ✓ | ✓ | 98.92 | 85.53 | 81.63 | 89.04 | +12.61% |

Effectiveness of the specialized sub-agents. Ablation studies on the GAIA Test demonstrate the synergistic effect inherent in our multi-agent coordination. Integrating coarse-grained exploratory retrieval (Researcher) with fine-grained operational interaction (Browser) nearly doubles performance (36.54% to 72.76%), proving that breadth of information and depth of interaction are mutually reinforcing. The Deep Analyzer’s 8% gain highlights the necessity of specialized reasoning pipelines for high-entropy multimodal tasks, while the Tool Generator’s 12.61% boost validates the efficacy of on-demand capability synthesis in overcoming the limitations of static, predefined toolsets. These results suggest that complex problem-solving emerges not just from individual agent strength, but from the structured delegation of specialized roles.

Efficiency analysis. AgentOrchestra ’s operational efficiency is evaluated across varying task complexities. Simple tasks typically complete within 30 seconds using approximately 5k tokens, while medium-complexity tasks average 3 minutes (25k tokens). Complex multimodal or long-horizon scenarios require approximately 10 minutes and 100k tokens. Compared to monolithic baselines, our hierarchical architecture optimizes resource allocation, maintaining operational costs comparable to commercial research agents while delivering significant performance gains.

Effectiveness of the self-evolution module. The TEA Protocol enables self-optimization by treating system components as evolvable variables, helping bridge the gap between base model capacity and task requirements. Evaluations on GPQA-Diamond and AIME benchmarks show that iterative refinement, including gradient-based (TextGrad) and symbolic (self-reflection) approaches, mitigates reasoning bottlenecks in foundation models. The improvement is exemplified by a 13.34% gain on AIME25 for gpt-4.1 under self-reflection, highlighting recursive trajectory refinement. Leveraging execution feedback via TEA’s versioning and tracer mechanisms, the system identifies and corrects logical inconsistencies in its planning. Overall, this shifts reasoning from one-shot inference to a managed optimization process, enabling AgentOrchestra to evolve problem-solving strategies for frontier-level tasks.

Table 5: Effectiveness of the self-evolution module. Direct means using the base model directly.

| Strategy | GPQA-Diamond | AIME24 | AIME25 |

| --- | --- | --- | --- |

| Base Model: gpt-4o | | | |

| Direct | 47.98% | 13.34% | 6.67% |

| w/ TextGrad | 54.04% | 10.00% | 10.00% |

| w/ Self-reflection | 55.05% | 20.00% | 6.67% |

| Base Model: gpt-4.1 | | | |

| Direct | 61.11% | 23.34% | 20.00% |

| w/ TextGrad | 65.15% | 26.67% | 23.34% |

| w/ Self-reflection | 68.18% | 33.34% | 33.34% |

Regarding tool evolution, the tool generator agent demonstrates efficient creation and reuse capabilities within the TCP framework. During our evaluation, the agent autonomously generated over 50 specialized tools, achieving a 30% reuse rate across subsequent tasks. This indicates an effective balance between tool specialization and generalization, ensuring that the system’s capabilities expand adaptively while maintaining resource efficiency.

6 Conclusion

We introduced the TEA Protocol, unifying environments, agents, and tools to address fragmentation in existing standards. Building on this, we presented AgentOrchestra, a hierarchical multi-agent framework with specialized sub-agents for planning, research, web interaction, and multimodal analysis. Evaluations on three benchmarks show that AgentOrchestra achieves SOTA performance and scalable orchestration through dynamic resource transformations. Future work will extend TEA to support dynamic role allocation and autonomous agent reconfiguration. Building on tool and solution evolution, we will pursue deeper self-evolution, such as using RL to optimize agent components and decision policies without fine-tuning LLM parameters. We also aim to expand these mechanisms to agent structures and communication protocols, while enhancing multimodal capabilities for fine-grained real-time video analysis.

7 Limitations

7.1 Limitations of TEA Protocol and AgentOrchestra

Despite its strengths in orchestrating multi-agent systems, AgentOrchestra has several limitations that provide directions for future research:

First, System Complexity and Learning Curve. The TEA protocol introduces a structured abstraction layer for tools, environments, and agents to ensure interoperability. However, this structure may present a steeper learning curve for developers compared to simpler, ad-hoc scripting methods. To address this, we will provide extensive documentation, interactive tutorials, and a variety of pre-configured templates to simplify the onboarding process.

Second, Communication and Execution Overhead. Standardizing interactions through a formal protocol can introduce marginal computational and communication overhead, potentially increasing latency in real-time applications. We plan to optimize the serialization protocols and explore asynchronous execution models to minimize these effects in future versions.

Third, Dependence on Underlying Model Capabilities. The effectiveness of the orchestration is inherently limited by the reasoning and instruction-following performance of the foundation LLMs used. While TEA provides a robust framework, it cannot fully compensate for failures caused by model hallucinations or poor tool-use logic. Future work will focus on developing model-agnostic error recovery strategies and more sophisticated validation layers to enhance system-wide resilience.

7.2 Potential Risks

While AgentOrchestra and the TEA protocol aim to enhance multi-agent productivity, their capability to interact with local environments and web browsers introduces certain ethical and security risks.

One primary concern is the Misuse for Malicious Automation. The framework’s flexibility in controlling browser sessions and executing terminal commands could be repurposed to develop unauthorized "plugins" or "cheats" for online platforms, leading to unfair advantages or automated fraud. Furthermore, there are significant Privacy and Security Risks associated with granting autonomous agents access to personal data or sensitive system resources. If not properly sandboxed or governed by strict security policies, an agent could inadvertently leak private information or perform harmful, irreversible system actions. To mitigate these risks, we emphasize that AgentOrchestra should be used within isolated, monitored environments, and we advocate for the integration of robust human-in-the-loop verification mechanisms and strict access control policies in any real-world deployment.

References

- Anthropic (2024a) Introducing Computer Use, a New Claude 3.5 Sonnet, and Claude 3.5 Haiku. Note: https://www.anthropic.com/news/3-5-models-and-computer-use Accessed: 2025-05-13 Cited by: §1, §2.2.

- Anthropic (2024b) Introducing the Model Context Protocol. Note: https://www.anthropic.com/news/model-context-protocol Cited by: §C.2.1, §D.1, §1, §2.1, §2.2, §3.2.

- Anthropic (2025) Equipping agents for the real world with Agent Skills. Note: https://www.anthropic.com/engineering/equipping-agents -for-the-real-world-with-agent-skills Cited by: §C.2, §3.2.

- K. Black, N. Brown, J. Darpinian, K. Dhabalia, D. Driess, A. Esmail, M. Equi, C. Finn, N. Fusai, M. Y. Galliker, et al. (2025) $\pi$ 0. 5: a vision-language-action model with open-world generalization. arXiv preprint arXiv:2504.16054. Cited by: §A.1.2.

- G. Brockman, V. Cheung, L. Pettersson, J. Schneider, J. Schulman, J. Tang, and W. Zaremba (2016) Openai gym. arXiv preprint arXiv:1606.01540. Cited by: §C.2.2.

- G. DeepMind (2024) Gemini Deep Research. Note: https://gemini.google/overview/deep-research/?hl=en Cited by: §1.

- A. Ehtesham, A. Singh, G. K. Gupta, and S. Kumar (2025) A survey of agent interoperability protocols: Model context protocol (mcp), agent communication protocol (acp), agent-to-agent protocol (a2a), and agent network protocol (anp). arXiv preprint arXiv:2505.02279. Cited by: §2.1.

- Google (2024) LangFun Agent. Note: https://github.com/google/langfun Cited by: Table 2.

- Google (2025) Announcing the Agent2Agent Protocol (A2A). Note: https://developers.googleblog.com/en/a2a-a-new-era-of-agent-interoperability/ Cited by: §C.2.3, §1, §2.1.

- S. Hong, X. Zheng, J. Chen, Y. Cheng, J. Wang, C. Zhang, Z. Wang, S. K. S. Yau, Z. Lin, L. Zhou, et al. (2023) MetaGPT: Meta Programming for Multi-agent Collaborative Framework. arXiv preprint arXiv:2308.00352 3 (4), pp. 6. Cited by: §2.2.

- HuggingFace (2024) Open-source DeepResearch - Freeing Our Search Agents. Note: https://huggingface.co/blog/open-deep-research Cited by: Table 2.

- P. Li, X. Zou, Z. Wu, R. Li, S. Xing, H. Zheng, Z. Hu, Y. Wang, H. Li, Q. Yuan, et al. (2025) Safeflow: A principled protocol for trustworthy and transactional autonomous agent systems. arXiv preprint arXiv:2506.07564. Cited by: §2.1.

- G. Liang and Q. Tong (2025) LLM-Powered AI Agent Systems and Their Applications in Industry. arXiv preprint arXiv:2505.16120. Cited by: §2.2.

- X. Liang, J. Xiang, Z. Yu, J. Zhang, S. Hong, S. Fan, and X. Tang (2025) OpenManus: An Open-Source Framework for Building General AI Agents. Zenodo. External Links: Document, Link Cited by: §D.1.

- G. Mialon, C. Fourrier, C. Swift, T. Wolf, Y. LeCun, and T. Scialom (2023) GAIA: A Benchmark for General AI Assistants. External Links: 2311.12983, Link Cited by: §5.

- M. Müller and G. Žunič (2024) Browser Use: Enable AI to Control Your Browser External Links: Link Cited by: §1, §2.2.

- OpenAI (2023) Function Calling. Note: https://platform.openai.com/docs/guides/function-calling Cited by: §D.1, §2.2.

- OpenAI (2024) Introducing Deep Research. Note: https://openai.com/index/introducing-deep-research Cited by: §1, Table 2, Table 3.

- OpenAI (2025a) Context-Free Grammar. Note: https://platform.openai.com/docs/guides/function-calling#page-top Cited by: §A.1.2.

- OpenAI (2025b) Introducing Operator. Note: https://openai.com/blog/operator Cited by: §1, §2.2.

- Perplexity (2025) Introducing Perplexity Deep Research. Note: https://www.perplexity.ai/hub/blog/introducing-perplexity-deep-research Cited by: Table 3, Table 3.

- L. Phan, A. Gatti, Z. Han, N. Li, J. Hu, H. Zhang, C. B. C. Zhang, M. Shaaban, J. Ling, S. Shi, et al. (2025) Humanity’s Last Exam. arXiv preprint arXiv:2501.14249. Cited by: §5.

- Y. Qin, Y. Ye, J. Fang, H. Wang, S. Liang, S. Tian, J. Zhang, J. Li, Y. Li, S. Huang, et al. (2025) UI-TARS: Pioneering Automated GUI Interaction with Native Agents. arXiv preprint arXiv:2501.12326. External Links: Link Cited by: §1, §2.2.

- J. Qiu, X. Qi, T. Zhang, X. Juan, J. Guo, Y. Lu, Y. Wang, Z. Yao, Q. Ren, X. Jiang, X. Zhou, D. Liu, L. Yang, Y. Wu, K. Huang, S. Liu, H. Wang, and M. Wang (2025) Alita: generalist agent enabling scalable agentic reasoning with minimal predefinition and maximal self-evolution. External Links: 2505.20286, Link Cited by: §2.2.

- A. Roucher, A. V. del Moral, T. Wolf, L. von Werra, and E. Kaunismäki (2025) smolagents: A Smol Library to Build Great Agentic Systems. Note: https://github.com/huggingface/smolagents Cited by: §D.1, §2.2.

- M. Shen and Q. Yang (2025) From Mind to Machine: The Rise of Manus AI as a Fully Autonomous Digital Agent. External Links: 2505.02024, Link Cited by: §2.2, Table 2.

- W. Tan, W. Zhang, X. Xu, H. Xia, Z. Ding, B. Li, B. Zhou, J. Yue, J. Jiang, Y. Li, et al. (2024) Cradle: Empowering Foundation Agents toward General Computer Control. arXiv preprint arXiv:2403.03186. Cited by: §1, §2.2.

- G. Wang, Y. Xie, Y. Jiang, A. Mandlekar, C. Xiao, Y. Zhu, L. Fan, and A. Anandkumar (2023) Voyager: An Open-Ended Embodied Agent with Large Language Models. arXiv preprint arXiv:2305.16291. Cited by: §1, §2.2.

- X. Wang, Y. Chen, L. Yuan, Y. Zhang, Y. Li, H. Peng, and H. Ji (2024a) Executable Code Actions Elicit Better LLM Agents. External Links: 2402.01030, Link Cited by: §1, §2.2.

- X. Wang, B. Li, Y. Song, F. F. Xu, X. Tang, M. Zhuge, J. Pan, Y. Song, B. Li, J. Singh, et al. (2024b) OpenHands: An Open Platform for AI Software Developers as Generalist Agents. In The Thirteenth International Conference on Learning Representations, Cited by: §D.1, §2.2.

- J. Wei, N. Karina, H. W. Chung, Y. J. Jiao, S. Papay, A. Glaese, J. Schulman, and W. Fedus (2024) Measuring Short-Form Factuality in Large Language Models. External Links: 2411.04368, Link Cited by: §5.

- G. Wölflein, D. Ferber, D. Truhn, O. Arandjelović, and J. N. Kather (2025) LLM Agents Making Agent Tools. arXiv preprint arXiv:2502.11705. Cited by: §2.2.

- xAI (2025) Grok 3 Beta — The Age of Reasoning Agents. Note: https://x.ai/news/grok-3 Cited by: §1.

- C. Yu, S. Lu, C. Zhuang, D. Wang, Q. Wu, Z. Li, R. Gan, C. Wang, S. Hou, G. Huang, W. Yan, L. Hong, A. Xue, Y. Wang, J. Gu, D. Tsai, and T. Lin (2025) AWorld: orchestrating the training recipe for agentic ai. External Links: 2508.20404, Link Cited by: Table 2.

- M. Yuksekgonul, F. Bianchi, J. Boen, S. Liu, P. Lu, Z. Huang, C. Guestrin, and J. Zou (2025) Optimizing generative AI by backpropagating language model feedback. Nature 639 (8055), pp. 609–616. Cited by: §C.4, §C.4, §3.4.

\appendixpage

Appendix A Comprehensive Motivation for TEA Protocol

This section provides a comprehensive motivation for the TEA Protocol by examining the fundamental relationships and transformations between agents, environments, and tools in multi-agent systems. The discussion is organized into two main parts: first, we explore the conceptual relationships between agents, environments, and tools, examining how these three fundamental components interact and complement each other in modern AI systems; second, we analyze why transformation relationships between these components are necessary, demonstrating the need for their conversion and integration through the TEA Protocol to create a unified, flexible framework for general-purpose task solving.

A.1 Conceptual Relationships

A.1.1 Environment

The environment constitutes one of the fundamental components of multi-agent systems, providing the external stage upon which agents perceive, act, and accomplish tasks. Within the context of the TEA Protocol, highlighting the role of environments is crucial, since environments not only define the operational boundaries of agents but also exhibit complex structural and evolutionary properties. In what follows, we outline the motivation for explicitly modeling environments in the TEA framework from several perspectives.

Classification of environments. From a broad perspective, environments can be divided into two categories: the real world and the virtual world. The real world is concrete and directly perceivable by humans, such as kitchens, offices, or factories. By contrast, the virtual world cannot be directly perceived or objectively described by humans, including domains such as the network world, simulation platforms, and game worlds. Importantly, these two types of environments are not independent. Rather, they are tightly coupled through physical carriers, such as computers, displays, keyboards, mice, and sensors, which act as mediators that enable the bidirectional flow of information between the real and virtual domains. Hence, environments should be regarded not as isolated domains but as interdependent layers connected through mediating carriers.

Nested and expandable properties. Environments are inherently nested and expandable. For example, when an individual is situated in a kitchen, their observable range and available tools are restricted to kitchen-related objects such as faucets, knives, and microwaves, all governed by the local rules of that sub-environment. When the activity range extends to the living room, new objects such as televisions, remote controls, and chairs become accessible, while the kitchen remains embedded as a sub-environment within a broader space. Furthermore, environments can interact with one another, as when a bottle of milk is taken from the kitchen to the living room. This demonstrates that enlarged environments can be conceptualized not merely as simple unions, but rather as structured integrations of the state and action spaces of smaller constituent environments, where local rules and affordances are preserved while new forms of interaction emerge from their composition.

Relationship with state–action spaces. In reinforcement learning, environments are formalized in terms of state and action spaces. The state space comprises the set of possible environmental states, represented in modalities such as numerical values, text, images, or video. The action space denotes the set of operations available to agents, generally divided into continuous and discrete spaces. Real and virtual environments are naturally continuous, but discrete abstractions are often extracted for the sake of tractability, forming the basis of most reinforcement learning systems. However, this discretization constrains the richness of interaction. In contrast, large language models (LLMs) enable a new paradigm: instead of selecting from a discrete set, LLMs can generate natural language descriptions that encode complex action sequences. These outputs can be understood as an intermediate representation between continuous and discrete action spaces, richer and more expressive than discrete actions, yet still mappable to concrete operations in continuous environments. To realize this mapping, intermediate actions are required as bridges. For instance, the natural language command “boil water” can be decomposed into executable steps such as turning on the kettle, filling it with water, powering it on, and waiting until boiling. This property indicates that LLM-driven interaction expands the definition of action representations and broadens the scope of environmental engagement.

Mediation and interaction. The notion of mediation highlights that environments are not static backdrops but relative constructs whose boundaries depend on available carriers and interfaces. In hybrid physical–virtual systems, for example, Internet-of-Things (IoT) devices serve as mediators: a smart refrigerator in the physical world can be controlled through a mobile application in the virtual world, while the application itself is subject to network protocols. Consequently, the definition of an environment is dynamic and conditioned by interactional means. In the TEA Protocol, this mediation must be explicitly modeled, since it determines accessibility and interoperability across environments.

Toward intelligent environments. Traditionally, environments are passive components that provide states and respond to actions. However, as embedded simulators, interfaces, and actuators grow more sophisticated, environments may gradually acquire semi-agentic properties. For instance, a smart home environment may not only respond to the low-level command “turn on the light” but also understand and execute a high-level instruction such as “create a comfortable atmosphere for reading,” by autonomously adjusting lighting, curtains, and background music. This trend suggests that environments are evolving from passive contexts into adaptive and cooperative components.

In conclusion, the environment should not be regarded as a passive backdrop for agent activity, but as a dynamic and evolving component that fundamentally shapes the scope and feasibility of interaction. Its dual nature across real and virtual domains, its nested and compositional structure, and its formalization through state–action spaces all demonstrate that environments provide both the constraints and the affordances within which agents operate. At the same time, the rise of LLM-based agents introduces new forms of action representation that require environments to support more flexible, language-driven interfaces. Looking ahead, as environments increasingly incorporate adaptive and semi-agentic features, their role in task execution will only become more central. Within the TEA Protocol, this motivates treating environments as a co-equal pillar alongside agents and tools, ensuring that general-purpose task solving remains both grounded in environmental constraints and empowered by environmental possibilities.

A.1.2 Agent

Within the TEA Protocol, the motivation for treating agents as a core component alongside environments and tools extends beyond mere terminological convenience. Agents represent the indispensable connective tissue between the generative capabilities of LLMs, the operational affordances of tools, and the structural dynamics of environments. While environments provide the stage on which tasks unfold and tools extend the range of possible actions, it is agents that unify perception, reasoning, and execution into coherent task-solving processes. Without explicitly recognizing agents as an independent pillar, the TEA Protocol would lack a systematic way to explain how abstract linguistic outputs can be transformed into grounded operations, how tools can be selected and orchestrated, and how autonomy, memory, and adaptivity emerge in multi-agent systems. The following dimensions illustrate why agents must be elevated to a core component of the framework.

Necessity of environment interaction. Unlike large language models (LLMs), which only produce textual descriptions that require conversion into executable actions, agents are fundamentally characterized by their ability to directly interact with environments. While LLMs can generate detailed plans, instructions, or hypotheses, such outputs remain inert unless they are translated into concrete operations that affect the state of an environment. This gap between symbolic reasoning and actionable execution highlights the necessity of an intermediate entity capable of grounding abstract instructions into domain-specific actions. Agents fulfill precisely this role: they map language-level reasoning to executable steps, whether in physical settings, such as controlling robotic arms or sensors, or in virtual contexts, such as interacting with databases, APIs, or software systems.

By serving as this mapping layer, agents enable the closure of full task loops, where perception leads to reasoning, reasoning produces plans, and plans culminate in actions that in turn modify the environment. Without explicitly modeling agents, the process would remain incomplete, as LLMs alone cannot guarantee the translation of reasoning into operational change. Within the TEA Protocol, this necessity justifies the elevation of agents to a core component: they provide the indispensable interface that connects the generative capacities of LLMs with the affordances and constraints of environments, ensuring that tasks are not only conceived but also carried through to completion.

The decisive role of non-internalizable tools. The fundamental distinction between LLMs and agents lies in whether they can effectively employ tools that cannot be internalized into model parameters. Some tools can indeed be absorbed into LLMs, particularly those whose logic can be fully simulated in symbolic space, whose inputs and outputs are representable in language or code, and whose patterns fall within the training distribution (for example, mathematical reasoning, structured text formatting, code generation, and debugging). For example, early LLMs struggled with JSON output formatting and code reasoning, often requiring external correction or checking tools, but reinforcement learning (RL) and supervised fine-tuning (SFT) have progressively enabled such capabilities to be internalized.

In contrast, many tools remain non-internalizable because they are intrinsically tied to environmental properties. These include tools that depend on physical devices such as keyboards, mice, and robotic arms, external infrastructures such as databases and APIs, or proprietary software governed by rigid protocols. Two recent approaches further illustrate this limitation. Vision-language-action (VLA) (Black et al., 2025) models map perceptual inputs directly into actions, which may appear to bypass intermediate symbolic descriptions, yet the resulting actions must still be aligned with the discrete action spaces of environments. This alignment represents not a fundamental internalization but a compromise, adapting model outputs to the constraints of environmental action structures. Similarly, the upgraded function calling mechanism introduced after GPT-5, which incorporates context-free grammar (CFG) (OpenAI, 2025a), allows LLMs to output structured and rule-based actions that conform to external system requirements. However, this remains a syntactic constraint on model outputs, effectively providing a standardized interface to external systems rather than a truly internalized ability of the model.

Agents therefore play a decisive role in mediating this boundary. They allow LLMs to internalize symbolic tools, thereby enhancing reasoning and self-correction, while also orchestrating access to non-internalizable tools through external mechanisms. This dual pathway ensures that LLMs are not confined to their parameterized capabilities alone but can extend into broader operational domains. In this way, agents transform the tension between internalizable and non-internalizable tools from a limitation into an opportunity, enabling robust problem solving in multimodal, embodied, and real-world contexts.

Memory and learning extension. Another crucial motivation for agents lies in their capacity to overcome the intrinsic memory limitations of LLMs. Due to restricted context windows, LLMs struggle to maintain continuity across extended interactions or to accumulate knowledge over multiple sessions. Agents address this shortcoming by incorporating external memory systems capable of storing, retrieving, and contextualizing past experiences. Such systems simulate long-term memory and enable experiential learning, allowing agents to refine strategies based on historical outcomes rather than treating each interaction as isolated. However, in the TEA Protocol, memory is not defined as a core protocol component but is instead positioned at the infrastructure layer. This design choice reflects the anticipation that future LLMs may gradually internalize memory mechanisms into their parameters, thereby reducing or even eliminating the need for external memory systems. In other words, while memory expansion is indispensable for today’s agents, it may represent a transitional solution rather than a permanent defining element of agency.

Bridging virtual and external worlds. It has been suggested that LLMs encode within their parameters a kind of “virtual world,” enabling them to simulate reasoning and predict outcomes internally. However, without an external interface, such simulations remain trapped in closed loops of self-referential inference, disconnected from the contingencies of real-world environments. Agents play a critical role in bridging this gap: they translate the abstract reasoning of LLMs into concrete actions, validate outcomes against environmental feedback, and close the loop between perception, reasoning, and execution. This bridging function transforms LLMs from purely linguistic engines into operationally grounded components whose outputs can be tested, refined, and extended within real or simulated environments.

Autonomy and goal-directedness. Beyond reactivity, agents are motivated by their capacity for autonomy. While LLMs typically operate in a reactive fashion, producing outputs in response to explicit prompts, agents can adopt proactive behaviors. They are capable of formulating subgoals, planning action sequences, and dynamically adapting strategies in light of environmental changes or task progress. This goal-directedness is what elevates agents from passive tools into active participants in problem solving. Autonomy ensures that agents are not merely executing instructions but are able to pursue objectives, adjust course when facing uncertainty, and coordinate with other agents. Such properties are essential for multi-agent collaboration and for tackling open-ended, general-purpose tasks that require initiative as well as adaptability.

Taken together, these motivations highlight why agents must be modeled as a core pillar of the TEA Protocol. Environments provide the stage for interaction, tools expand the operational scope, but it is agents that integrate reasoning, memory, tool usage, and autonomy into cohesive systems of action. By serving as mediators between LLMs and their environments, agents ensure that abstract reasoning is translated into grounded execution, enabling robust and scalable task solving across domains. In this sense, agents represent the crucial entity that transforms language models from passive predictors into active problem solvers within a unified multi-agent framework.

A.1.3 Tool

Within the TEA Protocol, the decision to treat tools as a core component alongside environments and agents extends far beyond a matter of convenience in terminology. Tools represent the crucial mediating constructs that encapsulate and operationalize the action spaces of environments, while simultaneously serving as the primary extension layer of agent capabilities. Environments provide the structural stage on which interactions occur, and agents embody the reasoning and decision-making mechanisms that drive behavior, but it is through tools that such reasoning becomes executable and scalable. Without tools, agents would be confined to abstract planning or primitive environmental actions, and environments would remain underutilized as passive backdrops rather than dynamic arenas of transformation.

Moreover, tools play a unique role in bridging symbolic reasoning and concrete execution, providing the abstraction layers necessary to decompose complex tasks into manageable units, and enabling cross-domain transfer through their modularity and portability. They also reveal the shifting boundary between what can be internalized into an agent’s parameters and what must remain external, highlighting the evolving interplay between intelligence and embodiment. In this sense, tools are not merely auxiliary aids but indispensable pillars that shape the architecture of multi-agent systems. The following dimensions illustrate the motivations for elevating tools to a core component of the TEA.

Extending the operational boundary. The primary function of tools is to expand the operational scope of agents beyond what is directly encoded in model parameters or supported by immediate environment interactions. Environments by themselves typically offer only primitive actions, and LLMs by themselves are limited to symbolic reasoning. Tools bridge this gap by furnishing additional pathways for action, allowing agents to manipulate physical artifacts or virtual systems in ways that exceed the direct expressive capacity of the model. From physical devices such as hammers, keyboards, and robotic arms to virtual infrastructures such as databases, APIs, and code execution engines, tools multiply the modes through which agents can influence their environments. Without tools, agents would be confined to intrinsic reasoning and the primitive action space of environments, leaving them incapable of executing tasks that require domain-specific operations. With tools, however, complex objectives can be decomposed into modular operations that are both tractable and reusable. This decomposition makes problem solving significantly more efficient, while also enhancing adaptability across domains. In this way, tools act as multipliers of agency, transforming abstract reasoning into a wider range of tangible interventions.

Hierarchy and abstraction. Tools are not flat or uniform components but exhibit a hierarchical and abstract structure. At the lowest level, tools correspond to atomic environmental actions, such as “clicking a button” or “moving one step.” These atomic units can then be combined into higher-level compound tools such as “opening a file” or “conducting a search.” At an even higher level, compound tools may evolve into strategy-like constructs, such as “writing a report,” “planning a trip,” or “completing a financial transaction.” Each level builds upon the previous, creating a hierarchy of reusable capabilities. This hierarchical structure is not only efficient but also central to interpretability. Higher-level tools inherently carry semantic labels that communicate their function, which in turn makes agent behavior more transparent to human observers and more predictable to other agents. Such abstraction layers reduce the cognitive and computational load on the agent when planning, since invoking a high-level tool can encapsulate dozens or hundreds of low-level steps. Moreover, in multi-agent systems, the semantic richness of high-level tools serves as a lingua franca, facilitating coordination and collaboration.