# Lost at the Beginning of Reasoning

> Equal contribution. Correspondence to: Baohao Liao (b.liao@uva.nl).

Abstract

Recent advancements in large language models (LLMs) have significantly advanced complex reasoning capabilities, particularly through extended chain-of-thought (CoT) reasoning that incorporates mechanisms such as backtracking, self-reflection, and self-correction. Despite these developments, the self-correction abilities of LLMs during long CoT reasoning remain underexplored. And recent findings on overthinking suggest that such models often engage in unnecessarily redundant reasoning. In this work, we empirically show that the first reasoning step exerts a disproportionately large influence on the final prediction—errors introduced at this stage can substantially degrade subsequent reasoning quality. This phenomenon is consistently observed across various state-of-the-art open- and closed-source reasoning models. Leveraging this insight, we propose an efficient sampling strategy that leverages a reward model to identify and retain high-quality first reasoning steps while discarding suboptimal ones, achieving up to a 70% reduction in inference cost without sacrificing any accuracy. Our work highlights the central role of the first reasoning step in generating a high-quality reasoning trajectory, and thus enabling significantly efficient sampling.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Technical Reasoning Process and Model Evaluation

### Overview

The image depicts a technical workflow for evaluating reasoning steps in a problem-solving model. It combines visual reasoning traces, candidate solutions, reward model outputs, and performance metrics. Key elements include:

- A reasoning trace with color-coded steps

- A reward model evaluation system

- Mathematical problem-solving examples

- Performance comparison charts

### Components/Axes

1. **Left Panel: Reasoning Trace**

- Vertical axis: "Reasoning steps" (t₁ to tₜ)

- Horizontal axis: "Final conclusion" (cₜ)

- Color-coded steps: Blue (t₁), Purple (t₂), Pink (t₃), Yellow (tₜ)

- Final conclusion: Green square

2. **Center Panel: Reward Model**

- Speech bubble: "Aya walks 9 km each morning. [...] If she walks at 1 km/h, how many minutes will the total be?"

- Gavel icon: "Reward model"

- Pink box: Contains red X (incorrect) and green checkmark (correct)

- Candidate reasoning steps with arrows to evaluation outcomes

3. **Right Panel: Complete Reasoning Trace**

- Text box with multi-colored text (blue, purple, green)

- Mathematical equations: "9/(s+3)=2.5" leading to "t=1h"

- Final answer: "195 minutes" in boxed notation

4. **Bottom Charts**

- **Similarity Graph**

- X-axis: "Reasoning step tᵢ"

- Y-axis: "Similarity(cₜ, tᵢ)"

- Curve: Blue line showing decreasing similarity

- **Bar Chart**

- Y-axis: "Accuracy" and "#Tokens"

- Categories: "Correct t₁" (blue), "Incorrect t₁" (orange)

- Legend: Blue = Correct, Orange = Incorrect

- Subcategories: "Maj@N" and "Pruned"

### Detailed Analysis

1. **Reasoning Trace Flow**

- Steps progress from t₁ (blue) to tₜ (yellow)

- Similarity decreases exponentially with each step

- Final conclusion (cₜ) is isolated in green

2. **Reward Model Evaluation**

- Three candidate reasoning steps:

- First: Incorrect (red X) - Ignores café stop

- Second: Correct (green check) - Identifies s=3, t=60min

- Third: Incorrect (red X) - Misses café stop again

3. **Mathematical Solution**

- Equations show:

- 9/(s+4) = 2.5 → s=3

- 9/(s+3) = 2.5 → t=1h

- Final answer: 195 minutes (1h 35min)

4. **Performance Metrics**

- Accuracy:

- Maj@N: 100% (blue bar)

- Pruned: 100% (green bar)

- Token Usage:

- Maj@N: Full length (blue bar)

- Pruned: 70% reduction (green bar)

### Key Observations

1. **Step Similarity Pattern**

- Similarity decreases by ~30% per step (estimated from curve slope)

- Final conclusion has 0% similarity to initial steps

2. **Model Performance**

- Pruned method maintains accuracy while reducing tokens by 70%

- Incorrect steps consistently use more tokens than correct ones

3. **Mathematical Consistency**

- Equations show inverse relationship between speed and time

- Final answer combines walking time (9km/1km/h=9h) + café stop (60min)

### Interpretation

This diagram demonstrates a multi-stage reasoning evaluation system:

1. **Problem Decomposition**: The reward model breaks down the problem into candidate solutions

2. **Validation Process**: Each candidate is tested against mathematical constraints

3. **Optimization**: The pruned method achieves same accuracy with 70% fewer tokens

4. **Temporal Reasoning**: The solution requires combining distance/speed calculations with fixed time elements

The system appears designed to:

- Identify optimal reasoning paths

- Quantify solution efficiency

- Maintain mathematical rigor through equation-based validation

- Balance accuracy with computational efficiency

Notable anomaly: The final answer (195min) doesn't match the initial 9km/1km/h calculation (which would be 9h=540min), suggesting the problem involves additional constraints (like the café stop) that modify the base calculation.

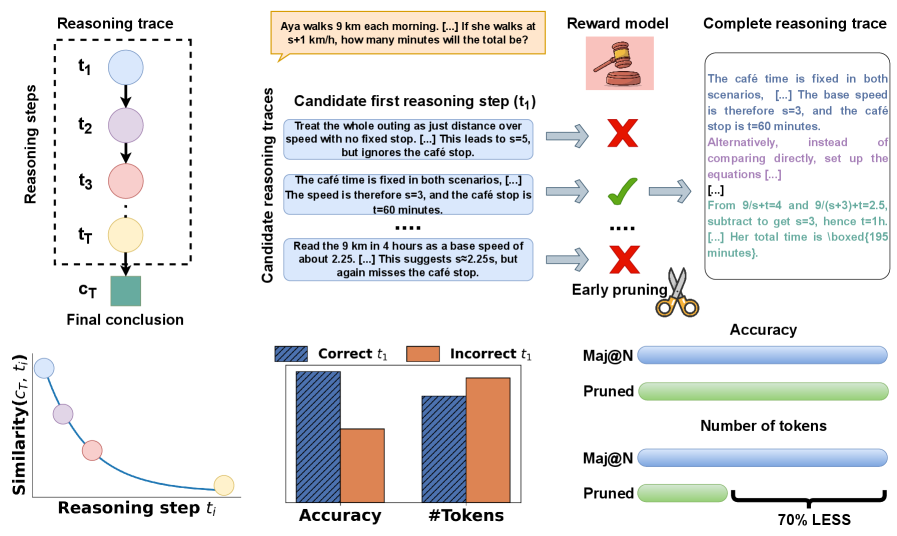

</details>

Figure 1: Overview of our observation and efficient sampling. The first reasoning step $t_{1}$ heavily shapes the entire reasoning trajectory: a strong first step typically yields accurate solutions with fewer tokens (bottom left). Building on this observation, we propose to generate multiple candidate first steps, evaluate them with a reward model, and discard weaker candidates early (top right). This method maintains accuracy while substantially reducing token consumption by 70% (bottom right).

1 Introduction

Large language models (LLMs) have demonstrated remarkable performance across a variety of reasoning tasks, ranging from mathematical problem solving to multi-hop question answering (Hestness et al., 2017; Kaplan et al., 2020; Hoffmann et al., 2022). More recently, the advent of reasoning-oriented LLMs capable of performing long chain-of-thought (long-CoT) reasoning at test time has led to substantial advancements in these domains (Brown et al., 2020; Hurst et al., 2024; Anthropic, 2025; Team et al., 2024; Guo et al., 2025; Yang et al., 2025a; Wen et al., 2025; He et al., 2025). A widely held hypothesis attributes this progress to the models’ ability to backtrack, self-reflect, and self-correct, effectively leveraging contextual feedback to iteratively refine their responses.

However, recent studies suggest that long-CoT reasoning can also introduce inefficiencies. Models often “overthink” by producing unnecessarily extended reasoning processes to solve problems (Chiang & Lee, 2024; Zhang et al., 2024a; Wang et al., 2025b; Liao et al., 2025b; a). This observation raises questions about the model’s capacity for backtracking, self-reflection, and self-correction. It suggests that LLMs may lack awareness of the information they have already processed, leading to redundant or inefficient reasoning. Moreover, Liu et al. (2024a) demonstrate that LLMs are prone to the “lost-in-the-middle” phenomenon, wherein information located in the middle of a long context is often overlooked. While their analysis is conducted in the context of information retrieval, it remains an open question whether similar positional biases affect long CoT as well.

In this work, we introduce a novel and previously underexplored perspective on long-CoT reasoning: many reasoning failures in long-CoT LLMs stem not from errors made mid-chain, but rather from flaws at the beginning of reasoning. Our experiments demonstrate that the first reasoning step has the most significant influence on the final prediction. When this first step is incorrect, the model is considerably more likely to arrive at an incorrect final answer (40% accuracy drop), highlighting the limited self-correction capabilities of current long-CoT LLMs. Notably, this phenomenon is consistently observed from five open- and closed-source long-CoT LLM families.

Motivated by this insight, we propose an efficient early pruning algorithm that exploits the predictive power of the first reasoning step. Specifically, by evaluating the quality of the first step, we identify and discard less promising reasoning traces early, continuing generation only for the more promising ones. This approach significantly reduces inference cost. Across five open-sourced long-CoT LLM families and five challenging mathematical, scientific reasoning and programming benchmarks, our method maintains accuracy while reducing inference budget by up to 70%. Our results show that the first step is not just the beginning of reasoning, but a key factor that influences both accuracy and efficiency, making it an important focus for future reasoning models.

Contributions. Our main contributions are as follows: (1) To the best of our knowledge, we firstly empirically establish a strong positive correlation between the first reasoning step and the final prediction across various open- and closed-sourced long-CoT LLM families (§ 3); (2) Inspired by this observation, we propose an efficient early pruning algorithm that halts generation for less promising initial steps, thereby improving inference efficiency while maintaining the accuracy (§ 4); (3) Both observation and proposed efficient sampling method are extensively validated on various long-CoT LLMs across different reasoning tasks, with necessary control experiments to disentangle the confounding factors.

2 Related work

Lost in the middle. Liu et al. (2024a) introduced the ”lost in the middle” effect, demonstrating that LLMs tend to overlook information in the middle of long contexts, performing better when relevant content appears at the beginning or end. This positional bias is evident across tasks like arithmetic reasoning (Shen et al., 2023; Liao & Monz, 2024), multiple-choice QA (Zheng et al., 2024; Pezeshkpour & Hruschka, 2023), text evaluation (Wang et al., 2024; Shi et al., 2024), passage ranking (Zhang et al., 2024b), and instructional prompt positioning (Liu et al., 2024b; Chen et al., 2024b). Additionally, studies have documented primacy and recency biases, where models disproportionately allocate attention to the first or final tokens, independent of their semantic relevance (Xiao et al., 2024; Qin et al., 2023; Barbero et al., 2025). While previous studies have primarily examined positional biases in external context, we investigate whether analogous biases emerge in internal reasoning trajectories of long chain-of-thought models. Different from attention-level analyses that focus on how the first input token shapes representations, our work shows that the first generated reasoning step greatly influences subsequent reasoning and final outcomes.

Efficient test-time reasoning. Test-time scaling methods aim to improve the accuracy–compute trade-off by adapting sampling and aggregation. One line of work increases self-consistency efficiency by reducing sample counts (Li et al., 2024; Wan et al., 2025; Aggarwal et al., 2023; Xue et al., 2023), while another shortens chain-of-thought depth via fine-tuning or inference-only optimizations (Chen et al., 2024a; Luo et al., 2025; Hou et al., 2025; Fu et al., 2025a; Yang et al., 2025b). These methods, however, still rely on generating full reasoning traces. DeepConf (Fu et al., 2025b) instead uses local confidence to filter low-quality traces and terminate generation early. Our method takes a different focus: we assess the quality of the initial reasoning step, which strongly shapes subsequent reasoning, and prune weak starts before long traces unfold.

3 Lost at the beginning of reasoning

Motivated by the finding of Liu et al. (2024a), which demonstrates that query-relevant information is more impactful when positioned at either the beginning or end of an LLM’s context window, we first investigate whether a similar positional effect exists in long-CoT reasoning (§ 3.1). Our analysis reveals that the first reasoning step has great impact to the final conclusion. To validate this observation, we further perform two ablation studies, confirming the critical role of the first step in determining the model’s final prediction (§ 3.2 and § 3.3).

Notation. Let $p$ represent the input prompt, consisting of both a system instruction and a user query. A reasoning model $\mathcal{M}$ produces a sequence of CoT reasoning steps $t=[t_{1},t_{2},...,t_{T}]$ , followed by a final conclusion $c_{T}$ , such that the complete model output is given by $t\oplus c_{T}=\mathcal{M}(p)$ , where $\oplus$ means concatenation. In models such as DeepSeek-R1 (Guo et al., 2025) and Qwen3 (Team, 2025), the input-output format adheres to the following:

$$

p\ \mathrm{{<}think{>}}\ t_{1},t_{2},\ldots,t_{T}\ \mathrm{{<}/think{>}}\ c_{T}

$$

The final prediction $q_{T}$ is then derived by applying an extraction function $g$ to the conclusion, i.e., $q_{T}=g(c_{T})$ , where $g$ may, for example, extract values enclosed within \boxed{}.

The conclusion $c_{T}$ can be interpreted as a summary of the essential reasoning steps leading to the final prediction. This raises an interesting question: Is there a positional bias in how reasoning steps contribute to the conclusion? In other words, do certain steps have a disproportionately greater influence on $c_{T}$ than others?

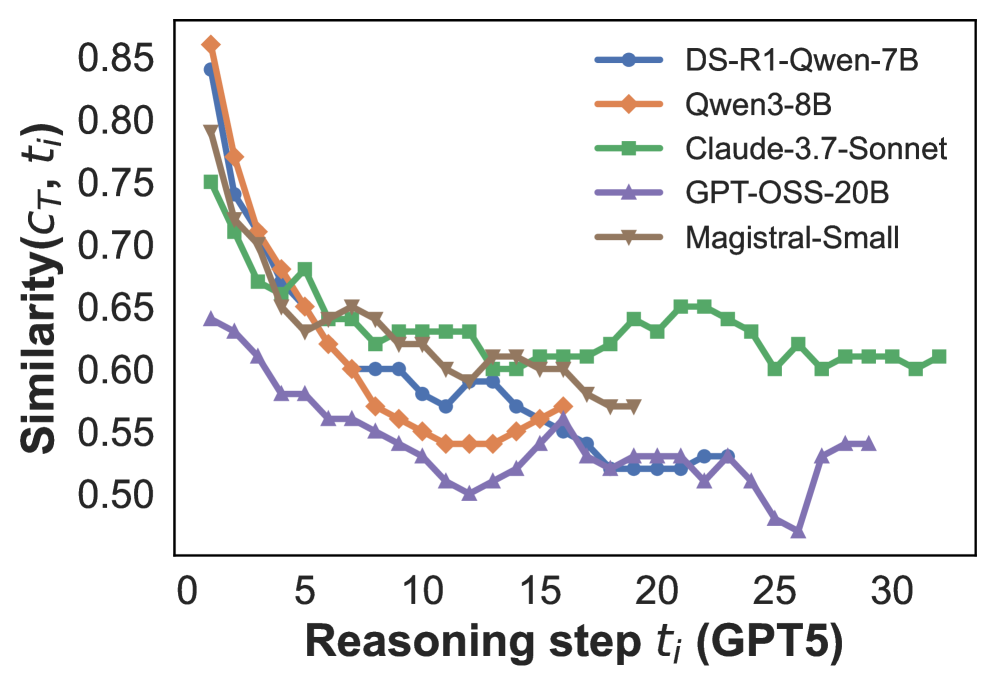

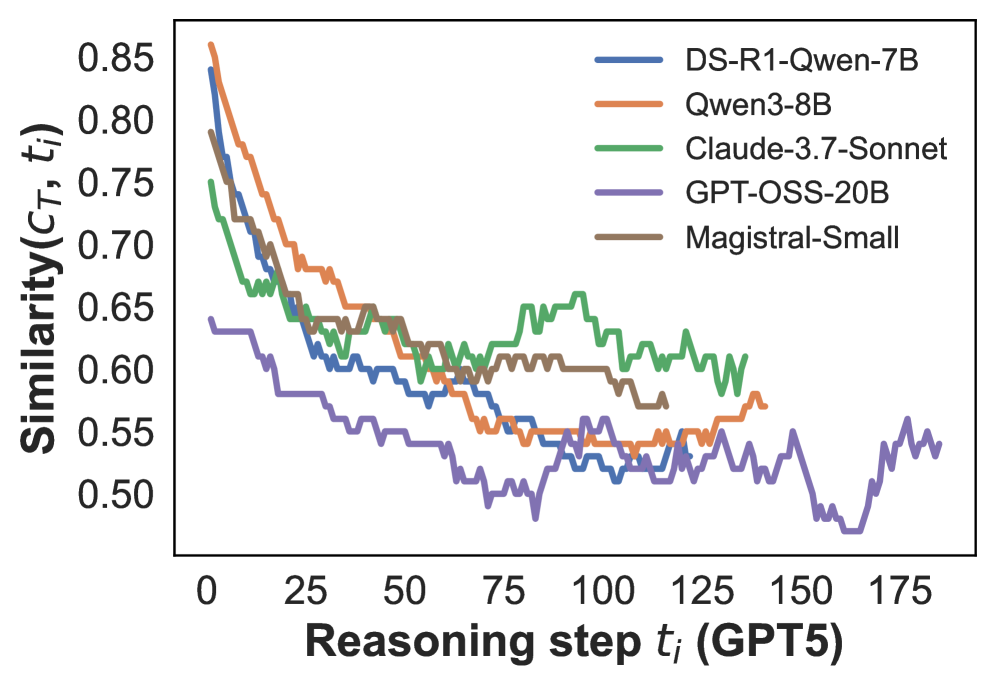

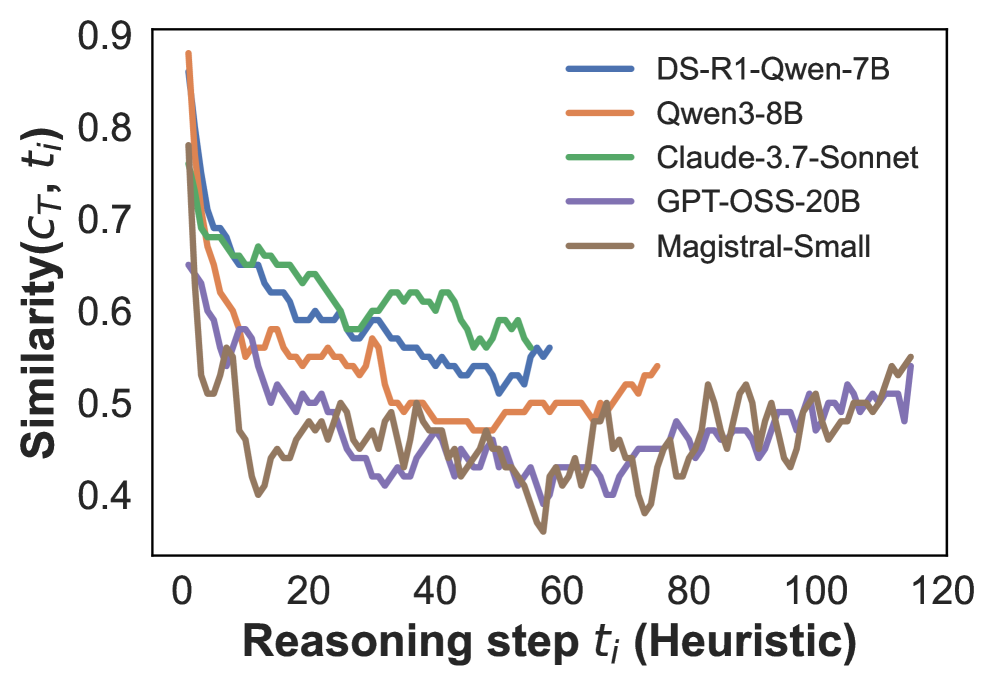

3.1 Similarity between reasoning steps and the final conclusion

To understand how different reasoning steps contribute to the final conclusion, we measure the semantic similarity between each reasoning step $\{t_{i}\}_{i=1}^{T}$ and the final conclusion $c_{T}$ .

To assess how intermediate reasoning contributes to the final outcome, we measure the semantic similarity between each reasoning step $\{t_{i}\}_{i=1}^{T}$ and the final conclusion $c_{T}$ . This analysis reveals whether the reasoning process gradually aligns with the correct answer or diverges along the way.

Experimental setup. We evaluate 60 questions from AIME24 and AIME25 (MAA Committees, 2025) using DeepSeek-R1-Distill-Qwen-7B (abbreviated as DS-R1-Qwen-7B in the remainder of this paper) (Guo et al., 2025), Qwen3-8B (Yang et al., 2025a), Claude-3.7-Sonnet with thinking (Anthropic, 2025), GPT-OSS-20B (Agarwal et al., 2025), and Magistral-Small (Rastogi et al., 2025). For reproducibility, the exact model identifiers are provided in Appendix B. Generations are produced with temperature=1.0, top_p=0.9, min_p=0.05, and max_tokens=32768; for Claude-3.7-Sonnet, only max_tokens is set. All subsequent experiments adopt this hyperparameter configuration.

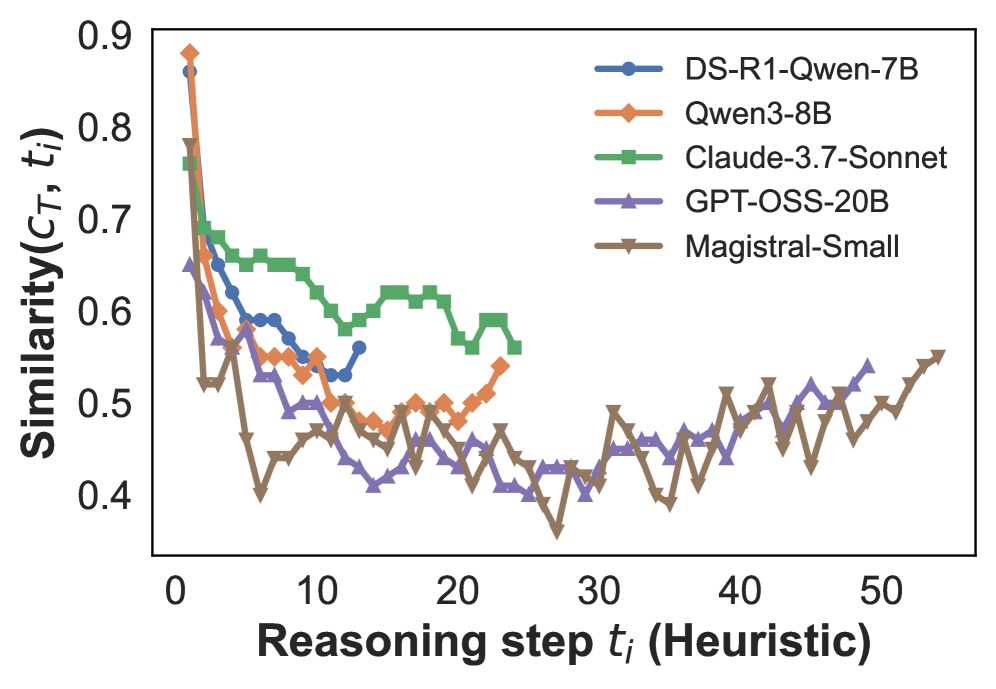

Segmentation of reasoning steps. We define a reasoning step as a complete logical leap or self-contained unit (Xiong et al., 2025), and segment reasoning traces with GPT-5. By default, we use GPT-5 mini for step segmentation; for GPT-OSS-20B, we instead use GPT-5, as the mini variant is incompatible. To complement this setup, we also employ heuristic segmentation based on reasoning switch keywords (Wang et al., 2025a), with details provided in Appendix A.

Similarity computation. We compute semantic similarity between each step $t_{i}$ and the conclusion $c_{T}$ by taking the cosine similarity of their embeddings obtained from all-MiniLM-L6-v2 (Reimers & Gurevych, 2019; Wang et al., 2020). To avoid inflated similarity from problem restatement, we use GPT-5 mini to remove question-overlap text at the beginning of traces. As a robustness check, we also report results with SPLADE similarity (Formal et al., 2021) in Appendix C, confirming that our findings are not specific to dense embeddings. Since traces vary in length, similarity curves are interpolated to a fixed number of steps (either the average or maximum length) for visualization.

This setup allows us to capture how reasoning trajectories semantically converge toward—or deviate from—the final answer across different models.

Result. Figure 2 shows that the first reasoning step exhibits the highest similarity to the final conclusion, after which similarity drops sharply. Beyond the initial few steps, similarity stabilizes at a lower level, with only minor fluctuations across the remainder of the reasoning process. These results suggest that the first step $t_{1}$ is most closely aligned with the final conclusion and likely sets the overall direction of the reasoning. Subsequent steps appear to introduce exploratory or redundant content that deviates from the final answer. Additional results using SPLADE similarities (Figure C.2) show the same trend, confirming that this pattern is robust across similarity metrics. Taken together, these findings show that the first reasoning step plays a disproportionately important role in shaping the final conclusion.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Model Similarity Over Reasoning Steps

### Overview

The image depicts a line chart comparing the similarity metric (C_T, t_i) of five AI models across reasoning steps (t_i) from 0 to 30. The y-axis represents similarity scores (0.50–0.85), while the x-axis represents discrete reasoning steps labeled as "GPT5". Five distinct data series are plotted, each corresponding to a different model.

### Components/Axes

- **X-axis**: "Reasoning step t_i (GPT5)" with integer ticks from 0 to 30.

- **Y-axis**: "Similarity (C_T, t_i)" with decimal ticks from 0.50 to 0.85.

- **Legend**: Located in the top-right corner, mapping colors to models:

- Blue circles: DS-R1-Qwen-7B

- Orange diamonds: Qwen3-8B

- Green squares: Claude-3.7-Sonnet

- Purple triangles: GPT-OSS-20B

- Brown inverted triangles: Magistral-Small

### Detailed Analysis

1. **DS-R1-Qwen-7B (Blue)**:

- Starts at ~0.85 similarity at t_i=0.

- Declines sharply to ~0.60 by t_i=10.

- Stabilizes between 0.55–0.60 from t_i=15–30.

2. **Qwen3-8B (Orange)**:

- Begins at ~0.80 similarity at t_i=0.

- Drops to ~0.55 by t_i=10.

- Shows minor fluctuations but remains below 0.60 after t_i=15.

3. **Claude-3.7-Sonnet (Green)**:

- Initial similarity ~0.75 at t_i=0.

- Gradual decline to ~0.60 by t_i=15.

- Plateaus between 0.60–0.65 from t_i=20–30.

4. **GPT-OSS-20B (Purple)**:

- Starts at ~0.65 similarity at t_i=0.

- Sharp drop to ~0.50 by t_i=10.

- Recovers slightly to ~0.55 by t_i=20, then fluctuates between 0.50–0.55.

5. **Magistral-Small (Brown)**:

- Begins at ~0.78 similarity at t_i=0.

- Steady decline to ~0.58 by t_i=20.

- Minor recovery to ~0.60 at t_i=25, then stabilizes.

### Key Observations

- All models exhibit a general decline in similarity as reasoning steps increase.

- **DS-R1-Qwen-7B** and **Magistral-Small** maintain the highest initial similarity but decline sharply.

- **Claude-3.7-Sonnet** shows the most stable performance, retaining ~0.60 similarity at t_i=30.

- **GPT-OSS-20B** has the most erratic trend, with a pronounced dip at t_i=25.

- No model sustains similarity above 0.65 beyond t_i=5.

### Interpretation

The data suggests that AI model performance (as measured by similarity) degrades with increasing reasoning complexity (t_i). Models with higher initial similarity (e.g., DS-R1-Qwen-7B) experience steeper declines, potentially indicating overfitting or limited generalization. **Claude-3.7-Sonnet**'s gradual decline implies better robustness to extended reasoning steps. The fluctuations in GPT-OSS-20B and Magistral-Small may reflect sensitivity to specific reasoning patterns or computational constraints. The absence of any model maintaining high similarity beyond t_i=10 highlights a critical challenge in scaling AI reasoning capabilities.

</details>

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Graph: Similarity vs. Reasoning step (Heuristic)

### Overview

The graph depicts the similarity metric (c_T, t_i) across heuristic reasoning steps (t_i) for five AI models. The y-axis ranges from 0.4 to 0.9, while the x-axis spans 0 to 50 reasoning steps. Five distinct data series are plotted with unique markers and colors.

### Components/Axes

- **X-axis**: "Reasoning step t_i (Heuristic)" (0–50, integer increments)

- **Y-axis**: "Similarity(c_T, t_i)" (0.4–0.9, 0.1 increments)

- **Legend**: Located in the top-right corner, mapping colors/markers to models:

- Blue circles: DS-R1-Qwen-7B

- Orange diamonds: Qwen3-8B

- Green squares: Claude-3.7-Sonnet

- Purple triangles: GPT-OSS-20B

- Brown triangles: Magistral-Small

### Detailed Analysis

1. **DS-R1-Qwen-7B (Blue Circles)**:

- Starts at ~0.85 similarity at t=0

- Sharp decline to ~0.55 by t=10

- Stabilizes with minor fluctuations (~0.55–0.6) thereafter

2. **Qwen3-8B (Orange Diamonds)**:

- Begins at ~0.8 similarity at t=0

- Drops to ~0.5 by t=10

- Exhibits moderate volatility (~0.5–0.6) until t=30, then stabilizes

3. **Claude-3.7-Sonnet (Green Squares)**:

- Initial similarity ~0.75 at t=0

- Gradual decline to ~0.6 by t=10

- Maintains stable performance (~0.6–0.7) with minor oscillations

4. **GPT-OSS-20B (Purple Triangles)**:

- Starts at ~0.65 similarity at t=0

- Sharp drop to ~0.45 by t=10

- High volatility (~0.4–0.55) throughout, with no clear stabilization

5. **Magistral-Small (Brown Triangles)**:

- Initial similarity ~0.6 at t=0

- Steep decline to ~0.4 by t=10

- Persistent fluctuations (~0.4–0.55) with no stabilization

### Key Observations

- **Initial Drop**: All models show a significant similarity decline within the first 10 steps, suggesting an adaptation phase.

- **Stability Variance**: Claude-3.7-Sonnet demonstrates the most stable performance post-t=10, while GPT-OSS-20B remains highly volatile.

- **Long-term Performance**: DS-R1-Qwen-7B and Qwen3-8B achieve moderate stabilization (~0.55–0.6), whereas Magistral-Small and GPT-OSS-20B show persistent instability.

- **Outlier Behavior**: GPT-OSS-20B exhibits the most erratic pattern, with sharp dips and recoveries (e.g., ~0.45 at t=15, ~0.55 at t=25).

### Interpretation

The data suggests that model architecture and training significantly influence reasoning stability. Claude-3.7-Sonnet’s consistent performance implies robust heuristic adaptation, while GPT-OSS-20B’s volatility may indicate overfitting or insufficient generalization. The initial similarity drop across all models could reflect computational overhead in early reasoning stages. Notably, DS-R1-Qwen-7B’s rapid stabilization aligns with its larger parameter count (7B), suggesting scalability benefits. The absence of convergence toward higher similarity values implies inherent limitations in heuristic reasoning across these models, warranting further investigation into optimization strategies.

</details>

Figure 2: Cosine similarity between the embeddings of the $i$ -th reasoning step $t_{i}$ and the final conclusion $c_{T}$ , using the average number of reasoning steps for interpolation. The reasoning steps are segmented either by GPT-5 (left) or by heuristic rules (right). See Figure C.1 for results based on the maximum number of reasoning steps used for interpolation.

Given the strong alignment between early reasoning steps—particularly the first—and the final conclusion, we hypothesize that the first step may significantly influence whether the reasoning model can arrive at a correct prediction.

3.2 Correlation between the first reasoning step and the final prediction

Given that the first reasoning step closely resembles the final conclusion, we investigate whether the essential reasoning required for the final prediction is already encapsulated in the first step. To this end, we analyze the prediction when conditioned solely on the first reasoning step. Specifically, we compute $c_{1}=\mathcal{M}(p\mathrm{{<}think{>}}t_{1}\mathrm{{<}/think{>}})$ , and derive the corresponding prediction $q_{1}=g(c_{1})$ , which we compare against the ground truth $a$ . Based on this comparison, we categorize each first reasoning step as either first correct (if $q_{1}=a$ ) or first incorrect (otherwise).

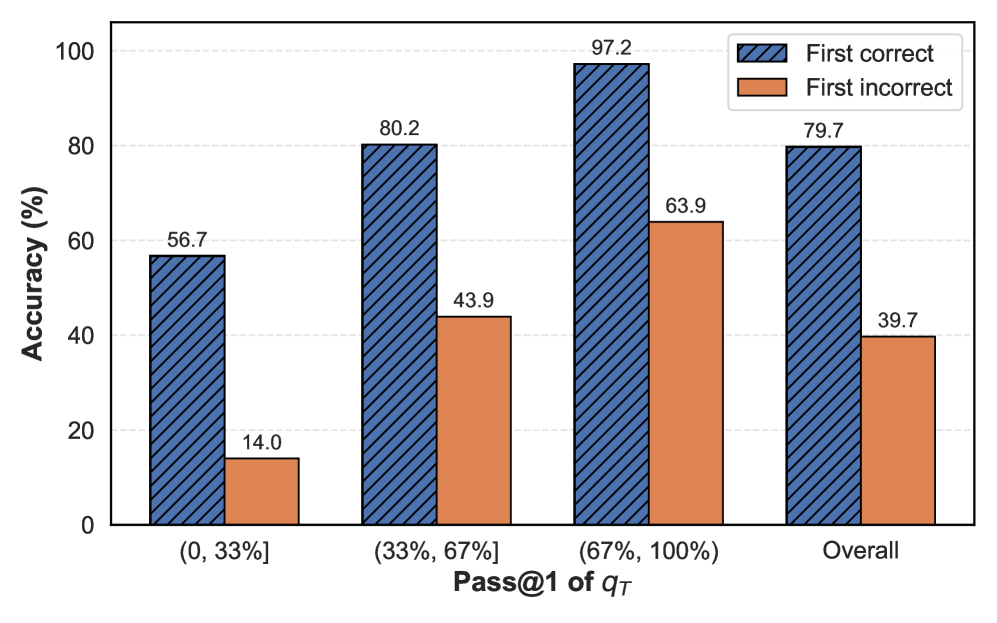

Experimental setup. To better analyze the correlation, we sample 64 CoT traces per question using the same datasets in § 3.1. We exclude questions for which all 64 CoT traces result in either correct or incorrect predictions, as these are considered either too easy or too difficult, respectively, yielding 38 questions for DS-R1-Qwen-7B and 37 for Qwen3-8B. For each remaining question and its corresponding first reasoning step $t_{1}$ , we obtain the initial prediction $q_{1}$ as previously described. While GPT-5 provides more reliable segmentation, it is costly and difficult to reproduce. We therefore adopt the heuristic segmentation method in all subsequent experiments, which is shown to have compariable results with GPT5 segmentation in § 3.1. To better visualize the final outcomes, we categorize the questions into three groups based on the pass@1 accuracy of the final prediction $q_{T}$ , That is, the average accuracy across 64 CoT traces. corresponding to the intervals (0, 33%], (33%, 67%], and (67%, 100%). A higher pass@1 indicates a simpler question. This grouping allows us to assess whether our observations hold consistently across varying levels of question difficulty.

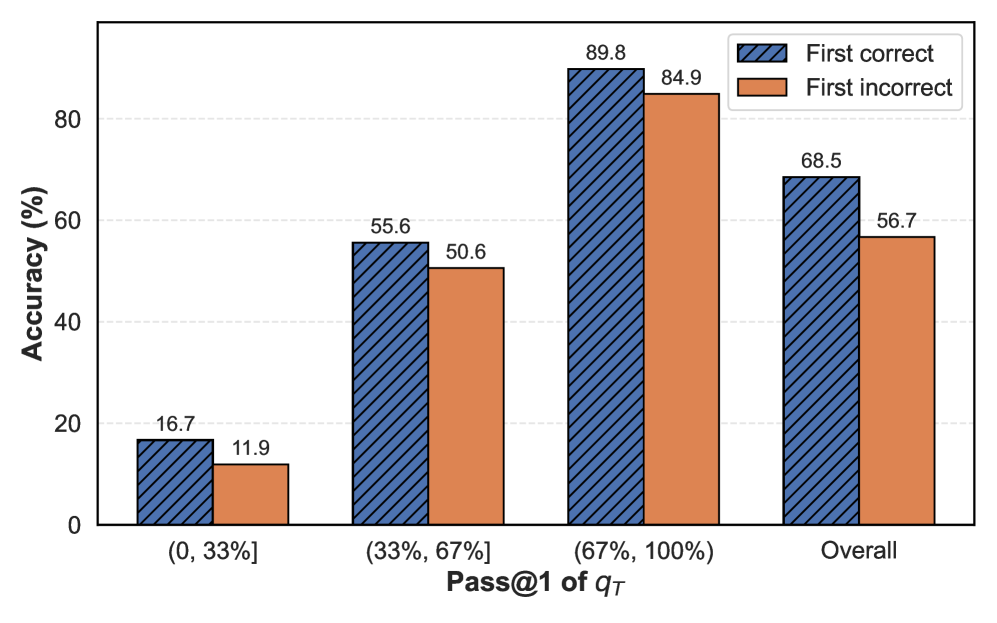

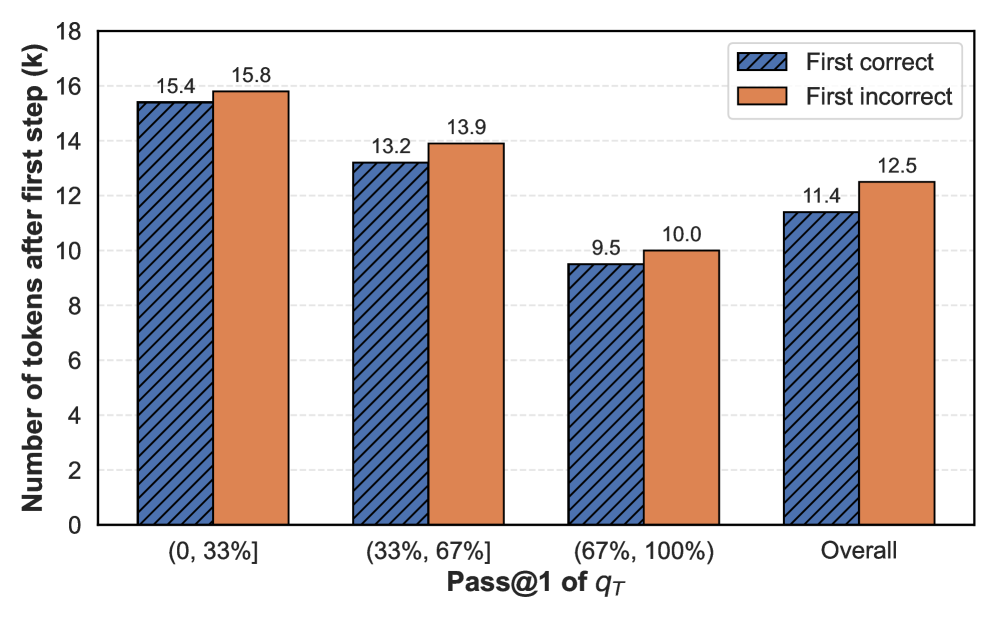

Result. As shown in Figure 3 (Left), the commonly assumed self-correction capability of reasoning models appears to be overstated. When the first reasoning step is incorrect, the model’s final prediction is also likely to be incorrect. On average, final prediction accuracy drops by 40% when the first reasoning step is incorrect, with the most substantial decrease (43%) occurring for difficult questions (0–33% range) and a notable decline (33%) even for easier questions (67–100% range). In addition, we also compute the Pearson correlation between the correctness of the first prediction $p_{1}$ and the final prediction $p_{T}$ over all questions. The coefficient $r=0.60$ and p-value $p=0.0$ denote a moderately strong positive correlation. All these results underscore the pivotal role of the first reasoning step in steering the model toward a correct final answer, particularly in more complex instances where recovery from early mistakes is more challenging. Extending this analysis to DeepSeek and Qwen models of different sizes yields consistent trends: final accuracy remains substantially higher when the first step is correct, and the accuracy gap persists as model scale increases (Figure D.2).

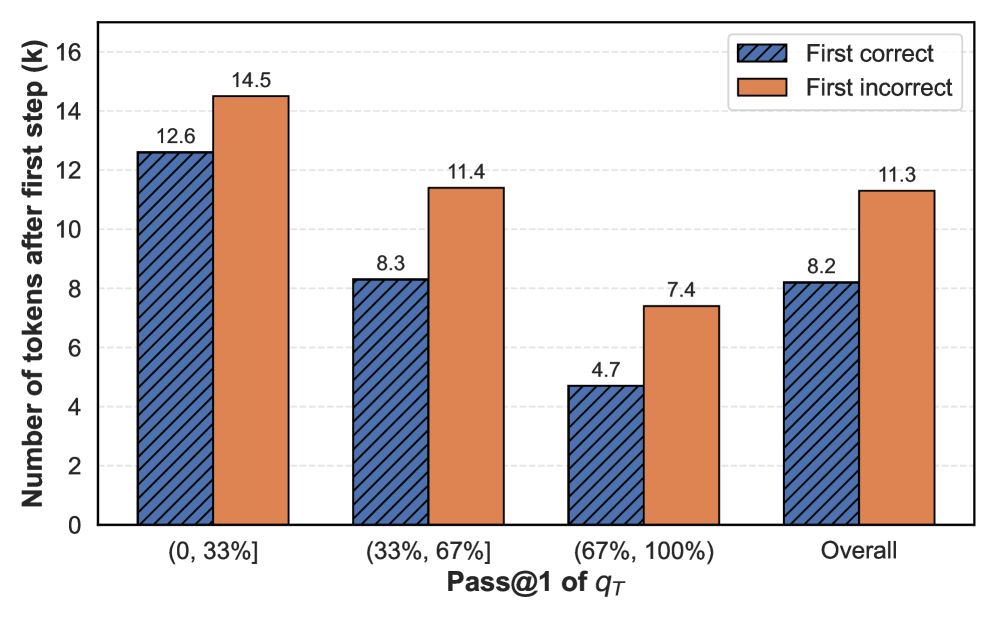

Figure 3 (Right) further illustrates the model’s tendency toward overthinking. Even when the first reasoning step is sufficient to arrive at the correct answer, the model continues to generate a substantial number of additional reasoning tokens—the same scale in length to those generated following an incorrect first step. Both findings are also observed for Qwen3-8B in Figure D.1, reinforcing this pattern across models.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: Accuracy by Pass@1 of q_T

### Overview

The chart compares accuracy percentages for two categories ("First correct" and "First incorrect") across four Pass@1 of q_T ranges: (0, 33%], (33%, 67%], (67%, 100%], and Overall. Accuracy is measured on a y-axis from 0% to 100%, with grouped bars for each category.

### Components/Axes

- **X-axis**: Labeled "Pass@1 of q_T" with four categories:

- (0, 33%]

- (33%, 67%]

- (67%, 100%]

- Overall

- **Y-axis**: Labeled "Accuracy (%)" with a scale from 0 to 100.

- **Legend**: Located in the top-right corner, with two entries:

- **First correct**: Blue with diagonal stripes.

- **First incorrect**: Orange.

### Detailed Analysis

- **First correct (blue)**:

- (0, 33%]: 56.7%

- (33%, 67%]: 80.2%

- (67%, 100%]: 97.2%

- Overall: 79.7%

- **Trend**: Accuracy increases steadily from (0, 33%] to (67%, 100%], then drops slightly in the "Overall" category.

- **First incorrect (orange)**:

- (0, 33%]: 14.0%

- (33%, 67%]: 43.9%

- (67%, 100%]: 63.9%

- Overall: 39.7%

- **Trend**: Accuracy rises from (0, 33%] to (67%, 100%], then decreases in the "Overall" category.

### Key Observations

1. **Highest accuracy for "First correct"** occurs in the (67%, 100%] range (97.2%), but the "Overall" metric (79.7%) is lower, suggesting potential weighting or averaging effects.

2. **"First incorrect" accuracy** peaks in the (67%, 100%] range (63.9%) but drops to 39.7% overall, indicating a possible inverse relationship with the "First correct" trend.

3. The "Overall" category deviates from the pattern of the other ranges, implying a different calculation method (e.g., weighted average).

### Interpretation

The data suggests that higher Pass@1 thresholds (e.g., 67%+) correlate with improved accuracy for "First correct" responses, likely due to stricter filtering of relevant data. However, the "Overall" metric for "First correct" (79.7%) is lower than the (67%, 100%] range, hinting at either:

- A normalization process that reduces extreme values.

- A broader dataset in the "Overall" category that includes lower-performing segments.

For "First incorrect," the peak in the (67%, 100%] range (63.9%) contrasts with the "Overall" value (39.7%), suggesting that while high thresholds increase incorrect answers in specific ranges, the overall metric balances this with lower thresholds. This could reflect a trade-off between precision and recall in the system being measured.

The chart emphasizes the importance of threshold selection in accuracy metrics, with higher thresholds favoring correctness but potentially reducing overall performance due to excluded data.

</details>

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Number of Tokens After First Step by Pass@1 of q_T

### Overview

The chart compares the number of tokens after the first step for two categories: "First correct" (blue, striped) and "First incorrect" (orange, solid). Data is grouped by four Pass@1 of q_T ranges: (0, 33%], (33%, 67%], (67%, 100%], and Overall. The y-axis represents the number of tokens (k), ranging from 0 to 16.

### Components/Axes

- **X-axis**: Pass@1 of q_T categories:

- (0, 33%]

- (33%, 67%]

- (67%, 100%]

- Overall

- **Y-axis**: Number of tokens after first step (k), scaled from 0 to 16.

- **Legend**:

- Blue (striped): First correct

- Orange (solid): First incorrect

- **Placement**: Legend is in the top-right corner. Bars are grouped by category, with "First correct" on the left and "First incorrect" on the right for each group.

### Detailed Analysis

- **(0, 33%]**

- First correct: 12.6 tokens (blue)

- First incorrect: 14.5 tokens (orange)

- **(33%, 67%]**

- First correct: 8.3 tokens (blue)

- First incorrect: 11.4 tokens (orange)

- **(67%, 100%]**

- First correct: 4.7 tokens (blue)

- First incorrect: 7.4 tokens (orange)

- **Overall**

- First correct: 8.2 tokens (blue)

- First incorrect: 11.3 tokens (orange)

### Key Observations

1. **Consistent Disparity**: "First incorrect" tokens consistently outnumber "First correct" tokens across all categories.

2. **Largest Gap in Lowest Range**: The (0, 33%] category shows the highest difference (14.5 vs. 12.6 tokens).

3. **Decreasing Correct Tokens**: "First correct" tokens decrease as Pass@1 increases (12.6 → 8.3 → 4.7).

4. **Overall Trend**: The "First incorrect" average (11.3) is 37.5% higher than "First correct" (8.2).

### Interpretation

The data suggests that lower confidence ranges (Pass@1 ≤ 33%) are associated with higher error rates in the first step, as reflected by the larger gap between correct and incorrect tokens. While the gap narrows in higher confidence ranges (67–100%), incorrect tokens still dominate. The "Overall" category indicates a systemic bias toward incorrect tokens, even when averaged across all ranges. This could imply challenges in the model's initial processing steps, particularly in low-confidence scenarios, warranting further investigation into error sources or confidence calibration.

</details>

Figure 3: Accuracy and number of tokens on DS-R1-Qwen-7B. Left: The relationship between the accuracy of the final prediction ( $q_{T}$ ) and the correctness of the prediction solely based on the first reasoning step ( $q_{1}$ ) across different difficulty levels. If $q_{1}$ is incorrect, $q_{T}$ is more likely incorrect. Right: The number of tokens used for the final prediction after the first reasoning step $t_{1}$ , i.e., the number of tokens used for $[t_{2},t_{3},...,t_{T}]$ . Although $q_{1}$ is correct, the model still consumes a large amount of tokens for the following reasoning steps–overthinking.

3.3 Minor perturbation to the correct first step leads to significant loss

Building on our findings in § 3.2, which demonstrate a positive correlation between the model’s first and final predictions, we further investigate the significance of the first reasoning step by introducing minor perturbations. Specifically, we slightly alter an initially correct reasoning step and provide it as input to the model to assess whether it can recover from such errors.

Experimental setup. Unlike § 3.2, where we analyze the correctness of the first reasoning step $t_{1}$ , here we treat the final correct conclusion $c_{T}$ —which satisfies $q_{T}=g(c_{T})=a$ —as the new first reasoning step, denoted $t^{\prime}_{1}$ . This choice ensures that the step contains all necessary reasoning for arriving at the correct answer, which cannot be guaranteed for $t_{1}$ . As illustrated in Figure 3 (Left), an initially correct reasoning step can still lead to an incorrect final prediction. To construct $t^{\prime}_{1}$ , we apply the following perturbations to $c_{T}$ (see Appendix G for an example): (1) we remove not only the explicit answer formatting (e.g., \boxed{a}) but also any surrounding sentences that may directly disclose or repeat the final answer; (2) the resulting text from (1) is treated as the correct version of $t^{\prime}_{1}$ (serving as our baseline); (3) we generate an incorrect version by replacing the correct answer $a$ in the remaining reasoning with $a± 1$ or $a± 10$ . The answer of AIME question is integer in the range of $[0,999)$ .

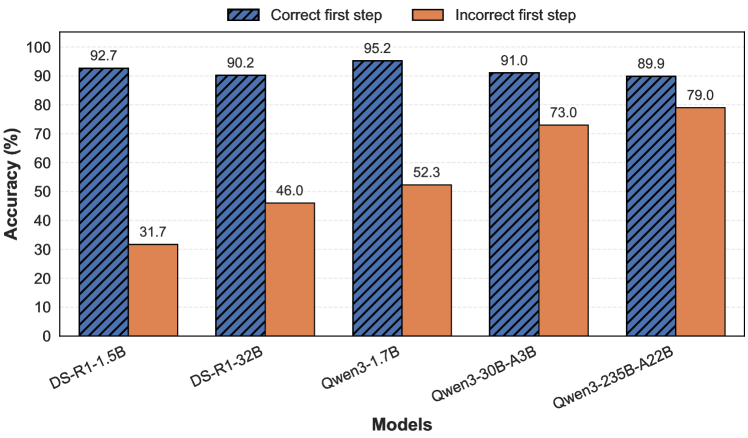

Table 1: Perturbation experiments. Reported accuracy (%) with correct vs. incorrect first step. Even minor perturbations cause significant drops.

| DS-R1-Qwen-1.5B DS-R1-Qwen-7B DS-R1-Qwen-32B | 95.4 94.8 100.0 | 64.4 28.5 85.8 |

| --- | --- | --- |

| Qwen3-1.7B | 96.0 | 46.6 |

| Qwen3-8B | 71.4 | 37.0 |

| Qwen3-30B-A3B | 100.0 | 74.7 |

| Qwen3-235B-A22B | 100.0 | 78.7 |

These perturbations are minimal, as they preserve the core reasoning structure while only altering the final prediction in the incorrect variant. We then combine the prompt $p$ with the modified first reasoning step $t^{\prime}_{1}$ and input it to the model as $\mathcal{M}(p\mathrm{{<}think{>}}t^{\prime}_{1}\mathrm{Alternatively})$ to assess subsequent reasoning behavior.

Result. As shown in Table 1, we make two key observations: (1) Smaller models rarely reach 100% accuracy even when the first reasoning step is correct, suggesting that they may revise or deviate from their initial reasoning. In contrast, larger models (e.g., DS-R1-32B) consistently achieve 100% accuracy given a correct first step, indicating greater stability. (2) There is a substantial drop in accuracy when the first reasoning step is incorrect, highlighting that even minor errors early in the reasoning process can significantly affect the final prediction. These findings further indicate that the LLM’s ability to self-correct has been overestimated.

In this section, we observe that the reasoning model is particularly vulnerable at the initial stage of the reasoning process; an error in the first step can propagate and substantially degrade the final prediction. Can we develop a method to identify and retain more promising first reasoning steps while discarding suboptimal ones to enhance the overall generation efficiency?

4 Early pruning with hint from first step

In this section, we propose an efficient and straightforward sampling method to identify a promising first reasoning step. By doing so, we can terminate the generation process early when a suboptimal first step is detected, thereby reducing unnecessary computational overhead.

4.1 Problem definition

In contrast to the notation introduced in § 3, we incorporate a random seed $\epsilon$ to introduce stochasticity into the sampling process. Specifically, a sampled trace is computed as $t\oplus c_{T}=\mathcal{M}(p,\epsilon)$ . By varying the random seed $\epsilon^{n}$ , we obtain diverse generations, denoted as $t^{n}\oplus c^{n}_{T}=\mathcal{M}(p,\epsilon^{n})$ , where $t^{n}=[t^{n}_{1},t^{n}_{2},...,t^{n}_{T}]$ . In prior experiments, we sampled 64 CoT traces per question using 64 distinct random seeds $\{\epsilon^{n}\}_{n=1}^{64}$ .

A widely adopted technique for reasoning tasks is majority voting or self-consistency generation (Wang et al., 2022). To promote exploration of the reasoning space, models are typically sampled at high temperatures, resulting in diverse outputs. Majority voting then serves to aggregate these outputs by selecting the most frequent final prediction. Formally, majority voting over $K$ samples is defined as:

$$

q_{\text{maj@}K}=\text{mode}(\{q^{n}_{T}\}_{n=1}^{K})\quad\text{where}\quad q^{n}_{T}=g(c^{n}_{T})

$$

However, for models generating long CoT traces, majority voting becomes computationally expensive, as it requires sampling $N$ complete reasoning paths independently. In this section, we propose a more efficient approach that samples only $M$ full traces, where $M<N$ , while maintaining comparable majority voting performance to the one with $N$ samplings.

4.2 Methodology

In § 3, we demonstrated a strong positive correlation between the first reasoning step and the final prediction. This observation motivates a method that identifies the top $M$ most promising first reasoning steps out of a total of $N$ , and continues generation only for these selected $M$ candidates, while discarding the remaining $(N-M)$ .

Let a reasoning model generate $N$ candidate first reasoning step $\{t_{1}^{1},t_{1}^{2},...,t_{1}^{N}\}$ from a prompt $p$ with different random seeds $\{\epsilon^{n}\}_{n=1}^{N}$ . Each $t_{1}^{n}$ is the first reasoning step of a full reasoning trajectory. We define a scoring function $r:t_{1}^{n}→\mathbb{R}$ that estimates the promise of a first step, e.g., rating from a reward model. We then select the top $M$ first steps based on their scores:

$$

\mathcal{R}_{\text{top}}=\text{TopM}(\{r(t_{1}^{n})\}_{n=1}^{N})

$$

Only the selected $M$ first steps $\{t_{1}^{n}\mid n∈\mathcal{R}_{\text{top}}\}$ are used for further multi-step generation. The remaining $(N-M)$ are discarded. Since the first step typically requires only a small number of tokens to generate, this selection approach efficiently reduces computation by avoiding full sampling for the less promising $(N-M)$ candidates.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Graph: Mean@K Accuracy Comparison Across Datasets

### Overview

The image displays a set of line graphs comparing the performance of two methods, **Vanilla N** (orange) and **TopM** (blue), across five datasets: **Average**, **AIME24**, **AIME25**, **HMMT**, and **GPQA**. Each subplot shows how Mean@K accuracy (%) evolves as the parameter **K** varies. Vertical dashed lines at **K=32** are present in all subplots, likely indicating a threshold or reference point. Annotations in some subplots highlight specific model configurations (e.g., "DS-R1-Qwen-1.5B", "Qwen3-8B").

---

### Components/Axes

- **X-axis (K)**: Labeled with values **148, 16, 32, 64** (non-linear scale, possibly categorical or segmented).

- **Y-axis (Mean@K Accuracy %)**: Ranges from **25% to 80%**.

- **Legend**:

- **Vanilla N** (orange line)

- **TopM** (blue line)

- **Subplots**:

- **Average** (top-left)

- **AIME24** (top-middle)

- **AIME25** (top-right)

- **HMMT** (middle-left)

- **GPQA** (middle-right)

---

### Detailed Analysis

#### **Average Subplot**

- **Vanilla N**: Starts at ~30% (K=148), rises to ~40% (K=16), ~50% (K=32), and ~60% (K=64).

- **TopM**: Starts at ~35% (K=148), peaks at ~45% (K=16), ~55% (K=32), and ~65% (K=64).

- **Vertical Line (K=32)**: Both lines cross ~50% (Vanilla N) and ~55% (TopM).

#### **AIME24 Subplot**

- **Vanilla N**: ~30% (K=148) → ~40% (K=16) → ~50% (K=32) → ~60% (K=64).

- **TopM**: ~35% (K=148) → ~45% (K=16) → ~55% (K=32) → ~65% (K=64).

- **Vertical Line (K=32)**: Similar crossing points as Average.

#### **AIME25 Subplot**

- **Vanilla N**: ~30% (K=148) → ~40% (K=16) → ~50% (K=32) → ~60% (K=64).

- **TopM**: ~35% (K=148) → ~45% (K=16) → ~55% (K=32) → ~65% (K=64).

- **Vertical Line (K=32)**: Consistent with other subplots.

#### **HMMT Subplot**

- **Vanilla N**: ~30% (K=148) → ~40% (K=16) → ~50% (K=32) → ~60% (K=64).

- **TopM**: ~35% (K=148) → ~45% (K=16) → ~55% (K=32) → ~65% (K=64).

- **Annotations**:

- **DS-R1-Qwen-32B**: 64% (K=64)

- **Qwen3-8B**: 58.5% (K=64)

#### **GPQA Subplot**

- **Vanilla N**: ~30% (K=148) → ~40% (K=16) → ~50% (K=32) → ~60% (K=64).

- **TopM**: ~35% (K=148) → ~45% (K=16) → ~55% (K=32) → ~65% (K=64).

- **Annotations**:

- **DS-R1-Qwen-1.5B**: 62.5% (K=64)

- **Qwen3-8B**: 55.0% (K=64)

- **SW-OR1-7B-Preview**: 54% (K=64)

---

### Key Observations

1. **Consistent Performance**: **TopM** consistently outperforms **Vanilla N** across all datasets and K values.

2. **Threshold at K=32**: The vertical dashed lines at K=32 may indicate a critical point where performance stabilizes or a reference for evaluation.

3. **Model-Specific Annotations**:

- In **HMMT**, **DS-R1-Qwen-32B** achieves 64% accuracy at K=64, while **Qwen3-8B** scores 58.5%.

- In **GPQA**, **DS-R1-Qwen-1.5B** reaches 62.5%, **Qwen3-8B** 55.0%, and **SW-OR1-7B-Preview** 54%.

---

### Interpretation

- **Method Comparison**: **TopM** demonstrates superior scalability and accuracy, suggesting it is more effective for the evaluated tasks.

- **K=32 as a Reference**: The vertical lines may highlight a standard evaluation point, possibly reflecting a balance between computational cost and performance.

- **Model Variants**: The annotations indicate that specific model configurations (e.g., Qwen3-8B, DS-R1-Qwen) have distinct performance profiles, potentially tied to their architecture or training data.

- **Anomalies**: The **SW-OR1-7B-Preview** in GPQA shows a lower accuracy (54%) compared to other models, suggesting it may be less optimized for this dataset.

This analysis underscores the importance of method selection and model configuration in achieving high Mean@K accuracy, with **TopM** emerging as the more robust approach.

</details>

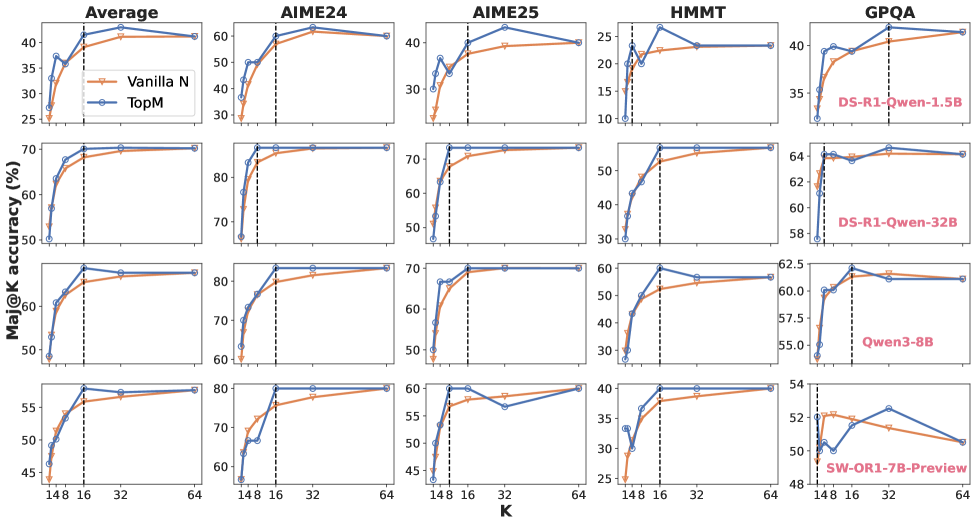

Figure 4: Majority voting accuracy with different number of samplings for four LLMs. The vertical dashed line denotes the smallest $M$ whose accuracy is equal to or larger than the accuracy of $N=64$ .

4.3 Experiments

Setup. We evaluate five families of reasoning models—DS-R1-Qwen (Guo et al., 2025), Qwen3 (Yang et al., 2025a), Skywork-OR1 (SW-OR1) (He et al., 2025), Magistral (Rastogi et al., 2025) and GPT-OSS (Agarwal et al., 2025) —on five challenging reasoning benchmarks spanning mathematics, science and programming: AIME24, AIME25 (MAA Committees, 2025), HMMT Feb 2025 (Balunović et al., 2025), the GPQA Diamond set (Rein et al., 2024) and LiveCodeBench Latest release, containing problems from Jan–Apr 2025. (Jain et al., 2024), consisting of 30, 30, 30, 198, and 175 problems, respectively. For decoding, we adopt each model’s recommended temperature and top_p, with a maximum generation length of 32K tokens.

We consider values of $N$ and $M$ from the set $\{1,2,4,8,16,32,64\}$ . For early pruning, we fix $N=64$ and select the top $M$ most promising first-step candidates using reward scores predicted by a process reward model (PRM), Qwen2.5-Math-PRM-7B (Zhang et al., 2025). When $M=64$ , the accuracy is exactly the same as the one for $N=64$ , since all candidates are chosen. For PRM, a “step” is defined as a segment of text ending with “ \n\n ”, and each step receives an individual reward score. The trajectory $t^{n}_{1}$ contains multiple such steps, and we use the score of its final step as the overall reward, $r(t^{n}_{1})$ . Notably, using a PRM to score $t^{n}_{1}$ is really cheap, because $t^{n}_{1}$ is short, and its computation is similar to generate one token with input $p\oplus t^{n}_{1}$ .

Unlike the definition used in § 3, where $t^{n}_{1}$ terminates upon generating the keyword “Alternatively”, we redefine $t^{n}_{1}$ in this section to have a fixed token length, $\text{len}(t^{n}_{1})$ . The previous definition made it difficult to control the computational budget for generating first steps, as the trigger phrase might not appear or could occur late in the sequence. By fixing the token length, we achieve precise control over the generation budget when sampling $N$ candidate first steps. By default, $\text{len}(t^{n}_{1})=512$ .

4.3.1 Main results

In Figure 4, we analyze the performance as $M$ varies. We find that selecting the top 16 first reasoning steps from 64 candidates and continuing generation from them achieves accuracy on par with, or even exceeding, conventional sampling with $N=64$ . This trend is consistent across diverse LLMs and benchmarks. Interestingly, for certain cases—such as HMMT on DS-R1-Qwen-1.5B, and AIME24, AIME25, and GPQA on DS-R1-Qwen-32B—using as few as $M≤ 8$ suffices to match the performance obtained with all 64 samples.

Table 2: Early pruning accuracy and efficiency. We select $M=16$ first steps with the highest reward scores out of $N=64$ candidate first steps. The number of tokens used for the 48 discarded first steps is also included for the early pruning method. Early pruning maintains the accuracy, even improves sometimes, while only requiring $<30\%$ original inference budget.

| DS-R1-Qwen-1.5B | $N=64$ | 60.0 | 40.0 | 23.3 | 41.4 | 41.2 | $×$ 1.00 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| $N=16$ | 57.0 | 37.6 | 22.4 | 39.4 | 39.1 (-2.1) | $×$ 0.25 | |

| $M=16$ | 60.0 | 40.0 | 26.7 | 39.4 | 41.5 (+0.3) | $×$ 0.28 | |

| DS-R1-Qwen-32B | $N=64$ | 86.7 | 73.3 | 56.7 | 64.1 | 70.2 | $×$ 1.00 |

| $N=16$ | 85.4 | 70.9 | 52.6 | 63.9 | 68.2 (-2.0) | $×$ 0.25 | |

| $M=16$ | 86.7 | 73.3 | 56.7 | 63.6 | 70.1 (-0.1) | $×$ 0.29 | |

| Qwen3-8B | $N=64$ | 83.3 | 70.0 | 56.7 | 61.1 | 67.8 | $×$ 1.00 |

| $N=16$ | 79.8 | 69.0 | 52.3 | 61.3 | 65.6 (-2.2) | $×$ 0.25 | |

| $M=16$ | 83.3 | 70.0 | 60.0 | 62.1 | 68.9 (+1.1) | $×$ 0.28 | |

| SW-OR1-7B | $N=64$ | 80.0 | 60.0 | 40.0 | 50.5 | 57.6 | $×$ 1.00 |

| $N=16$ | 75.7 | 58.0 | 37.9 | 51.9 | 55.9 (-1.7) | $×$ 0.25 | |

| $M=16$ | 80.0 | 60.0 | 40.0 | 52.5 | 57.9 (+0.3) | $×$ 0.29 | |

| Magistral-Small | $N=64$ | 86.7 | 83.3 | 76.7 | 70.2 | 79.2 | $×$ 1.00 |

| $N=16$ | 87.1 | 82.6 | 71.1 | 69.2 | 77.5 (-1.7) | $×$ 0.25 | |

| $M=16$ | 86.7 | 83.3 | 73.3 | 70.2 | 78.4 (-0.8) | $×$ 0.28 | |

| GPT-OSS-20B | $N=64$ | 86.7 | 83.3 | 80.0 | 73.2 | 80.8 | $×$ 1.00 |

| $N=16$ | 85.3 | 81.7 | 73.3 | 72.7 | 78.3 (-2.5) | $×$ 0.25 | |

| $M=16$ | 86.7 | 83.3 | 80.0 | 73.2 | 80.8 (-0.0) | $×$ 0.27 | |

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Graphs: Model Performance Comparison Across Datasets

### Overview

The image contains three line graphs comparing the performance of two models ("Vanilla N" and "TopM") across three datasets: "DS-R1-Qwen-32B", "Qwen3-8B", and "GPT-OSS-20B". Each graph plots "pass@K" (y-axis) against "K" (x-axis), showing how performance improves as K increases. Both models exhibit similar upward trends, with "TopM" consistently outperforming "Vanilla N" slightly.

---

### Components/Axes

1. **X-Axis (K)**:

- Labeled "K" with discrete values: 1, 3, 5, 7, 9, 11, 13, 15.

- Represents the number of top candidates considered for evaluation.

2. **Y-Axis (pass@K)**:

- Labeled "pass@K" with values ranging from 40 to 65.

- Measures the percentage of successful outcomes (e.g., correct predictions) within the top K candidates.

3. **Legends**:

- Positioned in the **bottom-left** of each graph.

- "Vanilla N" is represented by **orange triangles** (▲).

- "TopM" is represented by **blue circles** (●).

4. **Graph Titles**:

- Top-left corner of each graph specifies the dataset:

- "DS-R1-Qwen-32B"

- "Qwen3-8B"

- "GPT-OSS-20B"

---

### Detailed Analysis

#### DS-R1-Qwen-32B

- **Vanilla N**: Starts at ~48 (K=1), rises sharply to ~63 (K=15).

- **TopM**: Starts at ~49 (K=1), rises to ~63 (K=15).

- **Trend**: Both lines converge at K=15, with TopM maintaining a ~1-2 point advantage throughout.

#### Qwen3-8B

- **Vanilla N**: Begins at ~42 (K=1), increases to ~54 (K=15).

- **TopM**: Starts at ~43 (K=1), rises to ~55 (K=15).

- **Trend**: TopM outperforms Vanilla N by ~1-2 points across all K values.

#### GPT-OSS-20B

- **Vanilla N**: Starts at ~44 (K=1), climbs to ~60 (K=15).

- **TopM**: Begins at ~45 (K=1), reaches ~61 (K=15).

- **Trend**: TopM maintains a ~1-2 point lead, with both models showing steep initial growth.

---

### Key Observations

1. **Consistent Performance Gap**: "TopM" outperforms "Vanilla N" by 1-2 points across all datasets and K values.

2. **Diminishing Returns**: Performance improvements slow as K increases, especially after K=9.

3. **Dataset Variability**: "GPT-OSS-20B" shows the largest absolute performance gap (~16 points at K=15), while "Qwen3-8B" has the smallest (~3 points at K=15).

---

### Interpretation

The data suggests that "TopM" is a more effective model variant, likely due to architectural optimizations or training strategies. The marginal performance gap implies that while "TopM" is superior, the difference may not justify additional computational costs in all scenarios. The convergence of lines at higher K values indicates that both models saturate at similar performance ceilings, but "TopM" achieves this faster. This could reflect better candidate prioritization or filtering mechanisms in "TopM".

</details>

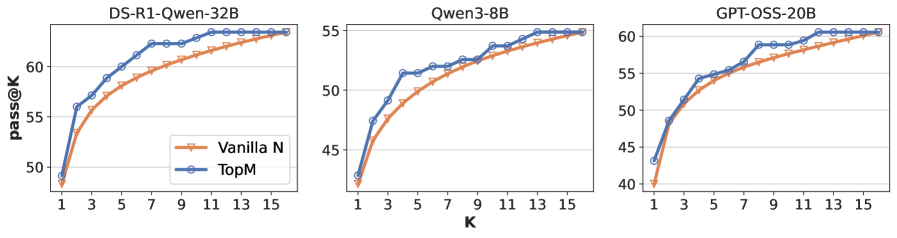

Figure 5: The pass rate on LiveCodeBench, where we set $N=16$ for early pruning (TopM).

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Charts: Model Performance vs Context Size

### Overview

Two line charts compare model performance across different context sizes (K and len(t₁)). The left chart evaluates models against varying K values (1-64), while the right chart tests performance with different sequence lengths (len(t₁)=256, 512, 1024). All models show increasing accuracy with larger context sizes, plateauing near 70% performance.

### Components/Axes

**Left Chart (K Values):**

- **X-axis (K):** Discrete values [1, 4, 8, 16, 32, 64]

- **Y-axis:** Average m@K accuracy (%) [45-75%]

- **Legend (bottom-right):**

- Vanilla N (orange triangle)

- Qwen2.5-Math-PRM-7B (blue circle)

- Qwen2.5-Math-PRM-72B (dark blue square)

- Qwen2.5-Math-RM-72B (pink diamond)

**Right Chart (len(t₁) Values):**

- **X-axis (len(t₁)):** Values [256, 512, 1024]

- **Y-axis:** Average m@K accuracy (%) [50-75%]

- **Legend (bottom-right):**

- Vanilla N (orange triangle)

- len(t₁)=256 (dark blue square)

- len(t₁)=512 (blue circle)

- len(t₁)=1024 (pink diamond)

### Detailed Analysis

**Left Chart Trends:**

1. **Vanilla N:** Starts at ~45% (K=1), rises sharply to ~65% (K=4), plateaus at ~70% (K≥16)

2. **Qwen2.5-Math-PRM-7B:** Begins at ~50% (K=1), peaks at ~72% (K=16), maintains ~70% (K≥32)

3. **Qwen2.5-Math-PRM-72B:** Similar trajectory to PRM-7B but with slightly smoother ascent

4. **Qwen2.5-Math-RM-72B:** Matches PRM-72B performance across all K values

**Right Chart Trends:**

1. **Vanilla N:** Starts at ~50% (len(t₁)=256), rises to ~68% (len(t₁)=512), plateaus at ~70% (len(t₁)=1024)

2. **len(t₁)=256:** Matches Vanilla N baseline

3. **len(t₁)=512:** Outperforms Vanilla N by ~8% at peak

4. **len(t₁)=1024:** Matches Qwen2.5-Math-PRM-7B performance from left chart

### Key Observations

1. **Context Size Impact:** All models show >15% accuracy improvement when context size increases from minimum to maximum values

2. **Model Efficiency:** Qwen2.5 variants consistently outperform Vanilla N by 8-12 percentage points

3. **PRM vs RM Variants:** PRM-72B and RM-72B demonstrate identical performance across both context size tests

4. **Diminishing Returns:** Accuracy gains become marginal beyond K=16/len(t₁)=512

### Interpretation

The data suggests that:

1. **Context Size Matters:** Larger context windows (K or len(t₁)) enable better performance across all models, with diminishing returns after ~16 tokens or 512 sequence length

2. **Model Architecture Advantage:** Qwen2.5's PRM/RM variants demonstrate superior context utilization compared to Vanilla N, maintaining high performance even at maximum context sizes

3. **PRM/RM Equivalence:** The identical performance of PRM-72B and RM-72B implies these variants may share architectural similarities or training objectives

4. **Practical Implications:** For applications requiring high accuracy, Qwen2.5 models with moderate context sizes (K=16/len(t₁)=512) offer optimal performance-to-resource tradeoffs

*Note: All values are approximate due to lack of grid lines. Color coding was verified through multiple cross-references between legend and data points.*

</details>

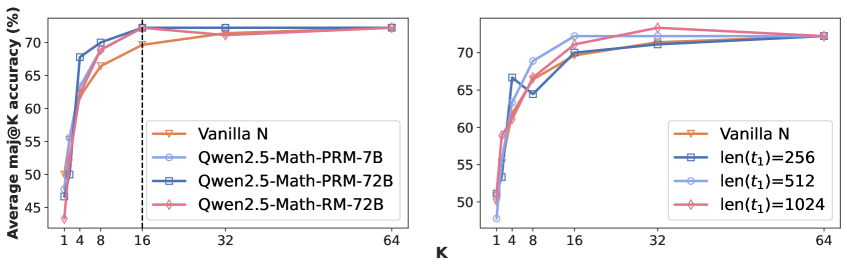

Figure 6: Average accuracy across AIME24, AIME25 and HMMT on DS-R1-Qwen-32B. Left: Comparison of reward signals derived from different reward models. The choice of reward model has minimal impact on overall performance. Right: Effect of varying the length of the first reasoning step. Using a very short first step, like 256 tokens, leads to suboptimal performance, likely because it provides insufficient reasoning context to effectively evaluate the quality of the step.

Table 2 reports the detailed accuracy and token consumption of different methods. When using $M=16$ , early pruning consistently matches the accuracy of $N=64$ across a range of LLMs and benchmarks, while substantially outperforming $N=16$ under a comparable token budget. Notably, for Qwen3-8B, early pruning even yields a 1.1% improvement in average accuracy. Importantly, these gains come at less than 30% of the inference cost of majority voting with $N=64$ , underscoring the strong efficiency advantage of our method.

For the code generation benchmark, LiveCodeBench, where majority voting is not applicable, we present the pass rate in Figure 5. Early pruning consistently surpasses standard sampling given the same number of complete sampling.

4.3.2 Ablation studies

Here we further validate our default settings.

Choice of reward model. In Figure 6 (Left), we evaluate two additional reward models: a larger PRM, Qwen2.5-Math-PRM-72B, and an outcome reward model, Qwen2.5-Math-RM-72B (Yang et al., 2024). The results indicate that the choice of reward model has minimal impact on performance. Notably, the smaller 7B PRM achieves comparable results, highlighting the efficiency of our approach.

Length of first step. In Figure 6 (Right), we examine the impact of varying the length of the first reasoning step. We observe that the shortest first step (i.e., $\text{len}(t^{n}_{1})=256$ ) leads to degraded performance. We hypothesize that shorter $t^{n}_{1}$ sequences lack sufficient reasoning content, making them less informative for reliable reward model evaluation. Nevertheless, setting $\text{len}(t^{n}_{1})≥ 512$ tokens yields consistently better performance than vanilla sampling.

Table 3: Average maj@K for early pruning, with first step defined by length or phrase.

| DS-R1-Qwen-1.5B DS-R1-Qwen-32B Qwen3-8B | 41.5 70.1 68.9 | 43.8 70.4 68.2 |

| --- | --- | --- |

| SW-OR1-7B | 57.9 | 57.7 |

Effect of first step split. Table 3 examines how defining the first step influences early pruning performance. The heuristic approach follows the method described in § 3. Overall, both definitions yield comparable results. Nevertheless, we recommend using the token-count–based definition, as it provides a clearer way to manage the token budget across candidate first steps. Moreover, trigger phrases that signal step boundaries may vary across LLMs.

Table 4: Time spent by early pruning.

| DS-R1-Qwen-1.5B Qwen3-8B | $×$ 1.00 $×$ 1.00 | $×$ 0.27 $×$ 0.37 |

| --- | --- | --- |

Overhead from reward model. Relative to vanilla sampling, early pruning requires scoring the first step with a reward model, which introduces additional overhead. To ensure a fair comparison, we avoid using extra GPUs for deploying the reward model. Instead, our procedure is as follows: (1) load the reasoning model to generate candidate first steps and then offload it; (2) load the reward model on the same GPU to evaluate these steps and offload it; and (3) reload the reasoning model to continue generation from the selected first steps. The timing results are reported in Table 4. Notably, early pruning remains efficient both in terms of token usage and runtime, since evaluating the first step with the reward model is inexpensive—comparable to computing embeddings for the short first steps.

5 Conclusion

In this paper, we empirically demonstrate that the first reasoning step plays a critical role in determining the final outcome of a model’s reasoning process. Errors introduced early can significantly degrade overall performance. Motivated by this observation, we propose an efficient sampling strategy that identifies and retains high-quality first reasoning steps, reducing the inference computation up to 70% across three model families. These findings suggest that improving or exploiting the very first step is a promising direction for building more accurate and efficient reasoning LLMs.

Acknowledgements

This research was partly supported by the Netherlands Organization for Scientific Research (NWO) under project number VI.C.192.080, 024.004.022, NWA.1389.20.183, and KICH3.LTP.20.006, and the European Union under grant agreements No. 101070212 (FINDHR) and No. 101201510 (UNITE). We are very grateful for the computation support from eBay. Views and opinions expressed are those of the author(s) only and do not necessarily reflect those of their respective employers, funders and/or granting authorities.

References

- Agarwal et al. (2025) Sandhini Agarwal, Lama Ahmad, Jason Ai, Sam Altman, Andy Applebaum, Edwin Arbus, Rahul K Arora, Yu Bai, Bowen Baker, Haiming Bao, et al. gpt-oss-120b & gpt-oss-20b model card. arXiv preprint arXiv:2508.10925, 2025.

- Aggarwal et al. (2023) Pranjal Aggarwal, Aman Madaan, Yiming Yang, et al. Let’s sample step by step: Adaptive-consistency for efficient reasoning and coding with llms. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, pp. 12375–12396, 2023.

- Anthropic (2025) Anthropic. Claude 3.7 sonnet system card. 2025. URL https://api.semanticscholar.org/CorpusID:276612236.

- Balunović et al. (2025) Mislav Balunović, Jasper Dekoninck, Ivo Petrov, Nikola Jovanović, and Martin Vechev. Matharena: Evaluating llms on uncontaminated math competitions, February 2025. URL https://matharena.ai/.

- Barbero et al. (2025) Federico Barbero, Alvaro Arroyo, Xiangming Gu, Christos Perivolaropoulos, Michael Bronstein, Petar Veličković, and Razvan Pascanu. Why do llms attend to the first token? arXiv preprint arXiv:2504.02732, 2025.

- Brown et al. (2020) Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, et al. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Chen et al. (2024a) Xingyu Chen, Jiahao Xu, Tian Liang, Zhiwei He, Jianhui Pang, Dian Yu, Linfeng Song, Qiuzhi Liu, Mengfei Zhou, Zhuosheng Zhang, et al. Do not think that much for 2+ 3=? on the overthinking of o1-like llms. arXiv preprint arXiv:2412.21187, 2024a.

- Chen et al. (2024b) Xinyi Chen, Baohao Liao, Jirui Qi, Panagiotis Eustratiadis, Christof Monz, Arianna Bisazza, and Maarten de Rijke. The SIFo benchmark: Investigating the sequential instruction following ability of large language models. In Findings of the Association for Computational Linguistics: EMNLP 2024, pp. 1691–1706. Association for Computational Linguistics, 2024b. URL https://aclanthology.org/2024.findings-emnlp.92/.

- Chiang & Lee (2024) Cheng-Han Chiang and Hung-yi Lee. Over-reasoning and redundant calculation of large language models. In Yvette Graham and Matthew Purver (eds.), Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics (Volume 2: Short Papers), pp. 161–169, St. Julian’s, Malta, March 2024. Association for Computational Linguistics. URL https://aclanthology.org/2024.eacl-short.15/.

- Formal et al. (2021) Thibault Formal, Benjamin Piwowarski, and Stéphane Clinchant. Splade: Sparse lexical and expansion model for first stage ranking. In Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval, pp. 2288–2292, 2021.

- Formal et al. (2022) Thibault Formal, Carlos Lassance, Benjamin Piwowarski, and Stéphane Clinchant. From distillation to hard negative sampling: Making sparse neural ir models more effective, 2022. URL https://arxiv.org/abs/2205.04733.

- Fu et al. (2025a) Yichao Fu, Junda Chen, Yonghao Zhuang, Zheyu Fu, Ion Stoica, and Hao Zhang. Reasoning without self-doubt: More efficient chain-of-thought through certainty probing. In ICLR 2025 Workshop on Foundation Models in the Wild, 2025a. URL https://openreview.net/forum?id=wpK4IMJfdX.

- Fu et al. (2025b) Yichao Fu, Xuewei Wang, Yuandong Tian, and Jiawei Zhao. Deep think with confidence. arXiv preprint arXiv:2508.15260, 2025b.

- Guo et al. (2025) Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- He et al. (2025) Jujie He, Jiacai Liu, Chris Yuhao Liu, Rui Yan, Chaojie Wang, Peng Cheng, Xiaoyu Zhang, Fuxiang Zhang, Jiacheng Xu, Wei Shen, Siyuan Li, Liang Zeng, Tianwen Wei, Cheng Cheng, Yang Liu, and Yahui Zhou. Skywork open reasoner series. https://capricious-hydrogen-41c.notion.site/Skywork-Open-Reaonser-Series-1d0bc9ae823a80459b46c149e4f51680, 2025. Notion Blog.

- Hestness et al. (2017) Joel Hestness, Sharan Narang, Newsha Ardalani, Gregory Diamos, Heewoo Jun, Hassan Kianinejad, Md Mostofa Ali Patwary, Yang Yang, and Yanqi Zhou. Deep learning scaling is predictable, empirically. arXiv preprint arXiv:1712.00409, 2017.

- Hoffmann et al. (2022) Jordan Hoffmann, Sebastian Borgeaud, Arthur Mensch, Elena Buchatskaya, Trevor Cai, Eliza Rutherford, Diego de Las Casas, Lisa Anne Hendricks, Johannes Welbl, Aidan Clark, et al. Training compute-optimal large language models. arXiv preprint arXiv:2203.15556, 2022.

- Hou et al. (2025) Bairu Hou, Yang Zhang, Jiabao Ji, Yujian Liu, Kaizhi Qian, Jacob Andreas, and Shiyu Chang. Thinkprune: Pruning long chain-of-thought of llms via reinforcement learning. arXiv preprint arXiv:2504.01296, 2025.

- Hurst et al. (2024) Aaron Hurst, Adam Lerer, Adam P Goucher, Adam Perelman, Aditya Ramesh, Aidan Clark, AJ Ostrow, Akila Welihinda, Alan Hayes, Alec Radford, et al. Gpt-4o system card. arXiv preprint arXiv:2410.21276, 2024.

- Jain et al. (2024) Naman Jain, King Han, Alex Gu, Wen-Ding Li, Fanjia Yan, Tianjun Zhang, Sida Wang, Armando Solar-Lezama, Koushik Sen, and Ion Stoica. Livecodebench: Holistic and contamination free evaluation of large language models for code. arXiv preprint arXiv:2403.07974, 2024.

- Kaplan et al. (2020) Jared Kaplan, Sam McCandlish, Tom Henighan, Tom B Brown, Benjamin Chess, Rewon Child, Scott Gray, Alec Radford, Jeffrey Wu, and Dario Amodei. Scaling laws for neural language models. arXiv preprint arXiv:2001.08361, 2020.

- Li et al. (2024) Yiwei Li, Peiwen Yuan, Shaoxiong Feng, Boyuan Pan, Xinglin Wang, Bin Sun, Heda Wang, and Kan Li. Escape sky-high cost: Early-stopping self-consistency for multi-step reasoning. In ICLR, 2024.

- Liao & Monz (2024) Baohao Liao and Christof Monz. 3-in-1: 2d rotary adaptation for efficient finetuning, efficient batching and composability. arXiv preprint arXiv:2409.00119, 2024.

- Liao et al. (2025a) Baohao Liao, Hanze Dong, Yuhui Xu, Doyen Sahoo, Christof Monz, Junnan Li, and Caiming Xiong. Fractured chain-of-thought reasoning. arXiv preprint arXiv:2505.12992, 2025a.

- Liao et al. (2025b) Baohao Liao, Yuhui Xu, Hanze Dong, Junnan Li, Christof Monz, Silvio Savarese, Doyen Sahoo, and Caiming Xiong. Reward-guided speculative decoding for efficient LLM reasoning. arXiv preprint arXiv:2501.19324, 2025b.

- Liu et al. (2024a) Nelson F. Liu, Kevin Lin, John Hewitt, Ashwin Paranjape, Michele Bevilacqua, Fabio Petroni, and Percy Liang. Lost in the middle: How language models use long contexts. Transactions of the Association for Computational Linguistics, 12:157–173, 2024a. doi: 10.1162/tacl˙a˙00638. URL https://aclanthology.org/2024.tacl-1.9/.

- Liu et al. (2024b) Yijin Liu, Xianfeng Zeng, Chenze Shao, Fandong Meng, and Jie Zhou. Instruction position matters in sequence generation with large language models. In Lun-Wei Ku, Andre Martins, and Vivek Srikumar (eds.), Findings of the Association for Computational Linguistics: ACL 2024, pp. 11652–11663, Bangkok, Thailand, August 2024b. Association for Computational Linguistics. doi: 10.18653/v1/2024.findings-acl.693. URL https://aclanthology.org/2024.findings-acl.693/.

- Luo et al. (2025) Haotian Luo, Li Shen, Haiying He, Yibo Wang, Shiwei Liu, Wei Li, Naiqiang Tan, Xiaochun Cao, and Dacheng Tao. O1-pruner: Length-harmonizing fine-tuning for o1-like reasoning pruning. arXiv preprint arXiv:2501.12570, 2025.

- MAA Committees (2025) MAA Committees. AIME Problems and Solutions. https://artofproblemsolving.com/wiki/index.php/AIME_Problems_and_Solutions, 2025.

- Muennighoff et al. (2025) Niklas Muennighoff, Zitong Yang, Weijia Shi, Xiang Lisa Li, Li Fei-Fei, Hannaneh Hajishirzi, Luke Zettlemoyer, Percy Liang, Emmanuel Candès, and Tatsunori Hashimoto. s1: Simple test-time scaling. arXiv preprint arXiv:2501.19393, 2025.

- Pezeshkpour & Hruschka (2023) Pouya Pezeshkpour and Estevam Hruschka. Large language models sensitivity to the order of options in multiple-choice questions. arXiv preprint arXiv:2308.11483, 2023.

- Qin et al. (2023) Guanghui Qin, Yukun Feng, and Benjamin Van Durme. The NLP task effectiveness of long-range transformers. In Andreas Vlachos and Isabelle Augenstein (eds.), Proceedings of the 17th Conference of the European Chapter of the Association for Computational Linguistics, pp. 3774–3790. Association for Computational Linguistics, 2023. doi: 10.18653/v1/2023.eacl-main.273. URL https://aclanthology.org/2023.eacl-main.273/.

- Rastogi et al. (2025) Abhinav Rastogi, Albert Q Jiang, Andy Lo, Gabrielle Berrada, Guillaume Lample, Jason Rute, Joep Barmentlo, Karmesh Yadav, Kartik Khandelwal, Khyathi Raghavi Chandu, et al. Magistral. arXiv preprint arXiv:2506.10910, 2025.

- Reimers & Gurevych (2019) Nils Reimers and Iryna Gurevych. Sentence-bert: Sentence embeddings using siamese bert-networks. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2019. URL https://arxiv.org/abs/1908.10084.

- Rein et al. (2024) David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R Bowman. GPQA: A graduate-level Google-proof Q&A benchmark. In First Conference on Language Modeling, 2024.

- Shen et al. (2023) Ruoqi Shen, Sébastien Bubeck, Ronen Eldan, Yin Tat Lee, Yuanzhi Li, and Yi Zhang. Positional description matters for transformers arithmetic. arXiv preprint arXiv:2311.14737, 2023.

- Shi et al. (2024) Lin Shi, Chiyu Ma, Wenhua Liang, Weicheng Ma, and Soroush Vosoughi. Judging the judges: A systematic investigation of position bias in pairwise comparative assessments by llms. arXiv preprint arXiv:2406.07791, 2024.

- Team et al. (2024) Gemini Team, Petko Georgiev, Ving Ian Lei, Ryan Burnell, Libin Bai, Anmol Gulati, Garrett Tanzer, Damien Vincent, Zhufeng Pan, Shibo Wang, et al. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context. arXiv preprint arXiv:2403.05530, 2024.

- Team (2025) Qwen Team. Qwen3, April 2025. URL https://qwenlm.github.io/blog/qwen3/.

- Wan et al. (2025) Guangya Wan, Yuqi Wu, Jie Chen, and Sheng Li. Reasoning aware self-consistency: Leveraging reasoning paths for efficient LLM sampling. In Luis Chiruzzo, Alan Ritter, and Lu Wang (eds.), Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers), pp. 3613–3635, Albuquerque, New Mexico, April 2025. Association for Computational Linguistics. ISBN 979-8-89176-189-6. doi: 10.18653/v1/2025.naacl-long.184. URL https://aclanthology.org/2025.naacl-long.184/.

- Wang et al. (2024) Peiyi Wang, Lei Li, Liang Chen, Zefan Cai, Dawei Zhu, Binghuai Lin, Yunbo Cao, Lingpeng Kong, Qi Liu, Tianyu Liu, and Zhifang Sui. Large language models are not fair evaluators. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 9440–9450. Association for Computational Linguistics, 2024. URL https://aclanthology.org/2024.acl-long.511/.

- Wang et al. (2020) Wenhui Wang, Furu Wei, Li Dong, Hangbo Bao, Nan Yang, and Ming Zhou. Minilm: Deep self-attention distillation for task-agnostic compression of pre-trained transformers. Advances in Neural Information Processing Systems, 2020. URL https://arxiv.org/abs/2002.10957.

- Wang et al. (2022) Xuezhi Wang, Jason Wei, Dale Schuurmans, Quoc Le, Ed Chi, Sharan Narang, Aakanksha Chowdhery, and Denny Zhou. Self-consistency improves chain of thought reasoning in language models. arXiv preprint arXiv:2203.11171, 2022.

- Wang et al. (2025a) Yue Wang, Qiuzhi Liu, Jiahao Xu, Tian Liang, Xingyu Chen, Zhiwei He, Linfeng Song, Dian Yu, Juntao Li, Zhuosheng Zhang, et al. Thoughts are all over the place: On the underthinking of o1-like llms. arXiv preprint arXiv:2501.18585, 2025a.

- Wang et al. (2025b) Zezhong Wang, Xingshan Zeng, Weiwen Liu, Yufei Wang, Liangyou Li, Yasheng Wang, Lifeng Shang, Xin Jiang, Qun Liu, and Kam-Fai Wong. Chain-of-probe: Examining the necessity and accuracy of CoT step-by-step. In Findings of the Association for Computational Linguistics: NAACL 2025, pp. 2586–2606. Association for Computational Linguistics, 2025b. ISBN 979-8-89176-195-7. URL https://aclanthology.org/2025.findings-naacl.140/.

- Wen et al. (2025) Liang Wen, Yunke Cai, Fenrui Xiao, Xin He, Qi An, Zhenyu Duan, Yimin Du, Junchen Liu, Lifu Tang, Xiaowei Lv, Haosheng Zou, Yongchao Deng, Shousheng Jia, and Xiangzheng Zhang. Light-r1: Curriculum sft, dpo and rl for long cot from scratch and beyond. arXiv preprint arXiv:2503.10460, 2025.

- Xiao et al. (2024) Guangxuan Xiao, Yuandong Tian, Beidi Chen, Song Han, and Mike Lewis. Efficient streaming language models with attention sinks. In The Twelfth International Conference on Learning Representations, 2024. URL https://openreview.net/forum?id=NG7sS51zVF.

- Xiong et al. (2025) Wei Xiong, Wenting Zhao, Weizhe Yuan, Olga Golovneva, Tong Zhang, Jason Weston, and Sainbayar Sukhbaatar. Stepwiser: Stepwise generative judges for wiser reasoning. arXiv preprint arXiv:2508.19229, 2025.

- Xue et al. (2023) Mingfeng Xue, Dayiheng Liu, Wenqiang Lei, Xingzhang Ren, Baosong Yang, Jun Xie, Yidan Zhang, Dezhong Peng, and Jiancheng Lv. Dynamic voting for efficient reasoning in large language models. In Findings of the Association for Computational Linguistics: EMNLP 2023, pp. 3085–3104, 2023.

- Yang et al. (2024) An Yang, Beichen Zhang, Binyuan Hui, Bofei Gao, Bowen Yu, Chengpeng Li, Dayiheng Liu, Jianhong Tu, Jingren Zhou, Junyang Lin, Keming Lu, Mingfeng Xue, Runji Lin, Tianyu Liu, Xingzhang Ren, and Zhenru Zhang. Qwen2.5-math technical report: Toward mathematical expert model via self-improvement. arXiv preprint arXiv:2409.12122, 2024.

- Yang et al. (2025a) An Yang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv, et al. Qwen3 technical report. arXiv preprint arXiv:2505.09388, 2025a.

- Yang et al. (2025b) Chenxu Yang, Qingyi Si, Yongjie Duan, Zheliang Zhu, Chenyu Zhu, Qiaowei Li, Zheng Lin, Li Cao, and Weiping Wang. Dynamic early exit in reasoning models. arXiv preprint arXiv:2504.15895, 2025b.

- Zhang et al. (2024a) Yusen Zhang, Sarkar Snigdha Sarathi Das, and Rui Zhang. Verbosity $neq$ veracity: Demystify verbosity compensation behavior of large language models. arXiv preprint arXiv:2411.07858, 2024a.

- Zhang et al. (2025) Zhenru Zhang, Chujie Zheng, Yangzhen Wu, Beichen Zhang, Runji Lin, Bowen Yu, Dayiheng Liu, Jingren Zhou, and Junyang Lin. The lessons of developing process reward models in mathematical reasoning. arXiv preprint arXiv:2501.07301, 2025.

- Zhang et al. (2024b) Zhenyu Zhang, Runjin Chen, Shiwei Liu, Zhewei Yao, Olatunji Ruwase, Beidi Chen, Xiaoxia Wu, and Zhangyang Wang. Found in the middle: How language models use long contexts better via plug-and-play positional encoding. In The Thirty-eighth Annual Conference on Neural Information Processing Systems, 2024b.

- Zheng et al. (2024) Chujie Zheng, Hao Zhou, Fandong Meng, Jie Zhou, and Minlie Huang. Large language models are not robust multiple choice selectors. In The Twelfth International Conference on Learning Representations, 2024.

Appendix A Keywords for Reasoning Step Segmentation

Good reasoning switching keywords should signal a clear switch in reasoning, while should not occur too frequently which often indicate only minor digressions, or too rare to be reliable delimiters. We collect a list of reasoning switching keywords from papers (Muennighoff et al., 2025; Hou et al., 2025). To assess which reasoning switch keywords could serve a similar role, we compute their average frequency per question in model outputs. After cosidering the frequencies and manually check the segment results, we found that “alternatively” strikes a balance, appearing often enough to capture step boundaries while still reflecting shifts in reasoning, for DS-R1-Qwen-7B and Qwen3-8B. We uses both “alternatively” and “wait” for Claude-3.7-Sonnet, GPT-OSS-20B and Magistral-Small.

Table A.1: Average frequency per question of discourse markers in model outputs.

| DS-R1-Qwen-7B Qwen3-8B | but 82 91 | wait 72 66 | alternatively 14 20 | hmm 7 6 | hold on 3 1 | let me confirm $<$ 1 $<$ 1 | however 10.1 10.4 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Claude-3.7-Sonnet | 28.5 | 22.6 | 0.2 | 6.1 | 0.7 | 0.0 | 0.9 |

| Magistral-Small | 47.1 | 54.8 | 3.0 | 0.0 | 0.9 | 0.2 | 1.4 |

| GPT-OSS-20B | 171.6 | 60.5 | 4.9 | 1.2 | 0.7 | 0.0 | 5.9 |

Appendix B Model Identifiers

For reproducibility, we provide the exact model identifiers used in our experiments. The models are: DeepSeek-R1-Distill-Qwen-7B (deepseek-ai/DeepSeek-R1-Distill-Qwen-7B), Qwen3-8B (Qwen/Qwen3-8B), Claude 3.7 (claude-3-7-sonnet-20250219, accessed via the Claude API), GPT-OSS-20B (openai/gpt-oss-20b), and Magistral-Small (mistralai/Magistral-Small-2509).

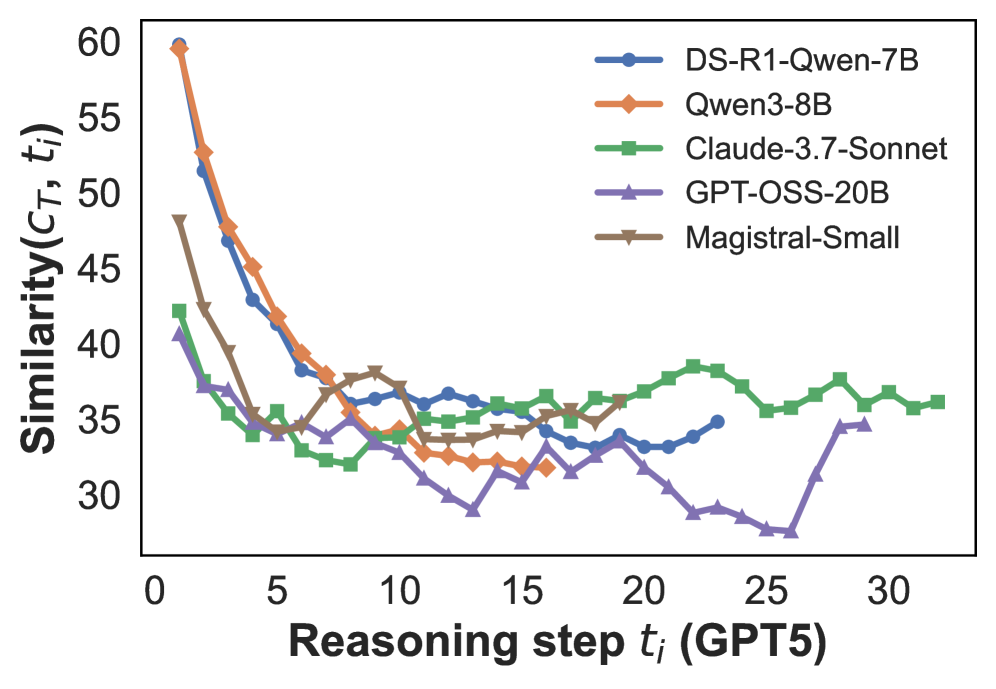

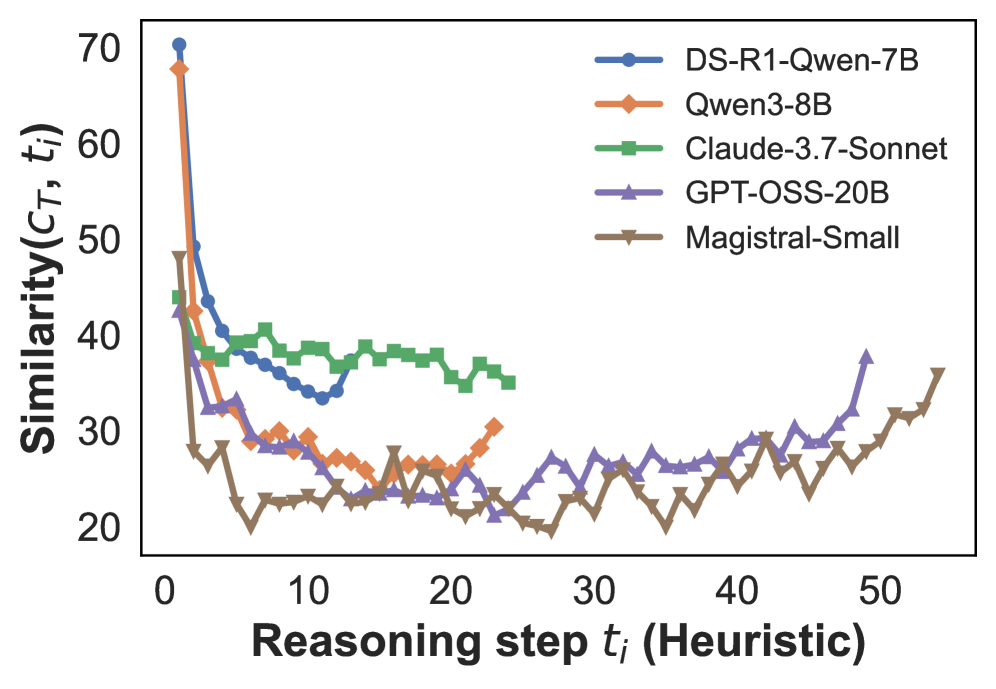

Appendix C Additional Similarity Analyses

We report the cosine similarity between the embedding of the $i$ -th reasoning step $t_{i}$ and the final conclusion $c_{T}$ , interpolated using the maximum number of reasoning steps (Figure C.1).

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Graph: Similarity Decay Across Reasoning Steps (GPT5)

### Overview

The image depicts a line graph comparing the similarity decay of five AI models over reasoning steps (t_i) measured in GPT5 units. The y-axis represents similarity scores (C_T, t_i) ranging from 0.50 to 0.85, while the x-axis spans 0 to 175 reasoning steps. Five distinct data series are plotted, each corresponding to a different model.

### Components/Axes

- **X-axis**: "Reasoning step t_i (GPT5)" (0–175, linear scale)

- **Y-axis**: "Similarity (C_T, t_i)" (0.50–0.85, linear scale)

- **Legend**:

- Blue: DS-R1-Qwen-7B

- Orange: Qwen3-8B

- Green: Claude-3.7-Sonnet

- Purple: GPT-OSS-20B

- Brown: Magistral-Small

- **Data Series**: Five colored lines with jagged trends, indicating stepwise measurements.

### Detailed Analysis

1. **DS-R1-Qwen-7B (Blue)**:

- Starts at ~0.85 similarity at t_i=0.

- Declines sharply to ~0.60 by t_i=50.

- Stabilizes with minor fluctuations between t_i=75–175.

2. **Qwen3-8B (Orange)**:

- Begins at ~0.80 similarity at t_i=0.

- Gradual decline to ~0.55 by t_i=100.

- Slight recovery to ~0.58 by t_i=150.

3. **Claude-3.7-Sonnet (Green)**:

- Initial similarity ~0.75 at t_i=0.