# Can Large Language Models Develop Strategic Reasoning? Post-training Insights from Learning Chess

> Equal contributions. This work was done during an internship at KRAFTON.Co-advising.

## Abstract

While reinforcement learning (RL) for large language models (LLMs) has shown promise in mathematical reasoning, strategic reasoning for LLMs using RL remains largely unexplored. We investigate whether LLMs can develop strategic reasoning capabilities through RL in chess. To this end, we leverage a chess-pretrained action-value network to provide dense reward on the LLM’s output move quality, which can be seen as a form of knowledge distillation. Our experiments show that our distillation-based dense rewards often outperform sparse binary rewards. However, surprisingly, all models plateau far below expert levels. We provide SFT and RL ablations on chess reasoning training and find evidence that this limitation stems from a deficit in the pretrained models’ internal understanding of chess—a deficit which RL alone may not be able to fully overcome. The code is available at https://github.com/krafton-ai/Chess-R1.

## 1 Introduction

Reinforcement learning with verifiable rewards (RLVR) has shown strong performance in developing mathematical reasoning capabilities for large language models (LLMs) (Guo et al., 2025; Li et al., 2025; Yu et al., 2025). While these successes highlight LLMs’ capacity for logical thinking, a critical dimension of intelligence remains largely unexplored: strategic reasoning —the ability to plan, anticipate adversary actions, and make decisions in multi-agent environments. Beyond logical reasoning in static settings, strategic reasoning aligns more with real-world scenarios such as games, negotiation, and market competitions (Zhang et al., 2024; Park et al., 2025).

To investigate this gap, we turn to chess, a strategic game demanding deep strategic reasoning abilities such as positional evaluation, long-term planning, and reasoning about an opponent’s intentions. In addition, chess offers a favorable environment for applying RLVR on LLMs, as it provides abundant publicly available game records and human-annotated reasoning about optimal moves. Given such a testbed for examining strategic reasoning, we raise the following research question:

Can LLMs develop strategic reasoning capabilities through RLVR with chess?

To this end, we train Qwen2.5 (Qwen: et al., 2025) and Llama3.1 (Grattafiori et al., 2024) models to predict the next best move in chess using GRPO (Shao et al., 2024). Unlike typical RLVR approaches that rely on sparse binary rewards (correct/incorrect), chess allows for dense reward signals: we leverage the fact that we can evaluate each move based on its estimated win probability, providing graded feedback proportional to the quality of the move. We implement this using a pre-trained chess expert as a reward model—a form of knowledge distillation from Q-value network to LLM—which evaluates position strength and provides dense continuous rewards rather than sparse binary ones.

We conduct systematic experiments on a chess puzzle dataset, and observe the following:

- Distillation-based dense rewards substantially outperforms sparse binary rewards in chess puzzle accuracy.

- RL fine-tuning performs stronger than supervised fine-tuning (SFT); yet, its performance plateaus at 25-30 $\$ puzzle accuracy—well below what is considered human expert performance (60–80 $\$ ).

- Additional reasoning distillation shows no RLVR gains, indicating the models fail to develop meaningful strategic reasoning despite further post-training.

Through additional failure analysis, we find that base LLMs often struggle to grasp fundamental chess rules. Thus, we hypothesize that, in contrast to logical reasoning in math domains, the limited emergence of strategic reasoning in chess may stem from insufficient exposure to chess-specific knowledge during LLM pre-training. Our empirical findings also support recent claims that RL mainly amplifies existing capabilities of pre-trained LLMs (Li et al., 2025; Zhao et al., 2025b), and offer insight for practitioners aiming to elicit reasoning abilities in new environments: pre-trained domain knowledge is essential to develop advanced reasoning.

## 2 Post-training LLM on Chess

<details>

<summary>fig/chess_training_diagram.png Details</summary>

### Visual Description

\n

## Diagram: Chess Agent Training Pipeline

### Overview

This diagram illustrates the training pipeline for a chess-playing agent. It depicts the flow of data and models, starting from a base language model and culminating in a reward model used for reinforcement learning. The pipeline involves stages like annotation, supervised fine-tuning (SFT), policy model training, and reward model training using Generative Rollout Policy Optimization (GRPO).

### Components/Axes

The diagram consists of the following components:

* **Text Prompt:** "You are a helpful assistant who plays chess professionally."

* **Current FEN string:** "7R/7P/4p3/P1p5/8/2k5/7r/3K4 w - 0 54"

* **Legal moves in SAN:** "Rg8 Rxg8 Re8 Rd8 Rc8 Rb8 Ra8 Kel Kcl a6"

* **Base Model:** Labeled "Qwen/Llama"

* **Annotations:** Labeled "o3 reasoning"

* **SFT (optional):** Supervised Fine-Tuning

* **Policy Model:** Labeled "Base or SFT"

* **Reward Model:** Labeled "Critic Q(s, a)"

* **GRPO:** Generative Rollout Policy Optimization

* **States:** S1, S2, S3

* **Actions:** a(1), a(2), a(3)

* **Rewards:** r(1), r(2), r(3)

* **Chess Piece Icon:** A white chess knight.

### Detailed Analysis or Content Details

The diagram shows a data flow starting from a text prompt and a chess position (FEN string).

1. **Initial State:** The process begins with a text prompt defining the agent's role and a current chess position represented by the FEN string "7R/7P/4p3/P1p5/8/2k5/7r/3K4 w - 0 54". The side to move is white ("w"), there are no castling rights ("-"), and the halfmove clock is 0, with the fullmove number being 54.

2. **Legal Moves:** A list of legal moves in Standard Algebraic Notation (SAN) is provided: "Rg8 Rxg8 Re8 Rd8 Rc8 Rb8 Ra8 Kel Kcl a6".

3. **Base Model:** The initial model is a "Qwen/Llama" language model.

4. **Annotations:** The base model's output is annotated with "o3 reasoning". These annotations are used for supervised fine-tuning.

5. **SFT (Optional):** The base model can optionally be fine-tuned using the annotations via Supervised Fine-Tuning (SFT).

6. **Policy Model:** The output of either the base model or the SFT model becomes the "Policy Model".

7. **GRPO & Reward Model:** The policy model interacts with the environment (chess game) and generates actions. These actions are evaluated by a "Reward Model" (Critic Q(s, a)). The Reward Model provides a reward signal based on the state (s) and action (a).

8. **Reward Loop:** The diagram shows three iterations of this reward loop:

* Action a(1) results in reward r(1) = Q(s, a(1))

* Action a(2) results in reward r(2) = Q(s, a(2))

* Action a(3) results in reward r(3) = Q(s, a(3))

9. **GRPO Connection:** The Reward Model is connected to the Policy Model via "GRPO" (Generative Rollout Policy Optimization), indicating that the reward signal is used to update the policy model.

### Key Observations

* The pipeline emphasizes the use of language models for chess playing.

* The optional SFT stage suggests that supervised learning can improve the base model's performance.

* The GRPO loop highlights the reinforcement learning aspect of the training process.

* The diagram focuses on the interaction between the policy and reward models.

* The FEN string indicates a late-game scenario.

### Interpretation

This diagram represents a modern approach to training chess-playing agents, leveraging the power of large language models and reinforcement learning. The use of a base language model (Qwen/Llama) suggests an attempt to transfer knowledge from general language understanding to the specific domain of chess. The annotations and SFT stage aim to align the model's behavior with expert chess knowledge. The GRPO loop allows the agent to learn from its own experiences and improve its policy over time. The reward model acts as a critic, providing feedback to the policy model and guiding its learning process. The diagram suggests a focus on learning to evaluate chess positions and select optimal moves. The inclusion of the FEN string and legal moves provides concrete context for the training process. The optional SFT step indicates a flexible training approach, allowing for both supervised and reinforcement learning techniques to be combined.

</details>

(a) Chess Training Example. (b) Post-training with GRPO.

Figure 1: Overview: (a) A data sample from the Lichess puzzle dataset is formatted into a prompt that includes the current board state and the set of legal moves. (b) At each GRPO step, the policy model generates multiple rollouts of predicted actions. A reward model evaluates these rollouts with dense feedback, including sub-optimal actions. Optionally, reasoning traces from OpenAI o3 are fine-tuned into the base model before RL.

### 2.1 Chess Dataset

#### Training Data.

We use Lichess puzzles collected from the Lichess Puzzle Database https://database.lichess.org as our training dataset. Each puzzle contains an initial board position along with a list of sequential moves which, when played in order, leads to a tactical win. To create our training dataset, we decompose each puzzle’s solution trajectory into individual position-action pairs. Formally, each puzzle is denoted as a trajectory $g^{(i)}=(s^{(i)}_{0},a^{*(i)}_{0},s^{(i)}_{1},a^{*(i)}_{1},\ldots,s^{(i)}_{T_{i}})$ , where $i\in\{1,\ldots,N\}$ indexes the $i$ -th puzzle, $s^{(i)}_{t}$ is the board position at time step $t$ , $a^{*(i)}_{t}$ is the optimal move, and $T_{i}$ is the trajectory length. We construct our position-diverse training dataset by aggregating all such pairs: $\mathcal{D}=\{\,(s^{(i)}_{t},\,a^{*(i)}_{t})\mid i\in\{1,\dots,N\},\;0\leq t<T_{i}\}\!$ , resulting in a total of 19.2k training samples for our dataset $\mathcal{D}$ .

#### Prompt template.

We require a textual interface which LLMs can reason about chess positions. Based on our collected chess dataset $\mathcal{D}$ , we adopt a concise prompt format using Forsyth–Edwards Notation (FEN) (Edwards, 1994) format for board states and Standard Algebraic Notation (SAN) format for moves. Alternative representations of chess data, such as full move histories in Portable Game Notation (PGN) or Universal Chess Interface (UCI) move notation, did not yield measurable improvements. We also require the model to place reasoning in <think> tags and answers in <answer> tags (Guo et al., 2025; Zhao et al., 2025a). See Appendix A, C.1 for qualitative examples and ablation studies on prompt formatting.

### 2.2 Reinforcement Learning Fine Tuning

To train LLMs with RL on chess, we adopt GRPO (Shao et al., 2024) for policy improvement, which has recently been shown to be effective by contrasting multiple rollouts of the same prompt (Guo et al., 2025). Specifically, we employ two alternative reward schemes:

- Sparse reward: Binary indicator whether predicted move $\hat{a}_{t}$ matches optimal answer $a_{t}^{\star}$ :

$$

r_{\text{sparse}}\;=\;\mathbf{1}[\,\hat{a}_{t}=a_{t}^{\star}\,].

$$

- Dense reward: A dense real-valued score provided by a pre-trained action-value network $Q_{\theta}(s,a)$ from Ruoss et al. (2024) that predicts post-move win probability:

$$

r_{\text{dense}}\;=\;Q_{\theta}(s_{t},a_{t}),\qquad Q_{\theta}(s,a)\in[0,1].

$$

The critic $Q_{\theta}(s,a)$ is a 270 M-parameter, 16-layer decoder-only Transformer (Vaswani et al., 2017) trained for 10 M optimization steps on 15B Stockfish annotated state-action pairs with the HL-Gauss loss (Imani & White, 2018). Querying this expert network during RL fine-tuning can be viewed as a form of knowledge distillation (Zhang et al., 2025c): dense win-rate evaluations inject the teacher’s strategic insight, guiding the student for not only optimal moves, but sub-optimal moves also. The critic itself attains 2299 ELO and achieves 95.4% accuracy on Lichess puzzles.

We also include two auxiliary binary rewards: (i) $r_{\text{fmt}}\in\{0,1\}$ for proper tag formatting (<think> for reasoning and <answer> for answers), and (ii) $r_{\text{lang}}\in\{0,1\}$ to enforce that the output is in English. The total training reward at step $t$ is therefore $r_{t}=\lambda_{\text{sparse}}r_{\text{sparse}}+\lambda_{\text{dense}}r_{\text{dense}}+\lambda_{\text{fmt}}r_{\text{fmt}}+\lambda_{\text{lang}}r_{\text{lang}}$ . We set $(\lambda_{\text{sparse}}=1,\lambda_{\text{dense}}=0)$ for the sparse reward setting and $(\lambda_{\text{sparse}}=0,\lambda_{\text{dense}}=1)$ for the dense reward setting.

## 3 Experiments

We now present a series of experiments designed to evaluate post-training LLM on chess with RLVR. Further details regarding experiment setup are provided in Appendix B.

### 3.1 RL Fine-tuning

<details>

<summary>fig/chess_rl_training.png Details</summary>

### Visual Description

## Line Chart: Licheness Puzzle Accuracy vs. Training Step for Different Models

### Overview

This image presents three line charts, each displaying the Licheness Puzzle Accuracy (ACC) as a function of Training Step for three different language models: Qwen2.5-3B, Qwen2.5-7B, and Llama3.1-8B. Each chart includes three data series representing different reward schemes: Dense reward, Sparse reward, and Action SFT.

### Components/Axes

* **X-axis:** Training Step, ranging from 0 to 150.

* **Y-axis:** Licheness Puzzle ACC, ranging from 0.00 to 0.30 (scales vary slightly between charts).

* **Legend:** Located in the bottom-left corner of the first chart (Qwen2.5-3B), and applies to all three charts.

* **Dense reward:** Represented by a solid blue line.

* **Sparse reward:** Represented by a solid light-blue line.

* **Action SFT:** Represented by a dotted red line.

* **Titles:** Each chart is labeled with the corresponding model name: "Qwen2.5-3B", "Qwen2.5-7B", and "Llama3.1-8B".

### Detailed Analysis

**Qwen2.5-3B Chart:**

* **Dense reward:** Starts at approximately 0.01 at Training Step 0, increases steadily to approximately 0.23 at Training Step 150. The line exhibits a slight plateau between Training Steps 90 and 120.

* **Sparse reward:** Starts at approximately 0.00 at Training Step 0, increases rapidly to approximately 0.16 at Training Step 60, then plateaus around 0.16-0.18 until Training Step 150.

* **Action SFT:** Remains relatively constant at approximately 0.15 across all Training Steps.

**Qwen2.5-7B Chart:**

* **Dense reward:** Starts at approximately 0.02 at Training Step 0, increases rapidly and reaches approximately 0.30 at Training Step 120, then remains stable.

* **Sparse reward:** Starts at approximately 0.00 at Training Step 0, increases rapidly to approximately 0.20 at Training Step 60, then continues to increase more slowly to approximately 0.25 at Training Step 150.

* **Action SFT:** Remains relatively constant at approximately 0.15 across all Training Steps.

**Llama3.1-8B Chart:**

* **Dense reward:** Starts at approximately 0.03 at Training Step 0, increases rapidly to approximately 0.30 at Training Step 60, then fluctuates between approximately 0.28 and 0.32 until Training Step 150.

* **Sparse reward:** Starts at approximately 0.00 at Training Step 0, increases rapidly to approximately 0.22 at Training Step 60, then increases more slowly to approximately 0.27 at Training Step 150.

* **Action SFT:** Remains relatively constant at approximately 0.15 across all Training Steps.

### Key Observations

* The "Dense reward" consistently outperforms the "Sparse reward" across all three models.

* The "Action SFT" reward scheme shows minimal improvement with increasing Training Steps, remaining consistently low.

* Qwen2.5-7B and Llama3.1-8B achieve higher overall accuracy compared to Qwen2.5-3B.

* Llama3.1-8B exhibits more fluctuation in the "Dense reward" line after reaching peak accuracy, suggesting potential instability or overfitting.

### Interpretation

The data suggests that the "Dense reward" scheme is the most effective for training these language models on the Licheness Puzzle task. The higher accuracy achieved by Qwen2.5-7B and Llama3.1-8B indicates that model size plays a significant role in performance. The consistent low performance of "Action SFT" suggests that this reward scheme is not well-suited for this particular task or requires further tuning. The fluctuations observed in the Llama3.1-8B "Dense reward" line could indicate the need for regularization techniques to prevent overfitting, or it could be inherent noise in the training process. The rapid initial increase in accuracy for all models suggests that the early stages of training are crucial for establishing a strong foundation. The plateauing of the "Sparse reward" lines indicates diminishing returns as training progresses, highlighting the benefits of more frequent and informative reward signals.

</details>

Figure 2: Evaluation performance comparison of RL fine-tuned models.

We fine-tuned Qwen2.5-3B, Qwen2.5-7B and Llama3.1-8B on our Lichess position-action pair dataset $\mathcal{D}$ . For each model, we trained three variants: one with sparse rewards, one with dense expert guidance, and one baseline variant fine-tuned directly via supervised learning (SFT) on optimal actions, without RL. The supervised baseline helps evaluate performance when no explicit reasoning is involved.

Figure 2 shows that dense reward models generally outperform sparse reward variants, with sparse rewards completely failing for Qwen2.5-3B and Llama3.1-8B. Dense reward models also surpass SFT baselines, demonstrating the value of reasoning over direct action prediction. However, all models plateau around 25-30% puzzle accuracy, well below expert performance (1800 ELO models achieve 66.5% (Ruoss et al., 2024)). Qualitative analysis (Appendix D) reveals that while models show structured reasoning and systematic move exploration, they lack strategic coherence and long-term evaluation. In summary, although RL improves tactical reasoning particularly with expert-guided feedback, achieving expert-level strategic chess understanding from scalar rewards remains challenging for LLMs.

<details>

<summary>fig/chess_reasoning_training.png Details</summary>

### Visual Description

\n

## Line Chart: Lichess Puzzle Accuracy vs. Training Step for Two Models

### Overview

This image presents two line charts comparing the performance of two language models, Qwen2.5-7B and Llama3.1-8B, on the Lichess puzzle accuracy task during training. Each chart displays two lines representing models trained *with* reasoning Supervised Fine-Tuning (SFT) and *without* reasoning SFT. The x-axis represents the training step, and the y-axis represents the Lichess puzzle accuracy.

### Components/Axes

* **X-axis (Both Charts):** "Training Step", ranging from 0 to 150. Gridlines are present at intervals of 30.

* **Y-axis (Both Charts):** "Lichess Puzzle Acc", ranging from 0.00 to 0.30. Gridlines are present at intervals of 0.05.

* **Chart 1 Title:** "Qwen2.5-7B"

* **Chart 2 Title:** "Llama3.1-8B"

* **Legend (Both Charts):** Located in the bottom-left corner.

* Blue Line: "w/ Reasoning SFT"

* Gray Line: "w/o Reasoning SFT"

### Detailed Analysis or Content Details

**Qwen2.5-7B Chart:**

* **w/ Reasoning SFT (Blue Line):** The line starts at approximately 0.02 at Training Step 0. It increases rapidly to around 0.22 at Training Step 30. The line continues to increase, leveling off around 0.28-0.29 between Training Steps 90 and 150.

* **w/o Reasoning SFT (Gray Line):** The line starts at approximately 0.00 at Training Step 0. It increases more slowly than the blue line, reaching around 0.15 at Training Step 30. The line continues to increase, reaching approximately 0.27 at Training Step 90, and then fluctuates between 0.27 and 0.29 until Training Step 150.

**Llama3.1-8B Chart:**

* **w/ Reasoning SFT (Blue Line):** The line starts at approximately 0.02 at Training Step 0. It increases rapidly to around 0.24 at Training Step 30. The line plateaus around 0.26-0.27 between Training Steps 60 and 150, with some minor fluctuations.

* **w/o Reasoning SFT (Gray Line):** The line starts at approximately 0.01 at Training Step 0. It increases rapidly to around 0.20 at Training Step 30. The line continues to increase, reaching approximately 0.31 at Training Step 60, then decreases to around 0.28 at Training Step 90, and fluctuates between 0.28 and 0.31 until Training Step 150.

### Key Observations

* For both models, training *with* reasoning SFT consistently results in higher Lichess puzzle accuracy than training *without* reasoning SFT, especially in the early stages of training.

* The Qwen2.5-7B model appears to reach a plateau in accuracy earlier than the Llama3.1-8B model.

* The Llama3.1-8B model *without* reasoning SFT shows a significant peak in accuracy around Training Step 60, followed by a slight decrease and then stabilization. This is a notable outlier.

* The difference in performance between the two SFT strategies diminishes as training progresses, suggesting that the benefits of reasoning SFT may decrease over time.

### Interpretation

The data suggests that incorporating reasoning SFT significantly improves the ability of both Qwen2.5-7B and Llama3.1-8B to solve Lichess puzzles. This indicates that explicitly training the models to reason enhances their performance on this task. The plateau observed in Qwen2.5-7B suggests that the model may have reached its capacity for improvement on this specific task with the given training data and methodology. The peak and subsequent stabilization in Llama3.1-8B (without reasoning SFT) could be due to overfitting to a specific subset of puzzles or a temporary convergence during training. The diminishing difference between the two SFT strategies as training progresses could indicate that the benefits of reasoning SFT are more pronounced during the initial stages of learning, and that other factors become more important as the models become more proficient. Further investigation would be needed to understand the cause of the Llama3.1-8B outlier.

</details>

Figure 3: Evaluation performance of models trained with reasoning SFT followed by RL fine-tuning.

| Qwen2.5-3B Qwen2.5-3B-It Qwen2.5-7B | 0.0% 0.0% 0.0% | 35.8% 53.7% 42.7% |

| --- | --- | --- |

| Qwen2.5-7B-It | 0.0% | 52.0% |

| Llama3.1-8B | 0.0% | 12.7% |

| Llama3.1-8B-It | 0.0% | 52.4% |

Table 1: LLM performance on chess knowledge diagnostic tasks

### 3.2 Reasoning SFT

To probe the cause of the RL plateau, we asked whether domain-specific reasoning traces from advanced reasoning models could enable LLMs better leverage RL signals for chess skill acquisition and overcome the previously observed performance ceiling. To do so, we curated a reasoning SFT corpus of 1k high-quality reasoning traces generated by OpenAI o3, featuring candidate move evaluation, tactical assessment, and strategic justifications (See Appendix B.2 for details). After performing SFT on Qwen2.5-7B and Llama3.1-8B with this corpus, we applied our GRPO RL pipeline with expert critic $Q_{\theta}(s,a)$ feedback.

While LLMs produced markedly more comprehensive reasoning traces after reasoning SFT, Figure 3 shows that they disappointingly exhibited similar puzzle accuracy plateaus when subsequently trained with RL. In fact, Llama3.1-8B’s performance actually declined relative to its non-SFT baseline. These results raise questions about whether the reason behind the chess performance plateau stems not from inadequate reasoning abilities, but from insufficient chess knowledge. Without extensive domain knowledge from pre-training, RL alone may not provide the chess-specific understanding that strategic play demands.

### 3.3 Failure Analysis

To study whether the performance limitation of RLVR for chess stems from inadequate chess domain knowledge, we evaluate LLMs on two diagnostic tasks that require basic and essential but non-trivial understanding of chess rules.

Board–state comprehension: Given a FEN string and a legal sequence of one to five SAN moves, the model predicts the resulting position in FEN. The model must have a faithful internal simulator of chess rules to be successful in this task.

MATE puzzle: Each instance in the MATE dataset (Wang et al., 2024) presents a mid-game position together with two candidate moves. The model must identify the superior move through tactical evaluation.

As summarized in Table 1, all tested LLMs show poor performance on both diagnostics, confirming inadequate internal models of chess state transitions and tactics. We tested both Base and Instruct variants—Instruct models for better formatting compliance, though they share the same underlying knowledge as their Base counterparts. This shortfall strongly supports our earlier hypothesis: current models lack fundamental understanding of chess, preventing them from developing high performance through reward optimization alone. Since models cannot reliably track game states or recognize elementary tactics, reward optimization fails to develop high-quality strategic reasoning and elicit expert-level play.

## 4 Related Work

#### RLVR for LLM reasoning.

RLVR (Lambert et al., 2024) has emerged as a powerful paradigm to elicit LLM reasoning for tasks with deterministic evaluation criteria. Notably, in mathematical domains, simple rule-based rewards have proven surprisingly effective without requiring complex reward models (Guo et al., 2025; Yu et al., 2025; Wang et al., 2025), achieving performance comparable to, or even surpassing, that of humans on mathematical olympiads such as AIME. Furthermore, some works have extended the application of RLVR to other domains as well (Liu et al., 2025b; Zhang et al., 2025a; Gurung & Lapata, 2025).

Despite these successes, fundamental questions about RLVR’s success remain underexplored. Recent studies suggest that its success often originates from abundant knowledge already present in base models rather than being genuinely learned through RL (Liu et al., 2025a; Zhao et al., 2025b; Li et al., 2025; Shao et al., 2025; Yue et al., 2025). A systematic investigation across models, tasks, RL algorithms, and scale remains necessary to fully understand LLM capabilities and its limitations in developing genuine reasoning abilities through RL.

#### LLMs in chess.

Recent work explores how LLMs can tackle chess tasks, complementing traditional chess engines. While specialized networks like AlphaZero (Silver et al., 2017) achieve superhuman performance via self-play and search, they lack human-readable insight. Conversely, LLMs can explain moves in natural language but often lack precise strategic understanding, leading to hallucinations or illegal moves. To bridge this gap, researchers have integrated chess engines with LLMs for fluent move commentary (Kim et al., 2025) and developed chess-centric models like ChessGPT (Feng et al., 2023) through domain-specific pre-training. Fine-tuning on expert rationales from the MATE dataset (Wang et al., 2024) enables Llama models to outperform GPT-4 and Claude at binary move selection. Most notably, ChessLLM (Zhang et al., 2025b) demonstrates that an LLM fine-tuned on 20B tokens of complete games can play chess at an expert level, attaining an Elo of 1788 without external search; however FEN-centric training risks overfitting and degrading natural language responses into fragmented notations.

Despite these advances, existing approaches primarily rely on supervised learning from human games or engine guidance rather than autonomous strategic development. Whether LLMs can develop genuine chess reasoning through RL remains largely unexplored, particularly in understanding if such learning produces transferable strategic insights or merely pattern memorization. Our work addresses this gap using chess as a controlled testbed for studying autonomous strategic development in LLMs.

## 5 Conclusion & Limitations

We investigated whether LLMs can develop strategic reasoning through RLVR on chess, introducing a novel approach that utilizes dense rewards from pretrained action-value networks. While dense rewards often outperform sparse ones, all models plateau well below human experts, revealing fundamental limitations in current post-training approaches. Surprisingly, despite producing more structured reasoning when trained on advanced traces from OpenAI o3, models still yield similar performance plateaus when subsequently trained with RL. Our failure analysis reveals a potential root cause: current LLMs demonstrate inadequate internal chess knowledge. These results raise speculation that RL cannot overcome impoverished domain knowledge. While RL excels at optimizing behavior toward rewards, LLMs cannot learn de novo the foundational knowledge necessary for strategic thinking when absent from pretraining. We hypothesize that prior RLVR successes in math domains stem from rich pretraining exposure, unlike chess which receives minimal coverage. Our findings align with recent work showing that RL only amplifies existing capabilities (Li et al., 2025; Liu et al., 2025a; Yue et al., 2025). For strategic reasoning in new domains, adequate domain knowledge during pretraining is essential—post-training RL alone is insufficient.

## References

- Edwards (1994) Steven J. Edwards. Standard: Portable game notation specification and implementation guide, 1994. URL https://ia802908.us.archive.org/26/items/pgn-standard-1994-03-12/PGN_standard_1994-03-12.txt.

- Feng et al. (2023) Xidong Feng, Yicheng Luo, Ziyan Wang, Hongrui Tang, Mengyue Yang, Kun Shao, David Mguni, Yali Du, and Jun Wang. Chessgpt: Bridging policy learning and language modeling. Advances in Neural Information Processing Systems, 36:7216–7262, 2023.

- Grattafiori et al. (2024) Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, et al. The llama 3 herd of models. arXiv preprint arXiv:2407.21783, 2024.

- Guo et al. (2025) Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- Gurung & Lapata (2025) Alexander Gurung and Mirella Lapata. Learning to reason for long-form story generation. arXiv preprint arXiv:2503.22828, 2025.

- Imani & White (2018) Ehsan Imani and Martha White. Improving regression performance with distributional losses. In International conference on machine learning, pp. 2157–2166. PMLR, 2018.

- Kim et al. (2025) Jaechang Kim, Jinmin Goh, Inseok Hwang, Jaewoong Cho, and Jungseul Ok. Bridging the gap between expert and language models: Concept-guided chess commentary generation and evaluation. In Proceedings of the 2025 Conference of the Nations of the Americas Chapter of the Association for Computational Linguistics: Human Language Technologies (Volume 1: Long Papers), Albuquerque, New Mexico, April 2025. Association for Computational Linguistics.

- Lambert et al. (2024) Nathan Lambert, Jacob Morrison, Valentina Pyatkin, Shengyi Huang, Hamish Ivison, Faeze Brahman, Lester James V Miranda, Alisa Liu, Nouha Dziri, Shane Lyu, et al. T $\backslash$ " ulu 3: Pushing frontiers in open language model post-training. arXiv preprint arXiv:2411.15124, 2024.

- Li et al. (2025) Dacheng Li, Shiyi Cao, Tyler Griggs, Shu Liu, Xiangxi Mo, Eric Tang, Sumanth Hegde, Kourosh Hakhamaneshi, Shishir G Patil, Matei Zaharia, et al. Llms can easily learn to reason from demonstrations structure, not content, is what matters! arXiv preprint arXiv:2502.07374, 2025.

- Liu et al. (2025a) Zichen Liu, Changyu Chen, Wenjun Li, Penghui Qi, Tianyu Pang, Chao Du, Wee Sun Lee, and Min Lin. Understanding r1-zero-like training: A critical perspective. arXiv preprint arXiv:2503.20783, 2025a.

- Liu et al. (2025b) Ziyu Liu, Zeyi Sun, Yuhang Zang, Xiaoyi Dong, Yuhang Cao, Haodong Duan, Dahua Lin, and Jiaqi Wang. Visual-rft: Visual reinforcement fine-tuning. arXiv preprint arXiv:2503.01785, 2025b.

- Park et al. (2025) Dongmin Park, Minkyu Kim, Beongjun Choi, Junhyuck Kim, Keon Lee, Jonghyun Lee, Inkyu Park, Byeong-Uk Lee, Jaeyoung Hwang, Jaewoo Ahn, et al. Orak: A foundational benchmark for training and evaluating llm agents on diverse video games. arXiv preprint arXiv:2506.03610, 2025.

- Qwen: et al. (2025) Qwen:, An Yang, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chengyuan Li, Dayiheng Liu, Fei Huang, Haoran Wei, Huan Lin, Jian Yang, Jianhong Tu, Jianwei Zhang, Jianxin Yang, Jiaxi Yang, Jingren Zhou, Junyang Lin, Kai Dang, Keming Lu, Keqin Bao, Kexin Yang, Le Yu, Mei Li, Mingfeng Xue, Pei Zhang, Qin Zhu, Rui Men, Runji Lin, Tianhao Li, Tianyi Tang, Tingyu Xia, Xingzhang Ren, Xuancheng Ren, Yang Fan, Yang Su, Yichang Zhang, Yu Wan, Yuqiong Liu, Zeyu Cui, Zhenru Zhang, and Zihan Qiu. Qwen2.5 technical report. 2025. URL https://arxiv.org/abs/2412.15115.

- Ruoss et al. (2024) Anian Ruoss, Grégoire Delétang, Sourabh Medapati, Jordi Grau-Moya, Li K Wenliang, Elliot Catt, John Reid, Cannada A Lewis, Joel Veness, and Tim Genewein. Amortized planning with large-scale transformers: A case study on chess. Advances in Neural Information Processing Systems, 37:65765–65790, 2024.

- Shao et al. (2025) Rulin Shao, Shuyue Stella Li, Rui Xin, Scott Geng, Yiping Wang, Sewoong Oh, Simon Shaolei Du, Nathan Lambert, Sewon Min, Ranjay Krishna, et al. Spurious rewards: Rethinking training signals in rlvr. arXiv preprint arXiv:2506.10947, 2025.

- Shao et al. (2024) Zhihong Shao, Peiyi Wang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y Wu, et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300, 2024.

- Sheng et al. (2024) Guangming Sheng, Chi Zhang, Zilingfeng Ye, Xibin Wu, Wang Zhang, Ru Zhang, Yanghua Peng, Haibin Lin, and Chuan Wu. Hybridflow: A flexible and efficient rlhf framework. arXiv preprint arXiv: 2409.19256, 2024.

- Silver et al. (2017) David Silver, Thomas Hubert, Julian Schrittwieser, Ioannis Antonoglou, Matthew Lai, Arthur Guez, Marc Lanctot, Laurent Sifre, Dharshan Kumaran, Thore Graepel, et al. Mastering chess and shogi by self-play with a general reinforcement learning algorithm. arXiv preprint arXiv:1712.01815, 2017.

- Vaswani et al. (2017) Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. Attention is all you need. Advances in neural information processing systems, 30, 2017.

- Wang et al. (2024) Shu Wang, Lei Ji, Renxi Wang, Wenxiao Zhao, Haokun Liu, Yifan Hou, and Ying Nian Wu. Explore the reasoning capability of llms in the chess testbed. arXiv preprint arXiv:2411.06655, 2024.

- Wang et al. (2025) Yiping Wang, Qing Yang, Zhiyuan Zeng, Liliang Ren, Liyuan Liu, Baolin Peng, Hao Cheng, Xuehai He, Kuan Wang, Jianfeng Gao, et al. Reinforcement learning for reasoning in large language models with one training example. arXiv preprint arXiv:2504.20571, 2025.

- Yu et al. (2025) Qiying Yu, Zheng Zhang, Ruofei Zhu, Yufeng Yuan, Xiaochen Zuo, Yu Yue, Tiantian Fan, Gaohong Liu, Lingjun Liu, Xin Liu, et al. Dapo: An open-source llm reinforcement learning system at scale. arXiv preprint arXiv:2503.14476, 2025.

- Yue et al. (2025) Yang Yue, Zhiqi Chen, Rui Lu, Andrew Zhao, Zhaokai Wang, Shiji Song, and Gao Huang. Does reinforcement learning really incentivize reasoning capacity in llms beyond the base model? arXiv preprint arXiv:2504.13837, 2025.

- Zhang et al. (2025a) Sheng Zhang, Qianchu Liu, Guanghui Qin, Tristan Naumann, and Hoifung Poon. Med-rlvr: Emerging medical reasoning from a 3b base model via reinforcement learning. arXiv preprint arXiv:2502.19655, 2025a.

- Zhang et al. (2024) Yadong Zhang, Shaoguang Mao, Tao Ge, Xun Wang, Adrian de Wynter, Yan Xia, Wenshan Wu, Ting Song, Man Lan, and Furu Wei. Llm as a mastermind: A survey of strategic reasoning with large language models. arXiv preprint arXiv:2404.01230, 2024.

- Zhang et al. (2025b) Yinqi Zhang, Xintian Han, Haolong Li, Kedi Chen, and Shaohui Lin. Complete chess games enable LLM become a chess master. Association for Computational Linguistics, 2025b.

- Zhang et al. (2025c) Yudi Zhang, Lu Wang, Meng Fang, Yali Du, Chenghua Huang, Jun Wang, Qingwei Lin, Mykola Pechenizkiy, Dongmei Zhang, Saravan Rajmohan, et al. Distill not only data but also rewards: Can smaller language models surpass larger ones? arXiv preprint arXiv:2502.19557, 2025c.

- Zhao et al. (2025a) Andrew Zhao, Yiran Wu, Yang Yue, Tong Wu, Quentin Xu, Matthieu Lin, Shenzhi Wang, Qingyun Wu, Zilong Zheng, and Gao Huang. Absolute zero: Reinforced self-play reasoning with zero data. arXiv preprint arXiv:2505.03335, 2025a.

- Zhao et al. (2025b) Rosie Zhao, Alexandru Meterez, Sham Kakade, Cengiz Pehlevan, Samy Jelassi, and Eran Malach. Echo chamber: Rl post-training amplifies behaviors learned in pretraining. arXiv preprint arXiv:2504.07912, 2025b.

- Zheng et al. (2024) Yaowei Zheng, Richong Zhang, Junhao Zhang, Yanhan Ye, Zheyan Luo, Zhangchi Feng, and Yongqiang Ma. Llamafactory: Unified efficient fine-tuning of 100+ language models. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 3: System Demonstrations), Bangkok, Thailand, 2024. Association for Computational Linguistics. URL http://arxiv.org/abs/2403.13372.

## Appendix A Input prompt format

For our main experiments, we employ the input prompt template shown in Figure 4 for both training and evaluation. This prompt format consists of several key components: (i) the current board state represented in Forsyth–Edwards Notation (FEN), (ii) the complete set of legal moves available from the current position written in Standard Algebraic Notation (SAN), and (iii) structured instructions that guide the model to produce reasoning enclosed in <think> tags followed by the chosen move in <answer> tags. Additionally, the prompt includes a concise reminder of fundamental chess rules to support the model’s reasoning process. This comprehensive prompt design ensures that the model has access to all necessary information about the current game state while maintaining a consistent format that facilitates both learning during training and reliable evaluation during testing.

Input prompt template

A conversation between User and Assistant. The User asks the best move to make for a given chess board state, and the Assistant solves it. The Assistant is a professional chess player who first thinks about the reasoning process in the mind and then provides the User with the answer. The Assistant’s reasoning process and answer must be enclosed within <think> </think> and <answer> </answer> tags, respectively. The reasoning process should describe how the Assistant analyzes the position and decide on the best move, including: - A strategic evaluation of the position. - A comparison of key candidate moves. - For each candidate, consider the opponent’s likely response and outcome. - Conclude with a clear justification for your final choice. The answer must be in SAN notation, strictly using the moving piece and the destination square (e.g., Nf3, Rxf2, c5). Reminder of chess rules: - Bishops move diagonally. - Rooks move horizontally or vertically. - Knights jump in an L-shape. - Queens combine rook and bishop movement. - Kings move one square in any direction. - Pawns move forward, capture diagonally, and can promote. User: The current FEN string is <fen> and legal moves are <san_1> <san_2> …<san_L>. What is the best move to make out of the list of legal moves? Assistant: Let me solve this step by step. <think>

Figure 4: Input prompt format for chess reasoning tasks with FEN for board state and SAN notation for move action. This prompt structure was used throughout our main studies.

## Appendix B Experiment Setup

### B.1 Lichess Puzzle Dataset

#### Training data collection.

We collect Lichess puzzles spanning solver ratings from 200 to 2800 Elo from the Lichess Puzzle Database. Each puzzle contains an initial board position and a sequence of moves that leads to a tactical victory. To create position-action training pairs, we decompose each puzzle’s solution trajectory, where a puzzle with $T$ moves generates $T$ individual training samples. This decomposition process yields a total of 19.2k training samples for our dataset $\mathcal{D}$ .

#### Evaluation protocol.

We evaluate chess understanding through puzzle-solving capability using a held-out evaluation dataset of 10K Lichess puzzles across various difficulty levels from (Ruoss et al., 2024). Our evaluation metric is puzzle accuracy, defined as the percentage of puzzles where the model’s complete action sequence exactly matches the ground-truth solution sequence. This strict evaluation criterion ensures that models must not only identify good moves but solve puzzles entirely and correctly, providing a comprehensive assessment of chess reasoning ability. To ensure consistency, we use the same prompt template during evaluation as in training.

### B.2 Reasoning Trace Collection

We used OpenAI’s o3 model (reasoning: high, summary: detailed) to collect sophisticated chess reasoning data samples for our reasoning SFT experiments. We provide an example of the synthetic o3 reasoning trajectory in Figure 23, 24. From o3 reasoning traces, we observed fewer hallucinations about spatial positioning and reasoning compared to our base models, while evaluating strategic positions in terms of both breadth and width.

To build the SFT corpus, we sample 1,000 chess problems from our larger collection, balancing them evenly across the entire ELO range. We create each SFT example by concatenating o3 reasoning summaries and final generation to each problem. This yields a high-quality chess SFT dataset, which we expected would strengthen our model’s strategic reasoning capability by distilling structured rationale before subsequent RL training. We include the token length distribution of the SFT data in Figure 5.

<details>

<summary>fig/o3_reasoning_token_distributions_qwen_vs_llama.png Details</summary>

### Visual Description

\n

## Histograms: OpenAI o3 Reasoning Data Token Length Distributions

### Overview

The image presents two histograms comparing the distributions of token lengths for the OpenAI o3 reasoning data, using two different tokenizers: Owen2.5 and Llama3.1. Both histograms share the same x and y axes, allowing for a direct visual comparison of the token length distributions.

### Components/Axes

* **Title:** OpenAI o3 Reasoning Data Token Length Distributions (centered at the top)

* **X-axis Label:** Token Count (present on both histograms, bottom-center)

* Scale: 400 to 1600, with increments of 200.

* **Y-axis Label:** Frequency (present on both histograms, left-center)

* Scale: 0 to 175, with increments of 25.

* **Legend:** Located in the top-right corner of each histogram.

* Owen2.5 Tokenizer: A solid red dashed line labeled "Mean: 974.0"

* Llama3.1 Tokenizer: A solid red dashed line labeled "Mean: 965.4"

### Detailed Analysis or Content Details

**Histogram 1: Owen2.5 Tokenizer (Left)**

* The histogram is filled with light blue bars.

* The distribution appears approximately normal, but slightly skewed to the right.

* The highest frequency occurs between 800 and 1000 token counts, with a frequency of approximately 130.

* The frequency decreases as the token count moves away from this peak in both directions.

* The mean is indicated by a vertical red dashed line at approximately 974 token count.

* Approximate data points (reading from the histogram):

* 400-600: Frequency ~ 10

* 600-800: Frequency ~ 50

* 800-1000: Frequency ~ 130

* 1000-1200: Frequency ~ 70

* 1200-1400: Frequency ~ 30

* 1400-1600: Frequency ~ 5

**Histogram 2: Llama3.1 Tokenizer (Right)**

* The histogram is filled with light green bars.

* The distribution is also approximately normal, but appears more symmetrical than the Owen2.5 distribution.

* The highest frequency occurs between 1000 and 1200 token counts, with a frequency of approximately 150.

* The frequency decreases as the token count moves away from this peak in both directions.

* The mean is indicated by a vertical red dashed line at approximately 965 token count.

* Approximate data points (reading from the histogram):

* 400-600: Frequency ~ 5

* 600-800: Frequency ~ 40

* 800-1000: Frequency ~ 100

* 1000-1200: Frequency ~ 150

* 1200-1400: Frequency ~ 60

* 1400-1600: Frequency ~ 10

### Key Observations

* Both tokenizers produce distributions centered around a similar mean token count (974.0 vs. 965.4).

* The Owen2.5 tokenizer has a slightly higher mean token count.

* The Llama3.1 tokenizer's distribution is more concentrated around the mean, suggesting less variability in token lengths.

* The Owen2.5 tokenizer's distribution is slightly right-skewed, indicating a higher frequency of longer token sequences.

### Interpretation

The histograms demonstrate the distribution of token lengths in the OpenAI o3 reasoning data when processed by two different tokenizers. The similarity in means suggests that both tokenizers are generally effective at representing the data. However, the differences in distribution shape and variability indicate that the tokenizers handle tokenization differently. The Llama3.1 tokenizer appears to produce more consistent token lengths, while the Owen2.5 tokenizer allows for more variation, potentially capturing more nuanced linguistic information but also potentially leading to longer sequences. This information is crucial for understanding the computational cost and efficiency of using each tokenizer with this dataset, as longer sequences require more processing power. The slight right skew in the Owen2.5 tokenizer could indicate a tendency to break down complex phrases into more tokens, while the Llama3.1 tokenizer might be more aggressive in combining words into fewer tokens.

</details>

Figure 5: Token length distribution of o3 reasoning data per each tokenizer type.

### B.3 Training Hyperparameters

#### Reinforcement learning.

We train our models using the verl (Sheng et al., 2024) framework. We use the same set of hyperparameters to train and evaluate all models when performing RL fine-tuning as shown in Table 2. We fine-tune all models using 4 NVIDIA A100 80GB GPUs. A single training instance takes approximately 14 hours.

Table 2: Reinforcement learning hyperparameters.

| Hyperparameter Training Configuration Training Steps | Value 150 |

| --- | --- |

| Optimizer | AdamW |

| Learning Rate | 1e-6 |

| Gradient Clipping | 1.0 |

| Mini-batch Size | 128 |

| GRPO Configuration | |

| Epochs | 1 |

| Sampling Batch Size | 128 |

| Number of Rollouts | 8 |

| Rollout Temperature | 1.0 |

| KL Loss Coefficient | 1e-3 |

| Entropy Coefficient | 1e-3 |

| Clip Ratio | 0.2 |

| Reward Configuration | |

| Sparse Coefficient ( $\lambda_{\text{sparse}}$ ) | 1 if use sparse reward 0 otherwise |

| Dense Coefficient ( $\lambda_{\text{dense}}$ ) | 1 if use dense reward 0 otherwise |

| Format Coefficient ( $\lambda_{\text{fmt}}$ ) | 0.1 |

| Language Coefficient ( $\lambda_{\text{lang}}$ ) | 0.1 |

#### Supervised Fine-Tuning.

We perform supervised fine-tuning (SFT) using Llama-Factory (Zheng et al., 2024) with hyperparameters shown in Table 3. Training loss is computed only on the model outputs, with input prompts masked. We fine-tune all models using 4 NVIDIA A100 80GB GPUs. A single training instance takes approximately 1 hour.

Table 3: Supervised fine-tuning hyperparameters.

| Number of Samples Epochs Optimizer | 979 10 AdamW |

| --- | --- |

| Learning Rate | 5e-6 |

| Scheduler | Cosine |

| Warmup Ratio | 0.1 |

| Gradient Clipping | 1.0 |

| Mini-batch Size | 32 |

## Appendix C Ablation studies

We provide various ablations on our experiments to make sure our experiments are not simply limited to our specific prompt format or reward design.

### C.1 Prompt Formatting

We conducted systematic experiments on different prompt formats while performing RL with expert critic rewards as our default reward configuration, examining two key design axes: (i) move action representation and (ii) board state representation. Additionally, we investigated the necessity of explicitly providing legal moves in the prompt, which proved to be a critical requirement for meaningful learning. For move action representations, we tested UCI and SAN notations. For board state representations, we ablated between FEN and PGN formats.

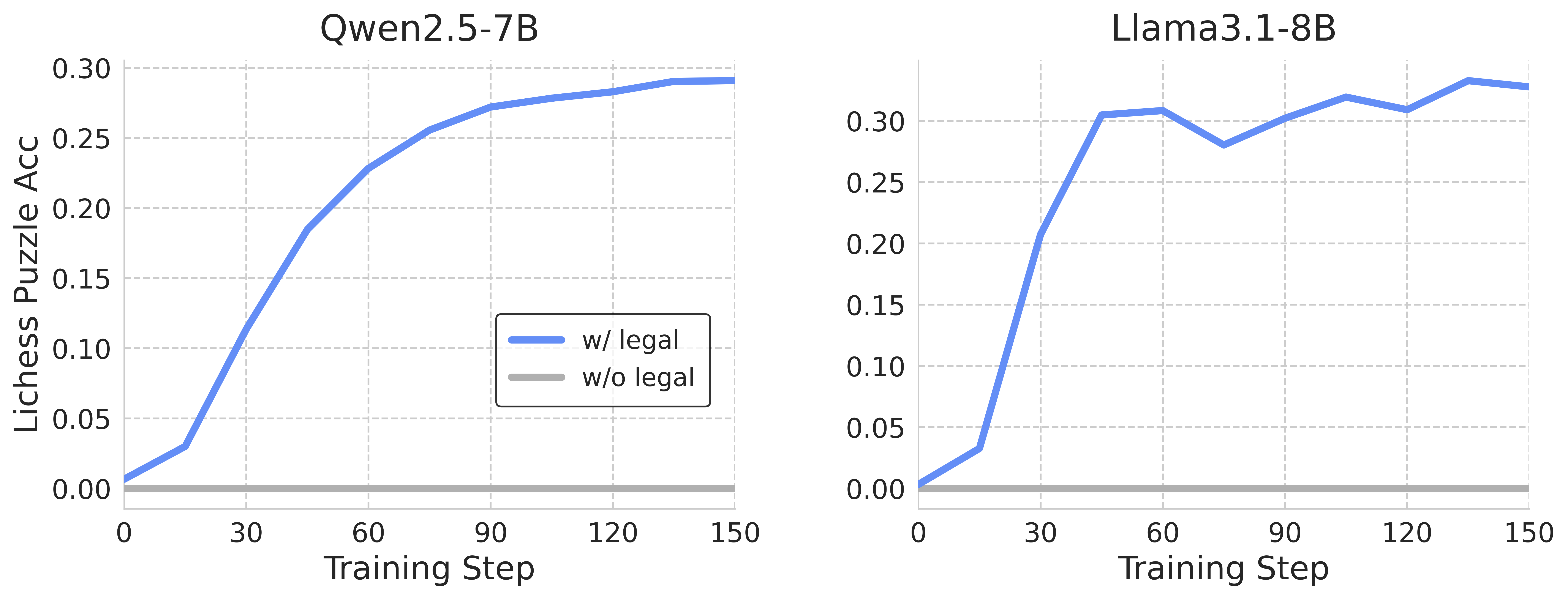

#### Legal moves requirement:

To investigate the necessity of providing legal moves, we conducted experiments using our baseline FEN board representation with SAN move notation, comparing performance with and without explicit legal move information. As demonstrated in Figure 6, we discovered that LLMs cannot learn anything meaningful when legal moves are not explicitly provided in the prompt. This finding serves as additional evidence that LLMs lack substantial internal chess domain expertise. Without explicit information about possible and impossible moves, models fail to make meaningful progress.

<details>

<summary>fig/chess_nolegal_prompt_ablation.png Details</summary>

### Visual Description

## Line Chart: Lichess Puzzle Accuracy vs. Training Step for Two Models

### Overview

The image presents two line charts comparing the performance of two language models, Qwen2.5-7B and Llama3.1-8B, on Lichess puzzles during training. The charts plot "Lichess Puzzle Acc" (Accuracy) on the y-axis against "Training Step" on the x-axis. Each chart displays two lines representing performance "w/ legal" (with legal moves considered) and "w/o legal" (without legal moves considered).

### Components/Axes

* **X-axis:** "Training Step" ranging from 0 to 150. The axis is divided into increments of 30.

* **Y-axis:** "Lichess Puzzle Acc" (Accuracy) ranging from 0.00 to 0.30, divided into increments of 0.05.

* **Left Chart Title:** "Qwen2.5-7B"

* **Right Chart Title:** "Llama3.1-8B"

* **Legend (Left Chart):**

* Blue Line: "w/ legal"

* Gray Line: "w/o legal"

* **Legend (Right Chart):**

* Blue Line: "w/ legal"

* Gray Line: "w/o legal"

* **Gridlines:** Light gray vertical and horizontal gridlines are present in both charts.

### Detailed Analysis or Content Details

**Qwen2.5-7B (Left Chart):**

* **"w/ legal" (Blue Line):** The line starts at approximately 0.01 at Training Step 0. It exhibits a steep upward slope until approximately Training Step 60, reaching around 0.26. The slope then gradually decreases, leveling off around 0.29-0.30 between Training Steps 90 and 150.

* **"w/o legal" (Gray Line):** The line starts at approximately 0.01 at Training Step 0. It rises more slowly than the "w/ legal" line, reaching around 0.15 at Training Step 60. It continues to increase, but at a slower rate, reaching approximately 0.22 at Training Step 150.

**Llama3.1-8B (Right Chart):**

* **"w/ legal" (Blue Line):** The line starts at approximately 0.01 at Training Step 0. It rises rapidly, reaching around 0.28 at Training Step 30. It then plateaus around 0.30-0.32 between Training Steps 60 and 120, with some fluctuations. It decreases slightly to around 0.30 at Training Step 150.

* **"w/o legal" (Gray Line):** The line starts at approximately 0.01 at Training Step 0. It rises quickly, reaching around 0.20 at Training Step 30. It then increases more gradually, reaching approximately 0.27 at Training Step 60. It fluctuates between approximately 0.27 and 0.31 between Training Steps 60 and 150.

### Key Observations

* Both models show a clear positive correlation between training steps and Lichess puzzle accuracy.

* For both models, performance "w/ legal" consistently outperforms performance "w/o legal" throughout the training process.

* Llama3.1-8B reaches a higher peak accuracy than Qwen2.5-7B.

* Llama3.1-8B appears to converge faster than Qwen2.5-7B, reaching a plateau in accuracy earlier in the training process.

* Qwen2.5-7B shows a more gradual increase in accuracy over the entire training period.

### Interpretation

The data suggests that both Qwen2.5-7B and Llama3.1-8B are capable of learning to solve Lichess puzzles through training. The consistent outperformance of the "w/ legal" condition indicates that incorporating legal move constraints significantly improves the models' ability to solve puzzles correctly. The faster convergence and higher peak accuracy of Llama3.1-8B suggest that it may be a more efficient and effective model for this task, or that it benefits more from the legal move constraints. The plateauing of accuracy in both models after a certain number of training steps suggests that further training may yield diminishing returns. The fluctuations in the Llama3.1-8B "w/ legal" line between 60 and 150 training steps could indicate instability or sensitivity to the training data during that period. The difference in the learning curves between the two models could be attributed to differences in model architecture, training data, or hyperparameters.

</details>

Figure 6: Evaluation performance comparison with and without legal moves in the input prompt for Qwen2.5-7B and Llama3.1-8B.

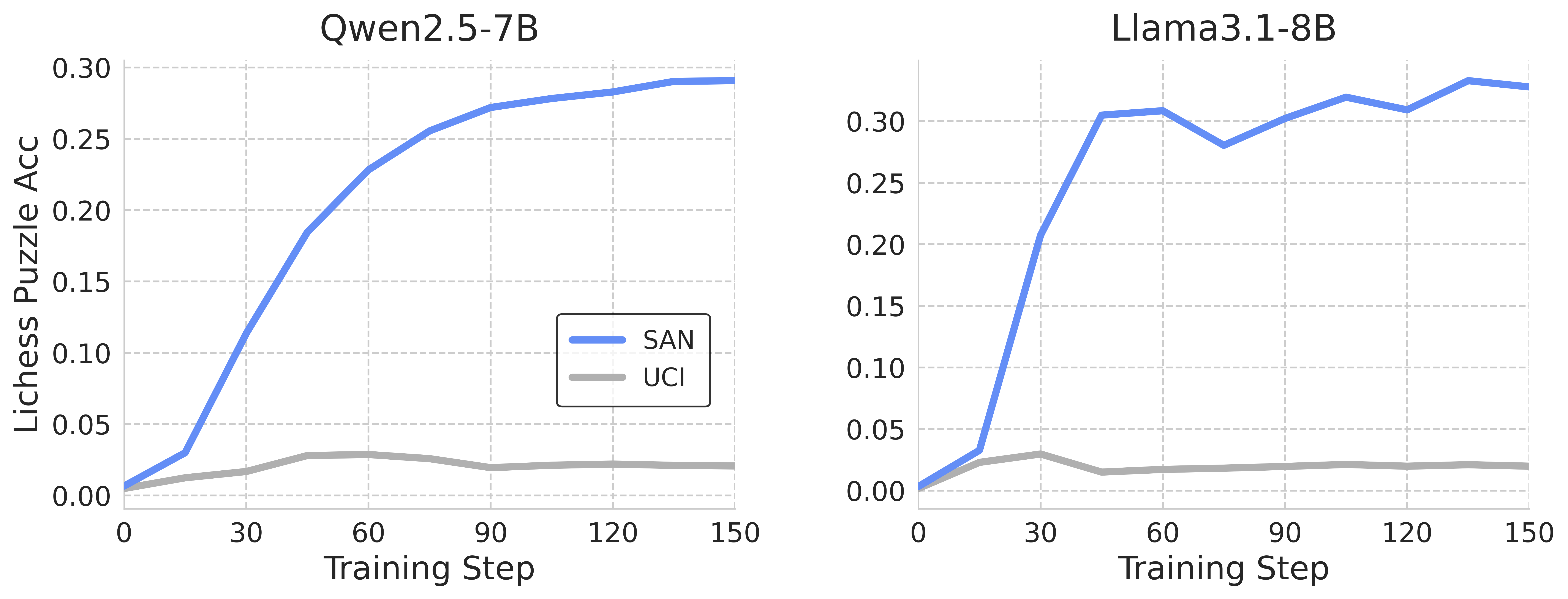

#### Move notation impact:

As shown in Figure 7, we found that while SAN notation enables meaningful learning, UCI notation results in substantially degraded performance. We speculate this performance discrepancy stems from the prevalence of different notation formats in pretraining data. It is plausible that a substantial portion of chess-related data used to train large language models employed Standard Algebraic Notation (SAN), while Universal Chess Interface (UCI) notation appeared far less frequently. Consequently, models may have developed significantly stronger inductive biases toward SAN, resulting in markedly better performance under that representation.

<details>

<summary>fig/chess_action_prompt_ablation.png Details</summary>

### Visual Description

## Line Chart: Lichess Puzzle Accuracy vs. Training Step for Two Models

### Overview

The image presents two line charts side-by-side, comparing the performance of two language models – Qwen2.5-7B and Llama3.1-8B – on Lichess puzzles during training. The y-axis represents the puzzle accuracy (Lichess Puzzle Acc), and the x-axis represents the training step. Each chart displays two lines representing different evaluation methods: SAN and UCI.

### Components/Axes

* **X-axis:** Training Step (ranging from 0 to 150, with markers at 0, 30, 60, 90, 120, and 150).

* **Y-axis:** Lichess Puzzle Acc (ranging from 0.00 to 0.30, with markers at 0.05 intervals).

* **Left Chart Title:** Qwen2.5-7B

* **Right Chart Title:** Llama3.1-8B

* **Legend (Left Chart):**

* SAN (Blue Line)

* UCI (Gray Line)

* **Legend (Right Chart):**

* SAN (Blue Line)

* UCI (Gray Line)

### Detailed Analysis or Content Details

**Qwen2.5-7B Chart:**

* **SAN Line (Blue):** The SAN line starts at approximately 0.02 at Training Step 0. It exhibits a steep upward trend until around Training Step 60, reaching approximately 0.26. It plateaus between Training Steps 60 and 120, hovering around 0.28. Finally, it slightly decreases to approximately 0.27 at Training Step 150.

* **UCI Line (Gray):** The UCI line starts at approximately 0.01 at Training Step 0. It shows a slow, gradual increase throughout the entire training process. At Training Step 150, it reaches approximately 0.04.

**Llama3.1-8B Chart:**

* **SAN Line (Blue):** The SAN line begins at approximately 0.03 at Training Step 0. It rapidly increases to approximately 0.28 by Training Step 30. It then fluctuates between approximately 0.28 and 0.31, peaking at around 0.31 at Training Step 90. It decreases slightly to approximately 0.30 at Training Step 150.

* **UCI Line (Gray):** The UCI line starts at approximately 0.01 at Training Step 0. It shows a slow, gradual increase throughout the training process, similar to the Qwen2.5-7B model. At Training Step 150, it reaches approximately 0.04.

### Key Observations

* Both models show significantly higher accuracy when evaluated using the SAN method compared to the UCI method.

* Qwen2.5-7B reaches a plateau in SAN accuracy relatively early in training (around step 60), while Llama3.1-8B continues to fluctuate and maintain a higher accuracy for a longer period.

* The UCI accuracy for both models remains consistently low throughout the training process.

* Llama3.1-8B demonstrates a faster initial learning rate (SAN line) compared to Qwen2.5-7B.

### Interpretation

The data suggests that both Qwen2.5-7B and Llama3.1-8B models improve their ability to solve Lichess puzzles as they are trained. The substantial difference in accuracy between the SAN and UCI evaluation methods indicates that the UCI method may be less sensitive to the models' performance or may be evaluating different aspects of the problem-solving process.

The plateau observed in Qwen2.5-7B's SAN accuracy could indicate that the model has reached its maximum performance level with the given training data and parameters. The continued fluctuation in Llama3.1-8B's SAN accuracy suggests that it may still be learning and adapting, potentially benefiting from further training.

The consistently low UCI accuracy for both models raises questions about the effectiveness of the UCI method for evaluating these models on Lichess puzzles. It's possible that the UCI method is more susceptible to noise or that it requires a different training approach to yield meaningful results. The faster initial learning rate of Llama3.1-8B suggests it may be more efficient at learning the underlying patterns in the Lichess puzzle data.

</details>

Figure 7: Evaluation performance comparison across move notations (SAN vs. UCI) for Qwen2.5-7B and Llama3.1-8B.

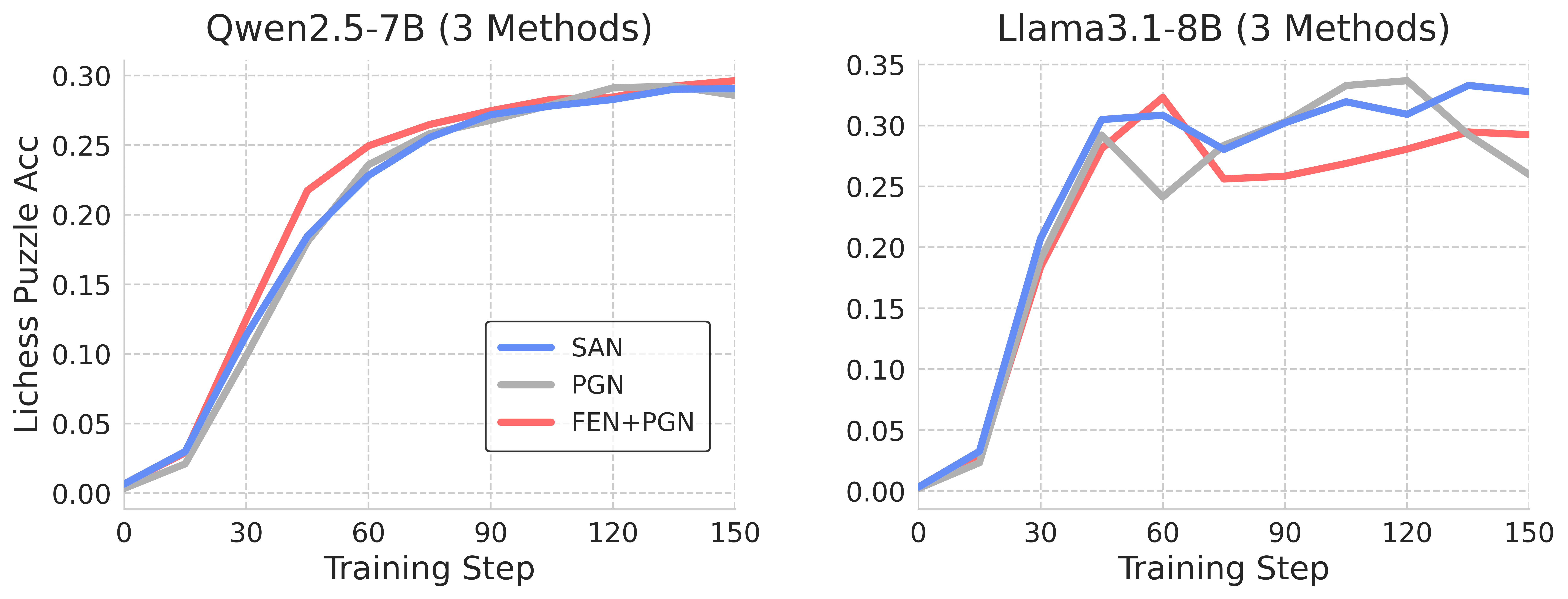

#### Board state representation:

We also ablated different board state representations, noting key differences between FEN and PGN. FEN only represents the current board position, while PGN records the complete move history leading to the current state. However, PGN alone lacks explicit current board state representation. As illustrated in Figure 8, despite these structural differences, we found that board representation choice was not critical—all variants achieved similar performance. Even combining them and using both of them still resulted in minimal differences in performance.

<details>

<summary>fig/chess_board_state_prompt_ablation.png Details</summary>

### Visual Description

## Line Chart: Model Performance on Lichess Puzzle Accuracy

### Overview

The image presents two line charts comparing the performance of two language models, Qwen2.5-7B and Llama3.1-8B, on solving Lichess puzzles. The performance is measured by "Lichess Puzzle Acc" (Accuracy) against "Training Step". Each chart displays three different training methods: SAN, PGN, and FEN+PGN.

### Components/Axes

* **X-axis:** "Training Step" ranging from 0 to 150.

* **Y-axis:** "Lichess Puzzle Acc" ranging from 0.00 to 0.35.

* **Left Chart Title:** "Qwen2.5-7B (3 Methods)"

* **Right Chart Title:** "Llama3.1-8B (3 Methods)"

* **Legend (Bottom-Right of both charts):**

* SAN (Blue)

* PGN (Gray)

* FEN+PGN (Red)

* **Gridlines:** Horizontal and vertical gridlines are present on both charts to aid in reading values.

### Detailed Analysis or Content Details

**Qwen2.5-7B Chart:**

* **SAN (Blue):** The line starts at approximately 0.00 at Training Step 0, rapidly increases to around 0.25 by Training Step 30, and plateaus around 0.28-0.30 from Training Step 60 onwards.

* **PGN (Gray):** The line starts at approximately 0.00 at Training Step 0, increases more slowly than SAN, reaching around 0.18 by Training Step 30, and continues to increase, reaching approximately 0.26 by Training Step 150.

* **FEN+PGN (Red):** The line starts at approximately 0.00 at Training Step 0, increases rapidly, surpassing both SAN and PGN by Training Step 30 (around 0.26), and plateaus around 0.29-0.30 from Training Step 90 onwards.

**Llama3.1-8B Chart:**

* **SAN (Blue):** The line starts at approximately 0.00 at Training Step 0, increases rapidly to around 0.28 by Training Step 30, then fluctuates between approximately 0.28 and 0.32 for the remainder of the training steps.

* **PGN (Gray):** The line starts at approximately 0.00 at Training Step 0, increases to around 0.22 by Training Step 30, then fluctuates between approximately 0.22 and 0.28 for the remainder of the training steps.

* **FEN+PGN (Red):** The line starts at approximately 0.00 at Training Step 0, increases rapidly to around 0.26 by Training Step 30, then fluctuates between approximately 0.24 and 0.29 for the remainder of the training steps.

### Key Observations

* **Qwen2.5-7B:** FEN+PGN consistently outperforms both SAN and PGN throughout the training process. SAN initially shows the fastest improvement but plateaus earlier.

* **Llama3.1-8B:** SAN consistently outperforms both PGN and FEN+PGN throughout the training process. All three methods show more fluctuation in accuracy compared to Qwen2.5-7B.

* **Overall:** The FEN+PGN method appears to be more effective for Qwen2.5-7B, while the SAN method appears to be more effective for Llama3.1-8B.

### Interpretation

The charts demonstrate the impact of different training methods on the performance of two language models in solving Lichess puzzles. The varying performance suggests that the optimal training method is model-dependent.

For Qwen2.5-7B, combining FEN and PGN data seems to provide the most beneficial training signal, leading to higher accuracy. This could be because the model architecture is better suited to leverage the information contained in both FEN and PGN formats. The rapid initial improvement of SAN, followed by a plateau, suggests that the model quickly learns from the SAN data but reaches a limit in its ability to generalize.

For Llama3.1-8B, the SAN method consistently achieves the highest accuracy. The fluctuations in accuracy for all methods in the Llama3.1-8B chart might indicate a more sensitive training process or a higher variance in the puzzle difficulty. The lower overall accuracy compared to Qwen2.5-7B suggests that Llama3.1-8B may require more extensive training or a different architecture to achieve comparable performance on this task.

The differences in performance between the models and training methods highlight the importance of tailoring training strategies to specific model architectures and tasks. Further investigation could explore why certain methods are more effective for specific models and how to mitigate the fluctuations observed in the Llama3.1-8B chart.

</details>

Figure 8: Evaluation performance comparison across board state representations (FEN vs. PGN vs. FEN+PGN) for Qwen2.5-7B and Llama3.1-8B.

#### Overall:

Based on these ablations, we adopted FEN with SAN notation with legal moves for their simplicity in terms of token count and input prompt length, enabling more efficient training while maintaining optimal performance.

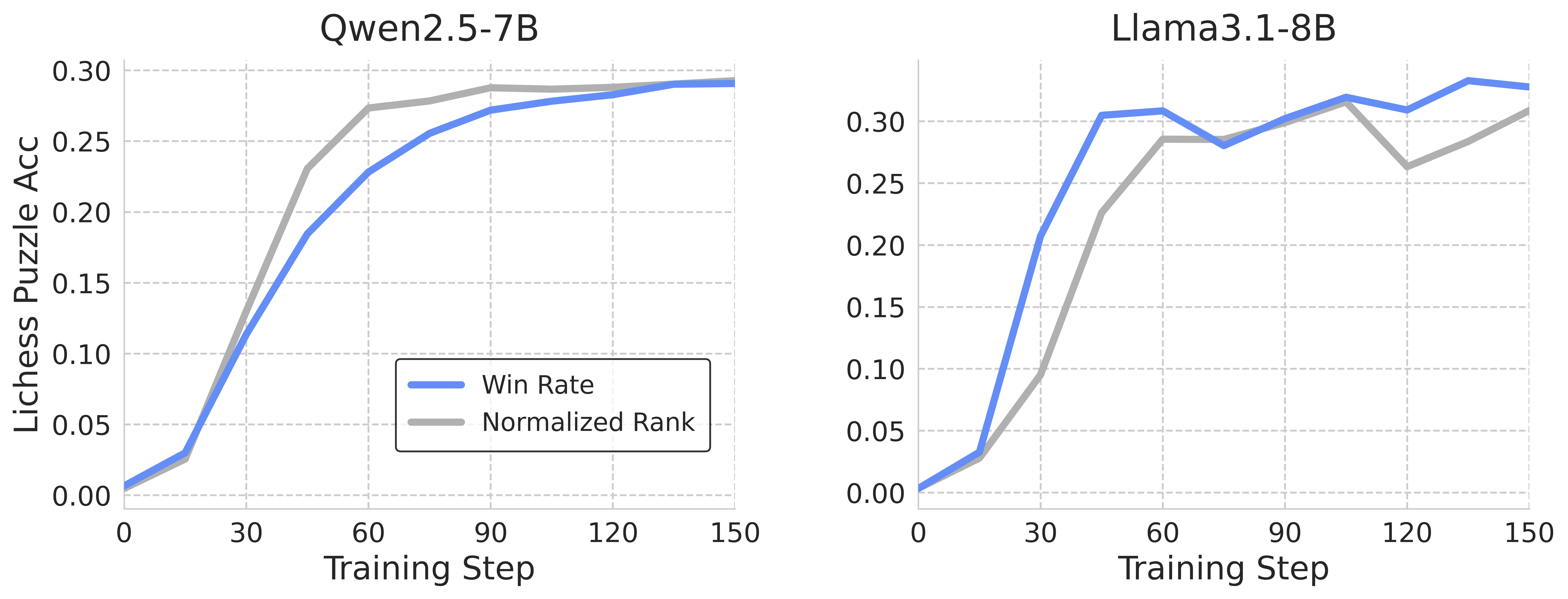

### C.2 Dense reward

When providing dense rewards with our expert critic network $Q_{\theta}(s,a)$ , we explored two methods for extracting reward signals from the model’s win-rate predictions: direct win-rate feedback and normalized rank feedback.

#### Direct win-rate feedback.

As described in Equation (• ‣ 2.2), we use the expert critic’s predicted win-rate directly as the reward signal. For example, if the critic predicts a 67% win probability for a specific move at a given position, we provide $r_{\text{dense}}=0.67$ as the reward.

#### Normalized rank feedback.

Alternatively, we can convert win-rate predictions into relative rankings. Given the set of legal moves $\{a_{1},a_{2},\ldots,a_{L}\}$ at state $s$ , we first obtain win-rate predictions $\{Q_{\theta}(s,a_{1}),Q_{\theta}(s,a_{2}),\ldots,Q_{\theta}(s,a_{L})\}$ , then rank these moves by their predicted win-rates. The normalized rank reward is defined as:

$$

r_{\text{dense}}=\frac{\text{rank}(a_{t})-1}{L-1}\in[0,1] \tag{1}

$$

where $\text{rank}(a_{t})$ denotes the rank of the selected action $a_{t}$ (with rank 1 being the highest win-rate and rank $L$ being the lowest win-rate), and $L$ is the number of legal moves.

<details>

<summary>fig/chess_reward_ablation.png Details</summary>

### Visual Description

## Line Chart: Model Performance on Lichess Puzzle Accuracy

### Overview

The image presents two line charts comparing the performance of two models, Qwen2.5-7B and Llama3.1-8B, on Lichess puzzles. Both charts plot "Lichess Puzzle Acc" (Accuracy) against "Training Step". Each chart displays two data series: "Win Rate" and "Normalized Rank".

### Components/Axes

* **X-axis:** "Training Step" ranging from 0 to 150. The axis is linearly scaled with markers at intervals of 30.

* **Y-axis:** "Lichess Puzzle Acc" ranging from 0.00 to 0.30. The axis is linearly scaled with markers at intervals of 0.05.

* **Left Chart Title:** "Qwen2.5-7B"

* **Right Chart Title:** "Llama3.1-8B"

* **Legend (Bottom-Left of each chart):**

* "Win Rate" - Represented by a solid blue line.

* "Normalized Rank" - Represented by a solid grey line.

### Detailed Analysis or Content Details

**Qwen2.5-7B Chart:**

* **Win Rate (Blue Line):** The line slopes sharply upward from 0 at Training Step 0, reaching approximately 0.15 at Training Step 30. It continues to rise, leveling off around 0.28-0.29 between Training Steps 90 and 150.

* Step 0: 0.00

* Step 30: ~0.15

* Step 60: ~0.23

* Step 90: ~0.27

* Step 120: ~0.28

* Step 150: ~0.29

* **Normalized Rank (Grey Line):** The line also increases rapidly from 0 at Training Step 0, reaching approximately 0.12 at Training Step 30. It continues to increase, reaching approximately 0.28-0.29 between Training Steps 90 and 150.

* Step 0: 0.00

* Step 30: ~0.12

* Step 60: ~0.21

* Step 90: ~0.27

* Step 120: ~0.28

* Step 150: ~0.29

**Llama3.1-8B Chart:**

* **Win Rate (Blue Line):** The line starts at 0 at Training Step 0 and rises very steeply to approximately 0.28 at Training Step 30. It then fluctuates between approximately 0.26 and 0.31 between Training Steps 60 and 150.

* Step 0: 0.00

* Step 30: ~0.28

* Step 60: ~0.26

* Step 90: ~0.31

* Step 120: ~0.29

* Step 150: ~0.30

* **Normalized Rank (Grey Line):** The line starts at 0 at Training Step 0 and rises steeply to approximately 0.15 at Training Step 30. It then fluctuates between approximately 0.22 and 0.28 between Training Steps 60 and 150.

* Step 0: 0.00

* Step 30: ~0.15

* Step 60: ~0.22

* Step 90: ~0.26

* Step 120: ~0.25

* Step 150: ~0.28

### Key Observations

* Both models show a significant improvement in performance during the initial training steps (0-30).

* Qwen2.5-7B exhibits a smoother learning curve, with a more gradual increase in both Win Rate and Normalized Rank.

* Llama3.1-8B shows a more rapid initial increase, followed by fluctuations in performance.

* The Win Rate consistently outperforms the Normalized Rank for both models.

* Both models appear to converge in performance around Training Step 120-150.

### Interpretation

The data suggests that both Qwen2.5-7B and Llama3.1-8B are effective at learning to solve Lichess puzzles. The initial rapid increase in performance indicates that the models quickly grasp the basic principles of puzzle solving. The subsequent leveling off or fluctuations suggest that the models are approaching their performance limits or are encountering more challenging puzzles.

The difference in learning curves between the two models could be attributed to differences in their architectures, training data, or optimization algorithms. The smoother learning curve of Qwen2.5-7B might indicate a more stable training process, while the fluctuations in Llama3.1-8B could suggest a more sensitive or volatile training process.

The consistently higher Win Rate compared to Normalized Rank suggests that the Win Rate metric is more sensitive to improvements in puzzle-solving ability. The Normalized Rank metric might be influenced by other factors, such as the difficulty of the puzzles or the performance of other players.

The convergence of performance around Training Step 120-150 suggests that both models are reaching a similar level of proficiency in solving Lichess puzzles. Further training might not yield significant improvements in performance.

</details>

Figure 9: Evaluation performance comparison between direct win-rate feedback and normalized rank feedback as dense rewards for Qwen2.5-7B and Llama3.1-8B.

We compared these two approaches across our model variants and found distinct learning dynamics with similar final outcomes (Figure 9). For Qwen2.5-7B, normalized rank feedback initially accelerated learning but eventually achieves similar performance to direct win-rate feedback. For Llama3.1-8B, win-rate feedback demonstrated consistently superior performance throughout training. Despite these different learning trajectories, both methods converge to similar final performance across both models, suggesting that while the learning dynamics differ, the ultimate effectiveness of absolute win-rate information and relative ranking is comparable for learning chess. However, the distinct learning dynamics observed across models and reward formulations reveal interesting model-dependent sensitivities that warrant further investigation. Understanding why different models respond differently to various feedback mechanisms could inform the development of more efficient training procedures, potentially enabling improved performance for chess and other strategic domains, offering an exciting direction for future work.

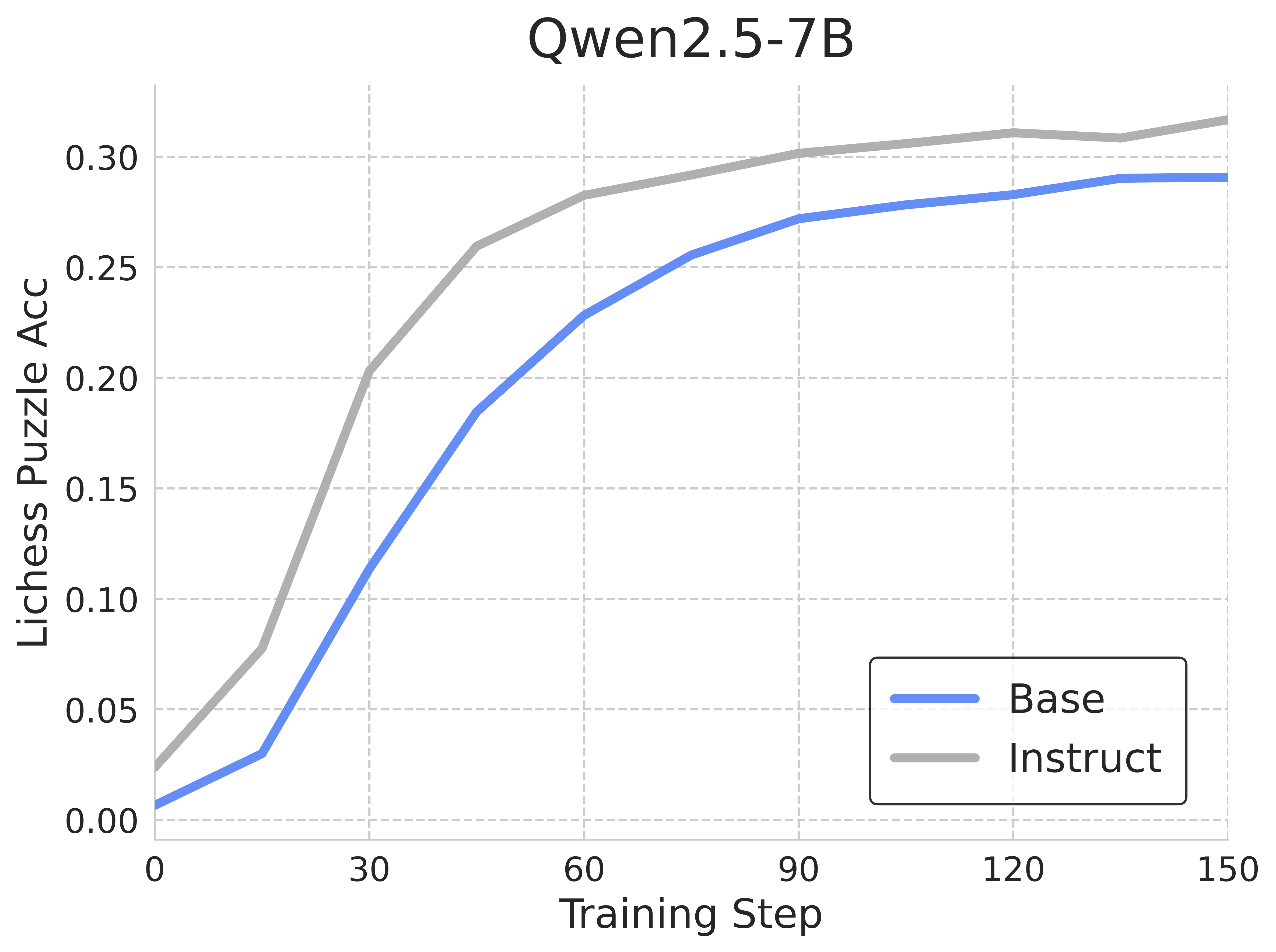

### C.3 Base model vs. Instruct model

<details>

<summary>fig/chess_base_instruct_single_comparison.png Details</summary>

### Visual Description

\n

## Line Chart: Qwen2.5-7B Lichess Puzzle Accuracy vs. Training Step

### Overview

This line chart displays the Lichess Puzzle Accuracy (y-axis) of two models, "Base" and "Instruct", over a range of Training Steps (x-axis). The chart aims to compare the performance of the two models as they are trained. The title of the chart is "Qwen2.5-7B".

### Components/Axes

* **Title:** Qwen2.5-7B (positioned at the top-center)

* **X-axis:** Training Step (ranging from 0 to 150, with tick marks every 30 steps)

* **Y-axis:** Lichess Puzzle Acc (ranging from 0.00 to 0.30, with tick marks every 0.05)

* **Legend:** Located in the bottom-right corner, containing two entries:

* "Base" - represented by a solid blue line.

* "Instruct" - represented by a solid gray line.

* **Gridlines:** Horizontal and vertical gridlines are present to aid in reading values.

### Detailed Analysis

**Base Model (Blue Line):**

The blue line representing the "Base" model starts at approximately 0.00 at Training Step 0. It exhibits a steep upward slope from 0 to 30 Training Steps, reaching approximately 0.15. The slope then decreases, becoming more gradual from 30 to 150 Training Steps. At 60 Training Steps, the accuracy is approximately 0.24. At 90 Training Steps, the accuracy is approximately 0.27. At 120 Training Steps, the accuracy is approximately 0.28. At 150 Training Steps, the accuracy plateaus at approximately 0.29.

**Instruct Model (Gray Line):**

The gray line representing the "Instruct" model also starts at approximately 0.00 at Training Step 0. It shows a rapid increase in accuracy from 0 to 30 Training Steps, reaching approximately 0.25. The slope then decreases, becoming more gradual from 30 to 150 Training Steps. At 60 Training Steps, the accuracy is approximately 0.29. At 90 Training Steps, the accuracy is approximately 0.30. At 120 Training Steps, the accuracy is approximately 0.31. At 150 Training Steps, the accuracy plateaus at approximately 0.31.

### Key Observations

* The "Instruct" model consistently outperforms the "Base" model across all Training Steps.

* Both models exhibit diminishing returns in accuracy as the number of Training Steps increases. The initial gains are significant, but the rate of improvement slows down considerably after 30 Training Steps.

* The "Instruct" model reaches a plateau in accuracy earlier than the "Base" model.

* The difference in accuracy between the two models is most pronounced in the early stages of training (0-60 steps).

### Interpretation

The data suggests that the "Instruct" model benefits significantly from the training process, achieving higher Lichess Puzzle Accuracy compared to the "Base" model. The initial rapid increase in accuracy for both models indicates that the models are quickly learning from the training data. The subsequent plateau suggests that the models are approaching their maximum performance level, or that the training data is no longer providing significant new information. The consistent outperformance of the "Instruct" model implies that the instruction-based training approach is more effective for this specific task (Lichess puzzle solving) than the base training approach. The diminishing returns observed after 60 training steps suggest that further training may not yield substantial improvements in accuracy. This could be due to the limitations of the model architecture, the quality of the training data, or the optimization algorithm used.

</details>

Figure 10: Training performance comparison between Qwen2.5-7B Base and Instruct model.

Our failure analysis in Section 3.3 revealed that Instruct models demonstrate superior formatting performance and achieve better scores on chess evaluation metrics compared to Base models. Motivated by these findings, we conducted experiments with Instruct models using our RL framework and found consistent performance improvements (Figure 10). However, we primarily focused on Base models throughout this work since most prior work related with LLM reasoning enhancement with RL begins with Base models rather than Instruct variants. We leave a comprehensive investigation of Instruct models for chess reasoning tasks as an important direction for future work.

## Appendix D Reasoning Qualitative Examples

For each qualitative example below, we use the standardized input prompt format presented in Figure 4. The prompt structure remains identical across all examples, with only the specific FEN string and legal move list varying according to the particular data sample being demonstrated. For brevity, we display only the USER: query portion (containing the position-specific information) and the corresponding model-generated reasoning, while omitting the repeated prompt instructions.

### D.1 Qwen2.5-3B with Sparse Rewards

Qwen2.5-3B with sparse rewards

Input Prompt: [Standardized prompt instructions omitted for brevity] User: The current FEN string is r3kb1r/1p2np2/p1p4p/4Pbp1/3PN3/8/PPP3PP/R1B2RK1 w kq - 0 16 and legal moves are Nf6+ Nd6+ Nxg5 Nc5 Ng3 Nc3 Nf2 Nd2 Kf2 Kh1 Rxf5 Rf4 Rf3 Rf2 Re1 Rd1 Bxg5 Bf4 Be3 Bd2 Rb1 e6 d5 h3 g3 c3 b3 a3 h4 g4 c4 b4 a4. What is the best move to make out of the list of legal moves? Assistant: Let me solve this step by step. <think> Generated Output: So looking at our current board position we have some interesting squares to asses. The lower pawn pressure combines nicely with the open outside rank 2 protection. Wanting to force him to make a puzzlingly precise move seems low key. Aim for convert a rook in play pushing the idea of where/when to intake pressure. Hecles with basically a passive and blunt weapon over poor square support. </think> <answer> h5 </answer><|endoftext|>

Figure 11: Qualitative reasoning example from Qwen2.5-3B after RL fine-tuning with sparse rewards.

### D.2 Qwen2.5-3B with Dense Rewards

Qwen2.5-3B with dense rewards