# Towards Unified Neurosymbolic Reasoning on Knowledge Graphs

> Qika Lin, Kai He, and Mengling Feng are with the Saw Swee Hock School of Public Health, National University of Singapore, 117549, Singapore. Fangzhi Xu and Jun Liu are with the School of Computer Science and Technology, Xi’an Jiaotong University, Xi’an, Shaanxi 710049, China. Hao Lu is with the State Key Laboratory of Multimodal Artificial Intelligence Systems, Institute of Automation, Chinese Academy of Sciences, Beijing 100190, China. Rui Mao and Erik Cambria are with the College of Computing and Data Science, Nanyang Technological University, 639798, Singapore.

## Abstract

Knowledge Graph (KG) reasoning has received significant attention in the fields of artificial intelligence and knowledge engineering, owing to its ability to autonomously deduce new knowledge and consequently enhance the availability and precision of downstream applications. However, current methods predominantly concentrate on a single form of neural or symbolic reasoning, failing to effectively integrate the inherent strengths of both approaches. Furthermore, the current prevalent methods primarily focus on addressing a single reasoning scenario, presenting limitations in meeting the diverse demands of real-world reasoning tasks. Unifying the neural and symbolic methods, as well as diverse reasoning scenarios in one model is challenging as there is a natural representation gap between symbolic rules and neural networks, and diverse scenarios exhibit distinct knowledge structures and specific reasoning objectives. To address these issues, we propose a unified neurosymbolic reasoning framework, namely Tunsr, for KG reasoning. Tunsr first introduces a consistent structure of reasoning graph that starts from the query entity and constantly expands subsequent nodes by iteratively searching posterior neighbors. Based on it, a forward logic message-passing mechanism is proposed to update both the propositional representations and attentions, as well as first-order logic (FOL) representations and attentions of each node. In this way, Tunsr conducts the transformation of merging multiple rules by merging possible relations at each step. Finally, the FARI algorithm is proposed to induce FOL rules by constantly performing attention calculations over the reasoning graph. Extensive experimental results on 19 datasets of four reasoning scenarios (transductive, inductive, interpolation, and extrapolation) demonstrate the effectiveness of Tunsr.

Index Terms: Neurosymbolic AI, Knowledge graph reasoning, Propositional reasoning, First-order logic, Unified model

## 1 Introduction

As a fundamental and significant topic in the domains of knowledge engineering and artificial intelligence (AI), knowledge graphs (KGs) have been spotlighted in many real-world applications [1], such as question answering [2, 3], recommendation systems [4, 5], relation extraction [6, 7] and text generation [8, 9]. Thanks to their structured manner of knowledge storage, KGs can effectively capture and represent rich semantic associations between real entities using multi-relational graphical structures. Factual knowledge is often stored in KGs using the fact triple as the fundamental unit, represented in the form of (subject, relation, object), such as (Barack Obama, bornIn, Hawaii) in Figure 1. However, most common KGs, such as Freebase [10] and Wikidata [11], are incomplete due to the limitations of current human resources and technical conditions. Furthermore, incomplete KGs can degrade the accuracy of downstream intelligent applications or produce completely wrong answers. Therefore, inferring missing facts from the observed ones is of great significance for downstream KG applications, which is called link prediction that is one form of KG reasoning [12, 13].

The task of KG reasoning is to infer or predict new facts using existing knowledge. For instance, in Figure 1, KG reasoning involves predicting the validity of the target missing triple (Barack Obama, nationalityOf, U.S.A.) based on other available triples. Using two distinct paradigms, connectionism, and symbolicism, which serve as the foundation for implementing AI systems [14, 15], existing methods can be categorized into neural, symbolic, and neurosymbolic models.

Neural methods, drawing inspiration from the connectionism of AI, typically employ neural networks to learn entity and relation representations. Subsequently, a customized scoring function, such as translation-based distance or semantic matching strategy, is utilized for model optimization and query reasoning, which is illustrated in the top part of Figure 1. However, such an approach lacks transparency and interpretability [16, 17]. On the other hand, symbolic methods draw inspiration from the idea of symbolicism in AI. As shown in the bottom part of Figure 1, they first learn logic rules and then apply these rules, based on known facts to deduce new knowledge. In this way, symbolic methods offer natural interpretability due to the incorporation of logical rules. However, owing to the limited modeling capacity given by discrete representation and reasoning strategies of logical rules, these methods often fall short in terms of reasoning performance [18].

<details>

<summary>extracted/6596839/fig/ns.png Details</summary>

### Visual Description

## Diagram: Hybrid Neural-Symbolic Reasoning for Knowledge Graph Inference

### Overview

The image is a technical diagram illustrating a hybrid reasoning system that combines neural and symbolic methods to infer new facts from a Knowledge Graph (KG). The left side displays a sample KG centered on Barack Obama and his family. The right side details a two-step process: (1) Neural Reasoning using Knowledge Graph Embeddings (KGE) and (2) Symbolic Reasoning using a learned Rule Set. The system's goal is to infer the missing relation `nationalityOf` between "Barack Obama" and "U.S.A.".

### Components/Axes

The diagram is divided into two primary sections connected by gray arrows indicating data flow.

**1. Left Section: Knowledge Graph**

* **Title:** "Knowledge Graph" (bottom center).

* **Entities (Nodes):** Represented as colored ovals.

* **Light Blue Ovals (People):** "Michelle Obama", "Barack Obama", "Malia Obama", "Ann Dunham".

* **Yellow Ovals (Locations):** "Chicago", "U.S.A.", "Honolulu", "Hawaii".

* **Orange Oval (Institution):** "Harvard University".

* **Relations (Edges):** Represented as labeled arrows connecting entities. Each relation type has a distinct color.

* **Purple Arrows:** `bornIn` (Michelle Obama → Chicago), `marriedTo` (Michelle Obama ↔ Barack Obama), `placeIn` (Chicago → U.S.A.).

* **Green Arrows:** `bornIn` (Barack Obama → Hawaii), `locatedInCountry` (Hawaii → U.S.A.).

* **Blue Arrows:** `hasCity` (Hawaii → Honolulu), `locatedInCountry` (Honolulu → U.S.A.).

* **Black Arrows:** `fatherOf` (Barack Obama → Malia Obama), `motherOf` (Ann Dunham → Barack Obama), `graduateFrom` (Barack Obama → Harvard University).

* **Highlighted Paths:** Three potential reasoning paths are numbered with colored circles.

* **Path 1 (Green):** `bornIn(Barack Obama, Hawaii) ∧ locatedInCountry(Hawaii, U.S.A.)`

* **Path 2 (Blue):** `bornIn(Barack Obama, Hawaii) ∧ hasCity(Hawaii, Honolulu) ∧ locatedInCountry(Honolulu, U.S.A.)`

* **Path 3 (Purple):** `marriedTo(Barack Obama, Michelle Obama) ∧ bornIn(Michelle Obama, Chicago) ∧ placeIn(Chicago, U.S.A.)`

* **Target Inference:** A red dashed arrow with a question mark points from "Barack Obama" to "U.S.A.", representing the unknown `nationalityOf` relation to be inferred.

**2. Right Section: Reasoning Process**

* **(1) Neural Reasoning:**

* **Input:** The Knowledge Graph.

* **Component 1:** A box labeled "KGE" (Knowledge Graph Embedding) containing a neural network icon.

* **Output 1:** Two grids labeled "Relation Embedding" (green shades) and "Entity Embedding" (blue shades).

* **Component 2:** A box labeled "Score Function" containing a neural network icon.

* **Flow:** KG → KGE → Embeddings → Score Function.

* **(2) Symbolic Reasoning:**

* **Input:** The Knowledge Graph and learned rules.

* **Component:** A box labeled "Rule Set" containing three logical rules with confidence scores (γ).

* **Rule γ₁:** `0.89 ∀X, Y, Z bornIn(X, Y) ∧ locatedInCountry(Y, Z) → nationalityOf(X, Z)`

* **Rule γ₂:** `0.65 ∀X, Y₁, Y₂, Z bornIn(X, Y₁) ∧ hasCity(Y₁, Y₂) ∧ locatedInCountry(Y₂, Z) → nationalityOf(X, Z)`

* **Rule γ₃:** `0.54 ∀X, Y₁, Y₂, Z marriedTo(X, Y₁) ∧ bornIn(Y₁, Y₂) ∧ placeIn(Y₂, Z) → nationalityOf(X, Z)`

* **Final Output:** Both reasoning paths (Neural and Symbolic) converge via gray arrows to the inferred fact: a light blue oval "Barack Obama" connected by a red arrow labeled `nationalityOf` to a yellow oval "U.S.A.".

### Detailed Analysis

* **Knowledge Graph Structure:** The KG is a directed, labeled graph. Entities are typed (Person, Location, Institution) by color. Relations are binary and typed. The graph contains both direct facts (e.g., `bornIn`) and multi-hop paths that can be used for inference.

* **Neural Reasoning Path:** This is a latent, embedding-based approach. The KGE model learns vector representations for entities and relations. The Score Function then uses these embeddings to compute a plausibility score for the candidate triple `(Barack Obama, nationalityOf, U.S.A.)`.

* **Symbolic Reasoning Path:** This is an explicit, rule-based approach. The system uses three first-order logic rules, each with a learned confidence score (γ). The rules correspond directly to the three highlighted paths in the KG:

* Rule γ₁ (confidence 0.89) matches Path 1 (Green).

* Rule γ₂ (confidence 0.65) matches Path 2 (Blue).

* Rule γ₃ (confidence 0.54) matches Path 3 (Purple).

* **Inference:** The system can use the confidence scores from the symbolic rules (e.g., taking the maximum or a weighted combination) alongside the neural score to make a final prediction about the `nationalityOf` relation.

### Key Observations

1. **Rule-Path Correspondence:** There is a perfect one-to-one mapping between the numbered paths in the KG and the rules in the Rule Set. This demonstrates how symbolic rules are derived from or correspond to observable patterns in the graph structure.

2. **Confidence Hierarchy:** The rules have descending confidence scores: γ₁ (0.89) > γ₂ (0.65) > γ₃ (0.54). This suggests the system has learned that the most direct path (birthplace → country) is the strongest indicator of nationality, while paths involving a spouse's birthplace are weaker evidence.

3. **Hybrid Convergence:** The diagram's central theme is the convergence of two distinct AI paradigms. The gray arrows from both the "Score Function" (neural) and the "Rule Set" (symbolic) point to the same final output, illustrating a ensemble or hybrid prediction method.

4. **Target Relation:** The inferred relation `nationalityOf` is not explicitly present in the original KG; it is a new fact derived by the reasoning process.

### Interpretation

This diagram illustrates a **neuro-symbolic AI** approach to knowledge graph completion. The core idea is to combine the strengths of two methods:

* **Neural (KGE):** Good at capturing complex, non-linear patterns and generalizing from data, but often operates as a "black box" with low interpretability.

* **Symbolic (Rules):** Provides high interpretability (the rules are human-readable) and can incorporate logical constraints, but may struggle with scalability and capturing implicit patterns.

The system uses the symbolic rules to generate **interpretable explanations** for its predictions (e.g., "Barack Obama is inferred to be a U.S.A. national because he was born in Hawaii, which is located in the U.S.A."). The confidence scores (γ) quantify the reliability of each explanatory rule. Simultaneously, the neural component provides a complementary, data-driven score.

The example is carefully chosen: the `nationalityOf` relation is not directly stated but is a commonsense inference from the graph. The three rules represent different "reasoning strategies" a human might use, with varying strengths. The hybrid model can leverage all of them, potentially weighting the more confident rules more heavily, to make a robust and explainable prediction. This approach is valuable for applications requiring both accuracy and transparency, such as question answering, decision support, and knowledge base validation.

</details>

Figure 1: Illustration of neural and symbolic methods for KG reasoning. Neural methods learn entity and relation embeddings to calculate the validity of the specific fact. Symbolic methods perform logic deduction using known facts on learned or given rules (like $\gamma_{1}$ , $\gamma_{2}$ and $\gamma_{3}$ ) for inference.

TABLE I: Classical studies for KG reasoning. PL and FOL denote the propositional and FOL reasoning, respectively. SKG T, SKG I, TKG I, and TKG E represent transductive, inductive, interpolation, and extrapolation reasoning. “ $\checkmark$ ” means the utilized reasoning manners (neural and logic) or their vanilla application scenarios.

| Model | Neural | Logic | Reasoning Scenarios | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| PL | FOL | SKG T | SKG I | TKG I | TKG E | | |

| TransE [19] | ✓ | | | ✓ | | | |

| AMIE [20] | | | ✓ | ✓ | | | |

| Neural LP [21] | ✓ | | ✓ | ✓ | | | |

| TAPR [22] | ✓ | ✓ | | ✓ | | | |

| RLogic [23] | ✓ | | ✓ | ✓ | | | |

| LatentLogic [24] | ✓ | | ✓ | ✓ | | | |

| PSRL [25] | ✓ | ✓ | | ✓ | | | |

| ConGLR [26] | ✓ | | ✓ | | ✓ | | |

| TeAST [27] | ✓ | | | | | ✓ | |

| TLogic [28] | | | ✓ | | | | ✓ |

| TR-Rules [29] | | | ✓ | | | | ✓ |

| TECHS [30] | ✓ | ✓ | ✓ | | | | ✓ |

| Tunsr | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

To leverage the strengths of both neural and symbolic methods while mitigating their respective drawbacks, there has been a growing interest in integrating them to realize neurosymbolic systems [31]. Several approaches such as Neural LP [21], DRUM [32], RNNLogic [33], and RLogic [23] have emerged to address the learning and reasoning of rules by incorporating neural networks into the whole process. Despite achieving some successes, there remains a notable absence of a cohesive modeling approach that integrates both propositional and first-order logic (FOL) reasoning. Propositional reasoning on KGs, generally known as multi-hop reasoning [34], is dependent on entities and predicts answers through specific reasoning paths, which demonstrates strong modeling capabilities by providing diverse reasoning patterns for complex scenarios [35, 36]. On the other hand, FOL reasoning utilizes learned FOL rules to infer information from the entire KG by variable grounding, ultimately scoring candidates by aggregating all possible FOL rules. FOL reasoning is entity-independent and exhibits good transferability. Unfortunately, as shown in Table I, mainstream methods have failed to effectively combine these two reasoning approaches within a single framework, resulting in suboptimal models.

Moreover, as time progresses and society undergoes continuous development, a wealth of new knowledge consistently emerges. Consequently, simple reasoning on static KGs (SKGs), i.e., transductive reasoning, can no longer meet the needs of practical applications. Recently, there has been a gradual shift in the research community’s focus toward inductive reasoning with emerging entities on SKGs, as well as interpolation and extrapolation reasoning on temporal KGs (TKGs) [37] that introduce time information to facts. The latest research, which predominantly concentrated on individual scenarios, proved insufficient in providing a comprehensive approach to address various reasoning scenarios simultaneously. This limitation significantly hampers the model’s generalization ability and its practical applicability. To sum up, by comparing the state-of-the-art recent studies on KG reasoning in Table I, it is observed that none of them has a comprehensive unification across various KG reasoning tasks, either in terms of methodology or application perspective.

The challenges in this domain can be categorized into three main aspects: (1) There is an inherent disparity between the discrete nature of logic rules and the continuous nature of neural networks, which presents a natural representation gap to be bridged. Thus, implementing differentiable logical rule learning and reasoning is not directly achievable. (2) It is intractable to solve the transformation and integration problems for propositional and FOL rules, as they have different semantic representation structures and reasoning mechanisms. (3) Diverse scenarios on SKGs or TKGs exhibit distinct knowledge structures and specific reasoning objectives. Consequently, a model tailored for one scenario may encounter difficulties when applied to another. For example, each fact on SKGs is in a triple form while that of TKGs is quadruple. Conventional embedding methods for transductive reasoning fail to address inductive reasoning as they do not learn embeddings of emerging entities in the training phase. Similarly, methods employed for interpolation reasoning cannot be directly applied to extrapolation reasoning, as extrapolation involves predicting facts with future timestamps that are not present in the training set.

To address the above challenges, we propose a unified neurosymbolic reasoning framework (named Tunsr) for KG reasoning. Firstly, to realize the unified reasoning on different scenarios, we introduce a consistent structure of reasoning graph. It starts from the query entity and constantly expands subsequent nodes (entities for SKGs and entity-time pairs for TKGs) by iteratively searching posterior neighbors. Upon this, we can seamlessly integrate diverse reasoning scenarios within a unified computational framework, while also implementing different types of propositional and FOL rule-based reasoning over it. Secondly, to combine neural and symbolic reasoning, we propose a forward logic message-passing mechanism. For each node in the reasoning graph, Tunsr learns an entity-dependent propositional representation and attention using the preceding counterparts. Besides, it utilizes a gated recurrent unit (GRU) [38] to integrate the current relation and preceding FOL representations as the edges’ representations, following which the entity-independent FOL representation and attention are calculated by message aggregation. In this process, the information and confidence of the preceding nodes in the reasoning graph are passed to the subsequent nodes and realize the unified neurosymbolic calculation. Finally, with the reasoning graph and learned attention weights, a novel Forward Attentive Rule Induction (FARI) algorithm is proposed to induce different types of FOL rules. FARI gradually appends rule bodies by searching over the reasoning graph and viewing the FOL attentions as rule confidences. It is noted that our reasoning form for link prediction is data-driven to learn rules and utilizes grounding to calculate the fact probabilities, while classic Datalog [39] and ASP (Answer Set Programming) reasoners [40, 41] usually employ declarative logic programming to conduct precise and deterministic deductive reasoning on a set of rules and facts.

In summary, the contribution can be summarized as threefold:

$\bullet$ Combining the advantages of connectionism and symbolicism of AI, we propose a unified neurosymbolic framework for KG reasoning from both perspectives of methodology and reasoning scenarios. To the best of our knowledge, this is the first attempt to do such a study.

$\bullet$ A forward logic message-passing mechanism is proposed to update both the propositional representations and attentions, as well as FOL representations and attentions of each node in the expanding reasoning graph. Meanwhile, a novel FARI algorithm is introduced to induce FOL rules using learned attentions.

$\bullet$ Extensive experiments are carried out on the current mainstream KG reasoning scenarios, including transductive, inductive, interpolation, and extrapolation reasoning. The results demonstrate the effectiveness of our Tunsr and verify its interpretability.

This study is an extension of our model TECHS [30] published at the ACL 2023 conference. Compared with it, Tunsr has been enhanced in three significant ways: (1) From the theoretical perspective, although propositional and FOL reasoning are integrated in TECHS for extrapolation reasoning on TKGs, these two reasoning types are entangled together in the forward process, which limits the interpretability of the model. However, the newly proposed Tunsr framework presents a distinct separation of propositional and FOL reasoning in each reasoning step. Finally, they are combined for the reasoning results. This transformation enhances the interpretability of the model from both propositional and FOL rules’ perspectives. (2) For the perspective of FOL rule modeling, not limited to modeling temporal extrapolation Horn rules in TECHS, the connected and closed Horn rules, and the temporal interpolation Horn rules are also included in the Tunsr framework. (3) From the application perspective, the TECHS model is customized for the extrapolation reasoning on TKGs. Based on the further formalization of the reasoning graph and FOL rules, we can utilize the Tunsr model for current mainstream reasoning scenarios of KGs, including transductive, inductive, interpolation, and extrapolation reasoning. The experimental results demonstrate that our Tunsr model performs well in all those scenarios.

## 2 Preliminaries

### 2.1 KGs, Variants, and Reasoning Scenarios

Generally, a static KG (SKG) can be represented as $\mathcal{G}=\{\mathcal{E},\mathcal{R},\mathcal{F}\}$ , where $\mathcal{E}$ and $\mathcal{R}$ denote the set of entities and relations, respectively. $\mathcal{F}\subset\mathcal{E}\times\mathcal{R}\times\mathcal{E}$ is the fact set. Each fact is a triple, such as ( $s$ , $r$ , $o$ ), where $s$ , $r$ , and $o$ denote the head entity, relation, and tail entity, respectively. By introducing time information in the knowledge, a TKG can be represented as $\mathcal{G}=\{\mathcal{E},\mathcal{R},\mathcal{T},\mathcal{F}\}$ , where $\mathcal{T}$ denotes the set of time representations (timestamps or time intervals). $\mathcal{F}\subset\mathcal{E}\times\mathcal{R}\times\mathcal{E}\times\mathcal{T}$ is the fact set. Each fact is a quadruple, such as $(s,r,o,t)$ where $s,o\in\mathcal{E}$ , $r\in\mathcal{R}$ , and $t\in\mathcal{T}$ .

For these two types of KGs, there are mainly the following reasoning types (query for predicting the head entity can be converted to the tail entity prediction by adding reverse relations), which is illustrated in Figure 2:

$\bullet$ Transductive Reasoning on SKGs: Given a background SKG $\mathcal{G}=\{\mathcal{E},\mathcal{R},\mathcal{F}\}$ , the task is to predict the missing entity for the query $(\tilde{s},\tilde{r},?)$ . The true answer $\tilde{o}\in\mathcal{E}$ , and $\tilde{s}\in\mathcal{E}$ , $\tilde{r}\in\mathcal{R}$ , $(\tilde{s},\tilde{r},\tilde{o})\notin\mathcal{F}$ .

$\bullet$ Inductive Reasoning on SKGs: It indicates that there are new entities appearing in the testing stage, which were not present during the training phase. Formally, the training graph can be expressed as $\mathcal{G}_{t}=\{\mathcal{E}_{t},\mathcal{R},\mathcal{F}_{t}\}$ . The inductive graph $\mathcal{G}_{i}=\{\mathcal{E}_{i},\mathcal{R},\mathcal{F}_{i}\}$ shares the same relation set with $\mathcal{G}_{t}$ . However, their entity sets are disjoint, i.e., $\mathcal{E}_{t}\cap\mathcal{E}_{i}=\varnothing$ . A model needs to predict the missing entity $\tilde{o}$ for the query $(\tilde{s},\tilde{r},?)$ , where $\tilde{s}\in\mathcal{E}_{i}$ , $\tilde{o}\in\mathcal{E}_{i}$ , $\tilde{r}\in\mathcal{R}$ , and $(\tilde{s},\tilde{r},\tilde{o})\notin\mathcal{F}_{i}$ .

$\bullet$ Interpolation Reasoning on TKGs: For a query $(\tilde{s},\tilde{r},?,\tilde{t})$ in the testing phase based on a training TKG $\mathcal{G}_{t}=\{\mathcal{E}_{t},\mathcal{R}_{t},\mathcal{T}_{t},\mathcal{F}_ {t}\}$ , a model needs to predict the answer entity $\tilde{o}$ using the facts in the TKG. It denotes that $min(\mathcal{T}_{t})\leqslant\tilde{t}\leqslant max(\mathcal{T}_{t})$ , where $min$ and $max$ denote the functions to obtain the minimum and maximum timestamp within the set, respectively. Also, the query satisfies $\tilde{s}\in\mathcal{E}_{t}$ , $\tilde{o}\in\mathcal{E}_{t}$ , $\tilde{r}\in\mathcal{R}_{t}$ , and $(\tilde{s},\tilde{r},\tilde{o},\tilde{t})\notin\mathcal{F}_{t}$ .

$\bullet$ Extrapolation Reasoning on TKGs: It is similar to the interpolation reasoning that predicts the target entity $\tilde{o}$ for a query $(\tilde{s},\tilde{r},?,\tilde{t})$ in the testing phase, based on a training TKG $\mathcal{G}_{t}=\{\mathcal{E}_{t},\mathcal{R}_{t},\mathcal{T}_{t},\mathcal{F}_ {t}\}$ . Differently, this task is to predict future facts, which means the prediction utilizes the facts that occur earlier than $\tilde{t}$ in TKGs, i.e., $\tilde{t}>max(\mathcal{T}_{t})$ .

<details>

<summary>extracted/6596839/fig/transductive.png Details</summary>

### Visual Description

## Knowledge Graph Diagram: Obama Family and Geographic Relationships

### Overview

The image is a directed graph (knowledge graph) illustrating relationships between five primary entities: two individuals (Michelle Obama, Barack Obama) and three geographic locations (U.S.A., Hawaii, Honolulu). The diagram uses nodes (circles with images and labels) connected by labeled, directional edges (arrows) to represent specific relationships. A background network of unlabeled gray nodes and edges suggests this is a subset of a larger knowledge graph.

### Components/Nodes

The diagram contains five main nodes, each with an associated image and text label:

1. **Node: Michelle Obama**

* **Position:** Top-left quadrant.

* **Visual:** Circular node with a purple border containing a portrait photograph of Michelle Obama. Below the image is a rectangular label with the text "Michelle Obama" in purple serif font.

2. **Node: Barack Obama**

* **Position:** Bottom-left quadrant.

* **Visual:** Circular node with a green border containing a portrait photograph of Barack Obama. Below the image is a rectangular label with the text "Barack Obama" in green serif font.

3. **Node: U.S.A.**

* **Position:** Center of the diagram.

* **Visual:** Circular node with a blue border containing an image of the flag of the United States. Below the flag is a rectangular label with the text "U.S.A." in blue serif font.

4. **Node: Hawaii**

* **Position:** Bottom-right quadrant.

* **Visual:** Circular node with a gold border containing the official seal of the State of Hawaii. The seal includes text: "STATE OF HAWAII", "1959", and the state motto "UA MAU KE EA O KA AINA I KA PONO". Below the seal is a rectangular label with the text "Hawaii" in gold serif font.

5. **Node: Honolulu**

* **Position:** Top-right quadrant.

* **Visual:** Circular node with a red border containing the official seal of the City and County of Honolulu. The seal includes text: "CITY AND COUNTY OF HONOLULU". Below the seal is a rectangular label with the text "Honolulu" in red serif font.

**Background Elements:** Several smaller, solid gray circles (nodes) are connected by thin gray lines (edges) in the background, primarily in the upper half of the image. These nodes have no labels or images, indicating they represent other entities within a larger graph not detailed in this view.

### Detailed Analysis: Relationships (Edges)

The primary information is conveyed through the labeled, directional edges connecting the main nodes. Each edge has a text label describing the relationship.

1. **Edge: `marriedTo`**

* **Direction:** From Barack Obama (bottom-left) to Michelle Obama (top-left).

* **Description:** A solid black arrow points from the Barack Obama node to the Michelle Obama node. The label "marriedTo" is written along the arrow shaft.

2. **Edge: `liveIn`**

* **Direction:** From Michelle Obama (top-left) to U.S.A. (center).

* **Description:** A solid black arrow points from the Michelle Obama node to the U.S.A. node. The label "liveIn" is written along the arrow shaft.

3. **Edge: `bornIn`**

* **Direction:** From Barack Obama (bottom-left) to Hawaii (bottom-right).

* **Description:** A solid black arrow points from the Barack Obama node to the Hawaii node. The label "bornIn" is written along the arrow shaft.

4. **Edge: `locatedIn Country` (from Hawaii)**

* **Direction:** From Hawaii (bottom-right) to U.S.A. (center).

* **Description:** A solid black arrow points from the Hawaii node to the U.S.A. node. The label "locatedIn Country" is written along the arrow shaft.

5. **Edge: `hasCity`**

* **Direction:** From Hawaii (bottom-right) to Honolulu (top-right).

* **Description:** A solid black arrow points from the Hawaii node to the Honolulu node. The label "hasCity" is written along the arrow shaft.

6. **Edge: `locatedIn Country` (from Honolulu)**

* **Direction:** From Honolulu (top-right) to U.S.A. (center).

* **Description:** A solid black arrow points from the Honolulu node to the U.S.A. node. The label "locatedIn Country" is written along the arrow shaft.

7. **Edge: `nationality Of ?` (Query Edge)**

* **Direction:** From Barack Obama (bottom-left) to U.S.A. (center).

* **Description:** A **dashed red arrow** points from the Barack Obama node to the U.S.A. node. The label "nationality Of ?" is written along the arrow shaft. This edge is visually distinct (dashed, red) from the others, indicating it represents a query, an inferred relationship, or a point of investigation within the graph.

### Key Observations

1. **Central Node:** The "U.S.A." node is the central hub, with three incoming edges (`liveIn`, two `locatedIn Country`) and one outgoing query edge.

2. **Transitive Relationship:** The graph implies a transitive geographic relationship: Barack Obama was born in Hawaii, and Hawaii is located in the U.S.A.

3. **Visual Query:** The dashed red edge labeled "nationality Of ?" is the most salient feature. It explicitly poses a question about Barack Obama's nationality based on the other asserted facts in the graph (his birth in Hawaii, which is in the U.S.A.).

4. **Background Context:** The network of unlabeled gray nodes suggests this diagram is an excerpt or a focused view extracted from a more complex knowledge graph containing additional entities and relationships.

### Interpretation

This diagram is a semantic network or knowledge graph fragment designed to model factual assertions and pose a logical query. It demonstrates how structured knowledge can be represented to enable reasoning.

* **What it demonstrates:** The graph asserts several facts: the marital status of the Obamas, Michelle Obama's residence, Barack Obama's birthplace, and the geopolitical hierarchy of Honolulu (city) within Hawaii (state) within the U.S.A. (country).

* **The Core Inference:** The central purpose of this specific view is to highlight a **reasoning task**. By connecting the facts "Barack Obama bornIn Hawaii" and "Hawaii locatedIn Country U.S.A.", the graph sets up the logical premise to infer or query the "nationality Of" Barack Obama. The dashed red line visually represents this inference step or the question it generates.

* **Relationships:** The edges define clear, typed relationships between entities, forming a chain of evidence. The graph structure allows one to trace the path from Barack Obama to the U.S.A. via two routes: directly through the query edge, or indirectly through the `bornIn` and `locatedIn Country` edges.

* **Anomaly/Notable Feature:** The only "anomaly" is the intentionally unresolved query edge, which transforms the diagram from a static display of facts into an illustration of a knowledge-based reasoning problem. The background gray nodes remind the viewer that this reasoning occurs within a broader, more complex knowledge context.

</details>

(a) Transductive reasoning on SKGs.

<details>

<summary>extracted/6596839/fig/inductive.png Details</summary>

### Visual Description

## Knowledge Graph Diagram: Relationships Between Christopher Nolan, Emma Thomas, Syncopy Inc., London, and the United Kingdom

### Overview

The image is a knowledge graph or entity-relationship diagram illustrating connections between five primary entities: two individuals (Christopher Nolan, Emma Thomas), a company (Syncopy Inc.), a city (London), and a country (United Kingdom). The diagram uses nodes (circles with images and labels) connected by directed, labeled edges (arrows) to define specific relationships. A background network of gray nodes and lines suggests a larger, underlying knowledge structure.

### Components/Axes

**Nodes (Entities):**

1. **Christopher Nolan**: Located in the top-left. A circular node with a teal border containing a photograph of a man in a suit. Below the image is a white rectangular label with the text "Christopher Nolan" in teal.

2. **Emma Thomas**: Located in the bottom-left. A circular node with a maroon border containing a photograph of a woman. Below the image is a white rectangular label with the text "Emma Thomas" in maroon.

3. **Syncopy Inc.**: Located at the top-center. A circular node with a gold border containing a dark blue logo with the stylized text "SYNCOPY". Below the logo is a white rectangular label with the text "Syncopy Inc." in gold.

4. **London**: Located in the top-right. A circular node with an olive green border containing a photograph of the Houses of Parliament and Big Ben. Below the image is a white rectangular label with the text "London" in olive green.

5. **United Kingdom**: Located in the bottom-right. A circular node with a purple border containing the Union Jack flag. Below the flag is a white rectangular label with the text "United Kingdom" in purple.

**Edges (Relationships):**

* **cofounderOf**: A solid black arrow from "Christopher Nolan" to "Syncopy Inc."

* **cofounderOf**: A solid black arrow from "Emma Thomas" to "Syncopy Inc."

* **marriedTo**: A solid black arrow from "Christopher Nolan" to "Emma Thomas".

* **hasofficeIn**: A solid black arrow from "Syncopy Inc." to "London".

* **capitalOf**: A solid black arrow from "London" to "United Kingdom".

* **bornIn**: A solid black arrow from "Emma Thomas" to "United Kingdom".

* **nationalityOf-?**: A dashed red arrow from "Christopher Nolan" to "United Kingdom". The label includes a hyphen and question mark, indicating an inferred or uncertain relationship.

**Background:**

A faint gray network of interconnected nodes and lines is visible behind the primary diagram, suggesting these entities are part of a larger knowledge base.

### Detailed Analysis

**Node-by-Node Text Extraction:**

* **Christopher Nolan Node**: Image text: None. Label text: "Christopher Nolan".

* **Emma Thomas Node**: Image text: A partial watermark "lynda.c" is visible on the photograph. Label text: "Emma Thomas".

* **Syncopy Inc. Node**: Image text: "SYNCOPY" (stylized, blue). Label text: "Syncopy Inc.".

* **London Node**: Image text: None. Label text: "London".

* **United Kingdom Node**: Image text: None (flag only). Label text: "United Kingdom".

**Relationship Mapping:**

1. **Christopher Nolan** is the `cofounderOf` **Syncopy Inc.**

2. **Emma Thomas** is the `cofounderOf` **Syncopy Inc.**

3. **Christopher Nolan** is `marriedTo` **Emma Thomas**.

4. **Syncopy Inc.** `hasofficeIn` **London**.

5. **London** is the `capitalOf` the **United Kingdom**.

6. **Emma Thomas** was `bornIn` the **United Kingdom**.

7. **Christopher Nolan** has an inferred or queried (`?`) `nationalityOf` relationship with the **United Kingdom** (indicated by the dashed red line).

### Key Observations

* The diagram explicitly states that both Christopher Nolan and Emma Thomas are co-founders of Syncopy Inc. and are married to each other.

* It establishes a geographic chain: Syncopy Inc. has an office in London, which is the capital of the United Kingdom.

* Emma Thomas's birthplace is explicitly linked to the United Kingdom.

* Christopher Nolan's nationality is not stated as a fact but is posed as a question or inference (`nationalityOf-?`), visually distinguished by a dashed red line. This is the central investigative element of the diagram.

* The use of color is consistent: each node's border, label text, and the text of its outgoing "cofounderOf" edge share the same color (teal for Nolan, maroon for Thomas, gold for Syncopy).

### Interpretation

This knowledge graph visually synthesizes biographical and professional facts about film producer Emma Thomas and director Christopher Nolan, centering on their shared company, Syncopy Inc. The diagram's primary function is to **pose a specific query**: "What is Christopher Nolan's nationality?"

The graph provides contextual clues to help answer this. It shows that his business partner and spouse, Emma Thomas, was born in the United Kingdom. It also shows their company has a physical office in London, UK. These facts create a strong circumstantial link to the UK. The dashed red line labeled `nationalityOf-?` represents the system's or viewer's hypothesis that Nolan's nationality might also be British, based on these associations. The diagram does not confirm this; it highlights it as an open question to be resolved, perhaps by querying a more complete knowledge base (hinted at by the gray background network). It effectively demonstrates how relational data can be used to infer or question attributes of entities.

</details>

(b) Inductive reasoning on SKGs using training data in 2.

<details>

<summary>extracted/6596839/fig/interpolation.png Details</summary>

### Visual Description

## Temporal Network Diagram: Geopolitical Interactions Over Time

### Overview

The image is a temporal network diagram illustrating geopolitical interactions between nations and political figures across three discrete time points, labeled \( t_{i-2} \), \( t_{i-1} \), and \( t_i \). The diagram uses a horizontal timeline at the bottom to anchor three distinct network snapshots, showing how relationships and actions evolve. Nodes represent countries (identified by flags and labels) and individuals (identified by portraits and labels). Directed edges (arrows) with text labels describe specific actions or relationships between nodes. A notable element is a red, dashed arrow with a question mark, indicating an uncertain or hypothetical action.

### Components/Axes

* **Timeline Axis:** A horizontal black line at the bottom of the image, labeled "time" at the far right. It has three marked points:

* \( t_{i-2} \) (leftmost)

* \( t_{i-1} \) (center)

* \( t_i \) (rightmost)

* **Network Nodes:** Each time point features a cluster of interconnected nodes. Node types are:

* **Country Nodes:** Circular icons containing a national flag, with a rectangular label below.

* **Person Nodes:** Circular portrait photos, with a rectangular label below.

* **Unlabeled Nodes:** Solid grey circles, likely representing other entities or placeholders in the network.

* **Network Edges:** Directed arrows connecting nodes, each with a text label describing the relationship or action. The primary edge labels are:

* `make VisitTo`

* `express EntendTo`

* `negotiate`

* `consult`

* `censure ?` (red, dashed)

* `sign Agreement`

* `make Statement`

### Detailed Analysis

The diagram is segmented into three temporal regions:

**1. Time \( t_{i-2} \) (Left Region):**

* **Nodes Present:**

* **Barack Obama** (Person, bottom-left)

* **Angela Merkel** (Person, bottom-center)

* **China** (Country, top-left)

* **Russia** (Country, top-center)

* **South Korea** (Country, center)

* Several unlabeled grey nodes.

* **Edges & Actions:**

* Barack Obama → China: `make VisitTo`

* Barack Obama → South Korea: `express EntendTo`

* Barack Obama → Angela Merkel: `express EntendTo`

* South Korea → Russia: `negotiate`

* **Spatial Layout:** Obama and Merkel are positioned at the bottom. China and Russia are at the top. South Korea is centrally located, acting as a hub connecting to both Obama and Russia.

**2. Time \( t_{i-1} \) (Center Region):**

* **Nodes Present:**

* **Angela Merkel** (Person, center-left)

* **Singapore** (Country, top-left)

* **Pakistan** (Country, top-center)

* **North Korea** (Country, center-right)

* **South Korea** (Country, bottom-right)

* Several unlabeled grey nodes.

* **Edges & Actions:**

* Singapore → Angela Merkel: `consult`

* Angela Merkel → North Korea: `express EntendTo`

* Angela Merkel → South Korea: `sign Agreement`

* **Special Edge:** A red, dashed arrow from Angela Merkel to Pakistan labeled `censure ?`. The question mark and distinct styling indicate this action is uncertain, hypothetical, or under investigation.

* **Spatial Layout:** Merkel is the central actor. Singapore and Pakistan are above her, while North and South Korea are to her right.

**3. Time \( t_i \) (Right Region):**

* **Nodes Present:**

* **Barack Obama** (Person, center-left)

* **South Korea** (Country, top-center)

* **North Korea** (Country, top-right)

* **Pakistan** (Country, bottom-center)

* Several unlabeled grey nodes.

* **Edges & Actions:**

* Barack Obama → South Korea: `make Statement`

* Barack Obama → Pakistan: (Arrow present, but no text label is visible on the edge in this snapshot).

* South Korea → North Korea: (Arrow present, but no text label is visible on the edge in this snapshot).

* **Spatial Layout:** Obama is again a central actor on the left. South Korea and North Korea are positioned above and to the right, with Pakistan below.

### Key Observations

1. **Shifting Central Actors:** The focal point of activity shifts from Barack Obama at \( t_{i-2} \) to Angela Merkel at \( t_{i-1} \), and back to Barack Obama at \( t_i \).

2. **Evolving Relationships:** The connections between entities change over time. For example, South Korea interacts with Obama and Russia at \( t_{i-2} \), but with Merkel at \( t_{i-1} \), and with Obama and North Korea at \( t_i \).

3. **Introduction of Uncertainty:** The `censure ?` edge at \( t_{i-1} \) is the only element marked with uncertainty (dashed line, question mark, red color), highlighting it as a point of analytical interest or missing data.

4. **Persistent Network Structure:** Behind the labeled interactions, a mesh of grey nodes and faint connecting lines persists across all time points, suggesting a stable underlying network of potential relationships.

5. **Action Verbs:** The edge labels are all action-oriented verbs (`visit`, `express`, `negotiate`, `consult`, `sign`, `censure`, `make statement`), framing the diagram as a map of diplomatic or political events.

### Interpretation

This diagram models the dynamic and evolving nature of international relations as a temporal network. It suggests that geopolitical influence and interaction are not static; the key players and the nature of their engagements (from cooperative `sign Agreement` to potentially confrontational `censure ?`) shift across discrete time intervals.

The **`censure ?`** arrow is the most significant analytical element. Its distinct visual treatment implies it is either a predicted event, an unconfirmed report, or a relationship the model is attempting to infer. It introduces a layer of hypothesis or ambiguity into the otherwise declarative network of actions.

The diagram effectively demonstrates how a single entity (e.g., South Korea) can be involved in multiple, concurrent relationships (`express EntendTo` with Obama, `negotiate` with Russia at \( t_{i-2} \)), and how these relationships are reconfigured over time. The underlying grey network implies that the labeled actions are just a subset of all possible interactions, emphasizing the complexity of the system being modeled. This type of visualization is crucial for understanding event sequences, identifying central actors in a temporal context, and highlighting areas of uncertainty in intelligence or relational data.

</details>

(c) Interpolation reasoning on TKGs.

<details>

<summary>extracted/6596839/fig/extrapolation.png Details</summary>

### Visual Description

## Temporal Relationship Network Diagram: Geopolitical Interactions and Prediction

### Overview

The image is a conceptual diagram illustrating a temporal sequence of geopolitical interactions between nations and political leaders, culminating in a predictive task. It visualizes a network of relationships at two past time points (`t_i-2` and `t_i-1`) and uses this history to predict a future interaction at time `t_i`. The diagram is structured horizontally along a timeline.

### Components/Axes

* **Timeline:** A horizontal black arrow at the bottom labeled "time" points to the right. It is marked with three discrete time points: `t_i-2` (left), `t_i-1` (center), and `t_i` (right).

* **Nodes:** Represented by circular images with text labels below them. They are of two types:

* **Political Figures:** Portraits of individuals (Barack Obama, Angela Merkel).

* **Countries:** National flags (China, Russia, South Korea, Singapore, Pakistan, North Korea).

* **Edges (Relationships):** Directed arrows connecting nodes, labeled with the nature of the interaction. The labels are in English.

* **Network Background:** Faint gray lines and unlabeled gray circles form a background network, suggesting a broader, unshown web of connections.

* **Prediction Arrow:** A large, dark gray arrow labeled "predict" points from the `t_i-1` cluster to the `t_i` cluster.

### Detailed Analysis

The diagram is segmented into three temporal regions:

**1. Time `t_i-2` (Left Cluster):**

* **Central Node:** Barack Obama (portrait).

* **Outgoing Relationships from Obama:**

* `make VisitTo` → China (flag).

* `express EntendTo` → South Korea (flag).

* `express EntendTo` → Angela Merkel (portrait).

* **Other Relationships:**

* South Korea → `negotiate` → Russia (flag).

* Russia and China are connected by an unlabeled gray line.

* Angela Merkel and South Korea are connected by an unlabeled gray line.

**2. Time `t_i-1` (Center Cluster):**

* **Central Node:** Angela Merkel (portrait).

* **Outgoing Relationships from Merkel:**

* `consult` → Singapore (flag).

* `consult` → Pakistan (flag).

* `express EntendTo` → North Korea (flag).

* `sign Agreement` → South Korea (flag).

* **Other Relationships:**

* Singapore and Pakistan are connected by an unlabeled gray line.

* Pakistan and North Korea are connected by an unlabeled gray line.

* North Korea and South Korea are connected by an unlabeled gray line.

**3. Time `t_i` (Right Cluster - Prediction Target):**

* **Nodes Present:** Barack Obama (portrait) and South Korea (flag).

* **Predicted Relationship:** A red, dashed, double-headed arrow connects Obama and South Korea. It is labeled `make Statement` with a large question mark (`?`) below it, indicating this is the unknown interaction to be predicted based on prior history.

### Key Observations

* **Recurring Actors:** Barack Obama, Angela Merkel, and South Korea appear in multiple time slices, indicating their persistent roles in this modeled network.

* **Shift in Central Actor:** The focus shifts from Obama at `t_i-2` to Merkel at `t_i-1`.

* **Relationship Diversity:** Interactions include diplomatic visits (`make VisitTo`), expressions of intent (`express EntendTo`), negotiations, consultations, and formal agreements (`sign Agreement`).

* **Temporal Flow:** The diagram explicitly models how relationships at `t_i-2` and `t_i-1` provide the context for inferring a future relationship at `t_i`.

* **Prediction Focus:** The core task highlighted is predicting the nature (`make Statement?`) of a future interaction between two specific entities (Obama and South Korea).

### Interpretation

This diagram is a schematic for a **temporal knowledge graph** or **dynamic network analysis** model used in political science or intelligence forecasting. It demonstrates the Peircean concept of **abductive reasoning**—using observed patterns (the historical network of interactions) to infer the most plausible explanation or prediction for a future event (the `make Statement?` relationship).

The data suggests that to predict a future action between Actor A (Obama) and Entity B (South Korea), one must analyze:

1. **Direct Historical Ties:** Their past interaction (`express EntendTo` at `t_i-2`).

2. **Indirect Network Influence:** The actions of other closely connected actors. For instance, Merkel's `sign Agreement` with South Korea at `t_i-1` and her `consult` relationships with other Asian nations create a contextual backdrop that may influence Obama's subsequent actions.

3. **Evolving Roles:** The model accounts for how the central actors and the density of interactions change over time.

The "predict" arrow and the question mark encapsulate the fundamental challenge of predictive analytics in complex systems: using structured historical relational data to forecast future states, where the outcome is uncertain but informed by the weight of prior connections. The diagram argues that future interactions are not isolated but are embedded in a fabric of past diplomatic engagements.

</details>

(d) Extrapolation reasoning on TKGs.

Figure 2: Illustration of four reasoning scenarios on KGs: transductive, inductive, interpolation, and extrapolation. The red dashed arrows indicate the query fact to be predicted.

### 2.2 Logic Reasoning on KGs

Logical reasoning involves using a given set of facts (i.e., premises) to deduce new facts (i.e., conclusions) by a rigorous form of thinking [42, 43]. It generally covers propositional and first-order logic (also known as predicate logic). Propositional logic deals with declarative sentences that can be definitively assigned a truth value, leaving no room for ambiguity. It is usually known as multi-hop reasoning [44, 35] on KGs, which views each fact as a declarative sentence and usually reasons over query-related paths to obtain an answer. Thus, propositional reasoning on KGs is entity-dependent. First-order logic (FOL) can be regarded as an expansion of propositional logic, enabling the expression of more refined and nuanced ideas [42, 45]. FOL rules extend the modeling scope and application prospect by introducing quantifiers ( $\exists$ and $\forall$ ), predicates, and variables. They encompass variables that belong to a specific domain and encompass objects and relationships among those objects [46]. They are usually in the form of $premise\rightarrow conclusion$ , where $premise$ and $conclusion$ denote the rule body and rule head which are all composed of atomic formulas. Each atomic formula consists of a predicate and several variables, e.g., $bornIn(X,Y)$ in $\gamma_{1}$ of Figure 1, where $bornIn$ is the predicate and $X$ and $Y$ are all entity variables. Thus, FOL reasoning is entity-independent, leveraging consistent FOL rules for different entities [47]. In this paper, we utilize Horn rules [48] to enhance the adaptability of FOL rules to various KG reasoning tasks. These rules entail setting the rule head to a single atomic formula. Furthermore, to make the Horn rules suitable for multiple reasoning scenarios, we introduce the following definitions.

Connected and Closed Horn (CCH) Rule. Based on Horn rules, CCH rules possess two distinct features, i.e., connected and closed. The term connected means the rule body necessitates a transitive and chained connection between atomic formulas through shared variables. Concurrently, the term closed indicates the rule body and rule head utilize identical start and end variables.

CCH rules of length $n$ (the quantifier $\forall$ would be omitted for better exhibition in the following parts of the paper) are in the following form:

$$

\begin{split}\epsilon,\;\forall&X,Y_{1},Y_{2},\cdots,Y_{n},Z\;\;r_{1}(X,Y_{1})

\land r_{2}(Y_{1},Y_{2})\land\cdots\\

&\land r_{n}(Y_{n-1},Z)\rightarrow r(X,Z),\end{split} \tag{1}

$$

where atomic formulas in the rule body are connected by variables ( $X,Y_{1},Y_{2},\cdots,Y_{n-1},Z$ ). For example, $r_{1}(X,Y_{1})$ and $r_{2}(Y_{1},Y_{2})$ are connected by $Y_{1}$ . Meanwhile, all variables form a path from $X$ to $Z$ that are the start variable and end variable of rule head $r_{t}(X,Z)$ , respectively. $r_{1},r_{2},\cdots,r_{n},r$ are relations in KGs to represent predicates. To model different credibility of different rules, we configure a rule confidence $\epsilon\in[0,1]$ for each Horn rule. Rule length refers to the number of atomic formulas in the rule body. For example, $\gamma_{1}$ , $\gamma_{2}$ , and $\gamma_{3}$ in Figure 1 are three example Horn rules of lengths 2, 3, and 3. Rule grounding of a Horn rule can be realized by replacing each variable with a real entity, e.g., bornIn(Barack Obama, Hawaii) $\land$ locatedInCountry(Hawaii, U.S.A.) $\rightarrow$ nationalityOf(Barack Obama, U.S.A.) is a grounding of rule $\gamma_{1}$ . CCH rules can be utilized for transductive and inductive reasoning.

Temporal Interpolation Horn (TIH) Rule. Based on CCH rules on static KGs that require connected and closed variables, TIH rules assign each atomic formula a time variable.

An example of TIH rule can be:

$$

\epsilon,\;\forall X,Y,Z\;\;r_{1}(X,Y):t_{1}\land r_{2}(Y,Z):t_{2}\rightarrow r

(X,Z):t, \tag{2}

$$

where $t_{1}$ , $t_{2}$ and $t$ are time variables. To expand the model capacity when grounding TIH rules, time variables are virtual and do not have to be instantiated to real timestamps, which is distinct from the entity variables (e.g., $X$ , $Y$ , $Z$ ). However, we model the relative sequence of occurrence. This implies that TIH rules with the same atomic formulas but varying time variable conditions are distinct and may have different degrees of confidence, such as for $t_{1}<t_{2}$ vs. $t_{1}>t_{2}$ .

Temporal Extrapolation Horn (TEH) Rule. Based on CCH rules on static KGs that require connected and closed variables, TEH rules assign each atomic formula a time variable. Unlike TIH rules, TEH rules have the characteristic of time growth, which means the time sequence is increasing and the time in the rule head is the maximum.

For example, the following rule is a TEH rule with length 2:

$$

\begin{split}\epsilon,\;\forall X,Y,Z\;\;&r_{1}(X,Y):t_{1}\land r_{2}(Y,Z):t_{

2}\\

&\rightarrow r(X,Z):t,\;\;s.t.\;\;t_{1}\leqslant t_{2}<t.\end{split} \tag{3}

$$

Noticeably, for rule learning and reasoning, $t_{1}$ , $t_{2}$ and $t$ are also virtual time variables that are only used to satisfy the time growth and do not have to be instantiated.

<details>

<summary>extracted/6596839/fig/arc.png Details</summary>

### Visual Description

## System Architecture Diagram: Multi-Step Knowledge Graph Reasoning

### Overview

The image is a technical system architecture diagram illustrating a multi-step reasoning process over a Knowledge Graph (KG). The flow proceeds from left to right, starting with an input query and KG, passing through multiple iterative "Logic Blocks," and culminating in an output of reasoning scores. The diagram uses a consistent visual language of colored boxes, arrows, and icons to represent data structures, processing modules, and information flow.

### Components/Axes

The diagram is segmented into four primary horizontal sections, each with a labeled header:

1. **Input (Leftmost Section):**

* **Header:** "Input" in a light green box.

* **Components:**

* A network graph icon labeled **"KG"** (Knowledge Graph).

* A pink box labeled **"Initial Embed"**.

* A yellow box containing the query specification: **"Query : (s, r, ?) or (s, r, ?, t)"**.

* **Flow:** Dotted arrows connect the KG icon to both the "Initial Embed" box and a smaller KG icon in the next block. A solid arrow labeled **"Initialize"** connects the query box to the first Logic Block.

2. **Logic Block #1 (Second Section):**

* **Header:** "Logic Block # 1" in a light green box.

* **Enclosure:** A dashed rectangle contains the block's internal components.

* **Inputs (from left):**

* The smaller **"KG"** icon.

* The **"Initial Embed"** box.

* The **"Initialize"** arrow from the query.

* **Internal Processing Flow (left to right):**

1. A light blue trapezoid labeled **"Neighbor facts"**.

2. A stack of gray boxes labeled **"Fact 1"**, **"Fact 2"**, **"Fact 3"**, **"..."**, **"Fact N-1"**, **"Fact N"**.

3. A light blue trapezoid labeled **"Expanding Reasoning Graph"**.

4. A peach-colored rectangle labeled **"Logical Message-passing"**.

* **Outputs (bottom):**

* A yellow box: **"Reasoning Graph (1 step)"**.

* A pink box: **"Updated Emb & Att"**.

* **Flow to Next Block:** A large white arrow points from the "Logical Message-passing" module to the next section.

3. **Logic Block #N (Third Section):**

* **Header:** "Logic Block # N" in a light green box.

* **Structure:** This block is visually identical to Logic Block #1, indicating a repeated, iterative process.

* **Inputs (from left):**

* A smaller **"KG"** icon.

* A yellow box: **"Reasoning Graph (N-1)"** (output from the previous block).

* A pink box: **"Updated Emb & Att"** (output from the previous block).

* **Internal Processing Flow:** Identical to Block #1: **"Neighbor facts"** -> **"Fact 1...N"** -> **"Expanding Reasoning Graph"** -> **"Logical Message-passing"**.

* **Outputs (bottom):**

* A yellow box: **"Reasoning Graph (N step)"**.

* A pink box: **"Updated Emb & Att"**.

* **Flow to Output:** A large white arrow points from the "Logical Message-passing" module to the final section.

4. **Output (Rightmost Section):**

* **Header:** "Output" in a light green box.

* **Components:**

* A pink box: **"Updated Emb & Att"** (final state).

* A bar chart icon with an arrow pointing down from the "Updated Emb & Att" box.

* A label below the chart: **"Reasoning scores"**.

### Detailed Analysis

* **Data Flow & Transformation:** The core process is iterative. Each Logic Block takes the current state of the Knowledge Graph, its embeddings/attention ("Emb & Att"), and the reasoning graph from the previous step. It performs three key operations:

1. **Neighbor Fact Retrieval:** Identifies relevant facts from the KG.

2. **Reasoning Graph Expansion:** Builds upon the existing reasoning path.

3. **Logical Message-Passing:** Updates the node embeddings and attention weights based on the logical structure.

* **State Evolution:** The pink **"Updated Emb & Att"** box and the yellow **"Reasoning Graph"** box are the persistent states that evolve through each Logic Block. The "Initial Embed" is the starting point for the embeddings.

* **Query Types:** The input query supports two formats: `(s, r, ?)` for finding an object given a subject and relation, and `(s, r, ?, t)` which likely includes a temporal or type constraint `t`.

* **Output:** The final output is not a single answer but **"Reasoning scores"**, visualized as a bar chart. This suggests the system produces a ranked list of potential answers or confidence scores for possible query completions.

### Key Observations

* **Modularity and Repetition:** The identical structure of Logic Block #1 and Logic Block #N emphasizes that the system is designed for an arbitrary number (`N`) of reasoning steps.

* **Dual-State Tracking:** The system explicitly maintains and updates two parallel representations: the structural **Reasoning Graph** and the vector-based **Embeddings & Attention** ("Emb & Att").

* **Visual Consistency:** Color coding is used consistently: pink for embedding/attention states, yellow for reasoning graph states and queries, light blue for processing modules, and peach for the core message-passing operation.

* **Spatial Layout:** The linear, left-to-right flow clearly communicates a sequential pipeline. The dashed enclosures around the Logic Blocks clearly define their boundaries and internal processes.

### Interpretation

This diagram depicts a **neuro-symbolic reasoning system** designed for complex query answering over knowledge graphs. It bridges symbolic AI (represented by the explicit "Fact" retrieval and "Logical Message-passing") with neural AI (represented by "Embeddings" and "Attention").

The process can be interpreted as follows: Starting with a query and a knowledge graph, the system initializes embeddings. It then enters a reasoning loop. In each step (Logic Block), it explores the neighborhood of current entities in the KG, gathers relevant facts, expands a dedicated reasoning graph that traces the logical path of inference, and uses a logical message-passing mechanism to update its neural understanding (embeddings) of the entities and relations involved. After `N` steps of this iterative refinement, the final embeddings and attention weights are used to generate a set of reasoning scores, which represent the system's confidence in various possible answers to the original query.

The key innovation suggested by this architecture is the tight coupling between the evolving symbolic reasoning graph and the neural embeddings. The system doesn't just retrieve facts; it builds an explicit, multi-step logical proof path (the Reasoning Graph) while simultaneously refining its vector-space representations to better capture the nuances of the reasoning process. This allows it to handle complex, multi-hop queries that require chaining multiple facts together.

</details>

Figure 3: An overview of the Tunsr. It utilizes multiple logic blocks to find the answer, where the reasoning graph is constructed and iteratively expanded. Meanwhile, a forward logic message-passing mechanism is proposed to update embeddings and attentions for unified propositional and FOL reasoning.

<details>

<summary>extracted/6596839/fig/rg2.png Details</summary>

### Visual Description

## Knowledge Graph Diagram: Iterative Expansion from Barack Obama

### Overview

The image displays a directed graph illustrating the iterative expansion of a knowledge graph starting from a central entity, "Barack Obama." The graph is organized into four vertical, dashed-line boxes representing sequential iterations (O₀ to O₃). Nodes are circles, and relationships are represented by labeled, directed arrows. The diagram demonstrates how a knowledge base can be traversed or expanded to discover connected entities and facts.

### Components/Axes

* **Structure:** Four vertical columns, each enclosed in a gray dashed rectangle, labeled at the top:

* **O₀** (Iteration 0): The starting point.

* **O₁** (Iteration 1): First-hop connections.

* **O₂** (Iteration 2): Second-hop connections.

* **O₃** (Iteration 3): Third-hop connections.

* **Nodes:** Represent entities. They are colored circles.

* **Blue Node:** "Barack Obama" (located in O₀).

* **Orange Nodes:** All other entities (located in O₁, O₂, and O₃).

* **Edges:** Represent relationships. They are dark blue arrows with text labels indicating the relationship type. Some labels include a superscript "-1" (e.g., `graduateFrom⁻¹`), likely indicating an inverse relationship.

* **Text Labels:** All entity names and relationship types are in English.

### Detailed Analysis

The graph expands from left to right. Below is a complete reconstruction of all visible nodes and their connecting edges, organized by iteration column.

**Iteration O₀ (Leftmost Column):**

* **Node:** `Barack Obama` (Blue circle).

* **Outgoing Edges:**

1. `marriedTo` → `Michelle Obama` (in O₁).

2. `graduateFrom` → `Harvard University` (in O₁).

3. `bornIn` → `Hawaii` (in O₁).

4. `fatherOf` → `Malia Obama` (in O₁).

**Iteration O₁ (Second Column):**

* **Nodes & Their Outgoing Edges:**

1. `Michelle Obama`:

* `bornIn` → `Chicago` (in O₂).

* `graduateFrom` → `Harvard University` (in O₂).

2. `Harvard University`:

* `self` → `Harvard University` (in O₂). *[This appears to be a self-loop or identity link]*

* `graduateFrom⁻¹` → `Bill Gates` (in O₂). *[Inverse of "graduateFrom"]*

3. `Hawaii`:

* `capitalOf⁻¹` → `Honolulu` (in O₂). *[Inverse of "capitalOf"]*

4. `Malia Obama`:

* `growUpIn` → `Honolulu` (in O₂).

* `sisterOf` → `Sasha Obama` (in O₂).

5. `Sasha Obama` (Node is present, but no outgoing edges are drawn from it in this column).

* **Ellipsis (`...`):** Indicates additional, unshown nodes in this iteration.

**Iteration O₂ (Third Column):**

* **Nodes & Their Outgoing Edges:**

1. `Chicago`:

* `self` → `Chicago` (in O₃).

* `placeIn` → `U.S.A.` (in O₃).

2. `Harvard University`:

* `mascot` → `John Harvard` (in O₃).

3. `Bill Gates`:

* `founderOf` → `Microsoft` (in O₃).

4. `Honolulu`:

* `self` → `Honolulu` (in O₃).

5. `Sasha Obama`:

* `growUpIn` → `Honolulu` (in O₃).

* `graduateFrom` → `Sidwell Friends School` (in O₃).

* **Ellipsis (`...`):** Indicates additional, unshown nodes in this iteration.

**Iteration O₃ (Rightmost Column):**

* **Nodes (Terminal in this diagram):**

* `Chicago`

* `U.S.A.`

* `John Harvard`

* `Microsoft`

* `Honolulu`

* `Sidwell Friends School`

* No outgoing edges are drawn from nodes in this column.

### Key Observations

1. **Iterative Expansion:** The graph clearly models a multi-hop traversal process, where each iteration reveals entities one relationship step further from the origin.

2. **Relationship Semantics:** Relationships are specific and typed (e.g., `marriedTo`, `bornIn`, `founderOf`). The use of `self` edges in O₂→O₃ for locations (`Chicago`, `Honolulu`) may indicate a canonicalization or identity resolution step.

3. **Inverse Relationships:** The presence of `graduateFrom⁻¹` and `capitalOf⁻¹` edges is notable. This suggests the knowledge graph or traversal algorithm can reason about and follow relationships in reverse (e.g., from a university to its alumni, or from a state to its capital).

4. **Entity Color Coding:** The single blue node (`Barack Obama`) versus all orange nodes visually distinguishes the query or seed entity from the discovered entities.

5. **Incomplete Graph:** The ellipses (`...`) in O₁ and O₂ explicitly indicate that the shown graph is a subset of a larger, more complete knowledge base.

### Interpretation

This diagram is a pedagogical or technical illustration of **knowledge graph traversal** or **link prediction**. It demonstrates how a system can start with a known entity (Barack Obama) and, by following a chain of relationships, uncover a web of connected facts about people, places, institutions, and their interrelations.

The progression from O₀ to O₃ shows the exponential growth of discoverable information with each hop. The inclusion of inverse relationships (`⁻¹`) is a key technical detail, highlighting that advanced graph algorithms don't just follow edges forward but can also reason backwards to infer new connections (e.g., "Bill Gates is an alumnus of Harvard" is inferred via the inverse of "Harvard has alumnus Bill Gates").

The "self" edges are particularly interesting. They might represent a step where an entity is confirmed or linked to its canonical representation in the knowledge base, ensuring consistency across iterations. Overall, the diagram effectively communicates the power and methodology of structured knowledge representation and reasoning, showing how a single fact can be a gateway to a vast network of related information.

</details>

(a) An example of reasoning graph in SKGs.

<details>

<summary>extracted/6596839/fig/rg1.png Details</summary>

### Visual Description

## Directed Graph Diagram: Temporal Entity Relationship Network

### Overview

The image displays a directed graph diagram illustrating the expansion of relationships from a central query entity over three sequential iterations. The graph visualizes how an initial entity (Catherine Ashton) connects to other entities and entity-time pairs through specific actions, with the network growing in complexity from left to right. The diagram is structured into four vertical columns, each representing a stage or "observation" (O₀ to O₃), corresponding to the initial state and three subsequent iterations.

### Components/Axes

* **Structure:** The diagram is organized into four dashed-line rectangular columns, labeled at the top:

* **O₀:** The leftmost column, containing the start node.

* **O₁:** Labeled "iteration 1" at the top.

* **O₂:** Labeled "iteration 2" at the top.

* **O₃:** Labeled "iteration 3" at the top.

* **Legend (Right Side):**

* **Blue Circle:** "start node: query entity"

* **Orange Circle:** "subsequent node: entity or entity-time pair"

* **Node Types:**

* **Start Node (Blue):** A single blue circle in column O₀, labeled "Catherine Ashton".

* **Subsequent Nodes (Orange):** Multiple orange circles in columns O₁, O₂, and O₃. Each is labeled with an entity name and a date in `YYYY-MM-DD` format (e.g., "Catherine Ashton: 2014-01-01").

* **Edges:** Directed arrows (blue lines with arrowheads) connect nodes from left to right (from O₀ to O₁, O₁ to O₂, O₂ to O₃). Each edge has a text label describing the relationship or action (e.g., "makeStatement", "consult").

### Detailed Analysis

**Node Inventory by Column:**

* **Column O₀:**

* Node: `Catherine Ashton` (Blue, Start Node)

* **Column O₁ (Iteration 1):**

* Node 1: `Catherine Ashton: 2014-01-01`

* Node 2: `Mohammad Javad: 2014-10-01`

* Node 3: `Iran: 2014-10-04`

* Node 4: `Cabient: 2014-10-05` (Note: Likely a typo for "Cabinet")

* (Ellipsis `...` indicates additional nodes not fully drawn)

* **Column O₂ (Iteration 2):**

* Node 1: `Catherine Ashton: 2014-01-01`

* Node 2: `Iran: 2014-11-04`

* Node 3: `China: 2014-10-30`

* Node 4: `John Kerry: 2014-11-05`

* Node 5: `John Kerry: 2014-10-28`

* (Ellipsis `...` indicates additional nodes)

* **Column O₃ (Iteration 3):**

* Node 1: `Oman: 2014-11-04`

* Node 2: `Oman: 2014-11-08`

* Node 3: `Iran: 2014-11-08`

* (Ellipsis `...` indicates additional nodes)

**Edge (Relationship) Inventory:**

* **From O₀ to O₁:**

* `Catherine Ashton` -> `Catherine Ashton: 2014-01-01` via `self`

* `Catherine Ashton` -> `Mohammad Javad: 2014-10-01` via `makeStatement`

* `Catherine Ashton` -> `Iran: 2014-10-04` via `hostVisit`

* `Catherine Ashton` -> `Cabient: 2014-10-05` via `consult`

* **From O₁ to O₂:**

* `Catherine Ashton: 2014-01-01` -> `Catherine Ashton: 2014-01-01` via `self`

* `Catherine Ashton: 2014-01-01` -> `Iran: 2014-11-04` via `expressIntentTo`

* `Mohammad Javad: 2014-10-01` -> `Iran: 2014-11-04` via `makeVisit`

* `Mohammad Javad: 2014-10-01` -> `China: 2014-10-30` via `hostVisit`

* `Iran: 2014-10-04` -> `China: 2014-10-30` via `expressIntentTo`

* `Cabient: 2014-10-05` -> `John Kerry: 2014-11-05` via `consult`

* `Cabient: 2014-10-05` -> `John Kerry: 2014-10-28` via `meetTo`

* **From O₂ to O₃:**

* `Catherine Ashton: 2014-01-01` -> `Oman: 2014-11-04` via `self`

* `Iran: 2014-11-04` -> `Oman: 2014-11-04` via `makeOptimisticComment`

* `China: 2014-10-30` -> `Oman: 2014-11-08` via `consult`

* `John Kerry: 2014-11-05` -> `Iran: 2014-11-08` via `makeVisit`

* `John Kerry: 2014-10-28` -> (An unlabeled orange node in O₃) via `makeVisit`

### Key Observations

1. **Temporal Progression:** The dates on the nodes generally progress from left to right (e.g., 2014-01-01 in O₁, 2014-10/11 in O₂, 2014-11-04/08 in O₃), indicating a timeline of events.

2. **Network Expansion:** The graph grows denser with each iteration. O₁ has 4 visible nodes, O₂ has 5, and O₃ has 3 visible nodes, but the ellipses suggest a much larger underlying network.

3. **Relationship Types:** The edge labels define a specific vocabulary of interactions: `self`, `makeStatement`, `hostVisit`, `consult`, `expressIntentTo`, `makeVisit`, `meetTo`, `makeOptimisticComment`.

4. **Entity Recurrence:** Certain entities appear multiple times with different dates (e.g., `John Kerry` on 2014-10-28 and 2014-11-05; `Iran` on 2014-10-04 and 2014-11-04), showing their involvement in multiple events.

5. **Potential Typo:** The node labeled "Cabient: 2014-10-05" is likely a misspelling of "Cabinet".

### Interpretation

This diagram is a visualization of a **temporal knowledge graph** or an **event sequence model**. It demonstrates a method for expanding a query about a central entity (Catherine Ashton) into a network of related events and actors over time.

* **What it represents:** The graph models diplomatic or political activities. The entities are primarily individuals (Catherine Ashton, Mohammad Javad, John Kerry) and countries (Iran, China, Oman). The relationships (`makeStatement`, `hostVisit`, `consult`, etc.) describe formal diplomatic actions.

* **How elements relate:** The iterations (O₁, O₂, O₃) likely represent steps in a graph traversal or reasoning algorithm. Starting from the query entity, the system discovers direct connections (Iteration 1), then uses those new nodes to discover second-order connections (Iteration 2), and so on. This builds a contextual web of events.

* **Purpose and Anomalies:** The purpose is to uncover indirect relationships and temporal patterns. For example, it shows how Catherine Ashton's actions in early 2014 are linked, through a chain of intermediaries, to events involving Oman and Iran in November 2014. The ellipses (`...`) are a critical component, indicating that the shown graph is a simplified excerpt from a much larger, more complex dataset. The diagram effectively illustrates how a single entity can be the root of a wide-reaching network of dated events.

</details>

(b) An example of reasoning graph in TKGs.

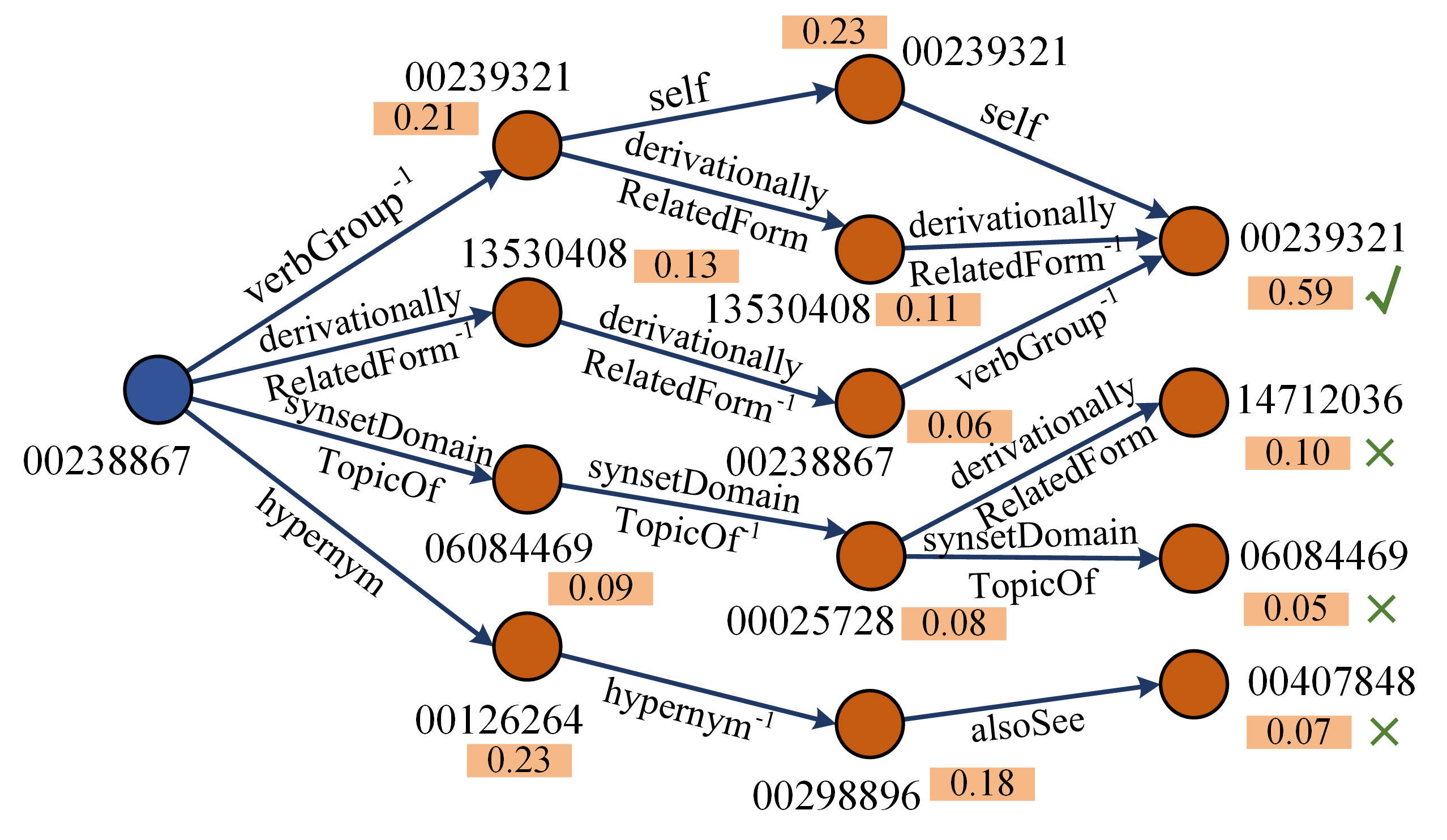

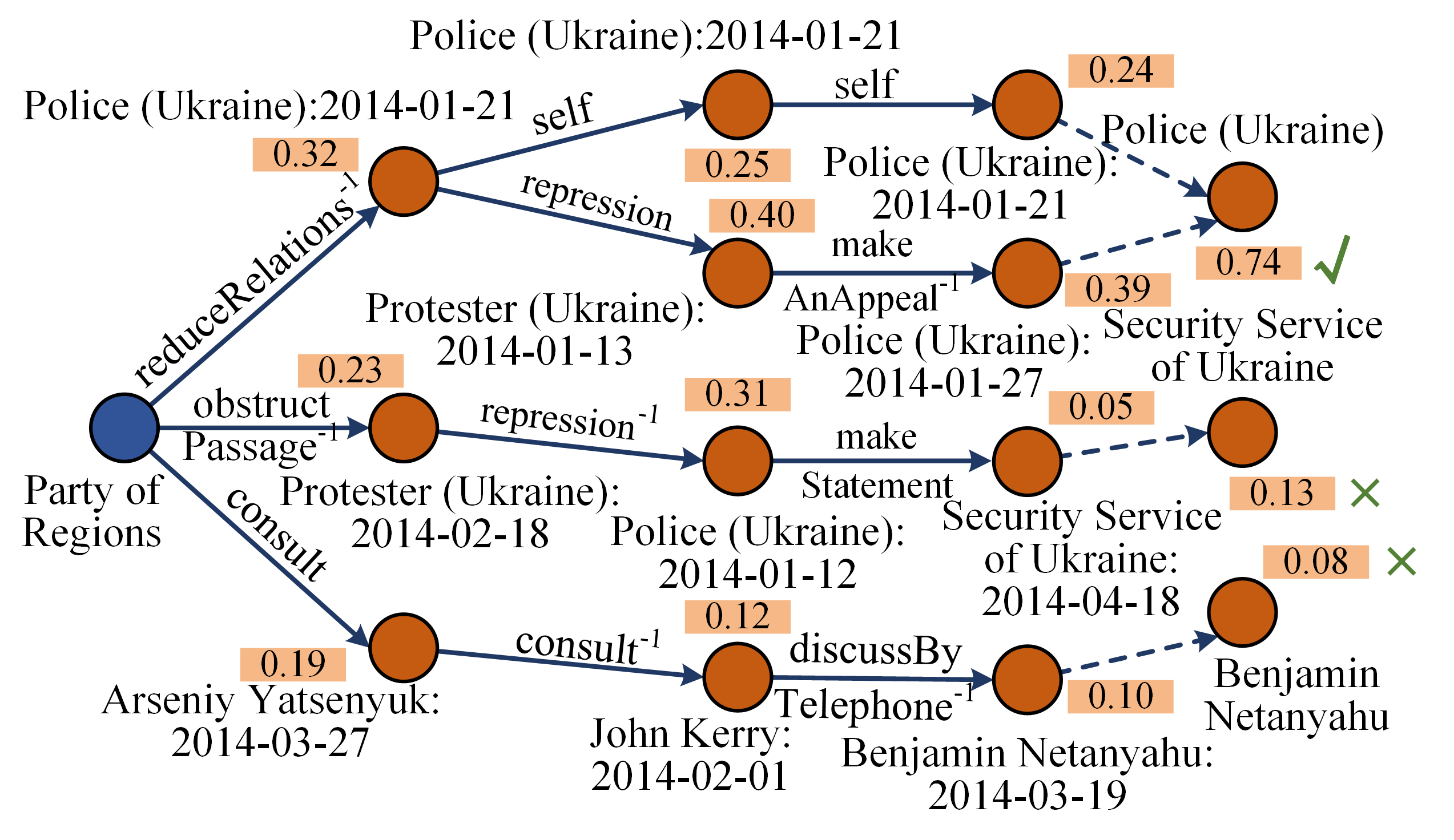

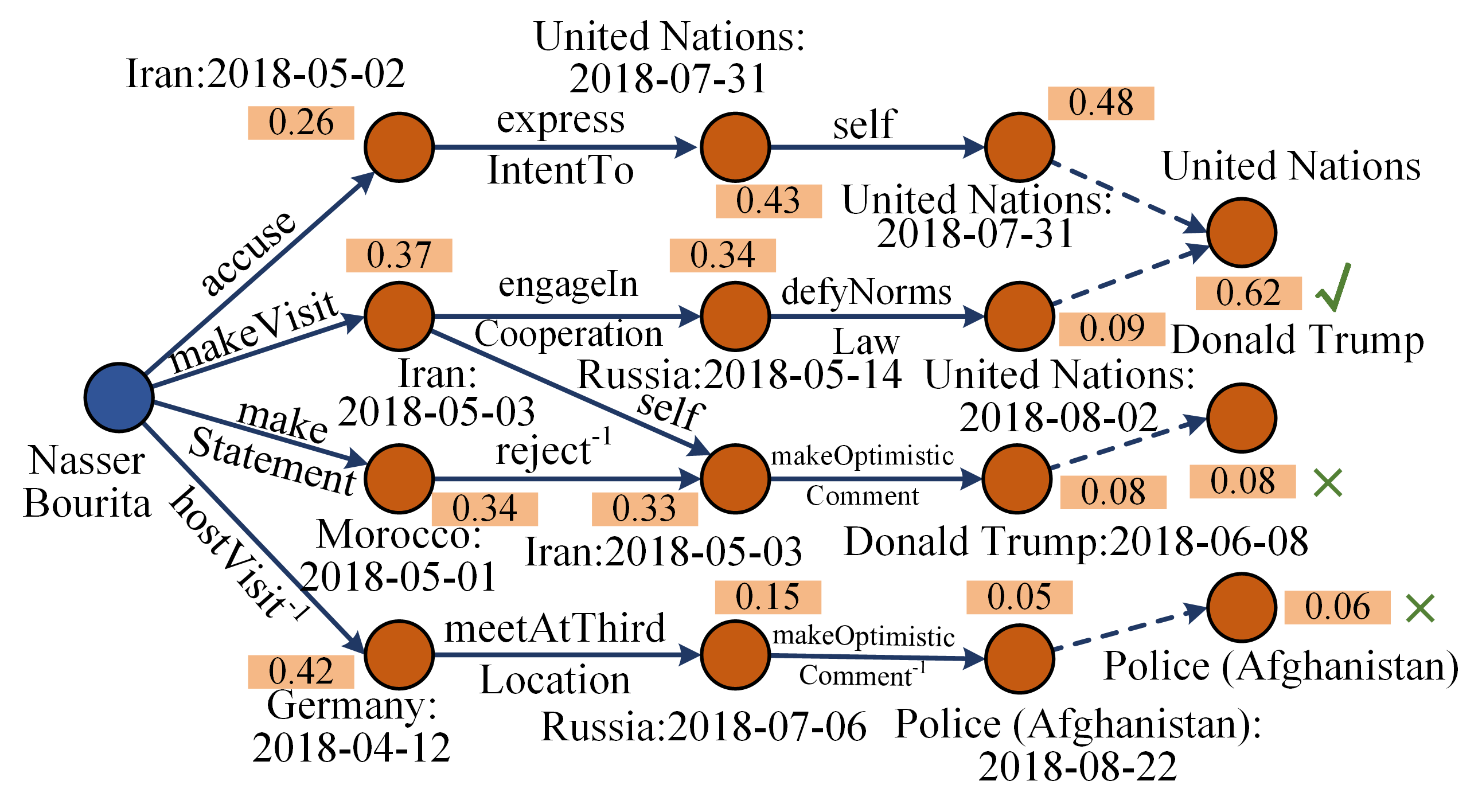

Figure 4: Examples of the reasoning graph with three iterations. (a) is on SKGs while (b) is on TKGs.

## 3 Methodology

In this section, we present the technical details of our Tunsr model. It leverages a combination of logic blocks to obtain reasoning results, which involves constructing or expanding a reasoning graph and introducing a forward logic message-passing mechanism for propositional and FOL reasoning. The overall architecture is illustrated in Figure 3.

### 3.1 Reasoning Graph Construction

For each query of KGs, i.e., $\mathcal{Q}=(\tilde{s},\tilde{r},?)$ for SKGs or $\mathcal{Q}=(\tilde{s},\tilde{r},?,\tilde{t})$ for TKGs, we introduce an expanding reasoning graph to find the answer. The formulation is as follows.

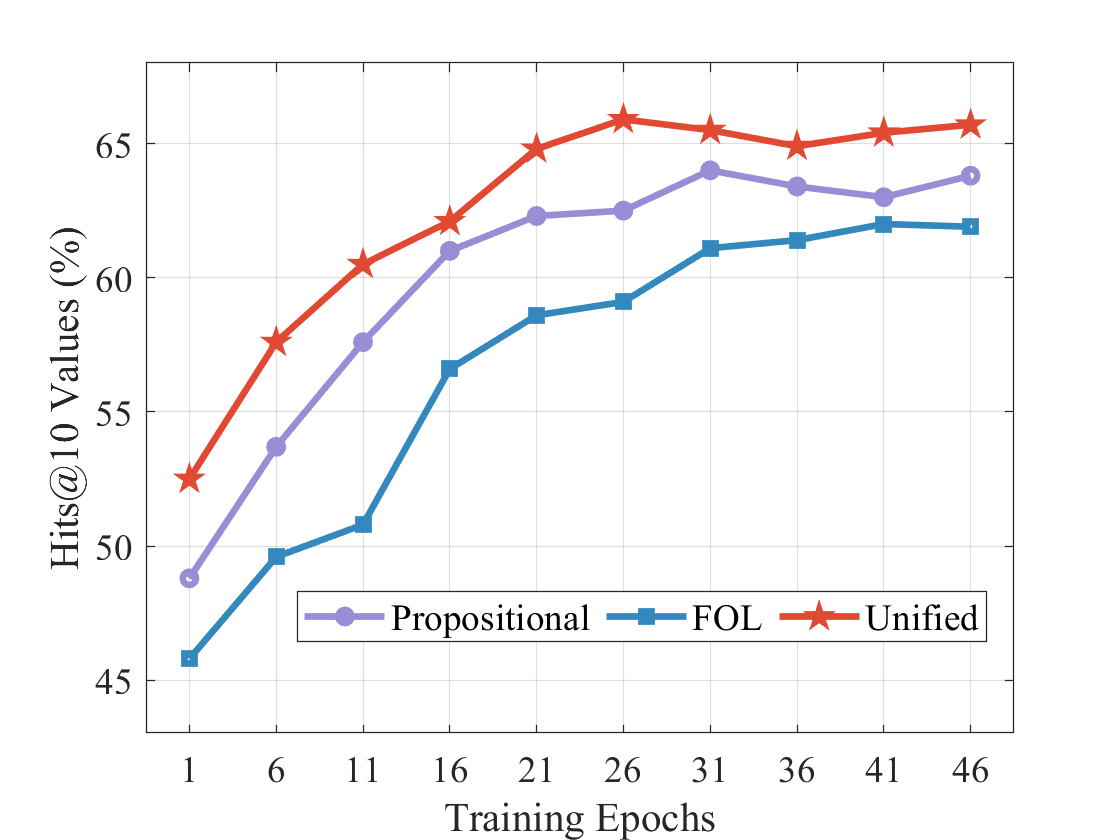

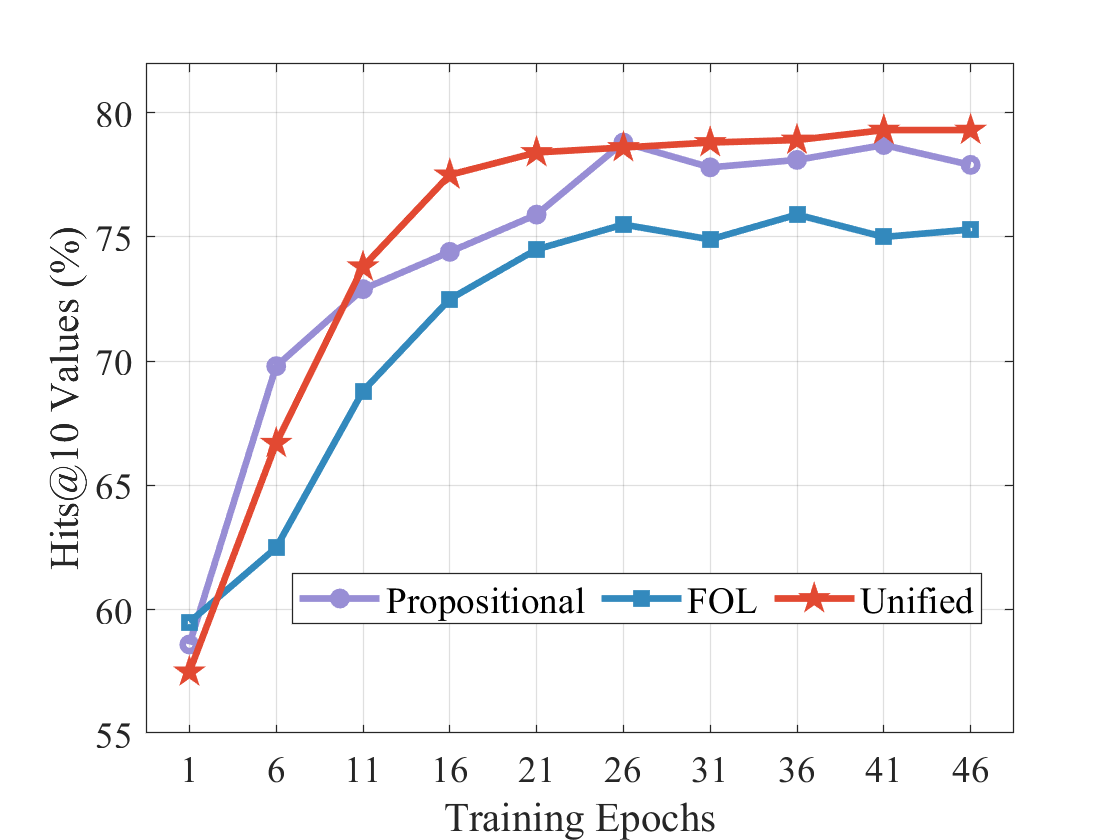

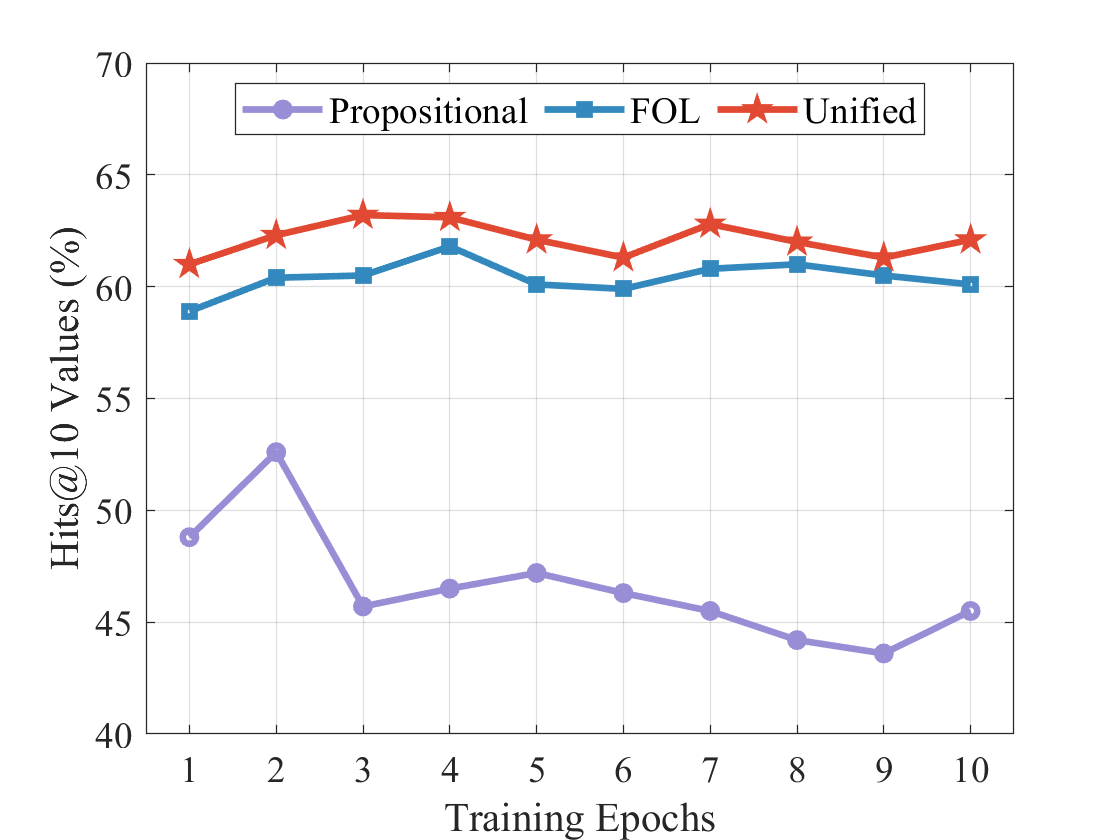

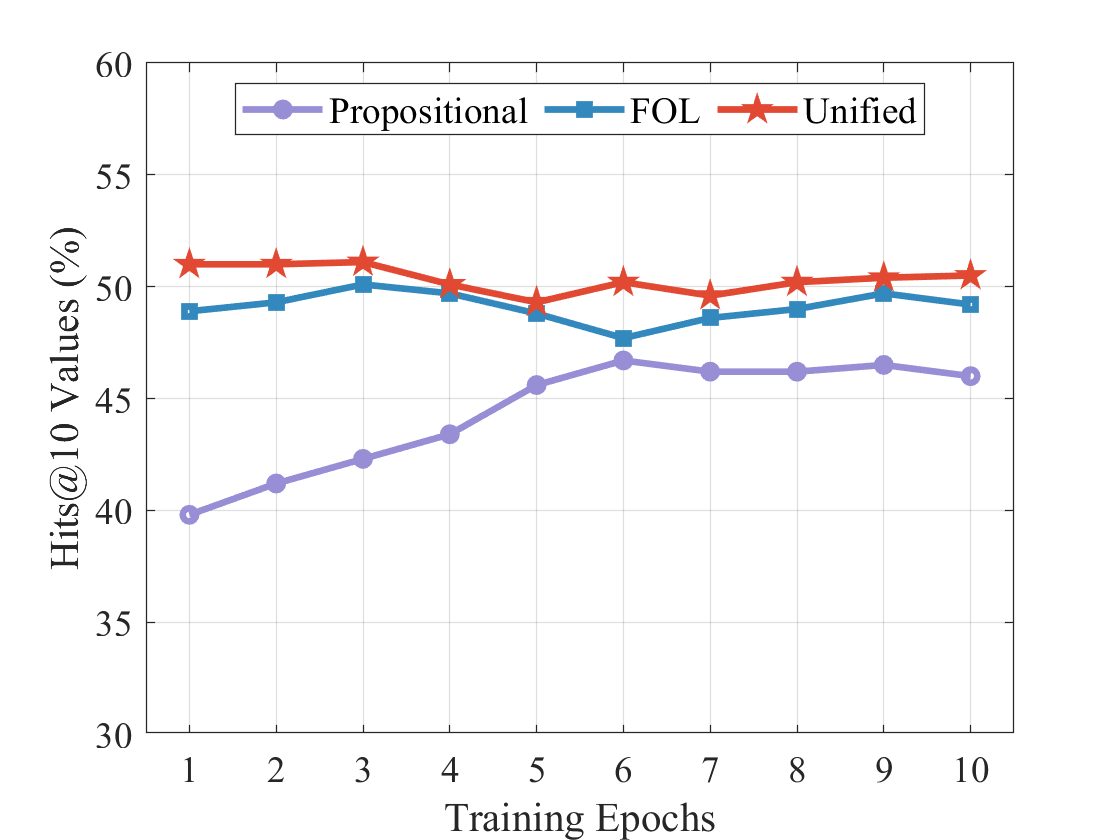

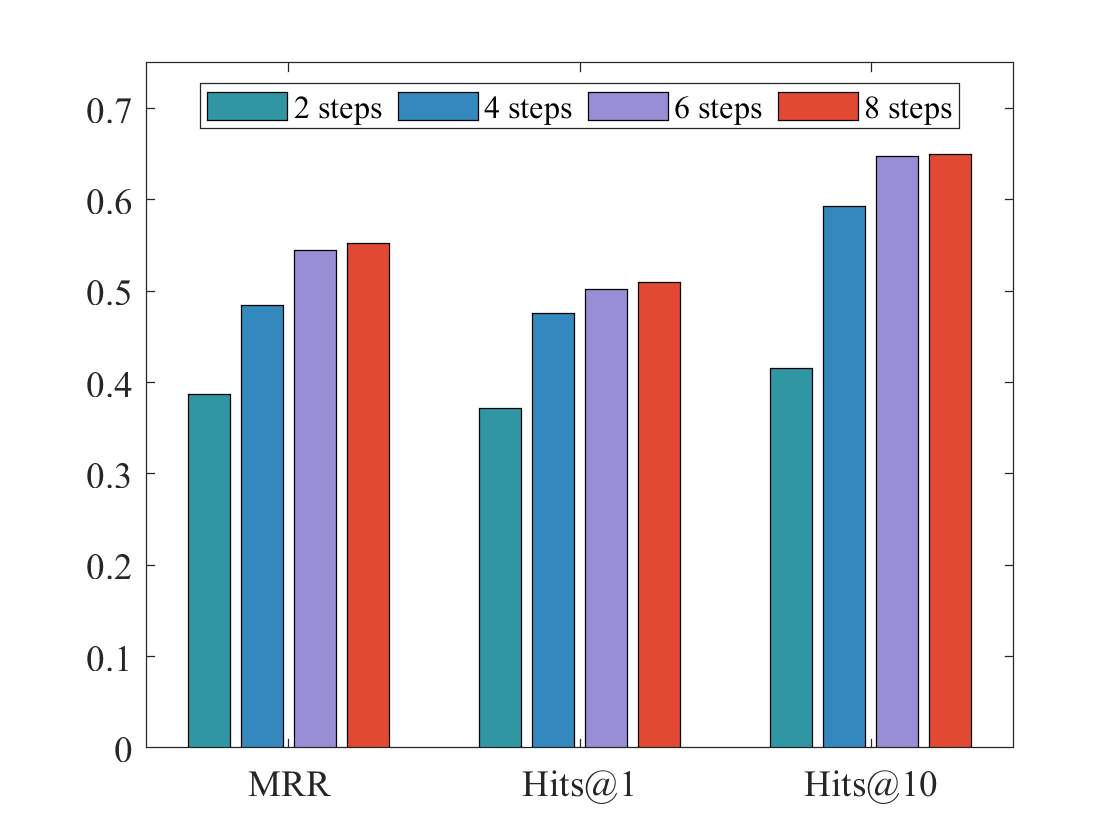

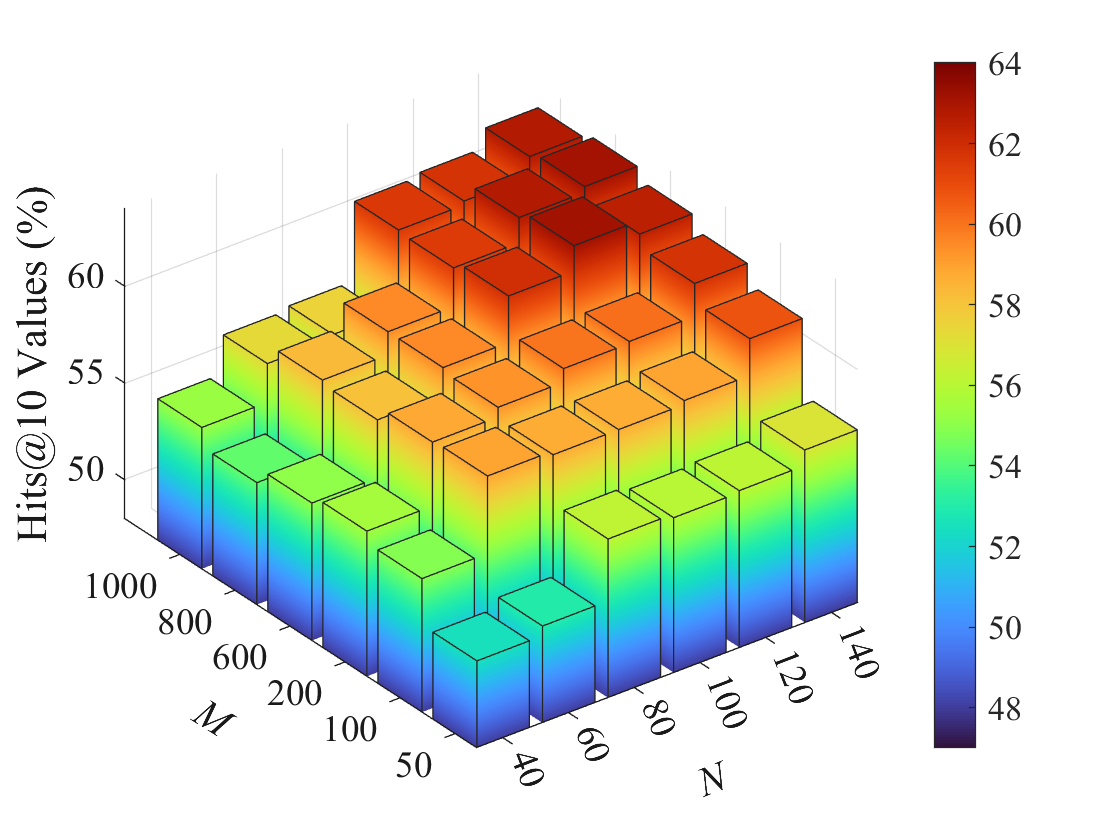

Reasoning Graph. For a specific query $\mathcal{Q}$ , a reasoning graph is defined as $\widetilde{\mathcal{G}}=\{\mathcal{O},\mathcal{R},\widetilde{\mathcal{F}}\}$ for propositional and first-order reasoning. $\mathcal{O}$ is a node set that consists of nodes in different iteration steps, i.e., $\mathcal{O}=\mathcal{O}_{0}\cup\mathcal{O}_{1}\cup\cdots\cup\mathcal{O}_{L}$ . For SKGs, $\mathcal{O}_{0}$ only contains a query entity $\tilde{s}$ and the subsequent is in the form of entities. $(n_{i}^{l},\bar{r},n_{j}^{l+1})\in\widetilde{\mathcal{F}}$ is an edge that links nodes at two neighbor steps, i.e., $n_{i}^{l}\in\mathcal{O}_{l}$ , $n_{j}^{l+1}\in\mathcal{O}_{l+1}$ and $\bar{r}\in\mathcal{R}$ . The reasoning graph is constantly expanded by searching for posterior neighbor nodes. For start node $n^{0}=\tilde{s}$ , its posterior neighbors are $\mathcal{N}(n^{0})=\{e_{i}|(\tilde{s},\bar{r},e_{i})\in\mathcal{F}\}$ . For a node in following steps $n_{i}^{l}=e_{i}\in\mathcal{O}_{l}$ , its posterior neighbors are $\mathcal{N}(n_{i}^{l})=\{e_{j}|(e_{i},\bar{r},e_{j})\in\mathcal{F}\}$ . Its preceding parents are $\widetilde{\mathcal{N}}(n_{i}^{l})=\{(n_{j}^{l-1},\bar{r})|n_{j}^{l-1}\in \mathcal{O}_{l-1}\land(n_{j}^{l-1},\bar{r},n_{i}^{l})\in\widetilde{\mathcal{F}}\}$ . To take preceding nodes into account at the current step, an extra relation self is added. Then, $n_{i}^{l}=e_{i}$ can be obtained at the next step as $n_{i}^{l+1}=e_{i}$ and there have $(n_{i}^{l},self,n_{i}^{l+1})\in\widetilde{\mathcal{F}}$ .