# A statistical physics framework for optimal learning

**Authors**:

- Francesca Mignacco, Francesco Mori (Graduate Center, City University of New York, New York, NY 10016, USA)

Abstract

Learning is a complex dynamical process shaped by a range of interconnected decisions. Careful design of hyperparameter schedules for artificial neural networks or efficient allocation of cognitive resources by biological learners can dramatically affect performance. Yet, theoretical understanding of optimal learning strategies remains sparse, especially due to the intricate interplay between evolving meta-parameters and nonlinear learning dynamics. The search for optimal protocols is further hindered by the high dimensionality of the learning space, often resulting in predominantly heuristic, difficult to interpret, and computationally demanding solutions. Here, we combine statistical physics with control theory in a unified theoretical framework to identify optimal protocols in prototypical neural network models. In the high-dimensional limit, we derive closed-form ordinary differential equations that track online stochastic gradient descent through low-dimensional order parameters. We formulate the design of learning protocols as an optimal control problem directly on the dynamics of the order parameters with the goal of minimizing the generalization error at the end of training. This framework encompasses a variety of learning scenarios, optimization constraints, and control budgets. We apply it to representative cases, including optimal curricula, adaptive dropout regularization and noise schedules in denoising autoencoders. We find nontrivial yet interpretable strategies highlighting how optimal protocols mediate crucial learning tradeoffs, such as maximizing alignment with informative input directions while minimizing noise fitting. Finally, we show how to apply our framework to real datasets. Our results establish a principled foundation for understanding and designing optimal learning protocols and suggest a path toward a theory of meta-learning grounded in statistical physics.

1 Introduction

Learning is intrinsically a multilevel process. In both biological and artificial systems, this process is defined through a web of design choices that can steer the learning trajectory toward crucially different outcomes. In machine learning (ML), this multilevel structure underlies the optimization pipeline: model parameters are adjusted by a learning algorithm—e.g., stochastic gradient descent (SGD)—that itself depends on a set of higher‐order decisions, specifying the network architecture, hyperparameters, and data‐selection procedures [1]. These meta-parameters are often adjusted dynamically throughout training following predefined schedules to enhance performance. Biological learning is also mediated by a range of control signals across scales. Cognitive control mechanisms are known to modulate attention and regulate learning efforts to improve flexibility and multi-tasking [2, 3, 4]. Additionally, structured training protocols are widely adopted in animal and human training to make learning processes faster and more robust. For instance, curricula that progressively increase the difficulty of the task often improve the final performance [5, 6].

Optimizing the training schedules—effectively “learning to learn”—is a crucial problem in ML. However, the proposed solutions remain largely based on trial-and-error heuristics and often lack a principled assessment of their optimality. The increasing complexity of modern ML architectures has led to a proliferation of meta-parameters, exacerbating this issue. As a result, several paradigms for automatic learning, such as meta-learning and hyperparameter optimization [7, 8], have been developed. Proposed methods range from grid and random hyperparameter searches [9] to Bayesian approaches [10] and gradient‐based meta‐optimization [11, 12]. However, these methods operate in high‐dimensional, nonconvex search spaces, making them computationally expensive and often yielding strategies that are hard to interpret. Although one can frame the selection of training protocols as an optimal‐control (OC) problem, applying standard control techniques to the full parameter space is often infeasible due to the curse of dimensionality.

Statistical physics provides a long-standing theoretical framework for understanding learning through prototypical models [13], a perspective that has carried over into recent advances in ML theory [14, 15]. It exploits the high dimensionality of learning problems to extract low-dimensional effective descriptions in terms of order parameters that capture the key properties of training and performance. A substantial body of theoretical results has been obtained in the Bayes-optimal setting, characterizing the information-theoretically optimal performance for given data-generating processes and providing a threshold that no algorithm can improve [16, 17]. In parallel, the algorithmic performance of practical procedures, such as empirical risk minimization, has been studied both in the asymptotic regime via equilibrium statistical mechanics [18, 19, 20, 21, 22, 23] and through explicit analyses of training dynamics [24, 25, 26, 27, 28]. More recently, neural network models analyzed with statistical physics methods have been used to study various paradigmatic learning settings relevant to cognitive science [29, 30, 31]. However, these lines of work have mainly focused on predefined protocols, often keeping meta-parameters constant during training, without addressing the derivation of optimal learning schedules.

In this paper, we propose a unified framework for optimal learning that combines statistical physics and control theory to systematically identify training schedules across a broad range of learning scenarios. Specifically, we define an OC problem directly on the low-dimensional dynamics of the order parameters, where the meta-parameters of the learning process serve as controls and the final performance is the objective. This approach serves as a testbed for uncovering general principles of optimal learning and offers two key advantages. First, the reduced descriptions of the learning dynamics circumvent the curse of dimensionality, enabling the application of standard control-theoretic techniques. Second, the order parameters capture essential aspects of the learning dynamics, allowing for a more interpretable analysis of why the resulting strategies are effective.

In particular, we consider online training with SGD in a general two-layer network model that includes several learning settings as special cases. Building on the foundational work of [32, 33, 34], we derive exact closed-form equations describing the evolution of the relevant order parameters during training. Control-theoretical techniques can then be applied to identify optimal training schedules that maximize the final performance. This formulation enables a unified treatment of diverse learning paradigms and their associated meta-parameter schedules, such as task ordering, learning rate tuning, and dynamic modulation of the node activations. A variety of learning constraints and control budgets can be directly incorporated. Our work contributes to the broader effort to develop theoretical frameworks for the control of nonequilibrium systems [35, 36, 37], given that learning dynamics are high-dimensional, stochastic, and inherently nonequilibrium processes.

While we present our approach here in full generality, a preliminary application of this method for optimal task-ordering protocols in continual learning was recently presented in the conference paper [38]. Related variational approaches were explored in earlier work from the 1990s, primarily in the context of learning rate schedules [39, 40]. More recently, computationally tractable meta-learning strategies have been studied in linear networks [41, 42]. However, a general theoretical framework for identifying optimal training protocols in nonlinear networks is still missing.

The rest of the paper is organized as follows. In Section 2, we introduce the theoretical framework. Specifically, we present the model in Section 2.1 and we define the order parameters and derive the dynamical equations for online SGD training in Section 2.2. The control-theoretic techniques used throughout the paper are described in Section 2.3. In Section 2.4, we illustrate a range of learning scenarios that can be addressed within this framework. In Section 3, we derive and discuss optimal training schedules in three representative settings: curriculum learning (Section 3.1), dropout regularization (Section 3.2), and denoising autoencoders (Section 3.3). We conclude in Section 4 with a summary of our findings and a discussion of open directions. Additional technical details are provided in the appendices.

2 Theoretical framework

2.1 The model

We study a general learning framework based on the sequence multi-index model introduced in [43]. This model captures a broad class of learning scenarios, both supervised and unsupervised, and admits a closed-form analytical description of its training dynamics. This dual feature allows us to derive optimal learning strategies across various regimes and to highlight multiple potential applications. We begin by presenting a general formulation of the model, followed by several concrete examples.

We consider a dataset $\mathcal{D}=\bigl{\{}(\bm{x}^{\mu},y^{\mu})\bigr{\}}_{\mu=1}^{P}$ of $P$ samples, where $\bm{x}^{\mu}∈\mathbb{R}^{N× L}$ are i.i.d. inputs and $y^{\mu}∈\mathbb{R}$ are the corresponding labels (if supervised learning is considered). Each input sample ${\bm{x}}∈\mathbb{R}^{N× L}$ , a sequence with $L$ elements ${\bm{x}}_{l}$ of dimension $N$ , is drawn from a Gaussian mixture

$$

{\bm{x}}_{l}\sim\mathcal{N}\left(\frac{{\bm{\mu}}_{l,c_{l}}}{\sqrt{N}},\sigma^%

{2}_{l,c_{l}}\bm{I}_{N}\right)\,, \tag{1}

$$

where $c_{l}∈\{1\,,...\,,C_{l}\}$ denotes cluster membership. The random vector ${\bm{c}}=\{c_{l}\}_{l=1}^{L}$ is sampled from a probability distribution $p_{c}({\bm{c}})$ , which can encode arbitrary correlations. In supervised settings, we will often assume

$$

y=f^{*}_{{\bm{w}}_{*}}({\bm{x}})+\sigma_{n}z,\qquad z\sim\mathcal{N}(0,1), \tag{2}

$$

where $f^{*}_{{\bm{w}}_{*}}({\bm{x}})$ is a fixed teacher network with $M$ hidden units and parameters ${\bm{w}}_{*}∈\mathbb{R}^{N× M}$ , and $\sigma_{n}$ controls label noise. This teacher–student (TS) paradigm is standard in statistical physics and it allows for analytical characterization [44, 45, 32, 33, 34, 13, 24].

We consider a two-layer neural network $f_{\bm{w},\bm{v}}(\bm{x})=\tilde{f}\bigl{(}\tfrac{\bm{x}^{→p}\,\bm{w}}{\sqrt%

{N}},\mathbf{v}\bigr{)}$ with $K$ hidden units. In a TS setting, this network serves as the student. The parameters $\bm{w}∈\mathbb{R}^{N× K}$ (first-layer) and $\bm{v}∈\mathbb{R}^{K× H}$ (readout) are both trainable. The readout $\bm{v}$ has $H$ heads, $\bm{v}_{h}∈\mathbb{R}^{K}$ for $h=1,...,H$ , which can be switched to adapt to different contexts or tasks. In the simplest case, $H=L=1$ , the network will often take the form

$$

f_{\bm{w},\bm{v}}(\bm{x})=\frac{1}{\sqrt{K}}\sum_{k=1}^{K}v_{k}\leavevmode%

\nobreak\ g\left(\frac{{\bm{w}}_{k}\cdot{\bm{x}}}{\sqrt{N}}\right)\,, \tag{3}

$$

where we have dropped the head index, and $g(·)$ is a nonlinearity (e.g., $g(z)=\operatorname{erf}(z/\sqrt{2}))$ .

To characterize the learning process, we consider a cost function of the form

$$

\mathcal{L}({\bm{w}},{\bm{v}}|\bm{x},\bm{c})=\ell\left(\frac{{\bm{x}}^{\top}{%

\bm{w}_{*}}}{\sqrt{N}},\frac{{\bm{x}}^{\top}{\bm{w}}}{\sqrt{N}},\frac{\bm{w}^{%

\top}\bm{w}}{N},{\bm{v}},{\bm{c}},z\right)+\tilde{g}\left(\frac{\bm{w}^{\top}%

\bm{w}}{N},{\bm{v}}\right)\,, \tag{4}

$$

where we have introduced the loss function $\ell$ , and the regularization function $\tilde{g}$ , which typically penalizes large values of the parameter norms. Note that the functional form of $\ell(·)$ in Eq. (4) implicitly contains details of the problem, including the network architecture, the specific loss function used, and the shape of the target function. Additionally, it may contain adaptive hyperparameters and controls on architectural features. When considering a TS setting, the loss takes the form

$$

\ell\left(\frac{{\bm{x}}^{\top}{\bm{w}_{*}}}{\sqrt{N}},\frac{{\bm{x}}^{\top}{%

\bm{w}}}{\sqrt{N}},\frac{\bm{w}^{\top}\bm{w}}{N},{\bm{v}},{\bm{c}},z\right)=%

\tilde{\ell}(f_{\bm{w},\bm{v}}(\bm{x}),y)\,, \tag{5}

$$

where $y$ is given in Eq. (2) and $\tilde{\ell}(a,b)$ penalizes dissimilar values of $a$ and $b$ . A typical choice is the square loss: $\tilde{\ell}(a,b)=(a-b)^{2}/2$ .

2.2 Learning dynamics

We study the learning dynamics under online (one‐pass) SGD, in which each update is computed using a fresh sample $\bm{x}^{\mu}$ at each training step $\mu$ In contrast, offline (multi-pass) SGD repeatedly reuses the same samples throughout training.. This regime admits an exact analysis via statistical‐physics methods [32, 33, 34, 24]. The parameters evolve as

$$

\displaystyle{\bm{w}}^{\mu+1}={\bm{w}}^{\mu}-{\eta}\nabla_{\bm{w}}\mathcal{L}(%

{\bm{w}}^{\mu},{\bm{v}}^{\mu}|\bm{x}^{\mu},\bm{c}^{\mu})\;, \displaystyle\bm{v}^{\mu+1}=\bm{v}^{\mu}-\frac{\eta_{v}}{N}\nabla_{\bm{v}}%

\mathcal{L}({\bm{w}}^{\mu},{\bm{v}}^{\mu}|\bm{x}^{\mu},\bm{c}^{\mu})\;, \tag{6}

$$

where $\eta$ and $\eta_{v}$ denote the learning rates of the first-layer and readout parameters. Other training algorithms, such as biologically plausible learning rules [46, 47], can be incorporated into this framework, but we leave their analysis to future work. We focus on the high-dimensional limit where the dimensionality of the input layer $N$ and the number of training epochs $\mu$ , jointly tend to infinity at fixed training time $\alpha=\mu/N$ . All other dimensions, i.e., $K$ , $H$ , $L$ and $M$ , are assumed to be $\mathcal{O}_{N}(1)$ .

The generalization error is given by

$$

\epsilon_{g}({\bm{w}},{\bm{v}})=\mathbb{E}_{\bm{x},\bm{c}}\left[\ell_{g}\left(%

\frac{{\bm{x}}^{\top}{\bm{w}_{*}}}{\sqrt{N}},\frac{{\bm{x}}^{\top}{\bm{w}}}{%

\sqrt{N}},\frac{\bm{w}^{\top}\bm{w}}{N},{\bm{v}},{\bm{c}},0\right)\right]\,, \tag{7}

$$

where $\mathbb{E}_{\bm{x},\bm{c}}$ denotes the expectation over the joint distribution of $\bm{x}$ and ${\bm{c}}$ and the label noise $z$ is set to zero. Depending on the context, the function $\ell_{g}$ may coincide with the training loss $\ell$ , or it may represent a different metric—such as the misclassification error in the case of binary labels. Crucially, the generalization error $\epsilon_{g}({\bm{w}},{\bm{v}})$ depends on the high-dimensional first-layer weights only through the following low-dimensional order parameters:

$$

Q^{\mu}_{kk^{\prime}}\coloneqq\frac{{\bm{w}^{\mu}_{k}}\cdot\bm{w}^{\mu}_{k^{%

\prime}}}{N}\;,\quad M^{\mu}_{km}\coloneqq\frac{{\bm{w}^{\mu}_{k}}\cdot\bm{w}_%

{*,m}}{N}\;,\quad R^{\mu}_{k(l,c_{l})}\coloneqq\frac{{\bm{w}^{\mu}_{k}}\cdot%

\bm{\mu}_{l,c_{l}}}{{N}}\;. \tag{8}

$$

Collecting these together with the readout parameters $\bm{v}^{\mu}$ into a single vector

$$

\mathbb{Q}=\left({\rm vec}\left({\bm{Q}}\right),{\rm vec}\left({\bm{M}}\right)%

,{\rm vec}\left({\bm{R}}\right),{\rm vec}\left({\bm{v}}\right)\right)^{\top}%

\in\mathbb{R}^{K^{2}+KM+K(C_{1}+\ldots+C_{L})+HK}\,, \tag{9}

$$

we can write $\epsilon_{g}({\bm{w}},{\bm{v}})=\epsilon_{g}(\mathbb{Q})$ (see Appendix A). Additionally, it is useful to define the low-dimensional constant parameters

$$

\displaystyle\begin{split}S_{m(l,c_{l})}\coloneqq\frac{{\bm{w}_{*,m}}\cdot\bm{%

\mu}_{l,c_{l}}}{{N}}\;,\quad T_{mm^{\prime}}\coloneqq\frac{{\bm{w}_{*,m}}\cdot%

\bm{w}_{*,m^{\prime}}}{N}\;,\quad\Omega_{(l,c_{l})(l^{\prime},c^{\prime}_{l^{%

\prime}})}=\frac{\bm{\mu}_{l,c_{l}}\cdot\bm{\mu}_{l^{\prime},c^{\prime}_{l^{%

\prime}}}}{N}\;.\end{split} \tag{10}

$$

Note that the scaling of teacher vectors $\bm{w}_{*,m}$ and the centroids $\bm{\mu}_{l,c_{l}}$ with $N$ is chosen so that the parameters in Eq. (10) are $\mathcal{O}_{N}(1)$ .

In the high‐dimensional limit, the stochastic fluctuations of the order parameters $\mathbb{Q}$ vanish and their dynamics concentrate on a deterministic trajectory. Consequently, $\mathbb{Q}(\alpha)$ satisfies a closed system of ordinary differential equations (ODEs) [32, 33, 34, 13, 24]:

$$

\displaystyle\frac{{\rm d}\mathbb{Q}}{{\rm d}\alpha}=f_{\mathbb{Q}}\left(%

\mathbb{Q}(\alpha),\bm{u}(\alpha)\right)\;,\qquad{\rm with}\quad\alpha\in(0,%

\alpha_{F}]\;, \tag{11}

$$

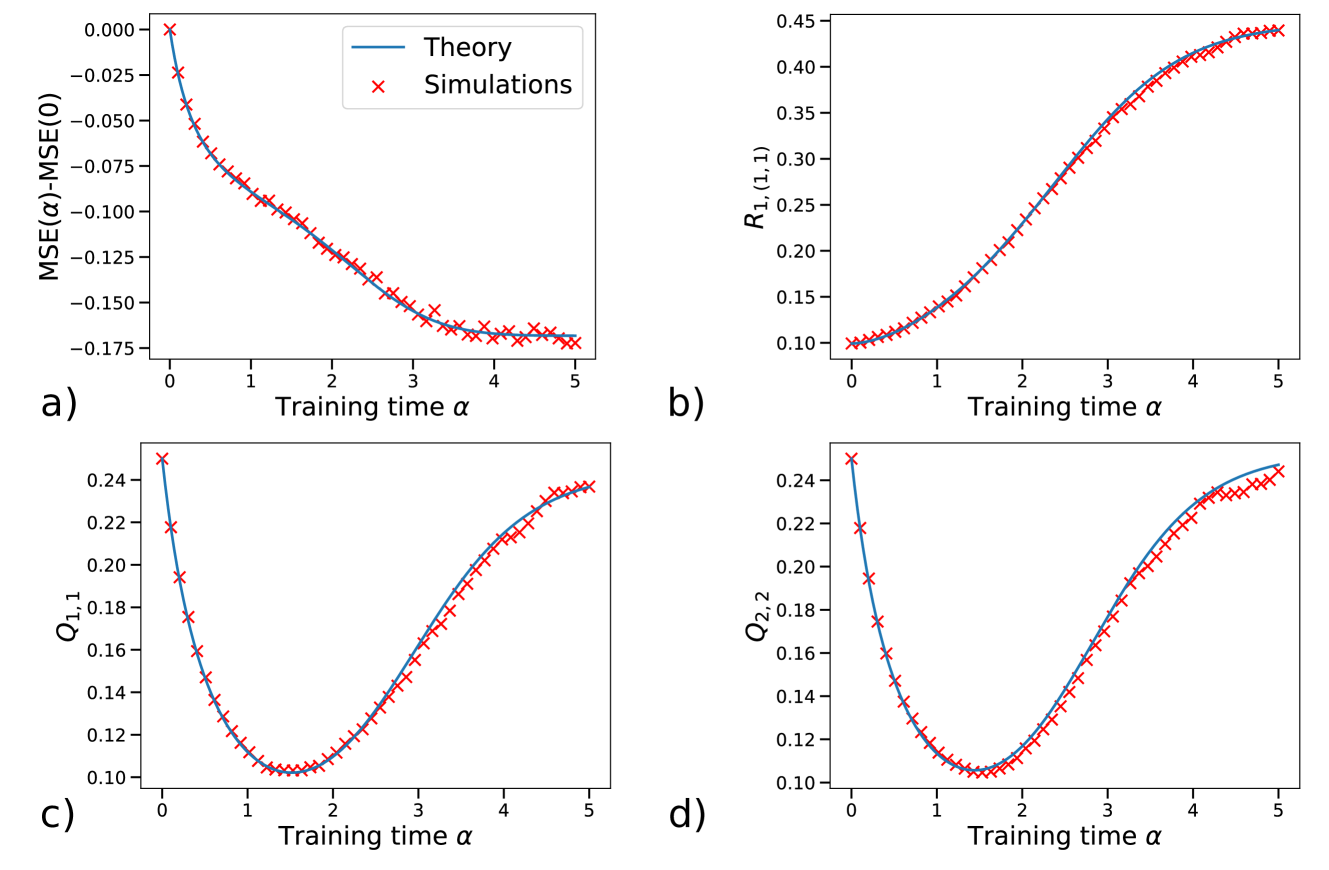

where $\alpha_{F}=P/N$ denotes the final training time and the explicit form of $f_{\mathbb{Q}}$ is provided in Appendix A. In Appendix C, we check these theoretical ODEs via numerical simulations, finding excellent agreement. The vector $\bm{u}(\alpha)$ encodes controllable parameters involved in the training process. We assume that ${\bm{u}}(\alpha)∈\mathcal{U}$ , where $\mathcal{U}$ is the set of feasible controls, whose dimension is $\mathcal{O}_{N}(1)$ . The set $\mathcal{U}$ may include discrete, continuous, or mixed controls. For example, setting $\bm{u}(\alpha)=\eta(\alpha)$ corresponds to dynamic learning‐rate schedules. The control $\bm{u}(\alpha)$ could also parameterize a time-dependent distribution of the cluster variable $\bm{c}$ to encode sample difficulty, e.g., to study curriculum learning. Likewise, $\bm{u}(\alpha)$ could describe aspects of the network architecture, e.g., a time‐dependent dropout rate. Several specific examples are discussed in Section 2.4.

Identifying optimal schedules for $\bm{u}(\alpha)$ is the central goal of this work. Solving this control problem directly in the original high‐dimensional parameter space is computationally challenging. However, the exact low‐dimensional description of the training dynamics in Eq. (11) allows to readily apply standard OC techniques.

2.3 Optimal control of the learning dynamics

In this section, we describe the OC framework that allows us to identify optimal learning strategies. We seek to identify the OC $\bm{u}(\alpha)∈\mathcal{U}$ that minimizes the generalization error at the end of training, i.e., at training time $\alpha_{F}$ . To this end, we introduce the cost functional

$$

\mathcal{F}[\bm{u}]=\epsilon_{g}(\mathbb{Q}(\alpha_{F}))\,, \tag{12}

$$

where the square brackets indicate functional dependence on the full control trajectory $\bm{u}(\alpha)$ , for $0≤\alpha≤\alpha_{F}$ . The functional dependence on $\bm{u}(\alpha)$ appears implicitly through the ODEs (11), which govern the evolution from the fixed initial state $\mathbb{Q}(0)=\mathbb{Q}_{0}$ to the final state $\mathbb{Q}(\alpha_{F})$ . Note that, while we consider globally optimal schedules—that is, schedules optimized with respect to the final cost functional—previous works have also explored greedy schedules that are locally optimal, maximizing the error decrease or the learning speed at each training step [48, 49]. These schedules are easier to analyze but generally lead to suboptimal results [40]. Furthermore, although our focus is on minimizing the final generalization error, the framework can accommodate alternative objectives. For instance, one may optimize the time‐averaged generalization error as in [41], if the performance during training, rather than only at $\alpha_{F}$ , is of interest. We adopt two types of OC techniques: indirect methods, which solve the boundary‐value problem defined by the Pontryagin maximum principle [50, 51, 52], and direct methods, which discretize the control $\bm{u}(\alpha)$ and map the problem into a finite‐dimensional nonlinear program [53]. Additional costs or constraints associated with the control signal ${\bm{u}}$ can be directly incorporated into both classes of methods.

2.3.1 Indirect methods

Following Pontryagin’s maximum principle [50], we augment the functional in Eq. (12) by introducing the Lagrange multipliers $\hat{\mathbb{Q}}(\alpha)$ to enforce the dynamics (11)

$$

\mathcal{F}[\bm{u},\mathbb{Q},\hat{\mathbb{Q}}]=\epsilon_{g}\bigl{(}\mathbb{Q}%

(\alpha_{F})\bigr{)}+\int_{0}^{\alpha_{F}}{\rm d}\alpha\;\hat{\mathbb{Q}}(%

\alpha)\cdot\left[-\frac{{\rm d}\mathbb{Q}(\alpha)}{{\rm d}\alpha}+f_{\mathbb{%

Q}}\bigl{(}\mathbb{Q}(\alpha),\,\bm{u}(\alpha)\bigr{)}\right], \tag{13}

$$

where $\hat{\mathbb{Q}}(\alpha)$ are known as adjoint (or costate) variables. The optimality conditions are $\delta\mathcal{F}/\delta\hat{\mathbb{Q}}(\alpha)=0$ and $\delta\mathcal{F}/\delta\mathbb{Q}(\alpha)=0$ . The first yields the forward dynamics (11). For $\alpha<\alpha_{F}$ , the second, after integration by parts, gives the adjoint (backward) ODEs

$$

\displaystyle-\frac{{\rm d}\hat{\mathbb{Q}}(\alpha)^{\top}}{{\rm d}\alpha} \displaystyle=\hat{\mathbb{Q}}(\alpha)^{\top}\nabla_{\mathbb{Q}}f_{\mathbb{Q}}%

\bigl{(}\mathbb{Q}(\alpha),\bm{u}(\alpha)\bigr{)}, \tag{14}

$$

with the final condition at $\alpha=\alpha_{F}$ :

$$

\hat{\mathbb{Q}}(\alpha_{F})=\nabla_{\mathbb{Q}}\,\epsilon_{g}\bigl{(}\mathbb{%

Q}(\alpha_{F})\bigr{)}. \tag{15}

$$

Variations at $\alpha=0$ are not considered since $\mathbb{Q}(0)=\mathbb{Q}_{0}$ is fixed. Finally, optimizing $\bm{u}$ point-wise yields

$$

\bm{u}^{*}(\alpha)=\underset{\bm{u}\in\mathcal{U}}{\arg\min}\;\bigl{\{}\hat{%

\mathbb{Q}}(\alpha)\cdot\,f_{\mathbb{Q}}\bigl{(}\mathbb{Q}(\alpha),\bm{u}\bigr%

{)}\bigr{\}}. \tag{16}

$$

In practice, we use the forward-backward sweep method: starting from an initial guess for $\bm{u}$ , we iterate the following steps until convergence.

1. Integrate $\mathbb{Q}$ forward via (11) from $\mathbb{Q}(0)=\mathbb{Q}_{0}$ .

1. Integrate $\hat{\mathbb{Q}}$ backward via (14) from $\hat{\mathbb{Q}}(\alpha_{F})$ in (15).

1. Update $\bm{u}^{k+1}(\alpha)=\gamma_{\rm damp}\bm{u}^{k}(\alpha)+(1-\gamma_{\rm damp})%

\bm{u}^{*}(\alpha)$ , where $\bm{u}^{*}(\alpha)$ is given in (16).

We typically choose the damping parameter $\gamma_{\rm damp}>0.9$ . Convergence is usually reached within a few hundred to a few thousand iterations.

2.3.2 Direct methods

Direct methods discretize the control trajectory $\bm{u}(\alpha)$ on a finite grid of $I=\alpha_{F}/{\rm d}\alpha$ intervals and map the continuous‐time OC problem into a finite‐dimensional nonlinear program (NLP). We introduce optimization variables for $\mathbb{Q}$ and $\bm{u}$ at each node $\alpha_{j}=j\leavevmode\nobreak\ {\rm d}\alpha$ , enforce the dynamics (11) via constraints on each interval, and solve the resulting NLP using the CasADi package [54]. In this paper, we implement a multiple‐shooting scheme: $\bm{u}(\alpha)$ is parameterized as constant on each interval, and continuity of $\mathbb{Q}$ is enforced at the boundaries. While direct methods are conceptually simpler—relying on standard NLP solvers and avoiding the explicit derivation of adjoint equations—in the settings under consideration, we find that they tend to perform worse when the control $\bm{u}$ has discrete components. Conversely, indirect methods require computing costate derivatives but yield more accurate solutions for discrete controls. Depending on the problem setting, we therefore choose between direct and indirect approaches as specified in each case.

2.4 Special cases of interest

In this section, we illustrate how the proposed framework can be readily applied to describe several representative learning scenarios, addressing theoretical questions emerging in machine learning and cognitive science. We organize the presentation of different learning strategies into three main categories, each reflecting a distinct aspect of the training process: hyperparameters of the optimization, data selection mechanisms, and architectural adaptations.

2.4.1 Hyperparameter schedules

Optimization hyperparameters are external configuration variables that shape the dynamics of the learning process. Dynamically tuning these parameters during training is a standard practice in machine learning, and represents one of the most widely used and studied forms of training protocols.

Learning rate.

The learning rate $\eta$ is often regarded as the single most important hyperparameter [1]. A small $\eta$ mitigates the impact of data noise but slows convergence, whereas a large $\eta$ accelerates convergence at the expense of amplified stochastic fluctuations, which can lead to divergence of the training dynamics. Consequently, many empirical studies have proposed heuristic schedules, such as initial warm‐ups [55] or periodic schemes [56], and methods to optimize $\eta$ via additional gradient steps [57]. From a theoretical perspective, optimal learning rate schedules were already investigated in the 1990s in the context of online training of two-layer networks, using a variational approach closely related to ours [39, 40, 58]. More recently, [59] analytically derived optimal learning rate schedules to optimize high-dimensional non-convex landscapes. Within our framework, the learning rate can be always included in the control vector $\bm{u}$ , as done in [38] focusing on online continual learning. Optimal learning rate schedules are further discussed in the context of curriculum learning in Section 3.1.

Batch size.

Dynamically adjusting the batch size, i.e., the number of data samples used to estimate the gradient at each SGD step, has been proposed as a powerful alternative to learning rate schedules [60, 61, 62]. Mini-batch SGD can be treated within our theoretical formulation by identifying the batch of samples with the input sequence, corresponding to a loss function of the form:

$$

\displaystyle\ell\left(\frac{{\bm{x}}^{\top}{\bm{w}_{*}}}{\sqrt{N}},\frac{{\bm%

{x}}^{\top}{\bm{w}}}{\sqrt{N}},\frac{\bm{w}^{\top}\bm{w}}{N},{\bm{v}},{\bm{c}}%

,z\right)=\frac{1}{L}\sum_{l=1}^{L}\hat{\ell}\left(\frac{{\bm{w}_{*}}^{\top}{%

\bm{x}}_{l}}{\sqrt{N}},\frac{{\bm{w}}^{\top}{\bm{x}}_{l}}{\sqrt{N}},\frac{\bm{%

w}^{\top}\bm{w}}{N},{\bm{v}},c_{l},z\right), \tag{17}

$$

where $L$ here denotes the batch size and can be adapted dynamically during training. An explicit example of this approach is presented in Section 3.3, in the context of batch augmentation to train a denoising autoencoder.

Weight-decay.

Schedules of regularization hyperparameters, e.g., the strength of the penalty on the $L2$ -norm of the weights, have also been empirically studied, for instance in the context of weight pruning [63]. The early work [64] investigated optimal regularization strategies through a variational approach akin to ours. More generally, hyperparameters of the regularization function $\tilde{g}$ can be directly included in the control vector $\bm{u}$ .

2.4.2 Dynamic data selection

Accurately selecting training samples is a central challenge in modern machine learning. In heterogeneous datasets, e.g., composed of examples from multiple tasks or with varying levels of difficulty, the final performance of a model can be significantly influenced by the order in which samples are presented during training.

Task ordering.

The ability to learn new tasks without forgetting previously learned ones is crucial for both artificial and biological learners [65, 66]. Recent theoretical studies have assessed the relative effectiveness of various pre‐specified task sequences [67, 68, 69, 70, 71]. In contrast, our framework allows to identify optimal task sequences in a variety of settings and was applied in [38] to derive interpretable task‐replay strategies that minimize forgetting. The model in [67, 68, 38] is a special case of our formulation where each of the teacher vectors defines a different task $y_{m}=f^{*}_{\bm{w}^{*}_{m}}(\bm{x})$ , $m=1,...,M$ , and $L=1$ . The student has $K=M$ hidden nodes and $H=M$ task-specific readout heads. When training on task $m$ , the loss function takes the simplified form

$$

\displaystyle\ell\left(\frac{{\bm{x}}^{\top}{\bm{w}_{*}}}{\sqrt{N}},\frac{{\bm%

{x}}^{\top}{\bm{w}}}{\sqrt{N}},\frac{\bm{w}^{\top}\bm{w}}{N},{\bm{v}}\right)=%

\hat{\ell}\left(\frac{{\bm{w}^{*}_{m}}\cdot{\bm{x}}}{\sqrt{N}},\frac{{\bm{w}}^%

{\top}{\bm{x}}}{\sqrt{N}},\frac{\bm{w}^{\top}\bm{w}}{N},{\bm{v}}_{m}\right)\,. \tag{18}

$$

The task variable $m$ can then be treated as a control variable to identify optimal task orderings that minimize generalization error across tasks [38].

Curriculum learning.

When heterogeneous datasets involve a notion of relative sample difficulty, it is natural to ask whether training performance can be enhanced by using a curriculum, i.e., by presenting examples in a structured order based on their difficulty, rather than sampling them at random. This question has been theoretically explored in recent literature [29, 72, 73] and is investigated within our formulation in Section 3.1.

Data imbalance.

Many real-world datasets exhibit class imbalance, where certain classes are significantly over-represented [74]. Recent theoretical work has used statistical physics to study class-imbalance mitigation through under- and over-sampling in sequential data [75, 76]. Further aspects of data imbalance, such as relative representation imbalance and different sub-population variances, have been explored using a TS setting in [77, 78]. All these types of imbalance can be incorporated in our general formulation, e.g., by tilting the distribution of cluster memberships $p_{c}(\bm{c})$ , the cluster variances, and the alignment parameters $\bm{S}$ between teacher vectors and cluster centroids (see Eq. (10)). This framework would allow to investigate dynamical mitigation strategies—such as optimal data ordering, adaptive loss reweighting, and learning-rate schedules—aimed at restoring balance.

2.4.3 Dynamic architectures

Dynamic architectures allow models to adjust their structure during training based on data or task demands, addressing some limitations of static models [79]. Several heuristic strategies have been proposed to dynamically adapt a network’s architecture, e.g., to avoid overfitting or to facilitate knowledge transfer. Our framework enables the derivation of principled mechanisms for adapting the architecture during training across several settings.

Dropout.

Dropout is a widely adopted dynamic regularization technique in which random subsets of the network are deactivated during training to encourage robust, independent feature representations [80, 81]. While empirical studies have proposed adaptive dropout probabilities to enhance performance [82, 83], a theoretical understanding of optimal dropout schedules remains limited. In recent work, we introduced a two‐layer network model incorporating dropout and analyzed the impact of fixed dropout rates [84]. As shown in Section 3.2, our general framework contains the model of [84] as a special case, enabling the derivation of principled dropout schedules.

Gating.

Gating functions modify the network architecture by selectively activating specific pathways, thereby modulating information flow and allocating computational resources based on input context. This principle improves model efficiency and expressiveness, and underlies diverse systems such as mixture of experts [85], squeeze-and-excitation networks [86], and gated recurrent units [87]. Gated linear networks—introduced in [88] as context-gated models based on local learning rules—have been investigated in several theoretical works [89, 90, 91, 92]. Our framework offers the possibility to study dynamic gating and adaptive modulation, including gain and engagement modulation mechanisms [41], by controlling the hyperparameters of the gating functions. For instance, in teacher-student settings as in Eqs. (2) and (5), the model considered in [92] arises as a special case of our formulation, where $L=1$ and $f_{\bm{w},\bm{v}}(\bm{x})=\sum_{k=1}^{\lfloor K/2\rfloor}g_{k}(\bm{w}_{k}·%

\bm{x})\,(\bm{w}_{\lfloor K/2\rfloor+k}·\bm{x})$ with gating functions $g_{k}$ .

Dynamic attention.

Self-attention is the core building block of the transformer architecture [93]. Dynamic attention mechanisms enhance standard attention by adapting its structure in response to input properties or task requirements, for example, by selecting sparse token interactions [94], varying attention spans [95], or pruning attention heads dynamically [96, 97]. Recent theoretical works have introduced minimal models of dot‐product attention that admit an analytic characterization [43, 98, 99]. These models can be incorporated into our framework to study adaptive attention dynamics. In particular, a multi-head single-layer dot-product attention model can be recovered by setting

$$

\displaystyle f_{\bm{w},\bm{v}}(\bm{x})=\sum_{h=1}^{H}v^{(h)}\bm{x}%

\operatorname{softmax}\left(\frac{\bm{x}^{\top}\bm{w}^{(h)}_{\mathcal{Q}}{\bm{%

w}^{(h)}_{\mathcal{K}}}^{\top}\bm{x}}{N}\right)\in\mathbb{R}^{N\times L}\;, \tag{19}

$$

where $\bm{w}^{(h)}_{\mathcal{Q}}∈\mathbb{R}^{N× D_{H}}$ and $\bm{w}^{(h)}_{\mathcal{Q}}∈\mathbb{R}^{N× D_{H}}$ denote the query and key matrices for the $h^{\rm th}$ head, with head dimension $D_{H}$ such that the total number of student vectors is $K=2HD_{H}$ . The value matrix is set to the identity, while the readout vector $\bm{v}∈\mathbb{R}^{H}$ acts as the output weights across heads. In teacher-student settings [98], the model in Eq. (19) is a special case of our formulation (see also [43]). Possible controls in this case include masking variables that dynamically prune attention heads, sparsify token interactions, or modulate context visibility, enabling adaptive structural changes to the model.

3 Applications

In this section, we present three different learning scenarios in which our framework allows to identify optimal learning strategies.

3.1 Curriculum learning

<details>

<summary>x1.png Details</summary>

### Visual Description

## Neural Network Diagram: Teacher-Student Model

### Overview

The image presents a diagram illustrating a teacher-student model in a neural network context. It describes the input data, the teacher network's output, and the student network's output. The diagram highlights the concept of relevant and irrelevant input features and their impact on the learning process.

### Components/Axes

* **Input:**

* `x = (x1, x2) ∈ R^(N x 2)`: The input vector `x` consists of two components, `x1` and `x2`, and belongs to the real space of dimension `N x 2`.

* **Relevant:** A vertical stack of green circles, labeled "Relevant" on the left. Represents the relevant input feature `x1`.

* `x1 ~ N(0, IN)`: `x1` follows a normal distribution with mean 0 and covariance matrix `IN` (identity matrix of size N).

* "Unit variance" is written below the equation.

* **Irrelevant:** A vertical stack of red circles, labeled "Irrelevant" on the left. Represents the irrelevant input feature `x2`.

* `x2 ~ N(0, √Δ IN)`: `x2` follows a normal distribution with mean 0 and covariance matrix `√Δ IN`.

* "Control: variance" is written below the equation.

* `u = Δ`: Control parameter `u` is equal to `Δ`.

* **Teacher:**

* A network with green input nodes connected to a single output node.

* `w*`: Represents the weights of the teacher network.

* `y = sign(w* · x1 / √N)`: The teacher's output `y` is the sign of the dot product of the teacher's weights `w*` and the relevant input `x1`, divided by the square root of `N`.

* **Student:**

* A network with green and red input nodes connected to a single output node.

* `w1`: Represents the weights associated with the relevant input `x1` in the student network.

* `w2`: Represents the weights associated with the irrelevant input `x2` in the student network.

* `y = erf((w1 · x1 + w2 · x2) / (2√N))`: The student's output `y` is the error function (erf) of the sum of the dot products of the student's weights `w1` and `w2` with the relevant input `x1` and irrelevant input `x2` respectively, divided by `2√N`.

### Detailed Analysis

* **Input Representation:** The input `x` is composed of two parts: a relevant feature `x1` and an irrelevant feature `x2`. The relevance is indicated by the color-coding (green for relevant, red for irrelevant).

* **Teacher Network:** The teacher network only uses the relevant input feature `x1` to produce its output. The output is a binary value (+1 or -1) determined by the sign function.

* **Student Network:** The student network uses both the relevant (`x1`) and irrelevant (`x2`) input features. The output is a continuous value determined by the error function.

* **Variance Control:** The variance of the irrelevant input feature `x2` is controlled by the parameter `Δ`. This allows for studying the effect of irrelevant information on the student's learning process.

### Key Observations

* The teacher network is designed to focus solely on the relevant input feature.

* The student network is exposed to both relevant and irrelevant features, potentially making the learning process more complex.

* The control parameter `Δ` allows for manipulating the amount of noise or irrelevant information the student network receives.

### Interpretation

The diagram illustrates a setup for studying how neural networks learn in the presence of irrelevant information. The teacher network represents an ideal learner that only focuses on the essential features. The student network, on the other hand, must learn to filter out the irrelevant information to achieve good performance. By varying the variance of the irrelevant input feature (`Δ`), one can investigate how the student's learning process is affected by the presence of noise or distracting information. This setup is useful for understanding the robustness and generalization capabilities of neural networks.

</details>

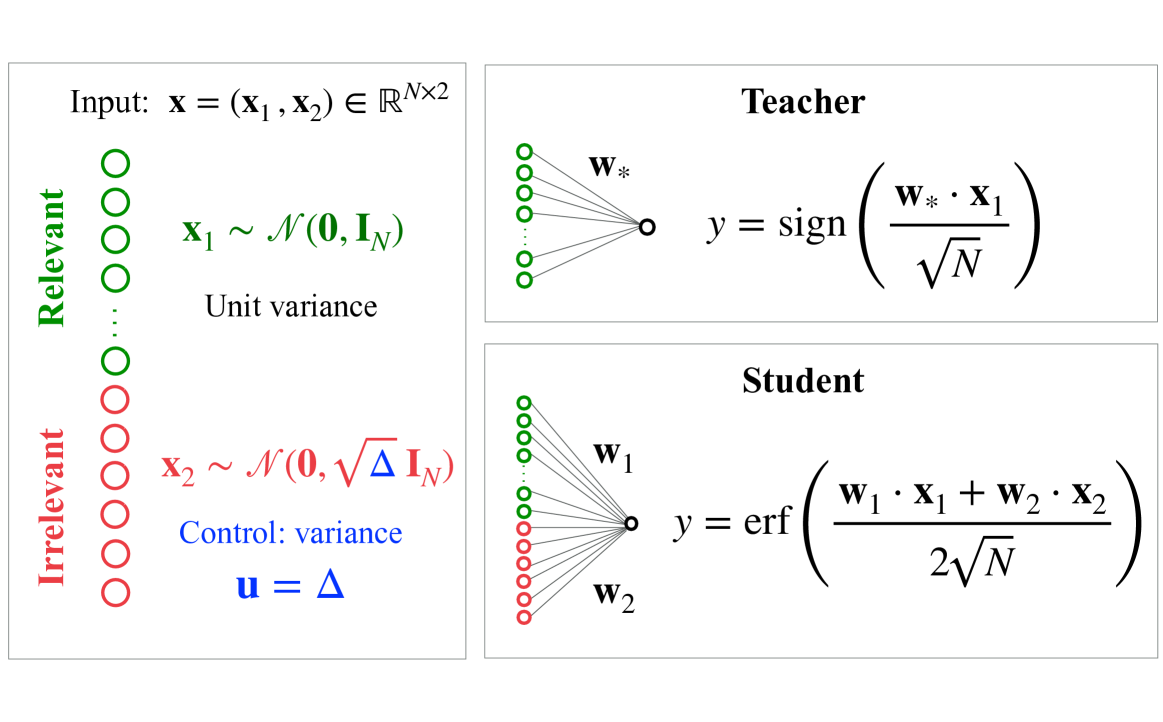

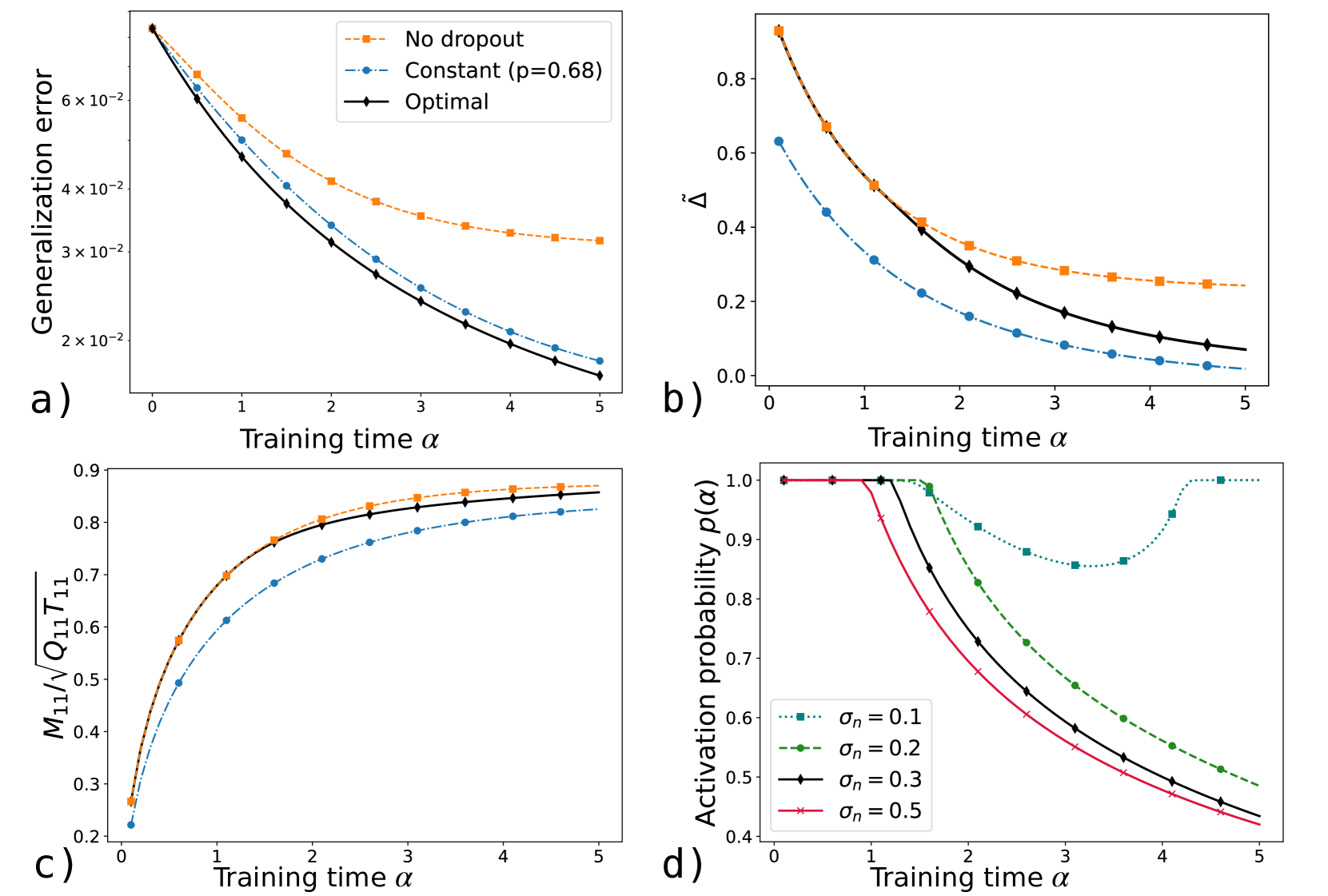

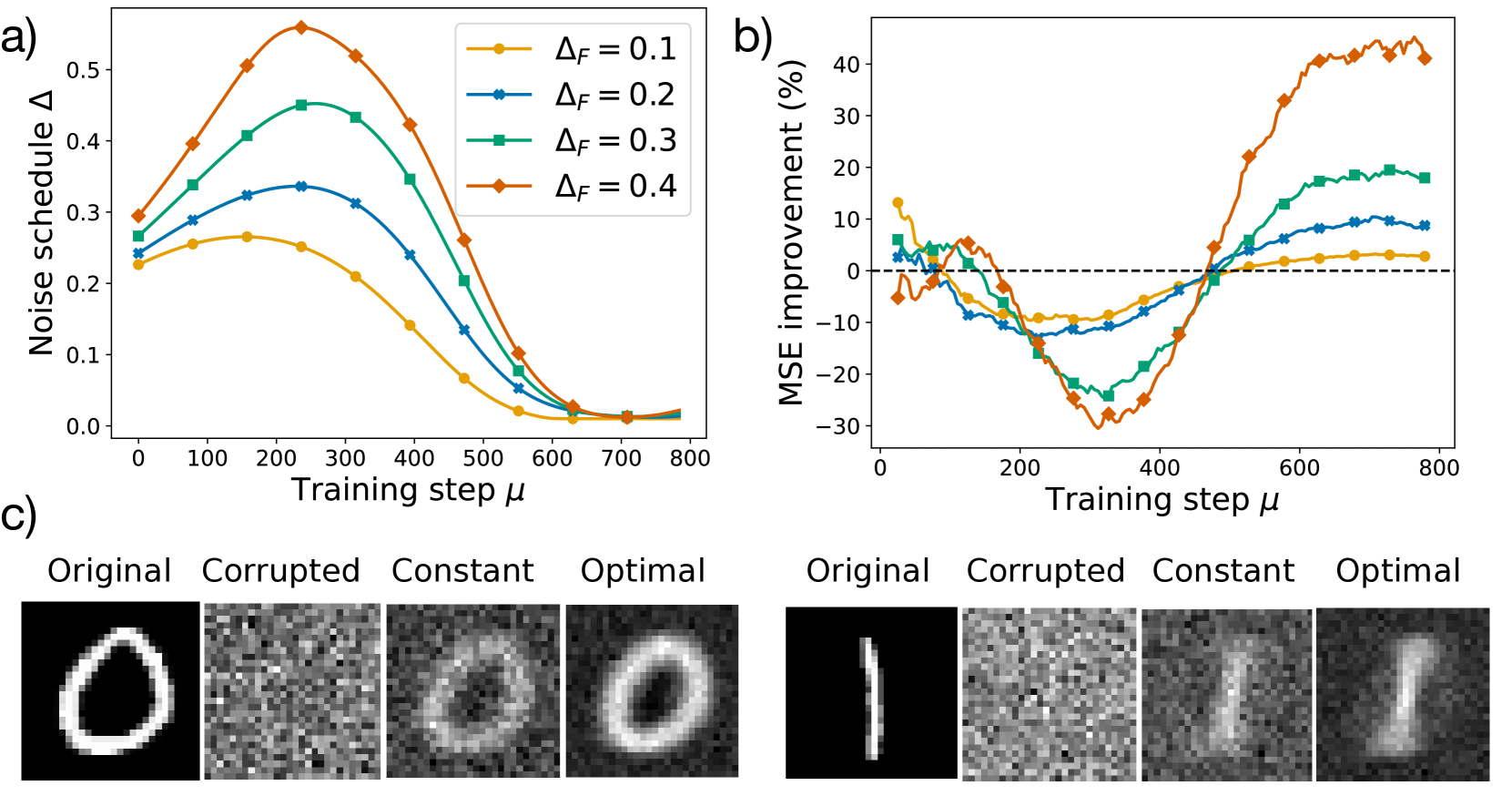

Figure 1: Illustration of the curriculum learning model studied in Section 3.1.

Curriculum learning (CL) refers to a variety of training protocols in which examples are presented in a curated order—typically organized by difficulty or complexity. In animal and human training, CL is widely used and extensively studied in behavioral research, demonstrating clear benefits [100, 101, 102]. For example, shaping —the progressive introduction of subtasks to decompose a complex task—is a common technique in animal training [6, 103]. By contrast, results on the efficacy of CL in machine learning remain sparse and less conclusive [104, 105]. Empirical studies across diverse settings have nonetheless demonstrated that curricula can outperform standard heuristic strategies [106, 107, 108].

Several theoretical studies have explored the benefits of curriculum learning in analytically tractable models. Easy-to-hard curricula have been shown to accelerate learning in convex settings [109, 110] and improve generalization in more complex nonconvex problems, such as XOR classification [111] or parity functions [112, 113]. However, these analyses typically focused on predefined heuristics, which may not be optimal. In particular, it remains unclear under what conditions an easy‐to‐hard curriculum is truly optimal and what alternative strategies might outperform it when it is not. Moreover, although hyperparameter schedules have been shown to enhance curriculum learning empirically [49], a principled approach to their joint optimization remains largely unexplored.

Here, we focus on a prototypical model of curriculum learning introduced in [104] and recently studied analytically in [110], where high-dimensional learning curves for online SGD were derived. This model considers a binary classification problem in a TS setting where both teacher and student are perceptron (one-layer) networks. The input vectors consist of $L=2$ elements—relevant directions $\bm{x}_{1}$ , which the teacher ( $M=1$ ) uses to generate labels $y=\operatorname{sign}({\bm{x}}_{1}·{\bm{w}}_{*}/\sqrt{N})$ , and irrelevant directions $\bm{x}_{2}$ , which do not affect the labels For simplicity, we consider an equal proportion of relevant and irrelevant directions. It is possible to extend the analysis to arbitrary proportions as in [110].. The student network ( $K=2$ ) is given by

$$

f_{\bm{w}}(\bm{x})=\operatorname{erf}\left(\frac{{\bm{x}}_{1}\cdot{\bm{w}}_{1}%

+{\bm{x}}_{2}\cdot{\bm{w}}_{2}}{2\sqrt{N}}\right)\,. \tag{20}

$$

As a result, the student does not know a priori which directions are relevant. The teacher vector is normalized such that $T_{11}=\bm{w}_{*}·\bm{w}_{*}/N=2$ . All inputs are single-cluster zero-mean Gaussian variables and the sample difficulty is controlled by the variance $\Delta$ of the irrelevant directions, while the relevant directions are assumed to have unit variance (see Figure 1). We do not include label noise. We consider the squared loss $\ell=(y-f_{\bm{w}}(\bm{x}))^{2}/2$ and ridge regularization $\tilde{g}\left(\bm{w}^{→p}\bm{w}/N\right)=\lambda\left({\bm{w}}_{1}·{\bm%

{w}}_{1}+{\bm{w}}_{2}·{\bm{w}}_{2}\right)/(4N)$ , with tunable strength $\lambda≥ 0$ . An illustration of the model is presented in Figure 1. Full expressions for the ODEs governing the learning dynamics of the order parameters $M_{11}={\bm{w}}_{*}·{\bm{w}}_{1}/N$ , $Q_{11}={\bm{w}}_{1}·{\bm{w}}_{1}/N$ , $Q_{22}={\bm{w}}_{2}·{\bm{w}}_{2}/N$ , and the generalization error are provided in Appendix A.1.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Multi-Panel Figure: Curriculum Learning Performance

### Overview

The image presents a multi-panel figure comparing the performance of three different training curricula: Curriculum, Anti-Curriculum, and Optimal. The figure consists of four subplots (a, b, c, d) that illustrate different aspects of the training process, including generalization error, difficulty protocol, cosine similarity with signal, and the norm of irrelevant weights.

### Components/Axes

**Panel a: Generalization Error vs. Training Time**

* **Y-axis:** Generalization error (log scale). Markers: 2 x 10^-1, 3 x 10^-1, 4 x 10^-1

* **X-axis:** Training time α. Markers: 0, 2, 4, 6, 8, 10, 12

* **Legend (top-left):**

* Curriculum (blue, dashed line with circle markers)

* Anti-Curriculum (orange, dashed line with square markers)

* Optimal (black, solid line with diamond markers)

**Panel b: Difficulty Protocol vs. Training Time**

* **Y-axis:** Difficulty protocol Δ

* **X-axis:** Training time α (arrow indicating direction)

* **Color Coding:**

* Easy (cyan)

* Hard (coral)

* **Curricula:**

* Curriculum: Easy initially, then Hard

* Anti-Curriculum: Hard initially, then Easy

* Optimal: Easy initially, then Hard, then Easy

**Panel c: Cosine Similarity with Signal vs. Training Time**

* **Y-axis:** Cosine similarity with signal. Markers: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0

* **X-axis:** Training time α. Markers: 0, 2, 4, 6, 8, 10, 12

* **Inset Plot:** Zoomed-in view of the cosine similarity between training time 8 and 12.

* Y-axis markers: 0.89, 0.90, 0.91, 0.92, 0.93, 0.94, 0.95

* X-axis markers: 8.0, 8.5, 9.0, 9.5, 10.0, 10.5, 11.0, 11.5, 12.0

* **Legend (inferred from other plots):**

* Curriculum (blue, dashed line with circle markers)

* Anti-Curriculum (orange, dashed line with square markers)

* Optimal (black, solid line with diamond markers)

**Panel d: Norm of Irrelevant Weights vs. Training Time**

* **Y-axis:** Norm of irrelevant weights. Markers: 1.0, 1.5, 2.0, 2.5, 3.0, 3.5, 4.0

* **X-axis:** Training time α. Markers: 0, 2, 4, 6, 8, 10, 12

* **Legend (inferred from other plots):**

* Curriculum (blue, dashed line with circle markers)

* Anti-Curriculum (orange, dashed line with square markers)

* Optimal (black, solid line with diamond markers)

### Detailed Analysis

**Panel a: Generalization Error**

* **Curriculum (blue):** Starts at approximately 4.5 x 10^-1, decreases rapidly until training time ~6, then plateaus around 1.5 x 10^-1.

* **Anti-Curriculum (orange):** Starts at approximately 4.5 x 10^-1, decreases steadily to approximately 1.3 x 10^-1 at training time 12.

* **Optimal (black):** Starts at approximately 4.5 x 10^-1, decreases rapidly to approximately 1.5 x 10^-1 at training time 6, then decreases slowly to approximately 1.2 x 10^-1 at training time 12.

**Panel b: Difficulty Protocol**

* **Curriculum:** Starts with "Easy" tasks (cyan) for approximately half the training time, then switches to "Hard" tasks (coral).

* **Anti-Curriculum:** Starts with "Hard" tasks (coral) and switches to "Easy" tasks (cyan) after approximately one-third of the training time.

* **Optimal:** Starts with "Easy" tasks (cyan), switches to "Hard" tasks (coral) after a short period, and then switches back to "Easy" tasks (cyan).

**Panel c: Cosine Similarity with Signal**

* **Curriculum (blue):** Starts at 0, increases rapidly to approximately 0.8 by training time 4, then increases slowly to approximately 0.97 by training time 12.

* **Anti-Curriculum (orange):** Starts at 0, increases rapidly to approximately 0.7 by training time 4, then increases slowly to approximately 0.95 by training time 12.

* **Optimal (black):** Starts at 0, increases rapidly to approximately 0.85 by training time 4, then increases slowly to approximately 0.98 by training time 12.

* **Inset Plot:** Shows that the cosine similarity for Anti-Curriculum surpasses Curriculum after training time 10.

**Panel d: Norm of Irrelevant Weights**

* **Curriculum (blue):** Remains at approximately 1.0 until training time 6, then increases to approximately 2.2 by training time 12.

* **Anti-Curriculum (orange):** Increases rapidly from 1.0 to approximately 4.1 by training time 4, then plateaus.

* **Optimal (black):** Remains at approximately 1.0 until training time 2, then increases to approximately 2.8 by training time 12.

### Key Observations

* The "Optimal" curriculum achieves the lowest generalization error and highest cosine similarity with signal.

* The "Anti-Curriculum" results in the highest norm of irrelevant weights.

* The "Curriculum" shows a delayed increase in the norm of irrelevant weights.

### Interpretation

The data suggests that the order in which training tasks are presented significantly impacts the learning process. The "Optimal" curriculum, which starts with easy tasks, transitions to hard tasks, and then returns to easy tasks, appears to be the most effective in minimizing generalization error and maximizing the alignment of the learned representation with the signal. The "Anti-Curriculum," which starts with hard tasks, leads to a higher norm of irrelevant weights, potentially indicating that the model is learning spurious correlations early in training. The "Curriculum" approach, starting with easy tasks and then transitioning to hard tasks, shows a delayed increase in the norm of irrelevant weights, suggesting that it may be more robust to learning irrelevant features early on. The inset in panel c highlights a subtle but potentially important difference in the long-term behavior of the cosine similarity, where the Anti-Curriculum eventually surpasses the Curriculum. This could indicate that while the initial learning is slower, the Anti-Curriculum may eventually converge to a better solution.

</details>

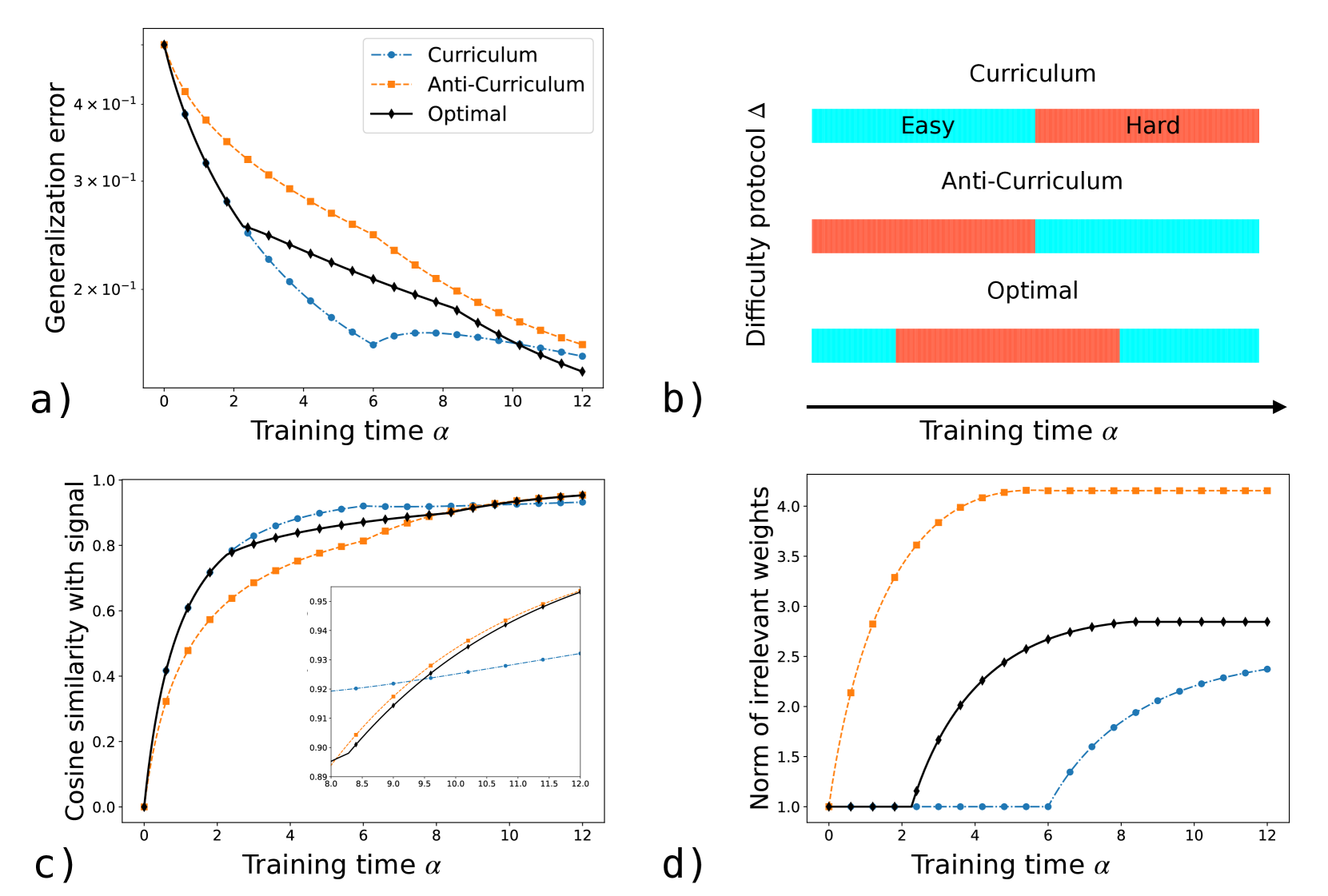

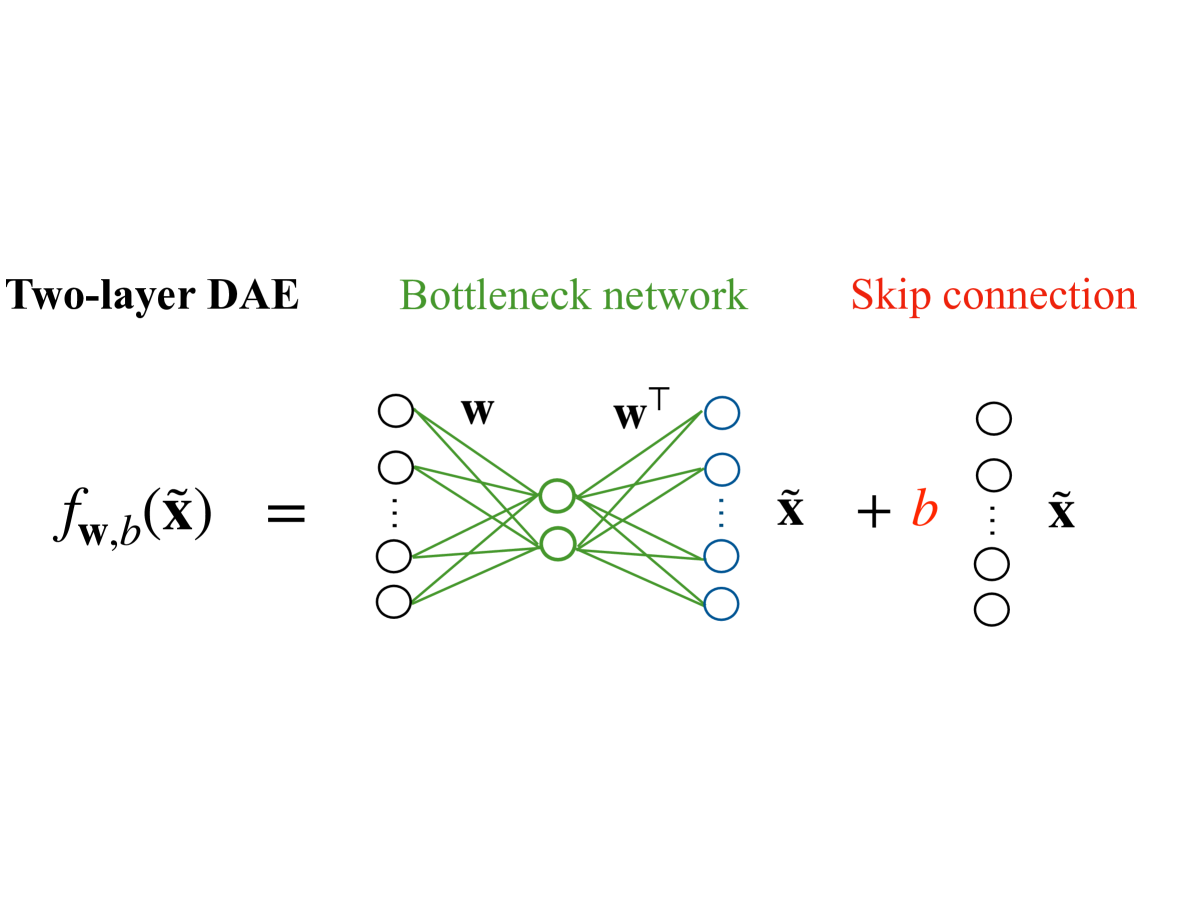

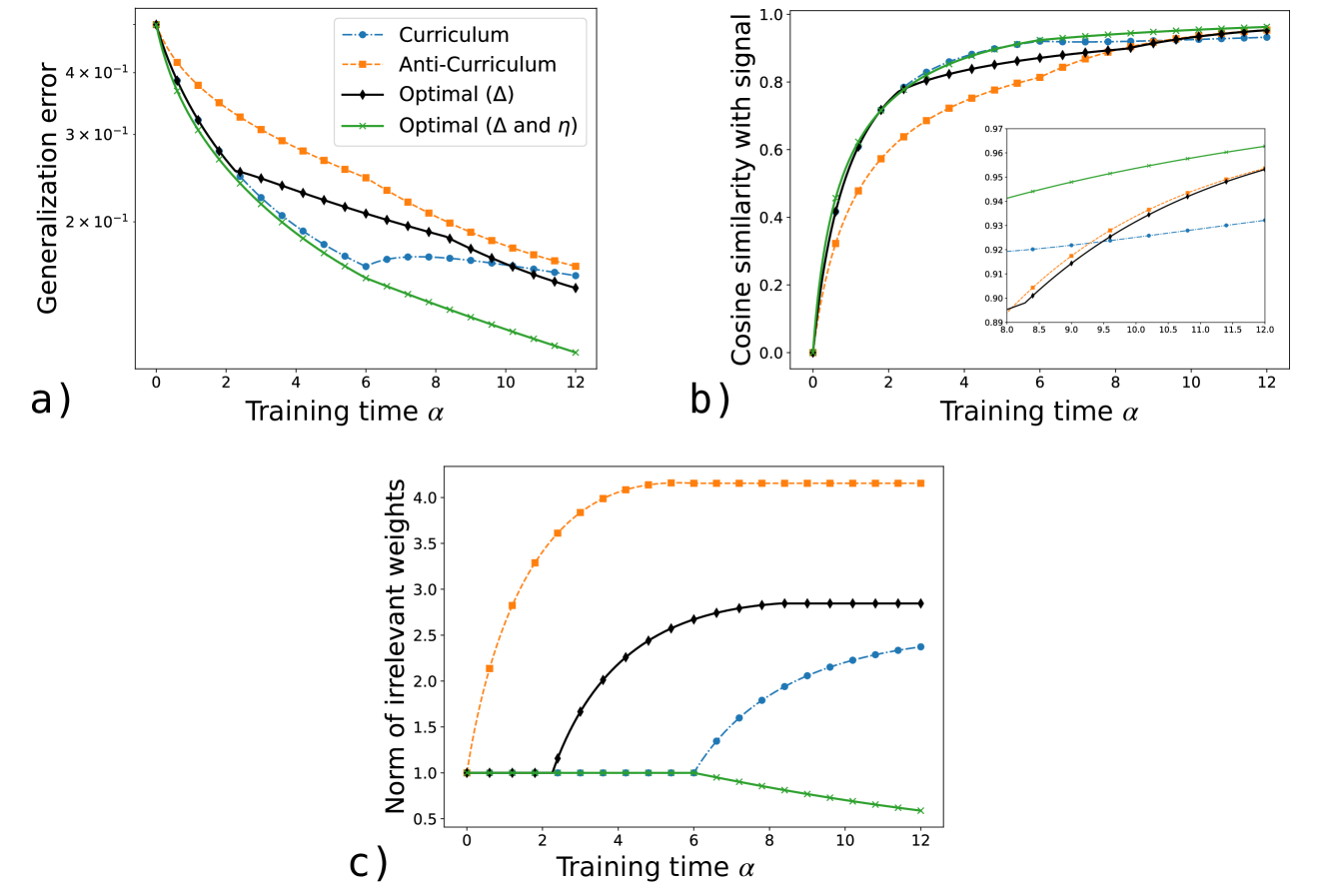

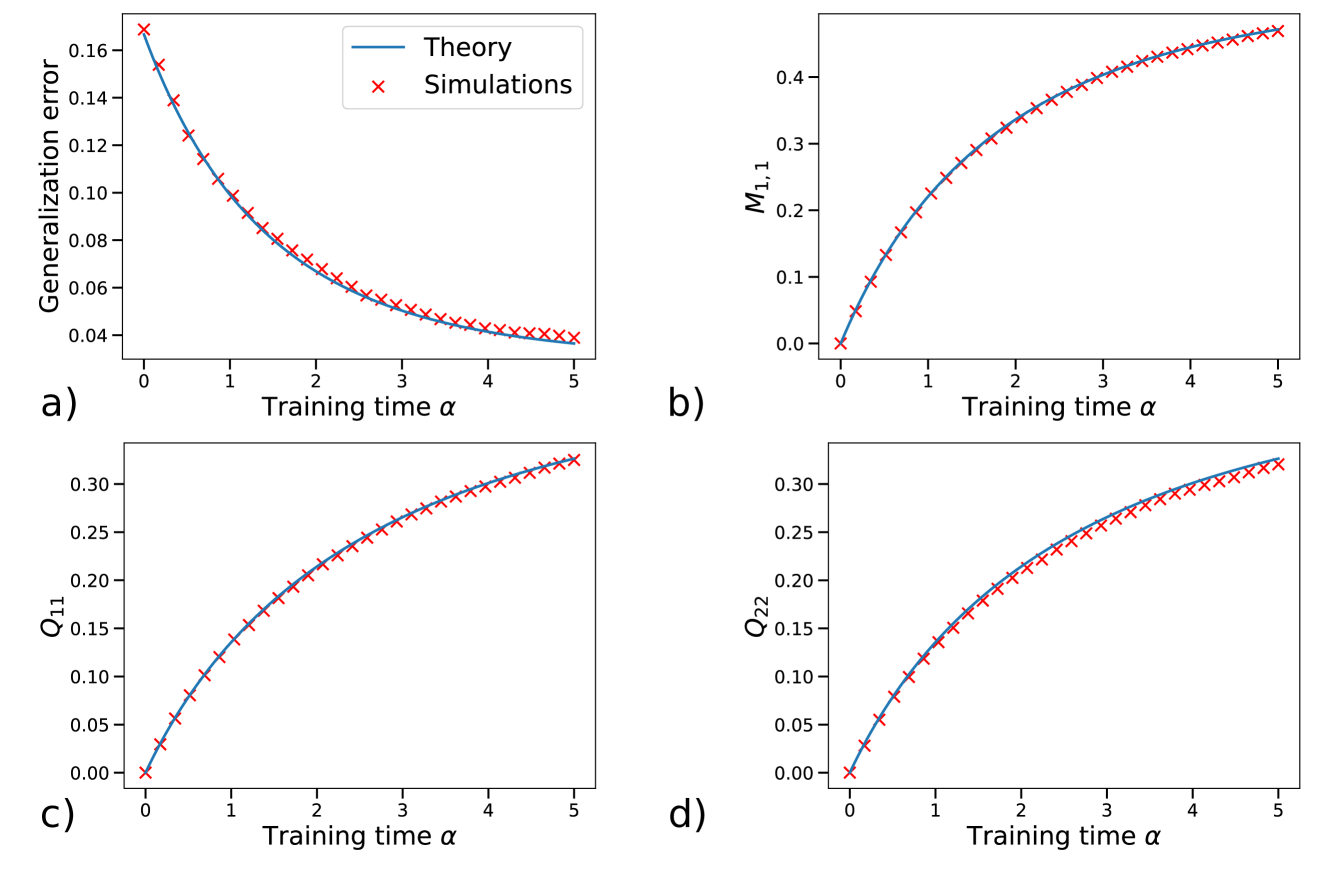

Figure 2: Learning dynamics for different difficulty schedules: curriculum (easy-to-hard), anti-curriculum (hard-to-easy) and the optimal one. a) Generalization error vs. training time $\alpha$ . b) Timeline of each schedule. c) Cosine similarity with the target signal $M_{11}/\sqrt{T_{11}Q_{11}}$ (inset zooms into the late-training regime). d) Squared norm of irrelevant weights $Q_{22}$ vs. $\alpha$ . Parameters: $\alpha_{F}=12$ , $\Delta_{1}=0$ , $\Delta_{2}=2$ , $\eta=3$ , $\lambda=0$ , $T_{11}=2$ . Initialization: $Q_{11}=Q_{22}=1$ , $M_{11}=0$ .

We consider a dataset composed of two difficulty levels: $50\%$ “easy” examples ( $\Delta=\Delta_{1}$ ), and $50\%$ “hard” examples ( $\Delta=\Delta_{2}>\Delta_{1}$ ). We call curriculum the easy-to-hard schedule in which all easy samples are presented first, and anti-curriculum the opposite strategy (see Figure 2 b). We compute the optimal sampling strategy $\bm{u}(\alpha)=\Delta(\alpha)∈\{\Delta_{1},\Delta_{2}\}$ using Pontryagin’s maximum principle, as explained in Section 2.3.1. The constraint on the proportion of easy and hard examples in the training set is enforced via an additional Lagrange multiplier in the cost functional (Eq. (13)). As the final objective in Eq. (12) we use the misclassification error averaged over an equal proportion of easy and hard examples.

Good generalization requires balancing two competing objectives: maximizing the teacher–student alignment along relevant directions—as measured by the cosine similarity with the signal $M_{11}/\sqrt{T_{11}Q_{11}}$ —and minimizing the norm of the student’s weights along the irrelevant directions, $\sqrt{Q_{22}}$ . We observe that anti-curriculum favors the first objective, while curriculum the latter. This is shown in Figure 2, where we take constant learning rate $\eta=3$ and no regularization $\lambda=0$ . In this case, the optimal strategy is non-monotonic in difficulty, following an “easy-hard-easy” schedule, that balances the two objectives (see panels 2 c and 2 d), and achieves lower generalization error compared to the two monotonic strategies.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Chart: Final Error vs Regularization and Optimal Learning Rate vs Training Time

### Overview

The image presents two plots. The left plot (a) shows the final error as a function of regularization for different training methods: Curriculum, Anti-Curriculum, Optimal (Δ), and Optimal (Δ and η). The right plot (b) illustrates the optimal learning rate as a function of training time, segmented into "Easy" and "Hard" regions.

### Components/Axes

**Plot a) Final Error vs Regularization:**

* **Y-axis:** "Final error" with a logarithmic scale (10<sup>-1</sup>). The axis ranges from approximately 1.0 x 10<sup>-1</sup> to 2.0 x 10<sup>-1</sup>.

* **X-axis:** "Regularization λ" ranging from 0.00 to 0.30 in increments of 0.05.

* **Legend (bottom-left):**

* Blue dashed line with circles: "Curriculum"

* Orange dashed line with squares: "Anti-Curriculum"

* Black solid line with diamonds: "Optimal (Δ)"

* Green solid line with crosses: "Optimal (Δ and η)"

**Plot b) Optimal Learning Rate vs Training Time:**

* **Y-axis:** "Optimal learning rate η" ranging from 1.0 to 5.0 in increments of 0.5.

* **X-axis:** "Training time α" ranging from 0 to 12 in increments of 2.

* **Top:** A horizontal bar divided into two sections:

* Left (cyan): "Easy"

* Right (coral): "Hard"

* **Data Series:** A single green line representing the optimal learning rate.

### Detailed Analysis

**Plot a) Final Error vs Regularization:**

* **Curriculum (Blue):** The final error increases as regularization increases. At λ = 0, the final error is approximately 0.15 x 10<sup>-1</sup>, and at λ = 0.3, it's approximately 0.21 x 10<sup>-1</sup>.

* **Anti-Curriculum (Orange):** The final error starts at approximately 0.16 x 10<sup>-1</sup>, decreases slightly to about 0.155 x 10<sup>-1</sup>, and then remains relatively constant as regularization increases.

* **Optimal (Δ) (Black):** The final error increases slightly with regularization. It starts at approximately 0.145 x 10<sup>-1</sup> and ends at approximately 0.16 x 10<sup>-1</sup>.

* **Optimal (Δ and η) (Green):** The final error decreases initially and then slightly increases with regularization. It has a minimum value of approximately 0.10 x 10<sup>-1</sup> around λ = 0.15.

**Plot b) Optimal Learning Rate vs Training Time:**

* **Optimal Learning Rate (Green):** The learning rate starts at approximately 4.25, increases to a peak of approximately 4.9 around α = 2, and then decreases rapidly until α = 6. At α = 6, there is a sharp drop in the learning rate from approximately 2.3 to 1.3. After this drop, the learning rate continues to decrease gradually, reaching approximately 1.0 at α = 12.

### Key Observations

* In plot a), the "Optimal (Δ and η)" method consistently achieves the lowest final error across all regularization values.

* In plot b), the optimal learning rate decreases over time, with a significant drop at the transition from the "Easy" to the "Hard" training phase.

### Interpretation

The plots suggest that incorporating both Δ and η in the optimization process leads to better performance (lower final error) compared to other methods. The optimal learning rate plot indicates that a higher learning rate is beneficial during the initial "Easy" phase of training, but it needs to be reduced significantly as the training progresses into the "Hard" phase. The sharp drop in the learning rate at the transition point suggests a deliberate adjustment to prevent overshooting or instability as the model encounters more complex data.

</details>

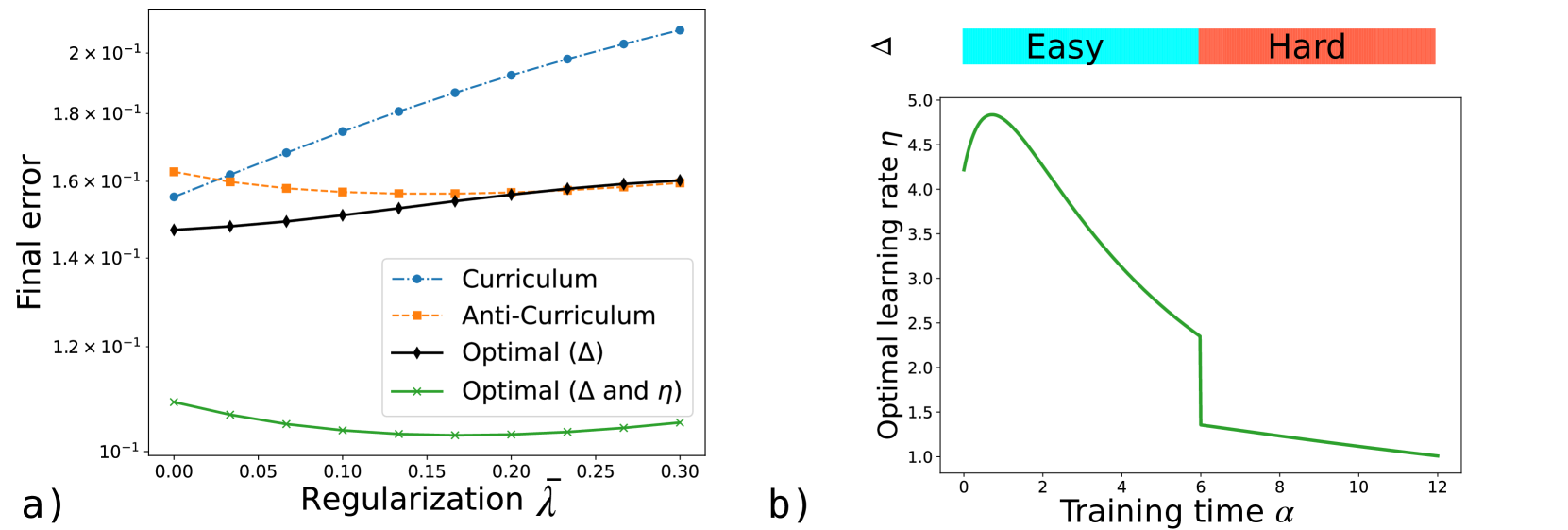

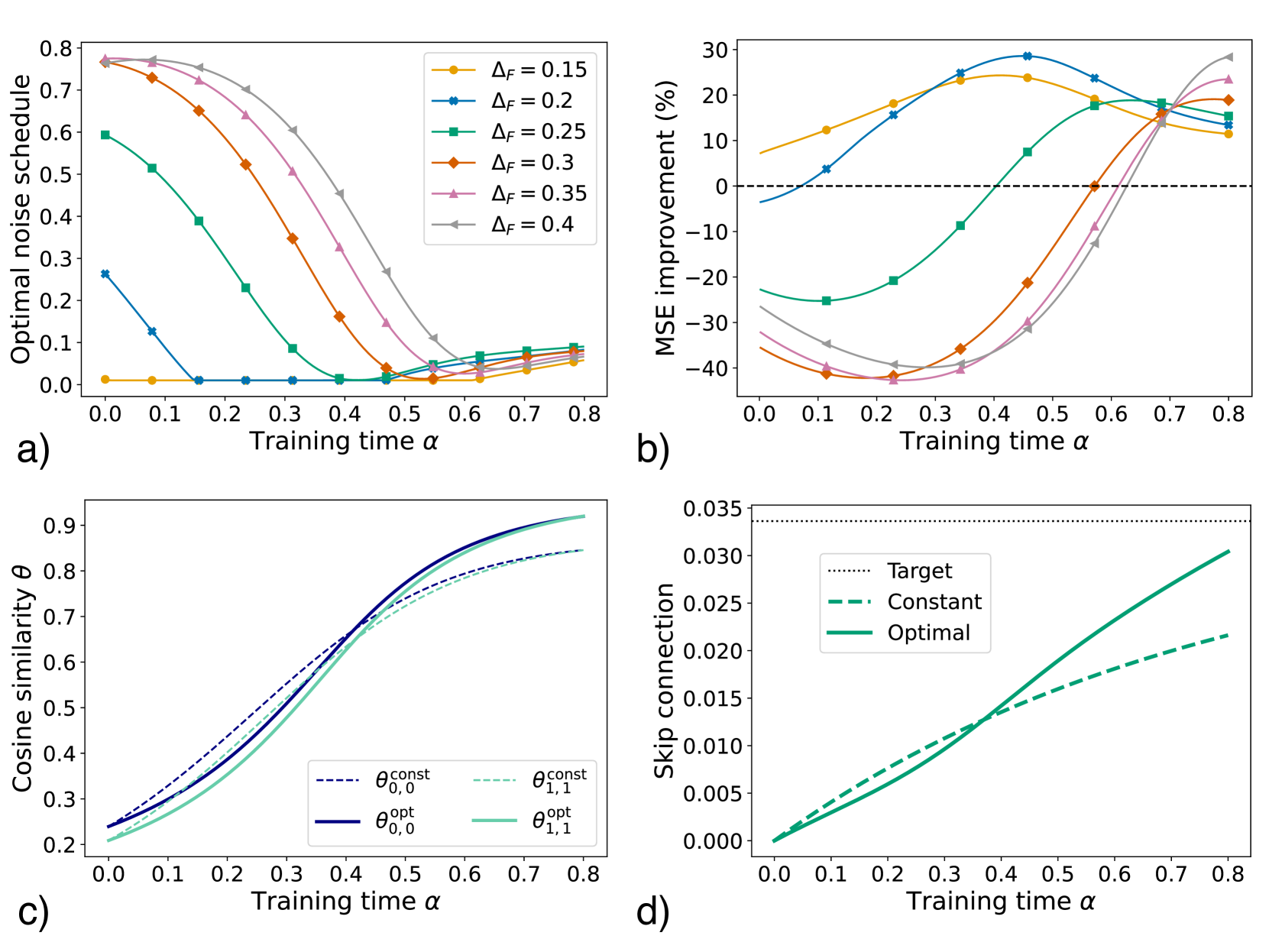

Figure 3: Simultaneous optimization of difficulty protocol $\Delta$ and learning rate $\eta$ in curriculum learning. a) Generalization error at the final time $\alpha_{F}=12$ , averaged over an equal fraction of easy and hard examples, as a function of the (rescaled) regularization $\bar{\lambda}=\lambda\eta$ for the three strategies presented in Figure 2, obtained optimizing over $\Delta$ at constant $\eta=3$ , and the optimal strategy (displayed in panel b for $\lambda=0$ ) obtained by jointly optimizing $\Delta$ and $\eta$ . Same parameters as Figure 2.

Furthermore, we observe that the optimal balance between these competing goals is determined by the interplay between the difficulty schedule and other problem hyperparameters such as regularization and learning rate. Figure 3 a shows the final generalization error as a function of the regularization strength (held constant during training) for curriculum (blue), anti-curriculum (orange), and the optimal schedule (black), at fixed learning rate. When the regularization is high ( $\lambda>0.2$ ), weight decay alone ensures norm suppression along the irrelevant directions, so the optimal strategy reduces to anti-curriculum.

We next explore how a time‐dependent learning‐rate schedule $\eta(\alpha)$ can be coupled with the curriculum to improve generalization. This corresponds to extending the control vector $\bm{u}(\alpha)=\left(\Delta(\alpha),\eta(\alpha)\right)$ , where both difficulty and learning rate schedules are optimized jointly. In Figure 3 a, we see that this joint optimization produces a substantial reduction in generalization error compared to any constant‐ $\eta$ strategy. Interestingly, for all parameter settings considered, an easy‐to‐hard curriculum becomes optimal once the learning rate is properly adjusted. Figure 3 b displays the optimal learning rate schedule $\eta(\alpha)$ at $\lambda=0$ : it begins with a warm‐up phase, transitions to gradual annealing, and then undergoes a sharp drop precisely when the curriculum shifts from easy to hard samples. This behavior is intuitive, since learning harder examples benefits from a lower, more cautious learning rate. As demonstrated in Figure 10 (Appendix B), this combined schedule effectively balances both objectives—maximizing signal alignment and minimizing noise overfitting. These results align with the empirical learning rate scheduling employed in the numerical experiments of [111], where easier samples were trained with a higher (constant) learning rate and harder samples with a lower one. Importantly, our framework provides a principled derivation of the optimal joint schedule, thereby confirming and grounding prior empirical insights.

3.2 Dropout regularization

<details>

<summary>x4.png Details</summary>

### Visual Description

## Neural Network Architectures: Teacher-Student Model

### Overview

The image presents a diagram illustrating a teacher-student model in machine learning, showcasing the architectures of the teacher network and the student network during training and testing phases. The diagram highlights the flow of information and the key parameters involved in each stage.

### Components/Axes

* **Teacher Network (Left)**:

* Input: x

* Weights: w\*

* Hidden Nodes: M hidden nodes

* Activation Function: φ(x)

* Output: φ(x)

* Label Noise: z ~ N(0,1)

* Equation: y = φ(x) + σ\_n z

* **Student Network (Center)**:

* State: at training step μ

* Input: x

* Weights: w

* Hidden Nodes: K hidden nodes

* Node-activation variables: r\_μ^(1), r\_μ^(2), ..., r\_μ^(K) ~ Bernoulli(p\_μ)

* Output: ŷ

* **Student Network (Right)**:

* State: at testing time

* Input: x

* Weights: w

* Hidden Nodes: K hidden nodes

* Rescaling factor: p\_f

* Output: ŷ

### Detailed Analysis or ### Content Details

**Teacher Network:**

* The input 'x' is fed into the network.

* The network has 'M' hidden nodes.

* The weights connecting the input layer to the hidden layer are denoted as 'w\*'.

* The output of the teacher network is φ(x).

* Label noise 'z' is added to the output, where 'z' follows a normal distribution with mean 0 and standard deviation 1 (N(0,1)).

* The final output 'y' is given by the equation y = φ(x) + σ\_n z, where σ\_n represents the noise level.

* The connections between the input layer and the hidden layer are green.

* The connections between the hidden layer and the output layer are gray.

**Student Network (Training):**

* The input 'x' is fed into the network.

* The network has 'K' hidden nodes.

* The weights connecting the input layer to the hidden layer are denoted as 'w'.

* Node-activation variables r\_μ^(1), r\_μ^(2), ..., r\_μ^(K) follow a Bernoulli distribution with parameter p\_μ.

* The output of the student network is ŷ.

* The connections between the input layer and the hidden layer are orange.

* The connections between the hidden layer and the output layer are gray.

* The node-activation variables are represented by purple squares.

**Student Network (Testing):**

* The input 'x' is fed into the network.

* The network has 'K' hidden nodes.

* The weights connecting the input layer to the hidden layer are denoted as 'w'.

* A rescaling factor 'p\_f' is applied.

* The output of the student network is ŷ.

* The connections between the input layer and the hidden layer are orange.

* The connections between the hidden layer and the output layer are blue.

### Key Observations

* The teacher network has 'M' hidden nodes, while the student network has 'K' hidden nodes.

* The student network's architecture remains the same during training and testing, but the connections to the output node change. During training, the connections are gray, and during testing, the connections are blue.

* The teacher network introduces label noise, while the student network uses node-activation variables during training and a rescaling factor during testing.

### Interpretation

The diagram illustrates a teacher-student learning paradigm, where a student network learns to mimic the behavior of a teacher network. The teacher network provides the target outputs, while the student network adjusts its parameters to minimize the difference between its output and the teacher's output. The use of node-activation variables during training and a rescaling factor during testing suggests that the student network is learning to generalize from noisy data. The teacher network introduces noise to the labels, which forces the student network to learn a more robust representation of the data. The student network uses node-activation variables during training to explore different configurations of the network, and it uses a rescaling factor during testing to adjust the output scale.

</details>

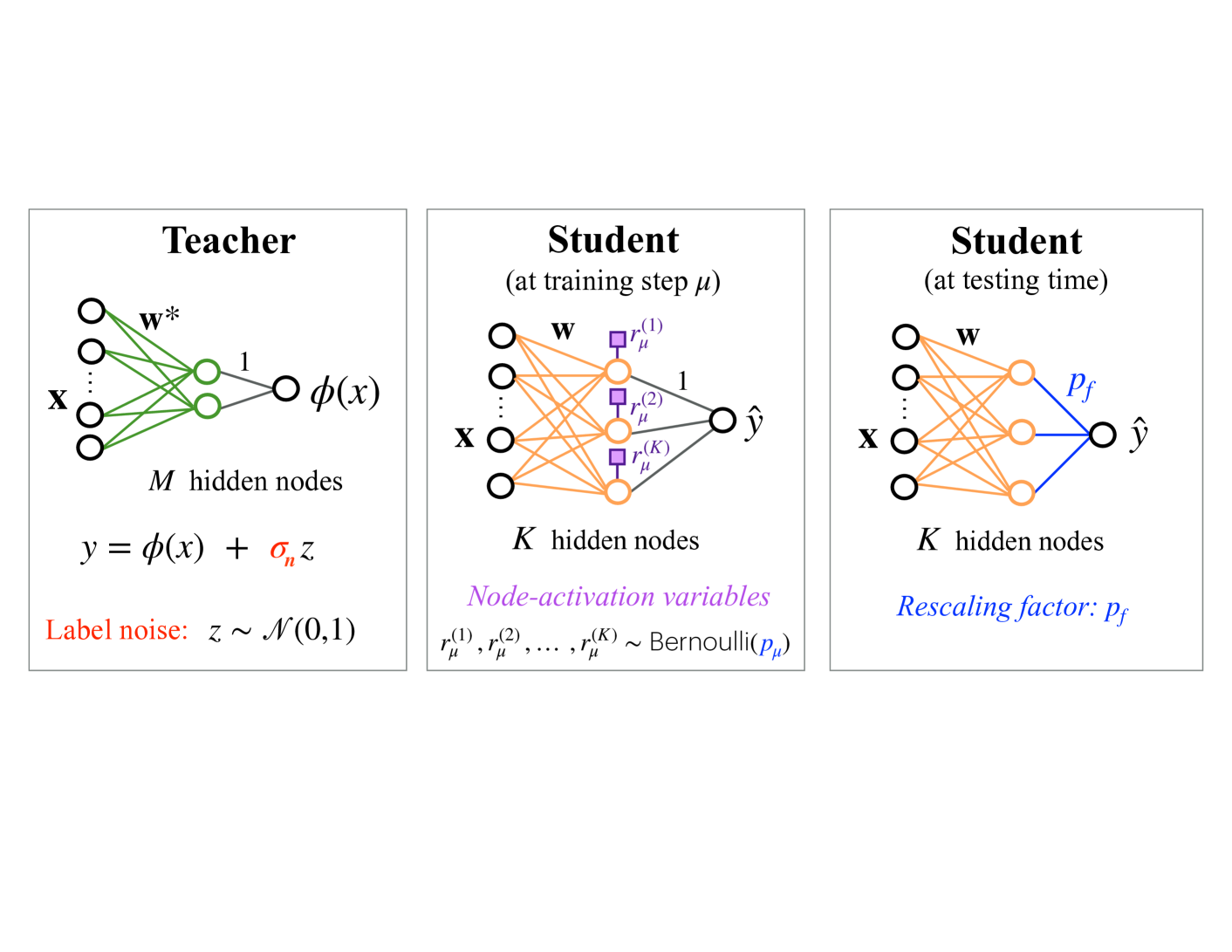

Figure 4: Illustration of the dropout model studied in Section 3.2.

Dropout [80, 81] is a regularization technique designed to prevent harmful co-adaptations of hidden units, thereby reducing overfitting and enhancing the network’s performance. During training, each node is independently kept active with probability $p$ and “dropped” (i.e., its output set to zero) otherwise, effectively sampling a random subnetwork at each iteration. At test time, the full network is used, which corresponds to averaging over the ensemble of all subnetworks and yields more robust predictions.

Dropout has become a cornerstone of modern neural‐network training [114]. While early works recommended keeping the activation probability fixed—typically in the range $0.5$ - $0.8$ —throughout training [80, 81], recent empirical studies propose varying this probability over time, using adaptive schedules to further enhance performance [115, 82, 83]. In particular, [82] showed that heuristic schedules that decrease the activation probability over time are analogous to easy-to-hard curricula and can lead to improved performance. Although adaptive dropout schedules have attracted practical interest, the conditions under which they outperform constant strategies remain poorly understood, and the theoretical foundations of their potential optimality are largely unexplored.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Chart: Training Time vs. Various Metrics

### Overview

The image presents four line charts (a, b, c, d) that depict the relationship between training time (alpha) and different performance metrics or probabilities in a machine learning context. The charts explore the impact of dropout regularization and noise levels on generalization error, a measure of model performance (Delta), a ratio involving matrix elements (M11/sqrt(Q11*T11)), and activation probability.

### Components/Axes

**Chart a)**

* **Title:** Generalization error vs. Training time

* **X-axis:** Training time α (values: 0 to 5, incrementing by 1)

* **Y-axis:** Generalization error (values: 2 x 10^-2 to 6 x 10^-2, incrementing by 1 x 10^-2)

* **Legend:**

* Orange dashed line with square markers: No dropout

* Blue dashed-dotted line with circle markers: Constant (p=0.68)

* Black solid line with diamond markers: Optimal

**Chart b)**

* **Title:** Delta vs. Training time

* **X-axis:** Training time α (values: 0 to 5, incrementing by 1)

* **Y-axis:** Δ̃ (values: 0.0 to 0.8, incrementing by 0.2)

* **Legend:** (Same as Chart a)

* Orange dashed line with square markers: No dropout

* Blue dashed-dotted line with circle markers: Constant (p=0.68)

* Black solid line with diamond markers: Optimal

**Chart c)**

* **Title:** M11/sqrt(Q11\*T11) vs. Training time

* **X-axis:** Training time α (values: 0 to 5, incrementing by 1)

* **Y-axis:** M11/√(Q11\*T11) (values: 0.2 to 0.9, incrementing by 0.1)

* **Legend:** (Same as Chart a)

* Orange dashed line with square markers: No dropout

* Blue dashed-dotted line with circle markers: Constant (p=0.68)

* Black solid line with diamond markers: Optimal

**Chart d)**

* **Title:** Activation probability p(α) vs. Training time

* **X-axis:** Training time α (values: 0 to 5, incrementing by 1)

* **Y-axis:** Activation probability p(α) (values: 0.4 to 1.0, incrementing by 0.1)

* **Legend:**

* Teal dotted line with square markers: σn = 0.1

* Green dashed line with circle markers: σn = 0.2

* Black solid line with diamond markers: σn = 0.3

* Red line with x markers: σn = 0.5

### Detailed Analysis

**Chart a) Generalization Error**

* **No dropout (orange):** The generalization error decreases rapidly from approximately 6.8e-2 at α=0 to approximately 3.2e-2 at α=5.

* **Constant (p=0.68) (blue):** The generalization error decreases from approximately 6.3e-2 at α=0 to approximately 1.6e-2 at α=5.

* **Optimal (black):** The generalization error decreases from approximately 6.8e-2 at α=0 to approximately 1.4e-2 at α=5.

**Chart b) Delta**

* **No dropout (orange):** Delta decreases from approximately 0.88 at α=0 to approximately 0.25 at α=5.

* **Constant (p=0.68) (blue):** Delta decreases from approximately 0.63 at α=0 to approximately 0.08 at α=5.

* **Optimal (black):** Delta decreases from approximately 0.88 at α=0 to approximately 0.07 at α=5.

**Chart c) M11/sqrt(Q11\*T11)**

* **No dropout (orange):** The ratio increases from approximately 0.27 at α=0 to approximately 0.87 at α=5.

* **Constant (p=0.68) (blue):** The ratio increases from approximately 0.27 at α=0 to approximately 0.83 at α=5.

* **Optimal (black):** The ratio increases from approximately 0.27 at α=0 to approximately 0.86 at α=5.

**Chart d) Activation Probability**

* **σn = 0.1 (teal):** The activation probability remains at 1.0 until α=2, then decreases to approximately 0.86 at α=3.5, then increases back to 1.0 at α=5.

* **σn = 0.2 (green):** The activation probability remains at 1.0 until α=1, then decreases to approximately 0.58 at α=5.

* **σn = 0.3 (black):** The activation probability remains at 1.0 until α=1, then decreases to approximately 0.43 at α=5.

* **σn = 0.5 (red):** The activation probability remains at 1.0 until α=0.5, then decreases to approximately 0.43 at α=5.

### Key Observations

* In charts a, b, and c, the "Optimal" configuration generally performs best or is very close to the best, followed by "No dropout," and then "Constant (p=0.68)."

* In chart d, higher noise levels (σn) lead to a more rapid decrease in activation probability as training time increases.

### Interpretation

The charts suggest that the "Optimal" configuration, likely referring to an optimized dropout strategy, consistently achieves the lowest generalization error and delta, while maximizing the ratio M11/sqrt(Q11\*T11). This indicates that a well-tuned dropout strategy can significantly improve model performance. The activation probability plots show how different noise levels affect the activation of neurons during training. Higher noise levels cause neurons to deactivate more quickly, potentially influencing the model's learning dynamics and generalization ability. The data suggests that finding the right balance of dropout and noise is crucial for optimizing model performance.

</details>

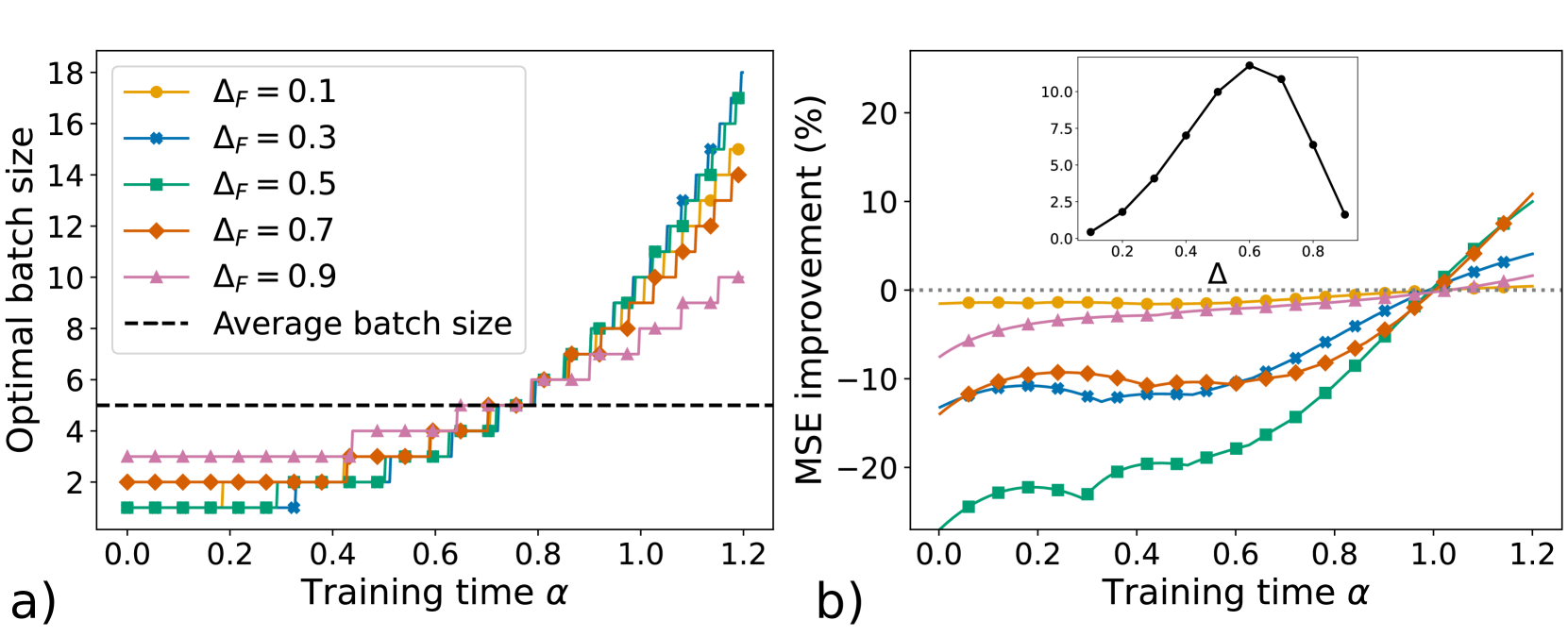

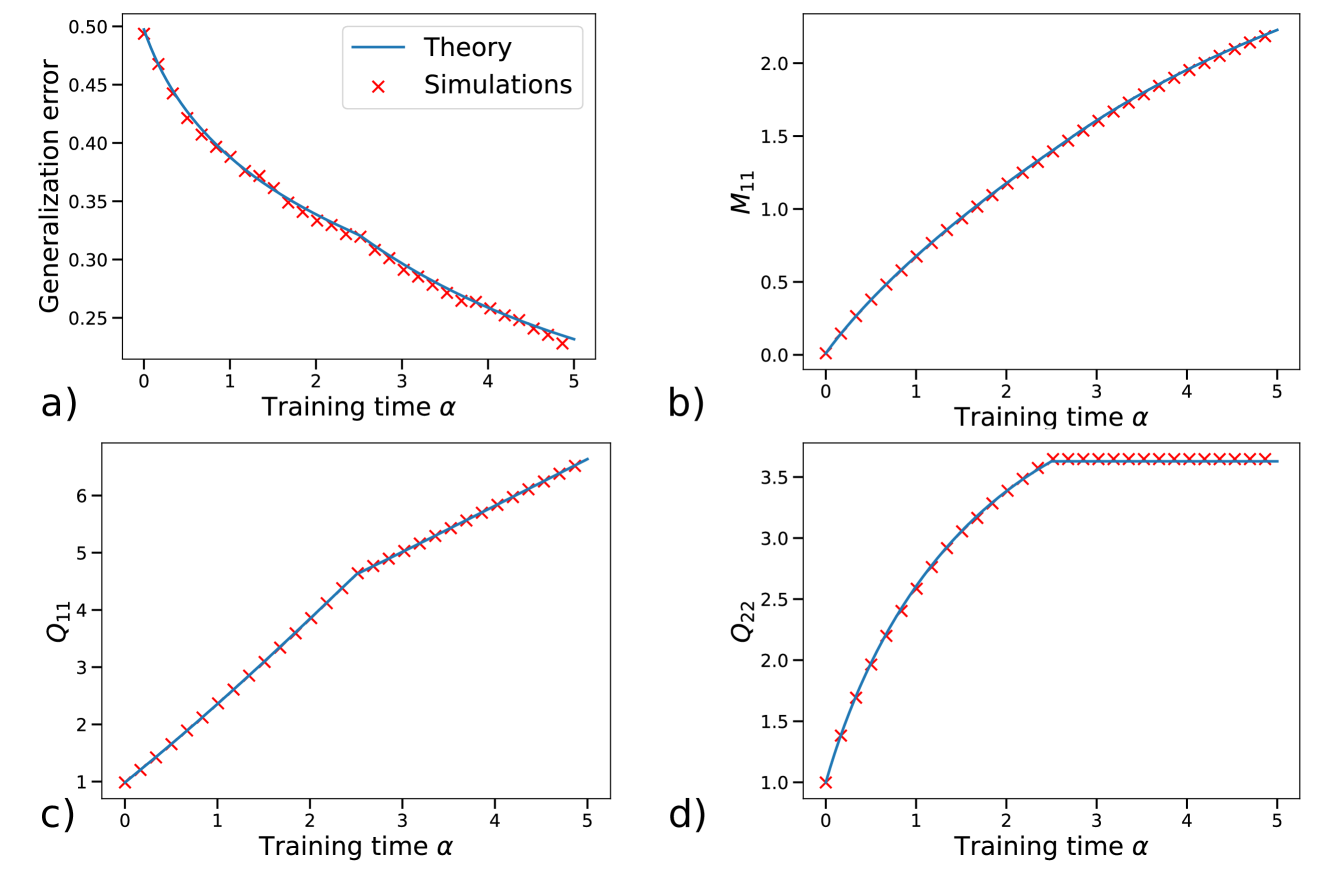

Figure 5: Learning dynamics with dropout regularization. a) Generalization error vs. training time $\alpha$ without dropout (orange), for constant activation probability $p=p_{f}=0.68$ (blue), and for the optimal dropout schedule with $p_{f}=0.678$ (black), at label noise $\sigma_{n}=0.3$ . b) Detrimental correlations between the student’s hidden nodes, measured by $\tilde{\Delta}=(Q_{12}-M_{11}M_{21})/\sqrt{Q_{11}Q_{22}}$ , vs. $\alpha$ , at $\sigma_{n}=0.3$ . c) Teacher-student cosine similarity $M_{11}/\sqrt{Q_{11}T_{11}}$ vs. $\alpha$ , at $\sigma_{n}=0.3$ . d) Optimal dropout schedules for different label-noise levels. The black curve ( $\sigma_{n}=0.3$ ) shows the optimal schedule used in panels a - c. Parameters: $\alpha_{F}=5$ , $K=2$ , $M=1$ , $\eta=1$ . The teacher weights $\bm{w}^{*}$ are drawn i.i.d. from $\mathcal{N}(0,1)$ with $N=10000$ . The student weights are initialized to zero.

In [84], we introduced a prototypical model of dropout and derived analytic results for constant dropout probabilities. We showed that dropout reduces harmful node correlations—quantified via order parameters—and consequently improves generalization. We further demonstrated that the optimal (constant) activation probability decreases as the variance of the label noise increases. In this section, we first recast the model of [84] within our general framework and then extend the analysis to optimal dropout schedules.

We consider a TS setup where both teacher and student networks are soft-committee machines [34], i.e., two-layer networks with untrained readout weights set to one. Specifically, the inputs $\bm{x}∈\mathbb{R}^{N}$ are taken to be standard Gaussian variables and the corresponding labels are produced via Eq. (2) with label noise variance $\sigma^{2}_{n}$ :

$$

\displaystyle y=f^{*}_{\bm{w}_{*}}(\bm{x})+\sigma_{n}\,z\;, \displaystyle z\sim\mathcal{N}(0,1)\;, \displaystyle f^{*}_{\bm{w}_{*}}(\bm{x})=\sum_{m=1}^{M}\operatorname{erf}\left%

(\frac{\bm{w}_{*,m}\cdot{\bm{x}}}{\sqrt{N}}\right)\,. \tag{21}

$$

To describe dropout, at each training step $\mu$ we couple i.i.d. node-activation Bernoulli random variables $r^{(k)}_{\mu}\sim{\rm Ber}(p_{\mu})$ to each of the student’s hidden nodes $k=1,...,K$ :

$$

f^{\rm train}_{\bm{w}}(\bm{x}^{\mu})=\sum_{k=1}^{K}r^{(k)}_{\mu}\operatorname{%

erf}\left(\frac{\bm{w}_{k}\cdot{\bm{x}}^{\mu}}{\sqrt{N}}\right)\,, \tag{22}

$$

so that node $k$ is active if $r^{(k)}_{\mu}=1$ . At testing time, the full network is used as

$$

f^{\rm test}_{\bm{w}}(\bm{x})=\sum_{k=1}^{K}p_{f}\operatorname{erf}\left(\frac%

{\bm{w}_{k}\cdot{\bm{x}}}{\sqrt{N}}\right)\,. \tag{23}

$$

The rescaling factor $p_{f}$ ensures that the reduced activity during training is taken into account when testing. We consider the squared loss $\ell=(y-f_{\bm{w}}(\bm{x}))^{2}/2$ and no weight-decay regularization. The ODEs governing the order parameters $M_{km}$ and $Q_{jk}$ , as well as the resulting generalization error, are provided in Appendix A.2. These equations arise from averaging over the binary activation variables $r_{\mu}^{(k)}$ , so that the dropout schedule is determined by the time‐dependent activation probability $p(\alpha)$ .

For simplicity, we focus our analysis on the case $M=1$ and $K=2$ , although our considerations hold more generally. During training, assuming $T_{11}=1$ , each student weight vector can be decomposed as ${\bm{w}}_{i}=M_{i1}{\bm{w}}_{*,1}+\tilde{{\bm{w}}}_{i}$ , where $\tilde{\bm{w}}_{i}\perp\bm{w}_{*,1}$ denotes the uninformative component acquired due to noise in the inputs and labels. Generalization requires balancing two competing goals: improving the alignment of each hidden unit with the teacher, measured by $M_{i1}$ , and reducing correlations between their uninformative components, $\tilde{\bm{w}}_{1}$ and $\tilde{\bm{w}}_{2}$ , so that noise effects cancel rather than compound. We quantify these detrimental correlations by the observable $\tilde{\Delta}=(Q_{12}-M_{11}M_{21})/\sqrt{Q_{11}Q_{22}}$ . Figure 5 b compares a constant‐dropout strategy ( $p=p_{f}=0.68$ , orange) with no dropout ( $p=p_{f}=1$ , blue) and shows that dropout sharply reduces $\tilde{\Delta}$ during training. Intuitively, without dropout, both nodes share identical noise realizations at each step, reinforcing their uninformative correlation; with dropout, nodes are from time to time trained individually, reducing correlations. Although dropout also slows the growth of the teacher–student cosine similarity (Figure 5 c) by reducing the number of updates per node, the large decrease in $\tilde{\Delta}$ leads to an overall lower generalization error (Figure 5 a).

To find the optimal dropout schedule, we treat the activation probability as the control variable, $u(\alpha)=p(\alpha)∈[0,1]$ . Additionally, we optimize over the final rescaling $p_{f}∈[0,1]$ to minimize the final error. We solve this optimal‐control problem using a direct multiple‐shooting method implemented in CasADi (Section 2.3.2). Figure 5 shows the resulting optimal schedules for increasing label‐noise levels $\sigma_{n}$ . Each schedule exhibits an initial period with no dropout ( $p(\alpha)=1$ ) followed by a gradual decrease of $p(\alpha)$ . These strategies resemble those heuristically proposed in [82] but are obtained here via a principled procedure.

The order parameters of the theory suggest a simple interpretation of the optimal schedules. In the initial phase of training, it is beneficial to fully exploit the rapid increase in the teacher-student cosine similarity by keeping both nodes active (see Figure 5). Once the increase in cosine similarity plateaus, it becomes more advantageous to decrease the activation probability in order to mitigate negative correlations among the student’s nodes. As a result, the optimal schedule achieves lower generalization error than any constant‐dropout strategy.

Noisier tasks, corresponding to higher values of $\sigma_{n}$ , induce stronger detrimental correlations between the student nodes and therefore require a lower activation probability, as shown in [84] for the case of constant dropout. This observation remains valid for the optimal dropout schedules in Figure 5 d: as $\sigma_{n}$ grows, the initial no‐dropout phase becomes shorter and the activation probability decreases more sharply. Conversely, at low label noise ( $\sigma_{n}=0.1$ ), the activation probability remains close to one and becomes non-monotonic in training time.

3.3 Denoising autoencoder

<details>

<summary>x6.png Details</summary>

### Visual Description

## Neural Network Diagram: Two-layer DAE with Bottleneck and Skip Connection

### Overview

The image is a diagram illustrating the architecture of a two-layer Denoising Autoencoder (DAE) with a bottleneck network and a skip connection. It visually represents the flow of data through the network, highlighting the key components and their interconnections.

### Components/Axes

* **Title:** Two-layer DAE (left), Bottleneck network (center, green), Skip connection (right, red)

* **Equation:** *f<sub>w,b</sub>(x̃)* = ... *x̃* + *b* ... *x̃*

* **Nodes:**

* Input Layer: Represented by a column of four white circles on the left, with an ellipsis indicating potentially more nodes.

* Bottleneck Layer: Represented by two green circles in the center.

* Output Layer: Represented by a column of four blue circles to the right of the bottleneck layer, with an ellipsis indicating potentially more nodes.

* Skip Connection Layer: Represented by a column of four white circles on the right, with an ellipsis indicating potentially more nodes.

* **Connections:**

* Input to Bottleneck: Green lines connecting each node in the input layer to each node in the bottleneck layer. The connections are labeled with "w".

* Bottleneck to Output: Green lines connecting each node in the bottleneck layer to each node in the output layer. The connections are labeled with "w<sup>T</sup>".

* Skip Connection: A direct connection from the input (x̃) to the output, bypassing the bottleneck.

### Detailed Analysis

* **Input Layer:** Four nodes are explicitly shown, with an ellipsis suggesting more.

* **Bottleneck Layer:** Two nodes are shown.

* **Output Layer:** Four nodes are explicitly shown, with an ellipsis suggesting more.

* **Skip Connection Layer:** Four nodes are explicitly shown, with an ellipsis suggesting more.

* **Connections:**

* Each input node connects to both bottleneck nodes.

* Each bottleneck node connects to each output node.

* The skip connection directly connects the input to the output.

* **Equation Breakdown:**

* *f<sub>w,b</sub>(x̃)* represents the function performed by the DAE, where *w* represents the weights and *b* represents the bias. *x̃* represents the input.

* The equation shows that the output is a function of the input *x̃*, the weights *w*, and the bias *b*. The skip connection adds *b* to the transformed input.

### Key Observations

* The diagram clearly illustrates the bottleneck architecture, where the input is compressed into a lower-dimensional representation before being reconstructed.

* The skip connection provides a direct path for the input to the output, potentially helping to preserve information and improve performance.

* The use of different colors (green and blue) distinguishes the bottleneck and output layers.

### Interpretation

The diagram represents a two-layer Denoising Autoencoder (DAE) architecture. The DAE aims to learn a compressed representation of the input data by encoding it into a lower-dimensional space (the bottleneck) and then decoding it back to the original input space. The bottleneck forces the network to learn the most important features of the data. The skip connection allows the network to bypass the bottleneck, potentially improving the reconstruction accuracy and allowing the network to learn both low-level and high-level features. The equation *f<sub>w,b</sub>(x̃)* = ... *x̃* + *b* ... *x̃* summarizes the transformation performed by the network, where the input *x̃* is transformed by the weights *w* and bias *b*, and then combined with the original input *x̃* via the skip connection.

</details>

Figure 6: Illustration of the denoising autoencoder model studied in Section 3.3.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Chart/Diagram Type: Multi-Panel Plot

### Overview

The image presents four plots (a, b, c, d) that analyze the effects of different noise schedules on a machine learning model during training. The plots explore optimal noise schedules, MSE improvement, cosine similarity, and skip connections as functions of training time.

### Components/Axes

**Panel a: Optimal Noise Schedule**

* **Title:** Optimal noise schedule

* **X-axis:** Training time α, ranging from 0.0 to 0.8 in increments of 0.1.

* **Y-axis:** Optimal noise schedule, ranging from 0.0 to 0.8 in increments of 0.1.

* **Legend (Top-Right):**

* Yellow: ΔF = 0.15

* Blue: ΔF = 0.2

* Green: ΔF = 0.25

* Orange: ΔF = 0.3

* Pink: ΔF = 0.35

* Gray: ΔF = 0.4

**Panel b: MSE Improvement**

* **Title:** MSE improvement (%)

* **X-axis:** Training time α, ranging from 0.0 to 0.8 in increments of 0.1.

* **Y-axis:** MSE improvement (%), ranging from -40 to 30 in increments of 10.

* **Horizontal dashed line:** at y = 0

* **Legend:** (Refer to Panel a for color correspondence to ΔF values)