<details>

<summary>Image 1 Details</summary>

### Visual Description

\n

## Logo: Sorbonne Université

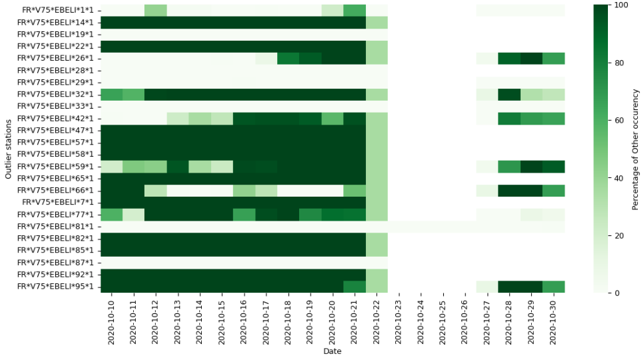

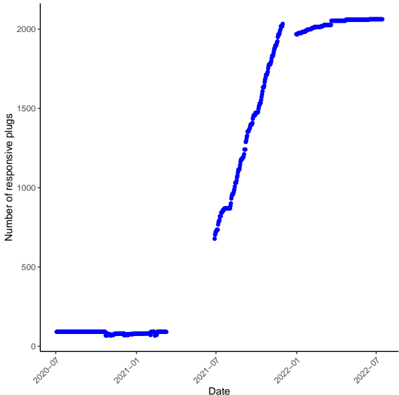

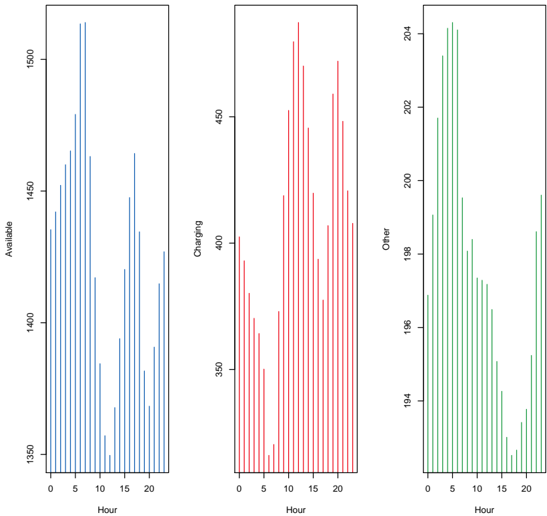

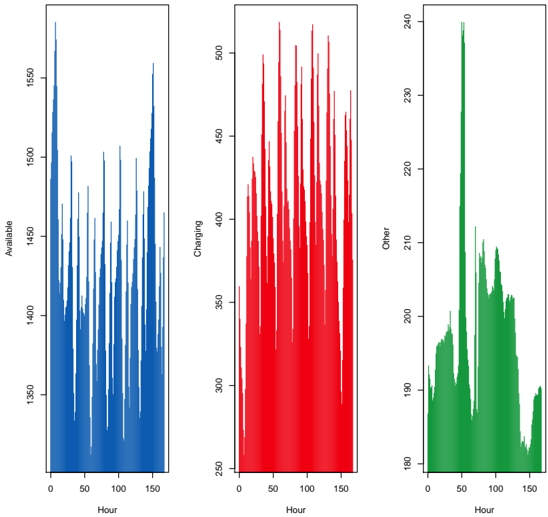

### Overview

The image displays the logo of Sorbonne Université, a French public research university. The logo is a stylized "S" incorporating a depiction of the Sorbonne's dome.

### Components/Axes

The logo consists of two primary elements:

* A stylized, dark blue "S" shape.

* Text reading "SORBONNE UNIVERSITÉ" in red.

### Detailed Analysis or Content Details

The "S" shape is a flowing, cursive form. Integrated within the upper curve of the "S" is a simplified, white depiction of a dome-shaped building, representing the historical Sorbonne building. The text "SORBONNE" is positioned to the right of the "S", in a larger font size, and is colored red. Below "SORBONNE" is the word "UNIVERSITÉ", also in red, but in a smaller font size.

The text "SORBONNE UNIVERSITÉ" is in French. The English translation is "Sorbonne University".

### Key Observations

The logo is visually clean and modern, while still referencing the university's historical architecture. The color scheme of dark blue and red is striking and memorable.

### Interpretation

The logo effectively communicates the identity of Sorbonne Université. The "S" shape is a clear identifier, and the inclusion of the dome symbolizes the university's long history and academic tradition. The use of French text reinforces the university's location and cultural context. The logo is designed to be easily recognizable and to convey a sense of prestige and academic excellence. The logo does not contain any factual data or numerical values. It is a symbolic representation of an institution.

</details>

<details>

<summary>Image 2 Details</summary>

### Visual Description

\n

## Diagram: LPSM Logo

### Overview

The image presents a logo for "LPSM". The logo consists of an abstract graphical element above the text "LPSM". The graphical element appears to be a stylized representation of interconnected nodes or arcs.

### Components/Axes

The logo is composed of two main elements:

1. **Graphical Element:** A series of curved lines connecting two circular nodes. The lines are colored in shades of green and blue.

2. **Text:** The letters "LPSM" in a dark blue, sans-serif font.

### Detailed Analysis or Content Details

The graphical element features two dark blue circular nodes positioned at the extreme left and right of the image. Arcs connect these nodes. There are three arcs connecting the nodes.

* The top arc is green.

* The middle arc is a lighter shade of green.

* The bottom arc is dark blue.

The arcs intersect in the center of the image, creating a complex interwoven pattern. The text "LPSM" is positioned directly below the graphical element, centered horizontally. The font size is relatively large, making the acronym prominent.

### Key Observations

The logo is visually balanced, with the graphical element and text complementing each other. The use of green and blue colors suggests a connection to nature, technology, or trustworthiness. The interconnected arcs could symbolize networking, collaboration, or complex systems.

### Interpretation

The logo likely represents an organization or entity named "LPSM". The abstract graphical element suggests a focus on interconnectedness, systems thinking, or a dynamic approach. Without further context, the specific meaning of "LPSM" and the logo's symbolism remain open to interpretation. The logo is designed to be memorable and visually appealing, conveying a sense of professionalism and innovation. The logo does not contain any factual data or numerical values. It is a symbolic representation of a brand or organization.

</details>

Thèse présentée pour l'obtention du grade de

## DOCTEUR de SORBONNE UNIVERSITÉ

Discipline / Spécialité Mathématiques appliquées / Statistique

École doctorale Sciences Mathématiques de Paris Centre (ED 386)

Physics-informed machine learning: A mathematical framework with applications to time series forecasting

Nathan Doumèche

Rapporteur Rapporteur Président du jury Examinateur Examinatrice Membre invité Membre invité Directeur de thèse Co-directrice de thèse Encadrant industriel de thèse

Soutenue publiquement le 7 juillet 2025

Devant un jury composé de :

Gilles BLANCHARD , Professeur, Université Paris-Saclay Richard NICKL , Professeur, Université de Cambridge Gabriel PEYRÉ , Directeur de recherche, CNRS Jalal FADILI , Professeur, ENSICAEN Mathilde MOUGEOT , Professeure, ENSIIE Francis BACH , Directeur de recherche, CNRS Stéphane TANGUY , Directeur de recherche, CIO & CTO à EDF Labs Gérard BIAU , Professeur, Sorbonne Université Claire BOYER , Professeure, Université Paris-Saclay Yannig GOUDE , Professeur associé, Université Paris-Saclay

<details>

<summary>Image 3 Details</summary>

### Visual Description

\n

## Logo: EDF Logo

### Overview

The image presents the logo of EDF (Électricité de France), a French multinational electric power company. The logo consists of a stylized graphic element above the company's acronym.

### Components/Axes

The logo is composed of two main elements:

1. **Graphic Element:** An abstract design featuring four flame-like or petal-like shapes arranged in a circular pattern. The color of these shapes is a vibrant orange (#FFA500).

2. **Text:** The acronym "EDF" is positioned below the graphic element, rendered in a dark blue color (#000080).

### Detailed Analysis or Content Details

The graphic element is not a chart or diagram with quantifiable data. It is a visual symbol. The text "EDF" is a clear identifier of the company. There are no axes, scales, or legends present.

### Key Observations

The logo's design evokes energy, power, and potentially the sun or flames, aligning with the company's core business of electricity generation and distribution. The color scheme (orange and blue) is visually striking and memorable.

### Interpretation

The EDF logo is a branding element designed to represent the company's identity and values. The abstract graphic element is intended to be recognizable and associated with the EDF brand. The use of orange suggests energy and warmth, while the blue conveys reliability and stability. The logo is a visual shorthand for the company's services and expertise in the energy sector. The logo does not contain any factual data or trends. It is purely a symbolic representation.

</details>

## Remerciements

Trois ans sont donc passés... D'aucuns supplièrent jadis les heures de suspendre leur cours. Sans succès. Quant à moi, pour endiguer le vertige qui me submerge à la clôture de ce chapitre de ma vie, je n'ai d'autre artifice que de recourir au rite consacré. Aussi, j'ai le bonheur teinté de nostalgie d'entamer cette thèse en remerciant ceux qui l'ont rendue possible.

Au commencement était Gérard, et de Gérard naquit cette thèse. Voilà cinq ans maintenant que tu me formes avec exigence et me guides avec bienveillance. Véritable amoureux de la statistique mathématique, tu partages avec générosité ton entrain scientifique et ta vision pour une rédaction scientifique réellement pédagogique. Travailleur acharné, insatisfait de tes rôles de chercheur chevronné et d'enseignant apprécié, tu prends aussi de ton temps pour façonner la statistique de demain et représenter la Sorbonne. J'ose donc affirmer que, fidèle au poète qui t'est cher, tu incarnes bien l'homme des utopies, les pieds ici, les yeux ailleurs. Pour tout cela, je suis heureux et fier d'avoir été ton élève.

Deuxième pilier de cette thèse, il me faut remercier Claire. Tu harmonises rigueur et curiosité scientifique par un plaisir solaire à apprendre, découvrir et écrire. Aventurière des statistiques, tu m'as suivi sur tous les chemins, des réseaux de neurones à la mesurabilité des estimateurs, aux noyaux et aux EDP. C'est sans relâche que tu entretiens ton goût du verbe et de la bonne formule. Ton empathie, ton sang-froid et ton humour m'ont aidé à faire face aux moments les plus durs de cette thèse. Merci aussi d'avoir toujours eu à cœur de mettre en valeur mon travail, et de m'encourager à participer à des conférences, séminaires et écoles d'été.

Ultime panneau de ce triptyque doctoral, je veux témoigner de toute ma gratitude envers Yannig. Avatar de l'esprit sportif, tu appliques avec méthode et ardeur à la prévision énergétique tous les algorithmes prometteurs qui te sont présentés. En compétition, il faut faire feu de tout bois ! De tous les statisticiens que j'ai rencontrés, tu es sûrement celui dont je partage le plus la vision des mathématiques appliquées. En entraîneur attentionné, tu as sans cesse veillé à ma bonne intégration au sein de l'équipe à EDF, et à mon épanouissement tant sur le plan pratique que théorique. Merci pour ta patience, ton expérience, et tes encouragements.

Plus généralement, je tiens à exprimer ma reconnaissance aux membres du jury, qui me consacrent une journée de leur temps que je sais fort précieux. Merci à Gilles et à Richard pour l'enthousiasme dont ils ont fait preuve à la relecture de ce manuscrit de thèse. Bien que le sérieux de l'évaluation repose sur le fait que nous ne nous connaissons pas personnellement, j'ai lu avec gourmandise les travaux de Gilles sur les noyaux; de Jalal, Mathilde et Richard sur les méthodes informées par la physique; et de Gabriel sur les fondements mathématiques de l'IA. C'est un grand honneur pour moi que de vous voir siéger à mon jury.

Ma thèse n'aurait pas été possible sans la joyeuse farandole des statisticiens qui m'ont accompagné, au détour d'un article ou d'un stage, et ont enrichi mon paysage informatique et mathématique. Merci à Stéfania pour ta connaissance des indices téléphoniques, à Yann pour m'avoir épaulé sur ma toute première base de données, et à Yvenn pour tes talents d'organisateur de challenge. Un merci tout particulier à Francis qui, par l'exercice d'un prosélytisme dont il ne se cache pas, m'a totalement converti aux méthodes à noyaux et m'a conduit à la béatitude exaltée que confère la dimension effective. Merci et bravo à mes stagiaires, Éloi et Guillhem, pour le travail, la confiance, et la joie qu'ils m'ont apportés. J'ai été très fier de vous voir tant progresser. Merci en retour à Adeline et Pierre, membres éminents de la dynastie

des Gérardiens et dont j'ai été le stagiaire dans le temps, pour m'avoir donné envie de faire des statistiques.

Ces trois ans n'auraient pas eu la même saveur sans l'ambiance chaleureuse des équipes d'EDF et de la Sorbonne. Merci à Caroline, Éloi, Ferdinand, Guillaume Lambert, Guillaume Principato, Julie et Stanislas pour avoir tant égayé les bureaux que nous avons partagés. Chers collègues d'EDF, merci à Amaury pour ta musique punchy, à Bachir pour ton goût pour la bonne chère, à Christian pour nos discussions de régression linéaire et de randonnée, à Élaine pour notre dévotion commune à Arte et Élisabeth Quin, à Élise pour ta gentillesse sans égal, à Félicie pour ta joie de vivre, à Hugo pour ton humour pince-sans-rire, à Gilles, Sandra et Véronique pour le maintien de la tradition du café et de la conversation matinale, à Joseph pour m'avoir montré la voie, à Manel pour ton affection presque maternelle, à Margaux pour la force de tes convictions, à Virgile pour ton regard émerveillé sur le monde. Honorables collègues du LPSM, merci à mes anciens professeurs de master Anna, Antoine, Arnaud, Erwan, Ismaël et Lorenzo pour leur savoir encyclopédique, à Charlotte pour m'avoir accueilli les bras ouverts comme chargé de travaux dirigés, et à Alice, Miguel, et Paul pour leurs conseils d'enseignement. Merci également à Hugues, Natalie, Nisrine, Nora et Xavier, sans qui les rouages administratifs m'auraient sans doute avalé depuis longtemps.

Au-delà de ma thèse, je sais ce que je dois à ceux qui me soutiennent au quotidien et agrémentent ma vie de leur présence loufoque. Merci à mes amis d'enfance, qui portent en eux la chaleur et la tranquillité du Sud. À Gilliane, avec qui j'ai coévolué au point d'entendre mentalement sa voix. Merci pour ton rire communicatif, le théâtre, nos vacances, et notre amour incompris du Top D17. À mes amis de lycée, Gabrielle et Léo en particulier, pour nos innombrables soirées plage. Merci à mes amis des Mines, fièrement rassemblés sous la bannière de la Piche et régulièrement convoqués par notre roi élu. À Agathe pour avoir partagé ma détresse sur l'Île-Molène, à Amandine pour notre danse sur I Like To Move It à Barcelone, à Antoine pour tes extravagances mégalomanes, à Charlène et Jean pour votre sens du chic et de la fête, à Denis pour tes traits d'esprit caustiques, à Félix pour m'avoir transmis ta passion du Japon, à Victor pour avoir créé un si beau Donjon où tu nous accueilles toujours avec l'hospitalité médiévale de circonstance. Merci à mes amis de l'ENS, notamment aux autoproclamés malins , qui réapparaissent périodiquement pour me professer leur sagesse douteuse. Paraît-il qu'un bol n'est jamais plus utile que quand il est vide...

Une place toute spéciale est prise en mon cœur par ce quatuor étrange et, il faut bien l'avouer, un peu disparate, qui toutefois s'accorde merveilleusement à l'harmonie de mon existence 1 . À Baptiste, avec qui j'ai souvent festoyé jusqu'à l'évanouissement, co-inventeur des fameuses pâtes au gras. À toi, que l'honneur mal placé exhorte régulièrement à des aventures qui forcent l'admiration, tant en sport qu'en sciences, et qui n'a pourtant pas encore pleinement conscience de sa force. À Éric, qui envisage l'existence comme un jeu, et avec qui je prends un plaisir non dissimulé (certes, parfois après-coup) à pousser aux extrêmes limites nos capacités physiques et mentales. À toi, cher voisin du C2 en mes temps de pape, toi qui m'a fait survivre sur une île bordée de phoques, toi qui m'a traîné, mourant, sur la muraille de Chine, bref, toi en qui j'ai une confiance irraisonnable. À Nataniel, dragon majestueux et sautillant, un peu bruyant par moments, mon Doppelgänger flamboyant. À toi qui me suit, chaque année, selon le rituel, arpenter les cimes du Mercantour. À ton amour débordant pour la vie, les amis, les animaux mignons (et les limaces !?), les champignons, la littérature, la musique, la danse... À Alexis, qui partage courageusement ma vie. À tes passions hétéroclites pour les chats, le matcha, le karaoké, la pop, les voyages, le clubbing, les jeux vidéo... À toi qui préfères acheter la whey que pousser à la salle, et à tous ces autres traits qui te rendent si attachant.

Il est des dons que l'on ne peut rendre. Je tâche ici au moins d'en rendre compte. Merci à ma mère, pour ton soutien inconditionnel, indéfectible. Pour ta foi en l'école et dans le savoir,

1 Les férus de solfège reconnaissent ici une septième diminuée.

qui m'a porté jusque-là. Pour ta force devant la maladie, qui t'a fait soigner les autres. À mon père, pour m'avoir transmis ton amour des sciences et de la nature. Pour ces journées passées à pêcher, cueillir les plantes sauvages, tailler des silex, observer les animaux, et ergoter sur les espèces d'arbres. À Anne qui prend soin au quotidien de cet homme préhistorique en puissance. À ma grande sœur, Andréa, qui m'a toujours servi de modèle et qui a initié en moi un intérêt regrettable pour la télé-réalité et les séries. À mon adorable neveu, Arthur, qui fait preuve d'une patience rare, mêlée d'un intérêt sincère, à écouter mes histoires. À mes oncles, tantes, cousins et cousines, Élie, Fabien, Irène, Jokyo, Lucie, Magali, Pierre, Prune, et Rodrigue. À ma marraine Jocelyne, pour sa quête du spirituel et sa tendresse pour la montagne. Aux amis de mes parents, Alain et Thavy, Christian, Christophe, Georges et Janine, qui m'ont tant appris sur les vérités cachées de la vie. J'ai ici une pensée pour ceux dont le feu s'est éteint. À papé Jean Doumèche, médaillé de la Résistance en 47, pour les exploits dont les récits ont bercé ma jeunesse, et qui m'a donné son nom. À pépé Marcel, incorrigible musicien et blagueur, qui m'a légué ses partitions. Le monde en soit témoin, vous êtes partis comme vous avez vécu: dignes.

## Abstract

Physics-informed machine learning (PIML) is an emerging framework that integrates physical knowledge into machine learning models. This physical prior often takes the form of a partial differential equation (PDE) system that the regression function must satisfy. In the first part of this dissertation, we analyze the statistical properties of PIML methods. In particular, we study the properties of physics-informed neural networks (PINNs) in terms of approximation, consistency, overfitting, and convergence. We then show how PIML problems can be framed as kernel methods, making it possible to apply the tools of kernel ridge regression to better understand their behavior. In addition, we use this kernel formulation to develop novel physicsinformed algorithms and implement them efficiently on GPUs. The second part explores industrial applications in forecasting energy signals during atypical periods. We present results from the Smarter Mobility challenge on electric vehicle charging occupancy and examine the impact of mobility on electricity demand. Finally, we introduce a physics-constrained framework for designing and enforcing constraints in time series, applying it to load forecasting and tourism forecasting in various countries.

Keywords: Physics-informed machine learning, neural networks, kernel methods, load forecasting, time series

## Résumé

L'apprentissage automatique informé par la physique est un domaine récent qui consiste à intégrer des connaissances physiques dans des modèles d'apprentissage automatique. L'information physique prend souvent la forme d'un système d'équations aux dérivées partielles (EDPs) que la fonction de régression doit satisfaire. Dans la première partie de cette thèse, nous analysons les propriétés statistiques des méthodes d'apprentissage automatique informé par la physique. En particulier, nous étudions les propriétés des réseaux de neurones informés par la physique, en termes d'approximation, de consistance, de surapprentissage et de convergence. En outre, nous montrons comment l'apprentissage statistique pénalisé par des systèmes d'EDPs linéaires peut se réécrire comme une méthode à noyaux. En s'appuyant sur cette reformulation, nous développons de nouveaux algorithmes informés par la physique, que nous implémentons ensuite efficacement sur carte graphique. La deuxième partie se concentre sur des applications industrielles en prévision de signaux énergétiques en périodes atypiques. Nous présentons les résultats du Smarter Mobility Data Challenge sur l'occupation de la charge des véhicules électriques, et examinons l'impact de la mobilité sur la demande d'électricité. Enfin, nous développons un cadre pour la conception et l'application de contraintes dans les séries temporelles, en l'appliquant à la prévision de la consommation électrique et à la prévision du tourisme dans différents pays.

Keywords: Apprentissage statistique informé par la physique, réseaux de neurones, méthodes à noyau, prévision de consommation électrique, séries temporelles

## Présentation de la thèse

La thèse de doctorat présentée ici est le fruit d'une collaboration entre l'entreprise EDF, spécialisée dans la production et la vente d'électricité, et Sorbonne Université. Afin d'améliorer la performance et l'explicabilité des modèles de prévision énergétique, EDF s'intéresse à l'incorporation de connaissances humaines (souvent regroupées sous le nom de l'expertise métier) dans ses méthodes statistiques d'aide à la décision. À cette fin, le domaine de l'apprentissage automatique informé par la physique (PIML en anglais) permet d'intégrer des contraintes dans des modèles d'apprentissage automatique. Il a été introduit en 2019 par l'invention des réseaux neuronaux informés par la physique. Dans cette thèse, nous nous focalisons donc sur l'étude des algorithmes d'apprentissage automatique informés par la physique et sur la prévision énergétique en période atypique. Par conséquent, nous abordons à la fois des aspects théoriques, tels l'impact des contraintes physiques sur les propriétés statistiques des estimateurs, ainsi que des applications industrielles sur données réelles.

## Intégration de contraintes physiques dans les méthodes statistiques et applications industrielles

## Apprentissage automatique informé par la physique

Interprétabilité des modèles. Les algorithmes d'apprentissage automatique affichent des performances remarquables sur de nombreuses tâches complexes en analyse et de génération de données [Wan+23a]. Cependant, malgré d'impressionnants résultats en reconnaissance d'images, en traitement du langage et en interaction avec des environnements adversoriaux (apprentissage par renforcement), les techniques modernes d'apprentissage profond peinent encore à accomplir certaines tâches simples mais cruciales pour de nombreuses applications pratiques. La prévision de séries temporelles en est peut-être l'exemple le plus saillant [MSA22a; McE+23; Zen+23; Ksh+24]. De surcroît, même lorsqu'ils sont performants, les algorithmes d'apprentissage profond ne présentent pas les mêmes garanties théoriques que les méthodes statistiques plus standard. Il est donc risqué de s'appuyer sur de tels algorithmes, souvent qualifiés de "boîte noire", pour des applications industrielles sensibles ou à fort enjeu [VAT21]. En complément d'efforts de recherche pour mieux comprendre les algorithmes d'apprentissage profond, de nombreux travaux visent à développer de nouveaux algorithmes avec de meilleures garanties théoriques, sous le nom d'apprentissage automatique interprétable ou explicable [LZ21; Lis+23]. Une piste prometteuse pour améliorer l'interprétabilité des algorithmes consiste à intégrer des connaissances issue de la modélisation dans les modèles statistiques. Ces informations préalables sur les caractéristiques du signal à prévoir peuvent prendre la forme d'un modèle physique.

Physique et statistique. L'idée de coupler une modélisation physique à des modèles statistiques n'est pas nouvelle. D'une part, la déduction empirique de lois fondamentales de la

nature est dans l'essence même de la physique en tant que science expérimentale. Par exemple, les célèbres lois de Kepler de 1609 furent découvertes empiriquement, par l'ajustement de courbes sur des observations du mouvement des planètes. D'autre part, la modélisation mathématique de l'intégration d'équations aux dérivées partielles (EDPs) issues de la physique dans des modèles statistiques était déjà formulée dans les travaux de Wahba [Wah90] en 1990. Cependant, jusqu'à peu, deux problèmes principaux étaient restés en suspens. Premièrement, aucun algorithme n'était capable d'incorporer efficacement et de façon systématique des connaissances physiques au sein de méthodes de régression statistique. Cela signifie que les praticiens devaient fournir un travail conséquent afin d'incorporer leurs modélisations physiques dans des méthodes statistiques. Deuxièmement, l'impact mathématique de l'ajout de contraintes physique sur la performance des méthodes statistiques était inconnu. Bien qu'il soit intuitif que les modèles avec plus d'informations devraient être plus puissants, en pratique, l'ajout de connaissances physiques augmente souvent la complexité informatique des modèles et en détériore l'optimisation.

L'apprentissage automatique informé de la physique. Au cours des dernières années, de nouveaux moyens furent découverts pour adapter des algorithmes bien connus afin qu'ils emploient efficacement des a priori physiques. En effet, l'incorporation d'EDPs dans les algorithmes statistiques a été illustrée par Raissi et al. [RPK19] sur les réseaux neuronaux et Nickl [Nic23] sur les méthodes de Monte-Carlo par chaînes de Markov (MCMC en anglais), tandis que l'utilisation des techniques d'EDP pour des tâches statistiques a été illustrée par Arnone et al. [Arn+22] sur la méthode des éléments finis (FEM). Dans cette thèse, nous montrons comment faire de même avec des méthodes à noyaux [Dou+24a]. En particulier, le concept d'incorporer de la physique à des algorithmes d'apprentissage automatique classiques est maintenant connu sous le nom d'apprentissage automatique informé par la physique (PIML en anglais) Karniadakis et al. [Kar+21]. S'appuyer sur des algorithmes bien connus pour l'intégration de contraintes physiques est très avantageux d'un point de vue informatique. Par exemple, les réseaux neuronaux informés par la physique (PINNs en anglais) de Raissi et al. [RPK19] exploitent les écosystèmes publics Pytorch et Tensorflow , développés et maintenus par la communauté de l'apprentissage automatique et soutenus par de riches entreprises d'intelligence artificielles, comme Google et Meta. L'inscription dans ces écosystèmes permet de directement tirer parti des puissantes accélérations de calcul et d'une gestion optimisée de la mémoire issues d'années de recherche en apprentissage automatique. En outre, cela permet de facilement implémenter les algorithmes informés par la physique sur du matériel informatique très efficace. Tyiquement, il s'agit d'exécuter les algorithmes sur des cartes graphiques (GPU en anglais), qui sont des unités de calcul ultra-optimisées pour effectuer des opérations mathématiques spécifiques d'algèbre linéaire, comme le produit matrice-vecteur. Dans cette thèse, nous montrons comment mettre en oeuvre nos méthodes à noyaux sur GPU. Toutes ces accélérations, ainsi que la bonne gestion de la mémoire, sont des améliorations cruciales pour le PIML, car l'ajout de physique à un algorithme statistique rend généralement son apprentissage plus coûteux en calculs.

Vers des contraintes plus faibles. La plupart des algorithmes informés par la physique furent développés pour incorporer des a priori physiques prenant la forme de systèmes d'EDPs. Bien que ce cadre soit approprié pour la description de systèmes physiques dont les lois sont connues, les connaissances humaines ne prennent pas toujours une forme aussi rigide. Par exemple, c'est le cas de nombreux signaux macroéconomiques, tels que la demande d'électricité. Il n'en demeure pas moins que certaines contraintes peuvent être incorporées dans les modèles de prévision de tels signaux. Par exemple, les lois de la macroéconomie stipulent que, toutes choses égales par ailleurs, la demande d'électricité diminuera lorsque le prix augmentera. De telles formes plus faibles de physique (où le terme « physique » est entendu ici au sens large de

toute information issue d'une modélisation) ont été intégrées avec succès dans les PINNs [voir, par exemple, Daw+22]. Nous expliquons au chapitre 7 comment appliquer des contraintes faibles aux séries temporelles.

## Défis mathématiques de l'apprentissage informé de la physique

Cadre mathématique. Le PIML est généralement divisé en quatre tâches [RPK19; Kar+21; Cuo+22]. Soient d ∈ N /star la dimension du problème, Ω ⊆ R d le domaine d'intérêt, et D l'opérateur différentiel correspondant à la physique du problème. L'exemple typique est d = 2 , Ω = [0 , 1] 2 , et D = ∂ 2 1 , 1 + ∂ 2 2 , 2 est l'opérateur laplacien.

La première tâche est la résolution d'EDP. Étant donné un ensemble de conditions limites, l'objectif est de trouver une fonction satisfaisant à la fois le système d'EDPs et les conditions limites. Par exemple, la condition limite de Dirichlet h : ∂ Ω → R se traduit par la recherche d'une fonction f h telle que pour tout x ∈ Ω , D ( f h )( x ) = 0 , et f h | ∂ Ω = h . Dans l'exemple précédent, cela revient à résoudre l'EDP ∂ 2 1 , 1 f h + ∂ 2 1 , 1 f h = 0 compte tenu de la condition aux limites h . Dans ce contexte, l'utilisateur spécifie la condition limite h et entraîne ensuite un algorithme d'apprentissage (par exemple, un PINN) à apprendre la solution de l'EDP. Bien que les méthodes d'EDP telles que la méthode des éléments finis (FEM) soient déjà très efficaces dans la résolution des EDP, elles peuvent être coûteuses en termes de calcul. Ici, les méthodes d'apprentissage automatique sont utiles pour trouver rapidement des approximations pour f h , appelées modèles de substitution. Ceci est particulièrement intéressant lorsque l'EDP doit être résolue de manière répétée, comme c'est le cas des prévisions météorologiques.

La deuxième tâche est la modélisation hybride. Étant données n ∈ N /star observations ( X 1 , Y 1 ) , . . . , ( X n , Y n ) indépendantes et identiquement distribuées (i.i.d.) selon la loi de la variable aléatoire ( X,Y ) , où Y = f /star ( X ) + ε et ε est un bruit, l'objectif est d'estimer la fonction f /star . La particularité de ce cadre d'apprentissage supervisé est l'a priori que f /star est solution de l'EDP D ( f /star ) = 0 , toutefois avec une éventuelle erreur de modélisation. Ce cadre est particulièrement pertinent lorsque l'a priori physique est incomplet. Par exemple, les conditions aux limites peuvent ne pas être entièrement spécifiées, ou l'ensemble des équations différentielles peut admettre un nombre infini de solutions. Dans ce cas, les techniques traditionnelles d'EDP ne peuvent pas être appliquées directement et les données sont nécessaires pour résoudre le problème.

La troisième tâche est l'apprentissage d'EDP. Étant données n ∈ N /star observations ( X 1 , Y 1 ) , . . . , ( X n , Y n ) indépendantes et identiquement distribuées (i.i.d.) selon la loi de la variable aléatoire ( X,Y ) , où Y = f /star ( X ) + ε et ε est un bruit, l'objectif est d'estimer la fonction f /star ainsi que la loi physique à laquelle f /star obéit. Dans ce contexte d'apprentissage supervisé, la seule connaissance préalable est le fait que la fonction f /star est solution d'un système d'EDPs à coefficients inconnus. Un exemple de tel a priori physique pourrait être que f /star satisfait l'EDP ∆ f /star = λ /star f /star , où λ /star ∈ R est un paramètre inconnu devant être estimé grâce aux données. Les PINNs Raissi et al. [RPK19], la régression LASSO [KKB20] et les MCMCs [Nic23] sont des algorithmes efficaces d'apprentissage d'EDP.

La quatrième tâche consiste à directement construire un solveur d'EDP. Formellement, il s'agit d'apprendre l'opérateur φ : h ∈ L 2 ( ∂ Ω) ↦→ f h ∈ L 2 (Ω) qui, à une condition limite h , associe l'unique solution f h telle que f h | ∂ Ω = h et ∀ x ∈ Ω , D ( f h )( x ) = 0 . Ce contexte est appelé apprentissage d'opérateur. L'objectif ici est de fournir des modèles de substitution plus rapides, en apprenant un modèle global φ , plutôt que d'entraîner un algorithme spécifique pour chaque nouvelle condition limite h . DeepONet [Lu+21] et les opérateurs neuronaux de Fourier [Li+21] sont des algorithmes d'apprentissage d'opérateur efficaces.

Dans ce document, nous nous concentrons principalement sur la résolution d'EDP et la modélisation hybride. Les deux autres tâches seront l'objet de de travaux ultérieurs. En effet, l'apprentissage d'EDP et l'apprentissage d'opérateur sont des opérations plus complexes, et leurs propriétés statistiques sont encores inconnues.

Comment créer des algorithmes intégrant de l'information physique ? L'un des principaux défis du PIML est de concevoir des algorithmes efficaces pour traiter les tâches susmentionnées. En pratique, la plupart des implémentations reposent sur la minimisation d'un risque empirique constitué d'une partie d'accroche aux données et d'une pénalité physique. En modélisation hybride, l'EDP est intégrée comme une pénalité L 2 , et le risque empirique devient

$$\mathcal { R } _ { n } ( f ) = \sum _ { j = 1 } ^ { n } \| f ( X _ { j } ) - Y _ { j } \| _ { 2 } ^ { 2 } + \lambda \int _ { \Omega } | \ m a t h s c r { D } ( f ) ( x ) | ^ { 2 } d x ,$$

où λ > 0 est un hyperparamètre fixé par l'utilisateur. Cette minimisation est effectuée sur une classe de fonctions, à savoir les réseaux neuronaux pour les PINNs [RPK19], et une base de Fourier pour nos méthodes à noyaux [Dou+24b]. Cependant, la pénalité EDP rend particulièrement difficile la recherche d'un minimiseur global de R n sur une classe de fonctions donnée. Il faut pour cela pouvoir être capable de dériver une fonction de la classe de fonctions considérée, ce qui n'est pas toujours possible (par exemple, les forêts aléatoires ne sont pas dérivables). D'ailleurs, bien que les PINNs soient la technique qui a reçu le plus d'attention récemment, l'algorithme proposé par Raissi et al. [RPK19] est susceptible de surapprendre [WYP22; DBB25], tandis que son optimisation est fortement dégradée lorsque l'opérateur différentielle D est non linéaire [BBC24]. En outre, la plupart des algorithmes proposés dans la littérature sont très gourmands en ressources informatiques. En particulier, les PINNs nécessitent des milliers d'étapes de descente de gradient pour converger, alors qu'ils ne sont pas toujours significativement plus performants que les solveurs d'EDP traditionnels, tant en termes de vitesse de calcul que de précision, comme le révèle la méta-analyse de McGreivy and Hakim [MH24].

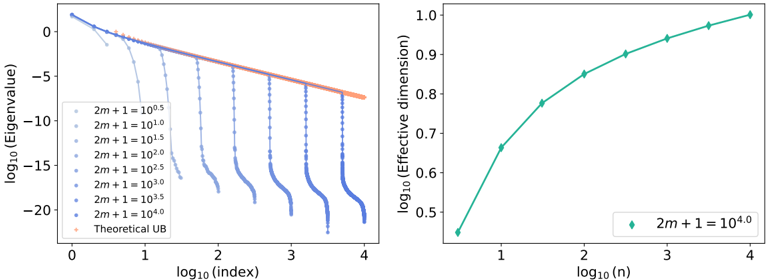

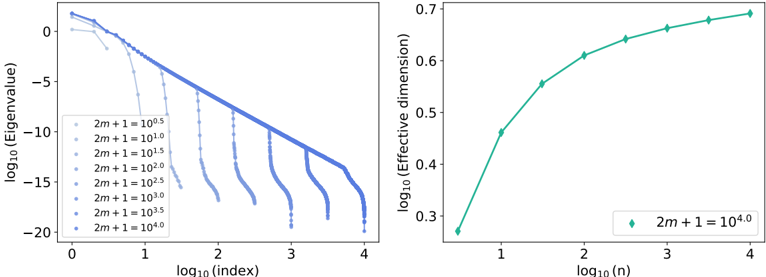

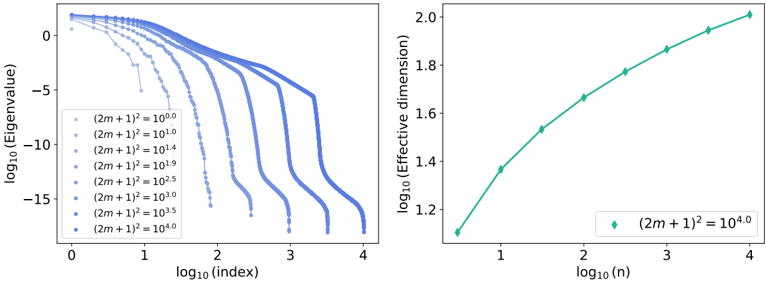

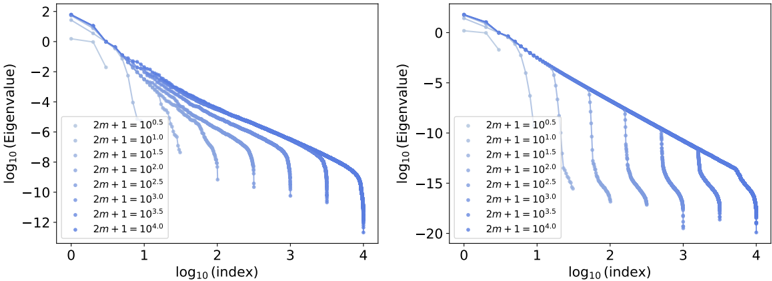

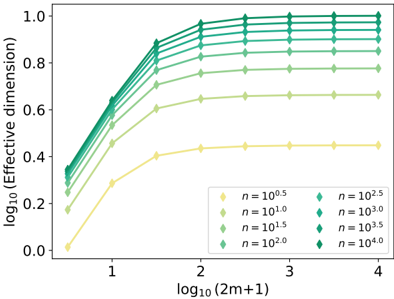

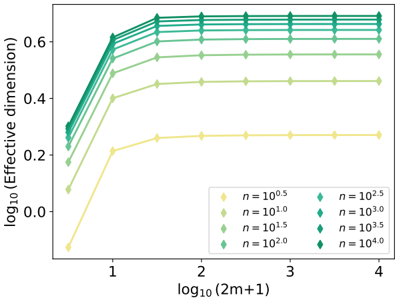

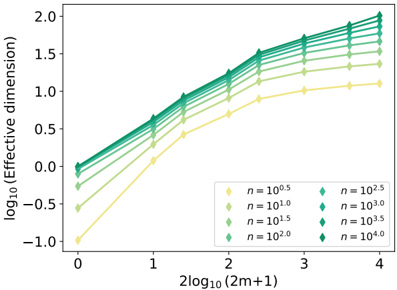

Comment quantifier les gains de la physique ? D'un point de vue théorique, mesurer l'impact de la physique sur la performance de l'algorithme est une question qui n'a toujours pas trouvé de réponse complète. Un avantage de l'apprentissage informé de la physique est que, en s'appuyant sur des algorithmes connus, il devient possible d'adapter les outils spécifiques à l'algorithme initial pour comprendre les propriétés théoriques des versions physiquement pénalisées. Par exemple, l'analyse du noyau tangent neuronal (NTK) des PINNs caractérise la convergence de leur descente de gradient [BBC24], tandis que les outils de l'inférence bayésienne non paramétrique permettent d'étudier le taux de convergence des MCMCs physiquement informées [NGW20]. Dans cette thèse, nous nous appuyons sur l'analyse de la dimension effective de Caponnetto and Vito [CV07] et Blanchard et al. [BBM08] pour caractériser le taux de convergence de nos méthodes à noyaux [Dou+24a]. La plupart de ces résultats théoriques confirment l'intuition selon laquelle les algorithmes PIML sont plus difficiles à optimiser, mais offrent de meilleures performances statistiques lorsqu'ils sont entraînés dans de bonnes conditions.

## Contexte industriel

Au-delà des aspects théoriques liés aux propriétés statistiques et à la complexité des algorithmes, l'apprentissage automatique informé de la physique a démontré son efficacité dans

des applications industrielles. Cette thèse, menée en collaboration avec l'entreprise EDF, se concentre sur les applications aux séries temporelles, en particulier dans le domaine de la prévision énergétique.

Séries temporelles. Les séries temporelles sont omniprésentes dans les applications industrielles [Pet+22], englobant la prévision des signaux macroéconomiques (offre, demande, prix, mobilité humaine...), l'estimation de la propagation des maladies et du trafic hospitalier, le suivi des processus industriels en temps réel (processus de fabrication, réactions chimiques, maintenance préventive...) et l'anticipation des événements environnementaux (vagues de chaleur, incendies, précipitations...). Cependant, les séries temporelles sont particulièrement difficiles à traiter d'un point de vue statistique. Tout d'abord, les observations sont corrélées, ce qui signifie que la loi des grands nombres et le théorème central limite ne peuvent pas être directement appliqués pour créer des estimateurs. Ensuite, il est courant que la distribution des séries temporelles évolue au cours du temps, que cela soit en raison d'une tendance, d'une saisonnalité ou de ruptures. En outre, les séries temporelles comportent souvent des valeurs manquantes, en conséquence de fréquences d'échantillonnage potentiellement différentes dans les données, ou encore de défaillances de capteurs. Tous ces phénomènes limitent la quantité de données pertinentes disponibles pour l'apprentissage et rendent difficile la prévision des séries temporelles. L'ajout de contraintes physiques aux modèles de prévision apparaît comme un moyen prometteur pour en améliorer les performances. Les techniques de PIML ont été appliquées avec succès à des séries temporelles du monde réel présentant des dépendances physiques bien connues, telles que les prévisions météorologiques [Kas+21], la prévision de production d'énergie renouvelable [LO+23], ou le contrôle en temps réel de réactions chimiques industrielles [Ngu+22].

Prévision de signaux énergétiques. Dans cette thèse, nous nous concentrons principalement sur la prévision de signaux énergétiques, comme l'occupation de bornes de recharge de véhicules électriques et la demande d'électricité. Ces deux tâches de prévision sont difficiles, mais utiles pour l'industrie de l'énergie. En effet, le marché des véhicules électriques est émergent et en forte croissance, et les fournisseurs doivent adapter le réseau électrique à ses demandes de haute intensité électrique. Cependant, les bases de données et les modèles de machine learning appliquées aux véhicules électriques sont encore rares [AO+21]. En ce qui concerne la prévision de demande d'électricité (également appelée prévision de charge), le stockage de l'électricité est coûteux et limité, tandis que l'offre doit correspondre à la demande à tout moment pour éviter les pannes. La prévision de la demande est donc nécessaire pour ajuster la production et agir efficacement sur les marchés de l'électricité [Ham+20]. Cependant, aucun de ces signaux n'est régi par un ensemble connu d'EDPs. De fait, l'ensemble complet des variables explicatives responsables de leur variations est inconnu ou non mesuré. Des modèles statistiques, potentiellement guidée par notre connaissance du comportement de ces signaux, sont donc nécessaires pour combler ces défauts de modélisation.

## Organisation du document

Ce document est composé d'une introduction, d'une partie théorique (Partie I), d'une partie appliquée (Partie II) et d'une conclusion. Chaque partie est divisée en plusieurs chapitres, chacun correspondant à une contribution autonome. Nous donnons ci-dessous un bref aperçu de chaque chapitre. Chacun a donné ou donnera lieu à une publication.

## Partie I : Quelques résultats mathématiques sur l'apprentissage automatique informé par la physique

On the convergence of PINNs , Nathan Doumèche, Gérard Biau (Sorbonne Université), et Claire Boyer (Université Paris-Saclay). Publié à Bernoulli.

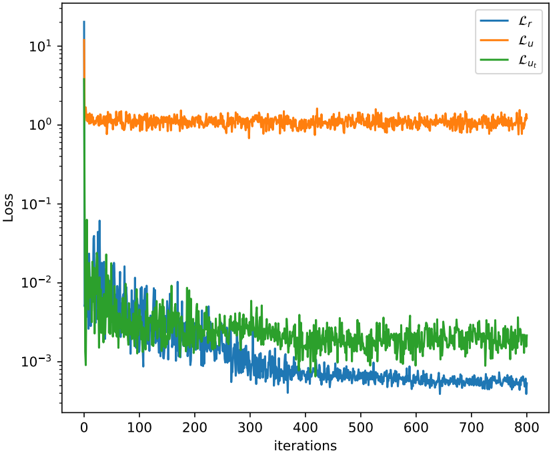

Le chapitre 2 est consacré à l'analyse des propriétés statistiques des réseaux neuronaux informés par la physique (PINNs) pour la résolution d'EDPs et la modélisation hybride. Nous nous concentrons sur l'approximation, la consistance du risque et la cohérence physique des PINNs. Au travers d'exemples, nous illustrons comment les réseaux neuronaux informés par la physique sont sujet à un surapprentissage systémique. Nous montrons également que les techniques usuelles de régularisation sont efficaces pour s'assurer de leur consistance.

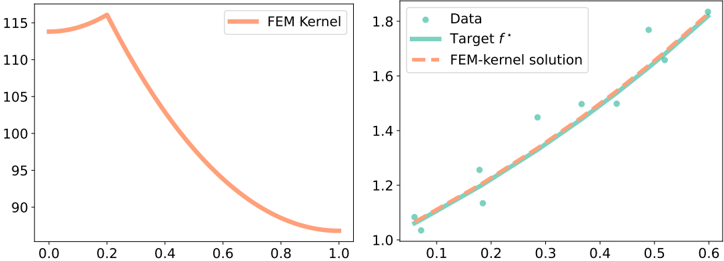

Physics-informed machine learning as a kernel method , Nathan Doumèche, Francis Bach (INRIA Paris), Gérard Biau (Sorbonne Université), et Claire Boyer (Université Paris-Saclay). Publié aux Proceedings of Thirty Seventh Conference on Learning Theory (COLT 2024).

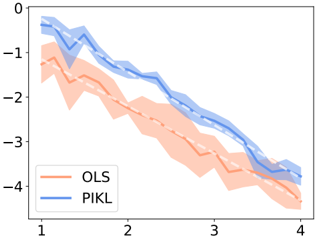

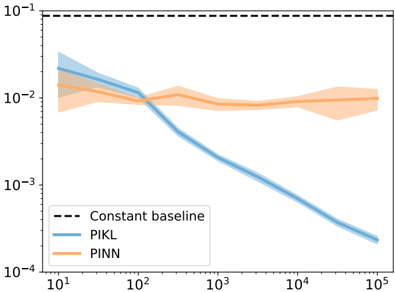

Dans le chapitre 3, nous prouvons que, pour les EDPs linéaires, la résolution d'EDPs et la modélisation hybride sont des méthodes à noyaux. En s'appuyant sur la théorie des méthodes à noyaux, nous montrons que l'estimateur informé par la physique converge au moins au taux minimax de Sobolev. Des taux plus rapides peuvent être atteints, mettant alors en évidence les bénéfices de l'a priori physique.

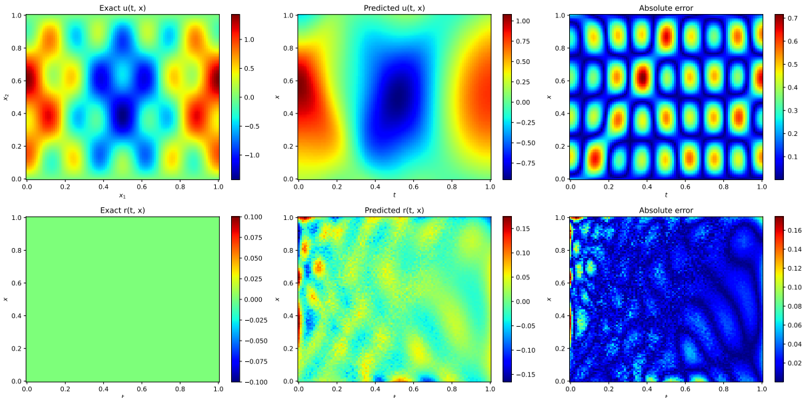

Physics-informed kernel learning , Nathan Doumèche, Francis Bach (INRIA Paris), Gérard Biau (Sorbonne Université), et Claire Boyer (Université Paris-Saclay). Accepté avec révisions mineures au Journal of Machine Learning Research (JMLR).

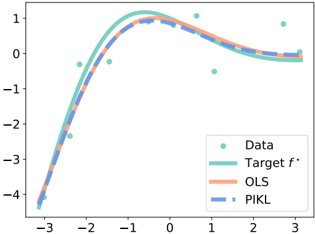

Le chapitre 4 est dédié à l'emploi de séries de Fourier pour approximer le noyau susmentionné. Nous y proposons un estimateur implémentable minimisant le risque empirique informée par la physique. Nous illustrons la performance de l'estimateur à noyaux par des expériences numériques, tant pour la modélisation hybride que pour la résolution d'EDP.

## Partie II: Prévision de séries temporelles en périodes atypiques

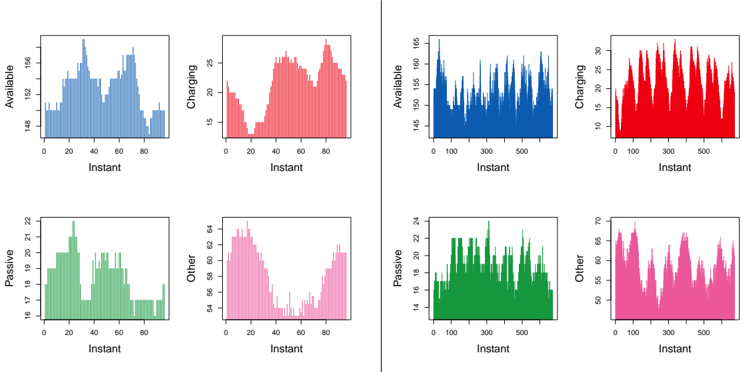

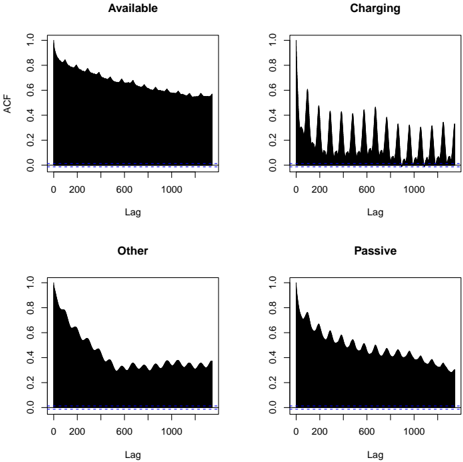

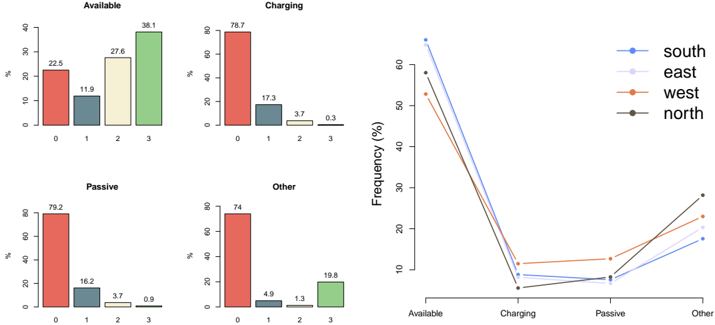

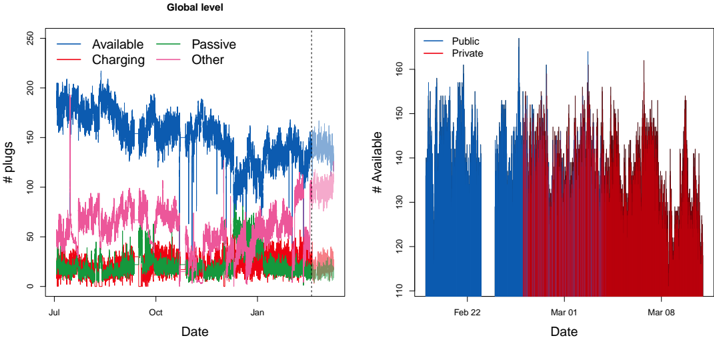

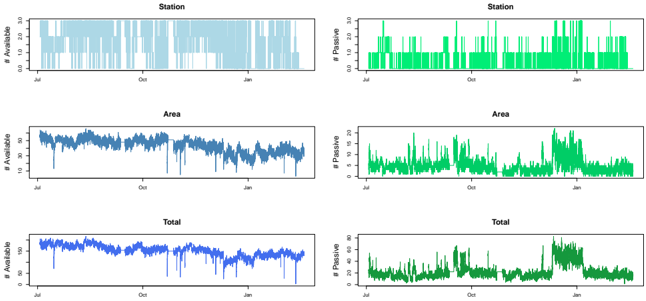

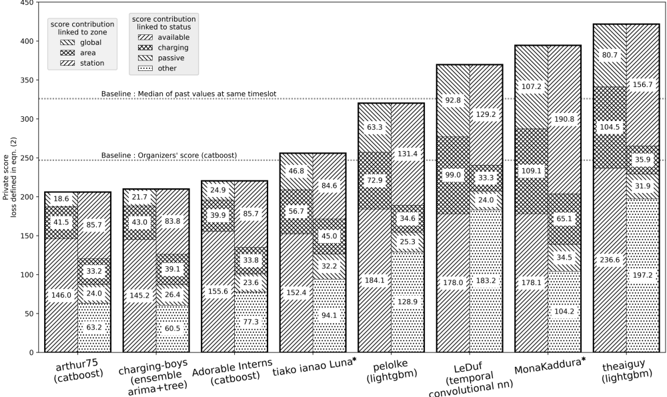

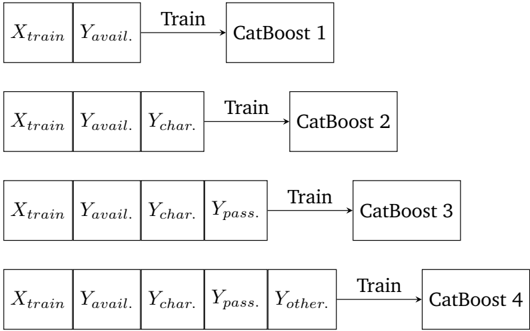

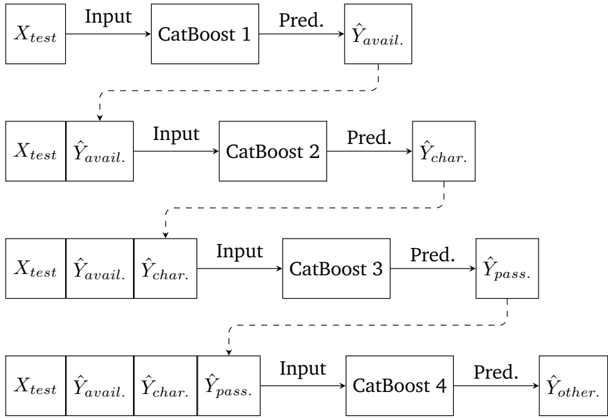

Forecasting Electric Vehicle Charging Station Occupancy: Smarter Mobility Data Challenge , Yvenn Amara-Ouali (Université Paris-Saclay), Yannig Goude (Université Paris-Saclay), Nathan Doumèche (Sorbonne Université), Pascal Veyret (EDF R&D), et al. Publié au Journal of Data-centric Machine Learning Research (DMLR).

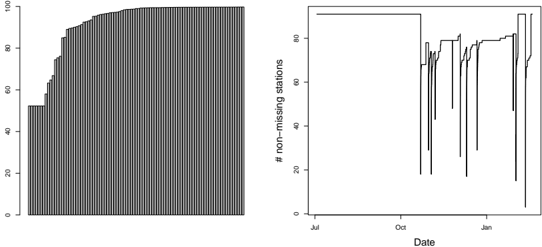

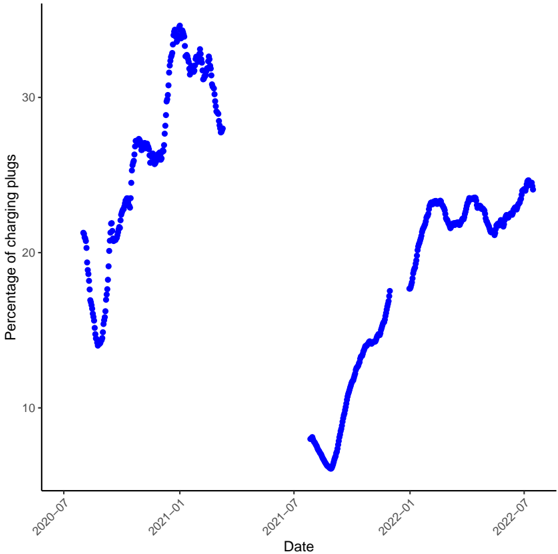

Le chapitre 5 est un chapitre spécial décrivant les résultats des trois équipes gagnantes du Smarter Mobility Data Challenge. L'objectif de ce défi était de prédire l'occupation des bornes de recharge de véhicules électriques à Paris en 2021. Notre équipe s'est classée 3ème de ce challenge.

Human spatial dynamics for electricity demand forecasting , Nathan Doumèche, Yannig Goude (Université Paris-Saclay), Stefania Rubrichi (Orange Innovation), et Yann Allioux (EDF R&D). En évaluation par les pairs.

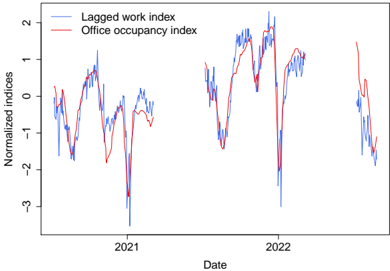

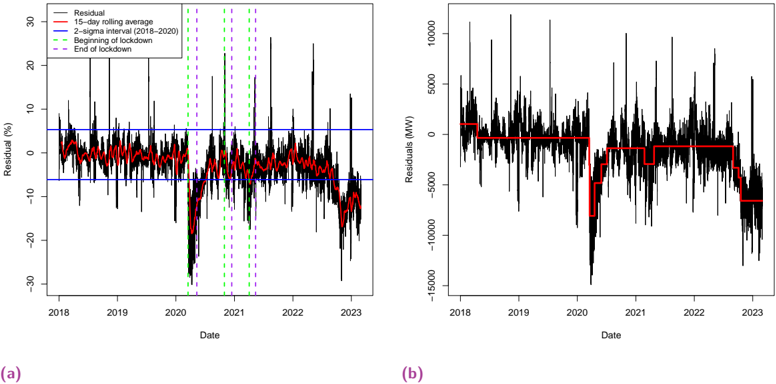

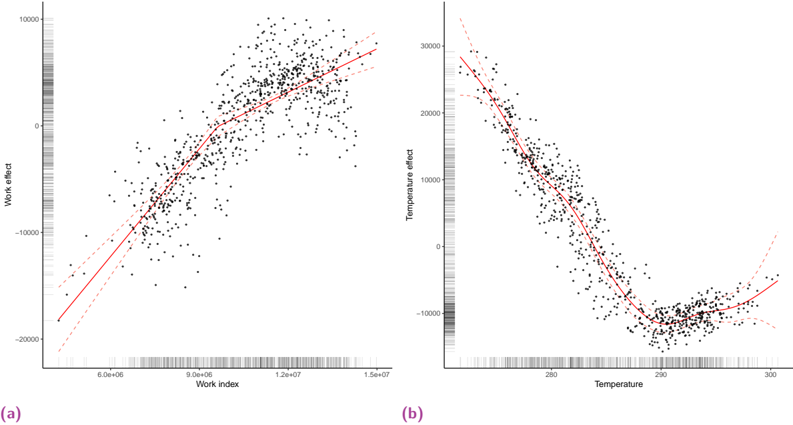

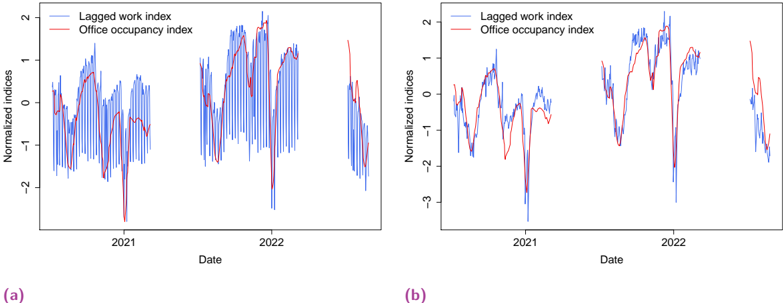

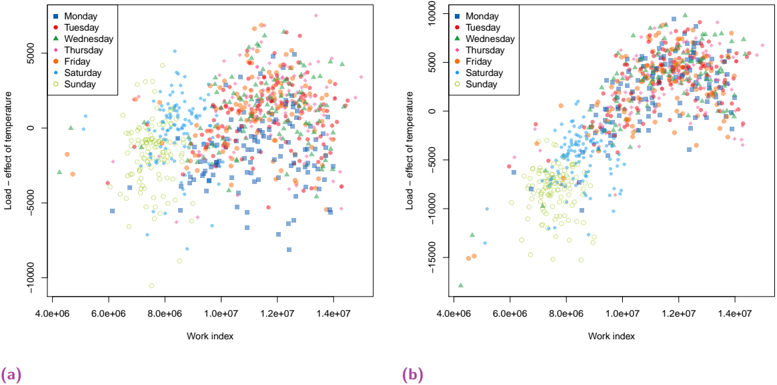

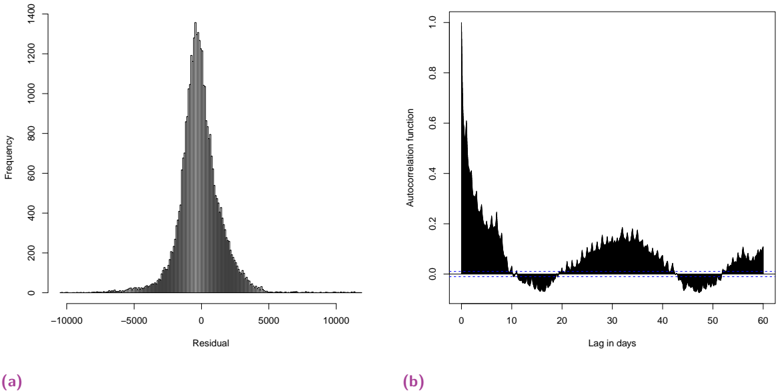

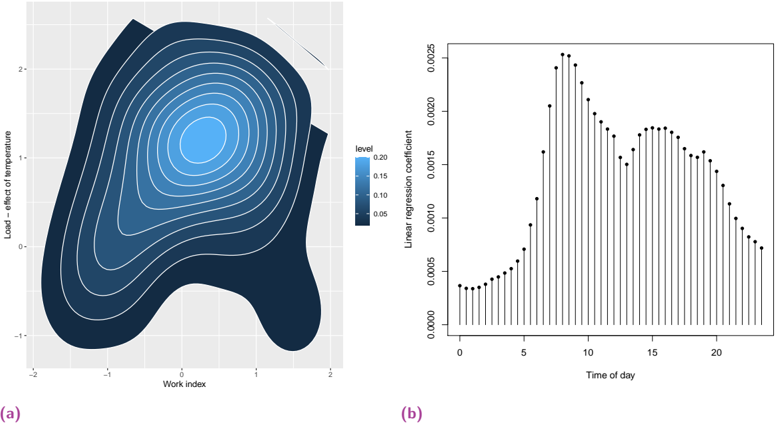

Dans le chapitre 6, nous explorons l'impact des données liées au travail sur la prévision de la demande d'électricité. Nous démontrons que les indices de mobilité dérivés des données des réseaux mobiles améliorent de manière significative la performance des modèles de l'état de l'art, en particulier pendant la période de sobriété énergétique de la France de l'hiver 2022-2023.

Forecasting time series with constraints , Nathan Doumèche, Francis Bach (INRIA Paris), Eloi Bedek (EDF R&D), Gérard Biau (Sorbonne Université), Claire Boyer (Université Paris-Saclay), et Yannig Goude (Université Paris-Saclay). En évaluation par les pairs.

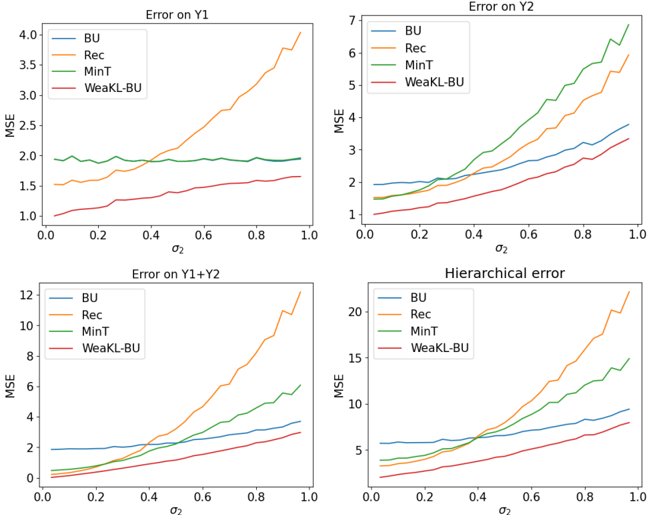

Le chapitre 7 se concentre sur l'extension du cadre de Fourier développé au chapitre 3 à des contraintes spécifiques aux séries temporelles. Les séries temporelles macroéconomiques ne satisfaisant que rarement un jeu d'EDPs connu, nous nous concentrons sur des contraintes plus faibles, comme les modèles additifs, l'adaptation en ligne aux ruptures, la prévision hiérarchique et l'apprentissage par transfert. Nous démontrons que les méthodes à noyaux qui en résultent atteignent des performances de pointe en prévision de charge et de tourisme.

## Contents

| 1 Introduction | 1 Introduction | 1 Introduction | 1 |

|-------------------------------------------------------------------|-------------------------------------------------------------------------|----------------------------------------------------------------------------------|-----|

| | 1.1 | Integrating physical prior into machine learning for industrial applications . . | . 1 |

| | 1.2 Some mathematical insights on | physics-informed machine learning . . . . . . . | 5 |

| | 1.3 Time series forecasting | in atypical periods . . . . . . . . . . . . . . . . . . . . . | 11 |

| I Some mathematical insights on physics-informed machine learning | I Some mathematical insights on physics-informed machine learning | I Some mathematical insights on physics-informed machine learning | 17 |

| On the convergence of PINNs | On the convergence of PINNs | On the convergence of PINNs | 19 |

| 2.1 | Introduction . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 19 |

| 2.2 | The PINN framework . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 20 |

| 2.3 | PINNs can overfit . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 24 |

| 2.4 | Consistency of regularized PINNs for linear and nonlinear PDE systems . | . . . . | 27 |

| 2.5 | Strong convergence of PINNs for linear PDE systems . | . . . . . . . . . . . . . . | 30 |

| 2.6 | Conclusion . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 39 |

| 2.A | Notations . . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 39 |

| 2.B | Some reminders of functional analysis on Lipschitz domains | . . . . . . . . . . . | 40 |

| 2.C | Some useful lemmas . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 42 |

| 2.D | Proofs of Proposition 2.2.3 . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 53 |

| 2.E | Proofs of Section 2.3 . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 54 |

| 2.F | Proofs of Section 2.4 . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 56 |

| 2.G | Proofs of Section 2.5 . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 66 |

| 3 Physics-informed machine learning as a kernel method | 3 Physics-informed machine learning as a kernel method | 3 Physics-informed machine learning as a kernel method | 75 |

| 3.1 | Introduction . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 75 |

| 3.2 | Related works . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 76 |

| 3.3 | PIML as a kernel method . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 77 |

| 3.4 | Convergence rates . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 80 |

| 3.5 | Application: speed-up effect of the physical penalty | . . . . . . . . . . . . . . . . | 84 |

| 3.6 | Conclusion . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 85 |

| 3.A | Some fundamentals of functional analysis . | . . . . . . . . . . . . . . . . . . . . | 86 |

| 3.B | The kernel point of view of PIML . . | . . . . . . . . . . . . . . . . . . . . . . . . | 92 |

| 3.C | Integral operator and eigenvalues . . | . . . . . . . . . . . . . . . . . . . . . . . . | 101 |

| 3.D | From eigenvalues of the integral operator to minimax convergence rates | . . . . | 107 |

| 3.E | About the choice of regularization . . | . . . . . . . . . . . . . . . . . . . . . . . . | 111 |

| 3.F | Application: the case D = d dx . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 114 |

| 4 Physics-informed kernel learning | 4 Physics-informed kernel learning | 4 Physics-informed kernel learning | 121 |

| 4.1 | Introduction . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 121 |

| 4.2 | The PIKL estimator . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . 123 | |

| 4.3 | The PIKL algorithm in practice . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 128 |

| | 4.3.1 Hybrid modeling . . . . . . . | . . . . . . . . . . . . . . . . . . . . . . . . | 128 |

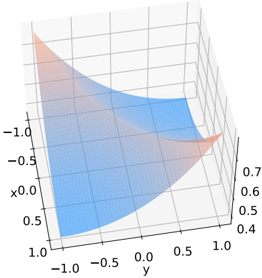

| | 4.3.2 | Measuring the impact of physics with the effective dimension | . . 130 |

|---------|-------------------------------------------------------------------------------------------------|-------------------------------------------------------------------------------------|---------------|

| 4.4 | PDE solving: Mitigating the difficulties of PINNs with PIKL . . . . . . | PDE solving: Mitigating the difficulties of PINNs with PIKL . . . . . . | . . 133 |

| 4.5 | PDE solving with noisy boundary conditions . . . . . . . . . . | . . . | . . 135 |

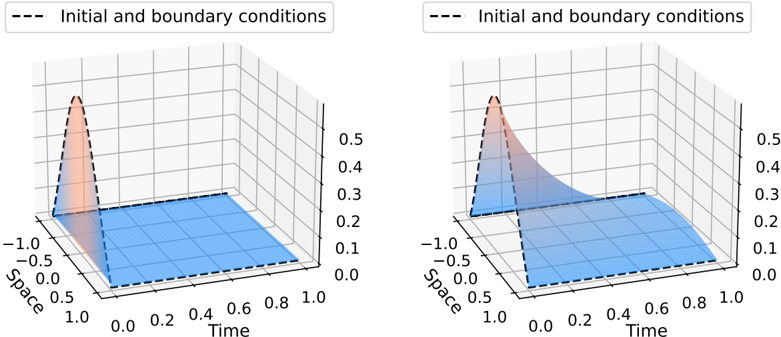

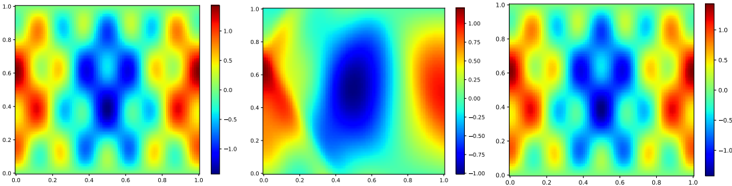

| | 4.5.1 | Wave equation in dimension 2 . . . . . . . . . . . . . . . . . | . . 135 |

| | 4.5.2 | Heat equation in dimension 4 . . . . . . . . . . . . . . . . . | . . 137 |

| 4.6 | Conclusion and future directions . | . . . . . . . . . . . . . . . . . . | . . 137 |

| 4.A | Comments on the PIKL estimator . . . . . . | . . . . . . . . . . . . . | . . 138 |

| | 4.A.1 | Spectral methods and PIKL . . . . . . . . . . . . . . . . . . | . . 138 |

| | 4.A.2 | Choice of the extended domain . . . . . . . . . . . . . . . . | . . 139 |

| | 4.A.3 | Reproducing property . . . . . . . . . . . . . . . . . . . . . | . . 139 |

| 4.B | Fundamentals of functional analysis on complex Hilbert spaces | . . | . . 140 |

| 4.C | Theoretical results for PIKL | . . . . . . . . . . . . . . . . . . . . . . | . . 141 |

| | 4.C.1 | Detailed computation of the Fourier expansion of the differential | penalty 141 |

| | 4.C.2 | Proof of Proposition 4.2.4 . . . . . . . . . . . . . . . . . . . | . . 142 |

| | 4.C.3 | Operations on characteristic functions . . . . . . . . . . . . | . . 142 |

| | 4.C.4 | Operator extensions . . . . . . . . . . . . . . . . . . . . . . | . . 143 |

| | 4.C.5 | Convergence of M - 1 m . . . . . . . . . . . . . . . . . . . . . . | . . 144 |

| | 4.C.6 | Operator norms of C m and C . . . . . . . . . . . . . . . . . | . . 147 |

| | 4.C.7 | Proof of Theorem 4.3.1 . . . . . . . . . . . . . . . . . . . . | . . 148 |

| 4.D | Experiments . . . . . . . . . . . . . . . . . . . . . . . | . . . . . . . . | . . 149 |

| | 4.D.1 | Numerical precision . . . . . . . . . . . . . . . . . . . . . . | . . 149 |

| | 4.D.2 | Convergence of the effective dimension approximation . . . | . . 150 |

| | 4.D.3 | Numerical schemes . . . . . . . . . . . . . . . . . . . . . . . | . . 150 |

| | 4.D.4 | PINN training . . . . . . . . . . . . . . . . . . . . . . . . . . | . . 153 |

| II | Time series forecasting in atypical periods | Time series forecasting in atypical periods | 155 |

| 5 | Forecasting Electric Vehicle Charging Station Occupancy: Smarter Mobility Challenge | Forecasting Electric Vehicle Charging Station Occupancy: Smarter Mobility Challenge | Data 157 |

| 5.1 | | . . . . . . . . . . . . . . . . . . . . . . . . . . | . . |

| | Introduction . . . . . | Introduction . . . . . | 157 |

| 5.2 | EV charging dataset . . | . . . . . . . . . . . . . . . . . . . . . . . . | . . 159 |

| 5.3 | Problem description . . . . . . . . . . . . | . . . . . . . . . . . . . . | . . 162 |

| 5.4 | Solutions of the winning teams . . . . . . . . . . | . . . . . . . . . . | . . 166 |

| 5.5 | Summary of findings and discussion . . | . . . . . . . . . . . . . . . | . . 173 |

| 5.A | Belib's history: pricing mechanism and park evolution . . | . . . . . | . . 174 |

| 5.B | Data description . . . . . . . | . . . . . . . . . . . . . . . . . . . . . | . . 177 |

| 5.C | Further insights on the winning strategies . . | . . . . . . . . . . . . | . . 177 |

| 5.D | perpectives: a longer dataset with more features . . . | . . . | . . 178 |

| | Future | Future | |

| 6 | Human spatial dynamics for electricity demand forecasting | . . . . . . . . . . . . . . . . . . | 183 . . 183 |

| 6.1 6.2 | Introduction . . . . . . . . . . . . . Using mobility data to forecast electricity | demand . . . . . . . . . . | . . 184 |

| 6.3 6.4 | Explainability of the models . | . . . . . . . . . . . . . . . . . . . . . | . . 189 |

| | Conclusion Datasets and features . . . . | . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | . . 192 . 193 |

| 6.A | | . . . . . . . . . . . . . . . . . . . . . . | . |

| 6.B | Benchmark and models . . . | . . . . . . . . . . . . . . . . . . . . . | . . 196 . 202 |

| 6.C 6.D | Change point detection . . . . . . . . . . . . Statistical analysis . . . . . . . . . . . . . . | . . . . . . . . . . . . . . . . . . . . | . . . 204 |

| | . . . . . . Forecasting time series with constraints | . . . . . . Forecasting time series with constraints | 209 |

| 7 | | | |

| | Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | Introduction . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . | |

| 7.1 | constraints in time series forecasting . . | constraints in time series forecasting . . | |

| 7.2 | Incorporating | . . . | . . 210 |

| 7.3 | Shape constraints . . . . . . . . . . . . . | . 213 |

|----------------|-----------------------------------------------|---------|

| 7.4 | Learning constraints . . . . . . . . . . . | . 219 |

| 7.5 | Conclusion . . . . . . . . . . . . . . . . | . 224 |

| 7.A | Proofs . . . . . . . . . . . . . . . . . . . | . 225 |

| 7.B | More WeaKL models . . . . . . . . . . . | . 228 |

| 7.C | A toy-example of hierarchical forecasting | . 229 |

| 7.D | Experiments . . . . . . . . . . . . . . . . | . 232 |

| III Conclusion | III Conclusion | 241 |

| Bibliography | Bibliography | 245 |

## Introduction

The work presented in this manuscript is the result of a collaboration between EDF, a company specializing in the production and sale of electricity, and Sorbonne University. To improve the performance and explainability of its forecasting models, EDF is particularly interested in incorporating human knowledge into its statistical methods. To this end, physics-informed machine learning (PIML) is a new framework designed to integrate physical constraints into established machine learning models. It was introduced in 2019 with physics-informed neural networks (PINNs). In this thesis, we investigate the mathematical properties of PIML, as well as applications to energy forecasting during atypical periods. Consequently, this thesis addresses both theoretical aspects, such as the impact of physical constraints on the statistical properties of the physics-informed estimators, and real-world applications.

## 1.1 Integrating physical prior into machine learning for industrial applications

## Physics-informed machine learning

Towards interpretable machine learning models. Machine learning techniques have achieved remarkable performance in many complex tasks of data analysis and generation [Wan+23a]. However, despite impressive results in image recognition, language processing, and interaction with adversarial environments (reinforcement learning), modern deep learning techniques still struggle with some simpler tasks which are crucial for practical applications. Time series forecasting is perhaps the most striking of such examples [MSA22a; McE+23; Zen+23; Ksh+24]. Moreover, even when they perform well, deep learning algorithms do not have the same theoretical guarantees as standard statistical techniques. This makes it risky to rely on such black-box algorithms for sensitive or high-stakes industrial applications [VAT21]. In addition to studying the mathematical properties of efficient deep learning algorithms to better understand their behavior, new algorithms with theoretical guarantees are being developed under the name of interpretable or explainable machine learning [LZ21; Lis+23]. A promising way to achieve efficient and explainable machine learning is to develop algorithms that are able to integrate expert knowledge. Such prior knowledge can take the form of a physical model of the phenomenon at hand.

Physics and Statistics. Mixing physical modeling and statistical models is nothing new. On the one hand, using data to infer physical laws is the essence of physics as an experimental science. For example, the famous Keplerian laws of 1609 were derived by fitting curves from observations of planetary motion. On the other hand, the integration of partial differential equations (PDEs) from physics into statistical models was already formally mathematically modeled by Wahba [Wah90] in 1990. However, up until recently, two main problems remained unsolved. First, no algorithm was able to efficiently and systematically incorporate physical priors into statistical regression problems. This meant that practitioners had to work a lot

to incorporate their physical knowledge into statistical methods. Second, the mathematical impact of adding physics to statistical methods in terms of performance was unknown. In fact, although common sense suggests that models with more information should be more powerful, doing so adds complexity to the statistical models and make their training more challenging.

Physics-informed machine learning. What has changed in recent years is the discovery of new ways to efficiently incorporate physical priors into statistical problems using well-known algorithms. Indeed, the incorporation of physics into statistical algorithms has been exemplified by Raissi et al. [RPK19] with neural networks and Nickl [Nic23] with Monte Carlo Markov Chains (MCMCs), while the use of PDE techniques for statistical tasks has been illustrated by Arnone et al. [Arn+22] with the finite element method (FEM). In this dissertation, we show how physics can be incorporated into kernel methods [Dou+24a]. In particular, this idea of adding physics into well-known machine learning algorithms is now called physics-informed machine learning (PIML), as theorized by Karniadakis et al. [Kar+21]. Relying on well-known algorithms to incorporate physical prior is extremely advantageous from a computational point of view. Indeed, the physics-informed neural networks (PINNs) of Raissi et al. [RPK19] can leverage the open source Pytorch and Tensorflow ecosystems, developed and maintained by the machine learning community and supported by wealthy AI companies like Google and Meta. This allows the many powerful computational speedups and efficient memory allocations from years of machine learning research to be implemented directly in PIML. In addition, it allows PIML algorithms to run on powerful hardware such as graphics processing units (GPUs), the ultra-optimized computing units that have been improved by AMD, INTEL, and NVIDIA for many years to perform specific mathematical operations (such as matrix-vector products). In this dissertation, we will show how to implement our kernel methods on GPUs [Dou+24b]. All of these speedups and memory implementations are crucial for PIML, because adding physics to a statistical algorithm generally makes its training more computationally expensive.

Towards weaker constraints. Most PIML algorithms have been developed to incorporate physical priors taking the form of PDE systems. Although this setting is appropriate for wellstudied physical systems, expert knowledge does not always take such a rigid form. For example, many macroeconomic signals, such as electricity demand, do not satisfy a known set of PDEs. Nevertheless, there are some physical insights that can be incorporated into forecasting models. For example, the laws of macroeconomics say that demand for electricity will fall as the price rises. Such weaker forms of physics -where "physics" is understood here as modeling information- have been successfully integrated into PINNs [see, e.g., Daw+22]. We will discuss how to apply weak constraints to time series in Chapter 7.

## Mathematical challenges of PIML

Mathematical framings of PIML. PIML is usually divided into four different tasks [RPK19; Kar+21; Cuo+22]. Let d ∈ N /star be the dimension of the problem, Ω ⊆ R d be the domain of interest, and D be a differential operator. The typical example is d = 2 , Ω = [0 , 1] 2 , and D = ∂ 2 1 , 1 + ∂ 2 2 , 2 is the Laplacian operator.

The first PIML task is PDE solving. Given a set of boundary conditions, the goal is to find a function that satisfies both the PDE system and the boundary conditions. For example, the Dirichlet boundary condition h : ∂ Ω → R translates into finding a function f h such that for all x ∈ Ω , D ( f h )( x ) = 0 , and f h | ∂ Ω = h . In the previous example, this amounts to solving the PDE ∂ 2 1 , 1 f h + ∂ 2 1 , 1 f h = 0 given the boundary condition h . In this context, the user specifies the boundary condition h and then trains a learning algorithm (e.g., a PINN) to learn the

solution to the PDE. Altough PDE methods like the finite element method (FEM) are already very effective in PDE solving, they can be computationally expensive. Here, methods from machine learning are helpful to find computationally efficient approximations for f h , called surrogate models. This is especially interesting when the PDE has to be solved quickly and many times, as in the case of daily weather forecasts [Che+22].

The second task is hybrid modeling. Given n ∈ N /star i.i.d. observations ( X 1 , Y 1 ) , . . . , ( X n , Y n ) distributed as the random variable ( X,Y ) such that Y = f /star ( X ) + ε , where ε is a random noise, the goal is to estimate f /star . What makes this supervised learning setting special is that we know that f /star follows the PDE D ( f /star ) = 0 , up to a possible modeling error. This setting is particularly relevant when the physical prior is incomplete, in the sense that it is ill-posed. For example, the boundary condition may not be fully specified, or the set of differential equations may admit an infinite number of solutions. Therefore, traditional PDE techniques cannot be applied directly, and the data is needed to solve the problem.

The third PIML task is PDE learning. Given n ∈ N /star i.i.d. observations ( X 1 , Y 1 ) , . . . , ( X n , Y n ) distributed as the random variable ( X,Y ) such that Y = f /star ( X ) + ε , where ε is a random noise, the goal is to estimate f /star as well as the physical law it obeys. In this supervised learning setting, the only prior knowledge is that f /star is the solution to a PDE system with unknown coefficients. For instance, the physical prior could be that we know that f /star satisfies the PDE ∆ f /star = λ /star f /star , where λ /star ∈ R is an unknown parameter which must be inferred from the data. Efficient implementation of PDE learning algorithms include PINNs [RPK19], LASSO regression [KKB20], and MCMCs [Nic23].

The fourth PIML task is to learn the PDE solver directly. Formally, the goal is to learn the operator φ : h ∈ L 2 ( ∂ Ω) ↦→ f h ∈ L 2 (Ω) associating to a boundary condition h the unique solution f h such that f h | ∂ Ω = h and ∀ x ∈ Ω , D ( f h )( x ) = 0 . This context is called operator learning. The goal here is to provide faster surrogate models by training a large model to learn the operator φ , instead of training a new algorithm for each new boundary condition h . Efficient operator learning algorithms include DeepONet [Lu+21] and Fourier neural operators [Li+21].

In this manuscript, we will mainly focus on PDE solving and hybrid modeling. The two other tasks are left for future works and will be discussed in the conclusion section. Indeed, operator learning and PDE learning are more complex problems, which statistical properties are still to be uncovered.

How to create efficient PIML algorithms? One of the main challenges in PIML is to design effective algorithms to handle the aforementioned tasks. In practice, many implementations rely on minimizing an empirical loss with a data-driven part and a physical penalty. For instance, most hybrid modeling frameworks use the PDE as a soft penalty, and intend to minimize the empirical risk

$$\mathcal { R } _ { n } ( f ) = \sum _ { j = 1 } ^ { n } \| f ( X _ { j } ) - Y _ { j } \| _ { 2 } ^ { 2 } + \lambda \int _ { \Omega } | \ m a t h s c r { D } ( f ) ( x ) | ^ { 2 } d x ,$$

where λ > 0 is an hyperparameter to be scaled by the user. This minimization is performed over a class of functions, such as neural networks for PINNs [RPK19] or low frequency Fourier modes in our kernel methods [Dou+24b]. However, finding a global minimizer of R n over a given class of function is made particularly difficult because of the PDE penalty. This requires being able to differentiate a function from the function class of interest, which is not always possible (e.g., random forests are not differentiable). In fact, although PINNs is the technique that has received the most attention recently, the algorithm proposed by Raissi et al. [RPK19] has been shown to be prone to overfitting [WYP22; DBB25], while its optimization is highly degraded

when D is nonlinear [BBC24]. Moreover, many of the algorithms proposed in the literature are computationally intensive. In particular, PINNs require thousands of gradient descent steps to converge, and do not clearly outperform the much faster traditional PDE solvers on PDE solving tasks, as revealed by the meta-analysis of McGreivy and Hakim [MH24].

How to quantify the gains from the physics? From a theoretical point of view, measuring the impact of the physics on the performance of the algorithm is challenging. An advantage of PIML is that, by relying on known algorithms, it becomes possible to adapt some well-established tools to understand the theoretical properties of the physically penalized versions of the algorithms. For example, the neural tangent kernel (NTK) analysis of PINNs better characterizes the convergence of their gradient descent [BBC24], whereas tools from nonparametric Bayesian analysis makes it possible to study the convergence rate of physics-informed MCMCs [NGW20]. In this dissertation, we rely on the effective dimension analysis of Caponnetto and Vito [CV07] and Blanchard et al. [BBM08] to characterize the convergence rate of our kernel techniques [Dou+24a]. Most of these theoretical results confirm the intuition that PIML algorithms are harder to optimize, but yield better statistical performance when done right.

## Industrial context

Beyond the theoretical aspects related to the statistical properties and complexity of the algorithms, PIML has demonstrated its efficiency in industrial applications. This PhD, conducted in collaboration with the EDF energy company, focuses on time series applications, particularly in energy forecasting.

Time series. Time series are ubiquitous in real-world applications [Pet+22], including forecasting macroeconomic signals (supply, demand, prices, human mobility...), estimating the spread of disease and hospital traffic, monitoring industrial processes in real time (manufacturing, chemical reactions, preventive maintenance...), and anticipating environmental events (heat waves, wildfires, rainfall...). However, time series are particularly difficult to handle from a statistical point of view. First, the observations are correlated, which means that the law of large numbers and the central limit theorem cannot be directly applied to create estimators. Then, the distribution of the target time series often changes over time, either due to trend, seasonality, or breaks. Moreover, time series often have missing values, due to different sampling frequencies in the data or sensor failures. All of these phenomena limit the amount of relevant data available and make time series forecasting difficult. Adding physical constraints to the models appears like a promising way to improve the performance of the forecasts. PIML techniques have been successfully applied to real-world time series with well-known physical dependencies, such as weather forecasting [Kas+21], renewable energy production forecasting [LO+23], or real-time control of industrial chemical reactions [Ngu+22].

Energy forecasting. In this dissertation, we will mainly focus on forecasting energy signals, like electric vehicle charging station occupancy and electricity demand. Both of these forecasting tasks are challenging but valuable to the energy industry. Indeed, the electric vehicle market is emerging and fast-growing, and providers need to adapt electricity grids to its high-intensity demands. However, open data sets and models are still rare [AO+21]. As for electricity demand forecasting (also called load forecasting), electricity is expensive to store, while supply must match demand at all times to avoid blackouts. Forecasting demand is thus necessary to adjust production, and to act on the electricity markets [Ham+20]. Neither of

these signals is governed by a known set of PDEs. In fact, even the set of explanatory variables responsible for their fluctuation is unknown or unmeasured. Therefore, statistical models are needed to fill this modeling gap.

## Organization of the manuscript

This thesis consists of an introduction, a theoretical part (Part I), an applied part (Part II), and a conclusion. Each part is separated in several chapters, each corresponding to a standalone contribution. Thus, each chapter has led to or should lead to a publication.

The following sections introduce the basic concepts of each chapter and present the main contributions of this thesis. Note that the notation in these sections is unified and may differ slightly from the notation in the chapters, which corresponds to the notation in the corresponding papers.

## 1.2 Some mathematical insights on physics-informed machine learning

## Chapter 2: On the convergence of PINNs

On the convergence of PINNs , Nathan Doumèche, Gérard Biau (Sorbonne Université), and Claire Boyer (Université Paris-Saclay). Published in Bernoulli.

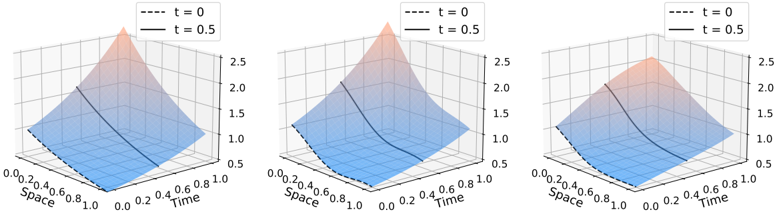

In Chapter 2, we analyze the statistical properties of physics-informed neural networks (PINNs) for PDE solving and hybrid modeling, focusing on approximation, risk consistency, and physical inconsistency. Through specific examples, we show that PINNs are prone to overfitting and demonstrate that standard regularization techniques are effective in ensuring their consistency.

Theoretical risk in hybrid modeling. PINNs intend to tackle hybrid modeling tasks by minimizing a physically penalized theoretical risk over a class of neural networks. In this supervised learning setting, the goal is to learn the function f /star such that Y = f /star ( X ) + ε , where ε is a random noise, given i.i.d. observation ( X 1 , Y 1 ) , . . . , ( X n , Y n ) of the process ( X,Y ) ∈ Ω × R d 2 , where Ω is a bounded Lipschitz domain of R d 1 . Lispchitz domains are a generalization of C 1 -manifolds, which encompasses the square [0 , 1] d 1 for example. What makes this regression setting special is the prior physical knowledge that f /star satisfies the boundary conditions ∀ x ∈ ∂ Ω , f /star ( x ) = h ( x ) , and the PDE system, ∀ 1 ≤ k ≤ M , ∀ x ∈ Ω , D k ( f )( x ) = 0 . The theoretical risk takes the form

$$\mathcal { R } _ { n } ( f ) = \frac { \lambda _ { d } } { n } \sum _ { i = 1 } ^ { n } \| f ( X _ { i } ) - Y _ { i } \| _ { 2 } ^ { 2 } + \lambda _ { e } \mathbb { E } \| f ( X ^ { ( e ) } ) - h ( X ^ { ( e ) } ) \| _ { 2 } ^ { 2 } + \frac { 1 } { | \Omega | } \sum _ { k = 1 } ^ { M } \int _ { \Omega } \mathcal { D } _ { k } ( f ) ( x ) ^ { 2 } d x ,$$

where f is a neural network, λ d > 0 and λ e > 0 are hyperparameters, Ω is the domain of interest, D j are differential operators, X ( e ) is a random variable sampled on ∂ Ω . Though we assume that the practitioner has no control on the data ( X i , Y i ) , the distribution of the random variable X ( e ) can be chosen freely to incorporate the boundary condition. Usually, X ( e ) is taken as the uniform distribution on ∂ Ω . The interest of using neural networks is that it is easy to compute their derivatives by backpropagation, and that neural networks are universal

approximators, meaning that there is no bias intrinsic to the neural network class (given the neural networks are big enough).

The neural network class. To be able to evaluate the operators D k ( f ) , the neural network f must be differentiable. Thus, instead of considering the usual relu activation function, the PINNs community relies on the hyperbolic tangent function tanh . The class of interest is thus the class of fully-connected feedforward neural networks with H ∈ N /star hidden layers of sizes ( L 1 , . . . , L H ) := ( D,.. . , D ) ∈ ( N /star ) H and activation tanh . This corresponds to the space of functions f θ from R d 1 to R d 2 , defined by

$$f _ { \theta } = \mathcal { A } _ { H + 1 } \circ ( t a n h \circ \mathcal { A } _ { H } ) \circ \cdots \circ ( t a n h \circ \mathcal { A } _ { 1 } ) ,$$

where tanh is applied element-wise. Each A k : R L k -1 → R L k is an affine function of the form A k ( x ) = W k x + b k , with W k a ( L k -1 × L k )-matrix, b k ∈ R L k a vector, L 0 = d 1 , and L H +1 = d 2 . The neural network f θ is thus parameterized by θ = ( W 1 , b 1 , . . . , W H +1 , b H +1 ) ∈ Θ H,D , where Θ H,D = R ∑ H i =0 ( L i +1) × L i +1 .

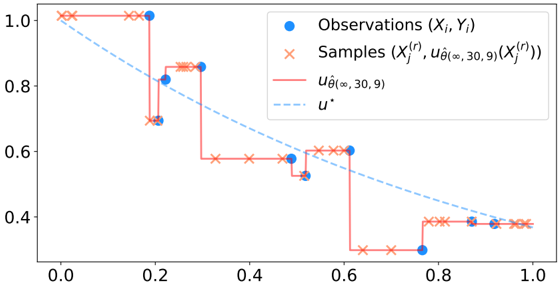

The discretized version of the risk. The idea behind PINNs is that, though minimizing R n is difficult, it is possible to minimized the following discretized version by gradient descent

$$R _ { n , n _ { e } , n _ { r } } ( f _ { \theta } ) & = \frac { \lambda _ { d } } { n } \sum _ { i = 1 } ^ { n } \| f _ { \theta } ( X _ { i } ) - Y _ { i } \| _ { 2 } ^ { 2 } + \frac { \lambda _ { e } } { n _ { e } } \sum _ { j = 1 } ^ { n _ { e } } \| f _ { \theta } ( X _ { j } ^ { ( e ) } ) - h ( X _ { j } ^ { ( e ) } ) \| _ { 2 } ^ { 2 } \\ & \quad + \frac { 1 } { n _ { r } } \sum _ { k = 1 } ^ { M } \sum _ { \ell = 1 } ^ { n _ { r } } \mathcal { D } _ { k } ( f _ { \theta } ) ( X _ { \ell } ^ { ( r ) } ) ^ { 2 } ,$$

where n e and n r are chosen by the practitioner, the n e points X ( e ) j are sampled according to the distribution of X ( e ) , and the n r collocation points X ( r ) /lscript are sampled according to the uniform distribution on Ω . Indeed, the gradient of R n,n e ,n r with respect to θ can be efficiently computed by backpropagation. However, R n,n e ,n r ( f θ ) is not convex in θ , meaning that the gradient descent is not guaranteed to converge towards a global minimum. In what follows, to simplify the analysis, we assume to have at hand a minimizing sequence ( ˆ θ ( p, n e , n r , D )) p ∈ N ∈ Θ N H,D , i.e.,

$$\lim _ { p \to \infty } R _ { n , n _ { e } , n _ { r } } ( f _ { \hat { \theta } ( p , n _ { e } , n _ { r } , D ) } ) = \inf _ { \theta \in \Theta _ { H , D } } \, R _ { n , n _ { e } , n _ { r } } ( f _ { \theta } ) .$$

The hope is that, in the limit n e , n r → ∞ , minimizing the discretized risk R n,n e ,n r is similar to minimizing the theoretical risk R n . When both these minimizations are equivalent, i.e., lim n e ,n r →∞ lim p →∞ R n ( f ˆ θ ( p,n e ,n r ,D ) ) = inf f ∈ NN H ( D ) R n ( f ) , we say that PINNs are riskconsistent. Otherwise, we say that they overfit.

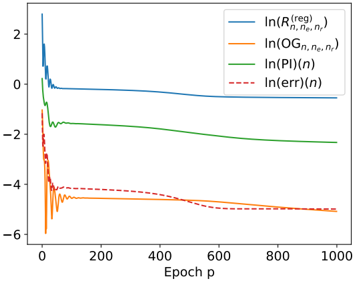

Contributions. In this chapter, we prove the following theoretical results on the convergence of PINNs.

- (i) [Proposition 2.2.3] The class of neural networks is indeed able to approximate simultaneously any function and its derivatives. Formally, for all differentiation order k ∈ N , this class is dense in the space C ∞ ( ¯ Ω , R d 2 ) with respect to the ‖ · ‖ C k (Ω) norm. This generalizes the result of De Ryck et al. [DLM21].

- (ii) [Propositions 2.3.1 and 2.3.2] We have exhibited general cases where PINNs overfit. These results were later complemented by the NTK analysis of Bonfanti et al. [BBC24].

- (iii) [Theorem 2.4.6] When adding a tailored ridge penalty ‖ θ ‖ 2 2 to the discretized risk, PINNs become risk-consistent. This result is very general, as it covers systems of linear and nonlinear PDEs.

- (iv) [Examples 2.5.1 and 2.5.2] Because of the challenging topological properties induced by the PDE penalty in the theoretical risk R n , risk-consistency is not enough to recover a physically-coherent neural network.

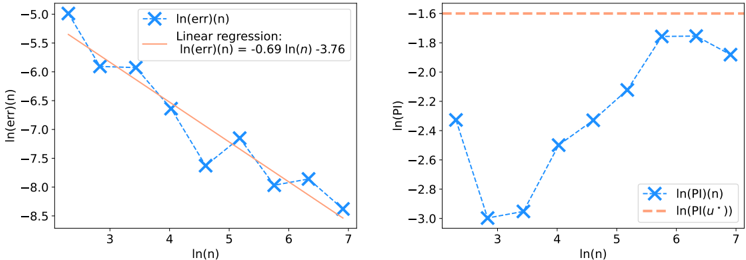

- (v) [Theorem 2.5.13] Adding a Sobolev penalty to the empirical risk ensures that, in the limit p, n e , n r , D →∞ , the PINN f ˆ θ ( p,n e ,n r ,D ) converge to f /star at least at a n -1 / 2 rate, and that f ˆ θ ( p,n e ,n r ,D ) respect the physical prior. This results is only proven for linear PDE systems.

- (vi) [Theorem 2.5.8] We show how PDE solving can be seen as a particular instance of hybrid modeling without data, i.e., n = 0 . We show the convergence of the PINN to the unique solution of a PDE system, when the PDE is well-posed. This result complements those of Shin [Shi20], Shin et al. [SZK23], Mishra and Molinaro [MM23], De Ryck and Mishra [DM22], Wu et al. [Wu+23], and Qian et al. [Qia+23] who focused on intractable modifications of PINNs.

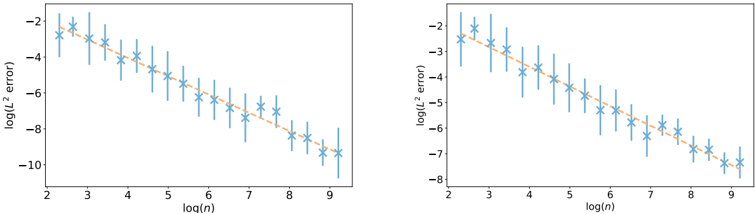

- (vii) [Figure 2.4] We carry out numerical experiments, confirming empirically our results on the convergence rate of PINNs.

Remark on Sobolev spaces. The notion of Sobolev space is central throughout all this manuscript. For instance, here, the Sobolev penalty used in PINNs is nothing but a squared Sobolev norm. Let s ∈ N . The Sobolev space H s (Ω , R d 2 ) is a generalization of the Hölder space C s (Ω , R d 2 ) to functions that are not differentiable under the usual definition (called strong differentiability), but to a weaker sense (involving so-called weak derivatives). Formally, H s (Ω , R d 2 ) is the topological closure of C s (Ω , R d 2 ) with respect to the Sobolev norm ‖ f ‖ 2 H s (Ω) = ∑ α ∈ N d 1 , ‖ α ‖ 1 ≤ s ‖ ∂ α f ‖ 2 L 2 (Ω) , where ∂ ( α 1 ,...,α d ) f = ∂ α 1 1 . . . ∂ α d d f . This norm corresponds to the sum of the L 2 norms of the derivatives up to the order s . For example, though the function f : x ↦→| x | is not differentiable at x = 0 and thus does not belongs to C 1 ([ -1 , 1] , R ) , it belongs to H 1 ([ -1 , 1] , R ) . This L 2 framework is particularly well-suited to PIML, where PDEs are penalized in the risk R n by an L 2 penalty. Sections 2.A and 2.B offer a more detailed introduction to weak derivatives, Sobolev spaces, and Lipschitz domains.

## Chapter 3: PIML as a kernel method

Physics-informed machine learning as a kernel method , Nathan Doumèche, Francis Bach (INRIA Paris), Gérard Biau (Sorbonne Université), and Claire Boyer (Université Paris-Saclay). Published in the Proceedings of Thirty Seventh Conference on Learning Theory (COLT 2024).

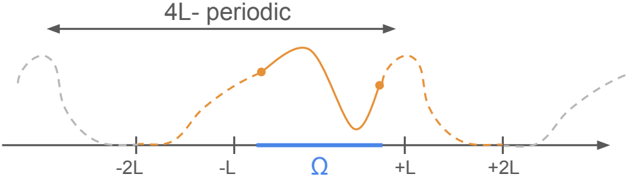

In Chapter 3, we prove that for linear PDEs, PDE solving and hybrid modeling are kernel regression tasks. By leveraging the theory of kernel methods, we show that the physicsinformed estimator converges at least at the minimax Sobolev rate. Faster rates can be achieved, highlighting the benefits of the physical prior.

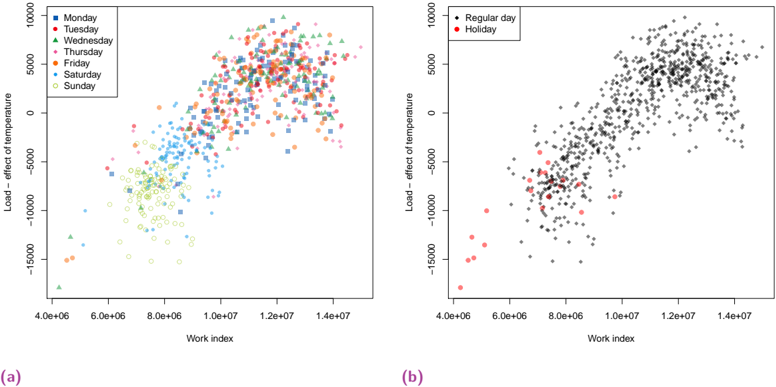

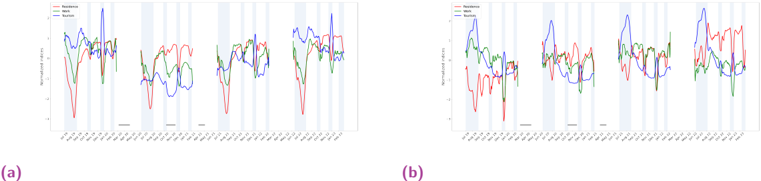

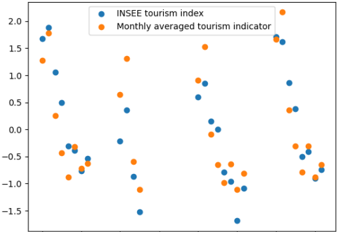

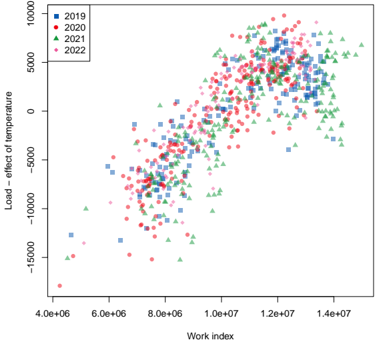

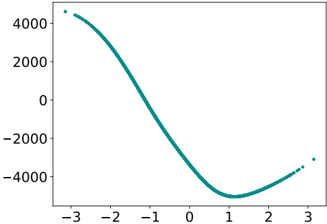

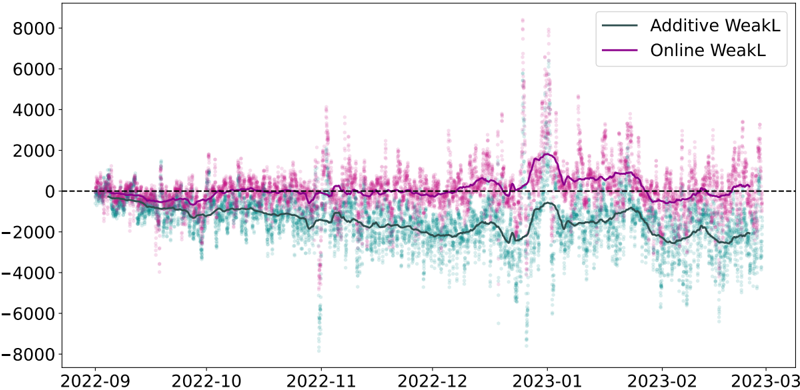

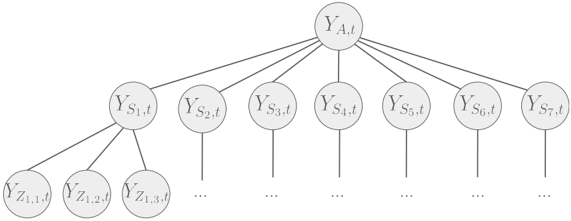

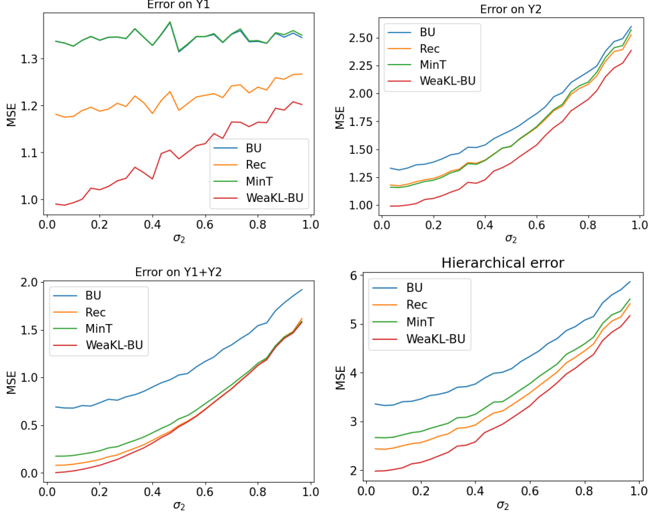

Minimax convergence rate. In Chapter 2, we showed that PINNs with extra regularization terms are risk-consistent, and that they converge to f /star at a n -1 / 2 rate if the PDE system is linear. This result is satisfying because of its generality. Indeed, it encompasses large classes of PDEs, holds for any Lispchitz domain Ω , and only requires the initial condition h to be Lipschitz. An interesting results to compare with is the Sobolev minimax rate [see, e.g., Theorem 2.11, Tsy09]. It states that no algorithm can learn an unknown function of the Sobolev ball { f, ‖ f ‖ H s (Ω , R ) ≤ 1 } more quickly than the rate n -2 s/ (2 s + d 1 ) . Note how the curse of the dimension appears in this rate because of its exponential dependency in d 1 . This rate is attained by many algorithms, like Sobolev kernel methods. In particular, if the target function f /star is very smooth and belongs to C ∞ ( ¯ Ω , R d 2 ) , then it can be learnt at the parametric rate n -1 . Thus, the rate of n -1 / 2 that we computed for PINNs convergence is not optimal.