# PRACtical: Subarray-Level Counter Update and Bank-Level Recovery Isolation for Efficient PRAC Rowhammer Mitigation

**Authors**: Ravan Nazaraliyev Saber Ganjisaffar Nurlan Nazaraliyev Nael Abu-Ghazaleh

> University of California, Riversidernaza005, sganj003, nnaza008, naelag@ucr.edu

Abstract.

As DRAM density continues to scale, the Rowhammer vulnerability increases in severity due to heightened charge leakage, which lowers the activation threshold required to induce bit flips. To mitigate this risk, industry-standard solutions have shifted from memory controller-based row activation counters, which require large SRAM storage with significant area and power overheads, to in-DRAM row activation counters. The DDR5 JEDEC standard incorporates a modified DRAM architecture featuring per-row activation counters (PRAC) and an Alert Back-Off (ABO) signal that notifies the memory controller (MC) to trigger mitigation mechanisms. However, PRAC introduces a performance overheads by incrementing counters during the precharge operation, adding an additional delay to the precharge phase. Furthermore, when the ABO signal is triggered upon a row reaching the Alert threshold, RFM ab indiscriminately stalls all memory requests at the memory channel level, even when only a single bank is being accessed heavily, leading to unnecessary performance degradation. In this work, we propose PRACtical, an optimized approach to improving the performance of existing PRAC+ABO mechanisms while maintaining security guarantees. To reduce counter update latency, we introduce a centralized increment circuit, allowing the memory controller to proceed with subsequent activations to other subarrays without suffering the increment delays. To minimize unnecessary memory stalls and make the system resilient against memory performance attacks based on channel stalling upon Alert, we enhance RFM ab with bank-level granularity, enabling the memory controller to selectively stall only the affected banks rather than the entire memory channel. This is achieved by introducing a register in the DRAM that shows the banks under attack upon an Alert signal. Our proposed techniques improve the performance over state of the art opportunistic PRAC and ABO, by 8% (20% max). Additionally, PRACtical saves an average of 19% energy relative to PRAC+ABO. PRACtical is resilient to performance attacks, showing less than 6% slowdown on an aggressive performance attack, while providing the same security as PRAC+ABO.

DRAM, Rowhammer, PRAC, ABO

1. Introduction

Rowhammer (Cojocar et al., 2019; de Ridder et al., 2021; Mutlu and Kim, 2019; Kim et al., 2014a) is a well-known serious vulnerability affecting DRAM: repeated access to one or more target rows can induce bit flips in adjacent rows. The bit flips occur due to charge leakage from repeated activations of a DRAM row, which can accelerate charge leakage in nearby memory cells, causing their state to change. When a row is activated a sufficient number of times within a single refresh interval, it may corrupt the contents of nearby rows. The estimated minimum number of such activations that could induce a bit flip is referred to as the Rowhammer Threshold, denoted by $N_{\mathrm{RH}}$ . As DRAM technology has scaled to smaller feature sizes, $N_{\mathrm{RH}}$ has significantly decreased—from approximately 140K activations in earlier DDR3 devices to as low as 4.8K in LPDDR4 modules (Kim et al., 2014a, 2020); it is expected to drop further in future generations. Concerningly, the significant drop in $N_{\mathrm{RH}}$ leads to a noticeable increase in the frequency of DRAM bit flips (Loughlin et al., 2021). Accordingly, mitigation strategies must continuously evolve to remain effective against increasingly demanding and sophisticated threat conditions.

DRAM plays a critical role in determining the performance of memory-intensive workloads, leading to the well known memory wall (Wulf and McKee, 1995; Gholami et al., 2024; Ghose et al., 2019). As Rowhammer threats continue to increase with memory scaling, the proposed mitigations have come at a substantial cost to DRAM performance. JEDEC (JEDEC, 2024a) has recently introduced a new standard mitigation mechanism for the Rowhammer vulnerability in DDR5 memory devices. This mechanism incorporates two key components: Per-Row Activation Counters (PRAC) (JEDEC, 2024b) and Alert Back-Off (ABO) protocol. PRAC, inspired by the Panopticon framework (Bennett et al., 2021), implements an in-DRAM architectural enhancement where each row is extended to store an activation counter. When the number of activations to a row exceeds a predefined Alert threshold, the mechanism should trigger mitigation for that row and its neighboring rows. This mechanism enables localized, row-level tracking without incurring the area and power overhead associated with external SRAM-based counters (Qureshi, [n. d.]; Saxena and Qureshi, 2024). The ABO protocol (JEDEC, 2024b) complements PRAC by providing a signaling mechanism through which the DRAM device can notify the memory controller (MC) when a critical activation threshold is crossed. The MC sends all-bank RFM (RFM ab) triggering targeted refresh operations, thereby preventing potential bit flips. While the PRAC+ABO framework marks a significant improvement in integrated, hardware-supported Rowhammer mitigations under reduced thresholds (Canpolat et al., 2025; Qureshi and Qazi, 2024; Woo et al., 2025), it also introduces several critical limitations that compromise both performance and scalability, particularly in high-performance or multi-threaded environments.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: DRAM Timing Sequences - Unsafe Baseline vs. Practical Approaches

### Overview

The image presents a comparative diagram illustrating DRAM timing sequences for an "Unsafe Baseline" and two "Practical" approaches: "PRAC+ABO" and "Practical (this work)". The diagram focuses on the timing of ACT (Activate), RAS (Row Address Strobe), and PRE (Precharge) commands across two memory banks (A and B) or subarrays. The diagram is split into two main sections (a) and (b), representing different scenarios.

### Components/Axes

* **Horizontal Axis:** Represents time, with increasing time moving from left to right. The scale is not explicitly defined but durations are indicated in nanoseconds (ns).

* **Vertical Axis:** Represents memory banks or subarrays. In (a), it shows Subarray A and Subarray B. In (b), it shows Bank A and Bank B.

* **Command Blocks:** Rectangular blocks labeled "ACT", "RAS", and "PRE" represent the timing of these DRAM commands. The blocks are color-coded: ACT (green), RAS (red), PRE (orange).

* **Arrows:** Arrows indicate the duration of commands and the timing relationships between them.

* **Labels:**

* "Subarray A", "Subarray B"

* "Bank A", "Bank B"

* "ACT", "RAS", "PRE"

* "Pre-Recovery"

* "RFM" (Redundant Fault Management)

* "ABO" (Address Bank Optimization)

* "Unsafe Baseline"

* "PRAC+ABO"

* "Practical (this work)"

* "15ns", "180ns", "350ns", "10ns"

### Detailed Analysis / Content Details

**Section (a) - Unsafe Baseline:**

* **Subarray A:** ACT, RAS, PRE sequence.

* **Subarray B:** ACT, RAS, PRE sequence, with a 15ns delay indicated after RAS.

* The timing between ACT and RAS is very short, and the PRE command follows closely after RAS.

**Section (b) - PRAC+ABO and Practical (this work):**

* **PRAC+ABO (Top):**

* Bank A: ACT, RAS, PRE sequence.

* Bank B: ACT, RAS, ABO sequence.

* A 180ns duration is indicated for the PRE command in Bank A.

* ABO is shown to occur after RAS in Bank B.

* RFM is shown to occur after PRE in Bank A.

* **Practical (this work) (Bottom):**

* Bank A: ACT, RAS, PRE sequence.

* Bank B: ACT, RAS, ABO sequence.

* A 180ns duration is indicated for the PRE command in Bank A.

* ABO is shown to occur after RAS in Bank B.

* RFM is shown to occur after PRE in Bank A.

* A 10ns delay is indicated after RAS in Bank B.

* A 350ns duration is indicated for the RFM command.

### Key Observations

* The "Unsafe Baseline" (a) has the shortest timing between commands, potentially leading to timing violations.

* Both "Practical" approaches (PRAC+ABO and Practical) introduce delays and additional steps (ABO, RFM) to improve reliability.

* The "PRAC+ABO" approach has a 180ns PRE command duration in Bank A, while the "Practical" approach has a 350ns RFM duration.

* The "Practical" approach introduces a 10ns delay after RAS in Bank B.

* The diagram highlights the trade-off between performance (shorter timings) and reliability (longer timings with additional steps).

### Interpretation

The diagram demonstrates the evolution of DRAM timing strategies to address reliability concerns. The "Unsafe Baseline" represents a potentially faster but less reliable approach, while the "Practical" approaches prioritize reliability by introducing delays and redundancy management (RFM). The inclusion of Address Bank Optimization (ABO) in "PRAC+ABO" suggests an attempt to mitigate the performance impact of the added delays. The differences in timing durations (180ns vs. 350ns) and the 10ns delay in the "Practical" approach indicate different optimization strategies for managing redundancy and ensuring data integrity. The diagram suggests that the "Practical (this work)" approach is a refinement of the "PRAC+ABO" approach, potentially offering a better balance between performance and reliability. The diagram is a visual representation of a technical problem and the proposed solutions, likely within the context of DRAM design or memory controller development.

</details>

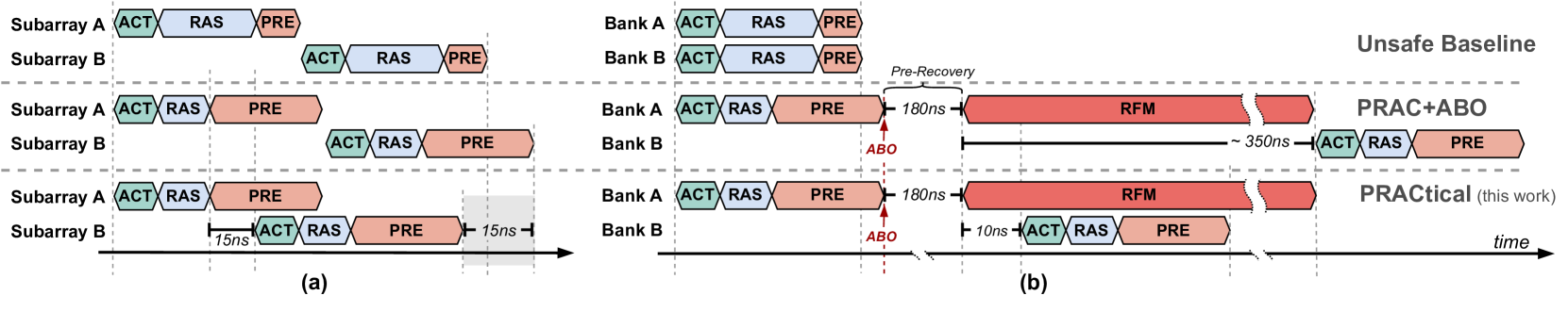

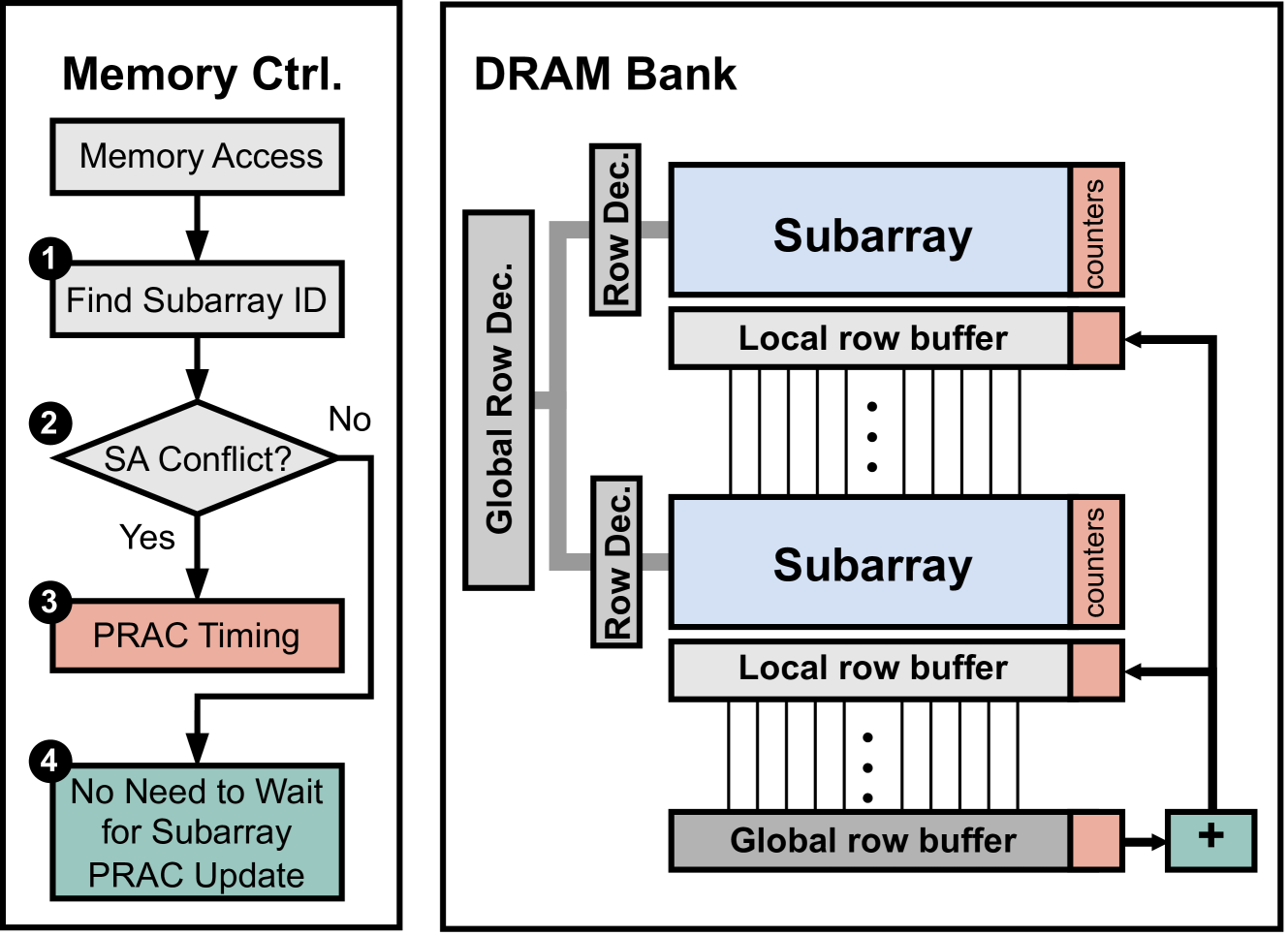

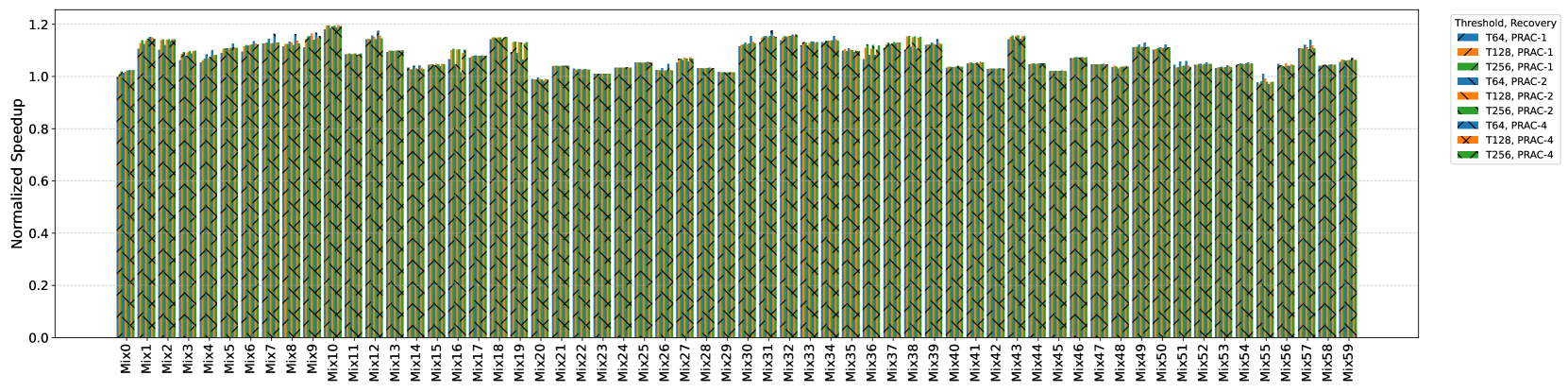

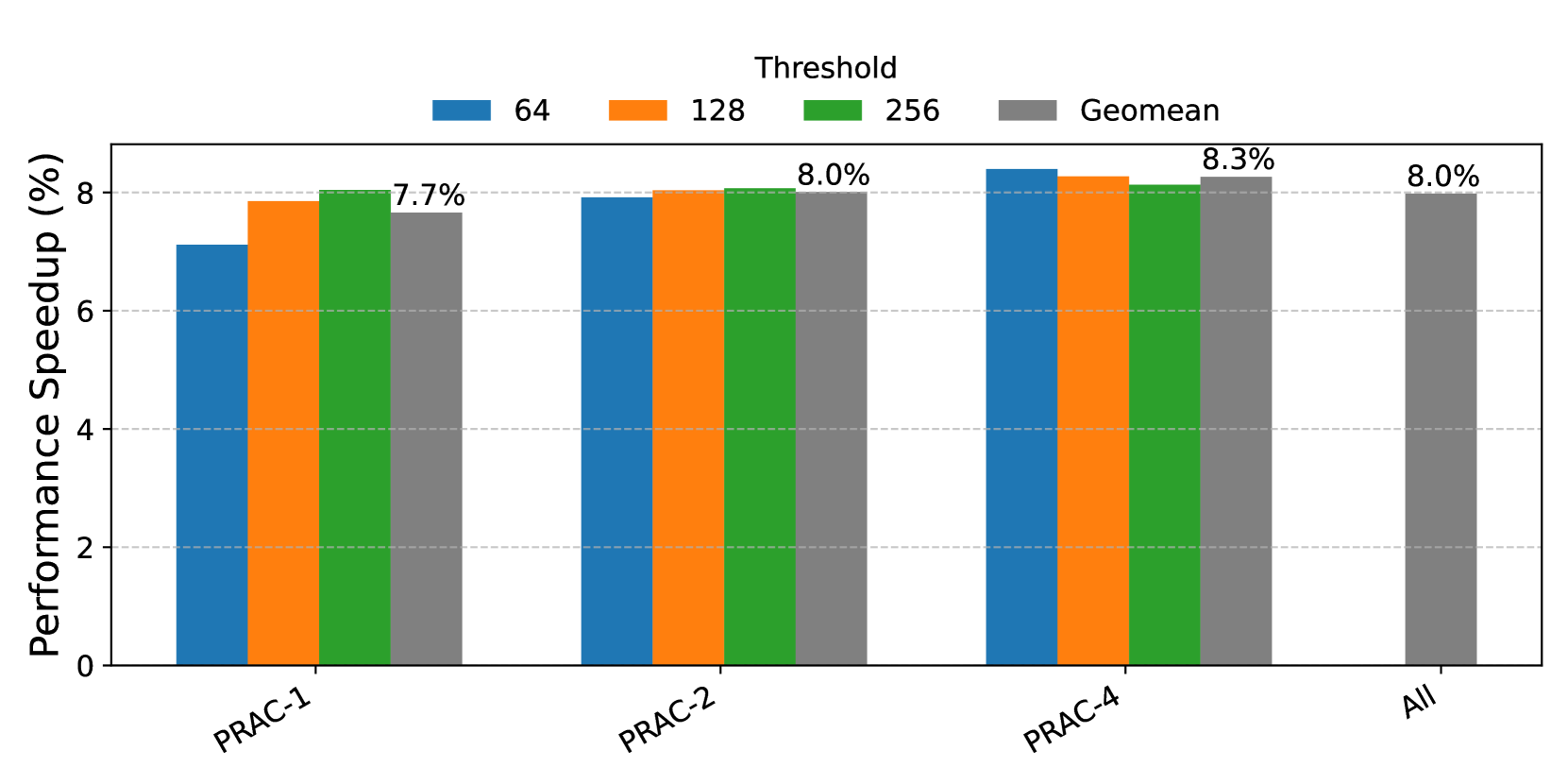

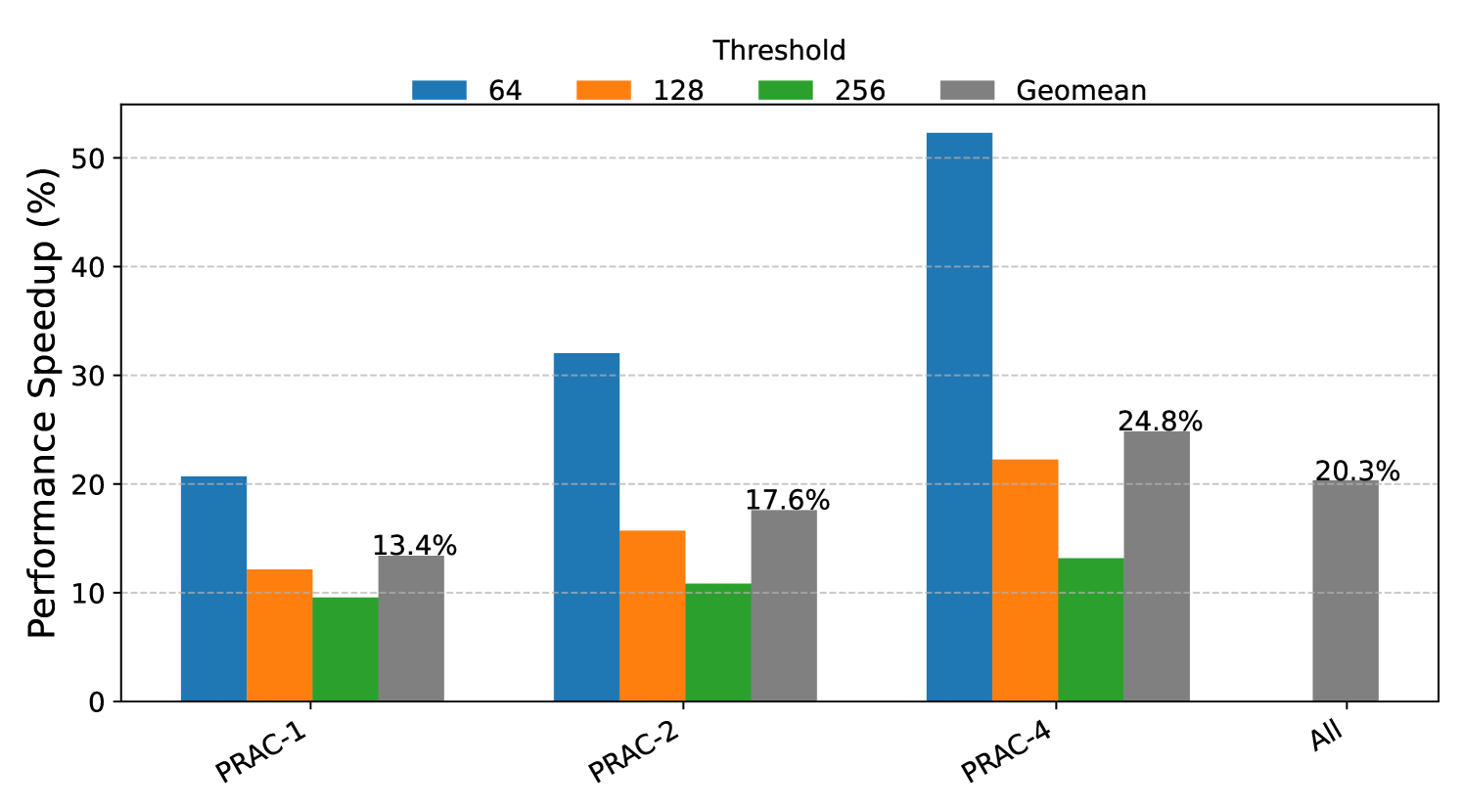

Figure 1. Comparison of baseline DRAM (top), PRAC+ABO (middle), and the proposed design (bottom). PRAC+ABO introduces (a) access latency from counter update overhead and (b) all-bank stalls due to coarse-grained RFM recovery. The proposed mechanism mitigates both by enabling subarray-level PRAC updates and bank-level mitigation. The gray regions illustrate the performance improvements enabled by the proposed approach. Register read operation takes 10ns in PRACtical in part (b).

\Description

First, the integration of PRAC requires architectural modifications that impact critical DRAM timing parameters (Hassan et al., 2024; JEDEC, 2024a). Specifically, the logic responsible for updating the per-row counters introduces an additional latency of around 5 ns to the row cycle time ( $t_{\mathrm{RC}}$ ). Even more significantly, the precharge timing ( $t_{\mathrm{RP}}$ ) is extended from 15 ns to 36 ns to accommodate the read-modify-write (RMW) operations needed for per-row counter updates. These modifications directly affect memory access latency and throughput, especially in workloads with high row buffer conflicts, as illustrated in Figure 1 (a) (Baseline vs. PRAC+ABO).

Second, after ABO is signalled to MC from DRAM, MC sends RFM ab command to DRAM for recovery. This command operates at the memory channel granularity. Therefore, the memory controller must conservatively stall all requests across the entire channel, even if only a single bank is affected, as illustrated in the middle row of Figure 1 (b). Since all banks undergo recovery, even in the absence of an actively hammered (hot) row, the mechanism opportunistically refreshes potential victim rows to preemptively mitigate future attacks. Our analysis reveals that this opportunistic strategy results in a threefold increase in recovery refreshes, underscoring the inefficiency and potential redundancy of these additional operations. We further observe that, across a set of memory-intensive benchmarks, on average, only 1.16 out of 64 banks need recovery at any given time (See § 3.2). Consequently, the remaining banks are unnecessarily stalled, leading to avoidable performance degradation. This coarse-grained design limits memory-level parallelism, degrading performance and responsiveness in scenarios where finer-grained mitigation would suffice.

While the PRAC+ABO mechanism represents meaningful progress toward an in-DRAM Rowhammer mitigation, it introduces two key performance-related drawbacks: (1) increased memory access latency due to counter update overhead, and (2) channel-wide stalls caused by the coarse granularity of RFM ab. These challenges do not undermine the mechanism’s ability to prevent Rowhammer bit flips, but they do limit its efficiency and suitability for performance-sensitive systems. As such, there is a pressing need for a more fine-grained, low-overhead mitigation framework that minimizes latency and preserves DRAM throughput while maintaining effective protection. To address the performance and energy limitations of the existing PRAC+ABO framework, we propose PRACtical — a minimal and efficient redesign that improves both responsiveness and scalability of PRAC+ABO. This new design introduces two key enhancements. First, it leverages subarray-level increment logic to decouple counter updates from global precharge timing, allowing subsequent activations to proceed in other subarrays without incurring the additional latency associated with PRAC’s read-modify-write operations. While prior works have explored and leveraged subarray-level parallelism to enhance memory performance and efficiency (Kim et al., 2012; Hassan et al., 2024), the introduction of the PRAC standard imposes new constraints that limit such parallelism. In this work, we demonstrate that with modest modifications to the DRAM circuitry, it is possible to restore a degree of subarray parallelism by overlapping the activation of a new row with the counter update of the previously accessed row. This significantly reduces access delay and enables known subarray-level parallelism for per-row counter updates. Second, PRACtical provides a new RFM command called RFM_MASK that eliminates stalling every bank once ABO is signalled from DRAM to MC, thereby ensuring that only the affected banks are stalled in response to a threshold violation. This fine-grained control minimizes unnecessary interference with unrelated memory traffic and improves overall system throughput. PRACtical makes small modifications to the memory controller and DRAM.

Together, these enhancements enable precise, low-latency and low-energy Rowhammer mitigation with minimal disruption to normal DRAM operations, as demonstrated in the bottom row of Figure 1 (a) and (b). Our performance evaluation, detailed in Section 6, utilizing DRAM simulation with Ramulator (Luo et al., 2023b), demonstrates that PRACtical achieves mean performance improvement of 8% over opportunistic PRAC+ABO for high-performance and memory-intensive applications while maintaining the security guarantees of PRAC+ABO-based mitigations (Canpolat et al., 2025; Woo et al., 2025; Qureshi and Qazi, 2024). In summary, the key contributions of this paper are:

- We identify two major performance bottlenecks in the PRAC+ABO mechanism: precharge-induced latency caused by PRAC updates, and coarse-grained channel-wide stalling due to RFM ab.

- We propose a centralized increment circuit that enables subarray-level parallelism, minimizing the counter update overhead during precharge.

- We introduce a bank-level recovery scheme that minimizes unnecessary stalls by isolating mitigation to the affected bank.

- We integrate these two mechanisms into PRACtical, a low-overhead Rowhammer mitigation framework that improves performance while preserving the security guarantees of PRAC+ABO.

- We evaluate PRACtical using Ramulator, showing lower performance overheads compared to PRAC+ABO with minimal hardware modifications.

2. Background

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: DRAM Module Architecture

### Overview

The image presents a hierarchical block diagram illustrating the architecture of a Dynamic Random Access Memory (DRAM) module. It details the organization from the Memory Controller down to the individual DRAM cell, highlighting key components like DRAM Ranks, Chips, Subarrays, PRAC (Per-row Access Counter), and the DRAM cell itself. The diagram is divided into three main sections: a high-level module view, a DRAM Bank view, and a detailed subarray/cell view.

### Components/Axes

The diagram features the following components:

* **Memory Controller:** Top-left corner.

* **I/O Circuitry:** Adjacent to the Memory Controller.

* **DRAM Module:** Encompassing the entire upper section.

* **DRAM Rank:** Within the DRAM Module, containing multiple Chips.

* **Chip:** Individual memory chips within a Rank.

* **DRAM Bank:** Upper-right section, detailing the internal organization of a DRAM Rank.

* **Global Decoder:** Connects the DRAM Bank to the DRAM Rank.

* **Row Dec:** Row Decoder within the DRAM Bank.

* **Subarray:** Multiple subarrays within a DRAM Bank.

* **Local Row Buffer:** Associated with each Subarray.

* **Global Row Buffer:** Connects the subarrays.

* **PRAC (Per-row Access Counter):** Adjacent to the Subarrays.

* **Subarray (Detailed):** Bottom-left section, showing the arrangement of cells.

* **Bitline:** Vertical lines connecting cells within the Subarray.

* **Wordline:** Horizontal lines selecting rows of cells.

* **Row:** Represents a row of cells.

* **DRAM Cell:** Bottom-right section, showing the transistor and capacitor structure.

* **Transistor:** Component of the DRAM cell.

* **Capacitor:** Component of the DRAM cell.

The diagram uses arrows to indicate data flow and connections between components.

### Detailed Analysis or Content Details

**DRAM Module Level:**

* The DRAM Module contains multiple DRAM Ranks.

* Each DRAM Rank contains multiple Chips.

* The I/O Circuitry connects the Memory Controller to the DRAM Ranks.

**DRAM Bank Level:**

* The DRAM Bank consists of multiple Subarrays.

* Each Subarray has a Local Row Buffer.

* A Global Row Buffer connects the Subarrays.

* The Global Decoder connects the DRAM Bank to the DRAM Rank.

* PRAC is positioned adjacent to the Subarrays.

**Subarray Level:**

* The Subarray is organized as a grid of DRAM Cells.

* Bitlines run vertically, and Wordlines run horizontally.

* The Row Decoder selects the Wordline for a specific Row.

* The Local Row Buffer is connected to the rows of the Subarray.

**DRAM Cell Level:**

* The DRAM Cell consists of a Transistor and a Capacitor.

* The Capacitor stores the data bit.

* The Transistor acts as a switch to access the Capacitor.

* The Wordline controls the Transistor.

* The Bitline reads/writes data to the Capacitor.

### Key Observations

* The diagram illustrates a hierarchical structure, with increasing levels of detail as you move from the Module to the Cell.

* The PRAC is a key component for managing access to rows within the Subarray.

* The Local Row Buffer acts as a cache for frequently accessed rows.

* The DRAM cell is a simple but effective storage element.

* The diagram emphasizes the parallel access capabilities of DRAM through the use of multiple Subarrays.

### Interpretation

The diagram demonstrates the complex organization required to implement a DRAM module. The hierarchical structure allows for efficient access to large amounts of data. The PRAC and Local Row Buffers are crucial for optimizing performance by reducing access latency. The diagram highlights the fundamental building block of DRAM – the DRAM cell – and its simple yet effective design. The arrangement of subarrays and the use of bitlines and wordlines enable parallel access to data, which is essential for high-bandwidth memory systems. The diagram suggests a focus on optimizing row access patterns, as evidenced by the prominence of the PRAC and Local Row Buffers. The overall architecture is designed to balance capacity, speed, and power consumption. The diagram is a simplified representation, and real-world DRAM modules are significantly more complex, but it provides a valuable overview of the key components and their relationships.

</details>

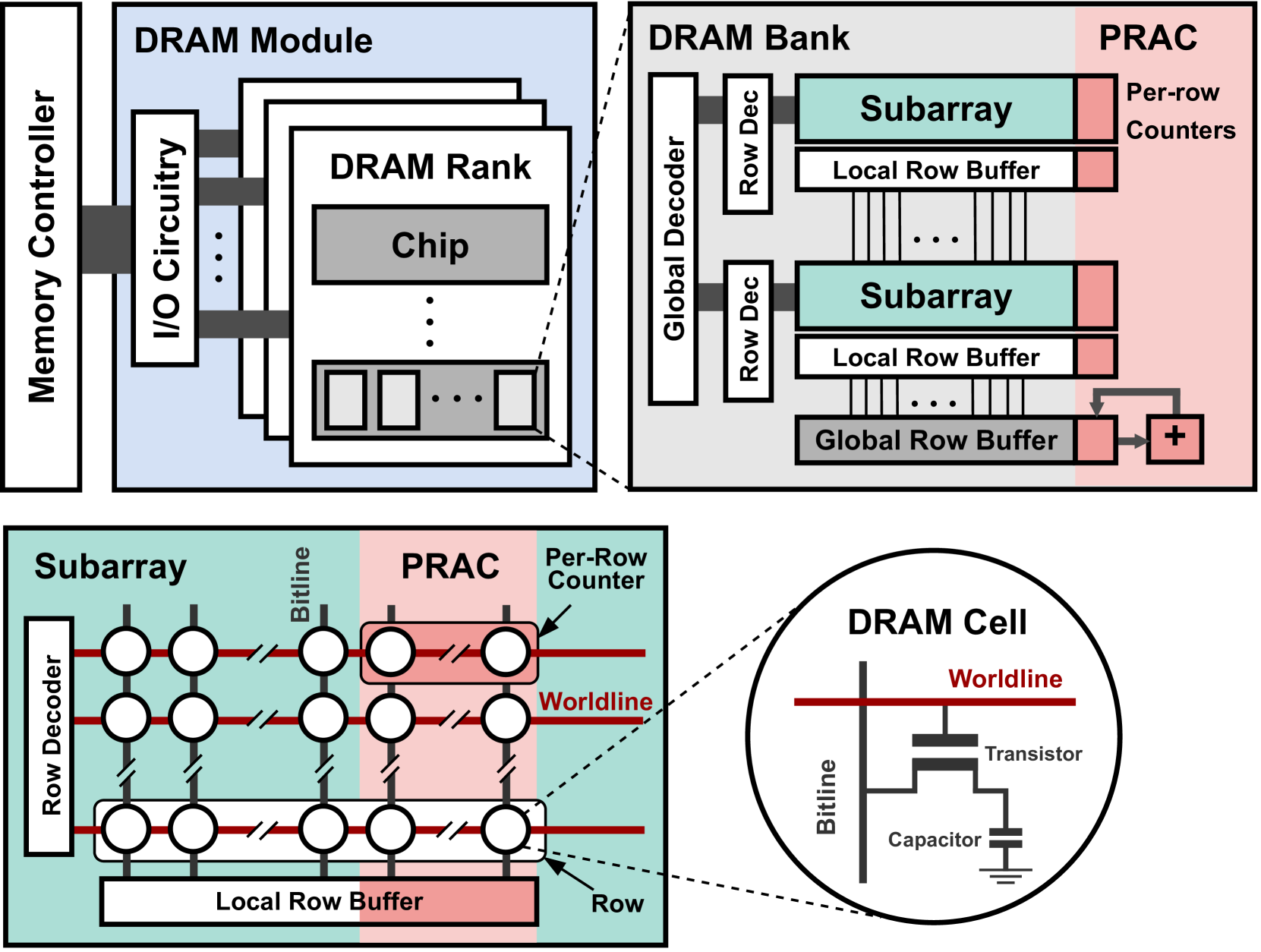

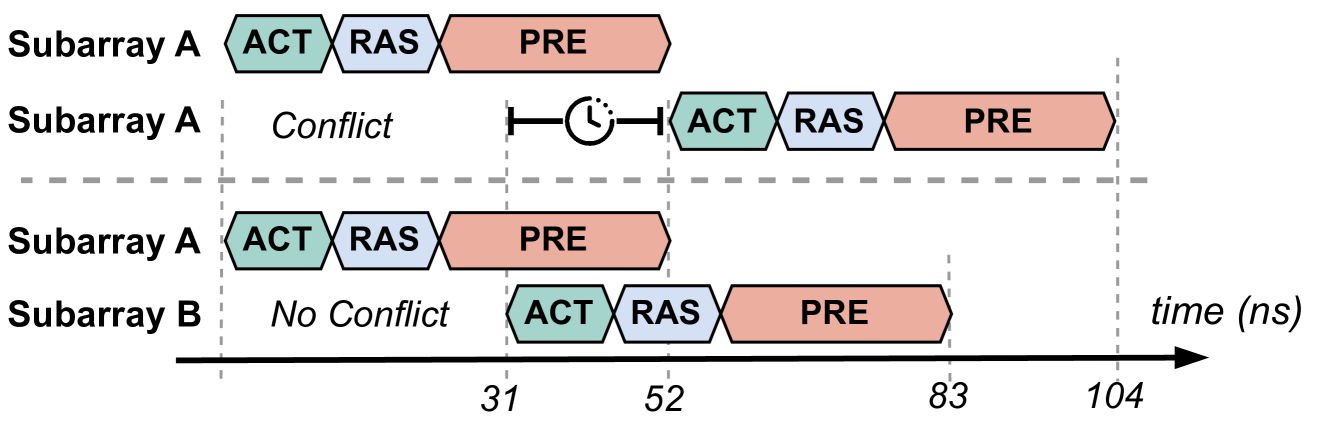

Figure 2. DRAM Organization and Per-Row Activation Counter (PRAC) extension.

In this section, we provide an overview of key background topics, including DRAM architecture, the Rowhammer vulnerability, and existing mitigation strategies. We also describe the PRAC+ABO mechanism introduced in the DDR5 standard, which forms the basis for our proposed performance enhancements.

2.1. DRAM Architecture and Parameters

Modern DRAM is organized hierarchically into ranks, banks, subarrays, rows, and columns, as illustrated in Figure 2. At the top of this hierarchy, the memory controller communicates with DRAM modules via a dedicated memory channel. Each module is composed of one or more ranks, which share access to the memory channel in a time-multiplexed fashion. A rank comprises several DRAM chips, and each chip contains multiple banks. Within each bank are numerous subarrays, forming the fundamental building blocks of memory storage. Each subarray is implemented as a two-dimensional array of cells, accessed via rows (wordlines) and columns (bitlines). A single DRAM cell includes a capacitor, which holds a bit of data as an electric charge, and an access transistor that enables read and write operations. Each subarray contains its own local row buffer, which temporarily holds the contents of an activated row. Only one subarray at a time can forward its data to the global row buffer shared across the bank, enabling read and write operations to proceed. To access data, the memory controller issues an Activate (ACT) command, which opens a specific row by transferring its contents into the row buffer. Subsequent read or write operations are performed on this open row, resulting in a low-latency row hit. If a different row within the same bank must be accessed, the current row must first be closed using a Precharge (PRE) command, which restores the contents of the row buffer to the array and resets the bitlines.

DRAM access behavior is governed by a set of well-defined timing parameters specified by the JEDEC standard (JEDEC, 2024a), as summarized in Table 1. We use DDR5-3200AN timings. For example, the Row Access Strobe (RAS) latency defines the minimum delay between an ACT and a subsequent PRE command within the same bank. Additionally, to maintain data integrity, DRAM cells require periodic refreshing within a retention window denoted as $t_{\mathrm{REFW}}$ , typically 32ms. To amortize the cost of refresh, DRAM divides its cells into 8192 refresh groups, and a Refresh (REF) command is issued every $t_{\mathrm{REFI}}$ = 3900ns to refresh one group at a time.

| $t_{RAS}$ | Minimum time a row must be kept open | 32ns | 16ns |

| --- | --- | --- | --- |

| $t_{RP}$ | Time to precharge an open row | 15ns | 36ns |

| $t_{RC}$ | Time between successive ACTs to a bank | 47ns | 52ns |

| $t_{RTP}$ | Minimum time for a PRE after a RD to the same bank | 7.5ns | 5ns |

| $t_{WR}$ | minimum time for a PRE after a WR to the same bank | 30ns | 10ns |

Table 1. DRAM Timing Standards

2.2. Read Disturbance Attacks

Modern DRAM is increasingly vulnerable to read disturbance effects, where the act of accessing one memory row can inadvertently influence the integrity of data stored in nearby rows. This phenomenon arises from the shrinking physical dimensions of DRAM cells and the reduced noise margins between them. As manufacturing processes continue to scale, the isolation between adjacent rows weakens, making DRAM cells more susceptible to charge leakage and electromagnetic coupling.

The most prominent example of a read disturbance attack is Rowhammer (Kim et al., 2014a), where an attacker repeatedly activates (i.e., opens) a single DRAM row, known as the aggressor row, within a refresh interval. If the number of activations exceeds a certain threshold, electrical interference can induce bit flips in adjacent victim rows. Since its discovery, Rowhammer has been shown to compromise system security in various ways, including enabling privilege escalation (Seaborn and Dullien, 2015), breaking isolation between virtual machines (Razavi et al., 2016), and subverting browser-based sandboxes (Gruss et al., 2016).

Beyond Rowhammer, newer variants continue to expose the fragility of DRAM. RowPress (Luo et al., 2023a) demonstrates that simply holding a row open for an extended duration—without repeated activations—can cause similar disturbance effects in neighboring rows. This shows that both temporal access frequency and row residency time can lead to data corruption, expanding the threat model beyond traditional Rowhammer.

The security implications of these attacks are severe. Bit flips in sensitive memory structures such as page tables, kernel space, or encryption keys can be exploited to gain arbitrary code execution, bypass memory isolation, or compromise confidentiality (Frigo et al., 2020; Gruss et al., 2018; Razavi et al., 2016; Gruss et al., 2016). To mitigate read disturbance attacks, a wide range of defenses have been proposed. These typically follow a two-phase approach: (1) Detecting aggressor rows, using techniques such as memory controller-based row activation counters (Kim et al., 2021), probabilistic sampling (Son et al., 2017; Kim et al., 2014a), or in-DRAM tracking (Jaleel et al., 2024b; Qureshi et al., 2024); and (2) Applying preventive measures, such as Target Row Refresh (TRR) (Marazzi et al., 2022; Frigo et al., 2020), domain-aware memory allocation (Saxena et al., 2024b), or row remapping (Saxena et al., 2024a). While many of these defenses are effective at reducing bit flips, they often incur significant performance overheads and hardware complexity, especially when mitigation is applied conservatively to avoid false negatives. As DRAM continues to scale and disturbance thresholds fall, there is a growing need for efficient, low-latency, and fine-grained mitigation mechanisms that preserve performance without compromising reliability or security.

2.3. PRAC and ABO in Modern DRAM Standards

To address the growing threat of Rowhammer attacks, recent JEDEC standards have introduced two complementary in-DRAM mitigation mechanisms: Per-Row Activation Counter (PRAC) and Alert Back-Off (ABO). These mechanisms aim to detect and mitigate malicious or excessive row activations efficiently, while remaining scalable for high-density DRAM systems.

2.3.1. PRAC

This feature is designed to mitigate Rowhammer attacks with minimal area and power overhead by embedding activation tracking directly within the DRAM array. Specifically, PRAC extends each DRAM row with a dedicated activation counter. Every time a row is precharged, its corresponding counter is incremented by the increment logic in the global row buffer shared between all subarrays within a bank, as illustrated in Figure 2. This increment operation is performed during the precharge phase and is implemented as a read-modify-write (RMW) sequence at the bank level. When a precharge command (PRE) is issued, the DRAM bank reads the activation counter of the recently accessed row, updates its value, and writes it back before deactivating the wordline. This sequence introduces additional latency to the precharge operation, requiring DRAM timing parameters to be extended to accommodate the counter update overhead. While PRAC enables fine-grained tracking of row activations without relying on external memory controller storage, its bank-wide RMW implementation creates performance bottlenecks by delaying subsequent accesses to other non-conflicting subarrays until the counter update completes, as illustrated in top and middle rows of the Figure 1 (a). The performance impact of this overhead is analyzed in detail in Section 3.

2.3.2. ABO

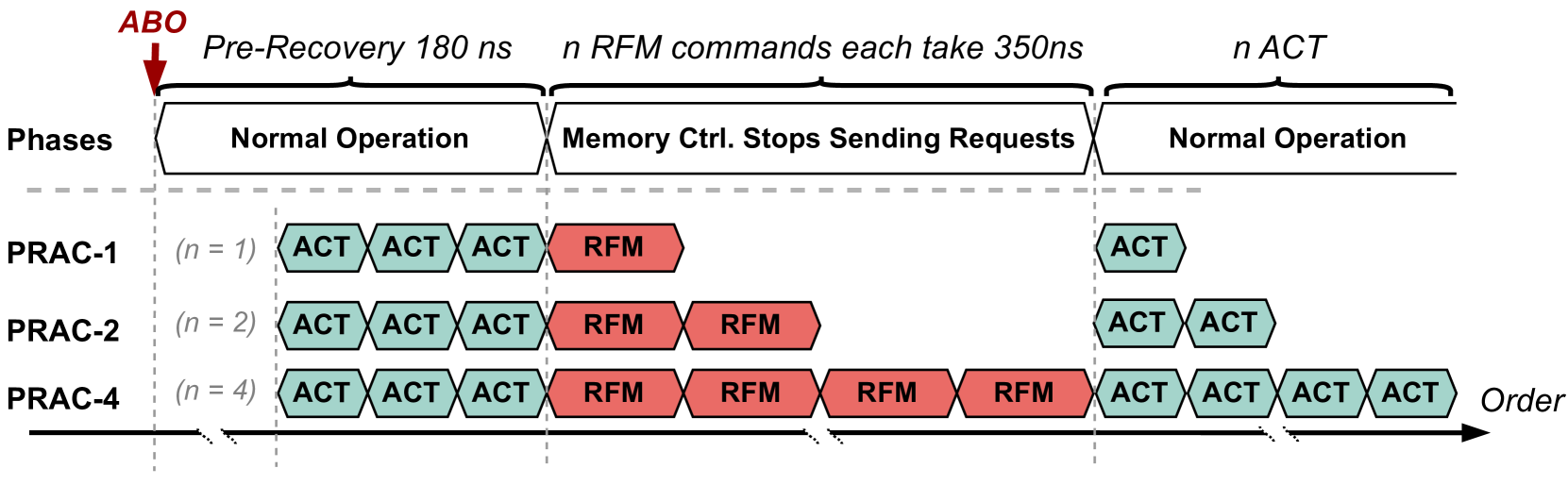

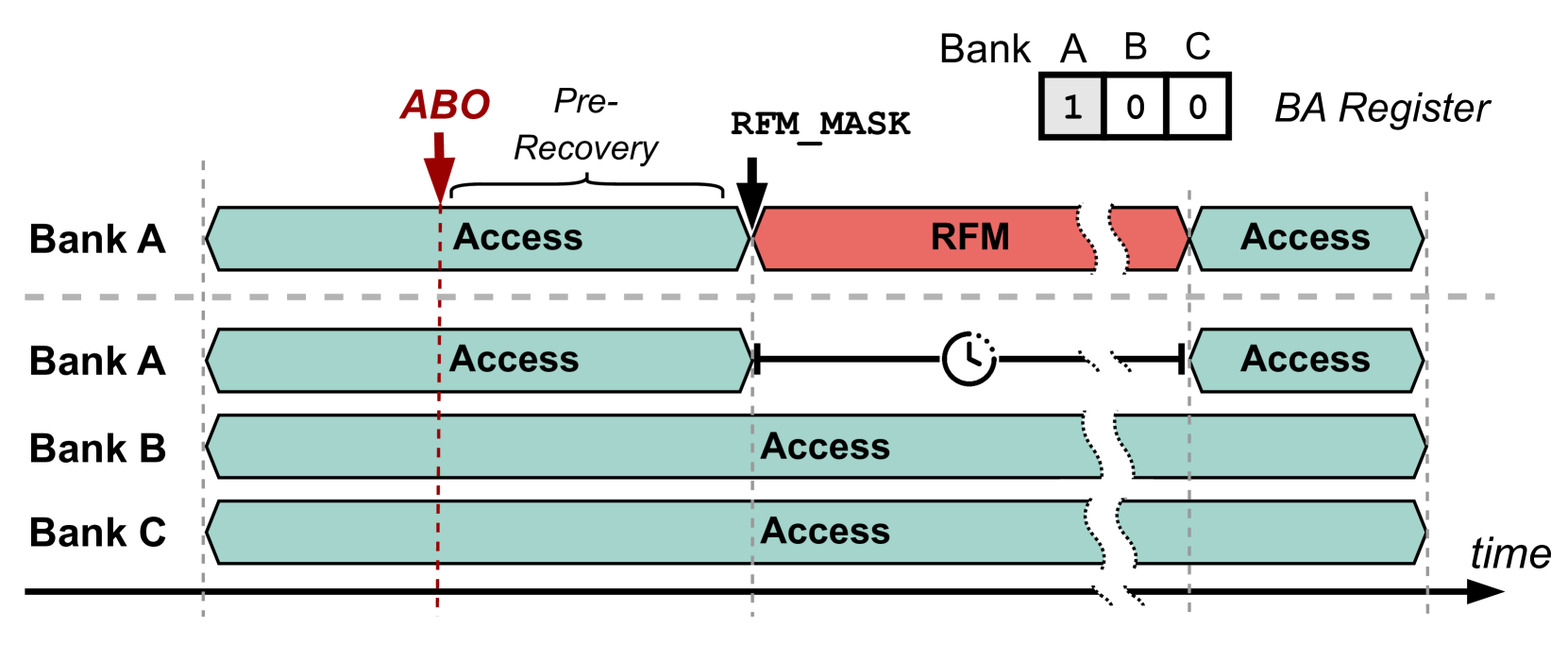

This mechanism complements PRAC by providing a signaling interface between the DRAM device and the memory controller. When a PRAC counter in any bank reaches a pre-defined threshold, the corresponding bank sends an ABO signal to notify the memory controller that mitigation is needed. Upon receiving the ABO signal, the memory controller initiates a pre-recovery phase lasting 180ns, during which it continues to serve memory requests normally as shown in Figure 3. After this window, the controller issues an RFM ab command to trigger targeted mitigation. Each RFM ab command incurs a latency of 350ns and applies to all banks in the channel (typically 64 banks). During this period, the memory controller stalls requests to the channel while the banks perform recovery operations, such as refreshing victim rows, as shown in the middle row of Figure 1 (b). If multiple banks reach the threshold in close succession, the memory controller may need to issue multiple RFM commands, each separated by a recovery window. This can amplify the performance impact, especially under aggressive access patterns that frequently trigger mitigation events.

To prevent back-to-back ABO signals, the protocol requires a minimum number of ACT commands between two consecutive alerts. In Figure 3, this value is denoted by $n$ . It also corresponds to the number of required RFM operations, as each RFM can service only a limited number of victim rows per invocation. After all RFM commands are completed, one additional ACT is permitted. The allowed values for $n$ are 1, 2, or 4, as illustrated by PRAC-1, PRAC-2, and PRAC-4 in Figure 3, as well as in Figures in Sections 3 and 6. Consequently, the minimum and maximum ABO intervals range from 350ns to 1500ns, each preceded by a fixed 180ns pre-recovery window.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Memory Controller Operation Phases

### Overview

The diagram illustrates the phases of operation for a memory controller following an ABO (Abort) event, showing how the number of parallel requests (PRAC-1, PRAC-2, PRAC-4) affects the recovery process. The diagram depicts a timeline divided into phases: Normal Operation, Memory Controller Stops Sending Requests, and a return to Normal Operation. It highlights the execution of ACT (Active) and RFM (Refresh) commands during these phases.

### Components/Axes

* **Vertical Axis:** "Phases" - labeled with "Normal Operation" and "Memory Ctrl. Stops Sending Requests".

* **Horizontal Axis:** "Order" - representing the sequence of commands over time.

* **PRAC-1:** Represents the case with n=1 parallel requests.

* **PRAC-2:** Represents the case with n=2 parallel requests.

* **PRAC-4:** Represents the case with n=4 parallel requests.

* **ACT:** Green rectangles representing Active commands.

* **RFM:** Red rectangles representing Refresh commands.

* **ABO:** Red downward pointing triangle indicating the abort event.

* **Time Annotations:** "Pre-Recovery 180 ns", "n RFM commands each take 350ns", "n ACT".

### Detailed Analysis

The diagram shows three scenarios (PRAC-1, PRAC-2, PRAC-4) illustrating the impact of the number of parallel requests on the recovery process after an ABO event.

* **PRAC-1 (n=1):**

* Before ABO: Three ACT commands are executed.

* After ABO: One RFM command is executed.

* Return to Normal: One ACT command is executed.

* **PRAC-2 (n=2):**

* Before ABO: Three ACT commands are executed.

* After ABO: Two RFM commands are executed.

* Return to Normal: Two ACT commands are executed.

* **PRAC-4 (n=4):**

* Before ABO: Three ACT commands are executed.

* After ABO: Four RFM commands are executed.

* Return to Normal: Four ACT commands are executed.

The diagram indicates that the pre-recovery phase takes 180 ns. Each RFM command takes 350 ns. The number of RFM commands executed during the "Memory Ctrl. Stops Sending Requests" phase corresponds to the value of 'n' for each PRAC scenario. The time taken for the ACT commands is denoted as 'n ACT', but no specific duration is provided.

### Key Observations

* The number of RFM commands executed during the recovery phase directly correlates with the number of parallel requests (n).

* The diagram visually demonstrates that a higher number of parallel requests (PRAC-4) requires more RFM commands to be executed during the recovery phase compared to lower numbers of parallel requests (PRAC-1, PRAC-2).

* The diagram does not provide specific timing information for the ACT commands, only indicating that 'n' ACT commands are executed.

### Interpretation

The diagram illustrates a memory controller's response to an abort event, specifically focusing on the refresh operations needed to restore data integrity. The number of RFM commands executed is directly proportional to the number of active requests at the time of the abort. This suggests that the memory controller needs to refresh the data associated with each outstanding request to ensure consistency after an error. The diagram highlights a trade-off: increasing the number of parallel requests can improve performance during normal operation, but it also increases the recovery time after an abort event due to the need for more refresh operations. The diagram is a simplified model, and doesn't account for potential variations in RFM command execution time or other factors that could influence recovery performance. The diagram is a visual aid for understanding the relationship between parallel requests, abort events, and memory refresh operations in a memory controller.

</details>

Figure 3. Alert-back-off (ABO) overview

2.4. Mitigations Based On PRAC+ABO

Several recent works build on the PRAC+ABO framework to strengthen its security guarantees and reduce its performance overheads. In this paper, we focus on three representative designs—Chronus (Canpolat et al., 2025), QPRAC (Woo et al., 2025), and MOAT (Qureshi and Qazi, 2024) —which propose distinct approaches to improving the effectiveness and efficiency of in-DRAM RowHammer mitigation.

Chronus (Canpolat et al., 2025) introduces architectural modifications to decouple PRAC counter updates from the critical path of DRAM access. By relocating per-row counters to a dedicated metadata subarray, Chronus reduces the serialization overhead associated with precharge operations. It also proposes enhancements to the ABO protocol by holding the alert signal until all mitigations are complete, ensuring stronger coordination between DRAM and the memory controller.

QPARC (Woo et al., 2025) revisits PRAC’s security model as originally proposed in the Panopticon framework (Bennett et al., 2021), and identifies two new attack vectors that exploit timing gaps in counter updates. To address this, it introduces a priority-based queue that tracks frequently accessed rows and schedules them for mitigation through ABO. This queuing mechanism enables timely and targeted mitigation while preserving compatibility with the existing PRAC+ABO interface.

MOAT (Qureshi and Qazi, 2024) focuses on simplifying mitigation logic by replacing queue-based designs with a tracking mechanism. It uses two SRAM registers to monitor one hot row at a time, applying proactive refreshes below a configurable threshold and issuing ABO signals when the threshold is exceeded. While MOAT reduces hardware complexity, it may face limitations under workloads with multiple simultaneous hot rows.

3. PRAC+ABO Limitations and Motivation

While PRAC+ABO provides a low-cost, JEDEC-standardized approach for in-DRAM Rowhammer mitigation, it introduces significant performance bottlenecks and security risks due to its coarse-grained design. This section presents a detailed analysis of the overheads introduced by PRAC updates and ABO-triggered channel-wide stalls. We also show how these issues can be exploited by adversaries to launch memory performance attacks. All insights are derived from cycle-accurate simulations, as described in Section 5.

3.1. Impact of PRAC Updates on DRAM Latency

PRAC extends each DRAM row with an activation counter that is incremented during the Precharge (PRE) command using a read-modify-write (RMW) operation, as illustrated in Figure 2. This update is performed at the bank level, using logic typically located near the global row buffer. As a result, when a PRE command is issued, the DRAM must first read, modify, and write back the counter value associated with the activated row before deactivating the wordline and returning to an idle state. This serialization delays subsequent memory operations, even when they target non-conflicting subarrays. To accommodate this RMW sequence, DRAM timing parameters are modified. Specifically, the precharge latency increases from 15ns to 36ns, and the activation-to-activation interval ( $t_{RC}$ ) increases from 47ns to 52ns. Interestingly, the row active time ( $t_{RAS}$ ) is reduced from 32ns to 16ns, partially offsetting the impact. Nevertheless, the increase in $t_{RC}$ introduces a minimum 5ns delay between successive row activations — particularly impactful in workloads with high row buffer conflicts.

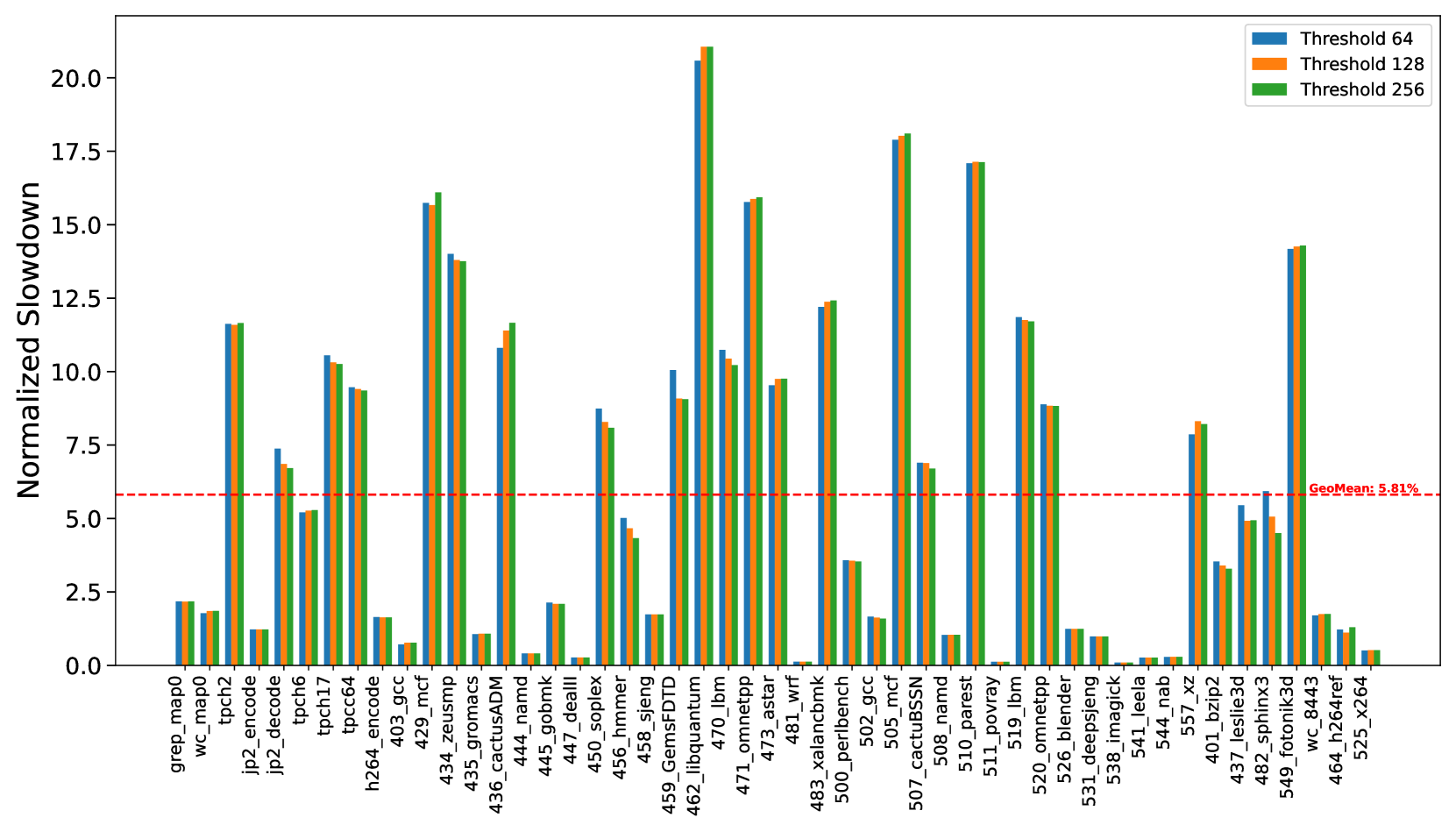

To quantify this impact, we evaluated a set of memory-intensive workloads under two timing configurations: (1) a baseline DRAM configuration using standard DDR5 timings, and (2) a PRAC-enabled configuration with updated timings, as specified in Table 1. The results, shown in Figure 4, indicate that workloads experience an average slowdown of 6%, with a maximum of close to 20% for 462.libquantum. These slowdowns are primarily attributed to increased latency introduced by PRAC counter updates, particularly in scenarios involving frequent row activations within the same bank. Although the row access time increases by only 10% (from 47ns to 52ns), the observed system-level performance overhead can reach up to 20%. This discrepancy arises because rows are not always precharged immediately after access. In many cases, a row remains open for extended durations due to row buffer policy, reducing the impact of a longer row access time. However, the increased precharge latency, nearly a 100% increase in the PRE timing, becomes the dominant contributor to performance degradation, especially when frequent rows are not immediately precharged.

We also evaluated multiple Alert thresholds (64, 128, and 256) and observed only minor variations in slowdown across these configurations. This suggests that the performance degradation is largely due to static timing overheads from PRAC’s counter update logic, rather than the dynamic triggering of ABO or mitigation procedures. As expected, applications with low row conflict rates, such as 447_dealII and 444_namd, exhibited significantly less performance impact.

Observation 1: The updated DDR5 timings introduce performance overhead, slowing down benign workloads by geometric mean of 6%.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Bar Chart: Normalized Slowdown for Different Benchmarks

### Overview

This image presents a bar chart comparing the normalized slowdown of various benchmarks under different threshold settings (64, 128, and 256). The x-axis lists the benchmarks, and the y-axis represents the normalized slowdown. A horizontal dashed red line indicates a geometric mean slowdown of 5.81%.

### Components/Axes

* **X-axis:** Benchmark names (grep, map0, wc, tpch2, jp2\_encode, jp2\_decode, tpch6, tpch16, h264\_encode, tpcc64, 403\_gcc, 434\_zeusmp, 435\_gromacs, 436\_cactusADM, 444\_namd, 445\_gobmk, 446\_dealII, 456\_sheng, 458\_hmmer, 459\_GemsFDTD, 462\_libquantum, 470\_lbm, 471\_omnetpp, 473\_astar, 481\_wrf, 483\_xalancbmk, 500\_perlbench, 502\_gcc, 505\_mcf, 507\_cactusSSN, 508\_namd, 510\_povray, 511\_lbm, 520\_omnetpp, 526\_blender, 531\_deepspnj, 538\_imagick, 541\_leelab, 557\_xz, 401\_lesstif, 437\_sphinx3d, 549\_fotronik3d, 464\_radioss, 525\_x264).

* **Y-axis:** Normalized Slowdown (scale from 0 to 20+, labeled in increments of 2.5).

* **Legend:** Located at the top-right of the chart.

* Blue: Threshold 64

* Orange: Threshold 128

* Yellow: Threshold 256

* **Horizontal Line:** Dashed red line at y = 5.81, labeled "Geomean: 5.81%".

### Detailed Analysis

The chart displays three bars for each benchmark, representing the normalized slowdown at each threshold.

* **grep:** Threshold 64: ~1.2, Threshold 128: ~1.2, Threshold 256: ~1.2

* **map0:** Threshold 64: ~1.5, Threshold 128: ~1.5, Threshold 256: ~1.5

* **wc:** Threshold 64: ~1.5, Threshold 128: ~1.5, Threshold 256: ~1.5

* **tpch2:** Threshold 64: ~2.0, Threshold 128: ~2.0, Threshold 256: ~2.0

* **jp2\_encode:** Threshold 64: ~2.5, Threshold 128: ~2.5, Threshold 256: ~2.5

* **jp2\_decode:** Threshold 64: ~3.0, Threshold 128: ~3.0, Threshold 256: ~3.0

* **tpch6:** Threshold 64: ~3.5, Threshold 128: ~3.5, Threshold 256: ~3.5

* **tpch16:** Threshold 64: ~4.0, Threshold 128: ~4.0, Threshold 256: ~4.0

* **h264\_encode:** Threshold 64: ~4.5, Threshold 128: ~4.5, Threshold 256: ~4.5

* **tpcc64:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **403\_gcc:** Threshold 64: ~16.0, Threshold 128: ~16.0, Threshold 256: ~16.0

* **434\_zeusmp:** Threshold 64: ~16.0, Threshold 128: ~16.0, Threshold 256: ~16.0

* **435\_gromacs:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **436\_cactusADM:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **444\_namd:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **445\_gobmk:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **446\_dealII:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **456\_sheng:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **458\_hmmer:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **459\_GemsFDTD:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **462\_libquantum:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **470\_lbm:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **471\_omnetpp:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **473\_astar:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **481\_wrf:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **483\_xalancbmk:** Threshold 64: ~15.0, Threshold 128: ~15.0, Threshold 256: ~15.0

* **500\_perlbench:** Threshold 64: ~16.0, Threshold 128: ~16.0, Threshold 256: ~16.0

* **502\_gcc:** Threshold 64: ~16.0, Threshold 128: ~16.0, Threshold 256: ~16.0

* **505\_mcf:** Threshold 64: ~16.0, Threshold 128: ~16.0, Threshold 256: ~16.0

* **507\_cactusSSN:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **508\_namd:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **510\_povray:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **511\_lbm:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **520\_omnetpp:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **526\_blender:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **531\_deepspnj:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **538\_imagick:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **541\_leelab:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **557\_xz:** Threshold 64: ~17.0, Threshold 128: ~17.0, Threshold 256: ~17.0

* **401\_lesstif:** Threshold 64: ~5.0, Threshold 128: ~5.0, Threshold 256: ~5.0

* **437\_sphinx3d:** Threshold 64: ~5.0, Threshold 128: ~5.0, Threshold 256: ~5.0

* **549\_fotronik3d:** Threshold 64: ~5.0, Threshold 128: ~5.0, Threshold 256: ~5.0

* **464\_radioss:** Threshold 64: ~5.0, Threshold 128: ~5.0, Threshold 256: ~5.0

* **525\_x264:** Threshold 64: ~5.0, Threshold 128: ~5.0, Threshold 256: ~5.0

### Key Observations

* The normalized slowdown is relatively consistent across the three thresholds for most benchmarks.

* Benchmarks `tpcc64`, `403_gcc`, `434_zeusmp`, `435_gromacs`, `436_cactusADM`, `444_namd`, `445_gobmk`, `446_dealII`, `456_sheng`, `458_hmmer`, `459_GemsFDTD`, `462_libquantum`, `470_lbm`, `471_omnetpp`, `473_astar`, `481_wrf`, `483_xalancbmk`, `500_perlbench`, `502_gcc`, `505_mcf` exhibit significantly higher slowdowns (around 15-17) compared to others.

* Benchmarks `grep`, `map0`, `wc`, `tpch2`, `jp2_encode`, `jp2_decode`, `tpch6`, `tpch16`, `h264_encode` show relatively low slowdowns (below 5).

* Benchmarks `401_lesstif`, `437_sphinx3d`, `549_fotronik3d`, `464_radioss`, `525_x264` show slowdowns around the geometric mean (5).

### Interpretation

The chart demonstrates the impact of different threshold settings on the performance of various benchmarks. The consistent slowdown across thresholds for most benchmarks suggests that the threshold setting does not significantly affect their performance. However, the substantial slowdown observed for certain benchmarks (e.g., `tpcc64`) indicates that these benchmarks are particularly sensitive to the threshold. The geometric mean line provides a baseline for comparison, highlighting benchmarks that perform significantly above or below average. The data suggests that optimizing the threshold for these specific benchmarks might yield performance improvements, while for others, the threshold has minimal impact. The large variation in slowdowns across benchmarks indicates that the performance impact of the threshold is highly workload-dependent.

</details>

Figure 4. Percentage slow-down due to the increase in the latency of PRE command.

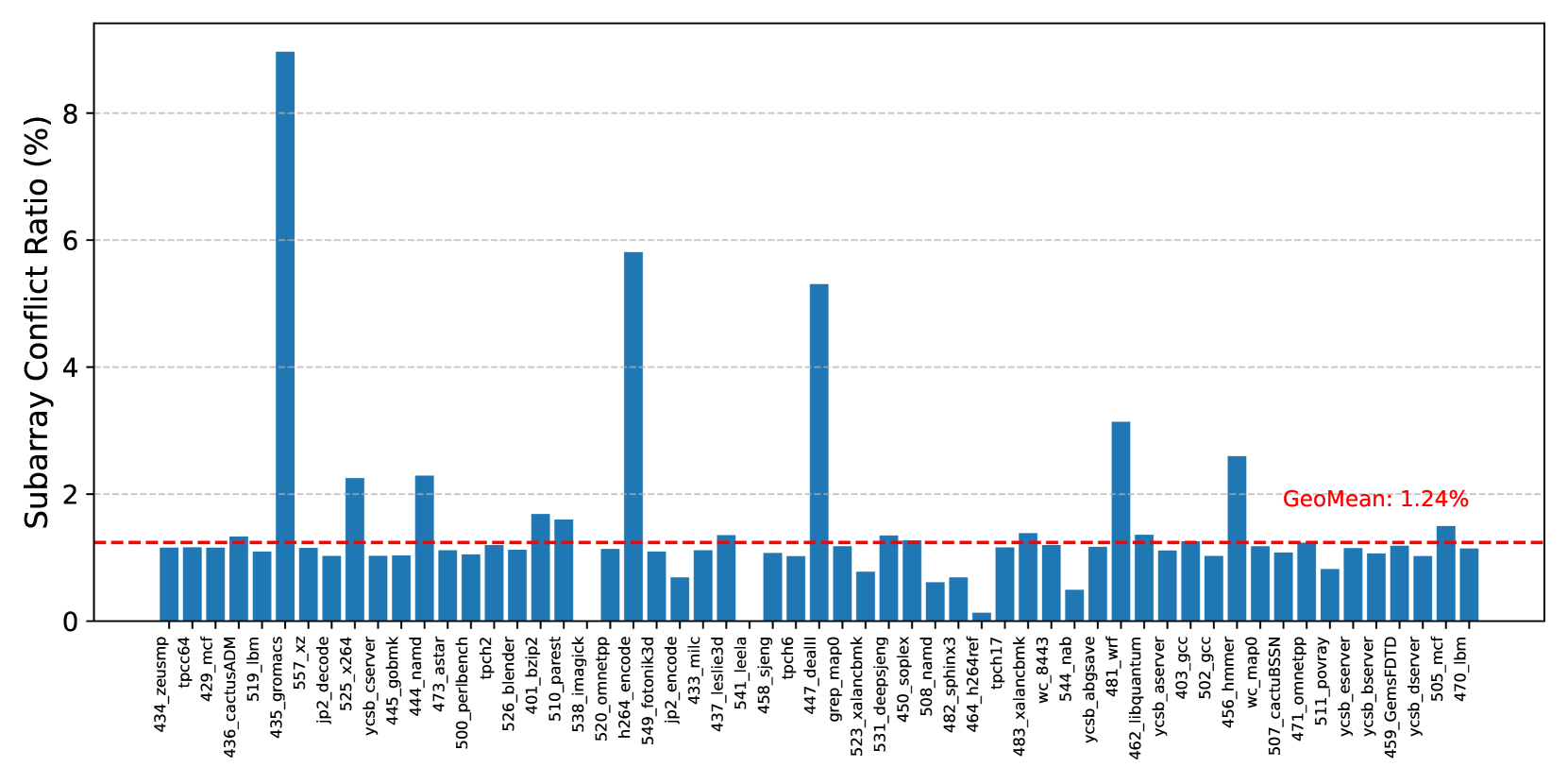

PRACtical proposes reducing the performance overhead of PRAC by performing counter updates within the local row buffers of individual subarrays. Modern DRAM architectures commonly adopt a density-optimized open-bitline structure (Keeth et al., 2007; Itoh, 2001; Chang et al., 2016; Luo et al., 2020), which places sense amplifiers on both sides of a subarray and allows adjacent subarrays to share these amplifiers. As a result, when a subarray is performing a counter update, the memory controller must delay not only accesses to that subarray but also to its neighboring subarrays. To evaluate the practical impact of this constraint, we measure the percentage of subarray conflicts relative to total row buffer conflicts. Assuming a configuration with 256 subarrays per bank, Figure 5 presents the ratio of subarray conflicts, including those involving adjacent subarrays, to total row buffer conflicts. The results demonstrate that subarray conflicts are relatively rare, accounting for only 1.24% on average across a diverse set of workloads. This observation indicates that enabling PRAC updates at the subarray level can enhance performance by permitting concurrent accesses to subarrays that are not involved in counter updates.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Bar Chart: Subarray Conflict Ratio

### Overview

The image presents a bar chart displaying the Subarray Conflict Ratio (%) for a series of identifiers. The chart appears to be comparing the conflict ratio across different systems or components, with a horizontal line indicating the geometric mean.

### Components/Axes

* **Y-axis:** "Subarray Conflict Ratio (%)", ranging from 0 to 8, with increments of 1.

* **X-axis:** A series of identifiers, including: 434\_zeusmcp, 429\_n4, 436\_cactusADM, 519\_lbm, 535\_gromacs, 557\_xz, jp2\_d5e\_x2e, ycsb\_server, 442\_gromk, 471\_tensor, 500\_perbench2, tcdb, 526\_ble\_p2, 401\_pzip2, 516\_imagepk, 520\_omnetpp, 524\_fotonik3d, 549\_leslie3d, 433\_milc, 541\_sheng, 523\_greg\_detail, 447\_tcdb, 531\_x26\_napld, 508\_namd, 464\_h264rx, 482\_sphinx3, 464\_tpcnh7, 483\_xalanc\_843, wc\_8443, ycsb\_abgsve, 544\_lbm, 462\_libquantum, 456\_gcc, 405\_nm\_mpego, 507\_tz\_mpego, 474\_cactusSN, 511\_omnetpp, ycsb\_server, 459\_GemsFDTD, 505\_mcf, 470\_lbm.

* **Horizontal Line:** A dashed orange line labeled "GeoMean: 1.24%". This line represents the geometric mean of the Subarray Conflict Ratio.

### Detailed Analysis

The chart consists of 35 bars, each representing the Subarray Conflict Ratio for a specific identifier. The bars vary significantly in height.

Here's a breakdown of approximate values, reading from left to right:

* 434\_zeusmcp: ~0.8%

* 429\_n4: ~1.2%

* 436\_cactusADM: ~1.6%

* 519\_lbm: ~0.4%

* 535\_gromacs: ~1.0%

* 557\_xz: ~0.6%

* jp2\_d5e\_x2e: ~0.2%

* ycsb\_server: ~0.4%

* 442\_gromk: ~0.8%

* 471\_tensor: ~0.6%

* 500\_perbench2: ~0.4%

* tcdb: ~0.2%

* 526\_ble\_p2: ~0.4%

* 401\_pzip2: ~0.2%

* 516\_imagepk: ~0.6%

* 520\_omnetpp: ~0.8%

* 524\_fotonik3d: ~5.6% (Highest value)

* 549\_leslie3d: ~0.4%

* 433\_milc: ~0.2%

* 541\_sheng: ~0.4%

* 523\_greg\_detail: ~0.2%

* 447\_tcdb: ~0.2%

* 531\_x26\_napld: ~0.2%

* 508\_namd: ~0.4%

* 464\_h264rx: ~0.2%

* 482\_sphinx3: ~0.4%

* 464\_tpcnh7: ~0.2%

* 483\_xalanc\_843: ~0.2%

* wc\_8443: ~0.2%

* ycsb\_abgsve: ~0.4%

* 544\_lbm: ~0.2%

* 462\_libquantum: ~0.4%

* 456\_gcc: ~0.2%

* 405\_nm\_mpego: ~0.2%

* 507\_tz\_mpego: ~0.2%

* 474\_cactusSN: ~0.4%

* 511\_omnetpp: ~0.2%

* ycsb\_server: ~0.4%

* 459\_GemsFDTD: ~0.2%

* 505\_mcf: ~0.2%

* 470\_lbm: ~0.2%

### Key Observations

* The identifier "524\_fotonik3d" has a significantly higher Subarray Conflict Ratio (approximately 5.6%) compared to all other identifiers. This is a clear outlier.

* Most identifiers have a Subarray Conflict Ratio below 1%.

* The geometric mean (1.24%) is relatively low, suggesting that, overall, the Subarray Conflict Ratio is generally low across these identifiers.

* There is a wide range of conflict ratios, indicating varying levels of conflict across the different systems/components.

### Interpretation

The chart demonstrates the distribution of Subarray Conflict Ratios across a set of identifiers. The high conflict ratio observed for "524\_fotonik3d" warrants further investigation. It could indicate a specific issue with that system or component, such as inefficient memory access patterns or contention for shared resources. The geometric mean provides a useful benchmark for assessing the overall performance and identifying potential areas for optimization. The wide variation in conflict ratios suggests that some systems are more prone to conflicts than others, and targeted improvements could be made to reduce these conflicts. The data suggests that the majority of the systems have low conflict ratios, but the outlier highlights a potential problem area. Further analysis would be needed to understand the root cause of the high conflict ratio for "524\_fotonik3d" and determine if similar issues exist in other systems.

</details>

Figure 5. Ratio(%) of Subarray conflicts.

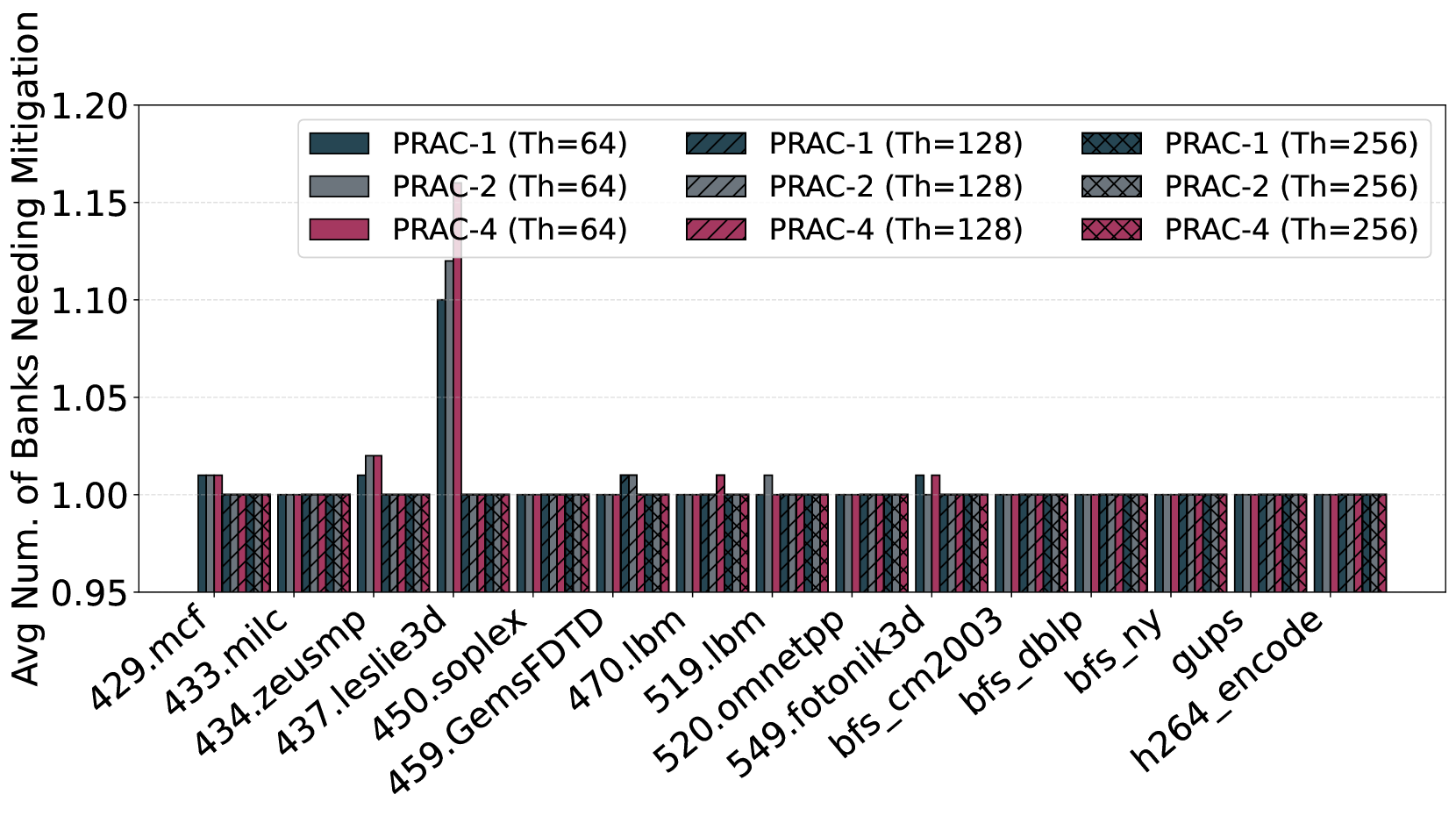

3.2. Inefficiency of Channel-Wide Stall of RFM ab

The ABO mechanism notifies the memory controller when a row’s activation count crosses the threshold. After the 180ns pre-recovery period, the controller issues an RFM ab command, triggering a 350ns stall across the entire memory channel even if only a small subset of banks require mitigation.

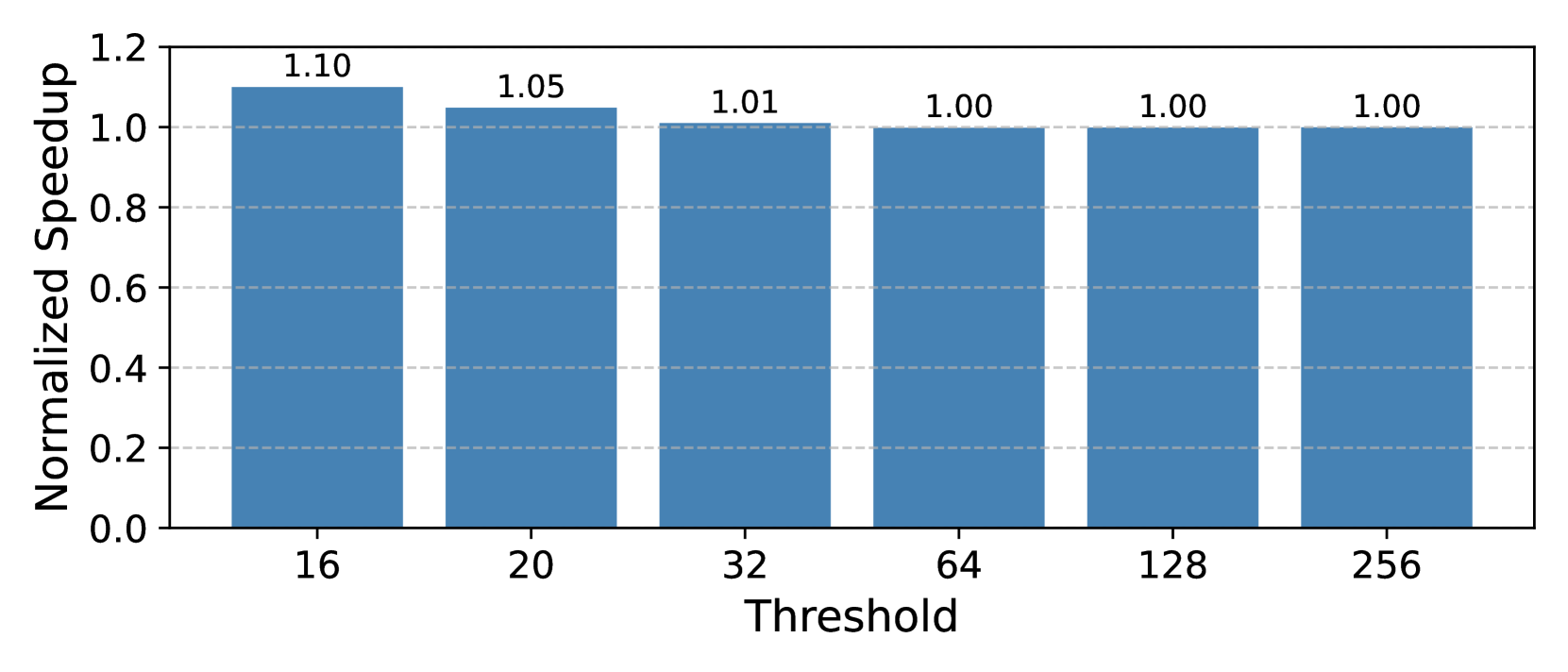

This coarse-grained stall mechanism is overly conservative. Across a wide range of benign workloads, our measurements show that, on average, only 1.16 out of 64 banks require mitigation when an ABO signal is raised (Figure 7) and maximum of 4 banks need mitigation across all recovery periods (Figure 8). As a result, over 90% of the banks are unnecessarily stalled during each recovery phase, significantly reducing memory-level parallelism and penalizing threads accessing unaffected banks.

Although a bank may not require recovery, i.e., it does not contain any hot rows, the issuance of the RFM ab command blocks memory accesses to all banks within the channel. To utilize this otherwise idle period, prior works (Qureshi and Qazi, 2024; Canpolat et al., 2025; Woo et al., 2025) adopt opportunistic mitigation strategies. These strategies proactively refresh potential victim rows whose counters are likely to reach the critical threshold in the near future. Specifically, upon issuance of RFM ab, each bank refreshes rows adjacent to the row with the highest activation count (i.e., the most likely aggressor), thereby ensuring that banks do not remain idle and reducing the need for issuing additional RFM ab commands in the future.

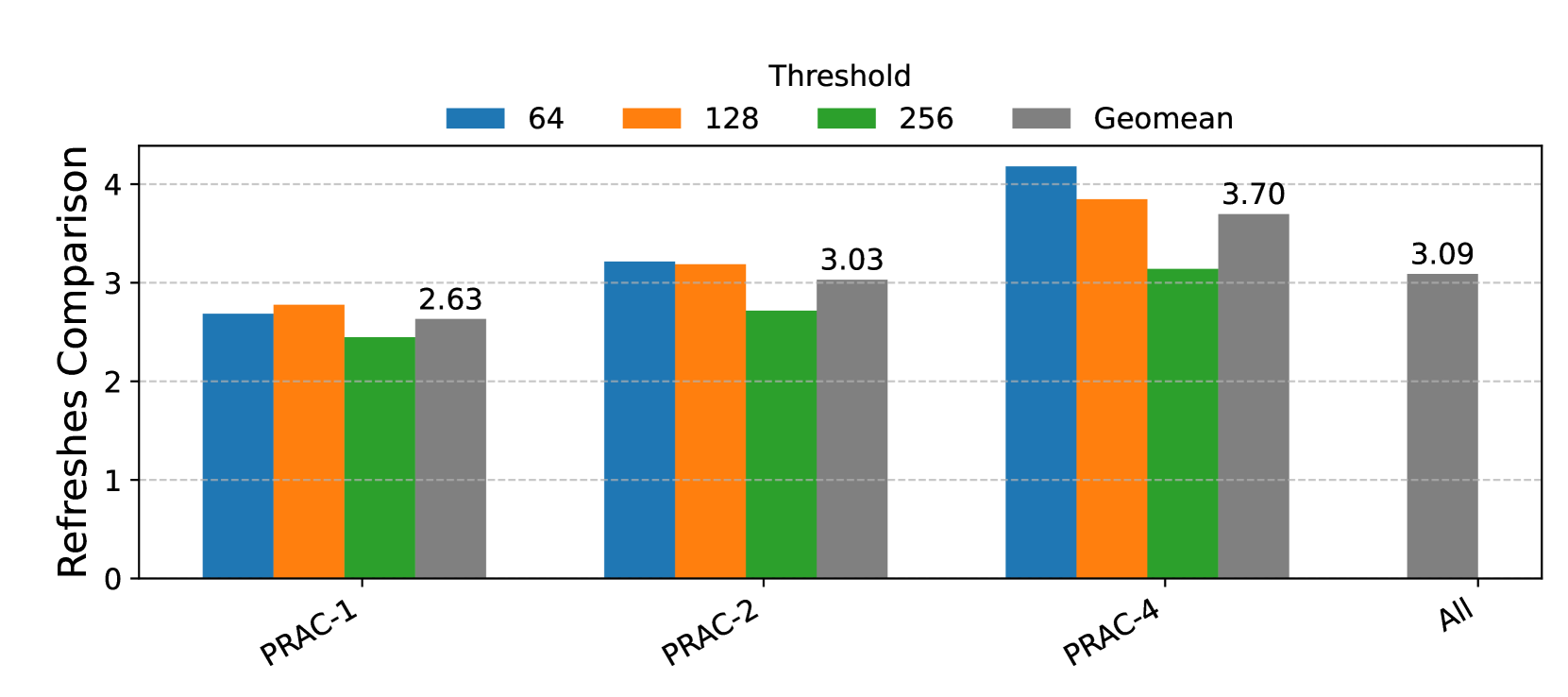

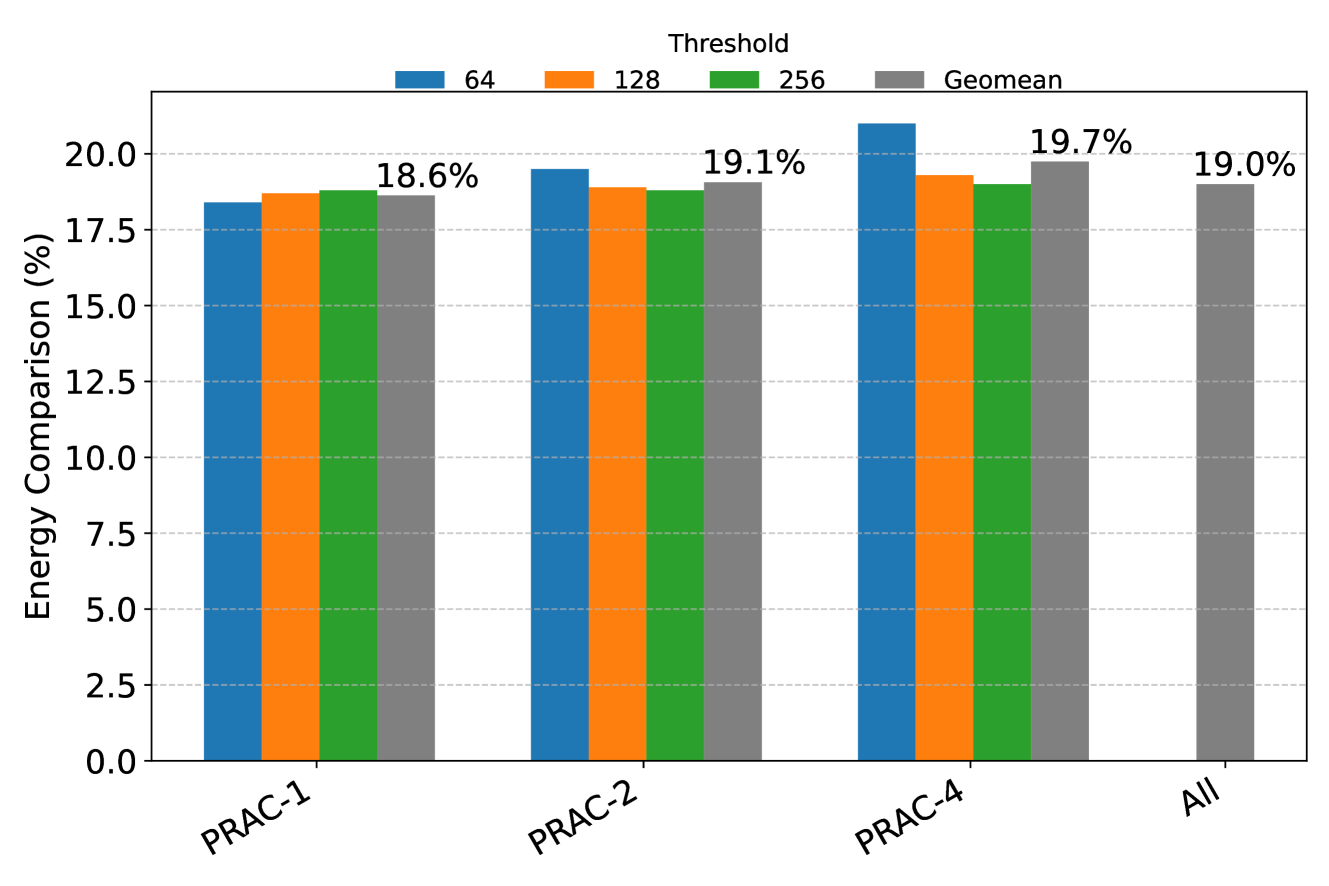

However, this approach introduces inefficiencies. Not all of the refreshes performed are strictly necessary, leading to redundant operations. To quantify this overhead, we compare the number of total RFM refreshes that are strictly required to those actually performed under opportunistic mitigation. As shown in Figure 6, the results, aggregated as the geometric mean across multiple PRAC variants (PRAC-1, PRAC-2, PRAC-4) and thresholds (64, 128, 256), demonstrate that opportunistic mitigation incurs more than a 3× increase in RFM refreshes. This analysis underscores a significant trade-off: while opportunistic mitigation reduces idle bank time and preempts future violations, it comes at the cost of approximately 70% higher RFM -related energy consumption in DRAM.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Bar Chart: Refreshes Comparison

### Overview

The image presents a bar chart comparing "Refreshes" across four different categories: PRAC-1, PRAC-2, PRAC-4, and "All". The chart displays data for four different thresholds: 64, 128, 256, and the geometric mean (Geomean). The y-axis represents the value of "Refreshes Comparison", ranging from 0 to 4.

### Components/Axes

* **Y-axis:** "Refreshes Comparison" (Scale: 0 to 4, increments of 1)

* **X-axis:** Categories: PRAC-1, PRAC-2, PRAC-4, All

* **Legend:** Located at the top-right of the chart.

* Blue: Threshold 64

* Orange: Threshold 128

* Green: Threshold 256

* Gray: Geomean

### Detailed Analysis

The chart consists of four groups of bars, one for each category on the x-axis. Each group contains four bars, representing the four thresholds.

* **PRAC-1:**

* Threshold 64: Approximately 2.80

* Threshold 128: Approximately 2.40

* Threshold 256: Approximately 1.00

* Geomean: 2.63

* **PRAC-2:**

* Threshold 64: Approximately 3.10

* Threshold 128: Approximately 3.20

* Threshold 256: Approximately 1.00

* Geomean: 3.03

* **PRAC-4:**

* Threshold 64: Approximately 4.20

* Threshold 128: Approximately 3.80

* Threshold 256: Approximately 1.00

* Geomean: 3.70

* **All:**

* Threshold 64: Approximately 3.00

* Threshold 128: Approximately 3.00

* Threshold 256: Approximately 1.00

* Geomean: 3.09

The bars for Threshold 256 are consistently low (around 1.00) across all categories. The Geomean values are generally higher than the Threshold 256 bars, but lower than the Threshold 64 and 128 bars.

### Key Observations

* PRAC-4 consistently shows the highest values for Thresholds 64 and 128.

* Threshold 256 consistently shows the lowest values across all categories.

* The Geomean values are relatively stable across the categories, hovering around 3.0.

* The difference between the Threshold 64/128 bars and the Geomean bar is most pronounced in PRAC-4.

### Interpretation

The chart demonstrates the impact of different thresholds on the number of refreshes. The consistently low values for the 256 threshold suggest that this threshold is rarely triggered, or that refreshes are not needed at this level. The higher values for the 64 and 128 thresholds indicate that these thresholds are more frequently met, leading to more refreshes.

The Geomean provides a central tendency measure, indicating the typical number of refreshes across all thresholds. The fact that the Geomean is lower than the 64 and 128 thresholds suggests that the lower thresholds are driving the overall refresh rate.

The significant difference between the thresholds and the Geomean in PRAC-4 suggests that this category is particularly sensitive to the lower thresholds, and that reducing the refresh rate at these thresholds could have a substantial impact on overall performance. The "All" category provides a baseline for comparison, showing that the overall refresh rate is similar to that of PRAC-2.

</details>

Figure 6. Comparison of number of RFM refreshes for opportunistic mitigation for PRAC-1, 2, and 4 and thresholds of 64, 128, and 256.

Observation 2: In benign workloads, fewer than 10% of banks require mitigation during an ABO-triggered recovery period, while opportunistic mitigation performs 3x more recovery refreshes than needed.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: Average Number of Banks Needing Mitigation

### Overview

This bar chart compares the average number of banks needing mitigation across different applications (listed on the x-axis) for different PRAC configurations (PRAC-1, PRAC-2, PRAC-4) and thread counts (Th=64, Th=128, Th=256). The y-axis represents the average number of banks needing mitigation. Each application has three bars representing the different PRAC/thread configurations.

### Components/Axes

* **X-axis:** Application names: 429.mcf, 433.milc, 434.zeusmp, 437.leslie3d, 450.soplex, 459.GemsFDTD, 470.lbm, 519.lbm, 520.omnetpp, 549.fotonik3d, bfs\_cm2003, bfs\_dlbp, bfs\_ny, gups, h264\_encode.

* **Y-axis:** "Avg Num. of Banks Needing Mitigation", ranging from approximately 0.95 to 1.20.

* **Legend:** Located at the top of the chart, horizontally aligned.

* PRAC-1 (Th=64) - Dark Blue

* PRAC-2 (Th=64) - Gray

* PRAC-4 (Th=64) - Pink

* PRAC-1 (Th=128) - Medium Blue

* PRAC-2 (Th=128) - Light Gray

* PRAC-4 (Th=128) - Light Pink

* PRAC-1 (Th=256) - Dark Cyan

* PRAC-2 (Th=256) - Light Cyan

* PRAC-4 (Th=256) - Purple

### Detailed Analysis

The chart consists of 15 applications, each with three bars representing different PRAC and thread configurations. The bars represent the average number of banks needing mitigation.

Here's a breakdown of the approximate values for each application and configuration:

* **429.mcf:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **433.milc:**

* PRAC-1 (Th=64): ~1.02

* PRAC-2 (Th=64): ~1.02

* PRAC-4 (Th=64): ~1.02

* PRAC-1 (Th=128): ~1.02

* PRAC-2 (Th=128): ~1.02

* PRAC-4 (Th=128): ~1.02

* PRAC-1 (Th=256): ~1.02

* PRAC-2 (Th=256): ~1.02

* PRAC-4 (Th=256): ~1.02

* **434.zeusmp:**

* PRAC-1 (Th=64): ~1.03

* PRAC-2 (Th=64): ~1.03

* PRAC-4 (Th=64): ~1.03

* PRAC-1 (Th=128): ~1.03

* PRAC-2 (Th=128): ~1.03

* PRAC-4 (Th=128): ~1.03

* PRAC-1 (Th=256): ~1.03

* PRAC-2 (Th=256): ~1.03

* PRAC-4 (Th=256): ~1.03

* **437.leslie3d:**

* PRAC-1 (Th=64): ~1.04

* PRAC-2 (Th=64): ~1.04

* PRAC-4 (Th=64): ~1.04

* PRAC-1 (Th=128): ~1.04

* PRAC-2 (Th=128): ~1.04

* PRAC-4 (Th=128): ~1.04

* PRAC-1 (Th=256): ~1.04

* PRAC-2 (Th=256): ~1.04

* PRAC-4 (Th=256): ~1.04

* **450.soplex:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **459.GemsFDTD:**

* PRAC-1 (Th=64): ~1.02

* PRAC-2 (Th=64): ~1.02

* PRAC-4 (Th=64): ~1.02

* PRAC-1 (Th=128): ~1.02

* PRAC-2 (Th=128): ~1.02

* PRAC-4 (Th=128): ~1.02

* PRAC-1 (Th=256): ~1.02

* PRAC-2 (Th=256): ~1.02

* PRAC-4 (Th=256): ~1.02

* **470.lbm:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **519.lbm:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **520.omnetpp:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **549.fotonik3d:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **bfs\_cm2003:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **bfs\_dlbp:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **bfs\_ny:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **gups:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

* **h264\_encode:**

* PRAC-1 (Th=64): ~1.01

* PRAC-2 (Th=64): ~1.01

* PRAC-4 (Th=64): ~1.01

* PRAC-1 (Th=128): ~1.01

* PRAC-2 (Th=128): ~1.01

* PRAC-4 (Th=128): ~1.01

* PRAC-1 (Th=256): ~1.01

* PRAC-2 (Th=256): ~1.01

* PRAC-4 (Th=256): ~1.01

### Key Observations

The average number of banks needing mitigation is consistently around 1.01 for most applications and PRAC/thread configurations. There is very little variation across the different configurations. The values are all very close to 1.0, suggesting that the mitigation needs are relatively stable across these parameters.

### Interpretation

The data suggests that the PRAC configuration and thread count (within the tested range of 64, 128, and 256) have a minimal impact on the average number of banks needing mitigation for these applications. The consistently low values (around 1.01) indicate that the mitigation requirements are generally low. This could imply that the applications are well-suited to the PRAC architecture, or that the mitigation techniques employed are effective regardless of the specific configuration. The lack of significant variation suggests that optimizing PRAC or thread count for mitigation purposes may not yield substantial benefits for these workloads. The data does not provide information on *why* mitigation is needed, or the nature of the mitigation itself. Further investigation would be needed to understand the underlying causes of mitigation requirements and the effectiveness of different mitigation strategies.

</details>

Figure 7. Average number of banks that need mitigation on an ABO signal for benign workloads 429.mcf 433.milc 434.zeusmp 437.leslie3d 450.soplex 459.GemsFDTD 470.lbm 519.lbm 520.omnetpp 549.fotonik3d bfs_cm2003 bfs_dblp bfs_ny gups h264_encode $0 0$ $1$ $2$ $3$ $4$ Max Num. of Banks Needing Mitigation PRAC-1 PRAC-2 PRAC-4

Figure 8. Maximum number of banks that need mitigation on an ABO signal for benign workloads.

3.3. Exploiting ABO: Performance Attacks

The coarse-grained nature of the ABO protocol introduces a new form of unfairness in DRAM systems, making them susceptible to performance slowdown attacks. Because the ABO signal triggers channel-wide stalls without identifying the specific bank responsible for the excessive activation, a malicious actor can exploit this limitation to repeatedly disrupt system-level memory access.

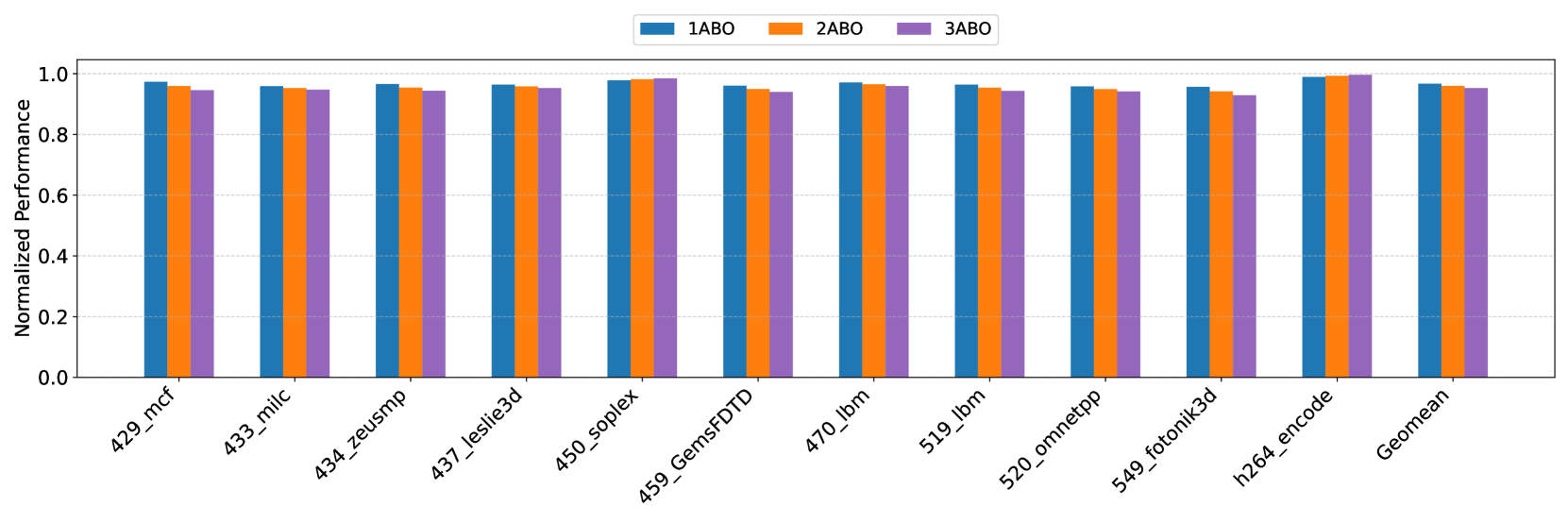

In particular, an attacker can intentionally issue frequent row activations to a single bank to induce an ABO event. Since the memory controller stalls the entire memory channel upon receiving an alert, without visibility into which bank requires mitigation, benign workloads distributed across other banks also suffer from the resulting stall. This lack of spatial granularity makes it extremely difficult to attribute the cause of the alert or to selectively suppress malicious access patterns, rendering existing defenses ineffective against such attacks. MOAT (Qureshi and Qazi, 2024) proposed the Torrent-of-Staggered-ALERT (TSA) attack, a performance degradation strategy that carefully coordinates ALERTs across multiple DRAM banks. In this attack, each bank repeatedly activates a small set of rows (e.g., rows A, B, C, D, E) to trigger an ALERT. Crucially, banks issue ACTs in a staggered fashion: a bank only initiates activation once all targeted rows in the previous bank have caused ALERTs and entered the mitigation phase. This serialized activation ensures that when one bank is under mitigation, other banks are forced to stall, as there are no eligible rows to activate without interference. This deliberate serialization of ALERTs forms a torrent of staggered mitigation events, significantly throttling memory concurrency. The attack was shown to reduce system throughput by 24% with four banks and up to 52% with 17 banks, aligning with the tFAW constraint.

BreakHammer (Canpolat et al., 2024a) introduces a score-based mitigation framework that assigns a score to each hardware thread based on its contribution to Rowhammer mitigation events. This approach is particularly effective when mitigation logic is implemented within the MC, where score attribution can be performed at finer spatial granularity, such as individual banks or row activations. However, performance degradation attacks may evade detection under BreakHammer when mitigations are signaled via coarse-grained mechanisms such as the Alert Back-Off (ABO) signal.

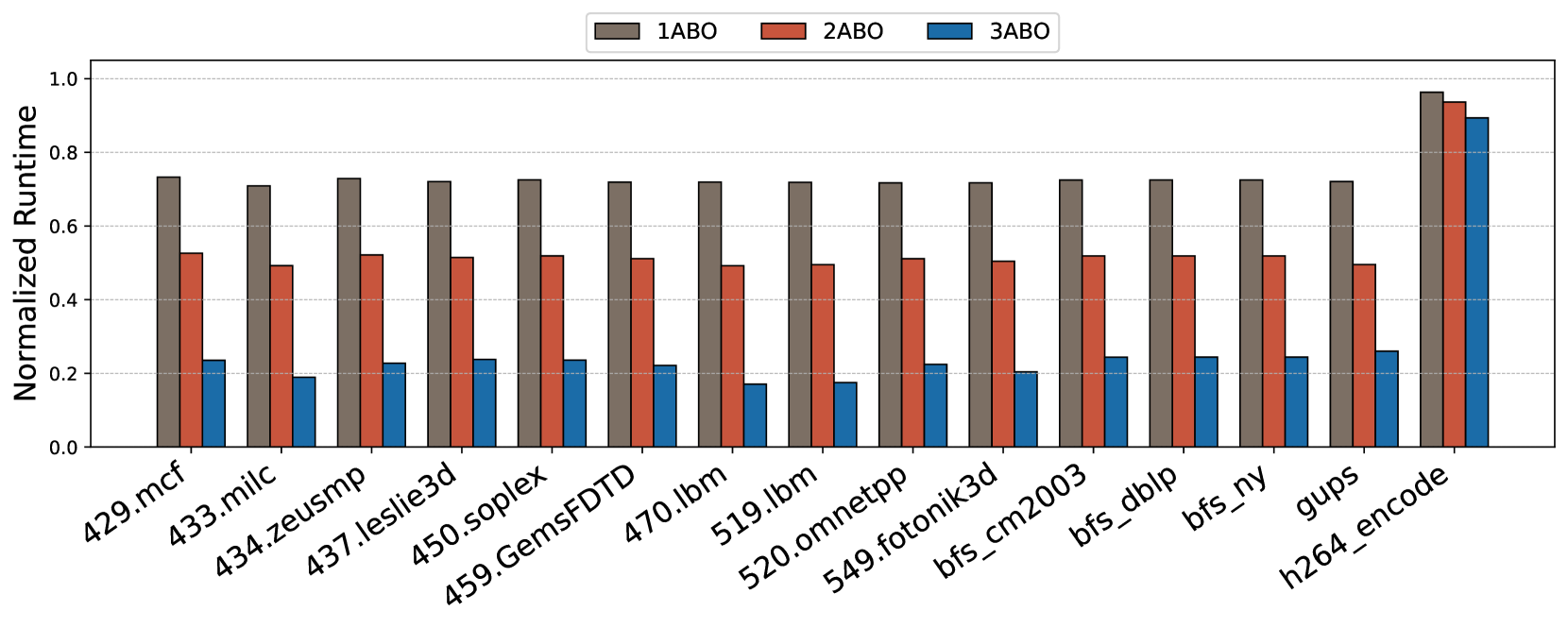

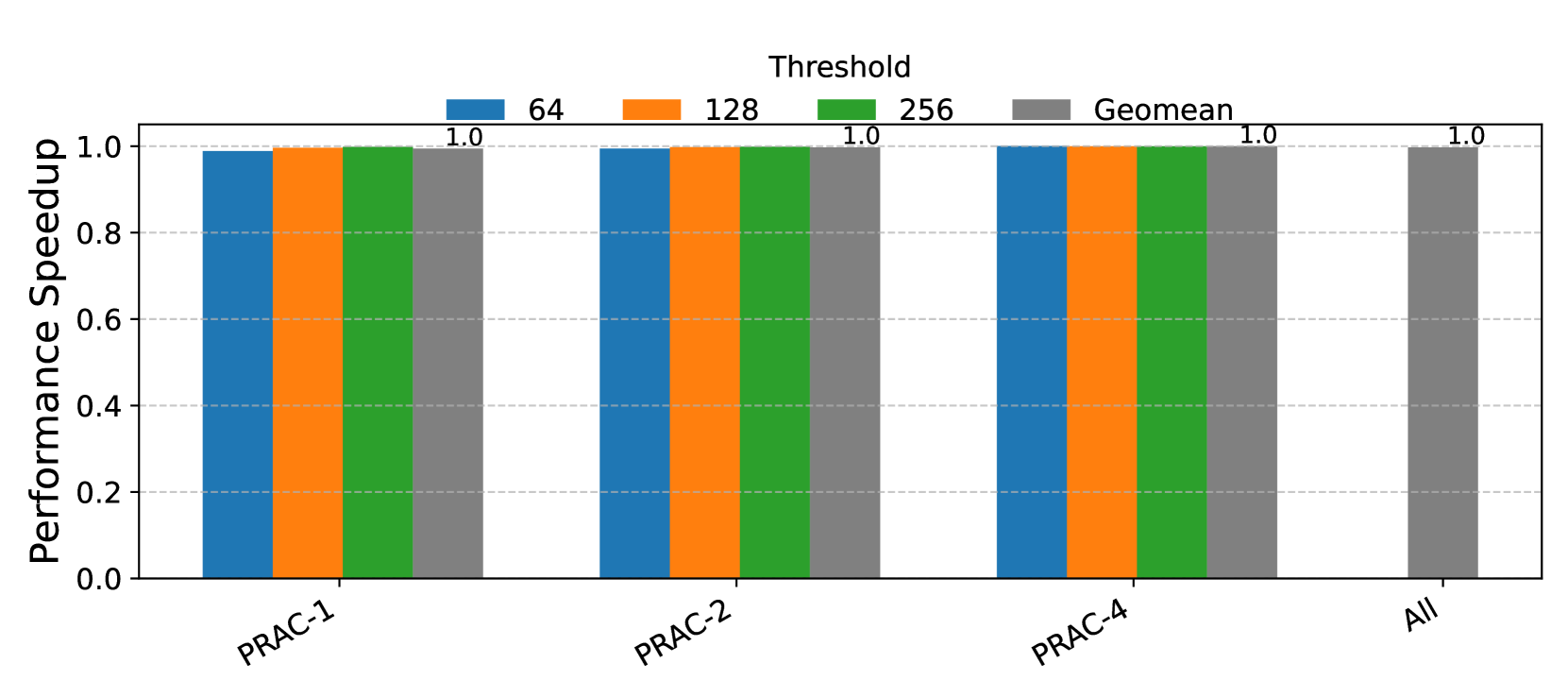

A broader class of memory performance degradation attacks exploits the observation that Rowhammer mitigations, such as recovery operations, are triggered more frequently at lower thresholds. As the mitigation frequency increases, overall system performance degrades significantly. To evaluate the performance cost associated with frequent mitigation, we study the impact of varying thresholds— 64, 32, and 16 as considered in prior works (Woo et al., 2025; Canpolat et al., 2025). Assuming a refresh interval (tREFI) of 3900 ns and a refresh operation latency (REF) of 410 ns, approximately 67 row activations can be issued within one tREFI interval. We consider a closed-row memory policy where each activated row is closed after access. For a realistic scenario, an attacker can alternate between two rows to issue repeated activations. Under a threshold of 64, this behavior can typically trigger one Alert per tREFI. For thresholds of 32 and 16, the number of ALERTs increases proportionally, allowing an attacker to induce two to three (or even four) ALERTs within the same interval. Based on this analysis, we evaluate performance degradation under scenarios involving one, two, and three ALERTs per tREFI duration to quantify the impact of aggressive mitigation triggering. As shown in Figure 9, even a single ABO event per interval results in a 20–30% slowdown for most applications. With three alerts per interval, performance degradation exceeds 80% in several cases. Interestingly, workloads such as h264_encode exhibit resilience due to their lower sensitivity to DRAM stalls, but most others are highly vulnerable. These results demonstrate that the lack of bank-level precision in ABO signaling can be exploited by attackers to inflict disproportionate slowdowns on benign threads, emphasizing the need for spatially-aware mitigation mechanisms.

Observation 3: Coarse-grained ABO signaling enables attackers to trigger repeated channel-wide stalls, leading to excessive performance loss.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Bar Chart: Normalized Runtime Comparison

### Overview

This bar chart compares the normalized runtime of three different configurations (1ABO, 2ABO, and 3ABO) across a series of applications/benchmarks. The y-axis represents the normalized runtime, ranging from 0.0 to 1.0, while the x-axis lists the names of the applications. Each application has three bars representing the runtime for each configuration.

### Components/Axes

* **X-axis:** Application/Benchmark Names: 429.mcf, 433.milc, 434.zeusmp, 437.leslie3d, 450.soplex, 459.GemsFDTD, 470.lbm, 519.lbm, 520.omnetpp, 549.fotonik3d, bfs\_cm2003, bfs\_dlbp, bfs\_ny, gups, h264\_encode.

* **Y-axis:** Normalized Runtime (Scale: 0.0 to 1.0)

* **Legend:**

* 1ABO (Red)

* 2ABO (Blue)

* 3ABO (Gray)

### Detailed Analysis

The chart consists of 15 applications, each with three bars representing the normalized runtime for 1ABO, 2ABO, and 3ABO configurations. I will analyze each application individually, noting approximate values.

* **429.mcf:** 1ABO ≈ 0.72, 2ABO ≈ 0.22, 3ABO ≈ 0.18

* **433.milc:** 1ABO ≈ 0.68, 2ABO ≈ 0.20, 3ABO ≈ 0.16

* **434.zeusmp:** 1ABO ≈ 0.64, 2ABO ≈ 0.24, 3ABO ≈ 0.20

* **437.leslie3d:** 1ABO ≈ 0.56, 2ABO ≈ 0.28, 3ABO ≈ 0.24

* **450.soplex:** 1ABO ≈ 0.52, 2ABO ≈ 0.26, 3ABO ≈ 0.22

* **459.GemsFDTD:** 1ABO ≈ 0.50, 2ABO ≈ 0.24, 3ABO ≈ 0.20

* **470.lbm:** 1ABO ≈ 0.48, 2ABO ≈ 0.22, 3ABO ≈ 0.18

* **519.lbm:** 1ABO ≈ 0.44, 2ABO ≈ 0.20, 3ABO ≈ 0.16

* **520.omnetpp:** 1ABO ≈ 0.40, 2ABO ≈ 0.18, 3ABO ≈ 0.14

* **549.fotonik3d:** 1ABO ≈ 0.36, 2ABO ≈ 0.16, 3ABO ≈ 0.12

* **bfs\_cm2003:** 1ABO ≈ 0.32, 2ABO ≈ 0.14, 3ABO ≈ 0.10

* **bfs\_dlbp:** 1ABO ≈ 0.28, 2ABO ≈ 0.12, 3ABO ≈ 0.08

* **bfs\_ny:** 1ABO ≈ 0.24, 2ABO ≈ 0.10, 3ABO ≈ 0.06

* **gups:** 1ABO ≈ 0.96, 2ABO ≈ 0.24, 3ABO ≈ 0.20

* **h264\_encode:** 1ABO ≈ 0.92, 2ABO ≈ 0.22, 3ABO ≈ 0.18

**Trends:**

* For most applications, 1ABO consistently exhibits the highest normalized runtime, followed by 2ABO, and then 3ABO.

* The difference in runtime between 1ABO and the other configurations is more pronounced for some applications (e.g., gups, h264\_encode) than others.

* The runtime for 2ABO and 3ABO are relatively close for many applications.

### Key Observations

* The application "gups" shows a significantly higher normalized runtime for 1ABO (approximately 0.96) compared to 2ABO (0.24) and 3ABO (0.20). This suggests that 1ABO performs substantially worse on this particular benchmark.

* Similarly, "h264\_encode" also shows a large difference, with 1ABO at approximately 0.92.

* For applications like "bfs\_dlbp" and "bfs\_ny", the runtime differences between the configurations are smaller.

### Interpretation

The chart demonstrates the performance impact of different configurations (1ABO, 2ABO, 3ABO) on a variety of applications. The normalized runtime indicates the relative execution time, with higher values representing longer runtimes.

The consistent dominance of 1ABO in terms of runtime suggests that this configuration may be less optimized for these benchmarks compared to 2ABO and 3ABO. The large differences observed in "gups" and "h264\_encode" indicate that 1ABO is particularly inefficient for these specific workloads.

The relatively similar performance of 2ABO and 3ABO across most applications suggests that the changes between these configurations have a less significant impact on runtime. This could be due to the optimizations already present in 2ABO being sufficient, or that the specific changes in 3ABO do not translate to substantial performance gains for these benchmarks.

Further investigation would be needed to understand the underlying reasons for these performance differences. This could involve profiling the applications under each configuration to identify bottlenecks and areas for optimization. The chart provides a valuable starting point for identifying which applications benefit most from switching to 2ABO or 3ABO.

</details>

Figure 9. Percentage slowdown of benign applications when there is a 1, 2 and 3 ABO alerts happening in each tREFI

4. PRACtical Design and Implementation

To overcome the limitations of PRAC+ABO, we introduce PRACtical—an enhanced version that builds upon the original framework. PRACtical extends the underlying mechanisms to significantly reduce performance overheads while maintaining the robust security guarantees offered by state-of-the-art Rowhammer mitigation techniques. This section presents an overview of the design and implementation of PRACtical.

4.1. Overview and Design Goals

The core design principle of PRACtical is to minimize the performance overheads inherent in the original PRAC+ABO mechanism. Specifically, the design aims to achieve the following two objectives:

1. Reduce PRAC update latency through subarray-level decoupling. Enable subarray-level counter updates to allow overlapping the counter update time of a row with activation of the next row when rows are non-conflicting at subarray-level, eliminating the 21ns counter update delay.

1. Minimize unnecessary memory stalls with fine-grained recovery command. Refine RFM ab to operate at bank-level granularity, allowing the memory controller to stall only the affected banks instead of the entire channel.

4.2. Hardware Modifications

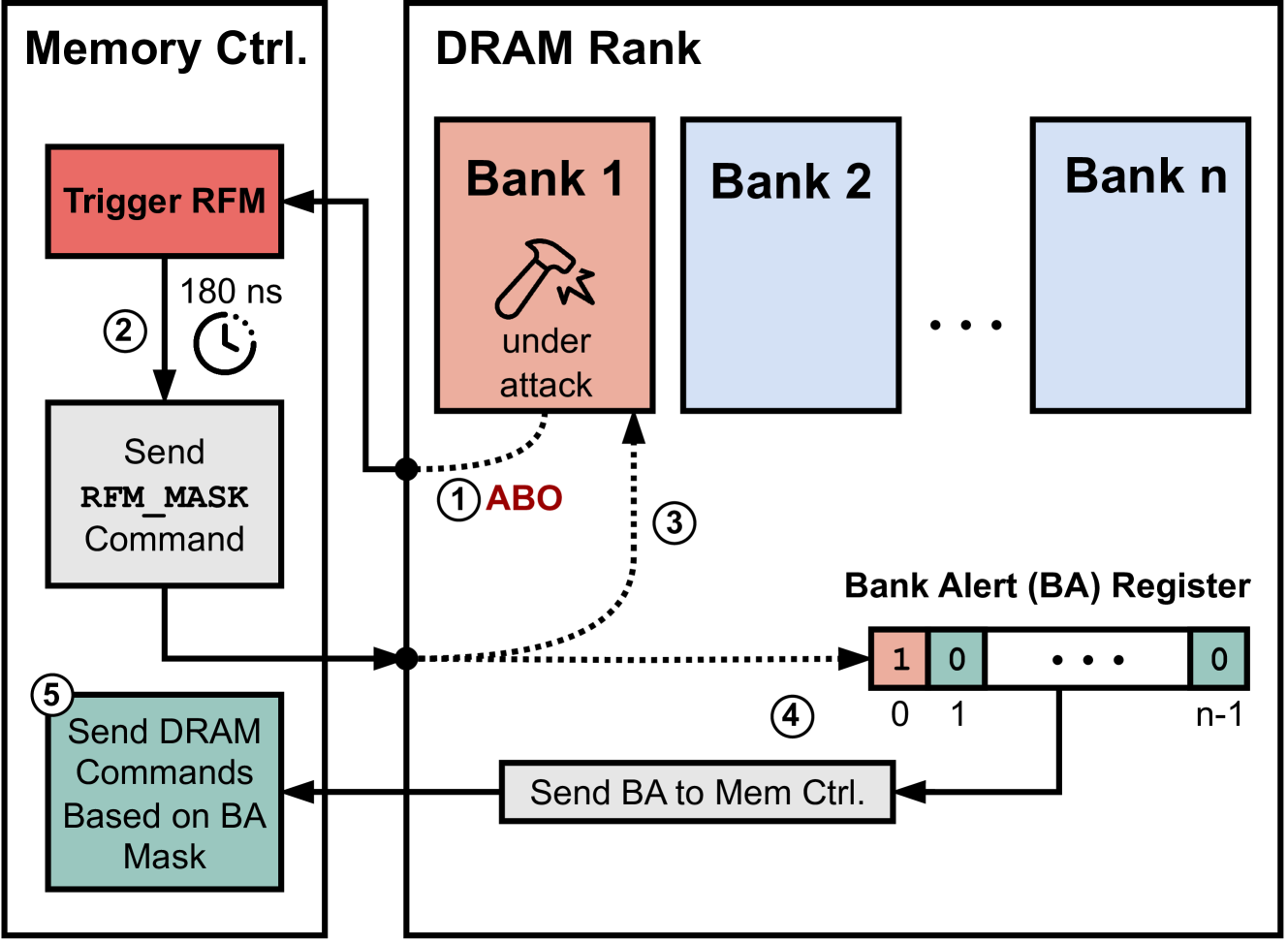

PRACtical introduces minimal hardware modifications to enable fine-grained, low-latency RowHammer mitigation by enhancing the PRAC+ABO feature.

First, to support subarray-level PRAC updates, the traditional bank-level increment logic, typically located near the global row buffer, is replaced with a centralized increment circuit that is connected to local row buffers in subarrays through a different bus (8-wire bus for 8-bit counters). This design allows counter updates without delaying the subsequent memory accesses to the memory bank that do not conflict at the subarray level. Correspondingly, the memory controller is extended with subarray mapping logic and an address decoder capable of identifying the target subarray for each memory request.

Second, to enable fine-grained bank-level recovery stalling, the DRAM chip is modified to include a new control register called the Bank Alert (BA) register. This register contains one bit per bank, where each bit indicates whether a given bank has any rows with an activation number higher than the Alert threshold. The contents of the BA register are communicated to the memory controller and serve as a mask, allowing the controller to selectively stall only the requests to the banks under mitigation while continuing to issue commands to unaffected banks.

4.3. PRACtical Mechanism

PRACtical introduces two core mechanisms to reduce the performance overheads associated with the PRAC+ABO framework while maintaining its security guarantees against RowHammer attacks.

4.3.1. Subarray-Level PRAC Updates

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Diagram: DRAM Bank Operation with PRAC Timing

### Overview

The image is a diagram illustrating the operation of a DRAM bank, specifically focusing on how the memory controller handles subarray conflicts and PRAC (Precharge Access) timing. The diagram is split into two main sections: "Memory Ctrl." (Memory Controller) on the left and "DRAM Bank" on the right. It depicts a flow chart within the memory controller and the internal structure of the DRAM bank, showing how they interact.

### Components/Axes

The diagram consists of the following components:

* **Memory Ctrl. (Memory Controller):** Contains blocks representing "Memory Access", "Find Subarray ID", "SA Conflict?", "PRAC Timing", and "No Need to Wait for Subarray PRAC Update".

* **DRAM Bank:** Contains "Global Row Dec.", "Row Dec.", "Subarray" (repeated twice), "Local row buffer" (repeated twice), "Global row buffer", and "counters" (repeated twice).

* **Flow Arrows:** Indicate the direction of data flow and decision-making.

* **Numbered Steps:** 1, 2, 3, and 4 mark the sequence of operations.

### Detailed Analysis or Content Details

The diagram illustrates the following process:

1. **Memory Access:** The process begins with a memory access request.

2. **Find Subarray ID:** The memory controller identifies the subarray associated with the access.

3. **SA Conflict?:** A decision is made whether there is a subarray (SA) conflict.

* **Yes:** If a conflict exists, the process proceeds to "PRAC Timing".

* **No:** If no conflict exists, the process proceeds to "No Need to Wait for Subarray PRAC Update".

4. **PRAC Timing:** This block represents the timing required for precharge access, likely to resolve the subarray conflict.

5. **No Need to Wait for Subarray PRAC Update:** This indicates that the operation can proceed without waiting for a PRAC update, as there was no conflict.

Within the DRAM Bank section:

* **Global Row Dec.:** Drives the "Row Dec." signals.