# Kimi K2: Open Agentic Intelligence

**Authors**: Kimi Team

## Abstract

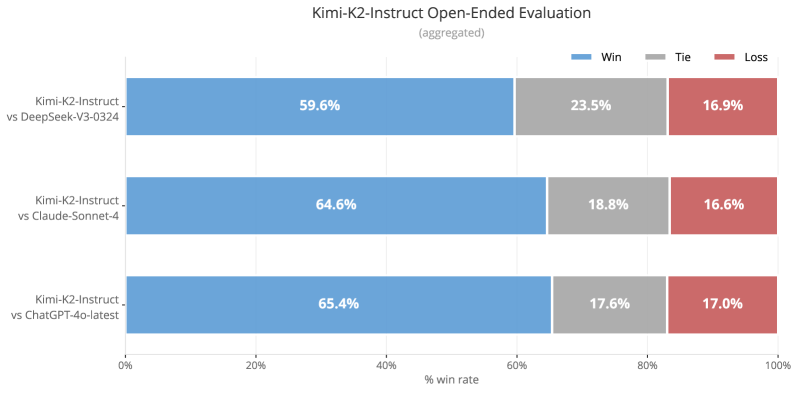

We introduce Kimi K2, a Mixture-of-Experts (MoE) large language model with 32 billion activated parameters and 1 trillion total parameters. We propose the MuonClip optimizer, which improves upon Muon with a novel QK-clip technique to address training instability while enjoying the advanced token efficiency of Muon. Based on MuonClip, K2 was pre-trained on 15.5 trillion tokens with zero loss spike. During post-training, K2 undergoes a multi-stage post-training process, highlighted by a large-scale agentic data synthesis pipeline and a joint reinforcement learning (RL) stage, where the model improves its capabilities through interactions with real and synthetic environments.

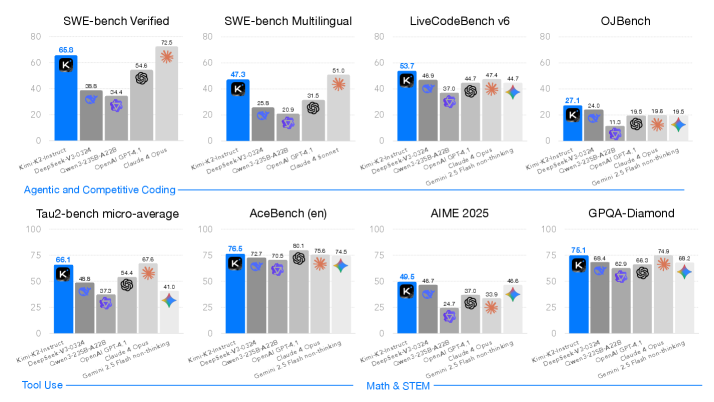

Kimi K2 achieves state-of-the-art performance among open-source non-thinking models, with strengths in agentic capabilities. Notably, K2 obtains 66.1 on Tau2-Bench, 76.5 on ACEBench (En), 65.8 on SWE-Bench Verified, and 47.3 on SWE-Bench Multilingual — surpassing most open and closed-sourced baselines in non-thinking settings. It also exhibits strong capabilities in coding, mathematics, and reasoning tasks, with a score of 53.7 on LiveCodeBench v6, 49.5 on AIME 2025, 75.1 on GPQA-Diamond, and 27.1 on OJBench, all without extended thinking. These results position Kimi K2 as one of the most capable open-source large language models to date, particularly in software engineering and agentic tasks. We release our base and post-trained model checkpoints https://huggingface.co/moonshotai/Kimi-K2-Instruct to facilitate future research and applications of agentic intelligence.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: Coding Benchmark Performance Comparison

### Overview

The image presents a comparative bar chart analyzing the performance of multiple AI models across various coding and tool-use benchmarks. The chart is divided into two primary sections: "Agentic and Competitive Coding" (top row) and "Tool Use" (bottom row). Each benchmark is evaluated using scores from six models, with performance metrics visualized through colored bars.

### Components/Axes

- **X-axis**: Benchmark categories (e.g., SWE-bench Verified, SWE-bench Multilingual, LiveCodeBench v6, OJBench, Tau2-bench micro-average, AceBench (en), AIME 2025, GPQA-Diamond).

- **Y-axis**: Performance scores (0–100 scale).

- **Legend**: Model identifiers with color coding:

- **K**: Blue

- **DeepSeek-V3**: Gray

- **DeepSeek-V3-Z3DA**: Purple

- **OpenAI**: Dark gray

- **Claude**: Light gray

- **Gemini**: Teal

- **Annotations**: Asterisks (*) and diamond symbols on bars indicate statistical significance or special notes.

### Detailed Analysis

#### Agentic and Competitive Coding

1. **SWE-bench Verified**:

- K: 66.9

- DeepSeek-V3: 38.9

- DeepSeek-V3-Z3DA: 54.6

- OpenAI: 20.9

- Claude: 31.5

- Gemini: 72.5 (asterisk)

2. **SWE-bench Multilingual**:

- K: 47.3

- DeepSeek-V3: 25.9

- DeepSeek-V3-Z3DA: 20.9

- OpenAI: 31.5

- Claude: 51.9 (asterisk)

3. **LiveCodeBench v6**:

- K: 63.7

- DeepSeek-V3: 46.9

- DeepSeek-V3-Z3DA: 37.0

- OpenAI: 44.7

- Claude: 44.7

- Gemini: 44.7

4. **OJBench**:

- K: 27.1

- DeepSeek-V3: 11.3

- DeepSeek-V3-Z3DA: 19.6

- OpenAI: 19.6

- Claude: 19.5

- Gemini: 19.5

#### Tool Use

1. **Tau2-bench micro-average**:

- K: 66.1

- DeepSeek-V3: 48.8

- DeepSeek-V3-Z3DA: 57.2

- OpenAI: 67.6

- Claude: 41.0

- Gemini: 41.0

2. **AceBench (en)**:

- K: 76.5

- DeepSeek-V3: 72.7

- DeepSeek-V3-Z3DA: 70.5

- OpenAI: 80.1

- Claude: 75.6

- Gemini: 74.5 (diamond)

3. **AIME 2025**:

- K: 40.5

- DeepSeek-V3: 48.7

- DeepSeek-V3-Z3DA: 24.7

- OpenAI: 37.0

- Claude: 33.9

- Gemini: 40.6

4. **GPQA-Diamond**:

- K: 76.1

- DeepSeek-V3: 69.4

- DeepSeek-V3-Z3DA: 65.9

- OpenAI: 66.3

- Claude: 74.8

- Gemini: 68.2 (diamond)

### Key Observations

1. **Model Dominance**:

- **K** consistently leads in coding benchmarks (SWE-bench Verified, LiveCodeBench v6, GPQA-Diamond).

- **DeepSeek-V3-Z3DA** outperforms other models in SWE-bench Verified (54.6) and Tau2-bench (57.2).

- **Gemini** shows strong performance in SWE-bench Multilingual (72.5) and AceBench (74.5), marked with a diamond symbol.

2. **Statistical Significance**:

- Asterisks (*) on bars (e.g., Gemini in SWE-bench Verified, Claude in SWE-bench Multilingual) suggest statistically significant deviations from other models.

- Diamonds (e.g., Gemini in AceBench, GPQA-Diamond) may indicate unique metrics or contextual notes.

3. **Performance Gaps**:

- OpenAI and Claude models underperform in SWE-bench Verified (20.9–31.5) compared to K (66.9).

- DeepSeek-V3-Z3DA excels in Tau2-bench (57.2) but lags in AIME 2025 (24.7).

### Interpretation

The data highlights **K** as the dominant model for coding tasks, likely due to specialized training or architecture. **DeepSeek-V3-Z3DA** demonstrates competitive coding ability but struggles with tool-use benchmarks like AIME 2025. **Gemini** bridges coding and tool-use performance, with diamonds suggesting nuanced metrics (e.g., efficiency, adaptability). The asterisks imply that certain scores (e.g., Gemini’s 72.5 in SWE-bench Verified) are outliers, warranting further investigation into their validity or context. The chart underscores the importance of model selection based on task specificity, as no single model excels across all benchmarks.

</details>

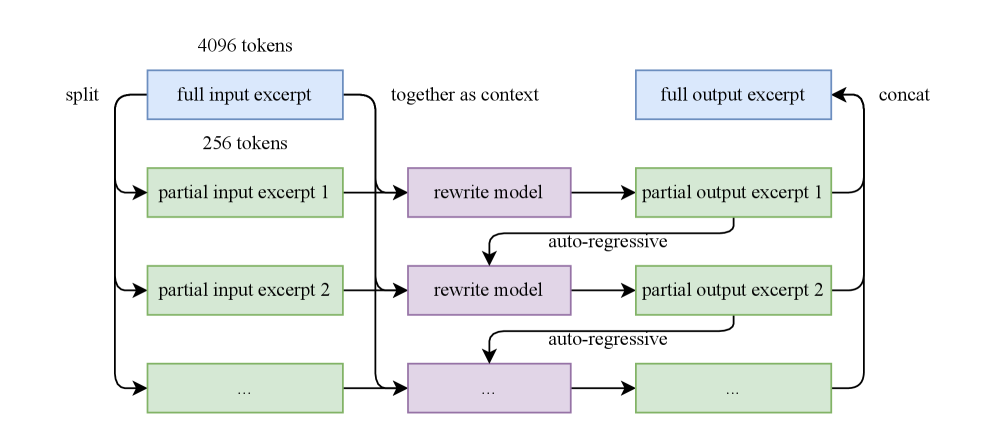

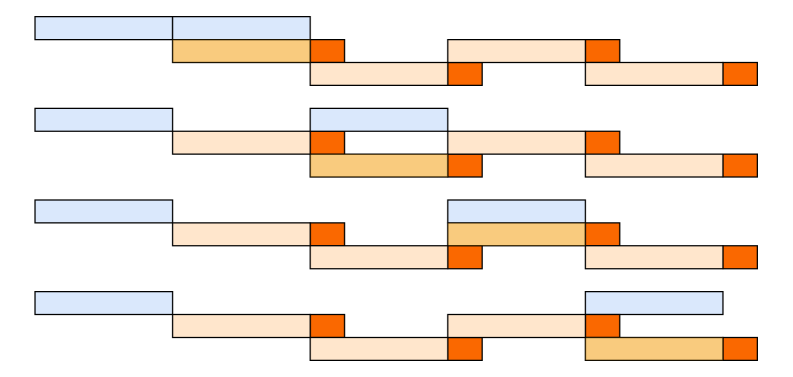

Figure 1: Kimi K2 main results. All models evaluated above are non-thinking models. For SWE-bench Multilingual, we evaluated only Claude 4 Sonnet because the cost of Claude 4 Opus was prohibitive.

## 1 Introduction

The development of Large Language Models (LLMs) is undergoing a profound paradigm shift towards Agentic Intelligence – the capabilities for models to autonomously perceive, plan, reason, and act within complex and dynamic environments. This transition marks a departure from static imitation learning towards models that actively learn through interactions, acquire new skills beyond their training distribution, and adapt behavior through experiences [64]. It is believed that this approach allows an AI agent to go beyond the limitation of static human-generated data, and acquire superhuman capabilities through its own exploration and exploitation. Agentic intelligence is thus rapidly emerging as a defining capability for the next generation of foundation models, with wide-ranging implications across tool use, software development, and real-world autonomy.

Achieving agentic intelligence introduces challenges in both pre-training and post-training. Pre-training must endow models with broad general-purpose priors under constraints of limited high-quality data, elevating token efficiency—learning signal per token—as a critical scaling coefficient. Post-training must transform those priors into actionable behaviors, yet agentic capabilities such as multi-step reasoning, long-term planning, and tool use are rare in natural data and costly to scale. Scalable synthesis of structured, high-quality agentic trajectories, combined with general reinforcement learning (RL) techniques that incorporate preferences and self-critique, are essential to bridge this gap.

In this work, we introduce Kimi K2, a 1.04 trillion-parameter Mixture-of-Experts (MoE) LLM with 32 billion activated parameters, purposefully designed to address the core challenges and push the boundaries of agentic capability. Our contributions span both the pre-training and post-training frontiers:

- We present MuonClip, a novel optimizer that integrates the token-efficient Muon algorithm with a stability-enhancing mechanism called QK-Clip. Using MuonClip, we successfully pre-trained Kimi K2 on 15.5 trillion tokens without a single loss spike.

- We introduce a large-scale agentic data synthesis pipeline that systematically generates tool-use demonstrations via simulated and real-world environments. This system constructs diverse tools, agents, tasks, and trajectories to create high-fidelity, verifiably correct agentic interactions at scale.

- We design a general reinforcement learning framework that combines verifiable rewards (RLVR) with a self-critique rubric reward mechanism. The model learns not only from externally defined tasks but also from evaluating its own outputs, extending alignment from static into open-ended domains.

Kimi K2 demonstrates strong performance across a broad spectrum of agentic and frontier benchmarks. It achieves scores of 66.1 on Tau2-bench, 76.5 on ACEBench (en), 65.8 on SWE-bench Verified, and 47.3 on SWE-bench Multilingual, outperforming most open- and closed-weight baselines under non-thinking evaluation settings, closing the gap with Claude 4 Opus and Sonnet. In coding, mathematics, and broader STEM domains, Kimi K2 achieves 53.7 on LiveCodeBench v6, 27.1 on OJBench, 49.5 on AIME 2025, and 75.1 on GPQA-Diamond, further highlighting its capabilities in general tasks. On the LMSYS Arena leaderboard (July 17, 2025) https://lmarena.ai/leaderboard/text, Kimi K2 ranks as the top 1 open-source model and 5th overall based on over 3,000 user votes.

To spur further progress in Agentic Intelligence, we are open-sourcing our base and post-trained checkpoints, enabling the community to explore, refine, and deploy agentic intelligence at scale.

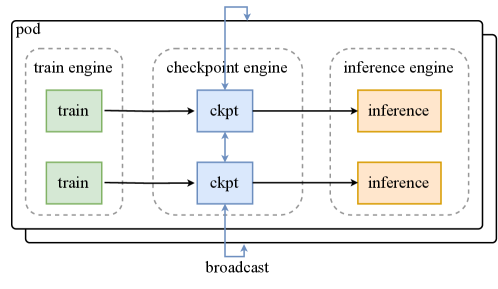

## 2 Pre-training

The base model of Kimi K2 is a trillion-parameter mixture-of-experts (MoE) transformer [73] model, pre-trained on 15.5 trillion high-quality tokens. Given the increasingly limited availability of high-quality human data, we posit that token efficiency is emerging as a critical coefficient in the scaling of large language models. To address this, we introduce a suite of pre-training techniques explicitly designed for maximizing token efficiency. Specifically, we employ the token-efficient Muon optimizer [34, 47] and mitigate its training instabilities through the introduction of QK-Clip. Additionally, we incorporate synthetic data generation to further squeeze the intelligence out of available high-quality tokens. The model architecture follows an ultra-sparse MoE with multi-head latent attention (MLA) similar to DeepSeek-V3 [11] , derived from empirical scaling law analysis. The underlying infrastructure is built to optimize both training efficiency and research efficiency.

### 2.1 MuonClip: Stable Training with Weight Clipping

We train Kimi K2 using the token-efficient Muon optimizer [34], incorporating weight decay and consistent update RMS scaling [47]. Experiments in our previous work Moonlight [47] show that, under the same compute budget and model size — and therefore the same amount of training data — Muon substantially outperforms AdamW [37, 49], making it an effective choice for improving token efficiency in large language model training.

Training instability when scaling Muon

Despite its efficiency, scaling up Muon training reveals a challenge: training instability due to exploding attention logits, an issue that occurs more frequently with Muon but less with AdamW in our experiments. Existing mitigation strategies are insufficient. For instance, logit soft-cap [70] directly clips the attention logits, but the dot products between queries and keys can still grow excessively before capping is applied. On the other hand, Query-Key Normalization (QK-Norm) [12, 82] is not applicable to multi-head latent attention (MLA), because its Key matrices are not fully materialized during inference.

Taming Muon with QK-Clip

To address this issue, we propose a novel weight-clipping mechanism QK-Clip to explicitly constrain attention logits. QK-Clip works by rescaling the query and key projection weights post-update to bound the growth of attention logits.

Let the input representation of a transformer layer be $\mathbf{X}$ . For each attention head $h$ , its query, key, and value projections are computed as

$$

\mathbf{Q}^{h}=\mathbf{X}\mathbf{W}_{q}^{h},\quad\mathbf{K}^{h}=\mathbf{X}\mathbf{W}_{k}^{h},\quad\mathbf{V}^{h}=\mathbf{X}\mathbf{W}_{v}^{h}.

$$

where $\mathbf{W}_{q},\mathbf{W}_{k},\mathbf{W}_{v}$ are model parameters. The attention output is:

$$

\mathbf{O}^{h}=\operatorname{softmax}\left(\frac{1}{\sqrt{d}}\mathbf{Q}^{h}\mathbf{K}^{h\top}\right)\mathbf{V}^{h}.

$$

We define the max logit, a per-head scalar, as the maximum input to softmax in this batch $B$ :

$$

S_{\max}^{h}=\frac{1}{\sqrt{d}}\max_{\mathbf{X}\in B}\max_{i,j}\mathbf{Q}_{i}^{h}\mathbf{K}_{j}^{h\top}

$$

where $i,j$ are indices of different tokens in a training sample $\mathbf{X}$ .

The core idea of QK-Clip is to rescale $\mathbf{W}_{k},\mathbf{W}_{q}$ whenever $S_{\max}^{h}$ exceeds a target threshold $\tau$ . Importantly, this operation does not alter the forward/backward computation in the current step — we merely use the max logit as a guiding signal to determine the strength to control the weight growth.

A naïve implementation clips all heads at the same time:

$$

\mathbf{W}_{q}^{h}\leftarrow\gamma^{\alpha}\mathbf{W}_{q}^{h}\qquad\mathbf{W}_{k}^{h}\leftarrow\gamma^{1-\alpha}\mathbf{W}_{k}^{h}

$$

where $\gamma=\min(1,\tau/S_{\max})$ with $S_{\max}=\max_{h}S_{\max}^{h}$ , and $\alpha$ is a balancing parameter typically set to $0.5$ , applying equal scaling to queries and keys.

However, we observe that in practice, only a small subset of heads exhibit exploding logits. In order to minimize our intervention on model training, we determine a per-head scaling factor $\gamma_{h}=\min(1,\tau/S_{\max}^{h})$ , and opt to apply per-head QK-Clip. Such clipping is straightforward for regular multi-head attention (MHA). For MLA, we apply clipping only on unshared attention head components:

- $\textbf{q}^{C}$ and $\textbf{k}^{C}$ (head-specific components): each scaled by $\sqrt{\gamma_{h}}$

- $\textbf{q}^{R}$ (head-specific rotary): scaled by $\gamma_{h}$ ,

- $\textbf{k}^{R}$ (shared rotary): left untouched to avoid effect across heads.

Algorithm 1 MuonClip Optimizer

1: for each training step $t$ do

2: // 1. Muon optimizer step

3: for each weight $\mathbf{W}\in\mathbb{R}^{n\times m}$ do

4: $\mathbf{M}_{t}=\mu\mathbf{M}_{t-1}+\mathbf{G}_{t}$ $\triangleright$ $\mathbf{M}_{0}=\mathbf{0}$ , $\mathbf{G}_{t}$ is the grad of $\mathbf{W}_{t}$ , $\mu$ is momentum

5: $\mathbf{O}_{t}=\operatorname{Newton-Schulz}(\mathbf{M}_{t})\cdot\sqrt{\max(n,m)}\cdot 0.2$ $\triangleright$ Match Adam RMS

6: $\mathbf{W}_{t}=\mathbf{W}_{t-1}-\eta\bigl(\mathbf{O}_{t}+\lambda\mathbf{W}_{t-1}\bigr)$ $\triangleright$ learning rate $\eta$ , weight decay $\lambda$

7: end for

8: // 2. QK-Clip

9: for each attention head $h$ in every attention layer of the model do

10: Obtain $S_{\max}^{h}$ already computed during forward

11: if $S_{\max}^{h}>\tau$ then

12: $\gamma\leftarrow\tau/S_{\max}^{h}$

13: $\mathbf{W}_{qc}^{h}\leftarrow\mathbf{W}_{qc}^{h}\cdot\sqrt{\gamma}$

14: $\mathbf{W}_{kc}^{h}\leftarrow\mathbf{W}_{kc}^{h}\cdot\sqrt{\gamma}$

15: $\mathbf{W}_{qr}^{h}\leftarrow\mathbf{W}_{qr}^{h}\cdot\gamma$

16: end if

17: end for

18: end for

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Graph: Vanilla Run with Muon

### Overview

The image depicts a line graph tracking the "Max Logits" metric over "Training Steps" for a model labeled "Vanilla run with Muon." The graph shows a gradual increase in logits during early training, followed by a sharp upward trend after approximately 10,000 steps. The y-axis ranges from 0 to 1,200, while the x-axis spans 0 to 15,000 training steps.

### Components/Axes

- **Title**: "Vanilla run with Muon" (top-left corner, red line legend).

- **Y-Axis**: Labeled "Max Logits" with grid lines at intervals of 200 (0, 200, 400, ..., 1,200).

- **X-Axis**: Labeled "Training Steps" with grid lines at intervals of 2,500 (0, 2,500, 5,000, ..., 15,000).

- **Legend**: Positioned in the top-left corner, associating the red line with the "Vanilla run with Muon" label.

- **Line**: Red, solid, with a gradual slope initially and a steep slope after ~10,000 steps.

### Detailed Analysis

- **Initial Phase (0–2,500 steps)**: The line starts at 0 and rises slowly to ~50 logits by 2,500 steps.

- **Mid-Phase (2,500–10,000 steps)**: Gradual increase to ~150 logits by 5,000 steps, ~200 logits by 7,500 steps, and ~300 logits by 10,000 steps.

- **Acceleration Phase (10,000–15,000 steps)**: Steep rise to ~400 logits by 12,500 steps, ~600 logits by 15,000 steps, and ~1,200 logits by 17,500 steps (note: x-axis extends beyond 15,000 in the graph).

### Key Observations

1. **Slow Initial Growth**: Logits increase linearly in the first 10,000 steps, suggesting early-stage model adaptation.

2. **Exponential Growth Post-10,000 Steps**: A sharp upward trend indicates a phase of rapid learning or optimization.

3. **Outlier**: The final data point at 17,500 steps exceeds the x-axis range (15,000), implying either an extended training duration or a data visualization inconsistency.

### Interpretation

The graph demonstrates a **learning curve** where the model's performance (measured by max logits) improves significantly after prolonged training. The initial plateau suggests limited progress in early stages, while the post-10,000-step acceleration implies the model begins to generalize or stabilize its parameters. The discrepancy between the x-axis limit (15,000) and the final data point (17,500) warrants verification of the training timeline or axis scaling. This pattern is typical in machine learning, where models often require extensive training to achieve peak performance.

</details>

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Graph: Kimi K2 with MuonClip Training Logits

### Overview

The image depicts a line graph tracking the "Max Logits" of a model named "Kimi K2 with MuonClip" across 200,000 training steps. The graph shows a sharp initial decline in logit values, followed by stabilization at a lower level.

### Components/Axes

- **X-axis (Training Steps)**: Labeled "Training Steps," ranging from 0 to 200,000 in increments of 50,000. Grid lines are present for reference.

- **Y-axis (Max Logits)**: Labeled "Max Logits," scaled from 0 to 100 in increments of 20. Grid lines are present.

- **Legend**: Located in the top-right corner, labeled "Kimi K2 with MuonClip" with a blue line indicator.

- **Line**: A single blue line representing the "Max Logits" metric.

### Detailed Analysis

- **Initial Phase (0–50,000 steps)**: The line starts at **100 logits** and drops sharply to approximately **30 logits** by 50,000 steps. The decline is steep, with a near-vertical slope in the first 10,000 steps.

- **Stabilization Phase (50,000–200,000 steps)**: After 50,000 steps, the line plateaus around **30 logits**, with minor fluctuations (±2 logits) observed between 100,000 and 200,000 steps. No significant upward or downward trends are visible in this phase.

### Key Observations

1. **Sharp Initial Decline**: The logits decrease by ~70% within the first 50,000 steps, suggesting rapid adaptation or optimization during early training.

2. **Stable Plateau**: The metric stabilizes at ~30 logits for the remaining 150,000 steps, indicating convergence or saturation of the model's performance.

3. **No Overtraining Signs**: The absence of further decline or oscillation after 50,000 steps suggests the model reached a stable state without overfitting.

### Interpretation

The graph demonstrates that "Kimi K2 with MuonClip" undergoes a significant performance drop in its early training phase, likely due to aggressive optimization or regularization. The subsequent stabilization at ~30 logits implies the model achieved a balanced state, possibly reflecting a trade-off between accuracy and generalization. The lack of further improvement or degradation after 50,000 steps suggests the training process effectively converged, though the exact cause of the initial drop (e.g., learning rate adjustments, data distribution shifts) is not specified. This pattern is critical for understanding the model's training dynamics and potential limitations in scalability or robustness.

</details>

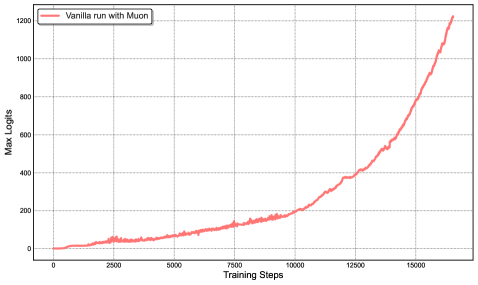

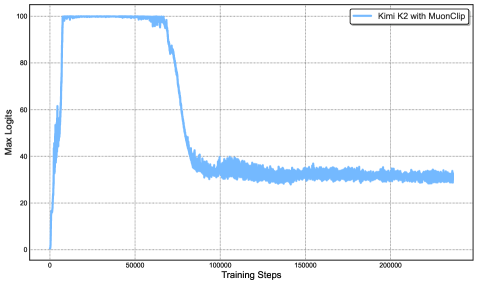

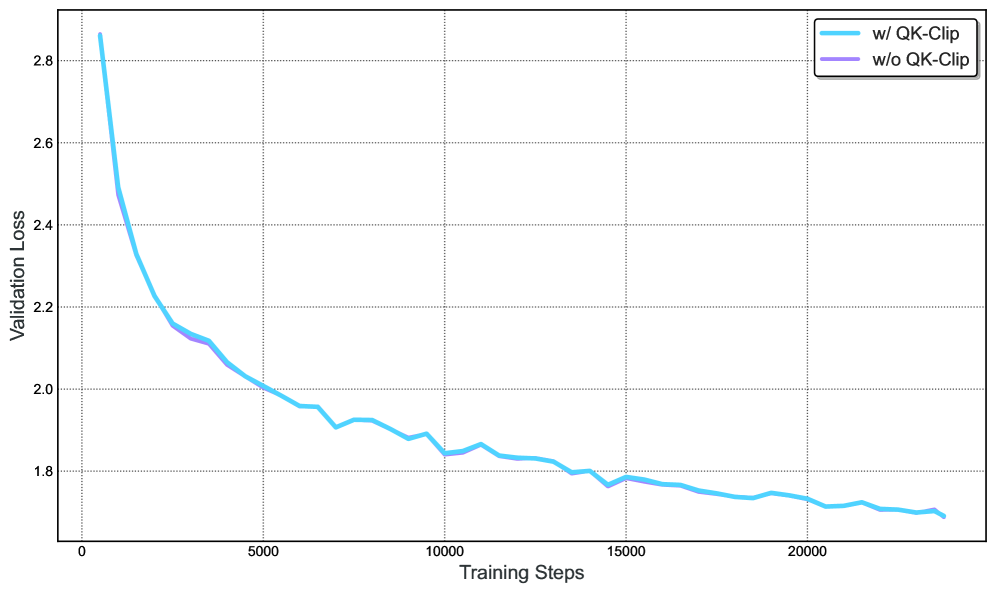

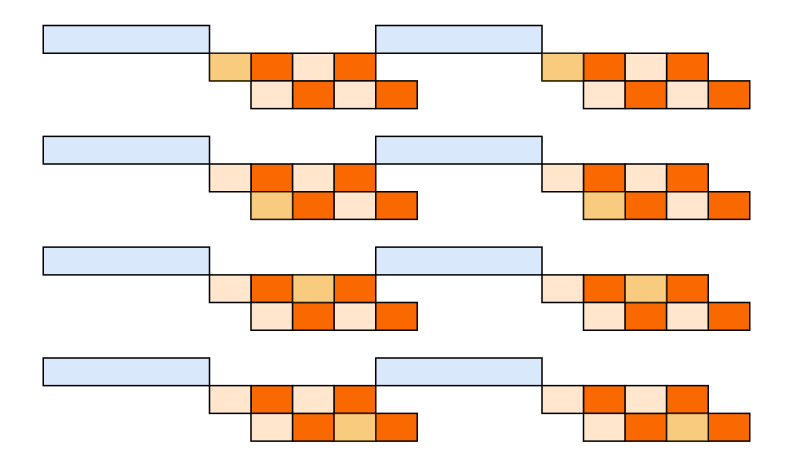

Figure 2: Left: During a mid-scale training run, attention logits rapidly exceed 1000, which could lead to potential numerical instabilities and even training divergence. Right: Maximum logits for Kimi K2 with MuonClip and $\tau$ = 100 over the entire training run. The max logits rapidly increase to the capped value of 100, and only decay to a stable range after approximately 30% of the training steps, demonstrating the effective regulation effect of QK-Clip.

MuonClip: The New Optimizer

We integrate Muon with weight decay, consistent RMS matching, and QK-Clip into a single optimizer, which we refer to as MuonClip (see Algorithm 1).

We demonstrate the effectiveness of MuonClip from several scaling experiments. First, we train a mid-scale 9B activated and 53B total parameters Mixture-of-Experts (MoE) model using the vanilla Muon. As shown in Figure 2 (Left), we observe that the maximum attention logits quickly exceed a magnitude of 1000, showing that attention logits explosion is already evident in Muon training to this scale. Max logits at this level usually result in instability during training, including significant loss spikes and occasional divergence.

Next, we demonstrate that QK-Clip does not degrade model performance and confirm that the MuonClip optimizer preserves the optimization characteristics of Muon without adversely affecting the loss trajectory. A detailed discussion of the experiment designs and findings is provided in the Appendix D.

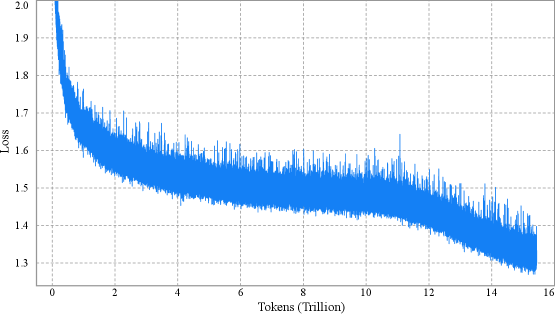

Finally, we train Kimi K2, a large-scale MoE model, using MuonClip with $\tau=100$ and monitor the maximum attention logits throughout the training run (Figure 2 (Right)). Initially, the logits are capped at 100 due to QK-Clip. Over the course of training, the maximum logits gradually decay to a typical operating range without requiring any adjustment to $\tau$ . Importantly, the training loss remains smooth and stable, with no observable spikes, as shown in Figure 3, validating that MuonClip provides robust and scalable control over attention dynamics in large-scale language model training.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Graph: Model Performance Loss Over Tokens

### Overview

The image depicts a line graph illustrating the relationship between computational tokens (in trillions) and a metric labeled "Loss." The graph shows a sharp initial decline in loss, followed by a gradual stabilization with minor fluctuations. The y-axis ranges from 1.3 to 2.0, while the x-axis spans 0 to 16 trillion tokens.

### Components/Axes

- **X-Axis**: "Tokens (Trillion)" with grid markers at intervals of 2 trillion, labeled from 0 to 16 trillion.

- **Y-Axis**: "Loss" with grid markers at intervals of 0.1, labeled from 1.3 to 2.0.

- **Legend**: Located in the top-right corner, indicating a single data series:

- **Blue Line**: "Model Performance" (matches the plotted line).

- **Grid**: Light gray dashed lines for reference.

### Detailed Analysis

- **Initial Decline**:

- At 0 tokens, the loss starts at approximately **2.0**.

- By 2 trillion tokens, the loss drops sharply to **~1.5**, with a steep slope.

- Between 2 and 4 trillion tokens, the loss decreases further to **~1.4**, with minor oscillations.

- **Stabilization Phase**:

- From 4 to 14 trillion tokens, the loss fluctuates between **1.4 and 1.5**, with small spikes (e.g., ~1.55 at 10 trillion tokens).

- After 14 trillion tokens, the loss stabilizes near **1.3**, with reduced variability.

- **Noise**:

- Throughout the graph, the line exhibits minor "jitter" (e.g., ~1.45 at 6 trillion tokens), suggesting measurement variability or model adjustments.

### Key Observations

1. **Rapid Initial Improvement**: The steepest loss reduction occurs in the first 2 trillion tokens.

2. **Gradual Convergence**: Loss decreases by ~0.7 units (from 2.0 to 1.3) over 16 trillion tokens.

3. **Noise vs. Trend**: Fluctuations are small relative to the overall trend, indicating a stable model after initial training.

4. **Plateau Effect**: Loss stabilizes near 1.3 after 14 trillion tokens, suggesting diminishing returns.

### Interpretation

The graph demonstrates that the model's performance improves significantly as it processes more tokens, with loss decreasing sharply in the early stages and then converging to a stable value. The initial drop suggests rapid learning or optimization, while the plateau implies the model reaches a steady state where additional tokens yield minimal improvement. The minor fluctuations may reflect data noise, model fine-tuning, or external factors affecting training stability. This pattern is typical in machine learning, where early gains are substantial, but later progress requires exponentially more resources. The stabilization at ~1.3 loss indicates a potential optimal performance threshold for the model under the given conditions.

</details>

Figure 3: Per-step training loss curve of Kimi K2, without smoothing or sub-sampling. It shows no spikes throughout the entire training process. Note that we omit the very beginning of training for clarity.

### 2.2 Pre-training Data: Improving Token Utility with Rephrasing

Token efficiency in pre-training refers to how much performance improvement is achieved for each token consumed during training. Increasing token utility—the effective learning signal each token contributes—enhances the per-token impact on model updates, thereby directly improving token efficiency. This is particularly important when the supply of high-quality tokens is limited and must be maximally leveraged. A naive approach to increasing token utility is through repeated exposure to the same tokens, which can lead to overfitting and reduced generalization.

A key advancement in the pre-training data of Kimi K2 over Kimi K1.5 is the introduction of a synthetic data generation strategy to increase token utility. Specifically, a carefully designed rephrasing pipeline is employed to amplify the volume of high-quality tokens without inducing significant overfitting. In this report, we describe two domain-specialized rephrasing techniques—targeted respectively at the Knowledge and Mathematics domains—that enable this controlled data augmentation.

Knowledge Data Rephrasing

Pre-training on natural, knowledge-intensive text presents a trade-off: a single epoch is insufficient for comprehensive knowledge absorption, while multi-epoch repetition yields diminishing returns and increases the risk of overfitting. To improve the token utility of high-quality knowledge tokens, we propose a synthetic rephrasing framework composed of the following key components:

- Style- and perspective-diverse prompting: Inspired by WRAP [50], we apply a range of carefully engineered prompts to enhance linguistic diversity while maintaining factual integrity. These prompts guide a large language model to generate faithful rephrasings of the original texts in varied styles and from different perspectives.

- Chunk-wise autoregressive generation: To preserve global coherence and avoid information loss in long documents, we adopt a chunk-based autoregressive rewriting strategy. Texts are divided into segments, rephrased individually, and then stitched back together to form complete passages. This method mitigates implicit output length limitations that typically exist with LLMs. An overview of this pipeline is presented in Figure 4.

- Fidelity verification: To ensure consistency between original and rewritten content, we perform fidelity checks that compare the semantic alignment of each rephrased passage with its source. This serves as an initial quality control step prior to training.

We compare data rephrasing with multi-epoch repetition by testing their corresponding accuracy on SimpleQA. We experiment with an early checkpoint of K2 and evaluate three training strategies: (1) repeating the original dataset for 10 epochs, (2) rephrasing the data once and repeating it for 10 epochs, and (3) rephrasing the data 10 times with a single training pass. As shown in Table 1, the accuracy consistently improves across these strategies, demonstrating the efficacy of our rephrasing-based augmentation. We extended this method to other large-scale knowledge corpora and observed similarly encouraging results, and each corpora is rephrased at most twice.

Table 1: SimpleQA Accuracy under three rephrasing-epoch configurations

| # Rephrasings | # Epochs | SimpleQA Accuracy |

| --- | --- | --- |

| 0 (raw wiki-text) | 10 | 23.76 |

| 1 | 10 | 27.39 |

| 10 | 1 | 28.94 |

<details>

<summary>x6.png Details</summary>

### Visual Description

## Flowchart: Text Processing Pipeline with Auto-Regressive Rewrite Model

### Overview

The diagram illustrates a multi-stage text processing workflow involving input splitting, iterative rewriting via an auto-regressive model, and output concatenation. The process handles 4096 tokens of input text, splitting it into smaller partial excerpts (256 tokens each) for sequential processing through a rewrite model. The final output combines partial results into a full output excerpt.

### Components/Axes

- **Input Stage**:

- **Full Input Excerpt**: 4096 tokens (blue box)

- **Split Operation**: Divides input into 256-token partial excerpts

- **Partial Input Excerpts**: Labeled 1, 2, ... (green boxes)

- **Processing Stage**:

- **Rewrite Model**: Central purple box with "auto-regressive" annotation

- **Partial Output Excerpts**: Generated sequentially (green boxes)

- **Output Stage**:

- **Concat Operation**: Combines partial outputs

- **Full Output Excerpt**: Final result (blue box)

### Detailed Analysis

1. **Input Splitting**:

- 4096 tokens → 16 partial excerpts (4096 ÷ 256 = 16)

- Each partial input excerpt (256 tokens) is processed independently

2. **Rewrite Model**:

- Auto-regressive processing indicated by bidirectional arrows

- Suggests iterative refinement of partial outputs

3. **Output Concatenation**:

- Partial outputs (1, 2, ...) combined sequentially

- Final output matches original input size (4096 tokens)

### Key Observations

- **Token Consistency**: Input/output token counts match (4096), suggesting lossless processing

- **Modular Design**: Auto-regressive model processes discrete chunks rather than full text

- **Sequential Dependency**: Partial outputs are ordered (1 → 2 → ...) before concatenation

- **Color Coding**: Blue for input/output, green for partial stages, purple for model

### Interpretation

This architecture demonstrates a divide-and-conquer approach to text processing:

1. **Efficiency**: Splitting large inputs into manageable chunks (256 tokens) enables parallelizable processing

2. **Quality Control**: Auto-regressive refinement suggests iterative improvement of partial outputs

3. **Reconstructability**: Final output maintains original token count through precise concatenation

4. **Potential Applications**: Could represent text summarization, translation, or content generation pipelines where context preservation is critical

The auto-regressive annotation implies the model generates outputs token-by-token with contextual awareness, likely improving coherence in partial outputs before final combination.

</details>

Figure 4: Auto-regressive chunk-wise rephrasing pipeline for long input excerpts. The input is split into smaller chunks with preserved context, rewritten sequentially, and then concatenated into a full rewritten passage.

Mathematics Data Rephrasing

To enhance mathematical reasoning capabilities, we rewrite high-quality mathematical documents into a “learning-note” style, following the methodology introduced in SwallowMath [16]. In addition, we increased data diversity by translating high-quality mathematical materials from other languages into English.

Although initial experiments with rephrased subsets of our datasets show promising results, the use of synthetic data as a strategy for continued scaling remains an active area of investigation. Key challenges include generalizing the approach to diverse source domains without compromising factual accuracy, minimizing hallucinations and unintended toxicity, and ensuring scalability to large-scale datasets.

Pre-training Data Overall

The Kimi K2 pre-training corpus comprises 15.5 trillion tokens of curated, high-quality data spanning four primary domains: Web Text, Code, Mathematics, and Knowledge. Most data processing pipelines follow the methodologies outlined in Kimi K1.5 [36]. For each domain, we performed rigorous correctness and quality validation and designed targeted data experiments to ensure the curated dataset achieved both high diversity and effectiveness.

### 2.3 Model Architecture

Kimi K2 is a 1.04 trillion-parameter Mixture-of-Experts (MoE) transformer model with 32 billion activated parameters. The architecture follows a similar design to DeepSeek-V3 [11] , employing Multi-head Latent Attention (MLA) [45] as the attention mechanism, with a model hidden dimension of 7168 and an MoE expert hidden dimension of 2048. Our scaling law analysis reveals that continued increases in sparsity yield substantial performance improvements, which motivated us to increase the number of experts to 384, compared to 256 in DeepSeek-V3. To reduce computational overhead during inference, we cut the number of attention heads to 64, as opposed to 128 in DeepSeek-V3. Table 2 presents a detailed comparison of architectural parameters between Kimi K2 and DeepSeek-V3.

Table 2: Architectural comparison between Kimi K2 and DeepSeek-V3

| | DeepSeek-V3 | Kimi K2 | $\Delta$ |

| --- | --- | --- | --- |

| #Layers | 61 | 61 | = |

| Total Parameters | 671B | 1.04T | $\uparrow$ 54% |

| Activated Parameters | 37B | 32.6B | $\downarrow$ 13% |

| Experts (total) | 256 | 384 | $\uparrow$ 50% |

| Experts Active per Token | 8 | 8 | = |

| Shared Experts | 1 | 1 | = |

| Attention Heads | 128 | 64 | $\downarrow$ 50% |

| Number of Dense Layers | 3 | 1 | $\downarrow$ 67% |

| Expert Grouping | Yes | No | - |

Sparsity Scaling Law

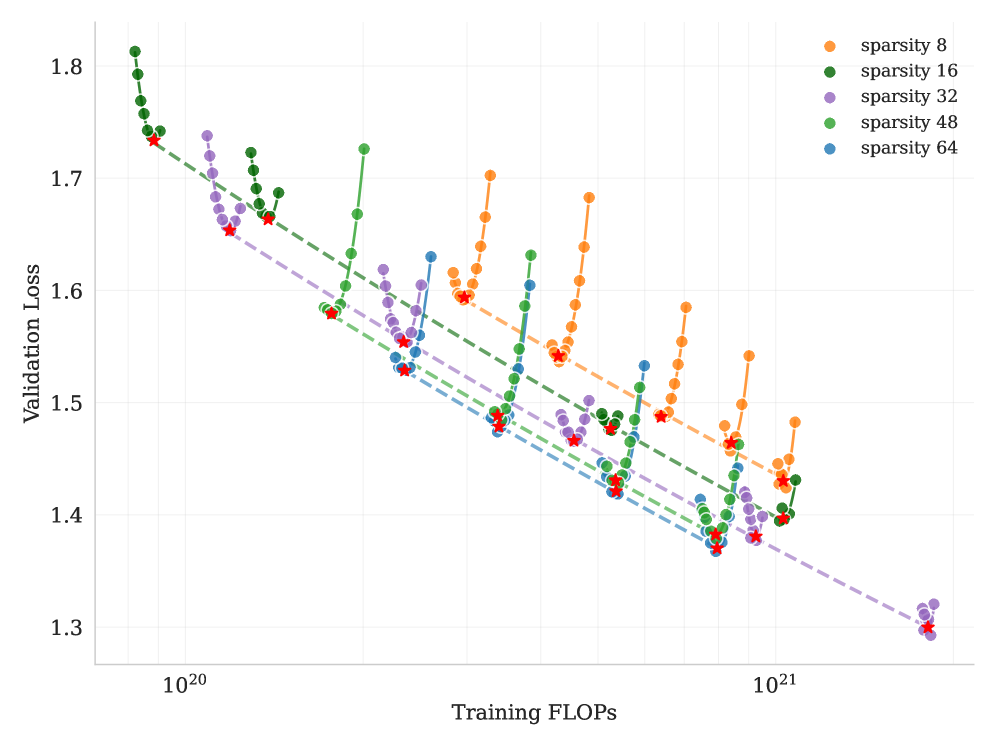

We develop a sparsity scaling law tailored for the Mixture-of-Experts (MoE) model family using Muon. Sparsity is defined as the ratio of the total number of experts to the number of activated experts. Through carefully controlled small-scale experiments, we observe that — under a fixed number of activated parameters (i.e., constant FLOPs) — increasing the total number of experts (i.e., increasing sparsity) consistently lowers both the training and validation loss, thereby enhancing overall model performance (Figure 6). Concretely, under the compute-optimal sparsity scaling law, achieving the same validation loss of 1.5, sparsity 48 reduces FLOPs by 1.69×, 1.39×, and 1.15× compared to sparsity levels 8, 16, and 32, respectively. Though increasing sparsity leads to better performance, this gain comes with increased infrastructure complexity. To balance model performance with cost, we adopt a sparsity of 48 for Kimi K2, activating 8 out of 384 experts per forward pass.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Validation Loss vs Training FLOPs

### Overview

The chart visualizes the relationship between training computational effort (FLOPs) and validation loss across five sparsity levels (8, 16, 32, 48, 64). Each sparsity level is represented by a distinct color-coded line with data points, showing how validation loss evolves during training. Red stars mark the minimum validation loss for each sparsity level.

### Components/Axes

- **X-axis (Training FLOPs)**: Logarithmic scale from 10²⁰ to 10²¹.

- **Y-axis (Validation Loss)**: Linear scale from 1.3 to 1.8.

- **Legend**: Located in the top-right corner, mapping colors to sparsity levels:

- Orange: sparsity 8

- Green: sparsity 16

- Purple: sparsity 32

- Green: sparsity 48

- Blue: sparsity 64

- **Lines**: Dashed lines for each sparsity level, connecting data points.

- **Data Points**: Colored circles (matching legend) with red stars indicating minima.

### Detailed Analysis

1. **Sparsity 8 (Orange)**:

- Starts at ~1.75 (10²⁰ FLOPs), dips to ~1.65 (10²⁰.⁵ FLOPs), then rises to ~1.7 (10²¹ FLOPs).

- Minimum validation loss: **1.65** at ~10²⁰.⁵ FLOPs.

2. **Sparsity 16 (Green)**:

- Begins at ~1.78 (10²⁰ FLOPs), decreases to ~1.68 (10²⁰.² FLOPs), then increases to ~1.72 (10²¹ FLOPs).

- Minimum validation loss: **1.68** at ~10²⁰.² FLOPs.

3. **Sparsity 32 (Purple)**:

- Starts at ~1.75 (10²⁰ FLOPs), drops to ~1.62 (10²⁰.⁴ FLOPs), then rises to ~1.68 (10²¹ FLOPs).

- Minimum validation loss: **1.62** at ~10²⁰.⁴ FLOPs.

4. **Sparsity 48 (Green)**:

- Begins at ~1.72 (10²⁰ FLOPs), decreases to ~1.58 (10²⁰.³ FLOPs), then increases to ~1.64 (10²¹ FLOPs).

- Minimum validation loss: **1.58** at ~10²⁰.³ FLOPs.

5. **Sparsity 64 (Blue)**:

- Starts at ~1.7 (10²⁰ FLOPs), dips to ~1.55 (10²⁰.² FLOPs), then rises to ~1.6 (10²¹ FLOPs).

- Minimum validation loss: **1.55** at ~10²⁰.² FLOPs.

### Key Observations

- **Inverse Relationship**: Higher sparsity levels (e.g., 64) generally achieve lower validation loss minima compared to lower sparsity levels (e.g., 8), despite the latter having more parameters.

- **Optimal Training FLOPs**: Each sparsity level reaches its minimum validation loss at distinct FLOP thresholds (e.g., sparsity 64 at ~10²⁰.² FLOPs).

- **Fluctuations**: Data points show non-monotonic trends, with validation loss increasing after initial decreases for most sparsity levels.

### Interpretation

The data suggests that **higher sparsity correlates with better validation performance**, contradicting the intuitive expectation that reduced sparsity (more parameters) would improve model accuracy. This could indicate:

1. **Efficiency vs. Performance Tradeoff**: Higher sparsity may enable faster convergence or better generalization despite fewer parameters.

2. **Training Dynamics**: The minima for higher sparsity occur earlier in training (lower FLOPs), suggesting these models stabilize faster.

3. **Anomalies**: The green lines for sparsity 16 and 48 overlap in color but show distinct trends, highlighting potential ambiguities in legend labeling or data grouping.

The red stars emphasize that optimal performance for each sparsity level is achieved at specific training stages, guiding resource allocation for model training.

</details>

Figure 5: Sparsity Scaling Law. Increasing sparsity leads to improved model performance. We fixed the number of activated experts to 8 and the number of shared experts to 1, and varied the total number of experts, resulting in models with different sparsity levels.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Validation Loss vs Training Tokens

### Overview

The chart illustrates the relationship between validation loss and training tokens for different model configurations and computational budgets. It shows multiple data series with distinct trends, highlighting how model architecture and computational resources impact performance.

### Components/Axes

- **Y-axis**: Validation Loss (1.35 to 1.75)

- **X-axis**: Training Tokens (10¹¹ to 10¹²)

- **Legend**:

- Blue squares: Models with attention heads equal to number of layers

- Blue circles: Counterparts with doubled attention heads

- Pink squares: 2.2e+20 FLOPs

- Green squares: 4.5e+20 FLOPs

- Orange squares: 9.0e+20 FLOPs

- Blue dotted line: 1.2e+20 FLOPs

### Detailed Analysis

1. **Blue Squares (Models with attention heads = layers)**:

- Starts at ~1.74 validation loss at 10¹¹ tokens

- Dips to ~1.65 at 10¹² tokens

- Shows a U-shaped curve with a minimum around 10¹¹.5 tokens

2. **Blue Circles (Doubled attention heads)**:

- Starts at ~1.64 validation loss at 10¹¹ tokens

- Dips to ~1.58 at 10¹² tokens

- Maintains lower loss than blue squares throughout

3. **Pink Squares (2.2e+20 FLOPs)**:

- Starts at ~1.62 validation loss at 10¹¹ tokens

- Dips to ~1.56 at 10¹² tokens

- Shows gradual improvement with more tokens

4. **Green Squares (4.5e+20 FLOPs)**:

- Starts at ~1.50 validation loss at 10¹¹ tokens

- Dips to ~1.45 at 10¹² tokens

- Maintains lowest loss among FLOPs-based series

5. **Orange Squares (9.0e+20 FLOPs)**:

- Starts at ~1.42 validation loss at 10¹¹ tokens

- Dips to ~1.38 at 10¹² tokens

- Shows U-shaped curve with minimum at 10¹¹.5 tokens

6. **Blue Dotted Line (1.2e+20 FLOPs)**:

- Starts at ~1.74 validation loss at 10¹¹ tokens

- Dips to ~1.65 at 10¹² tokens

- Shows consistent downward trend

### Key Observations

- **Architecture Impact**: Models with doubled attention heads (blue circles) consistently outperform standard configurations (blue squares) across all token ranges.

- **FLOPs Correlation**: Higher computational budgets (orange > green > pink) correlate with lower validation loss.

- **Training Token Effect**: All series show improved performance with more training tokens, though the rate of improvement varies.

- **1.2e+20 FLOPs Trend**: The blue dotted line demonstrates the most significant improvement (1.74 → 1.65) with increased tokens.

### Interpretation

The data suggests a complex interplay between model architecture and computational resources:

1. **Attention Head Scaling**: Doubling attention heads provides a ~0.06 validation loss advantage over standard configurations, indicating architectural efficiency gains.

2. **FLOPs vs Architecture**: While higher FLOPs generally improve performance, the 9.0e+20 FLOPs series (orange) shows diminishing returns compared to architectural improvements (blue circles).

3. **Training Token Efficiency**: The 1.2e+20 FLOPs series (blue dotted line) demonstrates that even with limited computational resources, extended training can yield substantial improvements.

4. **U-Shaped Curves**: Multiple series show initial improvement followed by plateauing, suggesting optimal performance at mid-range token counts before potential overfitting or diminishing returns.

This analysis reveals that both architectural choices (attention head scaling) and computational investment (FLOPs) significantly impact model performance, with architectural improvements often providing better returns than raw computational power alone.

</details>

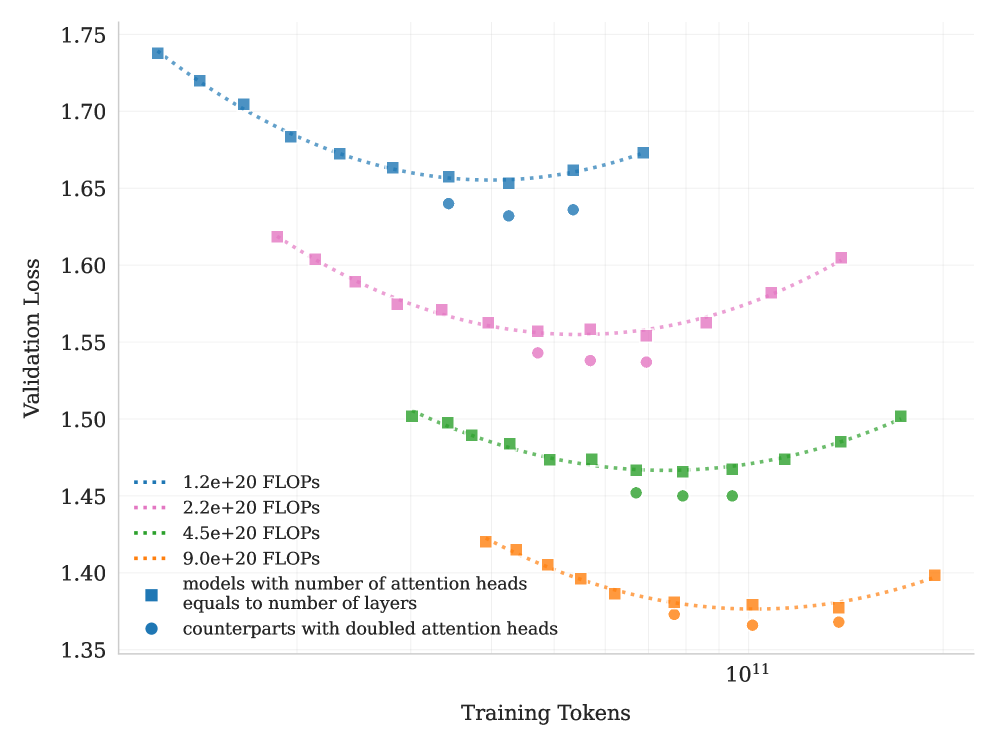

Figure 6: Scaling curves for models with number of attention heads equals to number of layers and their counterparts with doubled attention heads. Doubling the number of attention heads leads to a reduction in validation loss of approximately $0.5\$ to $1.2\$ .

Number of Attention Heads

DeepSeek-V3 [11] sets the number of attention heads to roughly twice the number of model layers to better utilize memory bandwidth and enhance computational efficiency. However, as the context length increases, doubling the number of attention heads leads to significant inference overhead, reducing efficiency at longer sequence lengths. This becomes a major limitation in agentic applications, where efficient long context processing is essential. For example, with a sequence length of 128k, increasing the number of attention heads from 64 to 128, while keeping the total expert count fixed at 384, leads to an 83% increase in inference FLOPs. To evaluate the impact of this design, we conduct controlled experiments comparing configurations where the number of attention heads equals the number of layers against those with double number of heads, under varying training FLOPs. Under iso-token training conditions, we observe that doubling the attention heads yields only modest improvements in validation loss (ranging from 0.5% to 1.2%) across different compute budgets (Figure 6). Given that sparsity 48 already offers strong performance, the marginal gains from doubling attention heads do not justify the inference cost. Therefore we choose to 64 attention heads.

### 2.4 Training Infrastructure

#### 2.4.1 Compute Cluster

Kimi K2 was trained on a cluster equipped with NVIDIA H800 GPUs. Each node in the H800 cluster contains 2 TB RAM and 8 GPUs connected by NVLink and NVSwitch within nodes. Across different nodes, $\text{8}\!\times\!\text{400}~\text{Gbps}$ RoCE interconnects are utilized to facilitate communications.

#### 2.4.2 Parallelism for Model Scaling

Training of large language models often progresses under dynamic resource availability. Instead of optimizing one parallelism strategy that’s only applicable under specific amount of resources, we pursue a flexible strategy that allows Kimi K2 to be trained on any number of nodes that is a multiple of 32. Our strategy leverages a combination of 16-way Pipeline Parallelism (PP) with virtual stages [29, 54, 39, 58, 48, 22], 16-way Expert Parallelism (EP) [40], and ZeRO-1 Data Parallelism [61].

Under this setting, storing the model parameters in BF16 and their gradient accumulation buffer in FP32 requires approximately 6 TB of GPU memory, distributed over a model-parallel group of 256 GPUs. Placement of optimizer states depends on the training configurations. When the total number of training nodes is large, the optimizer states are distributed, reducing its per-device memory footprint to a negligible level. When the total number of training nodes is small (e.g., 32), we can offload some optimizer states to CPU.

This approach allows us to reuse an identical parallelism configuration for both small- and large-scale experiments, while letting each GPU hold approximately 30 GB of GPU memory for all states. The rest of the GPU memory are used for activations, as described in Sec. 2.4.3. Such a consistent design is important for research efficiency, as it simplifies the system and substantially accelerates experimental iteration.

EP communication overlap with interleaved 1F1B

By increasing the number of warm-up micro-batches, we can overlap EP all-to-all communication with computation under the standard interleaved 1F1B schedule [22, 54]. In comparison, DualPipe [11] doubles the memory required for parameters and gradients, necessitating an increase in parallelism to compensate. Increasing PP introduces more bubbles, while increasing EP, as discussed below, incurs higher overhead. The additional costs are prohibitively high for training a large model with over 1 trillion parameters and thus we opted not to use DualPipe.

However, interleaved 1F1B splits the model into more stages, introducing non-trivial PP communication overhead. To mitigate this cost, we decouple the weight-gradient computation from each micro-batch’s backward pass and execute it in parallel with the corresponding PP communication. Consequently, all PP communications can be effectively overlapped except for the warm-up phase.

Smaller EP size

To ensure full computation-communication overlap during the 1F1B stage, the reduced attention computation time in K2 (which has 64 attention heads compared to 128 heads in DeepSeek-V3) necessitates minimizing the time of EP operations. This is achieved by adopting the smallest feasible EP parallelization strategy, specifically EP = 16. Utilizing a smaller EP group also relaxes expert-balance constraints, allowing for near-optimal speed to be achieved without further tuning.

#### 2.4.3 Activation Reduction

After reserving space for parameters, gradient buffers, and optimizer states, the remaining GPU memory on each device is insufficient to hold the full MoE activations. To ensure the activation memory fits within the constraints, especially for the initial pipeline stages that accumulate the largest activations during the 1F1B warm-up phase, the following techniques are employed.

Selective recomputation

Recomputation is applied to inexpensive, high-footprint stages, including LayerNorm, SwiGLU, and MLA up-projections [11]. Additionally, MoE down-projections are recomputed during training to further reduce activation memory. While optional, this recomputation maintains adequate GPU memory, preventing crashes caused by expert imbalance in early training stages.

FP8 storage for insensitive activations

Inputs of MoE up-projections and SwiGLU are compressed to FP8-E4M3 in 1 $\times$ 128 tiles with FP32 scales. Small-scale experiments show no measurable loss increase. Due to potential risks of performance degradation that we observed during preliminary study, we do not apply FP8 in computation.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Diagram: Computational Process Flow with Offload/Onload Stages

### Overview

The diagram illustrates a multi-stage computational process involving computation, communication, and offload/onload operations. It includes a grid representing sequential steps (1-8) with color-coded operations (forward pass, backward pass, PP communication, EP dispatch/combined). Three primary sections at the top define computational stages, while the grid below maps the flow of operations across iterations.

---

### Components/Axes

1. **Top Sections**:

- **Computation**: Contains blocks labeled `Attn` (Attention), `MLP` (Multi-Layer Perceptron), `EP-D` (EP Dispatch), and `EP-C` (EP Combine).

- **Communication**: Includes `EP-D`, `EP-C`, and `PP` (Parameter Passing).

- **Offload/Onload**: Labeled `Offload` (yellow) and `Onload` (yellow), with `WGrad` (Weight Gradient) in red.

2. **Grid**:

- **Rows**: Labeled `VPP + 1 warmup` (Vertical Pipeline Parallelism + 1 warmup step).

- **Columns**: Numbered 1-8, representing sequential steps.

- **Colors**:

- Blue: Forward pass

- Red: Backward pass

- Green: PP communication

- Yellow: EP dispatch/combined (EP-D, EP-C)

3. **Legend**:

- Located at the bottom, mapping colors to operations:

- Blue: Forward pass

- Red: Backward pass

- Green: PP communication

- Yellow: EP dispatch/combined (EP-D, EP-C)

---

### Detailed Analysis

1. **Top Sections**:

- **Computation**:

- Leftmost section: `Attn` (blue), `MLP` (blue), `EP-D` (blue), `EP-C` (blue).

- Middle section: `Attn` (red), `MLP` (red), `EP-D` (red), `EP-C` (red).

- Rightmost section: `MLP` (red), `Attn` (red), `WGrad` (red), `EP-C` (red), `PP` (green).

- **Communication**:

- Repeats `EP-D` (blue), `EP-C` (blue), `PP` (green) across stages.

- **Offload/Onload**:

- Left: `Offload` (yellow).

- Middle: `Offload` (yellow) and `Onload` (yellow).

- Right: `Onload` (yellow).

2. **Grid**:

- **Rows**:

- Row 1: `1 2 3 4 1 2 3 4` (blue).

- Row 2: `1 2 3 4 1 2 3 4` (blue).

- Row 3: `1 2 3 4 1 2 3 4` (blue).

- Row 4: `1 2 3 4 1 2 3 4` (blue).

- **Columns**:

- Columns 1-4: Blue (forward pass).

- Columns 5-8: Red (backward pass), with green (PP communication) in column 7, row 4.

3. **Color Consistency**:

- Blue blocks in the grid align with "Forward pass" in the legend.

- Red blocks align with "Backward pass."

- Green blocks (e.g., column 7, row 4) match "PP communication."

- Yellow blocks in the top sections correspond to "EP dispatch/combined."

---

### Key Observations

1. **Sequential Flow**:

- The grid shows a repetitive pattern of forward pass (blue) in steps 1-4, followed by backward pass (red) in steps 5-8.

- PP communication (green) occurs in step 7, row 4, indicating a parameter-passing step mid-process.

2. **Offload/Onload Dynamics**:

- Offload (yellow) appears in the left and middle sections, suggesting data transfer out of the computation stage.

- Onload (yellow) in the middle and right sections indicates data retrieval into the computation stage.

3. **WGrad and PP**:

- `WGrad` (red) in the rightmost computation section highlights weight gradient computation during backward pass.

- `PP` (green) in the communication section emphasizes parameter synchronization.

---

### Interpretation

The diagram models a distributed training workflow, likely for neural networks, with stages for computation, communication, and data transfer. The grid represents iterative steps (1-8) where:

- **Forward pass** (blue) dominates early steps, executing attention and MLP layers.

- **Backward pass** (red) follows, computing gradients and updating weights.

- **PP communication** (green) occurs mid-process, synchronizing parameters between pipeline stages.

- **EP dispatch/combined** (yellow) manages data offloading/onloading, optimizing resource usage.

The `VPP + 1 warmup` row suggests a pipeline parallelism strategy with an initial warmup phase to stabilize computation. The repetition of steps 1-4 in blue and 5-8 in red implies a two-phase workflow (forward/backward), while the green and yellow blocks highlight critical communication and data transfer points. This structure balances computational efficiency with communication overhead, typical in large-scale machine learning systems.

</details>

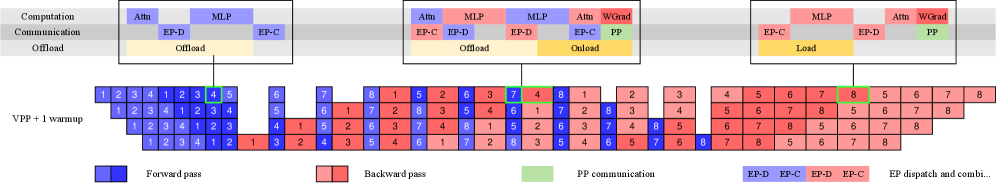

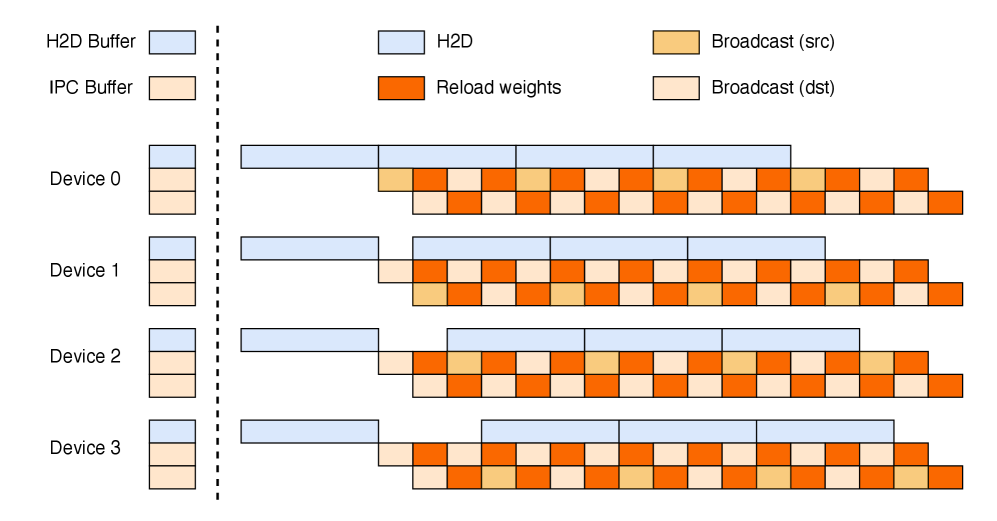

Figure 7: Computation, communication and offloading overlapped in different PP phases.

Activation CPU offload

All remaining activations are offloaded to CPU RAM. A copy engine is responsible for streaming the offload and onload, overlapping with both computation and communication kernels. During the 1F1B phase, we offload the forward activations of the previous micro-batch while prefetching the backward activations of the next. The warm-up and cool-down phases are handled similarly and the overall pattern is shown in Figure 7. Although offloading may slightly affect EP traffic due to PCIe traffic congestion, our tests show that EP communication remains fully overlapped.

### 2.5 Training recipe

We pre-trained the model with a 4,096-token context window using the MuonClip optimizer (Algorithm 1) and the WSD learning rate schedule [26], processing a total of 15.5T tokens. The first 10T tokens were trained with a constant learning rate of 2e-4 after a 500-step warm-up, followed by 5.5T tokens with a cosine decay from 2e-4 to 2e-5. Weight decay was set to 0.1 throughout, and the global batch size was held at 67M tokens. The overall training curve is shown in Figure 3.

Towards the end of pre-training, we conducted an annealing phase followed by a long-context activation stage. The batch size was kept constant at 67M tokens, while the learning rate was decayed from 2e-5 to 7e-6. In this phase, the model was trained on 400 billion tokens with a 4k sequence length, followed by an additional 60 billion tokens with a 32k sequence length. To extend the context window to 128k, we employed the YaRN method [56].

## 3 Post-Training

### 3.1 Supervised Fine-Tuning

We employ the Muon optimizer [34] in our post-training and recommend its use for fine-tuning with K2. This follows from the conclusion of our previous work [47] that a Muon-pre-trained checkpoint produces the best performance with Muon fine-tuning.

We construct a large-scale instruction-tuning dataset spanning diverse domains, guided by two core principles: maximizing prompt diversity and ensuring high response quality. To this end, we develop a suite of data generation pipelines tailored to different task domains, each utilizing a combination of human annotation, prompt engineering, and verification processes. We adopt K1.5 [36] and other in-house domain-specialized expert models to generate candidate responses for various tasks, followed by LLMs or human-based judges to perform automated quality evaluation and filtering. For agentic data, we create a data synthesis pipeline to teach models tool-use capabilities through multi-step, interactive reasoning.

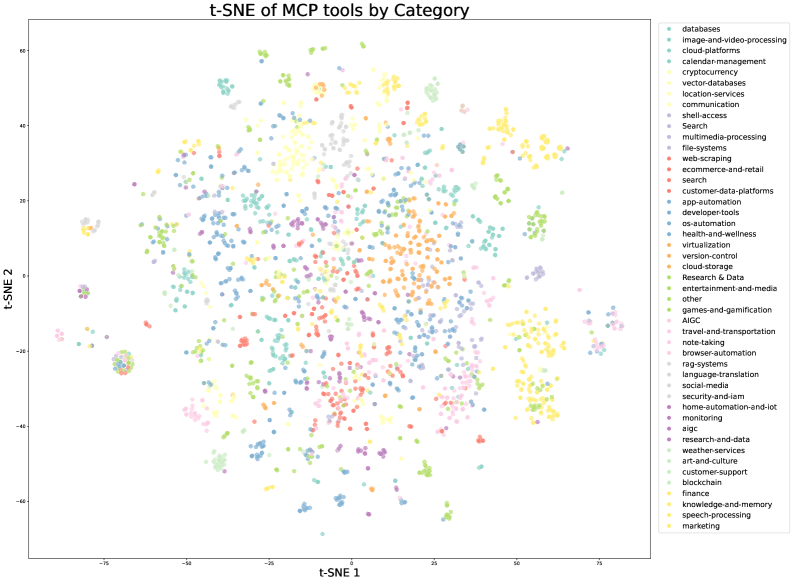

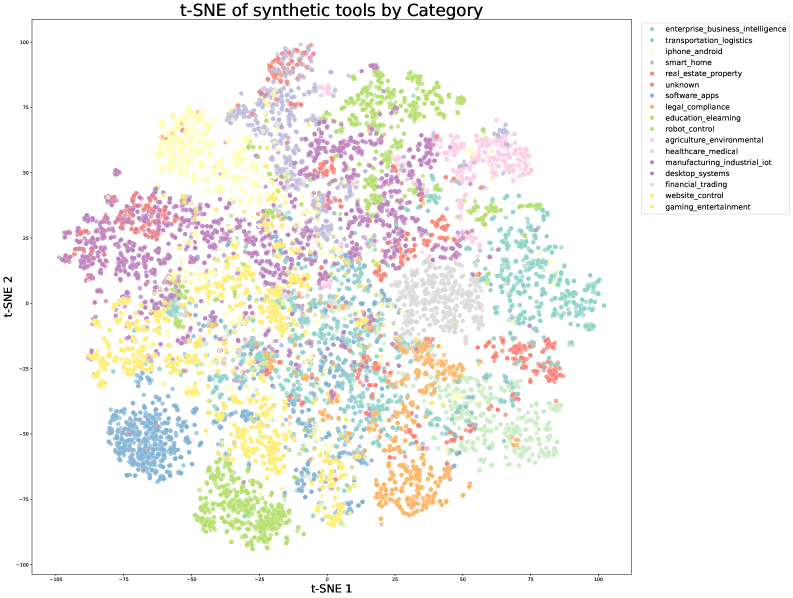

#### 3.1.1 Large-Scale Agentic Data Synthesis for Tool Use Learning

A critical capability of modern LLM agents is their ability to autonomously use unfamiliar tools, interact with external environments, and iteratively refine their actions through reasoning, execution, and error correction. Agentic tool use capability is essential for solving complex, multi-step tasks that require dynamic interaction with real-world systems. Recent benchmarks such as ACEBench [7] and $\tau$ -bench [86] have highlighted the importance of comprehensive tool-use evaluation, while frameworks like ToolLLM [59] and ACEBench [7] have demonstrated the potential of teaching models to use thousands of tools effectively.

However, training such capabilities at scale presents a significant challenge: while real-world environments provide rich and authentic interaction signals, they are often difficult to construct at scale due to cost, complexity, privacy and accessibility constraints. Recent work on synthetic data generation (AgentInstruct [52]; Self-Instruct [76]; StableToolBench [21]; ZeroSearch [67]) has shown promising results in creating large-scale data without relying on real-world interactions. Building on these advances and inspired by ACEBench [7] ’s comprehensive data synthesis framework, we developed a pipeline that simulates real-world tool-use scenarios at scale, enabling the generation of tens of thousands of diverse and high-quality training examples.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Flowchart: System Architecture for Tool Specification and Task Execution

### Overview

The diagram illustrates a hierarchical workflow connecting domains, applications, tool specifications, agents, and tasks with rubrics. It emphasizes the flow of data or processes from high-level domains to specific task execution, with a central "Tool Repository" acting as an intermediary.

---

### Components/Axes

- **Nodes**:

- **Domains** (topmost, purple)

- **Applications** (purple, connected to Domains)

- **MCP tools** (green, connected to Applications)

- **Real-world tool specs** (green, connected to MCP tools)

- **Synthesized tool specs** (purple, connected to Applications)

- **Tool Repository** (blue, receiving inputs from both tool specs)

- **Agents** (yellow, connected to Tool Repository)

- **Tasks with rubrics** (pink, connected to Agents)

- **Arrows**: Indicate directional flow between components.

- **Colors**:

- Purple: High-level conceptual components (Domains, Applications, Synthesized tool specs)

- Green: Real-world/tool-specific components (MCP tools, Real-world tool specs)

- Blue: Central repository (Tool Repository)

- Yellow: Processing unit (Agents)

- Pink: Output/goal (Tasks with rubrics)

---

### Detailed Analysis

1. **Flow Path**:

- **Domains** → **Applications**: High-level domains are broken down into specific applications.

- **Applications** → **MCP tools** and **Synthesized tool specs**: Applications generate both real-world tool specifications (via MCP tools) and simulated/synthesized specifications.

- **Tool specs** → **Tool Repository**: Both real and synthesized specifications are stored or processed in a central repository.

- **Tool Repository** → **Agents**: The repository supplies data to agents.

- **Agents** → **Tasks with rubrics**: Agents execute tasks defined by rubrics (performance criteria).

2. **Key Relationships**:

- The **Tool Repository** serves as a convergence point for real and synthesized tool specifications, suggesting a unified system for managing diverse data sources.

- **Agents** act as intermediaries between the repository and task execution, implying automation or decision-making capabilities.

- **Tasks with rubrics** are the final output, indicating that agent performance is evaluated against predefined standards.

---

### Key Observations

- **Dual Inputs to Tool Repository**: The system integrates both real-world and synthesized tool specifications, enabling flexibility for testing or hybrid workflows.

- **Agent-Centric Design**: Agents are positioned to process repository data and execute tasks, highlighting their role as autonomous or semi-autonomous components.

- **Rubric-Driven Tasks**: The inclusion of rubrics suggests a focus on measurable outcomes or quality control in task execution.

---

### Interpretation

This diagram represents a system architecture where **domains** are decomposed into **applications**, which define **tool specifications** (both real and simulated). These specifications are centralized in a **Tool Repository**, enabling **Agents** to execute **Tasks with rubrics**. The structure implies:

- **Modularity**: Components are decoupled (e.g., Domains → Applications → Tool specs).

- **Scalability**: The repository can handle diverse inputs, supporting both real and synthetic data.

- **Evaluation Focus**: Rubrics in tasks emphasize performance metrics, suggesting the system is designed for optimization or validation.

The absence of numerical data or explicit trends indicates the diagram is conceptual, focusing on workflow design rather than quantitative analysis.

</details>

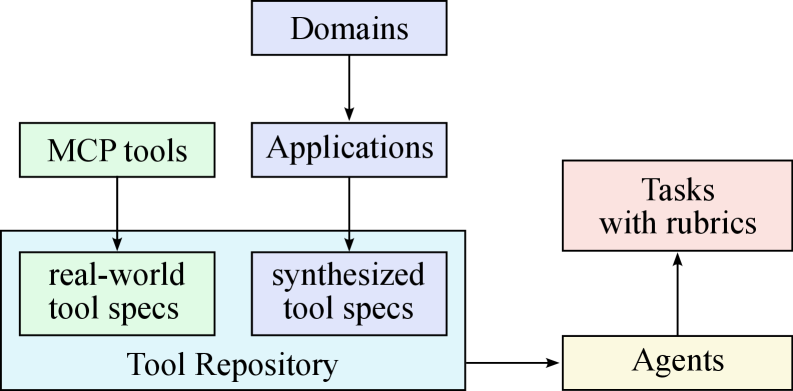

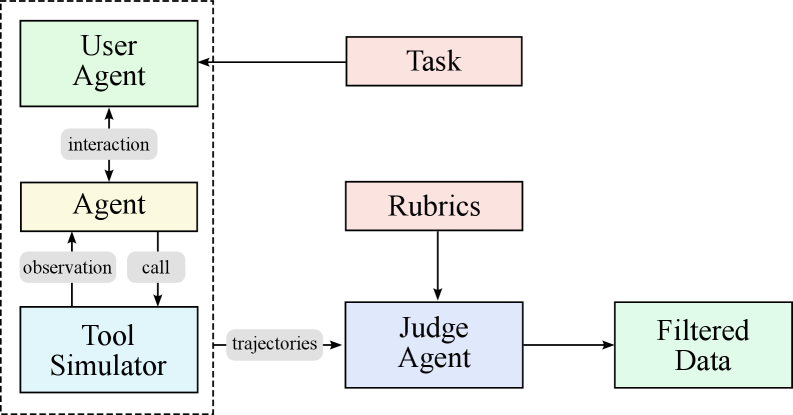

(a) Synthesizing tool specs, agents and tasks

<details>

<summary>x11.png Details</summary>

### Visual Description

## Flowchart: Agent Workflow and Evaluation System

### Overview

The diagram illustrates a multi-component system for agent interaction, task execution, and data validation. It features two primary sections: a left-side agent workflow and a right-side evaluation process, connected through data trajectories.

### Components/Axes

1. **Left Section (Agent Workflow)**

- **User Agent** (green box): Initiates interactions

- **Agent** (yellow box): Core processing unit

- **Tool Simulator** (blue box): Execution environment

- Arrows indicate:

- `interaction` (User Agent → Agent)

- `observation` (Agent → Tool Simulator)

- `call` (Agent → Tool Simulator)

2. **Right Section (Evaluation Process)**

- **Task** (red box): Defined objectives

- **Rubrics** (red box): Evaluation criteria

- **Judge Agent** (purple box): Validation component

- **Filtered Data** (green box): Output

- Arrows indicate:

- `trajectories` (Tool Simulator → Judge Agent)

- Evaluation flow (Rubrics → Judge Agent → Filtered Data)

3. **Connectivity**

- Task box connects to User Agent

- Judge Agent receives trajectories from Tool Simulator

- Filtered Data is the final output

### Detailed Analysis

- **Agent Workflow**:

- User Agent initiates task interactions

- Agent observes tool simulator outputs and makes calls

- Tool Simulator executes actions based on agent instructions

- **Evaluation Process**:

- Task definition originates from User Agent

- Rubrics provide evaluation framework

- Judge Agent analyzes trajectories against rubrics

- Filtered Data represents validated results

### Key Observations

1. **Modular Architecture**: Clear separation between execution (left) and evaluation (right)

2. **Data Flow**: Trajectories from tool execution feed directly into judgment

3. **Validation Layer**: Judge Agent acts as quality control between raw data and final output

4. **Color Coding**:

- Green = User-facing components (User Agent, Filtered Data)

- Red = Task definition and evaluation criteria

- Blue/Purple = Technical components (Tool Simulator, Judge Agent)

### Interpretation

This system implements a closed-loop validation framework where:

1. The User Agent defines tasks and receives final outputs

2. The Agent executes actions through a simulated environment

3. All trajectories are independently validated by the Judge Agent using predefined rubrics

4. The separation of execution and evaluation ensures objective assessment

5. Filtered Data represents both task completion and quality assurance

The architecture suggests a focus on reliable AI agent systems where outputs must meet explicit criteria before being considered valid results. The use of separate evaluation criteria (rubrics) implies potential applications in educational technology, automated grading systems, or quality control for AI-generated content.

</details>

(b) Generating agent trajectories

Figure 8: Data synthesis pipeline for tool use. (a) Tool specs are from both real-world tools and LLMs; agents and tasks are the generated from the tool repo. (b) Multi-agent pipeline to generate and filter trajectories with tool calling.

<details>

<summary>x12.png Details</summary>

### Visual Description

## Scatter Plot: t-SNE of MCP Tools by Category

### Overview

The image is a 2D t-SNE (t-distributed Stochastic Neighbor Embedding) visualization of Machine Control Platform (MCP) tools categorized by functionality. Points are colored by category, with a legend on the right. The plot reveals clustering patterns, indicating relationships between tool categories in a reduced-dimensional space.

### Components/Axes

- **X-axis**: Labeled "t-SNE 1" (horizontal), ranging approximately from -75 to 75.

- **Y-axis**: Labeled "t-SNE 2" (vertical), ranging approximately from -60 to 80.

- **Legend**: Located in the top-right corner, listing 40+ categories (e.g., "databases," "cloud-platforms," "finance") with distinct colors. Colors include light blue, dark blue, green, yellow, red, purple, pink, orange, and gray.

### Detailed Analysis

1. **Clustering Patterns**:

- **Central Cluster**: A dense mix of colors (e.g., yellow, green, blue) dominates the center, suggesting overlapping similarities between categories like "research-and-data," "developer-tools," and "cloud-storage."

- **Outliers**:

- **"Other" Category**: A small cluster in the bottom-left quadrant (t-SNE 1 ≈ -70, t-SNE 2 ≈ -50).

- **"Finance" Category**: A distinct cluster in the top-right quadrant (t-SNE 1 ≈ 60, t-SNE 2 ≈ 60).

- **"Image-and-Video-Processing"**: Scattered points in the upper-left quadrant (t-SNE 1 ≈ -50, t-SNE 2 ≈ 40).

- **Distinct Groups**:

- **"Search" and "Ecommerce-and-Retail"**: Clustered near the center (t-SNE 1 ≈ 0–20, t-SNE 2 ≈ -20 to 20).

- **"Blockchain" and "Cryptocurrency"**: Located in the upper-right quadrant (t-SNE 1 ≈ 40–50, t-SNE 2 ≈ 30–40).

2. **Color-Legend Consistency**:

- All legend colors match their corresponding data points. For example:

- "Databases" (light blue) appears in the lower-left quadrant.

- "Marketing" (yellow) is concentrated in the upper-right quadrant.

### Key Observations

- **Dominant Categories**: "Research-and-data" (yellow) and "developer-tools" (blue) form the largest central cluster.

- **Outliers**: "Other" and "Finance" are spatially isolated, indicating low similarity to other categories.

- **Overlap**: Categories like "cloud-platforms" (green) and "cloud-storage" (orange) are intermingled, suggesting functional overlap.

- **Sparse Regions**: The bottom-left quadrant has fewer points, indicating underrepresented categories.

### Interpretation

The t-SNE plot demonstrates that MCP tools with similar functionalities (e.g., "research-and-data" and "developer-tools") are grouped closely, while distinct categories like "Finance" and "Image-and-Video-Processing" occupy separate regions. The "Other" category’s isolation suggests it contains tools that do not align well with the primary functional groups. The central cluster’s diversity implies many tools share hybrid functionalities, reflecting the interdisciplinary nature of modern MCP ecosystems. Outliers like "Finance" highlight niche domains requiring specialized tooling. This visualization aids in identifying tool redundancies, gaps, and potential integration opportunities.

</details>

(a) t-SNE visualization of real MCP tools, colored by their original source categories

<details>

<summary>x13.png Details</summary>

### Visual Description

## t-SNE Plot: Synthetic Tools by Category

### Overview

The image is a t-SNE (t-distributed Stochastic Neighbor Embedding) visualization of synthetic tools categorized by domain. The plot uses two principal components (t-SNE 1 and t-SNE 2) to represent high-dimensional data in 2D space. Each point represents a synthetic tool, colored by its associated category. The legend on the right maps 15 categories to distinct colors.

### Components/Axes

- **Axes**:

- X-axis: t-SNE 1 (range: -100 to 100)

- Y-axis: t-SNE 2 (range: -100 to 100)

- **Legend**:

- Positioned on the right, with 15 categories mapped to colors (e.g., `enterprise_business_intelligence` = light blue, `transportation_logistics` = teal, `iphone_android` = yellow, etc.).

- Includes an "unknown" category (gray) and a "gaming_entertainment" category (bright yellow).

### Detailed Analysis

- **Data Distribution**:

- **Bottom-left quadrant** (t-SNE 1 < -50, t-SNE 2 < -50): Dominated by `education_e-learning` (green) and `software_apps` (blue).

- **Top-left quadrant** (t-SNE 1 < -50, t-SNE 2 > 50): Clustered `iphone_android` (yellow) and `smart_home` (purple).

- **Top-right quadrant** (t-SNE 1 > 50, t-SNE 2 > 50): Concentrated `real_estate_property` (red) and `agriculture_environmental` (pink).

- **Bottom-right quadrant** (t-SNE 1 > -50, t-SNE 2 < -50): Spread of `financial_trading` (orange) and `robot_control` (dark green).

- **Center**: Mixed clusters of `healthcare_medical` (light gray), `manufacturing_industrial_iot` (dark purple), and `legal_compliance` (orange).

- **Outliers**:

- `desktop_systems` (dark purple) and `website_control` (bright yellow) appear scattered across all quadrants.

- `unknown` (gray) points are dispersed throughout the plot, suggesting unclassified or ambiguous tools.

### Key Observations

1. **Clustering Patterns**:

- `iphone_android` (yellow) and `real_estate_property` (red) form tight clusters, indicating high similarity within their categories.

- `education_e-learning` (green) and `software_apps` (blue) are tightly grouped but separated from other domains.

2. **Dispersion**:

- `unknown` (gray) and `desktop_systems` (dark purple) show the widest dispersion, suggesting heterogeneity or overlap with multiple categories.

3. **Color Consistency**:

- All legend colors match their corresponding data points (e.g., `transportation_logistics` = teal, `legal_compliance` = orange).

### Interpretation

The t-SNE plot reveals distinct groupings of synthetic tools by domain, with some categories (e.g., `iphone_android`, `real_estate_property`) exhibiting strong internal cohesion. The widespread distribution of `unknown` and `desktop_systems` suggests either ambiguous categorization or tools that span multiple domains. The separation of `education_e-learning` and `software_apps` from other categories implies these tools may have unique characteristics or applications. The plot highlights the need for further analysis to resolve overlaps and refine category definitions, particularly for the "unknown" group.

</details>

(b) t-SNE visualization of synthetic tools, colored by pre-defined domain categories

Figure 9: t-SNE visualizations of tool embeddings. (a) Real-world MCP tools exhibit natural clustering based on their original source categories. (b) Synthetic tools are organized into pre-defined domain categories, providing systematic coverage of the tool space. Together, they ensure comprehensive representation across different tool functionalities.

There are three stages in our data synthesis pipeline, depicted in Fig. 8.

- Tool spec generation: we first construct a large repository of tool specs from both real-world tools and LLM-synthetic tools;

- Agent and task generation: for each tool-set sampled from the tool repository, we generate an agent to use the toolset and some corresponding tasks;

- Trajectory generation: for each agent and task, we generate trajectories where the agent finishes the task by invoking tools.

Domain Evolution and Tool Generation.

We construct a comprehensive tool repository through two complementary approaches. First, we directly fetch 3000+ real MCP (Model Context Protocol) tools from GitHub repositories, leveraging existing high-quality tool specs. Second, we systematically evolve [83] synthetic tools through a hierarchical domain generation process: we begin with key categories (e.g., financial trading, software applications, robot control), then evolve multiple specific application domains within each category. Specialized tools are then synthesized for each domain, with clear interfaces, descriptions, and operational semantics. This evolution process produces over 20,000 synthetic tools. Figure 9 visualizes the diversity of our tool collection through t-SNE embeddings, demonstrating that both MCP and synthetic tools cover complementary regions of the tool space.

Agent Diversification.

We generate thousands of distinct agents by synthesizing various system prompts and equipping them with different combinations of tools from our repository. This creates a diverse population of agents with varied capabilities, areas of expertise, and behavioral patterns, ensuring a broad coverage of potential use cases.

Rubric-Based Task Generation.

For each agent configuration, we generate tasks that range from simple to complex operations. Each task is paired with an explicit rubric that specifies success criteria, expected tool-use patterns, and evaluation checkpoints. This rubric-based approach ensures a consistent and objective evaluation of agent performance.

Multi-turn Trajectory Generation.

We simulate realistic tool-use scenarios through several components:

- User Simulation: LLM-generated user personas with distinct communication styles and preferences engage in multi-turn dialogues with agents, creating naturalistic interaction patterns.

- Tool Execution Environment: A sophisticated tool simulator (functionally equivalent to a world model) executes tool calls and provides realistic feedback. The simulator maintains and updates state after each tool execution, enabling complex multi-step interactions with persistent effects. It introduces controlled stochasticity to produce varied outcomes including successes, partial failures, and edge cases.

Quality Evaluation and Filtering.

An LLM-based judge evaluates each trajectory against the task rubrics. Only trajectories that meet the success criteria are retained for training, ensuring high-quality data while allowing natural variation in task-completion strategies.

Hybrid Approach with Real Execution Environments.

While simulation provides scalability, we acknowledge the inherent limitation of simulation fidelity. To address this, we complement our simulated environments with real execution sandboxes for scenarios where authenticity is crucial, particularly in coding and software engineering tasks. These real sandboxes execute actual code, interact with genuine development environments, and provide ground-truth feedback through objective metrics such as test suite pass rates. This combination ensures that our models learn from both the diversity of simulated scenarios and the authenticity of real executions, significantly strengthening practical agent capabilities.

By leveraging this hybrid pipeline that combines scalable simulation with targeted real-world execution, we generate diverse, high-quality tool-use demonstrations that balance coverage and authenticity. The scale and automation of our synthetic data generation, coupled with the grounding provided by real execution environments, effectively implements large-scale rejection sampling [27, 88] through our quality filtering process. This high-quality synthetic data, when used for supervised fine-tuning, has demonstrated significant improvements in the model’s tool-use capabilities across a wide range of real-world applications.

### 3.2 Reinforcement Learning

Reinforcement learning (RL) is believed to have better token efficiency and generalization than SFT. Based on the work of K1.5 [36], we continue to scale RL in both task diversity and training FLOPs in K2. To support this, we develop a Gym-like extensible framework that facilitates RL across a wide range of scenarios. We extend the framework with a large number of tasks with verifiable rewards. For tasks that rely on subjective preferences, such as creative writing and open-ended question answering, we introduce a self-critic reward in which the model performs pairwise comparisons to judge its own outputs. This approach allows tasks from various domains to all benefit from the RL paradigm.

#### 3.2.1 Verifiable Rewards Gym

Math, STEM and Logical Tasks

For math, stem and logical reasoning domains, our RL data preparation follows two key principles, diverse coverage and moderate difficulty.