# Is Chain-of-Thought Reasoning of LLMs a Mirage? A Data Distribution Lens

**Authors**: Chengshuai Zhao, Zhen Tan, Pingchuan Ma, Dawei Li, Bohan Jiang, Yancheng Wang, Yingzhen Yang, Huan Liu

> Arizona State University

\pdftrailerid

redacted Corresponding author: {czhao93, ztan36, pingchua, daweili5, bjiang14, yancheng.wang, yingzhen.yang, huanliu}@asu.edu

Abstract

Chain-of-Thought (CoT) prompting has been shown to improve Large Language Model (LLM) performance on various tasks. With this approach, LLMs appear to produce human-like reasoning steps before providing answers (a.k.a., CoT reasoning), which often leads to the perception that they engage in deliberate inferential processes. However, some initial findings suggest that CoT reasoning may be more superficial than it appears, motivating us to explore further. In this paper, we study CoT reasoning via a data distribution lens and investigate if CoT reasoning reflects a structured inductive bias learned from in-distribution data, allowing the model to conditionally generate reasoning paths that approximate those seen during training. Thus, its effectiveness is fundamentally bounded by the degree of distribution discrepancy between the training data and the test queries. With this lens, we dissect CoT reasoning via three dimensions: task, length, and format. To investigate each dimension, we design DataAlchemy, an isolated and controlled environment to train LLMs from scratch and systematically probe them under various distribution conditions. Our results reveal that CoT reasoning is a brittle mirage that vanishes when it is pushed beyond training distributions. This work offers a deeper understanding of why and when CoT reasoning fails, emphasizing the ongoing challenge of achieving genuine and generalizable reasoning. Our code is available at GitHub: https://github.com/ChengshuaiZhao0/DataAlchemy.

1 Introduction

Recent years have witnessed Large Language Models’ (LLMs) dominant role in various domains (Zhao et al., 2023; Li et al., 2025b; Zhao et al., 2025; Ting et al., 2025) through versatile prompting techniques (Wei et al., 2022; Yao et al., 2023; Kojima et al., 2022). Among these, Chain-of-Thought (CoT) prompting (Wei et al., 2022) has emerged as a prominent method for eliciting structured reasoning from LLMs (a.k.a., CoT reasoning). By appending a simple cue such as “Let’s think step by step,” LLMs decompose complex problems into intermediate steps, producing outputs that resemble human-like reasoning. It has been shown to be effective in tasks requiring logical inference Xu et al. (2024), mathematical problem solving (Imani et al., 2023), and commonsense reasoning (Wei et al., 2022). The empirical successes of CoT reasoning lead to the perception that LLMs engage in deliberate inferential processes (Yu et al., 2023; Zhang et al., 2024a; Ling et al., 2023; Zhang et al., 2024c).

However, a closer examination reveals inconsistencies that challenge this optimistic view. Consider this straightforward question: “The day the US was established is in a leap year or a normal year?” When prompted with the CoT prefix, the modern LLM Gemini responded: “The United States was established in 1776. 1776 is divisible by 4, but it’s not a century year, so it’s a leap year. Therefore, the day the US was established was in a normal year.” This response exemplifies a concerning pattern: the model correctly recites the leap year rule and articulates intermediate reasoning steps, yet produces a logically inconsistent conclusion (i.e., asserting 1776 is both a leap year and a normal year). Such inconsistencies suggest that there is a distinction between human-like inference and CoT reasoning.

An expanding body of analyses reveals that LLMs tend to rely on surface-level semantics and clues rather than logical procedures (Bentham et al., 2024; Chen et al., 2025b; Lanham et al., 2023). LLMs construct superficial chains of logic based on learned token associations, often failing on tasks that deviate from commonsense heuristics or familiar templates (Tang et al., 2023). In the reasoning process, performance degrades sharply when irrelevant clauses are introduced, which indicates that models cannot grasp the underlying logic (Mirzadeh et al., 2024). This fragility becomes even more apparent when models are tested on more complex tasks, where they frequently produce incoherent solutions and fail to follow consistent reasoning paths (Shojaee et al., 2025). Collectively, these pioneering works deepen the skepticism surrounding the true nature of CoT reasoning.

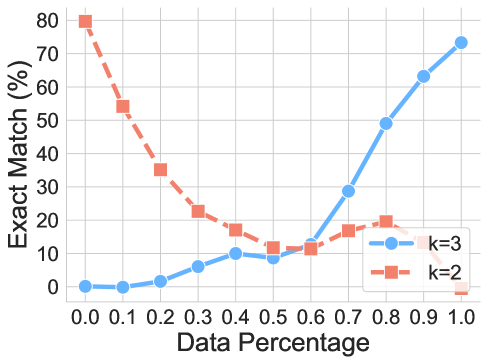

In light of this line of research, we question the CoT reasoning by proposing an alternative lens through data distribution and further investigating why and when it fails. We hypothesize that CoT reasoning reflects a structured inductive bias learned from in-distribution data, allowing the model to conditionally generate reasoning paths that approximate those seen during training. As such, its effectiveness is inherently limited by the nature and extent of the distribution discrepancy between training data and the test queries. Guided by this data distribution lens, we dissect CoT reasoning via three dimensions: (i) task —To what extent CoT reasoning can handle tasks that involve transformations or previously unseen task structures. (2) length —how CoT reasoning generalizes to chains with length different from that of training data; and (3) format —how sensitive CoT reasoning is to surface-level query form variations. To evaluate each aspect, we introduce DataAlchemy, a controlled and isolated experiment that allows us to train LLMs from scratch and systematically probe them under various distribution shifts.

Our findings reveal that CoT reasoning works effectively when applied to in-distribution or near in-distribution data but becomes fragile and prone to failure even under moderate distribution shifts. In some cases, LLMs generate fluent yet logically inconsistent reasoning steps. The results suggest that what appears to be structured reasoning can be a mirage, emerging from memorized or interpolated patterns in the training data rather than logical inference. These insights carry important implications for both practitioners and researchers. For practitioners, our results highlight the risk of relying on CoT as a plug-and-play solution for reasoning tasks and caution against equating CoT-style output with human thinking. For researchers, the results underscore the ongoing challenge of achieving reasoning that is both faithful and generalizable, motivating the need to develop models that can move beyond surface-level pattern recognition to exhibit deeper inferential competence. Our contributions are summarized as follows:

- Novel perspective. We propose a data distribution lens for CoT reasoning, illuminating that its effectiveness stems from structured inductive biases learned from in-distribution training data. This framework provides a principled lens for understanding why and when CoT reasoning succeeds or fails.

- Controlled environment. We introduce DataAlchemy, an isolated experimental framework that enables training LLMs from scratch and systematically probing CoT reasoning. This controlled setting allows us to isolate and analyze the effects of distribution shifts on CoT reasoning without interference from complex patterns learned during large-scale pre-training.

- Empirical validation. We conduct systematic empirical validation across three critical dimensions— task, length, and format. Our experiments demonstrate that CoT reasoning exhibits sharp performance degradation under distribution shifts, revealing that seemingly coherent reasoning masks shallow pattern replication.

- Real-world implication. This work reframes the understanding of contemporary LLMs’ reasoning capabilities and emphasizes the risk of over-reliance on COT reasoning as a universal problem-solving paradigm. It underscores the necessity for proper evaluation methods and the development of LLMs that possess authentic and generalizable reasoning capabilities.

2 Related Work

2.1 LLM Prompting and Co

Chain-of-Thought (CoT) prompting revolutionized how we elicit reasoning from Large Language Models by decomposing complex problems into intermediate steps (Wei et al., 2022). By augmenting few-shot exemplars with reasoning chains, CoT showed substantial performance gains on various tasks (Xu et al., 2024; Imani et al., 2023; Wei et al., 2022). Building on this, several variants emerged. Zero-shot CoT triggers reasoning without exemplars using instructional prompts (Kojima et al., 2022), and self-consistency enhances performance via majority voting over sampled chains (Wang et al., 2023). To reduce manual effort, Auto-CoT generates CoT exemplars using the models themselves (Zhang et al., 2023). Beyond linear chains, Tree-of-Thought (ToT) frames CoT as a tree search over partial reasoning paths (Yao et al., 2023), enabling lookahead and backtracking. SymbCoT combines symbolic reasoning with CoT by converting problems into formal representations (Xu et al., 2024). Recent work increasingly integrates CoT into the LLM inference process, generating long-form CoTs (Jaech et al., 2024; Team, 2024; Guo et al., 2025; Team et al., 2025). This enables flexible strategies like mistake correction, step decomposition, reflection, and alternative reasoning paths (Yeo et al., 2025; Chen et al., 2025a). The success of prompting techniques and long-form CoTs has led many to view them as evidence of emergent, human-like reasoning in LLMs. In this work, we challenge that viewpoint by adopting a data-centric perspective and demonstrating that CoT behavior arises largely from pattern matching over training distributions.

2.2 Discussion on Illusion of LLM Reasoning

While Chain-of-Thought prompting has led to impressive gains on complex reasoning tasks, a growing body of work has started questioning the nature of these improvements. One major line of research highlights the fragility of CoT reasoning. Minor and semantically irrelevant perturbations such as distractor phrases or altered symbolic forms can cause significant performance drops in state-of-the-art models (Mirzadeh et al., 2024; Tang et al., 2023). Models often incorporate such irrelevant details into their reasoning, revealing a lack of sensitivity to salient information. Other studies show that models prioritize the surface form of reasoning over logical soundness; in some cases, longer but flawed reasoning paths yield better final answers than shorter, correct ones (Bentham et al., 2024). Similarly, performance does not scale with problem complexity as expected—models may overthink easy problems and give up on harder ones (Shojaee et al., 2025). Another critical concern is the faithfulness of the reasoning process. Intervention-based studies reveal that final answers often remain unchanged even when intermediate steps are falsified or omitted (Lanham et al., 2023), a phenomenon dubbed the illusion of transparency (Bentham et al., 2024; Chen et al., 2025b). Together, these findings suggest that LLMs are not principled reasoners but rather sophisticated simulators of reasoning-like text. However, a systematic understanding of why and when CoT reasoning fails is still a mystery.

2.3 OOD Generalization of LLMs

Out-of-distribution (OOD) generalization, where test inputs differ from training data, remains a key challenge in machine learning, particularly for large language models (LLMs) Yang et al. (2024, 2023); Budnikov et al. (2025); Zhang et al. (2024b). Recent studies show that LLMs prompted to learn novel functions often revert to similar functions encountered during pretraining (Wang et al., 2024; Garg et al., 2022). Likewise, LLM generalization frequently depends on mapping new problems onto familiar compositional structures (Song et al., 2025). CoT prompting improves OOD generalization (Wei et al., 2022), with early work demonstrating length generalization for multi-step problems beyond training distributions (Yao et al., 2025; Shen et al., 2025). However, this ability is not inherent to CoT and heavily depends on model architecture and training setups. For instance, strong generalization in arithmetic tasks was achieved only when algorithmic structures were encoded into positional encodings (Cho et al., 2024). Similarly, finer-grained CoT demonstrations during training boost OOD performance, highlighting the importance of data granularity (Wang et al., 2025a). Theoretical and empirical evidence shows that CoT generalizes well only when test inputs share latent structures with training data; otherwise, performance declines sharply (Wang et al., 2025b; Li et al., 2025a). Despite its promise, CoT still struggles with genuinely novel tasks or formats. In the light of these brilliant findings, we propose rethinking CoT reasoning through a data distribution lens: decomposing CoT into task, length, and format generalization, and systematically investigating each in a controlled setting.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Computational Generalization Framework

### Overview

The diagram illustrates a framework for text element generalization, showcasing transformations, task adaptation, length handling, and format modifications. It uses color-coded blocks (red, blue, pink) to represent input, output, training, and testing phases, with arrows indicating data flow and transformations.

### Components/Axes

1. **Basic Atoms**:

- A 5x5 grid of uppercase letters (A-Z) labeled "Basic atoms A".

- Example element: "APPLE" split into individual letters (A, P, P, L, E).

2. **Transformations**:

- **f₁: ROT Transformation**: Shifts letters by +13 positions (e.g., A→N, P→C).

- **f₂: Cyclic Shift**: Rotates letters right by +1 position (e.g., APPLE→EAPPLE).

3. **Task Generalization**:

- Components: ID, Comp, POOD, OOD.

- Transformations:

- ID: `f₁ → f₁`

- Comp: `{f₁, f₁, f₁, f₁, f₂} → f₂`

- POOD: `f₁ → f₁`

- OOD: `f₁ → f₂`

4. **Length Generalization**:

- Text lengths: 5, 4, 5 characters.

- Example: "ABCD" → "ABCDA" via insertion.

5. **Format Generalization**:

- Operations: Insertion, Deletion, Modify.

- Example: "ABCD" → "ABC?D" (insertion of "?").

6. **Legend**:

- Red: Input

- Blue: Output

- Pink: Training (solid) / Testing (dashed)

### Detailed Analysis

- **Basic Atoms**: The 5x5 grid represents foundational text units (e.g., letters).

- **Transformations**:

- ROT13 (f₁) and cyclic shifts (f₂) alter letter positions.

- Example: "APPLE" → "NC CYR" (f₁) → "EAPPLE" (f₂).

- **Task Generalization**:

- Tasks (ID, Comp, POOD, OOD) apply transformations to generalize across domains.

- Comp task combines multiple f₁/f₂ operations.

- **Length Generalization**: Handles variable text lengths (e.g., 4→5 characters via insertion).

- **Format Generalization**: Modifies text structure (insertion, deletion, modification).

### Key Observations

- **Color Consistency**:

- Red blocks (Input) align with legend.

- Blue blocks (Output) match legend.

- Pink blocks: Solid (Training) and dashed (Testing) phases.

- **Flow Direction**: Arrows indicate sequential processing (e.g., Input → Training → Output).

- **Transformation Logic**:

- f₁ and f₂ are applied iteratively or combined (e.g., Comp task).

- OOD task shifts from f₁ to f₂, suggesting adaptation to new domains.

### Interpretation

The diagram demonstrates how a model generalizes text processing across tasks, lengths, and formats. Transformations (f₁, f₂) enable adaptability, while task generalization (ID, Comp, POOD, OOD) shows domain-specific adjustments. The Training/Testing phases (pink blocks) highlight evaluation strategies. The framework emphasizes modularity, with components like length and format generalization addressing specific challenges. The use of color coding and structured flow clarifies the relationship between input manipulation and output generation, suggesting a pipeline for robust text processing systems.

</details>

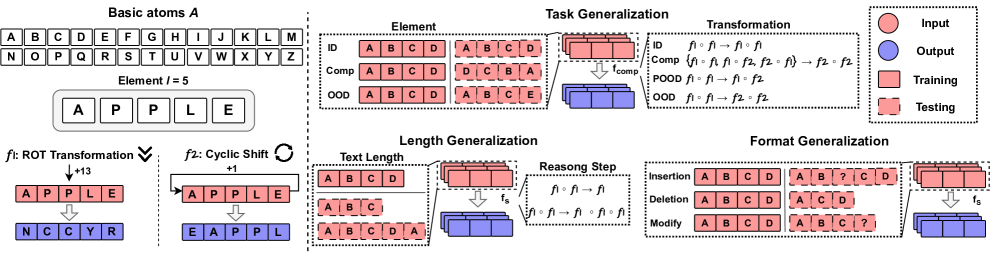

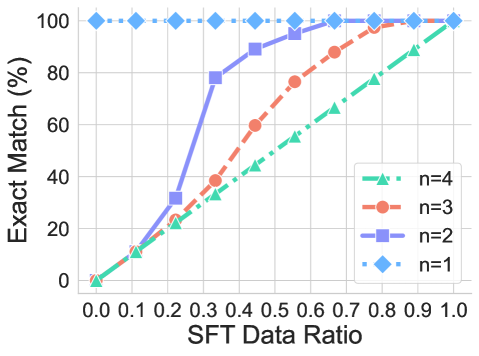

Figure 1: Framework of DataAlchemy. It creates an isolated and controlled environment to train LLMs from scratch and probe the task, length, and format generalization.

3 The Data Distribution Lens

We propose a fundamental reframing to understand what CoT actually represents. We hypothesize that the underlying mechanism is better understood through the lens of data distribution: rather than executing explicit reasoning procedures, CoT operates as a pattern-matching process that interpolates and extrapolates from the statistical regularities present in its training distribution. Specifically, we posit that CoT’s success stems not from a model’s inherent reasoning capacity, but from its ability to generalize conditionally to out-of-distribution (OOD) test cases that are structurally similar to in-distribution exemplars.

To formalize this view, we model CoT prompting as a conditional generation process constrained by the distributional properties of the training data. Let $\mathcal{D}_{\text{train}}$ denote the training distribution over input-output pairs $(x,y)$ , where $x$ represents a reasoning problem and $y$ denotes the solution sequence (including intermediate reasoning steps). The model learns an approximation $f_{\theta}(x)≈ y$ by minimizing empirical risk over samples drawn from $\mathcal{D}_{\text{train}}$ .

Let the expected training risk be defined as:

$$

R_{\text{train}}(f_{\theta})=\mathbb{E}_{(x,y)\sim\mathcal{D}_{\text{train}}}[\ell(f_{\theta}(x),y)], \tag{1}

$$

where $\ell$ is a task-specific loss function (e.g., cross-entropy, token-level accuracy). At inference time, given a test input $a_{\text{test}}$ sampled from a potentially different distribution $\mathcal{D}_{\text{test}}$ , the model generates a response $y_{\text{test}}$ conditioned on patterns learned from $\mathcal{D}_{\text{train}}$ . The corresponding expected test risk is:

$$

R_{\text{test}}(f_{\theta})=\mathbb{E}_{(x,y)\sim\mathcal{D}_{\text{test}}}[\ell(f_{\theta}(x),y)]. \tag{2}

$$

The degree to which the model generalizes from $\mathcal{D}_{\text{train}}$ to $\mathcal{D}_{\text{test}}$ is governed by the distributional discrepancy between the two, which we quantify using divergence measures:

**Definition 3.1 (Distributional Discrepancy)**

*Given training distribution $\mathcal{D}_{\text{train}}$ and test distribution $\mathcal{D}_{\text{test}}$ , the distributional discrepancy is defined as:

$$

\Delta(\mathcal{D}_{\text{train}},\mathcal{D}_{\text{test}})=\mathcal{H}(\mathcal{D}_{\text{train}}\parallel\mathcal{D}_{\text{test}}) \tag{3}

$$

where $\mathcal{H}(·\parallel·)$ is a divergence measure (e.g., KL divergence, Wasserstein distance) that quantifies the statistical distance between the two distributions.*

**Theorem 3.1 (CoT Generalization Bound)**

*Let $f_{\theta}$ denote a model trained on $\mathcal{D}_{\text{train}}$ with expected training risk $R_{\text{train}}(f_{\theta})$ . For a test distribution $\mathcal{D}_{\text{test}}$ , the expected test risk $R_{\text{test}}(f_{\theta})$ is bounded by:

$$

R_{\text{test}}(f_{\theta})\leq R_{\text{train}}(f_{\theta})+\Lambda\cdot\Delta(\mathcal{D}_{\text{train}},\mathcal{D}_{\text{test}})+\mathcal{O}\left(\sqrt{\frac{\log(1/\delta)}{n}}\right) \tag{4}

$$

where $\Lambda>0$ is a Lipschitz constant that depends on the model architecture and task complexity, $n$ is the training sample size, and the bound holds with probability $1-\delta$ , where $\delta$ is the failure propability.*

The proof is provided in Appendix A.1

Building on this data distribution perspective, we identify three critical dimensions along which distributional shifts can occur, each revealing different aspects of CoT’s pattern-matching nature: ➊ Task generalization examines how well CoT transfers across different types of reasoning tasks. Novel tasks may have unique elements and underlying logical structure, which introduces distributional shifts that challenge the model’s ability to apply learned reasoning patterns. ➋ Length generalization investigates CoT’s robustness to reasoning chains of varying lengths. Since training data typically contains reasoning sequences within a certain length range, test cases requiring substantially longer or shorter reasoning chains represent a form of distributional shift along the sequence length dimension. This length discrepancy could result from the reasoning step or the text-dependent solution space. ➌ Format generalization explores how sensitive CoT is to variations in prompt formulation and structure. Due to various reasons (e.g., sophistical training data or diverse background of users), it is challenging for LLM practitioners to design a golden prompt to elicit knowledge suitable for the current case. Their detailed definition and implementation are given in subsequent sections.

Each dimension provides a unique lens for understanding the boundaries of CoT’s effectiveness and the mechanisms underlying its apparent reasoning capabilities. By systematically varying these dimensions in controlled experimental settings, we can empirically validate our hypothesis that CoT performance degrades predictably as distributional discrepancy increases, thereby revealing its fundamental nature as a pattern-matching rather than reasoning system.

4 DataAlchemy: An Isolated and Controlled Environment

To systematically investigate the influence of distributional shifts on CoT reasoning capabilities, we introduce DataAlchemy, a synthetic dataset framework designed for controlled experimentation. This environment enables us to train language models from scratch under precisely defined conditions, allowing for rigorous analysis of CoT behavior across different OOD scenarios. The overview is shown in Figure 1.

4.1 Basic Atoms and Elements

Let $\mathcal{A}=\{\texttt{A},\texttt{B},\texttt{C},...,\texttt{Z}\}$ denote the alphabet of 26 basic atoms. An element $\mathbf{e}$ is defined as an ordered sequence of atoms:

$$

\mathbf{e}=(a_{0},a_{1},\ldots,a_{l-1})\quad\text{where}\quad a_{i}\in\mathcal{A},\quad l\in\mathbb{Z}^{+} \tag{5}

$$

This design provides a versatile manipulation for the size of the dataset $\mathcal{D}$ (i.e., $|\mathcal{D}|=|\mathcal{A}|^{l}$ ) by varying element length $l$ to train language models with various capacities. Meanwhile, it also allows us to systematically probe text length generalization capabilities.

4.2 Transformations

A transformation is an operation that operates on elements $F:\mathbf{e}→\hat{\mathbf{e}}$ . In this work, we consider two fundamental transformations: the ROT Transformation and the Cyclic Position Shift. To formally define the transformations, we introduce a bijective mapping $\phi:\mathcal{A}→\mathbb{Z}_{26}$ , where $\mathbb{Z}_{26}=\{0,1,...,25\}$ , such that $\phi(c)$ maps a character to its zero-based alphabetical index.

**Definition 4.1 (ROT Transformation)**

*Given an element $\mathbf{e}=(a_{0},\\

...,a_{l-1})$ and a rotation parameter $n∈\mathbb{Z}$ , the ROT Transformation $f_{\text{rot}}$ produces an element $\hat{\mathbf{e}}=(\hat{a}_{0},...,\hat{a}_{l-1})$ . Each atom $\hat{a}_{i}$ is:

$$

\hat{a}_{i}=\phi^{-1}((\phi(a_{i})+n)\pmod{26}) \tag{6}

$$

This operation cyclically shifts each atom $n$ positions forward in alphabetical order. For example, if $\mathbf{e}=(\texttt{A},\texttt{P},\texttt{P},\texttt{L},\texttt{E})$ and $n=13$ , then $f_{\text{rot}}(\mathbf{e},13)=(\texttt{N},\texttt{C},\texttt{C},\texttt{Y},\texttt{R})$ .*

**Definition 4.2 (Cyclic Position Shift)**

*Given an element $\mathbf{e}=(a_{0},\\

...,a_{l-1})$ and a shift parameter $n∈\mathbb{Z}$ , the Cyclic Position Shift $f_{\text{pos}}$ produces an element $\hat{\mathbf{e}}=(\hat{a}_{0},...,\hat{a}_{l-1})$ . Each atom $\hat{a}_{i}$ is defined by a cyclic shift of indices:

$$

\hat{a}_{i}=a_{(i-n)\pmod{l}} \tag{7}

$$

This transformation cyclically shifts the positions of the atoms within the sequence by $n$ positions to the right. For instance, if $\mathbf{e}=(\texttt{A},\texttt{P},\texttt{P},\texttt{L},\texttt{E})$ and $n=1$ , then $f_{\text{pos}}(\mathbf{e},1)=(\texttt{E},\texttt{A},\texttt{P},\texttt{P},\texttt{L})$ .*

**Definition 4.3 (Generalized Compositional Transformation)**

*To model multi-step reasoning, we define a compositional transformation as the successive application of a sequence of operations. Let $S=(f_{1},f_{2},...,f_{k})$ be a sequence of operations, where each $f_{i}$ is one of the fundamental transformations $\mathcal{F}=\{f_{\text{rot}},f_{\text{pos}}\}$ with its respective parameters. The compositional transformation $f_{\text{S}}$ for the sequence $S$ is the function composition:

$$

f_{\text{S}}=f_{k}\circ f_{k}\circ\cdots\circ f_{1} \tag{8}

$$

The resulting element $\hat{\mathbf{e}}$ is obtained by applying the operations sequentially to an initial element $\mathbf{e}$ :

$$

\hat{\mathbf{e}}=f_{k}(f_{k-1}(\ldots(f_{1}(\mathbf{e}))\ldots)) \tag{9}

$$*

This design enables the construction of arbitrarily complex transformation chains by varying the type, parameters, order, and length of operations within the sequence. At the sample time, we can naturally acquire the COT reasoning step by decomposing the intermediate process:

$$

\underbrace{f_{\text{S}}(\mathbf{e}):}_{\text{Query}}\quad\underbrace{\mathbf{e}\xrightarrow{f_{1}}\mathbf{e}^{(1)}\xrightarrow{f_{2}}\mathbf{e}^{(2)}\cdots\xrightarrow{f_{k-1}}\mathbf{e}^{(k-1)}\xrightarrow{f_{k}}}_{\text{COT reasoning steps}}\underbrace{\boxed{\hat{\mathbf{e}}}}_{\text{Answer}} \tag{1}

$$

4.3 Environment Setting

Through systematic manipulation of elements and transformations, DataAlchemy offers a flexible and controllable framework for training LLMs from scratch, facilitating rigorous investigation of diverse OOD scenarios. Without specification, we employ a decoder-only language model GPT-2 (Radford et al., 2019) with a configuration of 4 layers, 32 hidden dimensions, and 4 attention heads. We utilize a Byte-Pair Encoding (BPE) tokenizer. Both LLMs and the tokenizer follow the general modern LLM pipeline. During the inference time, we set the temperature to 1e-5. For rigor, we also study LLMs with various parameters, architectures, and temperatures in Section 8. Details of the implementation are provided in the Appendix B. We consider that each element consists of 4 basic atoms, which produces 456,976 samples for each dataset with varied transformations and token amounts. We initialize the two transformations $f_{1}=f_{\text{rot}}(e,13)$ and $f_{2}=f_{\text{pos}}(e,1)$ . We consider the exact match rate, Levenshtein distance (i.e., edit distance) (Yujian and Bo, 2007), and BLEU score (Papineni et al., 2002) as metrics and evaluate the produced reasoning step, answer, and full chain. Examples of the datasets and evaluations are shown in Appendix C

5 Task Generalization

Task generalization represents a fundamental challenge for CoT reasoning, as it directly tests a model’s ability to apply learned concepts and reasoning patterns to unseen scenarios. In our controlled experiments, both transformation and elements could be novel. Following this, we decompose task generalization into two primary dimensions: element generalization and transformation generalization.

Task Generalization Complexity. Guided by the data distribution lens, we first introduce a measure for generalization difficulty:

**Proposition 5.1 (Task Generalization Complexity)**

*For a reasoning chain $f_{S}$ operating on elements $\mathbf{e}=(a_{0},...,a_{l-1})$ , define:

$$

\displaystyle\operatorname{TGC}(C)= \displaystyle\alpha\sum_{i=1}^{m}\mathbb{I}\left[a_{i}\notin\mathcal{E}^{i}_{\text{train }}\right]+\beta\sum_{j=1}^{n}\mathbb{I}\left[f_{j}\notin\mathcal{F}_{\text{train }}\right]+\gamma\mathbb{I}\left[\left(f_{1},f_{2},\ldots,f_{k}\right)\notin\mathcal{P}_{\text{train }}\right]+C_{T} \tag{11}

$$

as a measurement of task discrepancy $\Delta_{task}$ , where $\alpha,\beta,\gamma$ are weighting parameters for different novelty types and $C_{T}$ is task specific constant. $\mathcal{E}^{i}_{\text{train }},\mathcal{F}_{\text{train }},$ and $\mathcal{P}_{\text{train }}$ denote the bit-wise element set, relation set and the order of relation set used during training.*

We establish a critical threshold beyond which CoT reasoning fails exponentially:

**Theorem 5.1 (Task Generalization Failure Threshold)**

*There exists a threshold $\tau$ such that when $\text{TGC}(C)>\tau$ , the probability of correct CoT reasoning drops exponentially:

$$

P(\text{correct}|C)\leq e^{-\delta(\text{TGC}(C)-\tau)} \tag{12}

$$*

The proof is provided in Appendix A.2.

5.1 Transformation Generalization

Transformation generalization evaluates the ability of CoT reasoning to effectively transfer when models encounter novel transformations during testing, which is an especially prevalent scenario in real-world applications.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Scatter Plot: BLEU Score vs Edit Distance with Distribution Shift

### Overview

The image is a scatter plot visualizing the relationship between BLEU Score (y-axis) and Edit Distance (x-axis), with data points color-coded by Distribution Shift. The plot reveals clusters of data points in distinct regions, suggesting patterns in how these metrics interact.

### Components/Axes

- **X-axis (Edit Distance)**: Ranges from 0.00 to 0.30 in increments of 0.05. Represents the magnitude of textual edits.

- **Y-axis (BLEU Score)**: Ranges from 0.2 to 1.0 in increments of 0.1. Measures text similarity to a reference.

- **Legend (Distribution Shift)**: A vertical color gradient from blue (low shift, ~0.2) to red (high shift, ~0.8). Positioned on the right side of the plot.

- **Data Points**: Circular markers with varying opacity, sized uniformly. Colors correspond to the Distribution Shift legend.

### Detailed Analysis

1. **Top-Left Cluster (Blue Points)**:

- **Position**: X ≈ 0.00–0.05, Y ≈ 0.95–1.0.

- **Characteristics**: High BLEU Scores (~0.95–1.0) with minimal Edit Distance (~0.00–0.05). Colors are predominantly blue, indicating low Distribution Shift.

- **Interpretation**: These points represent near-identical text to the reference, with minimal edits and high similarity.

2. **Central Cluster (Purple Points)**:

- **Position**: X ≈ 0.15–0.25, Y ≈ 0.4–0.6.

- **Characteristics**: Moderate BLEU Scores (~0.4–0.6) and Edit Distances (~0.15–0.25). Colors transition from purple to red, indicating mid-to-high Distribution Shift.

- **Interpretation**: This cluster suggests a trade-off between edit magnitude and similarity, with moderate performance and noticeable distribution shifts.

3. **Bottom-Right Cluster (Red Points)**:

- **Position**: X ≈ 0.25–0.30, Y ≈ 0.2–0.4.

- **Characteristics**: Low BLEU Scores (~0.2–0.4) and high Edit Distances (~0.25–0.30). Colors are predominantly red, indicating high Distribution Shift.

- **Interpretation**: These points reflect significant textual alterations, leading to poor similarity and high distribution shifts.

4. **Diagonal Trend**:

- A faint diagonal band of purple-to-red points stretches from (X=0.15, Y=0.5) to (X=0.25, Y=0.4), suggesting a negative correlation between Edit Distance and BLEU Score as Distribution Shift increases.

### Key Observations

- **Outliers**: The blue cluster in the top-left is an outlier group with exceptionally high BLEU Scores and near-zero Edit Distance.

- **Trend**: As Edit Distance increases, BLEU Scores generally decrease, with a steeper decline observed in regions of higher Distribution Shift (redder points).

- **Color Consistency**: All data points align with the legend’s color gradient, confirming accurate spatial grounding.

### Interpretation

The plot demonstrates that **lower Edit Distance** (closer to the original text) correlates with **higher BLEU Scores**, indicating better performance. Conversely, **higher Edit Distance** (more extensive edits) leads to **lower BLEU Scores**, likely due to greater divergence from the reference. The **Distribution Shift** gradient (blue to red) reinforces this trend, showing that increased shift (redder points) exacerbates performance degradation. The central purple cluster highlights a transitional zone where moderate edits and shifts result in mid-range performance, suggesting a threshold effect. This analysis implies that models or systems generating text with minimal edits (blue cluster) perform best, while those introducing significant changes (red cluster) struggle, particularly under high distribution shifts.

</details>

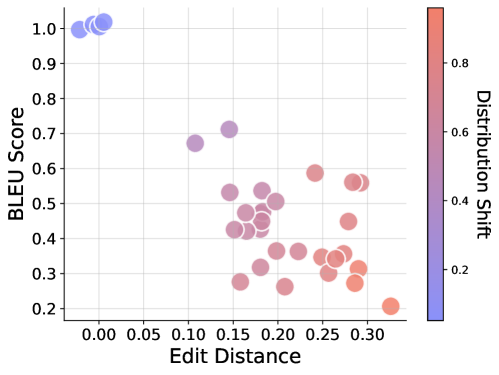

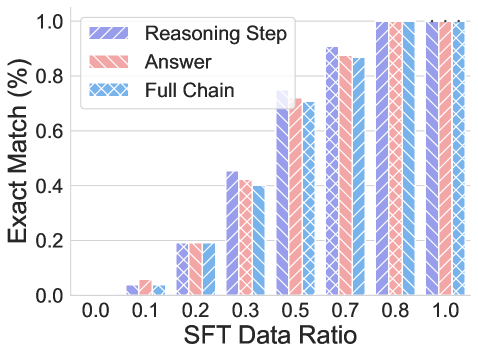

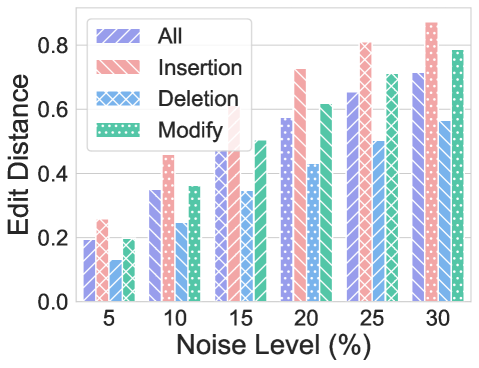

Figure 2: Performance of CoT reasoning on transformation generalization. Efficacy of CoT reasoning declines as the degree of distributional discrepancy increases.

Experimental Setup. To systematically evaluate the impact of transformations, we conduct experiments by varying transformations between training and testing sets while keeping other factors constant (e.g., elements, length, and format). Guided by the intuition formalized in Proposition 5.1, we define four incremental levels of distribution shift in transformations as shown in Figure 1: (i) In-Distribution (ID): The transformations in the test set are identical to those observed during training, e.g., $f_{1}\circ f_{1}→ f_{1}\circ f_{1}$ . (ii) Composition (CMP): Test samples comprise novel compositions of previously encountered transformations, though each individual transformation remains familiar, e.g., ${f_{1}\circ f_{1},f_{1}\circ f_{2},f_{2}\circ f_{1}}→ f_{2}\circ f_{2}$ . (iii) Partial Out-of-Distribution (POOD): Test data include compositions involving at least one novel transformation not seen during training, e.g., $f_{1}\circ f_{1}→ f_{1}\circ f_{2}$ . (iv) Out-of-Distribution (OOD): The test set contains entirely novel transformation types that are unseen in training, e.g., $f_{1}\circ f_{1}→ f_{2}\circ f_{2}$ .

Table 1: Full chain evaluation under different scenarios for transformation generalization.

| $f_{1}\circ f_{1}→ f_{1}\circ f_{1}$ $\{f_{2}\circ f_{2},f_{1}\circ f_{2},f_{2}\circ f_{1}\}→ f_{1}\circ f_{1}$ $f_{1}\circ f_{2}→ f_{1}\circ f_{1}$ | ID CMP POOD | 100.00% 0.01% 0.00% | 0 0.1326 0.1671 | 1 0.6867 0.4538 |

| --- | --- | --- | --- | --- |

| $f_{2}\circ f_{2}→ f_{1}\circ f_{1}$ | OOD | 0.00% | 0.2997 | 0.2947 |

Findings. Figure 2 illustrates the performance of the full chain under different distribution discrepancies computed by task generalize complexities (normalized between 0 and 1) in Definition 5.1. We can observe that, in general, the effectiveness of CoT reasoning decreases when distribution discrepancy increases. For the instance shown in Table 1, from in-distribution to composition, POOD, and OOD, the exact match decreases from 1 to 0.01, 0, and 0, and the edit distance increases from 0 to 0.13, 0.17 when tested on data with transformation $f_{1}\circ f_{1}$ . Apart from ID, LLMs cannot produce a correct full chain in most cases, while they can produce correct CoT reasoning when exposed to some composition and POOD conditions by accident. As shown in Table 2, from $f_{1}\circ f_{2}$ to $f_{2}\circ f_{2}$ , the LLMs can correctly answer 0.1% of questions. A close examination reveals that it is a coincidence, e.g., the query element is A, N, A, N, which happened to produce the same result for the two operations detailed in the Appendix D.1. When further analysis is performed by breaking the full chain into reasoning steps and answers, we observe strong consistency between the reasoning steps and answers. For example, under the composition generalization setting, the reasoning steps are entirely correct on test data distribution $f_{1}\circ f_{1}$ and $f_{2}\circ f_{2}$ , but with wrong answers. Probe these insistent cases in Appendix D.1, we can find that when a novel transformation (say $f_{1}\circ f_{1}$ ) is present, LLMs try to generalize the reasoning paths based on the most similar ones (i.e., $f_{1}\circ f_{2}$ ) seen during training, which leads to correct reasoning paths, yet incorrect answer, which echo the example in the introduction. Similarly, generalization from $f_{1}\circ f_{2}$ to $f_{2}\circ f_{1}$ or vice versa allows LLMs to produce correct answers that are attributed to the commutative property between the two orthogonal transformations with unfaithful reasoning paths. Collectively, the above results indicate that the CoT reasoning fails to generalize to novel transformations, not even to novel composition transforms. Rather than demonstrating a true understanding of text, CoT reasoning under task transformations appears to reflect a replication of patterns learned during training.

Table 2: Evaluation on different components in CoT reasoning on transformation generalization. CoT reasoning shows inconsistency with the reasoning steps and answers.

| $\{f_{1}\circ f_{1},f_{1}\circ f_{2},f_{2}\circ f_{1}\}→ f_{2}\circ f_{2}$ $\{f_{1}\circ f_{2},f_{2}\circ f_{1},f_{2}\circ f_{2}\}→ f_{1}\circ f_{1}$ $f_{1}\circ f_{2}→ f_{2}\circ f_{1}$ | 100.00% 100.00% 0.00% | 0.01% 0.01% 100.00% | 0.01% 0.01% 0.00% | 0.000 0.000 0.373 | 0.481 0.481 0.000 | 0.133 0.133 0.167 |

| --- | --- | --- | --- | --- | --- | --- |

| $f_{2}\circ f_{1}→ f_{1}\circ f_{2}$ | 0.00% | 100.00% | 0.00% | 0.373 | 0.000 | 0.167 |

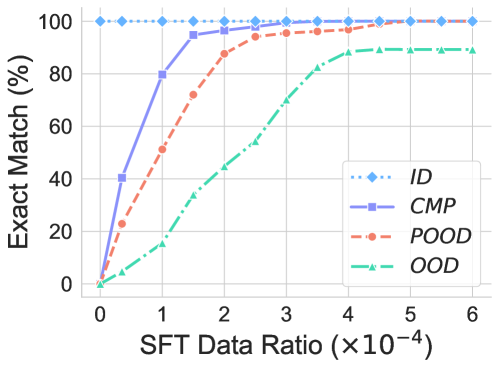

Experiment settings. To further probe when CoT reasoning can generalize to unseen transformations, we conduct supervised fine-tuning (SFT) on a small portion $\lambda$ of unseen data. In this way, we can decrease the distribution discrepancy between the training and test sets, which might help LLMs to generalize to test queries.

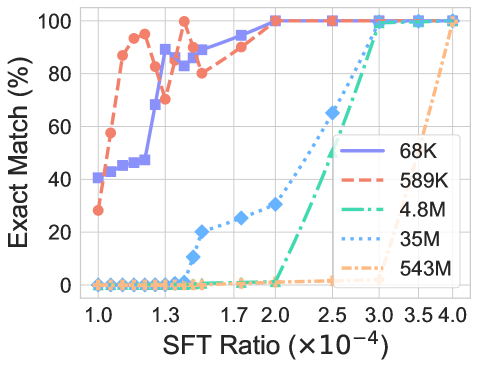

Findings. As shown in Figure 3, we can find that generally a very small portion ( $\lambda=1.5e^{-4}$ ) of data can make the model quickly generalize to unseen transformations. The less discrepancy between the training and testing data, the quicker the model can generalize. This indicates that a similar pattern appears in the training data, helping LLMs to generalize to the test dataset.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Graph: Exact Match Performance vs. SFT Data Ratio

### Overview

The graph illustrates the relationship between the SFT Data Ratio (scaled by ×10⁻⁴) and the Exact Match (%) performance of four distinct methods: ID, CMP, POOD, and OOD. Performance is measured as a percentage, with all methods plateauing near 100% at higher data ratios.

### Components/Axes

- **X-axis**: SFT Data Ratio (×10⁻⁴), ranging from 0 to 6 in increments of 1.

- **Y-axis**: Exact Match (%), ranging from 0 to 100% in increments of 20.

- **Legend**: Located in the bottom-right corner, mapping:

- Blue diamonds (dashed line): ID

- Purple squares (solid line): CMP

- Red circles (dashed line): POOD

- Green triangles (dashed line): OOD

### Detailed Analysis

1. **ID (Blue Diamonds)**:

- Starts at 0% at x=0.

- Rises sharply to 100% by x=1.

- Remains flat at 100% for x ≥ 1.

2. **CMP (Purple Squares)**:

- Begins at 0% at x=0.

- Increases gradually, reaching 100% by x=2.

- Plateaus at 100% for x ≥ 2.

3. **POOD (Red Circles)**:

- Starts at 0% at x=0.

- Rises steadily, surpassing CMP around x=2.

- Reaches 100% by x=4 and plateaus.

4. **OOD (Green Triangles)**:

- Begins at 0% at x=0.

- Increases slowly, reaching ~85% by x=6.

- Stabilizes near 85% for x ≥ 6.

### Key Observations

- **Early Performance**: ID achieves 100% performance fastest (x=1), followed by CMP (x=2) and POOD (x=4).

- **Late-Stage Growth**: OOD lags significantly, only reaching ~85% by x=6.

- **Crossing Trends**: POOD overtakes CMP between x=2 and x=3, indicating superior performance at higher data ratios.

- **Plateaus**: All methods plateau near 100% except OOD, which plateaus at ~85%.

### Interpretation

The graph suggests that **ID** is the most efficient method, achieving full performance with minimal data. **CMP** and **POOD** show similar trajectories but diverge at higher ratios, with POOD outperforming CMP beyond x=3. **OOD** demonstrates the weakest performance, requiring the largest data ratio to reach ~85% and failing to match the others. This could reflect differences in algorithmic efficiency, data utilization, or model architecture. The plateauing behavior implies diminishing returns beyond certain data ratios, highlighting a potential threshold for optimal performance.

</details>

Figure 3: Performance on unseen transformation using SFT in various levels of distribution shift. Introducing a small amount of unseen data helps CoT reasoning to generalize across different scenarios.

5.2 Element Generalization

Element generalization is another critical factor to consider when LLMs try to generalize to new tasks.

Experiment settings. Similar to transformation generalization, we fix other factors and consider three progressive distribution shifts for elements: ID, CMP, and OOD, as shown in Figure 1. It is noted that in composition, we test if CoT reasoning can be generalized to novel combinations when seeing all the basic atoms in the elements, e.g., $(\texttt{A},\texttt{B},\texttt{C},\texttt{D})→(\texttt{B},\texttt{C},\texttt{D},\texttt{A})$ . Based on the atom order in combination (can be measured by edit distance $n$ ), the CMP can be further developed. While for OOD, atoms that constitute the elements are totally unseen during the training.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Heatmap: BLEU Scores and Exact Match Rates Across Scenarios and Transformations

### Overview

The image presents two stacked heatmaps comparing performance metrics (BLEU scores and exact match percentages) across three scenarios (ID, CMP, OOD) and six transformation types (f1, f2, f1·f1, f1·f2, f2·f1, f2·f2). The top heatmap shows BLEU scores (0-1 scale), while the bottom shows exact match rates (0-100%).

### Components/Axes

**Axes:**

- **Y-axis (Scenarios):**

- Top: ID (identical data)

- Middle: CMP (comparison data)

- Bottom: OOD (out-of-distribution data)

- **X-axis (Transformations):**

- f1, f2, f1·f1, f1·f2, f2·f1, f2·f2

- **Color Scales:**

- Right (BLEU Scores): Red (1.0) → Blue (0.0)

- Bottom (Exact Match): Red (100%) → Blue (0%)

**Legend Placement:**

- BLEU score legend: Right side, top heatmap

- Exact match legend: Bottom heatmap, right side

### Detailed Analysis

**BLEU Scores (Top Heatmap):**

- **ID Scenario:** All transformations score 1.00 (perfect BLEU)

- **CMP Scenario:**

- f1: 0.71

- f2: 0.62

- f1·f1: 0.65

- f1·f2: 0.68

- f2·f1: 0.32

- f2·f2: 0.16

- **OOD Scenario:**

- f1·f1: 0.46

- f1·f2: 0.35

- f2·f1: 0.40

- f2·f2: 0.35

- All other transformations: 0.00

**Exact Match Rates (Bottom Heatmap):**

- **ID Scenario:** All transformations score 100%

- **CMP & OOD Scenarios:** All transformations score 0%

### Key Observations

1. **ID Scenario Dominance:** Perfect performance (BLEU=1.00, exact match=100%) across all transformations

2. **CMP Scenario Variability:**

- Highest BLEU for f1·f2 (0.68)

- Lowest BLEU for f2·f2 (0.16)

- No exact matches despite moderate BLEU scores

3. **OOD Scenario Limitations:**

- Only transformation combinations (f1·f1, f1·f2, f2·f1, f2·f2) show partial BLEU scores (0.35-0.46)

- No exact matches despite non-zero BLEU scores

4. **Transformation Impact:**

- Single-feature transformations (f1, f2) perform better than combinations in CMP/OOD

- f2·f2 combination shows worst performance in both metrics

### Interpretation

The data reveals a clear performance hierarchy: ID > CMP > OOD. While BLEU scores suggest some fluency preservation in CMP scenarios, the absence of exact matches indicates these transformations fail to maintain semantic integrity. The OOD scenario's near-zero exact matches despite moderate BLEU scores highlight a critical disconnect between surface-level fluency and factual accuracy in out-of-distribution contexts. The f2·f2 transformation emerges as particularly problematic, showing both the lowest BLEU and no exact matches in CMP scenarios. This suggests that complex feature interactions degrade performance more severely than single-feature transformations.

</details>

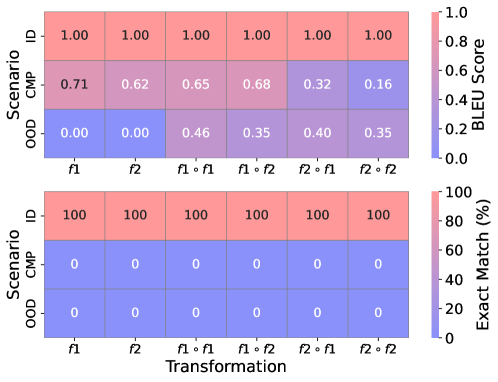

Figure 4: Element generalization results on various scenarios and relations.

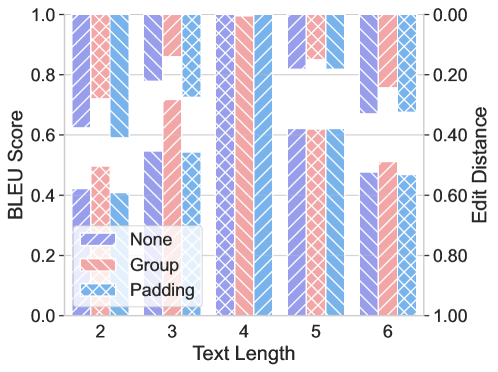

Findings. Similar to transformation generalization, the performances degrade sharply when facing the distribution shift consistently across all transformations, as shown in Figure 4. From ID to CMP and OOD, the exact match decreases from 1.0 to 0 and 0, for all cases. Most strikingly, the BLEU score is 0 when transferred to $f_{1}$ and $f_{2}$ transformations. A failure case in Appendix D.1 shows that the models cannot respond to any words when novel elements are present. We further explore when CoT reasoning can generalize to novel elements by conducting SFT. The results are summarized in Figure 5. We evaluate the performance under three exact matches for the full chain under three scenarios, CMP based on the edit distance n. The result is similar to SFT on transformation. The performance increases rapidly when presented with similar (a small $n$ ) examples in the training data. Interestingly, the exact match rate for CoT reasoning aligns with the lower bound of performance when $n=3$ , which might suggest the generalization of CoT reasoning on novel elements is very limited, even SFT on the downstream task. When we further analyze the exact match of reasoning, answer, and token during the training for $n=3$ , as summarized in Figure 5b. We find that there is a mismatch of accuracy between the answer and the reasoning step during the training process, which somehow might provide an explanation regarding why CoT reasoning is inconsistent in some cases.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Graph: Exact Match (%) vs SFT Data Ratio

### Overview

The graph illustrates the relationship between the SFT Data Ratio (x-axis) and Exact Match percentage (y-axis) for four distinct data series (n=1 to n=4). Each series is represented by a unique line style and marker, with trends showing how Exact Match improves as more data is utilized.

### Components/Axes

- **X-axis**: SFT Data Ratio (0.0 to 1.0 in increments of 0.1).

- **Y-axis**: Exact Match (%) (0% to 100% in increments of 20%).

- **Legend**: Located in the bottom-right corner, mapping:

- **n=4**: Green dashed line with triangle markers.

- **n=3**: Red dashed line with circle markers.

- **n=2**: Blue solid line with square markers.

- **n=1**: Blue dashed line with diamond markers.

### Detailed Analysis

1. **n=1 (Blue Diamonds)**:

- **Trend**: Horizontal line at 100% across all SFT Data Ratios.

- **Key Points**: Remains constant at 100% from 0.0 to 1.0.

2. **n=2 (Blue Squares)**:

- **Trend**: Steep upward slope, starting at 0% and reaching 100% at 1.0.

- **Key Points**:

- 0.3: ~80%

- 0.5: ~90%

- 0.7: ~95%

- 0.9: ~98%

3. **n=3 (Red Circles)**:

- **Trend**: Moderate upward slope, starting at 0% and reaching 100% at 1.0.

- **Key Points**:

- 0.3: ~20%

- 0.5: ~60%

- 0.7: ~85%

- 0.9: ~95%

4. **n=4 (Green Triangles)**:

- **Trend**: Gradual upward slope, starting at 0% and reaching 100% at 1.0.

- **Key Points**:

- 0.3: ~10%

- 0.5: ~40%

- 0.7: ~60%

- 0.9: ~85%

### Key Observations

- **Convergence at 1.0**: All lines intersect at 100% Exact Match when SFT Data Ratio = 1.0.

- **Growth Rates**:

- n=2 (blue squares) shows the steepest improvement.

- n=4 (green triangles) exhibits the slowest growth.

- **n=1 Anomaly**: The blue diamond line (n=1) remains at 100% regardless of data ratio, suggesting a baseline or theoretical maximum.

### Interpretation

The graph demonstrates that higher SFT Data Ratios correlate with improved Exact Match performance across all n values. However, the rate of improvement varies significantly with the number of data points (n):

- **n=1**: Implies perfect performance even with minimal data, possibly indicating an idealized or overfitted scenario.

- **n=2 vs. n=4**: Lower n values (e.g., n=2) achieve higher performance with less data, suggesting efficiency in smaller datasets. Higher n values (e.g., n=4) require near-complete data utilization to reach similar performance levels, highlighting diminishing returns with increased data complexity.

This trend may reflect trade-offs between data quantity and model efficiency, where smaller datasets (lower n) achieve optimal results faster, while larger datasets (higher n) demand more comprehensive data to mitigate variability or noise.

</details>

(a) Performance on unseen element via SFT in various CMP scenarios.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: Exact Match Performance vs SFT Data Ratio

### Overview

The chart compares the performance of three model components (Reasoning Step, Answer, Full Chain) across varying SFT Data Ratios (0.0 to 1.0). Performance is measured as Exact Match percentage, with all components showing increasing effectiveness as data ratio increases.

### Components/Axes

- **X-axis**: SFT Data Ratio (0.0, 0.1, 0.2, 0.3, 0.5, 0.7, 0.8, 1.0)

- **Y-axis**: Exact Match (%) (0.0 to 1.0 in 0.2 increments)

- **Legend**:

- Purple (Reasoning Step)

- Red (Answer)

- Blue (Full Chain)

- **Bar Groups**: Three bars per data ratio (purple, red, blue)

### Detailed Analysis

1. **0.0 Ratio**:

- All components near 0% (purple: ~0.03, red: ~0.05, blue: ~0.02)

2. **0.1 Ratio**:

- Reasoning Step (~0.05) > Answer (~0.03) > Full Chain (~0.02)

3. **0.2 Ratio**:

- All ~0.2% (purple: 0.2, red: 0.2, blue: 0.2)

4. **0.3 Ratio**:

- Reasoning Step (~0.45) > Answer (~0.4) > Full Chain (~0.38)

5. **0.5 Ratio**:

- All ~0.7% (purple: 0.7, red: 0.7, blue: 0.7)

6. **0.7 Ratio**:

- All ~0.9% (purple: 0.9, red: 0.88, blue: 0.87)

7. **0.8 Ratio**:

- All reach 1.0% (purple: 1.0, red: 1.0, blue: 1.0)

8. **1.0 Ratio**:

- All maintain 1.0% (purple: 1.0, red: 1.0, blue: 1.0)

### Key Observations

- **Consistent Growth**: All components show near-linear improvement with increasing data ratio.

- **Full Chain Performance**: Slightly lags behind Reasoning Step and Answer at lower ratios (0.3) but matches them at higher ratios.

- **Saturation Point**: All components achieve 100% exact match at 0.8+ data ratio.

- **Color Consistency**: Legend colors perfectly match bar colors (purple/red/blue).

### Interpretation

The data demonstrates that model performance scales predictably with training data volume. The "Full Chain" component (likely an integrated system) matches the performance of individual components (Reasoning Step and Answer) at higher data ratios, suggesting effective integration. The minor performance gap at 0.3 ratio may indicate data sparsity challenges or architectural limitations in early training stages. The saturation at 0.8 ratio implies diminishing returns beyond this point, making 0.8+ ratios optimal for deployment.

</details>

(b) Evaluation of CoT reasoning in SFT.

Figure 5: SFT performances for element generalization. SFT helps to generalize to novel elements.

6 Length Generalization

Length generalization examines how CoT reasoning degrades when models encounter test cases that differ in length from their training distribution. The difference in length could be introduced from the text space or the reasoning space of the problem. Therefore, we decompose length generalization into two complementary aspects: text length generalization and reasoning step generalization. Guided by instinct, we first propose to measure the length discrepancy.

Length Extrapolation Bound. We establish a power-law relationship for length extrapolation:

**Proposition 6.1 (Length Extrapolation Gaussian Degradation)**

*For a model trained on chain-of-thought sequences of fixed length $L_{\text{train}}$ , the generalization error at test length $L$ follows a Gaussian distribution:

$$

\mathcal{E}(L)=\mathcal{E}_{0}+\left(1-\mathcal{E}_{0}\right)\cdot\left(1-\exp\left(-\frac{\left(L-L_{\text{train }}\right)^{2}}{2\sigma^{2}}\right)\right) \tag{13}

$$

where $\mathcal{E}_{0}$ is the in-distribution error at $L=L_{\text{train}}$ , $\sigma$ is the length generalization width parameter, and $L$ is the test sequence length*

The proof is provided in Appendix A.3.

6.1 Text Length Generalization

Text length generalization evaluates how CoT performance varies when the input text length (i.e., the element length $l$ ) differs from training examples. Considering the way LLMs process long text, this aspect is crucial because real-world problems often involve varying degrees of complexity that manifest as differences in problem statement length, context size, or information density.

Experiment settings. We pre-train LLMs on the dataset with text length merely on $l=4$ while fixing other factors and evaluate the performance on a variety of lengths. We consider three different padding strategies during the pre-training: (i) None: LLMs do not use any padding. (ii) Padding: We pad LLM to the max length of the context window. (iii) Group: We group the text and truncate it into segments with a maximum length.

Table 3: Evaluation for text length generalization.

| 2 3 4 | 0.00% 0.00% 100.00% | 0.00% 0.00% 100.00% | 0.00% 0.00% 100.00% | 0.3772 0.2221 0.0000 | 0.4969 0.3203 0.0000 | 0.5000 0.2540 0.0000 | 0.4214 0.5471 1.0000 | 0.1186 0.1519 1.0000 | 0.0000 0.0000 1.0000 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| 5 | 0.00% | 0.00% | 0.00% | 0.1818 | 0.2667 | 0.2000 | 0.6220 | 0.1958 | 0.2688 |

| 6 | 0.00% | 0.00% | 0.00% | 0.3294 | 0.4816 | 0.3337 | 0.4763 | 0.1174 | 0.2077 |

Findings. As illustrated in the Table 3, the CoT reasoning failed to directly generate two test cases even though those lengths present a mild distribution shift. Further, the performance declines as the length discrepancy increases shown in Figure 6. For instance, from data with $l=4$ to those with $l=3$ or $l=5$ , the BLEU score decreases from 1 to 0.55 and 0.62. Examples in Appendix D.1 indicate that LLMs attempt to produce CoT reasoning with the same length as the training data by adding or removing tokens in the reasoning chains. The efficacy of CoT reasoning length generalization deteriorates as the discrepancy increases. Moreover, we consider using a different padding strategy to decrease the divergence between the training data and test cases. We found that padding to the max length doesn’t contribute to length generalization. However, the performance increases when we replace the padding with text by using the group strategy, which indicates its effectiveness.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: BLEU Score vs. Text Length with Grouping and Padding

### Overview

The image is a grouped bar chart comparing BLEU scores across different text lengths (2–6) for three categories: "None," "Group," and "Padding." A secondary y-axis labeled "Edit Distance" is present on the right, but no bars align with it, suggesting it may represent a separate metric or be a placeholder. The chart uses diagonal hatch patterns (crosshatch) to differentiate categories, with a legend at the bottom left.

---

### Components/Axes

- **X-axis (Text Length)**: Discrete categories labeled 2, 3, 4, 5, 6.

- **Y-axis (BLEU Score)**: Continuous scale from 0.0 to 1.0.

- **Secondary Y-axis (Edit Distance)**: Continuous scale from 0.0 to 1.0, but no bars are plotted against it.

- **Legend**: Located at the bottom left, with three categories:

- **None** (purple, diagonal hatch)

- **Group** (red, diagonal hatch)

- **Padding** (blue, diagonal hatch)

---

### Detailed Analysis

#### BLEU Score Trends

- **Text Length 2**:

- None: ~0.4

- Group: ~0.5

- Padding: ~0.3

- **Text Length 3**:

- None: ~0.6

- Group: ~0.7

- Padding: ~0.5

- **Text Length 4**:

- None: ~0.8

- Group: ~0.9

- Padding: ~0.7

- **Text Length 5**:

- None: ~0.6

- Group: ~0.7

- Padding: ~0.5

- **Text Length 6**:

- None: ~0.5

- Group: ~0.6

- Padding: ~0.4

#### Edit Distance Axis

- No bars are plotted against the Edit Distance axis, which ranges from 0.0 to 1.0. This suggests it may represent a separate metric (e.g., edit distance between original and modified text) but is not visually linked to the BLEU score bars.

---

### Key Observations

1. **BLEU Score Variability**:

- BLEU scores generally increase with text length up to 4, then decline.

- The "Group" category consistently outperforms "None" and "Padding" across most text lengths.

- "Padding" shows the lowest scores, particularly at text lengths 2 and 6.

2. **Edit Distance Discrepancy**:

- The Edit Distance axis is present but unutilized, indicating potential missing data or a design oversight.

3. **Hatch Patterns**:

- Diagonal hatches (crosshatch) are used to differentiate categories, but the legend clarifies the color-coding.

---

### Interpretation

- **Performance Insights**:

- The "Group" category (red) demonstrates the highest BLEU scores, suggesting it may represent a more effective text processing method (e.g., grouping related terms).

- "Padding" (blue) underperforms, possibly due to irrelevant or redundant text additions.

- The decline in scores for longer text lengths (5–6) could indicate overfitting or noise in longer sequences.

- **Design Considerations**:

- The secondary Edit Distance axis lacks corresponding data, raising questions about its purpose.

- The use of hatch patterns for differentiation is effective but could be supplemented with clearer color coding for accessibility.

- **Uncertainties**:

- Exact BLEU scores are approximate due to the lack of numerical labels on the bars.

- The relationship between Edit Distance and BLEU Score remains unclear without additional data.

This chart highlights the impact of text length and grouping strategies on BLEU scores, with "Group" emerging as the most effective approach. Further analysis of the Edit Distance metric is needed to fully contextualize the results.

</details>

Figure 6: Performance of text length generalization across various padding strategies. Group strategies contribute to length generalization.

6.2 Reasoning Step Generalization

The reasoning step generalization investigates whether models can extrapolate to reasoning chains requiring different steps $k$ from those observed during training. which is a popular setting in multi-step reasoning tasks.

Experiment settings. Similar to text length generalization, we first pre-train the LLM with reasoning step $k=2$ , and evaluate on data with reasoning step $k=1$ or $k=3$ .

<details>

<summary>x8.png Details</summary>

### Visual Description

```markdown

## Line Graph: Exact Match Percentage vs Data Percentage

### Overview

The image depicts a line graph comparing two data series (k=1 and k=2) across varying data percentages (0.0 to 1.0). The y-axis represents "Exact Match (%)" from 0 to 100, while the x-axis represents "Data Percentage" in increments of 0.1. Two distinct trends are observed: one series (k=1) increases steadily with data percentage, while the other (k=2) declines sharply after an initial plateau.

### Components/Axes

- **X-axis (Data Percentage)**: Labeled "Data Percentage" with markers at 0.0, 0.1, 0.2, ..., 1.0.

- **Y-axis (Exact Match %)**: Labeled "Exact Match (%)" with increments of 20 from 0 to 100.

- **Legend**: Located in the bottom-right corner, indicating:

- **Blue circles**: k=1

- **Red squares**: k=2

### Detailed Analysis

#### k=1 (Blue Circles)

- **Trend**: Starts at 0% Exact Match at 0.0 Data Percentage.

- **Key Points**:

- 0.1: ~30%

- 0.2: ~55%

- 0.3: ~65%

- 0.4: ~70%

- 0.5–1.0: Plateaus at ~85%.

- **Pattern**: Steep initial growth followed by stabilization.

#### k=2 (Red Squares)

- **Trend**: Begins at ~90% Exact Match at 0.0 Data Percentage.

- **Key Points**:

- 0.1: ~90%

- 0.2: ~85%

- 0.3: ~70%

- 0.4: ~40%

- 0.5: ~15%

- 0.6–1.0: Drops to ~0%.

- **Pattern**: Sharp decline after 0.3 Data Percentage.

### Key Observations

1. **Intersection Point**: Both series intersect near 0.3 Data Percentage (~65–70% Exact Match).

2. **Divergence**: k=1 improves with more data, while k=2 deteriorates sharply beyond 0.3.

3. **Asymptotic Behavior**: k=2 approaches 0% Exact Match as Data Percentage approaches 1.0.

### Interpretation

The graph suggests that k=1 (blue circles) demonstrates robust performance across all data percentages, with diminishing returns after 0.4. In contrast, k=2 (red squares) exhibits a critical threshold at ~0.3 Data Percentage, where its effectiveness collapses. This could indicate:

- **Model Sensitivity**: k=2 may overfit or rely on specific data patterns that degrade with increased data volume.

- **Threshold Effects**: k=1’s plateau at ~85% suggests diminishing marginal gains beyond 0.4 Data Percentage.

- **Practical Implications**: For applications requiring high Exact Match, k=

</details>

(a) Reasoning step. From k=2 to k=1

<details>

<summary>x9.png Details</summary>

### Visual Description

```markdown

## Line Graph: Exact Match Percentage vs Data Percentage

### Overview

The image depicts a line graph comparing the performance of two models (k=2 and k=3) across varying data percentages. The y-axis represents "Exact Match (%)" (0-80%), and the x-axis represents "Data Percentage" (0.0-1.0). Two data series are plotted: a blue line with circular markers (k=3) and a red dashed line with square markers (k=2).

### Components/Axes

- **X-axis (Data Percentage)**: Labeled "Data Percentage" with tick marks at 0.0, 0.1, 0.2, ..., 1.0.

- **Y-axis (Exact Match %)**: Labeled "Exact Match (%)" with increments of 10 (0-80).

- **Legend**: Located in the top-right corner, with:

- Blue line (solid circles): "k=3"

- Red dashed line (squares): "k=2"

### Detailed Analysis

#### k=3 (Blue Line)

- **Trend**: Starts at 0% (0.0 data), rises gradually to 2% (0.2), dips slightly to 9% (0.5), then surges to 72% (1.0).

- **Key Points**:

- 0.0: 0%

- 0.1: 0%

- 0.2: 2%

- 0.3: 5%

- 0.4: 10%

- 0.5: 9%

- 0.6: 12%

- 0.7: 28%

- 0.8: 48%

- 0.9: 62%

- 1.0: 72%

#### k=2 (Red Dashed Line)

- **Trend**: Starts at 80% (0.0 data), declines steadily to 0% (1.0).

- **Key Points**:

- 0.0: 80%

- 0.1: 55%

- 0.2: 35%

- 0.3: 22%

- 0.4: 18%

- 0.5: 12%

- 0.6: 10%

- 0.7: 16%

- 0.8: 20%

- 0.9: 14%

- 1.0: 0%

### Key Observations

1. **Crossover Point**: At 0.5 data percentage, both models show ~10% exact match, but k=3 overtakes k=2 beyond this point.

2. **k=3 Performance**: Shows a U-shaped curve

</details>

(b) Reasoning step. From k=2 to k=3

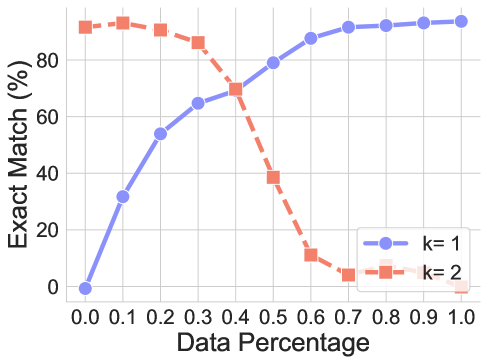

Figure 7: SFT performances for reasoning step generalization.

Findings. As showcased in Figure 7, CoT reasoning cannot generalize across data requiring different reasoning steps, indicating the failure of generalization. Then, we try to decrease the distribution discrepancy introduced by gradually increasing the ratio of unseen data while keeping the dataset size the same when pre-training the model. And then, we evaluate the performance on two datasets. As we can observe, the performance on the target dataset increases along with the ratio. At the same time, the LLMs can not generalize to the original training dataset because of the small amount of training data. The trend is similar when testing different-step generalization, which follows the intuition and validates our hypothesis directly.

7 Format Generalization

Format generalization assesses the robustness of CoT reasoning to surface-level variations in test queries. This dimension is especially crucial for determining whether models have internalized flexible, transferable reasoning strategies or remain reliant on the specific templates and phrasings encountered during training.

Format Alignment Score. We introduce a metric for measuring prompt similarity:

**Definition 7.1 (Format Alignment Score)**

*For training prompt distribution $P_{train}$ and test prompt $p_{test}$ :

$$

\text{PAS}(p_{test})=\max_{p\in P_{train}}\cos(\phi(p),\phi(p_{test})) \tag{14}

$$

where $\phi$ is a prompt embedding function.*

<details>

<summary>x10.png Details</summary>

### Visual Description

## Bar Chart: Edit Distance vs. Noise Level

### Overview

The chart compares the edit distance (a measure of string similarity) across four categories (All, Insertion, Deletion, Modify) at varying noise levels (5% to 30%). Each category is represented by a distinct patterned bar, with edit distance values on the y-axis (0.0–0.8) and noise levels on the x-axis (5%–30%).

### Components/Axes

- **X-axis**: Noise Level (%) with markers at 5%, 10%, 15%, 20%, 25%, and 30%.

- **Y-axis**: Edit Distance (0.0–0.8) in increments of 0.2.

- **Legend**: Located in the top-left corner, mapping categories to patterns/colors:

- **All**: Purple with diagonal stripes.

- **Insertion**: Red with diagonal stripes.

- **Deletion**: Blue with crosshatch.

- **Modify**: Green with dots.

### Detailed Analysis

- **5% Noise**:

- All: ~0.2

- Insertion: ~0.25

- Deletion: ~0.15

- Modify: ~0.2

- **10% Noise**:

- All: ~0.35

- Insertion: ~0.45

- Deletion: ~0.25

- Modify: ~0.35

- **15% Noise**:

- All: ~0.5

- Insertion: ~0.6

- Deletion: ~0.35

- Modify: ~0.5

- **20% Noise**:

- All: ~0.6

- Insertion: ~0.7

- Deletion: ~0.45

- Modify: ~0.6

- **25% Noise**:

- All: ~0.65

- Insertion: ~0.8

- Deletion: ~0.55

- Modify: ~0.7

- **30% Noise**:

- All: ~0.7

- Insertion: ~0.85

- Deletion: ~0.6

- Modify: ~0.8

### Key Observations

1. **Upward Trend**: All categories show increasing edit distance as noise level rises.

2. **Insertion Dominance**: Insertion consistently has the highest edit distance across all noise levels.

3. **Deletion Consistency**: Deletion has the lowest edit distance, remaining stable relative to other categories.

4. **Modify vs. All**: Modify and All categories exhibit similar trends, with Modify slightly outperforming All at higher noise levels (e.g., 25% and 30%).

### Interpretation

The data suggests that **insertion errors are the most disruptive** to edit distance, likely due to their direct impact on string alignment. Deletion errors are the least impactful, possibly because they remove characters without introducing new ones. Modify errors fall between the two, indicating that character substitutions are moderately disruptive. The "All" category aggregates all error types, showing a middle-ground trend. The consistent upward trend across noise levels highlights the sensitivity of edit distance to increasing corruption in input data. This could inform noise-robustness strategies in text processing systems.

</details>

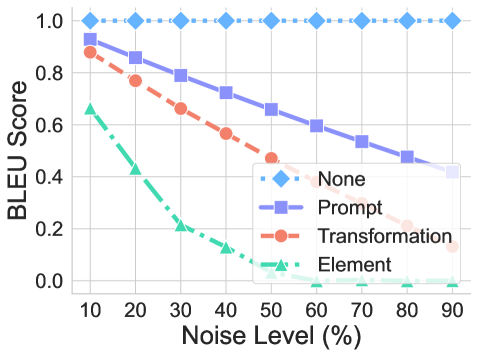

(a) Format generalization. Performance under various perturbation methods.

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Graph: BLEU Score vs. Noise Level

### Overview

The image is a line graph comparing the performance of four methods ("None," "Prompt," "Transformation," "Element") in terms of BLEU score degradation under increasing noise levels (10% to 90%). The graph shows distinct trends for each method, with "None" maintaining a constant score and others declining progressively.

### Components/Axes

- **X-axis**: Noise Level (%) ranging from 10 to 90 in increments of 10.

- **Y-axis**: BLEU Score ranging from 0.0 to 1.0 in increments of 0.2.

- **Legend**: Located in the bottom-right corner, mapping:

- Blue diamonds: "None"

- Purple squares: "Prompt"

- Red circles: "Transformation"

- Green triangles: "Element"

### Detailed Analysis

1. **"None" (Blue Diamonds)**:

- **Trend**: Horizontal line at **1.0** across all noise levels.

- **Data Points**: Unchanged at 100% noise (1.0).

2. **"Prompt" (Purple Squares)**:

- **Trend**: Steady linear decline from ~0.95 (10% noise) to ~0.4 (90% noise).

- **Data Points**:

- 10%: ~0.95

- 20%: ~0.85

- 30%: ~0.75

- 40%: ~0.65

- 50%: ~0.55

- 60%: ~0.45

- 70%: ~0.35

- 80%: ~0.25

- 90%: ~0.15

3. **"Transformation" (Red Circles)**:

- **Trend**: Sharp linear decline from ~0.85 (10% noise) to ~0.15 (90% noise).

- **Data Points**:

- 10%: ~0.85

- 20%: ~0.75

- 30%: ~0.65

- 40%: ~0.55

- 50%: ~0.45

- 60%: ~0.35

- 70%: ~0.25

- 80%: ~0.15

- 90%: ~0.05

4. **"Element" (Green Triangles)**:

- **Trend**: Steepest decline from ~0.65 (10% noise) to ~0.05 (90% noise).

- **Data Points**:

- 10%: ~0.65

- 20%: ~0.55

- 30%: ~0.45

- 40%: ~0.35

- 50%: ~0.25

- 60%: ~0.15

- 70%: ~0.05

- 80%: ~0.02

- 90%: ~0.01

### Key Observations

- **"None"** remains unaffected by noise, suggesting it may represent a baseline or control condition.

- **"Prompt"** shows moderate resilience, retaining ~40% of its initial score at 90% noise.

- **"Transformation"** and **"Element"** degrade significantly, with "Element" being the most vulnerable.

- All methods except "None" exhibit a consistent linear relationship between noise level and BLEU score.

### Interpretation

The graph demonstrates that noise severely impacts BLEU scores for all methods except "None," which remains constant. This implies:

1. **"None"** may represent an idealized or noise-agnostic scenario (e.g., noiseless data or a theoretical upper bound).

2. **"Prompt"** offers the best trade-off between noise resilience and performance, retaining higher scores than other methods at high noise levels.

3. **"Transformation"** and **"Element"** are highly sensitive to noise, suggesting they rely on precise input conditions or lack robustness mechanisms.

4. The linear degradation patterns indicate that noise introduces systematic errors, disproportionately affecting methods dependent on structural or contextual cues (e.g., "Element" may involve positional or syntactic dependencies).

This analysis highlights the importance of noise robustness in NLP systems, with "Prompt" emerging as the most practical choice for noisy environments.

</details>

(b) Format generalization. Performance vs. various applied perturbation areas.

Figure 8: Performance of format generalization.

Experiment settings. To systematically probe this, we introduce four distinct perturbation modes to simulate scenario in real-world: (i) insertion, where a noise token is inserted before each original token; (ii) deletion: it deletes the original token; (iii) modification: it replaces the original token with a noise token; and (iv) hybrid mode: it combines multiple perturbations. Each mode is applied for tokens with probabilities $p$ , enabling us to quantify the model’s resilience to increasing degrees of prompt distribution shift.

Findings. As shown in Figure 8a, we found that generally CoT reasoning can be easily affected by the format changes. No matter insertion, deletion, modifications, or hybrid mode, it creates a format discrepancy that affects the correctness. Among them, the deletion slightly affects the performance. While the insertions are relatively highly influential on the results. We further divide the query into several sections: elements, transformations, and prompt tokens. As shown in Figure 8b, we found that the elements and transformation play an important role in the format, whereas the changes to other tokens rarely affect the results.

8 Temperature and Model Size

Temperature and model size generalization explores how variations in sampling temperature and model capacity can influence the stability and robustness of CoT reasoning. For the sake of rigorous evaluation, we further investigate whether different choices of temperatures and model sizes may significantly affect our results.

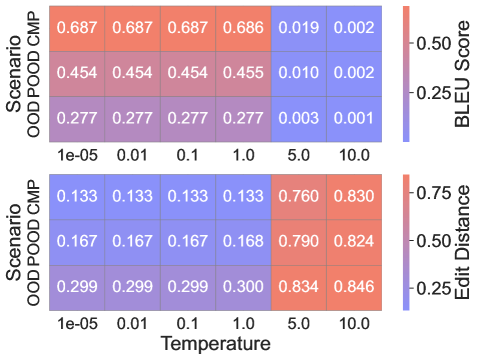

Experiment settings. We explore the impact of different temperatures on the validity of the presented results. We adopt the same setting in the transformation generalization.

Findings. As illustrated in Figure 9a, LLMs tend to generate consistent and reliable CoT reasoning across a broad range of temperature settings (e.g., from 1e-5 up to 1), provided the values remain within a suitable range. This stability is maintained even when the models are evaluated under a variety of distribution shifts.

<details>

<summary>x12.png Details</summary>

### Visual Description

## Heatmap: BLEU Score and Edit Distance Across Scenarios and Temperatures

### Overview

The image is a dual-axis heatmap comparing performance metrics (BLEU Score and Edit Distance) across two scenarios ("Scenario" and "Scenario OOD CMP") at varying temperatures (1e-05 to 10.0). The heatmap uses a color gradient from blue (low values) to red (high values) to represent metric magnitudes.

### Components/Axes

- **Y-Axis (Left)**:

- Top Section: "Scenario" with subcategories:

- OOD POD CMP (0.687, 0.454, 0.277)

- Bottom Section: "Scenario OOD CMP" with subcategories:

- OOD POD CMP (0.133, 0.167, 0.299)

- **X-Axis (Bottom)**: Temperature values: 1e-05, 0.01, 0.1, 1.0, 5.0, 10.0

- **Color Legends (Right)**:

- **BLEU Score**: Red (high) to Blue (low), range 0.002–0.687

- **Edit Distance**: Red (high) to Blue (low), range 0.133–0.846

### Detailed Analysis

#### Top Section ("Scenario")

- **BLEU Score Trends**:

- **OOD POD CMP**:

- 1e-05: 0.687 (red)

- 0.01: 0.687 (red)

- 0.1: 0.687 (red)

- 1.0: 0.686 (red)

- 5.0: 0.019 (blue)

- 10.0: 0.002 (blue)

- **Trend**: Sharp decline in BLEU Score as temperature increases beyond 1.0.

#### Bottom Section ("Scenario OOD CMP")

- **Edit Distance Trends**:

- **OOD POD CMP**:

- 1e-05: 0.133 (blue)

- 0.01: 0.133 (blue)

- 0.1: 0.133 (blue)

- 1.0: 0.133 (blue)

- 5.0: 0.760 (red)

- 10.0: 0.830 (red)

- **Trend**: Gradual increase in Edit Distance with temperature, accelerating after 1.0.

### Key Observations

1. **BLEU Score Degradation**: In the "Scenario" section, BLEU Score drops dramatically at higher temperatures (5.0–10.0), suggesting model performance collapses under extreme conditions.

2. **Edit Distance Correlation**: In "Scenario OOD CMP", Edit Distance increases with temperature, indicating more edits are required as model confidence decreases.

3. **Scenario Comparison**: The "Scenario" section consistently shows higher BLEU Scores and lower Edit Distances than "Scenario OOD CMP", implying better baseline performance.

### Interpretation

- **Model Robustness**: The data highlights a critical temperature threshold (1.0) beyond which both metrics degrade significantly. This suggests models trained on "Scenario" are less robust to out-of-distribution (OOD) conditions compared to "Scenario OOD CMP".

- **Trade-off Analysis**: Lower temperatures (1e-05 to 1.0) optimize BLEU Score but may underfit OOD data, while higher temperatures (5.0–10.0) increase Edit Distance, reflecting overcorrection or noise amplification.

- **Practical Implications**: The stark contrast between scenarios underscores the need for temperature calibration in deployment environments to balance fluency (BLEU) and accuracy (Edit Distance).

### Spatial Grounding & Validation

- **Legend Alignment**: Red in the BLEU Score legend matches high values (0.687), while blue matches low values (0.002). Similarly, Edit Distance red (0.846) aligns with high values, and blue (0.133) with low values.

- **Data Consistency**: All numerical values in the heatmap correspond to the color gradient, with no mismatches observed.

</details>

(a) Influences of various temperatures.

<details>

<summary>x13.png Details</summary>

### Visual Description

## Line Graph: Exact Match (%) vs SFT Ratio (×10⁻⁴)

### Overview

The graph depicts the relationship between SFT Ratio (scaled by 10⁻⁴) and Exact Match (%) for five distinct data series, each represented by unique line styles, colors, and markers. The y-axis ranges from 0% to 100%, while the x-axis spans 1.0 to 4.0 (×10⁻⁴). The legend in the top-right corner maps line styles/colors to labels (e.g., "68K," "589K," etc.).

---

### Components/Axes

- **X-axis**: "SFT Ratio (×10⁻⁴)" with ticks at 1.0, 1.3, 1.7, 2.0, 2.5, 3.0, 3.5, 4.0.

- **Y-axis**: "Exact Match (%)" with ticks at 0%, 20%, 40%, 60%, 80%, 100%.

- **Legend**: Located in the top-right corner, with five entries:

- **68K**: Solid blue line with square markers.

- **589K**: Dashed red line with circular markers.