# A Comparative Study of Neurosymbolic AI Approaches to Interpretable Logical Reasoning

**Authors**: Michael K. Chen michaelchenkj@gmail.com, Raffles Institution

:

## Abstract

General logical reasoning, defined as the ability to reason deductively on domain-agnostic tasks, continues to be a challenge for large language models (LLMs). Current LLMs fail to reason deterministically and are not interpretable. As such, there has been a recent surge in interest in neurosymbolic AI, which attempts to incorporate logic into neural networks. We first identify two main neurosymbolic approaches to improving logical reasoning: (i) the integrative approach comprising models where symbolic reasoning is contained within the neural network, and (ii) the hybrid approach comprising models where a symbolic solver, separate from the neural network, performs symbolic reasoning. Both contain AI systems with promising results on domain-specific logical reasoning benchmarks. However, their performance on domain-agnostic benchmarks is understudied. To the best of our knowledge, there has not been a comparison of the contrasting approaches that answers the following question: Which approach is more promising for developing general logical reasoning? To analyze their potential, the following best-in-class domain-agnostic models are introduced: Logic Neural Network (LNN), which uses the integrative approach, and LLM-Symbolic Solver (LLM-SS), which uses the hybrid approach. Using both models as case studies and representatives of each approach, our analysis demonstrates that the hybrid approach is more promising for developing general logical reasoning because (i) its reasoning chain is more interpretable, and (ii) it retains the capabilities and advantages of existing LLMs. To support future works using the hybrid approach, we propose a generalizable framework based on LLM-SS that is modular by design, model-agnostic, domain-agnostic, and requires little to no human input.

## 1 Introduction

State-of-the-art large language models (SOTA LLMs) steadily inch higher and higher on a wide array of benchmarks, from text summarization to code generation (Achiam et al., 2023). Nonetheless, LLMs continue to exhibit serious deficiencies in their ability to perform logical reasoning (Huang and Chang, 2022). Despite the gradual rise in accuracy in logical reasoning benchmarks, LLMs’ fundamental problems have not been mitigated.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Screenshot: Question-Answer Pair with Reasoning

### Overview

The image is a digital screenshot displaying a two-panel interface, likely from a language model or reasoning system. It presents a question in an "Input" panel on the left and a structured, highlighted answer in an "Output" panel on the right. The design is minimal, with a dark gray background and white text.

### Components/Axes

The image is divided into two distinct, side-by-side rectangular panels with rounded corners.

1. **Left Panel (Input):**

* **Header Label:** "Input" (bold, white text).

* **Content:** A single line of text presenting a question.

* **Text:** `Q: Would a sophist use an épée?`

2. **Right Panel (Output):**

* **Header Label:** "Output" (bold, white text).

* **Content:** A multi-sentence answer with specific text segments highlighted using semi-transparent colored overlays.

* **Full Transcription:** `A: A sophist is a person who is skilled in the art of persuasion. An épée is a type of sword. Thus, a sophist could use an épée. So the answer is yes.`

* **Highlighting Details:**

* **Yellow Highlight:** Covers the first two sentences: `A sophist is a person who is skilled in the art of persuasion. An épée is a type of sword.`

* **Green Highlight:** Covers the third sentence: `a sophist could use an épée.`

### Detailed Analysis

The content is entirely textual and in English. No other languages are present.

* **Input Text:** A direct, closed-ended question combining a philosophical/historical figure ("sophist") with a specific type of weapon ("épée").

* **Output Text:** The answer follows a clear, logical structure:

1. **Definition 1:** Defines "sophist."

2. **Definition 2:** Defines "épée."

3. **Logical Inference:** Connects the two definitions with "Thus," concluding possibility.

4. **Final Answer:** Explicitly states the answer to the original question ("yes").

### Key Observations

* The output does not answer with a simple "yes" or "no" but provides a reasoned argument.

* The highlighting visually segments the answer into its logical components: premises (yellow) and conclusion (green).

* The language is formal and explanatory, resembling a pedagogical or tutorial style.

* The term "épée" includes the correct accent mark (é), indicating attention to typographical detail.

### Interpretation

This image demonstrates a **reasoning or explanation process** rather than presenting raw data. The "data" here is the logical argument itself.

* **What it demonstrates:** The system is designed to break down a compound question into its constituent parts, define the key terms, and then synthesize a conclusion based on those definitions. This mimics a basic form of deductive reasoning.

* **Relationship between elements:** The "Input" poses a problem. The "Output" solves it by first establishing common ground (definitions) before proceeding to inference. The highlighting reinforces this structure, guiding the viewer's attention to the foundational facts and the resulting deduction.

* **Notable pattern:** The answer hinges on the literal, dictionary definitions of the words, not on historical context (e.g., whether ancient Greek sophists historically used swords) or the typical modern usage of "épée" in fencing. This suggests the system operates on semantic and logical relationships within language rather than deep world knowledge.

* **Purpose:** The format is likely intended for educational purposes, to show step-by-step reasoning, or to validate that a language model can perform basic definitional logic. The clear visual separation of input and output, along with the highlighting, makes the reasoning process transparent and easy to follow.

</details>

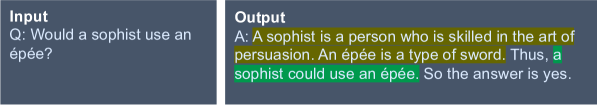

Figure 1: An example CoT output from a StrategyQA question in Wei et al. (2022)

Consider the example in Fig. 1, which shows a chain-of-thought (CoT) response to a question from the StrategyQA dataset (Geva et al., 2021), generated by Wei et al. (2022). In a CoT response, the model will generate intermediate reasoning steps before arriving at the final answer. The text highlighted in yellow shows the LLM’s premises, i.e. the evidence used to support the conclusion, while the text highlighted in green shows the LLM’s conclusion. This example highlights two primary flaws in LLMs: (1) the premises do not lead to the conclusion. Given the two premises, the final answer should have been “no”; (2) the conclusion may not have been derived from the premises at all. This problem is more subtle, for we naturally assume that if the conclusion does not follow from the premises, it implies the LLM has made a logical reasoning mistake. However, we cannot definitively state that the LLM inferred based on any and all of the premises, which are further explained below.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: AI System Architectures - Integrative vs. Hybrid Approaches

### Overview

The image displays a side-by-side comparison of two conceptual architectures for AI systems that combine neural networks with symbolic reasoning. The diagram is a technical flowchart illustrating the structural relationship between these two core components. The left panel is labeled "Integrative Approach," and the right panel is labeled "Hybrid Approach."

### Components/Axes

The diagram is divided into two distinct, bordered panels.

**Left Panel: Integrative Approach**

* **Title:** "Integrative Approach" (positioned top-left within the panel).

* **Flow:** A linear, left-to-right sequence.

* **Components:**

1. **Input:** Text label on the far left.

2. **Neural Network:** A large, light-gray rectangular box. Inside this box is a smaller, dark-gray box.

3. **Symbolic Reasoning:** Text label inside the smaller, dark-gray box, which is itself contained within the "Neural Network" box.

4. **Output:** Text label on the far right.

* **Connections:** A single arrow points from "Input" to the "Neural Network" box. Another arrow points from the "Neural Network" box to "Output."

**Right Panel: Hybrid Approach**

* **Title:** "Hybrid Approach" (positioned top-left within the panel).

* **Flow:** A linear, left-to-right sequence.

* **Components:**

1. **Input:** Text label on the far left.

2. **Neural Network:** A dark-gray rectangular box.

3. **Symbolic Reasoning:** A separate, dark-gray rectangular box of similar size.

4. **Output:** Text label on the far right.

* **Connections:** An arrow points from "Input" to the "Neural Network" box. A second arrow points from the "Neural Network" box to the "Symbolic Reasoning" box. A final arrow points from the "Symbolic Reasoning" box to "Output."

### Detailed Analysis

The core difference between the two architectures is the **topological relationship** between the "Neural Network" and "Symbolic Reasoning" components.

* **Integrative Approach:** Symbolic Reasoning is depicted as a **sub-component or module embedded within** the Neural Network. This suggests a tightly coupled system where symbolic operations are an intrinsic part of the neural processing pipeline, potentially happening in parallel or as an integrated layer.

* **Hybrid Approach:** Neural Network and Symbolic Reasoning are shown as **two distinct, sequential modules**. The flow is strictly serial: Input → Neural Network → Symbolic Reasoning → Output. This suggests a pipeline where the neural network processes the input first, and its output is then passed to a separate symbolic reasoning engine for further processing.

### Key Observations

1. **Structural Contrast:** The primary visual distinction is containment (Integrative) versus sequence (Hybrid).

2. **Component Consistency:** The same two core components ("Neural Network" and "Symbolic Reasoning") are used in both diagrams, emphasizing that the difference is architectural, not compositional.

3. **Flow Direction:** Both architectures maintain a unidirectional, left-to-right data flow from Input to Output.

4. **Visual Weight:** In the Integrative diagram, the "Neural Network" box is larger to encompass the "Symbolic Reasoning" box. In the Hybrid diagram, the two component boxes are of equal visual weight.

### Interpretation

This diagram illustrates a fundamental design choice in neuro-symbolic AI systems.

* The **Integrative Approach** implies a more unified, potentially end-to-end trainable model where symbolic logic and neural pattern recognition are deeply intertwined. This could lead to more seamless reasoning but might be complex to design and interpret.

* The **Hybrid Approach** represents a modular, pipeline-based system. This design offers clearer separation of concerns—the neural network handles perception and pattern matching, while the symbolic engine handles explicit logic and rules. This can be easier to develop, debug, and update individual components, but may suffer from error propagation between stages and a lack of joint optimization.

The choice between these architectures involves trade-offs between integration tightness, modularity, interpretability, and system complexity. The diagram serves as a high-level conceptual map for discussing these trade-offs in technical documentation.

</details>

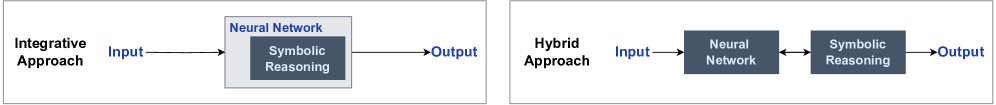

Figure 2: Integrative and hybrid neurosymbolic approaches for general logical reasoning

Both problems are manifestations of the limitations of Transformer-based architectures. Their probabilistic nature causes Flaw (1), while the lack of interpretability prevents Flaw (2) from being resolvable. In response to these challenges, neurosymbolic systems have recently regained prominence as an alternative to purely Transformer-based architectures; their deterministic and interpretable methods appear promising for enabling logical reasoning. Interpretability is defined as a model’s ability to accurately demonstrate its flow of reasoning to arrive at an answer. As the name suggests, it aims to combine the best of both worlds: neural networks and symbolic reasoning. The former enables learning, creativity, and inductive reasoning, while the latter handles logical reasoning with symbolic rules and algorithms.

However, many recent neurosymbolic works (Badreddine et al., 2022; Riegel et al., 2020) are not generalizable as evidenced by how they are typically benchmarked on domain-specific tasks. For example, the CLUTRR benchmark, which only includes family relations, is commonly used (Sinha et al., 2019) to evaluate inference skills. This is because such models require a comprehensive list of task-specific axioms/formulas to be defined before training; additional details and examples are provided in Section A. Since it is unfeasible to manually define logical rules for hundreds of expansive topics, these models cannot be applied to domain-agnostic benchmarks like MMLU (Hendrycks et al., 2020), FOLIO (Han et al., 2022), and StrategyQA (Geva et al., 2021); they cannot achieve the broad applicability of existing LLMs. As such, we focus on general logical reasoning in this paper, which we define as the ability to deductively reason in domain-agnostic tasks.

Inspired by the taxonomies in Kautz (2020) and Ciatto et al. (2024), we identify two main approaches to neurosymbolic AI for general logical reasoning, i.e. integrative and hybrid, which are illustrated in Fig. 2. The integrative approach modifies the neural network architecture to allow it to perform logical reasoning in a deterministic and interpretable way. On the other hand, the hybrid approach sidesteps the limitations of traditional neural networks by coupling them with external symbolic solvers. Recent literature found success with both approaches for domain-specific benchmarks like CLUTRR and StepGame (Shi et al., 2022), but their use in general logical reasoning is still understudied. The Related Works section can be found in Appendix A.

To evaluate the merits of both approaches, we develop a best-in-class model for each approach and compare their strengths and weaknesses. For the integrative approach, we create a novel neural network that consists solely of differentiable logic gates, which we refer to as Logic Neural Network (LNN). It can deterministically represent any and all laws of propositional calculus. For example, the statement “If $a$ and $b$ , then $c$ is true.” can be represented by an AND logic gate ( ${a∧ b}$ ). Moreover, it is interpretable. Once a specific neuron has chosen a logic gate, one can precisely interpret the argument form used. We experimentally validate that LNN is comparable or better than the current SOTA integrative model using both synthetic and real-world datasets.

For the hybrid approach, we introduce LLM-SS, a framework that combines an LLM and a symbolic solver. It is, by design, model-agnostic, domain-agnostic, and requires little human input. Broadly speaking, in the case of question-answering (QA) tasks, the LLM is responsible for generating natural language premises for the question, and then translating them into logical form. Afterward, the logical form is fed to the symbolic solver, which outputs the final conclusion using deductive reasoning. LLM-SS achieved higher or similar performance and lower error rates on domain-agnostic QA tasks compared to other models using the hybrid approach through the use of several novel techniques.

To evaluate which approach holds more potential for general logical reasoning, we formulate our comparison based on the following criteria: (i) ability to reason symbolically, (ii) interpretability of reasoning chain, and (iii) retention of LLM abilities. We find that while both integrative and hybrid approaches are able to reason symbolically, the former’s interpretability decreases when model size increases and the former loses much of the capabilities of existing LLMs, such as knowledge retrieval and generalization. LNN and the integrative approach as a whole suffer from theoretical limitations that limit their potential for tackling general logical reasoning. Given these factors, we contend that the hybrid approach is more promising. Finally, we propose a neurosymbolic framework based on LLM-SS to support future works in this direction.

## 2 Model Architectures

### 2.1 Logic Neural Network (LNN)

Representing the integrative approach, LNN is a regular neural network with an adapted logic gate formula for each neuron, which builds upon the Logic Gate Network (Petersen et al., 2022). Every 2 neurons in a layer connect to a randomly chosen neuron in the subsequent layer. Each neuron has a choice of 16 distinct logic gates, as shown in Table 1. This is because, given 2 binary inputs, there are 4 unique input combinations. Since the output is also binary, there are 16 unique output combinations, each corresponding to a logic gate. 2 neurons are connected instead of 3 or more because the latter’s implementation is considerably more complicated, yet does not exhibit stronger performance empirically given a comparable number of parameters (Petersen et al., 2022; Benamira et al., ).

Table 1: List of logic gates with their corresponding relaxation formulas and outputs values given input neurons $a$ and $b$ . This table is derived from Petersen et al. (2022).

| False $A∧ B$ $¬(A⇒ B)$ | 0 $A· B$ $A-AB$ | 0 0 0 | 0 0 0 | 0 0 1 | 0 1 0 |

| --- | --- | --- | --- | --- | --- |

| $A$ | $A$ | 0 | 0 | 1 | 1 |

| $¬(A⇐ B)$ | $B-AB$ | 0 | 1 | 0 | 0 |

| $B$ | $B$ | 0 | 1 | 0 | 1 |

| $A⊕ B$ | $A+B-2AB$ | 0 | 1 | 1 | 0 |

| $A∨ B$ | $A+B-AB$ | 0 | 1 | 1 | 1 |

| $¬(A∨ B)$ | $1-(A+B-AB)$ | 1 | 0 | 0 | 0 |

| $¬(A⊕ B)$ | $1-(A+B-2AB)$ | 1 | 0 | 0 | 1 |

| $¬ B$ | $1-B$ | 1 | 0 | 1 | 0 |

| $A⇐ B$ | $1-B+AB$ | 1 | 0 | 1 | 1 |

| $¬ A$ | $1-A$ | 1 | 1 | 0 | 0 |

| $A⇒ B$ | $1-A+AB$ | 1 | 1 | 0 | 1 |

| $¬(A∧ B)$ | $1-AB$ | 1 | 1 | 1 | 0 |

| True | 1 | 1 | 1 | 1 | 1 |

However, logic gates are discrete, with values of either 0 or 1, and are therefore non-differentiable. To enable gradient descent, each logic gate is relaxed into a continuous graph with 0 and 1 at each boundary, also known as real-valued logic. For example, the AND logic gate can be represented by $a· b$ while the OR logic gate is represented by $a+b-a· b$ , based on probabilistic T-norm and T-conorm respectively (van Krieken et al., 2022). Notice that all formulas in Table 1 require at most inputs $a$ , $b$ , and $a· b$ , where $a$ and $b$ are the values of the input neurons. We can therefore further generalize the formulas into Eq. 1, where $a$ and $b$ are the input neurons, $c$ is the output neuron, and $w_1-4$ are trainable weights. The sigmoid function $σ$ ensures that output $c$ ranges from 0 to 1 during training. Each neuron there uses Eq. 1 during training.

$$

c=σ(w_1a+w_2b+w_3(a· b)+w_4) \tag{1}

$$

At inference time, Eq. 1 is then translated into a discrete logic gate for each neuron. Each output $o_i$ of the 4 input possibilities is discretized into 0 or 1, such that when $o_i>0.5$ , it is assigned a value of 1. Based on the 4 outputs, the corresponding logic gate is determined. All reported results are based on accuracies from the discretized version.

### 2.2 LLM-Symbolic Solver (LLM-SS)

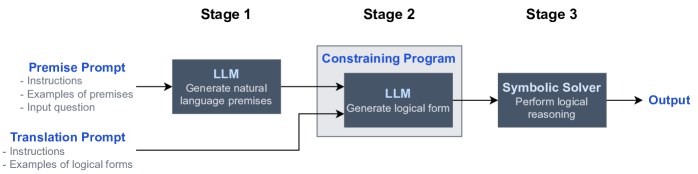

Representing the hybrid approach, LLM-SS is a multi-stage system, as illustrated in Fig. 3. In Stage 1, an LLM is prompted to generate natural language premises for an input question. Two types of propositional logic premises are allowed: (1) declarative sentences, which are statements that have truth values and no connectives, e.g. “A spider has 8 legs.”, and (2) conditional sentences, or if-else statements, e.g. “If an animal has 6 legs, it is not a spider.” (Pospesel, 1974). In other words, this is similar to CoT (Wei et al., 2022), except the final answer is not generated and only two types of sentences are encouraged.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Three-Stage LLM-Symbolic Reasoning Pipeline

### Overview

The image displays a technical flowchart illustrating a three-stage pipeline for processing natural language prompts into logical outputs. The system combines Large Language Models (LLMs) with a symbolic reasoning solver. The diagram is structured horizontally, flowing from left to right, with clear stage demarcations.

### Components/Axes

The diagram is segmented into three distinct stages, labeled at the top:

* **Stage 1** (Left)

* **Stage 2** (Center)

* **Stage 3** (Right)

**Input Prompts (Far Left):**

Two distinct input blocks are shown, both feeding into Stage 1.

1. **Premise Prompt** (Top-left):

* Contains the following bullet points:

* Instructions

* Examples of premises

* Input question

2. **Translation Prompt** (Bottom-left):

* Contains the following bullet points:

* Instructions

* Examples of logical forms

**Processing Blocks:**

* **Stage 1 - LLM Block:** A dark grey rectangle labeled "LLM" with the sub-text "Generate natural language premises". It receives input from the "Premise Prompt".

* **Stage 2 - Constraining Program:** A larger, light grey outlined box labeled "Constraining Program". Inside it is another dark grey "LLM" block with the sub-text "Generate logical form".

* **Stage 3 - Symbolic Solver:** A dark grey rectangle labeled "Symbolic Solver" with the sub-text "Perform logical reasoning".

**Output (Far Right):**

* A final arrow points to the label "Output".

**Flow Arrows:**

* An arrow flows from the "Premise Prompt" to the Stage 1 LLM.

* An arrow flows from the Stage 1 LLM to the Stage 2 LLM (inside the Constraining Program).

* An arrow flows from the "Translation Prompt" directly to the Stage 2 LLM.

* An arrow flows from the Stage 2 LLM to the Stage 3 Symbolic Solver.

* An arrow flows from the Symbolic Solver to the final "Output".

### Detailed Analysis

The pipeline describes a specific workflow:

1. **Stage 1 (Premise Generation):** A Large Language Model (LLM) takes a "Premise Prompt" (containing instructions, examples, and a question) and generates natural language premises.

2. **Stage 2 (Logical Form Generation):** A second LLM, constrained within a "Constraining Program," receives two inputs: the natural language premises from Stage 1 and a separate "Translation Prompt" (containing instructions and examples of logical forms). Its task is to generate a structured logical form.

3. **Stage 3 (Reasoning):** The generated logical form is passed to a "Symbolic Solver," which performs formal logical reasoning on it.

4. **Output:** The final result of the symbolic reasoning is produced as the system's output.

### Key Observations

* **Hybrid Architecture:** The system explicitly combines neural (LLM) and symbolic (Solver) AI components.

* **Constrained Generation:** The LLM in Stage 2 operates within a "Constraining Program," suggesting its output is restricted or guided to ensure it produces a valid logical form suitable for the symbolic solver.

* **Dual-Prompt Input:** The system uses two separate, specialized prompts to guide different parts of the process: one for generating premises and another for guiding the translation to logical form.

* **Sequential Dependency:** The process is strictly sequential; the output of Stage 1 is a necessary input for Stage 2.

### Interpretation

This diagram represents a method for bridging the gap between flexible natural language understanding and rigorous formal reasoning. The LLMs handle the ambiguous, human-like tasks of interpreting questions and translating them into a structured format. The symbolic solver then performs reliable, verifiable logic on that structure. The "Constraining Program" is a critical component, acting as a translator or guardrail to ensure the LLM's output is compatible with the strict requirements of the symbolic system. This architecture aims to leverage the strengths of both AI paradigms: the LLM's language proficiency and the symbolic system's reasoning precision. The separation of "Premise Prompt" and "Translation Prompt" indicates a deliberate design to decouple the problem understanding phase from the formalization phase.

</details>

Figure 3: Architecture of LLM-SS model

In Stage 2, an LLM translates the natural language premises into logical forms, essentially a semantic parsing task. However, the few-shot examples cannot cover all forms of knowledge representations exhibited by uncontrolled natural language, which includes a multitude of vocabulary and grammatical structures, often leading to invalid logical forms. In fact, Lyu et al. (2023) find that syntax errors and infinite loops (a subset of syntax errors) account for 52.9% of all errors made by OpenAI Codex on the StrategyQA dataset. To tackle this issue, we develop a novel constraining program for the LLM. Specifically, based on constraints introduced by the formal grammar of the logical form syntax, the program masks the logits of tokens that violate these constraints. For example, a grammar may dictate that “=” must be followed by True or False. In this case, assuming the subsequent token is “=”, all tokens besides those two will be masked.

In Stage 3, a symbolic solver receives the logical forms as input and then deductively derives the answer. To ensure determinism and interoperability, we turn to answer set programming (ASP) (Lifschitz, 2019), a form of logic programming (Lloyd, 2012). Logic programming represents natural language sentences as logical forms, which allow the logical relationships between entities to be unambiguously parsed by the program, unlike natural language sentences which may have multiple interpretations. ASP is a subset of logical programming, which focuses on solving search problems; finding the truth value of a statement based on a set of premises is one such problem. Clingo (Gebser et al., 2019), an ASP, is used in our experiments (which is further explained in Appendix D).

Importantly, so long as the premises and ASP code are accurate, the final output is necessarily true due to the deterministic nature of Clingo. This is unlike traditional CoT (Wei et al., 2022), where the final output may not be consistent with its premises, as highlighted in Figure 1.

## 3 Experimental Setup

We benchmark LNN and LLM-SS against other methods with integrative and hybrid approaches respectively. Note that different benchmarks are used for each approach. Currently, the integrative and hybrid approaches tackle completely different task domains; there is no common benchmark available to directly compare the approaches. Nonetheless, this experimental setup is sufficient to evaluate the strengths and weaknesses of each approach, which we further justify in Appendix C.

### 3.1 Logic Neural Network (LNN)

Our experiments for LNN aim to understand whether (i) the neurons are able to converge on the appropriate logic gate, and (ii) its performance on existing benchmarks. Both objectives are empirically studied in Experiments 1 and 2 respectively. This will help us understand the integrative approach’s pros and cons, which we discuss in Section 5.1

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Simple Flow Network

### Overview

The image displays a simple directed graph or flow diagram consisting of four nodes connected by three arrows. The diagram illustrates a process where two initial elements feed into a central processing node, which then produces a single output.

### Components/Axes

The diagram contains the following labeled components:

1. **Node `a`**: A light blue circle located in the top-left quadrant.

2. **Node `b`**: A light blue circle located in the bottom-left quadrant.

3. **Node `n1`**: A dark gray square located in the center of the diagram.

4. **Node `c`**: A light blue circle located on the right side.

**Connections (Arrows):**

* A black arrow originates from the right side of node `a` and points to the left side of node `n1`.

* A black arrow originates from the right side of node `b` and points to the left side of node `n1`.

* A black arrow originates from the right side of node `n1` and points to the left side of node `c`.

### Detailed Analysis

The structure defines a clear directional flow:

1. **Input Stage**: Two parallel input nodes, `a` and `b`, are positioned vertically on the left.

2. **Processing/Combination Stage**: Both inputs (`a` and `b`) converge via arrows into a single central node, `n1`. The distinct shape (square vs. circles) and color (dark gray vs. light blue) of `n1` suggest it represents a different type of entity—likely a function, process, operator, or aggregation point.

3. **Output Stage**: A single arrow leads from the central node `n1` to the output node `c` on the right.

### Key Observations

* **Asymmetric Inputs**: The inputs `a` and `b` are visually distinct in their vertical placement but identical in shape and color, implying they are of the same class or type.

* **Central Transformation**: The node `n1` is the only element with a different shape (square) and a darker color, highlighting its role as the core transformative or combinatory component in the system.

* **Single Output**: The system results in one output, `c`, from the two inputs, suggesting a many-to-one relationship or a consolidation process.

### Interpretation

This diagram is a canonical representation of a **binary operation** or a **data fusion process**. It models a system where two separate entities (`a` and `b`) are processed by a single function or unit (`n1`) to yield a resultant entity (`c`).

* **Possible Contexts**: This could represent:

* A mathematical function: `c = n1(a, b)`.

* A logical gate (e.g., AND, OR) where `a` and `b` are inputs.

* A data pipeline where two data streams are merged or joined.

* A decision-making node where two pieces of information are evaluated to produce an outcome.

* **The "n1" Label**: The label `n1` is generic, often used in technical diagrams to denote "node 1" or "network 1." Its specific meaning would be defined by the surrounding context not present in the image.

* **Visual Semantics**: The use of circles for `a`, `b`, and `c` versus a square for `n1` is a common visual shorthand to distinguish between data/state objects (circles) and processing/functional units (squares).

**In essence, the image conveys the fundamental concept of synthesis: two distinct inputs are operated upon by a central processor to create a single, new output.**

</details>

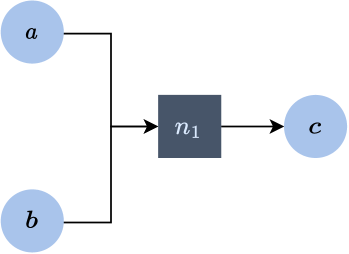

Figure 4: Experiment 1: Model Architecture of LNN & LGN

For Experiment 1, we create a simple synthetic dataset where the model must identify the appropriate logic gate when provided with 4 unique sets of neuron $a$ and $b$ values and their corresponding output. For example, with reference to Table 1, when provided the outputs 0, 0, 0, 1 for their corresponding $a$ and $b$ values, the model is expected to identify the $A∧ B$ logic gate.

We benchmark LNN against the SOTA Logic Gate Network (LGN) model (Petersen et al., 2022) based on (i) whether it converges on the correct logic gate and (ii) how many iterations it takes. Models like the IBM Logical Neural Network and the Logic Tensor Network are excluded because they presuppose that the model already knows the logic gates used before training. This condition does not hold for the aforementioned task, thus making it beyond these models’ existing capabilities. The architecture of both models is shown in Figure 4: a single-layer model containing a neuron with the respective formulas of LNN and LGN. When the neuron’s discretized version chooses the appropriate logic gate, it is considered to have converged.

For Experiment 2, we utilize the Adult Census and Breast Cancer datasets (Asuncion et al., 2007), which are classification tasks containing 48842 and 286 instances respectively. LNN is benchmarked against LGN and a multi-layer perception (MLP). The model architecture and hyperparameters of LGN and MLP can be found in Appendix E.

### 3.2 LLM-Symbolic Solver (LLM-SS)

<details>

<summary>x5.png Details</summary>

### Visual Description

## Process Diagram: Logical Reasoning Workflow via Clingo

### Overview

The image displays a three-stage horizontal flowchart illustrating a logical reasoning process. It demonstrates how natural language premises about historical figures are translated into a formal logic program (Clingo code) and then executed to derive a conclusion. The process flows from left to right, indicated by light blue arrows connecting the stages.

### Components/Axes

The diagram is segmented into three distinct rectangular boxes, each with a dark blue header bar containing white text.

1. **Stage 1 (Left Box):**

* **Header:** "Stage 1" / "Generate premises"

* **Content:** A list of three bulleted statements in natural English.

2. **Stage 2 (Center Box):**

* **Header:** "Stage 2" / "Generate Clingo code"

* **Content:** A block of Clingo (Answer Set Programming) code, including comments (lines starting with `%`) and logic rules.

3. **Stage 3 (Right Box):**

* **Header:** "Stage 3" / "Run Clingo program"

* **Content:** The single word "False" in bold, representing the program's output.

### Detailed Analysis

**Stage 1: Generate premises**

The input consists of three natural language statements:

* "Jackson Pollock lived in the 20th century."

* "Leonardo da Vinci lived in the 17th century."

* "If Jackson Pollock and Leonardo da Vinci lived in different centuries, Jackson Pollock was not trained by Leonardo da Vinci."

**Stage 2: Generate Clingo code**

The natural language premises are translated into formal Clingo syntax. The code is structured as follows:

* **Facts:**

* `% Jackson Pollock lived in the 20th century.` (Comment)

* `lived_century(jackson_pollock, 20).`

* `% Leonardo da Vinci lived in the 17th century.` (Comment)

* `lived_century(leonardo_da_vinci, 17).`

* **Rule:**

* `% If Jackson Pollock and Leonardo da Vinci lived in different centuries, Jackson Pollock was not trained by Leonardo da Vinci.` (Comment)

* `not trained(leonardo_da_vinci, jackson_pollock) :-`

* ` lived_century(jackson_pollock, X),`

* ` lived_century(leonardo_da_vinci, Y), X != Y.`

* **Conclusion Query:**

* `% Conclusion` (Comment)

* `answer() :- trained(leonardo_da_vinci, jackson_pollock).`

**Stage 3: Run Clingo program**

The execution of the Clingo program defined in Stage 2 yields the result: **False**.

### Key Observations

1. **Logical Translation:** The process accurately converts temporal facts ("lived in the Xth century") into predicate logic (`lived_century(person, century)`).

2. **Rule Formulation:** The conditional statement ("If... then...") is correctly encoded as a constraint rule (`:-`) in Clingo. The rule states that the `trained` relationship is *not* true if the centuries are different (`X != Y`).

3. **Conclusion Check:** The final `answer()` predicate is defined to be true only if `trained(leonardo_da_vinci, jackson_pollock)` is true. The program's output of "False" indicates this predicate could not be satisfied.

4. **Data Consistency:** The centuries provided (20 and 17) satisfy the condition `X != Y` in the rule, thereby activating the `not trained(...)` constraint.

### Interpretation

This diagram serves as a concrete example of **automated logical reasoning**. It shows the pipeline from human knowledge representation to machine verification.

* **What the data suggests:** The system is testing the hypothesis "Leonardo da Vinci trained Jackson Pollock." Given the premises that they lived centuries apart and that such a temporal gap precludes a training relationship, the logical engine correctly concludes the hypothesis is **false**.

* **How elements relate:** The stages represent a transformation chain: **Natural Language -> Formal Logic -> Computed Truth Value**. The arrows signify the flow of information and the increasing formality of representation.

* **Notable patterns/anomalies:** The key insight is the encoding of *common sense* (a person cannot train someone who lived centuries later) into a strict logical rule. The "False" result is not a failure but a successful validation of logical consistency based on the given axioms. The diagram effectively demystifies how symbolic AI systems process and reason over factual knowledge.

</details>

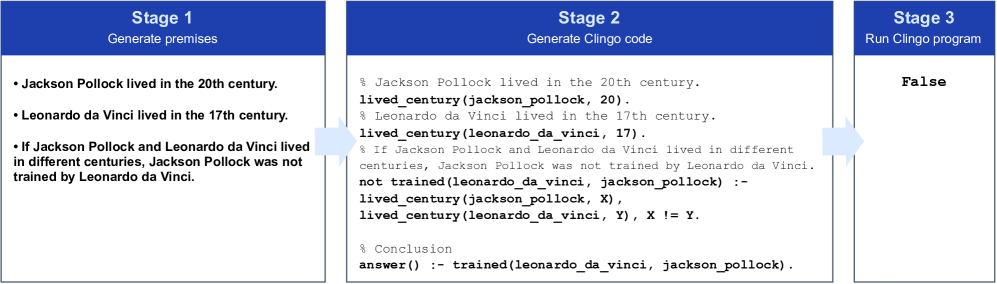

Figure 5: LLM-SS’s response to “Was Jackson Pollock trained by Leonardo da Vinci?”

The task chosen is the StrategyQA dataset (Geva et al., 2021), where the model must infer the appropriate premises based on a domain-agnostic question and reason about those premises. Fig. 5 shows how LLM-SS will answer the question “Was Jackson Pollock trained by Leonardo da Vinci?”. The model must first infer the appropriate premises in Stage 1, followed by their corresponding Clingo representations in Stage 2, which is ultimately used to generate the final answer using the Clingo program. Beyond testing for general logical reasoning, StrategyQA requires the advantages of existing LLMs: (i) learning, storing, and retrieval of knowledge, which is evaluated by whether models have the facts needed for the question from their training data, and (ii) inductive reasoning, which is evaluated by whether models can select the relevant facts.

We compare LLM-SS to (i) traditional CoT, (ii) Faithful-CoT, and (iii) an unconstrained LLM-SS, where the constraining software in Stage 2 is removed. LLM-SS uses Llama2-7B (Touvron et al., 2023) in Stage 1 and CodeQwen1.5-7B (Bai et al., 2023) in Stage 2; unconstrained LLM-SS and the traditional CoT model uses Llama2-7B; Faithful-CoT uses GPT-4. All models use Clingo as their symbolic solver, except Faithful-CoT, which uses Prolog, an alternative logic programming language. Few-shot prompts are executed with four examples due to Llama2-7B’s shorter context length, while Faithful-CoT uses six. As for metrics, other than accuracy, we also measure the error rate, which is the percentage of questions with no answers generated. This happens when the CoT model does not produce a yes or no answer, or when the ASP code has a syntax error.

## 4 Results

### 4.1 Logic Neural Network (LNN)

The results of Experiment 1 are shown in Table 2. LNN’s accuracy of 100% serves as a simple, foundational check that Eq. 1 can converge on the correct logic gate for all 16 options before moving to the more complex Experiment 2. Moreover, while LGN also achieves 100% accuracy, it converges almost 3 times slower than LNN, suggesting the latter’s relaxation formula may be more optimal for training.

Table 2: Results of Experiment 1

| Logic Gate Network Logic Neural Network | 1.00 1.00 | 163.4 63.9 |

| --- | --- | --- |

As for Experiment 2, the results are shown in Table 3. LNN achieves the highest accuracy (78.6%) out of the 3 models for the Breast Cancer dataset. The Adult Census, on the other hand, saw comparable results for all models, with MLP being marginally better (84.9%) than the rest. These results indicate that LNN outperforms LGN on smaller datasets due to the former’s stronger convergence abilities but underperforms on larger datasets. While further research is needed to understand and refine LNN’s scope and applicability, this lies beyond the scope of our work, as it is not essential for the analysis presented in Section 5.1.

Table 3: Results of Experiment 2

| Multi-Layer Perceptron | 0.753 | 1.4KB | 0.849 | 15KB | ✗ |

| --- | --- | --- | --- | --- | --- |

| Logic Gate Network | 0.761 | 320B | 0.848 | 640B | ✓ |

| Logic Neural Network | 0.786 | 320B | 0.848 | 640B | ✓ |

### 4.2 LLM-Symbolic Solver (LLM-SS)

As shown in Table 4, LLM-SS has a significantly higher accuracy and lower error rate compared to its unconstrained counterpart. It is evident that constraining LLM generation to enforce the syntax of Clingo leads to fewer errors during code execution, thereby increasing LLM-SS’s accuracy. This is further highlighted when benchmarked against Faithful-CoT, which suggests a smaller constrained model, e.g., Llama2-7B, can perform better than a larger unconstrained model, e.g., GPT-4. However, while LLM-SS is interpretable, it still lags behind the traditional CoT model in terms of accuracy.

Table 4: Results of LLM-SS experiment

| CoT | Llama2-7B | 60.6 | 0.6 | ✗ |

| --- | --- | --- | --- | --- |

| Faithful-CoT | GPT-4 | 54.0 This result is reported in https://github.com/veronica320/Faithful-COT | - | ✓ |

| LLM-SS (Unconstrained) | Llama2-7B | 48.5 | 17.8 | ✓ |

| LLM-SS | Llama2-7B | 54.5 | 1.5 | ✓ |

The main bottleneck of LLM-SS’s accuracy can be straightforwardly deduced. The process in which the CoT model gathers facts is identical to Stage 1 of LLM-SS. Stage 3 of LLM-SS is a deterministic execution of Clingo, so it cannot be blamed for any errors. Thus, Stages 1 and 3 cannot explain the gap in accuracy between the two models. Thus, Stage 2 is the cause, specifically, the translation from natural language sentences to code. McGinness and Baumgartner (2024) categorizes translation errors into syntactic and semantic errors. Syntactic errors are defined as errors in the logical form that prevent parsing, while semantic errors are defined as logical forms that falsely represent their corresponding sentence despite being parsable. Given that the syntactic error rate for LLM-SS is 1.5%, it is evident that semantic errors are mostly responsible. Semantic error manifests in several ways: first, the naming convention between premises is sometimes inconsistent. For example, one premise may say “1519” while another may say “16th century”, thus making the use of math operators to compare between them impossible. Second, the code translation may also be nonsensical, such as the usage of words and phrases that do not even appear in the premise.

## 5 Discussion

### 5.1 Comparison of Integrative & Hybrid Approaches

Is the integrative or hybrid approach more promising for developing general logical reasoning without sacrificing the capabilities of existing LLMs? Given the strong performance of LNN and LLM-SS against SOTA models, they serve as case studies to answer the aforementioned question. We compare the approaches using the following criteria: (i) ability to reason symbolically, (ii) interpretability of reasoning chain, and (ii) retention of LLM abilities, i.e. whether the model can still preserve the advantages of existing LLMs, such as memorization and generalization. Table 5 summarizes our analysis.

Table 5: Comparison of integrative and hybrid approaches

| Integrative | ✓ | $∼$ | ✗ |

| --- | --- | --- | --- |

| Hybrid | ✓ | ✓ | ✓ |

Criteria 1: Symbolic Reasoning. LNN is able to logically reason, albeit in a limited fashion. Specifically, it is restricted by the connections pre-formed between neurons of one layer to the next: a pre-formed connection may not always accurately represent a given logical argument. Models should be allowed to learn the most optimal connections. Gumbel-Max Equation Learner Networks (Chen, 2020) uses Gumbel-Softmax to learn which outputs of the previous layer should be the input of the next layer. However, each layer’s arithmetic operations are predefined. Since combining both flexible logical formulas and flexible connections has not been achieved but appears plausible, we argue that the integrative approach is capable of symbolic reasoning.

The hybrid approach, on the other hand, is capable of symbolic reasoning due to the use of symbolic programs. While Stage 2 currently hinders reasoning due to its inadequate translation abilities, this can be addressed by designing improved natural language-to-Clingo code translation models. Future works can draw inspiration from semantic parsing tasks like Abstract Meaning Representation (AMR) (Knight et al., 2021). Effective methods for AMR parsing include structure-aware transition-based approaches with pre-trained language models (Zhou et al., 2021) and model ensembling (Lee et al., 2021).

Criteria 2: Interpretability. Both approaches contain some degree of interpretability given their explicit use of symbolic reasoning: the logic gates used by LNN and the Clingo code used by LLM-SS are readily accessible. However, the former’s interpretability scales poorly. Consider the LNN implemented for the Breast Cancer dataset which contains $5× 128=640$ neurons. While one can identify the logic gate of each neuron, a human cannot interpret a line of reasoning containing $640$ logic gates. Therefore, LNN is only realistically interpretable when restricted to just a few logic gates. From a human perspective, as the number of neurons increases, LNN’s interpretability becomes no different from an LLM.

For the hybrid approach, Lyu et al. (2023) point out that while the Problem-Solving stage in Faithful-CoT (or Stage 3 in LLM-SS) is transparent and interpretable, the Translation Stage (or Stages 1 and 2 in LLM-SS) is still opaque. One cannot interpret how premises and logical form translations are generated. However, we argue that this is not a fundamental issue. Consider an analogy to the human brain: a human’s argument is deemed logical so long as the premises retrieved from memory lead to a conclusion. The criteria for an interpretable line of reasoning do not require transparency into how our brain retrieved the premises in the first place. Similarly, for LLM-SS, Stages 1 and 2 do not necessarily need to be deterministic and interpretable for logical reasoning to occur.

Criteria 3: Retention of LLM Abilities. Transformer-based architectures excel at inductive reasoning and the learning, storage, and retrieval of knowledge. To compete against existing LLMs in general logical reasoning, neurosymbolic models must retain these capabilities. The integrative approach replaces the Transformers architecture with a logic-based one, thereby entirely stripping the model of its LLM capabilities. Moreover, attempting to attain the advantages of LLMs conflicts with the reasoning capabilities of integrative models: when LNN is scaled to millions of neurons, it is no longer interpretable.

The hybrid approach, on the other hand, has no such issues. In LLM-SS, Stage 1 still uses a Transformer-based architecture, i.e. Llama2-7B, thereby retaining the capabilities of existing LLMs, while Stage 3 uses Clingo to perform symbolic reasoning. This separation of responsibilities allows LLM-SS to achieve the best of both worlds.

To summarize, the hybrid approach is superior to the integrative approach with regard to interpretability and retention of LLM abilities. We therefore posit that the hybrid approach is more promising for developing general logical reasoning.

### 5.2 LLM-SS: A framework for the hybrid approach

The framework exemplified by LLM-SS in Fig. 3 is generalizable. By segmenting LLM-SS into three distinct stages, it becomes a modular framework, where each stage can be independently tested, updated, and improved. Specifically, the choice of models for each stage is also flexible: alternative LLMs like GPT-4 and Gemini 1.5 can be used in Stage 1, semantic parsing models in Stage 2, and other symbolic solvers like Datalog and Python in Stage 3. Most neurosymbolic models are tailored to solve domain-specific tasks by using domain-specific knowledge modules and manually translated logical forms. In contrast, LLM-SS is designed for general logical reasoning, ensuring its viability across a variety of tasks, regardless of domain. To apply this framework to a new task, only in-context learning is required as human input.

To improve this framework, future directions include the (i) optimization of prompts and choice of models, (ii) mitigation of semantic errors in Stage 2, (iii) use of alternative logical forms in Stage 2 and 3, such as knowledge graphs (Pan et al., 2024), and (iv) evaluation of LLM-SS’s effectiveness in other domain-agnostic tasks.

## 6 Conclusion

Our study finds that the hybrid approach to neurosymbolic AI is more promising than the integrative approach for developing general logical reasoning without sacrificing the capabilities of existing LLMs. To aid our analysis, we introduced the Logic Neural Network (LNN) and LLM-Symbolic Solver (LLM-SS) which serve as case studies and representatives for the integrative and hybrid approaches respectively. Finally, to support future research in neurosymbolic AI, we propose LLM-SS as a modular, model-agnostic, and domain-agnostic framework.

## References

- Achiam et al. (2023) Josh Achiam, Steven Adler, Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Asuncion et al. (2007) Arthur Asuncion, David Newman, et al. Uci machine learning repository, 2007.

- Badreddine et al. (2022) Samy Badreddine, Artur d’Avila Garcez, Luciano Serafini, and Michael Spranger. Logic tensor networks. Artificial Intelligence, 303:103649, 2022. ISSN 0004-3702. https://doi.org/10.1016/j.artint.2021.103649.

- Bai et al. (2023) Jinze Bai, Shuai Bai, Yunfei Chu, Zeyu Cui, Kai Dang, Xiaodong Deng, Yang Fan, Wenbin Ge, Yu Han, Fei Huang, et al. Qwen technical report. arXiv preprint arXiv:2309.16609, 2023.

- (5) Adrien Benamira, Thomas Peyrin, Trevor Yap, Tristan Guérand, and Bryan Hooi. Truth table net: Scalable, compact & verifiable neural networks with a dual convolutional small boolean circuit networks form.

- Chen (2020) Gang Chen. Learning symbolic expressions via gumbel-max equation learner networks. arXiv preprint arXiv:2012.06921, 2020.

- Ciatto et al. (2024) Giovanni Ciatto, Federico Sabbatini, Andrea Agiollo, Matteo Magnini, and Andrea Omicini. Symbolic knowledge extraction and injection with sub-symbolic predictors: A systematic literature review. ACM Computing Surveys, 56(6):1–35, 2024.

- Dai et al. (2019) Wang-Zhou Dai, Qiuling Xu, Yang Yu, and Zhi-Hua Zhou. Bridging machine learning and logical reasoning by abductive learning. Advances in Neural Information Processing Systems, 32, 2019.

- Daniele and Serafini (2019) Alessandro Daniele and Luciano Serafini. Knowledge enhanced neural networks. In PRICAI 2019: Trends in Artificial Intelligence: 16th Pacific Rim International Conference on Artificial Intelligence, Cuvu, Yanuca Island, Fiji, August 26–30, 2019, Proceedings, Part I 16, pages 542–554. Springer, 2019.

- Fischer et al. (2019) Marc Fischer, Mislav Balunovic, Dana Drachsler-Cohen, Timon Gehr, Ce Zhang, and Martin Vechev. Dl2: training and querying neural networks with logic. In International Conference on Machine Learning, pages 1931–1941. PMLR, 2019.

- Garcez and Lamb (2023) Artur d’Avila Garcez and Luís C. Lamb. Neurosymbolic ai: the 3rd wave. Artif. Intell. Rev., 56(11):12387–12406, mar 2023. ISSN 0269-2821. 10.1007/s10462-023-10448-w. URL https://doi.org/10.1007/s10462-023-10448-w.

- Gebser et al. (2019) Martin Gebser, Roland Kaminski, Benjamin Kaufmann, and Torsten Schaub. Multi-shot asp solving with clingo. Theory and Practice of Logic Programming, 19(1):27–82, 2019.

- Geva et al. (2021) Mor Geva, Daniel Khashabi, Elad Segal, Tushar Khot, Dan Roth, and Jonathan Berant. Did aristotle use a laptop? a question answering benchmark with implicit reasoning strategies. Transactions of the Association for Computational Linguistics, 9:346–361, 2021.

- Guo et al. (2005) Yuanbo Guo, Zhengxiang Pan, and Jeff Heflin. Lubm: A benchmark for owl knowledge base systems. Journal of Web Semantics, 3(2-3):158–182, 2005.

- Han et al. (2022) Simeng Han, Hailey Schoelkopf, Yilun Zhao, Zhenting Qi, Martin Riddell, Wenfei Zhou, James Coady, David Peng, Yujie Qiao, Luke Benson, et al. Folio: Natural language reasoning with first-order logic. arXiv preprint arXiv:2209.00840, 2022.

- Hendrycks et al. (2020) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300, 2020.

- Huang and Chang (2022) Jie Huang and Kevin Chen-Chuan Chang. Towards reasoning in large language models: A survey. arXiv preprint arXiv:2212.10403, 2022.

- Kautz (2020) H Kautz. Third AI Summer, AAAI Robert S. Engelmore Memorial Lecture, 2020. URL https://www.cs.rochester.edu/u/kautz/talks/Kautz%20Engelmore%20Lecture.pdf.

- Knight et al. (2021) Kevin Knight, Bianca Badarau, Laura Baranescu, Claire Bonial, Madalina Bardocz, Kira Griffitt, Ulf Hermjakob, Daniel Marcu, Martha Palmer, Tim O’Gorman, et al. Abstract meaning representation (amr) annotation release 3.0. Linguistic Data Consortium, 2020., 2021.

- Lee et al. (2021) Young-Suk Lee, Ramon Fernandez Astudillo, Thanh Lam Hoang, Tahira Naseem, Radu Florian, and Salim Roukos. Maximum bayes smatch ensemble distillation for amr parsing. arXiv preprint arXiv:2112.07790, 2021.

- Lifschitz (2019) Vladimir Lifschitz. Answer set programming, volume 3. Springer Heidelberg, 2019.

- Lloyd (2012) John W Lloyd. Foundations of logic programming. Springer Science & Business Media, 2012.

- Lyu et al. (2023) Qing Lyu, Shreya Havaldar, Adam Stein, Li Zhang, Delip Rao, Eric Wong, Marianna Apidianaki, and Chris Callison-Burch. Faithful chain-of-thought reasoning. In The 13th International Joint Conference on Natural Language Processing and the 3rd Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics (IJCNLP-AACL 2023), 2023.

- Manhaeve et al. (2018) Robin Manhaeve, Sebastijan Dumancic, Angelika Kimmig, Thomas Demeester, and Luc De Raedt. Deepproblog: Neural probabilistic logic programming. Advances in neural information processing systems, 31, 2018.

- McGinness and Baumgartner (2024) Lachlan McGinness and Peter Baumgartner. Automated theorem provers help improve large language model reasoning. In Proceedings of 25th Conference on Logic for Programming, Artificial Intelligence and Reasoning, volume 100, pages 51–69, 2024.

- Pan et al. (2024) Shirui Pan, Linhao Luo, Yufei Wang, Chen Chen, Jiapu Wang, and Xindong Wu. Unifying large language models and knowledge graphs: A roadmap. IEEE Transactions on Knowledge and Data Engineering, 36(7):3580–3599, 2024. 10.1109/TKDE.2024.3352100.

- Petersen et al. (2022) Felix Petersen, Christian Borgelt, Hilde Kuehne, and Oliver Deussen. Deep differentiable logic gate networks. Advances in Neural Information Processing Systems, 35:2006–2018, 2022.

- Pospesel (1974) Howard Pospesel. Introduction to Logic: Propositional Logic. Prentice-Hall, Englewood Cliffs, NJ, USA, 1974.

- Riegel et al. (2020) Ryan Riegel, Alexander Gray, Francois Luus, Naweed Khan, Ndivhuwo Makondo, Ismail Yunus Akhalwaya, Haifeng Qian, Ronald Fagin, Francisco Barahona, Udit Sharma, et al. Logical neural networks. arXiv preprint arXiv:2006.13155, 2020.

- Shi et al. (2022) Zhengxiang Shi, Qiang Zhang, and Aldo Lipani. Stepgame: A new benchmark for robust multi-hop spatial reasoning in texts. In Proceedings of the AAAI conference on artificial intelligence, volume 36, pages 11321–11329, 2022.

- Silver et al. (2016) David Silver, Aja Huang, Chris J Maddison, Arthur Guez, Laurent Sifre, George Van Den Driessche, Julian Schrittwieser, Ioannis Antonoglou, Veda Panneershelvam, Marc Lanctot, et al. Mastering the game of go with deep neural networks and tree search. nature, 529(7587):484–489, 2016.

- Sinha et al. (2019) Koustuv Sinha, Shagun Sodhani, Jin Dong, Joelle Pineau, and William L Hamilton. Clutrr: A diagnostic benchmark for inductive reasoning from text. arXiv preprint arXiv:1908.06177, 2019.

- Touvron et al. (2023) Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, et al. Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288, 2023.

- Trinh et al. (2024) Trieu H Trinh, Yuhuai Wu, Quoc V Le, He He, and Thang Luong. Solving olympiad geometry without human demonstrations. Nature, 625(7995):476–482, 2024.

- van Krieken et al. (2022) Emile van Krieken, Erman Acar, and Frank van Harmelen. Analyzing differentiable fuzzy logic operators. Artificial Intelligence, 302:103602, 2022.

- Wei et al. (2022) Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35:24824–24837, 2022.

- Yang et al. (2023) Zhun Yang, Adam Ishay, and Joohyung Lee. Coupling large language models with logic programming for robust and general reasoning from text. arXiv preprint arXiv:2307.07696, 2023.

- Zhang et al. (2023) Hanlin Zhang, Jiani Huang, Ziyang Li, Mayur Naik, and Eric Xing. Improved logical reasoning of language models via differentiable symbolic programming. arXiv preprint arXiv:2305.03742, 2023.

- Zhou et al. (2021) Jiawei Zhou, Tahira Naseem, Ramón Fernandez Astudillo, Young-Suk Lee, Radu Florian, and Salim Roukos. Structure-aware fine-tuning of sequence-to-sequence transformers for transition-based amr parsing. arXiv preprint arXiv:2110.15534, 2021.

## Appendix A Related Works

With regards to the integrative approach, Garcez and Lamb (2023) reviewed several systems where symbolic reasoning is contained within the neural network. Most notably, Logic Tensor Network (Badreddine et al., 2022) is designed to learn new predicates via a deep neural network while satisfying a first-order logic knowledge base. Daniele and Serafini (2019), Fischer et al. (2019), and Manhaeve et al. (2018) present models with similar principles. Logical Neural Network (Riegel et al., 2020), developed by IBM, creates a 1-on-1 correspondence between each neuron and a logic gate, a similar concept to our LNN.

However, the primary difference between the two aforementioned systems and LNN is that the former requires Real Logic axioms/formulas specific to the given task to be manually defined prior to training. The model subsequently learns the weights with respect to the axioms provided. LNN, on the other hand, consists of the same 6 logic gates for each neuron across any given task. It learns each neuron’s optimal logic gate during training, thus dynamically constructing the best-performing axioms/formulas. This is a significant disadvantage for the Logic Tensor Network and the IBM Logical Neural Network because it limits their scope to problems with known, well-defined logical rules, thereby diminishing their usefulness in real-world applications. For example, IBM’s Logical Neural Network is tested on the Lehigh University Benchmark (LUBM) (Guo et al., 2005), which contains predefined OWL axioms.

To the best of our knowledge, the only model architecture that allows for the choice of a logic gate is the Logic Gate Network (LGN) (Petersen et al., 2022). Its fundamental principle is the same as LNN: allow neural networks to choose between logic gates through differentiation by continuously relaxing them. However, LNN and LGN differ in how various logic gates are combined. This paper creates a distinct relaxation formula for each logic gate and then uses a categorical probability distribution to create a weighted average across 16 logic gates. However, LGN’s primary objective was to decrease inference time for computer vision tasks by using the highest probability logic gate for each neuron. Hence, its practical ability to converge to the appropriate logic gate for reasoning tasks remains unexamined.

On the other hand, the hybrid approach has seen SOTA results on domain-specific reasoning tasks, but few have applied this approach to domain-agnostic tasks. Yang et al. (2023) applied GPT-3 and Clingo to the benchmark datasets: bABI, StepGame, CLUTRR, and gSCAN. However, the scope of its application is limited. For example, CLUTRR only involves the inference of family relationships. Moreover, the authors manually wrote an ASP knowledge module for each task, e.g. the one for CLUTRR contains an exhaustive list of family relationships. Therefore, Yang’s architecture is not applicable to general QA tasks. Deepmind’s AlphaGeometry (Trinh et al., 2024) combines an LLM with the symbolic engine DD+AR, which contains geometric rules. Similarly, its scope is limited to geometry problems. Moreover, these natural language geometry questions are manually translated into a domain-specific form in order for DD+AR to understand the problem. This is again unrealistic for general QA tasks. Models with similar frameworks, and therefore similar limitations include Silver et al. (2016), McGinness and Baumgartner (2024), Zhang et al. (2023), and Dai et al. (2019).

To our knowledge, only the Faithful-CoT model (Lyu et al., 2023) attempts to combine LLMs with symbolic engines for a wide range of tasks, without knowledge modules or manual translation of problems. However, using GPT-4 and Prolog, an alternative to Clingo, it achieved a mere 54% accuracy rate on the StrategyQA benchmark (Geva et al., 2021).

## Appendix B Are LNN and LLM-SS Generalizable Representatives?

This study compares one method only from each approach, which therefore begs the question: are LNN and LLM-SS reasonable representatives of each approach? In other words, can their results and analyses be extrapolated to form conclusions about each approach as a whole? Our conviction is that both methods’ analyses can be generalized as a comparison between both approaches, justified by the key characteristics shared by the class of models within each approach.

Referencing Appendix A, the integrative approach is characterized by the embedding of logic gates or axioms within the neural network, which are manipulated to various degrees during training via gradient descent. This defining property of integrative models is undoubtedly represented by LNN. The hybrid approach, on the other hand, is a system characterized by two distinct parts: a probabilistic neural network and a deterministic symbolic solver. This is similarly well-represented by LLM-SS. Importantly, our analysis is contingent upon the aforementioned universal key characteristics, rather than any model-specific implementation method. We therefore expect our conclusions in Section 5.1 to hold regardless of the choice of model representatives.

## Appendix C Explaining the Experiment Design

Why are the integrative and hybrid approaches evaluated using different benchmarks, preventing them from being directly comparable? This is due to the current limitations of both approaches. On one hand, the integrative approach has not yet been scaled to accommodate natural language datasets, and thus can only be trained on classification tasks with limited features. The hybrid approach, on the other hand, specializes in natural language datasets, given its use of LLMs; it cannot reliably train on datasets like Adult Census without defeating its purpose. Thus, their incompatible task domains prevent a direct comparison.

Nonetheless, we contend that this experimental setup provides sufficient empirical support for our conclusions. Ultimately, we are interested in studying which approach is more interpretable and which better retains the advantages of existing LLMs; a direct performance comparison is secondary. Our experiments adequately study these questions.

## Appendix D Clingo as Part of LLM-SS

Below are examples of how natural language sentences, both declarative and conditional, can be represented in Clingo. Note the ability to use mathematical expressions in the last example.

- [Declarative] Sam has a cow. no_of_cows_owned(sam, 1).

- [Conditional] If Sam owns a cow, Sam is a farmer. farmer(sam) :- no_of_cows_owned(sam, 1).

- [Conditional] If Sam owns more than five cows, then he is rich. rich(sam) :- no_of_cows_owned(sam, Number), Number >5.

This explains the architectural choices in the earlier stages. Restricting the premises generated in Stage 1 to declarative and conditional forms allows a simpler and therefore more error-free translation into logical form. Moreover, using LLM constraining software in Stage 2 helps ensure the LLM adheres to Clingo’s strict syntax rules.

Choice of Clingo. In theory, any ASP or logic programming language can be used as part of LLM-SS. However, our choice of Clingo over Prolog, another commonly used solver, is twofold. First, Clingo’s syntax is more consistent with propositional logic in terms of semantic meaning. For example, negation in propositional logic ( $¬ P$ ) and in Clingo (not P) both mean $P$ is false. However, negation in Prolog (\+ P) means $P$ cannot be proven, which is distinctly different. This makes the natural language-to-code translation unnecessarily complex. Second, as a secondary consideration, Clingo’s syntax is simpler than Prolog’s and may therefore be less prone to random errors, e.g., for negation (Prolog’s \+ vs. Clingo’s not). We recommend future works to select symbolic solvers based on (i) alignment with the chosen logic system and (ii) ease of parsing and generation by LLMs.

## Appendix E Model Architecture and Hyperparameters

For LNN’s Experiment 1, both models are trained with 1000 iterations, a learning rate of 0.01, and using the Adam optimizer.

For LNN’s Experiment 2, the model architectures and hyperparameters are determined by the recommended settings in Petersen et al. (2022), which are as shown in Table 6. Importantly, model sizes are kept roughly equivalent in the interest of fairness. All models are trained up to 200 epochs at a batch size of 100. The MLPs are ReLU activated.

| Model Breast Cancer | Space | Layers | Neurons/layer |

| --- | --- | --- | --- |

| Logic Neural Network | 320B | 5 | 128 |

| Logic Gate Network | 320B | 5 | 128 |

| Multi-Layer Perceptron | 1.4KB | 2 | 8 |

| Adult | | | |

| Logic Neural Network | 640B | 5 | 256 |

| Logic Gate Network | 640B | 5 | 256 |

| Multi-Layer Perceptron | 15KB | 2 | 32 |

Table 6: Model architecture and hyperparameters for LNN’s Experiment 2