# Intuition emerges in Maximum Caliber models at criticality

**Authors**: Lluís Arola-Fernández

> Instituto de Física Interdisciplinar y Sistemas Complejos IFISC (CSIC-UIB), Campus UIB, 07122 Palma de Mallorca, Spain Departament d’Enginyeria Informàtica i Matemàtiques, Universitat Rovira i Virgili, 43007 Tarragona, Catalonia, Spain Current address.

(September 26, 2025)

## Abstract

Whether large predictive models merely parrot their training data or produce genuine insight lacks a physical explanation. This work reports a primitive form of intuition that emerges as a metastable phase of next-token prediction under future path-entropy maximization. The intuition mechanism is discovered via mind-tuning, the minimal principle that imposes Maximum Caliber in predictive models with a control temperature-like parameter $\lambda$ . Training on random walks in deterministic mazes reveals a rich phase diagram: imitation (low $\lambda$ ), rule-breaking hallucination (high $\lambda$ ), and a fragile in-between window exhibiting strong protocol-dependence (hysteresis) and multistability, where models spontaneously discover novel goal-directed strategies. These results are captured by a mechanistic low-dimensional theory and frame intuition as an emergent property at the critical balance between memorizing what is and wondering what could be.

Introduction.— The rise of large-scale predictive models is reshaping artificial intelligence and transforming science and society. This progress is built upon a dominant scaling paradigm: pre-training autoregressive neural networks [1] with enormous parameter counts on big volumes of data [2] using massive compute resources [3]. When coupled with powerful search at inference time [4], this approach has yielded impressive performance in complex games [5], medical diagnosis [6] and algorithmic discovery [7]. Yet, the brute-force solution does not match the elegant efficiency of natural intelligence, which discovers intuitive shortcuts and novel, creative strategies from sparse data without rewards [8]. This contrast sharpens a foundational debate: are these models showing sparks of artificial general intelligence (AGI) [9], or are they “stochastic parrots” [10] that leverage vast experience to create an illusion of thought [5, 11]? While often addressed via complex reasoning benchmarks [12], the paradigm’s limits can be distilled into a simple Gedankenexperiment (Fig. 1).

<details>

<summary>Figure1.png Details</summary>

### Visual Description

## Icon/Symbol: Abstract Maze-like Pattern

### Overview

The image displays a square, black and white abstract pattern that resembles a maze or a stylized symbol. It consists of thick white lines forming a continuous path against a solid black background, filling the majority of the square area. There are no labels, axes, legends, or text present.

### Components/Axes

The image is a single, self-contained graphic. It does not feature any traditional chart components like axes, labels, or legends. The primary components are:

- **Outer Boundary:** A square frame defined by the edge of the image, which is entirely black.

- **Inner Pattern:** A complex, continuous white line path that navigates through the black space.

### Detailed Analysis

The pattern is a grid-like structure with right-angle turns, characteristic of a maze. The white path has a consistent width throughout.

Starting from the top-left corner:

1. The white path begins as a vertical segment extending downwards from the top edge, approximately one-fifth of the way from the left edge.

2. It turns right, forming a horizontal segment that extends towards the center.

3. It then turns upwards, creating a vertical segment.

4. Another right turn leads to a short horizontal segment.

5. A downward turn creates a vertical segment.

6. This is followed by a left turn, a short horizontal segment, and another downward turn.

7. The path then makes a right turn, extending horizontally towards the right side

</details>

Figure 1: Gedankenexperiment on emergent reasoning. A minimal environment abstracts a reasoning task into its essential components: a constrained space (a maze) and a hidden, optimal solution (to escape). The reader’s own intuition immediately grasps the task, yet a standard predictive model trained on random walk trajectories (i.e., non-intelligent data without rewards) will never discover it.

This work provides a physical explanation for this leap. We introduce mind-tuning, a simple principle that balances next-token prediction against future path-entropy maximization with a temperature-like parameter $\lambda$ . To our knowledge, mind-tuning is the minimal implementation of the Maximum Caliber (MaxCal) principle [13, 14, 15] compatible with autoregressive training. It reveals the emergence of a fragile metastable phase, within a narrow temperature window between imitation and hallucination regimes, that is reminiscent of intuition.

While our intuition mechanism points toward a horizon of diverse futures to explore, the prevailing paradigm remains blind, fixated only on predicting the next token. Constrained path-entropy maximization is already implicit in intrinsic motivation frameworks [16] like Causal Entropic Forces [17], Active Inference [18], Empowerment [19], or the Maximum Occupancy Principle [20]. Yet, a physical basis for such emergent behavior in pure predictive models has remained elusive. The metastable regime reported here, bounded by distinct, entropy and energy-driven transitions with strong hysteresis and multistability, explains that emergent reasoning is both rare and protocol-dependent. Furthermore, the high-dimensional mechanisms behind this phenomenology are captured analytically by a low-dimensional theory.

This perspective casts intelligence as a state of computational matter [21], building on a rich history of minimal models for emergent cognitive behavior, from Hopfield’s memory [22] and Kuramoto’s synchronization [23] to phenomena in deep learning like double-descent [24], grokking [25], neural collapse [26], symmetry-breaking [27], and collective learning [28], often analyzed through spin-glass analogies [29] and phase diagrams [30, 28]. The phase-transition picture is key to research showing that intelligent systems may operate near a critical point, at the “edge of chaos” [31, 32, 33]. At criticality, fluctuations and system responsiveness peak [31, 34], creating the ideal conditions for the leap from mimicry to insight. In the learning problem, our theory points toward a critical scaling axis driven by the system’s intrinsic dynamics and suggests that current models operate in a suboptimal imitation phase, lacking the intuition that a physical mechanism unlocks.

Mind-tuning.— We focus on reasoning problems solvable by generating trajectories $z=(x_{0},a_{0},x_{1},a_{1},\dots)$ . The system’s behavior is governed by a policy $\pi_{\theta,\beta}$ , a neural network with parameters $\theta$ that maps a data history $h_{t}=(x_{0},a_{0},\dots,x_{t})$ to a probability distribution over a discrete set of actions $\mathcal{A}$ via a softmax function

$$

\pi_{\theta,\beta}(a_{t}\!\mid\!h_{t})=\frac{e^{\beta\,\ell_{\theta}(h_{t},a_{t})}}{\sum_{a^{\prime}\in\mathcal{A}}e^{\beta\,\ell_{\theta}(h_{t},a^{\prime})}}, \tag{1}

$$

where $\ell_{\theta}$ are the network’s output logits and $\beta$ controls the policy’s stochasticity. This general setting includes state-decision spaces, standard autoregressive models where histories contain tokens and other representations (see SM Sec. S1 for implementation details).

To isolate the intuition mechanism, we assume an offline, imperfect setting [35]: the model never interacts with the environment, has no external rewards, and learns from a dataset $\mathcal{D}$ of non-optimal histories. How can a purely predictive model discover a better solution than what it has seen? By biasing prediction toward futures with high causal path diversity, as prescribed by the Maximum Caliber (MaxCal) principle [13]: among all dynamics consistent with known constraints, prefer those that maximize the entropy of trajectories.

The most unbiased learning objective that imposes MaxCal is the free-energy-like functional

$$

\mathcal{F}_{\lambda,\beta,\tau}(\theta)=\mathcal{E}_{\beta}(\theta)-\lambda\mathcal{H}_{\tau,\beta}(\theta), \tag{2}

$$

where $\lambda\!\geq\!0$ is an effective temperature controlling the energy–entropy trade-off. The first term is the standard Cross-Entropy or negative log-likelihood $(\mathcal{E}$ ), measuring the cost of imitating the training data

$$

\mathcal{E}_{\beta}(\theta)=\left\langle-\log\pi_{\theta,\beta}(a_{t}|h_{t})\right\rangle_{(h_{t},a_{t})\in\mathcal{D}}. \tag{3}

$$

This energy $\mathcal{E}$ is traded against the causal path-entropy $\mathcal{H}$ , a Shannon entropy of self-generated futures up to a horizon of length $\tau$

$$

\mathcal{H}_{\tau,\beta}(\theta)=\left\langle\frac{1}{\tau}\left\langle-\ln P(z_{\text{future}}|h_{t})\right\rangle_{z_{\text{future}}\sim\pi_{\theta,\beta}}\right\rangle_{h_{t}\in\mathcal{D}}. \tag{4}

$$

Eq.(4) is estimated over the cone of futures induced by the model itself (see SM Sec. S2B for entropy calculations), making the objective function inherently subjective and self-referential, as the internal beliefs dynamically shape the learning landscape. The gradient update

$$

\theta(t+1)\leftarrow\theta(t)+\eta[{-\nabla_{\theta}\mathcal{E}_{\beta}(\theta)}+{\lambda\nabla_{\theta}\mathcal{H}_{\tau,\beta}(\theta)}] \tag{5}

$$

frames learning as a competition between prediction and causal entropic forces acting on the system’s degrees of freedom, i.e. the network weights. To our knowledge, this self-contained mechanism is the minimal MaxCal implementation compatible with prevalent offline auto-regressive training. Unlike surprise-minimization [36, 37], here the entropic term rewards keeping plausible futures open, pulling toward the adjacent possible [38], without environment interaction [19, 20, 39]. The framework also admits a Bayesian interpretation [40, 41]: standard auto-regressive training use flat priors. In mind-tuning, instead, the data likelihood filters an optimistic entropic prior over futures with high diversity.

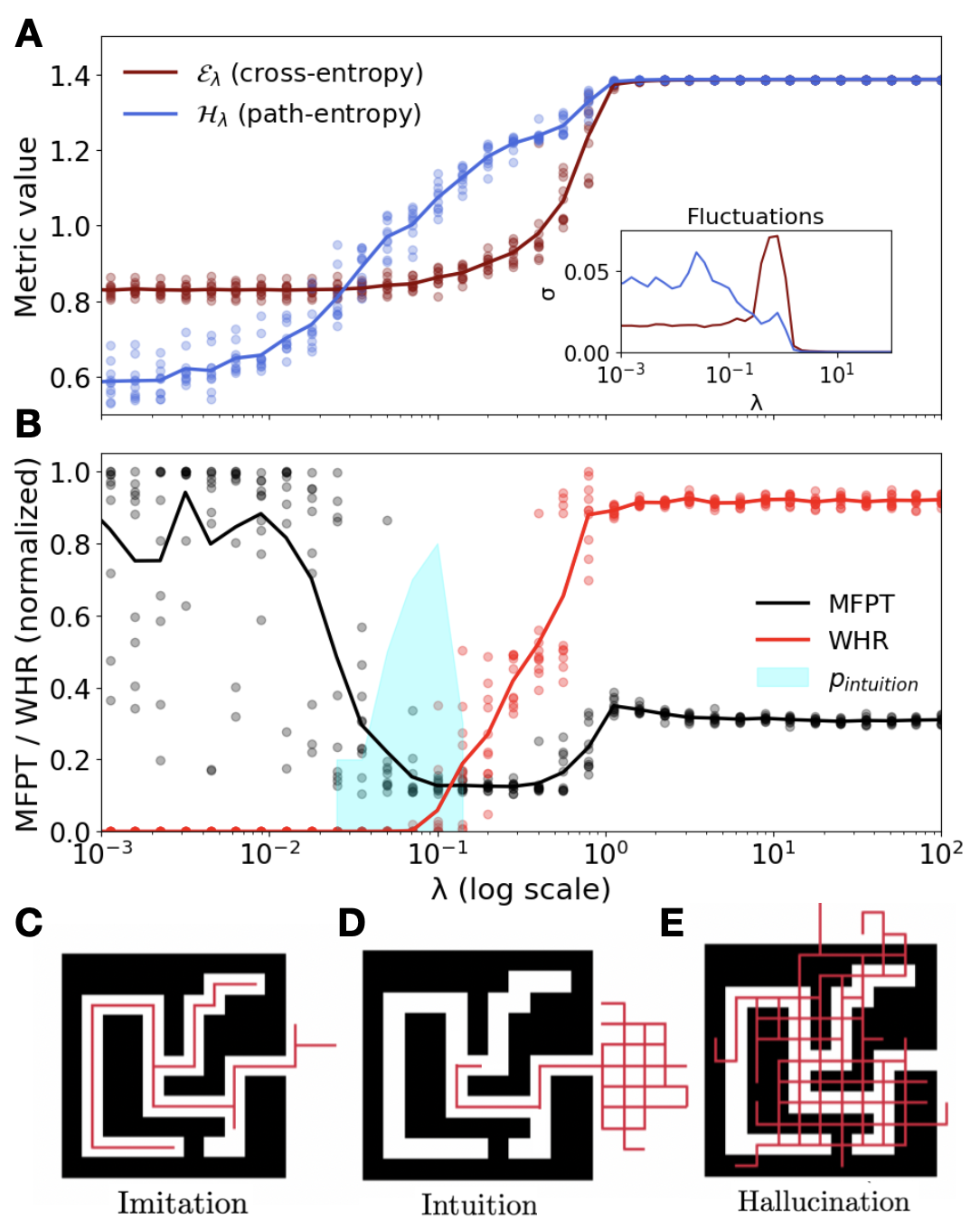

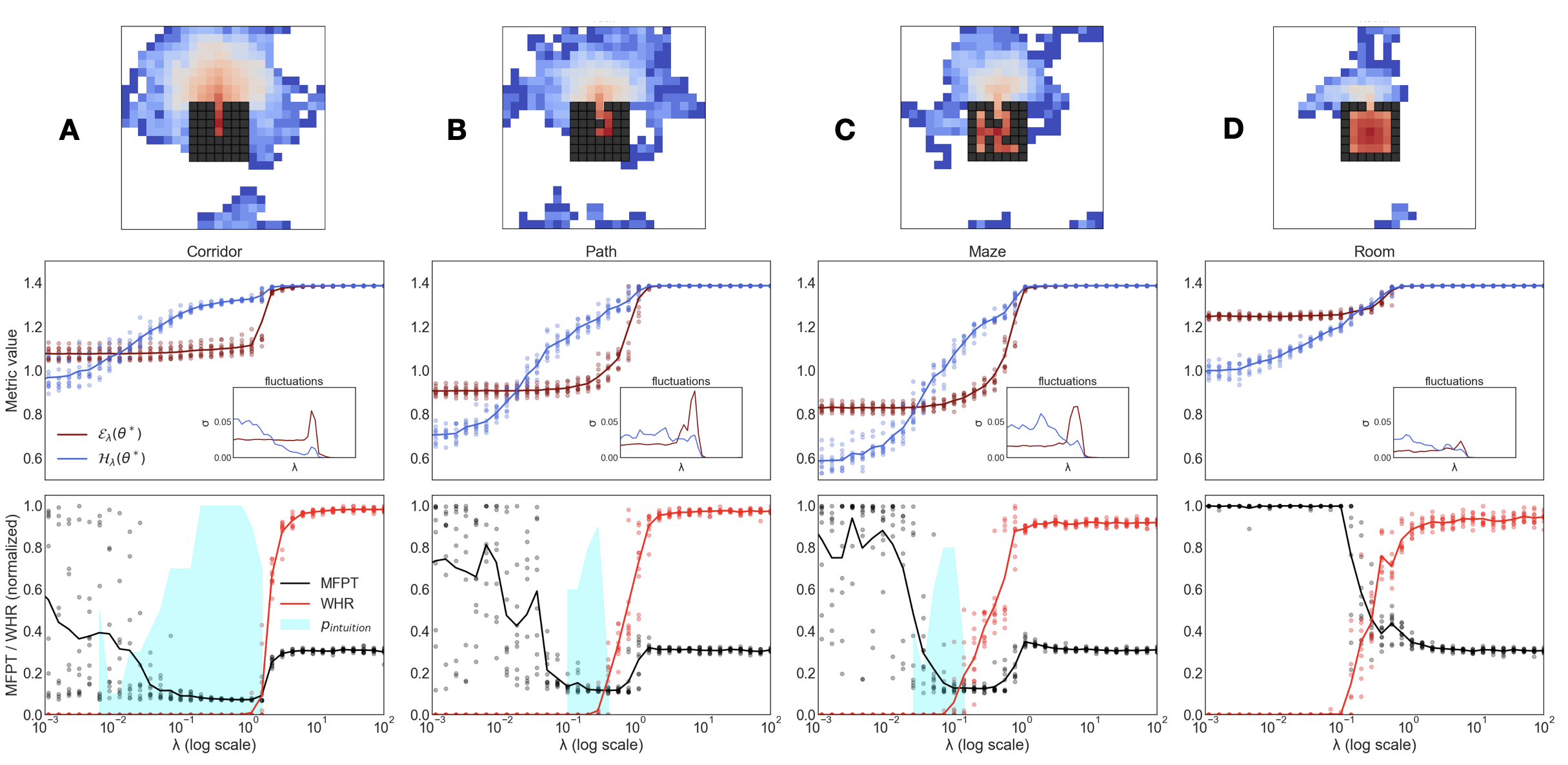

Experiments.— We test this principle in the minimal sandbox of the Gedankenexperiment (Fig. 1). A model is trained on constrained random-walk trajectories, which respect the maze walls but contain no intelligent strategies for escaping. Sweeping the parameter $\lambda$ yields a rich phase diagram, with clear transitions in both genotype (Fig. 2 A) and phenotype (Fig. 2 B) metrics.

<details>

<summary>Figure2.png Details</summary>

### Visual Description

## Chart and Diagram Composite: Analysis of Pathfinding Metrics and Strategies

### Overview

This image is a composite figure consisting of two line charts (A and B) and three illustrative diagrams (C, D, E). The charts display various metric values and normalized pathfinding performance against a parameter 'λ' on a logarithmic scale. The diagrams visually represent different pathfinding strategies—Imitation, Intuition, and Hallucination—within a maze-like environment. The overall figure appears to illustrate how different pathfinding behaviors emerge or are characterized across a range of 'λ' values.

### Components/Axes

**Panel A: Metric Values vs. λ**

* **Type**: Line chart with scatter points.

* **X-axis**: Implicitly 'λ' (log scale), ranging from approximately $10^{-3}$ to $10^2$. Tick marks are at $10^{-3}, 10^{-2}, 10^{-1}, 10^0, 10^1, 10^2$.

* **Y-axis**: "Metric value", ranging from 0.6 to 1.4. Tick marks are at 0.6, 0.8, 1.0, 1.2, 1.4.

* **Legend (Top-left, inside chart area)**:

* Brown line and scatter points: "$\mathcal{E}_\lambda$ (cross-entropy)"

* Blue line and scatter points: "$H_\lambda$ (path-entropy)"

* **Inset Chart (Top-right, inside Panel A)**:

* **Title**: "Fluctuations"

* **X-axis**: "λ", ranging from $10^{-3}$ to $10^2$. Tick marks are at $10^{-3}, 10^{-1}, 10^1$.

* **Y-axis**: "σ", ranging from 0.00 to 0.05. Tick marks are at 0.00, 0.05.

* **Data Series**: Brown line (corresponding to $\mathcal{E}_\lambda$) and Blue line (corresponding to $H_\lambda$).

**Panel B: Normalized Performance Metrics vs. λ**

* **Type**: Line chart with scatter points and a shaded area.

* **X-axis**: "λ (log scale)", ranging from approximately $10^{-3}$ to $10^2$. Tick marks are at $10^{-3}, 10^{-2}, 10^{-1}, 10^0, 10^1, 10^2$.

* **Y-axis**: "MFPT / WHR (normalized)", ranging from 0.0 to 1.0. Tick marks are at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend (Top-right, inside chart area)**:

* Black line and scatter points: "MFPT"

* Red line and scatter points: "WHR"

* Light cyan shaded area: "$p_{intuition}$"

**Panels C, D, E: Illustrative Diagrams**

* **Type**: Maze diagrams with overlaid paths. Each diagram shows a black background representing walls and white paths representing open space. Red lines indicate a trajectory or path.

* **Panel C (Bottom-left)**:

* **Title**: "Imitation"

* **Content**: A simple maze with a single, direct red path following the white open space.

* **Panel D (Bottom-center)**:

* **Title**: "Intuition"

* **Content**: A slightly more complex maze. A red path starts within the maze, follows a segment of the white path, and then extends outside the maze structure into a grid-like pattern of red lines.

* **Panel E (Bottom-right)**:

* **Title**: "Hallucination"

* **Content**: A complex maze. Red paths are extensively overlaid both within the white open spaces and over the black wall areas, forming a dense, grid-like network that does not strictly adhere to the maze's navigable paths.

### Detailed Analysis

**Panel A: Metric Values**

* **$\mathcal{E}_\lambda$ (cross-entropy) - Brown Line**:

* **Trend**: Starts relatively flat around a "Metric value" of 0.82 for λ from $10^{-3}$ to approximately $10^{-1.5}$. It then begins to increase sharply, crossing 1.0 around λ = $10^{-1}$, and continues to rise steeply to reach a plateau at approximately 1.38 for λ values greater than about $10^0$.

* **Scatter Points**: Show some variability, especially in the rising phase, but generally follow the trend line.

* **$H_\lambda$ (path-entropy) - Blue Line**:

* **Trend**: Starts around a "Metric value" of 0.6 for λ from $10^{-3}$ to approximately $10^{-2}$. It then gradually increases, crossing 0.8 around λ = $10^{-1.5}$, and continues to rise, crossing 1.0 around λ = $10^{-1}$. It then rises more steeply, reaching a plateau at approximately 1.38 for λ values greater than about $10^0$.

* **Scatter Points**: Show more variability than the brown line at lower λ values, but converge to the trend line at higher λ values.

* **Comparison**: Both metrics increase with λ, eventually plateauing at a similar high value. $H_\lambda$ starts lower and increases earlier than $\mathcal{E}_\lambda$. They converge around λ = $10^0$ and then plateau together.

* **Inset Chart: Fluctuations (σ)**:

* **Brown Line (for $\mathcal{E}_\lambda$)**: Shows a low fluctuation (near 0.00) for λ < $10^{-1.5}$, then a sharp peak around λ = $10^{-0.5}$ (approximately 0.045), and then drops back to near 0.00 for λ > $10^0$.

* **Blue Line (for $H_\lambda$)**: Shows a moderate fluctuation (around 0.02-0.03) for λ < $10^{-1}$, then decreases to near 0.00 for λ > $10^0$. It has a slight peak around λ = $10^{-1.5}$ (approx 0.035) before decreasing.

**Panel B: Normalized Performance Metrics**

* **MFPT (Mean First Passage Time) - Black Line**:

* **Trend**: Starts high, around 0.9-1.0, for λ from $10^{-3}$ to $10^{-2}$. It then decreases sharply, reaching a minimum around 0.1-0.2 for λ between $10^{-1}$ and $10^0$. After this minimum, it increases slightly to plateau around 0.3-0.4 for λ values greater than $10^0$.

* **Scatter Points**: Show significant variability, especially at lower λ values, but generally follow the trend line.

* **WHR (Weighted Hitting Rate) - Red Line**:

* **Trend**: Starts very low, near 0.0, for λ from $10^{-3}$ to approximately $10^{-1.5}$. It then increases sharply, crossing 0.2 around λ = $10^{-1}$, and continues to rise steeply to reach a plateau around 0.9-0.95 for λ values greater than about $10^0$.

* **Scatter Points**: Show some variability, particularly in the rising phase, but generally follow the trend line.

* **$p_{intuition}$ (Intuition Probability) - Light Cyan Shaded Area**:

* **Distribution**: This area is low for λ < $10^{-1.5}$, then rises to a peak around λ = $10^{-1}$ to $10^{-0.5}$, where it covers a significant range of normalized values (from approx 0.0 to 0.8). It then decreases and is negligible for λ > $10^0$. This area broadly overlaps with the region where MFPT is decreasing and WHR is increasing.

**Panels C, D, E: Illustrative Diagrams**

* **C: Imitation**: The red path perfectly traces the navigable white path within the maze, suggesting a direct, known, or learned route.

* **D: Intuition**: The red path follows the navigable white path for a segment, but then extends beyond the maze's physical boundaries into a structured, grid-like pattern. This suggests a process that starts with known information but then extrapolates or generates possibilities beyond the immediate environment.

* **E: Hallucination**: The red paths are dense and chaotic, covering both navigable white paths and non-navigable black wall areas. This indicates a process that generates many paths without strict adherence to environmental constraints, potentially representing an overactive or unconstrained generation of possibilities.

### Key Observations

* **Phase Transitions**: Both charts A and B show distinct phases or transitions as λ increases.

* In Panel A, both entropy metrics are low and stable, then rise, and finally plateau.

* In Panel B, MFPT starts high and drops, while WHR starts low and rises, both eventually plateauing.

* **Inverse Relationship**: MFPT and WHR in Panel B exhibit an inverse relationship: as one decreases, the other increases, particularly in the range of λ from $10^{-2}$ to $10^0$.

* **Intuition Zone**: The $p_{intuition}$ shaded area in Panel B peaks in the region where MFPT is at its minimum and WHR is rapidly increasing, suggesting that "intuition" is most prevalent when pathfinding efficiency (low MFPT) and success rate (high WHR) are transitioning. This also corresponds to the region where the fluctuations in $\mathcal{E}_\lambda$ are highest (Panel A inset).

* **Entropy Behavior**: Path-entropy ($H_\lambda$) starts lower and increases earlier than cross-entropy ($\mathcal{E}_\lambda$), suggesting that the diversity of paths (path-entropy) might increase before the "error" or divergence from a target distribution (cross-entropy) becomes significant.

* **Diagrammatic Correspondence**: The diagrams C, D, E visually represent distinct pathfinding strategies that likely correspond to different ranges of λ, though this correspondence is not explicitly mapped on the charts. "Imitation" might correspond to low λ, "Intuition" to intermediate λ (where $p_{intuition}$ is high), and "Hallucination" to high λ.

### Interpretation

The data presented in this composite figure likely explores the behavior of a pathfinding or decision-making model as a parameter 'λ' is varied. 'λ' appears to control a trade-off or a transition between different modes of operation.

* **Low λ (e.g., $10^{-3}$ to $10^{-2}$)**: In this regime, $\mathcal{E}_\lambda$ and $H_\lambda$ are low and stable, suggesting a predictable or constrained path generation process. MFPT is high, and WHR is low, indicating poor performance in finding paths. This could correspond to the "Imitation" strategy (Panel C), where the agent strictly follows known paths, which might be inefficient or fail in complex scenarios. The low fluctuations in entropy metrics further support a stable, perhaps rigid, behavior.

* **Intermediate λ (e.g., $10^{-2}$ to $10^0$)**: This is a critical transition zone.

* Both $\mathcal{E}_\lambda$ and $H_\lambda$ are rapidly increasing, indicating a greater diversity of paths and potentially a divergence from a simple, known distribution.

* MFPT drops significantly, and WHR rises sharply, suggesting improved pathfinding efficiency and success.

* Crucially, the $p_{intuition}$ area peaks here, and the fluctuations in $\mathcal{E}_\lambda$ are highest. This suggests that "Intuition" (Panel D) is a strategy characterized by exploring beyond immediate constraints, leading to better performance but also higher variability or uncertainty in the underlying process. The path extending outside the maze in Panel D visually supports this idea of extrapolation or generation beyond the given environment.

* **High λ (e.g., $10^0$ to $10^2$)**: In this regime, both $\mathcal{E}_\lambda$ and $H_\lambda$ plateau at high values, indicating a high diversity of generated paths and a significant divergence from a baseline. MFPT slightly increases from its minimum, and WHR plateaus at a high value, suggesting good but not necessarily optimal performance. The "Hallucination" strategy (Panel E) could correspond to this phase, where the path generation process is overly unconstrained, creating many paths even through walls. While this might ensure finding a path (high WHR), the process itself is inefficient or "noisy" (higher MFPT than the minimum, high entropy values).

In essence, the figure illustrates a model that transitions from a rigid, imitative behavior at low 'λ' to a more exploratory, "intuitive" phase at intermediate 'λ' (which optimizes performance), and finally to an overly generative, "hallucinatory" phase at high 'λ'. The parameter 'λ' likely tunes the balance between adherence to known information and the generation of novel possibilities. The peak in fluctuations for cross-entropy during the "intuitive" phase suggests that this optimal balance comes with inherent uncertainty or exploration.

</details>

Figure 2: Experimental phase diagram. Sweeping $\lambda$ reveals three behavioral phases. (A) Genotype metrics: Cross-Entropy ( $\mathcal{E}$ ) and causal path-entropy ( $\mathcal{H}$ ). Inset: steady-state fluctuations $\sigma$ over different initial realizations depending on $\lambda$ . (B) Phenotype metrics: Mean First Passage Time (MFPT), Wall Hit Ratio (WHR) and intuition likelihood (see SM Sec. 4B). (C-E) Example trajectories for each phase: (C) Imitation, (D) Intuition, and (E) Hallucination.

For low $\lambda$ , the system is in an imitation phase: cross-entropy is low, path-entropy is low, and trajectories reproduce the suboptimal random walks from the data, leading to a high Mean First Passage Time (MFPT) to the exit (Fig. 2 C). For high $\lambda$ , the entropic term dominates and the system enters a hallucination phase: cross- and path-entropy are high; maze rules are broken to maximize path diversity, and the Wall Hit Ratio (WHR) increases sharply (Fig. 2 E). Between these two regimes lies a narrow intuition phase, where the trade-off between $\mathcal{E}$ and $\mathcal{H}$ yields an emergent strategy: the model discovers the shortest legal path to the exit (Fig. 2 D), achieving minimal MFPT with zero WHR. The separation between the fluctuation peaks of $\mathcal{E}$ and $\mathcal{H}$ (Fig. 2 A inset) reveals distinct entropy- and energy-driven phase boundaries.

<details>

<summary>Figure3.png Details</summary>

### Visual Description

## Chart Type: Multi-Panel Line Charts Analyzing Metric Values, MFPT/WHR Ratios, and Mean Weights Across Lambda (λ)

### Overview

This image presents four distinct line charts, labeled A, B, C, and D, along with two inset "Random baseline" charts. All charts plot various metrics against a common parameter, λ (lambda), which is displayed on a logarithmic scale. The plots compare "forward" and "backward" processes or conditions, and analyze metrics such as `E_λ`, `H_λ`, Mean First Passage Time (MFPT), Waiting Time Ratio (WHR), and mean weight `(w)`.

### Components/Axes

The entire figure is composed of four main panels arranged in a 2x2 grid, labeled A (top-left), D (top-right), B (bottom-left), and C (bottom-right).

**Panel A (Top-Left Main Chart)**

* **X-axis**: `λ` (lambda), logarithmic scale from 10⁻² to 10². Major ticks at 10⁻², 10⁻¹, 10⁰, 10¹, 10².

* **Y-axis**: `Metric values`, linear scale from 0.4 to 1.4. Major ticks at 0.4, 0.6, 0.8, 1.0, 1.2, 1.4.

* **Legend (Top-Left)**:

* Dark red solid line with right-pointing triangles: `E_λ forward`

* Blue solid line with right-pointing triangles: `H_λ (fwd)`

* Dark red dashed line with left-pointing triangles: `E_λ backward`

* Blue dashed line with left-pointing triangles: `H_λ (bwd)`

* **Inset Chart (Top-Right within Panel A)**: Labeled "Random baseline".

* **X-axis**: `λ`, logarithmic scale from 10⁻² to 10². Major ticks at 10⁻², 10⁰, 10².

* **Y-axis**: Unlabeled, linear scale from 0.5 to 1.0. Major ticks at 0.5, 1.0.

* **Content**: Two shaded regions (blue and red) with corresponding solid lines.

**Panel B (Bottom-Left Main Chart)**

* **X-axis**: `λ` (lambda), logarithmic scale from 10⁻² to 10². Major ticks at 10⁻², 10⁻¹, 10⁰, 10¹, 10².

* **Y-axis**: `MFPT / WHR (fwd)`, linear scale from 0.00 to 1.00. Major ticks at 0.00, 0.25, 0.50, 0.75, 1.00.

* **Legend (Bottom-Center)**:

* Black solid line: `MFPT`

* Red solid line: `WHR`

* **Highlight**: A light blue shaded vertical region approximately between λ = 0.02 and λ = 0.08.

**Panel C (Bottom-Right Main Chart)**

* **X-axis**: `λ` (lambda), logarithmic scale from 10⁻² to 10². Major ticks at 10⁻², 10⁻¹, 10⁰, 10¹, 10².

* **Y-axis**: `MFPT / WHR (bwd)`, linear scale from 0.00 to 1.00. Major ticks at 0.00, 0.25, 0.50, 0.75, 1.00.

* **Legend (Bottom-Right)**:

* Black dashed line: `MFPT`

* Red dashed line: `WHR`

* **Highlight**: A light blue shaded vertical region approximately between λ = 0.01 and λ = 0.02.

**Panel D (Top-Right Main Chart)**

* **X-axis**: `λ` (lambda), logarithmic scale from 10⁻² to 10². Major ticks at 10⁻², 10⁻¹, 10⁰, 10¹, 10².

* **Y-axis**: `(w) (mean weight)`, linear scale from 0.0 to 1.2. Major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0, 1.2.

* **Legend (Top-Right)**:

* Black solid line with right-pointing triangles: `forward`

* Black dashed line with left-pointing triangles: `backward`

* **Inset Chart (Bottom-Left within Panel D)**: Labeled "Random baseline".

* **X-axis**: `λ`, logarithmic scale from 10⁻² to 10². Major ticks at 10⁻², 10⁰, 10².

* **Y-axis**: Unlabeled, linear scale from 0.2 to 1.0. Major ticks at 0.2, 0.4, 0.6, 0.8, 1.0.

* **Content**: A grey shaded region with a corresponding solid black line.

### Detailed Analysis

**Panel A: Metric values vs. λ**

* **`E_λ forward` (dark red solid line)**: Starts at approximately 0.85 at λ=10⁻². It remains relatively stable around 0.85-0.87 until λ ≈ 0.1. From λ ≈ 0.1, it shows a gradual upward trend, crossing 1.0 around λ ≈ 0.3, and then increases more steeply to reach a plateau at approximately 1.38 for λ ≥ 1.

* **`H_λ (fwd)` (blue solid line)**: Starts at approximately 0.55 at λ=10⁻². It exhibits a steady upward trend, crossing 0.8 around λ ≈ 0.05, crossing 1.0 around λ ≈ 0.15, and then continues to increase, reaching a plateau at approximately 1.38 for λ ≥ 1.

* **`E_λ backward` (dark red dashed line)**: Starts at approximately 0.85 at λ=10⁻². It stays relatively flat around 0.85-0.87 until λ ≈ 0.05. From λ ≈ 0.05, it increases sharply, crossing 1.0 around λ ≈ 0.1, and then reaches a plateau at approximately 1.38 for λ ≥ 0.5.

* **`H_λ (bwd)` (blue dashed line)**: Starts at approximately 0.55 at λ=10⁻². It shows a sharp upward trend, crossing 0.8 around λ ≈ 0.02, crossing 1.0 around λ ≈ 0.05, and then reaches a plateau at approximately 1.38 for λ ≥ 0.2.

* **Inset (Random baseline)**: Both the blue and red lines (with shaded regions indicating variability) start at their respective initial values (blue ~0.5, red ~0.8) at λ=10⁻². They both increase to approximately 1.0 around λ=10⁰ and then remain flat. The blue line shows a steeper initial rise than the red line.

**Panel B: MFPT / WHR (fwd) vs. λ**

* **`MFPT` (black solid line)**: Starts at 1.00 at λ=10⁻². It decreases sharply, reaching a minimum value of approximately 0.10 around λ ≈ 0.05. This minimum occurs within the light blue shaded region (λ ≈ 0.02 to 0.08). After the minimum, it gradually increases to approximately 0.30 at λ=10².

* **`WHR` (red solid line)**: Starts at 0.00 at λ=10⁻². It remains at 0.00 until approximately λ ≈ 0.05 (within the light blue shaded region). From this point, it increases sharply, reaching approximately 0.95 at λ ≈ 0.5, and then stays relatively flat around 0.95-0.98 until λ=10².

**Panel C: MFPT / WHR (bwd) vs. λ**

* **`MFPT` (black dashed line)**: Starts at 1.00 at λ=10⁻². It decreases sharply, reaching a minimum value of approximately 0.10 around λ ≈ 0.015. This minimum occurs within the light blue shaded region (λ ≈ 0.01 to 0.02). After the minimum, it gradually increases to approximately 0.30 at λ=10².

* **`WHR` (red dashed line)**: Starts at 0.00 at λ=10⁻². It remains at 0.00 until approximately λ ≈ 0.015 (within the light blue shaded region). From this point, it increases sharply, reaching approximately 0.95 at λ ≈ 0.5, and then stays relatively flat around 0.95-0.98 until λ=10².

**Panel D: (w) (mean weight) vs. λ**

* **`forward` (black solid line)**: Starts at approximately 1.05 at λ=10⁻². It remains relatively stable, fluctuating between 1.0 and 1.1, until λ ≈ 0.5. It then shows a slight increase to a peak of approximately 1.2 at λ ≈ 1.5. Following this peak, it drops sharply to approximately 0.05 at λ ≈ 10, and remains flat at this value until λ=10².

* **`backward` (black dashed line)**: Starts at approximately 1.05 at λ=10⁻². It remains relatively stable around 1.0-1.05 until λ ≈ 0.1. From λ ≈ 0.1, it decreases sharply, crossing 0.8 around λ ≈ 0.2, crossing 0.4 around λ ≈ 0.4, and reaching approximately 0.05 at λ ≈ 0.8. It then remains flat at this value until λ=10².

* **Inset (Random baseline)**: The black line (with grey shaded region) starts at approximately 1.0 at λ=10⁻². It decreases sharply to approximately 0.2 at λ=10⁰ and then remains flat at this value until λ=10². The shaded region indicates variability around the mean weight.

### Key Observations

1. **Convergence of Metrics in Panel A**: All four metrics (`E_λ` and `H_λ` for both forward and backward processes) converge to a common value of approximately 1.38 at high λ values (λ ≥ 1).

2. **Faster Response for Backward Processes (Panel A)**: The `backward` metrics (`E_λ backward` and `H_λ (bwd)`) show a sharper increase and reach their plateau values at lower λ values compared to their `forward` counterparts. `H_λ (bwd)` is the fastest to rise.

3. **Inverse Relationship between MFPT and WHR (Panels B & C)**: In both forward and backward scenarios, MFPT and WHR exhibit an inverse relationship. When MFPT is high, WHR is low (near 0), and vice-versa.

4. **Critical λ Range for MFPT/WHR Transition**: There's a narrow range of λ where MFPT drops sharply and WHR rises sharply. This range is highlighted by the light blue shaded regions. For the forward process (Panel B), this transition occurs around λ ≈ 0.02-0.08. For the backward process (Panel C), this transition occurs at a lower λ range, around λ ≈ 0.01-0.02.

5. **Mean Weight Behavior (Panel D)**: The `mean weight (w)` for the `backward` process drops sharply at a much lower λ (around λ ≈ 0.1 to 0.8) compared to the `forward` process, which maintains a high mean weight until λ ≈ 1.5 before a sharp drop. Both eventually converge to a low mean weight (around 0.05) at high λ.

6. **Random Baseline Comparison**: The "Random baseline" insets show different behaviors. In Panel A, the baseline metrics increase to 1.0. In Panel D, the baseline mean weight decreases to 0.2. This suggests that the observed behaviors in the main plots are distinct from a purely random process.

### Interpretation

The plots collectively illustrate the behavior of certain metrics (`E_λ`, `H_λ`, MFPT, WHR, and mean weight `w`) as a function of a parameter `λ`, likely representing a regularization strength, a coupling constant, or a similar control parameter in a system. The distinction between "forward" and "backward" processes is central to the analysis.

**Panels A and D** suggest that the "backward" process is more sensitive to changes in `λ` than the "forward" process. In Panel A, `H_λ (bwd)` and `E_λ backward` reach their high plateau values at smaller `λ` values, indicating a faster "activation" or saturation of these metrics. Similarly, in Panel D, the mean weight for the `backward` process drops significantly earlier than for the `forward` process. This implies that the "backward" process might be more efficient or responsive in reaching a certain state or exhibiting a particular behavior at lower `λ` values.

**Panels B and C** highlight a critical transition point for `λ`. The inverse relationship between MFPT (Mean First Passage Time) and WHR (Waiting Time Ratio) suggests a trade-off or a phase transition. When MFPT is high and WHR is low, it implies that the system takes a long time to reach a certain state, and the waiting time is not effectively utilized. Conversely, when MFPT is low and WHR is high, the system reaches the state quickly, and waiting times are efficiently managed. The sharp transition in these metrics within the shaded `λ` regions indicates a critical `λ` value where the system's dynamics fundamentally change. The fact that this transition occurs at a lower `λ` for the "backward" process (Panel C) reinforces the idea of its higher sensitivity or efficiency.

The "Random baseline" insets provide a crucial context. In Panel A, the baseline metrics reaching 1.0 suggests that the observed `E_λ` and `H_λ` values (which go up to ~1.38) represent a performance *above* a random expectation. In Panel D, the baseline mean weight decreasing to 0.2, while the main curves drop to 0.05, indicates that the system's mean weight can be driven even lower than a random process, especially at high `λ`.

Overall, the data suggests that `λ` acts as a control parameter that can significantly alter the system's behavior, particularly in terms of efficiency (MFPT/WHR) and the influence of certain components (mean weight). The "backward" process consistently demonstrates a higher sensitivity or faster response to `λ` compared to the "forward" process, reaching critical transitions and stable states at lower `λ` values. This could be indicative of different underlying mechanisms or optimization strategies for the two directions.

</details>

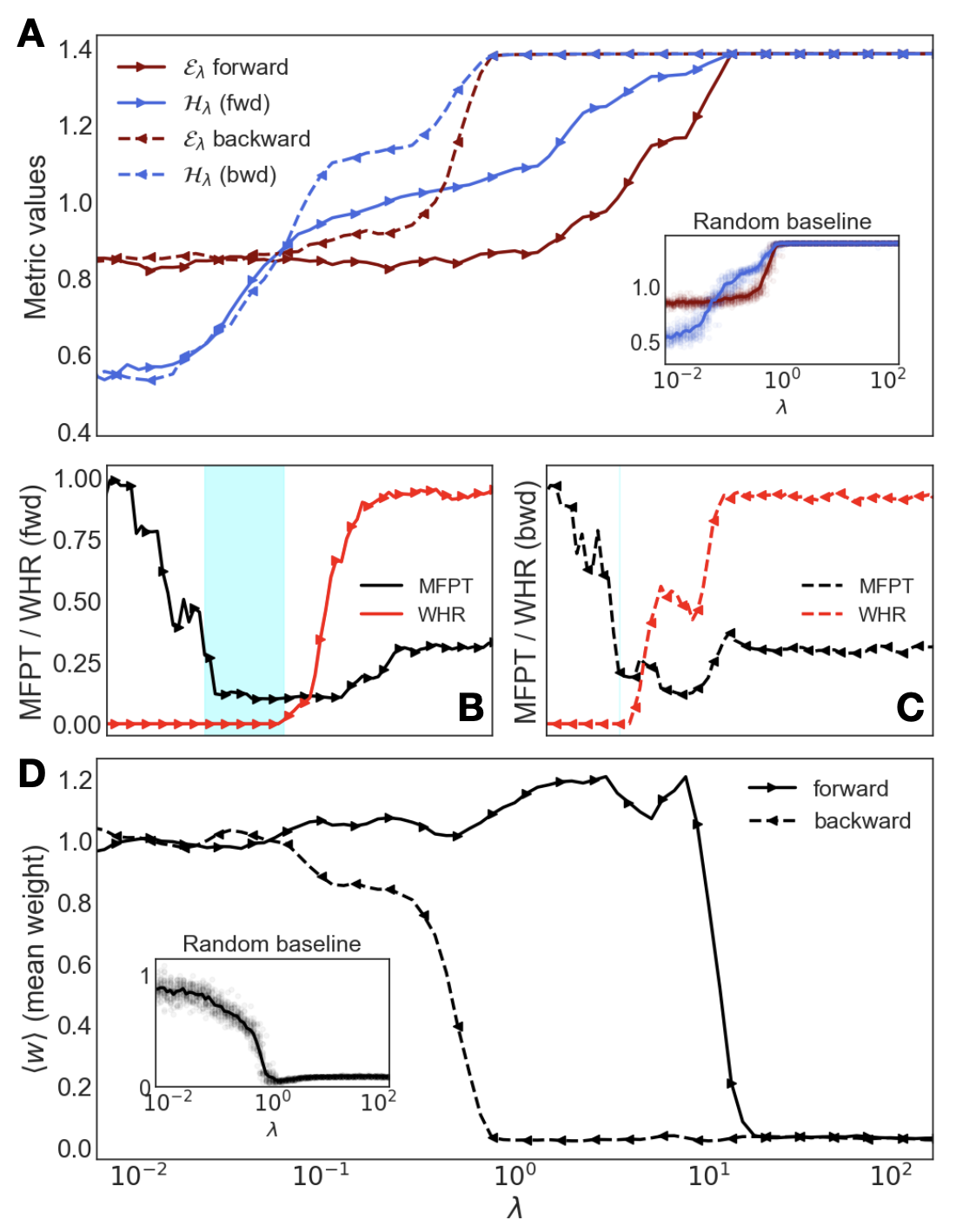

Figure 3: Hysteresis and protocol-dependence. Comparing a forward (solid) and backward (dashed) sweep of $\lambda$ reveals that the intuitive state is stable once found. (A) Hysteresis loop in genotype metrics ( $\mathcal{E},\mathcal{H}$ ). (B, C) Phenotype for the forward and backward sweeps, respectively, with the forward sweep showing a wider intuition window. (D) The mean network weight $\langle w\rangle$ acts as an order parameter capturing the system’s bistability. Insets show baselines without protocol.

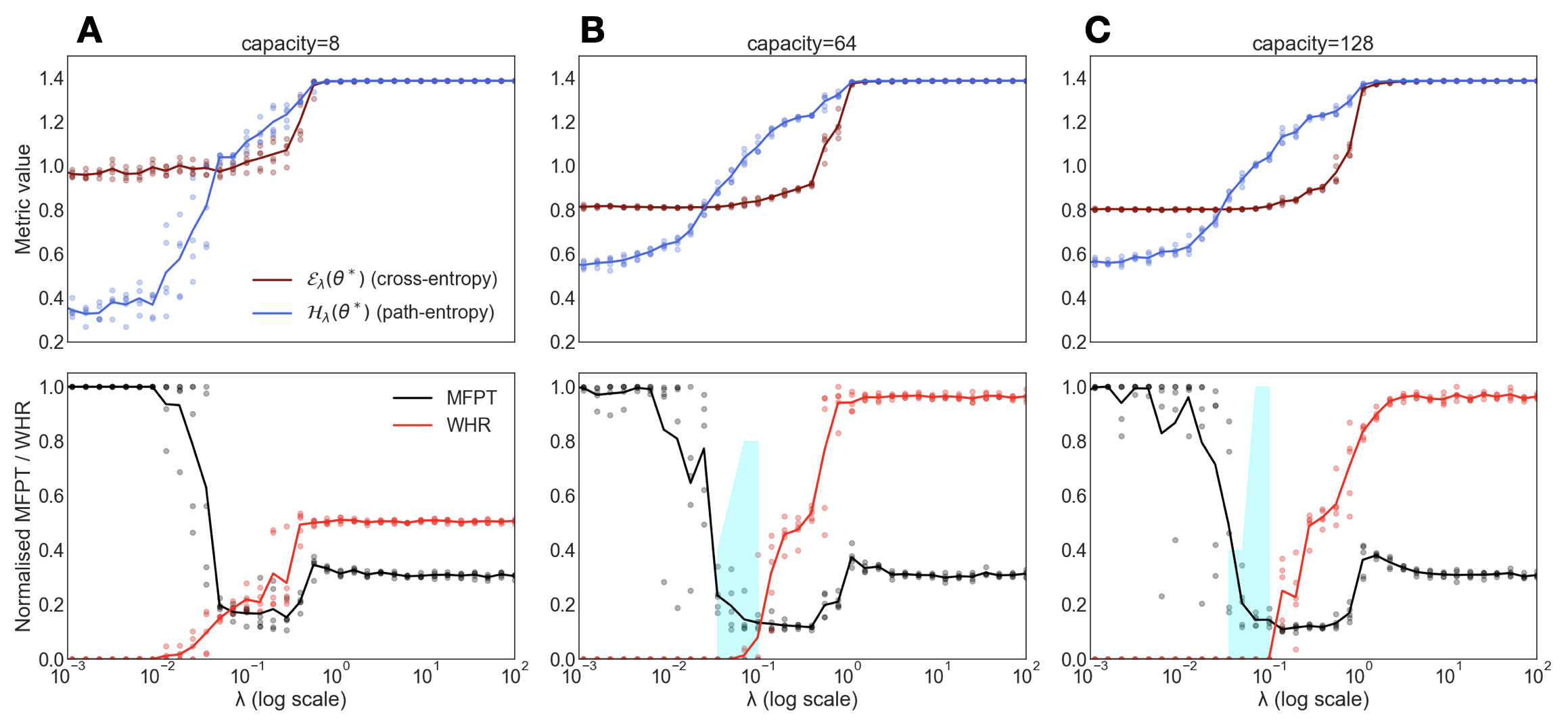

Operationally, this critical learning phase maximizes future path-entropy with minimal cross-entropy, enabling novel, goal-directed behavior at inference without interaction or explicit rewards. Reaching this phase depends on data quality and model complexity, requiring a sufficiently large future horizon and adequate model capacity (see SM Sec. S3 for a parametric study). The fragility of the mechanism is tied to multistability, as observed when applying adiabatic protocols that smoothly sweep the control parameter $\lambda$ (Fig. 3). A large hysteretic loop appears in the genotype metrics (A), which has behavioral consequences in the phenotype: while a forward sweep from $\lambda\approx 0$ opens the intuition window, with low MFPT and low WHR (B), a backward sweep starting from high $\lambda$ does not reach the desired phase (C). The bistability is captured by an effective order parameter –the mean network weight– which remains in an ordered intuitive state once the system has been guided there (D). The adiabatic protocol shows that a self-referential fine-tuning from imitation to controlled imagination allows the system to stabilize in a metastable phase, a process that motivates the term mind-tuning.

Effective theory.— The phenomenology of mind-tuning emerges from a high-dimensional, multistable free-energy landscape. We capture the essential mechanism in a scalar order parameter $m\in[0,1]$ , representing the model’s rationality, and define a Boltzmann policy with an effective potential $U_{m}(a)$ :

$$

p_{m,\beta}(a|h_{t})=\frac{e^{-\beta U_{m}(a)}}{\sum_{a^{\prime}\in\mathcal{A}}e^{-\beta U_{m}(a^{\prime})}}. \tag{6}

$$

Actions, or decisions, are classified into optimal $a^{*}$ , rational-but-suboptimal $a^{r}$ , and non-rational $a^{n}$ and $m_{D}$ is a free parameter representing the training data’s rationality. The effective costs,

$$

\displaystyle U(a^{*}) \displaystyle=0, \displaystyle U(a^{r}) \displaystyle=\frac{\max(0,m-m_{D})}{1-m_{D}}, \displaystyle U(a^{n}) \displaystyle=m, \tag{7}

$$

are designed to create a trade-off: as the model’s rationality $m$ improves beyond the data’s, the cost of suboptimal-but-legal actions grows, forcing a choice between true optimality and rule-breaking. For the simple Markovian maze with a small state-space, the free energy $\mathcal{F}_{\lambda}(m)$ can be computed analytically (see SM Sec. S4A). For a given $\lambda$ , one can also explore the learning dynamics in this landscape by sampling rationality states $m$ from the equilibrium distribution $P(m)\propto e^{-\hat{\beta}\mathcal{F}(m)}$ , where the inverse temperature $\hat{\beta}$ controls the exploration-exploitation trade-off, modeling stochasticity during gradient descent.

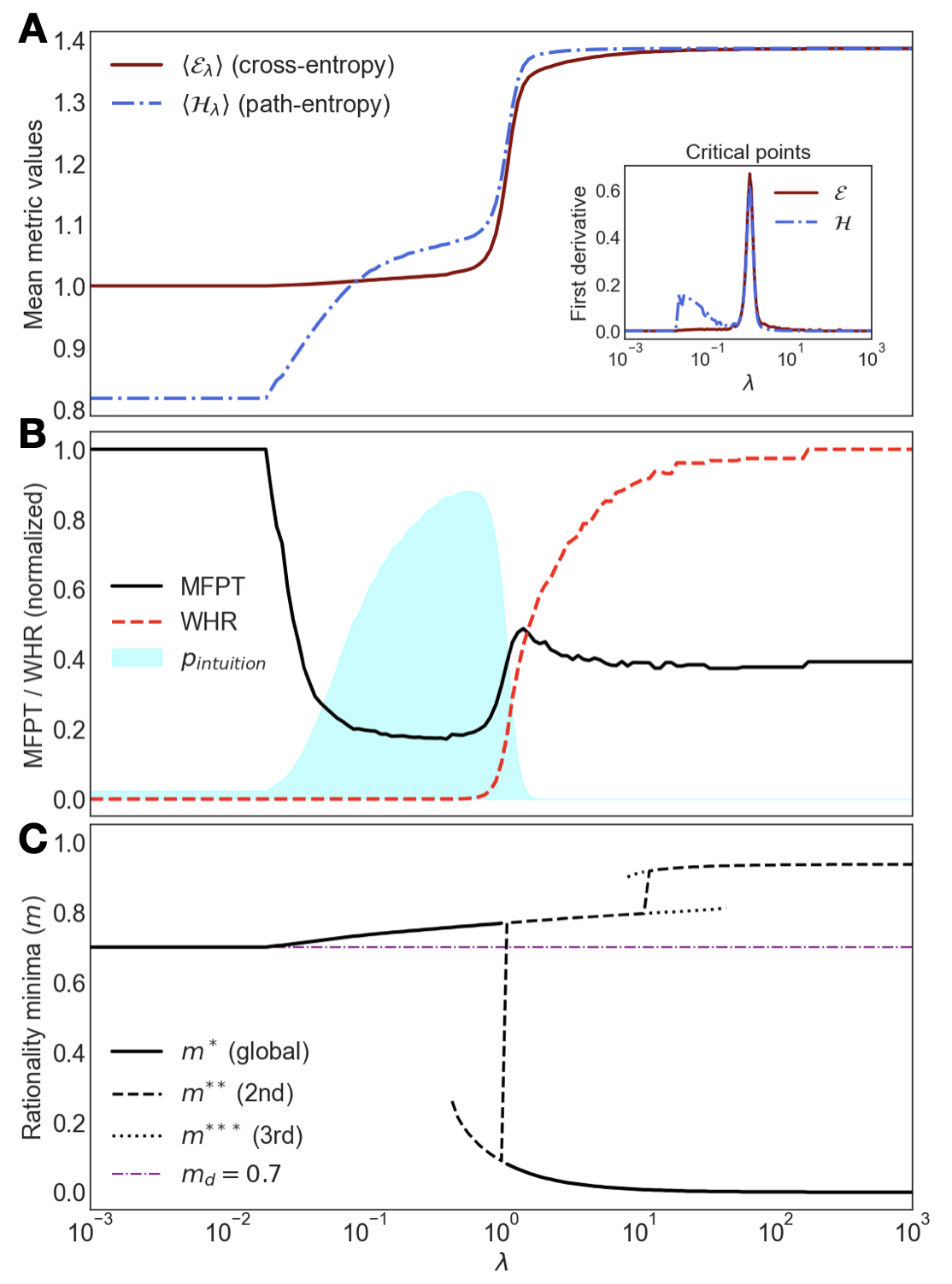

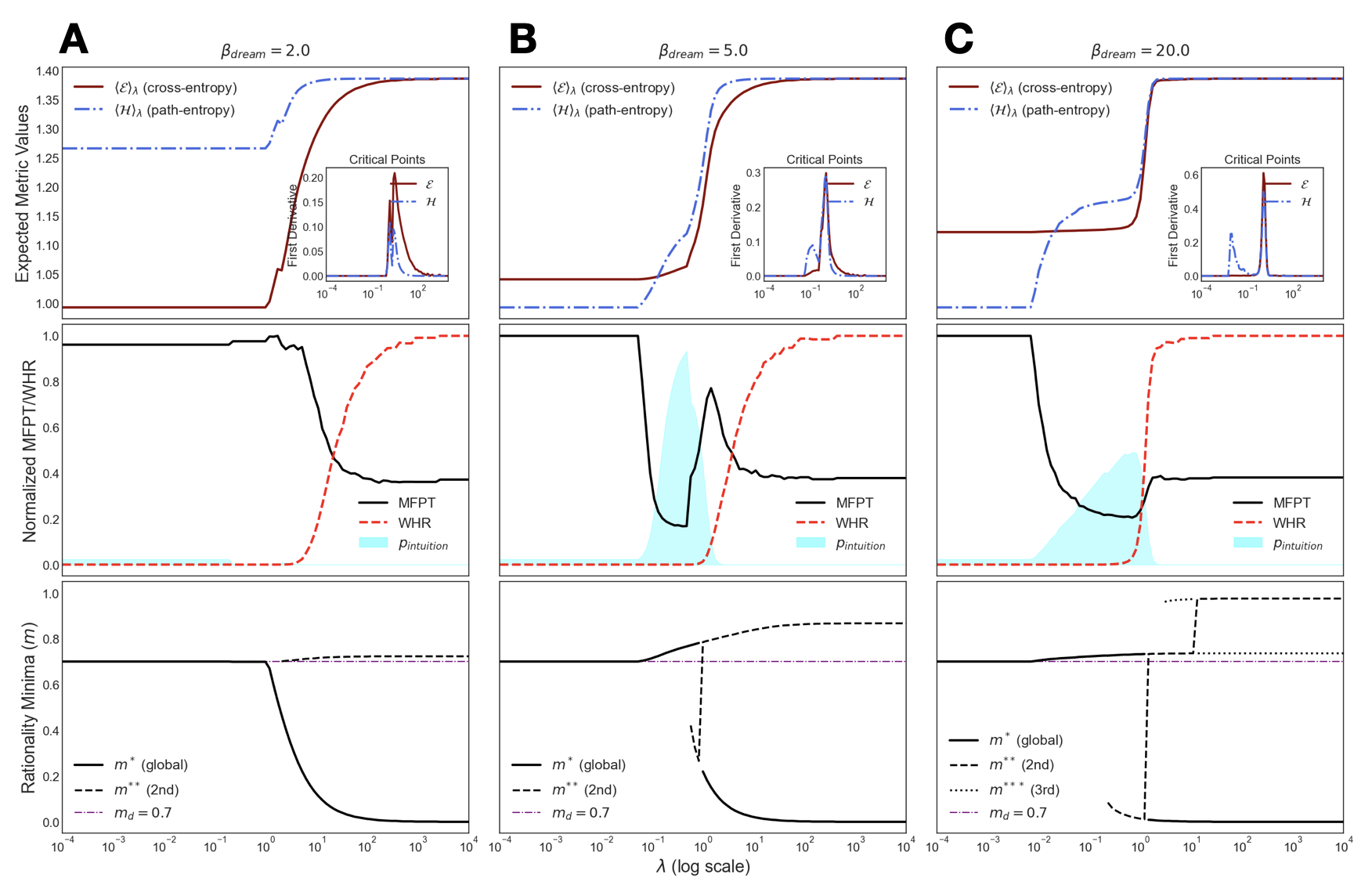

This effective theory qualitatively reproduces the experimental phase diagram, including the transitions in both genotypic (Fig. 4 A) and phenotypic metrics (Fig. 4 B). The underlying mechanism is revealed by exploring the minima of the free-energy landscape, found by solving $\partial\mathcal{F}_{\lambda}(m)/\partial m=0$ . This analysis confirms a smooth, entropy-driven transition followed by an abrupt, first-order energy-driven one, creating a bistable region where intuition ( $m>m_{D}$ ) and hallucination ( $m\ll m_{D}$ ) coexist (Fig. 4 C). Intriguingly, the theory further predicts a more elusive inspiration phase: a third stable solution with $m\approx 1$ , associated to a state of true creative insight. This strategy abruptly departs from data and represents internalized understanding. Unlike the subtle intuitive state, which often requires a high inference $\beta$ to be executed without error, this inspired solution would be robust even with a noisy policy. Yet, it is hidden within a tiny basin of attraction masked by the dominant hallucination phase (see SM Sec. S4.C). These predictions point to a very rich phase diagram, where intuition may be the trigger of even more exotic phenomena.

<details>

<summary>Figure4.png Details</summary>

### Visual Description

## Chart Type: Multi-Panel Line Charts Illustrating System Transitions

### Overview

This image presents three vertically stacked line charts (labeled A, B, and C) that share a common logarithmic x-axis, representing a parameter `λ`. Each chart displays different metrics as a function of `λ`, revealing a system undergoing a transition. Chart A shows mean entropy values, Chart B displays normalized decision-making metrics and an "intuition" probability distribution, and Chart C illustrates rationality minima. An inset in Chart A highlights the critical points of the entropy metrics.

### Components/Axes

**Common X-axis (bottom of Chart C):**

* **Label:** `λ`

* **Scale:** Logarithmic, ranging from 10⁻³ to 10³ (i.e., 0.001 to 1000). Major ticks at 10⁻³, 10⁻², 10⁻¹, 10⁰, 10¹, 10², 10³.

---

**Chart A: Mean Metric Values**

* **Y-axis Label:** Mean metric values

* **Y-axis Scale:** Linear, ranging from 0.8 to 1.4. Major ticks at 0.8, 0.9, 1.0, 1.1, 1.2, 1.3, 1.4.

* **Legend (top-left):**

* Solid brown line: `⟨ε_λ⟩` (cross-entropy)

* Dash-dot blue line: `⟨H_λ⟩` (path-entropy)

**Inset Chart (within Chart A, top-right): Critical points**

* **Title:** Critical points

* **Y-axis Label:** First derivative

* **Y-axis Scale:** Linear, ranging from 0.0 to 0.6. Major ticks at 0.0, 0.2, 0.4, 0.6.

* **X-axis Label:** `λ` (same scale as main charts)

* **Legend (top-right of inset):**

* Solid brown line: `ε`

* Dash-dot blue line: `H`

---

**Chart B: MFPT / WHR (normalized)**

* **Y-axis Label:** MFPT / WHR (normalized)

* **Y-axis Scale:** Linear, ranging from 0.0 to 1.0. Major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend (center-left):**

* Solid black line: MFPT

* Dashed red line: WHR

* Light blue shaded area: `p_intuition`

---

**Chart C: Rationality minima (m)**

* **Y-axis Label:** Rationality minima (m)

* **Y-axis Scale:** Linear, ranging from 0.0 to 1.0. Major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend (bottom-left):**

* Solid black line: `m*` (global)

* Dashed black line: `m**` (2nd)

* Dotted black line: `m***` (3rd)

* Dash-dot purple line: `m_d = 0.7`

### Detailed Analysis

**Chart A: Mean Metric Values**

* **`⟨ε_λ⟩` (cross-entropy) - Solid brown line:**

* Starts at approximately 1.0 for `λ` values from 10⁻³ to about 10⁻¹.

* Begins a sharp upward trend around `λ` = 0.1, increasing rapidly.

* Reaches a plateau at approximately 1.38 for `λ` values greater than about 10.

* **`⟨H_λ⟩` (path-entropy) - Dash-dot blue line:**

* Starts at approximately 0.82 for `λ` values from 10⁻³ to about 10⁻¹.

* Begins a sharp upward trend around `λ` = 0.1, increasing rapidly and crossing `⟨ε_λ⟩` around `λ` = 0.5.

* Reaches a plateau at approximately 1.38 for `λ` values greater than about 10, converging with `⟨ε_λ⟩`.

**Inset Chart: Critical points**

* **`ε` (First derivative of cross-entropy) - Solid brown line:**

* Shows a small peak around `λ` = 0.08 with a value of approximately 0.05.

* Exhibits a much larger and sharper peak around `λ` = 0.8, reaching a value of approximately 0.65.

* **`H` (First derivative of path-entropy) - Dash-dot blue line:**

* Shows an initial small peak around `λ` = 0.005 with a value of approximately 0.15.

* Displays a second, more pronounced peak around `λ` = 0.05 with a value of approximately 0.18.

* Exhibits a third, very large and sharp peak around `λ` = 0.8, reaching a value of approximately 0.65, closely mirroring the `ε` peak.

**Chart B: MFPT / WHR (normalized)**

* **MFPT (solid black line):**

* Starts at 1.0 for `λ` values from 10⁻³ to about 0.01.

* Drops sharply from `λ` = 0.01 to a minimum of approximately 0.18 around `λ` = 0.2.

* Rises sharply from `λ` = 0.2 to approximately 0.48 around `λ` = 1.

* Gradually decreases and stabilizes around 0.38 for `λ` values greater than about 10.

* **WHR (dashed red line):**

* Starts at approximately 0.02 for `λ` values from 10⁻³ to about 0.2.

* Rises sharply from `λ` = 0.2 to approximately 0.95 around `λ` = 10.

* Stabilizes at approximately 0.95 for `λ` values greater than about 10.

* **`p_intuition` (light blue shaded area):**

* Starts from 0 around `λ` = 0.01.

* Increases to a peak value of approximately 0.85 around `λ` = 0.15.

* Decreases back to 0 around `λ` = 1.

**Chart C: Rationality minima (m)**

* **`m*` (global) - Solid black line:**

* Starts at approximately 0.7 for `λ` values from 10⁻³ to about 0.1.

* Gradually increases to approximately 0.78 around `λ` = 0.8.

* Drops sharply from approximately 0.78 to approximately 0.05 around `λ` = 1.

* Gradually decreases further to approximately 0.02 for `λ` values greater than about 100.

* **`m**` (2nd) - Dashed black line:**

* Starts at approximately 0.7 for `λ` values from 10⁻³ to about 0.1, overlapping with `m*`.

* Gradually increases to approximately 0.78 around `λ` = 0.8, overlapping with `m*`.

* Jumps sharply from approximately 0.78 to approximately 0.9 around `λ` = 1.

* Gradually increases to approximately 0.95 for `λ` values greater than about 100.

* **`m***` (3rd) - Dotted black line:**

* Appears only for `λ` values greater than approximately 10.

* Starts at approximately 0.85 around `λ` = 10.

* Increases to approximately 0.88 around `λ` = 20.

* Flattens out at approximately 0.88 for `λ` values greater than about 20.

* **`m_d = 0.7` (dash-dot purple line):**

* A horizontal reference line at `m` = 0.7 across the entire `λ` range.

### Key Observations

* **Phase Transition Region:** All three charts exhibit significant, often abrupt, changes in behavior within the `λ` range of approximately 0.1 to 10. This strongly suggests a critical transition or phase change in the system being modeled.

* **Critical Points Alignment:** The sharpest changes in entropy (Chart A) are precisely identified by the peaks in their first derivatives (inset in Chart A), particularly the large peak around `λ` = 0.8. These critical points align with major shifts in MFPT, WHR (Chart B), and the rationality minima (`m*`, `m**`) (Chart C).

* **Role of `p_intuition`:** The `p_intuition` distribution (Chart B) peaks *before* the most dramatic shifts in other metrics (around `λ` = 0.15), suggesting it might represent a preparatory or initiating phase for the main transition.

* **Entropy Convergence:** While `⟨H_λ⟩` starts lower than `⟨ε_λ⟩`, both entropies converge to the same high value after the transition, implying a new, more complex, but stable state.

* **Bifurcation in Rationality Minima:** Chart C clearly shows a bifurcation around `λ` = 1. The global minimum `m*` drops dramatically to very low values, while the second minimum `m**` jumps to high values. This indicates a fundamental change in the landscape of rationality minima, where the previously dominant minimum becomes less favorable, and a new, very low minimum emerges as global.

### Interpretation

The data presented across these three panels describes a system that undergoes a significant qualitative change as the parameter `λ` increases. This change can be characterized as a phase transition, marked by critical points where the system's properties shift rapidly.

At **low `λ` values (e.g., `λ` < 0.1)**, the system appears to be in a stable, less complex state. Entropies are low and constant, MFPT is high (possibly indicating slower decision processes), WHR is low, and the rationality minima (`m*`, `m**`) are relatively high and stable, suggesting a particular type of optimal behavior.

As `λ` enters the **intermediate range (e.g., 0.1 < `λ` < 10)**, the system transitions. This is where `p_intuition` peaks, potentially indicating a period where intuitive processes are most active or influential in driving the change. The entropies (`⟨ε_λ⟩`, `⟨H_λ⟩`) sharply increase, signifying a rise in complexity, uncertainty, or the number of accessible states. Concurrently, MFPT drops to a minimum and then rises, while WHR sharply increases, suggesting a shift towards more efficient or "rational" decision-making, or a change in the underlying dynamics of the process. The most striking change occurs around `λ` ≈ 1, where the global rationality minimum `m*` collapses to near zero, while `m**` jumps to a high value. This implies a fundamental restructuring of the system's optimal states, where a new, highly "rational" (low `m`) state becomes globally optimal, while other, higher `m` states persist as local minima.

At **high `λ` values (e.g., `λ` > 10)**, the system reaches a new, stable regime. Entropies plateau at a higher level, indicating a more complex but settled state. WHR is high and stable, and MFPT stabilizes at a lower value than its initial state, suggesting a highly efficient and "rational" mode of operation. The rationality minima reflect this, with `m*` remaining very low and `m**` and `m***` stabilizing at high values, confirming the persistence of the new optimal state.

In essence, `λ` acts as a control parameter that drives the system from a state of lower complexity and potentially different decision-making characteristics to a state of higher complexity, marked by a dramatic shift in optimal behaviors and an increased role for "intuition" during the transition itself. The critical points identified by the first derivatives pinpoint the precise `λ` values where these transformations are most pronounced.

</details>

Figure 4: Theoretical predictions. The low-dimensional model reproduces the experimental findings. (A) Theoretical $\mathcal{E}$ and $\mathcal{H}$ vs. $\lambda$ . (B) Corresponding MFPT and WHR. (C) Minima of the free-energy landscape vs the control parameter $\lambda$ . The plot reveals coexisting stable states ( $m^{*},m^{**},m^{***}$ ) and a first-order transition where the global minimum jumps discontinuously, explaining the observed hysteresis.

Accessing these different cognitive phases requires navigating a complex landscape. Indeed, the observed hysteresis and the success of the adiabatic protocol are explained by this multi-stability. The analytical phase-diagram (Fig. 4 C) shows that slowly increasing $\lambda$ is a safe route to guide the system into the intuition basin of attraction. In Bayesian terms, it first grounds the model with the data likelihood before introducing the entropic prior. Reaching more exotic phases in the landscape, like the predicted inspiration state, would likely demand more complex, non-equilibrium protocols.

Discussion.— High-quality human data can carry an implicit drive toward path diversity, and optimization itself can induce entropic pressures that improve generalization [42], yielding an “intelligent simulator” from curated experience. This view predicts that current models should spontaneously increase their causal path entropy with scale. Our framework makes this drive explicit and grounded in MaxCal, providing a shortcut to intuition that encodes implicit search into model weights to reduce the need for expensive search at inference [43]. These results point toward a hidden axis, training-time imagination, that may be key to unlock out-of-distribution generalization in offline predictive models [35].

Our results are demonstrated in a minimal sandbox, a choice that is deliberate. The maze is the simplest non-trivial setting where the mechanism can be isolated and reproduced analytically. Many reasoning tasks can be viewed as navigation through a “conceptual maze” where a key insight unlocks a vastly larger state-space [17, 19, 20, 21]. This argument promises applications in control [17, 20], reasoning [8, 44], and planning [44]. Stefan Zweig’s The Royal Game [45] provides a compelling literary analogue: a prisoner achieves chess mastery by first studying games (imitation) and then playing against himself in his mind (imagination). His triumph occurs at the edge of madness, a state mirroring intuition coexisting with hallucination in our phase diagram.

Yet, scaling mind-tuning to real-world cases faces significant challenges. Computationally, estimating path-entropy for long horizons is hard due to the combinatorial explosion of futures [13]. This requires designing clever sampling strategies [17, 46], perhaps inspired by dreaming, hierarchical reasoning [44] and unconventional methods and architectures [47, 48]. Theoretically, a full characterization of the phase diagrams and universality classe is needed to design optimal tuning protocols [49]. For uncharted domains, identifying the right spaces for entropy maximization can be difficult and the offline theory may need data augmentation from environment interaction [18]. Yet, tuning $\lambda$ for future diversity in practice can turn into an alignment problem, trading benefits for safety [50]. Despite these challenges, this work takes a high-risk, high-reward route to reframing intelligence not merely as compression and computation, but as a physical phenomenon emerging at criticality.

Acknowledgments.— The author thanks many colleagues at IFISC and URV for enriching discussions. This work has been partially supported by the María de Maeztu project CEX2021-001164-M funded by the MICIU/AEI/10.13039/501100011033 and by Programa Maria Goyri URV.

## References

- Vaswani et al. [2017] A. Vaswani et al., Attention is all you need, in Adv. in Neural Info. Processing Systems, Vol. 30 (2017).

- Kaplan et al. [2020] J. Kaplan et al., Scaling laws for neural language models (2020), arXiv:2001.08361 [cs.LG] .

- Hoffmann et al. [2022] J. Hoffmann et al., Training compute-optimal large language models, arXiv preprint (2022), 2203.15556 .

- DeepSeek-AI et al. [2025] DeepSeek-AI et al., Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning (2025), arXiv:2501.12948 [cs.CL] .

- Shojaee et al. [2024] P. Shojaee et al., The illusion of thinking, arXiv preprint (2024), 2401.00675 .

- Brodeur et al. [2024] P. G. Brodeur et al., Superhuman performance of a large language model on the reasoning tasks of a physician, arXiv preprint (2024), 2412.10849 .

- Novikov et al. [2025] A. Novikov et al., Alphaevolve: A coding agent for scientific and algorithmic discovery (2025), arXiv:2506.13131 .

- Chollet [2019] F. Chollet, On the measure of intelligence, arXiv preprint (2019), 1911.01547 .

- Bubeck et al. [2023] S. Bubeck et al., Sparks of artificial general intelligence: Early experiments with gpt-4, (2023), 2303.12712 .

- Bender et al. [2021] E. M. Bender et al., On the dangers of stochastic parrots: Can language models be too big?, in Proceedings ACM (2021) pp. 610–623.

- Mitchell and Krakauer [2023] M. Mitchell and D. C. Krakauer, The debate over understanding in ai’s large language models, PNAS 120, e2215907120 (2023).

- Liang et al. [2022] P. Liang et al., Holistic evaluation of language models, arXiv preprint (2022), 2211.09110 .

- Jaynes [1980] E. T. Jaynes, The minimum entropy production principle, Ann. Rev.of Physical Chemistry 31, 579 (1980).

- Pressé et al. [2013] S. Pressé, K. Ghosh, J. Lee, and K. A. Dill, Principles of maximum entropy and maximum caliber in statistical physics, Reviews of Modern Physics 85, 1115 (2013).

- Dixit et al. [2018] P. D. Dixit et al., Perspective: Maximum caliber is a general variational principle for dynamical systems, The Journal of Chemical Physics 148, 010901 (2018).

- Kiefer [2025] A. B. Kiefer, Intrinsic motivation as constrained entropy maximization, arXiv preprint (2025), 2502.02962 .

- Wissner-Gross and Freer [2013] A. D. Wissner-Gross and C. E. Freer, Causal entropic forces, Physical Review Letters 110, 168702 (2013).

- Wen [2025] B. Wen, The missing reward: Active inference in the era of experience (2025), arXiv:2508.05619 .

- Klyubin et al. [2005] A. S. Klyubin, D. Polani, and C. L. Nehaniv, Empowerment: A universal agent-centric measure of control, in 2005 IEEE CEC, Vol. 1 (2005) pp. 128–135.

- Ramirez-Ruiz et al. [2024] J. Ramirez-Ruiz et al., Complex behavior from intrinsic motivation to occupy future action-state path space, Nature Communications 15, 5281 (2024).

- Friston et al. [2022] K. J. Friston et al., Designing ecosystems of intelligence from first principles, arXiv preprint (2022), 2212.01354 .

- Hopfield [1982] J. J. Hopfield, Neural networks and physical systems with emergent collective computational abilities, Proceedings of the National Academy of Sciences 79, 2554 (1982).

- Kuramoto [1975] Y. Kuramoto, Self-entrainment of a population of coupled non-linear oscillators, in International Symposium on Mathematical Problems in Theoretical Physics (Springer, 1975) pp. 420–422.

- Belkin et al. [2019] M. Belkin, D. Hsu, S. Ma, and S. Mandal, Reconciling modern machine-learning practice and the classical bias–variance trade-off, PNAS 116, 15849 (2019).

- Power et al. [2022] A. Power et al., Grokking: Generalization beyond overfitting in small neural networks, arXiv (2022), 2201.02177 .

- Papyan et al. [2020] V. Papyan, X. Y. Han, and D. L. Donoho, Prevalence of neural collapse during the terminal phase of deep learning training, PNAS 117, 24927 (2020).

- Liu et al. [2025] Z. Liu, Y. Xu, T. Poggio, and I. Chuang, Parameter symmetry potentially unifies deep learning theory, arXiv preprint (2025), 2502.05300 .

- Arola-Fernández and Lacasa [2024] L. Arola-Fernández and L. Lacasa, Effective theory of collective deep learning, Phys. Rev. Res. 6, L042040 (2024).

- Carleo et al. [2019] G. Carleo et al., Machine learning and the physical sciences, Rev. Mod. Phys. 91, 045002 (2019).

- Lewkowycz et al. [2020] A. Lewkowycz et al., The large learning rate phase of deep learning: the catapult mechanism (2020), arXiv:2003.02218 [stat.ML] .

- Muñoz [2018] M. A. Muñoz, Colloq.: Criticality and dynamical scaling in living systems, R. of Mod. Phys. 90, 031001 (2018).

- Zhang et al. [2025] S. Zhang et al., Intelligence at the edge of chaos (2025), arXiv:2410.02536 [cs.AI] .

- Jiménez-González et al. [2025] P. Jiménez-González, M. C. Soriano, and L. Lacasa, Leveraging chaos in the training of artificial neural networks (2025), arXiv:2506.08523 [cs.LG] .

- Arola-Fernández et al. [2020] L. Arola-Fernández et al., Uncertainty propagation in complex networks: From noisy links to critical properties, Chaos: An Interdisciplinary Journal of Nonlinear Science 30, 023129 (2020).

- Levine et al. [2020] S. Levine, A. Kumar, G. Tucker, and J. Fu, Offline reinforcement learning: Tutorial, review, and perspectives on open problems (2020), arXiv:2005.01643 [cs.LG] .

- Heins et al. [2024] C. Heins et al., Collective behavior from surprise minimization, PNAS 121, e2320239121 (2024).

- Friston [2010] K. Friston, The free-energy principle: A unified brain theory?, Nature Reviews Neuroscience 11, 127 (2010).

- Kauffman [2000] S. A. Kauffman, Investigations (Oxford Univ. Pr., 2000).

- Eysenbach and Levine [2022] B. Eysenbach and S. Levine, Maximum entropy rl (provably) solves some robust rl problems (2022), arXiv:2103.06257 [cs.LG] .

- Jaynes [1957] E. T. Jaynes, Information theory and statistical mechanics, The Physical Review 106, 620 (1957).

- Zdeborová and Krzakala [2016] L. Zdeborová and F. Krzakala, Statistical physics of inference: thresholds and algorithms, Adv. in Phys. 65, 453–552 (2016).

- Ziyin et al. [2025] L. Ziyin, Y. Xu, and I. Chuang, Neural thermodynamics i: Entropic forces in deep and universal representation learning (2025), arXiv:2505.12387 [cs.LG] .

- Belcak et al. [2025] P. Belcak et al., Small language models are the future of agentic ai (2025), arXiv:2506.02153 .

- Wang et al. [2025] G. Wang et al., Hierarchical reasoning models, arXiv preprint (2025), 2506.21734 .

- Zweig [1943] S. Zweig, The Royal Game (Viking Press, 1943).

- Aguilar [2022] J. e. a. Aguilar, Sampling rare trajectories using stochastic bridges, Phys. Rev. E 105, 064138 (2022).

- Labay-Mora et al. [2025] Labay-Mora et al., Theoretical framework for quantum associative memories, Quantum Science and Technology 10, 035050 (2025).

- Brunner et al. [2025] D. Brunner et al., Roadmap on neuromorphic photonics (2025), arXiv:2501.07917 [cs.ET] .

- Manzano et al. [2024] G. Manzano et al., Thermodynamics of computations with absolute irreversibility, unidirectional transitions, and stochastic computation times, Phys. Rev. X 14, 021026 (2024).

- Arenas et al. [2011] A. Arenas et al., The joker effect: Cooperation driven by destructive agents, J. of Theo. Bio. 279, 113–119 (2011).

- Maddison et al. [2017] C. J. Maddison, A. Mnih, and Y. W. Teh, The concrete distribution: A continuous relaxation of discrete random variables, in ICLR (2017) 1611.00712 .

- Williams [1992] R. J. Williams, Simple statistical gradient-following algorithms for connectionist rl, ML 8, 229 (1992).

Supplementary Material for: “Intuition emerges in Maximum Caliber models at criticality”

## Appendix A S1. Experimental Setup and Hyperparameters

The experimental setting is a minimal yet non-trivial environment for testing emergent reasoning. It consists of a deterministic $24\times 24$ maze with periodic boundary conditions, where an agent must find the path to a designated exit. This controlled testbed provides a tractable state space for analyzing the learning dynamics. The agent’s behavior is determined by a policy network that maps the current state (2D position $x_{t}$ ) to a probability distribution over the four cardinal actions: $\mathcal{A}=\{\text{Up, Down, Right, Left}\}$ . For auto-regressive training, a simple deterministic function $f(x_{t},a)$ maps the last action to the next state.

The training dataset $\mathcal{D}$ is intentionally non-optimal. In our main experiments, it contains $N=100$ trajectories, each of length $T=60$ steps, generated by a constrained random walks. These walkers respect the maze walls (i.e., never collide with them) but otherwise move randomly, exhibiting no goal-directed behavior. This design ensures that the optimal exit strategy is not present in the training data, forcing the model to discover it.

The model parameters $\theta$ are optimized by minimizing the free-energy functional $\mathcal{F}_{\lambda,\beta,\tau}(\theta)$ (Eq. (2) in the main text) via the Adam optimizer. The results presented in the main text (Fig. 2) are averaged over 20 independent training runs, each with a different random weight initialization, to ensure statistical robustness. The key hyperparameters used in the main experiments are: a policy network structured as a multi-layer perceptron (MLP) with one hidden layer of 128 neurons and ReLU activation; a learning rate of $1\times 10^{-3}$ ; 300 training epochs per $\lambda$ value; and a future horizon of $\tau=40$ steps in the entropy calculation.

The policy stochasticities are set to $\beta=1$ for training, $\beta=5$ for entropy calculation (imagination), and $\beta=10$ at inference time. A high imagination $\beta$ (compared to the training $\beta$ ) is beneficial for discovering hidden solutions that maximize causal entropy (i.e., finding the exit) with a finite $\tau$ and sparse data. A high inference $\beta$ is necessary to induce intuitive behavior in practice. In the intuition phase, the agent finds a superior solution but must execute its policy quite deterministically to follow the optimal path in the minimum time.

For problems that are not Markovian or where the data representation does not contain full state information (e.g., data are sequences of moves or the agent only sees its local environment), a more advanced neural network is required. Transformers are the standard for modeling long, non-Markovian sequences of tokens. Our framework naturally extends to these sequential autoregressive architectures, albeit at the cost of more parameters and computational effort.

Code availability.— PyTorch source code to reproduce the results of this paper is publicly available on GitHub: https://github.com/mystic-blue/mind-tuning.

## Appendix B S2. Calculation of Objective Functionals

The mind-tuning objective function $\mathcal{F}_{\lambda,\beta,\tau}(\theta)=\mathcal{E}_{\beta}(\theta)-\lambda\mathcal{H}_{\tau,\beta}(\theta)$ consists of two key terms. Below we detail their calculation.

### B.1 A. Cross-Entropy Estimation

The cross-entropy term $\mathcal{E}_{\beta}(\theta)$ , defined in Eq. (3) of the main text, measures the model’s ability to imitate the training data. It is estimated by averaging the negative log-likelihood of the actions taken in the dataset $\mathcal{D}$ given the preceding histories:

$$

\hat{\mathcal{E}}_{\beta}(\theta)=\frac{1}{|\mathcal{D}|}\sum_{(h_{t},a_{t})\in\mathcal{D}}[-\log\pi_{\theta,\beta}(a_{t}|h_{t})]

$$

where $|\mathcal{D}|$ is the total number of state-action pairs in the training set. This term encourages the policy to assign high probability to the trajectories observed during training.

### B.2 B. Causal Path-Entropy: Analytic Calculation for Markovian Systems

For systems with fully-observed, discrete, and reasonably small state spaces $\mathcal{V}$ , such as our maze environment, the path-entropy can be computed analytically. Since the system is Markovian ( $h_{t}=x_{t}$ ), we can define a policy-dependent transition matrix $M_{\pi}$ . The element $(M_{\pi})_{x^{\prime},x}$ gives the probability of transitioning from state $x$ to state $x^{\prime}$ under the current policy $\pi_{\theta,\beta}$ . Specifically, $(M_{\pi})_{x^{\prime},x}=\sum_{a\in\mathcal{A}}\pi_{\theta,\beta}(a|x)\delta_{x^{\prime},f(x,a)}$ , where $f(x,a)$ is the deterministic function that returns the next state.

Given a starting state $x_{start}$ , we can compute the probability distribution over future states $\vec{\rho}_{k}$ at any time step $k$ by evolving an initial occupancy vector (a point mass at $x_{start}$ ) via the recursion $\vec{\rho}_{k+1}=M_{\pi}\vec{\rho}_{k}$ . The conditional path-entropy for a trajectory starting at $x_{start}$ is then the time-averaged Shannon entropy of the policy, weighted by the occupancy probability at each future state:

$$

\mathcal{H}_{\tau,\beta}(\theta|x_{start})=\frac{1}{\tau}\sum_{k=0}^{\tau-1}\sum_{x\in\mathcal{V}}(\rho_{k})_{x}\left[-\sum_{a\in\mathcal{A}}\pi_{\theta,\beta}(a|x)\log\pi_{\theta,\beta}(a|x)\right].

$$

The total functional $\mathcal{H}_{\tau,\beta}(\theta)$ is the expectation of Eq. (S2) over all starting states in the training dataset $\mathcal{D}$ . This entire calculation is fully differentiable with respect to the network parameters $\theta$ , allowing for efficient gradient-based optimization. This exact method was used to produce all experimental and theoretical results in this work. Its primary computational cost scales with the size of the state space $|\mathcal{V}|$ , making it suitable for our testbed.

### B.3 C. Causal Path-Entropy: Monte Carlo Estimation for High-Dimensional Systems

For high-dimensional or continuous state spaces, or for non-Markovian sequence models like Transformers, the analytic approach becomes intractable. In these cases, $\mathcal{H}$ must be estimated via Monte Carlo sampling. For each starting history $h_{start}$ in a training mini-batch, we generate $K$ independent future trajectories (rollouts) of length $\tau$ by autoregressively sampling actions from the policy. The estimator for the path-entropy functional is:

$$

\hat{\mathcal{H}}_{\tau,\beta}(\theta)\approx\frac{1}{|\mathcal{B}|}\sum_{h_{start}\in\mathcal{B}}\left(\frac{1}{K\tau}\sum_{k=1}^{K}\sum_{j=0}^{\tau-1}\left[-\ln\pi_{\theta,\beta}(a_{j}^{(k)}|h_{j}^{(k)})\right]_{h_{start}}\right).

$$

To ensure that gradients can be backpropagated through the sampling process, especially for discrete action spaces, reparameterization techniques are required. A standard method is the Gumbel-Softmax trick [51], which provides a continuous, differentiable approximation to the sampling procedure. Alternatively, the gradient of the entropic objective can be estimated using policy gradient methods like REINFORCE [52], though this often suffers from high variance.

## Appendix C S3. Parametric Dependencies of the Intuition Phase

The emergence of the fragile intuition phase is a critical phenomenon highly sensitive to the model, data, and learning protocol parameters. Below, we detail the key dependencies we investigated.

### C.1 A. Future Horizon $\tau$

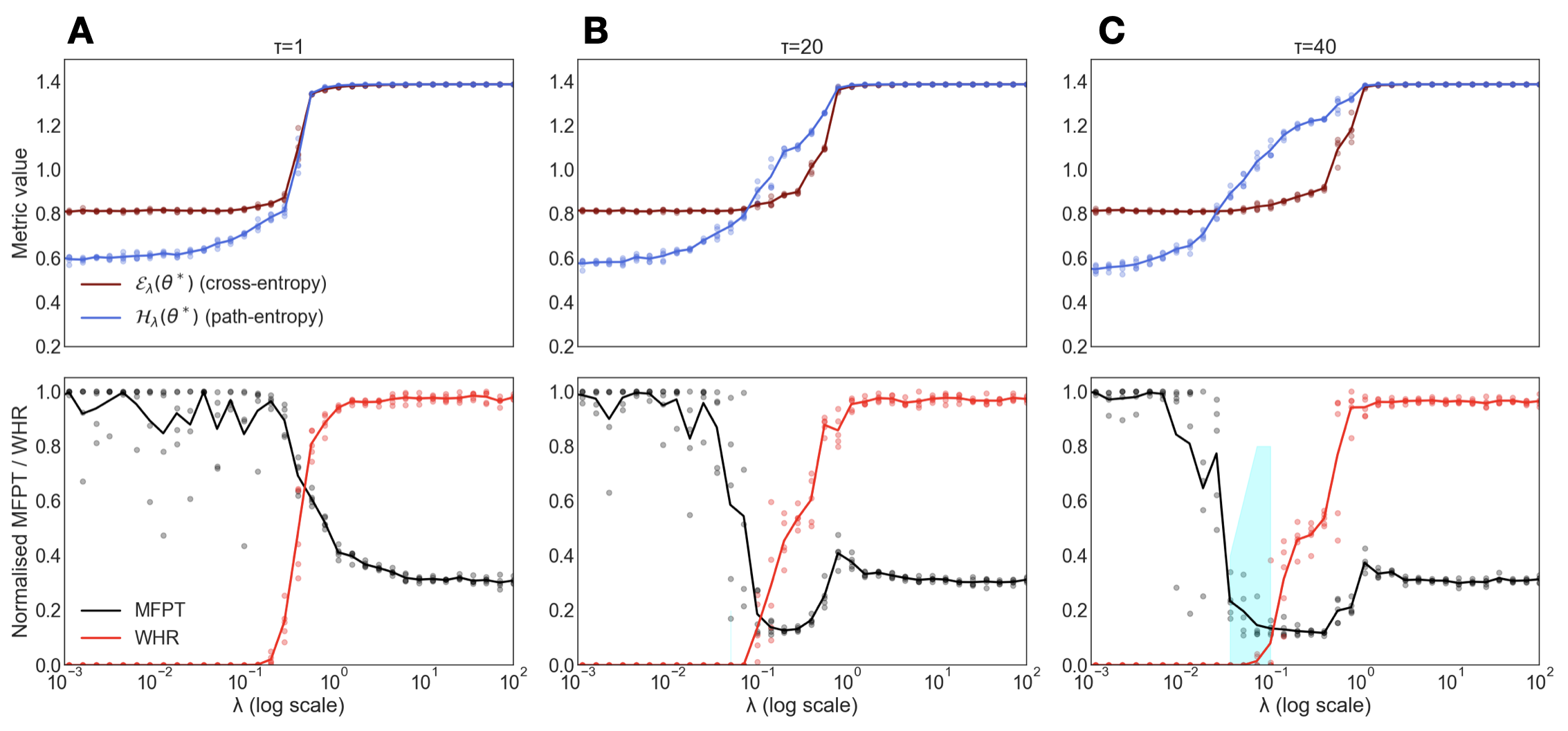

The future horizon $\tau$ dictates the timescale of the model’s “imagination”. Our experiments show that the intuition phase only emerges for a sufficiently long horizon (Fig. S1).

For a small $\tau$ , the model is myopic; the long-term entropic gain from escaping the maze is not visible, so the model defaults to minimizing cross-entropy and remains in the imitation phase. As $\tau$ increases, the model can foresee the vast expansion of possible futures that awaits outside the maze, creating a strong entropic incentive to find an exit. For intermediate horizons, we often observe a cheating phase—a local minimum in the free-energy landscape where the model learns to take a single illegal step through a wall. This strategy is a compromise: it incurs a small penalty for rule-breaking but gains a significant medium-term entropic advantage. Only for large $\tau$ does the incentive to find a legal path to maximal freedom dominate (i.e., virtue over vice).

<details>

<summary>FigureS1.png Details</summary>

### Visual Description

## Line Charts: Metric Values and Normalised Performance Metrics vs. Lambda for Varying Tau

### Overview

This image presents a set of three two-panel line charts, labeled A, B, and C, which illustrate the relationship between various metrics and a parameter `λ` (lambda) on a logarithmic scale. Each main panel (A, B, C) corresponds to a different value of `τ` (tau), specifically `τ=1`, `τ=20`, and `τ=40`, respectively. The top sub-panels display "Metric value" for cross-entropy and path-entropy, while the bottom sub-panels show "Normalised MFPT / WHR" for MFPT and WHR. All metrics are plotted against `λ` on a logarithmic scale, ranging from 10^-3 to 10^2. A light blue shaded region is present in the bottom sub-panel of chart C.

### Components/Axes

The image is composed of three main vertical panels, labeled A, B, and C, from left to right. Each main panel contains two sub-panels stacked vertically.

**Common X-axis (bottom of each main panel):**

* **Label**: `λ` (log scale)

* **Scale**: Logarithmic, ranging from 10^-3 to 10^2.

* **Tick Markers**: 10^-3, 10^-2, 10^-1, 10^0, 10^1, 10^2.

**Common Y-axis (left side of top sub-panels):**

* **Label**: Metric value

* **Scale**: Linear, ranging from 0.2 to 1.4.

* **Tick Markers**: 0.2, 0.4, 0.6, 0.8, 1.0, 1.2, 1.4.

**Common Y-axis (left side of bottom sub-panels):**

* **Label**: Normalised MFPT / WHR

* **Scale**: Linear, ranging from 0.0 to 1.0.

* **Tick Markers**: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

**Legends:**

**Top Sub-panels (located in the bottom-left of panel A's top sub-panel):**

* **Brown line with circular markers**: `E_λ(θ*)` (cross-entropy)

* **Blue line with circular markers**: `H_λ(θ*)` (path-entropy)

**Bottom Sub-panels (located in the bottom-left of panel A's bottom sub-panel):**

* **Black line with circular markers**: MFPT

* **Red line with circular markers**: WHR

**Panel Titles (top-right of each main panel):**

* **Panel A**: `τ=1`

* **Panel B**: `τ=20`

* **Panel C**: `τ=40`

### Detailed Analysis

**Panel A (τ=1)**

* **Top Sub-panel (Metric value vs. λ):**

* **Brown line (`E_λ(θ*)` - cross-entropy)**: Starts at approximately 0.8 at `λ=10^-3` and remains relatively flat until `λ` approaches 10^-1 (around 0.1). It then sharply increases, crossing 1.0 around `λ=0.2` and reaching a plateau at approximately 1.38 for `λ` values greater than 10^0 (1.0). Each data point is marked with a small brown circle.

* **Blue line (`H_λ(θ*)` - path-entropy)**: Starts at approximately 0.6 at `λ=10^-3` and gradually increases, reaching about 0.8 at `λ=10^-1`. It then sharply increases, crossing 1.0 around `λ=0.2` and reaching a plateau at approximately 1.38 for `λ` values greater than 10^0 (1.0). The blue line intersects the brown line around `λ=0.15` at a metric value of approximately 0.85. Each data point is marked with a small blue circle.

* **Bottom Sub-panel (Normalised MFPT / WHR vs. λ):**

* **Black line (MFPT)**: Starts at approximately 1.0 at `λ=10^-3` and fluctuates between 0.8 and 1.0 until `λ` approaches 10^-1 (around 0.1). It then sharply decreases, crossing 0.6 around `λ=0.2` and reaching a plateau at approximately 0.3 for `λ` values greater than 10^0 (1.0). Each data point is marked with a small black circle.

* **Red line (WHR)**: Starts at approximately 0.0 at `λ=10^-3` and remains at 0.0 until `λ` approaches 10^-1 (around 0.1). It then sharply increases, crossing 0.6 around `λ=0.2` and reaching a plateau at approximately 0.98 for `λ` values greater than 10^0 (1.0). Each data point is marked with a small red circle.

* **Grey scattered points**: Numerous grey circular data points are scattered across the plot. For `λ < 10^-1`, they are mostly clustered between 0.8 and 1.0. For `λ > 10^-1`, they show a wider spread, generally decreasing in value, with some points near 0.0 and others up to 1.0.

**Panel B (τ=20)**

* **Top Sub-panel (Metric value vs. λ):**

* **Brown line (`E_λ(θ*)` - cross-entropy)**: Similar to Panel A, starts at approximately 0.8 and remains flat until `λ` approaches 10^-1.5 (around 0.03). It then sharply increases, reaching a plateau at approximately 1.38 for `λ` values greater than 10^0 (1.0).

* **Blue line (`H_λ(θ*)` - path-entropy)**: Starts at approximately 0.58 and gradually increases. The sharp increase begins earlier than in Panel A, around `λ=10^-1.5` (around 0.03), and it reaches a plateau at approximately 1.38 for `λ` values greater than 10^0 (1.0). The blue line intersects the brown line around `λ=0.08` at a metric value of approximately 0.85.

* **Bottom Sub-panel (Normalised MFPT / WHR vs. λ):**

* **Black line (MFPT)**: Similar to Panel A, starts at approximately 1.0 and fluctuates. The sharp decrease begins earlier than in Panel A, around `λ=10^-1.5` (around 0.03), reaching a minimum of approximately 0.15 around `λ=0.1`, and then slightly increasing to a plateau around 0.3 for `λ` values greater than 10^0 (1.0).

* **Red line (WHR)**: Similar to Panel A, starts at approximately 0.0. The sharp increase begins earlier than in Panel A, around `λ=10^-1.5` (around 0.03), reaching a plateau at approximately 0.98 for `λ` values greater than 10^0 (1.0).

* **Grey scattered points**: Similar distribution to Panel A, but the transition zone appears to shift to lower `λ` values.

**Panel C (τ=40)**

* **Top Sub-panel (Metric value vs. λ):**

* **Brown line (`E_λ(θ*)` - cross-entropy)**: Similar to Panels A and B, starts at approximately 0.8 and remains flat until `λ` approaches 10^-2 (around 0.01). It then sharply increases, reaching a plateau at approximately 1.38 for `λ` values greater than 10^0 (1.0).

* **Blue line (`H_λ(θ*)` - path-entropy)**: Starts at approximately 0.58 and gradually increases. The sharp increase begins even earlier than in Panel B, around `λ=10^-2` (around 0.01), and it reaches a plateau at approximately 1.38 for `λ` values greater than 10^0 (1.0). The blue line intersects the brown line around `λ=0.05` at a metric value of approximately 0.85.

* **Bottom Sub-panel (Normalised MFPT / WHR vs. λ):**

* **Black line (MFPT)**: Similar to Panels A and B, starts at approximately 1.0 and fluctuates. The sharp decrease begins even earlier than in Panel B, around `λ=10^-2` (around 0.01), reaching a minimum of approximately 0.1 around `λ=0.08`, and then slightly increasing to a plateau around 0.3 for `λ` values greater than 10^0 (1.0).

* **Red line (WHR)**: Similar to Panels A and B, starts at approximately 0.0. The sharp increase begins even earlier than in Panel B, around `λ=10^-2` (around 0.01), reaching a plateau at approximately 0.98 for `λ` values greater than 10^0 (1.0).

* **Grey scattered points**: Similar distribution to Panels A and B, with the transition zone further shifted to lower `λ` values and a noticeable spread.

* **Light blue shaded region**: A shaded area is present from approximately `λ=0.04` to `λ=0.15`, covering Y-values from approximately 0.0 to 0.8. This region encompasses the minimum of the MFPT curve and the steep rising phase of the WHR curve.

### Key Observations

1. **Sigmoidal Transition**: All primary metrics (cross-entropy, path-entropy, MFPT, WHR) exhibit a sigmoidal-like transition as `λ` increases. They either increase or decrease sharply within a specific range of `λ` values, reaching a plateau on either side of this transition.

2. **Inverse Relationship**: In the top panels, `E_λ(θ*)` (cross-entropy) and `H_λ(θ*)` (path-entropy) generally increase with `λ`. In the bottom panels, MFPT decreases while WHR increases with `λ`.

3. **Shift with τ**: As `τ` increases from 1 to 20 to 40, the entire transition region for all metrics shifts towards lower `λ` values. This means the sharp changes in metric values occur at smaller `λ` for larger `τ`.

* For `τ=1`, the transition is roughly between `λ=0.1` and `λ=1.0`.

* For `τ=20`, the transition is roughly between `λ=0.01` and `λ=0.5`.

* For `τ=40`, the transition is roughly between `λ=0.005` and `λ=0.2`.

4. **MFPT Minimum**: For `τ=20` and `τ=40`, the MFPT curve shows a distinct minimum before slightly increasing to its plateau. This minimum becomes more pronounced and shifts to lower `λ` values as `τ` increases.

5. **WHR Saturation**: WHR consistently saturates near 1.0 for larger `λ` values across all `τ`.

6. **Cross-entropy vs. Path-entropy**: `E_λ(θ*)` starts higher and remains flat for small `λ`, while `H_λ(θ*)` starts lower and gradually increases before the sharp transition. They converge to the same high value after the transition.

7. **Scattered Data**: The grey scattered points in the bottom panels suggest individual trial results or underlying variability, which the black and red lines represent as averaged or smoothed trends.

### Interpretation

The data presented in these charts likely illustrates the behavior of a system or model under varying conditions, controlled by parameters `λ` and `τ`.