# G2RPO-A: Guided Group Relative Policy Optimization with Adaptive Guidance

> Equal Contribution.

## Abstract

Reinforcement Learning with Verifiable Rewards (RLVR) has markedly enhanced the reasoning abilities of large language models (LLMs). Its success, however, largely depends on strong base models with rich world knowledge, yielding only modest improvements for small-size language models (SLMs). To address this limitation, we investigate Guided GRPO, which injects ground-truth reasoning steps into roll-out trajectories to compensate for SLMs’ inherent weaknesses. Through a comprehensive study of various guidance configurations, we find that naively adding guidance delivers limited gains. These insights motivate G 2 RPO-A, an adaptive algorithm that automatically adjusts guidance strength in response to the model’s evolving training dynamics. Experiments on mathematical reasoning and code-generation benchmarks confirm that G 2 RPO-A substantially outperforms vanilla GRPO. Our code and models are available at https://github.com/T-Lab-CUHKSZ/G2RPO-A.

## 1 Introduction

Recent advancements in reasoning-centric large language models (LLMs), exemplified by DeepSeek-R1 Guo et al. (2025), OpenAI-o1 Jaech et al. (2024), and Qwen3 Yang et al. (2025a), have significantly expanded the performance boundaries of LLMs, showcasing the immense potential of reasoning-enhanced models. Building upon robust base models with comprehensive world knowledge, these reasoning-focused LLMs have achieved breakthrough progress in complex domains such as mathematics Guan et al. (2025), coding Souza et al. (2025); HUANG et al. (2025), and other grounding tasks (Li et al., 2025b; Wei et al., 2025). At the core of this success lies Reinforcement Learning with Verifiable Rewards (RLVR) (Shao et al., 2024; Chu et al., 2025; Liu et al., 2025). This innovative approach, which employs reinforcement learning techniques in LLMs using rule-based outcome rewards, has garnered significant attention in the AI community. RLVR has demonstrated remarkable improvement in generalization across a wide spectrum of downstream tasks (Jia et al., 2025; Wu et al., 2025), positioning it as a pivotal advancement in the field of artificial intelligence.

As the de-facto algorithm, Group Relative Policy Optimization (GRPO) (Shao et al., 2024) improves upon Proximal Policy Optimization (PPO) (Schulman et al., 2017) by removing the need for a critic model through inner-group response comparison, thereby speeding up the training.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Training Dynamics of Simple Prompt Guidance

### Overview

This is a line chart titled "The training dynamics of simple prompt guidance." It plots the "Accuracy reward" (y-axis) against the "Global step" (x-axis) for two different training methods over the course of approximately 32 steps. The chart demonstrates a comparative performance trend, showing an initial decline followed by a significant recovery and increase for both methods.

### Components/Axes

* **Chart Title:** "The training dynamics of simple prompt guidance" (centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy reward" (rotated vertically on the left).

* **Scale:** Linear scale ranging from 0.45 to 0.60.

* **Major Ticks:** 0.45, 0.50, 0.55, 0.60.

* **X-Axis:**

* **Label:** "Global step" (centered at the bottom).

* **Scale:** Linear scale ranging from -2 to 34.

* **Major Ticks:** -2, 0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30, 32, 34.

* **Legend:**

* **Position:** Bottom-right corner of the chart area, slightly overlapping the plot.

* **Items:**

1. **Blue line with square markers:** "With simple guidance"

2. **Red line with square markers:** "Original GRPO"

### Detailed Analysis

The chart tracks two data series. The visual trend for both is a distinct "U" or "check-mark" shape: a decline in the first half of training, a trough in the middle, and a sharp rise in the final steps.

**1. Data Series: "With simple guidance" (Blue Line)**

* **Trend:** Starts at a moderate value, declines steadily to a minimum around step 20, then rises sharply, ending at the highest point on the chart.

* **Approximate Data Points:**

* Step 0: ~0.542

* Step 4: ~0.525

* Step 10: ~0.485

* Step 15: ~0.480

* Step 20: ~0.475 (Approximate minimum)

* Step 25: ~0.490

* Step 30: ~0.528

* Step 32: ~0.595 (Approximate maximum)

**2. Data Series: "Original GRPO" (Red Line)**

* **Trend:** Follows a nearly identical pattern to the blue line but starts higher and ends slightly lower. It also declines, troughs, and then experiences a late, sharp increase.

* **Approximate Data Points:**

* Step 0: ~0.570

* Step 4: ~0.532

* Step 10: ~0.492

* Step 15: ~0.488

* Step 20: ~0.482 (Approximate minimum)

* Step 25: ~0.490 (Converges with blue line)

* Step 30: ~0.528 (Converges with blue line)

* Step 32: ~0.590

### Key Observations

1. **Parallel Trajectories:** The two lines are remarkably parallel for most of the training process, suggesting the underlying training dynamics are similar regardless of the guidance method.

2. **Performance Crossover:** The "Original GRPO" (red) starts with a higher accuracy reward. The "With simple guidance" (blue) method starts lower but ends at a slightly higher final value (~0.595 vs. ~0.590).

3. **Convergence Point:** The two lines appear to converge and overlap almost exactly between Global steps 25 and 30.

4. **Late-Stage Spike:** The most dramatic feature is the very sharp, near-vertical increase in accuracy reward for both methods after step 30, suggesting a significant event or phase change in the training process at that point.

5. **Mid-Training Trough:** Both methods experience their lowest performance around step 20, indicating a challenging period in the middle of the training run.

### Interpretation

The data suggests that the "simple prompt guidance" method modifies the training trajectory of the Original GRPO algorithm but does not fundamentally alter its learning dynamics. Both methods follow the same pattern of initial performance degradation followed by recovery.

The key finding is the **late-stage performance spike**. This could indicate:

* A delayed effect of the guidance mechanism finally taking hold.

* The model reaching a critical capacity or discovering a more efficient solution pathway after a period of exploration (the trough).

* A potential artifact of the training schedule, such as a learning rate change or the introduction of a new data phase around step 30.

The fact that the guided method ends with a marginally higher reward, despite starting lower, implies it may offer a slight long-term benefit, though the difference is small. The convergence in the middle suggests that for a significant portion of training, the guidance has a negligible effect on the measured accuracy reward. The chart effectively demonstrates that the guidance influences the starting point and the final peak but not the overall shape of the learning curve.

</details>

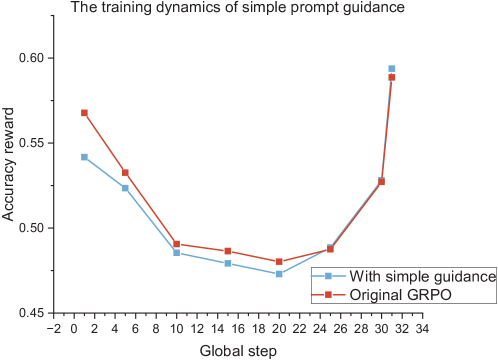

Figure 1: Naive guidance does not help. Using Qwen2.5-Math-7B as the base model, we train it on the s1K-1.1 dataset for a single epoch with a simple, fixed-length guidance (naive guidance). The naive guidance method shows a temporary increase in the accuracy reward during the early training stages, but it quickly becomes indistinguishable from the vanilla GRPO curve.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Mathematical Problem and LLM Reasoning Process

### Overview

The image is a horizontally-oriented diagram illustrating a mathematical problem and a subsequent step-by-step reasoning process by a Large Language Model (LLM). It is divided into three distinct sections connected by a directional arrow, showing the flow from problem statement to guided explanation to the LLM's internal reasoning.

### Components/Axes

The diagram consists of three primary text blocks, each accompanied by a small illustrative icon.

1. **Left Section (Problem Statement):**

* **Icon:** A stylized profile of a person's head with a pink question mark bubble, positioned at the bottom-right of the text block.

* **Text Block:** Contains the core mathematical problem statement.

2. **Middle Section (Guidance):**

* **Icon:** A cartoon figure of a person with glasses and a beard, positioned at the top-left of the text block.

* **Text Block:** Provides a guided, step-by-step breakdown of the problem.

3. **Right Section (LLM Response):**

* **Icon:** A cartoon robot, positioned at the top-left of the text block.

* **Text Block:** Shows the LLM's internal reasoning process as it parses and works through the problem.

* **Connector:** A blue arrow points from the Guidance section to the LLM Response section, indicating the flow of information or the prompting sequence.

### Detailed Analysis / Content Details

**1. Left Section - Problem Statement (Transcription):**

> For the sequence of positive numbers:

> {a_n}, the sum of the first n terms is b_n,

> and the product of the first n terms of

> the sequence {b_n} is c_n, and it is given

> that b_n + 2c_n = 1 (n ∈ Z+). Find the

> number in the sequence {1/a_n} that is

> closest to 2013.

**2. Middle Section - Guidance (Transcription):**

> Guidance:

> Alright, let's try to tackle this problem

> step by step. So, we have a sequence of

> positive numbers {a_n}. The sum of the first

> n terms is b_n. Then, the product of the first

> n terms of the sequence {b_n} is c_n. It's given that for every positive

> integer n, b_n + 2c_n = 1. We need to find the

> number in the sequence {1/a_n} that is

> closest to 2013.

**3. Right Section - LLM Response (Transcription):**

> LLMs continue response:

> Hmm, okay. Let me parse this again. The

> sum of the first n terms of {a_n} is b_n.

> Then, the product of the first n terms of

> {b_n} is c_n. So, c_n = b_1 * b_2 * ... * b_n. And

> for each n, b_n + 2c_n = 1. Interesting.

> So, for each n, both b_n and c_n are

> involved in this equation. Since {a_n} are

> positive, all the b_n will be positive as

> well, and hence c_n is also positive.

### Key Observations

* **Structured Problem-Solving:** The diagram explicitly models a pedagogical or debugging process: presenting a problem, offering structured guidance, and then showing an AI's attempt to follow that guidance.

* **Mathematical Notation:** The text uses standard mathematical notation for sequences ({a_n}, {b_n}), summation (implied by "sum of the first n terms"), product, and set membership (n ∈ Z+).

* **LLM Reasoning Pattern:** The LLM's response begins with a parsing step ("Let me parse this again"), restates the problem in its own words, defines terms explicitly (c_n = b_1 * b_2 * ... * b_n), and makes an initial logical deduction about positivity based on the given constraints.

* **Visual Flow:** The arrow creates a clear narrative: Problem -> Human-like Guidance -> AI Reasoning.

### Interpretation

This diagram serves as a meta-illustration of AI-assisted problem-solving. It doesn't contain numerical data or trends but rather documents a *process*.

* **What it demonstrates:** The image captures a moment in the interaction between a human-provided framework (the "Guidance") and an AI's cognitive process. It highlights how an LLM can be prompted to break down a complex, multi-step mathematical problem into constituent parts and begin formalizing the relationships between variables (a_n, b_n, c_n).

* **Relationship between elements:** The Guidance section acts as a scaffold, translating the dense problem statement into a more conversational, step-by-step format. The LLM's response directly mirrors this structure, indicating successful comprehension and the initiation of a solution path. The core mathematical relationship `b_n + 2c_n = 1` is the central constraint that both the guidance and the LLM focus on.

* **Notable aspects:** The LLM's first deductive step—inferring the positivity of all `b_n` and `c_n` from the positivity of `a_n`—is a correct and crucial logical foundation for solving the problem. The diagram stops at this preliminary parsing and setup phase, leaving the actual solution (finding the term in `{1/a_n}` closest to 2013) unexplored. The value "2013" is a specific target number, suggesting the problem may have been crafted for a particular year or context.

</details>

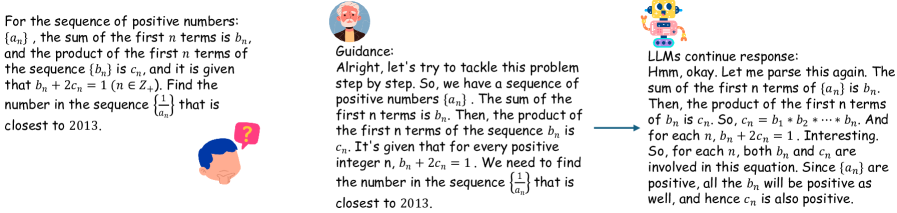

Figure 2: Illustration of roll-outs with guidance. An example of using high-quality thinking trajectories to guide models.

#### Capacity of small-size LLMs limit the performance gains of GRPO.

Despite GRPO’s success with large-scale LLMs, its effectiveness is significantly constrained when applied to smaller LLMs. Recent research (Ye et al., 2025; Muennighoff et al., 2025a) reveals that GRPO’s performance gains highly depend on the base model’s capacity Bae et al. (2025); Xu et al. (2025); Zhuang et al. (2025). Consequently, small-scale LLMs (SLMs) show limited improvement under GRPO (Table 3, 8), exposing a critical scalability challenge in enhancing reasoning capabilities across diverse model sizes. To address this challenge, researchers have explored various approaches: distillation Guo et al. (2025), multi-stage training Xu et al. (2025) prior to RLVR, and selective sample filtering Xiong et al. (2025); Shi et al. (2025). However, these methods precede RLVR or suffer performance degradation in complex problems (Table 7). Consequently, optimizing the RLVR process for efficient learning in SLMs remains an open challenge, representing a critical frontier in AI research.

#### Adaptive guidance as a solution.

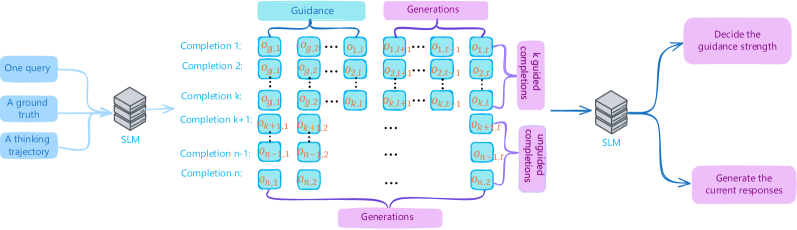

We propose incorporating guidance into the roll-out process to facilitate the generation of high-quality, reward-worthy candidates (Figure 2). However, our initial findings revealed that the implementation of simple fixed-length guidance to the prompts (naive guidance) failed to improve overall performance (Figure 1). Through a comprehensive analysis of the guidance mechanism—varying both the proportion of guided roll-outs within GRPO batches and the guidance length over training epochs—we obtained two key findings: (1) Code-generation tasks benefit from a higher guidance ratio than mathematical reasoning tasks, and smaller models likewise require more guidance than larger ones. (2) The optimal guidance length evolves throughout training and is highly context-dependent, rendering simple, predefined schedules ineffective. In response, we introduce the Guided Group Relative Policy Optimization with Adaptive Guidance (G 2 RPO-A) algorithm. This innovative approach dynamically adjusts guidance length based on the model’s real-time learning state, offering a sophisticated solution to the challenges of enhancing small-size LLMs’ performance in RLVR processes. The key contributions of this paper are summarized as follows:

- We enhance GRPO for small-scale LLMs by injecting guidance into the rollout thinking trajectories and conduct a systematic analysis of the effects of key guidance configurations, specifically focusing on the guidance ratio and guidance length.

- Our study also examines the importance of hard training samples. We find that integrating these samples into the dataset using a curriculum learning approach, and aided by the guidance mechanism, significantly boosts the training efficiency of our method for SLMs.

- Drawing on these findings, we introduce G 2 RPO-A, an adaptive algorithm that automatically adjusts guidance length in response to the evolving training state. Our experimental results demonstrate the effectiveness of the proposed G 2 RPO-A algorithm.

- We evaluate our method on mathematical reasoning and coding tasks with several models–including the Qwen3 series, DeepSeek-Math-7B-Base, and DeepSeek-Coder-6.7B-Base–and observe substantial performance gains over both vanilla GRPO and simple guided baselines.

## 2 Related Works

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: AI Response Generation with Guidance Mechanism

### Overview

The image is a technical flowchart illustrating a process for generating AI responses using a Small Language Model (SLM) with an integrated guidance mechanism. The diagram shows a multi-stage pipeline that takes user inputs, processes them through an SLM to generate multiple completions, applies guidance to these generations, and then uses a second SLM pass to decide on guidance strength and produce the final output. The overall flow moves from left to right.

### Components/Axes

The diagram is organized into several distinct regions and components:

**1. Input Region (Far Left):**

* Three blue, rounded rectangular boxes stacked vertically.

* **Labels (from top to bottom):**

* `One query`

* `A ground truth`

* `A thinking trajectory`

* These three inputs are connected by lines converging into the first processing block.

**2. First Processing Block (Center-Left):**

* A gray, 3D-styled box labeled `SLM` (Small Language Model).

* It receives the three inputs from the left.

**3. Generation & Guidance Region (Center):**

This is the core of the diagram, split into two parallel vertical sections.

* **Left Section - "Guidance":** A light blue header box labeled `Guidance`. Below it is a column of light blue, rounded rectangular boxes representing different "Completions."

* **Labels (from top to bottom):**

* `Completion 1:`

* `Completion 2:`

* `Completion k:`

* `Completion k+1:`

* `Completion n-1:`

* `Completion n:`

* Each "Completion" label is followed by a sequence of mathematical symbols in small boxes (e.g., `η₁₁`, `η₁₂`, ..., `η₁ₘ` for Completion 1). The sequences vary in length and the specific `η` subscripts. The symbols for Completion `k+1` are highlighted in orange.

* **Right Section - "Generations":** A light purple header box labeled `Generations`. Below it is a corresponding column of light purple, rounded rectangular boxes.

* These boxes contain sequences of symbols (e.g., `g₁₁`, `g₁₂`, ..., `g₁ₘ` for the first row) that align horizontally with the "Guidance" completions.

* The sequences for the row corresponding to `Completion k+1` are also highlighted in orange.

* **Connections:** Horizontal lines connect each "Guidance" completion box to its corresponding "Generations" box. A large purple bracket at the bottom labeled `Generations` encompasses all the purple generation boxes.

**4. Comparison & Second Processing Block (Center-Right):**

* Two vertical, purple rectangular boxes labeled `Compare generations` are positioned to the right of the "Generations" column. They appear to aggregate or process the outputs from the generation stage.

* Lines from these comparison boxes converge into a second gray, 3D-styled box labeled `SLM`.

**5. Output Region (Far Right):**

* Two pink, rounded rectangular boxes stacked vertically, connected by lines from the second `SLM` block.

* **Labels (from top to bottom):**

* `Decide the guidance strength`

* `Generate the current responses`

### Detailed Analysis

The diagram depicts a cyclical or iterative refinement process:

1. **Input Stage:** A single query, its associated ground truth, and a "thinking trajectory" (likely a chain-of-thought or reasoning path) are fed into an initial SLM.

2. **Parallel Generation Stage:** The SLM produces `n` different "Completions" (labeled 1 through n). Each completion is a sequence of tokens or steps, represented by `η` variables with double subscripts (e.g., `η₁₁` is the first token of the first completion). Simultaneously, a corresponding set of "Generations" (sequences represented by `g` variables) is created. The highlighting of row `k+1` suggests it may be a focal or example point in the process.

3. **Guidance Application:** The "Guidance" column implies that some form of steering or conditioning is applied to the generation process, influencing the `η` sequences.

4. **Comparison and Decision:** The generated sequences are compared (via the "Compare generations" blocks). This comparison output is fed into a second SLM.

5. **Final Output:** The second SLM performs two tasks: it decides on the appropriate "guidance strength" for future iterations and produces the final "current responses."

### Key Observations

* **Dual SLM Architecture:** The process uses two instances (or stages) of an SLM—one for initial generation and one for meta-decision making.

* **Parallel Processing:** The system generates multiple completions (`n` of them) in parallel, suggesting a strategy like beam search, best-of-n sampling, or ensemble generation.

* **Explicit Guidance Mechanism:** The diagram explicitly separates "Guidance" from "Generations," indicating that guidance is a distinct, controllable input or modifier to the generation process.

* **Iterative Potential:** The output "Decide the guidance strength" suggests the system can adaptively tune its own guidance parameter for subsequent steps, creating a feedback loop.

* **Mathematical Notation:** The use of `η` (eta) and `g` variables with double subscripts provides a formal, mathematical representation of the token sequences, common in machine learning literature.

### Interpretation

This diagram illustrates a sophisticated framework for improving the quality and controllability of text generation from a Small Language Model. The core innovation appears to be an **adaptive guidance loop**.

* **What it demonstrates:** Instead of generating a single response, the system creates a diverse set of candidate completions (`n` paths). A separate process evaluates these candidates ("Compare generations") and uses a second model pass to determine how strongly to guide the model ("guidance strength") for the next iteration. This is akin to a model self-critiquing its outputs and adjusting its own parameters for better results.

* **Relationship between elements:** The inputs (query, ground truth, thinking trajectory) provide the foundation. The first SLM explores the solution space by generating multiple possibilities. The guidance mechanism steers this exploration. The comparison and second SLM act as a controller or manager, optimizing the guidance strategy based on the observed outputs.

* **Notable implications:** This approach could lead to more reliable, accurate, and context-appropriate responses compared to a single-pass generation. The "thinking trajectory" input is particularly interesting, as it suggests the model is being conditioned not just on the desired answer (ground truth) but also on a reasoning process, potentially improving its explanatory capabilities. The system embodies a form of **meta-learning**, where the model learns to improve its own generation process dynamically.

</details>

Figure 3: Overview of G 2 RPO-A. Each step we split roll-outs into a guided set and an unguided set. We then compare the current rewards with those from the previous steps; the resulting ratio determines the future guidance length.

The introduction of chain-of-thought (CoT) prompting has markedly improved LLM performance on complex reasoning tasks (Wei et al., 2022; Kojima et al., 2022). Complementing this advance, Reinforcement Learning with Verifiable Rewards (RLVR) has emerged as a powerful training paradigm for reasoning-centric language models Yue et al. (2025); Lee et al. (2024). The de-facto RLVR algorithm, Group Relative Policy Optimization (GRPO) (Guo et al., 2025) delivers strong gains on various benchmarks while remaining training-efficient because it dispenses with the need for a separate critic network Wen et al. (2025); Shao et al. (2024). Recent efforts to improve GRPO have explored several directions. Some approaches focus on refining the core GRPO objective, either by pruning candidate completions Lin et al. (2025) or by removing normalization biases Liu et al. (2025). Separately, another studies aim to enhance the training signal and stability. DAPO Yu et al. (2025), for instance, introduces dense, step-wise advantage signals and decouples the actor-critic training to mitigate reward sparsity.

However, adapting GRPO–style algorithms to small-scale LLMs remains challenging due to the sparse-reward problem Lu et al. (2024); Nguyen et al. (2024); Dang and Ngo (2025). Recent studies have therefore focused on improved reward estimation Cui et al. (2025). TreeRPO Yang et al. (2025b) uses a tree-structured sampling procedure to approximate step-wise expected rewards, and Hint-GRPO Huang et al. (2025) applies several reward-shaping techniques. Other lines of research investigate knowledge distillation (Guo et al., 2025), multi-stage pre-training before RLVR Xu et al. (2025), and selective sample filtering Xiong et al. (2025); Shi et al. (2025). In our experiments, however, these filtering or sampling strategies are performed either only before RLVR or do not improve performance on more complex tasks. In this paper, we introduce a guidance mechanism that injects ground-truth reasoning steps directly into the model’s roll-out trajectories during RL training. Because the guidance is provided online, the proposed method can still learn effectively from difficult examples while mitigating the sparse-reward issue.

The role of guidance in GRPO-style training remains underexplored. Two concurrent studies have addressed related questions (Nath et al., 2025; Park et al., 2025), but both simply append guidance tokens to the input prompt, offering neither a systematic analysis of guidance configurations nor a mechanism that adapts to the changing training state of the model. We show that naive guidance often fails to improve performance because it yields low expected advantage. To remedy this, we provide a comprehensive examination of how guidance length, ratio, and scheduling affect learning, and we introduce G 2 RPO-A, an adaptive strategy that dynamically adjusts guidance strength throughout training.

## 3 Preliminary

#### Group Relative Policy Optimization (GRPO).

Given a prompt, GRPO Shao et al. (2024) samples $G$ completions and computes their rewards $\{r_{i}\}_{i=1}^{G}$ . Define the $t^{\text{th}}$ token of the $i^{\text{th}}$ completion as $o_{i,t}$ . GRPO then assigns a advantage, $\hat{A}_{i,t}$ to it. The optimization objective is defined as:

$$

\begin{split}\mathcal{L}_{\text{GRPO}}(\theta)=-\frac{1}{\sum_{i=1}^{G}|o_{i}|}\sum_{i=1}^{G}\sum_{t=1}^{|o_{i}|}\biggl{[}\min\biggl{(}w_{i,t}\hat{A}_{i,t},\text{clip}\left(w_{i,t},1-\epsilon,1+\epsilon\right)\hat{A}_{i,t}\biggr{)}-\beta\mathcal{D}_{\text{KL}}(\pi_{\theta}\|\pi_{\text{ref}})\biggr{]},\end{split} \tag{1}

$$

where the importance weight $w_{i,t}$ is given by

$$

w_{i,t}=\frac{\pi_{\theta}(o_{i,t}\mid q,o_{i,<t})}{[\pi_{\theta_{\text{old}}}(o_{i,t}\mid q,o_{i,<t})]}. \tag{2}

$$

The clipping threshold $\epsilon$ controls update magnitude, $[\,\cdot\,]_{\text{old}}$ indicates policy at last step, $\beta$ is the influence of KL divergence $\mathcal{D}_{\text{KL}}$ , whose detailed definition can be found in the section Detailed equations in Appendix.

#### Limitations of GRPO for small-size LLMs.

Despite the success of GRPO in large language models (LLMs), small-size LLMs (SLMs) face significant challenges when confronted with complex problems requiring long chains of thought Zhang et al. (2025). Due to their inherently limited capacity, SLMs struggle to generate high-quality, reward-worthy candidates for such tasks Li et al. (2025a); Zheng et al. (2025). As shown in Figure 4(a), where Qwen3-1.7B is implemented on a code task and it fails to generate correct answers for most queries. This limitation substantially reduces the probability of sampling high-reward candidates, resulting in advantage signals vanishing (Figure 5(b)), thereby constraining the potential performance gains achievable through GRPO in SLMs.

## 4 Methodology

To address the limitations of GRPO on SLMs, we propose incorporating guidance mechanisms into the thinking trajectories, thereby facilitating the sampling of high-quality candidates. We then conduct a comprehensive investigation into various design choices for guidance strategies. Finally, we introduce the G 2 RPO-A algorithm, which integrates our empirical observations and significantly reduces the need for extensive hyperparameter tuning.

### 4.1 Guided GRPO as a Solution

The Guided GRPO can be formulated as:

| | | $\displaystyle\mathcal{L}_{\text{guided}}(\theta)=\mathbb{E}_{(q,a)\sim\mathcal{D},\{g_{i}\}_{i=1}^{G}\sim\mathcal{G},\{o_{i}\}_{i=1}^{G}\sim\pi_{\text{ref}}(\cdot|q,g_{i})}$ | |

| --- | --- | --- | --- |

where $w_{g,i,t}$ and $w_{o,i,t}$ denote the token-level weighting coefficient of guidance $g_{i}$ and the model outputs $o_{i}$ , respectively. As shown in Figure 4(b), this guidance enables SLMs to generate higher-reward candidates, potentially overcoming their inherent limitations.

#### Naive Guided GRPO fails to boost the final performance.

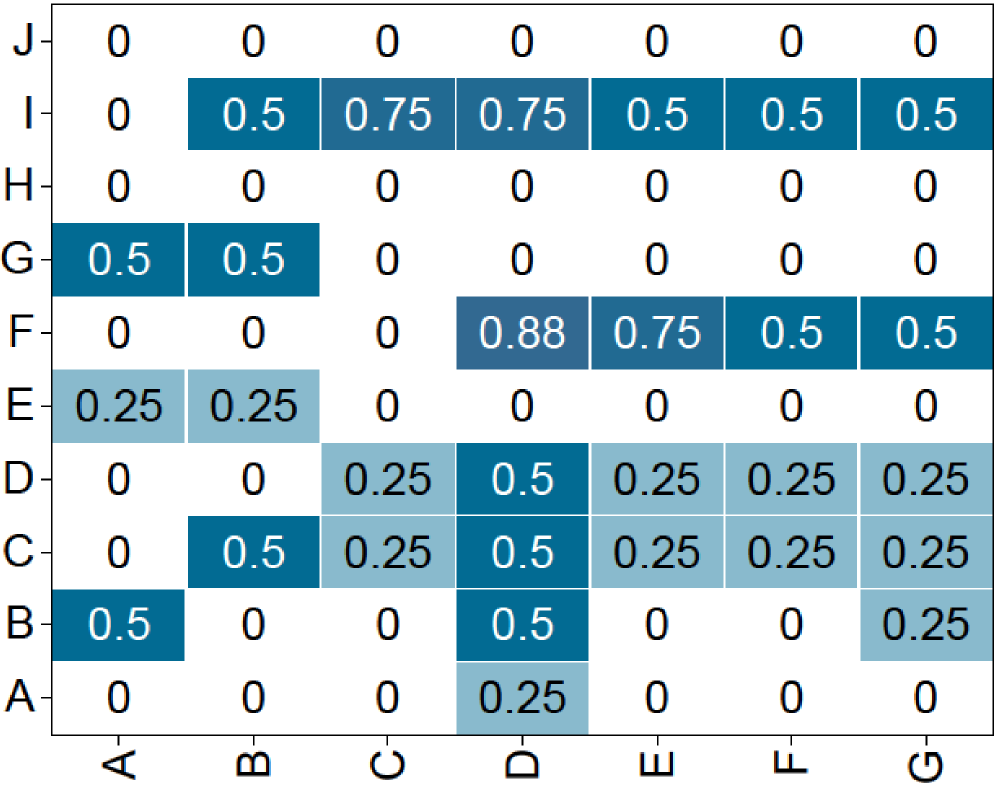

Despite increasing expected rewards (Figure 4(b)), we found that

simply adding guidance to thinking trajectories of all candidates doesn’t enhance final performance and suffers from low advantage.

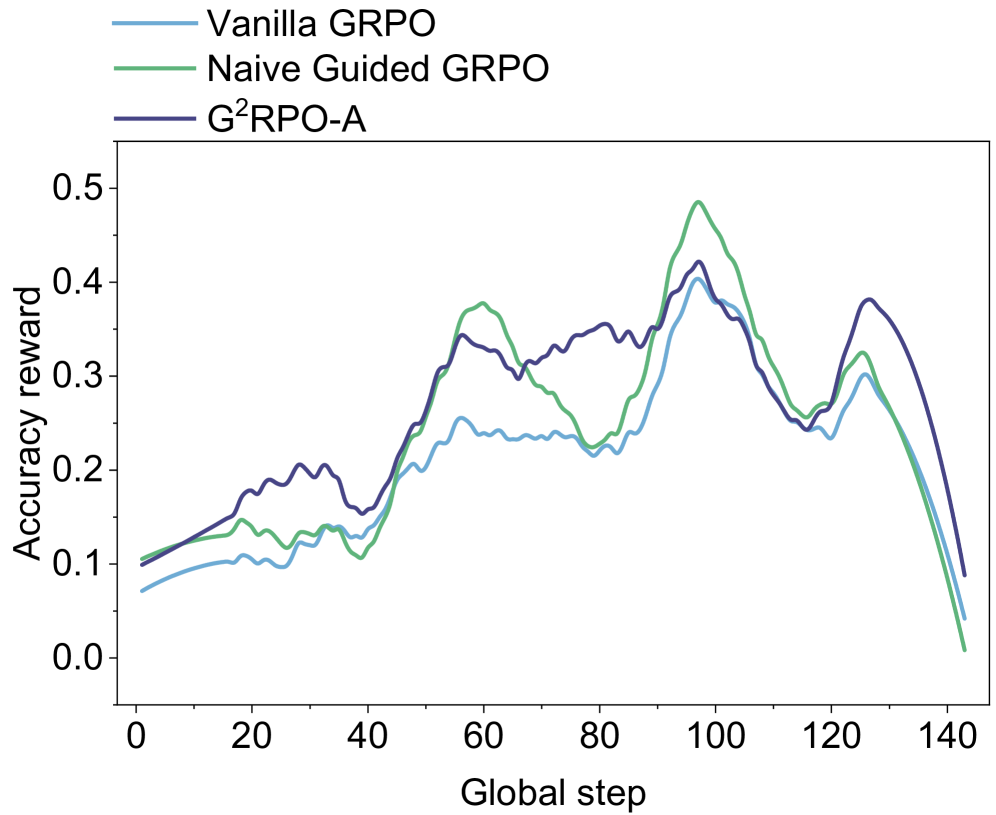

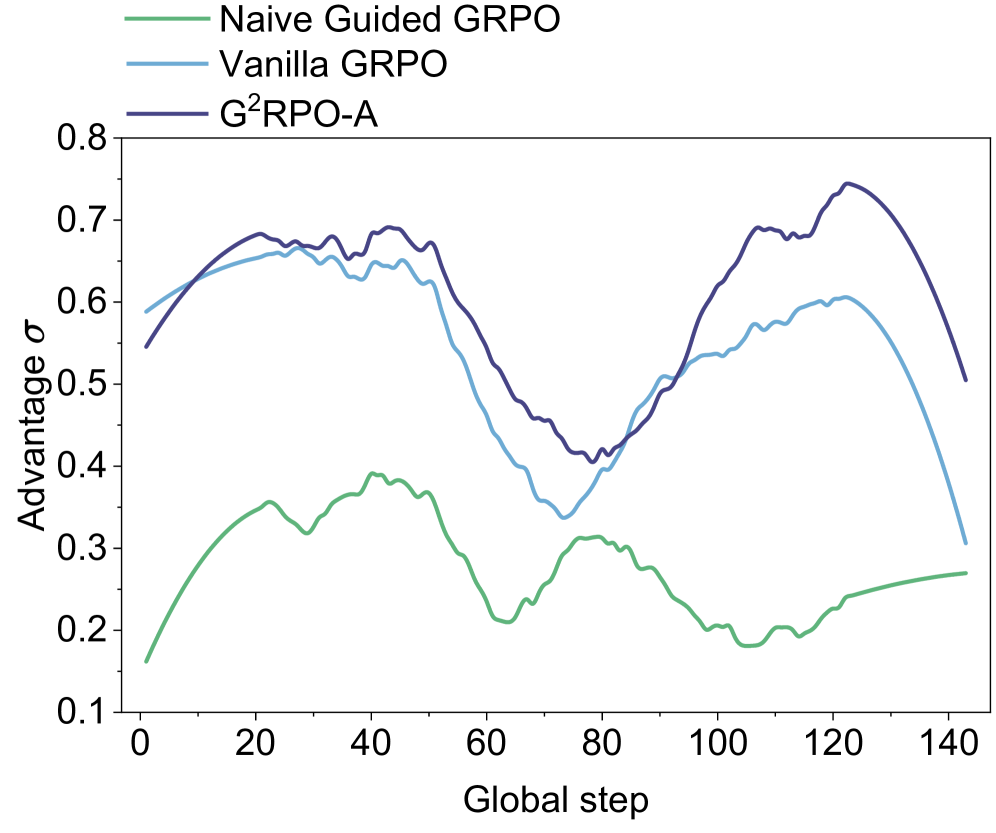

As shown in Figure 5(a) and 5(b), we train Qwen-3-1.7B-Base on a math dataset sourced from math 220k dataset Wang et al. (2024), and find that: (1) Guided GRPO’s accuracy reward curve almost matches original GRPO. (2) Guided GRPO suffers from low advantage standard deviation, hindering the optimization of the models. As a result, further investigation is needed to leverage Guided GRPO’s higher rewards while ensuring effective training, as the naive approach fails to utilize its potential benefits.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Heatmap: Row-Column Value Matrix

### Overview

The image displays a heatmap or matrix chart with 10 rows (labeled A through J) and 7 columns (labeled A through G). Each cell contains a numerical value, and the cell's background color intensity corresponds to that value, with darker shades of blue representing higher numbers. The chart is presented on a plain white background.

### Components/Axes

* **Vertical Axis (Y-axis):** Labeled with single letters from bottom to top: A, B, C, D, E, F, G, H, I, J.

* **Horizontal Axis (X-axis):** Labeled with single letters from left to right: A, B, C, D, E, F, G.

* **Data Encoding:** Each cell's value is displayed as text (e.g., "0.5", "0.25"). The background color is a shade of blue, where the saturation/lightness correlates with the numerical value. Higher values (e.g., 0.88, 0.75) are a darker, more saturated blue. Lower non-zero values (e.g., 0.25) are a lighter, grayish-blue. Zero values have a white background.

* **Legend:** There is no explicit legend provided. The color-to-value mapping must be inferred from the data presented.

### Detailed Analysis

The following table reconstructs the matrix data. Rows are listed from top (J) to bottom (A), matching the visual layout.

| Row \ Col | A | B | C | D | E | F | G |

| :-------- | :---- | :---- | :---- | :---- | :---- | :---- | :---- |

| **J** | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| **I** | 0 | 0.5 | 0.75 | 0.75 | 0.5 | 0.5 | 0.5 |

| **H** | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| **G** | 0.5 | 0.5 | 0 | 0 | 0 | 0 | 0 |

| **F** | 0 | 0 | 0 | 0.88 | 0.75 | 0.5 | 0.5 |

| **E** | 0.25 | 0.25 | 0 | 0 | 0 | 0 | 0 |

| **D** | 0 | 0 | 0.25 | 0.5 | 0.25 | 0.25 | 0.25 |

| **C** | 0 | 0.5 | 0.25 | 0.5 | 0.25 | 0.25 | 0.25 |

| **B** | 0.5 | 0 | 0 | 0.5 | 0 | 0 | 0.25 |

| **A** | 0 | 0 | 0 | 0.25 | 0 | 0 | 0 |

**Trend Verification & Spatial Grounding:**

* **Row J (Top):** All cells are 0 with white backgrounds. No trend.

* **Row I:** Shows a cluster of high values. The line of data slopes upward from left (0 at A-I) to a peak at C-I and D-I (0.75), then slopes gently downward to 0.5 at G-I. The cells from B-I to G-I are all dark blue.

* **Row H:** All cells are 0 with white backgrounds. No trend.

* **Row G:** Contains two 0.5 values at the far left (A-G, B-G), both dark blue. The rest are 0.

* **Row F:** Shows a distinct peak. The value is 0 at A-F, B-F, C-F, then jumps to the highest value in the chart, 0.88, at D-F (darkest blue cell). It then decreases to 0.75 at E-F, and 0.5 at F-F and G-F.

* **Row E:** Contains two 0.25 values at the far left (A-E, B-E), both light blue. The rest are 0.

* **Row D:** Values are mostly 0.25 (light blue) except for a 0.5 (darker blue) at D-D.

* **Row C:** Similar pattern to Row D, with a 0.5 at B-C and D-C, and 0.25 elsewhere from C-C to G-C.

* **Row B:** Scattered values: 0.5 (dark blue) at A-B and D-B, and 0.25 (light blue) at G-B.

* **Row A (Bottom):** Only one non-zero value: 0.25 (light blue) at D-A.

### Key Observations

1. **Zero-Dominant Rows:** Rows J and H are entirely zero, creating two horizontal bands of white across the matrix.

2. **High-Value Clusters:** The highest values (0.75, 0.88) are concentrated in the central columns (C, D, E) of rows I and F.

3. **Column D Prominence:** Column D contains non-zero values in 8 out of 10 rows (all except J and H), making it the most frequently active column.

4. **Symmetry/Pattern:** There is no obvious diagonal symmetry. The distribution appears asymmetric, with higher values clustered in the upper-middle section (Rows I, F) and lower values scattered in the lower rows (A-E).

5. **Value Discrete Set:** All values are multiples of 0.25 (0, 0.25, 0.5, 0.75, 0.88). The 0.88 value is an outlier as it is not a clean multiple of 0.25.

### Interpretation

This heatmap likely represents a **correlation matrix, a confusion matrix, or a similarity/distance matrix** between entities labeled A-J (rows) and A-G (columns). The asymmetry (10x7 grid) suggests the row and column sets are different, possibly representing two different groups or stages.

* **What the data suggests:** The matrix shows weak to moderate relationships (values ≤ 0.88) between the row and column entities. The strongest relationship (0.88) is between Row F and Column D. The complete lack of relationship (0) between certain pairs (e.g., all of Row J with anything) is also significant.

* **How elements relate:** The color intensity provides an immediate visual cue for strength. The clustering of dark blue in the upper-middle indicates that entities I and F have the strongest and most widespread connections to the column entities, particularly to columns C, D, and E.

* **Notable anomalies:** The value 0.88 at (F, D) is the maximum and stands out. The two all-zero rows (J, H) are notable for their complete lack of measured relationship with any column entity. The pattern suggests that entities A, B, C, D, E, F, G (columns) have varying degrees of connection to the row entities, with D being the most connected column.

</details>

(a) GRPO

<details>

<summary>x5.png Details</summary>

### Visual Description

## Heatmap: Grid of Numerical Values (Rows A-J, Columns A-G)

### Overview

The image displays a heatmap or grid chart with 10 rows (labeled A through J from bottom to top) and 7 columns (labeled A through G from left to right). Each cell contains a numerical value, primarily between 0 and 1, and is shaded in varying intensities of blue. Darker blues correspond to higher values, while lighter blues or white correspond to lower values. The chart presents a matrix of data without an explicit title or legend for the color scale.

### Components/Axes

- **Vertical Axis (Rows):** Labeled from bottom to top as A, B, C, D, E, F, G, H, I, J.

- **Horizontal Axis (Columns):** Labeled from left to right as A, B, C, D, E, F, G.

- **Cell Values:** Each cell contains a decimal number, typically with two decimal places (e.g., 0.25, 0.5, 0.75, 0.98).

- **Color Coding:** Cells are filled with shades of blue. The intensity correlates with the numerical value: higher values (e.g., 1, 0.98) are dark blue, mid-range values (e.g., 0.5) are medium blue, and lower values (e.g., 0.25, 0) are light blue or white.

### Detailed Analysis

The following table reconstructs the grid's data, with rows listed from top (J) to bottom (A) for readability. All values are transcribed directly from the image.

| Row | Col A | Col B | Col C | Col D | Col E | Col F | Col G |

|-----|-------|-------|-------|-------|-------|-------|-------|

| **J** | 0.75 | 0.75 | 1 | 1 | 0.75 | 1 | 0.75 |

| **I** | 1 | 1 | 1 | 1 | 1 | 1 | 1 |

| **H** | 0 | 0 | 0 | 0 | 0 | 0.5 | 0.5 |

| **G** | 0.98 | 0.73 | 0.96 | 0.96 | 0.46 | 0.46 | 0.5 |

| **F** | 0.25 | 0.25 | 0.25 | 0.74 | 0.49 | 0.49 | 0.49 |

| **E** | 0 | 0 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| **D** | 0 | 0.25 | 0.5 | 0 | 0 | 0 | 0 |

| **C** | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 |

| **B** | 1 | 0.5 | 0.5 | 0.5 | 0.5 | 0.5 | 1 |

| **A** | 1 | 1 | 1 | 1 | 0.75 | 0.5 | 1 |

**Visual Trend Verification:**

- **Row I:** Uniformly dark blue across all columns, indicating consistently maximum values (1).

- **Row H:** Mostly white (0) in columns A-E, shifting to light blue (0.5) in columns F-G.

- **Row G:** Dark blue in columns A-D (values 0.98, 0.73, 0.96, 0.96), transitioning to lighter blue in columns E-G (0.46, 0.46, 0.5).

- **Row F:** Light blue in columns A-C (0.25), a darker patch at column D (0.74), then medium blue in columns E-G (0.49).

- **Row E:** White (0) in columns A-B, then uniform medium blue (0.5) from columns C-G.

- **Row D:** White (0) in columns A, D-G, with light blue patches at B (0.25) and C (0.5).

- **Row C:** Uniform medium blue (0.5) across all columns.

- **Row B:** Dark blue at the edges (columns A and G, value 1), with medium blue (0.5) in between.

- **Row A:** Dark blue in columns A-D and G (value 1), with a slight dip at E (0.75) and F (0.5).

### Key Observations

1. **Uniform Rows:** Row I is entirely 1s. Row C is entirely 0.5s. This suggests these categories (I and C) have consistent, high or mid-range values across all measured columns.

2. **Edge Effects:** In rows B and A, the first and last columns (A and G) often have higher values (1) compared to the middle columns, indicating a potential "edge" or boundary effect.

3. **Value Clustering:** High values (≥0.96) cluster in the top-left quadrant (rows G-J, columns A-D). Low values (0 or 0.25) cluster in the middle-left area (rows D-H, columns A-E).

4. **Color-Value Consistency:** The color coding accurately reflects the numerical values. For example, the darkest blue cells (value 1) are in rows I, B (columns A,G), and A (columns A-D,G). The lightest cells (value 0) are in rows H, E, and D.

5. **Missing Context:** There is no chart title, axis descriptions, or legend explaining what the rows, columns, or values represent. The data is presented in isolation.

### Interpretation

The heatmap displays a structured matrix of normalized data, likely representing scores, probabilities, correlations, or some metric scaled between 0 and 1. The patterns suggest underlying groupings or relationships:

- **Row I** represents a category with perfect or maximum scores across all measured dimensions (columns A-G).

- **Row C** represents a category with consistent, moderate performance across all dimensions.

- The **high-value cluster** in the top-left (rows G-J, columns A-D) indicates that categories G through J perform particularly well on dimensions A through D.

- The **low-value cluster** in the middle-left (rows D-H, columns A-E) suggests these categories have minimal or zero scores on those dimensions, except for specific exceptions (e.g., row G's high values).

- The **edge effect** in rows A and B (high values at columns A and G) might indicate that the first and last measured dimensions are more strongly associated with these categories, or it could be an artifact of the data structure.

Without additional context, the data implies a system where certain categories (like I, G, J) are strongly associated with certain dimensions (like A, B, C, D), while others (like H, D, E) show weak or selective associations. The uniformity in rows I and C suggests these are control groups, benchmarks, or categories with inherent consistency. The heatmap effectively visualizes a complex set of relationships, highlighting contrasts between high-performance and low-performance clusters.

</details>

(b) Guided GRPO

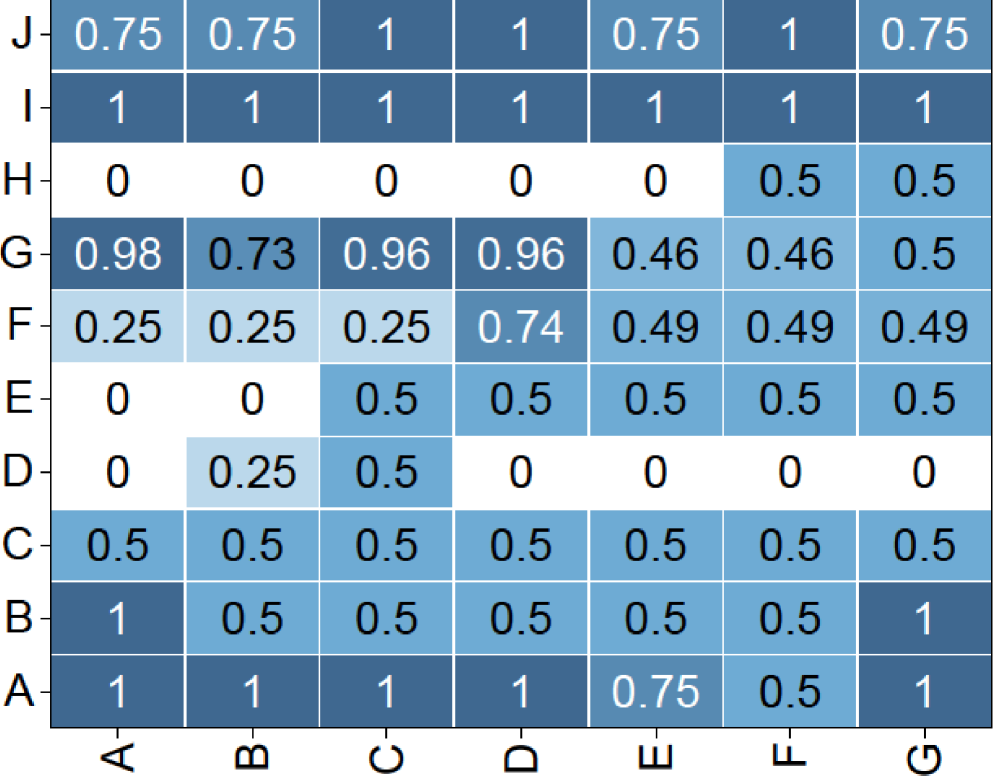

Figure 4: Reward of Guided GRPO. We fine-tuned Qwen3-1.7B on coding tasks, using 10 roll-outs and generating 280 candidates per batch. The candidates’ rewards form a 20x14 matrix. We then applied 2x2 average pooling, reducing it to a 10x7 matrix for clearer visualization. The results demonstrate that when configured with an optimal guidance ratio, G 2 RPO-A enables the model to sample candidates that yield a significantly denser reward signal.

| MATH500 $\ell=50$ $\ell=100$ | $\alpha=\frac{5}{6}$ $66.80$ $65.20$ | $\alpha=\frac{3}{6}$ $66.00$ $63.00$ | $\alpha=\frac{1}{6}$ $67.20$ $66.20$ | $\alpha=1$ $65.10$ $64.70$ |

| --- | --- | --- | --- | --- |

| $\ell=200$ | $57.60$ | $52.40$ | $62.00$ | $59.30$ |

| $\ell=500$ | $57.80$ | $62.00$ | $68.20$ | $55.80$ |

| $\ell=0$ | 62.00 | | | |

Table 1: Empirical study on guidance length $\ell$ and guidance ratio $\alpha$ . We use the Qwen2.5-Math-7B as the backbone.

### 4.2 Optimizing Guided GRPO Design

In this section, we thoroughly examine optimal design choices for Guided GRPO, focusing on guidance ratio of GRPO candidate groups and adjusting guidance strength at different training stages. These investigations aim to maximize the effectiveness of the Guided GRPO and overcome the limitations observed in the naive implementation.

#### Inner-Group Varied Guidance Ratio.

The insufficiency of naive guidance suggests that a more nuanced approach is required. We begin by investigating the impact of the guidance ratio $\alpha$ . In each GRPO group of size $G$ , we steer only an $\alpha$ -fraction of the candidates. Let $g_{i}$ denote the guidance for the $i$ -th candidate (ordered arbitrarily), we have:

$$

|g_{i}|=0\quad(i>\alpha G),\qquad|g_{i}|=l\quad(i\leq\alpha G). \tag{3}

$$

That is, the first $\alpha G$ candidates have guidance, while the remaining $(1-\alpha)G$ candidates evolve freely. We conduct experiments on the Qwen2.5-Math-7B model Yang et al. (2024) with a roll-out number $n=6$ , training for one epoch on the s1k-1.1 dataset Muennighoff et al. (2025b). We set $\alpha\in\{1/6,\ldots,1\}$ and $l\in\{50,100,\dots,500\}$ tokens, with all accuracies reported on the Math 500 benchmark. The results in Table 1 show that:

- Partial inner-group guidance improves model performance. In most settings, Guided GRPO with guidance provided to only a subset of candidates outperforms the vanilla GRPO, confirming the usefulness of the guidance mechanism.

- For Qwen2.5-Math-7B on the Math500 benchmark, the lowest guidance ratio $\alpha$ combined with a longest guidance window $\ell$ yields the best results. This suggests that Qwen2.5-Math-7B benefits from infrequent but heavyweight guidance.

In summary, selective guidance–directing only few candidates by a long guidance–strikes the best balance between exploration and control, thereby improving model performance. Moreover, the optimal guidance ratio varies with both the task domain and model capacity. As Table 8, 9 shows, smaller models and coding tasks benefit from stronger intervention, whereas larger models and math tasks achieve better results with lighter guidance.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Accuracy Reward vs. Global Step for Three GRPO Variants

### Overview

The image is a line chart comparing the performance of three different methods—Vanilla GRPO, Naive Guided GRPO, and G²RPO-A—over the course of training. The performance metric is "Accuracy reward," plotted against "Global step," which likely represents training iterations or time. The chart shows that all three methods follow a similar general trend of increasing reward, peaking around step 100, and then declining sharply, but with distinct differences in their peak values and volatility.

### Components/Axes

* **Chart Type:** Line chart with three data series.

* **X-Axis:**

* **Title:** "Global step"

* **Scale:** Linear, ranging from 0 to 140.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100, 120, 140.

* **Y-Axis:**

* **Title:** "Accuracy reward"

* **Scale:** Linear, ranging from 0.0 to 0.5.

* **Major Tick Marks:** 0.0, 0.1, 0.2, 0.3, 0.4, 0.5.

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Entries:**

1. **Vanilla GRPO:** Represented by a light blue line.

2. **Naive Guided GRPO:** Represented by a green line.

3. **G²RPO-A:** Represented by a dark blue line.

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

| Method | Step 0 | Step 40 | Step 60 | Step 80 | Step 100 (Global Peak) | Step 120 | Step 140 |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **Vanilla GRPO** | ~0.07 | ~0.13 | ~0.25 (Local Peak) | ~0.22 | ~0.40 | ~0.23 | ~0.04 |

| **Naive Guided GRPO** | ~0.10 | ~0.10 (Trough) | ~0.38 (Local Peak) | ~0.22 (Trough) | ~0.48 (Highest value) | ~0.26 | ~0.01 (Lowest final) |

| **G²RPO-A** | ~0.10 | ~0.16 | ~0.34 | ~0.35 | ~0.42 | ~0.25 | ~0.09 (Highest final) |

**Trend Descriptions:**

1. **Vanilla GRPO (Light Blue Line):**

* **Trend:** Starts lowest, shows a gradual, relatively smooth increase with minor fluctuations, reaches a moderate peak, and then declines steeply.

2. **Naive Guided GRPO (Green Line):**

* **Trend:** Starts in the middle, exhibits the most volatility with pronounced peaks and troughs, achieves the highest overall peak, and then experiences the most severe decline.

3. **G²RPO-A (Dark Blue Line):**

* **Trend:** Starts slightly above Vanilla GRPO, shows a steadier and more consistent upward trend with less volatility than Naive Guided GRPO, peaks at a value between the other two, and maintains a higher reward than the others during the final decline.

### Key Observations

1. **Common Trajectory:** All three methods follow a macro pattern of rise, peak, and fall. The peak for all occurs around Global Step 100.

2. **Performance Hierarchy at Peak:** At the peak (~Step 100), Naive Guided GRPO > G²RPO-A > Vanilla GRPO.

3. **Volatility:** Naive Guided GRPO is the most volatile, with the largest swings between local maxima and minima. Vanilla GRPO is the smoothest.

4. **Final Performance:** After the peak, all methods degrade. However, G²RPO-A degrades the slowest, ending with the highest reward at Step 140. Naive Guided GRPO degrades the fastest, ending near zero.

5. **Early Training:** In the first 40 steps, G²RPO-A establishes a clear lead over Vanilla GRPO, while Naive Guided GRPO lags initially before catching up.

### Interpretation

This chart likely visualizes the training dynamics of different reinforcement learning or optimization algorithms (variants of "GRPO") on a task where performance is measured by an accuracy-based reward signal.

* **What the data suggests:** The "guided" variants (Naive Guided and G²RPO-A) generally outperform the "Vanilla" baseline, indicating that incorporating guidance improves learning efficiency and peak performance. However, the guidance in "Naive Guided GRPO" appears to introduce instability, leading to higher peaks but also more severe crashes, possibly due to overfitting or aggressive policy updates. G²RPO-A seems to strike a better balance, achieving strong performance with more stability, as evidenced by its smoother curve and better final retention of reward.

* **The Decline Phase:** The sharp, synchronized decline after Step 100 is a critical feature. This could indicate several scenarios: 1) The training task becomes progressively harder after this point, 2) The learning rate or another hyperparameter causes divergence, 3) The agents have overfitted to a certain phase of the environment and fail to generalize, or 4) This is an intentional part of the experimental design (e.g., a curriculum that resets or changes). The fact that G²RPO-A retains more reward suggests it may be more robust to whatever causes this decline.

* **Peircean Reading:** The chart is an indexical sign of the learning process. The jaggedness of the green line is a direct trace of a more reactive, less stable learning policy. The synchronized peak and fall across all three lines point to a common external factor (the environment or training protocol) exerting a strong influence, over and above the differences between the algorithms themselves. The key takeaway for a researcher is not just that G²RPO-A has a good peak, but that its performance profile suggests a more robust and reliable learning trajectory.

</details>

(a) Accuracy Reward

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Comparison of Advantage σ Across Global Steps for Three GRPO Variants

### Overview

The image displays a line chart comparing the performance of three different algorithms—Naive Guided GRPO, Vanilla GRPO, and G²RPO-A—over the course of training, measured in "Global step." The performance metric is "Advantage σ." The chart shows that all three methods exhibit fluctuating performance, with G²RPO-A generally maintaining the highest advantage values throughout the observed period.

### Components/Axes

* **Chart Type:** Line chart with three data series.

* **X-Axis:**

* **Label:** "Global step"

* **Scale:** Linear, ranging from 0 to 140.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100, 120, 140.

* **Y-Axis:**

* **Label:** "Advantage σ"

* **Scale:** Linear, ranging from 0.1 to 0.8.

* **Major Tick Marks:** 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8.

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Entries:**

1. **Naive Guided GRPO:** Represented by a green line.

2. **Vanilla GRPO:** Represented by a light blue line.

3. **G²RPO-A:** Represented by a dark blue/purple line.

### Detailed Analysis

**1. Naive Guided GRPO (Green Line):**

* **Trend:** Starts at the lowest point among the three, rises to a moderate peak, then generally declines with some fluctuations, ending with a slight upward trend.

* **Key Data Points (Approximate):**

* Step 0: ~0.16

* Step 20: ~0.35

* Step 40: ~0.39 (Peak)

* Step 60: ~0.21

* Step 80: ~0.31

* Step 100: ~0.20

* Step 120: ~0.24

* Step 140: ~0.27

**2. Vanilla GRPO (Light Blue Line):**

* **Trend:** Starts relatively high, rises to an early peak, experiences a significant mid-training dip, recovers to a second peak, and then declines sharply towards the end.

* **Key Data Points (Approximate):**

* Step 0: ~0.59

* Step 20: ~0.66

* Step 30: ~0.67 (First Peak)

* Step 60: ~0.45

* Step 75: ~0.34 (Trough)

* Step 100: ~0.54

* Step 120: ~0.60 (Second Peak)

* Step 140: ~0.31

**3. G²RPO-A (Dark Blue/Purple Line):**

* **Trend:** Follows a pattern similar to Vanilla GRPO but consistently maintains a higher advantage value after the initial steps. It reaches the highest overall peak on the chart.

* **Key Data Points (Approximate):**

* Step 0: ~0.55

* Step 20: ~0.68

* Step 45: ~0.69 (First Peak)

* Step 60: ~0.55

* Step 75: ~0.40 (Trough)

* Step 100: ~0.62

* Step 120: ~0.75 (Highest Peak on Chart)

* Step 140: ~0.51

### Key Observations

1. **Performance Hierarchy:** G²RPO-A (dark blue) consistently outperforms Vanilla GRPO (light blue) after approximately step 10, and both significantly outperform Naive Guided GRPO (green) for the entire duration.

2. **Correlated Fluctuations:** The Vanilla GRPO and G²RPO-A lines show highly correlated movement patterns—rising and falling in near unison—suggesting they may be responding similarly to training dynamics, with G²RPO-A maintaining a performance offset.

3. **Mid-Training Dip:** All three methods experience a notable performance dip between global steps 60 and 80. The recovery from this dip is much stronger for Vanilla GRPO and G²RPO-A than for Naive Guided GRPO.

4. **Late-Stage Divergence:** After step 120, the performance of Vanilla GRPO and G²RPO-A diverges sharply downward, while Naive Guided GRPO shows a slight upward trend, though it remains at a much lower absolute advantage level.

5. **Peak Performance:** The single highest advantage value (~0.75) is achieved by G²RPO-A around step 120.

### Interpretation

This chart likely visualizes a key performance metric from a reinforcement learning or optimization experiment. "Advantage σ" probably represents a measure of policy improvement or reward advantage, with higher values being better. "Global step" represents training iterations.

The data suggests that the **G²RPO-A** algorithm is the most effective of the three, achieving the highest peak advantage and maintaining a lead over **Vanilla GRPO** throughout most of the training process. The strong correlation between these two lines implies that G²RPO-A may be an enhanced version of Vanilla GRPO that provides a consistent performance boost.

The **Naive Guided GRPO** method performs poorly in comparison, indicating that its guiding mechanism may be ineffective or even detrimental compared to the other approaches. The universal dip around steps 60-80 could point to a challenging phase in the training environment, a change in data distribution, or a common instability in the optimization process that all algorithms face, albeit with different resilience.

The sharp late-stage decline for the two better-performing methods is a critical observation. It could indicate overfitting, catastrophic forgetting, or that the policy has entered a region of the state space where the advantage estimate becomes unstable. In contrast, the slight rise of the naive method at the end might suggest it is learning a simpler, more stable, but ultimately suboptimal policy. The chart effectively demonstrates not just which method is best on average, but how their performance dynamics differ across the entire training timeline.

</details>

(b) Advantage $\sigma$

Figure 5: Pitfalls of naive Guided GRPO. We trained Qwen3-1.7B-Base on a curriculum-ordered subset of Math-220K Wang et al. (2024): problems are presented from easy to hard. Because the curriculum continually increases task difficulty, the accuracy reward does not plateau at a high level–an expected outcome of the CL schedule. This training dynamic indicates that the advantage standard deviation is extremely low under the naive guidance condition, a situation that negatively impacts training efficiency for SLMs.

| $\ell=50$ , $\alpha=0.8333$ $\ell=100$ , $\alpha=0.1667$ $\ell=200$ , $\alpha=0.8333$ | 63.80 60.60 66.60 | 58.40 62.00 54.20 | 57.60 66.20 58.40 |

| --- | --- | --- | --- |

| $\ell=200$ , $\alpha=0.1667$ | 61.20 | 64.20 | 59.60 |

| $\ell=500$ , $\alpha=0.1667$ | 59.60 | 69.80 | 62.40 |

| $\ell=0$ | 62.00 | | |

Table 2: Performance of Guided GRPO under different guidance-length adjustment policies. We train Qwen2.5-Math-7B and evaluate it on the MATH 500 benchmark. For each guidance-length schedule, we report the results obtained with the guidance ratio that achieves the highest score in Table 1.

| Base Model Qwen3-0.6B-Base Minerva | $\alpha$ 0.75 11.43 | Benchmark MATH500 9.57 | Base 40.18 10.40 | GRPO 54.26 12.29 | SFT 50.53 | G 2 RPO-A 51.77 | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| gpqa | 25.49 | 24.51 | 25.49 | 30.39 | | | |

| Qwen3-1.7B-Base | 0.25 | MATH500 | 50.96 | 63.74 | 62.11 | 67.21 | |

| Minerva | 13.84 | 16.19 | 18.89 | 15.10 | | | |

| gpqa | 27.45 | 29.41 | 24.51 | 32.35 | | | |

| Qwen3-8B-Base | 0.14 | MATH500 | 71.32 | 79.49 | 80.29 | 82.08 | |

| Minerva | 33.24 | 37.51 | 36.60 | 36.42 | | | |

| gpqa | 43.17 | 44.13 | 42.85 | 49.72 | | | |

Table 3: Performance of G 2 RPO-A on Math Tasks. We report accuracy (%) on various benchmarks. Models are trained for 5 epochs, and guidance ratios are selected based on the best settings obtained from Table 9.

| Qwen3-0.6B Minerva gpqa | 0.75 12.32 24.51 | MATH500 20.59 25.45 | 76.20 21.57 26.43 | 85.37 | 87.15 |

| --- | --- | --- | --- | --- | --- |

| AIME24 | 10.00 | 6.67 | 10.00 | | |

| AIME25 | 13.33 | 20.00 | 23.33 | | |

| Qwen3-1.7B | 0.25 | MATH500 | 92.71 | 94.52 | 91.69 |

| Minerva | 33.16 | 35.38 | 38.26 | | |

| gpqa | 48.23 | 51.68 | 55.27 | | |

| AIME24 | 46.67 | 56.67 | 63.33 | | |

| AIME25 | 36.67 | 50.00 | 53.33 | | |

Table 4: Performance of G 2 RPO-A on Math Tasks. The experiment settings are the same with Table 3. However, we use extra benchmarks like AIME24 and AIME25 here due to the stronger model performances.

#### Time Varied Guidance Length.

Apart from the guidance ratio, Table 1 shows that performance also depends on the guidance length $\ell$ . To investigate this further, we evaluate guided GRPO by varying the guidance length during training under three strategies. Those are:

$$

\displaystyle\text{Concave decay:}\quad\ell_{t} \displaystyle=\ell_{0}\Bigl{(}1-\tfrac{t}{T}\Bigr{)}^{\beta}\, \displaystyle\text{Linear decay:}\quad\ell_{t} \displaystyle=\ell_{0}\Bigl{(}1-\tfrac{t}{T}\Bigr{)}\, \displaystyle\text{Stepwise decay:}\quad\ell_{t} \displaystyle=\ell_{0}\,\gamma^{\lfloor t/s\rfloor}\,, \tag{4}

$$

where $T$ is the total training steps, and $\ell_{0}$ is the initial guidance length. The parameter $\beta\in(1,\infty]$ controls the concavity, and $\gamma\in(0,1)$ sets the decay rate, and $s$ specifies the decay interval.

We use the same experiment setting as in Table 1, and choose the guidance ratio that performs the best. The results are reported in Table 2. The results indicate that (1) model quality is highly sensitive to the chosen guidance length $\ell_{t}$ , and (2) no single schedule consistently outperforms the others. This highlights the need for more effective methods of controlling guidance length.

### 4.3 G 2 RPO-A: Sampling Difficulty Motivated Adaptive Guidance

In this section, we propose an adaptive algorithm that automatically selects the guidance strength $\ell$ at every optimization step. Our approach is inspired by recent work on data filtering and sampling (Bae et al., 2025; Xiong et al., 2025; Shi et al., 2025) , which removes examples that yield uniformly low or uniformly high rewards. Such “uninformative” samples–being either too easy or too hard–contribute little to learning and can even destabilize training. The pseudo-code can be found in Appendix.

#### Guidance length adjustment.

Our key idea is to control the difficulty of training samples by dynamically adjusting the guidance length, taking into account the ongoing training states. At each training step $k$ , the guidance $\ell_{k+1}$ is determined by the following equation:

$$

\ell_{k+1}=\ell_{k}\cdot\frac{\text{min}(\mathcal{T},k)r_{k}}{\sum_{\tau=1}^{\text{min}(\mathcal{T},k)}r_{k-\tau}}, \tag{5}

$$

where $r_{k}$ is the average reward of the $k$ -th training step, $\mathcal{T}$ is a hyperparameter that controls the number of history steps we considered, and we found that setting $\mathcal{T}=2$ is already sufficient for noticeably improving Guided GRPO performance (Table 10, 11).

Equation 5 implies the following dynamics:

- When recent rewards rise, $\ell_{k}$ is reduced, making the next batch of examples harder.

- When recent rewards fall, $\ell_{k}$ is increased, making the next batch easier.

Thus, the training difficulty is automatically and continuously adjusted to match the model’s current competence.

| Base Model Qwen3-0.6B | Guidance Ratio 0.75 | Benchmark-humaneval | Base Perf. 32.32 | GRPO 38.89 | SFT 40.33 | G 2 RPO-A 44.96 | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Live Code bench | 17.07 | 22.22 | 13.58 | 23.14 | | | |

| Qwen3-1.7B | 1 | humaneval | 46.08 | 67.65 | 63.34 | 75.93 | |

| Live Code bench | 34.31 | 53.14 | 56.33 | 51.96 | | | |

| Qwen3-8B | 0.57 | humaneval | 64.36 | 81.48 | 77.42 | 80.33 | |

| Live Code bench | 60.58 | 77.12 | 63.82 | 79.71 | | | |

Table 5: Performance of G 2 RPO-A on Code Tasks. We report accuracy (%) on various benchmarks. Models are trained for 5 epochs, and guidance ratios are selected based on the best settings obtained from Table 8.

#### Curriculum learning for further improvements.

Equation 5 shows that the adaptive guidance-length controller updates $\ell$ by comparing the current reward with rewards from previous steps. When consecutive batches differ markedly in difficulty, these reward variations no longer reflect the model’s true learning progress, which in turn degrades G 2 RPO-A ’s performance.

| Qwen3-1.7-Base | Random GRPO | CL G 2 RPO-A | GRPO | G 2 RPO-A |

| --- | --- | --- | --- | --- |

| MATH500 | 53.81 | 57.67 | 52.05 | 58.94 |

| Minarva | 12.41 | 15.12 | 14.98 | 16.69 |

| gpqa | 24.79 | 23.53 | 27.45 | 25.49 |

| Qwen3-0.6B-Base | | | | |

| Math 500 | 43.25 | 50.72 | 48.16 | 53.59 |

| Minarva | 11.04 | 11.21 | 9.66 | 10.08 |

| gpqa | 23.1 | 25.49 | 24.51 | 32.35 |

Table 6: Comparison of training with random order and curriculum learning (CL) order across different models and benchmarks.

| Remove Replace Original | 76.74 88.37 86.05 | 71.11 75.56 77.77 | 50.00 54.00 60.00 | 35.00 37.00 43.00 | 24.00 18.00 28.00 |

| --- | --- | --- | --- | --- | --- |

Table 7: Performance of GRPO with different sample-filtering methods. We train Qwen3-1.7B model using G 2 RPO-A, with $\alpha=0.25$ . In the Remove setting all hard samples are excluded from the original dataset, whereas in the Replace setting each hard sample is substituted with a sample of moderate difficulty.

To eliminate this mismatch, we embed a curriculum-learning (CL) strategy Parashar et al. (2025); Shi et al. (2025); Zhou et al. (2025). Concretely, we sort the samples by difficulty. Using math task as an example, we rank examples by source, yielding five ascending difficulty tiers: cn_contest, aops_forum, amc_aime, olympiads, and olympiads_ref. We also tested ADARFT Shi et al. (2025), which orders samples by success rate, but its buckets proved uninformative in our cases—most questions were either trivial or impossible (see Appendix Figure 6)—so it failed to separate difficulty levels effectively. Table 6 shows that both the performance of vanilla GRPO and G 2 RPO-A boosted by CL.

#### Compare G 2 RPO-A to sample-filtering methods.

Earlier work argues that policy-gradient training benefits most from mid-level queries. Bae et al. (2025) keep only moderate-difficulty batches via an online filter, and Reinforce-Rej Xiong et al. (2025) discards both the easiest and hardest examples to preserve batch quality. Our experiments show that this exclusion is counter-productive: removing hard problems deprives the model of vital learning signals and lowers accuracy on challenging tasks. Table 7 confirms that either dropping hard items or substituting them with moderate ones reduces Level 4 and 5 test accuracy. G 2 RPO-A avoids this pitfall by retaining tough examples and attaching adaptive guidance to them, thus exploiting the full difficulty spectrum without sacrificing performance.

## 5 Experiments

| humaneval LCB Qwen3-0.6B | 68.52 28.43 $\alpha=0$ | 59.88 19.61 $\alpha=\frac{1}{4}$ | 64.81 23.53 $\alpha=\frac{2}{4}$ | 72.22 30.39 $\alpha=\frac{3}{4}$ | 70.81 35.72 $\alpha=1$ |

| --- | --- | --- | --- | --- | --- |

| humaneval | 41.98 | 32.10 | 27.72 | 38.89 | 49.38 |

| LCB | 12.75 | 11.76 | 9.80 | 18.63 | 12.75 |

Table 8: Ablation studies on guidance ratio $\alpha$ for Code Tasks. The group size is set to 12. The initial guidance length for G 2 RPO-A is set to 3072. The LCB indicates Live Code Bench.

| MATH500 Minerva gpqa | 52.05 14.98 27.45 | 58.71 16.69 25.49 | 53.09 16.25 30.39 | 55.53 18.21 25.49 | 45.95 16.11 22.55 |

| --- | --- | --- | --- | --- | --- |

| Qwen3-0.6B-Base | | | | | |

| MATH500 | 48.16 | 49.59 | 50.94 | 53.50 | 38.42 |

| Minerva | 9.66 | 9.10 | 8.96 | 10.08 | 15.69 |

| gpqa | 24.51 | 19.61 | 31.37 | 32.35 | 25.49 |

Table 9: Ablation studies on guidance ratio $\alpha$ for Math Tasks. The group size is set to 12. The initial guidance length for G 2 RPO-A is set to 3072.

| Qwen3-1.7B-Base | GRPO 3072 | Fixed Guidance 2048 | RDP 1024 | G 2 RPO-A | | |

| --- | --- | --- | --- | --- | --- | --- |

| MATH500 | 52.05 | 51.28 | 60.52 | 46.78 | 51.02 | 58.71 |

| Minerva | 14.98 | 14.40 | 17.16 | 12.22 | 17.99 | 22.46 |

| gpqa | 27.45 | 25.00 | 24.51 | 23.53 | 22.13 | 25.49 |

| Qwen3-0.6B-Base | | | | | | |

| MATH500 | 48.16 | 55.80 | 54.17 | 52.69 | 55.97 | 53.50 |

| Minerva | 9.66 | 13.27 | 15.26 | 11.78 | 14.32 | 15.69 |

| gpqa | 24.51 | 24.00 | 21.57 | 22.55 | 26.00 | 32.35 |

Table 10: Guidance-length ablation on Math Tasks. Each run uses the optimal guidance ratio reported in Table 9. The initial guidance budget for G 2 RPO-A is fixed at 3,072 tokens. RDP refers to the rule-based decay policy.

### 5.1 Experiment Settings

In this section, we outline the experiment settings we used, and more details about dataset filtering methods and evaluation on more models can be found in Appendix.

#### Datasets and models.

We conduct experiments on math and code tasks. In detail,

- Mathematical reasoning tasks. We construct a clean subset of the Open-R1 math-220k corpus Wang et al. (2024). Problems are kept only if their solution trajectories are (i) complete, (ii) factually correct, and (iii) syntactically parsable.

- Code generation. For programming experiments we adopt the Verifiable-Coding-Problems-Python benchmark from Open-R1. For every problem we automatically generate a chain-of-thought with QWQ-32B-preview Team (2024). These traces are later used as guidance by our proposed G 2 RPO-A training procedure.

We use Qwen3 series Yang et al. (2025a) for both tasks. Results of DeepSeek-Math-7B-Base Shao et al. (2024) for math and DeepSeek-Coder-6.7B-Base Guo et al. (2024) for code also included in Appendix. Unless specifically mentioned, CL is used for all experiments for fair comparison, and we also conducted ablation studies in Table 6.

#### Evaluation protocol.

We assess our models mainly on three public mathematical–reasoning benchmarks— Math500 Hendrycks et al. (2021), Minerva-Math Lewkowycz et al. (2022), and GPQA Rein et al. (2024). For the mathematical training of Qwen3-1.7B and Qwen3-0.6B, AIME24 Li et al. (2024) and AIME25 benchmarks are also used. And for code tasks, we use two benchmarks: humaneval Chen et al. (2021) and Live Code Bench Jain et al. (2024). Decoding hyper-parameters are fixed to: temperature $=0.6$ , $\mathrm{top}\text{-}p=0.95$ , and $\mathrm{top}\text{-}k=20$ . Unless otherwise noted, we generate with a batch size of $128$ and permit a token budget between $1{,}024$ and $25{,}000$ , based on each model’s context window.

#### Training details.

Our G 2 RPO-A algorithm is implemented on top of the fully open-source Open-R1 framework Face (2025). We use the following hyper-parameters: (i) number of roll-outs per sample set to $12$ for 0.6B and 1.7B backbones, and $7$ for 7B and 8B backbones; (ii) initial learning rate $1\times 10^{-6}$ , decayed with a cosine schedule and a warm-up ratio of $0.1$ ; (iii) a training set of $1{,}000$ problems for $5$ epochs. Note that for ablation experiments, only 1 epoch is implemented in our training. (iv) All models are trained on 8 A100 GPUs.

### 5.2 Numerical Results

#### Superior performance of G 2 RPO-A.

As reported in Table 3, 4, and 5, (i) our proposed G 2 RPO-A markedly surpasses vanilla GRPO on nearly every benchmark, and (ii) all RL-based methods outperform both the frozen base checkpoints and their SFT variants, mirroring trends previously observed in the literature.

#### Effect of the guidance ratio $\boldsymbol{\alpha}$ .

Table 8, 9 show that (1) larger models benefit from weaker guidance—e.g., Qwen3-1.7B peaks at $\alpha{=}0.25/0.5$ on Math, whereas the smaller Qwen3-0.6B prefers $\alpha{=}0.75$ ; (2) Code tasks consistently require a higher guidance ratio than Math.

#### Ablation on guidance–length schedules.

Table 10, 11 contrast our adaptive scheme (G 2 RPO-A) with (i) fixed guidance and (ii) a rule-based decay policy (RDP). (1) G 2 RPO-A achieves the best score on almost every model–benchmark pair, confirming the benefit of on-the-fly adjustment. (2) For fixed guidance, the optimal value varies across both tasks and model sizes, with no clear global pattern, underscoring the need for an adaptive mechanism such as G 2 RPO-A.

| | GRPO | Fixed Guidance | RDP | G 2 RPO-A | | |

| --- | --- | --- | --- | --- | --- | --- |

| 3072 | 2048 | 1024 | | | | |

| Qwen3-1.7B | | | | | | |

| humaneval | 68.52 | 58.64 | 58.02 | 60.49 | 69.29 | 70.81 |

| LCB | 23.53 | 29.41 | 28.43 | 31.37 | 26.47 | 35.72 |

| Qwen3-0.6B | | | | | | |

| humaneval | 38.89 | 43.93 | 36.54 | 38.40 | 42.27 | 49.38 |

| LCB | 12.75 | 13.73 | 10.78 | 9.80 | 11.67 | 12.75 |

Table 11: Guidance-length ablation on Code Tasks. Each run uses the optimal guidance ratio reported in Table 8. The initial guidance budget for G 2 RPO-A is fixed at 3,072 tokens. RDP refers to the rule-based decay policy.

## 6 Conclusion and Future Work

We introduce a method that injects ground-truth guidance into the thinking trajectories produced during GRPO roll-outs, thereby improving the performance of small-scale LLMs. After an extensive study of guidance configurations, we observe that the guidance ratio is significant in guidance mechanism and the optimal guidance length is context-dependent and, based on this, we develop G 2 RPO-A, an auto-tuned approach. Experiments on mathematical reasoning and code generation demonstrate that G 2 RPO-A consistently boosts accuracy. In future work, we plan to evaluate G 2 RPO-A across a broader range of tasks and model architectures, which we believe will further benefit the community.

## References

- Bae et al. [2025] Sanghwan Bae, Jiwoo Hong, Min Young Lee, Hanbyul Kim, JeongYeon Nam, and Donghyun Kwak. Online difficulty filtering for reasoning oriented reinforcement learning, 2025. URL https://arxiv.org/abs/2504.03380.

- Chen et al. [2021] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, Alex Ray, Raul Puri, Gretchen Krueger, Michael Petrov, Heidy Khlaaf, Girish Sastry, Pamela Mishkin, Brooke Chan, Scott Gray, Nick Ryder, Mikhail Pavlov, Alethea Power, Lukasz Kaiser, Mohammad Bavarian, Clemens Winter, Philippe Tillet, Felipe Petroski Such, Dave Cummings, Matthias Plappert, Fotios Chantzis, Elizabeth Barnes, Ariel Herbert-Voss, William Hebgen Guss, Alex Nichol, Alex Paino, Nikolas Tezak, Jie Tang, Igor Babuschkin, Suchir Balaji, Shantanu Jain, William Saunders, Christopher Hesse, Andrew N. Carr, Jan Leike, Josh Achiam, Vedant Misra, Evan Morikawa, Alec Radford, Matthew Knight, Miles Brundage, Mira Murati, Katie Mayer, Peter Welinder, Bob McGrew, Dario Amodei, Sam McCandlish, Ilya Sutskever, and Wojciech Zaremba. Evaluating large language models trained on code, 2021. URL https://arxiv.org/abs/2107.03374.

- Chu et al. [2025] Tianzhe Chu, Yuexiang Zhai, Jihan Yang, Shengbang Tong, Saining Xie, Sergey Levine, and Yi Ma. SFT memorizes, RL generalizes: A comparative study of foundation model post-training. In The Second Conference on Parsimony and Learning (Recent Spotlight Track), 2025. URL https://openreview.net/forum?id=d3E3LWmTar.

- Cui et al. [2025] Ganqu Cui, Lifan Yuan, Zefan Wang, Hanbin Wang, Wendi Li, Bingxiang He, Yuchen Fan, Tianyu Yu, Qixin Xu, Weize Chen, et al. Process reinforcement through implicit rewards. CoRR, 2025.

- Dang and Ngo [2025] Quy-Anh Dang and Chris Ngo. Reinforcement learning for reasoning in small llms: What works and what doesn’t, 2025. URL https://arxiv.org/abs/2503.16219.

- Face [2025] Hugging Face. Open r1: A fully open reproduction of deepseek-r1, January 2025. URL https://github.com/huggingface/open-r1.

- Guan et al. [2025] Xinyu Guan, Li Lyna Zhang, Yifei Liu, Ning Shang, Youran Sun, Yi Zhu, Fan Yang, and Mao Yang. rstar-math: Small LLMs can master math reasoning with self-evolved deep thinking. In Forty-second International Conference on Machine Learning, 2025. URL https://openreview.net/forum?id=5zwF1GizFa.

- Guo et al. [2024] Daya Guo, Qihao Zhu, Dejian Yang, Zhenda Xie, Kai Dong, Wentao Zhang, Guanting Chen, Xiao Bi, Y Wu, YK Li, et al. Deepseek-coder: When the large language model meets programming-the rise of code intelligence. CoRR, 2024.

- Guo et al. [2025] Daya Guo, Dejian Yang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi Wang, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948, 2025.

- Hendrycks et al. [2021] Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the MATH dataset. In Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track, 2021. URL https://openreview.net/forum?id=7Bywt2mQsCe.

- HUANG et al. [2025] Dong HUANG, Guangtao Zeng, Jianbo Dai, Meng Luo, Han Weng, Yuhao QING, Heming Cui, Zhijiang Guo, and Jie Zhang. Efficoder: Enhancing code generation in large language models through efficiency-aware fine-tuning. In Forty-second International Conference on Machine Learning, 2025. URL https://openreview.net/forum?id=8bgaOg1TlZ.

- Huang et al. [2025] Qihan Huang, Weilong Dai, Jinlong Liu, Wanggui He, Hao Jiang, Mingli Song, Jingyuan Chen, Chang Yao, and Jie Song. Boosting mllm reasoning with text-debiased hint-grpo, 2025. URL https://arxiv.org/abs/2503.23905.

- Jaech et al. [2024] Aaron Jaech, Adam Kalai, Adam Lerer, Adam Richardson, Ahmed El-Kishky, Aiden Low, Alec Helyar, Aleksander Madry, Alex Beutel, Alex Carney, et al. Openai o1 system card. CoRR, 2024.

- Jain et al. [2024] Naman Jain, King Han, Alex Gu, Wen-Ding Li, Fanjia Yan, Tianjun Zhang, Sida Wang, Armando Solar-Lezama, Koushik Sen, and Ion Stoica. Livecodebench: Holistic and contamination free evaluation of large language models for code. CoRR, 2024.

- Jia et al. [2025] Ruipeng Jia, Yunyi Yang, Yongbo Gai, Kai Luo, Shihao Huang, Jianhe Lin, Xiaoxi Jiang, and Guanjun Jiang. Writing-zero: Bridge the gap between non-verifiable tasks and verifiable rewards, 2025. URL https://arxiv.org/abs/2506.00103.

- Kojima et al. [2022] Takeshi Kojima, Shixiang Shane Gu, Machel Reid, Yutaka Matsuo, and Yusuke Iwasawa. Large language models are zero-shot reasoners. Advances in neural information processing systems, 35:22199–22213, 2022.

- Lee et al. [2024] Jung Hyun Lee, June Yong Yang, Byeongho Heo, Dongyoon Han, and Kang Min Yoo. Token-supervised value models for enhancing mathematical reasoning capabilities of large language models. CoRR, 2024.

- Lewkowycz et al. [2022] Aitor Lewkowycz, Anders Johan Andreassen, David Dohan, Ethan Dyer, Henryk Michalewski, Vinay Venkatesh Ramasesh, Ambrose Slone, Cem Anil, Imanol Schlag, Theo Gutman-Solo, Yuhuai Wu, Behnam Neyshabur, Guy Gur-Ari, and Vedant Misra. Solving quantitative reasoning problems with language models. In Alice H. Oh, Alekh Agarwal, Danielle Belgrave, and Kyunghyun Cho, editors, Advances in Neural Information Processing Systems, 2022. URL https://openreview.net/forum?id=IFXTZERXdM7.

- Li et al. [2025a] Chen Li, Nazhou Liu, and Kai Yang. Adaptive group policy optimization: Towards stable training and token-efficient reasoning. arXiv preprint arXiv:2503.15952, 2025a.

- Li et al. [2024] Jia Li, Edward Beeching, Lewis Tunstall, Ben Lipkin, Roman Soletskyi, Shengyi Huang, Kashif Rasul, Longhui Yu, Albert Q Jiang, Ziju Shen, et al. Numinamath: The largest public dataset in ai4maths with 860k pairs of competition math problems and solutions, 2024.

- Li et al. [2025b] Xuefeng Li, Haoyang Zou, and Pengfei Liu. Torl: Scaling tool-integrated rl. arXiv preprint arXiv:2503.23383, 2025b.

- Lin et al. [2025] Zhihang Lin, Mingbao Lin, Yuan Xie, and Rongrong Ji. Cppo: Accelerating the training of group relative policy optimization-based reasoning models, 2025. URL https://arxiv.org/abs/2503.22342.

- Liu et al. [2025] Zichen Liu, Changyu Chen, Wenjun Li, Penghui Qi, Tianyu Pang, Chao Du, Wee Sun Lee, and Min Lin. Understanding r1-zero-like training: A critical perspective, 2025. URL https://arxiv.org/abs/2503.20783.

- Lu et al. [2024] Zhenyan Lu, Xiang Li, Dongqi Cai, Rongjie Yi, Fangming Liu, Xiwen Zhang, Nicholas D Lane, and Mengwei Xu. Small language models: Survey, measurements, and insights. CoRR, 2024.

- Muennighoff et al. [2025a] Niklas Muennighoff, Zitong Yang, Weijia Shi, Xiang Lisa Li, Li Fei-Fei, Hannaneh Hajishirzi, Luke Zettlemoyer, Percy Liang, Emmanuel Candes, and Tatsunori Hashimoto. s1: Simple test-time scaling. In Workshop on Reasoning and Planning for Large Language Models, 2025a. URL https://openreview.net/forum?id=LdH0vrgAHm.