# RynnEC: Bringing MLLMs into Embodied World

**Authors**: Ronghao Dang, Yuqian Yuan, Yunxuan Mao, Kehan Li, Jiangpin Liu, Zhikai Wang, Fan Wang, Deli Zhao, Xin Li

1]DAMO Academy, Alibaba Group 2]Hupan Lab 3]Zhejiang University \contribution [*]Equal contribution

(November 18, 2025)

Abstract

We introduce RynnEC, a video multimodal large language model designed for embodied cognition. Built upon a general-purpose vision-language foundation model, RynnEC incorporates a region encoder and a mask decoder, enabling flexible region-level video interaction. Despite its compact architecture, RynnEC achieves state-of-the-art performance in object property understanding, object segmentation, and spatial reasoning. Conceptually, it offers a region-centric video paradigm for the brain of embodied agents, providing fine-grained perception of the physical world and enabling more precise interactions. To mitigate the scarcity of annotated 3D datasets, we propose an egocentric video based pipeline for generating embodied cognition data. Furthermore, we introduce RynnEC-Bench, a region-centered benchmark for evaluating embodied cognitive capabilities. We anticipate that RynnEC will advance the development of general-purpose cognitive cores for embodied agents and facilitate generalization across diverse embodied tasks. The code, model checkpoints, and benchmark are available at: https://github.com/alibaba-damo-academy/RynnEC

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Robotic Task Planning & Visual Question Answering

### Overview

The image depicts a diagram illustrating a robotic task planning and visual question answering system. It showcases a Large Language Model (LLM) integrating visual information from a "Visual Encoder" and "Mask Encoder" to respond to questions about a scene. The scene appears to be a kitchen setting with various objects like a window, a pan, a teddy bear, a pillow, and cleaning supplies. The diagram is structured around a series of steps (Step 1-Step 10) representing questions posed to the system, along with the system's responses. A heatmap at the bottom visualizes attention weights.

### Components/Axes

The diagram consists of the following key components:

* **Visual Encoder:** Processes visual information from the scene.

* **Mask Encoder:** Processes mask information (object segmentation) from the scene.

* **Large Language Model (LLM):** Integrates visual and mask information to generate responses.

* **Mask Decoder:** Decodes the mask token.

* **Question Prompts (Step 1-Step 10):** Textual questions posed to the system.

* **System Responses (A):** The LLM's answers to the questions.

* **Heatmap:** A 10x10 grid visualizing attention weights, with color intensity indicating the strength of attention. The axes are not explicitly labeled, but represent the spatial dimensions of the scene being analyzed.

* **Scene Image:** A photograph of a kitchen scene with various objects.

### Detailed Analysis or Content Details

**Question & Answer Pairs:**

* **Step 1:** "How many panes of glass are there on the window?" A: "Three"

* **Step 2:** "How wide is the pad on the table? Can it be placed on the bookshelf without falling?" A: "The width of is 20 cm, and it can be placed on the bookshelf."

* **Step 3:** "Where are the teddy bear and the pillow located?" A: "The teddy bear is and the pillow is"

* **Step 4:** "Do my feet need to move in the process of moving the teddy bear onto the pillow?" A: "needs to be moved 2.1 meters onto"

* **Step 5:** "Storage box on the bottom bookshelf?" A: ""

* **Step 6:** "Where is the degreasing spray bottle?" A: "is the degreasing spray bottle"

* **Step 7:** "I am going to clean the kitchen windowsill. Which of the two objects, or needs to be removed?" A: "needs to be removed"

* **Step 8:** "Where is the soy sauce?" A: "is the soy sauce"

* **Step 9:** "What is the function of ?" A: "This object is a wok, which is used for stir-frying."

* **Step 10:** "How far is from me? Can I reach it without moving?" A: "is 1.3 meters away from me, and my arm is only 0.8 meters long, so I need to walk up to it to pick it up."

**Heatmap Analysis:**

The heatmap displays attention weights. The color scale ranges from dark blue (low attention) to red (high attention). The heatmap appears to be centered around the objects in the scene.

* **Row 1:** Weights are highest in columns 1-3, then decrease.

* **Row 2:** Weights are highest in columns 2-4, then decrease.

* **Row 3:** Weights are highest in columns 3-5, then decrease.

* **Row 4:** Weights are highest in columns 4-6, then decrease.

* **Row 5:** Weights are highest in columns 5-7, then decrease.

* **Row 6:** Weights are highest in columns 6-8, then decrease.

* **Row 7:** Weights are highest in columns 7-9, then decrease.

* **Row 8:** Weights are highest in columns 8-10, then decrease.

* **Row 9:** Weights are highest in columns 9-1, then decrease.

* **Row 10:** Weights are highest in columns 10-2, then decrease.

**Task Descriptions:**

* **Task 1:** "You need to first stick window stickers onto each pane of glass. Then, tidy up the table by placing the pad on the bookshelf and putting the teddy bear on the pillow. Finally, use the storage box on the bottom bookshelf to organize the smaller items on the table."

* **Task 2:** "I need to stir-fry; please pour some soy sauce into the pan, turn on the heat, and cover it with a lid. Then, use the degreasing spray bottle to clean the kitchen windowsill."

### Key Observations

* The system demonstrates the ability to answer questions about object locations, quantities, and functions within a visual scene.

* The heatmap suggests a sequential attention pattern, moving across the scene from left to right, then right to left.

* The system can reason about distances and reachability for robotic manipulation.

* The responses are not always complete, with some answers being truncated ("" in Step 5).

* The system can generate multi-step task instructions.

### Interpretation

This diagram illustrates a sophisticated robotic system capable of integrating visual perception with natural language understanding. The LLM acts as a central reasoning engine, leveraging information from both the visual and mask encoders to answer questions and plan tasks. The heatmap provides insight into the system's attention mechanism, revealing how it focuses on different parts of the scene to extract relevant information. The sequential attention pattern observed in the heatmap suggests the system may be scanning the scene in a systematic manner. The ability to reason about distances and reachability is crucial for robotic manipulation tasks. The incomplete responses highlight potential limitations of the system, possibly related to the complexity of the scene or the ambiguity of the questions. Overall, the diagram demonstrates a promising approach to building intelligent robots that can interact with the world in a more natural and intuitive way. The system is likely being used for research into embodied AI, where robots learn to perform tasks by interacting with their environment and responding to human instructions. The use of both visual and mask information suggests the system is capable of not only recognizing objects but also understanding their spatial relationships and boundaries.

</details>

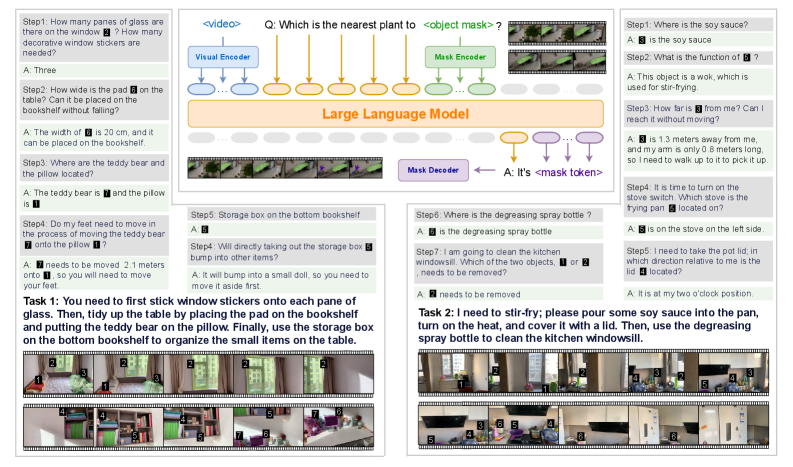

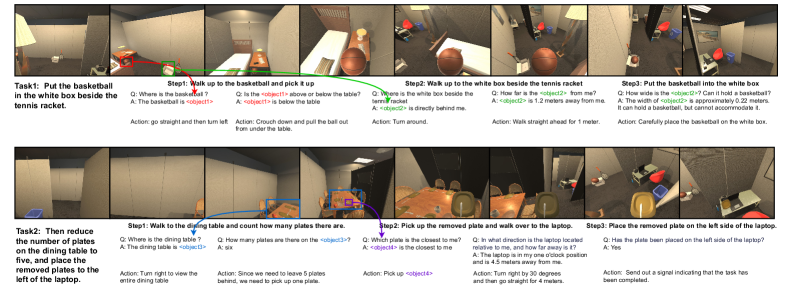

Figure 1: RynnEC is a video multi-modal large language model (MLLM) specifically designed for embodied cognition tasks. It can accept inputs interwoven from video, region masks, and text, and produce output in the form of text or masks based on the question. RynnEC is capable of addressing a diverse range of object and spatial questions within embodied contexts and plays a significant role in indoor embodied tasks.

1 Introduction

In recent years, Multi-modal Large Language Models (MLLMs) Wu et al. [2023], Zhang et al. [2024a] have experienced rapid development, leading to the emergence of models such as Gemini Team et al. [2024] and GPT-4o OpenAI et al. [2024] that can handle image and even video inputs. These MLLMs are attracting increasing attention from researchers due to their powerful contextual understanding Doveh et al. [2025] and generalization Zhang et al. [2024c] abilities. Researchers in embodied intelligence are also beginning to explore the use of MLLMs as the brains of robots Han et al. [2025b], Jin et al. [2024], enabling them to perceive the real world through visual inputs like humans do. However, the current mainstream MLLMs are trained on extensive internet images and lack the foundational visual cognition to match the physical world Dang et al. [2025], Yuan et al. [2025b].

Some works have begun exploring how MLLMs can be applied to ego-centric embodied scenarios. Models like Exo2Ego Zhang et al. [2025b] and EgoLM Hong et al. [2025] enhance the understanding of ego-centric dynamic environment interactions. SpatialVLM Chen et al. [2024a] and SpatialRGPT Cheng et al. [2024a] focus on addressing spatial understanding challenges within embodied contexts. However, these approaches are challenging to directly implement in physical robots to perform complex tasks. The main limitations are as follows:

1. Lack of flexible visual interaction: In complex embodied scenarios, relying solely on textual communication is prone to ambiguity or vagueness. Direct visual interaction references, such as masks or points, can more accurately and flexibly index entities within a scene, facilitating precise task execution.

1. Insufficient detailed understanding of objects: During task execution, objects typically serve as the smallest operational units, making comprehensive and detailed understanding of objects crucial. As illustrated in Task 1 Step 1 in Fig. 1, recognizing the number of panes in a window is essential to determine the quantity of window decals needed.

1. Absence of video-based coherent spatial awareness: For humans, spatial cognition arises from continuous visual perception Pasqualotto and Proulx [2012]. Current methods in spatial intelligence Zhang et al. [2025c], Xu et al. [2025] primarily focus on single or discrete images, lacking the capacity for spatial understanding in high-continuity videos. For example, in Task 1 Step 4 in Fig. 1, the absolute distance between the teddy bear and the pillow requires a spatial scale concept derived from the entire video to be properly inferred.

Thus, we propose RynnEC, an embodied cognitive MLLM designed to enhance robotic understanding of the physical world. As illustrated in Fig. 1, RynnEC is a large video understanding model whose visual encoder and foundational parameters are derived from VideoLLaMA3 Zhang et al. [2025a]. To enable flexible visual interaction, we incorporate an encoder and decoder specifically for region masks in videos, allowing RynnEC to achieve precise instance-level comprehension and grounding.

Within this framework, RynnEC is designed to perform diverse cognitive tasks in embodied scenarios. We categorize embodied cognitive abilities into two essential components: object cognition and spatial cognition. Object cognition necessitates MLLMs’ understanding of object attributes, quantities, and their relationships with the environment, alongside accurate object grounding. Spatial cognition is further divided into world-centric and ego-centric perspectives. World-centric spatial cognition requires the model to grasp absolute scales and relative positions within scenes, as exemplified by object size estimations in Task 1 Step 2 (Fig. 1). Ego-centric spatial cognition connects the robot’s physical embodiment with the world, thereby assisting in behavioral decisions. For example, as depicted in Fig. 1, the reachability estimation in Task 2 Step 3 and the orientation estimation in Task 2 Step 5 assist the robot in clearly defining its relationship with interactive objects. Equipped with enhanced object and spatial reasoning, RynnEC supports more efficient execution of complex, real-world robotic tasks.

Regrettably, the development of embodied cognition models has been slow due to a lack of ego-centric videos and high-quality annotations. Efforts such as Multi-SpatialMLLM Xu et al. [2025], Spatial-MLLM Wu et al. [2025a], and SpaceR Ouyang et al. [2025] leverage open-source datasets with comprehensive 3D point cloud and annotations to generate training data. However, in an era of scarce 3D annotations Hou et al. [2025], Lyu et al. [2024], this approach cannot achieve rapid and cost-effective expansion of data scale. Hence, we propose a data generation pipeline that transforms ego-centric RGB videos into embodied cognition question-answering datasets. This pipeline begins with instance segmentation from videos and diverges into two branches: one generating object cognition data and the other producing spatial cognition data. Ultimately, data from both branches are integrated into a comprehensive embodied cognition dataset. From over 200 households, we collect more than 20,000 egocentric videos. A subset from ten households is manually verified and balanced to create RynnEC-Bench, a fine-grained embodied cognition benchmark encompassing 22 tasks in object and spatial cognition.

Extensive experiments demonstrate that RynnEC significantly outperforms both general OpenAI et al. [2024], Bai et al. [2025], Zhu et al. [2025] and task-specific Yuan et al. [2025a, c], Team et al. [2025] MLLMs in cognitive abilities within embodied scenarios, showcasing scalable application potential. Additionally, we observe notable advantages in multi-task training with RynnEC and identify preliminary signs of emergence in more challenging embodied cognition tasks. Finally, we highlight the potential of RynnEC in facilitating robots to undertake large-scale, long-range tasks.

2 Related Work

2.1 MLLMs for Video Understanding

Early MLLMs primarily relied on sparse sampling and simple connectors, such as MLPs Lin et al. [2023], Ataallah et al. [2024], Maaz et al. [2023] and Q-Formers Zhang et al. [2023], Li et al. [2024b], to integrate visual representation with large language models. Subsequently, to tackle the problem of long video understanding, Zhang et al. [2024b] directly expanded the context window of language models, while Zhang et al. [2024d] introduced pooling in the spatial and temporal dimensions to compress the number of video tokens. As the need for more fine-grained understanding emerged, some studies (VideoRefer Yuan et al. [2025c], DAM Lian et al. [2025] and PAM Lin et al. [2025]) employed region-level feature encoders enabling video MLLMs to accept masked inputs and comprehend the semantic features of objects within the masks. Although these video MLLMs have demonstrated superior capabilities in high-level semantic capture and temporal modeling, they lack robust physical-world comprehension in egocentric embodied scenarios.

2.2 Embodied Scene Understanding Benchmarks

Some studies Ren et al. [2024a], Li et al. [2024c], Han et al. [2025a] have begun to explore leveraging MLLMs to assist robots in solving embodied tasks. However, determining whether these MLLMs possess the ability to understand and interact with the physical world is challenging. Consequently, several benchmarks have emerged to evaluate the capability of MLLMs to perceive the physical world. OpenEQA Majumdar et al. [2024] and IndustryEQA Li et al. [2025a] focus on several key competencies in home and industrial settings, respectively, and manually designed open-vocabulary questions. VSI-Bench Yang et al. [2025c] centers on assessing the spatial cognitive abilities of MLLMs. STI-Bench Li et al. [2025b] introduces more complex kinematic (e.g. velocity) problems. ECBench Dang et al. [2025] systematically categorizes embodied cognitive abilities into static environments, dynamic environments, and overcoming hallucinations, offering a comprehensive evaluation across 30 sub-competencies. While these benchmarks encompass a wide range of abilities, they are unable to assess more fine-grained, region-level understanding capabilities in embodied scenarios. Compared to purely textual question-answering, region-level visual interaction can more accurately refer to targets in the complex real world.

2.3 Improving MLLMs for Embodied Cognition

The aforementioned embodied benchmarks have highlighted the cognitive limitations of current MLLMs in embodied scenarios. Consequently, some studies have started to investigate diverse strategies for enhancing MLLMs’ understanding of the physical world. GPT4Scene Qi et al. [2025] improves MLLMs’ consistent global scene understanding by explicitly adding instance marks between video frames. SAT Ray et al. [2024] explores multi-frame dynamic spatial reasoning in simulated environments. Spatial-MLLM Wu et al. [2025a], Multi-SpatialMLLM Xu et al. [2025], and SpaceR Ouyang et al. [2025] leverage 3D datasets with detailed annotations (e.g., ScanNet Yeshwanth et al. [2023]) to construct the suite of spatial-intelligence tasks introduced in VSI-Bench. In contrast, our data generation pipeline based on RGB videos yields more realistic and scalable training data. More importantly, RynnEC is designed not just to handle selected capabilities in embodied scenarios, but to cover a broad swath of the world cognition required for embodied task execution under a single paradigm.

3 Methodology

RynnEC is a robust video embodied cognition model capable of processing and outputting various video object proposals. This enables it to flexibly address embodied questions about objects and space. Due to a paucity of research in this domain, we comprehensively present the construction process of RynnEC from four perspectives: data generation (Sec. 3.1), evaluation framework establishment (Sec. 3.2), model architecture (Sec. 3.3), and training (Sec. 3.4).

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Visual Representation of a Multi-Stage Object and Spatial QA Pipeline

### Overview

The image depicts a diagram illustrating a pipeline for generating object and spatial Question Answering (QA) systems. The pipeline takes video instance segmentation as input and progresses through keyframe extraction, object description generation, and spatial reasoning to ultimately produce answers to questions about objects and their spatial relationships. The diagram is divided into three main sections: Video Instance Segmentation, Keyframe of Objects, and Generate Spatial QA. Each section is visually separated by colored backgrounds (blue, orange, and teal respectively).

### Components/Axes

The diagram doesn't have traditional axes, but it features several key components and labels:

* **Video Instance Segmentation:** Labeled "40s" indicating a time duration. Shows a series of video frames.

* **Keyframe of Objects:** Contains images of a footstool, labeled "Owen 2.5-VL" and "Owen 3". Includes a "Prompt" box and a text description.

* **Generate Object QA:** Contains images of a child and a sofa. Includes "Object Referring Expression" label.

* **Extract Object Name:** Labeled "Grounding DINO" and "Segment Anything". Shows a series of video frames.

* **Mast3r-SLAM + Mask 2D to 3D:** A 3D point cloud representation with "Start Pos" and "End Pos" markers.

* **Ground Level Calibration:** A 3D representation of the footstool with X, Y, and Z axes labeled.

* **Template:** Labeled "Template"

* **Spatial Cognition Question:** Labeled "Spatial Cognition Question"

* **QA Examples:** Several question-answer pairs are provided within the "Generate Object QA" and "Generate Spatial QA" sections.

### Detailed Analysis or Content Details

**Video Instance Segmentation:**

This section shows a sequence of video frames, suggesting the input to the pipeline is a video stream. The "40s" label indicates the video duration being processed.

**Keyframe of Objects:**

* Two images of a footstool are shown, labeled "Owen 2.5-VL" and "Owen 3".

* A "Prompt" box is present, likely representing the input to a language model.

* The text description associated with "Owen 2.5-VL" reads: "The object is a small footstool. It has a rectangular shape with rounded corners and is upholstered in a dark colored material. Likely leather or leather-like fabric."

* Two QA examples are provided:

* Q: "Is the <object> currently being exposed to sunlight?" A: "Yes"

* Q: "How many legs does the <object> have?" A: "4"

**Extract Object Name:**

* Labeled "Grounding DINO" and "Segment Anything".

* Shows a series of video frames.

* Labeled "one second interval"

**Mast3r-SLAM + Mask 2D to 3D:**

* A 3D point cloud representation of a scene is shown, with red lines indicating the trajectory of a camera or sensor.

* "Start Pos" and "End Pos" markers are visible, indicating the beginning and end points of the trajectory.

**Ground Level Calibration:**

* A 3D representation of the footstool is shown, with X, Y, and Z axes labeled. This suggests a calibration process to align the 3D model with the real world.

**Generate Spatial QA:**

* Two QA examples are provided:

* (Egocentric): Q: "Which of the two objects, object1 or object2, is closer to me?" A: "<object1>"

* (World-Centric): Q: "What is the difference in height above the ground between <object1> and <object2>?" A: "1.2 meters"

### Key Observations

* The pipeline appears to be designed for understanding objects and their spatial relationships within a video scene.

* The use of 3D reconstruction ("Mast3r-SLAM + Mask 2D to 3D") suggests the system aims to create a geometric understanding of the environment.

* The QA examples demonstrate the system's ability to answer both egocentric (relative to the viewer) and world-centric (absolute) spatial questions.

* The "Prompt" box in the "Keyframe of Objects" section suggests the use of a language model to generate object descriptions.

### Interpretation

This diagram illustrates a sophisticated system for visual question answering, going beyond simple object recognition to incorporate spatial reasoning. The pipeline leverages video instance segmentation to identify objects, extracts keyframes for detailed analysis, and uses 3D reconstruction to understand the spatial layout of the scene. The generated object descriptions and spatial QA capabilities suggest the system could be used for applications such as robotic navigation, virtual assistants, and scene understanding. The inclusion of both egocentric and world-centric questions indicates a focus on providing contextually relevant answers. The pipeline appears to be modular, with each stage performing a specific task, allowing for potential improvements and customization. The use of "Grounding DINO" and "Segment Anything" suggests the use of state-of-the-art segmentation models. The overall design suggests a system capable of complex scene understanding and interaction.

</details>

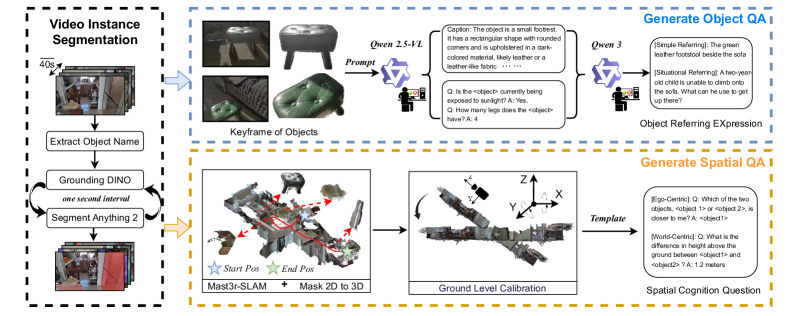

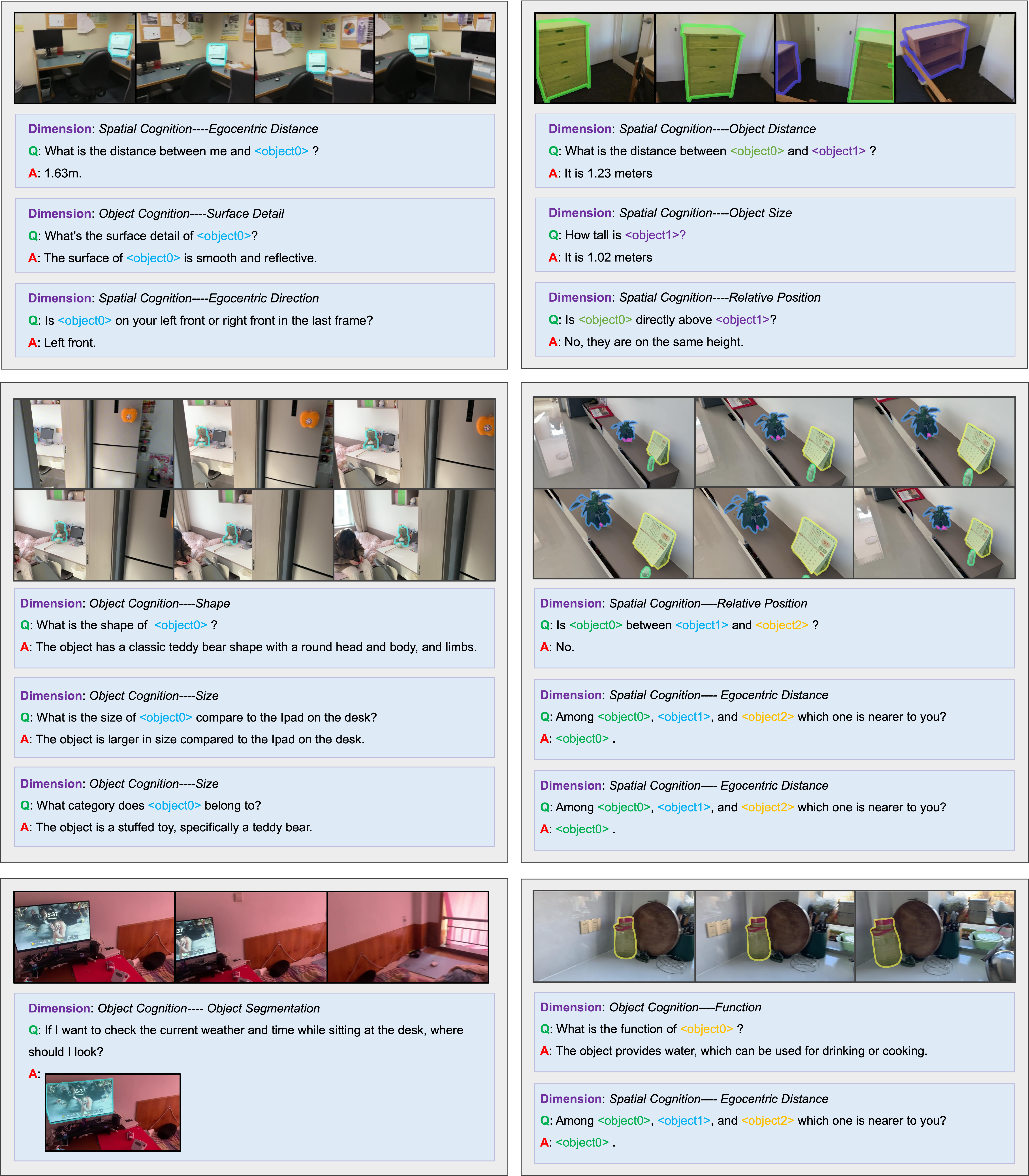

Figure 2: Embodied Cognition Question-Answer (QA) Data Generation Pipeline: First, objects within the scene are segmented from the video. Subsequently, object and spatial QA pairs are generated via two distinct branches.

3.1 Embodied Cognition Data Generation

Our embodied cognition dataset construction (Fig. 2) begins with egocentric video collection and instance segmentation. One branch employs a human-in-the-loop streaming generation approach to construct various object cognition QA pairs. The other branch utilizes a monocular dense 3D reconstruction method and diverse question templates to generate spatial cognition task QA pairs.

3.1.1 Video Collection and Instance Segmentation

Our egocentric video collection encompasses $200+$ houses, with approximately 100 videos per house. To ensure video quality, we require a resolution of at least 1080p and a frame rate no less than 30fps, using a gimbal to maintain shooting stability. To achieve diversity among different video trajectories, each house is divided into multiple zones, with filming trajectories categorized into single-zone, dual-zone, and tri-zone types. Cross-zone filming enhances diversity by altering the sequence of traversed zones. Additionally, we randomly vary lighting conditions and camera height under different trajectories. We require that each video includes both vertical and horizontal rotations, as well as at least two close-ups of objects, simulating the variable field of view in robotic task execution. Ultimately, we collect 20,832 egocentric videos of indoor movement. To control video length, these videos are segmented every 40 seconds.

Previous works Luo et al. [2025], Wang et al. [2024] adopted a strategy of designing separate data generation processes for each task type, leading to limited data reusability and continuity. We aim to create a lineage among different types of foundational data to reduce unnecessary redundancy in data generation. Therefore, this paper proposes a mask-centric embodied cognition QA generation pipeline. This pipeline initiates with the generation of object masks from video instance segmentation within a scene. First, Qwen2.5-VL Bai et al. [2025] observes the raw video and outputs an object list containing the names of all entity categories in the scene. Utilizing this object list, Grounding DINO 1.5 Ren et al. [2024b] detects objects in key frames at one-second intervals. SAM2 Ravi et al. [2024] assists in segmenting and tracking the objects detected by Grounding DINO 1.5 during the intervening one-second interval. To ensure consistency of instance IDs, the tracking results of old instances are compared with the segmentation results of newly detected instances at key frames. If an instance is found to have overlapping masks (IOU > 0.5), it retains the ID of the old tracking instance. Due to the performance limitations of Grounding DINO 1.5, newly detected object instances may already have appeared in preceding frames yet were missed. Thus, SAM2 conducts a reverse four-second instance tracking for each new object in key frames, thereby achieving full lifecycle instance tracking. In total, we obtain 1.14 million video instance masks from all the egocentric videos.

3.1.2 Object QA Generation

In this work, we generate three types of object-related tasks: object captioning, object comprehension QA, and referring video object segmentation. For each instance, we first divide all frames containing the instance into eight equal parts in chronological order. Within each frame group, an instance key frame is selected based on two factors: the size of the instance in the frame and the distance between the instance center and the frame center. Consequently, each instance is associated with eight instance key frames, featuring good instance visibility and diverse viewing angles. Half of these frames have the instance cropped out using a mask, while the other four highlight the instance using a red bounding box and background dimming technique. The final set of object cue images is displayed within the blue box in Fig. 2.

Due to the limitation of SAM2 in consistent object tracking in egocentric videos, the same instance may be assigned multiple IDs if the instance appears intermittently in the video. We employ an object category filtering method that limits each video to a maximum of two instances per object category, thereby minimizing duplicate instances. The presence of multiple video segments per house leads to repeated occurrences of certain salient objects, causing a pronounced long-tail distribution. We downsample object categories that occur frequently to prevent extreme object distribution. After the aforementioned filtering, the cue image sets of retained instances are input into Qwen2.5-VL Bai et al. [2025], generating object caption and object comprehension QA through various prompts. It is noteworthy that in the object comprehension QA, counting QA task is particularly unique and requires specially designed prompts. Subsequently, based on each instance’s caption and QAs, Qwen3 Yang et al. [2025a] generates two types of referring expressions: simple referring expressions and situational referring expressions. Simple referring expressions identify objects through a combination of features such as spatial location and category. Situational referring expressions establish a task scenario, requiring the model to infer the instance needed by the user within this context. Each type of QA undergoes manual filtering post-output to ensure data quality. Detailed prompts are provided in the Appendix A.2.

3.1.3 Spatial QA Generation

Unlike object QA, spatial QA requires more precise 3D information concerning the global scene context. Therefore, we utilize MASt3R-SLAM Murai et al. [2025] to reconstruct 3D point clouds from RGB videos and obtain camera extrinsic parameters. Subsequently, by projecting 2D pixel points to 3D coordinates, the segmentation of each instance in the video can be mapped onto the point cloud. However, it is important to note that the world coordinate system established by MASt3R-SLAM for the 3D point cloud is not aligned with the floor. Therefore, the Random Sample Consensus (RANSAC) Fischler and Bolles [1981] algorithm is implemented to identify inlier points for plane fitting through ten iterative executions. In each iteration, the detected planar surface and its inliers are removed from the point cloud for subsequent plane detection. Given that the initial camera pose was approximately horizontal but not perpendicular to the ground, the ground plane is selected based on minimal angular deviation between its normal vector and the initial camera Y-axis orientation. The point cloud is then aligned to ensure orthogonality between the world coordinate Z-axis and the detected ground plane.

RynnEC dataset encompasses 10 fundamental spatial abilities, each of which is further divided into quantitative and qualitative variants. We construct spatial QA in a template-based manner. Diverse QA templates are designed according to the characteristics of each task, and the missing attributes within the templates (e.g., distance, height) can be calculated from the 3D point cloud. We denote each instance in the format <Object X>. Furthermore, to obtain purely textual spatial QA pairs, we replace <Object X> with simple referring expressions generated in the above object QA pipeline. These texts are then further refined and diversified using GPT-4o, resulting in the final natural language spatial QA data. With training on these data, RynnEC is able to answer spatial questions in various input forms. Examples of the generated spatial QAs are illustrated in Fig. 2, and more examples as well as detailed templates are provided in the Appendix A.3.

Building on insights from prior works Wu et al. [2025a], Ouyang et al. [2025], we recognize that spatial cognition tasks are highly challenging. Therefore, in addition to constructing a large-scale video-based spatial QA dataset, we also develop a relatively simpler image-based spatial QA dataset. This combination of tasks with varying levels of difficulty is intended to improve learning efficiency and enhance model robustness. Specifically, we collect 500k indoor images from 39k houses. Leveraging the single-image-to-3D reconstruction and calibration methods from SpatialRGPT Cheng et al. [2024a], we obtain the 3D spatial relationships between objects in each image. We then select tasks from the video-based spatial cognition set that can also be addressed via single images, and design corresponding QA templates. The format of the image-based spatial QA is kept consistent with that of the video-based spatial QA.

3.2 RynnEC-Bench

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Cognition Bench - RyynEC

### Overview

The image depicts a circular diagram titled "Cognition Bench - RyynEC". The diagram is segmented into various cognitive abilities, arranged radially around a central point. Each segment is associated with a question, an image, and an answer, demonstrating how the cognitive ability is applied in a real-world scenario. The diagram is surrounded by questions and answers related to visual reasoning and spatial understanding.

### Components/Axes

The diagram is structured as a circular wheel divided into segments representing different cognitive abilities. These segments include:

* **Direct Referring**

* **Situational Referring**

* **Referring Object Segmentation**

* **Historical Spatial Cognition**

* **Ego-Centric Spatial Cognition**

* **Spatial Cognition**

* **World-Centric Spatial Cognition**

* **Positional Relationship**

* **Object Properties Cognition**

* **Category**

* **Color**

* **Material**

* **Shape**

* **State**

* **Position**

* **Function**

* **Surface Detail**

* **Size**

* **Counting**

Each segment is associated with a question, an image, and an answer. The questions are positioned around the outer edge of the diagram, and the answers are placed near the corresponding questions. The images are located within the segments.

### Detailed Analysis or Content Details

Let's analyze the questions and answers surrounding the diagram:

1. **Q1:** If I want to travel and need to carry a lot of clothes, which item should I take?

* **Image:** A person holding a suitcase.

* **A1:** It is a suitcase.

2. **Q2:** Where is the silver suitcase with a black bag on top?

* **Image:** A scene with a silver suitcase and a black bag.

* **A:** It is.

3. **Q3:** What is the color of ❓?

* **Image:** A red object.

* **A:** The ❓ is red.

4. **Q4:** What is the function of ❓?

* **Image:** An ironing board.

* **A:** It is to provide a flat, heat-resistant surface for efficiently ironing clothes and removing wrinkles.

5. **Q5:** How many white clothes are near ❓?

* **Image:** A scene with clothes.

* **A:** 3.

6. **Q6:** Upon making a 90-degree left turn, how will ❓ be oriented with respect to you? ❓ will located at 11 o'clock direction.

* **Image:** A person walking.

* **A:** Is.

7. **Q7:** Is ❓ on your left front or left rear?

* **Image:** A person walking.

* **A:** Left rear.

8. **Q8:** How far have you walked in total?

* **Image:** A person walking.

* **A:** 2.3m.

9. **Q9:** Among the three objects ❓ ❓ and ❓ which one is the tallest?

* **Image:** Three objects of varying heights.

* **A:** Reaches the greatest height.

10. **Q10:** What is the approximate height of ❓?

* **Image:** An object.

* **A:** It is 0.6m tall.

11. **Q11:** Which is closer to ❓ ❓ or ❓?

* **Image:** Three objects.

* **A:** Is closer.

The central circle reads "Cognition Bench" and "RyynEC". The segments are color-coded, with shades of orange, yellow, and green.

### Key Observations

The diagram demonstrates a range of cognitive abilities, from basic object recognition (color, shape) to more complex spatial reasoning (historical, ego-centric, world-centric). The questions and answers are designed to test the ability to apply these cognitive skills in practical scenarios. The use of images alongside the questions and answers suggests a focus on visual reasoning. The questions are diverse, covering object properties, spatial relationships, and historical context.

### Interpretation

The "Cognition Bench - RyynEC" diagram appears to be a framework for evaluating and understanding different aspects of human cognition, particularly those related to visual perception and spatial reasoning. The arrangement of cognitive abilities in a circular format suggests that these abilities are interconnected and work together to enable intelligent behavior. The questions and answers serve as examples of how these abilities are used in everyday life. The diagram could be used as a tool for assessing cognitive function, developing AI systems, or simply for gaining a deeper understanding of how the human mind works. The use of question marks (❓) in several questions suggests a need for visual input to answer the questions, emphasizing the importance of visual information in these cognitive processes. The diagram highlights the importance of both static object properties (color, shape) and dynamic spatial relationships (position, distance) in cognitive processing. The inclusion of "Historical Spatial Cognition" suggests an ability to recall and reason about past spatial experiences.

</details>

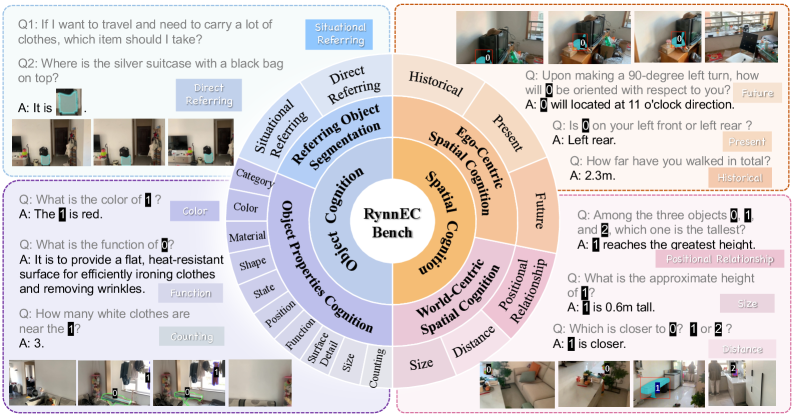

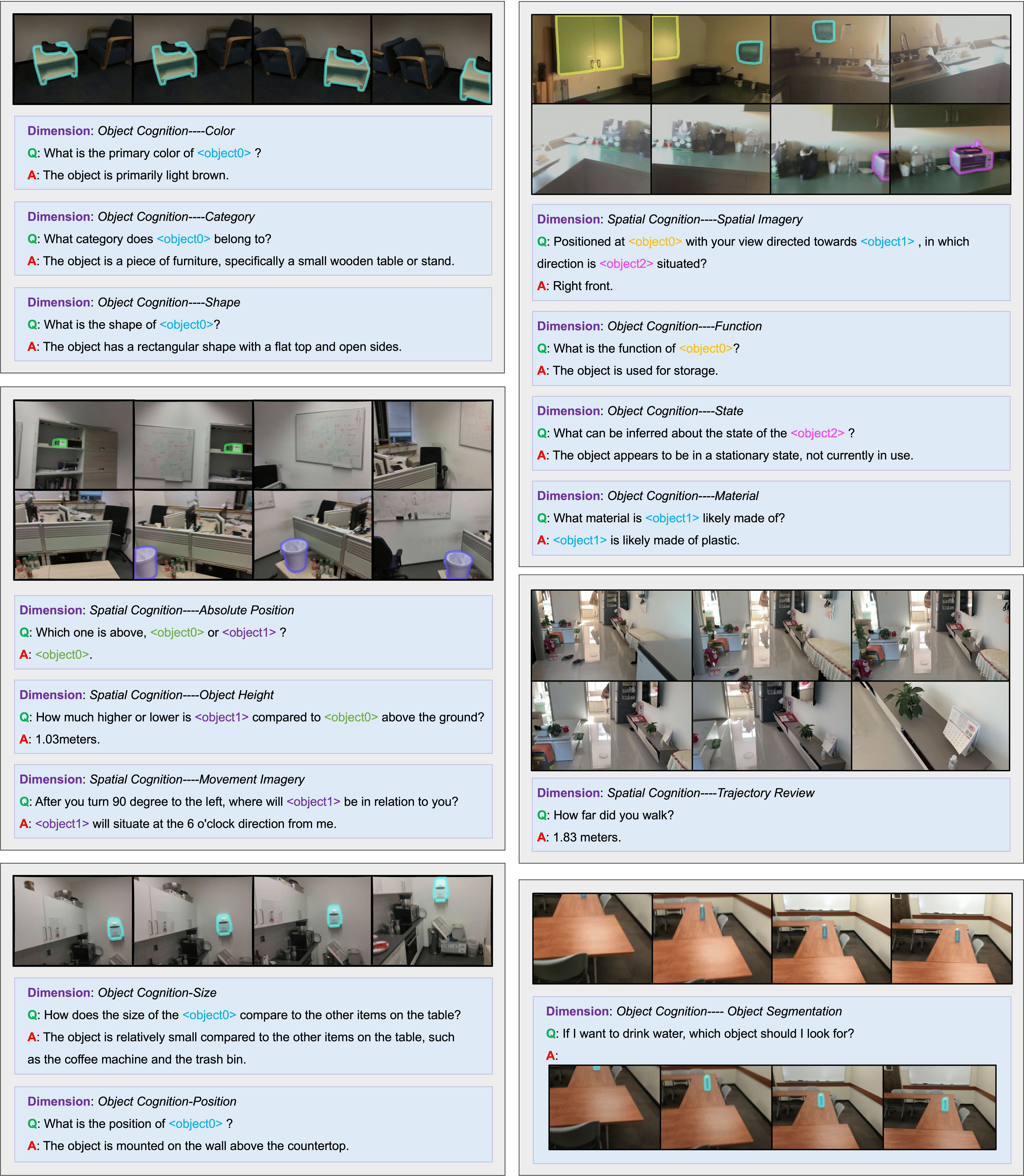

Figure 3: Overview of embodied cognition dimensions in RynnEC-Bench. RynnEC-Bench includes two subsets: object cognition and spatial cognition, evaluating a total of 22 embodied cognitive abilities.

As this work is the first to propose a comprehensive set of fine-grained embodied video tasks, a robust evaluation framework for assessing MLLMs’ overall capabilities in this domain is currently lacking. To address this, we propose RynnEC-Bench, which evaluates fine-grained embodied understanding models from the perspectives of object cognition and spatial cognition in open-world scenarios. Fig. 3 provides a detailed illustration of the capability taxonomy in RynnEC-Bench.

3.2.1 Capability Taxonomy

Object cognition is divided into two tasks: object properties cognition and referring object segmentation. During embodied task execution, robots often require a clear understanding of key objects’ functions, locations, quantities, surface details, relationships with the surrounding environment, etc. Accordingly, the object properties recognition tasks comprehensively and meticulously construct questions in these aspects. In the processes of robotic manipulation and navigation, identifying operation instances and target instances is an essential step. Precise instance segmentation in videos serves as the best approach to indicate the positions of these key objects. Specifically, the referring object segmentation task is categorized into direct referring problems and situational referring problems. Direct referring problems involve only combinations of descriptions for the instance, while situational referring problems are set within a scenario, requiring MLLMs to perform reasoning in order to identify the target object.

Spatial cognition requires MLLMs to derive a 3D spatial awareness from egocentric video. We categorize it into ego-centric and world-centric spatial cognition. Ego-centric spatial cognition maintains awareness of agent-environment spatial relations and supports spatial reasoning and mental simulation; by temporal scope, we consider past, present, and future cases. World-centric spatial cognition focuses on understanding the 3D layout and scale of the physical world, which we further evaluate in terms of size, distance, and positional relations.

3.2.2 Data Balance

The videos in RynnEC-Bench are collected from ten houses that do not overlap with those in the training set. When evaluating object cognition, we observe substantial variation in object-category distributions across houses, making results highly sensitive to which houses are sampled. To mitigate this bias and better reflect real-world deployment, we introduce a physical-world-based evaluation protocol. We first define a taxonomy of 12 coarse and 119 fine-grained indoor object categories. Using GPT-4o, we then estimate an empirical category-frequency distribution by parsing 500,000 indoor images from 39,000 houses; given the scale, this serves as a close approximation to real-world indoor object frequencies. Finally, we perform frequency-proportional sampling so that the object-category distribution in RynnEC-Bench closely matches the empirical distribution, enabling a more objective and realistic evaluation. Specifically, counting questions with answers of 1 or 2 are reduced by 50% to achieve a more balanced difficulty distribution. All QA pairs in RynnEC-Bench are further subjected to meticulous human screening to ensure high quality. Additional implementation details are available in Appendix B.

3.2.3 Evaluation Framework

The questions are categorized into three types based on the nature of their answers: numerical questions, textual questions, and segmentation questions. For numerical questions such as distance estimation and direction estimation, we directly use the formula to calculate the precise indicators. For scale-related questions, Mean Relative Accuracy (MRA) Yang et al. [2025c], Everingham et al. [2010] is used to calculate the scores. Specifically, given a model’s prediction $\hat{y}$ , ground truth $y$ , and a confidence threshold $\theta$ , relative accuracy is calculated by considering $\hat{y}$ correct if the relative error rate, defined as $|\hat{y}-y|/y$ , is less than $1-\theta$ . As single-confidence-threshold accuracy only considers relative error within a narrow scope, the MRA averages the relative accuracy across a range of confidence thresholds $\mathcal{C}=\{0.5,\,0.55,\,...,\,0.95\}$ :

$$

MRA=\frac{1}{|\mathcal{C}|}\sum_{\theta\in\mathcal{C}}\mathbb{I}\Bigg(\frac{|\hat{y}-y|}{y}<1-\theta\Bigg) \tag{1}

$$

where $\mathbb{I}(·)$ is the indicator function. For angle-related questions, MRA is not suitable due to the cyclic nature of angular measurements. We therefore designed a rotational accuracy metric (RoA).

$$

RoA=1-min\Bigg(\frac{min(|\widehat{y}-y|,360-|\widehat{y}-y|)}{90},1\Bigg) \tag{2}

$$

RoA assigns a score only when the angular difference is less than 90 degrees, ensuring consistency in task difficulty across different settings.

Textual questions are further categorized into close-ended and open-ended questions. For the close-ended part, we prompt GPT-4o to assign a straightforward binary score of either 0 or 1. For the open-ended part, answers are scored by GPT-4o on a scale from 0 to 1 in increments of 0.2. This question-type-adaptive evaluation approach enables the metrics of RynnEC-Bench to be both precise and consistent.

For segmentation evaluation, prior work Yuan et al. [2025a], Yan et al. [2024] typically reports the $\mathcal{J}\&\mathcal{F}$ measure, combining region-overlap ( $\mathcal{J}$ ) and boundary-accuracy ( $\mathcal{F}$ ) scores. However, the conventional frame-averaged $\mathcal{J}\&\mathcal{F}$ treats empty frames (i.e., frames with no ground-truth mask) in a binary manner: if any predicted mask appears, the frame score is set to 0; otherwise it is set to 1. This evaluation method fails to account for the actual size of erroneous masks in empty frames, which can have a significant impact on embodied segmentation tasks. To address this, we propose the Global IoU metric, defined as

$$

\overline{\mathcal{J}}=\frac{\sum_{i=1}^{N}|\mathcal{S}_{i}\cap\mathcal{G}_{i}|}{\sum_{i=1}^{N}|\mathcal{S}_{i}\cup\mathcal{G}_{i}|}, \tag{3}

$$

where $N$ is the total number of video frames, $\mathcal{S}_{i}$ denotes the predicted segmentation mask for frame $i$ , and $\mathcal{G}_{i}$ denotes the ground truth mask for frame $i$ . For the boundary accuracy metric $\overline{\mathcal{F}}$ , we compute the average only over non-empty frames. The mean of $\overline{\mathcal{J}}$ and $\overline{\mathcal{F}}$ , denoted as $\overline{\mathcal{J}}\&\overline{\mathcal{F}}$ , provides an accurate reflection of segmentation quality, especially in egocentric videos where the target object appears in relatively few frames.

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Diagram: Multimodal LLM Pipeline Stages

### Overview

The image depicts a four-stage pipeline for a multimodal Large Language Model (LLM), showcasing the progression from basic image understanding to complex referring segmentation. Each stage builds upon the previous one, incorporating different components and achieving increasingly sophisticated tasks. The diagram is arranged horizontally, with each stage presented as a distinct block.

### Components/Axes

The diagram consists of four stages labeled "Stage 1", "Stage 2", "Stage 3", and "Stage 4". Each stage has two main sections: an image/question-answer pair at the top and a component diagram at the bottom. The component diagram consistently includes "Vision Encoder", "Region Encoder", and "LLM" (Large Language Model). Stage 4 additionally includes "LoRA" and "Mask Decoder". Icons at the very bottom represent data sources (images, text).

### Detailed Analysis or Content Details

**Stage 1: Mask Alignment**

* **Image:** Shows a kitchen scene with a black kettle on a table.

* **Caption:** "A single black kettle on the table."

* **Components:** Vision Encoder -> Region Encoder -> LLM.

**Stage 2: Object Understanding**

* **Image:** Shows a dining room scene with a table set for a meal.

* **Q:** "What is the purpose of <objects>?"

* **A:** "It enhances flavor, adding umami and richness to dishes."

* **Components:** Vision Encoder -> Region Encoder -> LLM.

**Stage 3: Spatial Understanding**

* **Image:** Shows a room with furniture and a doorway.

* **Q:** "What is the distance of <objects> and <objects>?"

* **A:** "0.7m."

* **Components:** Vision Encoder -> Region Encoder -> LLM.

**Stage 4: Referring Segmentation**

* **Image:** Shows a person holding a brown teddy bear on a table.

* **Q:** "Can you segment the brown teddy bear located on the table in this video?"

* **A:** "Sure, [566]."

* **Components:** Vision Encoder -> Region Encoder -> LLM + LoRA -> Mask Decoder.

The "LLM" component is consistently highlighted with a flame icon. The "Vision Encoder" and "Region Encoder" components also have distinct icons.

### Key Observations

* The complexity of the pipeline increases with each stage. Stage 4 introduces "LoRA" and "Mask Decoder", indicating a more specialized task.

* The questions posed in each stage become progressively more complex, requiring deeper understanding of the image content.

* The consistent presence of "Vision Encoder", "Region Encoder", and "LLM" suggests a core architecture that is augmented with additional components as needed.

* The inclusion of a numerical value "[566]" in Stage 4's answer suggests a segmentation mask or identifier.

### Interpretation

This diagram illustrates a progressive approach to multimodal LLM development. It starts with basic image recognition (mask alignment) and gradually builds towards more sophisticated tasks like object understanding, spatial reasoning, and finally, referring segmentation. The addition of LoRA in Stage 4 suggests a fine-tuning approach to specialize the LLM for the segmentation task. The pipeline demonstrates how different components work together to enable the LLM to "see" and understand images, and then respond to complex queries about them. The flame icon on the LLM component likely signifies its computational intensity or central role in the process. The diagram highlights the increasing complexity of the tasks and the corresponding increase in the number of components required to achieve them. The numerical output in Stage 4 suggests the model is capable of generating precise segmentation masks. This pipeline represents a significant step towards creating AI systems that can interact with the world in a more natural and intuitive way.

</details>

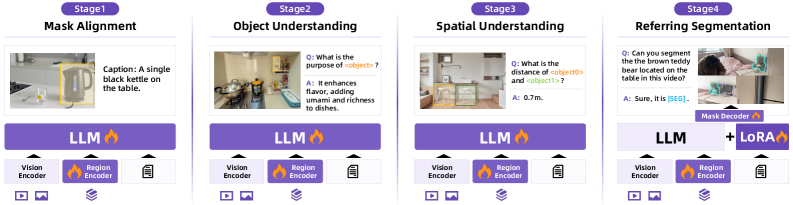

Figure 4: Training paradigm of RynnEC. The model is trained in four progressive stages: 1) Mask Alignment, 2) Object Understanding, 3) Spatial Understanding, and 4) Referring Segmentation.

3.3 RynnEC Architecture

RynnEC consists of three core components: the foundational vision-language model for basic multimodal comprehension, a region-aware encoder for fine-grained object-centric representation learning, an adaptive mask decoder for video segmentation tasks. Notably, the latter two modules are designed as plug-and-play components with independent parameter spaces, ensuring architectural flexibility and modular extensibility.

Foundational Vision-Language Model. We ultilize VideoLLaMA3-Image Zhang et al. [2025a] as the foundational vision-language model for RynnEC, which consists of three main modules: a Vision Encoder, the Projector and the Large Language Model (LLM). For the vision encoder, we use VL3-SigLIP-NaViT Zhang et al. [2025a], which leverages an any-resolution vision tokenization strategy to flexibly encode images of varying resolutions. As the LLM, we employ Qwen2.5-1.5B-Instruct Yang et al. [2024] and Qwen2.5-7B-Instruct Yang et al. [2024], enabling scalable trade-offs between performance and computational cost.

Region Encoder. Egocentric videos often feature cluttered scenes with similar objects that are difficult to distinguish using linguistic cues alone. To address this, we introduce a dedicated object encoder for specific object representation. This facilitates more precise cross-modal alignment during training and enables intuitive, fine-grained user interaction at inference time. Following Yuan et al. [2024, 2025c], we use a simple yet efficient MaskPooling for object tokenization, followed by a two-layer projector to align object features with LLM embedding space. During training, object masks spanning multiple frames in a video are utilized to achieve accurate representations. At inference, the encoder offers flexibility, operating effectively with either single-frame or multi-frame object masks.

Mask Decoder. Accurate object localization is critical for egocentric video understanding. To incorporate robust visual grounding capabilities without degrading the model’s pretrained performance, we fine-tune the LLM with LoRA. Our mask decoder is based on the architecture of SAM2 Ravi et al. [2024], which has demonstrated strong generalization capabilities and prior knowledge in purely visual segmentation tasks. For a given video and the instruction, we adpot a [SEG] token as a specifical token to trigger mask generation for the corresponding visual region. To facilitate this process, an additional linear layer is introduced to align the [SEG] token with SAM2’s feature space.

3.4 Training and Inference

As illustrated in Fig. 4, RynnEC is trained using a progressive four-stage pipeline: 1) Mask Alignment, 2) Object Understanding, 3) Spatial Understanding, and 4) Referring Segmentation. The first three stages are designed to incrementally enhance fine-grained, object-centric understanding, while the final stage focuses on equipping the model with precise object-level segmentation capabilities. This curriculum-based approach ensures gradual integration of visual, spatial, and grounding knowledge without overfitting to a single task. The datasets used in each stage are summarized in Tab. 1. The details of each training stage are as follows:

1) Mask Alignment. The goal of this initial stage is to encourage the model to attend to region-specific tokens rather than relying solely on global visual features. We fine-tune both the region encoder and the LLM on a large-scale object-level captioning dataset, where each caption is explicitly aligned with a specific object mask. This alignment training conditions the model to associate object-centric embeddings with corresponding linguistic descriptions, laying the foundation for localized reasoning in later stages.

2) Object Understanding. In this stage, the focus shifts to enriching the model’s egocentric object knowledge, encompassing attributes such as color, shape, material, size, and functional properties. The region encoder and the LLM are jointly fine-tuned to integrate this object-level information more effectively into the cross-modal embedding space. This stage is the basic for spatial understanding.

3) Spatial Understanding. Building on the previous stage, this phase equips the model with spatial reasoning abilities, enabling it to understand and reason about the relative positions and configurations of objects within a scene. We use a large amount of spatial QA we generated and the previous stage data as well as general VQA to maintain the ability to follow instructions.

4) Referring Segmentation. In the final stage, we integrate the Mask Decoder module after the LLM to endow the model with fine-grained referring segmentation capabilities. The LLM is fine-tuned via LoRA to minimize interference with its pretrained reasoning abilities. The training data includes not only segmentation-specific datasets but also samples from earlier stages to mitigate catastrophic forgetting. This multi-task mixture ensures that segmentation performance is improved without sacrificing the model’s object and spatial understanding.

Table 1: Datasets used at four training stages. IM and OM indicate whether the task involves the input mask and output mask, respectively.

| Training Stage | Task | IM | OM | # Samples | Datasets |

| --- | --- | --- | --- | --- | --- |

| Mask Alignment (Stage-1) | General Mask Captioning | ✓ | ✗ | 1.17M | RefCOCO Yu et al. [2016], Mao et al. [2016], VideoRefer-Caption Yuan et al. [2025c], DAM Lian et al. [2025], Osprey-Caption Yuan et al. [2024], MDVP-Data Lin et al. [2024], HC-STVG Tang et al. [2021] |

| Scene Instance Captioning | ✓ | ✗ | 0.14M | RynnEC-Caption | |

| Object Understanding (Stage-2) | Basic Properties QA | ✓ | ✗ | 1.49M | RynnEC-Object |

| Object-Centric Counting | ✓ | ✗ | 0.25M | RynnEC-Counting | |

| Spatial Understanding (Stage-3) | Our Stage-2 | ✓ | ✗ | 0.30M | RynnEC-Object, RynnEC-Counting |

| Spatial QA | ✓ | ✗ | 0.60M | RynnEC-Spatial (Image), RynnEC-Spatial (Video) | |

| ✗ | ✗ | 0.54M | VLM-3R-Data Fan et al. [2025] | | |

| General VQA | ✗ | ✗ | 0.74M | LLaVA-OV-SI Li et al. [2024a], LLaVA-Video Zhang et al. [2024e], ShareGPT-4o-video Chen et al. [2024b], VideoGPT-plus Maaz et al. [2024], FineVideo Farré et al. [2024], CinePile Rawal et al. [2024], ActivityNet Caba Heilbron et al. [2015], YouCook2 Zhou et al. [2018], LLaVA-SFT Liu et al. [2023] | |

| Referring Segmentation (Stage-4) | Our Stage-2 & Stage-3 | ✓ | ✗ | 0.60M | RynnEC-Object, RynnEC-Counting, RynnEC-Spatial |

| General Segmentation | ✗ | ✓ | 0.32M | ADE20K Zhou et al. [2017], COCOStuff Caesar et al. [2018], Mapillary Neuhold et al. [2017], PACO-LVIS Ramanathan et al. [2023], PASCAL-Part Chen et al. [2014] | |

| Embodied Segmentation | ✗ | ✓ | 0.31M | RynnEC-Segmentation | |

| General VQA | ✗ | ✗ | 0.80M | LLaVA-OV-SI Li et al. [2024a], LLaVA-Video Zhang et al. [2024e], ShareGPT-4o-video Chen et al. [2024b], VideoGPT-plus Maaz et al. [2024], FineVideo Farré et al. [2024], CinePile Rawal et al. [2024], ActivityNet Caba Heilbron et al. [2015], YouCook2 Zhou et al. [2018], LLaVA-SFT Liu et al. [2023] | |

4 Experiments

Table 2: Main evaluation results on RynnEC-Bench. We evaluate in two major categories: Object Cognition and Spatial Cognition. DR and SR represent Direct Referring and Situational Referring. PR represents Positional Relationship.

| Model | Overall Mean | Object Cognition | Spatial Cognition | | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Object Properties | Segmentation | Mean | Ego-Centric | World-Centric | Mean | | | | | | | |

| DR | SR | His. | Pres. | Fut. | Size | Dis. | PR | | | | | |

| Proprietary Generalist MLLMs | | | | | | | | | | | | |

| GPT-4o OpenAI et al. [2024] | 28.3 | 41.1 | — | — | 33.9 | 13.4 | 22.8 | 6.0 | 24.3 | 16.7 | 36.1 | 22.2 |

| GPT-4.1 OpenAI et al. [2024] | 33.5 | 45.9 | — | — | 37.8 | 17.2 | 27.6 | 6.1 | 35.9 | 30.4 | 45.7 | 28.8 |

| Seed1.5-VL Guo et al. [2025] | 34.7 | 52.1 | — | — | 42.8 | 8.2 | 27.7 | 4.3 | 32.9 | 19.1 | 27.9 | 26.1 |

| Genimi-2.5 Pro Comanici et al. [2025] | 45.5 | 64.0 | — | — | 52.7 | 9.3 | 36.7 | 8.1 | 47.0 | 29.9 | 69.3 | 37.8 |

| Open-source Generalist MLLMs | | | | | | | | | | | | |

| VideoLLaMA3-7B Zhang et al. [2025a] | 27.3 | 36.7 | — | — | 30.2 | 5.1 | 26.8 | 1.2 | 30.0 | 19.0 | 34.9 | 24.1 |

| InternVL3-78B Zhu et al. [2025] | 29.0 | 45.3 | — | — | 37.3 | 9.0 | 31.8 | 2.2 | 10.9 | 30.9 | 26.0 | 20.0 |

| Qwen2.5-VL-72B Bai et al. [2025] | 36.4 | 54.2 | — | — | 44.7 | 11.3 | 24.8 | 7.2 | 27.2 | 22.9 | 83.7 | 27.4 |

| Open-source Object-Level MLLMs | | | | | | | | | | | | |

| DAM-3B Lian et al. [2025] | 15.6 | 22.2 | — | — | 18.3 | 2.8 | 14.1 | 1.3 | 28.7 | 6.1 | 18.3 | 12.6 |

| VideoRefer-VL3-7B Yuan et al. [2025c] | 32.9 | 44.1 | — | — | 36.3 | 5.8 | 29.0 | 6.1 | 38.1 | 30.7 | 28.8 | 29.3 |

| Referring Video Object Segmentation MLLMs | | | | | | | | | | | | |

| Sa2VA-4B Yuan et al. [2025a] | 4.9 | 5.9 | 35.3 | 14.8 | 9.4 | 0.0 | 0.0 | 1.3 | 0.0 | 0.0 | 0.0 | 0.0 |

| VideoGlaMM-4B Munasinghe et al. [2025] | 9.0 | 16.4 | 5.8 | 4.2 | 14.4 | 4.1 | 4.7 | 1.4 | 0.8 | 0.0 | 0.3 | 3.2 |

| RGA3-7B Wang et al. [2025] | 10.5 | 15.2 | 32.8 | 23.4 | 17.5 | 0.0 | 5.5 | 6.1 | 1.2 | 0.9 | 0.0 | 3.0 |

| Open-source Embodied MLLMs | | | | | | | | | | | | |

| RoboBrain-2.0-32B Team et al. [2025] | 24.2 | 25.1 | — | — | 20.7 | 8.8 | 34.1 | 0.2 | 37.2 | 30.4 | 3.6 | 28.0 |

| RynnEC-2B | 54.4 | 59.3 | 46.2 | 36.9 | 56.3 | 30.1 | 47.2 | 23.8 | 67.4 | 31.2 | 85.8 | 52.3 |

| RynnEC-7B | 56.2 | 61.4 | 45.3 | 36.1 | 57.8 | 40.9 | 50.2 | 22.3 | 67.1 | 39.2 | 89.7 | 54.5 |

4.1 Implementation Details

4.1.1 Training

In this part, we briefly introduce the implementation details of each training stage. For all stages, we adopt the cosine learning rate scheduler. The warm up ratio of the learning rate is set as 0.03. The maximum token length is set to 16384, while the maximum token length for vision tokens is set to 8192. In Stage 1, both the vision encoder and the LLM are initialized with pretrained weights from VideoLLaMA3-Image. During this stage, we train the LLM, the projector, and the region encoder, using learning rates of $1× 10^{-5}$ , $1× 10^{-5}$ , and $4× 10^{-5}$ , respectively. In Stages 2 and 3, the learning rates for the LLM, projector, and region encoder are adjusted to $4× 10^{-5}$ , $1× 10^{-5}$ , and $1× 10^{-5}$ , respectively. In the final stage, the LLM is fine-tuned using LoRA with the same learning rates as in Stage 3. The learning rate of Mask Decoder is set to $4× 10^{-5}$ .

4.1.2 Evaluation

We present a comprehensive evaluation of five MLLM categories on RynnEC-Bench, including both general-purpose models and those fine-tuned for region-level understanding and segmentation. For models that do not accept direct region-based inputs, we uniformly highlight target objects using bounding boxes in the video. Multiple objects are distinguished by different colored boxes, which are referenced in the question prompt. We observe that general-purpose MLLMs are incapable of localizing objects in videos; thus, only specialist models fine-tuned for this ability are evaluated on the RynnEC-Bench segmentation subset. To ensure a consistent evaluation protocol, videos are sampled at 1 fps up to a maximum of 30 frames. If the initial sampling exceeds the 30-frame limit, these target-containing frames are kept, and the remaining frames are selected via uniform sampling from the rest of the video.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Radar Charts: Object and Spatial Cognition Performance

### Overview

The image presents two radar charts, labeled (a) Object Cognition and (b) Spatial Cognition. Each chart displays the performance of six different models – Gemini-2.5-Pro, Qwen2.5-VL-72B, VideoRefer-VL3-7B, RoboBrain-2.0-32B, RGA3-7B, and RynnEC-7B (labeled as "Ours") – across various cognitive attributes. The charts use a radial scale to represent performance, with higher values indicating better performance.

### Components/Axes

Each radar chart has the following components:

* **Center:** Represents the minimum performance value (presumably 0).

* **Radial Axes:** Represent different cognitive attributes.

* **Legend:** Located at the top of the image, associating colors with each model.

* **Labels:** Each radial axis is labeled with a specific cognitive attribute.

**Object Cognition (a):**

* Attributes: Situational Seg., Direct Seg., Counting, Size, Surface, Function, Position, State, Shape, Material, Color, Category.

* Scale: The radial axes extend to a maximum value of approximately 70.

**Spatial Cognition (b):**

* Attributes: Trajectory Review, Relative Position, Absolute Position, Object Distance, Object Size, Object Height, Spatial Imagery, Movement Imagery, Egocentric Distance, Egocentric Direction.

* Scale: The radial axes extend to a maximum value of approximately 80.

**Legend:**

* Gemini-2.5-Pro: Orange

* Qwen2.5-VL-72B: Light Blue

* VideoRefer-VL3-7B: Green

* RoboBrain-2.0-32B: Red

* RGA3-7B: Purple

* RynnEC-7B (Ours): Dark Blue

### Detailed Analysis or Content Details

**Object Cognition (a):**

* **Gemini-2.5-Pro (Orange):** Shows relatively high performance in Category (approx. 60), Color (approx. 60), and Material (approx. 68). Performance dips significantly in Counting (approx. 11) and Direct Seg. (approx. 45). The line generally fluctuates, indicating varying performance across attributes.

* **Qwen2.5-VL-72B (Light Blue):** Exhibits moderate performance across most attributes, with a peak in Color (approx. 56) and a low point in Counting (approx. 14). The line is relatively smooth.

* **VideoRefer-VL3-7B (Green):** Shows a peak in Material (approx. 66) and a low point in Counting (approx. 26). The line is somewhat erratic.

* **RoboBrain-2.0-32B (Red):** Demonstrates relatively low performance across all attributes, with a peak in Color (approx. 38) and a low point in Counting (approx. 7).

* **RGA3-7B (Purple):** Shows moderate performance, peaking in Category (approx. 58) and dipping in Counting (approx. 16).

* **RynnEC-7B (Dark Blue):** Displays a relatively consistent performance across attributes, peaking in Category (approx. 62) and dipping in Counting (approx. 22).

**Spatial Cognition (b):**

* **Gemini-2.5-Pro (Orange):** Shows high performance in Trajectory Review (approx. 77) and Relative Position (approx. 41). Performance is lower in Object Height (approx. 21) and Movement Imagery (approx. 15).

* **Qwen2.5-VL-72B (Light Blue):** Exhibits moderate performance, peaking in Trajectory Review (approx. 40) and dipping in Movement Imagery (approx. 13).

* **VideoRefer-VL3-7B (Green):** Shows a peak in Relative Position (approx. 40) and a low point in Object Height (approx. 22).

* **RoboBrain-2.0-32B (Red):** Demonstrates relatively low performance across all attributes, peaking in Object Distance (approx. 30) and dipping in Movement Imagery (approx. 6).

* **RGA3-7B (Purple):** Shows moderate performance, peaking in Trajectory Review (approx. 41) and dipping in Object Height (approx. 21).

* **RynnEC-7B (Dark Blue):** Displays a relatively consistent performance across attributes, peaking in Trajectory Review (approx. 46) and dipping in Movement Imagery (approx. 15).

### Key Observations

* **Counting is a consistent weakness:** Across both charts, all models exhibit the lowest performance in the "Counting" attribute (Object Cognition) and "Movement Imagery" (Spatial Cognition).

* **Gemini-2.5-Pro and RynnEC-7B generally perform well:** These models consistently show higher values across most attributes in both charts.

* **RoboBrain-2.0-32B consistently underperforms:** This model exhibits the lowest values across most attributes in both charts.

* **Trajectory Review is a strength:** In the Spatial Cognition chart, several models (Gemini-2.5-Pro, RGA3-7B, and RynnEC-7B) demonstrate relatively high performance in "Trajectory Review."

### Interpretation

The radar charts provide a comparative analysis of six models' cognitive abilities in object and spatial reasoning. The data suggests that while all models possess some level of competence in these areas, significant performance variations exist. The consistent weakness in "Counting" and "Movement Imagery" across all models indicates a potential area for improvement in current AI architectures. Gemini-2.5-Pro and RynnEC-7B appear to be the most well-rounded performers, while RoboBrain-2.0-32B lags behind.

The separation of object and spatial cognition into distinct charts allows for a focused evaluation of each domain. The higher performance in "Trajectory Review" for several models in the Spatial Cognition chart suggests a stronger ability to understand and predict motion. The charts highlight the complex interplay of different cognitive attributes and provide valuable insights into the strengths and weaknesses of each model. The "Ours" model (RynnEC-7B) appears to be competitive, particularly in Object Cognition, but further investigation is needed to understand the specific architectural features contributing to its performance. The charts are useful for identifying areas where further research and development are needed to improve AI's cognitive capabilities.

</details>

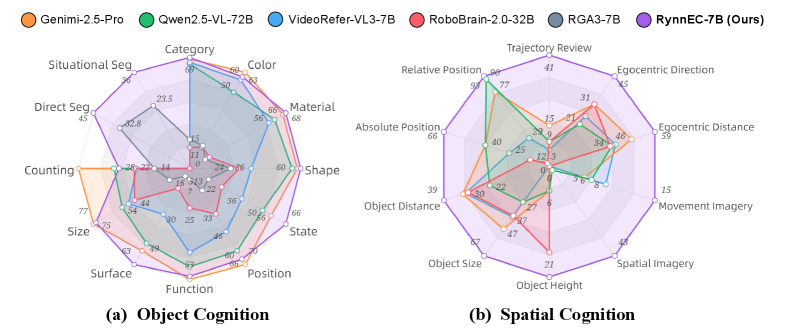

Figure 5: More granular assessments of object cognition and spatial cognition. We compare the best-performing MLLM from each category with our RynnEC-7B.

4.2 Embodied Cognition Evaluation

4.2.1 Main Results

Tab. 2 presents the evaluation results of our RynnEC model and five categories of related MLLMs on the RynnEC-Bench. Although the RynnEC model contains only 7B parameters, it demonstrates robust embodied cognitive abilities, outperforming even the most advanced proprietary model, Gemini-2.5 Pro Comanici et al. [2025], by 10.7 points. Moreover, RynnEC achieves both balanced and superior performance across various tasks. For object cognition, RynnEC achieved a score of 61.4 and possesses the ability to both understand and segment objects. In terms of spatial cognition, RynnEC achieves a score of 54.5, which is 44.2% higher than that of Gemini-2.5 Pro. To support resource-constrained settings, we present a 2B-parameter RynnEC that delivers markedly lower inference latency while maintaining near-parity performance ( $<2$ percentage points drop), enabling on-device deployment for embodied applications. In the following sections, we will introduce the performance of different types of MLLMs on RynnEC-Bench in detail.

Proprietary Generalist MLLMs

Among the four leading proprietary generalist MLLMs evaluated, Gemini-2.5 Pro establishes a clear lead with an overall score of 45.5. This represents a substantial performance margin of 25% over the best open-source generalist MLLM and 38.3% over the premier open-source object-level MLLM. Even more notably, it achieves a remarkable score of 37.8 in the notoriously difficult domain of spatial cognition. This finding provides compelling evidence that spatial awareness can emerge as a byproduct of extensive training on video comprehension tasks.

Open-source Generalist MLLMs

Qwen2.5-VL-72B Bai et al. [2025] exhibits outstanding performance, achieving a score of 36.4 and surpassing GPT-4.1 OpenAI et al. [2024]. This suggests that, in specialized capabilities such as embodied cognition, the gap between open-source and proprietary MLLMs has been significantly narrowed. Furthermore, we observe that Qwen2.5-VL and InternVL3 Zhu et al. [2025] demonstrate superior performance in positional relationship (PR) and distance perception tasks, respectively, even outperforming Gemini-2.5 Pro. Such pronounced differences in various aspects of spatial cognition may be attributed to the distribution of training data.

Open-source Object-Level MLLMs

These MLLMs are capable of accepting region masks as input, enabling more direct localization of target objects and facilitating finer-grained object perception. VideoRefer-VL3-7B Yuan et al. [2025c] is a model fine-tuned from the base model VideoLLaMA3-7B Zhang et al. [2025a]. As shown in Tab. 2, VideoRefer-VL3-7B consistently outperforms VideoLLaMA3-7B in both object cognition and spatial cognition tasks. This demonstrates that, in embodied scenarios, integrating mask understanding within the model is superior to explicit visual prompting.

Referring Video Object Segmentation MLLMs

Recently, several studies have applied MLLMs to object segmentation tasks while retaining the original multimodal understanding capabilities of MLLMs. However, the best-performing model, RGA3-7B Wang et al. [2025], achieves only 15.2 points on the object properties task. Although these MLLMs can still address some general video understanding tasks, their task generalization ability is significantly diminished following segmentation training. In contrast, our RynnEC model, which is specifically designed for embodied scenarios, maintains strong object and spatial understanding capabilities even after segmentation training.

Open-source Embodied MLLMs

With the growing demand for highly generalizable cognitive abilities in the field of embodied intelligence, a number of studies have begun to develop MLLMs specifically tailored for embodied scenarios. A representative model is RoboBrain-2.0 Team et al. [2025], which achieves 24.2 even worse than general-purpose video models such as VideoLLaMA3-7B. There are two primary reasons for this: (1) Loss of object cognition: Embodied MLLMs typically emphasize spatial perception and task planning abilities, but tend to overlook the importance of detailed object understanding. (2) Lack of fine-grained perceptual capability: In egocentric videos, RoboBrain-2.0 demonstrates limited ability to interpret region-level features.

4.2.2 Object Cognition

Fig. 5 (a) presents a more comprehensive evaluation of RynnEC’s capability in object properties cognition from multiple dimensions. Since most object properties cognition abilities are encompassed by general video understanding skills, Gemini-2.5-Pro exhibits superior performance across various competencies. However, due to the high edge deployment requirements of embodied MLLMs, the inference speed of these large-scale models becomes a bottleneck. With only 7B parameters, RynnEC achieves object properties cognition comparable to that of Gemini-2.5-Pro in most categories. Notably, for attributes such as surface details, object state, and object shape, RynnEC-2B even surpasses all other MLLMs. Moreover, most MLLMs lack video object segmentation capabilities, whereas dedicated segmentation MLLMs often sacrifice understanding abilities. RynnEC, while maintaining strong comprehension capabilities, achieves 30.9% and 57.7% improvements over state-of-the-art segmentation MLLMs in direct referring and situational referring object segmentation tasks, respectively.

4.2.3 Spatial Cognition

Fig. 5 (b) demonstrates RynnEC’s spatial cognition capabilities through more fine-grained tasks. As spatial abilities have not been formally defined or systematically explored in previous work, different MLLMs only exhibit strengths in a limited set of specific skills. Overall, spatial cognition abilities such as Spatial Imagery, Movement Imagery, and Trajectory Review are typically absent in prior MLLMs. In contrast, RynnEC possesses a more comprehensive set of spatial abilities, which can facilitate embodied agents in developing spatial awareness within complex environments.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Bar Chart: Performance Comparison of VideoLLaMA3-7B and RynnEC-7B

### Overview

This bar chart compares the performance scores of two models, VideoLLaMA3-7B and RynnEC-7B, across several categories related to spatial reasoning and scene understanding. The chart displays the average score for each model on each category.

### Components/Axes

* **X-axis:** Categories - "Abs. Dist.", "Route Plan", "Rel. Dir. Hard", "Rel. Dist.", "Rel. Dir. Medium", "Rel. Dir. Easy", "Obj. Count", "Obj. Size", "Room Size", "Appear. Order".

* **Y-axis:** Score - ranging from 0 to 70.

* **Models:**

* VideoLLaMA3-7B (represented by a light purple color) with an average score of 35.8.

* RynnEC-7B (represented by a dark purple color) with an average score of 45.8.

* **Legend:** Located at the top of the chart, clearly indicating the color correspondence for each model.

### Detailed Analysis

The chart consists of 10 pairs of bars, one for each model per category. The values are as follows:

* **Abs. Dist. (Absolute Distance):** VideoLLaMA3-7B: 23.5, RynnEC-7B: 25.4

* **Route Plan:** VideoLLaMA3-7B: 25.4, RynnEC-7B: 32.0

* **Rel. Dir. Hard (Relative Direction - Hard):** VideoLLaMA3-7B: 38.7, RynnEC-7B: 30.0

* **Rel. Dist. (Relative Distance):** VideoLLaMA3-7B: 42.9, RynnEC-7B: 39.4

* **Rel. Dir. Medium (Relative Direction - Medium):** VideoLLaMA3-7B: 44.2, RynnEC-7B: 46.3

* **Rel. Dir. Easy (Relative Direction - Easy):** VideoLLaMA3-7B: 51.9, RynnEC-7B: 45.2

* **Obj. Count (Object Count):** VideoLLaMA3-7B: 53.5, RynnEC-7B: 41.9

* **Obj. Size (Object Size):** VideoLLaMA3-7B: 58.5, RynnEC-7B: 42.2

* **Room Size:** VideoLLaMA3-7B: 54.9, RynnEC-7B: 27.1

* **Appear. Order (Appearance Order):** VideoLLaMA3-7B: 42.7, RynnEC-7B: 31.4

**Trends:**

* RynnEC-7B generally outperforms VideoLLaMA3-7B across most categories.

* The performance gap between the two models is most significant in "Obj. Count", "Obj. Size", and "Room Size".

* VideoLLaMA3-7B performs better than RynnEC-7B in "Rel. Dir. Hard".

### Key Observations

* RynnEC-7B consistently achieves higher scores, indicating superior performance overall.

* The largest performance difference is observed in "Room Size", where RynnEC-7B scores significantly lower than VideoLLaMA3-7B. This could indicate a weakness in RynnEC-7B's ability to estimate or understand room dimensions.

* The "Rel. Dir. Hard" category is an outlier, where VideoLLaMA3-7B outperforms RynnEC-7B.

### Interpretation

The data suggests that RynnEC-7B is a more robust model for spatial reasoning and scene understanding tasks, as evidenced by its consistently higher scores across most categories. The models are being evaluated on their ability to understand spatial relationships, object properties, and scene layouts. The significant difference in performance on "Obj. Count", "Obj. Size", and "Room Size" suggests that RynnEC-7B may have a better understanding of object characteristics and spatial configurations. The outlier in "Rel. Dir. Hard" could indicate a specific strength of VideoLLaMA3-7B in handling complex directional reasoning or a weakness of RynnEC-7B in this particular area. The average scores provided at the top of the legend (35.8 and 45.8) confirm the overall trend of RynnEC-7B outperforming VideoLLaMA3-7B. The chart provides a clear quantitative comparison of the two models' capabilities, highlighting their strengths and weaknesses in different aspects of spatial understanding.

</details>

| Models | VSI-Bench |

| --- | --- |

| Qwen2.5-VL-7B Bai et al. [2025] | 35.9 |

| InternVL3-8B Zhu et al. [2025] | 42.1 |

| GPT-4o OpenAI et al. [2024] | 43.6 |

| Magma-8B Yang et al. [2025b] | 12.7 |

| Cosmos-Reason1-7B Azzolini et al. [2025] | 25.6 |

| VeBrain-8B Luo et al. [2025] | 26.3 |

| RoboBrain-7B-1.0 Ji et al. [2025] | 31.1 |

| RoboBrain-7B-2.0 Team et al. [2025] | 36.1 |

| M2-Reasoning-7B AI et al. [2025] | 42.3 |

| ViLaSR Wu et al. [2025b] | 45.4 |

| RynnEC-7B | 45.8 |

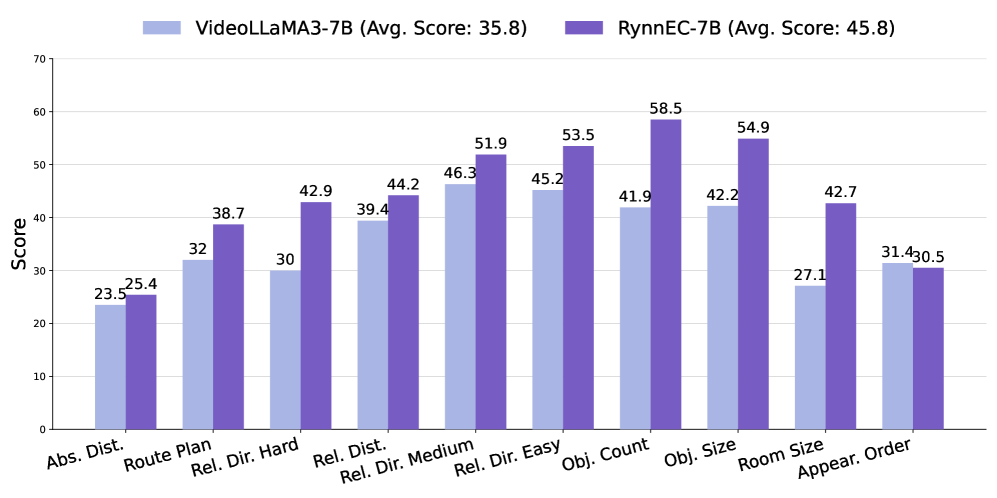

Figure 6: Performance on VSI-Bench Yang et al. [2025c]. Left: per-subtask comparison with VideoLLaMA3, the base model of our RynnEC. Right: overall comparison with generalist MLLMs and embodied MLLMs without explicit 3D encoding.

4.3 Generalization and Scalability

To investigate the generalizability of RynnEC, we conduct experiments on VSI-Bench Yang et al. [2025c], a purely textual spatial intelligence benchmark. As shown in Fig. 6, RynnEC-7B consistently surpasses VideoLLaMA3-7B across almost all capability dimensions. Notably, RynnEC is trained with a mask-centric spatial awareness paradigm, whereas all tasks in VSI-Bench involve purely textual spatial reasoning. This demonstrates that spatial awareness need not be constrained by the modality of representation, and spatial reasoning abilities can be effectively transferred across modalities. Further observation reveals substantial performance gains of RynnEC on the Route Planning task, despite this task not being included during training. This indicates that the navigation performance of embodied agents is currently constrained by foundational spatial perception capabilities, such as the understanding of direction, distance, and spatial relationships. Only with robust foundational spatial cognition can large embodied models achieve superior performance in high-level planning and decision-making tasks. Compared to other embodied MLLMs of comparable size, RynnEC-7B also achieves a leading score of 45.8.

Certain tasks, such as object segmentation and movement imagery, remain significant challenges for RynnEC. We hypothesize that the suboptimal performance on these tasks stems primarily from insufficient training data. To validate this, we conduct an empirical analysis of data scalability across different task categories. As the data volume increases progressively from 20% to 100%, the model’s performance on all tasks improves steadily. This observation motivates further expansion of the dataset to enhance RynnEC’s spatial reasoning capabilities. However, it is noteworthy that the marginal gains diminish as data volume grows, indicating a decreasing return on scale. Investigating strategies to enhance data diversity in order to sustain this scaling behavior remains a critical open challenge for future research.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Task Sequence: Robot Navigation and Object Manipulation

### Overview

The image presents a sequence of eight screenshots depicting a robot performing a series of tasks in an indoor environment. Each screenshot illustrates a step in the task, accompanied by questions and action descriptions related to robot perception and control. The tasks involve navigating to specific locations, identifying objects, and manipulating them.

### Components/Axes

Each screenshot contains the following elements: