# Bridging the Analog and the Probabilistic Computing Divide: Configuring Oscillator Ising Machines as P-bit Engines

**Authors**: E.M.Hasantha Ekanayake, Nikhat Khan, Nikhil Shukla

> University of Virginia, Charlottesville, VA, USA

## Abstract

Oscillator Ising Machines (OIMs) and probabilistic bit (p-bit) platforms have emerged as promising non-Von Neumann paradigms for tackling hard computational problems. While OIMs realize gradient-flow dynamics, p-bit platforms operate through stochastic sampling. Although traditionally viewed as distinct approaches, this work presents a theoretically grounded framework for configuring OIMs as p-bit engines. We demonstrate that this functionality can be enabled through a novel interplay between first- and second harmonic injection to the oscillators. Our work identifies new synergies between the two methods and broadens the scope of applications for OIMs beyond combinatorial optimization problems to those that entail stochastic sampling. We further show that the proposed approach can be applied to other analog dynamical systems, such as the Dynamical Ising Machine.

preprint: APS/123-QED

## I Introduction

The endeavor to devise efficient solutions to complex computational problems has been a longstanding focus of science and technology research owing to its far-reaching implications for practical applications. A particularly promising direction is the design of special-purpose hardware that accelerates such tasks while improving energy efficiency, especially for problems that remain challenging for conventional digital platforms. Within this context, two emerging approaches have attracted significant attention: analog oscillator Ising machines (OIMs) and probabilistic bit (p-bit) based computing engines– the focus of the present work.

OIMs, first proposed by Wang et al. [1] exploit the elegant equivalence between the ’energy function’ characterizing the dynamics of a network of coupled oscillators under second harmonic injection (SHI) and the Ising Hamiltonian. Minimizing the Ising Hamiltonian is an archetypal combinatorial optimization problem (COP) where the goal is to find spin configurations $s∈\{-1,+1\}$ that minimizes the Ising Hamiltonian given by $H=-∑{J_ijs_is_j}$ , where $J_ij$ is the interaction between spin $i$ and $j$ . OIMs realize gradient-flow dynamics on a continuous relaxation of the Ising model and have been extensively explored for solving combinatorial optimization problems (COPs) [2, 3]. They have been experimentally realized across diverse platforms, including optical [4, 5], acoustic [6], electronic [7, 8, 9, 10, 11, 12], spin-wave [13], and quantum systems [14]. These demonstrations have been complemented by extensive theoretical studies [15, 16, 17].

P-bit-based computing engines, on the other hand, are a complementary computing paradigm uniquely capable of Boltzmann sampling. A p-bit can be viewed as a tunable random number generator whose output probability depends on the synaptic input. At the network level, this yields a binary stochastic neural network (BSNN) [18], with spin updates governed by,

$$

s_i^+=sgn≤ft[\tanh≤ft(β∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ijs_j\right)-μ\right] \tag{1}

$$

where, $μ$ is a random number typically selected from a uniform distribution between $[-1,1]$ , and $β$ is the equivalent of inverse temperature [19]. Furthermore, if we interpret the spins as the phases of oscillators—a perspective that is useful in the context of this work—then the state update rule can be expressed as,

$$

s_i^+=\cosφ_i=sgn≤ft[\tanh≤ft(β∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\cosφ_j\right)-μ\right] \tag{2}

$$

where, $φ∈\{0,π\}$ (wrapped phase form). In both Eqs. (1) and (2), the self bias term has not been considered although it is relatively straightforward to include it into the approach presented here.

The intrinsic stochastic sampling capability of p-bit engines offers a complementary approach to OIMs in the context of solving COPs [20, 21, 22, 23, 24, 25, 26, 27]. In addition, the stochastic sampling can be exploited in other application such as probabilistic inference and learning. Establishing such a capability within OIMs—hitherto not demonstrated—could therefore significantly broaden their functional scope beyond combinatorial optimization. To date, OIMs and p-bit engines have largely been pursued as independent approaches, with limited exploration of their potential synergies [28, 29]. In this work, we bridge this gap by demonstrating the feasibility of configuring OIMs as p-bit engines, thereby establishing them as an alternative analog platform for probabilistic computing.

<details>

<summary>Figure1r.png Details</summary>

### Visual Description

## Multi-Panel Technical Figure: Phase Dynamics with Harmonic Injection, Synaptic Input, and Energy Landscapes

### Overview

This image is a 4-panel technical figure (labeled a–d) illustrating phase dynamics in a system involving harmonic injection, synaptic input, noise, and energy landscapes. It combines schematic diagrams, a 2D heatmap, a 3D energy surface plot, and 2D energy vs. phase plots to characterize system behavior.

### Components/Axes

#### Panel (a): Schematic Diagram

- **Block Diagram (Top Left)**:

- Components: Square labeled "SHI" (Second Harmonic Injection) → circular oscillator → output labeled "V_out(∅)".

- Annotations: Downward arrow labeled "FHI" (First Harmonic Injection) into the oscillator.

- Text below: "FHI: First Harmonic Injection"; "SHI: Second Harmonic Injection".

- **Phase Diagram (Bottom)**:

- Circular phase space with ∅ (phase) marked from 0 to π (dashed lines).

- Blue dot at ε=0 (ε = phase difference/error).

- Synaptic input: Orange arrows labeled "-γ" (left) and "+γ" (right).

- Noise: Green wavy line labeled "Noise" above the phase circle.

- Trajectory: Pink arc labeled "Trajectory of ∅,ε" around the blue dot.

#### Panel (b): 2D Heatmap (Phase Dynamics)

- **Axes**:

- X-axis: γ (range: -0.5 to 0.5).

- Y-axis: ε(π) (range: -0.5 to 0.5).

- **Color Bar (Right)**:

- Label: "-∇E = ε̇" (time derivative of ε).

- Range: -0.5 (blue) to 0.5 (yellow).

- **Annotations**:

- Dashed line labeled "ε̇=0" (yellow arrow points to it).

- Text: "K_s=0.15" (bottom left).

- Yellow arrows labeled "Phase trajectory resulting from synaptic input" (two arrows, one upward, one downward, with noise wiggles).

#### Panel (c): 3D Energy Surface Plot

- **Axes**:

- X-axis: γ (range: -0.2 to 0.2).

- Y-axis: ε(π) (range: -0.5 to 0.5).

- Z-axis: Energy (a.u., range: -0.25 to 0.1).

- **Color Bar (Right)**:

- Label: "Energy (a.u.)".

- Range: -0.25 (blue) to 0.1 (yellow).

#### Panel (d): 2D Energy vs. Phase Plots (Stacked)

- **Axes (All Plots)**:

- X-axis: ε(π) (range: -0.5 to 0.5).

- Y-axis: Energy (a.u., range: -0.30 to 0.15).

- **Top Plot**:

- Label: "K_s=0.15".

- Legend: γ = -0.1 (pink), -0.15 (purple), -0.2 (green).

- **Middle Plot**:

- Label: "γ=0" (orange line).

- **Bottom Plot**:

- Legend: γ = 0.1 (pink), 0.15 (purple), 0.2 (green).

### Detailed Analysis

#### Panel (a) Schematic

- The block diagram models a system with two harmonic injections (FHI, SHI) and a phase-dependent output V_out(∅).

- The phase diagram shows synaptic input (±γ) and noise perturbing the phase trajectory around ε=0.

#### Panel (b) Heatmap

- **Trend Verification**: The heatmap shows ε̇ (color) as a function of γ and ε. The dashed line ε̇=0 divides regions where ε increases (yellow, ε̇>0) and decreases (blue, ε̇<0).

- **Spatial Grounding**: Yellow arrows (synaptic input trajectories) align with vertical dashed lines, indicating phase shifts induced by synaptic input.

#### Panel (c) 3D Energy Surface

- **Trend Verification**: Energy (z-axis) varies with γ and ε. Higher energy (yellow) occurs at γ ≈ 0 and ε ≈ 0; lower energy (blue) occurs at γ ≈ ±0.2 and ε ≈ ±0.5.

#### Panel (d) Line Plots

- **Top Plot (Negative γ)**: For γ = -0.1, -0.15, -0.2, energy peaks at ε=0 and dips at ε=±0.5.

- **Middle Plot (γ=0)**: Energy peaks at ε=0 and dips at ε=±0.5 (symmetric).

- **Bottom Plot (Positive γ)**: For γ = 0.1, 0.15, 0.2, energy dips at ε=0 and peaks at ε=±0.5 (opposite of negative γ).

### Key Observations

1. **Phase Trajectory Symmetry**: Synaptic input (±γ) induces symmetric phase shifts (Panel b).

2. **Energy Landscape Symmetry**: Energy vs. ε is symmetric around ε=0 for γ=0 (Panel d, middle).

3. **γ-Dependent Energy Inversion**: Negative γ causes energy peaks at ε=0; positive γ causes energy dips at ε=0 (Panel d, top vs. bottom).

4. **Noise Impact**: Noise (Panel a) perturbs the phase trajectory, visible as wiggles in Panel b’s arrows.

### Interpretation

This figure characterizes a phase-based system (likely a neural oscillator or similar) where:

- **Harmonic Injection (FHI/SHI)** modulates the oscillator’s output.

- **Synaptic Input (±γ)** and **noise** perturb the phase trajectory (ε).

- **Energy Landscapes** (Panels c–d) show that γ controls the stability of phase states: negative γ stabilizes ε=0 (energy peak), while positive γ destabilizes it (energy dip).

The data suggests that synaptic input and harmonic injection can tune the system’s phase dynamics, with energy landscapes dictating stable/unstable phase states. This has implications for understanding oscillatory systems (e.g., neural circuits) where phase synchronization and energy stability are critical.

</details>

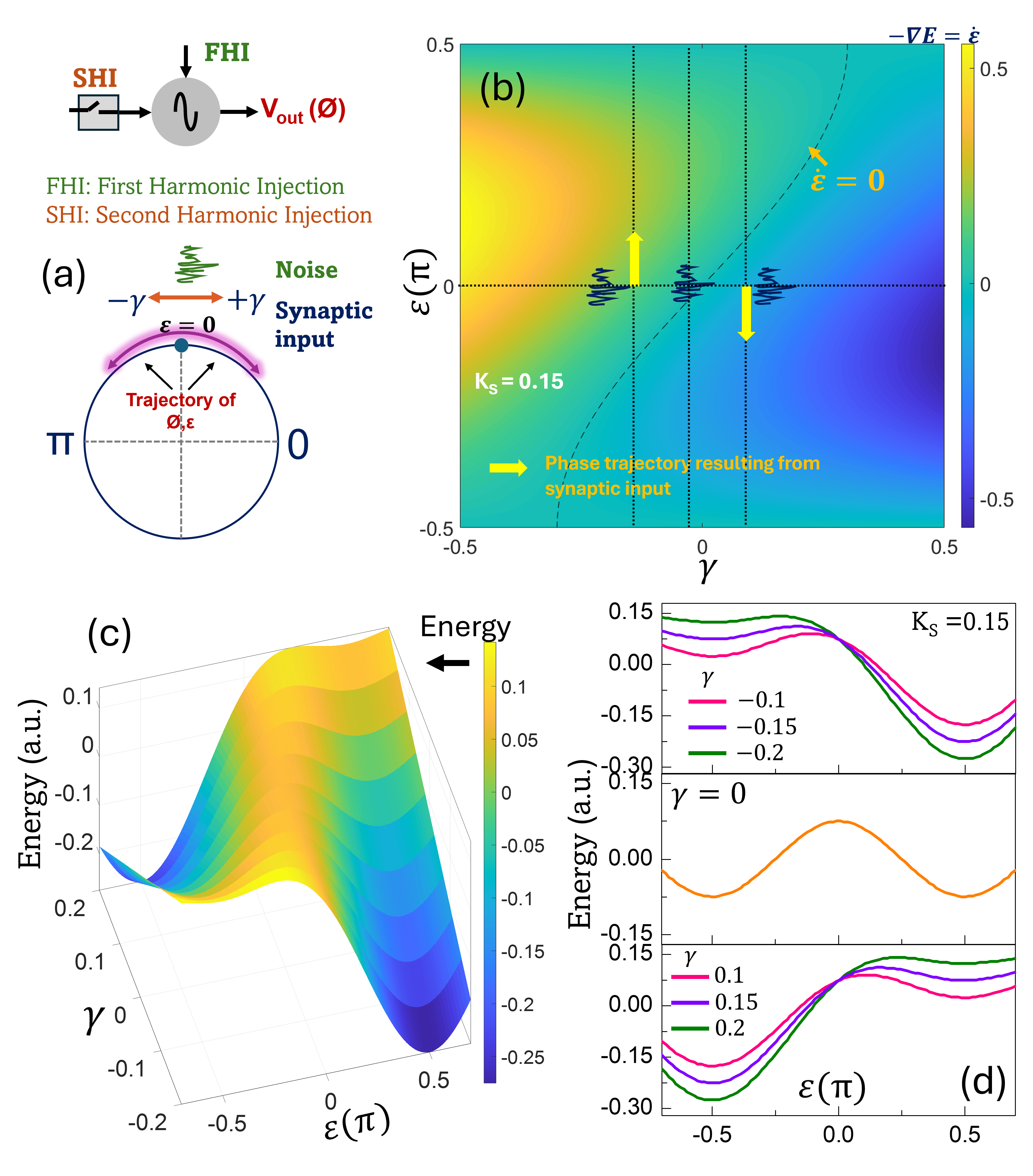

Figure 1: Dynamics of a harmonic oscillator under SHI (a) Schematic illustration of the signals required to program an harmonic oscillator as a p-bit. (b) Force field as a function of $γ$ ( $K_s=0.15$ , where $K_s$ is the strength of SHI.). (c) Corresponding energy landscape demonstrating its evolution with the synaptic input, $γ$ . (d) Specific cuts of the energy landscape at $γ=\{0, ± 0.1, ± 0.15, ± 0.2\}$ ( $K_s=0.15$ ).

To establish the foundation of our oscillator-based p-bit engine, we begin by demonstrating two key properties of harmonic oscillators (the class of harmonic oscillators considered in this study) and their networks operating under SHI: (a) An oscillator subjected to first- and second harmonic injection can function as a binary stochastic neuron (BSN); and (b) A network of such coupled oscillators—specifically an OIM—can operate as a binary stochastic neural network (BSNN). These two properties form the conceptual and mathematical basis for designing an oscillator-based p-bit engine.

## II Configuring Oscillators as Stochastic Neurons

To design a binary stochastic neuron (BSN) using an oscillator, we first examine the dynamics of a harmonic oscillator subjected to two external inputs (Fig. 1 (a)). (a) The first input is a signal with a frequency nearly equal to the oscillator’s natural frequency—a condition commonly referred to as injection locking. Within a specific locking range, this external signal entrains the oscillator, steering its output toward the phase and frequency of the injected signal, effectively synchronizing the oscillator’s behavior with the input. An energetics-based explanation of the relevant phase behavior is provided in Appendix A. We refer to this signal as the fundamental harmonic injection (FHI). All phase values are defined with respect to a common reference signal. (b) The second input is SHI, operating at twice the oscillator’s natural frequency. SHI drives the oscillator phase toward either $φ=0$ or $φ=π$ .

The resulting phase dynamics of such an oscillator can be described by,

$$

\begin{split}\frac{dφ}{dt}&=-K_c\sin{≤ft(φ-θ\right)}-K_s\sin(2φ)\\

\end{split} \tag{3}

$$

where, $φ$ denotes the output phase of the oscillator, while $θ$ represents the phase offset of the FHI signal input. The parameters $K_c$ and $K_s$ are coupling constants of the FHI and SHI signals, with the first and the second terms on the RHS of Eq. (LABEL:neuron1) capturing the influence of the FHI and SHI, respectively.

In this work, we will restrict our attention to cases where $θ∈\{0,\frac{π}{2},π\}$ . Initially, we will focus on the analysis where $θ∈\{0,π\}$ , with the case $θ=\frac{π}{2}$ becoming relevant further on. Under this constraint, we can recast Eq. (LABEL:neuron1) as,

$$

\begin{split}\frac{dφ}{dt}=-γ\sin{≤ft(φ\right)}-K_s\sin(2φ)\end{split} \tag{4}

$$

where $γ (=± K_c)$ denotes the scaled synaptic input; $γ=K_c$ when $θ=0$ and $γ=-K_c$ when $θ=π$ . For simplicity, we will generally refer to $γ$ as the synaptic input in the following discussion. The details of the scaling factor will be elaborated in the subsequent section.

Furthermore, for convenience, we perform a frame rotation by $0.5π$ , redefining the phase as $φ=\frac{π}{2}+ε$ . We also note that the relevant values of $θ$ for this rotated frame shift to $θ^{^\prime}∈\{-\frac{π}{2},0,\frac{π}{2}\}$ . Under this transformation, equation (4) becomes,

$$

\frac{dε}{dt}=-γ\cos{≤ft(ε\right)}+K_s\sin(2ε) \tag{5}

$$

We now analyze the properties and the behavior of Eq. (5). First, the fixed points of this system lie at $ε_1^*=± 0.5π$ , and at values satisfying $\sin(ε_2^*)=\frac{γ}{2K_s}$ . A detailed stability analysis of all the fixed points has been presented in Appendix B.

Next, we investigate the expected dynamical behavior of the system by analyzing the effective force field $(-∇ E=\dot{ε})$ driving the phase evolution as a function of $γ$ , as shown in Fig. 1 (b). The dotted line in the figure represents the set of phase points where the phase velocity vanishes, i.e., $\frac{dε}{dt}=0$ , and is described by the equation:

$$

-γ+2K_s\sin(ε)=0

$$

This curve defines the nullcline of the system, separating regions of positive and negative phase flow.

Interestingly, when the system is initialized at $ε=0$ , the direction of phase evolution depends entirely on the sign of $γ$ . For $γ<0$ , the dynamics flow toward $ε=\frac{π}{2}$ (corresponding to $φ=π$ ). Conversely, for $γ>0$ , the dynamics flow toward $ε=-\frac{π}{2}$ (i.e., $φ=0$ ). Moreover, the magnitude of $γ$ determines the steepness of the phase flow at $ε=0$ . At the critical point $γ=0$ , the direction of phase evolution becomes entirely stochastic in the presence of noise.

Figure 1 (c) shows the evolution of the oscillator’s energy landscape with the synaptic input, $γ$ , with Fig. 1 (d) showing cuts at specific values of $γ=\{0, ± 0.1, ± 0.15, ± 0.2\}$ . In Fig. 1 (d), the relative symmetry about $ε=0$ is evident across all cases, with the energy profiles for $γ=\{+0.1, +0.15, +0.2\}$ and $γ=\{-0.1, -0.15, -0.2\}$ appearing as mirror images, respectively, and the $γ=0$ case exhibiting a perfectly symmetric landscape.

In alignment with prior work on magnetic tunnel junctions (MTJs)-based p-bits [30], we engineer our oscillator-based BSN to operate within the low energy barrier regime. Since the height of the barrier is controlled by $K_s$ (see Appendix C for details), this is achieved by tuning $K_s$ to a small value ( $K_s\ll 1$ ) during the sampling event. Thus, the oscillator-based approach can enable a BSN with a tunable barrier.

From 1 (b) as well as from the form of Eq. (5), it can be observed that the system exhibits a unique symmetry at $ε=0$ , within the domain $ε∈≤ft[-\frac{π}{2},\frac{π}{2}\right]$ , whereby the magnitude of the (scaled) synaptic input has a symmetric effect for $+γ$ and $-γ$ , but induces phase flows in opposite directions. This symmetry plays a critical role in enabling the oscillator to function as a BSN, as it defines a neutral point from which the system can stochastically evolve toward one of two stable states depending on the sign of the synaptic input. As we will show later, this stochastic response is also highly non-linear. In practical settings, the oscillator can be driven to this neutral point $ε=0$ using an FHI signal with a phase offset of $θ^{^\prime}=0$ (we refer to this input as $FHI^0$ ), and without applying the SHI.

Thus, operating the oscillator as a BSN entails the following steps:

(i) Set oscillator phase to the neutral point using $FHI^0$ . (ii) Remove $FHI^0$ followed by application of the synaptic input (also an FHI signal when considering a single oscillator) and SHI signal (applied as a ramp) to evaluate the (stochastic) neuron’s state—this constitutes a sampling event. As we will demonstrate later, in the OIM network, such synaptic input is effectively generated by other connected oscillators within the network.

## III Dynamics of an Oscillator-based BSN

To quantitatively analyze the stochastic nonlinear response of the oscillator-based BSN, we begin with the oscillator dynamics described in Eq. (5). We first define the updated spin state in terms of $ε$ , which, in the context of the continuous time dynamics considered here, is given by,

$$

s^+=sgn≤ft(\cos(φ^+)\right)=-sgn≤ft(\sin(ε^+)\right)

$$

where, $φ^+$ and $ε^+$ refer to the phase of the system at a small time increment $Δ t→ 0$ after the sampling has been initiated.

We now evaluate the solution to the dynamics presented in Eq. (5). Although deriving an explicit solution is challenging, Eq. (5) has an implicit analytical solution given by,

$$

\frac{ζ(ε)-γ\tanh^-1(\sin(ε))}{γ^2-4K_s^2}=t \tag{6}

$$

where,

$$

\begin{split}ζ(ε)&=K_s\big(-2\log(γ-2K_s\sin(ε))\\

&+\log(1-\sin(ε))+\log(\sin(ε)+1)\big)\\

\end{split} \tag{7}

$$

For it’s applications as a BSN, we focus on the direction of the initial phase trajectory. To understand and evaluate the system dynamics at the sampling instant—defined as the moment when (FHI 0) is suppressed and the SHI and the synaptic input are asserted —we adopt the following approximation: (i) The dynamics are evaluated in the limit $t→ 0$ . (ii) We model the noise as Gaussian white noise with $⟨η(t)⟩=0, ⟨η(t)η(t^\prime)⟩=2K_nδ(t-t^\prime),$ with $K_n$ denoting the noise intensity i.e., $η(t) dt=√{2K_n} dW_t,$ where $W_t$ is a Wiener process. Equivalently, over a finite timestep $Δ t$ , $∫_t^t+Δ tη(τ) dτ ∼ N\big(0, 2K_n Δ t\big).$ (iii) At the onset of sampling ( $t→ 0$ ) , we assume $K_s→ 0$ such that $K_s\ll|γ|$ . This reflects the requirement for a low energy barrier in the probabilistic regime. Subsequently, $K_s$ must be ramped up for reasons discussed in the following section.

Under these approximations, the oscillator phase can be expressed as:

$$

\begin{split}\sin(ε_i)&≈\tanh≤ft(-γ t+ε^η(t)\right)\\

\\

&≈\tanh≤ft(-γ t\right)+ε^η(t).sech^2(γ t)\\

\\

\end{split} \tag{8}

$$

Here, $ε^η(t)∼N\big(0, 2K_n t\big)$ , and is small such that the $\tanh(.)$ term can be linearized. Accordingly, at a small time increment $Δ t→ 0$ after the onset of sampling at t=0, the updated state of the oscillator-based BSN can be described as,

$$

\begin{split}s^+&≈sgn≤ft[\tanh(γΔ t)-ε^η(Δ t).sech^2(γΔ t)\right]\\

\\

&≡sgn≤ft[\tanh(γΔ t)-ϑ\right]\end{split} \tag{9}

$$

where, $ϑ≡ε^η(Δ t).sech^2(γΔ t)$ . Since $sech^2(γΔ t)∈(0,1]$ , and noise intensity is assumed to be small, $ϑ$ has a high probability of being in the interval [-1,+1]. The infinitesimal time ( $Δ t$ ) can be interpreted as the time duration required by the oscillator phase to reach a critical threshold magnitude $|ε_th|$ , beyond which the oscillator phase cannot ‘reverse’ trajectory. $Δ t$ can be tuned through properties of the SHI pulse, such as its slew rate. Additional details on $Δ t$ are discussed in the following sections. The derivation of Eqs. (8) and (9) has been presented in Appendix D. Furthermore, while Eq. (8) addresses the regime where $γ\gg K_s$ , we also consider (in Appendix D) the complementary case where both $γ$ and $K_s$ are small and comparable. In this setting, the synaptic bias $γ$ and the stochastic perturbations act as competing drivers of the phase dynamics. A large $|γ|$ produces a predictable exponential drift, whereas strong noise leads to rapid amplification of fluctuations and broad dispersion of trajectories. The observed evolution is therefore governed by the balance between deterministic drive and stochastic forcing.

We note that the actual synaptic input to the BSN would be applied as the voltage (or current) amplitude, $V_inj$ , and phase (in-phase or out-of-phase) of the injected signal, which relate to $γ$ and the coupling constant $K_c$ as $γ=± K_c ≈± \frac{ω_0}{2Q}·\frac{V_inj}{V_osc}$ [31, 32], where $Q$ is the quality factor of the oscillator, $ω_0$ is the natural frequency, and $V_osc$ represents the amplitude of the oscillator, respectively. This formulation implies that $γ$ serves as a scaled representation of the synaptic input, as noted earlier. Furthermore, the effective inverse temperature is then given by $β_eff=\frac{ω_0Δ t}{2V_osc}.\frac{1}{Q}$ implying that $β_eff$ can be modulated using the quality factor, $Q$ , of the oscillator as well as other parameters such as the oscillation amplitude among others. This tunability provides a practical mechanism for controlling the stochastic behavior of the system. The updated state is then expressed as

$$

\begin{split}s^+&≈sgn≤ft[\tanh≤ft(\frac{ω_0Δ t}{2QV_osc}· V_inj\right)-ϑ\right]\\

\\

&≡sgn≤ft[\tanh≤ft(β_eff· V_inj\right)-ϑ\right]\end{split} \tag{10}

$$

<details>

<summary>Figure2r.png Details</summary>

### Visual Description

## [Chart Type]: Probability vs. Injection Voltage (Two Panels: (a) and (b))

### Overview

The image contains two side-by-side plots (labeled (a) and (b)) showing the probability \( p \) of a process (e.g., injection success) as a function of injection voltage \( V_{\text{inj}} \). Both plots use a sigmoidal (tanh-based) fit: \( p = \frac{1 + \tanh(\beta_{\text{eff}} V_{\text{inj}})}{2} \), with different parameter sets ( \( K_n \) in (a), \( \beta_{\text{eff}} \) in (b)).

### Components/Axes

#### Panel (a) (Left):

- **Title/Label**: (a) with \( K_{s,\text{max}} = 0.15 \).

- **Axes**:

- Y-axis: Probability, \( p \) (range: \( 0.0 \) to \( 1.0 \)).

- X-axis: Injection voltage, \( V_{\text{inj}} \) (range: \( -0.8 \) to \( 0.8 \)).

- **Equation**: \( p = \frac{1 + \tanh(\beta_{\text{eff}} V_{\text{inj}})}{2} \) (labeled "fit:").

- **Legend/Table**: A table maps colors to \( K_n \) and \( \beta_{\text{eff}} \):

| Color | \( K_n \) | \( \beta_{\text{eff}} \) |

|-------|----------|------------------------|

| Dark blue | 0.01 | 88.76 |

| Orange | 0.05 | 12.631 |

| Green | 0.10 | 6.289 |

| Purple | 0.15 | 3.837 |

| Olive | 0.20 | 2.704 |

#### Panel (b) (Right):

- **Title/Label**: (b) with \( K_{s,\text{max}} = 0.15 \), \( K_n = 0.15 \).

- **Axes**:

- Y-axis: Probability, \( p \) (range: \( 0.0 \) to \( 1.0 \)).

- X-axis: Injection voltage, \( V_{\text{inj}} \) (range: \( -1.0 \) to \( 1.0 \)).

- **Equation**: Same as (a): \( p = \frac{1 + \tanh(\beta_{\text{eff}} V_{\text{inj}})}{2} \) (labeled "fit:").

- **Legend/Table**: A table maps colors to \( \beta_{\text{eff}} \approx \):

| Color | \( \beta_{\text{eff}} \approx \) |

|-------|-------------------------------|

| Purple | 8 |

| Orange | 6 |

| Green | 4 |

| Dark blue | 2 |

### Detailed Analysis (Data Points & Trends)

#### Panel (a) (Varying \( K_n \), \( K_{s,\text{max}} = 0.15 \)):

- **Dark blue (\( K_n = 0.01 \), \( \beta_{\text{eff}} = 88.76 \))**:

- \( V_{\text{inj}} \approx -0.8 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.4 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \) (crossing point)

- \( V_{\text{inj}} \approx 0.4 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 0.8 \): \( p \approx 1.0 \)

- **Trend**: Steepest curve (highest \( \beta_{\text{eff}} \)), fastest transition from \( p \approx 0 \) to \( p \approx 1 \).

- **Orange (\( K_n = 0.05 \), \( \beta_{\text{eff}} = 12.631 \))**:

- \( V_{\text{inj}} \approx -0.8 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.4 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.4 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 0.8 \): \( p \approx 1.0 \)

- **Trend**: Less steep than dark blue, more than green.

- **Green (\( K_n = 0.10 \), \( \beta_{\text{eff}} = 6.289 \))**:

- \( V_{\text{inj}} \approx -0.8 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.4 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.4 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 0.8 \): \( p \approx 1.0 \)

- **Trend**: Less steep than orange, more than purple.

- **Purple (\( K_n = 0.15 \), \( \beta_{\text{eff}} = 3.837 \))**:

- \( V_{\text{inj}} \approx -0.8 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.4 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.4 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 0.8 \): \( p \approx 1.0 \)

- **Trend**: Less steep than green, more than olive.

- **Olive (\( K_n = 0.20 \), \( \beta_{\text{eff}} = 2.704 \))**:

- \( V_{\text{inj}} \approx -0.8 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.4 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.4 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 0.8 \): \( p \approx 1.0 \)

- **Trend**: Least steep curve (lowest \( \beta_{\text{eff}} \)), slowest transition.

#### Panel (b) (Varying \( \beta_{\text{eff}} \), \( K_{s,\text{max}} = 0.15 \), \( K_n = 0.15 \)):

- **Purple (\( \beta_{\text{eff}} \approx 8 \))**:

- \( V_{\text{inj}} \approx -1.0 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.5 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.5 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 1.0 \): \( p \approx 1.0 \)

- **Trend**: Steepest curve (highest \( \beta_{\text{eff}} \)), fastest transition.

- **Orange (\( \beta_{\text{eff}} \approx 6 \))**:

- \( V_{\text{inj}} \approx -1.0 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.5 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.5 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 1.0 \): \( p \approx 1.0 \)

- **Trend**: Less steep than purple, more than green.

- **Green (\( \beta_{\text{eff}} \approx 4 \))**:

- \( V_{\text{inj}} \approx -1.0 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.5 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.5 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 1.0 \): \( p \approx 1.0 \)

- **Trend**: Less steep than orange, more than dark blue.

- **Dark blue (\( \beta_{\text{eff}} \approx 2 \))**:

- \( V_{\text{inj}} \approx -1.0 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx -0.5 \): \( p \approx 0.0 \)

- \( V_{\text{inj}} \approx 0.0 \): \( p \approx 0.5 \)

- \( V_{\text{inj}} \approx 0.5 \): \( p \approx 1.0 \)

- \( V_{\text{inj}} \approx 1.0 \): \( p \approx 1.0 \)

- **Trend**: Least steep curve (lowest \( \beta_{\text{eff}} \)), slowest transition.

### Key Observations

1. **Sigmoidal Transition**: All curves follow \( p = \frac{1 + \tanh(\beta_{\text{eff}} V_{\text{inj}})}{2} \), showing a threshold-like transition from \( p \approx 0 \) to \( p \approx 1 \) around \( V_{\text{inj}} = 0 \).

2. **Parameter Dependence**:

- In (a), lower \( K_n \) (higher \( \beta_{\text{eff}} \)) increases curve steepness (faster transition).

- In (b), higher \( \beta_{\text{eff}} \) increases curve steepness (faster transition).

3. **Crossing Point**: All curves cross at \( V_{\text{inj}} = 0 \), \( p = 0.5 \), consistent with \( \tanh(0) = 0 \).

### Interpretation

- The plots model a threshold-like process (e.g., injection success) where probability depends on \( V_{\text{inj}} \) and parameters \( K_n \) (or \( \beta_{\text{eff}} \)).

- The tanh function captures a sigmoidal transition, typical of systems with a sharp threshold (e.g., switching from low to high probability as \( V_{\text{inj}} \) crosses 0).

- Lower \( K_n \) (or higher \( \beta_{\text{eff}} \)) makes the process more sensitive to \( V_{\text{inj}} \) near the threshold (steeper transition), implying smaller \( K_n \) (or larger \( \beta_{\text{eff}} \)) enhances the "sharpness" of the threshold behavior.

- The consistent crossing point (\( V_{\text{inj}} = 0 \), \( p = 0.5 \)) suggests the threshold is fixed at \( V_{\text{inj}} = 0 \), but the transition rate (steepness) depends on \( K_n \) or \( \beta_{\text{eff}} \).

This analysis provides a complete description of the image’s content, trends, and implications, enabling reconstruction of the data and relationships without the original image.

</details>

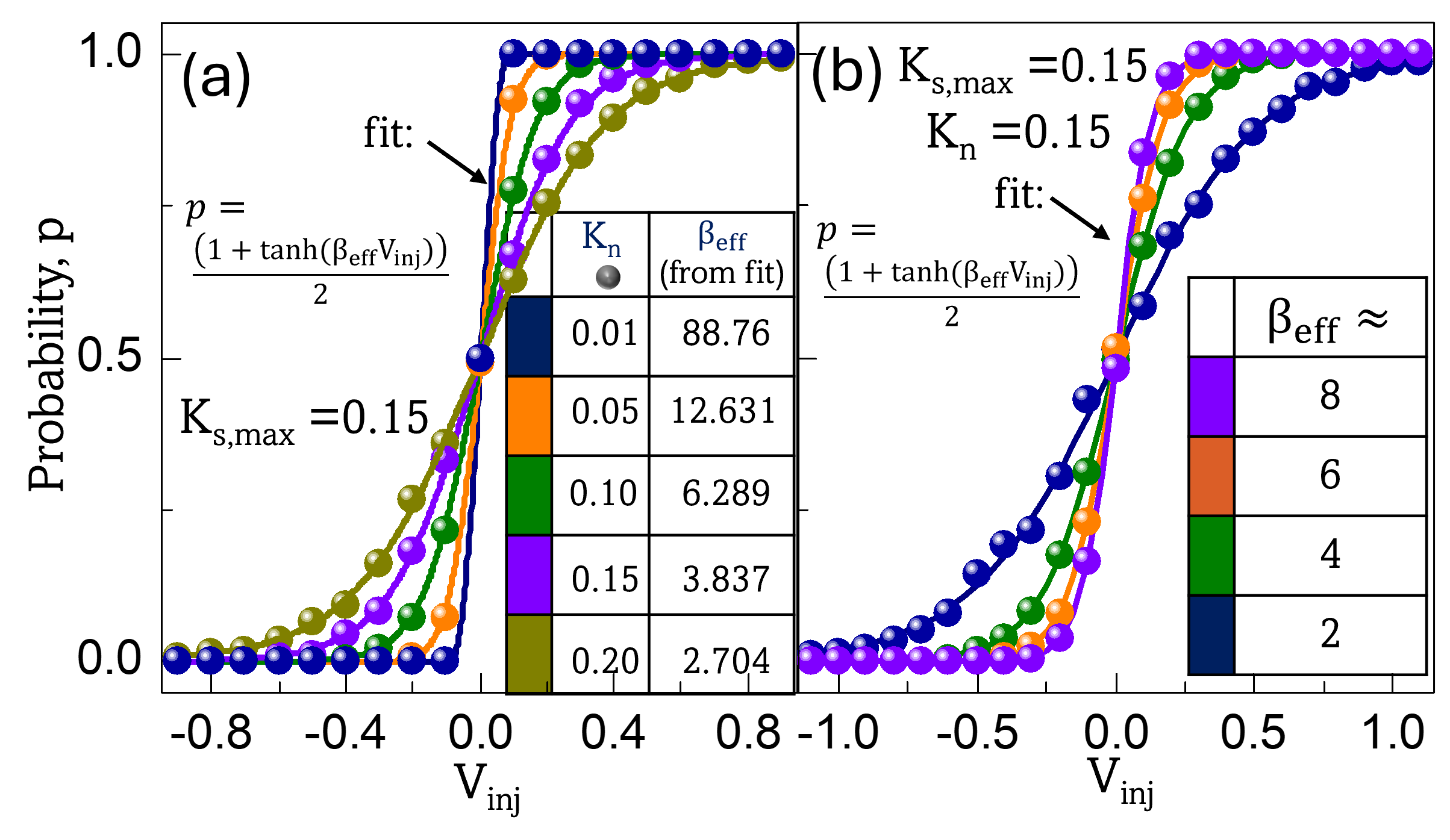

Figure 2: Oscillator-based BSN. Firing probability (symbols) as a function of the synaptic input for: (a) varying levels of noise ( $K_n$ ). (b) different values of $β_eff$ derived for $K_n=0.15$ . We note that the $β_eff$ profile will change for a different $K_n$ . The lines in the plot indicate fits using the equation $p=\frac{1+\tanh(β_effV_inj)}{2}$ along with the calculated $β_eff$ ; $p:$ firing probability. All fits exhibit $R^2>0.999$

Equation (LABEL:final_BSN) showcases the BSN’s capability to perform Boltzmann sampling, and consequently, function as a p-bit when initialized at the critical phase point, $ε=0$ . In the following section, we show that, in OIMs, $V_inj$ —and consequently $γ$ —is generated and regulated through the mutual feedback among the coupled oscillators. To validate the dynamics derived above, we simulate the oscillator-based BSN’s switching using a stochastic differential equation solver implemented in MATLAB ®. We first examine the evolution of stochastic behavior under varying noise levels. The phase is initialized at $ε=0$ and allowed to evolve in the presence of different noise intensities and synaptic inputs. The firing probability is then estimated over 2000 such cycles. Figure 2 (a) presents the simulation results (symbols) for the output firing probability of the oscillator-based BSN as a function of $V_inj$ . These results are fitted using the function $p=\frac{1+\tanh(β_effV_inj)}{2}$ (solid lines), showing excellent agreement with the simulated data ( $R^2>0.999$ ); p is the probability of the neuron firing i.e., switching to $s=+1$ .

In practical implementations, modulating external noise to control temperature and stochasticity may not be feasible. Instead, the effective inverse temperature—and thus the degree of stochastic behavior—can be tuned by adjusting $β_eff$ , which is achievable through modulation of the oscillator’s quality factor $Q$ . Figure 2 (b) illustrates the evolution of firing probability (symbols) for different values of $β_eff$ , where the switching probability again exhibits tanh(.) dependence on $V_inj$ as confirmed by the corresponding fitted curves (solid lines) with $R^2>0.999$ . A noise strength of $K_n=0.15$ was used in the simulation. These simulation results further support that the oscillator exhibits the characteristic behavior of a BSN capable of performing Boltzmann sampling.

A key consideration in engineering the oscillator’s dynamics for BSN functionality is the relative magnitude of $γ$ and the SHI strength, $K_s$ . Realizing effective Boltzmann sampling behavior requires a small energy barrier, which corresponds to the regime $K_s→ 0$ such that $K_s\ll|γ|$ . If this condition is not satisfied, the system may still operate as a BSN; however, its dynamics may deviate from the Boltzmann sampling behavior. This is detailed in the analysis in Appendix D.

In contrast, to preserve the oscillator’s phase trajectory after sampling and to enable reliable readout, a lower bound on $K_s$ must be satisfied. Specifically, this bound ensures that once the phase magnitude exceeds a certain threshold ( $|ε_th|$ ), the phase continues to evolve in the same direction until it reaches the corresponding fixed point.

While a detailed analytical derivation is provided in Appendix E, we offer here a qualitative explanation of the origin of this constraint by examining the dynamics described by Eq. (5). Within the interval $ε∈(-\frac{π}{2},\frac{π}{2})$ , the cosine term satisfies $\cos(ε)>0$ , and the synaptic input term $-γ\cos(ε)$ therefore drives the phase evolution in the direction opposite to the sign of $γ$ . This implies that the synaptic input alone tends to push the phase toward the fixed point opposite to the sign of $γ$ , unless counteracted by the SHI term.

If noise initially drives $\frac{dε}{dt}$ in the direction not favored by the synaptic input (Fig. 8 (b)), and $K_s$ is too small, the oscillator may reverse its trajectory to align with the trajectory favored by the synaptic input. In such cases, the final state may not reflect the oscillator’s initial trajectory or the intended output $s^+$ . The SHI term— specifically, the $K_s\sin(2ε)$ component in Eq. (5), acts to reinforce the initial direction of $\frac{dε}{dt}$ generated by the stochastic sampling process, provided $K_s$ is sufficiently large. This reinforcement helps ensure that the phase continues toward the correct fixed point.

The critical condition to ensure that an oscillator at $ε=|ε_th|$ maintains its trajectory is given by:

$$

|γ|<2K_s\sin(|ε_th|)

$$

It is important to note that due to the presence of noise, this threshold is inherently probabilistic, resulting in a diffuse rather than deterministic boundary. This condition appears to contradict the earlier requirement concerning the relative magnitudes of $γ$ and $K_s$ . To reconcile these seemingly opposing constraints, we propose implementing the SHI input as a ramp signal with a carefully engineered slew rate. Specifically, the SHI can be initialized at a low amplitude—ensuring that $K_s\ll|γ|$ —to facilitate stochastic sampling in the low-barrier regime. Subsequently, the amplitude of the SHI is increased to reinforce the resulting phase trajectory. Additionally, as noted above the characteristics of the applied SHI input, particularly its slew rate, also modulate $Δ t$ . A smaller slew rate increases $Δ t$ , which in turn modifies the inverse temperature and also makes the system less stochastic (see Appendix F for details).

The analysis presented in this section reveals that the competition between synaptic input and noise gives rise to a stochastic bifurcation, which constitutes the core operating principle of the proposed oscillator-based BSN.

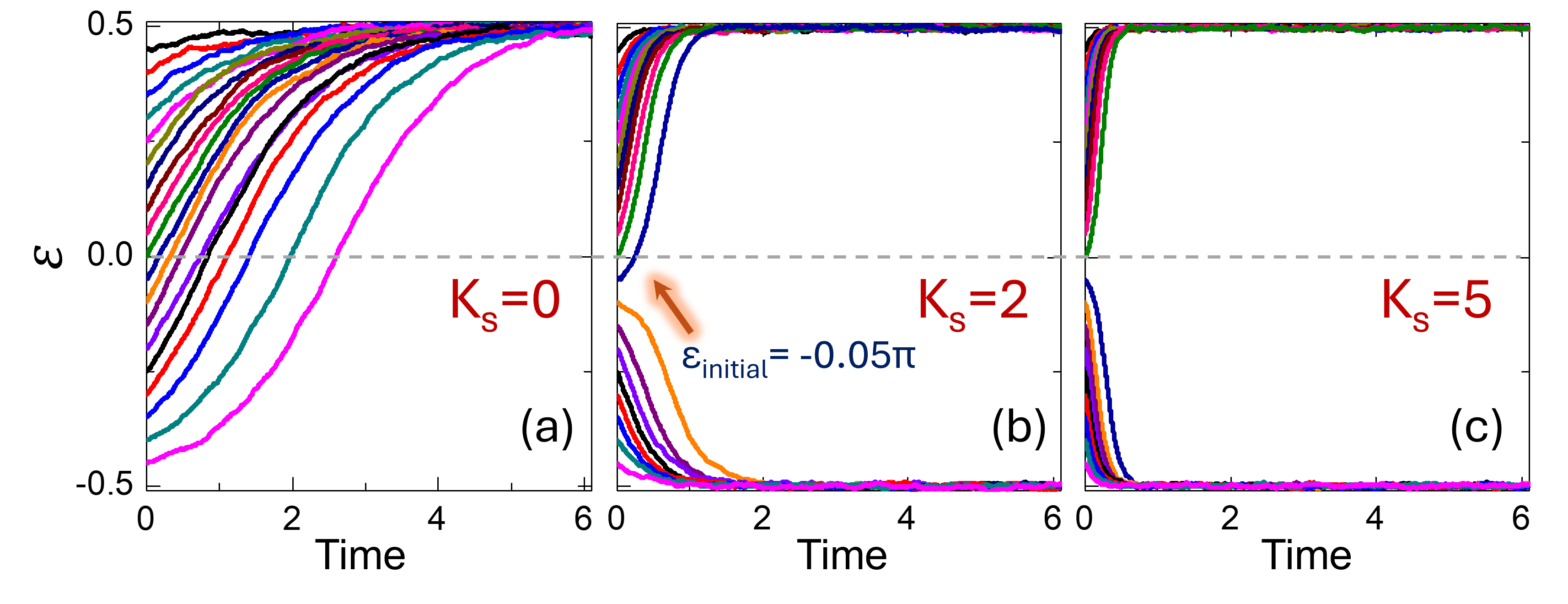

## IV Configuring OIMs As Binary Stochastic Neural Networks

Building upon the ability to configure an oscillator as a BSN capable of performing Boltzmann sampling, we now investigate the possibility of configuring an OIM as a p-bit engine, or in other words, a BSNN. The core premise of this idea is that after the system reaches steady state, i.e., $ε∈\{-\frac{π}{2},\frac{π}{2}\}$ , the dynamics of a randomly sampled oscillator driven to the phase point $ε=0$ (i.e., $φ=\frac{π}{2}$ ) still approximate Boltzmann sampling. Steady state initialization at $ε∈\{-\frac{π}{2},\frac{π}{2}\}$ can be achieved by applying an SHI signal prior to the onset of stochastic sampling. As noted earlier, in the OIM, the feedback from other coupled oscillators acts as the effective synaptic input, which subsequently modulates the oscillator’s stochastic dynamics.

To establish this result, in the following sections, we divide the oscillators in the network into two categories and analyze their dynamics:

(i) Randomly sampled oscillator $i$ initialized to $ε_i=0$ . The phase evolution of an oscillator in the OIM network can be described by the equation,

$$

\frac{dφ_i}{dt}=-K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(φ_i-φ_j)-K_s\sin(2φ_i)\\

$$

which, in the rotated frame of reference, can be expressed as,

$$

\frac{dε_i}{dt}=-K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(ε_i-ε_j)+K_s\sin(2ε_i) \tag{11}

$$

As alluded to earlier, we assume that:

(i) The selected oscillator is initialized at $ε=0$ . This can be accomplished using $FHI^0$ signal with large amplitude. (ii) Since we begin performing stochastic sampling after the OIM network has achieved steady state, all other oscillators are at $ε=±\frac{π}{2}\>\>\big(φ∈\{0,π\}\big)$ . Furthermore, we will ensure that the oscillators maintain their state during the sampling event by applying a sufficiently large $K_s$ . This condition is required to ensure that the oscillator dynamics map to Gibbs sampling (see Appendix G). In the subsequent sections, we will discuss how these conditions can be implemented.

Under the constraints outlined above, the phase dynamics of the selected oscillator simplify to:

$$

\begin{split}\frac{dε_i}{dt}&=≤ft(K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(ε_j)\right)\cos(ε_i)+K_s\sin(2ε_i)\\

\\

&≡-γ_i\cos(ε_i)+K_s\sin(2ε_i)\end{split} \tag{12}

$$

where,

$$

γ_i=-K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(ε_j)

$$

We now show that $γ_i$ , as defined above, represents the synaptic input to oscillator $i$ from the other connected oscillators in the network. To establish this, we consider the relationship between $ε$ and $φ$ , and the fact that $s=\cos(φ)$ when $φ∈\{0,π\}$ :

$$

\begin{split}γ_i=-K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(ε_j)&=-K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin≤ft(φ_j-\frac{π}{2}\right)\\

=K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\cos(φ_j)&=K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ijs_j\end{split}

$$

This establishes that $γ_i$ represents the net synaptic input received by oscillator $i$ from the connected oscillators under the conditions described above. Additionally, we note that the self-biasing term can be incorporated by injecting an FHI signal to oscillator with the strength and phase of the signal representing the self-bias input.

The updated state of oscillator $i$ can then be expressed as,

$$

\begin{split}s_i^+&≈-sgn≤ft[\tanh(-γ_iΔ t)+ε^η(Δ t).sech^2(γΔ t)\right]\\

\\

&≈sgn≤ft[\tanh≤ft(KΔ t∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ijs_j\right)-ϑ\right]\end{split} \tag{13}

$$

which closely resembles the state update rule for p-bits (Eq. (1)), with the factor $β_eff=KΔ t$ serving as the effective inverse temperature. As detailed in [1], the value of K depends on the perturbation projection vector function for the oscillator as well as the amplitude of the perturbation from the oscillators in the network, which can be tuned via the coupling element in the network. This equivalence implies that even in the OIM, the oscillator can approximate Boltzmann sampling thereby enabling the network to function as a p-bit platform.

One of the critical requirements of realizing the above dynamics is to drive the randomly selected oscillator $i$ to $ε_i=0\>\>\big(φ_i=\frac{π}{2}\big)$ . This can be achieved by applying a large FHI signal, $FHI^0$ , while suppressing the SHI signal $(K_s=0)$ . The resulting dynamics can be described by:

$$

\frac{dε_i}{dt}=-K_c,i\sin(ε_i)-K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(ε_i-ε_j) \tag{14}

$$

The largest magnitude of the second term is $D_i$ —the degree of the node (oscillator) $i$ in the network. By designing $K_c,i\gg KD_i$ ensures that the contributions of the second term are small. Consequently, the phase will be driven to $ε_i≈ 0$ , thereby preparing it for the subsequent stochastic sampling event.

(ii) Oscillators not being sampled Under steady state, such oscillators have phases $ε=±\frac{π}{2}$ , and the goal is to maintain the configuration when oscillator $i$ is sampled. To achieve this, we apply a strong SHI signal. The corresponding dynamics for an oscillator $j$ that is not being sampled can be then described as follows:

$$

\begin{split}\frac{dε_j}{dt}=-K∑_\begin{subarray{c}k=1\\

k≠ j\end{subarray}}^NJ_jk\sin(ε_j-ε_k)+K_s\sin(2ε_j)\\

\\

∀ j∈\{1,2,\dots,i-1,i+1,\dots,N\}\end{split} \tag{15}

$$

Furthermore, $\sin(ε_j-ε_k)≈ 0$ since

$$

ε_k∈≤ft\{-\frac{π}{2},\frac{π}{2}\right\} ∀ j,k∈\{1,2,\dots,i-1,i+1,\dots,N\}

$$

Consequently, the dynamics can be reduced to,

$$

\begin{split}\frac{dε_j}{dt}=-KJ_ji\sin(ε_j-ε_i)+K_s\sin(2ε_j)\\

\\

∀ j∈\{1,2,\dots,i-1,i+1,\dots,N\}\end{split} \tag{16}

$$

The maximum magnitude of the first term is $|KJ_ji|$ . Thus, by using $2K_s,j\gg KJ_ji$ , the phase can be maintained at $ε_j≈\{-\frac{π}{2},\frac{π}{2}\}$ . These assumptions are further validated through simulations presented in the following section.

Based on the above analysis, algorithm 1 presents the scheme for configuring OIMs as p-bit engines. Although the analysis above was conducted in a rotated frame of reference, we present the operational scheme in terms of the original phase variable $φ$ , to maintain consistency with the prevailing conventions in the OIM literature, where the spin states are defined as $φ∈\{0,π\}$ . As previously noted, the relationship between the two frames is given by $φ=\frac{π}{2}+ε$ .

Table 1: Operating OIM as a p-bit platform

| 1: 2: 3: | Initialize all oscillators. Apply SHI signal to all oscillators. The OIM performs gradient descent and achieves a steady state characterized by $φ∈\{0,π\}$ . while not converged or for a fixed number of iterations do |

| --- | --- |

| 4: | Randomly select an oscillator $i$ . |

| {Step (i): Prepare oscillator for stochastic sampling} | |

| 5: | Apply $FHI^0$ signal to oscillator $i$ with appropriate amplitude and set SHI signal to oscillator $i$ to $0$ . |

| {Step (ii): Initiate probabilistic evolution} | |

| 6: | Reduce $FHI^0$ signal to $0$ . |

| 7: | Ramp SHI signal to oscillator $i$ . |

| 8: | Let $φ_i$ probabilistically relax to $φ=0$ or $φ=π$ based on synaptic feedback from connected nodes and intrinsic noise. |

| 9: | end while |

## V Computing with OIMs configured as P-bit engines

### V.1 5-Node Adder

<details>

<summary>Figure_sampling.png Details</summary>

### Visual Description

## [Bar Chart with Table Overlay]: Probability Distribution of 5-Bit Binary States (Boltzmann vs. Measured)

### Overview

The image presents a bar graph comparing the probability of 5-bit binary states (represented as \([C_i\ B\ A\ S\ C_o]\)) between the **Boltzmann law** (theoretical, blue bars) and **measured data from oscillators** (experimental, orange bars). A table in the top-left corner maps 5-bit binary values to their decimal equivalents, defining the states plotted on the x-axis.

### Components/Axes

- **Y-axis**: Labeled "Probability" with ticks at \(0, 0.05, 0.1, 0.15, 0.2\).

- **X-axis**: Labeled \([C_i\ B\ A\ S\ C_o]\) with tick marks at decimal values \(0, 6, 10, 13, 18, 21, 25, 31\) (matching the table’s "Decimal" column).

- **Legend**: Top-right, with:

- Blue: "Boltzmann law" (theoretical probability).

- Orange: "Measured (oscillators)" (experimental probability).

- **Table (Top-Left)**: Columns: \(C_i, B, A, S, C_o, \text{Decimal}\). Rows (binary → decimal):

| \(C_i\) | \(B\) | \(A\) | \(S\) | \(C_o\) | Decimal |

|---------|-------|-------|-------|---------|---------|

| 0 | 0 | 0 | 0 | 0 | 0 |

| 0 | 0 | 1 | 1 | 0 | 6 |

| 0 | 1 | 0 | 1 | 0 | 10 |

| 0 | 1 | 1 | 0 | 1 | 13 |

| 1 | 0 | 0 | 1 | 0 | 18 |

| 1 | 0 | 1 | 0 | 1 | 21 |

| 1 | 1 | 0 | 0 | 1 | 25 |

| 1 | 1 | 1 | 1 | 1 | 31 |

### Detailed Analysis

- **Bar Heights (Probability)**:

For each decimal state (x-axis tick: \(0, 6, 10, 13, 18, 21, 25, 31\)):

- Blue (Boltzmann) and orange (Measured) bars have nearly identical heights, approximately \(0.12–0.13\) (visually between \(0.1\) and \(0.15\), closer to \(0.125\)).

- Between these main ticks (e.g., between \(0\) and \(6\), \(6\) and \(10\), etc.), small blue/orange bars (near \(0\) probability) indicate negligible probability for intermediate states.

### Key Observations

1. **Theory-Experiment Alignment**: The Boltzmann law (blue) and measured (orange) bars are nearly identical in height for all plotted states, suggesting the oscillator system’s probability distribution closely matches the Boltzmann distribution.

2. **Negligible Intermediate States**: Small bars between main ticks (e.g., between \(0\) and \(6\)) have near-zero probability, meaning these binary states are rarely observed.

3. **State Selection**: The system preferentially occupies the 5-bit states listed in the table (with non-negligible probability), while other states are rarely occupied.

### Interpretation

- **Boltzmann Law Validation**: The close match between theoretical (Boltzmann) and measured probabilities confirms the oscillator system’s behavior follows the Boltzmann law (a key result in statistical mechanics, relating energy states to probability).

- **State Stability**: The plotted states (e.g., \(0, 6, 10, 13, 18, 21, 25, 31\)) likely correspond to lower-energy configurations (consistent with Boltzmann’s energy-probability relationship), explaining their higher probability.

- **Practical Implication**: The system’s measured probabilities align with theoretical expectations, validating the use of Boltzmann statistics to model its behavior. The negligible probability of intermediate states suggests a discrete, well-defined set of stable configurations.

This analysis confirms the oscillator system’s probability distribution is consistent with the Boltzmann law, with preferential occupation of specific 5-bit binary states.

</details>

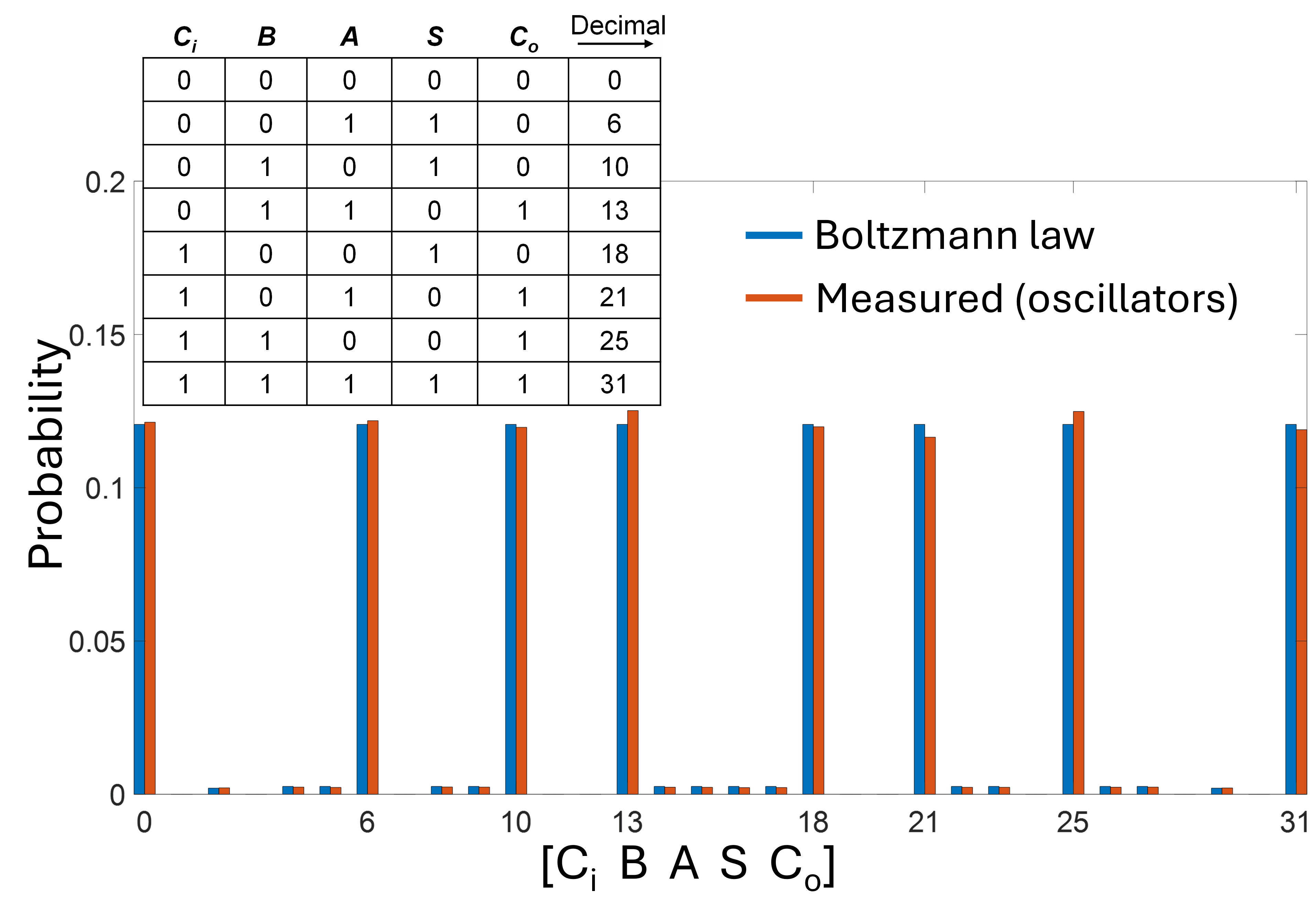

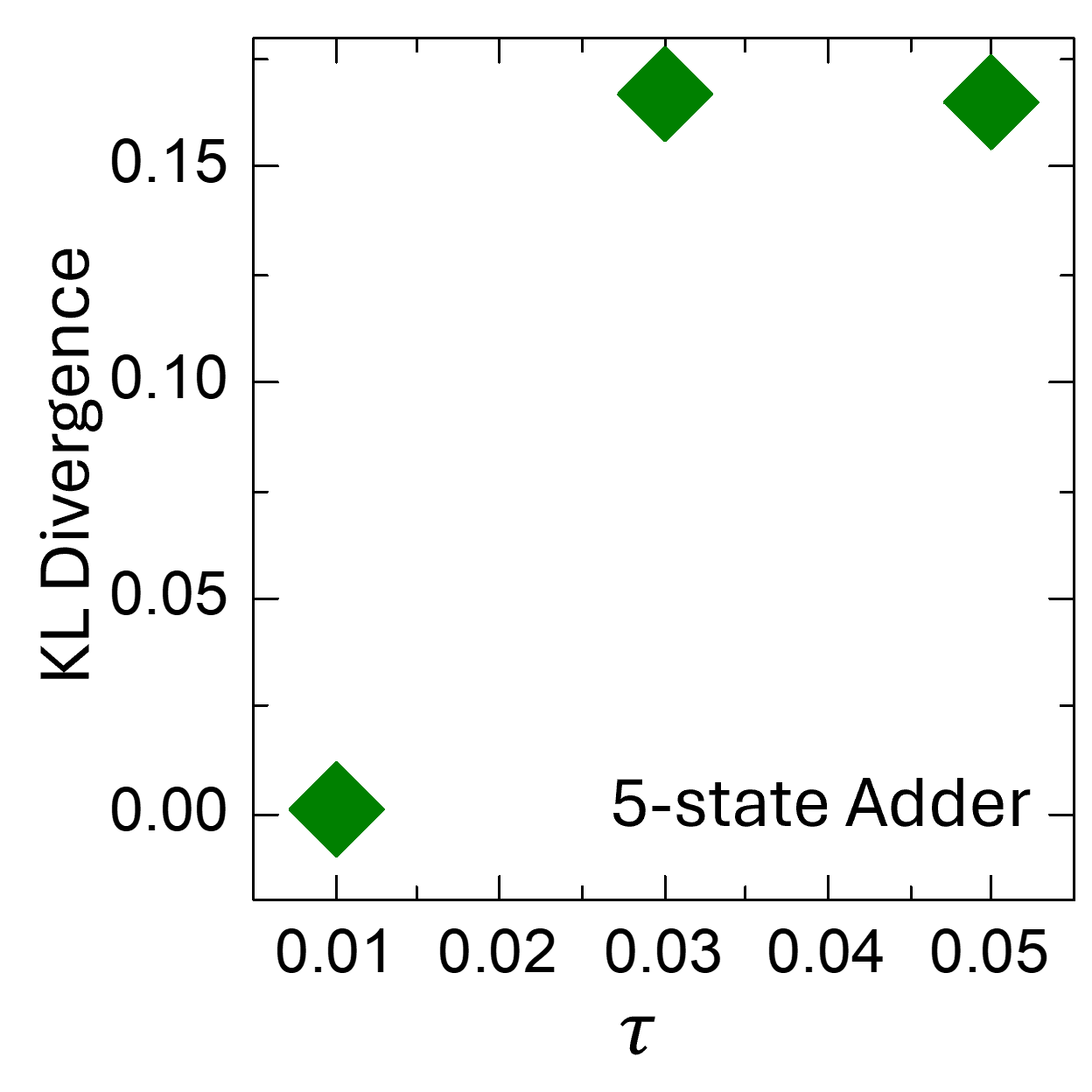

Figure 3: Full adder Probability histogram measured using the oscillators (orange bars, obtained using $5× 10^5$ sweeps) compared with the target Boltzmann distribution (blue bars). Following the convention of Ref. [19], states are indexed by the decimal value of the binary word $[C_in A B S C_out]$ . The dominant peaks correspond to the valid entries of the full-adder truth table, as highlighted in the inset. The two distributions show excellent agreement, with a measured KL divergence of $7.68× 10^-4$ . Simulation parameters: $K=0.18$ ; $K_s(t)=K_s,max(1-e^-\frac{t{10^-2}})$ ; $K_n=0.1$ .

To evaluate the sampling capability of our oscillator-based BSN, we benchmark its output distribution against the target Boltzmann distribution for a full adder, following the approach presented by Camsari et al. [19]. For this purpose, we construct the corresponding $14× 14$ adjacency matrix with the following assignment of nodes: 1–9 represent auxiliary and handle bits, 10 corresponds to $C_in$ , 11 to $A$ , 12 to $B$ , 13 to the sum $S$ , and 14 to the carry-out $C_out$ . The network is operated in the so-called truth table mode, where all input and output terminals are left floating. Under this condition, the distribution obtained using the oscillator-based BSN (using $5× 10^5$ sweeps) aligns closely with the target Boltzmann distribution, thereby confirming its ability to perform correct stochastic sampling. Here, we note that simulating the analog dynamics of all oscillators for $5× 10^5$ sweeps (corresponding to a total simulated time of $∼ 2× 10^7$ per oscillator) within the stochastic differential equation (SDE) framework (to account for noise) is computationally prohibitive. Therefore, we only simulate the dynamics of oscillator being sampled, while the remaining oscillators are held at their fixed phase values. However, in the following example—computing MaxCut—which requires a smaller number of sweeps, we simulate the entire oscillator network.

Figure 3 presents the resulting probability histogram, showing an excellent match to the target Boltzmann distribution (KL divergence= $7.68× 10^-4$ ). Each state is labeled by the decimal value of the binary string $[ C_in A B S C_out ]$ and the inset highlights the valid truth-table states of the full adder, which appear as the tallest peaks in the distribution.

### V.2 Computing MaxCut

<details>

<summary>Figure3r.png Details</summary>

### Visual Description

## Multi-Panel Oscillator Sampling and Network Analysis Figure

### Overview

The image is a three-panel technical figure (labeled (a), (b), (c)) illustrating oscillator sampling dynamics, phase behavior, and a network "Cut" metric, with a top text box defining a sampling sequence. It combines time-series plots, a sampling sequence, and a network diagram to relate oscillator sampling to network properties.

### Components/Axes

#### Top Text Box

- **Title**: "Oscillator sampling sequence:" (red text)

- **Sequence**: A directed sequence of oscillator indices:

`5→6→15→7→1→3→9→4→11→8→14→12→13→10→2→14→9→12→8→5→11→3→15→10→13→6→4→7→2→1`

(Note: Some indices repeat, e.g., 14, 9, 12, 8, 5, 11, 3, 15, 10, 13, 6, 4, 7, 2, 1 appear multiple times.)

#### Panel (a): Oscillator State Plot

- **Left Labels**:

- Top row: `FHI⁰>0 Kₛ=0`

- Bottom row: `FHI⁰=0 Kₛ>0`

- **Content**: Vertical colored bars (matching panel (b)’s line colors) aligned with the sampling sequence above. Each bar likely represents the state of an oscillator at a sampling step.

#### Panel (b): Phase Time-Series Plot

- **Y-axis**: Label `φ(π)` (phase in units of π), ticks at `-1`, `0`, `1`.

- **Content**: Multiple colored lines (matching panel (a)’s bars) showing phase evolution over time. Red arrows mark specific time points (aligned with panel (c)’s step changes).

#### Panel (c): "Cut" Metric Time-Series Plot

- **Y-axis**: Label `Cut`, ticks at `35`, `40`.

- **X-axis**: Label `Time`, ticks at `0`, `20`, `40`, `60`, `80`.

- **Content**: A green stepwise line showing the "Cut" value increasing over time. Red arrows mark step increases (aligned with panel (b)’s arrows).

- **Inset**: A network diagram with 15 blue nodes labeled `1`–`15`, connected by blue edges (indicating oscillator interactions).

### Detailed Analysis

#### Panel (a)

The vertical bars are color-coded (e.g., pink, yellow, black, blue, green, red, orange, purple) and correspond to oscillators in the sampling sequence. The two rows distinguish oscillator states: `FHI⁰>0 Kₛ=0` (top) and `FHI⁰=0 Kₛ>0` (bottom).

#### Panel (b)

- **Phase Trends**: Most lines cluster near `φ=1` or `φ=0`, indicating synchronized or grouped phase behavior. One purple line remains near `φ=-1` with periodic dips (e.g., at time ~30 and ~60).

- **Red Arrows**: Point to time points where the "Cut" value in panel (c) increases, linking phase dynamics to the Cut metric.

#### Panel (c)

- **Cut Metric**: The green line increases in discrete steps:

- Starts below `35` at `Time=0`.

- Jumps to ~`35` at `Time≈10`.

- Jumps to ~`38` at `Time≈30` (first red arrow).

- Jumps to ~`40` at `Time≈60` (second red arrow).

- **Inset Network**: Nodes `1`–`15` are connected by multiple edges, forming a dense, interconnected graph (likely representing oscillator coupling).

### Key Observations

1. **Sampling Sequence**: The sequence includes repeated indices (e.g., 14, 9, 12), suggesting a non-permutational sampling strategy.

2. **Phase Clustering**: Most oscillators cluster in two phase groups (`φ≈1` and `φ≈0`), with one outlier (purple line) at `φ≈-1`.

3. **Cut Metric Steps**: The "Cut" value increases only at specific time points (marked by red arrows), indicating discrete events driving network changes.

4. **Color Consistency**: Colors in panels (a) and (b) are identical, linking oscillator states to their phase dynamics.

### Interpretation

The figure illustrates a process where oscillators are sampled in a defined sequence (top text), their phases are tracked (panel b), and a "Cut" metric (likely a network partition or synchronization measure) evolves over time (panel c). The step increases in the Cut metric correspond to specific sampling events (red arrows), suggesting that certain sampling steps trigger changes in the network’s structure or synchronization. The inset network confirms the oscillators are interconnected, implying the sampling sequence interacts with the network topology to modify the Cut metric. The phase clustering indicates partial synchronization, with the outlier (purple line) possibly representing a desynchronized oscillator. This setup likely models how targeted sampling of oscillators affects network-level properties (e.g., synchronization or partitioning).

</details>

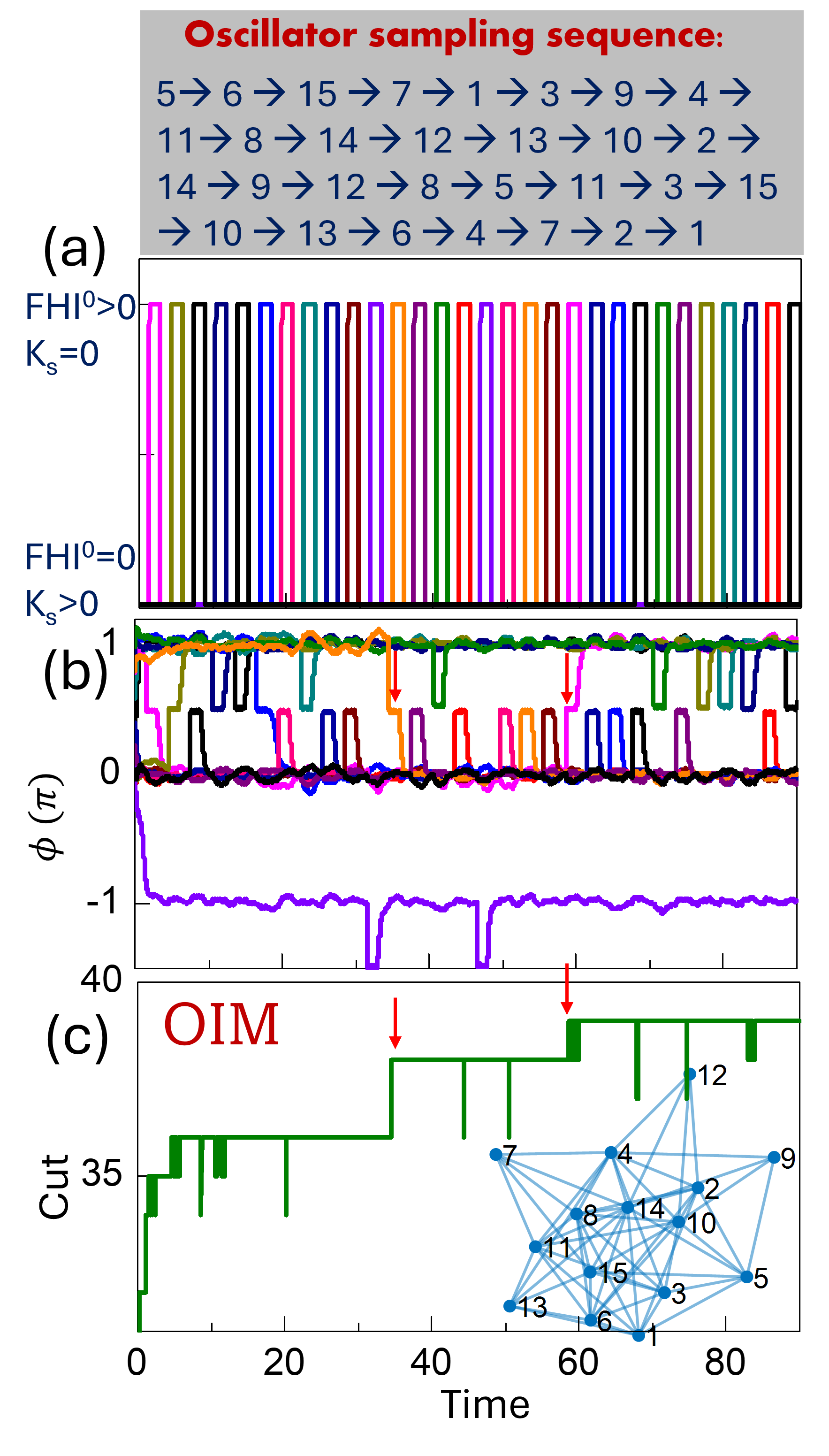

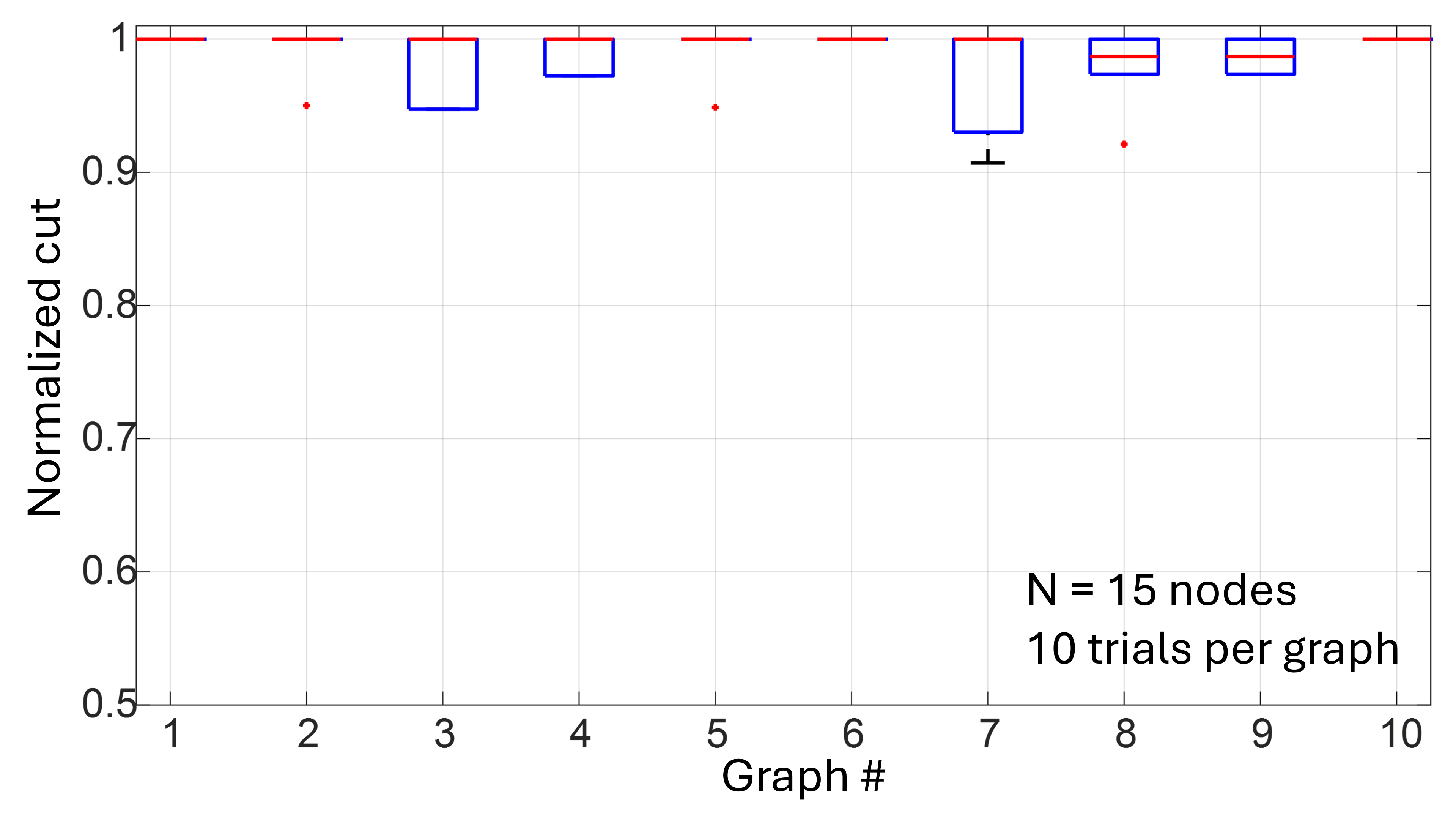

Figure 4: Operating OIMs as p-bit platforms. (a) Randomly generated FHI sequence to the oscillators. The application of FHI to the oscillator is accompanied by the suppression of SHI, and vice-versa. (b) Phase response of the oscillators over time. (c) Evolution of the computed graph cut over time/iterations. With stochastic sampling, the system is able to reach the globally optimal solution (MaxCut =39). The red arrows highlight sampling events that improved cut. Simulation parameters: $K=1;K_s,max=2;\>FHI^0=50;\>K_n for sampled oscillator=0.04$ ; ramping schedule of the SHI signal: $K_s(t)=K_s,max\big(1-e^-\frac{t{τ}}\big)$ , where $K_s,max=2$ and $τ=10^-2$ .

We now evaluate the archetypal MaxCut problem using the OIM’s stochastic sampling mode. Computing the MaxCut of a graph is a NP-hard problem where the objective is to partition the nodes such that the weight of the edges shared among the two sets (i.e., intersect the cut) is maximized. The MaxCut problem directly maps to the solution of the corresponding anti-ferromagnetic Ising Hamiltonian i.e., $J_ij=-W_ij$ , where $\>W_ij$ represents the weight of the edges in the graph to be partitioned. While the MaxCut problem has been used as a representative example, the above approach can be applied to other COPs such as graph coloring and finding maximum independent sets, among others.

We demonstrate the functionality using a randomly generated graph with 15 nodes and 59 edges. Figure 4 (a) illustrates the sequence of $FHI^0$ inputs applied to various randomly sampled oscillators in a sequential manner. The assertion of $FHI^0$ is accompanied by suppression of the SHI signal to that oscillator. The resulting phase dynamics of the oscillators are shown in Fig. 4 (b). It can be observed that during each sampling event, the sampled oscillator is first driven to a phase of $φ_i=\frac{π}{2}$ by the application of the $FHI^0$ signal, while the phases of the other (non-sampled) oscillators remain at $φ∈\{0,π\}$ . Subsequently, the $FHI^0$ signal is suppressed, and the corresponding SHI input is reasserted (not shown in Fig. 4 (a) for clarity). As observed in the dynamics, the sampled oscillator then stochastically relaxes toward either $φ_i=0$ or $φ_i=π$ , with the direction of phase relaxation determined by the competition between the synaptic input and the noise, as noted earlier. Arrows in Fig. 4 (b) indicate representative cases where the oscillator flips its state. It is noteworthy that in traditional OIMs, such transitions are likely to have a low probability of occurrence once all oscillator phases have settled to $φ_i=0$ or $φ_i=π$ since $\frac{dφ}{dt}$ , which effectively represents the diving force ( $-∇ E=\frac{dφ}{dt}$ ) on the oscillator phase is close to zero for all oscillators i.e., $\frac{dφ}{dt}≈ 0$ . Figure 4 (c) shows the corresponding evolution of the graph cut. The red arrows in Figs. 4 (c) highlight the sampling events that lead to an increase in the graph cut allowing the system to compute the optimal MaxCut. Simulation results on 10 additional randomly generated graphs have been presented in Appendix H.

<details>

<summary>Figure_MaxCutBZ.png Details</summary>

### Visual Description

## [Chart Type]: Two Subplots (Scatter Plots with Trend Lines and ACF vs Lag)

### Overview

The image contains two side-by-side subplots (labeled (a) and (b)) analyzing relationships involving a parameter \( \boldsymbol{\beta} \). Subplot (a) shows \( \log(p) \) vs. Energy with linear trend lines, while subplot (b) shows Autocorrelation Function (ACF) vs. lag. Both use three color-coded data series (purple, orange, green) corresponding to \( \beta = 1.145 \), \( 0.662 \), and \( 0.362 \), respectively.

### Components/Axes

#### Subplot (a) (Left):

- **X-axis**: Label = "Energy", Range = \([-20, 10]\), Ticks = \(-20, -10, 0, 10\).

- **Y-axis**: Label = "log(p)", Range = \([-15, 0]\), Ticks = \(-15, -10, -5, 0\).

- **Legend**: Top-right, with three entries:

- Purple: \( \beta = 1.145 \), \( R^2 = 0.99 \)

- Orange: \( \beta = 0.662 \), \( R^2 = 0.977 \)

- Green: \( \beta = 0.362 \), \( R^2 = 0.99 \)

- **Data Series**: Scatter points with linear trend lines (gray) for each \( \beta \).

#### Subplot (b) (Right):

- **X-axis**: Label = "lag", Range = \([0, 30]\), Ticks = \(0, 10, 20, 30\).

- **Y-axis**: Label = "ACF", Range = \([0.0, 1.0]\), Ticks = \(0.0, 0.5, 1.0\).

- **Legend**: Top-right, with three entries (same \( \beta \) values as (a)):

- Purple: \( \beta = 1.145 \)

- Orange: \( \beta = 0.662 \)

- Green: \( \beta = 0.362 \)

- **Data Series**: Scatter points (no trend lines) for each \( \beta \).

### Detailed Analysis

#### Subplot (a) (log(p) vs. Energy):

- **Trend Verification**: All three series show a **decreasing trend** (log(p) decreases as Energy increases).

- Purple (\( \beta = 1.145 \)): Steepest slope (e.g., at Energy = \(-20\), log(p) ≈ \(-5\); at Energy = \(-10\), log(p) ≈ \(-15\)).

- Orange (\( \beta = 0.662 \)): Moderate slope (e.g., at Energy = \(-20\), log(p) ≈ \(-5\); at Energy = \(-10\), log(p) ≈ \(-12\)).

- Green (\( \beta = 0.362 \)): Shallowest slope (e.g., at Energy = \(-20\), log(p) ≈ \(-5\); at Energy = \(-10\), log(p) ≈ \(-10\)).

- **R² Values**: Purple and green have \( R^2 = 0.99 \) (excellent linear fit); orange has \( R^2 = 0.977 \) (good fit).

#### Subplot (b) (ACF vs. lag):

- **Trend Verification**: All three series show a **decreasing trend** (ACF decreases as lag increases).

- Purple (\( \beta = 1.145 \)): Slowest decay (e.g., at lag = 0, ACF ≈ \(1.0\); at lag = 30, ACF ≈ \(0.3\)).

- Orange (\( \beta = 0.662 \)): Moderate decay (e.g., at lag = 0, ACF ≈ \(1.0\); at lag = 30, ACF ≈ \(0.2\)).

- Green (\( \beta = 0.362 \)): Fastest decay (e.g., at lag = 0, ACF ≈ \(1.0\); at lag = 30, ACF ≈ \(0.1\)).

### Key Observations

- In (a), \( \log(p) \) decreases with Energy for all \( \beta \), with steeper slopes for higher \( \beta \).

- In (b), ACF decays with lag for all \( \beta \), with slower decay for higher \( \beta \).

- High \( R^2 \) values in (a) confirm strong linear relationships between \( \log(p) \) and Energy.

### Interpretation

- **Subplot (a)**: The linear relationship between \( \log(p) \) and Energy (high \( R^2 \)) suggests a consistent statistical/physical model. Higher \( \beta \) accelerates the decrease of \( \log(p) \) with Energy, implying \( \beta \) modulates the energy-probability relationship.

- **Subplot (b)**: ACF decay rate inversely correlates with \( \beta \): higher \( \beta \) means longer-range correlations (ACF stays higher for longer lags), while lower \( \beta \) means shorter-range correlations. This suggests \( \beta \) controls the temporal/spatial correlation structure.

- **Overall**: The two plots link \( \beta \) to both the energy-probability relationship (a) and the autocorrelation decay (b), indicating \( \beta \) is a key parameter governing the system’s statistical behavior.

(No non-English text is present; all labels and text are in English.)

</details>

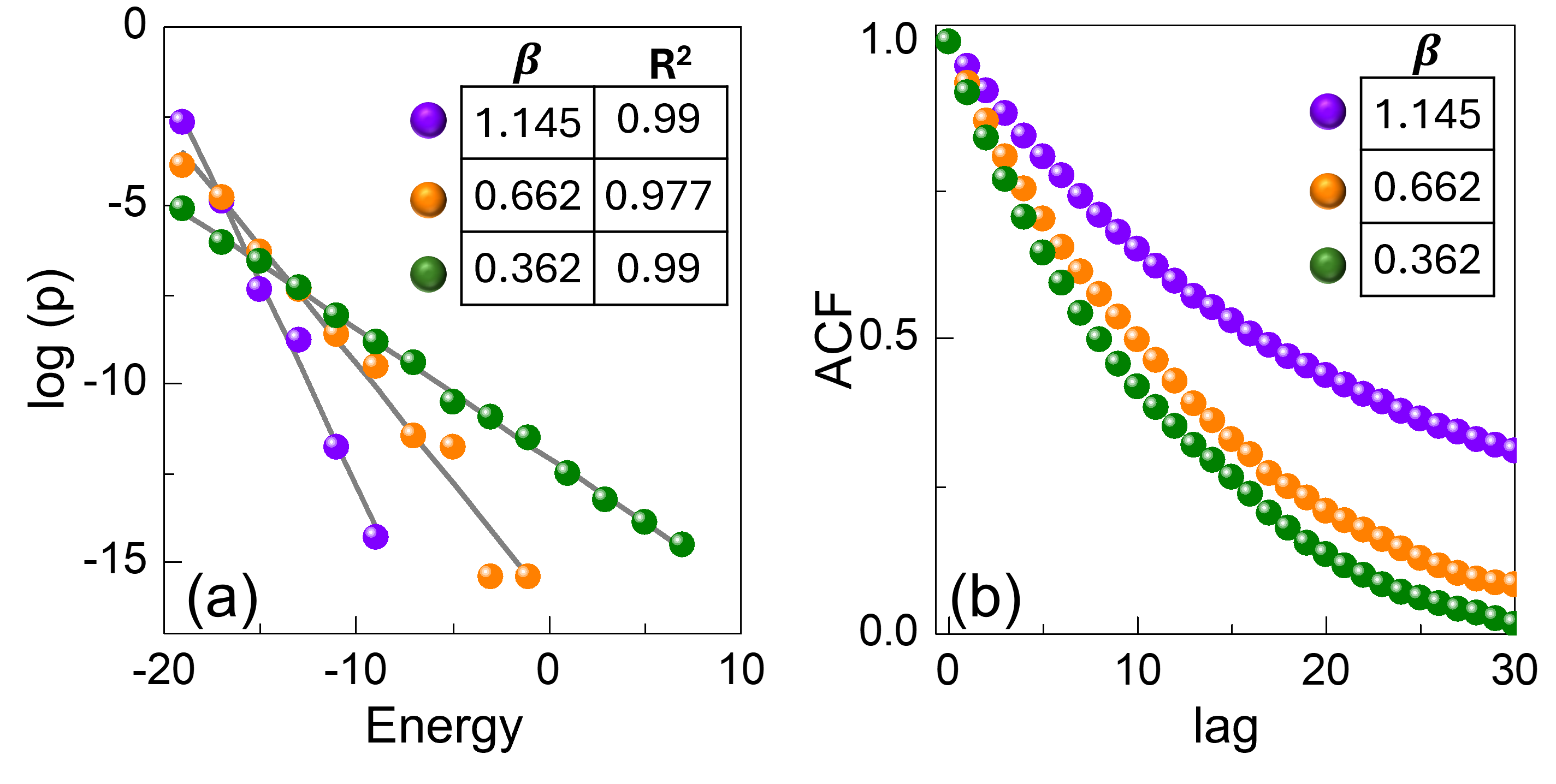

Figure 5: Boltzmann sampling behavior. (a) Plot of $\log$ (p) (p: probability) versus energy for varying noise intensities ( $K_n=0.05$ , 0.1, 0.15), corresponding to different effective temperatures. The extracted effective inverse temperatures ( $β$ ) and the quality of the linear fits ( $R^2$ ) are shown in the inset. (b) Autocorrelation function (ACF) of the system energy as a function of lag, exhibiting a decay toward zero, confirming that the stochastic dynamics effectively decorrelate successive configurations.

While the above simulations demonstrate the application of the OIM’s stochastic sampling capability in solving COPs such as MaxCut, we also employ the same example to further corroborate their Boltzmann sampling behavior. Specifically, we perform simulations on the 15-node graph considered in Fig. 4 under varying noise intensities, which effectively correspond to different effective temperatures. Similar to the five-state adder analysis, we simulate only the dynamics of the oscillator being sampled, while the remaining oscillator phases are held fixed. Figure 5 (a) shows the resulting $\log$ (p) (p: probability) versus energy distributions obtained over 5000 sweeps. The observed linear dependence confirms that the sampled state probabilities follow the expected Boltzmann relation, with the slope yielding the effective inverse temperature ( $β$ ). Furthermore, Fig. 5 (b) presents the normalized autocorrelation function (ACF) [33] of the system energy as a function of the lag. In all cases, the autocorrelation decays toward zero with increasing delay, indicating that the stochastic dynamics efficiently decorrelate successive configurations.

<details>

<summary>Figure4r.png Details</summary>

### Visual Description

## [Two-Panel Time-Series Plot]: Dynamical System Behavior Before and After Bifurcation

### Overview

The image contains two vertically stacked time-series plots (panels (a) and (b)) sharing a common **x-axis (Time)** ranging from 0 to 300. Panel (a) (top) displays a variable \( \boldsymbol{\Phi} \) (in units of \( \pi \)) over time, while panel (b) (bottom) displays a metric labeled “Cut” over time. A red arrow marks a critical time point (≈225–250) associated with a bifurcation event, with “Before Bifurcation” text indicating the pre-bifurcation regime (left of the arrow).

### Components/Axes

#### Panel (a) – Top Plot

- **Y-axis**: Labeled \( \boldsymbol{\Phi (\pi)} \), with values from 0.0 to 1.0 (units of \( \pi \)).

- **X-axis**: Time (0–300, shared with panel (b)).

- **Data Series**: Multiple colored lines (red, blue, green, purple, orange, cyan, brown, etc.) representing distinct components/variables. Lines cluster into two groups:

- Top cluster: \( \Phi \approx 1.0 \) (stable high state).

- Bottom cluster: \( \Phi \approx 0.0 \) (stable low state).

- **Annotations**: A red arrow (≈225–250) marks a critical time; “Before Bifurcation” text (below panel (a)) labels the pre-bifurcation regime (left of the arrow).

#### Panel (b) – Bottom Plot

- **Y-axis**: Labeled “Cut,” with values from 35 to 40.

- **X-axis**: Time (0–300, shared with panel (a)).

- **Data Series**: A green line with stepwise increases:

- Time 0–50: \( \text{Cut} \approx 37–38 \).

- Time 50–225: \( \text{Cut} \approx 39 \) (first step).

- Time 225–300: \( \text{Cut} \approx 40 \) (second step, aligned with the red arrow in panel (a)).

- **Annotations**: “DIM” (red text, bottom right) likely denotes a method/model (e.g., Dynamic Information Measure).

### Detailed Analysis

#### Panel (a) – Order Parameter \( \boldsymbol{\Phi} \)

- **Pre-Bifurcation (Left of Arrow)**: Lines cluster into two stable states (\( \Phi \approx 1.0 \) and \( \Phi \approx 0.0 \)), suggesting **bistability** (two coexisting stable states).

- **Post-Bifurcation (Right of Arrow)**: The red arrow marks a transition; lines may shift or reorganize, indicating a change in the system’s dynamics (e.g., loss of bistability or a new stable state).

#### Panel (b) – “Cut” Metric

- **Trend**: The green line increases in steps, with the largest step at the bifurcation time (≈225–250). This suggests the “Cut” metric (e.g., complexity, information content) **increases at the bifurcation**, correlating with the order parameter’s transition in panel (a).

### Key Observations

1. **Bifurcation Event**: The red arrow (≈225–250) marks a critical time where both panels show qualitative changes:

- Panel (a): Transition in \( \Phi \)’s stable states.

- Panel (b): Stepwise increase in “Cut.”

2. **Bistability in \( \boldsymbol{\Phi} \)**: Before bifurcation, \( \Phi \) exhibits two distinct stable states (high/low), a hallmark of dynamical systems near a bifurcation.

3. **Correlation Between Panels**: The step in “Cut” aligns with the bifurcation time, implying the metric quantifies the system’s state change.

### Interpretation

- **What the Data Suggests**: The system (e.g., a physical, biological, or computational dynamical system) undergoes a **bifurcation** at ≈225–250. Before bifurcation, it is bistable (two stable \( \Phi \) states); at bifurcation, the “Cut” metric (complexity/information) increases, and \( \Phi \)’s dynamics change (e.g., loss of bistability or a new stable regime).

- **Relationship Between Elements**: Panel (a) shows the order parameter’s behavior, while panel (b) quantifies the system’s state via “Cut.” The correlation between the step in “Cut” and the bifurcation arrow suggests the metric captures the system’s transition.

- **Anomalies/Outliers**: The stepwise increase in “Cut” at the bifurcation time is a clear anomaly, indicating a qualitative shift in the system’s dynamics.

This analysis provides a complete, reproducible description of the image’s content, enabling reconstruction of the data and its interpretation without the original image.

</details>

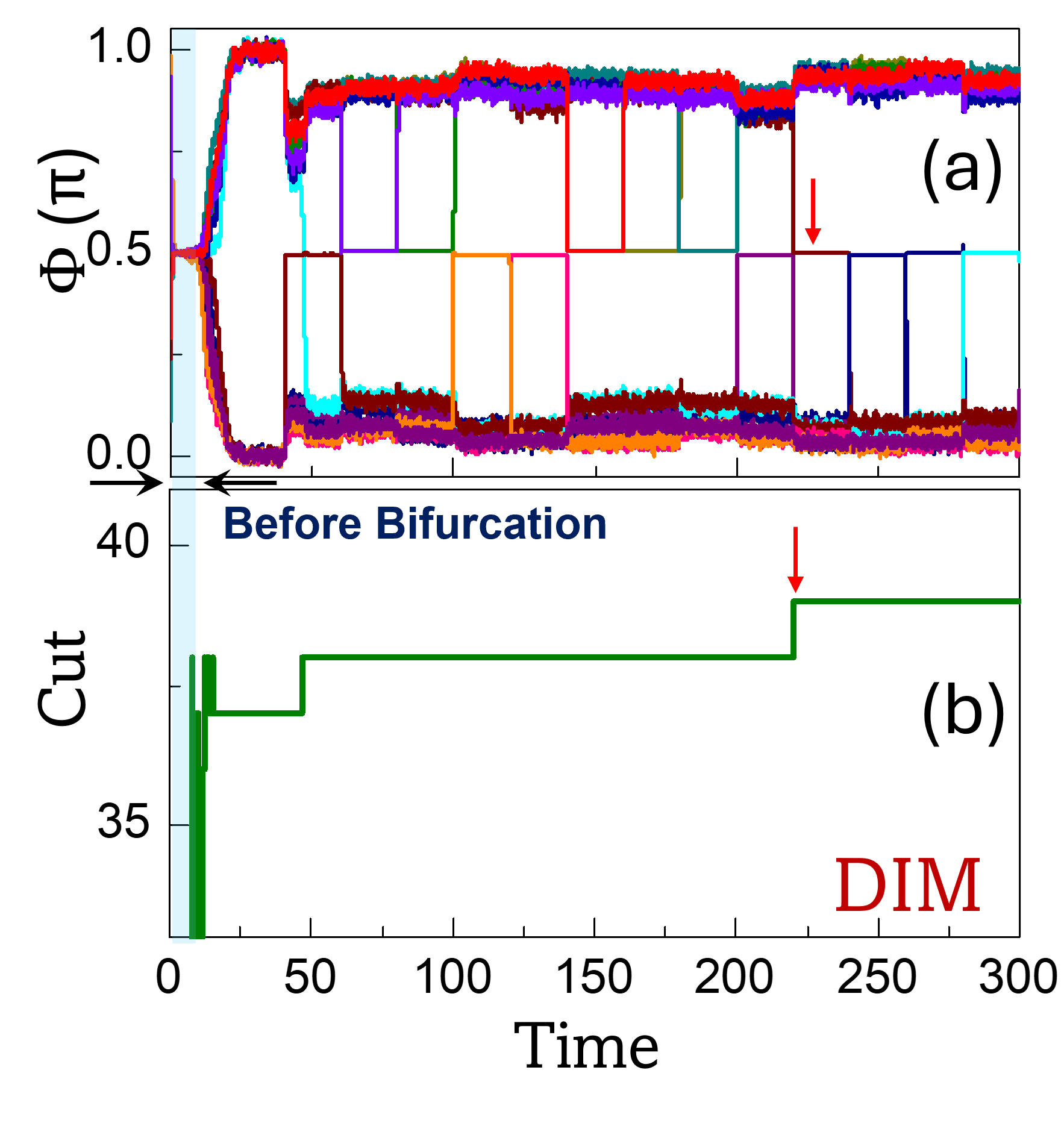

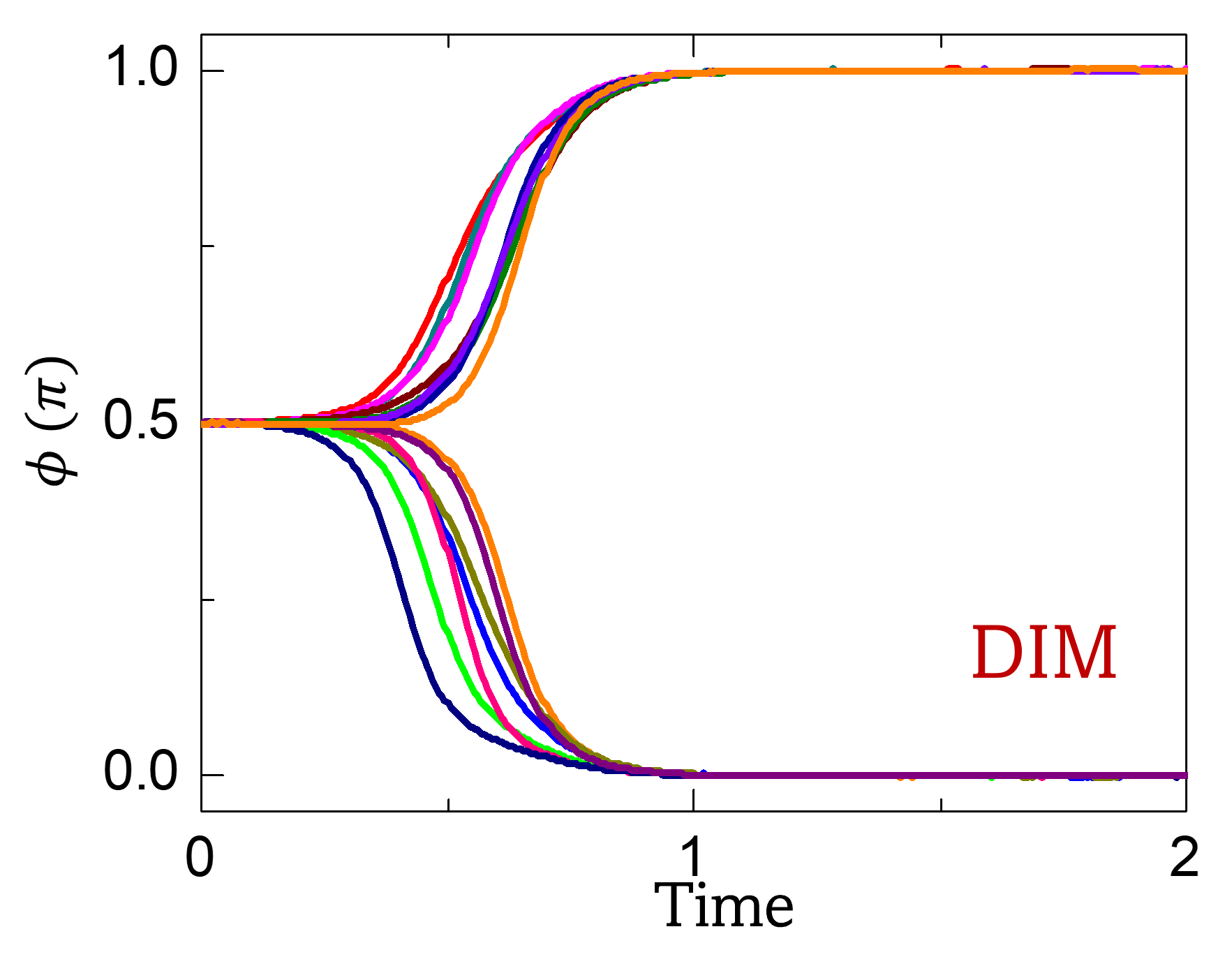

Figure 6: Operating DIMs as p-bit platforms. Evolution of (a) phases in the DIM model; and (b) corresponding graph cut over time. The same graph shown in Fig. 4 has been considered in the simulation. With stochastic sampling, the system is able to compute the MaxCut (=39) ( $K=1;K_s,max=4;\>\>FHI^0=50;\>\>K_n for sampled oscillator=0.006$ ).

## VI Realizing P-bit Engines Using Other Analog Ising Machines

Beyond conventional OIMs, the proposed sampling methodology exhibits potential for generalization to a broader class of analog dynamical systems. As a case in point, we evaluate the implementation of the proposed sampling mode in the Dynamical Ising Machine (DIM) recently introduced by the authors [34]. Unlike the traditional Kuramoto model, which relies on phase differences, the DIM employs additive phase interactions. The DIM dynamics can be described by:

$$

\begin{split}\frac{dφ_i}{dt}&=-K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(φ_i\bm{+}φ_j)-K_s\sin(2φ_i)\end{split} \tag{17}

$$

Specifically, when $K_s$ is below a certain threshold, the system stabilizes at the trivial state $φ=\frac{π}{2}$ . As $K_s$ increases beyond this threshold, the system undergoes a bifurcation, leading to the emergence of stable phase configurations at $φ∈\{0,π\}^N$ , which can subsequently be mapped to a spin configuration. The phase dynamics of the DIM, without stochastic sampling, are presented in Appendix I for the graph considered in Fig. 4 .

While a detailed analysis of the DIM dynamics has been presented in [34], it is worth noting that the system exhibits a pitchfork bifurcation, qualitatively similar to that observed in the other popular Ising machine models such as the simulated bifurcation machine (SBM) [35]. This similarity suggests the feasibility of performing stochastic sampling in a broad class of analog dynamical systems beyond the OIM.

Similar to the OIM, the DIM dynamics in the rotated frame of reference are given by,

$$

\begin{split}\frac{dε_i}{dt}&=K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(ε_i+ε_j)+K_s\sin(2ε_i)\\

\\

&=\cos(ε_i)≤ft(K∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ij\sin(ε_j)\right)+K_s\sin(2ε_i)\\

\\

&≡-γ_i\cos(ε_i) +K_s\sin(2ε_i)\end{split} \tag{18}

$$

where $γ_i$ has the same definition and meaning as that in the case of the OIM. Moreover, equation (18) is exactly the same as the corresponding equation (12) derived for the OIM. Therefore, by employing the same approach and constraints used for the OIM, the updated spin state using the DIM can be derived as follows:

$$

\begin{split}s_i^+&≈-sgn≤ft[\tanh(-γ_iΔ t)+ε^η(Δ t).sech^2(γΔ t)\right]\\

\\

&≈sgn≤ft[\tanh≤ft(KΔ t∑_\begin{subarray{c}j=1\\

j≠ i\end{subarray}}^NJ_ijs_j\right)-ϑ\right]\end{split} \tag{19}

$$

Figure 6 presents a simulation demonstrating the operation of the DIM in sampling mode to compute the MaxCut of the same graph considered in Fig. 4. As shown in Fig. 6 (a), the phase dynamics initially exhibit a bifurcation as $K_s$ is ramped up from $K_s=0$ to $K_s=4$ (not shown in the figure). At this stage, however, the system has not yet reached the optimal solution. With the onset of stochastic sampling, the system begins to sample the solution space and eventually converges to the optimal MaxCut value of 39, as shown in Fig. 6 (b).We note that while both the models exhibit Gibbs sampling, the characteristics (e.g., effective temperature) obtained for a specific set of parameters ( $K,K_s,K_n$ ) will depend on the choice of exact dynamics.

## VII Conclusion

This work builds a conceptual bridge between two paradigms that have traditionally been regarded distinct: analog oscillator-based Ising machines (OIMs) and stochastic sampling-based p-bit engines. By leveraging the natural dynamics of coupled oscillators—specifically through the interplay of SHI and FHI signals—we demonstrate that analog OIMs can perform stochastic sampling without requiring explicit computation of energy functions or the synaptic feedback.

An intrinsic feature of this approach is the initial phase evolution, during which the oscillator network naturally performs gradient descent that involves the oscillator phases evolving simultaneously. Starting from random initial conditions, the phases converge to discrete states ( $φ_i≈ 0$ or $φ_i≈π$ ), effectively settling into a local minimum of the Ising energy landscape. In certain applications such as combinatorial optimization, this simultaneous evolution has the potential to offer a potential speed-up, positioning the system in a low-energy configuration even before the onset of the sampling-mode operation. However, these promising features come with the trade-off of requiring physical connectivity among oscillators—digital p-bit designs are better suited to implement the interaction between p-bits. From an implementation standpoint, this makes the analog approach more appropriate for sparse architectures which aligns well with the operational regimes where traditional p-bit platforms are expected to perform well.

Nevertheless, it is important to recognize several key factors that must be optimized to ensure reliable operation: (a) The SHI pulse characteristics (amplitude and slew rate) must be carefully designed, as they determine $Δ t$ , which in turn governs the effective temperature, the effective noise, and the validity of the approximations used in Eq. (8) to achieve Gibbs sampling. In practice, a high slew-rate SHI signal (resulting in a small $Δ t$ ) is generally desirable to simultaneously satisfy these requirements. (b) Beyond $Δ t$ , the effective temperature also depends on the coupling strength, $K$ , among oscillators. Therefore, the coupling strength must be co-designed with $Δ t$ to realize the desired temperature range, while maintaining frequency locking and satisfying the weak-coupling condition implicit in the dynamics considered here. Since $K$ itself is determined by factors such as the natural frequency, oscillation amplitude, and the magnitude of the physical quantity (e.g., coupling resistance) characterizing the coupling element, these parameters must also be carefully considered to ensure robust operation. (c) Finally, the present analysis does not account for parameter variations or natural frequency mismatches–effects that may play a critical role in determining whether robust operation can be achieved. These design aspects and challenges will need to be systematically investigated going forward.

From an algorithmic standpoint, we note that since Gibbs sampling is inherently sequential, incorporating techniques such as the graph-coloring method proposed by Niazi et al. [36] which enables multiple spins to be updated simultaneously— need exploration to further enhance performance, and represents a compelling direction for future exploration. Looking ahead, the capability of OIMs—when configured as p-bit engines—to perform stochastic sampling opens promising avenues for neural network applications, including the training of restricted Boltzmann machines.

The overlaps between the analog and probabilistic paradigms identified in this work motivate the extension of this framework to more generalized computational models, including higher-order Ising machines [37, 38], p-bits with more than two states [39, 22], as well as to other analog systems [35, 40, 41, 42, 43, 44] beyond those explored here.

## ACKNOWLEDGEMENTS

We gratefully acknowledge Prof. Kerem Camsari for providing valuable insights on the sampling properties of p-bits. This material is based upon work supported in part by ARO award W911NF-24-1-0228 and National Science Foundation grants ( $\#$ 2422333, #2433871).

## APPENDIX A INJECTION LOCKING IN SINGLE OSCILLATOR

We analyze the impact of FHI on oscillator dynamics from an energy-based perspective. For an oscillator driven by an FHI signal at its natural frequency but with a phase offset $θ$ , the phase dynamics can be described using Adler’s equation [31, 32] as follows:

$$

\frac{dφ}{dt}=-K_c\sin(φ-θ) \tag{20}

$$

The corresponding energy function that the above dynamics minimize can be expressed as:

$$

E(φ)=-K_c\cos(φ-θ) \tag{21}

$$

For this system, the relationship $-\frac{dE}{dφ}=\frac{dφ}{dt}$ holds, implying that:

$$

\frac{dE}{dt}=\frac{dE}{dφ}·\frac{dφ}{dt}=-≤ft(\frac{dφ}{dt}\right)^2≤ 0

$$

Thus, the energy monotonically decreases over time, and the system evolves toward a stable fixed point.

The minimum of the energy function occurs at $φ^*=θ$ , where $\frac{dE}{dt}=0$ . Within the domain $φ∈[θ,θ+2π)$ , the only other fixed point satisfying $\frac{dE}{dt}=\frac{dφ}{dt}=0$ is at $φ^*=θ+π$ , which corresponds to a local maximum, and is therefore unstable. For all other values of $φ$ in this domain, $\frac{dE}{dt}<0$ . Consequently, the oscillator phase converges to the stable fixed point $φ=θ$ , although perturbations may be necessary to prevent the dynamics from settling at the unstable fixed point $φ^*=θ+π$ .

## APPENDIX B STABILITY OF THE FIXED POINTS OF THE DYNAMICS

We analyze the stability of the fixed points associated with the dynamics described in Eq. (5) of the main text. As previously discussed, the fixed points of the dynamics are given by, $ε_1^*=±\frac{π}{2}$ and $\sin(ε_2^*)=\frac{γ_i}{2K_s}$ .

To investigate the local stability of the system, we analyze the sign of the second derivative:

$$

H(ε_i)=\frac{d^2ε_i}{dt^2}=γ_i\sin(ε_i)+2K_s\cos(2ε_i)

$$

We seek conditions under which $H(ε_i)<0$ , indicating local stability.

- Stability of $ε_1^*=\frac{π}{2}$ At this point, $\sin(ε_1^*)=1$ , $\cos(2ε_1^*)=-1$ , so:

$$

H(ε_1^*)=γ_i+(-2K_s)=γ_i-2K_s

$$

Hence, $H(ε_1^*)<0$ when $γ_i<2K_s$ .

- Stability of $ε_1^*=-\frac{π}{2}$ Here, $\sin(ε_1^*)=-1$ , $\cos(2ε_1^*)=-1$ , therefore:

$$

H(ε_1^*)=-γ_i-2K_s

$$

Thus, $H(ε_1^*)<0$ when $γ_i+2K_s>0$ .

- Combined Condition: Both fixed points $ε_1^*=±\frac{π}{2}$ are stable when:

$$

γ_i^2<4K_s^2

$$

- Stability of $\sin(ε_2^*)=\frac{γ_i}{2K_s}$ Substituting into $H(ε_i)$ , we find that:

$$

H(ε_2^*)<0 when γ_i^2>4K_s^2

$$

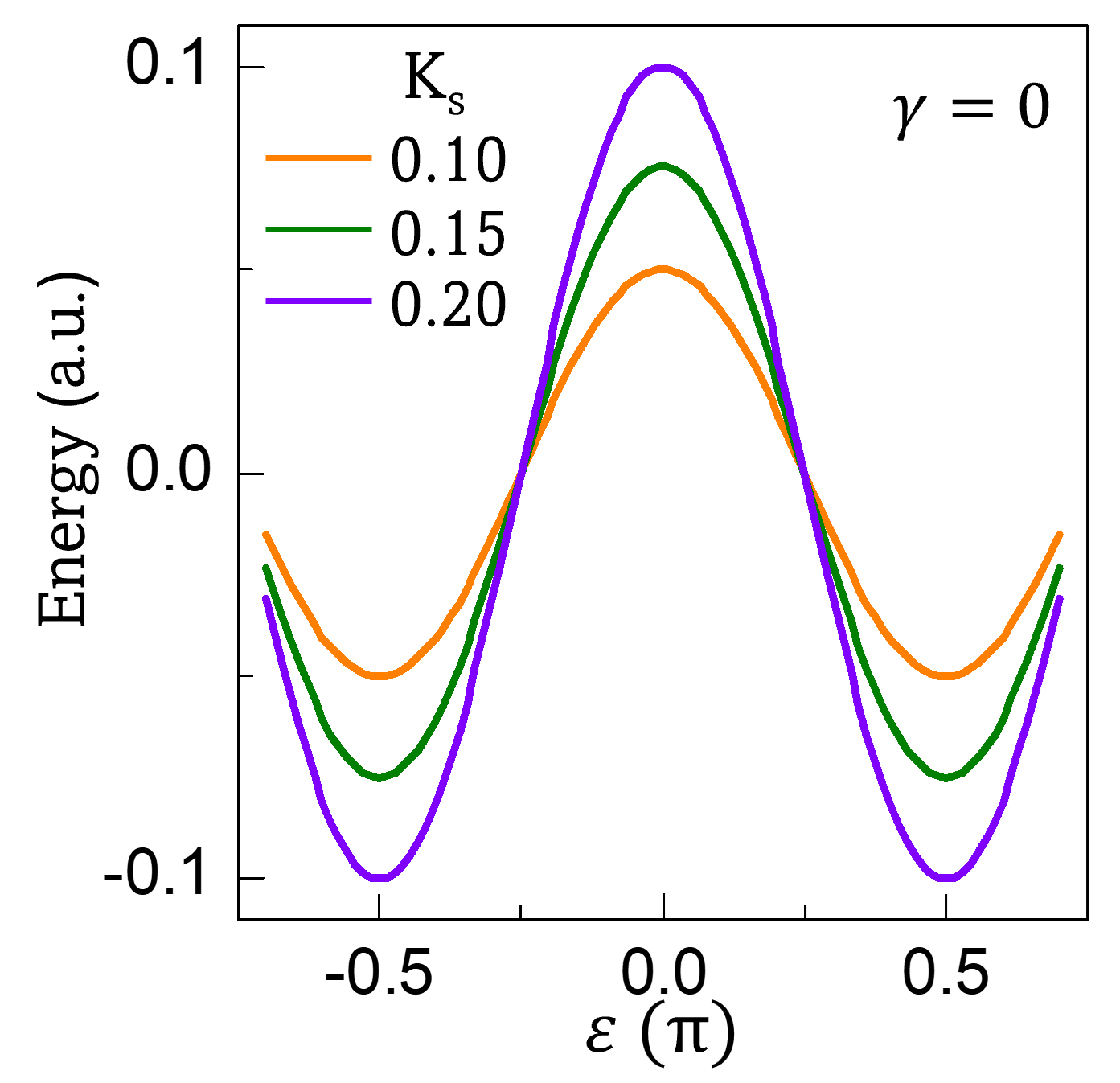

## APPENDIX C TUNING ENERGY BARRIER WITH SHI STRENGTH ( $K_s$ )

<details>

<summary>Figure5r.png Details</summary>

### Visual Description

## Line Plot: Energy vs. ε (π) for Fixed γ=0

### Overview

This is a symmetric 2D line plot illustrating the relationship between Energy (in arbitrary units, a.u.) and the parameter ε (scaled in units of π), for three distinct values of the parameter Kₛ, with γ fixed at 0. All curves exhibit symmetric behavior around ε=0.

### Components/Axes

- **Y-axis**: Labeled *Energy (a.u.)*, with a linear scale ranging from -0.1 to 0.1, marked with major ticks at -0.1, 0.0, and 0.1.

- **X-axis**: Labeled *ε (π)*, with a linear scale ranging from -0.5 to 0.5, marked with major ticks at -0.5, 0.0, and 0.5.

- **Legend**: Positioned at the top-left corner, labeled *Kₛ*, with three color-coded entries:

- Orange line: Kₛ = 0.10

- Green line: Kₛ = 0.15

- Purple line: Kₛ = 0.20

- **Fixed Parameter Annotation**: Located at the top-right corner, text reads *γ = 0*, confirming this parameter is constant for all plotted curves.

### Detailed Analysis

All curves are symmetric about ε=0, with identical behavior for positive and negative ε values:

1. **Orange Line (Kₛ=0.10)**:

- Trend: Starts at ~-0.02 a.u. at ε=-0.5, dips to a minimum of ~-0.05 a.u. at ε≈-0.25, rises to a peak of ~0.05 a.u. at ε=0, dips to ~-0.05 a.u. at ε≈0.25, then returns to ~-0.02 a.u. at ε=0.5.

2. **Green Line (Kₛ=0.15)**:

- Trend: Starts at ~-0.03 a.u. at ε=-0.5, dips to a minimum of ~-0.07 a.u. at ε≈-0.25, rises to a peak of ~0.07 a.u. at ε=0, dips to ~-0.07 a.u. at ε≈0.25, then returns to ~-0.03 a.u. at ε=0.5.

3. **Purple Line (Kₛ=0.20)**: