# Mutual Information Surprise: Rethinking Unexpectedness in Autonomous Systems

**Authors**: Yinsong Wang, Quan Zeng, Xiao Liu, Yu Ding, H. Milton Stewart School of Industrial and Systems Engineering, Georgia Institute of Technology, Atlanta & 30332, USA

## Abstract

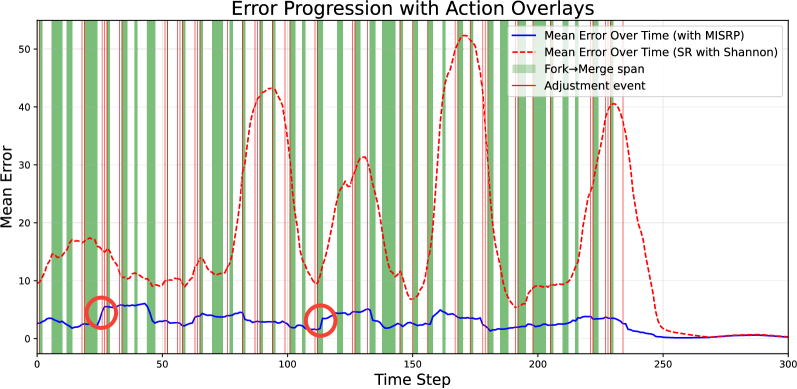

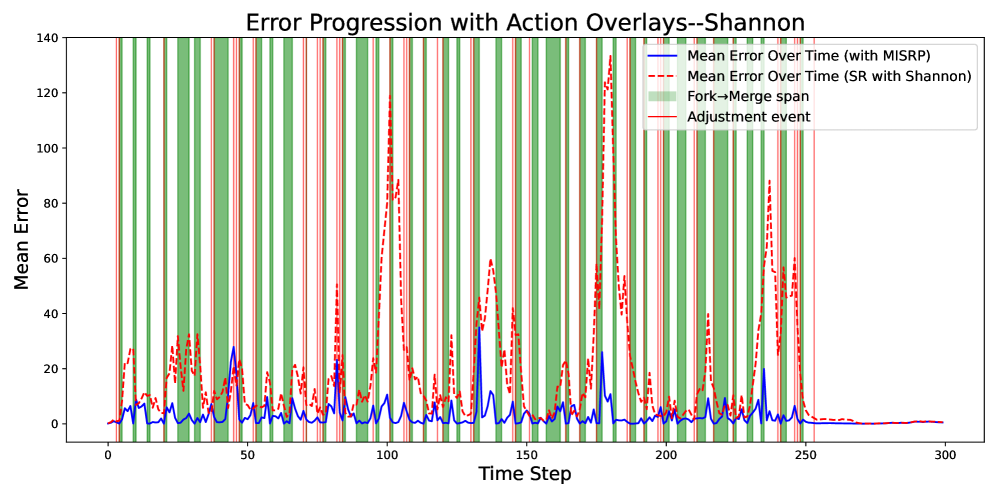

Recent breakthroughs in autonomous experimentation have demonstrated remarkable physical capabilities, yet their cognitive control remains limited—often relying on static heuristics or classical optimization. A core limitation is the absence of a principled mechanism to detect and adapt to the unexpectedness. While traditional surprise measures—such as Shannon or Bayesian Surprise—offer momentary detection of deviation, they fail to capture whether a system is truly learning and adapting. In this work, we introduce Mutual Information Surprise (MIS), a new framework that redefines surprise not as anomaly detection, but as a signal of epistemic growth. MIS quantifies the impact of new observations on mutual information, enabling autonomous systems to reflect on their learning progression. We develop a statistical test sequence to detect meaningful shifts in estimated mutual information and propose a mutual information surprise reaction policy (MISRP) that dynamically governs system behavior through sampling adjustment and process forking. Empirical evaluations—on both synthetic domains and a dynamic pollution map estimation task—show that MISRP-governed strategies significantly outperform classical surprise-based approaches in stability, responsiveness, and predictive accuracy. By shifting surprise from reactive to reflective, MIS offers a path toward more self-aware and adaptive autonomous systems.

## 1 Introduction

In July 2020, Nature published a cover story (?) about an autonomous robotic chemist—locked in a lab for a week with no external communication—independently conducting experiments to search for improved photocatalysts for hydrogen production from water. In the years that followed, Nature featured three more articles (?, ?, ?) highlighting the transformative role of autonomous systems in materials discovery, experimentation, and even manufacturing, each reporting orders-of-magnitude improvements in efficiency. These reports spotlighted the intensifying global race to advance autonomous technologies beyond the already well-established domain of self-driving cars (?, ?, ?, ?). Nature was not alone; numerous other outlets have documented the surge in autonomous research and innovation (?, ?, ?). This rapid expansion is a natural consequence of recent advances in robotics and artificial intelligence, which continue to push the boundaries of what autonomous systems can accomplish.

The systems featured in the Nature publications demonstrate highly capable bodies that can perform complex tasks. Recall that an autonomous system comprises two fundamental components: a brain and a body—colloquial terms for its control mechanism and its sensing-action capabilities, respectively. Unlike traditional automation systems, which follow predefined instructions to execute simple, repetitive tasks, true autonomy requires a higher level of cognitive capacity—an autonomous system is supposedly capable of making decisions with minimal human intervention. However, their brain function, while more sophisticated than rigid pre-programmed instructions, remains relatively limited.

Surveying the literature over the past decade, we found that (?), (?), and (?) rely on classical Bayesian optimization to guide system decisions—a technique that, although effective, does not constitute full autonomy, i.e., completely eliminating human involvement. More recent works in Nature (?, ?) continue in a similar vein, adopting active learning frameworks akin to Bayesian optimization, without fundamentally enhancing the cognitive capabilities of these systems. The conceptual limitations of their decision-making mechanisms continue to impede progress toward genuine autonomy. (?) argue that a core deficiency of current autonomous systems is the absence of a “surprise” mechanism—the capacity to detect and adapt to unforeseen situations. Without this capability, true autonomy remains out of reach.

What is a “surprise,” and how does it differ from existing measures governing automation? Surprise is a fundamental psychological trigger that enables humans to react to unexpected events. Intuitively, it arises when observations deviate from expectations. Traditionally, unexpectedness has been loosely equated with anomalies—quantifying inconsistencies between new observations and historical data. Common approaches to anomaly detection include statistical methods such as z-scores (?) and hypothesis testing (?, ?); distance-based techniques (?), including Euclidean (?) and Mahalanobis distances (?, ?); and machine learning-based models (?, ?), which learn patterns to identify and filter out anomalous data. However, researchers increasingly recognize that simply detecting and discarding unexpected events is insufficient for achieving higher levels of autonomy. In human cognition, unexpectedness is not inherently undesirable; in fact, surprise often signals opportunities for discovery rather than error. Although mathematically similar to anomaly measures, surprise is conceptually distinct: it is not merely a deviation to be rejected, but a valuable learning signal that can enhance adaptation and decision-making.

This shift in perspective aligns with formal definitions of surprise in information theory and computational psychology, such as Shannon surprise (?), Bayesian surprise (?), Bayes Factor surprise (?), and Confidence-Corrected surprise (?). These surprise definitions quantify unexpectedness by modeling deviations from prior beliefs or probability distributions. In the following section, we will delve deeper into these existing measures and evaluate whether they truly serve the intended role of identifying opportunities, as human surprise does, more than merely flagging anomalies. Using current surprise definitions, (?) demonstrated that treating surprising events not as noise to be removed but as catalysts for learning can significantly enhance a system’s learning speed. Additional empirical evidence shows that incorporating surprise as a learning mechanism can improve autonomy in domains such as autonomous driving (?, ?, ?) and manufacturing (?, ?).

In our research, we find that existing definitions of surprise require significant improvement. Their close resemblance to anomaly detection measures suggests that they may not effectively support higher levels of autonomy. Specifically, a robust surprise measure should emphasize knowledge acquisition and adaptability, rather than treating unexpectedness merely as a deviation from the norm—an approach that current surprise definitions tend to adopt. We therefore argue that it is essential to develop a novel surprise metric that inherently fosters learning and deepens an autonomous system’s understanding of the underlying processes it encounters. To capture this dynamic capability, we introduce the Mutual Information Surprise (MIS)—a new framework that redefines how autonomous systems interpret and respond to unexpected events. MIS quantifies the degree of both frustration and enlightenment associated with new observations, measuring their impact on refining the system’s internal understanding of its environment. We also demonstrate the differences that arise when applying mutual information surprise, as opposed to relying solely on classical surprise definitions, highlighting MIS’s potential to meaningfully enhance autonomous learning and decision-making.

The paper is organized as follows. In Section 2, we revisit the concept of surprise by presenting a taxonomy of existing surprise measures and introducing the intuition, mathematical formulation, and limitations of classical definitions. In Section 3, we formally define the Mutual Information Surprise (MIS) and derive a testing sequence for detecting multiple types of system changes in autonomous systems. We also design an MIS reaction policy (MISRP) that provides high-level guidance to complement existing exploration-exploitation active learning strategies. In Section 4, we compare MIS with classical surprise measures to illustrate its numerical stability and enhanced cognitive capability. We further demonstrate the effectiveness of the MIS reaction policy through a pollution map estimation simulation. In Section 5, we conclude the paper.

## 2 Current Surprise Definitions and Their Limitations

Classical definitions of surprise, such as Shannon and Bayesian Surprise, provide elegant mathematical frameworks for quantifying unexpectedness. However, these approaches often fall short in capturing the core mechanisms driving adaptive behavior: continuous learning and flexible model updating. This section revisits and analyzes existing formulations, elaborating on their conceptual foundations and outlining both their strengths and limitations.

Before proceeding with our discussion, we introduce the notation used throughout this paper. Scalars are denoted by lowercase letters (e.g., $x$ ), vectors by bold lowercase letters (e.g., $\mathbf{x}$ ), and matrices by bold uppercase letters (e.g., $\mathbf{X}$ ). Distributions in the data space are represented by uppercase letters (e.g., $P$ ), probabilities by lowercase letters (e.g., $p$ ), and distributions in the parameter space by the symbol $\pi$ . The $L_{2}$ norm is denoted by $\|\cdot\|_{2}$ , and the absolute value or $L_{1}$ norm is denoted by $|\cdot|$ . We use $\mathbb{E}[\cdot]$ to denote the expectation operator and $\text{sgn}(\cdot)$ for the sign operator. Estimators are denoted with a hat, as in $\hat{\cdot}$ .

### The Family of Shannon Surprises

The family of Shannon Surprise metrics emphasizes the improbability of observed data, typically independent of explicit model parameters. This class broadly aligns with “observation” and “probabilistic-mismatch” surprises as categorized in (?). The central question which the Shannon family of surprises tries to answer is straightforward: How unlikely is the observation?

The most widely recognized measure is Shannon Surprise (?), formally defined as:

$$

S_{\text{Shannon}}(\mathbf{x})=-\log p(\mathbf{x}), \tag{1}

$$

interpreting surprise directly through event rarity. Although conceptually clear and mathematically elegant, this definition has a significant limitation: encountering a Shannon Surprise does not inherently imply knowledge acquisition. Consider, for instance, a uniform dartboard—a stochastic yet entirely understood system. Each outcome has an equally low probability, thus appearing “surprising” under Shannon’s definition, despite humans neither genuinely finding these outcomes surprising nor gaining any additional knowledge by observing them. In other words, the focus of Shannon Surprise is statistical rarity rather than genuine knowledge gain.

To address this limitation, particularly in highly stochastic scenarios, Residual Information Surprise (?) has been introduced, which measures surprise by quantifying the gap between the minimally achievable and observed Shannon Surprises:

$$

S_{\text{Residual}}(\mathbf{x})=|\underset{\mathbf{x}^{\prime}}{\min}\{-\log p(\mathbf{x}^{\prime})\}-(-\log p(\mathbf{x}))|=\underset{\mathbf{x}^{\prime}}{\max}\log p(\mathbf{x}^{\prime})-\log p(\mathbf{x}).

$$

In the dartboard example, Residual Information Surprise becomes zero for all outcomes, as $p(\mathbf{x}^{\prime})$ remains constant for every $\mathbf{x}^{\prime}$ , accurately reflecting an absence of genuine surprise. However, this formulation introduces a conceptual challenge, as determining $\underset{\mathbf{x}^{\prime}}{\max}\log p(\mathbf{x}^{\prime})$ implicitly presumes an omniscient oracle, an assumption typically infeasible in practice.

Interestingly, Shannon Surprise serves as a foundation for various anomaly measures. For example, under Gaussian assumptions, Shannon Surprise becomes proportional to squared error:

$$

S_{\text{Shannon}}(\mathbf{x})\propto\|\mathbf{x}-\mu_{\mathbf{x}}\|_{2}^{2},

$$

thus linking surprise with deviation from the mean. Similarly, assuming a Laplace distribution recovers an absolute error interpretation, termed Absolute Error Surprise in (?):

$$

S_{\text{Shannon}}(\mathbf{x})\propto|\mathbf{x}-\mu_{\mathbf{x}}|.

$$

We note that both Squared Error Surprise and Absolute Error Surprise are commonly utilized metrics in anomaly detection literature (?, ?, ?).

### The Family of Bayesian Surprises

Bayesian Surprises, by contrast, explicitly model belief updates. These measures quantify the degree to which a new observation alters the internal model, shifting the focus from event rarity to epistemic impact. This concept parallels the “belief-mismatch” surprise in the taxonomy by (?).

The canonical formulation, introduced in (?), defines Bayesian Surprise as the Kullback–Leibler divergence between the prior and posterior distributions over parameters:

$$

S_{\text{Bayes}}(\mathbf{x})=D_{\text{KL}}\left(\pi(\boldsymbol{\theta}\mid\mathbf{x})\,\|\,\pi(\boldsymbol{\theta})\right).

$$

This measure offers a principled approach to belief revision and naturally aligns with learning mechanisms. In theory, it encourages agents to reduce surprise through model updates, providing a pathway toward adaptive autonomy.

However, Bayesian Surprise is not without limitations. As data accumulates, new observations exert diminishing influence on the posterior, rendering the agent increasingly “stubborn.” This behavior can result in Bayesian Surprise overlooking rare but meaningful anomalies. For example, consider the discovery by S. S. Ting of the $J$ particle, characterized by an unusually long lifespan compared to other particles in its class. Under standard Bayesian updating, scientists’ beliefs about particle lifespans would barely shift due to this single observation. Consequently, Bayesian Surprise would classify such an event as merely an anomaly, potentially disregarding it.

To mitigate this posterior overconfidence, Confidence-Corrected (CC) Surprise (?) compares the current informed belief against that of a naïve learner with a flat prior:

$$

S_{\text{CC}}(\mathbf{x})=D_{\text{KL}}\left(\pi(\boldsymbol{\theta})\,\|\,\pi^{\prime}(\boldsymbol{\theta}\mid\mathbf{x})\right),

$$

where $\pi^{\prime}(\boldsymbol{\theta}\mid\mathbf{x})$ represents the updated belief assuming a uniform prior. This confidence-corrected formulation remains sensitive to new data irrespective of prior history. In the $J$ particle example, employing Confidence-Corrected Surprise would trigger a genuine surprise, as the posterior remains responsive to the novel observation without the inertia introduced by extensive historical data.

A related idea emerges with Bayes Factor (BF) Surprise (?), which compares likelihoods under naïve and informed beliefs:

$$

S_{\text{BF}}(\mathbf{x})=\frac{p(\mathbf{x}\mid\pi^{0}(\boldsymbol{\theta}))}{p(\mathbf{x}\mid\pi^{t}(\boldsymbol{\theta}))},

$$

where $\pi^{0}(\boldsymbol{\theta})$ represents the naïve (untrained) prior and $\pi^{t}(\boldsymbol{\theta})$ the informed belief based on all prior observations up to time $t$ (before observing $\mathbf{x}$ ). This ratio quantifies how strongly the current observation supports the naïve prior over the informed prior. In practice, the effectiveness of both Confidence-Corrected and Bayes Factor Surprises heavily depends on constructing appropriate priors—a task often challenging and subjective.

Another variant within the Bayesian Surprise family is Postdictive Surprise (?), which operates in the output space rather than parameter space as in the original Bayesian Surprise:

$$

S_{\text{Postdictive}}(\mathbf{x})=D_{\text{KL}}\left(P(\mathbf{y}\mid\boldsymbol{\theta}^{\prime},\mathbf{x})\,\|\,P(\mathbf{y}\mid\boldsymbol{\theta},\mathbf{x})\right), \tag{2}

$$

where $\boldsymbol{\theta}$ and $\boldsymbol{\theta}^{\prime}$ denote parameters before and after the update, respectively. (?) argue that computing KL divergence in the output space is more computationally tractable for variational models but potentially less expressive when output variance depends on the input (e.g., under heteroskedastic conditions).

### Reflection

We acknowledge the presence of alternative categorizations of surprise definitions, notably the taxonomy in (?), which classifies surprise measures into three groups: observation surprises, probabilistic-mismatch surprises, and belief-mismatch surprises. As discussed previously, the Shannon Surprise family aligns closely with the first two categories, whereas the Bayesian Surprise family corresponds to the last.

These categorizations are not strictly delineated. For instance, Residual Information Surprise incorporates a conceptual element common to the Bayesian Surprise family—providing a baseline against which the observed data is contrasted with. On the other hand, Bayes Factor Surprise, despite being explicitly Bayesian in its formulation, closely resembles a Shannon Surprise conditioned on alternative priors. Furthermore, notwithstanding their philosophical distinctions, Bayesian and Shannon Surprises often behave similarly in practice; we provide further details on this observation in Section 4.

It is understandable that researchers initially explored these two foundational surprise definitions, each possessing inherent limitations: Shannon Surprise conflates probability with knowledge gain, while Bayesian Surprise suffers from increasing posterior stubbornness. Subsequent refinements emerged to address these shortcomings, primarily through adjusting the choice of prior to create more meaningful contrasts. The Residual Information Surprise assumes an oracle-like prior, whereas Confidence-Corrected and Bayes Factor Surprises rely on a non-informative prior. Regardless of the priors chosen, defining a suitable prior remains a challenging and unresolved issue in the research community.

Both surprise families share other critical limitations: they are single-instance measures and inherently one-sided measures. Being single instance means that they assess surprise based solely on the marginal impact of individual observations, without explicitly modeling cumulative learning dynamics over time, whereas being one-sided means that they have a decision threshold on one single side, offering limited expressiveness since human perceptions of surprise can range from positive to negative.

## 3 Mutual Information Surprise

In this section, we introduce the concept of Mutual Information Surprise (MIS). We first explore the intuition and motivation underlying this concept, followed by the development of a novel, theoretically grounded testing sequence. We then discuss the implications when this test sequence is violated and propose a reaction policy contingent on different types of violations. Table 1 summarizes the perspective differences between Mutual Information Surprise vs Shannon and Bayesian family of surprises.

Table 1: The perspective differences among Shannon family surprises, Bayesian family surprises, and Mutual Information Surprise.

| Surprise | Single Instance Focused | Capture Transient Changes | Aware of Learning Progression | Parametric Predictive Modeling |

| --- | --- | --- | --- | --- |

| Shannon Family | ✓ | ✗ | ✗ | ✗ |

| Bayesian Family | ✓ | ✗ | ✗ | ✓ |

| MIS | ✗ | ✓ | ✓ | ✗ |

### 3.1 What Do We Expect from a Surprise?

In human cognition, surprise often triggers reflection and adaptation. A computational analog should similarly prompt deeper examination and enhanced understanding, transcending mere statistical rarity and indicating an opportunity for learning.

To formalize this perspective, consider a system governed by a functional mapping $f:\mathbf{x}\rightarrow\mathbf{y}$ , with observations drawn from a joint distribution $P(\mathbf{x},\mathbf{y})$ . This system is well-regulated, meaning the input distribution $P(\mathbf{x})$ , output distribution $P(\mathbf{y})$ , and joint distribution $P(\mathbf{x},\mathbf{y})$ are time-invariant. This definition expands the traditional notion of time-invariance by explicitly including consistent exposure $P(\mathbf{x})$ , aligning closely with human trust in persistent patterns across rules and experiences.

To quantify system understanding, we use mutual information (MI) (?), defined as

$$

I(\mathbf{x},\mathbf{y})=\mathbb{E}_{\mathbf{x},\mathbf{y}}\left[\log\frac{p(\mathbf{y}\mid\mathbf{x})}{p(\mathbf{y})}\right]=H(\mathbf{x})+H(\mathbf{y})-H(\mathbf{x},\mathbf{y})=H(\mathbf{y})-H(\mathbf{y}\mid\mathbf{x}), \tag{3}

$$

where $H(\cdot)$ denotes entropy, measuring uncertainty or chaos of a random variable. Mutual information quantifies the reduction in uncertainty about $\mathbf{y}$ given knowledge of $\mathbf{x}$ . A high $I(\mathbf{x},\mathbf{y})$ indicates strong comprehension of $f$ , whereas stagnation or a decrease in $I(\mathbf{x},\mathbf{y})$ signals stalled learning. For the aforementioned well-regulated system, $I(\mathbf{x},\mathbf{y})$ remains constant.

Typically, mutual information $I(\mathbf{x},\mathbf{y})$ is estimated via maximum likelihood estimation (MLE) (?); details of the MLE estimator are provided in the Appendix. Empirical estimation of $I(\mathbf{x},\mathbf{y})$ is, however, downward biased for clean data with a low noise level (?):

$$

\mathbb{E}[\hat{I}(\mathbf{x},\mathbf{y})]\leq I(\mathbf{x},\mathbf{y}).

$$

Interestingly, this bias can serve as an informative feature: As experience accumulates, $\mathbb{E}[\hat{I}(\mathbf{x},\mathbf{y})]$ should increase and approach the true value $I(\mathbf{x},\mathbf{y})$ , determined by $p(\mathbf{x})$ and function $f$ . Thus, a monotonic growth in mutual information estimate signals learning.

Returning to our core question—what do we expect from a surprise? Unlike classical surprise measures (Shannon or Bayesian), which focus narrowly on conditional distributions and rarity, we posit that a surprise measure should reflect whether learning occurred. Noticing the connection between mutual information growth and learning, we define surprise as a deviation from expected mutual information growth. Specifically, we define Mutual Information Surprise (MIS) as the difference in mutual information estimates after incorporating new observations:

$$

\text{MIS}\triangleq\hat{I}_{n+m}-\hat{I}_{n}, \tag{4}

$$

where $\hat{I}_{n}$ is the estimation of mutual information $I_{n}$ at the time of the first $n$ observations, and $\hat{I}_{n+m}$ for $I_{m+n}$ after observing $m$ additional points. Starting from here, we omit the variable terms, $\mathbf{x}$ and $\mathbf{y}$ , in the notations of mutual information and its estimation for the sake of simplicity. A large (relative to sample size $m$ and $n$ ) positive MIS signals enlightenment, indicating significant learning, whereas a near-zero or negative MIS indicates frustration, suggesting stalled progress. Hence, MIS provides operational insight into whether a system evolves as expected, transforming it into a practical autonomy test. Significant deviations from the expected MIS trajectory indicate meaningful changes or system stagnation.

### 3.2 Bounding MIS

Mutual information estimation is inherently challenging: it is high-dimensional, nonlinear, and exhibits complex variance. The standard method, though principled, is a computationally expensive permutation test (?, ?), involving repeatedly shuffling $m+n$ observations into two groups, calculating MI differences, and evaluating rejection probabilities:

$$

p=\frac{1}{B}\sum_{i=1}^{B}\mathbf{1}(|\Delta\hat{I}|>|\Delta\hat{I}|_{i}),

$$

where $\Delta\hat{I}=\hat{I}_{n}-\hat{I}_{m}$ represents the actual differences between mutual information estimations, and $\Delta\hat{I}_{i}$ represents the $i$ th permuted difference. $\mathbf{1}(\cdot)$ is the indicator function. In real-time streaming scenarios, however, permutation tests become impractical due to their computational load. Moreover, when $m\ll n$ , permutation tests lose effectiveness, yielding noisy outcomes.

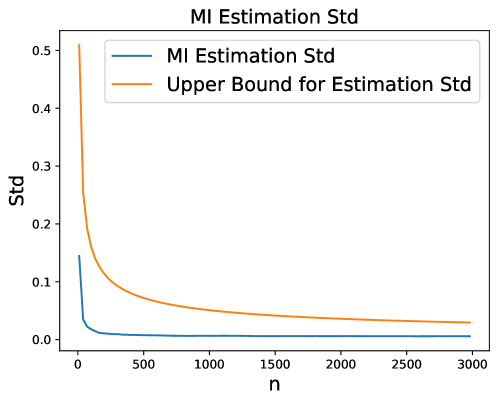

An alternative is standard deviation-based testing. For MLE mutual information estimator $\hat{I}_{n}$ , its estimation standard deviation satisfies (?):

$$

\sigma\lesssim\frac{\log n}{\sqrt{n}}, \tag{5}

$$

where $\lesssim$ stands for less or equal to (in terms of order), which yields an analytical test on the mutual information change when omitting the bias term (brief derivation provided in the Appendix),

$$

\hat{I}_{m+n}-\hat{I}_{n}\in\pm\sqrt{\frac{\log^{2}(m+n)}{m+n}+\frac{\log^{2}n}{n}}\cdot z_{\alpha}\asymp\mathcal{O}\left(\frac{\log n}{\sqrt{n}}\right), \tag{6}

$$

where $z_{\alpha}$ represents the standard normal random variable at confidence level $\alpha$ and $\asymp$ represents equal in order. But this test too is unsatisfying, because the above bound is so loose that it rarely gets violated. The root cause is the loose upper bound shown in Eq. (5), where empirical evidence suggests the true estimation standard deviation is usually much smaller than the theoretical bound. We provide the empirical evidence in the Appendix.

So, we turn to a new path for bounding MIS as follows. First, we impose several mild assumptions on the observations and the physical process.

**Assumption 1**

*We impose the following assumptions on the sampling process and physical system. 1. We assume that the existing observations are typical in the sense of the Asymptotic Equipartition Property (?), meaning that empirical statistics computed from the data are representative of their corresponding expected values under the experimental design’s intended distribution, i.e., $\hat{I}_{n}\approx\mathbb{E}[\hat{I}_{n}]$ . This is true when we regard the initial observations as true system information.

1. The number of existing observations $n$ is much smaller than cardinality of space $\mathcal{X},\mathcal{Y}$ . $n\ll|\mathcal{X}|,|\mathcal{Y}|$

1. The number of new observations $m$ is much smaller than the number of existing observations. $m\ll n$ .*

**Theorem 1**

*Consider a well-regulated autonomous system defined in Section 3.1, which satisfies the conditions in Assumption 1. With probability at least $1-\rho$ , the change in MLE-based mutual information estimates satisfies:

$$

\hat{I}_{n+m}-\hat{I}_{n}\in\left(\log(m+n)-\log n\right)\pm\frac{\sqrt{2m\log\frac{2}{\rho}}\log(m+n)}{m+n}\triangleq MIS_{\pm}.

$$

$MIS_{\pm}$ denotes the upper and lower bound for the test sequence.*

The proof of Theorem 1 is shown in the Appendix. These bounds are both tighter ( $\mathcal{O}(\frac{\log n}{n})$ instead of $\mathcal{O}(\frac{\log n}{\sqrt{n}})$ ) and more efficient (analytical test sequence) than previous methods. The bounds offer theoretically grounded thresholds within which we expect MI to evolve. When these bounds $MIS_{\pm}$ are breached—either from below or from above—we know the system has encountered something.

Some may argue that for an oversampled system, Assumption 2 does not hold. That is true, and as a result, the expectation term in Theorem 1, $\log(m+n)-\log n$ , needs to be adjusted. For a noise-free system with limited outcome and large number of existing observations, one needs to replace the expectation term with $(|\mathcal{Y}|-1)(\frac{1}{n}-\frac{1}{m+n})$ and the bounds in Theorem 1 still works.

### 3.3 What Does MIS Actually Tell Us?

When the quantity $\text{MIS}=\hat{I}_{n+m}-\hat{I}_{n}$ falls outside the established bounds $MIS_{\pm}$ —either exceeding the upper bound or falling below the lower bound—the system is considered to be surprised, thereby triggering a Mutual Information Surprise (MIS). Essentially, Theorem 1 functions as a statistical hypothesis test: the null hypothesis posits that the underlying system remains well-regulated, implying $\Delta I=I_{n+m}-I_{n}=0$ , where $I_{n}$ denotes the true mutual information at the time of $n$ observations. Any violation indicates a significant shift, with negative deviations ( $\Delta I<0$ ) and positive deviations ( $\Delta I>0$ ) each carrying distinct implications.

Recall that mutual information can be expressed in terms of entropy, as shown in Eq. (3), so changes in $\Delta I$ may result from variations in $H(\mathbf{x})$ , $H(\mathbf{y})$ , and $H(\mathbf{y}\mid\mathbf{x})$ . In this subsection, we examine the implications of MIS under different driving forces.

#### Violation from Below: Learning Has Stalled or Regressed

If

$$

\text{MIS}<\text{MIS}_{-},

$$

this implies $\Delta I(\mathbf{x},\mathbf{y})<0$ , signifying a downward shift in mutual information. A negative surprise indicates diminished or stalled learning, potentially due to:

1. Stagnation in Exploration: A downward shift driven by a decrease in input entropy $\Delta H(\mathbf{x})<0$ suggests the system repeatedly samples in a limited region, thus gathering redundant data with minimal new information.

1. Increased Noise or Process Drift: A downward shift could also result from increased conditional entropy $\Delta H(\mathbf{y}\mid\mathbf{x})>0$ , indicating greater uncertainty in predicting $\mathbf{y}$ given $\mathbf{x}$ . Practically, this often signifies increased external noise or a fundamental change in the underlying process.

#### Violation from Above: Sudden Growth in Understanding

If

$$

\text{MIS}>\text{MIS}_{+},

$$

this implies $\Delta I(\mathbf{x},\mathbf{y})>0$ , indicating an upward shift in mutual information. This positive surprise can result from:

1. Aggressive Exploration: If the increase is driven by higher input entropy $\Delta H(\mathbf{x})>0$ , the system is likely exploring previously unvisited regions aggressively, potentially inflating knowledge gains without sufficient validation.

1. Reduction in Noise: An increase due to reduced conditional entropy $\Delta H(\mathbf{y}\mid\mathbf{x})<0$ signals a desirable decrease in uncertainty, thus generally representing a beneficial development.

1. Novel Discovery: An increase in output entropy $\Delta H(\mathbf{y})>0$ suggests discovery of novel and previously rare outputs—particularly valuable in exploratory or scientific contexts.

#### Summary Table

| Violation from Below | Stagnation in exploration | $\downarrow H(\mathbf{x})\Rightarrow\downarrow I(\mathbf{x},\mathbf{y})$ |

| --- | --- | --- |

| Increased noise / process drift | $\uparrow H(\mathbf{y}\mid\mathbf{x})\Rightarrow\downarrow I(\mathbf{x},\mathbf{y})$ | |

| Violation from Above | Aggressive exploration | $\uparrow H(\mathbf{x})\Rightarrow\uparrow I(\mathbf{x},\mathbf{y})$ |

| Noise reduction | $\downarrow H(\mathbf{y}\mid\mathbf{x})\Rightarrow\uparrow I(\mathbf{x},\mathbf{y})$ | |

| Novel discovery | $\uparrow H(\mathbf{y})\Rightarrow\uparrow I(\mathbf{x},\mathbf{y})$ | |

The table above summarizes potential causes for MIS violations and their implications. These patterns help the system differentiate between meaningful learning and misleading deviations, expanding beyond the capacity of classical surprise measures and providing a road map to corrective or adaptive responses for higher level autonomy. We purposely omit the case where a decrease in $H(\mathbf{y})$ causes violation from below, as this scenario typically lacks independent significance. Instead, its happening is generally caused by changes in sampling strategy or underlying processes, which we have already discussed.

### 3.4 Reaction Policy: A Three-Pronged Approach

Following the identification of potential causes behind MIS triggers (Section 3.3), the next question is how the system should respond. Naturally, the system’s reaction should align with the dominant entropy component contributing to the change. In practice, we identify the dominant entropy change by computing and ranking the ratios

$$

\frac{\text{sgn}(\text{MIS})\Delta\hat{H}(\mathbf{x})}{|\text{MIS}|},\quad\frac{\text{sgn}(\text{MIS})\Delta\hat{H}(\mathbf{y})}{|\text{MIS}|},\quad\text{and}\quad\frac{\text{sgn}(\text{MIS})\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})}{|\text{MIS}|},

$$

where $\Delta\hat{H}(\cdot)=\hat{H}_{m+n}(\cdot)-\hat{H}_{n}(\cdot)$ denotes the estimated entropy change.

We do not prescribe a specific reaction when $\Delta\hat{H}(\mathbf{y})$ dominates the MIS, as an increase in $H(\mathbf{y})$ is typically a passive consequence of changes in $H(\mathbf{x})$ and $H(\mathbf{y}\mid\mathbf{x})$ . When both $H(\mathbf{x})$ and $H(\mathbf{y}\mid\mathbf{x})$ remain relatively stable, a rise in $H(\mathbf{y})$ indicates that the current sampling strategy is effectively uncovering novel information; thus, no change in action is required.

For $\Delta\hat{H}(\mathbf{x})$ and $\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})$ , situations may arise where their contributions are similar, i.e., no clear dominant entropy component exists and we need a resolution mechanism to break the tie. To address all these scenarios, we propose a three-pronged reaction policy that serves as a supervisory layer, compatible with existing exploration–exploitation sampling strategies:

1. Sampling Adjustment. The first policy addresses variations in input entropy $H(\mathbf{x})$ . If $\Delta\hat{H}(\mathbf{x})>0$ dominates MIS, indicating overly aggressive exploration, the system should moderate exploration and emphasize exploitation to prevent fitting to noise. Conversely, if $\Delta\hat{H}(\mathbf{x})<0$ , suggesting redundant sampling, the system should enhance exploration to restore sample diversity.

2. Process Forking. The second policy responds to variations in conditional entropy $H(\mathbf{y}\mid\mathbf{x})$ , i.e., changes in function mapping. Upon surprise triggered by $\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})$ , the system forks into two subprocesses, each consisting of $n$ existing observations and $m$ new observations divided at the surprise moment (Theorem 1). The two subprocesses represent the prior process (existing observations) and the likely altered process (new observations), and will continue their sampling separately. The subprocess first encountering a $\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})$ -triggered surprise is discarded, and the remaining subprocess continues as the main process. In the extremely rare case when both subprocesses trigger a $\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})$ dominated MIS surprise at the same time, we discard the process with fewer observations, and continues with the subprocess with more observations.

3. Coin Toss Resolution. There are occasions where changes in $\Delta\hat{H}(\mathbf{x})$ and $\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})$ are comparable, making selecting a reaction policy challenging. Instead of arbitrarily favoring the slightly larger change, we always use a biased coin toss approach, stochastically selecting which entropy to address based on the magnitude of changes:

$$

p_{\text{adjust}}=\frac{|\Delta\hat{H}(\mathbf{x})|}{|\Delta\hat{H}(\mathbf{x})|+|\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})|},\quad p_{\text{fork}}=1-p_{\text{adjust}}.

$$

The decision variable $z$ is sampled as $z\sim\text{Bernoulli}(P_{\text{adjust}})$ , with $z=1$ indicating sampling adjustment and $z=0$ indicating process forking. This mechanism ensures balanced reactions, robustness, and prevents overreactions to marginal signals.

The description above provides a brief summary of the MIS reaction policy. In the remaining portion of this subsection, we will present the specific MIS reaction policy in an algorithm. To do that, we first need to define a sampling process formally and then present the detailed algorithmic implementation of this reaction policy in Algorithm 1.

**Definition 1**

*A sampling process $\mathcal{P}(\mathbf{X},g(\cdot))$ consists of two components: existing observations $\mathbf{X}$ and a sampling function $g(\cdot)$ , where the next sample location is determined by

$$

\mathbf{x}_{\text{next}}\sim g(\mathbf{X}),

$$

with $\mathbf{x}_{\text{next}}$ drawn from the stochastic oracle $g(\mathbf{X})$ . If $g(\cdot)$ is deterministic, $\sim$ is replaced by equality ( $=$ ). For clarity, a sampling process with $n$ existing observations is denoted $\mathcal{P}_{n}$ .*

Algorithm 1 Mutual Information Surprise Reaction Policy (MISRP)

1: A sampling process $\mathcal{P}(\mathbf{Z},g(\cdot))$ , where $\mathbf{Z}$ consists of $k$ pairs of input $\mathbf{X}$ and output $\mathbf{Y}$ ; A maximum reflection threshold $T$ ; Reflection period $m=2$

2: while $m\leq\min(T,\frac{k}{2})$ do

3: Set $n=k-m$ ; Compute $MIS=\hat{I}_{m+n}-\hat{I}_{n}$ ; Record $\Delta\hat{H}(\mathbf{x})$ , $\Delta\hat{H}(\mathbf{y})$ , and $\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})$

4: if $MIS\not\in MIS_{\pm}$ and $\frac{\text{sgn}(\text{MIS})\Delta\hat{H}(\mathbf{y})}{|\text{MIS}|}\neq\max\big{\{}\frac{\text{sgn}(\text{MIS})\Delta\hat{H}(\mathbf{x})}{|\text{MIS}|},\frac{\text{sgn}(\text{MIS})\Delta\hat{H}(\mathbf{y})}{|\text{MIS}|},\frac{\text{sgn}(\text{MIS})\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})}{|\text{MIS}|}\big{\}}$ then

5: Compute bias: $p\leftarrow\frac{|\Delta\hat{H}(\mathbf{x})|}{|\Delta\hat{H}(\mathbf{x})|+|\Delta\hat{H}(\mathbf{y}\mid\mathbf{x})|}$

6: Sample $z\sim\text{Bernoulli}(p)$

7: if $z=1$ then $\triangleright$ Sampling Adjustment

8: if $MIS>MIS_{+}$ then

9: Modify $g$ to reduce exploration and increase exploitation

10: else

11: Modify $g$ to increase exploration and reduce redundancy

12: end if

13: break while

14: else $\triangleright$ Process Forking

15: if $\mathcal{P}$ is forked and the other process is not requesting Process Forking then

16: Delete $\mathcal{P}$ ; Merge the other process as the main process

17: break while

18: end if

19: if $\mathcal{P}$ is forked and the other process is requesting Process Forking then

20: Delete the $\mathcal{P}$ with fewer data; Merge the other one as the main process

21: break while

22: end if

23: Fork process into two branches: $\mathcal{P}_{n}$ and $\mathcal{P}_{m}$

24: Call $\text{MISRP}(\mathcal{P}_{n},t)$ and $\text{MISRP}(\mathcal{P}_{m},t)$

25: break while

26: end if

27: else

28: No action required (surprise within expected bounds)

29: end if

30: $m=m+1$

31: end while

We offer several remarks on the MIS reaction policy $\text{MISRP}(\mathcal{P},t)$ :

- In the pseudocode, we introduce two additional notations: the maximum reflection threshold $T$ and the total number of observations $k$ . In practice, MIS is computed retroactively, that is, given a sequence of $k$ observations, we partition them into $m$ recent observations and $n=k-m$ older observations to compute the MIS. We term the $m$ recent observation as the reflection period and we increment $m$ to iterate over different partition points. The reflection period $m$ is constrained to be no greater than $\min(T,\frac{k}{2})$ . This constraint is motivated by the comparative behavior of test statistics derived from Theorem 1 and the variance-based test in Eq. (6). Specifically, when $m=n$ , both our proposed test and the variance-based test yield statistics of order $\mathcal{O}\left(\frac{\log n}{\sqrt{n}}\right)$ . As discussed in Section 3.2, such statistics are typically too loose to be violated in practice, thereby diminishing the sensitivity advantage of our method. Consequently, evaluating MIS beyond $m=\frac{k}{2}$ is unnecessary and computationally inefficient. The reflection threshold $T$ is introduced to ensure computational feasibility, and we recommend selecting $T$ as large as computational resources permit.

- Note that the reflection period $m$ starts at $2$ . This implies that the reaction policy does not respond to a single-instance surprise. Mathematically, this is because the derivation of the bound in Theorem 1 is ill-defined for $m=1$ . Intuitively, MIS measures the progression of learning in a sampling process, and it is impossible to determine whether a single observation is informative or erroneous without additional verification. Therefore, the MIS policy always take at least two additional samples to start to react. One may argue that this requirement for an extra sample imposes additional cost in conducting experiments. That is true. But recall one insight from the study in (?) is the benefit of “ the extra resources spent on deciding the nature of an observation ” in the long run.

- It is important to emphasize that bot the sampling adjustment and process forking approaches are rooted in the active learning literature and practice. Balancing exploration and exploitation, i.e., sampling adjustment, has long been a key topic in Bayesian optimization and active learning (?), whereas discarding irrelevant observations, as we do in process forking, is a common practice in the dataset drift literature (?, ?, ?, ?, ?). Our Mutual Information Surprise reaction framework provides a principled mechanism for autonomous systems to determine how to balance exploration versus exploitation and when or what to discard (i.e., forget).

## 4 Numerical Analysis

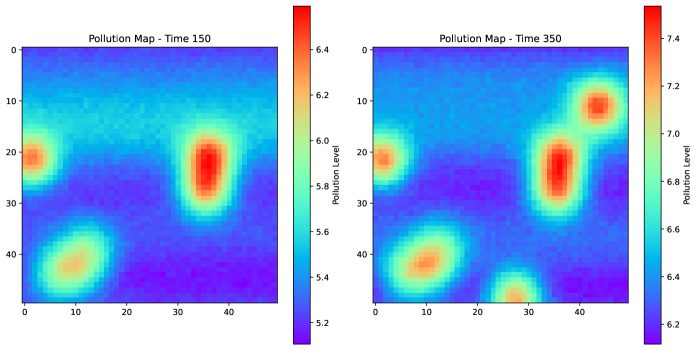

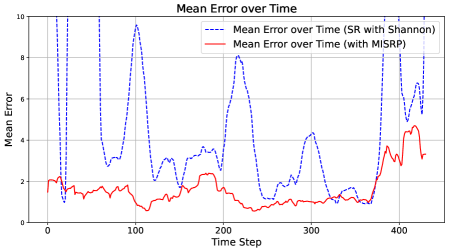

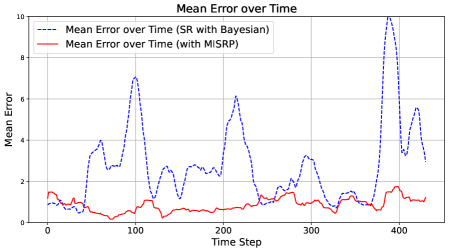

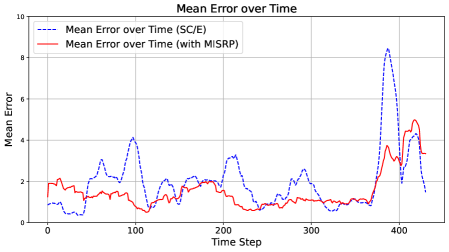

In this section, we illustrate the merits of Mutual Information Surprise (MIS). Section 4.1 demonstrates the strength of MIS compared to classical surprise measures. Section 4.2 showcases the advantages of the MIS reaction policy in the context of dynamically estimating a pollution map using data generated from a physics-based simulator.

### 4.1 Putting Surprise to the Test

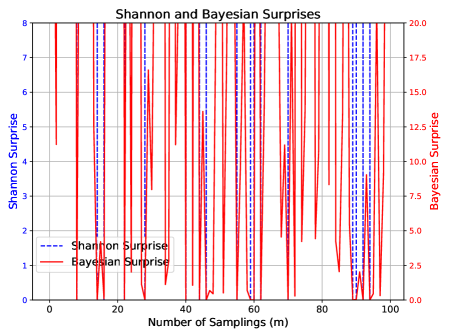

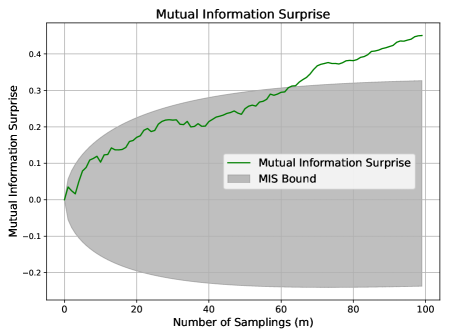

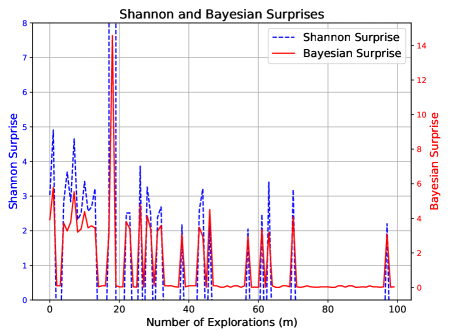

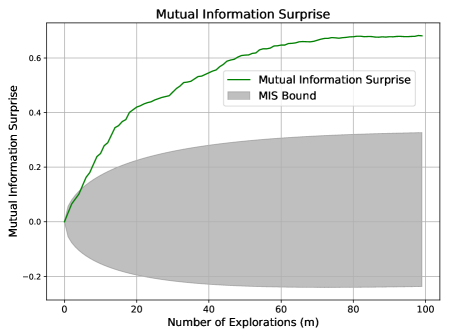

To compare MIS with classical surprise measures—principally Shannon and Bayesian Surprises—we conduct a series of controlled simulations using a simple yet interpretable system, designed to reveal how each measure behaves under varying conditions. The system is governed by the mapping

$$

y=x\mod 10, \tag{7}

$$

chosen for its simplicity, modifiability, and clarity of interpretation. The first four scenarios are fully deterministic, while the final two introduce noise and perturbations, enabling an assessment of whether each surprise measure responds meaningfully to new observations, structural changes, or stochastic disturbances. Each simulation begins with $100$ samples drawn uniformly from $x\in[0,30]$ to establish the system’s initial knowledge. We then progressively introduce new data under different conditions, recording the response of each surprise measure. As the magnitudes of MIS, Shannon Surprise, and Bayesian Surprise differ in scale, our analysis focuses on behavioral trends —how each measure changes, spikes, or saturates—rather than on their absolute values.

The surprise measures are computed as follows. Shannon Surprise is calculated using its classical definition in Eq. (1), as the negative log-likelihood of the true label under a Gaussian Process predictive model. Bayesian Surprise is computed as Postdictive Surprise, defined in Eq. (2), using the KL divergence between the prior and posterior predictive distributions of $y$ at each input $x$ . The same Gaussian Process predictive model is used for both, using a Matérn $\nu=2.5$ kernel with a constant noise level set to $0.1$ . After each surprise computation, the model is re-trained with all currently available data.

For MIS, we treat the initial $100$ observations as the initial sample size $n=100$ , as defined in Section 3.1. As sampling continues, the number of new observations $m$ increases (represented in the ticks of the X-axis in the figures). The output space has cardinality $|\mathcal{Y}|=10$ , corresponding to the ten possible outcomes of the modulus function, except in Scenario 6 where $|\mathcal{Y}|=20$ . MIS is calculated as defined in Eq. (4). When the theoretical bound in Theorem 1 is used, the probability level is set to $\rho=0.1$ . The bias term is adjusted as discussed in Section 3.2, since $n\gg|\mathcal{Y}|$ in this setting.

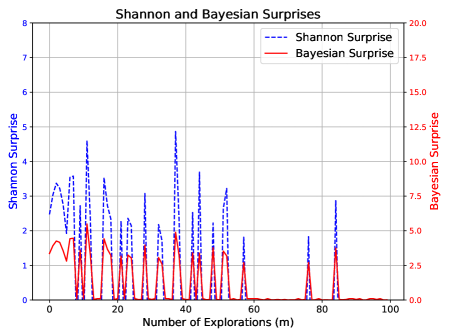

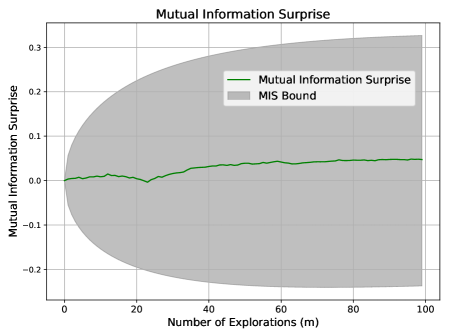

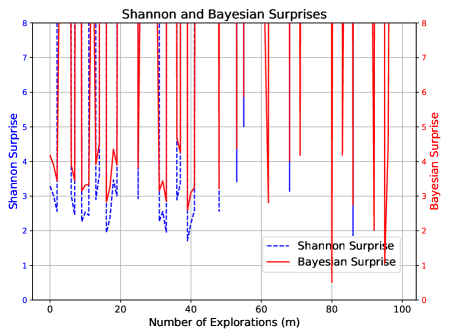

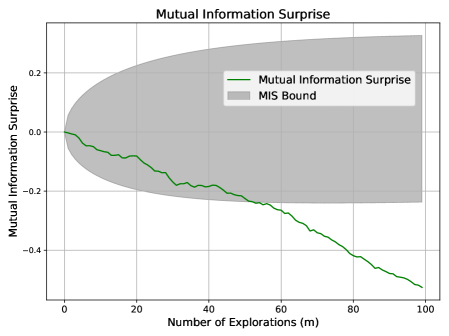

Scenario 1: Standard Exploration.

New data is randomly sampled from $x\in[30,100]$ , expanding the domain without altering the underlying function or aggressively exploring unfamiliar regions. This represents a system exploring new yet consistent areas of its environment.

Expected behavior: A well-calibrated surprise measure should indicate ongoing learning without abrupt fluctuations. We do not expect MIS to be violated.

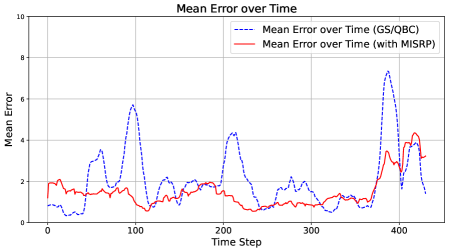

As shown in Figure 1, MIS progresses steadily within its expected bounds, reflecting a stable and well-regulated learning process. In contrast, Shannon and Bayesian Surprises fluctuate erratically, often spiking without clear justification.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Shannon and Bayesian Surprises

### Overview

This is a dual-axis line chart comparing two metrics, "Shannon Surprise" and "Bayesian Surprise," plotted against the "Number of Explorations (m)." The chart displays how these two measures of surprise or information gain evolve over a sequence of exploratory actions.

### Components/Axes

* **Chart Title:** "Shannon and Bayesian Surprises" (centered at the top).

* **X-Axis:**

* **Label:** "Number of Explorations (m)"

* **Scale:** Linear, ranging from 0 to 100 with major tick marks every 20 units (0, 20, 40, 60, 80, 100).

* **Primary Y-Axis (Left):**

* **Label:** "Shannon Surprise"

* **Scale:** Linear, ranging from 0 to 8 with major tick marks every 1 unit.

* **Associated Data Series:** Blue dashed line.

* **Secondary Y-Axis (Right):**

* **Label:** "Bayesian Surprise"

* **Scale:** Linear, ranging from 0.0 to 20.0 with major tick marks every 2.5 units.

* **Associated Data Series:** Red solid line.

* **Legend:** Located in the top-right corner of the plot area.

* **Blue dashed line:** "Shannon Surprise"

* **Red solid line:** "Bayesian Surprise"

* **Grid:** A light gray grid is present, aligned with the major ticks of both the x-axis and the primary (left) y-axis.

### Detailed Analysis

**Data Series Trends and Key Points:**

1. **Shannon Surprise (Blue Dashed Line, Left Axis):**

* **Trend:** The series exhibits high volatility with frequent, sharp spikes, particularly in the first half of the exploration sequence (m=0 to m=60). The magnitude of the spikes generally decreases as the number of explorations increases. After approximately m=60, the values drop significantly and remain low, with only a few minor spikes.

* **Key Data Points (Approximate):**

* Initial value at m=0: ~2.8

* Major peaks:

* m ≈ 10: ~4.5

* m ≈ 35: ~4.8 (highest peak)

* m ≈ 45: ~3.7

* m ≈ 85: ~2.8

* Values after m=60 are predominantly below 1.0, often near 0.

2. **Bayesian Surprise (Red Solid Line, Right Axis):**

* **Trend:** This series also shows spiky behavior, with peaks that are temporally correlated with the peaks in Shannon Surprise. However, its overall magnitude (on its own scale) is lower relative to its axis maximum. The trend shows a more pronounced decline after the initial explorations, approaching and staying near zero from approximately m=60 onward.

* **Key Data Points (Approximate):**

* Initial value at m=0: ~3.5

* Major peaks (correlated with Shannon peaks):

* m ≈ 10: ~5.0

* m ≈ 35: ~4.0

* m ≈ 45: ~3.5

* m ≈ 85: ~3.5

* Values after m=60 are consistently very low, frequently at or near 0.0.

**Spatial and Visual Correlation:**

The peaks of both lines are closely aligned along the x-axis, indicating that events causing a high Shannon Surprise also cause a high Bayesian Surprise. The red line (Bayesian) appears to have a slightly smoother baseline between spikes compared to the blue line (Shannon).

### Key Observations

1. **Strong Correlation:** The most notable pattern is the tight temporal correlation between spikes in Shannon Surprise and Bayesian Surprise. They rise and fall together.

2. **Diminishing Surprise:** Both metrics show a clear overall trend of diminishing magnitude as the number of explorations (m) increases. The most significant "surprises" occur early in the process.

3. **Phase Transition:** There is a distinct change in behavior around m=60. After this point, both surprise measures become quiescent, suggesting the system or model has largely assimilated the environment or data, leading to few new surprises.

4. **Scale Difference:** While correlated, the absolute values are measured on different scales. A Shannon Surprise of ~4.8 corresponds to a Bayesian Surprise of ~4.0 at m≈35.

### Interpretation

This chart likely visualizes the learning or adaptation process of an agent or model in an unknown environment. "Surprise" quantifies the discrepancy between expectation and observation.

* **What the data suggests:** The early exploratory phase (m < 60) is characterized by frequent, significant updates to the model's beliefs, as evidenced by high surprise values. Each exploration provides substantial new information. The perfect correlation between the two surprise metrics indicates they are capturing related, though mathematically distinct, aspects of information gain. Shannon Surprise is rooted in information theory (reduction in entropy), while Bayesian Surprise measures the divergence between prior and posterior belief distributions.

* **How elements relate:** The x-axis represents time or experience. The dual y-axes allow the comparison of two different quantitative measures of the same conceptual phenomenon ("surprise") on their native scales. The decline in both lines demonstrates the principle of learning: as the agent explores, its predictions become more accurate, and observations become less surprising.

* **Notable anomalies/implications:** The near-zero values after m=60 are critical. They imply the learning process has converged or the environment has become predictable. The few late spikes (e.g., at m≈85) could represent rare, novel events or a change in the environment that temporarily reintroduces uncertainty. The chart effectively argues that both Shannon and Bayesian surprise are valid, correlated signals for guiding exploration, with the most informative explorations happening early on.

</details>

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart with Confidence Interval: Mutual Information Surprise

### Overview

The image displays a line chart titled "Mutual Information Surprise," plotting a metric against the number of explorations. The chart includes a central trend line and a shaded region representing a bound or confidence interval.

### Components/Axes

* **Chart Title:** "Mutual Information Surprise" (centered at the top).

* **X-Axis:**

* **Label:** "Number of Explorations (m)"

* **Scale:** Linear, ranging from 0 to 100.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100.

* **Y-Axis:**

* **Label:** "Mutual Information Surprise"

* **Scale:** Linear, ranging from approximately -0.25 to 0.35.

* **Major Tick Marks:** -0.2, -0.1, 0.0, 0.1, 0.2, 0.3.

* **Legend:** Located in the top-right quadrant of the chart area.

* **Green Line:** Labeled "Mutual Information Surprise".

* **Gray Shaded Area:** Labeled "MIS Bound".

### Detailed Analysis

* **Data Series - "Mutual Information Surprise" (Green Line):**

* **Trend:** The line shows a generally stable, slightly increasing trend with minor fluctuations.

* **Key Points (Approximate):**

* At m=0, the value is ~0.0.

* The line remains close to 0.0 until approximately m=20.

* There is a slight dip to a local minimum of ~ -0.01 around m=25.

* From m=30 onward, the line exhibits a very gradual upward slope.

* At m=50, the value is ~0.03.

* At m=75, the value is ~0.04.

* At m=100, the value is ~0.05.

* **Data Series - "MIS Bound" (Gray Shaded Area):**

* **Trend:** The bound starts very narrow and expands symmetrically (or nearly symmetrically) around the green line as the number of explorations (m) increases.

* **Key Points (Approximate):**

* At m=0, the bound is negligible, spanning roughly -0.01 to 0.01.

* At m=25, the bound spans approximately -0.15 to 0.15.

* At m=50, the bound spans approximately -0.22 to 0.28.

* At m=100, the bound is at its widest, spanning approximately -0.25 to 0.33.

### Key Observations

1. **Stability of the Core Metric:** The "Mutual Information Surprise" value itself remains relatively small and stable, staying within the narrow range of -0.01 to 0.05 across all 100 explorations.

2. **Diverging Uncertainty:** The most prominent feature is the "MIS Bound," which grows significantly wider as the number of explorations increases. This indicates that the uncertainty or potential range of the surprise metric expands with more data/explorations.

3. **Minor Anomaly:** A small, localized dip in the green line occurs around m=25, which is the only notable deviation from its otherwise smooth, slightly ascending path.

### Interpretation

This chart likely illustrates a concept from information theory or machine learning, possibly related to active learning or Bayesian optimization. "Mutual Information Surprise" could measure how unexpected new data points are given the current model.

* **What the data suggests:** The core finding is that while the *average* or *expected* surprise (green line) increases only marginally with more explorations, the *potential range* of surprise (gray bound) grows dramatically. This implies that as the system explores more, it encounters a wider spectrum of possible outcomes—some highly surprising (positive values) and some highly predictable (negative values)—even if the central tendency changes little.

* **Relationship between elements:** The green line represents the central estimate or mean of the surprise. The gray "MIS Bound" likely represents a confidence interval, standard deviation, or theoretical bound (e.g., based on the Cramér-Rao bound or similar). The widening bound suggests that the estimator's variance increases with `m`, or that the theoretical limits of predictability become more extreme.

* **Notable implication:** The chart demonstrates a key trade-off: more exploration leads to a better understanding of the *possible* range of outcomes (wider bound), but does not necessarily lead to a dramatic shift in the *average* outcome (stable green line). The dip at m=25 could indicate a specific point where the model encountered a cluster of highly predictable data before resuming a trend of slightly increasing average surprise.

</details>

Figure 1: Surprise measures during standard exploration.

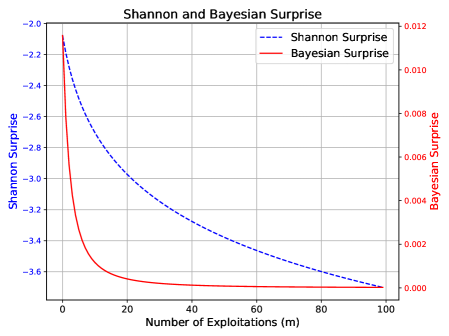

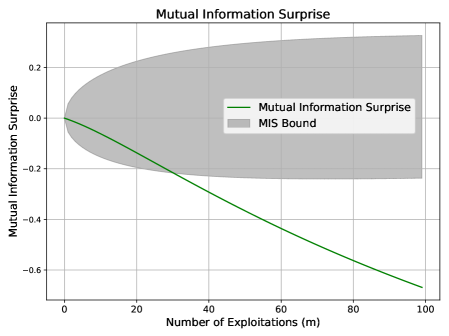

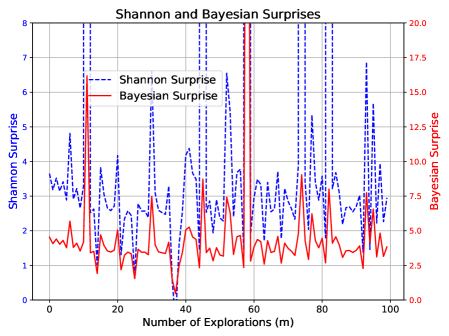

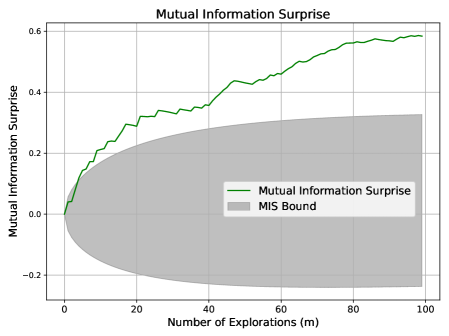

Scenario 2: Over-Exploitation.

In this scenario, the system repeatedly samples a previously seen point from $x\in[0,30]$ , specifically observing the pair $(x,y)=(7,7)$ one hundred times. This simulates stagnation.

Expected behavior: Surprise should diminish as no new information is gained. This mirrors the stagnation case in Section 3.3, and we expect MIS to violate its lower bound.

Figure 2 shows that MIS falls below its lower bound, signaling a lack of knowledge gain. While Shannon and Bayesian Surprises also trend downward, they lack a defined lower threshold, limiting their reliability for flagging such behavior. Recall that both Shannon and Bayesian Surprises are inherently one-sided, as noted in (?) and (?).

<details>

<summary>x3.png Details</summary>

### Visual Description

## [Chart]: Shannon and Bayesian Surprise vs. Number of Exploitations

### Overview

The image is a dual-axis line chart titled "Shannon and Bayesian Surprise". It plots two different metrics of "surprise" against the "Number of Exploitations (m)". The chart demonstrates how both metrics decrease as the number of exploitations increases, but at significantly different rates and scales.

### Components/Axes

* **Title:** "Shannon and Bayesian Surprise" (centered at the top).

* **X-Axis:**

* **Label:** "Number of Exploitations (m)" (centered at the bottom).

* **Scale:** Linear, ranging from 0 to 100.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100.

* **Primary Y-Axis (Left):**

* **Label:** "Shannon Surprise" (rotated vertically, left side).

* **Scale:** Linear, with negative values.

* **Range:** Approximately -2.0 to -3.6.

* **Major Tick Marks:** -2.0, -2.2, -2.4, -2.6, -2.8, -3.0, -3.2, -3.4, -3.6.

* **Secondary Y-Axis (Right):**

* **Label:** "Bayesian Surprise" (rotated vertically, right side).

* **Scale:** Linear, with positive values.

* **Range:** 0.000 to 0.012.

* **Major Tick Marks:** 0.000, 0.002, 0.004, 0.006, 0.008, 0.010, 0.012.

* **Legend:**

* **Position:** Top-right corner, inside the plot area.

* **Entry 1:** Blue dashed line (`--`) labeled "Shannon Surprise".

* **Entry 2:** Red solid line (`-`) labeled "Bayesian Surprise".

* **Grid:** A light gray grid is present for both axes.

### Detailed Analysis

**1. Shannon Surprise (Blue Dashed Line):**

* **Trend:** The line shows a steady, monotonic decrease (negative slope) across the entire range. The rate of decrease slows slightly as `m` increases.

* **Data Points (Approximate):**

* At m=0: ~ -2.1

* At m=20: ~ -2.9

* At m=40: ~ -3.2

* At m=60: ~ -3.4

* At m=80: ~ -3.5

* At m=100: ~ -3.6

**2. Bayesian Surprise (Red Solid Line):**

* **Trend:** The line exhibits a very sharp, exponential-like decay initially, followed by a rapid plateau. The value approaches zero asymptotically.

* **Data Points (Approximate):**

* At m=0: ~ 0.011

* At m=5: ~ 0.004

* At m=10: ~ 0.001

* At m=20: ~ 0.0002

* At m=40 to m=100: The value is visually indistinguishable from 0.000 on this scale.

### Key Observations

* **Scale Disparity:** The two metrics operate on vastly different scales. Shannon Surprise is measured in negative units (likely bits, given the name), while Bayesian Surprise is a small positive number.

* **Rate of Change:** Bayesian Surprise diminishes to near-zero within the first 20 exploitations. Shannon Surprise continues to decrease meaningfully across the full range of 100 exploitations.

* **Convergence:** Both lines appear to converge towards a lower bound as `m` increases, but the Bayesian metric reaches its effective floor much sooner.

### Interpretation

This chart likely illustrates concepts from information theory and Bayesian statistics in the context of an exploration-exploitation process (e.g., in reinforcement learning or active learning).

* **What it Suggests:** The "surprise" associated with new information decreases as more data is gathered (exploitations increase). The chart quantitatively compares two ways of measuring this surprise.

* **Relationship Between Elements:** The dual-axis format is necessary because the fundamental units and magnitudes of the two metrics are different. The shared x-axis allows for direct comparison of their behavior relative to the same process variable (`m`).

* **Notable Patterns:**

1. **Bayesian Surprise is "Front-Loaded":** It captures almost all of its informational value very early in the process. This suggests it is highly sensitive to initial, novel data and quickly becomes uninformative as the model's beliefs stabilize.

2. **Shannon Surprise has "Long-Tail" Information:** It continues to register decreasing surprise over many more steps, indicating it may be measuring a more persistent form of uncertainty or information gain that diminishes gradually.

3. **The Plateau:** The flatlining of the Bayesian Surprise curve after m≈40 indicates that, according to this metric, virtually no new, surprising information is being obtained from additional exploitations beyond that point. The Shannon metric, however, still registers a (small) decrease, implying some residual uncertainty is still being resolved.

**In summary, the chart demonstrates that while both metrics capture the reduction of uncertainty with experience, Bayesian Surprise is a more rapidly saturating measure, whereas Shannon Surprise provides a more prolonged signal of decreasing uncertainty.**

</details>

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart with Shaded Bound: Mutual Information Surprise

### Overview

The image is a technical line chart titled "Mutual Information Surprise." It plots a single data series against a shaded region representing a bound. The chart illustrates how a metric called "Mutual Information Surprise" changes as the "Number of Exploitations (m)" increases, with an associated uncertainty or confidence interval.

### Components/Axes

* **Chart Title:** "Mutual Information Surprise" (centered at the top).

* **X-Axis:**

* **Label:** "Number of Exploitations (m)"

* **Scale:** Linear, ranging from 0 to 100.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100.

* **Y-Axis:**

* **Label:** "Mutual Information Surprise"

* **Scale:** Linear, ranging from approximately -0.7 to +0.3.

* **Major Tick Marks:** -0.6, -0.4, -0.2, 0.0, 0.2.

* **Legend:** Located in the center-right region of the plot area.

* **Entry 1:** A solid green line labeled "Mutual Information Surprise".

* **Entry 2:** A gray shaded rectangle labeled "MIS Bound".

* **Grid:** A light gray grid is present, aligning with the major tick marks on both axes.

### Detailed Analysis

* **Data Series (Green Line - "Mutual Information Surprise"):**

* **Trend Verification:** The line exhibits a consistent, nearly linear downward slope from left to right.

* **Data Points (Approximate):**

* At m = 0, y ≈ 0.0.

* At m = 20, y ≈ -0.13.

* At m = 40, y ≈ -0.27.

* At m = 60, y ≈ -0.40.

* At m = 80, y ≈ -0.53.

* At m = 100, y ≈ -0.66.

* **Shaded Region (Gray Area - "MIS Bound"):**

* **Trend Verification:** The region is symmetric about the y=0 line. Its vertical width increases monotonically as the x-value increases.

* **Boundaries (Approximate):**

* At m = 0, the bound is very narrow, centered at y=0.

* At m = 50, the upper bound is ≈ +0.22 and the lower bound is ≈ -0.22.

* At m = 100, the upper bound is ≈ +0.30 and the lower bound is ≈ -0.30.

### Key Observations

1. **Inverse Relationship:** There is a clear inverse relationship between the "Number of Exploitations (m)" and the "Mutual Information Surprise" value. As `m` increases, the surprise metric decreases (becomes more negative).

2. **Expanding Uncertainty:** The "MIS Bound" widens significantly as `m` increases, indicating that the range of possible values for the surprise metric grows with more exploitations.

3. **Divergence:** The green data line and the center of the gray bound (y=0) diverge sharply. By m=100, the data line is far outside the initial tight bound and is approaching the lower edge of the expanded bound.

### Interpretation

This chart likely visualizes a concept from information theory or machine learning, possibly related to active learning or exploration strategies.

* **What the data suggests:** The "Mutual Information Surprise" metric appears to quantify how "surprising" or informative new data points are. The downward trend suggests that as an agent or algorithm performs more exploitations (presumably gathering data from the most informative sources), the marginal surprise or information gain from subsequent exploitations diminishes. This is a classic sign of diminishing returns.

* **Relationship between elements:** The "MIS Bound" represents a theoretical or empirical confidence interval around the surprise metric. Its expansion indicates that while the *expected* surprise decreases, the *variability* or uncertainty in that surprise measurement increases with more samples. This could be due to accumulating noise or the exploration of more diverse, less predictable regions of the data space.

* **Notable anomaly/insight:** The most striking feature is that the actual measured surprise (green line) does not stay within the central region of its own bound. It trends strongly negative, suggesting the process is systematically reducing surprise faster than a neutral (zero) expectation. This could imply the exploitation strategy is highly effective at targeting informative data, or that the bound itself is calculated under assumptions (e.g., stationarity) that are being violated as the process continues. The chart effectively argues that more exploitation leads to less surprise but greater uncertainty about that surprise value.

</details>

Figure 2: Surprise measures under over-exploitation.

Scenario 3: Noisy Exploration.

We perform standard exploration over $x\in[30,100]$ but apply random corruption to the outputs $\mathbf{y}$ , replacing each with a uniformly random digit between $0$ and $9$ . This simulates exploration without informative feedback.

Expected behavior: Despite novel inputs, the system should register confusion if understanding fails to improve. This mirrors the noise-increase case in Section 3.3, and we expect MIS to violate its lower bound.

Figure 3 confirms the following: MIS drops below its expected range, accurately signaling lack of learning. In contrast, Shannon and Bayesian Surprises again display erratic behavior without consistent trends.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Shannon and Bayesian Surprises

### Overview

This is a dual-axis line chart comparing two metrics—"Shannon Surprise" and "Bayesian Surprise"—over a sequence of explorations. The chart visualizes how these two distinct measures of "surprise" or information gain evolve as the number of explorations increases.

### Components/Axes

* **Chart Title:** "Shannon and Bayesian Surprises" (centered at the top).

* **X-Axis:**

* **Label:** "Number of Explorations (m)"

* **Scale:** Linear, ranging from 0 to 100.

* **Major Tick Marks:** At intervals of 20 (0, 20, 40, 60, 80, 100).

* **Primary Y-Axis (Left):**

* **Label:** "Shannon Surprise"

* **Scale:** Linear, ranging from 0 to 8.

* **Major Tick Marks:** At integer intervals (0, 1, 2, 3, 4, 5, 6, 7, 8).

* **Secondary Y-Axis (Right):**

* **Label:** "Bayesian surprise"

* **Scale:** Linear, ranging from 0 to 8.

* **Major Tick Marks:** At integer intervals (0, 1, 2, 3, 4, 5, 6, 7, 8).

* **Legend:**

* **Placement:** Bottom-right corner of the chart area.

* **Items:**

1. A blue dashed line labeled "Shannon Surprise".

2. A red solid line labeled "Bayesian Surprise".

* **Grid:** A light gray grid is present, aligning with the major ticks of both the x-axis and the primary y-axis.

### Detailed Analysis

**1. Shannon Surprise (Blue Dashed Line):**

* **Trend:** The line exhibits moderate volatility in the first half of the explorations (m=0 to ~40), generally fluctuating between values of 2 and 4 on the left y-axis. After approximately m=40, the line drops significantly and stabilizes at a much lower level, mostly between 1 and 2, with a few isolated points slightly higher.

* **Key Data Points (Approximate):**

* Starts near 3 at m=0.

* Peaks around 4 at m≈10, m≈20, and m≈30.

* Has a notable dip to ~2 at m≈18.

* Shows a sharp, sustained drop starting around m=38, falling to ~1.5 by m=42.

* Remains low (1-2 range) from m=42 to m=100, with a minor peak near 2.5 at m≈55.

**2. Bayesian Surprise (Red Solid Line):**

* **Trend:** This line is characterized by extreme, high-frequency volatility. It consists almost entirely of sharp, vertical spikes that frequently reach the maximum value of 8 on the right y-axis, interspersed with rapid drops to values near or below 2. There is no clear upward or downward long-term trend; the pattern of intense spiking is consistent across the entire range of explorations.

* **Key Data Points (Approximate):**

* The line spikes to 8 or near-8 more than 15 times across the x-axis range.

* Notable deep troughs (values ≤ 2) occur at approximately m=5, m=15, m=40, m=65, and a very deep one near m=80 where it drops to ~0.5.

* The spikes are densely packed, especially between m=0-40 and m=60-100.

### Key Observations

1. **Dichotomy in Behavior:** The two metrics display fundamentally different behaviors. Shannon Surprise shows a regime shift (from moderate volatility to low, stable values), while Bayesian Surprise maintains a consistent pattern of high-amplitude, high-frequency spikes throughout.

2. **Divergence Point:** The most significant event in the chart is the divergence that occurs around m=40. At this point, the Shannon Surprise metric drops and stays low, while the Bayesian Surprise continues its spiking pattern unabated.

3. **Value Range:** Both metrics utilize the full 0-8 scale, but they do so in completely different ways. Shannon Surprise occupies the lower-to-mid range (1-4) for most of the chart, while Bayesian Surprise repeatedly hits the ceiling (8) and floor (0-2) of its scale.

4. **Visual Density:** The red line (Bayesian) creates a dense, "barcode-like" visual texture due to its rapid oscillations, whereas the blue line (Shannon) is more sparse and easier to follow visually.

### Interpretation

This chart likely illustrates a comparison between two different mathematical frameworks for quantifying "surprise" or information gain in a sequential learning or exploration process.

* **What the Data Suggests:** The stark contrast implies that the two measures are sensitive to different aspects of the data or the learning process. The **Bayesian Surprise** (red) appears to be a highly reactive, instantaneous measure. Its constant spiking suggests that nearly every new exploration (data point) provides a significant update to the Bayesian model, causing a large "surprise" value. This could indicate a model that is constantly being surprised by new evidence, perhaps due to a high initial uncertainty or a complex underlying distribution.

* The **Shannon Surprise** (blue), based on information theory, seems to measure a more cumulative or smoothed uncertainty. Its drop and stabilization after m=40 suggest that, from the model's perspective, the *informational content* or *reduction in entropy* gained from each new exploration diminishes significantly after a certain point. The system may have learned the broad structure of the environment by then, so new samples provide less "new" information in a Shannon sense, even if they still cause large Bayesian updates.

* **Relationship and Anomaly:** The key relationship is their divergence. The anomaly is not a single data point but the entire behavioral dichotomy. This visual evidence argues that "surprise" is not a monolithic concept. The choice of metric (Bayesian vs. Shannon) fundamentally changes the narrative of the learning process: one tells a story of constant, dramatic updates, while the other tells a story of initial learning followed by saturation. The chart powerfully demonstrates that the interpretation of system behavior is contingent on the chosen analytical lens.

</details>

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart with Confidence Interval: Mutual Information Surprise

### Overview

This image is a line chart titled "Mutual Information Surprise" that plots a metric against the number of explorations. The chart includes a central trend line and a shaded region representing a bound or confidence interval. The overall visual suggests a decreasing trend with increasing uncertainty.

### Components/Axes

* **Chart Title:** "Mutual Information Surprise" (centered at the top).

* **X-Axis:**

* **Label:** "Number of Explorations (m)"

* **Scale:** Linear, ranging from 0 to 100.

* **Major Tick Marks:** 0, 20, 40, 60, 80, 100.

* **Y-Axis:**

* **Label:** "Mutual Information Surprise"

* **Scale:** Linear, ranging from approximately -0.5 to 0.3.

* **Major Tick Marks:** -0.4, -0.2, 0.0, 0.2.

* **Legend:** Located in the top-right quadrant of the chart area.

* **Green Line:** Labeled "Mutual Information Surprise".

* **Gray Shaded Area:** Labeled "MIS Bound".

* **Grid:** A light gray grid is present in the background.

### Detailed Analysis

1. **Data Series - "Mutual Information Surprise" (Green Line):**

* **Trend Verification:** The line exhibits a consistent, monotonic downward slope from left to right.

* **Spatial Grounding & Data Points (Approximate):**

* At x=0, y ≈ 0.0.

* At x=20, y ≈ -0.08.

* At x=40, y ≈ -0.18.

* At x=60, y ≈ -0.28.

* At x=80, y ≈ -0.42.

* At x=100, y ≈ -0.55.

* The line shows minor local fluctuations but the overall negative trend is clear and strong.

2. **Data Series - "MIS Bound" (Gray Shaded Region):**

* **Trend Verification:** The shaded region is narrow at x=0 and expands symmetrically (or nearly so) around the green line as x increases, forming a funnel or cone shape opening to the right.

* **Spatial Grounding & Bounds (Approximate):**

* At x=0: The bound is very tight, approximately y = 0.0 ± 0.01.

* At x=50: The bound spans from approximately y = -0.1 to y = +0.15.

* At x=100: The bound spans from approximately y = -0.55 (coinciding with the line) to y = +0.25.

* The upper edge of the bound increases slightly, while the lower edge decreases sharply, following the trend of the central line.

### Key Observations

* **Strong Negative Correlation:** There is a clear, strong inverse relationship between the "Number of Explorations (m)" and the "Mutual Information Surprise" value.

* **Diverging Uncertainty:** The "MIS Bound" widens dramatically as the number of explorations increases. This indicates that the variance or uncertainty associated with the "Mutual Information Surprise" measurement grows substantially with more data/explorations.

* **Asymmetry in Bound:** While the bound expands in both directions, the expansion is more pronounced in the negative direction, closely tracking the downward trend of the central line. The upper bound shows a much gentler positive slope.

### Interpretation

The chart demonstrates a system where increased exploration (m) leads to a decrease in "Mutual Information Surprise." In information-theoretic terms, this likely means that as the system gathers more data, its predictions or model become less "surprised" by new observations—the observed data aligns better with its expectations. This is a sign of learning or model convergence.

However, the critical insight is the **expanding MIS Bound**. This suggests that while the *average* surprise decreases, the *range of possible surprise values* grows. This could indicate:

1. **Increasing Model Uncertainty:** The system's confidence in its own decreasing surprise metric diminishes with more explorations.

2. **Heteroscedasticity:** The underlying process being measured has variability that increases with the scale of exploration.

3. **A Trade-off:** There may be a trade-off between reducing average surprise and maintaining consistent, predictable performance. The system becomes better on average but less predictable in its specific outcomes.

The funnel shape is a classic visual indicator of this phenomenon. The data suggests that while exploration is effective at reducing surprise, it does so at the cost of introducing greater variability into the system's performance metric.

</details>

Figure 3: Surprise measures under noisy exploration.

Scenario 4: Aggressive Exploration.

This scenario enforces strict exploration over $x\in[30,500]$ , where each new sample is far from all observed points (i.e., outside the $\pm 1$ neighborhood range).

Expected behavior: Aggressive exploration without verification can lead to overconfidence. This mirrors the aggressive exploration case in Section 3.3, and we expect MIS to exceed its upper bound.

Figure 4 shows MIS surpassing its upper bound, consistent with this expectation. Shannon and Bayesian Surprises again fluctuate unpredictably.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Shannon and Bayesian Surprises

### Overview

This is a dual-axis line chart comparing two different metrics of "surprise" over a series of explorations. The chart plots "Shannon Surprise" and "Bayesian Surprise" against the "Number of Explorations (m)". The data shows high variability and spiky behavior for both metrics, with notable differences in scale and pattern.

### Components/Axes

* **Title:** "Shannon and Bayesian Surprises" (centered at the top).

* **X-Axis:**

* **Label:** "Number of Explorations (m)"

* **Scale:** Linear, ranging from 0 to 100.

* **Major Ticks:** 0, 20, 40, 60, 80, 100.

* **Primary Y-Axis (Left):**

* **Label:** "Shannon Surprise"

* **Scale:** Linear, ranging from 0 to 8.

* **Major Ticks:** 0, 1, 2, 3, 4, 5, 6, 7, 8.

* **Associated Data Series:** Blue dashed line.

* **Secondary Y-Axis (Right):**

* **Label:** "Bayesian Surprise"

* **Scale:** Linear, ranging from 0.0 to 20.0.

* **Major Ticks:** 0.0, 2.5, 5.0, 7.5, 10.0, 12.5, 15.0, 17.5, 20.0.

* **Associated Data Series:** Red solid line.

* **Legend:**

* **Position:** Top-left corner of the plot area.

* **Items:**

1. Blue dashed line: "Shannon Surprise"

2. Red solid line: "Bayesian Surprise"

### Detailed Analysis

**Data Series Trends:**

1. **Shannon Surprise (Blue Dashed Line):**

* **Trend:** Exhibits high-frequency, high-amplitude oscillations throughout the entire range of explorations. The line frequently spikes to values between 4 and 8 on its axis, with deep troughs often falling below 2.

* **Key Data Points (Approximate):**

* Starts near 3.5 at m=0.

* Major peaks: ~7.5 at m≈12, ~6.5 at m≈45, ~7.8 at m≈58, ~7.5 at m≈75, ~6.8 at m≈95.

* Notable trough: Near 0 at m≈40.