# Diffusion Language Models Know the Answer Before Decoding

**Authors**:

- Soroush Vosoughi, Shiwei Liu (The Hong Kong Polytechnic University Dartmouth College University of Surrey Sun Yat-sen University)

Abstract

Diffusion language models (DLMs) have recently emerged as an alternative to autoregressive approaches, offering parallel sequence generation and flexible token orders. However, their inference remains slower than that of autoregressive models, primarily due to the cost of bidirectional attention and the large number of refinement steps required for high-quality outputs. In this work, we highlight and leverage an overlooked property of DLMs— early answer convergence: in many cases, the correct answer can be internally identified by half steps before the final decoding step, both under semi-autoregressive and random re-masking schedules. For example, on GSM8K and MMLU, up to 97% and 99% of instances, respectively, can be decoded correctly using only half of the refinement steps. Building on this observation, we introduce Prophet, a training-free fast decoding paradigm that enables early commit decoding. Specifically, Prophet dynamically decides whether to continue refinement or to go “all-in” (i.e., decode all remaining tokens in one step), using the confidence gap between the top-2 prediction candidates as the criterion. It integrates seamlessly into existing DLM implementations, incurs negligible overhead, and requires no additional training. Empirical evaluations of LLaDA-8B and Dream-7B across multiple tasks show that Prophet reduces the number of decoding steps by up to 3.4 $×$ while preserving high generation quality. These results recast DLM decoding as a problem of when to stop sampling, and demonstrate that early decode convergence provides a simple yet powerful mechanism for accelerating DLM inference, complementary to existing speedup techniques. Our code is available at https://github.com/pixeli99/Prophet.

1 Introduction

Along with the rapid evolution of diffusion models in various domains (Ho et al., 2020; Nichol & Dhariwal, 2021; Ramesh et al., 2021; Saharia et al., 2022; Jing et al., 2022), Diffusion language models (DLMs) have emerged as a compelling and competitively efficient alternative to autoregressive (AR) models for sequence generation (Austin et al., 2021a; Lou et al., 2023; Shi et al., 2024; Sahoo et al., 2024; Nie et al., 2025; Gong et al., 2024; Ye et al., 2025). Primary strengths of DLMs over AR models include, but are not limited to, efficient parallel decoding and flexible generation orders. More specifically, DLMs decode all tokens in parallel through iterative denoising and remasking steps. The remaining tokens are typically refined with low-confidence predictions over successive rounds (Nie et al., 2025).

Despite the speed-up potential of DLMs, the inference speed of DLMs is slower than AR models in practice, due to the lack of KV-cache mechanisms and the significant performance degradation associated with fast parallel decoding (Israel et al., 2025a). Recent endeavors have proposed excellent algorithms to enable KV-cache (Ma et al., 2025a; Liu et al., 2025a; Wu et al., 2025a) and improve the performance of parallel decoding (Wu et al., 2025a; Wei et al., 2025a; Hu et al., 2025).

In this paper, we aim to accelerate the inference of DLMs from a different perspective, motivated by an overlooked yet powerful phenomenon of DLMs— early answer convergence. Through extensive analysis, we observed that: a strikingly high proportion of samples can be correctly decoded during the early phase of decoding for both semi-autoregressive remasking and random remasking. This trend becomes more significant for random remasking. For example, on GSM8K and MMLU, up to 97% and 99% of instances, respectively, can be decoded correctly using only half of the refinement steps.

Motivated by this finding, we introduce Prophet, a training-free fast decoding strategy designed to capitalize on early answer convergence. Prophet continuously monitors the confidence gap between the top-2 answer candidates throughout the decoding trajectory, and opportunistically decides whether it is safe to decode all remaining tokens at once. By doing so, Prophet achieves substantial inference speed-up (up to 3.4 $×$ ) while maintaining high generation quality. Our contributions are threefold:

- Empirical observations of early answer convergence: We demonstrate that a strikingly high proportion of samples (up to 99%) can be correctly decoded during the early phase of decoding for both semi-autoregressive remasking and random remasking. This underscores a fundamental redundancy in conventional full-length slow decoding.

- A fast decoding paradigm enabling early commit decoding: We propose Prophet, which evaluates at each step whether the remaining answer is accurate enough to be finalized immediately, which we call Early Commit Decoding. We find that the confidence gap between the top-2 answer candidates serves as an effective metric to determine the right time of early commit decoding. Leveraging this metric, Prophet dynamically decides between continued refinement and immediate answer emission.

- Substantial speed-up gains with high-quality generation: Experiments across diverse benchmarks reveal that Prophet delivers up to 3.4 $×$ reduction in decoding steps. Crucially, this acceleration incurs negligible degradation in accuracy-affirming that early commit decoding is not just computationally efficient but also semantically reliable for DLMs.

2 Related Work

2.1 Diffusion Large Language Model

The idea of adapting diffusion processes to discrete domains traces back to the pioneering works of Sohl-Dickstein et al. (2015); Hoogeboom et al. (2021). A general probabilistic framework was later developed in D3PM (Austin et al., 2021a), which modeled the forward process as a discrete-state Markov chain progressively adding noise to the clean input sequence over time steps. The reverse process is parameterized to predict the clean text sequence based on the current noisy input by maximizing the Evidence Lower Bound (ELBO). This perspective was subsequently extended to the continuous-time setting. Campbell et al. (2022) reinterpreted the discrete chain within a continuous-time Markov chain (CTMC) formulation. An alternative line of work, SEDD (Lou et al., 2023), focused on directly estimating likelihood ratios and introduced a denoising score entropy criterion for training. Recent analyses in MDLM (Shi et al., 2024; Sahoo et al., 2024; Zheng et al., 2024) and RADD (Ou et al., 2024) demonstrate that multiple parameterizations of MDMs are in fact equivalent.

Motivated by these groundbreaking breakthroughs, practitioners have successfully built product-level DLMs. Notable examples include commercial releases such as Mercury (Labs et al., 2025), Gemini Diffusion (DeepMind, 2025), and Seed Diffusion (Song et al., 2025b), as well as open-source implementations including LLaDA (Nie et al., 2025) and Dream (Ye et al., 2025). However, DLMs face an efficiency-accuracy tradeoff that limits their practical advantages. While DLMs can theoretically decode multiple tokens per denoising step, increasing the number of simultaneously decoded tokens results in degraded quality. Conversely, decoding a limited number of tokens per denoising step leads to high inference latency compared to AR models, as DLMs cannot naively leverage key-value (KV) caching or other advanced optimization techniques due to their bidirectional nature.

2.2 Acceleration Methods for Diffusion Language Models

To enhance the inference speed of DLMs while maintaining quality, recent optimization efforts can be broadly categorized into three complementary directions. One strategy leverages the empirical observation that hidden states exhibit high similarity across consecutive denoising steps, enabling approximate caching (Ma et al., 2025b; Liu et al., 2025b; Hu et al., 2025). The alternative strategy restructures the denoising process in a semi-autoregressive or block-autoregressive manner, allowing the system to cache states from previous context or blocks. These methods may optionally incorporate cache refreshing that update stored cache at regular intervals (Wu et al., 2025b; Arriola et al., 2025; Wang et al., 2025b; Song et al., 2025a). The second direction reduces attention cost by pruning redundant tokens. For example, DPad (Chen et al., 2025) is a training-free method that treats future (suffix) tokens as a computational ”scratchpad” and prunes distant ones before computation. The third direction focuses on optimizing sampling methods or reducing the total denoising steps through reinforcement learning (Song et al., 2025b). Sampling optimization methods aim to increase the number of tokens decoded at each denoising step through different selection strategies. These approaches employ various statistical measures—such as confidence scores or entropy—as thresholds for determining the number of tokens to decode simultaneously. The token count can also be dynamically adjusted based on denoising dynamics (Wei et al., 2025b; Huang & Tang, 2025), through alignment with small off-the-shelf AR models (Israel et al., 2025b) or use the DLM itself as a draft model for speculative decoding (Agrawal et al., 2025).

Different from the above optimization methods, our approach stems from the observation that DLMs can correctly predict the final answer at intermediate steps, enabling early commit decoding to reduce inference time. Note that the early answer convergence has also been discovered by an excellent concurrent work (Wang et al., 2025a), where they focus on averaging predictions across time steps for improved accuracy, whereas we develop an early commit decoding method that reduces computational steps while maintaining quality.

3 Preliminary

3.1 Background on Diffusion Language Models

Concretely, let $x_{0}\sim p_{\text{data}}(x_{0})$ be a clean input sequence. At an intermediate noise level $t∈[0,T]$ , we denote by $x_{t}$ the corrupted version obtained after applying a masking procedure to a subset of its tokens.

Forward process.

The corruption mechanism can be expressed as a Markov chain

$$

\displaystyle q(x_{1:T}\mid x_{0})\;=\;\prod_{t=1}^{T}q(x_{t}\mid x_{t-1}), \tag{1}

$$

which gradually transforms the original sample $x_{0}$ into a maximally degraded representation $x_{T}$ . At each step, additional noise is injected, so that the sequence becomes progressively more masked as $t$ increases.

While the forward process in Eq.(1) is straightforward, its exact reversal is typically inefficient because it unmasks only one position per step (Campbell et al., 2022; Lou et al., 2023). To accelerate generation, a common remedy is to use the $\tau$ -leaping approximation (Gillespie, 2001), which enables multiple masked positions to be recovered simultaneously. Concretely, transitioning from corruption level $t$ to an earlier level $s<t$ can be approximated as

$$

\displaystyle q_{s|t} \displaystyle=\prod_{i=1}^{n}q_{s|t}({x}_{s}^{i}\mid{x}_{t}),\quad q_{s|t}({x}_{s}^{i}\mid{x}_{t})=\begin{cases}1,&{x}_{t}^{i}\neq[\text{MASK}],~{x}_{s}^{i}={x}_{t}^{i},\\[4.0pt]

\frac{s}{t},&{x}_{t}^{i}=[\text{MASK}],~{x}_{s}^{i}=[\text{MASK}],\\[4.0pt]

\frac{t-s}{t}\,q_{0|t}({x}_{s}^{i}\mid{x}_{t}),&{x}_{t}^{i}=[\text{MASK}],~{x}_{s}^{i}\neq[\text{MASK}].\end{cases} \tag{2}

$$

Here, $q_{0|t}({x}_{s}^{i}\mid{x}_{t})$ is a predictive distribution over the vocabulary, supplied by the model itself, whenever a masked location is to be unmasked. In conditional generation (e.g., producing a response ${x}_{0}$ given a prompt $p$ ), this predictive distribution additionally depends on $p$ , i.e., $q_{0|t}({x}_{s}^{i}\mid{x}_{t},p)$ .

Reverse generation.

To synthesize text, one needs to approximate the reverse dynamics. The generative model is parameterized as

This reverse process naturally decomposes into two complementary components. i. Prediction step. The model $p_{\theta}(x_{0}\mid x_{t})$ attempts to reconstruct a clean sequence from the corrupted input at level $t$ . We denote the predicted sequence after this step by $x_{0}^{t}$ , i.e. $x_{0}^{t}=p_{\theta}(x_{0}\mid x_{t})$ . (2) ii. Re-masking step. Once a candidate reconstruction $x_{0}^{t}$ is obtained, the forward noising mechanism is reapplied in order to produce a partially corrupted sequence $x_{t-1}$ that is less noisy than $x_{t}$ . This “re-masking” can be implemented in various ways, such as masking tokens uniformly at random or selectively masking low-confidence positions (Nie et al., 2025). Through the interplay of these two steps—prediction and re-masking—the model iteratively refines an initially noisy sequence into a coherent text output.

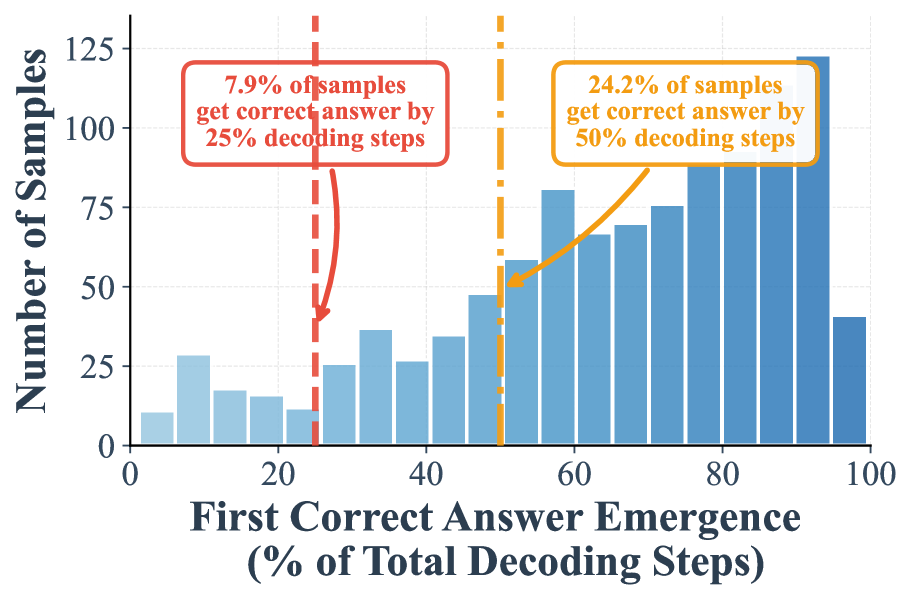

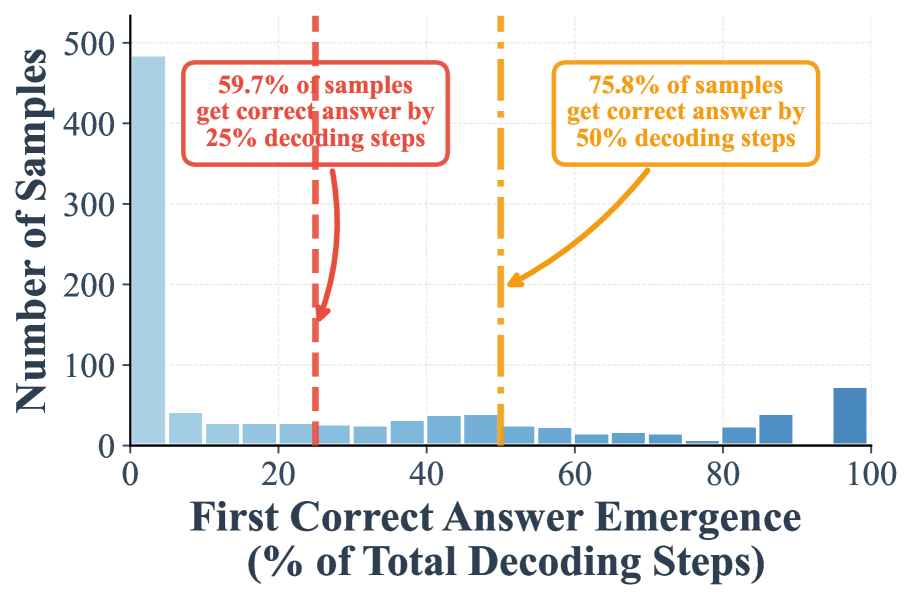

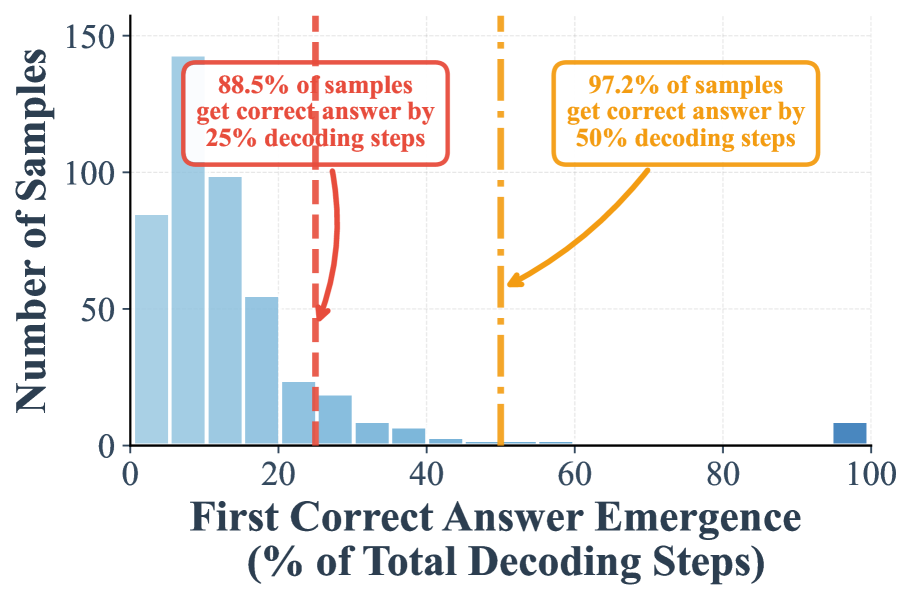

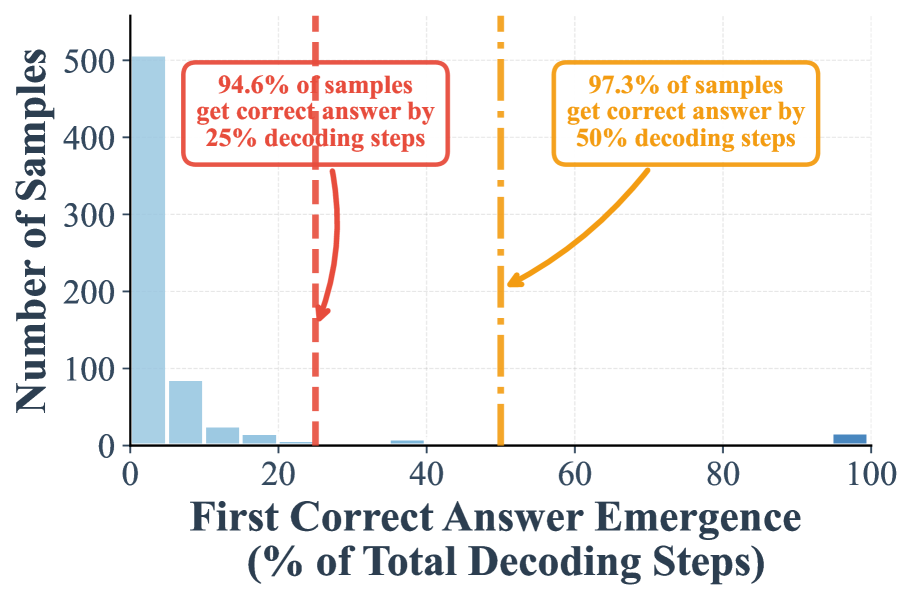

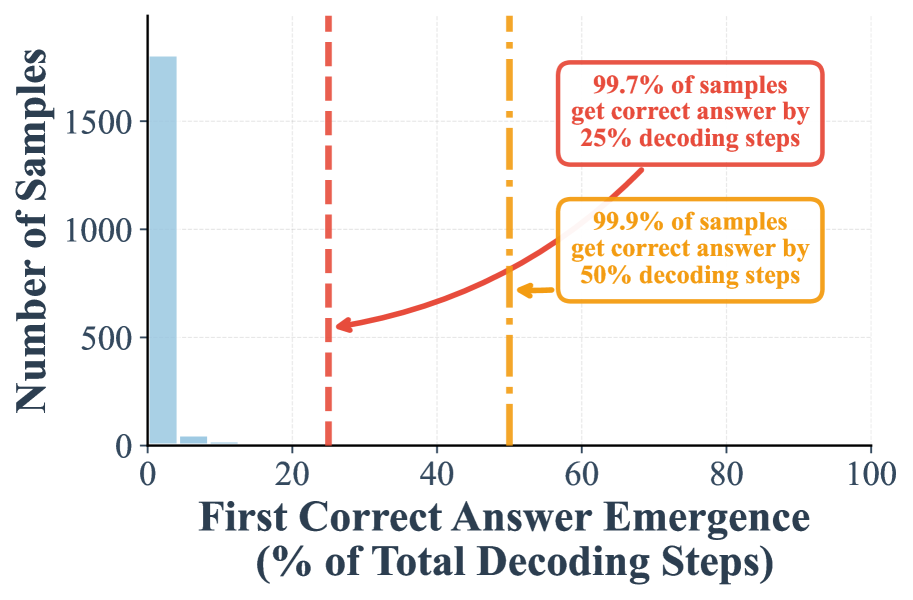

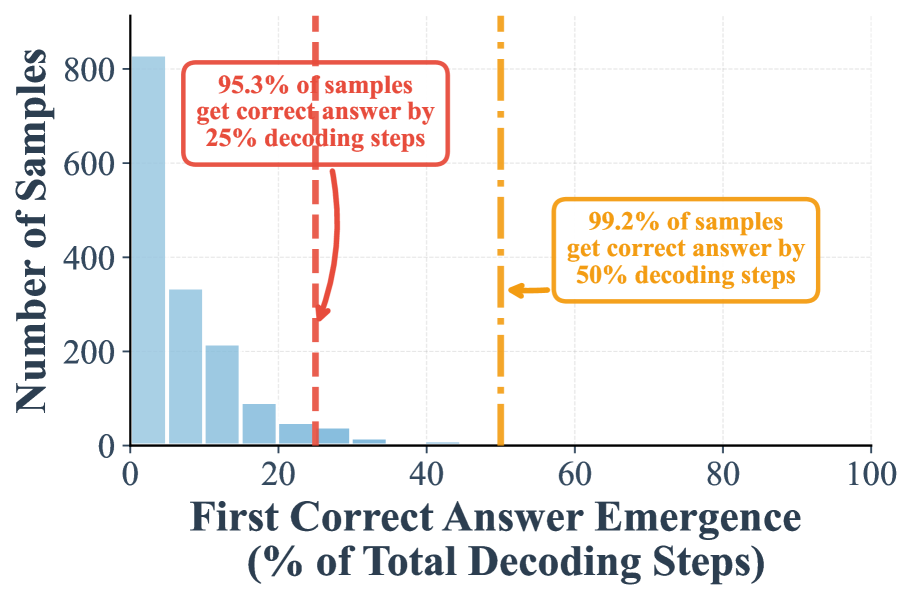

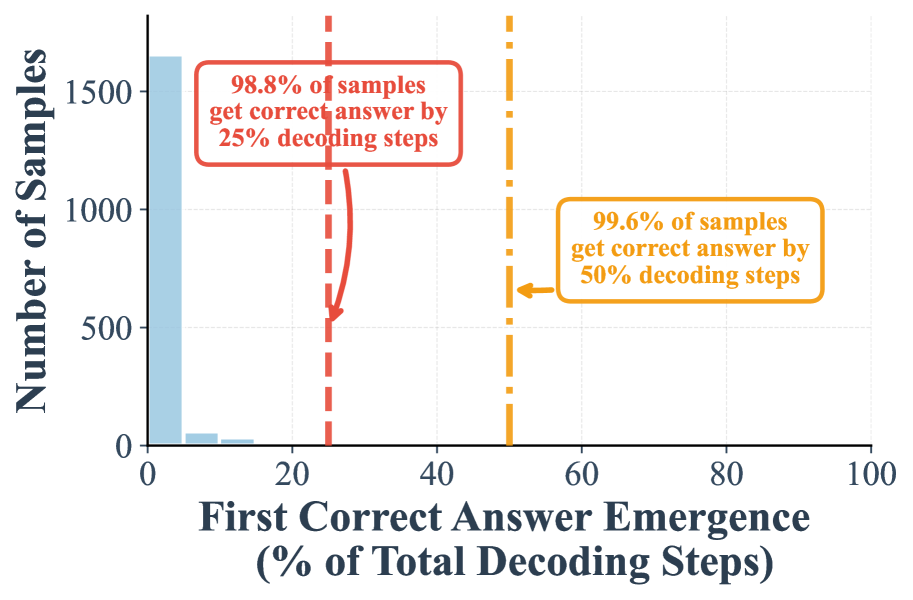

3.2 Early Answer Convergency

In this section, we investigate the early emergence of correct answers in DLMs. We conduct a comprehensive analysis using LLaDA-8B (Nie et al., 2025) on two widely used benchmarks: GSM8K (Cobbe et al., 2021) and MMLU (Hendrycks et al., 2021). Specifically, we examine the decoding dynamics, that is, how the top 1 predicted token evolves across positions at each decoding step, and report the percentage of the full decoding process at which the top 1 predicted tokens first match the ground truth answer tokens. In this study, we only consider samples where the final output contains the ground truth answer.

For low confidence remasking, we set Answer length at 256 and Block length at 32 for GSM8K, and Answer length at 128 and Block length to 128 for MMLU. For random remasking, we set Answer length at 256 and Block length at 256 for GSM8K, and Answer length at 128 and Block length at 128 for MMLU.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Bar Chart: First Correct Answer Emergence vs. Number of Samples

### Overview

The chart visualizes the relationship between the percentage of total decoding steps required for the first correct answer emergence and the number of samples achieving correctness at specific thresholds. Two vertical dashed lines highlight critical decoding step percentages (25% and 50%), with annotations indicating the proportion of samples achieving correctness by these thresholds. The bars represent the distribution of samples across decoding step percentages, peaking around 80% before declining.

### Components/Axes

- **X-axis**: "First Correct Answer Emergence (% of Total Decoding Steps)" (0–100% range, labeled in increments of 20%).

- **Y-axis**: "Number of Samples" (0–125, labeled in increments of 25).

- **Annotations**:

- **Red Box**: "7.9% of samples get correct answer by 25% decoding steps" (positioned near the 25% threshold).

- **Yellow Box**: "24.2% of samples get correct answer by 50% decoding steps" (positioned near the 50% threshold).

- **Dashed Lines**:

- Red vertical dashed line at 25% decoding steps.

- Yellow vertical dashed line at 50% decoding steps.

- **Bars**: Blue-colored bars represent the number of samples for each decoding step percentage. Heights increase monotonically up to ~80% decoding steps, then decline sharply.

### Detailed Analysis

- **Annotations**:

- At 25% decoding steps: 7.9% of samples achieve correctness.

- At 50% decoding steps: 24.2% of samples achieve correctness.

- **Bar Trends**:

- Bars rise steadily from 0% to ~80% decoding steps, peaking at approximately 100 samples.

- After 80%, bars drop sharply, with the final bar at 100% decoding steps showing ~40 samples.

- **Thresholds**:

- The 25% and 50% decoding steps are marked with vertical dashed lines and annotations, emphasizing their significance.

### Key Observations

1. **Threshold Performance**:

- Only 7.9% of samples achieve correctness by 25% decoding steps, while 24.2% do so by 50%.

- The gap between these thresholds suggests diminishing returns in early decoding steps.

2. **Peak Efficiency**:

- The highest number of samples (near 100) achieves correctness at ~80% decoding steps, indicating an optimal efficiency point.

3. **Decline Post-80%**:

- Performance drops significantly after 80%, with only ~40 samples achieving correctness at 100% decoding steps.

### Interpretation

The data suggests that model performance improves with increased decoding steps but exhibits a critical threshold around 80%, where the majority of samples achieve correctness. The sharp decline post-80% implies potential inefficiencies or instability in further decoding steps. The annotations highlight that early decoding steps (25–50%) capture a small fraction of correct answers, emphasizing the need for deeper processing in most cases. The peak at 80% may reflect a balance between computational cost and accuracy, while the post-80% drop could indicate overfitting, noise, or model limitations in handling edge cases. This chart underscores the trade-off between decoding effort and accuracy in sequence generation tasks.

</details>

(a) w/o suffix prompt (low-confidence remasking)

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: First Correct Answer Emergence by Decoding Steps

### Overview

The chart visualizes the distribution of samples based on the percentage of total decoding steps required to identify the first correct answer. It highlights two key thresholds (25% and 50% decoding steps) with annotations showing cumulative correct answer rates.

### Components/Axes

- **Y-Axis**: "Number of Samples" (scale: 0–500, increments of 100).

- **X-Axis**: "First Correct Answer Emergence (% of Total Decoding Steps)" (scale: 0–100%, increments of 10%).

- **Legend**: Implicit via color-coded annotations:

- **Red dashed line**: 25% decoding steps (59.7% correct answers).

- **Orange dashed line**: 50% decoding steps (75.8% correct answers).

### Detailed Analysis

- **Bar Distribution**:

- **0% decoding steps**: Tallest bar (~480 samples).

- **10% decoding steps**: ~40 samples.

- **20% decoding steps**: ~30 samples.

- **30% decoding steps**: ~25 samples.

- **40% decoding steps**: ~35 samples.

- **50% decoding steps**: ~10 samples.

- **60–100% decoding steps**: Gradual decline, with the smallest bar at 100% (~70 samples).

- **Annotations**:

- Red box at 25%: "59.7% of samples get correct answer by 25% decoding steps."

- Orange box at 50%: "75.8% of samples get correct answer by 50% decoding steps."

### Key Observations

1. **Skewed Distribution**: Over 95% of samples resolve within the first 50% of decoding steps.

2. **Rapid Initial Drop**: Samples plummet from ~480 at 0% to ~40 at 10%, indicating most correct answers emerge immediately.

3. **Diminishing Returns**: Beyond 50%, the number of new correct answers per step decreases sharply (e.g., ~10 samples at 50% vs. ~70 at 100%).

### Interpretation

The data suggests that the system under analysis is highly efficient, with the majority of correct answers identified early in the decoding process. The 25% threshold captures nearly 60% of correct answers, while 50% captures 75.8%, implying that additional decoding steps yield minimal gains. This pattern could reflect:

- **Model Confidence**: Early steps may leverage strong prior knowledge or high-confidence predictions.

- **Data Characteristics**: Problems may be structured such that critical information is concentrated in initial decoding phases.

- **Resource Optimization**: Focusing computational resources on early decoding steps could maximize efficiency without significant loss of accuracy.

The sharp decline after 50% highlights potential bottlenecks or inefficiencies in later decoding stages, warranting further investigation into why fewer samples resolve in these phases.

</details>

(b) w/ suffix prompt (low-confidence remasking)

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: First Correct Answer Emergence (% of Total Decoding Steps)

### Overview

The chart visualizes the distribution of samples based on the percentage of total decoding steps required for the first correct answer to emerge. It highlights two key thresholds: 25% and 50% decoding steps, with annotations indicating the cumulative percentage of samples achieving correctness at these points.

### Components/Axes

- **X-axis**: "First Correct Answer Emergence (% of Total Decoding Steps)"

- Scale: 0% to 100% in 20% increments.

- Notable markers:

- Red dashed line at 25% (annotated: "88.5% of samples get correct answer by 25% decoding steps").

- Orange dashed line at 50% (annotated: "97.2% of samples get correct answer by 50% decoding steps").

- **Y-axis**: "Number of Samples"

- Scale: 0 to 150 in 50-unit increments.

- **Bars**: Blue, representing sample counts for each percentage bin.

### Detailed Analysis

- **Data Distribution**:

- The majority of samples (highest bar) fall in the 0–10% range (~140 samples).

- Sample counts decrease progressively across bins:

- 10–20%: ~100 samples.

- 20–30%: ~55 samples.

- 30–40%: ~25 samples.

- 40–50%: ~10 samples.

- 50–60%: ~2 samples.

- 60–70%: ~1 sample.

- 70–80%, 80–90%, 90–100%: ~1 sample each.

- The final bin (90–100%) contains ~5 samples.

- **Threshold Annotations**:

- At 25% decoding steps (red line), 88.5% of samples achieve correctness.

- At 50% decoding steps (orange line), 97.2% of samples achieve correctness.

### Key Observations

1. **Rapid Initial Improvement**: Over 88% of samples resolve within the first 25% of decoding steps.

2. **Diminishing Returns**: Additional decoding steps beyond 25% yield minimal gains (only 8.7% additional samples correct between 25% and 50%).

3. **Long-Tail Distribution**: A small fraction of samples (≤5) require nearly the full decoding process (90–100%).

### Interpretation

The chart demonstrates that the model achieves high accuracy early in the decoding process, with diminishing returns as more steps are added. This suggests:

- **Efficiency**: The model is highly effective at generating correct answers quickly, making it suitable for applications requiring rapid inference.

- **Robustness**: The 25% threshold captures most samples, indicating strong performance even with limited computational resources.

- **Outliers**: The long tail (90–100% range) highlights edge cases where the model struggles, potentially pointing to ambiguities or complexities in those inputs.

The annotations confirm that 97.2% of samples are resolved by 50% decoding steps, implying the model’s performance stabilizes well before full decoding. This could inform optimizations for real-time systems or resource-constrained environments.

</details>

(c) w/o suffix prompt (random remasking)

<details>

<summary>x4.png Details</summary>

### Visual Description

## Bar Chart: First Correct Answer Emergence (% of Total Decoding Steps)

### Overview

The chart visualizes the distribution of samples based on the percentage of total decoding steps required to achieve the first correct answer. Two key thresholds (25% and 50% decoding steps) are highlighted, with annotations indicating cumulative percentages of samples achieving correctness by those steps.

### Components/Axes

- **X-axis**: "First Correct Answer Emergence (% of Total Decoding Steps)" with markers at 0%, 20%, 40%, 60%, 80%, and 100%.

- **Y-axis**: "Number of Samples" scaled from 0 to 500 in increments of 100.

- **Legend**: Implicitly defined by bar colors:

- **Blue bar**: 25% decoding steps (500 samples).

- **Orange bar**: 50% decoding steps (200 samples).

- **Annotations**:

- Red dashed line at 25% decoding steps with text: "94.6% of samples get correct answer by 25% decoding steps."

- Orange dotted line at 50% decoding steps with text: "97.3% of samples get correct answer by 50% decoding steps."

### Detailed Analysis

- **Blue Bar (25% decoding steps)**:

- Height: ~500 samples.

- Position: Leftmost bar, centered at 25% on the x-axis.

- **Orange Bar (50% decoding steps)**:

- Height: ~200 samples.

- Position: Right of the blue bar, centered at 50% on the x-axis.

- **Annotations**:

- Red box (25%): Indicates 94.6% of total samples achieve correctness by 25% decoding steps.

- Orange box (50%): Indicates 97.3% of total samples achieve correctness by 50% decoding steps.

### Key Observations

1. **Dominance at 25% decoding steps**: The majority of samples (500) achieve correctness at 25% decoding steps, with 94.6% of total samples reaching the correct answer by this threshold.

2. **Drop in sample count at 50%**: Only 200 samples require 50% decoding steps, but this group contributes to the remaining 2.7% of samples achieving correctness (97.3% total).

3. **Cumulative correctness**: The annotations suggest near-universal correctness (97.3%) by 50% decoding steps, with minimal additional samples requiring further steps.

### Interpretation

The data demonstrates that **most samples (94.6%) resolve correctly within 25% of decoding steps**, while a smaller subset (2.7%) requires up to 50% decoding steps to achieve correctness. This implies:

- **Efficiency**: The system performs well for the majority of cases, with rapid convergence to correct answers.

- **Long-tail challenges**: A minority of samples (2.7%) demand significantly more computational effort, highlighting potential edge cases or complex scenarios.

- **Diminishing returns**: Increasing decoding steps beyond 25% yields only marginal improvements in correctness (2.7% additional samples), suggesting optimization opportunities for resource allocation.

The chart underscores a trade-off between computational cost and correctness, with the bulk of samples resolving efficiently but a small fraction requiring disproportionate resources.

</details>

(d) w/ suffix prompt (random remasking)

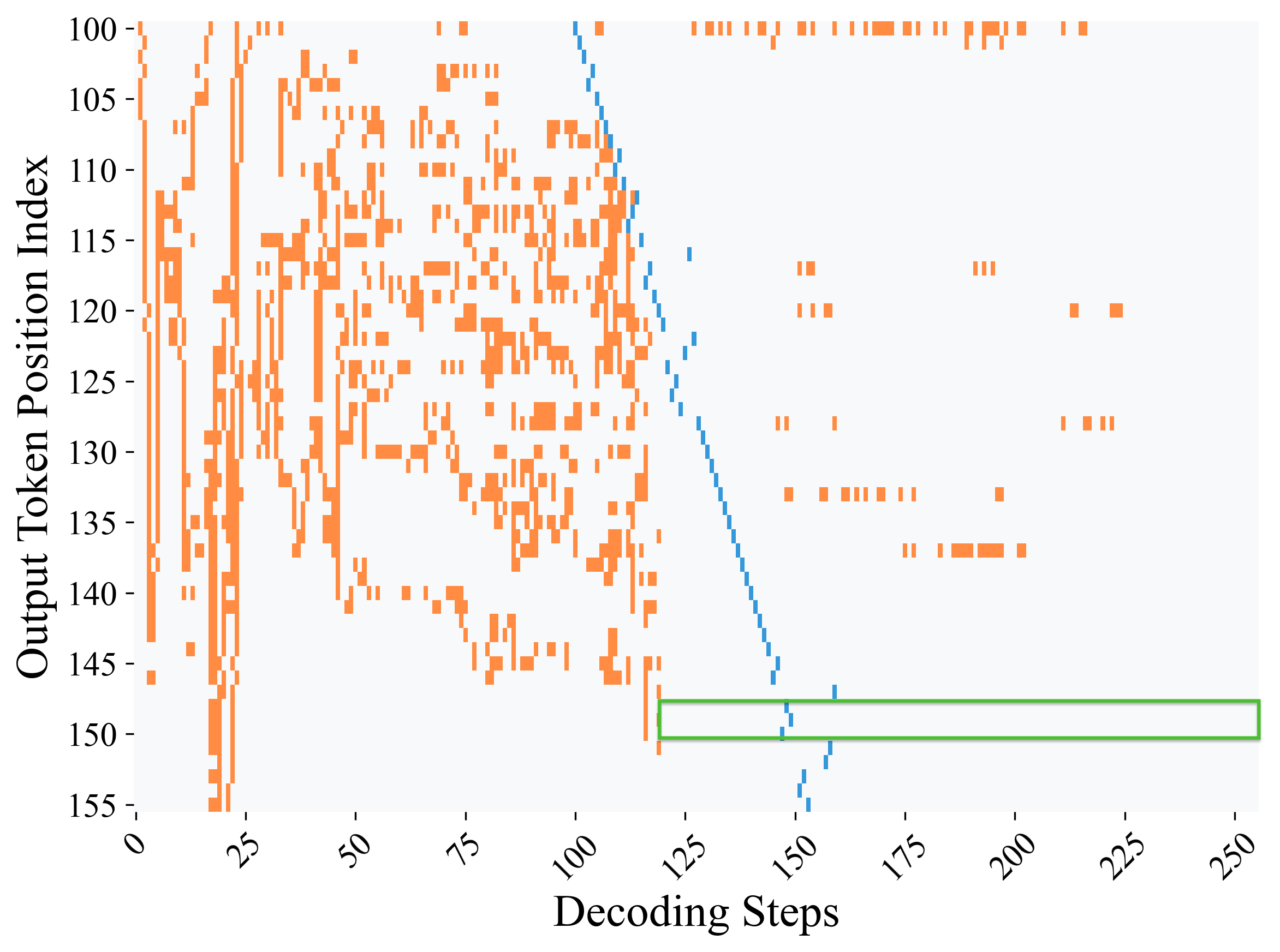

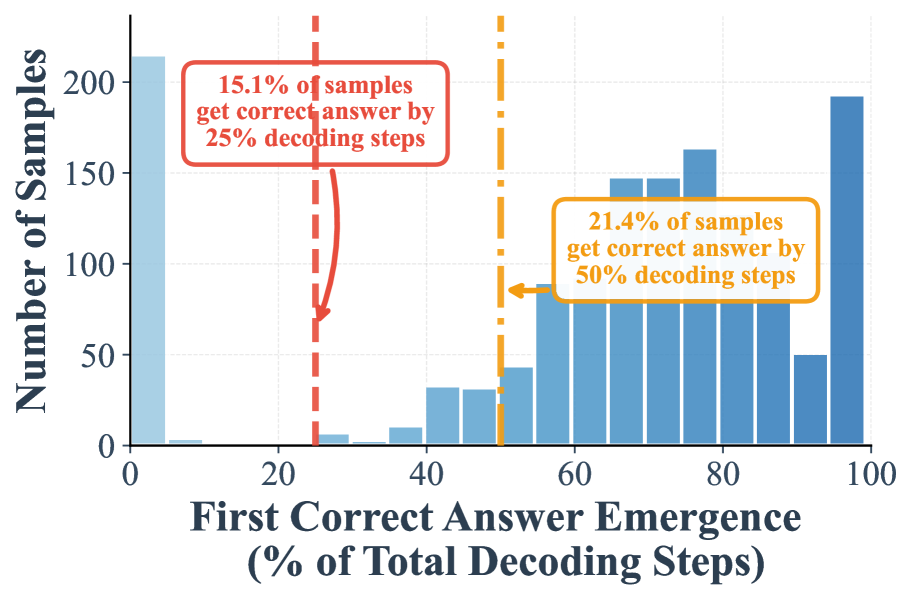

Figure 1: Distribution of early correct answer detection during decoding process.. Histograms show when correct answers first emerge during diffusion decoding, measured as percentage of total decoding steps, using LLaDA 8B on GSM8K. Red and orange dashed lines indicate 50% and 70% completion thresholds, with corresponding statistics showing substantial early convergence. Suffix prompting (b,d) dramatically accelerates convergence compared to standard prompting (a,c). This early convergence pattern demonstrates that correct answer tokens stabilize as top-1 candidates well before full decoding.

I. A high proportion of samples can be correctly decoded during the early phase of decoding. Figure 1 (a) demonstrates that when remasking with the low-confidence strategy, 24.2% samples are already correctly predicted in the first half steps, and 7.9% samples can be correctly decoded in the first 25% steps. These two numbers will be further largely boosted to 97.2% and 88.5%, when shifted to random remasking as shown in Figure 1 -(c).

II. Our suffix prompt further amplifies the early emergence of correct answers. Adding the suffix prompt “Answer:” significantly improves early decoding. With low confidence remasking, the proportion of correct samples emerging by the 25% step rises from 7.9% to 59.7%, and by the 50% step from 24.2% to 75.8% (Figure 1 -(b)). Similarly, under random remasking, the 25% step proportion increases from 88.5% to 94.6%.

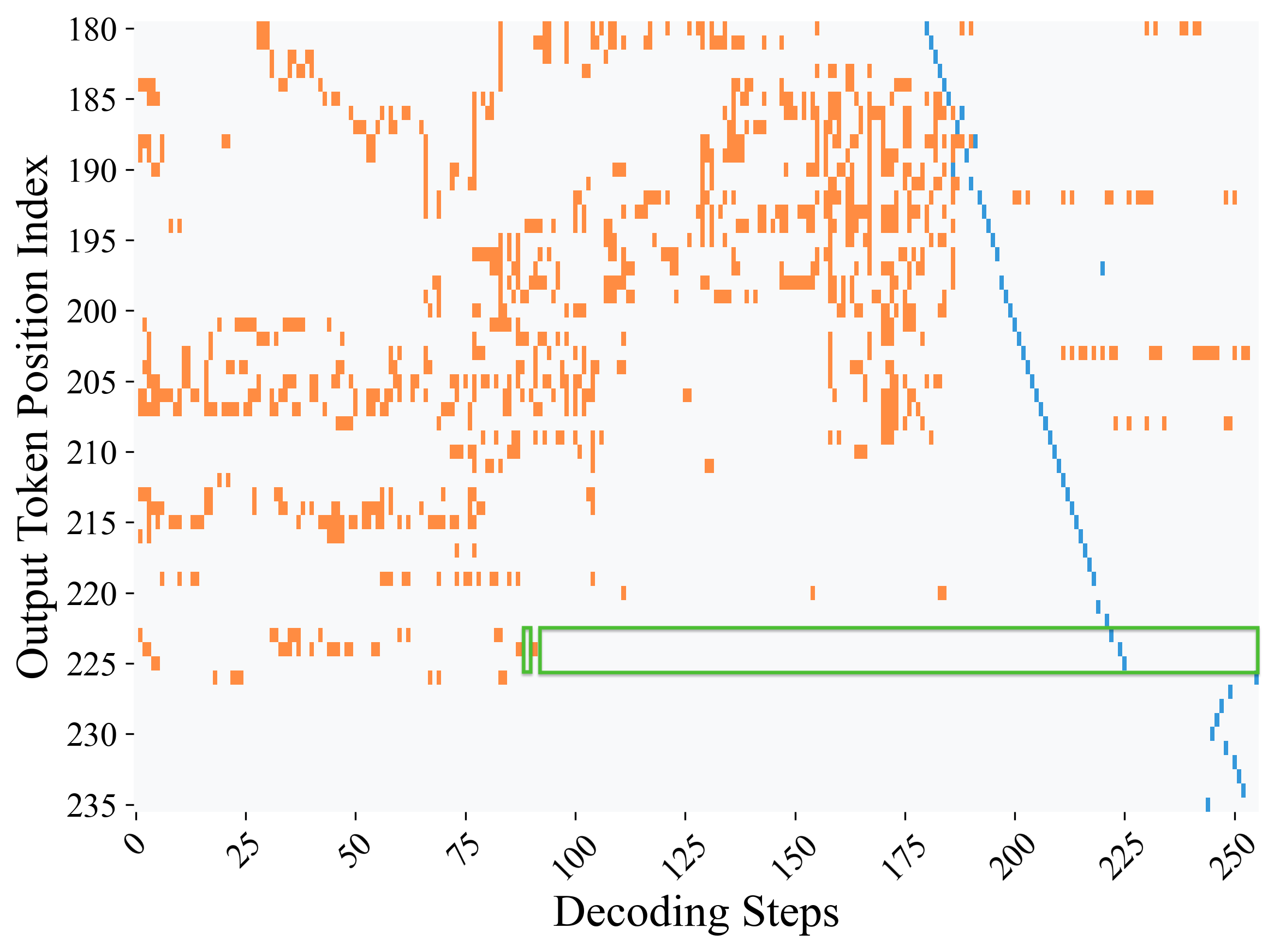

III. Decoding dynamics of chain-of-thought tokens. We further examine the decoding dynamics of chain-of-thought tokens in addition to answer tokens, as shown in Figure 2. First, most non-answer tokens fluctuate frequently before being finalized. Second, answer tokens change far less often and tend to stabilize earlier, remaining unchanged for the rest of the decoding process.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: Token State Representation

### Overview

The image depicts a horizontal sequence of four colored rectangles, each labeled with a distinct state. The diagram uses color-coded blocks to represent different token statuses or actions within a system. The legend is positioned at the top-left, establishing a clear mapping between colors and labels.

### Components/Axes

- **Legend**: Located at the top-left corner, with four color-coded entries:

- **Gray**: "No Change"

- **Orange**: "Token Change"

- **Blue**: "Token Deleted"

- **Green**: "Correct Answer Token"

- **Rectangles**: Four horizontally aligned blocks, each corresponding to a legend entry:

1. **Gray Rectangle**: Labeled "No Change" (matches legend color).

2. **Orange Rectangle**: Labeled "Token Change" (matches legend color).

3. **Blue Rectangle**: Labeled "Token Deleted" (matches legend color).

4. **Green Rectangle**: Labeled "Correct Answer Token" (matches legend color).

### Detailed Analysis

- **Spatial Grounding**:

- The legend is positioned at the top-left, above the rectangles.

- Rectangles are evenly spaced from left to right, with labels placed to the right of each block.

- Colors are consistent between legend entries and their corresponding rectangles (e.g., orange rectangle matches "Token Change" in the legend).

- **Content Details**:

- No numerical values, scales, or axes are present.

- Text is minimal and purely categorical, with no embedded data tables or additional annotations.

### Key Observations

1. The diagram uses a strict one-to-one mapping between colors and labels.

2. The sequence of rectangles suggests a potential workflow or state transition (e.g., "No Change" → "Token Change" → "Token Deleted" → "Correct Answer Token").

3. The green rectangle ("Correct Answer Token") is the final element, implying a resolution or validation step.

### Interpretation

This diagram likely represents a state machine or decision tree for token processing in a computational system. The progression from "No Change" to "Correct Answer Token" suggests a workflow where tokens are evaluated, modified, or removed before arriving at a validated state. The use of distinct colors ensures clarity in distinguishing states, which is critical for debugging or auditing processes. The absence of numerical data implies the focus is on categorical transitions rather than quantitative metrics.

</details>

<details>

<summary>figures/position_change_heatmap_low_conf_non_qi_700_step256_blocklen32_box.png Details</summary>

### Visual Description

## Scatter Plot: Output Token Position Index vs Decoding Steps

### Overview

The image depicts a scatter plot visualizing the relationship between decoding steps and output token position indices. Two distinct data series are represented: orange points labeled "Token Position Distribution" and a blue line labeled "Decoding Trajectory." A green rectangular highlight is present in the lower-right quadrant.

### Components/Axes

- **X-axis (Decoding Steps)**:

- Range: 0 to 250

- Major ticks: 0, 25, 50, 75, 100, 125, 150, 175, 200, 225, 250

- Label: "Decoding Steps" (black text, bold)

- **Y-axis (Output Token Position Index)**:

- Range: 100 to 155

- Major ticks: 100, 105, 110, 115, 120, 125, 130, 135, 140, 145, 150, 155

- Label: "Output Token Position Index" (black text, bold)

- **Legend**:

- Position: Right side of the plot

- Entries:

- Orange square: "Token Position Distribution"

- Blue square: "Decoding Trajectory"

- **Highlight**:

- Green rectangle spanning decoding steps 125–250 and token positions 150–155

### Detailed Analysis

1. **Token Position Distribution (Orange Points)**:

- Density: Highest concentration in the lower-left quadrant (decoding steps 0–50, token positions 100–120).

- Distribution: Sparse and scattered in the upper-right quadrant (decoding steps 150–250, token positions 130–155).

- Notable: No orange points in the green-highlighted region (125–250 decoding steps, 150–155 token positions).

2. **Decoding Trajectory (Blue Line)**:

- Trend: Linear decline from ~150 token position at decoding step 0 to ~100 at decoding step 250.

- Slope: Approximately -0.2 token position per decoding step.

- Intersection: Crosses the green-highlighted region at decoding step ~150, token position ~150.

### Key Observations

- **Concentration vs. Dispersion**: Token positions cluster tightly early in decoding but spread out later.

- **Negative Correlation**: The blue line indicates a consistent decrease in token position index with increasing decoding steps.

- **Empty Highlight**: The green rectangle contains no data points, suggesting no activity in this region.

### Interpretation

The plot demonstrates that early decoding steps (0–50) produce highly concentrated token positions, while later steps (100–250) show dispersed distributions. The blue line’s negative slope implies that token positions systematically decrease as decoding progresses, potentially reflecting a model’s ability to refine predictions over time. The green-highlighted region (125–250 decoding steps, 150–155 token positions) appears to be a theoretical boundary with no observed data, possibly indicating a design constraint or an unexplored operational regime. The absence of points in this area suggests either a limitation in the model’s capacity to reach higher token positions during extended decoding or a deliberate truncation of outputs beyond a certain threshold.

</details>

(a) w/o suffix prompt

<details>

<summary>figures/position_change_heatmap_low_conf_constraint_qi_700_step256_blocklen32_box.png Details</summary>

### Visual Description

## Scatter Plot: Output Token Position Index vs Decoding Steps

### Overview

The image is a scatter plot visualizing the relationship between **Decoding Steps** (x-axis) and **Output Token Position Index** (y-axis). Orange data points represent individual observations, while a blue diagonal line and a green rectangular highlight indicate specific trends or regions of interest. The plot suggests a dynamic interaction between decoding steps and token positions, with notable patterns in data distribution.

---

### Components/Axes

- **X-axis (Decoding Steps)**: Ranges from 0 to 250, with increments of 25.

- **Y-axis (Output Token Position Index)**: Ranges from 180 to 235, with increments of 5.

- **Data Points**:

- **Orange**: Scattered across the plot, with higher density in the mid-range (Decoding Steps: 50–150; Token Positions: 190–210).

- **Blue**: Forms a diagonal line from top-left (Decoding Steps ~0, Token Positions ~235) to bottom-right (Decoding Steps ~250, Token Positions ~180).

- **Green Rectangle**: Highlights a region spanning Decoding Steps 75–100 and Token Positions 225–230.

---

### Detailed Analysis

1. **Orange Data Points**:

- Distributed unevenly, with clusters in the mid-range of both axes.

- Notable outliers: A few points near Decoding Steps 200–250 and Token Positions 180–190.

- No clear trend, but density decreases as Decoding Steps approach 250.

2. **Blue Diagonal Line**:

- Slope: Negative (Token Position Index decreases as Decoding Steps increase).

- Equation: Approximately **Token Position = -0.25 × Decoding Steps + 235** (estimated from endpoints).

- Aligns with the blue data points, suggesting a modeled or theoretical trend.

3. **Green Rectangle**:

- Positioned in the upper-middle range of the plot.

- Covers Decoding Steps 75–100 and Token Positions 225–230.

- Contains ~10–15 orange data points, indicating a localized concentration.

---

### Key Observations

- **Inverse Relationship**: The blue line and points suggest that higher decoding steps correlate with lower token position indices.

- **Regional Focus**: The green rectangle highlights a critical area where decoding steps and token positions intersect, possibly indicating a threshold or anomaly.

- **Data Variability**: Orange points show significant spread, especially in the lower Token Position Index range (180–190), suggesting heterogeneity in the dataset.

---

### Interpretation

The plot likely represents a machine learning or NLP model's behavior during decoding, where:

- **Decoding Steps** correspond to iterative processing steps (e.g., in autoregressive models).

- **Token Position Index** reflects the model's confidence or adjustment in token placement.

- The **blue line** may represent an idealized or expected trend, while the **orange points** reflect real-world variability.

- The **green rectangle** could denote a region of interest (e.g., a specific task or error threshold).

The negative slope of the blue line implies that as the model processes more steps, it refines token positions downward, possibly optimizing for accuracy or coherence. The green rectangle’s concentration of points might indicate a critical phase in decoding where adjustments are most impactful.

---

### Notes on Uncertainty

- Exact values for the blue line’s equation are approximate due to the absence of a legend or explicit formula.

- The green rectangle’s boundaries are defined by visual estimation, with potential ±5 units in both axes.

- Outliers in the orange data points are inferred from sparse distribution patterns.

</details>

(b) w/ suffix prompt

Figure 2: Decoding dynamics across all positions based on maximum-probability predictions. Heatmaps track how the top-1 token changes at each position, if it is decoded at the current step, over the course of decoding. (a) Without our suffix prompts, correct answer tokens reach maximum probability at step 119. (b) With our suffix prompts, this occurs earlier at step 88, showing that the model internally identifies correct answers well before the final output. Results are shown for LLaDA 8B solving problem index 700 from GSM8K under low-confidence decoding. Gray indicates positions where the top-1 prediction remains unchanged, orange marks positions where the prediction changes to a different token, blue denotes the step at which the corresponding y-axis position is actually decoded, and green box highlights the answer region where the correct answer remains stable as the top-1 token and can be safely decoded without further changes as the decoding process progresses.

4 Methodology

<details>

<summary>x6.png Details</summary>

### Visual Description

## Diagram: Comparison of Decoding Methods in Language Models

### Overview

The image compares two decoding strategies in language models:

1. **(a) Standard Full-Step Decoding**: A sequential process with explicit intermediate steps.

2. **(b) Prophet with Early Commit Decoding**: A streamlined approach with confidence-based early termination.

Both methods solve the arithmetic problem "3×3=9, 9×60=?" and output **540**, but differ in computational efficiency.

---

### Components/Axes

#### (a) Standard Full-Step Decoding

- **Timeline (x-axis)**: Steps labeled `t=0`, `t=2`, `t=4`, `t=6`, `t=10`.

- **Y-axis**: Not explicitly labeled; represents stages of decoding.

- **Legend**:

- **Purple**: Chain-of-Thought (CoT) reasoning.

- **Orange**: Answer tokens (e.g., "3", "60", "5400").

- **Green**: Final output ("540").

- **Key Text**:

- "3 sprints [MASK]"

- "3×3=9, 9×60=[MASK]"

- "Redundant Steps" (highlighted at `t=10`).

#### (b) Prophet with Early Commit Decoding

- **Timeline (x-axis)**: Steps labeled `t=0`, `t=2`, `t=4`, `t=6`.

- **Y-axis**: Not explicitly labeled; represents stages of decoding.

- **Legend**:

- **Green**: Confidence Gap > T (threshold for early termination).

- **Yellow**: Early Commit Decoding.

- **Purple**: Final output ("540").

- **Key Text**:

- "Confidence Gap > T"

- "Early Commit Decoding"

- "~55% Steps Saved"

---

### Detailed Analysis

#### (a) Standard Full-Step Decoding

- **Steps**:

- `t=0`: Initial prompt with `[MASK]` placeholders.

- `t=2`: Partial answer "3" (orange).

- `t=4`: Intermediate result "60" (orange).

- `t=6`: Final result "5400" (orange), then corrected to "540" (green).

- `t=10`: Redundant steps (dashed red box) after the correct answer is known.

- **Flow**: Linear progression with no early termination.

#### (b) Prophet with Early Commit Decoding

- **Steps**:

- `t=0`: Initial prompt with `[MASK]` placeholders.

- `t=2`: Partial answer "3" (orange).

- `t=4`: Intermediate result "60" (orange).

- `t=6`: Confidence threshold met (green), triggering early commit.

- **Flow**: Early termination at `t=6` avoids redundant steps.

---

### Key Observations

1. **Efficiency**: Prophet with Early Commit Decoding skips `t=10` steps, saving ~55% of computational effort.

2. **Accuracy**: Both methods produce the same final output (**540**).

3. **Confidence Mechanism**: The green "Confidence Gap > T" in (b) indicates a model's ability to self-correct and terminate early.

4. **Redundancy**: Standard decoding performs unnecessary computations post-solution.

---

### Interpretation

The diagram illustrates how **early commit decoding** optimizes language model inference by leveraging confidence thresholds to avoid redundant computations. This is critical for real-time applications where latency and resource usage matter. The "Confidence Gap > T" mechanism acts as a self-regulating checkpoint, ensuring correctness while minimizing wasted steps. The 55% efficiency gain highlights the practical value of adaptive decoding strategies in large-scale NLP systems.

</details>

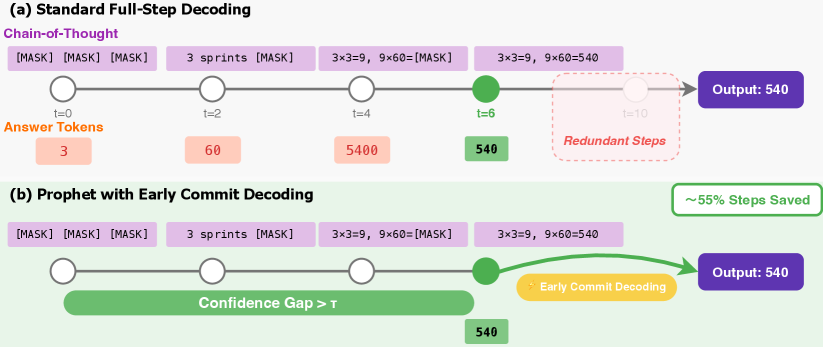

Figure 3: An illustration of the Prophet’s early-commit-decoding mechanism. (a) Standard full-step decoding completes all predefined steps (e.g., 10 steps), incurring redundant computations after the answer has stabilized (at t=6). (b) Prophet dynamically monitors the model’s confidence (the “Confidence Gap”). It triggers an early commit decoding as soon as the answer converges, saving a significant portion of the decoding steps (in this case, 55%) without compromising the output quality.

Built upon the above findings, we introduce Prophet, a training-free fast decoding algorithm designed to accelerate the generation phase of DLMs. Prophet by committing to all remaining tokens in one shot and predicting answers as soon as the model’s predictions have stabilized, which we call Early Commit Decoding. Unlike conventional fixed-step decoding, Prophet actively monitors the model’s certainty at each step to make an informed, on-the-fly decision about when to finalize the generation.

Confidence Gap as a Convergence Metric.

The core mechanism of Prophet is the Confidence Gap, a simple yet effective metric for quantifying the model’s conviction for a given token. At any decoding step $t$ , the DLM produces a logit matrix $\mathbf{L}_{t}∈\mathbb{R}^{N×|\mathcal{V}|}$ , where $N$ is the sequence length and $|\mathcal{V}|$ is the vocabulary size. For each position $i$ , we identify the highest logit value, $L_{t,i}^{(1)}$ , and the second-highest, $L_{t,i}^{(2)}$ . The confidence gap $g_{t,i}$ is defined as their difference:

$$

g_{t,i}=L_{t,i}^{(1)}-L_{t,i}^{(2)}. \tag{1}

$$

This value serves as a robust indicator of predictive certainty. A large probability gap signals that the prediction has likely converged, with the top-ranked token clearly outweighing all others.

Early Commit Decoding.

The decision of when to terminate the decoding loop can be framed as an optimal stopping problem. At each step, we must balance two competing costs: the computational cost of performing additional refinement iterations versus the risk of error from a premature and potentially incorrect decision. The computational cost is a function of the remaining steps, while the risk of error is inversely correlated with the model’s predictive certainty, for which the Confidence Gap serves as a robust proxy.

Prophet addresses this trade-off with an adaptive strategy that embodies a principle of time-varying risk aversion. Let denote $p=(T_{\text{max}}-t)/T_{\text{max}}$ as the decoding progress, where $T_{\text{max}}$ is the total number of decoding steps, and $\tau(p)$ is the threshold for early commit decoding. In the early, noisy stages of decoding (when progress $p$ is small), the potential for significant prediction improvement is high. Committing to an answer at this stage carries a high risk. Therefore, Prophet acts in a risk-averse manner, demanding an exceptionally high threshold ( $\tau_{\text{high}}$ ) to justify an early commit decoding, ensuring such a decision is unequivocally safe. As the decoding process matures (as $p$ increases), two things happen: the model’s predictions stabilize, and the potential computational savings from stopping early diminish. Consequently, the cost of performing one more step becomes negligible compared to the benefit of finalizing the answer. Prophet thus becomes more risk-tolerant, requiring a progressively smaller threshold ( $\tau_{\text{low}}$ ) to confirm convergence.

This dynamic risk-aversion policy is instantiated through our staged threshold function, which maps the abstract trade-off between inference speed and generation certainty onto a concrete decision rule:

$$

\bar{g}_{t}\geq\tau(p),\quad\text{where}\quad\tau(p)=\begin{cases}\tau_{\text{high}}&\text{if }p<0.33\\

\tau_{\text{mid}}&\text{if }0.33\leq p<0.67\\

\tau_{\text{low}}&\text{if }p\geq 0.67\end{cases} \tag{5}

$$

Once the exit condition is satisfied at step $t^{*}$ , the iterative loop is terminated. The final output is then constructed in a single parallel operation by filling any remaining [MASK] tokens with the argmax of the current logits $\mathbf{L}_{t^{*}}$ .

Algorithm Summary.

The complete Prophet decoding procedure is outlined in Algorithm 1. The integration of the confidence gap check adds negligible computational overhead to the standard DLM decoding loop. Prophet is model-agnostic, requires no retraining, and can be readily implemented as a wrapper around existing DLM inference code.

Algorithm 1 Prophet: Early Commit Decoding for Diffusion Language Models

1: Input: Model $M_{\theta}$ , prompt $\mathbf{x}_{\text{prompt}}$ , max steps $T_{\text{max}}$ , generation length $N_{\text{gen}}$

2: Input: Threshold function $\tau(·)$ , answer region positions $\mathcal{A}$

3: Initialize sequence $\mathbf{x}_{T}←\text{concat}(\mathbf{x}_{\text{prompt}},\text{[MASK]}^{N_{\text{gen}}})$

4: Let $\mathcal{M}_{t}$ be the set of masked positions at step $t$ .

5: for $t=T_{\text{max}},T_{\text{max}}-1,...,1$ do

6: Compute logits: $\mathbf{L}_{t}=M_{\theta}(\mathbf{x}_{t})$

7: $\triangleright$ Prophet’s Early-Commit-Decoding Check

8: Calculate average confidence gap $\bar{g}_{t}$ over positions $\mathcal{A}$ using Eq. 4.

9: Calculate progress: $p←(T_{\text{max}}-t)/T_{\text{max}}$

10: if $\bar{g}_{t}≥\tau(p)$ then $\triangleright$ Check condition from Eq. 5

11: $\mathbf{\hat{x}}_{0}←\text{argmax}(\mathbf{L}_{t},\text{dim}=-1)$

12: $\mathbf{x}_{0}←\mathbf{x}_{t}$ . Fill positions in $\mathcal{M}_{t}$ with tokens from $\mathbf{\hat{x}}_{0}$ .

13: Return $\mathbf{x}_{0}$ $\triangleright$ Terminate and finalize

14: end if

15: $\triangleright$ Standard DLM Refinement Step

16: Determine tokens to unmask $\mathcal{U}_{t}⊂eq\mathcal{M}_{t}$ via a re-masking strategy.

17: $\mathbf{\hat{x}}_{0}←\text{argmax}(\mathbf{L}_{t},\text{dim}=-1)$

18: Update $\mathbf{x}_{t-1}←\mathbf{x}_{t}$ , replacing tokens at positions $\mathcal{U}_{t}$ with those from $\mathbf{\hat{x}}_{0}$ .

19: end for

20: Return $\mathbf{x}_{0}$ $\triangleright$ Return result after full iterations if no early commit decoding

5 Experiments

We evaluate Prophet on diffusion language models (DLMs) to validate two key hypotheses: first, that Prophet can preserve the performance of full-budget decoding while using substantially fewer denoising steps; second, that our adaptive approach provides more reliable acceleration than naive static baselines. We demonstrate that Prophet achieves notable computational savings with negligible quality degradation through comprehensive experiments across diverse benchmarks.

5.1 Experimental Setup

We conduct experiments on two state-of-the-art diffusion language models: LLaDA-8B (Nie et al., 2025) and Dream-7B (Ye et al., 2025). For each model, we compare three decoding strategies: Full uses the standard diffusion decoding with the complete step budget of $T_{\max}$ and Prophet employs early commit decoding with dynamic threshold scheduling. The threshold parameters are set to $\tau_{\text{high}}=7.5$ , $\tau_{\text{mid}}=5.0$ , and $\tau_{\text{low}}=2.5$ , with transitions occurring at 33% and 67% of the decoding progress. These hyperparameters were selected through preliminary validation experiments.

Our evaluation spans four capability domains to comprehensively assess Prophet’s effectiveness. For general reasoning, we use MMLU (Hendrycks et al., 2021), ARC-Challenge (Clark et al., 2018), HellaSwag (Zellers et al., 2019), TruthfulQA (Lin et al., 2021), WinoGrande (Sakaguchi et al., 2021), and PIQA (Bisk et al., 2020). Mathematical and scientific reasoning are evaluated through GSM8K (Cobbe et al., 2021) and GPQA (Rein et al., 2023). For code generation, we employ HumanEval (Chen et al., 2021) and MBPP (Austin et al., 2021b). Finally, planning capabilities are assessed using Countdown and Sudoku tasks (Gong et al., 2024). We follow the prompt in simple-evals for LLaDA and Dream, making the model reason step by step. Concretely, we set the generation length $L$ to 128 for general tasks, to 256 for GSM8K and GPQA, and to 512 for the code benchmarks. Unless otherwise noted, all baselines use a number of iterative steps equal to the specified generation length. All experiments employ greedy decoding to ensure deterministic and reproducible results.

5.2 Main Results and Analysis

The results of our experiments are summarized in Table 1. Across the general reasoning tasks, Prophet demonstrates its ability to match or even exceed the performance of the full baseline. For example, using LLaDA-8B, Prophet achieves 54.0% on MMLU and 83.5% on ARC-C, both of which are statistically on par with the full step decoding. Interestingly, on HellaSwag, Prophet (70.9%) not only improves upon the full baseline (68.7%) but also the half baseline (70.5%), suggesting that early commit decoding can prevent the model from corrupting an already correct prediction in later, noisy refinement steps. Similarly, Dream-7B maintains competitive performance across benchmarks, with Prophet achieving 66.1% on MMLU compared to the full model’s 67.6%—a minimal drop of 1.5% while delivering 2.47× speedup.

Prophet continues to prove its reliability in more complex reasoning tasks, including mathematics, science, and code generation. For the GSM8K dataset, Prophet with LLaDA-8B obtains an accuracy of 77.9%, outperforming the baseline’s 77.1%. This reliability also extends to code generation benchmarks. For instance, on HumanEval, Prophet perfectly matches the full baseline’s score with LLaDA-8B (30.5%) and even slightly improves it with Dream-7B (55.5% vs. 54.9%). Notably, the acceleration on these intricate tasks (e.g., 1.20× on HumanEval) is more conservative compared to general reasoning. This demonstrates Prophet’s adaptive nature: it dynamically allocates more denoising steps when a task demands further refinement, thereby preserving accuracy on complex problems. This reinforces Prophet’s role as a ”safe” acceleration method that avoids the pitfalls of premature, static termination.

In summary, our empirical results strongly support the central hypothesis of this work: DLMs often determine the correct answer long before the final decoding step. Prophet successfully capitalizes on this phenomenon by dynamically monitoring the model’s predictive confidence. It terminates the iterative refinement process as soon as the answer has stabilized, thereby achieving significant computational savings with negligible, and in some cases even positive, impact on task performance. This stands in stark contrast to static truncation methods, which risk cutting off the decoding process prematurely and harming accuracy. Prophet thus provides a robust and model-agnostic solution to accelerate DLM inference, enhancing its practicality for real-world deployment.

Table 1: Benchmark results on LLaDA-8B-Instruct and Dream-7B-Instruct. Sudoku and Countdown are evaluated using 8-shot setting; all other benchmarks use zero-shot evaluation. Detailed configuration is listed in the Appendix.

| Benchmark General Tasks MMLU | LLaDA 8B 54.1 | LLaDA 8B (Ours) 54.0 (2.34×) | Gain ( $\Delta$ ) -0.1 | Dream-7B 67.6 | Dream-7B (Ours) 66.1 (2.47×) | Gain ( $\Delta$ ) -1.5 |

| --- | --- | --- | --- | --- | --- | --- |

| ARC-C | 83.2 | 83.5 (1.88×) | +0.3 | 88.1 | 87.9 (2.61×) | -0.2 |

| Hellaswag | 68.7 | 70.9 (2.14×) | +2.2 | 81.2 | 81.9 (2.55×) | +0.7 |

| TruthfulQA | 34.4 | 46.1 (2.31×) | +11.7 | 55.6 | 53.2 (1.83×) | -2.4 |

| WinoGrande | 73.8 | 70.5 (1.71×) | -3.3 | 62.5 | 62.0 (1.45×) | -0.5 |

| PIQA | 80.9 | 81.9 (1.98×) | +1.0 | 86.1 | 86.6 (2.29×) | +0.5 |

| Mathematics & Scientific | | | | | | |

| GSM8K | 77.1 | 77.9 (1.63×) | +0.8 | 75.3 | 75.2 (1.71×) | -0.1 |

| GPQA | 25.2 | 25.7 (1.82×) | +0.5 | 27.0 | 26.6 (1.66×) | -0.4 |

| Code | | | | | | |

| HumanEval | 30.5 | 30.5 (1.20×) | 0.0 | 54.9 | 55.5 (1.44×) | +0.6 |

| MBPP | 37.6 | 37.4 (1.35×) | -0.2 | 54.0 | 54.6 (1.33×) | +0.6 |

| Planning Tasks | | | | | | |

| Countdown | 15.3 | 15.3 (2.67×) | 0.0 | 14.6 | 14.6 (2.37×) | 0.0 |

| Sudoku | 35.0 | 38.0 (2.46×) | +3.0 | 89.0 | 89.0 (3.40×) | 0.0 |

5.3 Ablation Studies

Beyond the coarse step–budget ablation above, we further dissect why Prophet outperforms static truncation by examining (i) sensitivity to the generation length $L$ and available step budget, (ii) robustness to the granularity of semi-autoregressive block updates, and (iii) compatibility with different re-masking heuristics. Together, these studies consistently show that Prophet’s adaptive early-commit rule improves the compute–quality Pareto frontier, whereas static schedules either under-compute (hurting accuracy) or over-compute (wasting steps).

Accuracy vs. step budget under different $L$ .

Table 2 (Panel A) summarizes GSM8K accuracy as we vary the number of refinement steps under two generation lengths ( $L\!=\!256$ and $L\!=\!128$ ). Accuracy under a static step cap rises monotonically with more steps (e.g., $7.7\%\!→\!22.5\%\!→\!58.8\%\!→\!76.2\%$ for 16/32/64/128 at $L\!=\!256$ ), but still underperforms either the full-budget decoding or Prophet. In contrast, Prophet stops adaptively at $≈ 160$ steps for $L\!=\!256$ (saving $≈ 38\%$ steps; $256/160\!≈\!1.63×$ ) and yields a higher score than the 256-step baseline (77.9% vs. 77.1%). When the target length is shorter ( $L\!=\!128$ ), Prophet again surpasses the 128-step baseline (72.7% vs. 71.3%) while using only $≈ 74$ steps (saving $≈ 42\%$ ; $128/74\!≈\!1.73×$ ). These results reaffirm that the gains are not a byproduct of simply using fewer steps: Prophet avoids late-stage over-refinement when the answer has already stabilized, while still allocating extra iterations when needed.

Granularity of semi-autoregressive refinement (block length).

Table 3 shows that static block schedules are brittle: accuracy peaks around moderate blocks and collapses for large blocks (e.g., 59.9 at 64 and 33.1 at 128). Prophet markedly attenuates this brittleness, delivering consistent gains across the entire range, and especially at large blocks where over-aggressive parallel updates inject more noise. For instance, at block length 64 and 128, Prophet improves accuracy by $+9.9$ and $+19.1$ points, respectively. This robustness is a direct consequence of Prophet’s time-varying risk-aversion: when coarse-grained updates raise uncertainty, the threshold schedule defers early commit; once predictions settle, Prophet exits promptly to avoid additional noisy revisions.

Re-masking strategy compatibility.

Table 2 (Panel B) evaluates three off-the-shelf re-masking heuristics (random, low-confidence, top- $k$ margin). Prophet consistently outperforms their static counterparts, with the largest gain under random re-masking (+2.8 points), aligning with our earlier observation that random schedules accentuate early answer convergence. The improvement persists under more informed heuristics (low-confidence: +1.4; top- $k$ margin: +0.7), indicating that Prophet’s stopping rule complements, rather than replaces, token-selection policies.

Table 2: GSM8K ablations. (a) Accuracy vs. step budget under two generation lengths $L$ . Prophet stops early (average steps in parentheses) yet matches/exceeds the full-budget baseline. (b) Accuracy under different re-masking strategies; Prophet complements token-selection policies.

(a) Accuracy vs. step budget and generation length

| 256 | 7.7 | 22.5 | 58.8 | 76.2 | 77.9 ( $≈$ 160; 1.63 $×$ ) | 77.1 |

| --- | --- | --- | --- | --- | --- | --- |

| 128 | 21.8 | 50.3 | 67.9 | 71.3 | 72.7 ( $≈$ 74; 1.73 $×$ ) | 71.3 |

(b) Re-masking strategy

| Random Low-confidence Top- $k$ margin | 63.8 71.3 72.4 | 66.6 72.7 73.1 |

| --- | --- | --- |

Table 3: Sensitivity to block length on GSM8K (semi-autoregressive updates). Prophet is less brittle to coarse-grained updates and yields larger gains as block length increases.

| Baseline Ours (Prophet) $\Delta$ (Abs.) | 67.1 72.8 +5.7 | 68.7 73.3 +4.6 | 71.3 72.7 +1.4 | 59.9 69.8 +9.9 | 33.1 52.2 +19.1 |

| --- | --- | --- | --- | --- | --- |

6 Conclusion

In this work, we identified and leveraged a fundamental yet overlooked property of diffusion language models: early answer convergence. Our analysis revealed that up to 99% of instances can be correctly decoded using only half the refinement steps, challenging the necessity of conventional full-length decoding. Building on this observation, we introduced Prophet, a training-free early commit decoding paradigm that dynamically monitors confidence gaps to determine optimal termination points. Experiments on LLaDA-8B and Dream-7B demonstrate that Prophet achieves up to 3.4× reduction in decoding steps while maintaining generation quality. By recasting DLM decoding as an optimal stopping problem rather than a fixed-budget iteration, our work opens new avenues for efficient DLM inference and suggests that early convergence is a core characteristic of how these models internally resolve uncertainty, across diverse tasks and settings.

References

- Agrawal et al. (2025) Sudhanshu Agrawal, Risheek Garrepalli, Raghavv Goel, Mingu Lee, Christopher Lott, and Fatih Porikli. Spiffy: Multiplying diffusion llm acceleration via lossless speculative decoding, 2025. URL https://arxiv.org/abs/2509.18085.

- Arriola et al. (2025) Marianne Arriola, Aaron Gokaslan, Justin T. Chiu, Zhihan Yang, Zhixuan Qi, Jiaqi Han, Subham Sekhar Sahoo, and Volodymyr Kuleshov. Block diffusion: Interpolating between autoregressive and diffusion language models, 2025. URL https://arxiv.org/abs/2503.09573.

- Austin et al. (2021a) Jacob Austin, Daniel D Johnson, Jonathan Ho, Daniel Tarlow, and Rianne Van Den Berg. Structured denoising diffusion models in discrete state-spaces. Advances in neural information processing systems, 34:17981–17993, 2021a.

- Austin et al. (2021b) Jacob Austin, Augustus Odena, Maxwell Nye, Maarten Bosma, Henryk Michalewski, David Dohan, Ellen Jiang, Carrie Cai, Michael Terry, Quoc Le, et al. Program synthesis with large language models. arXiv preprint arXiv:2108.07732, 2021b.

- Bisk et al. (2020) Yonatan Bisk, Rowan Zellers, Jianfeng Gao, Yejin Choi, et al. Piqa: Reasoning about physical commonsense in natural language. In Proceedings of the AAAI conference on artificial intelligence, 2020.

- Campbell et al. (2022) Andrew Campbell, Joe Benton, Valentin De Bortoli, Thomas Rainforth, George Deligiannidis, and Arnaud Doucet. A continuous time framework for discrete denoising models. Advances in Neural Information Processing Systems, 35:28266–28279, 2022.

- Chen et al. (2021) Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde De Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, et al. Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374, 2021.

- Chen et al. (2025) Xinhua Chen, Sitao Huang, Cong Guo, Chiyue Wei, Yintao He, Jianyi Zhang, Hai Li, Yiran Chen, et al. Dpad: Efficient diffusion language models with suffix dropout. arXiv preprint arXiv:2508.14148, 2025.

- Clark et al. (2018) Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge. arXiv:1803.05457v1, 2018.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- DeepMind (2025) Google DeepMind. Gemini-diffusion, 2025. URL https://blog.google/technology/google-deepmind/gemini-diffusion/.

- Gillespie (2001) Daniel T Gillespie. Approximate accelerated stochastic simulation of chemically reacting systems. The Journal of chemical physics, 115(4):1716–1733, 2001.

- Gong et al. (2024) Shansan Gong, Shivam Agarwal, Yizhe Zhang, Jiacheng Ye, Lin Zheng, Mukai Li, Chenxin An, Peilin Zhao, Wei Bi, Jiawei Han, et al. Scaling diffusion language models via adaptation from autoregressive models. arXiv preprint arXiv:2410.17891, 2024.

- Hendrycks et al. (2021) Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv:2103.03874, 2021.

- Ho et al. (2020) Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. Advances in neural information processing systems, 33:6840–6851, 2020.

- Hoogeboom et al. (2021) Emiel Hoogeboom, Didrik Nielsen, Priyank Jaini, Patrick Forré, and Max Welling. Argmax flows and multinomial diffusion: Learning categorical distributions. Advances in Neural Information Processing Systems, 34:12454–12465, 2021.

- Hu et al. (2025) Zhanqiu Hu, Jian Meng, Yash Akhauri, Mohamed S Abdelfattah, Jae-sun Seo, Zhiru Zhang, and Udit Gupta. Accelerating diffusion language model inference via efficient kv caching and guided diffusion. arXiv preprint arXiv:2505.21467, 2025.

- Huang & Tang (2025) Chihan Huang and Hao Tang. Ctrldiff: Boosting large diffusion language models with dynamic block prediction and controllable generation. arXiv preprint arXiv:2505.14455, 2025.

- Israel et al. (2025a) Daniel Israel, Guy Van den Broeck, and Aditya Grover. Accelerating diffusion llms via adaptive parallel decoding. arXiv preprint arXiv:2506.00413, 2025a.

- Israel et al. (2025b) Daniel Israel, Guy Van den Broeck, and Aditya Grover. Accelerating diffusion llms via adaptive parallel decoding, 2025b. URL https://arxiv.org/abs/2506.00413.

- Jing et al. (2022) Bowen Jing, Gabriele Corso, Jeffrey Chang, Regina Barzilay, and Tommi Jaakkola. Torsional diffusion for molecular conformer generation. Advances in neural information processing systems, 35:24240–24253, 2022.

- Labs et al. (2025) Inception Labs, Samar Khanna, Siddhant Kharbanda, Shufan Li, Harshit Varma, Eric Wang, Sawyer Birnbaum, Ziyang Luo, Yanis Miraoui, Akash Palrecha, Stefano Ermon, Aditya Grover, and Volodymyr Kuleshov. Mercury: Ultra-fast language models based on diffusion, 2025. URL https://arxiv.org/abs/2506.17298.

- Lin et al. (2021) Stephanie Lin, Jacob Hilton, and Owain Evans. Truthfulqa: Measuring how models mimic human falsehoods. arXiv preprint arXiv:2109.07958, 2021.

- Liu et al. (2025a) Zhiyuan Liu, Yicun Yang, Yaojie Zhang, Junjie Chen, Chang Zou, Qingyuan Wei, Shaobo Wang, and Linfeng Zhang. dllm-cache: Accelerating diffusion large language models with adaptive caching. arXiv preprint arXiv:2506.06295, 2025a.

- Liu et al. (2025b) Zhiyuan Liu, Yicun Yang, Yaojie Zhang, Junjie Chen, Chang Zou, Qingyuan Wei, Shaobo Wang, and Linfeng Zhang. dllm-cache: Accelerating diffusion large language models with adaptive caching, 2025b. URL https://arxiv.org/abs/2506.06295.

- Lou et al. (2023) Aaron Lou, Chenlin Meng, and Stefano Ermon. Discrete diffusion language modeling by estimating the ratios of the data distribution. arXiv preprint arXiv:2310.16834, 2023.

- Ma et al. (2025a) Xinyin Ma, Runpeng Yu, Gongfan Fang, and Xinchao Wang. dkv-cache: The cache for diffusion language models. arXiv preprint arXiv:2505.15781, 2025a.

- Ma et al. (2025b) Xinyin Ma, Runpeng Yu, Gongfan Fang, and Xinchao Wang. dkv-cache: The cache for diffusion language models, 2025b. URL https://arxiv.org/abs/2505.15781.

- Nichol & Dhariwal (2021) Alexander Quinn Nichol and Prafulla Dhariwal. Improved denoising diffusion probabilistic models. In International conference on machine learning, pp. 8162–8171. PMLR, 2021.

- Nie et al. (2025) Shen Nie, Fengqi Zhu, Zebin You, Xiaolu Zhang, Jingyang Ou, Jun Hu, Jun Zhou, Yankai Lin, Ji-Rong Wen, and Chongxuan Li. Large language diffusion models. arXiv preprint arXiv:2502.09992, 2025.

- Ou et al. (2024) Jingyang Ou, Shen Nie, Kaiwen Xue, Fengqi Zhu, Jiacheng Sun, Zhenguo Li, and Chongxuan Li. Your absorbing discrete diffusion secretly models the conditional distributions of clean data. arXiv preprint arXiv:2406.03736, 2024.

- Ramesh et al. (2021) Aditya Ramesh, Mikhail Pavlov, Gabriel Goh, Scott Gray, Chelsea Voss, Alec Radford, Mark Chen, and Ilya Sutskever. Zero-shot text-to-image generation. In International conference on machine learning, pp. 8821–8831. Pmlr, 2021.

- Rein et al. (2023) David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R Bowman. Gpqa: A graduate-level google-proof q&a benchmark. arXiv preprint arXiv:2311.12022, 2023.

- Saharia et al. (2022) Chitwan Saharia, Jonathan Ho, William Chan, Tim Salimans, David J Fleet, and Mohammad Norouzi. Image super-resolution via iterative refinement. IEEE transactions on pattern analysis and machine intelligence, 45(4):4713–4726, 2022.

- Sahoo et al. (2024) Subham Sahoo, Marianne Arriola, Yair Schiff, Aaron Gokaslan, Edgar Marroquin, Justin Chiu, Alexander Rush, and Volodymyr Kuleshov. Simple and effective masked diffusion language models. Advances in Neural Information Processing Systems, 37:130136–130184, 2024.

- Sakaguchi et al. (2021) Keisuke Sakaguchi, Ronan Le Bras, Chandra Bhagavatula, and Yejin Choi. Winogrande: An adversarial winograd schema challenge at scale. Communications of the ACM, 64(9):99–106, 2021.

- Shi et al. (2024) Jiaxin Shi, Kehang Han, Zhe Wang, Arnaud Doucet, and Michalis K Titsias. Simplified and generalized masked diffusion for discrete data. arXiv preprint arXiv:2406.04329, 2024.

- Sohl-Dickstein et al. (2015) Jascha Sohl-Dickstein, Eric Weiss, Niru Maheswaranathan, and Surya Ganguli. Deep unsupervised learning using nonequilibrium thermodynamics. In International conference on machine learning, pp. 2256–2265. PMLR, 2015.

- Song et al. (2025a) Yuerong Song, Xiaoran Liu, Ruixiao Li, Zhigeng Liu, Zengfeng Huang, Qipeng Guo, Ziwei He, and Xipeng Qiu. Sparse-dllm: Accelerating diffusion llms with dynamic cache eviction, 2025a. URL https://arxiv.org/abs/2508.02558.

- Song et al. (2025b) Yuxuan Song, Zheng Zhang, Cheng Luo, Pengyang Gao, Fan Xia, Hao Luo, Zheng Li, Yuehang Yang, Hongli Yu, Xingwei Qu, Yuwei Fu, Jing Su, Ge Zhang, Wenhao Huang, Mingxuan Wang, Lin Yan, Xiaoying Jia, Jingjing Liu, Wei-Ying Ma, Ya-Qin Zhang, Yonghui Wu, and Hao Zhou. Seed diffusion: A large-scale diffusion language model with high-speed inference, 2025b. URL https://arxiv.org/abs/2508.02193.

- Wang et al. (2025a) Wen Wang, Bozhen Fang, Chenchen Jing, Yongliang Shen, Yangyi Shen, Qiuyu Wang, Hao Ouyang, Hao Chen, and Chunhua Shen. Time is a feature: Exploiting temporal dynamics in diffusion language models, 2025a. URL https://arxiv.org/abs/2508.09138.

- Wang et al. (2025b) Xu Wang, Chenkai Xu, Yijie Jin, Jiachun Jin, Hao Zhang, and Zhijie Deng. Diffusion llms can do faster-than-ar inference via discrete diffusion forcing, aug 2025b. URL https://arxiv.org/abs/2508.09192. arXiv:2508.09192.

- Wei et al. (2025a) Qingyan Wei, Yaojie Zhang, Zhiyuan Liu, Dongrui Liu, and Linfeng Zhang. Accelerating diffusion large language models with slowfast: The three golden principles. arXiv preprint arXiv:2506.10848, 2025a.

- Wei et al. (2025b) Qingyan Wei, Yaojie Zhang, Zhiyuan Liu, Dongrui Liu, and Linfeng Zhang. Accelerating diffusion large language models with slowfast sampling: The three golden principles, 2025b. URL https://arxiv.org/abs/2506.10848.

- Wu et al. (2025a) Chengyue Wu, Hao Zhang, Shuchen Xue, Zhijian Liu, Shizhe Diao, Ligeng Zhu, Ping Luo, Song Han, and Enze Xie. Fast-dllm: Training-free acceleration of diffusion llm by enabling kv cache and parallel decoding. arXiv preprint arXiv:2505.22618, 2025a.

- Wu et al. (2025b) Chengyue Wu, Hao Zhang, Shuchen Xue, Zhijian Liu, Shizhe Diao, Ligeng Zhu, Ping Luo, Song Han, and Enze Xie. Fast-dllm: Training-free acceleration of diffusion llm by enabling kv cache and parallel decoding, 2025b. URL https://arxiv.org/abs/2505.22618.

- Ye et al. (2025) Jiacheng Ye, Zhihui Xie, Lin Zheng, Jiahui Gao, Zirui Wu, Xin Jiang, Zhenguo Li, and Lingpeng Kong. Dream 7b: Diffusion large language models. arXiv preprint arXiv:2508.15487, 2025.

- Zellers et al. (2019) Rowan Zellers, Ari Holtzman, Yonatan Bisk, Ali Farhadi, and Yejin Choi. Hellaswag: Can a machine really finish your sentence? arXiv preprint arXiv:1905.07830, 2019.

- Zheng et al. (2024) Kaiwen Zheng, Yongxin Chen, Hanzi Mao, Ming-Yu Liu, Jun Zhu, and Qinsheng Zhang. Masked diffusion models are secretly time-agnostic masked models and exploit inaccurate categorical sampling. arXiv preprint arXiv:2409.02908, 2024.

Appendix

Appendix A Additional results

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: First CorrectAnswer Emergence (% of Total Decoding Steps)

### Overview

The chart visualizes the distribution of samples based on the percentage of total decoding steps required to produce the first correct answer. Two vertical dashed lines highlight key thresholds (25% and 50% decoding steps), with annotations indicating the percentage of samples achieving correctness by those points.

### Components/Axes

- **X-axis**: "First Correct Answer Emergence (% of Total Decoding Steps)"

- Scale: 0% to 100% in 20% increments.

- Key markers:

- Red dashed line at **25%** with annotation:

*"15.1% of samples get correct answer by 25% decoding steps"*

- Orange dashed line at **50%** with annotation:

*"21.4% of samples get correct answer by 50% decoding steps"*

- **Y-axis**: "Number of Samples"

- Scale: 0 to 200 in 50-sample increments.

- **Bars**: Blue bars represent the frequency of samples at each percentage interval.

### Detailed Analysis

- **Distribution**:

- The majority of samples (peak at ~200) cluster near **0–20% decoding steps**, indicating most correct answers emerge early.

- A sharp decline follows, with fewer samples at higher decoding steps (e.g., ~50 samples at 40–60%, ~25 samples at 80–100%).

- **Thresholds**:

- At **25% decoding steps**, 15.1% of samples achieve correctness (red line).

- At **50% decoding steps**, 21.4% of samples achieve correctness (orange line).

- The gap between these thresholds suggests diminishing returns in correctness gains beyond 25% decoding steps.

### Key Observations

1. **Early Efficiency**: Over 15% of samples resolve within the first 25% of decoding steps, highlighting strong initial performance.

2. **Diminishing Returns**: Only an additional 6.3% of samples (21.4% – 15.1%) resolve between 25% and 50% decoding steps, indicating limited improvement in later stages.

3. **Long-Tail Distribution**: A small fraction of samples (e.g., ~50 samples at 80–100%) require near-complete decoding to produce correct answers.

### Interpretation

The data demonstrates that the model achieves the majority of correct answers early in the decoding process, with efficiency dropping sharply after 25% of steps. This suggests:

- **Optimization Opportunity**: Improving early decoding accuracy could significantly reduce the need for longer decoding steps.

- **Model Behavior**: The steep decline implies the model either converges quickly or struggles with ambiguous cases requiring full decoding.

- **Threshold Utility**: The 25% and 50% markers provide actionable benchmarks for evaluating model performance or setting computational limits.

No additional languages or hidden data structures are present. The chart focuses on quantifying the relationship between decoding progress and correctness, emphasizing early-stage efficiency.

</details>

(a) MMLU w/o suffix prompt (low confidence)

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: First Correct Answer Emergence vs. Number of Samples

### Overview

The chart illustrates the relationship between the percentage of decoding steps required to reach the first correct answer and the number of samples achieving that outcome. Two data series (red and orange lines) show cumulative sample counts as decoding steps increase from 0% to 100%.

### Components/Axes

- **X-axis**: "First Correct Answer Emergence (% of Total Decoding Steps)" (0–100% range, linear scale).

- **Y-axis**: "Number of Samples" (0–1500, linear scale).

- **Legend**: Located on the right, with:

- **Red line**: "99.7% of samples get correct answer by 25% decoding steps".

- **Orange line**: "99.9% of samples get correct answer by 50% decoding steps".

- **Annotations**:

- Red dashed vertical line at 25% decoding steps.

- Orange dashed vertical line at 50% decoding steps.

- Arrows linking annotations to data points.

### Detailed Analysis

1. **Red Line (99.7% at 25%)**:

- Starts at **25% decoding steps** with **~500 samples**.

- Rises to **~1000 samples** at **50% decoding steps**.

- Slope: Steep upward trend between 25% and 50%.

2. **Orange Line (99.9% at 50%)**:

- Begins at **50% decoding steps** with **~1000 samples**.

- Increases to **~1500 samples** at **100% decoding steps**.

- Slope: Gradual upward trend after 50%.

3. **Key Data Points**:

- At **0% decoding steps**: ~1500 samples (y-intercept, no decoding steps required).

- At **25% decoding steps**: Red line intersects at ~500 samples.

- At **50% decoding steps**: Orange line intersects at ~1000 samples.

- At **100% decoding steps**: Orange line reaches ~1500 samples.

### Key Observations

- **Rapid Initial Coverage**: 99.7% of samples achieve correctness by 25% decoding steps, suggesting high efficiency in early decoding.

- **Diminishing Returns**: The orange line shows slower growth after 50%, indicating most samples are resolved by mid-decoding steps.

- **Saturation**: By 100% decoding steps, all samples (1500) are resolved, but the orange line plateaus near this value.

### Interpretation

The data demonstrates that **early decoding steps (≤50%) resolve the majority of samples**, with 99.7% correctness achieved by 25% steps. The red line’s steep rise between 25% and 50% implies a critical threshold where most samples are resolved. The orange line’s gradual increase after 50% suggests diminishing returns, as only 0.2% additional samples are resolved by extending decoding steps to 100%. This highlights the efficiency of the decoding process, where most errors are corrected early, and further steps have minimal impact. The annotations emphasize the model’s robustness, as even limited decoding steps yield near-complete correctness.

</details>

(b) MMLU w/ suffix prompt (low confidence)

<details>

<summary>x9.png Details</summary>

### Visual Description

## Bar Chart: First Correct Answer Emergence by Decoding Steps

### Overview

The chart visualizes the distribution of samples based on the percentage of total decoding steps required to produce the first correct answer. Two vertical dashed lines highlight key thresholds (25% and 50% decoding steps), with annotations indicating cumulative sample coverage at these points.

### Components/Axes

- **Y-Axis**: "Number of Samples" (linear scale, 0–800+).

- **X-Axis**: "First Correct Answer Emergence (% of Total Decoding Steps)" (0–100%).

- **Annotations**:

- **Red Dashed Line (~25%)**: "95.3% of samples get correct answer by 25% decoding steps."

- **Yellow Dashed Line (~50%)**: "99.2% of samples get correct answer by 50% decoding steps."

### Detailed Analysis

- **Bar Distribution**:

- The majority of samples (800+) cluster near 0–10% decoding steps, indicating most correct answers emerge early.

- The count decreases sharply as decoding steps increase, with fewer samples requiring higher percentages (e.g., ~200 samples at 10–20%, ~100 at 20–30%).

- Bars become sparse beyond 30%, with minimal samples beyond 50%.

- **Thresholds**:

- At **25% decoding steps**, 95.3% of samples achieve correctness (red annotation).

- At **50% decoding steps**, coverage increases to 99.2% (yellow annotation), suggesting diminishing returns after 25%.

### Key Observations