# Robustness Assessment and Enhancement of Text Watermarking for Google’s SynthID

**Authors**: Xia Han, Qi Li, Jianbing Niand Mohammad Zulkernine

> ∗ Xia Han and Qi Li contributed equally to this work.

## Abstract

Recent advances in LLM watermarking methods such as SynthID-Text by Google DeepMind offer promising solutions for tracing the provenance of AI-generated text. However, our robustness assessment reveals that SynthID-Text is vulnerable to meaning-preserving attacks, such as paraphrasing, copy-paste modifications, and back-translation, which can significantly degrade watermark detectability. To address these limitations, we propose SynGuard, a hybrid framework that combines the semantic alignment strength of Semantic Invariant Robust (SIR) with the probabilistic watermarking mechanism of SynthID-Text. Our approach jointly embeds watermarks at both lexical and semantic levels, enabling robust provenance tracking while preserving the original meaning. Experimental results across multiple attack scenarios show that SynGuard improves watermark recovery by an average of 11.1% in F1 score compared to SynthID-Text. These findings demonstrate the effectiveness of semantic-aware watermarking in resisting real-world tampering. All code, datasets, and evaluation scripts are publicly available at: https://github.com/githshine/SynGuard.

## I Introduction

Text watermarking has emerged as a promising solution for tracing the origin of AI-generated content, offering a lightweight, model-agnostic method for content provenance verification [1, 2]. It identifies generated text from surface form alone, without access to the original prompt or underlying model. This makes watermarking especially appealing in open-world scenarios, where black-box models and unknown sources proliferate.

Among existing approaches, Google DeepMind’s SynthID-Text is state-of-the-art [3], notable as the only watermarking method integrated into a real-world product (Google’s Gemini models), a rare industrial deployment in this domain. It embeds imperceptible statistical signals during generation via tournament sampling, departing from earlier post-hoc or green-list based methods [4, 1]. This approach introduces controlled stochasticity in token selection and shows improved detectability in benign settings. However, its resilience to malicious tampering remains underexplored. Previous studies note the fragility of lexical watermarks under meaning-preserving, surface-altering transformations [5, 6]; SynthID-Text, despite advancements, shares this limitation, motivating deeper analysis of its practical robustness.

In this work, we systematically assess SynthID-Text under real-world meaning-preserving transformations: paraphrasing, synonym substitution, copy-paste rearrangement, and back-translation, attacks preserving semantic content while modifying lexical or syntactic surface form. Results reveal a critical vulnerability: detection accuracy drops sharply even under light paraphrasing or translation. These findings align with prior concerns, highlighting a gap in current capabilities.

To address this, we propose SynGuard, a hybrid scheme integrating Semantic Invariant Robust (SIR) alignment [6] with SynthID’s token-level probabilistic masking. Our method embeds provenance signals at both lexical and semantic levels: the semantic component guides generation toward SIR-favored contexts (enhancing robustness to synonym and paraphrase attacks), while SynthID’s token logic retains seed-derived randomness (resisting keyless removal).

Unlike prior lexical-only approaches [1, 3], SynGuard adds a semantic signal to detect tampering that preserves meaning but alters surface structure. This hybrid design better balances false positive rate and tampering robustness. We formalize this via theoretical analysis (Section V-C), showing semantically consistent transformations rarely suppress SIR-guided scores unless meaning is significantly distorted, one of the first formal analyses of watermark resilience under semantic equivalence.

Empirical evaluation across four attacks shows SynGuard improves average F1 by 11.1% over SynthID-Text, performing especially well under paraphrasing and round-trip translation (common in content reposting and cross-lingual reuse). We uncover a new vulnerability axis: back-translation-induced watermark degradation correlates with translation quality, as poorer machine translation distorts signals more even with preserved semantics. This insight introduces new considerations for evaluating robustness across linguistic contexts and highlights the need for multilingual benchmarks.

Our contributions are summarized as follows:

1. Conduct the first comprehensive robustness evaluation of SynthID-Text under four meaning-preserving transformations: paraphrasing, synonym substitution, copy-paste tampering, back-translation.

1. Propose SynGuard, a hybrid algorithm combining semantic-aware token preferences with token-level probabilistic sampling.

1. Demonstrate SynGuard consistently improves detection robustness, particularly for surface-altered but meaning-preserved content.

1. Reveal back-translation attack vulnerability correlates with machine translation quality, an overlooked axis.

## II Related Work

Text watermarking distinguishes AI vs human text by embedding specific information into text sequences without quality loss. By watermark insertion stage in text generation, methods fall into two types [4]: watermarking for existing text and during generation. The first type adds watermarks via post-processing of existing text, typically via reformatting sentences with Unicode, altering lexicon or syntax. Though easy to implement, they are easy to remove via reformatting/normalization.

Watermarking during generation is achieved by modifying logits in token generation. This approach is more stable, imperceptible, harder for attackers to detect/remove. A key method is the KGW algorithm [1]: it splits vocabulary into green/red lists via pseudorandom seed. Adding positive bias to green list tokens makes them more likely selected than red ones. This skew enables high-confidence post hoc detection. KGW balances robustness and imperceptibility, underpinning recent frameworks [7, 8, 9].

Google DeepMind’s SynthID-Text [3] advances generation-based watermarking by using pseudorandom functions (PRFs) and tournament sampling to guide token generation in a more randomized and less perceptible manner. During the sampling process, each token candidate is assigned $m$ independent $g$ -values $(g_{1},...,g_{m})$ , and the token with the highest total $g$ -value (e.g., the sum of all $g_{i}$ ) among all candidates is selected. These $g$ -values can later be used for watermark detection. This design improves robustness against removal attacks such as truncation and basic paraphrasing.

Despite these strengths, most generation-time watermarking algorithms, including SynthID-Text, do not incorporate semantic information when adjusting logits. As a result, they remain vulnerable to semantic-preserving adversarial attacks. Recent studies have begun exploring semantic-aware watermarking strategies [6, 10, 11]. A Semantic Invariant Robust watermarking algorithm is introduced [6], which maps extracted semantic features from preceding context into the logit space to guide next-token generation. In this approach, semantic similarity becomes a key indicator for detecting watermarks. While promising in terms of robustness, this method relies on additional language models, which increases computational complexity and resource consumption. Furthermore, enforcing semantic consistency reduces output diversity and naturalness.

## III Preliminaries

### III-A Large Language Model

A large language model (LLM) $M$ operates over a defined set of tokens, known as the vocabulary $V$ . Given a sequence of tokens $t=[t_{0},t_{1},\ldots,t_{T-1}]$ , also referred to as the prompt, the model computes the probability distribution over the next token $t_{T}$ as $P_{M}(t_{T}\mid t_{:T-1})$ . The model $M$ then samples one token from the vocabulary $V$ according to this distribution and other sampling parameters (e.g., temperature). This process is repeated iteratively until the maximum token length is reached or an end-of-sequence (EOS) token is generated.

This next-token prediction is typically implemented using a neural network architecture called the Transformer [12]. The process involves two main steps:

1. The Transformer computes a vector of logits $z_{T}=M_{t_{:T-1}}$ over all tokens in $V$ , based on the current context $t_{:T-1}$ .

1. The softmax function is applied to these logits to produce a normalized probability distribution: $P_{M}(t_{T}\mid t_{:T-1})$ .

### III-B SynthID-Text in LLM Text Watermarking

Text watermarking for LLMs operates mainly at two stages: embedding-level (modifying internal embedding vectors, which is complex and less generalizable) and generation-level (altering token generation via logits adjustment or sampling strategies). Generation-level methods include logits-based approaches (e.g., KGW algorithm [1], biasing logits toward “green list” tokens) and sampling-based approaches (e.g., Christ algorithm [13], using pseudorandom functions to guide sampling without logit modification).

SynthID-Text is a sampling-based algorithm featuring a novel tournament sampling mechanism for token selection. Candidate tokens are sampled from the original LLM-generated probability distribution $p_{LM}$ , so higher-probability tokens may appear multiple times in the candidate set. Each candidate token is evaluated using $m$ independent pseudorandom binary watermark functions $g_{1},g_{2},...,g_{m}$ . These functions assign a value of 0 or 1 to a token $x\in V$ based on both the token and a random seed $r\in\mathbb{R}$ : $g_{l}(x,r)\in\{0,1\}.$ The tournament sampling procedure selects the token with statistically high $g$ -values across the $m$ functions, while respecting the base LLM distribution. To detect if a text $t=[t_{1},...,t_{T}]$ is watermarked, the average $g$ -value across all tokens and functions is computed:

$$

\text{Score}(t)=\frac{1}{mT}\sum_{i=1}^{T}\sum_{l=1}^{m}g_{l}(t_{i},r_{i}). \tag{1}

$$

### III-C Text Watermarking Challenges

Compared to watermarking techniques in other media such as images or audio [14, 15, 16, 17], embedding watermarks in text introduces a distinct set of challenges:

Token Budget Constraints: A standard $256\times 256$ image offers over 65K potential pixel positions for embedding watermarks [18]. In contrast, the maximum token length for LLMs like GPT-4 is around 8.2K tokens (with limited access to 32K https://openai.com/index/gpt-4-research/), which is significantly smaller. This limited capacity makes it harder to embed watermarks without detection by human readers and increases vulnerability to adversarial edits. As a result, watermarking algorithms for text require more careful design to ensure both imperceptibility and robustness.

Perturbation Sensitivity: Text data is highly sensitive to editing [19]. While small pixel changes in an image are often imperceptible to the human eye, even minor alterations in a text, such as character replacements or word substitutions, can be easily noticed by readers or detected by spelling and grammar tools. Moreover, replacing entire words can unintentionally alter the meaning, introduce ambiguity, or degrade sentence fluency.

Vulnerability: Watermarks in text are particularly susceptible to removal through common natural language transformations. An attacker can easily re-edit the content by substituting synonyms, or paraphrasing with new sentence structures [20].

## IV Evaluating the Robustness of SynthID-Text

This chapter presents the experimental settings, evaluation metrics, and results from robustness analysis of the SynthID-Text watermarking algorithm. Section VI-A outlines the experimental setup, including the backbone model, dataset, and metrics used for evaluation. Sections IV-B through IV-E report SynthID-Text’s performance under four types of text editing attacks: synonym substitution, copy-and-paste, paraphrasing, and re-translation. Finally, Section IV-F summarizes and compares results across all attack types to provide a comprehensive evaluation.

### IV-A Experimental Setup

Backbone Model and Dataset. All experiments were conducted using Sheared-LLaMA-1.3B [21], a model further pre-trained from meta-llama/Llama-2-7b-hf https://huggingface.co/meta-llama/Llama-2-7b-hf. The model used is publicly available via HuggingFace https://huggingface.co/princeton-nlp/Sheared-LLaMA-1.3B. For the dataset, we adopt the Colossal Clean Crawled Corpus (C4) [22], which includes diverse, high-quality web text. Each C4 sample is split into two segments: the first segment serves as the prompt for generation, while the second (human-written) segment is used as reference text. These unaltered human texts are treated as control data for evaluating the watermark detector’s false positive rate.

Evaluation Metrics. The robustness of SynthID-Text is evaluated using the following metrics:

- True Positive Rate (TPR): The proportion of watermarked texts correctly identified.

- False Positive Rate (FPR): The proportion of unwatermarked texts incorrectly identified as watermarked.

- F1 Score: The harmonic mean of precision and recall, computed at the best threshold.

- ROC-AUC: The area under the Receiver Operating Characteristic (ROC) curve, measuring overall classification performance across all thresholds.

Each experiment was conducted using 200 watermarked and 200 unwatermarked samples, each with a fixed length of $T=200$ tokens. All experiments were implemented using the MarkLLM toolkit [23].

### IV-B Synonym Substitution Attack

Given an original text sequence, the synonym substitution attack aims to replace words with their synonyms until a specified replacement ratio $\epsilon$ is reached, or no further substitutions are possible. This approach maintains semantic fidelity while subtly altering the lexical surface of the text. A well-chosen $\epsilon$ ensures that the semantic meaning remains largely intact, which aligns with the attack’s objective—to disrupt watermark detection without affecting readability or content.

In this work, synonym replacement is guided by a context-aware language model to ensure substitutions remain semantically appropriate. Specifically, we implemented a method that uses WordNet [24], a widely used lexical database of English, to retrieve synonym sets for eligible words. For each target word, a synonym is randomly selected using the NumPy library’s random function [25]. The substitution is further refined using BERT-Large [26], which predicts contextually suitable replacements. The process is repeated iteratively until the desired substitution ratio $\epsilon$ is reached or no more valid substitutions remain. This ensures the altered text remains semantically coherent while maximally disrupting watermark patterns.

Details of the BERT Span Attack. To perform context-aware synonym substitution, BERT-Large https://huggingface.co/google-bert/bert-large-uncased is first used to tokenize the watermarked text. Then, eligible words are iteratively replaced with contextually appropriate synonyms until either the maximum replacement ratio $\epsilon$ is reached or no further substitutions are possible. The substitution process proceeds as follows:

- Randomly select a word that has at least one synonym and replace it with a [MASK] token:

⬇

"I love programming."

"I [MASK] programming."

Listing 1: Word Masking

- Feed the masked sentence into the BERT-Large model, which produces a logits vector over the vocabulary using a forward pass.

- Rank all candidate words based on their logits and select the word with the highest probability to replace the masked token.

BERT-Large is chosen for its bidirectional architecture, allowing it to consider both preceding and succeeding context when predicting the masked word. This contextual understanding ensures that substituted words maintain semantic consistency with the original sentence.

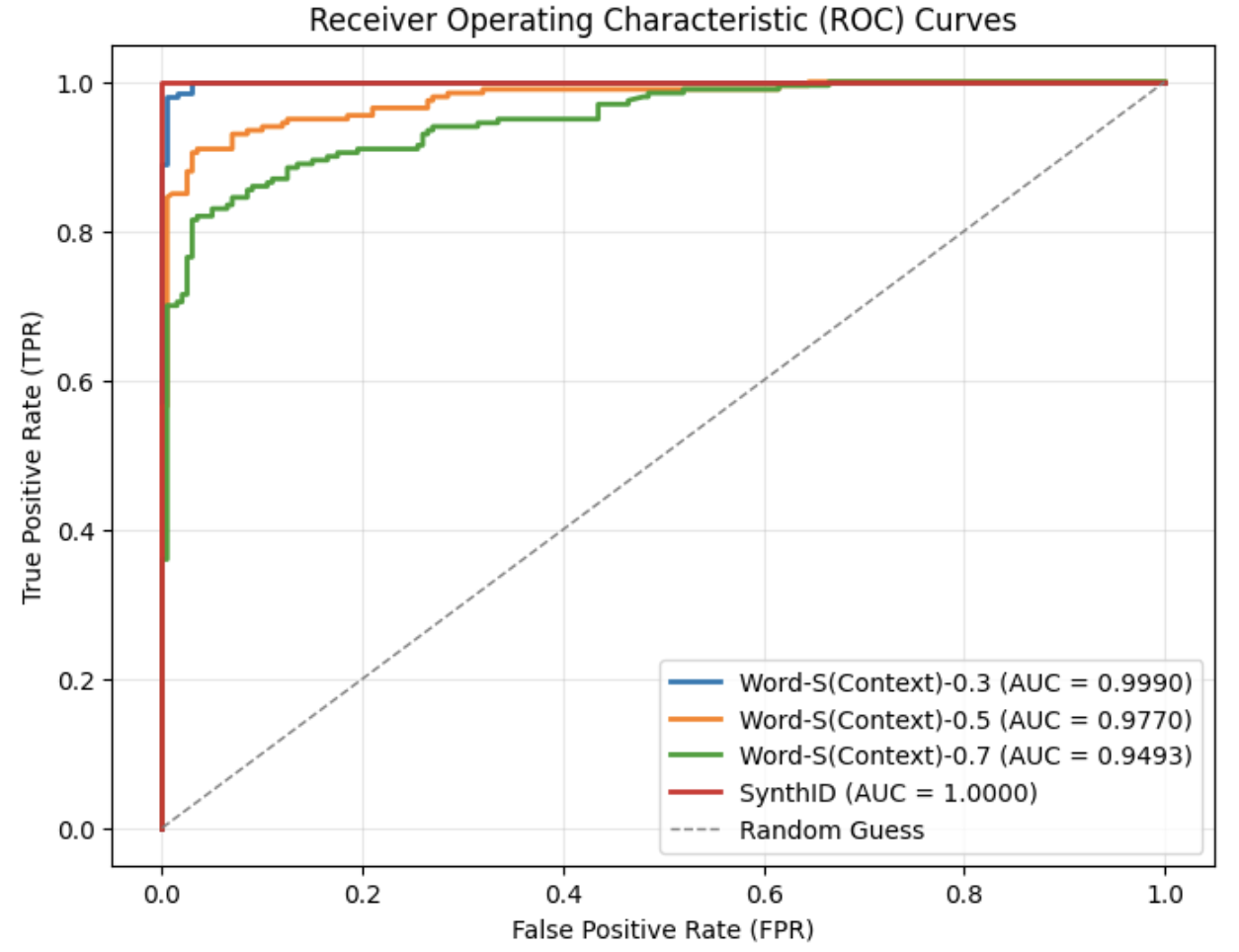

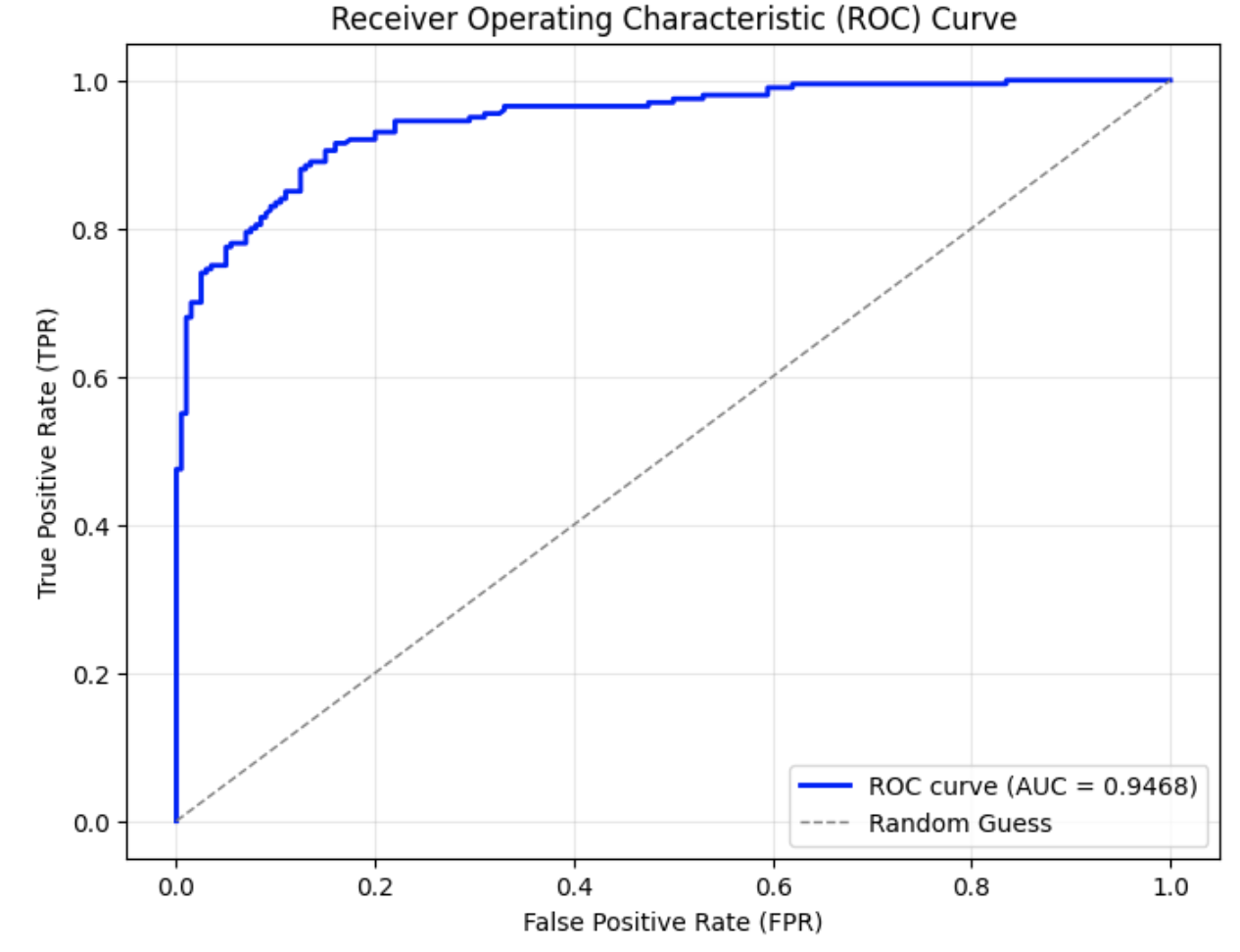

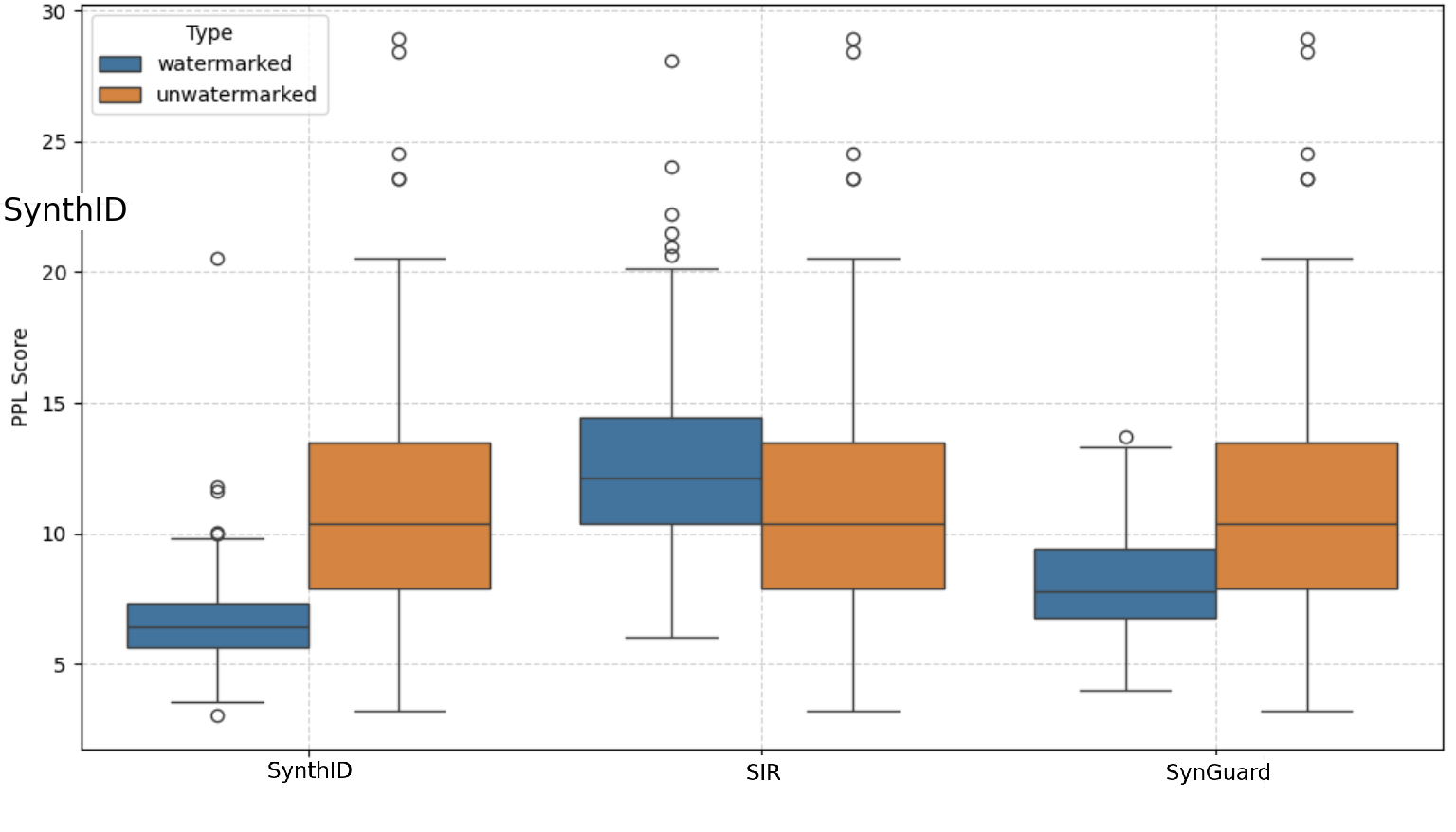

After applying the synonym substitution strategy to a set of 200 watermarked texts, each with a token length of $T=200$ , the resulting ROC curves are presented in Fig. 1. As shown, the area under the curve (AUC) gradually decreases as the replacement ratio increases. Even with a replacement ratio as high as 0.7, the AUC remains above 0.94, and the corresponding F1 score is relatively high at 0.884, as reported in Table I. These results demonstrate that SynthID-Text exhibits strong robustness against context-preserving lexical substitutions.

<details>

<summary>Synonym_Substitution_attack_for_SynthID-Text.png Details</summary>

### Visual Description

## Chart: Receiver Operating Characteristic (ROC) Curves

### Overview

The image presents Receiver Operating Characteristic (ROC) curves for four different models: Word-S(Context)-0.3, Word-S(Context)-0.5, Word-S(Context)-0.7, and SynthID. The curves plot True Positive Rate (TPR) against False Positive Rate (FPR), and are used to evaluate the performance of binary classification models. A diagonal dashed line represents a random guess. The Area Under the Curve (AUC) is provided for each model, indicating its overall performance.

### Components/Axes

* **Title:** Receiver Operating Characteristic (ROC) Curves

* **X-axis:** False Positive Rate (FPR) - Scale: 0.0 to 1.0

* **Y-axis:** True Positive Rate (TPR) - Scale: 0.0 to 1.0

* **Legend:** Located in the bottom-right corner. Contains the following entries:

* Blue Solid Line: Word-S(Context)-0.3 (AUC = 0.9990)

* Orange Solid Line: Word-S(Context)-0.5 (AUC = 0.9770)

* Green Solid Line: Word-S(Context)-0.7 (AUC = 0.9493)

* Red Solid Line: SynthID (AUC = 1.0000)

* Gray Dashed Line: Random Guess

### Detailed Analysis

* **Random Guess (Gray Dashed Line):** This line represents the performance of a classifier that randomly guesses the class label. It slopes diagonally from (0.0, 0.0) to (1.0, 1.0).

* **Word-S(Context)-0.3 (Blue Line):** This line starts at approximately (0.0, 0.0) and quickly rises to a TPR of nearly 1.0 at an FPR of approximately 0.1. It remains at a TPR close to 1.0 for the remainder of the FPR range. The AUC is 0.9990.

* **Word-S(Context)-0.5 (Orange Line):** This line starts at approximately (0.0, 0.0) and rises to a TPR of nearly 1.0 at an FPR of approximately 0.2. It remains at a TPR close to 1.0 for the remainder of the FPR range. The AUC is 0.9770.

* **Word-S(Context)-0.7 (Green Line):** This line starts at approximately (0.0, 0.0) and rises to a TPR of nearly 1.0 at an FPR of approximately 0.3. It remains at a TPR close to 1.0 for the remainder of the FPR range. The AUC is 0.9493.

* **SynthID (Red Line):** This line starts at approximately (0.0, 0.0) and immediately rises vertically to a TPR of 1.0, remaining at this level for all FPR values. The AUC is 1.0000.

### Key Observations

* The SynthID model exhibits perfect classification performance (AUC = 1.0000).

* The Word-S(Context) models demonstrate good performance, with AUC values decreasing as the context value increases (0.9990, 0.9770, 0.9493).

* All models perform significantly better than random guessing.

* The curves show that increasing the context value in the Word-S(Context) models leads to a trade-off between sensitivity and specificity, resulting in a lower AUC.

### Interpretation

The ROC curves demonstrate the ability of each model to distinguish between positive and negative classes. The AUC value provides a single metric to quantify this ability, with higher values indicating better performance. The SynthID model achieves perfect separation, suggesting it is highly effective at classifying the data. The Word-S(Context) models show a decreasing performance with increasing context, potentially indicating that the added context introduces noise or irrelevant information that hinders the classification process. The curves visually represent the trade-off between TPR and FPR, allowing for a comprehensive evaluation of each model's performance across different classification thresholds. The fact that all curves are above the random guess line indicates that all models are performing better than chance. The steepness of the curves also indicates how quickly the models can achieve a high TPR while maintaining a low FPR.

</details>

(a) Overall ROC curves under synonym substitution with different replacement ratios

<details>

<summary>zoom-in_synonym_substitution_attack_for_SynthID.png Details</summary>

### Visual Description

## Chart: Zoomed-in ROC Curve (Log-scaled FPR)

### Overview

The image presents a Receiver Operating Characteristic (ROC) curve, specifically a zoomed-in view with the False Positive Rate (FPR) plotted on a logarithmic scale. The chart compares the performance of four different models or configurations, labeled "Word-S(Context)-0.3", "Word-S(Context)-0.5", "Word-S(Context)-0.7", and "SynthID". The curves illustrate the trade-off between the True Positive Rate (TPR) and the FPR for each model.

### Components/Axes

* **Title:** "Zoomed-in ROC Curve (Log-scaled FPR)" - positioned at the top-center of the chart.

* **X-axis:** "False Positive Rate (FPR, log scale)" - ranging from 10<sup>-4</sup> to 10<sup>0</sup> (i.e., 0.0001 to 1). The scale is logarithmic.

* **Y-axis:** "True Positive Rate (TPR)" - ranging from 0.90 to 1.00.

* **Legend:** Located in the top-right corner of the chart. It identifies each line with its corresponding model name and Area Under the Curve (AUC) value.

* "Word-S(Context)-0.3" (AUC = 0.9990) - Blue line

* "Word-S(Context)-0.5" (AUC = 0.9770) - Orange line

* "Word-S(Context)-0.7" (AUC = 0.9493) - Green line

* "SynthID" (AUC = 1.0000) - Red line

* **Grid:** A light gray grid is present, aiding in the reading of values.

### Detailed Analysis

The chart displays four ROC curves. Let's analyze each one:

* **Word-S(Context)-0.3 (Blue):** This line exhibits a very steep initial rise, quickly reaching a TPR of approximately 0.98 at an FPR of around 0.001 (10<sup>-3</sup>). It remains at a TPR of nearly 1.0 for the rest of the FPR range.

* **Word-S(Context)-0.5 (Orange):** This line starts lower than the blue line, with a TPR of approximately 0.94 at an FPR of around 0.001. It gradually increases, reaching a TPR of approximately 0.98 at an FPR of around 0.01 (10<sup>-2</sup>).

* **Word-S(Context)-0.7 (Green):** This line has the lowest initial TPR, starting at approximately 0.90 at an FPR of around 0.001. It shows a more gradual increase, reaching a TPR of approximately 0.95 at an FPR of around 0.1 (10<sup>-1</sup>).

* **SynthID (Red):** This line maintains a TPR of 1.0 across the entire FPR range, indicating perfect classification performance.

The AUC values provided in the legend quantify the overall performance of each model.

### Key Observations

* The "SynthID" model has a perfect AUC score of 1.0, indicating flawless performance.

* "Word-S(Context)-0.3" performs the best among the Word-S models, with an AUC of 0.9990.

* As the context value increases from 0.3 to 0.7, the AUC score decreases, suggesting that increasing the context negatively impacts performance.

* The curves demonstrate that all models achieve high TPR values, but at varying FPR levels.

### Interpretation

This ROC curve analysis suggests that the "SynthID" model is the most effective at distinguishing between positive and negative cases. The "Word-S(Context)" models show a trade-off between TPR and FPR, with lower context values (0.3) leading to better performance. The decreasing AUC scores with increasing context values indicate that incorporating more context may introduce noise or irrelevant information, hindering the model's ability to accurately classify instances. The logarithmic scale on the FPR axis emphasizes the performance of the models at low false positive rates, which is often crucial in applications where minimizing false alarms is paramount. The steep initial rise of the blue line suggests that the "Word-S(Context)-0.3" model can achieve high accuracy with a very low rate of false positives. The chart provides a visual and quantitative comparison of the models' performance, allowing for informed decision-making regarding model selection and parameter tuning.

</details>

(b) Zoomed-in ROC curves under synonym substitution with different replacement ratios

Figure 1: ROC curves of SynthID-Text under synonym substitution attacks with varying replacement ratios.

TABLE I: Watermark detection accuracy under different synonym substitution attack ratios.

| Attack | TPR | FPR | F1 with best threshold |

| --- | --- | --- | --- |

| No attack | 1.0 | 0.0 | 1.0 |

| Word-S(Context)-0.3 | 0.98 | 0.005 | 0.987 |

| Word-S(Context)-0.5 | 0.91 | 0.035 | 0.936 |

| Word-S(Context)-0.7 | 0.82 | 0.035 | 0.884 |

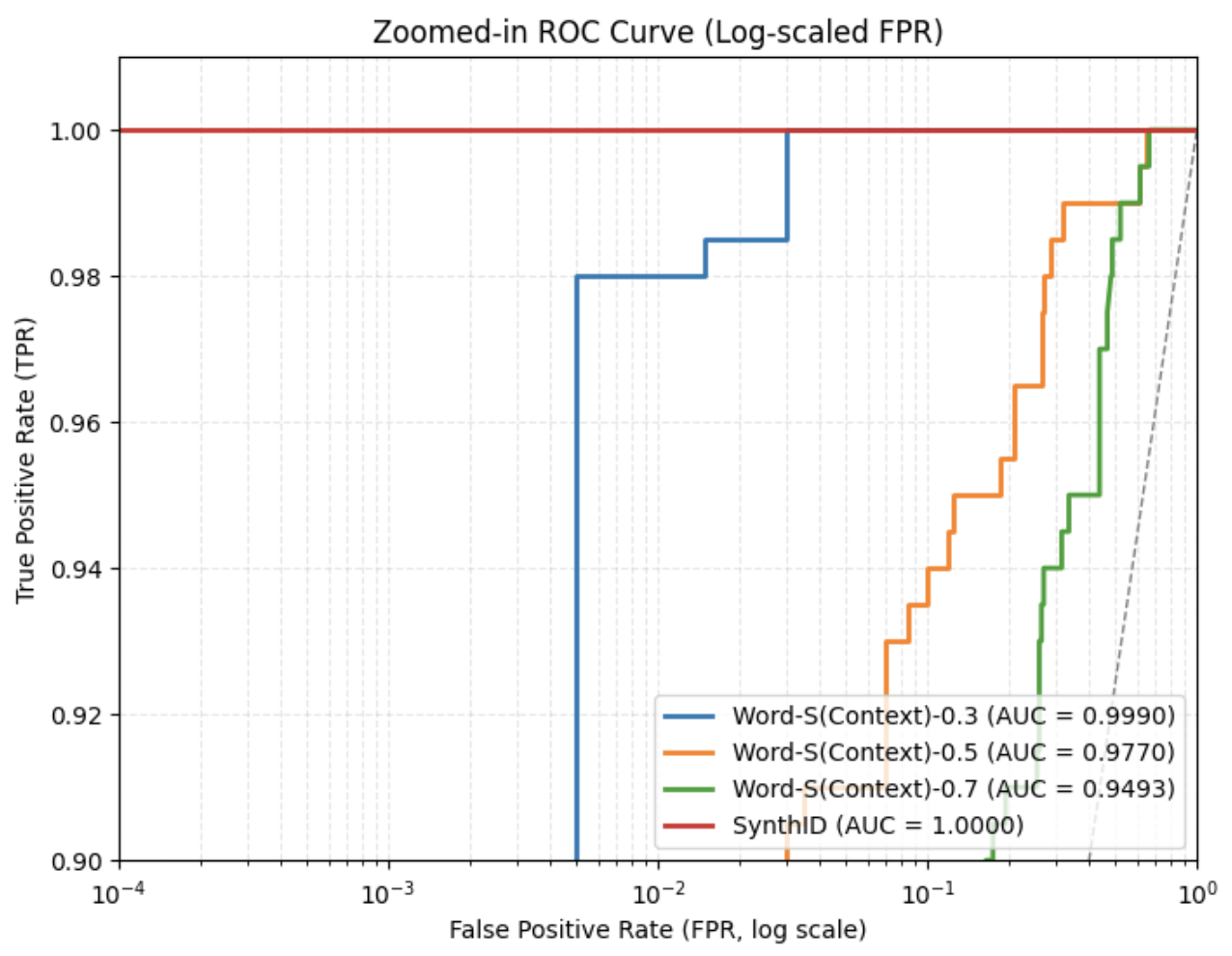

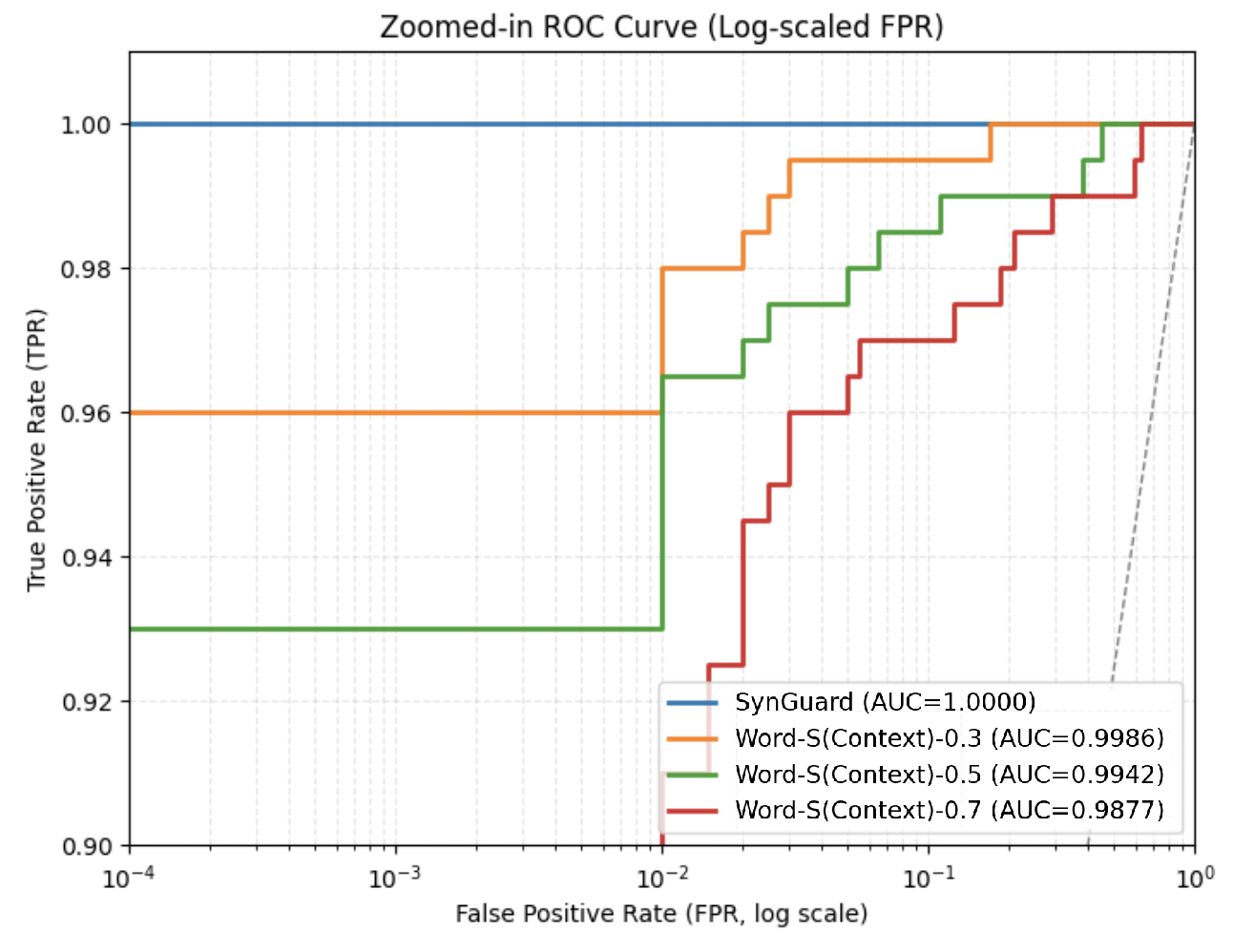

### IV-C Copy-and-Paste Attack

Unlike synonym substitution attacks, the copy-and-paste attack does not alter the original watermarked text. Instead, it embeds the watermarked segment within a larger body of human-written or unwatermarked content. This type of attack exploits the fact that detection algorithms typically analyze text holistically; by diluting the watermarked portion, the overall watermark signal becomes weaker and harder to detect.

Prior work [9] has shown that when the watermarked portion comprises only 10% of the total text, the attack can outperform many paraphrasing methods in reducing watermark detectability. In this work, we experiment with different copy-and-paste ratios and evaluate the detection performance to assess robustness.

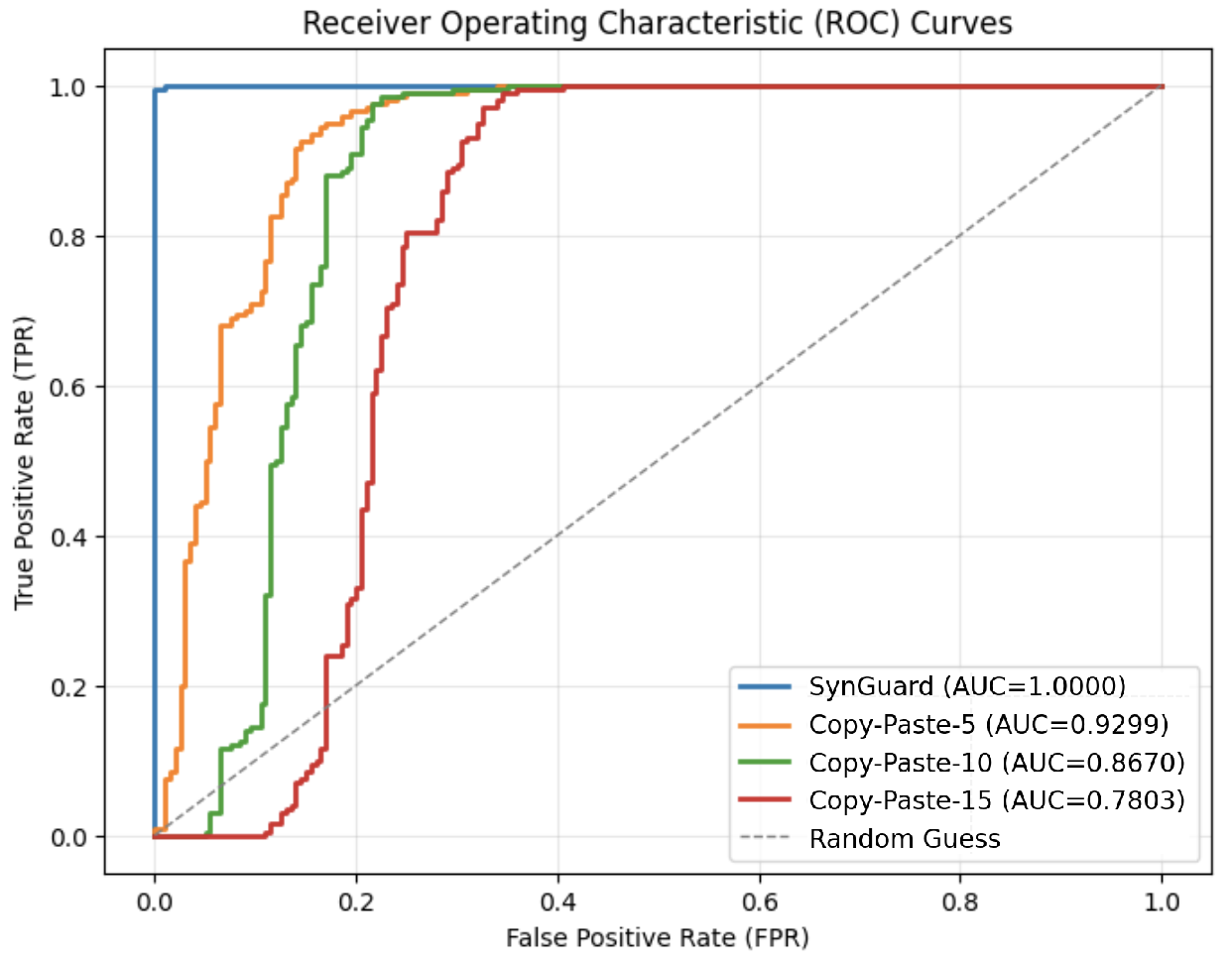

Fig. 2 presents the ROC curves for varying copy-and-paste ratios. The green curve represents the case where the added natural text is ten times longer than the original watermarked text, resulting in an AUC of 0.62—only slightly above random guess. As shown in Table II, the false positive rate (FPR) for ratio $=10$ reaches 0.53, meaning that more than half of unwatermarked texts are incorrectly identified as watermarked. As the copy-and-paste ratio increases, detection performance degrades further. When the ratio reaches 20 or higher, the AUC decreases to around or below 0.5, effectively equating to or falling below random guessing performance.

<details>

<summary>figures/copy-paste_attack_roc.png Details</summary>

### Visual Description

\n

## Chart: Receiver Operating Characteristic

</details>

Figure 2: ROC curves under different copy-and-paste attack ratios. The blue curve represents the original SynthID-Text ROC curve without attack; the gray curve indicates random guessing. Other curves depict results under varying ratios, where the ratio denotes how many times longer the inserted natural text is compared to the original watermarked text.

TABLE II: Watermark detection accuracy under different copy-and-paste attack ratios

| Attack | TPR | FPR | F1 with best threshold |

| --- | --- | --- | --- |

| No attack | 1.0 | 0.005 | 0.9975 |

| Copy-and-Paste-5 | 0.985 | 0.27 | 0.874 |

| Copy-and-Paste-10 | 0.995 | 0.53 | 0.788 |

| Copy-and-Paste-20 | 0.99 | 0.565 | 0.775 |

| Copy-and-Paste-30 | 0.99 | 0.565 | 0.775 |

### IV-D Paraphrasing Attack

Paraphrasing attacks aim to modify the structure and wording of a paragraph while preserving its original semantic meaning. This is typically done by rephrasing sentences or altering word choice and sentence order. Therefore, paraphrasing can be characterized along two key dimensions: lexical diversity, which measures variation in vocabulary, and order diversity, which reflects changes in sentence or phrase order.

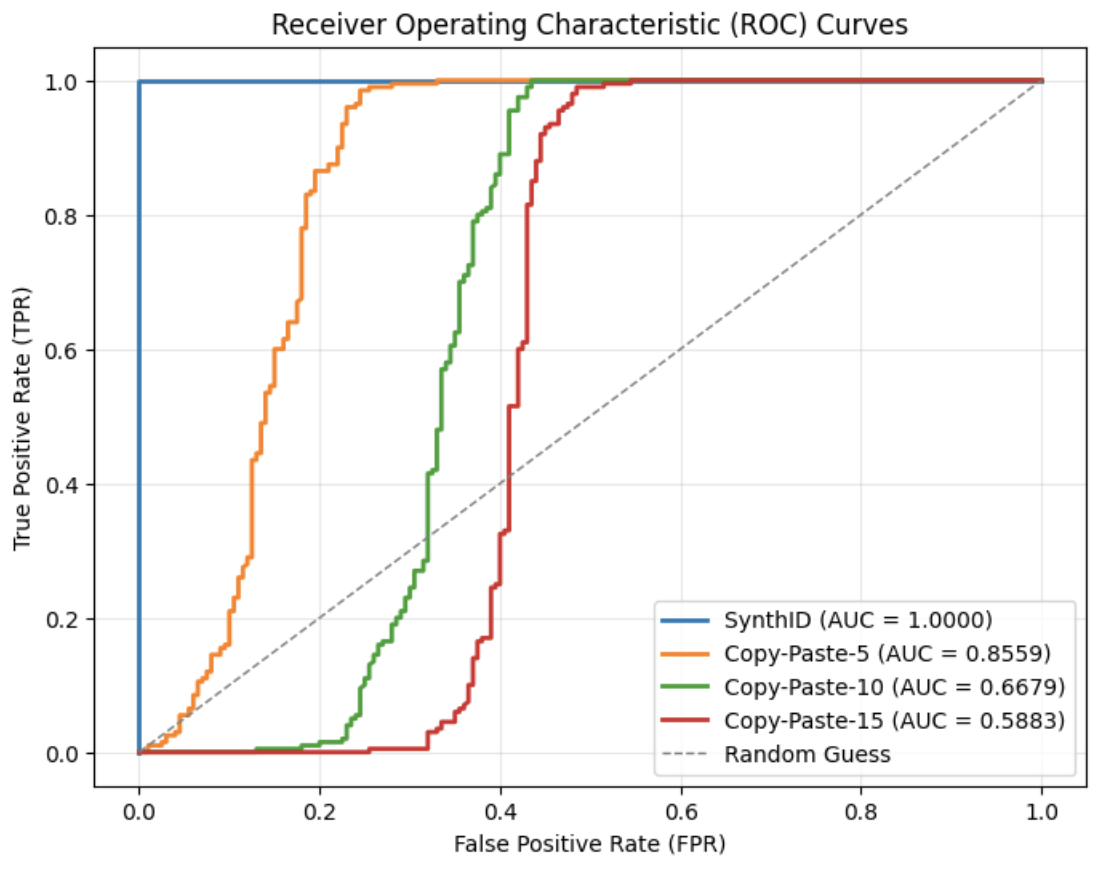

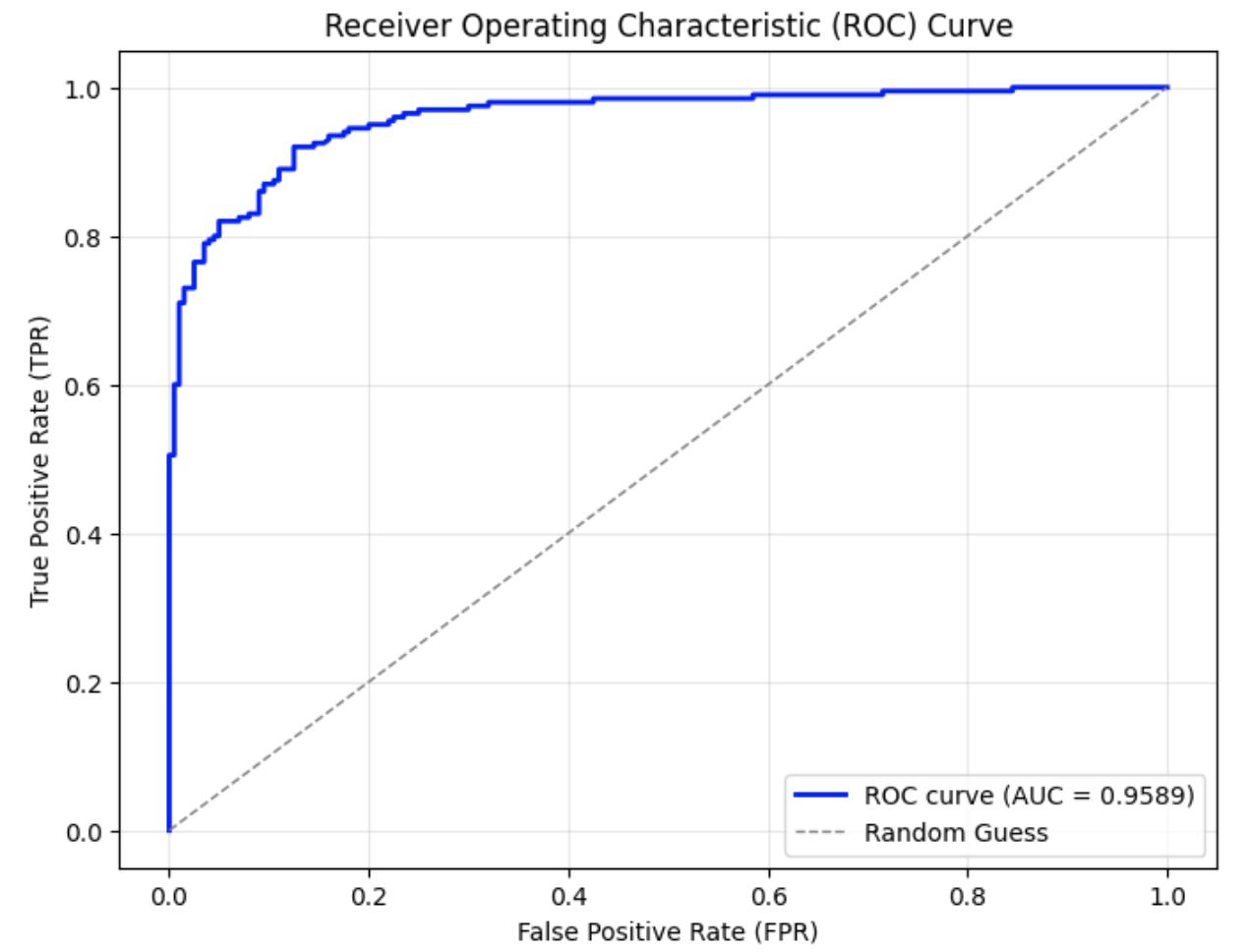

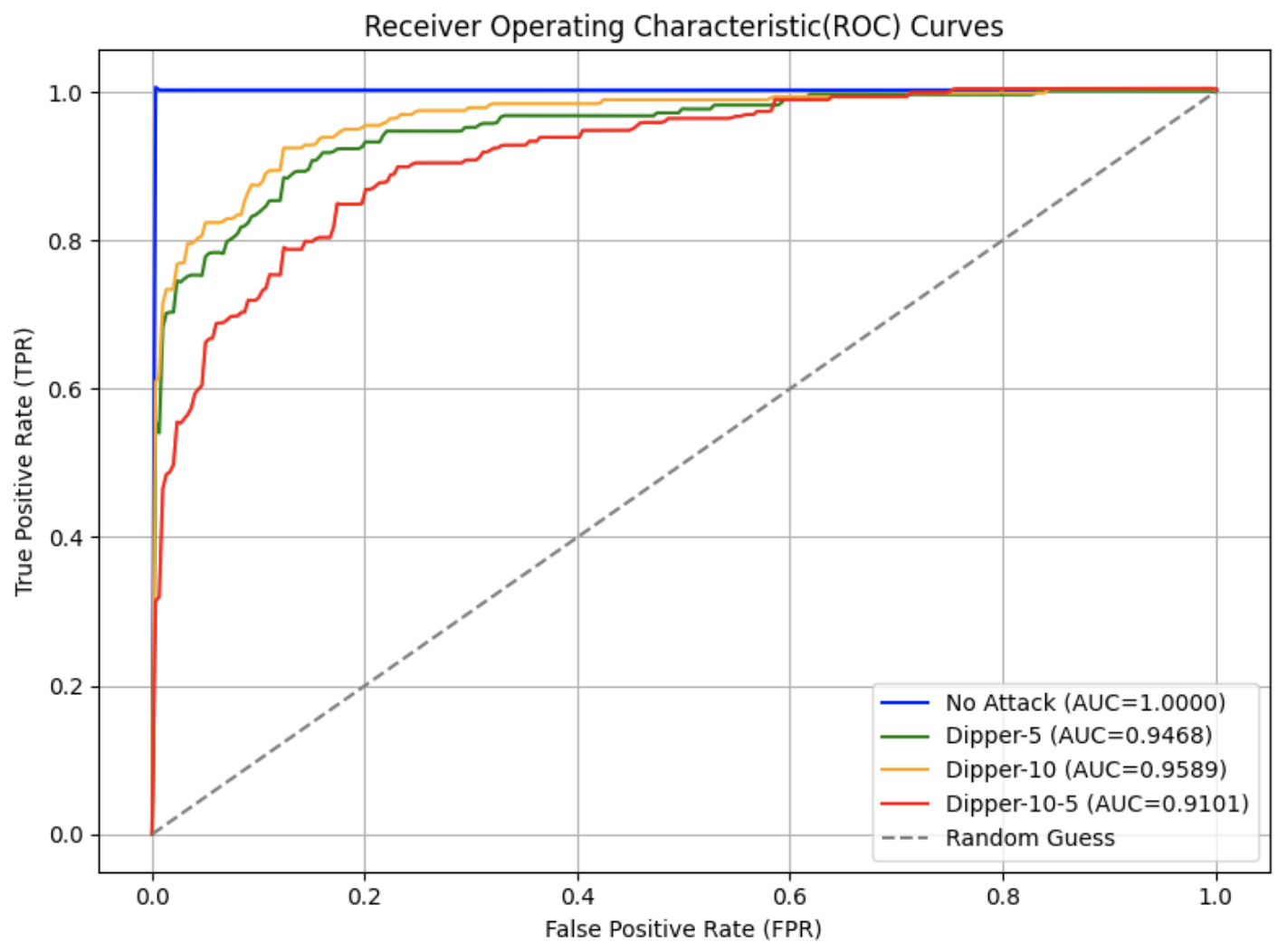

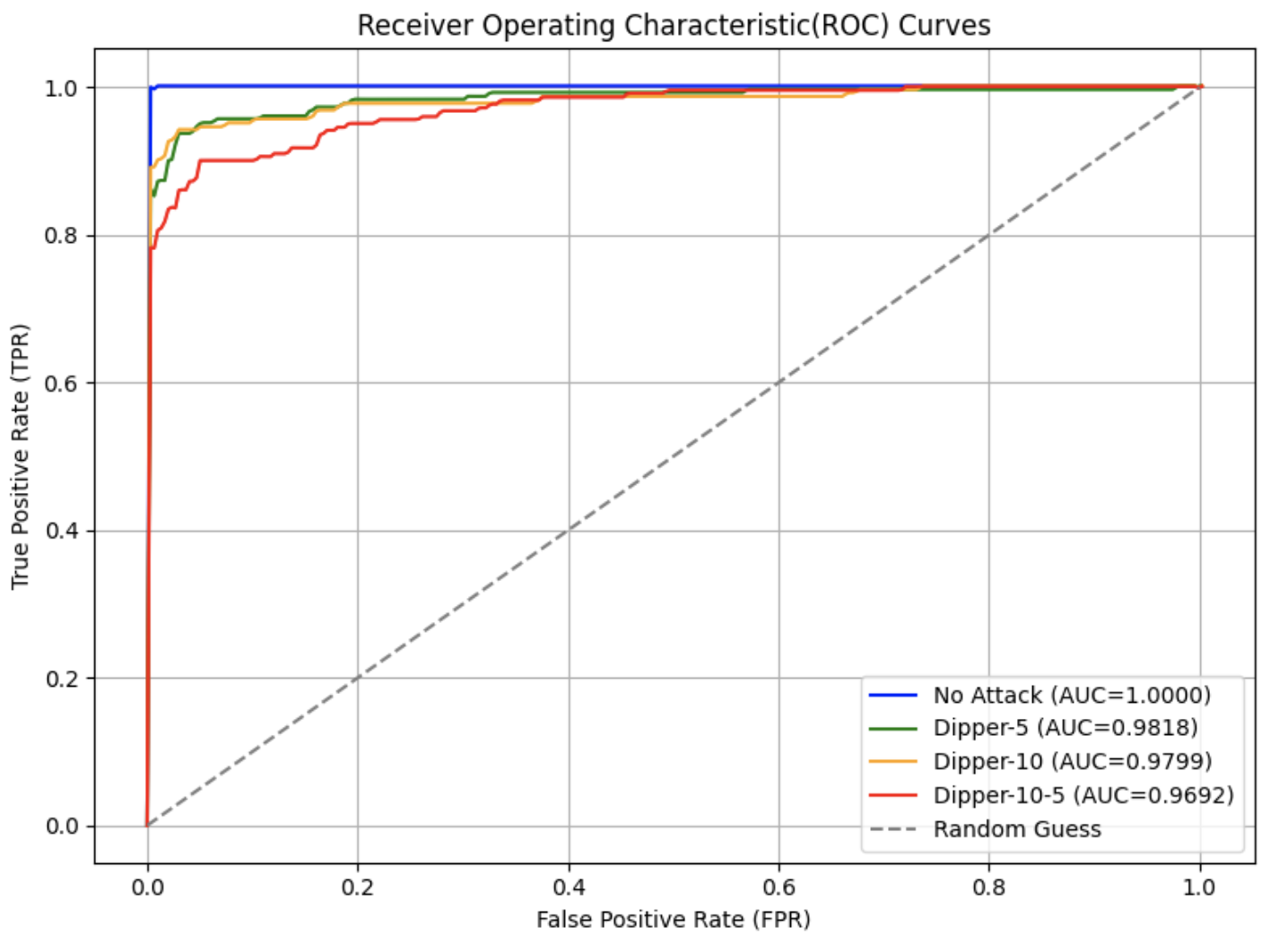

In this experiment, we adopted the Dipper paraphrasing model [27], which is built on the T5-XXL [22] architecture. Dipper allows fine-tuned control over both lexical and order diversity through configurable parameters. Two levels of lexical diversity were used to conduct the attacks, and the results are shown in Fig. 3.

From the graphs, it can be observed that compared to the original ROC curve of SynthID-Text without attack in Fig. 3 (a), the AUC in Fig. 3 (b) and (c) decrease by approximately 0.04–0.05 when only lexical diversity was applied. When both lexical diversity and order diversity were set simultaneously, the AUC experienced a decline to 0.91 in Fig. 3 (d) from 1.00 in the no attack setting. The corresponding FPR and F1 scores are presented in Table III. Particularly, when lex_diversity=10 and order_diversity=5 (shown in the fourth row), the FPR exceeded 20%, and the F1 score dropped to 0.84, indicating a significant reduction in detection accuracy under this paraphrasing condition.

<details>

<summary>figures/SynthID_solo_curve.png Details</summary>

### Visual Description

\n

## Chart: Receiver Operating Characteristic (ROC) Curve

### Overview

The image displays a Receiver Operating Characteristic (ROC) curve, a graphical representation of the performance of a binary classification model at various threshold settings. The curve plots the True Positive Rate (TPR) against the False Positive Rate (FPR). A diagonal line represents random guessing.

### Components/Axes

* **Title:** Receiver Operating Characteristic (ROC) Curve

* **X-axis:** False Positive Rate (FPR), ranging from 0.0 to 1.0.

* **Y-axis:** True Positive Rate (TPR), ranging from 0.0 to 1.0.

* **Legend:** Located in the bottom-right corner.

* **ROC curve (AUC = 1.0000):** Represented by a solid blue line.

* **Random Guess:** Represented by a dashed gray line.

* **Grid:** A light gray grid is present in the background to aid in reading values.

### Detailed Analysis

The chart contains two data series: the ROC curve and the random guess line.

**1. ROC Curve (Solid Blue Line):**

The ROC curve starts at approximately (0.0, 0.0) and rises sharply to approximately (0.2, 0.8), then continues with a slight upward slope to reach (1.0, 1.0). The curve is very close to the top-left corner of the plot, indicating excellent classification performance. The Area Under the Curve (AUC) is reported as 1.0000.

**2. Random Guess (Dashed Gray Line):**

The random guess line is a diagonal line that starts at (0.0, 0.0) and ends at (1.0, 1.0). This line represents the performance of a classifier that randomly guesses the class label.

### Key Observations

* The ROC curve is significantly above the random guess line, indicating that the model performs much better than random chance.

* The AUC of 1.0000 indicates perfect classification performance. The model can perfectly distinguish between the positive and negative classes.

* The curve's proximity to the top-left corner suggests a high sensitivity and specificity.

### Interpretation

The ROC curve demonstrates that the binary classification model has excellent performance. The AUC of 1.0000 signifies that the model is capable of perfectly separating the two classes. This suggests that the features used in the model are highly informative and that the model has learned to effectively discriminate between the positive and negative instances. The model is not prone to false positives or false negatives. This is an ideal scenario, but it's important to consider the context of the data and whether this level of performance is realistic or potentially indicative of overfitting. The model is performing optimally, and any threshold chosen will yield the best possible trade-off between true positive rate and false positive rate.

</details>

(a) No attack (original SynthID-Text)

<details>

<summary>figures/Dipper-5_synthID.png Details</summary>

### Visual Description

\n

## Chart: Receiver Operating Characteristic (ROC) Curve

### Overview

The image displays a Receiver Operating Characteristic (ROC) curve, a graphical representation of the performance of a binary classification model at all classification thresholds. It plots the True Positive Rate (TPR) against the False Positive Rate (FPR). A diagonal line represents random guessing. The area under the curve (AUC) is a measure of the model's ability to distinguish between positive and negative classes.

### Components/Axes

* **Title:** Receiver Operating Characteristic (ROC) Curve

* **X-axis:** False Positive Rate (FPR) - Scale ranges from 0.0 to 1.0 with increments of 0.2.

* **Y-axis:** True Positive Rate (TPR) - Scale ranges from 0.0 to 1.0 with increments of 0.2.

* **Legend:** Located in the bottom-right corner.

* **ROC curve (AUC = 0.9468):** Represented by a solid blue line.

* **Random Guess:** Represented by a dashed grey line.

### Detailed Analysis

The solid blue line, representing the ROC curve, starts at approximately (0.0, 0.75) and rises steeply to approximately (0.1, 0.9) before leveling off and approaching (1.0, 1.0). The curve remains consistently above the diagonal dashed grey line, indicating that the model performs better than random guessing.

Here's a breakdown of approximate data points extracted from the blue ROC curve:

* (0.0, 0.75)

* (0.05, 0.85)

* (0.1, 0.9)

* (0.2, 0.95)

* (0.3, 0.97)

* (0.4, 0.98)

* (0.5, 0.99)

* (0.6, 0.99)

* (0.7, 1.0)

* (0.8, 1.0)

* (0.9, 1.0)

* (1.0, 1.0)

The dashed grey line, representing random guessing, is a diagonal line from (0.0, 0.0) to (1.0, 1.0).

### Key Observations

* The ROC curve is significantly above the random guess line, indicating good discriminatory power.

* The AUC value of 0.9468 suggests a high probability that the model will be able to distinguish between positive and negative classes.

* The curve rises quickly initially, indicating that the model can effectively identify true positives with a low false positive rate.

* The curve plateaus at a high TPR, suggesting that the model reaches a point where increasing the threshold does not significantly improve the TPR.

### Interpretation

The ROC curve demonstrates that the binary classification model has excellent performance. The high AUC value (0.9468) indicates that the model is highly effective at distinguishing between the two classes. The curve's position well above the random guess line confirms that the model's predictions are significantly better than chance. The initial steep rise suggests the model is particularly good at identifying true positives without generating many false positives. The plateau indicates that there's a limit to how much the model's performance can be improved by adjusting the classification threshold. This information is crucial for understanding the model's strengths and weaknesses and for selecting an appropriate classification threshold based on the specific application's requirements.

</details>

(b) Dipper paraphrasing with $lex\_diversity=5$

<details>

<summary>figures/Dipper-10_synthID.png Details</summary>

### Visual Description

\n

## Chart: Receiver Operating Characteristic (ROC) Curve

### Overview

The image displays a Receiver Operating Characteristic (ROC) curve, a graphical representation of the performance of a binary classification model at all classification thresholds. It plots the True Positive Rate (TPR) against the False Positive Rate (FPR). A diagonal line represents random guessing.

### Components/Axes

* **Title:** Receiver Operating Characteristic (ROC) Curve

* **X-axis:** False Positive Rate (FPR) - Scale ranges from 0.0 to 1.0 with markers at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Y-axis:** True Positive Rate (TPR) - Scale ranges from 0.0 to 1.0 with markers at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Legend:** Located in the bottom-right corner.

* **ROC curve (AUC = 0.9589):** Solid blue line.

* **Random Guess:** Gray dashed line.

### Detailed Analysis

The chart contains two data series: the ROC curve and a line representing random guessing.

**1. ROC Curve (Solid Blue Line):**

The ROC curve starts at approximately (0.0, 0.0) and initially rises steeply, indicating a high true positive rate for low false positive rates. The curve then plateaus, maintaining a high TPR as the FPR increases.

* Approximate data points (estimated from the graph):

* (0.0, 0.0)

* (0.05, 0.7)

* (0.1, 0.85)

* (0.2, 0.92)

* (0.3, 0.95)

* (0.4, 0.96)

* (0.5, 0.97)

* (0.6, 0.98)

* (0.7, 0.99)

* (0.8, 0.99)

* (0.9, 1.0)

* (1.0, 1.0)

The Area Under the Curve (AUC) is reported as 0.9589.

**2. Random Guess (Gray Dashed Line):**

This line is a diagonal from approximately (0.0, 0.0) to (1.0, 1.0). It represents the performance of a classifier that randomly guesses the class label.

### Key Observations

* The ROC curve is significantly above the random guess line, indicating that the classification model performs better than random chance.

* The AUC of 0.9589 is very high, suggesting excellent discrimination ability of the model. A value close to 1.0 indicates a near-perfect ability to distinguish between positive and negative classes.

* The steep initial rise of the ROC curve indicates that the model can achieve a high true positive rate with a relatively low false positive rate.

### Interpretation

The ROC curve demonstrates that the binary classification model has strong predictive power. The high AUC value (0.9589) suggests that the model is capable of effectively distinguishing between the two classes. The curve's position well above the random guess line confirms that the model's performance is significantly better than chance. This model is likely a good candidate for deployment, as it minimizes both false positives and false negatives. The rapid initial ascent of the curve suggests that the model is particularly good at identifying true positives without generating many false alarms.

</details>

(c) Dipper paraphrasing with $lex\_diversity=10$ aaaaaaa aaaaaaaa

<details>

<summary>figures/Dipper-10-5_SynthID.png Details</summary>

### Visual Description

\n

## Chart: Receiver Operating Characteristic (ROC) Curve

### Overview

The image displays a Receiver Operating Characteristic (ROC) curve, a graphical representation of the performance of a binary classification model at all classification thresholds. It plots the True Positive Rate (TPR) against the False Positive Rate (FPR). A diagonal line represents random guessing. The area under the curve (AUC) is a measure of the model's ability to distinguish between

</details>

(d) Dipper paraphrasing with $lex\_diversity=10$ and $order\_diversity=5$

Figure 3: ROC curves under paraphrasing attacks with different settings.

Note ∗: Due to hardware limitations in Google Colab Pro—specifically, a maximum GPU memory of 40 GB—Dipper could only be run once per session. As a result, the ROC curves were generated in separate runs, requiring a restart between each execution, and are presented across multiple graphs.

TABLE III: Watermark detection accuracy under different paraphrasing attack settings

| Attack | TPR | FPR | F1 with best threshold |

| --- | --- | --- | --- |

| No attack | 1.0 | 0.0 | 1.0 |

| Dipper-5 | 0.915 | 0.16 | 0.882 |

| Dipper-10 | 0.92 | 0.125 | 0.8998 |

| Dipper-10-5 | 0.895 | 0.23 | 0.842 |

Note ∗: In this figure, Dipper- $x$ denotes that the Dipper model was run with a lexical diversity parameter of $x$ , while Dipper- $x$ - $y$ indicates a lexical diversity of $x$ and an order diversity of $y$ .

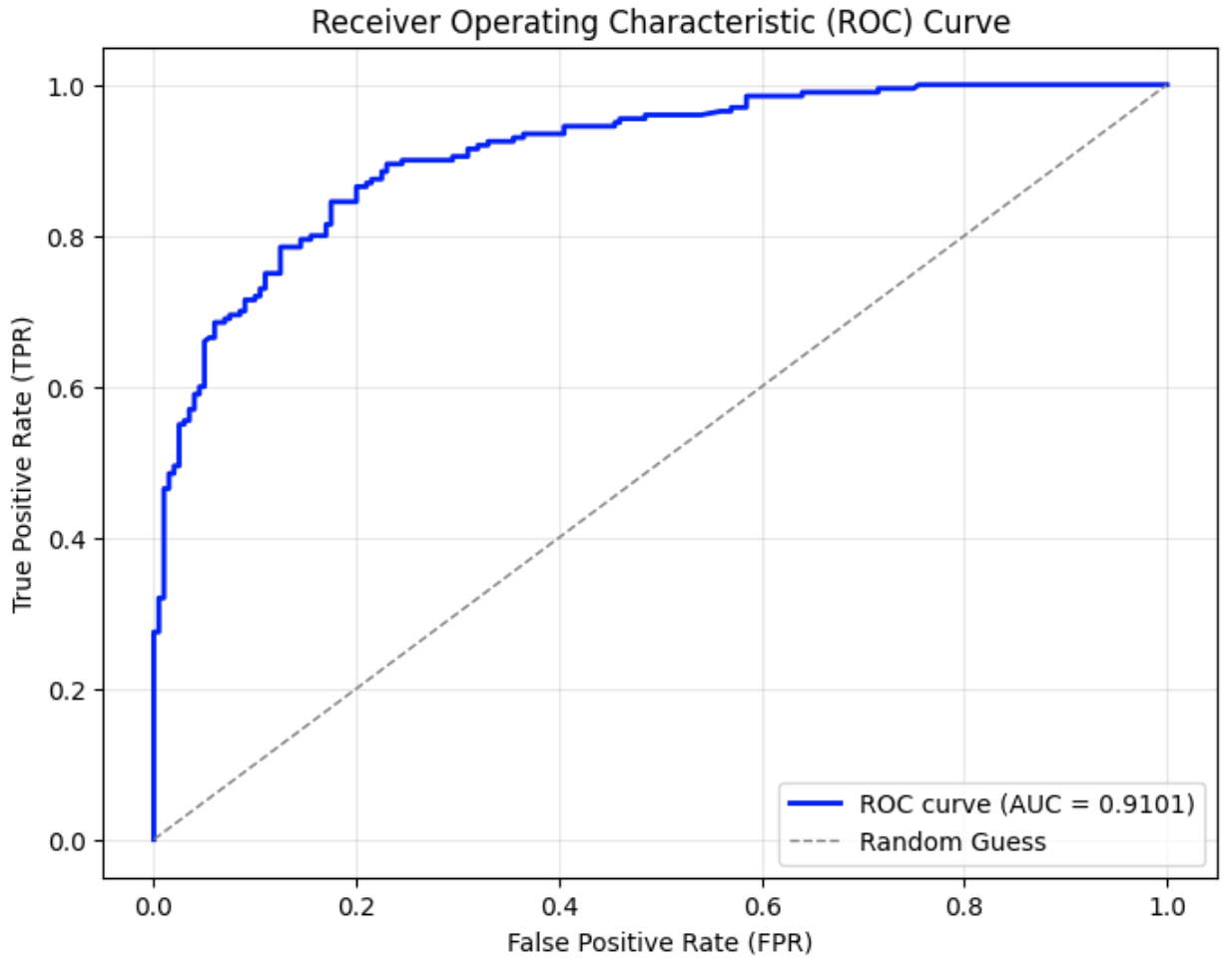

### IV-E Re-Translation Attack

The re-translation attack involves translating the original watermarked text into a pivot language and then translating it back into the original language. This process preserves the overall meaning, but may disrupt the watermark signal due to intermediate transformations applied by a translation model, as illustrated in Fig. 4.

<details>

<summary>figures/watermark_dilution_through_translation.jpg Details</summary>

### Visual Description

\n

## Diagram: Watermark Strength & Translation System Flow

### Overview

This diagram illustrates a system involving a Large Language Model (LLM), a Watermark Algorithm, a Translation System, and the resulting responses in both English (En) and Chinese (Zh). The diagram depicts the flow of text through these components and highlights the concept of "Watermark Strength," ranging from Strong to Weak.

### Components/Axes

* **Components:** LLM, Watermark Algorithm, Translation System, Response (En), Response (Zh).

* **Axis:** A horizontal axis labeled "Watermark Strength" with endpoints "Strong" and "Weak".

* **Arrows:** Arrows indicate the direction of information flow.

* **Text Blocks:** Several text blocks contain statements about college applications and the company ZeeMee.

### Detailed Analysis or Content Details

The diagram can be segmented into three main areas: Input Text, Processing Flow, and Output Responses.

**1. Input Text (Left Side):**

* A text block states: "Students have long applied to colleges and universities with applications that are heavy on test scores and grades. While that’s not necessarily wrong, the founders of…"

**2. Processing Flow (Center):**

* The input text flows into an "LLM" (Large Language Model) component.

* The output of the LLM flows into a "Watermark Algorithm" component, which then produces a "Watermark".

* The Watermark and the LLM output are fed into a "Translation System".

* The Translation System produces two responses: "Response (En)" and "Response (Zh)".

**3. Output Responses (Right Side):**

* **Response (En) - Top:** "ZeeMee believe it doesn’t tell the whole story. This Redwood City, California-based company created a platform that lets students bring their stories to life."

* **Response (En) - Bottom:** "ZeeMee believes it doesn’t tell the full story. The Redwood City, California-based company has created a platform that enables students to bring their own stories to life."

* **Response (Zh) - Center:** “ZeeMee 认为并没有讲述完整的故事情节。这家位于加利福尼亚的红木城公司创建了一个平台,让学生能够将自己的故事变成现实。”

* *English Translation:* "ZeeMee believes it doesn't tell the complete story. This Redwood City, California-based company has created a platform that allows students to bring their own stories to life."

**Watermark Strength:**

* The "Watermark" component is positioned above the "Watermark Strength" axis.

* The axis indicates that the watermark strength varies from "Strong" on the left to "Weak" on the right.

* The diagram implies that the strength of the watermark influences the output of the Translation System.

### Key Observations

* The diagram demonstrates a process where text is processed by an LLM, watermarked, translated, and then output in two languages.

* There are two versions of the English response, suggesting potential variations or refinements in the translation or post-processing.

* The "Watermark Strength" axis suggests that the presence and strength of a watermark can affect the output.

* The diagram does not provide specific numerical data or quantitative measurements.

### Interpretation

The diagram illustrates a system designed to detect or verify the origin of text generated by an LLM. The Watermark Algorithm likely embeds a subtle signal within the text that can be detected even after translation. The "Watermark Strength" axis suggests that the robustness of this signal can be adjusted. The two English responses may represent different levels of watermark strength or different processing steps.

The diagram highlights the challenges of maintaining provenance and authenticity in the age of LLMs, particularly when dealing with multilingual content. The system aims to address these challenges by embedding a detectable signal within the generated text. The diagram suggests that the system is designed to verify that the text originated from a specific source (ZeeMee, in this case) and has not been altered or misrepresented. The fact that the diagram includes both English and Chinese responses suggests that the watermark is designed to be resilient to translation.

The diagram is a conceptual illustration of a system and does not provide detailed technical specifications or performance metrics. It serves as a high-level overview of the key components and their interactions.

</details>

Figure 4: Illustration of watermark dilution through translation

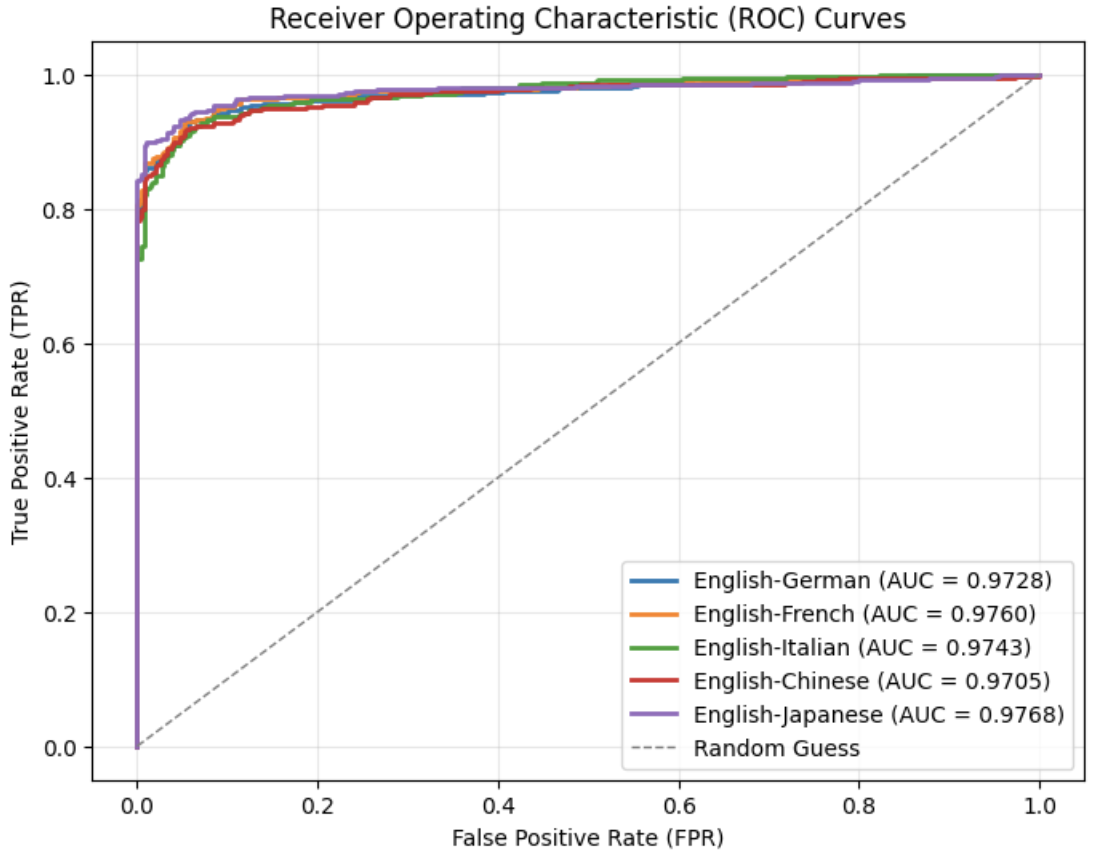

For this experiment, we used the nllb-200-distilled-600M https://huggingface.co/facebook/nllb-200-distilled-600M model, a distilled 600M-parameter variant of NLLB-200 [28]. NLLB-200 is a multilingual machine translation model that supports direct translation between 200 languages and is designed for research purposes. Several different languages were selected as pivot languages, including French, Italian, Chinese, and Japanese. Since the original dataset only consists of English prompts and human-written English completions, the watermarked outputs were first translated into pivot language and then re-translated into English to maintain consistency with the original prompt language.

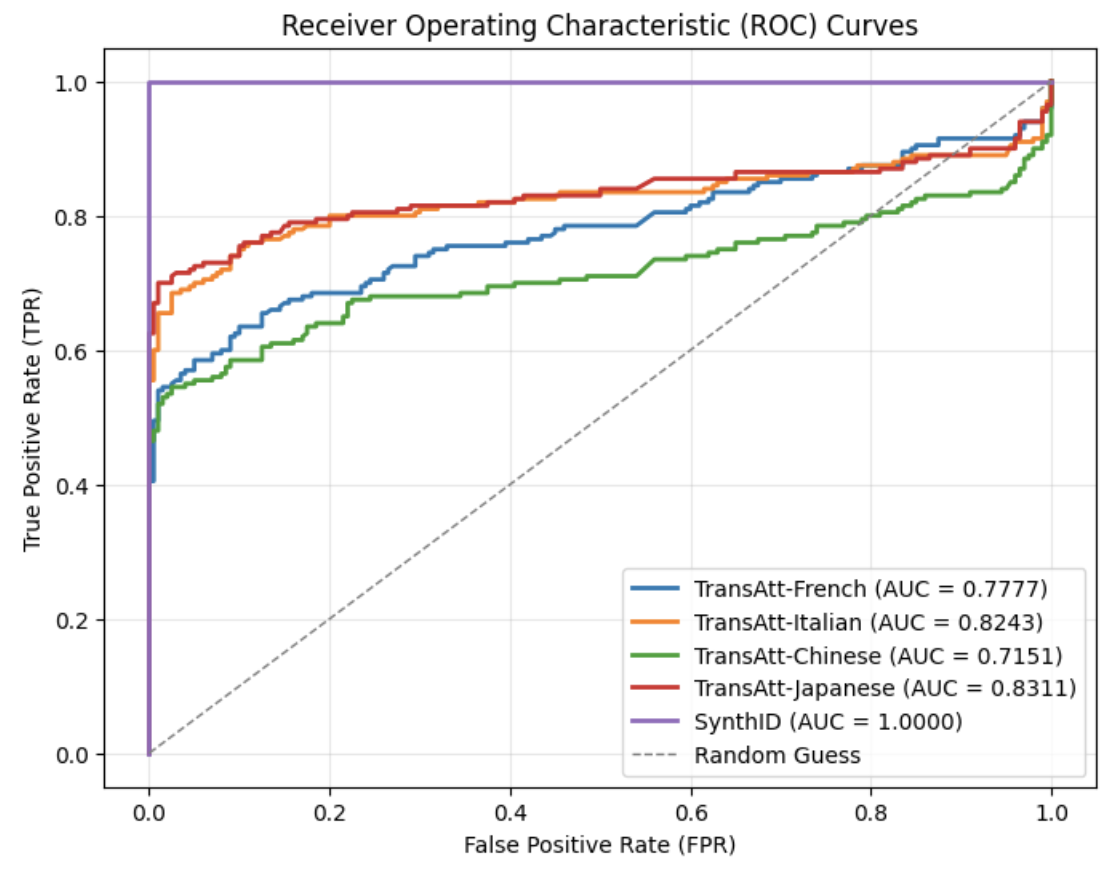

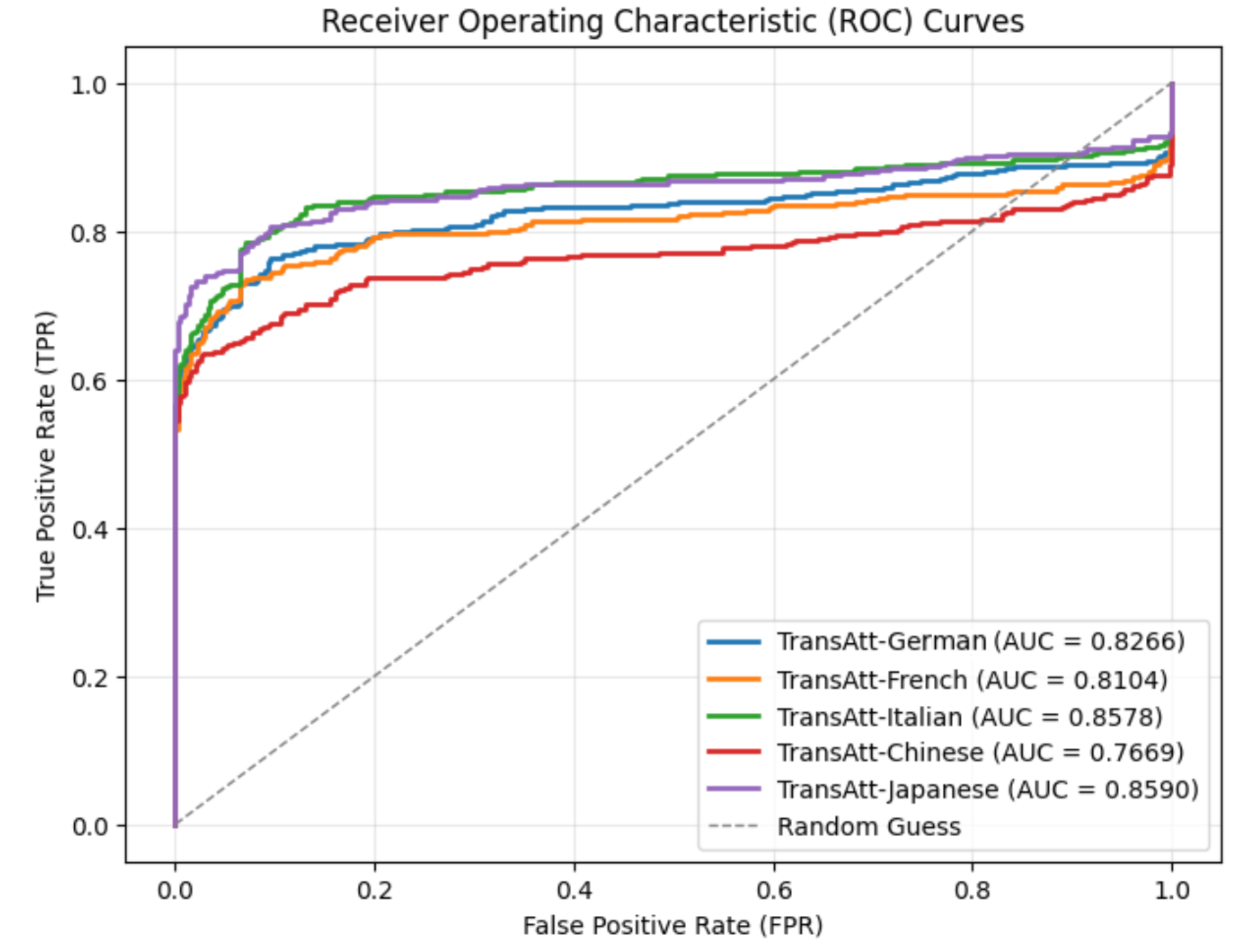

The ROC curves under this re-translation attacks using different pivot languages are presented in Fig. 5. The results indicate that the choice of pivot language significantly influences the effectiveness of re-translation attacks. French and Italian, which both belong to the Latin language family , share substantial linguistic similarities with English, which has been heavily influenced by Latin. As a result, the round-trip retranslated texts maintain relatively high AUC scores. In contrast, Chinese is more significantly different from English, leading to the lowest AUC observed after re-translation. Surprisingly, Japanese produces the highest AUC among all tested pivot languages, even slightly surpassing Italian. This outcome may be attributed to the specific design of English-to-Japanese translation systems. Given the syntactic differences between Japanese and English (such as SOV versus SVO word order), many modern translation tools adopt a linear translation strategy when translating from English to Japanese [29, 30]. This approach attempts to preserve the original sentence structure as much as possible to enhance translation quality. Consequently, round-trip translation using Japanese tends to retain more of the original semantics and structure, making the re-translation attack less effective. Compared to the baseline performance of SynthID-Text without attack, the F1 score for the re-translation attack using Chinese reduces significantly from 1.00 to 0.711, while the F1 score remains 0.819 for Japanese, which is the highest, as shown in Table IV.

<details>

<summary>Retranslation_attacks_for_SynthID-Text.png Details</summary>

### Visual Description

## Chart: Receiver Operating Characteristic (ROC) Curves

### Overview

The image displays Receiver Operating Characteristic (ROC) curves for several models, evaluating their performance in distinguishing between positive and negative cases. The curves plot the True Positive Rate (TPR) against the False Positive Rate (FPR) for each model. A diagonal dashed line represents random guessing. The Area Under the Curve (AUC) is provided for each model, indicating its overall performance.

### Components/Axes

* **Title:** Receiver Operating Characteristic (ROC) Curves

* **X-axis:** False Positive Rate (FPR) - Scale: 0.0 to 1.0

* **Y-axis:** True Positive Rate (TPR) - Scale: 0.0 to 1.0

* **Legend:** Located in the bottom-right corner. Contains the following models and their corresponding AUC scores:

* TransAtt-French (AUC = 0.7777) - Blue

* TransAtt-Italian (AUC = 0.8243) - Orange

* TransAtt-Chinese (AUC = 0.7151) - Green

* TransAtt-Japanese (AUC = 0.8311) - Red

* SynthID (AUC = 1.0000) - Purple

* Random Guess - Gray dashed line

### Detailed Analysis

The chart shows the performance of different models. The curves start at (0,0) and extend towards (1,1).

* **SynthID (Purple):** This model exhibits perfect performance, with the curve immediately rising to TPR = 1.0 at FPR = 0.0. AUC = 1.0000.

* **TransAtt-Japanese (Red):** This model performs very well, with a curve that is close to the top-left corner. The curve rises quickly and maintains a high TPR even at relatively low FPRs. Approximate data points: (0.0, 0.0), (0.1, ~0.85), (0.3, ~0.95), (0.6, ~0.98), (0.8, ~0.99), (1.0, ~1.0). AUC = 0.8311.

* **TransAtt-Italian (Orange):** This model performs well, but slightly less than the Japanese model. The curve is consistently above the random guess line. Approximate data points: (0.0, 0.0), (0.1, ~0.75), (0.3, ~0.85), (0.6, ~0.92), (0.8, ~0.95), (1.0, ~0.98). AUC = 0.8243.

* **TransAtt-French (Blue):** This model has moderate performance. The curve is above the random guess line, but is lower than the Italian and Japanese models. Approximate data points: (0.0, 0.0), (0.1, ~0.65), (0.3, ~0.75), (0.6, ~0.85), (0.8, ~0.90), (1.0, ~0.95). AUC = 0.7777.

* **TransAtt-Chinese (Green):** This model has the lowest performance among the TransAtt models. The curve is relatively close to the random guess line. Approximate data points: (0.0, 0.0), (0.1, ~0.55), (0.3, ~0.65), (0.6, ~0.75), (0.8, ~0.85), (1.0, ~0.92). AUC = 0.7151.

* **Random Guess (Gray dashed):** This line represents the baseline performance of a random classifier. It runs diagonally from (0,0) to (1,1).

### Key Observations

* SynthID demonstrates perfect classification ability.

* TransAtt-Japanese and TransAtt-Italian models perform the best among the TransAtt models.

* TransAtt-Chinese has the lowest performance among the TransAtt models.

* The AUC scores provide a quantitative measure of the models' performance, with higher scores indicating better performance.

### Interpretation

The ROC curves demonstrate the ability of each model to discriminate between positive and negative instances. The AUC score quantifies this ability, with a score of 1.0 representing perfect discrimination and a score of 0.5 representing random guessing. The SynthID model achieves perfect discrimination, suggesting it is highly effective at the task. The TransAtt models show varying degrees of performance, with the Japanese and Italian models performing better than the French and Chinese models. This suggests that the language used in the data may influence the model's ability to learn and generalize. The random guess line serves as a baseline for evaluating the performance of the models; any model with a curve above this line is performing better than random guessing. The differences in AUC scores between the models indicate that some models are more effective at distinguishing between positive and negative instances than others. This information can be used to select the best model for a given task or to identify areas for improvement in the less-performing models.

</details>

Figure 5: ROC curves of re-translation attacks on SynthID

TABLE IV: Watermark detection accuracy under re-translation attacks using different pivot languages

| Attack | TPR | FPR | F1 |

| --- | --- | --- | --- |

| No attack | 1.0 | 0.0 | 1.0 |

| Re-trans-French | 0.675 | 0.155 | 0.738 |

| Re-trans-Italian | 0.76 | 0.11 | 0.813 |

| Re-trans-Chinese | 0.675 | 0.225 | 0.711 |

| Re-trans-Japanese | 0.715 | 0.03 | 0.819 |

### IV-F Summary

Table V summarizes the watermark detection performance of SynthID-Text under various attack scenarios. For the re-translation attack, we present the result for Chinese as it is one of the three most widely spoken languages in the world.

Without any attack, the algorithm achieves a perfect F1 score of 1.0 and a false positive rate (FPR) of 0.0, demonstrating excellent baseline performance in detecting watermarked text. Under synonym substitution attacks, the F1 score decreases to 0.884, slightly below 0.9, indicating a moderate level of resilience to lexical variation.

For the copy-and-paste attack with a length ratio of 10, the F1 score decreases more substantially to 0.788, while the FPR rises sharply to 0.53. This suggests that simply appending large segments of natural (unwatermarked) text can significantly weaken watermark detectability, even if the original watermarked content remains unchanged. The paraphrasing attack, particularly when involving both high lexical diversity (lex_diversity = 10) and syntactic reordering (order_diversity = 5), also lead to a notable decrease in robustness. In this setting, the FPR increases to 0.23, and the F1 score falls to 0.842.

The most severe degradation occurs under the re-translation attack. Translating the watermarked text into Chinese and subsequently back into English results in a significant decline in detection performance: the F1 score falls to 0.711, and the TPR declines to 0.675, only slightly better than random guessing. This highlights the substantial vulnerability of SynthID-Text to semantic-preserving transformations.

These findings suggest that while SynthID-Text remains robust against simple lexical substitutions, it is significantly less effective under complex semantic-preserving attacks such as paraphrasing and round-trip translation, which pose the greatest challenges for reliable watermark detection.

TABLE V: Watermark detection accuracy of SynthID-Text under various attacks

| Attack | TPR | FPR | F1 |

| --- | --- | --- | --- |

| No attack | 1.0 | 0.0 | 1.0 |

| Substitution ( $\epsilon=0.7$ ) | 0.82 | 0.035 | 0.884 |

| Copy-and-Paste (ratio = 10) | 0.995 | 0.53 | 0.788 |

| Paraphrasing (lex_diversity | | | |

| = 10, order_diversity = 5) | 0.895 | 0.23 | 0.842 |

| Re-Translation (Chinese) | 0.675 | 0.225 | 0.711 |

## V SynGuard: An Enhanced SynthID-Text Watermarking

Since SynthID-Text embeds watermarks during the text generation process, if the generated text is regenerated or modified by another translation or language model, the original watermarking signals may be disrupted. As a result, the watermark information is prone to being destroyed. This vulnerability becomes especially apparent in the detection performance when subjected to back-translation attacks. The results could be found in Section VI.

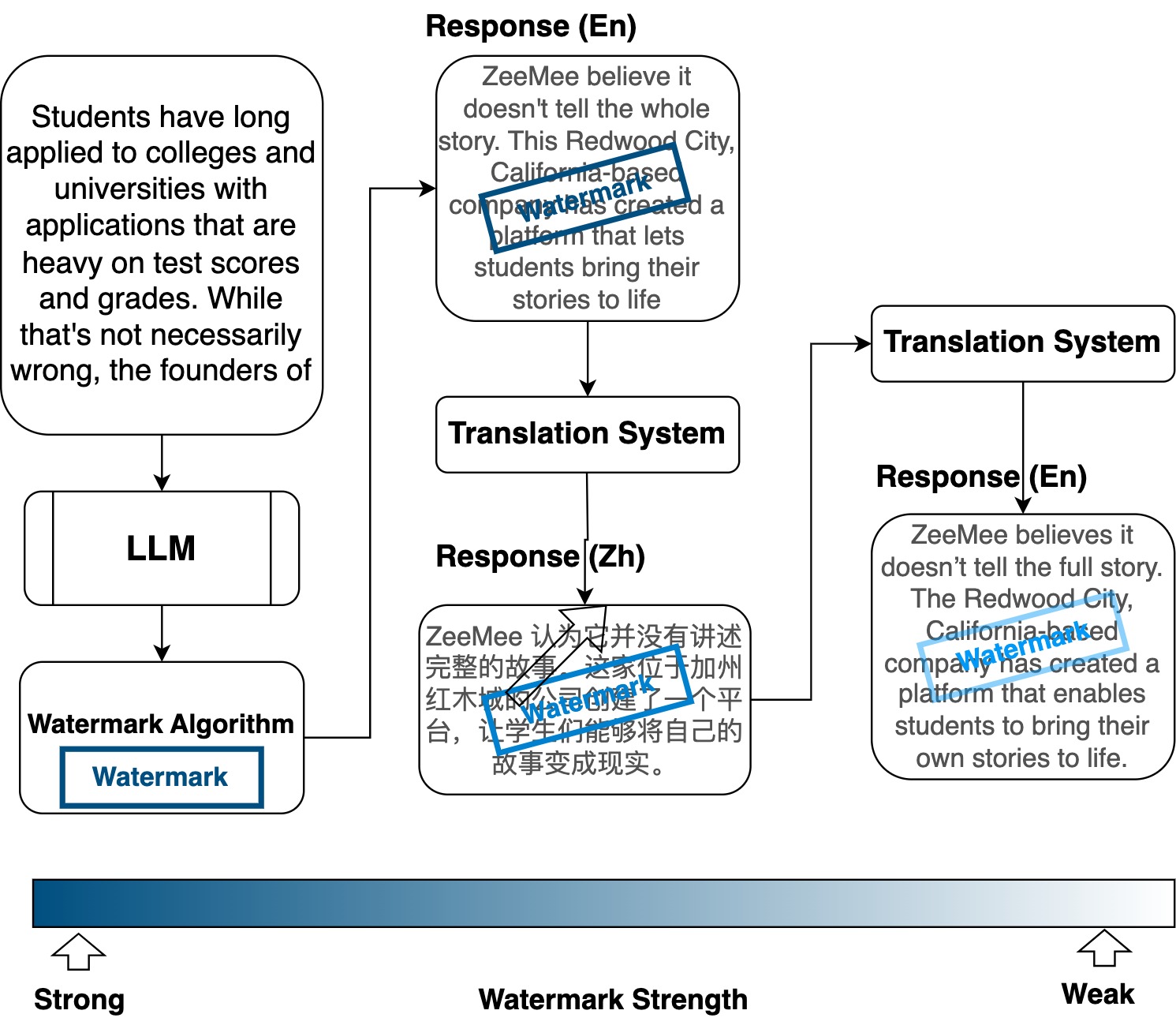

In this section, we introduce a novel watermarking method, SynGuard, which combines the Semantic Invariant Robust (SIR) watermarking algorithm [6] with the SynthID-Text tournament sampling mechanism [3].

### V-A Watermark Embedding

Watermarking algorithms embed watermarks by modifying logits during the token generation process. SynthID-Text achieves this by using the hash values of preceding tokens along with a secret key $k$ to generate pseudorandom numbers. These numbers are then used to guide the token sampling process. This design, based on pseudorandom functions and a fixed key, makes the watermark difficult to remove unless the attacker has access to both the key and the random seed.

However, if the entire text is regenerated by another language model, such as in the back-translation scenario, the watermark signal can be severely degraded. This vulnerability stems from the fact that SynthID-Text does not incorporate semantic understanding into its watermarking process. By contrast, the SIR algorithm [6] embeds watermark signals by mapping semantic features of preceding tokens to specific token preferences. This semantic-aware approach has demonstrated resilience to meaning-preserving transformations.

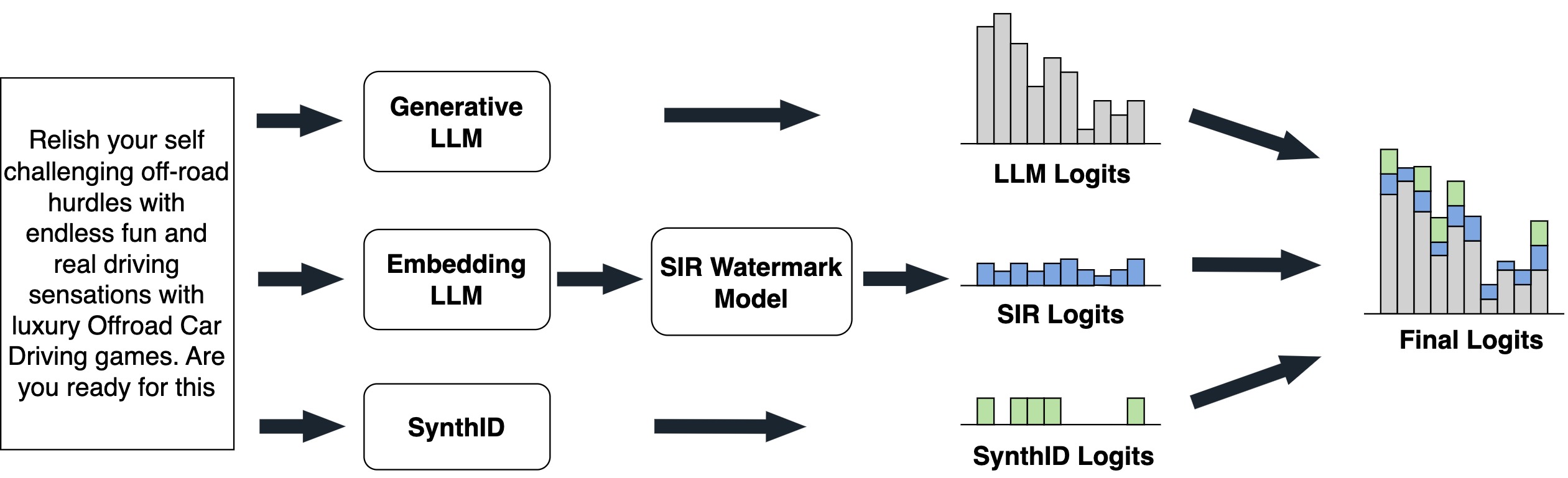

To enhance robustness against semantic perturbations, we propose a hybrid approach that integrates SynthID-Text with SIR. This new method, called SynGuard, generates three separate sets of logits at different stages and combines them to form the final logits vector. This vector is then passed through a softmax function to obtain a probability distribution over the vocabulary $V$ . The three component logits are:

- Base LLM logits: Generated directly from the backbone LLM, representing the standard token probabilities.

- SIR logits: Derived from a semantic watermarking model conditioned on the preceding text, encoding semantic consistency.

- SynthID logits: Computed using the pseudorandom watermarking mechanism based on hash values of tokens, a random seed and a secret key.

The overall embedding process is illustrated in Fig. 6, and the detailed procedure is described in Algorithm 1.

<details>

<summary>figures/new_algorithm_structure.jpg Details</summary>

### Visual Description

\n

## Diagram: System Architecture for Watermarking LLM Outputs

### Overview

The image depicts a system architecture diagram illustrating a method for watermarking outputs from Large Language Models (LLMs). The diagram shows three input sources (Generative LLM, Embedding LLM, and SynthID) feeding into a "SIR Watermark Model," which then produces outputs ("SIR Logits") that are combined with the original LLM outputs ("LLM Logits" and "SynthID Logits") to generate "Final Logits." A block of text is present on the left side of the diagram.

### Components/Axes

The diagram consists of the following components:

* **Input Sources:**

* Generative LLM

* Embedding LLM

* SynthID

* **Watermarking Component:** SIR Watermark Model

* **Output Components:**

* LLM Logits (represented as a bar chart)

* SIR Logits (represented as a bar chart)

* SynthID Logits (represented as a bar chart)

* Final Logits (represented as a bar chart)

* **Arrows:** Indicate the flow of data between components.

* **Text Block:** Located on the left side of the diagram.

There are no explicit axes or scales present in the bar charts. The charts are qualitative representations of logits.

### Detailed Analysis or Content Details

The diagram shows a flow of information from three sources into a central watermarking model.

* **Generative LLM** feeds into **LLM Logits**. The LLM Logits bar chart shows approximately 8 bars of varying heights. The heights are roughly between 0.2 and 0.8, with some bars being slightly higher. The bars are colored in shades of gray and light blue.

* **Embedding LLM** feeds into the **SIR Watermark Model**, which then outputs **SIR Logits**. The SIR Logits bar chart shows approximately 6 bars of varying heights. The heights are roughly between 0.1 and 0.5. The bars are colored in shades of blue.

* **SynthID** feeds into **SynthID Logits**. The SynthID Logits bar chart shows approximately 6 bars of varying heights. The heights are roughly between 0.1 and 0.6. The bars are colored in shades of gray.

* **Final Logits** are generated by combining **LLM Logits**, **SIR Logits**, and **SynthID Logits**. The Final Logits bar chart shows approximately 8 bars of varying heights. The heights are roughly between 0.2 and 0.9. The bars are colored in shades of gray and light blue.

The text block on the left reads:

"Relish your self challenging off-road hurdles with endless fun and real driving sensations with luxury Offroad Car Driving games. Are you ready for this"

### Key Observations

* The diagram illustrates a parallel processing architecture where outputs from different LLMs are combined through a watermarking process.

* The bar charts representing logits are qualitative and do not provide precise numerical values.

* The "SIR Watermark Model" appears to be the core component responsible for embedding the watermark.

* The final logits are a combination of the original LLM logits and the watermarked logits.

### Interpretation

The diagram demonstrates a system for embedding a watermark into the outputs of LLMs. The use of multiple input sources (Generative LLM, Embedding LLM, SynthID) suggests a robust watermarking scheme that leverages different aspects of the LLM's output. The SIR Watermark Model likely introduces a subtle but detectable signal into the logits, allowing for the identification of watermarked text. The combination of logits in the "Final Logits" stage indicates that the watermark is integrated into the LLM's output without significantly altering its overall characteristics. The text block appears to be an advertisement for off-road driving games and is likely unrelated to the watermarking process itself, serving as a placeholder or example text. The diagram suggests a method for tracing the origin of LLM-generated text, potentially to combat misinformation or plagiarism.

</details>

Figure 6: SynGuard watermark embedding.

Algorithm 1 Watermark Embedding of SynGuard

1: Language model $M$ , prompt $x^{\text{prompt}}$ , text $t=[t_{0},...,t_{T-1}]$ , embedding model $E$ , watermark model $W$ , semantic weight $\delta$ , tournament sampler $G$ , key $k$ , token $x$

2: Generate logits from $M$ : $P_{M}(x^{\text{prompt}},t_{:T-1})$ ;

3: Generate embedding $E_{:T-1}$ ;

4: Get SIR watermark logits $P_{W}(E_{:T-1})$ ;

5: Get SynthID-Text watermark logits $P_{G}(x^{\text{prompt}},k,x)$ ;

6: Compute:

| | $\displaystyle P_{\hat{M}}(x^{\text{prompt}},t_{:T-1})$ | $\displaystyle=P_{M}(x^{\text{prompt}},t_{:T-1})$ | |

| --- | --- | --- | --- |

7: Final watermarked logits $P_{\hat{M}}(t_{T})$

### V-B Watermark Extraction

SynGuard determines whether a given text is watermarked by evaluating both the semantic similarity to the preceding context and the statistical watermark signal encoded as $g$ -values. Intuitively, the more semantically aligned a token is with its context, and the higher its corresponding $g$ -value, the more probable it is that the text was generated by a watermarking algorithm.

Watermark Strength. The probability that a text contains a watermark is quantified by a composite score s. A higher s indicates a higher probability that the text is watermarked. Given a text $t=[t_{0},t_{1},\ldots,t_{T}]$ , we compute two components:

- Semantic similarity score: Let $P_{W}(x_{i},t_{:T-1})$ denote the semantic similarity between the token and the preceding generated text, computed using a pretrained semantic watermark model $W$ . The normalized semantic score is:

$$

s_{\text{semantic}}=\frac{1}{T}\sum\limits{}_{i=0}^{T}\left(P_{W}(x_{i},t_{:T-1})-0\right).

$$

- G-value score: Let $g_{l}$ represent the output of the $l_{th}$ SynthID-Text watermarking function for tokens. The average $g$ -value score is:

$$

s_{\text{g-value}}=\frac{1}{T*m}\sum_{i=0}^{T}\sum_{l=0}^{m}g_{l}(x_{i},t_{:T-1}).

$$

Since $s_{\text{semantic}}\in[-1,1]$ and $s_{\text{g-value}}\in[0,1]$ , we normalize $s_{\text{semantic}}$ to fall within the same range by applying a linear transformation. The final score $s$ is computed as:

$$

s=\delta\cdot\frac{s_{\text{semantic}}+1}{2}+(1-\delta)\cdot s_{\text{g-value}}. \tag{2}

$$

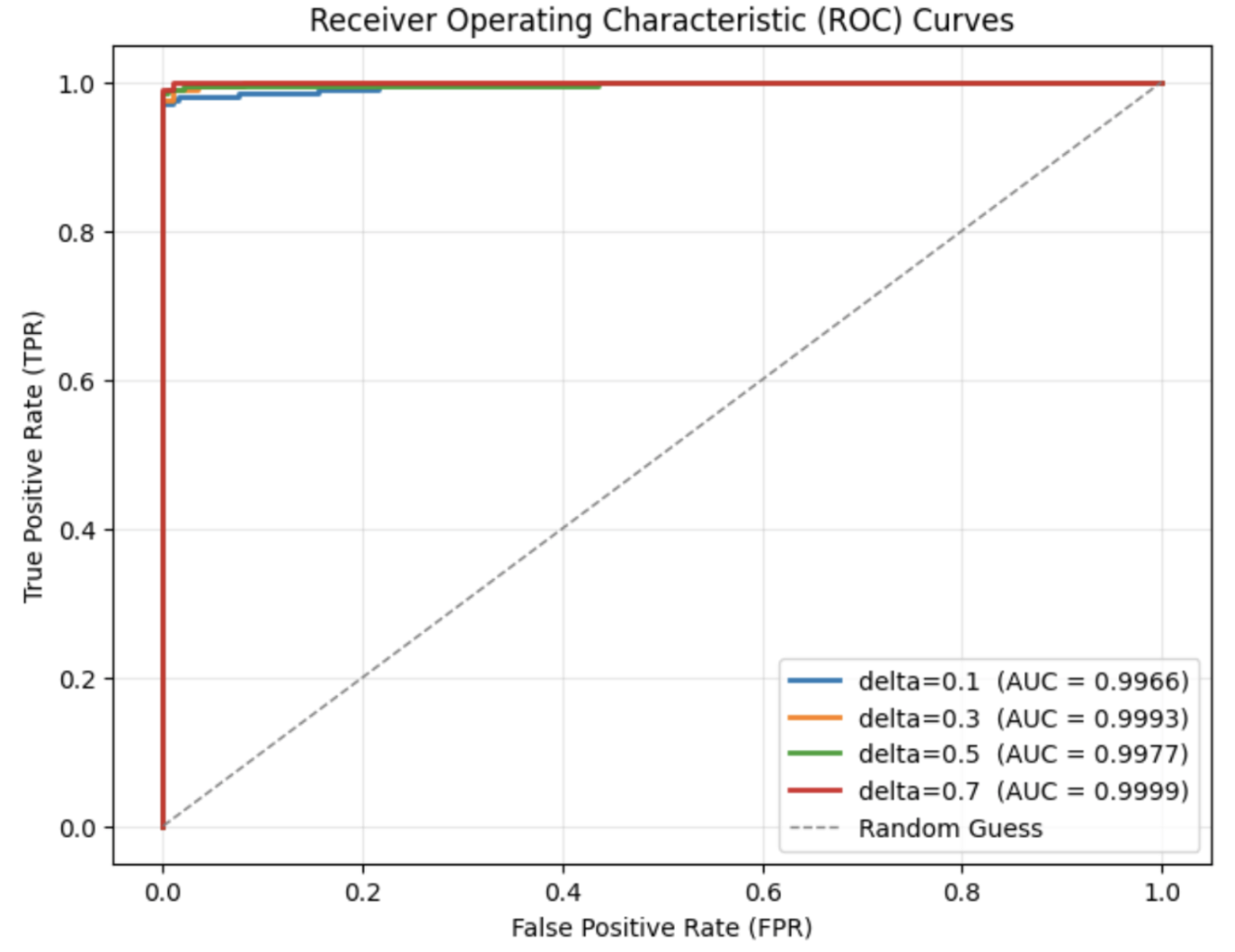

Here, $\delta\in[0,1]$ is a hyperparameter that controls the relative weighting between the semantic similarity signal and the token-level watermark signal. A larger $\delta$ places more emphasis on semantic alignment, while a smaller $\delta$ favors the token sampling randomness.

### V-C Robustness Analysis

To evaluate the robustness of SynGuard, we consider adversaries who attempt to remove or forge the watermark while preserving the underlying semantics. Our hybrid approach combines semantic-awareness from SIR and pseudorandom unpredictability from SynthID, offering both attack robustness and key-based security guarantees.

**Theorem 1**

*Let $t=[t_{0},t_{1},\ldots,t_{T}]$ be a watermarked text and $t^{\prime}$ be a meaning-preserving transformation of $t$ . Then, with high probability, the watermark detection score $s(t^{\prime})$ remains above detection threshold $\tau$ , i.e., the watermark is still detectable.*

* Proof*

The detection score $s$ is a weighted sum of two components: a semantic alignment score $s_{\text{semantic}}$ and a pseudorandom signature score $s_{\text{g-value}}$ . Because $t^{\prime}$ preserves the meaning of $t$ , the contextual embeddings of $t^{\prime}$ remain close to those of $t$ . Let $E(t_{:i})$ denote the semantic embedding of the prefix up to token $t_{i}$ . Since $t^{\prime}$ has nearly the same context at each position in a semantic sense, we have $\|E(t_{:i})-E(t^{\prime}_{:i})\|$ small for all $i$ . The semantic watermark model $W$ is assumed to be Lipschitz continuous [6]:

$$

|P_{W}(E(t_{:i}))-P_{W}(E(t^{\prime}_{:i}))|\leq L\cdot\|E(t_{:i})-E(t^{\prime}_{:i})\|,

$$

where $L>0$ denotes the Lipschitz constant. In other words, the watermark bias for the next token does not drastically change under a semantically invariant perturbation. Consequently, for each token position $i$ , the semantic preference $P_{W}(x_{i},t_{:i-1})$ assigned by $W$ to the actual token $x_{i}$ in $t^{\prime}$ will be close to the value it was for $t$ . If $t$ was watermarked, most tokens had high semantic preference values (the watermark favored those choices); $t^{\prime}$ , using synonymous or rephrased tokens, will on average still yield high $P_{W}$ values for each token, since the tokens remain well-aligned with a similar context. Thus, for each token $x^{\prime}_{i}$ in $t^{\prime}$ , we get

$$

s^{\prime}_{\text{semantic}}=\frac{1}{T}\sum\limits{}_{i=0}^{T}\left(P_{W}(x^{\prime}_{i},t^{\prime}_{:i-1})-0\right)\approx s_{\text{semantic}}-\varepsilon,

$$

for some small $\varepsilon$ . The SynthID component uses a secret key $k$ to generate pseudorandom preferences. Without $k$ , $s^{\prime}_{\text{g-value}}\approx 0.5$ . In the original watermarked $t$ , tokens are biased toward higher $g$ -values. Hence, under semantic-preserving transformation, the g-value component drops to 0.5, but $s_{\text{semantic}}$ remains high. Therefore, the overall score: $s(t^{\prime})=\delta\cdot\frac{s^{\prime}_{\text{semantic}}+1}{2}+(1-\delta)\cdot s^{\prime}_{\text{g-value}}$ is still above threshold if $\delta$ is reasonably large. In conclusion, the watermark remains detectable in $t^{\prime}$ . ∎

**Theorem 2**

*Let $k$ be the watermark key for SynGuard. For any text $u$ not generated by the watermarking algorithm, the probability that $s(u)>\tau$ is exponentially small in $T$ .*

* Proof*

The robustness stems from the pseudorandom behavior of the SynthID component, which introduces a hidden bias into token selection based on a watermark key $k$ . The watermarking model adds a preference signal $g_{k}(x_{i},t_{:T-1})\in[0,1]$ for candidate tokens, and combines it with the semantic alignment score $P_{W}$ . The detector computes a combined score:

| | $\displaystyle s=\frac{\delta}{T}\sum_{i=1}^{T}\frac{P_{W}(x_{i},t_{:T-1})+1}{2}+\frac{(1-\delta)}{T}\sum_{i=1}^{T}g_{k}(x_{i},t_{:T-1}).$ | |

| --- | --- | --- | Now consider an attacker attempting to generate a fake watermarked text without access to $k$ :

- Since $g_{k}$ is keyed and pseudorandom, its outputs are statistically independent of the attacker’s choices.

- Therefore, the second term in $s$ , the SynthID component, behaves like uniform noise with expected value $\approx 0.5$ and variance $O(1/T)$ .

- The first term (semantic preference) is not optimized in the attacker’s text either, since only the original watermarker uses $P_{W}$ for guidance.

- Hence, the attacker’s overall score $s_{\text{fake}}\approx 0.5$ , with small deviations bounded by concentration inequalities. Let $Y_{i}=\frac{P_{W}(x_{i},t_{:i-1})+1}{2}$ and $Z_{i}=g_{k}(x_{i},t_{:i-1})$ , both taking values in $[0,1]$ . Define $X_{i}:=\delta Y_{i}+(1-\delta)Z_{i}$ , so $X_{i}\in[0,1]$ . Since $g_{k}$ is pseudorandom with no attacker control, and $P_{W}$ is optimized only during watermark generation, their expected values over attacker-generated text are both approximately $0.5$ . Hence $\mathbb{E}[X_{i}]=0.5$ . With $\mathbb{E}[X_{i}]=0.5$ , and $X_{1},\dots,X_{T}$ are i.i.d., Hoeffding’s inequality gives:

$$

\Pr(s>\tau)=\Pr\left(\frac{1}{T}\sum_{i=1}^{T}X_{i}>\tau\right)\leq e^{-2T(\tau-0.5)^{2}}.

$$ This shows that for any non-watermarked text $u$ , the probability of it being misclassified as watermarked (i.e., $s(u)>\tau$ ) decays exponentially with length $T$ . Meanwhile, a genuine watermarked text has both components biased upward (semantic tokens aligned and token scores chosen with positive $g_{k}$ bias), yielding $s_{\text{true}}>\tau$ , where $\tau\in(0.6,0.9)$ is the detection threshold. Therefore, false positives (attacker’s text exceeding threshold) are exponentially rare as $T$ increases. Likewise, removal attempts (via editing tokens) cannot reduce the score below threshold unless semantic meaning is also damaged. ∎

## VI Experimental Evaluation

This section presents the experimental settings, evaluation metrics, and results of SynGuard compared to the baselines.

### VI-A Experimental Setup

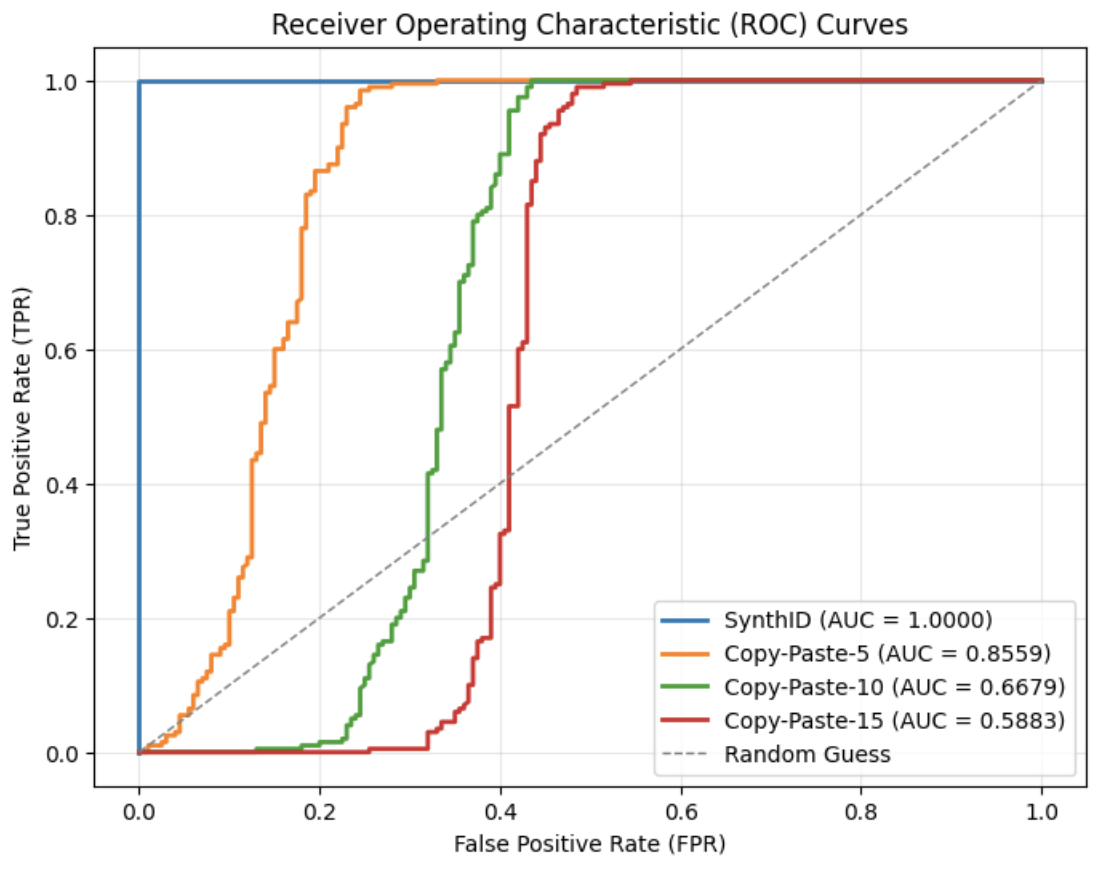

Backbone Model and Dataset. All experiments were conducted using Sheared-LLaMA-1.3B [21], a model further pre-trained from meta-llama/Llama-2-7b-hf https://huggingface.co/meta-llama/Llama-2-7b-hf and opt-1.3B https://huggingface.co/facebook/opt-1.3b from Meta. These models used are publicly available via HuggingFace. For the dataset, we adopt the Colossal Clean Crawled Corpus (C4) [22], which includes diverse, high-quality web text. Each C4 sample is split into two segments: the first segment serves as the prompt for generation, while the second (human-written) segment is used as reference text. The quality of the generated text is assessed using Perplexity (PPL) scores, which reflect how fluent and natural the output text is. These unaltered human texts are treated as control data for evaluating the watermark detector’s false positive rate.

Evaluation Metrics. The robustness is evaluated using the following metrics: True Positive Rate (TPR), False Positive Rate (FPR), F1 Score, and ROC-AUC. Each experiment was conducted using 200 watermarked and 200 unwatermarked samples, each with a fixed length of $T=200$ tokens, as same as the default setting of [23, 5]. All experiments were implemented using the MarkLLM toolkit [23].

### VI-B Main Results

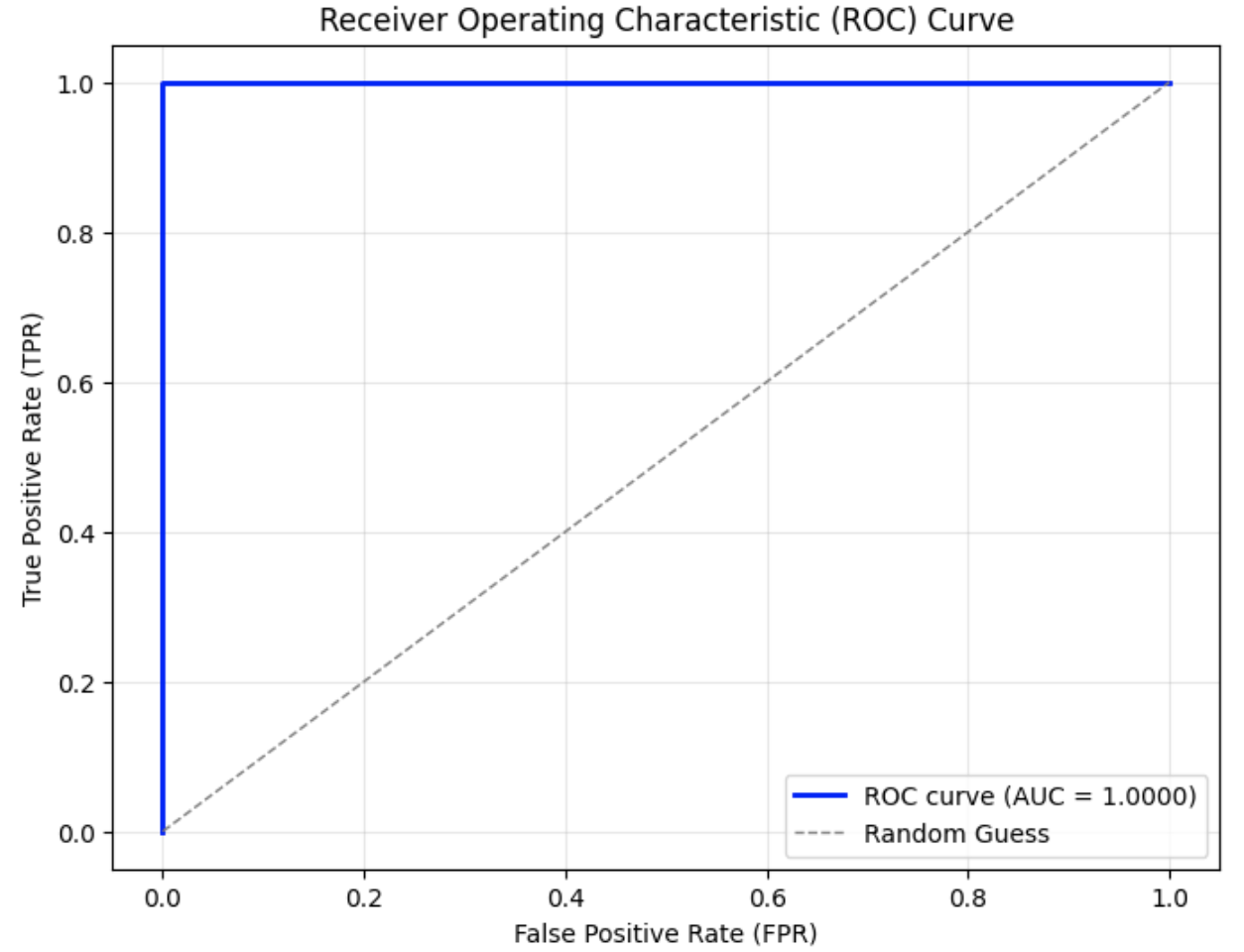

This section uses the F1 score to demonstrate the detection accuracy of SynGuard, and compares it to the baseline methods, SIR and SynthID-Text. The naturalness of the output texts generated by these three algorithms is also evaluated to assess their textual quality.

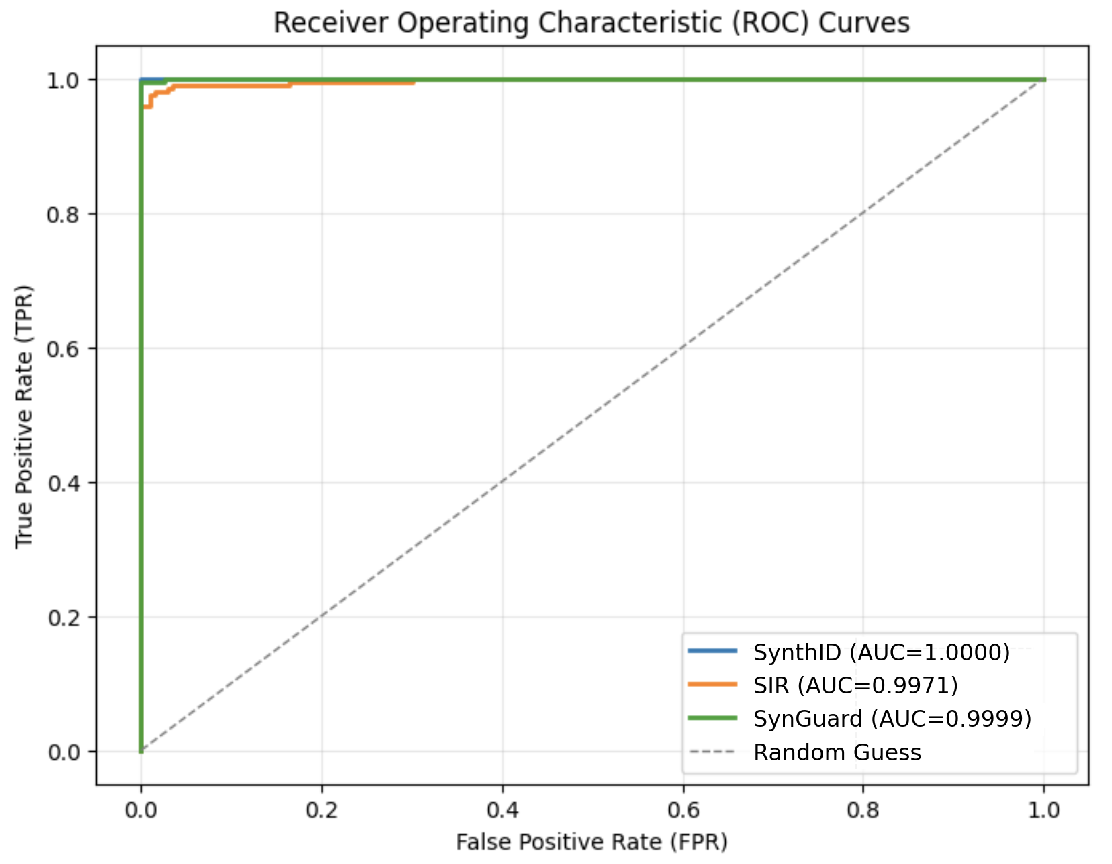

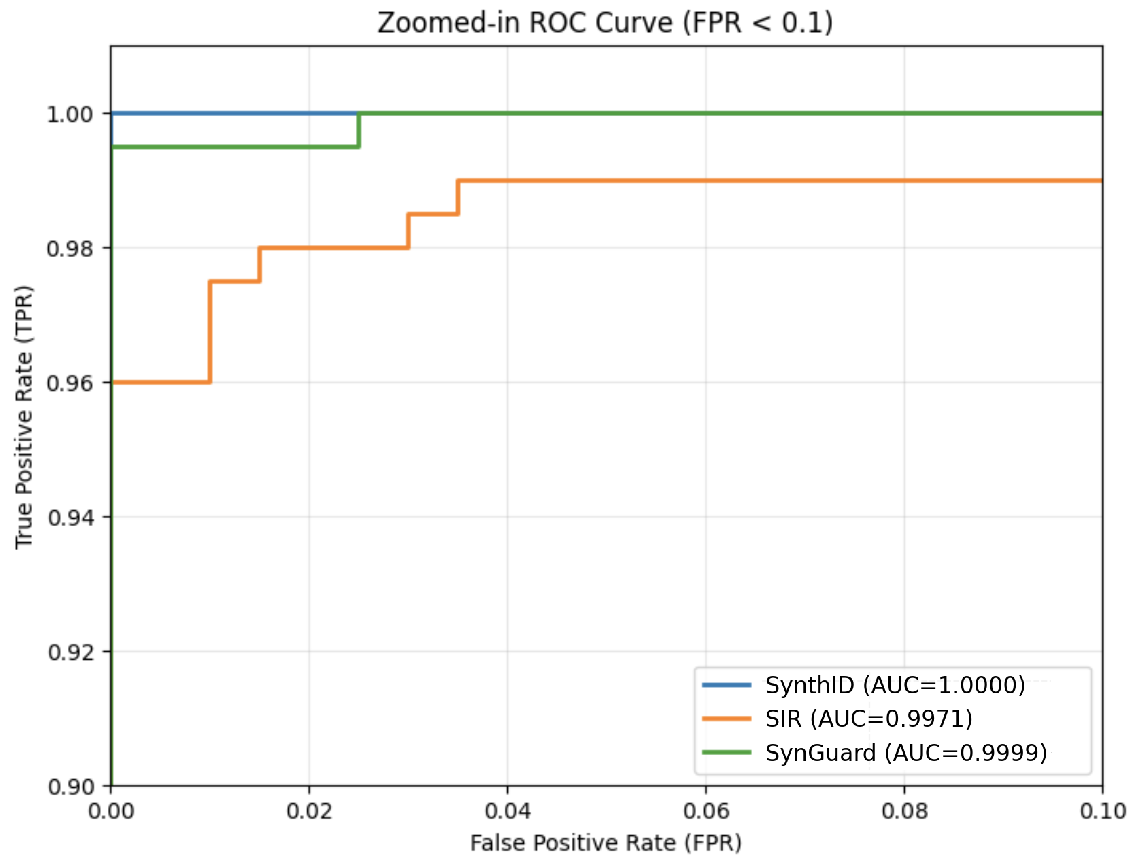

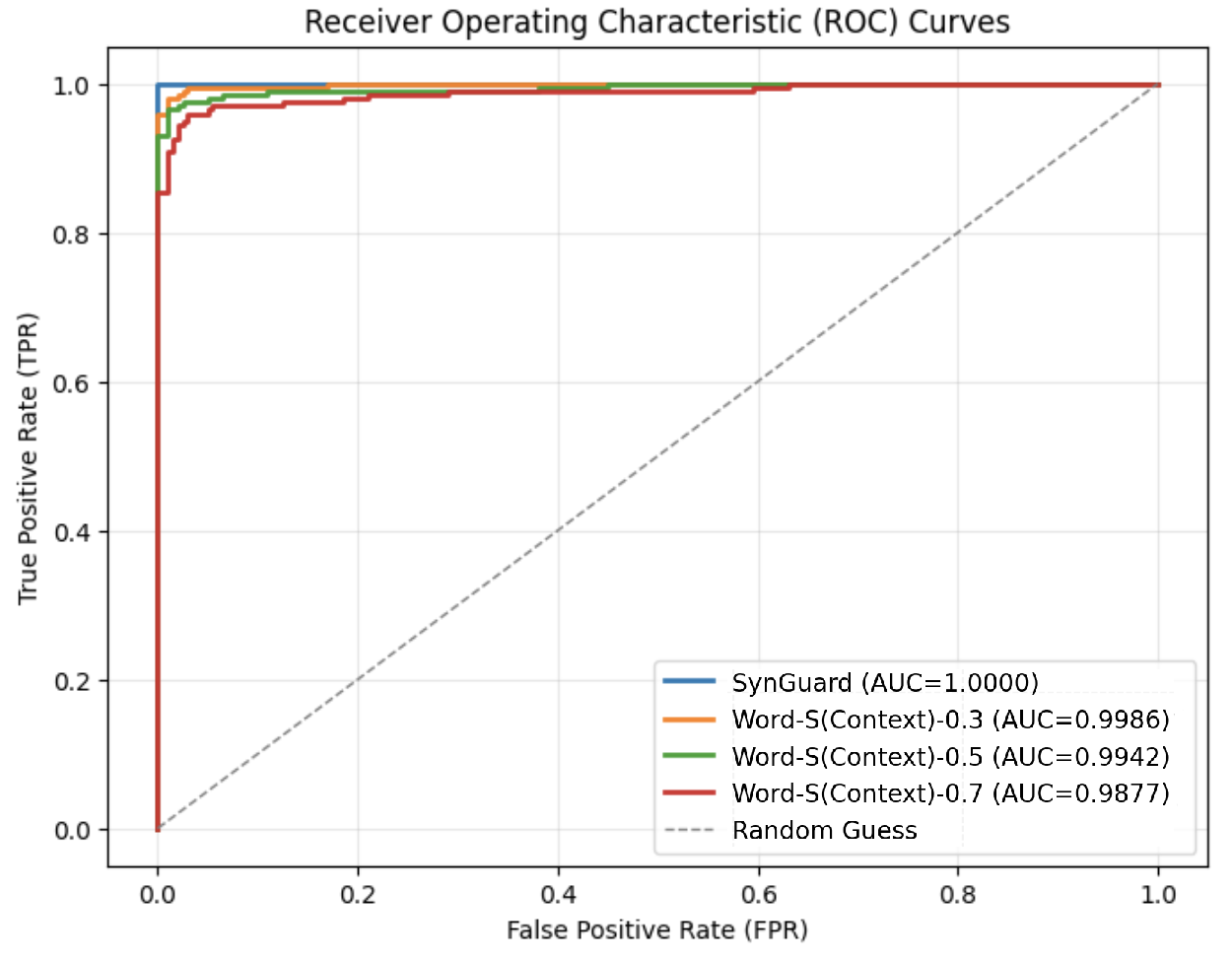

Detection Accuracy and ROC Curves. Fig. 7 (a) illustrates that all three algorithms achieve high detection accuracy, with AUC values above 0.9. From Fig. 7 (b), it is evident that SynthID-Text achieves the highest detection accuracy of 1.00. SIR yields the lowest detection accuracy at 0.9971, exhibiting a noticeable gap compared to SynthID-Text. The detection accuracy of SynGuard is slightly lower than SynthID-Text by only 0.0001, but higher than that of SIR.

<details>

<summary>figures/roc_curves_of_SynthID_SIR_SIR-SynthID.png Details</summary>

### Visual Description

\n

## Chart: Receiver Operating Characteristic (ROC) Curves

### Overview

The image displays Receiver Operating Characteristic (ROC) curves for three different models: SynthID, SIR, and SynGuard, along with a diagonal line representing a random guess. The chart assesses the performance of these models in distinguishing between two classes (positive and negative) as their discrimination threshold is varied.

### Components/Axes

* **Title:** Receiver Operating Characteristic (ROC) Curves

* **X-axis:** False Positive Rate (FPR) - Scale ranges from 0.0 to 1.0.

* **Y-axis:** True Positive Rate (TPR) - Scale ranges from 0.0 to 1.0.

* **Legend:** Located in the bottom-right corner.

* SynthID (Blue) - AUC = 1.0000

* SIR (Orange) - AUC = 0.9971

* SynGuard (Green) - AUC = 0.9999

* Random Guess (Gray dashed line)

* **Gridlines:** Present to aid in reading values.

### Detailed Analysis

The chart shows three curves representing the performance of the three models.

* **SynthID (Blue):** The curve starts at approximately (0.0, 1.0) and remains close to TPR = 1.0 across the entire FPR range. This indicates excellent performance. The Area Under the Curve (AUC) is reported as 1.0000.

* **SIR (Orange):** The curve starts at approximately (0.0, 1.0) and descends gradually as FPR increases. It remains above the random guess line. The AUC is reported as 0.9971.

* **SynGuard (Green):** The curve starts at approximately (0.0, 1.0) and descends gradually as FPR increases. It remains above the random guess line. The AUC is reported as 0.9999.

* **Random Guess (Gray dashed line):** This is a diagonal line from (0.0, 0.0) to (1.0, 1.0). It represents the performance of a classifier that randomly guesses the class label.

### Key Observations

* All three models (SynthID, SIR, and SynGuard) significantly outperform the random guess line.

* SynthID has the highest AUC (1.0000), indicating perfect discrimination.

* SynGuard has a very high AUC (0.9999), nearly as good as SynthID.

* SIR has a slightly lower AUC (0.9971) but still demonstrates excellent performance.

* The curves for all three models are very close to the top-left corner of the chart, indicating high TPR and low FPR.

### Interpretation

The ROC curves demonstrate that all three models are highly effective at distinguishing between the two classes. SynthID appears to be the best performing model, achieving perfect discrimination (AUC = 1.0). SynGuard is a very close second, and SIR also exhibits excellent performance. The high AUC values for all models suggest they are reliable and can be used with confidence. The fact that all curves are well above the random guess line confirms that the models are significantly better than chance at making predictions. The curves' proximity to the top-left corner indicates that the models can achieve high true positive rates with low false positive rates. This is desirable in many applications where minimizing false positives is crucial.

</details>

(a) ROC curves of three algorithms.

<details>

<summary>figures/zoom-in_rocs_for_3_algorithms.png Details</summary>

### Visual Description

\n

## Chart: Zoomed-in ROC Curve (FPR < 0.1)

### Overview

The image presents a Receiver Operating Characteristic (ROC) curve, specifically a zoomed-in view focusing on False Positive Rates (FPR) less than 0.1. The chart compares the performance of three different models: SynthID, SIR, and SynGuard, based on their True Positive Rate (TPR) versus FPR. The Area Under the Curve (AUC) is provided for each model.

### Components/Axes

* **Title:** "Zoomed-in ROC Curve (FPR < 0.1)" - positioned at the top-center.

* **X-axis:** "False Positive Rate (FPR)" - ranging from 0.00 to 0.10, with markers at 0.00, 0.02, 0.04, 0.06, 0.08, and 0.10.

* **Y-axis:** "True Positive Rate (TPR)" - ranging from 0.90 to 1.00, with markers at 0.90, 0.92, 0.94, 0.96, 0.98, and 1.00.

* **Legend:** Located in the bottom-right corner. It identifies the three data series:

* SynthID (Blue) - AUC = 1.0000

* SIR (Orange) - AUC = 0.9971

* SynGuard (Green) - AUC = 0.9999

### Detailed Analysis

* **SynthID (Blue):** The line starts at approximately (0.00, 0.96), rises sharply to (0.02, 0.98), remains at 1.00 from approximately (0.02, 1.00) to (0.10, 1.00).

* **SIR (Orange):** The line begins at approximately (0.00, 0.98), dips slightly to around (0.02, 0.97), then rises to approximately (0.04, 0.98), fluctuates between 0.98 and 0.99, and reaches approximately (0.10, 0.99).

* **SynGuard (Green):** The line is nearly flat, starting at approximately (0.00, 1.00) and remaining at 1.00 throughout the entire range of FPR values (0.00 to 0.10).

### Key Observations

* SynthID exhibits the highest performance, maintaining a TPR of 1.00 across the entire FPR range.

* SynGuard also demonstrates very high performance, with a TPR consistently at 1.00.

* SIR shows slightly lower performance, with some fluctuations in TPR, but still remains above 0.97.

* All three models perform exceptionally well within the FPR < 0.1 range.

### Interpretation

The ROC curve demonstrates the ability of each model to distinguish between positive and negative cases. A higher AUC indicates better performance. SynthID has a perfect AUC of 1.0000, suggesting it perfectly separates the positive and negative classes within the observed FPR range. SynGuard and SIR also have very high AUCs (0.9999 and 0.9971 respectively), indicating excellent performance. The zoomed-in view highlights the performance of these models when the risk of false positives is relatively low (FPR < 0.1). The nearly flat line for SynGuard suggests it consistently achieves a high TPR even with a very low FPR. The slight fluctuations in the SIR curve indicate a minor trade-off between TPR and FPR. Overall, the data suggests that all three models are highly effective at the task they are designed for, with SynthID exhibiting the best performance in this specific FPR range.

</details>

(b) Zoom-in ROC curves for the three algorithms.

Figure 7: Comparison and zoomed-in view of ROC curves for three watermarking algorithms: SynthID-Text, SIR, and SynGuard.

TABLE VI: Detection accuracy of SynthID-Text, SIR, and SynGuard.

| Algorithm | TPR | FPR | F1 with best threshold | Running Time(s/it) |

| --- | --- | --- | --- | --- |

| SynthID-Text | 1.0 | 0.0 | 1.0 | 6.09 |

| SIR | 0.98 | 0.015 | 0.9825 | 12.50 |

| SynGuard | 0.995 | 0.0 | 0.9975 | 12.93 |