# DeepTRACE: Auditing Deep Research AI Systems for Tracking Reliability Across Citations and Evidence

**Authors**:

- Pranav Narayanan Venkit (Salesforce AI Research)

- &Philippe Laban (Microsoft Research)

- &Yilun Zhou (Salesforce AI Research)

- &Kung-Hsiang Huang (Salesforce AI Research)

- \ANDYixin Mao (Salesforce AI Research)

- &Chien-Sheng Wu (Salesforce AI Research)

Abstract

Generative search engines and deep research LLM agents promise trustworthy, source-grounded synthesis, yet users regularly encounter overconfidence, weak sourcing, and confusing citation practices. We introduce DeepTRACE, a novel sociotechnically grounded audit framework that turns prior community-identified failure cases into eight measurable dimensions spanning answer text, sources, and citations. DeepTRACE uses statement-level analysis (decomposition, confidence scoring) and builds citation and factual-support matrices to audit how systems reason with and attribute evidence end-to-end. Using automated extraction pipelines for popular public models (e.g., GPT-4.5/5, You.com, Perplexity, Copilot/Bing, Gemini) and an LLM-judge with validated agreement to human raters, we evaluate both web-search engines and deep-research configurations. Our findings show that generative search engines and deep research agents frequently produce one-sided, highly confident responses on debate queries and include large fractions of statements unsupported by their own listed sources. Deep-research configurations reduce overconfidence and can attain high citation thoroughness, but they remain highly one-sided on debate queries and still exhibit large fractions of unsupported statements, with citation accuracy ranging from 40–80% across systems.

1 Introduction

Large langauge models (LLMs) have recently become part of daily life for many, with the models offering AI-based conversational assistance to hundreds of millions of users with informational retrieval and text generation features (Ferrara, 2024; Pulapaka et al., 2024). In doing so, such systems have graduated from purely research-based systems that were used from a technical standpoint to public sociotechnical tools (Cooper & Foster, 1971) that now impact both technical and social elements.

With the current text generation models growing capabilities, these systems are evolving from serving purely generative operations to functioning as “Generative Search Engines’ capable of synthesizing information retrieved from external sources. These systems are now designed to autonomously conduct in-depth research on complex topics by exploring the web, synthesizing information, and generating comprehensive reports with citations. These systems are therefore now dubbed a generative search engine (GSE) or a deep research agents (DR). A generative search engine summarizes and presents retrieved information, whereas a deep research agent executes in multi-step reasoning to derive insights resulting in a of a long-form report. These deep research agents first retrieve relevant

<details>

<summary>Images/icons/sources_color.png Details</summary>

### Visual Description

## Icon: File Folder with Documents

### Overview

The image is a simple icon representing a file folder containing documents. The icon is rendered in a single color, a shade of green, against a light gray background. The style is minimalist, using clean lines to depict the folder and papers.

### Components/Axes

* **Folder:** A rectangular shape with a notch cut out on the top edge, representing a file folder. A smaller rectangle is present near the bottom, likely representing a label area.

* **Documents:** Three rectangular shapes of varying sizes are stacked behind the folder, representing documents or papers. The topmost document has three horizontal lines, indicating text.

* **Color:** The entire icon is a shade of green.

### Detailed Analysis

* The folder is positioned in the foreground, with the documents behind it.

* The documents are slightly offset from each other, creating a sense of depth.

* The horizontal lines on the topmost document suggest that it contains text.

* The label area on the folder is blank.

### Key Observations

* The icon is simple and easily recognizable.

* The use of a single color gives it a clean and modern look.

* The icon effectively conveys the concept of a file folder containing documents.

### Interpretation

The icon is likely used to represent files, documents, or data storage in a digital interface or application. Its simplicity and clarity make it suitable for a wide range of uses. The absence of specific details allows it to be interpreted broadly, representing any type of document or file.

</details>

source documents that likely contain answer elements to the user’s questions or request, using a retrieval system (which can be a traditional search engine). The model then composes a textual prompt that contains the user’s query, and the retrieved sources, and instructs an LLM to generate a long and self-contained

<details>

<summary>Images/icons/answer_text_color.png Details</summary>

### Visual Description

## Icon: Text Selection

### Overview

The image is an icon representing text selection. It features a large, stylized letter "T" enclosed within a selection box. The icon is rendered in a solid pink color against a light gray background.

### Components/Axes

* **Letter:** A large, pink letter "T" dominates the center of the image.

* **Selection Box:** A pink selection box surrounds the letter "T". The box is formed by four corner brackets, one in each corner of the image.

* **Background:** The background is a light gray color.

### Detailed Analysis

* The letter "T" is a sans-serif font with a bold appearance.

* The selection box is formed by four corner brackets, each with rounded corners.

* The pink color is consistent throughout the letter "T" and the selection box.

* The light gray background provides contrast to the pink elements.

### Key Observations

* The icon is simple and easily recognizable.

* The use of color is effective in highlighting the key elements.

* The icon is likely used to represent text selection or text editing functionality.

### Interpretation

The icon is a visual representation of text selection. The letter "T" represents text, and the selection box indicates that the text is selected. The icon is likely used in software applications or websites to indicate that the user can select text. The simplicity of the design makes it easily recognizable and understandable.

</details>

answer based on the users preference and content of the sources. Importantly,

<details>

<summary>Images/icons/citation_color.png Details</summary>

### Visual Description

## Icon: Square Brackets

### Overview

The image is an icon representing a pair of square brackets. The brackets are depicted in a light blue color with rounded corners. The background is a light gray.

### Components/Axes

* **Brackets:** Two square brackets, one on the left and one on the right.

* **Color:** Light blue for the brackets, light gray for the background.

* **Shape:** The brackets have rounded corners.

### Detailed Analysis or ### Content Details

The icon consists of two square brackets, each formed by a light blue line. The left bracket is positioned on the left side of the image, and the right bracket is positioned on the right side. The brackets are mirror images of each other. The space between the brackets is filled with the light gray background color. The line thickness of the brackets is consistent throughout.

### Key Observations

* The icon is simple and easily recognizable.

* The use of rounded corners gives the icon a softer appearance.

* The color scheme is muted and unobtrusive.

### Interpretation

The icon likely represents a function or operation that involves enclosing something within brackets, such as in mathematical notation, programming syntax, or text formatting. The simplicity of the design makes it versatile for use in various applications.

</details>

citations are inserted into the answer, with each citation linking to the sources that support each statement within the answer. This citation-enriched answer is provided to the user in a

<details>

<summary>Images/icons/interface_color.png Details</summary>

### Visual Description

## Icon: Profile Page

### Overview

The image is a purple icon representing a profile page or user interface. It depicts a simplified browser window containing elements suggestive of a user profile.

### Components/Axes

* **Color:** Purple

* **Background:** Light gray

* **Browser Window:** A rounded rectangle representing a browser window.

* **Top-Left:** A small circle, likely representing a close button.

* **Top:** A short rectangle, likely representing a URL bar.

* **Left:** A circle containing a crescent shape, likely representing a user profile picture.

* **Below Profile Picture:** A short rectangle, likely representing a user name or title.

* **Right:** Three horizontal rectangles of varying lengths, likely representing content or information sections.

### Detailed Analysis

The icon is a simplified representation of a profile page within a browser window. The elements are arranged to suggest a typical user interface layout.

* The circle in the top-left corner mimics a close button on a browser window.

* The short rectangle at the top represents the URL bar.

* The circle on the left with the crescent shape inside represents a profile picture.

* The short rectangle below the profile picture represents a user name or title.

* The three horizontal rectangles on the right represent content or information sections.

### Key Observations

The icon is clean and simple, using basic shapes to convey the idea of a profile page. The use of purple gives it a modern and professional look.

### Interpretation

The icon is likely used to represent a user profile, account settings, or a similar concept in a digital interface. The design is clear and easily recognizable, making it effective for its intended purpose. The icon is designed to be easily understood and associated with user profiles or account information.

</details>

user interface with a click on a citation allowing the user to navigate to the source or sources that support any statement. These systems, therefore, are intended to go beyond simple search and text generation to provide detailed analysis and structured outputs, often resembling human-written research papers.

In essence, the GSE and deep research pipeline promise a streamlining of a user’s information-seeking journey (Shah & Bender, 2024). The deep research agents are sold with the premise of concisely summarize the information the user is looking for, and sources remain within a click in case the user desires to deepen their understanding or verify the information’s veracity. Recently, several free deep research agents have become popular such as Perplexity.ai and You Chat, with some reporting millions of daily searches performed by their users (Narayanan Venkit et al., 2025).

Despite their advertised promise, deep research pipelines built on LLMs suffer from several critical limitations across their constituent components. First, LLMs are prone to hallucination and struggle to identify factual fallacies even when provided with authoritative sources (Venkit et al., 2024; Huang et al., 2023). Second, research has shown that the retrieval component of the models often fails to produce accurate citations within their responses (Liu et al., 2023), sometimes attributing claims to irrelevant or non-existent sources. Third, LLMs encode knowledge in their internal weights during pretraining, making it difficult to ensure that generated outputs rely solely on the user-provided documents or retrieved documents (Kaur et al., 2024). Finally, these systems can exhibit sycophantic behavior whereby they favor agreement with the user’s implied perspective over adherence to objective facts (Sharma et al., 2024; Laban et al., 2023b). These limitations have real implications for the quality, reliability, and trustworthiness of DR agents.

Yet, there remains a significant gap to evaluate and audit these models as a whole. Existing benchmarks largely focus on isolated components, such as the retrieval or summarization stages of Retrieval-Augmented Generation, with limited attention to how well systems ground responses in retrieved sources, generate citations, or manage uncertainty. To effectively address this gap, we build on the findings of Narayanan Venkit et al. (2025) and Sharma et al. (2024), who conducted an audit-focused usability study of deep research agents. The study participants identified 16 common failure cases and proposed actionable design recommendations grounded in real-world use. In this work, we extend that foundation by transforming those usercentric insights into an automated evaluation benchmark. Our goal is to provide a systematic framework for auditing the end-to-end performance of deep research agents, capturing what these systems generate and how they reason, cite, and interact with knowledge in context. Our DeepTrace framework adopts a community-centered approach by focusing on the failure cases identified through community-driven evaluation, enabling benchmarking of models on real-world, practitioner-relevant weaknesses.

Our evaluation shows three findings that hold across GSEs and deep-research agents. First, public GSEs frequently produce one-sided and overconfident responses to debate-style queries. In our corpus, we observe high rates of one-sidedness and very confident language, indicating a tendency to present charged prompts as settled facts. Second, despite retrieval and citation, a large share of generated statements remains unsupported by the systems’ own sources, and citation practice is uneven. Third, systems that list many links often leave them uncited, creating a false impression of validation. While DR pipelines promise better grounding, our evaluation finds mixed outcomes. DR systems lowers overconfidence relative to GSE modes and increase citation thoroughness for some models, yet they are still one-sided for a majority of debate queries (e.g., GPT-5(DR) 54.7%; YouChat(DR) 63.1%; Copilot(DR) 94.8%). Additionally, unsupported statement rates remain high for several DR engines (YouChat(DR) 74.6%; PPLX(DR) 97.5%) and citation accuracy is well below perfect (40–80%). Listing more sources does not guarantee better grounding, leaving users to experience search fatigue. Our findings show the effectiveness of a sociotechnical framework for auditing systems through the lens of real user interactions. At the same time, they highlight that search-based AI systems require substantial progress to ensure safety and effectiveness, while mitigating risks such as echo chamber formation and the erosion of user autonomy in search.

2 Related Works

2.1 Evolution of Deep Research Systems

LLMs are increasingly embedded in sociotechnical settings that shape how people access and interact with information (Züger & Asghari, 2023; Narayanan Venkit, 2023). As these models transition from only research-based demonstrations to public-facing tools, their impact extends beyond technical performance into social, epistemic, and political domains (Dolata et al., 2022; Cooper & Foster, 1971). This shift has catalyzed the development of what are increasingly called generative search engines or deep research agents defined as a class of LLM-based systems that integrate information retrieval, summarization, and generation in response to complex user queries.

Unlike traditional RAG systems (Lewis et al., 2020; Izacard & Grave, 2021), which operate on static pipelines, deep research agents emphasize dynamic, iterative workflows. As defined by Huang et al. (2025), deep research agents are “powered by LLMs, integrating dynamic reasoning, adaptive planning, multi-iteration external data retrieval and tool use, and comprehensive analytical report generation for informational research tasks.” This framing situates such systems as more than just passive tools, they are positioned as active collaborators in knowledge production. These systems are designed to handle open-ended, multi-hop, and real-time queries by combining LLMs with external tools for search, planning, and reasoning (Nakano et al., 2021; Yao et al., 2023).

Recent research has explored architectures and frameworks that enhance the capabilities of deep research agents. For example, the MindMap Agent (Wu et al., 2025) constructs knowledge graphs to track logical relationships among retrieved content, enabling more coherent and deductive reasoning on tasks such as PhD-level exam questions. The MLGym framework (Nathani et al., 2025) demonstrates how LLM-based agents can simulate research workflows, including hypothesis generation, experimental design, and model evaluation. Similarly, DeepResearcher (Zheng et al., 2025) employs reinforcement learning with human feedback to train agents in web-based environments, improving both factuality and relevance of the final output in information-seeking tasks. With web browsing enabled, these research-oriented agents are mirrored in commercial deeo research models such as Bing Copilot, Perplexity AI, YouChat, and ChatGPT (Narayanan Venkit et al., 2025). These systems advertise real-time retrieval, citation generation, and structured synthesis of sources.

2.2 Beyond a Positivism and Technical Lens of Evaluation

A GSE and deep research agents gain traction in the NLP and AI communities, there has been a growing interest in evaluating their performance (Jeong et al., 2024; Wu et al., 2024; Es et al., 2023; Zhu et al., 2024). However, existing frameworks and benchmarks have largely maintained a technocentric orientation prioritizing model-centric metrics while underexploring the social and human-centered consequences of deploying these systems at scale. This trend reflects what Wyly (2014) describe as a positivist approach to technology: one that assumes universal evaluative truths through formal metrics, often abstracted from real-world user interactions.

Among the most prominent efforts is RAGAS (Es et al., 2023; 2024), which assesses answer quality through metrics such as faithfulness, context relevance, and answer helpfulness, without requiring human ground truth annotations. Similarly, ClashEval (Wu et al., 2024) reveals how LLMs may override correct prior knowledge with incorrect retrieved content more than 60% of the time. Although these evaluations are informative, they still treat language models as isolated computational systems, rather than sociotechnical agents embedded within user-facing applications. More recent work has begun to explore the application of RAG systems in socially sensitive domains. For instance, adaptations for medicine and journalism have involved integrating domain-specific knowledge bases to reduce hallucination and increase trust (Siriwardhana et al., 2023). Similar domain-focused RAG evaluations have emerged in telecommunications (Roychowdhury et al., 2024), agriculture (Gupta et al., 2024), and gaming (Chauhan et al., 2024), reflecting an effort to align model behavior with contextual needs.

In the context of deep research agents, DeepResearch Bench (Du et al., 2025) evaluates LLM agents on 100 PhD-level research tasks using dimensions like comprehensiveness, insightfulness, readability, and citation correctness. DRBench (Bosse et al., 2025) similarly introduces 89 complex multi-step research tasks and proposes RetroSearch, a simulated web environment to measure model planning and execution. Similarly, BrowseComp-Plus (Chen et al., 2025) employs a static 100,000 web document as their corpus to evaluate accuracy, recall, number of search of a deep research agent. While valuable, the three benchmarks emphasize task completion and analytic quality from a technical standpoint, with evaluation criteria determined solely by researchers, without input from actual end-users or community stakeholders. This gap motivates our work. Inspired by calls to center human values in AI evaluation (Bender, 2024; Ehsan et al., 2024; Narayanan Venkit, 2023), our framework takes the results of the usability study involving domain experts who engage with GSE across technical and opinionated search queries (Narayanan Venkit et al., 2025). Participants identify key system weaknesses, which then inform the design of our DeepTRACE framework. Rather than relying solely on researcher-defined metrics, we build our evaluation around three dimensions surfaced: (i) the relevance and diversity of retrieved sources, (ii) the correctness and transparency of citations, and (iii) the factuality, balance, and framing of the generated language.

3 Methodology

Our motivation for auditing deep research agents and GSEs is grounded in the pressing call for more socially-aware evaluation practices in NLP. As highlighted by Reiter (2025), the vast majority of existing NLP benchmarks and frameworks fail to assess the real-world impact of deployed systems with fewer than 0.1% of papers include any form of societal evaluation. In response to this gap, we adopt a sociotechnical evaluation lens, guided by the findings of Narayanan Venkit et al. (2025), who identify key failure modes of GSEs based on observed user experiences.

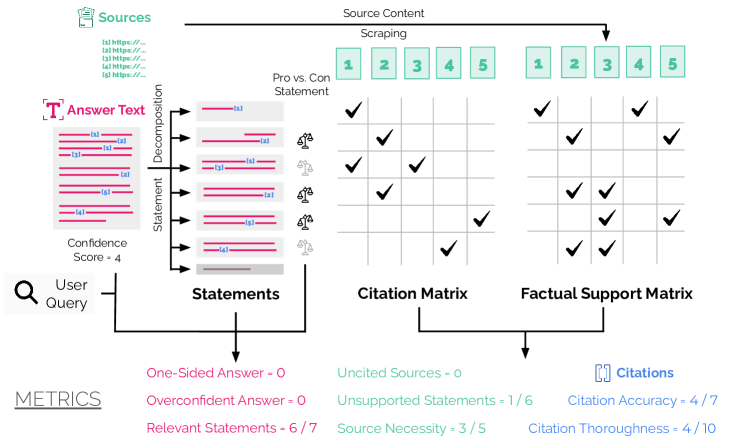

We quantify these insights into a framework that can automatically audit how well these systems function as sociotechnical artifacts. To make the findings from Narayanan Venkit et al. (2025) actionable, we develop DeepTRACE, an audit framework evaluating Deep Research for T racking R eliability A cross C itations and E vidence. Table 3, in Appendix C, outlines the mapping between qualitative insights, proposed system design recommendations, and their associated metrics. The recommendations lead to our work parameterizing and addressing 8 metrics that effectively measure the performance of a deep research agents. We describe each metric in detail below.

3.1 DeepTRACE Metrics

<details>

<summary>x1.png Details</summary>

### Visual Description

## Information Flow Diagram: Answer Text Analysis

### Overview

The image is a diagram illustrating the process of analyzing answer text, likely generated by a language model, by comparing it against source content. The diagram shows the flow from sources to answer text, decomposition into statements, comparison with source content via citation and factual support matrices, and finally, metrics related to the answer's quality.

### Components/Axes

* **Sources:** A list of five URLs, labeled \[1] through \[5].

* **Source Content:** Represented by two sets of five blocks, labeled 1 through 5, indicating source content being scraped.

* **Answer Text:** A block of text with a "T" icon, decomposed into multiple statements.

* **Confidence Score:** A numerical value of 4 associated with the answer text.

* **User Query:** Represented by a magnifying glass icon.

* **Statement Decomposition:** The process of breaking down the answer text into individual statements.

* **Statements:** A series of six statements, labeled \[1] through \[6], with indicators of whether they are "Pro vs. Con" statements.

* **Citation Matrix:** A grid showing which sources are cited by which statements.

* **Factual Support Matrix:** A grid showing which statements are factually supported by which sources.

* **Metrics:** A list of metrics evaluating the answer, including:

* One-Sided Answer: 0

* Overconfident Answer: 0

* Relevant Statements: 6/7

* Uncited Sources: 0

* Unsupported Statements: 1/6

* Source Necessity: 3/5

* Citation Accuracy: 4/7

* Citation Thoroughness: 4/10

* **Icons:**

* Document Icon: Associated with "Sources"

* "T" Icon: Associated with "Answer Text"

* Magnifying Glass Icon: Associated with "User Query"

* Scales Icon: Associated with "Pro vs. Con Statement"

* Document Icon with Brackets: Associated with "Citations"

### Detailed Analysis

* **Sources:** Five sources are listed, presumably web pages, indicated by URLs.

* **Source Content Scraping:** The diagram shows the content from these sources being scraped.

* **Answer Text:** The answer text is decomposed into six statements. Each statement is labeled \[1] through \[6].

* **Statement Decomposition:** The answer text is broken down into individual statements.

* **Pro vs. Con Statement:** Each statement has an associated "Pro vs. Con" indicator, represented by a scales icon. The last statement's icon is greyed out, suggesting it is neither pro nor con.

* **Citation Matrix:** This matrix shows the relationship between statements and sources. A checkmark indicates that a statement cites a particular source.

* Statement 1 cites sources 1 and 4.

* Statement 2 cites source 2.

* Statement 3 cites sources 1 and 3.

* Statement 4 cites source 5.

* Statement 5 cites source 4.

* **Factual Support Matrix:** This matrix shows whether the statements are factually supported by the sources. A checkmark indicates that a statement is supported by a particular source.

* Statement 1 is supported by sources 1 and 2.

* Statement 2 is supported by sources 2 and 3.

* Statement 3 is supported by sources 2 and 3.

* Statement 4 is supported by sources 4 and 5.

* Statement 5 is supported by source 5.

* **Metrics:** The metrics provide a quantitative assessment of the answer.

* The answer is not one-sided or overconfident.

* 6 out of 7 statements are relevant.

* No sources are uncited.

* 1 out of 6 statements is unsupported.

* 3 out of 5 sources are necessary.

* Citation accuracy is 4/7.

* Citation thoroughness is 4/10.

### Key Observations

* The diagram illustrates a pipeline for evaluating the quality of an answer generated from multiple sources.

* The matrices provide a structured way to assess citation and factual support.

* The metrics offer a summary of the answer's strengths and weaknesses.

### Interpretation

The diagram presents a comprehensive approach to evaluating the quality of an answer generated from multiple sources. The process involves decomposing the answer into individual statements, comparing these statements against the source content, and calculating metrics to assess the answer's accuracy, relevance, and thoroughness.

The matrices are crucial for identifying potential issues such as unsupported statements or inaccurate citations. The metrics provide a high-level overview of the answer's quality, allowing for quick identification of areas that need improvement.

The "Pro vs. Con" indicator suggests that the system is also considering the sentiment or stance of each statement, which is important for ensuring a balanced and unbiased answer. The greyed out scales icon for the last statement indicates that it is neither pro nor con, suggesting a neutral or background statement.

The metrics show that the answer has some weaknesses. While the answer is not one-sided or overconfident, and most statements are relevant, the citation accuracy and thoroughness are relatively low (4/7 and 4/10, respectively). This suggests that the answer may not be citing all relevant sources or that the citations may not be entirely accurate. Additionally, 1/6 statements are unsupported, indicating a potential factual issue.

</details>

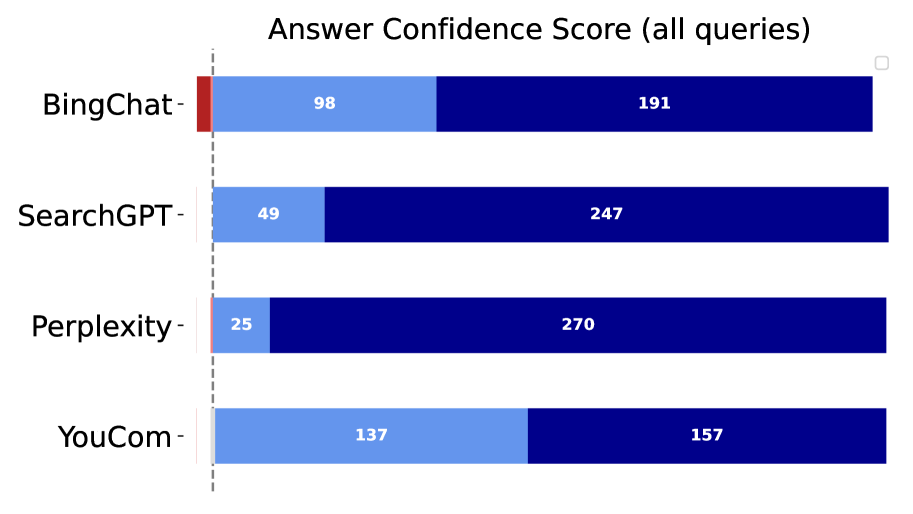

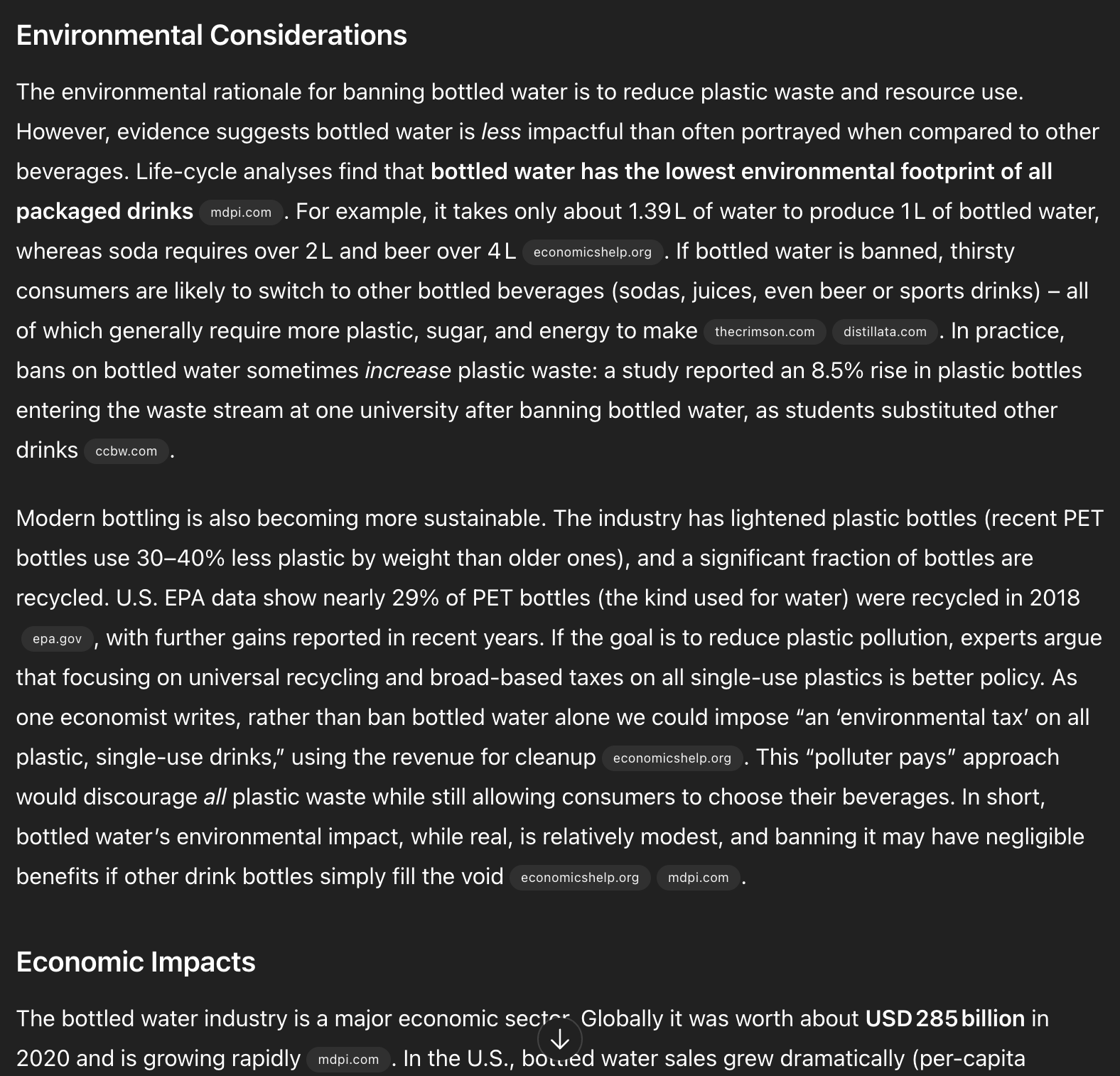

Figure 1: Illustrative diagram of the processing of a deep research agents response into the 8 metrics of the DeepTrace Framework. The description of each metrics is illustrated in Section 4.2.

Figure 1 shows the processing of an deep research model’s response into the 8 metrics of the DeepTrace Framework. We first go over the preliminary processing common to several metrics, then define each metric.

3.1.1 Preliminary Processing

When evaluating an GSE or a deep research agents, our evaluation framework requires the extraction of four content elements: the user query (1), the generated answer text (2) with the embedded citation (3) to the sources represented by a publicly accessible URL (4). Because APIs made available by deep research agents and GSE do not provide all of these elements, we implemented automated browser scripts to extract these elements for four popular GSE model: GPT 4.5/5, You.com, Perplexity.ai, and BingChat Extending the evaluation to other GSE would require adapting the scripts to the specific website structure of the target GSE. and four deep research agents: GPT 5 Deep Research, You.com Deep Research, Perplexity.ai Deep Research, BingChat Think Deeper and Gemini Deep Research. Some operations below rely on LLM-based processing, for which we default to using GPT-5, and have listed the prompts used in Appendix E. When necessary, we evaluate the accuracy of LLM-based processing and report on the level of agreement with manual annotation.

A first operation consists of decomposing the answer text into statements. Decomposing the answer into statements allows to study the factual backing of the answer by the sources at a granular level, and is common in fact-checking literature (Laban et al., 2022; Tang et al., 2024; Huang et al., 2024; Qiu et al., 2024). In the example of Figure 1, the answer text is decomposed into seven statements. Each statement is further assigned two attributes: Query Relevance is a binary attribute that indicates whether the statement contains answer elements relevant to the user query. Irrelevant statements are typically introductory or concluding statements that do not contain factual information (e.g., “That’s a great question!”, “Let me see what I can do here”). Pro vs. Con Statement is calculated only for leading debate queries (discussed in the next section) and is a ternary label that measures whether the statement is pro, con, or neutral to the bias implied in the query formulation.

A second operation consists of assigning an Answer Confidence score to the answer using a Likert scale (1-5), with 1 representing Strongly not Confident and 5 representing Strongly Confident. Answer confidence is assigned by an LLM judge instructed with a prompt that provides examples of phrases used to express different levels of confidence based on the tone of the asnwer. This is secifically done for debate questions (Section 3.2). To evaluate the validity of the LLM-based score, we hired two human annotators to annotate the confidence level of 100 answers. We observed a Pearson correlation of 0.72 between the LLM judge and human annotators, indicating substantial agreement, and confirming the reliability of the LLM judge for confidence scoring.

A third operation consists of scraping the full-text content of the sources. We leverage Jina.ai’s Reader tool https://jina.ai/reader/, to extract the full text of a webpage given its URL. Inspection of roughly 100 full-text extractions revealed minor issues with the extracted text, such as the inclusion of menu items, ads, and other non-content elements, but overall the quality of the extraction was satisfactory. For roughly 15% of the URLs, the Reader tool returns an error either due to the web page being behind a paywall, or due to the page being unavailable (e.g., a 404 error). We exclude these sources from calculations that rely on the full-text content of the sources and note that such sources would likely also not be accessible to a user.

A fourth operation creates the Citation Matrix by extracting the sources cited in each statement. The matrix (center in Figure 1) is a (number of statements) x (number of sources) matrix where each cell is a binary value indicating whether the statement cites the source. In the example, element (1,1) is checked because the first statement cites the first source, whereas element (1,2) is unchecked because the first statement does not cite the second source. A fifth operation creates the Factual Support Matrix by assigning for each (statement, source) pair a binary value indicating whether the source factually supports the statement. We leverage an LLM judge to assign each value in the matrix. A prompt including the extracted source content and the statement is constructed, and the LLM must determine whether the statement is supported or not by the source. Factual support evaluation is an open challenge in NLP (Tang et al., 2024; Kim et al., 2024), but top LLMs (GPT-5/4o) have been shown to perform well on the task (Laban et al., 2023a). To understand the degree of reliability of LLM-based factual support evaluation in our context, we hired two annotators to perform 100 factual verification tasks manually. We observed a Pearson correlation of 0.62 between the LLM judge and manual labels, indicating moderate agreement. Relying on an LLM to measure factual support is a limiting factor of our evaluation framework, necessary to scale our experiments: we ran on the order of 80,000 factual support evaluations in upcoming experiments, which would have been cost-prohibitive through manual annotation. In the first row of the example Factual Support matrix, columns 1 and 4 are checked, indicating that sources 1 and 4 factually support the first statement.

For the annotation efforts, we hired a total of four annotators who are either professional annotators hired in User Interviews www.userinterviews.com/, or graduate students enrolled in a computer science degree. We provided clear guidelines to annotators for the task and had individual Slack conversations where each annotator could discuss the task with the authors of the paper. Annotators were compensated at a rate of $25 USD per hour. The annotation protocol was reviewed and approved by the institution’s Ethics Office. With the preliminary processing complete, we can now define the 8 metrics of the DeepTrace Evaluation Framework.

3.1.2 DeepTrace Metrics and Definitions

I. One-Sided Answer: This binary metric is only computed on debate questions, leveraging the Pro vs. Con statement attribute. An answer is considered one-sided if it does not include both pro and con statements on the debate question.

$$

\text{One-Sided Answer}=\begin{cases}0&\text{both pro and con}\\

&\text{statements are present}\\

1&\text{otherwise}\end{cases} \tag{1}

$$

In the example of Figure 1, One-Sided Answer = 0 as there are three pro statements and two con statements. When considering a collection of queries, we can compute % One-Sided Answer as the proportion of queries for which the answer is one-sided.

II. Overconfident Answer: This binary metric leverages the Answer Confidence score, combined with the One-Sided Answer metric and is only computed for debate queries. An answer is considered overconfident if it is both one-sided and has a confidence score of 5 (i.e., Strongly Confident).

$$

\text{Overconfdnt. Ans}=\begin{cases}1&\text{if One-Sided Answer = 1}\\

&\text{\& Answer Confidence = 5}\\

0&\text{otherwise}\end{cases} \tag{2}

$$

We implement a confidence metric in conjunction with the one-sided metric as it is challenging to determine the acceptable confidence level for any query. However, based on the user study findings by Narayanan Venkit et al. (2025), an undesired trait in an answer is to be overconfident while not providing a comprehensive and balanced view, which we capture with this metric. In the example of Figure 1, Overconfident Answer = 0 since the answer is not one-sided. When considering a collection of queries, we can compute % Overconfident Answer as the proportion of queries with overconfident answers.

III. Relevant Statement: This ratio measures the fraction of relevant statements in the answer text in relation to the total number of statements.

$$

\text{Relevant Statement}=\frac{\text{Number of Relevant Statements}}{\text{Total Number of Statements}} \tag{3}

$$

This metric captures the to-the-pointedness of the answer, limiting introductory and concluding statements that do not directly address the user query. In the example of Figure 1, Relevant Statement = 6/7.

3.1.3 Sources Metrics

IV. Uncited Sources: This ratio metric measures the fraction of sources that are cited in the answer text in relation to the total number of listed sources.

$$

\text{Uncited Sources}=\frac{\text{Number of Cited Sources}}{\text{Number of Listed Sources}} \tag{4}

$$

This metric can be computed from the citation matrix: any empty column corresponds to an uncited source. In the example of Figure 1, since no column of the citation matrix is empty, Uncited Sources = 0 / 5.

V. Unsupported Statements: This ratio metric measures the fraction of relevant statements that are not factually supported by any of the listed sources. Any row of the factual support matrix with no checked cell corresponds to an unsupported statement.

$$

\text{Unsupported Statements}=\frac{\text{No. of Unsupported St.}}{\text{No. of Relevant St.}} \tag{5}

$$

In the example of Figure 1, the third row of the factual support matrix is the only entirely unchecked row, indicating that the third statement is unsupported. Therefore, Unsupported Statements = 1 / 6.

VI. Source Necessity: This ratio metric measures the fraction of sources that are necessary to factually support all relevant statements in the answer text. Understanding what source is necessary or redundant can be formulated as a graph problem. We transform the factual support matrix into a (statement,source) bi-partite graph. Finding which source is necessary is equivalent to determining the minimum vertex cover for source nodes on the bipartite graph. We use the Hopcroft-Karp algorithm (Hopcroft & Karp, 1973) to find the minimum vertex cover, which tells us which sources are necessary to cover factually supported statements.

$$

\text{Source Necessity}=\frac{\text{Number of Necessary Sources}}{\text{Number of Listed Sources}} \tag{6}

$$

In the example of Figure 1, one possible minimum vertex cover consists of sources 1, 2, and 3 (another consists of 2, 3, and 4). Therefore, Source Necessity = 3 / 5. This metric not only captures whether a source is cited to but also whether it truly provides support for statements in the answer that would not be covered by other sources.

3.1.4 Citation Metrics

VII. Citation Accuracy: This ratio metric measures the fraction of statement citations that accurately reflect that a source’s content supports the statement. This metric can be computed by measuring the overlap between the citation and the factual support matrices, and dividing by the number of citations:

$$

\text{Cit. Acc.}=\frac{\sum{\text{Citation Mtx}\odot\text{Factual Support Mtx}}}{\sum{\text{Citation Mtx}}} \tag{7}

$$

Where $\odot$ is element-wise multiplication, and $\sum$ is the sum of all elements in the matrix. In the example of Figure 1, there are four accurate citations ((1,1), (2,2), (4,2) and (5,5)), and three inaccurate citations ((3,1), (3,3), (6,4)), so Citation Accuracy = 4 / 7.

VIII. Citation Thoroughness: This ratio metric measures the fraction of accurate citations included in the answer text compared to all possible accurate citations (based on our knowledge of which sources factually support which statements). This metric can be computed by measuring the overlap between the citation and the factual support matrices:

$$

\text{Cit. Th.}=\frac{\sum{\text{Citation Mtx}\odot\text{Factual Support Mtx}}}{\sum{\text{Factual Support Mtx}}} \tag{8}

$$

In the example of Figure 1, there are four accurate citations, and ten factual support relationships (such as (1,4), (2,5), etc.), so Citation Thoroughness = 4 / 10.

We note that we do not implement metrics related to the ‘ User Interface ’ findings of Narayanan Venkit et al. (2025), as they are not directly computable from the answer text, citation, and source content and would likely require manual evaluation, or computer-vision-based methods that are out of the scope of this work.

3.2 DeepTrace Corpus and Framework

To perform the above evaluation, we use and release the DeepTrace dataset, which is used to prompt responses and assess model behavior. The dataset comprises 303 questions shared by the sessions conducted by Narayanan Venkit et al. (2025). These questions are divided into two categories:

- Debate Questions (N=168): These questions, sourced from the ProCon website, a nonpartisan platform providing balanced information on contentious issues, are characterized by their tendency to have multiple perspectives and are often subjects of debate https://www.procon.org/.

- Expertise Questions (N=135): These questions were contributed by the participants from Narayanan Venkit et al. (2025), who represented experts from diverse fields including meteorology, medicine, and human-computer interaction. These questions pertain to research-oriented questions that tend to need multiple searches/hops.

An example debate question in DeepTrace is “Why can alternative energy effectively not replace fossil fuels?”, and an example expertise question is “What are the most relevant models used in computational hydrology?”. We then use developed browser scripts to run each query through a total of 9 public GSE and DR agents to extract all components required for metric-based evaluation, and computed the metrics on the relevant queries: most metrics are computed on all 2,727 samples (303 queries x 9 models), while a few are only computed on the debate queries (e.g., One-Sided Answer, Overconfident Answer). Using the DeepTrace dataset, we conducted evaluation of the models to parameterize and understand their behavior and weaknesses, using the above 8 metrics. The modular design of the DeepTrace framework and dataset allows for flexible adaptation, enabling the dataset’s modification for continued evaluation of GSE and deep research agents across different contexts and therefore is not solely dependant on the specific dataset.

3.3 Public Deep Research Agents Evaluation

| Basic Statistics | Generative Search Engines You | Bing | PPLX | GPT 4.5 |

| --- | --- | --- | --- | --- |

| Number of Sources | 3.5 | 4.0 | 3.4 | 3.4 |

| Number of Statements | 13.9 | 10.5 | 18.8 | 12.0 |

| # Citations / Statement | 0.4 | 0.4 | 0.5 | 0.4 |

|

<details>

<summary>Images/icons/answer_text_color.png Details</summary>

### Visual Description

## Icon: Text Selection

### Overview

The image is an icon representing text selection. It features a large, stylized letter "T" enclosed within a selection box. The icon is rendered in a solid pink color against a light gray background.

### Components/Axes

* **Letter:** A large, pink letter "T" dominates the center of the image.

* **Selection Box:** A pink selection box surrounds the letter "T". The box is formed by four corner brackets, one in each corner of the image.

* **Background:** The background is a light gray color.

### Detailed Analysis

* The letter "T" is a sans-serif font with a bold appearance.

* The selection box is formed by four corner brackets, each with rounded corners.

* The pink color is consistent throughout the letter "T" and the selection box.

* The light gray background provides contrast to the pink elements.

### Key Observations

* The icon is simple and easily recognizable.

* The use of color is effective in highlighting the key elements.

* The icon is likely used to represent text selection or text editing functionality.

### Interpretation

The icon is a visual representation of text selection. The letter "T" represents text, and the selection box indicates that the text is selected. The icon is likely used in software applications or websites to indicate that the user can select text. The simplicity of the design makes it easily recognizable and understandable.

</details>

Answer Text Metrics | | | | |

| %One-Sided Answer | 51.6 ⚫ | 48.7 ⚫ | 83.4 ▼ | 90.4 ▼ |

| %Overconfident Answer | 19.4 ▲ | 29.5 ⚫ | 81.6 ▼ | 70.7 ▼ |

| %Relevant Statements | 75.5 ⚫ | 79.3 ⚫ | 82.0 ⚫ | 85.4 ⚫ |

|

<details>

<summary>Images/icons/sources_color.png Details</summary>

### Visual Description

## Icon: File Folder with Documents

### Overview

The image is a simple icon representing a file folder containing documents. The icon is rendered in a single color, a shade of green, against a light gray background. The style is minimalist, using clean lines to depict the folder and papers.

### Components/Axes

* **Folder:** A rectangular shape with a notch cut out on the top edge, representing a file folder. A smaller rectangle is present near the bottom, likely representing a label area.

* **Documents:** Three rectangular shapes of varying sizes are stacked behind the folder, representing documents or papers. The topmost document has three horizontal lines, indicating text.

* **Color:** The entire icon is a shade of green.

### Detailed Analysis

* The folder is positioned in the foreground, with the documents behind it.

* The documents are slightly offset from each other, creating a sense of depth.

* The horizontal lines on the topmost document suggest that it contains text.

* The label area on the folder is blank.

### Key Observations

* The icon is simple and easily recognizable.

* The use of a single color gives it a clean and modern look.

* The icon effectively conveys the concept of a file folder containing documents.

### Interpretation

The icon is likely used to represent files, documents, or data storage in a digital interface or application. Its simplicity and clarity make it suitable for a wide range of uses. The absence of specific details allows it to be interpreted broadly, representing any type of document or file.

</details>

Sources Metrics | | | | |

| %Uncited Sources | 1.1 ▲ | 36.2 ▼ | 8.4 ⚫ | 0.0 ▲ |

| %Unsupported Statements | 30.8 ▼ | 23.1 ⚫ | 31.6 ▼ | 47.0 ▼ |

| %Source Necessity | 69.0 ⚫ | 50.4 ▼ | 68.9 ⚫ | 67.3 ⚫ |

|

<details>

<summary>Images/icons/citation_color.png Details</summary>

### Visual Description

## Icon: Square Brackets

### Overview

The image is an icon representing a pair of square brackets. The brackets are depicted in a light blue color with rounded corners. The background is a light gray.

### Components/Axes

* **Brackets:** Two square brackets, one on the left and one on the right.

* **Color:** Light blue for the brackets, light gray for the background.

* **Shape:** The brackets have rounded corners.

### Detailed Analysis or ### Content Details

The icon consists of two square brackets, each formed by a light blue line. The left bracket is positioned on the left side of the image, and the right bracket is positioned on the right side. The brackets are mirror images of each other. The space between the brackets is filled with the light gray background color. The line thickness of the brackets is consistent throughout.

### Key Observations

* The icon is simple and easily recognizable.

* The use of rounded corners gives the icon a softer appearance.

* The color scheme is muted and unobtrusive.

### Interpretation

The icon likely represents a function or operation that involves enclosing something within brackets, such as in mathematical notation, programming syntax, or text formatting. The simplicity of the design makes it versatile for use in various applications.

</details>

Citation Metrics | | | | |

| %Citation Accuracy | 68.3 ⚫ | 65.8 ⚫ | 49.0 ▼ | 39.8 ▼ |

| %Citation Thoroughness | 24.4 ⚫ | 20.5 ⚫ | 23.0 ⚫ | 23.8 ⚫ |

| DeepTrace Score Card | | | | |

|

<details>

<summary>Images/icons/answer_text_color.png Details</summary>

### Visual Description

## Icon: Text Selection

### Overview

The image is an icon representing text selection. It features a large, stylized letter "T" enclosed within a selection box. The icon is rendered in a solid pink color against a light gray background.

### Components/Axes

* **Letter:** A large, pink letter "T" dominates the center of the image.

* **Selection Box:** A pink selection box surrounds the letter "T". The box is formed by four corner brackets, one in each corner of the image.

* **Background:** The background is a light gray color.

### Detailed Analysis

* The letter "T" is a sans-serif font with a bold appearance.

* The selection box is formed by four corner brackets, each with rounded corners.

* The pink color is consistent throughout the letter "T" and the selection box.

* The light gray background provides contrast to the pink elements.

### Key Observations

* The icon is simple and easily recognizable.

* The use of color is effective in highlighting the key elements.

* The icon is likely used to represent text selection or text editing functionality.

### Interpretation

The icon is a visual representation of text selection. The letter "T" represents text, and the selection box indicates that the text is selected. The icon is likely used in software applications or websites to indicate that the user can select text. The simplicity of the design makes it easily recognizable and understandable.

</details>

Answer Text Metrics | ⚫ ▲ ⚫ | ⚫⚫⚫ | ▼▼ ⚫ | ▼▼ ⚫ |

|

<details>

<summary>Images/icons/sources_color.png Details</summary>

### Visual Description

## Icon: File Folder with Documents

### Overview

The image is a simple icon representing a file folder containing documents. The icon is rendered in a single color, a shade of green, against a light gray background. The style is minimalist, using clean lines to depict the folder and papers.

### Components/Axes

* **Folder:** A rectangular shape with a notch cut out on the top edge, representing a file folder. A smaller rectangle is present near the bottom, likely representing a label area.

* **Documents:** Three rectangular shapes of varying sizes are stacked behind the folder, representing documents or papers. The topmost document has three horizontal lines, indicating text.

* **Color:** The entire icon is a shade of green.

### Detailed Analysis

* The folder is positioned in the foreground, with the documents behind it.

* The documents are slightly offset from each other, creating a sense of depth.

* The horizontal lines on the topmost document suggest that it contains text.

* The label area on the folder is blank.

### Key Observations

* The icon is simple and easily recognizable.

* The use of a single color gives it a clean and modern look.

* The icon effectively conveys the concept of a file folder containing documents.

### Interpretation

The icon is likely used to represent files, documents, or data storage in a digital interface or application. Its simplicity and clarity make it suitable for a wide range of uses. The absence of specific details allows it to be interpreted broadly, representing any type of document or file.

</details>

Sources Metrics | ▲ ▼ ⚫ | ▼ ⚫ ▼ | ⚫ ▼ ⚫ | ▲ ▼ ⚫ |

|

<details>

<summary>Images/icons/citation_color.png Details</summary>

### Visual Description

## Icon: Square Brackets

### Overview

The image is an icon representing a pair of square brackets. The brackets are depicted in a light blue color with rounded corners. The background is a light gray.

### Components/Axes

* **Brackets:** Two square brackets, one on the left and one on the right.

* **Color:** Light blue for the brackets, light gray for the background.

* **Shape:** The brackets have rounded corners.

### Detailed Analysis or ### Content Details

The icon consists of two square brackets, each formed by a light blue line. The left bracket is positioned on the left side of the image, and the right bracket is positioned on the right side. The brackets are mirror images of each other. The space between the brackets is filled with the light gray background color. The line thickness of the brackets is consistent throughout.

### Key Observations

* The icon is simple and easily recognizable.

* The use of rounded corners gives the icon a softer appearance.

* The color scheme is muted and unobtrusive.

### Interpretation

The icon likely represents a function or operation that involves enclosing something within brackets, such as in mathematical notation, programming syntax, or text formatting. The simplicity of the design makes it versatile for use in various applications.

</details>

Citation Metrics | ⚫⚫ | ⚫⚫ | ▼ ⚫ | ▼ ⚫ |

(a) Score Card Evaluation of GSE

<details>

<summary>x2.png Details</summary>

### Visual Description

## Horizontal Bar Chart: Answer Confidence Score (all queries)

### Overview

The image is a horizontal bar chart comparing the answer confidence scores of four different search engines: BingChat, SearchGPT, Perplexity, and YouCom. The chart displays two components for each search engine: the number of answers that were not confident (light blue) and the number of answers that were confident (dark blue). BingChat also has a small red bar, indicating the number of answers that were incorrect. The title of the chart is "Answer Confidence Score (all queries)".

### Components/Axes

* **Title:** Answer Confidence Score (all queries)

* **Y-axis Labels (Search Engines):** BingChat, SearchGPT, Perplexity, YouCom

* **X-axis:** The x-axis is not explicitly labeled, but it represents the number of answers.

* **Bar Colors:**

* Light Blue: Number of answers that were not confident

* Dark Blue: Number of answers that were confident

* Red: Number of answers that were incorrect (only present for BingChat)

### Detailed Analysis

* **BingChat:**

* Incorrect Answers: Represented by a small red bar at the beginning of the bar. The value is not explicitly stated, but it appears to be approximately 5.

* Not Confident Answers: 98 (light blue)

* Confident Answers: 191 (dark blue)

* **SearchGPT:**

* Not Confident Answers: 49 (light blue)

* Confident Answers: 247 (dark blue)

* **Perplexity:**

* Not Confident Answers: 25 (light blue)

* Confident Answers: 270 (dark blue)

* **YouCom:**

* Not Confident Answers: 137 (light blue)

* Confident Answers: 157 (dark blue)

### Key Observations

* Perplexity has the highest number of confident answers (270) and the lowest number of not confident answers (25).

* YouCom has the highest number of not confident answers (137).

* BingChat is the only search engine with incorrect answers, indicated by the red bar.

* SearchGPT has a relatively low number of not confident answers (49) and a high number of confident answers (247).

### Interpretation

The chart suggests that Perplexity has the highest answer confidence score among the four search engines, as it provides the most confident answers and the fewest not confident answers. YouCom appears to have the lowest confidence score, with the highest number of not confident answers. BingChat's score is impacted by the presence of incorrect answers. SearchGPT performs well, with a high number of confident answers and a low number of not confident answers.

The data demonstrates the relative performance of these search engines in terms of answer confidence. It highlights the trade-offs between providing a large number of answers and ensuring the confidence of those answers. The presence of incorrect answers for BingChat suggests a potential area for improvement in its answer generation process.

</details>

<details>

<summary>x3.png Details</summary>

### Visual Description

## Horizontal Bar Chart: Answer Confidence Score (debate queries)

### Overview

The image is a horizontal bar chart comparing the answer confidence scores of different search engines (BingChat, SearchGPT, Perplexity, and YouCom) for debate queries. The chart displays two confidence scores for each search engine, represented by two different shades of blue, except for BingChat which has a red segment.

### Components/Axes

* **Title:** Answer Confidence Score (debate queries)

* **Y-axis (Labels):** BingChat, SearchGPT, Perplexity, YouCom

* **X-axis:** Implied, but not explicitly labeled. Represents the answer confidence score.

* **Colors:**

* Light Blue: Represents the first confidence score.

* Dark Blue: Represents the second confidence score.

* Red: Represents a different category for BingChat.

* **Vertical Dashed Line:** A vertical dashed line is present near the left side of the chart, possibly indicating a threshold or baseline.

### Detailed Analysis

Here's a breakdown of the confidence scores for each search engine:

* **BingChat:**

* Red: Approximately 10

* Light Blue: 78

* Dark Blue: 83

* **SearchGPT:**

* Light Blue: 37

* Dark Blue: 131

* **Perplexity:**

* Light Blue: Approximately 15

* Dark Blue: 160

* **YouCom:**

* Light Blue: 110

* Dark Blue: 56

### Key Observations

* Perplexity has the highest dark blue confidence score (160).

* SearchGPT has the second highest dark blue confidence score (131).

* YouCom has the highest light blue confidence score (110).

* BingChat is the only search engine with a red segment.

* Perplexity has the lowest light blue confidence score (approximately 15).

### Interpretation

The chart compares the answer confidence scores of different search engines for debate queries. The two shades of blue likely represent different aspects or methods of calculating the confidence score. The red segment for BingChat suggests a different evaluation metric or category. Perplexity appears to have the highest overall confidence based on the dark blue score, while YouCom has a relatively high light blue score but a lower dark blue score. The vertical dashed line may represent a minimum acceptable confidence level. The data suggests that different search engines have varying strengths in terms of answer confidence for debate queries.

</details>

<details>

<summary>x4.png Details</summary>

### Visual Description

## Chart: Answer Confidence Score for Expertise Queries

### Overview

The image is a horizontal bar chart comparing the answer confidence scores of four different search engines (BingChat, SearchGPT, Perplexity, and YouCom) for expertise queries. The chart displays the number of responses categorized into different confidence levels: "Strongly Not Confident," "Not Confident," "Neutral," "Confident," and "Strongly Confident."

### Components/Axes

* **Title:** Answer Confidence Score (expertise queries)

* **X-axis:** Number of Responses

* **Y-axis:** Search Engines (BingChat, SearchGPT, Perplexity, YouCom)

* **Legend:** Located at the bottom of the chart.

* Strongly Not Confident (Dark Red)

* Not Confident (Light Red)

* Neutral (Light Gray)

* Confident (Light Blue)

* Strongly Confident (Dark Blue)

### Detailed Analysis

The chart presents the number of responses for each search engine, broken down by confidence level.

* **BingChat:**

* Confident: 20

* Strongly Confident: 108

* **SearchGPT:**

* Confident: 12

* Strongly Confident: 116

* **Perplexity:**

* Not Confident: 17

* Strongly Confident: 110

* **YouCom:**

* Confident: 27

* Strongly Confident: 101

### Key Observations

* All search engines have a significant number of responses categorized as "Strongly Confident."

* Perplexity has a small number of "Not Confident" responses, while the others have "Confident" responses.

* SearchGPT has the highest number of "Strongly Confident" responses among the four.

### Interpretation

The chart suggests that, for expertise queries, all four search engines generally elicit confident responses. SearchGPT appears to perform slightly better in terms of generating "Strongly Confident" answers compared to the others. Perplexity stands out with a small number of "Not Confident" responses, indicating a potential area for improvement. The data highlights the varying levels of confidence users have in the answers provided by these search engines when seeking expert information.

</details>

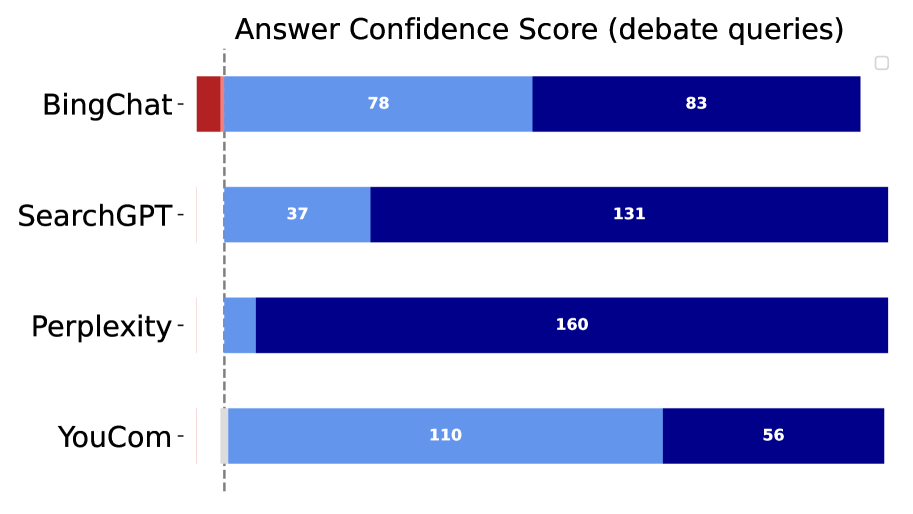

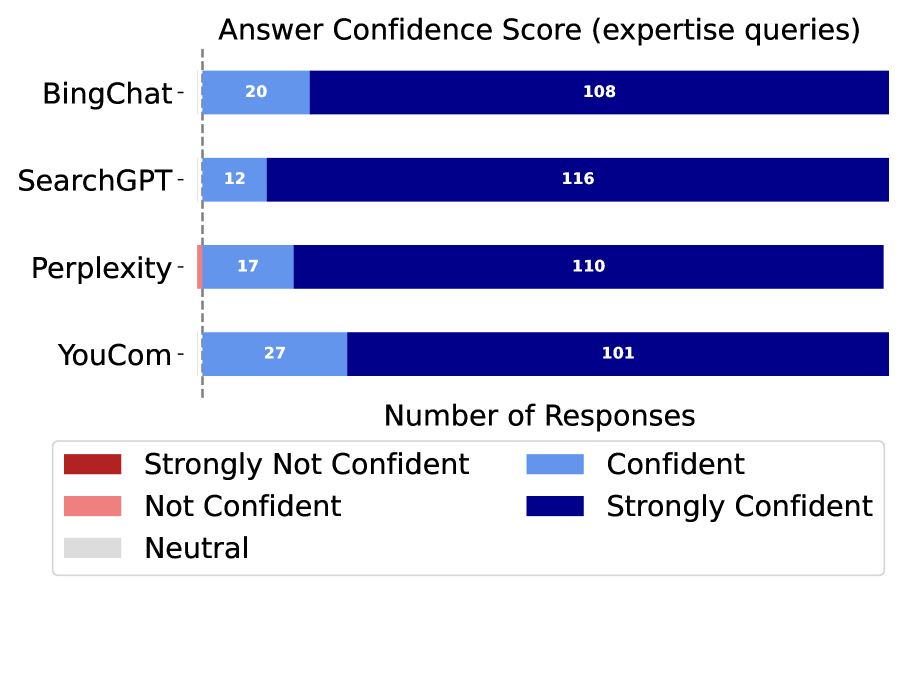

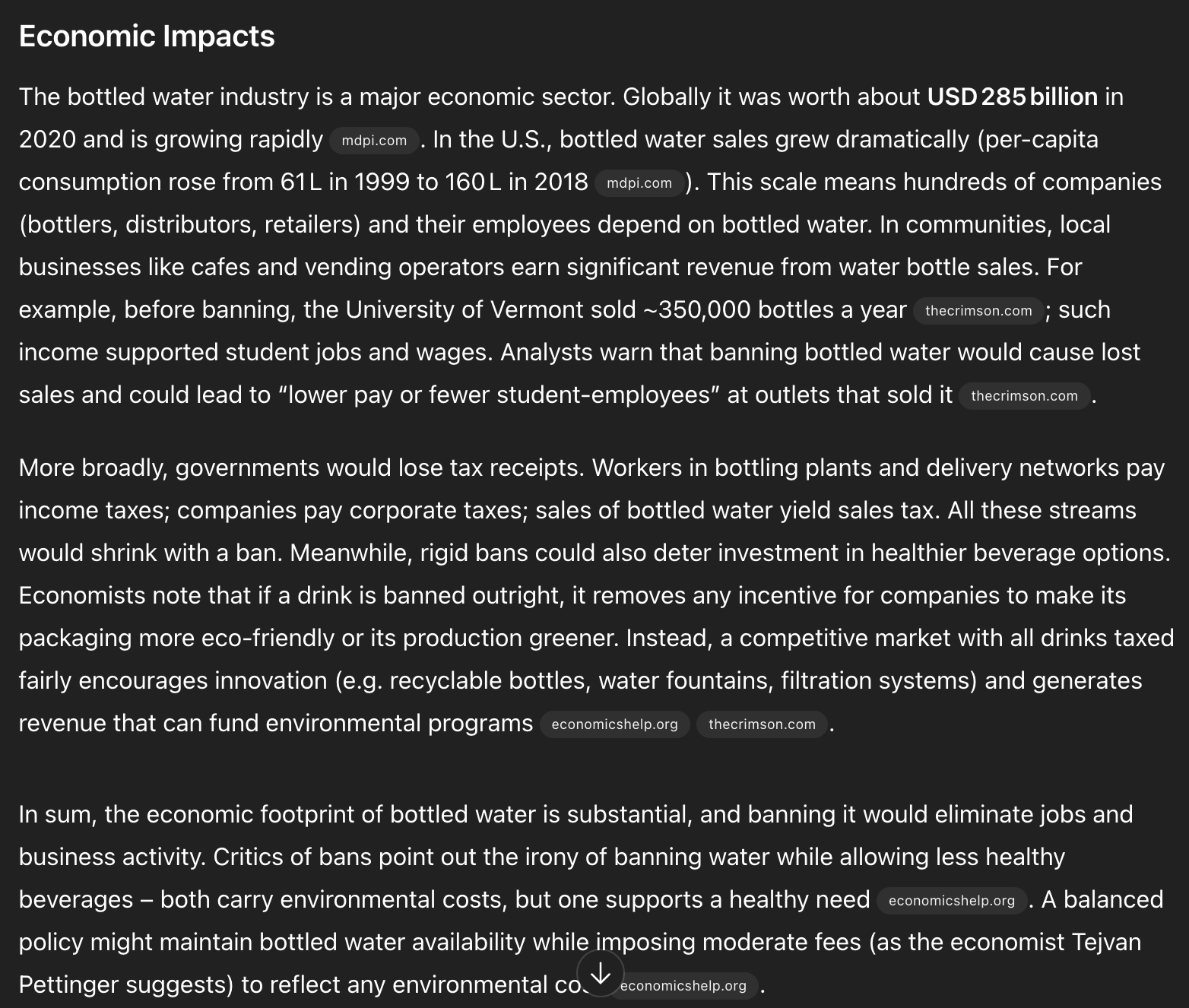

(b) Confidence Score Distribution

Figure 2: Quantitative Evaluation of three GSE – You.com, BingChat, and Perplexity – based on the eight metrics of the DeepTrace framework: metric report, color-coded for ▲ acceptable, ⚫ borderline, and ▼ problematic performance. Figure (b) plots distributions of answer confidence.

In the following section, we audit publicly available deep research agents and GSE to assess their societal impact. These systems, often referred to as AIaaS (AI as a Service) (Lins et al., 2021), are marketed as ready-to-use models requiring no prior expertise. To focus on publicly accessible systems, we selected the web search adn deep research capabilities of Perplexity, Bing Copilot, GPT (4.5/5) and YouChat for evaluation.

4 Results

Figure 2 (GSE) and Table 1 (Deep Research) show the results of the metrics-based evaluation on the DeepTrace corpus as of August 27, 2025. In the Table on the left, numerical values are assigned a color based on whether the score reflects an ▲ acceptable, ⚫ borderline, and ▼ problematic performance. Thresholds for the colors are listed in Table 2 with the explanation of the threshold in Appendix B based on the qualitative inputs obtained from Narayanan Venkit et al. (2025).

| Basic Statistics | Deep Research Agents GPT-5(DR) | YouChat(DR) | GPT-5(S) | PPLX(DR) | Copilot (TD) | Gemini (DR) |

| --- | --- | --- | --- | --- | --- | --- |

| Number of Sources | 18.3 | 57.2 | 13.5 | 7.7 | 3.6 | 33.2 |

| Number of Statements | 141.6 | 52.7 | 34.9 | 30.1 | 36.7 | 23.9 |

| # Citations / Statement | 1.4 | 0.8 | 0.4 | 0.2 | 0.3 | 0.2 |

|

<details>

<summary>Images/icons/answer_text_color.png Details</summary>

### Visual Description

## Icon: Text Selection

### Overview

The image is an icon representing text selection. It features a large, stylized letter "T" enclosed within a selection box. The icon is rendered in a solid pink color against a light gray background.

### Components/Axes

* **Letter:** A large, pink letter "T" dominates the center of the image.

* **Selection Box:** A pink selection box surrounds the letter "T". The box is formed by four corner brackets, one in each corner of the image.

* **Background:** The background is a light gray color.

### Detailed Analysis

* The letter "T" is a sans-serif font with a bold appearance.

* The selection box is formed by four corner brackets, each with rounded corners.

* The pink color is consistent throughout the letter "T" and the selection box.

* The light gray background provides contrast to the pink elements.

### Key Observations

* The icon is simple and easily recognizable.

* The use of color is effective in highlighting the key elements.

* The icon is likely used to represent text selection or text editing functionality.

### Interpretation

The icon is a visual representation of text selection. The letter "T" represents text, and the selection box indicates that the text is selected. The icon is likely used in software applications or websites to indicate that the user can select text. The simplicity of the design makes it easily recognizable and understandable.

</details>

Answer Text Metrics | | | | | | |

| %One-Sided Answer | 54.67 ▼ | 63.1 ▼ | 69.7 ▼ | 63.1 ▼ | 94.8 ▼ | 80.1 ▼ |

| %Overconfident Answer | 15.2 ▲ | 19.6 ▲ | 16.4 ▲ | 5.6 ▲ | 0.0 ▲ | 11.2 ▲ |

| %Relevant Statements | 87.5 ⚫ | 45.5 ▼ | 41.1 ▼ | 22.5 ▼ | 13.2 ▼ | 12.4 ▼ |

|

<details>

<summary>Images/icons/sources_color.png Details</summary>

### Visual Description

## Icon: File Folder with Documents

### Overview

The image is a simple icon representing a file folder containing documents. The icon is rendered in a single color, a shade of green, against a light gray background. The style is minimalist, using clean lines to depict the folder and papers.

### Components/Axes

* **Folder:** A rectangular shape with a notch cut out on the top edge, representing a file folder. A smaller rectangle is present near the bottom, likely representing a label area.

* **Documents:** Three rectangular shapes of varying sizes are stacked behind the folder, representing documents or papers. The topmost document has three horizontal lines, indicating text.

* **Color:** The entire icon is a shade of green.

### Detailed Analysis

* The folder is positioned in the foreground, with the documents behind it.

* The documents are slightly offset from each other, creating a sense of depth.

* The horizontal lines on the topmost document suggest that it contains text.

* The label area on the folder is blank.

### Key Observations

* The icon is simple and easily recognizable.

* The use of a single color gives it a clean and modern look.

* The icon effectively conveys the concept of a file folder containing documents.

### Interpretation

The icon is likely used to represent files, documents, or data storage in a digital interface or application. Its simplicity and clarity make it suitable for a wide range of uses. The absence of specific details allows it to be interpreted broadly, representing any type of document or file.

</details>

Sources Metrics | | | | | | |

| %Uncited Sources | 0.0 ▲ | 66.3 ▼ | 51.7 ▼ | 57.5 ▼ | 32.6 ▼ | 14.5 ▼ |

| %Unsupported Statements | 12.5 ⚫ | 74.6 ▼ | 58.9 ▼ | 97.5 ▼ | 90.2 ▼ | 53.6 ▼ |

| %Source Necessity | 87.5 ▲ | 63.2 ⚫ | 32.8 ▼ | 5.5 ▼ | 31.2 ▼ | 33.1 ▼ |

|

<details>

<summary>Images/icons/citation_color.png Details</summary>

### Visual Description

## Icon: Square Brackets

### Overview

The image is an icon representing a pair of square brackets. The brackets are depicted in a light blue color with rounded corners. The background is a light gray.

### Components/Axes

* **Brackets:** Two square brackets, one on the left and one on the right.

* **Color:** Light blue for the brackets, light gray for the background.

* **Shape:** The brackets have rounded corners.

### Detailed Analysis or ### Content Details

The icon consists of two square brackets, each formed by a light blue line. The left bracket is positioned on the left side of the image, and the right bracket is positioned on the right side. The brackets are mirror images of each other. The space between the brackets is filled with the light gray background color. The line thickness of the brackets is consistent throughout.

### Key Observations

* The icon is simple and easily recognizable.

* The use of rounded corners gives the icon a softer appearance.

* The color scheme is muted and unobtrusive.

### Interpretation

The icon likely represents a function or operation that involves enclosing something within brackets, such as in mathematical notation, programming syntax, or text formatting. The simplicity of the design makes it versatile for use in various applications.

</details>

Citation Metrics | | | | | | |

| %Citation Accuracy | 79.1 ⚫ | 72.3 ⚫ | 31.4 ▼ | 58.0 ⚫ | 62.1 ⚫ | 50.3 ⚫ |

| %Citation Thoroughness | 87.5 ▲ | 83.5 ▲ | 17.9 ▼ | 9.1 ▼ | 13.2 ▼ | 27.1 ⚫ |

| DeepTrace Eval Score Card | | | | | | |

|

<details>

<summary>Images/icons/answer_text_color.png Details</summary>

### Visual Description

## Icon: Text Selection

### Overview

The image is an icon representing text selection. It features a large, stylized letter "T" enclosed within a selection box. The icon is rendered in a solid pink color against a light gray background.

### Components/Axes

* **Letter:** A large, pink letter "T" dominates the center of the image.

* **Selection Box:** A pink selection box surrounds the letter "T". The box is formed by four corner brackets, one in each corner of the image.

* **Background:** The background is a light gray color.

### Detailed Analysis

* The letter "T" is a sans-serif font with a bold appearance.

* The selection box is formed by four corner brackets, each with rounded corners.

* The pink color is consistent throughout the letter "T" and the selection box.

* The light gray background provides contrast to the pink elements.

### Key Observations

* The icon is simple and easily recognizable.

* The use of color is effective in highlighting the key elements.

* The icon is likely used to represent text selection or text editing functionality.

### Interpretation

The icon is a visual representation of text selection. The letter "T" represents text, and the selection box indicates that the text is selected. The icon is likely used in software applications or websites to indicate that the user can select text. The simplicity of the design makes it easily recognizable and understandable.

</details>

Answer Text Metrics | ▼ ▲ ⚫ | ▼ ▲ ▼ | ▼ ▲ ▼ | ▼ ▲ ▼ | ▼ ▲ ▼ | ▼ ▲ ▼ |

|

<details>

<summary>Images/icons/sources_color.png Details</summary>

### Visual Description

## Icon: File Folder with Documents

### Overview

The image is a simple icon representing a file folder containing documents. The icon is rendered in a single color, a shade of green, against a light gray background. The style is minimalist, using clean lines to depict the folder and papers.

### Components/Axes

* **Folder:** A rectangular shape with a notch cut out on the top edge, representing a file folder. A smaller rectangle is present near the bottom, likely representing a label area.

* **Documents:** Three rectangular shapes of varying sizes are stacked behind the folder, representing documents or papers. The topmost document has three horizontal lines, indicating text.

* **Color:** The entire icon is a shade of green.

### Detailed Analysis

* The folder is positioned in the foreground, with the documents behind it.

* The documents are slightly offset from each other, creating a sense of depth.

* The horizontal lines on the topmost document suggest that it contains text.

* The label area on the folder is blank.

### Key Observations

* The icon is simple and easily recognizable.

* The use of a single color gives it a clean and modern look.

* The icon effectively conveys the concept of a file folder containing documents.

### Interpretation

The icon is likely used to represent files, documents, or data storage in a digital interface or application. Its simplicity and clarity make it suitable for a wide range of uses. The absence of specific details allows it to be interpreted broadly, representing any type of document or file.

</details>

Sources Metrics | ▲ ⚫ ▲ | ▼▼ ⚫ | ▼▼▼ | ▼▼▼ | ▼▼▼ | ▼▼▼ |

|

<details>

<summary>Images/icons/citation_color.png Details</summary>

### Visual Description

## Icon: Square Brackets

### Overview

The image is an icon representing a pair of square brackets. The brackets are depicted in a light blue color with rounded corners. The background is a light gray.

### Components/Axes

* **Brackets:** Two square brackets, one on the left and one on the right.

* **Color:** Light blue for the brackets, light gray for the background.

* **Shape:** The brackets have rounded corners.

### Detailed Analysis or ### Content Details

The icon consists of two square brackets, each formed by a light blue line. The left bracket is positioned on the left side of the image, and the right bracket is positioned on the right side. The brackets are mirror images of each other. The space between the brackets is filled with the light gray background color. The line thickness of the brackets is consistent throughout.

### Key Observations

* The icon is simple and easily recognizable.

* The use of rounded corners gives the icon a softer appearance.

* The color scheme is muted and unobtrusive.

### Interpretation

The icon likely represents a function or operation that involves enclosing something within brackets, such as in mathematical notation, programming syntax, or text formatting. The simplicity of the design makes it versatile for use in various applications.

</details>

Citation Metrics | ▲▲ | ⚫ ▲ | ▼▼ | ⚫ ▼ | ⚫ ▼ | ⚫⚫ |

Table 1: DeepTrace results for our Deep Research (DR) based models: GPT-5, YouChat, Perplexity (PPLX), Copilot Think Deeper, and Gemini. This table also includes GPT-5 Web Search (S) setting. Metrics evaluated according to DeepTrace thresholds: ▲ acceptable, ⚫ borderline, ▼ problematic. These results show that deep research agents still struggle with unsupported statements, poor source usage, and unreliable citation practices across models.

Generative Search Engines.

As shown in Figure 2, for answer text metrics, one-sidedness remains an issue (50–80%), with Perplexity performing worst, generating one-sided responses in over 83% of debate queries despite producing the longest answers (18.8 statements per response on average). Confidence calibration also varies where BingChat and You.com reduce confidence when addressing debate queries, whereas Perplexity maintains uniformly high confidence (90%+ very confident), resulting in overconfident yet one-sided answers on politically or socially contentious prompts. On relevance, GSE models perform comparably (75–85% relevant statements), which indicates better alignment with user queries relative to their DR counterparts. For source metrics, BingChat exemplifies the quantity without quality trade-off where it lists more sources on average (4.0), yet over a third remain uncited and only about half are necessary. You.com and Perplexity list slightly fewer sources (3.4–3.5) but still struggle with unsupported claims (23–47%). Finally, on citation metrics, all three engines show relatively low citation accuracy (40–68%), with frequent misattribution. Even when a supporting source exists, models often cite an irrelevant one, preventing users from verifying factual validity. Citation thoroughness is also limited, with engines typically citing only a subset of available supporting evidence. Our results therefore align with the findings of Narayanan Venkit (2023) where such models can be responsible in generatic echo chambers with very little automony towards the user to search and select the articles that they prefer.

Deep Research Agents.

In context of answer text, Table 1 shows that DR modes do not eliminate one-sidedness where rates remain high across the board (54.7–94.8%). Appendix D shows how GPT-5 deep research answers one sided answers for questions framed pro and con the same debate, without providing generalized coverage. This showcases sycophantic behavior of aligning only with the users perspective, causing potential echo chambers to search. Overconfidence is consistently low across DR engines ( $<$ 20%), indicating that calibration of language hedging is one relative strength of this pipeline. On relevance, however, performance is uneven where GPT-5(DR) attains borderline results (87.5%), while all other engines fall below 50%, including Gemini(DR) at just 12.4%. This suggests that verbosity or sourcing breadth does not translate to actually answering the user query. Turning to sources metrics, GPT-5(DR) remains the strongest with 0% uncited sources, only 12.5% unsupported statements, and 87.5% source necessity. By contrast, YouChat(DR), PPLX(DR), Copilot(DR), and Gemini(DR) all fare poorly, with unsupported rates ranging from 53.6% (Gemini) to 97.5% (PPLX). Gemini(DR) in particular includes 14.5% uncited sources and only one-third (33.1%) of its sources being necessary, reflecting inefficient citation usage. For citation metrics, GPT-5(DR) and YouChat(DR) again stand out with high citation thoroughness (87.5% and 83.5% respectively), although their citation accuracy has dropped to the borderline range (79.1% and 72.3%). Gemini(DR) demonstrates weak citation performance: only 40.3% citation accuracy (problematic) and 27.1% thoroughness (borderline). PPLX(DR) and Copilot(DR) also show poor grounding, with citation accuracies between 58–62%.

Taken together, the results reveal that neither GSE nor deep research agents deliver uniformly reliable outputs across DeepTRACE’s dimensions. GSEs excel at producing concise, relevant answers but fail at balanced perspective-taking, confidence calibration, and factual support. Deep research agents, by contrast, improve balance and citation correctness, but at the cost of overwhelming verbosity, low relevance, and huge unsupported claims. Our results show that more sources and longer answers do not translate into reliability. Over-citation (as in YouChat(DR)) leads to ‘search fatigue’ for users, while under-grounded verbose texts (as in Perplexity(DR)) erodes trust. At the same time, carefully calibrated systems (as with GPT-5(DR)) demonstrate that near-ideal reliability across multiple dimensions is achievable.

5 Discussion and Conclusion

Our work introduced DeepTRACE, a sociotechnically grounded framework for auditing generative search engines (GSEs) and deep research agents (DRs). By translating community-identified failure cases into measurable dimensions, our approach evaluates not just isolated components but the end-to-end reliability of these systems across balance, factual support, and citation integrity.