# seqBench: A Tunable Benchmark to Quantify Sequential Reasoning Limits of LLMs

**Authors**:

- M.R. Ramezanali

- Salesforce AI

- Palo Alto, CA

- &M. Vazifeh (Capital One, MIT)

- Cambridge, MA

- &P. Santi (MIT)

- Cambridge, MA

> ⋆ \star denotes equal contribution.

## Abstract

We introduce seqBench, a parametrized benchmark for probing sequential reasoning limits in Large Language Models (LLMs) through precise, multi-dimensional control over several key complexity dimensions. seqBench allows systematic variation of (1) the logical depth, defined as the number of sequential actions required to solve the task; (2) the number of backtracking steps along the optimal path, quantifying how often the agent must revisit prior states to satisfy deferred preconditions (e.g., retrieving a key after encountering a locked door); and (3) the noise ratio, defined as the ratio between supporting and distracting facts about the environment. Our evaluations on state-of-the-art LLMs reveal a universal failure pattern: accuracy collapses exponentially beyond a model-specific logical depth. Unlike existing benchmarks, seqBench ’s fine-grained control facilitates targeted analyses of these reasoning failures, illuminating universal scaling laws and statistical limits, as detailed in this paper alongside its generation methodology and evaluation metrics. We find that even top-performing models systematically fail on seqBench ’s structured reasoning tasks despite minimal search complexity, underscoring key limitations in their commonsense reasoning capabilities. Designed for future evolution to keep pace with advancing models, the seqBench datasets are publicly released to spur deeper scientific inquiry into LLM reasoning, aiming to establish a clearer understanding of their true potential and current boundaries for robust real-world application.

seqBench: A Tunable Benchmark to Quantify Sequential Reasoning Limits of LLMs

M.R. Ramezanali thanks: $\star$ denotes equal contribution. Salesforce AI Palo Alto, CA 94301 mramezanali@salesforce.com M. Vazifeh footnotemark: Capital One, MIT Cambridge, MA 02143 mvazifeh@mit.edu P. Santi MIT Cambridge, MA 02143 psanti@mit.edu

Large Language Models (LLMs) have shown remarkable performance (Vaswani et al., 2017; Brown et al., 2020; Lieber et al., 2021; Rae et al., 2021; Smith et al., 2022; Thoppilan et al., 2022; Hoffmann et al., 2022; Du et al., 2021; Fedus et al., 2022; Zoph et al., 2022) on a wide range of tasks and benchmarks spanning diverse human-like capabilities; however, these successes can obscure fundamental limitations in sequential reasoning that still persist. Arguably, reasoning captures a more pure form of intelligence, going beyond mere pattern matching or fact memorization, and is thus a critical capability to understand and enhance in AI systems. Recent studies show that state-of-the-art LLMs (OpenAI, 2025; Google DeepMind, 2025; Meta AI, 2025; Mistral AI, 2024; Anthropic, 2025) excel at complex benchmarks, yet stumble upon simple common-sense inferences trivial for an adult human (Nezhurina et al., 2025; Han et al., 2024; Sharma, 2024; Berglund et al., 2024; Yang et al., 2019). Most existing benchmarks saturate quickly, leaving little room for fine-grained attribution studies to perform systemic probes of LLM failure modes. Consequently, a robust understanding of why and under what circumstances these models fail, especially on problems requiring sequential reasoning, remains elusive.

This gap, we argue, stems from the lack of evaluation benchmarks allowing systematic, multi-dimensional control over key independent factors that influence a task’s overall reasoning difficulty. Most benchmarks (Cobbe et al., 2021; Hendrycks et al., 2021; Srivastava et al., 2023; Weston et al., 2015; Clark et al., 2018; Dua et al., 2019; Rein et al., 2023), despite their evaluation merits, often do not support a systematic variation of crucial complexity dimensions. This makes it difficult to isolate the specific conditions under which reasoning in LLMs falter. For instance, discerning whether a failure is due to the length of the required reasoning chain, the necessity to revise intermediate conclusions, or the density of distracting information is often not quantitatively possible. While prompting strategies like chain-of-thought (CoT) and model scaling have boosted aggregate performance, they often obscure sharp performance cliffs that can emerge when these underlying complexity dimensions are varied independently (Wei et al., 2023; Kojima et al., 2022). Without such systematic control, disentangling inherent architectural limitations from those addressable via scaling (model size, data, or compute), fine-tuning, or prompting techniques is challenging. A fine-grained understanding of these performance boundaries is crucial for developing more robust and reliable reasoning systems.

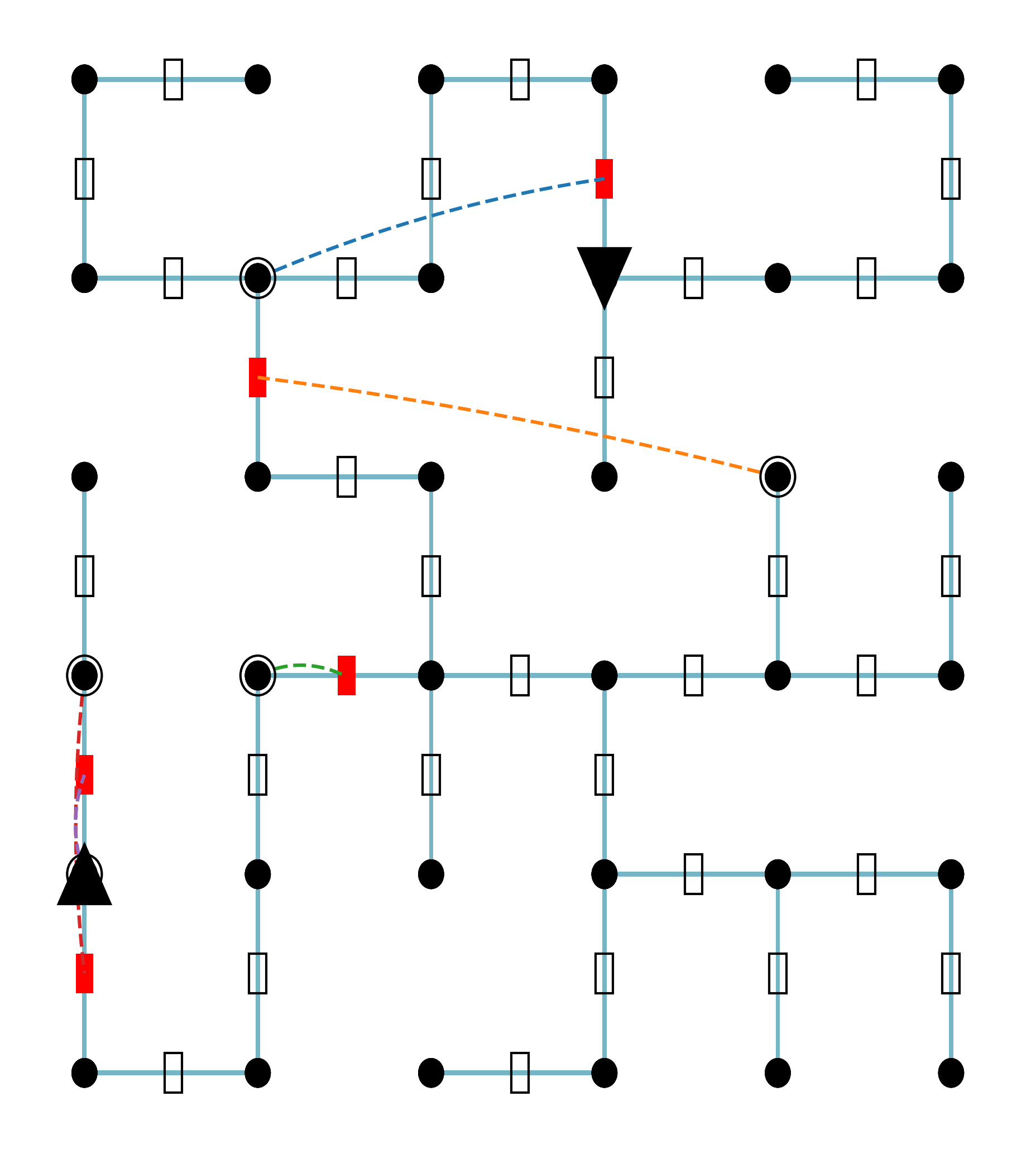

To complement recent efforts (Sprague et al., 2024; Tyagi et al., 2024; Kuratov et al., 2024; Tang and Kejriwal, 2025; Mirzaee et al., 2021; Tikhonov, 2024; Mirzaee and Kordjamshidi, 2022; Shi et al., 2022) in evaluating reasoning, and to address the need for more controlled analysis, we introduce seqBench, a tunable benchmark designed explicitly to probe and analyze sequential reasoning capabilities in language models. The dataset comprises synthetic yet linguistically grounded pathfinding task configurations on two-dimensional grids. Solving each problem requires sequential inference over relevant and distracting structured facts. Each instance is automatically verifiable and parameterized by controllable factors that directly address the previously identified gaps: (1) logical depth (total number of actions in the ground-truth solution, reflecting the length of the reasoning chain); (2) backtracking count (number of locked-door detours on the optimal path, requiring revision of tentative solution paths); and (3) noise ratio (proportion of distracting vs. supporting facts, testing robustness to irrelevant information). Performance against these dimensions can be quantified with fine-grained metrics (e.g., via progress ratio as we define here). We observe that beyond a certain logical depth, Pass@1 success collapses to near zero for all models (see Figure 1). These features enable precise attribution studies of model failure modes, offering insights into the brittle boundaries of current LLM generalization.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Performance Comparison Chart: AI Model Success Rate vs. Number of Actions

### Overview

The image contains two line charts comparing the performance of nine different AI models. The charts plot the "Success Rate" of each model against the "Number of Actions (L)" it is required to perform. The top chart uses a linear scale for the y-axis, while the bottom chart uses a logarithmic scale for the y-axis to better visualize performance differences at lower success rates. The data suggests an exponential decay in success rate as the number of actions increases for all models.

### Components/Axes

* **X-Axis (Both Plots):** Labeled "Number of Actions (L)". The scale runs from 0 to 300, with major tick marks at 0, 50, 100, 150, 200, 250, and 300.

* **Y-Axis (Top Plot):** Labeled "Success Rate". The scale is linear, running from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Y-Axis (Bottom Plot):** Labeled "Success Rate (Log Scale)". The scale is logarithmic (base 10), running from 10⁻³ (0.001) to 10⁰ (1.0), with major tick marks at 10⁻³, 10⁻², 10⁻¹, and 10⁰.

* **Legend (Top-Right of Top Plot):** A box containing the model names, their corresponding line colors and markers, and the parameters for their fitted exponential decay curves. The fit formula is given at the top of the legend box as: `Fit: ~ exp(-L / L₀)`.

* **gemini-2.5-flash-preview-04-17:** Red line with circle markers. (Fit: L₀ = 85.7)

* **gemini-2.0-flash:** Green line with circle markers. (Fit: L₀ = 40.2)

* **Llama-4-Maverick-17B-128E-Instruct-FP8:** Gray line with circle markers. (Fit: L₀ = 16.7)

* **Llama-3.3-70B-Instruct-Turbo:** Pink line with circle markers. (Fit: L₀ = 10.2)

* **gemma-2-27b-it:** Purple line with circle markers. (Fit: L₀ = 8.1)

* **Qwen2.5-Coder-32B-Instruct:** Orange line with circle markers. (Fit: L₀ = 4.8)

* **Qwen2.5-7B-Instruct-Turbo:** Blue line with circle markers. (Fit: L₀ = 4.0)

* **Llama-3.2-3B-Instruct-Turbo:** Brown line with circle markers. (Fit: L₀ = 1.6)

### Detailed Analysis

**Trend Verification:** All data series show a downward trend, with success rate decreasing as the number of actions (L) increases. The decay appears exponential, as indicated by the fitted dashed lines.

**Data Points & Model Performance (Approximate values from visual inspection):**

1. **gemini-2.5-flash-preview-04-17 (Red):**

* **Trend:** Slowest decay, highest overall performance.

* **Points (Top Plot):** L=0, Success≈1.0; L=50, Success≈0.5; L=100, Success≈0.25; L=200, Success≈0.1; L=300, Success≈0.05.

* **Points (Bottom Plot):** Confirms the trend, showing a near-linear decline on the log scale.

2. **gemini-2.0-flash (Green):**

* **Trend:** Second-best performance, decay faster than gemini-2.5-flash.

* **Points (Top Plot):** L=0, Success≈0.9; L=50, Success≈0.15; L=100, Success≈0.05; L=200, Success≈0.01.

* **Points (Bottom Plot):** Shows a steeper slope than the red line on the log scale.

3. **Llama-4-Maverick-17B-128E-Instruct-FP8 (Gray):**

* **Trend:** Moderate decay, performance falls between gemini-2.0-flash and Llama-3.3-70B.

* **Points (Top Plot):** L=0, Success≈0.85; L=50, Success≈0.1; L=100, Success≈0.02.

* **Points (Bottom Plot):** Line ends near L=100, Success≈10⁻².

4. **Llama-3.3-70B-Instruct-Turbo (Pink):**

* **Trend:** Faster decay than the gray line.

* **Points (Top Plot):** L=0, Success≈0.8; L=50, Success≈0.05; L=100, Success≈0.01.

* **Points (Bottom Plot):** Line ends near L=75, Success≈10⁻².

5. **gemma-2-27b-it (Purple):**

* **Trend:** Rapid decay.

* **Points (Top Plot):** L=0, Success≈0.7; L=25, Success≈0.1; L=50, Success≈0.02.

* **Points (Bottom Plot):** Line ends near L=50, Success≈10⁻².

6. **Qwen2.5-Coder-32B-Instruct (Orange):**

* **Trend:** Very rapid decay.

* **Points (Top Plot):** L=0, Success≈0.65; L=25, Success≈0.05.

* **Points (Bottom Plot):** Line ends near L=25, Success≈10⁻².

7. **Qwen2.5-7B-Instruct-Turbo (Blue):**

* **Trend:** Extremely rapid decay.

* **Points (Top Plot):** L=0, Success≈0.5; L=10, Success≈0.05.

* **Points (Bottom Plot):** Line ends near L=15, Success≈10⁻².

8. **Llama-3.2-3B-Instruct-Turbo (Brown):**

* **Trend:** Fastest decay, lowest overall performance.

* **Points (Top Plot):** L=0, Success≈0.4; L=10, Success≈0.02.

* **Points (Bottom Plot):** Line ends near L=10, Success≈10⁻².

### Key Observations

1. **Clear Performance Hierarchy:** There is a distinct and consistent ordering of model performance across the entire range of actions. `gemini-2.5-flash-preview-04-17` is the top performer, followed by `gemini-2.0-flash`, then the Llama and other models in descending order.

2. **Exponential Decay Fit:** The dashed lines representing the exponential fit `~ exp(-L / L₀)` align closely with the data points for each model, confirming this as a good model for the performance drop-off. The `L₀` parameter (decay constant) quantifies this: a higher `L₀` indicates slower decay (e.g., 85.7 for the top model vs. 1.6 for the bottom model).

3. **Log Scale Revelation:** The bottom logarithmic plot clearly shows that while all models start at different success rates, their decay *rates* (the slopes of the lines on the log plot) also differ significantly. The top models have shallower slopes.

4. **Performance Convergence at High L:** On the linear plot, all models' success rates converge towards zero as the number of actions approaches 300, though the top model maintains a small but non-zero rate.

### Interpretation

This data demonstrates a strong inverse relationship between task length (number of sequential actions) and the reliability of AI models in completing them successfully. The exponential decay suggests that each additional action introduces a roughly constant probability of failure, compounding over the sequence.

The significant differences in the `L₀` values (ranging from 85.7 to 1.6) highlight vast disparities in model capability for multi-step reasoning or planning. The `gemini-2.5-flash-preview-04-17` model is approximately 50 times more resilient to increasing task length than the `Llama-3.2-3B-Instruct-Turbo` model, as indicated by their `L₀` ratio.

**Practical Implication:** For applications requiring long sequences of actions (e.g., complex problem-solving, multi-turn dialogue, autonomous agent tasks), model selection is critical. Using a model with a low `L₀` value would lead to a very high likelihood of failure for all but the shortest tasks. The chart provides a quantitative basis for choosing a model based on the expected task length and required reliability. The near-perfect fit to an exponential model also allows for predicting success rates for action counts not explicitly tested.

</details>

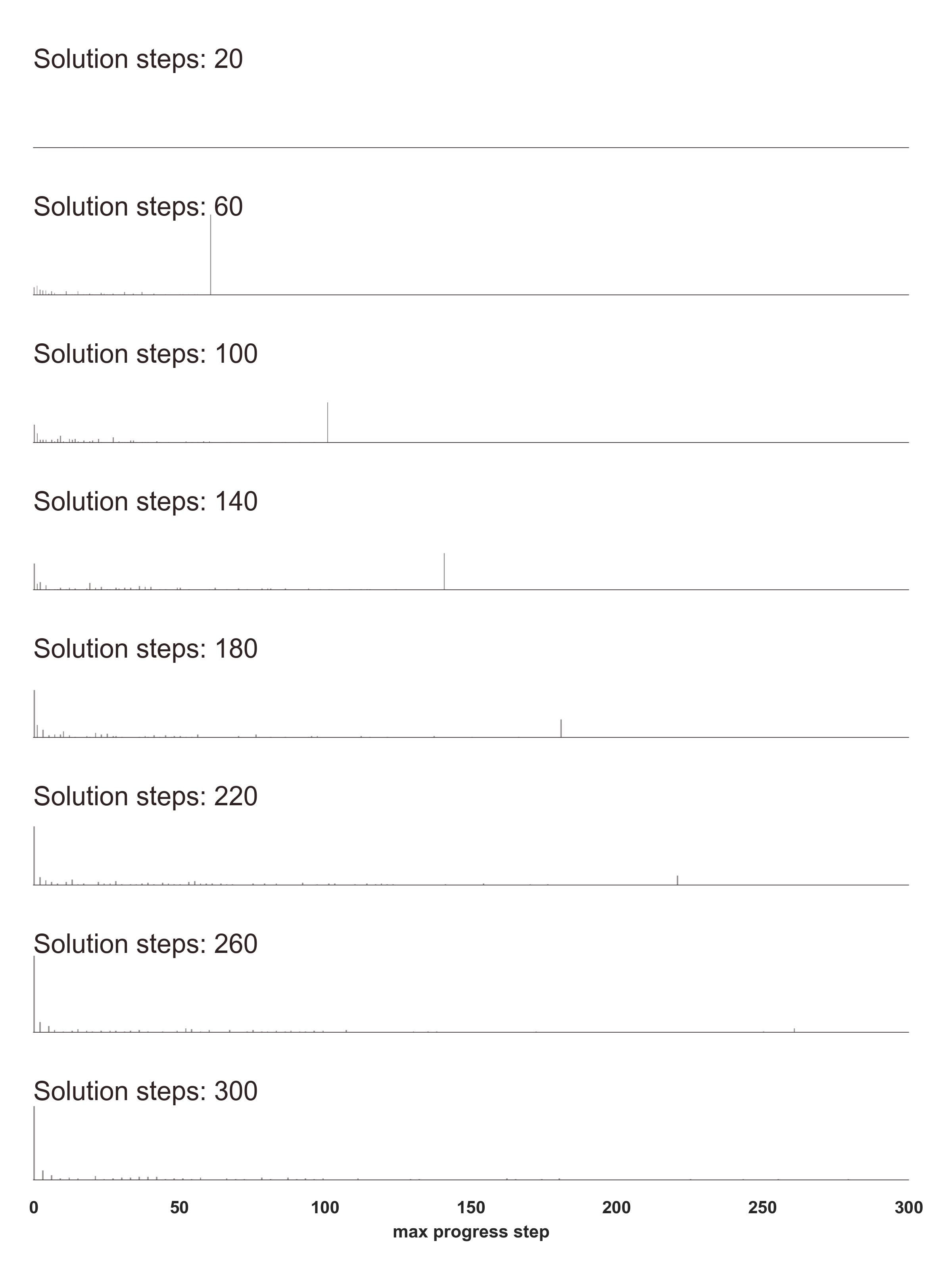

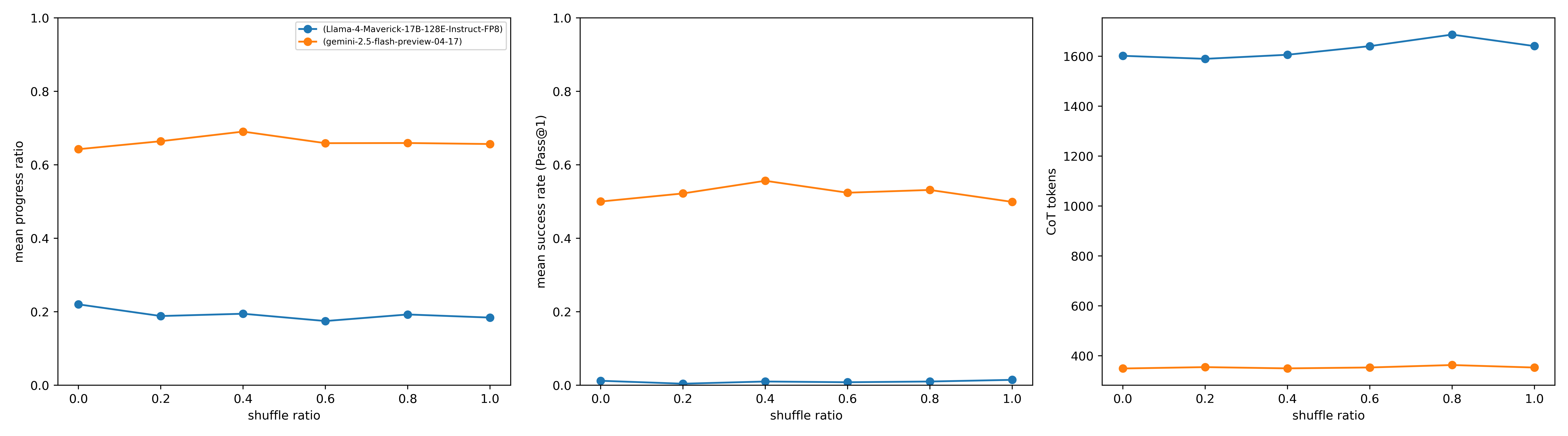

Figure 1: Performance collapse of various models with increasing logical depth $L$ for a pathfinding task ( $N,M=40,\mathcal{B}=2$ keys, Noise Ratio $\mathcal{N}=0.0$ ). Success rates (Pass@1) are shown on linear (top panel) and logarithmic (bottom panel) y-axes, averaged from 5 runs/problem across 40 problems per unit $L$ -bin. All evaluations used Temperature=1.0 and top-p=0.95 (Gemini-2.5-flash: ’auto’ thinking). The displayed fits employ a Weighted Least Squares (WLS) Carroll and Ruppert (2017) method on log-success rates. Weights are derived from inverse squared residuals of a preliminary Ordinary Least Squares (OLS) fit. (In the supplementary section, we have added Figure 16 to show a similar pattern is observed in recently released OpenAI models.)

Furthermore, the seqBench benchmark is built upon a scalable data generation framework, allowing it to evolve alongside increasingly capable models to help with both model training and evaluation. Through evaluations on popular LLMs, we reveal that top-performing LLMs exhibit steep universal declines as either of the three complexity dimensions increases, while remaining comparatively robust to fact shuffle, despite the underlying logical structure being unchanged.

#### Contributions.

Our main contributions are:

1. seqBench: A Tunable Benchmark for Sequential Reasoning. We introduce an open-source framework for generating pathfinding tasks with fine-grained, orthogonal control over logical depth, backtracking steps, and noise ratio. We also evaluate secondary factors like fact ordering (shuffle ratio; See supplementary material for details).

1. Comprehensive LLM Attribution Study. Using seqBench, we demonstrate the significant impact of these controlled complexities on LLM performance, revealing sharp performance cliffs in state-of-the-art models even when search complexity is minimal.

The seqBench dataset is publicly available https://huggingface.co/datasets/emnlp-submission/seqBench under the CC BY 4.0 license to facilitate benchmarking.

<details>

<summary>figs/llama4_deepdive.png Details</summary>

### Visual Description

## Line Graphs: Model Performance Metrics vs. Number of Actions

### Overview

The image contains two vertically stacked line charts sharing the same x-axis ("Number of actions"). The top chart plots the "Success rate" of a specific AI model against the number of actions, accompanied by an exponential decay fit. The bottom chart plots three related performance metrics ("Precision", "Recall", "Progress ratio") against the number of actions, with error bars indicating variability.

### Components/Axes

**Top Chart:**

* **X-axis:** Label: "Number of actions". Scale: Linear, from 0 to 300, with major ticks at 0, 50, 100, 150, 200, 250, 300.

* **Y-axis:** Label: "Success rate". Scale: Linear, from 0.0 to 0.6 (approx. 0.7 at top), with major ticks at 0.0, 0.2, 0.4, 0.6.

* **Legend (Top-right corner):**

* Blue line with circle markers: "Llama-4-Maverick-17B-128E-Instruct-FP8"

* Orange dashed line: "∝ exp(−L/L₀), L₀ = 16.7"

**Bottom Chart:**

* **X-axis:** Label: "Number of actions". Scale: Linear, from 0 to 400, with major ticks at 0, 100, 200, 300, 400.

* **Y-axis:** No explicit label, but values range from 0.0 to 1.0, with major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend (Top-right corner):**

* Blue line with circle markers and vertical error bars: "Precision"

* Orange line with circle markers and vertical error bars: "Recall"

* Green line with circle markers and vertical error bars: "Progress ratio"

### Detailed Analysis

**Top Chart - Success Rate:**

* **Trend Verification:** The blue data series ("Llama-4-Maverick...") shows a steep, concave-upward decay. It starts high and decreases rapidly, then asymptotically approaches zero. The orange dashed line (exponential fit) follows this trend very closely.

* **Data Points (Approximate):**

* At ~10 actions: Success rate ≈ 0.63

* At ~20 actions: Success rate ≈ 0.26

* At ~30 actions: Success rate ≈ 0.14

* At ~40 actions: Success rate ≈ 0.09

* At ~50 actions: Success rate ≈ 0.06

* At ~60 actions: Success rate ≈ 0.04

* At ~100 actions: Success rate ≈ 0.02

* From ~150 to 300 actions: Success rate is very close to 0.0, with data points hovering just above the axis.

**Bottom Chart - Precision, Recall, Progress Ratio:**

* **Trend Verification:**

* **Precision (Blue):** Starts high (~0.9) and remains relatively stable, showing a very slight downward trend with large error bars.

* **Recall (Orange):** Starts moderately high (~0.8) and shows a clear, steady downward trend.

* **Progress Ratio (Green):** Starts moderately high (~0.75) and shows the steepest decline of the three metrics.

* **Data Points & Error Bars (Approximate):**

* **Precision (Blue):**

* ~10 actions: Mean ≈ 0.90, Error bar range ≈ 0.85 to 0.95

* ~50 actions: Mean ≈ 0.92, Error bar range ≈ 0.88 to 0.96

* ~100 actions: Mean ≈ 0.91, Error bar range ≈ 0.84 to 0.98

* ~200 actions: Mean ≈ 0.87, Error bar range ≈ 0.76 to 0.98

* ~300 actions: Mean ≈ 0.87, Error bar range ≈ 0.79 to 0.95

* **Recall (Orange):**

* ~10 actions: Mean ≈ 0.79, Error bar range ≈ 0.68 to 0.90

* ~50 actions: Mean ≈ 0.62, Error bar range ≈ 0.40 to 0.84

* ~100 actions: Mean ≈ 0.54, Error bar range ≈ 0.18 to 0.90

* ~200 actions: Mean ≈ 0.38, Error bar range ≈ 0.16 to 0.60

* ~300 actions: Mean ≈ 0.28, Error bar range ≈ 0.10 to 0.46

* **Progress Ratio (Green):**

* ~10 actions: Mean ≈ 0.74, Error bar range ≈ 0.22 to 1.00 (very large)

* ~50 actions: Mean ≈ 0.26, Error bar range ≈ 0.02 to 0.50

* ~100 actions: Mean ≈ 0.11, Error bar range ≈ 0.02 to 0.20

* ~200 actions: Mean ≈ 0.09, Error bar range ≈ 0.02 to 0.16

* ~300 actions: Mean ≈ 0.04, Error bar range ≈ 0.01 to 0.08

### Key Observations

1. **Strong Exponential Decay:** The success rate of the "Llama-4-Maverick" model decays exponentially with the number of actions, with a characteristic length scale (L₀) of 16.7 actions. The fit is excellent.

2. **Divergent Metric Trends:** While the model's **Precision** remains high and stable (though with high variance) as actions increase, its **Recall** and **Progress Ratio** degrade significantly. The Progress Ratio degrades the fastest.

3. **Increasing Variability:** The error bars for all three metrics in the bottom chart are substantial, particularly for Recall and Progress Ratio at lower action counts, indicating high variance in model performance across different trials or tasks.

4. **Performance Plateau:** All metrics, especially Success Rate and Progress Ratio, appear to plateau near zero after approximately 150-200 actions, suggesting a functional limit to the model's effective operational range in this context.

### Interpretation

This data demonstrates a critical limitation in the evaluated AI model's performance on sequential or multi-step tasks. The exponential decay in success rate indicates that the probability of completing a task successfully diminishes rapidly with each additional action required.

The divergence between Precision and Recall is particularly insightful. The model maintains high **Precision** (when it claims to have completed a step or identified something, it is often correct), but its **Recall** plummets (it misses an increasing number of required steps or relevant items as the task length grows). This suggests the model becomes increasingly "conservative" or "forgetful" in longer action sequences—it may avoid making incorrect predictions but at the cost of failing to complete necessary actions.

The **Progress Ratio**, which likely measures the proportion of actions that meaningfully advance the task goal, decays fastest. This implies that in longer sequences, a growing fraction of the model's actions are either redundant, corrective, or non-productive.

**In summary:** The model is reliable for short action sequences but suffers from a severe "horizon problem." Its ability to maintain goal-directed behavior and recall necessary information degrades exponentially with task length, even while the correctness of its individual, isolated predictions remains relatively stable. This highlights a fundamental challenge in scaling such models to complex, long-horizon problems. The provided exponential fit (L₀=16.7) offers a quantitative benchmark for this limitation.

</details>

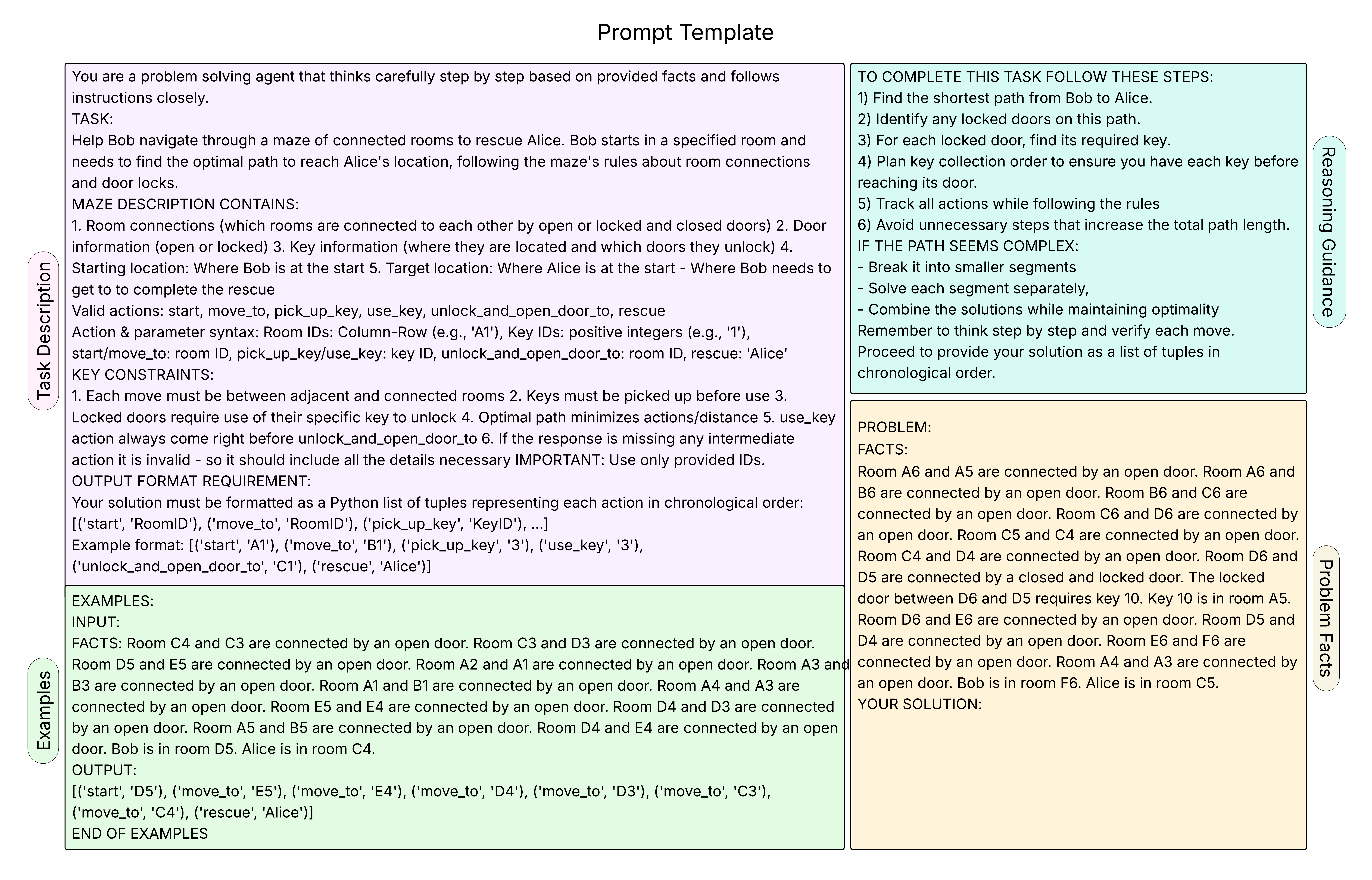

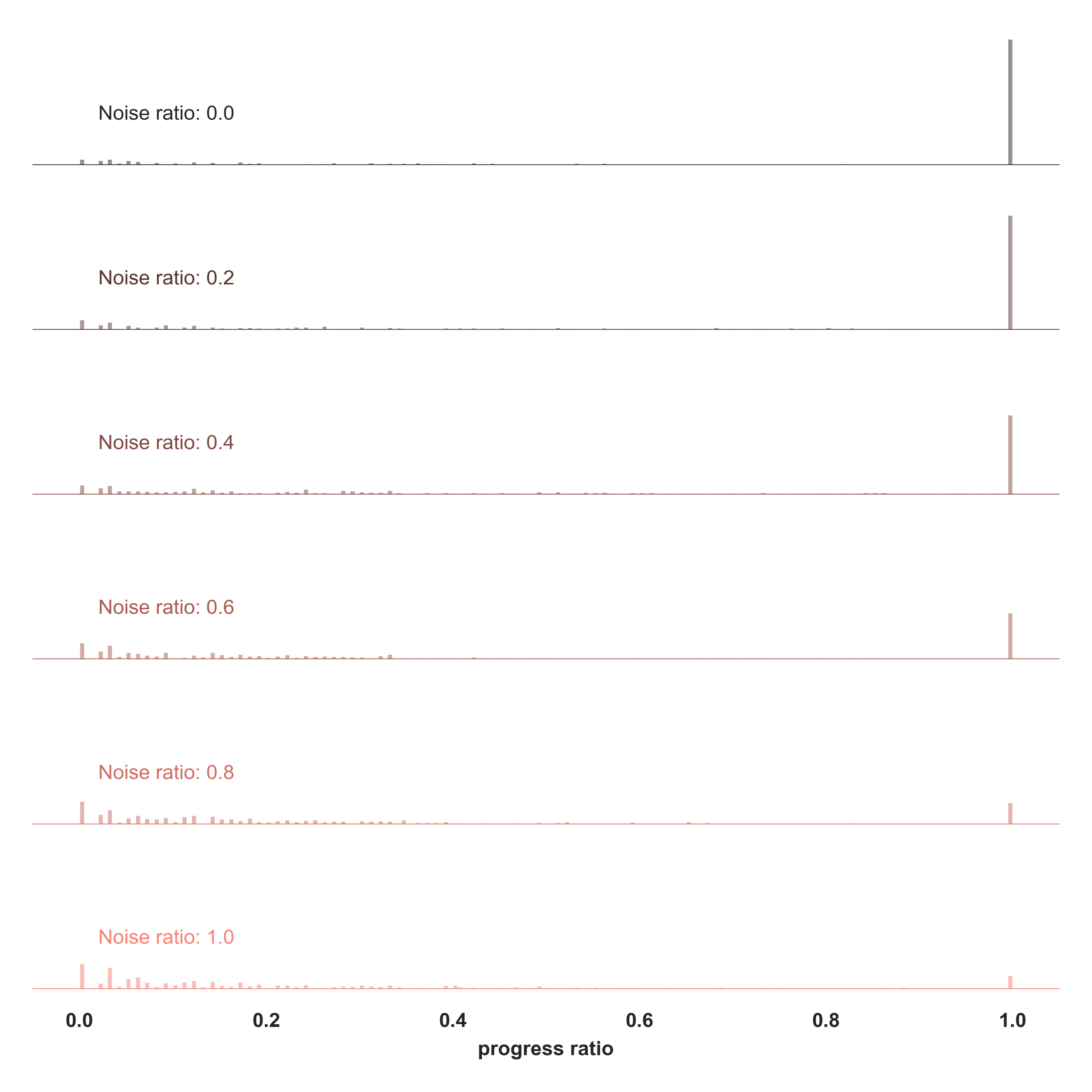

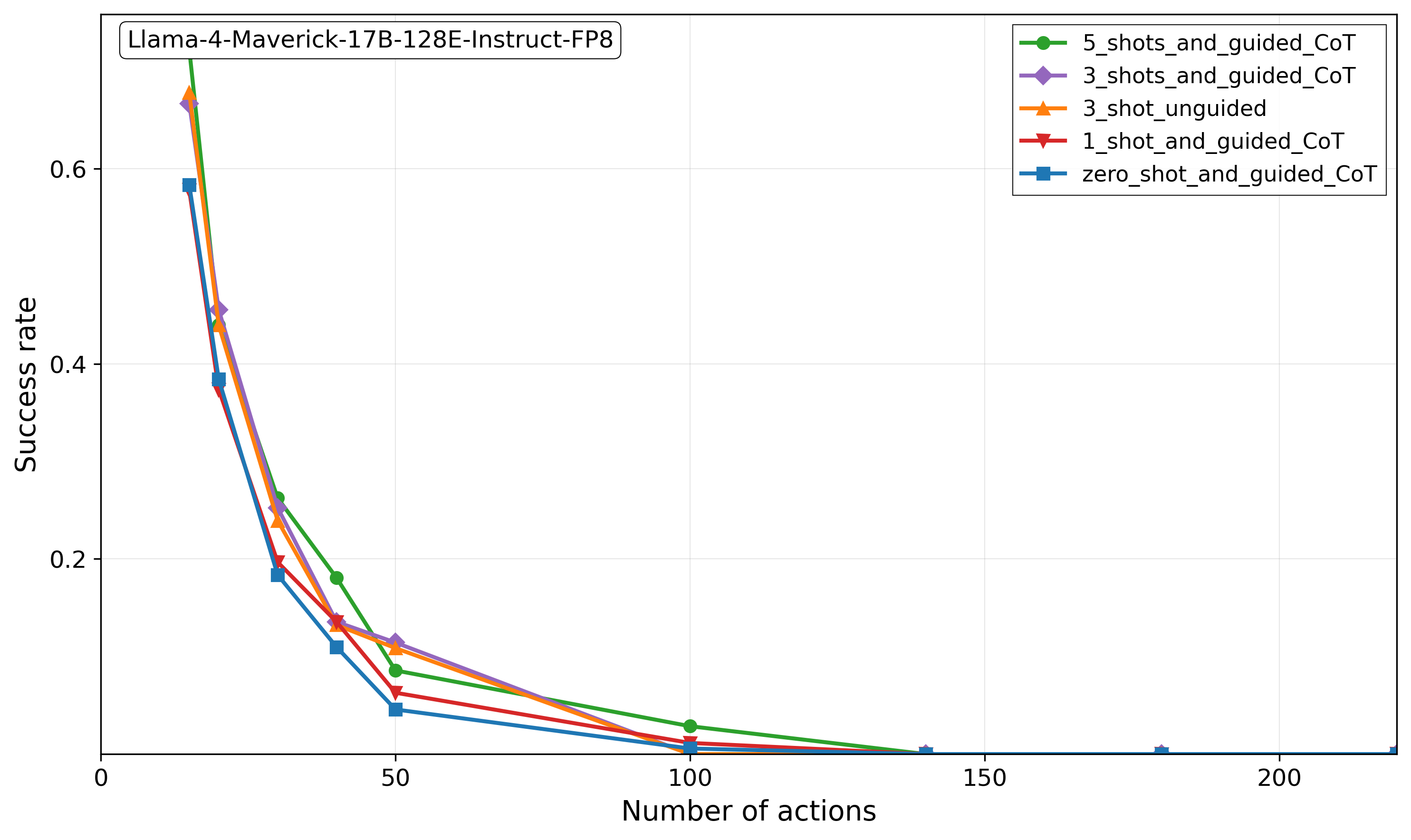

Figure 2: On the left: Llama-4 Maverick-17B-128E-Instruct Model’s performance (pass@1 success rate) versus number of actions in the ground truth path of the pathfinding problems ( $N,M=40,\mathcal{B}=2$ keys, Noise Ratio $\mathcal{N}=0.0$ ) is shown. This Pass@1 success rate across 5 runs per problem is averaged over the problem instances sampled from different actions count bins of width equal to 1. On the right: The mean of progress ratio across all problems as well as mean of precision and recall is shown to highlight models gradually increasing struggle in completing the path. The Temperature is set to 1.0 and the top-p is set to 0.95 in all runs.

## 1 Methods

### 1.1 Dataset Generation

The seqBench dataset consists of spatial pathfinding tasks. Task instance generation, detailed below (Algorithm 1; See Appendix A for details), is predicated on the precise independent control of the three key complexity dimensions introduced earlier: Logical Depth ( $L$ ), Backtracking Count ( $\mathcal{B}$ ), and Noise Ratio ( $\mathcal{N}$ ). This allows the creation of instances with specific values for these parameters, enabling targeted studies of their impact on LLM reasoning.

Task instances are produced in a multi-stage process. Initially, primary generation parameters—maze dimensions ( $N,M$ ), target backtracks ( $\mathcal{B}_{\text{target}}$ ), and target noise ratio ( $\mathcal{N}_{\text{target}}$ )—are specified. An acyclic maze graph ( $M_{g}$ ) is formed on an $N\times M$ grid using Kruskal’s algorithm (Kleinberg and Tardos, 2006). Our "Rewind Construction" method (Algorithm 1) then embeds $\mathcal{B}_{\text{target}}$ backtracking maneuvers by working backward from a goal to strategically place keys and locked doors, yielding the instance’s actual backtracking count $\mathcal{B}$ . Finally, a natural language fact list ( $\mathcal{F}$ ) is derived from the maze, and distracting facts are added according to $\mathcal{N}_{\text{target}}$ to achieve the final noise ratio $\mathcal{N}$ . The logical depth $L$ (optimal path length) emerges from these generative steps, influenced by $N,M,\mathcal{B}_{\text{target}}$ , and construction stochasticity. While $L$ is not a direct input to the generation algorithm, the process is designed to yield a wide spectrum of logical depths. Each generated instance is then precisely annotated with its emergent $L$ value, alongside its effective $\mathcal{B}$ and $\mathcal{N}$ values. This annotation effectively makes $L$ a key, selectable parameter for users of the seqBench dataset, enabling them to choose or filter tasks by their desired logical depth. Our rewind construction method guarantees task solvability. The full seqBench benchmark is constructed by systematically applying this instance generation process (detailed in Algorithm 1) across a wide range of initial parameters. This includes varied grid sizes (e.g., $N\in\{5..50\},M\approx N$ ) and target backtracks ( $\mathcal{B}_{\text{target}}\in\{0..7\}$ ), yielding a large and diverse data pool. For each $(N,M,\mathcal{B}_{\text{target}})$ configuration, multiple unique base mazes are generated, to which different noise ratios (e.g., $\mathcal{N}_{\text{target}}\in\{0..1\}$ ) are subsequently applied. It is important to note that the algorithm constrains backtracking complexity to a simple dependency chain. In this setting, retrieving the key for each locked door involves at most one backtracking step to pick up its corresponding key, without requiring the unlocking of additional doors along the optimal path. Combined with the uniform random placement of keys, this design ensures a well-balanced distribution of backtracking difficulty across the generated instances for each logical depth $L$ . Nevertheless, the same backward-in-time construction can be extended to generate tasks with higher backtracking complexity—for example, doors that require multiple keys, or intermediate doors that must be unlocked en route to other keys. Such extensions would introduce richer tree-structured dependency graphs and allow seqBench to probe model performance under more complex long-horizon reasoning regimes. The creation of this comprehensive data pool was computationally efficient, requiring approximately an hour of computation on a standard laptop while using minimal memory. The publicly released benchmark comprises a substantial collection of these generated instances, each annotated with its specific emergent logical depth $L$ , effective backtracking count $\mathcal{B}$ , and noise ratio $\mathcal{N}$ . This rich annotation is key, enabling researchers to readily select or filter task subsets by these dimensions for targeted studies (e.g., as done for Figure 1, where instances were sampled into $L$ -bins with other parameters fixed). For the experiments presented in this paper, specific subsets were drawn from this benchmark pool, often involving further filtering or parameter adjustments tailored to the objectives of each study; precise details for each experiment are provided in the relevant sections and figure captions. Full details on path derivation, fact compilation, and overall dataset generation parameters are provided in the Appendix A.

Input : Grid $N\times M$ , Target backtracks $\mathcal{B}$

Output : Maze graph $M_{g}$ , Locked doors $\mathcal{D}_{L}$ , Key info $\mathcal{K}_{I}$ , Path skeleton $\Pi_{S}$

1

2 $M_{g}\leftarrow$ Acyclic graph on grid (Kruskal’s);

3 $x\leftarrow C_{goal}\leftarrow$ Random goal cell in $M_{g}$ ;

4 $\mathcal{D}_{L},\mathcal{K}_{I}\leftarrow\emptyset,\emptyset$ ; $b\leftarrow 0$ ;

5 $\Pi_{S}\leftarrow[(C_{goal},\text{GOAL})]$ ;

6

7 while $b<\mathcal{B}$ do

8 $c_{key}\leftarrow$ Random cell in $M_{g}$ accessible from $x$ (path avoids $\mathcal{D}_{L}$ for this step);

9 $\pi_{seg}\leftarrow$ Unique path in $M_{g}$ from $x$ to $c_{key}$ ;

10 if $\exists e\in\pi_{seg}$ such that $e\notin\mathcal{D}_{L}$ then

11 $d\leftarrow$ Randomly select such an edge $e$ ;

12 $\mathcal{D}_{L}\leftarrow\mathcal{D}_{L}\cup\{d\}$ ;

13 $K_{id}\leftarrow$ New unique key ID;

14 $\mathcal{K}_{I}[K_{id}]\leftarrow\{\text{opens}:d,\text{loc}:c_{key}\}$ ;

15 $\Pi_{S}$ .prepend( $(c_{key},\text{PICKUP }K_{id})$ , $(d,\text{UNLOCK }K_{id})$ , $(\pi_{seg},\text{MOVE})$ );

16 $x\leftarrow c_{key}$ ; $b\leftarrow b+1$ ;

17

18 end if

19 else

20 Break

21 end if

22

23 end while

24 $\Pi_{S}$ .prepend( $(x,\text{START}))$ ;

25 return $M_{g},\mathcal{D}_{L},\mathcal{K}_{I},\Pi_{S}$ ;

Algorithm 1 Rewind Construction of Path Skeleton

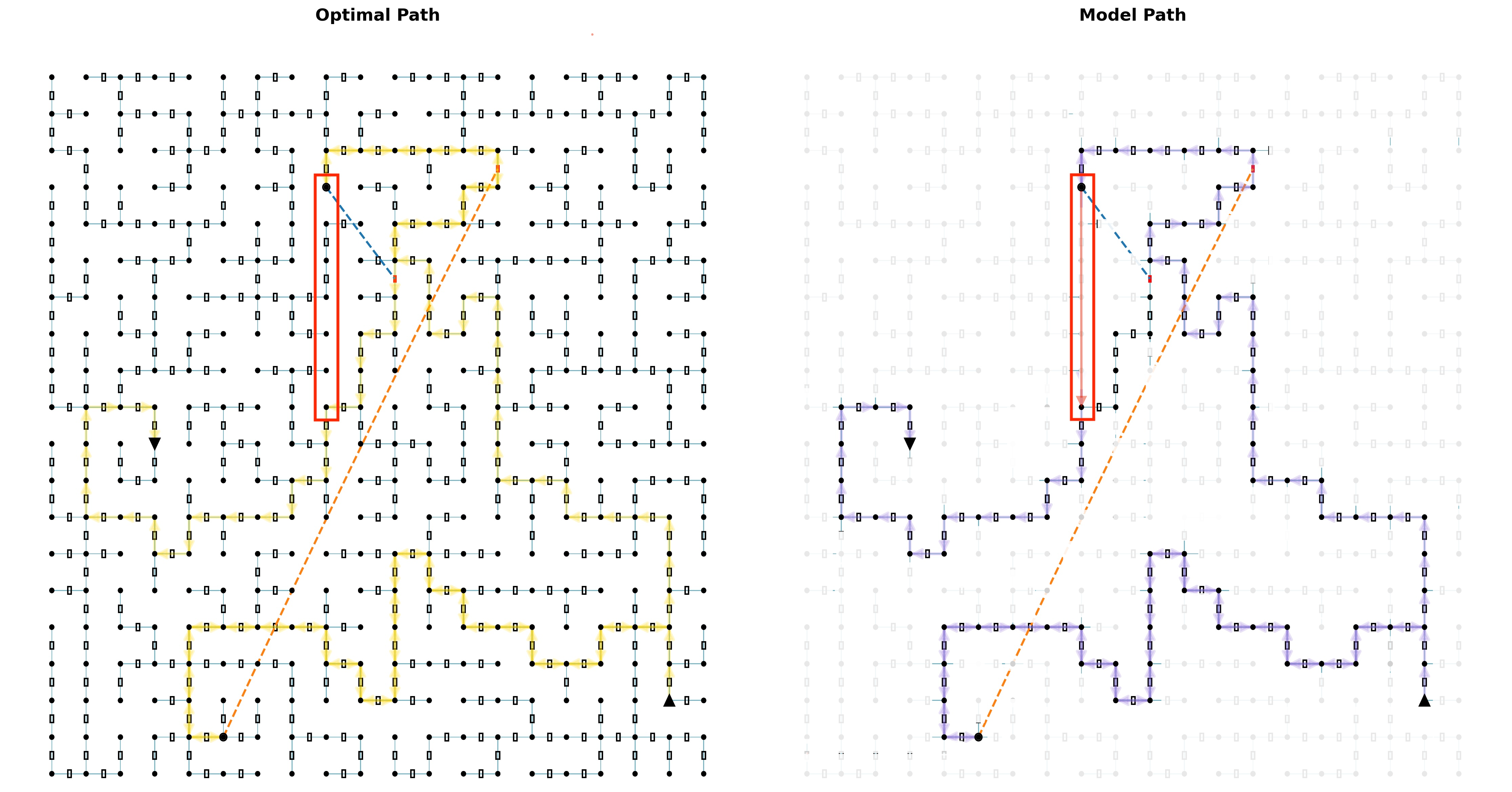

### 1.2 Prompt Construction and Model Configuration

Our evaluation uses a standardized prompt template with four components: (i) task instructions and action schema, (ii) three few-shot examples of increasing complexity (simple navigation, single-key, and multi-key backtracking), (iii) optional reasoning guidance, and (iv) the problem’s natural-language facts. All models are queried using temperature $T{=}1.0$ , nucleus sampling $p{=}0.95$ , and maximum allowed setting in terms of output token limits on a per model basis. For each instance, we compute 5 independent runs to establish robust performance statistics. The complete prompt structure, shown in Figure 6, is provided in the Appendix B.

### 1.3 Evaluation Metrics

To analyze not just success but also how models fail, we employ several complementary metrics. Success Rate (Pass@1) measures the proportion of runs where the predicted action sequence exactly matches the ground truth. The Progress Ratio (Tyagi et al., 2024), calculated as $k/n$ (where $n$ is the total ground-truth actions and $k$ is the number correctly executed before the first error), pinpoints the breakdown position in reasoning. We also use Precision and Recall. Precision is the proportion of predicted actions that are correct, while Recall is the proportion of ground-truth actions that were correctly predicted. Low precision indicates hallucinated actions, while low recall signifies missed necessary actions. Additionally, we visualize error locations via a Violation Map. This multi-faceted approach reveals each model’s effective "reasoning horizon"—the maximum sequence length it can reliably traverse. Further details on all metrics and visualizations are provided in the supplementary material.

## 2 Benchmarking Results

<details>

<summary>figs/fig_vs_backtracking_fixed_L_shuffle1.0_noise0.0.png Details</summary>

### Visual Description

## Line Charts: Performance Metrics vs. Backtracking Steps

### Overview

The image displays three horizontally aligned line charts comparing the performance of five different Large Language Models (LLMs) across varying numbers of backtracking steps (0 to 5). The charts measure three distinct metrics: Progress Ratio Mean, Success Rate, and Number of Tokens. A shared legend is present in the first chart.

### Components/Axes

* **Common X-Axis (All Charts):** "Number of backtracking steps" with integer markers from 0 to 5.

* **Chart 1 (Left):**

* **Y-Axis:** "Progress ratio mean" with a scale from 0.0 to 1.0.

* **Legend (Top-Left):** Contains five entries, each with a colored line and marker:

* Blue circle: `(Llama-4-maverick-17b-128e-instruct-fp8)`

* Orange circle: `(Qwen2.5-coder-32b-instruct)`

* Green circle: `(Llama-3.1-nemotron-70b-instruct-hf)`

* Red circle: `(Gemini-2.0-flash)`

* Purple circle: `(Gemini-2.5-flash-preview-04-17)`

* **Chart 2 (Middle):**

* **Y-Axis:** "Success rate" with a scale from 0.0 to 1.0.

* **Chart 3 (Right):**

* **Y-Axis:** "Number of tokens" with a scale from 250 to 1750.

### Detailed Analysis

**Chart 1: Progress Ratio Mean**

* **Trend Verification:** All models show a general downward trend in progress ratio as backtracking steps increase.

* **Data Points (Approximate):**

* **Purple (Gemini-2.5-flash-preview-04-17):** Starts highest at ~0.95 (step 0), declines to ~0.72 (step 5). It remains the top performer throughout.

* **Red (Gemini-2.0-flash):** Starts at ~0.75 (step 0), declines steadily to ~0.12 (step 5).

* **Blue (Llama-4-maverick):** Starts at ~0.50 (step 0), declines to ~0.18 (step 5).

* **Green (Llama-3.1-nemotron):** Starts at ~0.38 (step 0), declines to ~0.14 (step 5).

* **Orange (Qwen2.5-coder):** Starts lowest at ~0.29 (step 0), declines to ~0.04 (step 5).

**Chart 2: Success Rate**

* **Trend Verification:** Most models show a sharp decline in success rate with increased backtracking, except for the purple line which maintains a relatively high rate.

* **Data Points (Approximate):**

* **Purple (Gemini-2.5-flash-preview-04-17):** Starts highest at ~0.90 (step 0), dips to ~0.63 (step 2), and stabilizes around ~0.65-0.63 (steps 3-5).

* **Red (Gemini-2.0-flash):** Starts at ~0.54 (step 0), drops sharply to ~0.02 (step 5).

* **Blue (Llama-4-maverick):** Starts at ~0.26 (step 0), drops to near zero by step 2 and remains there.

* **Green (Llama-3.1-nemotron):** Starts very low at ~0.03 (step 0), remains near zero throughout.

* **Orange (Qwen2.5-coder):** Starts at 0.0 (step 0) and remains at 0.0 for all steps.

**Chart 3: Number of Tokens**

* **Trend Verification:** Token usage shows varied trends. The blue line increases, the orange and green lines show moderate increases, while the red and purple lines remain relatively low and stable.

* **Data Points (Approximate):**

* **Blue (Llama-4-maverick):** Shows a clear upward trend, starting at ~1580 (step 0) and rising to ~1820 (step 5). It uses the most tokens.

* **Orange (Qwen2.5-coder):** Starts at ~900 (step 0), peaks at ~1240 (step 4), and ends at ~1100 (step 5).

* **Green (Llama-3.1-nemotron):** Starts at ~640 (step 0), rises to ~880 (step 3), and stabilizes around ~870 (steps 4-5).

* **Red (Gemini-2.0-flash):** Starts at ~340 (step 0), fluctuates slightly, and ends at ~410 (step 5).

* **Purple (Gemini-2.5-flash-preview-04-17):** Starts lowest at ~280 (step 0), rises slowly to ~410 (step 5). It uses the fewest tokens overall.

### Key Observations

1. **Performance Hierarchy:** The `Gemini-2.5-flash-preview-04-17` (purple) model consistently outperforms the others in both progress ratio and success rate across all backtracking steps, while also using the fewest tokens.

2. **Backtracking Impact:** Increasing backtracking steps generally degrades performance (progress and success) for all models, but the magnitude of degradation varies significantly.

3. **Token Efficiency:** There is a clear inverse relationship between performance and token usage for the top-performing model (purple). The model with the highest token usage (blue, Llama-4-maverick) shows middling progress and poor success rates.

4. **Model Grouping:** The two Gemini models (red and purple) start with the highest progress and success rates. The Llama and Qwen models start lower and decline. The `Qwen2.5-coder` (orange) has a 0% success rate regardless of backtracking steps.

### Interpretation

The data suggests a significant performance advantage for the `Gemini-2.5-flash-preview-04-17` model in this specific evaluation context. It demonstrates superior robustness, maintaining high success rates even as backtracking steps increase, and does so with remarkable token efficiency.

The stark contrast between the purple and red lines (both Gemini models) indicates that the "preview-04-17" version likely incorporates substantial architectural or training improvements over the "2.0-flash" version, particularly in handling backtracking or complex reasoning tasks.

The general decline in success rate with more backtracking steps for most models is counter-intuitive, as backtracking is typically a strategy to improve correctness. This could imply that the backtracking mechanism itself is poorly implemented or that the models struggle to effectively utilize the additional steps, potentially getting "stuck" in unproductive loops. The anomaly is the purple line, which resists this trend, suggesting it has a more effective backtracking or recovery strategy.

The token usage chart reveals different operational strategies. The high token consumption of the Llama-4-maverick model (blue) without commensurate performance gains suggests inefficiency. Conversely, the Gemini-2.5-flash model's low token count combined with high performance points to a highly optimized and effective inference process for this task.

</details>

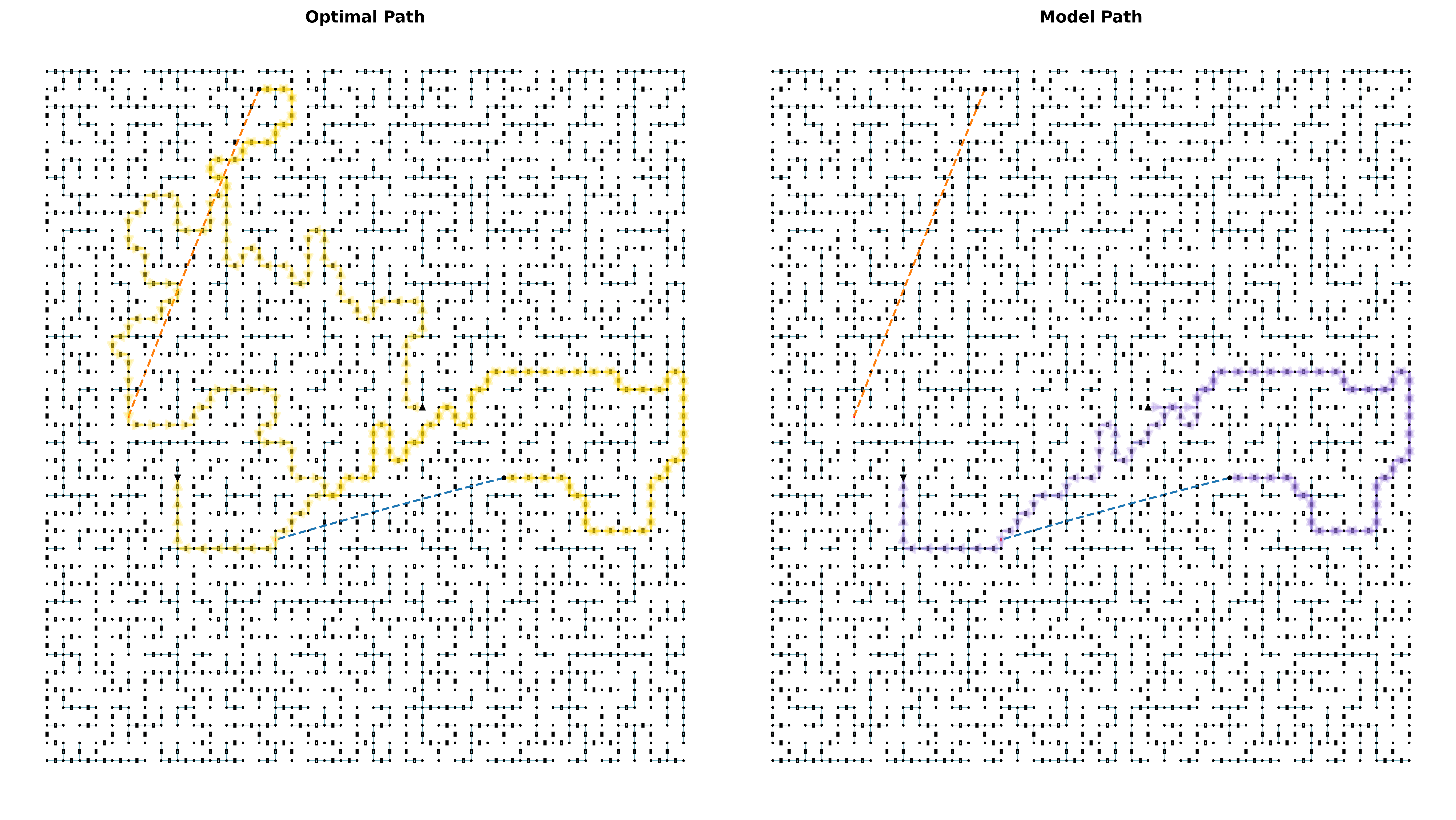

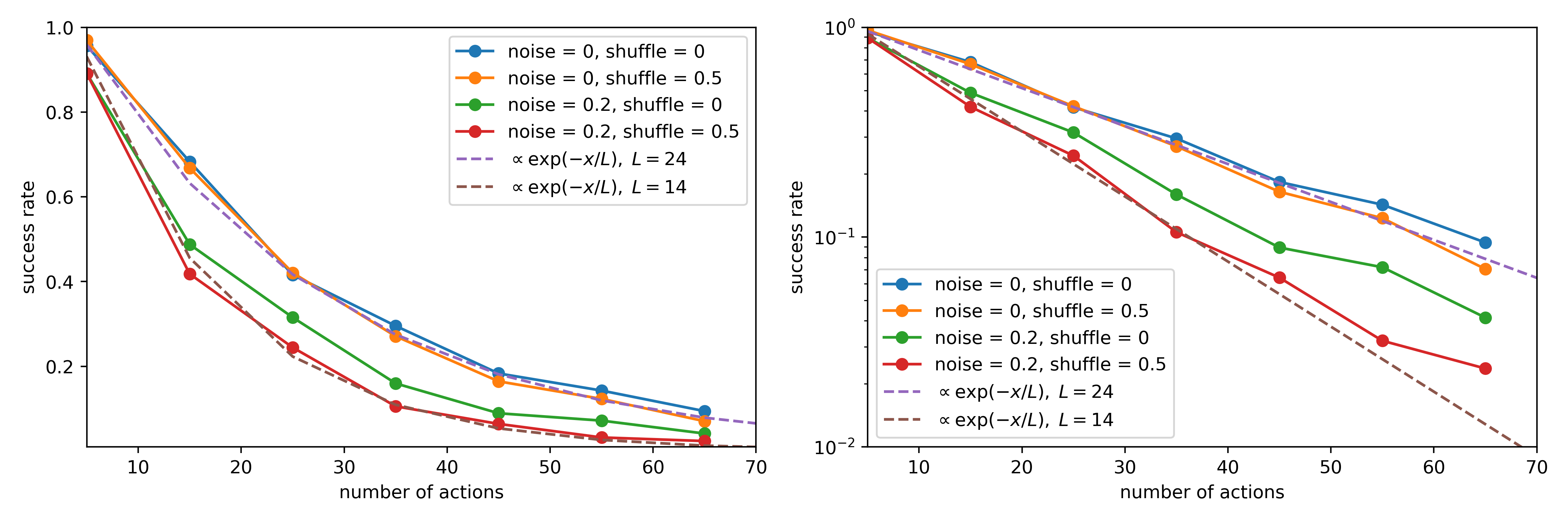

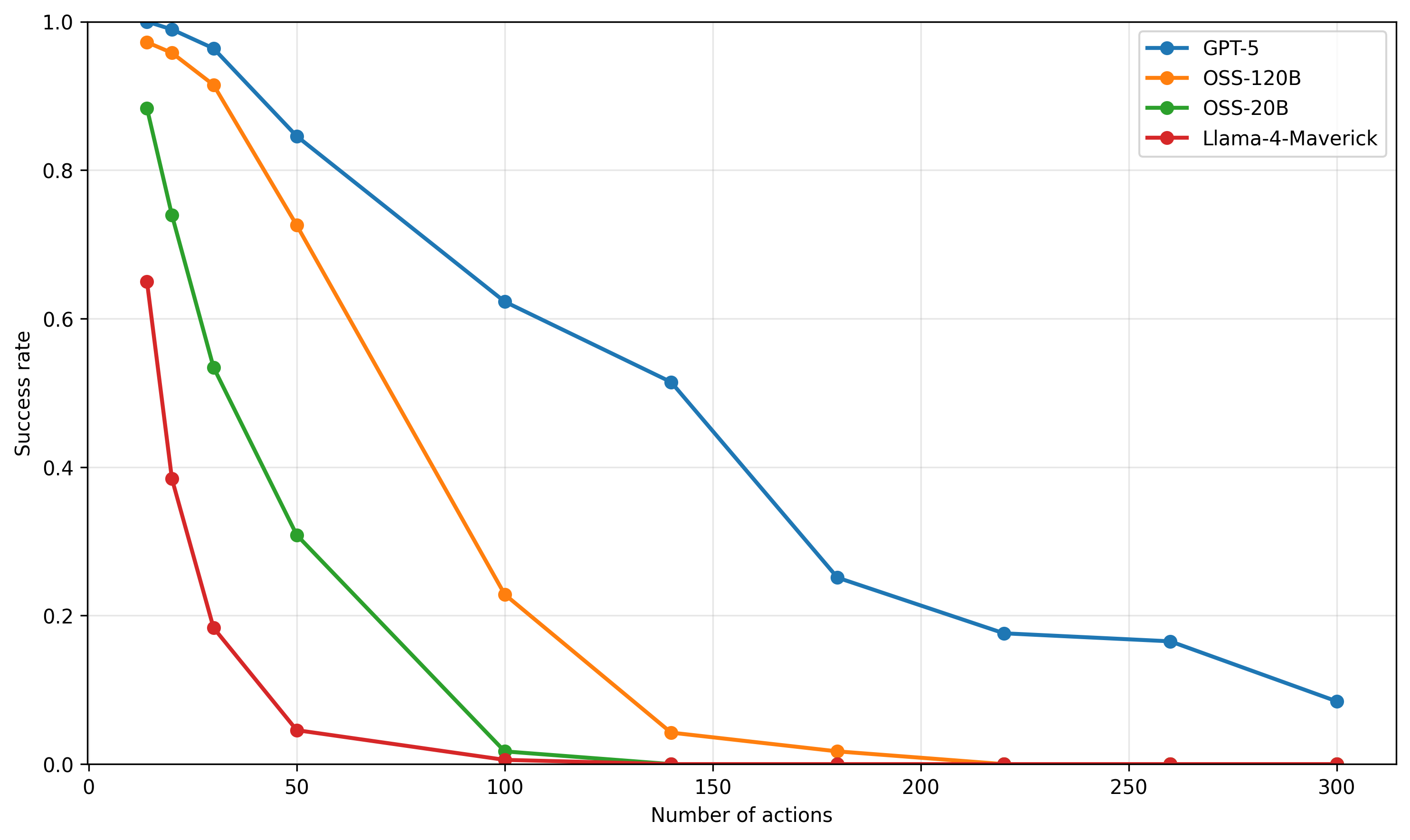

Figure 3: Performance as a function of the number of required backtracking steps, operationalized via the number of locked doors with distributed keys along the optimal path. Holding all other complexity factors constant, all models exhibit a clear decline in both progress ratio and success rate as backtracking demands increase. Additionally, we report the corresponding rise in output token counts per model, highlighting the increased reasoning burden associated with longer dependency chains. Fixed experimental parameters in this figure are the same as those in Figure 1. (for each point 100 problems sampled from $L=[40,60]$ )

### 2.1 Evaluated Models

We evaluate a diverse set of transformer-based LLMs across different model families and parameter scales. Our analysis includes Gemini models (2.5-flash-preview, 2.0-flash), Meta’s Llama family (4-Maverick-17B, 3.3-70B, 3.2-3B), Google’s Gemma-2-27b, and Alibaba’s Qwen models (2.5-Coder-32B, 2.5-7B). [Note: GPT-5 was released during the preparation of this paper’s final version. Our analysis shows that this model exhibits the same performance degradation, as shown in Figure 16]. Access to some open-weight models and benchmarking infrastructure was facilitated by platforms such as Together AI https://www.together.ai/ and Google AI Studio https://aistudio.google.com/. Problem instances for varying logical depths ( $L$ ) were generated by sampling 40 problems for each $L$ , using a fixed maze size of $40\times 40$ and 2 keys, unless otherwise specified for specific experiments (e.g., when varying the number of keys for backtracking analysis). All models were evaluated using the standardized prompt template (see Figure 6), the inference settings detailed in Section 1.2, and a common response parsing methodology. For each task instance, we perform 5 independent runs to establish robust performance statistics, primarily analyzing Pass@1 success rates.

### 2.2 Universal Performance Collapse with Increasing Logical Depth

A central finding of our study is the universal collapse in reasoning performance observed across all evaluated LLMs when confronted with tasks requiring increasing sequential inference steps. As illustrated in Figure 1, Pass@1 success rates exhibit a consistent and sharp exponential decay as the ground-truth path length ( $L$ ) increases. Performance rapidly approaches near-zero past a model-specific point in this decay. To quantify and compare this exponential decay, we fit an exponential decay curve $P(L)=\exp(-L/L_{0})$ to the success rates, deriving a characteristic path length $L_{0}$ . This $L_{0}$ value, representing the path length at which performance drops by a factor of $e^{-1}$ , serves as a robust metric for each model’s sequential reasoning horizon. Plotting success rates on a semi-logarithmic (log-y) scale against $L$ reveals an approximately linear decay trend across the evaluated regime. This log-linear relationship suggests that errors may accumulate with a degree of independence at each reasoning step, eventually overwhelming the model’s capacity for coherent inference. The observed $L_{0}$ values vary significantly, from 85.7 for Gemini-2.5-Flash down to 1.6 for Llama-3.2-3B (Figure 1), underscoring a fundamental bottleneck in current transformer architectures for extended multi-step reasoning.

### 2.3 Impact of Independently Controlled Complexity Dimensions

Beyond the universal impact of logical depth ( $L$ ) discussed in Section 2.2, our benchmark’s ability to independently vary key complexity dimensions allows for targeted analysis of their distinct impacts on LLM reasoning performance. We highlight the effects of noise, backtracking, and fact ordering, primarily focusing on Pass@1 success rates, mean progress ratios, and response token counts.

<details>

<summary>figs/fig_vary_noise_fixed_L_keys2_shuffle1.0.png Details</summary>

### Visual Description

## [Line Charts]: Performance of Two AI Models Under Increasing Noise

### Overview

The image displays three horizontally arranged line charts comparing the performance of two AI models—**Llama-4-maverick-17b-128e-instruct-fp8** (blue line) and **Gemini-2.5-flash-preview-04-17** (orange line)—across three different metrics as a function of "Noise ratio." The charts share a common x-axis but have different y-axes, measuring "Mean progress ratio," "Mean success rate (pass@1)," and "Cot tokens," respectively.

### Components/Axes

* **Legend:** Located in the top-left corner of the first (leftmost) chart. It identifies the two data series:

* Blue line with circle markers: `(Llama-4-maverick-17b-128e-instruct-fp8)`

* Orange line with circle markers: `(Gemini-2.5-flash-preview-04-17)`

* **Common X-Axis (All Charts):**

* **Label:** `Noise ratio`

* **Scale:** Linear, from `0.00` to `1.00`.

* **Tick Marks:** `0.00`, `0.25`, `0.50`, `0.75`, `1.00`.

* **Left Chart Y-Axis:**

* **Label:** `Mean progress ratio`

* **Scale:** Linear, from `0.0` to `1.0`.

* **Tick Marks:** `0.0`, `0.2`, `0.4`, `0.6`, `0.8`, `1.0`.

* **Middle Chart Y-Axis:**

* **Label:** `Mean success rate (pass@1)`

* **Scale:** Linear, from `0.0` to `1.0`.

* **Tick Marks:** `0.0`, `0.2`, `0.4`, `0.6`, `0.8`, `1.0`.

* **Right Chart Y-Axis:**

* **Label:** `Cot tokens`

* **Scale:** Linear, from `500` to `1750`.

* **Tick Marks:** `500`, `750`, `1000`, `1250`, `1500`, `1750`.

### Detailed Analysis

#### Chart 1: Mean Progress Ratio vs. Noise Ratio

* **Trend Verification:** Both lines show a clear downward trend as noise increases. The orange line (Gemini) has a steeper negative slope than the blue line (Llama).

* **Data Points (Approximate):**

* **Llama (Blue):** Starts at ~0.24 (Noise=0.00), decreases gradually to ~0.18 (0.25), ~0.16 (0.50), ~0.12 (0.75), and ends at ~0.11 (1.00).

* **Gemini (Orange):** Starts significantly higher at ~0.72 (0.00), decreases to ~0.65 (0.25), ~0.54 (0.50), ~0.38 (0.75), and ends at ~0.24 (1.00).

* **Key Observation:** Gemini consistently achieves a higher mean progress ratio than Llama across all noise levels, though the gap narrows as noise increases.

#### Chart 2: Mean Success Rate (pass@1) vs. Noise Ratio

* **Trend Verification:** Both lines show a strong downward trend. The orange line (Gemini) starts high and declines sharply. The blue line (Llama) starts very low and declines to near zero.

* **Data Points (Approximate):**

* **Llama (Blue):** Starts very low at ~0.03 (0.00), drops to ~0.01 (0.25), and is at or near `0.0` for noise ratios of 0.50, 0.75, and 1.00.

* **Gemini (Orange):** Starts at ~0.62 (0.00), decreases to ~0.50 (0.25), ~0.34 (0.50), ~0.19 (0.75), and ends at ~0.04 (1.00).

* **Key Observation:** Gemini has a substantially higher success rate than Llama at all noise levels. Llama's success rate is negligible, especially beyond a noise ratio of 0.25.

#### Chart 3: Cot Tokens vs. Noise Ratio

* **Trend Verification:** The blue line (Llama) shows a clear downward trend. The orange line (Gemini) is relatively flat with a very slight upward trend.

* **Data Points (Approximate):**

* **Llama (Blue):** Starts at ~1720 tokens (0.00), decreases to ~1640 (0.25), ~1620 (0.50), ~1510 (0.75), and ends at ~1460 (1.00).

* **Gemini (Orange):** Remains consistently low, starting at ~350 tokens (0.00), with values around ~350 (0.25), ~360 (0.50), ~355 (0.75), and ~370 (1.00).

* **Key Observation:** There is a massive disparity in token usage. Llama uses approximately 4-5 times more Chain-of-Thought (Cot) tokens than Gemini across all noise levels. Llama's token count decreases with noise, while Gemini's remains stable.

### Key Observations

1. **Performance Hierarchy:** Gemini-2.5-flash-preview-04-17 outperforms Llama-4-maverick-17b-128e-instruct-fp8 on both "Mean progress ratio" and "Mean success rate" metrics at every tested noise level.

2. **Noise Sensitivity:** Both models' performance metrics degrade as the noise ratio increases. The degradation in success rate is particularly severe for both.

3. **Efficiency Disparity:** The models exhibit opposite behaviors in resource usage. The higher-performing Gemini model uses a consistently low and stable number of Cot tokens. The lower-performing Llama model uses a very high number of Cot tokens, which decreases as noise increases (possibly indicating a failure to generate lengthy reasoning chains in noisy conditions).

4. **Llama's Near-Zero Success:** Llama's mean success rate (pass@1) is effectively zero for noise ratios of 0.50 and above, indicating a complete failure to produce correct final answers under moderate to high noise.

### Interpretation

The data suggests a fundamental trade-off or difference in model architecture/behavior between the two tested models. **Gemini-2.5-flash-preview-04-17** appears to be both more robust (higher performance) and more efficient (lower token usage) on this specific task under noisy conditions. Its performance degrades gracefully with noise.

In contrast, **Llama-4-maverick-17b-128e-instruct-fp8** struggles significantly. Its low progress and near-zero success rates, coupled with very high token consumption, indicate it may be generating lengthy but ineffective reasoning chains ("Cot tokens") that fail to lead to correct solutions, especially when the input is corrupted by noise. The decrease in its token count with higher noise might reflect an inability to sustain coherent reasoning rather than increased efficiency.

The "Noise ratio" likely represents the proportion of corrupted or irrelevant information in the input prompt. The charts demonstrate that maintaining performance in the presence of such noise is a critical challenge, and the two models handle it with vastly different levels of efficacy and efficiency. This analysis would be crucial for selecting a model for real-world applications where input data may be imperfect.

</details>

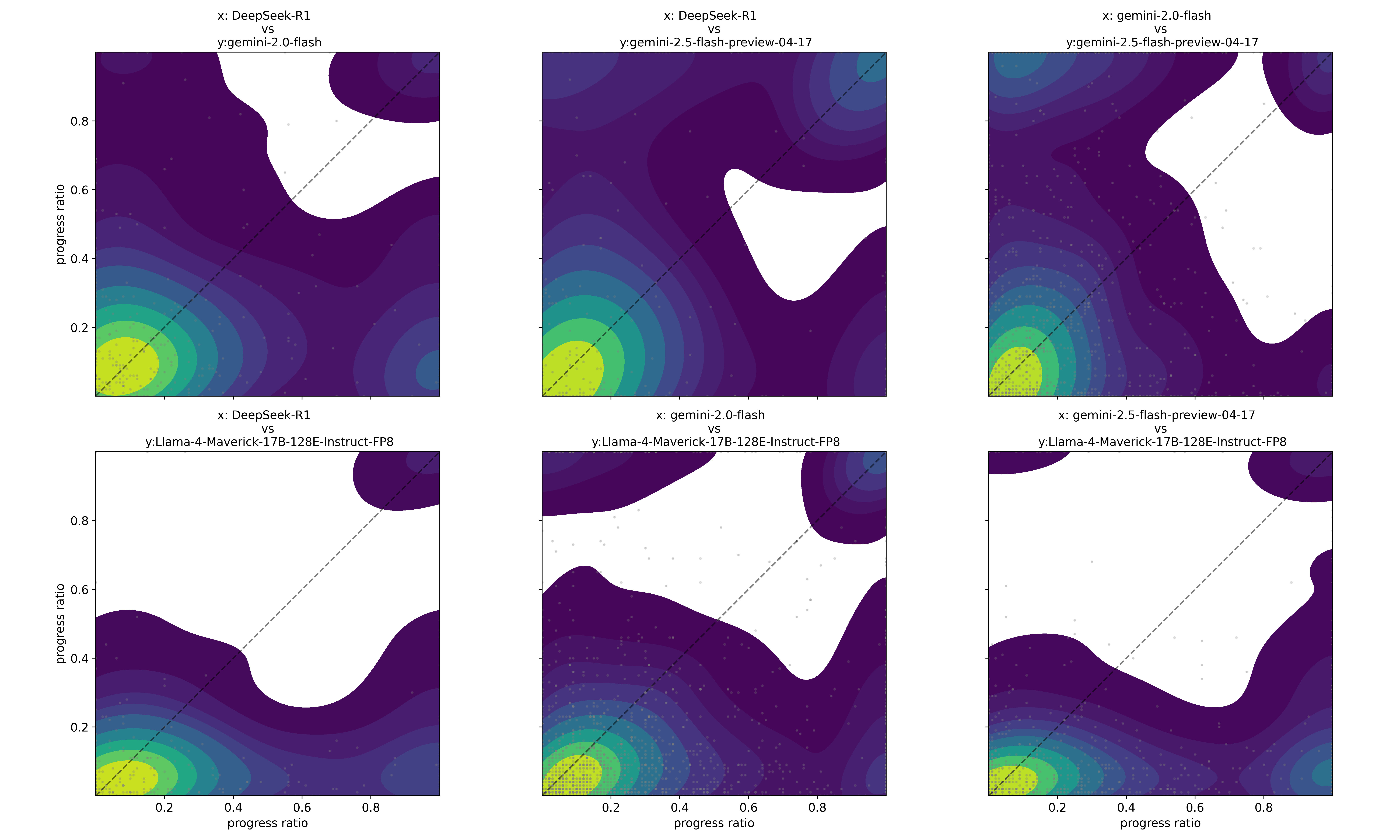

Figure 4: Performance as a function of contextual noise for Gemini 2.5 flash and Llama-4 Maverick-17B-128E-Instruct models. As noise increases through the inclusion of distracting or irrelevant facts, both models exhibit a clear and consistent decline in performance. Fixed experimental parameters in this figure are the same as those in Figure 1 (for each point 100 problems sampled from $L=[40,60]$ and number of keys is equal to 2).

#### Impact of Backtracking Requirements.

Increasing the number of required backtracking steps—operationalized via key-door mechanisms—also leads to a clear and significant decline in Pass@1 success rates and mean progress ratios across all evaluated models as shown in Figure 3. Gemini 2.5 Flash-preview maintains the highest performance but still exhibits a notable drop as backtracking count increases from 0 to 5. This decline in reasoning accuracy is generally accompanied by an increase or sustained high level in the mean number of response tokens (Figure 3, right panel). For example, models like Llama-4 Maverick and Gemini 2.5 Flash-preview show a clear upward trend or maintain high token counts as backtracking complexity rises, reflecting the increased reasoning effort or path length articulated by the models when managing more complex sequential dependencies.

#### Sensitivity to Noise Ratio.

Model performance is highly sensitive to the noise ratio—the proportion of distracting versus supporting facts. As demonstrated in Figure 4 for Gemini 2.5 Flash and Llama-4 Maverick, increasing the proportion of irrelevant facts consistently and significantly degrades both Pass@1 success rates and mean progress ratios. For instance, Gemini 2.5 Flash’s Pass@1 success rate drops from over 0.7 at zero noise to approximately 0.2 at a noise ratio of 1.0. Llama-4 Maverick, starting with lower performance, also shows a consistent decline. Interestingly, for these two models, the number of CoT (output) tokens remains relatively stable despite the increasing noise and degrading performance (Figure 4, right panel), suggesting that models do not necessarily "work harder" (in terms of output length) when faced with more distractors, but their accuracy suffers.

#### Fact Ordering (Shuffle Ratio).

In contrast to the strong effects of noise and backtracking, shuffle ratio (entropy of fact presentation order) within the prompt appears to play a secondary role when varied in isolation. Our experiments, exemplified by the performance of Gemini 2.5 Flash and Llama-4 Maverick (see Appendix C Figure 14 for details), show that complete shuffling of facts (randomizing their presentation order without adding or removing any information) has a minimal impact on Pass@1 success rates and mean progress ratios. Output token counts also remain stable. This suggests a relative robustness to presentation order as long as all necessary information is present and distinguishable. However, as details provided in supplementary material, when high noise and high shuffle co-occur, the combined effect can be more detrimental than either factor alone, though noise remains the dominant degrading factor.

### 2.4 Characterizing Key Failure Modes and Error Patterns

#### A Key Failure Mode: Omission of Critical Steps.

Beyond simply taking illegal shortcuts, detailed analysis reveals that LLMs often fail by omitting critical sub-goals necessary for task completion. Figure 2 (bottom panel) provides a quantitative view for Llama-4 Maverick (Meta AI, 2025), showing that while precision generally remains high (models infrequently hallucinate non-existent rooms or facts), recall and progress ratio plummet with increasing path length ( $L$ ). This indicates that models predominantly fail by missing necessary actions or entire crucial sub-sequences. For a qualitative example, even capable models like Gemini-2.5-Flash can neglect essential detours, such as collecting a required key, thereby violating sequential dependencies and rendering the task unsolvable (illustrative examples are provided in the Appendix B.4; see Figures 8 and 9). This pattern highlights a fundamental breakdown in robust multi-step planning and execution.

#### Path-Length Dependent First Errors: The Burden of Anticipated Complexity.

The propensity for models to make critical errors is not uniformly distributed across the reasoning process, nor is it solely a feature of late-stage reasoning fatigue. Examining the distribution of steps at which the first constraint violations occur reveals a counterintuitive pattern: as the total required path length ( $L$ ) of a problem increases, models tend to fail more frequently even at the earliest steps of the reasoning chain. This leftward shift in the first-error distribution also observed under increasing noise, (Appendix B.4; Figures 10 and 11) contradicts a simple cumulative error model where each step carries a fixed, independent failure probability. Instead, an error at an early step (e.g., step 5) becomes substantially more likely when the model is attempting to solve an 80-step problem versus a 20-step problem. This suggests that the overall anticipated complexity of the full problem influences reasoning quality from the very outset, indicating a struggle with global planning or maintaining coherence over longer horizons, rather than just an accumulation of local errors. This phenomenon may help explain why prompting techniques that decompose long problems into smaller, manageable sub-problems often succeed.

### 2.5 Disparity: Information Retention vs. Reasoning Capacity

On seqBench tasks, this disparity is quantitatively striking. While modern LLMs boast million-token contexts, their effective sequential reasoning depth typically remains on the order of hundreds of actions (Figure 1). This functional limit, even at several hundred actions (e.g., 300 actions, with each like (’move_to’, ’A12’) being 5-7 tokens, totaling 1.5k-2.1k tokens), still consumes a minute fraction of their nominal context. Consequently, the ratio of context capacity to reasoning tokens often spans from several hundred-fold (e.g., 500:1 for 300 actions consuming 2k tokens within a 1M context) to potentially higher values given fewer limiting actions or larger model contexts. This striking gap suggests that while transformers can store and retrieve vast information, their ability to reliably chain it for coherent, multi-step inference appears surprisingly constrained.

### 2.6 Challenging the Conventional Performance Hierarchy

While metrics like average $L_{0}$ provide a general ranking of model capabilities, our fine-grained analysis reveals instances that challenge a simple linear performance hierarchy. Scatter plots of progress ratios across different models on identical tasks (see Appendix C Figure 13) show intriguing cases where models with lower overall $L_{0}$ values (i.e., typically weaker models) occasionally solve specific complex problems perfectly, while models with higher average $L_{0}$ values fail on those same instances. These performance inversions suggest that sequential reasoning failures may not solely stem from insufficient scale (parameters or general training) but could also arise from more nuanced reasoning limitations.

## 3 Related Work

Recent advancements in benchmarks evaluating sequential reasoning capabilities of LLMs have illuminated various strengths and limitations across different dimensions of complexity. These benchmarks typically differ in how they isolate and quantify reasoning challenges, such as logical deduction, retrieval difficulty, combinatorial complexity, and sensitivity to irrelevant information. ZebraLogic (Lin et al., 2025), for instance, targets formal deductive inference through logic-grid puzzles framed as constraint-satisfaction problems (csp, 2008). While valuable for probing deduction, its core methodology leads to a search space that grows factorially with puzzle size (Sempolinski, 2009). This makes it challenging to disentangle intrinsic reasoning failures from the sheer combinatorial complexity of the search. As the ZebraLogic authors themselves acknowledge: “ solving ZebraLogic puzzles for large instances may become intractable… the required number of reasoning tokens may increase exponentially with the size of the puzzle. ” This inherent characteristic means that for larger puzzles, performance is primarily dictated by the manageability of the search space rather than the limits of sequential reasoning depth. GridPuzzle (Tyagi et al., 2024) complements this by providing a detailed error taxonomy for grid puzzles, focusing on what kinds of reasoning mistakes LLMs make. However, like ZebraLogic, it doesn’t offer independent control over key complexity dimensions such as logical depth, backtracking needs, or noise, separate from the puzzle’s inherent search complexity.

Other benchmarks conflate reasoning with different cognitive demands. BABILong (Kuratov et al., 2024) tests models on extremely long contexts (up to 50M tokens), primarily assessing the ability to retrieve "needles" (facts) from a "haystack" (distracting text that does not contribute to solving the task). While valuable for evaluating long-context processing, this design makes it hard to disentangle retrieval failures from reasoning breakdowns, as performance is often dictated by finding the relevant information rather than reasoning over it. MuSR (Sprague et al., 2024) embeds reasoning tasks within lengthy narratives (e.g., murder mysteries), mixing information extraction challenges with complex, domain-specific reasoning structures. This realism obscures which specific aspect—extraction or reasoning depth—causes model failures. Dyna-bAbI (Tamari et al., 2021) offers a dynamic framework for compositional generalization but focuses on qualitative combinations rather than systematically varying quantitative complexity metrics needed to find precise failure points.

Spatial reasoning benchmarks, while relevant, also target different aspects. GRASP (Tang and Kejriwal, 2025) assesses practical spatial planning efficiency (like obstacle avoidance) in 2D grids, a different skill than the abstract sequential reasoning seqBench isolates. SPARTQA (Mirzaee et al., 2021) focuses on specialized spatial relational complexity (transitivity, symmetry) using coupled dimensions, preventing independent analysis of factors like path length. SpaRTUN (Mirzaee and Kordjamshidi, 2022) uses synthetic data primarily for transfer learning in Spatial Question Answering (SQA), aiming to improve model performance rather than serve as a diagnostic tool with controllable complexity. Similarly, StepGame (Shi et al., 2022) demonstrates performance decay with more reasoning steps in SQA but lacks the fine-grained, orthogonal controls over distinct complexity factors provided by seqBench.

In contrast, seqBench takes a targeted diagnostic approach. By deliberately simplifying the spatial environment to minimize search complexity, it isolates sequential reasoning. Its core contribution lies in the independent, fine-grained control over (1) logical depth (the number of sequential actions required to solve the task), (2) backtracking count (the number of backtracking steps along the optimal path), and (3) noise ratio (the ratio of supporting to distracting facts). This orthogonal parameterization allows us to precisely pinpoint when and why sequential reasoning capabilities degrade, revealing fundamental performance cliffs even when search and retrieval demands are trivial. seqBench thus offers a complementary tool for understanding the specific limitations of sequential inference in LLMs.

## 4 Limitations

While seqBench offers precise control over key reasoning complexities, our study has limitations that open avenues for future research:

1. Generalizability and Task Design Fidelity: Our current findings are rooted in synthetic spatial pathfinding tasks. While this allows for controlled experimentation, future work must extend seqBench ’s methodology to more diverse reasoning domains (e.g., mathematical proofs) and incorporate greater linguistic diversity (e.g., ambiguity) to assess the broader applicability of the observed phenomena of performance collapse (quantified by $L_{0}$ ) and failure patterns. Moreover, this work did not investigate whether similar failure modes arise when the problem is also presented visually (e.g., as maze images). Multimodal capabilities could influence spatial reasoning outcomes, and we have already extended the benchmark by releasing maze image generation code alongside the HuggingFace dataset. This dataset can also be used to help train multimodal reasoning models.

1. Model Scope and Understanding Deeper Failure Dynamics: Our current evaluation, while covering diverse public models, should be expanded to a wider array of LLMs—including recent proprietary and newer open-source variants (e.g., GPT, Claude, DeepSeek series)—to rigorously assess the universality of our findings on the characteristic length $L_{0}$ and failure patterns. Furthermore, while seqBench effectively characterizes how reasoning performance degrades with logical depth (i.e., by determining $L_{0}$ ), two complementary research thrusts are crucial for understanding why. First, systematic investigation is needed to disentangle how $L_{0}$ is influenced by factors such as model architecture, scale (parameters, training data, compute), fine-tuning strategies, and inference-time computation (e.g., chain-of-thought depth). Second, deeper analysis is required to explain the precise mechanisms underlying the observed exponential performance collapse characterized by $L_{0}$ and to account for other non-trivial error patterns, such as path-length dependent first errors. Additionally, the evaluation presented here does not consider how agentic systems capable of tool use perform as the reasoning complexity is tuned across various dimensions. Exploring such setups, where the LLM can externalize sub-problems, invoke tools, or backtrack programmatically, could provide valuable insights into whether the same exponential failure modes persist. In particular, one can define sequential problems where the degree of backtracking or sequential tool use can be systematically varied, and to test whether similar performance drop emerge as the dependency chain grows. We highlight this as a promising direction for future research.

1. Impact of Prompting: Our current study employed standardized prompts and inference settings. A crucial next step is a robust sensitivity analysis to determine overall decay behavior are influenced by different prompting strategies (e.g., zero-shot vs. few-shot, decomposition techniques), varied decoding parameters (temperature, top-p), and interactive mechanisms such as self-verification or self-correction. Investigating the potential of these techniques to mitigate the observed sequential inference failures, particularly given seqBench ’s minimal search complexity, remains a key avenue for future research.

Addressing these points by leveraging frameworks like seqBench will be vital for developing LLMs with more robust and generalizable sequential reasoning capabilities, and for understanding their fundamental performance limits.

## 5 Conclusion

We introduced seqBench, a novel benchmark framework designed for the precise attribution of sequential reasoning failures in Large Language Models. seqBench ’s core strength lies in its unique capability for fine-grained, independent control over fundamental complexity dimensions; most notably, logical depth ( $L$ ), backtracking requirements, and noise ratio, its provision of automatically verifiable solutions, and critically minimizing confounding factors like search complexity. This design allows seqBench to isolate and rigorously evaluate the sequential inference capabilities of LLMs, enabling the automatic quantification of fine-grained performance metrics (such as progress ratio) and providing a clear lens into mechanisms often obscured in most other benchmarks. The framework’s inherent scalability and open-source nature position it as a durable tool for assessing and driving progress in current and future generations of models, ultimately aiming to enhance their utility for complex, real-world problems that often span multiple domains. Our comprehensive evaluations using seqBench reveal that reasoning accuracy consistently collapses exponentially with increasing logical depth across a diverse range of state-of-the-art LLMs. This collapse is characterized by a model-specific parameter $L_{0}$ (Section 2.2), indicating an inherent architectural bottleneck in maintaining coherent multi-step inference. In alignment with the goal of advancing NLP’s reach and fostering its responsible application in other fields by offering this precise analysis, seqBench provides a valuable resource. It encourages a shift beyond aggregate benchmark scores towards a more nuanced understanding of model capabilities, an essential step for rigorously assessing the true impact and potential risks of applying LLMs in new domains. The insights gleaned from seqBench can inform both NLP developers in building more robust models, and experts in other disciplines in setting realistic expectations and co-designing NLP solutions that are genuinely fit for purpose. Targeted improvements, guided by such fundamental understanding, are key to enhancing the robustness of sequential reasoning, making LLMs more reliable partners in interdisciplinary endeavors. Future work should leverage these insights to develop models that can overcome the observed performance cliffs and extend their effective reasoning horizons, thereby unlocking their transformative potential in diverse interdisciplinary applications—such as navigating complex scientific literature, supporting intricate legal analysis, or enabling robust multi-step planning in critical autonomous systems. Focusing on commonsense reasoning is paramount for NLP to achieve transformative societal impact, moving beyond incremental improvements to genuine breakthroughs.

## References

- csp (2008) 2008. Rina dechter , constraint processing, morgan kaufmann publisher (2003) isbn 1-55860-890-7, francesca rossi, peter van beek and toby walsh, editors, handbook of constraint programming, elsevier (2006) isbn 978-0-444-52726-4. Computer Science Review, 2:123–130.

- Anthropic (2025) Anthropic. 2025. Claude 3.7 sonnet. https://www.anthropic.com/news/claude-3-7-sonnet.

- Berglund et al. (2024) Lukas Berglund, Meg Tong, Max Kaufmann, Mikita Balesni, Asa Cooper Stickland, Tomasz Korbak, and Owain Evans. 2024. The reversal curse: Llms trained on "a is b" fail to learn "b is a". Preprint, arXiv:2309.12288.

- Brown et al. (2020) Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, and 1 others. 2020. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901.

- Carroll and Ruppert (2017) Raymond J Carroll and David Ruppert. 2017. Transformation and weighting in regression. Chapman and Hall/CRC.

- Clark et al. (2018) Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. 2018. Think you have solved question answering? try arc, the ai2 reasoning challenge. Preprint, arXiv:1803.05457.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. 2021. Training verifiers to solve math word problems. Preprint, arXiv:2110.14168.

- Du et al. (2021) Nan Du, Yanping Huang, Andrew M. Dai, Simon Tong, Dmitry Lepikhin, Yuanzhong Xu, Maxim Krikun, Yanqi Zhou, Adams Wei Yu, Orhan Firat, Barret Zoph, Liam Fedus, Maarten Bosma, Zongwei Zhou, Tao Wang, Yu Emma Wang, Kellie Webster, Marie Pellat, Kevin Robinson, and 8 others. 2021. Glam: Efficient scaling of language models with mixture-of-experts. In International Conference on Machine Learning.

- Dua et al. (2019) Dheeru Dua, Yizhong Wang, Pradeep Dasigi, Gabriel Stanovsky, Sameer Singh, and Matt Gardner. 2019. Drop: A reading comprehension benchmark requiring discrete reasoning over paragraphs. Preprint, arXiv:1903.00161.

- Fedus et al. (2022) William Fedus, Barret Zoph, and Noam Shazeer. 2022. Switch transformers: Scaling to trillion parameter models with simple and efficient sparsity. Journal of Machine Learning Research, 23(120):1–39.

- Google DeepMind (2025) Google DeepMind. 2025. Gemini 2.5 pro experimental. https://blog.google/technology/google-deepmind/gemini-model-thinking-updates-march-2025/.

- Han et al. (2024) Pengrui Han, Peiyang Song, Haofei Yu, and Jiaxuan You. 2024. In-context learning may not elicit trustworthy reasoning: A-not-b errors in pretrained language models. Preprint, arXiv:2409.15454.

- Hendrycks et al. (2021) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. 2021. Measuring massive multitask language understanding. Preprint, arXiv:2009.03300.

- Hoffmann et al. (2022) Jordan Hoffmann, Sebastian Borgeaud, Arthur Mensch, Elena Buchatskaya, Trevor Cai, Eliza Rutherford, Diego de Las Casas, Lisa Anne Hendricks, Johannes Welbl, Aidan Clark, Tom Hennigan, Eric Noland, Katie Millican, George van den Driessche, Bogdan Damoc, Aurelia Guy, Simon Osindero, Karen Simonyan, Erich Elsen, and 3 others. 2022. Training compute-optimal large language models. Preprint, arXiv:2203.15556.

- Kleinberg and Tardos (2006) Jon Kleinberg and Eva Tardos. 2006. Algorithm Design. Pearson/Addison-Wesley, Boston.

- Kojima et al. (2022) Takeshi Kojima, Shixiang (Shane) Gu, Machel Reid, Yutaka Matsuo, and Yusuke Iwasawa. 2022. Large language models are zero-shot reasoners. In Advances in Neural Information Processing Systems, volume 35, pages 22199–22213. Curran Associates, Inc.

- Kuratov et al. (2024) Yury Kuratov, Aydar Bulatov, Petr Anokhin, Ivan Rodkin, Dmitry Sorokin, Artyom Sorokin, and Mikhail Burtsev. 2024. Babilong: Testing the limits of llms with long context reasoning-in-a-haystack. Advances in Neural Information Processing Systems, 37:106519–106554.

- Lieber et al. (2021) Opher Lieber, Or Sharir, Barak Lenz, and Yoav Shoham. 2021. Jurassic-1: Technical details and evaluation. https://www.ai21.com/blog/jurassic-1-technical-details-and-evaluation. White Paper.

- Lin et al. (2025) Bill Yuchen Lin, Ronan Le Bras, Kyle Richardson, Ashish Sabharwal, Radha Poovendran, Peter Clark, and Yejin Choi. 2025. Zebralogic: On the scaling limits of llms for logical reasoning. Preprint, arXiv:2502.01100.

- Meta AI (2025) Meta AI. 2025. Llama 4: Open and efficient multimodal language models. https://github.com/meta-llama/llama-models.

- Mirzaee et al. (2021) Roshanak Mirzaee, Hossein Rajaby Faghihi, Qiang Ning, and Parisa Kordjmashidi. 2021. Spartqa: : A textual question answering benchmark for spatial reasoning. Preprint, arXiv:2104.05832.

- Mirzaee and Kordjamshidi (2022) Roshanak Mirzaee and Parisa Kordjamshidi. 2022. Transfer learning with synthetic corpora for spatial role labeling and reasoning. Preprint, arXiv:2210.16952.

- Mistral AI (2024) Mistral AI. 2024. Mistral large 2. https://mistral.ai/news/mistral-large-2407.

- Nezhurina et al. (2025) Marianna Nezhurina, Lucia Cipolina-Kun, Mehdi Cherti, and Jenia Jitsev. 2025. Alice in wonderland: Simple tasks showing complete reasoning breakdown in state-of-the-art large language models. Preprint, arXiv:2406.02061.

- OpenAI (2025) OpenAI. 2025. Openai gpt-5, o3 and o4-mini. https://openai.com/index/introducing-o3-and-o4-mini/, https://openai.com/index/introducing-gpt-5/. Paper’s supplementary material (appendix) was revised, after GPT-5 release, with a new figure, to reflect that GPT-5 also suffers from the same failure pattern we have observed in this paper.

- Rae et al. (2021) Jack W. Rae, Sebastian Borgeaud, Trevor Cai, Katie Millican, Jordan Hoffmann, Francis Song, John Aslanides, Sarah Henderson, Roman Ring, Susannah Young, Eliza Rutherford, Tom Hennigan, Jacob Menick, Albin Cassirer, Richard Powell, George van den Driessche, Lisa Anne Hendricks, Matthias Rauh, Po-Sen Huang, and 58 others. 2021. Scaling language models: Methods, analysis & insights from training Gopher. Preprint, arXiv:2112.11446.

- Rein et al. (2023) David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R. Bowman. 2023. Gpqa: A graduate-level google-proof q&a benchmark. Preprint, arXiv:2311.12022.

- Sempolinski (2009) Peter Sempolinski. 2009. Automatic solutions of logic puzzles.

- Sharma (2024) Manasi Sharma. 2024. Exploring and improving the spatial reasoning abilities of large language models. In I Can’t Believe It’s Not Better Workshop: Failure Modes in the Age of Foundation Models.

- Shi et al. (2022) Zhengxiang Shi, Qiang Zhang, and Aldo Lipani. 2022. Stepgame: A new benchmark for robust multi-hop spatial reasoning in texts. In Proceedings of the AAAI conference on artificial intelligence, volume 36, pages 11321–11329.

- Smith et al. (2022) Samuel Smith, Mostofa Patwary, Brian Norick, Patrick LeGresley, Samyam Rajbhandari, Jared Casper, Zhenhao Liu, Shrimai Prabhumoye, Georgios Zerveas, Vikas Korthikanti, Eric Zhang, Rewon Child, Reza Yazdani Aminabadi, Jared Bernauer, Xia Song Song, Mohammad Shoeybi, Yuxin He, Michael Houston, Shishir Tiwary, and Bryan Catanzaro. 2022. Using deepspeed and megatron to train megatron-turing nlg 530b, a large-scale generative language model. Preprint, arXiv:2201.11990.

- Sprague et al. (2024) Zayne Sprague, Xi Ye, Kaj Bostrom, Swarat Chaudhuri, and Greg Durrett. 2024. Musr: Testing the limits of chain-of-thought with multistep soft reasoning. Preprint, arXiv:2310.16049.

- Srivastava et al. (2023) Aarohi Srivastava, Abhinav Rastogi, Abhishek Rao, Abu Awal Md Shoeb, Abubakar Abid, Adam Fisch, Adam R. Brown, Adam Santoro, Aditya Gupta, Adrià Garriga-Alonso, Agnieszka Kluska, Aitor Lewkowycz, Akshat Agarwal, Alethea Power, Alex Ray, Alex Warstadt, Alexander W. Kocurek, Ali Safaya, Ali Tazarv, and 432 others. 2023. Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. Preprint, arXiv:2206.04615.

- Tamari et al. (2021) Ronen Tamari, Kyle Richardson, Aviad Sar-Shalom, Noam Kahlon, Nelson Liu, Reut Tsarfaty, and Dafna Shahaf. 2021. Dyna-babi: unlocking babi’s potential with dynamic synthetic benchmarking. Preprint, arXiv:2112.00086.

- Tang and Kejriwal (2025) Zhisheng Tang and Mayank Kejriwal. 2025. Grasp: A grid-based benchmark for evaluating commonsense spatial reasoning. Preprint, arXiv:2407.01892.

- Thoppilan et al. (2022) Rami Thoppilan, Daniel De Freitas, Jamie Hall, Noam Shazeer, Apoorv Kulshreshtha, Heng-Tze Cheng, Alicia Jin, Taylor Bos, Leslie Baker, Yi Du, Yanping Li, Hongrae Lee, Huaixiu Steven Zheng, Amin Ghafouri, Marcelo Menegali, Yanping Huang, Max Krikun, Dmitry Lepikhin, James Qin, and 38 others. 2022. Lamda: Language models for dialog applications. arXiv preprint. Technical report, Google Research.

- Tikhonov (2024) Alexey Tikhonov. 2024. Plugh: A benchmark for spatial understanding and reasoning in large language models. Preprint, arXiv:2408.04648.

- Tyagi et al. (2024) Nemika Tyagi, Mihir Parmar, Mohith Kulkarni, Aswin RRV, Nisarg Patel, Mutsumi Nakamura, Arindam Mitra, and Chitta Baral. 2024. Step-by-step reasoning to solve grid puzzles: Where do llms falter? Preprint, arXiv:2407.14790.

- Vaswani et al. (2017) Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Ł ukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. In Advances in Neural Information Processing Systems, volume 30. Curran Associates, Inc.

- Wei et al. (2023) Jason Wei, Xuezhi Wang, Dale Schuurmans, Maarten Bosma, Brian Ichter, Fei Xia, Ed Chi, Quoc Le, and Denny Zhou. 2023. Chain-of-thought prompting elicits reasoning in large language models. Preprint, arXiv:2201.11903.

- Weston et al. (2015) Jason Weston, Antoine Bordes, Sumit Chopra, Alexander M. Rush, Bart van Merriënboer, Armand Joulin, and Tomas Mikolov. 2015. Towards ai-complete question answering: A set of prerequisite toy tasks. Preprint, arXiv:1502.05698.

- Yang et al. (2019) Kaiyu Yang, Olga Russakovsky, and Jia Deng. 2019. SpatialSense: An adversarially crowdsourced benchmark for spatial relation recognition. In International Conference on Computer Vision (ICCV).

- Zoph et al. (2022) Barret Zoph, Irwan Bello, Sameer Kumar, Nan Du, Yanping Huang, Jeff Dean, Noam Shazeer, and William Fedus. 2022. St-moe: Designing stable and transferable sparse expert models. Preprint, arXiv:2202.08906.

## Appendices

## Appendix A Dataset Generation Details

The seqBench benchmark generates pathfinding tasks by systematically controlling several complexity dimensions. As described in Section 1 (main paper), Algorithm 1 is central to this process. This appendix provides further details on the generation phases, natural language encoding of tasks, and specific dataset parameters.

### A.1 Generation Phases

The generation process, guided by Algorithm 1, involves three main phases:

1. Base Maze Construction: An initial $N\times M$ grid is populated, and an acyclic maze graph ( $M_{g}$ ) is formed using Kruskal’s algorithm (Kleinberg and Tardos, 2006). This ensures a simply connected environment where a unique path exists between any two cells if all internal "walls" (potential door locations) were open. The overall process results in maze instances like the one visualized in Figure 5.

1. Rewind Construction for Path Skeleton and Key/Door Placement: This phase implements the "Rewind Construction" (Algorithm 1 in the main paper). Starting from a randomly selected goal cell ( $C_{goal}$ ), the algorithm works backward to define a solvable path skeleton ( $\Pi_{S}$ ). It iteratively:

1. Selects a cell $c_{key}$ that would be a preceding point on a path towards the current cell $x$ (initially $C_{goal}$ ).

1. Identifies the unique path segment $\pi_{seg}$ in $M_{g}$ from $x$ to $c_{key}$ .

1. Randomly selects an edge $d$ on this segment $\pi_{seg}$ to become a locked door. This edge $d$ is added to the set of locked doors $\mathcal{D}_{L}$ .

1. A new unique key $K_{id}$ is conceptually placed at $c_{key}$ , and its information (which door it opens, its location) is stored in $\mathcal{K}_{I}$ .

1. The conceptual steps (moving along $\pi_{seg}$ , unlocking door $d$ with $K_{id}$ , picking up $K_{id}$ at $c_{key}$ ) are prepended (in reverse logical order) to the path skeleton $\Pi_{S}$ .

1. The current cell $x$ is updated to $c_{key}$ , and the process repeats until the target number of backtracks ( $\mathcal{B}$ ) is achieved or no valid placements remain.

This backward construction ensures solvability and controlled backtracking complexity. The final agent starting position is the cell $x$ at the end of this phase.

1. Fact Compilation and Noise Injection: Based on the final maze structure ( $M_{g},\mathcal{D}_{L},\mathcal{K}_{I}$ ), a set of natural language facts $\mathcal{F}$ is compiled. This includes facts describing room connections, key locations, and door states. Distracting facts are then introduced based on the target noise ratio $\mathcal{N}$ . These distractors might describe non-existent connections, spurious keys, or misleading adjacencies, chosen to be plausible yet incorrect.

<details>

<summary>figs/compath_viz.png Details</summary>

### Visual Description

## Diagram: Network Topology with Annotated Connections

### Overview

The image displays a schematic diagram of a network or grid-based system. It consists of nodes (represented by circles and triangles) connected by lines (edges) in a structured, grid-like layout. The diagram includes annotations in the form of colored dashed lines and highlighted components (red rectangles), suggesting specific relationships, paths, or states within the network. There is no textual information, labels, axes, or legends present in the image.