# Kimi-Dev: Agentless Training as Skill Prior for SWE-Agents

> Indicates equal contribution. † Joint leads.

## Abstract

Large Language Models (LLMs) are increasingly applied to software engineering (SWE), with SWE-bench as a key benchmark. Solutions are split into SWE-Agent frameworks with multi-turn interactions and workflow-based Agentless methods with single-turn verifiable steps. We argue these paradigms are not mutually exclusive: reasoning-intensive Agentless training induces skill priors, including localization, code edit, and self-reflection that enable efficient and effective SWE-Agent adaptation. In this work, we first curate the Agentless training recipe and present Kimi-Dev, an open-source SWE LLM achieving 60.4% on SWE-bench Verified, the best among workflow approaches. With additional SFT adaptation on 5k publicly-available trajectories, Kimi-Dev powers SWE-Agents to 48.6% pass@1, on par with that of Claude 3.5 Sonnet (241022 version). These results show that structured skill priors from Agentless training can bridge workflow and agentic frameworks for transferable coding agents.

## 1 Introduction

Recent days have witnessed the rapid development of Large Language Models (LLMs) automating Software-Engineering (SWE) tasks (jimenez2023swe; yang2024swe; xia2024agentless; anthropic_claude_3.5_sonnet_20241022; pan2024training; wang2024openhands; wei2025swe; yang2025qwen3; team2025kimi_k2; openai_gpt5_system_card_2025). Among the benchmarks that track the progress of LLM coding agents in SWE scenarios, SWE-bench (jimenez2023swe) stands out as one of the most representative ones: Given an issue that reports a bug in a real-world GitHub repository, a model is required to produce a patch that fixes the bug, the correctness of which is further judged by whether the corresponding unit tests are passed after its application. The difficulty of the task (as of the date the benchmark was proposed), the existence of the outcome reward with the provided auto-eval harness, as well as the real-world economic value it reflects, have made the SWE-bench a focal point of the field.

Two lines of solutions have emerged for the SWE-bench task. Agent-based solutions like SWE-Agent (yang2024swe) and OpenHands (wang2024openhands) take an interactionist approach: Instructed with the necessary task description, a predefined set of available tools, as well as the specific problem statement, the agent is required to interact with an executable environment for multiple turns, make change to the source codes, and determine when to stop autonomously. In contrast, workflow-based solutions like Agentless (xia2024agentless) pre-define the solving progress as a pipeline, which consists of steps like localization, bug repair, and test composition. Such task decomposition transforms the agentic task into generating correct responses for a chain of single-turn problems with verifiable rewards (guo2025deepseek; wei2025swe; SWESwiss2025).

The two paradigms have been widely viewed as mutually exclusive. On the one hand, SWE-Agents are born with higher potential and better adaptability, thanks to the higher degree of freedom of the multi-turn interaction without the fixed routines. However, it has also proved more difficult to train with such frameworks due to their end-to-end nature (deepswe2025; cao2025skyrl). On the other hand, Agentless methods offer better modularity and the ease to train with Reinforcement Learning with Verifiable Rewards (RLVR) techniques, but more limited exploration space and flexibility, and difficulty in behavior monitoring as the erroneous patterns appear only in the single-turn long reasoning contents (pan2024training). However, we challenge the dichotomy from the perspective of training recipe: We argue that Agentless training should not be viewed as the ultimate deliverable, but rather as a way to induce skill priors – atomic capabilities such as the localization of buggy implementations and the update of erroneous code snippets, as well as self-reflection and verification, all of which help scaffold the efficient adaptation of more capable and generalizable SWE-agents.

Guided by this perspective, we introduce Kimi-Dev, an open-source code LLM for SWE tasks. Specifically, we first develop an Agentless training recipe, which includes mid-training, cold-start, reinforcement learning, and test-time self-play. This results in 60.4% accuracy on SWE-bench Verified, the SoTA performance among the workflow-based solutions. Building on this, we show that Agentless training induces skill priors: a minimal SFT cold-start from Kimi-Dev with 5k publicly-available trajectories enables efficient SWE-agent adaptation and reaches 48.6% pass@1 score, similar to that of Claude 3.5 Sonnet (the 20241022 version, anthropic_claude_3.5_sonnet_20241022). We demonstrate that these induced skills transfer from the non-agentic workflows to the agentic frameworks, and the self-reflection in long Chain-of-Thoughts baked through Agentless training further enable the agentic model to leverage more turns and succeed with a longer horizon. Finally, we also show that the skills from Agentless training generalize beyond SWE-bench Verified to broader benchmarks like SWE-bench-live (zhang2025swe) and SWE-bench Multilingual (yang2025swesmith). Together, these results reframe the relationship between Agentless and agentic frameworks: not mutually exclusive, but as complementary stages in building transferable coding LLMs. This shift offers a principled view that training with structural skill priors could scaffold autonomous agentic interaction.

The remainder of this paper is organized as follows. Section 2 reviews the background of the framework dichotomy and outlines the challenges of training SWE-Agents. Section 3 presents our Agentless training recipe and the experimental results. Section 4 demonstrates how these Agentless-induced skill priors enable efficient SWE-Agent adaptation, and evaluates the skill transfer and generalization beyond SWE-bench Verified.

## 2 Background

In this section, we first review the two dominant frameworks for SWE tasks and their dichotomy in Section 2.1. We then summarize the progress and challenges of training SWE-Agents in Section 2.2. The background introduction sets the stage for reinterpreting Agentless training as skill priors for SWE-Agents, a central theme developed throughout the later sections.

### 2.1 Framework Dichotomy

Two paradigms currently dominate the solutions for automating software engineering tasks. Agentless approaches decompose SWE tasks into modular workflows (xia2024agentless; wei2025swe; ma2024lingma; ma2025alibaba; swe-fixer). Typical workflows consist of bug localization, bug repair, and test generation. This design provides modularity and stability: each step could be optimized separately as a single-turn problem with verifiable rewards (wei2025swe; SWESwiss2025). However, such rigidity comes at the cost of flexibility. When encountering scenarios requiring multiple rounds of incremental updates, the Agentless approaches struggle to adapt.

By contrast, SWE-agents adopt an end-to-end, multi-turn reasoning paradigm (yang2024swe; wang2024openhands). Rather than following a fixed workflow, they iteratively plan, act, and reflect, resembling how human developers debug complex issues. This design enables greater adaptability, but introduces significant difficulties: trajectories often extend over tens or even hundreds of steps, context windows of the LLMs must span over the entire interaction history, and the model must handle exploration, reasoning, and tool use simultaneously.

The dichotomy between fixed workflows (e.g., Agentless) and agentic frameworks (e.g., SWE-Agent) has shaped much of the community’s perspective. The two paradigms are often regarded as mutually exclusive: one trades off flexibility and performance ceiling for modularity and stability, whereas the other makes the reverse compromise. Our work challenges this dichotomy, as we demonstrate that Agentless training induces skill priors that make further SWE-agent training both more stable and more efficient.

### 2.2 Training SWE-agents

Training SWE-agents relies on acquiring high-quality trajectories through interactions with executable environments. Constructing such large-scale environments and collecting reliable trajectories, however, requires substantial human labor as well as costly calls to frontier models, making data collection slow and resource-demanding (pan2024training; badertdinov2024sweextra). Recent studies also attempt to scale environment construction by synthesizing bugs for the reverse construction of executable runtime (jain2025r2e; yang2025swesmith).

However, credit assignment across long horizons still remains challenging, as outcome rewards are sparse and often only available when a final patch passes its tests. Reinforcement learning techniques have been proposed, but frequently suffer from instability or collapse when trajectories exceed dozens of steps (deepswe2025; cao2025skyrl). SWE-agent training is also highly sensitive to initialization: starting from a generic pre-trained model often leads to brittle behaviors, such as failing to use tools effectively or getting stuck in infinite loops of specific action patterns (pan2024training; yang2025swesmith).

These limitations motivate our central hypothesis: instead of training SWE-agents entirely from scratch, one can first induce skill priors through agentless training, enhancing the atomic capabilities like localization, repair, test composition, and self-reflection. These priors lay a foundation that makes subsequent agentic training both more efficient and more generalizable.

## 3 Agentless Training Recipe

Instead of training SWE-agents from scratch, we leverage Agentless training to induce skill priors. Skill priors enhanced by Agentless training include but are not limited to bug localization, patch generation, self-reflection and verification, which lay the foundation for end-to-end agentic interaction. In this section, we elaborate our Agentless training recipe: the duo framework design of BugFixer and TestWriter, mid-training and cold-start, reinforcement learning, and test-time self-play. Sections 3.1 – 3.4 detail these ingredients, and Section 3.5 presents the experimental results for each of them. This training recipe results in Kimi-Dev, an open-source 72B model that achieves 60.4% on SWE-bench Verified, the SoTA performance among the workflow-based solutions.

<details>

<summary>x3.png Details</summary>

### Visual Description

## System Architecture Diagram: Automated Bug Fixing and Test Generation Workflow

### Overview

The image is a technical system architecture diagram illustrating a closed-loop, automated software debugging and testing workflow. The system involves two primary modules, **BugFixer** and **TestWriter**, which are coordinated by a central **LLM** (Large Language Model). The diagram depicts a cyclical process where bugs are identified, fixed, and verified through generated test cases.

### Components/Axes

The diagram is organized into three main regions: a left module, a central processing hub, and a right module.

**1. Left Module (BugFixer):**

* **Primary Component:** `BugFixer` (text label).

* **Associated Functions (Icons & Labels):**

* `File Localization` (icon: a document with a magnifying glass).

* `Code Edit` (icon: a document with a pencil).

* **Position:** Left side of the diagram.

**2. Central Hub (LLM):**

* **Primary Component:** `LLM` (text label), represented by a network/graph icon (nodes connected by lines).

* **Position:** Center of the diagram, acting as the intermediary.

**3. Right Module (TestWriter):**

* **Primary Component:** `TestWriter` (text label).

* **Associated Functions (Icons & Labels):**

* `File Localization` (icon: a document with a magnifying glass).

* `Code Edit` (icon: a document with a pencil).

* **Position:** Right side of the diagram.

**4. Process Flows (Arrows & Labels):**

* **Top Arrow (Blue):** Flows from `BugFixer` (left) to `TestWriter` (right), passing above the LLM. It is labeled `Generate Test Case`.

* **Bottom Arrow (Blue):** Flows from `TestWriter` (right) to `BugFixer` (left), passing below the LLM. It is labeled `Fix Bugs`.

* **Central Arrows (Purple):** Two short, horizontal arrows connect the central `LLM` icon to the `BugFixer` and `TestWriter` labels, indicating bidirectional communication or control.

### Detailed Analysis

The diagram describes a specific technical workflow:

1. **Initiation & Bug Localization:** The process begins with the `BugFixer` module. Its associated icons indicate its core functions are to perform `File Localization` (finding the relevant source files containing a bug) and to execute `Code Edit` (modifying the code to apply a fix).

2. **Test Case Generation Request:** Once a bug is presumably localized, the `BugFixer` module triggers the top flow. The blue arrow labeled `Generate Test Case` indicates a request or data is sent from `BugFixer` to `TestWriter`.

3. **Test Writing & Execution:** The `TestWriter` module receives this request. Like `BugFixer`, it also has `File Localization` and `Code Edit` capabilities, suggesting it can locate test files and write or modify test code. Its primary function, as per its name and the incoming arrow, is to generate a test case based on the bug information.

4. **Bug Fixing Loop:** After generating the test case, the bottom blue arrow labeled `Fix Bugs` shows a return flow from `TestWriter` back to `BugFixer`. This implies the generated test case is used to validate a fix. The cycle suggests an iterative process: a fix is attempted, a test is generated to verify it, and the result informs the next fixing attempt.

5. **LLM Orchestration:** The central `LLM` component is connected to both modules via purple arrows. This positioning indicates the LLM acts as the orchestrator, decision-maker, or reasoning engine for the entire loop. It likely receives information from both sides, determines the next action (e.g., what to fix, what test to write), and directs the modules accordingly.

### Key Observations

* **Symmetry:** The `BugFixer` and `TestWriter` modules are structurally symmetric, both possessing `File Localization` and `Code Edit` capabilities. This suggests a design where both modules operate on the codebase but with different primary objectives (fixing vs. testing).

* **Closed Loop:** The two main blue arrows form a clear, continuous cycle, emphasizing an automated, iterative debugging process.

* **Centralized Intelligence:** The LLM is not just a tool used by one module but is placed at the heart of the diagram, connected to both. This highlights its role as the core intelligence driving the coordination between bug fixing and test generation.

* **Functional Icons:** The repeated use of the `File Localization` and `Code Edit` icons explicitly defines the low-level actions each high-level module can perform.

### Interpretation

This diagram represents a sophisticated **AI-driven DevOps or MLOps pipeline** for automated software maintenance. The system's purpose is to autonomously manage the bug lifecycle.

* **What it demonstrates:** It shows a framework where an LLM doesn't just generate code or tests in isolation but actively participates in a **feedback loop**. The `BugFixer` identifies a problem, the LLM reasons about it and directs the `TestWriter` to create a verification mechanism, and the outcome of that test feeds back into the fixing process.

* **Relationships:** The relationship is **cyclical and interdependent**. `BugFixer` depends on `TestWriter` for validation, and `TestWriter` depends on `BugFixer` for the bug context. The LLM is the **dependency hub** that enables this coordination, likely translating bug reports into test specifications and test results into fix strategies.

* **Notable Implications:** This architecture aims to reduce human intervention in the debugging process. The "File Localization" step is critical, as it implies the system can navigate a large codebase to find relevant code and test files. The "Code Edit" function for both modules indicates the system has the capability to directly modify production and test code, which would require robust safeguards and validation in a real-world implementation. The model suggests a move towards **autonomous software agents** that can perceive (localize), reason (LLM), and act (edit code) within a development environment.

</details>

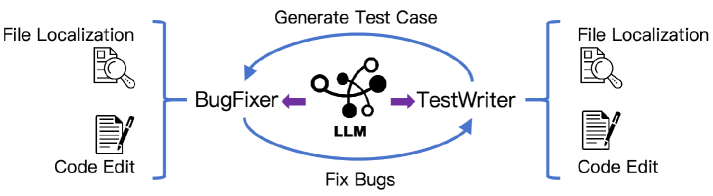

Figure 1: Agentless framework for Kimi-Dev: the duo of BugFixer and TestWriter.

### 3.1 Framework: the Duo of Bugfixer and Testwriter

In GitHub issue resolution, we conceptualize the process as the collaboration between two important roles: the BugFixer, who produces patches that correctly address software bugs, and the TestWriter, who creates reproducible unit tests that capture the reported bug. A resolution is considered successful when the BugFixer’s patch passes the tests provided for the issue, while a high-quality test from the TestWriter should fail on the pre-fix version of the code and pass once the fix is applied.

Each role relies on two core skills: (i) file localization, the ability to identify the specific files relevant to the bug or test, and (ii) code edit, the ability to implement the necessary modifications. For BugFixer, effective code edits repair the defective program logic, whereas for TestWriter, they update precise unit test functions that reproduce the issue into the test files. As illustrated in Figure 1, these two skills constitute the fundamental abilities underlying GitHub issue resolution. Thus, we enhance these skills through the following training recipes, including mid-training, cold-start, and RL.

### 3.2 Mid-Training & Cold Start

To enhance the model’s prior as both a BugFixer and a TestWriter, we perform mid-training with $∼$ 150B tokens in high-quality and real-world data. With the Qwen 2.5-72B-Base (qwen2025qwen25technicalreport) model as a starting point, we collect millions of GitHub issues and PR commits to form its mid-training dataset, which consists of (i) $∼$ 50B tokens in the form of Agentless derived from the natural diff patch, (ii) $∼$ 20B tokens of curated PR commit packs, and (iii) $∼$ 20B tokens of synthetic data with reasoning and agentic interaction patterns (upsampled by a factor of 4 during training). The data recipe is carefully constructed to enable the model to learn how human developers reason with GitHub issues, implement code fixes, and develop unit tests. We also performed strict data decontamination to exclude any repository from the SWE-bench Verified test set. Mid-training sufficiently enhances the knowledge in the model about practical bug fixes and unit tests, making it a better starting point for later stages. The details of the recipe are covered in Appendix A.

To activate the model’s long Chain-of-Thought (CoT) capability, we also construct a cold-start dataset with reasoning trajectories based on the SWE-Gym (pan2024training) and SWE-bench-extra (badertdinov2024scaling) datasets, generated by the DeepSeek R1 model (deepswe2025, the 20250120 version). In this setup, R1 acts the roles of Bugfixer and Testwriter, producing outputs such as file localization and code edits. Through supervised finetuning as a cold start with this dataset, we enable the model to acquire essential reasoning skills, including problem analysis, method sketching, self-refinement, and exploration of alternative solutions.

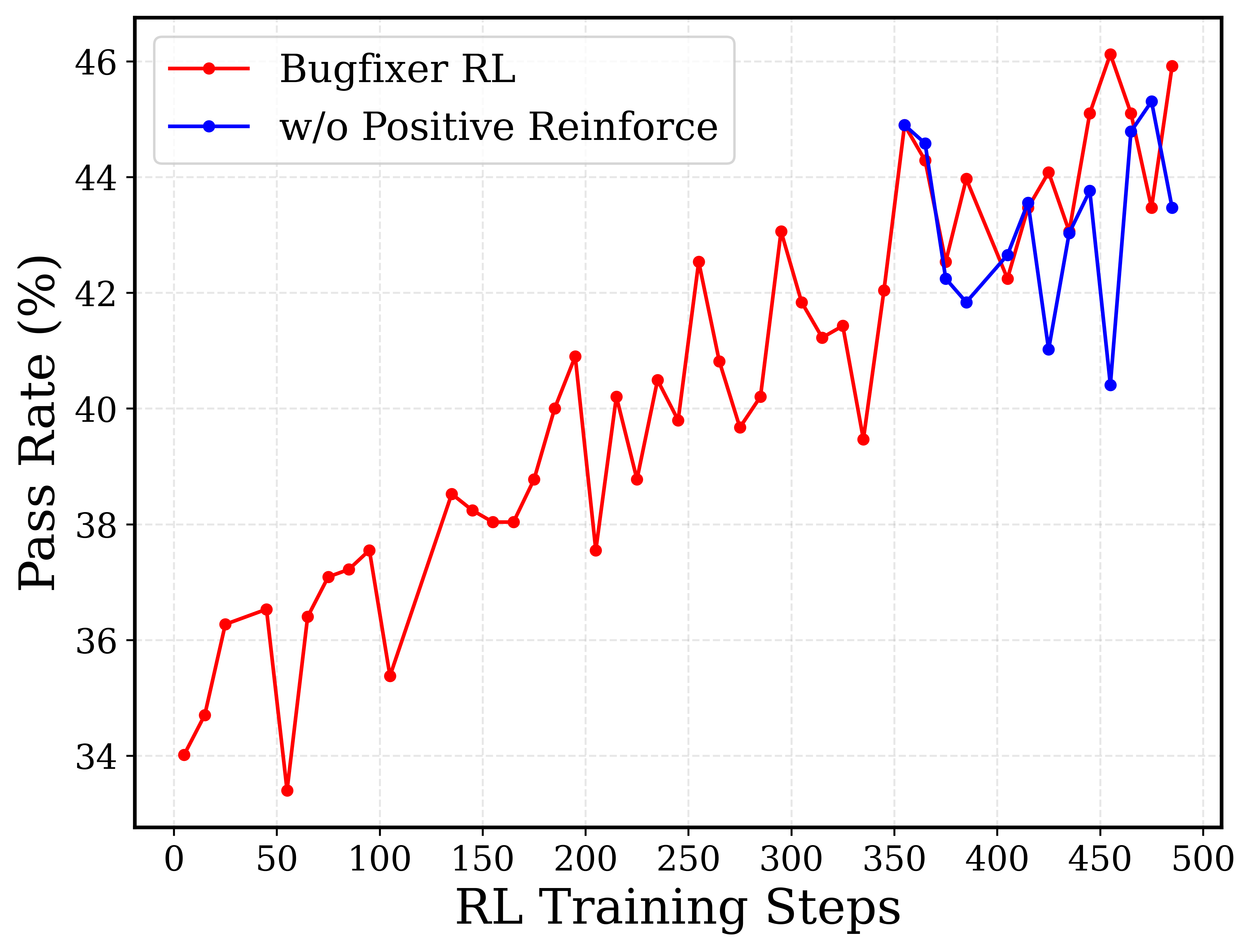

### 3.3 Reinforcement Learning

After mid-training and cold-start, the model demonstrates strong performance in localization. Therefore, reinforcement learning (RL) focuses solely on the code edit stage. We construct a training set specifically for this stage, where each prompt is equipped with an executable environment. We further employ multiple localization rollouts from the initial model to generate varied file location predictions, which diversifies the prompts used in code-edit RL.

For the RL algorithm, we adopt the policy optimization method proposed by Kimi k1.5 (team2025kimi_k15), which has shown promising results on reasoning tasks in both math and coding. Kimi k1.5 (team2025kimi_k15) adopts a simpler policy gradient approach based on the REINFORCE algorithm (williams1992simple). Similarly to GRPO (shao2024deepseekmath), we use the average rewards of multiple rollouts as the baseline to normalize the returns. When adapting the algorithm in our SWE-bench setting, we highlight the following 3 key desiderata:

1. Outcome-based reward only: We rely solely on the final execution outcome from the environment as the raw reward (0 or 1), without incorporating any format- or process-based signals. For BugFixer, a positive reward is given if the generated patch passes all ground-truth unittests. For TestWriter, a positive reward is assigned when (i) the predicted test raises a failure in the repo without the ground-truth bugfix patch applied, AND (ii) the failure is resolved once the ground-truth bugfix patch is applied.

1. Adaptive prompt selection: Prompts with pass@16 = 0 are initially discarded as they do not contribute to the batch loss. This results in an initial prompt set of 1,200 problems and enlarges the effective batch size. A curriculum learning scheme is then applied: once the success rate on the current set exceeds a threshold, 500 new (previously excluded) prompts (with initial pass@16 = 0 but improved under RL) are reintroduced every 100 RL steps to gradually raise task difficulty.

1. Positive example reinforcement: As performance improvements begin to plateau in later stages of training, we incorporate the positive samples from the recent RL iterations into the training batch of the current iteration. This approach reinforces the model’s reliance on successful patterns, thereby accelerating convergence in the final phase.

Robust sandbox infrastructure. We construct the docker environment with Kubernetes (kubernetes), which provides a secure and scalable sandbox infrastructure and efficient training and rollouts. The infra supports over 10,000 concurrent instances with robust performance, making it ideal for competitive programming and software engineering tasks (see Appendix D for details).

### 3.4 Test-Time Self-Play

After RL, the model masters the roles of both a BugFixer and a TestWriter. During test time, it adopts a self-play mechanism to coordinate its bug-fixing and test-writing abilities.

Following Agentless (xia2024agentless), we leverage the model to generate 40 candidate patches and 40 tests for each instance. Each patch generation involves independent runs of the localization and code edit from BugFixer, where the first run uses greedy decoding (temperature 0), and the remaining 39 use temperature 1 to ensure diversity. Similarly, 40 tests are generated independently from TestWriter. For the test patch candidates, to guarantee their validity, we first filter out those failing to raise a failure in the original repo without applying any BugFixer patch.

Denote the rest TestWriter patches as set $T$ , and the BugFixer patches as set $B$ . For each $b_i∈B$ and $t_j∈T$ , we execute the test suite over the test file modified by $t_j$ for twice: first without $b_i$ , and then with $b_i$ applied. From the execution log for the first run, we get the count of the failed and the passed tests from $t_j$ , denoted as ${\rm F}(j)$ and ${\rm P}(j)$ . Comparing the execution logs for the two test suite runs, we get the count of the fail-to-pass and the pass-to-pass tests, denoted as ${\rm FP}(i,j)$ and ${\rm PP}(i,j)$ , respectively. We then calculate the score for each $b_i$ with

$$

S_i=\frac{∑_j{\rm FP}(i,j)}{∑_j{\rm F}(j)}+\frac{∑_j{\rm PP}(i,j)}{∑_j{\rm P}(j)},\vskip-2.0pt \tag{1}

$$

where the first part reflects the performance of $b_i$ under reproduction tests, and the second part could be viewed as the characterization of $b_i$ under regression tests (xia2024agentless). We select the BugFixer patch $b_i$ with the highest $S_i$ score as the ultimate answer.

Table 1: Performance comparison for models on SWE-bench Verified under Agentless-like frameworks. All the performances are obtained under the standard 40 patch, 40 test setting (xia2024agentless), except that Llama3-SWE-RL uses 500 patches and 30 tests.

### 3.5 Experiments

#### 3.5.1 Main Results

<details>

<summary>figs/sec3_mid_training/mid-train_perf.png Details</summary>

### Visual Description

## Bar Chart: Pass Rate vs. Mid-training Tokens

### Overview

This is a vertical bar chart illustrating the relationship between the number of "Mid-training tokens" (in billions) and the resulting "Pass Rate" (as a percentage). The chart demonstrates a clear, positive correlation: as the number of mid-training tokens increases, the pass rate also increases.

### Components/Axes

* **Chart Type:** Vertical Bar Chart.

* **Y-Axis (Vertical):**

* **Label:** "Pass Rate (%)"

* **Scale:** Linear scale ranging from 26 to 38, with major tick marks and grid lines at intervals of 2 units (26, 28, 30, 32, 34, 36, 38).

* **X-Axis (Horizontal):**

* **Label:** "Mid-training tokens"

* **Categories:** Three discrete categories representing token counts: "50B", "100B", and "150B".

* **Data Series:** A single data series represented by three light blue bars with black outlines.

* **Data Labels:** Each bar has its exact numerical value displayed directly above it.

* **Legend:** Not present in this chart.

* **Title:** No chart title is present.

### Detailed Analysis

The chart presents three data points, each corresponding to a specific mid-training token count:

1. **50B Tokens:**

* **Bar Position:** Leftmost bar.

* **Pass Rate Value:** 28.6%

* **Visual Trend:** The shortest bar, establishing the baseline performance.

2. **100B Tokens:**

* **Bar Position:** Center bar.

* **Pass Rate Value:** 32.6%

* **Visual Trend:** The bar is taller than the 50B bar, indicating an increase in pass rate. The increase from 50B to 100B is 4.0 percentage points.

3. **150B Tokens:**

* **Bar Position:** Rightmost bar.

* **Pass Rate Value:** 36.6%

* **Visual Trend:** The tallest bar, showing the highest performance. The increase from 100B to 150B is another 4.0 percentage points.

**Trend Verification:** The visual trend is unambiguously upward. Each successive bar to the right is taller than the previous one, confirming a monotonic increase in pass rate with more mid-training tokens.

### Key Observations

* **Consistent Linear Increase:** The pass rate increases by a consistent margin of 4.0 percentage points for each 50-billion-token increment in mid-training data (from 50B to 100B, and from 100B to 150B).

* **No Plateau Observed:** Within the range shown (50B to 150B tokens), there is no visual indication of diminishing returns or a performance plateau. The growth appears linear.

* **Clear Positive Correlation:** The relationship between the two variables is direct and positive.

* **Absence of Outliers:** All data points follow the established trend perfectly.

### Interpretation

The data suggests a strong, positive, and linear relationship between the volume of mid-training tokens and the model's performance on the evaluated task (measured by pass rate). This implies that, within the tested range, investing in more mid-training data yields proportional improvements in model capability.

From a technical perspective, this chart likely comes from an AI/ML research context, evaluating how scaling the "mid-training" phase (a stage between initial pre-training and final fine-tuning) affects final model performance. The consistent 4% gain per 50B tokens provides a predictable scaling law for this specific training regimen and evaluation metric. The key takeaway is that increasing mid-training data is an effective strategy for boosting model pass rates, with no observed saturation point up to 150B tokens.

</details>

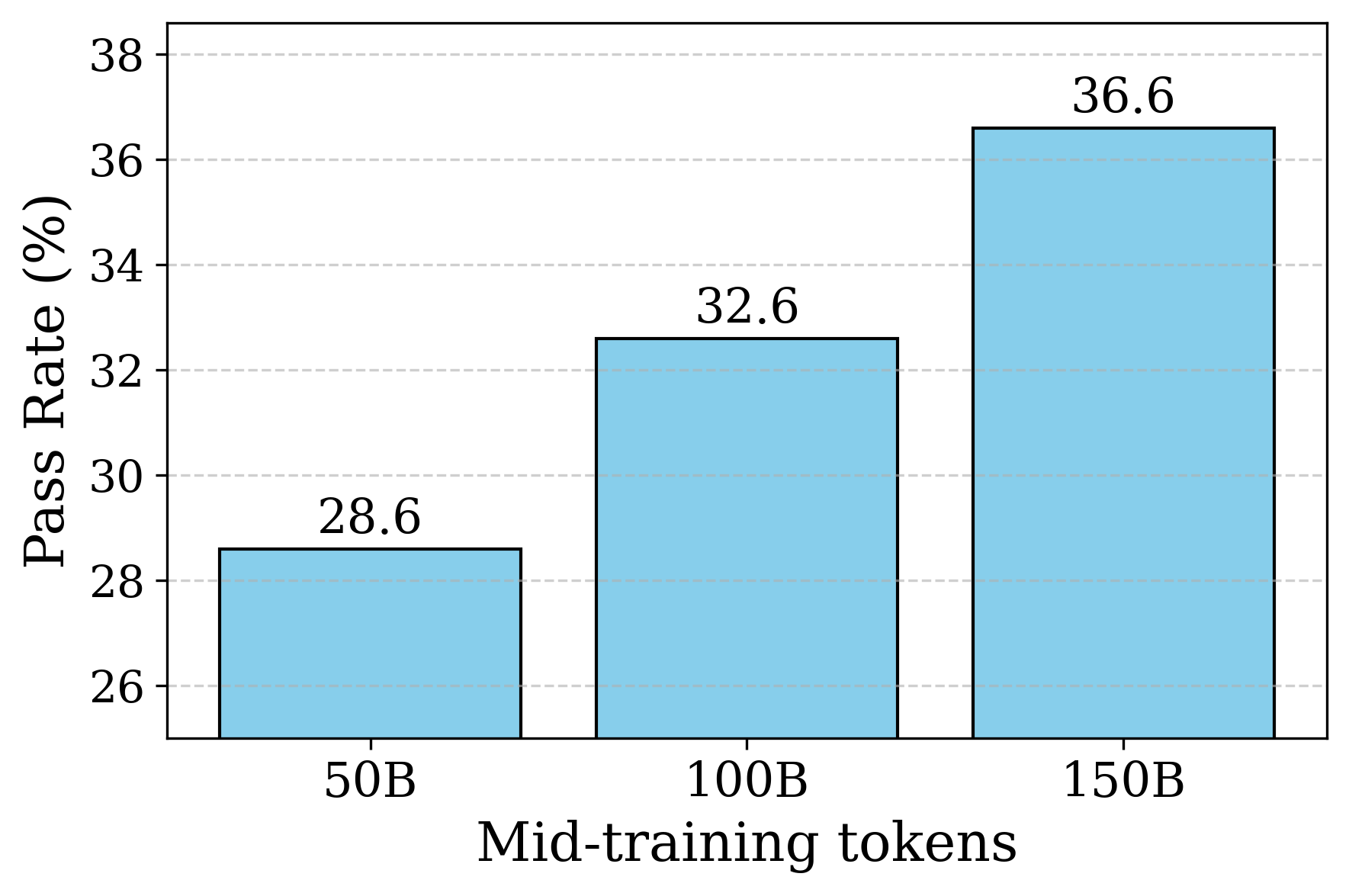

Figure 2: The performance on SWE-bench Verified after mid-training with different training token budgets.

Table 1 shows the performance of Kimi-Dev on SWE-bench Verified (jimenez2023swe). Instead of the text-similarity rewards used in SWE-RL (wei2025swe), we adopt execution-based signals for more reliable fix quality. Our two-stage TestWriter also improves over prior Agentless systems (xia2024agentless; guo2025deepseek; SWESwiss2025), which rely on a single root-level test, by better capturing repository context and mirroring real developer workflows (OpenAI-Codex-2025). Kimi-Dev attains state-of-the-art performance among open-source models, resolving 60.4% of issues.

#### 3.5.2 Mid-Training

In this section, we evaluate the relationship between the amount of data used during mid-training and model performance. Specifically, we finetuned Qwen 2.5-72B-Base with the subset of mid-training data of 50B, 100B, and approximately 150B tokens, and then lightly activated these mid-trained models using the same set of 2,000 Bugfixer input-output pairs for SFT cold start. We only report BugFixer pass@1 here for simplicity of evaluation. Figure 2 shows that increasing the number of tokens in mid-training consistently improves model performance, highlighting the effectiveness of this stage.

#### 3.5.3 Reinforcement Learning

<details>

<summary>figs/sec3_rl_scaling/quick_plot_twin_bf_final.png Details</summary>

### Visual Description

\n

## Dual-Axis Line Chart: RL Training Steps vs. Token Length and Pass Rate

### Overview

This is a dual-axis line chart plotting two metrics—Token Length and Pass Rate (%)—against the number of Reinforcement Learning (RL) training steps. The chart shows the progression of both metrics over 500 training steps, indicating a general upward trend for both, with the Pass Rate exhibiting significantly more volatility.

### Components/Axes

* **Chart Type:** Dual-axis line chart.

* **X-Axis (Bottom):**

* **Label:** "RL Training Steps"

* **Scale:** Linear, from 0 to 500.

* **Major Tick Marks:** Every 50 steps (0, 50, 100, 150, 200, 250, 300, 350, 400, 450, 500).

* **Primary Y-Axis (Left):**

* **Label:** "Token Length" (in blue text).

* **Scale:** Linear, from 4000 to 8000.

* **Major Tick Marks:** Every 500 units (4000, 4500, 5000, 5500, 6000, 6500, 7000, 7500, 8000).

* **Secondary Y-Axis (Right):**

* **Label:** "Pass Rate (%)" (in red text).

* **Scale:** Linear, from 34% to 46%.

* **Major Tick Marks:** Every 2% (34, 36, 38, 40, 42, 44, 46).

* **Legend (Top-Left Corner):**

* **Blue line with square markers:** "Token Length"

* **Red line with circle markers:** "Pass Rate (%)"

* **Data Series:**

1. **Token Length (Blue Line, Square Markers):** Plotted against the left Y-axis.

2. **Pass Rate (Red Line, Circle Markers):** Plotted against the right Y-axis.

### Detailed Analysis

**Trend Verification:**

* **Token Length (Blue):** Shows a clear, generally upward trend from ~3900 at step 0 to ~7800 at step 500. The increase is not perfectly smooth; there are minor dips and plateaus (e.g., around steps 50, 200, and 475).

* **Pass Rate (Red):** Also shows an overall upward trend from ~34% at step 0 to ~46% at step 500. This trend is highly volatile, characterized by sharp peaks and troughs throughout the training process.

**Approximate Data Points (Selected Key Points):**

*Note: Values are approximate based on visual inspection of the chart.*

| RL Training Steps | Token Length (Approx.) | Pass Rate (%) (Approx.) |

| :--- | :--- | :--- |

| 0 | 3900 | 34.0 |

| 50 | 4250 | 36.5 |

| 100 | 5000 | 35.0 |

| 150 | 5500 | 38.5 |

| 200 | 5700 | 41.0 |

| 250 | 5900 | 42.5 |

| 300 | 5900 | 43.0 |

| 350 | 6200 | 42.0 |

| 400 | 7200 | 44.0 |

| 450 | 7400 | 46.0 |

| 475 | 6700 | 43.5 |

| 500 | 7800 | 46.0 |

**Notable Volatility in Pass Rate:**

* A sharp drop occurs around step 50 (to ~34%).

* A significant peak occurs around step 250 (to ~42.5%).

* Another major peak is visible around step 450 (to ~46.0%).

* A pronounced dip follows the peak at step 450, dropping to ~43.5% at step 475 before recovering.

### Key Observations

1. **Correlated General Trend:** Both metrics improve over the course of training, suggesting that as the model trains longer (more RL steps), it tends to generate longer token sequences and achieve a higher pass rate on the evaluated task.

2. **Divergent Volatility:** The Pass Rate is far more sensitive to training steps, exhibiting large swings, while the Token Length increases more steadily. This indicates that the quality or success rate (Pass Rate) of the model's outputs is less stable during training than the length of the outputs.

3. **Late-Stage Performance:** The highest values for both metrics are achieved in the final 100 steps (400-500), with Token Length reaching near 8000 and Pass Rate hitting 46%.

4. **Potential Overfitting or Policy Shift:** The sharp drop in Pass Rate after its peak at step 450, while Token Length remains high, could indicate a phase where the model's outputs become longer but less correct or aligned, a potential sign of overfitting or a shift in the learned policy.

### Interpretation

The data demonstrates the progression of a reinforcement learning process for a language model. The x-axis ("RL Training Steps") represents the duration of training. The two y-axes track different aspects of the model's output:

* **Token Length** is a measure of output verbosity or complexity.

* **Pass Rate (%)** is a measure of output correctness or task success.

The chart suggests that with more training, the model learns to produce longer and, on average, more correct responses. However, the path to improvement is not linear, especially for correctness. The high volatility in Pass Rate implies that the training process involves periods of exploration where performance can degrade temporarily before improving. The strong correlation in the final steps suggests the training may be converging toward an optimal policy that balances length and correctness. The divergence at step 475 is a critical point for investigation, as it shows a temporary decoupling of length and success rate.

</details>

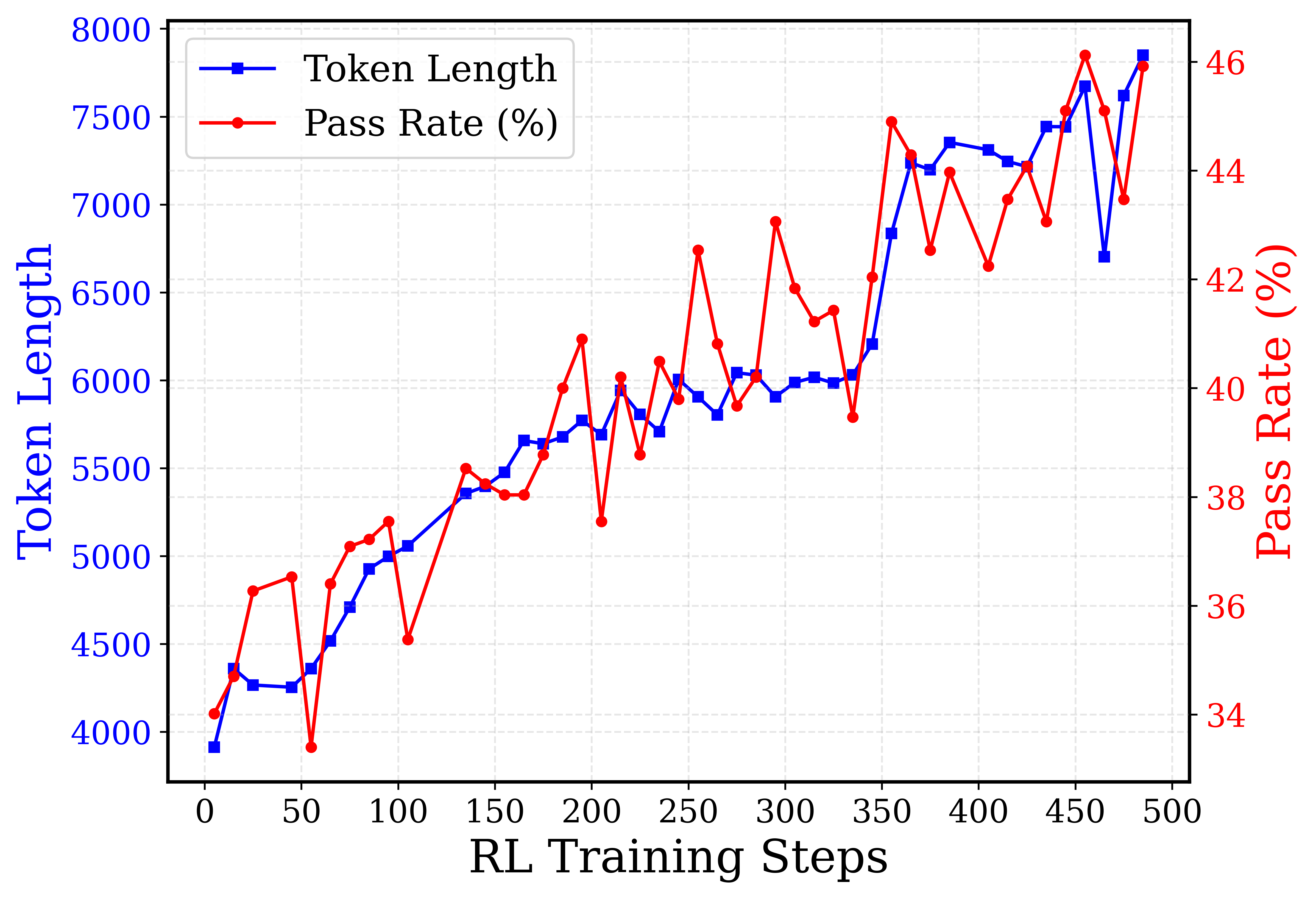

(a) 72B Joint RL, BugFixer

<details>

<summary>figs/sec3_rl_scaling/quick_plot_twin_tw_final.png Details</summary>

### Visual Description

## Dual-Axis Line Chart: RL Training Progression

### Overview

This is a dual-axis line chart plotting two metrics—**Token Length** and **Reproduced Rate (%)**—against **RL Training Steps**. The chart visualizes the progression of these two variables over the course of a reinforcement learning (RL) training process, spanning from step 0 to step 500. The data suggests a relationship between the length of generated tokens and the model's reproduction accuracy as training advances.

### Components/Axes

* **X-Axis (Bottom):** Labeled **"RL Training Steps"**. Linear scale from 0 to 500, with major tick marks every 50 steps.

* **Primary Y-Axis (Left):** Labeled **"Token Length"** in blue text. Linear scale from 3000 to 6500, with major tick marks every 500 units.

* **Secondary Y-Axis (Right):** Labeled **"Reproduced Rate (%)"** in red text. Linear scale from 20.0 to 35.0, with major tick marks every 2.5 percentage points.

* **Legend:** Located in the top-left corner of the plot area.

* Blue line with square markers: **"Token Length"**

* Red line with circle markers: **"Reproduced Rate (%)"**

* **Grid:** A light gray, dashed grid is present for both axes, aiding in value estimation.

### Detailed Analysis

**1. Token Length (Blue Line, Left Axis):**

* **Trend:** Shows a strong, generally consistent upward trend throughout training, with minor local fluctuations.

* **Key Data Points (Approximate):**

* Step 0: ~3050

* Step 50: ~3000 (local minimum)

* Step 100: ~3500

* Step 150: ~3650

* Step 200: ~4050

* Step 250: ~4600

* Step 300: ~4900

* Step 350: ~5100

* Step 400: ~6100

* Step 450: ~6200

* Step 500: ~6400 (peak)

* **Observation:** The growth is relatively smooth from step 150 onward, with a notable acceleration between steps 350 and 400.

**2. Reproduced Rate (%) (Red Line, Right Axis):**

* **Trend:** Exhibits a volatile but overall upward trend, characterized by sharp peaks and troughs.

* **Key Data Points (Approximate):**

* Step 0: ~20.0% (minimum)

* Step 50: ~22.5%

* Step 100: ~27.5% (local peak)

* Step 150: ~27.5%

* Step 200: ~27.5%

* Step 250: ~32.5% (local peak)

* Step 300: ~32.5%

* Step 350: ~32.5%

* Step 400: ~35.0% (global peak)

* Step 450: ~32.5%

* Step 500: ~32.0%

* **Observation:** The rate is highly unstable. Major dips occur around steps 75, 175, 275, and 425. The highest reproduction rate (~35%) is achieved near step 400, coinciding with a steep rise in token length.

### Key Observations

1. **Correlation with Volatility:** While both metrics trend upward, the **Reproduced Rate** is far more volatile than the steadily increasing **Token Length**. This suggests that while the model learns to generate longer sequences, its ability to accurately reproduce target content is less stable and may be sensitive to specific training phases.

2. **Peak Performance Window:** The highest reproduction rate (~35%) occurs in the step 380-420 window, where token length is also rapidly increasing (from ~5500 to ~6100). This could indicate an optimal training phase.

3. **Late-Stage Divergence:** After step 400, token length continues to climb to its maximum (~6400), but the reproduced rate declines from its peak and becomes erratic. This divergence might signal the onset of overfitting, where the model generates longer but less accurate outputs.

4. **Initial Phase:** The first 50 steps show minimal growth in token length and a low, fluctuating reproduction rate, typical of early training exploration.

### Interpretation

The chart demonstrates a common dynamic in RL training for generative models: **increased output complexity (longer tokens) does not guarantee improved performance (higher reproduction rate)**. The data suggests:

* **Learning Progress:** The model successfully learns to generate longer sequences as training progresses, indicating it is capturing more complex patterns or adhering to longer-form generation objectives.

* **Performance Instability:** The high volatility in the reproduction rate implies the training process is unstable. The model's accuracy is not improving monotonically; it experiences significant setbacks, which could be due to factors like reward function sparsity, policy updates causing catastrophic forgetting, or exploration-exploitation trade-offs.

* **Potential Overfitting or Objective Misalignment:** The final phase (steps 400-500) is critical. The continued increase in token length coupled with a declining and unstable reproduction rate suggests the model may be optimizing for length at the expense of accuracy, or that the training objective is not perfectly aligned with the desired outcome of faithful reproduction.

* **Actionable Insight:** A practitioner analyzing this chart might consider adjusting the training hyperparameters (e.g., learning rate, reward scaling) after step 400 to stabilize the reproduced rate, or investigate why performance peaks and then degrades despite longer generations. The optimal checkpoint for deployment might be around step 400, where reproduction rate is maximized.

</details>

(b) 72B Joint RL, TestWriter

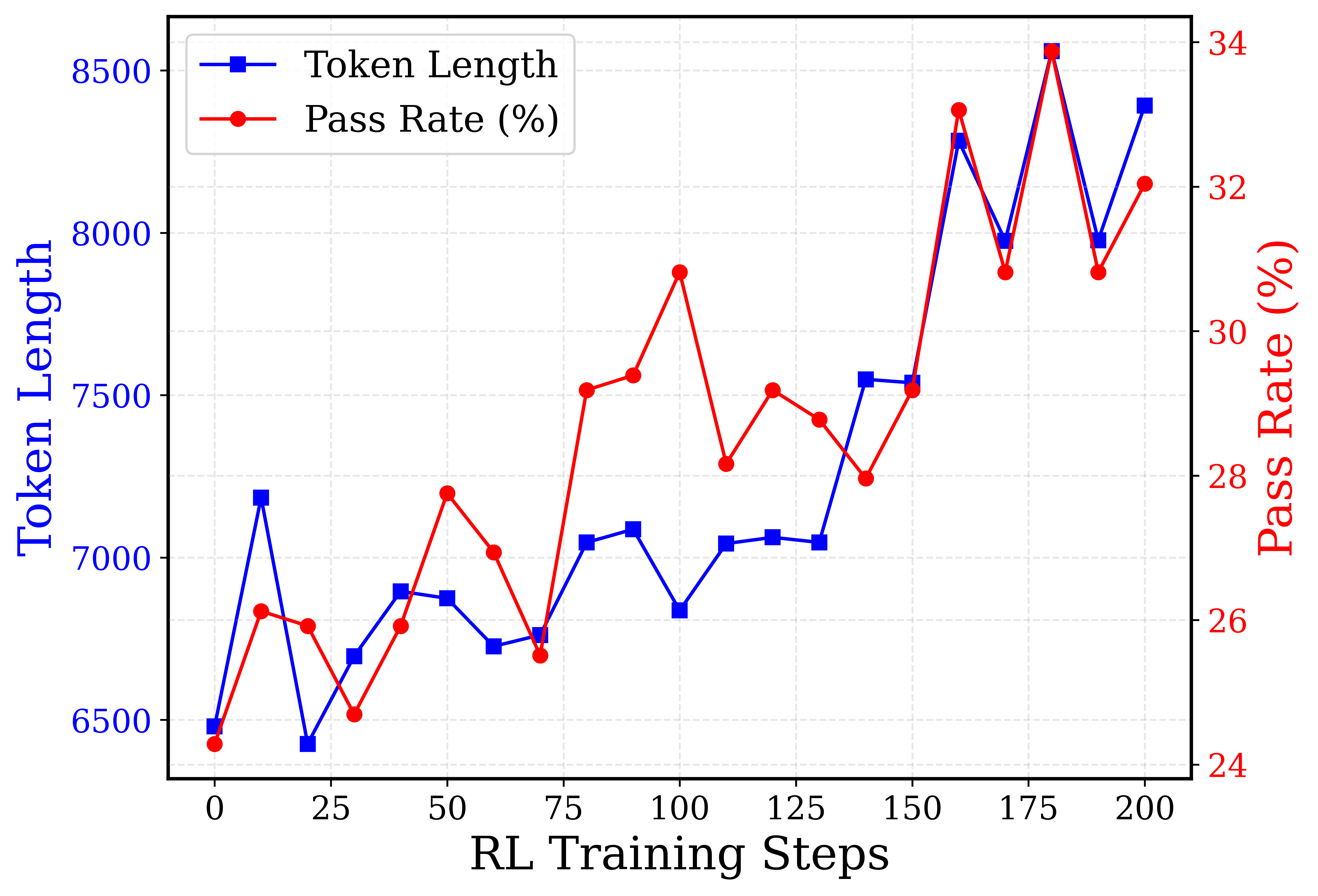

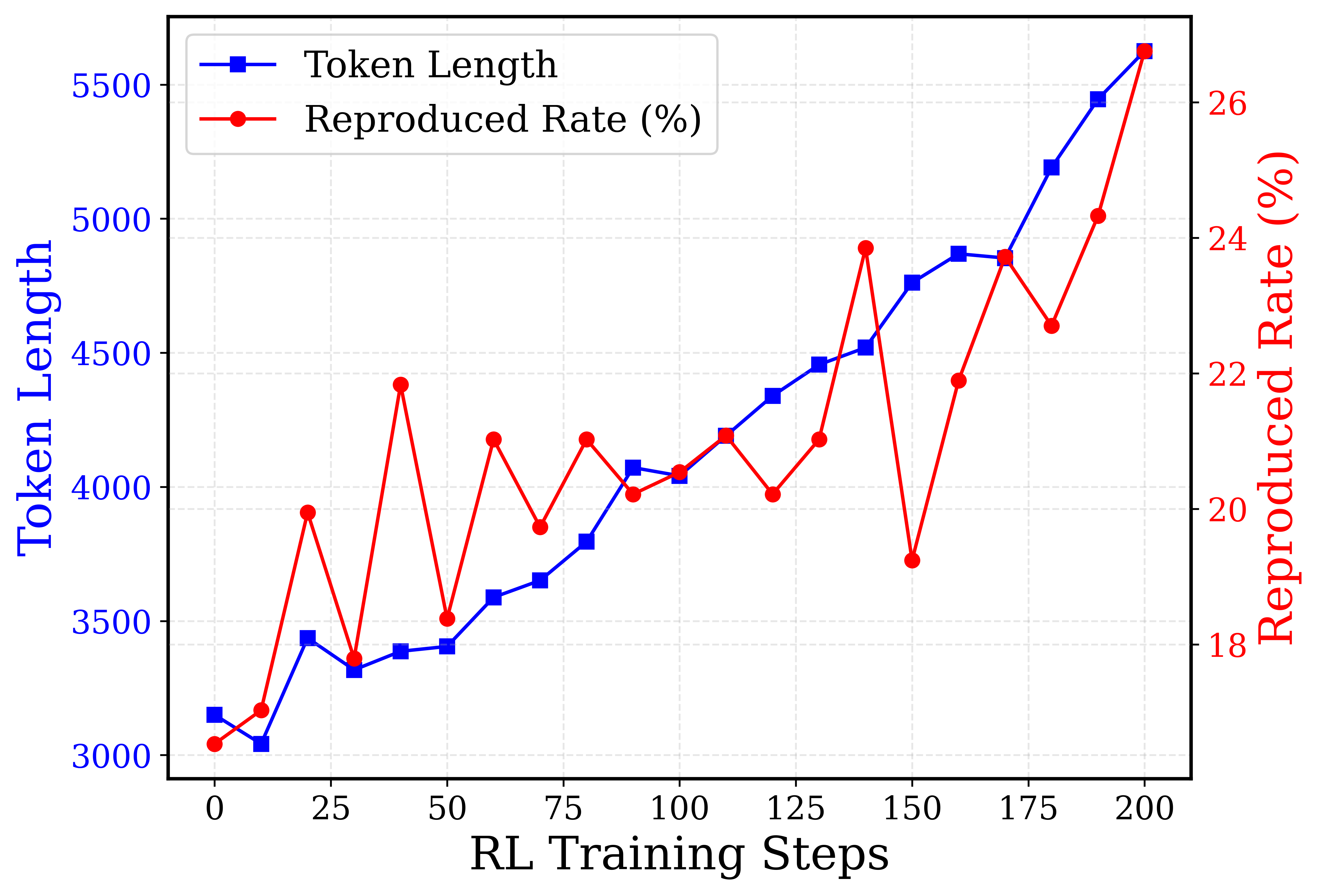

Figure 3: Joint code-edit RL experiments on the model after mid-training and cold-start. The pass rate for BugFixer and the reproduced rate for TestWriter are reported as pass@1 with temperature=1.0. The performance improves consistently as the output becomes increasingly longer.

Experimental setup

We set the training step per RL iteration as 5 and sample 10 rollouts for each of the 1,024 problems from the union of SWE-gym (pan2024training) and SWE-bench-extra (badertdinov2024sweextra). We dynamically adjust the prompt set every 20 iterations to gradually increase task difficulty. We fix the maximum training context length as 64k tokens, since the prompt input contains the contents of the entire files localized by the initial model in advance.

Results

Figure 3 shows the performance and response length curves on the test set during RL training. The pass rate and the reproduced rate are calculated from pass@1 and temperature=1. Specifically, we observe that both model performance and response length steadily increase, reflecting the expected benefits of RL scaling. Similar RL scaling curves are also observed in our ablation experiments run on Qwen2.5-14B-Instruct models, proving the effectiveness of the RL training recipe across models of different sizes. The experimental details, as well as the ablation studies on positive example reinforcement in Section 3.3, are listed in Appendix C.2). The lengthy outputs consist of in-depth problem analysis and self-reflection patterns, similar to those in the math and code reasoning tasks (team2025kimi_k15; guo2025deepseek). We have also observed that for TestWriter, occasional false-positive examples take place during RL training due to the lack of reproduction coverage. We leave the case studies in Appendix E and further improvement for future work.

<details>

<summary>figs/sec3_sp_scaling/selfplay_figure_v2.png Details</summary>

### Visual Description

## [Line Charts]: Pass Rate vs. Number of Patches (BF x TW)

### Overview

The image contains two side-by-side line charts comparing the performance (Pass Rate %) of different methods as the "Number of patches: BF x TW" increases. The left chart compares "Self-play" and "Majority Voting." The right chart compares "Self-play" and "Pass@N." Both charts share the same x-axis categories and the same "Self-play" data series, but have different y-axis scales and a different second data series.

### Components/Axes

**Common Elements:**

* **X-Axis (Both Charts):** Labeled "Number of patches: BF x TW". The categorical markers are: `1×1`, `3×3`, `5×5`, `10×10`, `20×20`, `40×40`.

* **Y-Axis (Both Charts):** Labeled "Pass Rate (%)".

* **Grid:** Both charts have a light gray, dashed grid.

**Left Chart Specifics:**

* **Y-Axis Scale:** Ranges from 45.0 to 62.5, with major ticks every 2.5 units.

* **Legend:** Located in the top-left corner.

* `Self-play`: Blue line with hollow circle markers.

* `Majority Voting`: Green line with hollow triangle markers.

**Right Chart Specifics:**

* **Y-Axis Scale:** Ranges from 45 to 75, with major ticks every 5 units.

* **Legend:** Located in the top-left corner.

* `Self-play`: Blue line with hollow circle markers (identical to left chart).

* `Pass@N`: Orange line with hollow diamond markers.

### Detailed Analysis

**Data Series: Self-play (Blue Line, Circle Markers)**

* **Trend:** Increases steadily from `1×1` to `20×20`, then plateaus.

* **Data Points:**

* `1×1`: 48.0%

* `3×3`: 52.6%

* `5×5`: 55.4%

* `10×10`: 58.8%

* `20×20`: 60.4%

* `40×40`: 60.4%

**Data Series: Majority Voting (Green Line, Triangle Markers) - Left Chart Only**

* **Trend:** Shows a modest, gradual increase, peaking at `20×20` before a slight decline.

* **Data Points:**

* `1×1`: 48.0%

* `3×3`: 48.8%

* `5×5`: 50.0%

* `10×10`: 51.0%

* `20×20`: 51.4%

* `40×40`: 51.2%

**Data Series: Pass@N (Orange Line, Diamond Markers) - Right Chart Only**

* **Trend:** Shows a strong, consistent upward trend across all patch numbers, with no sign of plateauing.

* **Data Points:**

* `1×1`: 48.0%

* `3×3`: 60.4%

* `5×5`: 64.0%

* `10×10`: 67.4%

* `20×20`: 71.6%

* `40×40`: 74.8%

### Key Observations

1. **Common Baseline:** All three methods start at the same performance (48.0%) for the `1×1` patch configuration.

2. **Diverging Performance:** As the number of patches increases, the performance of the three methods diverges significantly.

3. **Plateau vs. Growth:** "Self-play" performance plateaus after `20×20` patches. "Majority Voting" shows minimal gains overall. In contrast, "Pass@N" demonstrates continuous, strong improvement.

4. **Relative Performance:** At the highest patch count (`40×40`), "Pass@N" (74.8%) significantly outperforms "Self-play" (60.4%), which in turn outperforms "Majority Voting" (51.2%).

### Interpretation

The data demonstrates the impact of scaling the "Number of patches: BF x TW" on the pass rate for different evaluation or sampling strategies.

* **Self-play** benefits from increased patch count up to a point (`20×20`), after which its performance saturates. This suggests a limit to the effectiveness of self-play alone as the problem space (represented by patches) expands.

* **Majority Voting** provides only marginal improvements over the baseline, indicating it is not a highly effective strategy for leveraging increased patch counts in this context.

* **Pass@N** shows a strong, positive correlation between patch count and performance. This suggests that the Pass@N method is highly effective at utilizing the additional information or diversity provided by a larger number of patches, leading to substantially higher pass rates. The lack of a plateau within the tested range implies potential for further gains with even larger patch counts.

**Conclusion:** For the task measured by "Pass Rate," the Pass@N strategy scales most effectively with an increasing number of patches (BF x TW), followed by Self-play, while Majority Voting offers limited benefit. The choice of strategy becomes increasingly critical as the patch configuration grows larger.

</details>

Figure 4: Test-time self-play on SWE-bench Verified. Performance improves with more generated patches and tests. Left: Execution-based self-play consistently surpasses BugFixer majority voting. Right: Self-play performances remain below pass@N where the ground-truth test patch is used, suggesting the room exists for TestWriter to improve.

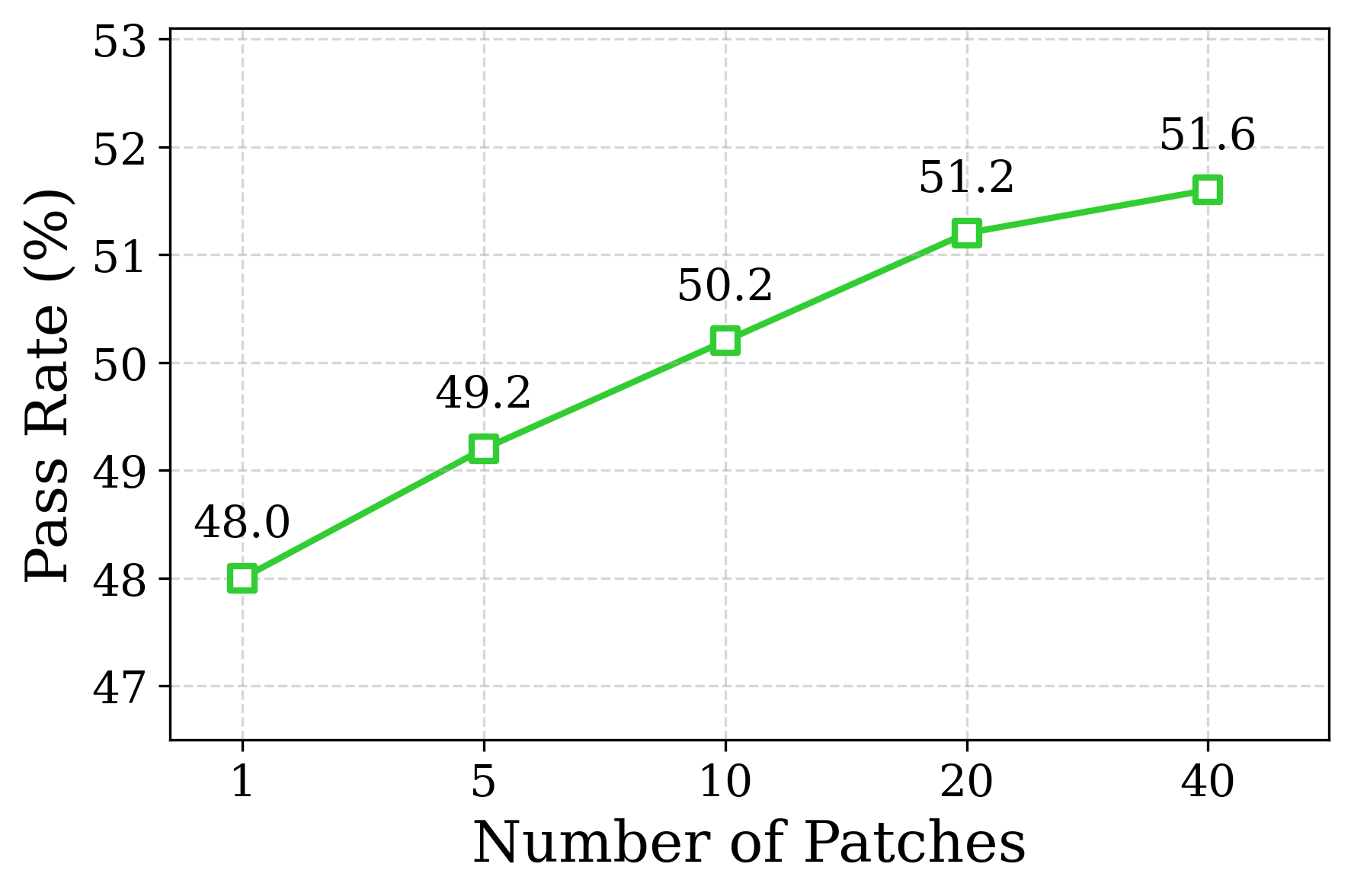

#### 3.5.4 Test-time Self-Play

Following Section 3.4, we evaluate how the final performance on SWE-bench Verified scales with the number of patches and tests generated. The temperature is fixed at 0 for the initial rollout, and set to 1.0 for the subsequent 39 rollouts. As shown on the left of Figure 4, the final performance improves from 48.0% to 60.4% as the number of patch-test pairs increases from 1 $×$ 1 to 40 $×$ 40, and consistently surpasses the results obtained from the majority vote of the BugFixer patches only.

Specifically, the self-play result obtained from 3 patches and 3 tests for each instance has already surpassed the performance with majority voting from 40 BugFixer patches. This demonstrates the effectiveness of additional information from test-time execution. The room for improvement of TestWriter, though, still exists for more powerful self-play: Shown on Figure 4, self-play performances remain below pass@N, where ground-truth test cases serve as the criterion for issue resolution. This finding aligns with anthropic_claude_3.5_sonnet_20241022, which introduced a final edge-case checking phase to generate a more diverse set of test cases, thereby strengthening the role of the “TestWriter” in their SWE-Agent framework. We also report preliminary observations of a potential parallel scaling phenomenon, which requires no additional training and may enable scalable performance improvements. The details of the phenomenon and analyses are covered in Appendix F.

## 4 Initializing SWE-Agents from Agentless Training

End-to-end multi-turn frameworks, such as SWE-Agent (yang2024swe; anthropic_claude_3.5_sonnet_20241022) and OpenHands (wang2024openhands), enable agents to leverage tools and interact with environments. Specifically, the system prompt employed in the SWE-Agent framework (anthropic_claude_3.5_sonnet_20241022) outlines a five-stage workflow: (i) repo exploration, (ii) error reproduction via a test script, (iii) code edit for bug repair, (iv) test re-execution for validation, and (v) edge-case generation and checks. Unlike Agentless, the SWE-Agent framework doesn’t enforce a strict stage-wise workflow; the agent can reflect, transition, and redo freely until it deems the task complete and submits.

The performance potential is therefore higher without a fixed routine; However, the training for SWE-Agent is more challenging because of the sparsity of the outcome reward for long-horizon credit assignment. Meanwhile, our Kimi-Dev model has undergone Agentless training, with its skills of localization and code edit for BugFixer and TestWriter strengthened elaborately. In this section, we investigate whether it can serve as an effective prior for multi-turn SWE-Agent scenarios.

Table 2: Single-attempt performance of different models on SWE-bench Verified under end-to-end agentic frameworks, categorized by proprietary or open-weight models, and size over or under 100B (as of 2025.09). “Internal” denotes results achieved with their in-house agentic frameworks.

### 4.1 Performance after SWE-Agent Fine-tuning

<details>

<summary>figs/sec4_main/v-sweeping-new-FINAL.png Details</summary>

### Visual Description

## Line Chart: Scaling Behavior of SWE-Agent Training Methods

### Overview

This is a multi-series line chart illustrating the performance scaling of four different training methods (RL, SFT, MT, Base) for an AI agent called "SWE-Agent." The chart plots the "Pass Rate (%)" against the number of "SWE-Agent SFT tokens" used for training. Each method is evaluated using three metrics: Pass@1, Pass@2, and Pass@3, resulting in 12 distinct data series. The overall trend shows that performance improves for all methods as the training token count increases, though the rate of improvement and starting points vary significantly.

### Components/Axes

* **X-Axis (Horizontal):** Labeled "# SWE-Agent SFT tokens". The scale is logarithmic, with tick marks at the following approximate values: `0`, `2^21` (~2.1 million), `2^23` (~8.4 million), `2^24` (~16.8 million), `1.1 × 2^25` (~36.9 million), `1.1 × 2^26` (~73.8 million), `1.1 × 2^27` (~147.6 million), and `1.5 × 2^28` (~402.7 million).

* **Y-Axis (Vertical):** Labeled "Pass Rate (%)". The scale is linear, ranging from 0 to 60 with major gridlines every 10 units.

* **Legend:** Positioned on the right side of the chart, outside the plot area. It defines the color and marker shape for each of the 12 data series:

* **RL (Red):** Pass@1 (Circle), Pass@2 (Square), Pass@3 (Triangle)

* **SFT (Orange):** Pass@1 (Circle), Pass@2 (Square), Pass@3 (Triangle)

* **MT (Purple):** Pass@1 (Circle), Pass@2 (Square), Pass@3 (Triangle)

* **Base (Blue):** Pass@1 (Circle), Pass@2 (Square), Pass@3 (Triangle)

* **Grid:** A light gray dashed grid is present for both axes.

### Detailed Analysis

The following table reconstructs the approximate data points for each series at the given token counts. Values are estimated from the chart's visual positioning.

| Token Count (Approx.) | RL Pass@1 | RL Pass@2 | RL Pass@3 | SFT Pass@1 | SFT Pass@2 | SFT Pass@3 | MT Pass@1 | MT Pass@2 | MT Pass@3 | Base Pass@1 | Base Pass@2 | Base Pass@3 |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **0** | ~4% | ~9% | ~12% | ~8% | ~13% | ~16% | ~0.5% | ~0.5% | ~0.5% | ~0% | ~0% | ~0% |

| **2^21** | ~23% | ~33% | ~39% | ~20% | ~33% | ~38% | ~5% | ~6% | ~7% | ~1% | ~2% | ~3% |

| **2^23** | ~33% | ~43% | ~48% | ~27% | ~35% | ~41% | ~27% | ~36% | ~44% | ~16% | ~24% | ~28% |

| **2^24** | ~34% | ~42% | ~47% | ~20% | ~31% | ~36% | ~29% | ~41% | ~47% | ~13% | ~22% | ~28% |

| **1.1 × 2^25** | ~34% | ~45% | ~50% | ~35% | ~46% | ~51% | ~31% | ~46% | ~52% | ~12% | ~28% | ~36% |

| **1.1 × 2^26** | ~38% | ~51% | ~58% | ~37% | ~49% | ~55% | ~37% | ~51% | ~58% | ~22% | ~38% | ~45% |

| **1.1 × 2^27** | ~44% | ~56% | ~60% | ~44% | ~55% | ~59% | ~45% | ~55% | ~60% | ~33% | ~48% | ~52% |

| **1.5 × 2^28** | ~49% | ~58% | ~64% | ~48% | ~58% | ~62% | ~46% | ~55% | ~60% | ~36% | ~48% | ~54% |

**Trend Verification per Method:**

* **RL (Red Lines):** All three lines show a strong, consistent upward trend. The slope is steep initially (0 to 2^23 tokens) and continues to rise steadily, with RL Pass@3 achieving the highest overall pass rate on the chart.

* **SFT (Orange Lines):** Also shows a strong upward trend. Notably, SFT Pass@1 exhibits a significant dip at 2^24 tokens before recovering and continuing its ascent.

* **MT (Purple Lines):** Starts very low (near 0%) but demonstrates the most dramatic scaling. The lines have a very steep slope between 2^21 and 2^23 tokens, eventually converging with and sometimes surpassing the SFT lines at higher token counts.

* **Base (Blue Lines):** Starts at or near 0% and shows the slowest initial growth. However, it exhibits a strong, consistent upward trend from 2^24 tokens onward, though it remains the lowest-performing group at every data point.

### Key Observations

1. **Performance Hierarchy:** At every token count, the performance order within each method is consistently Pass@3 > Pass@2 > Pass@1. This indicates that allowing the agent more attempts (k in Pass@k) reliably increases the success rate.

2. **Method Comparison:** RL and SFT methods start with a significant performance advantage over MT and Base at low token counts (0 to 2^21). MT shows a "catch-up" phenomenon, scaling rapidly to match SFT performance at higher token volumes. Base is consistently the lowest-performing method.

3. **Scaling Efficiency:** The most dramatic performance gains for all methods occur in the range between `2^21` and `1.1 × 2^25` tokens. After `1.1 × 2^26` tokens, the rate of improvement begins to plateau slightly for most series.

4. **Notable Anomaly:** The SFT Pass@1 series shows a clear performance drop at `2^24` tokens (from ~27% to ~20%) before recovering. This is the most pronounced deviation from the general upward trend in the chart.

### Interpretation

This chart demonstrates the **scaling laws** for different training paradigms applied to the SWE-Agent. The data suggests that:

* **Data Quantity is Critical:** All methods benefit from more training data (tokens), confirming that scale is a primary driver of performance for this agent.

* **Training Method Matters:** The choice of training method (RL, SFT, MT, Base) has a profound impact on data efficiency. RL and SFT are highly data-efficient, achieving decent performance with relatively few tokens. MT is less efficient initially but scales very effectively. The Base method is the least data-efficient, requiring orders of magnitude more data to achieve comparable results.

* **Metric Sensitivity:** The consistent gap between Pass@1, Pass@2, and Pass@3 highlights that the agent's "first-attempt" success rate is substantially lower than its success rate when given multiple chances. This is a crucial consideration for real-world deployment where the cost of multiple attempts may be high.

* **Practical Implication:** For resource-constrained scenarios (limited training data/compute), RL or SFT would be preferable. If massive data is available, MT becomes a competitive option. The Base method appears to be a weak baseline, likely representing a model without specialized training for the SWE-Agent task. The dip in SFT Pass@1 at `2^24` tokens could indicate a point of instability or overfitting in that specific training run, warranting further investigation.

</details>

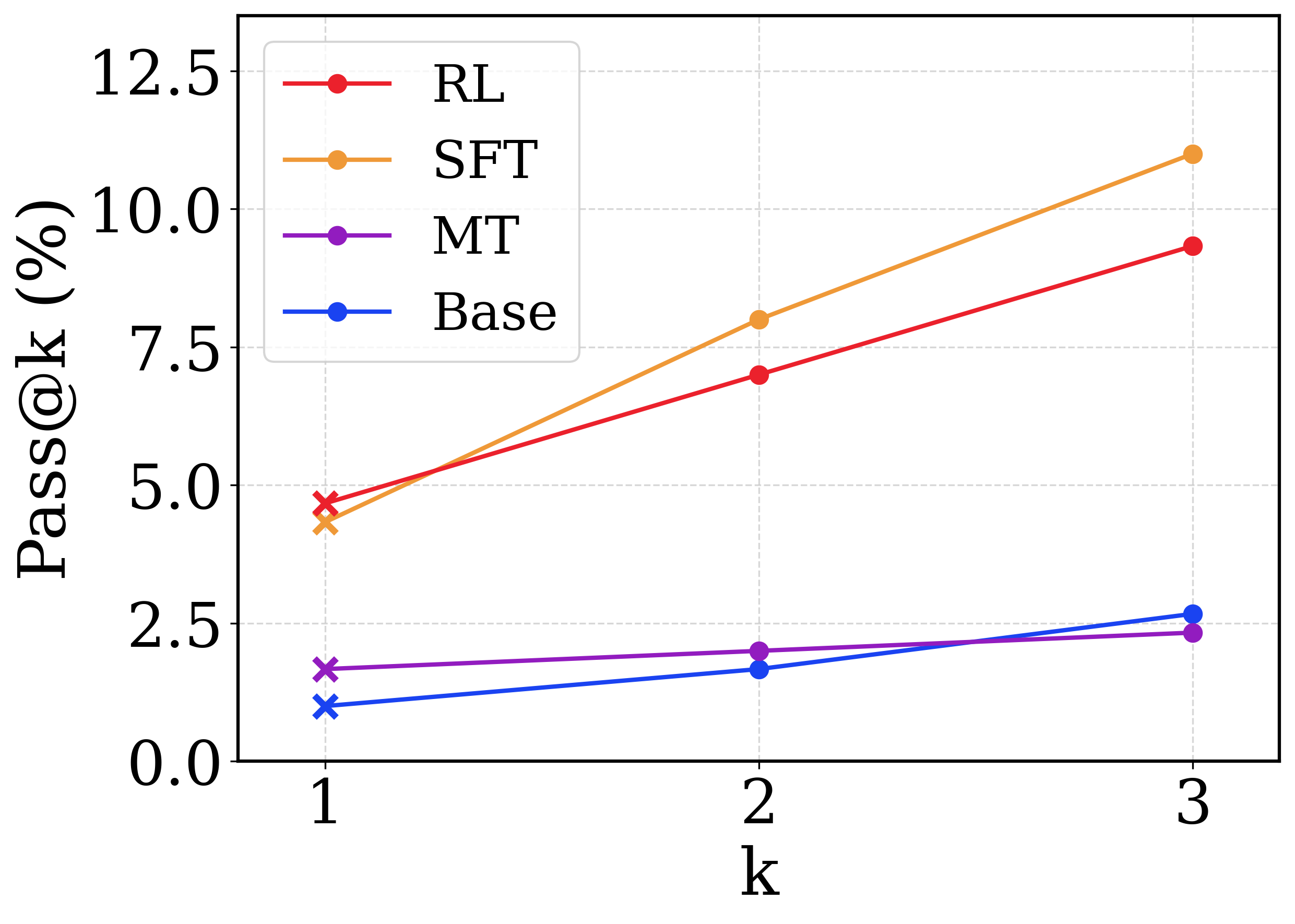

Figure 5: Comparing the quality of the raw Base, the Agentless mid-trained (MT), the Agentless mid-trained with reasoning-intensive cold-start (SFT), and the Kimi-Dev model after RL as the prior for SWE-Agent adaptation. The tokens of the SWE-Agent SFT trajectories are swept over different scales, and the SWE-Agent performances are reported up to pass@3 on SWE-bench Verified.

We use the publicly available SWE-Agent trajectories to finetune Kimi-Dev. The finetuning dataset we used is released by SWE-smith (yang2025swe), consisting of 5,016 SWE-Agent trajectories collected with Claude 3.7 Sonnet (Anthropic-Claude3.7Sonnet-2025) in the synthetic environments. We perform supervised fine-tuning over Kimi-Dev, setting the maximum context length as 64K tokens during training, and allowing up to 128K tokens and 100 turns during inference.

As shown in Table 2, without collecting more trajectory data over realistic environments, or conducting additional multi-turn agentic RL, our finetuned model achieves a pass@1 score of 48.6% on SWE-bench Verified under the agentic framework setup, without additional test-time scaling. Using the same SFT data, our finetuned Kimi-Dev model outperforms the SWE-agent-LM (yang2025swesmith), with the performance comparable to that of Claude 3.5 Sonnet (49% by the 241022 version). The pass@10 of our SWE-Agent adapted model is 74.0% and surpasses the pass@30 of our model under Agentless (73.8%), proving the higher potential for the SWE-Agent framework.

### 4.2 Skill Transfer and Generalization

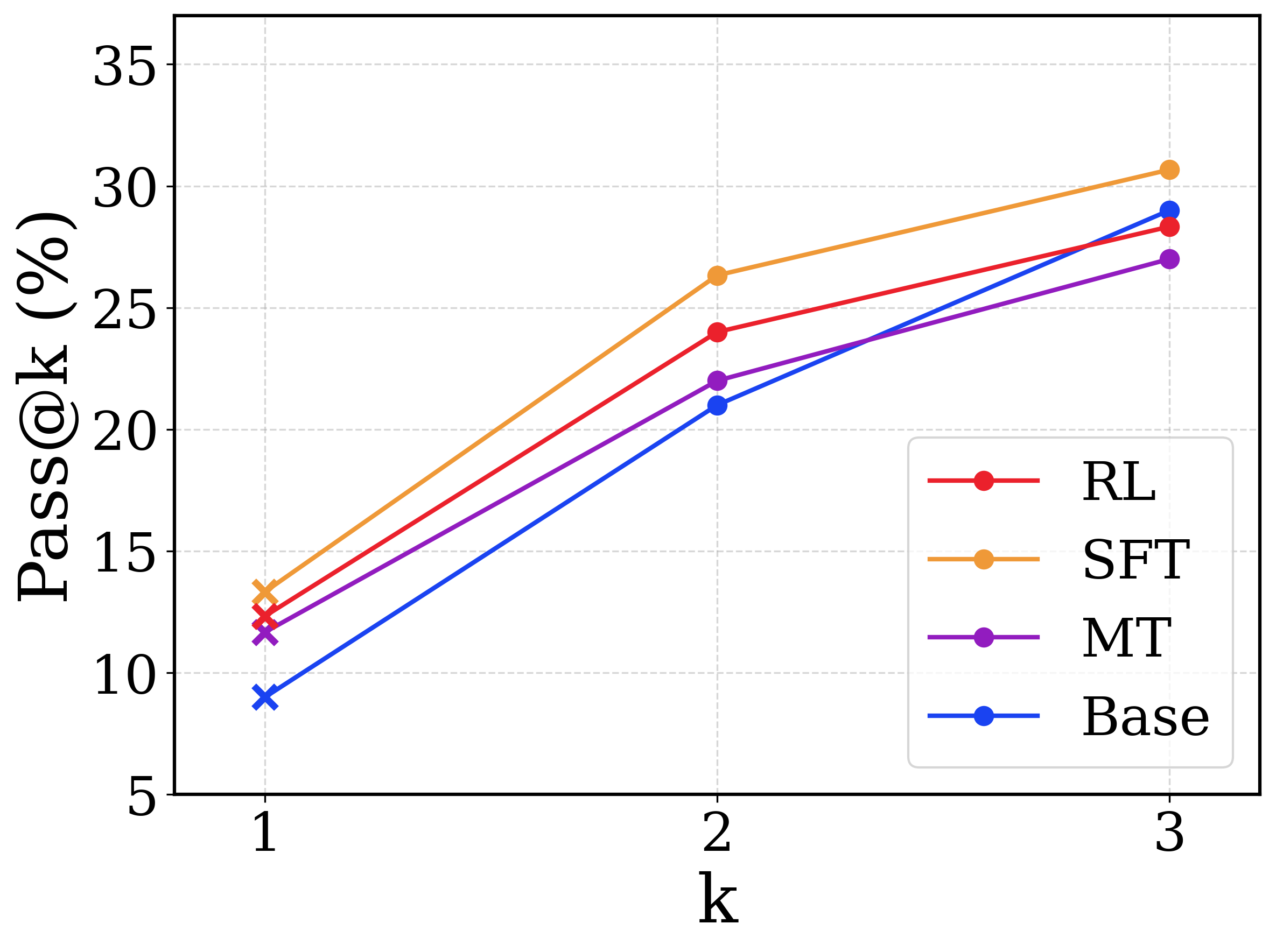

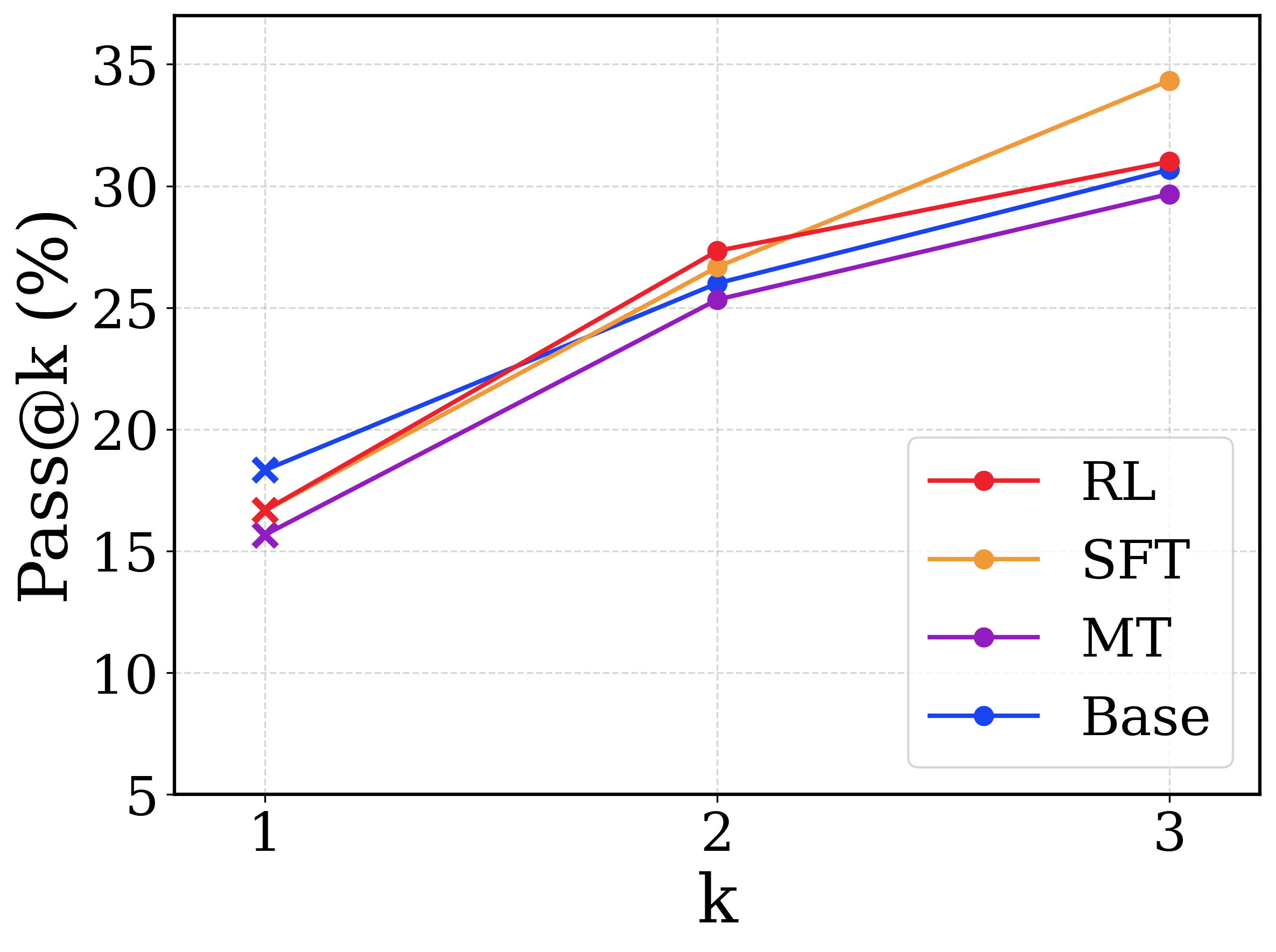

The results shown in Section 4.1 demonstrate that Kimi-Dev, a model with extensive Agentless training, could be adapted to end-to-end SWE-Agents with lightweight supervised finetuning. As the Agentless training recipe consists of mid-training, cold-start (SFT) and RL, we explore the contribution of each part in the recipe to the SWE-Agent capability after adaptation.

To figure this out, we perform SWE-Agent SFT on the original Qwen2.5-72B (Base), the mid-trained model (MT), the model then activated with Agentless-formatted long CoT data (SFT), and the (Kimi-Dev) model after finishing RL training (RL). As we are treating the four models as the prior for SWE-Agents We slightly abuse the term “prior” to refer to a model to be finetuned with SWE-Agent trajectories in the following analysis., and a good prior always demonstrates the ability of fast adaptation with a few shots (finn2017model; brown2020language), we also sweep the amount of SWE-Agent SFT data to measure the efficiency of each prior in SWE-Agent adaptation.

Specifically, we randomly shuffle the 5,016 SWE-Agent trajectories and construct nested subsets of sizes 100, 200, 500, 1,000, and 2,000, where each smaller subset is contained within the larger ones. In addition, we prepend two extreme baselines: (i) zero-shot, where the prior model is directly evaluated under the SWE-Agent framework without finetuning, and (ii) one-step gradient descent, where the model is updated with a single gradient step using the 100-trajectory subset. This yields a range of SFT token budgets spanning { $0$ , $2^21$ , $2^23$ , $2^24$ , $1.1× 2^25$ , $1.1× 2^26$ , $1.1× 2^27$ , $1.5× 2^28$ }. After these lightweight SFT experiments, we evaluate performance in terms of pass@{1,2,3} under the SWE-Agent framework, with evaluations for pass@1 conducted at temperature 0, and those for pass@2 and pass@3 at temperature 1.0.

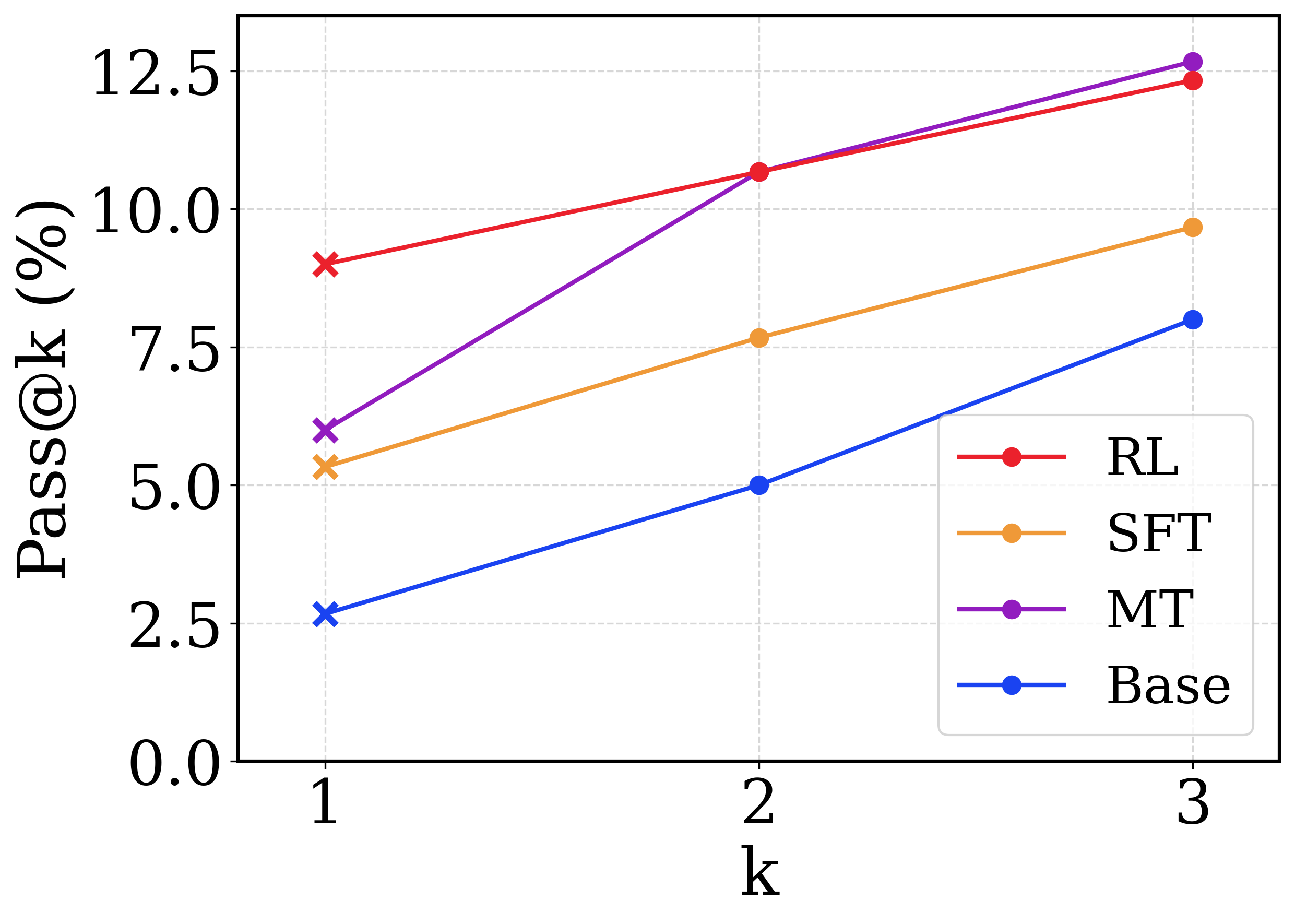

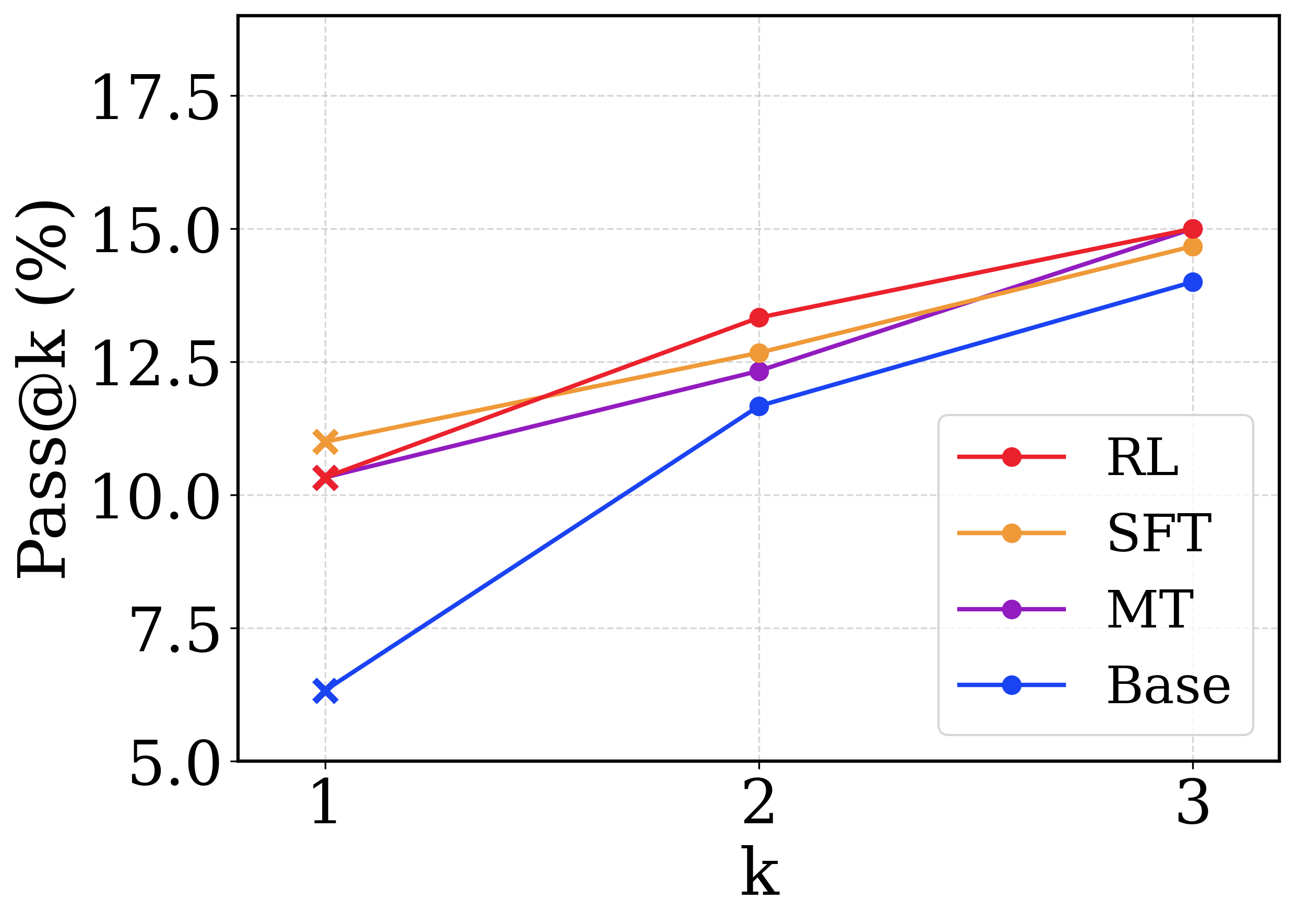

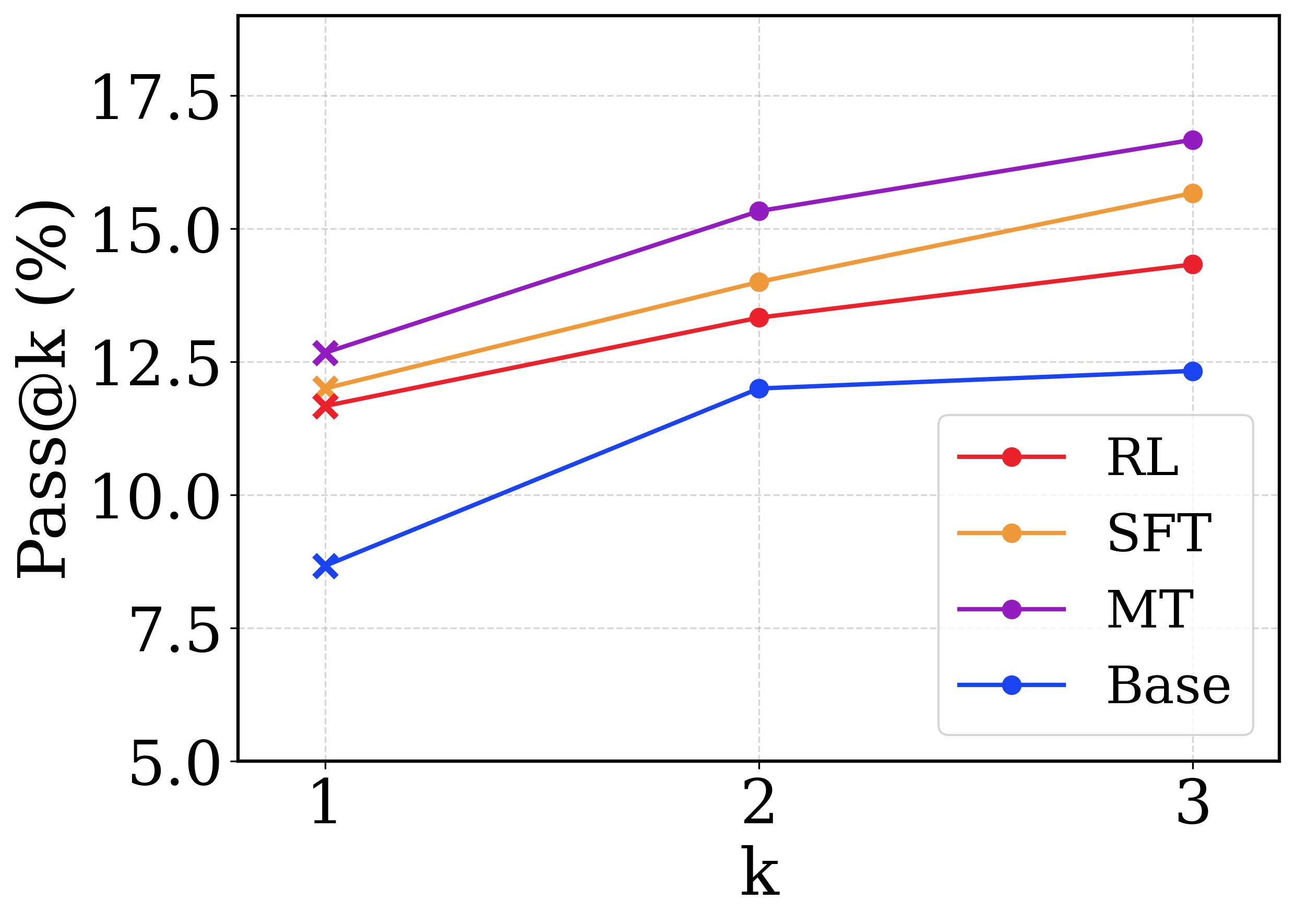

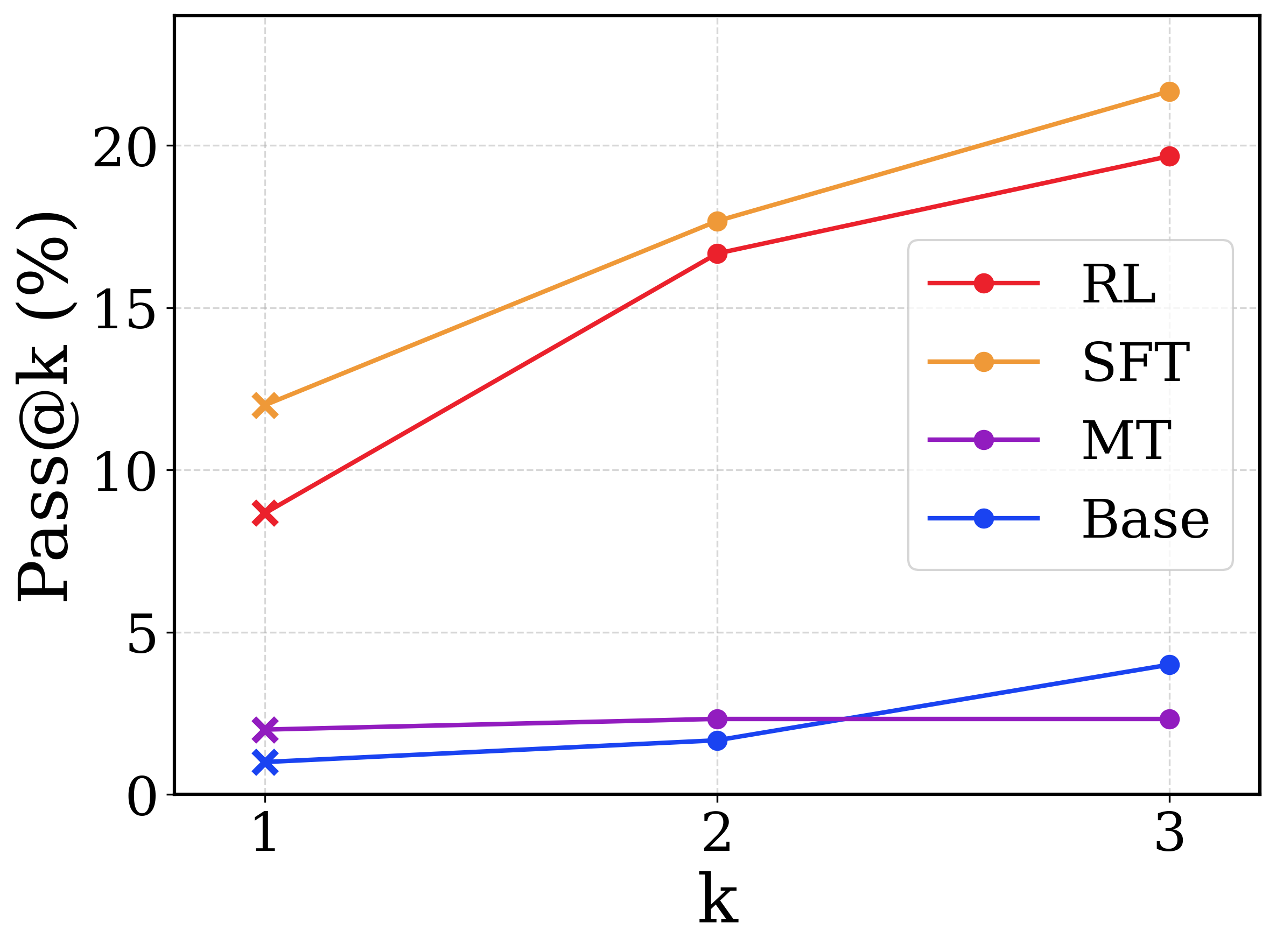

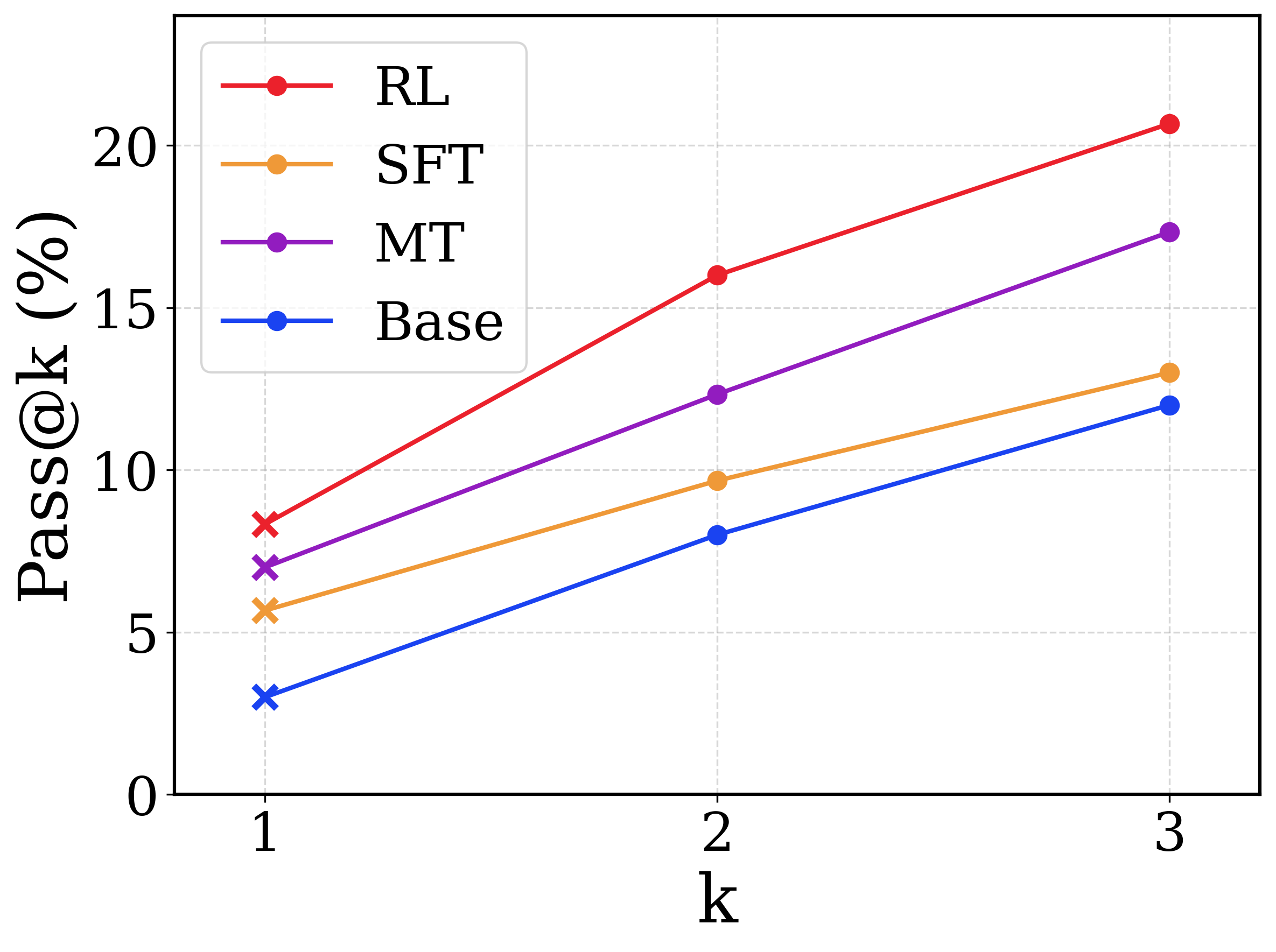

Figure 5 presents the SWE-Agent performances of each prior (Base, MT, SFT, RL) after being fine-tuned with different amounts of agentic trajectories. We have the following observations:

1. The RL prior outperforms all the other models in nearly all the SWE-Agent SFT settings. This demonstrates that the Agentless training recipe indeed strengthens the prior in terms of SWE-Agent adaptation. For example, To achieve the top pass@1 performance of the Base prior, the RL prior needs only $2^23$ SWE-Agent SFT tokens, whereas the Base prior consumes $1.5× 2^28$ tokens.

1. The MT prior is lagged behind the SFT and the RL ones in extremely data-scarce settings (zero-shot ( $0$ ) and one-step gradient descent ( $2^21$ ) ), but quickly becomes on par with them after 200 trajectories ( $2^24$ ) are available for finetuning. This indicates that adaptation efficiency remains comparable after the prior is strengthened through Agentless mid-training.

1. The performance of the SFT prior is mostly similar to the RL one except for two cases: (i) The SFT prior outperforms the RL one under the zero-shot setting. This is reasonable, as the RL prior might overfit to the Agentless input-output format, while the SFT prior suffers less from this. (ii) The SFT prior exhibits a significant degradation with 200 SWE-Agent trajectories ( $2^24$ ). A potential reason could be that the 200 trajectories collapse onto a single data mode, leading the SFT prior to overfit through memorization (chu2025sft); the RL prior instead embeds stronger transferable skills and thus generalizes better.

<details>

<summary>figs/sec4_long_cot_to_multi_turn/hist_steps_6x4.png Details</summary>

### Visual Description

## Step Histogram: Number of Instances Resolved (Per Bin of Turns)

### Overview

This image is a step histogram (or step plot) comparing the performance of four different models or methods—RL, SFT, MT, and Base—on a task. The chart displays how many problem instances were successfully resolved, grouped by the number of conversational turns required for resolution. The data suggests a performance comparison across different interaction lengths.

### Components/Axes

* **Chart Title:** "Number of instances resolved (per bin of turns)"

* **Y-Axis:**

* **Label:** "#Instances resolved"

* **Scale:** Linear, from 0 to 160.

* **Major Tick Marks:** 0, 40, 80, 120, 160.

* **X-Axis:**

* **Label:** "#Turns"

* **Scale:** Linear, binned in increments of 10.

* **Bins (Tick Marks):** 0, 10, 20, 30, 40, 50, 60, 70, 80, 90, 100.

* **Legend:** Located in the top-right corner of the plot area.

* **RL:** Solid red line.

* **SFT:** Dash-dot orange line.

* **MT:** Dotted purple line.

* **Base:** Dashed blue line.

### Detailed Analysis

The chart shows the count of resolved instances for each model within specific turn-count bins (e.g., 0-10 turns, 10-20 turns). Values are approximate based on visual inspection of the step heights.

**Trend Verification:** All four data series follow a similar visual trend: a sharp peak in the 10-20 turn bin, followed by a general decline as the number of turns increases. The RL series consistently shows the highest or near-highest resolved count in most bins.

**Data Points by Bin (Approximate Values):**

* **Bin 0-10 Turns:**

* MT: ~55 instances (highest)

* SFT: ~38

* RL: ~38

* Base: ~25 (lowest)

* **Bin 10-20 Turns (Peak for all models):**

* RL: ~155 instances (highest peak)

* SFT: ~145

* Base: ~140

* MT: ~140

* **Bin 20-30 Turns:**

* SFT: ~70 instances (highest)

* RL: ~55

* Base: ~55

* MT: ~50

* **Bin 30-40 Turns:**

* RL: ~30 instances

* Base: ~28

* MT: ~25

* SFT: ~22

* **Bin 40-50 Turns:**

* RL: ~20 instances

* SFT: ~15

* Base: ~12

* MT: ~8

* **Bin 50-60 Turns:**

* SFT: ~12 instances

* RL: ~8

* Base: ~5

* MT: ~5

* **Bin 60-70 Turns:**

* SFT: ~8 instances

* RL: ~5

* Base: ~2

* MT: ~2

* **Bin 70-80 Turns:**

* RL: ~8 instances (notable small rise)

* SFT: ~5

* Base: ~2

* MT: ~2

* **Bin 80-90 Turns:**

* SFT: ~5 instances

* RL: ~2

* Base: ~2

* MT: ~2

* **Bin 90-100 Turns:**

* RL: ~8 instances (another small rise)

* SFT: ~5

* Base: ~2

* MT: ~2

### Key Observations

1. **Universal Peak:** All models achieve their highest number of resolved instances in the 10-20 turn bin, indicating this is the most common length for successful resolution.

2. **RL Dominance at Peak:** The RL model has the highest peak performance (~155 instances) in the 10-20 turn range.

3. **Performance Decline:** For all models, the number of resolved instances drops significantly as the required number of turns increases beyond 20.

4. **MT's Early Strength:** The MT model performs best relative to others in the shortest bin (0-10 turns).

5. **SFT's Mid-Range Strength:** The SFT model shows the highest resolved count in the 20-30 turn bin.

6. **Long-Tail Performance:** In the higher turn bins (70+), the resolved counts are very low for all models, though RL shows minor, isolated increases in the 70-80 and 90-100 bins.

### Interpretation

This chart likely evaluates AI models on a conversational or multi-step task (e.g., dialogue systems, problem-solving agents). The "turns" represent interaction steps, and "instances resolved" are successful task completions.

* **What the data suggests:** The task is most frequently solvable within 10-20 interactions. Solving it requires more than 30 turns is progressively rarer, suggesting either increased difficulty or a dataset skewed towards shorter solutions.

* **Model Comparison:** RL appears most effective for the most common case (10-20 turns). MT may be better for very quick resolutions, while SFT holds an edge for slightly longer interactions (20-30 turns). The "Base" model generally underperforms the specialized methods (RL, SFT, MT).

* **Anomalies:** The small bumps for RL in the 70-80 and 90-100 turn bins are interesting. They could indicate a subset of very difficult problems that the RL model is uniquely capable of solving, or they could be statistical noise given the low counts.

* **Underlying Message:** The visualization argues for the effectiveness of trained models (RL, SFT, MT) over a base model, with RL showing particular strength for the most common resolution path. It also highlights the inherent challenge of the task as interaction length grows.

</details>

<details>

<summary>figs/skill_analysis_figure.png Details</summary>

### Visual Description

## Stacked Bar Chart: Model Performance in Resolving Cases

### Overview

The image displays a stacked bar chart comparing the performance of four different models (Base, MT, SFT, RL) in terms of the "Number of Resolved Cases." Each bar is divided into two segments: a solid-colored base representing the "Bugfixer cutoff" and a hatched top section representing "Reflection." The chart demonstrates a clear upward trend in total resolved cases across the models, with each subsequent model showing improvement.

### Components/Axes

* **Chart Type:** Stacked Bar Chart.

* **Y-Axis:**

* **Label:** "Number of Resolved Cases"

* **Scale:** Linear, ranging from 0 to 800, with major tick marks every 100 units.

* **X-Axis:**

* **Label:** "Models"

* **Categories (from left to right):** "Base", "MT", "SFT", "RL".

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Item 1:** A solid blue rectangle labeled "Bugfixer cutoff".

* **Item 2:** A blue rectangle with diagonal hatching labeled "Reflection".

* **Data Series & Colors:**

* **Base Model:** Solid blue base, blue hatched top.

* **MT Model:** Solid purple base, purple hatched top.

* **SFT Model:** Solid orange base, orange hatched top.

* **RL Model:** Solid red base, red hatched top.

### Detailed Analysis

The chart presents the following data for each model, broken down by component:

1. **Base Model:**

* **Bugfixer cutoff (Solid Blue):** 484 cases.

* **Reflection (Hatched Blue):** 94 cases.

* **Total Resolved Cases:** 578 (annotated as "578(+94)").

2. **MT Model:**

* **Bugfixer cutoff (Solid Purple):** 542 cases.

* **Reflection (Hatched Purple):** 100 cases.

* **Total Resolved Cases:** 642 (annotated as "642(+100)").

3. **SFT Model:**

* **Bugfixer cutoff (Solid Orange):** 584 cases.

* **Reflection (Hatched Orange):** 109 cases.

* **Total Resolved Cases:** 693 (annotated as "693(+109)").

4. **RL Model:**

* **Bugfixer cutoff (Solid Red):** 605 cases.

* **Reflection (Hatched Red):** 113 cases.

* **Total Resolved Cases:** 718 (annotated as "718(+113)").

**Trend Verification:**

* The **"Bugfixer cutoff"** component shows a steady upward trend: 484 → 542 → 584 → 605.

* The **"Reflection"** component also shows a steady upward trend: 94 → 100 → 109 → 113.

* The **Total Resolved Cases** consequently show a consistent upward trend: 578 → 642 → 693 → 718.

### Key Observations

* **Consistent Improvement:** Each model (Base → MT → SFT → RL) outperforms the previous one in both the "Bugfixer cutoff" and "Reflection" components, leading to a higher total.

* **Dominant Component:** The "Bugfixer cutoff" constitutes the majority of resolved cases for all models, ranging from approximately 83.7% (Base) to 84.3% (RL) of the total.

* **Growth of "Reflection":** The contribution from "Reflection" increases in absolute terms (from 94 to 113) and as a percentage of the total (from ~16.3% to ~15.7% - note: while the absolute number grows, its percentage share slightly decreases as the base grows faster).

* **Largest Gains:** The most significant total improvement occurs between the "Base" and "MT" models (+64 cases). The incremental gain from "SFT" to "RL" is the smallest (+25 cases), suggesting potential diminishing returns.

### Interpretation

This chart likely illustrates the results of an iterative model development or training process in a technical domain, such as automated bug fixing or problem resolution. The "Bugfixer cutoff" may represent a baseline or initial resolution capability, while "Reflection" could signify an additional, perhaps more sophisticated, reasoning or self-correction step that yields further resolutions.

The data suggests that sequential training or refinement techniques (represented by MT, SFT, RL) are effective. The "RL" (likely Reinforcement Learning) model achieves the highest performance, indicating that this training paradigm is the most successful among those tested for this task. The consistent, additive contribution of the "Reflection" component across all models implies it is a valuable and complementary module to the core "Bugfixer" system. The narrowing gap between later models (SFT to RL) might indicate that the problem space is approaching a performance ceiling with the current methodology, or that further gains require more substantial architectural changes.

</details>

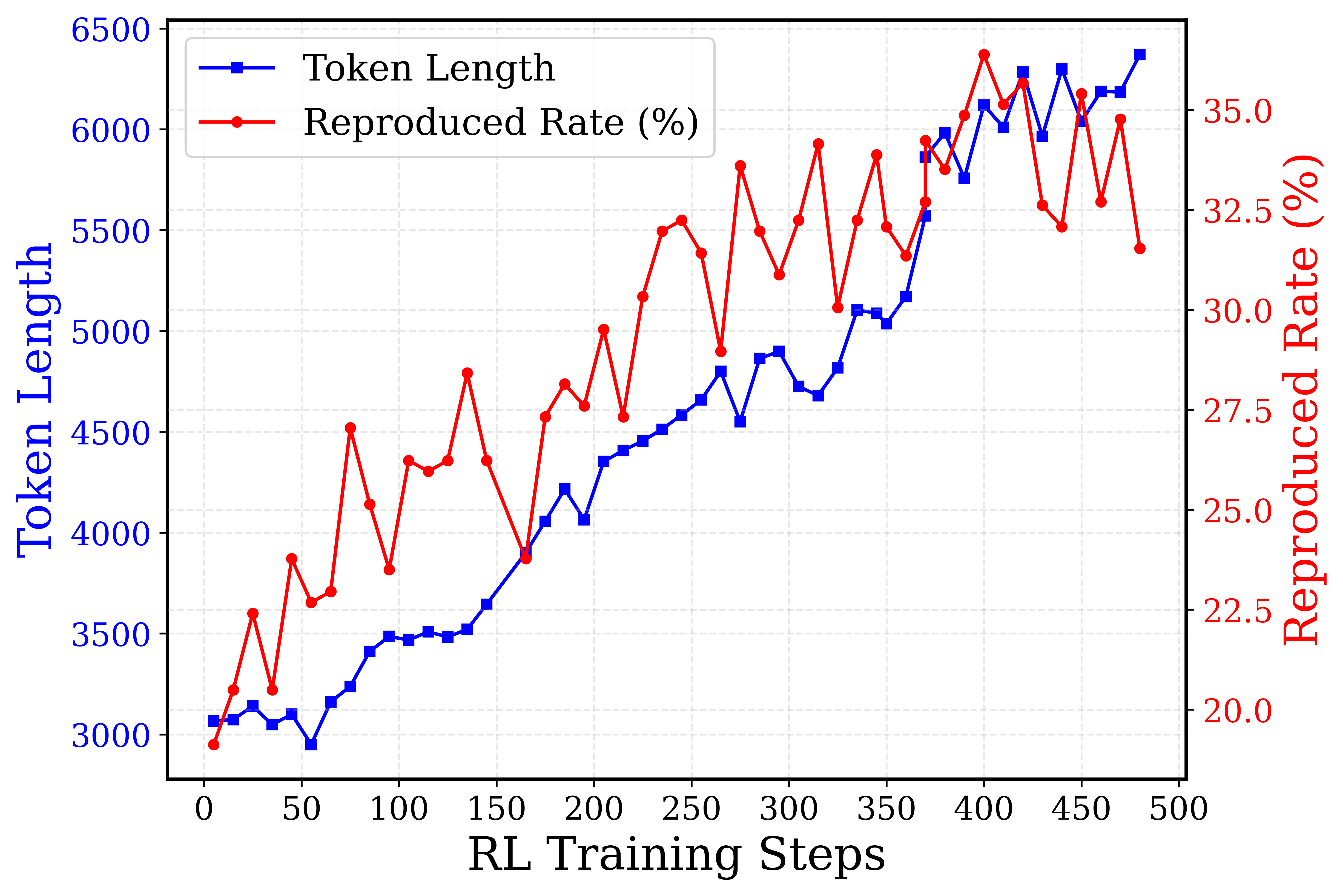

Figure 6: Left: Performance of the four priors under turn limits after SWE-Agent adaptation. Right: The characterization of the BugFixer and the reflection skills for each prior by counting the resolved cases of the 3 runs at Stage-3 cutoff moment, and comparing those with the final success cases.

From long CoT to extended multi-turn interactions.

We hypothesize that reflective behaviors cultivated through long chain-of-thought reasoning may transfer to settings requiring extended multi-turn interactions. To examine this, we evaluate the four priors (Base, MT, SFT, and RL) by finetuning on the 5,016 trajectories and test on SWE-bench Verified, under varying turn limits with pass@3 as the metric (Figure 6, left). The distinct interaction-length profiles show supportive evidence: the RL prior, after finetuning, continues to make progress beyond 70 turns, while the SFT, mid-trained, and raw models show diminishing returns around 70, 60, and 50 turns, respectively.

We further evaluate the efficacy of the Agentless skill priors (BugFixer and reflection) in the SWE-Agent adapted model. For BugFixer, given that the SWE-Agent may autonomously reflect between the five stages, we examine the moment in each trajectory when the bug fix of the third stage is initially completed, and the test rerun of the fourth stage has not yet been entered. Heuristically, when the SWE-Agent just completes the third stage, it has not yet obtained the execution feedback from the fourth stage, and thus has not further reflected based on the execution information or refined the bug fix. We therefore calculate the success rate of direct submission at this cutoff moment, which reflects the capability of the BugFixer skill. Regarding reflection, we further compare the performance at the cutoff point with the performance after full completion for each problem. The increment in the number of successful problems is used to reflect the capability of the reflection skill.

We use kimi-k2-0711-preview (team2025kimi_k2) to annotate the SWE-Agent trajectories, identifying the stage to which each turn belongs. Figure 6 (right) demonstrates that both skills are strengthened through each stage of the Agentless training recipe: For the BugFixer skill, the cutoff performance at Stage-3 within the SWE-Agent interaction trajectories of the four adapted models shows consistent improvement, ranging from 484 cases resolved by the Base prior to 605 cases by the RL prior, as measured by the number of successful resolutions within three passes. For the reflection skill, examining the performance gains from Stage-3 to the end of the trajectories reveals a similar trend, with improvements increasing from +94 under the Base prior to +113 under the RL prior. Taken together, the adapted model from the RL prior achieves the strongest overall performance across both skills. It should be noted that our analysis of the reflection skill remains coarse-grained, since the measured performance gains between the two checkpoints capture not only agentic reflection and redo behaviors, but also the intermediate test-writing process performed by the SWE-Agent. A more fine-grained evaluation that isolates the TestWriter skill prior is left for future work. The prompt for SWE-Agent stage annotation, extended qualitative studies, as well as additional discussions for skill transfer and generalization, are covered in Appendix G.

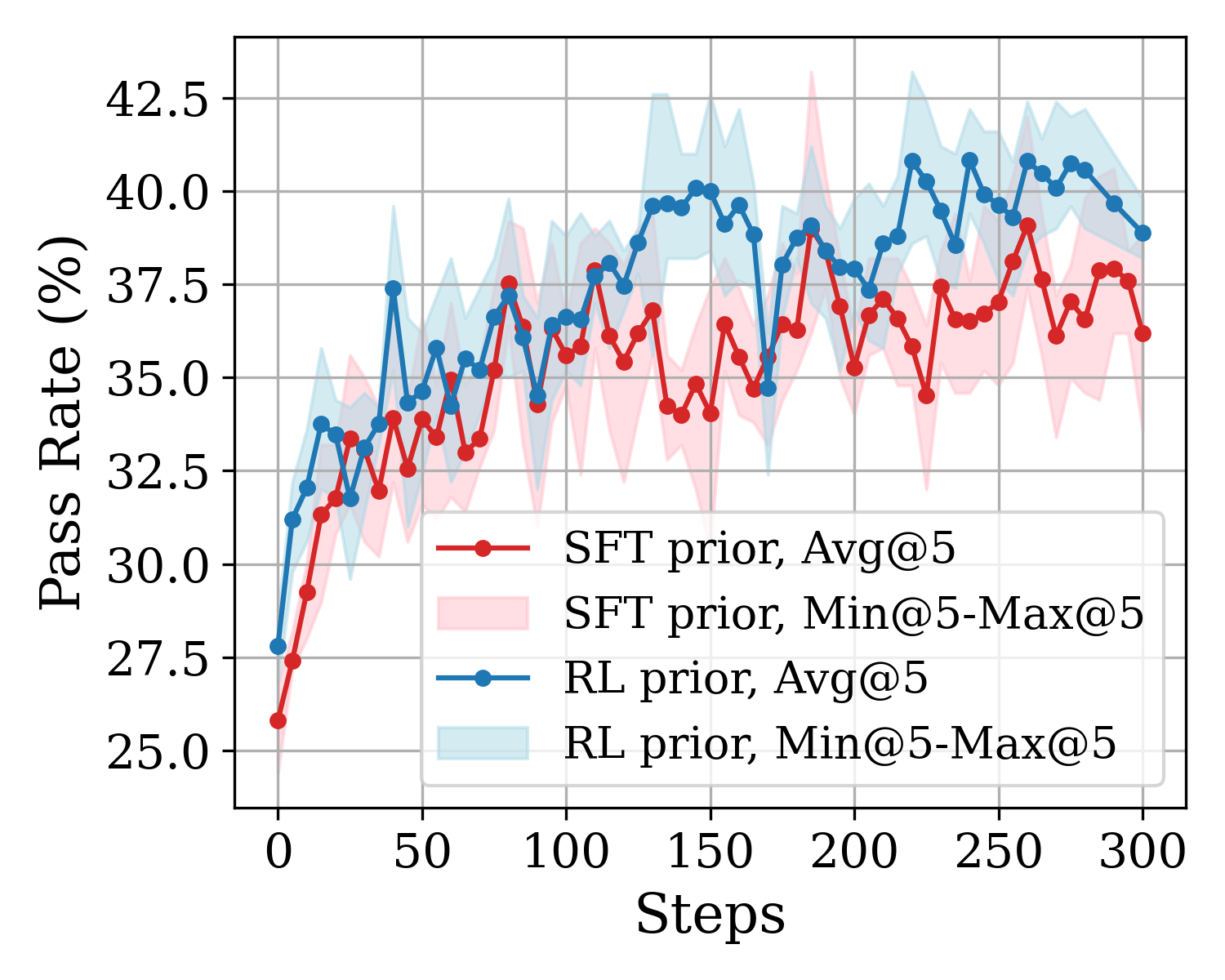

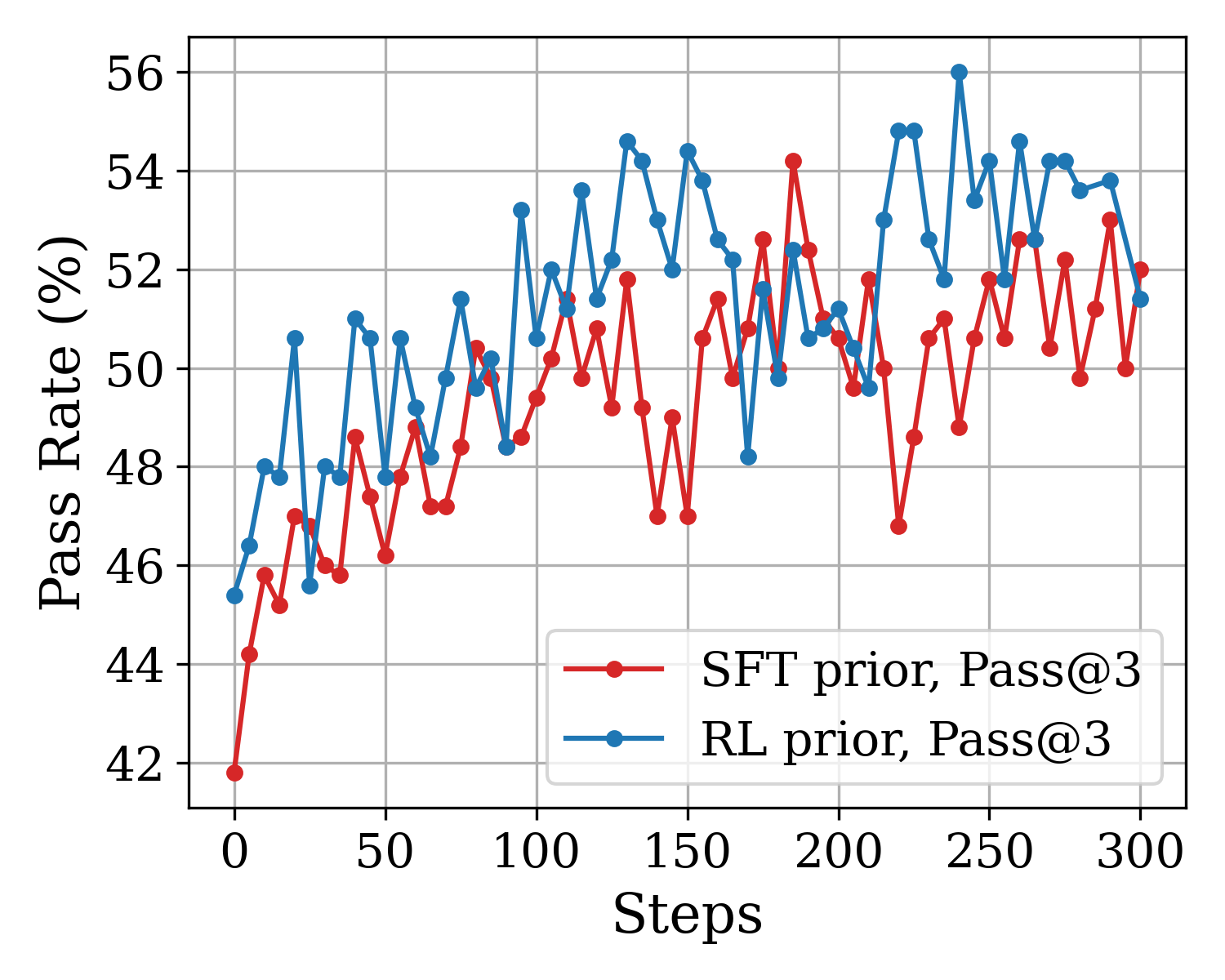

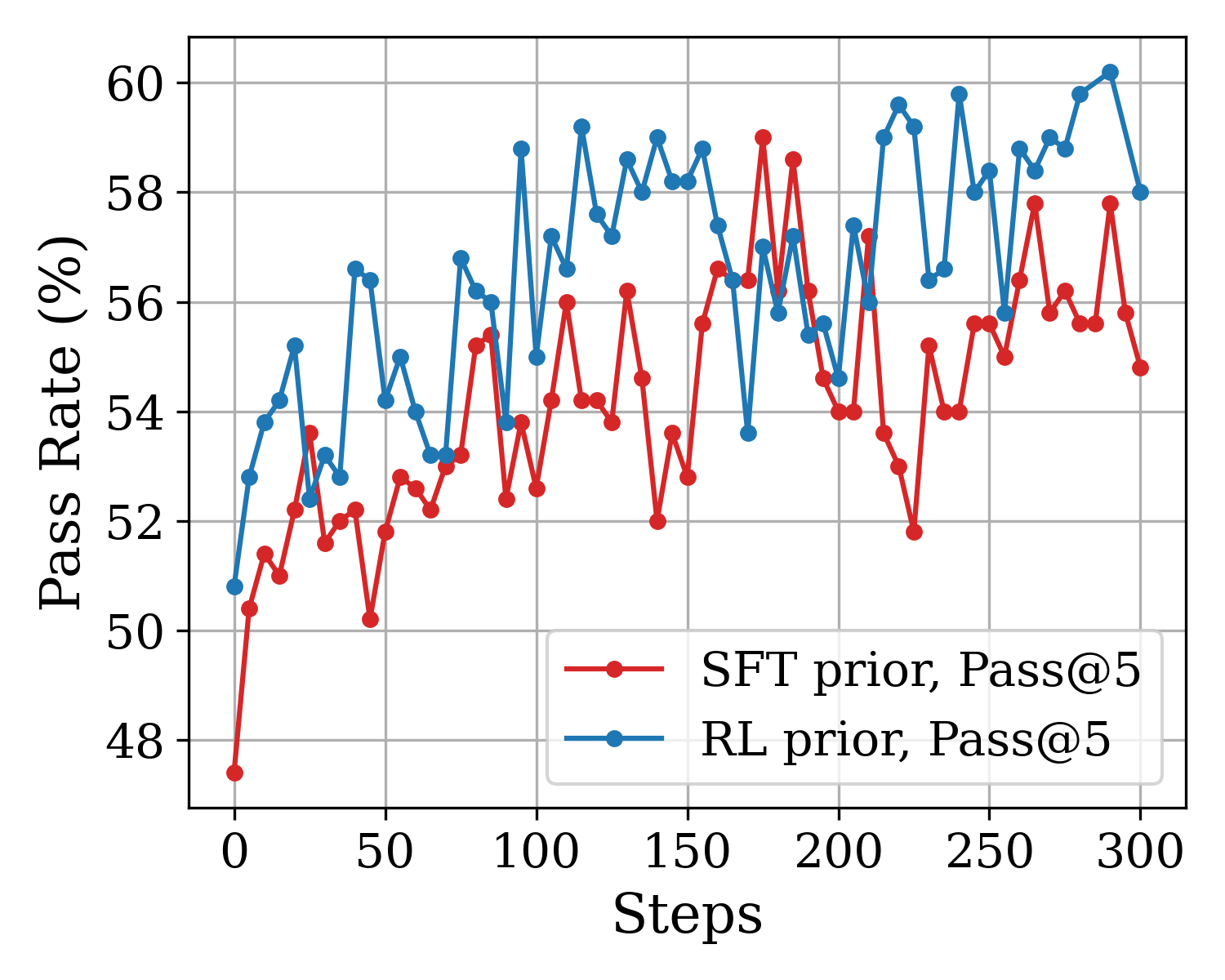

<details>

<summary>figs/sec4_swe_agent_rl/rebuttal_cmp_prior_pass1.png Details</summary>

### Visual Description

\n

## Line Chart: Performance Progression of SFT vs. RL Priors

### Overview

The image is a line chart comparing the performance of two different model training approaches—SFT (Supervised Fine-Tuning) prior and RL (Reinforcement Learning) prior—over the course of 300 training steps. Performance is measured by a "Pass Rate (%)" metric. Each approach is represented by an average line (Avg@5) and a shaded region indicating the range between the minimum and maximum values over 5 runs (Min@5-Max@5).

### Components/Axes

* **Chart Type:** Line chart with shaded confidence/range bands.

* **X-Axis:**

* **Label:** "Steps"

* **Scale:** Linear, from 0 to 300.

* **Major Tick Marks:** 0, 50, 100, 150, 200, 250, 300.

* **Y-Axis:**

* **Label:** "Pass Rate (%)"

* **Scale:** Linear, from 25.0 to 42.5.

* **Major Tick Marks:** 25.0, 27.5, 30.0, 32.5, 35.0, 37.5, 40.0, 42.5.

* **Legend (Positioned in the bottom-right quadrant of the plot area):**

1. **Red line with circular markers:** "SFT prior, Avg@5"

2. **Light pink shaded area:** "SFT prior, Min@5-Max@5"

3. **Blue line with circular markers:** "RL prior, Avg@5"

4. **Light blue shaded area:** "RL prior, Min@5-Max@5"

### Detailed Analysis

**1. SFT prior, Avg@5 (Red Line):**

* **Trend:** Starts at the lowest point (~25.8% at step 0). Shows a rapid initial increase, followed by a generally upward but highly volatile trend with frequent peaks and troughs. The growth rate slows after approximately step 150.

* **Key Data Points (Approximate):**

* Step 0: ~25.8%

* Step 50: ~33.5%

* Step 100: ~36.0%

* Step 150: ~34.5% (a local trough)

* Step 200: ~37.0%

* Step 250: ~37.0%

* Step 300: ~36.2%

* **Range (Pink Shaded Area):** The min-max range is substantial throughout, often spanning 3-5 percentage points. The range appears widest around steps 150-200 and 250-300, indicating high variance in performance across different runs at those stages.

**2. RL prior, Avg@5 (Blue Line):**

* **Trend:** Starts higher than SFT (~27.8% at step 0). Also shows a rapid initial increase. Its upward trend appears slightly more consistent and less volatile than the SFT line, especially after step 100. It maintains a performance lead over the SFT average for nearly the entire duration.

* **Key Data Points (Approximate):**

* Step 0: ~27.8%

* Step 50: ~37.5% (a sharp peak)

* Step 100: ~36.5%

* Step 150: ~40.0%

* Step 200: ~39.0%

* Step 250: ~41.0%

* Step 300: ~38.8%

* **Range (Blue Shaded Area):** The min-max range is also significant but appears slightly narrower on average than the SFT range, particularly in the later stages (steps 200-300). This suggests the RL prior may yield more consistent results across runs.

### Key Observations

1. **Performance Gap:** The RL prior (blue) consistently outperforms the SFT prior (red) on average after the initial steps. The gap is most pronounced around steps 150 and 250.

2. **Volatility:** Both methods exhibit high volatility, as seen in the jagged average lines and wide shaded ranges. However, the SFT prior's average line appears more erratic.

3. **Convergence and Divergence:** The two average lines converge briefly around step 100 and step 175 but otherwise maintain a separation. The shaded ranges overlap significantly throughout, indicating that while the averages differ, individual runs from either method can achieve similar performance levels.

4. **Peak Performance:** The highest observed average pass rate is achieved by the RL prior, reaching approximately 41% around step 250. The SFT prior's average peaks lower, at around 38-39%.

### Interpretation

This chart demonstrates a comparative analysis of two training methodologies for a task measured by a pass rate. The data suggests that, on average, the **RL prior approach leads to better final performance and a more stable learning trajectory** than the SFT prior approach over 300 steps.