# Chapter 1 Introduction

<details>

<summary>x1.png Details</summary>

### Visual Description

Icon/Small Image (458x51)

</details>

Imperial College London Department of Computing

Bayesian Mixture-of-Experts: Towards Making LLMs Know What They Don’t Know

Author:

Albus Yizhuo Li

Supervisor: Dr Matthew Wicker Second Marker: Dr Yingzhen Li

<details>

<summary>x2.png Details</summary>

### Visual Description

## Coat of Arms: McGill University

### Overview

The image depicts the coat of arms of McGill University. It features a shield divided into sections, an open book with the word "SCIENTIA" on it, and a banner with the Latin phrase "SCIENTIA IMPERII DECUS ET TUTAMEN".

### Components/Axes

* **Top-Left Quarter:** Three gold lions passant guardant on a red field.

* **Top-Right Quarter:** A red lion rampant on a gold field.

* **Bottom-Left Quarter:** A gold Irish harp on a blue field.

* **Bottom Section:** A gold shield with an open book bearing the word "SCIENTIA".

* **Banner:** A banner below the shield with the Latin phrase "SCIENTIA IMPERII DECUS ET TUTAMEN".

### Detailed Analysis or Content Details

* **Lions:** The three lions in the top-left quarter are gold and are positioned walking with their heads turned towards the viewer on a red background.

* **Lion Rampant:** The lion in the top-right quarter is red and standing upright on a gold background.

* **Harp:** The harp in the bottom-left quarter is gold and set against a blue background.

* **Book:** The open book in the bottom section has the word "SCIENTIA" written on its pages. The book is gold, and there are three buckles on either side of the book.

* **Banner Text:** The banner reads "SCIENTIA IMPERII DECUS ET TUTAMEN" in Latin.

### Key Observations

* The coat of arms is divided into quarters, each with distinct heraldic symbols.

* The open book with "SCIENTIA" is a central element, emphasizing knowledge and learning.

* The Latin motto on the banner encapsulates the university's mission.

### Interpretation

The coat of arms of McGill University is a symbolic representation of the institution's history, values, and mission. The heraldic symbols in the upper quadrants likely represent the university's historical connections or affiliations. The open book with "SCIENTIA" highlights the importance of knowledge and learning. The Latin motto, "SCIENTIA IMPERII DECUS ET TUTAMEN," translates to "Knowledge is the beauty and protection of the empire," emphasizing the role of education in societal advancement and security. The overall design conveys a sense of tradition, scholarship, and institutional pride.

</details>

Submitted in partial fulfillment of the requirements for the MSc degree in Computing (Artificial Intelligence and Machine Learning) of Imperial College London

September 2025

Abstract

The Mixture-of-Experts (MoE) architecture has enabled the creation of massive yet efficient Large Language Models (LLMs). However, the standard deterministic routing mechanism presents a significant limitation: its inherent brittleness is a key contributor to model miscalibration and overconfidence, resulting in systems that often do not know what they don’t know.

This thesis confronts this challenge by proposing a structured Bayesian MoE routing framework. Instead of forcing a single, deterministic expert selection, our approach models a probability distribution over the routing decision itself. We systematically investigate three families of methods that introduce this principled uncertainty at different stages of the routing pipeline: in the weight-space, the logit-space, and the final selection-space.

Through a series of controlled experiments on a 3-billion parameter MoE model, we demonstrate that this framework significantly improves routing stability, in-distribution calibration, and out-of-distribution (OoD) detection. The results show that by targeting this core architectural component, we can create a more reliable internal uncertainty signal. This work provides a practical and computationally tractable pathway towards building more robust and self-aware LLMs, taking a crucial step towards making them know what they don’t know.

Acknowledgments

This thesis is dedicated to my demanding, fulfilling and joyous year at Imperial College London, my Hogwarts.

This journey to this thesis was made possible by the support, guidance, and inspiration of many people, to whom I owe my deepest gratitude:

First and foremost, I would like to express my sincere gratitude to my supervisor, Dr. Matthew Wicker. His amazing 70015: Mathematics for Machine Learning module lured me down the rabbit hole of Probabilistic & Bayesian Machine Learning, a journey from which I have happily not returned. His initial ideation of Bayesianfying Mixture-of-Experts provides the foundation of this thesis. Since mid-stage of this project, his careful guidance and detailed feedback on both experiments and writing were invaluable. Thank you for being a great supervisor and friend.

My thanks also extend to my second marker, Dr. Yingzhen Li, whose lecture notes on Variational Inference and Introduction to BNNs are the best I have ever seen. I am grateful for her interest in this project and for the insightful meeting she arranged with her PhD student, Wenlong, which provided crucial perspective at a key stage.

The work was sharpened by the weekly discussions of LLM Shilling Crew, a reading group I had the pleasure of co-founding with my best friend at Imperial, James Kerns. Thank you all for the stimulating discussion and the fun we had, which were instrumental during the early research phase of this project.

To my parents, Yuhan and Wei, thank you for the unconditional love and the unwavering financial and emotional support you have provided for the past 22 years.

Last but certainly not least, I must thank my close friends at the Department of Computing, fellow habitants of the deep, dark, and cold basement of the Huxley building (you know who you are). You are a priceless treasure in my life. Contents

1. 1 Introduction

1. 1.1 Overview

1. 1.2 Contributions

1. 1.3 Thesis Outline

1. 2 Background

1. 2.1 Mixture-of-Experts (MoE) Architecture

1. 2.1.1 Modern LLM: A Primer

1. 2.1.2 MoE: From Dense Layers to Sparse Experts

1. 2.2 Uncertainty and Calibration in Large Language Models

1. 2.2.1 The Problem of Overconfidence and Miscalibration

1. 2.2.2 Evaluating Uncertainty: From Sequences to Controlled Predictions

1. 2.2.3 Formal Metrics for Calibration

1. 2.2.4 Related Work in LLM Calibration

1. 2.3 Bayesian Machine Learning: A Principled Approach to Uncertainty

1. 2.3.1 The Bayesian Framework

1. 2.3.2 Bayesian Neural Networks (BNNs)

1. 2.3.3 Variational Inference (VI)

1. 3 Motivation

1. 3.1 Motivation 1: Brittleness of Deterministic Routing

1. 3.1.1 Methodology

1. 3.1.2 Results and Observations

1. 3.1.3 Conclusion

1. 3.2 Motivation 2: Potentials of Stochastic Routing

1. 3.2.1 Methodology

1. 3.2.2 Results and Observations

1. 3.2.3 Conclusion

1. 3.3 Chapter Summary

1. 4 Methodology: Bayesian MoE Router

1. 4.1 Standard MoE Router: A Formal Definition

1. 4.2 Bayesian Inference on Expert Centroid Space

1. 4.2.1 Core Idea: Bayesian Multinomial Logistic Regression

1. 4.2.2 Method 1: MC Dropout Router (MCDR)

1. 4.2.3 Method 2: Stochastic Weight Averaging Gaussian Router (SWAGR)

1. 4.2.4 Method 3: Deep Ensembles of Routers (DER)

1. 4.2.5 Summary of Centroid-Space Methods

1. 4.3 Bayesian Inference on Expert Logit Space

1. 4.3.1 Core Idea: Amortised Variational Inference on the Logit Space

1. 4.3.2 Method 4: The Mean-Field Variational Router (MFVR)

1. 4.3.3 Method 5: The Full-Covariance Variational Router (FCVR)

1. 4.3.4 Summary of Logit-Space Methods

1. 4.4 Bayesian Inference on Expert Selection Space

1. 4.4.1 Core Idea: Learning Input-Dependent Temperature

1. 4.4.2 Method 6: Variational Temperature Sampling Router (VTSR)

1. 4.4.3 Summary of the Selection-Space Method

1. 4.5 Chapter Summary

1. 5 Experiments and Analysis

1. 5.1 Experimental Setup

1. 5.1.1 Model, Baselines, and Proposed Methods

1. 5.1.2 Datasets and Tasks

1. 5.1.3 Evaluation Metrics

1. 5.2 Implementation Details and Training Strategy

1. 5.2.1 Training Pipeline

1. 5.2.2 MoE Layer Selection Strategies

1. 5.2.3 Method-Specific Tuning and Considerations

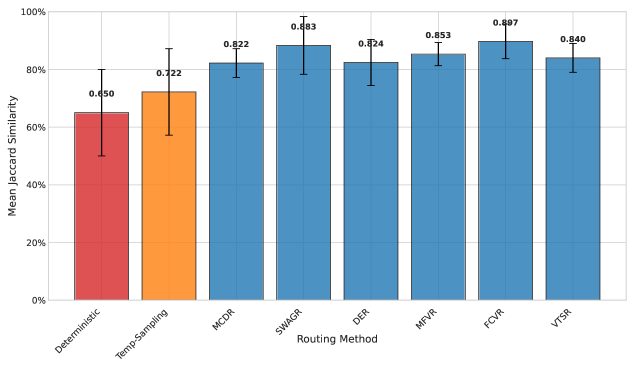

1. 5.3 Experiment 1: Stability Under Perturbation

1. 5.3.1 Goal and Methodology

1. 5.3.2 Results and Analysis

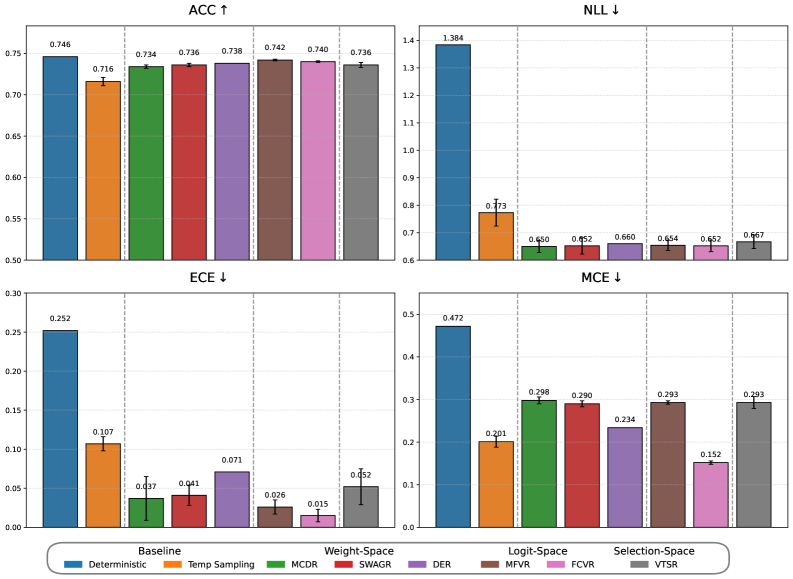

1. 5.4 Experiment 2: In-Distribution Calibration

1. 5.4.1 Goal and Methodology

1. 5.4.2 Results and Analysis

1. 5.5 Experiment 3: Out-of-Distribution Detection

1. 5.5.1 Goal and Methodology

1. 5.5.2 Experiment 3a: Improving Standard Uncertainty Signal

1. 5.5.3 Experiment 3b: Router-Level Uncertainty as Signal

1. 5.6 Ablation Study: Comparative Analysis of Layer Selection

1. 5.7 Practicality: Efficiency Analysis of Bayesian Routers

1. 5.7.1 Memory Overhead

1. 5.7.2 Computation Overhead

1. 5.7.3 Parallelisation and Practical Trade-offs

1. 5.8 Chapter Summary

1. 6 Discussion and Conclusion

1. 6.1 Limitations and Future works

1. 6.2 Conclusion

1. Declarations

1. A Models & Datasets

1. B Proof of KL Divergence Equivalence

1. C In Distribution Calibration Full Results

1. D Out of Distribution Detection Full Results

1. D.1 Formal Definitions of Router-Level Uncertainty Signals

1. D.2 Full Results: Standard Uncertainty Signal (Experiment 3a)

1. D.3 Full Results: Router-Level Uncertainty Signals (Experiment 3b)

1.1 Overview

Modern Large Language Models (LLMs) have achieved remarkable success through clever techniques for scaling both dataset and model size. A key architectural innovation enabling this progress is the Mixture-of-Experts (MoE) model [1, 2]. The computational cost of dense, all-parameter activation in traditional LLMs creates a bottleneck that limits further scaling and hinders wider, more accessible deployment. The MoE architecture elegantly circumvents this by using a routing network (gating network) to activate only a fraction of the model’s parameters for any given input. This sparsity allows for a massive increase in the total number of parameters, enhancing the model’s capacity for specialised knowledge without a proportional increase in computational cost. This dual benefit of specilisation and sparsity has made MoE a cornerstone of state-of-the-art LLMs.

Despite their power, the practical deployment of LLMs is hindered by fundamental challenges in robustness and calibration [3]. These models often produce highly confident yet incorrect outputs, a phenomenon known as overconfidence, which has been shown to be a persistent issue across a wide range of models and tasks [4]. This unreliability frequently manifests as hallucination, the generation of plausible but factually fictitious content, which poses a significant barrier to their adoption in high-stake domains [5], such as medicine and the law. At its core, this untrustworthiness stems from the models’ inability to quantify their own predictive uncertainty.

This thesis argues that in an MoE model, the classic deterministic routing mechanism represents a critical point of failure. The router’s decision is not a minor adjustment, but dictates which specialised sub-networks are activated for inference. An incorrect or brittle routing choice means the wrong knowledge-domain expert is applied to a token, leading to a flawed output. In modern LLMs with dozens of stacked MoE layers, this problem is magnified: A single routing error in an early layer creates a corrupted representation that is then passed to all subsequent layers, initiating a cascading failure.

This thesis proposes to address potential failure mode by introducing a Bayesian routing framework. Instead of forcing the router to make a single, deterministic choice, our approach is to model a probability distribution over the routing decisions themselves. This allows us to perform principled uncertainty quantification directly at the point of expert selection, drawing on foundational concepts in Bayesian deep learning [6, 7, 8]. While applying Bayesian methods to an entire multi-billion parameter LLM is often computationally daunting, focusing this treatment only on the lightweight routing networks is a highly pragmatic and tractable approach. The ultimate purpose is to leverage this targeted uncertainty to enable better calibrated and robust LLM inference, creating models that are not only powerful but also aware of the limits of their own knowledge.

1.2 Contributions

This thesis makes the following primary contributions to the study of reliable and calibrated Mixture-of-Experts models:

1. Diagnosis of Router Brittleness and Rationale for Probabilistic Routing: We establish the empirical foundation for this thesis with a two-part investigation, which reveals the inherent brittleness of standard deterministic routing and potentials for probablistic approaches respectively.

1. A Structured Framework for Bayesian Routing: We formulate and evaluate a novel framework that categorises Bayesian routing methods based on where uncertainty is introduced. This taxonomy provides a clear and structured landscape for analysis, focussed on Bayesian modelling of weight-space, logit-space and routing-space respectively.

1. Rigorous Evaluation of Calibration and Robustness: We conduct a series of controlled experiments on a pre-trained MoE model with 3B parameters, then systematically measure the impact of our proposed methods on in-distribution (ID) performance and calibration, out-of-distribution (OoD) detection, and overall router stability.

1. Memory and Computation Overhead Analysis: We assess the practical feasibility of the proposed Bayesian routing methods by performing a detailed analysis of their memory and computational overhead. This provides a clear picture of the trade-offs involved, demonstrating which methods are most viable for deployment in large-scale systems.

1.3 Thesis Outline

The remainder of this thesis is organised as follows. Chapter 2 provides a review of the foundational literature on Mixture-of-Experts models, uncertainty in LLMs, and Bayesian machine learning. Chapter 3 presents the motivational experiments that quantitatively establish the problem of router instability. Chapter 4 details the methodology behind our proposed Bayesian Routing Networks framework. Chapter 5 is dedicated to the main experiments and analysis, evaluating the impact of our methods on stability, calibration, and robustness, with further efficiency analysis. Finally, Chapter 6 concludes the thesis with a discussion that includes the limitations of this study, and promising directions for future work.

Chapter 2 Background

2.1 Mixture-of-Experts (MoE) Architecture

2.1.1 Modern LLM: A Primer

To understand the innovation of the Mixture-of-Experts (MoE) architecture, one must first understand the standard model it enhances. The foundational architecture for virtually all modern Large Language Models (LLMs) is the Transformer [9]. This section provides a brief but essential overview of the key components of a contemporary, dense LLM, establishing a baseline before we introduce the concept of sparsity.

Decoder-Only Transformer Blueprint

The dominant architecture for modern generative LLMs, such as those in the GPT family [10], is the Decoder-only Transformer [11]. As illustrated in Figure 2.1 (A), this model processes text through a sequential pipeline. The process begins with an input sequence of tokens, which are represented in the form of indices from the vocabulary by Tokeniser. These discrete IDs are first converted into continuous vector representations by an Embedding layer, which is a learnable lookup table. Positional encoding is also usually incorporated at the embedding stage.

The resulting embeddings are then processed by the core of the model: a stack of $N$ identical Decoder Layers. The output of one layer serves as the input to the next, allowing the model to build progressively more abstract and contextually rich representations of the sequence. After the final decoder layer, a concluding LayerNorm is applied. This final hidden state is then projected into the vocabulary space by a linear layer known as the Language Modelling Head [12], which produces a logit for every possible token from the vocabulary. Finally, a softmax function is applied to these logits to generate a probability distribution, from which the output Token ID is predicted. Each of these decoder blocks contains the same set of internal sub-layers, which we will describe next.

Inside the Transformer Block

As shown in Figure 2.1 (B), each identical decoder block is composed of two primary sub-layers, wrapped with essential components that enable stable training of deep networks.

The first sub-layer is the Multi-Head Self-Attention mechanism. This is the core innovation of the Transformer, allowing each token to weigh the importance of all other preceding tokens in the sequence. The output of this sub-layer, $\mathbf{u}$ , is computed by applying the attention function to the block’s input, $\mathbf{h}$ , with residual connection and layer normalisation added:

$$

\mathbf{u}=\text{LayerNorm}(\text{SA}(\mathbf{h})+\mathbf{h})

$$

As the attention mechanism is not the primary focus of this thesis, we will not detail its internal mechanics.

The second sub-layer is a position-wise Feed-Forward Network (FFN). This is a non-linear transformation that is applied independently to each token representation $\mathbf{u}_{t}$ after it has been updated by the attention mechanism. Skip connections and layer normalisation are again applied, yielding the final output of the Transformer block, $\mathbf{h^{\prime}}$ :

$$

\mathbf{h^{\prime}}=\text{LayerNorm}(\text{FFN}(\mathbf{u})+\mathbf{u})

$$

In modern LLMs, this is typically implemented as a Gated Linear Unit (GLU) variant such as SwiGLU [13], which has been shown to be highly effective:

$$

\text{FFN}(\mathbf{u}_{t})=\left(\sigma(\mathbf{u}_{t}W_{\text{Up}})\odot\mathbf{u}_{t}W_{\text{Gate}}\right)W_{\text{Down}}

$$

This FFN is the specific component that the Mixture-of-Experts architecture modifies and enhances.

Crucially, as stated, each of these two sub-layers is wrapped by two other components: a residual connection (or skip connection) and a layer normalisation step. The residual connection is vital for preventing the vanishing gradient problem. Layer normalisation stabilises the activations, ensuring that the training of dozens or even hundreds of stacked layers remains feasible.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Decode-only LLM and Transformer Block

### Overview

The image presents two diagrams side-by-side. Diagram (A) illustrates the architecture of a Decode-only LLM (Language Model), while diagram (B) depicts the structure of a Transformer Block. Both diagrams use a top-down flow to represent the processing steps.

### Components/Axes

**Diagram (A): Decode-only LLM**

* **Input:** Token IDs Input (Green box at the top)

* **Layers:**

* Embedding (Blue box)

* Decoder Layer (Blue box, repeated N times within a dashed box labeled "Decoder Stack N Layers")

* LayerNorm (Blue box)

* LM Head (Blue box)

* **Output:** Token IDs Output (Green box at the bottom)

* **Label:** (A) Decode-only LLM (bottom-left)

**Diagram (B): Transformer Block**

* **Input:** Sequence Hidden Input (Green box at the top)

* **Layers:**

* Self-Attention (Blue box)

* LayerNorm (Blue box)

* Feed-Forward Network (Blue box)

* LayerNorm (Blue box)

* **Connections:**

* A direct connection (arrow) bypasses the Self-Attention and LayerNorm layers, adding to the output of the first LayerNorm.

* A direct connection (arrow) bypasses the Feed-Forward Network and LayerNorm layers, adding to the output of the second LayerNorm.

* **Output:** Sequence Hidden Output (Green box at the bottom)

* **Label:** (B) Transformer Block (bottom-right)

### Detailed Analysis

**Diagram (A): Decode-only LLM**

1. **Token IDs Input:** The process begins with inputting token IDs.

2. **Embedding:** The token IDs are then passed through an embedding layer.

3. **Decoder Stack:** The core of the model consists of N Decoder Layers. The exact number of layers is not specified, but it is represented by "N Layers".

4. **LayerNorm:** A Layer Normalization layer follows the decoder stack.

5. **LM Head:** The output is then fed into a Language Model Head.

6. **Token IDs Output:** Finally, the model outputs token IDs.

**Diagram (B): Transformer Block**

1. **Sequence Hidden Input:** The block receives a sequence of hidden states as input.

2. **Self-Attention:** The input is processed through a self-attention mechanism.

3. **LayerNorm:** The output of the self-attention is normalized using LayerNorm. A residual connection adds the original input to the output of this LayerNorm.

4. **Feed-Forward Network:** The result is then passed through a feed-forward network.

5. **LayerNorm:** Another LayerNorm layer normalizes the output of the feed-forward network. A residual connection adds the input of the feed-forward network to the output of this LayerNorm.

6. **Sequence Hidden Output:** The block outputs a sequence of hidden states.

### Key Observations

* Diagram (A) shows a sequential flow of data through the layers of a Decode-only LLM.

* Diagram (B) highlights the internal structure of a Transformer Block, emphasizing the self-attention mechanism, feed-forward network, and residual connections.

* Both diagrams use similar visual elements (boxes, arrows) to represent layers and data flow.

* The "Decoder Stack N Layers" in diagram (A) indicates that the Decoder Layer is repeated multiple times, a key characteristic of deep learning models.

* The residual connections in diagram (B) are crucial for training deep networks, as they help to mitigate the vanishing gradient problem.

### Interpretation

The diagrams illustrate the architecture of a Decode-only LLM and the internal structure of a Transformer Block, which are fundamental components in modern natural language processing models. The Decode-only LLM processes input tokens through a series of embedding, decoding, and normalization layers, culminating in the generation of output tokens. The Transformer Block, with its self-attention mechanism and feed-forward network, enables the model to capture complex relationships between words in a sequence. The residual connections in the Transformer Block are essential for training deep networks effectively. The diagrams highlight the modularity and hierarchical structure of these models, where individual blocks can be stacked to create more complex architectures.

</details>

Figure 2.1: From Decoder-only LLM to Transformer Block. (A) The high-level of a decoder-only LLM, composed of a stack of identical Transformer blocks. (B) The internal structure of a single Transformer block.

Architectural Advances

Beyond the core components, the performance of modern LLMs relies on several key innovations, including:

- Root Mean Square Normalisation (RMSNorm): A computationally efficient alternative to LayerNorm that stabilises training by normalising activations based on their root-mean-square magnitude [14].

- Rotary Position Embeddings (RoPE): A method for encoding the relative positions of tokens by rotating their vector representations, which has been shown to improve generalisation to longer sequences [15].

- Advanced Attention Mechanisms: Techniques such as Latent Attention are used to handle longer contexts more efficiently by first compressing the input sequence into a smaller set of latent representations [16].

While these techniques optimise existing components of the Transformer, a more fundamental architectural shift for scaling model capacity involves reimagining the Feed-Forward Network (FFN) itself. This leads us directly to the Mixture-of-Experts paradigm, which is a sparsity-inducing modification of the FFN.

2.1.2 MoE: From Dense Layers to Sparse Experts

The architectural innovations described previously optimise existing components of the Transformer. The Mixture-of-Experts (MoE) paradigm introduces a more fundamental change by completely redesigning the Feed-Forward Network (FFN), the primary source of a dense model’s parameter count and computational cost [17, 1, 2].

Motivation and Key Benefits

The core idea of an MoE layer is to replace a single FFN with a collection of many smaller, independent FFNs called experts. For each incoming token, a lightweight routing mechanism dynamically selects a small subset of these experts (e.g., 2 or 4 out of 64) to process it. This strategy of sparse activation yields two significant benefits:

Massive Parameter Count for Specialised Knowledge. The first benefit is a dramatic increase in the model’s total number of learnable parameters. The total knowledge capacity of the model is the sum of all experts, enabling different experts to learn specialised functions for different types of data or tasks.

Constant Computational Cost for Efficient Inference. The second benefit is that this increased capacity does not come with a proportional rise in computational cost. Despite the vast number of total parameters, the cost (in FLOPs) per token remains constant and manageable, as it only depends on the small number of activated experts. This breaks the direct link between model size and inference cost, enabling a new frontier of scale.

This paradigm has been successfully adopted by many state-of-the-art open-source LLMs. A detailed comparison of their respective sizes and expert configurations is presented in Table A.2, Appendix A.

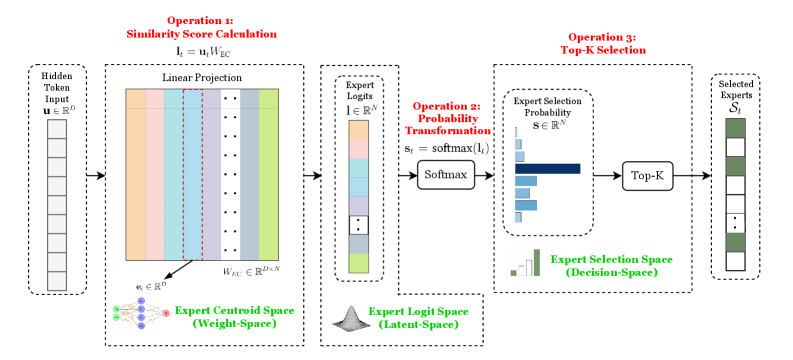

The MoE Routing Mechanism

The core of the MoE layer is a deterministic routing mechanism, which decides which subset of experts to activate during inference for each individual tokens. The entire MoE FFN layer’s working procedure is demonstrated in Figure 2.2. We can break this process down into three distinct stages:

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Mixture of Experts (MoE) Layer

### Overview

The image presents a diagram of a Mixture of Experts (MoE) layer within a neural network architecture. It illustrates the flow of data through self-attention, layer normalization, feed-forward networks, and the MoE component, which involves routing tokens to a selected set of experts and weighting their outputs.

### Components/Axes

* **Input/Output Blocks:**

* "Sequence Hidden Input" (top-left, green block)

* "Sequence Hidden Output" (bottom-left, green block)

* "Token hidden input" (top-center, green block)

* "Token hidden Output" (bottom-center, green block)

* **Processing Blocks (left side):**

* "Self-Attention" (blue block)

* "LayerNorm" (blue block)

* "Feed-Forward Network" (blue block)

* "LayerNorm" (blue block)

* **MoE Components (right side, within dashed box):**

* "Router" (blue block, top-right)

* "Selected Expert Set" (horizontal array of black/white blocks, labeled as "S<sub>t</sub>")

* "Expert 1", "Expert 2", "Expert 3", "Expert 4", ..., "Expert N" (blue blocks)

* "Similarity Scores (Logits)" (horizontal array of green blocks, top-right)

* "Top-K Select" (blue block, right)

* "Top-K Weighting Vector" (horizontal array of green blocks, bottom-center, labeled as "g<sub>t</sub>")

* **Variables:**

* "u<sub>t</sub>" (input to the experts)

* "l<sub>t</sub>" (output of Top-K Select)

* "W<sub>EC</sub>" (Router parameter)

* **Operations:**

* ⊕ (addition)

* ⊗ (multiplication)

* **Functions:**

* FFN<sup>expert</sup><sub>i</sub>(u<sub>t</sub>)

* FFN<sup>MoE</sup>(u<sub>t</sub>)

### Detailed Analysis

1. **Left Side (Sequence Processing):**

* The "Sequence Hidden Input" feeds into a "Self-Attention" block.

* The output of "Self-Attention" is normalized by "LayerNorm".

* This normalized output is added to the original input of "Self-Attention" (residual connection).

* The result is processed by a "Feed-Forward Network".

* The output of the "Feed-Forward Network" is normalized by "LayerNorm".

* This normalized output is added to the original input of the "Feed-Forward Network" (residual connection).

* The final output is the "Sequence Hidden Output".

2. **Right Side (Mixture of Experts):**

* The "Token hidden input" (u<sub>t</sub>) is fed into a "Router" with parameter "W<sub>EC</sub>".

* The "Router" outputs "Similarity Scores (Logits)" (l<sub>t</sub>), represented as a horizontal array of green blocks with varying shades of green.

* These scores are used by "Top-K Select" to select the top-K experts.

* The "Token hidden input" (u<sub>t</sub>) is also fed into each of the "Expert" blocks (Expert 1 to Expert N).

* The output of each expert, FFN<sup>expert</sup><sub>i</sub>(u<sub>t</sub>), is multiplied (⊗) by the corresponding weight from the "Top-K Weighting Vector" (g<sub>t</sub>).

* The weighted outputs of the experts are summed (⊕) to produce the "Token hidden Output", which is processed by FFN<sup>MoE</sup>(u<sub>t</sub>).

* The "Selected Expert Set" (S<sub>t</sub>) indicates which experts are selected (black blocks) and which are not (white blocks).

### Key Observations

* The diagram illustrates a common architecture for incorporating a Mixture of Experts layer into a neural network.

* The MoE layer allows the network to selectively route different tokens to different experts, enabling specialization and increased capacity.

* Residual connections are used around the "Self-Attention" and "Feed-Forward Network" blocks on the left side.

* The "Top-K Select" mechanism ensures that only the most relevant experts are used for each token.

### Interpretation

The diagram depicts a Mixture of Experts (MoE) layer, a technique used to increase the capacity of neural networks. The input sequence is processed through standard layers like self-attention and feed-forward networks. The MoE layer then routes different tokens to different experts based on the router's output. This allows the network to specialize its processing for different types of inputs. The "Top-K Select" mechanism ensures that only the most relevant experts are used, which can improve efficiency and performance. The residual connections help to stabilize training and improve the flow of information through the network. The varying shades of green in the "Similarity Scores" and "Top-K Weighting Vector" likely represent different levels of activation or importance.

</details>

Figure 2.2: Routing Mechanism in MoE Feed-Forward Network Layer.

Stage 1: Expert Similarity Scoring. First, the router computes a similarity score between the input token’s hidden state, $\mathbf{u}_{t}∈\mathbb{R}^{D}$ , and each of the $N$ unique, learnable expert centroid vectors, $\mathbf{e}_{i}∈\mathbb{R}^{D}$ . This is achieved using a dot product to measure the alignment between the token’s representation and each expert’s specialised focus. For computational efficiency, these $N$ centroid vectors are collected as the columns of a single weight matrix:

$$

W_{\text{EC}}=[\mathbf{e}_{1},\dots,\mathbf{e}_{N}]

$$

The similarity calculation for all experts is then performed with a single matrix multiplication. In neural network terms, this is a simple linear projection that produces a vector of unnormalised scores, or logits ( $\mathbf{l}_{t}∈\mathbb{R}^{N}$ ):

$$

\mathbf{l}_{t}=\mathbf{u}_{t}W_{\text{EC}}

$$

Stage 2: Probability Transformation. Next, these raw logit scores are transformed into a discrete probability distribution over all $N$ experts using the softmax function:

$$

\mathbf{s}_{t}=\text{softmax}(\mathbf{l}_{t})

$$

Taken together, this two-step process of a linear projection followed by a softmax function is a multinomial logistic regression [18] model.

Stage 3: Top-K Expert Selection. Finally, to enforce sparse activation, a hard, deterministic Top-K selection mechanism is applied to this probability vector $\mathbf{s}_{t}$ . This operation identifies the indices of the $K$ experts with the highest probabilities. Many practical implementations select the Top-K experts directly from the logits before applying a renormalising softmax to the scores of only the selected experts [16]. Since the softmax function is monotonic, this yields the exact same set of chosen experts. Our softmax $→$ Top-K framing is mathematically equivalent for the final selection and provides a more natural foundation for the probabilistic methods developed in this thesis.

$$

g^{\prime}_{t,i}=\begin{cases}s_{t,i}&\text{if }s_{t,i}\in\textsc{Top-K}(\{s_{t,j}\}_{j=1}^{N})\\

0&\text{otherwise}\end{cases}

$$

Let $\mathcal{S}_{t}$ be the set of the Top-K expert indices selected for token $\mathbf{u}_{t}$ , which contains $K$ indices. The probabilities for these selected experts are then renormalised to sum to one,

$$

\mathbf{g}_{t}=\frac{\mathbf{g}^{\prime}_{t}}{\sum_{i=1}^{N}g^{\prime}_{t,i}}

$$

forming the final sparse gating weights, $\mathbf{g}_{t}$ , which are used to compute the weighted sum of expert outputs.

$$

\text{FFN}^{\text{MoE}}(\mathbf{u}_{t})=\sum_{i\in\mathcal{S}_{t}}g_{t,i}\cdot\text{FFN}^{\text{expert}}_{i}(\mathbf{u}_{t})

$$

Auxiliary Losses for Router Training

The hard, competitive nature of the Top-K selection mechanism can lead to a training pathology known as routing collapse [1]. This occurs when a positive feedback loop causes the router to consistently send the majority of tokens to a small, favored subset of experts. The remaining experts are starved of data and fail to learn, rendering a large portion of the model’s capacity useless. To counteract this and ensure all experts are trained effectively, auxiliary loss functions are added to the main training task objective with a scaling hyperparameter $\beta$ :

$$

\mathcal{L}=\mathcal{L}_{\text{task}}+\beta\cdot\mathcal{L}_{\text{auxiliary}}

$$

Numerous auxillary losses for stablising and balancing router training have been proposed over the past few years [19, 20, 21]. Here we only introuduced two most famous ones:

Load-Balancing Loss

The most common auxiliary loss is a load-balancing loss designed to incentivise the router to distribute tokens evenly across all $N$ experts. For a batch of $T$ tokens, this loss is typically calculated as the dot product of two quantities for each expert $i$ : the fraction of tokens in the batch routed to it ( $f_{i}$ ), and the average router probability it received over those tokens ( $P_{i}$ ) [22]:

$$

\mathcal{L}_{\text{balance}}=N\sum_{i=1}^{N}f_{i}\cdot P_{i}

$$

This loss is minimised when each expert receives an equal share of the routing responsibility.

Router Z-Loss

Some models also employ a router Z-loss to regularise the magnitude of the pre-softmax logits [23]. This loss penalises large logit values, which helps to prevent the router from becoming overly confident in its selections early in training. This can improve training stability and encourage a smoother distribution of routing scores. The loss is calculated as the mean squared log-sum-exp of the logits over a batch:

$$

\mathcal{L}_{\text{Z}}=\frac{1}{T}\sum_{t=1}^{T}\left(\log\sum_{i=1}^{N}\exp(l_{t,i})\right)^{2}

$$

These auxiliary losses are combined with the primary task loss to guide the model towards a stable and balanced routing policy.

2.2 Uncertainty and Calibration in Large Language Models

Having detailed the architecture of a modern LLM, we now turn to the fundamental challenges of reliability that motivate our work. To understand the need for a Bayesian MoE router, it is crucial to first understand the general problems of overconfidence and miscalibration inherent in standard, deterministic models.

2.2.1 The Problem of Overconfidence and Miscalibration

A fundamental challenge in modern LLMs is the frequent mismatch between the model’s predictive probabilities and its true underlying knowledge. The softmax outputs of a well-trained network cannot be reliably interpreted as a true measure of the model’s confidence. This phenomenon is known as miscalibration, and for most modern deep networks, it manifests as consistent overconfidence, a tendency to produce high-probability predictions that are, in fact, incorrect [3].

This overconfidence is a primary driver of one of the most significant failure modes in LLMs: hallucination. Defined as the generation of plausible-sounding but factually baseless or fictitious content, hallucination makes models fundamentally untrustworthy [5]. In high-stakes domains such as medicine or law, the tendency to state falsehoods with unwavering certainty poses a critical safety risk and a major barrier to adoption.

The formal goal is to achieve good calibration. A model is considered perfectly calibrated if its predicted confidence aligns with its empirical accuracy. For instance, across the set of all predictions to which the model assigns an 80% confidence, a calibrated model will be correct on 80% of them. Achieving better calibration is therefore a central objective in the pursuit of safe and reliable AI, and it is a primary motivation for the methods developed in this thesis.

2.2.2 Evaluating Uncertainty: From Sequences to Controlled Predictions

Quantifying the uncertainty of an LLM’s output is a complex task, especially for open-ended, autoregressive generation. The output space is vast, and uncertainty can accumulate at each step, making it difficult to obtain a reliable and interpretable measure. This remains an active and challenging area of research, with various proposed methods.

The most traditional metric is Perplexity (PPL), the exponentiated average negative log-likelihood of a sequence, which measures how “surprised” a model is by the text:

$$

\text{PPL}(\mathbf{s})=\exp\left\{-\frac{1}{T}\sum_{t=1}^{T}\log p(s_{t}|s_{<t})\right\}

$$

More advanced approaches, like Semantic Entropy, aim to measure uncertainty by clustering the semantic meaning of many possible generated sequences [24, 25]. The entropy is calculated over the probability of these semantic clusters rather than individual tokens. Each semantic cluster $\mathbf{c}$ is defined as $∀\mathbf{s},\mathbf{s}^{\prime}∈\mathbf{c}:E(\mathbf{s},\mathbf{s}^{\prime})$ , where $E$ is a semantic equivalence relation. $\mathcal{C}$ is semantic cluster space. The semantic entropy is then given by:

$$

\mathcal{H}_{\text{sem}}(p(y|\mathbf{x}))=-\sum_{\mathbf{c}\in\mathcal{C}}p(\mathbf{c}|\mathbf{x})\log p(\mathbf{c}|\mathbf{x})

$$

Other methods focus on explicitly teaching the model to assess its own confidence, either through direct prompting or by using Supervised Fine-Tuning (SFT) to train the model to state when it does not know the answer [26]. An example of such prompting strategies is shown in Table 2.1.

Table 2.1: Examples of prompting strategies for outputing model confidence.

| Name | Format | Confidence |

| --- | --- | --- |

| Zero-Shot Classifier | “Question. Answer. True/False: True ” | $\frac{P(\text{``{\color[rgb]{1,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,0}True}''})}{P(\text{``{\color[rgb]{1,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,0}True}''})+P(\text{``{\color[rgb]{1,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,0}False}''})}$ |

| Verbalised | “Question. Answer. Confidence: 90% ” | float(‘‘ 90% ’’) |

While these methods are valuable for sequence-level analysis, in order to rigorously and quantitatively evaluate the impact of the architectural changes proposed in this thesis, a more controlled and standardised evaluation setting is required. A common and effective strategy is to simplify the task to the fundamental problem of next-token prediction in a constrained environment.

For this purpose, Multiple-Choice Question Answering (MCQA) A detailed summary of the MCQA datasets used later in this thesis is provided in Table LABEL:tab:mcqa_datasets_summary, Appendix A. provides an ideal testbed. In this setting, the model’s task is reduced to assigning probabilities over a small, discrete set of predefined answer choices. This allows for a direct and unambiguous comparison between the model’s assigned probability for the correct answer (its confidence) and the actual outcome. This provides a clean, reliable signal for measuring the model’s calibration, which is the focus of our evaluation.

2.2.3 Formal Metrics for Calibration

Within the controlled setting of Multiple-Choice Question Answering (MCQA), we can use a suite of formal metrics to quantify a model’s performance and, more importantly, its calibration.

A primary metric for any probabilistic classifier is the Negative Log-Likelihood (NLL), also known as the cross-entropy loss. It measures how well the model’s predicted probability distribution aligns with the ground-truth outcome. A lower NLL indicates that the model is not only accurate but also assigns high confidence to the correct answers.

To measure miscalibration directly, the most common metric is the Expected Calibration Error (ECE) [27, 3]. ECE measures the difference between a model’s average confidence and its actual accuracy. To compute it, predictions are first grouped into $M$ bins based on their confidence scores. For each bin $B_{m}$ , the average confidence, $\text{conf}(B_{m})$ , is compared to the actual accuracy of the predictions within that bin, $\text{acc}(B_{m})$ . The ECE is the weighted average of the absolute differences across all bins:

$$

\text{ECE}=\sum_{m=1}^{M}\frac{|B_{m}|}{n}\left|\text{acc}(B_{m})-\text{conf}(B_{m})\right|

$$

where $n$ is the total number of predictions. A lower ECE signifies a better-calibrated model. A complementary metric is the Maximum Calibration Error (MCE), which measures the worst-case deviation by taking the maximum of the differences:

$$

\text{MCE}=\max_{m=1,\dots,M}\left|\text{acc}(B_{m})-\text{conf}(B_{m})\right|

$$

These metrics are often visualised using Reliability Diagrams. As shown in Figure 2.3, this plot shows the actual accuracy for each confidence bin. For a perfectly calibrated model, the bars align perfectly with the diagonal line, where confidence equals accuracy.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Calibration Plots: Model Confidence vs. Accuracy

### Overview

The image presents four calibration plots, each visualizing the relationship between predicted confidence and actual accuracy for a classification model under different calibration scenarios: "Well-Calibrated", "Overconfident", "Underconfident", and "Uncalibrated (Random)". Each plot displays the accuracy and the gap (ECE - Expected Calibration Error) as stacked bars, along with a dashed line representing perfect calibration.

### Components/Axes

* **Titles:**

* Top-left: "Well-Calibrated"

* Top-middle-left: "Overconfident"

* Top-middle-right: "Underconfident"

* Top-right: "Uncalibrated (Random)"

* **Y-axis (Actual Accuracy):** Ranges from 0.0 to 1.0, with tick marks at 0.2 intervals (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

* **X-axis (Predicted Confidence):** Ranges from 0.0 to 1.0, without explicit tick marks, but implicitly divided into 10 bins of width 0.1.

* **Legend (Top-left of each plot):**

* Dashed Black Line: "Perfect Calibration"

* Blue Bars: "Accuracy"

* Red Bars: "Gap (ECE)"

* **ECE Value:** Each plot displays the ECE (Expected Calibration Error) value at the bottom-right.

### Detailed Analysis

**1. Well-Calibrated**

* **Trend:** The blue "Accuracy" bars closely follow the "Perfect Calibration" line. The red "Gap (ECE)" bars are relatively small.

* **Data Points:**

* ECE = 0.038

**2. Overconfident**

* **Trend:** The "Accuracy" bars are generally above the "Perfect Calibration" line for lower predicted confidence values and below the line for higher confidence values. This indicates overconfidence in low-confidence predictions and underconfidence in high-confidence predictions. The "Gap (ECE)" bars are more prominent than in the "Well-Calibrated" plot.

* **Data Points:**

* ECE = 0.065

**3. Underconfident**

* **Trend:** The "Accuracy" bars are generally below the "Perfect Calibration" line for lower predicted confidence values and above the line for higher confidence values. This indicates underconfidence in low-confidence predictions and overconfidence in high-confidence predictions. The "Gap (ECE)" bars are more prominent than in the "Well-Calibrated" plot.

* **Data Points:**

* ECE = 0.079

**4. Uncalibrated (Random)**

* **Trend:** The "Accuracy" bars show a scattered relationship with the "Perfect Calibration" line. The "Gap (ECE)" bars are large and inconsistent.

* **Data Points:**

* ECE = 0.289

### Key Observations

* The "Well-Calibrated" plot demonstrates the ideal scenario where predicted confidence aligns with actual accuracy.

* The "Overconfident" plot shows a tendency for the model to overestimate its accuracy, especially at lower confidence levels.

* The "Underconfident" plot shows a tendency for the model to underestimate its accuracy, especially at lower confidence levels.

* The "Uncalibrated (Random)" plot represents a poorly calibrated model with a high ECE, indicating a significant mismatch between predicted confidence and actual accuracy.

### Interpretation

The calibration plots provide a visual assessment of how well a classification model's predicted probabilities reflect the true likelihood of its predictions being correct. A well-calibrated model is crucial in applications where confidence scores are used for decision-making. The plots highlight the importance of calibration techniques to improve the reliability of model outputs, especially when dealing with overconfident or underconfident models. The ECE values quantify the degree of miscalibration, with lower values indicating better calibration. The "Uncalibrated (Random)" plot serves as a baseline, demonstrating the impact of poor calibration on the relationship between predicted confidence and actual accuracy.

</details>

Figure 2.3: An example of a Reliability Diagram. The blue bars represent the model’s accuracy within each confidence bin, while the red bars show the gap to perfect calibration (the diagonal line).

In addition to calibration, a key aspect of our evaluation is a model’s ability to distinguish in-domain data from out-of-distribution (OoD) data. This is framed as a binary classification task where the model’s uncertainty score is used as a predictor. We evaluate this using two standard metrics: the Area Under the Receiver Operating Characteristic curve (AUROC) and the Area Under the Precision-Recall curve (AUPRC) [28]. The AUROC measures the trade-off between true positive and false positive rates, while the AUPRC is more informative for imbalanced datasets. For both metrics, a higher score indicates a more reliable uncertainty signal for OoD detection.

2.2.4 Related Work in LLM Calibration

Improving the calibration of neural networks is an active area of research. Several prominent techniques have been proposed, which can be broadly categorised as post-hoc methods or training-time regularisation.

The most common and effective post-hoc method is Temperature Scaling [3]. This simple technique learns a single scalar temperature parameter, $T$ , on a held-out validation set. At inference time, the final logits of the model are divided by $T$ before the softmax function is applied. This “softens” the probability distribution, reducing the model’s overconfidence without changing its accuracy. While more complex methods exist, Temperature Scaling remains a very strong baseline.

Another approach is to regularise the model during training to discourage it from producing overconfident predictions. A classic example is Label Smoothing [29]. Instead of training on hard, one-hot labels (e.g., [0, 1, 0]), the model is trained on softened labels (e.g., [0.05, 0.9, 0.05]). This prevents the model from becoming excessively certain by discouraging the logits for the correct class from growing infinitely larger than others.

Towards Making MoE-based LLMs Know What They Don’t Know

In contrast to these approaches, which operate either as a post-processing step on the final output (Temperature Scaling) or as a modification to the training objective (Label Smoothing), the work in this thesis explores a fundamentally different, architectural solution. We hypothesise that miscalibration in MoE models can be addressed at a more foundational level, by improving the reliability of the expert selection mechanism itself. Rather than correcting the final output, we aim to build a more inherently calibrated model by introducing principled Bayesian uncertainty directly into the MoE router.

2.3 Bayesian Machine Learning: A Principled Approach to Uncertainty

This final section of our background review introduces the mathematical and conceptual tools used to address the challenges of uncertainty and calibration. While standard machine learning often seeks a single set of “best” model parameters, a point estimate, the Bayesian paradigm takes a different approach. Instead of a single answer, it aims to derive a full probability distribution over all possible parameters. This distribution serves as a principled representation of the model’s uncertainty, providing a foundation for building more reliable and robust systems.

2.3.1 The Bayesian Framework

Prior, Likelihood, and Posterior

Bayesian inference is a framework for updating our beliefs in light of new evidence. It involves three core components:

- The Prior Distribution, $p(\theta)$ , which represents our initial belief about the model parameters $\theta$ before observing any data. It often serves as a form of regularisation.

- The Likelihood, $p(\mathcal{D}|\theta)$ , which is the probability of observing our dataset $\mathcal{D}$ given a specific set of parameters $\theta$ .

- The Posterior Distribution, $p(\theta|\mathcal{D})$ , which is our updated belief about the parameters after having observed the data.

These components are formally connected by Bayes’ Theorem, which provides the mathematical engine for updating our beliefs:

$$

p(\theta|\mathcal{D})=\frac{p(\mathcal{D}|\theta)p(\theta)}{p(\mathcal{D})}

$$

The Challenge of the Marginal Likelihood

While elegant, this framework presents a major practical challenge. The denominator in Bayes’ Theorem, $p(\mathcal{D})$ , is the marginal likelihood, also known as the model evidence. It is calculated by integrating over the entire parameter space:

$$

p(\mathcal{D})=\int p(\mathcal{D}|\theta)p(\theta)d\theta

$$

For any non-trivial model like a neural network, where $\theta$ can represent millions or billions of parameters, this high-dimensional integral is computationally intractable. Since the marginal likelihood cannot be computed, the true posterior distribution is also inaccessible. This intractability is the central challenge in Bayesian deep learning and motivates the need for the approximation methods we will discuss next.

2.3.2 Bayesian Neural Networks (BNNs)

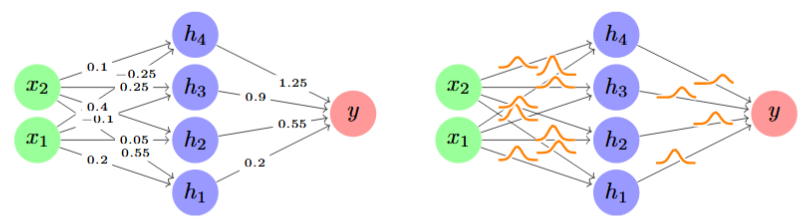

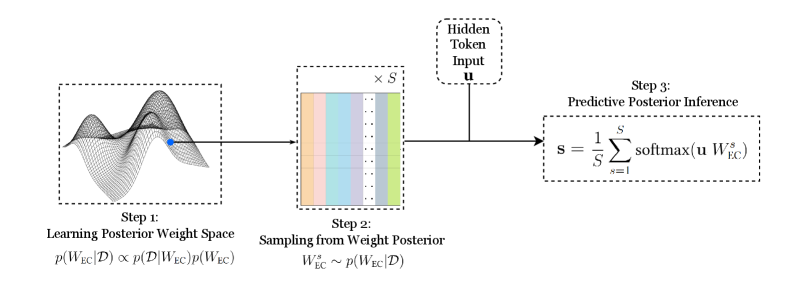

The general principles of Bayesian inference can be directly applied to neural networks, where the parameters $\theta$ correspond to the network’s weights and biases, $W$ . Instead of training to find a single, optimal point-estimate for these weights, a Bayesian Neural Network (BNN) aims to infer the full posterior distribution over them, $p(W|\mathcal{D})$ , as illlustrated in Figure 2.4 Illustration taken from the Murphy textbook [8]..

<details>

<summary>figures/bg/bnn_from_point_to_dist.png Details</summary>

### Visual Description

## Diagram: Neural Network Representations

### Overview

The image presents two diagrams of a simple neural network. The left diagram shows the network with numerical weights assigned to each connection, while the right diagram illustrates the same network with orange curves on the connections, possibly representing activation functions or distributions.

### Components/Axes

* **Nodes:**

* Input nodes: x1, x2 (green circles)

* Hidden layer nodes: h1, h2, h3, h4 (blue circles)

* Output node: y (red circle)

* **Connections:** Arrows indicating the flow of information between nodes.

* **Weights (Left Diagram):** Numerical values associated with each connection, representing the strength of the connection.

* **Curves (Right Diagram):** Orange curves on the connections, possibly representing activation functions or distributions.

### Detailed Analysis or ### Content Details

**Left Diagram (Numerical Weights):**

* **Connections from x2:**

* x2 to h4: 0.1

* x2 to h3: -0.25

* x2 to h2: 0.05

* x2 to h1: 0.2

* **Connections from x1:**

* x1 to h4: 0.4

* x1 to h3: 0.25

* x1 to h2: 0.55

* x1 to h1: -0.1

* **Connections to y:**

* h4 to y: 1.25

* h3 to y: 0.9

* h2 to y: 0.55

* h1 to y: 0.2

**Right Diagram (Curves on Connections):**

* The network structure is identical to the left diagram.

* Each connection has an orange curve on it. The shape of the curve varies slightly between connections. These curves likely represent activation functions or probability distributions associated with the connections.

### Key Observations

* The left diagram provides specific numerical weights for each connection in the neural network.

* The right diagram replaces the numerical weights with curves, suggesting a different representation of the connection strength or the transformation applied to the signal passing through the connection.

* The input nodes are x1 and x2, the hidden layer has nodes h1, h2, h3, and h4, and the output node is y.

### Interpretation

The image illustrates two different ways of representing a neural network. The left diagram uses numerical weights to quantify the strength of each connection, which is a common representation in many neural network models. The right diagram uses curves on the connections, which could represent activation functions, probability distributions, or some other form of transformation applied to the signal as it passes through the connection. The curves provide a more abstract representation of the network's behavior compared to the numerical weights. The curves could represent the uncertainty or variability associated with each connection.

</details>

Figure 2.4: From Point Estimate to Weight Distribution: The Bayesian Neural Network Paradigm. (A) A standard neural network learns a single set of weights, represented as a point estimate in weight space. (B) A Bayesian Neural Network learns a full posterior distribution over weights, capturing uncertainty and enabling more robust predictions.

Weight-Space Posterior and Predictive Distribution

The posterior distribution over the weights, $p(W|\mathcal{D})$ , captures the model’s epistemic uncertainty, that is, the uncertainty that arises from having limited training data. A wide posterior for a given weight indicates that many different values for that weight are plausible given the data, while a narrow posterior indicates high certainty.

To make a prediction for a new input $\mathbf{x}$ , a BNN marginalises over this entire distribution of weights. The resulting posterior predictive distribution averages the outputs of an infinite ensemble of networks, each weighted by its posterior probability:

$$

p(y|\mathbf{x},\mathcal{D})=\int p(y|\mathbf{x},W)p(W|\mathcal{D})dW

$$

The variance of this predictive distribution provides a principled measure of the model’s uncertainty in its output.

An Overview of Approximation Methods

As the true posterior $p(W|\mathcal{D})$ is intractable, BNNs must rely on approximation methods. The goal of these methods is to enable the approximation of the posterior predictive distribution, typically via Monte Carlo integration:

$$

p(y|\mathbf{x},\mathcal{D})=\int p(y|\mathbf{x},W)p(W|\mathcal{D})dW\approx\frac{1}{S}\sum_{s=1}^{S}p(y|\mathbf{x},W^{s})

$$

where $W^{s}$ are samples from a distribution that approximates the true posterior. The key difference between methods lies in how they obtain these samples.

Hamiltonian Monte Carlo (HMC)

MCMC methods like Hamiltonian Monte Carlo (HMC) [30] are a class of algorithms that can, given enough computation, generate samples that converge to the true posterior $p(W|\mathcal{D})$ . HMC is a gold-standard method that uses principles from Hamiltonian dynamics to explore the parameter space efficiently and produce high-quality samples. However, its significant computational cost makes it impractical for the vast parameter spaces of modern LLMs.

MC Dropout

A highly scalable alternative is Monte Carlo Dropout [31], which reinterprets dropout as approximate Bayesian inference. The key insight is to keep dropout active during inference. Each of the $S$ stochastic forward passes, with its unique random dropout mask, is treated as a sample from an approximate weight posterior. The resulting predictions are then averaged to approximate the predictive distribution, where each $W^{s}$ represents the base weights with the $s$ -th dropout mask applied.

Stochastic Weight Averaging Gaussian (SWAG)

SWAG [32] approximates the posterior with a multivariate Gaussian distribution, $\mathcal{N}(\boldsymbol{\mu}_{\text{SWAG}},\boldsymbol{\Sigma}_{\text{SWAG}})$ , by leveraging the trajectory of weights during SGD training. After an initial convergence phase, the first and second moments of the weight iterates are collected to form the mean and a low-rank plus diagonal covariance. Inference is performed by drawing $S$ weight samples, $W^{s}\sim\mathcal{N}(\boldsymbol{\mu}_{\text{SWAG}},\boldsymbol{\Sigma}_{\text{SWAG}})$ , and averaging their predictions.

Deep Ensembles

Deep Ensembles [33] provide a powerful, non-explicitly Bayesian approach. The method involves training an ensemble of $M$ identical networks independently from different random initialisations. This collection of trained models, $\{W_{1},...,W_{M}\}$ , is treated as an empirical sample from the true posterior. The predictive distribution is approximated by averaging the predictions of all $M$ models in the ensemble (i.e., where $S=M$ and $W^{s}$ is the weight matrix of the $s$ -th model).

These scalable methods provide computationally feasible ways to approximate the weight posterior. An alternative family of approximation methods, which reframes the problem as one of optimisation, is Variational Inference, which we will detail next.

2.3.3 Variational Inference (VI)

The final piece of theoretical background we require is Variational Inference (VI), a powerful and widely used alternative to MCMC for approximating intractable posterior distributions [34]. Instead of drawing samples, VI reframes the inference problem as one of optimisation, making it a natural fit for the gradient-based methods used in deep learning.

Core Idea: Posterior Approximation via Optimisation

The goal of VI is to approximate a complex and intractable true posterior, $p(\boldsymbol{z}|\boldsymbol{x})$ , with a simpler, tractable distribution, $q_{\phi}(\boldsymbol{z})$ , from a chosen family of distributions. The parameters $\phi$ of this “variational distribution” are optimised to make it as close as possible to the true posterior. This closeness is measured by the Kullback-Leibler (KL) Divergence.

Directly minimising the KL divergence is not possible, as its definition still contains the intractable posterior $p(\boldsymbol{z}|\boldsymbol{x})$ . However, we can derive an alternative objective. The log marginal likelihood of the data, $\log p(\boldsymbol{x})$ , can be decomposed as follows:

$$

\displaystyle\log p(\boldsymbol{x}) \displaystyle=\log\int p(\boldsymbol{x}|\boldsymbol{z})p(\boldsymbol{z})d\boldsymbol{z} \displaystyle=\log\int q_{\phi}(\boldsymbol{z})\frac{p(\boldsymbol{x}|\boldsymbol{z})p(\boldsymbol{z})}{q_{\phi}(\boldsymbol{z})}d\boldsymbol{z} \displaystyle\geq\int q_{\phi}(\boldsymbol{z})\log\frac{p(\boldsymbol{x}|\boldsymbol{z})p(\boldsymbol{z})}{q_{\phi}(\boldsymbol{z})}d\boldsymbol{z}\quad{\color[rgb]{0,0,0.8046875}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0.8046875}\text{(Jenson's Inequality)}} \displaystyle=\mathbb{E}_{q_{\phi}(\boldsymbol{z})}\left[\log p(\boldsymbol{x}|\boldsymbol{z})\right]-D_{\mathbb{KL}}\left[q_{\phi}(\boldsymbol{z})||p(\boldsymbol{z})\right]:=\mathcal{L}(\phi).

$$

This gives us the Evidence Lower Bound (ELBO), $\mathcal{L}(\phi)$ . As its name and the math suggest, ELBO is a lower bound on the log marginal likelihood. Besides, there’s also a connection between optimising ELBO and the original intention of optimising KL divergence between $q_{\phi}(\boldsymbol{z})$ and $p(\boldsymbol{z}|\boldsymbol{x})$ :

$$

\displaystyle\log p(\boldsymbol{x})-D_{\mathbb{KL}}(q_{\phi}(\boldsymbol{z})||p(\boldsymbol{z}|\boldsymbol{x})) \displaystyle=\log p(\boldsymbol{x})-\mathbb{E}_{q_{\phi}(\boldsymbol{z})}\left[\log\frac{q_{\phi}(\boldsymbol{z})}{p(\boldsymbol{z}|\boldsymbol{x})}\right] \displaystyle=\log p(\boldsymbol{x})+\mathbb{E}_{q_{\phi}(\boldsymbol{z})}\left[\log\frac{p(\boldsymbol{x}|\boldsymbol{z})p(\boldsymbol{z})}{q_{\phi}(\boldsymbol{z})p(\boldsymbol{x})}\right]\quad{\color[rgb]{0,0,0.8046875}\definecolor[named]{pgfstrokecolor}{rgb}{0,0,0.8046875}\text{(Bayes' Theorem)}} \displaystyle=\mathbb{E}_{q_{\phi}(\boldsymbol{z})}[\log p(\boldsymbol{x}|\boldsymbol{z})]-D_{\mathbb{KL}}(q_{\phi}(\boldsymbol{z})||p(\boldsymbol{z}))=\mathcal{L}(\phi).

$$

Crucially, because $\log p(\boldsymbol{x})$ is a constant with respect to $\phi$ , maximising the ELBO is equivalent to minimising the KL divergence Equations 2.21 and 2.22 are adapted from lecture note [35]..

The ELBO is typically written in a more intuitive form:

$$

\mathcal{L}(\phi)=\underbrace{\mathbb{E}_{q_{\phi}(\boldsymbol{z})}[\log p(\boldsymbol{x}|\boldsymbol{z})]}_{\text{Reconstruction Term}}-\underbrace{D_{\mathbb{KL}}(q_{\phi}(\boldsymbol{z})||p(\boldsymbol{z}))}_{\text{Regularisation Term}}

$$

The reconstruction term encourages the model to explain the observed data, while the regularisation term keeps the approximate posterior close to the prior $p(\boldsymbol{z})$ .

Structuring $q_{\phi}$ : Multivariate Gaussian and the Mean-Field Assumption

A primary design choice in VI is the family of distributions used for the approximate posterior, $q_{\phi}(\boldsymbol{z})$ . A common and flexible choice is the multivariate Gaussian distribution, $\mathcal{N}(\boldsymbol{z}|\boldsymbol{\mu}_{\phi},\boldsymbol{\Sigma}_{\phi})$ , as it can capture both the central tendency and the variance of the latent variables. When the prior is chosen to be a standard multivariate normal, $p(\boldsymbol{z})=\mathcal{N}(\boldsymbol{z}|\mathbf{0},I)$ , the KL divergence term in the ELBO has a convenient analytical solution:

$$

D_{\mathbb{KL}}\left(\mathcal{N}(\boldsymbol{\mu}_{\phi},\boldsymbol{\Sigma}_{\phi})||\mathcal{N}(\mathbf{0},I)\right)=\frac{1}{2}\left(\text{tr}(\boldsymbol{\Sigma}_{\phi})+\boldsymbol{\mu}_{\phi}^{\top}\boldsymbol{\mu}_{\phi}-k-\log|\boldsymbol{\Sigma}_{\phi}|\right)

$$

where $k$ is the dimensionality of the latent space $\boldsymbol{z}$ .

However, for high-dimensional latent spaces common in deep learning, parameterising and computing with a full-rank covariance matrix $\boldsymbol{\Sigma}_{\phi}$ is often computationally prohibitive. A standard and effective simplification is the mean-field assumption [7]. This assumes that the posterior distribution factorises across its dimensions, i.e., $q_{\phi}(\boldsymbol{z})=\prod_{i}q_{\phi_{i}}(z_{i})$ . For a Gaussian, this is equivalent to constraining the covariance matrix to be diagonal, $\boldsymbol{\Sigma}_{\phi}=\text{diag}(\boldsymbol{\sigma}_{\phi}^{2})$ .

This simplification significantly reduces the computational complexity. The KL divergence for the mean-field case reduces to a simple sum over the dimensions, avoiding all expensive matrix operations like determinants or inversions:

$$

D_{\mathbb{KL}}\left(\mathcal{N}(\boldsymbol{\mu}_{\phi},\text{diag}(\boldsymbol{\sigma}_{\phi}^{2}))||\mathcal{N}(\mathbf{0},I)\right)=\frac{1}{2}\sum_{i=1}^{k}\left(\mu_{{\phi}_{i}}^{2}+\sigma_{{\phi}_{i}}^{2}-\log(\sigma_{{\phi}_{i}}^{2})-1\right)

$$

This tractable and efficient formulation is a cornerstone of most practical applications of VI in deep learning. However, if the dimensionality of the latent space is tractable, it is possible to model the full-rank covariance matrix by parameterising it via its Cholesky decomposition [36]. This more expressive approach, which we detail later in our Methodology section 4.3.3, allows the model to capture correlations between the latent variables.

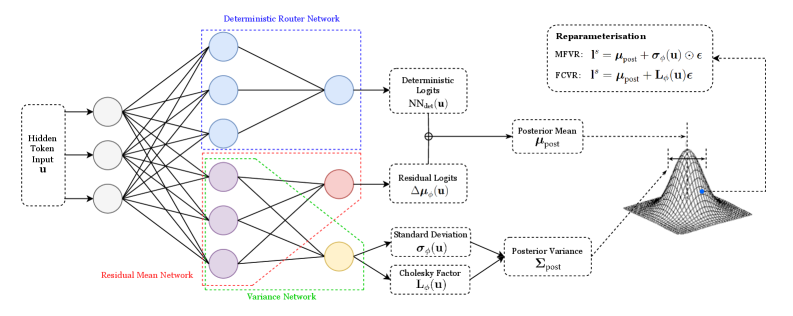

Amortised VI: VAE Case Study

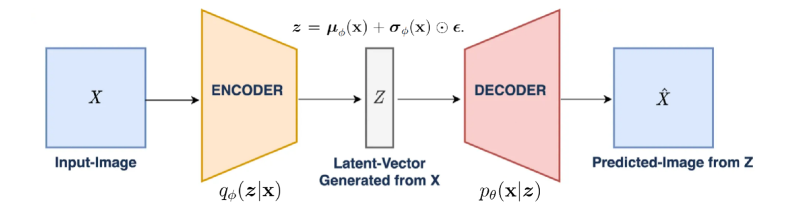

In the traditional formulation of VI, a separate set of variational parameters $\phi$ must be optimised for each data point. For large datasets, this is computationally infeasible. Amortised VI solves this by learning a single global function, an inference network, that maps any input data point $\mathbf{x}$ to the parameters of its approximate posterior, $q_{\phi}(\boldsymbol{z}|\mathbf{x})$ . The cost of training this network is thus “amortised” over the entire dataset.

The quintessential example of this approach is the Variational Autoencoder (VAE) [37]. A VAE is a generative model composed of two neural networks: an encoder ( $q_{\phi}(\boldsymbol{z}|\mathbf{x})$ ) that learns to map inputs to a latent distribution, and a decoder ( $p_{\theta}(\mathbf{x}|\boldsymbol{z})$ ) that learns to reconstruct the inputs from samples of that distribution. Typically, the latent distribution is assumed to be a mean-field Gaussian, so the encoder network has two heads to predict the mean $\boldsymbol{\mu}_{\phi}(\mathbf{x})$ and the log-variance $\log\boldsymbol{\sigma}^{2}_{\phi}(\mathbf{x})$ . $\boldsymbol{z}$ $\mathbf{x}$ $\phi$ $\theta$ $× N$

Figure 2.5: Probabilistic Graphical Model of the Variational Autoencoder (VAE). The solid lines represent the generative model $p_{\theta}(\mathbf{x}|\mathbf{z})$ , while the dashed lines represent the VI model (encoder) $q_{\phi}(\mathbf{z}|\mathbf{x})$ .

The VAE’s structure is represented by the probabilistic graphical model in Figure 2.5 PGM adapted from [37]. Note that in our depiction, latent prior $p(\boldsymbol{z})$ is not parameterised by $\theta$ .. This PGM clarifies how the two networks are trained jointly by maximising the ELBO. The reconstruction term, $\mathbb{E}_{q_{\phi}(\boldsymbol{z}|\mathbf{x})}[\log p_{\theta}(\mathbf{x}|\boldsymbol{z})]$ , corresponds directly to the generative path of the model (solid arrows), forcing the decoder (parametrised by $\theta$ ) to accurately reconstruct the input $\mathbf{x}$ from the latent code $\boldsymbol{z}$ . The regularisation term, $D_{\mathbb{KL}}(q_{\phi}(\boldsymbol{z}|\mathbf{x})||p(\boldsymbol{z}))$ , corresponds to the inference path (dashed arrows), forcing the encoder’s output (parametrised by $\phi$ ) to stay close to a simple prior, $p(\boldsymbol{z})$ .

To optimise the ELBO, we must backpropagate gradients through the sampling step $\boldsymbol{z}\sim q_{\phi}(\boldsymbol{z}|\mathbf{x})$ , which is non-differentiable. The VAE enables this with the reparameterisation trick. For a Gaussian latent variable, a sample is drawn by first sampling a standard noise variable $\boldsymbol{\epsilon}\sim\mathcal{N}(\textbf{0},I)$ and then computing the sample as $\boldsymbol{z}=\boldsymbol{\mu}_{\phi}(\mathbf{x})+\boldsymbol{\sigma}_{\phi}(\mathbf{x})\odot\boldsymbol{\epsilon}$ . This separates the stochasticity from the network parameters, creating a differentiable path for gradients. The entire VAE schematic is illustrated in Figure 2.6 VAE Schematic adapted from [38]. .

<details>

<summary>x6.png Details</summary>

### Visual Description

## Diagram: Variational Autoencoder (VAE) Architecture

### Overview

The image depicts a diagram of a Variational Autoencoder (VAE) architecture. It illustrates the flow of data from an input image through an encoder, a latent space representation, a decoder, and finally to a predicted output image.

### Components/Axes

* **Input-Image (X):** A blue square on the left, representing the input image.

* **Encoder:** An orange trapezoid labeled "ENCODER". The encoder transforms the input image into a latent representation. The function below the encoder is labeled as q<sub>φ</sub>(z|x).

* **Latent-Vector Generated from X (Z):** A gray rectangle in the center, representing the latent vector. The equation above the latent vector is z = μ<sub>φ</sub>(x) + σ<sub>φ</sub>(x) ⊙ ε.

* **Decoder:** A red trapezoid labeled "DECODER". The decoder transforms the latent vector back into an image. The function below the decoder is labeled as p<sub>θ</sub>(x|z).

* **Predicted-Image from Z (X̂):** A blue square on the right, representing the predicted output image.

### Detailed Analysis

The diagram shows the following flow:

1. An input image (X) is fed into the encoder.

2. The encoder maps the input image to a latent space, represented by the latent vector (Z). The latent vector is generated from X. The equation z = μ<sub>φ</sub>(x) + σ<sub>φ</sub>(x) ⊙ ε describes how the latent vector z is constructed from the mean μ<sub>φ</sub>(x) and standard deviation σ<sub>φ</sub>(x) of the encoder's output, combined with a random noise term ε.

3. The latent vector (Z) is then fed into the decoder.

4. The decoder reconstructs the image from the latent vector, producing a predicted image (X̂).

### Key Observations

* The diagram illustrates the core components of a VAE: encoder, latent space, and decoder.

* The encoder and decoder are represented as trapezoids, suggesting a transformation or compression/decompression process.

* The latent space is represented as a rectangle, indicating a lower-dimensional representation of the input data.

* The arrows indicate the flow of data through the network.

### Interpretation

The diagram illustrates the fundamental principle of a Variational Autoencoder (VAE). The VAE learns a probabilistic mapping from the input data to a latent space, and then learns to reconstruct the input data from this latent representation. The latent space is designed to capture the underlying structure and variability of the input data. The equation z = μ<sub>φ</sub>(x) + σ<sub>φ</sub>(x) ⊙ ε highlights the probabilistic nature of the latent space, where the latent vector z is sampled from a distribution parameterized by the encoder's output (mean μ<sub>φ</sub>(x) and standard deviation σ<sub>φ</sub>(x)) and a random noise term ε. This allows the VAE to generate new data points by sampling from the latent space and decoding them.

</details>

Figure 2.6: Schematic of the Variational Autoencoder (VAE) architecture.

A common modification to the VAE objective is the introduction of a hyperparameter $\beta$ to scale the KL divergence term, a model known as a $\beta$ -VAE [39].

$$

\mathcal{L}_{\beta\text{-VAE}}=\mathbb{E}_{q_{\phi}(\boldsymbol{z}|\mathbf{x})}[\log p_{\theta}(\mathbf{x}|\boldsymbol{z})]-\beta\cdot D_{\mathbb{KL}}(q_{\phi}(\boldsymbol{z}|\mathbf{x})||p(\boldsymbol{z}))

$$

This can be a crucial tool for preventing posterior collapse, a failure mode where the KL term is minimised too aggressively, causing the latent variables to become uninformative.

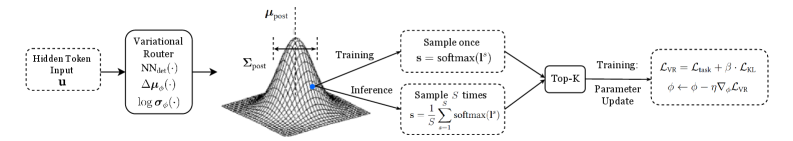

This amortised encoder-decoder architecture provides a direct conceptual blueprint for the Variational Routers developed in Section 4.3.

Chapter 3 Motivation

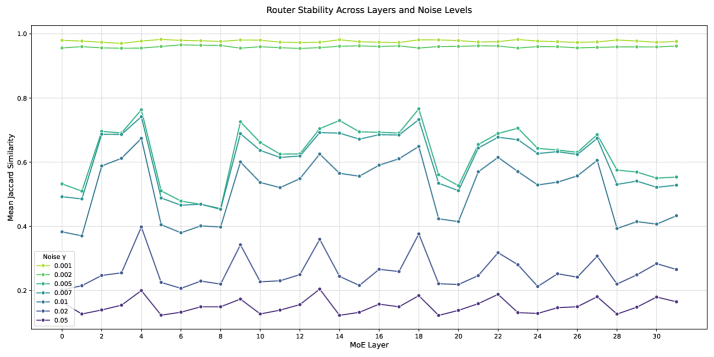

This chapter outlines two motivational experiments designed to understand the limitations of deterministic routing strategies in current MoE-based language models. The results reveal a fundamental brittleness in the standard routing mechanism under purturbation, while also demonstrating the clear potential of introducing stochasticity. Besides, since current LLMs are stacked with multiple MoE layers, the experiments are conducted across the network’s depth to identify which layers are most sensitive to these issues. Together, these findings motivate the central goal of this thesis: to develop a principled Bayesian routing approach for better uncertainty quantification, aiming to achieve robust expert selection and calibrated output confidence.

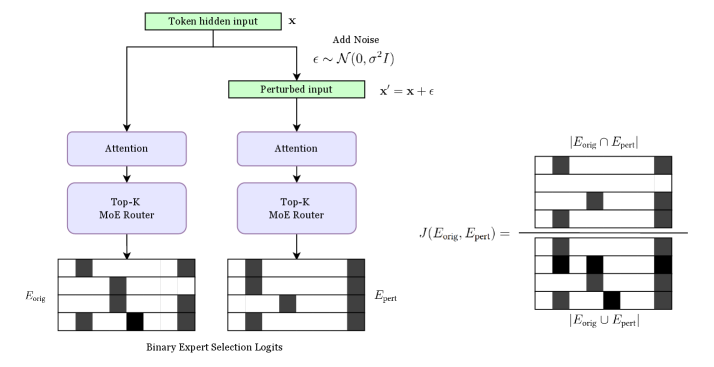

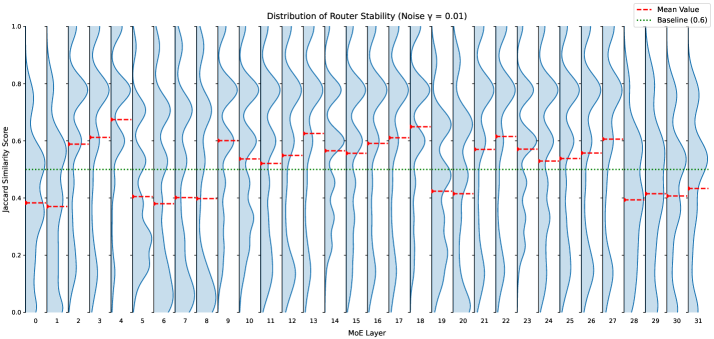

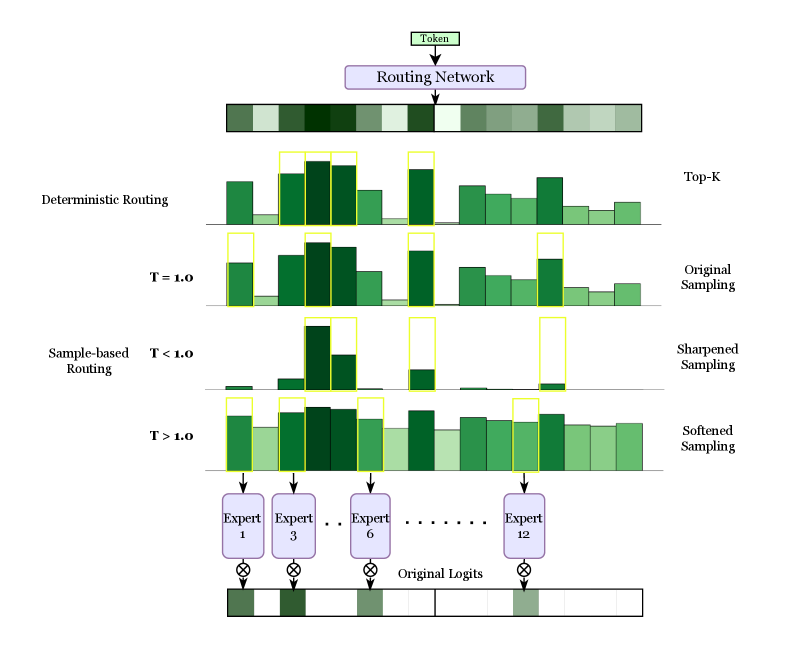

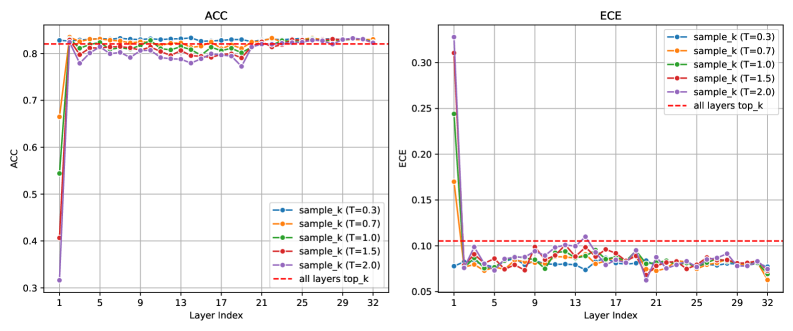

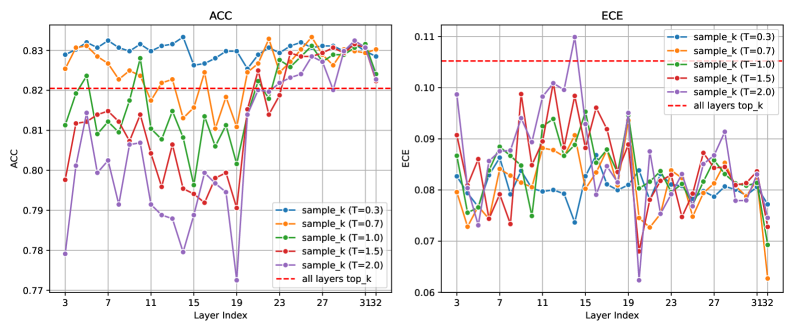

3.1 Motivation 1: Brittleness of Deterministic Routing

Our first experiment investigates a fundamental hypothesis: if a router has learned a robust mapping from input representations to expert selections, its decisions should be stable under minimal, non-semantic perturbations. A significant change in expert selection in response to meaningless noise would reveal that the routing mechanism is brittle and inherently unreliable. This section details the experiment designed to quantify this brittleness across the depth of the network.

3.1.1 Methodology

The experiment is conducted on our fine-tuned MAP baseline model using a randomly sampled subset of data from our In-Domain (ID) test set. The experimental methodology is illustrated in Figure 3.1.

To test stability, we introduce a minimal perturbation to the input of each MoE transformer layer. For each token embedding $\mathbf{x}$ , a perturbed version $\mathbf{x^{\prime}}$ is generated by adding Gaussian noise:

$$

\mathbf{x^{\prime}}=\mathbf{x}+\epsilon,\quad\text{where }\epsilon\sim\mathcal{N}(0,\sigma^{2}I)

$$

To ensure the noise is meaningful yet non-semantic, the choice of standard deviation $\sigma$ is in proportion to the average L2 norm of the token embeddings, $\bar{L}$ . We test multiple noise levels defined by a scaling factor $\gamma$ :

$$

\sigma=\gamma\cdot\bar{L},\quad\text{where }\gamma\in\{0.001,0.002,0.005,0.007,0.01,0.02,0.05\}

$$

For each token and for each noise level $\gamma$ , we record the set of $K$ experts selected for the original input ( $E_{\text{orig}}$ ) and the perturbed input ( $E_{\text{pert}}$ ) at every MoE layer. To quantify the change in expert selection, we compute the Jaccard Similarity between these two sets:

$$

J(E_{\text{orig}},E_{\text{pert}})=\frac{|E_{\text{orig}}\cap E_{\text{pert}}|}{|E_{\text{orig}}\cup E_{\text{pert}}|}

$$

A score of 1.0 indicates perfect stability, while a score of 0.0 indicates a complete change in the selected experts.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Diagram: MoE Router with Noise Injection

### Overview

The image is a diagram illustrating a process involving a Token hidden input, the addition of noise to create a Perturbed input, and the subsequent routing of both inputs through Attention and Top-K MoE Router modules. The diagram also visualizes the binary expert selection logits for both the original and perturbed inputs, and presents a formula for calculating the Jaccard index between them.

### Components/Axes

* **Top-Left:** "Token hidden input" (green box)

* Multiplication symbol "x" to the right of the box.

* **Top-Right:** "Add Noise"

* Equation: "ε ~ N(0, σ^2 I)"

* **Mid-Top:** "Perturbed input" (green box)

* Equation: "x' = x + ε"

* **Middle-Left:** "Attention" (blue box)

* **Middle-Right:** "Attention" (blue box)

* **Mid-Bottom Left:** "Top-K MoE Router" (blue box)

* **Mid-Bottom Right:** "Top-K MoE Router" (blue box)

* **Bottom-Left:** "Eorig" (label)

* **Bottom-Right:** "Epert" (label)

* **Bottom:** "Binary Expert Selection Logits" (label)

* **Far Right:** Jaccard Index Formula: "J(Eorig, Epert) = |Eorig ∩ Epert| / |Eorig ∪ Epert|"

* Numerator: "|Eorig ∩ Epert|" with a visual representation of intersecting expert selections.

* Denominator: "|Eorig ∪ Epert|" with a visual representation of the union of expert selections.

### Detailed Analysis

The diagram depicts a process where an initial "Token hidden input" is subjected to noise injection, resulting in a "Perturbed input." Both the original and perturbed inputs are then processed through identical pathways: an "Attention" module followed by a "Top-K MoE Router." The outputs of these routers are visualized as binary expert selection logits, represented by horizontal bars with filled (dark) and empty (white) segments. The Jaccard index formula on the right quantifies the similarity between the expert selections of the original and perturbed inputs.

**Left Branch (Original Input):**

* **Eorig (Binary Expert Selection Logits):**

* Row 1: White, Dark, White, White, Dark

* Row 2: White, White, Dark, White, White

* Row 3: White, White, White, White, Dark

* Row 4: Dark, Dark, Dark, White, Dark

* Row 5: White, White, White, Dark, Dark

**Right Branch (Perturbed Input):**

* **Epert (Binary Expert Selection Logits):**

* Row 1: White, Dark, White, White, White

* Row 2: White, White, Dark, White, White

* Row 3: White, White, White, White, Dark

* Row 4: White, Dark, White, White, Dark

* Row 5: White, White, White, Dark, Dark

**Jaccard Index Components:**

* **|Eorig ∩ Epert| (Intersection):**

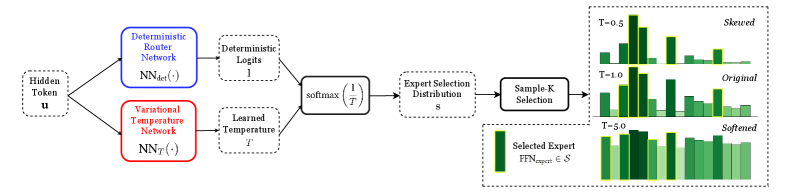

* Row 1: White, Dark, White, White, White