# Group-Relative REINFORCE Is Secretly an Off-Policy Algorithm: Demystifying Some Myths About GRPO and Its Friends

Abstract

Off-policy reinforcement learning (RL) for large language models (LLMs) is attracting growing interest, driven by practical constraints in real-world applications, the complexity of LLM-RL infrastructure, and the need for further innovations of RL methodologies. While classic REINFORCE and its modern variants like Group Relative Policy Optimization (GRPO) are typically regarded as on-policy algorithms with limited tolerance of off-policyness, we present in this work a first-principles derivation for group-relative REINFORCE without assuming a specific training data distribution, showing that it admits a native off-policy interpretation. This perspective yields two general principles for adapting REINFORCE to off-policy settings: regularizing policy updates, and actively shaping the data distribution. Our analysis demystifies some myths about the roles of importance sampling and clipping in GRPO, unifies and reinterprets two recent algorithms — Online Policy Mirror Descent (OPMD) and Asymmetric REINFORCE (AsymRE) — as regularized forms of the REINFORCE loss, and offers theoretical justification for seemingly heuristic data-weighting strategies. Our findings lead to actionable insights that are validated with extensive empirical studies, and open up new opportunities for principled algorithm design in off-policy RL for LLMs. Source code for this work is available at https://github.com/modelscope/Trinity-RFT/tree/main/examples/rec_gsm8k. footnotetext: Equal contribution. Contact: chaorui@ucla.edu, chenyanxi.cyx@alibaba-inc.com footnotetext: UCLA. Work done during an internship at Alibaba Group. footnotetext: Alibaba Group.

1 Introduction

The past few years have witnessed rapid progress in reinforcement learning (RL) for large language models (LLMs). This began with reinforcement learning from human feedback (RLHF) (Bai et al., 2022; Ouyang et al., 2022) that aligns pre-trained LLMs with human preferences, followed by reasoning-oriented RL that enables LLMs to produce long chains of thought (OpenAI, 2024; DeepSeek-AI, 2025; Kimi-Team, 2025b; Zhang et al., 2025b). More recently, agentic RL (Kimi-Team, 2025a; Gao et al., 2025; Zhang et al., 2025a) aims to train LLMs for agentic capabilities such as tool use, long-horizon planning, and multi-step task execution in dynamic environments.

Alongside these developments, off-policy RL has been attracting growing interest. In the “era of experience” (Silver and Sutton, 2025), LLM-powered agents need to be continually updated through interaction with the environment. Practical constraints in real-world deployment and the complexity of LLM-RL infrastructure often render on-policy training impractical (Noukhovitch et al., 2025): rollout generation and model training can proceed at mismatched speeds, data might be collected from different policies, reward feedback might be irregular or delayed, and the environment may be too costly or unstable to query for fresh trajectories. Moreover, in pursuit of higher sample efficiency and model performance, it is desirable to go beyond the standard paradigm of independent rollout sampling, e.g., via replaying past experiences (Schaul et al., 2016; Rolnick et al., 2019; An et al., 2025), synthesizing higher-quality experiences based on auxiliary information (Da et al., 2025; Liang et al., 2025; Guo et al., 2025), or incorporating expert demonstrations into online RL (Yan et al., 2025; Zhang et al., 2025c) — all of which incur off-policyness.

However, the prominent algorithms in LLM-RL — Proximal Policy Optimization (PPO) (Schulman et al., 2017) and Group Relative Policy Optimization (GRPO) (Shao et al., 2024) — are essentially on-policy methods: as modern variants of REINFORCE (Williams, 1992), their fundamental rationale is to produce unbiased estimates of the policy gradient, which requires fresh data sampled from the current policy. PPO and GRPO can handle a limited degree of off-policyness via importance sampling, but require that the current policy remains sufficiently close to the behavior policy. Truly off-policy LLM-RL often demands ad-hoc analysis and algorithm design; worse still, as existing RL infrastructure (Sheng et al., 2024; Hu et al., 2024; von Werra et al., 2020; Wang et al., 2025; Pan et al., 2025; Fu et al., 2025a) is typically optimized for REINFORCE-style algorithms, their support for specialized off-policy RL algorithms could be limited. All these have motivated our investigation into principled and infrastructure-friendly algorithm design for off-policy RL.

Core finding: a native off-policy interpretation for group-relative REINFORCE.

Consider a one-step RL setting and a group-relative variant of REINFORCE that, like in GRPO, assumes access to multiple responses $\{y_{1},...,y_{K}\}$ for the same prompt $x$ and use the group mean reward $\overline{r}$ as the baseline in advantage calculation. Each response is a sequence of tokens $y_{i}=(y^{1}_{i},y^{2}_{i},...)$ , and receives a response-level reward $r_{i}=r(x,y_{i})$ . Let $\pi_{\bm{\theta}}(·|x)$ denote an autoregressive policy parameterized by $\bm{\theta}$ . The update rule for each iteration of group-relative REINFORCE is $\bm{\theta}^{\prime}=\bm{\theta}+\eta\bm{g}$ , where $\eta$ is the learning rate, and $\bm{g}$ is the sum of updates from multiple prompts and their corresponding responses. For a specific prompt $x$ , the update would be For notational simplicity and consistency, we use the same normalization factor $1/K$ for both response-wise and token-wise formulas in Eq. (1a) and (1b). For practical implementation, the gradient is calculated with samples from a mini-batch, and typically normalized by the total number of response tokens. This mismatch does not affect our theoretical studies in this work. Interestingly, our analysis of REINFORCE in this work provides certain justifications for calculating the token-mean loss within a mini-batch, instead of first taking the token-mean loss within each sequence and then taking the average across sequences (Shao et al., 2024); our perspective is complementary to the rationales explained in prior works like DAPO (Yu et al., 2025), although a deeper understanding of this aspect is beyond our current focus.

$$

\displaystyle\bm{g}\big(\bm{\theta};x,\{y_{i},r_{i}\}_{1\leq i\leq K}\big) \displaystyle=\frac{1}{K}\sum_{1\leq i\leq K}(r_{i}-\overline{r})\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}\,|\,x)\quad\qquad\qquad\text{(response-wise)} \displaystyle=\frac{1}{K}\sum_{1\leq i\leq K}\sum_{1\leq t\leq|y_{i}|}(r_{i}-\overline{r})\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})\quad\text{(token-wise)}.

$$

Here, the response-wise and token-wise formulas are linked by the elementary decomposition $\log\pi_{\bm{\theta}}(y_{i}\,|\,x)=\sum_{t}\log\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})$ , where $y^{<t}_{i}$ denotes the first $t-1$ tokens of $y_{i}$ .

A major finding of this work is that group-relative REINFORCE admits a native off-policy interpretation. We establish this in Section 2 via a novel, first-principles derivation that makes no explicit assumption about the sampling distribution of the responses $\{y_{i}\}$ , in contrast to the standard policy gradient theory. Our derivation provides a new perspective for understanding how REINFORCE makes its way towards the optimal policy by constructing a series of surrogate objectives and taking gradient steps for the corresponding surrogate losses. Such analysis can be extended to multi-step RL settings as well, with details deferred to Appendix A.

Implications: principles and concrete methods for augmenting REINFORCE.

While the proposed off-policy interpretation does not imply that vanilla REINFORCE should converge to the optimal policy when given arbitrary training data (which is too good to be true), our analysis in Section 3 identifies two general principles for augmenting REINFORCE in off-policy settings: (1) regularize the policy update step to stabilize learning, and (2) actively shape the training data distribution to steer the policy update direction. As we will see in Section 4, this unified framework demystifies common myths about the rationales behind many recent RL algorithms: (1) It reveals that in GRPO, clipping (as a form of regularization) plays a much more essential role than importance sampling, and it is often viable to enlarge the clipping range far beyond conventional choices for accelerated convergence without sacrificing stability. (2) Two recent algorithms — Kimi’s Online Policy Mirror Descent (OPMD) (Kimi-Team, 2025b) and Meta’s Asymmetric REINFORCE (AsymRE) (Arnal et al., 2025) — can be reinterpreted as adding a regularization loss to the standard REINFORCE loss, which differs substantially from the rationales explained in their original papers. (3) Our framework justifies heuristic data-weighting strategies like discarding certain low-reward samples or up-weighting high-reward ones, even though they violate assumptions in policy gradient theory and often require ad-hoc analysis in prior works.

Extensive empirical studies in Section 4 and Appendix B validate these insights and demonstrate the efficacy and/or limitations of various algorithms under investigation. By revealing the off-policy nature of group-relative REINFORCE, our work opens up new opportunities for principled, infrastructure-friendly algorithm design in off-policy LLM-RL with solid theoretical foundation.

2 Two interpretations for REINFORCE

Consider the standard reward-maximization objective in reinforcement learning:

$$

\max_{\bm{\theta}}\quad J(\bm{\theta})\coloneqq{\mathbb{E}}_{x\sim D}\;J(\bm{\theta};x),\quad\text{where}\quad J(\bm{\theta};x)\coloneqq{\mathbb{E}}_{y\sim\pi_{\bm{\theta}}(\cdot|x)}\;r(x,y), \tag{2}

$$

where $D$ is a distribution over the prompts $x$ .

We first recall the standard on-policy interpretation of REINFORCE in Section 2.1, and then present our proposed off-policy interpretation in Section 2.2.

2.1 Recap: on-policy interpretation via policy gradient theory

In the classical on-policy view, REINFORCE updates policy parameters $\bm{\theta}$ using samples that are drawn directly from $\pi_{\bm{\theta}}$ . The policy gradient theorem (Sutton et al., 1998) tells us that

$$

\nabla_{\bm{\theta}}J(\bm{\theta};x)=\nabla_{\bm{\theta}}\;{\mathbb{E}}_{y\sim\pi_{\bm{\theta}}(\cdot|x)}\;r(x,y)={\mathbb{E}}_{y\sim\pi_{\bm{\theta}}(\cdot|x)}\!\Big[\big(r(x,y)-b(x)\big)\,\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y|x)\Big],

$$

where $b(x)$ is a baseline for reducing variance when $∇_{\bm{\theta}}J(\bm{\theta};x)$ is estimated with finite samples. If samples are drawn from a different behavior policy $\pi_{\textsf{b}}$ instead, the gradient can be rewritten as

| | $\displaystyle∇_{\bm{\theta}}J(\bm{\theta};x)$ | $\displaystyle={\mathbb{E}}_{y\sim\pi_{\textsf{b}}(·|x)}\!\bigg[\big(r(x,y)-b(x)\big)\,\frac{\pi_{\bm{\theta}}(y\mid x)}{\pi_{\textsf{b}}(y\mid x)}\,∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y\mid x)\bigg].$ | |

| --- | --- | --- | --- |

While the raw importance-sampling weight ${\pi_{\bm{\theta}}(y|x)}/{\pi_{b}(y|x)}$ facilitates unbiased policy gradient estimate, it may be unstable when $\pi_{\bm{\theta}}$ and $\pi_{\textsf{b}}$ diverge. Modern variants of REINFORCE address this by modifying the probability ratios (e.g., via clipping or normalization), which achieves better bias-variance trade-off in the policy gradient estimate and leads to a stable learning process.

In the LLM context, we have $∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y\,|\,x)=\sum_{t}∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y^{t}\,|\,x,y^{<t})$ , but the response-wise probability ratio $\pi_{\bm{\theta}}(y|x)/\pi_{\textsf{b}}(y|x)$ can blow up or shrink exponentially with the sequence length. Practical implementations typically adopt token-wise probability ratio instead:

| | $\displaystyle\widetilde{g}(\bm{\theta};x)$ | $\displaystyle={\mathbb{E}}_{y\sim\pi_{\textsf{b}}(·|x)}\!\bigg[\big(r(x,y)-b(x)\big)\,\sum_{1≤ t≤|y|}\frac{\pi_{\bm{\theta}}(y^{t}\,|\,x,y^{<t})}{\pi_{\textsf{b}}(y^{t}\,|\,x,y^{<t})}\,∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y^{t}\,|\,x,y^{<t})\bigg].$ | |

| --- | --- | --- | --- |

Although this becomes a biased approximation of $∇_{\bm{\theta}}J(\bm{\theta};x)$ , classical RL theory still offers policy improvement guarantees if $\pi_{\bm{\theta}}$ is sufficiently close to $\pi_{\textsf{b}}$ (Kakade and Langford, 2002; Fragkiadaki, 2018; Schulman et al., 2015, 2017; Achiam et al., 2017).

2.2 A new interpretation: REINFORCE is inherently off-policy

We now provide an alternative off-policy interpretation for group-relative REINFORCE. Let us think of policy optimization as an iterative process $\bm{\theta}_{1},\bm{\theta}_{2},...$ , and focus on the $t$ -th iteration that updates the policy model parameters from $\bm{\theta}_{t}$ to $\bm{\theta}_{t+1}$ . Our derivation consists of three steps: (1) define a KL-regularized surrogate objective, and show that its optimal solution must satisfy certain consistency conditions; (2) define a surrogate loss (with finite samples) that enforces such consistency conditions; and (3) take one gradient step of the surrogate loss, which turns out to be equivalently the group-relative REINFORCE method.

Step 1: surrogate objective and consistency condition.

Consider the following KL-regularized surrogate objective that incentivizes the policy to make a stable improvement over $\pi_{\bm{\theta}_{t}}$ :

$$

\max_{\bm{\theta}}\quad J(\bm{\theta};\pi_{\bm{\theta}_{t}})\coloneqq{\mathbb{E}}_{x\sim D}\Big[{\mathbb{E}}_{y\sim\pi_{\bm{\theta}}(\cdot|x)}[r(x,y)]-\tau\cdot D_{\textsf{KL}}\big(\pi_{\bm{\theta}}(\cdot|x)\,\|\,\pi_{\bm{\theta}_{t}}(\cdot|x)\big)\Big], \tag{3}

$$

where $\tau$ is a regularization coefficient. It is a well-known fact that the optimal policy $\pi$ for this surrogate objective satisfies the following (Nachum et al., 2017; Korbak et al., 2022; Rafailov et al., 2023; Richemond et al., 2024; Kimi-Team, 2025b): for any prompt $x$ and response $y$ ,

$$

\displaystyle\pi(y|x)=\frac{\pi_{\bm{\theta}_{t}}(y|x)e^{r(x,y)/\tau}}{Z(x,\pi_{\bm{\theta}_{t}})},\,\,\text{where}\,\,Z(x,\pi_{\bm{\theta}_{t}})\coloneqq\int\pi_{\bm{\theta}_{t}}(y^{\prime}|x)e^{r(x,y^{\prime})/\tau}\mathop{}\!\mathrm{d}y^{\prime}. \tag{4}

$$

Note that Eq. (4) is equivalent to the following: for any pair of responses $y_{1}$ and $y_{2}$ ,

| | $\displaystyle\frac{\pi(y_{1}|x)}{\pi(y_{2}|x)}=\frac{\pi_{\bm{\theta}_{t}}(y_{1}|x)}{\pi_{\bm{\theta}_{t}}(y_{2}|x)}\exp\bigg(\frac{r(x,y_{1})-r(x,y_{2})}{\tau}\bigg).$ | |

| --- | --- | --- |

Taking logarithm of both sides, we have this pairwise consistency condition:

$$

\displaystyle r_{1}-\tau\cdot\big(\log\pi(y_{1}|x)-\log\pi_{\bm{\theta}_{t}}(y_{1}|x)\big)=r_{2}-\tau\cdot\big(\log\pi(y_{2}|x)-\log\pi_{\bm{\theta}_{t}}(y_{2}|x)\big). \tag{5}

$$

Step 2: surrogate loss with finite samples.

Given a prompt $x$ and $K$ responses $y_{1},...,y_{K}$ , we define the following mean-squared surrogate loss that enforces the consistency condition:

$$

\widehat{L}({\bm{\theta}};x,\pi_{\bm{\theta}_{t}})\coloneqq\frac{1}{K^{2}}\sum_{1\leq i<j\leq K}\frac{(a_{i}-a_{j})^{2}}{(1+\tau)^{2}},\,\,\text{where}\,\,a_{i}\coloneqq r_{i}-\tau\Big(\log\pi_{\bm{\theta}}(y_{i}|x)-\log\pi_{\bm{\theta}_{t}}(y_{i}|x)\Big). \tag{6}

$$

Here, we normalize $a_{i}-a_{j}$ by $1+\tau$ to account for the loss scale. In theory, if this surrogate loss is defined by infinite samples with sufficient coverage of the action space, then its minimizer is the same as the optimal policy for the surrogate objective in Eq. (3).

Step 3: one gradient step of the surrogate loss.

Let us conduct further analysis for $(a_{i}-a_{j})^{2}$ . The trick here is that, if we take only one gradient step of this loss at $\bm{\theta}=\bm{\theta}_{t}$ , then the values of $\log\pi_{\bm{\theta}}(y_{i}|x)-\log\pi_{\bm{\theta}_{t}}(y_{i}|x)$ and $\log\pi_{\bm{\theta}}(y_{j}|x)-\log\pi_{\bm{\theta}_{t}}(y_{j}|x)$ are simply zero. As a result,

| | $\displaystyle∇_{\bm{\theta}}{(a_{i}-a_{j})^{2}}\big|_{\bm{\theta}_{t}}={-2\tau}\,(r_{i}-r_{j})\Big(∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}|x)\big|_{\bm{\theta}_{t}}-∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{j}|x)\big|_{\bm{\theta}_{t}}\Big)\quad\Rightarrow$ | |

| --- | --- | --- |

Putting these back to the surrogate loss defined in Eq. (6), we end up with this policy update step:

$$

\bm{g}\big(\bm{\theta};x,\{y_{i},r_{i}\}_{1\leq i\leq K}\big)=\frac{2\tau}{(1+\tau)^{2}}\cdot\frac{1}{K}\sum_{1\leq i\leq K}\big(r_{i}-\overline{r}\big)\,\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}\,|\,x). \tag{7}

$$

That’s it! We have just derived the group-relative REINFORCE method, but without any on-policy assumption about the distribution of training data $\{x,\{y_{i},r_{i}\}_{1≤ i≤ K}\}$ . The regularization coefficient $\tau>0$ controls the update step size; a larger $\tau$ effectively corresponds to a smaller learning rate.

Summary and remarks.

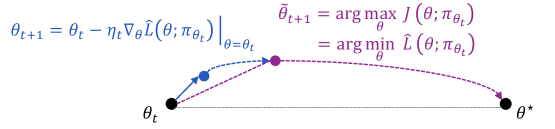

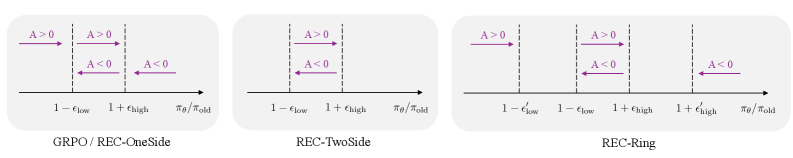

Figure 1 visualizes the proposed interpretation of what REINFORCE is actually doing. The curve going through $\bm{\theta}_{t}→\bm{\theta}_{t+1}→\widetilde{\bm{\theta}}_{t+1}→\bm{\theta}^{\star}$ stands for the ideal optimization trajectory from $\bm{\theta}_{t}$ to the optimal policy model parametrized by $\bm{\theta}^{\star}$ , if the algorithm solves each intermediate surrogate objective $J(\bm{\theta};\pi_{\bm{\theta}_{t}})$ / surrogate loss $\widehat{L}(\bm{\theta};\pi_{\bm{\theta}_{t}})$ exactly at each iteration $t$ . In comparison, REINFORCE is effectively taking a single gradient step of the surrogate loss and immediately moving on to the next iteration $\bm{\theta}_{t+1}$ with a new surrogate objective.

Two remarks are in place. (1) Our derivation of group-relative REINFORCE can be generalized to multi-step RL settings, by replacing a response $y$ in the previous analysis with a full trajectory consisting of multiple turns of agent-environment interaction. For example, regarding the surrogate objective in Eq. (3), we need to replace the response-level reward and KL divergence with their trajectory-level counterparts. Interested readers might refer to Appendix A for the full analysis. (2) The above analysis suggests that we might interpret group-relative REINFORCE from a pointwise or pairwise perspective. While the policy update in Eq. (7) is stated in a pointwise manner, we have also seen that, at each iteration, REINFORCE is implicitly enforcing the pairwise consistency condition in Eq. (5) among multiple responses. This allows us the flexibility to choose whichever perspective that offers more intuition for our analysis later in this work.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Optimization Path

### Overview

The image depicts a visual representation of an optimization process, likely gradient descent, showing the iterative steps towards finding the optimal parameter value (θ*). It combines a graphical illustration of the path taken during optimization with mathematical equations defining the update rule.

### Components/Axes

The diagram consists of:

* A horizontal axis representing the parameter space, labeled with θ<sub>t</sub> on the left and θ<sup>*</sup> on the right.

* A curved path illustrating the optimization trajectory.

* Points along the path, colored differently to indicate the progression of the optimization.

* Arrows indicating the direction of the update step.

* Mathematical equations describing the update rule and the optimization objective.

### Detailed Analysis or Content Details

The equations presented are:

1. θ<sub>t+1</sub> = θ<sub>t</sub> - η∇<sub>θ</sub>L(θ; π<sub>θt</sub>)

2. θ<sub>t+1</sub> = argmax<sub>θ</sub> J(θ; π<sub>θt</sub>)

3. = argmin<sub>θ</sub> L(θ; π<sub>θt</sub>)

Where:

* θ<sub>t</sub> represents the parameter value at time step t.

* θ<sub>t+1</sub> represents the parameter value at the next time step.

* η (eta) is the learning rate.

* ∇<sub>θ</sub>L(θ; π<sub>θt</sub>) is the gradient of the loss function L with respect to the parameter θ, evaluated at θ<sub>t</sub> and given the policy π<sub>θt</sub>.

* J(θ; π<sub>θt</sub>) is the objective function to be maximized.

* L(θ; π<sub>θt</sub>) is the loss function to be minimized.

* π<sub>θt</sub> represents the policy at time step t.

* θ<sup>*</sup> represents the optimal parameter value.

The diagram shows the following steps:

* **Initial Point:** A black circle at θ<sub>t</sub>.

* **First Update:** A blue circle connected to the initial point by an arrow, representing the first update step.

* **Intermediate Point:** A purple circle representing an intermediate parameter value.

* **Final Point:** A black circle at θ<sup>*</sup>, indicating the optimal parameter value.

* **Optimization Path:** A dashed purple curve connecting the intermediate points, illustrating the overall optimization trajectory.

### Key Observations

The diagram illustrates that the optimization process involves iteratively updating the parameter value (θ) by moving in the opposite direction of the gradient of the loss function (∇<sub>θ</sub>L). The learning rate (η) controls the step size. The goal is to reach the optimal parameter value (θ<sup>*</sup>) where the loss function is minimized or the objective function is maximized. The path is not necessarily a straight line, and may involve oscillations or curves as it approaches the optimum.

### Interpretation

This diagram visually explains the core concept of gradient-based optimization algorithms. The equations and the graphical representation work together to convey the iterative nature of the process. The diagram suggests that the optimization process starts from an initial parameter value (θ<sub>t</sub>) and iteratively updates it based on the gradient of the loss function, eventually converging to the optimal parameter value (θ<sup>*</sup>). The choice of learning rate (η) is crucial for the success of the optimization process; a too-large learning rate may cause oscillations or divergence, while a too-small learning rate may lead to slow convergence. The diagram highlights the trade-off between exploration (moving in the direction of the gradient) and exploitation (converging to the optimum). The use of both the mathematical notation and the visual representation makes the concept accessible to a wider audience. The diagram is a simplified representation of a complex process, but it effectively captures the essential elements of gradient-based optimization.

</details>

Figure 1: A visualization of our off-policy interpretation for group-relative REINFORCE. Here $\widehat{L}(\bm{\theta};\pi_{\bm{\theta}_{t}})={\mathbb{E}}_{x\sim\widehat{D}}[\widehat{L}(\bm{\theta};x,\pi_{\bm{\theta}_{t}})]$ , where $\widehat{D}$ is the sampling distribution for prompts, and $\widehat{L}(\bm{\theta};x,\pi_{\bm{\theta}_{t}})$ is the surrogate loss defined in Eq. (6) for a specific prompt $x$ .

3 Pitfalls and augmentations

Although we have provided a native off-policy interpretation for REINFORCE, it certainly does not guarantee convergence to the optimal policy when given arbitrary training data. This section identifies pitfalls that could undermine vanilla REINFORCE, which motivate two principles for augmentations in off-policy settings.

Pitfalls of vanilla REINFORCE.

In Figure 1, we might expect that ideally, (1) $\widetilde{\bm{\theta}}_{t+1}-\bm{\theta}_{t}$ aligns with the direction of $\bm{\theta}^{\star}-\bm{\theta}_{t}$ ; and (2) $\bm{\theta}_{t+1}-\bm{\theta}_{t}$ aligns with the direction of $\widetilde{\bm{\theta}}_{t+1}-\bm{\theta}_{t}$ . One pitfall, however, is that even if both conditions hold, they do not necessarily imply that $\bm{\theta}_{t+1}-\bm{\theta}_{t}$ should align well with $\bm{\theta}^{\star}-\bm{\theta}_{t}$ . That is, $\langle\widetilde{\bm{\theta}}_{t+1}-\bm{\theta}_{t},\bm{\theta}^{\star}-\bm{\theta}_{t}\rangle>0$ and $\langle\bm{\theta}_{t+1}-\bm{\theta}_{t},\widetilde{\bm{\theta}}_{t+1}-\bm{\theta}_{t}\rangle>0$ do not imply $\langle\bm{\theta}_{t+1}-\bm{\theta}_{t},\bm{\theta}^{\star}-\bm{\theta}_{t}\rangle>0$ . Moreover, it is possible that $\bm{\theta}_{t+1}-\bm{\theta}_{t}$ might not align well with $\widetilde{\bm{\theta}}_{t+1}-\bm{\theta}_{t}$ . Recall from Eq. (7) that, from $\bm{\theta}_{t}$ to $\bm{\theta}_{t+1}$ , we take one gradient step for a surrogate loss that enforces the pairwise consistency condition among a finite number of samples. Given the enormous action space of an LLM, some implicit assumptions about the training data (e.g., balancedness and coverage) would be needed to ensure that the gradient aligns well with the direction towards the optimum of the surrogate objective, namely $\widetilde{\bm{\theta}}_{t+1}-\bm{\theta}_{t}$ .

In fact, without a mechanism that ensures boundedness of policy update under a sub-optimal data distribution, vanilla REINFORCE could eventually converge to a sub-optimal policy. Let us show this with a minimal example in a didactic 3-arm bandit setting. Suppose that there are three actions $\{a_{j}\}_{1≤ j≤ 3}$ with rewards $\{r(a_{j})\}$ . Consider $K$ training samples $\{y_{i}\}_{1≤ i≤ K}$ , where $y_{i}∈\{a_{j}\}_{1≤ j≤ 3}$ is sampled from some behavior policy $\pi_{\textsf{b}}$ . Denote by $\mu_{r}\coloneqq\sum_{1≤ j≤ 3}\pi_{\textsf{b}}(a_{j})r(a_{j})$ the expected reward under $\pi_{\textsf{b}}$ , and $\overline{r}\coloneqq\sum_{i}r(y_{i})/K$ the average reward of training samples. We consider the softmax parameterization, i.e., $\pi_{\bm{\theta}}(a_{j})=e^{\theta_{j}}/\sum_{\ell}e^{\theta_{\ell}}$ for a policy parameterized by $\bm{\theta}∈{\mathbb{R}}^{3}$ . A standard fact is that $∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(a_{j})=\bm{e}_{j}-\pi_{\bm{\theta}}$ , where $\bm{e}_{j}∈{\mathbb{R}}^{3}$ is a one-hot vector with value 1 at entry $j$ . Now we examine the policy update direction of REINFORCE, as $K→∞$ :

| | $\displaystyle\bm{g}$ | $\displaystyle=\frac{1}{K}\sum_{1≤ i≤ K}(r(y_{i})-\overline{r})∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i})→\sum_{1≤ j≤ 3}\pi_{\textsf{b}}(a_{j})(r(a_{j})-\mu_{r})∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(a_{j})$ | |

| --- | --- | --- | --- |

For example, if $\bm{r}=[r(a_{j})]_{1≤ j≤ 3}=[0,0.8,1]$ and $\pi_{\textsf{b}}=[0.3,0.6,0.1]$ , then basic calculation says $\mu_{r}=0.58$ , $\bm{r}-\mu_{r}=[-0.58,0.22,0.42]$ , and finally $g_{2}=\pi_{\textsf{b}}(a_{2})(r(a_{2})-\mu_{r})>\pi_{\textsf{b}}(a_{3})(r(a_{3})-\mu_{r})=g_{3}$ , which implies that the policy will converge to the sub-optimal action $a_{2}$ .

Two principles for augmenting REINFORCE.

The identified pitfalls of vanilla REINFORCE suggest two general principles for augmenting REINFORCE in off-policy scenarios:

- One is to regularize the policy update step, ensuring that the optimization trajectory remains bounded and reasonably stable when given training data from a sub-optimal distribution;

- The other is to steer the policy update direction, by actively weighting the training samples rather than naively using them as is.

These two principles are not mutually exclusive, and might be integrated within a single algorithm. We will see in the next section that many RL algorithms can be viewed as instantiations of them.

4 Rethinking the rationales behind recent RL algorithms

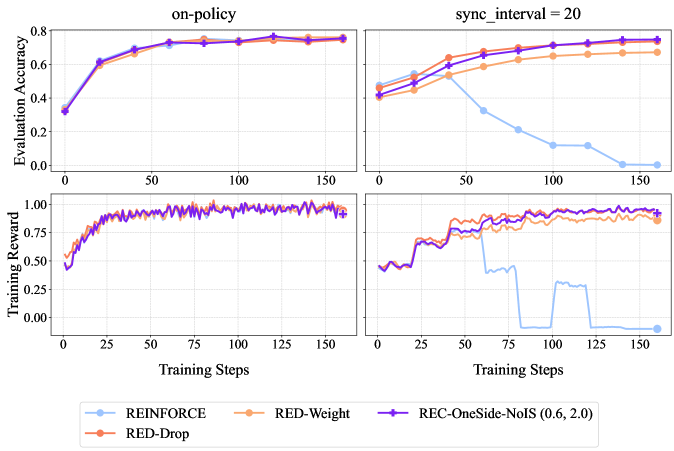

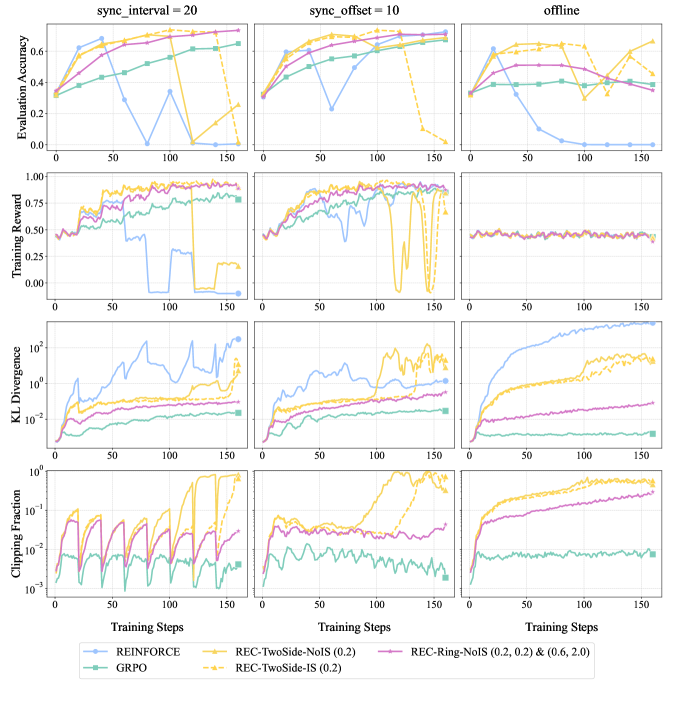

This section revisits various RL algorithms through a unified lens — the native off-policy interpretation of group-relative REINFORCE and its augmentations — and demystifies some common myths about their working mechanisms. Our main findings are summarized as follows:

| ID | Finding | Analysis & Experiments |

| --- | --- | --- |

| F1 | GRPO’s effectiveness in off-policy settings stems from clipping as regularization rather than importance sampling. A wider clipping range than usual often accelerates training without harming stability. | Section 4.1, Figures 3, 4, 6, 9, 10 |

| F2 | Kimi’s OPMD and Meta’s AsymRE can be interpreted as REINFORCE loss + regularization loss, a perspective that is complementary to the rationales in their original papers. | Section 4.2, Figure 11 |

| F3 | Data-oriented heuristics — such as dropping excess negatives or up-weighting high-reward rollouts — fit naturally into our off-policy view and show strong empirical performance. | Section 4.3, Figures 5, 6, 7 |

Experimental setup.

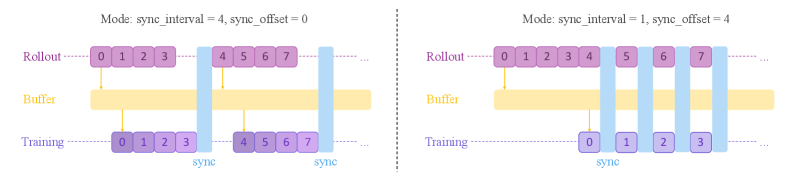

We primarily consider two off-policy settings in our experiments, specified by the sync_interval and sync_offset parameters in the Trinity-RFT framework (Pan et al., 2025). sync_interval specifies the number of generated rollout batches (each corresponding to one gradient step) between two model synchronization operations, while sync_offset specifies the relative lag between the generation and consumption of each batch. These parameters can be deliberately set to large values in practice, for improving training efficiency via pipeline parallelism and reduced frequency of model synchronization. In addition, $\texttt{sync\_offset}>1$ serves to simulate realistic scenarios where environmental feedback could be delayed. We also consider a stress-test setting that only allows access to offline data generated by the initial policy model. See Figure 2 for an illustration of these off-policy settings, and Appendix B.2 for further details.

We conduct experiments on math reasoning tasks like GSM8k (Cobbe et al., 2021), MATH (Hendrycks et al., 2021) and Guru (math subset) (Cheng et al., 2025), as well as tool-use tasks like ToolACE (Liu et al., 2025a). We consider models of different families and scales, including Qwen2.5-1.5B-Instruct, Qwen2.5-7B-Instruct (Qwen-Team, 2025), Llama-3.1-8B-Instruct, and Llama-3.2-3B-Instruct (Dubey et al., 2024). Additional experiment details can be found in Appendix B.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Synchronization Modes

### Overview

The image presents a diagram illustrating two different synchronization modes for a system involving "Rollout", "Buffer", and "Training" components. Each mode is defined by a `sync_interval` and `sync_offset` parameter. The diagram visually represents how data flows and synchronizes between these components under each mode.

### Components/Axes

The diagram consists of three horizontal components:

* **Rollout:** Represented by a row of numbered boxes (0-7).

* **Buffer:** Represented by a long yellow bar.

* **Training:** Represented by a row of numbered boxes (0-7 in the left diagram, 0-3 in the right diagram).

Vertical blue bars indicate synchronization points. The diagram is split into two sections, each representing a different mode:

* **Mode 1:** `sync_interval = 4`, `sync_offset = 0`

* **Mode 2:** `sync_interval = 1`, `sync_offset = 4`

The text "sync" is written below the synchronization points.

### Detailed Analysis or Content Details

**Mode 1: `sync_interval = 4`, `sync_offset = 0`**

* **Rollout:** Boxes numbered 0 through 7 are shown.

* **Buffer:** A long yellow bar spans the width of the diagram.

* **Training:** Boxes numbered 0 through 7 are shown.

* **Synchronization:** Two vertical blue bars are present. The first synchronizes boxes 0, 1, 2, and 3 in Rollout with boxes 0, 1, 2, and 3 in Training. The second synchronizes boxes 4, 5, 6, and 7 in Rollout with boxes 4, 5, 6, and 7 in Training.

**Mode 2: `sync_interval = 1`, `sync_offset = 4`**

* **Rollout:** Boxes numbered 0 through 7 are shown.

* **Buffer:** A long yellow bar spans the width of the diagram.

* **Training:** Boxes numbered 0 through 3 are shown.

* **Synchronization:** Five vertical blue bars are present. The synchronization points are at boxes 0, 1, 2, and 3 in Rollout with boxes 0, 1, 2, and 3 in Training. The fifth synchronization point is at box 4 in Rollout.

### Key Observations

* The `sync_interval` determines how frequently synchronization occurs. A smaller `sync_interval` (Mode 2) results in more frequent synchronization.

* The `sync_offset` determines the starting point for synchronization.

* The number of boxes in the Training component differs between the two modes. Mode 1 has 8 boxes, while Mode 2 has 4.

* The Buffer component appears to act as a temporary storage or delay mechanism.

### Interpretation

The diagram illustrates different strategies for synchronizing data between a rollout process and a training process. The `sync_interval` and `sync_offset` parameters control the granularity and timing of this synchronization.

Mode 1, with a larger `sync_interval` and zero offset, performs synchronization less frequently, potentially reducing overhead but increasing the risk of divergence between the rollout and training data.

Mode 2, with a smaller `sync_interval` and a non-zero offset, performs synchronization more frequently, potentially reducing divergence but increasing overhead. The reduced number of boxes in the Training component suggests that the training process may be truncated or operate on a smaller subset of the rollout data in this mode.

The Buffer component likely serves to smooth out the data flow and accommodate the differences in synchronization frequency between the two modes. The diagram suggests a trade-off between synchronization frequency, computational overhead, and the amount of data used for training. The choice of synchronization mode would depend on the specific requirements of the application and the characteristics of the data.

</details>

Figure 2: A visualization of the rollout-training scheduling in sync_interval = 4 (left) or sync_offset = 4 (right) modes. Each block denotes one batch of samples for one gradient step, and the number in it denotes the corresponding batch id. Training blocks are color-coded by data freshness, with lighter color indicating increasing off-policyness.

4.1 Demystifying myths about GRPO

Recall that in GRPO, the advantage for each response $y_{i}$ is defined as $A_{i}={(r_{i}-\overline{r})}/{\sigma_{r}}$ , where $\overline{r}$ and $\sigma_{r}$ denote the within-group mean and standard deviation of the rewards $\{r_{i}\}_{1≤ i≤ K}$ respectively. We consider the practical implementation of GRPO with token-wise importance-sampling (IS) weighting and clipping, whose loss function for a specific prompt $x$ and responses $\{y_{i}\}$ is In our experiments with GRPO, we neglect KL regularization with respect to an extra reference model, or entropy regularization that encourages output diversity. Recent works (Yu et al., 2025; Liu et al., 2025b) have shown that these practical techniques are often unnecessary.

| | $\displaystyle\widehat{L}=\frac{1}{K}\sum_{1≤ i≤ K}\sum_{1≤ t≤|y_{i}|}\min\bigg\{\frac{\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}{\pi_{\textsf{old}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}A_{i},\,\operatorname{clip}\Big(\frac{\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}{\pi_{\textsf{old}}(y_{i}^{t}\,|\,x,y_{i}^{<t})},1-\epsilon_{\textsf{low}},1+\epsilon_{\textsf{high}}\Big)A_{i}\bigg\},$ | |

| --- | --- | --- |

where $\pi_{\textsf{old}}$ denotes the older policy version that generated this group of rollout data. The gradient of this loss can be written as (Schulman et al., 2017)

$$

\bm{g}\big(\bm{\theta};x,\{y_{i},r_{i}\}_{1\leq i\leq K}\big)=\frac{1}{K}\sum_{1\leq i\leq K}\sum_{1\leq t\leq|y_{i}|}\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})\cdot A_{i}\frac{\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}{\pi_{\textsf{old}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}M_{i}^{t},

$$

where $M_{i}^{t}$ denotes a one-side clipping mask:

$$

M_{i}^{t}=\mathbbm{1}\bigg(A_{i}>0,\;\frac{\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}{\pi_{\textsf{old}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}\leq 1+\epsilon_{\textsf{high}}\bigg)+\mathbbm{1}\bigg(A_{i}<0,\;\frac{\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}{\pi_{\textsf{old}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}\geq 1-\epsilon_{\textsf{low}}\bigg). \tag{8}

$$

Ablation study with the REC series.

To isolate the roles of importance sampling and clipping, we consider a series of RE INFORCE-with- C lipping (REC) algorithms. Due to space limitation, we defer our studies of more clipping mechanisms to Appendix B.3, and focus on REC with one-side clipping in this section. More specifically, REC-OneSide-IS removes advantage normalization in GRPO (to reduce variability), and REC-OneSide-NoIS further removes IS weighting:

| | $\displaystyle\text{{REC-OneSide-IS}:}\;\;\bm{g}$ | $\displaystyle=\frac{1}{K}\sum_{1≤ i≤ K}\sum_{1≤ t≤|y_{i}|}∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})·(r_{i}-\overline{r})\,\frac{\pi_{\bm{\theta}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}{\pi_{\textsf{old}}(y_{i}^{t}\,|\,x,y_{i}^{<t})}\,M_{i}^{t},$ | |

| --- | --- | --- | --- |

<details>

<summary>x3.png Details</summary>

### Visual Description

## Chart: Training Performance Comparison

### Overview

The image presents a comparison of training performance for different reinforcement learning algorithms under varying synchronization conditions. It consists of six line charts arranged in a 2x3 grid. The top row displays "Evaluation Accuracy" versus "Training Steps", while the bottom row shows "Training Reward" versus "Training Steps". The charts are grouped by "sync_interval" and "sync_offset" settings, with the final column representing an "offline" condition.

### Components/Axes

* **X-axis:** "Training Steps" (Scale: 0 to 150, increments of 25)

* **Y-axis (Top Row):** "Evaluation Accuracy" (Scale: 0.2 to 0.8, increments of 0.1)

* **Y-axis (Bottom Row):** "Training Reward" (Scale: 0.0 to 1.0, increments of 0.1)

* **Titles (Columns):**

* "sync\_interval = 20"

* "sync\_offset = 10"

* "offline"

* **Legend:** Located at the bottom-center of the image.

* REINFORCE (Light Blue Solid Line)

* GRPO (Light Green Solid Line)

* REC-OneSide-NoIS (0.2) (Light Purple Solid Line)

* REC-OneSide-IS (0.2) (Light Purple Dotted Line)

* REC-OneSide-NoIS (0.6, 2.0) (Dark Purple Solid Line)

* REC-OneSide-IS (0.6, 2.0) (Dark Purple Dotted Line)

### Detailed Analysis or Content Details

**Column 1: sync\_interval = 20**

* **Evaluation Accuracy:**

* REINFORCE: Starts at approximately 0.3, fluctuates significantly, reaching a peak of around 0.6 at step 75, then declines to approximately 0.45 by step 150.

* GRPO: Starts at approximately 0.3, increases steadily to around 0.65 by step 150.

* REC-OneSide-NoIS (0.2): Starts at approximately 0.4, increases steadily to around 0.7 by step 150.

* REC-OneSide-IS (0.2): Starts at approximately 0.4, increases steadily to around 0.7 by step 150.

* REC-OneSide-NoIS (0.6, 2.0): Starts at approximately 0.35, increases steadily to around 0.7 by step 150.

* REC-OneSide-IS (0.6, 2.0): Starts at approximately 0.35, increases steadily to around 0.7 by step 150.

* **Training Reward:**

* REINFORCE: Fluctuates around 0.6, with some dips below 0.5.

* GRPO: Starts around 0.5, drops to approximately 0.2 at step 25, then recovers to around 0.6 by step 150.

* REC-OneSide-NoIS (0.2): Relatively stable around 0.7.

* REC-OneSide-IS (0.2): Relatively stable around 0.7.

* REC-OneSide-NoIS (0.6, 2.0): Relatively stable around 0.7.

* REC-OneSide-IS (0.6, 2.0): Relatively stable around 0.7.

**Column 2: sync\_offset = 10**

* **Evaluation Accuracy:**

* REINFORCE: Starts at approximately 0.3, increases to around 0.65 by step 50, then fluctuates between 0.5 and 0.7.

* GRPO: Starts at approximately 0.3, increases steadily to around 0.7 by step 150.

* REC-OneSide-NoIS (0.2): Starts at approximately 0.4, increases steadily to around 0.75 by step 150.

* REC-OneSide-IS (0.2): Starts at approximately 0.4, increases steadily to around 0.75 by step 150.

* REC-OneSide-NoIS (0.6, 2.0): Starts at approximately 0.35, increases steadily to around 0.75 by step 150.

* REC-OneSide-IS (0.6, 2.0): Starts at approximately 0.35, increases steadily to around 0.75 by step 150.

* **Training Reward:**

* REINFORCE: Fluctuates around 0.6, with some dips below 0.5.

* GRPO: Starts around 0.5, drops to approximately 0.2 at step 25, then recovers to around 0.6 by step 150.

* REC-OneSide-NoIS (0.2): Relatively stable around 0.7.

* REC-OneSide-IS (0.2): Relatively stable around 0.7.

* REC-OneSide-NoIS (0.6, 2.0): Relatively stable around 0.7.

* REC-OneSide-IS (0.6, 2.0): Relatively stable around 0.7.

**Column 3: offline**

* **Evaluation Accuracy:**

* REINFORCE: Starts at approximately 0.4, declines steadily to around 0.3 by step 150.

* GRPO: Starts at approximately 0.4, declines steadily to around 0.3 by step 150.

* REC-OneSide-NoIS (0.2): Remains relatively stable around 0.6.

* REC-OneSide-IS (0.2): Remains relatively stable around 0.6.

* REC-OneSide-NoIS (0.6, 2.0): Remains relatively stable around 0.6.

* REC-OneSide-IS (0.6, 2.0): Remains relatively stable around 0.6.

* **Training Reward:**

* REINFORCE: Remains relatively stable around 0.5.

* GRPO: Remains relatively stable around 0.5.

* REC-OneSide-NoIS (0.2): Remains relatively stable around 0.7.

* REC-OneSide-IS (0.2): Remains relatively stable around 0.7.

* REC-OneSide-NoIS (0.6, 2.0): Remains relatively stable around 0.7.

* REC-OneSide-IS (0.6, 2.0): Remains relatively stable around 0.7.

### Key Observations

* The "REC-OneSide" algorithms consistently outperform REINFORCE and GRPO in terms of evaluation accuracy, especially in the "sync\_interval = 20" and "sync\_offset = 10" conditions.

* GRPO exhibits a significant dip in training reward around step 25 in both the "sync\_interval = 20" and "sync\_offset = 10" conditions.

* REINFORCE shows high variability in evaluation accuracy, particularly in the "sync\_interval = 20" condition.

* In the "offline" condition, REINFORCE and GRPO experience a decline in evaluation accuracy, while the "REC-OneSide" algorithms maintain relatively stable performance.

### Interpretation

The data suggests that the "REC-OneSide" algorithms are more robust and effective for training reinforcement learning agents compared to REINFORCE and GRPO, particularly when synchronization is enabled ("sync\_interval = 20" and "sync\_offset = 10"). The consistent performance of "REC-OneSide" in the "offline" condition indicates that these algorithms are less reliant on real-time interaction and can still achieve good results without synchronization. The dip in GRPO's training reward suggests a potential instability or learning challenge during the initial training phase. The variability in REINFORCE's evaluation accuracy highlights its sensitivity to training conditions. Overall, the results indicate that the "REC-OneSide" algorithms offer a more stable and reliable approach to reinforcement learning training.

</details>

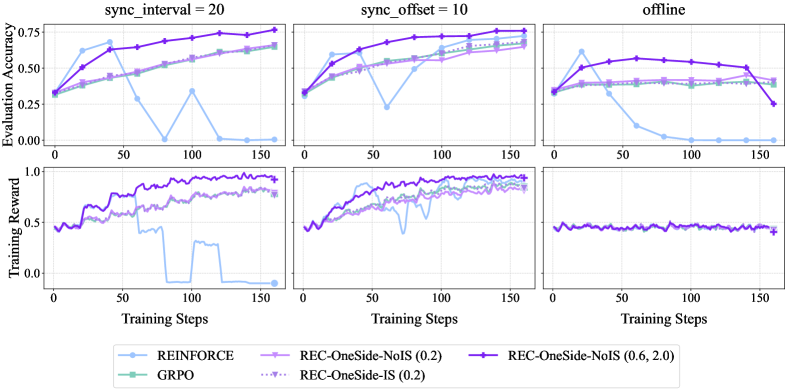

Figure 3: Empirical results for REC algorithms on GSM8k with Qwen2.5-1.5B-Instruct. Training reward curves are smoothed with a running-average window of size 3. Numbers in the legend denote clipping parameters $\epsilon_{\textsf{low}},\epsilon_{\textsf{high}}$ .

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Chart: Training Reward and Clipping Fraction vs. Training Steps

### Overview

The image presents two charts side-by-side, both displaying data related to training progress. The left chart shows "Training Reward" against "Training Steps," while the right chart shows "Clipping Fraction" against "Training Steps." Both charts share the same x-axis (Training Steps) and have a common title indicating a `sync_interval = 20`. Four different training configurations are represented by different colored lines in both charts.

### Components/Axes

* **X-axis (Both Charts):** "Training Steps" ranging from 0 to 400, with gridlines at increments of 50.

* **Left Chart Y-axis:** "Training Reward" ranging from 0.0 to 1.0, with gridlines at increments of 0.25.

* **Right Chart Y-axis:** "Clipping Fraction" on a logarithmic scale, ranging from 10<sup>-1</sup> to 10<sup>-5</sup>, with gridlines at increments of 10<sup>-2</sup>, 10<sup>-3</sup>, and 10<sup>-4</sup>.

* **Legend (Bottom Center):** Lists the four training configurations and their corresponding line colors:

* REC-OneSide-NoIS (0.2, 0.25) - Purple Solid Line

* REC-OneSide-IS (0.2, 0.25) - Blue Dotted Line

* REC-Ring-NoIS (0.2, 0.25) & (0.6, 2.0) - Purple Dashed Line

* REC-TwoSide-NoIS (0.2, 0.25) - Yellow Solid Line

### Detailed Analysis

**Left Chart (Training Reward):**

* **REC-OneSide-NoIS (0.2, 0.25) (Purple Solid):** Starts at approximately 0.25 at step 0, rises sharply to around 0.75 by step 50, then plateaus around 0.85-0.95 for the remainder of the training steps.

* **REC-OneSide-IS (0.2, 0.25) (Blue Dotted):** Starts at approximately 0.25 at step 0, rises to around 0.65 by step 50, then continues to increase more slowly, reaching approximately 0.85-0.95 by step 400.

* **REC-Ring-NoIS (0.2, 0.25) & (0.6, 2.0) (Purple Dashed):** Starts at approximately 0.25 at step 0, rises rapidly to around 0.80 by step 50, then fluctuates between 0.80 and 0.95 for the remainder of the training steps.

* **REC-TwoSide-NoIS (0.2, 0.25) (Yellow Solid):** Starts at approximately 0.25 at step 0, rises quickly to around 0.75 by step 50, then continues to increase, reaching approximately 0.90-0.95 by step 400.

**Right Chart (Clipping Fraction):**

* **REC-OneSide-NoIS (0.2, 0.25) (Purple Solid):** Exhibits a periodic oscillation, starting around 0.01, dipping to approximately 0.001 at steps 50, 150, 250, and 350, and peaking around 0.01 at steps 25, 125, 225, and 325.

* **REC-OneSide-IS (0.2, 0.25) (Blue Dotted):** Also exhibits a periodic oscillation, but with a smaller amplitude than the purple solid line. It starts around 0.003, dips to approximately 0.0003 at steps 50, 150, 250, and 350, and peaks around 0.003 at steps 25, 125, 225, and 325.

* **REC-Ring-NoIS (0.2, 0.25) & (0.6, 2.0) (Purple Dashed):** Shows a similar oscillating pattern to the purple solid line, but with a slightly higher amplitude. It starts around 0.02, dips to approximately 0.002 at steps 50, 150, 250, and 350, and peaks around 0.02 at steps 25, 125, 225, and 325.

* **REC-TwoSide-NoIS (0.2, 0.25) (Yellow Solid):** Exhibits a periodic oscillation, starting around 0.005, dipping to approximately 0.0005 at steps 50, 150, 250, and 350, and peaking around 0.005 at steps 25, 125, 225, and 325.

### Key Observations

* All four configurations show increasing training reward over time, but the rate of increase varies.

* The "REC-OneSide-NoIS" configuration (purple solid) reaches a high reward relatively quickly but exhibits the largest oscillations in clipping fraction.

* The "REC-OneSide-IS" configuration (blue dotted) has a slower initial reward increase but a lower clipping fraction.

* The "REC-Ring-NoIS" configuration (purple dashed) shows a similar reward pattern to "REC-OneSide-NoIS" but with a slightly lower peak reward and a higher clipping fraction.

* The "REC-TwoSide-NoIS" configuration (yellow solid) demonstrates a steady increase in reward and a moderate clipping fraction.

* The clipping fraction oscillates periodically for all configurations, suggesting a cyclical pattern in gradient clipping.

### Interpretation

The charts demonstrate the training dynamics of four different reinforcement learning configurations. The "Training Reward" chart indicates how well each configuration is learning to achieve its objective, while the "Clipping Fraction" chart provides insight into the stability of the training process. A high clipping fraction suggests that gradients are frequently being clipped, which can indicate instability or a need for a smaller learning rate.

The differences in reward and clipping fraction between the configurations suggest that the choice of training parameters (NoIS vs. IS, OneSide vs. TwoSide, and the specific parameter values) significantly impacts both learning performance and training stability. The periodic oscillation in clipping fraction across all configurations suggests that the training process is subject to a cyclical pattern, potentially related to the update frequency or the nature of the environment. The `sync_interval = 20` likely influences this periodicity.

The fact that all configurations eventually achieve relatively high rewards suggests that the environment is learnable, but the optimal configuration depends on the desired trade-off between learning speed and training stability. The "REC-OneSide-NoIS" configuration appears to learn fastest but at the cost of higher instability (as indicated by the clipping fraction).

</details>

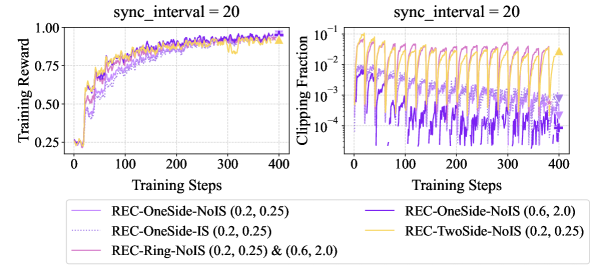

Figure 4: Empirical results for REC on ToolACE with Llama-3.2-3B-Instruct. Training reward curves are smoothed with a running-average window of size 3. Details about REC-TwoSide and REC-Ring are provided in Appendix B.3.

Experiments.

Figure 3 presents GSM8k results with Qwen2.5-1.5B-Instruct in various off-policy settings. REC-OneSide-IS / NoIS and GRPO (with the same $\epsilon_{\textsf{low}}=\epsilon_{\textsf{high}}=0.2$ ) have nearly identical performance, indicating that importance sampling is non-essential, whereas the collapse of REINFORCE highlights the critical role of clipping. Radically enlarging $(\epsilon_{\textsf{low}},\epsilon_{\textsf{high}})$ to $(0.6,2.0)$ accelerates REC-OneSide-NoIS without compromising stability in both sync_interval = 20 and sync_offset = 10 settings. Similar patterns also appear in Figure 4 (ToolAce with Llama-3.2-3B-Instruct) and other results in Appendix B. As for the stress-test (“offline”) setting, Figure 3 reveals an intrinsic trade-off between the speed and stability of policy improvement, motivating future work toward better algorithms that achieve both.

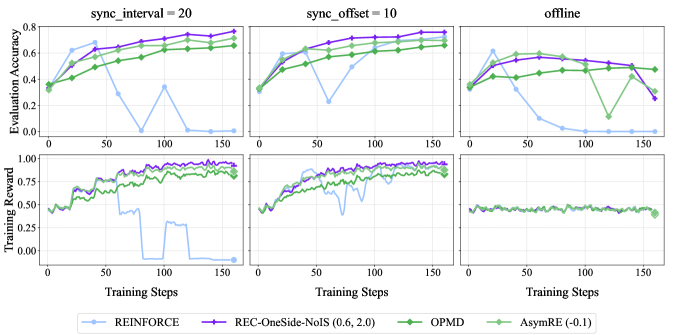

4.2 Understanding Kimi’s OPMD and Meta’s AsymRE

Besides clipping, another natural method is to add a regularization loss $R(·)$ to vanilla REINFORCE:

| | $\displaystyle\widehat{L}\big(\bm{\theta};x,\{y_{i},r_{i}\}_{1≤ i≤ K}\big)$ | $\displaystyle=-\frac{1}{K}\sum_{i∈[K]}(r_{i}-\overline{r})\log\pi_{\bm{\theta}}(y_{i}\,|\,x)+\tau· R\big(\bm{\theta};x,\{y_{i},r_{i}\}_{1≤ i≤ K}\big),$ | |

| --- | --- | --- | --- |

and take $\bm{g}=-∇_{\bm{\theta}}\widehat{L}$ . We show below that Kimi’s OPMD and Meta’s AsymRE are indeed special cases of this unified formula, with empirical validation of their efficacy deferred to Appendix B.5.

Kimi’s OPMD.

Kimi-Team (2025b) derives an OPMD variant by taking logarithm of both sides of Eq. (4), which leads to a consistency condition and further motivates the following surrogate loss:

$$

\widetilde{L}=\frac{1}{K}\sum_{1\leq i\leq K}\bigg(r_{i}-\tau\log Z(x,\pi_{\bm{\theta}_{t}})-\tau\,\Big(\log\pi_{\bm{\theta}}(y_{i}\,|\,x)-\log\pi_{\bm{\theta}_{t}}(y_{i}|x)\Big)\bigg)^{2}.

$$

With $K$ responses generated by $\pi_{\textsf{old}}=\pi_{\bm{\theta}_{t}}$ , the term $\tau\log Z(x,\pi_{\bm{\theta}_{t}})$ can be approximated by a finite-sample estimate $\tau\log(\sum_{i}e^{r_{i}/\tau}/K)$ , which can be further approximated by the mean reward $\overline{r}=\sum_{i}r_{i}/K$ if $\tau$ is large. With these approximations, the gradient of $\widetilde{L}$ becomes equivalent to that of the following loss (which is the final version of Kimi’s OPMD):

$$

\widehat{L}=-\frac{1}{K}\sum_{1\leq i\leq K}(r_{i}-\overline{r})\log\pi_{\bm{\theta}}(y_{i}\,|\,x)+\frac{\tau}{2K}\sum_{1\leq i\leq K}\Big(\log\pi_{\bm{\theta}}(y_{i}\,|\,x)-\log\pi_{\textsf{old}}(y_{i}\,|\,x)\Big)^{2}.

$$

In comparison, our analysis in Sections 2 and 3 suggests that this is in itself a principled loss function for off-policy RL, adding a mean-squared regularization loss to the vanilla REINFORCE loss.

Meta’s AsymRE.

AsymRE (Arnal et al., 2025) modifies REINFORCE by tuning down the baseline (from $\overline{r}$ to $\overline{r}-\tau$ ) in advantage calculation, which was motivated by the intuition of prioritizing learning from positive samples and justified by multi-arm bandit analysis in the original paper. We offer an alternative interpretation for AsymRE by rewriting its loss function:

| | $\displaystyle\widehat{L}$ | $\displaystyle=-\frac{1}{K}\sum_{i}\Big(r_{i}-(\overline{r}-\tau)\Big)\log\pi_{\bm{\theta}}(y_{i}\,|\,x)=-\frac{1}{K}\sum_{i}(r_{i}-\overline{r})\log\pi_{\bm{\theta}}(y_{i}\,|\,x)-\frac{\tau}{K}\sum_{i}\log\pi_{\bm{\theta}}(y_{i}\,|\,x).$ | |

| --- | --- | --- | --- |

Note that the first term on the right-hand side is the REINFORCE loss, and the second term serves as regularization, enforcing imitation of responses from an older version of the policy model. For the latter, we may also add a term that is independent of $\bm{\theta}$ to it and take the limit $K→∞$ :

| | $\displaystyle-\frac{1}{K}\sum_{1≤ i≤ K}\log\pi_{\bm{\theta}}(y_{i}\,|\,x)+\frac{1}{K}\sum_{1≤ i≤ K}\log\pi_{\textsf{old}}(y_{i}\,|\,x)=\frac{1}{K}\sum_{1≤ i≤ K}\log\frac{\pi_{\textsf{old}}(y_{i}\,|\,x)}{\pi_{\bm{\theta}}(y_{i}\,|\,x)}$ | |

| --- | --- | --- |

which turns out to be a finite-sample approximation of KL regularization.

4.3 Understanding data-weighting methods

We now shift our attention to the second principle for augmenting REINFORCE, i.e., actively shaping the training data distribution.

Pairwise weighting.

Recall from Section 2 that we define the surrogate loss in Eq. (6) as an unweighted sum of pairwise mean-squared losses. However, if we have certain knowledge about which pairs are more informative for RL training, we may assign higher weights to them. This motivates generalizing $\sum_{i<j}(a_{i}-a_{j})^{2}$ to $\sum_{i<j}w_{i,j}(a_{i}-a_{j})^{2}$ , where $\{w_{i,j}\}$ are non-negative weights. Assuming that $w_{i,j}=w_{j,i}$ and following the steps in Section 2, we end up with

$$

\bm{g}\big(\bm{\theta};x,\{y_{i},r_{i}\}_{1\leq i\leq K}\big)=\frac{1}{K}\sum_{1\leq i\leq K}\Big(\sum_{1\leq j\leq K}w_{i,j}\Big)\bigg(r_{i}-\frac{\sum_{j}w_{i,j}r_{j}}{\sum_{j}w_{i,j}}\bigg)\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}\,|\,x).

$$

In the special case where $w_{i,j}=w_{i}w_{j}$ , this becomes

$$

\bm{g}=\Big(\sum_{j}w_{j}\Big)\;\frac{1}{K}\sum_{1\leq i\leq K}w_{i}\big(r_{i}-\overline{r}_{w}\big)\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}\,|\,x),\,\,\text{where}\,\,\overline{r}_{w}\coloneqq\frac{\sum_{j}w_{j}r_{j}}{\sum_{j}w_{j}}. \tag{9}

$$

Based on this, we investigate two RE INFORCE-with- d ata-weighting (RED) methods.

RED-Drop: sample dropping.

The idea is to use a filtered subset $\mathcal{S}⊂eq[K]$ of responses for training; for example, the Kimi-Researcher technical blog (Kimi-Team, 2025a) proposes to “discard some negative samples strategically”, as negative gradients increase the risk of entropy collapse. This is indeed a special case of Eq. (9), by setting $w_{i}=\sqrt{K}/|\mathcal{S}|$ for $i∈\mathcal{S}$ and $0$ otherwise:

$$

\bm{g}\big(\bm{\theta};x,\{y_{i},r_{i}\}_{1\leq i\leq K}\big)=\frac{1}{|\mathcal{S}|}\sum_{i\in\mathcal{S}}(r_{i}-\overline{r}_{\mathcal{S}})\nabla_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}\,|\,x),\,\,\text{where}\,\,\overline{r}_{\mathcal{S}}=\frac{1}{|\mathcal{S}|}\sum_{i\in\mathcal{S}}r_{i}. \tag{10}

$$

While this is no longer an unbiased estimate of policy gradient even if all responses are sampled from the current policy, it is still well justified by our off-policy interpretation of REINFORCE.

RED-Weight: pointwise loss weighting.

Another approach for prioritizing high-reward responses is to directly up-weight their gradient terms in Eq. (1a). To better understand the working mechanism of this seemingly heuristic method, we rewrite its policy update:

| | $\displaystyle\bm{g}$ | $\displaystyle=\sum_{1≤ i≤ K}w_{i}(r_{i}-\overline{r})∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}|x)=\sum_{1≤ i≤ K}w_{i}(r_{i}-\overline{r}_{w}+\overline{r}_{w}-\overline{r})∇_{\bm{\theta}}\log\pi_{\bm{\theta}}(y_{i}|x)$ | |

| --- | --- | --- | --- |

This is the pairwise-weighted REINFORCE gradient in Eq. (9), plus a regularization term (weighted by $\overline{r}_{w}-\overline{r}>0$ ) that resembles the one in AsymRE but prioritizes imitating higher-reward responses, echoing the finding from offline RL literature (Hong et al., 2023a, b) that regularizing against high-reward trajectories can be more effective than conservatively imitating all trajectories in the dataset.

Implementation details.

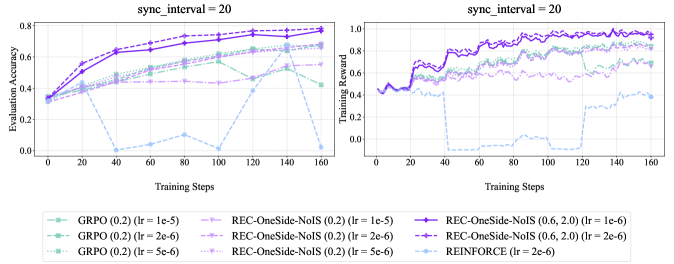

Below are the concrete instantiations adopted in our empirical studies:

- RED-Drop: When the number of negative samples in a group exceeds the number of positive ones, we randomly drop the excess negatives so that positives and negatives are balanced. After this subsampling step, we recompute the advantages using the remaining samples, which are then fed into the loss.

- RED-Weight: Each sample $i$ is weighted by $w_{i}=\exp({A_{i}}/{\tau})$ , where $A_{i}$ denotes its advantage estimate and $\tau>0$ is a temperature parameter controlling the sharpness of weighting. This scheme amplifies high-advantage samples while down-weighting low-advantage ones. We fix $\tau=1$ for all experiments.

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Charts: Training Performance Comparison

### Overview

The image presents a comparison of training performance for different reinforcement learning algorithms. It consists of two columns of charts, one labeled "on-policy" and the other "sync_interval = 20". Each column contains two charts: one displaying "Evaluation Accuracy" versus "Training Steps", and the other displaying "Training Reward" versus "Training Steps". Four algorithms are compared: REINFORCE, RED-Weight, RED-Drop, and REC-OneSide-NoIS (0.6, 2.0).

### Components/Axes

* **X-axis (both charts):** Training Steps, ranging from 0 to 150.

* **Y-axis (top charts):** Evaluation Accuracy, ranging from 0 to 0.8.

* **Y-axis (bottom charts):** Training Reward, ranging from 0 to 1.0.

* **Legend:** Located at the bottom center of the image.

* REINFORCE (Light Blue)

* RED-Weight (Orange)

* REC-OneSide-NoIS (0.6, 2.0) (Purple)

* RED-Drop (Brown)

### Detailed Analysis or Content Details

**On-Policy Column:**

* **Evaluation Accuracy:**

* REINFORCE (Light Blue): Starts at approximately 0.15, increases rapidly to around 0.65 by step 25, and plateaus around 0.7 with minor fluctuations.

* RED-Weight (Orange): Starts at approximately 0.15, increases to around 0.5 by step 25, and plateaus around 0.6 with minor fluctuations.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Starts at approximately 0.15, increases steadily to around 0.7 by step 50, and remains relatively stable around 0.7.

* RED-Drop (Brown): Starts at approximately 0.15, increases to around 0.5 by step 25, and plateaus around 0.6 with minor fluctuations.

* **Training Reward:**

* REINFORCE (Light Blue): Fluctuates around 0.8-0.9 throughout the training process.

* RED-Weight (Orange): Fluctuates around 0.8-0.9 throughout the training process.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Fluctuates around 0.7-0.9 throughout the training process, with a dip around step 25.

* RED-Drop (Brown): Fluctuates around 0.7-0.9 throughout the training process.

**Sync\_interval = 20 Column:**

* **Evaluation Accuracy:**

* REINFORCE (Light Blue): Starts at approximately 0.2, increases to around 0.5 by step 50, and then declines to around 0.2 by step 150.

* RED-Weight (Orange): Starts at approximately 0.4, increases to around 0.6 by step 50, and then declines to around 0.3 by step 150.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Starts at approximately 0.4, increases to around 0.7 by step 50, and remains relatively stable around 0.6-0.7.

* RED-Drop (Brown): Starts at approximately 0.4, increases to around 0.6 by step 50, and then declines sharply to around 0.1 by step 150.

* **Training Reward:**

* REINFORCE (Light Blue): Fluctuates around 0.7-0.8 throughout the training process.

* RED-Weight (Orange): Fluctuates around 0.8-0.9 throughout the training process.

* REC-OneSide-NoIS (0.6, 2.0) (Purple): Fluctuates around 0.7-0.8 throughout the training process.

* RED-Drop (Brown): Exhibits a large drop in reward around step 75, falling from approximately 0.8 to 0.0, and remains low for the rest of the training process.

### Key Observations

* In the "on-policy" setting, all algorithms achieve relatively high and stable evaluation accuracy and training reward. REINFORCE and REC-OneSide-NoIS perform slightly better in terms of evaluation accuracy.

* In the "sync\_interval = 20" setting, the performance of REINFORCE and RED-Drop deteriorates significantly over time, while REC-OneSide-NoIS maintains a relatively stable performance. RED-Weight also shows a decline, but less drastic than REINFORCE and RED-Drop.

* RED-Drop exhibits a catastrophic failure in the "sync\_interval = 20" setting, with a sudden drop in both evaluation accuracy and training reward around step 75.

### Interpretation

The charts demonstrate the impact of the synchronization interval on the training performance of different reinforcement learning algorithms. In the "on-policy" setting, where updates are applied immediately, all algorithms perform reasonably well. However, when a synchronization interval of 20 is introduced, the performance of REINFORCE and RED-Drop degrades significantly, suggesting that these algorithms are sensitive to delayed updates.

REC-OneSide-NoIS appears to be more robust to delayed updates, maintaining a stable performance even with a synchronization interval of 20. This could be due to the noise injection mechanism, which helps to prevent overfitting and improve generalization. The catastrophic failure of RED-Drop in the "sync\_interval = 20" setting suggests that this algorithm is particularly vulnerable to the effects of delayed updates, potentially due to instability in the learning process.

The difference in performance between the two settings highlights the importance of considering the synchronization interval when designing and training reinforcement learning algorithms. The choice of synchronization interval should be based on the specific characteristics of the algorithm and the environment.

</details>

Figure 5: Empirical performance of RED-Drop and RED-Weight on GSM8k with Qwen2.5-1.5B-Instruct, in both on-policy and off-policy settings. Training reward curves are smoothed with a running-average window of size 3.

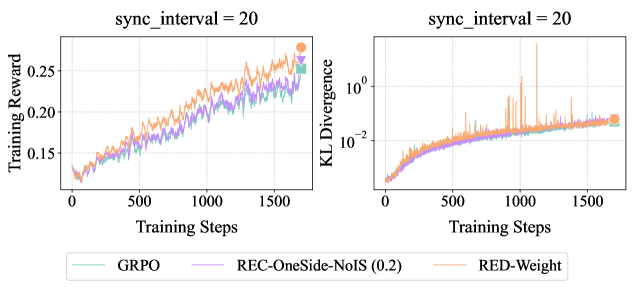

Experiments.

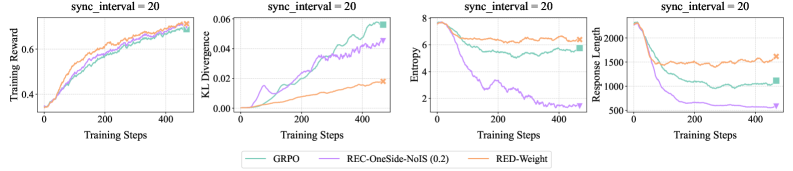

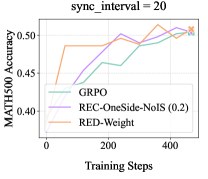

Figure 5 presents GSM8k results with Qwen2.5-1.5B-Instruct, which confirm the efficacy of RED-Drop and RED-Weight in on/off-policy settings, comparable to REC-OneSide-NoIS with enlarged $(\epsilon_{\textsf{low}},\epsilon_{\textsf{high}})$ . Figure 6 reports larger-scale experiments on Guru-Math with Qwen2.5-7B-Instruct, where RED-Weight achieves higher rewards than GRPO, with similar KL distance to the initial policy. Figure 7 further validates the efficacy of RED-Weight on MATH with Llama-3.1-8B-Instruct; compared to GRPO and REC-OneSide-NoIS, RED-Weight achieves higher rewards with lower KL divergence, while maintaining more stable entropy and response lengths.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Charts: Training Reward and KL Divergence vs. Training Steps

### Overview

The image presents two line charts, side-by-side, both with the title "sync_interval = 20". The left chart displays "Training Reward" against "Training Steps", while the right chart shows "KL Divergence" against "Training Steps". Both charts share the same x-axis (Training Steps) and display data for three different algorithms: GRPO, REC-OneSide-NoIS (0.2), and RED-Weight. A legend is positioned at the bottom of the image, identifying the color-coded lines for each algorithm.

### Components/Axes

* **Left Chart:**

* X-axis: "Training Steps" (Scale: 0 to 1600, increments of 100)

* Y-axis: "Training Reward" (Scale: 0.14 to 0.26, increments of 0.02, logarithmic scale is not used)

* **Right Chart:**

* X-axis: "Training Steps" (Scale: 0 to 1600, increments of 100)

* Y-axis: "KL Divergence" (Scale: 1e-2 to 1e0, logarithmic scale)

* **Legend:** Located at the bottom center of the image.

* GRPO: Light Green

* REC-OneSide-NoIS (0.2): Purple

* RED-Weight: Orange

### Detailed Analysis or Content Details

**Left Chart (Training Reward):**

* **GRPO (Light Green):** The line starts at approximately 0.155 at 0 Training Steps and generally slopes upward, reaching approximately 0.245 at 1600 Training Steps. There are fluctuations, but the overall trend is positive.

* **REC-OneSide-NoIS (0.2) (Purple):** The line begins at approximately 0.15 at 0 Training Steps and also slopes upward, reaching approximately 0.25 at 1600 Training Steps. It exhibits more pronounced fluctuations than GRPO.

* **RED-Weight (Orange):** The line starts at approximately 0.15 at 0 Training Steps and increases to approximately 0.26 at 1600 Training Steps. It shows the most significant fluctuations of the three algorithms.

**Right Chart (KL Divergence):**

* **GRPO (Light Green):** The line starts at approximately 0.02 at 0 Training Steps and decreases to approximately 0.01 at 1600 Training Steps. It remains relatively stable, with minor fluctuations.

* **REC-OneSide-NoIS (0.2) (Purple):** The line begins at approximately 0.02 at 0 Training Steps and decreases to approximately 0.01 at 1600 Training Steps. It is similar to GRPO in its trend and stability.

* **RED-Weight (Orange):** The line starts at approximately 0.02 at 0 Training Steps and initially increases sharply to approximately 0.1 at 200 Training Steps, then fluctuates significantly between approximately 0.01 and 0.08 before decreasing to approximately 0.02 at 1600 Training Steps. This line exhibits the most volatility.

### Key Observations

* All three algorithms show an increasing trend in Training Reward over time.

* RED-Weight consistently achieves the highest Training Reward, but also exhibits the greatest fluctuations.

* GRPO and REC-OneSide-NoIS (0.2) have similar Training Reward curves.

* KL Divergence decreases over time for GRPO and REC-OneSide-NoIS (0.2), indicating convergence.

* RED-Weight exhibits a highly unstable KL Divergence, with a large spike early in training.

### Interpretation

The charts demonstrate the performance of three reinforcement learning algorithms (GRPO, REC-OneSide-NoIS (0.2), and RED-Weight) during training. The increasing Training Reward suggests that all algorithms are learning to improve their performance. The higher Training Reward achieved by RED-Weight indicates that it may be the most effective algorithm, but its high KL Divergence and fluctuations suggest it may be less stable or prone to overfitting. The lower KL Divergence and more stable curves of GRPO and REC-OneSide-NoIS (0.2) suggest they may be more robust and generalize better. The "sync_interval = 20" indicates that the model parameters are synchronized every 20 training steps, which could influence the observed training dynamics. The spike in RED-Weight's KL Divergence at the beginning of training could indicate a significant update or change in the policy. The logarithmic scale on the KL Divergence chart emphasizes the relative magnitude of the fluctuations in RED-Weight.

</details>

Figure 6: Empirical results on Guru-Math with Qwen2.5-7B-Instruct. Training reward curves are smoothed with a running-average window of size 3.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Chart: Training Performance Metrics

### Overview

The image presents four line charts displaying training performance metrics over training steps, all with a `sync_interval` of 20. The metrics are Training Reward, KL Divergence, Entropy, and Response Length. Each chart plots the performance of three different methods: GRPO, REC-OneSide-NoIS (0.2), and RED-Weight.

### Components/Axes

* **X-axis (all charts):** Training Steps, ranging from 0 to approximately 450-500.

* **Y-axis (Chart 1):** Training Reward, ranging from 0 to approximately 8.

* **Y-axis (Chart 2):** KL Divergence, ranging from 0 to approximately 0.06.

* **Y-axis (Chart 3):** Entropy, ranging from 0 to approximately 8.

* **Y-axis (Chart 4):** Response Length, ranging from approximately 500 to 2000.

* **Legend (bottom-center):**

* GRPO (Green)

* REC-OneSide-NoIS (0.2) (Purple)

* RED-Weight (Orange)

* **Title (top of each chart):** `sync_interval = 20`

### Detailed Analysis or Content Details

**Chart 1: Training Reward**

* **GRPO (Green):** Line slopes upward, starting at approximately 0 at step 0, reaching approximately 7.5 at step 450.

* **REC-OneSide-NoIS (0.2) (Purple):** Line slopes upward, starting at approximately 0 at step 0, reaching approximately 6.5 at step 450.

* **RED-Weight (Orange):** Line slopes upward, starting at approximately 0 at step 0, reaching approximately 7.0 at step 450.

**Chart 2: KL Divergence**

* **GRPO (Green):** Line slopes upward, starting at approximately 0.01 at step 0, reaching approximately 0.05 at step 450.

* **REC-OneSide-NoIS (0.2) (Purple):** Line slopes upward, starting at approximately 0.02 at step 0, reaching approximately 0.04 at step 450.

* **RED-Weight (Orange):** Line slopes upward, starting at approximately 0.005 at step 0, reaching approximately 0.03 at step 450.

**Chart 3: Entropy**

* **GRPO (Green):** Line initially decreases from approximately 7.5 at step 0 to approximately 2.5 at step 100, then fluctuates around 2.5 to 3.5 until step 450.

* **REC-OneSide-NoIS (0.2) (Purple):** Line slopes downward, starting at approximately 6 at step 0, reaching approximately 1.5 at step 450.

* **RED-Weight (Orange):** Line initially decreases from approximately 7.5 at step 0 to approximately 2.5 at step 100, then fluctuates around 2.5 to 3.5 until step 450.

**Chart 4: Response Length**

* **GRPO (Green):** Line slopes downward, starting at approximately 1800 at step 0, reaching approximately 1000 at step 450.

* **REC-OneSide-NoIS (0.2) (Purple):** Line slopes downward, starting at approximately 1700 at step 0, reaching approximately 700 at step 450.

* **RED-Weight (Orange):** Line slopes downward, starting at approximately 1900 at step 0, reaching approximately 1200 at step 450.

### Key Observations

* All three methods show increasing Training Reward over time.

* KL Divergence increases for all methods, indicating increasing difference between the learned distribution and the prior.

* Entropy decreases for all methods, suggesting the model is becoming more confident in its predictions.

* Response Length decreases for all methods, indicating the model is generating shorter responses.

* The GRPO and RED-Weight methods exhibit similar behavior in Training Reward and Entropy.

* REC-OneSide-NoIS (0.2) consistently has the lowest Response Length.

### Interpretation

The charts demonstrate the training dynamics of three different methods. The increasing Training Reward suggests that all methods are learning to improve their performance. The increasing KL Divergence indicates that the models are diverging from the prior distribution, which could be a sign of overfitting or learning a more specialized distribution. The decreasing Entropy suggests that the models are becoming more certain in their predictions, which is generally desirable. The decreasing Response Length suggests that the models are learning to generate more concise responses.

The similarities between GRPO and RED-Weight suggest that they may be based on similar principles or have similar inductive biases. The consistently shorter Response Length of REC-OneSide-NoIS (0.2) could be due to its specific regularization or training objective. The `sync_interval = 20` parameter likely controls the frequency of synchronization between different components of the training process, and its value of 20 appears to be suitable for all three methods. Further analysis would be needed to determine the optimal value for this parameter.

</details>

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## Line Chart: MATH500 Accuracy vs. Training Steps

### Overview

This line chart depicts the MATH500 accuracy of three different models (GRPO, REC-OneSide-NoIS (0.2), and RED-Weight) as a function of training steps. The chart is labeled with a `sync_interval = 20`.

### Components/Axes

* **X-axis:** Training Steps (ranging from 0 to 500, with markers at 0, 200, and 400)

* **Y-axis:** MATH500 Accuracy (ranging from 0.40 to 0.52, with markers at 0.40, 0.45, 0.50)

* **Legend:** Located in the bottom-left corner.

* GRPO (Light Green Line)

* REC-OneSide-NoIS (0.2) (Purple Line)

* RED-Weight (Orange Line)

### Detailed Analysis

* **GRPO (Light Green Line):** The line starts at approximately 0.43 at 0 training steps. It increases to around 0.48 at 200 training steps, then decreases to approximately 0.47 at 400 training steps, and finally reaches around 0.50 at 500 training steps.

* **REC-OneSide-NoIS (0.2) (Purple Line):** The line begins at approximately 0.40 at 0 training steps. It rises steadily to around 0.47 at 200 training steps, continues to increase to approximately 0.51 at 400 training steps, and then slightly decreases to around 0.50 at 500 training steps.

* **RED-Weight (Orange Line):** The line starts at approximately 0.48 at 0 training steps. It initially decreases to around 0.47 at 100 training steps, then increases to approximately 0.51 at 300 training steps, decreases to around 0.49 at 400 training steps, and finally reaches approximately 0.51 at 500 training steps.

### Key Observations

* All three models show an increasing trend in MATH500 accuracy as training steps increase.

* The REC-OneSide-NoIS (0.2) model appears to achieve the highest accuracy at 400 training steps, reaching approximately 0.51.

* The RED-Weight model starts with the highest accuracy at 0 training steps, but its performance fluctuates more than the other two models.

* The GRPO model has the slowest initial increase in accuracy.

### Interpretation

The chart demonstrates the learning progress of three different models on the MATH500 dataset. The `sync_interval = 20` suggests that model parameters are synchronized every 20 training steps, which could influence the observed performance. The REC-OneSide-NoIS (0.2) model appears to be the most effective in this experiment, achieving the highest accuracy after 400 training steps. The fluctuations in the RED-Weight model's performance might indicate sensitivity to the training process or a need for parameter tuning. The overall upward trend for all models suggests that continued training could lead to further improvements in accuracy. The data suggests that the models are converging, but further training might be needed to reach a plateau in performance. The initial difference in starting accuracy between the models could be due to different initialization strategies or pre-training.

</details>

Figure 7: Comparison of RED-Weight, REC-OneSide-NoIS, and GRPO on MATH with Llama-3.1-8B-Instruct. Reported metrics for training include reward, KL distance to the initial model, entropy, and response length. We also report evaluation accuracy on the MATH500 subset.

5 Related works

Off-policy RL for LLMs has been studied from various perspectives. Importance sampling has long been considered one foundational mechanism for off-policy RL; besides PPO and GRPO, recent extensions include GSPO (Zheng et al., 2025) and GMPO (Zhao et al., 2025) that work with sequence-wise probability ratios, CISPO (Chen et al., 2025) that clips probability ratios rather than token updates, decoupled PPO (Fu et al., 2025a) that adapts PPO to asynchronous RL, among others. AsymRE (Arnal et al., 2025) offers an alternative baseline-shift approach (with ad-hoc analysis for discrete bandit settings), while OPMD (Kimi-Team, 2025b) partly overlaps with our analysis up to Eq. (4) before diverging, as discussed earlier in Section 4.2. Contrastive Policy Gradient (Flet-Berliac et al., 2024) overlaps with our analysis up to Eq. (6), but it requires paired responses within the same micro-batch (in order to optimize the pairwise surrogate loss), rendering it less infra-friendly than REINFORCE variants. Other perspectives include learning dynamics of DPO and SFT (Ren and Sutherland, 2025), training offline loss functions with negative gradients on on-policy data (Tajwar et al., 2024), or improving generalization of SFT via probability-aware rescaling (Wu et al., 2025). Another line of research integrates expert data into online RL (Yan et al., 2025; Zhang et al., 2025c; Fu et al., 2025b). Our work contributes complementary perspectives to this growing toolkit for off-policy LLM-RL.

6 Limitations and future work