# A Formal Comparison Between Chain of Thought and Latent Thought

**Authors**: Kevin Xu, Issei Sato

## Abstract

Chain of thought (CoT) elicits reasoning in large language models by explicitly generating intermediate tokens. In contrast, latent thought reasoning operates directly in the continuous latent space, enabling computation beyond discrete linguistic representations. While both approaches exploit iterative computation, their comparative capabilities remain underexplored. In this work, we present a formal analysis showing that latent thought admits more efficient parallel computation than inherently sequential CoT. In contrast, CoT enables approximate counting and sampling through stochastic decoding. These separations suggest the tasks for which depth-driven recursion is more suitable, thereby offering practical guidance for choosing between reasoning paradigms.

Machine Learning, ICML

## 1 Introduction

Transformer-based large language models (LLMs) (Vaswani et al., 2017) have shown strong performance across diverse tasks and have recently been extended to complex reasoning. Rather than directly predicting final answers, generating intermediate reasoning steps, known as chain of thought (CoT) (Wei et al., 2022), enhances reasoning abilities. This naturally raises the question: why is CoT effective for complex tasks? Recent studies have approached this question by framing reasoning as a computational problem and analyzing its complexity (Feng et al., 2023; Merrill and Sabharwal, 2024; Li et al., 2024; Nowak et al., 2024), showing that CoT improves performance by increasing the model’s effective depth through iterative computation, thereby enabling the solution of problems that would otherwise be infeasible.

As an alternative to CoT, recent work has explored latent thought, which reasons directly in the hidden state space rather than in the discrete token space. This paradigm includes chain of continuous thought (Coconut) (Hao et al., 2025), which replaces next tokens with hidden state, and looped Transformer (looped TF), in which output hidden states are iteratively fed back as inputs (Dehghani et al., 2019). Such iterative architectures have been shown to enhance expressivity: Coconut enables the simultaneous exploration of multiple traces (Zhu et al., 2025a; Gozeten et al., 2025), while looped TF satisfies universality (Giannou et al., 2023; Xu and Sato, 2025). Empirically, looped TF achieves competitive performance with fewer parameters (Csordás et al., 2024; Bae et al., 2025; Zhu et al., 2025b), and improves reasoning performance (Saunshi et al., 2025).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Computational Approach Comparison

### Overview

The diagram contrasts two computational paradigms: **"Chain of thought"** (blue) and **"Latent thought"** (orange). It highlights relationships between computational bounds, parallelizability, and counting methods, supported by referenced lemmas and theorems.

### Components/Axes

- **Sections**:

1. **Chain of thought** (blue):

- Subsection: **Parallel computation**

- Labels:

- "Upper bound Sequentially" (left box)

- "Exact bound Parallelizability" (right box)

- Arrows:

- "Thm. 3.12" (top-right)

- "Thm. 3.14, 3.15" (bottom-center)

2. **Latent thought** (orange):

- Subsection: **Approximate counting**

- Labels:

- "Lower bound Stochasticity" (left box)

- "Upper bound Determinism" (right box)

- Arrows:

- "Lem. 4.3" (bottom-left)

- "Thm. 4.4, 4.5" (bottom-center)

- **Color Coding**:

- Blue: Chain of thought (sequential/parallel computation)

- Orange: Latent thought (approximate counting)

### Detailed Analysis

- **Chain of thought**:

- Sequential upper bounds (left box) are linked to exact parallelizability bounds (right box) via **Thm. 3.12, 3.14, 3.15**.

- Emphasizes deterministic parallel computation.

- **Latent thought**:

- Stochastic lower bounds (left box) are connected to deterministic upper bounds (right box) via **Lem. 4.3, Thm. 4.4, 4.5**.

- Focuses on probabilistic counting methods.

### Key Observations

1. **Duality**: The diagram juxtaposes sequential vs. parallel computation (Chain of thought) with stochastic vs. deterministic counting (Latent thought).

2. **Theorem/Lemma References**: Each relationship is anchored to specific mathematical proofs, suggesting formal validation of the claims.

3. **No Numerical Data**: The diagram is conceptual, emphasizing structural relationships over quantitative values.

### Interpretation

The diagram illustrates a theoretical framework for evaluating computational efficiency:

- **Chain of thought** prioritizes parallel computation with exact bounds, implying scalability through deterministic parallelism.

- **Latent thought** leverages stochastic methods for approximate counting, balancing flexibility (stochasticity) with control (determinism).

- The use of theorems/lemmas suggests these paradigms are grounded in formal proofs, likely from a theoretical computer science or optimization context.

- The absence of numerical data implies the focus is on architectural trade-offs rather than empirical performance metrics.

</details>

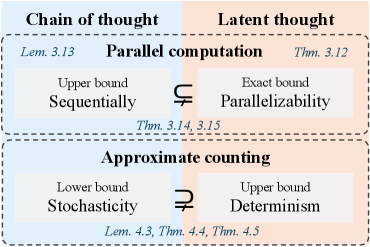

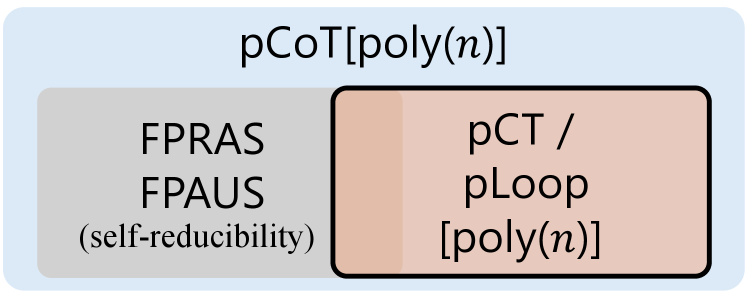

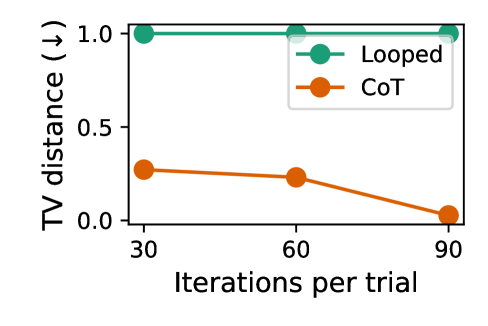

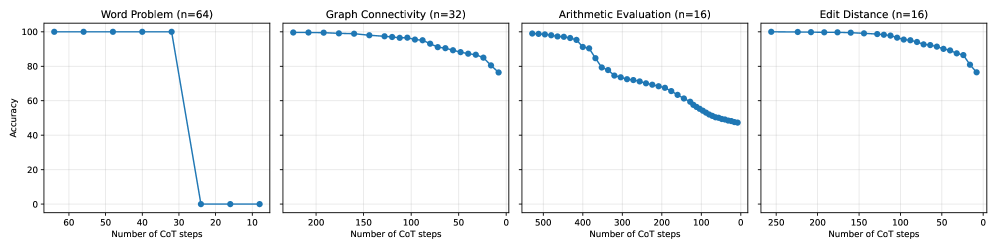

Figure 1: Overview of the formal comparison between chain of thought and latent thought reasoning with respect to parallel computation and approximate counting, providing upper, lower, or exact bounds that highlight their respective characteristics.

These reasoning paradigms share the core idea of iteratively applying Transformers to enhance expressive power, which naturally leads to a fundamental question:

What is the separation between chain of thought and latent thought?

Recent studies characterize how expressivity scales with the number of iterations. Specifically, it has been shown that looped TF subsumes deterministic CoT (Saunshi et al., 2025), and exhibits a strict separation with only a logarithmic number of iterations (Merrill and Sabharwal, 2025a). Nevertheless, fundamental questions remain open:

Does the separation extend beyond the logarithmic regime?

Is latent thought always more expressive than CoT?

### 1.1 Our Contributions

In this work, we address both questions by clarifying the respective strengths and limitations of the two reasoning paradigms through a formal complexity-theoretic analysis of their expressive power. Specifically, we show that latent thought gains efficiency from its parallelizability, yielding separations beyond the polylogarithmic regime. In contrast, CoT benefits from stochasticity, which enables approximate counting. An overview is given in Fig. 1.

Latent thought enables parallel reasoning.

By formalizing decision problems as the evaluation of directed acyclic graphs (DAGs), we reveal the parallel computational capability of latent thought utilizing continuous hidden states. This analysis can be formalized by relating the class of decision problems realizable by the model to Boolean circuits. In particular, Boolean circuits composed of logic gates such as AND, OR, NOT, and Majority, with polylogarithmic depth $\log^{k}n$ for $k\in\mathbb{N}$ and input size $n$ , define the class $\mathsf{TC}^{k}$ , a canonical model of parallel computation. Circuit complexity plays a central role in analyzing the computational power of Transformer models: fixed-depth Transformers without CoT are known to be upper-bounded by $\mathsf{TC}^{0}$ (Merrill and Sabharwal, 2023), and subsequent studies analyze how the expressivity of CoT scales their computational power in terms of Boolean circuit complexity (Li et al., 2024). We show that latent thought with $\log^{k}n$ iterations exactly captures the power of $\mathsf{TC}^{k}$ (Thm. 3.12); in contrast, CoT with $\log^{k}n$ steps cannot realize the full power of $\mathsf{TC}^{k}$ (Thm. 3.13) due to its inherent sequentiality. This yields a strict separation in favor of latent thought in polylogarithmic regime (Thm. 3.15), showing its efficiency in terms of the required number of iterations.

CoT enables approximate counting.

A counting problem is a fundamental task in mathematics and computer science that determines the number of solutions satisfying a given set of constraints, including satisfying assignments of Boolean formulas, graph colorings, and partition functions (Arora and Barak, 2009). While exact counting for the complexity class $\#\mathsf{P}$ is generally computationally intractable, approximation provides a feasible alternative. We show that CoT supports fully polynomial-time randomized approximation schemes ( $\mathsf{FPRAS}$ ), yielding reliable estimates even in cases where exact counting via deterministic latent thought reasoning is intractable (Lemma 4.3). Furthermore, leveraging classical results connecting approximate counting and sampling (Jerrum et al., 1986), we extend this separation to distribution modeling: there exist target distributions that CoT can approximately represent and sample from, but that remain inaccessible to latent thought (Theorem 4.4). To the best of our knowledge, this constitutes the first formal separation in favor of CoT.

## 2 Background

### 2.1 Models of Computation

We define a class of reasoning paradigms in which a Transformer block (Vaswani et al., 2017) is applied iteratively. Informally, CoT generates intermediate reasoning steps explicitly as tokens in an autoregressive manner. Formal definitions and illustrations are given in Appendix A.

**Definition 2.1 (CoT, followingMerrill and Sabharwal (2024))**

*Let ${\mathcal{V}}$ be a vocabulary, and let $\mathrm{TF}_{\mathrm{dec}}:{\mathcal{V}}^{*}\to{\mathcal{V}}$ denote an decoder-only Transformer. Given an input sequence $x=(x_{1},\dots,x_{n})\in{\mathcal{V}}^{n}$ , the outputs of CoT are defined by

$$

f_{\mathrm{cot}}^{0}(x)\coloneq x,\quad f_{\mathrm{cot}}^{k+1}(x)\coloneq f_{\mathrm{cot}}^{k}(x)\cdot\mathrm{TF}_{\mathrm{dec}}(f_{\mathrm{cot}}^{k}(x)),

$$

where $\cdot$ denotes concatenation. We define the output to be the last tokens of $f_{\mathrm{cot}}^{T(n)}(x)\in{\mathcal{V}}^{\,n+T(n)}$ .*

Coconut feeds the final hidden state as the embedding of the next token. Although the original Coconut model (Hao et al., 2025) can generate both language tokens and hidden states, we focus exclusively on hidden state reasoning steps, in order to compare its representational power with that of CoT. Here, $\mathbb{F}$ denotes the set of finite-precision floating-point numbers, and $d\in\mathbb{N}$ denotes the embedding dimension.

**Definition 2.2 (Coconut)**

*Let ${\mathcal{V}}$ be a vocabulary and let $\mathrm{TF}^{\mathrm{Coconut}}_{\mathrm{dec}}:{\mathcal{V}}^{*}\times(\mathbb{F}^{d})^{*}\to\mathbb{F}^{d}$ be a decoder-only Transformer that maps a fixed token prefix together with a hidden state to the next hidden state. Given an input sequence $x=(x_{1},\dots,x_{n})\in{\mathcal{V}}^{n}$ , we define the hidden states recursively by

$$

h^{0}\coloneq\bigl(e(x_{i})\bigr)_{i=1}^{n},\quad h^{k+1}\coloneq\mathrm{TF}^{\mathrm{Coconut}}_{\mathrm{dec}}(x,h^{k}),

$$

where $e:{\mathcal{V}}\to\mathbb{F}^{d}$ denotes an embedding. The output after $T(n)$ steps is obtained by decoding a suffix of the hidden state sequence ending at $h^{T(n)}$ .*

Looped TFs, by contrast, feed the entire model output back into the input without generating explicit tokens, recomputing all hidden states of the sequence at every iteration.

**Definition 2.3 (Looped TF)**

*Let $\mathrm{TF}:\mathbb{F}^{d\times*}\to\mathbb{F}^{d\times*}$ denote a Transformer block. Given an input sequence $x=(x_{1},\dots,x_{n})\in{\mathcal{V}}^{n}$ , the outputs are defined recursively by

$$

f_{\mathrm{loop}}^{0}(x)\coloneq\bigl(e(x_{i})\bigr)_{i=1}^{n},\quad f_{\mathrm{loop}}^{k+1}(x)\coloneq\mathrm{TF}(f_{\mathrm{loop}}^{k}(x)),

$$

where $e:{\mathcal{V}}\to\mathbb{F}^{d}$ denotes an embedding. The output after $T(n)$ loop iterations is the decoded last tokens of $f_{\mathrm{loop}}^{T(n)}(x)$ .*

Here, we assume that the input for looped TF may include sufficient padding so that its length is always at least as large as the output length, as in (Merrill and Sabharwal, 2025b). The definitions of the models describe their core architectures; the specific details may vary depending on the tasks to which they are applied.

Table 1: Comparison between prior theoretical analyses and our work on the computational power of CoT, Coconut, and looped TF.

$$

\mathsf{\mathsf{TC}^{k}} \mathsf{\mathsf{TC}^{k}} \mathsf{\mathsf{TC}^{k}} \tag{2024}

$$

### 2.2 Related Work

To understand the expressive power of Transformers, previous work studies which classes of problems can be solved and with what computational efficiency. These questions can be naturally analyzed within the framework of computational complexity theory. Such studies on CoT and latent thought are summarized below and in Table 1.

Computational power of chain of thought.

The expressivity of Transformers is limited by bounded depth (Merrill and Sabharwal, 2023), whereas CoT enhances their expressiveness by effectively increasing the number of sequential computational steps, enabling the solution of problems that would otherwise be intractable for fixed-depth architectures (Feng et al., 2023). Recent work has investigated how the expressivity of CoT scales with the number of reasoning steps, formalizing CoT for decision problems and computational complexity classes (Merrill and Sabharwal, 2024; Li et al., 2024). Beyond decision problems, CoT has been further formalized in a probabilistic setting for representing probability distributions over strings (Nowak et al., 2024).

Computational power of latent thought.

Latent thought is an alternative paradigm for increasing the number of computational steps without being constrained to the language space, with the potential to enhance model expressivity. In particular, Coconut has been shown to enable the simultaneous exploration of multiple candidate reasoning traces (Zhu et al., 2025a; Gozeten et al., 2025). Looped TFs can simulate iterative algorithms (Yang et al., 2024; de Luca and Fountoulakis, 2024) and, more generally, realize polynomial-time computations (Giannou et al., 2023). Recent results further demonstrate advantages over chain of thought reasoning: looped TFs can subsume the class of deterministic computations realizable by CoT using the same number of iterations (Saunshi et al., 2025), and exhibit a strict separation within the same logarithmic iterations (Merrill and Sabharwal, 2025a). Concurrent work (Merrill and Sabharwal, 2025b) further establishes that looped TFs, when equipped with padding and a polylogarithmic number of loops, are computationally equivalent to uniform $\mathsf{TC}^{k}$ .

## 3 Latent Thought Enables Parallel Reasoning

We formalize the reasoning problem as a graph evaluation problem. Section 3.2 illustrates how each model approaches the same problem differently, providing intuitive insight into their contrasting capabilities. Building on these observations, Section 3.3 characterizes their expressive power and establishes a formal separation between them.

### 3.1 Problem Setting

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Computation Graph and Simulation Models

### Overview

The image presents three interconnected diagrams illustrating computational processes:

1. **(a) Computation Graph (DAG)**: A directed acyclic graph (DAG) with nodes labeled `x1–x5` (inputs) and `y1–y4` (outputs), connected by operations (`+`, `-`, `×`, `÷`).

2. **(b) Latent Thought Simulation**: A layer-by-layer simulation of the DAG, showing iterative processing with grayed-out nodes.

3. **(c) Chain-of-Thought Simulation**: A sequential, node-by-node execution of operations, represented as a linear chain.

### Components/Axes

- **Nodes**:

- Inputs: `x1`, `x2`, `x3`, `x4`, `x5` (blue circles).

- Outputs: `y1`, `y2`, `y3`, `y4` (black circles).

- **Operations**:

- Arithmetic: `+`, `-`, `×`, `÷` (labeled on edges).

- Set of operations: `Fn = {+, −, ×, ÷}`.

- **Annotations**:

- Size: `12` (total nodes/edges in DAG).

- Depth: `2` (maximum path length in DAG).

- Labels: `iter` (iteration steps in latent thought), `step` (sequential steps in Chain-of-Thought).

### Detailed Analysis

- **Computation Graph (DAG)**:

- Nodes `x1–x5` are connected to `y1–y4` via operations. For example:

- `x1 +6` → `y1`

- `x2 ×7` → `y2`

- `x3 ÷8` → `y3`

- `x4 ×12` → `y4`

- The graph has 12 edges and a depth of 2, indicating two layers of computation.

- **Latent Thought Simulation**:

- Nodes are processed in layers, with `iter` labels indicating sequential iterations.

- Grayed-out nodes (`x4`, `x5`) suggest partial or deferred computation.

- **Chain-of-Thought Simulation**:

- A linear sequence of operations:

`x1 → + → y1 → × → y2 → − → y3 → × → y4`.

- Each `step` label represents a single operation, emphasizing sequential reasoning.

### Key Observations

1. **DAG Structure**: The graph is compact (size 12) with a shallow depth (2), suggesting efficient computation.

2. **Simulation Differences**:

- Latent thought emphasizes layer-wise processing (parallelism).

- Chain-of-Thought emphasizes step-by-step execution (serialism).

3. **Operation Distribution**:

- `+` and `×` appear most frequently in the DAG.

- `÷` is used only once (e.g., `x3 ÷8`).

### Interpretation

- **Computational Efficiency**: The DAG’s shallow depth (2) implies minimal computational steps, while the size (12) reflects moderate complexity.

- **Simulation Trade-offs**:

- Latent thought (layer-by-layer) may prioritize parallelism but risks incomplete processing (grayed nodes).

- Chain-of-Thought (node-by-node) ensures full execution but may be slower.

- **Operation Roles**:

- `+` and `×` dominate, suggesting additive/multiplicative relationships in the computation.

- `÷` is sparse, possibly indicating a critical normalization step.

The diagrams collectively illustrate how computational graphs can be simulated through different paradigms (parallel vs. sequential), with trade-offs in efficiency and completeness.

</details>

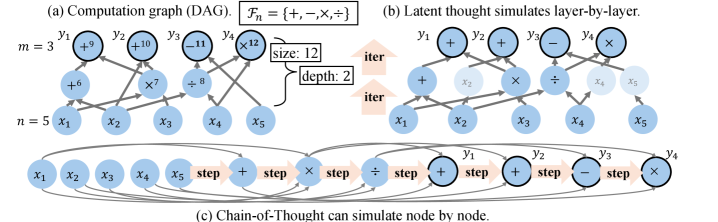

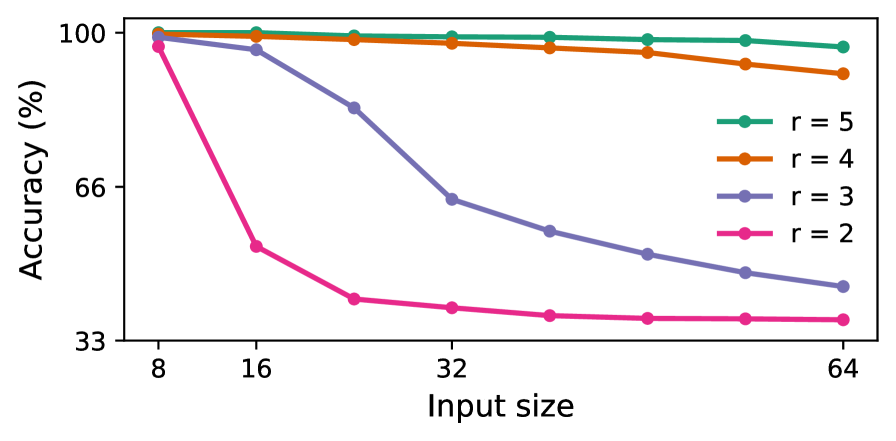

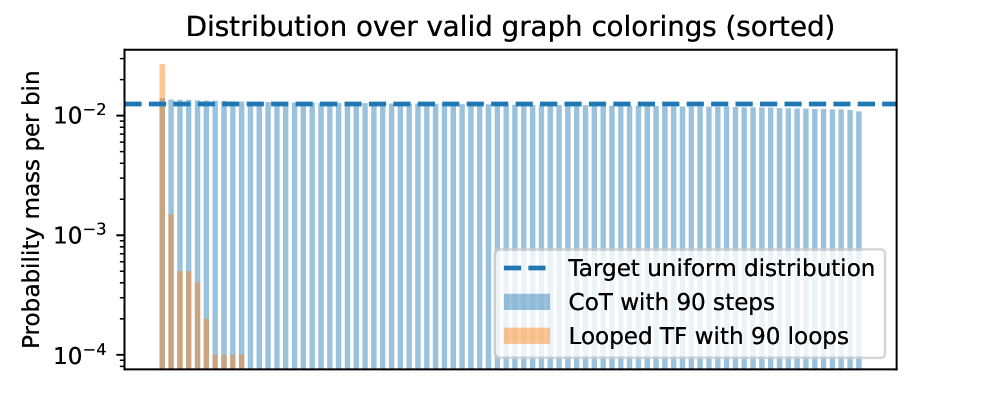

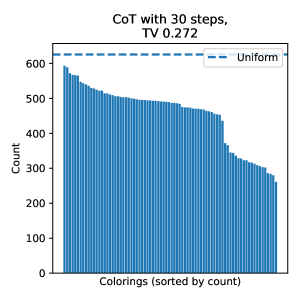

Figure 2: Comparison of reasoning paradigms for evaluating a DAG. (a) A computation graph $G_{n}$ . (b) Latent thought can simulate the computation layer by layer in parallel, using a number of loops equal to the depth of the graph, $\mathrm{depth}(G_{n})$ . (c) CoT can sequentially simulate the computation node by node, using a number of steps proportional to the size of the graph, $O(\mathrm{size}(G_{n}))$ .

Reasoning problems that can be solved by straight-line programs admit representations as directed acyclic graphs (DAGs) (Aho and Ullman, 1972), as illustrated in Fig. 2 (a).

**Definition 3.1 (Computation graph)**

*Let $\Sigma$ be a finite alphabet, and let $\mathcal{F}$ denote a finite set of functions $f:\Sigma^{*}\to\Sigma$ . A computation graph is a directed acyclic graph $G_{n}=(V_{n},E_{n})$ that defines a function $F_{G_{n}}:\Sigma^{n}\to\Sigma^{m(n)}$ , where $m(n)$ denotes the output length. Here $V_{n}$ denotes the set of nodes, consisting of (i) $n$ input nodes with in-degree $0$ , (ii) function nodes labeled by $f\in\mathcal{F}$ , which take as arguments the predecessor nodes specified by their incoming edges in $E_{n}$ , and (iii) $m(n)$ output nodes with out-degree $0$ . The overall function is obtained by evaluating the graph in topological order. The size of the graph is $|V_{n}|$ , denoted by $\mathrm{size}(G_{n})$ , and its depth is the length of the longest path from an input to an output node, denoted by $\mathrm{depth}(G_{n})$ .*

Assumptions on models.

Our goal is to evaluate the computational efficiency of each model via an asymptotic analysis of how the required number of reasoning steps or loops scales with the input size $n$ . Beyond time complexity, we also allow the space complexity of the model to scale with the input size $n$ . In particular, the embedding dimension in Transformer blocks can be viewed as analogous to the number of processors in classical parallel computation models. Accordingly, we adopt a non-uniform computational model, in which a different model is allowed for each input size. This non-uniform setting is standard in the study of circuit complexity and parallel computation (Cook, 1985), and is consistent with prior analyses of Transformers and CoT (Sanford et al., 2024b; Li et al., 2024).

On the fairness of comparing steps and loops.

We analyze expressivity in terms of the number of reasoning steps. Although this may appear unfair in terms of raw computation, it is justified when comparing latency. Specifically, CoT benefits from KV caching, which makes each step computationally inexpensive; however, accessing cached states is typically memory-bound, leaving compute resources underutilized. In contrast, looped TFs recompute over the full sequence at each iteration, incurring higher arithmetic cost but achieving higher arithmetic intensity and better utilization of modern parallel hardware. As a result, the latency of looped TFs is comparable to that of CoT.

### 3.2 CoT Suffices with Size-scaled Steps and Latent Thought Suffices with Depth-scaled Iterations

We show how each model can evaluate DAGs, which provides a lower bound on their expressivity and offers intuition for the distinctions between the models, in terms of sequentiality and parallelizability. Before presenting our main result, we first state the underlying assumptions.

**Definition 3.2 (Merrill and Sabharwal,2023)**

*The model is log-precision, where each scalar is stored with $O(\log n)$ bits and every arithmetic operation is rounded to that precision.*

**Assumption 3.3 (Poly-size graph)**

*$\mathrm{size}(G_{n})\in\mathsf{poly}(n)$ .*

**Assumption 3.4 (Poly-efficient approximation, cf.(Fenget al.,2023))**

*Each node function of $G_{n}$ can be approximated by a log-precision feedforward network whose parameter size is polynomial in the input length and the inverse of the approximation error. We denote by $\mathrm{ff\_param}(G_{n})$ an upper bound such that every $f\in\mathcal{F}$ admits such a network with at most $\mathrm{ff\_param}(G_{n})$ parameters.*

Under these assumptions, we show that CoT can simulate computation by sequentially decoding nodes, where intermediate tokens serve as a scratchpad allowing the evaluation of each node once all its predecessors have been computed.

**Theorem 3.5 (CoT for DAGs)**

*Let $\{G_{n}\}_{n\in\mathbb{N}}$ be a family of computation graphs that satisfy Assumptions 3.3 and 3.4. Then, for each $n\in\mathbb{N}$ , there exists a log-precision CoT with parameter size bounded by $O(\mathrm{ff\_param}(G_{n}))$ , such that for every input $x\in\Sigma^{n}$ , the model outputs $F_{G_{n}}(x)$ with steps proportional to the “size” of the graph, i.e., $O(\mathrm{size}(G_{n}))$ .*

* Proof sketch*

At each step, the attention layer retrieves the outputs of predecessor nodes from previously generated tokens, and a feed-forward layer then computes the node function, whose output is generated as the next token. ∎

In contrast, latent thought can operate in parallel, layer by layer, where all nodes at the same depth are computed simultaneously, provided that the model has sufficient size.

**Theorem 3.6 (Latent thought for DAGs)**

*Let $\{G_{n}\}_{n\in\mathbb{N}}$ be a family of computation graphs that satisfy Assumptions 3.3 and 3.4. Then, for each $n\in\mathbb{N}$ , there exists a log-precision Coconut and looped TF with parameter size $O(\mathrm{ff\_param}(G_{n})\cdot\mathrm{size}(G_{n}))$ , such that for every input $x\in\Sigma^{n}$ , it computes $F_{G_{n}}(x)$ with iterations proportional to the “depth” of the graph $G_{n}$ , i.e., $O(\mathrm{depth}(G_{n}))$ .*

* Proof sketch*

The role assignment of each layer is based on (Li et al., 2024), as shown in Figure 9. An attention layer aggregates its inputs into a single hidden state. Unlike discrete tokens, continuous latent states allow the simultaneous encoding of the outputs of multiple nodes, enabling the feed-forward layer to compute node functions in parallel. ∎

Remark.

Illustrations are provided in Fig. 2, with formal proofs deferred to Appendix B. These results reveal distinct characteristics: CoT can utilize intermediate steps as scratchpad memory and perform computations sequentially, whereas latent thought can leverage structural parallelism to achieve greater efficiency with sufficient resources.

### 3.3 Separation in Polylogarithmic Iterations

In this section, we shift to formal decision problems to precisely characterize the computational power of each reasoning paradigm, clarify what cannot be achieved, and use these limitations to derive rigorous separations. We begin by defining their complexity classes, as in (Li et al., 2024).

**Definition 3.7 (Complexity Classes𝖢𝗈𝖳\mathsf{CoT},𝖢𝖳\mathsf{CT}and𝖫𝗈𝗈𝗉\mathsf{Loop})**

*Let $\mathsf{CoT}[T(n),d(n),s(n)]$ , $\mathsf{CT}[T(n),d(n),s(n)]$ , and $\mathsf{Loop}[T(n),d(n),s(n)]$ denote the sets of languages ${\mathcal{L}}:\{0,1\}^{*}\to\{0,1\}$ for which there exists a deterministic CoT, Coconut, and looped TF, respectively, denoted by $M_{n}$ for each input size $n$ , with embedding size $O(d(n))$ and $O(s(n))$ bits of precision, such that for all $x\in\{0,1\}^{n}$ , the final output token after $O(T(n))$ iterations equals ${\mathcal{L}}(x)$ .*

Boolean circuits serve as a standard formal model of computation, where processes are defined by the evaluation of DAGs with well-established complexity measures.

**Definition 3.8 (Informal)**

*A Boolean circuit is a DAG over the alphabet $\Sigma=\{0,1\}$ , where each internal node (gate) computes a Boolean function such as AND, OR, or NOT. $\mathsf{SIZE}[s(n)]$ and $\mathsf{DEPTH}[d(n)]$ denote the class of languages decidable by a non-uniform circuit family $\{C_{n}\}$ with size $O(s(n))$ and depth $O(d(n))$ , respectively.*

First, we formalize the results of the previous section to show that latent thought iterations can represent circuit depth, whereas CoT corresponds to circuit size.

**Theorem 3.9 (Liet al.,2024)**

*$\forall T(n)\in\mathrm{poly}(n),$

$$

\mathsf{SIZE}[T(n)]\subseteq\mathsf{CoT}[T(n),\log{n},1].

$$*

**Theorem 3.10**

*For any function $T(n)\in\mathrm{poly}(n)$ and any non-uniform circuit family $\{C_{n}\}$ , it holds that

| | $\displaystyle\mathsf{DEPTH}[T(n)]$ | $\displaystyle\subseteq\mathsf{Loop}[T(n),\mathrm{size}(C_{n}),1],$ | |

| --- | --- | --- | --- |*

Boolean circuits serve as a formal model of parallel computations that run in polylogarithmic time using a polynomial number of processors (Stockmeyer and Vishkin, 1984).

**Definition 3.11**

*For each $k\in\mathbb{N}$ , the classes $\mathsf{NC}^{k}$ , $\mathsf{AC}^{k}$ , and $\mathsf{TC}^{k}$ consist of languages decidable by non-uniform circuit families of size $\mathsf{poly}(n)$ and depth $O(\log^{k}n)$ , using bounded-fanin Boolean gates, unbounded-fanin $\mathsf{AND}$ / $\mathsf{OR}$ gates, and threshold gates, respectively.*

We then characterize the exact computational power of latent thought in the parallel computation regime.

**Theorem 3.12**

*For each $k\in\mathbb{N},$ it holds that

| | | $\displaystyle\mathsf{Loop}[\log^{k}n,\,\mathsf{poly}(n),1\ \textup{(resp.\ }\log n)]$ | |

| --- | --- | --- | --- |*

* Proof sketch*

The inclusion from circuits to latent thought follows from Theorem 3.10. For the converse inclusion, we build on the arguments of prior work (Merrill and Sabharwal, 2023; Li et al., 2024), which show that a fixed-depth Transformer block under finite precision is contained in $\mathsf{AC}^{0}$ (or $\mathsf{TC}^{0}$ under logarithmic precision). We extend their analysis to the looped setting, which can be unrolled into a $\mathsf{TC}^{k}$ circuit by composing a $\mathsf{TC}^{0}$ block for $\log^{k}n$ iterations. ∎

Moreover, we establish an upper bound on the power of CoT in the parallel computation regime. This limitation arises from the inherently sequential nature of CoT.

**Lemma 3.13**

*For each $k\in\mathbb{N},$ it holds that

$$

\mathsf{CoT}[\log^{k}{n},\mathsf{poly}(n),\log{n}]\subseteq\mathsf{TC}^{k-1}.

$$*

* Proof*

The total $\log^{k}n$ steps can be divided into $\log^{k-1}n$ blocks, each consisting of $\log n$ steps. Since $\mathsf{CoT}[\log n,\mathsf{poly}(n),\log n]\subseteq\mathsf{TC}^{0}$ (Li et al., 2024), each block with the previous block’s outputs fed as inputs to the next block can be simulated in $\mathsf{TC}^{0}$ ; iterating this over $\log^{k-1}n$ layers yields a circuit in $\mathsf{TC}^{k-1}$ . ∎

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Computational Complexity Relationships

### Overview

The diagram presents a hierarchical relationship between computational complexity classes (CT, Loop, CoT, TC) parameterized by logarithmic terms and exponents. It uses three horizontal sections to illustrate dependencies between these classes across different logarithmic scales.

### Components/Axes

1. **Top Section**

- Label: `CT / Loop[log^k(n)] = TC^k`

- Color: Light beige background

- Position: Topmost horizontal band

2. **Middle Section**

- Label: `CT / Loop[log^{k-1}(n)] = TC^{k-1}`

- Color: Light beige background

- Position: Middle horizontal band

3. **Bottom Section**

- Label: `CoT[log^k(n)] ⊆ TC^{k-1}`

- Color: Dark blue background

- Position: Bottom horizontal band

**Key Symbols**:

- `⊆` (subset symbol) in bottom section

- Exponent notation: `log^k(n)` and `log^{k-1}(n)`

- Class abbreviations: CT, Loop, CoT, TC

### Detailed Analysis

1. **Top Section**

- Equation: `CT / Loop[log^k(n)] = TC^k`

- Interpretation: Computational Time (CT) divided by Loop complexity at logarithmic scale `log^k(n)` equals Time Complexity (TC) raised to the k-th power.

2. **Middle Section**

- Equation: `CT / Loop[log^{k-1}(n)] = TC^{k-1}`

- Interpretation: Similar to the top section but with reduced logarithmic scale (`k-1`), resulting in TC raised to the (k-1)-th power.

3. **Bottom Section**

- Equation: `CoT[log^k(n)] ⊆ TC^{k-1}`

- Interpretation: Computational Overhead Time (CoT) at `log^k(n)` is a subset of TC raised to the (k-1)-th power, indicating hierarchical inclusion.

### Key Observations

- **Exponent Progression**: The middle section reduces the logarithmic exponent from `k` to `k-1`, correlating with a proportional reduction in TC's exponent.

- **Subset Relationship**: The bottom section introduces a subset notation (`⊆`), suggesting CoT is bounded by TC^{k-1} but not necessarily equal.

- **Color Coding**: The darker blue in the bottom section may imply a distinct category or stricter constraint compared to the lighter beige sections.

### Interpretation

The diagram illustrates a **hierarchical dependency** between computational complexity classes:

1. **Reduction Principle**: Decreasing the logarithmic exponent (`k → k-1`) proportionally reduces the Time Complexity exponent (TC^k → TC^{k-1}).

2. **Inclusion Relationship**: CoT at `log^k(n)` is constrained within TC^{k-1}, implying CoT is a specialized or optimized variant of TC under specific conditions.

3. **Structural Implication**: The use of division (`/`) in the top/middle sections suggests a normalization or efficiency metric, while the subset notation in the bottom section emphasizes containment rather than equivalence.

This structure likely models computational trade-offs in algorithmic design, where logarithmic scaling and exponent adjustments govern performance bounds.

</details>

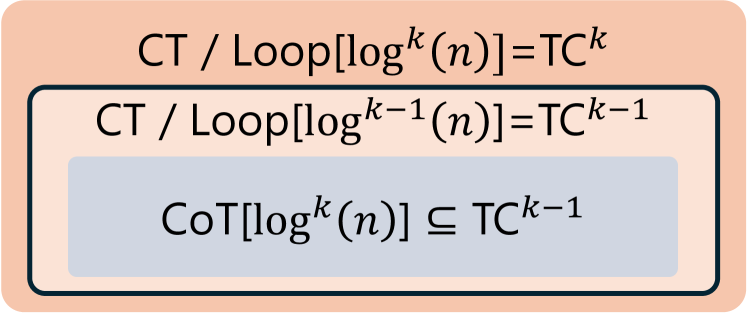

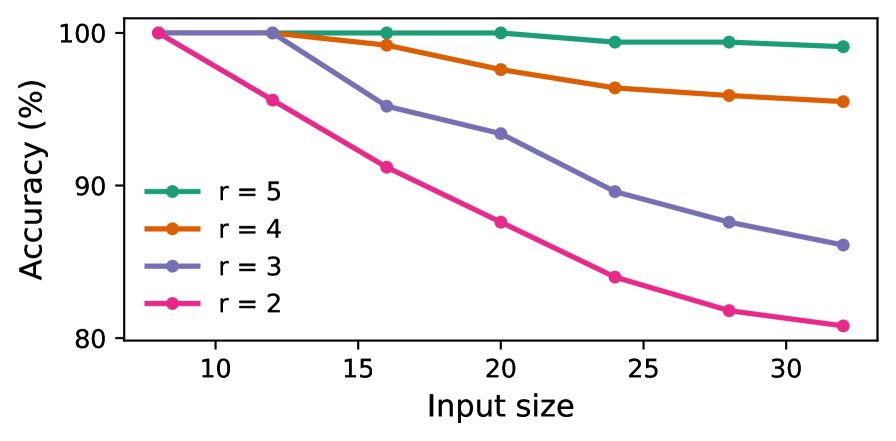

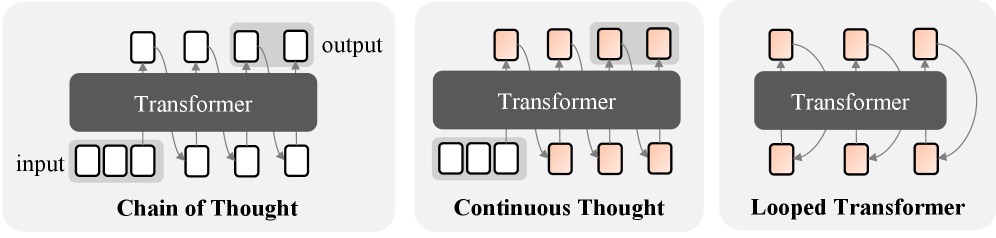

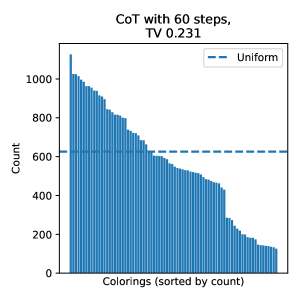

Figure 3: The separation between latent thought and CoT for decision problems, under polylogarithmic iterations.

These results lead to a separation in expressive power under standard complexity assumptions, as illustrated in Figure 3.

**Theorem 3.14**

*For each $k\in\mathbb{N}$ , if $\mathsf{TC}^{k-1}\subsetneq\mathsf{NC}^{k}$ , then

| | $\displaystyle\mathsf{CoT}[\log^{k}n,\mathsf{poly}(n),\log{n}]$ | $\displaystyle\subsetneq\mathsf{Loop}[\log^{k}n,\mathsf{poly}(n),1],$ | |

| --- | --- | --- | --- |*

**Theorem 3.15**

*For each $k\in\mathbb{N}$ , if $\mathsf{TC}^{k-1}\subsetneq\mathsf{TC}^{k}$ , then

| | $\displaystyle\mathsf{CoT}[\log^{k}n,\mathsf{poly}(n),\log n]$ | $\displaystyle\subsetneq\mathsf{Loop}[\log^{k}n,\mathsf{poly}(n),\log n],$ | |

| --- | --- | --- | --- |*

Remark.

The claims follow directly from Theorem 3.12 and Lemma 3.13. The established separations of the complexity classes, as summarized in Fig. 3, show that latent thought reasoning enables efficient parallel solutions more effectively than CoT, which is inherently sequential.

## 4 CoT Enables Approximate Counting

In the previous section, we showed that for decision problems, latent thought can yield more efficient solutions than CoT. This naturally raises the question of whether latent thought is universally more powerful than CoT. While prior work has shown that CoT can be simulated by looped Transformer models for deterministic decision problems under deterministic decoding (Saunshi et al., 2025), we found that this result does not directly extend to probabilistic settings with stochastic decoding. Accordingly, we shift our focus from efficiency in terms of the number of reasoning steps to expressive capability under polynomially many iterations.

### 4.1 Preliminaries

Approximate counting.

Formally, let $\Sigma$ be a finite alphabet and let $R\subseteq\Sigma^{*}\times\Sigma^{*}$ be a relation. For an input $x\in\Sigma^{*}$ , define $R(x):=\{\,y\in\Sigma^{*}\mid(x,y)\in R\,\},$ and the counting problem is to determine $|R(x)|$ . A wide class of natural relations admits a recursive structure, which allows solutions to be constructed from smaller subproblems.

**Definition 4.1 (Informal: Self-reducibility(Schnorr,1976))**

*A relation $R$ is self-reducible if there exists a polynomial-time procedure that, given any input $x$ and prefix $y_{1:k}$ (with respect to a fixed output order), produces a sub-instance $\psi(x,y_{1:k})$ such that every solution $z$ of $\psi(x,y_{1:k})$ extends $y_{1:k}$ to a solution of $R(x)$ (and conversely), i.e., $R\bigl(\psi(x,y_{1:k})\bigr)=\{z\mid\ \mathrm{concat}(y_{1:k},z)\in R(x)\,\}.$*

While exact counting is intractable, there exist efficient randomized approximation algorithms (Karp and Luby, 1983).

**Definition 4.2 (FPRAS)**

*An algorithm is called a fully polynomial-time randomized approximation scheme (FPRAS) for a function $f$ if, for any $\varepsilon>0$ and $\delta>0$ , it outputs an estimate $\hat{f}(x)$ such that

$$

\Pr\!\left[(1-\varepsilon)f(x)\leq\hat{f}(x)\leq(1+\varepsilon)f(x)\right]\geq 1-\delta,

$$

and runs in time polynomial in $|x|$ , $1/\varepsilon$ , and $\log(1/\delta)$ .*

The class of counting problems that admit an FPRAS is denoted by $\mathsf{FPRAS}$ . Although randomized algorithms provide only probabilistic guarantees, they are often both more efficient and simpler than their deterministic counterparts, denoted by $\mathsf{FPTAS}$ (Definition C.11). For example, counting the number of satisfying assignments of a DNF formula admits an FPRAS based on Monte Carlo methods (Karp et al., 1989), whereas no FPTAS is known for this problem. Moreover, probabilistic analysis enables us to capture algorithmic behavior on typical instances arising in real-world applications (Mitzenmacher and Upfal, 2017).

Probabilistic models of computation.

In contrast to the deterministic models considered in the previous section, we now study probabilistic models that define a conditional distribution over output strings $y=(y_{1},\ldots,y_{m})\in\Sigma^{*}$ . We consider autoregressive next-token prediction of the form

$$

p(y\mid x)=\prod_{i=1}^{m}p(y_{i}\mid x,y_{<i}),

$$

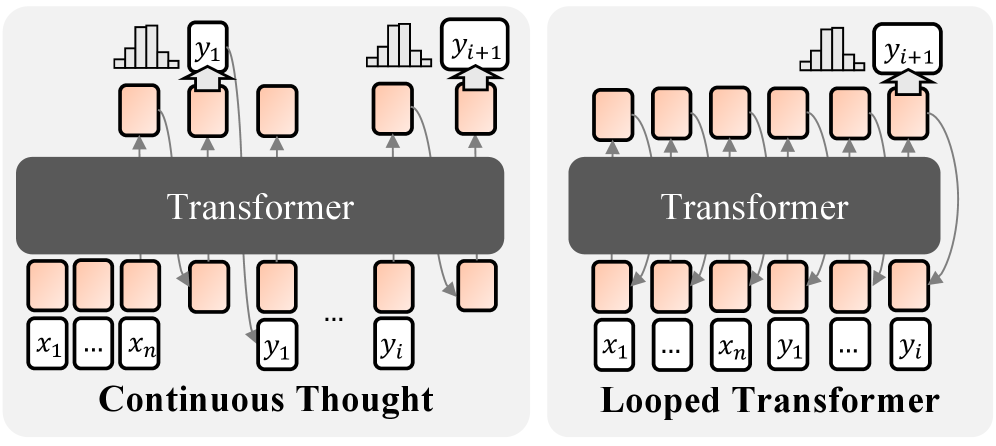

where the model is allowed to perform additional reasoning steps before producing each output token $y_{i}$ . This formulation was first used to formalize CoT for language modeling by Nowak et al. (2024). For latent thought, reasoning iterations are performed entirely in hidden space; no linguistic tokens are sampled except for the output token $y_{i}$ , as illustrated in Fig. 4. This definition is consistent with practical implementations (Csordás et al., 2024; Bae et al., 2025). Within this framework, we define complexity classes of probabilistic models, denoted by $\mathsf{pCoT}$ , $\mathsf{pCT}$ , and $\mathsf{pLoop}$ , respectively. Formal definitions are in Section C.1.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Transformer Architectures Comparison

### Overview

The image compares two Transformer-based architectures: "Continuous Thought" (left) and "Looped Transformer" (right). Both diagrams illustrate input-output relationships and processing flows within a Transformer model.

### Components/Axes

- **Central Block**: Labeled "Transformer" in both diagrams, representing the core processing unit.

- **Input Sequence**:

- Left diagram: Labeled `x₁ ... xₙ` (input tokens).

- Right diagram: Same input sequence, but with additional outputs (`y₁ ... yᵢ`) fed back into the input.

- **Output Sequence**:

- Left diagram: Labeled `y₁ ... yᵢ` (output tokens).

- Right diagram: Outputs `y₁ ... yᵢ` with a feedback loop connecting `yᵢ₊₁` back to the input.

- **Thought Process**:

- Left diagram: Labeled "Continuous Thought," showing sequential processing without feedback.

- Right diagram: Labeled "Looped Transformer," showing a circular feedback loop from `yᵢ₊₁` to `x₁`.

### Detailed Analysis

- **Continuous Thought**:

- Inputs (`x₁ ... xₙ`) flow unidirectionally through the Transformer to produce outputs (`y₁ ... yᵢ`).

- No feedback mechanism; processing stops after the final output.

- **Looped Transformer**:

- Outputs (`y₁ ... yᵢ`) are fed back into the input sequence via a looped connection (`yᵢ₊₁ → x₁`).

- Enables iterative processing, where outputs influence subsequent inputs.

### Key Observations

1. **Feedback Loop**: The Looped Transformer introduces a circular dependency between outputs and inputs, absent in the Continuous Thought model.

2. **Sequential vs. Iterative**: The left diagram represents a one-pass Transformer, while the right diagram supports multi-pass processing.

3. **Token Flow**: Both diagrams use identical input/output token labels (`x₁ ... xₙ`, `y₁ ... yᵢ`), but the looped architecture modifies the flow.

### Interpretation

The diagrams highlight a critical architectural difference:

- **Continuous Thought** models standard Transformer behavior, where inputs are processed once to generate outputs.

- **Looped Transformer** introduces a feedback mechanism, enabling recursive reasoning. This could allow the model to refine outputs iteratively, mimicking human-like "chain-of-thought" reasoning.

The looped architecture suggests potential applications in tasks requiring dynamic context updates, such as real-time decision-making or self-correcting language models. However, the added complexity may increase computational overhead compared to the simpler Continuous Thought design.

</details>

Figure 4: Probabilistic models of computation with latent thought. Each output token $y_{i}$ is generated autoregressively, with computation permitted in a continuous latent space prior to each token.

### 4.2 Separation in Approximate Counting

We first analyze the expressivity of the token-level conditional prediction at each step, $p(y_{i}\mid x,y_{<i})$ , and show that CoT is strictly more expressive than latent thought in this setting. The key distinction is whether intermediate computation permits sampling. CoT explicitly samples intermediate reasoning tokens, inducing stochastic computation and enabling the emulation of randomized algorithms. In contrast, latent thought performs only deterministic transformations in latent space, resulting in deterministic computation.

**Lemma 4.3 (Informal)**

*Assume that $\mathsf{FPTAS}\subsetneq\mathsf{FPRAS}$ for self-reducible relations. There exists a self-reducible relation $R$ and an associated function $f:\Sigma^{*}\times\Sigma^{*}\to\mathbb{N}$ defined by $f(x,y_{<i})\coloneqq|\{\,z\in\Sigma^{*}:(x,y_{<i}z)\in R\,\}|$ such that CoT with polynomially many steps admits an FPRAS for $f$ . Whereas, no latent thought with polynomially many iterations admits the same approximation guarantee.*

* Proof sketch*

For self-reducible relations, approximating the counting function $f$ on subproblems is polynomial-time inter-reducible with approximating $|R(x)|$ (Jerrum et al., 1986). If latent thought with polynomially many iterations admitted an FPTAS for $f$ , then it would induce a deterministic FPTAS for $|R(x)|$ , contradicting the assumption.∎

### 4.3 Separation in Approximate Sampling

We then move from token-level conditional distributions $p(y_{i}\mid x,y_{<i})$ to the full sequence-level distribution $p(y\mid x)$ . Beyond approximate counting, we establish a separation for approximate sampling problems. Specifically, we construct target distributions for which the complexity of each conditional can be reduced to approximate counting.

**Theorem 4.4**

*Assume that $\mathsf{FPTAS}\subsetneq\mathsf{FPRAS}$ for self-reducible relations. There exists a distribution $p(y\mid x)$ over $y\in\Sigma^{*}$ and $x\in\Sigma^{n}$ such that a CoT with a polynomial number of steps, whose induced output conditionals are denoted by $q(y_{i}\mid x,y_{<i})$ , admits an FPRAS for approximating the conditional probabilities $p(y_{i}\mid x,y_{<i})$ for all $x\in\Sigma^{n}$ , indices $i\geq 1$ , and prefixes $y_{<i}\coloneq(y_{1},\ldots,y_{i-1})$ . In contrast, no latent thought with polynomially many iterations admits the same approximation guarantee.*

* Proof sketch*

Define the target distribution $p$ to be the uniform distribution supported on the solution set $R(x)$ . We rely on the classical result that approximate sampling from the uniform distribution over solutions, captured by the class $\mathsf{FPAUS}$ , is polynomial-time inter-reducible with approximate counting for self-reducible relations (Jerrum et al., 1986). Let $U(\cdot\mid x)$ denote the uniform distribution over solutions of a self-reducible relation $R(x)$ . This distribution admits an autoregressive factorization $U(y\mid x)\;=\;\prod_{i=1}^{m}p(y_{i}\mid x,y_{<i}),$ where each conditional probability is given by $p(y_{i}\mid x,y_{<i})=\frac{\bigl|\{\,z\in\Sigma^{*}:(x,y_{1:i+1}z)\in R\,\}\bigr|}{\bigl|\{\,z\in\Sigma^{*}:(x,y_{1:i}z)\in R\,\}\bigr|}.$ We show that each conditional probability, expressed as a ratio of subproblem counts, reduces to approximate counting. Then, applying Lemma 4.3 to these conditionals yields the desired separation for approximate sampling. ∎

<details>

<summary>x5.png Details</summary>

### Visual Description

## Diagram: pCoT[poly(n)] Architecture

### Overview

The image presents a two-part diagram with a title "pCoT[poly(n)]" at the top. It visually separates two conceptual components: a gray rectangle labeled "FPRAS FPAUS (self-reducibility)" on the left and a pink rectangle labeled "pCT / pLoop [poly(n)]" on the right. The layout suggests a comparative or hierarchical relationship between these elements.

### Components/Axes

- **Title**: "pCoT[poly(n)]" (centered at the top)

- **Left Component**:

- Label: "FPRAS FPAUS"

- Sub-label: "(self-reducibility)" (in parentheses)

- **Right Component**:

- Label: "pCT / pLoop"

- Sub-label: "[poly(n)]" (in brackets)

- **Visual Structure**:

- Two horizontally aligned rectangles with distinct colors (gray and pink)

- No axes, scales, or numerical data present

### Detailed Analysis

- **Textual Elements**:

- All labels are explicitly stated with no ambiguity.

- The bracketed "[poly(n)]" and parenthetical "(self-reducibility)" indicate contextual modifiers.

- **Spatial Relationships**:

- Title is centered above both components.

- Left component (gray) is positioned to the left of the right component (pink).

- No overlapping or hierarchical layering between components.

### Key Observations

1. The diagram contrasts two distinct concepts: "self-reducibility" (left) and "polynomial-time" (right).

2. The use of brackets and parentheses suggests nuanced distinctions within each component.

3. The title "pCoT[poly(n)]" implies a unifying framework or higher-level concept encompassing both components.

### Interpretation

This diagram likely represents a theoretical framework in computational complexity or algorithm design. The left component ("FPRAS FPAUS") relates to self-reducibility, a property where problems can be broken into smaller instances of the same problem. The right component ("pCT / pLoop") references polynomial-time algorithms (pCT = Polynomial-Time Computation, pLoop = Polynomial-Time Loop), emphasizing efficiency. The title "pCoT[poly(n)]" may denote a class of problems or algorithms that integrate both self-reducibility and polynomial-time constraints. The separation into two components suggests these are complementary or orthogonal properties within the broader framework.

</details>

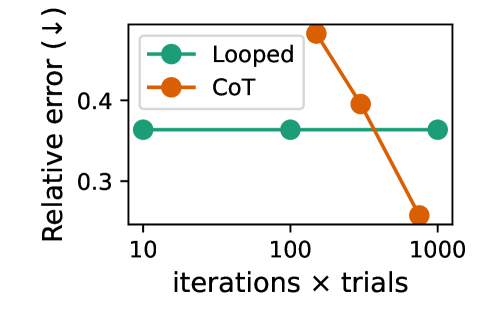

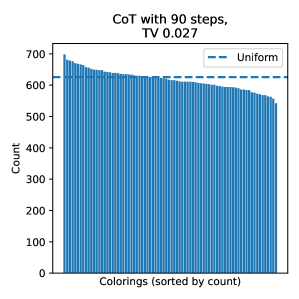

Figure 5: The separation for approximate counting (sampling).

Consequently, we obtain the following separations in favor of CoT, as also shown in Fig. 5.

**Theorem 4.5**

*Assuming $\mathsf{FPTAS}\subsetneq\mathsf{FPRAS}$ for self-reducible relations, it holds that

$$

\forall\,\mathcal{M}\in\{\mathsf{pCT},\mathsf{pLoop}\},\quad\mathcal{M}[\mathsf{poly}(n)]\subsetneq\mathsf{pCoT}[\mathsf{poly}(n)].

$$*

## 5 Experiments

In this section, we provide empirical validation of our theoretical results on tasks with well-characterized complexity. Specifically, we study parallelizable tasks to empirically validate the efficiency of latent thought predicted in Section 3, and approximate counting and sampling tasks to demonstrate the effectiveness of CoT as shown in Section 4.

### 5.1 Experimental Setting

Fundamental algorithmic reasoning tasks.

We use four problems. (1) Word problems for finite non-solvable groups: given a sequence of generators, the task is to evaluate their composition, which is $\mathsf{NC}^{1}$ -complete (Barrington, 1986), also studied for Looped TF (Merrill and Sabharwal, 2025a). (2) $s$ – $t$ connectivity (STCON): given a directed graph $G=(V,E)$ and two vertices $s,t\in V$ , the task is to decide whether $t$ is reachable from $s$ , which belongs to $\mathsf{TC}^{1}$ (Gibbons and Rytter, 1989). (3) Arithmetic expression evaluation: given a formula consisting of $+,\times,-,/$ operations on integers, the task is to evaluate it. This problem is $\mathsf{TC}^{0}$ -reducible to Boolean formula evaluation (Feng et al., 2023), which is $\mathsf{NC}^{1}$ -complete (Buss, 1987). (4) Edit distance: given two strings $x$ and $y$ , the task is to compute the minimum cost to transform $x$ into $y$ . By reducing the dynamic programming formulation to shortest paths, this problem is in $\mathsf{TC}^{1}$ (Apostolico et al., 1990).

Approximate counting tasks.

We consider DNF counting and uniform sampling of graph colorings, both of which admit fully polynomial randomized approximation schemes for counting and sampling (FPRAS and FPAUS). Specifically, DNF counting admits an FPRAS via Monte Carlo sampling (Karp et al., 1989), while approximate counting and sampling of graph colorings admit an FPAUS based on rapidly mixing Markov chain Monte Carlo under suitable degree and color constraints (Jerrum, 1995).

Training strategy.

Since our primary objective is to study expressive power, we allow flexibility in optimization and training strategies. For CoT models, training is performed with supervision from explicit sequential algorithms. For fewer CoT steps, we compare two strategies: uniformly selecting steps from the indices of the complete trajectory (Bavandpour et al., 2025), and stepwise internalization (distillation) methods (Deng et al., 2024). For latent thought, we observe that looped TF is easier to train than Coconut, and therefore adopt looped TF as our instantiation of latent thought, with curriculum learning applied to certain tasks.

Table 2: Accuracy (%) of CoT and looped TF on parallelizable tasks across different numbers of iterations. Here, $n$ denotes the problem size. For CoT, we report the best accuracy achieved across the two training strategies.

| Word Problem Graph Connectivity Arithmetic Evaluation | 64 32 32/16 | 0.8 80.8 43.7 | 0.8 95.8 99.4 | 100.0 99.0 99.5 | 100.0 99.0 99.7 | 0.8 81.0 47.3 | 0.8 81.4 47.6 | 100.0 88.2 48.2 | 100.0 100.0 82.5 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Edit Distance | 32/16 | 57.3 | 72.9 | 86.2 | 90.7 | 76.5 | 80.9 | 87.5 | 94.8 |

### 5.2 Results

Table 2 reports results on parallelizable tasks, comparing latent thought and CoT under varying numbers of iterations. Latent thought solves the problems with fewer iterations than CoT requires to reach comparable performance. These empirical results are consistent with our theoretical analysis: latent thought supports efficient parallel reasoning, in contrast to the inherently sequential nature of CoT.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Graph: Accuracy vs. Input Size for Different 'r' Values

### Overview

The graph illustrates how accuracy (%) varies with input size (8 to 64) for four distinct 'r' values (2, 3, 4, 5). Accuracy is plotted on the y-axis (33–100%), and input size is on the x-axis (logarithmic scale). Four lines represent different 'r' values, with trends showing diminishing accuracy as input size increases, particularly for lower 'r' values.

### Components/Axes

- **X-axis (Input size)**: Labeled "Input size" with values 8, 16, 32, 64 (logarithmic spacing).

- **Y-axis (Accuracy %)**: Labeled "Accuracy (%)" with a range of 33–100%.

- **Legend**: Located in the top-right corner, mapping colors to 'r' values:

- Teal: r = 5

- Orange: r = 4

- Purple: r = 3

- Pink: r = 2

### Detailed Analysis

1. **r = 5 (Teal)**:

- Starts at ~100% accuracy at input size 8.

- Remains nearly flat, declining slightly to ~98% at input size 64.

- Trend: Minimal degradation with increasing input size.

2. **r = 4 (Orange)**:

- Begins at ~100% at input size 8.

- Gradually declines to ~95% at input size 64.

- Trend: Slow, steady decrease.

3. **r = 3 (Purple)**:

- Starts at ~100% at input size 8.

- Drops sharply to ~65% at input size 16, then declines further to ~40% at 64.

- Trend: Steep initial drop, followed by a gradual decline.

4. **r = 2 (Pink)**:

- Begins at ~100% at input size 8.

- Plummets to ~35% at input size 16, stabilizing near 33% for larger inputs.

- Trend: Catastrophic drop after input size 8, then plateau.

### Key Observations

- **r = 5** maintains the highest accuracy across all input sizes, showing robustness.

- **r = 2** exhibits the most severe accuracy degradation, dropping below 40% for input sizes ≥16.

- All lines start near 100% at input size 8, suggesting optimal performance at minimal input.

- Accuracy degradation correlates with lower 'r' values, indicating 'r' may represent a parameter (e.g., regularization strength, model complexity) that stabilizes performance.

### Interpretation

The data suggests that higher 'r' values (e.g., r = 5) are critical for maintaining model accuracy as input size increases. Lower 'r' values (e.g., r = 2) fail to generalize, likely due to overfitting or insufficient capacity to handle larger inputs. This implies 'r' could represent a regularization parameter or model depth, where higher values improve generalization. The sharp decline for r = 2 highlights sensitivity to input size, emphasizing the need for careful parameter tuning in model design.

</details>

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Graph: Accuracy vs. Input Size for Different r Values

### Overview

The graph illustrates the relationship between input size (x-axis) and accuracy percentage (y-axis) for four distinct values of parameter `r` (2, 3, 4, 5). Accuracy declines as input size increases, with higher `r` values maintaining higher accuracy across all input sizes.

### Components/Axes

- **X-axis (Input size)**: Ranges from 10 to 30 in increments of 5.

- **Y-axis (Accuracy %)**: Ranges from 80% to 100%.

- **Legend**: Located on the left, with four entries:

- `r = 5` (teal line)

- `r = 4` (orange line)

- `r = 3` (purple line)

- `r = 2` (pink line)

### Detailed Analysis

1. **r = 5 (teal line)**:

- Starts at 100% accuracy at input size 10.

- Remains nearly flat, dropping slightly to ~99.5% at input size 30.

- **Trend**: Minimal decline, indicating high stability.

2. **r = 4 (orange line)**:

- Begins at 100% accuracy at input size 10.

- Declines gradually to ~95% at input size 30.

- **Trend**: Moderate decline, less steep than lower `r` values.

3. **r = 3 (purple line)**:

- Starts at 100% accuracy at input size 10.

- Drops sharply to ~85% at input size 30.

- **Trend**: Steeper decline compared to higher `r` values.

4. **r = 2 (pink line)**:

- Begins at 100% accuracy at input size 10.

- Declines most steeply, reaching ~80% at input size 30.

- **Trend**: Sharpest decline, indicating sensitivity to input size.

### Key Observations

- **Inverse relationship**: Accuracy decreases as input size increases for all `r` values.

- **r-dependent performance**: Higher `r` values (5, 4) maintain higher accuracy, while lower `r` values (2, 3) degrade faster.

- **Threshold behavior**: At input size 10, all `r` values achieve 100% accuracy, suggesting a baseline performance limit.

### Interpretation

The graph demonstrates that parameter `r` significantly influences model robustness to input size. Higher `r` values (e.g., 5) exhibit near-constant accuracy, suggesting they are better suited for large-scale inputs. Lower `r` values (e.g., 2) show rapid performance degradation, implying they may be less efficient or require smaller input sizes for optimal results. This trend could inform parameter tuning strategies for systems handling variable input sizes, prioritizing higher `r` values for scalability.

</details>

Figure 6: Accuracy of looped TFs on the arithmetic evaluation (top) and the connectivity (bottom). Each curve shows the performance for a fixed loop count $r$ as the input size $n$ increases.

We also evaluate the relationship between performance, input size, and the number of iterations, as in prior studies (Sanford et al., 2024b; Merrill and Sabharwal, 2025a). Figure 6 presents our results for looped TFs, illustrating that as the input size $n$ increases, the number of loops required to maintain high accuracy grows only logarithmically, supporting our theoretical claim in the (poly-)logarithmic regime.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Relative Error Comparison of Looped and CoT Methods

### Overview

The chart compares the relative error of two methods ("Looped" and "CoT") across three experimental conditions: 10, 100, and 1000 iterations × trials. The y-axis represents relative error (↓), while the x-axis represents the scale of iterations × trials.

### Components/Axes

- **X-axis**: "iterations × trials" with logarithmic spacing (10, 100, 1000)

- **Y-axis**: "Relative error (↓)" ranging from 0.3 to 0.4

- **Legend**:

- Green circles (■) = Looped method

- Orange circles (■) = CoT method

- **Data Points**:

- Looped: Three green data points at (10, 0.35), (100, 0.35), (1000, 0.35)

- CoT: Three orange data points at (10, 0.45), (100, 0.40), (1000, 0.25)

### Detailed Analysis

1. **Looped Method**:

- Maintains a constant relative error of ~0.35 across all conditions

- Data points form a horizontal line with no visible variation

- Error margin appears consistent (±0.02)

2. **CoT Method**:

- Shows a clear downward trend (R² ≈ 0.98)

- Error decreases from 0.45 (10 iterations) to 0.25 (1000 iterations)

- Rate of improvement: ~0.02 error reduction per order-of-magnitude increase in trials

### Key Observations

- CoT demonstrates a 43% error reduction (0.45 → 0.25) when scaling from 10 to 1000 iterations

- Looped's error remains stable but 25% higher than CoT's final performance

- Both methods show minimal error variation within individual conditions

### Interpretation

The data suggests CoT's performance improves significantly with increased computational effort, likely due to its iterative refinement mechanism. The Looped method's stability might indicate a simpler but less adaptive approach. At 1000 iterations, CoT achieves 28% lower error than Looped, suggesting it becomes more effective at larger scales. However, the initial higher error of CoT (0.45 vs 0.35) implies potential tradeoffs in early-stage performance. This pattern aligns with CoT's theoretical advantage in complex problem-solving through progressive approximation.

</details>

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: TV Distance vs. Iterations per Trial

### Overview

The image is a line chart comparing two methods ("Looped" and "CoT") across three iterations per trial (30, 60, 90). The y-axis measures "TV distance" (ranging from 0.0 to 1.0), while the x-axis represents "Iterations per trial." The chart includes a legend in the top-right corner, with green circles for "Looped" and orange circles for "CoT."

### Components/Axes

- **X-axis (Horizontal)**: Labeled "Iterations per trial," with markers at 30, 60, and 90.

- **Y-axis (Vertical)**: Labeled "TV distance (↓)," scaled from 0.0 to 1.0 in increments of 0.5.

- **Legend**: Located in the top-right corner, with:

- Green circles labeled "Looped"

- Orange circles labeled "CoT"

### Detailed Analysis

1. **Looped (Green Line)**:

- **Trend**: Constant at 1.0 across all iterations (30, 60, 90).

- **Data Points**: Three green circles aligned horizontally at y = 1.0.

2. **CoT (Orange Line)**:

- **Trend**: Decreasing linearly from 30 to 90 iterations.

- **Data Points**:

- At 30 iterations: ~0.3 (orange circle).

- At 60 iterations: ~0.2 (orange circle).

- At 90 iterations: ~0.0 (orange circle).

### Key Observations

- The "Looped" method maintains a maximum TV distance of 1.0 throughout all iterations, showing no improvement.

- The "CoT" method demonstrates a consistent reduction in TV distance, dropping from ~0.3 at 30 iterations to ~0.0 at 90 iterations.

- The orange line (CoT) slopes downward, indicating a negative correlation between iterations and TV distance.

### Interpretation

The data suggests that the "CoT" method significantly improves performance (as measured by TV distance) with increasing iterations, while the "Looped" method remains static and ineffective. The stark contrast implies that CoT adapts or optimizes over time, whereas Looped lacks such capability. The TV distance metric likely quantifies deviation from a target, with lower values indicating better performance. This trend could inform iterative optimization strategies in technical workflows.

</details>

<details>

<summary>x10.png Details</summary>

### Visual Description

## Bar Chart: Distribution over valid graph colorings (sorted)

### Overview

The chart compares three distributions of valid graph colorings across sorted bins, visualized as vertical bars on a logarithmic y-axis. The x-axis represents sorted categories (unspecified labels), while the y-axis shows probability mass per bin in logarithmic scale (10⁻⁴ to 10⁻²). Three data series are compared: a target uniform distribution (dashed blue line), CoT with 90 steps (light blue bars), and Looped TF with 90 loops (orange bars).

### Components/Axes

- **Y-axis**: "Probability mass per bin" (logarithmic scale: 10⁻⁴ to 10⁻²)

- **X-axis**: Unlabeled, but categories are sorted (likely by probability or another metric)

- **Legend**:

- Dashed blue line: Target uniform distribution

- Light blue bars: CoT with 90 steps

- Orange bars: Looped TF with 90 loops

- **Placement**: Legend in bottom-right corner; bars sorted left-to-right

### Detailed Analysis

1. **Target Uniform Distribution** (dashed blue line):

- Horizontal line at ~10⁻² probability across all bins

- Represents ideal uniform distribution

2. **CoT with 90 steps** (light blue bars):

- Uniform distribution matching the target line

- All bars approximately equal to 10⁻²

- No visible variation between bins

3. **Looped TF with 90 loops** (orange bars):

- Non-uniform distribution

- First 5-7 bins show significantly higher probability (~10⁻³ to 10⁻²)

- Remaining bins drop to ~10⁻⁴

- Long tail of low-probability bins

### Key Observations

- CoT with 90 steps perfectly matches the target uniform distribution

- Looped TF with 90 loops shows strong deviation from uniformity

- First 5-7 bins of Looped TF contain ~90% of total probability mass

- Logarithmic scale emphasizes differences in low-probability bins

### Interpretation

The data demonstrates that CoT with 90 steps successfully achieves the target uniform distribution, while Looped TF with 90 loops exhibits a skewed distribution with concentration in early bins. This suggests:

1. CoT maintains consistent performance across all categories

2. Looped TF may have bias toward specific categories or graph structures

3. The logarithmic scale reveals critical differences in tail probabilities

4. Sorting of bins implies an underlying ordering metric (possibly by probability or category importance)

The stark contrast between the two methods highlights potential limitations in Looped TF's ability to generalize across all valid colorings, possibly due to algorithmic constraints or parameter choices.

</details>

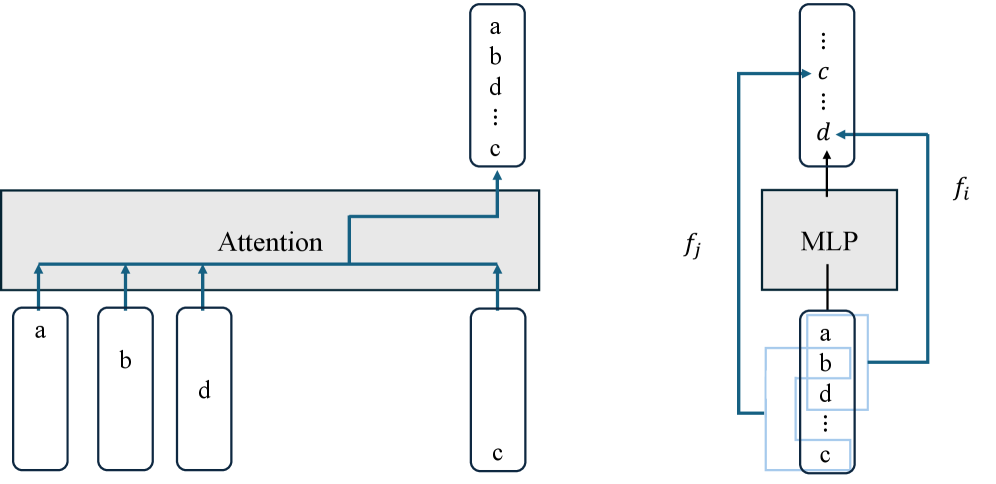

Figure 7: Performance of Looped TF and CoT. Top: Relative error for counting (left) and sampling (right). Bottom: Empirical distributions for approximate sampling of graph colorings.

Figure 7 shows the results on the approximate counting and approximate sampling tasks. For approximate counting, CoT performs Monte Carlo estimation: the effective number of samples is given by the product of the number of reasoning steps per trial and the number of independent trials. Accordingly, increasing the iteration count corresponds to increasing this total sample budget. For approximate sampling, CoT emulates Markov chain Monte Carlo, where the iteration axis represents the number of Markov chain steps per trial. In contrast, for looped TF on both tasks, the iteration count simply corresponds to the number of loop iterations applied within a single trial. These results support the separation advantages of CoT with stochastic decoding.

## 6 Conclusion

We formally analyze the computational capabilities of chain-of-thought and latent thought reasoning, providing a rigorous comparison that reveals their respective strengths and limitations. Specifically, we show that latent thought enables efficient parallel computation, whereas CoT enables randomized approximate counting. Our theoretical results are further supported by experiments. These insights offer practical guidance for reasoning model design. For future work, an important direction is to investigate whether techniques such as distillation can reduce the number of iterations without compromising computational power. Another promising avenue is to extend our analysis to diffusion language models, which possess both parallelizability and stochasticity. Moreover, extending the analysis to realistic downstream tasks remains an important direction.

## Impact Statement

This paper presents work whose goal is to advance the field of Machine Learning. There are many potential societal consequences of our work, none which we feel must be specifically highlighted here.

## References

- A. V. Aho and J. D. Ullman (1972) Optimization of straight line programs. SIAM Journal on Computing 1 (1), pp. 1–19. Cited by: §3.1.

- A. Apostolico, M. J. Atallah, L. L. Larmore, and S. McFaddin (1990) Efficient parallel algorithms for string editing and related problems. SIAM Journal on Computing. Cited by: §5.1.

- S. Arora and B. Barak (2009) Computational complexity: a modern approach. Cambridge University Press. Cited by: §1.1.

- S. Bae, Y. Kim, R. Bayat, S. Kim, J. Ha, T. Schuster, A. Fisch, H. Harutyunyan, Z. Ji, A. Courville, et al. (2025) Mixture-of-recursions: learning dynamic recursive depths for adaptive token-level computation. arXiv preprint arXiv:2507.10524. Cited by: §1, §4.1.

- D. A. Barrington (1986) Bounded-width polynomial-size branching programs recognize exactly those languages in nc. In ACM symposium on Theory of computing, Cited by: §5.1.

- A. A. Bavandpour, X. Huang, M. Rofin, and M. Hahn (2025) Lower bounds for chain-of-thought reasoning in hard-attention transformers. In Forty-second International Conference on Machine Learning, External Links: Link Cited by: §D.1.2, §D.1.2, §5.1.

- S. R. Buss (1987) The boolean formula value problem is in alogtime. In Proceedings of the nineteenth annual ACM symposium on Theory of computing, pp. 123–131. Cited by: §5.1.

- S. A. Cook (1985) A taxonomy of problems with fast parallel algorithms. Information and control 64 (1-3), pp. 2–22. Cited by: §3.1.

- R. Csordás, K. Irie, J. Schmidhuber, C. Potts, and C. D. Manning (2024) MoEUT: mixture-of-experts universal transformers. In The Thirty-eighth Annual Conference on Neural Information Processing Systems, External Links: Link Cited by: §1, §4.1.

- A. B. de Luca and K. Fountoulakis (2024) Simulation of graph algorithms with looped transformers. In Forty-first International Conference on Machine Learning, External Links: Link Cited by: §2.2.

- M. Dehghani, S. Gouws, O. Vinyals, J. Uszkoreit, and L. Kaiser (2019) Universal transformers. In International Conference on Learning Representations, External Links: Link Cited by: §1.

- Y. Deng, Y. Choi, and S. Shieber (2024) From explicit cot to implicit cot: learning to internalize cot step by step. arXiv preprint arXiv:2405.14838. Cited by: §D.1.2, §5.1.

- P. Erdos and A. Renyi (1959) On random graphs i. Publ. math. debrecen 6 (290-297), pp. 18. Cited by: §D.1.1.

- G. Feng, B. Zhang, Y. Gu, H. Ye, D. He, and L. Wang (2023) Towards revealing the mystery behind chain of thought: a theoretical perspective. Advances in Neural Information Processing Systems 36, pp. 70757–70798. Cited by: §B.2, §D.1.1, §D.1.1, §D.1.2, §1, §2.2, Assumption 3.4, §5.1.

- A. Giannou, S. Rajput, J. Sohn, K. Lee, J. D. Lee, and D. Papailiopoulos (2023) Looped transformers as programmable computers. In International Conference on Machine Learning, pp. 11398–11442. Cited by: §1, §2.2.

- A. Gibbons and W. Rytter (1989) Efficient parallel algorithms. Cambridge University Press. Cited by: §5.1.

- H. A. Gozeten, M. E. Ildiz, X. Zhang, H. Harutyunyan, A. S. Rawat, and S. Oymak (2025) Continuous chain of thought enables parallel exploration and reasoning. arXiv preprint arXiv:2505.23648. Cited by: §1, §2.2, Table 1.

- S. Hao, S. Sukhbaatar, D. Su, X. Li, Z. Hu, J. E. Weston, and Y. Tian (2025) Training large language models to reason in a continuous latent space. In Second Conference on Language Modeling, External Links: Link Cited by: §1, §2.1.

- M. R. Jerrum, L. G. Valiant, and V. V. Vazirani (1986) Random generation of combinatorial structures from a uniform distribution. Theoretical computer science 43, pp. 169–188. Cited by: Proposition C.13, Proposition C.16, Theorem C.19, §1.1, §4.2, §4.3.

- M. Jerrum (1995) A very simple algorithm for estimating the number of k-colorings of a low-degree graph. Random Structures & Algorithms 7 (2), pp. 157–165. Cited by: §D.2.2, §5.1.

- R. M. Karp, M. Luby, and N. Madras (1989) Monte-carlo approximation algorithms for enumeration problems. Journal of algorithms 10 (3), pp. 429–448. Cited by: §4.1, §5.1.

- R. M. Karp and M. Luby (1983) Monte-carlo algorithms for enumeration and reliability problems. In 24th Annual Symposium on Foundations of Computer Science, pp. 56–64. Cited by: §D.2.1, §4.1.

- Z. Li, H. Liu, D. Zhou, and T. Ma (2024) Chain of thought empowers transformers to solve inherently serial problems. In The Twelfth International Conference on Learning Representations, External Links: Link Cited by: §B.1, §B.3.1, §B.7, Definition B.1, Lemma B.10, Definition B.2, Definition B.3, Lemma B.5, Theorem B.9, §1.1, §1, §2.2, Table 1, §3.1, §3.2, §3.3, §3.3, §3.3, Theorem 3.9.

- Y. Liang, Z. Sha, Z. Shi, Z. Song, and Y. Zhou (2024) Looped relu mlps may be all you need as practical programmable computers. arXiv preprint arXiv:2410.09375. Cited by: §B.5.

- W. Merrill, A. Sabharwal, and N. A. Smith (2022) Saturated transformers are constant-depth threshold circuits. Transactions of the Association for Computational Linguistics. Cited by: item 3, §C.1.

- W. Merrill and A. Sabharwal (2023) The parallelism tradeoff: limitations of log-precision transformers. Transactions of the Association for Computational Linguistics 11, pp. 531–545. Cited by: §1.1, §2.2, §3.3, Definition 3.2.

- W. Merrill and A. Sabharwal (2024) The expressive power of transformers with chain of thought. In The Twelfth International Conference on Learning Representations, External Links: Link Cited by: §C.1, §1, §2.2, Definition 2.1.

- W. Merrill and A. Sabharwal (2025a) A little depth goes a long way: the expressive power of log-depth transformers. arXiv preprint arXiv:2503.03961. Cited by: §A.2, §1, §2.2, §5.1, §5.2.

- W. Merrill and A. Sabharwal (2025b) Exact expressive power of transformers with padding. arXiv preprint arXiv:2505.18948. Cited by: §D.1.1, §2.1, §2.2, Table 1.

- M. Mitzenmacher and E. Upfal (2017) Probability and computing: randomization and probabilistic techniques in algorithms and data analysis. Cambridge university press. Cited by: §4.1.

- F. Nowak, A. Svete, A. Butoi, and R. Cotterell (2024) On the representational capacity of neural language models with chain-of-thought reasoning. In Association for Computational Linguistics, External Links: Link Cited by: §C.1, Lemma C.6, §1, §2.2, Table 1, §4.1.

- C. Sanford, B. Fatemi, E. Hall, A. Tsitsulin, M. Kazemi, J. Halcrow, B. Perozzi, and V. Mirrokni (2024a) Understanding transformer reasoning capabilities via graph algorithms. In The Thirty-eighth Annual Conference on Neural Information Processing Systems, External Links: Link Cited by: §D.1.1.

- C. Sanford, D. Hsu, and M. Telgarsky (2024b) Transformers, parallel computation, and logarithmic depth. In Forty-first International Conference on Machine Learning, External Links: Link Cited by: §B.5, §3.1, §5.2.

- N. Saunshi, N. Dikkala, Z. Li, S. Kumar, and S. J. Reddi (2025) Reasoning with latent thoughts: on the power of looped transformers. In The Thirteenth International Conference on Learning Representations, External Links: Link Cited by: §1, §1, §2.2, Table 1, §4.

- C. Schnorr (1976) Optimal algorithms for self-reducible problems. In Proceedings of the Third International Colloquium on Automata, Languages and Programming, Cited by: Definition C.15, Definition 4.1.

- L. Stockmeyer and U. Vishkin (1984) Simulation of parallel random access machines by circuits. SIAM Journal on Computing 13 (2), pp. 409–422. Cited by: §3.3.

- A. Svete and A. Sabharwal (2025) On the reasoning abilities of masked diffusion language models. arXiv preprint arXiv:2510.13117. Cited by: Table 1.

- L. G. Valiant (1979) The complexity of enumeration and reliability problems. siam Journal on Computing 8 (3), pp. 410–421. Cited by: §C.3.

- A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser, and I. Polosukhin (2017) Attention is all you need. Advances in neural information processing systems 30. Cited by: §1, §2.1.

- J. Wei, X. Wang, D. Schuurmans, M. Bosma, F. Xia, E. Chi, Q. V. Le, D. Zhou, et al. (2022) Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 35, pp. 24824–24837. Cited by: §1.

- K. Xu and I. Sato (2025) On expressive power of looped transformers: theoretical analysis and enhancement via timestep encoding. In Forty-second International Conference on Machine Learning, External Links: Link Cited by: §D.1.2, §1.

- L. Yang, K. Lee, R. D. Nowak, and D. Papailiopoulos (2024) Looped transformers are better at learning learning algorithms. In The Twelfth International Conference on Learning Representations, External Links: Link Cited by: §2.2.

- H. Zhu, S. Hao, Z. Hu, J. Jiao, S. Russell, and Y. Tian (2025a) Reasoning by superposition: a theoretical perspective on chain of continuous thought. In The Thirty-ninth Annual Conference on Neural Information Processing Systems, External Links: Link Cited by: §1, §2.2, Table 1.

- R. Zhu, Z. Wang, K. Hua, T. Zhang, Z. Li, H. Que, B. Wei, Z. Wen, F. Yin, H. Xing, et al. (2025b) Scaling latent reasoning via looped language models. arXiv preprint arXiv:2510.25741. Cited by: §1.

## Appendix A Formal Definitions

### A.1 Notation

Vectors are written in lowercase bold letters (e.g., ${\bm{x}}$ ) and matrices in uppercase bold letters (e.g., ${\bm{W}}$ ). The $i$ -th entry of a vector ${\bm{x}}$ is ${\bm{x}}_{i}$ , the vector from the $i$ -th to the $j$ -th entry is denoted by ${\bm{x}}_{i:j}$ , and the $(i,j)$ -th entry of a matrix ${\bm{W}}$ is ${\bm{W}}_{i,j}$ . We use the symbol $*$ to denote a “don’t care” value (or block of values). For $n\in\mathbb{N}^{+}$ , let $[n]\coloneq\{1,2,\ldots,n\}$ . We sometimes write column vectors horizontally, e.g., ${\bm{x}}=(x_{1},\ldots,x_{n})$ , for brevity. The Hadamard (element-wise) product is $\odot$ . ${\bm{e}}_{i}\in\{0,1\}^{d}$ is the $i$ -th standard basis vector, ${\bm{1}}_{d}\in\mathbb{R}^{d}$ (or $1_{d}$ ) the all-ones vector, and ${\bm{0}}_{d}\in\mathbb{R}^{d}$ the zero vector. ${\bm{I}}_{d}\in\mathbb{R}^{d\times d}$ denotes the $d\times d$ identity matrix, and $\mathbf{0}_{m\times n}\in\mathbb{R}^{m\times n}$ the $m\times n$ zero matrix. The indicator function is ${\bm{1}}[\cdot]$ , and $\bigoplus$ denotes block-diagonal concatenation. Functions on scalars or vectors are written in upright letters (e.g., $\mathrm{FFN}$ ), while functions on matrices are boldface (e.g., $\mathbf{ATTN}$ ). Boldface is also used when scalar- or vector-level functions are extended to sequence level and applied independently to each token (e.g., $\mathbf{FFN}$ ). Finally, $\mathsf{poly}(n)$ denotes the set of functions growing at most polynomially: $\mathsf{poly}(n)\coloneq\left\{f:\mathbb{N}\to\mathbb{N}\;\middle|\;\exists k\in\mathbb{N},\;\exists c>0,\;\forall n\in\mathbb{N},\;f(n)\leq c\cdot n^{k}\right\}.$

### A.2 Transformer Block

We define the computational components of a Transformer block using the notation of (Merrill and Sabharwal, 2025a). Let $\mathbb{F}_{s}$ denote the set of $s$ -bit floating-point numbers with truncated arithmetic (Definition B.1).

**Definition A.1 (Transformer)**

*A Transformer consists of the following components:

1. A word embedding function $\mathrm{WE}:{\mathcal{V}}\to\mathbb{F}_{s}^{m}$ , where ${\mathcal{V}}$ denotes the vocabulary set.

1. A positional embedding function $\mathrm{PE}:\mathbb{N}\to\mathbb{F}_{s}^{m}$ .

1. A multi-head self-attention layer $\mathbf{SA}:\mathbb{F}_{s}^{m\times N}\to\mathbb{F}_{s}^{m\times N}$ for arbitrary sequence length $N$ , parameterized by a matrix ${\bm{O}}:\mathbb{F}_{s}^{s\times H}\to\mathbb{F}_{s}^{m}$ and, for each head $h\in[H]$ with head size $s$ , matrices ${\bm{Q}}_{h},{\bm{K}}_{h},{\bm{V}}_{h}:\mathbb{F}_{s}^{m}\to\mathbb{F}_{s}^{s}$ . Given an input ${\bm{x}}_{i}\in\mathbb{F}_{s}^{m}$ for each position $i\in[N]$ , it computes the query $\mathbf{q}_{i,h}={\bm{Q}}_{h}{\bm{x}}_{i}$ , key ${\bm{k}}_{i,h}={\bm{K}}_{h}{\bm{x}}_{i}$ , and value $\mathbf{v}_{i,h}={\bm{V}}_{h}{\bm{x}}_{i}$ , and outputs ${\bm{O}}\cdot({\bm{a}}_{i,1},\ldots,{\bm{a}}_{i,H}),$ where each attention output ${\bm{a}}_{i,h}$ is defined, for softmax function, as:

$$

{\bm{a}}_{i,h}=\sum_{j=1}^{c(i)}\frac{\exp(\mathbf{q}_{i,h}^{\top}{\bm{k}}_{j,h})}{Z_{i,h}}\cdot\mathbf{v}_{j,h},\quad Z_{i,h}=\sum_{j=1}^{c(i)}\exp(\mathbf{q}_{i,h}^{\top}{\bm{k}}_{j,h}), \tag{1}

$$

with $c(i)=i$ for causal attention and $c(i)=N$ for full attention. For the saturated hardmax attention (Merrill et al., 2022), each attention output ${\bm{a}}_{i,h}$ is defined as:

$$

{\bm{a}}_{i,h}=\sum_{j\in M_{i,h}}\frac{1}{|M_{i,h}|}\,\mathbf{v}_{j,h},\quad M_{i,h}=\left\{j\in[c(i)]\;\middle|\;\mathbf{q}_{i,h}^{\top}{\bm{k}}_{j,h}=\max_{j^{\prime}}\mathbf{q}_{i,h}^{\top}{\bm{k}}_{j^{\prime},h}\right\}. \tag{2}

$$