# Meaningless Tokens, Meaningful Gains: How Activation Shifts Enhance LLM Reasoning

Abstract

Motivated by the puzzling observation that inserting long sequences of meaningless tokens before the query prompt can consistently enhance LLM reasoning performance, this work analyzes the underlying mechanism driving this phenomenon and based on these insights proposes a more principled method that allows for similar performance gains. First, we find that the improvements arise from a redistribution of activations in the LLM’s MLP layers, where near zero activations become less frequent while large magnitude activations increase. This redistribution enhances the model’s representational capacity by suppressing weak signals and promoting stronger, more informative ones. Building on this insight, we propose the Activation Redistribution Module (ARM), a lightweight inference-time technique that modifies activations directly without altering the input sequence. ARM adaptively identifies near-zero activations after the non-linear function and shifts them outward, implicitly reproducing the beneficial effects of meaningless tokens in a controlled manner. Extensive experiments across diverse benchmarks and model architectures clearly show that ARM consistently improves LLM performance on reasoning tasks while requiring only a few lines of simple code to implement. Our findings deliver both a clear mechanistic explanation for the unexpected benefits of meaningless tokens and a simple yet effective technique that harnesses activation redistribution to further improve LLM performance. The code has been released at ARM-Meaningless-tokens.

1 Introduction

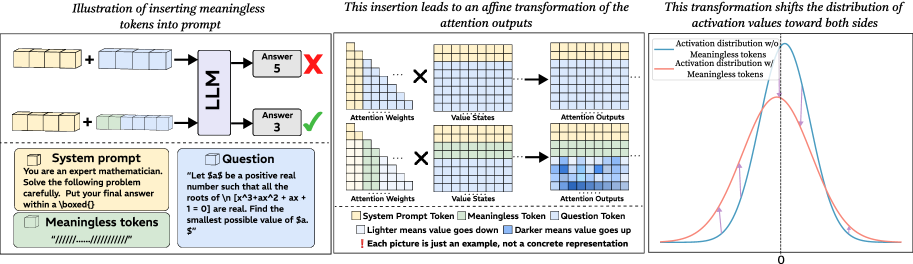

Large language models (LLMs) are known to be sensitive to subtle variations in their inputs, which makes it important to understand how tokens influence predictions (Guan et al., 2025; Errica et al., 2024; Zhuo et al., 2024). In this paper, we present a surprisingly counterintuitive finding named meaningless-token effect: inserting long sequences of meaningless tokens, such as repeated punctuation or separators, into prompts can consistently improve the performance of LLMs, particularly on reasoning tasks. Contrary to common intuition that long and irrelevant tokens are like noise and thus useless or even harmful during inference (Jiang et al., 2024; Guan et al., 2025), our experiments reveal the opposite. When long sequences of meaningless tokens are appended before query prompts, models that previously struggled with certain problems can produce correct solutions, as illustrated in the left panel of Figure 1 (see more examples in Appendix J). This effect occurs consistently across tasks and models, suggesting a counterintuitive behavior of LLMs pending deeper investigation.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Insertion of Meaningless Tokens into Prompt & Effect on Attention Outputs

### Overview

This diagram illustrates the effect of inserting meaningless tokens into a prompt given to a Large Language Model (LLM). It shows how this insertion leads to an affine transformation of the attention outputs, and consequently shifts the distribution of activation values. The diagram is divided into three main sections: Prompt Insertion, Attention Transformation, and Activation Distribution.

### Components/Axes

The diagram contains the following components:

* **System Prompt:** A text box containing the prompt: "You are an expert mathematician. Solve the following problem carefully. Put your final answer within a boxed [0]".

* **Question:** A text box containing the question: "Let $a$ be a positive real number such that all the roots of $(x^3 + ax^2 + 2x + 1 = 0)$ are real. Find the smallest possible value of $a$."

* **Meaningless Tokens:** Represented by a series of dashes ("-----"), these tokens are inserted into the prompt.

* **LLM:** A block labeled "LLM" representing the Large Language Model.

* **Attention Weights:** A grid of squares representing attention weights, with varying shades of gray.

* **Value States:** A grid of squares representing value states, with varying shades of gray.

* **Attention Outputs:** A grid of squares representing attention outputs, with varying shades of gray.

* **Activation Distribution:** A graph showing the distribution of activation values, with two curves: one representing the distribution without meaningless tokens (blue), and one representing the distribution with meaningless tokens (orange).

* **Legend:** A legend explaining the color coding for System Prompt Token, Meaningless Token, and Question Token.

* **Scale:** A scale at the bottom of the Activation Distribution graph, labeled "0".

* **Annotations:** Text annotations explaining the effects of the insertion and the meaning of the color gradients.

### Detailed Analysis / Content Details

**Prompt Insertion (Leftmost Section):**

* The top row shows a system prompt and a question being fed into the LLM. The output is "Answer 5" with a red "X" over it.

* The bottom row shows the same system prompt and question, but with meaningless tokens inserted before the question. The output is "Answer 3".

* The meaningless tokens are visually represented as a series of dashes.

**Attention Transformation (Middle Section):**

* The top row shows the transformation of Attention Weights, Value States, and Attention Outputs when the prompt *without* meaningless tokens is used. The Attention Weights and Value States are grids of light and dark gray squares. The Attention Outputs are also a grid of squares.

* The bottom row shows the same transformation when the prompt *with* meaningless tokens is used. The Attention Weights and Value States are grids of light and dark gray squares. The Attention Outputs are also a grid of squares.

* The annotation states: "Lighter means value goes down" and "Darker value goes up".

* The annotation states: "Each picture is just an example, not a concrete representation".

**Activation Distribution (Rightmost Section):**

* The graph shows two curves:

* **Blue Curve (Activation distribution w/o Meaningless tokens):** This curve is approximately bell-shaped, peaking around a value of 0.5 on the x-axis. The curve extends from approximately 0 to 1.

* **Orange Curve (Activation distribution w/ Meaningless tokens):** This curve is wider and flatter than the blue curve. It has two peaks, one around 0.2 and another around 0.8 on the x-axis. The curve extends from approximately 0 to 1.

### Key Observations

* The insertion of meaningless tokens leads to a shift in the distribution of activation values. The blue curve (without tokens) is more concentrated, while the orange curve (with tokens) is more spread out.

* The attention outputs appear to be affected by the insertion of meaningless tokens, as indicated by the changes in the grid of squares.

* The LLM produces different answers depending on whether meaningless tokens are present in the prompt (5 vs. 3).

### Interpretation

The diagram demonstrates that inserting meaningless tokens into a prompt can subtly alter the internal workings of an LLM, specifically affecting the attention mechanism and the distribution of activation values. This alteration can lead to different outputs, even for the same question. The shift in the activation distribution suggests that the meaningless tokens introduce noise or ambiguity into the model's processing, potentially influencing its decision-making process. The diagram highlights the sensitivity of LLMs to even seemingly insignificant changes in input and the importance of carefully crafting prompts to avoid unintended consequences. The annotation "Each picture is just an example, not a concrete representation" suggests that the specific patterns in the attention weights and value states are illustrative rather than definitive. The diagram is a conceptual illustration of a phenomenon rather than a precise empirical measurement.

</details>

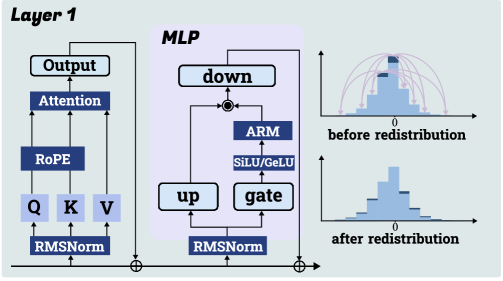

Figure 1: The left panel illustrates how meaningless-token effect can improve model performance. The middle panel shows the changes occurring in the attention module after introducing meaningless tokens. The right panel depicts the redistribution of activations that results from adding these tokens.

This unexpected result raises fundamental questions about how LLMs process input and what aspects of their internal computation are being affected. Why should tokens that convey no meaning lead to measurable performance gains? Are they simply acting as noise, or do they restructure representations in a systematic way that supports better reasoning? To answer these questions, we move beyond surface level observations and conduct a detailed investigation of the mechanisms behind this effect. Our analysis shows that the influence of meaningless tokens arises primarily in the first layer, and their effect on meaningful tokens can be approximated as an affine transformation of the attention outputs. As demonstrated in the middle schematic diagram of Figure 1, the resulting transformation shifts the distribution of activations in the MLP: the proportion of near-zero activations decreases, while more activations are pushed outward toward larger positive and negative values. The rightmost plot in Figure 1 gives a visualization of this process. We hypothesize that redistribution fosters richer exploration, enhancing reasoning performance, and clarify the mechanism by decomposing the transformation into coefficient and bias terms. Our theoretical analysis shows how each component shapes activation variance and induces the observed distributional shift.

Building on these insights, we propose ARM (an A ctivation R edistribution M odule), a lightweight alternative to explicit meaningless-token insertion. ARM requires only a few lines of code modification and no additional training. It automatically identifies a proportion of near-zero activations after the non-linear function and shifts their values outward, yielding a smoother and less sparse activation distribution. In doing so, ARM reproduces the beneficial effects of meaningless tokens without altering the input sequence and consistently improves LLM performance on reasoning and related tasks. In summary, the key findings and contributions of our work are:

- We uncover a meaningless-token effect in LLMs: inserting meaningless tokens, far from being harmful, systematically improves reasoning in LLMs. This runs counter to the common assumption that such tokens only add noise.

- Through theoretical and empirical analysis, we show that these tokens induce an activation redistribution effect in the first-layer MLP, reducing near-zero activations and increasing variance.

- Building on this understanding, we present ARM, a lightweight inference-time instantiation to demonstrate that the phenomenon can be directly harnessed.

2 Observation: Inserting Meaningless Tokens Induces an Affine Transformation on Meaningful Token Representations

We observe that meaningless tokens, such as a sequence of slashes (“/”) with appropriate lengths can enhance the performance of LLMs, particularly on reasoning tasks Varying token length, type, and position affects performance, as shown in Appendix F.. As shown in Table 1, when we insert a fixed-length sequence of meaningless tokens between the system prompt and the question, all evaluated models exhibit performance improvements on Math-500 and AIME2024 to different degrees. This consistent improvement suggests that the inserted meaningless tokens are not simply ignored or detrimental to the models; rather, they exert a positive influence, likely through non-trivial interactions with the models’ internal representations. To investigate this phenomenon, we start our analysis from the attention module. The formula of attention is:

Table 1: Performance on mathematical reasoning datasets with and without meaningless tokens across different models. “w/o” denotes the absence of meaningless tokens, while “w/” denotes their presence. We test each model five times to get the average result.

| Qwen2.5-Math-1.5B Qwen2.5-Math-7B DS-R1-Qwen-7B | 63.9 72.3 52.7 | 65.9 74.6 53.1 | 14.4 23.1 3.2 | 17.5 23.3 4.4 |

| --- | --- | --- | --- | --- |

| DS-Math-7B-instruct | 39.5 | 42.1 | 7.8 | 12.3 |

| Llama-3.1-8B-Instruct | 41.8 | 42.1 | 7.9 | 9.9 |

| Qwen-2.5-32B-Instruct | 81.3 | 81.7 | 17.6 | 22.8 |

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Line Charts: Average Attention Weight vs. Token Position

### Overview

The image presents six line charts comparing the average attention weight with and without "meaningless tokens" for three different language models: Owen-2.5-7B-Math, Llama-3-8B-Instruct, and Gemma-3-4b-it. Each model is represented by two charts, one showing attention weights up to token position 60 and the other up to token position 120. The charts aim to visualize the impact of meaningless tokens on attention distribution.

### Components/Axes

* **X-axis:** Token Position (ranging from 0 to 60 in the top row and 0 to 120 in the bottom row).

* **Y-axis:** Average Attention Weight (ranging from 0 to approximately 0.08 for Owen, 0 to 0.10 for Llama, and 0 to 0.17 for Gemma).

* **Lines:**

* Blue Line: Represents the average attention weight *without* meaningless tokens ("w/o Meaningless tokens").

* Orange Line: Represents the average attention weight *with* meaningless tokens ("w/ Meaningless tokens").

* **Titles:** Each chart has a title indicating the model name, layer number, and head number.

* Owen-2.5-7B-Math Layer 1 Head 22

* Llama-3-8B-Instruct Layer 1 Head 27

* Gemma-3-4b-it Layer 1 Head 3

* **Legend:** Located in the top-left corner of each chart, clearly labeling the blue and orange lines.

### Detailed Analysis or Content Details

**Owen-2.5-7B-Math Layer 1 Head 22 (Top Left)**

* The blue line (w/o meaningless tokens) exhibits high-frequency oscillations, fluctuating between approximately 0.01 and 0.06.

* The orange line (w/ meaningless tokens) also oscillates, but with a generally lower average attention weight, mostly between 0.005 and 0.04.

* The trend is generally erratic for both lines, with no clear upward or downward slope.

* Approximate data points (blue line):

* Token 0: ~0.02

* Token 20: ~0.05

* Token 40: ~0.03

* Token 60: ~0.01

* Approximate data points (orange line):

* Token 0: ~0.01

* Token 20: ~0.02

* Token 40: ~0.01

* Token 60: ~0.005

**Llama-3-8B-Instruct Layer 1 Head 27 (Top Middle)**

* The blue line (w/o meaningless tokens) shows similar high-frequency oscillations as Owen, ranging from approximately 0.02 to 0.08.

* The orange line (w/ meaningless tokens) also oscillates, with a lower average attention weight, mostly between 0.01 and 0.05.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.04

* Token 20: ~0.06

* Token 40: ~0.04

* Token 60: ~0.02

* Approximate data points (orange line):

* Token 0: ~0.02

* Token 20: ~0.03

* Token 40: ~0.02

* Token 60: ~0.01

**Gemma-3-4b-it Layer 1 Head 3 (Top Right)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.03 and 0.15.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.01 and 0.12.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.06

* Token 20: ~0.12

* Token 40: ~0.08

* Token 60: ~0.05

* Approximate data points (orange line):

* Token 0: ~0.02

* Token 20: ~0.06

* Token 40: ~0.04

* Token 60: ~0.03

**Owen-2.5-7B-Math Layer 1 Head 22 (Bottom Left)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.01 and 0.05.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.005 and 0.04.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.02

* Token 40: ~0.03

* Token 80: ~0.02

* Token 120: ~0.01

* Approximate data points (orange line):

* Token 0: ~0.01

* Token 40: ~0.02

* Token 80: ~0.01

* Token 120: ~0.005

**Llama-3-8B-Instruct Layer 1 Head 27 (Bottom Middle)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.02 and 0.06.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.01 and 0.04.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.03

* Token 40: ~0.04

* Token 80: ~0.03

* Token 120: ~0.02

* Approximate data points (orange line):

* Token 0: ~0.01

* Token 40: ~0.02

* Token 80: ~0.01

* Token 120: ~0.005

**Gemma-3-4b-it Layer 1 Head 3 (Bottom Right)**

* The blue line (w/o meaningless tokens) oscillates between approximately 0.04 and 0.14.

* The orange line (w/ meaningless tokens) oscillates between approximately 0.02 and 0.10.

* The trend is erratic for both lines.

* Approximate data points (blue line):

* Token 0: ~0.07

* Token 40: ~0.10

* Token 80: ~0.08

* Token 120: ~0.06

* Approximate data points (orange line):

* Token 0: ~0.03

* Token 40: ~0.06

* Token 80: ~0.04

* Token 120: ~0.03

### Key Observations

* In all charts, the attention weights with meaningless tokens are generally lower than those without.

* The attention weights exhibit high-frequency oscillations across all models and layers, suggesting a dynamic attention mechanism.

* Gemma-3-4b-it consistently shows higher average attention weights compared to Owen-2.5-7B-Math and Llama-3-8B-Instruct.

* The extended x-axis (up to 120 tokens) in the bottom row does not reveal any significantly different patterns compared to the top row (up to 60 tokens).

### Interpretation

The data suggests that the inclusion of meaningless tokens generally reduces the average attention weight across all three models. This indicates that the models are less focused on these tokens, which is expected. The high-frequency oscillations in attention weights suggest that the models are dynamically adjusting their attention based on the input sequence. The higher attention weights observed in Gemma-3-4b-it might indicate a more sensitive or complex attention mechanism. The lack of significant changes when extending the x-axis suggests that the attention patterns stabilize after a certain number of tokens. These charts provide insights into how different language models distribute their attention and how meaningless tokens affect this distribution. The erratic nature of the attention weights suggests that a more granular analysis, potentially involving averaging over larger datasets or examining specific token types, might be necessary to uncover more nuanced patterns.

</details>

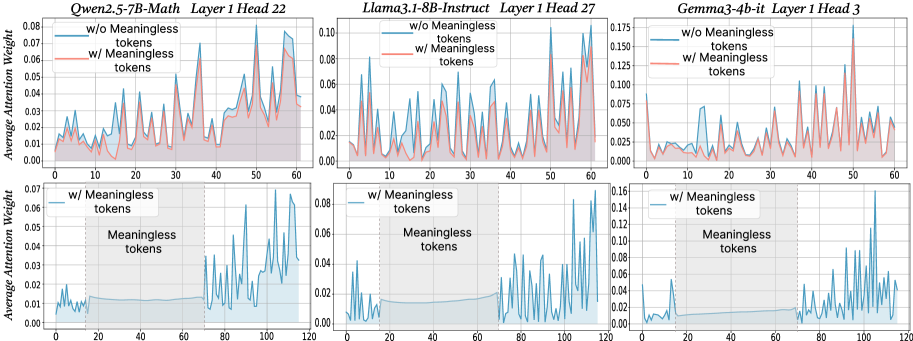

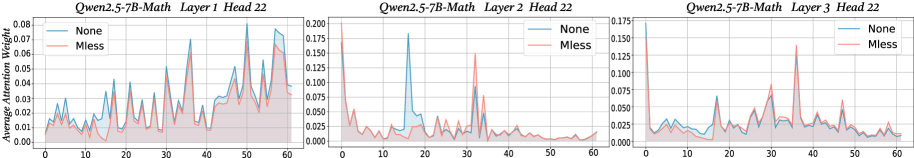

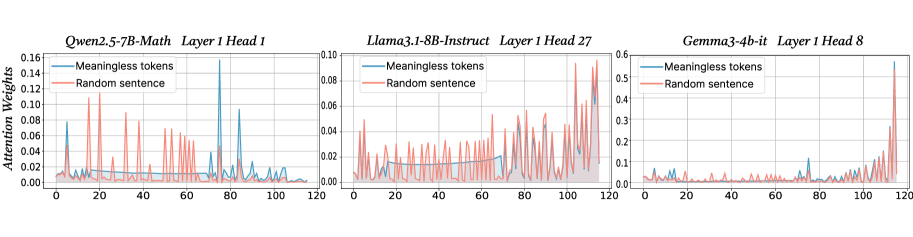

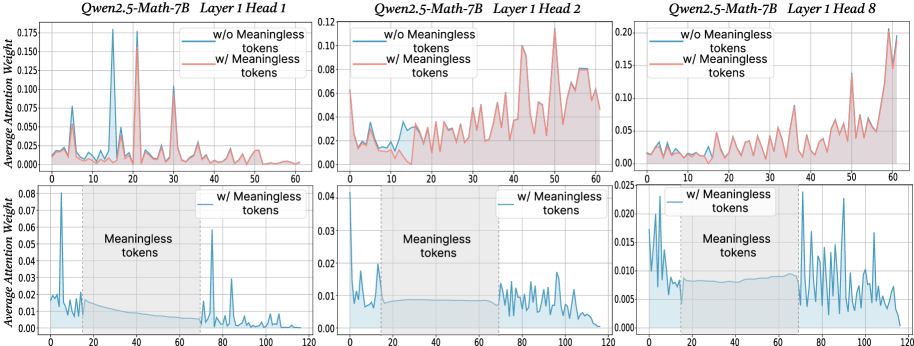

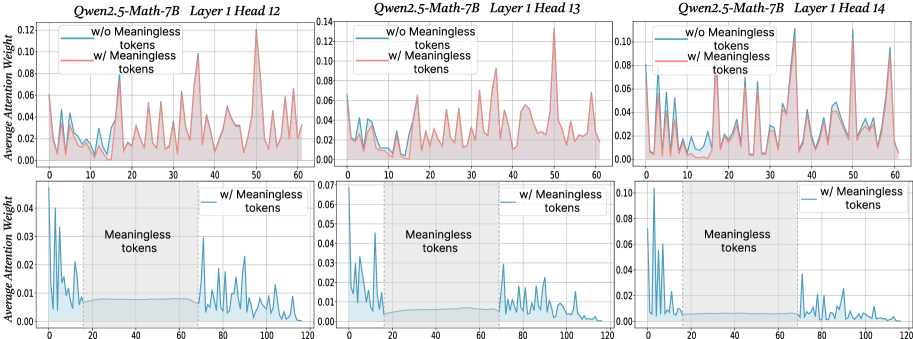

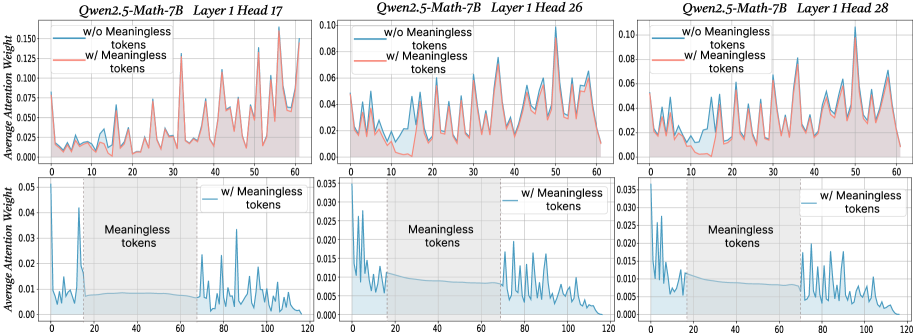

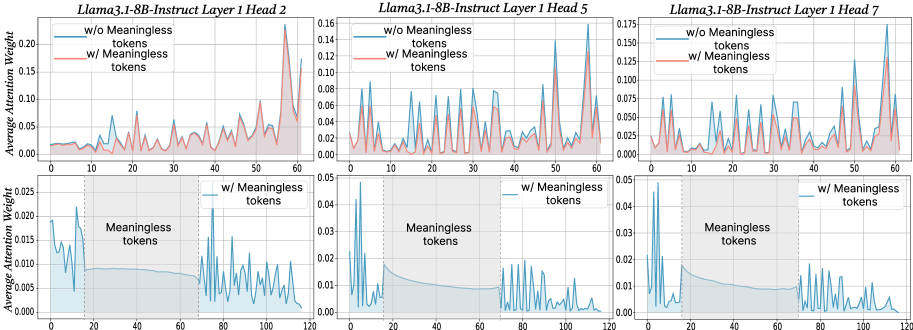

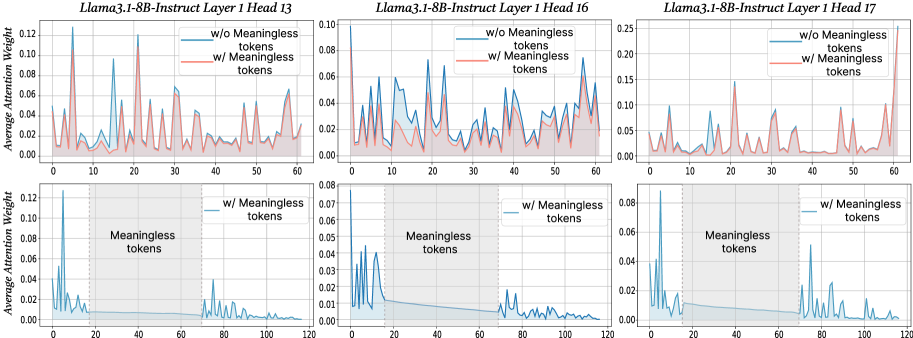

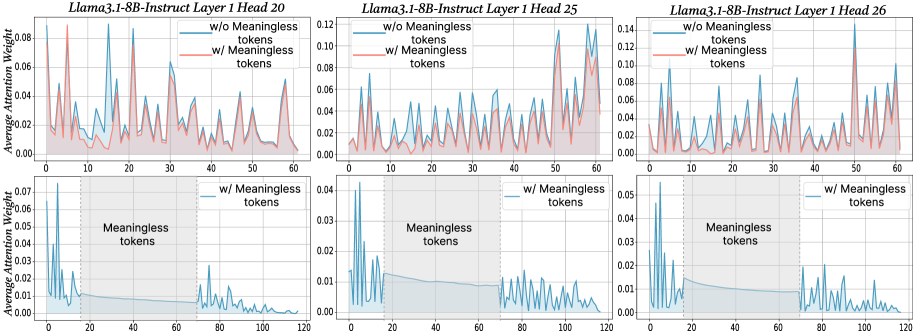

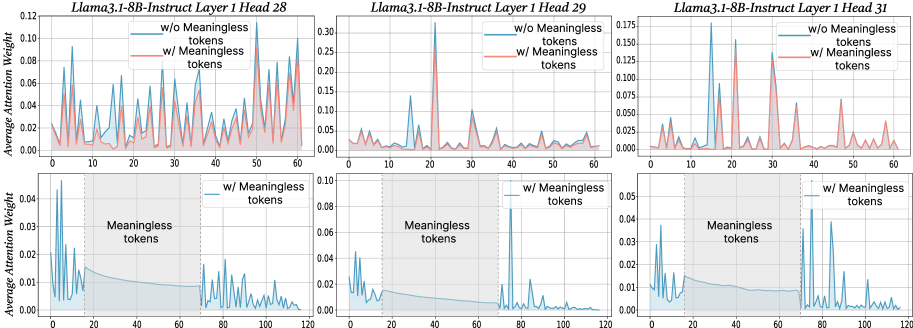

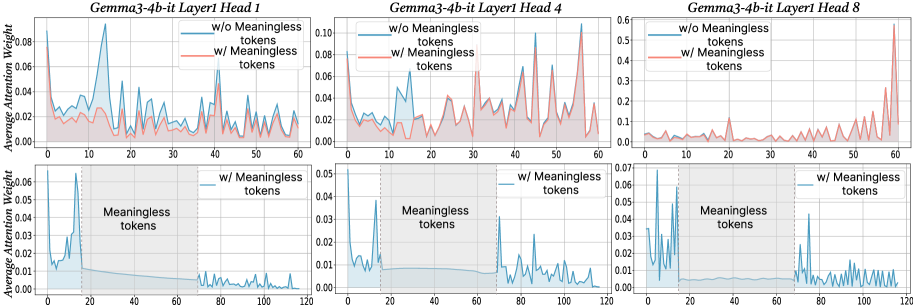

Figure 2: The x-axis shows token indices. Subsequent tokens assign lower average attention weights to the original prompt overall, while meaningless tokens receive similarly near-zero weights. We show additional average attention weights in Appendix I and layer-wise analyses in Section F.4.

$\text{Attention}(Q,K,V)=\text{softmax}\!\left(\frac{QK^{→p}}{\sqrt{d_{k}}}\right)V$ , where $Q$ , $K$ , $V$ are query vectors, key vectors and value vectors respectively, $d$ is the dimensionality of key/query. From this equation, adding extra tokens introduces additional terms into the softmax normalization, enlarging the softmax normalization denominator. Although the new tokens typically receive small weights, their presence redistributes probability mass and reduces the relative share of attention allocated to the original tokens. To probe the underlying case, we directly compare input’s attention weights with and without meaningless tokens while keeping tokens indices aligned in the first layers. For every token we computed the mean of its column below the diagonal of the attention matrix to measure the extent to which each token receives attention from all downstream tokens (Bogdan et al., 2025). When a string of meaningless tokens are present, the model assigns only small weights to each token, intuitively indicating that the model only pays little attention to them (see Figure 2 bottom row). The top row of Figure 2 presents a direct comparison of the attention to meaningful tokens without (blue) or with meaningless tokens (red; meaningless token indices are removed from visualization to allow for direct comparison). Among meaningful tokens, the average attention is decreased in the meaningless-token condition, especially driven by decreased high-attention spikes. The attention weights of the original prompt after inserting meaningless tokens are: $W^{\prime}=\lambda·\text{softmax}\!\left(\frac{QK^{→p}}{\sqrt{d_{k}}}\right)$ , where $W_{attn}$ are the attention weights after softmax, and $\lambda$ is the drop percentage of attention weights in the original prompt after adding meaningless tokens. Then, the attention output for each token not only obtains the weighted combination of the original tokens, but also includes attention weights and values from the meaningless tokens. Thus, the attention output can be expressed as:

$$

\text{Attn\_Output}_{new}=W_{j}^{\prime}V_{j}+W_{i}V_{i}, \tag{1}

$$

<details>

<summary>x3.png Details</summary>

### Visual Description

## Scatter Plot: Attention Output Displacement

### Overview

This image presents a scatter plot comparing the attention output with and without meaningless tokens. The plot visualizes the displacement between these two outputs, indicated by arrows connecting corresponding points. Two curved lines approximate the general trend of each data set.

### Components/Axes

* **X-axis:** The x-axis is not explicitly labeled, but represents a numerical value ranging approximately from 0 to 10. The scale is linear with markers at integer values.

* **Y-axis:** The y-axis is also not explicitly labeled, but represents a numerical value ranging approximately from 0 to 6. The scale is linear with markers at integer values.

* **Legend:** Located in the top-left corner, the legend defines the following:

* Blue 'x' markers: "Attn\_output w/o meaningless tokens"

* Red 'x' markers: "Attn\_output w/ meaningless tokens"

* Blue dashed arrows: "Displacement from x to x"

* Blue dotted line: "Attn\_output set w/o meaningless tokens"

* Red solid line: "Attn\_output set w/ meaningless tokens"

### Detailed Analysis

The plot contains two sets of scattered data points, one in blue and one in red. Each point represents an attention output value. Arrows connect corresponding points in the two sets, illustrating the displacement caused by the inclusion of meaningless tokens. Two curved lines approximate the trend of each data set.

**Blue Data (w/o meaningless tokens):**

The blue data points (Attn\_output w/o meaningless tokens) generally follow an upward trend, starting from approximately (0.5, 0.5) and reaching approximately (9.5, 5.5). The blue dotted line (Attn\_output set w/o meaningless tokens) provides a smoothed representation of this trend.

* Approximate data points (x, y): (0.5, 0.5), (1.5, 1.0), (2.5, 1.5), (3.5, 2.0), (4.5, 2.5), (5.5, 3.0), (6.5, 3.5), (7.5, 4.0), (8.5, 4.5), (9.5, 5.5).

**Red Data (w/ meaningless tokens):**

The red data points (Attn\_output w/ meaningless tokens) also exhibit an upward trend, but are generally shifted to the right and slightly above the blue data. The red solid line (Attn\_output set w/ meaningless tokens) represents this trend.

* Approximate data points (x, y): (1.0, 1.0), (2.0, 1.5), (3.0, 2.5), (4.0, 3.0), (5.0, 3.5), (6.0, 4.0), (7.0, 4.5), (8.0, 5.0), (9.0, 5.5), (10.0, 6.0).

**Displacement Arrows:**

The blue dashed arrows indicate the direction and magnitude of the displacement between corresponding blue and red points. The arrows generally point upwards and to the right, indicating that the inclusion of meaningless tokens increases both the x and y values of the attention output.

Two large, roughly elliptical shapes are drawn around the data. One encompasses the blue data, and the other the red data.

### Key Observations

* The inclusion of meaningless tokens consistently shifts the attention output to higher values.

* The displacement is not uniform; the magnitude of the shift varies across the range of x-values.

* The trend lines suggest a roughly linear relationship between the attention output and the presence/absence of meaningless tokens.

* The elliptical shapes highlight the overall distribution and spread of the data for each condition.

### Interpretation

The plot demonstrates the impact of meaningless tokens on attention output. The consistent upward and rightward displacement suggests that these tokens introduce a bias, inflating the attention scores. This could be problematic in applications where accurate attention weights are crucial, as it might lead to the model focusing on irrelevant information. The trend lines provide a visual summary of this effect, while the individual data points and displacement arrows reveal the variability in the impact of meaningless tokens. The elliptical shapes suggest that the data is somewhat clustered, but also exhibits some degree of spread, indicating that the effect of meaningless tokens is not entirely consistent. The visualization strongly suggests that removing meaningless tokens is beneficial for obtaining more accurate and reliable attention outputs.

</details>

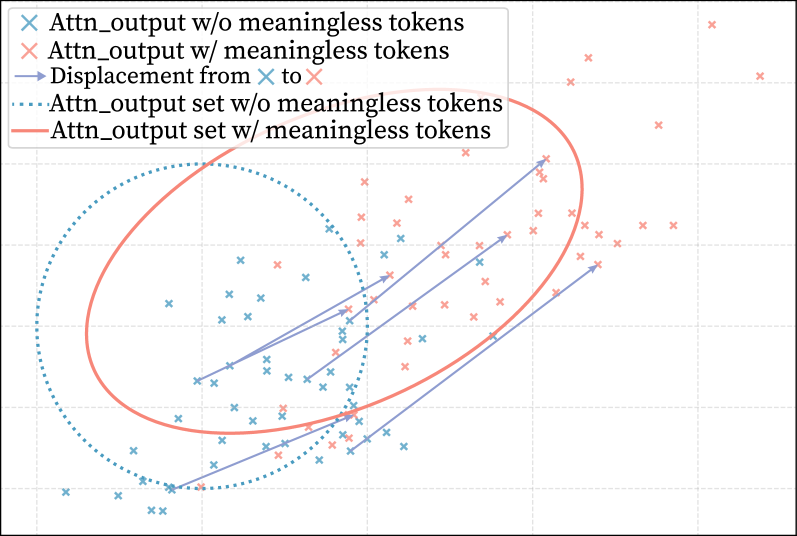

Figure 3: After adding meaningless tokens, each token vector is affinely transformed: blue points show the original vectors, and red points show them after the addition. Arrow is change direction.

where Attn_Output corresponds to the output of attention mechanism for each token in the original prompt, $W_{j}^{\prime}$ and $V_{j}$ are the attention weight and value vectors of the original prompt, and $W_{i}$ and $V_{i}$ are the attention weight and value vectors of meaningless tokens. As the meaningless tokens are repeated in long sequences and contribute no semantic information, the values of these tokens are identical, and their attention weights are small in a similar magnitude. Therefore, as shown in Equation 1, the term $W_{i}V_{i}$ primarily shifts the final attention output along an approximately unified direction as they accumulate, without introducing diverse semantic components. In this formula, $W_{j}V_{j}$ is the value of original attention output, we see $W_{i}V_{i}$ as $\Sigma_{\sigma}$ . As a result, the attention output of meaningful tokens after adding meaningless tokens can be seen as an affine transformation expressed as:

$$

\text{Attn\_Output}_{new}=\lambda\cdot\text{Attn\_Output}+\Sigma_{\sigma}, \tag{2}

$$

where Attn_Output is $W_{j}V_{j}$ . Following this equation, the introduction of meaningless tokens transforms the attention output of meaningful tokens into an affine function, consisting of a scaled original term ( $\lambda·\text{Attn\_Output}$ ) and an additional bias ( $\Sigma_{\sigma}$ ). Figure 3 illustrates the process of this transformation. After the attention module the affine transformed output passes through RMSNorm and serves as the input to the MLP. In the next section, we examine in detail how this transformation propagates through the subsequent MLP layers and shapes the model’s overall activation distribution.

3 Analysis: Why Affine Transformation Improve Reasoning Performance

Having established in the previous sections that meaningless-token effect induces scaling and bias terms that produce an affine transformation of the attention output, we next examine how this transformation propagates through the subsequent MLP modules and affects reasoning. In Equation 2, we decompose the transformation’s influencing factors into two primary components: the scaling factors $\lambda$ controls the magnitude of activations, and the bias factors $\Sigma_{\sigma}$ , a bounded zero-mean bias term reflecting the variation in attention outputs before and after meaningless-token insertion which introduce structured shifts in the activation distribution. Together, these two factors determine how the transformed attention representations shape the dynamics of the MLP layers.

3.1 Affine Transformation influence the output of gate layer

Key Takeaway

We demonstrate that applying an affine transformation, through both scaling and bias factors, systematically increases the variance of the gate layer’s output.

In this part, we show that these two factors increase the gate projection layer variance in MLP layer. As discussed above, because these tokens have low attention weights and nearly identical values, they shift the RMSNorm input almost entirely along a single direction with small margin; consequently, RMSNorm largely absorbs this change, producing only a minor numerical adjustment without adding semantic information. Specifically, the two factors act through different mechanisms. For the scaling factors, before entering the MLP, the attention output undergoes output projection and residual connection, which can be written as $x(\lambda)=\text{res}+\lambda*\text{U}*\text{A}$ , where A is the attention output and U the projection weights. Treating $\lambda$ as a functional variable, the RMSNorm output becomes $y(\lambda)=\text{RMS}(x(\lambda))$ . For the $j$ -th gate dimension, $z_{j}(\lambda)=w_{j}^{→p}y(\lambda)$ , and a small variation $\Delta\lambda$ leads to the variance change of this dimension.

$$

\text{Var}[z_{j}(\lambda+\Delta\lambda)]=\text{Var}[z_{j}(\lambda)]+2\text{Cov}(z_{j}(\lambda),g_{j}(\lambda))\Delta\lambda+\text{Var}[g_{j}(\lambda)]\Delta\lambda^{2}, \tag{3}

$$

the third term in Equation 3 remains strictly positive for all admissible parameters. Moreover, as $\Delta\lambda$ increases, this term exhibits monotonic growth and asymptotically dominates the second term, thereby guaranteeing a strictly increasing overall variance. We analyze the range of $\Delta\lambda$ in Appendix E. In the case of bias factors, we model the perturbation as stochastic noise which is bounded, zero-mean and statistically independent from the original attention output across all dimensions, which contributes an additional variance component and interacts non-trivially with the subsequent RMSNorm operation. Formally, after noise injection, the RMSNorm input can be written as $x=x_{0}+W\Sigma_{\sigma}$ , where $W$ is the linear coefficient of matrix $x$ preceding RMSNorm. After normalization, the covariance of the output can be expressed as:

$$

\text{Cov}(y)=J_{q}\text{Cov}(x)J_{q}^{{\top}}+o(\|x-x_{0}\|^{2}) \tag{4}

$$

where $x_{0}$ is the mean expansion point, $J_{q}$ is the Jacobian matrix of the RMSNorm mapping. Since the variance of the added perturbation is very small, the higher-order terms can be disregarded. In this case, the bias factor will bias the input of RMSNorm and lead to an increase in the covariance $\mathrm{Cov}(y)$ . Subsequently, the input to the activation function can be written as $z=W_{gate}(x+W\Sigma_{\sigma})$ . Based on the properties of the covariance, the variance of the $j$ -th dimension is given by:

$$

\text{Var}[z_{j}]\approx e_{j}^{\top}W_{gate}[J_{q}\text{Cov(x)}J_{q}^{{\top}}]W_{gate}^{\top}e_{j}, \tag{5}

$$

since the projection of the vector onto the tangent space is almost never zero in LLMs’ high dimensions, the resulting variance must be strictly greater than zero. From this, we can deduce that these two factors increase the variance of the output. In general, the scaling factors increase variance by amplifying inter-sample differences, whereas the bias factors correspondingly increase variance by enlarging the covariance structure across dimensions.

3.2 Variance change leads to activation redistribution

Key Takeaway

Our analysis shows that an increase in the input variance of activation functions broadens and reshapes the output activation distribution by raising both its mean and its variance.

As the variance of gate layer outputs grows under perturbations, the subsequent activation function further reshapes these signals by compressing values near zero. This motivates redistributing near-zero activations. For each sample in the hidden state, the second-order Taylor expansion on $\phi$ , the activation function output is:

$$

\phi(\mu+\sigma)=\phi(\mu)+\phi^{{}^{\prime}}(\mu)\sigma+\frac{1}{2}\phi^{{}^{\prime\prime}}(\mu)\sigma^{2}+o(|\sigma|^{3}), \tag{6}

$$

where $\sigma$ can represent both $\Delta k$ in scaling factor and $\Sigma_{\sigma}$ in bias factor. We denote the input to the activation function as $z=\mu+\sigma$ . For the $j$ -th dimension of the hidden state, the expectation and variance of the activation output can be expressed as:

$$

\mathbb{E}[\phi(z_{j})]=\mathbb{E}[\phi(\mu_{j})]+\mathbb{E}[\phi^{{}^{\prime}}(\mu_{j})\sigma]+\mathbb{E}[\frac{1}{2}\phi^{{}^{\prime\prime}}(\mu_{j})\sigma^{2}]+o(\mathbb{E}|\sigma|^{3}), \tag{7}

$$

$$

\text{Var}[\phi(z_{j})]=\phi^{{}^{\prime}}(\mu_{j})^{2}\text{Var}_{j}+o(\text{Var}_{j}^{2}). \tag{8}

$$

From above equations, We infer that distributional changes map to variations in expectation and variance. On a single dimension, activations shift in both directions; from Equation 6, higher-order terms are negligible, and the first derivative of GeLU/SiLU near zero is positive. Since perturbations include both signs, extrapolated activations also fluctuate around zero. From Equation 7, $\mathbb{E}[\sigma^{2}]=\text{Var}_{j}$ . For the bias factor, the zero-mean perturbation removes the first-order term. For scaling factors, expanding at the population mean gives $\mathbb{E}[\phi^{{}^{\prime}}(z_{j})g_{j}]=0$ , again canceling the first order. The second derivative near zero is strictly positive. From Equation 8, $\text{Var}_{j}$ increases, and so does the activation histogram variance, as the function is nearly linear near zero. In summary, scaling and bias factors jointly enlarge activation variance, expressed as:

$$

\text{Var}_{j}\approx{\color[rgb]{0.28515625,0.609375,0.75390625}\definecolor[named]{pgfstrokecolor}{rgb}{0.28515625,0.609375,0.75390625}\mathbb{E}[\text{Var}_{j}^{(\Sigma_{\sigma})}]}+{\color[rgb]{0.96875,0.53125,0.47265625}\definecolor[named]{pgfstrokecolor}{rgb}{0.96875,0.53125,0.47265625}\text{Var}(g_{j}^{\lambda})}. \tag{9}

$$

<details>

<summary>x4.png Details</summary>

### Visual Description

\n

## Bar Chart: Sparsity, L1 Norm, L2 Norm, and Gini Comparison with/without Transformation

### Overview

This image presents a comparative bar chart showing the values of Sparsity, L1 Norm, L2 Norm, and Gini for three different models: Qwen2.5-7B-Math, Llama3.1-8B-Instruct, and Gemma3-4b-it. Each model is presented in two conditions: with transformation (left) and without transformation (right). The bars are color-coded, with a light blue/red scheme. Each chart also includes a density plot along the x-axis.

### Components/Axes

* **X-axis:** Represents the metrics: Sparsity, L1 Norm, L2 Norm, and Gini.

* **Y-axis:** Represents the values of the metrics, ranging from 0 to 700,000 (left charts) and 0 to 50,000 (middle chart) and 0 to 400,000 (right chart).

* **Legend:** Located at the top-left corner, indicates that light blue bars represent "w/ transformation" and light red bars represent "w/o transformation".

* **Model Labels:** Located at the bottom of each chart, identifying the model being analyzed: Qwen2.5-7B-Math, Llama3.1-8B-Instruct, and Gemma3-4b-it.

* **Density Plots:** Red shaded areas along the x-axis, likely representing the distribution of values for each metric.

### Detailed Analysis or Content Details

**Qwen2.5-7B-Math:**

* **Sparsity (w/ transformation):** Approximately 0.185.

* **L1 Norm (w/ transformation):** Approximately 0.160.

* **L2 Norm (w/ transformation):** Approximately 0.120.

* **Gini (w/ transformation):** Approximately 0.045.

* **Sparsity (w/o transformation):** Approximately 0.0.

* **L1 Norm (w/o transformation):** Approximately 0.0.

* **L2 Norm (w/o transformation):** Approximately 0.0.

* **Gini (w/o transformation):** Approximately 0.40.

**Llama3.1-8B-Instruct:**

* **Sparsity (w/ transformation):** Approximately 0.10.

* **L1 Norm (w/ transformation):** Approximately 4.50.

* **L2 Norm (w/ transformation):** Approximately 7.00.

* **Gini (w/ transformation):** Approximately 4.30.

* **Sparsity (w/o transformation):** Approximately 0.0.

* **L1 Norm (w/o transformation):** Approximately 12.0.

* **L2 Norm (w/o transformation):** Approximately 13.0.

* **Gini (w/o transformation):** Approximately 5.00.

**Gemma3-4b-it:**

* **Sparsity (w/ transformation):** Approximately 0.10.

* **L1 Norm (w/ transformation):** Approximately 1.4.

* **L2 Norm (w/ transformation):** Approximately 0.6.

* **Gini (w/ transformation):** Approximately 1.0.

* **Sparsity (w/o transformation):** Approximately 0.6.

* **L1 Norm (w/o transformation):** Approximately 0.0.

* **L2 Norm (w/o transformation):** Approximately 0.0.

* **Gini (w/o transformation):** Approximately 0.0.

### Key Observations

* The "w/ transformation" condition consistently shows lower L1 and L2 Norm values compared to the "w/o transformation" condition for all three models.

* Sparsity is generally low for the "w/o transformation" condition, often close to zero.

* Gini values vary significantly between models and conditions.

* The density plots show a concentration of values near zero for the "w/o transformation" condition, particularly for L1 and L2 Norms.

### Interpretation

The data suggests that applying the transformation significantly impacts the sparsity, L1 norm, L2 norm, and Gini index of the models. The transformation appears to reduce the L1 and L2 norms, indicating a more compressed or regularized representation. The differences in Gini values suggest that the transformation affects the distribution of weights within the models. The near-zero values for L1 and L2 norms in the "w/o transformation" condition, coupled with the density plots, indicate that the models without transformation have a highly concentrated weight distribution, potentially leading to overfitting or reduced generalization ability. The transformation seems to introduce more diversity in the weight distribution, as reflected in the higher Gini values and non-zero L1/L2 norms. The Gemma3-4b-it model exhibits a particularly strong effect from the transformation, with a substantial decrease in L1 and L2 norms. This suggests that the transformation is more effective for this model.

</details>

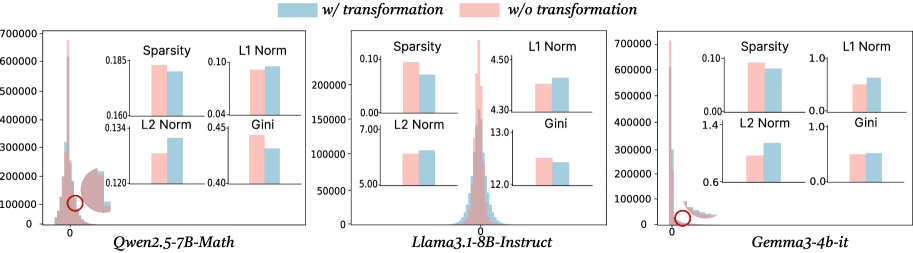

Figure 4: The histogram of the frequency of activations after activation functions in MLP, the sub-figure is the comparison of 4 metrics between before and after transformation.

The first term represents the expected variance of the $j$ -th hidden states under the influence of the bias factor. Since the bias factor varies across individual cases, taking the expectation is necessary to capture its overall impact. The second term corresponds to the variance induced by scaling factors, which inherently reflects the aggregate change. When combining them, the overall variance of the outputs of nonlinear activation functions increases, the mean shifts upward, and the activation distribution becomes broader, manifested as heavier tails and a thinner center. More details of above analysis and relative proof are in Appendix E. Moreover, we presume the reason that this redistribution has a positive impact on reasoning tasks is that reasoning-critical tokens (digits, operators, conjunctions) have a higher fraction of near-zero activations. Elevating their activation levels strengthens their representations and improves reasoning performance; see Section 6 for details.

3.3 Verification of activation redistribution

To verify whether the activation redistribution pattern in Section 3.2 indeed occurs in LLMs, Figure 4 illustrates the activation distribution after the first-layer MLP, explicitly comparing states before and after the transformation defined in Equation 2. We also comprehensively assess the transformation of activation states using several quantitative indicators, including:

- Relative Sparsity: Defined as the proportion of activations after the transformation whose values fall below the pre-transformation threshold.

- L1 Norm: The sum of the absolute activation values; smaller values indicate higher sparsity.

- L2 Norm: A measure of the overall magnitude of activations.

- Gini Coefficient: An indicator of the smoothness of the histogram distribution, where smaller absolute values correspond to smoother distributions.

From Figure 4, we observe that after transformation, the frequency of near-zero activations decreases, while the frequency of absolute high-magnitude activations increases. Both sparsity and smoothness in the activation distribution are improved. Specifically, the relative sparsity consistently decreases across all three models while the L1 and L2 norms increase, aligning with the previous phenomenon.

4 Method: Activation Redistribution Module

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Diagram: Layer 1 Architecture

### Overview

This diagram illustrates the architecture of Layer 1 in a neural network, likely a transformer-based model. It depicts the flow of data through several components, including Attention, RoPE, RMSNorm, MLP (Multi-Layer Perceptron), and activation functions. The diagram also includes visualizations of data distribution before and after a redistribution process.

### Components/Axes

The diagram is segmented into three main regions: a left-side processing chain, a central MLP block, and a right-side visualization of data distributions.

* **Left Side:**

* "Output"

* "Attention"

* "RoPE"

* "Q K V" (Query, Key, Value)

* "RMSNorm"

* **Central MLP Block:**

* "MLP" (title)

* "down"

* "up"

* "ARM"

* "SiLU/GeLU"

* "gate"

* "RMSNorm"

* **Right Side:**

* "before redistribution" (title of top histogram)

* "after redistribution" (title of bottom histogram)

* X-axis: Numerical scale, ranging from approximately -4 to +4.

* Y-axis: Represents frequency or probability density.

### Detailed Analysis or Content Details

The diagram shows a data flow starting from the bottom with "RMSNorm". The output of "RMSNorm" feeds into "Q K V". The output of "Q K V" is then passed to "RoPE", which in turn feeds into "Attention". The output of "Attention" is labeled "Output".

The "MLP" block receives input from "RMSNorm" (bottom) and "Attention" (top). Inside the MLP:

* The input splits into two paths: "up" and "down".

* "down" feeds into "ARM" and then into "SiLU/GeLU".

* "up" feeds into "RMSNorm" and then into "gate".

* The outputs of "ARM/SiLU/GeLU" and "gate" are combined at a circular node (likely an addition operation).

The right side shows two histograms:

* **Before Redistribution:** The histogram is multi-modal, with several peaks. The peaks are centered around approximately -2.5, -1, 0, +1, and +2.5. The height of the peaks are roughly equal. Arcs are drawn from the top of each peak to the x-axis, indicating the spread of the distribution.

* **After Redistribution:** The histogram is unimodal, centered around approximately 0. The distribution is more concentrated and has a narrower spread than the "before redistribution" histogram.

### Key Observations

* The diagram illustrates a typical transformer layer structure with attention and a feedforward network (MLP).

* The MLP block includes a complex internal structure with "ARM", "SiLU/GeLU", and "gate" components.

* The redistribution process appears to transform a multi-modal distribution into a unimodal distribution, suggesting a regularization or normalization effect.

* The circular node within the MLP suggests an additive operation.

### Interpretation

The diagram likely represents a component within a larger neural network architecture, possibly a transformer model. The "RoPE" component suggests the use of Rotary Positional Embeddings, a technique for incorporating positional information into the attention mechanism. The MLP block is designed to process the output of the attention mechanism and introduce non-linearity.

The redistribution process, as visualized by the histograms, is a key aspect of the architecture. It transforms a potentially complex and dispersed distribution into a more focused and stable distribution. This could be a form of normalization, regularization, or feature selection. The change from a multi-modal to a unimodal distribution suggests that the redistribution process is reducing the variance and concentrating the data around a central value.

The diagram provides a high-level overview of the data flow and component interactions within Layer 1. It does not provide specific numerical values or parameters, but it conveys the overall structure and functionality of the layer.

</details>

⬇

def forward (x, layer_idx): # in first layer

activation = self. act_fn (self. gate_proj (x))

#Our function

activation_alter = self. arm (activation. clone ())

down_proj = self. down_proj (activation_alter * self. up_proj (x))

return down_proj

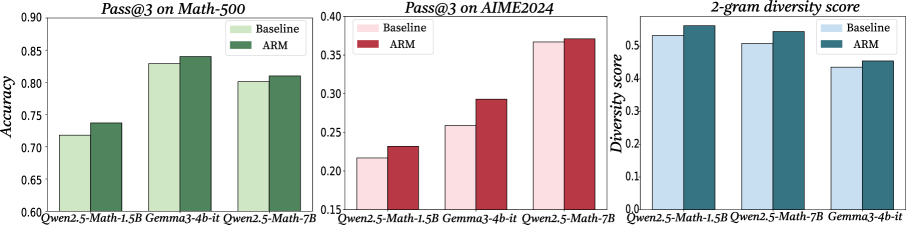

Figure 5: The upper panel illustrates the first-layer LLM architecture with ARM, while the lower panel presents the corresponding ARM code in the MLP module.

Inspired by the previous finding that meaningless tokens can shift meaningful activations and boost LLM performance, we propose ARM—a simple method replacing explicit meaningless tokens with an implicit mechanism that adjusts the MLP activation distribution after the activation function. Our approach has two steps: First, adaptively identify a proportion of near-zero activations based on the model and input; Then, extrapolate them outward to redistribute the activation pattern. The top half of Figure 5 shows the first-layer MLP with ARM, where selected activations around zero are shifted outward, reducing their frequency and increasing larger-magnitude activations. The bottom half of Figure 5 presents the ARM-specific code, a lightweight function inserted into the first-layer MLP without affecting inference speed. As shown in Appendix D, ARM’s time complexity is negligible within the MLP context. The significance of the ARM method is twofold. Firstly, it adds further evidence deductively supporting our theoretical analysis in Section 3. By directly replacing explicit meaningless token insertion with implicit activation redistribution, ARM yields a similar improvement in reasoning across models and benchmarks, thus strengthening our theoretical framework. Secondly, we introduce ARM as a lightweight inference time trick for boosting reasoning, which is not only robustly effective on its own (see experiments in Section 5) but also compatible with existing inference time scaling methods (see Appendix G.3).

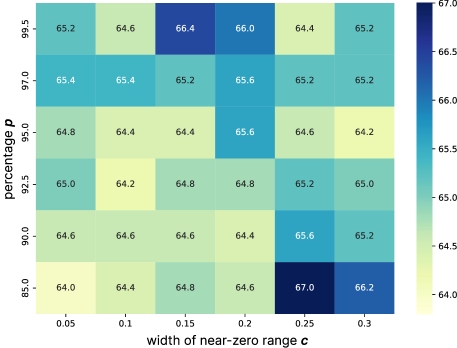

4.1 Select Appropriate Change proportion

Our method first selects a proportion of activations to be modified. However, different models exhibit varying sensitivities to meaningless tokens. To address this, we propose a dynamic strategy that adjusts the fraction of near-zero activations to be altered during inference. To determine this proportion, we measure the dispersion of activations around zero. Specifically, we define a neighborhood $\epsilon$ based on the activation distribution to identify which activations are considered “close to zero”. We adopt the Median Absolute Deviation (MAD) as our dispersion metric, since MAD is robust to outliers and better captures the core distribution. The threshold $\epsilon$ is given by: $\epsilon=\kappa*\text{MAD}*c$ , where $\kappa$ is a consistency constant, $c$ is a hyperparameter controlling the width of the near-zero range. Next, we compute the fraction of activations falling within $[-\epsilon,\epsilon]$ This fraction $p$ represents the proportion of activations that we think to be near zero. As a result, the fraction we want to change is $\text{fraction}=clip(p,(p_{\text{min}},p_{\text{max}}))$ . Here, $p$ denotes the calculated fraction, while $p_{\text{min}}$ and $p_{\text{max}}$ serve as bounds to prevent the scale from becoming either too small or excessively large. In our experiments, we set $p_{\text{min}}=0.02$ and $p_{\text{max}}=0.25$ .

4.2 Redistribution of Activation Values

After selecting the elements, we preserve its sign and adjust only its magnitude. Specifically, we add a positive or negative value depending on the element’s sign. To constrain the modified values within a reasonable range, the range is defined as follows:

$$

\text{R}=\begin{cases}[0,\text{Q}_{p_{1}}(\text{Activations)}],&\text{sign}=1,\\[6.0pt]

[\text{min(Activations)},0],&\text{sign}=-1.\end{cases} \tag{10}

$$

Where R is the range of modified values. In this range, we set the lower bound to the minimum activation value when $\text{sign}=-1$ , since activation functions such as SiLU and GeLU typically attain their smallest values on the negative side. For the upper bound when $\text{sign}=1$ , we select the value corresponding to the $p_{1}$ -th percentile of the activation distribution. Here, $p_{1}$ is a hyperparameter that depends on the distribution of activations. $\text{Q}_{p_{1}}(\text{Activations)}$ is the upper bound when we changing the chosen activations. The value of $p_{1}$ depends on the distribution of activations and the value of $c$ . Finally, we generate a random value in R and add it to the activation in order to modify its value. In this way, we adaptively adjust an appropriate proportion of activations, enriching the distribution with more effective values. We shows how to choose hyperparameter in Appendix H.

Table 2: After adding ARM to the first-layer MLP, we report reasoning-task performance for six models, using a dash (‘–’) for accuracies below 5% to indicate incapability.

| Model | Setting | GPQA Diamond | Math-500 | AIME 2024 | AIME 2025 | LiveCodeBench | Humaneval |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Pass@1 | Pass@1 | Pass@1 | Pass@1 | Pass@1 | Pass@1 | | |

| Qwen2.5 Math-1.5B | Baseline | 27.3 | 63.8 | 14.4 | 6.7 | - | 6.1 |

| \cellcolor cyan!15ARM | \cellcolor cyan!1528.8 | \cellcolor cyan!1567.0 | \cellcolor cyan!1518.9 | \cellcolor cyan!1510.0 | \cellcolor cyan!15- | \cellcolor cyan!158.5 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 1.5 $\uparrow$ | \cellcolor gray!15 3.2 $\uparrow$ | \cellcolor gray!15 4.5 $\uparrow$ | \cellcolor gray!15 3.3 $\uparrow$ | \cellcolor gray!15 - | \cellcolor gray!15 2.4 $\uparrow$ | |

| Qwen2.5 Math-7B | Baseline | 30.3 | 72.4 | 23.3 | 10.0 | - | 15.2 |

| \cellcolor cyan!15ARM | \cellcolor cyan!1534.9 | \cellcolor cyan!1573.4 | \cellcolor cyan!1525.6 | \cellcolor cyan!1513.3 | \cellcolor cyan!15- | \cellcolor cyan!1517.7 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 4.6 $\uparrow$ | \cellcolor gray!15 1.0 $\uparrow$ | \cellcolor gray!15 2.3 $\uparrow$ | \cellcolor gray!15 3.3 $\uparrow$ | \cellcolor gray!15 - | \cellcolor gray!15 2.5 $\uparrow$ | |

| Qwen2.5 7B-Instruct | Baseline | 28.3 | 61.4 | 20.0 | 10.0 | 29.7 | 43.9 |

| \cellcolor cyan!15ARM | \cellcolor cyan!1529.8 | \cellcolor cyan!1562.4 | \cellcolor cyan!1520.0 | \cellcolor cyan!1523.3 | \cellcolor cyan!1531.9 | \cellcolor cyan!1547.6 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 1.5 $\uparrow$ | \cellcolor gray!15 1.0 $\uparrow$ | \cellcolor gray!150 | \cellcolor gray!15 13.3 $\uparrow$ | \cellcolor gray!15 2.2 $\uparrow$ | \cellcolor gray!15 3.7 $\uparrow$ | |

| Qwen2.5 32B-Instruct | Baseline | 35.4 | 82.6 | 16.7 | 20.0 | 49.5 | 50.0 |

| \cellcolor cyan!15ARM | \cellcolor cyan!1535.9 | \cellcolor cyan!1582.6 | \cellcolor cyan!1518.8 | \cellcolor cyan!1526.7 | \cellcolor cyan!1549.5 | \cellcolor cyan!1551.2 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 0.5 $\uparrow$ | \cellcolor gray!150 | \cellcolor gray!15 2.1 $\uparrow$ | \cellcolor gray!15 6.7 $\uparrow$ | \cellcolor gray!150 | \cellcolor gray!15 1.2 $\uparrow$ | |

| Llama3.1 8B-Instruct | Baseline | 28.3 | 43.0 | 11.1 | - | 11.9 | 45.7 |

| \cellcolor cyan!15ARM | \cellcolor cyan!1531.3 | \cellcolor cyan!1545.8 | \cellcolor cyan!1513.3 | \cellcolor cyan!15- | \cellcolor cyan!1517.0 | \cellcolor cyan!1547.6 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 3.0 $\uparrow$ | \cellcolor gray!15 2.8 $\uparrow$ | \cellcolor gray!15 2.2 $\uparrow$ | \cellcolor gray!15- | \cellcolor gray!15 5.1 $\uparrow$ | \cellcolor gray!15 1.9 $\uparrow$ | |

| Gemma3 4b-it | Baseline | 34.3 | 72.6 | 13.3 | 20.0 | 20.2 | 17.1 |

| \cellcolor cyan!15ARM | \cellcolor cyan!1535.9 | \cellcolor cyan!1574.0 | \cellcolor cyan!1517.8 | \cellcolor cyan!1523.3 | \cellcolor cyan!1520.6 | \cellcolor cyan!1520.7 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 1.5 $\uparrow$ | \cellcolor gray!15 1.4 $\uparrow$ | \cellcolor gray!15 4.5 $\uparrow$ | \cellcolor gray!15 3.3 $\uparrow$ | \cellcolor gray!15 0.4 $\uparrow$ | \cellcolor gray!15 3.6 $\uparrow$ | |

| Gemma3 27b-it | Baseline | 33.3 | 85.4 | 25.6 | 26.7 | 31.9 | 9.1 |

| \cellcolor cyan!15ARM | \cellcolor cyan!1533.8 | \cellcolor cyan!1586.2 | \cellcolor cyan!1531.1 | \cellcolor cyan!1530.0 | \cellcolor cyan!1534.2 | \cellcolor cyan!1511.6 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 0.5 $\uparrow$ | \cellcolor gray!15 0.8 $\uparrow$ | \cellcolor gray!15 4.4 $\uparrow$ | \cellcolor gray!15 3.3 $\uparrow$ | \cellcolor gray!15 2.3 $\uparrow$ | \cellcolor gray!15 2.5 $\uparrow$ | |

5 Experiments

We evaluate our method on reasoning and non-reasoning tasks using seven models: Qwen2.5-Math-1.5B, Qwen2.5-Math-7B, Qwen2.5-Instruct-7B, Qwen2.5-Instruct-32B (qwe, 2025), Llama3.1-8B-Instruct (gra, 2024), Gemma3-4b-it, and Gemma3-27b-it (gem, 2025). All models use default generation parameters. For reasoning tasks, we cover three skill areas: (1) General: GPQA (Rein et al., 2024), a challenging expert-authored multiple-choice dataset; (2) Math & Text Reasoning: MATH-500 (Lightman et al., 2023), AIME’24 (AIME, 2024), and AIME’25 (AIME, 2025); (3) Agent & Coding: LiveCodeBench (Jain et al., 2024) and HumanEval (Chen et al., 2021). For non-reasoning tasks, we use GSM8K (Cobbe et al., 2021), ARC-E (Clark et al., 2018), ARC-C (Clark et al., 2018), MMLU (Hendrycks et al., 2021), BoolQ (Clark et al., 2019), HellaSwag (Zellers et al., 2019), and OpenBookQA (Mihaylov et al., 2018).

5.1 Experiment Results Analysis

For reasoning tasks, the results in Table 2 show pass@1 accuracy across multiple benchmarks. Our method consistently improves performance across most models and datasets, with the effect more pronounced in smaller models (e.g., Qwen2.5-Math-7B shows larger gains than Qwen2.5-32B-Instruct). On challenging benchmarks, however, improvements are limited when models lack sufficient capacity or when baseline accuracy is near saturation. For non-reasoning tasks (see 3(b)), applying ARM to the first-layer MLP yields little change. We attribute this to their largely factual nature, where models already have the necessary knowledge and response formats, requiring minimal reasoning. By contrast, for reasoning tasks, altering early activations helps reorganize knowledge, strengthens intermediate representations, and facilitates more effective and consistent reasoning.

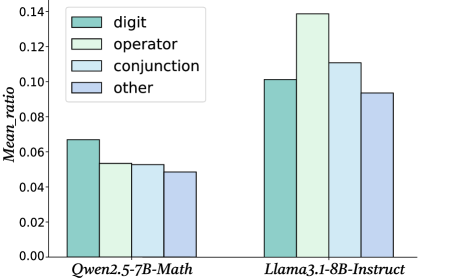

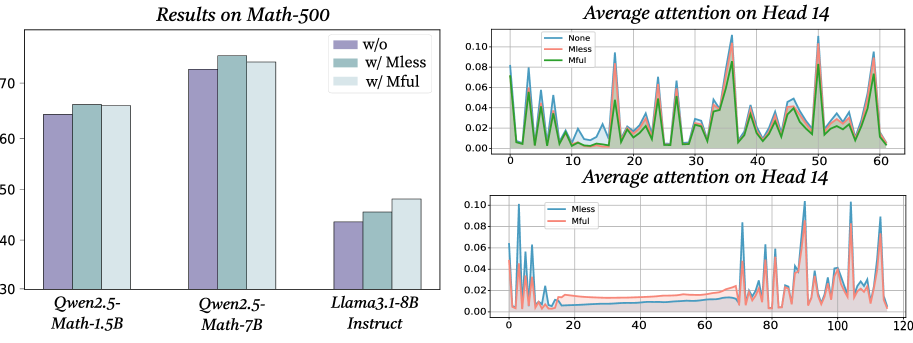

5.2 Comparison of Meaningless tokens and ARM

In 3(a), we provide a direct comparison between our proposed ARM method and the strategy of inserting a suitable number of meaningless tokens. The results demonstrate that both approaches are capable of improving model performance and neither requires post-training, therefore presenting lightweight interventions that lead to robust performance gains. However, since ARM directly utilizes the fundamental principle driving the meaningless-token effect, it provides more stable results. While the meaningless-token effect is pervasive, our experiments show that the effect itself depends heavily on the specific choice of token length and placement, and thus may be unstable or difficult to generalize across tasks. ARM provides a more principled and model-internal mechanism that directly reshapes the activation distribution within the MLP, yielding more consistent gains without relying on heuristic token engineering. In sum, while the insertion of a meaningless token string on the prompt level might seem like a promising prompt-tuning adjustment on the surface, it comes with an instability of the effect which ARM eliminates. This contrast highlights the trade-off between ease of use and robustness, and further underscores the value of ARM as a systematic method for enhancing the reasoning ability in large language models.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Bar Charts: Performance Comparison of Baseline and ARM Models

### Overview

The image presents three bar charts comparing the performance of "Baseline" and "ARM" models across three different metrics: Pass@3 on Math-500, Pass@3 on AIME2024, and 2-gram diversity score. The x-axis of each chart represents different models: Qwen2.5-Math-1.5B, Gemma3-4b-it, and Qwen2.5-Math-7B.

### Components/Axes

* **Chart 1:**

* Title: "Pass@3 on Math-500"

* X-axis: Model names (Qwen2.5-Math-1.5B, Gemma3-4b-it, Qwen2.5-Math-7B)

* Y-axis: Accuracy (ranging from 0.60 to 0.90)

* Legend:

* Baseline (Light Green)

* ARM (Dark Green)

* **Chart 2:**

* Title: "Pass@3 on AIME2024"

* X-axis: Model names (Qwen2.5-Math-1.5B, Gemma3-4b-it, Qwen2.5-Math-7B)

* Y-axis: Pass@3 Score (ranging from 0.15 to 0.40)

* Legend:

* Baseline (Light Red)

* ARM (Dark Red)

* **Chart 3:**

* Title: "2-gram diversity score"

* X-axis: Model names (Qwen2.5-Math-1.5B, Gemma3-4b-it, Qwen2.5-Math-7B)

* Y-axis: Diversity score (ranging from 0.1 to 0.6)

* Legend:

* Baseline (Light Blue)

* ARM (Dark Blue)

### Detailed Analysis or Content Details

**Chart 1: Pass@3 on Math-500**

* Qwen2.5-Math-1.5B: Baseline ≈ 0.72, ARM ≈ 0.78

* Gemma3-4b-it: Baseline ≈ 0.82, ARM ≈ 0.85

* Qwen2.5-Math-7B: Baseline ≈ 0.85, ARM ≈ 0.88

* Trend: ARM consistently outperforms Baseline across all models. The performance increases with model size.

**Chart 2: Pass@3 on AIME2024**

* Qwen2.5-Math-1.5B: Baseline ≈ 0.22, ARM ≈ 0.28

* Gemma3-4b-it: Baseline ≈ 0.32, ARM ≈ 0.30

* Qwen2.5-Math-7B: Baseline ≈ 0.36, ARM ≈ 0.38

* Trend: ARM generally outperforms Baseline, but the difference is smaller than in the Math-500 chart. Gemma3-4b-it shows a slight decrease in ARM performance compared to Baseline.

**Chart 3: 2-gram diversity score**

* Qwen2.5-Math-1.5B: Baseline ≈ 0.52, ARM ≈ 0.55

* Gemma3-4b-it: Baseline ≈ 0.58, ARM ≈ 0.57

* Qwen2.5-Math-7B: Baseline ≈ 0.60, ARM ≈ 0.60

* Trend: ARM and Baseline perform similarly, with slight variations. The diversity score appears relatively stable across different models.

### Key Observations

* ARM consistently improves accuracy on the Math-500 dataset.

* The performance gain from ARM is less pronounced on the AIME2024 dataset, and even slightly negative for Gemma3-4b-it.

* The 2-gram diversity score is relatively consistent across models and between Baseline and ARM.

* Larger models (Qwen2.5-Math-7B) generally achieve higher accuracy on Math-500.

### Interpretation

The data suggests that the ARM technique is effective in improving performance on the Math-500 benchmark, indicating its ability to enhance mathematical reasoning capabilities. However, its impact on the AIME2024 benchmark is less significant, and in some cases, slightly detrimental. This could indicate that ARM is more specialized for mathematical problem-solving than general reasoning tasks. The consistent 2-gram diversity scores suggest that ARM does not significantly alter the diversity of generated responses. The positive correlation between model size and accuracy on Math-500 highlights the importance of model capacity for complex mathematical tasks. The slight dip in ARM performance for Gemma3-4b-it on AIME2024 could be due to specific characteristics of that model or dataset interaction. Further investigation is needed to understand the reasons behind this anomaly.

</details>

Figure 6: The first two figures show pass@3 on Math-500 and AIME2024 for three models with and without ARM, and the last shows their 2-gram diversity under both conditions.

Table 3: Table (a) compares the performance of meaningless tokens and ARM, and Table (b) reports ARM’s results on non-reasoning tasks.

(a) Pass@1 on Math-500 and AIME2024 with meaningless tokens (Mless) or ARM.

| Qwen2.5 Math-7B Mless ARM | Baseline 75.0 73.4 | 72.4 24.4 25.6 | 23.3 |

| --- | --- | --- | --- |

| Llama3.1 8B-Instruct | Baseline | 43.0 | 11.1 |

| Mless | 44.9 | 13.3 | |

| ARM | 45.8 | 13.3 | |

(b) Performance of models with ARM on non-reasoning tasks. Additional results are in Appendix G.

| Model Qwen2.5 Math-1.5B ARM | Setting Baseline 78.6 | GSM8K 78.0 39.3 | ARC-E 39.3 39.5 | HellaSwag 39.1 |

| --- | --- | --- | --- | --- |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 0.6 $\uparrow$ | \cellcolor gray!150 | \cellcolor gray!15 0.4 $\uparrow$ | |

| Llama3.1 8B-Instruct | Baseline | 80.0 | 46.6 | 56.8 |

| ARM | 82.4 | 47.1 | 57.3 | |

| \cellcolor gray!15 Improve Rate (%) | \cellcolor gray!15 2.4 $\uparrow$ | \cellcolor gray!15 0.5 $\uparrow$ | \cellcolor gray!15 0.5 $\uparrow$ | |

5.3 Exploration capabilities after ARM

As discussed earlier, we hypothesize that redistributing activations enables the model to explore the reasoning space more effectively. To test this hypothesis, we evaluate the model’s pass@3 performance on the Math-500 and AIME2024 benchmarks as well as its 2-gram diversity. As shown in Figure 6, applying activation redistribution consistently yields higher pass@3 scores compared to the baselines on both tasks. In addition, the 2-gram diversity under ARM is also greater than that without ARM. These findings indicate that activation redistribution not only improves the likelihood of arriving at correct solutions within multiple samples but also promotes more diverse reasoning paths. This dual effect suggests that ARM enhances both the effectiveness and the breadth of the model’s internal reasoning processes, reinforcing our hypothesis that carefully manipulating internal activations can expand a model’s reasoning capacity without additional training or parameter growth.

6 Discussion: Why Activation Redistribution Enhances LLM Reasoning Performance

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Bar Chart: Mean Ratio of Token Types by Model

### Overview

This bar chart compares the mean ratio of different token types (digit, operator, conjunction, and other) for two language models: Qwen2.5-7B-Math and Llama3.1-8B-Instruct. The chart uses grouped bars to represent each token type within each model.

### Components/Axes

* **X-axis:** Model Name (Qwen2.5-7B-Math, Llama3.1-8B-Instruct)

* **Y-axis:** Mean\_ratio (ranging from 0.00 to 0.14)

* **Legend:**

* digit (light green)

* operator (pale green)

* conjunction (light blue)

* other (pale blue)

### Detailed Analysis

The chart consists of two groups of four bars, one for each model. Within each group, each bar represents the mean ratio for a specific token type.

**Qwen2.5-7B-Math:**

* **digit:** The light green bar for 'digit' is approximately 0.065.

* **operator:** The pale green bar for 'operator' is approximately 0.055.

* **conjunction:** The light blue bar for 'conjunction' is approximately 0.050.

* **other:** The pale blue bar for 'other' is approximately 0.048.

**Llama3.1-8B-Instruct:**

* **digit:** The light green bar for 'digit' is approximately 0.135.

* **operator:** The pale green bar for 'operator' is approximately 0.115.

* **conjunction:** The light blue bar for 'conjunction' is approximately 0.105.

* **other:** The pale blue bar for 'other' is approximately 0.090.

### Key Observations

* Llama3.1-8B-Instruct consistently exhibits higher mean ratios across all token types compared to Qwen2.5-7B-Math.

* For both models, the 'digit' token type has the highest mean ratio, followed by 'operator', 'conjunction', and 'other'.

* The difference in mean ratios between the two models is most pronounced for the 'digit' token type.

### Interpretation

The data suggests that Llama3.1-8B-Instruct is more inclined to generate or process text containing digits, operators, conjunctions, and other token types compared to Qwen2.5-7B-Math. This could indicate that Llama3.1-8B-Instruct is better suited for tasks involving mathematical reasoning or code generation, where these token types are more prevalent. The consistently higher ratios across all categories suggest a fundamental difference in the models' token distribution preferences. The large difference in the 'digit' category is particularly noteworthy, potentially indicating a stronger mathematical capability in Llama3.1-8B-Instruct. The chart provides a quantitative comparison of the token type distributions, offering insights into the models' strengths and weaknesses.

</details>

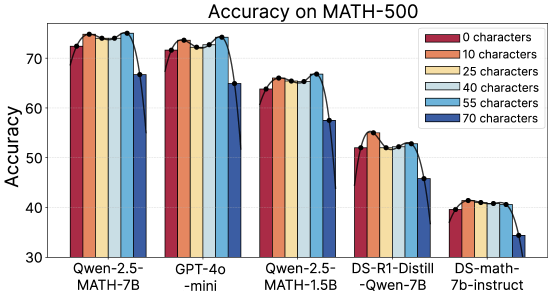

Figure 7: Percentage of near-zero activations across the four token types in the Math-500 dataset.

We provide one possible explanation for why redistributing the near-zero activations can improve the reasoning performance of LLMs. We categorize all tokens in Math-500 into four classes: digits, conjunctions, operators, and other tokens. For each class, we compute the average proportion of activations falling within near-zero range, which reflects how many dimensions of the hidden representation remain nearly inactive. The results are presented in Figure 7. As shown, normal tokens exhibit the lowest near-zero proportion, while digits, operators, and conjunctions show substantially higher proportions, which means that in the high-frequency near-zero activations after activation function, a larger portion of them are derived from these tokens. This suggests that although these tokens are crucial for reasoning, their information is insufficiently activated by the model. Our observation is consistent with the findings of Huan et al. (2025), which highlight the increasing importance of conjunctions after reinforcement learning, and also aligns with the recognized role of digits and operators in reasoning tasks such as mathematics and coding. Consequently, redistributing activations around zero enhances the representation of under-activated yet semantically important tokens, improving reasoning performance.

7 Related Work

Recent studies notice that symbols in an LLM’s input may affect their internal mechanism. Sun et al. (2024) show large activations for separators, periods, or newlines, suggesting that these tokens carry model biases. Razzhigaev et al. (2025) find that commas are essential for contextual memory, while Chauhan et al. (2025) and Min et al. (2024) highlight punctuation as attention sinks, memory aids, and semantic cues. Moreover, Chadimová et al. (2024) show that replacing words with meaningless tokens can reduce cognitive biases, whereas Li et al. (2024) report that such “glitch tokens” may also cause misunderstandings, refusals, or irrelevant outputs. Our work adds explanation to the puzzling downstream benefits that the inclusion of a string of meaningless tokens contributes to reasoning performance and shows how deep investigations of the underlying mechanisms can lead to improved inference solutions. We provide an extended discussion of related works in Appendix B.

8 Conclusion

In this paper, we report a meaningless-token effect that inserting long sequences of meaningless tokens improves model performance, particularly on reasoning tasks. Our analysis suggests that it stems from the fact that meaningless tokens induce an affine transformation on meaningful tokens, thereby redistributing their activations and enabling key information to be more effectively utilized. Building on this insight, we introduce ARM, a lightweight and training-free method for activation redistribution, which strengthens our analysis and serves as a practical approach for consistently improving LLM performance on reasoning tasks.

Ethics Statement

All datasets used in this work are publicly available and contain no sensitive information. Our method enhances LLM reasoning without introducing new data collection or human interaction. While stronger reasoning ability may be misused, we emphasize that this work is intended for beneficial research and responsible applications.

Reproducibility Statement

We will release our code and data once the paper is published. The appendix includes detailed experimental setups and hyperparameters so that others can reproduce our results. We also encourage the community to follow good research practices when using our code and data, to help maintain the reliability and transparency of future work.

References

- gra (2024) The llama 3 herd of models, 2024. URL https://arxiv.org/abs/2407.21783.

- gem (2025) Gemma 3 technical report, 2025. URL https://arxiv.org/abs/2503.19786.

- qwe (2025) Qwen2.5 technical report, 2025. URL https://arxiv.org/abs/2412.15115.

- AIME (2024) AIME. Aime problems and solutions, 2024. URL https://aime24.aimedicine.info/.

- AIME (2025) AIME. Aime problems and solutions, 2025. URL https://artofproblemsolving.com/wiki/index.php/AIMEProblemsandSolutions.

- Bogdan et al. (2025) Paul C Bogdan, Uzay Macar, Neel Nanda, and Arthur Conmy. Thought anchors: Which llm reasoning steps matter? arXiv preprint arXiv:2506.19143, 2025.

- Chadimová et al. (2024) Milena Chadimová, Eduard Jurášek, and Tomáš Kliegr. Meaningless is better: hashing bias-inducing words in llm prompts improves performance in logical reasoning and statistical learning. arXiv preprint arXiv:2411.17304, 2024.

- Chauhan et al. (2025) Sonakshi Chauhan, Maheep Chaudhary, Koby Choy, Samuel Nellessen, and Nandi Schoots. Punctuation and predicates in language models. arXiv preprint arXiv:2508.14067, 2025.

- Chen et al. (2021) Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, Alex Ray, Raul Puri, Gretchen Krueger, Michael Petrov, Heidy Khlaaf, Girish Sastry, Pamela Mishkin, Brooke Chan, Scott Gray, Nick Ryder, Mikhail Pavlov, Alethea Power, Lukasz Kaiser, Mohammad Bavarian, Clemens Winter, Philippe Tillet, Felipe Petroski Such, Dave Cummings, Matthias Plappert, Fotios Chantzis, Elizabeth Barnes, Ariel Herbert-Voss, William Hebgen Guss, Alex Nichol, Alex Paino, Nikolas Tezak, Jie Tang, Igor Babuschkin, Suchir Balaji, Shantanu Jain, William Saunders, Christopher Hesse, Andrew N. Carr, Jan Leike, Josh Achiam, Vedant Misra, Evan Morikawa, Alec Radford, Matthew Knight, Miles Brundage, Mira Murati, Katie Mayer, Peter Welinder, Bob McGrew, Dario Amodei, Sam McCandlish, Ilya Sutskever, and Wojciech Zaremba. Evaluating large language models trained on code. 2021.

- Clark et al. (2019) Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, and Kristina Toutanova. Boolq: Exploring the surprising difficulty of natural yes/no questions. In NAACL, 2019.

- Clark et al. (2018) Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge. arXiv:1803.05457v1, 2018.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- Dhanraj & Eliasmith (2025) Varun Dhanraj and Chris Eliasmith. Improving rule-based reasoning in llms via neurosymbolic representations. arXiv e-prints, pp. arXiv–2502, 2025.

- Errica et al. (2024) Federico Errica, Giuseppe Siracusano, Davide Sanvito, and Roberto Bifulco. What did i do wrong? quantifying llms’ sensitivity and consistency to prompt engineering. arXiv preprint arXiv:2406.12334, 2024.

- Guan et al. (2025) Bryan Guan, Tanya Roosta, Peyman Passban, and Mehdi Rezagholizadeh. The order effect: Investigating prompt sensitivity to input order in llms. arXiv preprint arXiv:2502.04134, 2025.

- Hendrycks et al. (2021) Dan Hendrycks, Collin Burns, Steven Basart, Andrew Critch, Jerry Li, Dawn Song, and Jacob Steinhardt. Aligning ai with shared human values. Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Højer et al. (2025) Bertram Højer, Oliver Jarvis, and Stefan Heinrich. Improving reasoning performance in large language models via representation engineering. arXiv preprint arXiv:2504.19483, 2025.

- Huan et al. (2025) Maggie Huan, Yuetai Li, Tuney Zheng, Xiaoyu Xu, Seungone Kim, Minxin Du, Radha Poovendran, Graham Neubig, and Xiang Yue. Does math reasoning improve general llm capabilities? understanding transferability of llm reasoning. arXiv preprint arXiv:2507.00432, 2025.

- Jain et al. (2024) Naman Jain, King Han, Alex Gu, Wen-Ding Li, Fanjia Yan, Tianjun Zhang, Sida Wang, Armando Solar-Lezama, Koushik Sen, and Ion Stoica. Livecodebench: Holistic and contamination free evaluation of large language models for code. arXiv preprint arXiv:2403.07974, 2024.

- Jiang et al. (2024) Ming Jiang, Tingting Huang, Biao Guo, Yao Lu, and Feng Zhang. Enhancing robustness in large language models: Prompting for mitigating the impact of irrelevant information. In International Conference on Neural Information Processing, pp. 207–222. Springer, 2024.

- Kaul et al. (2024) Prannay Kaul, Chengcheng Ma, Ismail Elezi, and Jiankang Deng. From attention to activation: Unravelling the enigmas of large language models. arXiv preprint arXiv:2410.17174, 2024.

- Kawasaki et al. (2024) Amelia Kawasaki, Andrew Davis, and Houssam Abbas. Defending large language models against attacks with residual stream activation analysis. arXiv preprint arXiv:2406.03230, 2024.

- Li et al. (2024) Yuxi Li, Yi Liu, Gelei Deng, Ying Zhang, Wenjia Song, Ling Shi, Kailong Wang, Yuekang Li, Yang Liu, and Haoyu Wang. Glitch tokens in large language models: Categorization taxonomy and effective detection. Proceedings of the ACM on Software Engineering, 1(FSE):2075–2097, 2024.

- Lightman et al. (2023) Hunter Lightman, Vineet Kosaraju, Yuri Burda, Harrison Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, and Karl Cobbe. Let’s verify step by step. In The Twelfth International Conference on Learning Representations, 2023.

- Liu et al. (2024) Weize Liu, Yinlong Xu, Hongxia Xu, Jintai Chen, Xuming Hu, and Jian Wu. Unraveling babel: Exploring multilingual activation patterns of llms and their applications. arXiv preprint arXiv:2402.16367, 2024.

- London & Kanade (2025) Charles London and Varun Kanade. Pause tokens strictly increase the expressivity of constant-depth transformers. arXiv preprint arXiv:2505.21024, 2025.

- Luo et al. (2025) Yifan Luo, Zhennan Zhou, and Bin Dong. Inversescope: Scalable activation inversion for interpreting large language models. arXiv preprint arXiv:2506.07406, 2025.

- Luo et al. (2024) Yuqi Luo, Chenyang Song, Xu Han, Yingfa Chen, Chaojun Xiao, Xiaojun Meng, Liqun Deng, Jiansheng Wei, Zhiyuan Liu, and Maosong Sun. Sparsing law: Towards large language models with greater activation sparsity. arXiv preprint arXiv:2411.02335, 2024.

- Mihaylov et al. (2018) Todor Mihaylov, Peter Clark, Tushar Khot, and Ashish Sabharwal. Can a suit of armor conduct electricity? a new dataset for open book question answering. In EMNLP, 2018.

- Min et al. (2024) Junghyun Min, Minho Lee, Woochul Lee, and Yeonsoo Lee. Punctuation restoration improves structure understanding without supervision. arXiv preprint arXiv:2402.08382, 2024.

- Owen et al. (2025) Louis Owen, Nilabhra Roy Chowdhury, Abhay Kumar, and Fabian Güra. A refined analysis of massive activations in llms. arXiv preprint arXiv:2503.22329, 2025.

- Pfau et al. (2024) Jacob Pfau, William Merrill, and Samuel R Bowman. Let’s think dot by dot: Hidden computation in transformer language models. arXiv preprint arXiv:2404.15758, 2024.

- Pham & Nguyen (2024) Van-Cuong Pham and Thien Huu Nguyen. Householder pseudo-rotation: A novel approach to activation editing in llms with direction-magnitude perspective. arXiv preprint arXiv:2409.10053, 2024.

- Rai & Yao (2024) Daking Rai and Ziyu Yao. An investigation of neuron activation as a unified lens to explain chain-of-thought eliciting arithmetic reasoning of llms. arXiv preprint arXiv:2406.12288, 2024.

- Razzhigaev et al. (2025) Anton Razzhigaev, Matvey Mikhalchuk, Temurbek Rahmatullaev, Elizaveta Goncharova, Polina Druzhinina, Ivan Oseledets, and Andrey Kuznetsov. Llm-microscope: Uncovering the hidden role of punctuation in context memory of transformers. arXiv preprint arXiv:2502.15007, 2025.

- Rein et al. (2024) David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R Bowman. Gpqa: A graduate-level google-proof q&a benchmark. In First Conference on Language Modeling, 2024.