# In-the-Flow Agentic System Optimization for Effective Planning and Tool Use

## Abstract

Outcome-driven reinforcement learning has advanced reasoning in large language models (LLMs), but prevailing tool-augmented approaches train a single, monolithic policy that interleaves thoughts and tool calls under full context; this scales poorly with long horizons and diverse tools and generalizes weakly to new scenarios. Agentic systems offer a promising alternative by decomposing work across specialized modules, yet most remain training-free or rely on offline training decoupled from the live dynamics of multi-turn interaction. We introduce AgentFlow, a trainable, in-the-flow agentic framework that coordinates four modules (planner, executor, verifier, generator) through an evolving memory and directly optimizes its planner inside the multi-turn loop. To train on-policy in live environments, we propose Flow-based Group Refined Policy Optimization (Flow-GRPO), which tackles long-horizon, sparse-reward credit assignment by converting multi-turn optimization into a sequence of tractable single-turn policy updates. It broadcasts a single, verifiable trajectory-level outcome to every turn to align local planner decisions with global success and stabilizes learning with group-normalized advantages. Across ten benchmarks, AgentFlow with a 7B-scale backbone outperforms top-performing baselines with average accuracy gains of 14.9% on search, 14.0% on agentic, 14.5% on mathematical, and 4.1% on scientific tasks, even surpassing larger proprietary models like GPT-4o. Further analyses confirm the benefits of in-the-flow optimization, showing improved planning, enhanced tool-calling reliability, and positive scaling with model size and reasoning turns.

footnotetext: *Equal contribution. † Co-senior authors. Work was partially done while ZL and HZ were visiting Stanford.

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Radar Chart & Bar Charts: AgentFlow Performance Comparison

### Overview

The image presents a comparison of the AgentFlow model's performance against a baseline (AgentFlow w/o Flow-GRPO) across several benchmarks. The comparison is visualized using a radar chart for an overview and a series of bar charts for detailed performance on individual benchmarks. The benchmarks cover diverse areas like question answering (2Wiki, HotpotQA, GAIA, GPQA), reasoning (Math, GameOf24, AMC23, AIME24), and science (MedQA, Musique, Bamboogle).

### Components/Axes

* **Radar Chart:**

* **Axes:** MedQA, Science, GPQA, GameOf24, Math, AMC23, AIME24, GAIA, 2Wiki, HotpotQA, Musique, Bamboogle. These represent the different benchmarks.

* **Scale:** 0 to 80 (approximately).

* **Lines:**

* AgentFlow (w/o Flow-GRPO) - Blue line

* AgentFlow - Red line

* **Legend:** Located in the top-left corner.

* **Bar Charts:**

* **X-axis:** Model names (Qwen-2.5-7B, GPT-4o (~200B), Search-R1 (7B), ReSearch (7B), AutoGen (7B), AgentFlow (7B)).

* **Y-axis:** Accuracy (%) - Scale from 0 to 80 (approximately).

* **Charts:** 2Wiki (Search), HotpotQA (Search), GAIA (Agentic), AIME24 (Math), GameOf24 (Math), GPQA (Science).

* **Legend:** Color-coded bars representing each model.

### Detailed Analysis or Content Details

**Radar Chart Analysis:**

The radar chart displays the performance of AgentFlow with and without Flow-GRPO across 12 benchmarks. The red line represents AgentFlow *with* Flow-GRPO, and the blue line represents AgentFlow *without* Flow-GRPO.

* **MedQA:** AgentFlow (w/o Flow-GRPO): ~80.0%, AgentFlow: ~76.0%

* **Science:** AgentFlow (w/o Flow-GRPO): ~76.0%, AgentFlow: ~69.6%

* **GPQA:** AgentFlow (w/o Flow-GRPO): ~47.0%, AgentFlow: ~37.0%

* **GameOf24:** AgentFlow (w/o Flow-GRPO): ~53.0%, AgentFlow: ~47.4%

* **Math:** AgentFlow (w/o Flow-GRPO): ~61.5%, AgentFlow: ~40.0%

* **AMC23:** AgentFlow (w/o Flow-GRPO): ~61.5%, AgentFlow: ~31.0%

* **AIME24:** AgentFlow (w/o Flow-GRPO): ~17.2%, AgentFlow: ~16.7%

* **GAIA:** AgentFlow (w/o Flow-GRPO): ~58.4%, AgentFlow: ~33.1%

* **2Wiki:** AgentFlow (w/o Flow-GRPO): ~71.2%, AgentFlow: ~60.0%

* **HotpotQA:** AgentFlow (w/o Flow-GRPO): ~51.3%, AgentFlow: ~57.0%

* **Musique:** AgentFlow (w/o Flow-GRPO): ~25.3%, AgentFlow: ~19.2%

* **Bamboogle:** AgentFlow (w/o Flow-GRPO): ~69.6%, AgentFlow: ~60.0%

The chart also indicates overall performance improvements: +7.0% (GPQA), +19.8% (Math), +15.9% (GAIA), +10.1% (2Wiki).

**Bar Chart Analysis:**

* **2Wiki (Search):** Qwen-2.5-7B: ~49.5%, GPT-4o (~200B): ~72.2%, Search-R1 (7B): ~38.2%, AutoGen (7B): ~44.0%, ReSearch (7B): ~21.0%, AgentFlow (7B): ~23.3%

* **HotpotQA (Search):** Qwen-2.5-7B: ~54.0%, GPT-4o (~200B): ~43.5%, Search-R1 (7B): ~37.0%, AutoGen (7B): ~30.0%, ReSearch (7B): ~3.2%, AgentFlow (7B): ~6.3%

* **GAIA (Agentic):** Qwen-2.5-7B: ~50.0%, GPT-4o (~200B): ~33.1%, Search-R1 (7B): ~17.3%, AutoGen (7B): ~19.1%, ReSearch (7B): ~6.3%, AgentFlow (7B): ~17.3%

* **AIME24 (Math):** Qwen-2.5-7B: ~40.0%, GPT-4o (~200B): ~13.3%, Search-R1 (7B): ~10.0%, AutoGen (7B): ~20.0%, ReSearch (7B): ~6.7%, AgentFlow (7B): ~10.0%

* **GameOf24 (Math):** Qwen-2.5-7B: ~53.0%, GPT-4o (~200B): ~31.0%, Search-R1 (7B): ~33.0%, AutoGen (7B): ~30.0%, ReSearch (7B): ~24.0%, AgentFlow (7B): ~33.0%

* **GPQA (Science):** Qwen-2.5-7B: ~42.0%, GPT-4o (~200B): ~35.0%, Search-R1 (7B): ~34.0%, AutoGen (7B): ~31.0%, ReSearch (7B): ~47.0%, AgentFlow (7B): ~42.0%

### Key Observations

* AgentFlow consistently performs better than the baseline (AgentFlow w/o Flow-GRPO) across all benchmarks in the radar chart.

* The largest performance gains with AgentFlow are observed in GPQA and Math.

* GPT-4o (~200B) generally achieves the highest accuracy across most benchmarks in the bar charts.

* AgentFlow (7B) generally performs lower than GPT-4o (~200B), Qwen-2.5-7B, and Search-R1 (7B) in the bar charts.

* ReSearch (7B) shows very low performance in HotpotQA and AIME24.

### Interpretation

The data suggests that the Flow-GRPO component significantly improves the performance of the AgentFlow model across a diverse set of tasks. The radar chart provides a holistic view of these improvements, while the bar charts offer a more granular comparison against other models. The consistent outperformance of GPT-4o highlights the current state-of-the-art in large language models. The relatively low performance of AgentFlow (7B) compared to larger models suggests that scaling model size remains a crucial factor in achieving high accuracy. The significant drop in ReSearch (7B)'s performance on HotpotQA and AIME24 could indicate a specific weakness in that model's architecture or training data for those tasks. The combination of radar and bar charts provides a comprehensive assessment of AgentFlow's capabilities and areas for potential improvement.

</details>

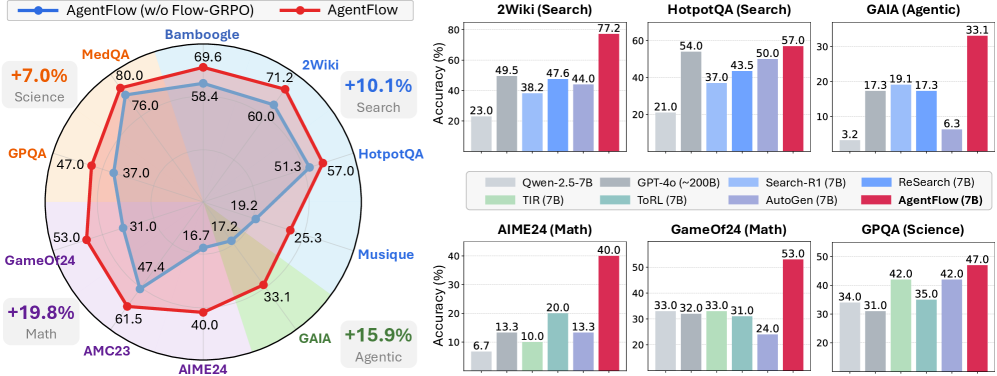

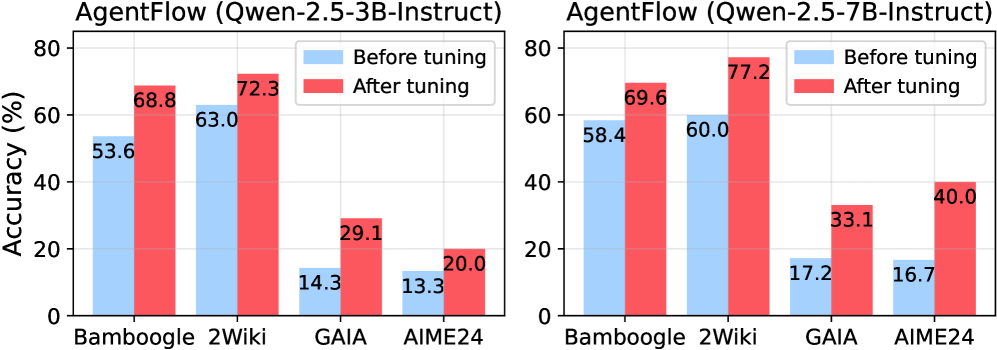

Figure 1: Left: Performance of AgentFlow with a 7B-scale backbone before and after Flow-GRPO tuning across ten diverse reasoning benchmarks. Flow-GRPO substantially improves performance by enhancing planning quality and tool-calling reliability. Right: AgentFlow achieves consistent gains over top baselines, including base LLMs, tool-integrated RL models, and training-free agentic systems. All 7B results use Qwen2.5-7B-Base/Instruct as the backbone and tools.

## 1 Introduction

Recent advances in large language models (LLMs) have unlocked remarkable reasoning capabilities, largely driven by reinforcement learning (RL) from outcome-based feedback. By fine-tuning models to maximize verifiable rewards, LLMs like DeepSeek-R1 (Guo et al., 2025) and SimpleRL (Zeng et al., 2025b) have demonstrated sophisticated behaviors in self-correction and multi-step deduction.

A complementary line of work augments LLMs with external tools (e.g., web search, code execution) for knowledge retrieval and precise computation. Tool-integrated reasoning (TIR) extends reinforcement learning with verifiable rewards to learn when and how to call tools by interleaving reasoning (e.g., <think>) with tool invocations (e.g., <tool call>) under full context (Jin et al., 2025; Song et al., 2025; Chen et al., 2025; Feng et al., 2025). Early systems supported only a single tool type, whereas recent work enables multi-tool settings by encoding tool metadata into prompts (Dong et al., 2025; Qian et al., 2025a; Zhang et al., 2025). However, these methods still train a single, monolithic policy under multi-turn full-context reasoning, which introduces scaling challenges: (i) training becomes increasingly unstable as horizons lengthen, tool diversity grows, and environments shift with tool feedback (Wang et al., 2025c; Mai et al., 2025; Moonshot AI, 2025; Xue et al., 2025); and (ii) inference -time generalization remains brittle to unseen tasks or tools (Dong et al., 2025; Hu et al., 2025b).

Agentic systems (Wu et al., 2024; Hong et al., 2024; Hu et al., 2025b) offer a promising alternative to monolithic tool-integrated reasoning models. They consist of multiple modules—often distinct LLMs with prescribed roles (e.g., planner, critic) or specialized components with dedicated tools and capabilities (e.g., executor, coder)—that coordinate via shared memory and inter-module communication. By decomposing problems into sub-goals and iterating over multiple turns, these systems can tackle tasks that demand diverse tools, long horizons, or multi-stage reasoning. However, achieving robust coordination in such systems ultimately requires training, since handcrafted logic or static prompting cannot reliably capture when and how modules should collaborate, adapt to evolving tool outputs, or recover from early mistakes. At the same time, they introduce new training challenges: modules coordinate sequentially, outcome feedback propagates through long reasoning chains, and state distributions shift with evolving tool outputs. As a result, most systems remain training-free, relying on handcrafted logic or prompting heuristics. While some employ supervised fine-tuning or preference optimization for key modules (Motwani et al., 2024; Park et al., 2025), these off-policy approaches are decoupled from live dynamics and learn poorly from downstream successes or failures. Thus, agentic systems struggle with sparse rewards, brittle adaptation, and inefficient orchestration in dynamic environments.

To address the central challenge of learning long-horizon reasoning with sparse rewards in tool-integrated agentic systems, we introduce AgentFlow, a trainable framework for effective planning and tool use (Figure 2). AgentFlow comprises four specialized modules—planner, executor, verifier, and generator—that interact iteratively over multiple turns via a shared evolving memory and a toolset. The system operates in the flow, with each turn cycling through planning, execution, and verification. Unlike prior agentic systems, AgentFlow directly optimizes its planner on-policy, inside the live multi-turn loop, allowing it to dynamically adapt to trajectories shaped by tool calls, verifier signals, and memory updates. This evolving memory serves as a deterministic, structured record of the reasoning process, enabling transparent state tracking, controllable behavior, and bounded context growth.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: AgentFlow: In-the-Flow Agentic System

### Overview

The image presents a diagram illustrating an agentic system called "AgentFlow". It depicts the flow of information and processing within the system across multiple turns (labeled Turn 1, Turn 2, ... Turn T). The diagram is split into two main sections: (a) AgentFlow: In-the-Flow Agentic System, which shows the overall system architecture, and (b) In-the-Flow Rollout at Turn t, which details the input and output of each component at a specific turn.

### Components/Axes

The diagram consists of several key components:

* **Query:** The initial input to the system.

* **Toolkit Set:** A collection of tools available to the agent (represented by icons).

* **Planner:** Generates a plan based on the query and available tools.

* **Executor:** Executes the plan.

* **Verifier:** Verifies the results of the execution.

* **Generator:** Generates the final answer.

* **Memory:** Stores information across turns.

* **Input/Output Blocks:** Represent the data flow into and out of each component at a given turn.

* **Turn Indicators:** Labels indicating the current turn (Turn 1, Turn 2, ... Turn T).

* **a<sup>1</sup>, a<sup>2</sup>, ..., a<sup>T</sup>:** Represent the actions taken at each turn.

* **q, K, M<sup>t</sup>:** Inputs to the Planner at turn t.

* **a<sup>t</sup>, K:** Inputs to the Executor at turn t.

* **e<sup>t</sup>, M<sup>t</sup>:** Inputs to the Verifier at turn t.

* **v<sup>t</sup>:** Output of the Verifier.

* **o:** The final answer.

### Detailed Analysis or Content Details

**(a) AgentFlow: In-the-Flow Agentic System**

* The system starts with a "Query" and a "Toolkit Set".

* The query is fed into a "Planner" at each turn (Turn 1, Turn 2, ... Turn T).

* The Planner outputs an action (a<sup>1</sup>, a<sup>2</sup>, ..., a<sup>T</sup>) which is passed to an "Executor".

* The Executor then passes its output to a "Verifier".

* At the final turn (Turn T), the Verifier's output is passed to a "Generator", which produces the "Answer".

* "Memory" is used across turns, with feedback loops from the Verifier to the Memory.

* The arrows indicate the flow of information.

**(b) In-the-Flow Rollout at Turn t**

* **Planner Input:** [Query Analysis], [Global Goal], [Required Skills].

* **Planner Output:** [Current Sub-goal], [Selected Tool], [Context for Tool Use].

* **Executor Input:** [Current Sub-goal], [Selected Tool & Context].

* **Executor Output:** [Generated Command], [Execution Result].

* **Verifier Input:** [Generated Command], [Execution Result].

* **Verifier Output:** [Execution Analysis], [Memory Analysis], [Verification Status].

* The "Memory" block is divided into "Trained" and "Frozen" sections.

* The diagram shows the inputs and outputs of each component at a single turn 't'.

* The inputs to the Planner are 'q', 'K', and 'M<sup>t</sup>'.

* The inputs to the Executor are 'a<sup>t</sup>' and 'K'.

* The inputs to the Verifier are 'e<sup>t</sup>' and 'M<sup>t</sup>'.

### Key Observations

* The system operates iteratively across multiple turns.

* Each turn involves planning, execution, and verification.

* The "Memory" component plays a crucial role in retaining information across turns.

* The system utilizes a toolkit of tools to accomplish tasks.

* The diagram clearly delineates the input and output of each component at each stage.

* The "Memory" is divided into "Trained" and "Frozen" sections, suggesting a learning or adaptation process.

### Interpretation

The diagram illustrates a sophisticated agentic system designed to solve complex tasks through iterative planning, execution, and verification. The system's ability to leverage a toolkit and maintain memory across turns suggests a capacity for learning and adaptation. The separation of "Trained" and "Frozen" memory indicates a mechanism for preserving knowledge while allowing for ongoing refinement. The detailed breakdown of inputs and outputs for each component at turn 't' highlights the system's transparency and modularity. The flow of information is clearly defined, allowing for a comprehensive understanding of the system's operation. The system appears to be designed for tasks that require reasoning, tool use, and memory retention. The diagram suggests a robust and adaptable agent capable of tackling complex challenges.

</details>

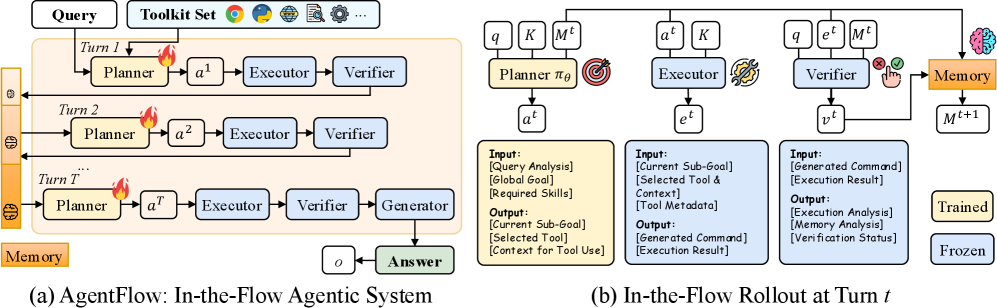

Figure 2: (a) Overview of AgentFlow, a trainable agentic system for in-the-flow planning and tool use. Four modules (planner, executor, verifier, generator) coordinate via a shared evolving memory $M$ and toolset $K$ , given a query $q$ . The planner policy is optimized on-policy inside the system’s multi-turn loop to enable adaptive, long-horizon reasoning. (b) A single state transition, showing the action $a^{t}$ , execution result $e^{t}$ , and verifier signal $v^{t}$ that update the memory from $M^{t}$ to $M^{t+1}$ .

To train the planner on-policy within this agentic system, we need to overcome the long-horizon credit assignment problem inherent to sparse, trajectory-level rewards. We introduce Flow-based Group Refined Policy Optimization (Flow-GRPO, Figure 4), an on-policy algorithm designed for this setting. Flow-GRPO operates on in-the-flow rollouts, which capture the full trajectory of states, actions, and tool events induced by the live system. Instead of attempting to assign credit with brittle, intermediate heuristics, we assign a single, verifiable final-outcome reward to the entire trajectory and broadcast it to every turn. This design effectively transforms the multi-turn reinforcement learning challenge into a series of single-turn updates: at each turn, the planner has access to the full memory context and receives a consistent reward signal aligned with global success. This approach, coupled with group-normalized advantages to stabilize training, enables robust credit assignment and allows the planner to learn effective long-horizon strategies from sparse feedback.

We evaluate AgentFlow on ten benchmarks across diverse reasoning domains, as results highlighted in Figure 1. In our main setting, all four modules use Qwen2.5-7B-Instruct (Yang et al., 2024a) as a backbone, with only the planner trained via Flow-GRPO. AgentFlow substantially outperforms top-performing specialized tool-integrated reasoning models and agentic systems, achieving average accuracy by 14.9% on knowledge-intensive search, 14.0% on broader agentic tasks, 14.5% on mathematical reasoning, and 4.1% on scientific reasoning (§ 4.2). Notably, our 7B-backbone system even surpasses the $\sim$ 200B-parameter GPT-4o (Hurst et al., 2024) across all domains. The trained planner learns to optimize planning, enhance tool-calling reliability, and discover effective solution pathways (§ 4.3). Further analyses confirm that our in-the-flow optimization with Flow-GRPO is crucial, far surpassing offline supervised tuning (§ 4.4). Moreover, our training approach proves highly efficient, leading to increased rewards and condensed responses compared to traditional tool-integrated RL methods (§ 4.5). Finally, we demonstrate that these benefits generalize, with consistent gains from scaling backbone size and turn budget (§ 4.6).

Our work makes three key contributions: (1) We present AgentFlow, a trainable in-the-flow agentic system that directly optimizes its planner inside the multi-turn loop. By coordinating specialized modules through an evolving memory, it enables adaptive long-horizon planning and robust tool orchestration. (2) We introduce Flow-GRPO, an on-policy, outcome-driven algorithm that hat converts multi-turn RL into a sequence of tractable single-turn policy updates by broadcasting a single, verifiable final-outcome reward to every turn. (3) Through comprehensive experiments on ten benchmarks, we show that AgentFlow with a 7B backbone outperforms specialized baselines and even larger proprietary models. Further analyses reveal improved planning, enhanced tool-calling reliability, and positive scaling with model size and turn budgets.

## 2 Preliminary

Reinforcement learning for reasoning LLMs.

Recent progress in reasoning LLMs has been significantly driven by reinforcement learning from outcome feedback, using a verifiable reward signal (Shao et al., 2024; Yu et al., 2025). This paradigm fine-tunes a language model to maximize an outcome-based reward while remaining close to a reference policy. Formally, the objective is to optimize a policy LLM $\pi_{\theta}$ to generate a response $o$ for a given query $q$ from dataset $\mathcal{D}$ :

$$

\max_{\pi_{\theta}}\;\mathbb{E}_{x\sim\mathcal{D},\,o\sim\pi_{\theta}(\cdot\mid q)}\big[R(q,o)\big]-\beta\,\mathbb{D}_{\text{KL}}\!\left(\pi_{\theta}(o\mid q)\,\|\,\pi_{\text{ref}}(o\mid q)\right), \tag{1}

$$

where $R(q,o)$ is the outcome-based reward, $\pi_{\text{ref}}$ is a reference model to prevent policy collapse, and $\beta$ controls KL regularization. Algorithms like Group Relative Policy Optimization (GRPO) (Shao et al., 2024) implement this by sampling groups of responses, normalizing advantages by their rewards, and updating the policy with a clipped objective to encourage high-reward outputs.

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Diagram: LLM Agent Architectures

### Overview

The image presents a comparative diagram illustrating two distinct architectures for Large Language Model (LLM) agents: Tool-Integrated Reasoning Models (LLM Agents) and Training-Free Agentic Systems. Both architectures take a "Query" as input and produce an "Answer" as output. The diagram highlights the internal processes and components within each architecture.

### Components/Axes

The diagram consists of four main sections, labeled (a) and (b), each representing a different system. Each section includes the following components:

* **Query:** The initial input to the system.

* **LLM (or Modules):** The core processing unit. In (a), it's a single LLM; in (b), it's a series of Modules.

* **Intermediate Steps:** Represented by boxes containing text like `<tools>`, `<think>`, `<tool_call>`, `<answer>`.

* **Answer:** The final output of the system.

* **"o":** A small circle placed near the "Answer" box, potentially indicating an output signal or completion marker.

* **Trained, Frozen, Token:** Labels associated with the LLM in section (a).

### Detailed Analysis or Content Details

**Section (a): Tool-Integrated Reasoning Models (LLM Agents)**

* **Input:** "Query" enters the system.

* **LLM:** The "Query" is fed into an LLM, which is labeled as "Trained," "Frozen," and "Token."

* **Process Flow:**

1. The LLM generates `<think>`.

2. `<think>` leads to `<tool_call>`.

3. `<tool_call>` results in `<answer>`.

4. `<answer>` produces the final "Answer."

* **Connections:** Arrows indicate a sequential flow of information from the "Query" through the LLM and intermediate steps to the "Answer."

**Section (b): Training-Free Agentic Systems**

* **Input:** "Query" enters the system.

* **Modules:** The "Query" is distributed to multiple "Module" components (Module 1, Module 2, ... Module N).

* **Process Flow:**

1. The "Query" is processed by multiple Modules in parallel.

2. The outputs of the Modules converge to produce the final "Answer."

* **Connections:** Arrows indicate the flow of information from the "Query" to the Modules and then to the "Answer." The Modules are connected in a cascading manner, with Module 1 feeding into Module 2, and so on, until Module N.

### Key Observations

* **Sequential vs. Parallel Processing:** The key difference between the two architectures is the processing approach. (a) uses a sequential, single-LLM approach, while (b) employs a parallel, multi-module approach.

* **Tool Integration:** Architecture (a) explicitly highlights the use of "tools" through the `<tools>` and `<tool_call>` steps, suggesting the LLM can interact with external resources.

* **Training Status:** The labels "Trained," "Frozen," and "Token" associated with the LLM in (a) indicate the LLM's training state.

* **Scalability:** Architecture (b) suggests scalability through the use of "Module N," implying the system can be expanded by adding more modules.

### Interpretation

The diagram illustrates two contrasting approaches to building LLM agents. The Tool-Integrated Reasoning Model (a) relies on a single, pre-trained LLM that leverages external tools to enhance its reasoning capabilities. The Training-Free Agentic System (b) utilizes a network of specialized modules, potentially allowing for more flexible and scalable agent behavior without requiring further training of the core LLM.

The use of `<think>`, `<tool_call>`, and `<answer>` tags in (a) suggests a structured reasoning process where the LLM explicitly considers its actions and utilizes tools before generating a final response. The parallel processing in (b) could lead to faster response times and improved robustness, as the system can leverage the strengths of multiple modules.

The "Trained, Frozen, Token" labels in (a) indicate that the LLM is likely a pre-trained model that has been fine-tuned or adapted for specific tasks. The "Training-Free" designation in (b) suggests that the modules are designed to operate without requiring additional training, potentially making the system more adaptable to new tasks and environments. The "o" symbol near the answer may indicate a completion signal or a flag indicating the answer is ready.

The diagram effectively communicates the core architectural differences between these two approaches, highlighting their respective strengths and weaknesses. It suggests a trade-off between the complexity of managing a single, powerful LLM versus the scalability and flexibility of a modular system.

</details>

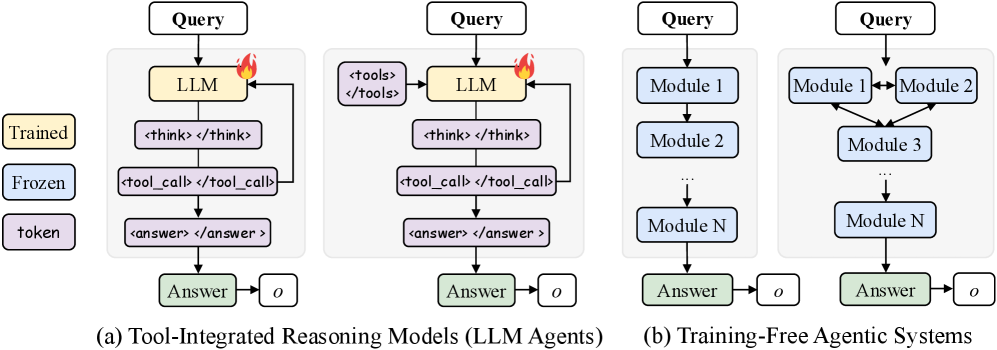

Figure 3: Comparison of two paradigms of LLMs with tool use. (a) Monolithic tool-integrated reasoning models train a single policy to interleave reasoning (e.g., <think>) and tool calls (e.g., <tool_call>) within a single, full-context trajectory. (b) Agentic systems decompose tasks across multiple specialized modules (e.g., planner, coder) that collaborate. These systems are typically training-free, orchestrated by handcrafted logic or prompting.

Tool-integrated reasoning models (LLM agents).

LLMs can be augmented with external tools to access knowledge and perform precise computation under reinforcement learning with outcome-based reward. As shown in Figure 3 (a), the LLM interleaves reasoning and tool calls, producing a chain of thought within <think></think> tokens followed by tool invocations (e.g., <tool call></tool call>). The resulting trajectory $\tau$ is a sequence of model generations and tool observations: $\tau=\{s^{1},a^{1},e^{1},\ldots,s^{T},a^{T}\}$ , where $s^{t}$ denotes the context, $a^{t}$ the generated action (thought + tool call), and $e^{t}$ the tool’s execution result. The policy model $\pi_{\theta}$ is then trained to maximize a final outcome reward. Prior work has explored single- and multi-tool settings for search and code execution (Jin et al., 2025; Chen et al., 2025; Feng et al., 2025; Qian et al., 2025a).

Agentic systems with tool usage.

An alternative approach is the use of agentic systems (Wu et al., 2024; Hong et al., 2024; Lu et al., 2025). As shown in Figure 3 (b), these frameworks deploy multiple specialized modules—often distinct LLMs with carefully designed prompts and roles—within a collaborative workflow. By decomposing tasks and assigning subproblems to modules with dedicated tools and capabilities (e.g., planner, coder, critic), they can address complex problems such as web browsing, document processing, and multi-stage programming that exceed the scope of a single model. A central limitation, however, is that these systems are typically training-free: modules remain frozen pre-trained models orchestrated by handcrafted logic or prompting heuristics.

## 3 In-the-Flow Agentic System Optimization

We aim to bridge the gap between trainable but monolithic reasoning models and flexible yet static agentic systems. We present AgentFlow, a flexible and trainable agentic system that integrates four specialized modules with an evolving memory (§ 3.1). Unlike prior agentic systems, AgentFlow directly optimizes the planner within the multi-turn loop of an agentic system (§ 3.2).

### 3.1 AgentFlow : An In-the-Flow Agentic System

We propose AgentFlow, a general-purpose tool-integrated agentic framework for solving complex reasoning tasks through fine-grained planning and effective tool use within a multi-turn architecture. As shown in Figure 2, the framework comprises four specialized modules— Action Planner $\mathcal{P}$ , Tool Executor $\mathcal{E}$ , Execution Verifier $\mathcal{V}$ , and Solution Generator $\mathcal{G}$ —coordinated by a shared evolving memory $M$ and a toolset $K$ . These modules interact sequentially and iteratively to perform action planning, tool execution, context verification, and solution generation, thereby enabling tool-integrated reasoning across multiple turns.

We formalize AgentFlow ’s problem-solving process as a multi-turn Markov Decision Process (MDP). Given a query $q$ and a toolset $K$ , the system proceeds for a variable number of turns. Let $M^{t}$ denote the memory state before turn $t$ (with $M^{1}$ initialized from $q$ ). At turn $t$ , the planner $\mathcal{P}$ (a trainable policy $\pi_{\theta}$ ) formulates a sub-goal, selects an appropriate tool $k\in K$ , and retrieves relevant context from memory, producing an action: $a^{t}\sim\pi_{\theta}(a^{t}\mid q,K,M^{t})$ .

The executor $\mathcal{E}$ invokes the chosen tool with context, yielding an execution observation $e^{t}\sim\mathcal{E}(e^{t}\mid a^{t},K)$ . The verifier $\mathcal{V}$ then evaluates whether $e^{t}$ is valid and whether the accumulated memory is sufficient to solve the query, producing a binary verification signal $v^{t}\sim\mathcal{V}(v^{t}\mid q,e^{t},M^{t})$ . If $v^{t}=0$ , the memory is updated deterministically to incorporate new evidence: $M^{t+1}=f_{\text{mem}}\!(M^{t},a^{t},e^{t},v^{t})$ , where $f_{\text{mem}}(\cdot)$ denotes the memory-update function, which records agent-process information in a concise, structured form along with contextual details such as time, turn index, and error signals.

The process repeats until $v^{t}=1$ (termination) or a predefined maximum turn budget is reached. Upon termination at turn $T$ , the solution generator $\mathcal{G}$ produces the final solution $o$ , conditioned on the query and the accumulated memory: $o\sim\mathcal{G}(o\mid q,M^{T})$ .

This formulation decomposes multi-turn, tool-integrated reasoning into structured, observable transitions. After $T$ turns, the trajectory $\tau=\{(a^{t},e^{t},v^{t})\}_{t=1}^{T}$ records the history of planning, execution, and verification. The joint generative process can be written as

$$

p_{\theta}\!\left(\{a^{t},e^{t},v^{t}\}_{t=1}^{T},\,o\mid q\right)=\Bigg[\prod_{t=1}^{T}\pi_{\theta}(a^{t}\mid q,K,M^{t})\;\mathcal{E}(e^{t}\mid a^{t},K)\;\mathcal{V}(v^{t}\mid q,e^{t},M^{t})\Bigg]\;\mathcal{G}(o\mid q,M^{T}), \tag{2}

$$

where $\{a^{t},e^{t},v^{t}\}_{t=1}^{T}$ are explicit realizations of the latent reasoning chain. Importantly, unlike latent thoughts behind trajectories, our memory $M$ is an explicit and deterministic record of the reasoning process, ensuring transparency and controllability of multi-turn decisions.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Diagram: Flow-GRPO

### Overview

This diagram illustrates the Flow-GRPO (likely an acronym for a reinforcement learning algorithm) process, depicting the interaction between a Policy Model, a Reference Model, and a Reward Model across multiple turns of agentic system rollouts. The diagram shows how actions are generated, observations are received, and rewards are calculated, with a distinction between trained and frozen models.

### Components/Axes

The diagram consists of the following key components:

* **Policy Model:** Represented by a flame icon, receiving input 'q' and outputting actions (a).

* **Reference Model:** Represented by a cube icon, receiving input 'K' and contributing to the KL divergence calculation.

* **Reward Model:** Represented by a cube icon, receiving observations (o) and outputting rewards (r).

* **Multi-turn Agentic System Rollouts:** A grid-like structure showing the sequence of actions, observations, and rewards across multiple turns (1 to G).

* **Multi-turn Group Computation:** A box indicating the aggregation of results across turns.

* **KL:** Label indicating the Kullback-Leibler divergence calculation.

* **Legend:** Distinguishes between "Trained Models" (light green) and "Frozen Models" (light blue).

* **Inputs:** 'q', 'M', 'K' are labeled as inputs.

* **Outputs:** 'a', 'o', 'r' are labeled as outputs.

### Detailed Analysis or Content Details

The diagram shows a flow from left to right.

1. **Inputs:** The process begins with inputs 'q', 'M', and 'K'. 'q' feeds into the Policy Model. 'K' feeds into the Reference Model. 'M' is connected to the KL divergence calculation.

2. **Policy Model & Actions:** The Policy Model generates a series of actions (a<sub>1</sub><sup>1</sup>, a<sub>1</sub><sup>2</sup>, a<sub>1</sub><sup>3</sup>, ... a<sub>G</sub><sup>1</sup>, a<sub>G</sub><sup>2</sup>, a<sub>G</sub><sup>3</sup>, ... a<sub>G</sub><sup>G</sup>) across G turns. The actions are arranged in a grid of G rows and G columns.

3. **Observations:** These actions lead to a series of observations (o<sub>1</sub>, o<sub>2</sub>, o<sub>3</sub>, ... o<sub>G</sub>).

4. **Reward Model & Rewards:** The Reward Model receives the observations and outputs corresponding rewards (r<sub>1</sub><sup>1</sup>, r<sub>1</sub><sup>2</sup>, r<sub>1</sub><sup>3</sup>, ... r<sub>G</sub><sup>1</sup>, r<sub>G</sub><sup>2</sup>, r<sub>G</sub><sup>3</sup>, ... r<sub>G</sub><sup>G</sup>), also arranged in a G x G grid.

5. **Multi-turn Group Computation:** The outputs from the rollouts are then fed into a "Multi-turn Group Computation" block.

6. **Model Status:** The Policy Model and Reference Model are color-coded. The Policy Model is shown as a light green box, indicating it is a "Trained Model". The Reference Model is shown as a light blue box, indicating it is a "Frozen Model". The Reward Model is also light green, indicating it is a "Trained Model".

### Key Observations

* The diagram emphasizes the iterative nature of the process, with multiple turns of agentic rollouts.

* The distinction between trained and frozen models suggests a specific training strategy where some models are updated while others remain fixed.

* The KL divergence calculation likely plays a role in regularizing the Policy Model's behavior relative to the Reference Model.

* The grid structure of actions and rewards indicates a parallel or batched processing of multiple rollouts.

### Interpretation

The Flow-GRPO diagram represents a reinforcement learning framework that leverages a reference model to guide the training of a policy model. The use of a frozen reference model suggests a desire to maintain a certain level of stability or prior knowledge during learning. The multi-turn rollouts and group computation indicate that the algorithm explores a range of possible actions and evaluates their cumulative rewards. The KL divergence term likely encourages the policy to stay close to the reference model, preventing it from deviating too far from established behavior. The diagram highlights a sophisticated approach to reinforcement learning that combines elements of imitation learning (through the reference model) and exploration (through the policy model). The color coding of the models is crucial for understanding the training dynamics of the system. The diagram suggests a system designed for complex, multi-step decision-making tasks.

</details>

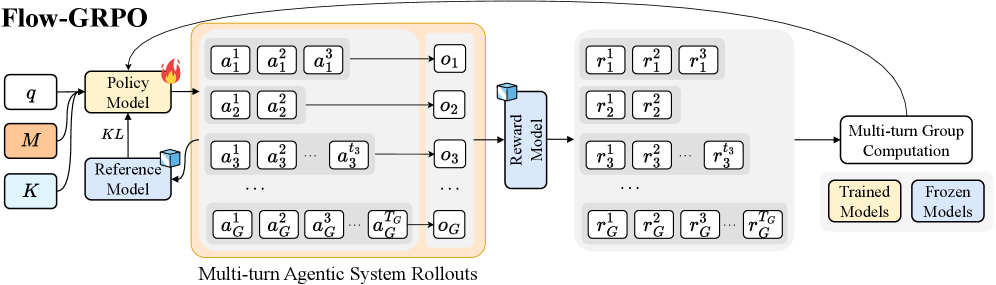

Figure 4: Optimization for our proposed agentic system AgentFlow. Given a query $q$ , an evolving memory $M$ , and a toolset $K$ , the policy model generates actions that target sub-goals and select tools. It is trained via Flow-based Group Refined Policy Optimization (Flow-GRPO), which enables multi-turn reinforcement learning and stable optimization under collaborative dynamics.

### 3.2 In-the-Flow Reinforcement Learning Optimization

We target tool-integrated agentic systems operating under long-horizon tasks with sparse rewards. In this setting, the Action Planner (the trainable policy of AgentFlow) selects a sequence of interdependent actions while the state $(q,K,M^{t})$ evolves with tool results and verifier feedback. Conventional offline training—e.g., supervised fine-tuning or preference fine-tuning on curated traces—optimizes the planner outside the active loop (Motwani et al., 2024; Park et al., 2025). This decoupling prevents real-time coordination with the executor, verifier, and solution generator, induces distribution shift between training and deployment, and provides limited guidance about which intermediate decisions truly matter. As a result, planners often adapt poorly to multi-turn dynamics; early errors cascade, and post-hoc fixes are brittle.

In-the-flow learning.

To address these issues, we optimize the planner in the flow of execution. We roll out the full AgentFlow system under the current policy, collect the actual trajectory $\tau$ of states, actions, and tool events it induces, and update the policy within the agentic system using a verifiable final-outcome signal. This exposes the multi-turn credit-assignment problem directly and trains the planner on the exact states it will face at inference. Our objective, Flow-GRPO, is designed to stabilize learning under sparse, trajectory-level rewards over multiple turns.

As established in § 3.1, rollouts in AgentFlow define a finite-horizon MDP with a variable horizon $T$ . At turn $t$ , the planner observes the state $(q,K,M^{t})$ , selects an action $a^{t}$ , the executor and verifier return $(e^{t},v^{t})$ , and the memory updates deterministically to $M^{t+1}$ .

Policy optimization objective.

The planner policy $\pi_{\theta}$ is trained to maximize the expected return over on-policy rollouts. Let $R(\tau)$ be the reward for a complete trajectory $\tau$ . The objective is:

$$

\mathcal{J}(\theta)=\mathbb{E}_{\tau\sim\pi_{\theta}}\!\big[R(\tau)\big],\qquad\theta^{\star}=\arg\max_{\theta}\mathcal{J}(\theta), \tag{3}

$$

where a rollout $\tau$ is the sequence of decisions $\{a^{t}\}_{t=1}^{T}$ generated on-policy by $\pi_{\theta}$ .

Final-outcome reward.

Assigning credit to intermediate actions is challenging because each $a^{t}$ influences the final solution only indirectly, and their value may only emerge after several turns (e.g., error or improvement accumulation). To avoid brittle local feedback, we adopt a final-outcome-based reward: every action within a rollout receives the same global reward signal, based on the correctness of the final solution $o$ with respect to query $q$ and ground truth $y^{*}$ :

$$

\displaystyle r=R(a^{t})=\bar{R}(o,q,y^{*}),\quad\forall t=1,\dots,T, \tag{4}

$$

where $\bar{R}(o,q,y^{*})\in\{0,1\}$ is assigned by an LLM-as-judge rubric for semantic, numeric, and option-level equivalence (see § E.3). This propagates a trajectory-level success signal back through the reasoning chain, aligning every decision $a^{t}$ with global correctness.

Objective function.

We formalize Flow -based G roup R efined P olicy O ptimization for the planner. The goal is to optimize the policy $\pi_{\theta}$ by maximizing the expected return over a group of parallel rollouts. For each query-label pair from training corpus $(q,y^{*})\sim\mathcal{D}$ , we sample a group of $G$ on-policy trajectories $\{\tau_{i}\}_{i=1}^{G}$ by running the current behavior policy $\pi_{\theta_{\text{old}}}$ inside AgentFlow, where $\tau_{i}=\{a_{i}^{1},....a_{i}^{T_{i}},o_{i}\}$ . Let $s_{i}^{t}=(q,K,M_{i}^{t})$ be the state at turn $t$ of rollout $i$ , $a_{i}^{t}$ the planner’s action (a token sequence of length $|a_{i}^{t}|$ ), and $o_{i}$ the final response. This structure is key to addressing the long-horizon credit assignment challenge: by broadcasting a single trajectory-level reward to all turns, we effectively decompose the multi-turn RL problem into a set of independent, single-turn policy updates; we provide a formal proof of this equivalence and analyze its convergence properties in § B. Each update for an action $a_{i}^{t}$ is conditioned on the full historical context encapsulated in the state $s_{i}^{t}$ and receives the same global success signal, simplifying optimization. The objective is

$$

\displaystyle\mathcal{J}_{\text{Flow-GRPO}}(\theta) \displaystyle=\mathbb{E}_{(q,y^{*})\sim\mathcal{D},\;\{\tau_{i}\}_{i=1}^{G}\sim\pi_{\theta_{\text{old}}}} \displaystyle\Bigg[\frac{1}{G}\sum_{i=1}^{G}\frac{1}{T_{i}}\sum_{t=1}^{T_{i}}\frac{1}{|a_{i}^{t}|}\sum_{j=1}^{|a_{i}^{t}|}\min\!\Big\{\rho_{i,j}^{t}A_{i}^{t},\,\mathrm{clip}(\rho_{i,j}^{t},1-\epsilon,1+\epsilon)\,A_{i}^{t}\Big\}\;-\;\beta\,\mathbb{D}_{\mathrm{KL}}\!\big(\pi_{\theta}\,\|\,\pi_{\text{ref}}\big)\Bigg], \tag{5}

$$

where $T_{i}$ is the (variable) number of turns in rollout $i$ , and

$$

\rho_{i,j}^{t}=\frac{\pi_{\theta}\!\big(a_{i,j}^{t}\,\big|\,s_{i}^{t},a_{i,1:j-1}^{t}\big)}{\pi_{\theta_{\text{old}}}\!\big(a_{i,j}^{t}\,\big|\,s_{i}^{t},a_{i,1:j-1}^{t}\big)} \tag{6}

$$

is the token-level importance ratio for the $j$ -th token of $a_{i}^{t}$ , $\epsilon>0$ is the PPO clipping parameter, and $\beta>0$ controls the KL penalty to a fixed reference policy $\pi_{\text{ref}}$ .

Group-normalized advantages.

Because the reward in Eq. 4 is a single trajectory-level signal, the per-turn advantage $A_{i}^{t}$ is constant over $t$ within a rollout $i$ . We reduce variance and sharpen credit assignment across the group by using a group-normalized advantage:

$$

A_{i}^{t}=\frac{\bar{R}(o_{i},q,y^{*})-\mathrm{mean}\left(\{\bar{R}(o_{k},q,y^{*})\}_{k=1}^{G}\right)}{\mathrm{std}\left(\{\bar{R}(o_{k},q,y^{*})\}_{k=1}^{G}\right)}. \tag{7}

$$

Technical contribution summary. To tackle long-horizon, sparse-reward training in multi-module agentic systems, we propose Flow-GRPO. This novel algorithm (i) formalizes the multi-turn RL problem in agentic systems into a series of tractable, single-turn policy updates, and (ii) broadcasts a single trajectory-level outcome to every turn to align local planner decisions with global success. Training uses an LLM-based rubric to assign verifiable final-outcome rewards, with group-normalized advantages, KL regularization, and clipping to stabilize learning.

## 4 Experiments

### 4.1 Experimental Setup

| Model Qwen-2.5-7B-Instruct | Size 7B-Inst | Search Intensive Bamboogle 12.0 | Agentic 2Wiki 23.0 | HotpotQA 21.0 | Musique 6.0 | Avg. 15.5 | $\Delta$ $\uparrow$ 41.8 | GAIA 3.2 | $\Delta$ $\uparrow$ 29.9 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Qwen-2.5-14B-Instruct | 14B-Inst | 21.6 | 26.7 | 20.0 | 8.0 | 19.1 | $\uparrow$ 38.2 | 5.5 | $\uparrow$ 27.6 |

| Qwen-2.5-32B-Instruct | 32B-Inst | 24.0 | 26.7 | 27.0 | 6.0 | 20.9 | $\uparrow$ 36.4 | 9.5 | $\uparrow$ 23.6 |

| Llama-3.3-70B-Instruct | 70B-Inst | 18.4 | 22.7 | 52.0 | 16.0 | 27.3 | $\uparrow$ 30.0 | 3.2 | $\uparrow$ 29.9 |

| GPT-4o-mini (Hurst et al., 2024) | $\sim$ 8B | 40.8 | 35.6 | 41.0 | 15.0 | 33.1 | $\uparrow$ 24.2 | 7.1 | $\uparrow$ 26.0 |

| GPT-4o (Hurst et al., 2024) | $\sim$ 200B | 68.8 | 49.5 | 54.0 | 24.0 | 49.1 | $\uparrow$ 8.2 | 17.3 | $\uparrow$ 15.8 |

| Supervised Fine-Tuning (SFT) | 7B-Inst | 12.0 | 25.9 | 22.0 | 6.6 | 16.6 | $\uparrow$ 40.7 | 3.2 | $\uparrow$ 29.9 |

| Iter-RetGen (Shao et al., 2023) | 7B-Inst | 36.8 | 33.6 | 37.4 | 17.8 | 31.4 | $\uparrow$ 25.9 | 3.9 | $\uparrow$ 29.2 |

| Search-R1 (Jin et al., 2025) | 7B-Inst | 43.2 | 38.2 | 37.0 | 14.6 | 33.3 | $\uparrow$ 24.0 | 19.1 | $\uparrow$ 14.0 |

| ZeroSearch (Sun et al., 2025) | 7B-Base | 27.8 | 35.2 | 34.6 | 18.0 | 28.9 | $\uparrow$ 28.4 | 16.5 | $\uparrow$ 16.6 |

| ReSearch (Chen et al., 2025) | 7B-Base | 42.4 | 47.6 | 43.5 | 22.3 | 39.0 | $\uparrow$ 18.3 | 17.3 | $\uparrow$ 15.8 |

| StepSearch (Wang et al., 2025d) | 7B-Base | 40.0 | 36.6 | 38.6 | 22.6 | 34.5 | $\uparrow$ 22.8 | – | – |

| VerlTool (Jiang et al., 2025) | 7B-Base | 46.4 | 45.3 | 44.8 | 19.3 | 39.0 | $\uparrow$ 18.3 | 11.2 | $\uparrow$ 21.9 |

| AutoGen (Wu et al., 2024) | 7B-Inst | 59.6 | 44.0 | 50.0 | 15.9 | 42.4 | $\uparrow$ 14.9 | 6.3 | $\uparrow$ 26.8 |

| AgentFlow | 7B-Inst | 58.4 | 60.0 | 51.3 | 19.2 | 47.2 | $\uparrow$ 12.1 | 17.2 | $\uparrow$ 15.9 |

| AgentFlow (w/ Flow-GRPO) | 7B-Inst | 69.6 | 77.2 | 57.0 | 25.3 | 57.3 | – | 33.1 | – |

Table 1: Accuracy comparison on search-intensive and agentic tasks. 7B-Base refers to Qwen-2.5-7B-Base and 7B-Inst refers to Qwen-2.5-7B-Instruct. AutoGen and our AgentFlow method are agentic systems, which use Qwen-2.5-7B-Instruct for the LLM-powered agents and tools for fair comparison. We visualize the gains of AgentFlow to the each baseline in the $\Delta$ columns.

| | | Math Reasoning | Scientific Reasoning | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Model | Size | AIME24 | AMC23 | GameOf24 | Avg. | $\Delta$ | GPQA | MedQA | Avg. | $\Delta$ |

| Qwen-2.5-7B-Instruct | 7B-Inst | 6.7 | 47.5 | 33.0 | 29.1 | $\uparrow$ 22.5 | 34.0 | 66.0 | 50.0 | $\uparrow$ 13.5 |

| Qwen-2.5-14B-Instruct | 14B-Inst | 6.7 | 60.0 | 25.0 | 30.6 | $\uparrow$ 21.0 | 31.0 | 75.0 | 53.0 | $\uparrow$ 10.5 |

| Llama-3.3-70B-Instruct | 70B-Inst | 6.7 | 47.5 | 31.0 | 28.4 | $\uparrow$ 23.1 | 35.0 | 67.0 | 51.0 | $\uparrow$ 12.5 |

| Llama-3.1-405B-Instruct | 405B-Inst | 26.7 | 47.5 | 23.0 | 32.4 | $\uparrow$ 19.1 | 30.0 | 62.0 | 46.0 | $\uparrow$ 17.5 |

| GPT-4o-mini (Hurst et al., 2024) | $\sim$ 8B | 13.3 | 57.5 | 16.0 | 28.9 | $\uparrow$ 22.6 | 27.0 | 66.0 | 46.5 | $\uparrow$ 17.0 |

| GPT-4o (Hurst et al., 2024) | $\sim$ 200B | 13.3 | 60.0 | 32.0 | 35.1 | $\uparrow$ 16.4 | 31.0 | 60.0 | 45.5 | $\uparrow$ 18.0 |

| Supervised Fine-Tuning (SFT) | 7B-Inst | 6.7 | 47.5 | 33.0 | 29.1 | $\uparrow$ 22.5 | 34.0 | 66.0 | 50.0 | $\uparrow$ 13.5 |

| SimpleRL-reason (Zeng et al., 2025b) | 7B-Base | 16.7 | 60.0 | 33.0 | 36.6 | $\uparrow$ 15.0 | 45.0 | 65.0 | 50.0 | $\uparrow$ 13.5 |

| Open-Reasoner-Zero (Hu et al., 2025a) | 7B-Base | 16.7 | 54.9 | 32.0 | 34.5 | $\uparrow$ 17.0 | 34.0 | 54.0 | 44.0 | $\uparrow$ 19.5 |

| General-Reasoner (Ma et al., 2025) | 7B-Base | 13.3 | 55.0 | 33.0 | 33.8 | $\uparrow$ 17.7 | 35.5 | 61.0 | 48.3 | $\uparrow$ 15.2 |

| Luffy (Yan et al., 2025) | 7B-Inst | 30.7 | 44.8 | 33.0 | 36.2 | $\uparrow$ 15.3 | 34.0 | 77.0 | 55.5 | $\uparrow$ 8.0 |

| TIR (Yang et al., 2024b) | 7B-Inst | 10.0 | 50.0 | 33.0 | 31.0 | $\uparrow$ 20.5 | 42.0 | 76.8 | 59.4 | $\uparrow$ 4.1 |

| ToRL (Li et al., 2025b) | 7B-Inst | 20.0 | 60.0 | 31.0 | 37.0 | $\uparrow$ 14.5 | 35.0 | 76.5 | 55.8 | $\uparrow$ 7.7 |

| AutoGen (Wu et al., 2024) | 7B-Inst | 13.3 | 57.5 | 24.0 | 31.6 | $\uparrow$ 19.9 | 42.0 | 72.0 | 57.0 | $\uparrow$ 6.5 |

| AgentFlow | 7B-Inst | 16.7 | 47.4 | 31.0 | 31.7 | $\uparrow$ 19.8 | 37.0 | 76.0 | 56.5 | $\uparrow$ 7.0 |

| AgentFlow (w/ Flow-GRPO) | 7B-Inst | 40.0 | 61.5 | 53.0 | 51.5 | – | 47.0 | 80.0 | 63.5 | – |

Table 2: Accuracy comparison of mathematical and scientific reasoning tasks. As the same in Table 1, AutoGen and AgentFlow use Qwen-2.5-7B-Instruct for the LLM-powered tools.

Implementation.

In our main experiments, all modules—Action Planner, Tool Executor, Executive Verifier, and Solution Generator—are instantiated with the Qwen2.5-7B-Instruct model (Yang et al., 2024a). Among these, only the Action Planner is trainable. The system operates with five interactive tools: Base Generator is an instance of Qwen2.5-7B-Instruct that acts as the default reasoning engine if the planner decides not to use an external tool; Python Coder generates and executes Python code given a query and returns the execution result; Google Search searches the web and returns a summarization of Top-K search results; Wikipedia Search searches articles matching a given query and returns a summarization; and Web Search returns summarized information from a given web page. During the RL fine-tuning phase, we mix data from Search-R1 (Jin et al., 2025) and DeepMath (He et al., 2025) as training data, which provides paired question-answer examples across search and mathematical domains.

Training.

We provide further details on the training setup for AgentFlow. Our Flow-GRPO implementation uses a learning rate of $1\times 10^{-6}$ . The Action Planner generates actions with a sampling temperature of $0.5$ to balance exploration and exploitation. To prevent policy collapse and stabilize training, we incorporate a KL-divergence penalty against a reference policy with a coefficient $\beta=0.001$ . The maximum output length for the planner is set to 2048 tokens to ensure complete exploration during rollouts. We use a batch size of 32 with 8 rollouts per sample.

To accelerate the training speed, we limit the maximum number of turns per rollout to $3$ . The final-outcome reward signal (Eq. 4) is provided by an LLM-as-judge, for which we use GPT-4o. All tool calls are executed synchronously with a 500-second timeout to handle external service latency robustly. The LLM engines within the tools are set to a temperature of 0.0 to ensure deterministic and stable outputs. The full training process was conducted on 8 NVIDIA A100 GPUs. Further details on agent prompts and the memory update mechanism are provided in § E.1.

Evaluation.

To comprehensively evaluate tool-use capabilities of AgentFlow, we conduct experiments on four types of reasoning tasks: (1) Knowledge-intensive search including Bamboogle (Press et al., 2023), 2Wiki (Ho et al., 2020), HotpotQA (Yang et al., 2018), and Musique (Trivedi et al., 2022); (2) Agentic reasoning such as GAIA (Mialon et al., 2023) (where we adopt the textual split); (3) Logic-dense mathematical reasoning including AIME2024 (Art of Problem Solving, 2025), AMC23 (MAA, 2023), and GameOf24 (Lightman et al., 2023); and (4) Scientific reasoning including GPQA (Rein et al., 2024) and MedQA (Yang et al., 2024c). To mitigate randomness, we report the average accuracy across three trials for all experiments. More evaluation details are provided in § C.

### 4.2 Main Results

Baselines.

As presented in Tables 1 and 2, we include five categories of baselines: (1) Open-source LLMs: Qwen2.5 (Yang et al., 2024a), Llama-3.1, and Llama-3.3 (Dubey et al., 2024); (2) Proprietary LLMs: GPT-4o-mini and GPT-4o; (3) Reasoning LLMs: supervised fine-tuning (Yang et al., 2024b), SimpleRL-reason, Open-Reasoner-Zero, General-Reasoner, and LUFFY; (4) Tool-integrated reasoning LLMs: both search-enhanced, including Iter-RetGen, Search-R1, ZeroSearch, ReSearch, StepSearch, and VerlTool, and code-enhanced, including TIR and ToRL; (5) Training-free agentic system: AutoGen. More details on baseline implementations are in § C.2.

Key insights.

AgentFlow consistently outperforms all baseline models by large margins. Compared to the best-performing 7B models without tool integration, AgentFlow achieves absolute gains of 40.7% on search (SFT), 29.9% on agentic reasoning (SFT), 15.0% on math (SimpleRL-reason), and 8.0% on scientific tasks (Luffy). Against specialized tool-integrated systems, AgentFlow surpasses the top models by 14.9% in search (AutoGen), 14.0% in agentic reasoning (Search-R1), 14.5% in math (ToRL), and 4.1% in science (TIR). Notably, our 7B-backbone AgentFlow even outperforms the $\sim$ 200B-parameter GPT-4o across all domains, with gains ranging from 8.2% to 18.0%. A detailed analysis is provided in § D.1.

### 4.3 In-depth Analysis of Optimized Planning

Flow-GRPO optimizes tool usage.

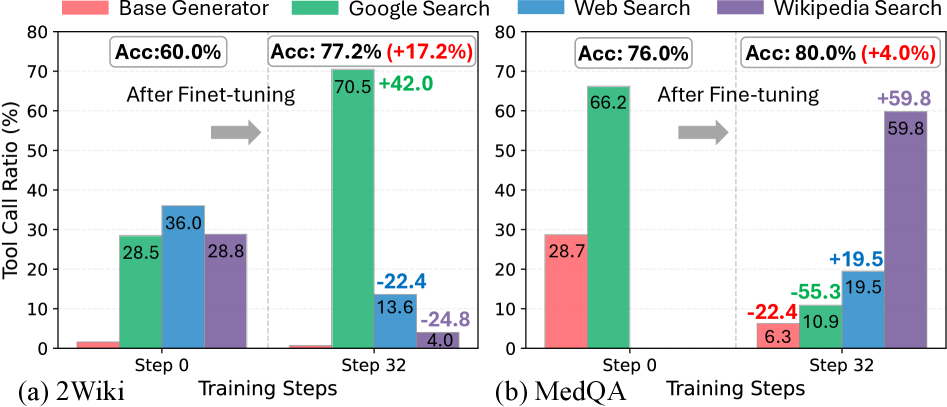

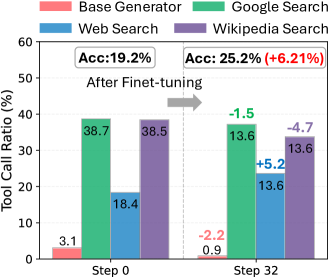

We compare tool usage distributions before and after in-the-flow RL training. Figure 6 shows results on two knowledge-intensive tasks, 2Wiki and MedQA, which exhibit distinct optimization patterns alongside improved task accuracy. For 2Wiki, which requires broad factual knowledge, Flow-GRPO optimizes the planner to increase Google Search usage by 42.0%. In contrast, for the specialized MedQA benchmark, which requires deep, domain-specific information retrieval, fine-tuning shifts the planner away from general tools, reducing Google Search calls (66.2 $\rightarrow$ 10.9%) in favor of in-document Web Search (0 $\rightarrow$ 19.5%) and specialized Wikipedia Search (0 $\rightarrow$ 59.8%). This demonstrates that the planner learns to select task-appropriate tools.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## Bar Charts: Tool Call Ratio vs. Training Steps for 2Wiki and MedQA

### Overview

The image presents two bar charts, labeled (a) 2Wiki and (b) MedQA, comparing the Tool Call Ratio (%) at two training steps: Step 0 and Step 32. Each chart displays the ratio for three different search methods: Base Generator, Google Search, and Wikipedia Search. The charts also show the accuracy (Acc) at each step, with the percentage increase after fine-tuning indicated.

### Components/Axes

* **X-axis:** Training Steps (Step 0, Step 32)

* **Y-axis:** Tool Call Ratio (%) - Scale ranges from 0 to 80.

* **Legend:**

* Red: Base Generator

* Green: Google Search

* Blue: Wikipedia Search

* **Accuracy Labels:** "Acc: [value]%" displayed above each set of bars for Step 0 and Step 32, with the percentage increase in parentheses.

* **Arrow:** A gray arrow indicates the progression from Step 0 to Step 32, labeled "After Fine-tuning".

### Detailed Analysis or Content Details

**Chart (a) 2Wiki:**

* **Step 0:**

* Base Generator: Approximately 28.5%

* Google Search: Approximately 36.0%

* Wikipedia Search: Approximately 28.8%

* Accuracy: 60.0%

* **Step 32:**

* Base Generator: Approximately 13.6% (-22.4%)

* Google Search: Approximately 70.5% (+42.0%)

* Wikipedia Search: Approximately 24.8% (-4.0%)

* Accuracy: 77.2% (+17.2%)

**Chart (b) MedQA:**

* **Step 0:**

* Base Generator: Approximately 28.7%

* Google Search: Approximately 66.2%

* Wikipedia Search: Approximately 59.8%

* Accuracy: 76.0%

* **Step 32:**

* Base Generator: Approximately 10.9% (-55.3%)

* Google Search: Approximately 6.3% (-22.4%)

* Wikipedia Search: Approximately 19.5% (+19.5%)

* Accuracy: 80.0% (+4.0%)

### Key Observations

* In both charts, the Google Search method shows a significant increase in Tool Call Ratio after fine-tuning (Step 32).

* The Base Generator consistently experiences a decrease in Tool Call Ratio after fine-tuning.

* The Wikipedia Search method shows a moderate increase in Tool Call Ratio for MedQA, but a decrease for 2Wiki.

* The accuracy increases in both datasets after fine-tuning.

* The MedQA dataset shows a more dramatic decrease in Tool Call Ratio for the Base Generator and Google Search after fine-tuning compared to the 2Wiki dataset.

### Interpretation

The data suggests that fine-tuning improves the overall accuracy of the model in both 2Wiki and MedQA datasets. However, the impact on the Tool Call Ratio varies significantly depending on the search method and the dataset.

The substantial increase in Tool Call Ratio for Google Search in both datasets indicates that fine-tuning effectively leverages the information retrieved through Google Search. Conversely, the decrease in Tool Call Ratio for the Base Generator suggests that fine-tuning might be reducing its reliance on its internal knowledge or that the fine-tuning process is negatively impacting its ability to generate tool calls.

The differing behavior of the Wikipedia Search method between the two datasets could be due to the nature of the information available in Wikipedia for each task. The MedQA dataset might benefit more from the structured knowledge available in Wikipedia, while the 2Wiki dataset might require more nuanced information retrieval from Google Search.

The large negative changes in the MedQA dataset for the Base Generator and Google Search suggest that the fine-tuning process may be overfitting to the training data, or that the initial model was particularly reliant on these methods, and the fine-tuning process has altered this reliance. Further investigation is needed to understand the underlying reasons for these trends.

</details>

Figure 5: Tool call ratio change by Flow-GRPO fine-tuning.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Calling Error Rate vs. Training Steps

### Overview

This line chart depicts the relationship between the calling error rate (in percentage) and the number of training steps for four different models: GAIA, 2Wiki, Bambooogle, and AIME24. The chart shows how the error rate changes as the models are trained further. The error rates are shown as lines plotted against the training steps. Final error rates are highlighted with boxes.

### Components/Axes

* **X-axis:** Training Steps (ranging from 0 to 32, with markers at 0, 8, 18, 28, and 32)

* **Y-axis:** Calling Error Rate (%) (ranging from 0 to 50, with markers at 0, 10, 20, 30, 40, and 50)

* **Legend:** Located in the top-right corner, identifying each line with a color and model name:

* GAIA (Green)

* 2Wiki (Magenta)

* Bambooogle (Blue)

* AIME24 (Orange)

### Detailed Analysis

* **GAIA (Green):** The green line slopes downward, indicating a decreasing error rate with increasing training steps.

* At 0 training steps: approximately 51%

* At 8 training steps: approximately 41%

* At 18 training steps: approximately 33%

* At 28 training steps: approximately 26%

* At 32 training steps: approximately 23% (Highlighted as -28.4%)

* **2Wiki (Magenta):** The magenta line also slopes downward, but less steeply than the GAIA line.

* At 0 training steps: approximately 34%

* At 8 training steps: approximately 28%

* At 18 training steps: approximately 21%

* At 28 training steps: approximately 18%

* At 32 training steps: approximately 15% (Highlighted as -19.4%)

* **Bambooogle (Blue):** The blue line shows a relatively stable error rate, with a slight downward trend.

* At 0 training steps: approximately 16%

* At 8 training steps: approximately 14%

* At 18 training steps: approximately 13%

* At 28 training steps: approximately 11%

* At 32 training steps: approximately 10% (Highlighted as -7.8%)

* **AIME24 (Orange):** The orange line initially decreases rapidly, then plateaus.

* At 0 training steps: approximately 9%

* At 8 training steps: approximately 4%

* At 18 training steps: approximately 3%

* At 28 training steps: approximately 5%

* At 32 training steps: approximately 4% (Highlighted as -8.4%)

### Key Observations

* GAIA exhibits the largest reduction in error rate over the training steps.

* AIME24 reaches a low error rate quickly and then stabilizes.

* Bambooogle shows the smallest change in error rate throughout the training process.

* 2Wiki shows a consistent, but moderate, decrease in error rate.

* All models demonstrate a decreasing error rate with increased training steps, suggesting that further training generally improves performance.

### Interpretation

The chart demonstrates the effectiveness of training on the calling error rate for each of the four models. The significant reduction in error rate for GAIA suggests it benefits most from increased training. AIME24, on the other hand, appears to converge quickly, indicating it may reach a performance limit with relatively few training steps. Bambooogle's stable error rate suggests it may have already reached a good level of performance or requires a different training approach. The consistent decrease in 2Wiki's error rate indicates that continued training is beneficial, but it may require more steps than GAIA to achieve similar results. The highlighted percentage changes at the final training step (32) provide a concise summary of the overall improvement for each model. The data suggests that the optimal training strategy may vary depending on the specific model being used.

</details>

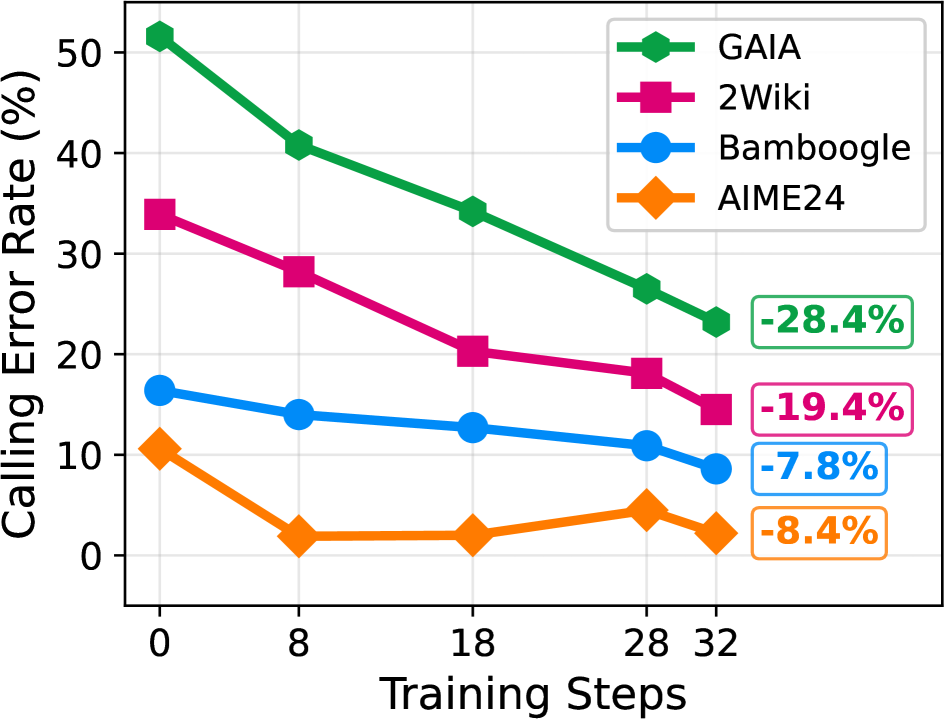

Figure 6: Calling error rate.

Flow-GRPO enhances tool-calling efficacy.

A key aspect of the model’s improvement is its increased reliability in tool usage. As shown in Figure 6, the tool-calling error rate consistently decreases across tasks during training, with a reduction of up to 28.4% on GAIA. This trend indicates that the training process not only teaches the model which tool to use but also how to invoke it correctly with proper arguments and format, leading to more robust and effective tool integration.

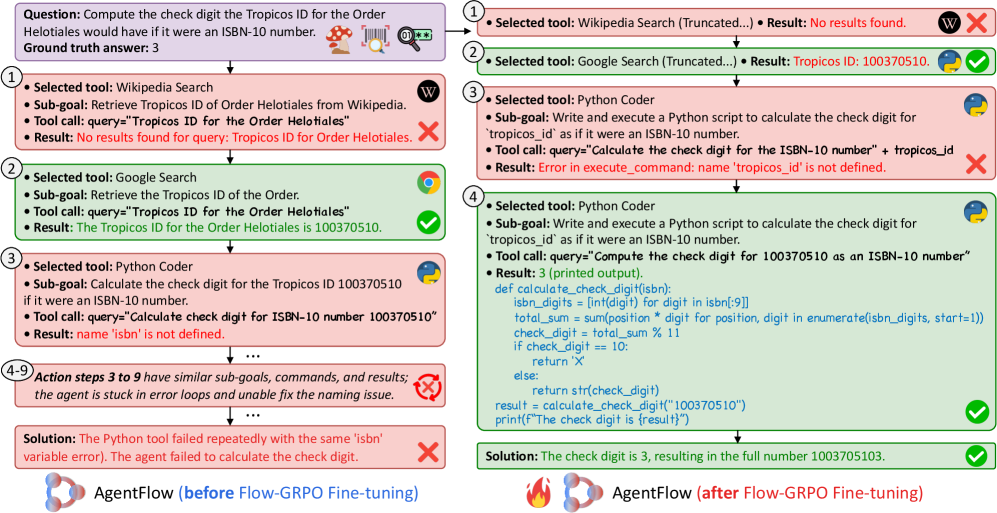

Flow-GRPO incentivizes autonomous discovery of new solutions.

We further examine qualitative examples in Figure 7 and additional cases in § F. These cases show that AgentFlow, trained with Flow-GRPO, develops enhanced capabilities for task planning and tool use. The planner exhibits adaptive efficiency, stronger self-correction, and spontaneous new integration of tools throughout step-by-step problem-solving, autonomously discovering effective solution pathways.

<details>

<summary>x9.png Details</summary>

### Visual Description

\n

## Diagram: Agent Execution Log - Check Digit Calculation

### Overview

This diagram depicts a log of an agent's attempts to compute the check digit for the Tropicos ID of the Order Helotiales, treating it as an ISBN-10 number. The agent utilizes a sequence of tools (Wikipedia Search, Google Search, Python Code) and encounters errors related to variable naming. The diagram shows the question, the ground truth answer, and the agent's step-by-step execution with results.

### Components/Axes

The diagram is structured as a series of numbered steps (1-9, with steps 4-9 grouped as "4-9"). Each step includes:

* **Step Number:** A circled number indicating the sequence of execution.

* **Selected Tool:** The tool used in that step (Wikipedia Search, Google Search, Python Code).

* **Sub-goal:** A description of the task the agent attempted to perform.

* **Tool call:** The specific query or command sent to the tool.

* **Result:** The output or outcome of the tool call.

* **Status Indicator:** A colored checkmark or 'X' indicating success or failure.

There is also a "Solution" section at the bottom summarizing the agent's final approach and result.

### Detailed Analysis or Content Details

**Step 1: Selected tool: Wikipedia Search**

* Sub-goal: Retrieve Tropicos ID of Order Helotiales from Wikipedia.

* Tool call: query="Tropicos ID for the Order Helotiales"

* Result: No results found for query: Tropicos ID for Order Helotiales.

* Status: 'X' (Red)

**Step 2: Selected tool: Google Search**

* Sub-goal: Retrieve the Tropicos ID of the Order.

* Tool call: query="Tropicos ID for the Order Helotiales"

* Result: The Tropicos ID for the Order Helotiales is 100370510.

* Status: Checkmark (Green)

**Step 3: Selected tool: Python Code**

* Sub-goal: Calculate the check digit for the Tropicos ID 100370510 if it were an ISBN-10 number.

* Tool call: "Calculate check digit for ISBN-10 number 100370510"

* Result: name 'isbn' is not defined.

* Status: 'X' (Red)

**Steps 4-9 (grouped):** Similar sub-goals, commands, and results. The agent is stuck in error loops and unable to fix the naming issue.

**Solution:**

The Python tool failed repeatedly using the same 'isbn' variable error. The agent fixed the naming issue.

* Result: The check digit is 3, resulting in the full number 100370513.

* Status: Checkmark (Green)

**Python Code Snippet (Step 4):**

```python

def calculate_check_digit(isbn):

isbn_digits = [int(digit) for digit in isbn[9]]

total_sum = sum(position * digit for position, digit in enumerate(isbn_digits, start=1))

check_digit = total_sum % 11

if check_digit == 10:

return 'X'

else:

return str(check_digit)

result = calculate_check_digit("100370510")

print("The check digit is " + result)

```

### Key Observations

* The agent initially fails to find the Tropicos ID on Wikipedia.

* Google Search successfully retrieves the Tropicos ID: 100370510.

* The agent repeatedly fails to calculate the check digit due to an undefined variable ('isbn').

* The agent eventually resolves the naming issue and successfully calculates the check digit as 3, resulting in the ISBN-10 number 100370513.

* The diagram highlights the iterative nature of the agent's problem-solving process and the importance of debugging.

### Interpretation

This diagram demonstrates an agent attempting a task involving information retrieval and calculation. The agent's initial attempts are unsuccessful, highlighting the challenges of natural language understanding and tool usage. The repeated errors with the 'isbn' variable suggest a limitation in the agent's ability to correctly map variables within the Python code. The successful resolution indicates the agent's capacity for learning and adaptation. The diagram provides insight into the agent's reasoning process, error handling, and eventual success in achieving the desired outcome. The grouping of steps 4-9 suggests a period of repetitive failure before the agent identifies and corrects the underlying issue. The final result (100370513) confirms the agent's ability to perform the calculation once the variable naming issue is resolved. The ground truth answer of 3 is confirmed by the agent's final calculation. The diagram is a valuable case study for understanding the strengths and weaknesses of AI agents in complex problem-solving scenarios.

</details>

Figure 7: One case study example. Initially failed with repetitive errors (left), AgentFlow, trained with Flow-GRPO, explores a new solution pathway at turn 4 after two failed attempts (right).

### 4.4 Training Strategies on the Planner

We conduct an ablation study to analyze the impact of different training strategies for the Action Planner module in AgentFlow, with results reported in Table 3. The executor, verifier, and generator modules remain fixed as Qwen2.5-7B-Instruct, consistent with our main setup (§ 4.1).

| GPT-4o | Frozen | 65.0 ${}_{\uparrow~6.6}$ | 70.0 ${}_{\uparrow~10.0}$ | 23.6 ${}_{\uparrow~6.4}$ | 16.7 ${}_{\uparrow~0.0}$ | 48.7 ${}_{\uparrow~1.3}$ | 42.0 ${}_{\uparrow~11.0}$ | 44.3 ${}_{\uparrow~5.8}$ |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Qwen-2.5-7B | SFT | 30.4 ${}_{\downarrow~28.0}$ | 32.7 ${}_{\downarrow~27.3}$ | 6.3 ${}_{\downarrow~10.9}$ | 3.3 ${}_{\downarrow~13.4}$ | 37.5 ${}_{\downarrow~9.9}$ | 7.0 ${}_{\downarrow~24.0}$ | 19.5 ${}_{\downarrow~19.0}$ |

| Qwen-2.5-7B | Flow-GRPO | 69.6 ${}_{\uparrow~11.2}$ | 77.2 ${}_{\uparrow~17.2}$ | 33.1 ${}_{\uparrow~15.9}$ | 40.0 ${}_{\uparrow~23.3}$ | 61.5 ${}_{\uparrow~14.1}$ | 53.0 ${}_{\uparrow~22.0}$ | 55.7 ${}_{\uparrow~17.2}$ |

Table 3: Performance comparison of AgentFlow across different training methods.

A more capable planner is beneficial, but has limits. Replacing the frozen Qwen2.5-7B-Instruct baseline with a stronger proprietary model, GPT-4o, yields only a modest 5.8% average gain. This indicates a key bottleneck that, while a more powerful model improves planning, its static nature prevents co-adaptation with the live dynamics of AgentFlow.

Offline SFT leads to performance collapse, while in-the-flow RL is crucial. The limitations of a static planner are further exposed when distilling GPT-4o’s behavior via offline supervised fine-tuning (SFT) on its trajectories as Action Planner in AgentFlow. This results in a catastrophic performance collapse, with an average accuracy drop of 19.0% compared to the frozen baseline. This failure arises from the token-level imitation objective of SFT, which misaligns with trajectory-level task success and prevents the planner from adapting to dynamic tool feedback or recovering from compounding errors. In contrast, training the planner with our on-policy Flow-GRPO method proves highly effective: by optimizing for the final outcome, the planner learns to handle long-horizon workflows, achieving a 17.2% average gain over the frozen baseline.

### 4.5 Training Efficiency Analysis

<details>

<summary>x10.png Details</summary>

### Visual Description

\n

## Line Chart: Training Reward and Response Length Trend

### Overview

This image presents a line chart illustrating the trends of "Training Reward" and "Response Length" over "Training Steps". The chart displays two distinct lines, each representing one of the tracked metrics, plotted against the number of training steps. A secondary y-axis is present on the right side of the chart to display the Response Length.

### Components/Axes

* **X-axis:** "Training Steps" ranging from 0 to 60, with tick marks at intervals of 10.

* **Y-axis (left):** "Training Reward (Acc.)" ranging from approximately 0.45 to 0.8, with tick marks at intervals of 0.1.

* **Y-axis (right):** "Response Length (tokens)" ranging from approximately 180 to 230, with tick marks at intervals of 10.

* **Legend (top-left):**

* Blue Line: "Training Reward Trend"

* Orange Line: "Response Length Trend"

* **Label (bottom-left):** "(a)" - likely a figure identifier.

* **Data Points:** Numerous small grey dots are scattered throughout the chart, representing individual data points for both metrics.

### Detailed Analysis

**Training Reward Trend (Blue Line):**

The blue line generally slopes upward, indicating an increasing training reward as the number of training steps increases.

* At Training Step 0, the Training Reward is approximately 0.51.

* At Training Step 10, the Training Reward is approximately 0.62.

* At Training Step 20, the Training Reward is approximately 0.67.

* At Training Step 30, the Training Reward is approximately 0.71.

* At Training Step 40, the Training Reward is approximately 0.68.

* At Training Step 50, the Training Reward is approximately 0.73.

* At Training Step 60, the Training Reward is approximately 0.77.

There are fluctuations in the trend, with dips around Training Steps 35 and 45.

**Response Length Trend (Orange Line):**

The orange line shows a generally decreasing trend in response length, with some fluctuations.

* At Training Step 0, the Response Length is approximately 215 tokens.

* At Training Step 10, the Response Length is approximately 225 tokens.

* At Training Step 20, the Response Length is approximately 210 tokens.

* At Training Step 30, the Response Length is approximately 205 tokens.

* At Training Step 40, the Response Length is approximately 195 tokens.

* At Training Step 50, the Response Length is approximately 200 tokens.

* At Training Step 60, the Response Length is approximately 190 tokens.

There is a peak around Training Step 10.

### Key Observations

* The Training Reward generally increases with training steps, while the Response Length generally decreases.

* The fluctuations in both lines suggest that the training process is not perfectly smooth.

* The initial increase in Response Length at the beginning of training is notable.

* The scales of the two y-axes are different, indicating that the metrics are measured in different units and have different ranges.

### Interpretation

The chart suggests that as the model is trained (increasing Training Steps), it becomes more accurate (increasing Training Reward) and generates shorter responses (decreasing Response Length). This could indicate that the model is learning to provide more concise and relevant answers. The fluctuations in both metrics suggest that the training process is dynamic and may require further optimization. The initial increase in response length could be due to the model exploring different response strategies early in training, before converging on more efficient ones. The relationship between the two trends is interesting; it's possible that the model is learning to achieve higher rewards by being more concise, or that the reduction in response length is a side effect of improving the model's accuracy. Further analysis would be needed to determine the exact nature of this relationship.

</details>

<details>

<summary>x11.png Details</summary>

### Visual Description

\n

## Line Chart: Validation Reward vs. Training Steps

### Overview

This image presents a line chart comparing the validation reward achieved by two reinforcement learning algorithms, Flow-GRPO (labeled as "ours") and ToRL, over a series of training steps. The chart displays the relationship between training progress (x-axis) and the resulting validation reward (y-axis).

### Components/Axes

* **X-axis:** "Training Steps" ranging from 0 to approximately 35. The axis is marked with tick intervals at 0, 10, 20, and 30.

* **Y-axis:** "Validation Reward (Acc.)" ranging from 0.05 to 0.45. The axis is marked with tick intervals at 0.1, 0.2, 0.3, and 0.4.

* **Legend:** Located in the top-left corner of the chart.

* **Flow-GRPO (ours):** Represented by a solid blue line with circular markers.

* **ToRL:** Represented by a solid orange line with circular markers.

* **Label:** "(b)" is present in the bottom-left corner.

### Detailed Analysis

* **Flow-GRPO (ours) - Blue Line:** The line starts at approximately 0.12 at Training Step 0. It fluctuates between approximately 0.15 and 0.22 until Training Step 15. From Training Step 15 to 30, the line exhibits a strong upward trend, increasing from approximately 0.18 to 0.32. Finally, it rises sharply to approximately 0.41 at Training Step 35.

* **ToRL - Orange Line:** The line begins at approximately 0.17 at Training Step 0. It decreases to approximately 0.11 at Training Step 5, then increases to approximately 0.18 at Training Step 10. From Training Step 10 to 20, the line fluctuates around 0.15. After Training Step 20, the line remains relatively stable, fluctuating between approximately 0.12 and 0.16.

Specific Data Points (approximate):

| Training Steps | Flow-GRPO (ours) | ToRL |

|---|---|---|

| 0 | 0.12 | 0.17 |

| 5 | 0.15 | 0.11 |

| 10 | 0.22 | 0.18 |

| 15 | 0.18 | 0.15 |

| 20 | 0.21 | 0.15 |

| 25 | 0.32 | 0.12 |

| 30 | 0.36 | 0.14 |

| 35 | 0.41 | 0.16 |

### Key Observations

* Flow-GRPO consistently outperforms ToRL throughout the training process.

* The performance gap between the two algorithms widens significantly after Training Step 15.

* ToRL's validation reward plateaus after Training Step 20, while Flow-GRPO continues to improve.

* Flow-GRPO exhibits a more volatile learning curve, with larger fluctuations in validation reward, especially between Training Steps 5 and 20.

### Interpretation

The data suggests that the Flow-GRPO algorithm is more effective at learning and improving its validation reward compared to the ToRL algorithm. The increasing trend of Flow-GRPO's validation reward indicates successful learning and adaptation during training. The plateauing of ToRL's performance suggests that it may have reached its learning limit or is struggling to generalize to the validation set. The volatility in Flow-GRPO's learning curve could indicate a more sensitive algorithm that requires careful tuning of hyperparameters. The significant performance difference after Training Step 15 suggests that Flow-GRPO benefits from continued training, while ToRL does not. The label "(b)" suggests this is part of a larger figure or set of experiments.

</details>

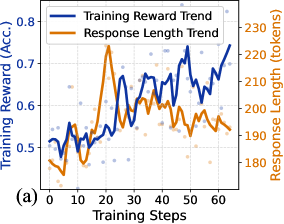

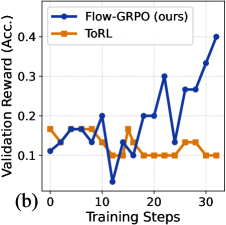

Figure 8: Training dynamics and efficiency of Flow-GRPO.

Optimized planning with increased rewards and condensed responses.

We analyze the training dynamics of the AgentFlow planner by tracking its average reward and response length on the train set (Figure 8 a). Training rewards steadily increase, indicating effective policy improvement via Flow-GRPO. Meanwhile, response length, after an initial exploratory rise, progressively shortens and stabilizes. This shows the planner learns to balance conciseness and informativeness, avoiding unnecessarily long outputs.

Flow-GRPO efficiency over tool-integrated reasoning RL.

We compare AgentFlow (trained with Flow-GRPO) against a monolithic tool-integrated reasoning baseline (ToRL) on AIME24. As shown in Figure 8 b, AgentFlow achieves sustained performance gains, with validation accuracy growing steadily. In contrast, ToRL’s performance quickly stagnates and trends downwards, highlighting the superior efficiency of our agentic training approach, which uses decomposition and stable credit assignment to avoid the instability.

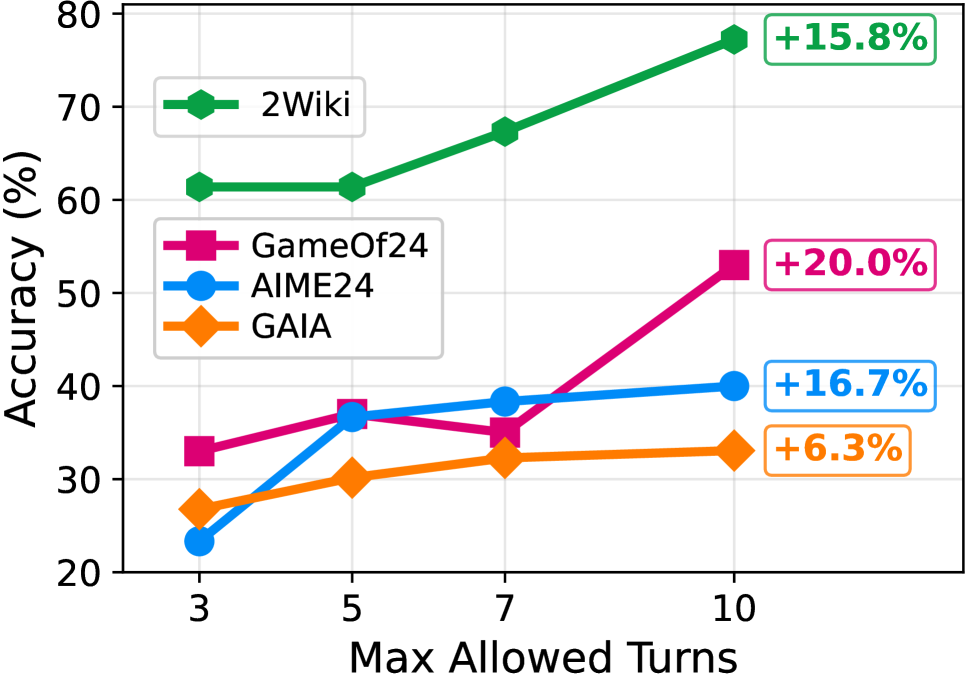

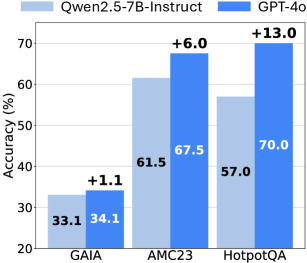

### 4.6 Scaling Trends in AgentFlow

<details>

<summary>x12.png Details</summary>

### Visual Description

\n

## Bar Chart: AgentFlow Accuracy Comparison

### Overview

This image presents a comparative bar chart illustrating the accuracy of AgentFlow using two different models: Qwen-2.5-3B-Instruct and Qwen-2.5-7B-Instruct. The charts compare accuracy "Before tuning" and "After tuning" across four datasets: Bamboogle, 2Wiki, GAIA, and AIME24.

### Components/Axes

* **X-axis:** Datasets - Bamboogle, 2Wiki, GAIA, AIME24

* **Y-axis:** Accuracy (%) - Scale ranges from 0 to 80, with increments of 10.

* **Legend:**

* Light Blue: "Before tuning"

* Red: "After tuning"

* **Titles:**

* Left Chart: "AgentFlow (Qwen-2.5-3B-Instruct)"

* Right Chart: "AgentFlow (Qwen-2.5-7B-Instruct)"

### Detailed Analysis or Content Details

**Left Chart: AgentFlow (Qwen-2.5-3B-Instruct)**

* **Bamboogle:**

* Before tuning: Approximately 53.6%

* After tuning: Approximately 68.8%

* **2Wiki:**

* Before tuning: Approximately 63.0%

* After tuning: Approximately 72.3%

* **GAIA:**

* Before tuning: Approximately 14.3%

* After tuning: Approximately 29.1%

* **AIME24:**

* Before tuning: Approximately 13.3%

* After tuning: Approximately 20.0%

**Right Chart: AgentFlow (Qwen-2.5-7B-Instruct)**