# Large Language Models Do NOT Really Know What They Don’t Know

Abstract

Recent work suggests that large language models (LLMs) encode factuality signals in their internal representations, such as hidden states, attention weights, or token probabilities, implying that LLMs may “ know what they don’t know ”. However, LLMs can also produce factual errors by relying on shortcuts or spurious associations. These error are driven by the same training objective that encourage correct predictions, raising the question of whether internal computations can reliably distinguish between factual and hallucinated outputs. In this work, we conduct a mechanistic analysis of how LLMs internally process factual queries by comparing two types of hallucinations based on their reliance on subject information. We find that when hallucinations are associated with subject knowledge, LLMs employ the same internal recall process as for correct responses, leading to overlapping and indistinguishable hidden-state geometries. In contrast, hallucinations detached from subject knowledge produce distinct, clustered representations that make them detectable. These findings reveal a fundamental limitation: LLMs do not encode truthfulness in their internal states but only patterns of knowledge recall, demonstrating that LLMs don’t really know what they don’t know.

Large Language Models Do NOT Really Know What They Don’t Know

Chi Seng Cheang 1 Hou Pong Chan 2 Wenxuan Zhang 3 Yang Deng 1 1 Singapore Management University 2 DAMO Academy, Alibaba Group 3 Singapore University of Technology and Design cs.cheang.2025@phdcs.smu.edu.sg, houpong.chan@alibaba-inc.com wxzhang@sutd.edu.sg, ydeng@smu.edu.sg

1 Introduction

Large language models (LLMs) demonstrate remarkable proficiency in generating coherent and contextually relevant text, yet they remain plagued by hallucination Zhang et al. (2023b); Huang et al. (2025), a phenomenon where outputs appear plausible but are factually inaccurate or entirely fabricated, raising concerns about their reliability and trustworthiness. To this end, researchers suggest that the internal states of LLMs (e.g., hidden representations Azaria and Mitchell (2023); Gottesman and Geva (2024), attention weights Yüksekgönül et al. (2024), output token logits Orgad et al. (2025); Varshney et al. (2023), etc.) can be used to detect hallucinations, indicating that LLMs themselves may actually know what they don’t know. These methods typically assume that when a model produces hallucinated outputs (e.g., “ Barack Obama was born in the city of Tokyo ” in Figure 1), its internal computations for the outputs (“ Tokyo ”) are detached from the input information (“ Barack Obama ”), thereby differing from those used to generate factually correct outputs. Thus, the hidden states are expected to capture this difference and serve as indicators of hallucinations.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: LLM Internal States and Hallucination Analysis

## 1. Overview

This image is a conceptual technical diagram illustrating how a Large Language Model (LLM) processes factual queries and the relationship between its internal latent states and the resulting generated output. It specifically categorizes outputs into factual associations, associated hallucinations, and unassociated hallucinations based on their proximity within the model's internal representation space.

---

## 2. Component Segmentation

### Region A: Factual Query (Input)

Located on the far left, this section represents the prompts fed into the model.

* **Icon:** 🔍 (Magnifying glass)

* **Label:** Factual Query

* **Transcribed Text (Input Prompts):**

1. *Barack Obama studied in the city of*

2. *Barack Obama was born in the city of*

3. *Barack Obama was born in the city of*

* **Visual Flow:** Three black arrows point from these text prompts toward the central LLM block.

### Region B: Internal States (Processing)

Located in the center, this section visualizes the model's latent space.

* **Icon:** 🧠 (Brain)

* **Label:** Internal States

* **Components:**

* **LLM Block:** A grey vertical rounded rectangle labeled "**LLM**".

* **Latent Space Projection:** A dashed-line square box connected to the LLM by diverging dashed lines, indicating a "zoom-in" on internal activations.

* **Data Distribution:** Inside the box is a scatter plot of colored dots arranged in a roughly circular/annular distribution.

* **Top Half:** Contains a mix of **Green** and **Blue** dots.

* **Bottom Half:** Contains primarily **Red** dots.

* **Center:** A void or empty space in the middle of the distribution.

### Region C: Generated Output (Legend and Results)

Located on the right, this section defines the categories of the model's response.

* **Icon:** 💬 (Speech bubble)

* **Label:** Generated Output

* **Legend and Classification:**

1. **Green Dot + ✅ Factual Associations**

* *Example:* e.g., *Chicago*

* *Spatial Grounding:* Corresponds to the green dots in the top half of the internal state plot.

2. **Blue Dot + ❌ Associated Hallucinations**

* *Example:* e.g., *Chicago*

* *Spatial Grounding:* Corresponds to the blue dots intermingled with green dots in the top half of the internal state plot.

3. **Red Dot + ❌ Unassociated Hallucinations**

* *Example:* e.g., *Tokyo*

* *Spatial Grounding:* Corresponds to the cluster of red dots in the bottom half of the internal state plot.

---

## 3. Logic and Trend Analysis

### Data Relationship Logic

The diagram establishes a spatial correlation between the "correctness" of an answer and its position in the LLM's internal state:

* **Clustering of Truth and Related Errors:** The **Green** (Factual) and **Blue** (Associated Hallucination) dots are spatially clustered together. This suggests that when the model hallucinates a "related" but incorrect fact (e.g., saying Obama was born in Chicago because he is strongly associated with that city), the internal state is nearly identical to the state for a factual truth.

* **Isolation of Unrelated Errors:** The **Red** dots (Unassociated Hallucinations, like "Tokyo") are clustered in a completely different region of the latent space. This indicates that "random" or unassociated hallucinations represent a distinct internal state compared to factual or contextually relevant information.

### Summary of Mappings

| Category | Internal State Region | Example Output | Status |

| :--- | :--- | :--- | :--- |

| **Factual Association** | Top Cluster (Mixed) | Chicago (as study location) | Correct |

| **Associated Hallucination** | Top Cluster (Mixed) | Chicago (as birth location) | Incorrect (but related) |

| **Unassociated Hallucination** | Bottom Cluster | Tokyo (as birth location) | Incorrect (unrelated) |

---

## 4. Language Declaration

* **Primary Language:** English (100%).

* **Note:** No other languages are present in the document. All text is transcribed directly as seen.

</details>

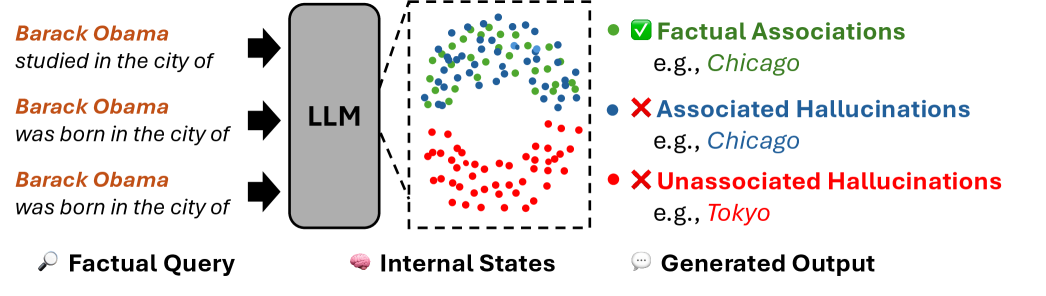

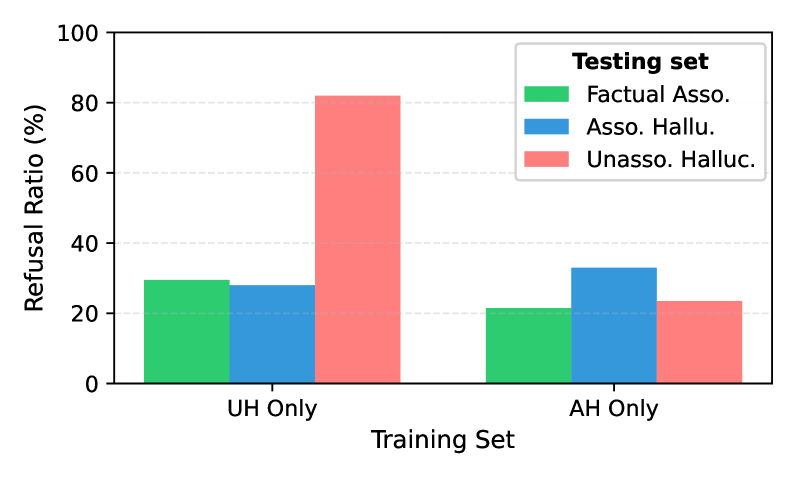

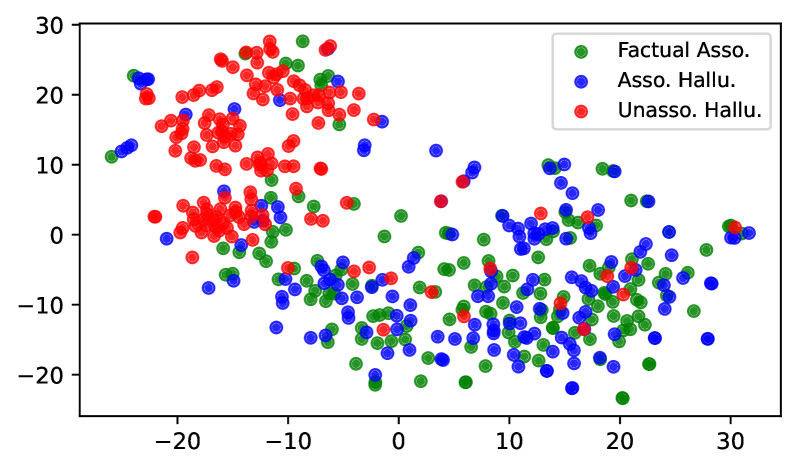

Figure 1: Illustration of three categories of knowledge. Associated hallucinations follow similar internal knowledge recall processes with factual associations, while unassociated hallucinations arise when the model’s output is detached from the input.

However, other research (Lin et al., 2022b; Kang and Choi, 2023; Cheang et al., 2023) shows that models can also generate false information that is closely associated with the input information. In particular, models may adopt knowledge shortcuts, favoring tokens that frequently co-occur in the training corpus over factually correct answers Kang and Choi (2023). As shown in Figure 1, given the prompt: “Barack Obama was born in the city of”, an LLM may rely on the subject tokens’ representations (i.e., “Barack Obama”) to predict a hallucinated output (e.g., “Chicago”), which is statistically associated with the subject entity but under other contexts (e.g., “ Barack Obama studied in the city of Chicago ”). Therefore, we suspect that the internal computations may not exhibit distinguishable patterns between correct predictions and input-associated hallucinations, as LLMs rely on the input information to produce both of them. Only when the model produces hallucinations unassociated with the input do the hidden states exhibit distinct patterns that can be reliably identified.

To this end, we conduct a mechanistic analysis of how LLMs internally process factual queries. We first perform causal analysis to identify hidden states crucial for generating Factual Associations (FAs) — factually correct outputs grounded in subject knowledge. We then examine how these hidden states behave when the model produces two types of factual errors: Associated Hallucinations (AHs), which remain grounded in subject knowledge, and Unassociated Hallucinations (UHs), which are detached from it. Our analysis shows that when generating both FAs and AHs, LLMs propagate information encoded in subject representations to the final token during output generation, resulting in overlapping hidden-state geometries that cannot reliably distinguish AHs from FAs. In contrast, UHs exhibit distinct internal computational patterns, producing clearly separable hidden-state geometries from FAs.

Building on the analysis, we revisit several widely-used hallucination detection approaches Gottesman and Geva (2024); Yüksekgönül et al. (2024); Orgad et al. (2025) that adopt internal state probing. The results show that these representations cannot reliably distinguish AHs from FAs due to their overlapping hidden-state geometries, though they can effectively separate UHs from FAs. Moreover, this geometry also shapes the limits the effectiveness of Refusal Tuning Zhang et al. (2024), which trains LLMs to refuse uncertain queries using refusal-aware dataset. Because UH samples exhibit consistent and distinctive patterns, refusal tuning generalizes well to unseen UHs but fails to generalize to unseen AHs. We also find that AH hidden states are more diverse, and thus refusal tuning with AH samples prevents generalization across both AH and UH samples.

Together, these findings highlight a central limitation: LLMs do not encode truthfulness in their hidden states but only patterns of knowledge recall and utilization, showing that LLMs don’t really know what they don’t know.

2 Related Work

Existing hallucination detection methods can be broadly categorized into two types: representation-based and confidence-based. Representation-based methods assume that an LLM’s internal hidden states can reflect the correctness of its generated responses. These approaches train a classifier (often a linear probe) using the hidden states from a set of labeled correct/incorrect responses to predict whether a new response is hallucinatory Li et al. (2023); Azaria and Mitchell (2023); Su et al. (2024); Ji et al. (2024); Chen et al. (2024); Ni et al. (2025); Xiao et al. (2025). Confidence-based methods, in contrast, assume that a lower confidence during the generation led to a higher probability of hallucination. These methods quantify uncertainty through various signals, including: (i) token-level output probabilities (Guerreiro et al., 2023; Varshney et al., 2023; Orgad et al., 2025); (ii) directly querying the LLM to verbalize its own confidence (Lin et al., 2022a; Tian et al., 2023; Xiong et al., 2024; Yang et al., 2024b; Ni et al., 2024; Zhao et al., 2024); or (iii) measuring the semantic consistency across multiple outputs sampled from the same prompt (Manakul et al., 2023; Kuhn et al., 2023; Zhang et al., 2023a; Ding et al., 2024). A response is typically flagged as a hallucination if its associated confidence metric falls below a predetermined threshold.

However, a growing body of work reveals a critical limitation: even state-of-the-art LLMs are poorly calibrated, meaning their expressed confidence often fails to align with the factual accuracy of their generations (Kapoor et al., 2024; Xiong et al., 2024; Tian et al., 2023). This miscalibration limits the effectiveness of confidence-based detectors and raises a fundamental question about the extent of LLMs’ self-awareness of their knowledge boundary, i.e., whether they can “ know what they don’t know ” Yin et al. (2023); Li et al. (2025). Despite recognizing this problem, prior work does not provide a mechanistic explanation for its occurrence. To this end, our work addresses this explanatory gap by employing mechanistic interpretability techniques to trace the internal computations underlying knowledge recall within LLMs.

3 Preliminary

Transformer Architecture

Given an input sequence of $T$ tokens $t_{1},...,t_{T}$ , an LLM is trained to model the conditional probability distribution of the next token $p(t_{T+1}|t_{1},...,t_{T})$ conditioned on the preceding $T$ tokens. Each token is first mapped to a continuous vector by an embedding layer. The resulting sequence of hidden states is then processed by a stack of $L$ Transformer layers. At layer $\ell∈{1,...,L}$ , each token representation is updated by a Multi-Head Self-Attention (MHSA) and a Feed-Forward Network (MLP) module:

$$

\mathbf{h}^{\ell}=\mathbf{h}^{\ell-1}+\mathbf{a}^{\ell}+\mathbf{m}^{\ell}, \tag{1}

$$

where $\mathbf{a}^{\ell}$ and $\mathbf{m}^{\ell}$ correspond to the MHSA and MLP outputs, respectively, at the $\ell$ -layer.

Internal Process of Knowledge Recall

Prior work investigates the internal activations of LLMs to study the mechanics of knowledge recall. For example, an LLM may encode many attributes that are associated with a subject (e.g., Barack Obama) (Geva et al., 2023). Given a prompt like “ Barack Obama was born in the city of ”, if the model has correctly encoded the fact, the attribute “ Honolulu ” propagates through self-attention to the last token, yielding the correct answer. We hypothesize that non-factual predictions follow the same mechanism: spurious attributes such as “ Chicago ” are also encoded and propagated, leading the model to generate false outputs.

Categorization of Knowledge

To investigate how LLMs internally process factual queries, we define three categories of knowledge, according to two criteria: 1) factual correctness, and 2) subject representation reliance.

- Factual Associations (FA) refer to factual knowledge that is reliably stored in the parameters or internal states of an LLM and can be recalled to produce correct, verifiable outputs.

- Associated Hallucinations (AH) refer to non-factual content produced when an LLM relies on input-triggered parametric associations.

- Unassociated Hallucinations (UH) refer to non-factual content produced without reliance on parametric associations to the input.

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Data Extraction: Average JS Divergence Heatmap

## 1. Image Overview

This image is a heatmap visualization representing the **Avg JS Divergence** (Average Jensen-Shannon Divergence) across different layers of a neural network model. The data is categorized by three distinct components or methods across 32 layers.

## 2. Component Isolation

### A. Header/Axes

* **Y-Axis (Left):** Categorical labels representing different components:

* `Subj.` (Top row)

* `Attn.` (Middle row)

* `Last.` (Bottom row)

* **X-Axis (Bottom):** Numerical labels representing `Layer` index, ranging from `0` to `30`. The labels are placed at intervals of 2 (0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30). There are 32 vertical columns in total (0-31).

* **Legend/Color Bar (Right):** A vertical gradient scale labeled `Avg JS Divergence`.

* **Range:** 0.2 (Lightest blue/white) to 0.6 (Darkest blue).

* **Markers:** 0.2, 0.3, 0.4, 0.5, 0.6.

### B. Main Chart Area (Heatmap Data)

The heatmap consists of a grid of 3 rows by 32 columns.

#### Row 1: `Subj.` (Subject-related divergence)

* **Trend:** High divergence in early layers, sharp drop-off in middle layers, near-zero divergence in late layers.

* **Data Points:**

* **Layers 0–15:** Darkest blue (~0.6). Indicates maximum divergence.

* **Layers 16–17:** Medium-light blue (~0.35 - 0.4). Transition phase.

* **Layers 18–31:** Very light blue to white (~0.2). Indicates minimal divergence.

#### Row 2: `Attn.` (Attention-related divergence)

* **Trend:** Near-zero divergence throughout most of the model, with a localized peak in the middle layers.

* **Data Points:**

* **Layers 0–10:** White (~0.2).

* **Layers 11–15:** Light blue (~0.3).

* **Layers 16–17:** Very light blue (~0.25).

* **Layers 18–31:** White (~0.2).

#### Row 3: `Last.` (Last-token/Final-state divergence)

* **Trend:** Near-zero divergence in early and middle layers, followed by a steady increase in the final third of the model.

* **Data Points:**

* **Layers 0–10:** White (~0.2).

* **Layers 11–17:** Very light blue (~0.22 - 0.25).

* **Layers 18–30:** Gradual increase in blue saturation (~0.3 - 0.4).

* **Layer 31:** Noticeable jump to a darker blue (~0.45 - 0.5).

## 3. Summary of Key Findings

* **Phase Separation:** The three components show high divergence at different stages of the model's depth. `Subj.` dominates the first half (Layers 0-15), `Attn.` has a minor peak in the middle (Layers 11-15), and `Last.` increases in the final third (Layers 18-31).

* **Maximum Divergence:** The highest recorded divergence (~0.6) occurs in the `Subj.` component during the initial 16 layers.

* **Minimum Divergence:** All components reach the baseline of ~0.2 at various points, specifically `Attn.` and `Last.` in the earliest layers, and `Subj.` in the final layers.

</details>

(a) Factual Associations

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Data Extraction: Heatmap Analysis of JS Divergence across Layers

## 1. Image Overview

This image is a heatmap visualization representing the **Average Jensen-Shannon (JS) Divergence** across different layers of a neural network model (likely a Transformer-based model with 32 layers). The data is segmented by three distinct categories or components of the model.

## 2. Component Isolation

### A. Header / Axis Labels

* **Y-Axis (Categories):** Located on the left. Contains three labels:

* `Subj.` (Top row)

* `Attn.` (Middle row)

* `Last.` (Bottom row)

* **X-Axis (Layers):** Located at the bottom. Represents layer indices from 0 to 31.

* Markers are labeled every 2 units: `0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30`.

* Axis Title: `Layer`

### B. Legend (Color Bar)

* **Spatial Placement:** Located on the far right.

* **Label:** `Avg JS Divergence`

* **Scale:** Linear gradient from light blue/white to dark navy blue.

* **Numerical Markers:** `0.2, 0.3, 0.4, 0.5, 0.6`.

* **Interpretation:** Darker blue indicates higher JS Divergence (~0.6), while white/light blue indicates lower JS Divergence (~0.2).

## 3. Data Extraction and Trend Verification

The heatmap is organized into three horizontal series. Each cell represents a specific layer for that category.

### Series 1: `Subj.` (Subject)

* **Visual Trend:** High divergence (dark blue) in the early layers, followed by a sharp decline (fading to white) in the middle-to-late layers.

* **Data Points:**

* **Layers 0–15:** Consistently high divergence, appearing at the maximum value of approximately **0.6**.

* **Layer 16:** Slight decrease (~0.5).

* **Layer 17:** Moderate decrease (~0.45).

* **Layer 18:** Significant drop (~0.35).

* **Layers 19–21:** Low divergence (~0.25–0.3).

* **Layers 22–31:** Minimum divergence, appearing near the baseline of **0.2**.

### Series 2: `Attn.` (Attention)

* **Visual Trend:** Low divergence throughout most of the model, with a localized "bump" or increase in divergence specifically in the middle layers.

* **Data Points:**

* **Layers 0–10:** Minimum divergence (~0.2).

* **Layers 11–18:** Increased divergence. The peak occurs around layers 13–15, reaching approximately **0.35 to 0.4**.

* **Layers 19–31:** Returns to minimum divergence (~0.2).

### Series 3: `Last.` (Last/Final)

* **Visual Trend:** Low divergence in the early and middle layers, with a steady increase starting from the middle and peaking at the very final layer.

* **Data Points:**

* **Layers 0–10:** Minimum divergence (~0.2).

* **Layers 11–16:** Very slight, gradual increase (~0.22–0.25).

* **Layers 17–30:** Sustained moderate divergence, plateauing around **0.4**.

* **Layer 31:** Sharp increase to the highest value for this series, approximately **0.55**.

## 4. Summary Table of Key Observations

| Category | Peak Divergence Phase | Peak Value (Approx) | Low Divergence Phase |

| :--- | :--- | :--- | :--- |

| **Subj.** | Early Layers (0-15) | 0.6 | Late Layers (22-31) |

| **Attn.** | Middle Layers (11-18) | 0.4 | Early & Late Layers |

| **Last.** | Final Layers (17-31) | 0.55 (at Layer 31) | Early Layers (0-10) |

## 5. Technical Conclusion

The visualization demonstrates a clear transition of information or "divergence" through the model's depth. The **Subject** component is most active/divergent in the initial half of the model, the **Attention** component shows a specific localized divergence in the center, and the **Last** component (likely referring to final token or output representations) becomes dominant in the latter half of the network.

</details>

(b) Associated Hallucinations

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Data Extraction: Heatmap Analysis of JS Divergence

## 1. Image Overview

This image is a heatmap visualization representing the **Avg JS Divergence** (Jensen-Shannon Divergence) across different layers of a neural network model, categorized by three specific components or methods.

## 2. Component Isolation

### A. Header / Title

* No explicit title is present within the image frame.

### B. Main Chart (Heatmap)

* **Type:** Heatmap with a grid structure.

* **X-Axis (Horizontal):** Labeled "**Layer**". It contains 32 discrete units, indexed from **0 to 31**. Major numerical markers are provided every 2 units (0, 2, 4, 6, 8, 10, 12, 14, 16, 18, 20, 22, 24, 26, 28, 30).

* **Y-Axis (Vertical):** Contains three categories:

1. **Subj.** (Top row)

2. **Attn.** (Middle row)

3. **Last.** (Bottom row)

### C. Legend / Color Bar

* **Location:** Right side of the plot.

* **Label:** "**Avg JS Divergence**" (oriented vertically).

* **Scale:** Linear gradient from light blue to dark blue.

* **Numerical Markers:** 0.2, 0.3, 0.4, 0.5, 0.6.

* **Color Mapping:**

* **0.2 (Minimum):** Near-white/very light blue.

* **0.6 (Maximum):** Deep navy blue.

---

## 3. Data Extraction and Trend Verification

### Row 1: "Subj." (Subject)

* **Visual Trend:** This row shows the highest divergence values in the dataset. It starts with a moderate-to-high blue intensity in the early layers, maintains this through the mid-layers, and then sharply drops to the minimum value (near-white) in the later layers.

* **Data Points (Approximate JS Divergence):**

* **Layers 0 - 15:** Values range between approximately **0.35 and 0.45**. The color is a consistent medium blue.

* **Layers 16 - 31:** Values drop significantly to approximately **0.20 - 0.22**. The color is nearly white.

### Row 2: "Attn." (Attention)

* **Visual Trend:** This row is consistently flat and low. There is almost no variation across the layers.

* **Data Points (Approximate JS Divergence):**

* **Layers 0 - 31:** Values are consistently at the minimum baseline of approximately **0.20**. The entire row appears as a very light, near-white band.

### Row 3: "Last." (Last)

* **Visual Trend:** Similar to the "Attn." row, this row remains at the baseline for almost the entire duration, with a very slight, negligible increase at the final layer.

* **Data Points (Approximate JS Divergence):**

* **Layers 0 - 30:** Values are at the minimum baseline of approximately **0.20**.

* **Layer 31:** There is a very slight darkening, suggesting a value of approximately **0.22 - 0.25**, though it remains much lighter than the "Subj." early layers.

---

## 4. Summary Table of Extracted Information

| Category (Y-Axis) | Layer Range (X-Axis) | Visual Intensity | Estimated Avg JS Divergence |

| :--- | :--- | :--- | :--- |

| **Subj.** | 0 - 15 | Medium Blue | 0.35 - 0.45 |

| **Subj.** | 16 - 31 | Near White | ~0.20 |

| **Attn.** | 0 - 31 | Near White | ~0.20 (Constant) |

| **Last.** | 0 - 30 | Near White | ~0.20 |

| **Last.** | 31 | Very Light Blue | ~0.23 |

## 5. Technical Observations

The data indicates that the "Subj." component experiences significantly higher Jensen-Shannon Divergence in the first half of the model's layers (0-15) compared to the "Attn." and "Last." components. After Layer 15, the divergence for "Subj." converges to the same low baseline seen in the other two categories. This suggests that the specific behavior or information captured by "Subj." is most distinct or volatile in the earlier stages of processing.

</details>

(c) Unassociated Hallucinations

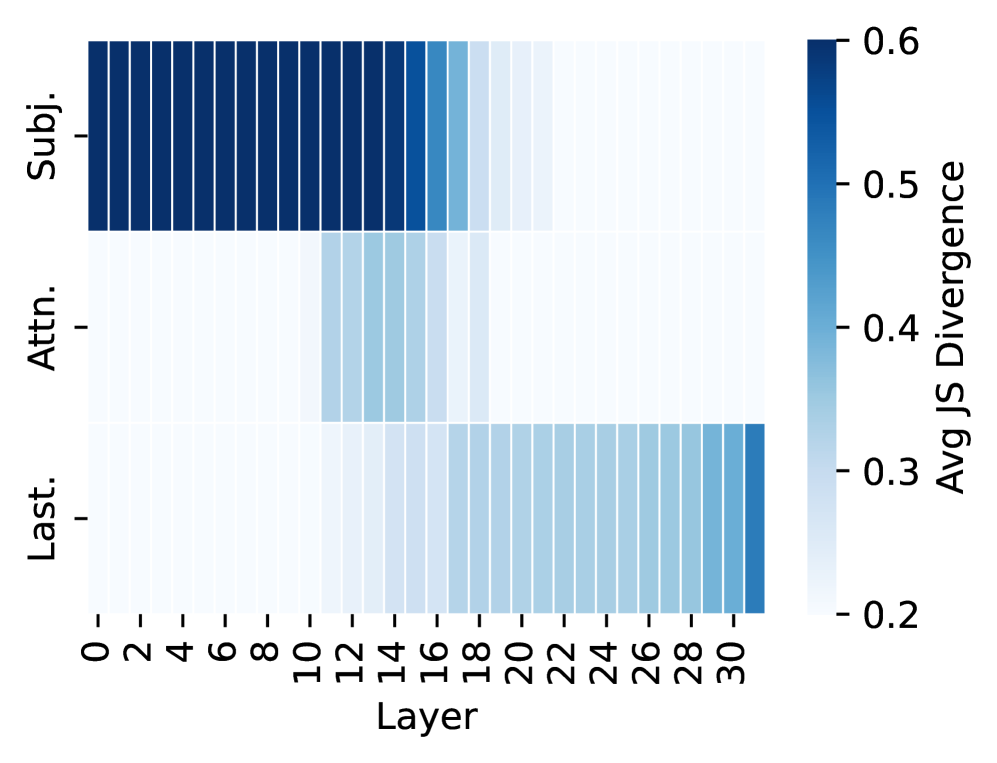

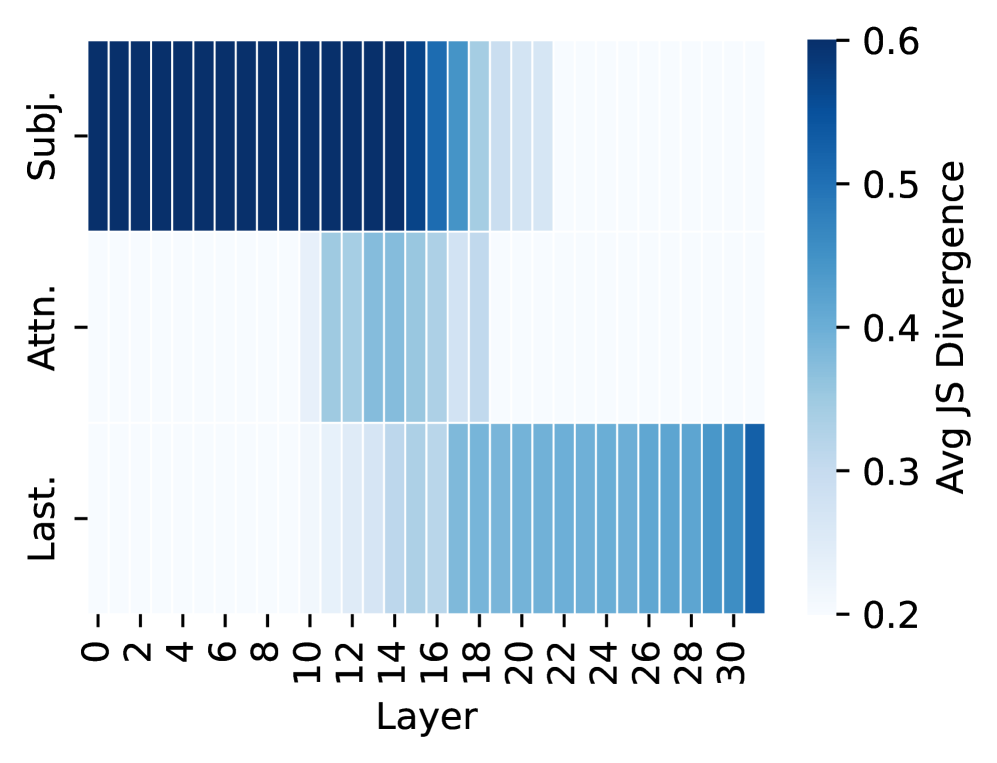

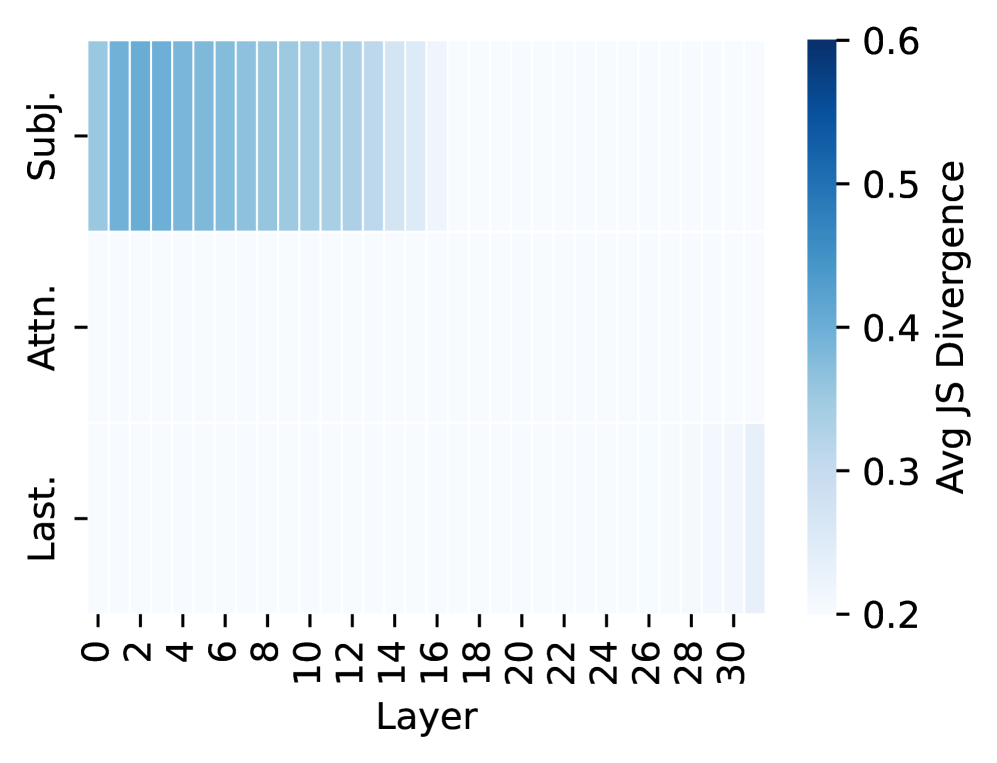

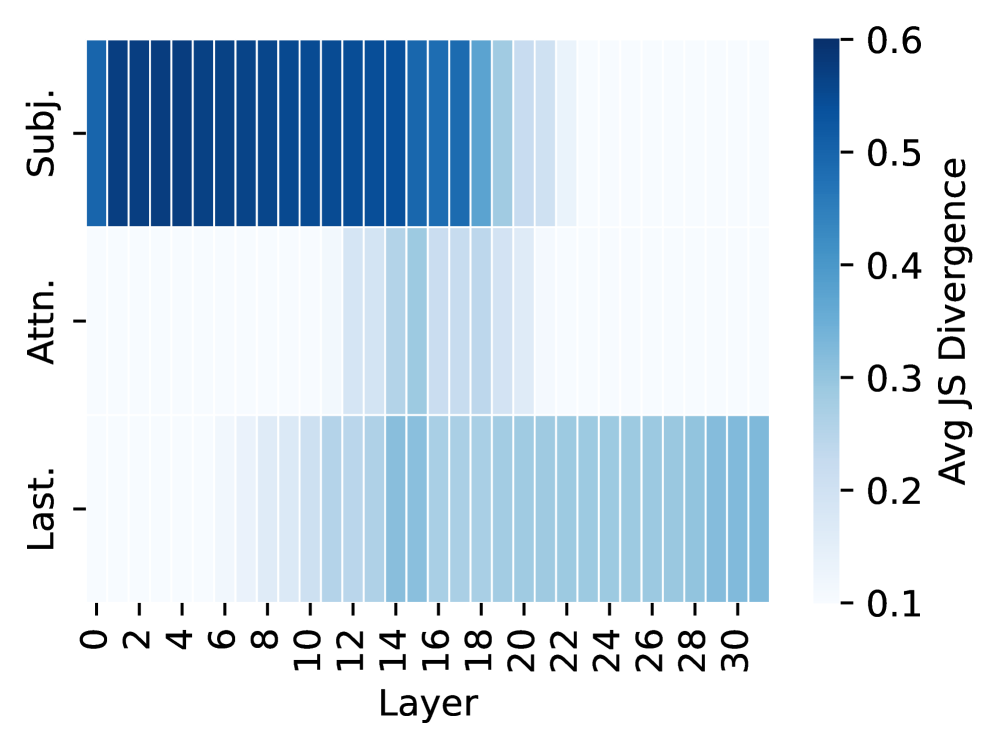

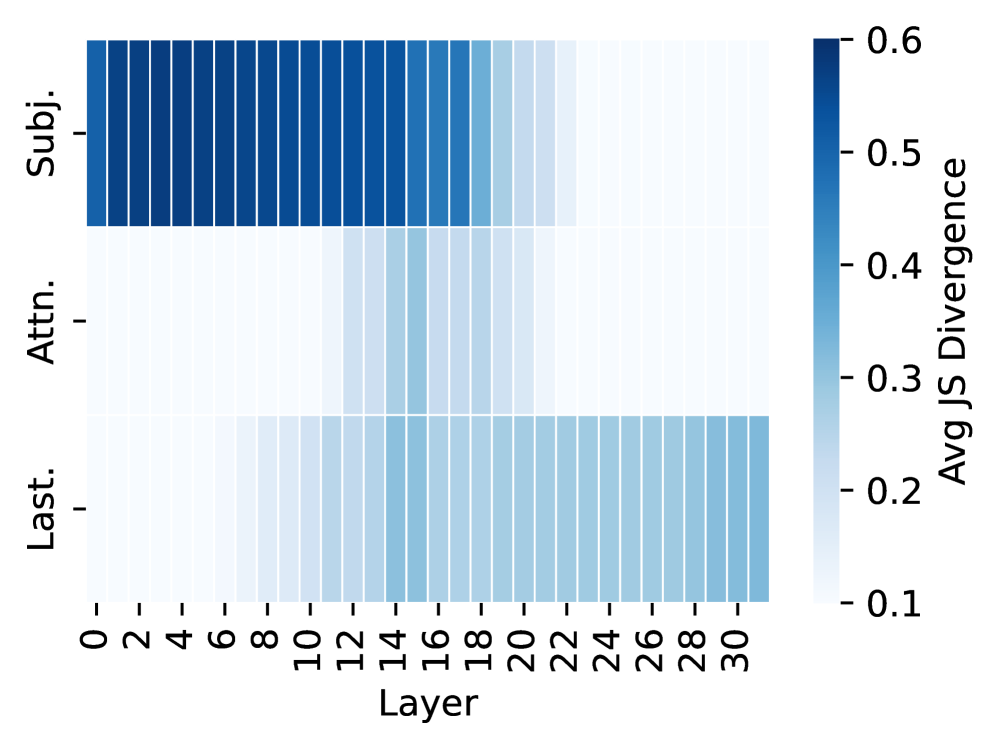

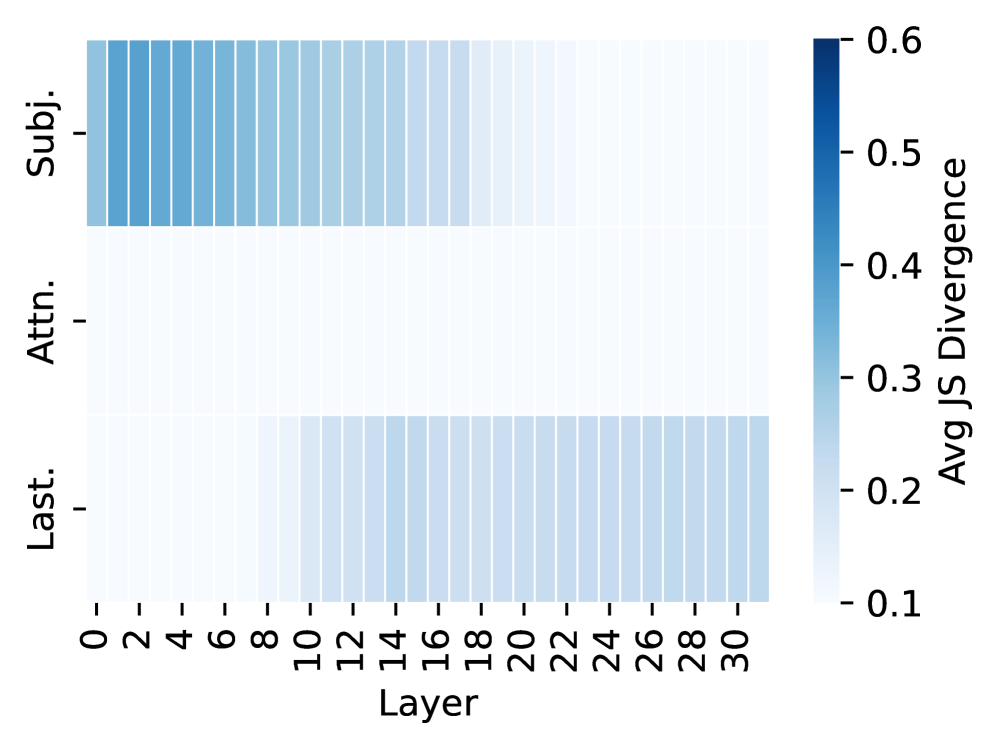

Figure 2: Effect of interventions across layers of LLaMA-3-8B. The heatmap shows JS divergence between the output distribution before and after intervention. Darker color indicates that the intervened hidden states are more causally influential on the model’s predictions. Top row: patching representations of subject tokens. Middle row: blocking attention flow from subject to the last token. Bottom row: patching representations of the last token.

Dataset Construction

| Factual Association Associated Hallucination Unassociated Hallucination | 3,506 1,406 7,381 | 3,354 1,284 7,655 |

| --- | --- | --- |

| Total | 12,293 | 12,293 |

Table 1: Dataset statistics across categories.

Our study is conducted under a basic knowledge-based question answering setting. The model is given a prompt containing a subject and relation (e.g., “ Barack Obama was born in the city of ”) and is expected to predict the corresponding object (e.g., “ Honolulu ”). To build the dataset, we collect knowledge triples $(\text{subject},\text{relation},\text{object})$ form Wikidata. Each relation was paired with a handcrafted prompt template to convert triples into natural language queries. The details of relation selection and prompt templates are provided in Appendix A.1. We then apply the label scheme presented in Appendix A.2: correct predictions are labeled as FAs, while incorrect ones are classified as AHs or UHs depending on their subject representation reliance. Table 1 summarizes the final data statistics.

Models

We conduct the experiments on two widely-adopted open-source LLMs, LLaMA-3 Dubey et al. (2024) and Mistral-v0.3 Jiang et al. (2023). Due to the space limit, details are presented in Appendix A.3, and parallel experimental results on Mistral are summarized in Appendix B.

4 Analysis of Internal States in LLMs

To focus our analysis, we first conduct causal interventions to identify hidden states that are crucial for eliciting factual associations (FAs). We then compare their behavior across associated hallucinations (AHs) and unassociated hallucinations (UHs). Prior studies Azaria and Mitchell (2023); Gottesman and Geva (2024); Yüksekgönül et al. (2024); Orgad et al. (2025) suggest that hidden states can reveal when a model hallucinates. This assumes that the model’s internal computations differ when producing correct versus incorrect outputs, causing their hidden states to occupy distinct subspaces. We revisit this claim by examining how hidden states update when recalling three categories of knowledge (i.e., FAs, AHs, and UHs). If hidden states primarily signal hallucination, AHs and UHs should behave similarly and diverge from FAs. Conversely, if hidden states reflect reliance on encoded knowledge, FAs and AHs should appear similar, and both should differ from UHs.

4.1 Causal Analysis of Information Flow

We identify hidden states that are crucial for factual prediction. For each knowledge tuple (subject, relation, object), the model is prompted with a factual query (e.g., “ The name of the father of Joe Biden is ”). Correct predictions indicate that the model successfully elicits parametric knowledge. Using causal mediation analysis Vig et al. (2020); Finlayson et al. (2021); Meng et al. (2022); Geva et al. (2023), we intervene on intermediate computations and measure the change in output distribution via JS divergence. A large divergence indicates that the intervened computation is critical for producing the fact. Specifically, to test whether token $i$ ’s hidden states in the MLP at layer $\ell$ are crucial for eliciting knowledge, we replace the computation with a corrupted version and observe how the output distribution changes. Similarly, following Geva et al. (2023), we mask the attention flow between tokens at layer $\ell$ using a window size of 5 layers. To streamline implementation, interventions target only subject tokens, attention flow, and the last token. Notable observations are as follows:

Obs1: Hidden states crucial for eliciting factual associations.

The results in Figure 2(a) show that three components dominate factual predictions: (1) subject representations in early-layer MLPs, (2) mid-layer attention between subject tokens and the final token, and (3) the final token representations in later layers. These results trace a clear information flow: subject representation, attention flow from the subject to the last token, and last-token representation, consistent with Geva et al. (2023). These three types of internal states are discussed in detail respectively (§ 4.2 - 4.4).

Obs2: Associated hallucinations follow the same information flow as factual associations.

When generating AHs, interventions on these same components also produce large distribution shifts (Figure 2(b)). This indicates that, although outputs are factually wrong, the model still relies on encoded subject information.

Obs3: Unassociated hallucinations present a different information flow.

In contrast, interventions during UH generation cause smaller distribution shifts (Figure 2(c)), showing weaker reliance on the subject. This suggests that UHs emerge from computations not anchored in the subject representation, different from both FAs and AHs.

4.2 Analysis of Subject Representations

The analysis in § 4.1 reveals that unassociated hallucinations (UHs) are processed differently from factual associations (FAs) and associated hallucinations (AHs) in the early layers of LLMs, which share a similar pattern. We examine how these differences emerge in the subject representations and why early-layer modules behave this way.

4.2.1 Norm of Subject Representations

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Document Extraction: Norm Ratio by Model Layers

## 1. Component Isolation

* **Header/Legend:** Located in the top-left quadrant of the chart area.

* **Main Chart:** A line graph with markers plotted against a grid.

* **Axes:** Y-axis (left) representing "Norm Ratio"; X-axis (bottom) representing "Layers".

## 2. Metadata and Axis Labels

* **Y-Axis Title:** Norm Ratio

* **Y-Axis Markers:** 0.94, 0.96, 0.98, 1.00, 1.02

* **X-Axis Title:** Layers

* **X-Axis Markers:** 0, 5, 10, 15, 20, 25, 30

* **Legend [Spatial Grounding: Top-Left]:**

* **Blue Line with Circle Markers:** `Asso. Hallu./Factual Asso.`

* **Red Line with Square Markers:** `Unasso. Hallu./Factual Asso.`

---

## 3. Data Series Analysis and Trend Verification

### Series 1: Asso. Hallu./Factual Asso. (Blue Circles)

* **Visual Trend:** This series is remarkably stable. It begins slightly below 1.00, peaks very early (around Layer 5), and then maintains a nearly horizontal trajectory just below the 1.00 mark for the remainder of the layers.

* **Key Data Points (Approximate):**

* **Layer 0:** ~0.994

* **Layer 5 (Peak):** ~1.003

* **Layers 10-30:** Fluctuates minimally between 0.995 and 0.998.

* **Layer 31 (Final):** ~0.998

### Series 2: Unasso. Hallu./Factual Asso. (Red Squares)

* **Visual Trend:** This series exhibits high volatility. It starts significantly lower than the blue series, undergoes a deep "V" shaped dip reaching its lowest point at Layer 12, recovers sharply until Layer 19, plateaus, and then finishes with a dramatic upward spike at the final layer.

* **Key Data Points (Approximate):**

* **Layer 0:** ~0.968

* **Layer 5:** ~0.958

* **Layer 12 (Global Minimum):** ~0.941

* **Layer 19 (Recovery Plateau):** ~0.988

* **Layers 20-29:** Relatively stable around 0.984.

* **Layer 31 (Global Maximum/Spike):** ~1.023

---

## 4. Comparative Summary

The chart compares two types of hallucination ratios across 32 layers (0-31) of a neural network.

1. **Stability vs. Volatility:** The "Asso. Hallu." (Associated Hallucination) ratio remains consistently close to 1.00, suggesting a stable relationship with factual association throughout the model's depth. In contrast, the "Unasso. Hallu." (Unassociated Hallucination) ratio varies significantly, indicating that the middle layers (specifically around Layer 12) have a much lower norm ratio compared to the initial and final layers.

2. **Final Layer Behavior:** At the final layer (Layer 31), the "Unasso. Hallu." ratio spikes aggressively, surpassing the "Asso. Hallu." ratio for the first time in the sequence, reaching the highest value recorded on the chart (~1.023).

3. **Convergence:** Between layers 20 and 30, the two metrics are at their closest proximity prior to the final layer divergence, though the blue line remains consistently higher than the red line during this interval.

</details>

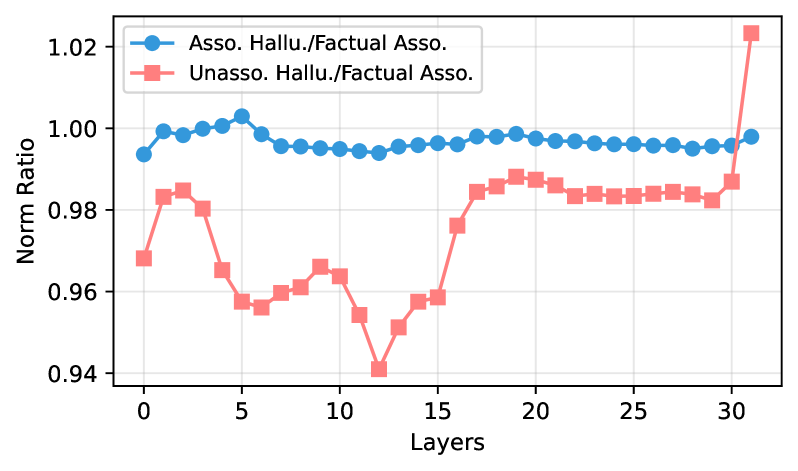

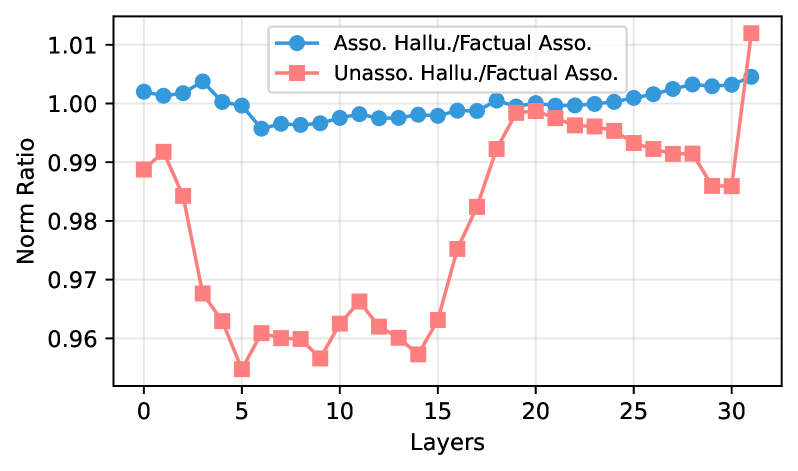

Figure 3: Norm ratio curves of subject representations in LLaMA-3-8B, comparing AHs and UHs against FAs as the baseline.

To test whether subject representations differ across categories, we measure the average $L_{2}$ norm of subject-token hidden activations across layers. For subject tokens $t_{s_{1}},..,t_{s_{n}}$ at layer $\ell$ , the average norm is $||\mathbf{h}_{s}^{\ell}\|=\tfrac{1}{n}\sum_{i=1}^{n}\|\mathbf{h}_{s_{i}}^{\ell}\|_{2}$ , computed by Equation (1). We compare the norm ratio between hallucination samples (AHs or UHs) and correct predictions (FAs), where a ratio near 1 indicates similar norms. Figure 3 shows that in LLaMA-3-8B, AH norms closely match those of correct samples (ratio $≈$ 0.99), while UH norms are consistently smaller, starting at the first layer (ratio $≈$ 0.96) and diverging further through mid-layers.

Findings:

At early layers, UH subject representations exhibit weaker activations than FAs, whereas AHs exhibit norms similar to FAs.

4.2.2 Relation to Parametric Knowledge

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Document Extraction: Model Hallucination Ratio Comparison

## 1. Component Isolation

* **Header:** None present.

* **Main Chart Area:** A grouped bar chart comparing two Large Language Models (LLMs) across two specific metrics.

* **Footer/Legend:** Located at the bottom center, containing two color-coded categories.

## 2. Axis and Label Extraction

* **Y-Axis Title:** "Ratio" (Vertical orientation).

* **Y-Axis Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0 (Linear scale with light grey horizontal grid lines).

* **X-Axis Categories:**

* **LLaMA-3-8B**

* **Mistral-7B-v0.3**

* **Legend Labels (Spatial Grounding: Bottom Center):**

* **Red Bar:** "Unasso. Hallu./Factual Asso." (Unassociated Hallucination / Factual Association)

* **Blue Bar:** "Asso. Hallu./Factual Asso." (Associated Hallucination / Factual Association)

## 3. Data Table Reconstruction

Based on the visual alignment with the Y-axis grid lines, the following values are extracted:

| Model | Unasso. Hallu./Factual Asso. (Red) | Asso. Hallu./Factual Asso. (Blue) |

| :--- | :---: | :---: |

| **LLaMA-3-8B** | ~0.68 | ~1.08 |

| **Mistral-7B-v0.3** | ~0.38 | ~0.80 |

## 4. Trend Verification and Analysis

* **Trend 1 (Inter-Model Comparison):** Mistral-7B-v0.3 exhibits lower ratios across both metrics compared to LLaMA-3-8B. Specifically, the red bar for Mistral is significantly lower (nearly half) than that of LLaMA.

* **Trend 2 (Intra-Model Comparison):** For both models, the "Associated Hallucination" ratio (Blue) is consistently higher than the "Unassociated Hallucination" ratio (Red).

* **Trend 3 (Magnitude):** LLaMA-3-8B's blue bar is the only data point exceeding the 1.0 ratio mark, indicating that associated hallucinations occur more frequently than factual associations in this specific context.

## 5. Detailed Description

This image is a grouped bar chart titled by its axes as a "Ratio" comparison between two AI models: **LLaMA-3-8B** and **Mistral-7B-v0.3**.

The chart uses a light grey background with horizontal grid lines every 0.2 units. The data is presented in two clusters. The first cluster (LLaMA-3-8B) shows a red bar reaching approximately 0.68 and a blue bar reaching approximately 1.08. The second cluster (Mistral-7B-v0.3) shows a red bar reaching approximately 0.38 and a blue bar reaching exactly 0.80.

The legend indicates that the red bars represent the ratio of unassociated hallucinations to factual associations, while the blue bars represent the ratio of associated hallucinations to factual associations. The overall data suggests that Mistral-7B-v0.3 performs better (lower hallucination ratios) than LLaMA-3-8B in both measured categories.

</details>

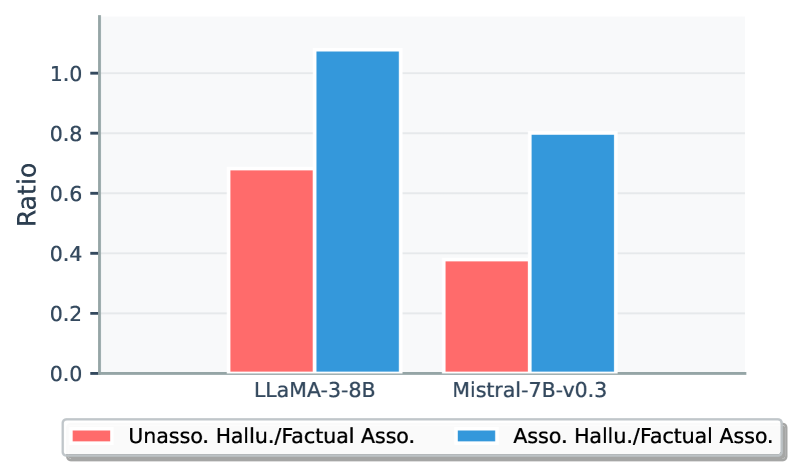

Figure 4: Comparison of subspace overlap ratios.

We next investigate why early layers encode subject representations differently across knowledge types by examining how inputs interact with the parametric knowledge stored in MLP modules. Inspired by Kang et al. (2024), the output norm of an MLP layer depends on how well its input aligns with the subspace spanned by the weight matrix: poorly aligned inputs yield smaller output norms.

For each MLP layer $\ell$ , we analyze the down-projection weight matrix $W_{\text{down}}^{\ell}$ and its input $x^{\ell}$ . Given the input $x_{s}^{\ell}$ corresponding to the subject tokens, we compute its overlap ratio with the top singular subspace $V_{\text{top}}$ of $W_{\text{down}}^{\ell}$ :

$$

r(x_{s}^{\ell})=\frac{\left\lVert{x_{s}^{\ell}}^{\top}V_{\text{top}}V_{\text{top}}^{\top}\right\rVert^{2}}{\left\lVert x_{s}^{\ell}\right\rVert^{2}}. \tag{2}

$$

A higher overlap ratio $r(x_{s}^{\ell})$ indicates stronger alignment to the subspace spanned by $W_{\text{down}}^{\ell}$ , leading to larger output norms.

To highlight relative deviations from the factual baseline (FA), we report the relative ratios between AH/FA and UH/FA. Focusing on the layer with the largest UH norm shift, Figure 4 shows that UHs have significantly lower $r(x_{s}^{\ell})$ than AHs in both LLaMA and Mistral. This reveals that early-layer parametric weights are more aligned with FA and AH subject representations than with UH subjects, producing higher norms for the former ones. These results also suggest that the model has sufficiently learned representations for FA and AH subjects during pretraining but not for UH subjects.

Findings:

Similar to FAs, AH hidden activations align closely with the weight subspace, while UHs do not. This indicates that the model has sufficiently encoded subject representations into parametric knowledge for FAs and AHs but not for UHs.

4.2.3 Correlation with Subject Popularity

<details>

<summary>x7.png Details</summary>

### Visual Description

# Technical Document Extraction: Hallucination and Association Analysis

## 1. Image Overview

This image is a grouped bar chart illustrating the relationship between three categories of data—**Factual Associations**, **Associated Hallucinations**, and **Unassociated Hallucinations**—across three distinct levels of a variable labeled as **Low**, **Mid**, and **High**.

## 2. Component Isolation

### A. Header / Title

* No explicit title is present within the image frame.

### B. Main Chart Area

* **Y-Axis Label:** Percentage (%)

* **Y-Axis Scale:** 0 to 100, with major tick marks every 20 units (0, 20, 40, 60, 80, 100).

* **X-Axis Categories:** Low, Mid, High.

* **Grid:** Light gray horizontal and vertical grid lines are present.

* **Data Labels:** Numerical percentages are printed directly above each bar for precision.

### C. Legend (Footer)

* **Location:** Bottom center of the image.

* **Green (Hex approx #58D68D):** Factual Associations

* **Blue (Hex approx #5DADE2):** Associated Hallucinations

* **Red/Salmon (Hex approx #EC7063):** Unassociated Hallucinations

---

## 3. Data Table Reconstruction

| Category (X-Axis) | Factual Associations (Green) | Associated Hallucinations (Blue) | Unassociated Hallucinations (Red) |

| :--- | :---: | :---: | :---: |

| **Low** | 5% | 1% | 94% |

| **Mid** | 27% | 7% | 66% |

| **High** | 52% | 14% | 34% |

---

## 4. Trend Verification and Analysis

### Series 1: Factual Associations (Green)

* **Visual Trend:** Slopes upward significantly from left to right.

* **Description:** As the level moves from Low to High, Factual Associations increase more than tenfold, starting at a negligible 5% and reaching a majority share of 52%.

### Series 2: Associated Hallucinations (Blue)

* **Visual Trend:** Slopes upward gradually.

* **Description:** This series represents the smallest portion of the data in all categories but shows a consistent upward trend, increasing from 1% at the Low level to 14% at the High level.

### Series 3: Unassociated Hallucinations (Red)

* **Visual Trend:** Slopes downward sharply.

* **Description:** This series dominates the "Low" category at 94%. However, it decreases steadily as the level increases, dropping to 66% at Mid and further to 34% at High.

---

## 5. Key Findings

* **Inverse Correlation:** There is a clear inverse relationship between "Factual Associations" and "Unassociated Hallucinations." As one increases, the other decreases.

* **Dominance Shift:** At the **Low** level, Unassociated Hallucinations are the overwhelming majority (94%). By the **High** level, Factual Associations become the primary category (52%), though Unassociated Hallucinations still maintain a significant presence (34%).

* **Hallucination Types:** "Unassociated Hallucinations" are consistently more prevalent than "Associated Hallucinations" across all three measured levels, though the gap narrows significantly at the "High" level.

</details>

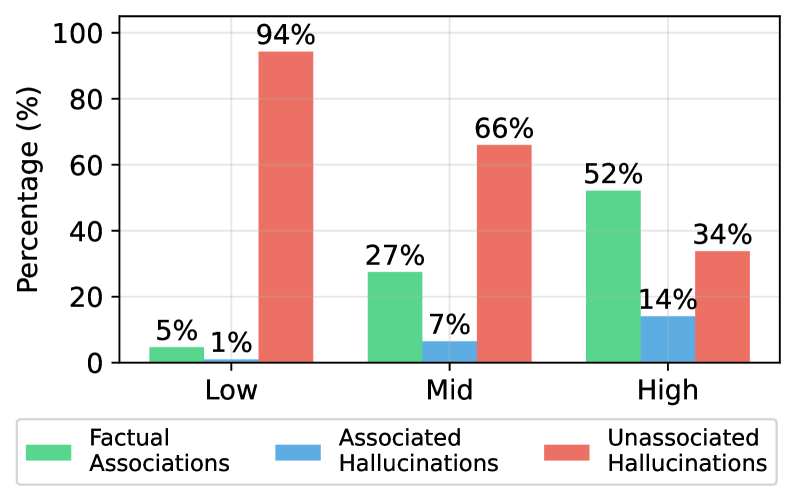

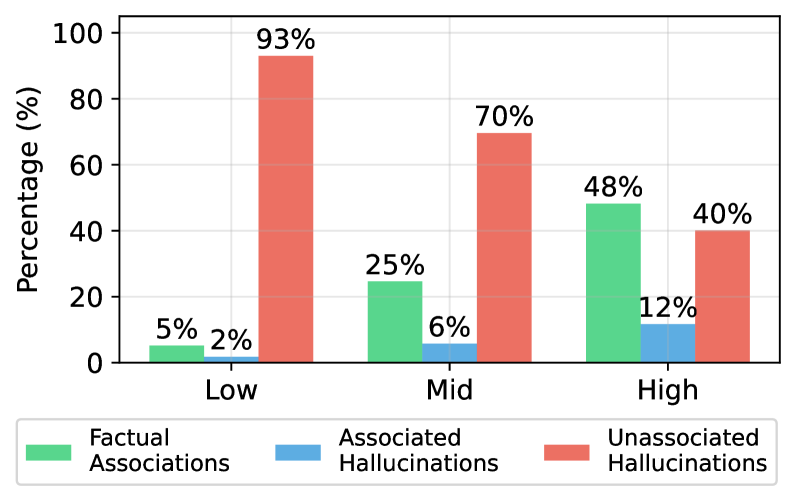

Figure 5: Sample distribution across different subject popularity (low, mid, high) in LLaMA-3-8B, measured by monthly Wikipedia page views.

We further investigate why AH representations align with weight subspaces as strongly as FAs, while UHs do not. A natural hypothesis is that this difference arises from subject popularity in the training data. We use average monthly Wikipedia page views as a proxy for subject popularity during pre-training and bin subjects by popularity, then measure the distribution of UHs, AHs, and FAs. Figure 5 shows a clear trend: UHs dominate among the least popular subjects (94% for LLaMA), while AHs are rare (1%). As subject popularity rises, UH frequency falls and both FAs and AHs become more common, with AHs rising to 14% in the high-popularity subjects. This indicates that subject representation norms reflect training frequency, not factual correctness.

Findings:

Popular subjects yield stronger early-layer activations. AHs arise mainly on popular subjects and are therefore indistinguishable from FAs by popularity-based heuristics, contradicting prior work Mallen et al. (2023a) that links popularity to hallucinations.

4.3 Analysis of Attention Flow

Having examined how the model forms subject representations, we next study how this information is propagated to the last token of the input where the model generates the object of a knowledge tuple. In order to produce factually correct outputs at the last token, the model must process subject representation and propagate it via attention layers, so that it can be read from the last position to produce the outputs Geva et al. (2023).

To quantify the specific contribution from subject tokens $(s_{1},...,s_{n})$ to the last token, we compute the attention contribution from subject tokens to the last position:

$$

\mathbf{a}^{\ell}_{\text{last}}=\sum\nolimits_{k}\sum\nolimits_{h}A^{\ell,h}_{\text{last},s_{k}}(\mathbf{h}^{\ell-1}_{s_{k}}W^{\ell,h}_{V})W^{\ell,h}_{O}. \tag{3}

$$

where $A^{\ell,h}_{i,j}$ denotes the attention weight assigned by the $h$ -th head in the layer $\ell$ from the last position $i$ to subjec token $j$ . Here, $\mathbf{a}^{\ell}_{\text{last}}$ represents the subject-to-last attention contribution at layer $\ell$ . Intuitively, if subject information is critical for prediction, this contribution should have a large norm; otherwise, the norm should be small.

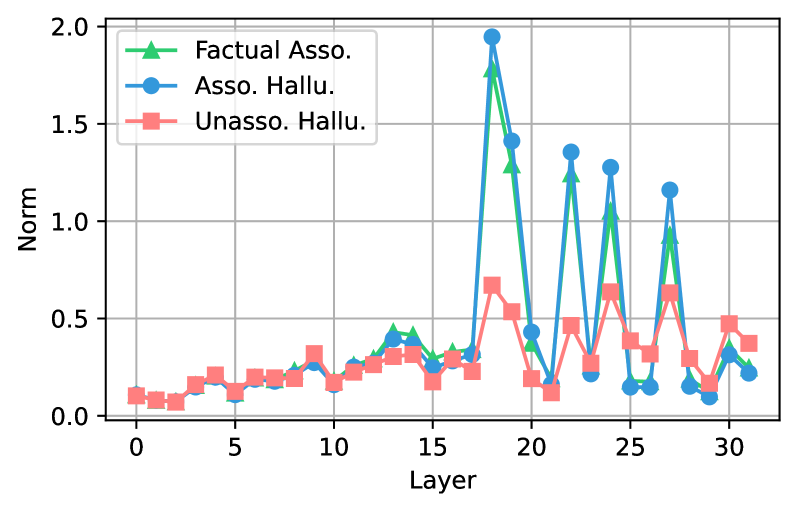

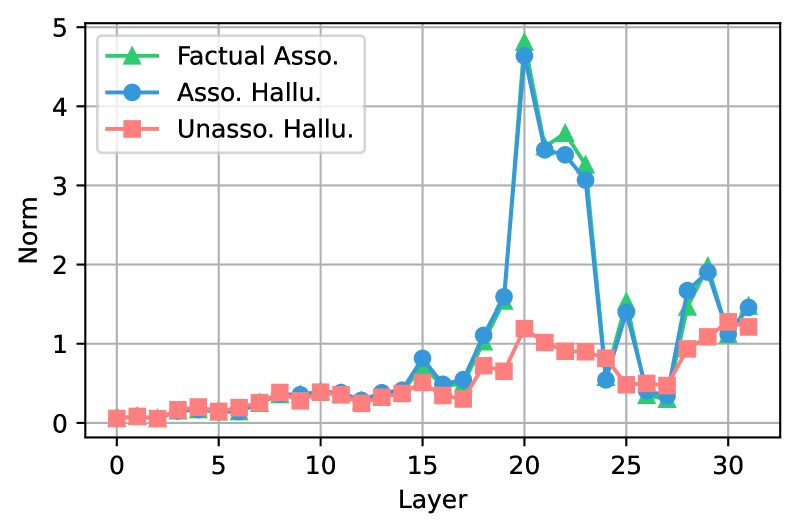

Figure 6 shows that in LLaMA-3-8B, both AHs and FAs exhibit large attention-contribution norms in mid-layers, indicating a strong information flow from subject tokens to the target token. In contrast, UHs show consistently lower norms, implying that their predictions rely far less on subject information. Yüksekgönül et al. (2024) previously argued that high attention flow from subject tokens signals factuality and proposed using attention-based hidden states to detect hallucinations. Our results challenge this view: the model propagates subject information just as strongly when generating AHs as when producing correct facts.

Findings:

Mid-layer attention flow from subject to last token is equally strong for AHs and FAs but weak for UHs. Attention-based heuristics can therefore separate UHs from FAs but cannot distinguish AHs from factual outputs, limiting their reliability for hallucination detection.

<details>

<summary>x8.png Details</summary>

### Visual Description

# Technical Document Extraction: Layer-wise Norm Analysis

## 1. Image Overview

This image is a line graph illustrating the relationship between neural network layers and a "Norm" metric across three distinct categories of data. The chart uses a coordinate grid system with markers to denote specific data points.

## 2. Component Isolation

### Header / Legend

* **Location:** Top-left quadrant (approximate [x, y] coordinates: [0.05, 0.95] relative to the plot area).

* **Legend Items:**

* **Green Line with Triangle Markers ($\blacktriangle$):** "Factual Asso."

* **Blue Line with Circle Markers ($\bullet$):** "Asso. Hallu."

* **Red Line with Square Markers ($\blacksquare$):** "Unasso. Hallu."

### Main Chart Area

* **X-Axis Label:** "Layer"

* **X-Axis Scale:** 0 to 32 (increments marked every 5 units: 0, 5, 10, 15, 20, 25, 30).

* **Y-Axis Label:** "Norm"

* **Y-Axis Scale:** 0.0 to 2.0 (increments marked every 0.5 units: 0.0, 0.5, 1.0, 1.5, 2.0).

* **Grid:** Major grid lines are present for both X and Y axes.

---

## 3. Data Series Analysis and Trend Verification

### Series 1: Factual Asso. (Green, Triangles)

* **Trend Description:** This series remains relatively flat and low (below 0.5) from Layer 0 to Layer 17. At Layer 18, it exhibits a massive spike, followed by a series of high-amplitude oscillations between Layers 18 and 32.

* **Key Data Points (Approximate):**

| Layer | Norm (Approx.) |

| :--- | :--- |

| 0–17 | 0.1 – 0.4 |

| 18 | 1.8 |

| 20 | 0.4 |

| 22 | 1.25 |

| 24 | 1.1 |

| 27 | 1.0 |

### Series 2: Asso. Hallu. (Blue, Circles)

* **Trend Description:** This series tracks very closely with "Factual Asso." throughout the entire range. It remains low until Layer 17, then experiences the highest peaks in the graph starting at Layer 18.

* **Key Data Points (Approximate):**

| Layer | Norm (Approx.) |

| :--- | :--- |

| 0–17 | 0.1 – 0.4 |

| 18 | 1.95 |

| 19 | 1.4 |

| 22 | 1.35 |

| 24 | 1.3 |

| 27 | 1.15 |

### Series 3: Unasso. Hallu. (Red, Squares)

* **Trend Description:** This series shows much lower variance than the other two. While it also experiences a rise in activity after Layer 17, the magnitude of its peaks is significantly dampened compared to the "Associated" categories.

* **Key Data Points (Approximate):**

| Layer | Norm (Approx.) |

| :--- | :--- |

| 0–17 | 0.1 – 0.3 |

| 18 | 0.65 |

| 24 | 0.65 |

| 27 | 0.65 |

| General | Rarely exceeds 0.7 |

---

## 4. Comparative Observations

* **Phase Shift:** There is a clear behavioral shift in the model after **Layer 17**. Prior to this, all three categories behave similarly with low Norm values.

* **Association Correlation:** The "Factual Asso." (Green) and "Asso. Hallu." (Blue) series are highly correlated, often peaking and dipping at the same layers with similar magnitudes.

* **Unassociated Divergence:** The "Unasso. Hallu." (Red) series diverges significantly from the other two in the latter half of the network (Layers 18–32), maintaining a much lower Norm profile despite following a similar oscillatory pattern.

* **Peak Layers:** Layers 18, 22, 24, and 27 represent significant "spikes" in Norm values for all categories, though the intensity varies by category.

</details>

Figure 6: Subject-to-last attention contribution norms across layers in LLaMA-3-8B. Values show the norm of the attention contribution from subject tokens to the last token at each layer.

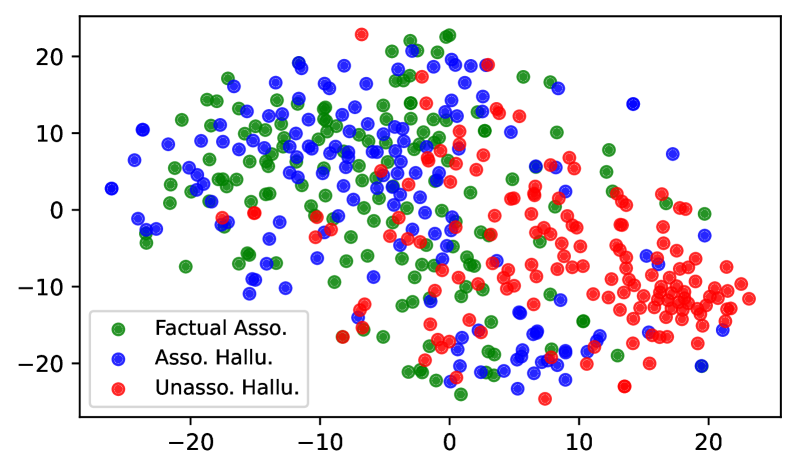

4.4 Analysis of Last Token Representations

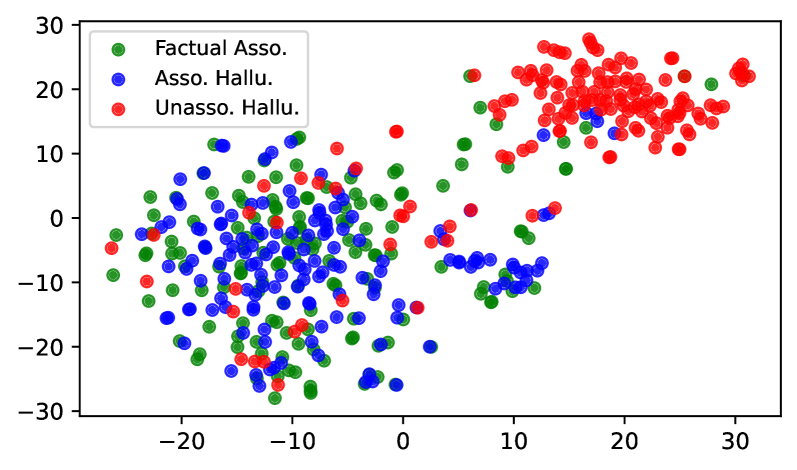

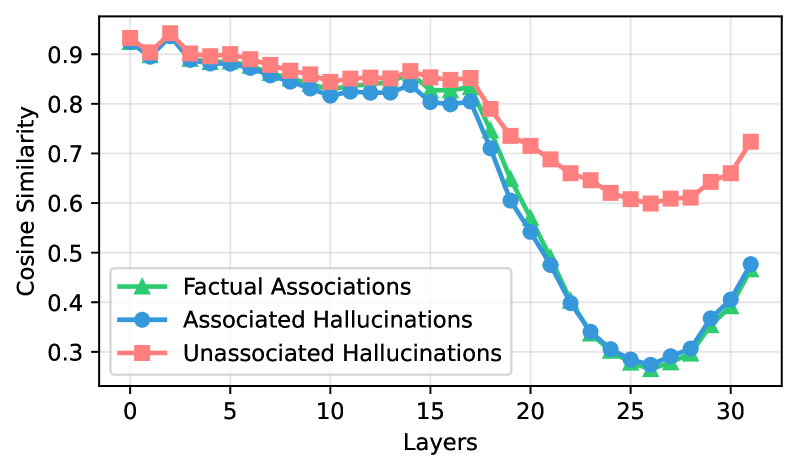

Our earlier analysis showed strong subject-to-last token information transfer for both FAs and AHs, but minimal transfer for UHs. We now examine how this difference shapes the distribution of last-token representations. When subject information is weakly propagated (UHs), last-token states receive little subject-specific update. For UH samples sharing the same prompt template, these states should therefore cluster in the representation space. In contrast, strong subject-driven propagation in FAs and AHs produces diverse last-token states that disperse into distinct subspaces.

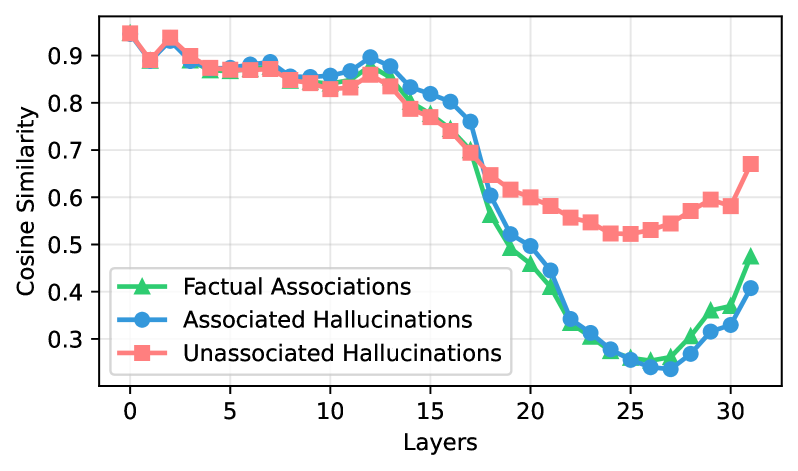

To test this, we compute cosine similarity among last-token representations $\mathbf{h}_{T}^{\ell}$ . As shown in Figure 7, similarity is high ( $≈$ 0.9) for all categories in early layers, when little subject information is transferred. From mid-layers onward, FAs and AHs diverge sharply, dropping to $≈$ 0.2 by layer 25. UHs remain moderately clustered, with similarity only declining to $≈$ 0.5.

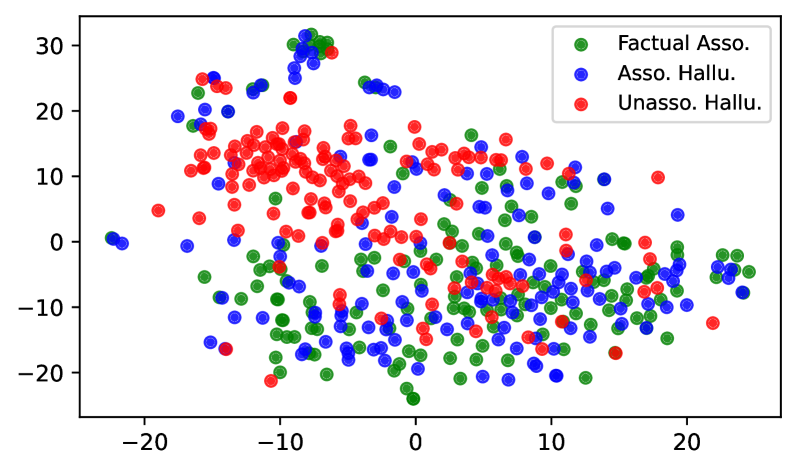

Figure 8 shows the t-SNE visualization of the last token’s representations at layer 25 of LLaMA-3-8B. The hidden representations of UH are clearly separated from FA, whereas AH substantially overlap with FA. These results indicate that the model processes UH differently from FA, while processing AH in a manner similar to FA. More visualization can be found in Appendix C.

<details>

<summary>x9.png Details</summary>

### Visual Description

# Technical Document Extraction: Cosine Similarity across Model Layers

## 1. Image Overview

This image is a line graph illustrating the relationship between model depth (Layers) and the Cosine Similarity of three distinct data categories. The chart uses a grid background for precise value estimation.

## 2. Component Isolation

### A. Header/Axes

* **Y-Axis Label:** Cosine Similarity (Vertical, left side).

* **Y-Axis Markers:** 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9.

* **X-Axis Label:** Layers (Horizontal, bottom center).

* **X-Axis Markers:** 0, 5, 10, 15, 20, 25, 30.

### B. Legend (Spatial Grounding: Bottom-Left [x≈0.1, y≈0.2])

The legend identifies three data series:

1. **Factual Associations:** Green line with triangle markers ($\triangle$).

2. **Associated Hallucinations:** Blue line with circle markers ($\bigcirc$).

3. **Unassociated Hallucinations:** Pink/Light Red line with square markers ($\square$).

---

## 3. Data Series Analysis and Trend Verification

### Series 1: Factual Associations (Green, Triangles)

* **Trend:** Starts high (~0.95), remains relatively stable with a slight downward drift until Layer 15. It then experiences a sharp, steep decline reaching a nadir at Layer 25, followed by a moderate recovery in the final layers.

* **Key Data Points:**

* Layer 0: ~0.95

* Layer 15: ~0.78

* Layer 25: ~0.26 (Minimum)

* Layer 31: ~0.48

### Series 2: Associated Hallucinations (Blue, Circles)

* **Trend:** Closely tracks the "Factual Associations" series for the first 15 layers. Between Layers 15 and 20, it maintains a slightly higher similarity than the factual series before following the same sharp decline. It reaches the lowest absolute similarity of all three groups around Layer 27.

* **Key Data Points:**

* Layer 0: ~0.95

* Layer 12: ~0.90 (Local peak)

* Layer 27: ~0.24 (Absolute Minimum)

* Layer 31: ~0.41

### Series 3: Unassociated Hallucinations (Pink, Squares)

* **Trend:** Starts at the same high point (~0.95). While it follows the general downward trend of the other two, its decline is significantly less severe. After Layer 17, it diverges upward from the other two series, maintaining a much higher cosine similarity through the middle and late layers.

* **Key Data Points:**

* Layer 0: ~0.95

* Layer 17: ~0.70

* Layer 25: ~0.52 (Minimum)

* Layer 31: ~0.68

---

## 4. Comparative Observations

* **Initial Convergence:** All three categories begin with nearly identical cosine similarity (~0.95) at Layer 0 and stay tightly clustered until approximately Layer 13.

* **The Divergence Point:** Significant divergence occurs after Layer 15.

* **The "U-Shape" Phenomenon:** All three series exhibit a "U-shaped" curve, where similarity drops in the middle-to-late layers (20-27) and begins to rise again toward the final layer (31).

* **Separation of Hallucinations:** "Unassociated Hallucinations" maintain a consistently higher similarity in the later layers (Layers 20-31) compared to both "Factual Associations" and "Associated Hallucinations." The "Associated Hallucinations" and "Factual Associations" remain closely coupled throughout the entire model depth.

</details>

Figure 7: Cosine similarity of target-token hidden states across layers in LLaMA-3-8B.

<details>

<summary>x10.png Details</summary>

### Visual Description

# Technical Document Extraction: t-SNE Visualization of Hallucination Types

## 1. Image Overview

This image is a 2D scatter plot, likely generated using a dimensionality reduction technique such as t-SNE or UMAP. It visualizes the clustering behavior of three distinct categories of data points based on their semantic or feature-based embeddings.

## 2. Component Isolation

### Header / Legend

* **Location:** Top-left quadrant (approximate [x, y] coordinates: [10, 85] in percentage of frame).

* **Legend Content:**

* **Green Circle ($\bullet$):** Factual Asso. (Factual Association)

* **Blue Circle ($\bullet$):** Asso. Hallu. (Associative Hallucination)

* **Red Circle ($\bullet$):** Unasso. Hallu. (Unassociated Hallucination)

### Axis Configuration

* **X-Axis:** Numerical scale ranging from approximately **-30 to +35**. Major tick marks are labeled at **-20, -10, 0, 10, 20, 30**.

* **Y-Axis:** Numerical scale ranging from approximately **-30 to +30**. Major tick marks are labeled at **-30, -20, -10, 0, 10, 20, 30**.

* **Note:** The axes represent abstract dimensions typical of manifold learning visualizations and do not have specific units.

---

## 3. Data Series Analysis and Trends

### Series 1: Factual Asso. (Green)

* **Visual Trend:** This series is primarily concentrated in a large, dense central cluster but is highly interleaved with the "Asso. Hallu." series.

* **Spatial Distribution:**

* **Primary Cluster:** Located between X: [-25, 5] and Y: [-30, 15].

* **Outliers:** A few green points are scattered toward the upper right (near X: 15, Y: 10 and X: 28, Y: 21), showing some overlap with the red cluster.

* **Observation:** The high degree of overlap with blue points suggests that "Factual Association" and "Associative Hallucination" share very similar feature spaces.

### Series 2: Asso. Hallu. (Blue)

* **Visual Trend:** This series follows a distribution almost identical to the green series, forming a unified central-left mass.

* **Spatial Distribution:**

* **Primary Cluster:** Concentrated between X: [-25, 5] and Y: [-30, 15].

* **Secondary Grouping:** A small, distinct "tail" or sub-cluster of blue points is visible on the right side between X: [5, 15] and Y: [-10, -5].

* **Observation:** The blue points act as a bridge between the main factual cluster and the lower-right region of the plot.

### Series 3: Unasso. Hallu. (Red)

* **Visual Trend:** This series exhibits a distinct "bimodal" distribution. While some points are scattered within the main green/blue cluster, a significant majority forms a separate, isolated cluster in the top-right.

* **Spatial Distribution:**

* **Isolated Cluster:** A dense concentration of red points is located between X: [10, 32] and Y: [10, 28]. This cluster has very little interference from green or blue points.

* **Scattered Points:** Several red points are interspersed within the main cluster (X: [-25, 0], Y: [-25, 10]).

* **Observation:** The clear separation of the top-right red cluster indicates that "Unassociated Hallucinations" possess distinct features that differentiate them significantly from both factual data and associative hallucinations.

---

## 4. Summary of Findings

| Category | Color | Primary Location (X, Y) | Clustering Behavior |

| :--- | :--- | :--- | :--- |

| **Factual Asso.** | Green | [-25 to 5, -30 to 15] | Highly integrated with Asso. Hallu. |

| **Asso. Hallu.** | Blue | [-25 to 5, -30 to 15] | Highly integrated with Factual Asso.; small sub-cluster at [10, -8]. |

| **Unasso. Hallu.** | Red | [10 to 32, 10 to 28] | Forms a distinct, isolated cluster in the upper-right quadrant. |

**Conclusion:** The visualization demonstrates that while Factual Associations and Associative Hallucinations are difficult to distinguish in this feature space, Unassociated Hallucinations form a statistically separable group, particularly in the positive X and positive Y coordinate space.

</details>

Figure 8: t-SNE visualization of last token’s representations at layer 25 of LLaMA-3-8B.

<details>

<summary>x11.png Details</summary>

### Visual Description

# Technical Document Extraction: Token Probability Analysis

## 1. Document Overview

This image is a violin plot comparing the token probability distributions of two Large Language Models (LLMs) across three distinct categories of outputs. The chart visualizes the density, range, and median values of probabilities assigned to specific tokens.

## 2. Component Isolation

### A. Header / Axis Labels

* **Y-Axis Title:** Token Probability

* **Y-Axis Scale:** Linear, ranging from `0.0` to `1.0` with major tick marks and dashed horizontal grid lines at intervals of `0.2`.

* **X-Axis Categories (Models):**

1. LLaMA-3-8B

2. Mistral-7B-v0.3

### B. Legend (Footer)

The legend is located at the bottom of the chart. Note: There are typographical errors in the original labels ("Associatied" instead of "Associated").

* **Green (Left):** Factual Associations

* **Blue (Middle):** Associated Hallucinations

* **Red/Pink (Right):** Unassociated Hallucinations

---

## 3. Data Extraction and Trend Analysis

Each model group contains three violin plots. Each violin includes a vertical line representing the full range (min to max) and a horizontal crossbar representing the median value.

### Model 1: LLaMA-3-8B

| Category | Color | Visual Trend/Shape | Median (Approx) | Range (Approx) |

| :--- | :--- | :--- | :--- | :--- |

| **Factual Associations** | Green | Wide base at 0.2, tapering to a long thin neck reaching near 1.0. | 0.35 | 0.05 to 0.96 |

| **Associated Hallucinations** | Blue | Bimodal-leaning; wide at 0.2 and 0.5, reaching near 1.0. | 0.38 | 0.02 to 0.96 |

| **Unassociated Hallucinations** | Red | Heavily bottom-weighted; bulbous at 0.1, sharp drop-off. | 0.12 | 0.02 to 0.50 |

### Model 2: Mistral-7B-v0.3

| Category | Color | Visual Trend/Shape | Median (Approx) | Range (Approx) |

| :--- | :--- | :--- | :--- | :--- |

| **Factual Associations** | Green | Similar to LLaMA; wide base at 0.2, long neck to 1.0. | 0.35 | 0.05 to 0.96 |

| **Associated Hallucinations** | Blue | More concentrated density between 0.2 and 0.6. | 0.40 | 0.08 to 0.92 |

| **Unassociated Hallucinations** | Red | Heavily bottom-weighted; very low density above 0.2. | 0.11 | 0.03 to 0.42 |

---

## 4. Key Observations and Data Patterns

1. **High-Confidence Hallucinations:** Both models exhibit "Associated Hallucinations" (Blue) with token probabilities reaching as high as ~0.95. This indicates that when a hallucination is contextually "associated," the models can be extremely confident in the incorrect output.

2. **Factual vs. Associated Hallucination Overlap:** The distributions for Green (Factual) and Blue (Associated Hallucinations) are remarkably similar in shape and median. This suggests that token probability alone is a poor discriminator for distinguishing factual statements from contextually relevant hallucinations.

3. **Unassociated Hallucinations:** The "Unassociated Hallucinations" (Red) consistently show the lowest token probabilities. The medians are near 0.1, and the maximum values rarely exceed 0.5. This suggests that completely random or irrelevant hallucinations are typically generated with lower model confidence.

4. **Model Comparison:** The behavior between `LLaMA-3-8B` and `Mistral-7B-v0.3` is highly consistent, suggesting these probability distribution patterns are a common characteristic of current transformer-based LLMs rather than a specific model quirk.

</details>

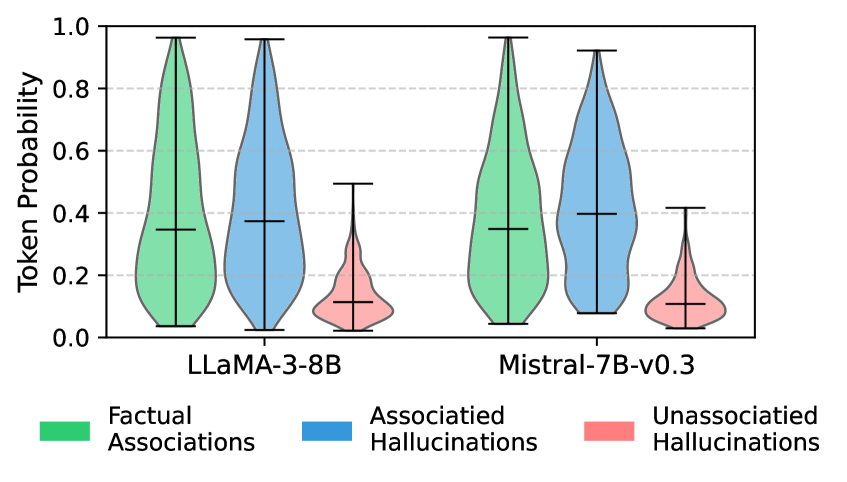

Figure 9: Distribution of last token probabilities.

This separation also appears in the entropy of the output distribution (Figure 9). Strong subject-to-last propagation in FAs and AHs yields low-entropy predictions concentrated on the correct or associated entity. In contrast, weak propagation in UHs produces broad, high-entropy distributions, spreading probability mass across many plausible candidates (e.g., multiple possible names for “ The name of the father of <subject> is ”).

Finding:

From mid-layers onward, UHs retain clustered last-token representations and high-entropy outputs, while FAs and AHs diverge into subject-specific subspaces with low-entropy outputs. This provides a clear signal to separate UHs from FAs and AHs, but not for FAs and AHs.

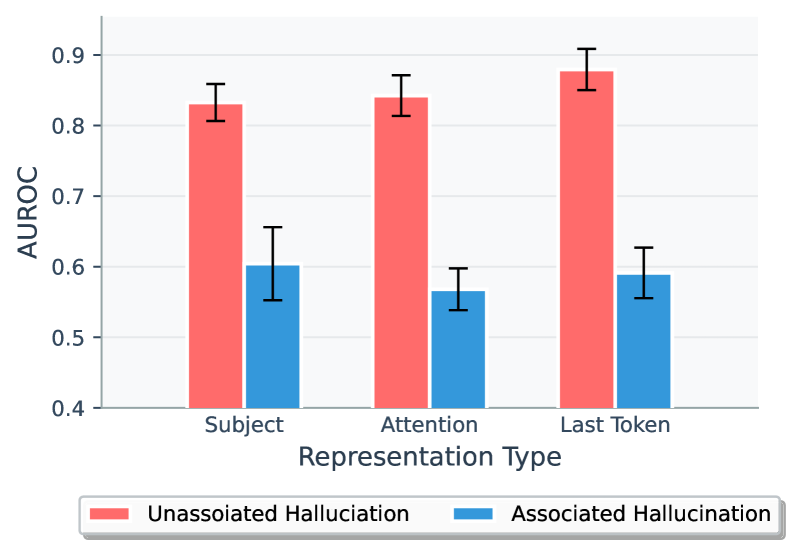

5 Revisiting Hallucination Detection

The mechanistic analysis in § 4 reveals that Internal states of LLMs primarily capture how the model recalls and utilizes its parametric knowledge, not whether the output is truthful. As both factual associations (FAs) and associated hallucinations (AHs) rely on the same subject-driven knowledge recall, their internal states show no clear separation. We therefore hypothesize that internal or black-box signals cannot effectively distinguish AHs from FAs, even though they could be effective in distinguishing unassociated hallucinations (UHs), which do not rely on parametric knowledge, from FAs.

Experimental Setups

To verify this, we revisit the effectiveness of widely-adopted white-box hallucination detection approaches that use internal state probing as well as black-box approaches that rely on scalar features. We evaluate on three settings: 1) AH Only (1,000 FAs and 1,000 AHs for training; 200 of each for testing), 2) UH Only (1,000 FAs and 1,000 UHs for training; 200 of each for testing), and 3) Full (1,000 FAs and 1,000 hallucination samples mixed of AHs and UHs for training; 200 of each for testing). For each setting, we use five random seeds to construct the training and testing datasets. We report the mean AUROC along with its standard deviation across seeds.

White-box methods: We extract and normalize internal features and then train a probe.

- Subject representations: last subject token hidden state from three consecutive layers Gottesman and Geva (2024).

- Attention flow: attention weights from the last token to subject tokens across all layers Yüksekgönül et al. (2024).

- Last-token representations: final token hidden state from the last layer Orgad et al. (2025).

Black-box methods: We test two commonly used scalar features, including answer token probability (Orgad et al., 2025) and subject popularity (average monthly Wikipedia page views) (Mallen et al., 2023a). As discussed in § 4.2.3 and § 4.4, these features are also related to whether the model relies on encoded knowledge to produce outputs rather than with truthfulness itself.

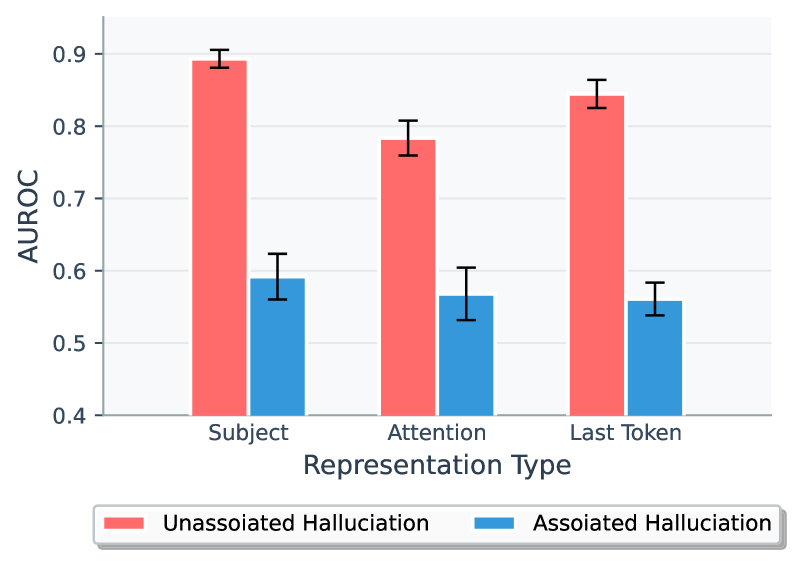

Experimental Results

| Subject Attention Last Token | $0.65± 0.02$ $0.58± 0.04$ $\mathbf{0.69± 0.03}$ | $0.91± 0.01$ $0.92± 0.02$ $\mathbf{0.93± 0.01}$ | $0.57± 0.02$ $0.58± 0.07$ $\mathbf{0.63± 0.02}$ | $0.81± 0.02$ $0.87± 0.01$ $\mathbf{0.92± 0.01}$ |

| --- | --- | --- | --- | --- |

| Probability | $0.49± 0.01$ | $0.86± 0.01$ | $0.46± 0.00$ | $0.89± 0.00$ |

| Subject Pop. | $0.48± 0.01$ | $0.87± 0.01$ | $0.52± 0.01$ | $0.84± 0.01$ |

Table 2: Hallucination detection performance on AH Only and UH Only settings.

<details>

<summary>x12.png Details</summary>

### Visual Description

# Technical Document Extraction: AUROC Performance by Representation Type

## 1. Image Classification and Overview

This image is a **grouped bar chart** comparing the performance (measured in AUROC) of two different hallucination categories across three distinct representation types. The chart includes error bars representing variability or confidence intervals for each data point.

## 2. Component Isolation

### A. Header / Axis Labels

* **Y-Axis Title:** `AUROC` (Area Under the Receiver Operating Characteristic curve).

* **X-Axis Title:** `Representation Type`.

* **Y-Axis Scale:** Numerical values ranging from `0.4` to `0.9` with major gridline increments of `0.1`.

* **X-Axis Categories:** `Subject`, `Attention`, `Last Token`.

### B. Legend (Spatial Grounding: Bottom Center)

The legend is located in a boxed area at the bottom of the chart.

* **Red Bar (Left):** `Unassoiated Halluciation` [sic] (Note: The image contains a typo for "Unassociated Hallucination").

* **Blue Bar (Right):** `Associated Hallucination`.

### C. Main Chart Area

The chart consists of three pairs of bars. In every pair, the red bar is significantly higher than the blue bar.

---

## 3. Data Extraction and Trend Verification

### Trend Analysis

* **Unassociated Hallucination (Red):** Shows a consistent **upward trend** across the categories. Performance is high at "Subject," slightly higher at "Attention," and reaches its peak at "Last Token."

* **Associated Hallucination (Blue):** Shows a **fluctuating/downward trend**. Performance starts at its highest in "Subject," drops to its lowest in "Attention," and recovers slightly in "Last Token," though it remains lower than the initial "Subject" value.

* **Comparative Gap:** The performance gap between "Unassociated" and "Associated" hallucinations increases as we move from left to right across the X-axis.

### Reconstructed Data Table

Values are estimated based on the Y-axis scale and gridlines.

| Representation Type | Unassociated Hallucination (Red) | Associated Hallucination (Blue) |

| :--- | :--- | :--- |

| **Subject** | ~0.83 (Error: ±0.03) | ~0.60 (Error: ±0.05) |

| **Attention** | ~0.84 (Error: ±0.03) | ~0.57 (Error: ±0.03) |

| **Last Token** | ~0.88 (Error: ±0.03) | ~0.59 (Error: ±0.04) |

---

## 4. Detailed Component Description

* **Subject Representation:**

* The Red bar (Unassociated) sits between 0.8 and 0.9, centered at approximately 0.83.

* The Blue bar (Associated) sits exactly on the 0.6 gridline.

* **Attention Representation:**

* The Red bar (Unassociated) shows a marginal increase compared to Subject, sitting at approximately 0.84.

* The Blue bar (Associated) shows a decrease, sitting below the 0.6 line at approximately 0.57.

* **Last Token Representation:**

* The Red bar (Unassociated) shows the highest performance in the set, approaching the 0.9 mark (approx. 0.88).

* The Blue bar (Associated) shows a slight recovery from the Attention phase but remains below the 0.6 mark (approx. 0.59).

## 5. Explicit Language Declaration

* **Primary Language:** English.

* **Note on Transcription:** The legend contains a typographical error: "Unassoiated Halluciation". This has been transcribed exactly as it appears in the image for technical accuracy. The intended meaning is "Unassociated Hallucination."

</details>

Figure 10: Hallucination detection performance on the Full setting (LLaMA-3-8B).

Table 2 shows that hallucination detection methods behave very differently in the AH Only and UH Only settings. For white-box probes, all approaches effectively distinguish UHs from FAs, with last-token hidden states reaching AUROC scores of about 0.93 for LLaMA and 0.92 for Mistral. In contrast, performance drops sharply on the AH Only setting, where the last-token probe falls to 0.69 for LLaMA and 0.63 for Mistral. Black-box methods follow the same pattern. Figure 10 further highlights this disparity under the Full setting: detection is consistently stronger on UH samples than on AH samples, and adding AHs to the training set significantly dilutes performance on UHs (AUROC $≈$ 0.9 on UH Only vs. $≈$ 0.8 on Full).

These results confirm that both internal probes and black-box methods capture whether a model draws on parametric knowledge, not whether its outputs are factually correct. Unassociated hallucinations are easier to detect because they bypass this knowledge, while associated hallucinations are produced through the same recall process as factual answers, leaving no internal cues to distinguish them. As a result, LLMs lack intrinsic awareness of their own truthfulness, and detection methods relying on these signals risk misclassifying associated hallucinations as correct, fostering harmful overconfidence in model outputs.

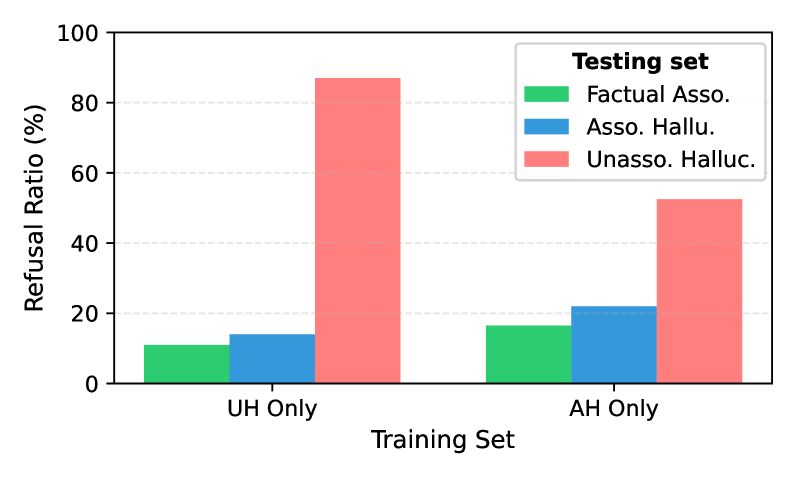

6 Challenges of Refusal Tuning

A common strategy to mitigate potential hallucination in the model’s responses is to fine-tune LLMs to refuse answering when they cannot provide a factual response, e.g., Refusal Tuning Zhang et al. (2024). For such refusal capability to generalize, the training data must contain a shared feature pattern across hallucinated outputs, allowing the model to learn and apply it to unseen cases.

Our analysis in the previous sections shows that this prerequisite is not met. The structural mismatch between UHs and AHs suggests that refusal tuning on UHs may generalize to other UHs, because their hidden states occupy a common activation subspace, but will not transfer to AHs. Refusal tuning on AHs is even less effective, as their diverse representations prevent generalization to either unseen AHs or UHs.

Experimental Setups

To verify the hypothesis, we conduct refusal tuning on LLMs under two settings: 1) UH Only, where 1,000 UH samples are paired with 10 refusal templates, and 1,000 FA samples are preserved with their original answers. 2) AH Only, where 1,000 AH samples are paired with refusal templates, with 1,000 FA samples again leave unchanged. We then evaluate both models on 200 samples each of FAs, UHs, and AHs. A response matching any refusal template is counted as a refusal, and we report the Refusal Ratio as the proportion of samples eliciting refusals. This measures not only whether the model refuses appropriately on UHs and AHs, but also whether it “over-refuses” on FA samples.

Experimental Results

<details>

<summary>x13.png Details</summary>

### Visual Description

# Technical Document Extraction: Refusal Ratio Analysis

## 1. Image Overview

This image is a grouped bar chart illustrating the "Refusal Ratio (%)" of a model across different testing scenarios based on two distinct training configurations. The chart compares how training on specific types of data (UH vs. AH) affects the model's tendency to refuse to answer factual associations versus different types of hallucinations.

## 2. Component Isolation

### A. Header / Legend

* **Location:** Top right quadrant of the chart area.

* **Legend Title:** **Testing set**

* **Categories & Color Mapping:**

* **Factual Asso.** (Green): Represents factual association testing.

* **Asso. Hallu.** (Blue): Represents associative hallucination testing.

* **Unasso. Halluc.** (Light Red/Pink): Represents unassociated hallucination testing.

### B. Axis Definitions

* **Y-Axis (Vertical):**

* **Label:** Refusal Ratio (%)

* **Scale:** 0 to 100

* **Markers:** 0, 20, 40, 60, 80, 100

* **Gridlines:** Horizontal dashed light-gray lines at every 20% interval.

* **X-Axis (Horizontal):**

* **Label:** Training Set

* **Categories:** "UH Only" and "AH Only"

## 3. Data Extraction & Trend Verification

### Training Set: UH Only

* **Trend:** In this group, the model shows a significantly higher refusal rate for unassociated hallucinations compared to factual associations or associative hallucinations.

* **Data Points:**

* **Factual Asso. (Green):** ~30%

* **Asso. Hallu. (Blue):** ~28%

* **Unasso. Halluc. (Red):** ~82%

### Training Set: AH Only

* **Trend:** In this group, the refusal rates are much more balanced and lower overall. The model is most likely to refuse associative hallucinations, while the refusal rate for unassociated hallucinations drops drastically compared to the "UH Only" training set.

* **Data Points:**

* **Factual Asso. (Green):** ~22%

* **Asso. Hallu. (Blue):** ~33%

* **Unasso. Halluc. (Red):** ~24%

## 4. Data Table Reconstruction

| Training Set | Factual Asso. (Green) | Asso. Hallu. (Blue) | Unasso. Halluc. (Red) |

| :--- | :---: | :---: | :---: |

| **UH Only** | ~30% | ~28% | ~82% |

| **AH Only** | ~22% | ~33% | ~24% |

## 5. Key Observations & Technical Summary

* **Impact of UH Training:** Training exclusively on "UH" (likely Unassociated Hallucinations) leads to a very high refusal rate (~82%) when encountering unassociated hallucinations in testing, but it does not effectively prepare the model to refuse associative hallucinations (~28%).

* **Impact of AH Training:** Training exclusively on "AH" (likely Associative Hallucinations) results in a more uniform refusal profile. While it increases the refusal of associative hallucinations (~33%), it significantly reduces the refusal rate for unassociated hallucinations (~24%) compared to the UH training method.

* **Factual Refusal:** Both training methods result in a baseline refusal of factual associations between 20% and 30%, with "AH Only" training showing a slightly lower (better) refusal rate for factual information.

</details>

Figure 11: Refusal tuning performance across three types of samples (LLaMA-3-8B).

Figure 11 shows that training with UHs leads to strong generalization across UHs, with refusal ratios of 82% for LLaMA. However, this effect does not transfer to AHs, where refusal ratios fall to 28%, respectively. Moreover, some FA cases are mistakenly refused (29.5%). These results confirm that UHs share a common activation subspace, supporting generalization within the category, while AHs and FAs lie outside this space. By contrast, training with AHs produces poor generalization. On AH test samples, refusal ratio is only 33%, validating that their subject-specific hidden states prevent consistent refusal learning. Generalization to UHs is also weak (23.5%), again reflecting the divergence between AH and UH activation spaces.

Overall, these findings show that the generalizability of refusal tuning is fundamentally limited by the heterogeneous nature of hallucinations. UH representations are internally consistent enough to support refusal generalization, but AH representations are too diverse for either UH-based or AH-based training to yield a broadly applicable and reliable refusal capability.

7 Conclusions and Future Work

In this work, we revisit the widely accepted claim that hallucinations can be detected from a model’s internal states. Our mechanistic analysis reveals that hidden states encode whether models are reliance on their parametric knowledge rather than truthfulness. As a result, detection methods succeed only when outputs are detached from the input but fail when hallucinations arise from the same knowledge-recall process as correct answers.