# ADEPT: Continual Pretraining via Adaptive Expansion and Dynamic Decoupled Tuning

> These authors contributed equally.Corresponding author.

Abstract

Conventional continual pretraining (CPT) for large language model (LLM) domain adaptation often suffers from catastrophic forgetting and limited domain capacity. Existing strategies adopt layer expansion, introducing additional trainable parameters to accommodate new knowledge. However, the uniform expansion and updates still entangle general and domain learning, undermining its effectiveness. Our pilot studies reveal that LLMs exhibit functional specialization, where layers and units differentially encode general-critical capabilities, suggesting that parameter expansion and optimization should be function-aware. We then propose ADEPT, A daptive Expansion and D ynamic D e coupled Tuning for continual p re t raining, a two-stage framework for domain-adaptive CPT. ADEPT first performs General-Competence Guided Selective Layer Expansion, duplicating layers least critical for the general domain to increase representational capacity while minimizing interference with general knowledge. It then applies Adaptive Unit-Wise Decoupled Tuning, disentangling parameter units within expanded layers according to their general-domain importance and assigning asymmetric learning rates to balance knowledge injection and retention. Experiments on mathematical and medical benchmarks show that ADEPT outperforms full-parameter CPT by up to 5.76% on the general domain and 5.58% on the target domain with only 15% of parameters tuned and less than 50% training time. Ablation studies, theoretical analysis, and extended investigations further demonstrate the necessity of targeted expansion and decoupled optimization, providing new principles for efficient and robust domain-adaptive CPT. Our code is open-sourced at https://github.com/PuppyKnightUniversity/ADEPT.

1 Introduction

Large language models (LLMs) have demonstrated remarkable performance across a wide range of general-domain tasks (OpenAI, 2023; Dubey et al., 2024c). However, their deployment in specialized domains, such as mathematics or healthcare, requires targeted adaptation (Ding et al., 2024; Chen et al., 2024; Ahn et al., 2024). Continual pretraining (CPT), which conducts post-pretraining on domain-specific corpora, has emerged as a crucial paradigm for injecting domain knowledge and capabilities into pretrained LLMs (Wu et al., 2024a; Ibrahim et al., 2024; Yıldız et al., 2024).

Despite its promise, CPT faces a persistent challenge: catastrophic forgetting. After pretraining, LLMs already encode substantial general knowledge, leaving limited parameter capacity for integrating new domain-specific information. While domain signals can be forcefully fitted through gradient-based optimization, the aggressive updates on the existing parameters come at the cost of overfitting to the target corpora, which in turn disrupts general abilities and triggers catastrophic forgetting (Liu et al., 2024a; Luo et al., 2025). This tension between new knowledge injection and previous knowledge retention poses a central obstacle to reliable and stable domain adaptation.

To address catastrophic forgetting, some approaches attempt through data-centric strategies, such as data replay or rehearsal (Huang et al., 2024; Zhang et al., 2025). While replay partially preserves prior knowledge, it fails to expand model capacity, leaving the conflict between knowledge injection and retention unresolved. Others focus on increasing capacity via transformer-layer extension (Wu et al., 2024b), yet typically insert new layers uniformly and update all parameters indiscriminately. This expansion strategy neglects the functional specialization within LLMs, where different layers and neurons serve distinct functional roles. Our pilot studies reveal that general-critical layers in LLMs are mainly located in early depths, and functional units within layers contribute unequally to general-domain performance, highlighting functional specialization similar to that found in the human brain (Xu et al., 2025; Zheng et al., 2024; Dai et al., 2022). Consequently, indiscriminate expansion and optimization may overwrite general-critical regions with new knowledge, compromising general competency preservation and leaving forgetting unresolved.

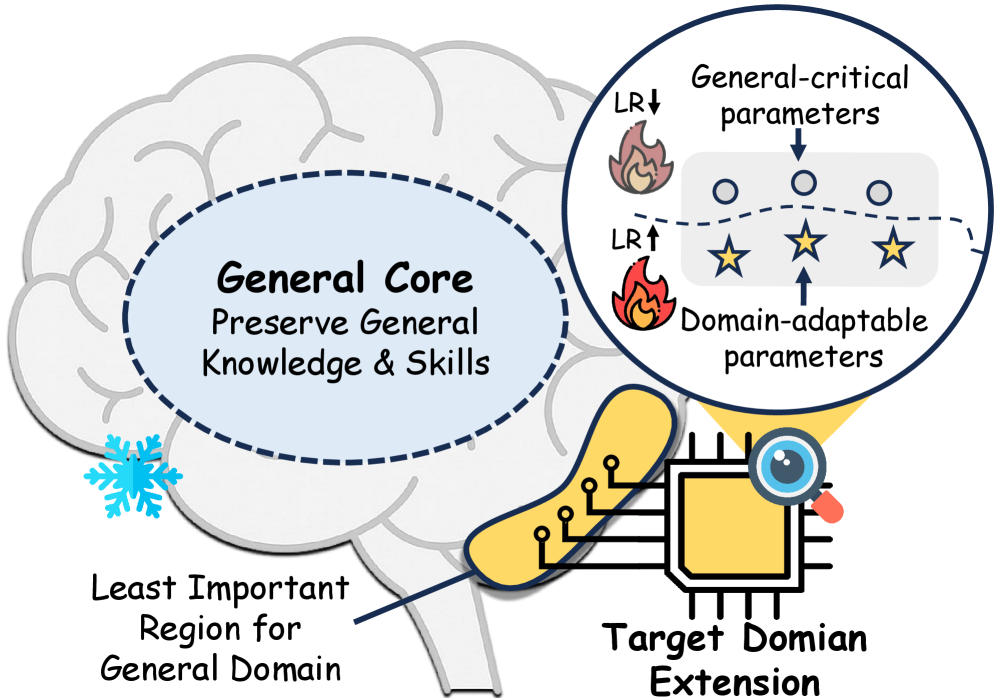

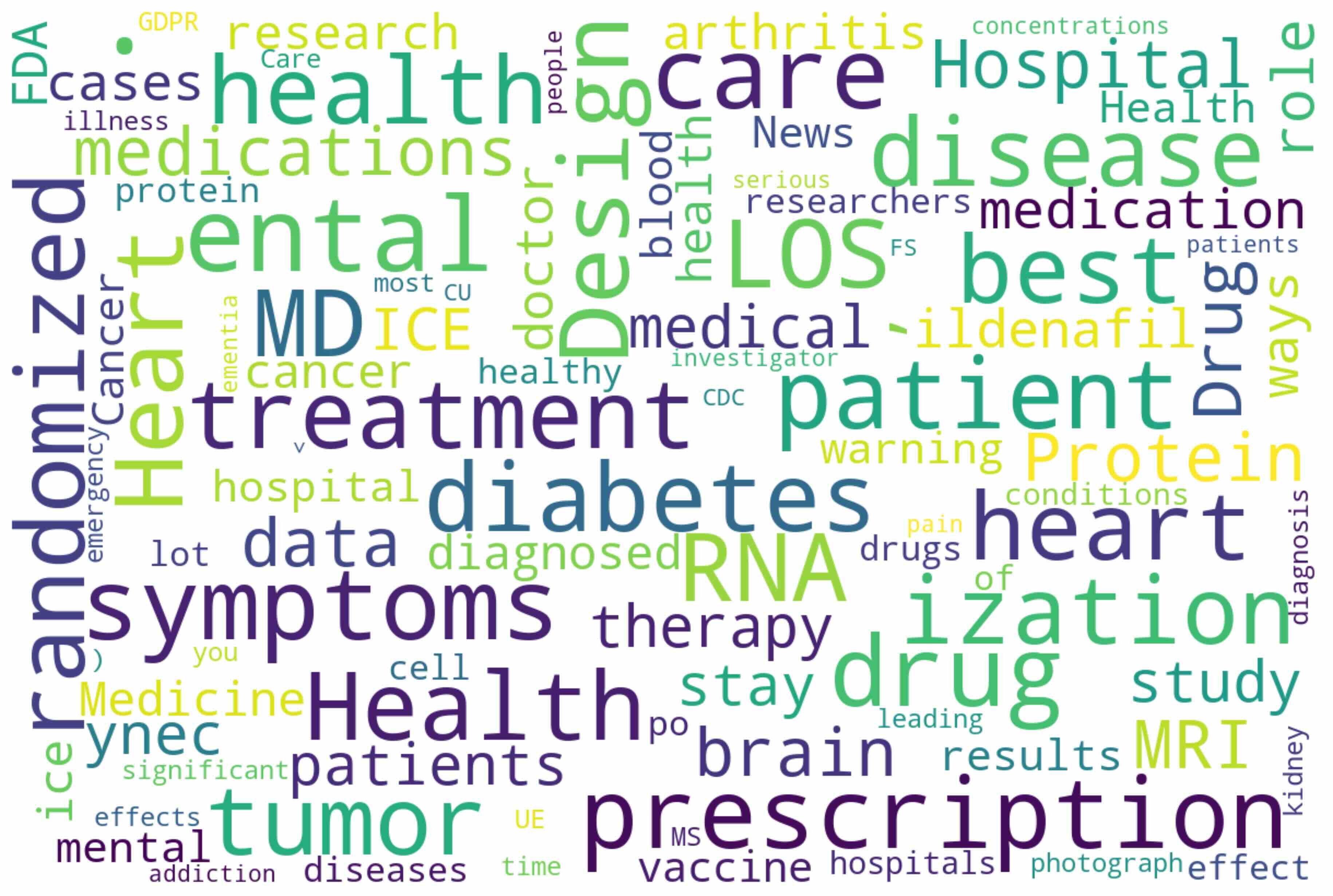

Inspired by the functional specialization perspective, we propose our core insight: effective CPT should expand and update the model adaptively, preserving the regions responsible for the general domain and targeting more adaptable parameters. Specifically, we argue that capacity allocation must be importance-guided, and optimization must be function-decoupled to minimize interference with general competencies. As illustrated in Figure 1, domain-specific extension should be allocated to the regions less constrained by general-domain knowledge and skills, and parameters within these regions should be decoupled and tuned accordingly, preserving general-critical parameters and allowing the rest to be more adaptable to absorb new domain-specific information.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Brain with Domain Adaptation

### Overview

The image is a diagram illustrating the concept of domain adaptation in the context of a brain-like structure. It shows a brain with labeled regions representing general knowledge, less important regions, and a target domain extension. A magnified view highlights the distinction between general-critical parameters and domain-adaptable parameters, indicating how learning rates (LR) are adjusted during adaptation.

### Components/Axes

* **Brain:** A simplified representation of a brain, serving as the central metaphor.

* **General Core:** A blue oval within the brain, labeled "General Core" and "Preserve General Knowledge & Skills."

* **Least Important Region for General Domain:** A region at the bottom-left of the brain, marked with a snowflake icon.

* **Target Domain Extension:** A yellow square with integrated circuit-like connections, representing the area for domain-specific learning.

* **Magnified View:** A circular inset showing "General-critical parameters" (represented by circles) and "Domain-adaptable parameters" (represented by stars).

* **Learning Rate (LR) Indicators:** Flames with arrows pointing up (LR↑) and down (LR↓) next to the parameters in the magnified view.

### Detailed Analysis

* **General Core:** Located in the center of the brain, this area is responsible for preserving general knowledge and skills.

* **Least Important Region:** Situated at the bottom-left, this region is deemed less crucial for general domain tasks, indicated by a blue snowflake.

* **Target Domain Extension:** A yellow square at the bottom-right, connected to the brain via a yellow extension, represents the area where domain-specific learning occurs. It has a magnifying glass icon, suggesting closer inspection.

* **Magnified View Details:**

* **General-critical parameters:** Represented by circles, these parameters are associated with a decreased learning rate (LR↓), indicated by a flame icon with a downward arrow.

* **Domain-adaptable parameters:** Represented by stars, these parameters are associated with an increased learning rate (LR↑), indicated by a flame icon with an upward arrow.

* The parameters are separated by a dashed line.

### Key Observations

* The diagram emphasizes the distinction between general knowledge and domain-specific learning.

* Learning rates are adjusted differently for general-critical and domain-adaptable parameters.

* The "Least Important Region" suggests a potential area for modification or pruning during domain adaptation.

### Interpretation

The diagram illustrates a domain adaptation strategy where the brain (or a neural network) retains its core general knowledge while adapting to a specific target domain. The "General Core" represents the pre-trained knowledge, while the "Target Domain Extension" signifies the area where new, domain-specific knowledge is acquired. The magnified view highlights the key mechanism: adjusting learning rates. General-critical parameters, essential for general knowledge, have their learning rates reduced to prevent catastrophic forgetting. Domain-adaptable parameters, crucial for the new domain, have their learning rates increased to facilitate learning. The "Least Important Region" might represent parameters that can be pruned or repurposed without significantly affecting general performance. This approach aims to balance knowledge retention and adaptation, enabling the system to perform well in both general and specific domains.

</details>

Figure 1: Illustration of the core idea of ADEPT. Target domain extension are applied on the least important region for general domain, minimizing catastrophic forgetting. Asymmetric learning rates are applied to parameter subsets for targeted knowledge injection.

Building on this insight, we propose A daptive Expansion and D ynamic D e coupled Tuning for continual p re- t raining (ADEPT), a framework for domain-adaptive continual pretraining. ADEPT comprises two stages: General-Competence Guided Selective Layer Expansion, which identifies and duplicates layers least critical for the general domain, allocating additional capacity precisely where interference with general capabilities is minimized, thereby preventing catastrophic forgetting. Adaptive Unit-Wise Decoupled Tuning, which disentangles the parameters within the expanded layers based on their importance to the general domain. Asymmetric learning rates are then applied on their subsets, ensuring that general-critical parameters are preserved while more adaptable parameters can fully absorb domain-specific knowledge. Extensive experiments on mathematical and medicine domains demonstrate that ADEPT enables efficient and robust domain knowledge injection, while substantially alleviating catastrophic forgetting. Specifically, compared to full-parameter CPT, ADEPT achieves up to 5.58% accuracy gain on target-domain benchmarks, and up to 5.76% gain on the general domain, confirming both effective knowledge acquisition and strong retention of general competencies. Furthermore, ADEPT attains these improvements with only 15% of parameters tuned, and reduces training time relative to other baselines greatly, highlighting its efficiency. Ablation studies and theoretical analysis further validate the designs of ADEPT.

To summarize, our contributions are threefold:

1. Insightfully, we highlight the importance of considering functional specialization in LLMs for continual pretraining through empirical experiments and theoretical analysis, advocating for targeted layer expansion and decoupled training as a principled solution to domain adaptation.

1. Technically, we propose ADEPT, a framework that consists of General-Competence Guided Selective Layer Expansion and Adaptive Unit-Wise Decoupled Tuning, enabling adaptive and effective domain knowledge integration while minimizing catastrophic forgetting.

1. Empirically, we conduct extensive experiments on both mathematical and medical domains, demonstrating that ADEPT consistently outperforms baselines in domain performance while preserving general competencies.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Heatmaps: Qwen3 Model Layer Analysis

### Overview

The image presents three heatmaps comparing the activity across different layers and components of Qwen3 models with varying sizes (1.7B, 4B, and 8B parameters). The heatmaps visualize the relative activation levels within each layer for different modules, providing insights into how the model's processing changes with scale.

### Components/Axes

* **Titles:**

* Top-left: "Qwen3-1.7B-Base"

* Top-center: "Qwen3-4B-Base"

* Top-right: "Qwen3-8B-Base"

* **Y-axis:** "Layer"

* Leftmost heatmap: Ranges from 0 to 27, incrementing by 1.

* Center heatmap: Ranges from 0 to 34, incrementing by 2.

* Rightmost heatmap: Ranges from 0 to 34, incrementing by 2.

* **X-axis:** (Modules, from left to right)

* "mlp.down_proj"

* "mlp.up_proj"

* "self_atten.o_proj"

* "mlp.gate_proj"

* "self_atten.v_proj"

* "self_atten.q_proj"

* "self_atten.k_proj"

* "post_attention_layernorm"

* "input_layernorm"

* "self_atten.k_norm"

* "self_atten.a_norm"

* **Color Legend:** Located on the right side of the rightmost heatmap. The color gradient ranges from light green (0) to dark blue (1), representing the activation level.

### Detailed Analysis

**Qwen3-1.7B-Base (Left Heatmap):**

* The heatmap shows activity concentrated in the lower layers (0-10).

* **mlp.down_proj:** High activation in layers 0-9, decreasing gradually.

* **mlp.up_proj:** Similar to mlp.down_proj, high activation in layers 0-9.

* **self_atten.o_proj:** Moderate activation in layers 0-5.

* **mlp.gate_proj:** Low to moderate activation in layers 0-5.

* **self_atten.v_proj:** Very low activation across all layers.

* **self_atten.q_proj:** Very low activation across all layers.

* **self_atten.k_proj:** Very low activation across all layers.

* **post_attention_layernorm:** Very low activation across all layers.

* **input_layernorm:** Very low activation across all layers.

* **self_atten.k_norm:** Very low activation across all layers.

* **self_atten.a_norm:** Very low activation across all layers.

**Qwen3-4B-Base (Center Heatmap):**

* Activity is more spread out across the layers compared to the 1.7B model.

* **mlp.down_proj:** High activation in layers 0-15, then decreases.

* **mlp.up_proj:** High activation in layers 0-15, then decreases.

* **self_atten.o_proj:** Moderate activation in layers 0-10.

* **mlp.gate_proj:** Low to moderate activation in layers 0-10.

* **self_atten.v_proj:** Very low activation across all layers.

* **self_atten.q_proj:** Very low activation across all layers.

* **self_atten.k_proj:** Very low activation across all layers.

* **post_attention_layernorm:** Very low activation across all layers.

* **input_layernorm:** Very low activation across all layers.

* **self_atten.k_norm:** Very low activation across all layers.

* **self_atten.a_norm:** Very low activation across all layers.

**Qwen3-8B-Base (Right Heatmap):**

* The activity pattern is similar to the 4B model, but with slightly higher activation in the lower layers.

* **mlp.down_proj:** High activation in layers 0-15, then decreases.

* **mlp.up_proj:** High activation in layers 0-15, then decreases.

* **self_atten.o_proj:** Moderate activation in layers 0-10.

* **mlp.gate_proj:** Low to moderate activation in layers 0-10.

* **self_atten.v_proj:** Very low activation across all layers.

* **self_atten.q_proj:** Very low activation across all layers.

* **self_atten.k_proj:** Very low activation across all layers.

* **post_attention_layernorm:** Very low activation across all layers.

* **input_layernorm:** Very low activation across all layers.

* **self_atten.k_norm:** Very low activation across all layers.

* **self_atten.a_norm:** Very low activation across all layers.

### Key Observations

* The mlp.down_proj and mlp.up_proj modules show the highest activation levels across all three models, particularly in the lower layers.

* The self_atten.o_proj and mlp.gate_proj modules exhibit moderate activation, also concentrated in the lower layers.

* The remaining modules (self_atten.v_proj, self_atten.q_proj, self_atten.k_proj, post_attention_layernorm, input_layernorm, self_atten.k_norm, self_atten.a_norm) show very low activation across all layers and models.

* As the model size increases (1.7B to 4B to 8B), the activity tends to spread out more across the layers, suggesting that larger models utilize more layers for processing.

### Interpretation

The heatmaps provide a visual representation of the internal workings of the Qwen3 models. The concentration of activity in the mlp.down_proj and mlp.up_proj modules suggests that these modules play a crucial role in the model's processing, especially in the initial layers. The low activation of other modules might indicate that they are either less important or that their activity is more distributed and less concentrated in specific layers.

The trend of activity spreading out across more layers as the model size increases suggests that larger models are able to distribute the processing load more effectively, potentially leading to improved performance. The consistent patterns across the different model sizes also indicate that the overall architecture and processing flow remain similar, with the larger models simply scaling up the existing structure.

</details>

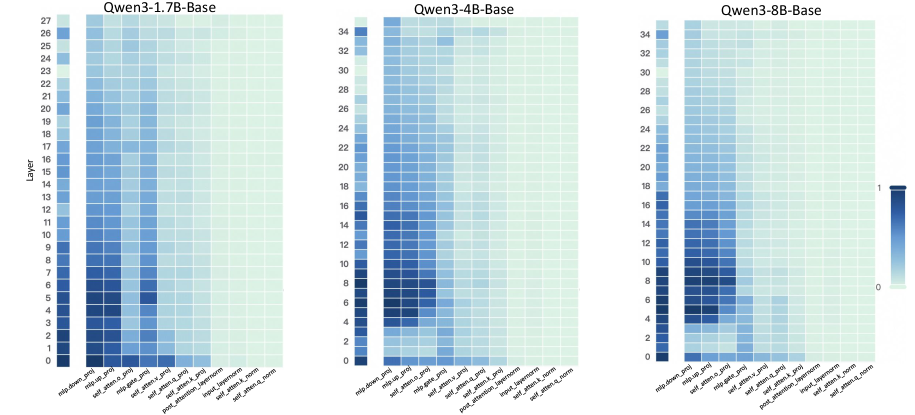

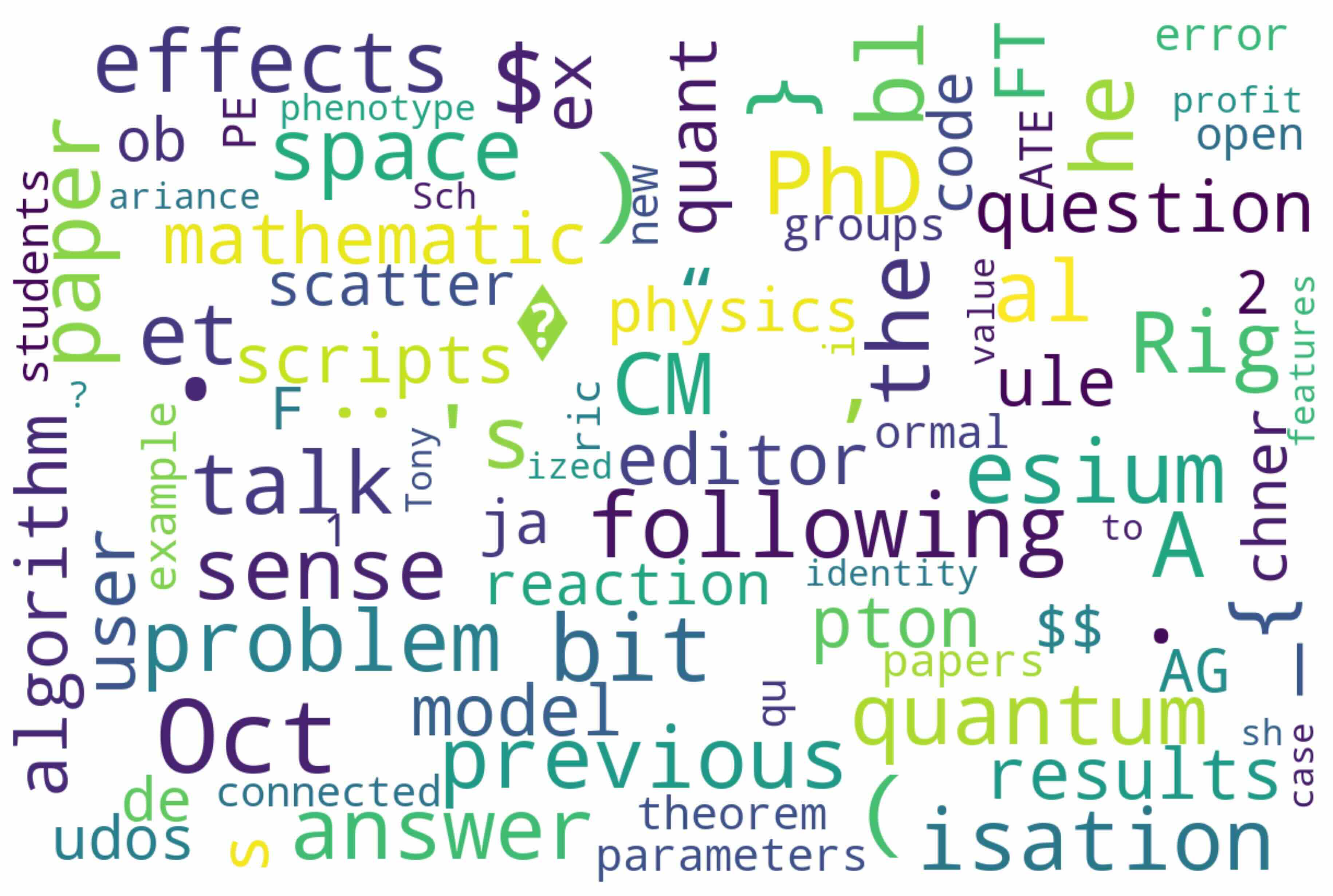

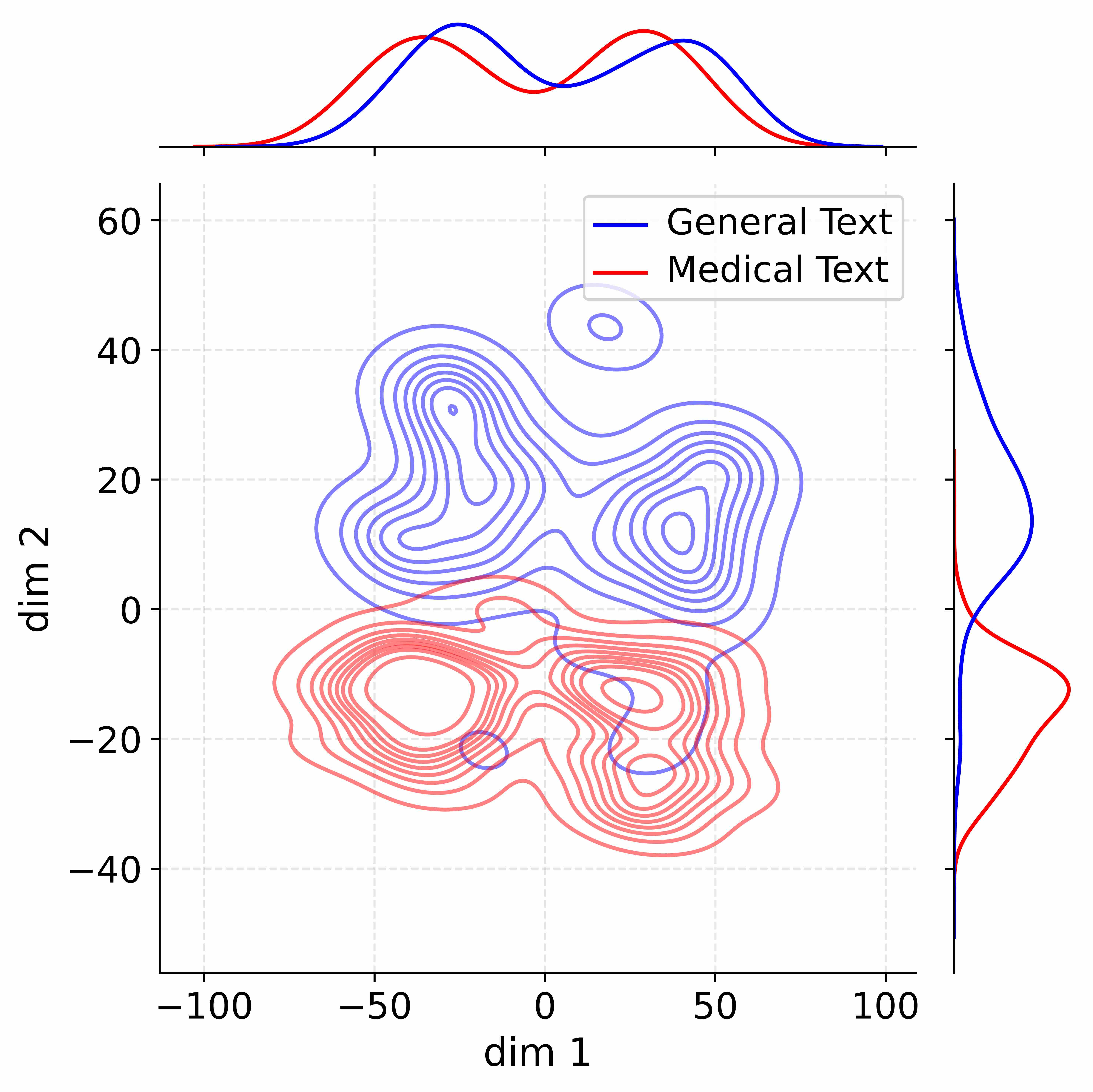

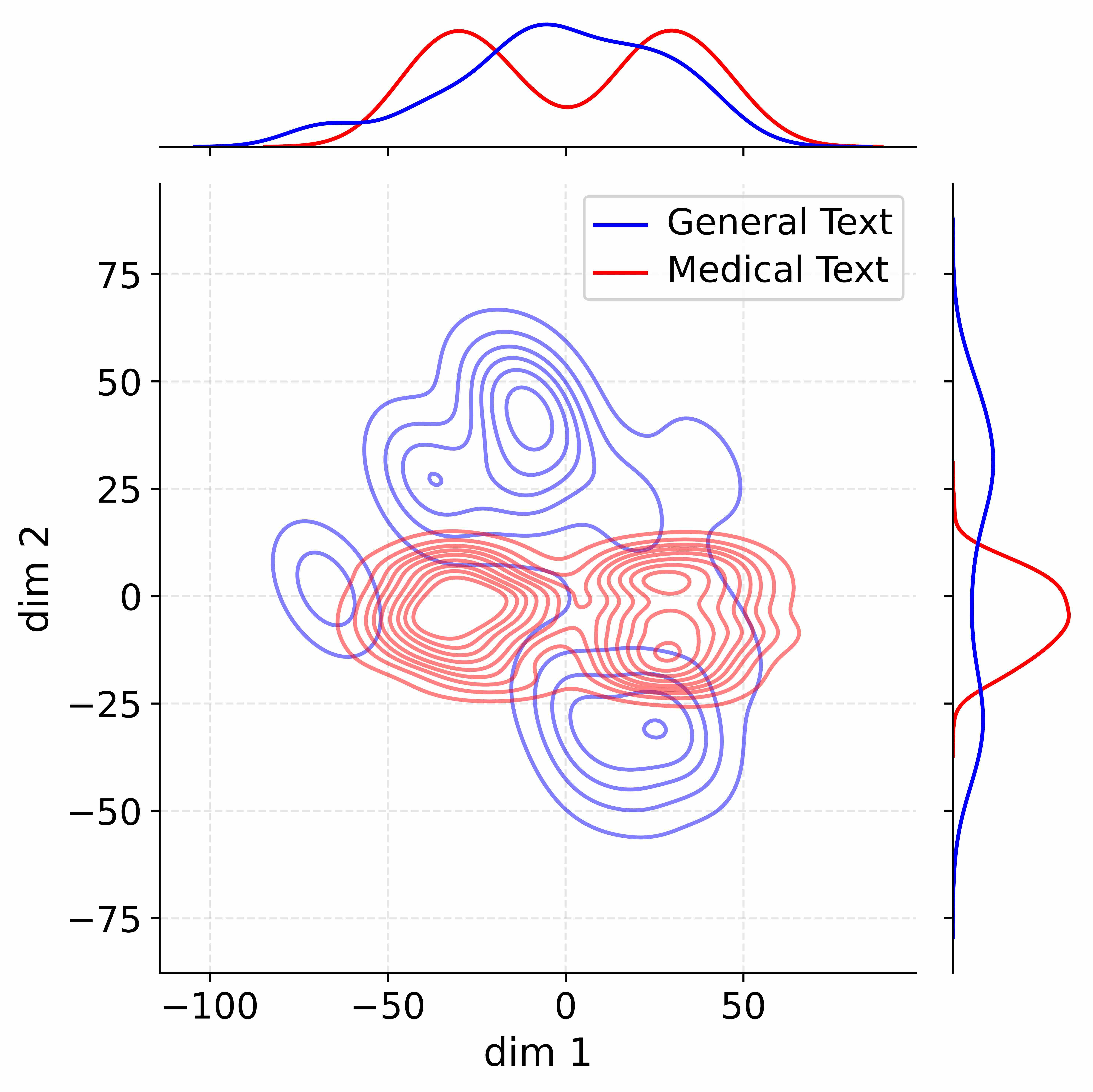

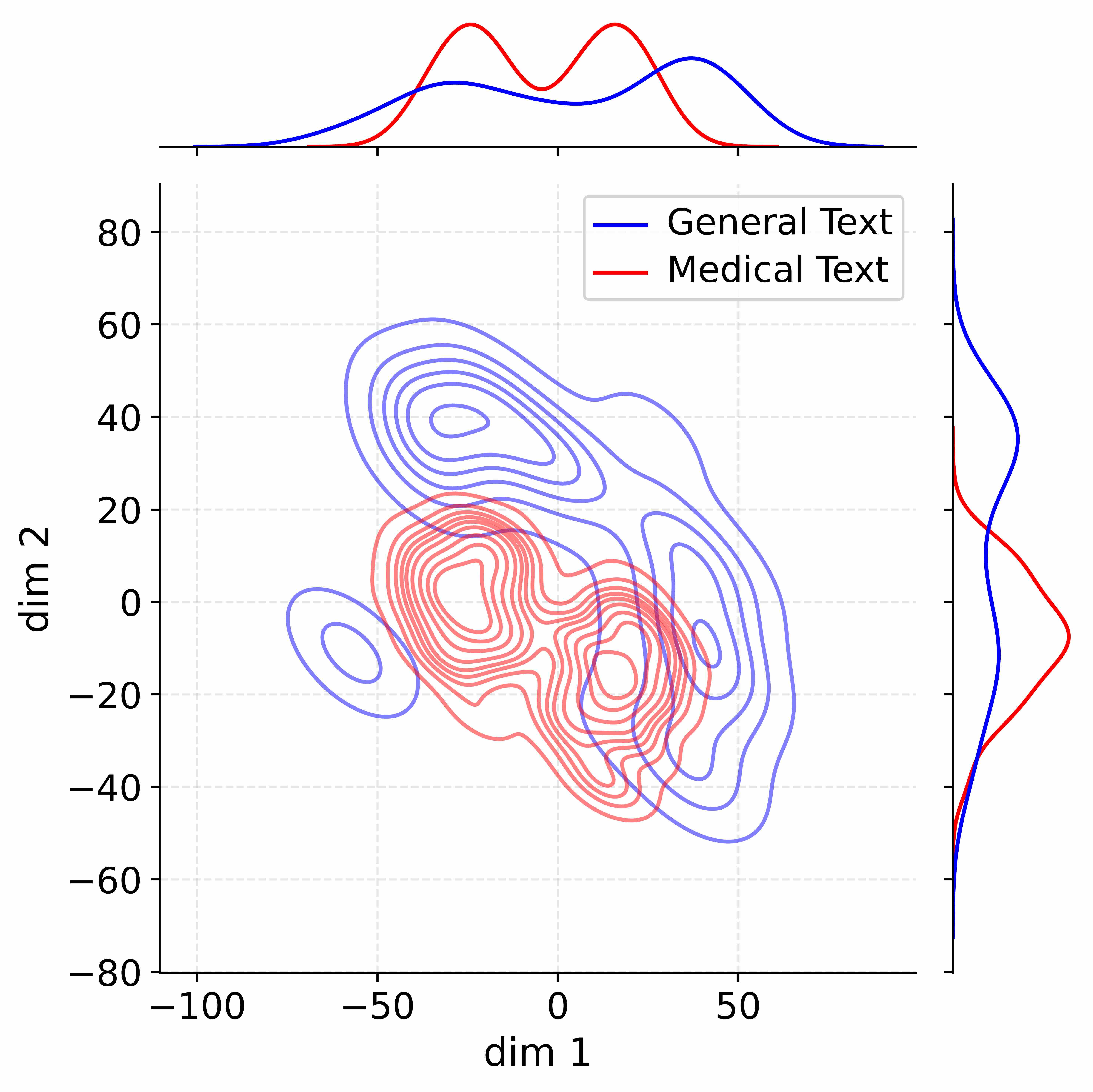

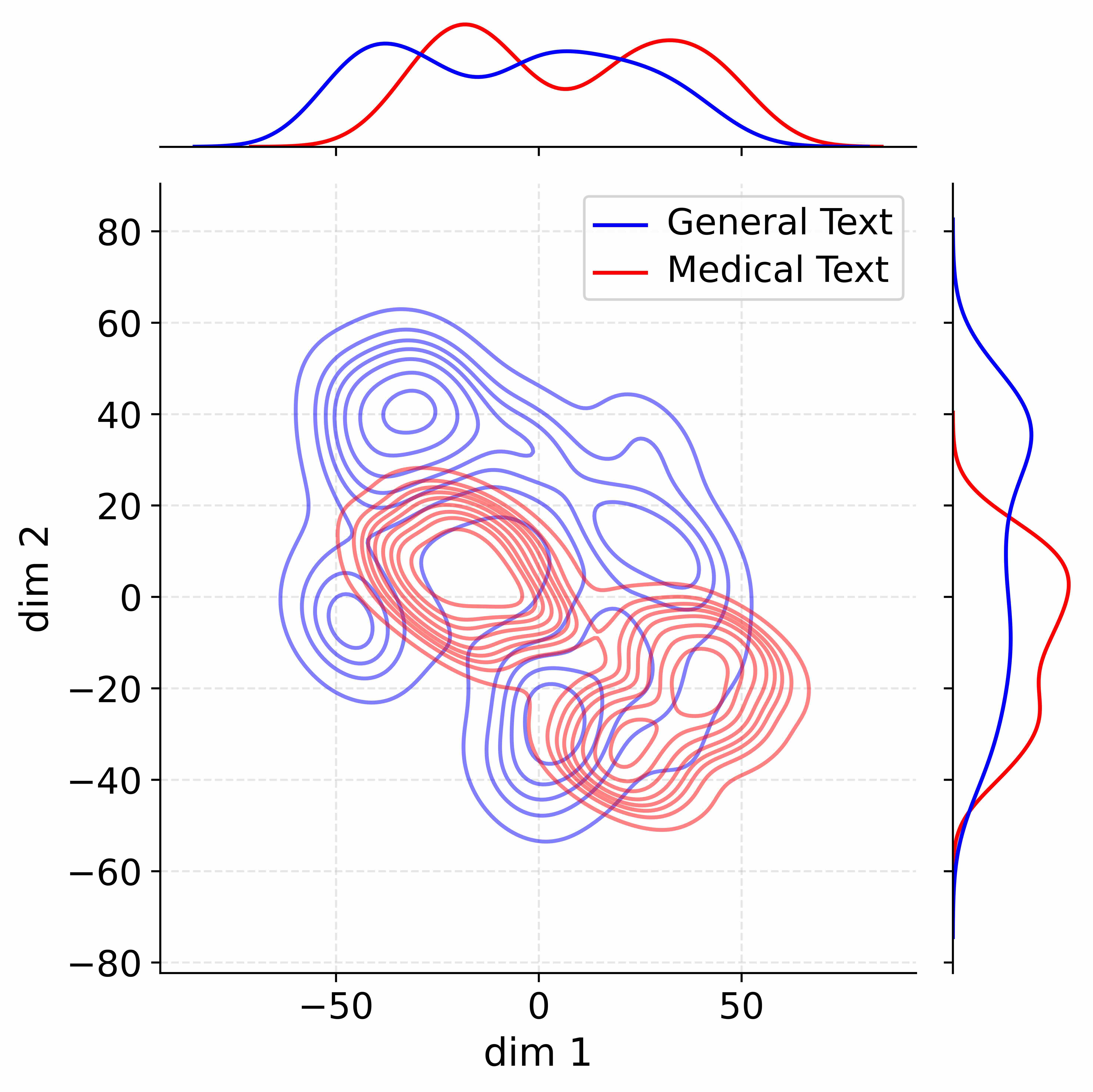

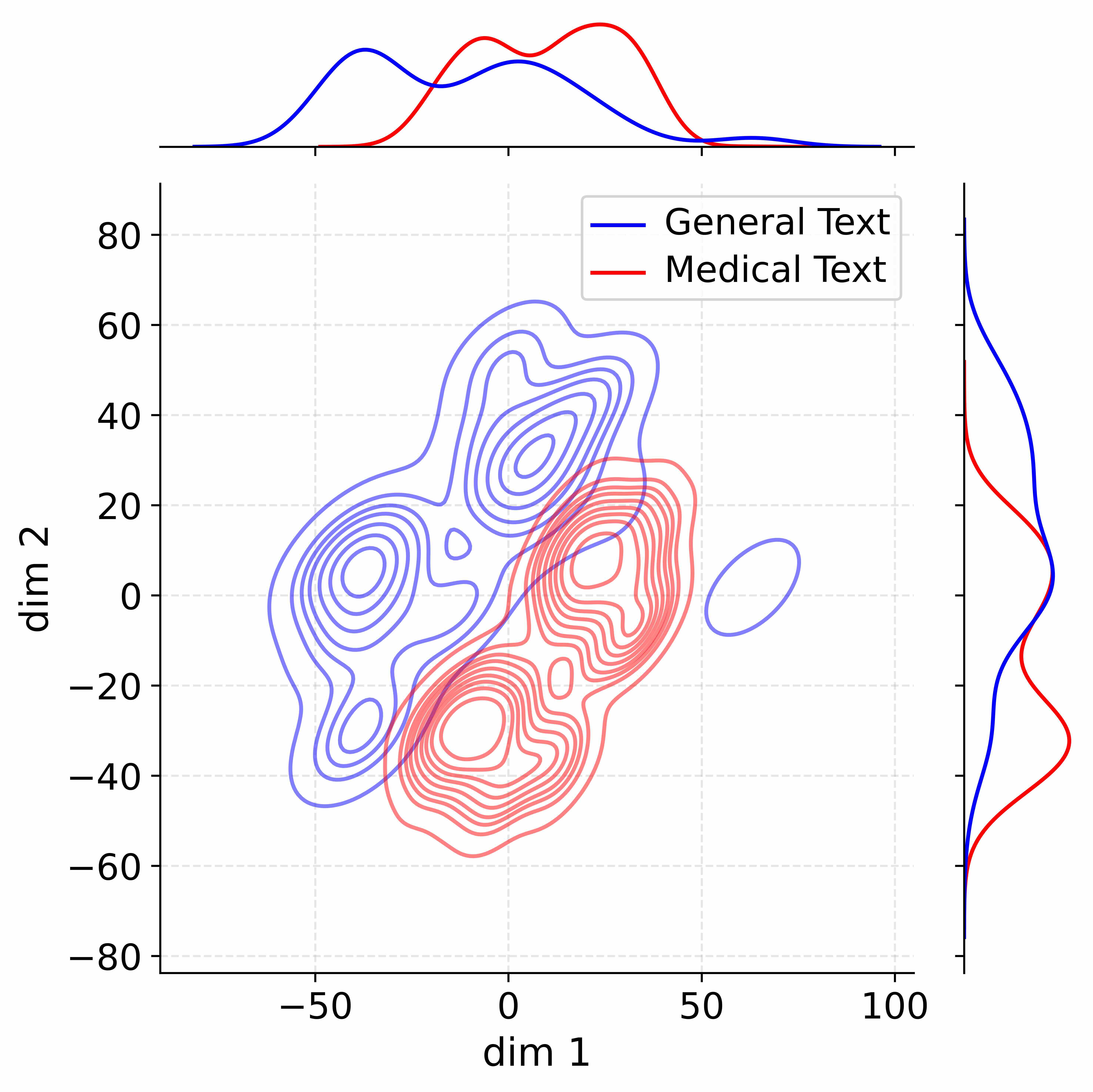

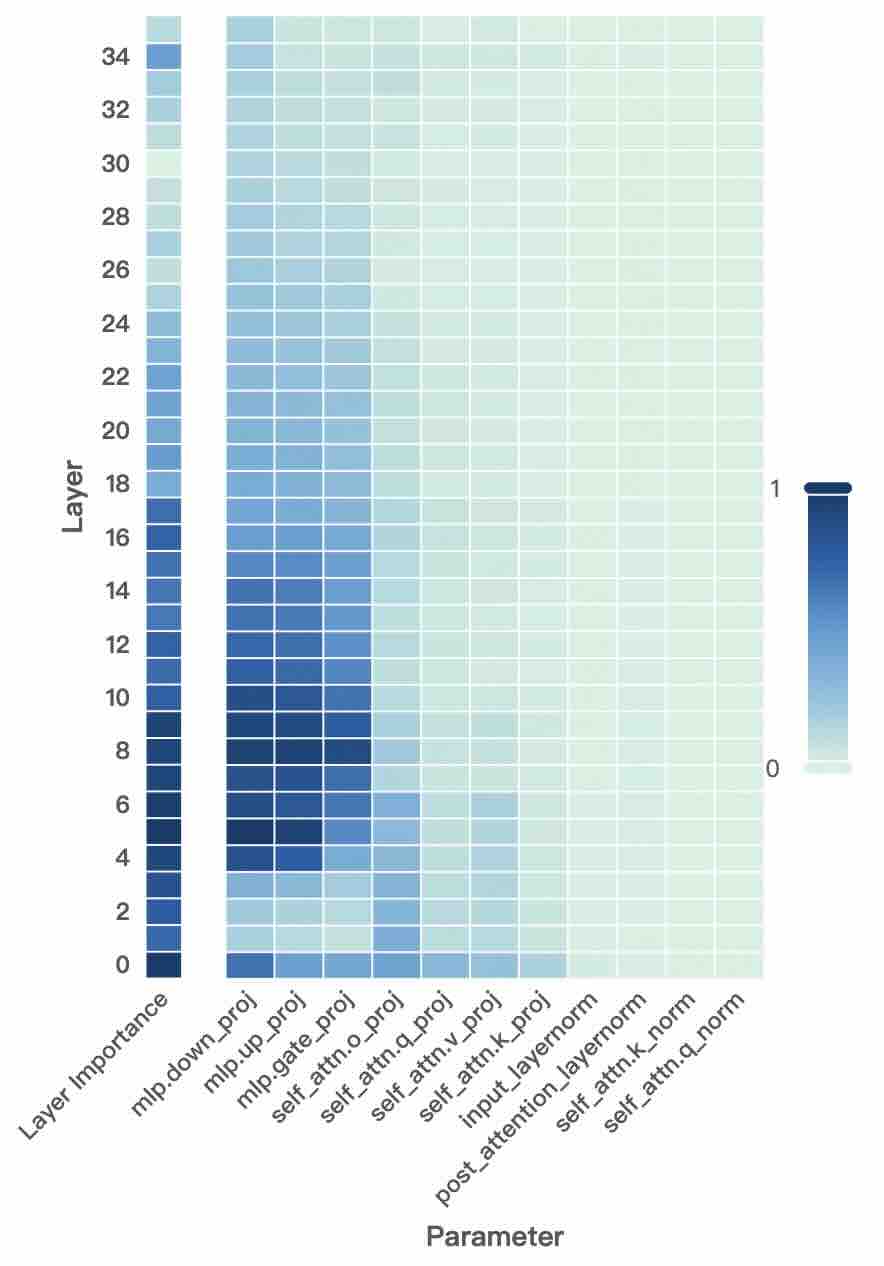

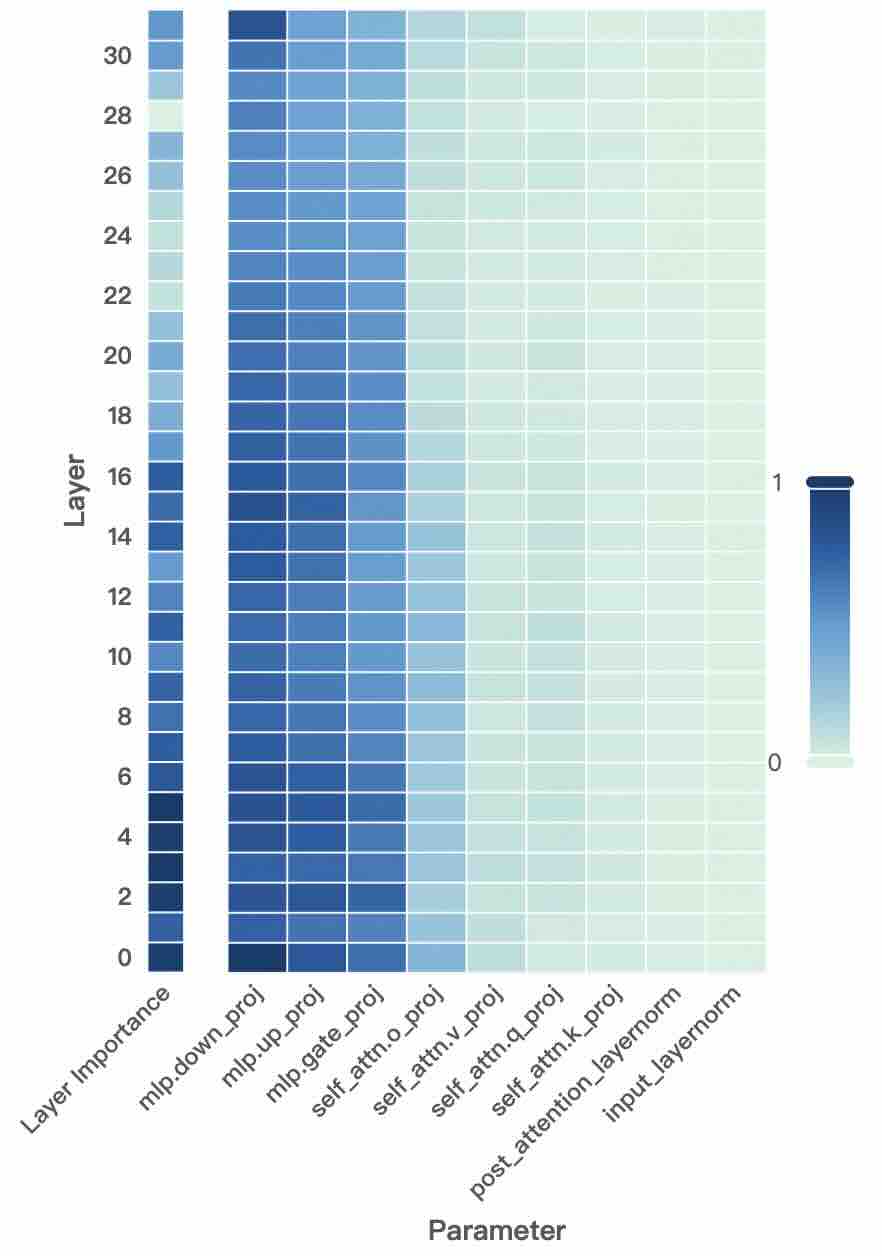

Figure 2: Layer- and unit-level importance distribution of the Qwen3 family. The vertical axis corresponds to different layers, while the horizontal axis denotes parameter units within each layer. Deeper blue indicates higher importance for preserving general-domain competencies.

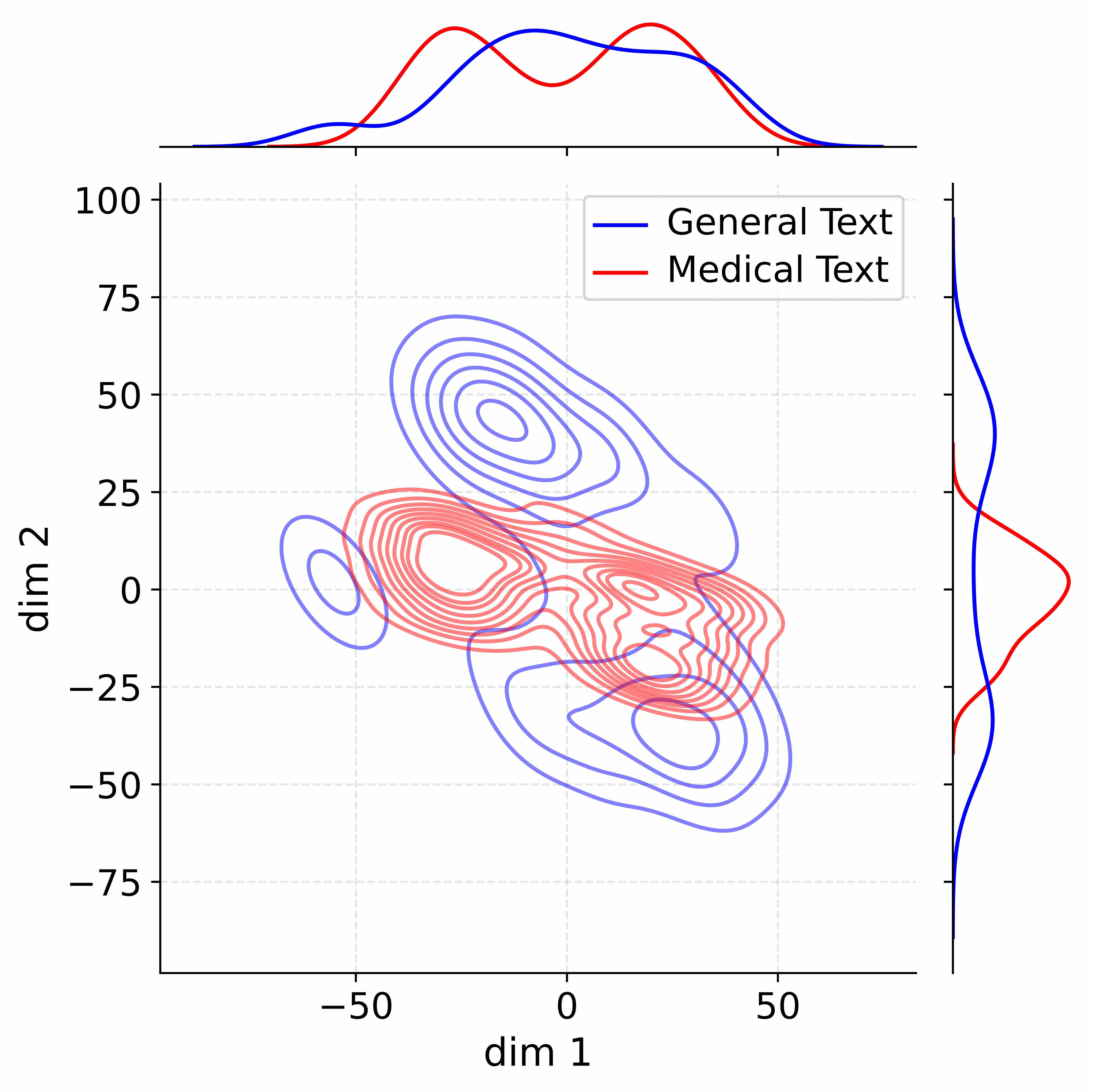

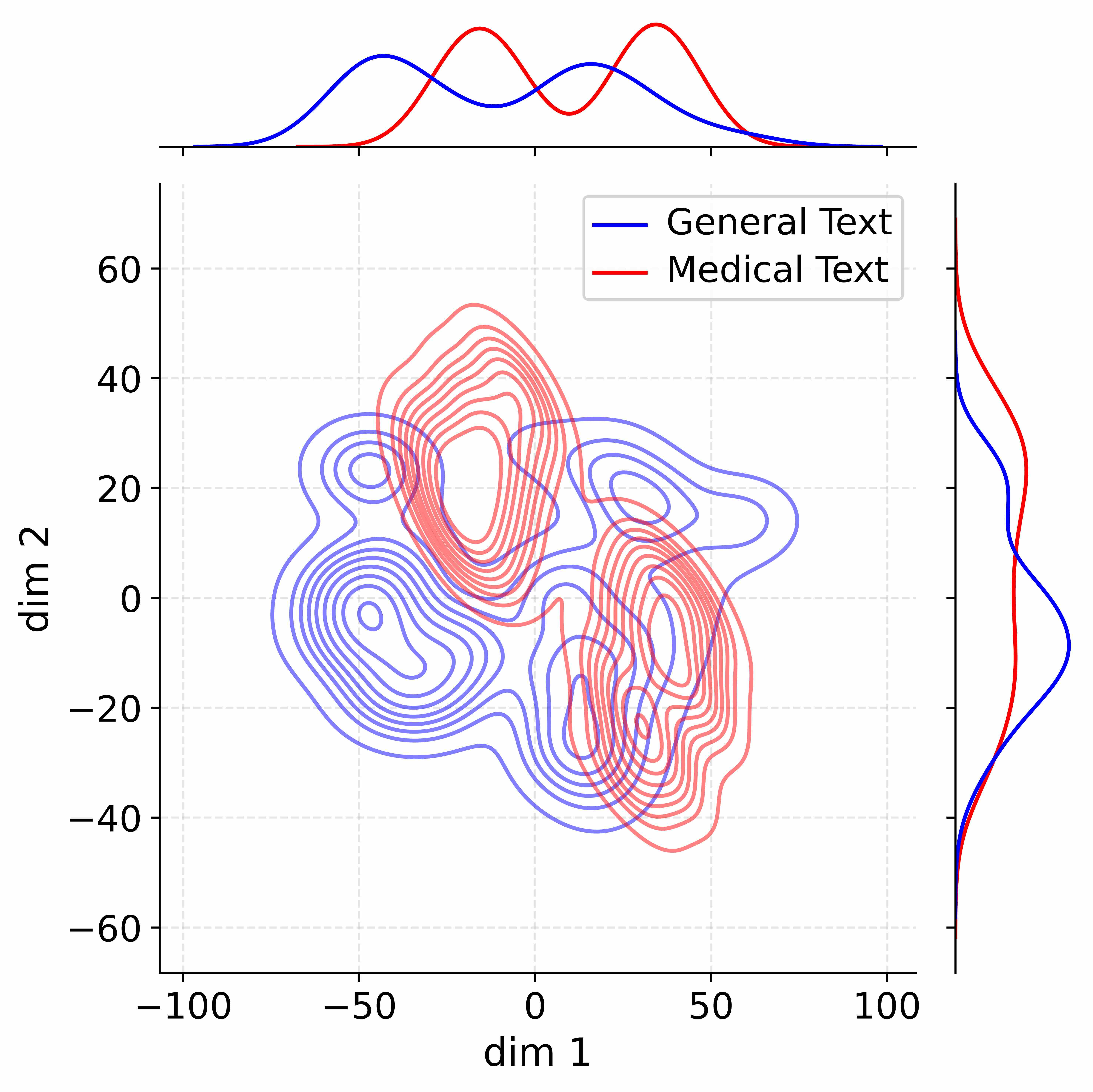

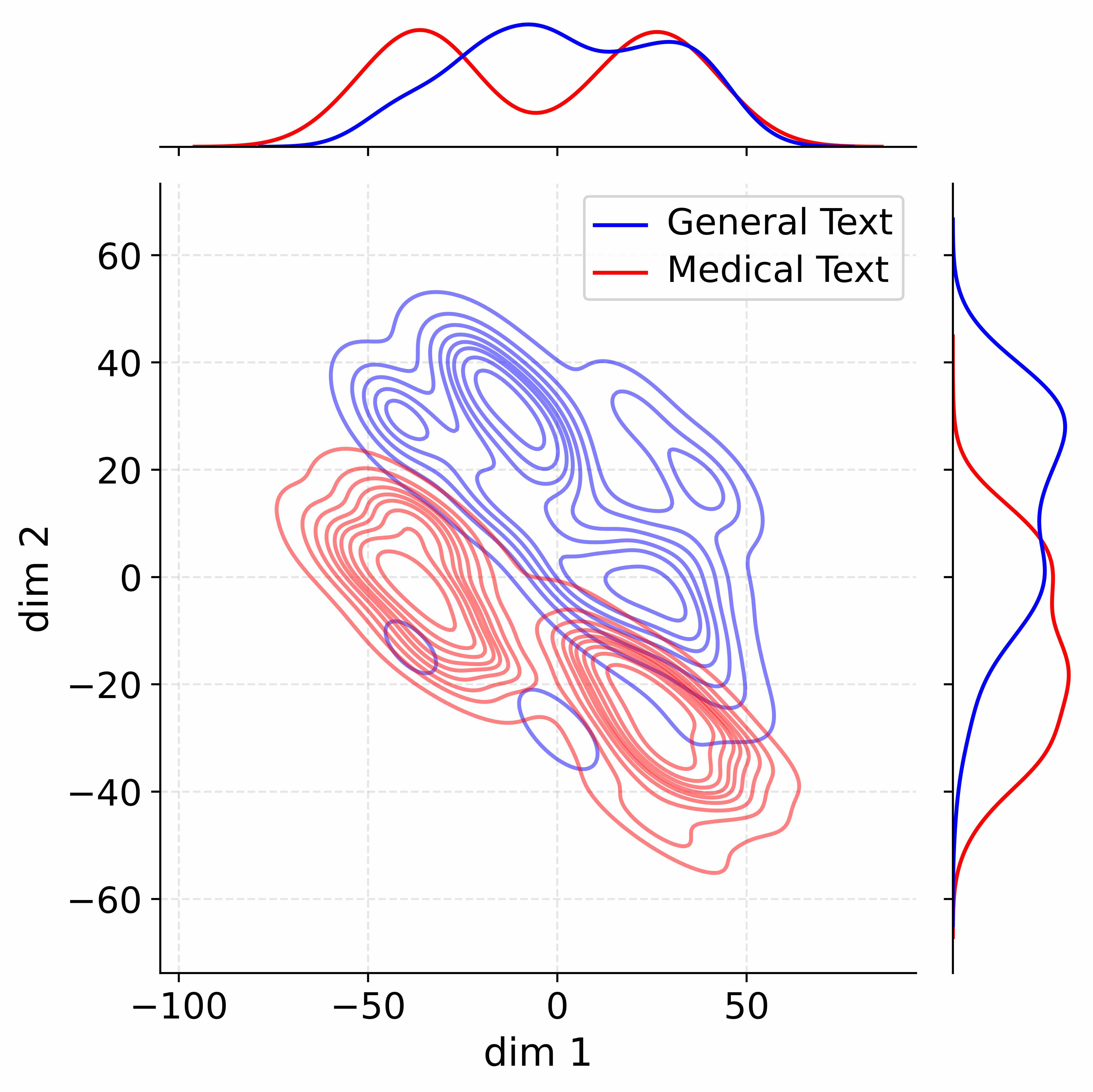

2 Pilot Study: Probing Parameter Importance

2.1 Experimental Setup for Importance Probing

To investigate the functional specialization of LLMs and understand how different parameters contribute to preserving general-domain knowledge during CPT, we conduct importance probing on multiple backbone models, including Qwen3-Base (1.7B, 4B, 8B) (Yang et al., 2025a) and LLaMA3-8B (Dubey et al., 2024b). Our analyses focus on probing general-knowledge-critical parameters rather than domain-specific ones. The rationale is that successful CPT must inject new, domain-specific knowledge without inducing catastrophic forgetting. This necessitates identifying and preserving the model’s core parameters that are crucial for its general-domain competencies. By contrast, domain knowledge can then be effectively allocated to less critical parameters, without risking the erosion of pre-existing knowledge and skills. To support this analysis, we construct a General Competence Detection Corpus containing broad world knowledge and instruction-following tasks in both English and Chinese, which servers as the probing ground to reflect a model’s general competencies. Details of its construction are provided in Appendix B.3.

2.2 Layer-Level Importance Probing

Our first research question is: How do different layers contribute to preserving general knowledge? To answer this, we measure the importance of each transformer layer by the model’s degradation in general-domain performance when that layer is ablated. Formally, given the General Competence Detection Corpus $\mathcal{D}_{\text{probe}}$ , we first compute the baseline next-token prediction loss of the pretrained LLM $M_{0}$ :

$$

\mathcal{L}_{\text{base}}=\frac{1}{|\mathcal{D}_{\text{probe}}|}\sum_{x\in\mathcal{D}_{\text{probe}}}\ell\big(M_{0}(x),x\big), \tag{1}

$$

where $\ell(·)$ denotes the standard next-token prediction loss in CPT. For each transformer layer $l∈\{1,...,L\}$ , we mask its output via a residual bypass and recompute the loss:

$$

\hat{\mathcal{L}}^{(l)}=\frac{1}{|\mathcal{D}_{\text{probe}}|}\sum_{x\in\mathcal{D}_{\text{probe}}}\ell\big(M_{0}^{(-l)}(x),x\big), \tag{2}

$$

where $M_{0}^{(-l)}$ denotes the model with the $l$ -th layer masked. The importance of layer $l$ is defined as the loss increase relative to the baseline:

$$

I_{\text{layer}}^{(l)}=\hat{\mathcal{L}}^{(l)}-\mathcal{L}_{\text{base}}. \tag{3}

$$

A larger $I_{\text{layer}}^{(l)}$ indicates that layer $l$ plays a more critical role in preserving general knowledge. Figure 2 (left-hand bars) reports the layer-level importance distributions of the Qwen3 family (results for LLaMA3-8B provided in Appendix D). We find that general-knowledge-critical layers are concentrated in the early layers, with importance gradually decreasing toward later layers. This uneven distribution suggests that uniformly expanding layers across the entire depth would be suboptimal. Since some layers are tightly coupled with general knowledge while others are more flexible, uniform expansion not only risks representational interference in critical layers but also allocates parametric budget where it is too constrained to be leveraged for domain learning. In contrast, identifying more adaptable layers with minimal impact on general knowledge and allocating expansion there for knowledge injection is a superior strategy. This leads to our first key observation:

Observation I: Layers exhibit heterogeneous importance for preserving general competencies, which motivates a selective expansion strategy that targets layers less constrained by general abilities yet more adaptable for domain adaptation.

2.3 Unit-Level Importance Probing

Building on the layer-level exploration, our next research question is: How do parameter units within each layer contribute to preserving general knowledge? To answer this, we partition each transformer layer into functional units (e.g., attention projections, MLP components, and normalization) and assess their relative contributions to preserving general competencies. The detailed partitioning scheme is provided in Appendix C. This granularity provides a more fine-grained perspective than layer-level probing, while avoiding the prohibitive cost of neuron-level analysis. Formally, for each parameter $\theta_{j}$ in a unit $U$ , we estimate its importance using a first-order Taylor approximation:

$$

I_{j}=\theta_{j}\cdot\nabla_{\theta_{j}}\mathcal{L}, \tag{4}

$$

where $\mathcal{L}$ is the autoregressive training loss. The importance of unit $U$ is then defined as the average importance of its parameters:

$$

I_{\text{unit}}=\frac{1}{|U|}\sum_{j\in U}I_{j}. \tag{5}

$$

A higher $I_{\text{unit}}$ indicates that the unit plays a more critical role in preserving general competencies. Figure 2 (right-hand heatmaps) illustrates the unit-level importance distributions of the Qwen3 family (results for LLaMA3-8B provided in Appendix D). We observe that importance is unevenly distributed across modules within a layer, with some units contributing more to general competencies and others more flexible. This finding suggests that treating all parameter units equally would be suboptimal, as a single update rule cannot simultaneously protect critical units and fully train adaptable ones, risking either damaging previous knowledge or failing to sufficiently learn new knowledge. This motivates us to pursue unit-level decoupling, where training can selectively protect critical units while enabling less general-relevant units to absorb new knowledge without constraint. This leads to our second key observation:

Observation II: Parameter units within each layer exhibit heterogeneous importance, which motivates unit-level decoupling that selectively protects critical units while enabling more adaptable ones to sufficiently absorb domain knowledge.

Summary. Building on the above observations, we propose ADEPT, a continual pretraining framework designed to enable effective domain knowledge injection while preserving general competencies. Inspired by the uneven importance distribution of layers (Observation I), ADEPT selectively expands layers less constrained by general abilities but more receptive to domain adaptation, thereby introducing fresh parameter capacity rather than uniformly expanding layers as in LLaMA-Pro (Wu et al., 2024b). Guided by the heterogeneous importance of parameter units within layers (Observation II), ADEPT further performs unit-level decoupling on the expanded layers, protecting critical units while enabling adaptable ones to specialize in domain knowledge.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: General-Competence Guided Selective Layer Expansion and Adaptive Unit-Wise Decoupled Tuning

### Overview

The image presents a two-stage diagram illustrating a method for selective layer expansion and adaptive unit-wise decoupled tuning in a neural network. Stage 1 focuses on identifying important layers and expanding them, while Stage 2 focuses on decoupling and tuning individual units.

### Components/Axes

**Stage 1: General-Competence Guided Selective Layer Expansion**

* **Step 1: General-Competence Aware Layer Importance Probing:**

* **Parameters:** Represented by a brain icon.

* **LLM:** A cartoon avatar wearing a VR headset.

* **General-Competence Detection Corpus:** Represented by a document icon with scribbles.

* **Probing Iteration:** Denoted by 'i'.

* **Layers:** L1, L2, Li, LM, represented as stacked blocks. One layer (Li) is shown as "Deactivated" (greyed out), while another is "Activated".

* **ΔLi:** Change in loss.

* **L:** Loss.

* **Step 2: Selective Expansion via Identity Copy:**

* **General-Competence Importance:** Y-axis of a bar graph.

* **ΔLi:** Change in loss.

* **Select Lowest-K Layers:** Text label.

* **Layer Index:** X-axis of the bar graph.

* **Copy:** Arrows indicating copying of layers.

* **Selective Expansion:** Expanded layers.

* **L(M+k):** Label indicating the copied layer.

**Stage 2: Adaptive Unit-Wise Decoupled Tuning**

* **Step 1: Unit-wise Neuron Decoupling:**

* **General-Competence Detection Corpus:** Represented by a document icon with scribbles.

* **Domain-adaptive Units:** Units highlighted with a star icon.

* **General-critical Units:** Units highlighted with a circle icon.

* **Calculate Unit-wise Importance:** I(wij) = |wij∇wijL|

* Two 3D surface plots, one labeled "Domain-adaptive Units" (red/orange) and the other "General-critical Units" (purple/blue).

* **Step 2: Dynamic Learning Rate Adaptation:**

* **Pretrain Dataset:** Represented by a document icon.

* **Decoupled Tuning:** Text label.

* **Higher Learning Rate:** Indicated by an upward-pointing arrow and a flame icon.

* **Lower Learning Rate:** Indicated by a downward-pointing arrow and a flame icon.

* **Update:** Text label with an arrow.

* Two 3D surface plots, one showing "Higher Learning Rate" (red/orange) and the other "Lower Learning Rate" (purple/blue).

**Legend (Right Side)**

* **Trainable:** Flame icon.

* **Frozen:** Snowflake icon.

* **Identity Copy:** Dashed arrow.

* **Update Flow:** Dotted arrow.

* **Forward Flow:** Solid arrow.

* **Probing:** Magnifying glass icon.

* **Domain Units:** Star icon.

* **General Units:** Circle icon.

* **Original Layer:** Light brown rectangle.

* **Masked Layer:** Grey rectangle.

* **Expanded Layer:** Red rectangle.

* **L:** Next Token Prediction Loss.

### Detailed Analysis

**Stage 1:**

* The process starts with "Parameters" and "General-Competence Detection Corpus" feeding into an LLM.

* The LLM probes the layers (L1 to LM) to determine their importance.

* The probing iteration is denoted by 'i'.

* Layers are either "Deactivated" or "Activated" based on the probing.

* The "Selective Expansion via Identity Copy" step involves selecting the lowest-K layers based on their importance (ΔLi).

* These selected layers are then copied and expanded.

**Stage 2:**

* The "Unit-wise Neuron Decoupling" step involves calculating the importance of individual units within the layers.

* The formula for calculating unit-wise importance is given as I(wij) = |wij∇wijL|.

* The "Dynamic Learning Rate Adaptation" step adjusts the learning rate for each unit based on its importance.

* Units with higher importance receive a higher learning rate, while units with lower importance receive a lower learning rate.

### Key Observations

* The diagram highlights a two-stage process for optimizing neural networks.

* Stage 1 focuses on identifying and expanding important layers, while Stage 2 focuses on decoupling and tuning individual units.

* The use of visual cues, such as icons and arrows, helps to illustrate the flow of information and the different steps involved in the process.

* The 3D surface plots in Stage 2 provide a visual representation of the learning rate adaptation process.

### Interpretation

The diagram illustrates a method for improving the efficiency and effectiveness of neural network training. By selectively expanding important layers and adaptively tuning individual units, the network can learn more quickly and achieve better performance. The method leverages the concept of "General-Competence" to guide the selection and tuning process, ensuring that the network is optimized for a specific task or domain. The use of decoupled tuning allows for more fine-grained control over the learning process, enabling the network to adapt to the specific characteristics of the data. The diagram suggests a sophisticated approach to neural network optimization that combines layer-level and unit-level techniques.

</details>

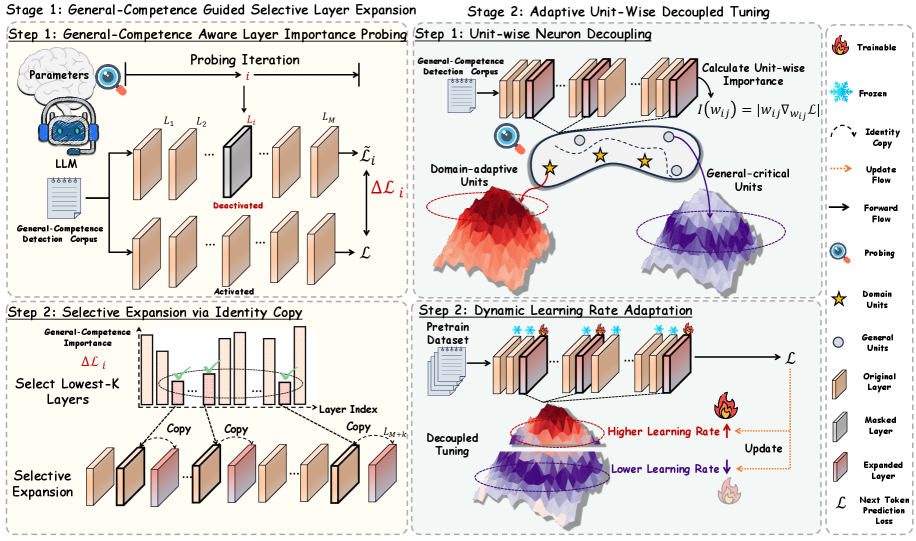

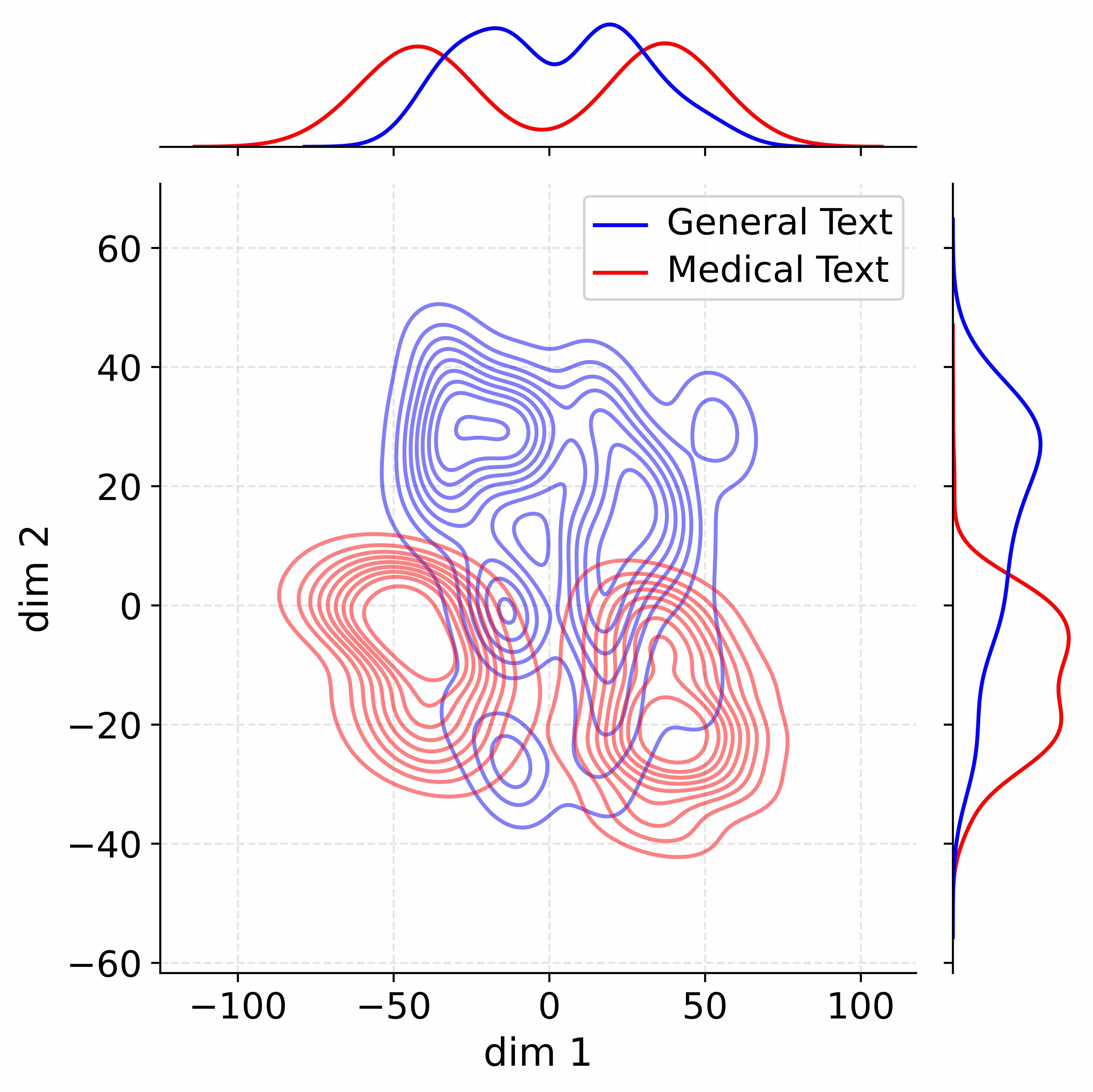

Figure 3: Illustration of ADEPT.

3 Methodology

As illustrated in Figure 3, ADEPT includes two stages:

- # Stage 1: General-Competence Guided Selective Layer Expansion. adaptively selects and duplicates layers that minimally affect general competencies while being more adaptable to domain-specific knowledge, thereby introducing fresh representational capacity for domain adaptation.

- # Stage 2: Adaptive Unit-Wise Decoupled Tuning. further decouples units within the expanded layers and apply learning-rate–driven adaptive tuning according to their importance to the general domain, ensuring knowledge injection while preserving general competencies.

3.1 General-Competence Guided Selective Layer Expansion

This stage aims to selectively expand model parameters in a way that introduces fresh representational capacity for domain adaptation while preserving general-domain competencies. To this end, we first estimate the contribution of each transformer layer to preserving general knowledge through General-Competence Aware Layer Importance Probing, and then perform Selective Parameter Expansion via Identity Copy to duplicate layers that are least critical for general abilities yet more adaptable to domain-specific knowledge.

General-Competence Aware Layer Importance Probing. To guide selective expansion, we leverage the layer importance scores $I_{\text{layer}}^{(l)}$ defined as Eq. 3. Intuitively, $I_{\text{layer}}^{(l)}$ quantifies how much the $l$ -th layer contributes to preserving general-domain knowledge. Layers with lower scores are deemed less critical for general competencies and are thus selected for expansion, as they can accommodate domain-specific adaptation with minimal risk of catastrophic forgetting.

Selective Parameter Expansion via Identity Copy. Based on the importance scores $I_{\text{layer}}^{(l)}$ , we sort layers by ascending importance and select the $k$ least-important ones for general competence:

$$

\mathcal{S}_{k}=\operatorname*{arg\,min}_{\begin{subarray}{c}\mathcal{S}\subseteq\{1,\ldots,L\}\\

|\mathcal{S}|=k\end{subarray}}\sum_{l\in\mathcal{S}}I_{\text{layer}}^{(l)}. \tag{6}

$$

We denote the selected set $\mathcal{S}_{k}$ as the Domain-Adaptable Layers. For each selected layer $l∈\mathcal{S}_{k}$ , we create a parallel copy by directly duplicating its parameters without re-initialization ( $\tilde{\Theta}^{(l)}=\Theta^{(l)}$ ). To preserve stability, we follow the Function Preserving Initialization (FPI) principle (Chen et al., 2015), ensuring that the expanded model $M_{1}$ produces identical outputs as the original model $M_{0}$ at initialization. Concretely, in the duplicated branch, we set the output projections of both attention and feed-forward sublayers to zero ( $W_{\text{MHSA}}^{\text{out}}=0,\,W_{\text{FFN}}^{\text{out}}=0$ ), so the forward computation remains unchanged ( $M_{1}(x)=M_{0}(x),\,∀ x$ ). The duplicated layers thus provide fresh representational capacity that can specialize for domain signals with minimal risk of eroding general-knowledge-critical parameters in the original pathway. As formally established in Appendix F.1, expanding the layers with the lowest general-competence importance provably minimizes the risk of forgetting. Intuitively, this strategy ensures that new capacity is added where interference with general abilities is weakest, yielding the most favorable trade-off between domain adaptation and knowledge retention.

3.2 Adaptive Unit-Wise Decoupled Tuning

This stage aims to further reduce catastrophic forgetting and enable fine-grained control over parameters within the expanded layers. To achieve this, we first decouple each expanded layer into semantic units and evaluate their importance using gradient-based estimation (Unit-wise Neuron Decoupling), and then dynamically adjust learning rates for different units according to their importance scores during training (Dynamic Learning Rate Adaptation).

Unit-wise Neuron Decoupling. Guided by the heterogeneous importance of parameter units within layers, we performs unit-level decoupling on the expanded layers. Following the probing analysis in Section 2.3, we quantify unit importance $I_{\text{unit}}$ using gradient sensitivity signals (cf. Eq. 5), which aggregate the first-order contributions of parameters $\theta_{j}$ to the training loss $\mathcal{L}$ via $∇_{\theta_{j}}\mathcal{L}$ . A higher $I_{\text{unit}}$ indicates greater contribution to general competencies and thus warrants more conservative updates, whereas less important units are encouraged to adapt more aggressively to domain-specific signals.

Dynamic Learning Rate Adaptation. Based on the unit importance $I_{\text{unit}}$ in Eq. 5, we assign adaptive learning rates to different units within the expanded layers:

$$

\text{lr}_{U}=2\cdot(1-I_{\text{unit}})\cdot\text{lr}_{\text{base}}, \tag{7}

$$

where $\text{lr}_{\text{base}}$ is the base learning rate, and the coefficient $2$ normalizes the global scale to keep the effective average approximately unchanged. Units more important for general knowledge (higher $I_{\text{unit}}$ ) receive smaller learning rates to reduce overwriting, while less important units are encouraged to adapt more aggressively to domain-specific data. Training proceeds with the standard autoregressive objective: $\mathcal{L}=-\sum_{t=1}^{T}\log P(x_{t}\mid x_{<t};\Theta)$ . Since the importance of units may change as training progresses, we periodically recompute $I_{\text{unit}}$ and update learning rates accordingly, ensuring dynamic adaptation throughout learning. The full training procedure is provided in Appendix L. Appendix F.2 further shows that allocating learning rates inversely to unit importance minimizes an upper bound on general-domain forgetting. In essence, this design formalizes the intuition that highly general-critical units should be preserved via conservative updates, while less critical yet more adaptable ones can update more aggressively to absorb domain-specific information.

Table 1: Performance comparison across Mathematical and Medical domains. Bold numbers indicate the best performance, and underlined numbers denote the second best.

| Method | Mathematics | Medical | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| General | Domain | General | Domain | | | | | | | |

| MMLU | CMMLU | GSM8K | ARC-Easy | ARC-Challenge | MMLU | CMMLU | MedQA | MMCU-Medical | CMB | |

| Qwen3-1.7B-Base | | | | | | | | | | |

| Vanilla | 62.57 | 66.86 | 57.62 | 81.44 | 51.19 | 62.57 | 66.86 | 48.39 | 69.17 | 63.67 |

| PT-Full | 60.07 | 62.84 | 51.86 | 81.24 | 49.65 | 59.44 | 62.84 | 48.45 | 67.45 | 62.77 |

| Replay | 60.69 | 63.52 | 54.74 | 81.01 | 49.73 | 60.52 | 63.85 | 49.00 | 67.32 | 62.20 |

| Llama-Pro | 61.54 | 63.40 | 60.03 | 81.08 | 49.80 | 59.80 | 65.51 | 50.43 | 66.51 | 63.54 |

| PT-LoRA | 60.07 | 62.69 | 59.50 | 80.22 | 49.34 | 57.31 | 59.68 | 47.29 | 61.55 | 57.60 |

| TaSL | 60.34 | 62.95 | 59.07 | 79.76 | 48.89 | 62.48 | 66.14 | 47.06 | 67.62 | 61.15 |

| ADEPT | 62.62 | 67.06 | 70.51 | 82.48 | 52.62 | 62.80 | 66.89 | 50.75 | 71.98 | 65.43 |

| Qwen3-4B-Base | | | | | | | | | | |

| Vanilla | 73.19 | 77.92 | 69.07 | 85.52 | 59.13 | 73.19 | 77.92 | 62.77 | 82.44 | 78.92 |

| PT-Full | 70.33 | 73.07 | 60.96 | 85.31 | 57.59 | 69.48 | 72.77 | 62.84 | 81.34 | 76.88 |

| Replay | 70.46 | 73.72 | 63.91 | 85.06 | 57.68 | 70.74 | 73.81 | 63.55 | 80.60 | 76.74 |

| Llama-Pro | 72.42 | 77.39 | 73.16 | 85.14 | 57.76 | 72.28 | 77.28 | 62.53 | 81.20 | 78.12 |

| PT-LoRA | 70.20 | 72.90 | 71.34 | 84.18 | 57.25 | 72.73 | 76.78 | 61.59 | 80.49 | 76.92 |

| TaSL | 70.50 | 73.20 | 70.84 | 83.68 | 56.75 | 73.03 | 77.08 | 60.99 | 79.20 | 77.08 |

| ADEPT | 73.21 | 78.30 | 76.19 | 88.44 | 60.98 | 72.95 | 78.77 | 64.49 | 84.58 | 79.87 |

| Qwen3-8B-Base | | | | | | | | | | |

| Vanilla | 76.94 | 82.09 | 69.98 | 87.12 | 64.25 | 76.94 | 82.09 | 66.30 | 86.45 | 81.67 |

| PT-Full | 74.90 | 78.49 | 80.21 | 85.90 | 61.77 | 74.06 | 78.82 | 67.24 | 87.69 | 85.27 |

| Replay | 75.19 | 78.92 | 81.12 | 85.98 | 62.37 | 74.51 | 78.86 | 68.89 | 86.66 | 84.73 |

| Llama-Pro | 76.16 | 81.42 | 80.97 | 86.62 | 63.91 | 76.58 | 81.69 | 66.77 | 87.19 | 83.76 |

| PT-LoRA | 75.66 | 80.81 | 82.87 | 86.36 | 62.46 | 76.60 | 81.57 | 67.01 | 86.70 | 83.04 |

| TaSL | 76.63 | 80.37 | 80.54 | 84.81 | 59.09 | 76.42 | 81.86 | 66.51 | 86.20 | 82.54 |

| ADEPT | 76.80 | 82.11 | 83.87 | 89.29 | 64.51 | 76.77 | 82.11 | 69.24 | 89.84 | 85.80 |

| Llama3-8B-Base | | | | | | | | | | |

| Vanilla | 65.33 | 50.83 | 36.84 | 84.18 | 54.01 | 65.33 | 50.83 | 58.91 | 46.29 | 35.61 |

| PT-Full | 61.62 | 46.21 | 49.73 | 84.01 | 53.52 | 59.15 | 51.39 | 59.23 | 66.58 | 61.65 |

| Replay | 62.00 | 53.31 | 49.51 | 82.49 | 54.18 | 59.98 | 54.52 | 59.07 | 65.84 | 61.71 |

| Llama-Pro | 64.53 | 50.26 | 48.29 | 83.29 | 53.07 | 64.19 | 50.59 | 59.94 | 53.96 | 47.05 |

| PT-LoRA | 64.86 | 49.82 | 48.82 | 83.80 | 54.01 | 64.34 | 50.13 | 58.84 | 56.05 | 48.22 |

| TaSL | 65.16 | 50.11 | 35.43 | 83.29 | 53.51 | 64.64 | 50.43 | 55.55 | 58.34 | 47.69 |

| ADEPT | 65.35 | 51.90 | 50.57 | 84.96 | 55.52 | 65.17 | 51.92 | 61.17 | 67.03 | 61.78 |

4 Experiment

4.1 Experimental Setup

Datasets. We evaluate ADEPT across two domains, Mathematics and Medicine. For the mathematical domain, we use OpenWebMath (Paster et al., 2023), together with AceReason-Math (Chen et al., 2025), concatenated into the continual pretraining corpora. For the medical domain, we adopt the multilingual MMedC corpus (Qiu et al., 2024), together with IndustryIns and MMedBench, forming the medical pretraining corpora. Dataset statistics are provided in Appendix B.1 and Appendix B.2. In addition, for detecting general-knowledge-critical regions, we construct a General Competence Detection Corpus, following the same setting as in Section 2 and described in Appendix B.3.

Baselines. We compare ADEPT with a broad range of baselines from four perspectives:

- Full-parameter tuning. PT-Full directly updates all model parameters on the target corpora.

- Replay-based tuning. Replay mitigates catastrophic forgetting by mixing general-domain data into the training process (Que et al., 2024).

- Architecture expansion. LLaMA-Pro (Wu et al., 2024b) expands the model by uniformly inserting new layers into each transformer block while freezing the original weights. Only the newly introduced parameters are trained, enabling structural growth while preserving prior knowledge.

- Parameter-efficient tuning. PT-LoRA performs CPT using Low-Rank Adaptation (Hu et al., 2022), updating only a small set of task-adaptive parameters. TaSL (Feng et al., 2024a) extends PT-LoRA to a multi-task regime by decoupling LoRA matrices across transformer layers, allowing different subsets of parameters to specialize for different tasks.

See Appendix B.6 for implementation details of all baselines.

Backbone Models. To assess the generality of our method, we instantiate ADEPT on multiple backbone models, including Qwen3-Base (1.7B, 4B, 8B) (Yang et al., 2025a) and LLaMA3.1-8B-Base (Dubey et al., 2024b), covering a wide range of parameter scales and architectural variants.

Evaluation Metrics and Strategy. We adopt multiple-choice question answering accuracy as the primary evaluation metric across all tasks (see Appendix B.9 for further details). For the Mathematics domain, we evaluate on GSM8K (Cobbe et al., 2021), ARC-Easy (Clark et al., 2018), and ARC-Challenge (Clark et al., 2018), which collectively span a wide range of reasoning difficulties. For the Medical domain, we use MedQA (Jin et al., 2021), MMCU-Medical (Zeng, 2023), and CMB (Wang et al., 2023b), covering diverse medical subjects and varying levels of complexity. Among them, MedQA is an English benchmark, while MMCU-Medical and CMB are in Chinese. To assess the model’s ability to retain general-domain knowledge during continual pretraining, we additionally evaluate on MMLU (Hendrycks et al., 2020) and CMMLU (Li et al., 2023), two broad-coverage benchmarks for general knowledge and reasoning in English and Chinese, respectively.

4.2 Experimental Results

Performance Comparison. As shown in Table 1, ADEPT consistently outperforms all CPT baselines across both mathematical and medical domains, confirming its effectiveness in domain-specific knowledge acquisition while substantially alleviating catastrophic forgetting. Concretely, ADEPT achieves substantial domain-specific improvements. Across all backbones and domain benchmarks, ADEPT consistently surpasses baselines, achieving the strongest performance. For instance, on Qwen3-1.7B-Base, ADEPT boosts GSM8K accuracy from 57.62% to 70.51% $\uparrow$ , bringing a large gain that highlights its advantage on enhancing LLMs’ complex reasoning. Similarly, on LLaMA3-8B-Base, it drastically improves CMB accuracy improves from 35.61% to 61.78% $\uparrow$ , underscoring the strong enhancement of medical-domain capabilities. On average, ADEPT achieves up to 5.58% gains over full-parameter CPT on target-domain benchmarks, confirming its advantage in domain knowledge acquisition. Furthermore, ADEPT demonstrates clear advantages in mitigating catastrophic forgetting. Whereas most baselines suffer noticeable degradation on general benchmarks such as MMLU and CMMLU, ADEPT preserves the pretrained LLMs’ general-domain competencies, and in some cases even surpasses the vanilla backbone. Notably, with Qwen3-4B under medical CPT, ADEPT improves CMMLU accuracy from 77.92% to 78.77% $\uparrow$ . It also results in an average performance increase of 5.76% on general benchmarks over full-parameter CPT. We attribute this to the disentanglement of domain-specific and general parameters, which prevents harmful representational interference during adaptation, ensuring that learning specialized knowledge does not corrupt the model’s foundational abilities. Instead, this focused learning process appears to refine the model’s overall competencies, leading to synergistic improvements on general-domain tasks. In summary, ADEPT offers a robust solution for CPT achieving superior domain adaptation while effectively preserving general knowledge.

Table 2: Ablation study on ADEPT in Medical domain. Bold numbers indicate the best performance, and underlined numbers denote the second best.

| Method | Qwen3-1.7B-Base | Llama3-8B-Base | | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| MMLU | CMMLU | MedQA | MMCU-Medical | CMB | MMLU | CMMLU | MedQA | MMCU-Medical | CMB | |

| ADEPT | 62.80 | 66.89 | 50.75 | 70.98 | 65.43 | 65.17 | 51.92 | 61.17 | 61.78 | 67.03 |

| w/o Stage-1 | 57.31 | 59.68 | 47.29 | 61.55 | 57.60 | 57.88 | 50.76 | 58.32 | 53.32 | 60.32 |

| w/o Stage-2 | 61.56 | 64.33 | 49.23 | 66.19 | 64.36 | 64.34 | 50.74 | 59.60 | 50.68 | 57.36 |

| Uniform Expansion | 59.80 | 65.51 | 50.43 | 66.51 | 63.54 | 64.19 | 50.59 | 59.94 | 47.05 | 53.96 |

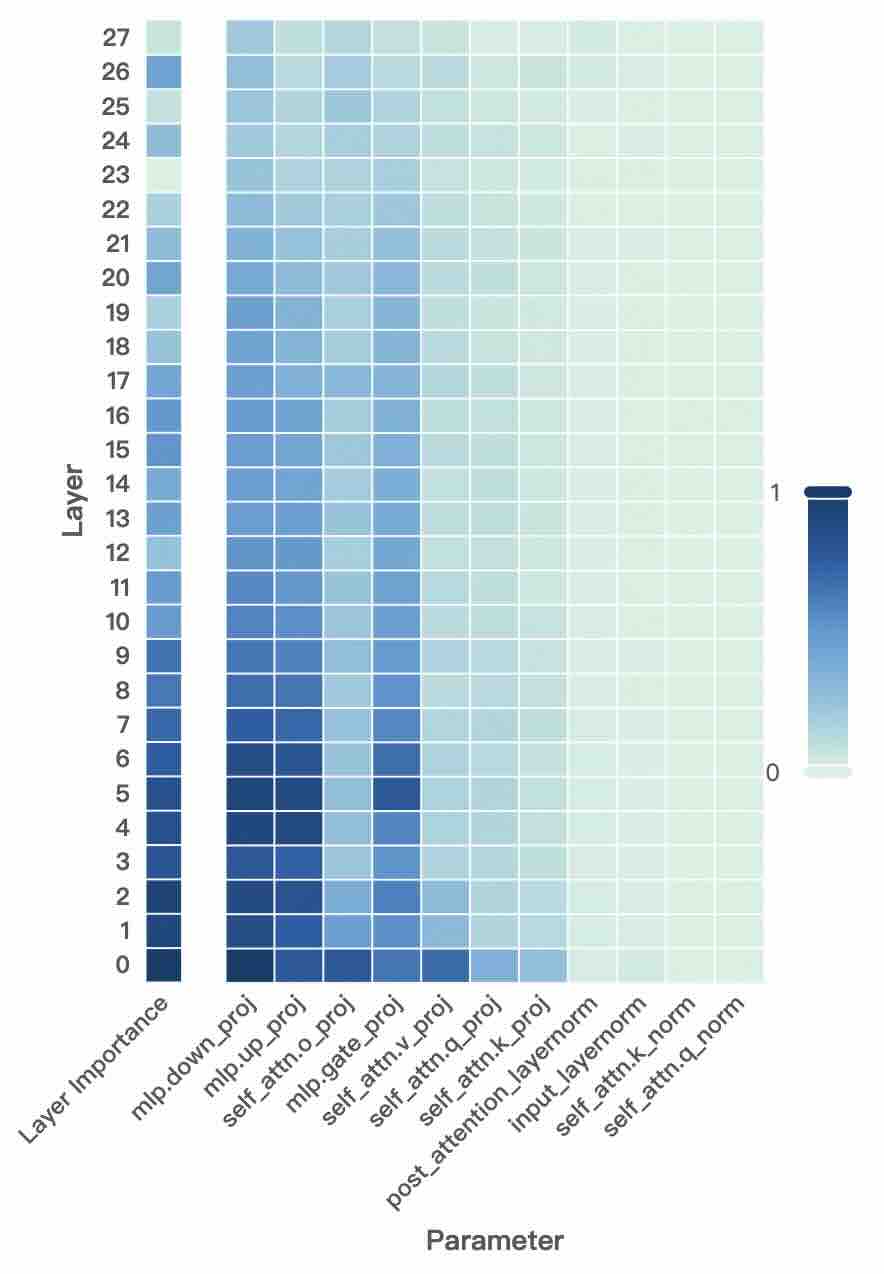

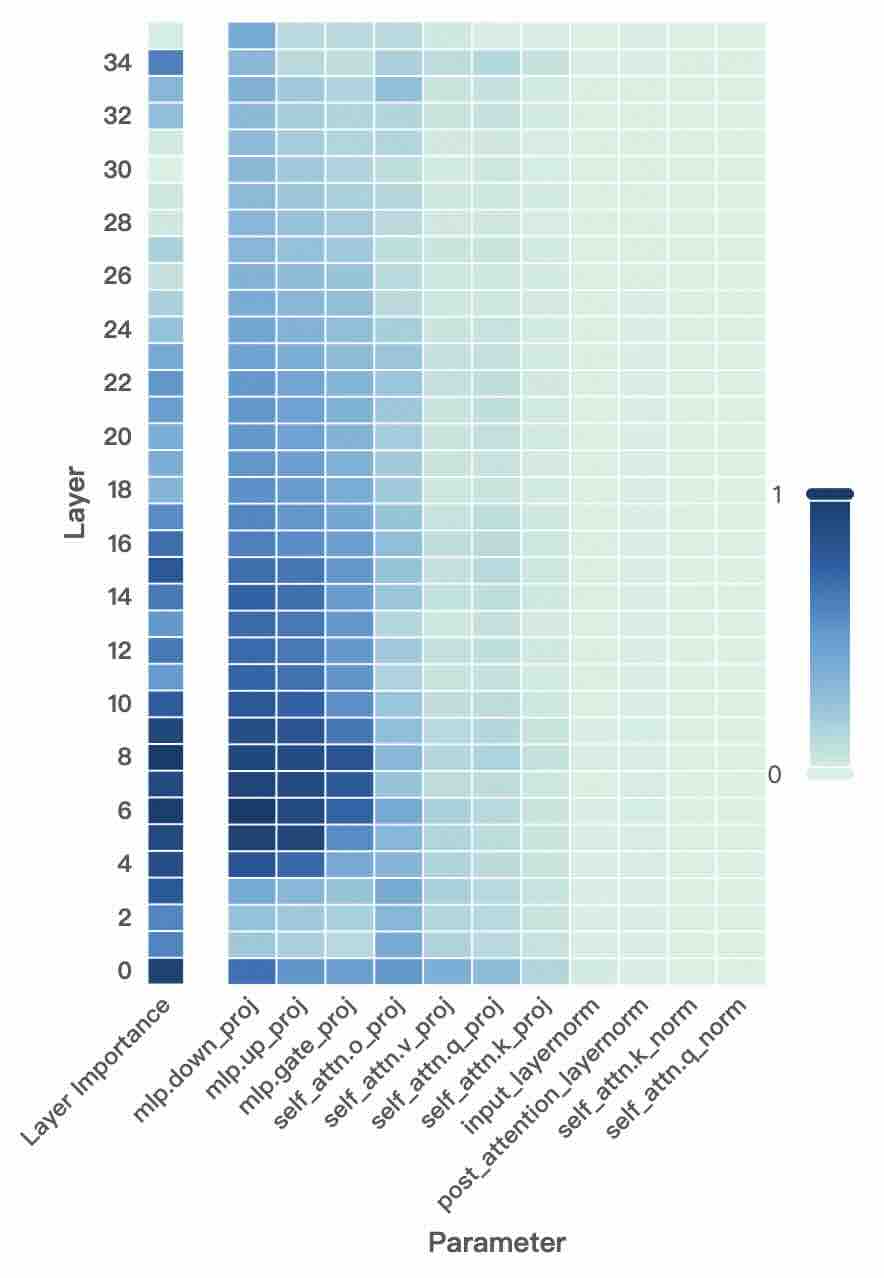

Ablation Study. To investigate the effectiveness of each component in ADEPT, we conduct ablation experiments in the medical domain using two representative backbones, Qwen3-1.7B and Llama3-8B. In w/o Stage-1, we remove the General-Competence Guided Selective Layer Expansion and directly apply Adaptive Unit-Wise Decoupled Tuning on the $k$ Domain-Adaptable Layers without introducing any new parameters. In w/o Stage-2, we discard the dynamic decoupled tuning stage and instead directly fine-tune the expanded layers from Stage-1. In Uniform Expansion, we replace importance-guided expansion with uniformly inserted layers followed by fine-tuning, which is equivalent to the strategy adopted in LLaMA-Pro. As shown in Table 2, removing either Stage-1 or Stage-2 leads to clear degradation in both general and domain-specific performance, confirming that both adaptive expansion and decoupled tuning are indispensable. In particular, eliminating Stage-1 results in the largest performance drop, suggesting that adaptive capacity allocation is crucial for enabling effective domain adaptation without sacrificing general-domain competencies. Meanwhile, replacing importance-guided expansion with uniform expansion yields inferior results, underscoring the advantage of expanding only the most domain-adaptable layers.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Density Contour Plots: Comparing Text Embeddings

### Overview

The image presents three sets of density contour plots, each comparing the distribution of "General Text" and "Medical Text" embeddings in a two-dimensional space. The plots are arranged horizontally, labeled "Vanilla," "W/o stage1," and "ADEPT." Each plot includes a 2D contour plot, a density plot above it representing the distribution along the horizontal axis (dim 1), and a density plot to the right representing the distribution along the vertical axis (dim 2). The plots aim to visualize how different embedding methods separate general and medical text.

### Components/Axes

* **Titles:** "Vanilla," "W/o stage1," "ADEPT" (placed above each respective plot).

* **Legends:** Each plot has a legend in the top-left corner indicating:

* "General Text" (represented by the color blue)

* "Medical Text" (represented by the color red)

* **Axes Labels:**

* Horizontal axis (dim 1): Ranges from approximately -75 to 75 for "Vanilla", -100 to 100 for "W/o stage1", and -100 to 100 for "ADEPT".

* Vertical axis (dim 2): Ranges from approximately -75 to 100 for "Vanilla", -60 to 60 for "W/o stage1", and -60 to 60 for "ADEPT".

* **Axis Markers:**

* Vanilla: dim 1: -50, 0, 50. dim 2: -75, -50, -25, 0, 25, 50, 75, 100

* W/o stage1: dim 1: -100, -50, 0, 50, 100. dim 2: -60, -40, -20, 0, 20, 40, 60

* ADEPT: dim 1: -100, -50, 0, 50. dim 2: -60, -40, -20, 0, 20, 40, 60

### Detailed Analysis

**1. Vanilla:**

* **2D Contour Plot:**

* "General Text" (blue): The contours form a cluster centered around dim 1 = -25 and dim 2 = 25. The distribution is somewhat elongated along a diagonal.

* "Medical Text" (red): The contours form a cluster centered around dim 1 = 25 and dim 2 = -10. The distribution is more spread out compared to "General Text."

* **Horizontal Density Plot (dim 1):**

* "General Text" (blue): Shows a single peak around -25.

* "Medical Text" (red): Shows a single peak around 25.

* **Vertical Density Plot (dim 2):**

* "General Text" (blue): Shows a single peak around 25.

* "Medical Text" (red): Shows a single peak around -10.

**2. W/o stage1:**

* **2D Contour Plot:**

* "General Text" (blue): The contours are more dispersed, with a primary cluster around dim 1 = -25 and dim 2 = 10, and a secondary cluster around dim 1 = 25 and dim 2 = -20.

* "Medical Text" (red): The contours are also dispersed, with a primary cluster around dim 1 = 25 and dim 2 = 10, and a secondary cluster around dim 1 = -25 and dim 2 = -20. There is significant overlap between the two distributions.

* **Horizontal Density Plot (dim 1):**

* "General Text" (blue): Shows two peaks, one around -25 and another around 25.

* "Medical Text" (red): Shows two peaks, one around -25 and another around 25.

* **Vertical Density Plot (dim 2):**

* "General Text" (blue): Shows two peaks, one around 10 and another around -20.

* "Medical Text" (red): Shows two peaks, one around 10 and another around -20.

**3. ADEPT:**

* **2D Contour Plot:**

* "General Text" (blue): The contours form a cluster centered around dim 1 = -40 and dim 2 = 20.

* "Medical Text" (red): The contours form a cluster centered around dim 1 = 40 and dim 2 = -20. The separation between the two distributions is more pronounced than in the other two plots.

* **Horizontal Density Plot (dim 1):**

* "General Text" (blue): Shows a single peak around -40.

* "Medical Text" (red): Shows a single peak around 40.

* **Vertical Density Plot (dim 2):**

* "General Text" (blue): Shows a single peak around 20.

* "Medical Text" (red): Shows a single peak around -20.

### Key Observations

* The "Vanilla" plot shows some separation between "General Text" and "Medical Text," but there is still some overlap.

* The "W/o stage1" plot shows significant overlap between the two distributions, indicating poor separation.

* The "ADEPT" plot shows the best separation between "General Text" and "Medical Text," with distinct clusters for each.

### Interpretation

The plots visualize the effectiveness of different embedding methods in distinguishing between general and medical text. The "ADEPT" method appears to be the most effective, as it produces the most distinct clusters for the two types of text. Removing "stage1" results in the worst separation. The "Vanilla" method provides an intermediate level of separation. This suggests that the "ADEPT" method is better at capturing the semantic differences between general and medical text, which could be beneficial for various natural language processing tasks in the medical domain. The overlap in "W/o stage1" suggests that stage 1 is critical for separating the two types of text.

</details>

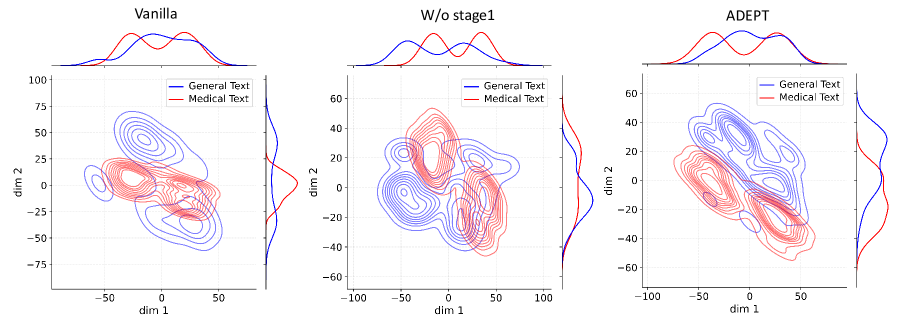

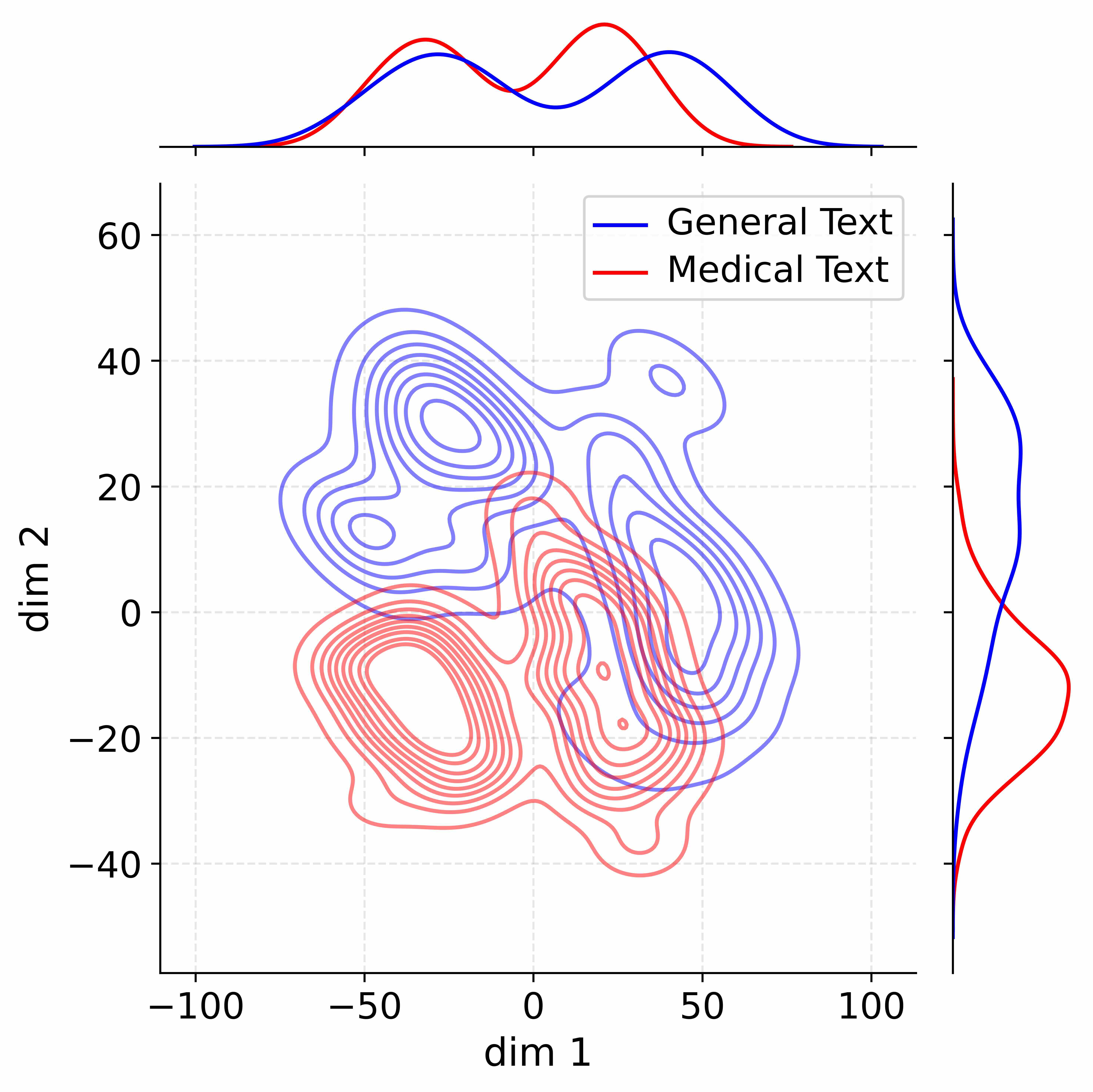

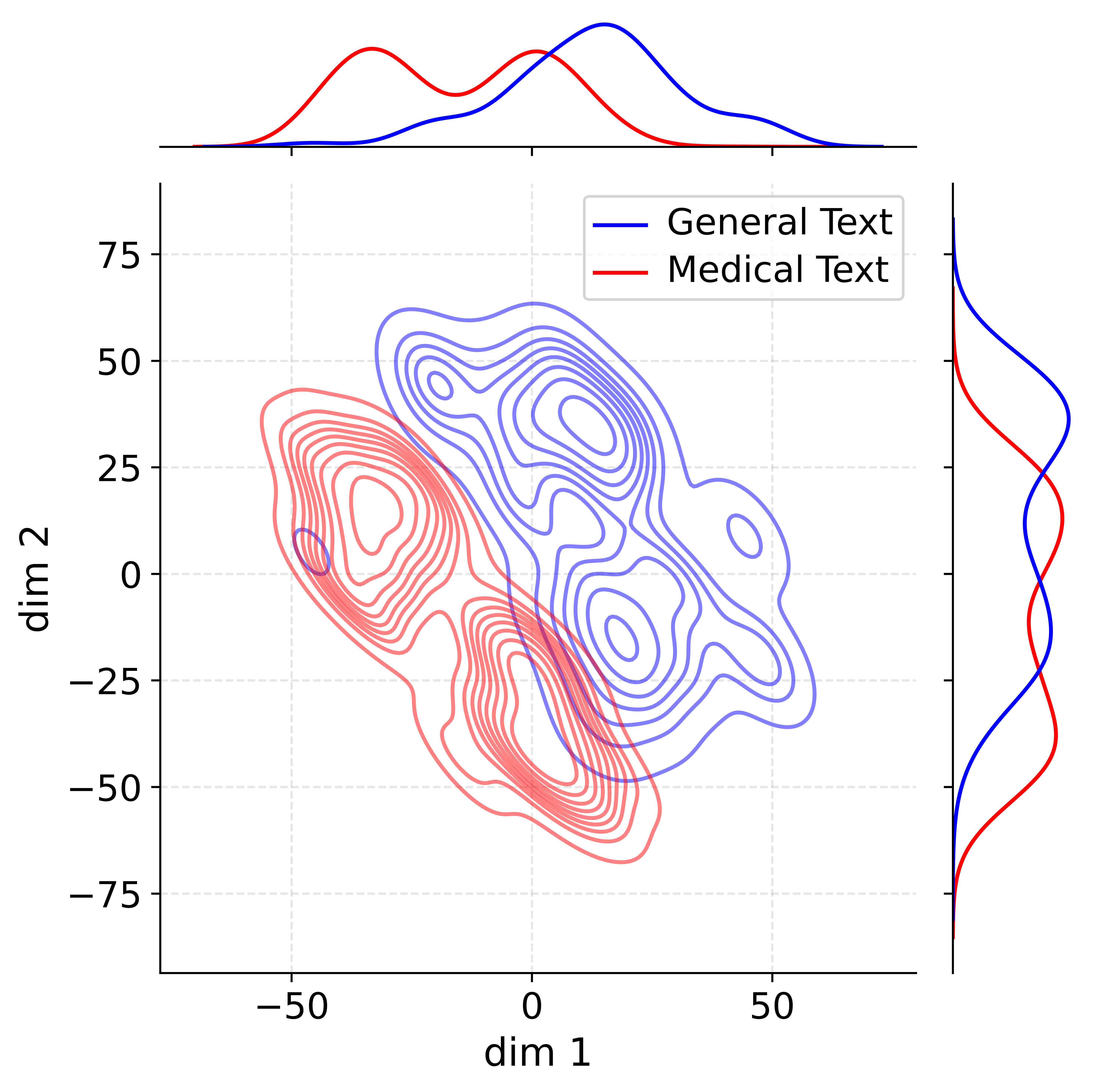

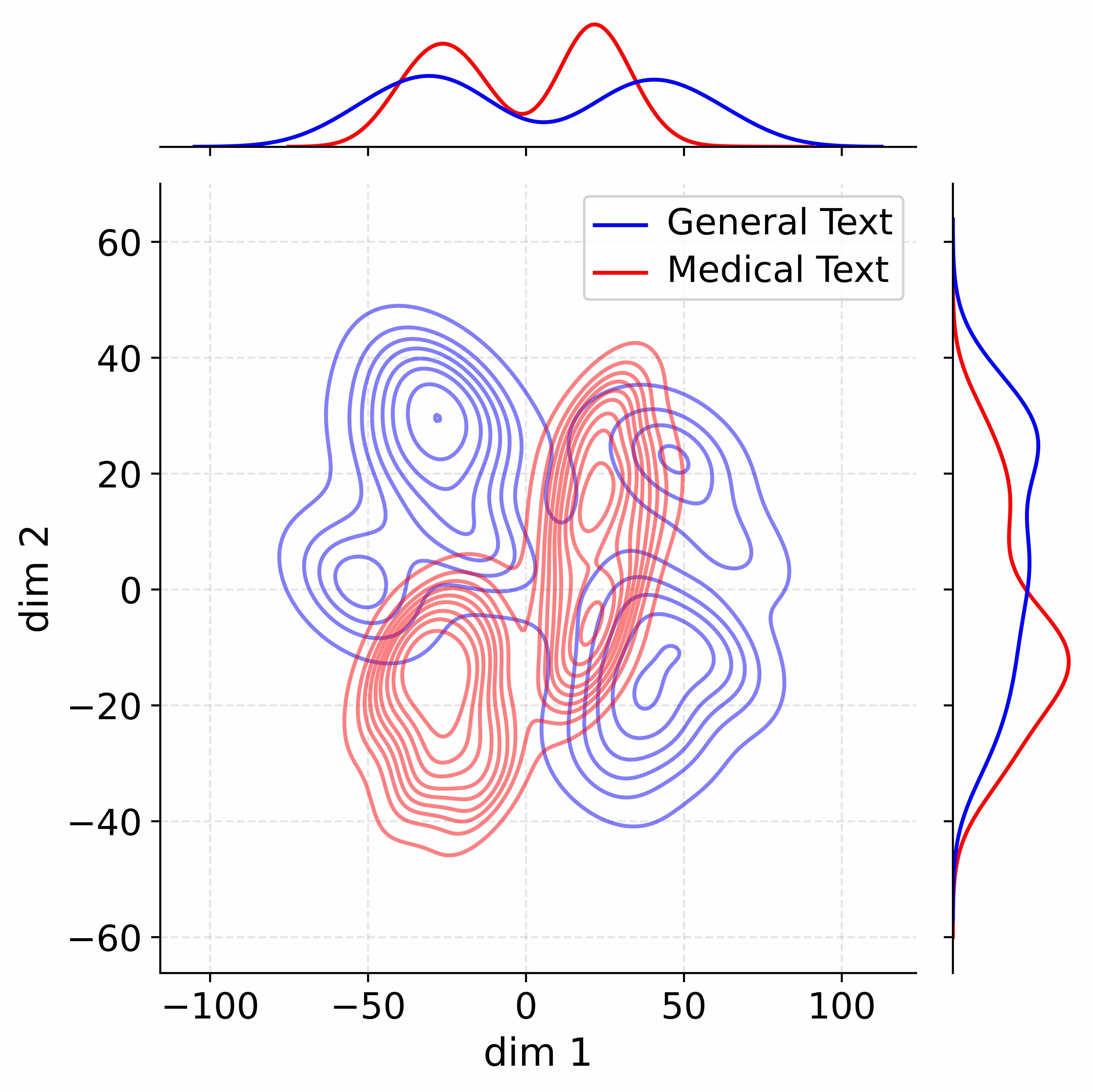

Figure 4: Activation distribution analysis of Qwen3-8B.

Decoupling Effectiveness on Expanded Parameters. We visualize cross-domain activations using Kernel Density Estimation (KDE) (Silverman, 2018), sampling 500 instances from both Medical and General corpora. For the original Qwen3-8B-Base (left in Figure 4), the most domain-adaptable layer (lowest $I_{\text{layer}}$ ) still shows heavy overlap between general and medical activations, evidencing strong parameter coupling. Direct decoupling without expansion (w/o Stage-1, middle) on the same layer fails to reduce this entanglement, confirming that pretrained parameters are inherently difficult to separate. In contrast, after expansion (right), the duplicated layers serve as a “blank slate,” yielding clearly separated activations across domains. Additional analyses on more backbones are provided in Appendix C.1, where we observe that this trend consistently holds across nearly all evaluated LLMs, further validating the generality of our approach.

<details>

<summary>figures/wordcloud_med.jpg Details</summary>

### Visual Description

## Word Cloud: Medical Research Terms

### Overview

The image is a word cloud, visually representing the frequency of different terms related to medical research. The size of each word corresponds to its frequency or importance within the context from which the words were extracted. The words are arranged randomly and colored in shades of green and blue.

### Components/Axes

* **Words:** The primary components are the individual words themselves, such as "Health," "Patient," "Drug," "Treatment," "Disease," etc.

* **Size:** The size of each word indicates its relative frequency or importance. Larger words appear more frequently.

* **Color:** The words are colored in shades of green and blue.

### Detailed Analysis or ### Content Details

Here's a breakdown of the words and their relative prominence based on size:

* **Largest Words:**

* "Health" (Green): One of the most prominent words, suggesting a strong focus on general health-related topics.

* "Patient" (Green): Indicates a significant emphasis on patient-related research.

* "Treatment" (Blue): Highlights the importance of treatment methodologies.

* "Drug" (Blue): Suggests a focus on pharmaceutical interventions.

* **Large Words:**

* "Care" (Blue): Indicates a focus on healthcare services.

* "Hospital" (Green): Suggests research related to hospital settings.

* "Disease" (Green): Indicates a focus on specific diseases.

* "Medication" (Green): Highlights the importance of pharmaceutical interventions.

* "Heart" (Blue): Suggests a focus on cardiovascular health.

* "Diabetes" (Blue): Indicates a focus on diabetes research.

* "Symptoms" (Blue): Highlights the importance of symptom identification.

* "Therapy" (Blue): Suggests a focus on therapeutic interventions.

* "Brain" (Blue): Indicates a focus on neurological research.

* "Tumor" (Green): Highlights the importance of cancer research.

* "Prescription" (Blue): Suggests a focus on pharmaceutical interventions.

* "Randomized" (Blue): Indicates a focus on randomized controlled trials.

* **Medium-Sized Words:**

* "Dental" (Green): Indicates a focus on dental health.

* "Medical" (Green): Suggests research related to medical settings.

* "RNA" (Green): Highlights the importance of RNA research.

* "Medicine" (Yellow): Indicates a focus on medical settings.

* "Results" (Green): Highlights the importance of research outcomes.

* "MRI" (Green): Suggests research related to medical imaging.

* **Smaller Words:**

* "FDA" (Blue): Indicates a focus on regulatory compliance.

* "GDPR" (Green): Indicates a focus on data privacy.

* "Research" (Green): Highlights the importance of research activities.

* "Arthritis" (Green): Suggests research related to arthritis.

* "Concentrations" (Green): Indicates a focus on chemical concentrations.

* "Cases" (Blue): Indicates a focus on case studies.

* "Illness" (Green): Suggests research related to illness.

* "Protein" (Green): Highlights the importance of protein research.

* "LOS" (Green): Length of Stay

* "Best" (Green): Indicates a focus on best practices.

* "MD" (Blue): Indicates a focus on medical doctors.

* "DICE" (Blue): Indicates a focus on dice research.

* "Cancer" (Green): Suggests research related to cancer.

* "Healthy" (Green): Indicates a focus on healthy lifestyles.

* "Investigator" (Green): Highlights the importance of research investigators.

* "CDC" (Green): Indicates a focus on the Centers for Disease Control and Prevention.

* "Warning" (Green): Highlights the importance of safety warnings.

* "Data" (Blue): Indicates a focus on data analysis.

* "Diagnosed" (Green): Highlights the importance of diagnosis.

* "Drugs" (Green): Suggests a focus on pharmaceutical interventions.

* "Stay" (Green): Indicates a focus on patient stay.

* "Study" (Green): Highlights the importance of research studies.

* "Significant" (Green): Indicates a focus on significant findings.

* "Effects" (Green): Highlights the importance of research effects.

* "Mental" (Blue): Indicates a focus on mental health.

* "Addiction" (Green): Suggests research related to addiction.

* "Diseases" (Green): Indicates a focus on specific diseases.

* "Time" (Green): Highlights the importance of time-related factors.

* "Vaccine" (Blue): Suggests research related to vaccines.

* "Hospitals" (Green): Indicates a focus on hospital settings.

* "Photograph" (Green): Highlights the importance of photographic documentation.

* "Effect" (Green): Highlights the importance of research effects.

* "Role" (Green): Indicates a focus on roles in healthcare.

* "Blood" (Green): Indicates a focus on blood-related research.

* "News" (Green): Highlights the importance of news dissemination.

* "Serious" (Green): Indicates a focus on serious conditions.

* "Researchers" (Green): Highlights the importance of research personnel.

* "Ways" (Green): Indicates a focus on different approaches.

* "Lot" (Green): Indicates a focus on lot sizes.

* "Of" (Green): Indicates a focus on relationships.

* "You" (Green): Indicates a focus on patient involvement.

* "Cell" (Green): Indicates a focus on cellular research.

* "Leading" (Green): Indicates a focus on leading research.

* "MS" (Blue): Indicates a focus on multiple sclerosis.

* "Kidney" (Green): Indicates a focus on kidney health.

* "Emergency" (Green): Indicates a focus on emergency care.

* "Dementia" (Green): Indicates a focus on dementia research.

* "Ildenafil" (Yellow): Indicates a focus on ildenafil research.

* "Diagnosis" (Green): Highlights the importance of diagnosis.

* "Izaion" (Green): Indicates a focus on ionization research.

* "UE" (Green): Indicates a focus on user experience.

* "Po" (Green): Indicates a focus on patient outcomes.

* "FS" (Green): Indicates a focus on financial services.

* "Most" (Green): Indicates a focus on most common conditions.

* "Doctor" (Green): Indicates a focus on doctor involvement.

* "Pain" (Green): Indicates a focus on pain management.

* "Ynec" (Yellow): Indicates a focus on gynecological research.

### Key Observations

* The word cloud emphasizes the importance of "Health," "Patient," "Treatment," and "Drug" in medical research.

* There is a significant focus on specific diseases like "Diabetes" and "Cancer."

* The inclusion of terms like "FDA" and "GDPR" suggests an awareness of regulatory and privacy considerations.

### Interpretation

The word cloud provides a snapshot of the key themes and areas of focus within medical research. The prominence of terms like "Health," "Patient," and "Treatment" underscores the patient-centric nature of the field. The presence of disease-specific terms indicates targeted research efforts, while the inclusion of regulatory terms highlights the importance of ethical and legal considerations. The word cloud suggests a field that is both broad in scope and highly specialized, with a strong emphasis on improving patient outcomes and advancing medical knowledge.

</details>

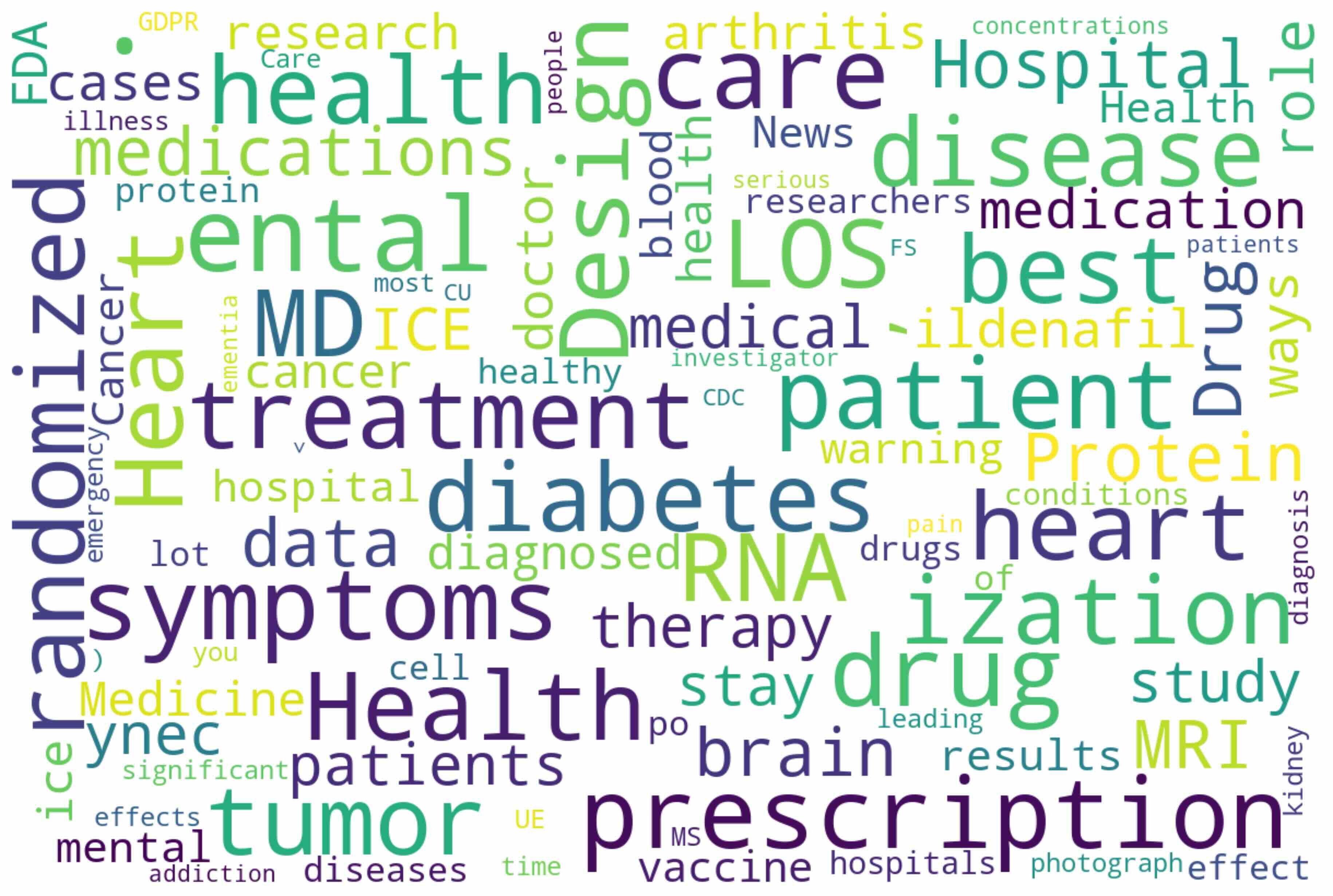

(a) Token distributions shift in Medical

<details>

<summary>figures/wordcloud_math.jpg Details</summary>

### Visual Description

## Word Cloud: Research Topics

### Overview

The image is a word cloud, visually representing the frequency of different terms. The size of each word corresponds to its frequency or importance. The words are related to research, academia, and scientific topics.

### Components/Axes

There are no axes in a word cloud. The key component is the collection of words themselves, with their relative sizes indicating frequency.

### Detailed Analysis or ### Content Details

Here's a transcription of the words, grouped loosely by location and size (largest to smallest):

* **Left Side:**

* algorithm

* students

* paper

* user

* talk

* scripts

* Oct

* problem

* sense

* udos

* de

* **Center:**

* mathematics

* space

* effects

* following

* editor

* physics

* answer

* previous

* quantum

* parameters

* model

* bit

* reaction

* identity

* theorem

* isation

* **Right Side:**

* question

* PhD

* results

* Rig

* value

* open

* profit

* error

* features

* code

* al

* ule

* to

* A

* chner

* case

* **Other words:**

* PE

* phenotype

* ob

* variance

* scatter

* et

* F

* Tony

* Sized

* ja

* ric

* ormal

* esium

* pton

* papers

* $$

* AG

* sh

* connected

* quant

* new

* ex

* CM

* the

* FT

* ATE

* he

* groups

* {

* }

* |

* b1

* 2

* qu

* $?$

### Key Observations

* The largest words include "algorithm," "mathematics," "space," "effects," "following," "editor," "physics," "answer," "previous," "quantum," "question," "PhD," and "results."

* There are some symbols and abbreviations scattered throughout, such as "$", "ex", "PE", "CM", "FT", "ATE", "AG", "sh", "b1", "2", "{", "}", "|", and "?".

* The words are arranged in a seemingly random fashion, typical of word clouds.

### Interpretation

The word cloud provides a visual summary of the topics most frequently discussed or emphasized in a given context (likely a collection of research papers, articles, or discussions). The prominence of terms like "algorithm," "mathematics," "physics," and "quantum" suggests a focus on quantitative and scientific research. The presence of words like "question," "results," and "PhD" indicates an academic or research-oriented environment. The cloud highlights the key themes and areas of interest within the source material.

</details>

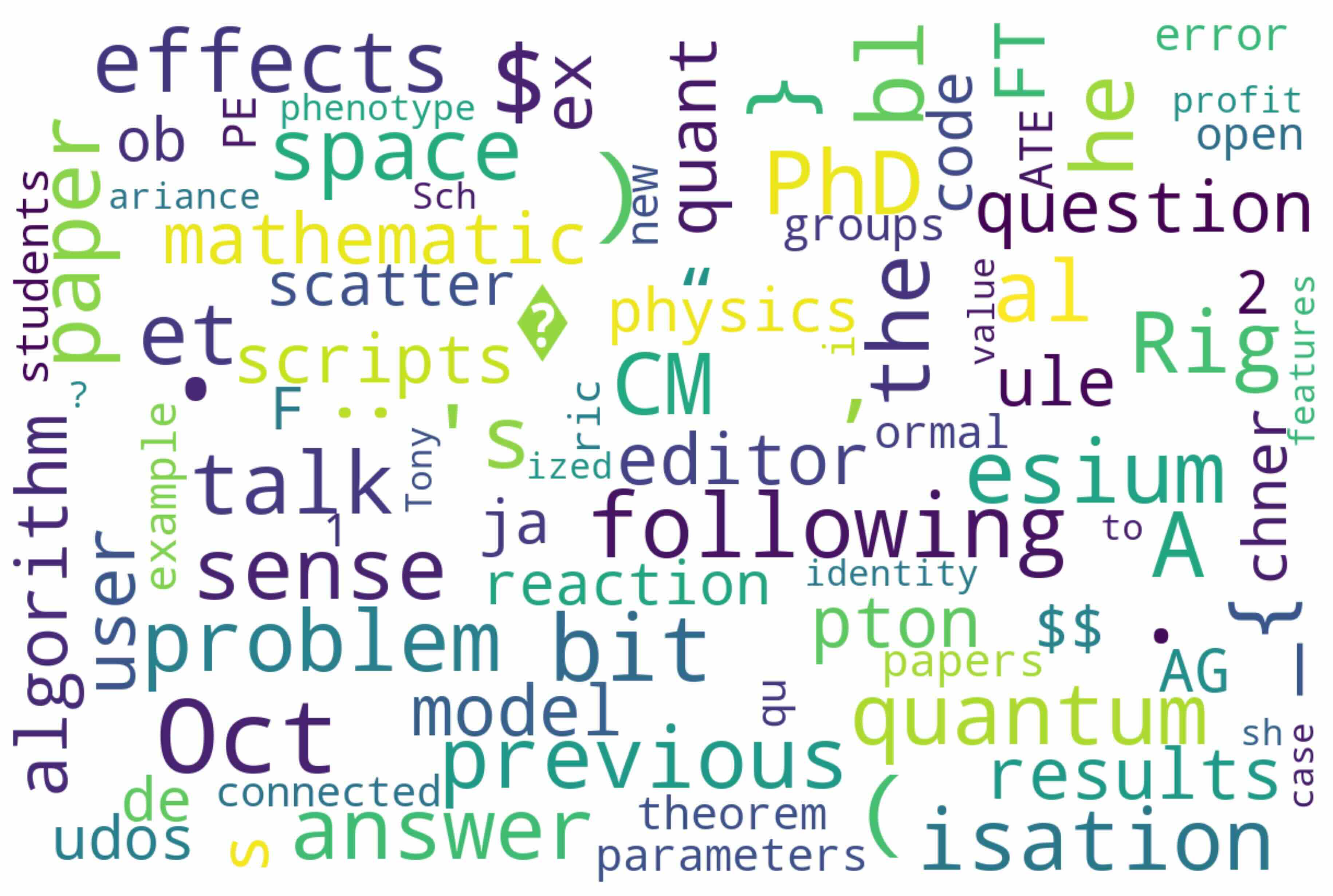

(b) Token distributions shift in Mathematical

Figure 5: Token distribution shifts across domains. Word cloud visualizations of shifted tokens reveal that ADEPT achieves highly focused alignment, with most changes concentrated on domain-specific terminology.

Token Distribution Shift Analysis. To assess how ADEPT injects domain knowledge while preserving general competencies, we analyze token-level shifts between the base and continually pretrained models. Following Lin et al. (2024), tokens are categorized as unshifted, marginal, or shifted. Only a small proportion of tokens shift, while most remain unchanged, indicating stable adaptation. In the medical domain, merely 2.18% shift (vs. 5.61% under full pretraining), largely medical terms such as “prescription,” “diagnosis,” and “therapy” (Figure 5(a)). In the mathematical domain, only 1.24% shift, mainly scientific terms such as “theorem” and “equation” (Figure 5(b)). Further details and analyses are provided in Appendix I. These results demonstrate that ADEPT achieves precise and economical domain knowledge injection while minimizing perturbation to general competence.

Extended Investigations and Key Insights. We further investigate several design choices of ADEPT in appendix: In Appendix E, we investigate alternative strategies for probing layer importance and observe the consistency of different measurement methods, offering insight into how importance estimation affects adaptation outcomes. Appendix G explores the effect of expanding different numbers of layers and reveals how the number of expansion layers should be selected under different circumstances and the potential reasons behind this. Appendix H shows that even with relatively low-quality importance detection corpus from pretrain data, our approach maintains strong generalization across domains, suggesting the robustness of ADEPT. Appendix J demonstrates our insights into the potential for merging expanded layers that are independently trained on different domains, offering an intriguing direction for achieving multi-domain adaptation with minimal catastrophic forgetting. In addition, Appendix B.8 analyzes the training efficiency of ADEPT, showing that our selective updating design substantially accelerates convergence compared to baselines.

5 Conclusions and Future Works

We present ADEPT, a framework for LLM continual pretraining for domain adaptation that effectively tackles catastrophic forgetting, leveraging functional specialization in LLMs. By selectively expanding layers less critical to the general domain and adaptively updating decoupled parameter units, ADEPT minimizes catastrophic forgetting while efficiently incorporating domain-specific expertise. Our experiments show significant improvements in both domain performance and general knowledge retention compared to baselines. Future work could focus on refining the decoupled tuning mechanism, designing more sophisticated learning rate strategies beyond linear mapping to allow for more precise adjustments. Another direction is to explore better dynamic and real-time methods for measuring parameter importance during training.

6 Ethics Statement

All datasets used for training and evaluation in this study are publicly available versions obtained from the Hugging Face platform. The datasets have been curated, cleaned, and de-identified by their respective data providers prior to release. No patient personal information or identifiable medical data is present. Consequently, the research does not involve human subjects, and there are no related concerns regarding privacy, confidentiality, or legal liability. And for full transparency, we report all aspects of large language model (LLM) involvement in the Appendix K.

We strictly adhered to the usage and redistribution licenses provided by the original dataset authors and hosting platforms. Our research poses no risk of harm to individuals or groups and does not contain any potentially harmful insights, models, or applications. Additionally, there are no conflicts of interest or sponsorship concerns associated with this work. We are committed to research integrity and ethical standards consistent with the ICLR Code of Ethics.

7 Reproducibility Statement

We actively support the spirit of openness and reproducibility advocated by ICLR. To ensure the reproducibility of our research, we have taken the following measures:

1. Disclosure of Base Models: All base models used in our experiments are explicitly identified and described in the main text. This allows readers to directly reference and obtain these models.

1. Datasets and Experimental Details: All experiments are conducted on publicly available datasets from the Hugging Face platform. In Appendix B, we provide a comprehensive description of our experimental implementation, including dataset sources, browser links, and detailed data processing procedures. We also detail the experimental setup, such as training duration, hardware environment (e.g., GPU type), and configuration of hyperparameters, including LoRA_rank, number of extended layers, batch_size, and max_length. These details facilitate transparent verification and replication of our results.

1. Open-Source Code Release: To further support reproducibility, we release all training and evaluation code in an anonymous repository (https://anonymous.4open.science/status/ADEPT-F2E3). The repository contains clear instructions on installation, data downloading, preprocessing, and experimentation, allowing interested researchers to replicate our results with minimal effort.

References

- Aghajanyan et al. (2021) Armen Aghajanyan, Sonal Gupta, and Luke Zettlemoyer. Intrinsic dimensionality explains the effectiveness of language model fine-tuning. In Chengqing Zong, Fei Xia, Wenjie Li, and Roberto Navigli (eds.), Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing, ACL/IJCNLP 2021, (Volume 1: Long Papers), Virtual Event, August 1-6, 2021, pp. 7319–7328. Association for Computational Linguistics, 2021. doi: 10.18653/V1/2021.ACL-LONG.568. URL https://doi.org/10.18653/v1/2021.acl-long.568.

- Ahn et al. (2024) Janice Ahn, Rishu Verma, Renze Lou, Di Liu, Rui Zhang, and Wenpeng Yin. Large language models for mathematical reasoning: Progresses and challenges. In Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics: Student Research Workshop, pp. 225–237, 2024.

- Arbel et al. (2024) Iftach Arbel, Yehonathan Refael, and Ofir Lindenbaum. Transformllm: Adapting large language models via llm-transformed reading comprehension text. arXiv preprint arXiv:2410.21479, 2024.

- Chen et al. (2024) Junying Chen, Zhenyang Cai, Ke Ji, Xidong Wang, Wanlong Liu, Rongsheng Wang, Jianye Hou, and Benyou Wang. Huatuogpt-o1, towards medical complex reasoning with llms. arXiv preprint arXiv:2412.18925, 2024.

- Chen et al. (2015) Tianqi Chen, Ian Goodfellow, and Jonathon Shlens. Net2net: Accelerating learning via knowledge transfer. arXiv preprint arXiv:1511.05641, 2015.

- Chen et al. (2025) Yang Chen, Zhuolin Yang, Zihan Liu, Chankyu Lee, Peng Xu, Mohammad Shoeybi, Bryan Catanzaro, and Wei Ping. Acereason-nemotron: Advancing math and code reasoning through reinforcement learning. arXiv preprint arXiv:2505.16400, 2025.

- Cheng et al. (2023) Daixuan Cheng, Shaohan Huang, and Furu Wei. Adapting large language models via reading comprehension. In The Twelfth International Conference on Learning Representations, 2023.

- Clark et al. (2019) Kevin Clark, Urvashi Khandelwal, Omer Levy, and Christopher D. Manning. What does BERT look at? an analysis of bert’s attention. In Tal Linzen, Grzegorz Chrupala, Yonatan Belinkov, and Dieuwke Hupkes (eds.), Proceedings of the 2019 ACL Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP, BlackboxNLP@ACL 2019, Florence, Italy, August 1, 2019, pp. 276–286. Association for Computational Linguistics, 2019. doi: 10.18653/V1/W19-4828. URL https://doi.org/10.18653/v1/W19-4828.

- Clark et al. (2018) Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge. arXiv preprint arXiv:1803.05457, 2018.

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, et al. Training verifiers to solve math word problems. arXiv preprint arXiv:2110.14168, 2021.

- Dai et al. (2022) Damai Dai, Li Dong, Yaru Hao, Zhifang Sui, Baobao Chang, and Furu Wei. Knowledge neurons in pretrained transformers. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 8493–8502, 2022.

- Ding et al. (2024) Hongxin Ding, Yue Fang, Runchuan Zhu, Xinke Jiang, Jinyang Zhang, Yongxin Xu, Xu Chu, Junfeng Zhao, and Yasha Wang. 3ds: Decomposed difficulty data selection’s case study on llm medical domain adaptation. arXiv preprint arXiv:2410.10901, 2024.

- Ding et al. (2025) Hongxin Ding, Baixiang Huang, Yue Fang, Weibin Liao, Xinke Jiang, Zheng Li, Junfeng Zhao, and Yasha Wang. Promed: Shapley information gain guided reinforcement learning for proactive medical llms. arXiv preprint arXiv:2508.13514, 2025.

- Dubey et al. (2024a) Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, Anirudh Goyal, Anthony Hartshorn, Aobo Yang, Archi Mitra, Archie Sravankumar, Artem Korenev, Arthur Hinsvark, Arun Rao, Aston Zhang, Aurélien Rodriguez, Austen Gregerson, Ava Spataru, Baptiste Rozière, Bethany Biron, Binh Tang, Bobbie Chern, Charlotte Caucheteux, Chaya Nayak, Chloe Bi, Chris Marra, Chris McConnell, Christian Keller, Christophe Touret, Chunyang Wu, Corinne Wong, Cristian Canton Ferrer, Cyrus Nikolaidis, Damien Allonsius, Daniel Song, Danielle Pintz, Danny Livshits, David Esiobu, Dhruv Choudhary, Dhruv Mahajan, Diego Garcia-Olano, Diego Perino, Dieuwke Hupkes, Egor Lakomkin, Ehab AlBadawy, Elina Lobanova, Emily Dinan, Eric Michael Smith, Filip Radenovic, Frank Zhang, Gabriel Synnaeve, Gabrielle Lee, Georgia Lewis Anderson, Graeme Nail, Grégoire Mialon, Guan Pang, Guillem Cucurell, Hailey Nguyen, Hannah Korevaar, Hu Xu, Hugo Touvron, Iliyan Zarov, Imanol Arrieta Ibarra, Isabel M. Kloumann, Ishan Misra, Ivan Evtimov, Jade Copet, Jaewon Lee, Jan Geffert, Jana Vranes, Jason Park, Jay Mahadeokar, Jeet Shah, Jelmer van der Linde, Jennifer Billock, Jenny Hong, Jenya Lee, Jeremy Fu, Jianfeng Chi, Jianyu Huang, Jiawen Liu, Jie Wang, Jiecao Yu, Joanna Bitton, Joe Spisak, Jongsoo Park, Joseph Rocca, Joshua Johnstun, Joshua Saxe, Junteng Jia, Kalyan Vasuden Alwala, Kartikeya Upasani, Kate Plawiak, Ke Li, Kenneth Heafield, Kevin Stone, and et al. The llama 3 herd of models. CoRR, abs/2407.21783, 2024a. doi: 10.48550/ARXIV.2407.21783. URL https://doi.org/10.48550/arXiv.2407.21783.

- Dubey et al. (2024b) Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The llama 3 herd of models. arXiv e-prints, pp. arXiv–2407, 2024b.

- Dubey et al. (2024c) Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Amy Yang, Angela Fan, et al. The llama 3 herd of models. arXiv e-prints, pp. arXiv–2407, 2024c.

- Fang et al. (2025) Yue Fang, Yuxin Guo, Jiaran Gao, Hongxin Ding, Xinke Jiang, Weibin Liao, Yongxin Xu, Yinghao Zhu, Zhibang Yang, Liantao Ma, et al. Toward better ehr reasoning in llms: Reinforcement learning with expert attention guidance. arXiv preprint arXiv:2508.13579, 2025.

- Feng et al. (2024a) Yujie Feng, Xu Chu, Yongxin Xu, Guangyuan Shi, Bo Liu, and Xiao-Ming Wu. Tasl: Continual dialog state tracking via task skill localization and consolidation. arXiv preprint arXiv:2408.09857, 2024a.

- Feng et al. (2024b) Yujie Feng, Xu Chu, Yongxin Xu, Guangyuan Shi, Bo Liu, and Xiao-Ming Wu. Tasl: Continual dialog state tracking via task skill localization and consolidation. In Lun-Wei Ku, Andre Martins, and Vivek Srikumar (eds.), Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), ACL 2024, Bangkok, Thailand, August 11-16, 2024, pp. 1266–1279. Association for Computational Linguistics, 2024b. doi: 10.18653/V1/2024.ACL-LONG.69. URL https://doi.org/10.18653/v1/2024.acl-long.69.

- Hendrycks et al. (2020) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300, 2020.

- Hewitt & Manning (2019) John Hewitt and Christopher D. Manning. A structural probe for finding syntax in word representations. In Jill Burstein, Christy Doran, and Thamar Solorio (eds.), Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT 2019, Minneapolis, MN, USA, June 2-7, 2019, Volume 1 (Long and Short Papers), pp. 4129–4138. Association for Computational Linguistics, 2019. doi: 10.18653/V1/N19-1419. URL https://doi.org/10.18653/v1/n19-1419.

- Hu et al. (2022) Edward J Hu, Yelong Shen, Phillip Wallis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean Wang, Lu Wang, Weizhu Chen, et al. Lora: Low-rank adaptation of large language models. ICLR, 1(2):3, 2022.

- Huang et al. (2024) Jianheng Huang, Leyang Cui, Ante Wang, Chengyi Yang, Xinting Liao, Linfeng Song, Junfeng Yao, and Jinsong Su. Mitigating catastrophic forgetting in large language models with self-synthesized rehearsal. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 1416–1428, 2024.

- Ibrahim et al. (2024) Adam Ibrahim, Benjamin Thérien, Kshitij Gupta, Mats L Richter, Quentin Anthony, Timothée Lesort, Eugene Belilovsky, and Irina Rish. Simple and scalable strategies to continually pre-train large language models. arXiv preprint arXiv:2403.08763, 2024.

- Jacot et al. (2018) Arthur Jacot, Clément Hongler, and Franck Gabriel. Neural tangent kernel: Convergence and generalization in neural networks. In Samy Bengio, Hanna M. Wallach, Hugo Larochelle, Kristen Grauman, Nicolò Cesa-Bianchi, and Roman Garnett (eds.), Advances in Neural Information Processing Systems 31: Annual Conference on Neural Information Processing Systems 2018, NeurIPS 2018, December 3-8, 2018, Montréal, Canada, pp. 8580–8589, 2018. URL https://proceedings.neurips.cc/paper/2018/hash/5a4be1fa34e62bb8a6ec6b91d2462f5a-Abstract.html.

- Jiang et al. (2024) Xinke Jiang, Yue Fang, Rihong Qiu, Haoyu Zhang, Yongxin Xu, Hao Chen, Wentao Zhang, Ruizhe Zhang, Yuchen Fang, Xu Chu, et al. Tc-rag: turing-complete rag’s case study on medical llm systems. arXiv preprint arXiv:2408.09199, 2024.

- Jiang et al. (2025) Xinke Jiang, Ruizhe Zhang, Yongxin Xu, Rihong Qiu, Yue Fang, Zhiyuan Wang, Jinyi Tang, Hongxin Ding, Xu Chu, Junfeng Zhao, et al. Hykge: A hypothesis knowledge graph enhanced rag framework for accurate and reliable medical llms responses. In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pp. 11836–11856, 2025.

- Jin et al. (2021) Di Jin, Eileen Pan, Nassim Oufattole, Wei-Hung Weng, Hanyi Fang, and Peter Szolovits. What disease does this patient have? a large-scale open domain question answering dataset from medical exams. Applied Sciences, 11(14):6421, 2021.

- Ke et al. (2023) Zixuan Ke, Yijia Shao, Haowei Lin, Tatsuya Konishi, Gyuhak Kim, and Bing Liu. Continual pre-training of language models. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net, 2023. URL https://openreview.net/forum?id=m_GDIItaI3o.

- Kirkpatrick et al. (2017) James Kirkpatrick, Razvan Pascanu, Neil Rabinowitz, Joel Veness, Guillaume Desjardins, Andrei A Rusu, Kieran Milan, John Quan, Tiago Ramalho, Agnieszka Grabska-Barwinska, et al. Overcoming catastrophic forgetting in neural networks. Proceedings of the national academy of sciences, 114(13):3521–3526, 2017.

- Li et al. (2023) Haonan Li, Yixuan Zhang, Fajri Koto, Yifei Yang, Hai Zhao, Yeyun Gong, Nan Duan, and Timothy Baldwin. Cmmlu: Measuring massive multitask language understanding in chinese. arXiv preprint arXiv:2306.09212, 2023.