# Self-Verifying Reflection Helps Transformers with CoT Reasoning

## Abstract

Advanced large language models (LLMs) frequently reflect in reasoning chain-of-thoughts (CoTs), where they self-verify the correctness of current solutions and explore alternatives. However, given recent findings that LLMs detect limited errors in CoTs, how reflection contributes to empirical improvements remains unclear. To analyze this issue, in this paper, we present a minimalistic reasoning framework to support basic self-verifying reflection for small transformers without natural language, which ensures analytic clarity and reduces the cost of comprehensive experiments. Theoretically, we prove that self-verifying reflection guarantees improvements if verification errors are properly bounded. Experimentally, we show that tiny transformers, with only a few million parameters, benefit from self-verification in both training and reflective execution, reaching remarkable LLM-level performance in integer multiplication and Sudoku. Similar to LLM results, we find that reinforcement learning (RL) improves in-distribution performance and incentivizes frequent reflection for tiny transformers, yet RL mainly optimizes shallow statistical patterns without faithfully reducing verification errors. In conclusion, integrating generative transformers with discriminative verification inherently facilitates CoT reasoning, regardless of scaling and natural language.

## 1 Introduction

Numerous studies have explored the ability of large language models (LLMs) to reason through a chain of thought (CoT), an intermediate sequence leading to the final answer. While simple prompts can elicit CoT reasoning [13], subsequent works have further enhanced CoT quality through reflective thinking [10] and the use of verifiers [4]. Recently, reinforcement learning (RL) [33] has achieved notable success in advanced reasoning models, such as OpenAI-o1 [20] and Deepseek-R1 [5], which show frequent reflective behaviors that self-verify the correctness of current solutions and explore alternatives, integrating generative processes with discriminative inference. However, researchers also report that the ability of these LLMs to detect errors is rather limited, and a large portion of reflection fails to bring correct solutions [11]. Given the weak verification ability, the experimental benefits of reflection and the emergence of high reflection frequency in RL require further explanation.

To address this challenge, we seek to analyze two main questions in this paper: 1) what role self-verifying reflection plays in training and execution of reasoning models, and 2) how reflective reasoning evolves in RL with verifiable outcome rewards [15]. However, the complexity of natural language and the prohibitive training cost of LLMs make it difficult to draw clear conclusions from theoretical abstraction and comprehensive experiments across settings. Inspired by Zeyuan et al. [2], we observe that task-specific reasoning and self-verifying reflection do not necessitate complex language. This allows us to investigate reflective reasoning through tiny transformer models [36], which provide efficient tools to understand self-verifying reflection through massive experiments.

To enable tiny transformers to produce long reflective CoTs and ensure analytic simplicity, we introduce a minimalistic reasoning framework, which supports essential reasoning behaviors that are operable without natural language. In our study, the model self-verifies the correctness of each thought step; then, it may resample incorrect steps or trace back to previous steps. Based on this framework, we theoretically prove that self-verifying reflection improves reasoning accuracy if verification errors are properly bounded, which does not necessitate a strong verifier. Additionally, a trace-back mechanism that allows revisiting previous solutions conditionally improves performance if the problem requires a sufficiently large number of steps.

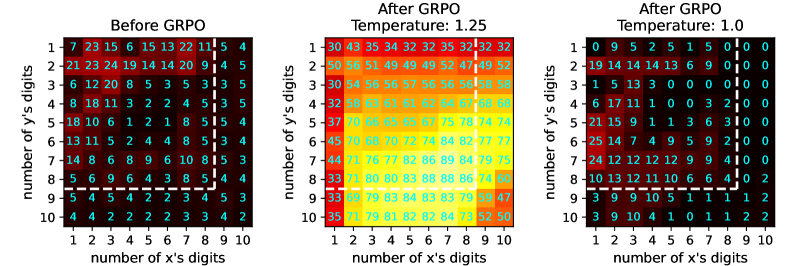

Our experiments evaluate 1M, 4M, and 16M transformers in solving integer multiplication [7] and Sudoku puzzles [3], which have simple definitions (thus, operable by transformers without language) yet still challenging for even LLM solvers. To maintain relevance to broader LLM research, the tiny transformers are trained from scratch through a pipeline similar to that of training LLM reasoners. Our main findings are listed as follows: 1) Learning to self-verify greatly facilitates the learning of forward reasoning. 2) Reflection improves reasoning accuracy if true correct steps are not excessively verified as incorrect. 3) Resembling the results of DeepSeek-R1 [5], RL can incentivize reflection if the reasoner can effectively explore potential solutions. 4) However, RL fine-tuning increases performance mainly statistically, with limited improvements in generalizable problem-solving skills.

Overall, this paper contributes to the fundamental understanding of reflection in reasoning models by clarifying its effectiveness and synergy with RL. Our findings based on minimal reasoners imply a general benefit of reflection for more advanced models, which operate on a super-set of our simplified reasoning behaviors. In addition, our implementation also provides insights into the development of computationally efficient reasoning models.

## 2 Related works

CoT reasoning

Pretrained LLMs emerge the ability to produce CoTs from simple prompts [13, 38], which can be explained via the local dependencies [25] and probabilistic distribution [35] of natural-language reasoning. Many recent studies develop models targeted at reasoning, e.g., scaling test-time inference with external verifiers [4, 17, 18, 32] and distilling large general models to smaller specialized models [34, 9]. In this paper, we train tiny transformers from scratch to not only generate CoTs but also self-verify, i.e., detect errors in their own thoughts without external models.

RL fine-tuning for CoT reasoning

RL [33] recently emerges as a key method for CoT reasoning [31, 40]. It optimizes the transformer model by favoring CoTs that yield high cumulated rewards, where PPO [29] and its variant GRPO [31] are two representative approaches. Central to RL fine-tuning are reward models that guide policy optimization: the 1) outcome reward models (ORM) assessing final answers, and the 2) process reward models (PRM) [17] evaluating intermediate reasoning steps. Recent advances in RL with verifiable rewards (RLVR) [5, 41] demonstrate that simple ORM based solely on answer correctness can induce sophisticated reasoning behaviors.

Reflection in LLM reasoning

LLM reflection provides feedback to the generated solutions [19] and may accordingly refine the solutions [10]. Research shows that supervised learning from verbal reflection improves performance, even though the reflective feedback is omitted during execution [42]. Compared to the generative verbal reflection, self-verification uses discriminative labels to indicate the correctness of reasoning steps, which supports reflective execution and is operable without linguistic knowledge. Recently, RL is widely used to develop strong reflective abilities [14, 27, 20]. In particular, DeepSeek-R1 [5] shows that RLVR elicits frequent reflection, and such a result is reproduced in smaller LLMs [24]. In this paper, we further investigate how reflection evolves during RLVR by examining the change of verification errors.

Understanding LLMs through small transformers

Small transformers are helpful tools to understand LLMs, for their architectural consistency with LLMs and low development cost to support massive experiments. For example, transformers smaller than 1B provide insights into how data mixture and data diversity influence LLM training [39, 2]. They also contribute to foundational understanding of CoT reasoning, such as length generalization [12], internalization of thoughts [6], and how CoTs inherently extend the problem-solving ability [8, 16]. In this paper, we further use tiny transformers to better understand reflection in CoT reasoning.

## 3 Reflective reasoning for transformers

In this section, we develop transformers to perform simple reflective reasoning in long CoTs. Focusing on analytic clarity and broader implications, the design of our framework follows the minimalistic principle, providing only essential reasoning behavior operable without linguistic knowledge. More advanced reasoning frameworks optimized for small-scale models are certainly our next move in future work. In the following, we first introduce the basic formulation of CoT reasoning; then, based on this formulation, we introduce our simple reasoning framework for self-verifying reflection; afterwards, we describe how transformers are trained to reason through this framework.

### 3.1 Reasoning formulation

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Sequential Reasoning Process with State Transitions

### Overview

The image displays a technical flowchart illustrating a sequential reasoning process. It depicts a model that progresses through a series of discrete reasoning steps, transitioning from an initial query to a final answer. The diagram is structured in three horizontal rows: a header row with labels, a central flowchart row, and a footer row with corresponding state transition equations.

### Components/Axes

The diagram is organized into three distinct rows:

1. **Header Row (Top):**

* **Left Label:** "Model"

* **Center Label:** "Reasoning Steps"

* **Right Label:** "A" (This appears to be a label for the final output box, not a separate header).

2. **Main Flowchart Row (Center):**

* **Components:** A sequence of rectangular boxes connected by right-pointing arrows.

* **Box Labels (from left to right):**

* `Q` (Initial Query, shaded gray)

* `R₁` (First Reasoning Step)

* `R₂` (Second Reasoning Step)

* `...` (Ellipsis indicating intermediate steps)

* `R_{T-1}` (Penultimate Reasoning Step)

* `A` (Final Answer, shaded gray)

* **Arrow Annotations:** Above each arrow connecting the boxes, there is a mathematical expression describing the action taken at that step.

* Above arrow from `Q` to `R₁`: `R₁ ~ π(·|S₀)`

* Above arrow from `R₁` to `R₂`: `R₂ ~ π(·|S₁)`

* Above arrow from `R_{T-1}` to `A`: `A ~ π(·|S_{T-1})`

3. **Footer Row (Bottom):**

* **Left Label:** "State Transition"

* **Equations (aligned below each box in the main row):**

* Below `Q`: `S₀ = Q`

* Below `R₁`: `S₁ = T(S₀, R₁)`

* Below `R₂`: `S₂ = T(S₁, R₂)`

* Below `...`: `...`

* Below `R_{T-1}`: `S_{T-1} = T(S_{T-2}, R_{T-1})`

* Below `A`: `S_T = T(S_{T-1}, A)`

### Detailed Analysis

The diagram formalizes a step-by-step reasoning or decision-making process:

1. **Initialization:** The process begins with an initial state `S₀`, which is defined as the input query `Q`.

2. **Iterative Reasoning:** For each step `t` from 1 to `T-1`:

* A reasoning action `R_t` is sampled from a policy `π` conditioned on the current state `S_{t-1}`. This is denoted as `R_t ~ π(·|S_{t-1})`.

* The state is then updated via a transition function `T` using the previous state and the chosen action: `S_t = T(S_{t-1}, R_t)`.

3. **Termination:** The process concludes when a final action, the answer `A`, is sampled from the policy conditioned on the penultimate state: `A ~ π(·|S_{T-1})`.

4. **Final State:** The terminal state `S_T` is computed by applying the transition function to the penultimate state and the final answer: `S_T = T(S_{T-1}, A)`.

The flow is strictly linear and sequential, moving from left to right. The ellipsis (`...`) indicates that the number of intermediate reasoning steps (`R₂` through `R_{T-2}`) is variable.

### Key Observations

* **Dual Notation:** The process is described using two parallel notations: a visual flowchart of actions (`Q -> R₁ -> R₂ -> ... -> A`) and a formal mathematical sequence of state updates (`S₀ -> S₁ -> S₂ -> ... -> S_T`).

* **Policy-Driven Actions:** Each action (reasoning step `R_t` or final answer `A`) is generated stochastically (`~`) from a policy distribution `π`, which depends on the current state.

* **State as Memory:** The state `S_t` encapsulates all information necessary for the next decision, acting as the process's memory. It is updated deterministically by the function `T`.

* **Symmetry of Final Step:** The final answer `A` is treated analogously to a reasoning step `R_t` in the policy sampling (`A ~ π(·|S_{T-1})`), but it also triggers the final state update to `S_T`.

### Interpretation

This diagram models a **sequential decision-making or reasoning framework**, common in fields like reinforcement learning, planning, and advanced AI reasoning systems.

* **What it represents:** It illustrates how an AI agent (the "Model") can break down a complex query (`Q`) into a series of intermediate reasoning steps (`R₁, R₂, ...`) before producing a final answer (`A`). Each step refines the agent's understanding (the state `S`).

* **Relationship between elements:** The "Reasoning Steps" row shows the *observable actions*, while the "State Transition" row shows the *underlying, evolving context or belief state* that drives those actions. The policy `π` is the core "brain" that decides what to do next based on the current context.

* **Underlying logic:** The framework suggests that good answers (`A`) are not produced in a single leap but are the culmination of a deliberate, iterative process of information gathering or logical deduction (`R₁, R₂, ...`). The state `S` ensures continuity and coherence throughout this process.

* **Notable abstraction:** The diagram is highly abstract. The functions `T` (transition) and `π` (policy) are not defined, making this a general template applicable to various specific algorithms (e.g., Chain-of-Thought reasoning, Monte Carlo Tree Search, or a recurrent neural network processing a sequence). The use of `~` implies a probabilistic or sampling-based approach, rather than purely deterministic logic.

</details>

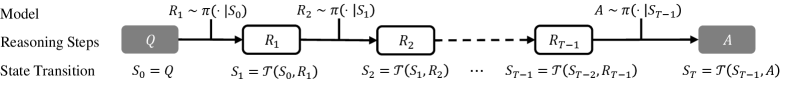

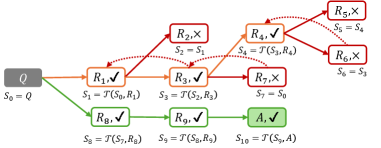

Figure 1: The illustration of MTP, where the transformer model $\pi$ reasons the answer $A$ of a query $Q$ through $T-1$ intermediate steps.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: State Transition Process with Intermediate Computation

### Overview

The image displays a technical flowchart or state transition diagram illustrating a three-stage computational process. It shows how an initial state \( S_t \) is transformed via an intermediate computational block \( R_{t+1} \) into a final state \( S_{t+1} \). The diagram uses mathematical notation and arrows to indicate the flow of operations.

### Components/Axes

The diagram consists of three primary rectangular boxes with rounded corners, connected by directional arrows.

1. **Left Box (Initial State):**

* **Label:** \( S_t \) (positioned above the box).

* **Content (transcribed):**

```

145

× 340

+ 290

```

2. **Central Box (Intermediate Computation/Reward):**

* **Label:** \( R_{t+1} \) (positioned above the box).

* **Content (transcribed):**

```

340 → 300

145 × 4 = 580

290 + 5800 = 6090

```

* **Note:** The arrow "→" indicates a transformation or mapping.

3. **Right Box (Final State):**

* **Label:** \( S_{t+1} \) (positioned above the box).

* **Content (transcribed):**

```

145

× 300

+ 6090

```

4. **Connecting Arrows:**

* An arrow labeled **\( \pi \)** (Greek letter pi) points from the right edge of the \( S_t \) box to the left edge of the \( R_{t+1} \) box.

* An arrow labeled **\( T \)** points from the right edge of the \( R_{t+1} \) box to the left edge of the \( S_{t+1} \) box.

### Detailed Analysis

The diagram depicts a sequential transformation of numerical values.

* **From \( S_t \) to \( R_{t+1} \) (via \( \pi \)):**

* The value `340` from the multiplication line in \( S_t \) is transformed to `300` in the first line of \( R_{t+1} \).

* A new computation is introduced: `145 × 4 = 580`. The multiplicand `145` matches the first number in \( S_t \).

* Another computation is shown: `290 + 5800 = 6090`. The addend `290` matches the last number in \( S_t \). However, the source of the value `5800` is not explicitly derived from the previous line (`580`). This is a notable inconsistency or potential error in the diagram's internal logic.

* **From \( R_{t+1} \) to \( S_{t+1} \) (via \( T \)):**

* The first number `145` is carried over unchanged from \( S_t \) through \( R_{t+1} \) to \( S_{t+1} \).

* The transformed multiplier `300` (from `340 → 300` in \( R_{t+1} \)) becomes the new multiplier in \( S_{t+1} \).

* The computed sum `6090` (from `290 + 5800` in \( R_{t+1} \)) becomes the new addend in \( S_{t+1} \).

### Key Observations

1. **Value Persistence:** The number `145` remains constant throughout all three stages.

2. **Value Transformation:** The multiplier changes from `340` to `300`. The addend changes dramatically from `290` to `6090`.

3. **Internal Inconsistency:** The computation `290 + 5800 = 6090` within \( R_{t+1} \) uses the value `5800`, which is not the result of the immediately preceding line (`145 × 4 = 580`). This suggests either a typo (where `5800` should be `580`, making the sum `870`) or that `5800` is an external input not shown in the flow.

4. **Symbolic Flow:** The labels \( \pi \) and \( T \) are common in reinforcement learning and control theory, often representing a *policy* and a *transition function*, respectively. This context suggests the diagram models a decision-making or state-update process.

### Interpretation

This diagram likely represents a single step in an iterative algorithm, such as those found in dynamic programming or reinforcement learning.

* **What it demonstrates:** It shows how a state \( S_t \), defined by a set of parameters (145, 340, 290), is processed. A policy \( \pi \) generates an intermediate result \( R_{t+1} \), which involves modifying one parameter (340→300) and performing auxiliary calculations. A transition function \( T \) then uses these results to compute the next state \( S_{t+1} \), updating the parameters to (145, 300, 6090).

* **Relationships:** The process is linear and causal. \( R_{t+1} \) acts as a computational workspace that depends on \( S_t \) and determines \( S_{t+1} \). The unchanged value `145` may represent a fixed feature of the environment or agent.

* **Notable Anomaly:** The discrepancy between `580` and `5800` is critical. If `5800` is correct, it implies a large, unexplained external input. If it is a typo for `580`, then the final state \( S_{t+1} \) would be (145, 300, 870), which is a much smaller update. The current diagram, as drawn, contains a logical gap in the data flow.

</details>

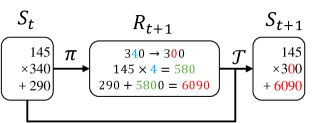

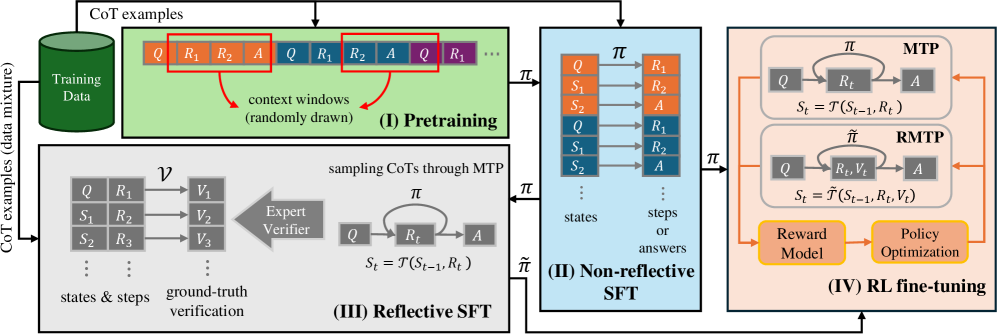

(a) Multiplication

<details>

<summary>x3.png Details</summary>

### Visual Description

\n

## Diagram: Sudoku State Transformation Process

### Overview

The image is a technical diagram illustrating a single-step transformation process for a Sudoku puzzle state. It shows an initial grid state (S_t), a transformation rule (R_{t+1}) that updates specific cells, and the resulting grid state (S_{t+1}). The process is mediated by two functions or operators, denoted by the Greek letters π (pi) and T.

### Components/Axes

The diagram consists of three primary components arranged horizontally from left to right:

1. **Left Component (S_t):** A 9x9 grid representing the initial state of a Sudoku puzzle at time step `t`. The grid is divided into nine 3x3 boxes by thicker lines. Cells contain numbers (1-9) or are empty.

2. **Middle Component (R_{t+1}):** A rounded rectangle labeled `R_{t+1}`. It contains two lines of text specifying cell updates:

* `cell_{6,2} ← 7`

* `cell_{7,9} ← 2`

An arrow labeled with the symbol `π` points from the S_t grid to this box.

3. **Right Component (S_{t+1}):** A 9x9 grid representing the resulting state at time step `t+1`. It is structurally identical to S_t. An arrow labeled with the symbol `T` points from the R_{t+1} box to this grid.

**Spatial Grounding:** The legend/transformation rule (R_{t+1}) is positioned centrally between the two state grids. The flow of information is strictly left-to-right: S_t → π → R_{t+1} → T → S_{t+1}.

### Detailed Analysis

**1. Initial State Grid (S_t):**

The grid contains the following numbers (row, column, value). Empty cells are denoted by `-`.

* Row 1: (1,1)=1, (1,3)=7, (1,7)=8, (1,9)=2

* Row 2: (2,2)=3, (2,5)=2, (2,8)=5, (2,9)=2

* Row 3: (3,2)=9, (3,7)=4, (3,8)=7

* Row 4: (4,1)=2, (4,3)=8, (4,4)=6, (4,9)=9

* Row 5: (5,2)=3, (5,3)=9, (5,4)=4, (5,5)=1, (5,8)=6

* Row 6: (6,1)=7, (6,4)=8, (6,8)=4

* Row 7: (7,2)=8, (7,9)=4

* Row 8: (8,1)=?, (8,2)=?, (8,3)=?, (8,4)=?, (8,5)=?, (8,6)=?, (8,7)=?, (8,8)=?, (8,9)=? (All cells in row 8 appear empty in the image).

* Row 9: (9,1)=?, (9,2)=?, (9,3)=?, (9,4)=?, (9,5)=?, (9,6)=?, (9,7)=?, (9,8)=?, (9,9)=? (All cells in row 9 appear empty in the image).

**2. Transformation Rule (R_{t+1}):**

The rule specifies two precise updates:

* Update 1: The cell at row 6, column 2 is assigned the value 7.

* Update 2: The cell at row 7, column 9 is assigned the value 2.

**3. Resulting State Grid (S_{t+1}):**

This grid is identical to S_t except for the two cells specified in R_{t+1}. These updated cells are highlighted in **red**.

* **Change 1 (Trend Verification):** The rule `cell_{6,2} ← 7` is applied. In S_t, cell (6,2) was empty (`-`). In S_{t+1}, cell (6,2) now contains the number **7** (in red).

* **Change 2 (Trend Verification):** The rule `cell_{7,9} ← 2` is applied. In S_t, cell (7,9) contained the number **4**. In S_{t+1}, cell (7,9) now contains the number **2** (in red).

All other numbers in S_{t+1} remain in the exact same positions as in S_t.

### Key Observations

1. **Targeted Update:** The transformation is not a full solve or a random change; it is a precise, rule-based update of two specific cells.

2. **Visual Highlighting:** The diagram uses the color red to draw immediate attention to the modified cells in the output state (S_{t+1}), making the effect of the transformation rule visually explicit.

3. **Process Abstraction:** The symbols `π` and `T` abstract the computational steps. `π` likely represents a policy or function that determines *which* cells to update (generating R_{t+1}), while `T` represents the transition function that *applies* those updates to the state.

4. **State Consistency:** Aside from the two specified changes, the Sudoku grid's state is perfectly preserved, indicating a deterministic and localized update process.

### Interpretation

This diagram models a single, discrete step in a computational process for solving or manipulating a Sudoku puzzle. It exemplifies a **state-transition model** common in reinforcement learning, search algorithms, or constraint satisfaction problems.

* **What it demonstrates:** The process shows how an agent or algorithm (`π`) observes a state (`S_t`), decides on a minimal set of actions (updating two cells to specific values, encoded in `R_{t+1}`), and then executes those actions via a transition function (`T`) to produce a new, slightly more solved state (`S_{t+1}`).

* **Relationship between elements:** The flow is causal and sequential. The initial state is the input. The policy `π` acts as the "brain," generating a compact action plan (`R_{t+1}`). The transition `T` is the "hands" that execute the plan on the environment (the grid). The output is a new state that is one step closer to a complete solution.

* **Notable implications:** The update from 4 to 2 in cell (7,9) suggests the algorithm is correcting a value, possibly because the 4 was a violation of Sudoku rules (e.g., a duplicate in its row, column, or 3x3 box). The insertion of a 7 into an empty cell (6,2) represents filling in a known, deduced value. This two-step action—correcting an error and filling a blank—is a microcosm of the entire solving process. The diagram effectively breaks down the complex task of solving a Sudoku into atomic, understandable operations.

</details>

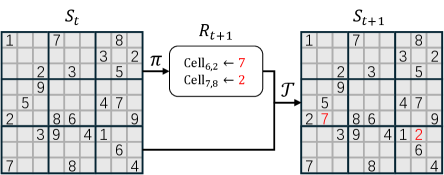

(b) Sudoku

Figure 2: Example reasoning steps for multiplication and Sudoku, where the core planning is presented in the reasoning step ${R}_{t+1}$ .

CoT Reasoning as a Markov decision process

A general form of CoT reasoning is given as a tuple $({Q},\{{R}\},{A})$ , where ${Q}$ is the input query, $\{{R}\}=({R}_{1},\ldots,{R}_{T-1})$ is the sequence of $T-1$ intermediate steps, and ${A}$ is the final answer. Following Wang [37], we formulate the CoT reasoning as a Markov thought process (MTP). As shown in Figure 1, an MTP follows that [37]:

$$

\displaystyle{R}_{t+1}\sim\pi(\cdot\mid{S}_{t}),\ {S}_{t+1}=\mathcal{T}({S}_{t},{R}_{t+1}), \tag{1}

$$

where ${S}_{t}$ is the $t$ -th reasoning state, $\pi$ is the planning policy (the transformer model), and $\mathcal{T}$ is the (usually deterministic) transition function. The initial state ${S}_{0}:=Q$ is given by the input query. In each reasoning step ${R}_{t+1}$ , the policy $\pi$ plans the next reasoning action that determines the state transition, which is then executed by $\mathcal{T}$ to obtain the next state. The process terminates when the step presents the answer, i.e., $A={R}_{T}$ . For clarity, a table of notations is presented in Appendix A.

An MTP is implemented by specifying the state representations and transition function $\mathcal{T}$ . Since we use tiny transformers that are weak in inferring long contexts, we suggest reducing the length of state representations, so that each state ${S}_{t}$ carries only necessary information for subsequent reasoning. Here, we present two examples to better illustrate how MTPs are designed for tiny transformers.

**Example 1 (An MTP for integer multiplication)**

*As shown in Figure 2(a), to reason the product of two integers $x,y\geq 0$ , each state is an expression ${S}_{t}:=[x_{t}\times y_{t}+z_{t}]$ mathematically equal to $x\times y$ , initialized as ${S}_{0}=[x\times y+0]$ . On each step, $\pi$ plans $y_{t+1}$ by eliminate a non-zero digit in $y_{t}$ to $0$ , and it then computes $z_{t+1}=z_{t}+x_{t}(y_{t}-y_{t+1})$ . Consequently, $\mathcal{T}$ updates ${S}_{t+1}$ as $[x_{t+1}\times y_{t+1}+z_{t+1}]$ with $x_{t+1}=x_{t}$ . Similarly, $\pi$ may also eliminate non-zero digits in $x_{t}$ in a symmetric manner. Finally, $\pi$ yields $A=z_{t}$ as the answer if either $x_{t}$ or $y_{t}$ becomes $0$ .*

**Example 2 (An MTP for Sudoku[3])**

*As shown in Figure 2(b), each Sudoku state is a $9\times 9$ game board. On each step, the model $\pi$ fills some blank cells to produce a new board, which is exactly the next state. The answer $A$ is a board with no blank cells.*

### 3.2 The framework of self-verifying reflection

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: State Transition Flowchart with Decision Points

### Overview

The image displays a directed graph or flowchart illustrating a sequential decision-making process. It shows a starting state, multiple intermediate steps with branching paths (some correct, some incorrect), and a final goal state. The diagram uses color-coding (green for correct/successful paths, red for incorrect/failed paths) and symbols (checkmarks ✓, crosses ✗) to indicate the validity of each transition.

### Components/Axes

The diagram is composed of nodes (boxes) and directed edges (arrows). There are no traditional chart axes. The components are:

1. **Nodes (States/Actions):**

* **Start Node (Leftmost):** A gray box labeled `Q` with the equation `S₀ = Q` below it.

* **Intermediate Nodes (Red - Incorrect):**

* `R₁, ✗` with `S₁ = S₀` below it.

* `R₄, ✗` with `S₄ = S₂` below it.

* `R₅, ✗` with `S₅ = S₂` below it.

* **Intermediate Nodes (Green - Correct):**

* `R₂, ✓` with `S₂ = J(S₁, R₂)` below it.

* `R₃, ✓` with `S₃ = J(S₂, R₃)` below it.

* `R₆, ✓` with `S₆ = J(S₅, R₆)` below it.

* **Goal Node (Rightmost):** A green box labeled `A, ✓` with `S₇ = J(S₆, A)` below it.

2. **Edges (Transitions):**

* **Red Arrows:** Indicate transitions from correct paths to incorrect nodes (`R₂` to `R₁`; `R₃` to `R₄` and `R₅`).

* **Green Arrows:** Indicate the primary successful path (`Q` -> `R₂` -> `R₃` -> `R₆` -> `A`) and a secondary path from an incorrect node (`R₅` to `R₆`).

3. **Legend/Key (Implied):**

* **Color:** Green = Correct/Successful Path. Red = Incorrect/Failed Path.

* **Symbols:** ✓ = Checkmark (Success). ✗ = Cross (Failure).

* **Positioning:** The legend is not in a separate box but is embedded in the node styling. The primary successful path flows horizontally from left to right across the lower portion of the diagram. Incorrect branches extend upward from the main path.

### Detailed Analysis

The diagram models a process where a state `S` is updated through a function `J` based on an action `R` or `A`.

* **Initial State:** The process begins at state `S₀`, which is equal to the initial query or condition `Q`.

* **Path 1 (Incorrect):** From `S₀`, taking action `R₁` leads to a state `S₁` that is unchanged from `S₀` (`S₁ = S₀`). This path is marked as incorrect (red, ✗).

* **Path 2 (Correct Start):** From `S₀`, taking action `R₂` leads to a new state `S₂` computed as `J(S₁, R₂)`. This is the first correct step (green, ✓).

* **Branching from `S₂`:**

* **Incorrect Branches:** From state `S₂`, actions `R₄` and `R₅` are possible but incorrect (red, ✗). Both lead to states that revert to `S₂` (`S₄ = S₂`, `S₅ = S₂`), indicating no progress.

* **Correct Continuation:** From state `S₂`, taking action `R₃` leads to state `S₃ = J(S₂, R₃)`. This is correct (green, ✓).

* **Convergence and Final Steps:**

* From state `S₃`, an incorrect action `R₆` is shown (red, ✗), but the arrow points to the correct `R₆` node, suggesting a possible mislabeling or that `R₆` can be reached from multiple states.

* The correct path continues from `S₃` to action `R₆`. Notably, the equation for the state after `R₆` is `S₆ = J(S₅, R₆)`. This implies that the state `S₅` (from the incorrect `R₅` branch) is used as input, not `S₃`. This is a critical detail.

* Finally, from state `S₆`, taking the goal action `A` leads to the terminal state `S₇ = J(S₆, A)`, marked as successful (green, ✓).

### Key Observations

1. **Non-Linear Progression:** The successful path is not a simple straight line. It involves a main sequence (`Q` -> `R₂` -> `R₃`) but then incorporates an element (`S₅`) from a previously failed branch (`R₅`) to compute the next state (`S₆`).

2. **State Reversion:** Incorrect actions (`R₁`, `R₄`, `R₅`) result in states that are equal to a previous state (`S₀` or `S₂`), representing a lack of progress or a loop.

3. **Critical Equation:** The equation `S₆ = J(S₅, R₆)` is pivotal. It shows that the successful path to the goal depends on information or a state (`S₅`) generated by an *incorrect* action (`R₅`). This suggests the process may learn from or require exploring dead ends.

4. **Spatial Layout:** The primary successful path is anchored along the bottom. Incorrect branches create upward "spurs," visually separating failure from progress. The final goal `A` is positioned at the far right, signifying the endpoint.

### Interpretation

This diagram likely represents a **state-space search, a reinforcement learning policy, or a logical proof tree** where an agent or algorithm must navigate through possible actions to reach a goal.

* **What it demonstrates:** The process is not about avoiding all wrong turns. Instead, it shows that reaching the goal (`A`) may require traversing a specific sequence of correct actions (`R₂`, `R₃`) while also leveraging the outcome (`S₅`) of an exploratory, incorrect action (`R₅`). The function `J` acts as a state transition or update function.

* **Relationship between elements:** The green arrows define the viable policy or solution path. The red arrows represent explored but suboptimal or erroneous actions. The connection from the incorrect `R₅` node to the correct `R₆` node is the most significant relationship, indicating that failure is not always terminal and can provide necessary input for eventual success.

* **Notable anomaly:** The equation `S₆ = J(S₅, R₆)` is the central anomaly. It breaks the simple sequential flow (`S₃` should logically feed into `R₆`) and instead creates a dependency on a side branch. This implies the system has memory or that the value of action `R₆` is contingent on having first attempted `R₅`, even though `R₅` itself was incorrect. This is a sophisticated concept, suggesting the model values exploration or has conditional logic where some "wrong" steps are prerequisites for later "right" steps.

</details>

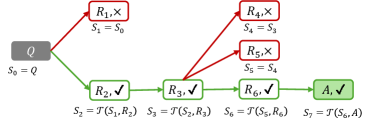

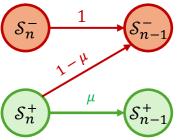

(a) Reflective MTP

<details>

<summary>x5.png Details</summary>

### Visual Description

\n

## Diagram: State Transition Flowchart with Decision Paths

### Overview

The image displays a directed graph or flowchart illustrating a state transition process, likely representing a search algorithm, decision tree, or reinforcement learning trajectory. It maps the progression from an initial state `Q` through various intermediate states (`R₁` to `R₁₀`) to a final state `A`. The diagram uses color-coding, symbols (✓/✗), and mathematical notation to denote success/failure and state transformations.

### Components/Axes

* **Node Types & Labels:** Each node is a rounded rectangle containing a label (e.g., `R₁`, `A`, `Q`) and a symbol (✓ or ✗). Below each node is a state transition equation.

* **Color Coding:**

* **Orange:** Nodes `R₁`, `R₂`, `R₃`, `R₄`, `R₅`, `R₇`, `R₁₀`. This appears to be the primary or explored branch.

* **Green:** Nodes `Q`, `R₈`, `R₉`, `A`. This appears to be a successful or alternative branch.

* **Red:** Nodes `R₂`, `R₅`, `R₇`, `R₆`. These nodes are marked with ✗, indicating failure or termination.

* **Connections:** Solid arrows indicate primary flow. Dotted red arrows indicate secondary or alternative transitions (e.g., from `R₄` to `R₆` and `R₅`).

* **Spatial Layout:** The flowchart originates from a single node `Q` on the far left. It branches into two main paths: an upper/orange path and a lower/green path. The final node `A` is positioned at the bottom right.

### Detailed Analysis

**Path 1 (Upper/Orange Branch):**

1. **Start:** `Q` (Green) - `S₀ = Q`

2. **Step 1:** `R₁, ✓` (Orange) - `S₁ = T(S₀, R₁)`

3. **Step 2 (from R₁):**

* `R₂, ✗` (Red) - `S₂ = S₁` (No state change)

* `R₃, ✓` (Orange) - `S₃ = T(S₂, R₃)`

4. **Step 3 (from R₂):**

* `R₄, ✓` (Orange) - `S₄ = T(S₃, R₄)`

* `R₅, ✗` (Red) - `S₅ = S₄` (No state change)

5. **Step 4 (from R₃):**

* `R₇, ✗` (Red) - `S₇ = S₆` (Note: `S₆` is defined later, suggesting a loop or reference).

6. **Step 5 (from R₄):**

* `R₆, ✗` (Red) - `S₆ = S₄` (No state change)

* `R₁₀, ✓` (Orange) - `S₁₀ = T(S₇, R₁₀)` (References `S₇` from the `R₃` branch).

**Path 2 (Lower/Green Branch):**

1. **Start:** `Q` (Green) - `S₀ = Q`

2. **Step 1:** `R₈, ✓` (Green) - `S₈ = T(S₀, R₈)`

3. **Step 2:** `R₉, ✓` (Green) - `S₉ = T(S₈, R₉)`

4. **Step 3 (Final):** `A, ✓` (Green) - `S₁₀ = T(S₉, A)`

**State Transition Notation:** The notation `Sₙ = T(Sₘ, Rₖ)` indicates that state `Sₙ` is the result of applying transformation `T` to previous state `Sₘ` using action/rule `Rₖ`. Notation like `S₂ = S₁` indicates a terminal or non-transformative step.

### Key Observations

1. **Two Distinct Outcomes:** The green path (`Q -> R₈ -> R₉ -> A`) leads directly to a successful terminal state `A`. The orange/red path is more complex, involving multiple branches, failures (✗), and loops (e.g., `R₇` referencing `S₆`).

2. **Failure States:** Nodes marked with ✗ (`R₂`, `R₅`, `R₇`, `R₆`) are all colored red and often result in no state change (`Sₙ = Sₘ`), indicating dead ends.

3. **Complex Interconnection:** The orange path is not linear. It features branching (`R₁` to `R₂/R₃`), convergence (dotted lines from `R₄`), and cross-branch references (`R₁₀` using `S₇` from the `R₃` sub-branch).

4. **Final State Convergence:** Both main branches ultimately reference a state `S₁₀`. The green path defines it as `T(S₉, A)`, while the orange path defines it via `R₁₀` as `T(S₇, R₁₀)`. This suggests `S₁₀` is a goal state achievable via different sequences.

### Interpretation

This diagram models a **search or decision-making process** where an agent explores possible actions from an initial state `Q`. The green path represents an **optimal or successful policy**—a direct, three-step sequence (`R₈, R₉, A`) that achieves the goal. The orange/red path represents a **more exploratory, trial-and-error process**. It includes successful steps (`R₁, R₃, R₄, R₁₀`) but also encounters failures (`R₂, R₅, R₇, R₆`) and complex state dependencies.

The notation `T(S, R)` strongly suggests a **formal transition function**, common in fields like:

* **Reinforcement Learning:** Where an agent takes actions (`R`) in states (`S`) to receive rewards and new states.

* **Automated Theorem Proving or Planning:** Where `R` represents rule applications to transform a logical state `S`.

* **Algorithmic Search:** Where nodes represent states and edges represent operations.

The key insight is that **multiple pathways can lead to the same goal state (`S₁₀`)**, but they differ dramatically in efficiency and risk of failure. The diagram visually argues for the superiority of the direct green policy over the convoluted orange exploration. The presence of failures (✗) and non-transformative steps highlights the cost of suboptimal decision-making.

</details>

(b) Reflective trace-back search (width $m=2$ )

Figure 3: Reflective reasoning based on MTP. “ $\checkmark$ ” and “ $\times$ ” are self-verification labels for positive and negative steps, respectively. The steps that are instantly verified as negative are highlighted in red. In RTBS, the dashed-line arrows back-propagate the negative labels, causing parental steps to be recursively rejected (orange). The green shows the steps that successfully lead to the answer.

Conceptually, reflection provides feedback for the proposed steps and may alter the subsequent reasoning accordingly. Reflection takes flexible forms in natural language (e.g., justifications and comprehensive evaluations), making it extremely costly to analyze. In this work, we propose to equip transformers with the simplest discriminative form of reflection, where the model self-verifies the correctness of each step and is allowed to retry those incorrect attempts. We currently do not consider the high-level revisory behavior that maps incorrect steps to correct ones, as we find learning such a mapping is challenging for tiny models and leads to no significant gain in practice. Specifically, we analyze two basic variants of reflective reasoning in this paper: the reflective MTP and the reflective trace-back search, as described below (see pseudo-code in Appendix D.1).

Reflective MTP (RMTP)

Given any MTP with a policy $\pi$ and transition $\mathcal{T}$ , we use a verifier $\mathcal{V}$ to produce a verification sequence after each reasoning step, denoted as ${V}_{t}\sim\mathcal{V}(\cdot|{R}_{t})$ . Such ${V}_{t}$ includes verification label(s): The positive “ $\checkmark$ ” and negative “ $\times$ " signifying correct and incorrect reasoning of ${R}_{t}$ , respectively. Given the verified step ${\tilde{R}}_{t+1}:=({R}_{t+1},{V}_{t+1})$ that contains verification, we define $\tilde{\mathcal{T}}$ as the reflective transition function that rejects incorrect steps:

$$

{S}_{t+1}=\tilde{\mathcal{T}}({S}_{t},{\tilde{R}}_{t+1})=\tilde{\mathcal{T}}({S}_{t},({R}_{t+1},{V}_{t+1})):=\begin{cases}{S}_{t},&\text{``$\times$''}\in{V}_{t+1};\\

\mathcal{T}({S}_{t},{R}_{t+1}),&\text{otherwise.}\end{cases} \tag{2}

$$

In other words, if $\mathcal{V}$ detects any error (i.e. “ $\times$ ") in ${R}_{t+1}$ , the state remains unchanged so that $\pi$ may re-sample another attempt. Focusing on self-verification, we use a single model called the self-verifying policy $\tilde{\pi}:=\{\pi,\mathcal{V}\}$ to serve simultaneously as the planning policy $\pi$ and the verifier $\mathcal{V}$ . By operating tokens, $\tilde{\pi}$ outputs the verified step ${\tilde{R}}_{t}$ for each input state ${S}_{t}$ . In this way, $\tilde{\mathcal{T}}$ and $\tilde{\pi}$ constitute a new MTP called the RMTP, with illustration in Figure 3(a).

Reflective trace-back search (RTBS)

Though RMTP allows instant rejections of incorrect steps, sometimes the quality of a step can be better determined by actually trying it. For example, a Sudoku solver occasionally makes tentative guesses and traces back if the subsequent reasoning fails. Inspired by o1-journey [26], a trace-back search allowing the reasoner to revisit previous states may be applied to explore solution paths in an MTP. We implement simple RTBS by simulating the depth-first search in the trajectory space. Let $m$ denote the RTBS width, i.e., the maximal number of attempts on each step. As illustrated in Figure 3(b), if $m$ proposed steps are rejected on a state ${S}_{t}$ , the negative label “ $\times$ ” will be propagated back to recursively reject the previous step ${R}_{t}$ . As a result, the state traces back to the closest ancestral state that has remaining attempt opportunities.

### 3.3 Training

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Technical Diagram: Multi-Stage Training Pipeline for Reasoning Models

### Overview

The image is a technical flowchart illustrating a four-stage training pipeline for a language model designed to perform reasoning tasks, likely using Chain-of-Thought (CoT) methods. The pipeline progresses from left to right, showing data flow and transformations through Pretraining, Non-reflective Supervised Fine-Tuning (SFT), Reflective SFT, and Reinforcement Learning (RL) fine-tuning. The diagram uses color-coded boxes, arrows, and mathematical notation to represent processes, data structures, and model components.

### Components/Axes

The diagram is segmented into four primary, labeled stages:

1. **(I) Pretraining (Green Box, Top-Left):**

* **Input:** "Training Data" (depicted as a green cylinder) and "CoT examples" (arrow from top).

* **Process:** Shows sequences of tokens (Q, R₁, R₂, A, etc.) being processed. Red boxes highlight "context windows (randomly drawn)".

* **Output:** A policy model denoted by π.

2. **(II) Non-reflective SFT (Blue Box, Center):**

* **Input:** The policy model π from stage (I).

* **Process:** Depicts a sequence of "states" (Q, S₁, S₂, ...) mapping to "steps or answers" (R₁, R₂, A, ...). The transition is governed by the policy π.

* **Output:** A refined policy model π.

3. **(III) Reflective SFT (Gray Box, Bottom-Left):**

* **Input:** "CoT examples (data mixture)" and the policy model π from stage (II).

* **Process:** Involves "sampling CoTs through MTP" (Model-Thought-Process, inferred). Shows a matrix with columns Q, S₁, S₂, ... and rows R₁, R₂, R₃, ... leading to "ground-truth verification" (V₁, V₂, V₃, ...). An "Expert Verifier" block processes this.

* **Output:** A policy model denoted by π̃ (π-tilde).

4. **(IV) RL fine-tuning (Orange Box, Right):**

* **Input:** The policy model π̃ from stage (III).

* **Process:** Contains two sub-modules:

* **MTP:** Shows a loop: `Q -> [π] -> R_t -> A`. State update: `S_t = T(S_{t-1}, R_t)`.

* **RMTP:** Shows a loop: `Q -> [π] -> (R_t, V_t) -> A`. State update: `S_t = T(S_{t-1}, R_t, V_t)`.

* **Components:** Includes a "Reward Model" and "Policy Optimization" block, with feedback loops (orange arrows) connecting them to the MTP/RMTP processes.

**Flow Arrows:** Black arrows indicate the primary data/model flow from (I) -> (II) -> (III) -> (IV). An additional arrow feeds "CoT examples" from the start into stage (III).

### Detailed Analysis

**Stage (I) Pretraining:**

* **Data Structure:** Sequences consist of a Question (Q), reasoning steps (R₁, R₂, ...), and an Answer (A). The diagram shows two example sequences: `Q, R₁, R₂, A` and `Q, R₁, A, Q, R₁, R₂, A, Q, R₁...`.

* **Key Mechanism:** "Context windows (randomly drawn)" are highlighted, suggesting the model is pretrained on variable-length segments of these reasoning chains.

**Stage (II) Non-reflective SFT:**

* **Mapping:** Establishes a direct mapping from a sequence of states (starting with Q, then S₁, S₂...) to a sequence of steps/answers (R₁, R₂, A). This represents standard supervised fine-tuning on reasoning traces.

**Stage (III) Reflective SFT:**

* **Verification Process:** Introduces a verification step. For a given question (Q) and state sequence (S₁, S₂...), multiple reasoning steps (R₁, R₂, R₃...) are generated and paired with verification scores or labels (V₁, V₂, V₃...). An "Expert Verifier" evaluates these against ground truth.

* **Model Update:** This process uses the policy π to sample CoTs and produces an updated policy π̃, incorporating reflective or verified reasoning.

**Stage (IV) RL fine-tuning:**

* **MTP (Model-Thought-Process):** A basic reactive model where the action (A) is taken based on the current reasoning step (R_t), and the state (S_t) is updated based only on the previous state and the new reasoning step.

* **RMTP (Reflective/Reinforced MTP):** An enhanced model where the action (A) is based on both a reasoning step (R_t) and its associated verification/value (V_t). The state update incorporates both R_t and V_t.

* **Optimization Loop:** The Reward Model provides feedback, which drives Policy Optimization. This optimized policy is then used in the MTP/RMTP modules, creating a reinforcement learning loop.

### Key Observations

1. **Progressive Complexity:** The pipeline evolves from simple sequence modeling (I) to supervised step-by-step reasoning (II), then adds verification (III), and finally integrates reinforcement learning with value feedback (IV).

2. **Notation Consistency:** The policy model is consistently denoted by π, with a tilde (π̃) used after the reflective stage to indicate a modified version.

3. **State Representation:** The state `S_t` is explicitly defined as a function `T` of previous states and reasoning steps/values, formalizing the reasoning trajectory.

4. **Dual RL Paths:** The RL stage explicitly contrasts a basic MTP with an enhanced RMTP, highlighting the integration of verification signals (V_t) into the decision and state-update process.

### Interpretation

This diagram outlines a sophisticated methodology for training AI models to perform complex reasoning. The pipeline's core innovation lies in its multi-stage approach that moves beyond standard pretraining and SFT.

* **From Imitation to Reflection:** Stages (I) and (II) teach the model to mimic reasoning patterns from data. Stage (III) introduces a critical reflective component, where the model's outputs are verified, likely teaching it to distinguish between valid and invalid reasoning paths.

* **From Supervision to Optimization:** Stage (IV) transitions from learning from static examples (SFT) to learning from outcomes via RL. The inclusion of the RMTP module suggests that the verification signal (V_t) from stage (III) is not just used for filtering data but is integrated as a core component of the decision-making process during RL, potentially guiding the model towards more reliable and high-reward reasoning strategies.

* **Overall Goal:** The pipeline aims to produce a model (final policy π) that doesn't just generate plausible-sounding reasoning chains but can engage in a verifiable, step-by-step thought process (`S_t = T(...)`) that is optimized for correctness, as determined by a reward model. This is a common framework for developing "reasoning" or "chain-of-thought" capabilities in large language models.

</details>

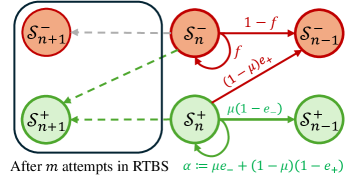

Figure 4: The training workflow for transformers to perform CoT reasoning.

As shown in Figure 4, we train the tiny transformers from scratch through consistent techniques of LLM counterparts, such as pretraining, supervised fine-tuning (SFT), and RL fine-tuning. First, we use conventional pipelines to train a baseline model $\pi$ with only the planning ability in MTPs. During (I) pretraining, these CoT examples are treated as a textual corpus, where sequences are randomly drawn to minimize cross-entropy loss of next-token prediction. Then, in (II) non-reflective SFT, the model learns to map each state ${S}_{t}$ to the corresponding step ${R}_{t+1}$ by imitating examples.

Next, we employ (III) reflective SFT to integrate the planning policy $\pi$ with the knowledge of self-verification. To produce ground-truth verification labels, we use $\pi$ to sample non-reflective CoTs, in which the sampled steps are then labeled by an expert verifier (e.g., a rule-based process reward model). Reflective SFT learns to predict these labels from the states and the proposed steps, i.e., $({S}_{t},{R}_{t+1})\to{V}_{t+1}$ . To prevent disastrous forgetting, we also mix the same CoT examples as in non-reflective SFT. This converts $\pi$ to a self-verifying policy $\tilde{\pi}$ that can self-verify reasoning steps.

Thus far, we have obtained the planning policy $\pi$ and the self-verifying policy $\tilde{\pi}$ , which can be further strengthened through (IV) RL fine-tuning. As illustrated in Figure 4, RL fine-tuning involves iteratively executing $\pi$ ( $\tilde{\pi}$ ) to collect experience CoTs through an MTP (RMTP), evaluating these CoTs with a reward model, and updating the policy to favor higher-reward solutions. Following the RLVR paradigm [15], we use binary outcome rewards (i.e., $1$ for correct answers and $0$ otherwise) computed by a rule-based answer checker $\operatorname{ORM}(Q,A)$ . When training the self-verifying policy $\tilde{\pi}$ , the RMTP treats verification ${V}_{t}$ as a part of the augmented step ${\tilde{R}}_{t}$ , simulating R1-like training [5] where reflection and solution planning are jointly optimized. We mainly use GRPO [31] as the algorithms to optimize policies. Details of RL fine-tuning are elaborated in Appendix B.

## 4 Theoretical results

This section establishes theoretical conditions under which self-verifying reflection (RMTP or RTBS in Section 3.2) enhances reasoning accuracy (the probability of deriving correct answers). The general relationship between the verification ability and reasoning accuracy (discussed in Appendix C.1) for any MTP is intractable as the states and transitions can be arbitrarily specified. Therefore, to derive interpretable insights, we discuss a simplified prototype of reasoning that epitomizes the representative principle of CoTs — to incrementally express complex relations by chaining the local relation in each step [25]. Specifically, Given query $Q$ as the initial state, we view a CoT as the step-by-step process that reduces the complexity within states:

- We define $\mathcal{S}_{n}$ as the set of states with a complexity scale of $n$ . For simplicity, we assume that each step, if not rejected by reflection, reduces the complexity scale by $1$ . Therefore, the scale $n$ is the number of effective steps required to derive an answer.

- An answer $A$ is a state with a scale of $0$ , i.e. $A\in\mathcal{S}_{0}$ . Given an input query $Q$ , the answers $\mathcal{S}_{0}$ are divided into positive (correct) answers $\mathcal{S}_{0}^{+}$ and negative (wrong) answers $\mathcal{S}_{0}^{-}$ .

- States $\mathcal{S}_{n}$ ( $n$ > 0) are divided into 1) positive states $\mathcal{S}_{n}^{+}$ that potentially lead to correct answers and 2) negative states $\mathcal{S}_{n}^{-}$ leading to only incorrect answers through forward transitions.

Consider a self-verifying policy $\tilde{\pi}=\{\pi,\mathcal{V}\}$ to solve this simplified task. We describe its fundamental abilities using the following probabilities (whose meanings will be explained afterwards):

$$

\displaystyle\mu:=p_{{R}\sim\pi}(\mathcal{T}({S},{R})\in\mathcal{S}^{+}_{n-1}\mid{S}\in\mathcal{S}_{n}^{+}) \displaystyle e_{+}:=p_{{R},{V}\sim\tilde{\pi}}(\mathcal{T}({S},{R})\in\mathcal{S}^{-}_{n-1},\text{``$\times$''}\notin{V}\mid{S}\in\mathcal{S}_{n}^{+}), \displaystyle e_{-}:=p_{{R},{V}\sim\tilde{\pi}}(\mathcal{T}({S},{R})\in\mathcal{S}^{+}_{n-1},\text{``$\times$''}\in{V}\mid{S}\in\mathcal{S}_{n}^{+}), \displaystyle f:=p_{{R},{V}\sim\tilde{\pi}}(\text{``$\times$''}\in{V}\mid{S}\in\mathcal{S}_{n}^{-}). \tag{3}

$$

To elaborate, $\mu$ measures the planning ability, defined as the probability that $\pi$ plans a step that leads to a positive next state, given that the current state is positive. For verification abilities, we measure the rates of two types of errors: $e_{+}$ (false positive rate) is the probability of accepting a step that leads to a negative state, and $e_{-}$ (false negative rate) is the probability of rejecting a step that leads to a positive state. Additionally, $f$ is the probability of rejecting any step on negative states, providing the chance of tracing back to previous states. Given these factors, Figure 5 illustrates the state transitions in non-reflective (vanilla MTP) and reflective (RMTB and RTBS) reasoning.

<details>

<summary>x7.png Details</summary>

### Visual Description

\n

## State Transition Diagram: Two-State System with Probabilistic Transitions

### Overview

The image displays a directed graph diagram representing a state transition model, likely a Markov chain or similar stochastic process. It consists of four nodes arranged in a 2x2 grid, connected by three directed edges (arrows) with associated transition probabilities or rates. The diagram uses color-coding (red and green) and mathematical notation to define states and their relationships.

### Components/Axes

**Nodes (States):**

* **Top-Left Node:** A red circle labeled `S_n^-`.

* **Top-Right Node:** A red circle labeled `S_{n-1}^-`.

* **Bottom-Left Node:** A green circle labeled `S_n^+`.

* **Bottom-Right Node:** A green circle labeled `S_{n+1}^+`.

**Edges (Transitions):**

1. A horizontal red arrow from `S_n^-` (top-left) to `S_{n-1}^-` (top-right). It is labeled with the number `1`.

2. A diagonal red arrow from `S_n^+` (bottom-left) to `S_{n-1}^-` (top-right). It is labeled with the expression `1 - μ`.

3. A horizontal green arrow from `S_n^+` (bottom-left) to `S_{n+1}^+` (bottom-right). It is labeled with the Greek letter `μ` (mu).

**Spatial Layout:**

* The diagram is organized into two distinct rows.

* The **top row** contains the two red nodes (`S_n^-` and `S_{n-1}^-`), connected by a direct horizontal transition.

* The **bottom row** contains the two green nodes (`S_n^+` and `S_{n+1}^+`), connected by a direct horizontal transition.

* The **diagonal transition** connects the bottom-left green node (`S_n^+`) to the top-right red node (`S_{n-1}^-`), crossing from the lower to the upper row.

### Detailed Analysis

**State Notation:**

* The states are denoted by `S` with a subscript (`n`, `n-1`, `n+1`) and a superscript (`+` or `-`).

* The subscript likely represents a discrete index, such as time step, generation, or a counter.

* The superscript (`+`/`-`) denotes a binary attribute or category, visually reinforced by the green/red color coding.

**Transition Logic:**

* From state `S_n^-` (red, index `n`), there is a **certain transition** (probability/rate = `1`) to state `S_{n-1}^-` (red, index `n-1`). This represents a deterministic decrease in the index while staying in the "negative" (`-`) category.

* From state `S_n^+` (green, index `n`), there are two possible transitions:

1. To state `S_{n-1}^-` (red, index `n-1`) with a weight of `1 - μ`. This represents a change from the "positive" (`+`) to the "negative" (`-`) category, accompanied by a decrease in index.

2. To state `S_{n+1}^+` (green, index `n+1`) with a weight of `μ`. This represents staying in the "positive" (`+`) category while increasing the index.

* The sum of the weights for transitions originating from `S_n^+` is `(1 - μ) + μ = 1`, indicating these are complementary probabilities.

### Key Observations

1. **Asymmetric Connectivity:** The "negative" (`-`) states only have outgoing transitions to other "negative" states (specifically, decreasing the index). The "positive" (`+`) state `S_n^+` is the only node with branching transitions, leading to both a "negative" state and another "positive" state.

2. **Index Flow:** The index (`n`) can decrease (`n -> n-1`), increase (`n -> n+1`), or stay conceptually similar (the transition from `S_n^-` to `S_{n-1}^-` changes the index but maintains the `-` category).

3. **Color-Coded Categories:** The red/green dichotomy is consistent. All nodes with a `-` superscript are red. All nodes with a `+` superscript are green. The diagonal transition arrow is also red, matching its destination node's color (`S_{n-1}^-`).

4. **Parameter `μ`:** The Greek letter `μ` acts as a critical parameter controlling the fate of the system when in the `S_n^+` state. It determines the probability of remaining in the "positive" lineage versus defecting to the "negative" lineage.

### Interpretation

This diagram models a **two-type branching process or a state-dependent random walk**. The states likely represent populations, strategies, or conditions in a system (e.g., in evolutionary game theory, population genetics, or stochastic modeling).

* **The "Negative" (`-`) Lineage:** Appears to be a **declining or absorbing pathway**. Once in a `-` state, the system deterministically moves to a `-` state with a lower index (`n-1`), suggesting a countdown or a process that terminates or reduces a count.

* **The "Positive" (`+`) Lineage:** Represents a **growth or persistence pathway**. From `S_n^+`, there's a probability `μ` to advance to a higher index (`S_{n+1}^+`), indicating growth, reproduction, or success. However, there's a risk (`1 - μ`) of "failure" or "mutation," where the system transitions to the declining `-` lineage at a lower index (`S_{n-1}^-`).

* **System Dynamics:** The overall behavior hinges on the value of `μ`. A high `μ` favors the expansion of the `+` lineage. A low `μ` leads to frequent collapse into the `-` lineage, which then deterministically winds down. The diagram captures a fundamental tension between growth and decay, persistence and failure, governed by a single parameter. The absence of transitions *into* the `S_n^-` state from any other state except `S_n^+` suggests it might be a specific entry point into the declining pathway.

</details>

(a) Non-reflective reasoning

<details>

<summary>x8.png Details</summary>

### Visual Description

\n

## State Transition Diagram: RTBS Process Model

### Overview

The image displays a state transition diagram modeling a process labeled "RTBS" (likely an acronym for a specific algorithm or system). The diagram illustrates transitions between positive (`+`) and negative (`-`) states across three sequential time steps or stages: `n+1`, `n`, and `n-1`. The flow moves generally from left (most recent/future state `n+1`) to right (past state `n-1`). The model incorporates probabilistic transitions and error terms, culminating in a defined parameter `α`.

### Components/Axes

The diagram is composed of nodes (states) and directed edges (transitions). There are no traditional chart axes.

**Nodes (States):**

* **Left Group (within a rounded rectangle):**

* `S_{n+1}^-` (Red circle, top-left)

* `S_{n+1}^+` (Green circle, bottom-left)

* **Middle Group:**

* `S_n^-` (Red circle, top-center)

* `S_n^+` (Green circle, bottom-center)

* **Right Group:**

* `S_{n-1}^-` (Red circle, top-right)

* `S_{n-1}^+` (Green circle, bottom-right)

**Edges (Transitions) and Labels:**

* **From `S_{n+1}^-`:**

* Solid red arrow to `S_n^-`, labeled `f`.

* Solid red arrow to `S_{n-1}^-`, labeled `1-f`.

* Dashed green arrow to `S_n^+` (no explicit label).

* **From `S_{n+1}^+`:**

* Dashed green arrow to `S_n^+` (no explicit label).

* Dashed green arrow to `S_n^-` (no explicit label).

* **From `S_n^-`:**

* Red self-loop arrow, labeled `μ(e_+)`.

* Solid red arrow to `S_{n-1}^-`, labeled `f`.

* Solid red arrow to `S_{n-1}^+`, labeled `(1-μ)e_+`.

* **From `S_n^+`:**

* Green self-loop arrow, labeled `μ(e_+)`.

* Solid green arrow to `S_{n-1}^+`, labeled `μ(e_+)`.

* Solid green arrow to `S_{n-1}^-`, labeled `(1-μ)e_+`.

**Legend/Footer Text:**

* Text at bottom-left: `After m attempts in RTBS`

* Equation at bottom-center/right: `α = μ e_- + (1-μ)(1-e_+)`

### Detailed Analysis

The diagram defines a Markov-like process where the system can be in a positive (`+`) or negative (`-`) state at each step. The transitions are governed by parameters `f`, `μ`, `e_+`, and `e_-`.

1. **Temporal Flow:** The primary flow is from stage `n+1` to `n` to `n-1`. The dashed arrows from `n+1` to `n` suggest a direct but possibly conditional or different-type transition compared to the solid arrows.

2. **Transition Logic from `S_n^-` (Negative State at step n):**

* It can remain in a negative state at the next step (`n-1`) with probability `f`.

* It can transition to a positive state at step `n-1` with probability `(1-μ)e_+`.

* It has a self-loop (staying in `S_n^-`?) with weight `μ(e_+)`. The notation is ambiguous; it may represent an internal process or a transition back to itself within the same time step.

3. **Transition Logic from `S_n^+` (Positive State at step n):**

* It can remain in a positive state at step `n-1` with probability `μ(e_+)`.

* It can transition to a negative state at step `n-1` with probability `(1-μ)e_+`.

* It also has a self-loop with weight `μ(e_+)`.

4. **Parameter `α`:** Defined as `α = μ e_- + (1-μ)(1-e_+)`. This is a weighted sum combining a term related to the negative state (`e_-`) and the complement of a term related to the positive state (`e_+`), with `μ` acting as a mixing weight.

### Key Observations

* **Symmetry and Asymmetry:** The transition structure from `S_n^-` and `S_n^+` to the `n-1` stage is symmetric in form but uses the same parameters (`f`, `μ`, `e_+`) for both source states. The asymmetry lies in the outcome: from a negative source, `f` leads to negative; from a positive source, `μ(e_+)` leads to positive.

* **Dashed vs. Solid Arrows:** Dashed arrows only appear from the `n+1` stage to the `n` stage, indicating these transitions might be of a different nature (e.g., initialization, observation, or a separate process layer) compared to the solid-arrow transitions between `n` and `n-1`.

* **Color Consistency:** Red is consistently used for negative (`-`) states and their outgoing solid transitions. Green is used for positive (`+`) states and their outgoing solid transitions. The dashed arrows from `S_{n+1}^+` are green, and from `S_{n+1}^-` is green, which is an interesting cross-color transition.

* **Parameter Reuse:** The term `μ(e_+)` appears as a self-loop on both `S_n^-` and `S_n^+`, and as the transition from `S_n^+` to `S_{n-1}^+`. The term `(1-μ)e_+` appears as the cross-state transition from both `S_n^-` to `S_{n-1}^+` and from `S_n^+` to `S_{n-1}^-`.

### Interpretation

This diagram models a sequential decision-making or error-propagation process, likely in the context of reliability, learning, or signal detection (RTBS could stand for something like "Repeated Trial Bayesian System" or "Response Threshold Based System").

* **What it represents:** The system's state (`+` or `-`) evolves over discrete attempts or time steps (`m` attempts). At each step, the state can persist, flip, or self-reinforce based on probabilistic rules involving a base rate (`f`), a modulation parameter (`μ`), and state-dependent error or sensitivity terms (`e_+`, `e_-`).

* **Relationships:** The parameter `α` is the key output or derived metric of the process after `m` attempts. It is not a simple average but a specific combination of the error terms, suggesting it might represent an overall system bias, confidence, or adjusted error rate. The process from `n+1` to `n` (dashed arrows) may represent the input or observation phase, while the process from `n` to `n-1` (solid arrows) represents the state update or memory consolidation phase.

* **Notable Implications:** The model suggests that maintaining a positive state (`S^+`) is governed by the same parameters (`μ`, `e_+`) that also govern escaping a negative state (`S^-`). This implies a system where the mechanisms for success and failure are intertwined. The definition of `α` indicates that system performance (`α`) depends on both the likelihood of errors in the negative state (`e_-`) and the likelihood of *not* making a correct response in the positive state (`1-e_+`), weighted by `μ`. This is characteristic of models in psychophysics, machine learning (e.g., multi-armed bandits), or quality control.

</details>

(b) Reflective reasoning through an RMTP or RTBS

Figure 5: The diagram of state transitions starting from scale $n$ in the simplified reasoning, where probabilities are attached to solid lines. In (b) reflective reasoning, the dashed-line arrow presents the trace-back move after $m$ attempts in RTBS.

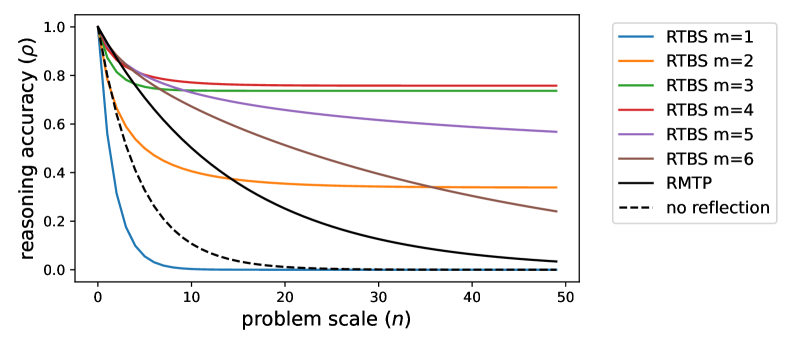

For input problems with scale $n$ , we use $\rho(n)$ , $\tilde{\rho}(n)$ , and $\tilde{\rho}_{m}(n)$ to respectively denote the reasoning accuracy using no reflection, RMTP, and RTBS (with width $m$ ). Obviously, we have $\rho(n)=\mu^{n}$ . In contrast, the mathematical forms of $\tilde{\rho}(n)$ and $\tilde{\rho}_{m}(n)$ are more complicated and therefore left to Appendix C.2. Our main result provides simple conditions for the above factors $(\mu,e_{-},e_{+},f)$ to ensure an improved accuracy when reasoning through an RMTP or RTBS.

**Theorem 1**

*In the above simplified problem, consider a self-verifying policy $\tilde{\pi}$ where $\mu$ , $e_{-}$ , and $e_{+}$ are non-trivial (i.e. neither $0$ nor $1$ ). Let $\alpha:=\mu e_{-}+(1-\mu)(1-e_{+})$ denote the rejection probability on positive states. Given an infinite computation budget, for $n>0$ we have:

- $\tilde{\rho}(n)\geq\rho(n)$ if and only if $e_{-}+e_{+}\leq 1$ , where equalities hold simultaneously; furthermore, reducing either $e_{-}$ or $e_{+}$ strictly increases $\tilde{\rho}(n)$ .

- $\tilde{\rho}_{m}(n)>\tilde{\rho}(n)$ for a sufficiently large $n$ if and only if $f>\alpha$ and $m>\frac{1}{1-\alpha}$ ; furthermore, such a gap of $\tilde{\rho}_{m}(n)$ over $\tilde{\rho}(n)$ increases strictly with $f$ .*

Does reflection require a strong verifier? Theorem 1 shows that RMTP improves performance over vanilla MTP if the verification errors $e_{+}$ and $e_{-}$ are properly bounded, which does not necessitate a strong verifier. In our simplified setting, this only requires the verifier $\mathcal{V}$ to be better than random guessing (which ensures $e_{-}+e_{+}=1$ ). This also indicates a trivial guarantee of RTBS, as an infinitely large width ( $m\to+\infty$ ) substantially converts RTBS to RMTB.

When does trace-back search facilitate reflection? Theorem 1 provides the conditions for RTBS to outperform RMTP for a sufficiently large $n$ : 1) The width $m$ is large enough to ensure effective exploration. 2) $f>\alpha$ indicates that negative states are inherently discriminated from positive ones, leading to a higher rejection probability on negative states than on positive states (see Figure 5(b)). In other words, provided $f>\alpha$ , RTBS is ensured to be more effective on complicated queries using a finite $m$ . However, this also implies a risk of over-thought on simple queries that have a small $n$ .

The derivation and additional details of Theorem 1 are provided in Appendix C.3. In addition, we also derive how many steps it costs to find a correct solution in RMTP. The following Proposition 1 (see proof in Appendix C.4) shows that a higher $e_{-}$ causes more steps to be necessarily rejected and increases the solution cost. In contrast, although a higher $e_{+}$ reduces accuracy, it forces successful solutions to rely less on reflection, leading to fewer expected steps. Therefore, a high false negative rate $e_{-}$ is worse than a high $e_{+}$ given the limited computational budget in practice.

**Proposition 1 (RMTP Reasoning Length)**

*For a simplified reasoning problem with scale $n$ , the expected number of steps $\bar{T}$ for $\tilde{\pi}$ to find a correct answer is $\bar{T}=\frac{n}{(1-\mu)e_{+}+\mu(1-e_{-})}$ . Especially, a correct answer will never be found if the denominator is $0$ .*

Appendix C.5 further extends our analysis to more realistic reasoning, where rejected attempts lead to a posterior drop of $\mu$ (or rise of $e_{-}$ ), indicating that the model may not well generalize the current state. In this case, the bound of $e_{-}$ to ensure improvements becomes stricter than that in Theorem 1.

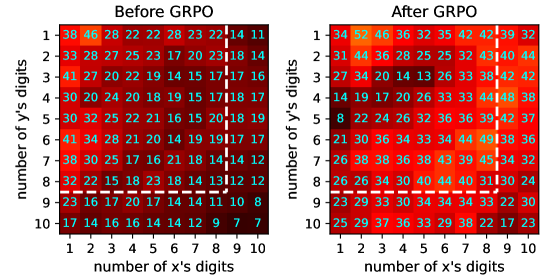

## 5 Experiments

We conduct comprehensive experiments to examine the reasoning performance of tiny transformers under various settings. We trained simple causal-attention transformers [36] (implemented by LitGPT [1]) with 1M, 4M, and 16M parameters, through the pipelines described in Section 3.3. Details of training data, model architectures, tokenization, and hyperparameters are included in Appendix D. The source code is available at https://github.com/zwyu-ai/self-verifying-reflection-reasoning.

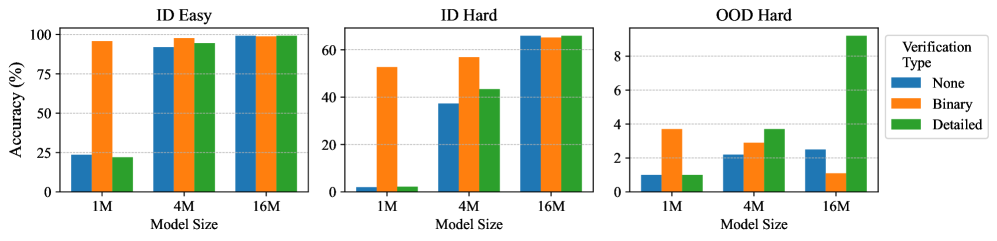

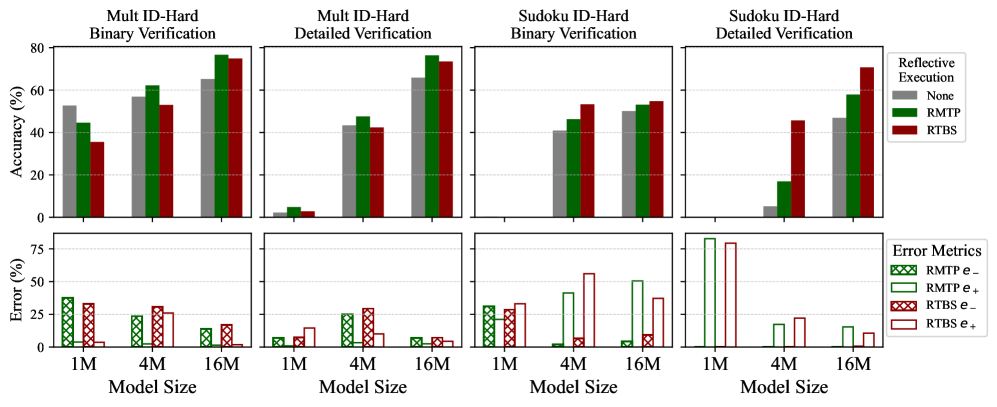

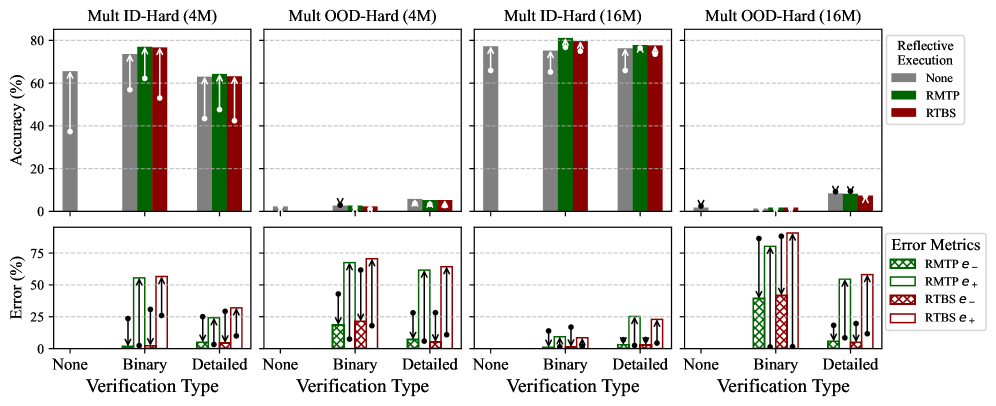

We test tiny transformers in two reasoning tasks: The integer multiplication task (Mult for short) computes the product of two integers $x$ and $y$ ; the Sudoku task fills numbers into blank positions of a $9\times 9$ matrix, such that each row, column, or $3\times 3$ block is a permutation of $\{1,\ldots,9\}$ . For both tasks, we divide queries into 3 levels of difficulties: The in-distribution (ID) Easy, ID Hard, and out-of-distribution (OOD) Hard. The models are trained on ID-Easy and ID-Hard problems, while tested additionally on OOD-Hard cases. We define the difficulty of a Mult query by the number $d$ of digits of the greater multiplicand, and that of a Sudoku puzzle is determined by the number $b$ of blanks to be filled. Specifically, we have $1\leq d\leq 5$ or $9\leq b<36$ for ID Easy, $6\leq d\leq 8$ or $36\leq b<54$ for ID Hard, and $9\leq d\leq 10$ or $54\leq b<63$ for OOD Hard.

Our full results are presented in Appendix E. Shown in Appendix E.1, these seemingly simple tasks pose challenges even for some well-known LLMs. Remarkably, through simple self-verifying reflection, our best 4M Sudoku model is as good as OpenAI o3-mini [21], and our best 16M Mult model outperforms DeepSeek-R1 [5] in ID difficulties.

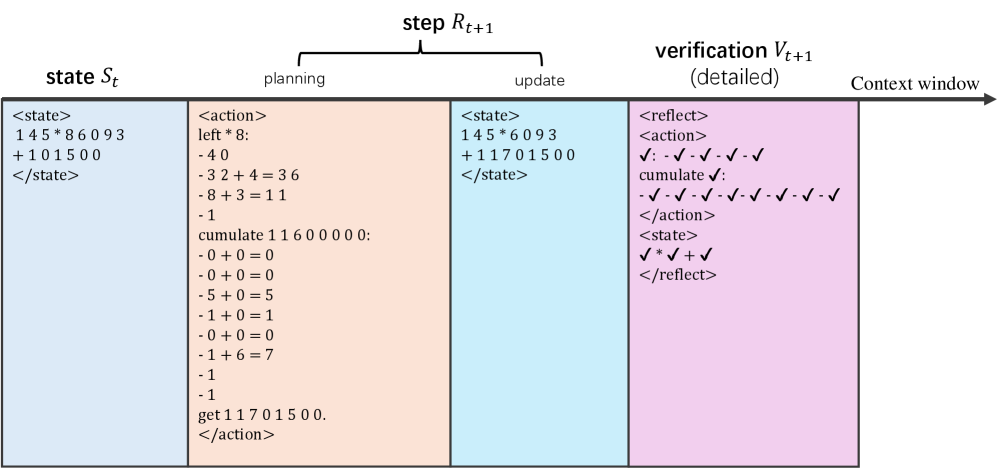

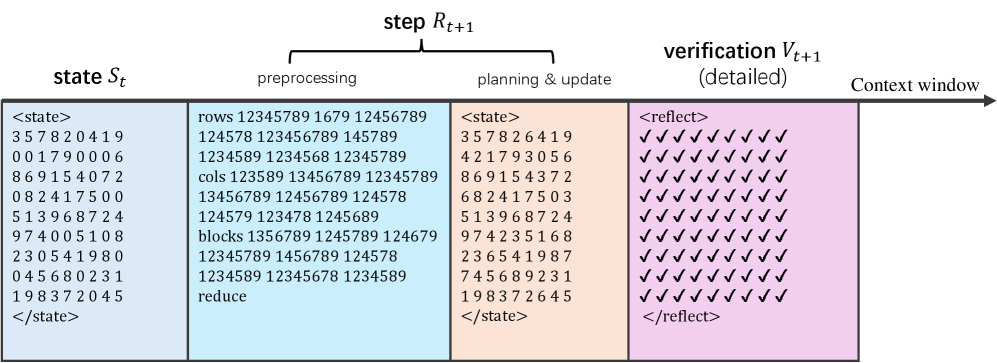

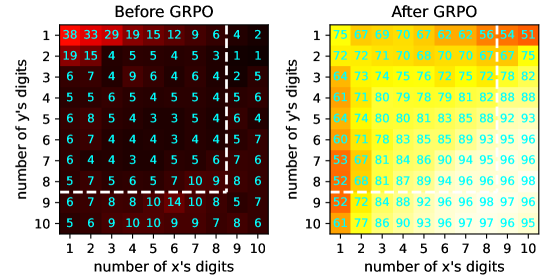

### 5.1 Results of supervised fine-tuning

First, we conduct (I) pretraining, (II) non-reflective SFT, and (III) reflective SFT as described in Section 3.3. In reflective SFT, we consider learning two types of self-verification: 1) The binary verification includes a single binary label indicating the overall correctness of a planned step; 2) the detailed verification includes a series of binary labels checking the correctness of each meaningful element in the step. The implementation of verification labels is elaborated in Appendix D.2.3. We present our full SFT results in Appendix E.2, which includes training 30 models and executing 54 tests. In the following, we discuss our main findings through visualizing representative results.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Grouped Bar Chart: Model Accuracy by Size and Verification Type

### Overview

The image displays three grouped bar charts arranged horizontally, comparing the accuracy (%) of machine learning models across three different evaluation settings ("ID Easy", "ID Hard", "OOD Hard") and three model sizes (1M, 4M, 16M). Performance is further broken down by three "Verification Types": None, Binary, and Detailed.

### Components/Axes

* **Chart Type:** Grouped Bar Chart (3 subplots).

* **Subplot Titles (Top Center):**

* Left: `ID Easy`

* Center: `ID Hard`

* Right: `OOD Hard`

* **Y-Axis (Leftmost chart only, shared scale concept):**

* **Label:** `Accuracy (%)`

* **Scale:** 0 to 100, with major ticks at 0, 25, 50, 75, 100.

* **X-Axis (Each subplot):**

* **Label:** `Model Size`

* **Categories:** `1M`, `4M`, `16M`.

* **Legend (Position: Far right, outside the "OOD Hard" chart):**

* **Title:** `Verification Type`

* **Categories & Colors:**

* `None` - Blue

* `Binary` - Orange

* `Detailed` - Green

### Detailed Analysis

**1. ID Easy (Left Subplot)**

* **Trend:** All verification types show high accuracy, with a slight upward trend as model size increases. Binary verification is consistently the highest.

* **Data Points (Approximate %):**

* **1M Model:** None ≈ 25%, Binary ≈ 95%, Detailed ≈ 25%.

* **4M Model:** None ≈ 90%, Binary ≈ 98%, Detailed ≈ 95%.

* **16M Model:** None ≈ 98%, Binary ≈ 99%, Detailed ≈ 98%.

**2. ID Hard (Center Subplot)**

* **Trend:** Clear positive correlation between model size and accuracy for all verification types. Binary verification maintains a lead, but the gap narrows at larger sizes.

* **Data Points (Approximate %):**

* **1M Model:** None ≈ 2%, Binary ≈ 52%, Detailed ≈ 2%.

* **4M Model:** None ≈ 38%, Binary ≈ 58%, Detailed ≈ 42%.

* **16M Model:** None ≈ 65%, Binary ≈ 65%, Detailed ≈ 65%.

**3. OOD Hard (Right Subplot)**

* **Trend:** Overall accuracy is drastically lower than in-distribution (ID) tasks. The trend is less uniform. Notably, Detailed verification shows a dramatic, disproportionate increase for the largest model.

* **Data Points (Approximate %):**

* **1M Model:** None ≈ 1%, Binary ≈ 3.8%, Detailed ≈ 1%.

* **4M Model:** None ≈ 2.2%, Binary ≈ 3%, Detailed ≈ 3.8%.

* **16M Model:** None ≈ 2.5%, Binary ≈ 1.2%, Detailed ≈ 9%.

### Key Observations

1. **Performance Hierarchy:** In easier tasks (ID Easy), Binary verification is superior. In harder tasks (ID Hard, OOD Hard), the advantage diminishes or changes.

2. **Model Scaling Benefit:** Increasing model size from 1M to 16M parameters consistently improves accuracy across all settings and verification types, though the absolute gain is smallest in OOD Hard (except for Detailed verification).

3. **Verification Type Impact:** The effect of verification is highly context-dependent. It provides a massive boost in "ID Easy" for the 1M model but has minimal or even negative impact in "OOD Hard" for some sizes.

4. **Critical Outlier:** The performance of **Detailed verification on the 16M model in the OOD Hard setting** is a major outlier. It jumps to ~9% accuracy, while all other data points in that chart are below 4%. This suggests a potential breakthrough or specific synergy for large models using detailed verification on out-of-distribution tasks.

5. **Task Difficulty Gradient:** There is a stark drop in baseline accuracy (None verification) from ID Easy (~25-98%) to ID Hard (~2-65%) to OOD Hard (~1-2.5%), clearly illustrating the increasing difficulty of these evaluation settings.

### Interpretation

This data suggests several important insights for model development and evaluation:

* **Verification is Not Universally Beneficial:** The "Binary" verification method acts as a strong performance crutch for smaller models on easier tasks but does not scale its advantage to harder problems or larger models. Its utility is context-specific.

* **The Promise of Detailed Verification:** While often underperforming on easier tasks, **Detailed verification shows unique potential for large models (16M) on the hardest, out-of-distribution tasks**. This could indicate that detailed reasoning or verification steps become crucial when a model has sufficient capacity to leverage them in unfamiliar scenarios.

* **Scaling Laws Hold, But Are Task-Dependent:** The consistent improvement with model size confirms scaling laws, but the *rate* of improvement varies dramatically. Gains are massive in ID settings but marginal in OOD settings, highlighting a fundamental challenge in generalization.

* **OOD Generalization Remains the Core Challenge:** The extremely low accuracy across the board in "OOD Hard" (all values <10%) underscores that current methods, even with large models and verification, struggle significantly with distribution shift. The spike for Detailed/16M is a promising but isolated data point that requires further investigation.

In summary, the charts argue for a nuanced approach: simple verification may suffice for known domains, but advancing to truly out-of-distribution problems may require both large model scale and more sophisticated, detailed verification mechanisms.

</details>

Figure 6: The accuracy of non-reflective execution of models in Mult. In each group, we compare training with various types of verification (“None” for no reflective SFT).