# SMEC:Rethinking Matryoshka Representation Learning for Retrieval Embedding Compression

**Authors**: Hangzhou, China, &Bo Zheng, Beijing, China

## Abstract

Large language models (LLMs) generate high-dimensional embeddings that capture rich semantic and syntactic information. However, high-dimensional embeddings exacerbate computational complexity and storage requirements, thereby hindering practical deployment. To address these challenges, we propose a novel training framework named Sequential Matryoshka Embedding Compression (SMEC). This framework introduces the Sequential Matryoshka Representation Learning(SMRL) method to mitigate gradient variance during training, the Adaptive Dimension Selection (ADS) module to reduce information degradation during dimension pruning, and the Selectable Cross-batch Memory (S-XBM) module to enhance unsupervised learning between high- and low-dimensional embeddings. Experiments on image, text, and multimodal datasets demonstrate that SMEC achieves significant dimensionality reduction while maintaining performance. For instance, on the BEIR dataset, our approach improves the performance of compressed LLM2Vec embeddings (256 dimensions) by 1.1 points and 2.7 points compared to the Matryoshka-Adaptor and Search-Adaptor models, respectively.

SMEC:Rethinking Matryoshka Representation Learning for Retrieval Embedding Compression

Biao Zhang, Lixin Chen, Tong Liu Taobao & Tmall Group of Alibaba Hangzhou, China {zb372670,tianyou.clx,yingmu}@taobao.com Bo Zheng Taobao & Tmall Group of Alibaba Beijing, China bozheng@alibaba-inc.com

## 1 Introduction

<details>

<summary>figures/fig_intr.png Details</summary>

### Visual Description

## Line Chart: NDCG@10 Performance vs. Embedding Dimensions

### Overview

The chart compares the performance of four language model variants (LLM2Vec-7B, LLM2Vec-7B with SMEC, LLM2Vec-1B, and LLM2Vec-1B with SMEC) across different embedding dimensions (128 to 3584). Performance is measured as NDCG@10, a metric for ranking quality, plotted against embedding dimensions. The chart includes annotations for lossless dimension compression ratios (~12x and ~14x) and visualizes trends in model efficiency and effectiveness.

### Components/Axes

- **X-axis**: "Embedding dimensions" (logarithmic scale: 128, 256, 512, 1024, 1536, 3584).

- **Y-axis**: "NDCG@10" (normalized Discounted Cumulative Gain at 10 results, range: 0.4–0.9).

- **Legend**: Located in the bottom-right corner, mapping colors/markers to model variants:

- **Blue line with triangles**: LLM2Vec-7B

- **Maroon line with triangles**: LLM2Vec-7B(w/ SMEC)

- **Dashed cyan line with circles**: LLM2Vec-1B

- **Dotted blue line with squares**: LLM2Vec-1B(w/ SMEC)

- **Annotations**:

- Green dashed lines labeled "~12x lossless dimension compression" and "~14x lossless dimension compression."

- Arrows pointing to specific data points (e.g., 0.832 at 512 dimensions for LLM2Vec-7B(w/ SMEC)).

### Detailed Analysis

1. **LLM2Vec-7B(w/ SMEC)** (Maroon line):

- Starts at **0.772** (128 dimensions) and increases steadily to **0.862** (3584 dimensions).

- Shows the highest NDCG@10 across all dimensions, with a ~14x lossless compression annotation at 512 dimensions.

2. **LLM2Vec-7B** (Blue line):

- Begins at **0.718** (128 dimensions) and rises to **0.802** (3584 dimensions).

- Outperforms LLM2Vec-1B variants but lags behind its SMEC-enhanced counterpart.

3. **LLM2Vec-1B(w/ SMEC)** (Dotted blue line):

- Starts at **0.492** (128 dimensions) and improves to **0.802** (3584 dimensions).

- Demonstrates the largest relative gain (~12x compression) at 512 dimensions.

4. **LLM2Vec-1B** (Dashed cyan line):

- Begins at **0.568** (128 dimensions) and reaches **0.715** (3584 dimensions).

- Shows minimal improvement compared to its SMEC-enhanced version.

### Key Observations

- **SMEC Enhancement**: All SMEC variants (7B and 1B) outperform their base models, with the 1B SMEC variant showing the most dramatic improvement (~12x compression).

- **Model Size Impact**: Larger models (7B) consistently achieve higher NDCG@10 than smaller models (1B), even without SMEC.

- **Diminishing Returns**: Performance gains plateau as embedding dimensions increase, particularly beyond 1024 dimensions.

### Interpretation

The data highlights the interplay between model size, embedding dimensions, and efficiency techniques like SMEC. While larger models (7B) inherently perform better, SMEC significantly boosts the efficiency of smaller models (1B), enabling them to approach the performance of larger models with reduced computational overhead. The ~12x and ~14x compression annotations suggest that SMEC allows for substantial dimensionality reduction without sacrificing ranking quality. This implies that SMEC is a critical optimization for deploying resource-constrained models in real-world applications.

</details>

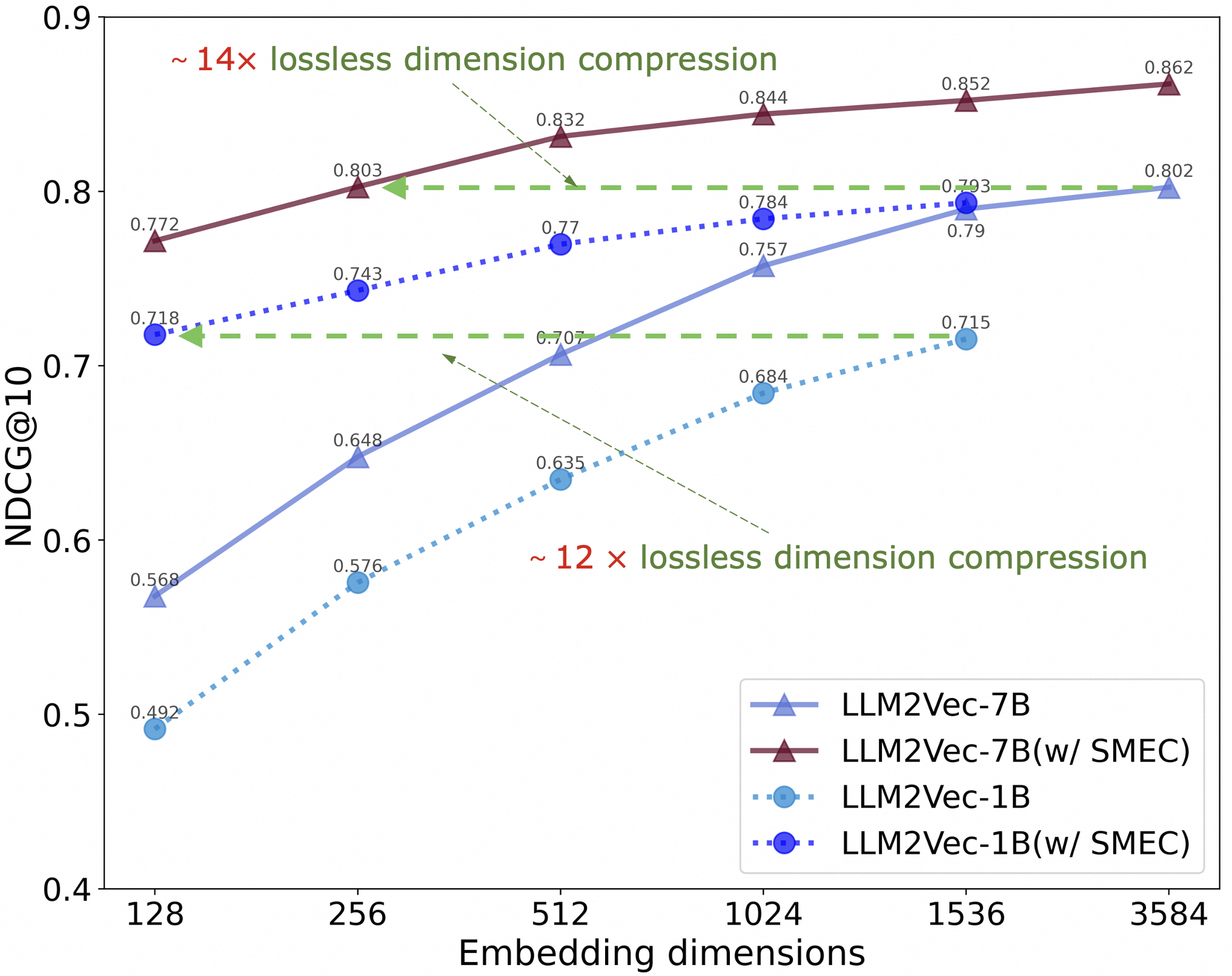

Figure 1: The effectiveness of the SMEC in dimensionality reduction. After customized training with the SMEC method on BEIR Quora dataset, the embeddings of LLM2Vec-7B (3584 dimensions) and LLM2Vec-1B (1536 dimensions) can achieve 14 $\times$ and 12 $\times$ lossless compression, respectively.

<details>

<summary>figures/overview.png Details</summary>

### Visual Description

## Flowchart Diagram: Neural Network Architecture Comparison

### Overview

The diagram compares three neural network architectures (Search-Adaptor, Matryoshka-Adaptor, SMEC) through visual workflows. Each architecture processes input through an encoder, followed by distinct layer configurations and loss functions. The right side includes visual metaphors (Matryoshka dolls, SMEC logo) to represent the methods.

### Components/Axes

- **Input**: Top box labeled "Input" for all architectures.

- **Encoder**: Blue trapezoid labeled "Encoder" feeding into each architecture.

- **Layers**:

- **Search-Adaptor (a)**: Four fully connected layers (M×N, M×N/2, M×N/4, M×N/8) with loss functions L(x₁₈), L(x₁₄), L(x₁₂), L(x₁₁).

- **Matryoshka-Adaptor (b)**: Single fully connected layer (M×N) with loss functions L(x₁₈), L(x₁₄), L(x₁₂), L(x₁₁).

- **SMEC (c)**: Three-step process with sub-layers (M×N/2, M×N/4, M×N/8) and loss functions L₁(x), L₂(x), L₃(x).

- **Legend**: Right side shows Matryoshka-Adaptor (dolls) and SMEC (logo).

### Detailed Analysis

1. **Search-Adaptor (a)**:

- Input → Encoder → Four fully connected layers with progressively smaller dimensions.

- Loss functions applied at each layer (L(x₁₈) to L(x₁₁)).

2. **Matryoshka-Adaptor (b)**:

- Input → Encoder → Single fully connected layer (M×N).

- Loss functions applied at four stages (L(x₁₈) to L(x₁₁)), despite only one layer.

3. **SMEC (c)**:

- Input → Encoder → Three-step sub-layer process:

- **Step 1**: M×N layer with loss L₁(x).

- **Step 2**: M×N/2 layer with loss L₂(x).

- **Step 3**: M×N/4 layer with loss L₃(x).

### Key Observations

- **Complexity**: Search-Adaptor has the most layers (4), Matryoshka-Adaptor the fewest (1), and SMEC a moderate three-step process.

- **Loss Functions**: All architectures use similar loss terms (L(x₁₈), L(x₁₄), etc.), but SMEC introduces stepwise losses (L₁, L₂, L₃).

- **Visual Metaphors**: Matryoshka dolls (nested) represent Matryoshka-Adaptor’s layered structure; SMEC logo emphasizes its stepwise approach.

### Interpretation

The diagram highlights trade-offs between architectural complexity and adaptability:

- **Search-Adaptor** prioritizes granularity with multiple layers but may increase computational cost.

- **Matryoshka-Adaptor** simplifies the process but risks underfitting due to fewer layers.

- **SMEC** balances complexity and efficiency through stepwise sub-layers, potentially offering better generalization.

The Matryoshka dolls and SMEC logo visually reinforce the conceptual differences, suggesting SMEC’s method is both innovative and optimized for performance.

</details>

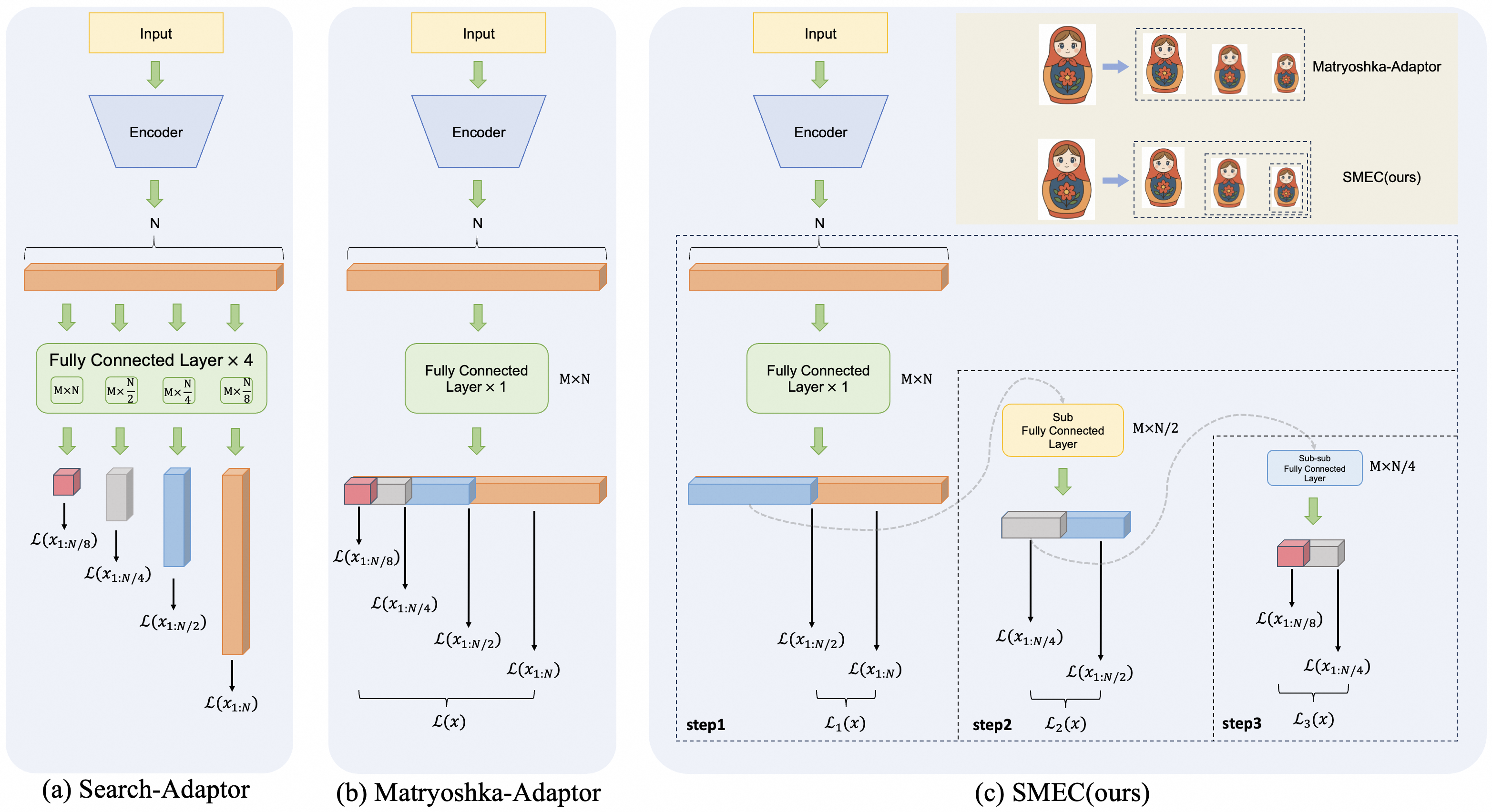

Figure 2: Illustration of embedding compression architectures and our proposed approach. (a) presents the direct feature dimensionality reduction performed by the Search-Adaptor using FC layers. (b) illustrates the Matryoshka-Adaptor, which employs a shared set of FC layers to generate low-dimensional embeddings with multiple output dimensions. A Matryoshka-like hierarchical inclusion relationship exists between the high- and low-dimensional embeddings. (c) presents our proposed Sequential Matryoshka Embedding Compression (SMEC) framework, which adopts a sequential approach to progressively reduce high-dimensional embeddings to the target dimension. The animated diagram in the upper-right corner vividly highlights the distinction between Matryoshka-Adaptor and SMEC.

Large language models excel in diverse text tasks due to their ability to capture nuanced linguistic structures and contextual dependencies. For instance, GPT-4 achieves state-of-the-art performance on benchmarks like GLUE Wang et al. (2018) and SuperGLUE Wang et al. (2019), demonstrating its proficiency in tasks such as natural language inference (NLI), question answering (QA), and text classification. This success is attributed to their transformer-based architectures Vaswani et al. (2017), which enable parallel processing of sequential data and capture long-range dependencies through self-attention mechanisms. Similarly, Llama-3 Grattafiori et al. (2024) and ChatGPT Brown et al. (2020) leverage similar principles to achieve comparable or superior performance in domain-specific and multi-lingual tasks.

LLMs are increasingly integrated into commercial information retrieval (IR) systems, such as search engines (e.g., Google’s MUM) and recommendation platforms (e.g., Netflix’s content retrieval). Their ability to generate embeddings for long documents (e.g., books, research papers) and dynamic queries (e.g., conversational search) makes them indispensable for modern applications. For example, the BEIR benchmark Thakur et al. (2021) evaluates cross-domain retrieval performance, where LLMs outperform traditional BM25 Robertson and Walker (1994) and BERT-based models Devlin et al. (2019) by leveraging contextual embeddings.

While LLMs’ high-dimensional embeddings enable sophisticated semantic modeling, their storage and computational costs hinder scalability. Embedding dimensions of LLMs typically range from 1,024 (e.g., GPT-3) to 4,096 (e.g., Llama-3), exacerbating storage overhead and computational inefficiency—especially in real-time systems requiring dynamic updates. Moreover, high-dimensional vectors degrade the performance of retrieval algorithms due to the curse of dimensionality Beyer et al. (1999). For example: exact nearest-neighbor search in high-dimensional spaces becomes computationally infeasible, necessitating approximate methods like FAISS Johnson et al. (2017) or HNSW Yury et al. (2018). Even with optimizations, query latency increases exponentially with dimensionality, limiting responsiveness in real-world applications.

To address these challenges, Matryoshka Representation Learning (MRL) Kusupati et al. (2022) encodes multi-scale information into a single embedding, balancing task complexity and efficiency. It achieves strong results in large-scale classification and retrieval tasks and has inspired variants like Matryoshka-Adaptor Yoon et al. (2024), which offers a scalable framework for transforming embeddings into structured representations with Matryoshka properties under both supervised and unsupervised settings. However, MRL’s multi-scale parallel training strategy simultaneously limits its practical application in industry. When the retrieval system requires a new low-dimensional embedding, retraining from scratch is necessary to achieve effective dimensionality reduction.

In this paper, we systematically analyze the limitations of MRL and its variants in embedding compression and propose three key enhancements: (1) a continued-training-friendly training framework named Sequential Matryoshka Representation Learning (SMRL); (2) an adaptive dimension selection (ADS) mechanism to minimize information degradation during dimension pruning; and (3) a Selectable Cross-batch Memory (S-XBM) strategy to enhance unsupervised learning between high- and low-dimensional embeddings.

## 2 Related Work

### 2.1 Matryoshka representation learning

Matryoshka representation learning introduces a novel paradigm where embeddings are pretrained to inherently support progressive dimension truncation. This enables fine-grained control over the trade-off between computational latency (via reduced dimensionality) and accuracy (via retained semantic structure). Key innovations include the design of Matryoshka properties, such as hierarchical information encoding and intra-cluster compactness, which ensure that even truncated embeddings retain utility for downstream tasks.

In addition to representation learning, the concept of MRL have been applied to image generation, such as Matryoshka Diffusion Models (MDM) Gu et al. (2023); multimodal content understanding, such as $M^{3}$ Cai et al. (2024); and Multimodal Large Language Model (MLLM), such as Matryoshka Query Transformer (MQT) Hu et al. (2024).

### 2.2 Embedding Compression

Embedding compression aims to reduce the computational and memory footprint of neural network models or embeddings while preserving their utility for downstream tasks. This objective has driven research across multiple paradigms, each addressing different trade-offs between compression efficiency, performance retention, and adaptability. Early approaches primarily focused on unsupervised techniques based on linear algebra, such as Principal Component Analysis (PCA) Jolliffe and Cadima (2016), Linear Discriminant Analysis (LDA) Mclachlan , and Non-negative Matrix Factorization (NMF) Lee and Seung (2000). Building upon these, autoencoders and their variants, such as Variational Autoencoders (VAEs) Kingma et al. (2013), have gradually emerged as powerful tools for nonlinear dimensionality reduction, capable of capturing complex data distributions. With the development of deep learning, methods such as Contrastive Predictive Coding (CPC) Oord et al. (2018) and Momentum Contrast (MoCo) He et al. (2020) are capable of learning robust and compact representations from unlabeled data.

Recently, customized methods such as Search-Adaptor Yoon et al. (2023) and Matryoshka-Adaptor Yoon et al. (2024) have emerged as a new trend in embedding compression. They achieve significant dimensionality reduction by adding only a small number of parameters to the original representation model and retraining it on specific data.

## 3 Method

### 3.1 Rethinking MRL for embedding compression

MRL employs a nested-dimensional architecture to train models that learn hierarchical feature representations across multiple granularities. This allows adaptive deployment of models based on computational constraints. Specifically, MRL defines a series of models $f_{1},f_{2},\ldots,f_{M}$ that share identical input and output spaces but progressively expand their hidden dimensions.

The term Matryoshka derives from the hierarchical parameter structure where the parameters of model $f_{m}$ are nested within those of its successor $f_{m+1}$ . To illustrate, consider a FC layer within the largest model $f_{M}$ , which contains $d_{M}$ neurons in its hidden layer. Correspondingly, the FC layer of $f_{m}$ retains the first $d_{m}$ neurons of this structure, with dimensions satisfying $d_{1}\leq d_{2}\leq\dots\leq d_{M}$ . MRL jointly trains these models using the following objective:

$$

\sum_{m=1}^{M}c_{m}\cdot\mathcal{L}(f_{m}(\mathbf{x});y), \tag{1}

$$

where $\mathcal{L}$ denotes the loss function, $y$ represents the ground-truth label, and $c_{m}$ are task-specific weighting coefficients. Notably, each training iteration requires forward and backward propagation for all $M$ models, resulting in substantial computational overhead compared to training a single standalone model. Upon convergence, MRL enables flexible inference by selecting any intermediate dimension $d_{i}\leq d_{M}$ , thereby accommodating diverse computational constraints.

Although the MRL method has partially mitigated the performance degradation of representations during dimensionality reduction, we contend that it still faces the following three unresolved issues:

Gradient Fluctuation. In large-scale vector retrieval systems, sample similarity is measured by the distance between their representation vectors. Consequently, the optimization of embedding models typically employs loss functions based on embedding similarity. In this condition, according to the derivation in Appendix A, the loss function $\mathcal{L}^{d}$ of MRL under dimension $d$ satisfies the following relationship with respect to the parameter $\mathbf{w}_{i}$ in the $i$ -th dimension of the FC layer:

$$

\frac{\partial\mathcal{L}^{d}}{\partial\mathbf{w}_{i}}\propto\frac{1}{\delta(d)^{2}}. \tag{2}

$$

Here, $\delta(d)$ is a complex function that is positively correlated with the dimension $d$ . This equation provides a mathematical foundation for analyzing gradient fluctuations in multi-dimensional joint optimization architecture. It indicates that during the MRL training process, loss functions from various dimensions result in gradients of varying magnitudes on the same model parameter, thereby increasing gradient variance. In Section 5.2, we empirically demonstrated that the conclusion above is applicable to different loss functions. We propose a solution to resolve the aforementioned problem in Section 3.2.

Information Degradation. Neural network parameters exhibit heterogeneous contributions to model performance, as demonstrated by the non-uniform distribution of their gradients and feature importance metrics Frankle and Carbin (2018). The MRL method employs a dimension truncation strategy (e.g., $D\rightarrow D/2\rightarrow D/4\ldots$ ) to prune parameters and reduce feature dimensions by retaining partial parameters. However, this approach fails to adequately preserve critical parameters because it relies on a rigid, static truncation rule. Although MRL employs joint training of high- and low-dimensional vectors to redistribute information between truncated and retained parameters, this process is unavoidably accompanied by information degradation. Specifically, discarded parameters may contain essential information, such as unique feature mappings or high-order dependencies, that cannot be effectively recovered by the remaining ones. Empirical evidence, such as accuracy degradation and increased generalization gaps, demonstrates that such loss leads to suboptimal model performance and slower convergence Li et al. (2023). In summary, while MRL enables hierarchical dimensionality reduction, its inability to selectively retain critical parameters and the inherent information degradation during post-truncation training ultimately undermine its effectiveness in maintaining model performance. In Section 3.3, we propose a more effective dimension pruning method.

<details>

<summary>figures/fig_ads.png Details</summary>

### Visual Description

## Diagram: Parameter Optimization and Dimension Pruning Process

### Overview

The diagram illustrates a two-stage process for optimizing learnable parameters in a neural network, involving stochastic sampling and dimensionality reduction. It shows how parameters are selected, pruned, and evaluated through rank loss metrics.

### Components/Axes

1. **Left Section (Learnable Parameter + topk select)**:

- **Input**: "Learnable Parameter" block with four green rectangles

- **Process**:

- "gumbel_softmax" operation (blue arrow)

- "topk select" operation (blue arrow)

- **Output**: Sequence of layers labeled [1,3,...,X_{i-2}, X_{i-1}]

- **Layer Stack**:

- X1 (lightest green)

- X2 (medium green)

- X3 (darker green)

- ...

- X_{i-2} (darkest green)

- X_{i-1} (darkest green)

- X_i (darkest green)

2. **Right Section (Dimension Pruning)**:

- **Input**: Full layer stack (X1 to X_i)

- **Process**: "Dimension Pruning" (blue arrow)

- **Output**: Pruned layer stack (X1, X2, ..., X_{i-2}, X_{i-1})

- **Dimensionality**: Reduced to D/2 dimensions

3. **Final Output**:

- "Rank Loss" metric (red block)

- Connection to both processing paths via blue arrows

### Detailed Analysis

- **Color Coding**:

- Green gradient represents parameter importance (light = less important, dark = more important)

- Red block for rank loss (critical evaluation metric)

- **Key Elements**:

- Gumbel-softmax: Stochastic sampling method for differentiable top-k selection

- Top-k selection: Identifies most important parameters

- Dimension pruning: Reduces computational complexity by half

- Rank loss: Measures performance degradation after pruning

### Key Observations

1. The process maintains critical parameters (darkest green layers) while pruning less important ones

2. Dimensionality reduction occurs after parameter selection, not before

3. Both processing paths converge on the same rank loss metric

4. The pruned version maintains the same evaluation standard as the full model

### Interpretation

This diagram demonstrates a parameter optimization strategy that:

1. Uses stochastic sampling (gumbel-softmax) to identify important parameters

2. Applies top-k selection to retain only the most critical parameters

3. Reduces dimensionality by half while preserving performance (as measured by rank loss)

4. Maintains evaluation consistency between full and pruned models

The process suggests a balance between computational efficiency (through pruning) and model performance (through careful parameter selection). The use of rank loss as the final metric indicates that the optimization aims to preserve the relative ordering/importance of parameters rather than absolute values.

</details>

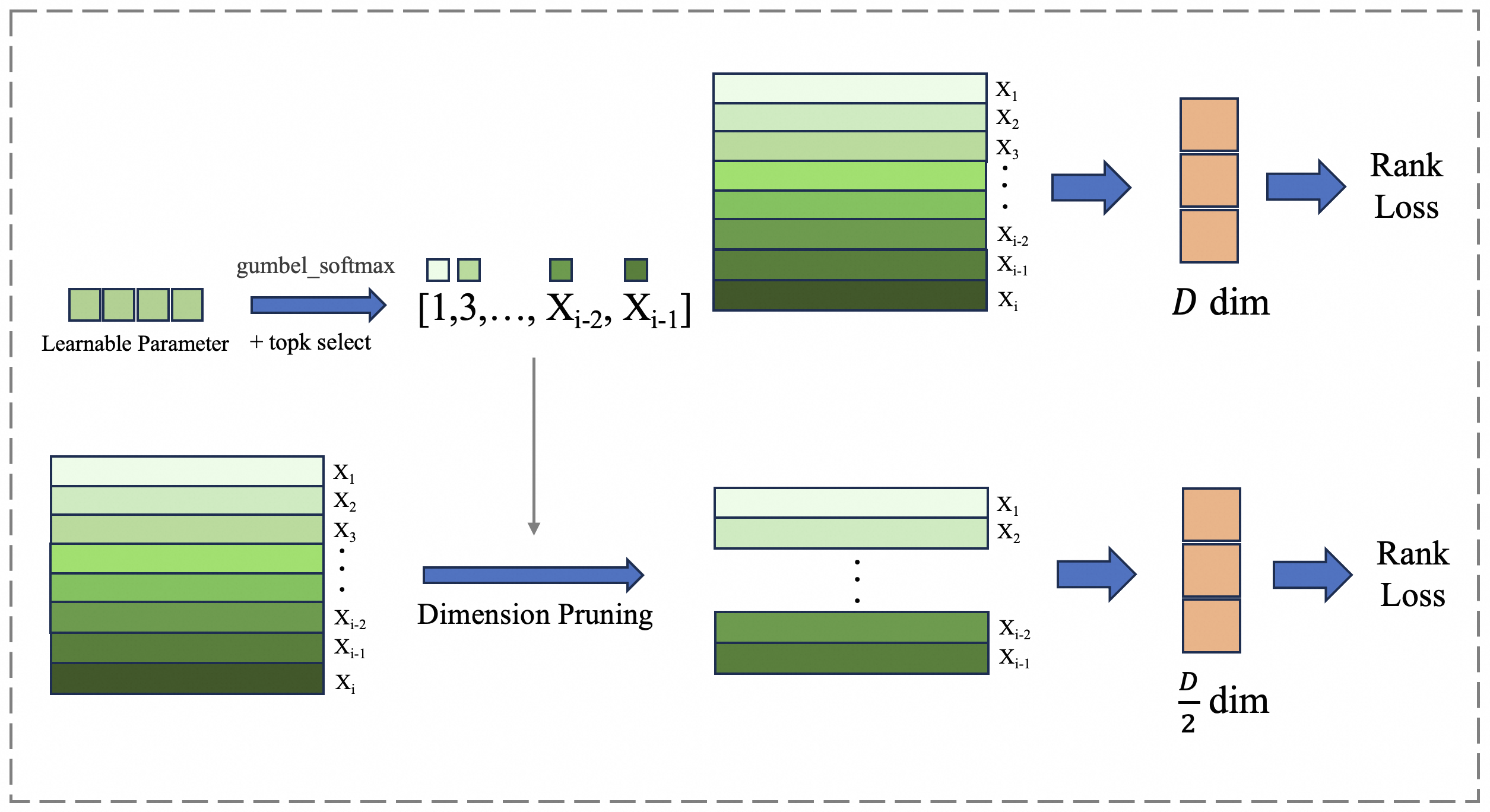

Figure 3: The ADS module introduces a set of learnable parameters to dynamically select dimensions based on their importance during the dimensionality reduction process.

Sample Selection. The MRL framework employs supervised learning to jointly train high-dimensional ( $D$ ) and low-dimensional ( $D^{\prime}$ ) features. However, the number of available samples is limited by manual annotation. Matryoshka-Adaptor introduces in-batch sample mining strategies to expand the training sample scale, thereby addressing the inherent limitation. Specifically, it generates cross-sample pairs via the cartesian product of batch samples:

$$

\mathcal{P}=\{(x_{i},x_{j})\mid x_{i},x_{j}\in\text{Batch},\ i\neq j\}. \tag{3}

$$

This approach creates $B(B-1)$ pairs per batch (where $B$ denotes the batch size), enabling cross-sample comparisons within large batches. However, this indiscriminate pairing introduces noise from non-representative or irrelevant sample pairs.

In light of this limitation, the method employs Top- $k$ similarity-based selection:

$$

\begin{split}\mathcal{P}_{\text{top-}k}&=\text{Top}_{k}\left(\text{similarity}(x_{i},x_{j})\right),\\

&\quad\forall\ (x_{i},x_{j})\in\mathcal{P}.\end{split} \tag{4}

$$

Here, only the top- $k$ most similar pairs are retained for training, reducing computational overhead while focusing on informative interactions. Despite this improvement, the diversity of effective samples remains fundamentally constrained by the original batch size $B$ . In Section 3.4, we develop a strategy that empowers the model to mine global sample beyond the current batch.

### 3.2 Sequential Matryoshka Representation Learning

Applying the conclusions from Section 3.1 to the MRL training process, and take the parallel dimensionality reduction process $[D,D/2,D/4]$ as an example. The ratio of the average gradients for parameters $\mathbf{w}_{i}(i\in[0,D/4])$ and $\mathbf{w}_{j}(j\in[D/4,D/2])$ is as follows:

$$

\displaystyle\overline{\text{grad}_{i}} \displaystyle:\overline{\text{grad}_{j}}=\left(\frac{\partial\mathcal{L}^{D}}{\partial\mathbf{w}_{i}}+\frac{\partial\mathcal{L}^{D/2}}{\partial\mathbf{w}_{i}}+\frac{\partial\mathcal{L}^{D/4}}{\partial\mathbf{w}_{i}}\right) \displaystyle:\left(\frac{\partial\mathcal{L}^{D}}{\partial\mathbf{w}_{i}}+\frac{\partial\mathcal{L}^{D/2}}{\partial\mathbf{w}_{i}}\right)\approx 1+\frac{\delta(D/2)^{2}}{\delta(D/4)^{2}}. \tag{5}

$$

As shown in Equation 3.2, the average gradient magnitude of parameter $\mathbf{w}_{i}$ can be approximated as $1+\frac{\delta(D/2)^{2}}{\delta(D/4)^{2}}$ times that of parameter $\mathbf{w}_{j}$ , primarily due to the influence of the lower-dimensional loss function $\mathcal{L}^{D/4}$ . To resolve this issue, we propose Sequential Matryoshka Representation Learning (SMRL), which substitutes the original parallel compression of embeddings with a sequential approach, as illustrated in the Figure 2. Assuming a dimensionality reduction trajectory of $[D,D/2,D/4,\dots,D/2^{n}]$ . In each iteration, only the immediate transition (e.g., $D/2^{n-1}\rightarrow D/2^{n}$ ) is trained, avoiding the inclusion of lower-dimensional losses that amplify gradients for low-dimensional parameters. By eliminating the above factor, the gradients of $\mathbf{w}_{i}(i\in[0,D/2^{n}])$ follow a consistent distribution with reduced variance, improving convergence speed and performance. Once the loss converges in the current iteration, the dimensionality reduction $D/2^{n-1}\rightarrow D/2^{n}$ is complete, and the process proceeds to the next stage $D/2^{n}\rightarrow D/2^{n+1}$ , repeating the procedure until the target dimension is reached. Additionally, after convergence in one iteration, the optimal parameters for the current dimension are fixed to prevent subsequent reductions from degrading their performance. Notably, compared to MRL, the SMRL framework is more amenable to continued training. In scenarios where low-dimensional retrieval embeddings (e.g., D/8) or intermediate embeddings (e.g., D/3) are required, these can be obtained through further dimensionality reduction training based on the already preserved D/4 or D/2 parameters, eliminating the need for retraining from scratch as is typically required in MRL.

### 3.3 Adaptive Dimension Selection Module

Since directly truncating dimensions to obtain low-dimensional representations in MRL inevitably leads to information degradation, we propose the Adaptive Dimension Selection (ADS) module to dynamically identify important dimensions during training. As illustrated in Figure 3, we introduce a set of learnable parameters that represent the importance of different dimensions in the original representation $\mathbf{Z}(\text{dim}=D)$ , and use these parameters to perform dimensional sampling, obtaining a reduced-dimension representation $\mathbf{Z}^{\prime}(\text{dim}=D/2)$ . Since the sampling operation is non-differentiable, during the training phase, we utilize the Gumbel-Softmax Jang et al. (2016) to approximate the importance of different dimensions. This is achieved by adding Gumbel-distributed noise $G\sim\text{Gumbel}(0,1)$ to the logits parameters $\hat{\mathbf{z}}$ for each dimension, followed by applying the softmax function to the perturbed logits to approximate the one-hot vector representing dimension selection. Mathematically, this can be expressed as:

$$

\mathbf{z}=\text{softmax}_{\tau}(\hat{\mathbf{z}}+G). \tag{6}

$$

Importantly, the Gumbel approximation allows the softmax scores of dimension importance to be interpreted as the probability of selecting each dimension, rather than enforcing a deterministic selection of the top- $k$ dimensions. This achieves a fully differentiable reparameterization, transforming the selection of embedding dimensions into an optimizable process.

<details>

<summary>figures/fig3.png Details</summary>

### Visual Description

## Flowchart: Machine Learning Pipeline with Pair-Based Loss

### Overview

The diagram illustrates a machine learning pipeline involving a frozen model, data enqueueing/dequeueing processes, a score matrix, top-k sampling, and a fully connected (FC) layer leading to pair-based loss computation. The flow is directional, with components connected by arrows indicating data or control flow.

### Components/Axes

1. **Frozen Model** (gray box): Starting point of the pipeline.

2. **Enqueue** (blue arrow): Adds data to the XBM queue.

3. **XBM Queue** (blue dashed box): Contains multiple data blocks (orange, blue, green).

4. **Dequeue** (blue arrow with curved path): Removes data from the XBM queue.

5. **Score Matrix** (grid): Generates a matrix of scores.

6. **Top-k Sample** (blue arrow): Selects top-k elements from the score matrix.

7. **FC Layer** (light green box): Processes the top-k sample.

8. **Pair-Based Loss** (green box): Final output of the pipeline.

### Detailed Analysis

- **Frozen Model**: Positioned at the bottom-left, it feeds data into the enqueue process.

- **Enqueue**: Connects the frozen model to the XBM queue via a blue arrow. The XBM queue contains six data blocks (three orange, three blue, two green), suggesting batch processing.

- **XBM Queue**: Labeled explicitly, it acts as a buffer for incoming data.

- **Dequeue**: A curved arrow from the XBM queue to the score matrix indicates data retrieval. The dequeue process is visually distinct with a dashed outline.

- **Score Matrix**: A 4x4 grid representing pairwise comparisons or similarity scores.

- **Top-k Sample**: A downward arrow from the score matrix to the FC layer, implying selection of highest-scoring elements.

- **FC Layer**: Processes the top-k sample before passing it to the pair-based loss.

- **Pair-Based Loss**: Final component, colored green, indicating optimization target.

### Key Observations

1. **Color Coding**:

- Orange blocks in the XBM queue correspond to enqueued data.

- Blue blocks represent intermediate processing steps.

- Green blocks in the XBM queue and the final loss component suggest prioritization or finalization.

2. **Flow Direction**:

- Data flows from the frozen model → enqueue → XBM queue → dequeue → score matrix → top-k sample → FC layer → pair-based loss.

3. **Dashed vs. Solid Boxes**:

- Dashed boxes (XBM queue, score matrix) represent intermediate structures, while solid boxes (frozen model, FC layer, pair-based loss) denote fixed components.

### Interpretation

This pipeline likely implements a contrastive learning framework, where:

- The **frozen model** provides embeddings for input data.

- The **XBM queue** manages batch processing, with dequeueing enabling dynamic sampling.

- The **score matrix** computes pairwise similarities, and **top-k sampling** focuses on the most relevant pairs.

- The **FC layer** refines these pairs, and **pair-based loss** optimizes the model to maximize agreement between similar pairs and minimize it for dissimilar ones.

The use of a frozen model suggests transfer learning, while the XBM queue and dequeue mechanism imply efficient handling of large datasets. The pair-based loss aligns with methods like SimCLR or MoCo, emphasizing contrastive learning for tasks such as image retrieval or recommendation systems.

</details>

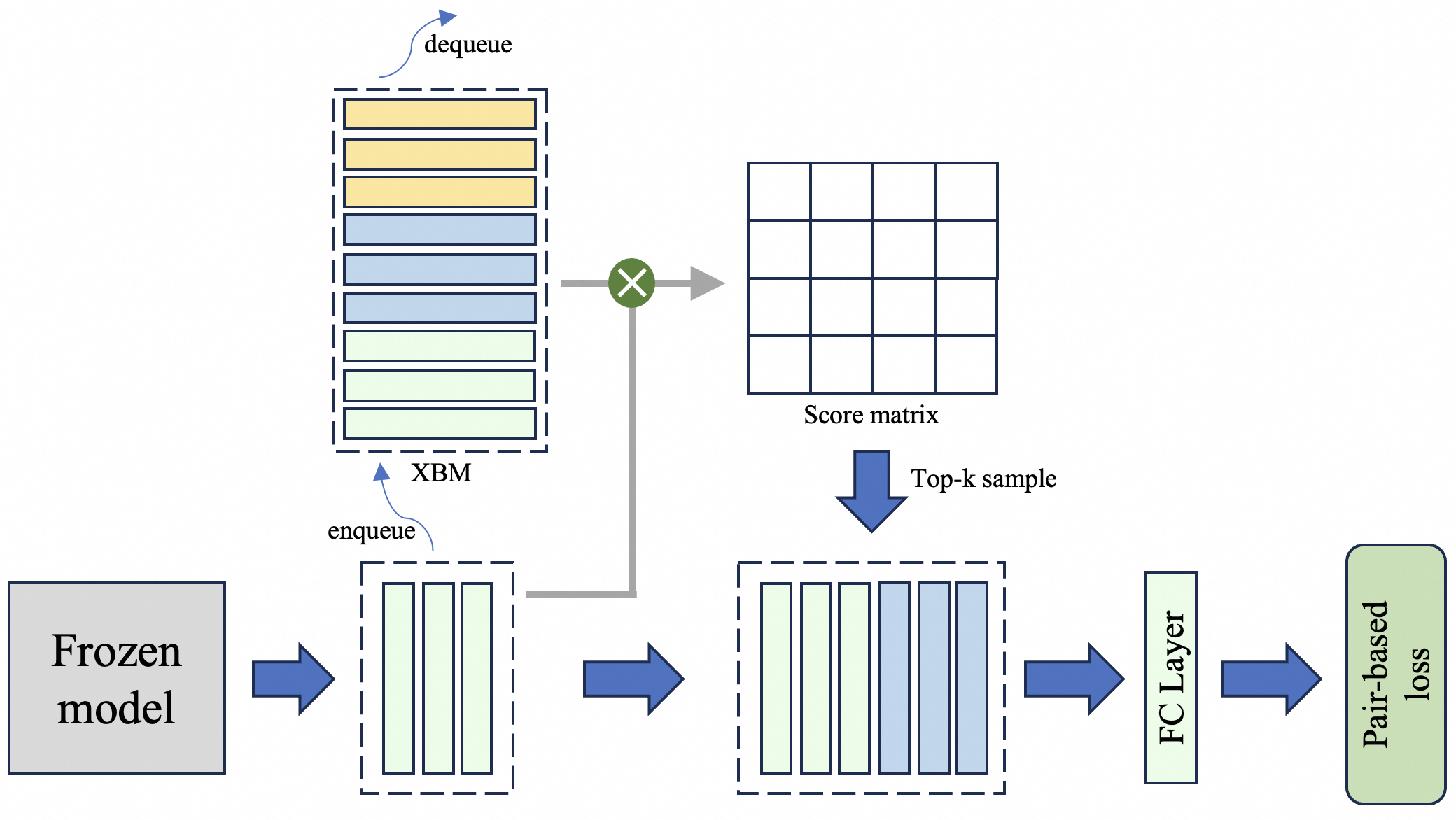

Figure 4: S-XBM maintains a queue during training to store historical features across batches. Rather than incorporating all stored features into the current batch, it selectively leverages hard samples that exhibit high similarity to the current batch samples.

### 3.4 Selectable Cross-Batch Memory

A natural teacher-student relationship inherently exists between the original embedding and its reduced-dimensional counterpart, making it feasible to improve the compressed embedding through unsupervised learning Yoon et al. (2024). However, as discussed in Section 3.1, performing this process within a single batch suffers from sample noise and insufficient diversity. As illustrated in Figure 4, we propose the Selectable Cross-Batch Memory (S-XBM) module, which constructs a first-in-first-out (FIFO) queue during training to store original embeddings across batches, with the aim of addressing this limitation. Unlike the original XBM Wang et al. (2020), we introduce two task-specific improvements: (1) retrieving only the top‑ $k$ most similar samples from the memory bank to construct new batches, and (2) deferring the trainable FC layer and only storing features generated by the frozen backbone, thereby avoiding feature drift. The unsupervised loss between original embedding $emb$ and low-dimensional embedding $emb[:d]$ is as follows:

$$

\displaystyle{\mathcal{L}_{un-sup}} \displaystyle=\sum_{i}\sum_{j\in\mathcal{N}_{K}(i)}\left|\text{Sim}(emb_{i},emb_{j})\right. \displaystyle\quad\left.-\text{Sim}(emb_{i}[:d],emb_{j}[:d])\right| \tag{7}

$$

where $\mathcal{N}_{K}(i)$ denotes the set of the top $k$ most similar embeddings to $emb_{i}$ within the S-XBM module.

<details>

<summary>figures/fig_openai.png Details</summary>

### Visual Description

## Line Chart: NDCG@10/% vs Dimensions

### Overview

The chart compares the performance of four different methods (Original(MRL), search-adaptor, MRL-Adaptor, SMEC) across varying dimensions (128 to 3072) using the metric NDCG@10/%. All methods show upward trends, with SMEC consistently outperforming others.

### Components/Axes

- **X-axis (Dimensions)**: Logarithmic scale with values 128, 256, 512, 768, 1536, 3072.

- **Y-axis (NDCG@10/%)**: Linear scale from 50% to 62%.

- **Legend**: Located in the bottom-right corner, mapping:

- Blue circles: Original(MRL)

- Orange crosses: search-adaptor

- Green triangles: MRL-Adaptor

- Yellow squares: SMEC

### Detailed Analysis

1. **Original(MRL)** (Blue circles):

- Starts at ~49% at 128 dimensions.

- Gradually increases to ~57% at 3072 dimensions.

- Slope: Gentle upward trend.

2. **search-adaptor** (Orange crosses):

- Begins at ~51.5% at 128 dimensions.

- Sharp rise to ~57.5% at 256 dimensions.

- Continues upward to ~61% at 3072 dimensions.

- Slope: Steeper than Original(MRL), overtakes it at ~256 dimensions.

3. **MRL-Adaptor** (Green triangles):

- Starts at ~54.5% at 128 dimensions.

- Rises steadily to ~61.5% at 3072 dimensions.

- Slope: Moderate upward trend, consistently above Original(MRL) and search-adaptor until ~768 dimensions.

4. **SMEC** (Yellow squares):

- Begins at ~56.5% at 128 dimensions.

- Increases to ~61.5% at 3072 dimensions.

- Slope: Steady upward trend, maintaining the highest performance across all dimensions.

### Key Observations

- **Performance Hierarchy**: SMEC > MRL-Adaptor > search-adaptor > Original(MRL) at higher dimensions.

- **Convergence**: Differences between methods narrow at 3072 dimensions (e.g., MRL-Adaptor and SMEC differ by ~0.5%).

- **Divergence**: search-adaptor surpasses Original(MRL) by ~256 dimensions and maintains a lead.

### Interpretation

The data suggests that **SMEC** and **MRL-Adaptor** are superior to the baseline **Original(MRL)** and **search-adaptor** methods, particularly at larger dimensions. The search-adaptor shows rapid improvement but fails to surpass MRL-Adaptor. The convergence of lines at higher dimensions implies diminishing returns or similar scalability across methods. SMEC’s consistent lead highlights its robustness, while MRL-Adaptor’s steady growth indicates effective adaptation. The Original(MRL) baseline serves as a reference for incremental improvements in the other methods.

</details>

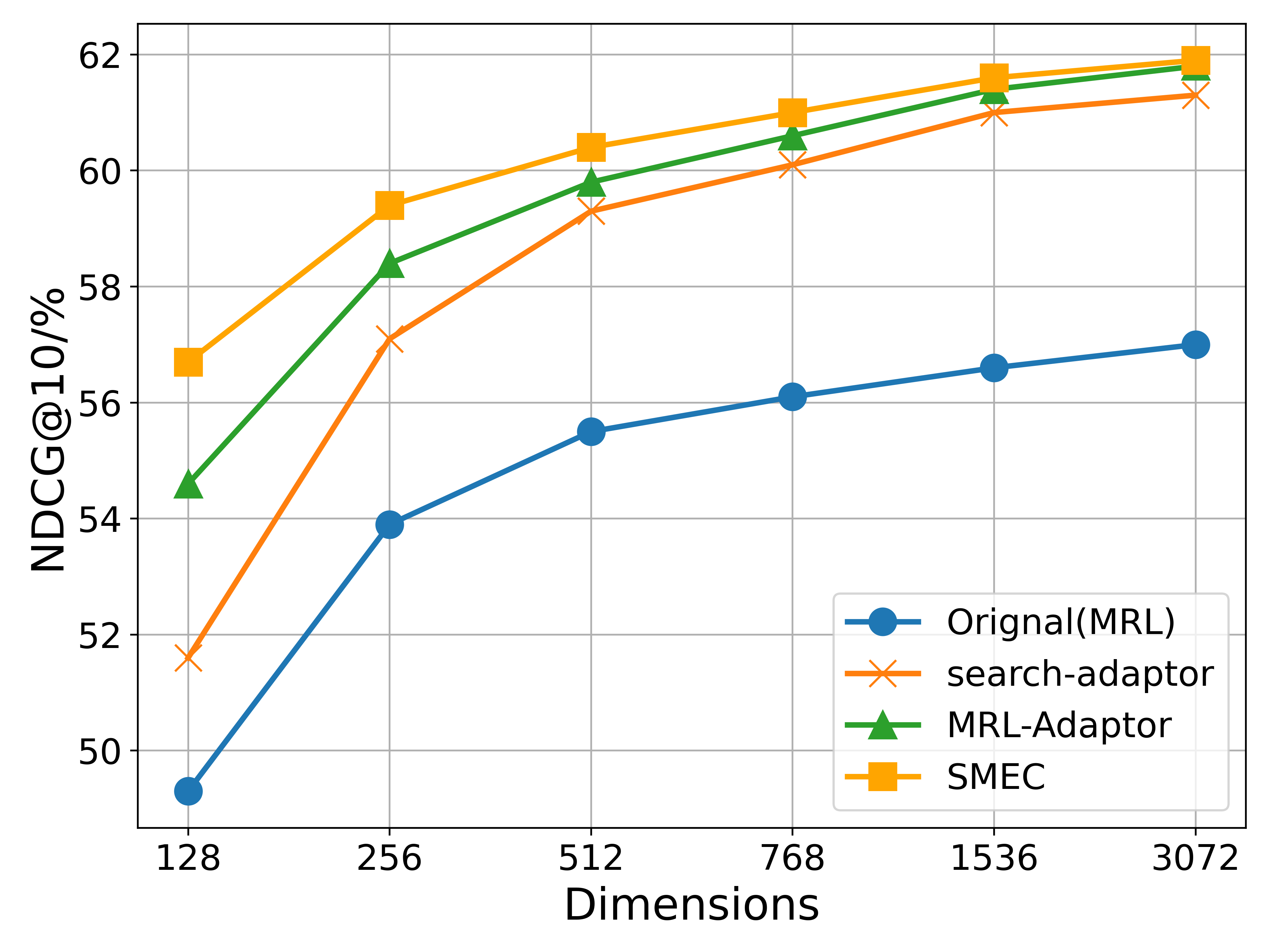

(a) OpenAI text embeddings

<details>

<summary>figures/fig_llm2vec.png Details</summary>

### Visual Description

## Line Graph: NDCG@10% Performance Across Dimensions

### Overview

The image is a line graph comparing the performance of four different methods (Original(PCA), Search-Adaptor, MRL-Adaptor, SMEC) in terms of NDCG@10% across varying dimensions. The x-axis represents dimensions (128 to 3584), and the y-axis represents NDCG@10% (30% to 60%). All methods show upward trends, with SMEC consistently performing best.

### Components/Axes

- **X-axis (Dimensions)**: Labeled "Dimensions" with values 128, 256, 512, 768, 1536, 3584.

- **Y-axis (NDCG@10%)**: Labeled "NDCG@10%" with values 30% to 60%.

- **Legend**: Located in the bottom-right corner, associating:

- Blue circles: Original(PCA)

- Orange crosses: Search-Adaptor

- Green triangles: MRL-Adaptor

- Yellow squares: SMEC

### Detailed Analysis

1. **Original(PCA)** (Blue circles):

- Starts at ~31% at 128 dimensions.

- Rises steeply to ~41% at 256 dimensions.

- Continues increasing to ~55% at 3584 dimensions.

- Values: 31% (128), 41% (256), 46% (512), 51% (768), 53% (1536), 55% (3584).

2. **Search-Adaptor** (Orange crosses):

- Begins at ~51% at 128 dimensions.

- Increases gradually to ~56% at 256 dimensions.

- Reaches ~59% at 3584 dimensions.

- Values: 51% (128), 56% (256), 58% (512), 59% (768), 60% (1536), 61% (3584).

3. **MRL-Adaptor** (Green triangles):

- Starts at ~54% at 128 dimensions.

- Rises to ~58% at 256 dimensions.

- Peaks at ~60% at 3584 dimensions.

- Values: 54% (128), 58% (256), 59% (512), 60% (768), 61% (1536), 61% (3584).

4. **SMEC** (Yellow squares):

- Begins at ~56% at 128 dimensions.

- Increases steadily to ~61% at 3584 dimensions.

- Values: 56% (128), 59% (256), 60% (512), 61% (768), 61% (1536), 61% (3584).

### Key Observations

- **Performance Trends**: All methods improve with higher dimensions, but SMEC maintains the highest NDCG@10% across all dimensions.

- **Original(PCA) Gap**: Original(PCA) starts significantly lower (~31% at 128 dimensions) but closes the gap to ~55% by 3584 dimensions.

- **Convergence**: Search-Adaptor, MRL-Adaptor, and SMEC converge closely at higher dimensions (e.g., 59–61% at 3584 dimensions).

### Interpretation

The data suggests that increasing dimensions enhances performance for all methods, with SMEC being the most robust. Original(PCA) underperforms initially but improves substantially with scale. The convergence of Search-Adaptor, MRL-Adaptor, and SMEC at higher dimensions implies diminishing returns for further dimensional increases beyond 768. The steep rise of Original(PCA) indicates it may benefit more from dimensional scaling compared to other methods. This could reflect architectural differences in how these methods handle dimensionality.

</details>

(b) LLM2Vec

Figure 5: Experimental results on the BEIR dataset comparing two models: OpenAI’s text-embedding-3-large (with 3072 dimensions) and LLM2Vec (with 3548 dimensions), the latter built upon the Qwen2-7B model. OpenAI text embeddings inherently contain multi-scale representations (enabled by MRL during pretraining), while LLM2Vec obtains its orignal low-dimensional representations via PCA.

## 4 Experiments

In this section, we compare our approach with state-of-the-art methods in the field of embedding dimensionality reduction.

### 4.1 Dataset Description

We evaluate the model’s retrieval performance across diverse datasets: BEIR Thakur et al. (2021) (text retrieval), Products-10K Bai et al. (2020) (image retrieval), and Fashion-200K Han et al. (2017) (cross-modal retrieval). BEIR is a comprehensive text retrieval benchmark consisting of 13 selected datasets from diverse domains. Products-10K contains approximately 10,000 products with over 150,000 images for large-scale product image retrieval. Fashion-200K includes over 200,000 fashion items with paired image-text data for cross-modal tasks.

### 4.2 Implementation Details

We use state-of-the-art models to extract the original embeddings for different datasets. Specifically, the BEIR dataset employs OpenAI text embeddings ope and LLM2Vec BehnamGhader et al. (2024) for text representation; the Products-10K dataset utilizes LLM2CLIP Huang et al. (2024) to obtain cross-modal embeddings; and the Fashion-200K dataset extracts image embeddings using the ViT-H Dosovitskiy et al. (2020) model. All dimensionality reduction methods are performed based on these original representations. To align with other methods, SMEC also adopts rank loss Yoon et al. (2023) as the supervised loss function, which is defined as follows:

$$

\displaystyle\mathcal{L}_{rank} \displaystyle=\sum_{i}\sum_{j}\sum_{k}\sum_{m}I(y_{ij}>y_{ik})(y_{ij}-y_{ik}) \displaystyle\log(1+\exp(s_{ik}[:m]-s_{ij}[:m])), \tag{8}

$$

where $I(y_{ij}>y_{ik})$ is an indicator function that is equal to 1 if $y_{ij}$ > $y_{ik}$ and 0 otherwise. $s_{ij}[:m]$ represents the cosine similarity between the query embedding $emb_{i}[:m]$ and the corpus embedding $emb_{j}[:m]$ . The total loss function is:

$$

\displaystyle\mathcal{L}_{total}=\mathcal{L}_{rank}+\alpha\mathcal{L}_{un-sup}, \tag{9}

$$

with $\alpha$ being hyper-parameters with fixed values as $\alpha=1.0$ . As SMEC involves multi-stage training, the training epochs of other methods are aligned with the total number of epochs costed by SMEC, and their best performance is reported.

### 4.3 Results

In this subsection, the results on the BEIR, Fashion-200K, and Products-10K datasets are given. Retrieval performance is evaluated using the normalized discounted cumulative gain at rank 10 (nDCG@10) Kalervo et al. (2002) metric.

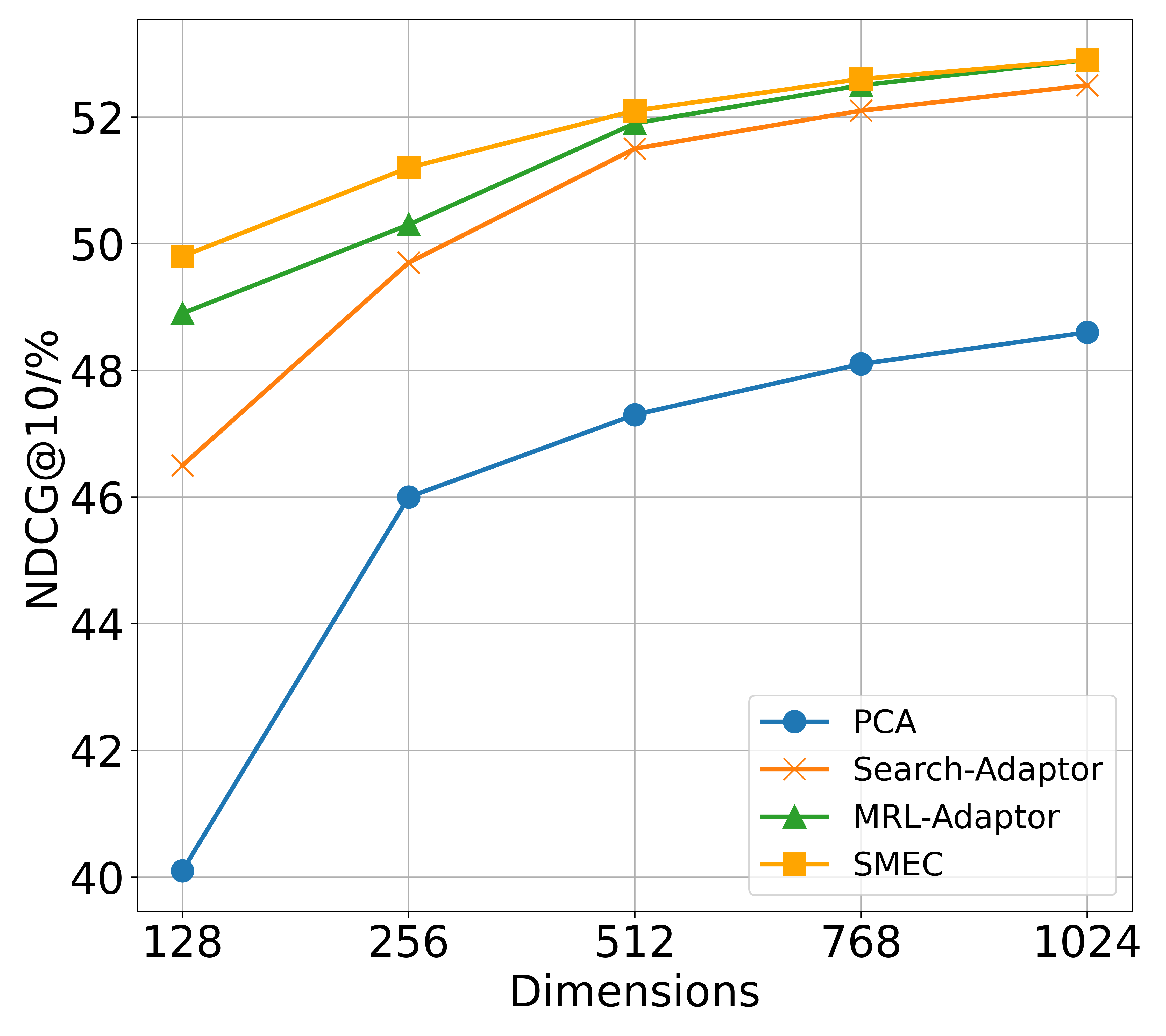

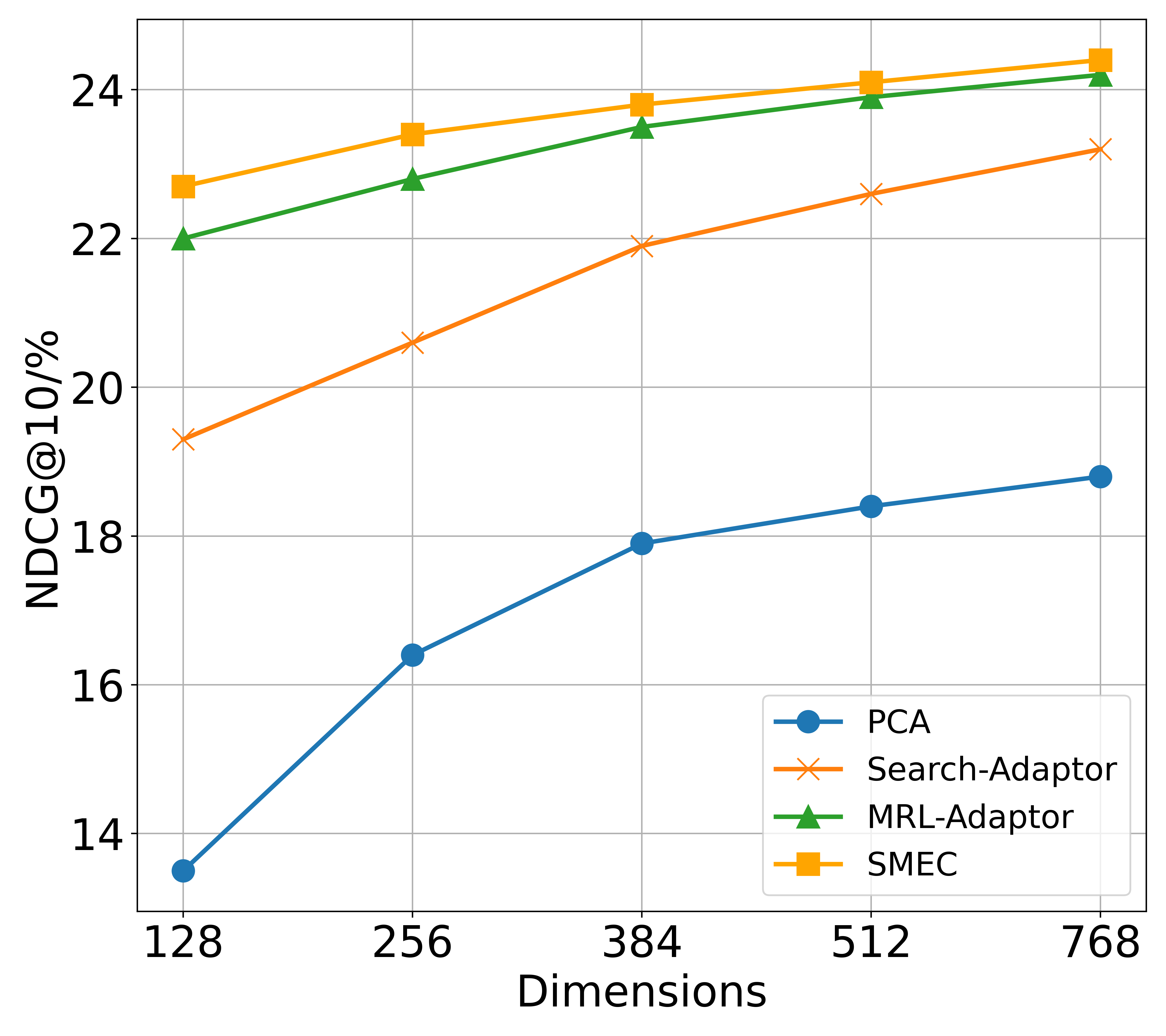

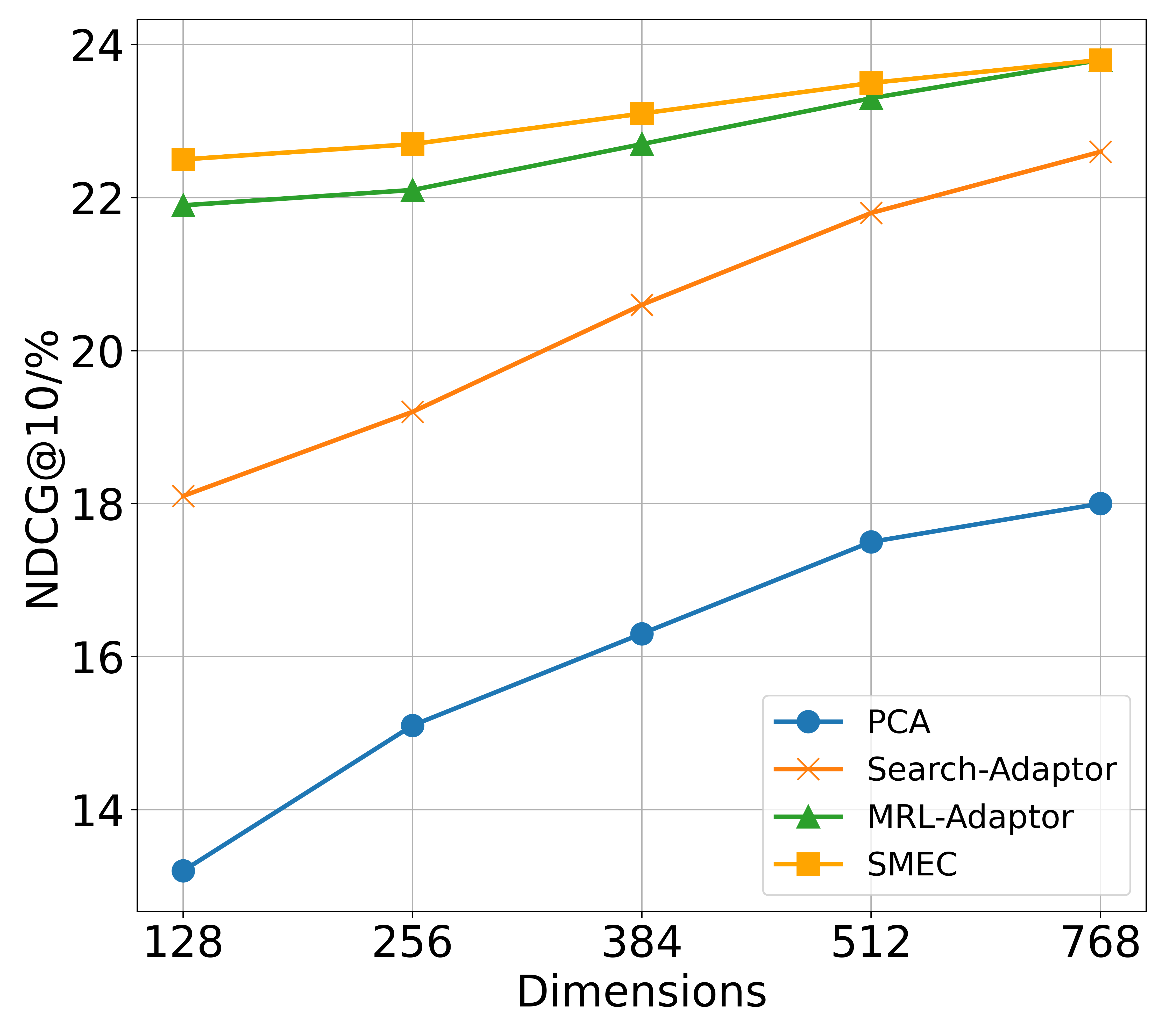

BEIR. As shown in Figure 5, we compare the performance of SMEC and other state-of-the-art methods on two types of models: the API-based OpenAI text embedding and the open-source LLM2vec, across various compressed dimensions. Significantly, SMEC exhibits the strongest performance retention, particularly at lower compression ratios. For example, when compressed to 128 dimensions, SMEC improves the performance of the OpenAI and LLM2vec models by 1.9 and 1.1 points respectively, compared to the best-performing Matryoshka-Adaptor.

Products-10K. Images naturally contain denser features than text O Pinheiro et al. (2020). As shown in Figure 8(a) of Appendix C, SMEC surpasses other dimensionality reduction methods in image retrieval tasks, highlighting the effectiveness of the ADS module in mitigating information degradation during dimension pruning.

Fashion-200K. Unlike unimodal datasets, Fashion-200K involves cross-modal queries and documents, such as image-to-text and text-to-image retrieval. As illustrated in the Figure 8(b) and 8(c) of Appendix C, SMEC achieves superior performance in both directions, demonstrating strong robustness in multimodal scenarios.

<details>

<summary>figures/fig_var.png Details</summary>

### Visual Description

## Line Graph: Gradient Variance vs. Epochs for MRL and SMRL

### Overview

The image is a line graph comparing the gradient variance of two algorithms, MRL (black line) and SMRL (cyan line), across 40 epochs. The y-axis uses a logarithmic scale (10⁻⁹ to 10⁻⁷) to represent gradient variance, while the x-axis tracks epochs from 0 to 40. Both lines show a general downward trend, but with distinct decay rates.

---

### Components/Axes

- **X-axis (Epochs)**: Labeled "Epochs," ranging from 0 to 40 in increments of 5.

- **Y-axis (Gradient Variance)**: Labeled "Gradient Variance," with a logarithmic scale from 10⁻⁹ to 10⁻⁷.

- **Legend**: Located in the top-right corner, with:

- **Black line**: MRL

- **Cyan line**: SMRL

---

### Detailed Analysis

1. **MRL (Black Line)**:

- Starts at ~10⁻⁷ at epoch 0.

- Gradually decreases, reaching ~10⁻⁸ by epoch 10.

- Continues to decline, stabilizing near ~10⁻⁹ by epoch 40.

- Shows minor fluctuations (e.g., slight peaks at epochs 15 and 30) but maintains a consistent downward trajectory.

2. **SMRL (Cyan Line)**:

- Begins at ~10⁻⁸ at epoch 0.

- Declines more steeply, reaching ~10⁻⁹ by epoch 10.

- Continues to drop sharply, hitting ~10⁻¹⁰ by epoch 40.

- Exhibits a smoother, more linear decay compared to MRL.

---

### Key Observations

- **Decay Rates**: SMRL exhibits a faster gradient variance reduction than MRL, with a steeper slope on the logarithmic scale.

- **Stability**: MRL shows minor fluctuations but remains relatively stable after epoch 20, while SMRL’s decline is more aggressive.

- **No Intersection**: The lines do not cross, indicating SMRL consistently outperforms MRL in reducing gradient variance over time.

- **Logarithmic Scale Impact**: The y-axis compression emphasizes the magnitude differences, making SMRL’s faster decay visually dominant.

---

### Interpretation

The graph suggests that SMRL achieves a more rapid reduction in gradient variance compared to MRL, potentially indicating faster convergence or optimization efficiency. However, the minor fluctuations in MRL’s line might imply occasional instability or slower adaptation. The logarithmic scale highlights the exponential nature of gradient variance decay, emphasizing SMRL’s superior performance in early epochs. This could reflect differences in algorithm design, such as learning rate adjustments or regularization strategies. Further analysis of hyperparameters or loss landscapes would clarify the practical implications of these trends.

</details>

(a) Gradient Variance

<details>

<summary>figures/fig_loss.png Details</summary>

### Visual Description

## Line Graph: Loss Comparison Between MRL and SMRL Over Epochs

### Overview

The image depicts a line graph comparing the loss values of two algorithms, MRL (black line) and SMRL (blue line), across 40 training epochs. Both lines show a general downward trend, with MRL starting at a higher loss value and converging toward SMRL's trajectory over time.

### Components/Axes

- **X-axis (Epochs)**: Ranges from 0 to 40 in increments of 5.

- **Y-axis (Loss)**: Ranges from 0.05 to 0.10 in increments of 0.01.

- **Legend**: Located in the top-right corner, with:

- **Black line**: Labeled "MRL"

- **Blue line**: Labeled "SMRL"

### Detailed Analysis

1. **MRL (Black Line)**:

- **Initial Value**: At epoch 0, loss ≈ 0.102.

- **Trend**: Steep decline until epoch 5 (loss ≈ 0.078), followed by a gradual decrease.

- **Final Value**: At epoch 40, loss ≈ 0.055.

- **Notable**: Sharpest drop occurs between epochs 0–5.

2. **SMRL (Blue Line)**:

- **Initial Value**: At epoch 0, loss ≈ 0.090.

- **Trend**: Gradual decline until epoch 10 (loss ≈ 0.052), then stabilizes with minor fluctuations.

- **Final Value**: At epoch 40, loss ≈ 0.045.

- **Notable**: Smoother, less volatile trajectory compared to MRL.

3. **Convergence**:

- The two lines intersect near epoch 10 (MRL ≈ 0.062, SMRL ≈ 0.052).

- Post-convergence, MRL maintains a slightly higher loss than SMRL but at a reduced rate.

### Key Observations

- **Early Performance**: MRL exhibits a faster initial reduction in loss, suggesting stronger early optimization.

- **Long-Term Stability**: SMRL demonstrates more consistent performance after epoch 10, with smaller fluctuations.

- **Convergence Point**: Both algorithms achieve similar loss values (~0.055–0.058) by epoch 20, indicating comparable effectiveness in later stages.

### Interpretation

The graph suggests that MRL may be more effective in rapidly reducing loss during the initial training phases, while SMRL offers greater stability and sustained improvement over time. The convergence near epoch 10 implies that both methods could achieve comparable results with sufficient training, though SMRL’s smoother trajectory might reduce the risk of overfitting or erratic behavior. The slight dip in SMRL’s loss around epoch 20 (≈0.048) could indicate a minor optimization milestone or noise in the data. Overall, the trends highlight trade-offs between early efficiency (MRL) and long-term reliability (SMRL).

</details>

(b) Validation loss

<details>

<summary>figures/fig_ndcg.png Details</summary>

### Visual Description

## Line Graph: NDCG@10 Performance Comparison Across Epochs

### Overview

The image depicts a line graph comparing the performance of two algorithms, MRL (black line) and SMRL (blue line), measured by NDCG@10 metric across 40 training epochs. Both lines exhibit fluctuating trends with distinct divergence patterns over time.

### Components/Axes

- **X-axis (Epochs)**: Labeled "Epochs" with integer markers from 0 to 40 in increments of 5.

- **Y-axis (NDCG@10)**: Labeled "NDCG@10" with decimal markers from 0.41 to 0.47 in increments of 0.01.

- **Legend**: Positioned in the top-left corner, explicitly labeling:

- Black line: MRL

- Blue line: SMRL

- **Gridlines**: Subtle gray gridlines for reference.

### Detailed Analysis

1. **Initial Phase (Epochs 0–10)**:

- Both lines start near **0.44** at epoch 0.

- MRL (black) dips below SMRL (blue) by epoch 5, reaching ~0.43.

- SMRL recovers slightly but remains below MRL until epoch 10, where both lines cross (~0.42).

2. **Mid-Phase (Epochs 10–25)**:

- SMRL (blue) rises sharply, peaking at ~0.46 by epoch 25.

- MRL (black) remains flat (~0.42–0.43) until epoch 20, then begins a gradual ascent.

3. **Final Phase (Epochs 25–40)**:

- MRL overtakes SMRL around epoch 25, reaching ~0.46 by epoch 30.

- SMRL stabilizes near ~0.47, while MRL fluctuates between ~0.455–0.46.

### Key Observations

- **Crossing Points**:

- First crossover at epoch 10 (~0.42).

- Second crossover at epoch 25 (~0.46 for MRL vs. ~0.455 for SMRL).

- **Performance Divergence**:

- SMRL dominates early (epochs 0–25), while MRL outperforms later (epochs 25–40).

- **Stability**:

- SMRL shows sharper fluctuations (e.g., sharp rise at epoch 20), while MRL exhibits smoother growth post-epoch 20.

### Interpretation

The data suggests that SMRL achieves higher initial performance but experiences diminishing returns after epoch 25. In contrast, MRL demonstrates delayed but sustained improvement, surpassing SMRL by epoch 25 and maintaining a ~0.005 advantage by epoch 40. This could indicate that MRL’s optimization strategy (e.g., adaptive learning rates or regularization) becomes more effective with prolonged training, whereas SMRL may overfit or plateau earlier. The crossing points highlight critical epochs where algorithmic efficiency shifts, warranting further investigation into hyperparameter tuning or architectural differences.

</details>

(c) Retrieval performance

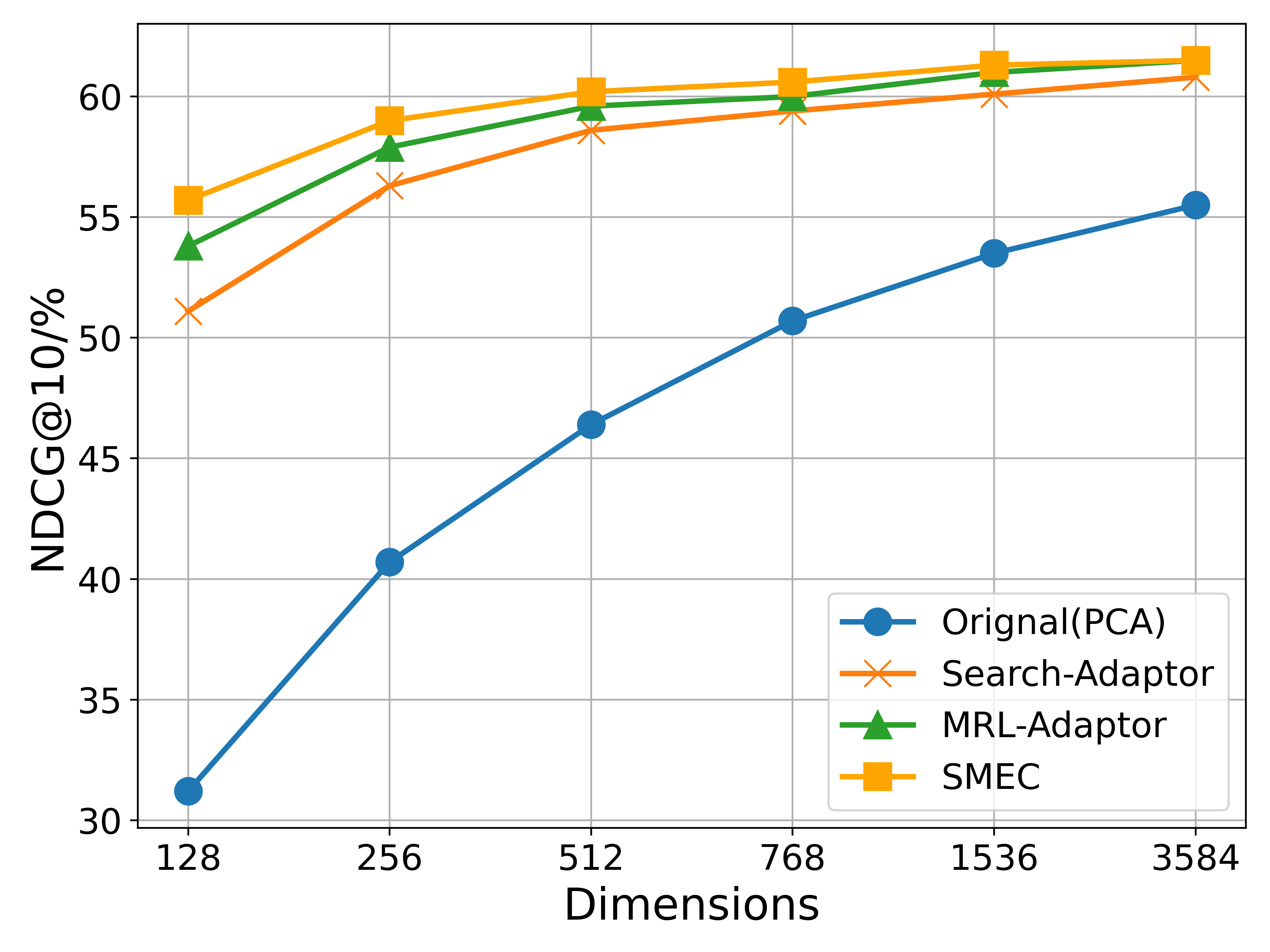

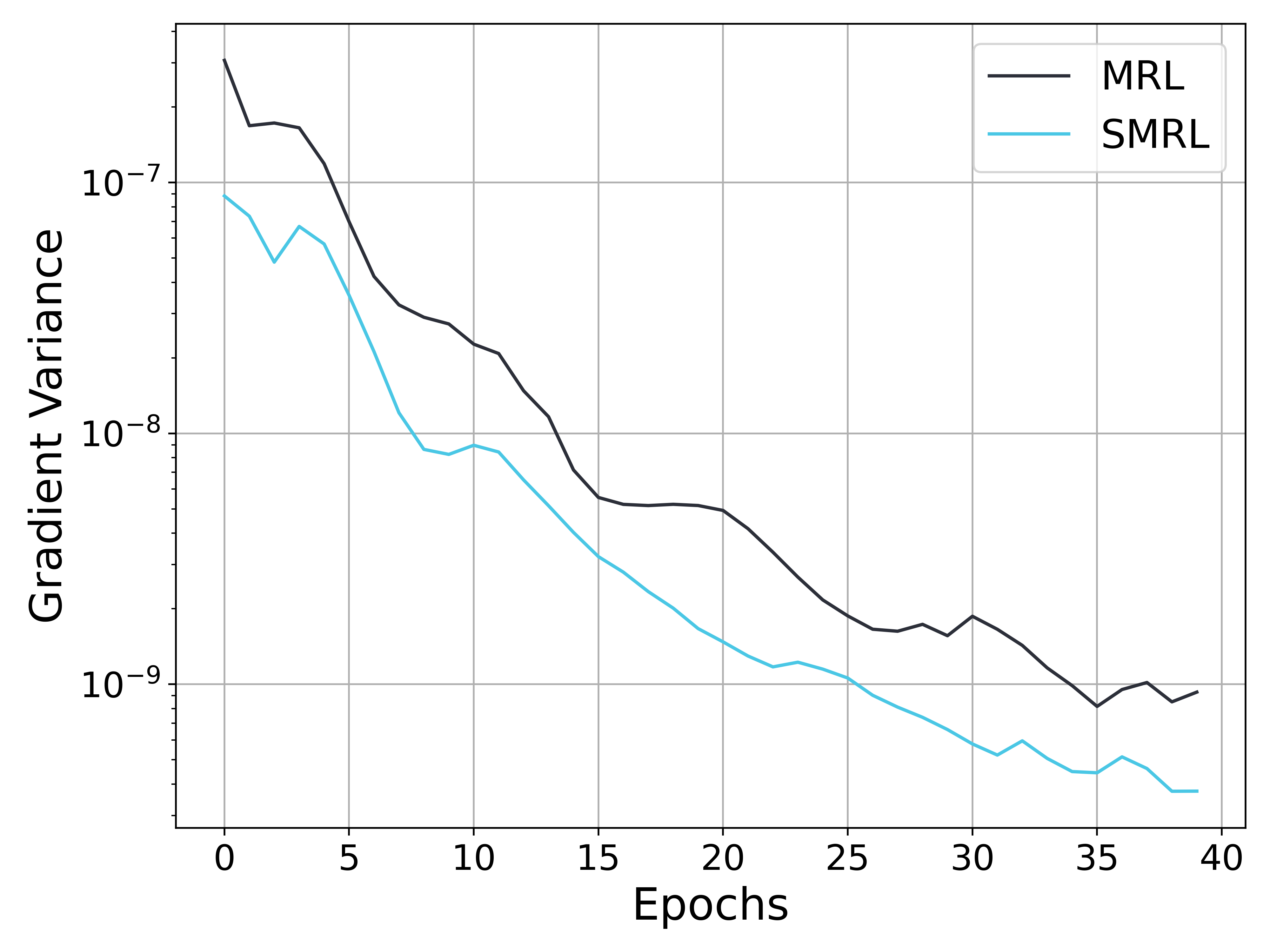

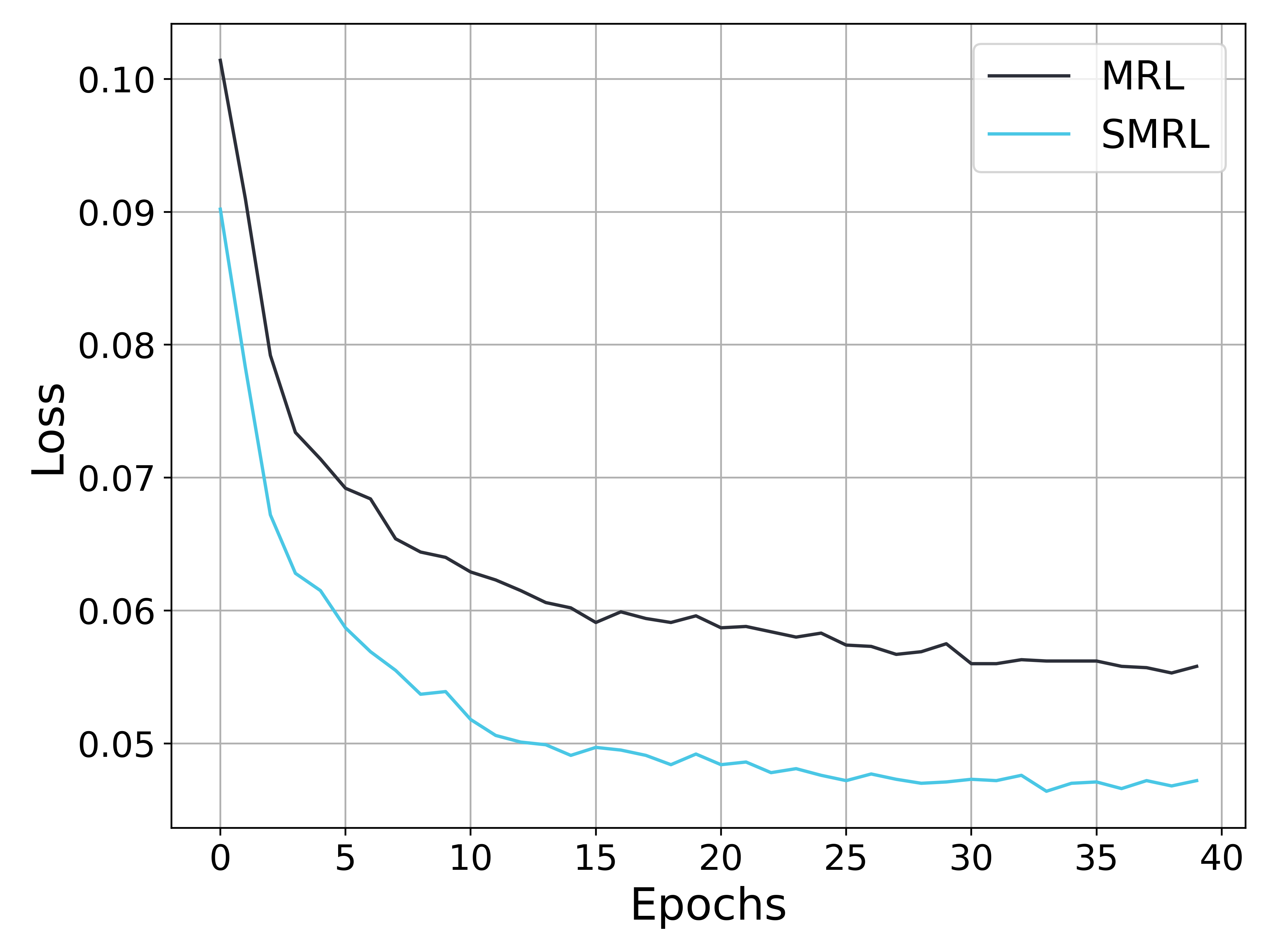

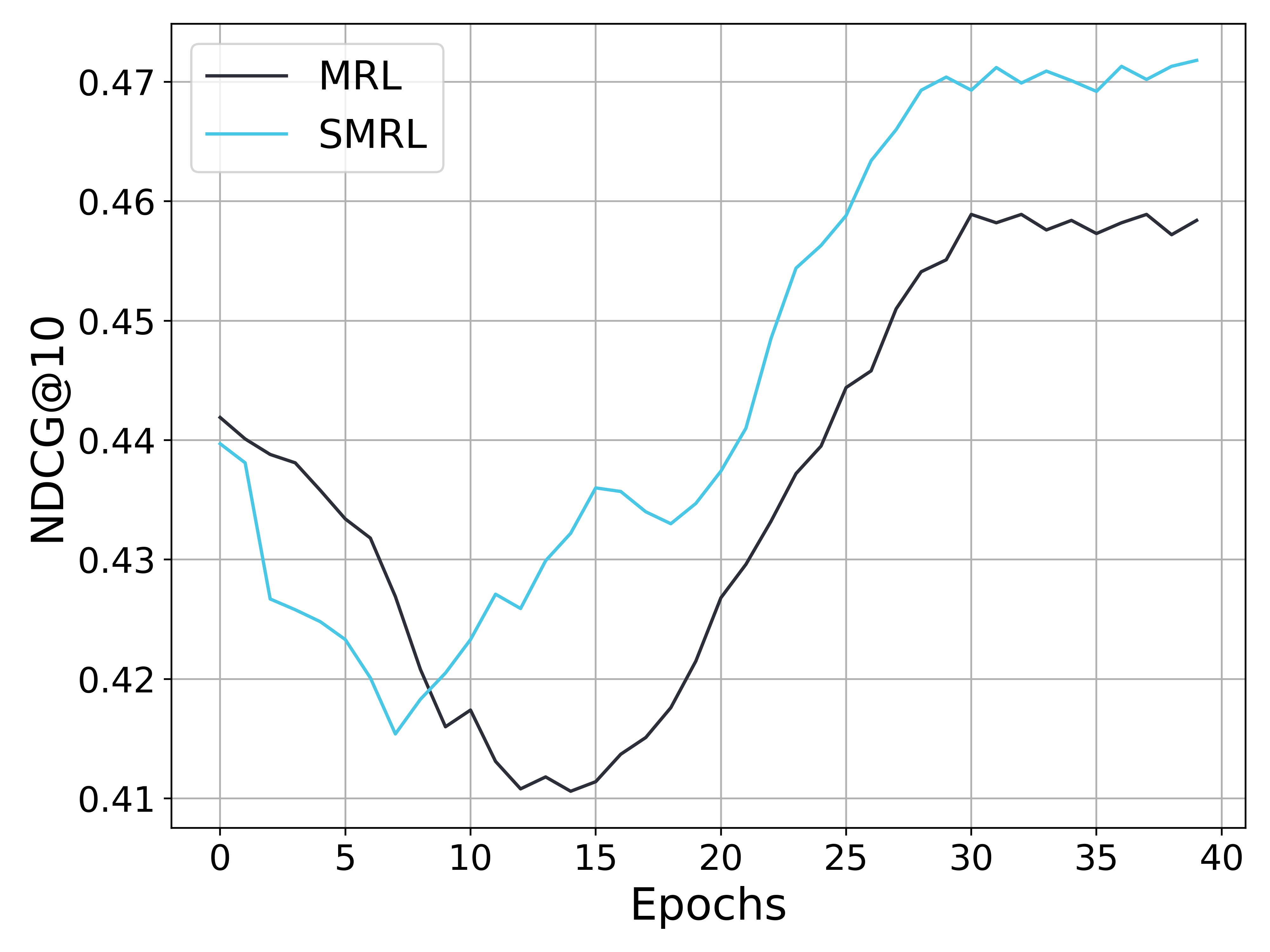

Figure 6: Analysis of metrics during the training process. (a) shows the gradient variance curve (with the vertical axis in a logarithmic scale), (b) presents the loss curve on the validation set, and (c) illustrates the performance variations on the test set. As training progresses, the gradient variances of both MRL and SMRL decrease; however, the gradient variance of MRL remains several times higher than that of SMRL. Consequently, the loss curve of SMRL converges more quickly to a lower value, and the compressed embedding demonstrates better retrieval performance.

## 5 Discussions

### 5.1 The influence of gradient variance

To validate the impact of gradient variance on convergence speed and model performance (as discussed in Section 3.2), we conducted comparative experiments between SMRL and MRL using the MiniLM model on the BEIR dataset. As shown in Figure 6(a), MRL consistently exhibits significantly higher gradient variance than SMRL throughout training. Consequently, the training loss of MRL continues to decline beyond the 20th epoch, whereas SMRL’s loss starts to converge at the 15th epoch. A similar trend is observed in subfigure 6(c), where SMRL enters the improvement phase earlier and converges to superior performance.

<details>

<summary>figures/fig_discuss2_rank.png Details</summary>

### Visual Description

## Bar Chart with Line Overlays: Gradient Size and Variance Across Epochs

### Overview

The image is a combined bar chart and line graph visualizing gradient size and variance across 31 epochs (0, 10, 20, 30). The chart compares two optimization methods (SMRL and MRL) using gradient metrics. Gradient size is plotted on a logarithmic scale (10⁻² to 10⁰) on the left y-axis, while gradient variance uses a secondary logarithmic scale (10⁻⁹ to 10⁻⁷) on the right y-axis. The x-axis represents training epochs.

### Components/Axes

- **X-axis**: Epochs (0, 10, 20, 30)

- **Left Y-axis**: Gradient Size (log scale: 10⁻² to 10⁰)

- **Right Y-axis**: Gradient Variance (log scale: 10⁻⁹ to 10⁻⁷)

- **Legend**:

- Light blue: Average Gradient (ωᵢ,ᵢ∈[0,96], SMRL)

- Blue: Average Gradient (ωⱼ,ⱼ∈[96,192], SMRL)

- Light orange: Average Gradient (ωᵢ,ᵢ∈[0,96], MRL)

- Orange: Average Gradient (ωⱼ,ⱼ∈[96,192], MRL)

- Blue line: Gradient Variance (ωₖ,ₖ∈[0,192], SMRL)

- Red line: Gradient Variance (ωₖ,ₖ∈[0,192], MRL)

### Detailed Analysis

#### Gradient Size (Bars)

- **Epoch 0**:

- Light blue (ωᵢ,ᵢ∈[0,96], SMRL): 1.198

- Blue (ωⱼ,ⱼ∈[96,192], SMRL): 1.054

- Light orange (ωᵢ,ᵢ∈[0,96], MRL): 3.666

- Orange (ωⱼ,ⱼ∈[96,192], MRL): 1.188

- **Epoch 10**:

- Light blue: 0.063

- Blue: 0.062

- Light orange: 0.184

- Orange: 0.062

- **Epoch 20**:

- Light blue: 0.024

- Blue: 0.026

- Light orange: 0.081

- Orange: 0.025

- **Epoch 30**:

- Light blue: 0.015

- Blue: 0.013

- Light orange: 0.038

- Orange: 0.013

#### Gradient Variance (Lines)

- **SMRL (Blue line)**:

- Epoch 0: 8.84e-8

- Epoch 10: 2.27e-8

- Epoch 20: 1.48e-9

- Epoch 30: 5.78e-10

- **MRL (Red line)**:

- Epoch 0: 3.07e-7

- Epoch 10: 2.27e-8

- Epoch 20: 4.93e-9

- Epoch 30: 1.87e-9

### Key Observations

1. **Gradient Size Trends**:

- All gradient magnitudes decrease over epochs, with MRL consistently showing higher values than SMRL in overlapping ranges.

- The largest gradient magnitudes occur in the [0.96,1.92] range for MRL at epoch 0 (3.666).

- By epoch 30, SMRL gradients stabilize near 0.013, while MRL remains slightly higher at 0.038.

2. **Gradient Variance Trends**:

- Both SMRL and MRL show decreasing variance over epochs.

- MRL starts with significantly higher variance (3.07e-7 at epoch 0) but converges closer to SMRL by epoch 30 (1.87e-9 vs. 5.78e-10).

- SMRL maintains lower variance throughout training.

3. **Notable Patterns**:

- The [0.96,1.92] range (blue/orange bars) dominates gradient magnitudes, suggesting larger parameter updates in later layers.

- Variance lines (blue/red) intersect at epoch 10, indicating similar stability for both methods at this stage.

### Interpretation

The data demonstrates that both SMRL and MRL reduce gradient magnitudes and variance as training progresses, with SMRL achieving faster stabilization. MRL maintains larger gradient magnitudes in the [0.96,1.92] range, potentially indicating stronger updates in later layers but at the cost of higher initial variance. The convergence of variance lines at epoch 10 suggests both methods reach comparable stability thresholds early in training. The logarithmic scales emphasize exponential decay in gradient magnitudes, highlighting the importance of monitoring both size and variance for effective optimization.

</details>

(a) Rank Loss

<details>

<summary>figures/fig_discuss2_mse.png Details</summary>

### Visual Description

## Bar Chart with Line Overlays: Gradient Size and Variance Across Epochs

### Overview

The chart visualizes gradient size and variance across four training epochs (0, 10, 20, 30) for different parameter ranges and methods (SMRL vs. MRL). It uses dual y-axes: left for gradient size (log scale) and right for gradient variance (log scale). Four bar categories and two line series are plotted, with distinct color coding for clarity.

### Components/Axes

- **X-axis**: Epochs (0, 10, 20, 30)

- **Left Y-axis**: Gradient Size (log scale, 10⁻¹ to 10⁰)

- **Right Y-axis**: Gradient Variance (log scale, 10⁻⁸ to 10⁻⁵)

- **Legend**:

- Light Blue: Average Gradient (ωᵢ,ᵢ∈[0,96], SMRL)

- Dark Blue: Average Gradient (ωⱼ,ⱼ∈[96,192], SMRL)

- Light Orange: Average Gradient (ωᵢ,ᵢ∈[0,96], MRL)

- Dark Orange: Average Gradient (ωⱼ,ⱼ∈[96,192], MRL)

- Blue Circle: Gradient Variance (ωₖ,ₖ∈[0,192], SMRL)

- Red Square: Gradient Variance (ωₖ,ₖ∈[0,192], MRL)

### Detailed Analysis

#### Bars (Gradient Size)

- **Epoch 0**:

- Light Blue: 1.124

- Dark Blue: 1.037

- Light Orange: 2.717

- Dark Orange: 1.093

- **Epoch 10**:

- Light Blue: 0.088

- Dark Blue: 0.083

- Light Orange: 0.18

- Dark Orange: 0.078

- **Epoch 20**:

- Light Blue: 0.039

- Dark Blue: 0.04

- Light Orange: 0.077

- Dark Orange: 0.037

- **Epoch 30**:

- Light Blue: 0.023

- Dark Blue: 0.023

- Light Orange: 0.062

- Dark Orange: 0.025

#### Lines (Gradient Variance)

- **SMRL (Blue Circle)**:

- Epoch 0: 1.51e-5

- Epoch 10: 2.43e-7

- Epoch 20: 2.09e-8

- Epoch 30: 6.51e-9

- **MRL (Red Square)**:

- Epoch 0: 5.32e-5

- Epoch 10: 9.88e-8

- Epoch 20: 4.68e-8

- Epoch 30: 2.75e-8

### Key Observations

1. **Gradient Size Decay**: All bar categories show exponential decay in gradient size over epochs. The largest initial gradient size (2.717) occurs in the light orange category (ωᵢ,ᵢ∈[0,96], MRL) at epoch 0.

2. **Variance Trends**:

- SMRL variance (blue line) starts higher than MRL (red line) but decays faster, reaching 6.51e-9 by epoch 30.

- MRL variance remains relatively stable after epoch 10, hovering around 2.75e-8.

3. **Parameter Range Differences**:

- The [0,96] range (light blue/orange bars) consistently has higher gradient sizes than [96,192] (dark blue/orange bars).

- Variance for [0,192] (blue/red lines) dominates over sub-range variances.

### Interpretation

The data demonstrates that gradient magnitudes and variances decrease with training epochs, indicating convergence. MRL exhibits more stable gradients (lower variance) compared to SMRL, particularly in later epochs. The [0,96] parameter range dominates in initial gradient magnitude but decays faster than [96,192]. The dual-axis visualization highlights the inverse relationship between gradient size and variance: as gradients shrink, their relative variability diminishes. This suggests MRL may be more robust for large-scale parameter optimization, while SMRL shows higher early variability but stabilizes more effectively over time.

</details>

(b) MSE Loss

<details>

<summary>figures/fig_discuss2_ce.png Details</summary>

### Visual Description

## Bar Chart with Dual Y-Axes: Gradient Size and Variance Across Epochs

### Overview

The chart visualizes the evolution of gradient magnitudes and variances during training epochs for two optimization methods (SMRL and MRL). It uses a logarithmic scale for both y-axes to accommodate wide-ranging values. The left y-axis tracks "Gradient Size" (average gradient magnitudes), while the right y-axis tracks "var" (gradient variance). Data is plotted at four epoch intervals: 0, 10, 20, and 30.

### Components/Axes

- **X-Axis**: Epochs (0, 10, 20, 30)

- **Left Y-Axis**: Gradient Size (log scale: 10⁻¹ to 10¹)

- **Right Y-Axis**: var (log scale: 10⁻⁷ to 10⁻⁴)

- **Legend**:

- Light blue: Average Gradient (ωᵢ,ᵢ∈[0,96], SMRL)

- Blue: Average Gradient (ωⱼ,ⱼ∈[96,192], SMRL)

- Light orange: Average Gradient (ωᵢ,ᵢ∈[0,96], MRL)

- Orange: Average Gradient (ωⱼ,ⱼ∈[96,192], MRL)

- Blue line with circles: Gradient Variance (ωₖ,ₖ∈[0,192], SMRL)

- Red line with squares: Gradient Variance (ωₖ,ₖ∈[0,192], MRL)

### Detailed Analysis

#### Gradient Size (Left Y-Axis)

- **Epoch 0**:

- SMRL (ωᵢ,ᵢ∈[0,96]): 2.281

- SMRL (ωⱼ,ⱼ∈[96,192]): 2.298

- MRL (ωᵢ,ᵢ∈[0,96]): 2.381

- MRL (ωⱼ,ⱼ∈[96,192]): 5.556

- **Epoch 10**:

- SMRL (ωᵢ,ᵢ∈[0,96]): 0.249

- SMRL (ωⱼ,ⱼ∈[96,192]): 0.255

- MRL (ωᵢ,ᵢ∈[0,96]): 0.204

- MRL (ωⱼ,ⱼ∈[96,192]): 0.528

- **Epoch 20**:

- SMRL (ωᵢ,ᵢ∈[0,96]): 0.099

- SMRL (ωⱼ,ⱼ∈[96,192]): 0.113

- MRL (ωᵢ,ᵢ∈[0,96]): 0.156

- MRL (ωⱼ,ⱼ∈[96,192]): 0.311

- **Epoch 30**:

- SMRL (ωᵢ,ᵢ∈[0,96]): 0.084

- SMRL (ωⱼ,ⱼ∈[96,192]): 0.082

- MRL (ωᵢ,ᵢ∈[0,96]): 0.102

- MRL (ωⱼ,ⱼ∈[96,192]): 0.257

#### Gradient Variance (Right Y-Axis)

- **Epoch 0**:

- SMRL: 7.17e-5

- MRL: 2.11e-4

- **Epoch 10**:

- SMRL: 9.97e-7

- MRL: 2.24e-6

- **Epoch 20**:

- SMRL: 1.64e-7

- MRL: 8.12e-7

- **Epoch 30**:

- SMRL: 9.17e-8

- MRL: 4.99e-7

### Key Observations

1. **Gradient Magnitude Trends**:

- All gradient magnitudes decrease monotonically across epochs.

- MRL consistently exhibits larger gradients than SMRL (e.g., MRL ωⱼ,ⱼ∈[96,192] starts at 5.556 vs. SMRL’s 2.298 at epoch 0).

- The largest gradient magnitude (5.556) occurs in MRL’s ωⱼ,ⱼ∈[96,192] at epoch 0.

2. **Variance Trends**:

- Both SMRL and MRL show exponential decay in variance.

- MRL variance remains higher than SMRL at all epochs (e.g., MRL variance at epoch 30: 4.99e-7 vs. SMRL’s 9.17e-8).

- The largest variance (2.11e-4) occurs in MRL at epoch 0.

3. **Divergence Between Methods**:

- MRL’s gradients and variances are systematically larger than SMRL’s, suggesting stronger updates but potentially less stability.

### Interpretation

The data demonstrates that both SMRL and MRL experience diminishing gradient magnitudes and variances as training progresses, indicating convergence toward stable parameter updates. However, MRL maintains consistently higher gradient magnitudes and variances compared to SMRL, implying:

- **Stronger Learning Dynamics**: MRL’s larger gradients may enable faster convergence but at the cost of increased variability.

- **Stability Trade-offs**: SMRL’s lower variances suggest more stable updates, potentially at the expense of slower learning.

- **Epoch-Specific Behavior**: The sharpest declines in gradient size occur between epochs 0–10, while variance reductions are more gradual. This could reflect an initial "explosive" phase of learning followed by refinement.

The logarithmic scaling highlights the exponential decay patterns, emphasizing the importance of monitoring both magnitude and variability in gradient-based optimization.

</details>

(c) CE Loss

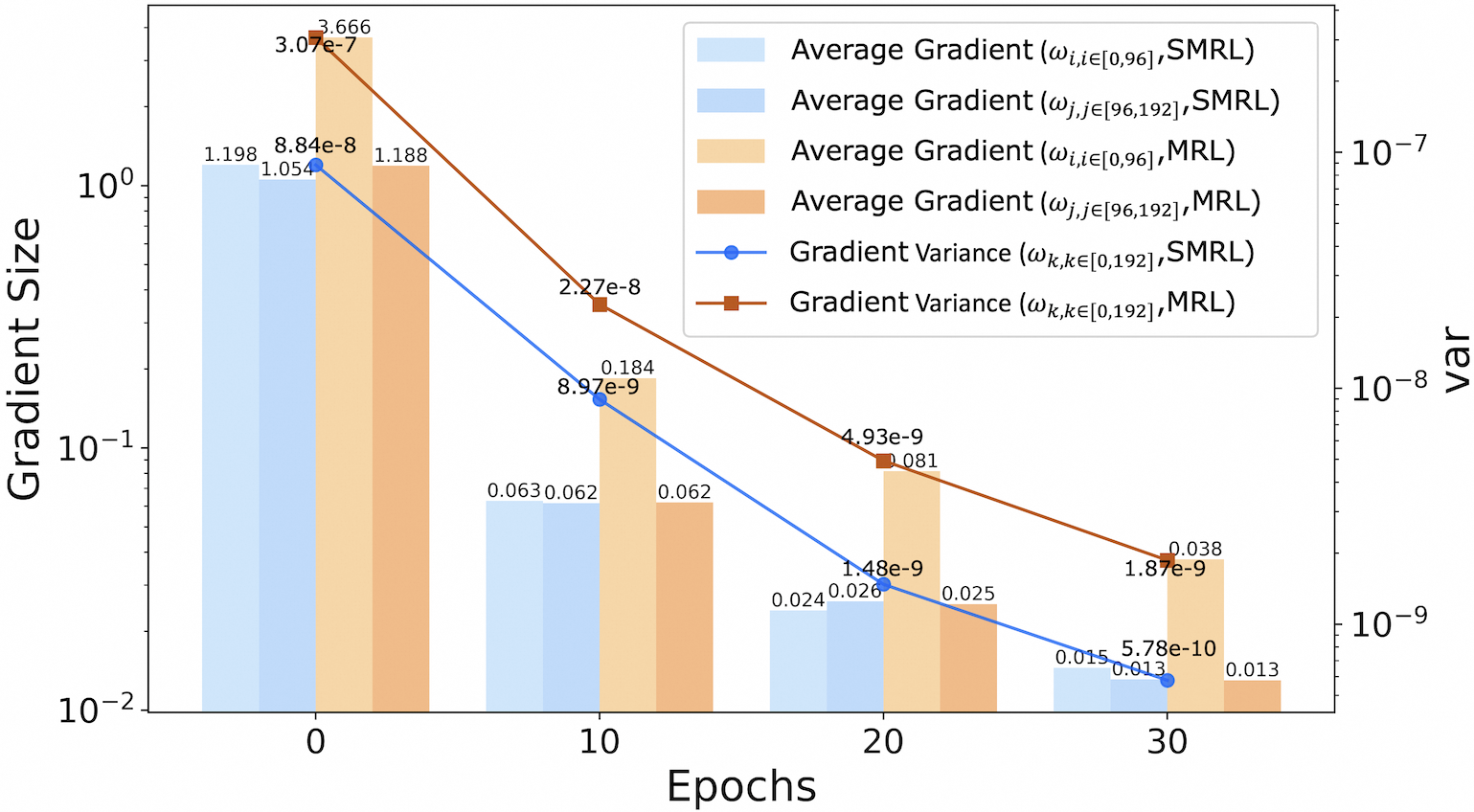

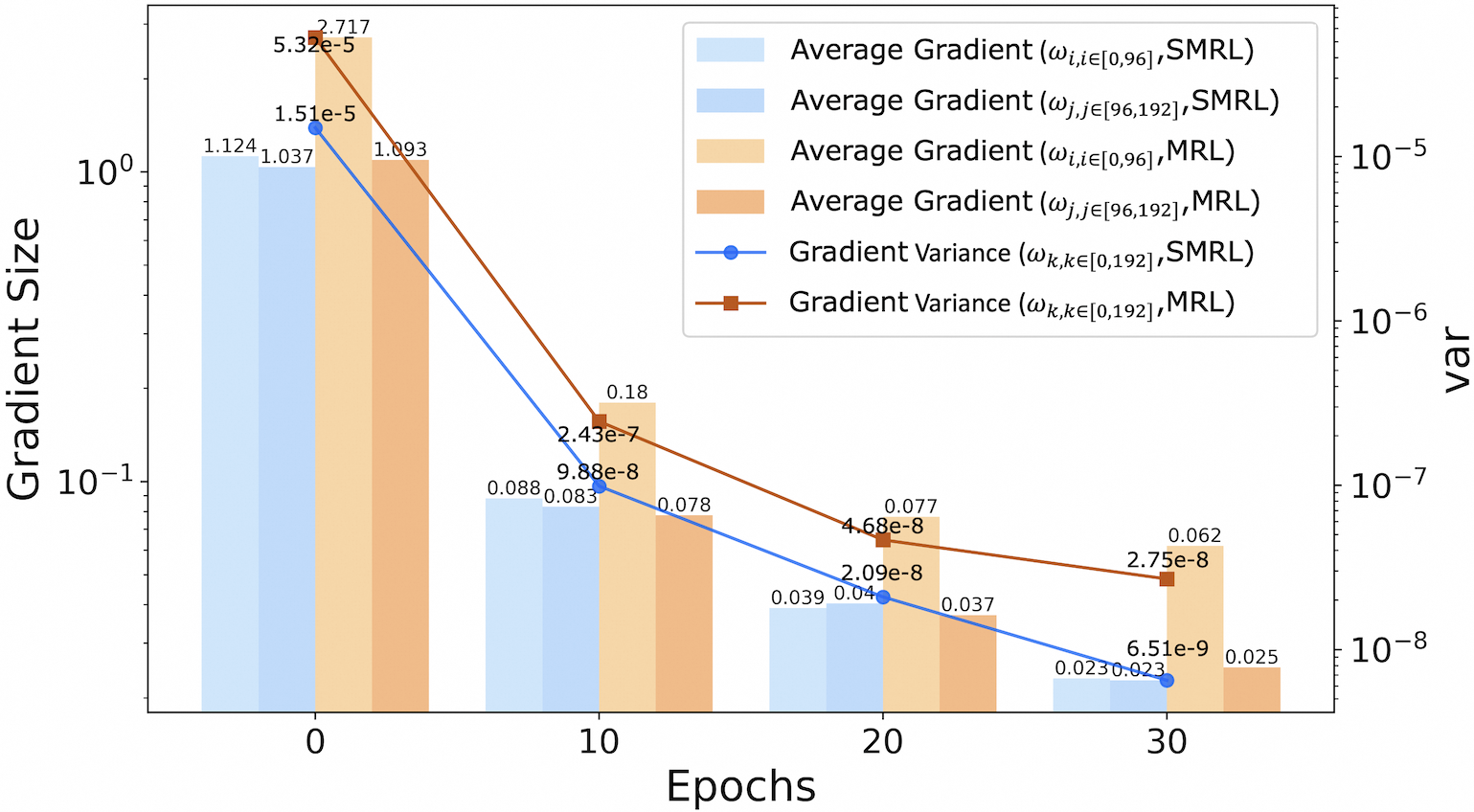

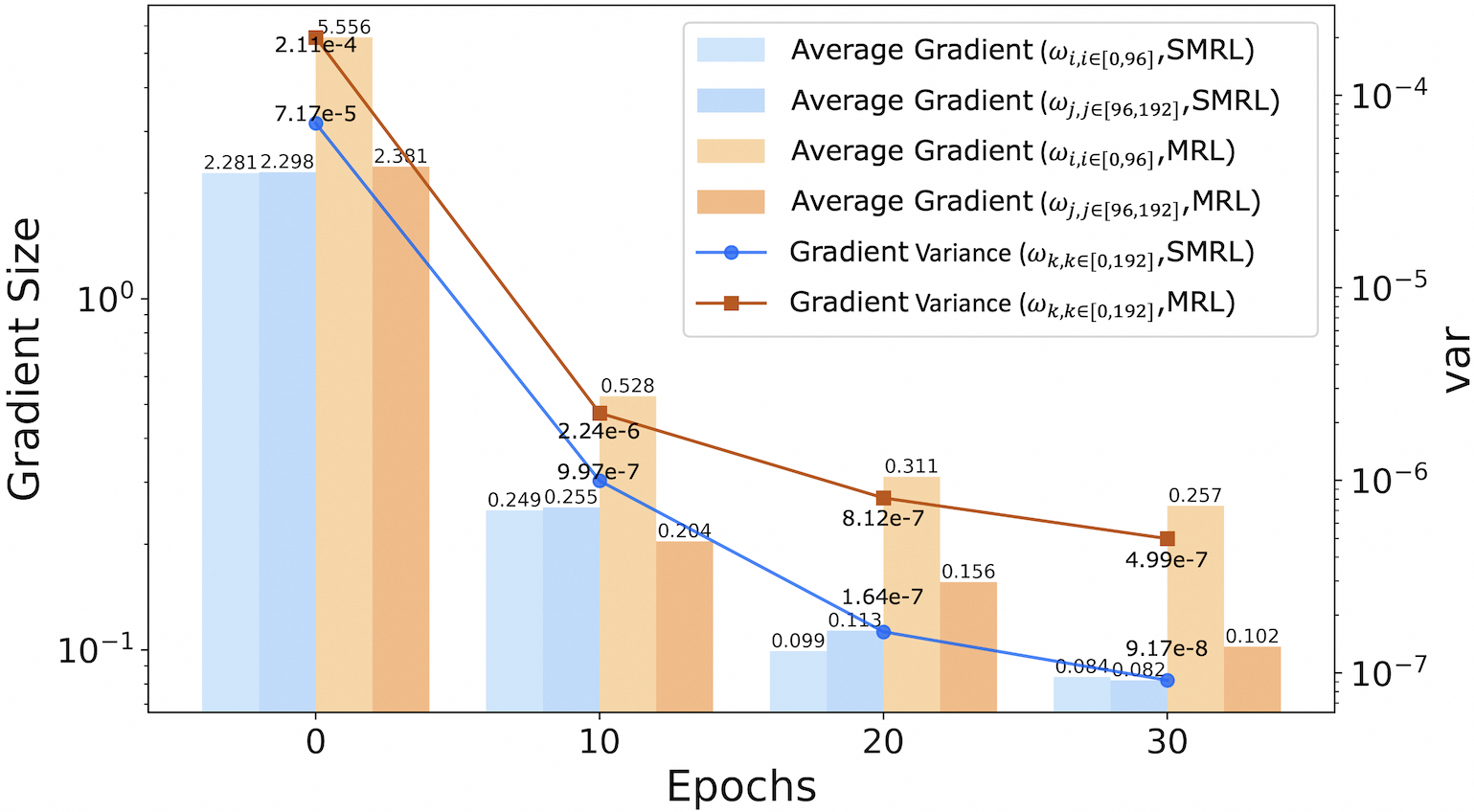

Figure 7: Gradient statistics with Rank, MSE and CE loss (with the vertical axis in a logarithmic scale): average gradient magnitudes of parameters in the ranges $[0,96]$ and $[96,192]$ , as well as the gradient variance over all parameters in the range $[0,192]$ , during training.

### 5.2 Gradient variance of different loss functions

Section 5.1 demonstrates that MRL exhibits higher gradient variance compared to SMRL when rank loss is employed as the loss function, thereby corroborating the findings presented in Section 3.2. To enhance the validation, we conducted additional experiments on the BEIR dataset using rank loss, MSE loss and cross-entropy (CE) loss under identical settings. The results depicted in Figure 7 reveal a consistent pattern across both loss functions, validating the robustness of our conclusions.

### 5.3 Ablation studies

To evaluate the contribution of each component in SMEC to the overall performance, we conduct ablation studies using MRL as the baseline. Different modules are incrementally added on top of MRL, as detailed in table 1. When examined individually, the SMRL strategy achieves the most significant performance gain, suggesting that its reduced gradient variance contributes positively to model performance. In addition, both the ADS module and the S-XBM module also provide notable improvements. The combination of all three components improves the performance of the 128-dimensional embedding by 3.1 points.

| w/ SMRL w/ ADS w/ S-XBM | 0.3808 0.3765 0.3778 | 0.4621 0.4583 0.4583 | 0.4895 0.4863 0.4853 | 0.5283 0.5254 0.5256 |

| --- | --- | --- | --- | --- |

| SMEC (Ours) | 0.4053 | 0.4848 | 0.5002 | 0.5459 |

Table 1: Ablation studies of SMEC on 8 BEIR datasets with MRL as the baseline.

### 5.4 The contribution of ADS in preserving key information

The selection of important parameters in neural networks is a well-established research area, with numerous studies demonstrating that network parameters are often redundant. As a result, Parameter Pruning have been widely adopted for model compression. We consider ADS (or more generally, the MEC family of methods), although it focuses on dimension selection within embeddings, to be fundamentally implemented through network parameter selection. Therefore, ADS can be regarded as a form of pruning method with theoretical feasibility.

To fully demonstrate the effectiveness of ADS, we evaluate both the dimension selection strategies of ADS and MRL using WARE Yu et al. (2018) (Weighted Average Reconstruction Error), a commonly used metric in the pruning area for assessing parameter importance. The WARE is defined as follows:

$$

\text{WARE}=\frac{1}{M}\sum_{m=1}^{M}\frac{|\hat{y}_{m}-y_{m}|}{|y_{m}|} \tag{10}

$$

,where $M$ denotes the number of samples; $\hat{y}_{m}$ and $y_{m}$ represent the model’s score (which can be interpreted as the similarity between embedding pairs) for the $m$ -th sample before and after dimension pruning, respectively. The core idea of WARE is to quantify the change in the model’s output induced by removing a specific dimension; a larger change indicates higher importance of that dimension.

We randomly sampled 10,000 instances from multiple sub-datasets of BEIR. For the LLM2VEC embeddings (3072dim), we computed the WARE for each dimension. Then, we used both ADS and MRL to generate low-dimensional embeddings of 1536, 768, and 256 dimensions, respectively. For each method and compression level, we calculated the achievement rate, which is defined as the proportion of selected dimensions that appear in the top-N most important dimensions according to the WARE-based ranking.

| Dimension 1536 768 | ADS (Dimension Selection) 94.3% 90.1% | MRL (Dimension Truncation) 50.3% 32.8% |

| --- | --- | --- |

| 256 | 83.6% | 17.4% |

Table 2: Achievement Rate of Important Dimension Selection at Different Dimension Levels.

The results in table 2 show that the achievement rate of MRL is roughly linear with the compression ratio, indicating that the importance of dimensions has no strong correlation with their positions. The achievement rate of ADS also decreases as the number of retained dimensions reduces, which is due to the increased difficulty of selecting the top-N most important dimensions under higher compression ratios. However, even when compressed by a factor of 6, ADS still selects over 80 of the most important dimensions. This explains why, as seen in Figure 5, SMEC demonstrates stronger performance at lower dimensions.

### 5.5 Memory size of S-XBM

In this subsection, we explore how the memory size of S-XBM module affects training speed and model performance. Theoretically, as the memory size increases, it is easier for the S-XBM module to mine more hard samples, thereby improving model performance. However, an excessively large memory size may increase the retrieval time for top-k samples, which could negatively affect training efficiency. To prove this observation experimentally, we train the SMEC framework with varying memory sizes (e.g., 1000, 2000, 5000, 10000, and 15000), as illustrated in the table 3. The results demonstrate a clear trade-off between training speed and model performance. We select a memory size of 5000 as our final choice to strike a balance between them.

| Forward Time/s $\downarrow$ NDCG@10 $\uparrow$ | 0.06 0.4631 | 0.08 0.4652 | 0.11 0.4675 | 0.15 0.4682 | 0.21 0.4689 |

| --- | --- | --- | --- | --- | --- |

Table 3: Trade-off analysis of training speed and model performance under different memory size of S-XBM.

## 6 Conclusions

Although high-dimensional embeddings from large language models (LLMs) capture rich semantic features, their practical use is often limited by computational efficiency and storage constraints. To mitigate these limitations, Sequential Matryoshka Embedding Compression (SMEC) framework is proposed in this paper to achieve efficient embedding compression. Our proposed SMEC framework contains Sequential Matryoshka Representation Learning(SMRL) module, adaptive dimension selection (ADS) module and Selectable Cross-batch Memory (S-XBM) module. The SMRL module is designed to mitigate gradient variance during training. The ADS module is utilized to minimize information degradation during feature compression. And the S-XBM is utilized to enhance unsupervised learning between high- and low-dimensional embeddings. Compared to existing approaches, our approaches preserve higher performance at the same compression rate.

## Limitations

The SMEC framework introduces only a small number of additional parameters on top of a pre-trained model and is trained using labeled data from a specific domain, along with mined hard samples, with the aim of reducing the dimensionality of the original embeddings. However, this design and objective limit its generalizability and applicability to broader scenarios. Future work could explore extending the SMEC approach to full-parameter training of representation models, enabling them to directly generate embeddings of multiple dimensions. Additionally, the feasibility of training the model on diverse datasets is also worth investigating.

## References

- (1) Openai text embedding. https://platform.openai.com/docs/guides/embeddings/embeddings.

- Bai et al. (2020) Yalong Bai, Yuxiang Chen, Wei Yu, Linfang Wang, and Wei Zhang. 2020. Products-10k: A large-scale product recognition dataset.

- BehnamGhader et al. (2024) Parishad BehnamGhader, Vaibhav Adlakha, Marius Mosbach, Dzmitry Bahdanau, Nicolas Chapados, and Siva Reddy. 2024. Llm2vec: Large language models are secretly powerful text encoders. arXiv preprint arXiv:2404.05961.

- Beyer et al. (1999) K. Beyer, J. Goldstein, R. Ramakrishnan, and U. Shaft. 1999. When is nearest neighbor meaningful. Springer.

- Brown et al. (2020) Tom Brown, Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, and 1 others. 2020. Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901.

- Cai et al. (2024) Mu Cai, Jianwei Yang, Jianfeng Gao, and Yong Jae Lee. 2024. Matryoshka multimodal models. In Workshop on Video-Language Models@ NeurIPS 2024.

- Devlin et al. (2019) Jacob Devlin, Ming Wei Chang, Kenton Lee, and Kristina Toutanova. 2019. Bert: Pre-training of deep bidirectional transformers for language understanding. ArXiv, abs/1810.04805.

- Dosovitskiy et al. (2020) Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, and 1 others. 2020. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929.

- Frankle and Carbin (2018) Jonathan Frankle and Michael Carbin. 2018. The lottery ticket hypothesis: Finding sparse, trainable neural networks. arXiv preprint arXiv:1803.03635.

- Grattafiori et al. (2024) Aaron Grattafiori, Abhimanyu Dubey, Abhinav Jauhri, Abhinav Pandey, Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex Vaughan, and 1 others. 2024. The llama 3 herd of models. arXiv preprint arXiv:2407.21783.

- Gu et al. (2023) Jiatao Gu, Shuangfei Zhai, Yizhe Zhang, Joshua M Susskind, and Navdeep Jaitly. 2023. Matryoshka diffusion models. In The Twelfth International Conference on Learning Representations.

- Han et al. (2017) Xintong Han, Zuxuan Wu, Phoenix X. Huang, Xiao Zhang, and Larry S. Davis. 2017. Automatic spatially-aware fashion concept discovery. IEEE.

- He et al. (2020) Kaiming He, Haoqi Fan, Yuxin Wu, Saining Xie, and Ross Girshick. 2020. Momentum contrast for unsupervised visual representation learning. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 9729–9738.

- Hu et al. (2024) Wenbo Hu, Zi-Yi Dou, Liunian Li, Amita Kamath, Nanyun Peng, and Kai-Wei Chang. 2024. Matryoshka query transformer for large vision-language models. Advances in Neural Information Processing Systems, 37:50168–50188.

- Huang et al. (2024) Weiquan Huang, Aoqi Wu, Yifan Yang, Xufang Luo, Yuqing Yang, Liang Hu, Qi Dai, Xiyang Dai, Dongdong Chen, Chong Luo, and Lili Qiu. 2024. Llm2clip: Powerful language model unlock richer visual representation. Preprint, arXiv:2411.04997.

- Jang et al. (2016) Eric Jang, Shixiang Gu, and Ben Poole. 2016. Categorical reparameterization with gumbel-softmax. arXiv preprint arXiv:1611.01144.

- Johnson et al. (2017) Jeff Johnson, Matthijs Douze, and Hervé Jégou. 2017. Billion-scale similarity search with gpus.

- Jolliffe and Cadima (2016) Ian T. Jolliffe and Jorge Cadima. 2016. Principal component analysis: a review and recent developments. Philos Trans A Math Phys Eng, 374(2065):20150202.

- Kalervo et al. (2002) Järvelin Kalervo, Jaana Kekäläinen, and Almahairi. 2002. Cumulated gain-based evaluation of ir techniques. ACM Transactions on Information Systems (TOIS), 20(4):422–446.

- Kingma et al. (2013) Diederik P Kingma, Max Welling, and 1 others. 2013. Auto-encoding variational bayes.

- Kusupati et al. (2022) Aditya Kusupati, Gantavya Bhatt, Aniket Rege, Matthew Wallingford, Aditya Sinha, Vivek Ramanujan, William Howard-Snyder, Kaifeng Chen, Sham Kakade, Prateek Jain, and 1 others. 2022. Matryoshka representation learning. Advances in Neural Information Processing Systems, 35:30233–30249.

- Lee and Seung (2000) Daniel Lee and H Sebastian Seung. 2000. Algorithms for non-negative matrix factorization. Advances in neural information processing systems, 13.

- Li et al. (2023) Yixiao Li, Yifan Yu, Qingru Zhang, Chen Liang, Pengcheng He, Weizhu Chen, and Tuo Zhao. 2023. Losparse: Structured compression of large language models based on low-rank and sparse approximation. In International Conference on Machine Learning, pages 20336–20350. PMLR.

- (24) Geoffrey J Mclachlan. Discriminant analysis and statistical pattern recognition. Wiley-Interscience,.

- O Pinheiro et al. (2020) Pedro O O Pinheiro, Amjad Almahairi, Ryan Benmalek, Florian Golemo, and Aaron C Courville. 2020. Unsupervised learning of dense visual representations. Advances in neural information processing systems, 33:4489–4500.

- Oord et al. (2018) Aaron van den Oord, Yazhe Li, and Oriol Vinyals. 2018. Representation learning with contrastive predictive coding. arXiv preprint arXiv:1807.03748.

- Robertson and Walker (1994) Stephen E. Robertson and Steve Walker. 1994. Some simple effective approximations to the 2-poisson model for probabilistic weighted retrieval. ACM.

- Thakur et al. (2021) Nandan Thakur, Nils Reimers, Andreas Rücklé, Abhishek Srivastava, and Iryna Gurevych. 2021. Beir: A heterogenous benchmark for zero-shot evaluation of information retrieval models.

- Vaswani et al. (2017) Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin. 2017. Attention is all you need. Advances in neural information processing systems, 30.

- Wang et al. (2019) Alex Wang, Yada Pruksachatkun, Nikita Nangia, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel Bowman. 2019. Superglue: A stickier benchmark for general-purpose language understanding systems. Advances in neural information processing systems, 32.

- Wang et al. (2018) Alex Wang, Amanpreet Singh, Julian Michael, Felix Hill, Omer Levy, and Samuel R Bowman. 2018. Glue: A multi-task benchmark and analysis platform for natural language understanding.

- Wang et al. (2020) Xun Wang, Haozhi Zhang, Weilin Huang, and Matthew R Scott. 2020. Cross-batch memory for embedding learning. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 6388–6397.

- Yoon et al. (2023) Jinsung Yoon, Sercan O Arik, Yanfei Chen, and Tomas Pfister. 2023. Search-adaptor: Embedding customization for information retrieval. arXiv preprint arXiv:2310.08750.

- Yoon et al. (2024) Jinsung Yoon, Rajarishi Sinha, Sercan O Arik, and Tomas Pfister. 2024. Matryoshka-adaptor: Unsupervised and supervised tuning for smaller embedding dimensions. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pages 10318–10336, Miami, Florida, USA. Association for Computational Linguistics.

- Yu et al. (2018) Ruichi Yu, Ang Li, Chun-Fu Chen, Jui-Hsin Lai, Vlad I Morariu, Xintong Han, Mingfei Gao, Ching-Yung Lin, and Larry S Davis. 2018. Nisp: Pruning networks using neuron importance score propagation. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 9194–9203.

- Yury et al. (2018) Yury, A, Malkov, Dmitry, A, and Yashunin. 2018. Efficient and robust approximate nearest neighbor search using hierarchical navigable small world graphs. IEEE Transactions on Pattern Analysis and Machine Intelligence.

## Appendix A Derivation of the Gradient Fluctuation