# The Mechanistic Emergence of Symbol Grounding in Language Models

**Authors**:

- Freda Shi Joyce Chai (University of Michigan

University of Waterloo

Vector Institute

UNC at Chapel Hill)

## Abstract

Symbol grounding (Harnad, 1990) describes how symbols such as words acquire their meanings by connecting to real-world sensorimotor experiences. Recent work has shown preliminary evidence that grounding may emerge in (vision-)language models trained at scale without using explicit grounding objectives. Yet, the specific loci of this emergence and the mechanisms that drive it remain largely unexplored. To address this problem, we introduce a controlled evaluation framework that systematically traces how symbol grounding arises within the internal computations through mechanistic and causal analysis. Our findings show that grounding concentrates in middle-layer computations and is implemented through the aggregate mechanism, where attention heads aggregate the environmental ground to support the prediction of linguistic forms. This phenomenon replicates in multimodal dialogue and across architectures (Transformers and state-space models), but not in unidirectional LSTMs. Our results provide behavioral and mechanistic evidence that symbol grounding can emerge in language models, with practical implications for predicting and potentially controlling the reliability of generation.

footnotetext: Authors contributed equally to this work. footnotetext: Advisors contributed equally to this work.

## 1 Introduction

Symbol grounding (Harnad, 1990) refers to the problem of how abstract and discrete symbols, such as words, acquire meaning by connecting to perceptual or sensorimotor experiences. Extending to the context of multimodal machine learning, grounding has been leveraged as an explicit pre-training objective for vision-language models (VLMs), by explicitly connecting linguistic units to the world that gives language meanings (Li et al., 2022; Ma et al., 2023). Through supervised fine-tuning with grounding signals, such as entity-phrase mappings, modern VLMs have achieved fine-grained understanding at both region (You et al., 2024; Peng et al., 2024; Wang et al., 2024) and pixel (Zhang et al., 2024b; Rasheed et al., 2024; Zhang et al., 2024a) levels.

With the rising of powerful autoregressive language models (LMs; OpenAI, 2024; Anthropic, 2024; Comanici et al., 2025, inter alia) and their VLM extensions, there is growing interest in identifying and interpreting their emergent capabilities. Recent work has shown preliminary correlational evidence that grounding may emerge in LMs (Sabet et al., 2020; Shi et al., 2021; Wu et al., 2025b) and VLMs (Cao et al., 2025; Bousselham et al., 2024; Schnaus et al., 2025) trained at scale, even when solely optimized with the simple next-token prediction objective. However, the potential underlying mechanisms that lead to such an emergence are not well understood. To address this limitation, our work seeks to understand the emergence of symbol grounding in LMs, causally and mechanistically tracing how symbol grounding arises within the internal computations.

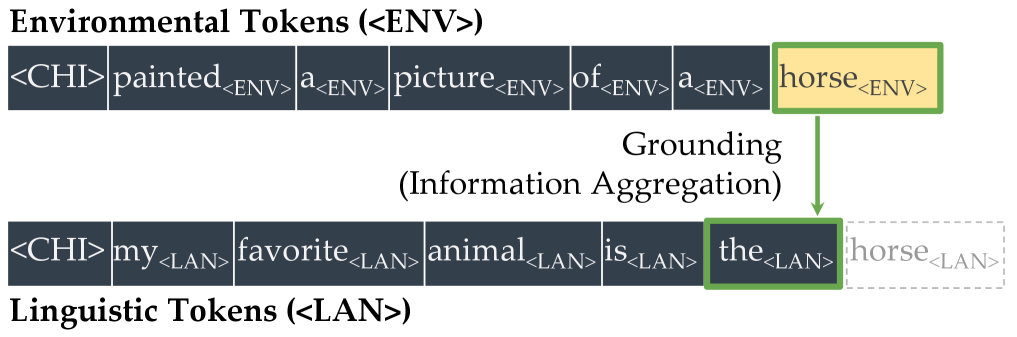

We begin by constructing a minimal testbed, motivated by the annotations provided in the CHILDES corpora (MacWhinney, 2000), where child–caregiver interactions provide cognitively plausible contexts for studying symbol grounding alongside verbal utterances. In our framework, each word is represented in two distinct forms: one token that appears in non-verbal scene descriptions (e.g., a box in the environment) and another that appears in spoken utterances (e.g., box in dialogue). We refer to these as environmental tokens ( $\langle$ ENV $\rangle$ ) and linguistic tokens ( $\langle$ LAN $\rangle$ ), respectively. A deliberately simple word-level tokenizer assigns separate vocabulary entries to each form, ensuring that they are treated as entirely different tokens by the language model. This framework enforces a structural separation between scenes and symbols, preventing correspondences from being reduced to trivial token identity. Under this setup, we can evaluate whether a model trained from scratch is able to predict the linguistic form from its environmental counterpart.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Token Grounding and Information Aggregation

### Overview

The image illustrates a two-tiered token grounding process, mapping environmental tokens (<ENV>) to linguistic tokens (<LAN>). It emphasizes the relationship between concrete environmental concepts (e.g., "horse") and their linguistic representations, with explicit grounding via a highlighted connection.

### Components/Axes

1. **Sections**:

- **Environmental Tokens (<ENV>)**: Top row, labeled with `<ENV>` tags.

- **Linguistic Tokens (<LAN>)**: Bottom row, labeled with `<LAN>` tags.

2. **Highlighted Tokens**:

- `horse_<ENV>` (yellow background, green border).

- `the_<LAN>` (green border, connected via arrow).

3. **Arrow**:

- Green arrow labeled "Grounding (Information Aggregation)" links `horse_<ENV>` to `the_<LAN>`.

### Detailed Analysis

#### Environmental Tokens (<ENV>)

- Sequence: `<CHI> painted_<ENV> a_<ENV> picture_<ENV> of_<ENV> a_<ENV> horse_<ENV>`.

- Structure:

- `<CHI>`: Likely a context or scene identifier.

- Tokens describe a painted picture of a horse, with `<ENV>` tags indicating environmental grounding.

- `horse_<ENV>` is emphasized via color (yellow) and the grounding arrow.

#### Linguistic Tokens (<LAN>)

- Sequence: `<CHI> my_<LAN> favorite_<LAN> animal_<LAN> is_<LAN> the_<LAN> horse_<LAN>`.

- Structure:

- `<CHI>`: Matches the environmental section, suggesting shared context.

- Tokens form a sentence fragment: "my favorite animal is the horse."

- `the_<LAN>` is highlighted, mirroring the environmental `horse_<ENV>` via the arrow.

#### Grounding Mechanism

- The arrow explicitly connects `horse_<ENV>` (environmental) to `the_<LAN>` (linguistic), indicating a semantic mapping.

- Both highlighted tokens share a green border, reinforcing their linkage.

### Key Observations

1. **Repetition of `<CHI>`**: Appears in both sections, possibly denoting a shared context or identifier.

2. **Token Alignment**:

- Environmental tokens describe a scene (`painted picture of a horse`).

- Linguistic tokens form a sentence fragment referencing the same scene.

3. **Highlighting**:

- `horse_<ENV>` and `the_<LAN>` are visually linked, suggesting they represent the same entity across modalities.

4. **Tagging**:

- `<ENV>` and `<LAN>` tags differentiate token types, critical for grounding tasks.

### Interpretation

This diagram demonstrates **cross-modal grounding**, where environmental data (e.g., visual or sensory tokens) is mapped to linguistic representations. The highlighted connection between `horse_<ENV>` and `the_<LAN>` implies that the system aggregates information to associate concrete entities (e.g., a horse in a scene) with their linguistic counterparts (e.g., the word "the horse").

The repetition of `<CHI>` suggests a shared context or identifier, possibly denoting a specific instance or scenario. The grounding arrow acts as a bridge between modalities, emphasizing the importance of aligning environmental and linguistic data for tasks like natural language understanding or multimodal AI systems.

Notably, the absence of `<LAN>` tags on `horse_<LAN>` (it is grayed out) may indicate it is inferred or derived from the grounding process rather than explicitly labeled. This aligns with how grounding often involves implicit mappings rather than explicit annotations.

</details>

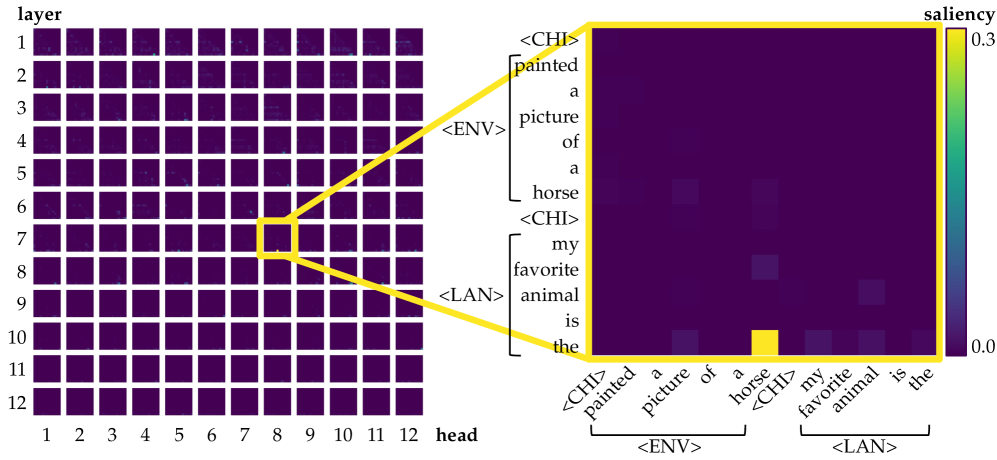

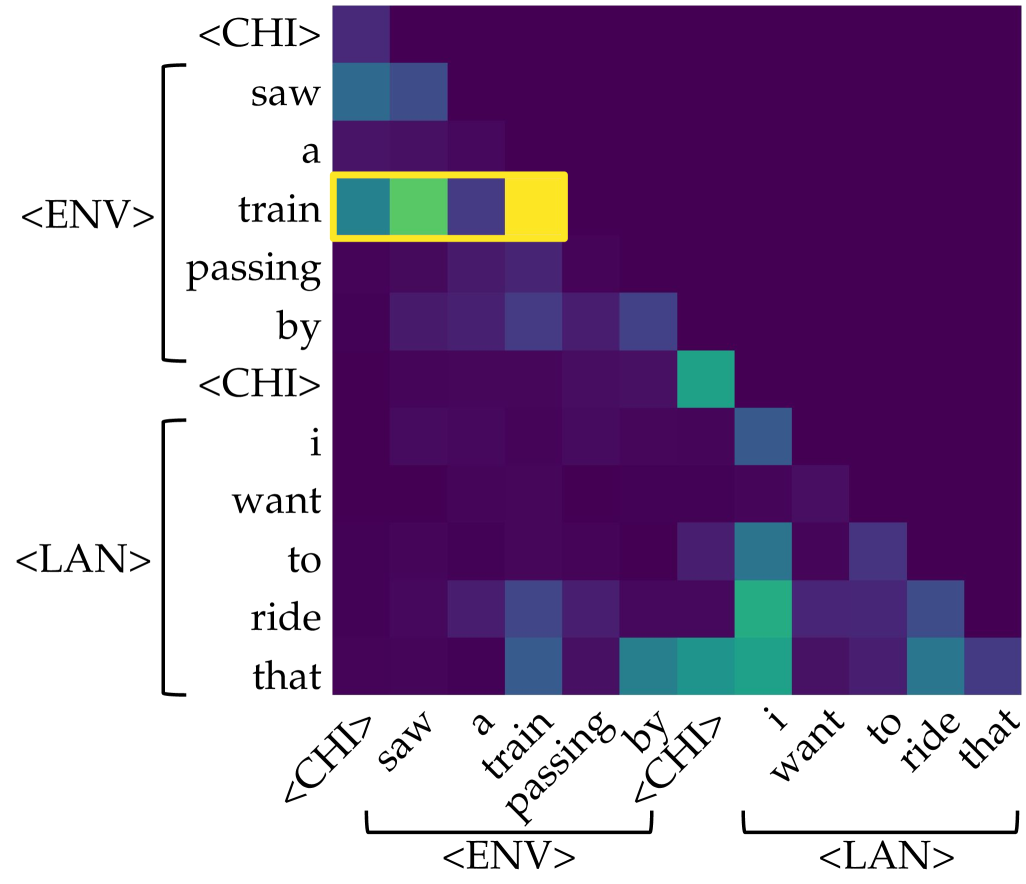

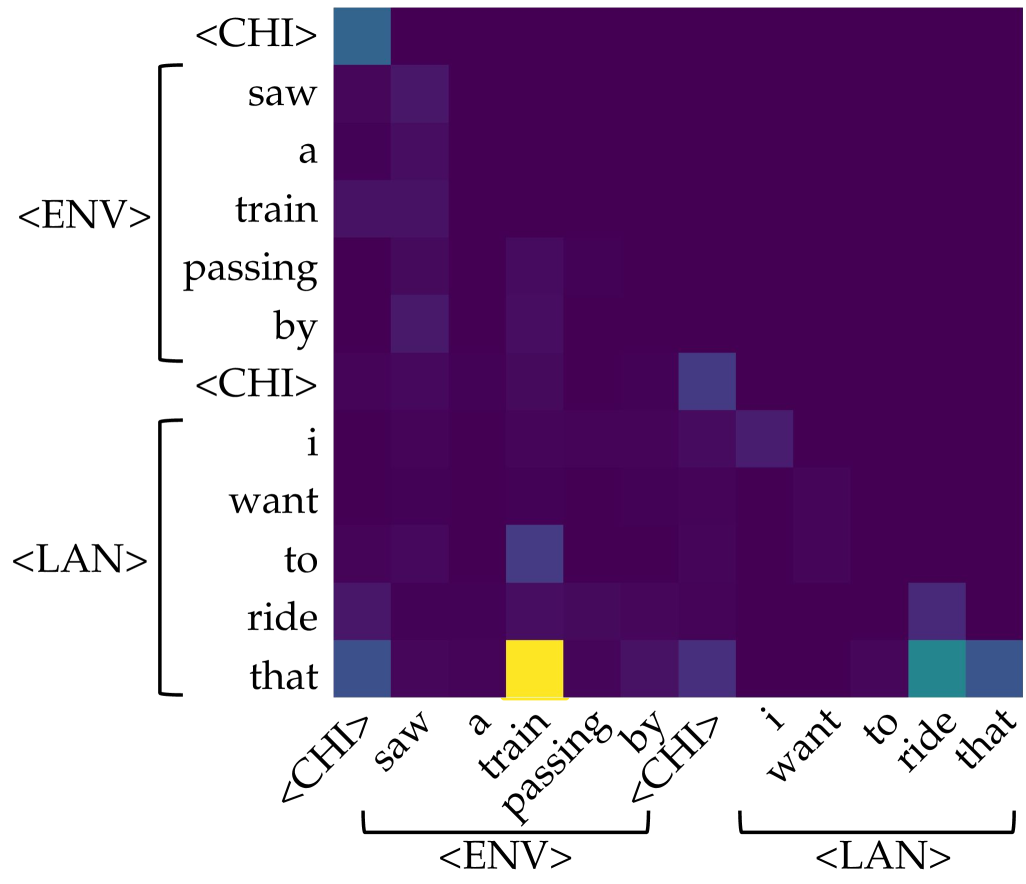

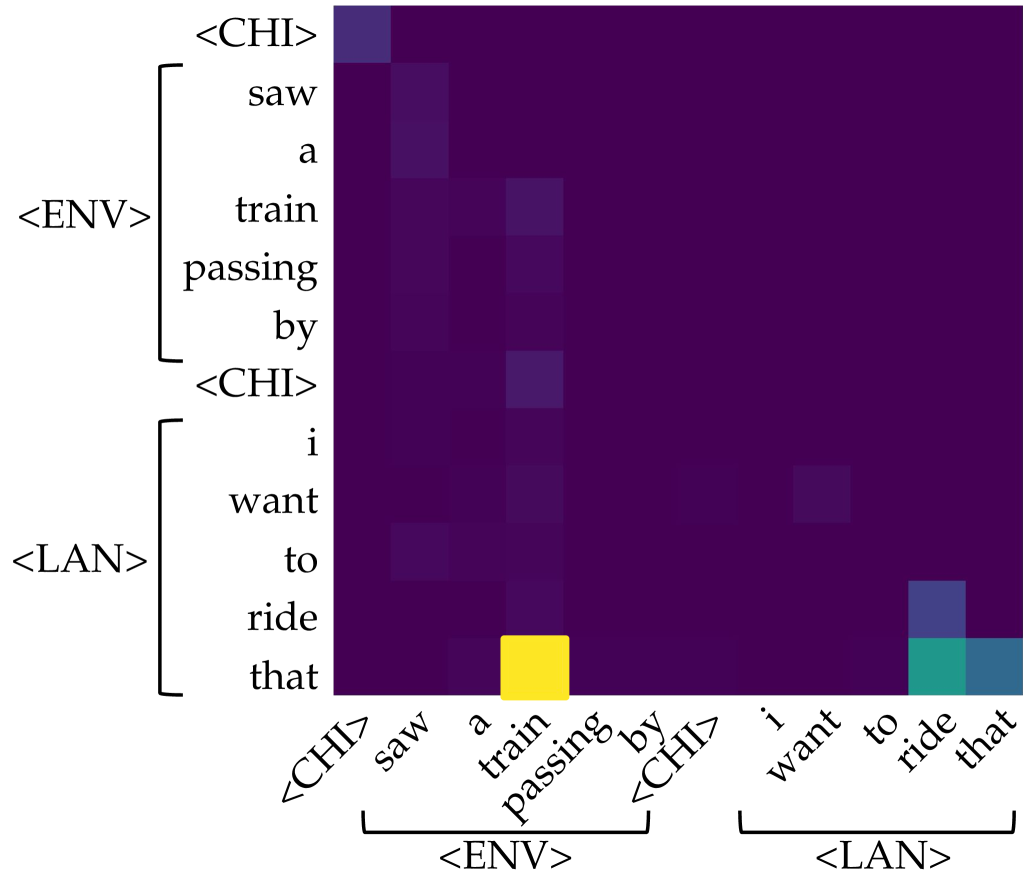

(a) Attention head 8 of layer 7 in GPT-CHILDES.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: Environmental Token Grounding Process

### Overview

The diagram illustrates the relationship between environmental tokens (<ENV>) and linguistic tokens (<LAN>) through a grounding process called "Information Aggregation." It combines a visual representation of a llama with textual analysis and token segmentation.

### Components/Axes

1. **Visual Element**:

- Image of a llama in a fenced enclosure with desert vegetation

- Colored bounding boxes (red/yellow) on the llama's body

2. **Textual Component**:

- Question: "what would you name this ? alpaca"

- Words segmented into individual dark blue boxes

- Green box highlighting the question mark ("?")

3. **Connecting Element**:

- Green arrow from yellow box on llama to "this ?" in text

4. **Token Labels**:

- Environmental Tokens (<ENV>)

- Linguistic Tokens (<LAN>)

### Detailed Analysis

1. **Visual Annotation**:

- Red boxes: Likely represent environmental features (e.g., "desert", "fence")

- Yellow box: Highlights the llama as the primary subject

2. **Text Segmentation**:

- Each word in "what would you name this ? alpaca" is individually boxed

- Question mark ("?") emphasized with green box

3. **Token Flow**:

- Green arrow connects visual grounding (llama) to linguistic output ("alpaca")

- Suggests information flow from environmental context to language model

### Key Observations

1. The grounding process transforms visual input into structured linguistic tokens

2. The question mark acts as a critical junction between perception and language

3. Color coding differentiates token types:

- Red/Yellow: Environmental features

- Dark Blue: Linguistic tokens

- Green: Connection/grounding element

4. "alpaca" appears as the final output token, disconnected from the question structure

### Interpretation

This diagram demonstrates a multimodal grounding process where:

1. Environmental context (visual scene) is analyzed through tokenized features

2. The system generates a question ("what would you name this ?") to bridge perception and language

3. The question mark serves as the critical interface between visual and linguistic processing

4. The final output ("alpaca") emerges from aggregating environmental information through the grounding mechanism

The color-coded tokenization suggests a structured approach to:

- Spatial analysis (ENV tokens)

- Semantic decomposition (LAN tokens)

- Contextual integration (green arrow connection)

The absence of numerical values indicates this is a conceptual diagram rather than data visualization, focusing on process flow rather than quantitative analysis.

</details>

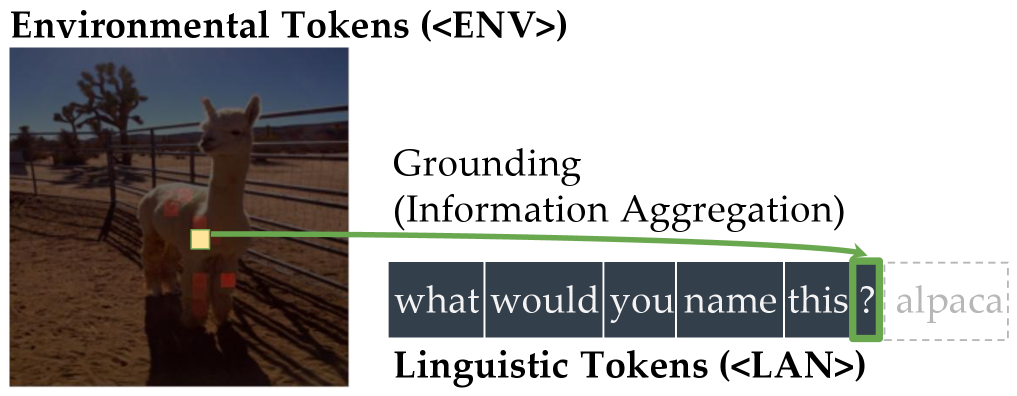

(b) Attention head 7 of layer 20 in LLaVA-1.5-7B.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Heatmap Visualization: Attention and Saliency Analysis

### Overview

The image presents a dual-part visualization:

1. **Left Grid**: A 12x12 matrix labeled "layer" (vertical axis) and "head" (horizontal axis), with a highlighted cell at layer 7, head 8.

2. **Right Heatmap**: A word-based saliency map with a color scale from 0.0 (dark purple) to 0.3 (yellow), highlighting the word "horse" in yellow.

The visualization connects the left grid to the right heatmap via yellow arrows, suggesting a relationship between attention patterns and word saliency.

---

### Components/Axes

#### Left Grid (Attention Matrix)

- **Vertical Axis (Layer)**: Labeled "layer" with values 1–12.

- **Horizontal Axis (Head)**: Labeled "head" with values 1–12.

- **Highlighted Cell**: Layer 7, Head 8 (marked with a yellow square).

- **Color Scale**: Not explicitly labeled, but the highlighted cell is yellow, implying higher attention.

#### Right Heatmap (Saliency Map)

- **Vertical Axis (Words)**: Contains phrases like `<CHI> painted a picture of a horse <CHI> my favorite animal is the`.

- **Horizontal Axis (Words)**: Contains phrases like `<ENV> <LAN>`.

- **Color Scale**: Labeled "saliency" with values 0.0 (dark purple) to 0.3 (yellow).

- **Highlighted Cell**: The word "horse" (yellow).

#### Legend

- **Color Bar**: Positioned to the right of the heatmap, transitioning from dark purple (0.0) to yellow (0.3).

---

### Detailed Analysis

#### Left Grid (Attention Matrix)

- **Structure**: 12x12 grid with uniform dark purple cells except for the highlighted cell at (7, 8).

- **Trend**: No discernible pattern in the grid; the highlighted cell is an outlier.

- **Uncertainty**: No numerical values provided for other cells, only the highlighted cell’s saliency is implied.

#### Right Heatmap (Saliency Map)

- **Structure**: 12x12 grid with varying shades of purple and yellow.

- **Key Data Points**:

- The word "horse" is the brightest (yellow), indicating the highest saliency.

- Other words (e.g., "painted," "picture," "favorite") show lower saliency (darker purple).

- **Trend**: The saliency decreases from "horse" outward, with no other words reaching the yellow threshold.

---

### Key Observations

1. **Outlier in Left Grid**: The highlighted cell at layer 7, head 8 is the only cell with a distinct color, suggesting it is the most active attention head in that layer.

2. **Saliency Focus**: The word "horse" dominates the right heatmap, indicating it is the most salient term in the text.

3. **Connection**: The yellow arrows linking the left grid to the right heatmap imply that the attention in layer 7, head 8 is directly tied to the saliency of "horse."

---

### Interpretation

- **Attention-Saliency Relationship**: The visualization suggests that the model’s attention in layer 7, head 8 is concentrated on the word "horse," which is the most salient term in the text. This could indicate that the model prioritizes this word for tasks like classification or generation.

- **Model Behavior**: The lack of other highlighted cells in the left grid implies that this specific head-layer combination is uniquely responsible for processing the salient word.

- **Implications**: This could reflect how the model encodes specific concepts (e.g., "horse") in its internal representations, with certain attention heads specializing in particular linguistic features.

---

**Note**: The image does not contain numerical values for non-highlighted cells, limiting quantitative analysis. The interpretation relies on visual cues and the explicit connection between the grid and heatmap.

</details>

(c) Left: saliency over tokens of each head in each layer for the prompt $\langle$ CHI $\rangle$ $\textit{painted}_{\texttt{$\langle$ENV$\rangle$}}$ $\textit{a}_{\texttt{$\langle$ENV$\rangle$}}$ $\textit{picture}_{\texttt{$\langle$ENV$\rangle$}}$ $\textit{of}_{\texttt{$\langle$ENV$\rangle$}}$ $\textit{a}_{\texttt{$\langle$ENV$\rangle$}}$ $\textit{horse}_{\texttt{$\langle$ENV$\rangle$}}$ $\langle$ CHI $\rangle$ $\textit{my}_{\texttt{$\langle$LAN$\rangle$}}$ $\textit{favorite}_{\texttt{$\langle$LAN$\rangle$}}$ $\textit{animal}_{\texttt{$\langle$LAN$\rangle$}}$ $\textit{is}_{\texttt{$\langle$LAN$\rangle$}}$ $\textit{the}_{\texttt{$\langle$LAN$\rangle$}}$ . Right: among all, only one of them (head 8 of layer 7) is identified as an aggregate head, where information flows from $\textit{horse}_{\texttt{$\langle$ENV$\rangle$}}$ to the current position, encouraging the model to predict $\textit{horse}_{\texttt{$\langle$LAN$\rangle$}}$ as the next token.

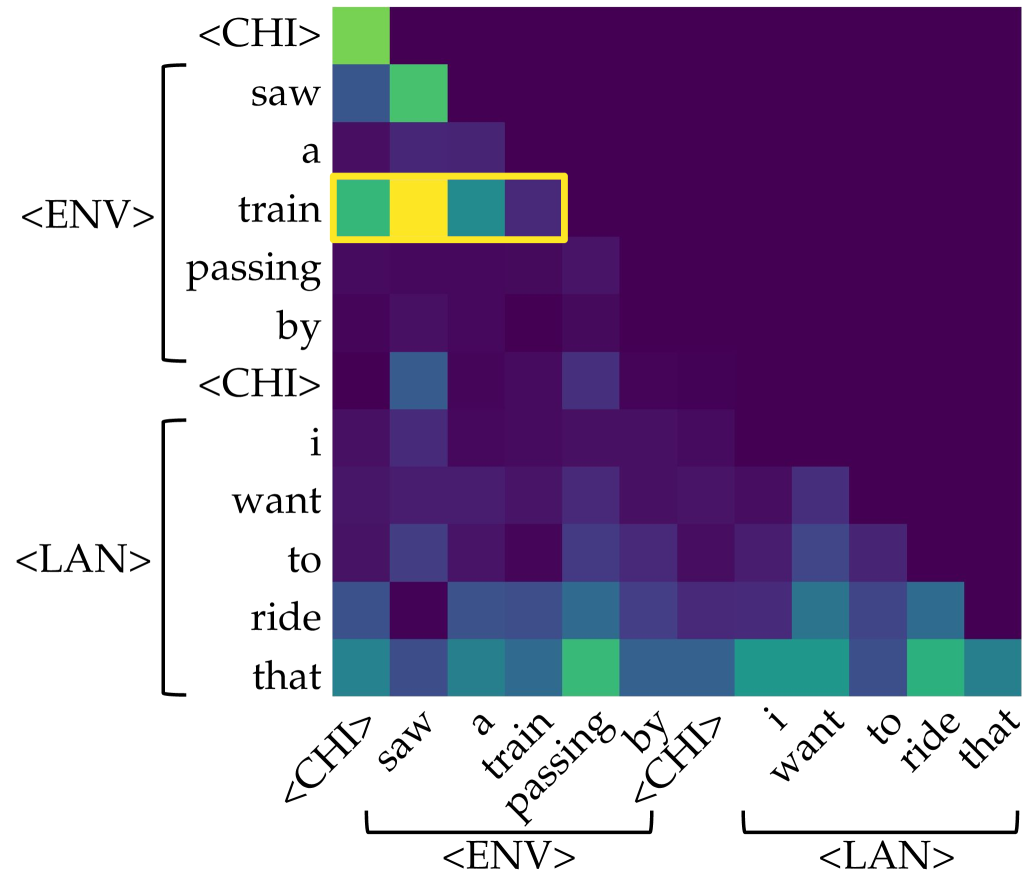

Figure 1: Illustration of the symbol grounding mechanism through information aggregation. Lighter colors denote more salient attention, quantified by saliency scores, i.e., gradient $\times$ attention contributions to the loss (Wang et al., 2023). When predicting the next token, aggregate heads (Bick et al., 2025) emerge to exclusively link environmental tokens (visual or situational context; $\langle$ ENV $\rangle$ ) to linguistic tokens (words in text; $\langle$ LAN $\rangle$ ). These heads provide a mechanistic pathway for symbol grounding by mapping external environmental evidence into its linguistic form.

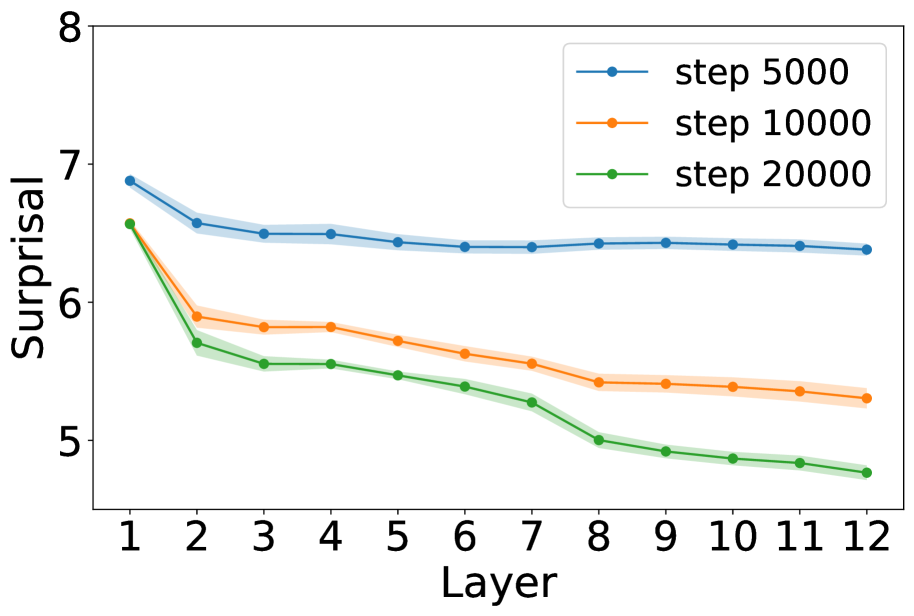

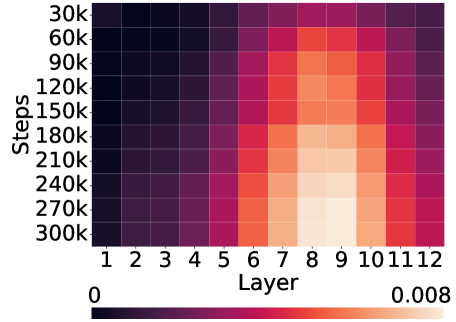

We quantify the level of grounding using surprisal: specifically, we compare how easily the model predicts a linguistic token ( $\langle$ LAN $\rangle$ ) when its matching environmental token ( $\langle$ ENV $\rangle$ ) is present versus when unrelated cues are given instead. A lower surprisal in the former condition indicates that the model has learned to align environmental grounds with linguistic forms. We find that LMs do learn to ground: the presence of environmental tokens consistently reduces surprisal for their linguistic counterparts, in a way that simple co-occurrence statistics cannot fully explain. To study the underlying mechanisms, we apply saliency analysis (Wang et al., 2023) and the tuned lens (Belrose et al., 2023), which converge on the result that grounding relations are concentrated in the middle layers of the network. Further analysis of attention heads reveals patterns consistent with the aggregate mechanism (Bick et al., 2025), where attention heads support the prediction of linguistic forms by retrieving their environmental grounds in the context.

Finally, we demonstrate that these findings generalize beyond the minimal CHILDES data and Transformer models. They appear in a multimodal setting with the Visual Dialog dataset (Das et al., 2017), and in state-space models (SSMs) such as Mamba-2 (Dao & Gu, 2024). In contrast, we do not observe grounding in unidirectional LSTMs, consistently with their sequential state compression and lack of content-addressable retrieval. Taken together, our results show that symbol grounding can mechanistically emerge in autoregressive LMs, while also delineating the architectural conditions under which it can arise.

## 2 Related Work

### 2.1 Language Grounding

Referential grounding has long been framed as the lexicon acquisition problem: how words map to referents in the world (Harnad, 1990; Gleitman & Landau, 1994; Clark, 1995). Early work focused on word-to-symbol mappings, designing learning mechanisms that simulate children’s lexical acquisition and explain psycholinguistic phenomena (Siskind, 1996; Regier, 2005; Goodman et al., 2007; Fazly et al., 2010). Subsequent studies incorporated visual grounding, first by aligning words with object categories (Roy & Pentland, 2002; Yu, 2005; Xu & Tenenbaum, 2007; Yu & Ballard, 2007; Yu & Siskind, 2013), and later by mapping words to richer visual features (Qu & Chai, 2010; Mao et al., 2019; 2021; Pratt et al., 2020). More recently, large-scale VLMs trained with paired text–image supervision have advanced grounding to finer levels of granularity, achieving region-level (Li et al., 2022; Ma et al., 2023; Chen et al., 2023; You et al., 2024; Wang et al., 2024) and pixel-level (Xia et al., 2024; Rasheed et al., 2024; Zhang et al., 2024b) grounding, with strong performance on referring expression comprehension (Chen et al., 2024a).

Recent work suggests that grounding emerges as a property of VLMs trained without explicit supervision, with evidence drawn from attention-based spatial localization (Cao et al., 2025; Bousselham et al., 2024) and cross-modal geometric correspondences (Schnaus et al., 2025). However, all prior work focused exclusively on static final-stage models, overlooking the training trajectory, a crucial aspect for understanding when and how grounding emerges. In addition, existing work has framed grounding through correlations between visual and textual signals, diverging from the definition by Harnad (1990), which emphasizes causal links from symbols to meanings. To address these issues, we systematically examine learning dynamics throughout the training process, applying causal interventions to probe model internals and introducing control groups to enable rigorous comparison.

### 2.2 Emergent Capabilities and Learning Dynamics of LMs

A central debate concerns whether larger language models exhibit genuinely new behaviors: Wei et al. (2022) highlight abrupt improvements in tasks, whereas later studies argue such effects are artifacts of thresholds or in-context learning dynamics (Schaeffer et al., 2023; Lu et al., 2024). Beyond end performance, developmental analyses show that models acquire linguistic abilities in systematic though heterogeneous orders with variability across runs and checkpoints (Sellam et al., 2021; Blevins et al., 2022; Biderman et al., 2023; Xia et al., 2023; van der Wal et al., 2025). Psychology-inspired perspectives further emphasize controlled experimentation to assess these behaviors (Hagendorff, 2023), and comparative studies reveal both parallels and divergences between machine and human language learning (Chang & Bergen, 2022; Evanson et al., 2023; Chang et al., 2024; Ma et al., 2025). At a finer granularity, hidden-loss analyses identify phase-like transitions (Kangaslahti et al., 2025), while distributional studies attribute emergence to stochastic differences across training seeds (Zhao et al., 2024). Together, emergent abilities are not sharp discontinuities but probabilistic outcomes of developmental learning dynamics. Following this line of work, we present a probability- and model internals–based analysis of how symbol grounding emerges during language model training.

### 2.3 Mechanistic Interpretability of LMs

Mechanistic interpretability has largely focused on attention heads in Transformers (Elhage et al., 2021; Olsson et al., 2022; Meng et al., 2022; Bietti et al., 2023; Lieberum et al., 2023; Wu et al., 2025a). A central line of work established that induction heads emerge to support in-context learning (ICL; Elhage et al., 2021; Olsson et al., 2022), with follow-up studies tracing their training dynamics (Bietti et al., 2023) and mapping factual recall circuits (Meng et al., 2022). At larger scales, Lieberum et al. (2023) identified specialized content-gatherer and correct-letter heads, and Wu et al. (2025a) showed that a sparse set of retrieval heads is critical for reasoning and long-context performance. Relatedly, Wang et al. (2023) demonstrated that label words in demonstrations act as anchors: early layers gather semantic information into these tokens, which later guide prediction. Based on these insights, Bick et al. (2025) proposed that retrieval is implemented through a coordinated gather-and-aggregate (G&A) mechanism: some heads collect content from relevant tokens, while others aggregate it at the prediction position. Other studies extended this line of work by analyzing failure modes and training dynamics (Wiegreffe et al., 2025) and contrasting retrieval mechanisms in Transformers and SSMs (Arora et al., 2025). Whereas prior analyses typically investigate ICL with repeated syntactic or symbolic formats, our setup requires referential alignment between linguistic forms and their environmental contexts, providing a complementary testbed for naturalistic language grounding.

## 3 Method

Table 1: Training and test examples across datasets with target word book. The training examples combine environmental tokens ( $\langle$ ENV $\rangle$ ; shaded) with linguistic tokens ( $\langle$ LAN $\rangle$ ). Test examples are constructed with either matched (book) or mismatched (toy) environmental contexts, paired with corresponding linguistic prompts. Note that in child-directed speech and caption-grounded dialogue, book ${}_{\texttt{$\langle$ENV$\rangle$}}$ and book ${}_{\texttt{$\langle$LAN$\rangle$}}$ are two distinct tokens received by LMs.

| Child-Directed Speech | tticblue!10 $\langle$ CHI $\rangle$ takes book from mother | $\langle$ CHI $\rangle$ what’s that $\langle$ MOT $\rangle$ a book in it … | tticblue!10 $\langle$ CHI $\rangle$ asked for a new book | tticblue!10 $\langle$ CHI $\rangle$ asked for a new toy | $\langle$ CHI $\rangle$ I love this |

| --- | --- | --- | --- | --- | --- |

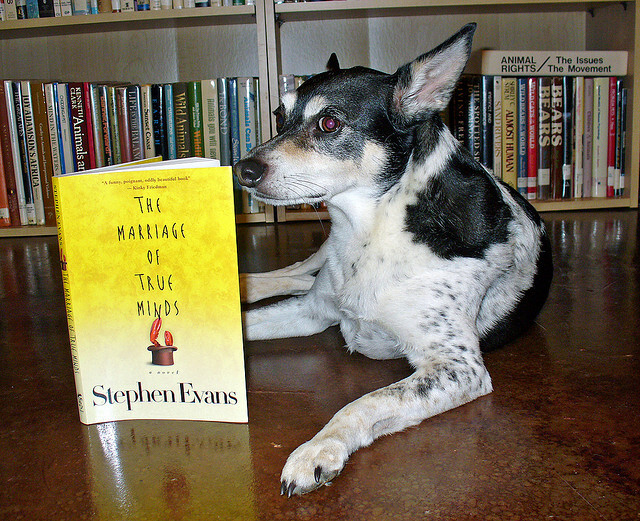

| Caption-Grounded Dialogue | tticblue!10 a dog appears to be reading a book with a full bookshelf behind | $\langle$ Q $\rangle$ can you tell what book it’s reading $\langle$ A $\rangle$ the marriage of true minds by stephen evans | tticblue!10 this is a book | tticblue!10 this is a toy | $\langle$ Q $\rangle$ can you name this object $\langle$ A $\rangle$ |

| Image-Grounded Dialogue | tticblue!10

<details>

<summary>figs/data/book-train.jpg Details</summary>

### Visual Description

## Photograph: Dog with Book in Library Setting

### Overview

A black-and-white speckled dog sits on a polished wooden floor, facing a yellow book titled *"The Marriage of True Minds"* by Stephen Evans. The dog’s head is tilted slightly toward the book, with its ears perked. Behind the dog, bookshelves filled with books are visible, including titles related to animal rights (e.g., *"Animal Rights / The Issues," "Bears," "Wild Animals"*).

### Components/Axes

- **Foreground**:

- **Dog**: Black-and-white speckled coat, pink nose, dark eyes, alert posture.

- **Book**: Yellow cover with bold black text: *"THE MARRIAGE OF TRUE MINDS"* (title), *"Stephen Evans"* (author). A small illustration of a red candle is visible near the bottom of the cover.

- **Background**:

- **Bookshelves**: Light-colored wooden shelves with books arranged vertically. Labels on shelves include:

- *"ANIMAL RIGHTS / The Issues / The Movement"* (top shelf).

- *"Wild Animals," "Bears," "Sanders," "Almost Human"* (middle shelves).

### Detailed Analysis

- **Text Extraction**:

- Book title: *"The Marriage of True Minds"* (Stephen Evans).

- Shelf labels: *"ANIMAL RIGHTS / The Issues / The Movement," "Wild Animals," "Bears," "Sanders," "Almost Human."*

- No other legible text or numerical data present.

### Key Observations

- The dog’s positioning suggests a staged, anthropomorphic scene, as if the dog is "reading" the book.

- The book’s theme (love/intellect) contrasts with the animal rights titles in the background, potentially implying a narrative about human-animal relationships.

- No numerical data, charts, or diagrams are present.

### Interpretation

The image likely serves as a creative or symbolic representation, juxtaposing the dog’s curiosity with the book’s philosophical themes. The animal rights titles in the background may hint at the owner’s interests or the dog’s role as a companion in a household engaged with ethical or intellectual pursuits. The absence of explicit data suggests the image prioritizes visual storytelling over factual or analytical content.

**Note**: No numerical or structured data (e.g., charts, tables) is present in the image. All textual elements are transcribed as described.

</details>

| $\langle$ Q $\rangle$ can you tell what book it’s reading $\langle$ A $\rangle$ the marriage of true minds by stephen evans | tticblue!10

<details>

<summary>figs/data/book-test.jpg Details</summary>

### Visual Description

## Photograph: Wooden Bookshelf with Decorative Items

### Overview

The image depicts a large, dark-stained wooden bookshelf against a yellow wall. The shelves are densely packed with books, framed photographs, and small decorative objects. A model ship is placed atop the bookshelf, and the arrangement suggests a personal library or study space. No readable text is visible on the books, photos, or decorative items.

### Components/Axes

- **Bookshelf Structure**:

- Three vertical sections with upper cabinets (no visible handles) and lower open shelves.

- Lower section includes drawers with dark metal handles.

- **Decorative Items**:

- Framed photographs (small, rectangular, placed on shelves and drawers).

- Stacked books (various sizes, some with visible spines but unreadable titles).

- Small figurines (e.g., a toy car, a small animal figurine).

- A model ship (wooden, multi-masted, positioned on the top shelf).

- **Wall**: Mustard-yellow color, plain with no additional decor.

### Detailed Analysis

- **Books**:

- Spines visible but titles indiscernible due to resolution.

- Colors range from red, blue, green, to black, suggesting diverse genres or authors.

- **Photographs**:

- Framed in simple black or metallic frames.

- Placed on lower shelves and drawers, suggesting personal significance.

- **Decorative Objects**:

- Toy car (blue and yellow, placed on the bottom shelf).

- Small animal figurine (white, near the center of the bookshelf).

- **Model Ship**:

- Positioned on the top shelf, centered.

- No visible text or markings on the ship.

### Key Observations

1. **No Readable Text**: No labels, titles, or inscriptions are legible in the image.

2. **Symmetry and Organization**: Books are arranged vertically, with decorative items interspersed for aesthetic balance.

3. **Color Contrast**: Dark wood of the bookshelf contrasts with the yellow wall and colorful books.

4. **Personalization**: Framed photos and figurines indicate the space is curated for personal use.

### Interpretation

The bookshelf serves as a functional and decorative element, blending literature with personal memorabilia. The absence of readable text suggests the focus is on visual appeal rather than cataloging. The model ship and framed photos imply a narrative of travel, family, or hobbies, while the toy car adds a playful touch. The yellow wall enhances the warmth of the wooden tones, creating a cohesive, inviting atmosphere.

**Note**: No textual data (e.g., titles, labels) is extractable from the image. All descriptions are based on visible spatial and aesthetic elements.

</details>

| tticblue!10

<details>

<summary>figs/data/book-test-control.jpg Details</summary>

### Visual Description

## Photograph: Wooden Cabinet with Glass Display Sections

### Overview

The image depicts a large, dark-stained wooden cabinet with multiple glass-fronted sections. Each section contains indistinct items, likely collectibles or decorative objects. A small model ship is placed atop the cabinet. The background wall is painted a warm yellow, and the cabinet occupies the majority of the frame.

### Components/Axes

- **Cabinet Structure**:

- Dark wood finish with recessed paneling.

- Glass-fronted compartments (at least three visible).

- Metal hinges and handles on lower sections.

- **Model Ship**:

- Positioned centrally on the cabinet’s top surface.

- Wooden construction with three masts and rigging.

- **Items in Display Sections**:

- Blurred contents visible through glass (no discernible labels or text).

- Objects include figurines, books, and possibly mechanical or glassware items.

### Detailed Analysis

- **Cabinet Design**:

- Uniform dark brown wood with lighter wood inlays.

- Glass panels are rectangular, framed by dark metal.

- Lower sections have horizontal drawers or doors with brass handles.

- **Model Ship**:

- Scale model, approximately 1:100 ratio.

- Rigging details suggest a historical sailing vessel (e.g., 18th/19th century).

- **Display Items**:

- No visible text, labels, or branding.

- Items appear curated, suggesting personal or thematic significance.

### Key Observations

1. **No Textual Elements**: The image contains no legible text, labels, or legends.

2. **Uniformity of Sections**: All glass-fronted compartments share identical design and spacing.

3. **Decorative Focus**: The cabinet and model ship emphasize aesthetic display over functional storage.

### Interpretation

The cabinet serves as a curated display unit, likely for personal or historical artifacts. The absence of text suggests the items are valued for their visual or sentimental appeal rather than informational content. The model ship atop the cabinet may indicate a nautical theme or personal interest in maritime history. The blurred contents of the glass sections imply the items are intentionally obscured, possibly to maintain privacy or focus attention on the cabinet’s craftsmanship.

No data trends, numerical values, or structured datasets are present. The image prioritizes visual composition over technical or analytical content.

</details>

| what do we have here? |

### 3.1 Dataset and Tokenization

To capture the emergent grounding from multimodal interactions, we design a minimal testbed with a custom word-level tokenizer, in which every lexical item is represented in two corresponding forms: one token that appears in non-verbal descriptions (e.g., a book in the scene description) and another that appears in utterances (e.g., book in speech). We refer to these by environmental ( $\langle$ ENV $\rangle$ ) and linguistic tokens ( $\langle$ LAN $\rangle$ ), respectively. For instance, book ${}_{\texttt{$\langle$ENV$\rangle$}}$ and book ${}_{\texttt{$\langle$LAN$\rangle$}}$ are treated as distinct tokens with separate integer indices; that is, the tokenization provides no explicit signal that these tokens are related, so any correspondence between them must be learned during training rather than inherited from their surface form. We instantiate this framework in three datasets, ranging from child-directed speech transcripts to image-based dialogue.

Child-directed speech. The Child Language Data Exchange System (CHILDES; MacWhinney, 2000) provides transcripts of speech enriched with environmental annotations. See the manual for data usage: https://talkbank.org/0info/manuals/CHAT.pdf We use the spoken utterances as the linguistic tokens ( $\langle$ LAN $\rangle$ ) and the environmental descriptions as the environment tokens ( $\langle$ ENV $\rangle$ ). The environmental context is drawn from three annotation types:

- Local events: simple events, pauses, long events, or remarks interleaved with the transcripts.

- Action tiers: actions performed by the speaker or listener (e.g., %act: runs to toy box). These also include cases where an action replaces speech (e.g., 0 [% kicks the ball]).

- Situational tiers: situational information tied to utterances or to larger contexts (e.g., %sit: dog is barking).

Caption-grounded dialogue. The Visual Dialog dataset (Das et al., 2017) pairs MSCOCO images (Lin et al., 2014) with sequential question-answering based multi-turn dialogues that exchange information about each image. Our setup uses MSCOCO captions as the environmental tokens ( $\langle$ ENV $\rangle$ ) and the dialogue turns form the linguistic tokens ( $\langle$ LAN $\rangle$ ). In this pseudo cross-modal setting, textual descriptions of visual scenes ground natural conversational interaction. Compared to CHILDES, this setup introduces richer semantics and longer utterances, while still using text-based inputs for both token types, thereby offering a stepping stone toward grounding in fully visual contexts.

Image-grounded dialogue. To move beyond textual proxies, we consider an image-grounded dialogue setup, using the same dataset as the caption-grounded dialogue setting. Here, a frozen vision transformer (ViT; Dosovitskiy et al., 2020) directly tokenizes each RGB image into patch embeddings, with each embedding treated as an $\langle$ ENV $\rangle$ token, analogously to the visual tokens in modern VLMs. We use DINOv2 (Oquab et al., 2024) as our ViT tokenizer, as it is trained purely on vision data without auxiliary text supervision (in contrast to models like CLIP; Radford et al., 2021), thereby ensuring that environmental tokens capture only visual information. The linguistic tokens ( $\langle$ LAN $\rangle$ ) remain unchanged from the caption-grounded dialogue setting, resulting in a realistic multimodal interaction where conversational utterances are grounded directly in visual input.

### 3.2 Evaluation Protocol

We assess symbol grounding with a contrastive test that asks whether a model assigns a higher probability to the correct linguistic token when the matching environmental token is in context, following the idea of priming in psychology. This evaluation applies uniformly across datasets (Table 1): in CHILDES and caption-grounded dialogue, environmental priming comes from descriptive contexts; in image-grounded dialogue, from ViT-derived visual tokens. We compare the following conditions:

- Match (experimental condition): The context contains the corresponding $\langle$ ENV $\rangle$ token for the target word, and the model is expected to predict its $\langle$ LAN $\rangle$ counterpart.

- Mismatch (control condition): The context is replaced with a different $\langle$ ENV $\rangle$ token. The model remains tasked with predicting the same $\langle$ LAN $\rangle$ token; however, in the absence of corresponding environmental cues, its performance is expected to be no better than chance.

For example (first row in Table 1), when evaluating the word $\textit{book}_{\texttt{$\langle$LAN$\rangle$}}$ , the input context is

$$

\displaystyle\vskip-2.0pt\langle\textit{CHI}\rangle\textit{ asked}_{\texttt{$\langle$ENV$\rangle$}}\textit{ for}_{\texttt{$\langle$ENV$\rangle$}}\textit{ a}_{\texttt{$\langle$ENV$\rangle$}}\textit{ new}_{\texttt{$\langle$ENV$\rangle$}}\textit{ book}_{\texttt{$\langle$ENV$\rangle$}}\textit{ }\langle\textit{CHI}\rangle\textit{ I}_{\texttt{$\langle$LAN$\rangle$}}\textit{ love}_{\texttt{$\langle$LAN$\rangle$}}\textit{ this}_{\texttt{$\langle$LAN$\rangle$}}\textit{ }\underline{\hskip 30.00005pt},\vskip-2.0pt \tag{1}

$$

where the model is expected to predict $\textit{book}_{\texttt{$\langle$LAN$\rangle$}}$ for the blank, and the role token $\langle$ CHI $\rangle$ indicates the involved speaker or actor’s role being a child. In the control (mismatch) condition, the environmental token box ${}_{\texttt{$\langle$ENV$\rangle$}}$ is replaced by another valid noun such as toy ${}_{\texttt{$\langle$ENV$\rangle$}}$ .

Context templates. For a target word $v$ with linguistic token $v_{\texttt{$\langle$LAN$\rangle$}}$ and environmental token $v_{\texttt{$\langle$ENV$\rangle$}}$ , we denote $\overline{C}_{v}$ as a set of context templates of $v$ . For example, when $v=\textit{book}$ , a $\overline{c}\in\overline{C}_{v}$ can be

$$

\displaystyle\vskip-2.0pt\langle\textit{CHI}\rangle\textit{ asked}_{\texttt{$\langle$ENV$\rangle$}}\textit{ for}_{\texttt{$\langle$ENV$\rangle$}}\textit{ a}_{\texttt{$\langle$ENV$\rangle$}}\textit{ new}_{\texttt{$\langle$ENV$\rangle$}}\textit{ }\texttt{[FILLER]}\textit{ }\langle\textit{CHI}\rangle\textit{ I}_{\texttt{$\langle$LAN$\rangle$}}\textit{ love}_{\texttt{$\langle$LAN$\rangle$}}\underline{\hskip 30.00005pt},\vskip-2.0pt \tag{2}

$$

where [FILLER] is to be replaced with an environmental token, and the blank indicates the expected prediction as in Eq. (1). In the match condition, the context $\overline{c}(v)$ is constructed by replacing [FILLER] with $v_{\texttt{$\langle$ENV$\rangle$}}$ in $\overline{c}$ . In the mismatch condition, the context $\overline{c}(u)$ uses $u_{\texttt{$\langle$ENV$\rangle$}}(u\neq v)$ as the filler, while the prediction target remains $v_{\texttt{$\langle$LAN$\rangle$}}$ .

For the choices of $v$ and $u$ , we construct the vocabulary $V$ with 100 nouns from the MacArthur–Bates Communicative Development Inventories (Fenson et al., 2006) that occur frequently in our corpus. Each word serves once as the target, with the remaining $M=99$ used to construct mismatched conditions. For each word, we create $N=10$ context templates, which contain both $\langle$ ENV $\rangle$ and $\langle$ LAN $\rangle$ tokens. Details of the vocabulary and context template construction can be found in the Appendix A.

Grounding information gain. Following prior work, we evaluate how well an LM learns a word using the mean surprisal over instances. The surprisal of a word $w$ given a context $c$ is defined as $s_{\boldsymbol{\theta}}(w\mid c)=-\log P_{\boldsymbol{\theta}}(w\mid c),$ where $P_{\boldsymbol{\theta}}(w\mid c)$ denotes the probability, under an LM parameterized by ${\boldsymbol{\theta}}$ , that the next word is $w$ conditioned on the context $c$ . Here, $s_{\boldsymbol{\theta}}(w\mid c)$ quantifies the unexpectedness of predicting $w$ , or the pointwise information carried by $w$ conditioned on the context.

The grounding information gain $G_{\boldsymbol{\theta}}(v)$ for $v$ is defined as

| | $\displaystyle G_{\boldsymbol{\theta}}(v)=\frac{1}{N}\sum_{n=1}^{N}\left(\frac{1}{M}\sum_{u\neq v}^{M}\Big[s_{\boldsymbol{\theta}}\left(v_{\texttt{$\langle$LAN$\rangle$}}\mid\overline{c}_{n}\left(u_{\texttt{$\langle$ENV$\rangle$}}\right)\right)-s_{\boldsymbol{\theta}}\left(v_{\texttt{$\langle$LAN$\rangle$}}\mid\overline{c}_{n}\left(v_{\texttt{$\langle$ENV$\rangle$}}\right)\right)\Big]\right).$ | |

| --- | --- | --- |

This is a sample-based estimation of the expected log-likelihood ratio between the match and mismatch conditions

| | $\displaystyle G_{\boldsymbol{\theta}}(v)=\mathbb{E}_{c,u}\left[\log\frac{P_{\boldsymbol{\theta}}(v_{\texttt{$\langle$LAN$\rangle$}}\mid c,v_{\texttt{$\langle$ENV$\rangle$}})}{P_{\boldsymbol{\theta}}(v_{\texttt{$\langle$LAN$\rangle$}}\mid c,u_{\texttt{$\langle$ENV$\rangle$}})}\right],$ | |

| --- | --- | --- |

which quantifies how much more information the matched ground provides for predicting the linguistic form, compared to a mismatched one. A positive $G_{\boldsymbol{\theta}}(v)$ indicates that the matched environmental token increases the predictability of its linguistic form. We report $G_{\boldsymbol{\theta}}=\frac{1}{|V|}\sum_{v\in V}G_{\boldsymbol{\theta}}(v)$ , and track $G_{{\boldsymbol{\theta}}^{(t)}}$ across training steps $t$ to analyze how grounding emerges over time.

### 3.3 Model Training

We train LMs from random initialization, ensuring that no prior linguistic knowledge influences the results. Our training uses the standard causal language modeling objective, as in most generative LMs. To account for variability, we repeat all experiments with 5 random seeds, randomizing both model initialization and corpus shuffle order. Our primary architecture is Transformer (Vaswani et al., 2017) in the style of GPT-2 (Radford et al., 2019) with 18, 12, and 4 layers, with all of them having residual connections. We extend the experiments to 4-layer unidirectional LSTMs (Hochreiter & Schmidhuber, 1997) with no residual connections, as well as 12- and 4-layer state-space models (specifically, Mamba-2; Dao & Gu, 2024). For fair comparison with LSTMs, the 4-layer Mamba-2 models do not involve residual connections, whereas the 12-layer ones do. For multimodal settings, while standard LLaVA (Liu et al., 2023) uses a two-layer perceptron to project ViT embeddings into the language model, we bypass this projection in our case and directly feed the DINOv2 representations into the LM. We obtain the developmental trajectory of the model by saving checkpoints at various training steps, sampling more heavily from earlier steps, following Chang & Bergen (2022).

## 4 Behavioral Evidence

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Graph: Surprisal vs Training Steps

### Overview

The image depicts a line graph comparing two data series ("Match" and "Mismatch") across 20,000 training steps. The y-axis measures "Surprisal" (log probability), while the x-axis represents training progression. Two distinct trends emerge: a sharp decline in "Match" surprisal followed by stabilization, and a gradual decline in "Mismatch" surprisal with minimal variability.

### Components/Axes

- **Y-axis**: "Surprisal" (log probability), scaled from 5.0 to 12.5 in increments of 2.5

- **X-axis**: "Training steps" (0 to 20,000), marked at 0, 10,000, and 20,000

- **Legend**:

- Blue line: "Match"

- Orange line: "Mismatch"

- **Placement**: Legend positioned in the top-right quadrant of the plot area

### Detailed Analysis

1. **Match (Blue Line)**:

- Initial value: ~12.5 at 0 steps

- Sharp decline to ~7.5 by ~2,500 steps

- Gradual decrease to ~5.0 by 20,000 steps

- Variability: ±0.2 around the trendline

2. **Mismatch (Orange Line)**:

- Initial value: ~10.0 at 0 steps

- Steady decline to ~7.5 by ~10,000 steps

- Minimal change after 10,000 steps (~7.5–7.7)

- Variability: ±0.1 around the trendline

### Key Observations

- **Convergence**: Both lines converge near 7.5 surprisal by 10,000 steps

- **Rate of Change**: "Match" shows a steeper initial decline (Δ~5.0 over 2,500 steps) vs "Mismatch" (Δ~2.5 over 10,000 steps)

- **Stability**: "Mismatch" demonstrates lower variance (±0.1) compared to "Match" (±0.2)

### Interpretation

The data suggests differential learning dynamics between matched and mismatched conditions:

1. **Match Condition**: Rapid initial reduction in surprisal indicates effective pattern recognition/learning, with diminishing returns after 2,500 steps

2. **Mismatch Condition**: Slower, more stable decline suggests either:

- Inherent difficulty in learning mismatched patterns

- Different optimization landscape characteristics

3. **Convergence Point**: Both conditions reach similar surprisal levels by 10,000 steps, implying comparable asymptotic performance despite divergent learning trajectories

The graph highlights the importance of data alignment in training efficiency, with matched conditions achieving faster initial learning but both approaches eventually reaching similar performance ceilings.

</details>

(a) 12-layer Transformer.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Graph: Surprisal vs Training Steps

### Overview

The image depicts a line graph comparing two data series ("Match" and "Mismatch") across 20,000 training steps. Both lines show decreasing trends in "Surprisal" values, with distinct initial trajectories and convergence patterns.

### Components/Axes

- **Y-axis (Surprisal)**: Labeled "Surprisal" with values ranging from 5.0 to 12.5 in increments of 2.5.

- **X-axis (Training steps)**: Labeled "Training steps" with values from 0 to 20,000 in increments of 10,000.

- **Legend**: Located in the top-right corner, with:

- **Blue line**: "Match"

- **Orange line**: "Mismatch"

### Detailed Analysis

1. **Initial Values (0 training steps)**:

- "Match" (blue): Starts at ~12.5

- "Mismatch" (orange): Starts at ~11.5

2. **Early Decline (0–5,000 steps)**:

- "Match" drops sharply from 12.5 to ~7.5

- "Mismatch" declines gradually from 11.5 to ~7.0

3. **Midpoint (10,000 steps)**:

- Both lines converge near ~6.5

4. **Late Training (20,000 steps)**:

- "Match": ~5.2

- "Mismatch": ~5.0

### Key Observations

- **Convergence**: Both lines merge near 10,000 steps and remain parallel thereafter.

- **Rate of Change**: "Match" shows a steeper initial decline compared to "Mismatch."

- **Stabilization**: Surprisal values plateau after ~15,000 steps for both conditions.

### Interpretation

The data suggests that:

1. **Learning Dynamics**: The rapid decline in "Match" surprisal indicates faster adaptation to predictable patterns, while "Mismatch" reflects slower learning from less predictable data.

2. **Model Robustness**: Convergence at later stages implies the model achieves similar generalization performance regardless of input type after sufficient training.

3. **Surprisal Thresholds**: The final surprisal values (~5.0–5.2) may represent the model's baseline uncertainty floor for both conditions.

No anomalies or outliers are observed. The graph demonstrates a clear trade-off between initial data predictability and long-term model performance.

</details>

(b) 4-layer Transformer.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Graph: Surprisal Trends in Match vs. Mismatch Conditions

### Overview

The image depicts a line graph comparing the "Surprisal" metric across two conditions ("Match" and "Mismatch") over 20,000 training steps. The graph shows distinct trends for each condition, with the "Match" line exhibiting a sharp decline followed by stabilization, while the "Mismatch" line remains relatively stable.

### Components/Axes

- **Y-Axis (Surprisal)**: Labeled "Surprisal," scaled from 0 to 12.5 in increments of 2.5.

- **X-Axis (Training Steps)**: Labeled "Training steps," scaled from 0 to 20,000 in increments of 10,000.

- **Legend**: Positioned in the top-right corner, with:

- **Blue line**: Labeled "Match"

- **Orange line**: Labeled "Mismatch"

- **Shaded Regions**: Light blue and orange bands around each line, likely representing variability or confidence intervals.

### Detailed Analysis

1. **Match (Blue Line)**:

- **Initial Value**: Starts at ~12.5 (with uncertainty ±0.5) at 0 training steps.

- **Trend**: Drops sharply to ~5.0 by ~5,000 steps, then stabilizes with minor fluctuations (~4.5–5.5) between 10,000 and 20,000 steps.

- **Shaded Region**: Narrower than the Mismatch line, suggesting lower variability in later stages.

2. **Mismatch (Orange Line)**:

- **Initial Value**: Begins at ~7.5 (with uncertainty ±0.5) at 0 training steps.

- **Trend**: Remains stable between ~7.0 and ~7.8 across all training steps, with no significant upward or downward movement.

- **Shaded Region**: Broader than the Match line, indicating higher variability.

### Key Observations

- The "Match" condition shows a steep decline in surprisal during early training, followed by stabilization.

- The "Mismatch" condition exhibits no meaningful change in surprisal over time.

- The shaded regions suggest that variability in the "Match" condition decreases as training progresses, while the "Mismatch" condition maintains consistent uncertainty.

- No crossover or interaction between the two lines is observed.

### Interpretation

The data suggests that the "Match" condition involves a learning process where surprisal (a measure of prediction error or uncertainty) decreases as the system adapts to predictable patterns. In contrast, the "Mismatch" condition lacks such a learning effect, as surprisal remains constant, implying no adaptation to unpredictable or irrelevant stimuli. The narrowing shaded region for "Match" may reflect increased confidence in predictions over time, while the broader region for "Mismatch" indicates persistent uncertainty. These trends align with theories of associative learning, where repeated exposure to predictable stimuli reduces cognitive surprise.

</details>

(c) 4-layer Mamba 2.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Graph: Surprisal vs. Training Steps

### Overview

The image depicts a line graph comparing two data series ("Match" and "Mismatch") across 20,000 training steps. Both lines show a sharp initial decline in surprisal values, followed by a plateau. The "Match" line (blue) starts slightly higher than the "Mismatch" line (orange) but converges with it as training progresses.

### Components/Axes

- **Y-axis (Surprisal)**: Ranges from 5.0 to 12.5 in increments of 2.5.

- **X-axis (Training steps)**: Spans 0 to 20,000 in increments of 10,000.

- **Legend**: Located in the top-right corner, with:

- **Blue line**: Labeled "Match"

- **Orange line**: Labeled "Mismatch"

### Detailed Analysis

- **Initial values (0 training steps)**:

- Both lines begin near **12.5** surprisal.

- The "Match" line peaks slightly higher (~12.7) before dropping.

- **Midpoint (10,000 steps)**:

- "Match": ~7.5 surprisal

- "Mismatch": ~8.0 surprisal

- **Final values (20,000 steps)**:

- Both lines plateau near **7.5** surprisal.

- **Trends**:

- "Match" declines faster initially (steeper slope) but flattens earlier.

- "Mismatch" declines more gradually, maintaining a slight lead until ~15,000 steps.

### Key Observations

1. Both data series exhibit a **rapid decrease in surprisal** during early training, followed by stabilization.

2. The "Match" line demonstrates a **sharper initial drop** compared to "Mismatch."

3. By 20,000 steps, the lines **converge**, suggesting diminishing differences between Match and Mismatch outcomes.

### Interpretation

The graph indicates that training reduces surprisal for both Match and Mismatch scenarios, implying the model becomes less uncertain or "surprised" over time. The convergence of the lines suggests that the distinction between Match and Mismatch outcomes weakens with prolonged training, potentially reflecting improved generalization or reduced sensitivity to input variations. The initial peak (~12.5) may represent baseline surprisal before training, while the plateau (~7.5) signifies the model's stabilized performance threshold.

</details>

(d) 4-layer LSTM.

Figure 2: Average surprisal of the experimental and control conditions over training steps.

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Graph: Information Gain vs R² Values Over Training Steps

### Overview

The image depicts a line graph comparing two metrics—**Information gain** (blue line) and **R² value** (orange line)—across **20,000 training steps**. The graph includes a secondary y-axis for Information gain (right side) and a primary y-axis for R² values (left side). Both lines exhibit distinct trends, with the R² value peaking early and declining, while Information gain rises steadily after an initial dip.

---

### Components/Axes

- **X-axis**: "Training steps" (0 to 20,000, linear scale).

- **Left Y-axis**: "R² values" (0 to 0.8, linear scale).

- **Right Y-axis**: "Information gain" (0 to 6, linear scale).

- **Legend**: Located in the top-left corner, with:

- **Blue line**: "Information gain"

- **Orange line**: "R² value"

---

### Detailed Analysis

1. **R² Value (Orange Line)**:

- Starts near 0 at 0 training steps.

- Peaks sharply at ~5,000 steps (~0.45 R² value).

- Declines steadily to ~0.05 by 20,000 steps.

- Shaded orange region indicates uncertainty (standard error), narrowing as training progresses.

2. **Information Gain (Blue Line)**:

- Begins near 0 at 0 steps.

- Dips slightly below 1,000 steps.

- Rises steadily to ~2.5 by 20,000 steps.

- Shaded blue region shows increasing uncertainty over time.

3. **Inverse Relationship**:

- The R² value and Information gain exhibit an inverse correlation: as R² peaks early, Information gain remains low, then diverges as R² declines while Information gain increases.

---

### Key Observations

- **Early Overperformance**: R² value peaks at ~5,000 steps (~0.45), suggesting initial model improvement.

- **Divergence Post-5,000 Steps**: After the R² peak, Information gain becomes the dominant metric, rising to ~2.5 by 20,000 steps.

- **Uncertainty Trends**: Both metrics show increasing uncertainty (wider shaded regions) as training progresses, particularly for Information gain.

---

### Interpretation

The graph suggests a trade-off between model performance metrics during training:

- **Early Training**: High R² values indicate strong initial correlations, but Information gain remains low, possibly due to limited data exploration.

- **Later Training**: Declining R² values may signal overfitting or diminishing returns in predictive accuracy, while rising Information gain implies improved model efficiency or feature relevance.

- **Practical Implications**: The divergence highlights the importance of balancing accuracy (R²) with efficiency (Information gain) in model selection, especially in resource-constrained scenarios.

The inverse relationship raises questions about whether the model prioritizes accuracy early on but shifts toward efficiency as training matures, or if the metrics reflect competing objectives (e.g., memorization vs. generalization).

</details>

(a) 12-layer Transformer.

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Graph: Training Steps vs. R² and Information Gain

### Overview

The image depicts a dual-axis line graph comparing two metrics across training steps: **R² values** (left y-axis) and **Information gain** (right y-axis). The x-axis represents training steps from 0 to 20,000. Two lines are plotted: a blue line for Information gain and an orange line for R² values. The legend is positioned in the top-left corner.

---

### Components/Axes

- **X-axis**: "Training steps" (0 to 20,000, linear scale).

- **Left Y-axis**: "R² values" (0 to 0.8, linear scale).

- **Right Y-axis**: "Information gain" (0 to 6, linear scale).

- **Legend**:

- Blue line: "Information gain"

- Orange line: "R² value"

- **Secondary Axis**: Right y-axis for Information gain (blue line).

---

### Detailed Analysis

1. **R² Values (Orange Line)**:

- Starts near 0 at 0 training steps.

- Peaks at ~0.35 around 5,000 steps.

- Declines steadily to ~0.05 by 20,000 steps.

- Shaded region (confidence interval) widens slightly after 5,000 steps.

2. **Information Gain (Blue Line)**:

- Starts at 0 at 0 training steps.

- Increases monotonically, reaching ~2.5 by 20,000 steps.

- Slope flattens slightly after ~15,000 steps.

3. **Intersection Point**:

- The two lines cross near 10,000 steps, where R² ≈ 0.2 and Information gain ≈ 2.

---

### Key Observations

- **R² Divergence**: The orange line peaks early and then declines, suggesting diminishing returns in model performance (as measured by R²) after ~5,000 steps.

- **Information Gain Growth**: The blue line shows sustained improvement, indicating continued learning or feature relevance even as R² plateaus.

- **Metric Discrepancy**: R² and Information gain diverge significantly after 10,000 steps, highlighting potential limitations of R² for this task.

---

### Interpretation

- **Training Dynamics**: The graph suggests that while R² initially improves with training, it eventually degrades, possibly due to overfitting or noise in the data. In contrast, Information gain continues to rise, implying the model is still capturing meaningful patterns.

- **Metric Selection**: The divergence between R² and Information gain raises questions about the suitability of R² as a sole evaluation metric. Information gain may better reflect long-term learning in this context.

- **Anomaly**: The sharp peak in R² at 5,000 steps could indicate a temporary overfit or a specific feature alignment that later becomes irrelevant.

---

### Spatial Grounding

- **Legend**: Top-left corner, clearly associating colors with metrics.

- **Secondary Axis**: Right y-axis for Information gain, avoiding overlap with R² values.

- **Line Placement**: Blue (Information gain) consistently above orange (R²) after 10,000 steps.

---

### Content Details

- **R² Values**:

- Peak: ~0.35 (5,000 steps).

- Final Value: ~0.05 (20,000 steps).

- **Information Gain**:

- Final Value: ~2.5 (20,000 steps).

- Slope: ~0.000125 per step (linear approximation).

---

### Key Observations (Reiterated)

- R² values decline after 5,000 steps, while Information gain continues to rise.

- The two metrics cross at ~10,000 steps, signaling a shift in model behavior.

- Information gain’s sustained growth suggests it is a more reliable metric for this task.

</details>

(b) 4-layer Transformer.

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Graph: Model Performance Metrics Over Training Steps

### Overview

The image depicts a line graph comparing two metrics—**Information gain** and **R² value**—across 20,000 training steps. The graph includes two y-axes: the left axis (orange) tracks R² values (0–0.8), and the right axis (blue) tracks Information gain (0–6). A legend in the top-left corner distinguishes the two metrics by color.

### Components/Axes

- **X-axis**: "Training steps" (0 to 20,000), with markers at 0, 10,000, and 20,000.

- **Left Y-axis**: "R² values" (0–0.8), labeled in orange.

- **Right Y-axis**: "Information gain" (0–6), labeled in blue.

- **Legend**: Top-left corner, with:

- **Blue line**: Information gain.

- **Orange line**: R² value.

### Detailed Analysis

1. **Information gain (blue line)**:

- Starts at 0 at step 0.

- Increases steadily, reaching approximately **4** by 10,000 steps.

- Plateaus slightly above 4 after 10,000 steps, with minor fluctuations.

- Final value at 20,000 steps: ~4.2.

2. **R² value (orange line)**:

- Begins at 0, rises sharply to a peak of **~0.3** at ~5,000 steps.

- Declines sharply after 5,000 steps, dropping to near 0 by 10,000 steps.

- Remains close to 0 for the remainder of training (10,000–20,000 steps).

### Key Observations

- **Divergence of metrics**: R² value peaks early (5,000 steps) and collapses, while Information gain continues to rise.

- **Stability**: Information gain stabilizes after 10,000 steps, suggesting diminishing returns in information acquisition.

- **Anomaly**: R² value’s sharp decline after 5,000 steps contrasts with the sustained growth of Information gain.

### Interpretation

The graph suggests that the model’s **R² value** (a measure of predictive accuracy) improves rapidly during initial training but plateaus and eventually degrades, indicating potential overfitting or saturation. Meanwhile, **Information gain** (a measure of new knowledge acquired) grows steadily, implying that the model continues to learn meaningful patterns even after R² stabilizes. This divergence highlights a trade-off: while R² reflects immediate performance, Information gain may better capture long-term learning dynamics. The sharp drop in R² after 5,000 steps warrants further investigation—it could signal data leakage, noise in the training process, or a mismatch between the model’s capacity and the task complexity.

</details>

(c) 4-layer Mamba 2.

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Graph: Training Performance Metrics

### Overview

The image depicts a line graph comparing two performance metrics ("Information gain" and "R² value") across training steps. The graph includes two y-axes: the left axis measures "R² values" (0–0.8), and the right axis measures "Information gain" (0–6). The x-axis represents "Training steps" (0–20,000). Two data series are plotted: a blue line for "Information gain" and an orange line for "R² value," with a shaded uncertainty region around the orange line.

### Components/Axes

- **X-axis**: "Training steps" (0–20,000), with tick marks at 0, 10,000, and 20,000.

- **Left Y-axis**: "R² values" (0–0.8), with increments of 0.2.

- **Right Y-axis**: "Information gain" (0–6), with increments of 2.

- **Legend**: Located in the top-right corner, with:

- Blue line: "Information gain"

- Orange line: "R² value"

- **Shaded Region**: Light orange area surrounding the orange line, indicating uncertainty in "R² value" estimates.

### Detailed Analysis

1. **R² Value (Orange Line)**:

- Starts at 0 at 0 training steps.

- Rises sharply to ~0.6 by 10,000 steps.

- Plateaus near 0.6–0.7 by 20,000 steps.

- Shaded uncertainty region widens slightly during the initial rise but narrows as the line plateaus.

2. **Information Gain (Blue Line)**:

- Starts near 0 at 0 training steps.

- Increases gradually, reaching ~0.1 by 20,000 steps.

- Remains relatively flat after ~5,000 steps.

3. **Axis Inconsistencies**:

- The right y-axis ("Information gain") scales to 6, but the blue line never exceeds ~0.1, suggesting a potential mismatch in axis scaling or data representation.

### Key Observations

- **R² Value**: Rapid improvement in early training steps, followed by saturation, indicating diminishing returns.

- **Information Gain**: Minimal improvement over training steps, suggesting limited sensitivity to additional training.

- **Uncertainty**: The shaded region around the orange line highlights variability in "R² value" estimates, particularly during the initial rise.

- **Axis Mismatch**: The right y-axis ("Information gain") scale (0–6) does not align with the blue line's actual values (~0–0.1), raising questions about visualization accuracy.

### Interpretation

The data suggests that the model's performance, as measured by "R² value," improves significantly during early training but plateaus by ~10,000 steps, indicating potential overfitting or model saturation. In contrast, "Information gain" shows minimal improvement, implying that additional training steps may not meaningfully enhance the model's ability to capture relevant information. The shaded uncertainty region around "R² value" underscores variability in early-stage performance estimates, which could reflect instability in the model's learning process. The discrepancy between the right y-axis scale and the blue line's values warrants further investigation, as it may misrepresent the magnitude of "Information gain" relative to "R² value."

</details>

(d) 4-layer LSTM.

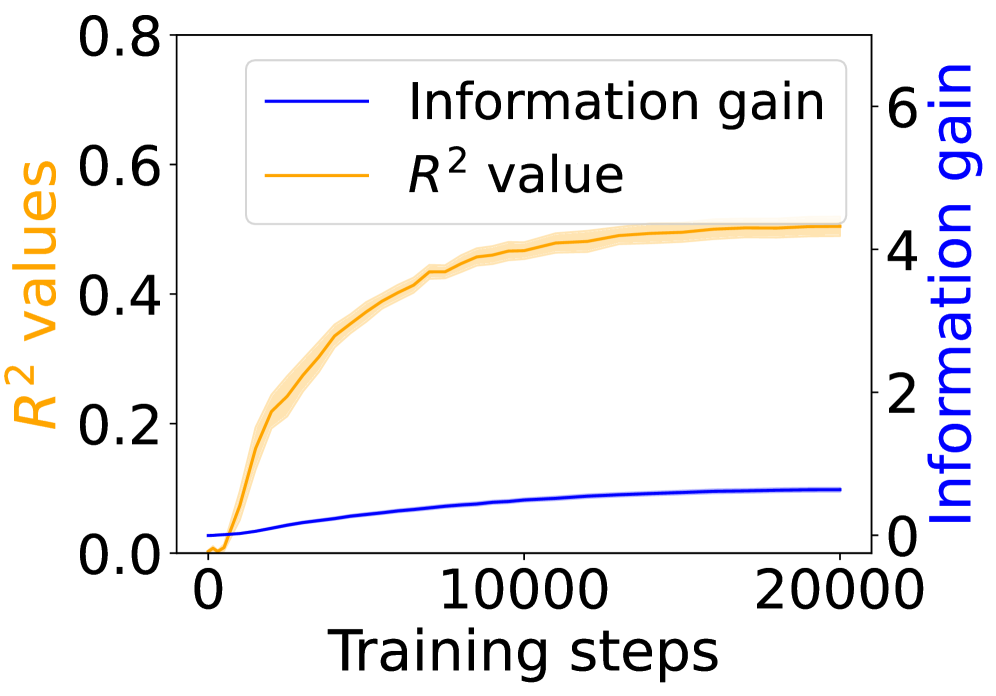

Figure 3: Grounding information gain and its correlation to the co-occurrence of linguistic and environment tokens over training steps.

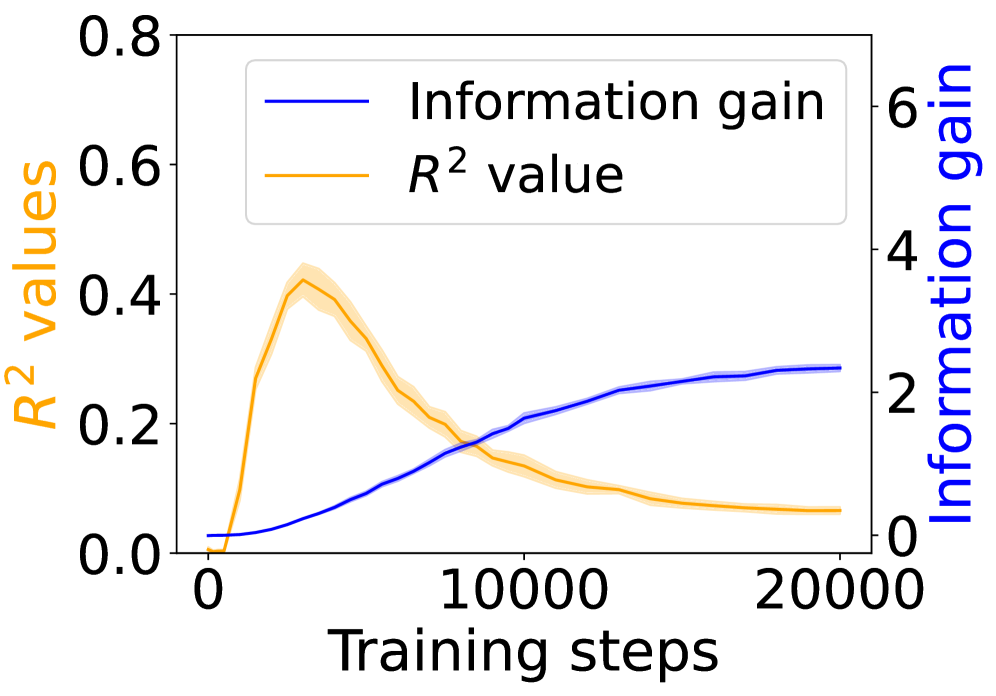

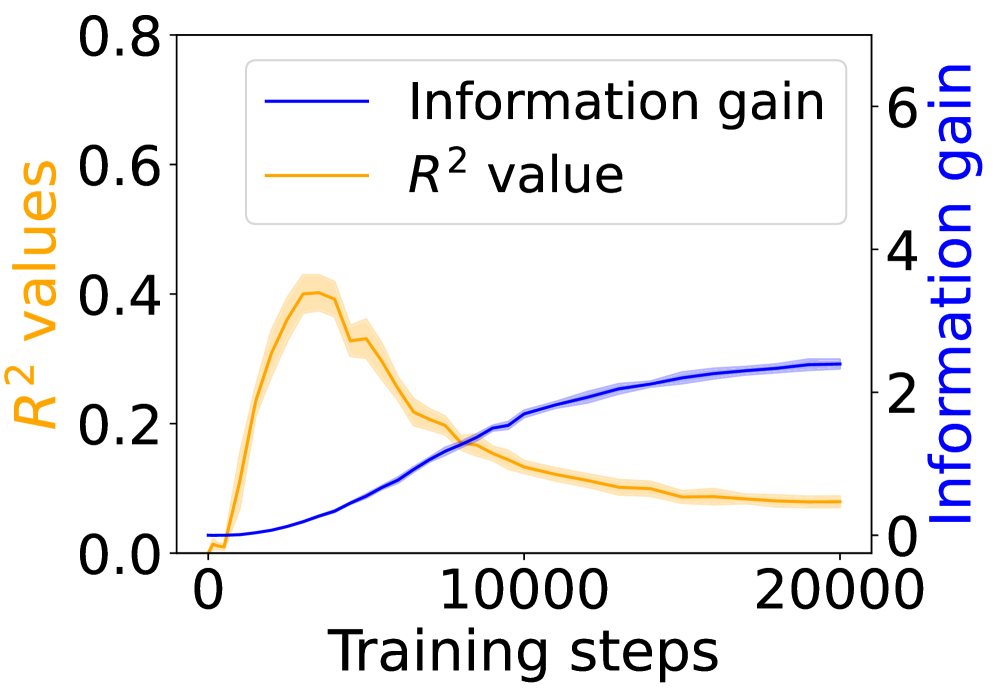

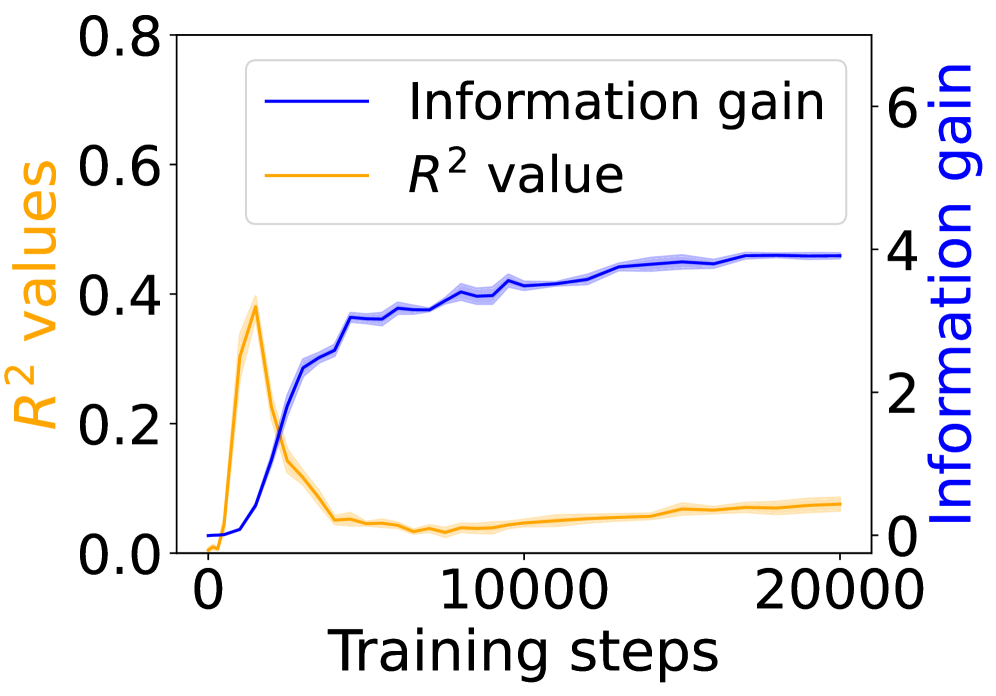

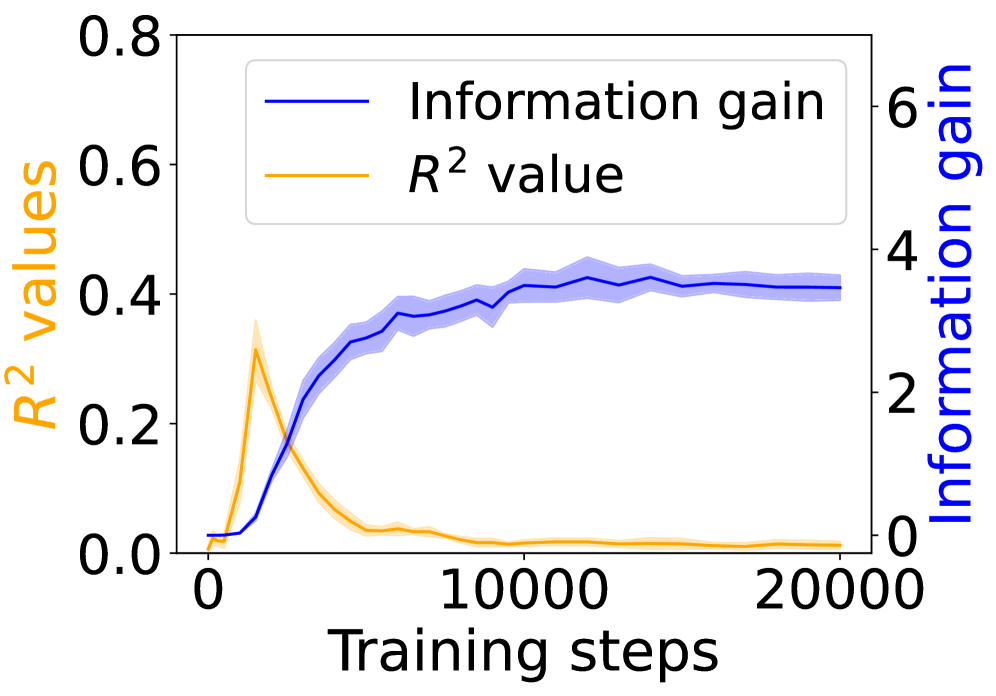

### 4.1 Behavioral Evidence of Emergent Grounding

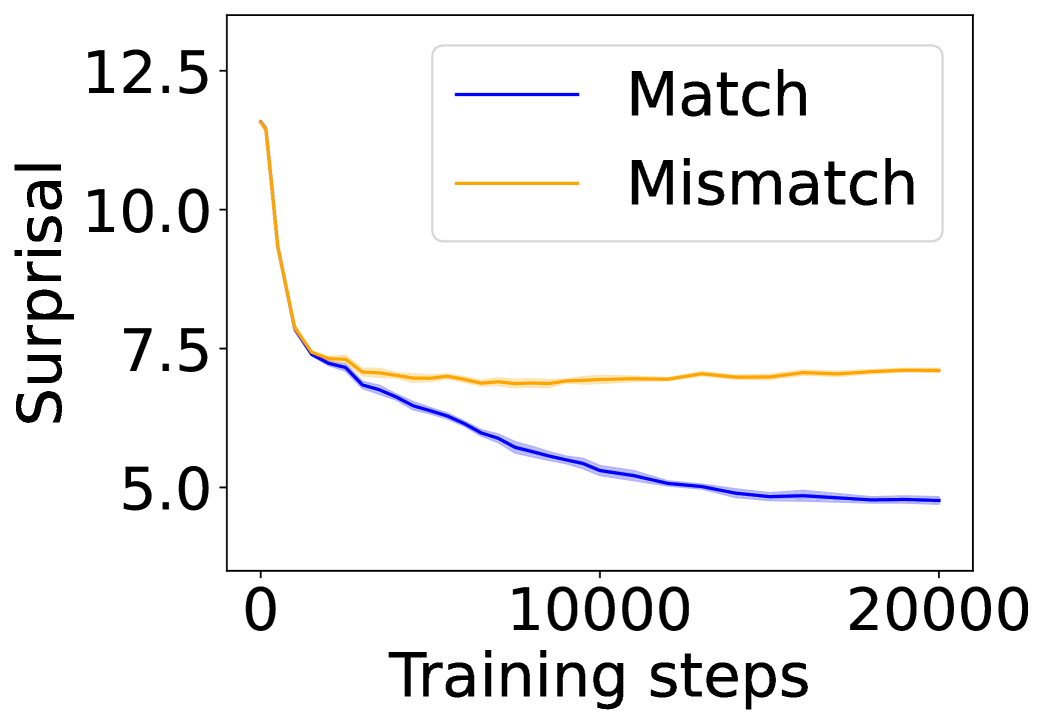

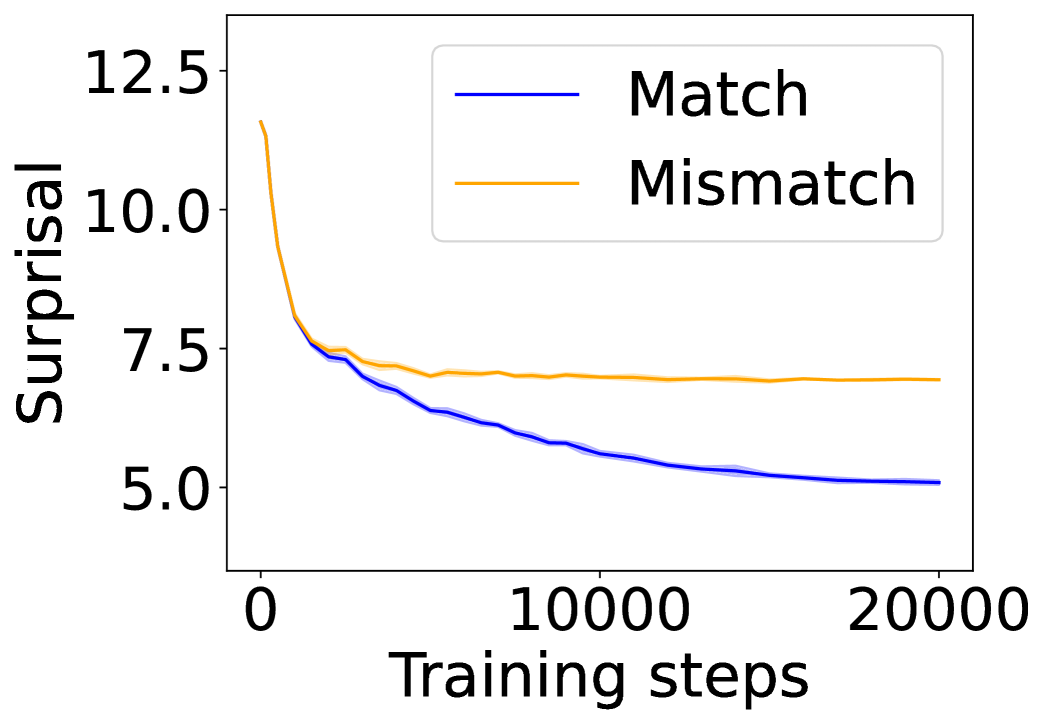

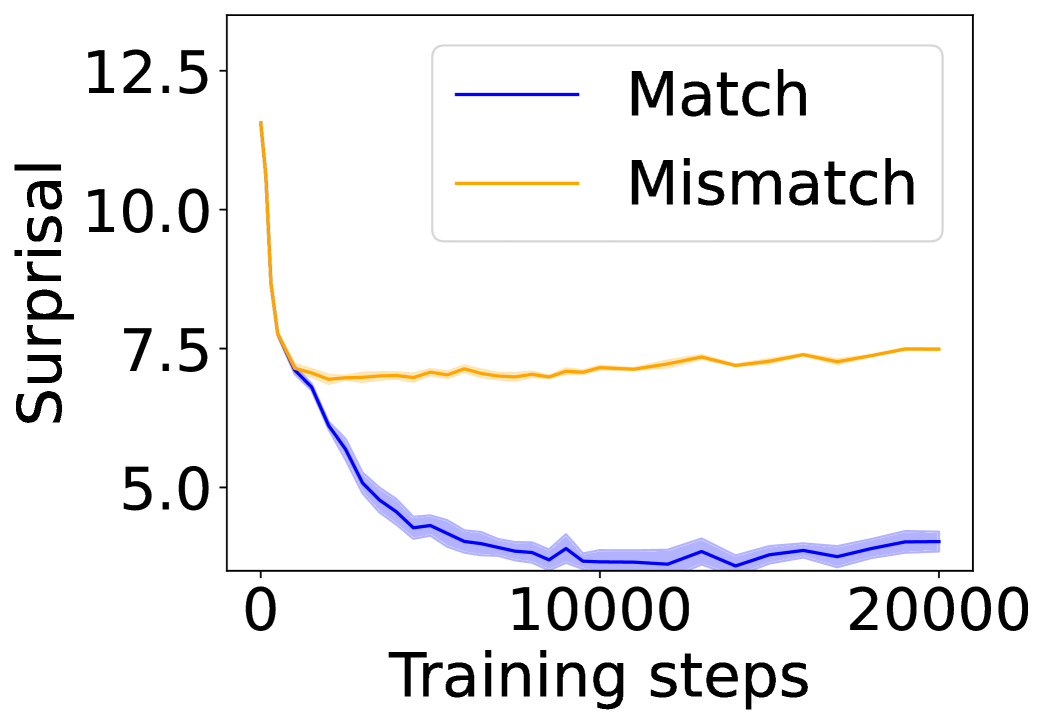

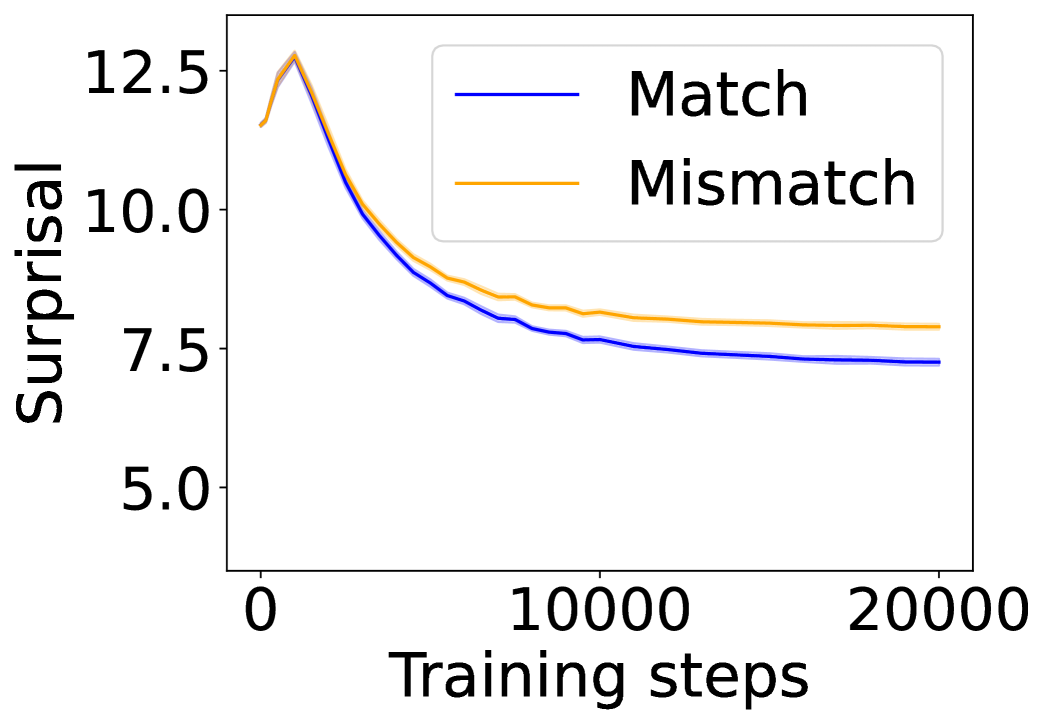

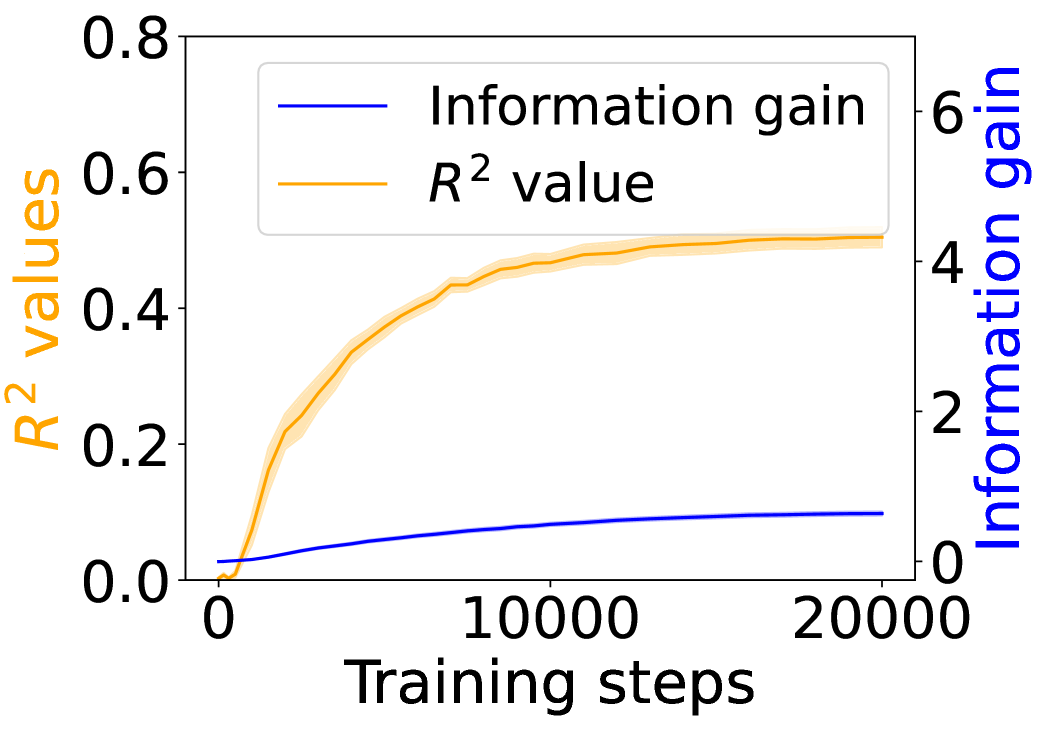

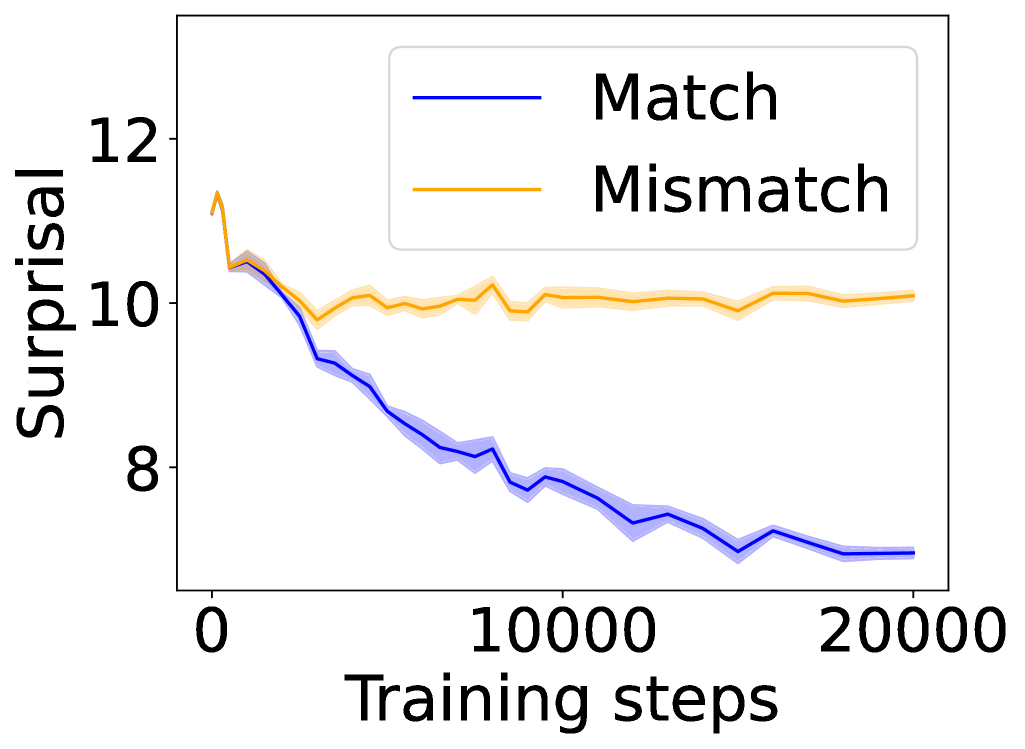

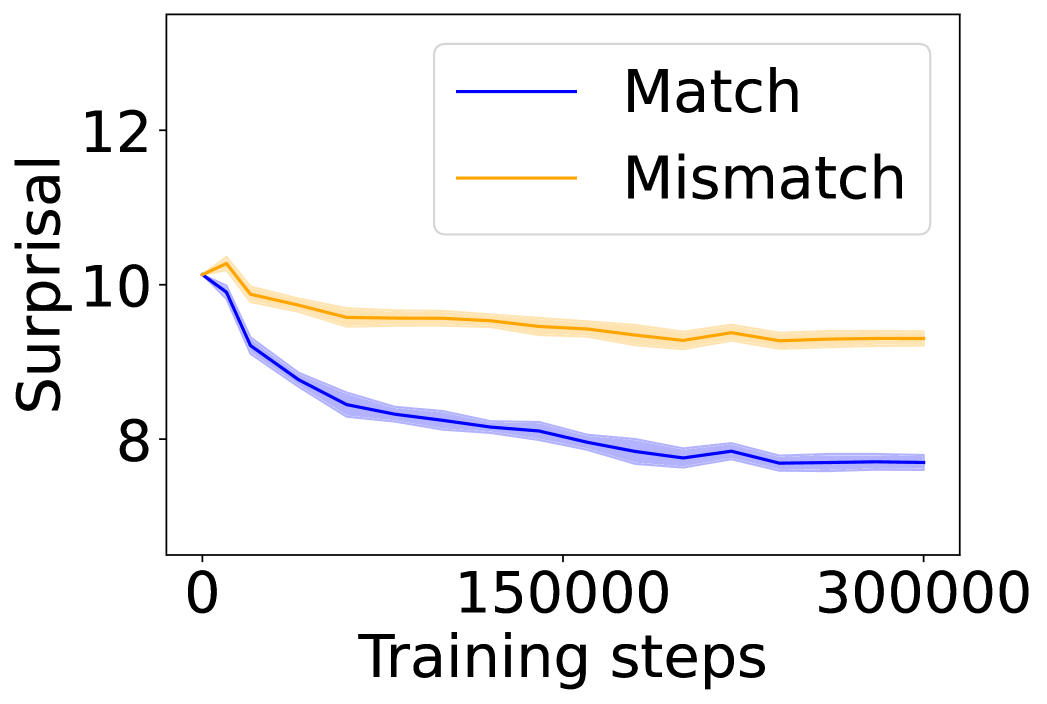

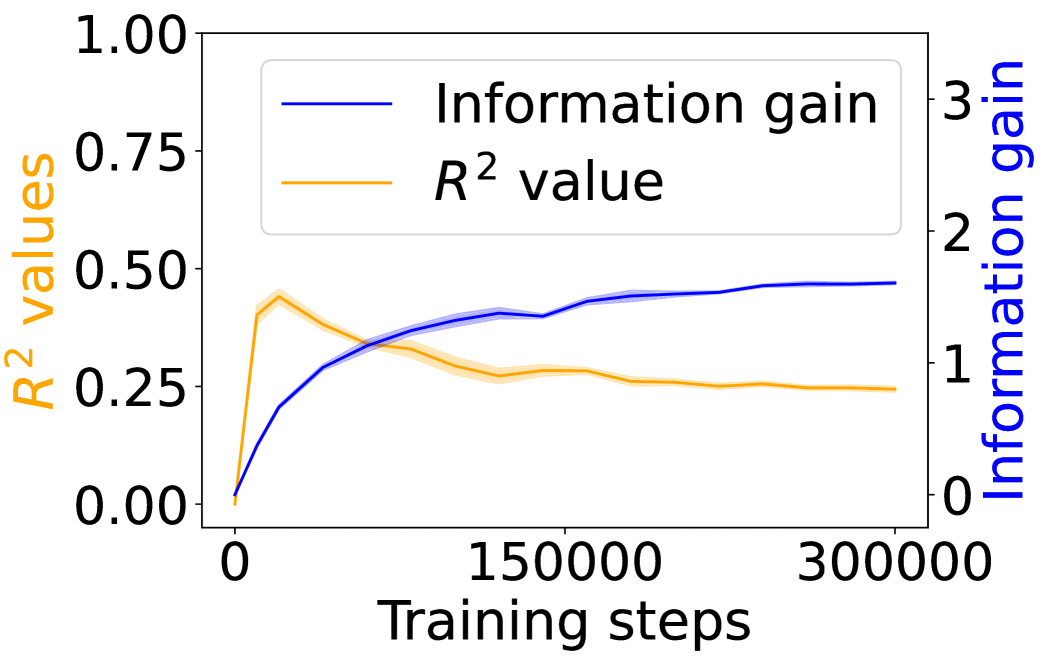

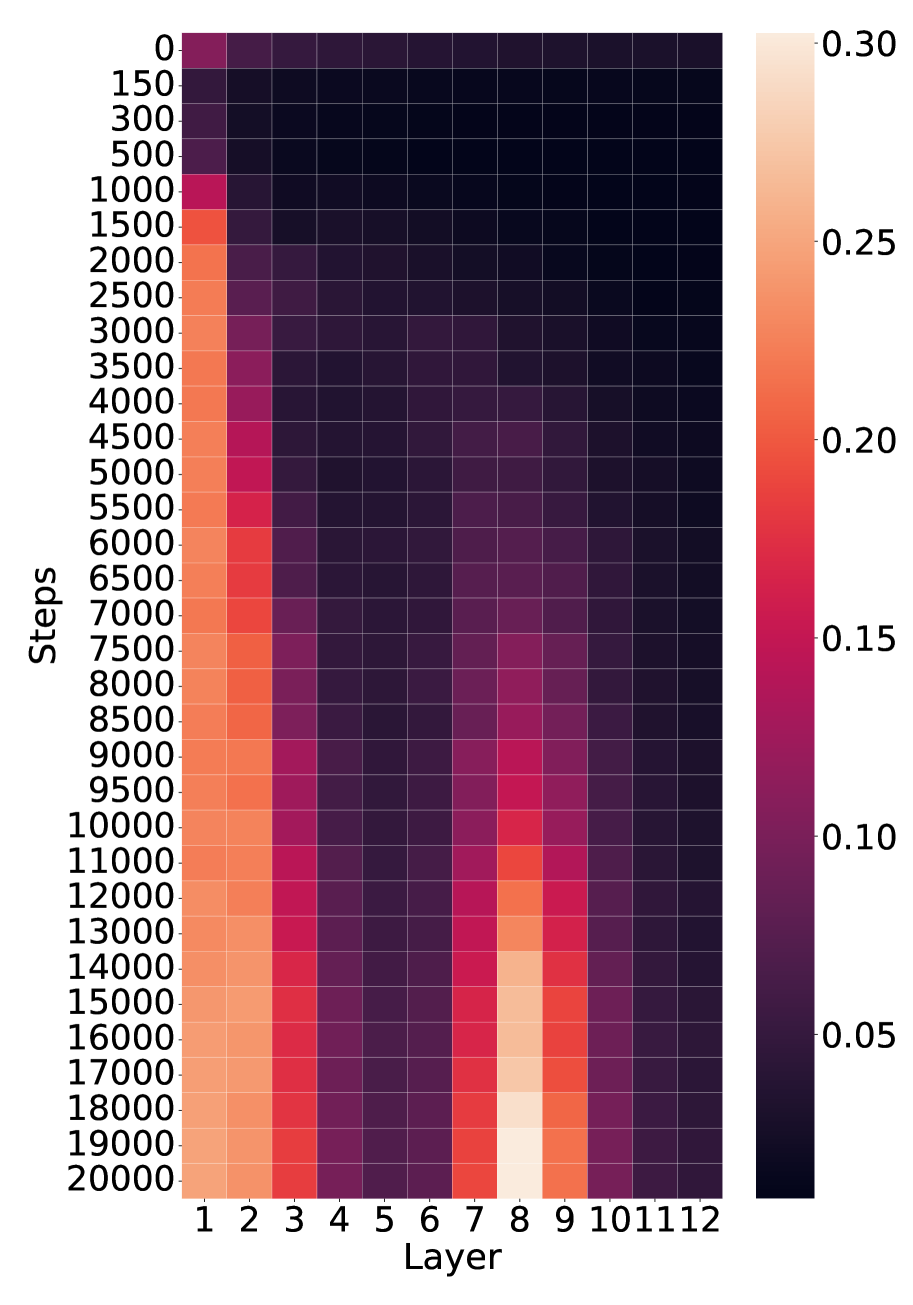

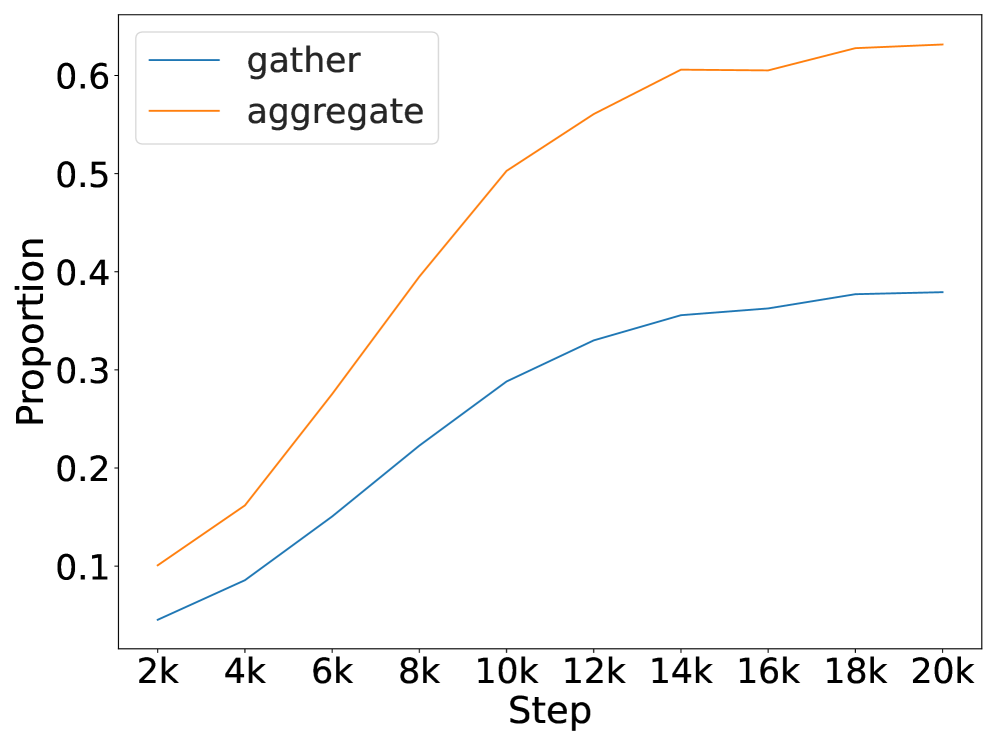

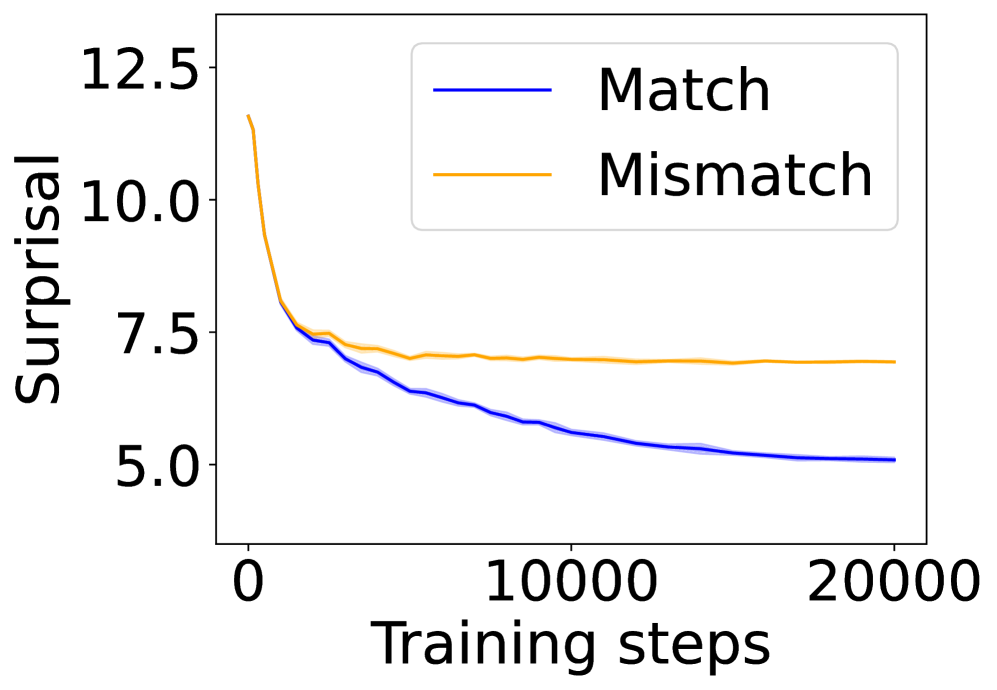

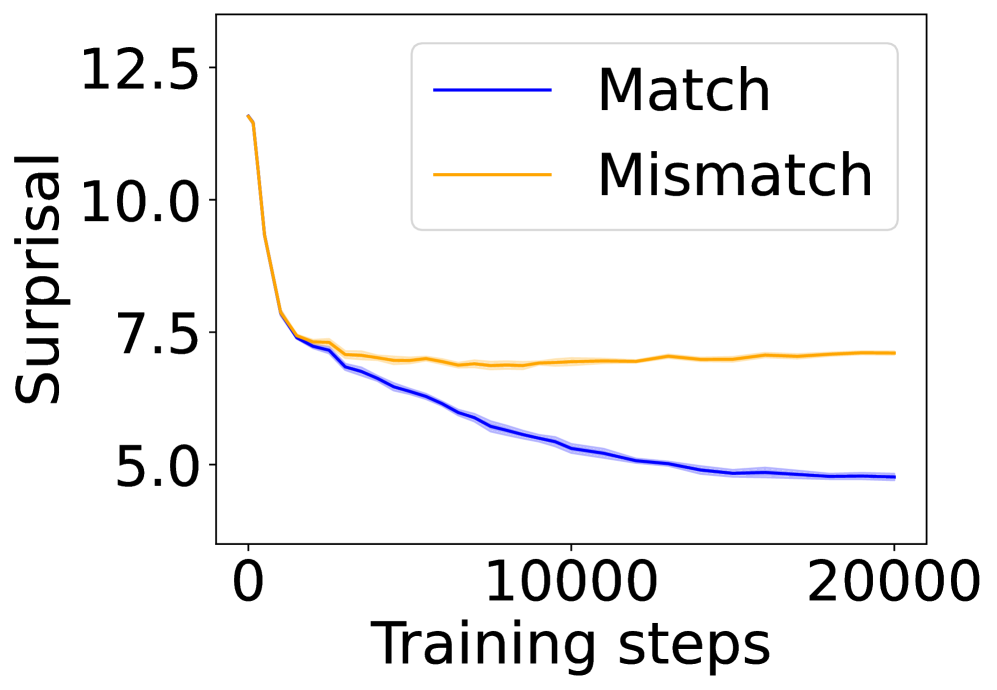

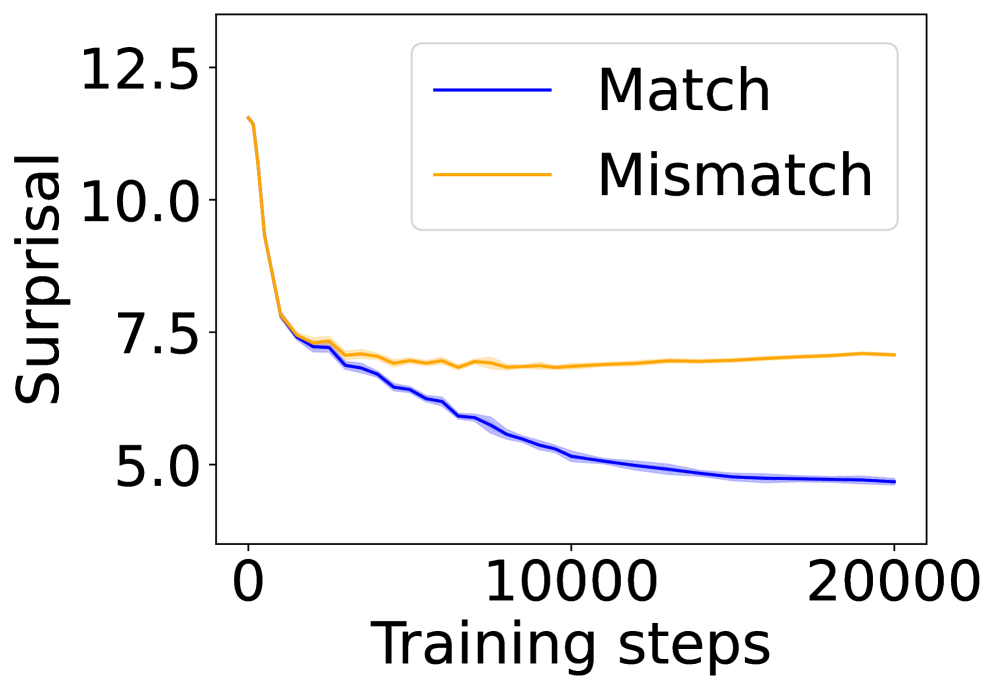

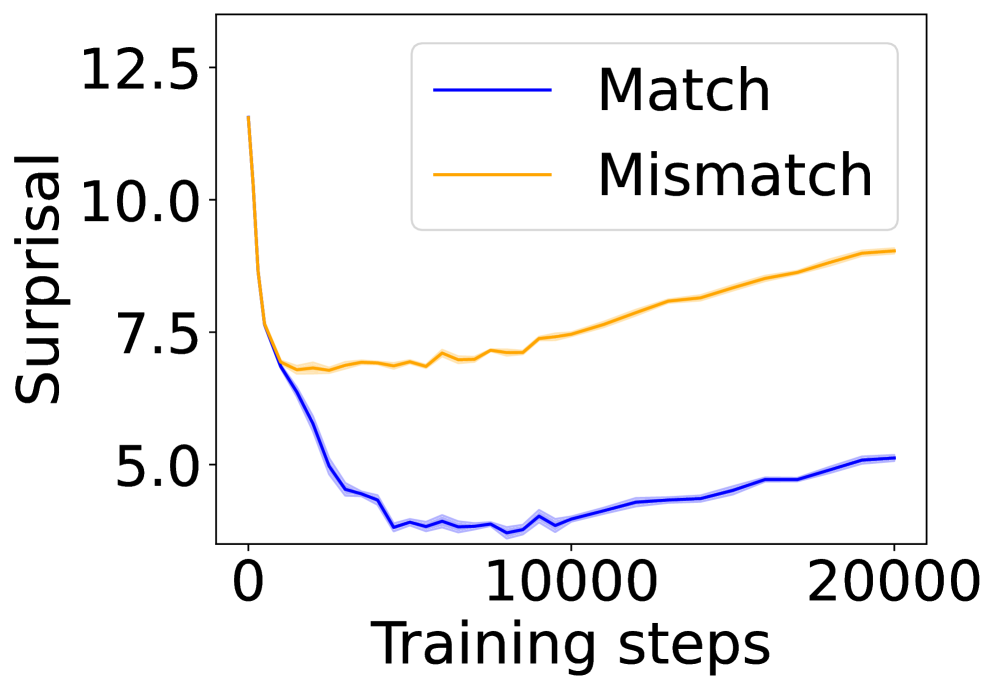

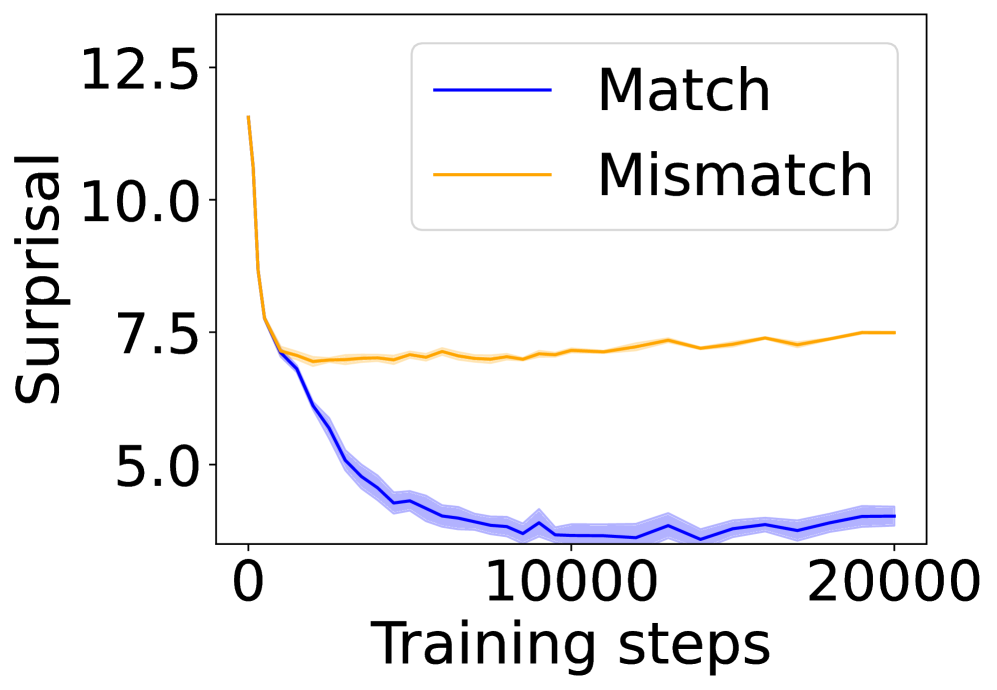

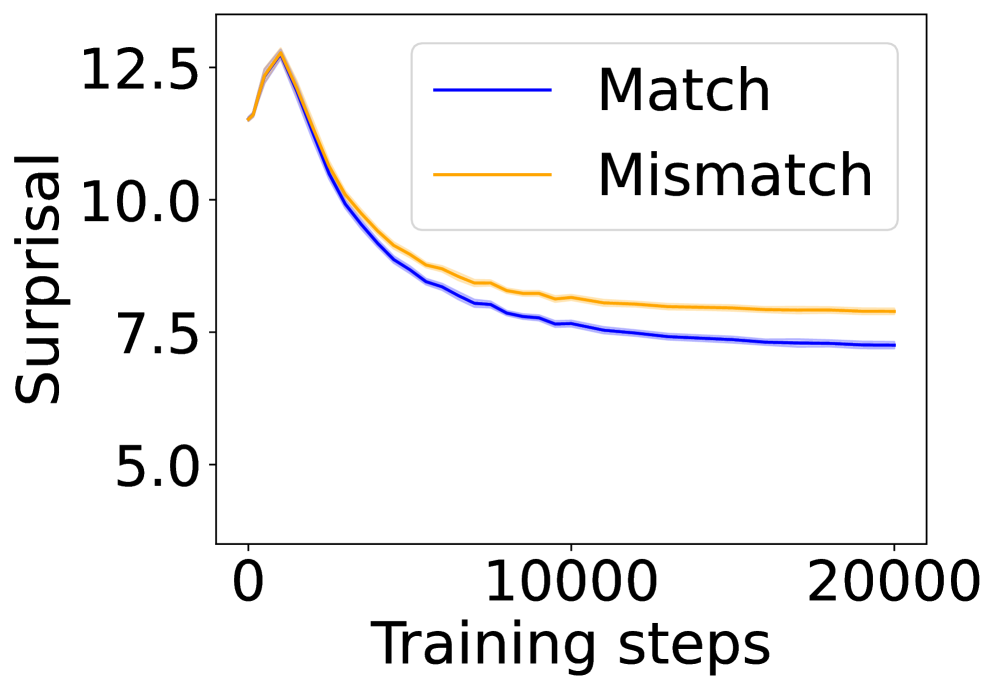

In this section, we ask: Does symbol grounding emerge behaviorally in autoregressive LMs? We first test whether models show systematic surprisal reduction when predicting a linguistic token when its environmental counterpart is in context (Figure 2, where the gap between the lines represent the grounding information gain). For Transformers (Figures 2(a) and 2(b)) and Mamba-2 (Figure 2(c)), surprisal in the match condition decreases steadily while that in the mismatch condition enters a high-surprisal plateau early, indicating that the models leverage environmental context to predict the linguistic form. In contrast, the unidirectional LSTM (Figure 2(d)) shows little separation between the conditions, reflecting the absence of grounding. Overall, these results provide behavioral evidence of emergent grounding: in sufficiently expressive architectures (Transformers and Mamba-2), the correct environmental context reliably lowers surprisal for its linguistic counterpart, whereas LSTMs fail to exhibit this effect, marking an architectural boundary on where grounding can emerge.

### 4.2 Behavioral Effects Beyond Co-occurrence

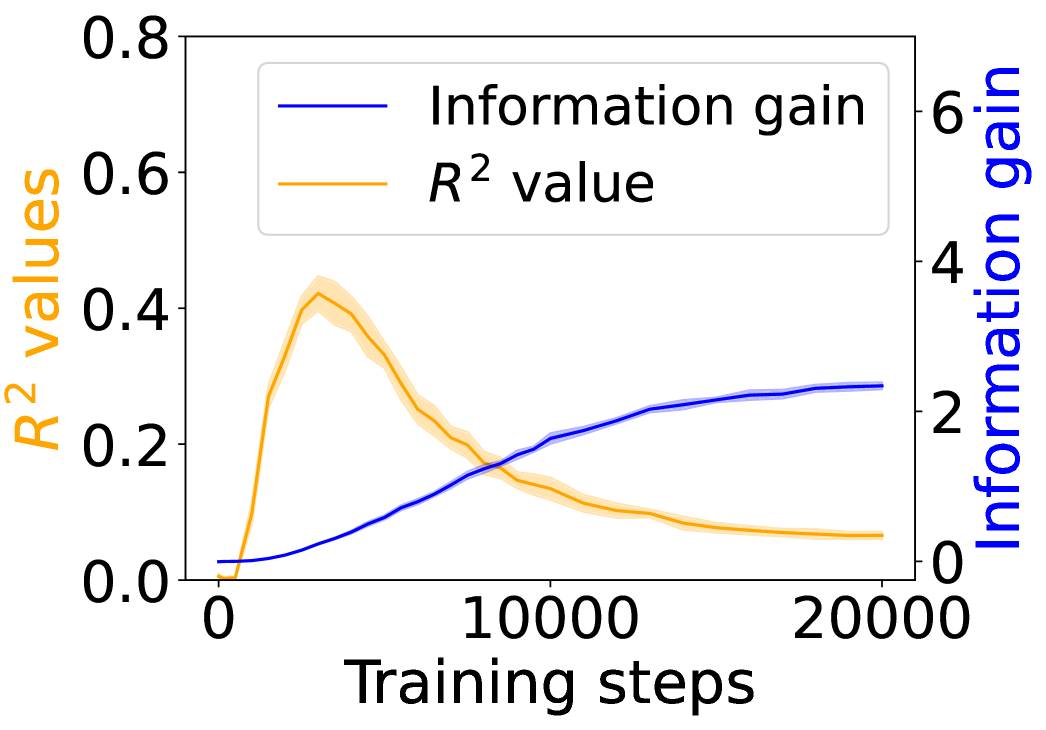

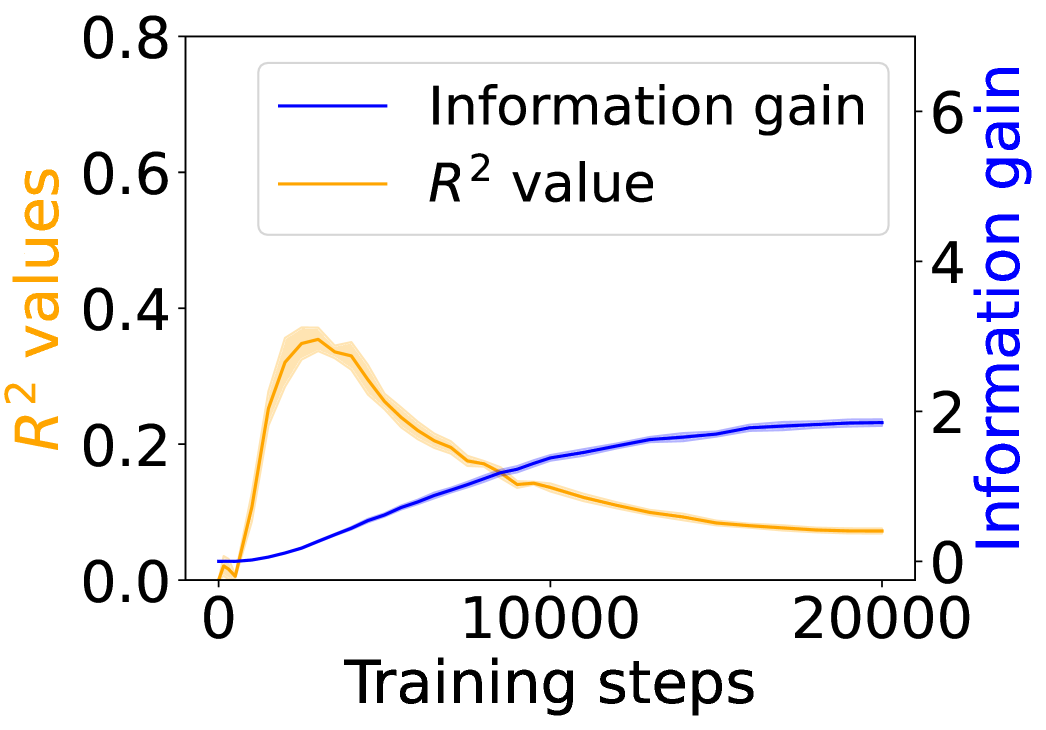

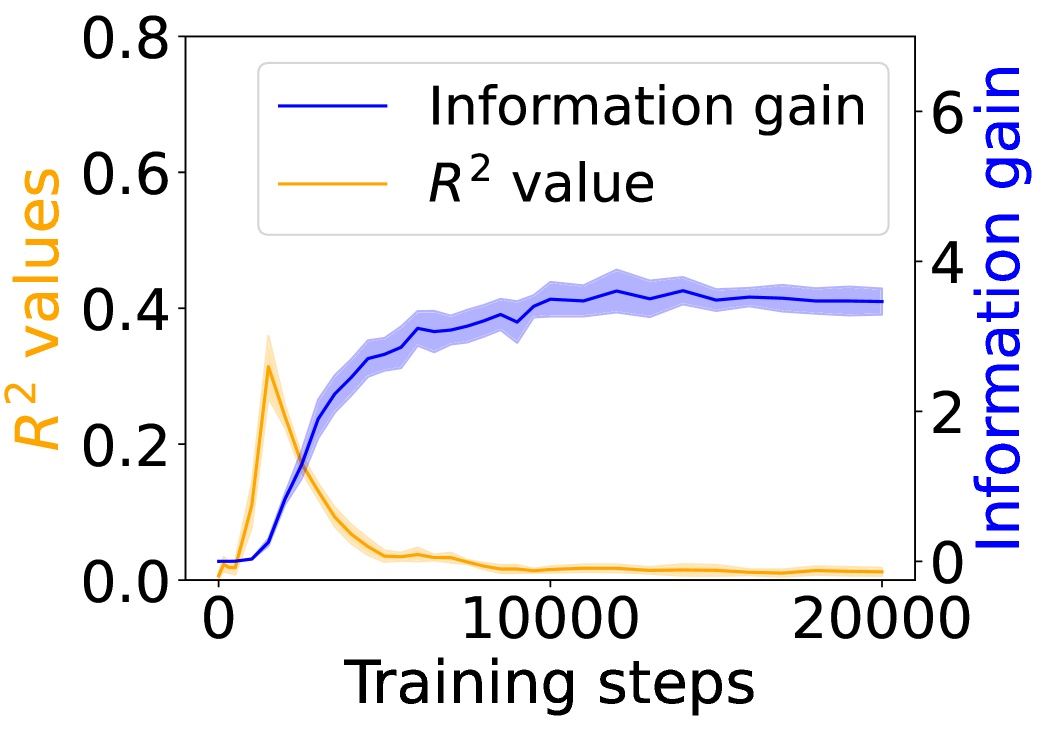

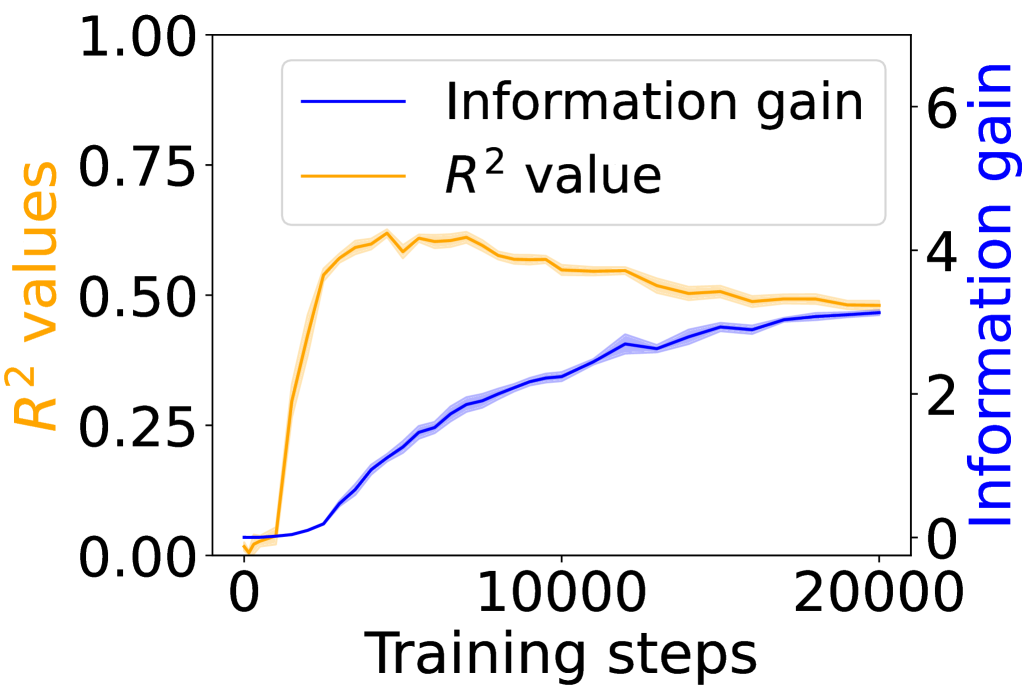

A natural concern is that the surprisal reductions might be fully explainable by shallow statistics: the models might have simply memorized frequent co-occurrences of $\langle$ ENV $\rangle$ and $\langle$ LAN $\rangle$ tokens, without learning a deeper and more general mapping. We test this hypothesis by comparing the tokens’ co-occurrence with the grounding information gain in the child-directed speech data.

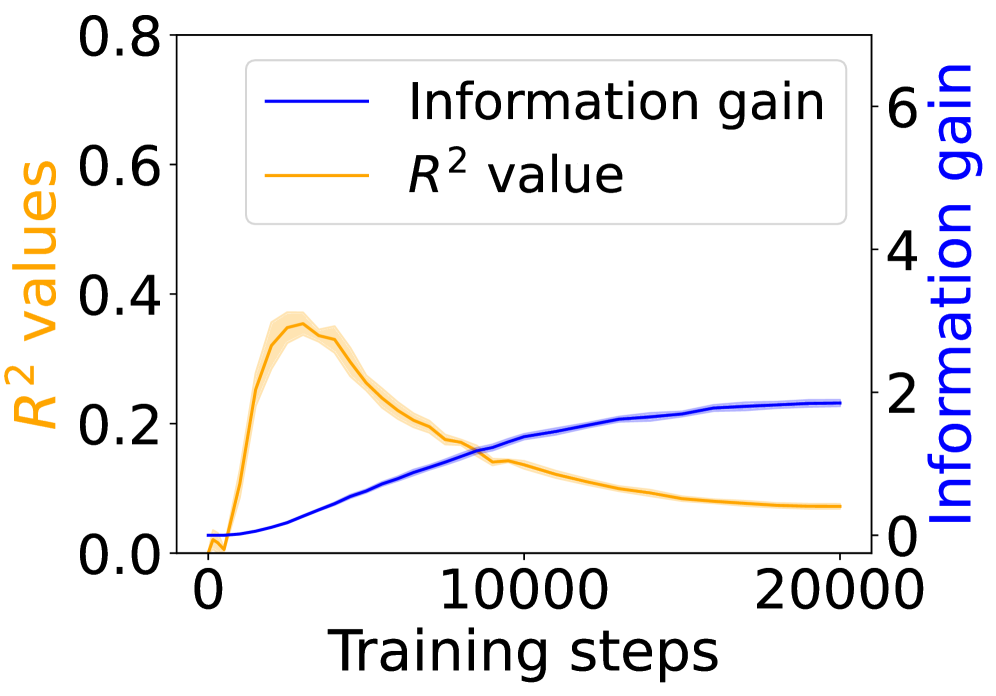

We define co-occurrence between the corresponding $\langle$ ENV $\rangle$ and $\langle$ LAN $\rangle$ tokens at the granularity of a 512-token training chunk. For each target word $v$ , we count the number of chunks in which both its $\langle$ ENV $\rangle$ and $\langle$ LAN $\rangle$ tokens appear. Following standard corpus-analysis practice, these raw counts are log-transformed. For each model checkpoint, we run linear regression between the log co-occurrence and the grounding information gain of words, obtaining an $R^{2}$ statistic as a function of training time.

Figure 3 shows the $R^{2}$ values (orange) alongside the grounding information gain (blue) for different architectures. In both the Transformer and Mamba-2, $R^{2}$ rises sharply at the early steps but then goes down, even if the grounding information gain continues increasing. These results suggest that grounding in Transformers and Mamba-2 cannot be fully accounted for by co-occurrence statistics: while models initially exploit surface co-occurrence regularities, later improvements in grounding diverge from these statistics, indicating reliance on richer and more complicated features acquired during training. In contrast, LSTM shows persistently increasing $R^{2}$ but little increase in grounding information gain over training steps, suggesting that it encodes co-occurrence but lacks the architectural mechanism to transform it into predictive grounding.

### 4.3 Visual Dialogue with Captions and Images

<details>

<summary>x12.png Details</summary>

### Visual Description

## Line Graph: Surprisal Trends Across Training Steps

### Overview

The image depicts a line graph comparing two data series labeled "Match" (blue) and "Mismatch" (orange) across 20,000 training steps. The y-axis measures "Surprisal" (a metric likely representing prediction uncertainty or error), while the x-axis tracks training progress. Both lines exhibit distinct trends, with the "Match" series declining sharply and the "Mismatch" series remaining relatively stable.

### Components/Axes

- **Y-axis (Surprisal)**: Labeled "Surprisal," scaled from 8 to 12 in increments of 1.

- **X-axis (Training steps)**: Labeled "Training steps," scaled from 0 to 20,000 in increments of 10,000.

- **Legend**: Positioned in the top-right corner, with:

- **Blue line**: "Match"

- **Orange line**: "Mismatch"

### Detailed Analysis

1. **Match (Blue Line)**:

- Starts at ~11.5 surprisal at 0 training steps.

- Declines steadily to ~7.0 surprisal by 20,000 steps.

- Exhibits minor fluctuations (e.g., slight dips at ~5,000 and ~15,000 steps).

- Shaded blue region (confidence interval?) narrows as training progresses.

2. **Mismatch (Orange Line)**:

- Begins at ~10.5 surprisal at 0 steps.

- Remains relatively flat (~10.0–11.0 surprisal) throughout training.

- Shows minor oscillations (e.g., peaks at ~3,000 and ~12,000 steps).

- Shaded orange region remains consistent in width.

### Key Observations

- The "Match" series demonstrates a **steady decline** in surprisal, suggesting improved performance or reduced uncertainty over training.

- The "Mismatch" series shows **no significant change**, implying stable performance or inherent difficulty in modeling mismatches.

- Both lines start with overlapping surprisal values (~10.5–11.5) but diverge sharply after ~5,000 steps.

### Interpretation

The data suggests that training effectively reduces surprisal for "Match" scenarios, likely due to the model learning patterns in these cases. In contrast, "Mismatch" surprisal remains high, indicating either:

1. **Inherent complexity** of mismatch patterns that the model cannot easily learn.

2. **Data imbalance**, where mismatch examples are underrepresented.

3. **Architectural limitations**, such as a model optimized for match prediction.

The divergence highlights a critical insight: training prioritizes match accuracy at the expense of mismatch performance, which may have implications for real-world applications requiring robust generalization. Further investigation into data distribution or model adjustments (e.g., balanced loss functions) could address this gap.

</details>

(a) Surprisal curves (w/ caption).

<details>

<summary>x13.png Details</summary>

### Visual Description

## Line Graph: Surprisal vs Training Steps

### Overview

The image depicts a line graph comparing two data series ("Match" and "Mismatch") across 300,000 training steps. The y-axis measures "Surprisal" (0-12), while the x-axis tracks "Training steps" (0-300,000). Two shaded confidence intervals accompany each line, indicating measurement uncertainty.

### Components/Axes

- **Y-axis (Surprisal)**: Linear scale from 8 to 12, with ticks at 8, 10, and 12.

- **X-axis (Training steps)**: Linear scale from 0 to 300,000, with ticks at 0, 150,000, and 300,000.

- **Legend**: Located in the top-right corner, with:

- **Blue line**: "Match" (solid blue)

- **Orange line**: "Mismatch" (solid orange)

- **Shaded regions**: Gray bands around each line represent 95% confidence intervals.

### Detailed Analysis

1. **Match (Blue Line)**:

- Starts at **10.0** (x=0) with a steep decline.

- Drops to **8.5** at 150,000 steps, then plateaus near **8.0** by 300,000 steps.

- Confidence interval narrows from ±0.5 at x=0 to ±0.2 at x=300,000.

2. **Mismatch (Orange Line)**:

- Begins at **10.0** (x=0) with a slight dip to **9.5** at 150,000 steps.

- Stabilizes at **9.3** by 300,000 steps, showing minimal change.

- Confidence interval remains consistent at ±0.3 throughout.

### Key Observations

- **Match** demonstrates a **20% reduction** in surprisal over training steps, while **Mismatch** shows only a **7% reduction**.

- The **blue line** (Match) exhibits a **non-linear decline**, with the steepest drop occurring in the first 50,000 steps.

- **Mismatch** maintains a **flat trajectory** after 150,000 steps, suggesting diminishing returns in training.

### Interpretation

The data suggests that "Match" conditions (likely aligned with training objectives) lead to **significant performance improvement** over time, as measured by decreasing surprisal. The "Mismatch" condition shows **limited adaptation**, maintaining higher surprisal values despite training. The narrowing confidence intervals for "Match" indicate increasing measurement precision as training progresses. This pattern aligns with machine learning principles where model parameters better align with training data over iterations, reducing uncertainty in predictions.

</details>

(b) Surprisal curves (w/ image).

<details>

<summary>x14.png Details</summary>

### Visual Description

## Line Graph: Training Metrics Over Steps

### Overview

The image depicts a line graph comparing two metrics—**Information gain** and **R² values**—across **20,000 training steps**. The graph uses dual y-axes: the left axis measures **R² values** (0–1), and the right axis measures **Information gain** (0–6). Both metrics show distinct trends over training steps.

---

### Components/Axes

- **X-axis**: Training steps (0 to 20,000, linear scale).

- **Left Y-axis**: R² values (0 to 1.00, increments of 0.25).

- **Right Y-axis**: Information gain (0 to 6, increments of 2).

- **Legend**: Located in the top-left corner, with:

- **Blue line**: Information gain.

- **Orange line**: R² value.

---

### Detailed Analysis

1. **Information gain (Blue line)**:

- Starts at **0** at step 0.

- Increases steadily, reaching **~4.5** by 20,000 steps.

- Slope is consistent, with minor fluctuations (e.g., slight dips around 10,000 steps).

2. **R² value (Orange line)**:

- Begins at **0** at step 0.

- Rises sharply to **~0.75** by ~5,000 steps.

- Plateaus around **~0.5** after 10,000 steps, with minor oscillations.

---

### Key Observations

- **Information gain** increases linearly throughout training, suggesting continuous improvement in model utility.

- **R² value** peaks early (~5,000 steps) and then declines, indicating diminishing returns in predictive accuracy.

- The dual axes highlight a disconnect: while Information gain grows, R² stabilizes, implying the model may prioritize exploration over exploitation.

---

### Interpretation

The data suggests that as training progresses:

1. **Information gain** reflects the model’s ability to reduce uncertainty in predictions, improving steadily.

2. **R² value** measures how well the model explains variance in the data, peaking early and then plateauing. This could indicate overfitting or saturation of simple patterns.

3. The divergence between the two metrics implies a trade-off: the model may be learning complex, less generalizable features (high Information gain) rather than refining core predictive relationships (R²).

This pattern is common in reinforcement learning, where exploration (driving Information gain) often outpaces immediate performance gains (R²). Further analysis could investigate whether the model’s behavior aligns with expected convergence properties.

</details>

(c) $R^{2}$ and information gain (w/ caption).

<details>

<summary>x15.png Details</summary>

### Visual Description

## Line Graph: Information Gain vs R² Value Over Training Steps

### Overview

The graph depicts two metrics—**Information gain** (blue line) and **R² value** (orange line)—plotted against **Training steps** (x-axis). The left y-axis represents **R² values** (0–1), while the right y-axis represents **Information gain** (0–3). The legend is positioned in the top-left corner, with blue and orange lines corresponding to their respective metrics.

---

### Components/Axes

- **X-axis**: Training steps (0 to 300,000, linear scale).

- **Left Y-axis**: R² values (0 to 1, linear scale).

- **Right Y-axis**: Information gain (0 to 3, linear scale).

- **Legend**: Top-left corner, with blue = Information gain, orange = R² value.

---

### Detailed Analysis

#### R² Value (Orange Line)

- **Initial trend**: Starts near **0.4** at 0 training steps, peaks at **~0.45** around 50,000 steps, then declines steadily.

- **Final value**: Stabilizes at **~0.25** by 300,000 steps.

- **Visual trend**: Slopes downward after the initial peak, with minor fluctuations.

#### Information Gain (Blue Line)

- **Initial trend**: Begins near **0** at 0 training steps, rises sharply to **~2.5** by 300,000 steps.

- **Visual trend**: Consistently upward-sloping with no plateaus or declines.

#### Key Intersection

- The two lines intersect at **~50,000 training steps**, where R² (~0.45) and Information gain (~0.45) are approximately equal.

---

### Key Observations