# REAP the Experts: Why Pruning Prevails for One-Shot MoE compression

**Authors**:

- Yani Ioannou, Vithursan Thangarasa (Cerebras Systems Inc., Schulich School of Engineering, University of Calgary)

Abstract

Sparsely-activated Mixture-of-Experts (SMoE) models offer efficient pre-training and low latency but their large parameter counts create significant memory overhead, motivating research into expert compression. Contrary to recent findings favouring expert merging on discriminative benchmarks, we demonstrate that expert pruning is a superior strategy for generative tasks. We prove that merging introduces an irreducible error by causing a “functional subspace collapse”, due to the loss of the router’s independent, input-dependent control over experts. Leveraging this insight, we propose Router-weighted Expert Activation Pruning (REAP), a novel pruning criterion that considers both router gate-values and expert activation norms. Across a diverse set of SMoE models ranging from 20B to 1T parameters, REAP consistently outperforms merging and other pruning methods on generative benchmarks, especially at 50% compression. Notably, our method achieves near-lossless compression on code generation and tool-calling tasks with Qwen3-Coder-480B and Kimi-K2, even after pruning 50% of experts. Correspondence to mklasby@ucalgary.ca & vithu@cerebras.net $\dagger$ Work completed while on internship at Cerebras

|

<details>

<summary>x1.png Details</summary>

### Visual Description

Icon/Small Image (25x24)

</details>

| https://github.com/CerebrasResearch/reap |

| --- | --- |

|

<details>

<summary>x2.png Details</summary>

### Visual Description

Icon/Small Image (26x24)

</details>

| https://hf.co/cerebras/Qwen3-Coder-REAP-363B-A35B-FP8 |

|

<details>

<summary>x3.png Details</summary>

### Visual Description

Icon/Small Image (26x24)

</details>

| https://hf.co/cerebras/Qwen3-Coder-REAP-246B-A35B-FP8 |

1 Introduction

Interest in the Sparsely-activated Mixture-of-Experts (SMoE) architecture for Large Language Models (LLMs) surged following the release of DeepSeek-V3 (DeepSeek-AI et al., 2024) and other high-quality open-weight SMoE LLMs (Jiang et al., 2024; Meta AI Team, 2025; Yang et al., 2025a; Zeng et al., 2025; Baidu, 2025; Kimi Team et al., 2025). Compared to dense models, the SMoEs offer lower latency and more efficient pre-training (Fedus et al., 2022). However, SMoEs require more parameters than dense models to achieve similar accuracy, resulting in significant memory overhead. Further, expert usage imbalance during inference causes poor accelerator utilization, leading to increased latency or compromises such as dropped tokens (Balmau et al., 2025). Expert usage imbalance also represents an opportunity, motivating prior work which investigates whether experts can be compressed without negatively impairing accuracy (Li et al., 2023; Lu et al., 2024). By eliminating or compressing redundant experts, memory overhead is reduced. A more uniform distribution of expert usage would also improve hardware utilization. Expert compression is particularly valuable for use cases which feature small batch sizes such as local deployments and academic research.

Initial expert compression efforts focused on expert pruning, the removal of experts in their entirety. However, expert pruning is a strong intervention on the model’s weights. Techniques such as quantization, low-rank compression, and expert merging also offer memory savings but maintain a lossy representation of the less important experts. Crucially, expert merging has recently been demonstrated to outperform expert pruning when evaluated with perplexity and on Multiple Choice (MC) question answering benchmarks (Li et al., 2023; Liu et al., 2024b). However, an evaluation comparing these methods on generative benchmarks has yet to be conducted. In this work, we demonstrate that — when paired with a suitable saliency criterion — expert pruning outperforms expert merging, particularly on generative benchmark tasks such as code generation, creative writing, and mathematical reasoning. Specifically, our main contributions are as follows:

- We prove that expert merging introduces irreducible error due to the loss of the router’s independent, input-dependant modulation of the expert outputs resulting in functional subspace collapse, substantially reducing the functional output space of the compressed SMoE layer. In contrast, in expert pruned SMoEs the router maintains independent control over the remaining experts;

- We introduce Router-weighted Expert Activation Pruning (REAP), a novel expert pruning saliency criterion, which selects experts to prune which contribute minimally to the layer output by considering both the router gate-values and average activation norm of the experts;

- Across diverse SMoE architectures ranging from 20B to 1T parameters and a suite of generative evaluations, we demonstrate the significant and consistent advantage of REAP over existing expert pruning and merging approaches, particularly at 50% compression. Notably, our method achieves near-lossless compression on code generation tasks after pruning 50% of experts from Qwen3-Coder-480B and Kimi-K2;

- We open-source our code and select compressed model checkpoints to facilitate further research on compressed SMoEs and their applications.

2 Related Work

Sparsely activated SMoE architecture.

A Mixture-of-Experts (MoE) layer is comprised of multiple, specialized feed-forward subnetworks known as experts and a router which produces gate-values (i.e., gates) to dynamically modulate the output of the experts based on the input. The architecture was revived in the deep learning era by the introduction of the SMoE by Shazeer et al. (2017). SMoEs layers only select a subset of experts to use for each input, enabling massive scaling of model parameters without a commensurate increase in computational cost (Lepikhin et al., 2021; Fedus et al., 2022). In transformer-based LLMs, SMoE layers are integrated by replacing the traditional feed-forward layers. Further innovations such as auxiliary-loss-free load balancing (DeepSeek-AI et al., 2024), shared experts, and fined-grained experts (Dai et al., 2024) have propelled SMoE architectures to become the de facto standard for LLMs in recent months.

Expert pruning.

Although SMoE layers effectively decouple total model parameters from inference costs, the memory overhead of storing large SMoEs restricts their deployment in resourced-constrained environments, motivating research in expert pruning to reduce total number of parameters. Early efforts demonstrated that progressively pruning experts based on router weights during fine-tuning until a single expert remained could preserve model quality in task-specific settings (Chen et al., 2022). Koishekenov et al. (2023) found expert pruning to be effective without further fine-tuning despite aggressively pruning up to 80% of experts. Muzio et al. (2024) found that global pruning using gate-values as a saliency criterion was more effective than uniform, layer-wise frequency-based pruning. Other sophisticated pruning criteria have been proposed: Lu et al. (2024) introduced an exhaustive search strategy which prunes experts that minimize the reconstruction loss between the original and pruned layer outputs; Liu et al. (2024a) used a gradient-free evolutionary algorithm to prune experts. Both of these works demonstrated significant improvements over naive frequency-based pruning. A comprehensive evaluation of 16 diverse pruning criteria was conducted by Jaiswal et al. (2025). Expert Activation Norm (EAN) was empirically found to be the highest performing criterion and the benefits of iterative pruning were presented.

Expert merging.

While the above-noted works prove that expert compression is feasible via pruning, an alternative compression technique is to merge experts. Generally, merging requires both a clustering algorithm and a merging technique. Li et al. (2023) introduced Merge Sparse Mixture of Experts (M-SMoE) which first initializes expert cluster centres by identifying the dominant experts with the highest usage frequency globally across all layers. The remaining non-dominant experts are clustered based on the cosine similarity of router logits. Finally, experts weights are aligned via permutation with the weight matching algorithm (Ainsworth et al., 2023) and merged using frequency-weighted parameter averaging. Li et al. (2023) found that their technique outperformed Chen et al. ’s (2022) pruning method on MC benchmarks. Chen et al. (2025) proposed Hierarchical Clustering for Sparsely activated Mixture of Experts (HC-SMoE). HC-SMoE clusters experts based on the euclidean similarity of their representative vectors — the average activation of each expert measured on every token in a calibration dataset — using hierarchical agglomerative clustering. Similar to M-SMoE, HC-SMoE uses frequency-weighted parameter averaging to merge clusters into a single merged expert. Without any fine-tuning, Chen et al. (2025) found that their technique outperformed expert pruning based on router logits (He et al., 2025a), frequency, and Lu et al. ’s (2024) method when benchmarked on a suite of MC question answering tasks.

Other compression techniques.

In addition to pruning and merging, experts may be compressed through quantization (Huang et al., 2025), low-rank decomposition (Yang et al., 2024a; Gu et al., 2025; He et al., 2025b), weight sparsity (He et al., 2025a), or a combination of any of the above techniques (Liu et al., 2025). These other approaches are orthogonal to expert pruning and merging; however, note that expert merging necessitates re-quantization for block quantization formats that share common scaling coefficients across a group of weights.

Model merging.

Model merging aims to combine parameters from multiple trained neural networks and has been rapidly adopted as a cost-effective way to improve model quality across diverse domains. The initial motivation for merging was based on the finding that mode connectivity exists between the loss landscapes of two or more trained neural networks, enabling interpolation of their parameters without incurring an increase in loss (Garipov et al., 2018; Ainsworth et al., 2023; Ito et al., 2024). Simple parameter averaging remains an effective technique; however, more sophisticated strategies based on task vectors have also been proposed to minimize interference in the merged model parameters (Ilharco et al., 2023; Yadav et al., 2023; Yu et al., 2024). Much of the existing literature focuses on the setting in which multiple fine-tunes of a single checkpoint are merged. Non-local merging in which the models do not share a common checkpoint is more closely related to expert merging. Sharma et al. (2024) found that re-scaling of model activations was necessary to achieve high-quality non-local merging.

LLM evaluation.

Evaluating LLMs is challenging; prior work demonstrated that simple metrics such as perplexity can be misleading when used to evaluate compressed LLMs (Jaiswal et al., 2024). MC benchmarks typically measure the log-likelihood of answer tokens to determine a model’s response to a question (Gao et al., 2023; Chandak et al., 2025). As such, each response choice is evaluated in a single forward pass, without any tokens being generated by the model. Perplexity and MC accuracy can therefore be viewed as discriminative metrics. In contrast, generative benchmarks require the model to output a response, more closely corresponding with real-world use-cases of LLMs. Tasks such as code generation, mathematical reasoning with structured outputs, and creative writing are examples of generative benchmarks.

3 Merging Experts Causes Functional Subspace Collapse

Setup.

To motivate our proposed expert pruning method, we first formally develop the expected errors of both expert merging and pruning. Consider a SMoE layer with $K$ experts $f_{1},...,f_{K}$ , each a function $f_{k}:\mathbb{R}^{d}→\mathbb{R}^{d}$ , and a router producing non-negative gates $\mathbf{g(x)}=(g_{1}(x),...,g_{K}(x))∈\Delta^{K-1}$ . Top- $k$ routing is achieved by zeroing all but the largest $k$ gates. The output of the original layer is

$$

h(x):=\sum_{k=1}^{K}g_{k}(x)\,f_{k}(x). \tag{1}

$$

Two operations at fixed compression.

To analyse the fundamental difference between compression operations, we focus on the elementary case of reducing two experts, $(f_{i},f_{j})$ , to one. This pairwise analysis is the building block for any larger merge within a cluster. Pruning removes expert $j$ and re-normalizes the router outputs over the remaining $K-1$ experts, producing a new set of gates $\bar{g}(x)$ . Merging replaces $(f_{i},f_{j})$ with a new expert $\tilde{f}$ . Existing one-shot expert merging methods such as HC-SMoE and M-SMoE sum the gates for the original experts $g_{i}(x)+g_{j}(x)$ . The pruned, $\bar{h}(x)$ , and merged, $\tilde{h}(x)$ , layer outputs are

$$

\bar{h}(x):=\sum_{k\neq j}\bar{g}_{k}(x)\,f_{k}(x), \tag{2}

$$

$$

\tilde{h}(x):=\sum_{k\neq i,j}g_{k}(x)f_{k}(x)+\big(g_{i}(x)+g_{j}(x)\big)\tilde{f}(x). \tag{3}

$$

3.1 Merging induces an input-dependent target a single expert cannot realize

Define the router’s input-dependent mixing ratio $r(x):=\frac{g_{i}(x)}{g_{i}(x)+g_{j}(x)}∈[0,1]$ on the set where $g_{i}+g_{j}>0$ . Substituting $g_{i}(x)$ and $g_{j}(x)$ in terms of $r(x)$ , the original contribution of the pair $(i,j)$ can be written as

$$

\displaystyle g_{i}(x)f_{i}(x)+g_{j}(x)f_{j}(x) \displaystyle=\big[r(x)(g_{i}(x)+g_{j}(x))\big]f_{i}(x)+\big[(1-r(x))(g_{i}(x)+g_{j}(x))\big]f_{j}(x) \displaystyle=\big(g_{i}(x)+g_{j}(x)\big)\underbrace{\Big(r(x)f_{i}(x)+\big(1-r(x)\big)f_{j}(x)\Big)}_{\text{The ideal, input-dependent target expert}}. \tag{4}

$$

After merging, the router must apply the summed gate, $g_{i}(x)+g_{j}(x)$ , to a constant convex combination of the constituent experts which is independent of $x$ . The core issue is that the merged model is forced to approximate the dynamic, input-dependent target expert with a static one. The following theorem quantifies this unavoidable approximation error.

**Theorem 1 (Irreducible error of merging)**

*Let $\tilde{f}_{\alpha}(x)=\alpha f_{i}(x)+(1-\alpha)f_{j}(x)$ with a constant $\alpha∈[0,1]$ and define $\Delta_{ij}:=f_{i}(x)-f_{j}(x)$ . The $L^{2}$ error of the merged pair is minimized when $\alpha$ is chosen to be the expected mixing ratio, $\alpha^{\star}:=\mathbb{E}[r(x)]$ . Omitting the argument $(x)$ for brevity, this minimal error is

$$

\displaystyle\big\|\,\big(g_{i}{+}g_{j}\big)\!\big(rf_{i}{+}(1{-}r)f_{j}\big)\;-\;\big(g_{i}{+}g_{j}\big)\!\big(\alpha f_{i}{+}(1{-}\alpha)f_{j}\big)\big\|^{2} \displaystyle=\underbrace{\mathbb{E}\!\left[(g_{i}{+}g_{j})^{2}\right]}_{\text{router scale}}\cdot\underbrace{\mathrm{Var}[r(x)]}_{\text{policy variability}}\cdot\underbrace{\|\Delta_{ij}\|^{2}}_{\text{expert gap}}. \tag{5}

$$

In particular, if the router’s policy is not constant ( $\mathrm{Var}[r(x)]>0$ ) and the experts are not functionally identical ( $\|\Delta_{ij}\|>0$ ), then every constant- $\alpha$ merge incurs strictly positive excess risk.*

* Proof*

The error term simplifies to $\big\|\big(g_{i}{+}g_{j}\big)\big(r-\alpha\big)\Delta_{ij}\big\|^{2}$ . Assuming independence between the router policy and expert functions, this is proportional to $\mathbb{E}[(r-\alpha)^{2}]$ . This is a standard least-squares problem minimized when $\alpha=\mathbb{E}[r]$ , and the minimal value is $\mathrm{Var}[r]$ . ∎

Consequences.

Theorem 1 illustrates that merging with summed gates is fundamentally flawed whenever (i) the router has learned an input-dependent policy for mixing two experts ( $\mathrm{Var}[r]>0$ ), and (ii) the experts are themselves distinct ( $\|\Delta_{ij}\|>0$ ). Any fixed $\alpha$ cannot overcome the irreducible error bound established in equation 5.

3.2 Pruning preserves independent control

Pruning removes one function but importantly does not tie the remaining gates. The router still modulates each surviving expert independently. In contrast, merging removes a degree of freedom in the policy by replacing individual experts with their mergers. For a direct comparison under no fine-tuning, pruning expert $j$ and reallocating its gate-value to expert $i$ produces the error

$$

\big\|(g_{i}(x)f_{i}(x)+g_{j}(x)f_{j}(x))-(g_{i}(x){+}g_{j}(x))f_{i}(x)\big\|^{2}\;=\;\mathbb{E}\!\left[g_{j}(x)^{2}\,\|\Delta_{ij}(x)\|_{2}^{2}\right]. \tag{6}

$$

Unlike equation 5, equation 6 does not penalize policy variability, the router still controls surviving experts independently. Whenever the router substantially mixes $i$ and $j$ (large $\mathrm{Var}[r]$ ) while the pruned expert $j$ has a small average gate-value ( $\mathbb{E}[g_{j}^{2}]$ ), pruning admits a strictly smaller error than merging.

Synthesis.

Theorem 1 establishes that summed gate merging incurs an irreducible error directly proportional to the router’s policy variability ( $\mathrm{Var}[r(x)]$ ). In contrast, the error from pruning a low-usage expert (Eq. 6) is proportional to its gate-value ( $\mathbb{E}[g_{j}^{2}]$ ) and is insensitive to policy variability. Therefore, when the router actively mixes between two distinct experts, merging is fundamentally disadvantaged.

Remarks.

(i) The constant-mixture model $\tilde{f}_{\alpha}$ is mathematically related to the frequency weighted parameter averaging merge used in practice. (ii) Even if $\tilde{f}$ was dependent on $x$ , the router after merging cannot independently modulate the two latent functions, so the original policy is invalidated. (iii) With top-k routers, the specific irreducible error from policy variability ( $\mathrm{Var}[r(x)]$ ) is generated exclusively on the support where both experts are selected. Outside that support, this component vanishes, leaving only a static error term that depends on the functional expert gap. (iv) See Appendix ˜ A for an extension of the above analysis to hierarchical clustering.

3.3 Empirical evidence for loss of independent control

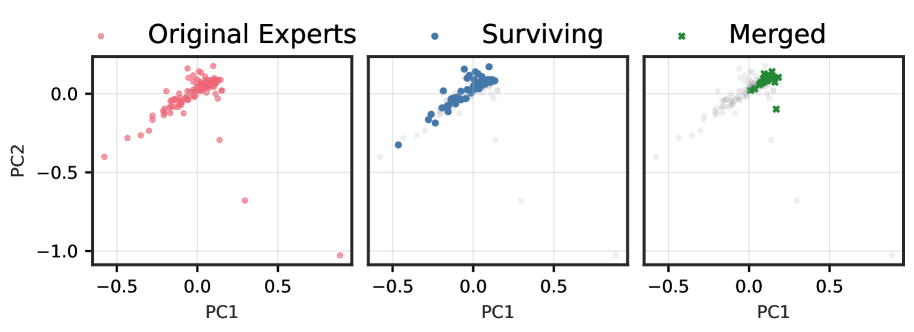

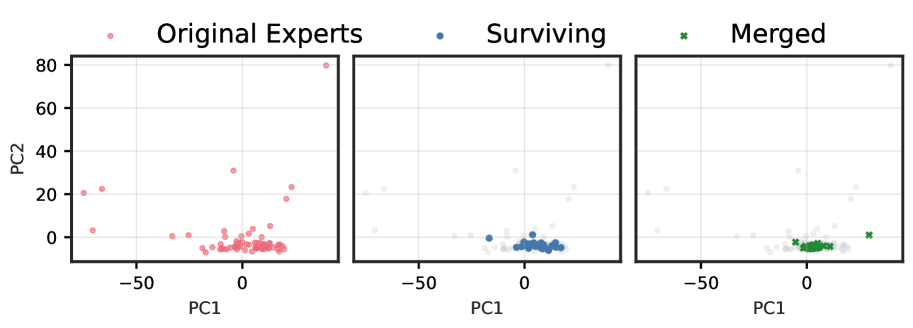

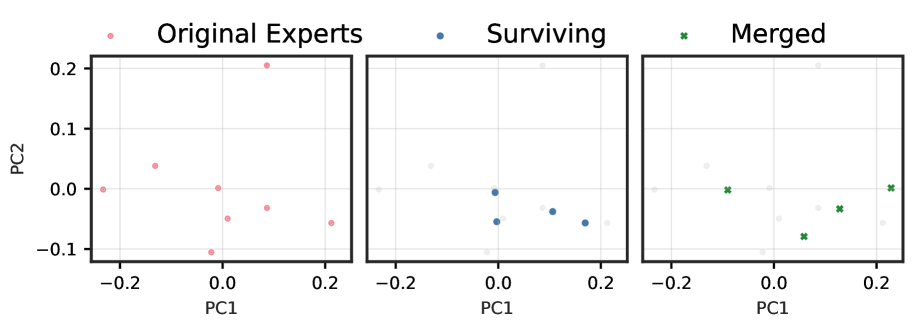

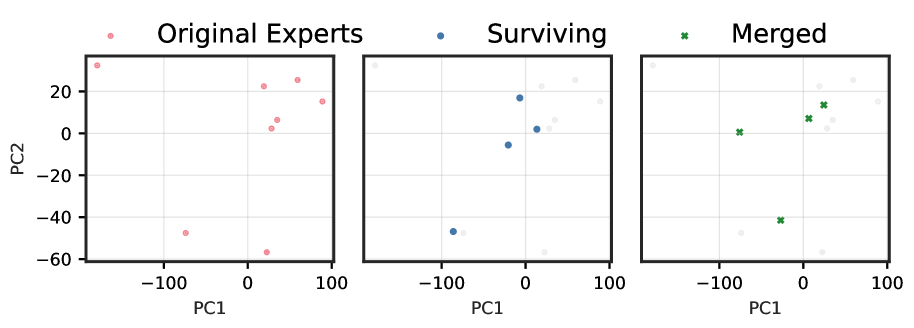

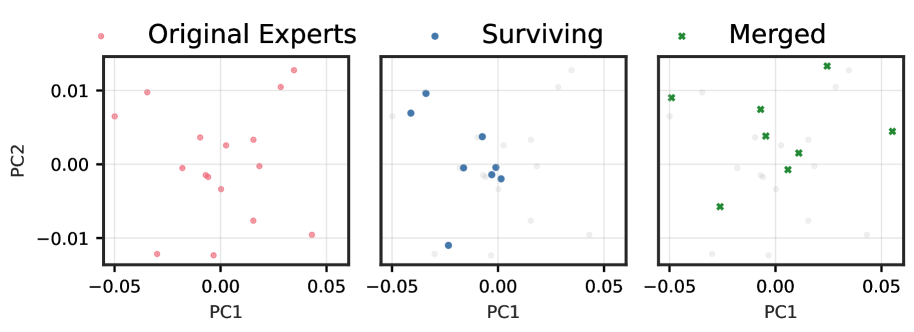

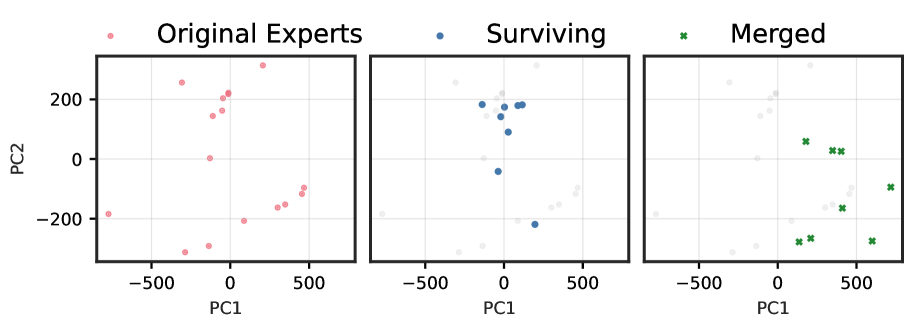

Setup.

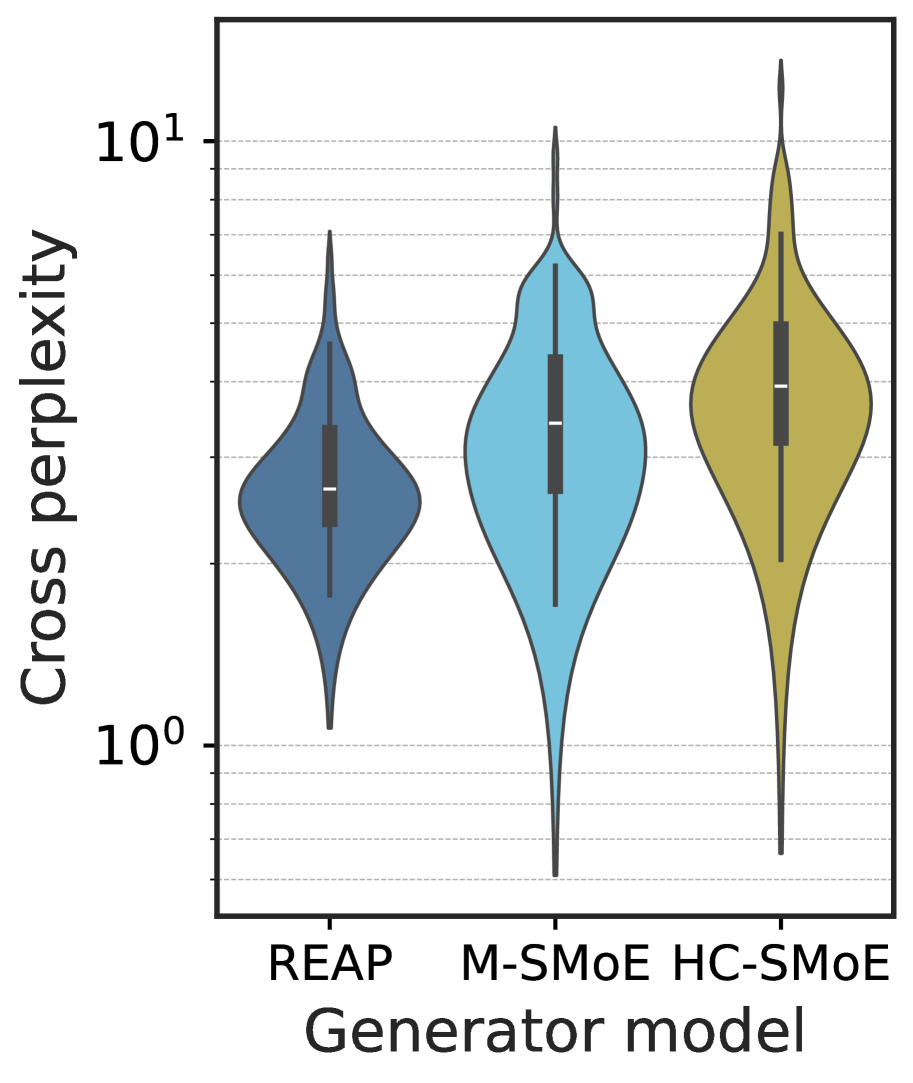

We analyse the functional subspaces of expert outputs across four diverse state-of-the-art SMoE architectures by recording mean expert activations from 32 samples of 2048 tokens from the c4 dataset (Raffel et al., 2020). By projecting expert activations onto their first two principal components, we visualize how pruning and merging affect the learned representations. See Appendix ˜ B for additional discussion.

Early vs. late behaviour.

Figures ˜ 1 and A4 demonstrate a striking progression of functional collapse from early to late layers across all architectures. In early layers, the original experts form relatively compact manifolds with moderate spread. After pruning, the surviving experts maintain their positions on the original manifold, preserving its geometric structure with reduced density. In contrast, merging produces a visible contraction toward the manifold’s centre. The contrast becomes dramatic in late layers, where experts are more specialized, and in high granularity architectures with many experts per layer.

The progression from early to late layers validates our theoretical prediction that the irreducible error is proportional to $\mathrm{Var}[r(x)]$ . Early layers, which typically learn more generic features, exhibit lower policy variability and thus less dramatic collapse. Late layers, where experts have specialized for distinct computational roles, demonstrate high policy variability, resulting in the severe functional collapse observed when these specialized experts are merged into static averages.

Synthesis across architectures.

The consistency of these patterns across architectures with vastly different expert counts (8 to 128), sparsity levels (6.25% to 25% active), and parameter scales (21.9B to 109B) demonstrates that functional collapse under merging is a fundamental property of the operation rather than an artifact of specific implementations. These visualizations reveal that the core issue is not the reduction in the number of experts per se, but rather the qualitative change in the router’s control structure.

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Data Extraction: Principal Component Analysis (PCA) of Experts

This image consists of three side-by-side scatter plots visualizing the distribution and transformation of "Experts" in a 2D principal component space (PC1 vs. PC2).

## 1. General Chart Metadata

* **X-Axis Label:** PC1 (Principal Component 1)

* **Y-Axis Label:** PC2 (Principal Component 2)

* **X-Axis Scale:** Ranges from approximately -0.6 to +0.9. Major ticks at -0.5, 0.0, and 0.5.

* **Y-Axis Scale:** Ranges from approximately -1.1 to +0.2. Major ticks at -1.0, -0.5, and 0.0.

* **Grid:** Light gray grid lines are present on all three plots.

---

## 2. Individual Plot Analysis

### Plot 1: Original Experts

* **Legend Label:** Original Experts (indicated by a light red circle icon).

* **Data Representation:** All data points are shown as semi-transparent red circles.

* **Distribution:**

* The majority of points are clustered in a dense diagonal formation extending from roughly (-0.2, -0.1) to (0.1, 0.1).

* A tail of sparse points extends toward the bottom-left (negative PC1, negative PC2), reaching approximately (-0.6, -0.4).

* There are three distinct outliers in the bottom-right quadrant:

* One near (0.15, -0.3)

* One near (0.3, -0.7)

* One near (0.9, -1.0)

### Plot 2: Surviving

* **Legend Label:** Surviving (indicated by a blue circle icon).

* **Data Representation:**

* **Active Points:** Solid blue circles.

* **Background Points:** The "Original Experts" from the first plot are shown as faint, light-gray circles for context.

* **Distribution:**

* The "Surviving" experts are primarily concentrated in the upper-right portion of the main cluster, centered around (0.0, 0.1).

* The sparse tail in the negative PC1/PC2 region and the extreme outliers in the bottom-right quadrant are largely excluded (rendered in gray), indicating they did not "survive."

### Plot 3: Merged

* **Legend Label:** Merged (indicated by a green 'x' icon).

* **Data Representation:**

* **Active Points:** Green 'x' markers.

* **Background Points:** The "Original Experts" are shown as faint, light-gray circles.

* **Distribution:**

* The "Merged" points are highly localized in the top-right corner of the main cluster, specifically around the coordinates (0.1, 0.1).

* One green 'x' outlier is visible at approximately (0.18, -0.1).

* This plot shows the highest level of pruning/consolidation, as the green markers occupy a much smaller area of the PC space compared to the "Surviving" or "Original" sets.

---

## 3. Summary of Trends

* **Data Reduction:** Moving from left to right, the number of active points decreases.

* **Spatial Filtering:** The process (Surviving -> Merged) appears to filter out experts located in the lower-left tail and the extreme bottom-right outliers.

* **Convergence:** The "Merged" experts represent a tight consensus group located at the positive extreme of the main density cluster on both the PC1 and PC2 axes.

</details>

(a) Qwen3-30B Layer 0

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Data Extraction: Principal Component Analysis (PCA) of Expert Distribution

This document provides a detailed extraction of data from a three-panel visualization showing the evolution of "Experts" in a PCA feature space.

## 1. General Layout and Axis Information

The image consists of three side-by-side scatter plots sharing the same coordinate system.

* **X-Axis (PC1):** Ranges from approximately -150 to 200. Major tick marks are at -100, 0, 100, and 200.

* **Y-Axis (PC2):** Ranges from approximately -150 to 75. Major tick marks are at -150, -100, -50, 0, and 50.

* **Grid:** A light gray grid is present in all panels to facilitate spatial orientation.

---

## 2. Panel-by-Panel Analysis

### Panel 1: Original Experts

* **Legend Label:** Original Experts (indicated by a light red/pink circle icon).

* **Data Series Color:** Light red/pink semi-transparent circles.

* **Spatial Distribution:**

* The points represent the baseline distribution of all experts.

* The highest density is clustered around the origin (0,0).

* There is a distinct vertical "spine" extending upwards along the PC2 axis to approximately +70.

* There is a horizontal spread along the PC1 axis reaching nearly +200.

* Outliers are visible at the extreme bottom-left (approx. [-150, -140]) and far right (approx. [190, -70]).

### Panel 2: Surviving

* **Legend Label:** Surviving (indicated by a medium blue circle icon).

* **Data Series Color:** Medium blue solid circles.

* **Background Context:** The "Original Experts" from Panel 1 are shown as faint, light gray circles in the background.

* **Spatial Distribution:**

* The "Surviving" experts are primarily located in the lower-left and central regions of the original distribution.

* They occupy the space between PC1 [-100 to +150] and PC2 [-150 to +10].

* Notably, the vertical "spine" extending into positive PC2 values (seen in Panel 1) is largely absent in this group, with only one or two blue points near PC2 = 0.

### Panel 3: Merged

* **Legend Label:** Merged (indicated by a dark green 'x' icon).

* **Data Series Color:** Dark green 'x' markers.

* **Background Context:** The "Original Experts" from Panel 1 are shown as faint, light gray circles in the background.

* **Spatial Distribution:**

* The "Merged" experts are highly localized in a tight cluster.

* **Cluster Center:** Approximately PC1 = -10, PC2 = +25.

* **Cluster Range:** PC1 [-30 to +10], PC2 [+15 to +45].

* This group corresponds to the upper section of the vertical "spine" identified in the "Original Experts" panel, which was notably missing from the "Surviving" panel.

---

## 3. Summary of Trends and Relationships

By cross-referencing the three panels, the following technical conclusions can be drawn:

1. **Total Population:** The "Original Experts" represent the full set of data points.

2. **Partitioning:** The "Surviving" and "Merged" groups appear to be mutually exclusive subsets of the "Original Experts."

3. **Spatial Separation:**

* **Surviving Experts** represent the "lower" and "right-leaning" portion of the PCA space (negative PC2 and positive PC1).

* **Merged Experts** represent a specific "upper-central" cluster (positive PC2, near-zero PC1).

4. **Dimensionality:** The merging process appears to collapse a specific sub-region of the feature space (the vertical spine) into a dense, localized cluster, while the surviving experts maintain a more dispersed distribution across the remaining original space.

</details>

(b) Qwen3-30B Layer 47

Figure 1: (1(a)) Functional subspace (PCA) for early SMoE layers in Qwen3-30B. Pruning (blue) preserves the manifold geometry; merging (green) collapses it toward the centre. (1(b)) Functional subspace (PCA) for late MoE layers. The contraction under merging is dramatically more pronounced, with up to 100 $×$ reduction in spread for models with many experts. See Figure ˜ A4 for results from other models.

4 Router-weighted Expert Activation Pruning (REAP)

The above analysis demonstrates that the functional output space of a SMoE layer is defined by the coordinated behaviour of the router and experts. An expert’s total contribution to its layer’s output is determined by both its gate-value, $g_{k}(x)$ , and the magnitude of its output vector, $\big\|f_{k}(x)\big\|_{2}$ . However, naive frequency-based pruning fails to consider these properties. Intuitively, pruning experts which contribute minimally to the layer output minimizes the difference between the original and pruned layer outputs. Let $h(x)$ be the original output and $\bar{h}_{\setminus j}(x)$ be the output after pruning expert $j$ and re-normalizing the remaining router weights. The error induced by pruning expert $j$ is

$$

\Delta\bar{h}_{\setminus j}(x):=h(x)-\bar{h}_{\setminus j}(x)=\sum_{k}g_{k}(x)f_{k}(x)-\sum_{k\neq j}\frac{g_{k}(x)}{1-g_{j}(x)}f_{k}(x). \tag{7}

$$

Re-normalization of the router weights after pruning expert $j$ modulates all other remaining expert outputs, making direct minimization of $\Delta h_{j}$ complex. However, since our goal is to prune unimportant experts, we can reasonably assume their gate-values are small when active $\mathbb{E}_{x\sim\mathcal{X}}[g_{j}(x)]\ll 1$ . Under this assumption, the weight re-normalization factor is negligible, i.e., $1-g_{j}(x)≈ 1$ , and the error induced by pruning expert $j$ is approximately equal to the expert’s direct contribution to the layer output

$$

\Delta\bar{h}_{\setminus j}(x)\approx\sum_{k}g_{k}(x)f_{k}(x)-\sum_{k\neq j}g_{k}(x)f_{k}(x))=g_{j}(x)f_{j}(x). \tag{8}

$$

To select which experts to prune, we propose a novel saliency criterion, REAP, which approximates an expert’s importance by measuring its direct contribution to the layer’s output magnitude. Specifically, the saliency score, $S_{j}$ , is defined as the average of this contribution over tokens for which the expert is active

where $S_{j}$ is the saliency of expert $f_{j}$ and $\mathcal{X}_{j}$ is the set of inputs where $g_{j}(x)∈ TopK(\mathbf{g(x))}$ .

$$

S_{j}=\frac{1}{|\mathcal{X}_{j}|}\sum_{x\in\mathcal{X}_{j}}g_{j}(x)\cdot\big\|f_{j}(x)\big\|_{2}, \tag{9}

$$

where $\mathcal{X}_{j}$ is the set of tokens where expert $j$ is active (i.e., $\mathcal{X}_{j}=\{x\mid j∈\text{TopK}(\mathbf{g}(x))\}$ ). The experts with the minimum saliency score are selected for pruning. REAP is robust to outlier activations and has a direct, intuitive interpretation by quantifying the average magnitude an expert adds to the output vector when it is selected by the router. Pruning experts with the lowest $S_{j}$ removes those with the least impactful contribution.

5 Experiments

Setup.

We implement REAP and other expert compression baselines in PyTorch (Ansel et al., 2024). We collect router logits and expert activation data to calibrate the compression algorithms using a variety of general pre-training and domain-specific Supervised Fine-Tuning (SFT) datasets. For calibration, 1,024 samples are randomly selected and packed to 2,048 sequence length for models with $≤$ 110B parameters. For models with $≥$ 110B parameters, we select 12,228 samples with a maximum sequence length of 16,384 tokens without truncation or packing.

We compress models by pruning or merging 25% or 50% of experts in each layer, except for M-SMoE which determines the number of clusters per layer based on global expert usage frequency. When evaluating models with $≤$ 50B parameters on coding and MC, we calibrate and compress the models using three different seeds and report the mean. Larger models, creative writing, and mathematical reasoning evaluations are reported using a single seed, except where explicitly noted otherwise. All models are evaluated in the one-shot setting, with no additional fine-tuning after compression.

Models and data.

We evaluate the expert compression algorithms on a diverse set of six SMoE architectures covering model sizes from 21B to 1T with varying degrees of sparsity and expert granularity, see Table ˜ 1 for details. For MC question answering and code generation benchmarks, we use c4 (Raffel et al., 2020; Allen Institute for AI, 2024) and evol-codealpaca (Chaudhary, 2023; Luo et al., 2024; Tam, 2023) datasets to assess both general and domain-specific calibration. Models with $≥$ 110B parameters are additionally calibrated with data from xlam-function-calling (Liu et al., 2024c; Salesforce, 2025) and SWE-smith-trajectories (Yang et al., 2025c; b) datasets. For creative writing and math benchmarks we employ WritingPrompts curated (Pritsker, 2024) and tulu-3-sft-personas-math (Lambert et al., 2025; Allen Institute for AI, 2025), respectively. The default chat template is applied to all SFT datasets and </think> tags are explicitly closed to disable reasoning in hybrid reasoning models.

Table 1: Comparison of SMoE models included in our study.

| Model | Routed Experts | Shared Experts | Top-K | Sparsity | Parameters (1e9) | Active Params. (1e9) | First layer dense |

| --- | --- | --- | --- | --- | --- | --- | --- |

| ERNIE-4.5-21B-A3B-PT | 64 | 2 | 6 | 87.88% | 21.9 | 3 | Yes |

| Qwen3-30B-A3B | 128 | 0 | 8 | 93.75% | 30.5 | 3 | No |

| Mixtral-8x7B-Instruct-v0.1 | 8 | 0 | 2 | 75.00% | 46.7 | 13 | No |

| GLM-4.5-Air | 128 | 1 | 8 | 93.02% | 106.9 | 12 | Yes |

| Llama-4-Scout-17B-16E-Instruct | 16 | 1 | 1 | 88.24% | 107.8 | 17 | No |

| Qwen3-Coder-480B-A35B-Instruct-FP8 | 160 | 0 | 8 | 95.00% | 480.2 | 35 | No |

| Kimi-K2-Instruct-W4A16 (RedHatAI, 2025) | 384 | 1 | 8 | 97.66% | 1026.4 | 32 | Yes |

Evaluation.

Compressed SMoE models are evaluated on a suite of benchmarks including MC question answering, code generation, mathematical reasoning, creative writing, and tool calling. See Appendix ˜ C for details. We implement HC-SMoE and M-SMoE as expert merging baselines. Average linkage criterion is used for HC-SMoE. M-SMoE does not include low-rank compression from the complete MC-SMoE method. Pruning baselines consist of frequency-based pruning and EAN. See Appendix ˜ D for formal definitions.

5.1 Results

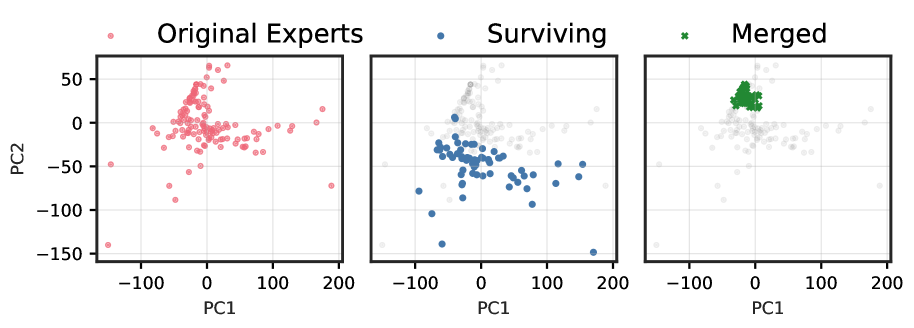

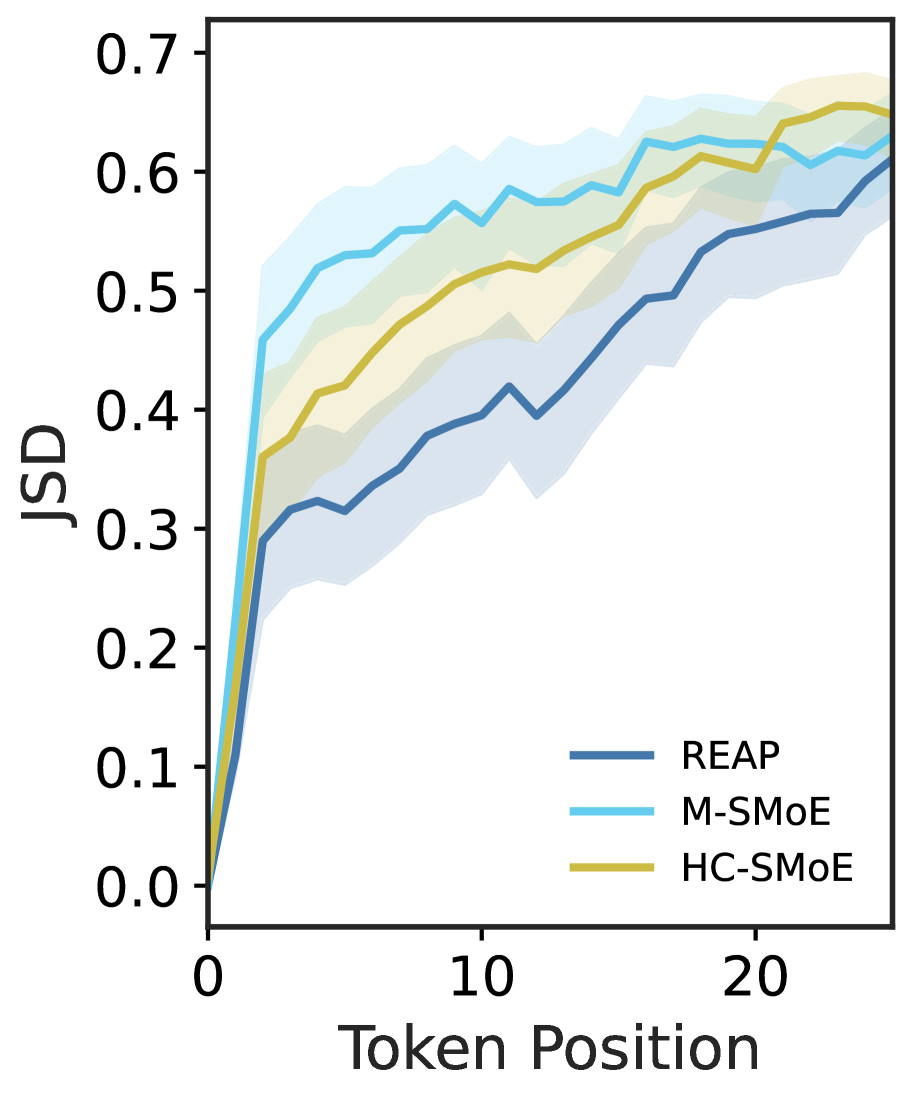

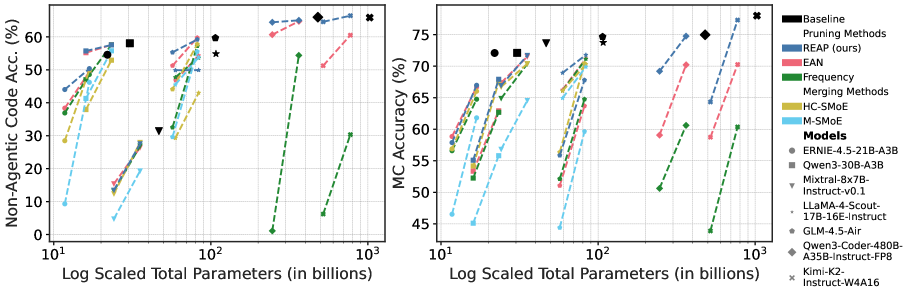

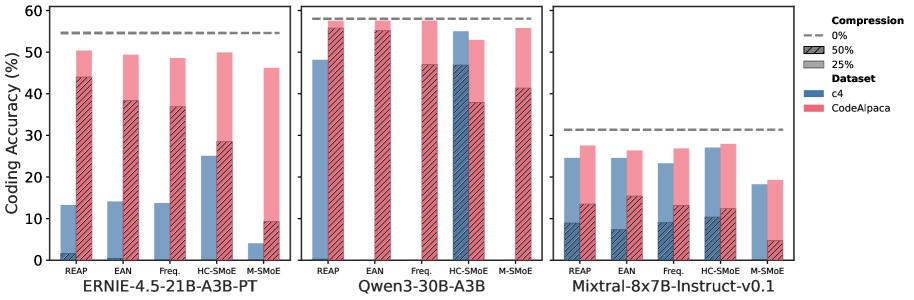

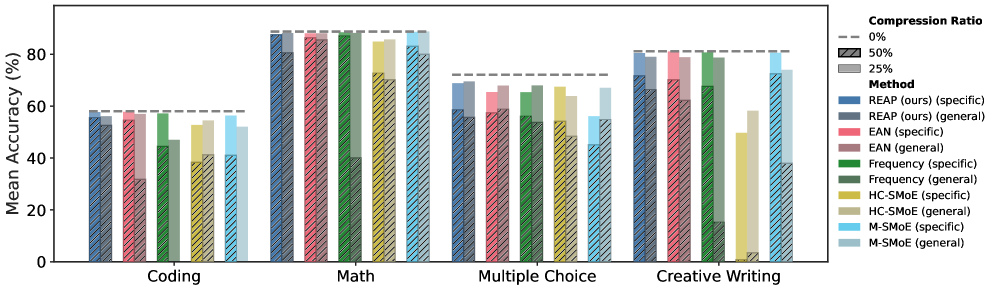

In Tables ˜ 2 and 2 code generation, creative writing, math reasoning, and MC results are presented for Qwen3-30B and GLM-4.5-Air after calibration with the evol-codealpaca dataset. Table ˜ 3 contains results for large-scale SMoE pruned models on code generation, tool calling, and MC benchmarks. See Table ˜ A4 and Table ˜ A5 for detailed MC and code generation results, respectively. Figure ˜ A5 depicts coding generation and MC accuracy verses model parameters. See Appendix ˜ E for additional results.

Zero-shot MC question answering.

Both merging and pruning are capable of producing accurate compressed SMoE models for MC question answering. HC-SMoE and REAP have a mean decrease in accuracy of approximately 4% and 13% for compression ratios of 25% and 50%, respectively, excluding large-scale SMoEs. REAP achieves first or second rank among all methods, models and compression ratios, suggesting strong consistency regardless of specific model architecture. When calibrated on c4, we find slightly improved accuracies for all compression methods with similar rankings as noted above, see Table ˜ A6.

Generative benchmarks.

Compared to MC, generative benchmarks are more representative of real-world use cases of LLMs. In this setting, pruning emerges as the clearly superior compression method on the generative task benchmarks. Excluding large-scale SMoEs, REAP achieves a mean decrease in accuracy of 2.8% and 8.0% at 25% and 50% compression ratios, respectively, on coding. In comparison, both HC-SMoE and M-SMoE produce mean decreases in accuracy >5% at 25% compression and >20% at 50% compression. Notably, REAP maintains significantly higher accuracy at 50% compression than other pruning methods. On creative writing, REAP and EAN are near-lossless at 25% compression with REAP offering improved quality at 50% compression. Merging methods are less consistent across various model architectures and compression ratios. For example, M-SMoE is the best method for Qwen3-30B at 50% compression, but the worst on GLM-4.5-Air. REAP attains the best mathematical reasoning results with a remarkable mean decrease in accuracy of just 1.1% at 50% compression. HC-SMoE and M-SMoE offer high accuracy at 25% compression but are significantly less accurate than pruning at 50% compression.

Table 2: MC and generative benchmark results for Qwen3-30B and GLM-4.5-Air.

| | Coding | Creative Writing | Math | MC | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Model | Compression | Technique | Method | Eval+ | LiveCode | Code Avg | WildBench | GSM8K | MATH-500 | Math Avg | MC Avg |

| Qwen3-30B-A3B | Baseline | 0.859 | 0.302 | 0.581 | 0.811 | 0.903 | 0.872 | 0.887 | 0.721 | | |

| 25% | Merging | M-SMoE | 0.822 | 0.293 | 0.558 | 0.805 | 0.901 | 0.872 | 0.886 | 0.558 | |

| HC-SMoE | 0.800 | 0.258 | 0.529 | 0.497 | 0.864 | 0.834 | 0.849 | 0.674 | | | |

| Pruning | Frequency | 0.849 | 0.302 | 0.576 | 0.807 | 0.905 | 0.864 | 0.885 | 0.600 | | |

| EAN | 0.840 | 0.311 | 0.576 | 0.811 | 0.895 | 0.866 | 0.881 | 0.603 | | | |

| REAP | 0.843 | 0.308 | 0.575 | 0.804 | 0.892 | 0.864 | 0.878 | 0.669 | | | |

| 50% | Merging | M-SMoE | 0.621 | 0.205 | 0.413 | 0.725 | 0.824 | 0.838 | 0.831 | 0.451 | |

| HC-SMoE | 0.574 | 0.185 | 0.379 | 0.008 | 0.760 | 0.696 | 0.728 | 0.542 | | | |

| Pruning | Frequency | 0.704 | 0.236 | 0.470 | 0.677 | 0.882 | 0.860 | 0.871 | 0.483 | | |

| EAN | 0.798 | 0.306 | 0.552 | 0.702 | 0.886 | 0.842 | 0.864 | 0.493 | | | |

| REAP | 0.821 | 0.293 | 0.557 | 0.718 | 0.878 | 0.872 | 0.875 | 0.518 | | | |

| GLM-4.5-Air | Baseline | 0.820 | 0.374 | 0.597 | 0.839 | 0.846 | 0.918 | 0.882 | 0.747 | | |

| 25% | Merging | M-SMoE | 0.781 | 0.330 | 0.555 | 0.781 | 0.848 | 0.880 | 0.864 | 0.596 | |

| HC-SMoE | 0.793 | 0.363 | 0.578 | 0.788 | 0.842 | 0.908 | 0.875 | 0.704 | | | |

| Pruning | Frequency | 0.805 | 0.341 | 0.573 | 0.793 | 0.832 | 0.908 | 0.870 | 0.648 | | |

| EAN | 0.821 | 0.374 | 0.597 | 0.824 | 0.839 | 0.908 | 0.874 | 0.637 | | | |

| REAP | 0.794 | 0.390 | 0.592 | 0.831 | 0.835 | 0.926 | 0.880 | 0.678 | | | |

| 50% | Merging | M-SMoE | 0.493 | 0.099 | 0.296 | 0.391 | 0.465 | 0.466 | 0.465 | 0.444 | |

| HC-SMoE | 0.662 | 0.220 | 0.441 | 0.593 | 0.667 | 0.732 | 0.700 | 0.564 | | | |

| Pruning | Frequency | 0.546 | 0.104 | 0.325 | 0.604 | 0.615 | 0.612 | 0.613 | 0.521 | | |

| EAN | 0.773 | 0.253 | 0.513 | 0.702 | 0.781 | 0.838 | 0.809 | 0.511 | | | |

| REAP | 0.755 | 0.352 | 0.553 | 0.754 | 0.820 | 0.926 | 0.873 | 0.559 | | | |

Table 3: Large-scale pruned SMoEs on agentic, non-agentic coding, tool-use tasks, and MC benchmarks.

| | Non-Agentic Coding | Agentic Coding | Tool-Use (BFCLv3) | MC | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Model | Compression | Method | Eval+ | LiveCode | Code Avg | SWE-Bench-Verified | Non-Live | Live | Multi-Turn | Overall | MC Avg |

| Qwen3-Coder- 480B-A35B- Instruct-FP8 | Baseline | 0.889 | 0.431 | 0.660 | 0.540 | 0.866 | 0.825 | 0.380 | 0.690 | 0.750 | |

| 25% | Frequency | 0.792 | 0.296 | 0.544 | 0.378 | 0.844 | 0.763 | 0.355 | 0.654 | 0.606 | |

| EAN | 0.876 | 0.419 | 0.647 | 0.534 | 0.831 | 0.813 | 0.384 | 0.676 | 0.702 | | |

| REAP | 0.884 | 0.416 | 0.650 | 0.540 | 0.878 | 0.823 | 0.392 | 0.698 | 0.748 | | |

| 50% | Frequency | 0.011 | 0.012 | 0.011 | 0.000 | 0.200 | 0.392 | 0.000 | 0.197 | 0.506 | |

| EAN | 0.831 | 0.382 | 0.607 | 0.536 | 0.822 | 0.774 | 0.383 | 0.659 | 0.591 | | |

| REAP | 0.873 | 0.415 | 0.644 | 0.522 | 0.849 | 0.801 | 0.371 | 0.674 | 0.692 | | |

| Kimi-K2- Instruct- W4A16 | Baseline | 0.883 | 0.434 | 0.659 | 0.554 | 0.840 | 0.802 | 0.355 | 0.666 | 0.780 | |

| 25% | Frequency | 0.524 | 0.082 | 0.303 | 0.000 | 0.644 | 0.603 | 0.045 | 0.431 | 0.604 | |

| EAN | 0.831 | 0.379 | 0.605 | 0.562 | 0.819 | 0.802 | 0.335 | 0.652 | 0.703 | | |

| REAP | 0.889 | 0.440 | 0.664 | 0.580 | 0.842 | 0.801 | 0.263 | 0.635 | 0.773 | | |

| 50% | Frequency | 0.124 | 0.000 | 0.062 | 0.000 | 0.255 | 0.397 | 0.003 | 0.218 | 0.439 | |

| EAN | 0.772 | 0.253 | 0.513 | 0.576 | 0.778 | 0.767 | 0.173 | 0.573 | 0.587 | | |

| REAP | 0.863 | 0.429 | 0.646 | 0.576 | 0.785 | 0.743 | 0.164 | 0.564 | 0.643 | | |

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Data Extraction: Model Compression Performance Comparison

This document contains a detailed extraction of data from a grouped bar chart comparing different model compression methods across two large language models (LLMs) and four task categories.

## 1. General Metadata

* **Y-Axis Title:** Mean Accuracy (%)

* **Y-Axis Scale:** 0 to 90 (increments of 10)

* **X-Axis Categories (Tasks):** Coding, Math, Creative Writing, MC (Multiple Choice)

* **Models Evaluated:**

* **GLM – 4.5 – Air** (Left Plot)

* **Qwen3 – 30B – A3B** (Right Plot)

## 2. Legend and Key

The chart uses a combination of colors and textures to represent different methods and compression ratios.

### Compression Ratio (Texture/Line)

* **0%:** Indicated by a horizontal dashed grey line above each task group (Baseline).

* **50%:** Indicated by bars with a **diagonal hatching pattern** (forward slash).

* **25%:** Indicated by **solid color bars**.

### Methods (Color)

* **Pruning methods:**

* **REAP (ours):** Blue

* **EAN:** Pink/Red

* **Frequency:** Green

* **Merging methods:**

* **HC-SMoE:** Gold/Yellow

* **M-SMoE:** Light Blue/Cyan

---

## 3. Data Extraction: GLM – 4.5 – Air (Left Plot)

| Task | Baseline (0%) | Method | 25% Ratio (Solid) | 50% Ratio (Hatched) |

| :--- | :--- | :--- | :--- | :--- |

| **Coding** | ~60% | REAP (ours) | ~60% | ~55% |

| | | EAN | ~59% | ~51% |

| | | Frequency | ~57% | ~32% |

| | | HC-SMoE | ~58% | ~44% |

| | | M-SMoE | ~55% | ~30% |

| **Math** | ~88% | REAP (ours) | ~88% | ~81% |

| | | EAN | ~87% | ~61% |

| | | Frequency | ~87% | ~70% |

| | | HC-SMoE | ~87% | ~47% |

| | | M-SMoE | ~86% | ~39% |

| **Creative Writing** | ~84% | REAP (ours) | ~84% | ~75% |

| | | EAN | ~82% | ~70% |

| | | Frequency | ~79% | ~60% |

| | | HC-SMoE | ~78% | ~59% |

| | | M-SMoE | ~78% | ~39% |

| **MC** | ~75% | REAP (ours) | ~68% | ~56% |

| | | EAN | ~64% | ~51% |

| | | Frequency | ~65% | ~52% |

| | | HC-SMoE | ~70% | ~56% |

| | | M-SMoE | ~59% | ~45% |

---

## 4. Data Extraction: Qwen3 – 30B – A3B (Right Plot)

| Task | Baseline (0%) | Method | 25% Ratio (Solid) | 50% Ratio (Hatched) |

| :--- | :--- | :--- | :--- | :--- |

| **Coding** | ~58% | REAP (ours) | ~58% | ~56% |

| | | EAN | ~58% | ~55% |

| | | Frequency | ~58% | ~47% |

| | | HC-SMoE | ~53% | ~38% |

| | | M-SMoE | ~55% | ~41% |

| **Math** | ~89% | REAP (ours) | ~88% | ~86% |

| | | EAN | ~88% | ~87% |

| | | Frequency | ~88% | ~73% |

| | | HC-SMoE | ~85% | ~1% |

| | | M-SMoE | ~88% | ~72% |

| **Creative Writing** | ~81% | REAP (ours) | ~81% | ~72% |

| | | EAN | ~81% | ~70% |

| | | Frequency | ~81% | ~68% |

| | | HC-SMoE | ~50% | ~0% |

| | | M-SMoE | ~81% | ~72% |

| **MC** | ~72% | REAP (ours) | ~68% | ~55% |

| | | EAN | ~63% | ~53% |

| | | Frequency | ~63% | ~54% |

| | | HC-SMoE | ~67% | ~56% |

| | | M-SMoE | ~56% | ~45% |

---

## 5. Key Observations and Trends

* **Performance Retention:** The "REAP (ours)" method consistently maintains the highest accuracy across almost all tasks and models, especially at the 50% compression ratio (hatched bars), where other methods often show significant degradation.

* **Task Sensitivity:** "Math" and "Creative Writing" generally show higher baseline accuracies (~80-90%) compared to "Coding" and "MC" (~60-75%).

* **Method Failure:** In the Qwen3 model, the "HC-SMoE" method shows a near-total failure (accuracy drops to near 0%) in the "Math" and "Creative Writing" tasks at the 50% compression ratio.

* **Compression Impact:** Performance at 25% compression (solid bars) is generally very close to the 0% baseline for most methods, while 50% compression (hatched bars) reveals the robustness of the "REAP" method compared to "Frequency" or "Merging" methods.

</details>

Figure 2: GLM-4.5-Air and Qwen3-30B accuracy vs. task type. REAP offers significant improvements compared to other methods at 50% compression. Note the significant performance drop for merging methods on generative tasks (Coding, Math, Creative Writing) compared to their relative strength on MC.

Expert pruning at scale.

To asses whether pruning remains viable at scale, we prune Qwen3-Coder-480B and Kimi-K2-Instruct. On MC questions, REAP outperforms other pruning methods. On non-agentic coding tasks, REAP achieves near-lossless accuracy with a 0.20% and 1.4% mean decrease in accuracy compared to baseline at 25% and 50%, respectively, outperforming EAN and frequency-based pruning, particularly at 50% compression. On the challenging SWE-Bench task, both REAP and EAN maintain high accuracy at 25% and 50% compression, with some scores slightly exceeding the baseline. On tool use, EAN and REAP are comparable, with REAP slightly outperforming at 50% compression with a mean decrease in accuracy of 5.9% versus 6.2% for EAN. Frequency-based pruning suffers from a sharp degradation in quality at 50% compression, highlighting the importance of pruning saliency criteria which consider expert activations. Scaling the pruning methods is relatively trivial. Unlike HC-SMoE, calibration for pruning does not require recording activations from every expert for every token, facilitating efficient calibration. Further, pruning can be easily applied to quantized models without any additional steps required to reconcile block scales or re-quantize following compression.

<details>

<summary>x7.png Details</summary>

### Visual Description

# Technical Data Extraction: N-gram Diversity Analysis

## 1. General Overview

This image is a grouped box plot illustrating the distribution of **N-gram diversity** scores across four different models/methods, evaluated at three different **N-gram sizes**.

## 2. Axis Information

* **Y-Axis Title:** N-gram diversity

* **Y-Axis Scale:** Linear, ranging from 0.2 to 1.0 (markers at 0.2, 0.4, 0.6, 0.8, 1.0).

* **X-Axis Title:** N-gram size

* **X-Axis Categories:** 2, 3, and 4.

## 3. Legend and Color Coding

The chart compares four distinct methods, represented by specific colors:

* **Baseline:** Grey

* **REAP:** Dark Blue/Slate

* **M-SMoE:** Light Blue/Cyan

* **HC-SMoE:** Olive Green/Gold

## 4. Data Trends and Observations

The data is grouped by N-gram size (2, 3, and 4). Within each group, the models are presented in the order listed in the legend (Baseline, REAP, M-SMoE, HC-SMoE).

### Group 1: N-gram size = 2

* **Baseline:** Median ~0.83. Tightest distribution among the four.

* **REAP:** Median ~0.82. Slightly lower than Baseline with a few outliers below 0.7.

* **M-SMoE:** Median ~0.78. Larger interquartile range (IQR) than REAP, with outliers extending down to ~0.4.

* **HC-SMoE:** Median ~0.75. Lowest median in this group, with the largest IQR and outliers extending down to ~0.25.

### Group 2: N-gram size = 3

* **Baseline:** Median ~0.93. High diversity with outliers between 0.7 and 0.8.

* **REAP:** Median ~0.92. Very similar to Baseline.

* **M-SMoE:** Median ~0.90. Slightly lower median and wider IQR than REAP.

* **HC-SMoE:** Median ~0.87. Lowest median in the group, significantly wider IQR, and numerous outliers extending down to ~0.3.

### Group 3: N-gram size = 4

* **Baseline:** Median ~0.97. Highest diversity scores overall.

* **REAP:** Median ~0.96. Nearly identical to Baseline.

* **M-SMoE:** Median ~0.94. High diversity but with a noticeable spread of outliers down to ~0.4.

* **HC-SMoE:** Median ~0.92. Lowest median in the group. Shows the highest variance (largest box and whiskers) and significant outliers reaching as low as ~0.25.

## 5. Key Technical Findings

1. **Positive Correlation:** As the **N-gram size** increases (from 2 to 4), the **N-gram diversity** generally increases for all models.

2. **Performance Hierarchy:** Across all N-gram sizes, the **Baseline** and **REAP** models consistently maintain the highest diversity scores with the lowest variance.

3. **Model Stability:** The **HC-SMoE** model (Olive) consistently exhibits the lowest median diversity and the highest variance/instability, as evidenced by the larger box sizes and the high density of low-value outliers.

4. **Outlier Behavior:** All models show a "bottom-heavy" outlier distribution, indicating that while they usually achieve high diversity, there are specific instances where diversity drops significantly, particularly for the SMoE variants.

</details>

(a) N-Gram diversity

<details>

<summary>x8.png Details</summary>

### Visual Description

# Technical Data Extraction: Cross Perplexity Violin Plot

## 1. General Description

This image is a violin plot comparing the distribution of **Cross perplexity** across three different **Generator models**. The y-axis is presented on a logarithmic scale. Each violin includes an internal box plot showing the median, interquartile range (IQR), and whiskers.

---

## 2. Axis Information

* **Y-Axis Label:** Cross perplexity

* **Y-Axis Scale:** Logarithmic (Base 10)

* **Major Markers:** $10^0$ (1), $10^1$ (10)

* **Minor Gridlines:** Represented by dashed horizontal lines between $10^0$ and $10^1$.

* **X-Axis Label:** Generator model

* **X-Axis Categories:**

1. **REAP** (Dark Blue)

2. **M-SMoE** (Light Blue)

3. **HC-SMoE** (Olive/Gold)

---

## 3. Data Distribution Analysis

### Category 1: REAP (Dark Blue)

* **Distribution Shape:** The most compact distribution of the three. The bulk of the density is concentrated between 2 and 4.

* **Median:** Approximately 2.5.

* **Range:** Extends from approximately 1.1 to 7.0.

* **Interquartile Range (IQR):** Roughly between 2.0 and 3.5.

### Category 2: M-SMoE (Light Blue)

* **Distribution Shape:** Wider and taller than REAP, indicating higher variance. The density is relatively uniform across the middle section.

* **Median:** Approximately 3.2.

* **Range:** Extends from approximately 0.6 to 11.0.

* **Interquartile Range (IQR):** Roughly between 2.2 and 4.5.

### Category 3: HC-SMoE (Olive/Gold)

* **Distribution Shape:** Shows the highest overall perplexity values. The density is concentrated higher up the y-axis compared to the other two models.

* **Median:** Approximately 3.8.

* **Range:** Extends from approximately 0.7 to 15.0 (the highest peak in the chart).

* **Interquartile Range (IQR):** Roughly between 2.8 and 5.5.

---

## 4. Key Trends and Observations

* **Performance Comparison:** The **REAP** model demonstrates the lowest median cross perplexity and the tightest distribution, suggesting more consistent and lower (better) perplexity scores.

* **Complexity Trend:** There is a visible upward trend in perplexity from REAP to M-SMoE to HC-SMoE.

* **Outliers/Extremes:** HC-SMoE has the highest maximum perplexity values, reaching well above $10^1$. M-SMoE and HC-SMoE both show longer "tails" toward the lower end (below $10^0$) compared to REAP.

</details>

(b) Cross perplexity

<details>

<summary>x9.png Details</summary>

### Visual Description

# Technical Data Extraction: JSD vs. Token Position Chart

## 1. General Description

This image is a line graph illustrating the relationship between **Token Position** (x-axis) and **JSD** (Jensen-Shannon Divergence, y-axis) for three different models or methods. Each method is represented by a solid colored line accompanied by a semi-transparent shaded area indicating a confidence interval or variance.

## 2. Axis Information

* **Y-Axis Label:** JSD

* **Y-Axis Scale:** 0.0 to 0.7, with major tick marks every 0.1 units.

* **X-Axis Label:** Token Position

* **X-Axis Scale:** 0 to 25, with major tick marks every 10 units (0, 10, 20). The data points appear to be sampled at integer intervals from 0 to 25.

## 3. Legend and Data Series

The legend is located in the bottom-right quadrant of the plot:

| Series Label | Line Color | Shaded Area Color |

| :--- | :--- | :--- |

| **REAP** | Dark Blue | Light Blue-Grey |

| **M-SMoE** | Cyan / Light Blue | Very Light Cyan |

| **HC-SMoE** | Gold / Olive Yellow | Light Yellow-Green |

## 4. Key Trends and Data Points

All three series show a positive correlation, where JSD increases as the Token Position increases, eventually showing signs of plateauing or slowing growth toward the end of the sequence.

### REAP (Dark Blue)

* **Initial State:** Starts at (0, 0).

* **Growth:** Shows the slowest initial growth compared to the other two models.

* **Mid-point:** Reaches a JSD of approximately 0.4 at Token Position 10.

* **Final State:** Ends at a JSD of approximately 0.6 at Token Position 25.

* **Variance:** Maintains a relatively consistent shaded variance band throughout the sequence.

### M-SMoE (Cyan)

* **Initial State:** Starts at (0, 0).

* **Growth:** Exhibits the sharpest initial increase, jumping to ~0.45 by Token Position 2.

* **Mid-point:** Reaches a JSD of approximately 0.55–0.58 at Token Position 10.

* **Final State:** Ends at a JSD of approximately 0.63 at Token Position 25.

* **Trend:** This model maintains the highest JSD values for the majority of the sequence (from position 1 to 20).

### HC-SMoE (Gold)

* **Initial State:** Starts at (0, 0).

* **Growth:** Growth rate is intermediate between REAP and M-SMoE.

* **Mid-point:** Reaches a JSD of approximately 0.5 at Token Position 10.

* **Final State:** Ends at the highest final JSD value of approximately 0.65 at Token Position 25.

* **Trend:** While it starts lower than M-SMoE, it converges with and slightly overtakes M-SMoE around Token Position 22.

## 5. Comparative Analysis

* **Early Sequence (0-5):** M-SMoE has the highest JSD, followed by HC-SMoE, with REAP being the lowest.

* **Late Sequence (20-25):** HC-SMoE and M-SMoE converge at the top of the scale (0.6-0.65), while REAP remains the lowest (0.55-0.6).

* **Uncertainty:** The shaded regions for all three models overlap significantly after Token Position 15, suggesting that the performance differences between the models become less statistically distinct as the sequence length increases.

</details>

(c) Completion logit JSD

<details>

<summary>x10.png Details</summary>

### Visual Description

# Technical Data Extraction: Model Layer Analysis Chart

## 1. General Overview

This image is a line graph plotting two different metrics across various model layers. It compares different expert clustering methods and a baseline model transition.

## 2. Axis Information

* **Y-Axis Label:** SV Align. ↑ / L2 Distance ↓

* **Note:** The upward arrow indicates higher is better for Singular-vector alignment; the downward arrow indicates lower is better for L2 Distance.

* **Scale:** 0.0 to 1.4 (increments of 0.2).

* **X-Axis Label:** Layer

* **Scale:** 0 to 48 (labeled markers at 0, 8, 16, 24, 32, 40).

## 3. Legend Information

The legend is divided into two categories:

### Dist. Type (Line Style)

| Style | Metric |

| :--- | :--- |

| Solid Line (—) | Singular-vector alignment |

| Dashed Line (---) | L2 Distance |

### Expert clusters (Color Coding)

| Color | Method |

| :--- | :--- |

| Grey | Base to IFT |

| Gold/Yellow | HC-SMoE |

| Light Blue | M-SMoE |

| Magenta/Purple | M-SMoE - permuted |

## 4. Data Trends and Observations

### Singular-vector alignment (Solid Lines)

* **Base to IFT (Grey):** Maintains a constant horizontal line at exactly **1.0** across all layers.

* **M-SMoE (Light Blue):** Remains constant at approximately **0.0** across all layers.

* **M-SMoE - permuted (Magenta):** Remains constant at a very low value, slightly above 0.0 (approx. **0.02 - 0.03**).

* **HC-SMoE (Gold):** This line is not visible in the lower section of the graph, suggesting it may overlap with other data or is primarily represented in the L2 distance section.

### L2 Distance (Dashed Lines)

All L2 Distance metrics are clustered at the top of the chart, significantly higher than the alignment metrics.

* **M-SMoE (Light Blue):** Highest L2 distance, fluctuating slightly around **1.42**.

* **HC-SMoE (Gold):** Fluctuates between **1.38 and 1.41**.

* **M-SMoE - permuted (Magenta):** Fluctuates between **1.38 and 1.40**.

* **Base to IFT (Grey):** This dashed line is located at the bottom of the graph, fluctuating slightly around **0.08 - 0.10**, indicating a much lower L2 distance compared to the SMoE variants.

## 5. Summary of Key Findings

* There is a massive disparity between the L2 Distance of SMoE-based clusters (approx. 1.4) and the Base to IFT transition (approx. 0.1).

* Conversely, the Singular-vector alignment for Base to IFT is perfect (1.0), while all SMoE variants show near-zero alignment across all layers.

* The "M-SMoE - permuted" variant shows slightly higher alignment and slightly lower L2 distance than the standard "M-SMoE" in some layers, but they remain very close.

</details>

(d) Expert distance

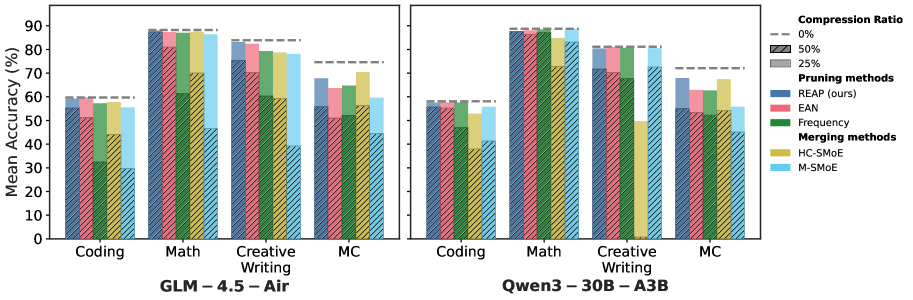

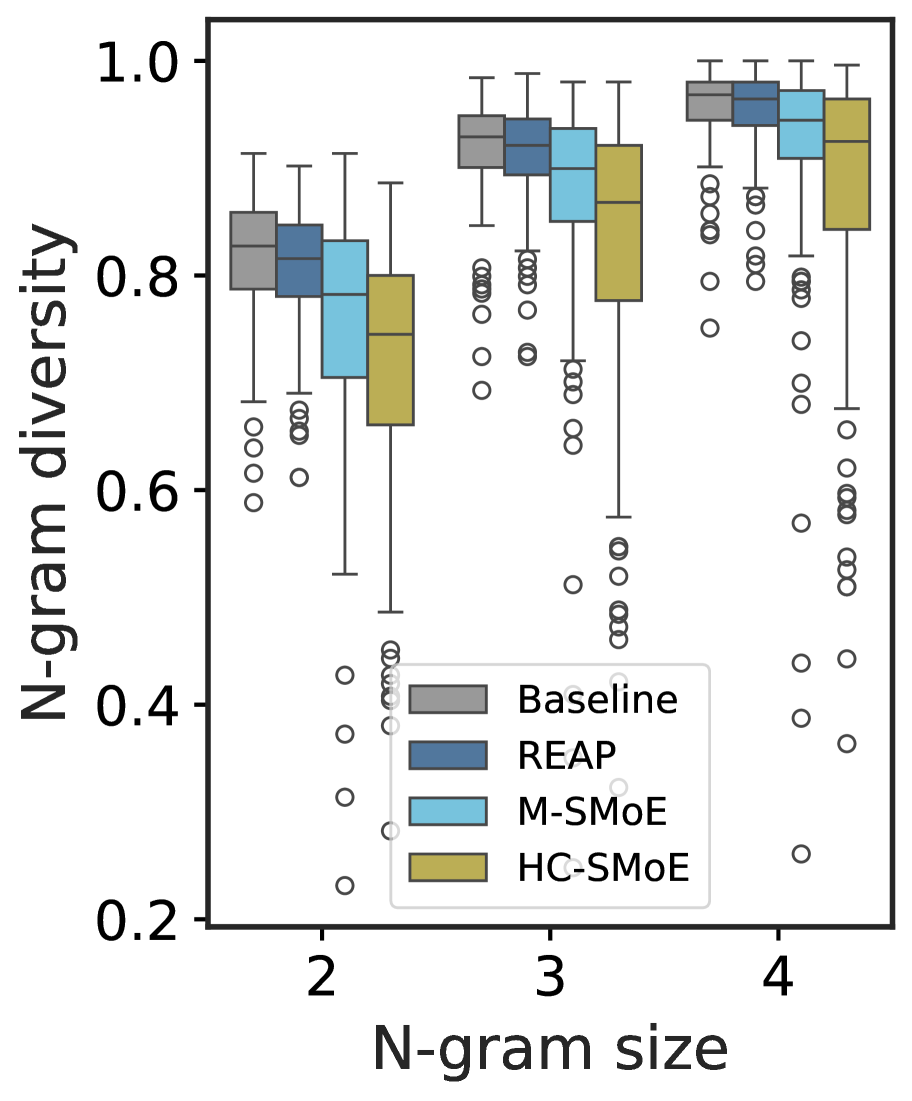

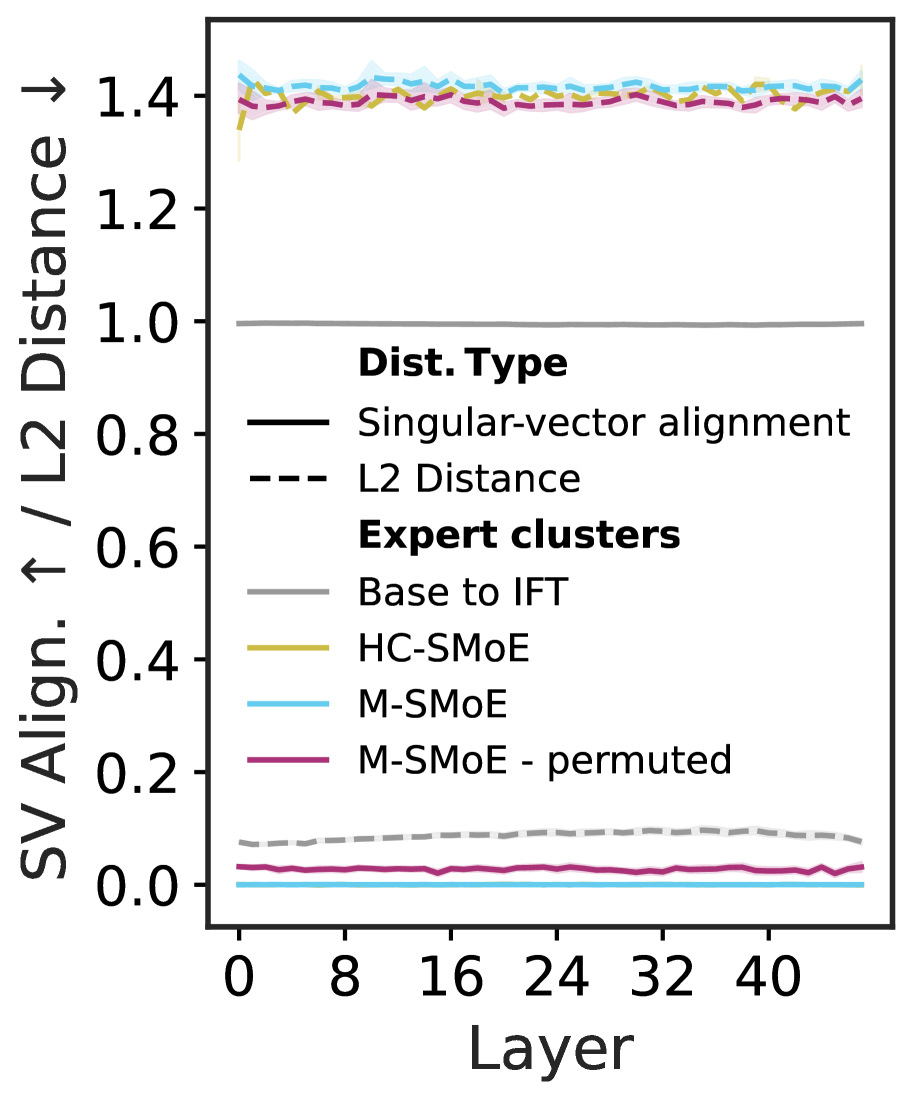

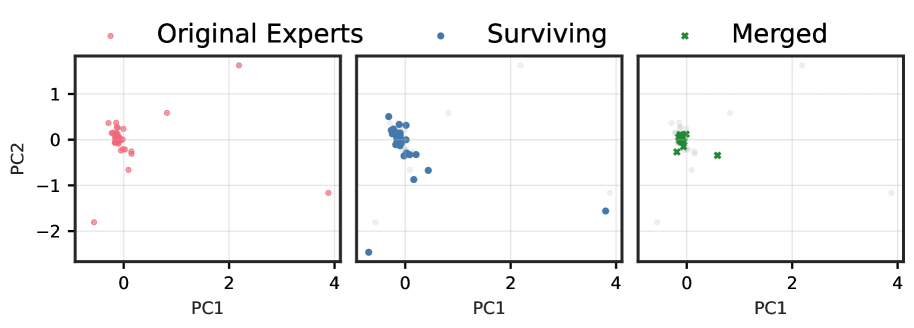

Figure 3: (3(a)) & (3(b)) N-Gram diversity and cross-perplexity of compressed Qwen3-30B-A3B models at 50% compression, respectively. (3(c)) JSD of compressed and baseline model logits vs. completion token position for Qwen3-30B-A3B at 50% compression. Initially, all compressed models share close alignment with the baseline model. However, as the completion token position increases the merged models diverge from the baseline more rapidly than the REAP pruned model. (3(d)) The mean relative L2-distance and singular-vector alignment between Qwen3-30B expert weights at 50% compression. Expert merging is more challenging than model merging due to large distances between experts in weight space and low singular-vector alignment.

Quantifying merged MoE generation quality.

While merged expert SMoEs offer reasonable quality for discriminative tasks such as MC question and answering, they fail to remain competitive on generative tasks. To help explain the performance gap of merged models between discriminative and generative tasks, we perform an analysis of the compressed model outputs and compare with REAP pruned models. We prompt 50% compressed Qwen3-30B models with 100 questions randomly sampled from the evol-codealpaca dataset and record their outputs. In Figure ˜ 3(a), we measure the N-gram diversity and find that the merged models have significantly lower diversity across all N-gram sizes measured. In contrast, the REAP pruned model remains similar to the base model, albeit slightly less diverse. In Figure ˜ 3(b), we measure the perplexity of the text generated by the compressed models with the original baseline model. The text generated by the merged models has both a higher mean and higher variance than the pruned model generations, suggesting that the REAP pruned model outputs are more closely aligned to the original model. The alignment between the baseline and REAP pruned SMoEs is further supported by Figure ˜ 3(c), which plots the JSD of the compressed and baseline logits vs. output token position. The merged model logits diverge from the baseline more rapidly than the pruned model.

The challenges of expert merging.

Model merging has been widely adopted to facilitate LLM fine-tuning. Why does expert merging miss the mark? In addition to the loss of the router’s input-dependent modulation of experts explored in Section ˜ 3, we argue that the non-local nature of expert merging and high cardinality of expert clusters pose significant unresolved challenges.

In Figure ˜ 3(d), we plot the mean relative L2-distance between experts clustered by HC-SMoE or M-SMoE and compare with the distance between expert weights from the pretrained to Instruct Fine-Tuned (IFT) checkpoints. We find that the distance between clustered experts within the same layer greatly exceeds that of experts in the IFT checkpoint after fine-tuning. Ito et al. (2024) found that weight matching permutations improved alignment of parameters’ singular vectors. Following their approach, we decompose expert weights with Singular Value Decomposition (SVD) and plot the singular-vector alignment in Figure ˜ 3(d). Even after applying weight matching permutations, the M-SMoE expert clusters remain far apart both in weight space and singular-vector alignment. The relatively poorly aligned experts highlight the considerable challenge of coherently merging their parameters.

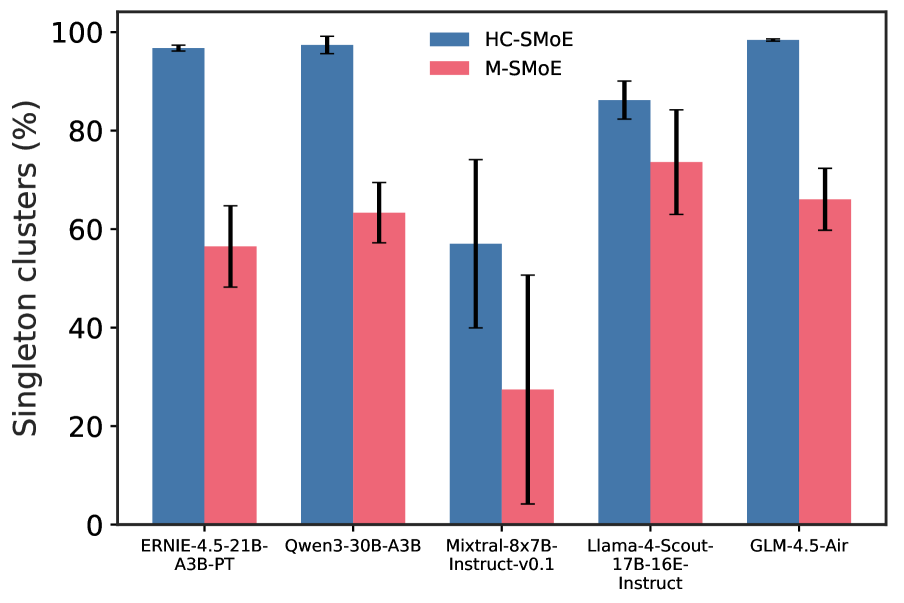

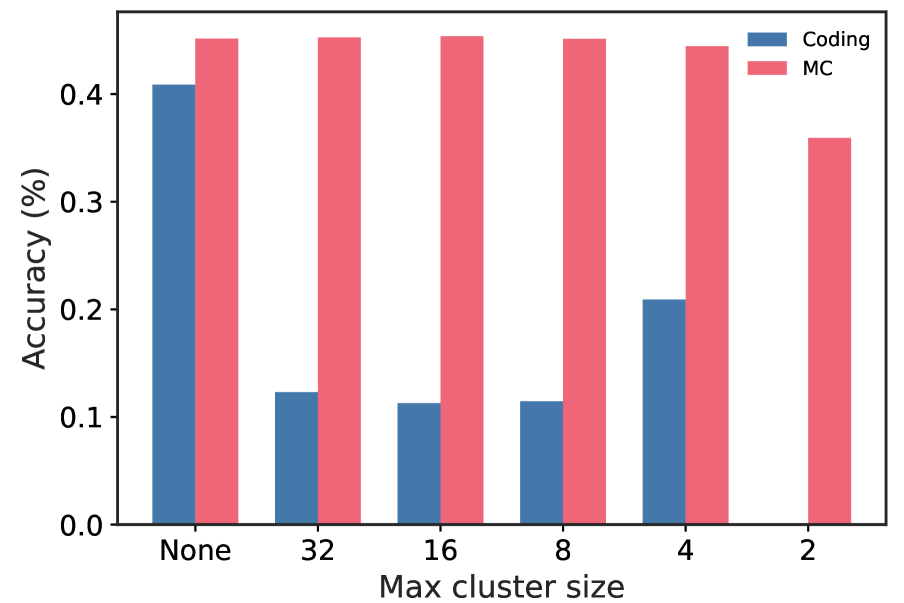

When merging works well, it’s more closely related to pruning than one might expect. In Figure ˜ 6(a), we depict the frequency of singleton clusters — clusters containing a single expert — for both HC-SMoE and M-SMoE. A singleton cluster is directly analogous to an expert that remains after pruning. We find that HC-SMoE in particular has a high prevalence of singleton clusters, leaving important experts unadulterated and compressing the rest into a few mega -clusters containing tens of experts. This is particularly true of the high granularity models which contain more experts per layer. We hypothesize that the cardinality of these mega-clusters poses a challenge for existing merging algorithms and test this intuition in Figure ˜ 6(b). Unfortunately, even modest restrictions of the maximum cluster size to 32 — half the number of experts to compress — results in large decreases in model quality on coding tasks.

The importance of domain-specific calibration.

In Figure ˜ A7, we plot the code generation accuracy of the various compression methods and models when calibrated on either c4 or evol-codealpaca. The difference is stark, c4 calibration results in a collapse in accuracy, with several compressed model instances failing to produce coherent outputs, resulting in 0% accuracy. In Figure ˜ A8, we compare the accuracy of compressed Qwen3-30B models calibrated with either domain-specific data or the combined calibration data across all generative tasks. The domain-specific calibrated models achieve significantly higher accuracy, especially at 50% compression.

6 Discussion

Similar to prior work, we find that expert merging performs reasonably well on MC benchmarks. This may be because MC tasks only require a discriminative function that can be approximated by an average expert. In contrast, merging fails to maintain model quality on generative tasks, particularly at 50% compression. Generative tasks require auto-regressive generation, a capability that is lost when the router’s fine-grained control is removed. Compared to expert pruning, merging is less consistent, exhibiting higher variance across models and compression ratios. The outputs of expert merged models are more repetitive and less closely aligned with the base model compared with pruned models. Taken together, these observations are direct evidence of alterations to the functional manifold of the SMoE layers discussed in Section ˜ 3.3 stemming from the loss of the router’s input-dependent control over the experts and subsequent introduction of novel functions due to tying of the merged expert gates.

Overall, expert pruned models offer consistently higher accuracy than merged models on generative tasks. REAP is a robust pruning criterion that generalizes across a wide array of SMoE architectures, compression ratios, and generative tasks. By taking into consideration both the router gate-values and expert activation norms, REAP prunes the experts which contribute the least to each layers output on a per-token average, regardless of usage frequency. REAP is scalable, achieving near-lossless compression on coding tasks with Qwen3-Coder-480B and Kimi-K2. The successes of REAP highlights the crucial importance of preserving coordination between the router and experts. Compression methods which impair the router’s ability to independently modulate expert outputs or tie gate-values are less likely to succeed.

Finally, this work highlights the importance of comprehensive downstream evaluations and the significant challenges involved with evaluating LLMs. Discriminative metrics such as perplexity and log-likelihood based MC benchmarks are not necessarily good proxies for generative model quality.

7 Conclusion

Our analysis of current SMoE expert merging techniques finds that the router’s loss of independent control over experts results in functional subspace collapse. In contrast, expert pruning produces a coordinate subspace of the original layer which maintains the topology of the functional manifold. Based on our findings that the coordination between the router and experts is fundamental, we introduce REAP, a novel expert pruning method which prunes experts that contribute the least to the layer’s output. Empirically, we demonstrate that REAP retains remarkably high accuracy on an wide array of generative tasks across a diverse set of model architectures. We hope that this work inspires further compression techniques for SMoEs and facilitates the deployment of accurate, domain-specific models in resource constrained settings.

Acknowledgments

We would like to acknowledge the helpful feedback of Mohammed Adnan and Rohan Jain. ML and YI gratefully acknowledge the support of Alberta Innovates (ALLRP-577350-22, ALLRP-222301502), the Natural Sciences and Engineering Research Council of Canada (NSERC) (RGPIN-2022-03120, DGECR-2022-00358), and Defence Research and Development Canada (DGDND-2022-03120). ML and YI are grateful for computational resources made available to us by the Digital Research Alliance of Canada. YI is supported by a Schulich Research Chair.

Ethics Statement

This work research focused on the algorithmic compression of SMoE models and does not involve the use of human subjects, personally identifiable information, or sensitive data. The datasets used for calibration and evaluation (e.g., c4, evol-codealpaca) are publicly available. Our aim is enable the use of large-scale SMoE models in resource constrained settings. However, we acknowledge that compression techniques such as REAP could potentially facilitate deployment of models for malicious purposes. Further, our compression methods are applied to pre-trained models and any biases related to fairness, discrimination, or representation inherent in the original models may be present in their compressed versions. We make no attempt in this work to mitigate these potential biases. The primary contribution of this paper is technical, and we do not foresee any new, direct ethical concerns arising from our proposed methodology beyond those already associated with the deployment of large language models.

Reproducibility Statement

We are committed to ensuring the reproducibility of our research. We have open-sourced our code and released select compressed model checkpoints to facilitate further research on compressed SMoEs. REAP is formally described in Section 4. The baseline methods we compare against, including frequency-based pruning, EAN, M-SMoE, and HC-SMoE, are formally defined in Appendix D. Section 5 provides a detailed description of our experimental setup, including the specific models used, the calibration and evaluation datasets, and the implementation details for all compression experiments. Further evaluation details are provided in Appendix C.

References

- Ainsworth et al. (2023) Samuel Ainsworth, Jonathan Hayase, and Siddhartha Srinivasa. Git re-basin: Merging models modulo permutation symmetries. In Proceedings of the Eleventh International Conference on Learning Representations, 2023. URL https://openreview.net/forum?id=CQsmMYmlP5T.

- Allen Institute for AI (2024) Allen Institute for AI. allenai/c4 · Datasets at Hugging Face, August 2024. URL https://huggingface.co/datasets/allenai/c4.

- Allen Institute for AI (2025) Allen Institute for AI. allenai/tulu-3-sft-personas-math · Datasets at Hugging Face, 2025. URL https://huggingface.co/datasets/allenai/tulu-3-sft-personas-math.

- Ansel et al. (2024) Jason Ansel, Edward Yang, Horace He, Natalia Gimelshein, Animesh Jain, Michael Voznesensky, Bin Bao, Peter Bell, David Berard, Evgeni Burovski, Geeta Chauhan, Anjali Chourdia, Will Constable, Alban Desmaison, Zachary DeVito, Elias Ellison, Will Feng, Jiong Gong, Michael Gschwind, Brian Hirsh, Sherlock Huang, Kshiteej Kalambarkar, Laurent Kirsch, Michael Lazos, Mario Lezcano, Yanbo Liang, Jason Liang, Yinghai Lu, CK Luk, Bert Maher, Yunjie Pan, Christian Puhrsch, Matthias Reso, Mark Saroufim, Marcos Yukio Siraichi, Helen Suk, Michael Suo, Phil Tillet, Eikan Wang, Xiaodong Wang, William Wen, Shunting Zhang, Xu Zhao, Keren Zhou, Richard Zou, Ajit Mathews, Gregory Chanan, Peng Wu, and Soumith Chintala. PyTorch 2: Faster Machine Learning Through Dynamic Python Bytecode Transformation and Graph Compilation. In 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, Volume 2 (ASPLOS ’24). ACM, April 2024. doi: 10.1145/3620665.3640366. URL https://docs.pytorch.org/assets/pytorch2-2.pdf.

- Artificial Analysis (2025) Artificial Analysis. Artificial analysis intelligence benchmarking methodology. https://artificialanalysis.ai/methodology/intelligence-benchmarking, September 2025. Version 3.0.

- Baidu (2025) Baidu. Ernie 4.5 technical report, 2025. URL https://yiyan.baidu.com/blog/publication/ERNIE_Technical_Report.pdf.

- Balmau et al. (2025) Oana Balmau, Anne-Marie Kermarrec, Rafael Pires, André Loureiro Espírito Santo, Martijn de Vos, and Milos Vujasinovic. Accelerating moe model inference with expert sharding. In Proceedings of the 5th Workshop on Machine Learning and Systems, pp. 192–199, 2025.

- Barres et al. (2025) Victor Barres, Honghua Dong, Soham Ray, Xujie Si, and Karthik Narasimhan. $\tau^{2}$ -bench: Evaluating conversational agents in a dual-control environment. arXiv preprint arXiv:2506.07982, 2025.

- Bentivogli et al. (2009) Luisa Bentivogli, Peter Clark, Ido Dagan, and Danilo Giampiccolo. The fifth pascal recognizing textual entailment challenge. TAC, 7(8):1, 2009.

- Chandak et al. (2025) Nikhil Chandak, Shashwat Goel, Ameya Prabhu, Moritz Hardt, and Jonas Geiping. Answer Matching Outperforms Multiple Choice for Language Model Evaluation, July 2025. URL http://arxiv.org/abs/2507.02856. arXiv:2507.02856 [cs].

- Chaudhary (2023) Sahil Chaudhary. Code alpaca: An instruction-following llama model for code generation. https://github.com/sahil280114/codealpaca, 2023.

- Chen et al. (2025) I-Chun Chen, Hsu-Shen Liu, Wei-Fang Sun, Chen-Hao Chao, Yen-Chang Hsu, and Chun-Yi Lee. Retraining-free merging of sparse moe via hierarchical clustering. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. URL https://openreview.net/forum?id=hslOzRxzXL.

- Chen et al. (2022) Tianyu Chen, Shaohan Huang, Yuan Xie, Binxing Jiao, Daxin Jiang, Haoyi Zhou, Jianxin Li, and Furu Wei. Task-Specific Expert Pruning for Sparse Mixture-of-Experts, June 2022. URL http://arxiv.org/abs/2206.00277. arXiv:2206.00277 [cs].

- Clark et al. (2019) Christopher Clark, Kenton Lee, Ming-Wei Chang, Tom Kwiatkowski, Michael Collins, and Kristina Toutanova. BoolQ: Exploring the surprising difficulty of natural yes/no questions. In Jill Burstein, Christy Doran, and Thamar Solorio (eds.), Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pp. 2924–2936, Minneapolis, Minnesota, June 2019. Association for Computational Linguistics. doi: 10.18653/v1/N19-1300. URL https://aclanthology.org/N19-1300/.

- Clark et al. (2018) Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge, 2018. URL https://arxiv.org/abs/1803.05457. arXiv:1803.05457 [cs].

- Cobbe et al. (2021) Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems, 2021. URL https://arxiv.org/abs/2110.14168. arXiv:2110.14168 [cs].

- Dai et al. (2024) Damai Dai, Chengqi Deng, Chenggang Zhao, R. X. Xu, Huazuo Gao, Deli Chen, Jiashi Li, Wangding Zeng, Xingkai Yu, Y. Wu, Zhenda Xie, Y. K. Li, Panpan Huang, Fuli Luo, Chong Ruan, Zhifang Sui, and Wenfeng Liang. DeepSeekMoE: Towards Ultimate Expert Specialization in Mixture-of-Experts Language Models, January 2024. URL http://arxiv.org/abs/2401.06066. arXiv:2401.06066 [cs] version: 1.

- DeepSeek-AI et al. (2024) DeepSeek-AI, Aixin Liu, Bei Feng, Bing Xue, Bingxuan Wang, Bochao Wu, Chengda Lu, Chenggang Zhao, Chengqi Deng, Chenyu Zhang, Chong Ruan, Damai Dai, Daya Guo, Dejian Yang, Deli Chen, Dongjie Ji, Erhang Li, Fangyun Lin, Fucong Dai, Fuli Luo, Guangbo Hao, Guanting Chen, Guowei Li, H. Zhang, Han Bao, Hanwei Xu, Haocheng Wang, Haowei Zhang, Honghui Ding, Huajian Xin, Huazuo Gao, Hui Li, Hui Qu, J. L. Cai, Jian Liang, Jianzhong Guo, Jiaqi Ni, Jiashi Li, Jiawei Wang, Jin Chen, Jingchang Chen, Jingyang Yuan, Junjie Qiu, Junlong Li, Junxiao Song, Kai Dong, Kai Hu, Kaige Gao, Kang Guan, Kexin Huang, Kuai Yu, Lean Wang, Lecong Zhang, Lei Xu, Leyi Xia, Liang Zhao, Litong Wang, Liyue Zhang, Meng Li, Miaojun Wang, Mingchuan Zhang, Minghua Zhang, Minghui Tang, Mingming Li, Ning Tian, Panpan Huang, Peiyi Wang, Peng Zhang, Qiancheng Wang, Qihao Zhu, Qinyu Chen, Qiushi Du, R. J. Chen, R. L. Jin, Ruiqi Ge, Ruisong Zhang, Ruizhe Pan, Runji Wang, Runxin Xu, Ruoyu Zhang, Ruyi Chen, S. S. Li, Shanghao Lu, Shangyan Zhou, Shanhuang Chen, Shaoqing Wu, Shengfeng Ye, Shengfeng Ye, Shirong Ma, Shiyu Wang, Shuang Zhou, Shuiping Yu, Shunfeng Zhou, Shuting Pan, T. Wang, Tao Yun, Tian Pei, Tianyu Sun, W. L. Xiao, Wangding Zeng, Wanjia Zhao, Wei An, Wen Liu, Wenfeng Liang, Wenjun Gao, Wenqin Yu, Wentao Zhang, X. Q. Li, Xiangyue Jin, Xianzu Wang, Xiao Bi, Xiaodong Liu, Xiaohan Wang, Xiaojin Shen, Xiaokang Chen, Xiaokang Zhang, Xiaosha Chen, Xiaotao Nie, Xiaowen Sun, Xiaoxiang Wang, Xin Cheng, Xin Liu, Xin Xie, Xingchao Liu, Xingkai Yu, Xinnan Song, Xinxia Shan, Xinyi Zhou, Xinyu Yang, Xinyuan Li, Xuecheng Su, Xuheng Lin, Y. K. Li, Y. Q. Wang, Y. X. Wei, Y. X. Zhu, Yang Zhang, Yanhong Xu, Yanhong Xu, Yanping Huang, Yao Li, Yao Zhao, Yaofeng Sun, Yaohui Li, Yaohui Wang, Yi Yu, Yi Zheng, Yichao Zhang, Yifan Shi, Yiliang Xiong, Ying He, Ying Tang, Yishi Piao, Yisong Wang, Yixuan Tan, Yiyang Ma, Yiyuan Liu, Yongqiang Guo, Yu Wu, Yuan Ou, Yuchen Zhu, Yuduan Wang, Yue Gong, Yuheng Zou, Yujia He, Yukun Zha, Yunfan Xiong, Yunxian Ma, Yuting Yan, Yuxiang Luo, Yuxiang You, Yuxuan Liu, Yuyang Zhou, Z. F. Wu, Z. Z. Ren, Zehui Ren, Zhangli Sha, Zhe Fu, Zhean Xu, Zhen Huang, Zhen Zhang, Zhenda Xie, Zhengyan Zhang, Zhewen Hao, Zhibin Gou, Zhicheng Ma, Zhigang Yan, Zhihong Shao, Zhipeng Xu, Zhiyu Wu, Zhongyu Zhang, Zhuoshu Li, Zihui Gu, Zijia Zhu, Zijun Liu, Zilin Li, Ziwei Xie, Ziyang Song, Ziyi Gao, and Zizheng Pan. DeepSeek-V3 Technical Report, December 2024. URL http://arxiv.org/abs/2412.19437. arXiv:2412.19437 [cs] version: 1.

- Fedus et al. (2022) William Fedus, Barret Zoph, and Noam Shazeer. Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity, June 2022. URL http://arxiv.org/abs/2101.03961. arXiv:2101.03961 [cs].

- Gao (2021) Leo Gao. Multiple Choice Normalization in LM Evaluation, October 2021. URL https://blog.eleuther.ai/multiple-choice-normalization/.

- Gao et al. (2023) Leo Gao, Jonathan Tow, Baber Abbasi, Stella Biderman, Sid Black, Anthony DiPofi, Charles Foster, Laurence Golding, Jeffrey Hsu, Alain Le Noac’h, Haonan Li, Kyle McDonell, Niklas Muennighoff, Chris Ociepa, Jason Phang, Laria Reynolds, Hailey Schoelkopf, Aviya Skowron, Lintang Sutawika, Eric Tang, Anish Thite, Ben Wang, Kevin Wang, and Andy Zou. A framework for few-shot language model evaluation, 12 2023. URL https://github.com/EleutherAI/lm-evaluation-harness.

- Garipov et al. (2018) Timur Garipov, Pavel Izmailov, Dmitrii Podoprikhin, Dmitry P Vetrov, and Andrew G Wilson. Loss surfaces, mode connectivity, and fast ensembling of dnns. Advances in neural information processing systems, 31, 2018.

- Gu et al. (2025) Hao Gu, Wei Li, Lujun Li, Zhu Qiyuan, Mark G. Lee, Shengjie Sun, Wei Xue, and Yike Guo. Delta decompression for moe-based LLMs compression. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. URL https://openreview.net/forum?id=ziezViPoN1.

- He et al. (2025a) Shwai He, Daize Dong, Liang Ding, and Ang Li. Towards Efficient Mixture of Experts: A Holistic Study of Compression Techniques, March 2025a. URL http://arxiv.org/abs/2406.02500. arXiv:2406.02500 [cs] version: 3.

- He et al. (2025b) Yifei He, Yang Liu, Chen Liang, and Hany Hassan Awadalla. Efficiently Editing Mixture-of-Experts Models with Compressed Experts, March 2025b. URL http://arxiv.org/abs/2503.00634. arXiv:2503.00634 [cs].

- Hendrycks et al. (2021a) Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding. In Proceedings of the Ninth International Conference on Learning Representations, 2021a. URL https://openreview.net/forum?id=d7KBjmI3GmQ.

- Hendrycks et al. (2021b) Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the MATH dataset. In Proceedings of the Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track (Round 2), 2021b. URL https://openreview.net/forum?id=7Bywt2mQsCe.