# Symbol Grounding in Neuro-Symbolic AI: A Gentle Introduction to Reasoning Shortcuts

**Authors**:

- Emanuele Marconato (University of Trento)

- &Samuele Bortolotti (University of Trento)

- &Emile van Krieken

- Vrije Universiteit Amsterdam

- The Netherlands

- Paolo Morettin (University of Trento)

- &Elena Umili (Sapienza University of Rome)

- &Antonio Vergari (University of Edinburgh)

- United Kingdom

- Efthymia Tsamoura (Huawei Labs)

- Cambridge United Kingdom

- &Andrea Passerini (University of Trento)

- &Stefano Teso (University of Trento)

> ∗Corresponding author.†\daggerEqual contribution.‡\ddaggerWork started before Efthymia Tsamoura joined Huawei Labs.

Abstract

Neuro-symbolic (NeSy) AI aims to develop deep neural networks whose predictions comply with prior knowledge encoding, e.g., safety or structural constraints. As such, it represents one of the most promising avenues for reliable and trustworthy AI. The core idea behind NeSy AI is to combine neural and symbolic steps: neural networks are typically responsible for mapping low-level inputs into high-level symbolic concepts, while symbolic reasoning infers predictions compatible with the extracted concepts and the prior knowledge. Despite their promise, it was recently shown that – whenever the concepts are not supervised directly – NeSy models can be affected by Reasoning Shortcuts (RSs). That is, they can achieve high label accuracy by grounding the concepts incorrectly. RSs can compromise the interpretability of the model’s explanations, performance in out-of-distribution scenarios, and therefore reliability. At the same time, RSs are difficult to detect and prevent unless concept supervision is available, which is typically not the case. However, the literature on RSs is scattered, making it difficult for researchers and practitioners to understand and tackle this challenging problem. This overview addresses this issue by providing a gentle introduction to RSs, discussing their causes and consequences in intuitive terms. It also reviews and elucidates existing theoretical characterizations of this phenomenon. Finally, it details methods for dealing with RSs, including mitigation and awareness strategies, and maps their benefits and limitations. By reformulating advanced material in a digestible form, this overview aims to provide a unifying perspective on RSs to lower the bar to entry for tackling them. Ultimately, we hope this overview contributes to the development of reliable NeSy and trustworthy AI models. Contents

1. 1 Introduction

1. 2 Preliminaries

1. 2.1 From Neuro-Symbolic AI to Neuro-Symbolic Predictors

1. 2.2 The Variety of NeSy Predictor Architectures

1. 2.3 NeSy Predictor Architectures: Differences and Similarities

1. 2.4 NeSy Predictors: Benefits

1. 3 A Gentle Introduction to Reasoning Shortcuts

1. 3.1 Causes, Frequency and Impact

1. 4 Theory of Reasoning Shortcuts

1. 4.1 Setting and Assumptions

1. 4.2 Perspective: Identification

1. 4.2.1 Model class of NeSy predictors

1. 4.2.2 Non-identifiability of the concept extractor

1. 4.2.3 Concept remapping distributions.

1. 4.2.4 Reasoning shortcuts as unintended, optimal concept remapping distributions

1. 4.2.5 Characterization of deterministic reasoning shortcuts.

1. 4.3 Perspective: Statistical Learning

1. 4.3.1 Knowledge complexity

1. 4.3.2 Impossibility result in bounding the reasoning shortcut risk

1. 4.3.3 Setting

1. 4.3.4 PAC learnability with $k$ -unambiguous inference layers

1. 4.4 Relationship between Theories

1. 5 Handling Reasoning Shortcuts

1. 5.1 Root Causes

1. 5.1.1 The knowledge

1. 5.1.2 The training distribution

1. 5.1.3 The optimality condition

1. 5.1.4 The family of learnable maps

1. 5.2 How to Diagnose Reasoning Shortcuts

1. 5.2.1 Task-level diagnosis

1. 5.2.2 Model metrics

1. 5.3 A Tour of Mitigation Strategies

1. 5.3.1 Concept supervision

1. 5.3.2 Multi-task learning

1. 5.3.3 Abductive weak supervision

1. 5.3.4 Entropy maximization

1. 5.3.5 Smoothing

1. 5.3.6 Reconstruction

1. 5.3.7 Contrastive learning

1. 5.3.8 Architectural disentanglement

1. 5.3.9 Which mitigation strategy is best?

1. 5.3.10 Can one simply recover learned concepts?

1. 5.4 Awareness Strategies

1. 5.4.1 RS-awareness as mixtures of deterministic RSs

1. 5.4.2 Building RS-aware NeSy predictors

1. 5.4.3 RS-Awareness via Ensembles

1. 5.4.4 RS-Awareness via Diffusion

1. 5.5 Awareness Helps Mitigation

1. 6 Extensions and Open Problems

1. 6.1 Reasoning Shortcuts in NeSy AI Beyond Predictors

1. 6.2 Reasoning Shortcuts in Concept-based Models

1. 6.3 Foundation and Large Language Models

1. 6.4 Reasoning Shortcuts in Reinforcement Learning

1. 6.5 Imbalanced Learning

1. 6.6 Additional open problems

1. 7 Related Work

1. 8 Conclusion

1 Introduction

In cognitive science and psychology, symbols —or more generally, concepts —are the compositional building blocks of human thought (Mandler, 2004; Spelke and Kinzler, 2007). They enable the abstraction and structuring of perception, supporting high-level cognitive functions (Whitehead, 1927; Johnson-Laird, 1994) such as language, reasoning, and planning. In recent years, there has been growing interest in using concepts in neural networks (Smolensky, 1987; Sun, 1992; Greff et al., 2020), which bring not only improved generalization but also greater interpretability to their decision-making processes. While the emergence of abstract concepts in the human brain is well-documented (Mandler, 2004), how—and even whether—such entities arise in neural networks remains fundamentally unclear (Jo and Bengio, 2017; Lake and Baroni, 2018; Park et al., 2024).

Neuro-symbolic (NeSy) models aim to bridge this gap by integrating neural learning with symbolic reasoning (Harnad, 1990; McMillan et al., 1991; Sun, 1992), thereby enabling explicit manipulation of concepts within the inference process. Some approaches embed prior symbolic structure—such as logical rules (De Raedt et al., 2021) —while others attempt to learn such structure through specialized, trainable components (Lake and Baroni, 2018; Ellis et al., 2021). A key promise of this integration is reliability and trustworthiness: by making all decisions traceable to interpretable, well-defined concepts, decisions become more transparent (Kambhampati et al., 2022). Moreover, thanks to the modularity of symbolic components, learned concepts can be reused in novel scenarios without significant performance degradation. Yet, the effectiveness of this integration hinges on solving a core challenge in NeSy AI: the problem of symbol grounding (Harnad, 1990) —that is, how to connect low-level perceptual data with high-level, abstract concepts.

**Example 1.1**

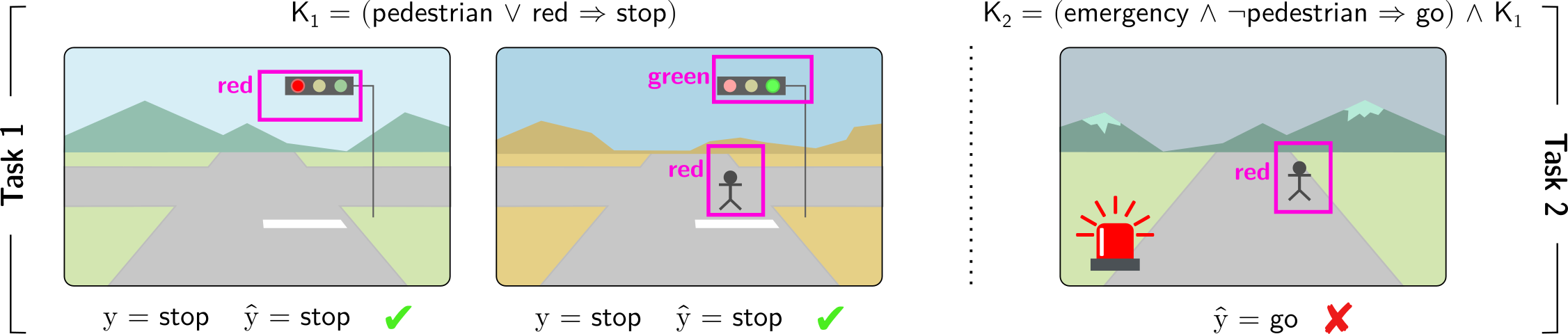

*Consider a model trained on autonomous driving scenarios, where the car must decide whether to stop or go based on the visual input, as per Fig. 1. The model leverages prior knowledge $\mathsf{K}_{1}$ which imposes that whenever pedestrian or red_light are detected, the car must stop. Attaining accurate action predictions requires assigning valid binary values to the concepts pedestrian and red_light (among others) from the visual input. Therefore, concepts are grounded by the model through learning to give correct driving predictions. Because pedestrian and red_light are treated symmetrically by the knowledge (due to the disjunction) We will precisely define the symmetries of the knowledge in later sections. the self-driving car may confuse the two while still achieving correct predictions (e.g., in Fig. 1 (Left), red_light fires when either “pedestrians” or “red lights” are detected). This example shows that model concepts can be grounded differently from their intended meaning: training the autonomous car to give correct predictions does not guarantee that concepts activate when they should; e.g., the autonomous car’s red_light may not solely contain information about the presence of “red lights” in the raw sensory data.*

Footnote 1 illustrates the central challenge of symbol grounding in Neuro-Symbolic AI: ensuring that abstract predicted concepts inside the model maintain a consistent and semantically correct link to the real-world entities they are meant to represent. Unfortunately, in many learning contexts NeSy models— even when they perform nearly optimally on a task—may fail to assign the right meaning to learned concepts, leading to concepts with unintended semantics (Marconato et al., 2023a, b). This issue originates from reasoning shortcuts (RSs): unwanted concept assignments that allow models to reach correct label predictions. These have been shown to affect many NeSy models (Marconato et al., 2023b; Yang et al., 2024). While the decision-making appears correct on the surface, the underlying concepts are flawed, undermining the reliability and trustworthiness of the NeSy model. This directly impacts the model’s interpretability, as well as generalization when concepts are reused in out-of-distribution scenarios and continual learning. For example, in the context of autonomous driving of Footnote 1 and of Fig. 1, the correct “ stop ” prediction can be achieved by mistaking “ pedestrians ” with “ red lights ”, as both concepts induce the same decision. While this misalignment does not induce a drop in prediction on data in-distribution, it becomes critical in situations where such distinctions matter. In fact, poorly grounded concepts do not transfer to out-of-distribution scenarios, as displayed in Fig. 1 (Right), where the wrong activation of model concepts leads to a faulty prediction.

RSs were observed in early NeSy work (Manhaeve et al., 2018; Chang et al., 2020; Topan et al., 2021), but only recently received a formal treatment, revealing that RSs are hard to tackle (Marconato et al., 2023b; Umili et al., 2024a; Yang et al., 2024; DeLong et al., 2024), despite arising naturally from certain symmetries in the symbolic component of NeSy systems. In turn, theoretical studies (Marconato et al., 2023b; Wang et al., 2023; Yang et al., 2024; Bortolotti et al., 2025) have begun to identify under which conditions RSs can be provably avoided. This often requires pairing standard NeSy training methods with mitigation strategies that encourage better concept grounding. However, designing effective mitigation strategies that do not require a significant annotation cost remains an open problem. In this paper, we aim to consolidate the scattered literature on RSs in NeSy AI and offer a comprehensive overview of different approaches to mitigating them.

Contributions

This article provides a gentle introduction to reasoning shortcuts, unifies the existing literature on the topic, and connects it to the well-known symbol grounding problem. We provide a general perspective on RSs, highlighting that they cannot be avoided simply by designing different neuro-symbolic architectures. To this end, we compile all relevant theory on RSs through the perspectives of identifiability and statistical learning. We then present known mitigation strategies to prevent RSs using a taxonomy, and explain which strategies can effectively reduce the likelihood of RSs. Finally, we identify open problems and future directions, showing that RSs — and thus correct symbol grounding — extend beyond the NeSy models studied so far.

<details>

<summary>figures/nesy-road-kill.png Details</summary>

### Visual Description

## Logical Reasoning with Visual Scenes

### Overview

The image presents a series of visual scenes depicting traffic scenarios, each associated with a logical rule and a predicted outcome. The scenes are divided into two tasks, each involving a different set of conditions and rules. The image evaluates the correctness of the predicted outcomes based on the given rules and visual inputs.

### Components/Axes

* **Task 1**: Labeled vertically on the left side of the first two images.

* **Task 2**: Labeled vertically on the right side of the third image.

* **Visual Scenes**: Three distinct scenes depicting roads, traffic lights, pedestrians, and emergency vehicles.

* **Logical Rules**:

* K1 = (pedestrian ∨ red → stop)

* K2 = (emergency ∧ ¬pedestrian → go) ∧ K1

* **Object Detection**: Magenta bounding boxes around traffic lights and pedestrians, with labels indicating the color of the traffic light (red or green) or the presence of a pedestrian (red).

* **Predicted Outcomes**: "y = stop", "ŷ = stop", "ŷ = go"

* **Correctness Indicators**: Green checkmark for correct predictions, red "X" for incorrect predictions.

### Detailed Analysis

**Scene 1 (Task 1)**

* **Visual Elements**: A road scene with a traffic light showing red. The background includes mountains and a sky.

* **Object Detection**: A magenta box surrounds the traffic light, labeled "red".

* **Logical Rule**: K1 = (pedestrian ∨ red → stop)

* **Predicted Outcome**: y = stop, ŷ = stop

* **Correctness**: Green checkmark, indicating the prediction is correct.

**Scene 2 (Task 1)**

* **Visual Elements**: A road scene with a traffic light showing green and a pedestrian crossing. The background includes mountains and a sky.

* **Object Detection**: A magenta box surrounds the traffic light, labeled "green". Another magenta box surrounds the pedestrian, labeled "red".

* **Logical Rule**: K1 = (pedestrian ∨ red → stop)

* **Predicted Outcome**: y = stop, ŷ = stop

* **Correctness**: Green checkmark, indicating the prediction is correct.

**Scene 3 (Task 2)**

* **Visual Elements**: A road scene with a pedestrian crossing and an emergency vehicle with flashing red lights. The background includes mountains and a sky.

* **Object Detection**: A magenta box surrounds the pedestrian, labeled "red". The emergency vehicle is also visible.

* **Logical Rule**: K2 = (emergency ∧ ¬pedestrian → go) ∧ K1

* **Predicted Outcome**: ŷ = go

* **Correctness**: Red "X", indicating the prediction is incorrect.

### Key Observations

* Task 1 focuses on the basic rule of stopping at a red light or when a pedestrian is present.

* Task 2 introduces an additional rule involving emergency vehicles and the absence of pedestrians.

* The third scene highlights a potential conflict between the two rules, where the presence of an emergency vehicle might suggest "go," but the presence of a pedestrian should override this and require a "stop."

### Interpretation

The image demonstrates a system for evaluating logical reasoning in visual scenes. The system uses object detection to identify relevant elements (traffic lights, pedestrians, emergency vehicles), applies logical rules to these elements, and predicts an outcome (stop or go). The correctness of the prediction is then assessed.

The incorrect prediction in the third scene suggests a limitation in the system's ability to resolve conflicts between different rules or to prioritize rules based on context. Specifically, the system incorrectly predicts "go" when an emergency vehicle is present, even though a pedestrian is also present, which should trigger the "stop" rule. This highlights the need for more sophisticated reasoning mechanisms that can handle complex scenarios and prioritize rules appropriately.

</details>

Figure 1: Reasoning shortcuts are failures of correct symbol grounding. Consider an autonomous driving car that must drive in compliance with traffic rules. (Left) The car learns to make correct predictions by leveraging background knowledge $\mathsf{K}_{1}$ in Task $1$ , i.e., “if there is a pedestrian or a red light the car has to stop”. However, in the process, it may incorrectly associate the concept of red_light with the presence of either pedestrians or red lights in the dashcam, resulting in what is known as a reasoning shortcut. (Right) Consequently, the learned concepts can lead to incorrect (and potentially catastrophic) predictions when the background knowledge changes. For example, $\mathsf{K}_{2}$ in Task $2$ introduces an exception in the presence of an emergency situation, i.e., “if there is an emergency and no pedestrians are on the street, the car may proceed”.

Outline

The remainder of the article is organized as follows. Section 2 introduces the preliminaries necessary for understanding the theoretical material. Section 3 presents the problem of reasoning shortcuts at a high level, providing examples and discussing their causes and impacts. Section 4 delves into the details by introducing the mathematical tools required to analyze reasoning shortcuts, exploring the issue from two perspectives: identification and statistical learning. Section 5 adopts a more applied perspective by examining the root causes of RSs, together with practical strategies for diagnosing their presence and for mitigating or addressing their effects, and discusses the benefits of each strategy. Section 6 explores various extensions of the reasoning shortcut problem across different domains and discusses open problems and future directions. Finally, Section 7 reviews relevant related research, and Section 8 provides concluding remarks.

2 Preliminaries

Table 1: Glossary of used symbols.

| $x$ , $y$ , $z$ , $X$ , $Y$ , $Z$ ${\bm{\mathrm{x}}}$ , ${\bm{\mathrm{X}}}$ | Scalar constants Scalar variables Vectors |

| --- | --- |

| $\mathcal{X},\mathcal{Y},\mathcal{C}$ | Sets |

| $f({\bm{\mathrm{x}}})$ , $p({\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}})$ | Concept extractor |

| $\beta({\bm{\mathrm{c}}})$ , $p({\bm{\mathrm{y}}}\mid{\bm{\mathrm{c}}};\mathsf{K})$ | Inference layer |

| $\models$ | Logical entailment |

| $\mathsf{K}$ | Prior knowledge |

| $\theta$ | Network parameters |

| $\mathcal{D}$ | Dataset |

Notation.

Throughout, we denote scalar constants by lowercase letters $x$ , scalar (random) variables by uppercase letters $X$ , vectors of constants ${\bm{\mathrm{x}}}$ and (random) variables ${\bm{\mathrm{X}}}$ in bold typeface, and sets (e.g., $\mathcal{X}$ ) by calligraphic letters. If $\varphi$ is a logical formula over variables ${\bm{\mathrm{X}}}$ , we say that the constants ${\bm{\mathrm{x}}}$ entail the formula $\varphi$ ( ${\bm{\mathrm{x}}}\models\varphi$ ) if and only if replacing the variables ${\bm{\mathrm{X}}}$ in the formula $\varphi$ with ${\bm{\mathrm{x}}}$ makes the formula true. In this case, we say that ${\bm{\mathrm{x}}}$ satisfies or is consistent with the formula; otherwise, it violates or is inconsistent with the formula. See Table 1 for a glossary.

2.1 From Neuro-Symbolic AI to Neuro-Symbolic Predictors

Neuro-symbolic models aim to solve the long-standing problem of integrating learning and reasoning. Over time, many radically different NeSy architectures have emerged (Garcez et al., 2022; De Raedt et al., 2021; Feldstein et al., 2024) that differ not only in what kind of logic they implement reasoning with (e.g., propositional (Hoernle et al., 2022; Ahmed et al., 2022; Buffelli and Tsamoura, 2023) vs. first-order (Lippi and Frasconi, 2009; Diligenti et al., 2012; Manhaeve et al., 2018) and classical (Zhou, 2019) vs. fuzzy (Donadello et al., 2017; van Krieken et al., 2020) vs. probabilistic (Manhaeve et al., 2018; Ahmed et al., 2022; Feldstein et al., 2023)), but also in how they interpret logic reasoning itself (e.g., model vs. proof-based inference (De Raedt et al., 2021)) and in how they implement the computations (e.g., fully neural (Rocktäschel and Riedel, 2016) vs. hybrid neural-symbolic (Manhaeve et al., 2018)). This variety is reflected by the diversity of tasks that NeSy architectures can tackle, which range from hierarchical classification (Giunchiglia and Lukasiewicz, 2020; Hoernle et al., 2022; Ahmed et al., 2022) and knowledge base completion (Rocktäschel and Riedel, 2016), to open-ended reasoning (Manhaeve et al., 2018; Badreddine et al., 2022) and learning knowledge graph embeddings (Maene and Tsamoura, 2025).

Reasoning shortcuts have been studied mainly in the context of NeSy predictors, a class of NeSy architectures specialized for constrained prediction tasks (Giunchiglia et al., 2022; Dash et al., 2022; Marconato et al., 2023a), denoted here as NeSy tasks; a discussion of RS in other NeSy architectures is left to Section 6.1. A NeSy task requires predicting labels that are consistent with known constraints. Each such task is defined by four elements: a description of the input ${\bm{\mathrm{X}}}$ with domain $\mathcal{X}$ , a description of the $k$ concepts ${\bm{\mathrm{C}}}=(C_{1},...,C_{k})$ with domain $\mathcal{C}=\mathcal{C}_{1}×...×\mathcal{C}_{k}$ , a description of $m$ discrete labels ${\bm{\mathrm{Y}}}=(Y_{1},...,Y_{m})$ with domain $\mathcal{Y}=\mathcal{Y}_{1}×...×\mathcal{Y}_{m}$ , and prior knowledge $\mathsf{K}$ . Here, $\mathcal{X}$ is the input domain (e.g., a vector space), $\mathcal{C}$ is a finite set of concept vectors ${\bm{\mathrm{c}}}$ , and so are the label sets $\mathcal{Y}_{i}$ .

The input ${\bm{\mathrm{X}}}$ is usually high-dimensional and sub-symbolic (e.g., an image), while the concepts ${\bm{\mathrm{C}}}$ are high-level and possibly human readable properties of the input (e.g., the objects appearing in the image). The prior knowledge $\mathsf{K}$ encodes the constraints that we wish the model to satisfy— e.g., safety constraints or relevant regulations—as a single propositional logical formula. Although some NeSy predictor architectures support first-order logical formulas, we restrict our description to propositional formulas, for ease of exposition. We postpone a discussion of how RSs transfer to the first-order case to Section 6.1. Both the concepts ${\bm{\mathrm{C}}}$ and the labels ${\bm{\mathrm{Y}}}$ appear as logic variables in $\mathsf{K}$ . We ground these notions using a running example:

**Example 2.1**

*Consider the simplified BDD-OIA autonomous driving task (Xu et al., 2020). Here, the inputs ${\bm{\mathrm{x}}}$ are dashcam images and the (binary) label $y$ indicates whether the vehicle is allowed to proceed. The task involves three binary concepts $C_{\texttt{grn}}$ , $C_{\texttt{red}}$ , $C_{\texttt{ped}}$ encoding the presence of green lights, red lights, and pedestrians in the input image, respectively, and the prior knowledge $\mathsf{K}$ encodes the constraint that if either a pedestrian or a red light is visible in the image, the vehicle must stop: $\mathsf{K}=(C_{\texttt{ped}}\lor C_{\texttt{red}}\Leftrightarrow\lnot Y)$ . In this scenario, a NeSy predictor has to predict vehicle actions that are both accurate and comply with traffic regulations.*

Given a (noiseless) training set $\mathcal{D}=\{({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})\}$ , a NeSy predictor is tasked with learning a mapping from inputs ${\bm{\mathrm{x}}}$ to labels ${\bm{\mathrm{y}}}$ that comply with the knowledge, that is, $({\bm{\mathrm{c}}},{\bm{\mathrm{y}}})\models\mathsf{K}$ . Here, $({\bm{\mathrm{c}}},{\bm{\mathrm{y}}})$ is to be read as the concatenation of ${\bm{\mathrm{c}}}$ and ${\bm{\mathrm{y}}}$ . The key challenge is that, just like in Example 2.1, the prior knowledge $\mathsf{K}$ is not specified at the input level, but rather over the concepts. These, in turn, are complex functions of the input that, in practice, cannot be manually specified. For this reason, NeSy predictors adopt modular architectures that comprise a learnable concept extractor responsible for predicting the concepts ${\bm{\mathrm{C}}}$ from the inputs ${\bm{\mathrm{X}}}$ , and an inference layer responsible for inferring the labels ${\bm{\mathrm{Y}}}$ from ${\bm{\mathrm{C}}}$ compatibly with the prior knowledge $\mathsf{K}$ . The former is typically implemented as a feed-forward neural network, Although other architectures can be used (Diligenti et al., 2012). and the latter using some form of (differentiable) symbolic reasoning. Given an input ${\bm{\mathrm{x}}}$ , a NeSy predictor first applies the concept extractor to obtain a distribution $p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{x}}})$ over concepts, and then applies the inference layer to obtain a distribution $p({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{C}}};\mathsf{K})$ over outputs ${\bm{\mathrm{y}}}$ , given the concepts. Overall, the predictor defines a predictive distribution $p({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{X}}};\mathsf{K})$ (see Section 2.2 for details).

**Example 2.2**

*Consider the BDD-OIA (Xu et al., 2020) dataset illustrated in Example 2.1. Here, the input ${\bm{\mathrm{x}}}∈\mathcal{X}$ is a dashcam image. The concept extractor is a neural network that takes ${\bm{\mathrm{x}}}$ and predicts the probabilities of three binary concepts $(C_{\texttt{grn}},C_{\texttt{red}},C_{\texttt{ped}})$ . For example, given an image ${\bm{\mathrm{x}}}$ , the extractor may output $p(C_{\texttt{red}}=1\mid{\bm{\mathrm{x}}})=0.9,\quad p(C_{\texttt{ped}}=1\mid{\bm{\mathrm{x}}})=0.2,\quad p(C_{\texttt{grn}}=1\mid{\bm{\mathrm{x}}})=0.1$ . These concept predictions are then passed to an inference layer, which combines them with prior knowledge $\mathsf{K}=(C_{\texttt{ped}}\lor C_{\texttt{red}})\Leftrightarrow\texttt{stop}$ to obtain the final decision $y$ . Depending on the chosen implementation, this reasoning step may enforce the rule strictly, approximate it through fuzzy logic, or learn it implicitly during training. In this example, since the concept extractor assigns high probability to $C_{\texttt{red}}=1$ , the system will likely infer $Y=\texttt{stop}$ , indicating that the vehicle should stop.*

In most cases, NeSy predictors can be, and in fact are, trained using label supervision only. This is not surprising if we consider that per-concept annotations can be expensive to collect in practice. However – and this is critical for our discussion of RSs – this also means that the concepts themselves are often not supervised, and that therefore they act as latent variables.

2.2 The Variety of NeSy Predictor Architectures

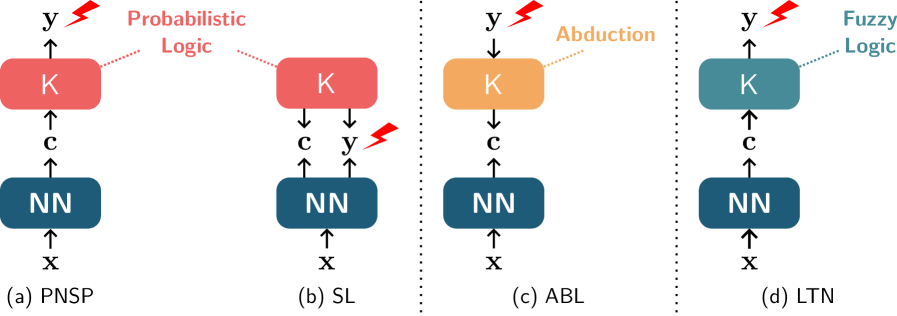

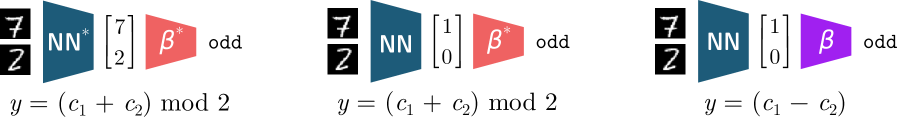

Different NeSy predictor architectures differ in how they implement and use the concept extractor and the reasoning layer. Next, we introduce the four architectures that have been most extensively studied in the RS literature, namely probabilistic NeSy predictors, the Semantic Loss, Logic Tensor Networks, and Abductive Learning. These are illustrated in Fig. 2.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Neural-Symbolic Architectures

### Overview

The image presents a comparative diagram illustrating four different neural-symbolic architectures: PNSP, SL, ABL, and LTN. Each architecture combines a neural network (NN) with a symbolic component (K) and depicts the flow of information between them. The diagrams highlight the type of logic associated with each architecture, such as Probabilistic Logic, Abduction, and Fuzzy Logic.

### Components/Axes

* **NN:** Represents the Neural Network component, depicted as a rounded rectangle. Input is 'x', output is 'c'.

* **K:** Represents the symbolic knowledge component, depicted as a rounded rectangle.

* **x:** Input to the Neural Network.

* **c:** Output from the Neural Network, input to the symbolic component K.

* **y:** Output from the symbolic component K.

* **Arrows:** Indicate the direction of information flow.

* **Dotted Lines:** Connect the symbolic component K to the type of logic it represents.

* **Lightning Bolt:** Indicates a point of potential error or uncertainty.

* **(a) PNSP:** Probabilistic Neural-Symbolic Program.

* **(b) SL:** Semantic Loss.

* **(c) ABL:** Abductive Learning.

* **(d) LTN:** Logic Tensor Networks.

### Detailed Analysis

**Diagram (a) PNSP:**

* **NN:** Located at the bottom, receives input 'x' and outputs 'c'.

* **K:** Located above the NN, receives input 'c' and outputs 'y'. K is colored red.

* **Logic:** "Probabilistic Logic" is associated with K via a dotted line. The text "Probabilistic Logic" is colored red.

* **Flow:** Information flows from NN to K.

* **Error:** A red lightning bolt is present near the output 'y' of K, indicating a potential error.

**Diagram (b) SL:**

* **NN:** Located at the bottom, receives input 'x' and outputs 'c' and 'y'.

* **K:** Located above the NN, receives input 'c' and 'y'. K is colored red.

* **Flow:** Information flows from NN to K.

* **Error:** A red lightning bolt is present near the output 'y' of the NN, indicating a potential error.

**Diagram (c) ABL:**

* **NN:** Located at the bottom, receives input 'x' and outputs 'c'.

* **K:** Located above the NN, receives input 'c' and outputs 'y'. K is colored orange.

* **Logic:** "Abduction" is associated with K via a dotted line. The text "Abduction" is colored orange.

* **Flow:** Information flows from NN to K.

**Diagram (d) LTN:**

* **NN:** Located at the bottom, receives input 'x' and outputs 'c'.

* **K:** Located above the NN, receives input 'c' and outputs 'y'. K is colored teal.

* **Logic:** "Fuzzy Logic" is associated with K via a dotted line. The text "Fuzzy Logic" is colored teal.

* **Flow:** Information flows from NN to K.

### Key Observations

* All four architectures share a common structure: an NN feeding into a symbolic component K.

* The architectures differ in the type of logic associated with K and the flow of information between NN and K.

* PNSP and SL diagrams include a lightning bolt symbol, suggesting potential errors or uncertainties in these architectures.

* The color of the K component and the associated logic text are consistent within each diagram.

### Interpretation

The diagram illustrates different approaches to integrating neural networks with symbolic reasoning. Each architecture leverages a specific type of logic (Probabilistic, Abductive, Fuzzy) to enhance the capabilities of the neural network. The presence of error indicators in PNSP and SL suggests potential challenges or limitations in these specific approaches. The diagram provides a high-level overview of the design and information flow in these neural-symbolic systems, highlighting the diversity of approaches in this field.

</details>

Figure 2: Schematic illustration of NeSy predictors. All of them employ a neural network for extracting concepts and prior knowledge $\mathsf{K}$ . PNSPs (a) map inputs ${\bm{\mathrm{x}}}$ to (a distribution over) concepts ${\bm{\mathrm{c}}}$ and then uses probabilistic logic to infer labels ${\bm{\mathrm{y}}}$ consistent with $\mathsf{K}$ . The SL (b) maps inputs to both concepts ${\bm{\mathrm{c}}}$ and labels ${\bm{\mathrm{y}}}$ and uses $\mathsf{K}$ to define a loss term penalizing inconsistent predictions. During training, ABL (c) abduces concepts from the ground-truth label ${\bm{\mathrm{y}}}$ using $\mathsf{K}$ and uses them as pseudo-labels to train the concept extractor. LTN (d) works similarly to PNSPs but uses fuzzy logic to make $\mathsf{K}$ differentiable. Lightning strikes indicate supervision.

Probabilistic NeSy Predictors

A common method for designing NeSy architectures is the family of Probabilistic NeSy Predictors (PNSPs) (Manhaeve et al., 2018, 2021a; Yang et al., 2020; Huang et al., 2021a). PNSPs can be viewed as combining a neural concept extractor with a probabilistic-logic reasoning layer (De Raedt and Kimmig, 2015), as shown in Fig. 2 (a). Perhaps the best-known member of this family is DeepProbLog (DPL) (Manhaeve et al., 2018, 2021a), a fully-fledged neuro-symbolic programming language based on Prolog and its probabilistic extension, ProbLog (De Raedt et al., 2007; Kimmig et al., 2011). From a simplified point of view, PNSPs define the following predictive distribution for any ${\bm{\mathrm{x}}}$ and ${\bm{\mathrm{y}}}$ :

$$

p({\bm{\mathrm{y}}}\mid{\bm{\mathrm{x}}};\mathsf{K})=\sum_{{\bm{\mathrm{c}}}}p({\bm{\mathrm{y}}},{\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}};\mathsf{K})=\sum_{{\bm{\mathrm{c}}}}p({\bm{\mathrm{y}}}\mid{\bm{\mathrm{c}}};\mathsf{K})\,p({\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}})=\frac{1}{Z_{{\bm{\mathrm{x}}}}}\sum_{{\bm{\mathrm{c}}}}\text{1}\!\left\{({\bm{\mathrm{c}}},{\bm{\mathrm{y}}})\models\mathsf{K}\right\}\,p({\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}}) \tag{1}

$$

Here, $p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{x}}})$ is the predictive distribution over concepts computed by the concept extractor, $\text{1}\!\left\{·\right\}$ is the indicator function, and $Z_{{\bm{\mathrm{x}}}}$ is a normalization constant ensuring that the result is indeed a conditional distribution. In words, the probability of a label ${\bm{\mathrm{y}}}$ is the sum of the probabilities of all concept vectors ${\bm{\mathrm{c}}}$ that are consistent with that label ${\bm{\mathrm{y}}}$ according to the knowledge $\mathsf{K}$ . Inference in PNSPs amounts to computing a most likely label, which usually requires solving the MAP problem $\operatorname*{argmax}_{{\bm{\mathrm{y}}}}\ p({\bm{\mathrm{y}}}\mid{\bm{\mathrm{x}}};\mathsf{K})$ (Koller and Friedman, 2009). Importantly, if a label ${\bm{\mathrm{y}}}$ , together with the predicted concepts, would violate the knowledge $\mathsf{K}$ , then its probability is exactly zero and it will not be predicted. Learning in PNSPs is implemented via maximum likelihood estimation. That is, given a training set $\mathcal{D}=\{({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})\}$ , learning amounts to maximizing the log-likelihood of the data, namely

$$

\mathcal{L}(p,\mathcal{D},\mathsf{K})=\frac{1}{|\mathcal{D}|}\sum_{({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})\in\mathcal{D}}\ \log{p({\bm{\mathrm{y}}}\mid{\bm{\mathrm{x}}};\mathsf{K})}. \tag{2}

$$

by tuning the parameters of the concept extractor. In practice, this is done via gradient descent, as the predictive distribution $p({\bm{\mathrm{y}}}\mid{\bm{\mathrm{x}}};\mathsf{K})$ is differentiable (Manhaeve et al., 2018).

PNSPs require evaluating the predictive probability $p({\bm{\mathrm{y}}}\mid{\bm{\mathrm{x}}};\mathsf{K})$ , which involves summing over a potentially exponential number (in the cardinality of the concept vector $k$ ) of vectors ${\bm{\mathrm{c}}}$ , as per Eq. 1. Hence, both inference and learning are worst-case intractable. DPL works around this issue by exploiting knowledge compilation techniques (Darwiche and Marquis, 2002) that leverage symmetries in the summation to rewrite it into a data structure – a probabilistic circuit (Choi et al., 2020; Vergari et al., 2021; Maene et al., 2025; Derkinderen et al., 2025a) – that is potentially much more compact and supports efficient evaluation. This allows DPL to scale to practical NeSy tasks. Other PNSPs refine and approximate these ideas (specifically, Eq. 1) to further improve scalability (Manhaeve et al., 2021b; Huang et al., 2021a; Winters et al., 2022; De Smet et al., 2023a; van Krieken et al., 2023; Choi et al., 2025; Chen et al., 2025).

Semantic Loss

The Semantic Loss (SL) (Xu et al., 2018) also relies on probabilistic logic and knowledge compilation, but rather than defining an inference layer proper, it uses them to convert the prior knowledge $\mathsf{K}$ into a differentiable penalty term. This can be used to steer any neural network classifier toward allocating low or even zero probability to inconsistent outputs. While the SL is a general purpose strategy, here we discuss how it can be exploited for defining a NeSy predictor (Marconato et al., 2023a). The idea is to apply the SL to a neural network that acts both as concept extractor and as classifier, i.e., that outputs both $p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{X}}})$ and $p({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{X}}})$ . This encourages the concepts and labels predicted by the network to be logically consistent with each other according to $\mathsf{K}$ . Whether this is a single neural network or two specialized networks makes no difference from a modeling perspective. The SL grows proportionally to how much probability mass the concept extractor associates to invalid configurations, or more precisely:

$$

\texttt{SL}(p_{\theta},({\bm{\mathrm{x}}},{\bm{\mathrm{y}}}),\mathsf{K})=-\log\sum_{\bm{\mathrm{c}}}\text{1}\!\left\{({\bm{\mathrm{c}}},{\bm{\mathrm{y}}})\models\mathsf{K}\right\}p_{\theta}({\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}}) \tag{3}

$$

As in DPL, efficient computation of this sum relies on knowledge compilation. During training, the SL is paired with a standard supervised loss $\ell$ (e.g., the cross-entropy loss), resulting in a joint objective of the form:

$$

\mathcal{L}(p_{\theta},\mathcal{D},\mathsf{K})=\frac{1}{|\mathcal{D}|}\sum_{({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})\in\mathcal{D}}\ell(p_{\theta},({\bm{\mathrm{x}}},{\bm{\mathrm{y}}}))+\mu\,\texttt{SL}(p_{\theta},({\bm{\mathrm{x}}},{\bm{\mathrm{y}}}),\mathsf{K}) \tag{4}

$$

with $\mu>0$ a hyperparameter. Learning amounts to minimizing this joint objective on training data. At test time, predictions are computed directly by the neural network classifier $p_{\theta}({\bm{\mathrm{y}}}\mid{\bm{\mathrm{x}}})$ rather than by a symbolic layer as in PNSPs.

Logic Tensor Networks

Another well-known approach is Logic Tensor Networks (LTN s) (Donadello et al., 2017; Badreddine et al., 2022), which share elements with both PNSPs and SL. As with the SL, they transform the symbolic knowledge $\mathsf{K}$ into a differentiable real-valued function $\mathcal{T}_{\mathsf{K}}$ that evaluates how well predictions conform to the logical constraints using fuzzy logic (van Krieken et al., 2020). This transformation is a proper relaxation. Specifically, it converts all the original Boolean variables in $\mathsf{K}$ (namely, the concepts and the labels) into real-valued variables in the range $[0,1]$ encoding degrees of truth. At the same time, it converts all logic connectives (conjunctions, disjunctions, and negations) into real-valued operators capable of handling soft degrees of truth. The translation yields a function $\mathcal{T}_{\mathsf{K}}$ that takes as input a distribution over the concepts ${\bm{\mathrm{C}}}$ and a distribution over the labels ${\bm{\mathrm{Y}}}$ , and outputs the degree (in $[0,1]$ ) to which these satisfy the knowledge $\mathsf{K}$ , the higher the better. By default, LTN s employ a transformation based on the product real logic (Donadello et al., 2017) T-norm to ensure that $\mathcal{T}_{\mathsf{K}}$ is fully differentiable, although other options are available (van Krieken et al., 2020). During training, LTN s penalize the concept extractor $p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{X}}})$ for violating the prior knowledge by minimizing the following:

$$

\mathcal{L}(p,\mathcal{D},\mathsf{K})=1-\frac{1}{|\mathcal{D}|}\sum_{({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})\in\mathcal{D}}\mathcal{T}_{\mathsf{K}}(p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{x}}}),\text{1}\!\left\{{\bm{\mathrm{Y}}}={\bm{\mathrm{y}}}\right\}) \tag{5}

$$

Here, $\text{1}\!\left\{{\bm{\mathrm{Y}}}={\bm{\mathrm{y}}}\right\}$ is a (deterministic) distribution that assigns all probability mass to the ground-truth label ${\bm{\mathrm{y}}}$ . The purpose of this objective is to encourage $p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{X}}})$ to concentrate its mass on concepts that satisfy $\mathsf{K}$ . Similarly to PNSPs, LTN s employ the prior knowledge at inference time. They proceed in two steps: they first compute the most probable concept vector $\hat{\bm{\mathrm{c}}}=\operatorname*{argmax}_{{\bm{\mathrm{c}}}}p_{\theta}({\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}})$ in a forward pass, then select the label $\hat{\bm{\mathrm{y}}}$ that maximizes the satisfaction of the knowledge $\mathcal{T}_{\mathsf{K}}(\hat{\bm{\mathrm{c}}},\text{1}\!\left\{{\bm{\mathrm{Y}}}=\hat{\bm{\mathrm{y}}}\right\})$ .

Abductive Learning

Another well-studied approach is Abductive Learning (ABL) (Zhou, 2019). Compared to the above NeSy predictors, it works backwards: rather than inferring a prediction from the concepts, it constrains the predicted concepts using the ground-truth label. To this end, during training, ABL finds the concepts vectors $\hat{\bm{\mathrm{c}}}$ that are both close to those predicted by the concept extractor (according to some pre-defined distance metric) and that according to $\mathsf{K}$ entail the ground-truth label ${\bm{\mathrm{y}}}$ . It then uses them as pseudo-labels to supervise the concept extractor. The pseudo-labels are obtained by solving the following optimization problem:

$$

\hat{\bm{\mathrm{c}}}=\operatorname*{argmin}_{{\bm{\mathrm{c}}}^{\prime}\in\mathcal{C}}d(\bar{\bm{\mathrm{c}}},{\bm{\mathrm{c}}}^{\prime})\qquad\mathrm{s.t.}\qquad({\bm{\mathrm{c}}}^{\prime},{\bm{\mathrm{y}}})\models\mathsf{K} \tag{6}

$$

where $\bar{\bm{\mathrm{c}}}=\operatorname*{argmax}_{{\bm{\mathrm{c}}}∈\mathcal{C}}p({\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}})$ , and $d$ is a suitable distance metric. The constraint ensures that $\hat{\bm{\mathrm{c}}}$ entail the ground-truth label ${\bm{\mathrm{y}}}$ . The choice of distance metric influences the type of weak supervision obtained, thereby intuitively biasing learning toward certain solutions. The training objective of the concept extractor is to maximize the (log)-likelihood of the pseudo-labels using $\hat{\bm{\mathrm{c}}}$ from Eq. 6:

$$

\mathcal{L}(p,\mathcal{D},\mathsf{K})=\cfrac{1}{|\mathcal{D}|}\sum_{({\bm{\mathrm{x}}},\hat{\bm{\mathrm{c}}})}\log p(\hat{\bm{\mathrm{c}}}\mid{\bm{\mathrm{x}}}) \tag{7}

$$

At inference time, ABL first predicts the concepts and then uses the symbolic knowledge to obtain the final prediction.

2.3 NeSy Predictor Architectures: Differences and Similarities

These different NeSy predictor architectures all share a key property: if the concept extractor allocates all probability mass to a single concept vector, then all architectures will output a label compatible with the rules of propositional logic. Consider MNIST-Add: if the concepts $C_{1}=2$ and $C_{2}=3$ are predicted with certainty, then all architectures will predict the label $Y=5$ with certainty—thus matching what any any logical encoding of the arithmetic sum would do. However, for concept predictions that are not certain, different architectures can output different label distributions. This fact will become relevant in Section 4.

At the same time, these four NeSy predictor architectures differ in several subtle but significant ways. The first one concerns efficiency. LTN and ABL can have an advantage over probabilistic logic approaches (like PNSPs and the SL) in that inference—and therefore training–does not require to sum over all possible concept configurations, making it potentially more efficient, although knowledge compilation and approximation strategies help bridge the gap (Huang et al., 2021a). This, however, often comes at a cost, in that LTN and ABL tend to be more susceptible to local minima (Badreddine et al., 2022). On the other hand, fuzzy relaxations (as used by LTN) may not be entirely accurate to the original prior knowledge, in the sense that, for certain problems, the optima of the satisfaction function $\mathcal{T}_{\mathsf{K}}$ may not correspond to models (that is, $0$ – $1$ solutions) of $\mathsf{K}$ (Giannini et al., 2018; van Krieken et al., 2022). Moreover, the fuzzy transformation may lead to mathematically different relaxations for logically equivalent constraints (Di Liello et al., 2020; van Krieken et al., 2022). Probabilistic logic is not affected by these issues (Xu et al., 2018). ABL is special in that it does not require the reasoning step to be differentiable, and as such it does not need to relax or extend the semantics of the prior knowledge at all.

A second difference concerns validity guarantees, which set apart layer-based approaches from penalty-based ones. The SL “bakes” the prior knowledge directly into the neural network, meaning that finding a high-quality output amounts to a simple forward pass over the network: $\mathsf{K}$ plays no role during inference. This also results in a lower memory footprint compared to PNSPs, as the probabilistic circuit can be dropped after training (Di Liello et al., 2020). The downside is that, while PNSPs and ABL ensure invalid outputs will not be predicted, this is not the case for the SL, unless the neural network attains exactly zero Semantic Loss during training, and even so this property might not carry over to test and out-of-distribution samples. Regardless, it has been shown that PNSPs and the SL have the same effect on the underlying neural network, that is, when learned to optimality both models yield concept extractors that comply with the prior knowledge $\mathsf{K}$ (Marconato et al., 2023a). LTN sits in-between these alternatives, as it uses the prior knowledge at inference time, but may output inconsistent predictions if the most likely inputs violate the knowledge. To resolve this issue, fuzzy logic layers such as CCN+ (Giunchiglia et al., 2024) ensure all outputs comply with the knowledge.

2.4 NeSy Predictors: Benefits

Despite these differences, all NeSy predictors offer a number of key benefits:

- Performance: Just like regular neural networks, they are fully-fledged deep learning architectures that can handle complex low-level inputs via end-to-end training and latent representations.

- Validity: Unlike regular neural networks, their predictions comply with the prior knowledge $\mathsf{K}$ , possibly also with guarantees and out-of-distribution, cf. Section 2.3. This is essential in high-stakes applications where predictions have to comply with safety or structural constraints.

- Reusability: It is straightforward to reuse the learned concept extractors in downstream tasks, e.g., out-of-distribution tasks (Marconato et al., 2023b), continual learning scenarios (Marconato et al., 2023a), model verification (Xie et al., 2022; Zaid et al., 2023; Morettin et al., 2024), and shielding (Yang et al., 2023a).

- Interpretability: Users can not only inspect the concept-level predictions and the symbolic inference steps to make sense of the model’s predictions, they can also trace back the model’s prediction to the underlying concepts using gradient-based (Sundararajan et al., 2017) or formal (Huang et al., 2021b) explainability techniques. This supports stakeholders in assessing the reliability of the predictor and potentially enables them to supply corrective feedback (Teso et al., 2023).

Compared to regular neural networks, which primarily target B1, benefits B2 – B4 make NeSy predictors an ideal choice for high-stakes applications (Di Liello et al., 2020; Hoernle et al., 2022; Marconato et al., 2023b; Yang et al., 2023a). However, reusability (B3) and interpretability (B4) hinge on the concepts being grounded appropriately. In fact, unless the learned concepts possess reasonable semantics, their meaning might be opaque to stakeholders, making it difficult to properly explain the model’s predictions with them. Furthermore, as exemplified by Fig. 1, reusing poorly grounded concepts in downstream applications may lead to unintended consequences. This is precisely where reasoning shortcuts enter the picture, as discussed next.

3 A Gentle Introduction to Reasoning Shortcuts

When does a NeSy predictor ground the concepts incorrectly? It may be tempting to assume that – as long as the training data $\mathcal{D}=\{({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})\}$ is abundant and noiseless, and the prior knowledge is complete and correct – NeSy predictors that minimize the training loss will predict the concepts correctly, just like in standard supervised learning. However, this is not the case. This is because of reasoning shortcuts (RSs), which we informally define as follows:

Definition 3.1 (Reasoning Shortcut, Informal).

A reasoning shortcut is a situation in which a NeSy predictor attains accurate label predictions that comply with the prior knowledge by grounding concepts incorrectly.

We illustrate two prototypical RSs in the following examples.

**Example 3.2 (RSs inMNIST-Add(Manhaeve et al.,2018))**

*In MNIST-Add, the task is to predict the sum (e.g., $y=9$ ) of the digits appearing in two MNIST (LeCun, 1998) images (e.g., ${\bm{\mathrm{x}}}=\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-4.png}}\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-5.png}}$ ). The concepts ${\bm{\mathrm{C}}}=(C_{1},C_{2})$ encode the individual digits (e.g., ${\bm{\mathrm{c}}}=(4,5)$ ). Here and elsewhere, we simplify the presentation by using numerical variables. It is always possible to encode these and the corresponding constraints into propositional logic. The prior knowledge enforces the prediction to be their arithmetic sum:

$$

\mathsf{K}=(Y=C_{1}+C_{2}), \tag{8}

$$

Consider a toy scenario where the training set consists of just two examples:

$$

(\raisebox{-1.0pt}{\includegraphics[width=7.96527pt]{figures/mnist-4.png}}\raisebox{-1.0pt}{\includegraphics[width=7.96527pt]{figures/mnist-5.png}})\mapsto 9\quad\text{and}\quad(\raisebox{-1.0pt}{\includegraphics[width=7.96527pt]{figures/mnist-3.png}}\raisebox{-1.0pt}{\includegraphics[width=7.96527pt]{figures/mnist-2.png}})\mapsto 5. \tag{9}

$$

Assume that the concept extractor processes the MNIST images separately. In this case, it may learn the intended image-concept mapping – that is, $\{\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-2.png}}\mapsto 2,\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-3.png}}\mapsto 3,\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-4.png}}\mapsto 4,\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-5.png}}\mapsto 5\}$ – as it adheres to the constraints and attains high label accuracy. But it can alternatively learn a different input-concept mapping $\{\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-2.png}}\mapsto 4,\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-3.png}}\mapsto 1,\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-4.png}}\mapsto 3,\raisebox{-1.0pt}{∈cludegraphics[width=7.96527pt]{figures/mnist-5.png}}\mapsto 6\}$ , which also complies with the constraints and achieves high label accuracy, but it does so by grounding the concepts incorrectly.*

While this is not a realistic application, the same issue affects also high-stakes tasks:

**Example 3.3 (RSs inBDD-OIA)**

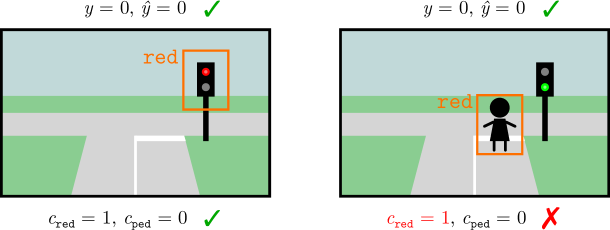

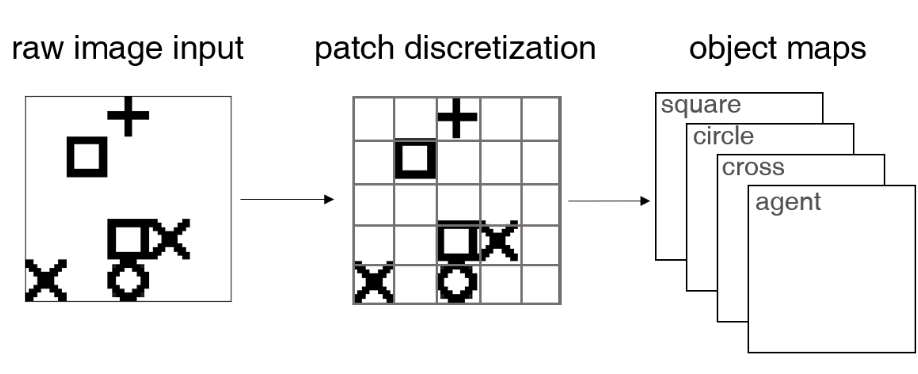

*Consider Example 2.1. A NeSy predictor that grounds the concepts of green light, red light, and pedestrian correctly achieves high accuracy, as it will also correctly infer that it has to stop whenever a pedestrian or a red light appear in the input image. However, a different NeSy predictor that systematically confuses pedestrians with red lights achieves the same accuracy, since, according to $\mathsf{K}$ , both lead to the correct ${\tt stop}$ action (Marconato et al., 2023b), as shown in Fig. 3.*

| 0 | 0 | 1 (go) |

| --- | --- | --- |

| 0 | 1 | 0 (stop) |

| 1 | 0 | 0 (stop) |

| 1 | 1 | 0 (stop) |

<details>

<summary>x2.png Details</summary>

### Visual Description

## Image Analysis: Object Detection Scenarios

### Overview

The image presents two scenarios related to object detection in a street scene. Each scenario depicts a simplified street intersection with a traffic light. The scenarios are used to illustrate the success or failure of an object detection model in identifying specific objects (traffic light color and pedestrians) and their corresponding labels.

### Components/Axes

Each scenario includes the following elements:

* **Street Scene:** A simplified representation of a street intersection with roads, sidewalks, and grass.

* **Traffic Light:** A traffic light with three lights (red, yellow, green).

* **Bounding Box:** An orange rectangle indicating the detected object.

* **Object Label:** Text indicating the type of object detected (e.g., "red").

* **Ground Truth Labels:** Variables representing the actual state of the scene (y), the predicted state (ŷ), the presence of a red light (c_red), and the presence of a pedestrian (c_ped).

* **Correctness Indicator:** A green checkmark or a red "X" indicating whether the prediction is correct or incorrect.

### Detailed Analysis or ### Content Details

**Scenario 1 (Left):**

* **Scene:** A street intersection with a traffic light showing a red light.

* **Bounding Box:** An orange bounding box surrounds the red light.

* **Object Label:** The text "red" is displayed above the bounding box.

* **Ground Truth Labels:**

* `y = 0, ŷ = 0`: Indicates that the model correctly predicted the absence of a pedestrian.

* `c_red = 1, c_ped = 0`: Indicates that a red light is present and no pedestrian is present.

* **Correctness Indicator:** A green checkmark indicates that the prediction is correct.

**Scenario 2 (Right):**

* **Scene:** A street intersection with a traffic light showing a green light. A stick figure representing a pedestrian is present.

* **Bounding Box:** An orange bounding box surrounds the pedestrian.

* **Object Label:** The text "red" is displayed above the bounding box.

* **Ground Truth Labels:**

* `y = 0, ŷ = 0`: Indicates that the model correctly predicted the absence of a pedestrian.

* `c_red = 1, c_ped = 0`: Indicates that a red light is present and no pedestrian is present.

* **Correctness Indicator:** A red "X" indicates that the prediction is incorrect.

### Key Observations

* In the first scenario, the model correctly identifies the red light and its absence of a pedestrian.

* In the second scenario, the model incorrectly identifies the pedestrian as "red" and incorrectly identifies the traffic light as red.

### Interpretation

The image demonstrates a scenario where an object detection model performs well in one case (correctly identifying a red light) but fails in another (misidentifying a pedestrian as "red"). This highlights the challenges of object detection, particularly in scenarios with varying object appearances and potential for misclassification. The model seems to be confusing the pedestrian with the "red" class, possibly due to visual similarities or biases in the training data. The incorrect prediction in the second scenario indicates a need for improvement in the model's ability to distinguish between different object classes and to generalize to new scenarios.

</details>

Figure 3: Left: truth table of the simplified prior knowledge $\mathsf{K}$ used in Example 2.1: the two concepts are interchangeable, in that as soon as one of them fires, the predictor infers the same (correct) action. Right: illustration of this RS: the model can confuse pedestrians and red lights with no drop in training loss.

These examples show that correct and faulty NeSy predictors cannot be distinguished by label accuracy alone, and therefore there is no reason why, during training, NeSy methods should favor one concept mapping over the other. They also indicate that RSs can occur even when the prior knowledge is accurate and complete, and the training data is noiseless. Moreover, RSs can persist even if the training data is exhaustive (e.g., if the BDD-OIA training set encompasses examples containing all possible combinations of green lights, red lights and pedestrians), as the correct and faulty models yield the same predictions on all examples.

3.1 Causes, Frequency and Impact

Why do RSs arise?

Roughly speaking, RSs stem from two related issues: on the one hand, the prior knowledge $\mathsf{K}$ may allow inferring the correct labels ${\bm{\mathrm{y}}}$ from improperly grounded concept vectors ${\bm{\mathrm{c}}}$ ; on the other, the concept extractor is expressive enough to learn the improperly grounded concepts. Our two examples satisfy both conditions. Their combination introduces ambiguity in the learning problem, meaning that NeSy predictors are free to learn incorrect concept mappings and still achieve high accuracy. Properly understanding the root causes of RSs, however, requires more technical background, which we provide in Section 4, hence we postpone a more comprehensive discussion to Section 5.1. Importantly, clarifying the causes of RSs allows us to discriminate between risky and safe NeSy tasks, and to design RS mitigation strategies. We explore these topics in Section 5.

Do RSs occur in practice?

The above discussion entails that RSs can occur whenever the prior knowledge is not “strict” enough, but it does not prove that they must occur. Arguably, NeSy predictors can learn the intended concepts even in this case. The question is then whether RSs occur in practice. While it is difficult to gauge their frequency in real-world applications, the literature abounds with situations in which NeSy predictors, trained in standard conditions, fall prey to RSs (Manhaeve et al., 2018; Wang et al., 2019; Chang et al., 2020; Manhaeve et al., 2021a; Marconato et al., 2023b, 2024; Bortolotti et al., 2024; Yang et al., 2024; DeLong et al., 2024), suggesting that RSs occur naturally in NeSy benchmarks.

Do RSs make NeSy predictors unusable?

The short answer is no. More precisely, in applications where the only goal is to obtain accurate (B1) and valid (B2) predictions, RSs are not an issue. In fact, Definition 3.1 makes it clear that RSs are a symbol grounding issue that leaves label accuracy unchanged. Hence, the label predictions of affected and unaffected NeSy can be just as accurate. We also remark that RSs have no impact on validity (B2). As mentioned in Section 2.3, predictors like PNSPs, ABL, and LTN ensure their output is always consistent with the prior knowledge by explicitly searching for a label ${\bm{\mathrm{y}}}$ that, paired with the predicted concepts vectors $\hat{\bm{\mathrm{c}}}$ (or their predictive distribution $p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{x}}})$ ), satisfies the knowledge $\mathsf{K}$ . Validity is built-in, i.e., it does not depend on the concepts predicted by the model.

A tricky aspect of RSs is that we cannot detect them by monitoring label predictions alone. We will discuss more reliable diagnostic techniques in Section 5.2 and strategies for rendering predictors aware of their own RSs in Section 5.4. Furthermore, RS mitigation strategies can greatly reduce the presence of RSs or even entirely remove them to ensure NeSy predictors are also reusable (B3) and interpretable (B4). We overview these strategies in Section 5.3.

What consequences do RSs have?

RSs can seriously compromise reusability (B3) and interpretability (B4). Let us begin from reusability. RSs may exploit concept groundings that do not work in other application settings, meaning that affected concepts suffer from poor generalization beyond the specific data and prior knowledge used during training (Marconato et al., 2023b; Li et al., 2023; Bortolotti et al., 2024). To see this, consider the following example, taken from (Marconato et al., 2023b):

**Example 3.4**

*Consider the NeSy predictor confusing pedestrians with red lights in Example 3.3. Imagine pairing it with prior knowledge intended for an autonomous ambulance use-case, encoding that, in case of an emergency, it is allowed to cross red lights. When emergencies arise, the resulting NeSy predictor would decide to $y={\tt go}$ when there are pedestrians on the road.*

RSs also affect concept reuse in downstream applications where the semantics of the concepts matters. For instance, in continual learning one is concerned with learning models that generalize across a sequence of learning tasks. NeSy predictors were shown to struggle with this precisely because of RSs (Marconato et al., 2023a):

**Example 3.5**

*Consider the shortcut predictor from Example 3.3. Imagine fine-tuning it on a new NeSy task that also includes the concept of “stop sign” and a new rule based on it, and specifically that whenever a stop sign is detected, the vehicle must stop. When fine-tuned on this new task, the concept extractor may mistakenly learn to equate stop signs and red lights, whose semantics is corrupted.*

Another affected application is neuro-symbolic verification (Xie et al., 2022; Zaid et al., 2023; Morettin et al., 2024; Manginas et al., 2025). In model verification, the goal is to formally verify whether a neural network satisfies some property of interest, such as robustness to adversarial attacks or fairness. This involves writing a formal specification of the property, translating the model into a logical formula (or a similar representation), and then using tools from automated reasoning to check whether the model’s formula is compatible with the specification. NeSy verification is similar, except that the property of interest is specified in terms of high-level concepts. Following the neuro-symbolic setup, their definitions are provided by a trained concept extractor – provided by a third party – that also gets translated into a logical formula for the purpose of verification. Clearly, if the concepts are incorrect, this will affect the meaning of the property to be verified.

Finally, interpretability (B4) also relies on the concepts being grounded appropriately (Marconato et al., 2023b; Koh et al., 2020; Poeta et al., 2023). Unless these possess human-aligned semantics, their meaning might be opaque to stakeholders, impairing understanding:

**Example 3.6**

*In Example 3.3, imagine the input scene portrays a pedestrian. An affected NeSy predictor would (correctly) predict stop, but its explanation would indicate that the decision depends on the presence of a red light. This explanation is misleading, and in fact it does not reflect the input at all.*

4 Theory of Reasoning Shortcuts

In this section, we outline the two mainstream formalizations of RSs. Section 4.2 discusses RSs from an identifiability perspective, investigating under what conditions models achieving high likelihood can in fact learn correct concepts in the infinite sample case. Section 4.3 instead is concerned with guarantees – in terms of empirical risk minimization – for learning correct concepts from finite data. The so-far vague notion of “correct” concept will be clarified in the following sections.

Additional notation

Going forward, we will often treat distributions over a finite set of values as vectors in a simplex. This simplex has one dimension for each value in the set. We will write $\Delta_{\mathcal{C}}$ to indicate the simplex defined by the values contained in $\mathcal{C}$ :

$$

\textstyle\Delta_{\mathcal{C}}=\{{\bm{\mathrm{p}}}\in[0,1]^{|\mathcal{C}|}\mid\sum_{i=1}^{|\mathcal{C}|}p_{i}=1\}

$$

Furthermore, we will write $\mathrm{Vert}(\Delta_{\mathcal{C}})=\{{\bm{\mathrm{p}}}∈\{0,1\}^{|\mathcal{C}|}\mid\sum_{i=1}^{|\mathcal{C}|}p_{i}=1\}$ to indicate the vertices of the simplex $\Delta_{\mathcal{C}}$ . This is simply a collection of one-hot vectors, one for each value in $\mathcal{C}$ .

4.1 Setting and Assumptions

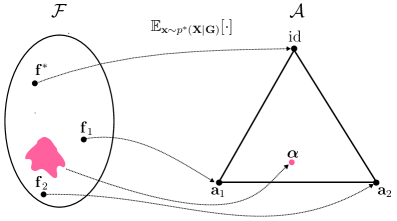

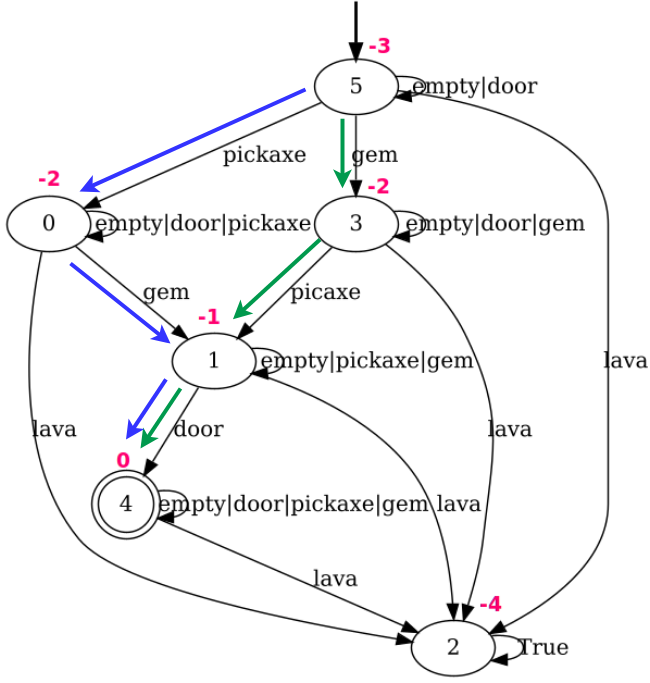

In order to formally describe RSs, we have to clarify the link between the observed data, the concepts it implicitly encodes, and the concepts learned by the predictor. This link is provided by the data generating process, described next, which forms a solid basis for both theoretical approaches. $C_{1}$ $·s$ $C_{k}$ ${\bm{\mathrm{X}}}$ $G_{k}$ $·s$ $G_{1}$ ${\bm{\mathrm{X}}}$ ${\bm{\mathrm{Y}}}$ ${\bm{\mathrm{Y}}}$ $\alpha_{f}$ $f$ $f^{*}$ $\beta$ $\beta^{*}$

Figure 4: Left. The data generation process: $f^{*}$ maps inputs ${\bm{\mathrm{X}}}$ onto (distributions over) ground-truth concepts ${\bm{\mathrm{G}}}$ , and $\beta^{*}$ maps these to (distributions over) labels ${\bm{\mathrm{Y}}}$ . In a standard learning setting, only ${\bm{\mathrm{X}}}$ and ${\bm{\mathrm{Y}}}$ are observed (in light gray), whereas ${\bm{\mathrm{G}}}$ are latent. Right. A NeSy predictor maps inputs ${\bm{\mathrm{X}}}$ to concepts ${\bm{\mathrm{C}}}$ via a learned $f$ and infers labels ${\bm{\mathrm{Y}}}$ via an inference layer $\beta$ . By Eq. 20, doing so entails a map $\alpha_{f}$ (in orange) from ground-truth concepts ${\bm{\mathrm{G}}}$ to learned concepts ${\bm{\mathrm{C}}}$ .

The data generating process

First we distinguish between ground-truth concepts ${\bm{\mathrm{g}}}=(g_{1},...,g_{k})∈\mathcal{C}$ underlying the data and learned concepts ${\bm{\mathrm{c}}}=(c_{1},...,c_{k})∈\mathcal{C}$ . For instance, in Example 3.3 there are two binary concepts encoding the presence of pedestrians and red traffic lights: $g_{\tt ped}$ and $g_{\tt red}$ are the true values, while $c_{\tt ped}$ and $c_{\tt red}$ are the predicted values.

The training examples $({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})$ are sampled from a ground-truth joint distribution $p^{*}({\bm{\mathrm{X}}},{\bm{\mathrm{Y}}})=p^{*}({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{X}}})p^{*}({\bm{\mathrm{X}}})$ , which is determined by the ground-truth concepts ${\bm{\mathrm{g}}}∈\mathcal{C}$ associated with the input ${\bm{\mathrm{x}}}$ . Specifically, the values of the ground-truth concepts are sampled from a ground-truth conditional distribution $p^{*}({\bm{\mathrm{G}}}\mid{\bm{\mathrm{X}}})$ , while the ground-truth labels ${\bm{\mathrm{y}}}$ are sampled from $p^{*}({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{G}}};\mathsf{K})$ . It is assumed that this distribution implements the background knowledge $\mathsf{K}$ , that is, it assigns zero or low probability to labels incompatible with the constraints. For technical reasons, it is assumed it implements the same reasoning process as the target NeSy predictor. It is useful to think of these two distributions as functions: the former as a function $f^{*}:\mathcal{X}→\Delta_{\mathcal{C}}$ mapping each input to a distribution over ground-truth concepts, and the latter as a function $\beta^{*}:\Delta_{\mathcal{C}}→\Delta_{\mathcal{Y}}$ mapping each distribution over concepts to a distribution over labels. Elements $f({\bm{\mathrm{x}}})∈\Delta_{\mathcal{C}}$ are probability distributions $p({\bm{\mathrm{C}}}\mid{\bm{\mathrm{x}}})$ over the possible concept vectors ${\bm{\mathrm{c}}}∈\mathcal{C}$ . Whenever $f({\bm{\mathrm{x}}})$ allocates all probability mass to one specific ${\bm{\mathrm{c}}}∈\mathcal{C}$ , i.e., it is one-hot, it is a vertex of the simplex and as such it belongs to $\mathrm{Vert}(\Delta_{\mathcal{C}})⊂\Delta_{\mathcal{C}}$ . Overall, $p^{*}({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{X}}},{\color[rgb]{1,0,0}\definecolor[named]{pgfstrokecolor}{rgb}{1,0,0}\mathsf{K}})$ results from composing $f^{*}$ and $\beta^{*}$ . A visualization of the data generation process is reported in Fig. 4 (Left). With this in mind, we present the first assumption capturing how data are generated, which will be used throughout the theoretical material.

Assumption 4.1 (Completeness).

Let $f^{*}:\mathcal{X}→\Delta_{\mathcal{C}}$ be the ground-truth concept extractor, and $\beta^{*}:\Delta_{\mathcal{C}}→\Delta_{\mathcal{Y}}$ the inference function induced by the prior knowledge $\mathsf{K}$ . Let $p^{*}({\bm{\mathrm{X}}})$ be the marginal distribution over input variables. Then, we have: $p^{*}({\bm{\mathrm{X}}},{\bm{\mathrm{G}}},{\bm{\mathrm{Y}}})=p^{*}({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{X}}};\mathsf{K})p^{*}({\bm{\mathrm{G}}}\mid{\bm{\mathrm{X}}})p^{*}({\bm{\mathrm{X}}})$ (10) where $p^{*}({\bm{\mathrm{G}}}\mid{\bm{\mathrm{X}}}):=f^{*}({\bm{\mathrm{X}}})$ and $p^{*}({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{X}}};\mathsf{K}):=(\beta^{*}\circ f^{*})({\bm{\mathrm{X}}})$ .

Under Assumption 4.1, the ground-truth concept extractor $f^{*}$ becomes a sufficient statistic of the input ${\bm{\mathrm{X}}}$ for inferring the labels ${\bm{\mathrm{Y}}}$ through its composition with $\beta^{*}$ . In other words, mapping inputs to the concept distribution with $f^{*}$ retains all information necessary to infer the labels. For concreteness, this assumption fails when the concept space is “too restrictive”. For example, in MNIST-Add with two digits, sums above $18$ cannot be generated. The above assumption excludes such cases from the training data.

Simplifying assumptions on the data generation process

While Assumption 4.1 underlies all theoretical works on RSs (Yang et al., 2024; Marconato et al., 2023b), we introduce two additional assumptions that make the theoretical analysis more manageable. The first assumption restricts the ground-truth concept extractor $f^{*}$ : It should assign all probability mass to a single vector of concepts ${\bm{\mathrm{g}}}$ for each input ${\bm{\mathrm{x}}}$ .

Assumption 4.2 (Extrapolability).

The ground-truth concept extractor is a function $f^{*}:\mathcal{X}→\mathrm{Vert}(\Delta_{\mathcal{C}})$ , i.e., for all inputs ${\bm{\mathrm{x}}}∈\mathcal{X}$ , the ground-truth concept distribution $f^{*}({\bm{\mathrm{x}}})$ is a one-hot (deterministic) distribution.

Assumption 4.2 allows us to retrieve ground-truth concepts ${\bm{\mathrm{G}}}$ from inputs ${\bm{\mathrm{X}}}$ via $f^{*}$ .

The second assumption ensures that, with the prior knowledge $\mathsf{K}$ , each vector of concepts ${\bm{\mathrm{g}}}$ is associated with a single label ${\bm{\mathrm{y}}}$ . To formally introduce this assumption, we define, overloading notation,

$$

\beta^{*}({\bm{\mathrm{c}}}):=\beta^{*}(\text{1}\!\left\{{\bm{\mathrm{C}}}={\bm{\mathrm{c}}}\right\}),\;\forall{\bm{\mathrm{c}}}\in\mathcal{C}. \tag{11}

$$

$\beta^{*}:\mathcal{C}→\Delta_{\mathcal{Y}}$ is a map from the set of concepts to distribution over labels. It describes how deterministic distributions are mapped to distributions over labels by the knowledge $\mathsf{K}$ .

Assumption 4.3 (Determinism).

The ground-truth inference layer defines a function $\beta^{*}:\mathcal{C}→\mathrm{Vert}(\Delta_{\mathcal{Y}})$ that maps each concept vector ${\bm{\mathrm{c}}}∈\mathcal{C}$ to a one-hot (deterministic) distribution over labels $\beta^{*}({\bm{\mathrm{c}}})$ .

In words, Assumption 4.3 ensures that $\beta^{*}$ assigns a single label ${\bm{\mathrm{y}}}$ to each concept vector ${\bm{\mathrm{c}}}$ . Assumptions 4.2 and 4.3 often apply in experimental regimes. For MNIST-Add and BDD-OIA, the concepts in the input image can be unambiguously determined (Assumption 4.2 is satisfied), and all concept vectors ${\bm{\mathrm{c}}}$ determine a single output label (Assumption 4.3 is satisfied), e.g., in MNIST-Add we use $Y=C_{1}+C_{2}$ . Together, Assumptions 4.2 and 4.3 ensure that each input ${\bm{\mathrm{x}}}∈\mathcal{X}$ is mapped to a single label ${\bm{\mathrm{y}}}∈\mathcal{Y}$ . The same holds for many common NeSy datasets (Bortolotti et al., 2024).

Before proceeding, we have to define the support of the input and ground-truth concept distributions. We consider the marginal distributions $p^{*}({\bm{\mathrm{X}}})$ and $p^{*}({\bm{\mathrm{G}}}):=\mathbb{E}_{{\bm{\mathrm{x}}}\sim p^{*}({\bm{\mathrm{X}}})}[f^{*}({\bm{\mathrm{x}}})]$ . We indicate the support of input data with $\mathrm{supp}({\bm{\mathrm{X}}})⊂eq\mathcal{X}$ , which contains the inputs with non-zero probability according to $p^{*}({\bm{\mathrm{X}}})$ . Similarly, we denote the support of ground-truth concepts by $\mathrm{supp}({\bm{\mathrm{G}}})⊂eq\mathcal{C}$ .

4.2 Perspective: Identification

In this section, we ask whether a NeSy predictor trained in a standard supervised fashion is guaranteed to learn the ground-truth concept extractor. Specifically, we want to determine whether—depending on the choice of knowledge, training data, and architectural bias—the ground-truth concept extractor is the unique maximum of the likelihood of the training data. If so, then any NeSy predictor that attains maximum likelihood will necessarily learn the ground-truth concept extractor, and thus ground all concepts correctly. This question puts us firmly in the context of identifiability theory; for an expanded presentation, please see Section 7.

Below we provide a revised perspective on the identifiability of RSs (Marconato et al., 2023a, b; Bortolotti et al., 2025) by clarifying the setting, presenting a first non-identifiability result from (Marconato et al., 2023a) in Theorem 4.4, and reviewing the characterization of RSs from (Marconato et al., 2023b; Bortolotti et al., 2025) in Theorems 4.10 and 4.12. All results assume the data follows the generating process described in Fig. 4 and that we have access to possibly infinite amounts of data.

4.2.1 Model class of NeSy predictors

Like in the data generation process, NeSy predictors can be understood as a pair of functions: a concept extractor $f:\mathcal{X}→\Delta_{\mathcal{C}}$ and an inference layer $\beta:\Delta_{\mathcal{C}}→\Delta_{\mathcal{Y}}$ . We indicate with $\mathcal{F}$ the space of learnable concept extractors $f$ . Most theoretical accounts on RSs work in a non-parametric setting, whereby $\mathcal{F}$ contains all possible functions from $\mathcal{X}$ to $\Delta_{\mathcal{C}}$ . In words, they assume the neural network implementing the concept extractor can express any function $f:\mathcal{X}→\Delta_{\mathcal{C}}$ (Marconato et al., 2023b; Yang et al., 2024; Bortolotti et al., 2025). We do the same, but we will relax this assumption when discussing mitigation and awareness strategies in Section 5.3 and Section 5.4. Furthermore, we also assume that, when provided with the prior knowledge $\mathsf{K}$ , the inference layer $\beta$ of a NeSy predictor architecture behaves exactly like the ground-truth inference layer $\beta^{*}$ , i.e.,

$$

\beta({\bm{\mathrm{c}}})=\beta^{*}({\bm{\mathrm{c}}}),\;\forall{\bm{\mathrm{c}}}\in\mathcal{C}. \tag{12}

$$

We denote with $\mathcal{B}$ the space of inference layers that satisfy Eq. 12. The choice of the NeSy predictor architecture (e.g., PNSPs or LTN) determines how the inference layer $\beta$ behaves, as discussed in Section 2.2. A specific NeSy predictor thus amounts to a pair $(f,\beta)∈\mathcal{F}×\mathcal{B}$ , Unless mentioned otherwise, we present the results in the non-parametric setting where $\mathcal{F}$ contains all possible concept extractors that map inputs to concept conditional probabilities (Bortolotti et al., 2025). ; its label distribution is given by:

$$

p_{f}({\bm{\mathrm{Y}}}\mid{\bm{\mathrm{X}}};\mathsf{K}):=(\beta\circ f)({\bm{\mathrm{X}}})\,, \tag{13}

$$

For this class of models, we consider the maximum log-likelihood objective over infinite data:

$$

{\arg\max}_{f\in\mathcal{F}}\ \mathbb{E}_{({\bm{\mathrm{x}}},{\bm{\mathrm{y}}})\sim p^{*}({\bm{\mathrm{X}}},{\bm{\mathrm{Y}}})}[\log p_{f}({\bm{\mathrm{y}}}\mid{\bm{\mathrm{x}}};\mathsf{K})]\,. \tag{14}

$$

It turns out that this problem may not admit a unique solution, as explained next.

4.2.2 Non-identifiability of the concept extractor

We start by presenting the necessary and sufficient conditions for a NeSy predictor to achieve maximum likelihood on data and then show that this can lead to non-identifiability. Before proceeding, for a probability measure $p^{*}({\bm{\mathrm{X}}})$ and any two measurable functions $r({\bm{\mathrm{x}}})$ and $s({\bm{\mathrm{x}}})$ with respect to $p^{*}$ , we will use the shorthand

$$

r({\bm{\mathrm{X}}})=s({\bm{\mathrm{X}}}) \tag{15}

$$

to mean that $r$ takes values equal to $s$ for $p^{*}$ -almost every ${\bm{\mathrm{x}}}∈\mathrm{supp}({\bm{\mathrm{X}}})$ .

Theorem 4.4 (Revisited from (Marconato et al., 2023a)).

Under Assumption 4.1, a NeSy predictor $(f,\beta)∈\mathcal{F}×\mathcal{B}$ attains maximum likelihood with respect to the distribution $p^{*}({\bm{\mathrm{X}}},{\bm{\mathrm{Y}}})$ if and only if $(\beta\circ f)({\bm{\mathrm{X}}})=(\beta^{*}\circ f^{*})({\bm{\mathrm{X}}})\,.$ (16)

This immediately yields the following important corollary: when the same label can be predicted using different concept vectors, we cannot identify the concept extractor, i.e., $f^{*}∈\mathcal{F}$ is not the only choice that leads to obtaining maximum likelihood for the ground-truth inference layer $\beta^{*}$ , but there are alternatives $f≠ f^{*}$ .

Corollary 4.5 (Non-identifiability).

Let $\Delta_{\mathcal{Y}}^{*}⊂eq\Delta_{\mathcal{Y}}$ be the subspace obtained from mapping inputs to labels, which is given by $\Delta^{*}_{\mathcal{Y}}:=(\beta^{*}\circ f^{*})(\mathrm{supp}({\bm{\mathrm{X}}}))$ , and let $\Delta^{*}_{\mathcal{C}}⊂eq\Delta_{\mathcal{C}}$ be the preimage of $\Delta^{*}_{\mathcal{Y}}$ over the ground-truth inference layer $\beta^{*}$ , defined as $\Delta^{*}_{\mathcal{C}}:=\{{\bm{\mathrm{p}}}∈\Delta_{\mathcal{C}}\mid\,\beta^{*}({\bm{\mathrm{p}}})∈\Delta_{\mathcal{Y}}^{*}\}$ . Under Assumption 4.1, if $\beta^{*}$ is not injective from $\Delta_{\mathcal{C}}^{*}$ to $\Delta_{\mathcal{Y}}^{*}$ , then $(\beta^{*}\circ f)({\bm{\mathrm{X}}})=(\beta^{*}\circ f^{*})({\bm{\mathrm{X}}})\mathchoice{\mathrel{\hbox to0.0pt{\kern 3.75pt\kern-5.30861pt$\displaystyle\not$\hss}{\implies}}}{\mathrel{\hbox to0.0pt{\kern 3.75pt\kern-5.30861pt$\textstyle\not$\hss}{\implies}}}{\mathrel{\hbox to0.0pt{\kern 2.625pt\kern-4.48917pt$\scriptstyle\not$\hss}{\implies}}}{\mathrel{\hbox to0.0pt{\kern 1.875pt\kern-3.9892pt$\scriptscriptstyle\not$\hss}{\implies}}}f({\bm{\mathrm{X}}})=f^{*}({\bm{\mathrm{X}}})\,.$ (17)

* Proof*

By construction. If $\beta^{*}:\Delta^{*}_{\mathcal{C}}→\Delta^{*}_{\mathcal{Y}}$ is not injective, we can find ${\bm{\mathrm{p}}},{\bm{\mathrm{p}}}^{\prime}∈\Delta_{\mathcal{C}}^{*}$ such that ${\bm{\mathrm{p}}}≠{\bm{\mathrm{p}}}^{\prime}$ and

$$

\beta^{*}({\bm{\mathrm{p}}})=\beta^{*}({\bm{\mathrm{p}}}^{\prime})\,. \tag{18}

$$

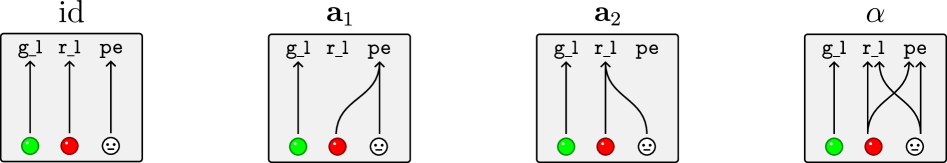

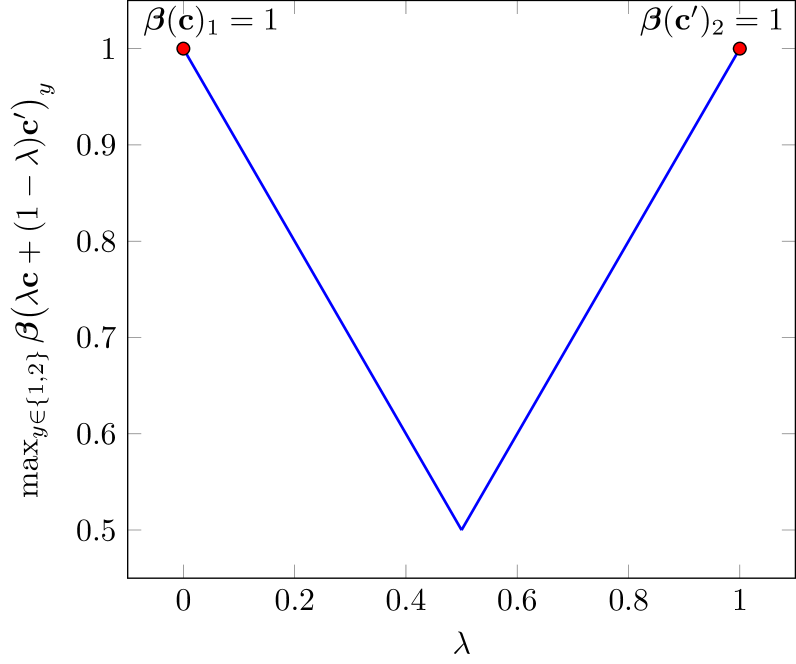

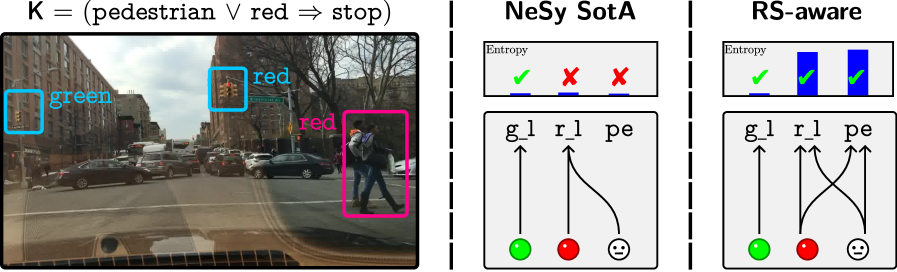

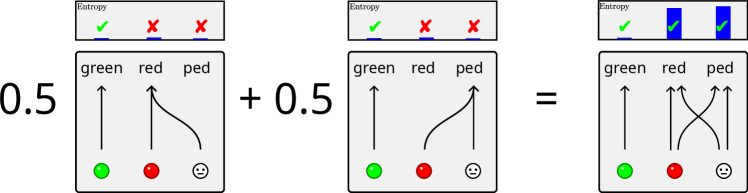

Hence, for all ${\bm{\mathrm{x}}}∈\mathrm{supp}({\bm{\mathrm{X}}})$ such that $f^{*}({\bm{\mathrm{x}}})={\bm{\mathrm{p}}}$ we can construct another function $f∈\mathcal{F}$ such that $f({\bm{\mathrm{x}}})={\bm{\mathrm{p}}}^{\prime}$ . By Eq. 18, the NeSy predictor $(f,\beta^{*})$ will obtain maximum likelihood (Theorem 4.4), but $f({\bm{\mathrm{X}}})≠ f^{*}({\bm{\mathrm{X}}})$ . ∎