# Can LLMs Correct Themselves? A Benchmark of Self-Correction in LLMs

> Equal contributions.†Corresponding authors: Pan Zhou and Lixing Chen.

Abstract

Self-correction of large language models (LLMs) emerges as a critical component for enhancing their reasoning performance. Although various self-correction methods have been proposed, a comprehensive evaluation of these methods remains largely unexplored, and the question of whether LLMs can truly correct themselves is a matter of significant interest and concern. In this study, we introduce CorrectBench, a benchmark developed to evaluate the effectiveness of self-correction strategies, including intrinsic, external, and fine-tuned approaches, across three tasks: commonsense reasoning, mathematical reasoning, and code generation. Our findings reveal that: 1) Self-correction methods can improve accuracy, especially for complex reasoning tasks; 2) Mixing different self-correction strategies yields further improvements, though it reduces efficiency; 3) Reasoning LLMs have limited optimization under additional self-correction methods and have high time costs. Interestingly, a comparatively simple chain-of-thought (CoT) baseline demonstrates competitive accuracy and efficiency. These results underscore the potential of self-correction to enhance LLM’s reasoning performance while highlighting the ongoing challenge of improving their efficiency. Consequently, we advocate for further research focused on optimizing the balance between reasoning capabilities and operational efficiency. Project Page: https://correctbench.github.io/

1 Introduction

The rapid advancement of large language models (LLMs), exemplified by GPT-3.5 Ye2023ACC and LLaMA 3 Dubey2024TheL3 , has precipitated a transformative shift in artificial intelligence (AI), yielding state-of-the-art performance across diverse tasks tie2025survey . Specifically, these tasks include content generation Agossah2023LLMBasedIF , natural language understanding Kuang2024NaturalLU , and complex decision-making Yang2024EvaluatingWM , all of which have been revolutionized by the extensive pretraining and sophisticated architectures of LLMs. Notably, the introduction of frameworks like Chain-of-Thought (CoT) Wei2022ChainOT has further expanded LLM’s capacity for multi-step reasoning, enabling them to tackle more intricate tasks.

Despite these advancements, ensuring the reliability and accuracy of model outputs, especially for reasoning-intensive tasks, remains a formidable challenge. In response, recent works have focused on self-correction strategies aimed at refining LLMs’ decision-making processes Kamoi2024WhenCL ; Lin2024CriticBenchBL through iterative revision. Pioneering approaches such as RARR Gao2022RARRRA , Refiner Paul2023REFINERRF , and CRITIC Gou2023CRITICLL illustrate the potential of integrating feedback loops and corrective components into model architectures. However, these approaches often yield inconsistent gains across different tasks, prompting deeper questions about their capability of correction and generalizability. This observation motivates the central question: Can LLMs truly correct themselves? Moreover, it remains unclear whether more intricate self-correction schemes necessarily translate into superior overall performance.

To address these issues, this paper presents CorrectBench, a systematic benchmark for investigating how self-correction methods affect LLMs performance across multiple tasks. Building on a recent survey of self-correction approaches Kamoi2024WhenCL , we categorize such methods into three primary categories (i.e., intrinsic correction, external correction and fine-tuned correction), and select 11 representative methods from them. Additionally, we establish comparative baselines using both the widely adopted CoT Wei2022ChainOT and a standard prompting strategy denoted as ‘Base’ (detailed in Appendix G.1).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Radar Charts: Model Performance on GPQA Benchmarks

### Overview

The image presents three radar charts comparing the performance of various language models on the GPQA benchmark, across different settings: a baseline model (Base), a model with intrinsic correction (S1), and a model with external correction (S2). Each chart visualizes the performance of multiple models across several tasks, including CS-QA, GSM8K, HotpotQA, AQUA, HumanEval, and MATH.

### Components/Axes

* **Chart Type**: Radar Charts (3 charts side-by-side)

* **Titles**:

* Left Chart: "Base (Baseline) GPQA"

* Middle Chart: "S1 (Intrinsic Correction) GPQA"

* Right Chart: "S2 (External Correction) GPQA"

* **Axes**:

* Radial Axis: Represents performance score, ranging from 0.0 to 0.8, with markers at 0.2, 0.4, 0.6, 0.8.

* Angular Axis: Represents different tasks/benchmarks: CS-QA, GSM8K, HotpotQA, AQUA, HumanEval, MATH. These are arranged clockwise around the circle.

* **Legend**: Located at the bottom of the image. Lists the models and their corresponding line colors:

* Light Blue: LLaMA3.1-8B-Instruct

* Light Yellow: LLaMA3.1-70B-Instruct

* Light Purple: Qwen2.5-7B-Instruct

* Light Red: Qwen2.5-72B-Instruct

* Darker Blue: Claude3.5-Sonnet

* Orange: GPT-3.5

* Green: GPT-4o

* Dashed Light Purple: QWQ-32B-Instruct

* Dashed Dark Blue: DeepSeek-V3

* Dashed Light Blue: DeepSeek-R1

* Dashed Light Pink: o3-mini

### Detailed Analysis or ### Content Details

**Chart 1: Base (Baseline) GPQA**

* **LLaMA3.1-8B-Instruct (Light Blue)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

* **LLaMA3.1-70B-Instruct (Light Yellow)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-7B-Instruct (Light Purple)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-72B-Instruct (Light Red)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Claude3.5-Sonnet (Darker Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-3.5 (Orange)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-4o (Green)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **QWQ-32B-Instruct (Dashed Light Purple)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

* **DeepSeek-V3 (Dashed Dark Blue)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-R1 (Dashed Light Blue)**: Scores approximately 0.7 on CS-QA, 0.75 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **o3-mini (Dashed Light Pink)**: Scores approximately 0.5 on CS-QA, 0.6 on GSM8K, 0.4 on HotpotQA, 0.3 on AQUA, 0.2 on HumanEval, and 0.1 on MATH.

**Chart 2: S1 (Intrinsic Correction) GPQA**

* **LLaMA3.1-8B-Instruct (Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **LLaMA3.1-70B-Instruct (Light Yellow)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-7B-Instruct (Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-72B-Instruct (Light Red)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Claude3.5-Sonnet (Darker Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-3.5 (Orange)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-4o (Green)**: Scores approximately 0.8 on CS-QA, 0.85 on GSM8K, 0.7 on HotpotQA, 0.6 on AQUA, 0.5 on HumanEval, and 0.4 on MATH.

* **QWQ-32B-Instruct (Dashed Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-V3 (Dashed Dark Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-R1 (Dashed Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **o3-mini (Dashed Light Pink)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

**Chart 3: S2 (External Correction) GPQA**

* **LLaMA3.1-8B-Instruct (Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **LLaMA3.1-70B-Instruct (Light Yellow)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-7B-Instruct (Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Qwen2.5-72B-Instruct (Light Red)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **Claude3.5-Sonnet (Darker Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-3.5 (Orange)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **GPT-4o (Green)**: Scores approximately 0.8 on CS-QA, 0.9 on GSM8K, 0.7 on HotpotQA, 0.6 on AQUA, 0.5 on HumanEval, and 0.4 on MATH.

* **QWQ-32B-Instruct (Dashed Light Purple)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-V3 (Dashed Dark Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **DeepSeek-R1 (Dashed Light Blue)**: Scores approximately 0.7 on CS-QA, 0.8 on GSM8K, 0.6 on HotpotQA, 0.5 on AQUA, 0.4 on HumanEval, and 0.3 on MATH.

* **o3-mini (Dashed Light Pink)**: Scores approximately 0.6 on CS-QA, 0.7 on GSM8K, 0.5 on HotpotQA, 0.4 on AQUA, 0.3 on HumanEval, and 0.2 on MATH.

### Key Observations

* **Task Performance**: Models generally perform best on GSM8K and CS-QA, and worst on MATH and HumanEval.

* **Model Comparison**: GPT-4o (Green) consistently shows higher performance across all tasks and settings compared to other models. o3-mini (Dashed Light Pink) generally performs the worst.

* **Correction Impact**: Intrinsic (S1) and External (S2) corrections generally improve performance compared to the baseline (Base), with S2 showing a slight edge over S1.

### Interpretation

The radar charts provide a visual comparison of language model performance across different question-answering tasks. The data suggests that:

* **Task Difficulty**: Some tasks are inherently more challenging for these models, as evidenced by the consistently lower scores on MATH and HumanEval.

* **Model Superiority**: GPT-4o demonstrates superior performance, indicating its advanced capabilities in handling diverse question types.

* **Effectiveness of Corrections**: Both intrinsic and external correction methods enhance model performance, suggesting that these techniques are valuable for improving accuracy and reliability. The slight advantage of external correction (S2) may indicate that providing additional context or information during the correction process is beneficial.

* **Model Consistency**: The relative performance of models remains consistent across different correction settings. Models that perform well in the baseline setting tend to maintain their relative advantage in the corrected settings.

</details>

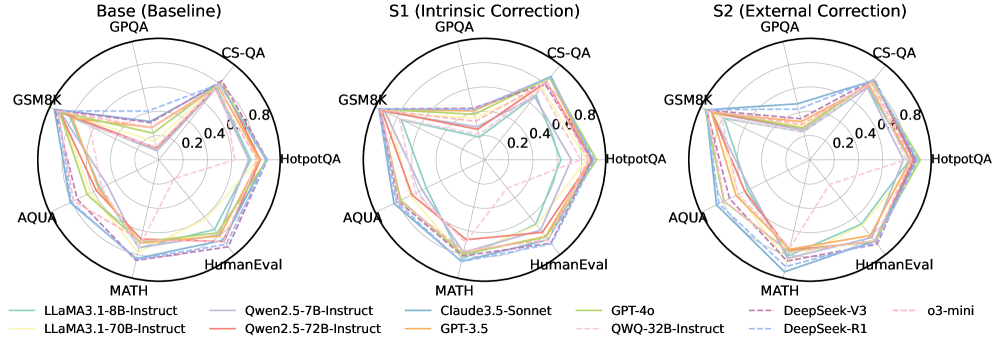

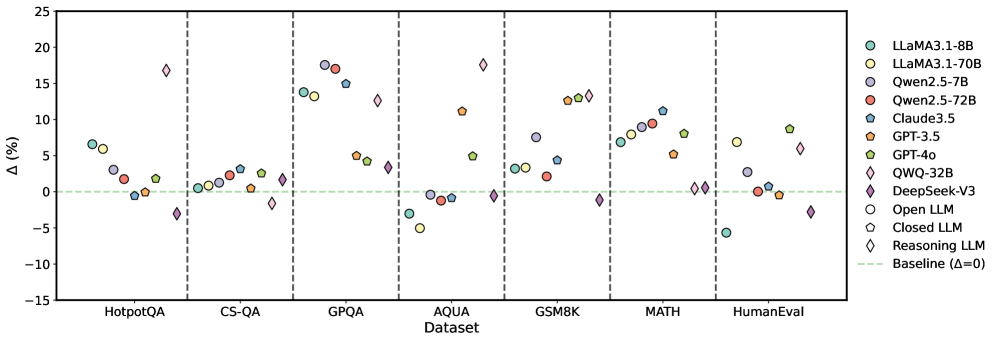

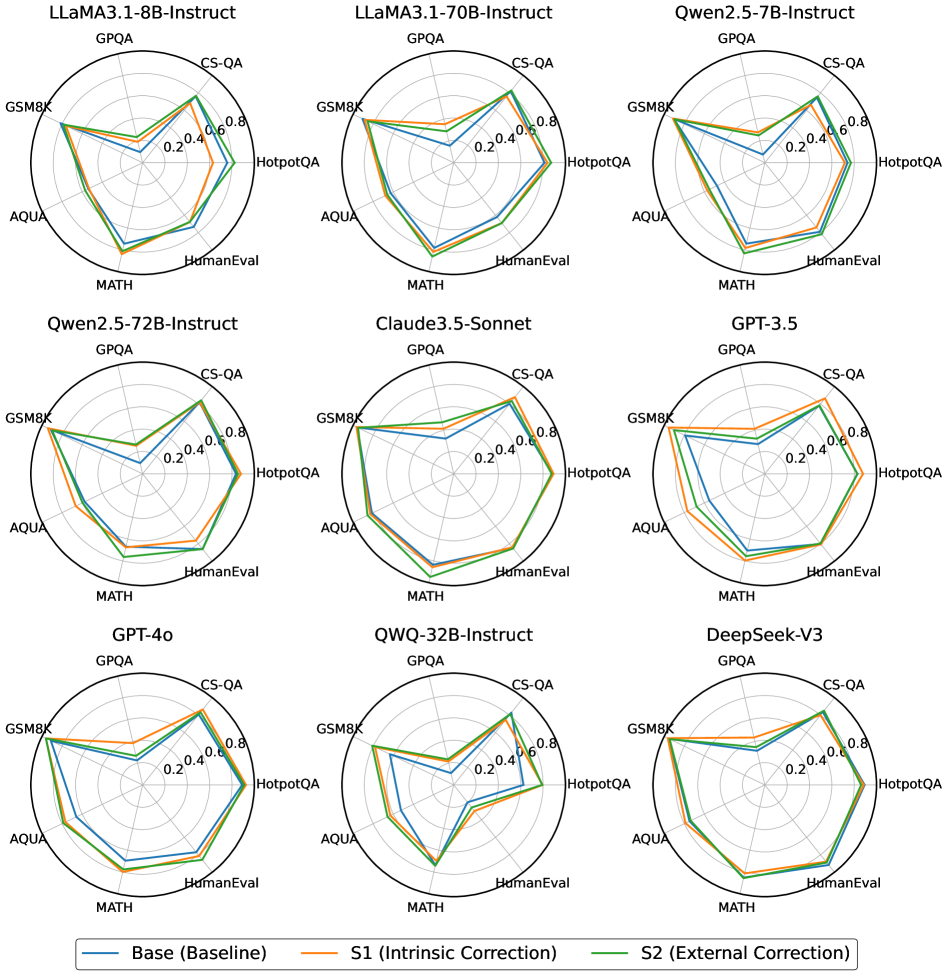

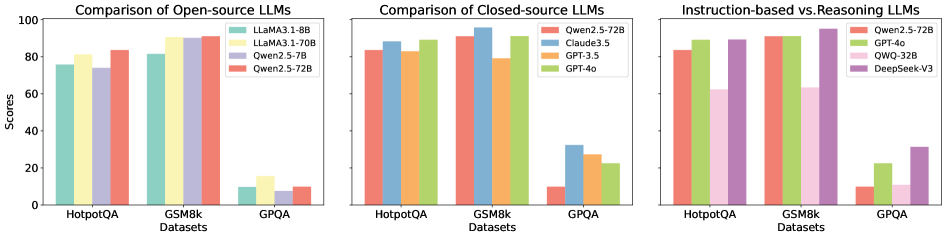

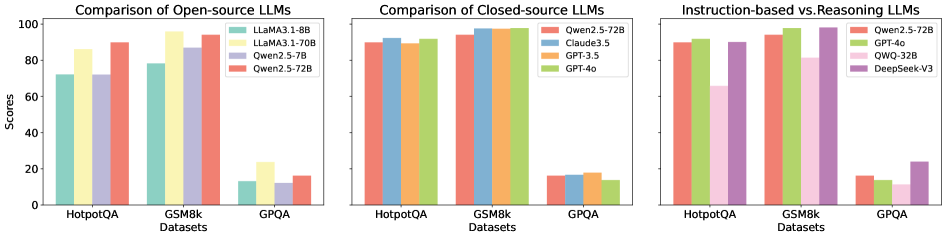

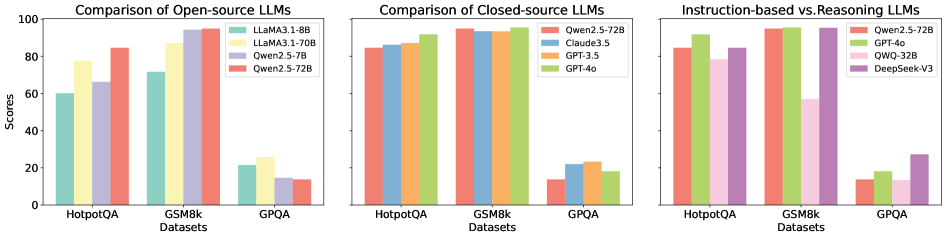

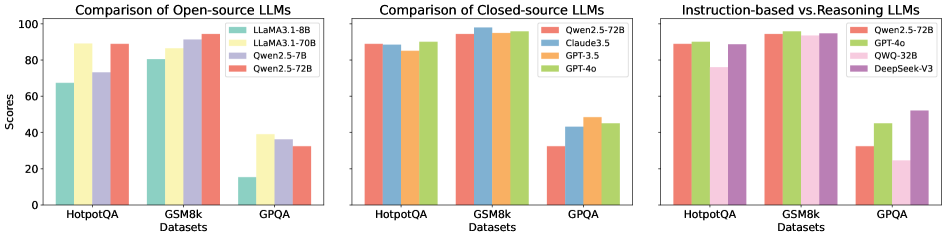

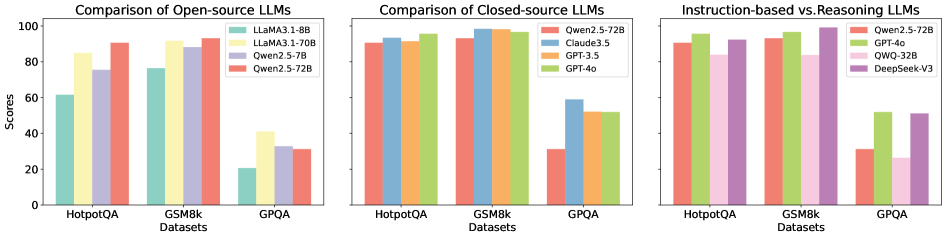

Figure 1: Comparison of different LLMs across various self-correction types and task scenarios.

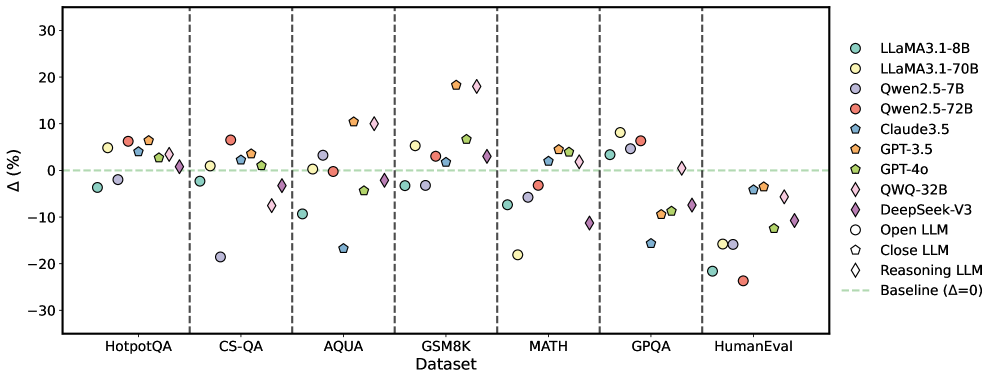

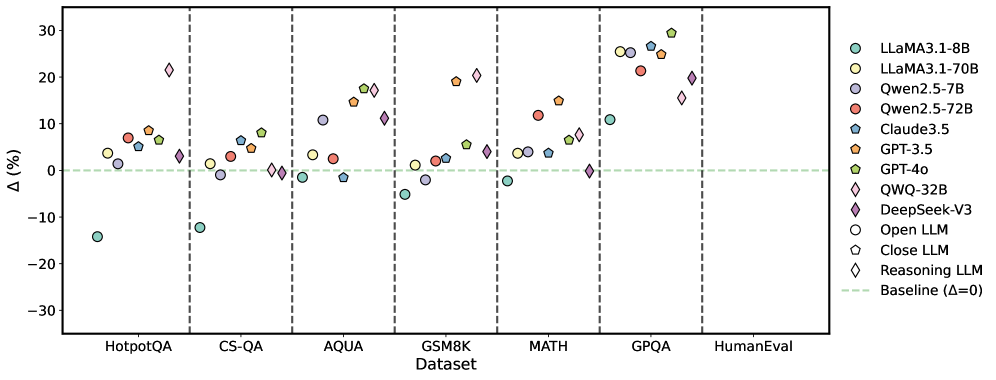

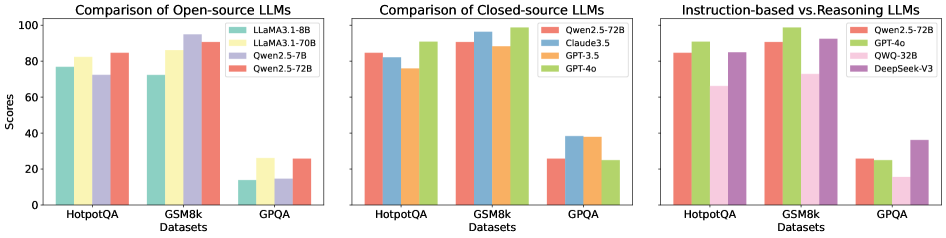

For a rigorous and comprehensive assessment, we construct two curated datasets: CorrectBench-base, which integrates diverse subtasks with 3,825 question-answer pairs drawn from 7 distinct subdatasets, and CorrectBench-test, a curated collection of question-answer pairs specifically tailored for correction-oriented experiments. These subdatasets cover three principal tasks: commonsense reasoning Putri2024CanLG , mathematical reasoning Hendrycks2021MeasuringMP , and code generation Chen2021EvaluatingLL . We then apply these subdatasets to both instruction-based LLMs (e.g., LLaMA 3.1-8B-Instruct meta2024llama3 , Qwen 2.5-7B-Instruct Yang2024Qwen25TR , GPT-4o hurst2024gpt , Claude 3.5-Sonnet AhtropicClaude ) and reasoning LLMs This paper defines “reasoning LLMs” as those models that are specifically enhanced with complex reasoning capabilities through a post-training optimization process. (e.g., DeepSeek-V3 DeepSeekAI2024DeepSeekV3TR ). Figure 1 compares the baseline (‘Base’) performance with the mean performances of intrinsic and external correction methods separately, revealing that self-correction bolsters overall accuracy (detailed in Figure 8).

Key insights. First, self-correction methods substantially enhance accuracy, particularly in complex reasoning tasks. Meanwhile, mixing multiple methods, while improving accuracy, incurs higher computational costs and reduced efficiency. For reasoning LLMs, these methods offer only marginal gains with increased time usage. Interestingly, the CoT Wei2022ChainOT strategy demonstrates a favorable trade-off between operational efficiency and overall accuracy, challenging the prevailing assumption that more sophisticated correction frameworks inherently produce superior outcomes.

To summarize, our work provides three key contributions:

- A Comprehensive Benchmark. We propose CorrectBench, the first benchmark devised to systematically evaluate the impact of self-correction on LLMs inference. Spanning multiple tasks and model categories, CorrectBench offers a robust, reproducible platform for methodological comparisons.

- Two Datasets. We present CorrectBench-base and CorrectBench-test, both meticulously constructed to encompass a broad range of question-answer formats and reasoning scenarios, facilitating thorough assessments of different correction methods.

- Insights and Implications. Our empirical findings show that self-correction substantially advances LLMs’ performance, especially on tasks demanding extensive reasoning. However, the increased computational load of mixing multiple correction strategies must be weighed against potential accuracy gains. Moreover, for reasoning LLMs, additional correction methods provide limited improvements, emphasizing critical cost-efficiency concerns for practical applications.

2 CorrectBench: A Benchmark of Self-Correction in LLMs

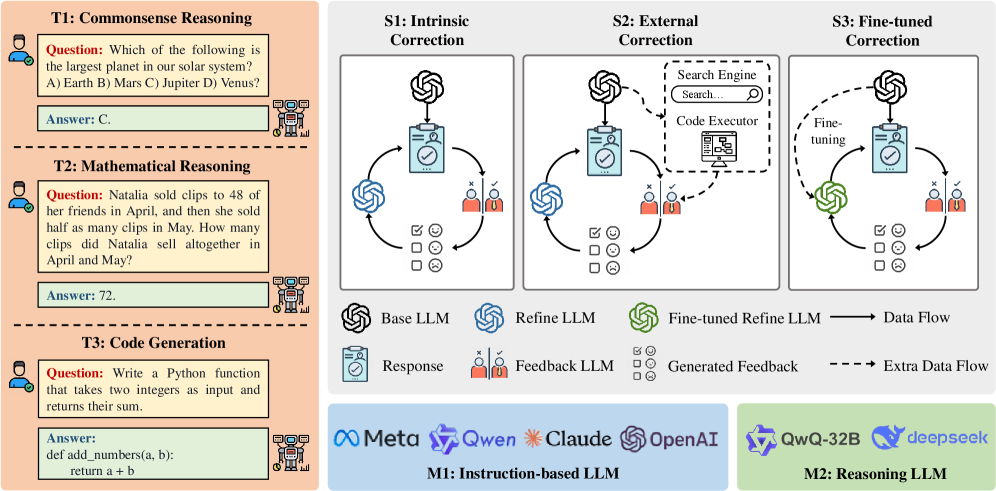

CorrectBench is a systematically designed benchmark that quantifies the extent to which various correction methods improve model outputs in reasoning-intensive scenarios. As illustrated in Figure 2, CorrectBench characterizes self-correction along three principal dimensions: Task Scenario, Self-Correction Type, and LLM Type. The evaluation pipeline begins with selecting a specific task scenario and dataset, followed by applying a chosen correction method, and concludes with assessing the model’s iterative self-correction process across diverse LLMs.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Diagram: LLM Correction and Reasoning Process

### Overview

The image illustrates a comparison between instruction-based and reasoning-based Large Language Models (LLMs), demonstrating different correction mechanisms applied to instruction-based LLMs. The diagram highlights intrinsic correction, external correction, and fine-tuned correction strategies. The image is divided into 3 main sections: Task Examples (T1, T2, T3), Correction Strategies (S1, S2, S3), and Model Types (M1, M2).

### Components/Axes

* **Task Examples (Left Column)**: Shows examples of tasks LLMs might encounter, including Commonsense Reasoning (T1), Mathematical Reasoning (T2), and Code Generation (T3).

* **Correction Strategies (Top Right)**: Illustrates three correction methods: Intrinsic Correction (S1), External Correction (S2), and Fine-tuned Correction (S3). Each correction strategy shows a looping process where a Base LLM produces a Response, Feedback is generated (either internally or externally), and then used to refine the LLM.

* **Model Types (Bottom)**: Differentiates between Instruction-based LLMs (M1) and Reasoning LLMs (M2) by showing logos of companies and specific models within each category.

* **Legend**:

* Base LLM (Swirl Symbol): Represents the initial LLM.

* Refine LLM (Swirl Symbol): Indicates the LLM after the initial refinement process.

* Fine-tuned Refine LLM (Green Swirl Symbol): Represents the final refined LLM after fine-tuning.

* Response (Document Icon): Represents the output or answer provided by the LLM.

* Feedback LLM (Avatar): Person providing the feedback.

* Generated Feedback (Checklist): Represents the feedback used to refine the model.

* Data Flow (Solid Arrow): Indicates the flow of data between components.

* Extra Data Flow (Dashed Arrow): Indicates auxiliary or external data flow.

### Detailed Analysis

**Task Examples (Left)**:

* **T1: Commonsense Reasoning**: Question: "Which of the following is the largest planet in our solar system? A) Earth B) Mars C) Jupiter D) Venus?" Answer: C.

* **T2: Mathematical Reasoning**: Question: "Natalia sold clips to 48 of her friends in April, and then she sold half as many clips in May. How many clips did Natalia sell altogether in April and May?" Answer: 72.

* **T3: Code Generation**: Question: "Write a Python function that takes two integers as input and returns their sum." Answer: `def add_numbers(a, b): return a + b`

**Correction Strategies (Top Right)**:

* **S1: Intrinsic Correction**:

* A "Base LLM" provides a "Response" that is reviewed by the "Feedback LLM". The "Generated Feedback" is used to refine the initial "Base LLM" into a "Refine LLM".

* Feedback is internally generated, with visual icons suggesting positive/negative feedback (check mark, smile, neutral, frown).

* **S2: External Correction**:

* Similar structure to S1.

* Includes a "Search Engine" and "Code Executor" which are used to provide "Extra Data Flow" for the "Generated Feedback." This feedback is used to refine the initial "Base LLM" into a "Refine LLM".

* The "Search Engine" box has a search bar labeled "Search..." inside it.

* The "Code Executor" has an icon resembling a computer with code on the screen.

* **S3: Fine-tuned Correction**:

* A "Base LLM" provides a "Response" that is reviewed by the "Feedback LLM". Then a "Fine-tuning" is performed, using a "Fine-tuned Refine LLM". Then "Generated Feedback" is used to refine the initial "Base LLM".

**Model Types (Bottom)**:

* **M1: Instruction-based LLM**: Includes logos for Meta, Qwen, Claude, and OpenAI.

* **M2: Reasoning LLM**: Includes logos for QwQ-32B and deepseek.

### Key Observations

* The diagram clearly distinguishes between methods for improving LLM performance through intrinsic, external, and fine-tuned corrections.

* The separation of instruction-based and reasoning LLMs suggests different architectural and training approaches.

* The presence of "extra data flow" in external correction highlights the use of external tools like search engines and code executors to enhance the feedback process.

### Interpretation

The diagram illustrates the evolution and sophistication of techniques used to improve LLMs. Instruction-based LLMs, represented by prominent industry players like Meta and OpenAI, benefit from correction strategies that involve internal feedback loops, external knowledge sources, and fine-tuning mechanisms. Reasoning LLMs, represented by QwQ-32B and deepseek, are presented as a distinct category, implicitly suggesting they may employ different core architectures or training paradigms that emphasize reasoning capabilities from the outset.

The progression from intrinsic to external to fine-tuned correction indicates a move towards more complex and resource-intensive methods for improving LLM performance. The external correction strategy, in particular, highlights the importance of leveraging external tools and data to ground LLM responses in real-world knowledge. The process appears cyclical, which suggests a closed-loop adaptive system that learns and improves over time.

</details>

Figure 2: An overview of the CorrectBench framework.

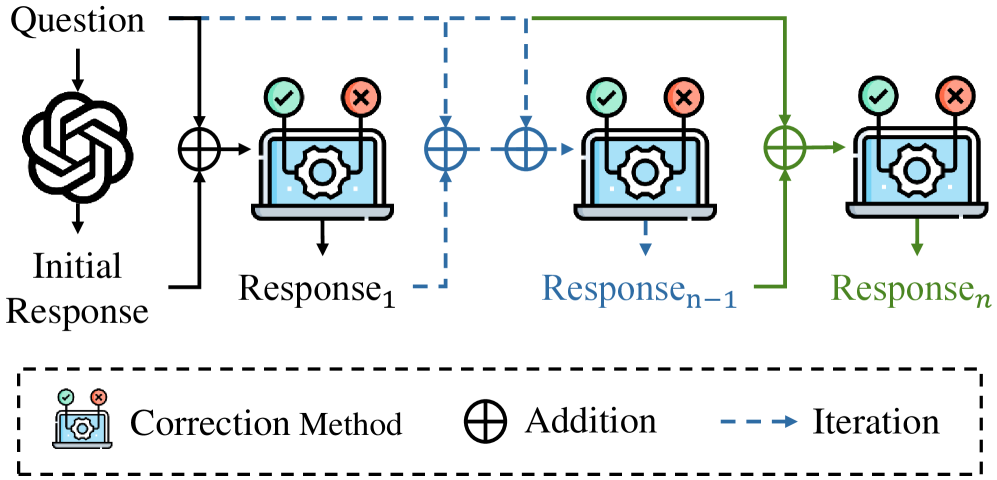

Iterative Self-Correction Paradigm. In a standard LLM evaluation, the model generates an initial response $r_{0}$ to a question $q$ given an initial prompt $p_{0}$ , formally $r_{0}=\mathcal{M}(q,p_{0})$ , where $\mathcal{M}$ denotes the LLM. While this process becomes iterative in the self-correction paradigm. Specifically, for the $k$ -th iteration, $p_{k}=p_{k-1}\cup r_{k-1},r_{k}=\mathcal{M}(q,p_{k})$ , where $p_{k}$ is the updated prompt that includes the previous response $r_{k-1}$ . After $K$ iterations, the final output $r_{K}$ reflects the model’s corrected response. This iterative mechanism enables the model to continually refine its output based on newly revealed errors or inconsistencies.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Iterative Response Correction

### Overview

The image is a diagram illustrating an iterative process for response correction. It shows a question being processed to generate an initial response, followed by a series of correction methods and additions to refine the response iteratively.

### Components/Axes

* **Question:** Input to the system, leading to the initial response.

* **Initial Response:** The first output generated from the question.

* **Response<sub>1</sub>:** The first corrected response.

* **Response<sub>n-1</sub>:** The (n-1)th corrected response.

* **Response<sub>n</sub>:** The nth corrected response.

* **Correction Method:** Represented by a laptop icon with a gear inside the screen, a green checkmark, and a red "X" symbol.

* **Addition:** Represented by a circled plus sign.

* **Iteration:** Represented by a dashed arrow.

### Detailed Analysis

The diagram starts with a "Question" that feeds into a symbol resembling the ChatGPT logo, which produces an "Initial Response." This initial response is then processed through a "Correction Method" block, labeled "Response<sub>1</sub>". The output of this block is added to the initial response via an "Addition" operation.

The process then iterates. The output of the first addition is fed back into another "Correction Method" block, labeled "Response<sub>n-1</sub>". This block's output is again added to the previous response. This iterative process continues until the final "Correction Method" block, labeled "Response<sub>n</sub>", produces the final corrected response.

The "Correction Method" blocks each have a green checkmark and a red "X" symbol, suggesting a process of validation and rejection or acceptance of certain aspects of the response.

The iteration loops are represented by dashed lines, colored blue and green, indicating the flow of information back into the addition operations.

### Key Observations

* The diagram illustrates an iterative refinement process.

* Each iteration involves a "Correction Method" and an "Addition" operation.

* The use of checkmarks and "X" symbols suggests a filtering or validation mechanism within the "Correction Method."

### Interpretation

The diagram depicts a system where an initial response to a question is iteratively refined through a series of correction and addition steps. The "Correction Method" likely involves evaluating the response and making adjustments based on certain criteria, as indicated by the checkmark and "X" symbols. The "Addition" operation suggests that the corrections are integrated back into the response, leading to a more refined output in each iteration. This iterative approach aims to improve the quality and accuracy of the response over time. The diagram highlights the cyclical nature of the refinement process, where feedback loops are used to continuously improve the response.

</details>

Figure 3: Mixture of different correction methods.

Mixture Framework. While individual self-correction methods can improve model responses, it is plausible that integrating multiple methods may yield further improvements in accuracy. To examine these potential synergies, we propose the mixture framework, illustrated in Figure 3. The response of one correction method serves as input to the next, forming a dynamic pipeline of iterative refinements. This setup enables us to analyze how distinct self-correction methods interact, thereby guiding the development of optimal configurations for improving LLM’s performance.

2.1 Self-Correction Method

CorrectBench comprehensively evaluates three distinct categories of self-correction methods:

S1: Intrinsic Correction. This category focuses on the LLMs’ capacity to internally identify and correct errors without external tools. Methods such as RCI Kim2023LanguageMC , Self-Refine Madaan2023SelfRefineIR , CoVe Dhuliawala2023ChainofVerificationRH , and Reflexion Shinn2023ReflexionLA enable the LLMs to re-evaluate its prior reasoning steps and resolve inconsistencies based on its internal knowledge.

S2: External Correction. In contrast to S1, S2 (e.g., Reflexion Shinn2023ReflexionLA , RARR Gao2022RARRRA , RATT Zhang2024RATTAT , CRITIC Gou2023CRITICLL ) leverages external resources, such as knowledge bases or Google search tools, to address gaps in the internal representation. This external support can correct factual inaccuracies or logical oversights, though it may constrain the model’s capacity for divergent reasoning.

S3: Fine-tuned Correction. Fine-tuned correction methods including DCoT Puerto2024FineTuningWD , SCORE Zhang2024SmallLM , and SuperCorrect Yang2024SuperCorrectSA enhance LLMs’ self-correction performance through targeted fine-tuning. Although potentially effective, these methods require substantial training and are often limited by the scope and quality of the fine-tuning data.

2.2 Task Scenario

CorrectBench investigates self-correction methods across three representative task scenarios:

T1: Commonsense Reasoning. This scenario probes the model’s capacity to address factual or logical inconsistencies within everyday knowledge domains. Relevant datasets include HotpotQA Yang2018HotpotQAAD , CommonsenseQA Putri2024CanLG CommonsenseQA is represented as CS-QA in the following., and the more challenging GPQA Rein2023GPQAAG , which emphasizes complex reasoning.

T2: Mathematical Reasoning. Datasets in this task scenario emphasize the detection and correction of errors in mathematical derivations, algebraic manipulations, and multi-step reasoning. Representative datasets include GSM8K Cobbe2021TrainingVT , AQUA Chen2019AQuAAA , and MATH Hendrycks2021MeasuringMP .

T3: Code Generation. This scenario assesses the LLM’s ability to generate functionally correct and logically consistent code from natural language prompts. For instance, HumanEval Chen2021EvaluatingLL measures the LLM’s ability to detect and correct syntax errors, logical flaws, and other coding mistakes.

2.3 LLM Type

To ensure a broad and realistic appraisal, CorrectBench encompasses two categories of LLMs:

M1: Instruction-Based LLMs. LLMs are designed to follow user-provided instructions to generate relevant outputs, making them highly versatile across diverse tasks. This category includes both open-source and closed-source LLMs, distinguished by their accessibility and design paradigms. Open-source LLMs, such as Qwen 2.5-7B/70B-Instruct Yang2024Qwen25TR , as well as LLaMA 3.1-8B/70B-Instruct meta2024llama3 , offer transparency and flexibility for modification, enabling detailed analysis and fine-tuning. Conversely, closed-source LLMs, including OpenAI’s GPT-3.5 ouyang2022training , GPT-4o hurst2024gpt , and Anthropic’s Claude 3.5-Sonnet AhtropicClaude , excel in real-world tasks due to proprietary optimizations, but restrict direct access and customization for research purposes.

M2: Reasoning LLMs. Reasoning LLMs are models specifically enhanced with advanced reasoning capabilities through targeted post-training optimization processes. These models are designed to excel in tasks requiring multi-step logical reasoning, often incorporating integrated self-correction mechanisms to refine their outputs. Representative examples include QWQ-32B-Instruct qwen2 , o3-mini and DeepSeek-R1 DeepSeekAI2024DeepSeekV3TR . DeepSeek-V3 DeepSeekAI2024DeepSeekV3TR , in particular, adopts an innovative approach to distilling reasoning capabilities from long chain-of-thought models, leveraging its predecessor. By integrating verification and reflection patterns from R1, DeepSeek-V3 achieves substantial improvements in reasoning accuracy while maintaining precise control over output style and length.

2.4 Research Question

This study aims to elucidate the effectiveness of different self-correction methods in enhancing LLMs’ performance, addressing the following core research questions:

[RQ1] To what extent can LLMs achieve accurate results by leveraging intrinsic (S1) and external (S2) self-correction methods S3 is analyzed separately due to dataset-specific constraints. without requiring further intervention?

[RQ2] How does mixing multiple self-correction methods influence model accuracy and robustness, and what are the associated computational trade-offs?

[RQ3] For reasoning LLMs with built-in correction mechanisms, to what extent can the above self-correction methods provide additional benefits?

3 Experiment Settings

Dataset Preparation. To ensure consistency and reproducibility, we employ CorrectBench-test for experimental evaluations. For each dataset within CorrectBench-test, we randomly select 100 samples and subsequently refine this selection by excluding a minimal number of outliers or irrelevant instances, thereby ensuring a more precise representation of error patterns. Comprehensive details regarding the datasets and preprocessing steps are provided in Appendix B.1, thereby promoting transparency and reproducibility for subsequent research.

Task and Model Selection. CorrectBench adopts a hierarchical strategy to evaluate self-correction across diverse task scenarios and LLM types. Specific datasets are selected to match the characteristics and objectives of each self-correction method, ensuring that the benchmark captures comprehensive error types and correction challenges. Further specifications regarding selections of datasets and LLMs are included in Appendix B.2, ensuring full reproducibility of the evaluation methodology.

Evaluation Metrics. We employ both task-specific and judgment-based metrics to evaluate the self-correction capabilities of diverse tasks: 1) Task-Specific Metrics. These metrics are tailored to evaluate model performance across different tasks. For T1, accuracy is computed as: $\mathrm{ACC}=\frac{1}{N}\sum_{i=1}^{N}\mathbb{I}(\hat{y}_{i}=y_{i})$ , where $N$ is the total number of samples, $y_{i}$ is the ground truth, $\hat{y}_{i}$ is the predicted answer, and $\mathbb{I}(·)$ is the indicator function. For T2, the solve rate represents the percentage of problems correctly solved by the model out of the total number of problems. For T3, pass@k evaluates whether at least one of the $k$ generated solutions for a problem passes all test cases. The final pass@k score is calculated as the average pass rate across all problems. 2) Judgment-Based Metrics. In cases where the model’s response is ambiguous or incomplete, we conduct human evaluations, where human evaluators apply stringent criteria to ensure an impartial and thorough assessment of the judgments. Additionally, we employ GPT-4o as an LLM-as-a-Judge paradigm, providing an automated yet consistent scoring mechanism for large-scale experimental runs.

4 Empirical Results and Analysis

Table 1: Main results on CorrectBench for the average of multiple LLMs. Values in () indicate the change from the baseline. Blue signifies improvements, and orange indicates declines, where darker shades reflect larger magnitudes. Further details are given in Appendix H.

| Type - CoT | Method Base 83.29 ( +2.53 ) | HotpotQA( $\uparrow$ ) 80.76 78.03 ( -1.93 ) | CS-QA( $\uparrow$ ) 79.96 16.52 ( -2.04 ) | GPQA( $\uparrow$ ) 18.56 91.96 ( +5.50 ) | GSM8K( $\uparrow$ ) 86.46 60.24 ( -0.99 ) | AQUA( $\uparrow$ ) 61.23 72.59 ( -2.53 ) | MATH( $\uparrow$ ) 75.12 60.10 ( -12.61 ) | HumanEval( $\uparrow$ ) 72.71 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| S1 | RCI | 79.67 ( -1.09 ) | 76.29 ( -3.67 ) | 19.98 ( +1.42 ) | 87.00 ( +0.54 ) | 67.12 ( +5.89 ) | 74.92 ( -0.20 ) | 67.46 ( -5.25 ) |

| CoVe | 83.04 ( +2.28 ) | 78.54 ( -1.42 ) | 37.41 ( +18.85 ) | 92.23 ( +5.77 ) | 71.12 ( +9.89 ) | 79.30 ( +4.18 ) | 76.96 ( +4.25 ) | |

| Self-Refine | 85.49 ( +4.73 ) | 81.06 ( +1.10 ) | 40.69 ( +22.13 ) | 91.74 ( +5.28 ) | 69.46 ( +8.23 ) | 81.77 ( +6.65 ) | - | |

| Reflexion-v1 | 69.52 ( -11.24 ) | 63.89 ( -16.07 ) | 19.25 ( +0.69 ) | 67.64 ( -18.82 ) | 48.33 ( -12.90 ) | 65.01 ( -10.11 ) | - | |

| S2 | Reflexion-v2 | 87.98 ( +7.22 ) | 82.21 ( +2.25 ) | 26.85 ( +8.29 ) | 89.87 ( +3.41 ) | 68.23 ( +7.00 ) | 81.36 ( +6.24 ) | - |

| RARR | 85.47 ( +4.71 ) | 80.57 ( +0.61 ) | 36.82 ( +18.26 ) | 88.92 ( +2.46 ) | 66.81 ( +5.58 ) | 82.78 ( +7.66 ) | 77.35 ( +4.64 ) | |

| RATT | 79.59 ( -1.17 ) | 80.81 ( +0.85 ) | 25.90 ( +7.34 ) | 88.08 ( +1.62 ) | 68.06 ( +6.83 ) | 80.74 ( +5.62 ) | 73.44 ( +0.73 ) | |

| CRITIC | - | 81.77 ( +1.81 ) | - | 77.46 ( -9.00 ) | - | - | - | |

| - | Average | 83.54 ( +2.78 ) | 80.18 ( +0.22 ) | 31.28 ( +12.72 ) | 85.04 ( -1.42 ) | 68.47 ( +7.24 ) | 80.15 ( +5.03 ) | 73.80 ( +1.09 ) |

4.1 Main Results

Table 1 summarizes the average performance improvements attained by various self-correction methods over the ‘Base’. The results reveal that each self-correction method demonstrates performance improvements over the ‘Base’ to varying degrees, with particularly pronounced gains in more complex tasks such as GPQA and MATH. For instance, CoVe from S1 yields an improvement of +23.24% on GPQA. However, simpler tasks like GSM8K exhibit more modest gains (e.g., +5.28% for CoVe). By contrast, external correction methods S2 generally achieve higher average gains than S1. For example, Reflexion-v1 Reflexion-v1 denotes reflexion without external tools experiences declines on tasks such as HotpotQA (-11.13%) and AQUA (-12.90%). However, Reflexion-v2 Reflexion-v2 denotes reflexion with external tools increases its effectiveness, yielding improvements of +7.33% on HotpotQA and +7.00% on AQUA. We analyze that is because Reflexion Shinn2023ReflexionLA was initially designed to leverage external tools for enhanced correction, but Reflexion-v1, stripped of these tools, lacks optimization. This leaves weaker LLMs prone to getting "stuck," producing persistent incorrect outputs and lowering the average score. Finally, S3 generally lags behind these methods, likely due to smaller model sizes and narrower training objectives (Details in Section 4.5).

<details>

<summary>x4.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance Across Datasets

### Overview

The image is a scatter plot comparing the performance of various language models (LLMs) across different datasets. The y-axis represents the change in performance (Δ in percentage), and the x-axis represents the datasets. Each model is represented by a unique color and shape combination, as indicated in the legend on the right. A horizontal dashed green line indicates the baseline performance (Δ=0).

### Components/Axes

* **X-axis:** Datasets: HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, HumanEval.

* **Y-axis:** Δ (%), ranging from -15% to 25% with increments of 5%.

* **Legend (Right side):**

* Light Blue Circle: LLaMA3.1-8B

* Yellow Circle: LLaMA3.1-70B

* Dark Blue Circle: Qwen2.5-7B

* Red Circle: Qwen2.5-72B

* Blue Pentagon: Claude3.5

* Orange Pentagon: GPT-3.5

* Green Pentagon: GPT-4o

* Purple Diamond: QWQ-32B

* Dark Purple Diamond: DeepSeek-V3

* White Circle: Open LLM

* White Pentagon: Closed LLM

* White Diamond: Reasoning LLM

* Green Dashed Line: Baseline (Δ=0)

### Detailed Analysis

**HotpotQA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -13%

* LLaMA3.1-70B (Yellow Circle): Approximately 5%

* Qwen2.5-7B (Dark Blue Circle): Approximately -2%

* Qwen2.5-72B (Red Circle): Approximately 5%

* Claude3.5 (Blue Pentagon): Approximately 5%

* GPT-3.5 (Orange Pentagon): Approximately 5%

* GPT-4o (Green Pentagon): Approximately 3%

* QWQ-32B (Purple Diamond): Approximately -1%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -1%

* Open LLM (White Circle): Approximately -3%

* Closed LLM (White Pentagon): Approximately -8%

* Reasoning LLM (White Diamond): Approximately -1%

**CS-QA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately -8%

* LLaMA3.1-70B (Yellow Circle): Approximately -6%

* Qwen2.5-7B (Dark Blue Circle): Approximately -9%

* Qwen2.5-72B (Red Circle): Approximately 8%

* Claude3.5 (Blue Pentagon): Approximately 8%

* GPT-3.5 (Orange Pentagon): Approximately 8%

* GPT-4o (Green Pentagon): Approximately 1%

* QWQ-32B (Purple Diamond): Approximately 11%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -1%

* Open LLM (White Circle): Approximately -1%

* Closed LLM (White Pentagon): Approximately -1%

* Reasoning LLM (White Diamond): Approximately -1%

**GPQA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 1%

* LLaMA3.1-70B (Yellow Circle): Approximately 15%

* Qwen2.5-7B (Dark Blue Circle): Approximately 1%

* Qwen2.5-72B (Red Circle): Approximately 17%

* Claude3.5 (Blue Pentagon): Approximately 15%

* GPT-3.5 (Orange Pentagon): Approximately 20%

* GPT-4o (Green Pentagon): Approximately 4%

* QWQ-32B (Purple Diamond): Approximately 12%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 17%

* Open LLM (White Circle): Approximately -5%

* Closed LLM (White Pentagon): Approximately -1%

* Reasoning LLM (White Diamond): Approximately 11%

**AQUA Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 2%

* LLaMA3.1-70B (Yellow Circle): Approximately 1%

* Qwen2.5-7B (Dark Blue Circle): Approximately 3%

* Qwen2.5-72B (Red Circle): Approximately 4%

* Claude3.5 (Blue Pentagon): Approximately 1%

* GPT-3.5 (Orange Pentagon): Approximately 5%

* GPT-4o (Green Pentagon): Approximately 2%

* QWQ-32B (Purple Diamond): Approximately 11%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -1%

* Open LLM (White Circle): Approximately 1%

* Closed LLM (White Pentagon): Approximately 1%

* Reasoning LLM (White Diamond): Approximately 1%

**GSM8K Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 11%

* LLaMA3.1-70B (Yellow Circle): Approximately 10%

* Qwen2.5-7B (Dark Blue Circle): Approximately 11%

* Qwen2.5-72B (Red Circle): Approximately 10%

* Claude3.5 (Blue Pentagon): Approximately 11%

* GPT-3.5 (Orange Pentagon): Approximately 10%

* GPT-4o (Green Pentagon): Approximately 11%

* QWQ-32B (Purple Diamond): Approximately 11%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 4%

* Open LLM (White Circle): Approximately 1%

* Closed LLM (White Pentagon): Approximately 1%

* Reasoning LLM (White Diamond): Approximately 1%

**MATH Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 3%

* LLaMA3.1-70B (Yellow Circle): Approximately 3%

* Qwen2.5-7B (Dark Blue Circle): Approximately 3%

* Qwen2.5-72B (Red Circle): Approximately 3%

* Claude3.5 (Blue Pentagon): Approximately 3%

* GPT-3.5 (Orange Pentagon): Approximately 3%

* GPT-4o (Green Pentagon): Approximately 3%

* QWQ-32B (Purple Diamond): Approximately 3%

* DeepSeek-V3 (Dark Purple Diamond): Approximately -4%

* Open LLM (White Circle): Approximately -6%

* Closed LLM (White Pentagon): Approximately -6%

* Reasoning LLM (White Diamond): Approximately -4%

**HumanEval Dataset:**

* LLaMA3.1-8B (Light Blue Circle): Approximately 4%

* LLaMA3.1-70B (Yellow Circle): Approximately 5%

* Qwen2.5-7B (Dark Blue Circle): Approximately 4%

* Qwen2.5-72B (Red Circle): Approximately 4%

* Claude3.5 (Blue Pentagon): Approximately 4%

* GPT-3.5 (Orange Pentagon): Approximately 4%

* GPT-4o (Green Pentagon): Approximately 4%

* QWQ-32B (Purple Diamond): Approximately 4%

* DeepSeek-V3 (Dark Purple Diamond): Approximately 1%

* Open LLM (White Circle): Approximately 1%

* Closed LLM (White Pentagon): Approximately 1%

* Reasoning LLM (White Diamond): Approximately 1%

### Key Observations

* The performance of different models varies significantly across different datasets.

* GPT-3.5 generally performs well across all datasets, often achieving high scores.

* LLaMA3.1-8B shows the lowest performance on HotpotQA.

* Reasoning LLM shows the lowest performance on MATH.

* The performance of Open LLM and Closed LLM is consistently low across all datasets.

### Interpretation

The scatter plot provides a comparative analysis of various language models' performance on different datasets. The variation in performance highlights the strengths and weaknesses of each model in handling different types of tasks. For example, GPT-3.5 consistently performs well, indicating its robustness across different tasks, while LLaMA3.1-8B struggles with HotpotQA. The plot also reveals that certain models, like Open LLM and Closed LLM, consistently underperform compared to others. This information is valuable for selecting the most appropriate model for a specific task and for identifying areas where model improvement is needed. The "Reasoning LLM" models (marked with diamonds) show a wide range of performance, suggesting that reasoning ability is highly task-dependent.

</details>

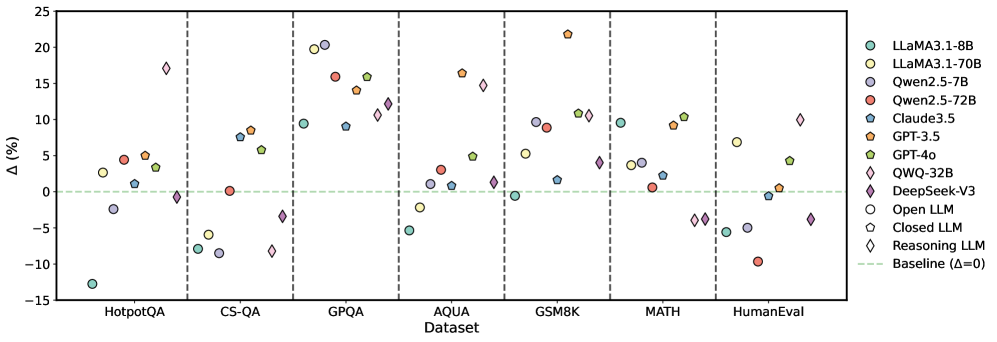

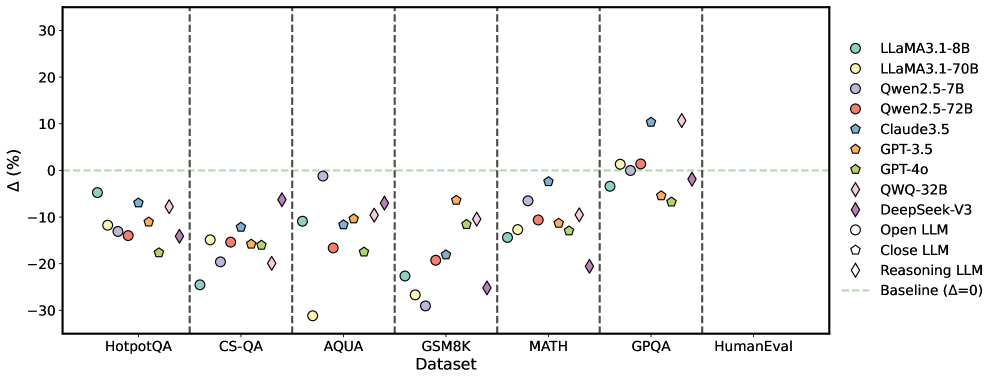

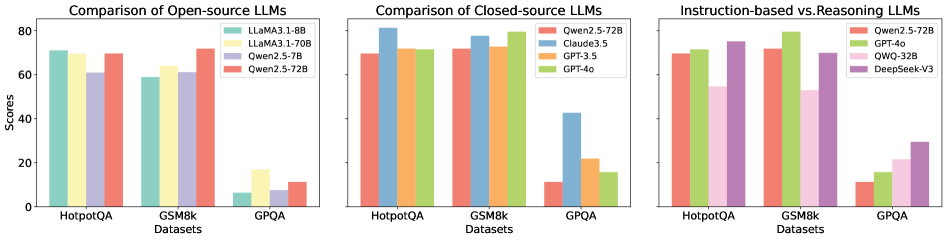

Figure 4: Average performance improvements achieved by S1 across multiple LLMs.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance on Various Datasets

### Overview

The image is a scatter plot comparing the performance (Delta %) of various Large Language Models (LLMs) on different datasets. The x-axis represents the dataset, and the y-axis represents the percentage difference (Delta %). Each model is represented by a unique color and marker. A horizontal dashed line indicates the baseline performance (Delta = 0).

### Components/Axes

* **X-axis:** "Dataset" with categories: HotpotQA, CS-QA, GPQA, AQUA, GSM8K, MATH, HumanEval.

* **Y-axis:** "Δ (%)" with a scale from -15 to 25, incrementing by 5.

* **Legend:** Located on the right side of the plot. It maps colors and markers to specific LLMs:

* Teal circle: LLaMA3.1-8B

* Yellow circle: LLaMA3.1-70B

* Purple circle: Qwen2.5-7B

* Red circle: Qwen2.5-72B

* Blue pentagon: Claude3.5

* Orange pentagon: GPT-3.5

* Green pentagon: GPT-4o

* Purple diamond: QWQ-32B

* Red diamond: DeepSeek-V3

* White circle: Open LLM

* White pentagon: Closed LLM

* White diamond: Reasoning LLM

* Light green dashed line: Baseline (Δ=0)

### Detailed Analysis

**HotpotQA:**

* LLaMA3.1-8B (Teal circle): ~6%

* LLaMA3.1-70B (Yellow circle): ~6%

* Qwen2.5-7B (Purple circle): ~1.5%

* Qwen2.5-72B (Red circle): ~1.5%

* Claude3.5 (Blue pentagon): ~-1%

* GPT-3.5 (Orange pentagon): ~2%

* GPT-4o (Green pentagon): ~2%

* QWQ-32B (Purple diamond): ~-3%

* DeepSeek-V3 (Red diamond): ~-3%

* Open LLM (White circle): ~1%

* Closed LLM (White pentagon): ~2%

* Reasoning LLM (White diamond): ~-3%

**CS-QA:**

* LLaMA3.1-8B (Teal circle): ~2%

* LLaMA3.1-70B (Yellow circle): ~2%

* Qwen2.5-7B (Purple circle): ~2%

* Qwen2.5-72B (Red circle): ~2%

* Claude3.5 (Blue pentagon): ~1%

* GPT-3.5 (Orange pentagon): ~2%

* GPT-4o (Green pentagon): ~2%

* QWQ-32B (Purple diamond): ~3%

* DeepSeek-V3 (Red diamond): ~-1%

* Open LLM (White circle): ~2%

* Closed LLM (White pentagon): ~2%

* Reasoning LLM (White diamond): ~-1%

**GPQA:**

* LLaMA3.1-8B (Teal circle): ~7%

* LLaMA3.1-70B (Yellow circle): ~17%

* Qwen2.5-7B (Purple circle): ~18%

* Qwen2.5-72B (Red circle): ~15%

* Claude3.5 (Blue pentagon): ~14%

* GPT-3.5 (Orange pentagon): ~5%

* GPT-4o (Green pentagon): ~5%

* QWQ-32B (Purple diamond): ~17%

* DeepSeek-V3 (Red diamond): ~-3%

* Open LLM (White circle): ~7%

* Closed LLM (White pentagon): ~15%

* Reasoning LLM (White diamond): ~17%

**AQUA:**

* LLaMA3.1-8B (Teal circle): ~-3%

* LLaMA3.1-70B (Yellow circle): ~-3%

* Qwen2.5-7B (Purple circle): ~-1%

* Qwen2.5-72B (Red circle): ~-1%

* Claude3.5 (Blue pentagon): ~-1%

* GPT-3.5 (Orange pentagon): ~11%

* GPT-4o (Green pentagon): ~5%

* QWQ-32B (Purple diamond): ~13%

* DeepSeek-V3 (Red diamond): ~-3%

* Open LLM (White circle): ~-3%

* Closed LLM (White pentagon): ~-1%

* Reasoning LLM (White diamond): ~13%

**GSM8K:**

* LLaMA3.1-8B (Teal circle): ~1%

* LLaMA3.1-70B (Yellow circle): ~13%

* Qwen2.5-7B (Purple circle): ~13%

* Qwen2.5-72B (Red circle): ~13%

* Claude3.5 (Blue pentagon): ~14%

* GPT-3.5 (Orange pentagon): ~11%

* GPT-4o (Green pentagon): ~11%

* QWQ-32B (Purple diamond): ~-2%

* DeepSeek-V3 (Red diamond): ~-2%

* Open LLM (White circle): ~1%

* Closed LLM (White pentagon): ~11%

* Reasoning LLM (White diamond): ~-2%

**MATH:**

* LLaMA3.1-8B (Teal circle): ~8%

* LLaMA3.1-70B (Yellow circle): ~10%

* Qwen2.5-7B (Purple circle): ~11%

* Qwen2.5-72B (Red circle): ~9%

* Claude3.5 (Blue pentagon): ~11%

* GPT-3.5 (Orange pentagon): ~6%

* GPT-4o (Green pentagon): ~7%

* QWQ-32B (Purple diamond): ~1%

* DeepSeek-V3 (Red diamond): ~1%

* Open LLM (White circle): ~8%

* Closed LLM (White pentagon): ~9%

* Reasoning LLM (White diamond): ~1%

**HumanEval:**

* LLaMA3.1-8B (Teal circle): ~-6%

* LLaMA3.1-70B (Yellow circle): ~7%

* Qwen2.5-7B (Purple circle): ~7%

* Qwen2.5-72B (Red circle): ~7%

* Claude3.5 (Blue pentagon): ~7%

* GPT-3.5 (Orange pentagon): ~1%

* GPT-4o (Green pentagon): ~1%

* QWQ-32B (Purple diamond): ~7%

* DeepSeek-V3 (Red diamond): ~-1%

* Open LLM (White circle): ~-6%

* Closed LLM (White pentagon): ~1%

* Reasoning LLM (White diamond): ~7%

### Key Observations

* The performance of different LLMs varies significantly across different datasets.

* Some models (e.g., LLaMA3.1-70B, Qwen2.5-7B, Qwen2.5-72B, Claude3.5) generally perform well on GPQA, GSM8K, and MATH datasets.

* Models like QWQ-32B and DeepSeek-V3 show relatively lower performance on several datasets, sometimes even below the baseline.

* The "Reasoning LLM" category (white diamond) shows high variance, with strong performance on GPQA, AQUA, GSM8K, and HumanEval, but weaker performance on HotpotQA, CS-QA, and MATH.

### Interpretation

The scatter plot provides a comparative analysis of LLM performance across various question-answering and reasoning datasets. The data suggests that no single model consistently outperforms all others across all tasks. The choice of the "best" model depends heavily on the specific dataset and the type of reasoning or knowledge required. The plot highlights the strengths and weaknesses of each model, which can inform model selection for specific applications. The "Reasoning LLM" category's high variance suggests that this type of model may be highly specialized, excelling in some reasoning tasks but struggling in others. The baseline (Δ=0) serves as a reference point to quickly identify models that perform above or below average on each dataset.

</details>

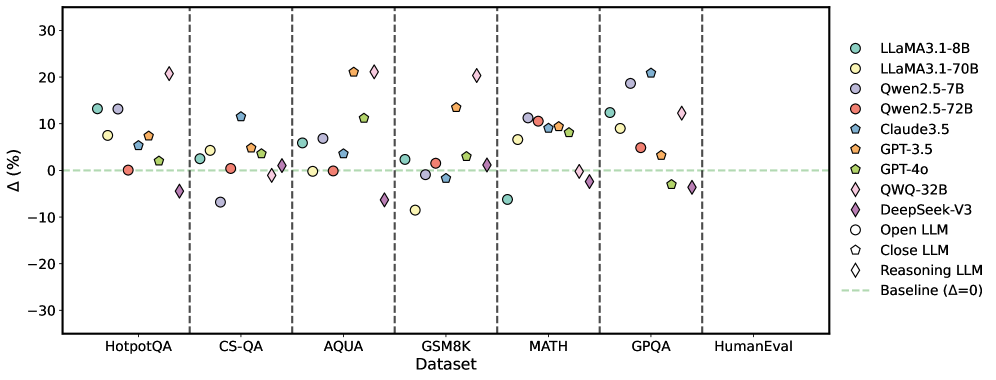

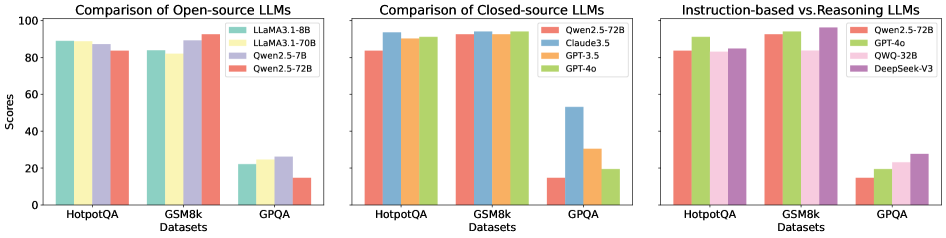

Figure 5: Average performance improvements achieved by S2 across multiple LLMs.

4.2 Results of Intrinsic Correction

Figure 4 illustrates the mean performance gains realized by all S1 methods across nine LLMs and multiple datasets, where y=0 denotes the baseline. For detailed results of each method, refer to Appendix E.1. Although S1 improves accuracy overall, the degree of improvement varies across instruction-based and reasoning LLMs. 1) Instruction-based LLMs. Closed-source LLMs exhibit uneven performance gains. For instance, LLaMA3.1-8B-Instruct shows significant declines on AQUA and HumanEval, whereas Qwen2.5-7B-Instruct demonstrates modest gains. These discrepancies likely stem from smaller parameter sizes and weaker instruction-following capabilities. In contrast, open-source LLMs offer more consistent and stable performance improvements. Notably, GPT-4o shows substantial gains on GPQA, whereas Claude3.5 achieves similar enhancements, highlighting the robust instruction-following adaptability of open-source architectures. 2) Reasoning LLMs. DeepSeek-V3’s performance remains close to the baseline across most tasks. While it demonstrates slight improvements on datasets such as CS-QA, GPQA, and MATH, it exhibits marginal declines on others. To further investigate this phenomenon, we conducted additional experiments (see Section 4.8), revealing that DeepSeek-V3’s built-in correction mechanism delivers a strong baseline performance. This high initial performance likely limits the impact of other correction methods. Conversely, QWQ attains considerable improvements on most datasets except CS-QA and MATH, likely reflecting the constraints posed by its smaller parameter size.

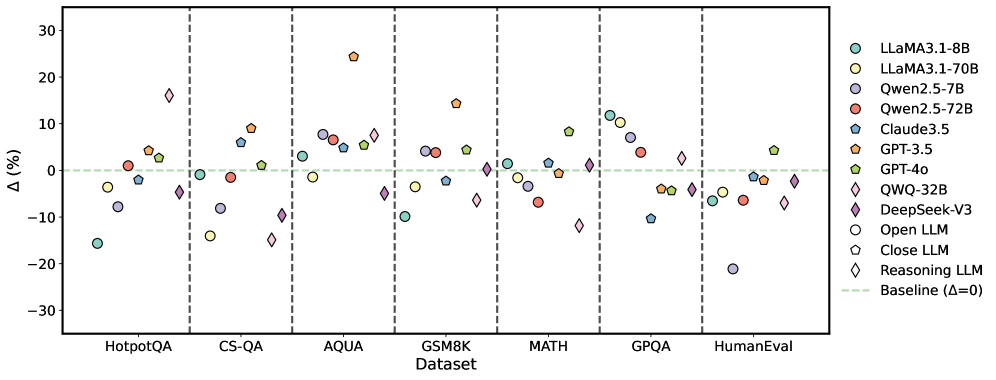

4.3 Results of External Correction

Figure 5 illustrates the average performance improvements achieved by each LLM utilizing external correction methods (S2) across various datasets. The trends observed align closely with those depicted in Figure 4, indicating a consistent enhancement in overall performance. Notably, external correction methods demonstrate greater stability, which can be attributed to their reliance on authoritative external resources. By referencing these resources, S2 effectively mitigates the occurrence of incorrect responses. However, this reliance on external inputs may also limit the LLM’s capacity for divergent or creative reasoning, resulting in steadier but less flexible performance compared to intrinsic correction methods. For comprehensive results for each method, please refer to Appendix E.2.

[RQ1] To what extent can LLMs achieve accurate results by leveraging intrinsic (S1) and external (S2) self-correction methods without requiring further intervention?

Conclusion: Both S1 and S2 enable significant performance gains, particularly for complex tasks requiring multi-step reasoning or domain-specific knowledge. By iteratively refining responses, these methods effectively correct themselves even without additional fine-tuning.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Stacked Bar Chart: Model Performance on GPQA and MATH Datasets

### Overview

The image presents four stacked bar charts comparing the performance of various language models on the GPQA and MATH datasets, evaluated using F1 scores. The charts are arranged in a 2x2 grid, with GPQA and MATH results shown for two different evaluation settings (F1). Each bar represents a model, and the bar is segmented into three components: "Base" performance, "Intrinsic Correction," and "External Correction."

### Components/Axes

* **Titles:**

* a) GPQA (F1)

* b) MATH (F1)

* c) GPQA (F2)

* d) MATH (F2)

* **Y-axis:**

* Label: "Percentage (%)"

* Scale: 0 to 100, with tick marks at 0, 20, 40, 60, 80, and 100.

* **X-axis:**

* Labels: Model names (see below).

* **Legend:** Located at the bottom of the image.

* Base (light teal)

* Intrinsic Correction (light coral)

* External Correction (light blue)

* **Models (X-axis labels, same for all charts):**

* LLaMA3.1-8B

* LLaMA3.1-70B

* Qwen2.5-7B

* Qwen2.5-72B

* Claude3.5

* GPT-3.5

* GPT-4o

* QWQ-32B

* DeepSeek-V3

### Detailed Analysis

**a) GPQA (F1)**

* **LLaMA3.1-8B:** Base ~10%, Intrinsic ~15%, External ~5%, Total ~30%

* **LLaMA3.1-70B:** Base ~15%, Intrinsic ~10%, External ~10%, Total ~35%

* **Qwen2.5-7B:** Base ~5%, Intrinsic ~5%, External ~10%, Total ~20%

* **Qwen2.5-72B:** Base ~10%, Intrinsic ~5%, External ~5%, Total ~20%

* **Claude3.5:** Base ~10%, Intrinsic ~25%, External ~25%, Total ~60%

* **GPT-3.5:** Base ~30%, Intrinsic ~10%, External ~5%, Total ~45%

* **GPT-4o:** Base ~20%, Intrinsic ~5%, External ~10%, Total ~35%

* **QWQ-32B:** Base ~20%, Intrinsic ~5%, External ~5%, Total ~30%

* **DeepSeek-V3:** Base ~10%, Intrinsic ~0%, External ~0%, Total ~10%

**b) MATH (F1)**

* **LLaMA3.1-8B:** Base ~10%, Intrinsic ~0%, External ~0%, Total ~10%

* **LLaMA3.1-70B:** Base ~75%, Intrinsic ~5%, External ~5%, Total ~85%

* **Qwen2.5-7B:** Base ~70%, Intrinsic ~5%, External ~5%, Total ~80%

* **Qwen2.5-72B:** Base ~70%, Intrinsic ~5%, External ~5%, Total ~80%

* **Claude3.5:** Base ~70%, Intrinsic ~5%, External ~5%, Total ~80%

* **GPT-3.5:** Base ~80%, Intrinsic ~5%, External ~5%, Total ~90%

* **GPT-4o:** Base ~70%, Intrinsic ~5%, External ~5%, Total ~80%

* **QWQ-32B:** Base ~85%, Intrinsic ~5%, External ~5%, Total ~95%

* **DeepSeek-V3:** Base ~75%, Intrinsic ~0%, External ~0%, Total ~75%

**c) GPQA (F2)**

* **LLaMA3.1-8B:** Base ~10%, Intrinsic ~5%, External ~10%, Total ~25%

* **LLaMA3.1-70B:** Base ~25%, Intrinsic ~5%, External ~5%, Total ~35%

* **Qwen2.5-7B:** Base ~15%, Intrinsic ~5%, External ~5%, Total ~25%

* **Qwen2.5-72B:** Base ~25%, Intrinsic ~0%, External ~5%, Total ~30%

* **Claude3.5:** Base ~30%, Intrinsic ~15%, External ~10%, Total ~55%

* **GPT-3.5:** Base ~10%, Intrinsic ~5%, External ~5%, Total ~20%

* **GPT-4o:** Base ~25%, Intrinsic ~10%, External ~10%, Total ~45%

* **QWQ-32B:** Base ~5%, Intrinsic ~0%, External ~0%, Total ~5%

* **DeepSeek-V3:** Base ~20%, Intrinsic ~10%, External ~10%, Total ~40%

**d) MATH (F2)**

* **LLaMA3.1-8B:** Base ~75%, Intrinsic ~5%, External ~5%, Total ~85%

* **LLaMA3.1-70B:** Base ~75%, Intrinsic ~5%, External ~5%, Total ~85%

* **Qwen2.5-7B:** Base ~70%, Intrinsic ~5%, External ~5%, Total ~80%

* **Qwen2.5-72B:** Base ~75%, Intrinsic ~5%, External ~5%, Total ~85%

* **Claude3.5:** Base ~75%, Intrinsic ~5%, External ~5%, Total ~85%

* **GPT-3.5:** Base ~80%, Intrinsic ~5%, External ~5%, Total ~90%

* **GPT-4o:** Base ~75%, Intrinsic ~5%, External ~5%, Total ~85%

* **QWQ-32B:** Base ~85%, Intrinsic ~5%, External ~5%, Total ~95%

* **DeepSeek-V3:** Base ~70%, Intrinsic ~5%, External ~5%, Total ~80%

### Key Observations

* The MATH dataset generally yields higher F1 scores than the GPQA dataset across all models.

* The "Base" performance component dominates the MATH results, while "Intrinsic Correction" and "External Correction" contribute relatively little.

* On GPQA, the "Intrinsic Correction" and "External Correction" components play a more significant role, especially for models like Claude3.5.

* The performance of different models varies significantly on GPQA, with Claude3.5 and GPT-4o showing relatively higher scores.

* DeepSeek-V3 shows relatively low performance on GPQA compared to MATH.

* QWQ-32B consistently achieves high scores on the MATH dataset.

### Interpretation

The charts illustrate the performance of various language models on two different tasks: GPQA (likely a general-purpose question answering task) and MATH (mathematical problem-solving). The stacked bars break down the performance into "Base" (initial performance), "Intrinsic Correction" (improvements from internal mechanisms), and "External Correction" (improvements from external tools or data).

The high "Base" performance on MATH suggests that many models are already proficient in mathematical reasoning. The relatively small contributions from "Intrinsic Correction" and "External Correction" indicate that these models may not benefit significantly from additional correction mechanisms for this task.

In contrast, the GPQA results show a greater reliance on "Intrinsic Correction" and "External Correction," suggesting that the models require more assistance to perform well on this task. The varying performance across models on GPQA highlights the differences in their ability to handle general question answering.

The difference in performance between the two datasets suggests that mathematical reasoning is a relatively easier task for these models compared to general question answering. The data also indicates that certain models, like Claude3.5, are better equipped to leverage correction mechanisms for improved performance on complex tasks like GPQA.

</details>

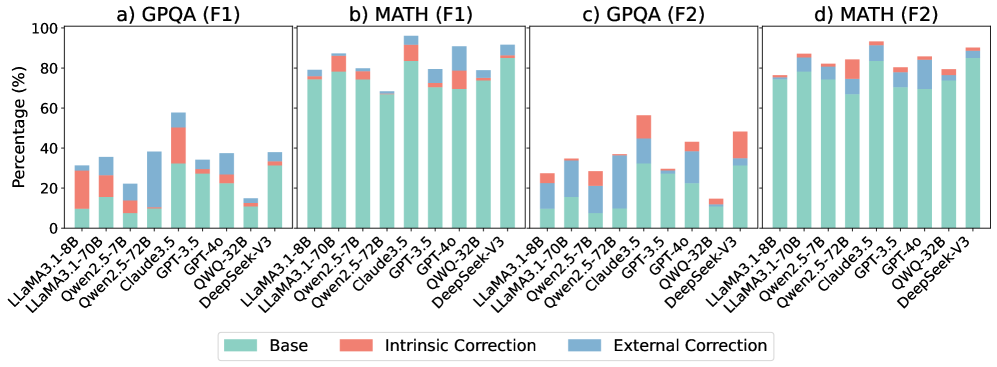

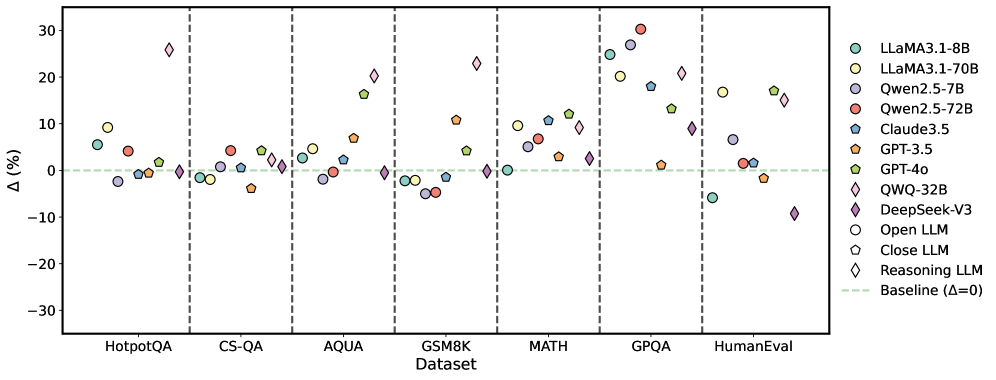

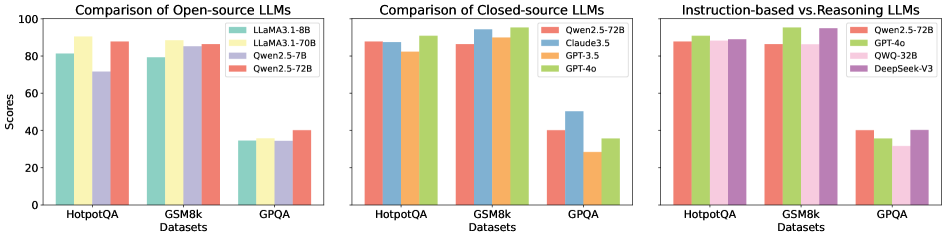

Figure 6: Comparison of different LLMs with mixture methods.

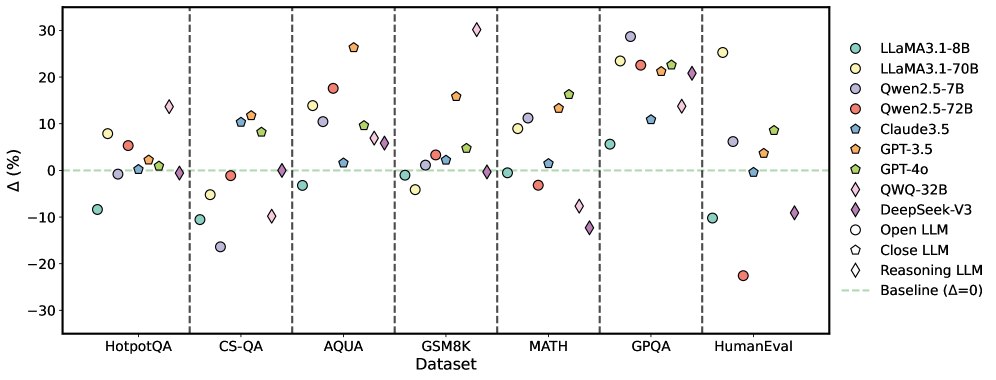

4.4 Results of Mixture Method

We further explored whether mixing multiple self-correction methods results in additive or synergistic performance improvements. Specifically, the responses generated by the baseline method (Base) are utilized as auxiliary prompts for an intrinsic method (S1), an external method (S2), or a mixture of both (S1 + S2). As shown in Figure 6, we evaluated two representative configurations (e.g., F1: ‘Base to S1 to S2 ’ and F2: ‘Base to S2 to S1 ’) on two benchmark tasks: GPQA and MATH. The results revealed two key findings. Firstly, applying one or more correction methods consistently improves model performance to varying degrees. Secondly, S2 generally yields larger performance gains compared to S1. Notably, complex reasoning tasks, such as GPQA, benefit the most from these mixed methods. However, these mixtures often introduce additional computational overhead. To address the associated efficiency and accuracy trade-offs, we conducted a complementary analysis of response times under different correction methods and model configurations, as detailed in Section 4.7.

4.5 Results of Fine-tuned Correction

Table 2: Performance of fine-tuned methods on selected datasets.

| Fine-tuned LLM - LLaMA2-7B-hf | Method Base DCoT | CS-QA( $\uparrow$ ) 31.40 29.65(-1.75) | GSM8K( $\uparrow$ ) 56.75 41.20(-15.55) |

| --- | --- | --- | --- |

| Gemma-7B-it | SCORE | 43.26(+11.86) | 75.30(+18.55) |

| LLaMA2-13B-chat | SCORE | 41.45(+10.05) | 72.10(+15.35) |

| Qwen2.5-Math-7B-Instruct | SuperCorrect | 46.25(+14.85) | 84.30(+27.55) |

| MATH( $\uparrow$ ) | HumanEval( $\uparrow$ ) | | |

| - | Base | 41.71 | 26.25 |

| Qwen2.5-Math-7B-Instruct | SuperCorrect | 70.16(+28.45) | 39.30(+13.05) |

Table 2 summarizes the performance of fine-tuned correction methods (S3), revealing two main observations. First, S3 (e.g., DCoT) often exhibits inconsistent outcomes across diverse tasks. This variability stems from their reliance on fine-tuning with narrowly focused datasets, which restricts their broader applicability. Second, domain-specific fine-tuning proves especially promising for models tailored to specialized tasks. For example, SuperCorrect, fine-tuned on Qwen2.5-Math-7B-Instruct, demonstrates marked improvements in mathematical reasoning (e.g., on GSM8K and MATH), outperforming other methods by a wide margin. This underscores the effectiveness of leveraging task-aligned models, particularly when fine-tuning objectives closely align with the target domain requirements.

Table 3: Performance of correction rate and misjudgment rate.

| Cove MR RARR | CR 8.1 CR | 31.6 8.0 30.7 | 36.0 6.7 49.3 | 52.1 7.5 51.3 | 40.8 47.1 |

| --- | --- | --- | --- | --- | --- |

| MR | 5.5 | 4.3 | 4.5 | 4.5 | |

4.6 Results of Correction and Misjudgment

In order to further assess the correction ability, we divide the responses of different models to questions in the three most challenging tasks (GPQA, AQUA, and HotpotQA) into error-based dataset and correction-based dataset, corresponding to the wrong and correct question-response pairs, respectively. We select CoVe and RARR from S1 and S2 respectively to evaluate on Claude 3.5-Sonnet. We hereby define two new metrics: Correction Rate (CR) indicates the proportion of incorrect responses that are successfully corrected, and Misjudgment Rate (MR) refers to the proportion of correct responses that are misjudged to be corrected wrongly. The results shown in Table 3 reflects that both methods achieve high CRs and low MRs, which shows that self-correction methods can effectively correct the wrong examples with less misjudgment.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Bar Chart: Model Performance on Self-Correction Methods

### Overview

The image is a bar chart comparing the performance of three language models (LLaMA3.1-70B, GPT-4o, and DeepSeek-V3) across various self-correction methods. Performance is measured in time (seconds).

### Components/Axes

* **Y-axis:** "Time (s)" with a scale from 0 to 400 in increments of 100.

* **X-axis:** "Self-correction Methods" with the following categories: Base, CoT, RCI, CoVe, Selfrefline, Refexion, RARR, RATT.

* **Legend:** Located at the top-left of the chart.

* LLaMA3.1-70B (light green)

* GPT-4o (light red)

* DeepSeek-V3 (light blue)

### Detailed Analysis

The chart displays the time taken by each model to perform each self-correction method.

* **Base:**

* LLaMA3.1-70B: ~5s

* GPT-4o: ~5s

* DeepSeek-V3: ~8s

* **CoT:**

* LLaMA3.1-70B: ~8s

* GPT-4o: ~8s

* DeepSeek-V3: ~15s

* **RCI:**

* LLaMA3.1-70B: ~15s

* GPT-4o: ~15s

* DeepSeek-V3: ~60s

* **CoVe:**

* LLaMA3.1-70B: ~28s

* GPT-4o: ~20s

* DeepSeek-V3: ~145s

* **Selfrefline:**

* LLaMA3.1-70B: ~70s

* GPT-4o: ~45s

* DeepSeek-V3: ~130s

* **Refexion:**

* LLaMA3.1-70B: ~70s

* GPT-4o: ~50s

* DeepSeek-V3: ~105s

* **RARR:**

* LLaMA3.1-70B: ~10s

* GPT-4o: ~10s

* DeepSeek-V3: ~55s

* **RATT:**

* LLaMA3.1-70B: ~430s

* GPT-4o: ~290s

* DeepSeek-V3: ~430s

### Key Observations

* For most self-correction methods (Base, CoT, RCI, CoVe, Selfrefline, Refexion, RARR), LLaMA3.1-70B and GPT-4o have similar performance, while DeepSeek-V3 generally takes more time.

* The RATT method shows a significant increase in time for all models, with LLaMA3.1-70B and DeepSeek-V3 taking approximately the same amount of time, and GPT-4o taking slightly less.

* DeepSeek-V3 shows the highest time consumption for CoVe, Selfrefline, and Refexion.

### Interpretation

The bar chart illustrates the computational cost (time) associated with different self-correction methods for three language models. The data suggests that the choice of self-correction method can significantly impact the performance of these models. The RATT method, in particular, appears to be computationally expensive for all models. The relative performance of the models varies depending on the specific self-correction method used, indicating that the effectiveness and efficiency of these methods are model-dependent. DeepSeek-V3 generally takes more time than LLaMA3.1-70B and GPT-4o, except for the RATT method where their performance is similar.

</details>

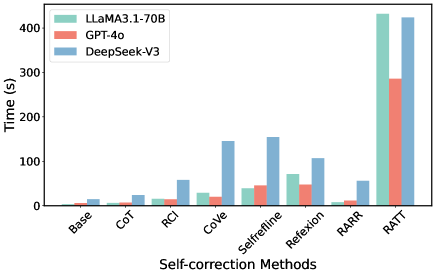

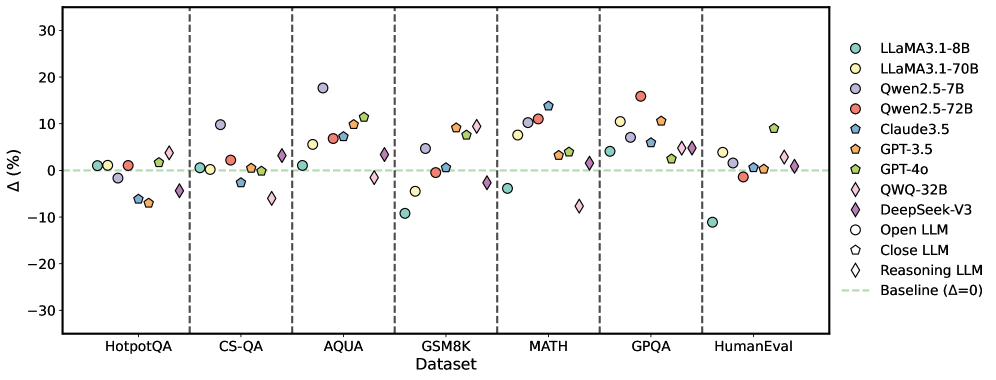

Figure 7: Average response times for LLaMA3.1-70B, GPT-4o, and DeepSeek-V3. Intrinsic (S1) and external (S2) methods generally increase inference duration relative to Base and CoT.

4.7 Results of Response Time

Figure 7 compares the average response times across representative models (e.g., LLaMA3.1-70B and GPT-4o from M1, DeepSeek-V3 from M2) under various correction methods. In general, both intrinsic and external methods extend inference times relative to baseline approaches (Base and CoT), largely due to increased reasoning complexity or reliance on external services (especially for RATT). Moreover, reasoning LLMs, such as DeepSeek-V3, exhibit notably longer execution times than instruction-based models, likely attributable to their built-in correction mechanisms. In contrast, the baseline CoT method achieves notably shorter response times while maintaining a reasonable accuracy (combined with Table 1). This observation underscores that more complex correction strategies do not always yield superior outcomes, highlighting the critical trade-off between model accuracy and computational efficiency. As shown in Table 4, RARR offers a balanced trade-off between efficiency and accuracy, with only 533 tokens and 2 API calls. Reflexion-v2 and RATT achieve the highest accuracies, reflecting the benefit of external retrieval or code execution, while their overhead remains manageable (below 15% additional search tokens). Bootstrap-based confidence intervals for these results are reported in Appendix B.3.

[RQ2] How does mixing multiple self-correction methods influence model accuracy and robustness, and what are the associated computational trade-offs?

Conclusion: Mixing self-correction methods typically results in accuracy improvements, though at the cost of increased computational overhead. Such mixtures are particularly beneficial for high-precision tasks where the trade-off of more runtime is justifiable.

Table 4: Comprehensive resource cost analysis on the MATH dataset (150 samples). Values in green indicate the best trade-off between cost and accuracy, while red highlights the least efficient results. “Efficiency Rank” represents the ratio of Accuracy / (Token Count × API Calls).

| Base CoT CoVe | - - S1 | 791 1804 2019 | 1.0 1.0 1.0 | 0 (0%) 0 (0%) 0 (0%) | 68.5 69.5 75.0 | 0.0866 0.0385 0.0371 |

| --- | --- | --- | --- | --- | --- | --- |

| RCI | S1 | 1780 | 1.2 | 0 (0%) | 70.2 | 0.0328 |

| Reflexion-v1 | S1 | 1460 | 3.5 | 0 (0%) | 72.8 | 0.0143 |

| Reflexion-v2 | S2 | 1712 | 4.0 | 154 (8.25%) | 74.5 | 0.0109 |

| RARR | S2 | 533 | 2.0 | 89 (14.31%) | 76.3 | 0.0716 |

| RATT | S2 | 2185 | 3.0 | 162 (6.9%) | 78.7 | 0.0120 |

4.8 Results of Reasoning LLMs

Table 5: Comparison of baseline performance among instruction-based and reasoning LLMs. Per-column maxima are bolded; per-column minima are underlined. Per-row maxima are highlighted with blue; per-row minima are highlighted with orange.

| Open-source LLaMA3.1-70B-Instruct Qwen2.5-7B-Instruct | LLaMA3.1-8B-Instruct 81.28 74.05 | 75.80 81.88 74.75 | 76.16 15.62 7.53 | 9.74 90.63 90.23 | 81.55 62.65 47.50 | 53.88 78.21 74.28 | 74.37 62.18 79.11 | 73.44 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Qwen2.5-72B-Instruct | 83.63 | 81.92 | 9.85 | 91.11 | 57.58 | 66.91 | 86.13 | |

| Closed-source | Claude3.5-Sonnet | 88.29 | 80.25 | 32.34 | 95.81 | 81.26 | 83.51 | 84.69 |

| GPT-3.5 | 82.94 | 77.92 | 27.29 | 79.14 | 55.15 | 70.44 | 80.29 | |

| GPT-4o | 89.16 | 80.65 | 22.49 | 91.15 | 65.82 | 69.54 | 77.04 | |

| Reasoning | QWQ-32B | 62.43 | 82.78 | 10.85 | 63.41 | 52.42 | 73.78 | 19.86 |

| DeepSeek-V3 | 89.29 | 83.35 | 31.35 | 95.12 | 74.79 | 85.02 | 91.67 | |

| DeepSeek-R1 | 88.92 | 79.93 | 41.15 | 92.63 | 80.23 | 84.21 | 89.06 | |

| o3-mini | 81.24 | 74.28 | 27.17 | 92.45 | 78.26 | 67.97 | 85.75 | |

To further investigate why DeepSeek-V3 shows limited improvement from self-correction, we compare instruction-based LLMs and reasoning LLMs on the Base alone. Table 5 shows that DeepSeek-V3 consistently achieves top-2 or even top-1 performance across all datasets. Combined with the results of Section 4.2, we find that this may be because reasoning LLMs already incorporate robust intrinsic correction mechanisms, limiting additional gains from external correction steps. In particular, DeepSeek-V3 integrates advanced reflection modules and comprehensive error-detection routines distilled from its earlier R1 series, enabling thorough multi-step reasoning at the outset. This high baseline effectively reduces the scope for further improvement through additional self-correction. Consequently, attempts to augment DeepSeek-V3 with further self-correction methods produce minimal net gains while incurring additional computational overhead.

[RQ3] For reasoning LLMs with built-in correction mechanisms, to what extent can the above self-correction methods provide additional benefits?

Conclusion: Reasoning LLMs (e.g., DeepSeek-V3) already embed sophisticated error-detection and correction processes. As a result, additional self-correction methods confer only marginal gains and may increase computational overhead, highlighting a performance ceiling in highly reasoning LLMs.

4.9 Failure Mode Taxonomy and Case Analysis

To better understand why different self-correction strategies succeed or fail, we conducted a supplemental failure-mode analysis on the GPQA (250 samples) and MATH (500 samples) datasets. Six major categories of failure were identified, alongside a residual “Other” category, as summarized in Table 6. Logical Oversight (32.9%) and Factual Inaccuracy (22.0%) dominate, implying that intrinsic corrections (S1) are suitable for reasoning-related errors, while external corrections (S2) excel at factual validation. These findings motivate our adaptive correction controller (Sec. 6), which dynamically selects correction strategies based on detected failure types.

Table 6: Error taxonomy of LLM self-correction failures across GPQA and MATH. Logical and factual errors dominate, suggesting distinct correction strategies (S1 vs. S2).

| Logical Oversight Factual Inaccuracy Over-Reliance on Tools | 32.9 22.0 14.6 | Reasoning step errors (e.g., misapplied formula) Outdated or incorrect retrieved evidence Excessive external API calls causing inefficiency | S1: CoT, RCI S2: RARR, RATT S2 (bounded) |

| --- | --- | --- | --- |

| Ambiguous Output | 14.2 | Incomplete or vague final answer | S1 refinement |

| Contextual Misunderstanding | 10.8 | Misinterpreted question or missing context | S1+S2 hybrid |

| Computational Error | 3.5 | Faulty code execution or symbolic computation | S2 verification |

| Other | 2.0 | Miscellaneous or formatting issues | - |

5 Related Work

Self-Correction Methods. With the continuous development of self-correction techniques wei2025unsupervised ; xu2024llava ; fan2025missing ; li2025vision ; wei2025advancing , researchers have proposed various approaches to enhance the performance of large language models. Intrinsic methods, such as CoVe Dhuliawala2023ChainofVerificationRH and RCI Kim2023LanguageMC , improve the precision and consistency of generated content through self-supervised mechanisms within the model. At the same time, extrinsic methods, such as CRITIC Gou2023CRITICLL , RATT Zhang2024RATTAT , and RARR Gao2022RARRRA , rely on an external tool to evaluate and provide feedback on the generated outputs, guiding the model towards optimization. Fine-tuned methods, such as DCoT Puerto2024FineTuningWD , Supercorrect Yang2024SuperCorrectSA , and SCORE Zhang2024SmallLM , further enhance the performance of the model by fine-tuning it for specific tasks, enabling more accurate and efficient handling of complex tasks. The continuous evolution of these methods provides diverse options and techniques for self-correction. Detailed discussions on the related word are provided in Appendix C.

Correction Benchmarks. Benchmarking the LLMs’ self-correction ability tie2025mmmr ; duan2024gtbench ; zheng2024thinking ; duan2024reta ; cao2025survey ; zhou2024comprehensive ; shi2024optimization ; liu2025agentic has prompted the development of specialized benchmarks for different tasks. For instance, CriticBench Lin2024CriticBenchBL evaluates critique ability using discrimination results, but it struggles with task-specific fine-grained metrics and reliance on costly human annotations or potentially biased GPT-4 outputs. In the realm of vision-language models, VISCO Wu2024VISCOBF focuses on self-correction in multimodal tasks, while Beyond Correctness Zheng2024BeyondCB specifically targets self-correction in large models for code generation. Our CorrectBench focused on striking a trade-off between reasoning capability and efficiency, proposing more generalized and nuanced evaluation methods for complex reasoning tasks.

6 Future Improvements in Self-Correction

Looking ahead, several promising directions can further enhance the robustness and adaptability of self-correction in large language models. (1) Dynamic Adjustment: reinforcement learning or meta-controller mechanisms could dynamically select among correction strategies (S1–S3) based on task complexity or confidence levels, reducing redundant computation through early stopping. (2) Task-Specific Optimization: domain-oriented fine-tuning, such as the 10.2% improvement achieved by SuperCorrect on MATH, suggests the value of adaptive pipelines that align with domain reasoning depth and structure. (3) Human-in-the-Loop Integration: in sensitive fields like medicine or law, coupling automated correction with limited expert feedback could improve factual reliability and ensure accountable model behavior. (4) Meta-Controller Framework: developing a lightweight controller to detect and correct intermediate reasoning inconsistencies may help refine the chain-of-thought process and prevent logical drift. Further analysis and discussions of these future directions are provided in Appendix D.

7 Conclusion

This paper presents CorrectBench, a comprehensive and extensible benchmark for evaluating the self-correction capabilities of large language models (LLMs) across diverse reasoning-intensive tasks, including commonsense inference, mathematical problem-solving, and code generation. Through systematic evaluation, we demonstrate that modern LLMs are increasingly capable of genuine self-correction, with reasoning-oriented models such as DeepSeek-R1 achieving substantial baseline accuracy and showing consistent improvement through iterative refinement. CorrectBench not only reveals the effectiveness of various correction paradigms (S1, S2, S3) but also exposes critical limitations—such as diminishing returns in deeper correction chains and resource inefficiencies in web-augmented methods. These insights emphasize the necessity for adaptive, cost-aware correction mechanisms that balance efficiency and reasoning depth. Overall, this study provides a unified foundation for understanding and benchmarking LLM self-correction. We hope this work serves as a stepping stone toward more trustworthy and self-improving language models.

Acknowledgments

This work is supported by National Natural Science Foundation of China (NSFC) under grant No. 62476107.

References

- [1] Alexandre Agossah, Frédérique Krupa, Matthieu Perreira da Silva, and Patrick Le Callet. Llm-based interaction for content generation: A case study on the perception of employees in an it department. Proceedings of the 2023 ACM International Conference on Interactive Media Experiences, 2023.

- [2] Claude Ahtropic. Claude. [Online]. Available: https://www.anthropic.com/claude, 2024.

- [3] Yuntao Bai, Saurav Kadavath, Sandipan Kundu, Amanda Askell, et al. Constitutional ai: Harmlessness from ai feedback. ArXiv, abs/2212.08073, 2022.

- [4] Su Lin Blodgett, Solon Barocas, Hal Daum’e, and Hanna M. Wallach. Language (technology) is power: A critical survey of “bias” in nlp. ArXiv, abs/2005.14050, 2020.

- [5] Yihan Cao, Siyu Li, Yixin Liu, Zhiling Yan, Yutong Dai, Philip Yu, and Lichao Sun. A survey of ai-generated content (aigc). ACM Computing Surveys, 57(5):1–38, 2025.

- [6] Canyu Chen and Kai Shu. Can llm-generated misinformation be detected? ArXiv, abs/2309.13788, 2023.

- [7] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, et al. Evaluating large language models trained on code. ArXiv, abs/2107.03374, 2021.

- [8] Michael Chen, Mike D’Arcy, Alisa Liu, Jared Fernandez, and Doug Downey. Aqua: An adversarially authored question-answer dataset for common sense. 2019.

- [9] Yuxuan Chen, Rongpeng Li, Xiaoxue Yu, Zhifeng Zhao, and Honggang Zhang. Adaptive layer splitting for wireless llm inference in edge computing: A model-based reinforcement learning approach. arXiv preprint arXiv:2406.02616, 2024.

- [10] Ethan Chern, Steffi Chern, Shiqi Chen, Weizhe Yuan, Kehua Feng, Chunting Zhou, Junxian He, Graham Neubig, and Pengfei Liu. Factool: Factuality detection in generative ai - a tool augmented framework for multi-task and multi-domain scenarios. ArXiv, abs/2307.13528, 2023.

- [11] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems. ArXiv, abs/2110.14168, 2021.

- [12] DeepSeek-AI, Aixin Liu, Bei Feng, Bing Xue, Bing-Li Wang, Bochao Wu, et al. Deepseek-v3 technical report. 2024.

- [13] Shehzaad Dhuliawala, Mojtaba Komeili, Jing Xu, Roberta Raileanu, Xian Li, Asli Celikyilmaz, and Jason Weston. Chain-of-verification reduces hallucination in large language models. ArXiv, abs/2309.11495, 2023.