# World-in-World: World Models in a Closed-Loop World

**Authors**:

- Jiahan Zhang

- Muqing Jiang

- Nanru Dai

- Taiming Lu

- Arda Uzunoglu

- Shunchi Zhang

- Yana Wei

- Jiahao Wang

- Vishal M. Patel

- Paul Pu Liang

- Daniel Khashabi

- Cheng Peng

- Rama Chellappa

- Tianmin Shu

- Alan Yuille

- Yilun Du

- Jieneng Chen (JHU PKU Princeton MIT Harvard)

## Abstract

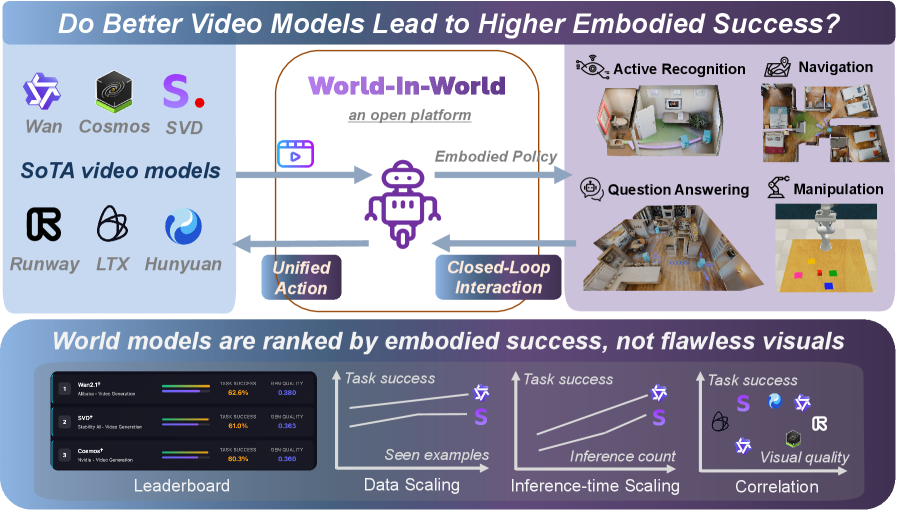

Generative world models (WMs) can now simulate worlds with striking visual realism, which naturally raises the question of whether they can endow embodied agents with predictive perception for decision making. Progress on this question has been limited by fragmented evaluation: most existing benchmarks adopt open-loop protocols that emphasize visual quality in isolation, leaving the core issue of embodied utility unresolved, i.e., do WMs actually help agents succeed at embodied tasks? To address this gap, we introduce World-in-World, the first open platform that benchmarks WMs in a closed-loop world that mirrors real agent-environment interactions. World-in-World provides a unified online planning strategy and a standardized action API, enabling heterogeneous WMs for decision making. We curate four closed-loop environments that rigorously evaluate diverse WMs, prioritize task success as the primary metric, and move beyond the common focus on visual quality; we also present the first data scaling law for world models in embodied settings. Our study uncovers three surprises: (1) visual quality alone does not guarantee task success—controllability matters more; (2) scaling post-training with action-observation data is more effective than upgrading the pretrained video generators; and (3) allocating more inference-time compute allows WMs to substantially improve closed-loop performance. Code will be available at github.com/World-In-World.

footnotetext: Correspondence to “jienengchen01@gmail.com”. We warmly welcome contributions to the open benchmark.

<details>

<summary>x1.png Details</summary>

### Visual Description

## [Technical Diagram & Chart Set]: Do Better Video Models Lead to Higher Embodied Success?

### Overview

The image is a technical visualization exploring the relationship between state-of-the-art (SoTA) video models and embodied AI success, using the *World-In-World* open platform. It includes a conceptual diagram (top) and three analytical charts (bottom) to illustrate model performance, scaling, and correlation.

### Components/Axes

#### Top: Conceptual Diagram (World-In-World Platform)

- **Left: SoTA Video Models**

Logos/names: *Wan, Cosmos, SVD, Runway, LTX, Hunyuan* (labeled “SoTA video models”).

- **Center: World-In-World (Open Platform)**

Robot icon with three connections:

- *Unified Action* (left arrow: video models → platform).

- *Embodied Policy* (right arrow: platform → tasks).

- *Closed-Loop Interaction* (bottom arrow: tasks → platform).

- **Right: Embodied Tasks**

Four tasks with icons:

- *Active Recognition* (room scene).

- *Navigation* (map).

- *Question Answering* (room scene).

- *Manipulation* (robot with blocks).

#### Bottom: Analytical Charts

1. **Leaderboard (Left)**

| Model | Task success (%) | Visual quality (score) |

|-------|------------------|------------------------|

| Wan2.1 | 82.6 | 0.880 |

| SVD* | 81.0 | 0.885 |

| Cosmos* | 80.2 | 0.880 |

2. **Data Scaling (Middle-Left)**

- X-axis: *Seen examples* (increasing).

- Y-axis: *Task success* (increasing).

- Two lines:

- Star icon (Wan2.1): Higher task success, increasing with seen examples.

- “S” icon (SVD*): Lower task success than Wan2.1, also increasing.

3. **Inference-time Scaling (Middle-Right)**

- X-axis: *Inference count* (increasing).

- Y-axis: *Task success* (increasing).

- Two lines (same as Data Scaling): Both increase with inference count, Wan2.1 remains higher.

4. **Correlation (Right)**

- X-axis: *Visual quality* (increasing).

- Y-axis: *Task success* (increasing).

- Points (models):

- *S* (SVD*): High visual quality, high task success.

- Star (Wan2.1): High task success, slightly lower visual quality than SVD*.

- Hexagon (Cosmos*): Lower visual quality, lower task success.

- *R* (Runway), *L* (LTX), *H* (Hunyuan): Lower visual quality, lower task success.

### Detailed Analysis

- **Leaderboard**: Wan2.1 leads in task success (82.6%) despite slightly lower visual quality than SVD* (0.880 vs. 0.885). Cosmos* trails in both metrics.

- **Scaling Charts**: Both *data scaling* (more seen examples) and *inference-time scaling* (more inference) improve task success for Wan2.1 and SVD*, with Wan2.1 consistently outperforming SVD*.

- **Correlation**: Visual quality and task success are positively correlated, but not perfectly (e.g., SVD* has higher visual quality than Wan2.1 but lower task success).

### Key Observations

- Wan2.1 outperforms SVD* and Cosmos* in task success, even with slightly lower visual quality than SVD*.

- Scaling (data or inference) boosts task success for top models.

- Visual quality is a factor but not the sole determinant of embodied success (Wan2.1’s lower visual quality but higher task success suggests other model attributes matter).

### Interpretation

The data suggests **better video models (higher task success) do lead to higher embodied success**, as seen in the leaderboard and scaling trends. The *World-In-World* platform integrates video models with embodied tasks, showing that scaling (data/inference) and model quality (task success) are critical. The correlation chart implies visual quality is important but not sufficient—other factors (e.g., model architecture, training) also drive embodied performance. For embodied AI, optimizing both video model quality and task-specific scaling is key to success.

(Note: All text, labels, and data points are extracted. The diagram’s flow (video models → platform → tasks → platform) and chart trends are detailed to enable full reconstruction of the image’s information.)

</details>

Figure 1: We introduce the first open benchmark to evaluate world models by closed-loop task success, analyze the link between task success and visual quality, and investigate scaling laws.

## 1 Introduction

Recent advances in visual generation have sparked interest in world generation, a field focused on the creation of diverse environments populated with varied scenes and entities, with applications in entertainment, gaming, simulation, and embodied AI. The rapid progress in video generation (Brooks et al., 2024b; Yang et al., 2024b; Wan et al., 2025), 3D scene generation (Fridman et al., 2023; Chung et al., 2023; Yu et al., 2024; Koh et al., 2023; Ling et al., 2025), and 4D scene generation (Bahmani et al., 2024b; Xu et al., 2024; Bahmani et al., 2024a) has demonstrated high-quality individual scene generation, highlighting the potential of these models as world generation systems.

Building on these developments, recent world generation systems (Yang et al., 2023b; Parker-Holder and Fruchter, 2025; Li et al., 2025b; Ye et al., 2025; Lu et al., 2025; He et al., 2025c) show promise as world models for embodied agents. Given an agent’s initial observation and a candidate action, such systems predict the resulting video, thereby estimating the future state of the environment. These action-conditioned simulators mirror human mental models by forecasting future states and can provide missing context under partial observability. As a result, they offer a pathway to improved decision-making for embodied tasks that rely on perception, planning, and control.

Despite this promise, the community lacks a unified benchmark that evaluates visual world models through the lens of embodied interaction. Existing suites emphasize video generation quality (e.g., VBench (Huang et al., 2024)) or visual plausibility (e.g., WorldModelBench (Li et al., 2025a)). The recent WorldScore (Duan et al., 2025) offers a unified assessment for models that take an image and a camera trajectory as input. However, no current benchmark tests whether generated worlds actually enhance embodied reasoning and task performance —for example, helping an agent perceive the environment, plan and execute actions, and replan based on new observations within such a closed loop. Establishing this evaluation framework is essential for tracking genuine progress across the rapidly expanding landscape of visual world models and embodied AI.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Scatter Plot: Model Performance Comparison (Generative Quality vs. Task Success Rate)

### Overview

This image is a scatter plot comparing the performance of various AI models, likely for video or image generation tasks. It plots two key metrics against each other: "Gen. Quality (Aesthetic+Image Quality)" on the horizontal axis and "Task Success Rate (%)" on the vertical axis. Each data point represents a specific model, labeled with its name and categorized by its training paradigm (Zero-shot, Post-trained, or Others).

### Components/Axes

* **Chart Type:** Scatter Plot.

* **X-Axis:**

* **Label:** `Gen. Quality (Aesthetic+Image Quality)↑`

* **Scale:** Linear, ranging from approximately 0.325 to 0.475. The upward arrow (↑) indicates that higher values are better.

* **Major Ticks:** 0.325, 0.350, 0.375, 0.400, 0.425, 0.450, 0.475.

* **Y-Axis:**

* **Label:** `Task Success Rate (%)`

* **Scale:** Linear, ranging from 55% to 63%.

* **Major Ticks:** 55, 56, 57, 58, 59, 60, 61, 62, 63.

* **Legend:** Located in the top-right corner.

* **Pink Circle:** `Zero-shot`

* **Blue Circle:** `Post-trained`

* **Green Square:** `Others`

* **Data Points & Labels:** Each point is labeled with a model name. Some names are followed by a dagger symbol (†), which, based on their blue color, appears to denote a post-trained variant of a base model.

### Detailed Analysis

The plot contains 15 distinct data points. Below is a reconstruction of the data, with approximate coordinates read from the chart. The color/category is confirmed by cross-referencing with the legend.

| Model Name (Label) | Approx. Gen. Quality (X) | Approx. Task Success Rate (Y) | Color/Shape | Category (from Legend) |

| :--- | :--- | :--- | :--- | :--- |

| Wan2.1† | 0.380 | 62.5% | Blue Circle | Post-trained |

| SVD† | 0.365 | 61.0% | Blue Circle | Post-trained |

| Cosmos-P2† | 0.355 | 60.3% | Blue Circle | Post-trained |

| Wan2.2 A14B | 0.455 | 59.5% | Pink Circle | Zero-shot |

| Wan2.1 | 0.475 | 58.2% | Pink Circle | Zero-shot |

| Hunyuan | 0.400 | 57.7% | Pink Circle | Zero-shot |

| SVD | 0.375 | 57.7% | Pink Circle | Zero-shot |

| LTXVideo† | 0.340 | 57.5% | Blue Circle | Post-trained |

| SE3DS | 0.365 | 57.5% | Green Square | Others |

| NWM | 0.325 | 57.3% | Green Square | Others |

| Pathdreamer | 0.340 | 57.0% | Green Square | Others |

| Wan2.2 5B† | 0.380 | 56.3% | Blue Circle | Post-trained |

| LTXVideo | 0.385 | 56.0% | Pink Circle | Zero-shot |

| Wan2.2 5B | 0.395 | 55.3% | Pink Circle | Zero-shot |

| Cosmos-P2 | 0.475 | 55.3% | Pink Circle | Zero-shot |

**Trend Verification:**

* **Post-trained Models (Blue):** This series shows a general trend where higher generative quality correlates with higher task success rate. The line formed by points like LTXVideo†, SVD†, and Wan2.1† slopes upward from left to right.

* **Zero-shot Models (Pink):** This series is more dispersed. There is a cluster of models (Wan2.2 5B, LTXVideo, Cosmos-P2) with lower task success rates (~55-56%) but spanning a wide range of generative quality (0.385 to 0.475). Another cluster (Hunyuan, SVD, Wan2.1, Wan2.2 A14B) has higher task success (~57.7-59.5%) and also spans a wide quality range.

* **Others (Green):** These three models (NWM, Pathdreamer, SE3DS) are clustered in the lower-left quadrant, indicating relatively lower performance on both metrics compared to the top performers.

### Key Observations

1. **Top Performer:** `Wan2.1†` (Post-trained) achieves the highest Task Success Rate (~62.5%) with moderate Generative Quality (~0.380).

2. **Quality Leader:** `Wan2.1` and `Cosmos-P2` (both Zero-shot) tie for the highest Generative Quality (~0.475), but their Task Success Rates are among the lowest (~55.3% and ~58.2% respectively).

3. **Post-training Effect:** For several model families, the post-trained variant (†) significantly outperforms its zero-shot counterpart in Task Success Rate, often with a trade-off in Generative Quality.

* **Example:** `Wan2.1†` vs. `Wan2.1`: Task Success ↑ ~4.3%, Gen. Quality ↓ ~0.095.

* **Example:** `SVD†` vs. `SVD`: Task Success ↑ ~3.3%, Gen. Quality ↓ ~0.010.

4. **Model Family Spread:** The "Wan" model family (Wan2.1, Wan2.2 5B, Wan2.2 A14B) and its post-trained variants are represented across a wide area of the plot, showing significant performance variability based on size and training.

### Interpretation

This chart visualizes a fundamental trade-off in generative AI model development: **optimizing for aesthetic/image quality does not guarantee success on downstream tasks, and vice-versa.**

* **Post-training is highly effective for task performance.** The clear upward trend and superior positioning of the blue "Post-trained" points suggest that specialized fine-tuning is crucial for achieving high task success rates, even if it slightly reduces generic quality metrics.

* **Zero-shot models excel in raw quality.** The highest generative quality scores belong to zero-shot models, indicating these base models are excellent at producing visually pleasing outputs without task-specific tuning.

* **The "Others" category lags behind.** The green-square models occupy a lower-performance region, suggesting they may be older, smaller, or less specialized architectures compared to the Wan, SVD, and Cosmos families highlighted here.

* **Strategic Choice:** The data implies a strategic choice for practitioners: select a **post-trained model** (like Wan2.1†) for applications where task completion is critical, or select a high-quality **zero-shot model** (like Wan2.1) for applications where visual fidelity is the primary concern. The ideal model would be in the top-right corner, a goal not yet achieved by any model in this comparison.

</details>

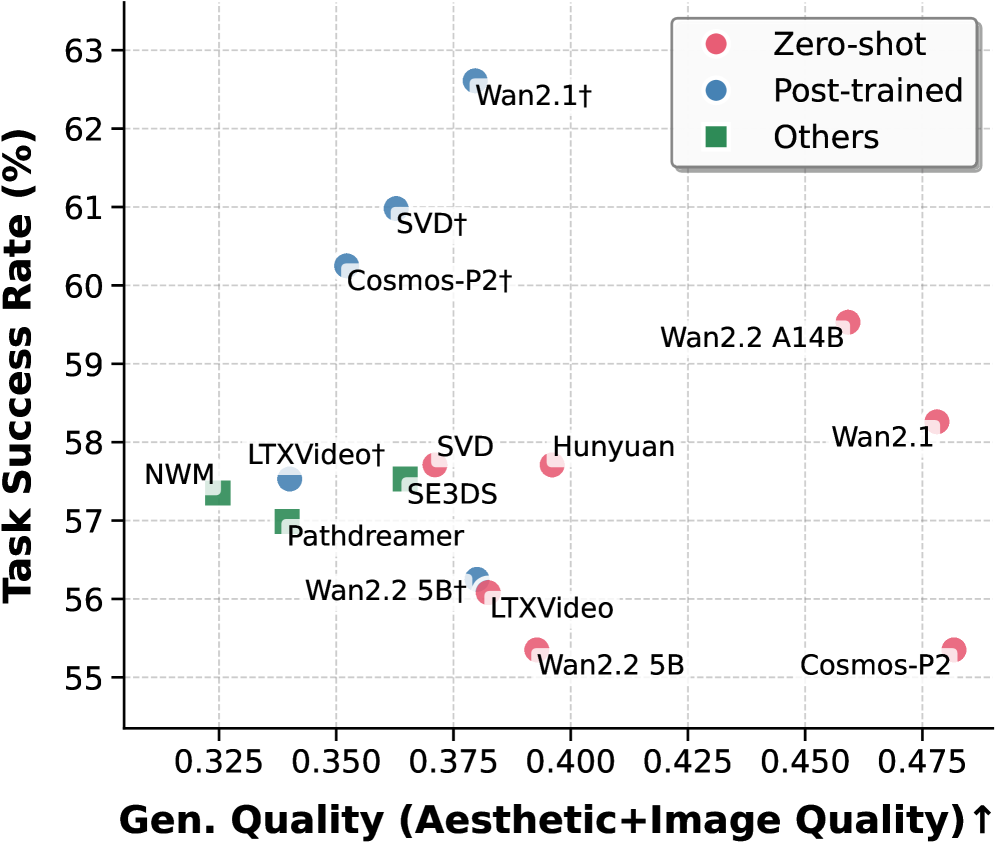

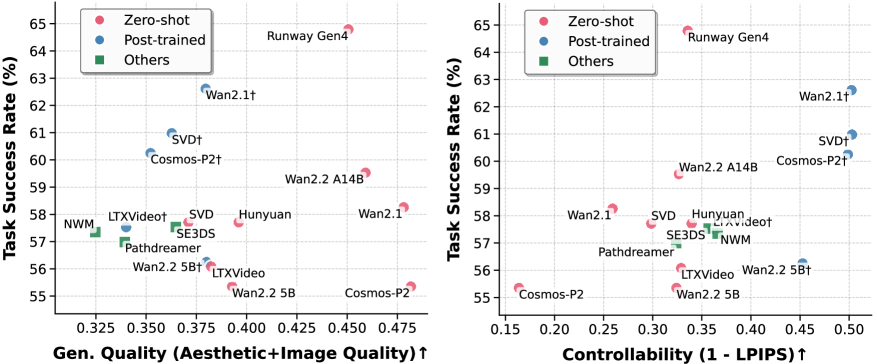

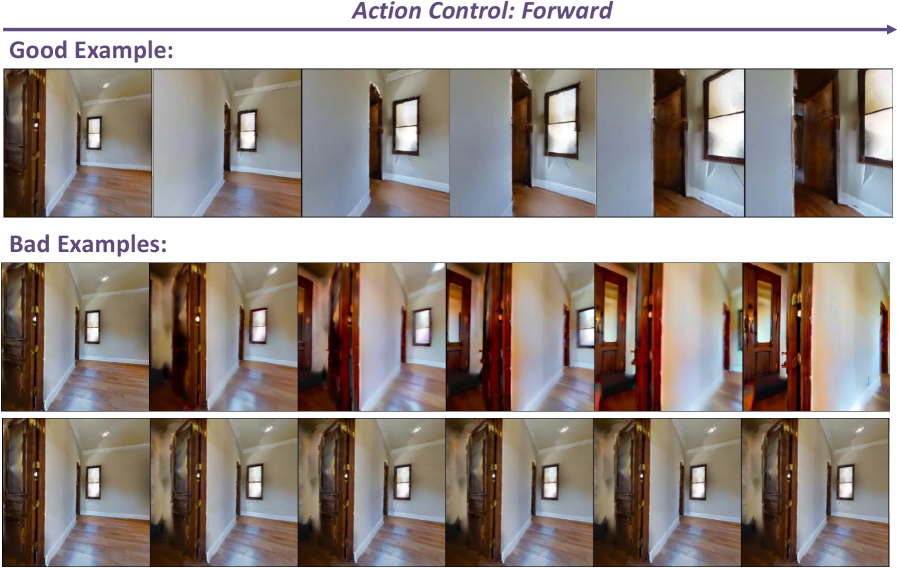

Figure 2: Task success rate vs. generation quality. $\dagger$ : post-trained with extra data. We defend that world models live and die by their closed-loop success, not flawless generated visuals.

In this work, we address this gap by proposing World-in-World, which wraps generative World models In a closed-loop World interface to measure their practical utility for embodied agents. Specifically, we present a unified strategy for closed-loop online planning and a standardized action API to seamlessly integrate diverse world models into closed-loop tasks. The online planning strategy allows the agent to look ahead by anticipating environmental changes and task rewards before committing to an action. The standardized action API harmonizes input modalities expected by different world models, so that each model can be controlled consistently within the same evaluation protocol. In addition, we introduce a post-training protocol that fine-tunes pretrained video generators using a modest amount of action–observation data drawn from the same action space as the downstream tasks, which allows us to examine their adaptation potential and to characterize a data scaling law.

World-in-World offers a fair, closed-loop world interface to evaluate diverse WMs. We benchmark leading video generators (Wan et al., 2025; HaCohen et al., 2024; Kong et al., 2024) alongside task-focused world models (Bar et al., 2025a; Koh et al., 2023, 2021a) in perception, navigation, and manipulation settings. Our findings reveal three consistent trends: (1) high visual quality does not necessarily translate into strong task success; (2) scaling post-training with action-observation data is more effective than upgrading the pretrained video generators; and (3) increasing inference-time compute via online planning substantially improves closed-loop performance. As shown in Figure ˜ 2, world models with strong visual scores do not necessarily bring high success rates, which underscores the need for closed-loop evaluation when judging WM practical value for embodied agents.

Our work makes three main contributions:

- We introduce World-in-World, the first comprehensive closed-loop benchmark that evaluates world models through the lens of embodied interaction, moving beyond the common focus on generation quality.

- We propose a unified closed-loop planning strategy with a unified action API, allowing diverse world models to be seamlessly integrated and evaluated within a single framework across four embodied tasks.

- We discover that high visual quality does not necessarily guarantee task success, and demonstrate how the performance of pretrained video generators can be substantially improved through training-time data scaling and inference-time scaling.

## 2 World-in-World: a Closed-Loop Interface for Visual World Models

Design overview. Our goal is to establish a benchmark that evaluates world-generation methods by their utility for embodied agents. Unlike prior work focused on generative quality, we develop a predictive-control framework to test how well a world model supports online decision-making. The evaluation setting mirrors practical scenarios in embodied AI, emphasizing the interaction between prediction, control, and reward under closed-loop operation.

We detail the unified strategy for closed-loop online planning (Section ˜ 2.1) and the unified action API (Section ˜ 2.2), which together provide a common interface across tasks and models. We then describe our task selection and evaluation protocol (Section ˜ 2.3). Finally, we present a post-training recipe that adapts a pretrained video generator into a more effective embodied world model (Section ˜ 2.4).

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Closed-loop Online Planning System

### Overview

This image is a technical system diagram illustrating a "Closed-loop online planning" framework for an embodied robotic agent. The diagram depicts a cyclical process where the agent uses imagined interactions to propose and revise action plans before executing them in a real environment. The flow is indicated by colored arrows, with a legend differentiating between imagined (purple) and real (teal) interactions.

### Components/Axes

**Legend (Top-Left):**

* **Purple Arrow:** Imagined Interactions

* **Teal Arrow:** Real Interactions

**Main Diagram Components (Spatially arranged from left to right, then looping back):**

1. **Embodied Task Env. (Left):** A dashed box containing icons representing the agent's environment. Icons include a globe, a robotic arm, a person at a desk, and a chat bubble.

2. **Observation Sequence (Top-Left):** A series of image icons labeled `O₁`, `O₂`, `O₃`, ..., `Oₜ`. A purple arrow points from this sequence into the planning system.

3. **Robot Icon (Center-Left):** A simple robot figure. Teal arrows point from the "Embodied Task Env." to the robot and from the robot back to the environment, indicating real interaction.

4. **Action Sequence (Bottom-Left):** A series of directional arrow icons labeled `D*₁`, `D*₂`, `D*₃`, ..., `D*ₜ`. A purple arrow points from the planning system to this sequence.

5. **Planning System (Right, within a large rounded rectangle):** This is the core processing unit, containing four numbered modules:

* **① π proposal:** Represented by a neural network icon. It receives observations (`Oₜ`) and outputs multiple candidate action plans.

* **Candidate Action Plans:** Listed as `Âₜ⁽¹⁾`, `Âₜ⁽²⁾`, ..., `Âₜ⁽ᴹ⁾`.

* **② Unified Action API:** Represented by an icon with an 'A' inside a circle and arrows. It receives the candidate plans.

* **③ World Model gθ:** Represented by a globe icon. It receives text/camera trajectories/actions from the API and outputs possible future states.

* **Possible Future States:** Listed as `Ôₜ⁽¹⁾`, `Ôₜ⁽²⁾`, ..., `Ôₜ⁽ᴹ⁾`.

* **④ π revision:** Represented by a document/gear icon. It receives the possible future states and outputs the final chosen action sequence (`D*ₜ`).

6. **Output Icons (Far Right):** Three small icons vertically aligned: a camera, a path/trajectory symbol, and the letter 'T'. These are connected to the World Model via a purple arrow labeled "Text/Camera traj/Actions".

### Detailed Analysis

**Flow and Relationships:**

The process begins with the agent observing the environment (`O₁...Oₜ`). These observations are fed (purple arrow) into the **π proposal** module (1), which generates `M` candidate action plans (`Âₜ⁽¹⁾...Âₜ⁽ᴹ⁾`). These plans are processed by the **Unified Action API** (2), which interfaces with the **World Model gθ** (3). The World Model simulates the outcomes of each candidate plan, producing `M` corresponding **Possible Future States** (`Ôₜ⁽¹⁾...Ôₜ⁽ᴹ⁾`). These simulated outcomes are evaluated by the **π revision** module (4), which selects the optimal action sequence (`D*₁...D*ₜ`). This chosen sequence is then executed in the real **Embodied Task Env.** via the robot (teal arrows), closing the loop. The cycle repeats with new observations.

**Text Transcription:**

All text in the diagram is in English. Key transcribed text includes:

* Title: "Closed-loop online planning"

* Legend: "Imagined Interactions", "Real Interactions"

* Module Labels: "① π proposal", "② Unified Action API", "③ World Model gθ", "④ π revision"

* Data Labels: `O₁`, `O₂`, `O₃`, `Oₜ`; `Âₜ⁽¹⁾`, `Âₜ⁽²⁾`, `Âₜ⁽ᴹ⁾`; `Ôₜ⁽¹⁾`, `Ôₜ⁽²⁾`, `Ôₜ⁽ᴹ⁾`; `D*₁`, `D*₂`, `D*₃`, `D*ₜ`

* Descriptive Text: "Embodied Task Env.", "Candidate Action Plan 1", "Candidate Action Plan 2", "Candidate Action Plan M", "Possible Future State 1", "Possible Future State 2", "Possible Future State M", "Text/Camera traj/Actions"

### Key Observations

1. **Dual Interaction Loops:** The system explicitly separates internal, simulated planning (purple "Imagined Interactions") from external, physical execution (teal "Real Interactions").

2. **Parallel Hypothesis Testing:** The core of the planning system is parallelized. Both the action proposal (`Âₜ⁽ᴹ⁾`) and the world model's state prediction (`Ôₜ⁽ᴹ⁾`) operate over `M` candidates simultaneously, suggesting a Monte Carlo or beam-search-like approach to planning.

3. **Model-Based Reinforcement Learning Structure:** The architecture follows a classic model-based RL pattern: an actor (π proposal) proposes actions, a world model (gθ) predicts outcomes, and a critic/revision module (π revision) evaluates them to select the best action.

4. **Symbolic and Perceptual Integration:** The World Model takes "Text/Camera traj/Actions" as input, indicating it integrates both symbolic (text) and perceptual (camera) data to make its predictions.

### Interpretation

This diagram represents a sophisticated architecture for an intelligent agent that "thinks before it acts." The key innovation is the **closed-loop online planning** mechanism. Instead of reacting reflexively, the agent uses its internal **World Model (gθ)**—a learned simulator of reality—to imagine the consequences of multiple potential action plans. The **π revision** module then acts as a decision-maker, choosing the plan predicted to lead to the most desirable future state.

The system's power lies in this ability to conduct risk-free, rapid internal simulations. It allows the robot to explore various strategies in its "mind" before committing to a physical action, which is crucial for complex, irreversible, or safety-critical tasks in the real world. The separation of the "Unified Action API" suggests a modular design where different planning strategies or action representations can be plugged in. The entire loop enables continuous adaptation: the agent acts, observes the real outcome, updates its understanding, and refines its future plans accordingly. This is a foundational paradigm for creating autonomous systems that can operate effectively in dynamic and uncertain environments.

</details>

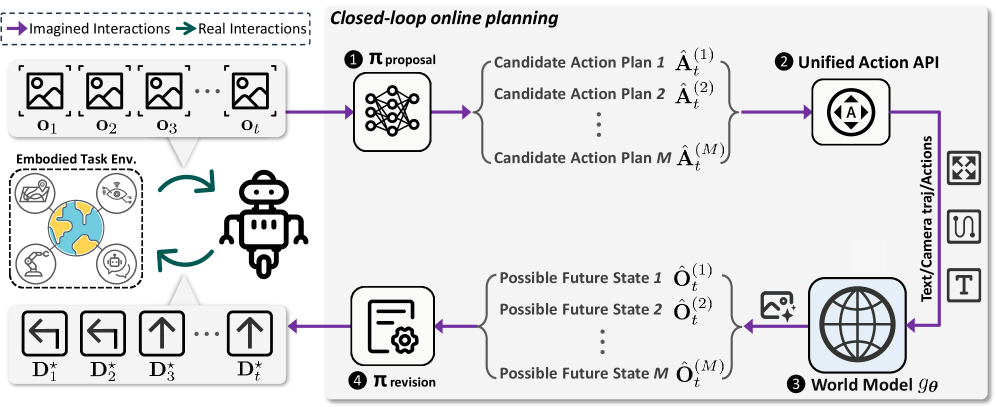

Figure 3: Closed-loop online planning in World-in-World: At time step $t$ , the agent receives the world state, represented by observation $\mathbf{o}_{t}$ , and invokes a proposal policy $\pi_{\text{proposal}}$ (❶) to produce a total of $M$ candidate action plans. The unified action API (❷) transforms each plan into the control inputs required by the world model. The world model (❸) then predicts the corresponding future states as observations $\hat{\mathbf{O}}_{t}$ . The revision policy $\pi_{\text{revision}}$ (❹) evaluates all rollouts and commits to the best, yielding decision $\mathbf{D}^{\star}_{t}$ . This decision is applied in the environment, closing the interaction loop.

### 2.1 Unified Strategy for Closed-Loop Online Planning

In Figure ˜ 3, we present a unified closed-loop strategy that uses visual world models for decision-making. It cycles through proposal, simulation, and revision. In proposal, the agent generates candidate plans; in simulation, each plan is rolled out by the world model to predict counterfactual futures; in revision, the agent scores rollouts and refines its plan. Finally, the agent executes the top-scoring plan in the environment, coupling model-based planning with real execution.

Let $\mathbf{o}_{t}$ denote the agent’s egocentric observation at time step $t$ . The observation can be RGB, RGB-D, or other sensory modalities. For clarity, we use $\mathbf{o}$ as the generic notation throughout. Define the agent’s future potential action sequence of horizon $L$ starting at time step $t$ as $\hat{\mathbf{A}}_{t}\;=\;\bigl[\hat{a}_{t+1},\,\hat{a}_{t+2},\,\dots,\,\hat{a}_{t+L}\bigr],$ where each elementary action $\hat{a}$ is specified in either a continuous action space or a discrete action space, i.e., $\hat{a}\in\mathcal{V}$ , with $\mathcal{V}$ denoting the set of action primitives available to the agent.

Our unified strategy can be formalized as a policy-guided beam search. The beam width corresponds to the number of candidate plans $M$ drawn from the proposal policy $\pi_{\text{proposal}}$ . At time step $t$ , given the current observation $\mathbf{o}_{t}$ and the task goal $\mathrm{g}$ , the proposal policy $\pi_{\text{proposal}}$ samples $M$ candidate action sequences that serve as future candidate plans:

$$

\hat{\mathbf{A}}_{t}^{(m)}\;\sim\;\pi_{\text{proposal}}\bigl(\mathbf{A}\,\big|\,\mathbf{o}_{t},\,\mathrm{g}\bigr),\qquad m=1,\dots,M. \tag{1}

$$

Each candidate plan $\hat{\mathbf{A}}_{t}^{(m)}$ is subsequently transformed by the unified action API $C$ into the control inputs expected by the world model: $I_{t}^{(m)}\;=\;C\bigl(\hat{\mathbf{A}}_{t}^{(m)}\bigr),$ where $I_{t}^{(m)}$ may include textual prompts, camera trajectories, or low-level action sequences, depending on the required format of the chosen world model. The visual world model $g_{\boldsymbol{\theta}}$ then performs a counterfactual rollout based on these control inputs, predicting the future world states $\hat{\mathbf{O}}_{t}^{(m)}$ with horizon $L$ :

$$

\hat{\mathbf{O}}_{t}^{(m)}\;\sim\;g_{\boldsymbol{\theta}}\!\Bigl(\mathbf{O}\,\big|\,\mathbf{o}_{t},\,I_{t}^{(m)}\Bigr),\qquad\hat{\mathbf{O}}_{t}^{(m)}=\bigl[\hat{\mathbf{o}}_{t+1}^{(m)},\,\hat{\mathbf{o}}_{t+2}^{(m)},\,\dots,\,\hat{\mathbf{o}}_{t+L}^{(m)}\bigr]. \tag{2}

$$

Then, the candidate plans and their simulated rollouts $\bigl(\hat{\mathbf{A}}_{t}^{(m)},\hat{\mathbf{O}}_{t}^{(m)}\bigr)$ are evaluated and revised by the revision policy $\pi_{\text{revision}}$ , which assigns a score to each trajectory and selects the decision that maximizes the expected reward. In the most general form, we write

$$

\mathbf{D}^{\star}_{t}=\pi_{\text{revision}}\Bigl(\{\,(\hat{\mathbf{A}}_{t}^{(m)},\,\hat{\mathbf{O}}_{t}^{(m)})\,\}_{m=1}^{M},\,\mathbf{o}_{t},\,\mathrm{g}\Bigr). \tag{3}

$$

Here, $\mathbf{D}^{\star}_{t}$ denotes the best decision according to $\pi_{\text{revision}}$ at time step $t$ . Depending on the task, $\mathbf{D}^{\star}_{t}$ may represent a high-level answer, a recognition result, or a refined sequence of low-level actions, which renders the framework more general than classical Model Predictive Control (MPC) (Morari and H. Lee, 1999), where optimization is restricted to sequences of actions.

A common instantiation implements $\pi_{\text{revision}}$ as a score-and-select operator $S$ . When the decision is an action sequence, selection is performed over the $M$ candidate plans produced at time step $t$ :

$$

m^{\star}=\operatorname*{arg\,max}_{m\in\{1,\dots,M\}}\;S\!\left(\hat{\mathbf{A}}_{t}^{(m)},\,\hat{\mathbf{O}}_{t}^{(m)}\,\big|\,\mathbf{o}_{t},\,\mathrm{g}\right),\qquad\mathbf{D}^{\star}_{t}=\hat{\mathbf{A}}_{t}^{(m^{\star})}. \tag{4}

$$

Here, $S(\cdot)$ denotes a task-specific scoring function that estimates the expected reward or utility of a candidate plan based on its simulated outcomes. Alternatively, $\pi_{\text{revision}}$ may synthesize or update a new decision by aggregating information across the candidate set and their predicted consequences, rather than selecting one candidate verbatim.

Once the best decision $\mathbf{D}^{\star}_{t}$ is executed in the environment, the agent acquires a new observation at time step $t{+}1$ . The unified strategy then re-enters the proposal-simulation-revision loop, using the newly observed state to initiate the next round of proposal, simulation, and revision. In our framework, both $\pi_{\text{proposal}}$ and $\pi_{\text{revision}}$ can be instantiated flexibly: they may be pretrained modules, such as large-scale vision-language models or diffusion policies, or simple rule-based heuristics. In our experiments, we explore multiple instantiations to systematically explore the flexibility and generality of our framework for different tasks.

### 2.2 Unified Action API

In this section, we present a unified action API that transforms an action sequence $\mathbf{A}$ into control inputs $I$ that guide the world model, i.e., $I\!=\!C(\mathbf{A})$ . The action API is designed to be flexible so that the same interface can serve a wide range of world models and tasks. It supports three principal types of control information: (1) text prompt, (2) camera trajectory/viewpoint, and (3) low-level actions, depending on the inputs expected by the chosen world model.

Text prompt. For image-and-text-to-video world models, the controller maps the intended action sequence into a descriptive text prompt. A predefined template converts each primitive action into a phrase, and concatenating these phrases yields the final prompt $I_{\text{text}}$ .

Camera trajectory / viewpoint. For models that consume explicit viewpoints, the controller translates $\mathbf{A}$ into a camera trajectory, e.g., each translation action moves the camera by $0.2\,\text{m}$ , and each rotation action changes the azimuth by $22.5^{\circ}$ . The resulting trajectory is represented as a sequence $\bigl[(x_{k},y_{k},\phi_{k})\bigr]_{k=1}^{K}$ with $(x_{k},y_{k})\in\mathbb{R}^{2}$ and azimuth $\phi_{k}\in\mathbb{R}$ .

Low-level actions. For world models that take discrete or continuous low-level actions as input, the controller maps the action sequence $\mathbf{A}$ to the world model’s action vocabulary, yielding $\mathbf{A}_{\text{world}}$ . This mapping $\mathbf{A}\mapsto\mathbf{A}_{\text{world}}$ applies the necessary transformations to maintain a unique and consistent correspondence between the agent’s actions and the inputs expected by the world model.

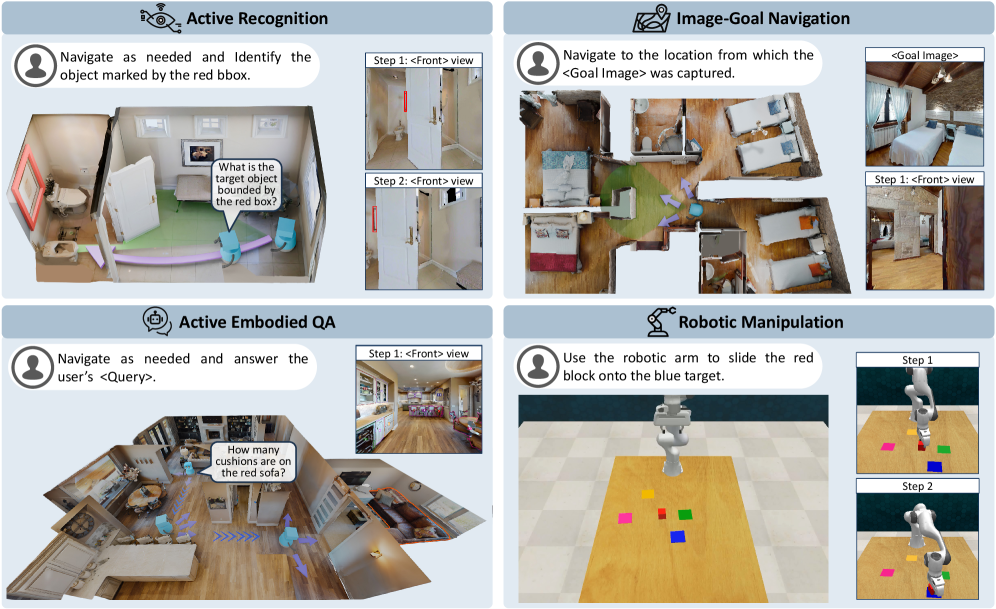

### 2.3 Comprehensive Embodied Tasks

To evaluate the practical utility of visual world models in embodied tasks, we select a diverse set of tasks that span multiple domains and stress distinct capabilities. We focus on four representative tasks: Active Recognition (AR), Active Embodied Question Answering (A-EQA), Image-Goal Navigation (ImageNav), and Robotic Manipulation, as illustrated in Figure ˜ 4. Taken together, these tasks emphasize complementary aspects of embodied intelligence, including perception, navigation, and object-level manipulation, and thus provide a comprehensive testbed for assessing how effectively a visual world model supports online planning and decision-making. Below, we describe the tasks included in our benchmark, and more detailed settings are provided in Appendix ˜ B.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Diagram: Four Embodied AI Task Scenarios

### Overview

The image is a composite diagram divided into four distinct panels, each illustrating a different task for an embodied AI agent (e.g., a robot). The panels are arranged in a 2x2 grid. Each panel contains a title, a task instruction, a primary 3D scene visualization, and, in most cases, a secondary panel showing a first-person ("Front") view or a step-by-step sequence. The overall theme is demonstrating capabilities in navigation, visual understanding, question answering, and physical manipulation within simulated indoor environments.

### Components/Axes

The diagram is segmented into four quadrants:

1. **Top-Left:** "Active Recognition"

2. **Top-Right:** "Image-Goal Navigation"

3. **Bottom-Left:** "Active Embodied QA"

4. **Bottom-Right:** "Robotic Manipulation"

Each quadrant contains:

* A title with an associated icon.

* A text bubble with a task instruction directed at an agent (represented by a person icon).

* A main visual scene (a top-down or isometric view of a room or workspace).

* A secondary visual element (a "Front" view panel or a step-by-step sequence).

### Detailed Analysis

#### Panel 1: Active Recognition (Top-Left)

* **Instruction Text:** "Navigate as needed and Identify the object marked by the red bbox."

* **Main Scene:** A top-down view of a living room. A light blue agent figure is shown with a purple path indicating movement. A red bounding box highlights a picture frame on the wall. A speech bubble from the agent asks: "What is the target object bounded by the red box?"

* **Secondary Panel (Right):** Two stacked images labeled "Step 1: <Front> view" and "Step 2: <Front> view". Both show a first-person perspective looking at a white door with a red vertical handle. The view in Step 2 appears slightly closer or adjusted compared to Step 1.

#### Panel 2: Image-Goal Navigation (Top-Right)

* **Instruction Text:** "Navigate to the location from which the <Goal Image> was captured."

* **Main Scene:** A top-down view of a multi-room apartment (bedroom, bathroom, living area). A light blue agent figure is shown with a purple path leading from a starting point to a goal location in the bedroom. A green cone emanates from the agent, indicating its field of view.

* **Secondary Panel (Right):** Two images. The top is labeled "<Goal Image>" and shows a bedroom with a bed, wooden ceiling, and a window. The bottom is labeled "Step 1: <Front> view" and shows a first-person perspective from within the bedroom, looking towards a doorway.

#### Panel 3: Active Embodied QA (Bottom-Left)

* **Instruction Text:** "Navigate as needed and answer the user's <Query>."

* **Main Scene:** An isometric view of a large, open-plan living and kitchen area. A light blue agent figure is shown with a purple path. A speech bubble from a user (person icon) asks: "How many cushions are on the red sofa?" The red sofa is visible in the scene.

* **Secondary Panel (Right):** A single image labeled "Step 1: <Front> view". It shows a first-person perspective from the kitchen area, looking towards the living room where the red sofa is partially visible.

#### Panel 4: Robotic Manipulation (Bottom-Right)

* **Instruction Text:** "Use the robotic arm to slide the red block onto the blue target."

* **Main Scene:** A top-down view of a wooden table. A white robotic arm is positioned over the table. On the table are several colored blocks: pink, yellow, green, red, and blue. The blue block is square and appears to be the target.

* **Secondary Panel (Right):** Two images showing a sequence. "Step 1" shows the robotic arm's gripper approaching the red block. "Step 2" shows the gripper having pushed the red block so that it is now on top of the blue target block.

### Key Observations

* **Consistent Agent Representation:** The AI agent is consistently visualized as a light blue, simplified humanoid figure in the navigation tasks (Panels 1-3).

* **Path Visualization:** Movement is indicated by a semi-transparent purple path with directional arrows.

* **First-Person Verification:** Three of the four tasks include a "<Front> view" panel, emphasizing the importance of the agent's egocentric visual perspective for completing the task.

* **Task Progression:** The "Robotic Manipulation" panel explicitly shows a two-step action sequence, while the others imply navigation steps.

* **Environment Complexity:** The environments range from a single room (Active Recognition) to a multi-room apartment (Image-Goal Navigation) and a complex open-plan space (Active Embodied QA).

### Interpretation

This diagram serves as a visual taxonomy or set of examples for core challenges in embodied AI. It demonstrates how an intelligent agent must integrate several capabilities:

1. **Perception & Grounding:** Identifying objects (Active Recognition) and understanding spatial relationships from images (Image-Goal Navigation).

2. **Action & Planning:** Generating navigation paths (purple lines) and physical manipulation sequences (sliding the block).

3. **Interactive Reasoning:** Combining navigation with visual question answering (Active Embodied QA), where the agent must move to gather visual information to answer a query.

4. **Multi-Modal Instruction Following:** Each task begins with a natural language instruction that the agent must interpret and execute.

The inclusion of both third-person (top-down) and first-person ("<Front> view") perspectives highlights a key research challenge: bridging the gap between an external observer's understanding of a scene and the agent's limited, on-board sensory input. The tasks progress from pure perception (Recognition) to goal-directed navigation, then to interactive QA, and finally to direct physical interaction, showcasing a hierarchy of complexity in agent capabilities. The clean, simulated environments suggest these are likely benchmark tasks for training and evaluating AI agents in controlled settings before deployment in the real world.

</details>

Figure 4: Top-left: Active Recognition (AR), the agent needs to identify a designated target under occlusions or extreme viewpoints while minimizing navigation cost. Top-right: Image-Goal Navigation (ImageNav), the agent reaches the viewpoint matching a goal image, emphasizing success rate and path efficiency. Bottom-left: Active Embodied Question Answering (A-EQA), the agent answers an open-ended question after active exploration. Bottom-right: Robotic Manipulation, the agent needs to control a robotic arm to complete tasks such as grasping and placement to specified targets.

Active Recognition (AR) is closely related to amodal recognition (Aydemir et al., 2013; Liu et al., 2018; Yang et al., 2019; Fan et al., 2024; Bhattacharjee et al., 2025), in which the agent must identify a designated target that may be observed from extreme viewpoints or be heavily occluded. In addition, AR allows the agent to acquire additional observations through active exploration. All AR experiments are conducted in the Habitat-Sim (Savva et al., 2019), encompassing 551 episodes across 29 scenes from the validation split of Matterport3D (Chang et al., 2017). Within AR, the visual world model assists two decision-making processes. For answering, synthetic views provide auxiliary evidence that helps the agent reason about occlusions and extreme viewpoints that impede recognition. For navigation, rollouts simulate the consequences of potential actions so that the agent can choose a path that is more likely to yield informative observations.

Image-Goal Navigation (ImageNav), also referred to as goal-conditioned visual navigation, requires an embodied agent to reach a target position in a scene given a single reference image that specifies the goal viewpoint. We construct 144 ImageNav episodes from 87 validation scenes of HM3D (Ramakrishnan et al., 2021). In this task, the visual world model exclusively supports navigation decisions. The agent simulates the outcomes of candidate action plans, selects the best option, executes the first segment of that plan, and then replans with the newly observed state in a closed-loop manner.

Active Embodied Question Answering (A-EQA) requires an agent to answer open-ended natural-language questions after actively exploring a 3D environment. Our evaluation set includes 184 questions across 54 indoor scenes from the official OpenEQA split (Majumdar et al., 2024) and the HM3D validation set (Ramakrishnan et al., 2021). As in AR, the visual world model supports both question answering and navigation. For answering, synthetic views generated by the world model provide complementary perspectives that help resolve references to occluded or distant objects. For navigation, the agent simulates high-level action plans using the world model’s predictions to choose exploration strategies likely to reveal question-relevant information.

Robotic Manipulations are fundamental capabilities for embodied agents that must operate in real-world interaction settings. We study how visual world models contribute to closed-loop manipulation planning, evaluating performance on four RLBench (James et al., 2020) tasks with 50 episodes per task. Here, the visual world model supports the agent in assessing candidate $7$ -DoF gripper actions by providing visual evidence about anticipated object motions and interactions, which enables a comparison of alternative plans before execution. The predicted outcomes then guide the selection of actions that are more likely to achieve the specified objective, thereby linking visual prediction accuracy to improvements in manipulation performance.

### 2.4 Exploiting World Models via Post-Training

To evaluate the feasibility of adapting pretrained video generators for embodied tasks, we introduce a post-training procedure that aligns a pretrained model with the domain distribution and action space of target environments. We perform fine-tuning separately on data from two simulators, Habitat-Sim and CoppeliaSim, to match the corresponding task domains. For Habitat-Sim tasks (AR, A-EQA, ImageNav), we post-train on a panoramic action-observation dataset collected from the HM3D (Ramakrishnan et al., 2021) training split. For CoppeliaSim tasks (Robotic Manipulation), we post-train on task demonstrations generated with RLBench (James et al., 2020). To assess generalization rather than memorization, all Habitat-Sim data used for post-training are sourced from scenes that are disjoint from our evaluation scenes, so the scenes in our evaluation tasks remain unseen by the world models after post-training. Additional details regarding the training objective, dataset construction, and training configuration are provided in Appendices ˜ C and D.

## 3 Evaluation Results and Analysis

In this section, we report quantitative results and key observations on the four embodied tasks in Section ˜ 3.1, followed by ablation studies in Section ˜ 3.2. We evaluate visual world models spanning image-based (PathDreamer (Koh et al., 2021b), SE3DS (Koh et al., 2023)) and video-based (SVD (Blattmann et al., 2023a), LTX-Video (HaCohen et al., 2024), Hunyuan (Kong et al., 2024), Wan2.1 (Wan et al., 2025), Wan2.2 (Wan et al., 2025), Cosmos-Predict2 (Agarwal et al., 2025), NWM (Bar et al., 2025a)) approaches, covering major control interfaces. For video-based models, we compare off-the-shelf versions with their post-trained variants.

### 3.1 Benchmark Results

Table 1: Active Recognition (AR) and Image-Goal Navigation (ImageNav) performance across various models and base policies. Higher success rate (SR %), success weighted by path length (SPL %), and lower mean trajectory length (Mean Traj.) are better. “ $\dagger$ ” denotes our post-trained video generators.

| Model Details | AR | ImageNav | | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| Model Type | Method | Control Type | Input Type | #Param. | SR $\uparrow$ | Mean Traj. $\downarrow$ | SR $\uparrow$ | Mean Traj. $\downarrow$ | SPL $\uparrow$ |

| Base Policy | Heuristic (w/o WM) | – | RGB | – | 39.02 | 8.81 | 2.08 | 59.6 | 0.63 |

| + Video Gen. Post-Train | SVD $\dagger$ | Action | RGB; Pano | 1.5B | 60.62 | 5.17 | 20.83 | 58.5 | 11.86 |

| WAN2.1 $\dagger$ | Action | RGB; Pano | 14B | 62.98 | 4.71 | 22.92 | 58.7 | 11.63 | |

| Base Policy | VLM (w/o WM) | – | RGB | 72B | 50.27 | 6.24 | 35.42 | 47.5 | 25.88 |

| + Image Gen. | PathDreamer | Viewpoint | RGB - D; Pano | 0.69B | 56.99 | 5.28 | 36.80 | 47.3 | 26.85 |

| + Image Gen. | SE3DS | Viewpoint | RGB - D; Pano | 1.1B | 57.53 | 5.29 | 36.11 | 47.0 | 26.91 |

| + Video Gen. | NWM | Trajectory | RGB | 1B | 57.35 | 5.68 | 40.28 | 47.1 | 27.83 |

| + Video Gen. Zero-Shot | SVD | Image | RGB | 1.5B | 57.71 | 5.29 | 40.28 | 46.4 | 28.59 |

| LTX - Video | Text | RGB | 2B | 56.08 | 5.37 | 36.81 | 47.5 | 25.85 | |

| Hunyuan | Text | RGB | 13B | 57.71 | 5.21 | 36.11 | 46.8 | 26.89 | |

| Wan2.1 | Text | RGB | 14B | 58.26 | 5.24 | 38.19 | 48.2 | 25.92 | |

| Wan2.2 | Text | RGB | 5B | 55.35 | 5.73 | 38.88 | 46.5 | 28.87 | |

| Cosmos - P2 | Text | RGB | 2B | 55.35 | 5.71 | 36.81 | 47.6 | 25.89 | |

| Wan2.2 | Text | RGB | A14B | 59.53 | 4.91 | 43.05 | 45.8 | 31.46 | |

| Runway Gen4 (proprietary) | Text | RGB | – | 64.79 | 4.06 | - | - | - | |

| + Video Gen. Post-Train | SVD $\dagger$ | Action | RGB; Pano | 1.5B | 60.98 | 5.02 | 43.05 | 46.0 | 30.96 |

| LTX - Video $\dagger$ | Action | RGB; Pano | 2B | 57.53 | 5.49 | 38.89 | 47.4 | 27.47 | |

| WAN2.1 $\dagger$ | Action | RGB; Pano | 14B | 62.61 | 4.73 | 45.14 | 45.8 | 32.10 | |

| Cosmos - P2 $\dagger$ | Action | RGB; Pano | 2B | 60.25 | 5.08 | 41.67 | 45.5 | 30.29 | |

| Wan2.2 $\dagger$ | Action | RGB; Pano | 5B | 56.26 | 5.15 | 38.89 | 46.7 | 28.24 | |

| Wan2.2 $\dagger$ | Action | RGB; Pano | A14B | 62.43 | 4.67 | 46.53 | 44.6 | 34.61 | |

Table 2: Active Embodied Question Answering (A-EQA) performance.

| Model Details | A-EQA Performance | | | |

| --- | --- | --- | --- | --- |

| Model Type | Method | Ans. Score $\uparrow$ | Mean Traj. $\downarrow$ | SPL $\uparrow$ |

| Base Policy | VLM (w/o WM) | 45.7 | 20.4 | 29.6 |

| + Image Gen. | PathDreamer | 46.0 | 20.4 | 29.3 |

| + Image Gen. | SE3DS | 45.8 | 20.3 | 29.4 |

| + Video Gen. | NWM | 47.1 | 20.5 | 30.1 |

| + Video Gen. | Wan2.1 | 45.7 | 20.1 | 28.8 |

| Wan2.2 (5B) | 46.3 | 20.3 | 31.4 | |

| LTX - Video | 46.6 | 20.8 | 29.5 | |

| Cosmos - P2 | 46.6 | 21.0 | 31.3 | |

| Hunyuan | 46.8 | 20.4 | 29.9 | |

| SVD | 46.9 | 20.4 | 29.7 | |

| Wan2.2 (A14B) | 47.2 | 20.7 | 31.9 | |

| + Video Gen. Post-Train | SVD $\dagger$ | 46.4 | 21.1 | 30.1 |

| Cosmos - P2 $\dagger$ | 46.5 | 20.6 | 30.1 | |

| Wan2.2 $\dagger$ (5B) | 47.5 | 20.8 | 30.7 | |

| Wan2.1 $\dagger$ | 48.2 | 20.7 | 31.6 | |

| LTX - Video $\dagger$ | 48.6 | 20.7 | 31.8 | |

| Wan2.2 $\dagger$ (A14B) | 48.4 | 20.2 | 31.9 | |

Table 3: Robotic manipulation performance across various models and base policies.

| Model Details | Manipulation Performance | | |

| --- | --- | --- | --- |

| Model Type | Method | SR $\uparrow$ | Mean Traj. $\downarrow$ |

| Base Policy | VLM (w/o WM) | 44.5 | 2.52 |

| + Video Gen. | SVD | 44.0 | 2.47 |

| LTX - Video | 44.5 | 2.46 | |

| Hunyuan | 44.5 | 2.44 | |

| Wan2.1 | 44.0 | 2.51 | |

| Cosmos - P2 | 44.0 | 2.50 | |

| + Video Gen. Post-Train | SVD $\dagger$ | 46.5 | 2.38 |

| Cosmos - P2 $\dagger$ | 45.0 | 2.40 | |

| Base Policy | 3D - DP (w/o WM) | 24.0 | 5.21 |

| + Video Gen. Post-Train | SVD $\dagger$ | 44.7 | 4.41 |

| Cosmos - P2 $\dagger$ | 38.0 | 4.79 | |

World models can enhance the performance of the base proposal policy. Across AR, A-EQA, ImageNav, and Manipulation, adding a visual world model consistently improves the performance of the base proposal policy (e.g., a VLM policy, a heuristic policy, or a 3D diffusion policy), as shown in Tables ˜ 1, 3 and 3. For example, in AR, the best proprietary model (Runway Gen4) attains an accuracy of $64.79\$ while reducing the mean steps per episode to $4.06$ , compared to the VLM base policy with an accuracy of $50.27\$ and mean steps $6.24$ . Similarly, in ImageNav, the best open-source model Wan2.1 $\dagger$ achieves a success rate of $45.14\$ with an average path length of $45.8$ , outperforming the VLM base policy at $35.42\$ SR and $47.5$ average length. These results support the effectiveness of our World-in-World online planning framework with world models, in which the world model provides simulated future states that inform better decisions.

World models struggle to simulate precise motion and dynamics in manipulation. The gains are less pronounced for Robotic Manipulations (Table ˜ 3), likely because accurately modeling contact-rich interactions and robot kinematics is significantly more challenging than predicting purely view changes. For instance, the best post-trained model on manipulation (SVD $\dagger$ ) reaches an SR of $46.5\$ with a mean trajectory length of $2.38$ , only modestly above the VLM baseline at $44.5\$ SR and $2.52$ mean length. This gap suggests that while current visual world models can effectively guide perception and navigation, capturing fine-grained physical dynamics and action-conditioned object motion remains an open challenge.

Post-training substantially boosts world-model utility. Our post-training adaptation yields consistent improvements. Relative to off-the-shelf Wan2.1, Wan2.1 $\dagger$ raises AR accuracy from $58.26\$ to $62.61\$ and ImageNav SR from $38.19\$ to $45.14\$ (Table ˜ 1). Likewise, SVD $\dagger$ improves AR accuracy from $57.71\$ to $60.98\$ and ImageNav SR from $40.28\$ to $43.05\$ . These gains show that aligning the generative model to the target domain and action space of the specific embodied tasks improves downstream decision-making.

### 3.2 Ablation and Findings

<details>

<summary>x5.png Details</summary>

### Visual Description

## Scatter Plot Comparison: Model Performance on Task Success Rate vs. Generative Quality & Controllability

### Overview

The image contains two side-by-side scatter plots. Both plots share the same Y-axis, "Task Success Rate (%)", but compare it against two different X-axis metrics: "Gen. Quality (Aesthetic+Image Quality) ↑" on the left and "Controllability (1 - LPIPS) ↑" on the right. The plots compare the performance of various AI models, categorized into three groups: Zero-shot (pink circles), Post-trained (blue circles), and Others (green squares). The upward arrows (↑) on the X-axis labels indicate that higher values are better.

### Components/Axes

**Common Elements:**

* **Y-axis:** "Task Success Rate (%)". Scale ranges from 55 to 65, with major ticks at every integer.

* **Legend:** Located in the top-left corner of each plot.

* Pink Circle: Zero-shot

* Blue Circle: Post-trained

* Green Square: Others

* **Data Points:** Each point is labeled with a model name. The color and shape correspond to the legend.

**Left Plot:**

* **X-axis:** "Gen. Quality (Aesthetic+Image Quality) ↑". Scale ranges from 0.325 to 0.475, with major ticks at 0.025 intervals.

**Right Plot:**

* **X-axis:** "Controllability (1 - LPIPS) ↑". Scale ranges from 0.15 to 0.50, with major ticks at 0.05 intervals.

### Detailed Analysis

**Left Plot: Task Success Rate vs. Generative Quality**

* **Trend Verification:** There is a general, weak positive trend. Models with higher Generative Quality scores tend to have slightly higher Task Success Rates, but the correlation is not strong, and there is significant scatter.

* **Data Points (Approximate Coordinates - Gen. Quality, Task Success):**

* **Zero-shot (Pink):**

* Runway Gen4: (0.450, 65.0)

* Wan2.2 A14B: (0.450, 59.5)

* Wan2.1: (0.475, 58.3)

* Hunyuan: (0.400, 58.0)

* Wan2.2 5B: (0.400, 55.0)

* Cosmos-P2: (0.475, 55.0)

* **Post-trained (Blue):**

* Wan2.1†: (0.375, 62.5)

* SVD†: (0.360, 61.0)

* Cosmos-P2†: (0.350, 60.0)

* SVD: (0.375, 57.5)

* Pathdreamer: (0.350, 57.0)

* Wan2.2 5B†: (0.375, 56.0)

* **Others (Green):**

* LTXVideo†: (0.350, 57.5)

* SE3DS: (0.365, 57.3)

* LTXVideo: (0.375, 56.5)

* NWM: (0.325, 57.2)

**Right Plot: Task Success Rate vs. Controllability**

* **Trend Verification:** There is a clearer positive trend compared to the left plot. Models with higher Controllability scores generally achieve higher Task Success Rates.

* **Data Points (Approximate Coordinates - Controllability, Task Success):**

* **Zero-shot (Pink):**

* Runway Gen4: (0.325, 65.0)

* Wan2.2 A14B: (0.325, 59.5)

* Wan2.1: (0.275, 58.3)

* SVD: (0.325, 57.8)

* Hunyuan: (0.350, 57.5)

* LTXVideo: (0.325, 56.0)

* Wan2.2 5B: (0.325, 55.5)

* Cosmos-P2: (0.175, 55.0)

* **Post-trained (Blue):**

* Wan2.1†: (0.500, 62.5)

* SVD†: (0.500, 61.0)

* Cosmos-P2†: (0.500, 60.0)

* Wan2.2 5B†: (0.450, 56.0)

* **Others (Green):**

* LTXVideo†: (0.350, 57.5)

* SE3DS: (0.350, 57.3)

* Pathdreamer: (0.300, 57.0)

* NWM: (0.375, 57.2)

### Key Observations

1. **Top Performer:** "Runway Gen4" (Zero-shot) is the clear outlier, achieving the highest Task Success Rate (~65%) in both plots, with high Generative Quality but only moderate Controllability.

2. **Post-training Effect:** Models with the "†" suffix (indicating post-training) consistently show a significant rightward shift on the Controllability axis (right plot) compared to their base versions, while maintaining or slightly improving Task Success Rate. This effect is less pronounced on the Generative Quality axis.

3. **Metric Correlation:** Task Success Rate appears to have a stronger visual correlation with Controllability than with Generative Quality.

4. **Cluster of "Others":** The green "Others" models (NWM, SE3DS, LTXVideo) cluster in a mid-range for both metrics, generally between 56-58% Task Success Rate.

5. **Cosmos-P2 Anomaly:** The base "Cosmos-P2" model has the lowest Controllability score (~0.175) but a mid-range Generative Quality score, indicating a potential trade-off or specialization in its design.

### Interpretation

This comparative analysis suggests several insights about the evaluated models:

* **The Success-Controlability Link:** The stronger trend in the right plot implies that a model's ability to be precisely controlled (as measured by 1-LPIPS) is a more reliable predictor of its overall task success than its raw aesthetic or image quality. This makes intuitive sense for applied tasks where following instructions is paramount.

* **Value of Post-training:** The dramatic improvement in Controllability for post-trained models (†) highlights the effectiveness of this technique for enhancing steerability without sacrificing—and sometimes even improving—task performance. This is a key finding for model development.

* **Performance vs. Specialization:** "Runway Gen4" demonstrates that it's possible to achieve top-tier task success with a zero-shot model, but its controllability is not the highest. Conversely, post-trained models like "Wan2.1†" and "SVD†" achieve the highest controllability scores, suggesting they may be preferable for applications requiring fine-grained user input.

* **Trade-off Identification:** The position of "Cosmos-P2" suggests a model architecture or training focus that prioritizes generative quality over controllability. This isn't inherently negative but defines its use case.

In summary, the data argues that for maximizing task success in this evaluation framework, optimizing for controllability is likely more impactful than optimizing solely for generative quality, and post-training is a highly effective method for achieving that optimization.

</details>

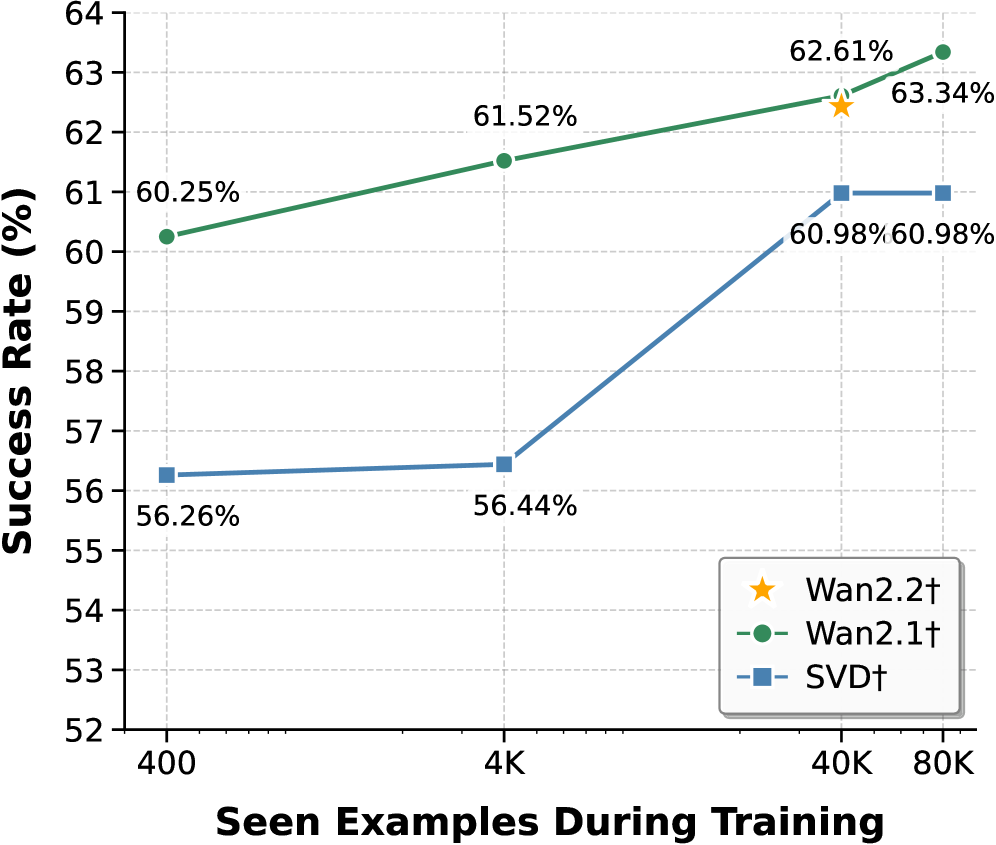

Figure 5: (a) SR vs. generation quality in AR; generation quality is scored as the average of an aesthetic predictor (Akio Kodaira, 2024) and an image-quality predictor (Ke et al., 2021), both trained to match human preferences. (b) SR vs. controllability in AR; controllability is quantified as $1-\mathrm{LPIPS}$ between ground-truth and predicted observations.

Fine-grained controllability matters more than visuals for task success. Although recent off-the-shelf video generators like Wan2.1 produce visually appealing clips, they are driven by text prompts with limited fine-grained low-level controls. Without adaptation, these models yield only small gains on downstream embodied tasks. We further study the relation between controllability and the success rate on AR. Here, controllability is defined as alignment between intended actions and the motions in the model’s predictions. After action-conditioned post-training, alignment improves substantially and SR rises accordingly. Figure ˜ 5 (b) shows a positive correlation: models that respond reliably to low-level controls achieve higher SR. These results indicate that precise control, not just visual quality, is critical for embodied world models to support effective decision-making.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Model Success Rate vs. Training Examples

### Overview

This image is a line chart comparing the performance of three different models or methods (Wan2.2†, Wan2.1†, and SVD†) as a function of the number of examples seen during training. The chart plots "Success Rate (%)" against "Seen Examples During Training" on a logarithmic scale. The data suggests an analysis of model learning efficiency or scaling behavior.

### Components/Axes

* **X-Axis (Horizontal):** Labeled **"Seen Examples During Training"**. It uses a logarithmic scale with major tick marks at **400**, **4K** (4,000), **40K** (40,000), and **80K** (80,000).

* **Y-Axis (Vertical):** Labeled **"Success Rate (%)"**. It uses a linear scale ranging from **52** to **64**, with major tick marks at every integer value.

* **Legend:** Located in the **bottom-right corner** of the chart area. It contains three entries:

* **Wan2.2†**: Represented by a **yellow star (★)** symbol.

* **Wan2.1†**: Represented by a **green line with circular markers (●)**.

* **SVD†**: Represented by a **blue line with square markers (■)**.

* **Data Series & Points:**

* **Wan2.1† (Green Line):** A solid green line connecting four circular data points.

* **SVD† (Blue Line):** A solid blue line connecting four square data points.

* **Wan2.2† (Yellow Star):** A single, isolated data point marked with a star.

### Detailed Analysis

**Data Series: Wan2.1† (Green Line with Circles)**

* **Trend:** The line shows a consistent, gradual upward slope from left to right, indicating a steady increase in success rate with more training examples.

* **Data Points:**

* At **400** examples: **60.25%**

* At **4K** examples: **61.52%**

* At **40K** examples: **62.61%**

* At **80K** examples: **63.34%**

**Data Series: SVD† (Blue Line with Squares)**

* **Trend:** The line shows a very shallow increase between 400 and 4K examples, followed by a steep upward slope between 4K and 40K examples, and then plateaus (flattens) between 40K and 80K examples.

* **Data Points:**

* At **400** examples: **56.26%**

* At **4K** examples: **56.44%**

* At **40K** examples: **60.98%**

* At **80K** examples: **60.98%**

**Data Point: Wan2.2† (Yellow Star)**

* **Placement:** This is a single data point located at the **40K** examples mark on the x-axis.

* **Value:** **62.61%**. This value is identical to the Wan2.1† data point at the same x-axis position.

### Key Observations

1. **Performance Hierarchy:** At all measured points, the Wan2.1† model (green) achieves a higher success rate than the SVD† model (blue).

2. **Convergence at 40K:** At 40,000 training examples, the performance of Wan2.1† and Wan2.2† is identical (62.61%).

3. **Diminishing Returns for SVD†:** The SVD† model shows no improvement in success rate between 40K and 80K examples, suggesting a performance plateau.

4. **Continuous Improvement for Wan2.1†:** The Wan2.1† model continues to show measurable improvement (from 62.61% to 63.34%) when scaling from 40K to 80K examples.

5. **Initial Gap:** The initial performance gap at 400 examples between Wan2.1† (60.25%) and SVD† (56.26%) is approximately **4 percentage points**.

### Interpretation

This chart demonstrates the scaling laws or data efficiency of different models. The **Wan2.1†** model exhibits superior and more consistent learning, maintaining a positive growth trajectory across the entire observed range. The **SVD†** model benefits significantly from increased data between 4K and 40K examples but hits a ceiling, indicating it may be a less scalable approach or has reached its capacity with the given architecture.

The single data point for **Wan2.2†** is intriguing. Its performance matching Wan2.1† at 40K examples could imply it is a variant or an optimized version that achieves comparable results at that specific data scale. The absence of data points for Wan2.2† at other scales leaves its overall scaling behavior unknown.

**Underlying Message:** For the task measured by "Success Rate," investing in more training data is beneficial for all models, but the returns are model-dependent. Wan2.1† appears to be the most robust and scalable choice among the three, as it continues to improve where SVD† stagnates. The chart provides empirical evidence to guide decisions on model selection and data collection budgets.

</details>

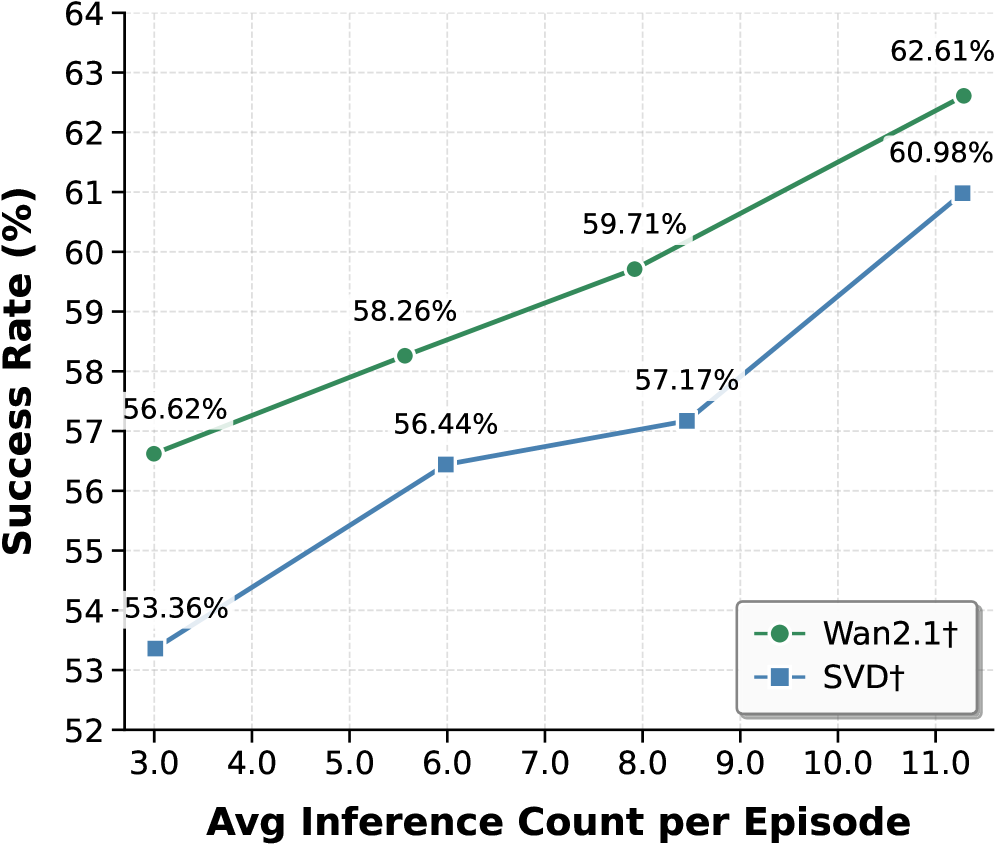

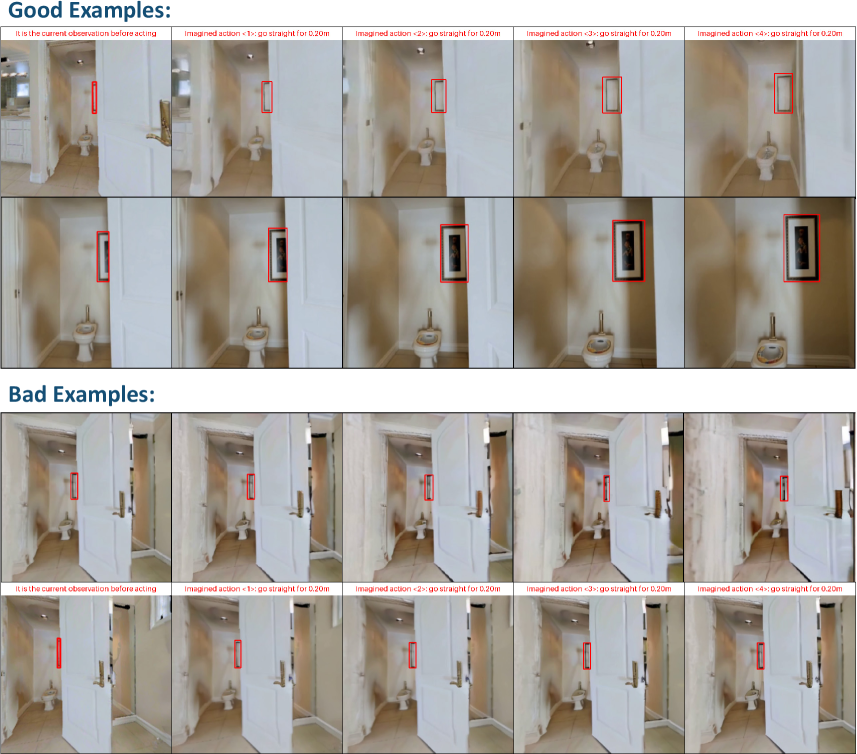

Figure 6: SR vs. seen examples during post-training. SR increases consistently with more downstream data, revealing a clear data-scaling trend for adaptation.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Success Rate vs. Average Inference Count per Episode

### Overview

The image is a line chart comparing the performance of two methods, labeled "Wan2.1†" and "SVD†", by plotting their Success Rate (%) against the Average Inference Count per Episode. The chart demonstrates a positive correlation between the number of inferences and the success rate for both methods.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis (Horizontal):**

* **Title:** "Avg Inference Count per Episode"

* **Scale:** Linear, ranging from 3.0 to 11.0.

* **Major Tick Marks:** 3.0, 4.0, 5.0, 6.0, 7.0, 8.0, 9.0, 10.0, 11.0.

* **Y-Axis (Vertical):**

* **Title:** "Success Rate (%)"

* **Scale:** Linear, ranging from 52 to 64.

* **Major Tick Marks:** 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64.

* **Legend:**

* **Placement:** Bottom-right corner of the chart area.

* **Entry 1:** A green dashed line with a circle marker, labeled "Wan2.1†".

* **Entry 2:** A blue dashed line with a square marker, labeled "SVD†".

* **Data Series & Points:**

* **Series 1 (Wan2.1† - Green line, circle markers):**

* Point 1: x ≈ 3.0, y = 56.62%

* Point 2: x ≈ 6.0, y = 58.26%

* Point 3: x ≈ 8.0, y = 59.71%

* Point 4: x ≈ 11.0, y = 62.61%

* **Series 2 (SVD† - Blue line, square markers):**

* Point 1: x ≈ 3.0, y = 53.36%

* Point 2: x ≈ 6.0, y = 56.44%

* Point 3: x ≈ 8.5, y = 57.17%

* Point 4: x ≈ 11.0, y = 60.98%

### Detailed Analysis

* **Trend Verification:**

* **Wan2.1† (Green):** The line shows a consistent, nearly linear upward slope from left to right. The rate of increase is steady across the measured range.

* **SVD† (Blue):** The line also slopes upward overall. The increase is steeper between the first two points (3.0 to 6.0), then flattens slightly between 6.0 and 8.5, before rising sharply again between 8.5 and 11.0.

* **Data Point Extraction:** All data points are explicitly labeled on the chart with their percentage values. The x-axis positions for the points are approximate based on their visual placement relative to the axis ticks.

### Key Observations

1. **Performance Gap:** The Wan2.1† method consistently achieves a higher Success Rate than the SVD† method at every comparable average inference count.

2. **Converging Trend:** The performance gap between the two methods appears to narrow slightly as the average inference count increases. At x=3.0, the gap is 3.26 percentage points (56.62% vs 53.36%). At x=11.0, the gap is 1.63 percentage points (62.61% vs 60.98%).

3. **SVD†'s Non-Linear Response:** The SVD† method shows a less uniform response to increased inferences, with a notable plateau between approximately 6.0 and 8.5 inferences per episode.

### Interpretation

The chart presents a clear performance comparison in a technical context, likely from a machine learning or AI research paper (suggested by terms like "Inference Count" and "Success Rate"). The data suggests that:

* **Resource-Performance Trade-off:** For both methods, investing more computational resources (higher average inference count per episode) yields a higher success rate. This is a common trade-off in iterative or sampling-based algorithms.

* **Method Superiority:** Wan2.1† is the more effective method under the tested conditions, providing a higher success rate for the same computational budget (inference count).

* **Algorithmic Behavior:** The differing slopes and shapes of the lines hint at underlying algorithmic differences. Wan2.1†'s steady improvement suggests a more predictable scaling behavior. SVD†'s plateau could indicate a point of diminishing returns or a phase where additional inferences provide minimal gain before another performance jump, which might be an important characteristic for system optimization. The narrowing gap at higher inference counts could imply that SVD† benefits more from very large computational budgets, though it remains inferior in the measured range.

</details>

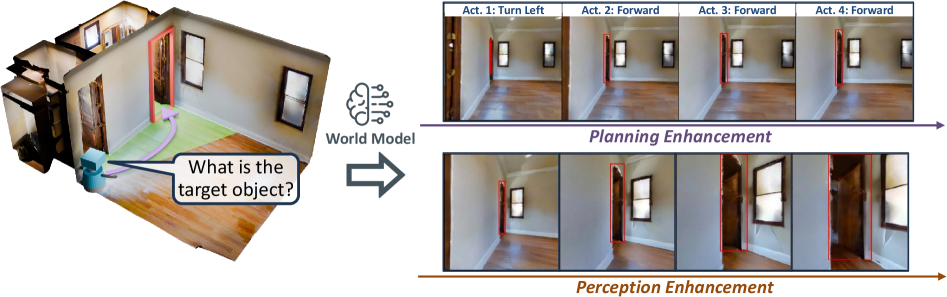

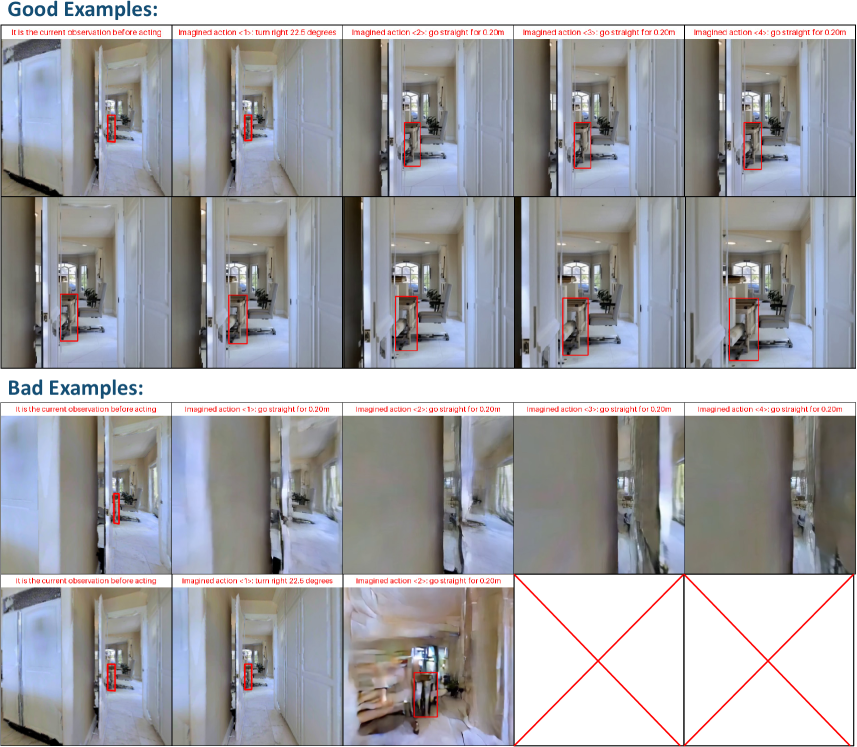

Figure 7: SR vs. average number of world-model inferences per episode. Increasing the inference-time computation allocated to each decision step leads to higher SR.

Data-size scaling for post-trained models. We study how post-training data size affects WM performance (Wan2.2 $\dagger$ , Wan2.1 $\dagger$ , SVD $\dagger$ ). Each WM is post-trained for one epoch on datasets from $400$ to $80\text{K}$ instances. As shown in Figure ˜ 7, more post-training data consistently improves AR performance: Wan2.1 $\dagger$ rises from $60.25\$ to $63.34\$ , and SVD $\dagger$ from $56.80\$ to $60.98\$ . Wan2.2 $\dagger$ (A14B), despite substantially larger web-video pretraining, reaches nearly the same performance as Wan2.1 $\dagger$ after $40\text{K}$ post-training instances, suggesting that scaling action-conditioned post-training is more effective for embodied utility than upgrading the pretrained generator. Moreover, larger models (Wan2.1 $\dagger$ , 14B) benefit more and saturate less than smaller ones (SVD $\dagger$ , 1.5B), indicating greater capacity to absorb action-conditioned supervision.

Inference-time scaling for online planning with world models. Within our online planning framework, the number of world-model inferences (simulated potential futures per episode) directly affects task performance. As shown in Figure ˜ 7, increasing the average inferences per episode for AR yields a clear positive correlation with SR. For example, increasing the average inference count from 3 to 11 improves SR from $53.36\$ to $60.98\$ for SVD $\dagger$ . This suggests that allocating more inference-time computation to simulate potential futures lets the planner make more informed decisions, thereby improving overall performance.

Table 4: Post-training with different input contexts: front view vs. panorama.

| Task | Model | Front View | Panorama | | |

| --- | --- | --- | --- | --- | --- |

| SR $\uparrow$ | Mean Traj. $\downarrow$ | SR $\uparrow$ | Mean Traj. $\downarrow$ | | |

| AR | SVD $\dagger$ | 57.89 | 5.04 | 60.98 | 5.02 |

| Wan2.1 $\dagger$ | 62.25 | 4.82 | 62.61 | 4.73 | |

| Wan2.2 $\dagger$ (5B) | 57.16 | 5.08 | 56.26 | 5.15 | |

| Cosmos-P2 $\dagger$ | 58.98 | 4.94 | 60.25 | 5.08 | |

| ImageNav | SVD $\dagger$ | 38.19 | 47.0 | 43.05 | 46.0 |

| Wan2.1 $\dagger$ | 48.61 | 43.8 | 45.14 | 45.8 | |

| Wan2.2 $\dagger$ (5B) | 40.97 | 45.8 | 38.89 | 46.7 | |

| Cosmos-P2 $\dagger$ | 40.97 | 47.0 | 41.67 | 45.5 | |

Global vs. local context for generation. We study the effect of input context format. Specifically, we compare post-trained models conditioned on panoramic versus front-view input (Table ˜ 4). Panoramic input provides a $360^{\circ}$ field of view, whereas front view offers a focused but limited perspective. For fairness, generated panoramas are converted to perspective views with the same horizontal field of view during evaluation. Although panoramic input offers richer global context, it does not consistently yield large gains across all settings. Likely, panorama-to-perspective conversion introduces resolution loss, degrading downstream perception and planning.

## 4 Discussion and Future Directions

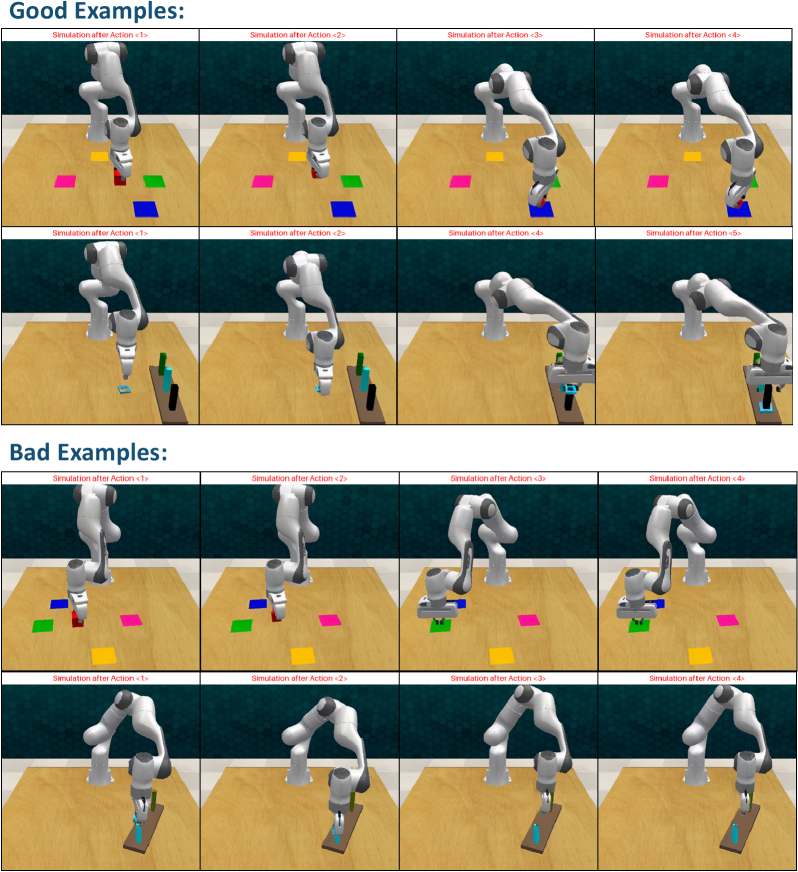

Generalization capacity of world models is critical for practical use. Most video generators are pretrained on web videos. In unseen embodied environments, they may revert to training priors or ignore action controls, yielding plausible but physically or semantically inconsistent rollouts (see Figures ˜ 13 and 14). These deviations mislead planning and reduce success. Larger models or more pretraining data can partly help, but robust generalization remains central. Future work should prioritize strategies and action representations to improve transfer to novel environments, such as unified action representations (Wang et al., 2025b; Zhi et al., 2025; Wang et al., 2025a) and curriculum or domain-specific data collection (Zhao et al., 2025).

Long-horizon planning with world models remains challenging. In our experiments, visual world models simulate short-term changes but struggle on long horizons due to limited mechanisms for accumulating spatiotemporal history. We attempted to alleviate this issue by replacing front-view inputs with panoramas to provide global context, but gains were inconsistent across models and tasks. Future work should better encode and retrieve long-term dependencies, e.g., spatial memory (Zhou et al., 2025b; Xiao et al., 2025; Li et al., 2025c; Yu et al., 2025a; Ren et al., 2025) and episode-level memory (Cai et al., 2025; Guo et al., 2025), to maintain scene-level context and enable coherent planning over extended horizons.

Precise modeling of interactions and dynamics remains difficult. For manipulation, capturing contact-rich interactions, compliance, friction, and state changes of articulated or deformable objects is essential. Current visual world models often miss these details, producing rollouts that violate physics and degrade planning and control—consistent with our observations and prior analyses (Kang et al., 2024). Promising directions include physics-guided motion generation (Chefer et al., 2025; Zhang et al., 2025b; Akkerman et al., 2025) and inferring or generating physical properties to inform action-conditioned predictions (Cao et al., 2025; Gillman et al., 2025; Zhang et al., 2024). Integrating such signals into conditioning pathways may improve fidelity when precise dynamics are required.

Stronger proposal and revision policies set the performance floor. The agent’s overall performance depends on both world-model fidelity and the strength of the proposal and revision policies that select and refine decisions. While simulated rollouts improve decision-making, base policies must be effective to provide a reliable starting point, and strengthening them raises the ceiling. Future work could explore stronger policies (Geng et al., 2025; Kim et al., 2025), and integration strategies that deepen synergy between world models and decision-making (Neary et al., 2025), such as more human-aligned reward models (Wang et al., 2024; Seneviratne et al., 2025; Rocamonde et al., 2023).

## 5 Conclusion

We introduce World-in-World, a closed-loop world interface and benchmark that evaluates generative world models via embodied interaction rather than isolated visual metrics. By unifying heterogeneous controls, our action API enables any world model to serve as perception and planning utilities for an embodied agent. Coupled with a unified closed-loop planning strategy that proposes, simulates, and revises action plans, the benchmark measures agent performance on four demanding tasks. Our experiments reveal large gaps between visual metrics and task success, underscoring the need for closed-loop evaluation, and show that pretrained video generators improve with post-training data scaling and inference-time scaling. We expect World-in-World to guide world models toward not only striking visual realism but also reliable perception, planning, and action in embodied scenarios.

## References

- Agarwal et al. (2025) Niket Agarwal, Arslan Ali, Maciej Bala, Yogesh Balaji, Erik Barker, Tiffany Cai, Prithvijit Chattopadhyay, Yongxin Chen, Yin Cui, Yifan Ding, et al. Cosmos world foundation model platform for physical ai. arXiv preprint arXiv:2501.03575, 2025.

- Akio Kodaira (2024) Sayan Goswami Akio Kodaira. Aesthetic predictor v2.5, May 2024. URL https://github.com/discus0434/aesthetic-predictor-v2-5/.

- Akkerman et al. (2025) Rick Akkerman, Haiwen Feng, Michael J. Black, Dimitrios Tzionas, and Victoria Fernández Abrevaya. Interdyn: Controllable interactive dynamics with video diffusion models. In Proceedings of the Computer Vision and Pattern Recognition Conference, pages 12467–12479, 2025.

- Alonso et al. (2024) Eloi Alonso, Adam Jelley, Vincent Micheli, Anssi Kanervisto, Amos Storkey, Tim Pearce, and François Fleuret. Diffusion for world modeling: Visual details matter in atari. In Advances in Neural Information Processing Systems (NeurIPS), 2024.

- Aydemir et al. (2013) Alper Aydemir, Andrzej Pronobis, Moritz Göbelbecker, and Patric Jensfelt. Active visual object search in unknown environments using uncertain semantics. IEEE Transactions on Robotics, 29(4):986–1002, August 2013. ISSN 1941-0468.

- Bahmani et al. (2024a) Sherwin Bahmani, Xian Liu, Wang Yifan, Ivan Skorokhodov, Victor Rong, Ziwei Liu, Xihui Liu, Jeong Joon Park, Sergey Tulyakov, Gordon Wetzstein, et al. Tc4d: Trajectory-conditioned text-to-4d generation. In Proceedings of the European Conference on Computer Vision (ECCV), pages 53–72. Springer, 2024a.

- Bahmani et al. (2024b) Sherwin Bahmani, Ivan Skorokhodov, Victor Rong, Gordon Wetzstein, Leonidas Guibas, Peter Wonka, Sergey Tulyakov, Jeong Joon Park, Andrea Tagliasacchi, and David B Lindell. 4d-fy: Text-to-4d generation using hybrid score distillation sampling. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 7996–8006, 2024b.

- Bai et al. (2025) Shuai Bai, Keqin Chen, Xuejing Liu, Jialin Wang, Wenbin Ge, Sibo Song, Kai Dang, Peng Wang, Shijie Wang, Jun Tang, Humen Zhong, Yuanzhi Zhu, Mingkun Yang, Zhaohai Li, Jianqiang Wan, Pengfei Wang, Wei Ding, Zheren Fu, Yiheng Xu, Jiabo Ye, Xi Zhang, Tianbao Xie, Zesen Cheng, Hang Zhang, Zhibo Yang, Haiyang Xu, and Junyang Lin. Qwen2.5-vl technical report, 2025.

- Bar et al. (2025a) Amir Bar, Gaoyue Zhou, Danny Tran, Trevor Darrell, and Yann LeCun. Navigation world models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025a.

- Bar et al. (2025b) Amir Bar, Gaoyue Zhou, Danny Tran, Trevor Darrell, and Yann LeCun. Navigation world models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2025b.

- Bhattacharjee et al. (2025) Subhransu S. Bhattacharjee, Dylan Campbell, and Rahul Shome. Believing is seeing: Unobserved object detection using generative models, March 2025.

- Blattmann et al. (2023a) Andreas Blattmann, Tim Dockhorn, Sumith Kulal, Daniel Mendelevitch, Maciej Kilian, Dominik Lorenz, Yam Levi, Zion English, Vikram Voleti, Adam Letts, et al. Stable video diffusion: Scaling latent video diffusion models to large datasets. arXiv preprint arXiv:2311.15127, 2023a.

- Blattmann et al. (2023b) Andreas Blattmann, Robin Rombach, Huan Ling, Tim Dockhorn, Seung Wook Kim, Sanja Fidler, and Karsten Kreis. Align your latents: High-resolution video synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023b.

- Brooks et al. (2024a) Tim Brooks, Bill Peebles, Connor Holmes, Will DePue, Yufei Guo, Li Jing, David Schnurr, Joe Taylor, Troy Luhman, Eric Luhman, et al. Video generation models as world simulators. OpenAI Blog, 1:8, 2024a.

- Brooks et al. (2024b) Tim Brooks, Bill Peebles, Connor Holmes, Will DePue, Yufei Guo, Li Jing, David Schnurr, Joe Taylor, Troy Luhman, Eric Luhman, et al. Sora: Video generation models as world simulators. OpenAI Blog, 1:8, 2024b.