# Plan Then Retrieve: Reinforcement Learning-Guided Complex Reasoning over Knowledge Graphs

**Authors**: Yanlin Song, Ben Liu, Víctor Gutiérrez-Basulto, Zhiwei Hu, Qianqian Xie, Min Peng, Sophia Ananiadou, Jeff Z. Pan

> School of Computer Science Wuhan University Wuhan China

> Ant Group Hangzhou China

> School of Computer Science and Informatics Cardiff University Cardiff UK

> College of Information Science and Engineering Shanxi Agricultural University Taiyuan China

> School of Artificial Intelligence Wuhan University Wuhan China Center for Language and Information Research Wuhan University Wuhan China

> School of Computer Science University of Manchester Manchester UK

> ILCC, School of Informatics University of Edinburgh Edinburgh UK

## Abstract

Knowledge Graph Question Answering (KGQA) aims to answer natural language questions by reasoning over structured knowledge graphs (KGs). While large language models (LLMs) have advanced KGQA through their strong reasoning capabilities, existing methods continue to struggle to fully exploit both the rich knowledge encoded in KGs and the reasoning capabilities of LLMs, particularly in complex scenarios. They often assume complete KG coverage and lack mechanisms to judge when external information is needed, and their reasoning remains locally myopic, failing to maintain coherent multi-step planning, leading to reasoning failures even when relevant knowledge exists. We propose Graph-RFT, a novel two-stage reinforcement fine-tuning KGQA framework with a “plan–KGsearch–and–Websearch–during–think” paradigm, that enables LLMs to perform autonomous planning and adaptive retrieval scheduling across KG and web sources under incomplete knowledge conditions. Graph-RFT introduces a chain-of-thought (CoT) fine-tuning method with a customized plan–retrieval dataset activates structured reasoning and resolves the GRPO cold-start problem. It then introduces a novel plan–retrieval guided reinforcement learning process integrates explicit planning and retrieval actions with a multi-reward design, enabling coverage-aware retrieval scheduling. It employs a Cartesian-inspired planning module to decompose complex questions into ordered sub-questions, and logical expression to guide tool invocation for globally consistent multi-step reasoning. This reasoning–retrieval process is optimized with a multi-reward combining outcome and retrieval-specific signals, enabling the model to learn when and how to combine KG and web retrieval effectively. Experiments on multiple KGQA benchmarks demonstrate that Graph-RFT achieves superior performance over strong baselines, even with smaller LLM backbones, and substantially improves complex question decomposition, factual coverage, and tool coordination.

Knowledge Graph Question Answering, Large Language Model, Reinforcement Learning copyright: acmlicensed ccs: Computing methodologies Semantic networks

## 1. Introduction

Knowledge Graph Question Answering (KGQA) aims to answer natural language questions by reasoning over structured knowledge graphs (KGs) that store explicit, factual knowledge in the form of triples. KGQA plays a central role in web-based intelligent systems such as search, recommendation, and social platforms (Khorashadizadeh et al., 2024; Zhao et al., 2024; Zeng et al., 2024; Xie et al., 2023, 2024, 2025; Wang et al., 2023), where accurate and explainable reasoning over structured data is crucial. Recent advances in large language models (LLMs) have transformed natural language understanding, reasoning, and generation (Hendrycks et al., 2020; Clark et al., 2018; Guo et al., 2025; Shi et al., 2025; Liu et al., 2025b). Leveraging their strong generalization and reasoning capabilities, recent KGQA methods increasingly rely on LLMs, integrating knowledge graphs as structured, verifiable knowledge sources to enhance factual precision and interpretability (Sun et al., 2023; Jiang et al., 2023; Tan et al., 2025b; Ma et al., 2025; Liu et al., 2025a; Xue et al., 2024).

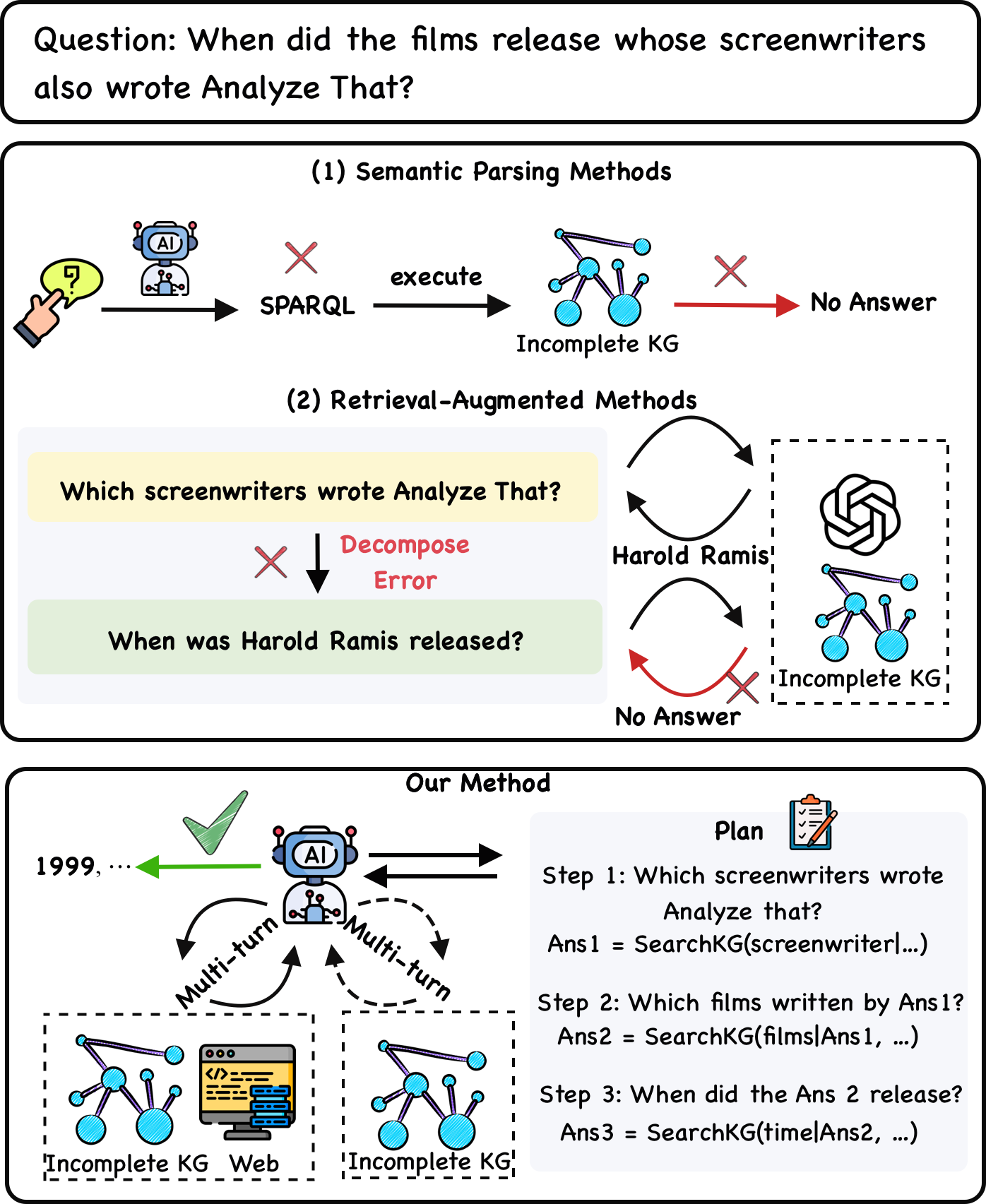

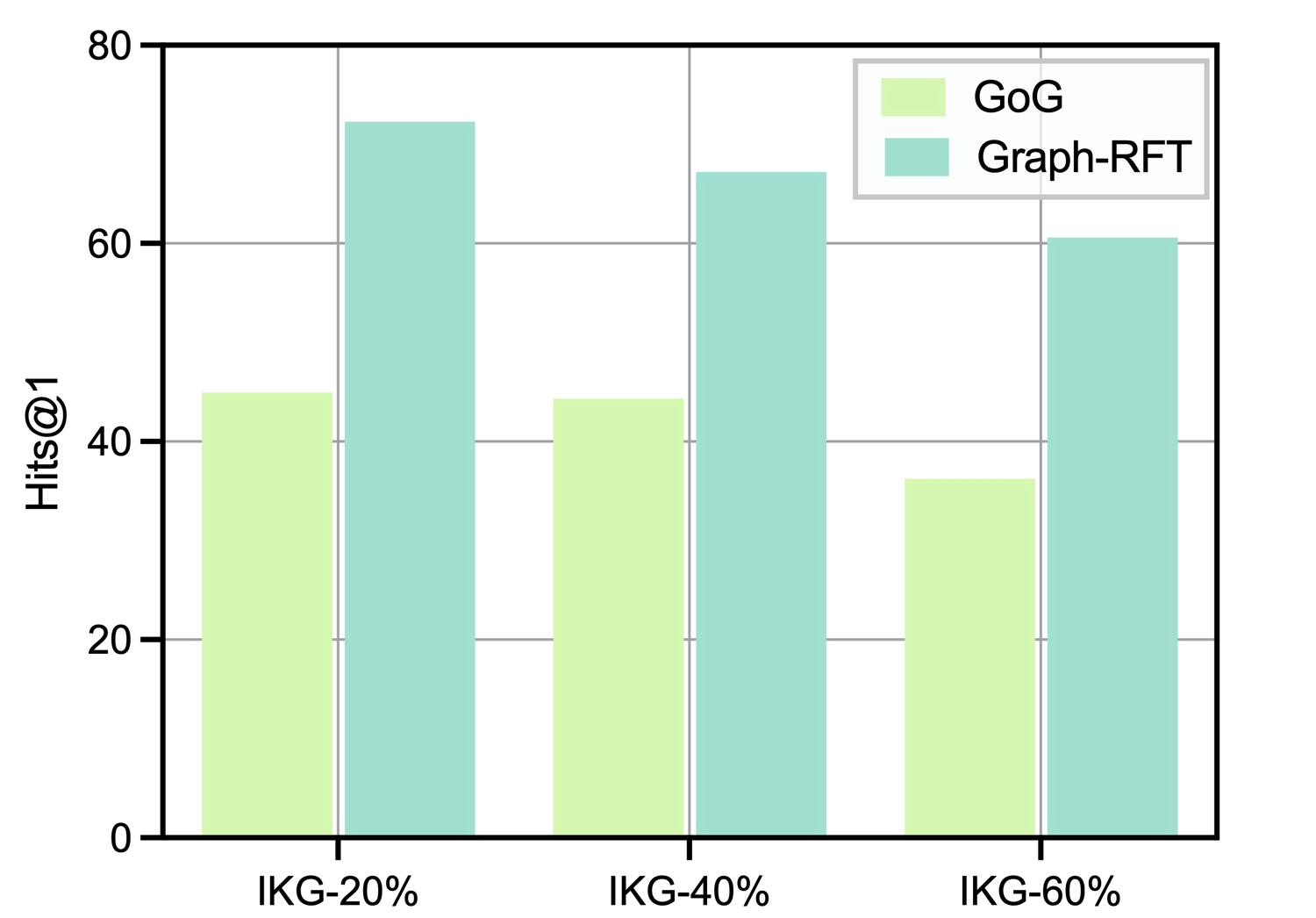

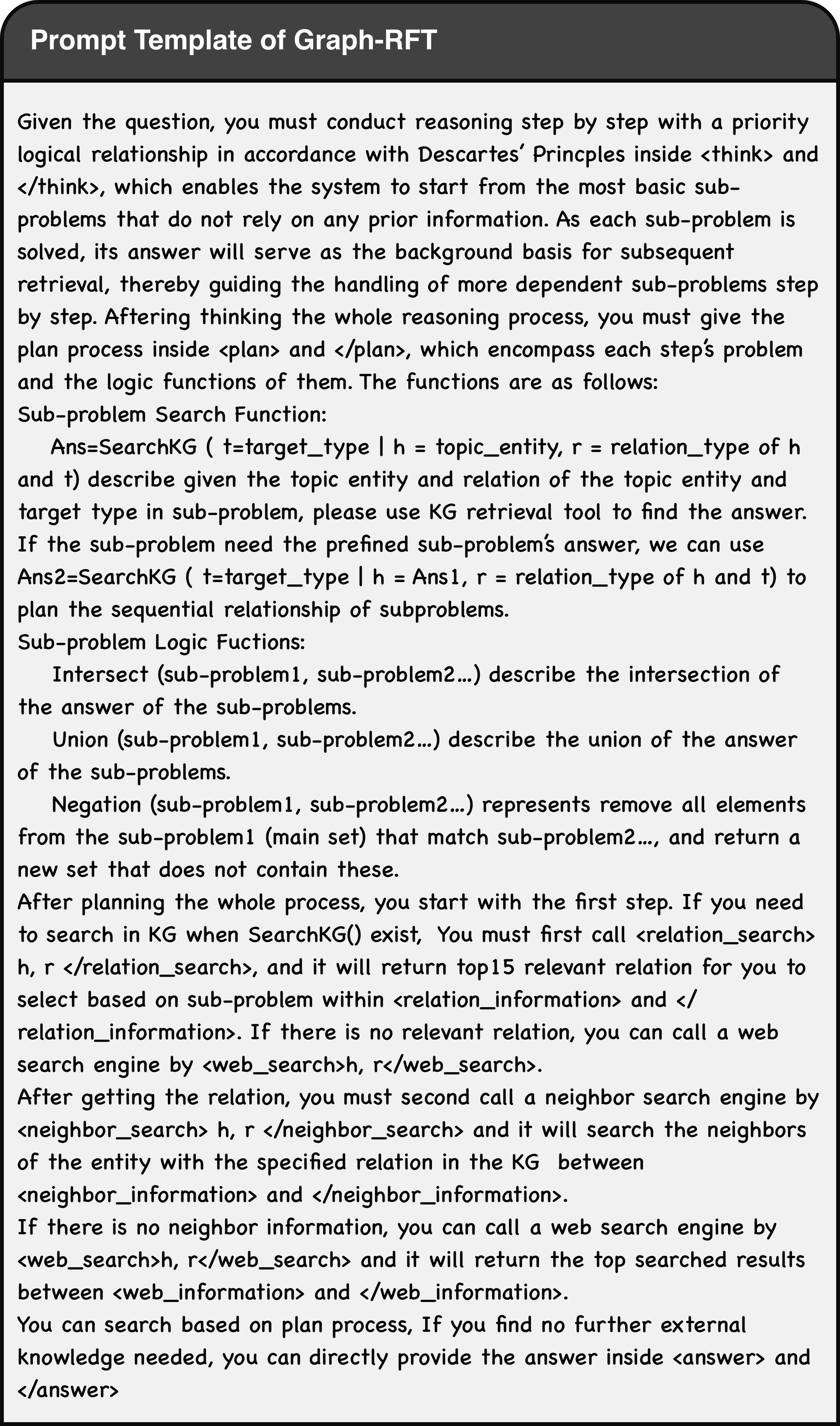

Existing LLM-based KGQA approaches fall into two paradigms: semantic parsing (SP), which translates natural questions into executable logical queries (e.g., SPARQL) and runs them over a KG (Li et al., 2023a; Luo et al., 2023a; Xu et al., 2024), and retrieval-augmented generation (RAG), which retrieves relevant KG triples to condition an LLM’s generation (Tan et al., 2025b; Xu et al., 2024). Despite substantial progress, both paradigms struggle in complex settings for two main reasons. First, they lack a reliable mechanism to judge whether a KG contains the facts necessary to answer a question. SP and RAG methods commonly assume that all required triples are present in the KG, a strong and unrealistic assumption for manually curated graphs. When coverage is incomplete, SP fails to produce correct executable queries, and RAG offers no built-in way to refine retrieval or to decide when to consult external (unstructured) sources. Second, these methods often lack the ability to perform coherent multi-step planning and adaptive retrieval scheduling. For example in Figure 1 (2), an LLM may correctly decompose a question into “Which screenwriters wrote Analyze That?” and retrieve “Harold Ramis,” but then fail to continue the planned decomposition and instead reformulates the follow-up as “When was Harold Ramis released?”, confusing the person with a film title. This failure stems from constrained local decision-making and an absence of coherent multi-step planning, which leads to retrieval and reasoning errors even when the KG contains the necessary facts. The comparison for previous work and Graph-RFT is in Table 1.

<details>

<summary>intro.png Details</summary>

### Visual Description

## Diagram: Comparison of Question Answering Methods Over Incomplete Knowledge Graphs

### Overview

The image is a technical diagram comparing three different approaches to answering a complex, multi-hop natural language question using a knowledge graph (KG). The central example question is: **"When did the films release whose screenwriters also wrote Analyze That?"** The diagram illustrates the failure modes of two existing methods and proposes a new, successful method.

### Components/Axes

The diagram is divided into three main rectangular panels, stacked vertically.

1. **Top Panel (Question):** Contains the primary question in a rounded rectangle.

2. **Middle Panel (Existing Methods):** Contains two sub-sections:

* **(1) Semantic Parsing Methods:** A linear flow from a user query to a failed outcome.

* **(2) Retrieval-Augmented Methods:** A cyclical flow showing a decomposition error leading to a failed outcome.

3. **Bottom Panel (Our Method):** Depicts a successful, multi-turn interactive process with a planning component.

**Key Visual Components & Icons:**

* **User/Query Icon:** A hand pointing at a speech bubble with a question mark (top-left of Semantic Parsing).

* **AI Agent Icon:** A blue robot head labeled "AI". Appears in Semantic Parsing and Our Method.

* **Knowledge Graph (KG) Icon:** A network of connected blue nodes. Labeled "Incomplete KG" in all instances.

* **Red "X" Mark:** Indicates a failure, error, or dead end in the process.

* **Green Checkmark:** Indicates a successful outcome (in "Our Method").

* **Web/Database Icon:** A computer monitor with code and a database symbol (in "Our Method").

* **Plan Icon:** A clipboard with a checklist (in "Our Method").

* **Arrows:** Black arrows indicate process flow. Red arrows indicate a failed path. Green arrow indicates successful output. Dashed arrows indicate multi-turn interaction.

### Detailed Analysis

#### **Section 1: Semantic Parsing Methods**

* **Flow:** User Query → AI Agent → **SPARQL** (with a red X above it, indicating generation failure) → execute → **Incomplete KG** → (red arrow) → **No Answer**.

* **Text Transcription:** "execute", "SPARQL", "Incomplete KG", "No Answer".

* **Interpretation:** This method attempts to directly translate the natural language question into a formal SPARQL query. The red "X" suggests this translation fails, or the generated query fails when executed against an incomplete knowledge graph, resulting in no answer.

#### **Section 2: Retrieval-Augmented Methods**

* **Flow:** This section shows a two-step decomposition that contains a logical error.

1. **Step 1 (Yellow Box):** "Which screenwriters wrote Analyze That?"

2. **Error:** A red "X" and the text **"Decompose Error"** point to the next step.

3. **Step 2 (Green Box):** "When was Harold Ramis released?" (This is an incorrect sub-question; it should ask for films, not the release date of a person).

4. **Retrieval Loop:** The process then enters a loop between an LLM icon (OpenAI logo) and the "Incomplete KG". It retrieves "Harold Ramis" but then fails (red X) to find the answer to the malformed second question, leading to **"No Answer"**.

* **Text Transcription:** "Which screenwriters wrote Analyze That?", "Decompose Error", "When was Harold Ramis released?", "Harold Ramis", "Incomplete KG", "No Answer".

* **Interpretation:** This method tries to break the complex question into simpler sub-questions. However, it makes a critical decomposition error by mis-framing the second sub-question, which leads to an unrecoverable failure when querying the incomplete KG.

#### **Section 3: Our Method**

* **Flow:** This depicts a successful, iterative process.

1. **Central Agent:** The AI agent icon is at the center, engaging in **"Multi-turn"** interaction (labeled on dashed arrows) with two resources: an **"Incomplete KG"** and the **"Web"**.

2. **Planning Component (Right Side):** A "Plan" box outlines a correct three-step decomposition:

* **Step 1:** "Which screenwriters wrote Analyze that?" → `Ans1 = SearchKG(screenwriter|...)`

* **Step 2:** "Which films written by Ans1?" → `Ans2 = SearchKG(films|Ans1, ...)`

* **Step 3:** "When did the Ans 2 release?" → `Ans3 = SearchKG(time|Ans2, ...)`

3. **Successful Output:** A green arrow points from the AI agent to the final answer: **"1999, ..."** with a green checkmark above it.

* **Text Transcription:** "Our Method", "Plan", "Step 1: Which screenwriters wrote Analyze that?", "Ans1 = SearchKG(screenwriter|...)", "Step 2: Which films written by Ans1?", "Ans2 = SearchKG(films|Ans1, ...)", "Step 3: When did the Ans 2 release?", "Ans3 = SearchKG(time|Ans2, ...)", "Multi-turn", "Incomplete KG", "Web", "1999, ...".

* **Interpretation:** The proposed method uses a planning module to correctly decompose the complex question into a logical sequence of sub-questions. The AI agent then interactively gathers information from both an incomplete KG and the web in a multi-turn fashion to answer each sub-question in order, ultimately producing the correct answer (e.g., "1999" for the release year of a film like *Analyze That*).

### Key Observations

1. **Consistent Failure Symbolism:** The red "X" is used uniformly to denote points of failure across different methods.

2. **Spatial Grounding of Legend:** The "Incomplete KG" icon is consistently placed at the point of data retrieval in all three methods. The "AI" agent is positioned as the central processor in the first and third methods.

3. **Critical Error Highlighted:** The "Decompose Error" in the Retrieval-Augmented method is explicitly called out in red text, identifying it as the root cause of failure for that approach.

4. **Answer Specificity:** The successful output "1999, ..." implies the answer is a list of release years, with "1999" being the first and most prominent (likely for *Analyze That* itself or a related film).

### Interpretation

This diagram is an argument for a specific architectural approach to complex question answering over imperfect data. It demonstrates that:

* **Direct translation (Semantic Parsing)** is brittle and fails with incomplete data.

* **Naive decomposition (Retrieval-Augmented)** can introduce logical errors that derail the entire process.

* **The proposed solution** combines **explicit, correct planning** with **interactive, multi-source retrieval**. The key innovation is the agent's ability to iteratively consult both a structured (but incomplete) KG and the unstructured web, guided by a coherent plan, to compensate for the KG's shortcomings. The diagram suggests that robustness in QA systems comes not from a perfect knowledge base, but from a resilient process that can plan, decompose, and gather evidence from multiple sources dynamically. The final answer "1999, ..." serves as proof of concept for the method's efficacy.

</details>

Figure 1. The comparison of Semantic Parsing, Retrieval-Augmented Methods, and Graph-RFT.

To address these challenges, we propose Graph-RFT, a novel two-stage reinforcement fine-tuning framework designed to enhance an LLM’s capabilities in autonomous planning and adaptive retrieval scheduling across multiple tools (specifically, KG search and web search) under conditions of incomplete KGs. In the first stage (Section 3.2), we introduce a chain-of-thought (CoT) (Wei et al., 2022) fine-tuning method that uses a customized plan–retrieval dataset to explicitly train the model to decompose questions, formulate plans, and select retrieval tools. This stage not only activates the model’s reasoning and planning capabilities but also resolves the GRPO (Guo et al., 2025) cold-start problem, enabling stable and meaningful reinforcement rollouts.

In the second stage (Section 3.3), we propose a plan–retrieval guided reinforcement learning method that integrates explicit planning steps and retrieval actions into the model’s reasoning loop, and learns a coverage-aware retrieval policy that dynamically coordinates knowledge graph and web search under incomplete KG conditions. It uses a structured template $⟨$ plan $⟩⟨$ relation_search $⟩⟨$ neighbor_search $⟩$ $⟨$ web_search $⟩$ , to explicitly let the model to alternate between symbolic planning and retrieval actions. The planning module draws inspiration from Cartesian principles (Descartes, 1901), breaking complex questions into logically ordered sub-questions. We further employ logical expressions to represent dependencies among sub-questions and guide the invocation of KG retrieval tools, ensuring globally coherent multi-step reasoning and controllable retrieval flow. For each planned execution step, Graph-RFT invokes both relation-search and neighbor-search tools to perform KG retrieval; when the KG lacks sufficient coverage, the model automatically triggers web search to acquire supplementary unstructured evidence. To optimize the reasoning–retrieval process, we apply a GRPO-based reinforcement learning with a novel multi-reward design: an outcome reward measuring factual correctness and a retrieval-specific reward that evaluates retrieval coverage, precision, and timing. This formulation encourages the model to learn when and how to combine KG and web retrieval, overcoming the limitations of prior SP and RAG methods that assume complete KGs and the absence of coherent multi-step planning.

Table 1. Comparison for main characteristics of previous SP, RAG methods with our Graph-RFT.

| Class | Algorithm | Paradigm | Incomplete KG | Global Planning | Decompose Problems | KG Interaction |

| --- | --- | --- | --- | --- | --- | --- |

| SP | RGR-KBQA (Feng and He, 2025) | SFT | $×$ | $×$ | $×$ | $×$ |

| SP | Rule-KBQA (Zhang et al., 2025) | SFT | $×$ | $×$ | $×$ | $×$ |

| RAG | PoG (Tan et al., 2025b) | ICL | $×$ | $×$ | $\checkmark$ | $\checkmark$ |

| RAG | DoG (Ma et al., 2025) | ICL | $×$ | $×$ | $\checkmark$ | $\checkmark$ |

| RAG | KG-Agent (Jiang et al., 2024) | SFT | $×$ | $×$ | $\checkmark$ | $\checkmark$ |

| Our Method | Graph-RFT | SFT & RL | $\checkmark$ | $\checkmark$ | $\checkmark$ | $\checkmark$ |

Our main contributions are as follows:

- We introduce Graph-RFT, a novel two-stage reinforcement fine-tuning framework for complex reasoning over incomplete KGs. It introduces the novel CoT-based fine-tuning method to activate planning and reasoning capabilities while resolving the GRPO cold-start issue, and a plan–retrieval guided reinforcement learning method to enable coherent multi-step reasoning and adaptive retrieval scheduling across KG and web sources under incomplete KG conditions.

- We propose a plan–retrieval guided reinforcement learning method with a multi-reward design that integrates graph-retrieval, web-retrieval, and penalty signals. This fine-grained feedback enables the model to learn when and how to combine KG and web retrieval for adaptive, coverage-aware reasoning under incomplete KGs.

- Experimental results across several widely used complex reasoning datasets confirm the superior performance of Graph-RFT against strong baselines, even when utilizing weaker LLM backbones (i.e., the 7B series). Furthermore, comprehensive empirical analyses validate Graph-RFT’s effectiveness across multiple critical aspects, including complex question decomposition planning, the identification of missing factual triples, and the appropriate invocation of various tools.

## 2. Related Work

Existing methods for improving LLM-based KGQA can be categorized into two main types: Semantic Parsing (SP) methods and Retrieval Augmented (RA) methods.

SP Methods convert natural language questions into structural queries (e.g., SPARQL) that can be executed on KGs to retrieve answers. Early research efforts (Hu et al., 2017; Xiong et al., 2017; Luo et al., 2025b) in semantic parsing often involved generating query graphs, resembling subgraphs of the KG and allow for direct mapping to logical forms. Lan (Lan and Jiang, 2020) incorporates constraints into the query graph, which effectively narrows the search space and facilitates more flexible query graph generation. Subsequent works (Atif et al., 2023; Schick et al., 2023; Raffel et al., 2020; Wei et al., 2022; Jiang et al., 2022; Lan and Jiang, 2020) utilize sequence-to-sequence models for multi-hop path and SPARQL-expression generation, enhancing the semantic parsing process. Recently, with the emergence of LLMs, various methods unify KGs and LLMs for KGQA.ChatKBQA (Luo et al., 2023a) generates question-aligned logical forms by treating LLMs as semantic parsers and retrieves entities and relations from KGs. RGR-KBQA (Feng and He, 2025) retrieves factual knowledge from KGs to enhance the semantic understanding capabilities of LLMs and finetune them to generate the logical form. Rule-KBQA (Zhang et al., 2025) guides logical form generation via a two-phase process that inducts a rule library and deducts forms via a rule-guided agent. However, these methods depend heavily on the assumption that KGs are complete, which means no answers can be obtained if information is missing.

RA Methods retrieve relevant KG triple based on input questions, then use these triples to guide LLMs to generate accurate answers, emphasizing the dynamic interaction between KG retrieval and LLM reasoning. ToG (Sun et al., 2023) employs an explore-and-exploit approach, which can discover the most promising reasoning paths and return the most likely reasoning results. GoG (Xu et al., 2024) proposes a think-search-generate paradigm, alleviating the incompleteness issue of KGs by using LLMs’ internal knowledge. PoG (Tan et al., 2025b) leverages knowledge reasoning paths as retrieval-augmented inputs for LLMs, thereby alleviating issues related to insufficient domain knowledge and unfaithful reasoning chains. DoG (Ma et al., 2025) introduces a subgraph-focused mechanism that allows LLMs to attempt intermediate answers after each reasoning step, effectively mitigating the drawbacks of lengthy reasoning trajectories. However, most of these approaches depend on powerful closed-source LLM APIs (e.g., GPT-4 (Achiam et al., 2023)), which results in substantial performance degradation when weaker open-source models are used as backbones. KG-Agent (Jiang et al., 2024) develops an iterative reasoning framework that integrates an LLM, a multifunctional toolbox, a KG-based executor, and a knowledge memory module, enabling smaller LLMs to make decisions autonomously. SymAgent (Liu et al., 2025a) conceptualizes the KG as a dynamic environment and is designed to autonomously and effectively integrate the capabilities of both the LLM and the KG. Graph-R1 (Luo et al., 2025a) proposes lightweight knowledge hypergraph construction and retrieval framed as a multi-turn agent–environment interaction, optimizing the reasoning process via an end-to-end reward mechanism. DynaSearcher (Hao et al., 2025) utilizes knowledge graphs as external structured knowledge to guide the search process by explicitly modeling entity relationships, thereby ensuring factual consistency in intermediate queries.

## 3. Methodology

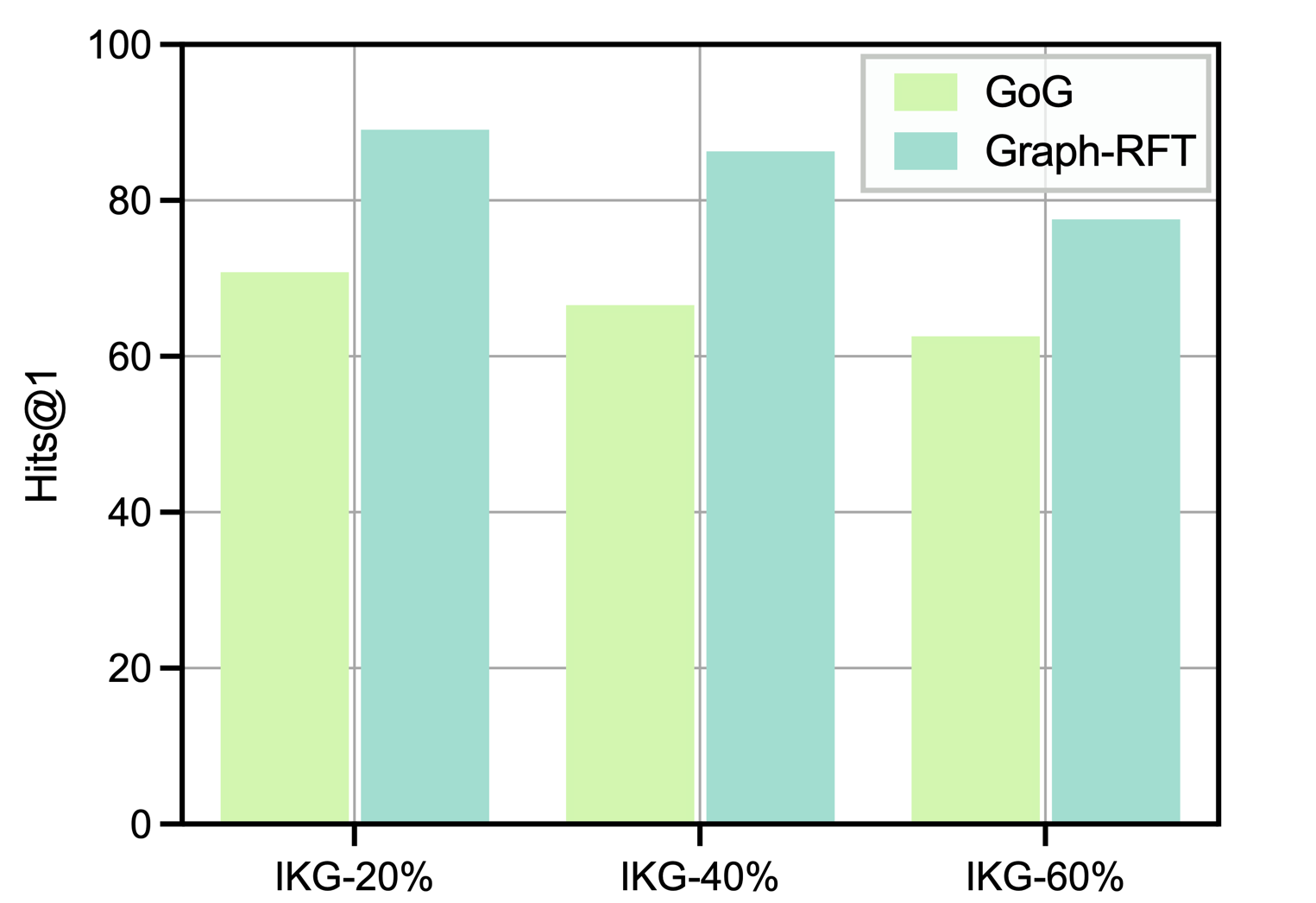

In this section, we introduce Graph-RFT, with autonomous planning and adaptive retrieval capabilities for reasoning over incomplete KGs. The framework is structured around two complementary core stages: (1) CoT Fine-Tuning for Reasoning Activation: we propose a supervised fine-tuning (SFT) method using high-quality CoT plan–retrieval trajectories to activate the model’s structured reasoning and planning abilities while resolving the GRPO cold-start problem. (2) RL-based Reasoning Enhancement: we propose a plan–retrieval guided RL method with a multi-reward design to optimize reasoning and retrieval coordination, enabling the model to perform coherent multi-step planning and adaptively invoke KG and external tools under incomplete knowledge conditions. We start with the definition of the KGQA task.

### 3.1. Task Formulation

Let $D$ be a dataset containing question–answer pairs $(q,a)$ , $G$ a KG containing a set of triples $\{(e_i,r,e_j) |e_i,e_j∈E;r∈R\}$ , where $E$ is a set of entities and $R$ is the set of relations names, and $E$ an external search engine. The KGQA task requires the LLM to generate reasoning trajectories or to iteratively interact with $G$ and $E$ . Formally, for each question $q$ , we generate a reasoning trajectory: $o=(τ_1,τ_2,...,τ_T)$ , where the $t$ -th intermediate reasoning step $τ_t=(s_t,c_t)$ consists of an action $s_t∈\{⟨ think⟩,⟨ plan⟩,$ $⟨ relation\_search⟩,⟨ neighbor\_search⟩,⟨ web\_search⟩,⟨ answer⟩\}$ (cf. Figure 7) and its associated content $c_t$ . The model is expected to repeatedly retrieve knowledge from $G$ and $E$ until reaching the final answer $a$ for the question $q$ .

### 3.2. CoT Fine-Tuning for Reasoning Activation

<details>

<summary>dataset_design.png Details</summary>

### Visual Description

## Diagram: Multi-Step Question-Answering System Using Knowledge Graphs and Web Retrieval

### Overview

The image is a technical flowchart illustrating a multi-step reasoning system designed to answer a complex natural language question. The system decomposes the question into sub-questions, uses a Knowledge Graph (KG) Retriever to find intermediate answers, and supplements with a Web Retriever when the KG is insufficient. The final answer is "Islamic republic".

### Components/Axes

The diagram is organized into several interconnected regions:

1. **Initial Question (Top-Left):** A speech bubble containing the user's query.

2. **Plan (Left Column):** A four-step plan with associated logic functions.

3. **Think Process (Center-Left):** A cartoon figure representing the reasoning engine, with arrows indicating iterative thinking.

4. **KG Retriever Modules (Top-Right and Center):** Two main blocks showing the use of "Relation Search Tool" and "Neighbor Search Tool" on a knowledge graph.

5. **Web Retriever Module (Center-Right):** A block showing web document retrieval when the KG is insufficient.

6. **Process Log (Bottom-Left):** A sequence of XML-like tags:

* `<plan> ... </plan>`

* `<relation_search> Iranian Rail, used_in </relation_search>`

* `<relation_information>common...</relation_information>`

* `<neighbor_search> Iranian Rail, ... </neighbor_search>`

* `<neighbor_information>Iran...</neighbor_information>`

* `<web_search>Iran, form_of_government... </web_search>`

* `<- - - More Steps - - ->`

* `<answer>Islamic republic, ...</answer>`

### Key Observations

1. **Hybrid Retrieval Strategy:** The system explicitly handles KG insufficiency by triggering a Web Retriever, which provides contextual documents that "fulfill" the missing knowledge.

2. **Iterative Reasoning:** The "Think" process and the step-by-step plan show a deliberate, sequential approach to decomposing and solving the complex query.

3. **Graph Query Specificity:** The logic forms use typed variables (`t1`, `h1`, `r1`) and specific relation names (`used_in`, `established_year`, `government_form`), indicating a structured query language for the knowledge graph.

4. **Visual Confirmation:** Green checkmarks in the Step 1 KG diagrams visually confirm the successful identification of "Iran" as the intermediate answer.

5. **Answer Synthesis:** The final answer "Islamic republic" is derived by combining the KG path (Iran -> Islamic Republic) with corroborating evidence from web documents (Doc1, Doc3).

### Interpretation

This diagram demonstrates a sophisticated **neuro-symbolic AI system** for complex question answering. It bridges the gap between unstructured natural language and structured knowledge bases.

* **What it suggests:** The system is designed for high accuracy and interpretability. By breaking the question into logical sub-tasks (`KGSearch`, `Inter`), it makes its reasoning process transparent and auditable, unlike end-to-end neural models.

* **How elements relate:** The **Plan** acts as the master controller. The **Think** process is the execution engine that calls specialized tools (**KG Retriever**, **Web Retriever**). The **Process Log** is the system's "black box" recorder. The flow is cyclical: Plan -> Execute (Think/Retrieve) -> Update State -> Next Plan Step.

* **Notable Anomalies/Insights:**

* The KG contains a path from "Iran" to "Islamic Republic" via the relation `government.form_of_government.countries`, but it's marked as insufficient, possibly because the relation is inverse or the system requires textual confirmation.

* The Web Retriever doesn't just fetch a direct answer; it retrieves documents that provide contextual support (`Doc3` about "theocracy"), which the system uses to validate and enrich the KG-derived answer.

* The system exhibits **Peircean abductive reasoning**: It observes data (KG paths, web docs), formulates a hypothesis ("Islamic Republic" is the government form), and seeks the most plausible explanation that fits all evidence.

In essence, the diagram is a blueprint for an AI that doesn't just retrieve answers but *reasons* its way to them by strategically combining structured knowledge with unstructured information, mimicking a human researcher's methodology.

</details>

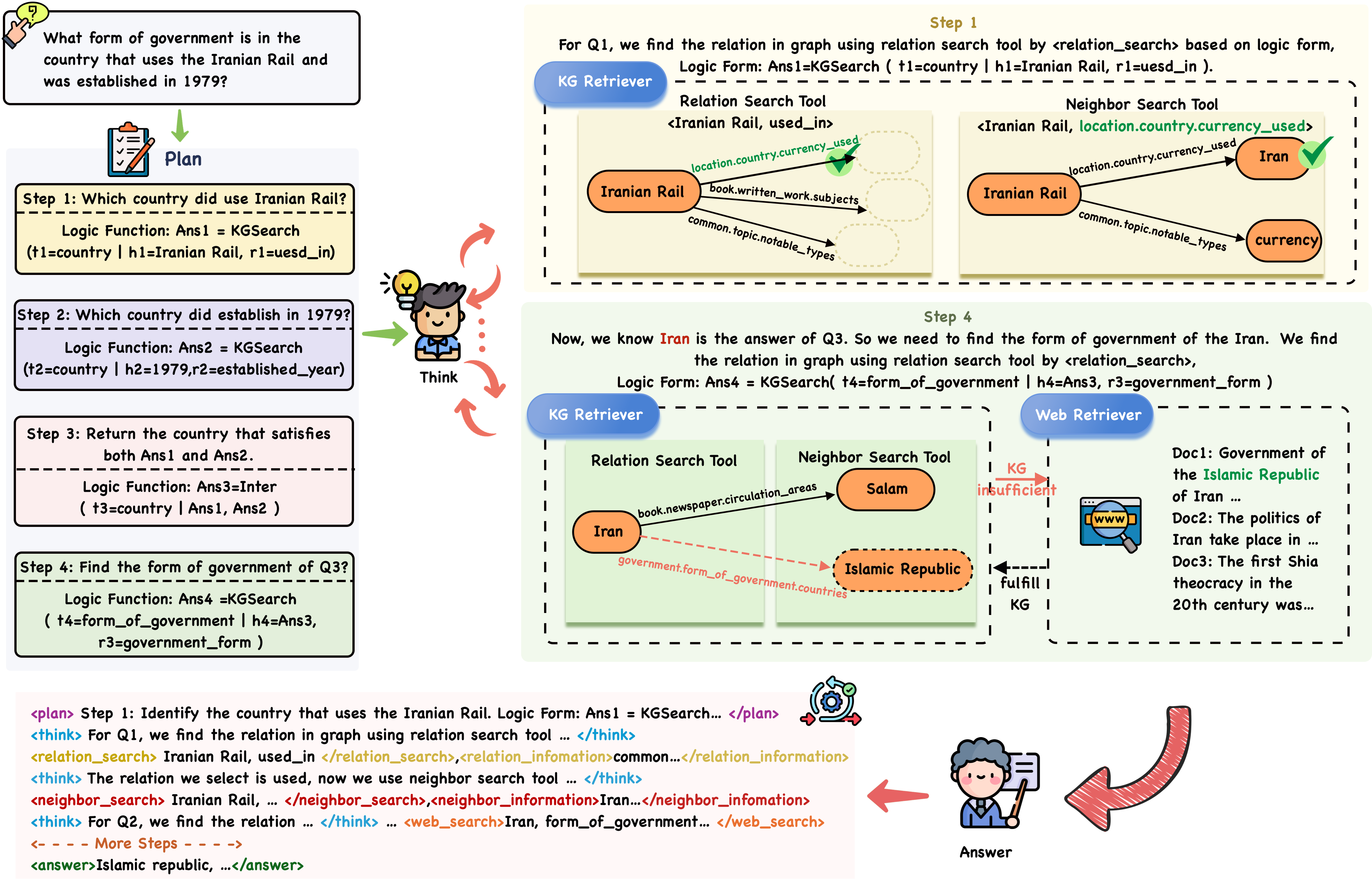

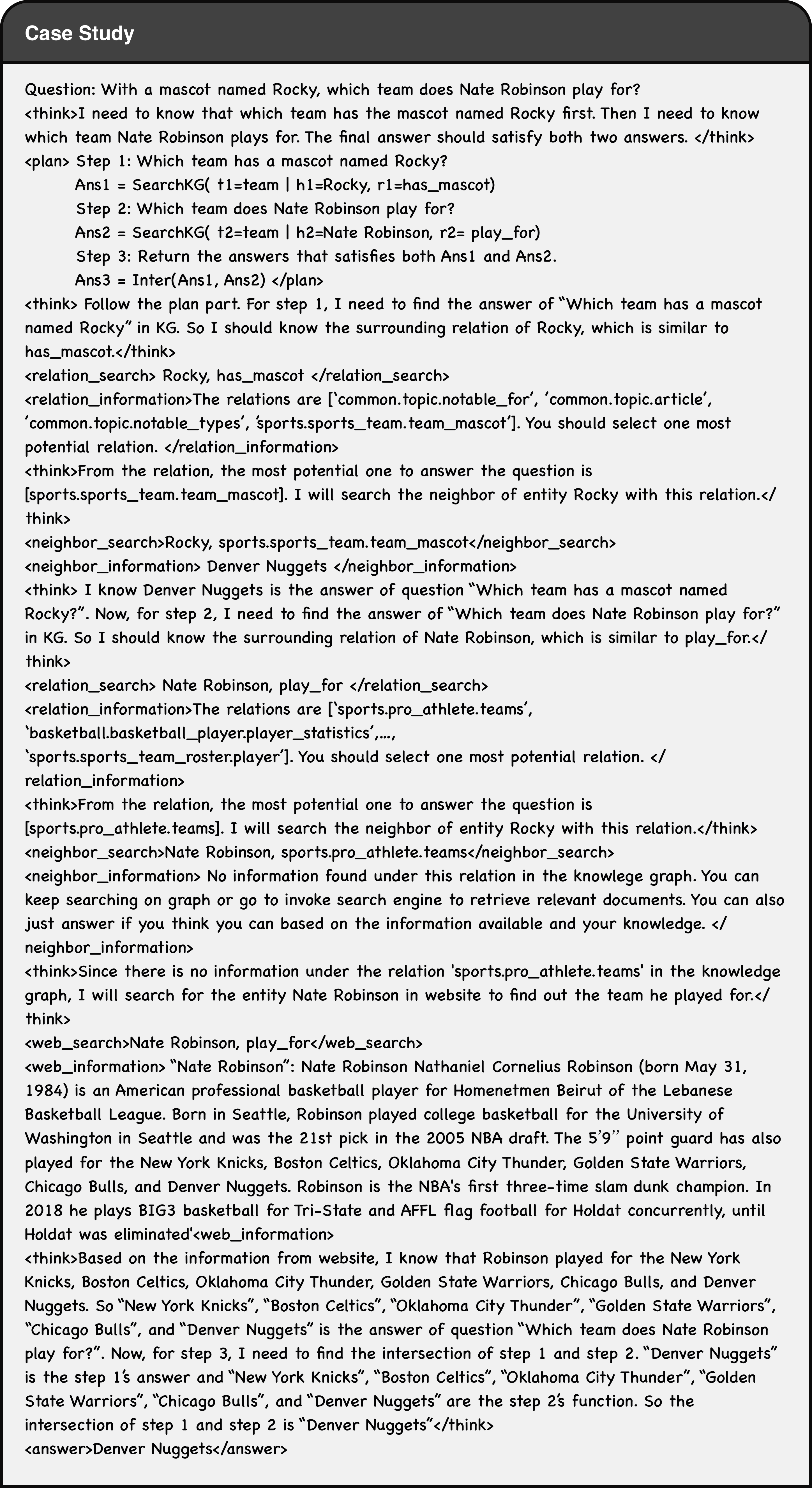

Figure 2. Graph-RFT Reasoning Trajectory for SFT dataset construction and rollout process for RL.

In the initial phase, we propose the CoT finetuning method using a structured reasoning dataset that contains step-by-step planning, reasoning, and retrieval processes. This phase is critical for activating the model’s planning-reasoning capabilities and establishing a robust cold start for subsequent RL. To construct our SFT dataset, as illustrated in Figure 2, we monitor and record the agent’s output throughout a multi-step interactive process with the KG and the external search engine. This approach transforms the complex, multi-step collaboration procedure into a CoT-style trajectory suitable for SFT. In addition, we use a two-stage process to filter the trajectory to get the high quality dataset. The Details regarding the data’s annotation and filtering processes are provided in Appendix A.1. The training objective minimizes:

$$

L_SFT=-∑_t=1^T\logπ_θ(y_t\mid q,y_<t) \tag{1}

$$

where $q$ is the question, $y$ is the concatenated sequence of reasoning steps and the answer, $π_θ$ is the model’s token distribution. The output model $π_CoT$ serves as the initialization for the next stage (Tan et al., 2025a), ensuring a robust foundation for RL.

### 3.3. RL-based Reasoning Enhancement

#### 3.3.1. Rollout with Search Tools

Our approach follows an iterative framework where the LLM alternates between text generation and external search engine queries. Specifically, our method consists of three steps: i) Plan the sub-problem decomposition and tool invocation for complex reasoning in a specific order; ii) Retrieve corresponding answers from the knowledge graph based on the priority and logical functions of these subproblems; iii) Obtain the correct answers by searching external sources for information not available in the knowledge graph.

Plan the Steps. We adhere to the Cartesian principle of breaking down complex problems into distinct steps, while designing logical functions to assist the model in understanding the interrelationships between sub-problems and determining the appropriate timing for tool utilization. In Figure 2, the first two steps correspond to subproblems of higher priority, as they do not rely on answers from other subproblems. Additionally, we use logical functions to represent the parameters for invoking KG retrieval tool, which helps the model gain a clear understanding of the invocation process. Step 3 expresses the logical relationship between the two subproblems through the $\textit{inter}(Ans_1,Ans_2,..,Ans_n)$ function (the intersection of the answers to subquestions), passes the returned results to Step 4, and obtains the final answer by invoking the KG retrieval tool. The planning steps are encapsulated between ¡plan¿ and ¡/plan¿.

Retrieve Facts from the KG. For each single steps obtained through planning, we need to sequentially select relevant triples from the KG. To address this, we design two interactive toolkits.

- Relation Search Tool. This tool retrieves a set of candidate relations for an entity in a given single-hop problem. The LLM encapsulates the entity and the hypothetical relation from the logical function between the relation search tokens ¡relation_search¿ and ¡/relation_search¿. By invoking the relation acquisition toolkit, the set of top 15 relations that are similar to the hypothetical relation is encapsulated between the tokens ¡relation_information¿ and ¡/relation_information¿.

- Neighbor Search Tool. This tool retrieves the tail entity corresponding to a given head entity-relation pair selected by LLMs. The LLM encapsulates the specified head entity and relation between the neighbor search tokens ¡neighbor_search¿ and ¡/neighbor_search¿. By invoking the neighbor acquisition toolkit, the answer is then encapsulated between the tokens ¡neighbor_information¿ and ¡/neighbor_information¿.

Given the obtained single-hop questions (e.g., “Identify the country that uses the Iranian Rail”), we first invoke the Relation Search Tool to retrieve a candidate set of relations associated with the initial (topic) entity. The LLM then reasons over this set and selects the most appropriate relation, for example, choosing “location.country.currency_used” to replace “used_in” in the retrieval function. This greedy strategy simplifies the reasoning process by selecting a locally optimal relation at each step, thereby reducing reliance on global contextual information. Next, the Neighbor Search Tool is called to obtain specific triples and their corresponding answers (e.g., “Iran”). If the LLM determines that the retrieved triples are insufficient to answer the question, or if no answer is found in the knowledge graph (i.e., when ¡neighbor_information¿ and ¡/neighbor_information¿ return “No information in the KG, please use web tool”), the system directly invokes the search engine. The answers to higher-priority (according to the order produced by the subquestion plan) single-hop questions can then be combined with those of lower-priority ones to generate new single-hop questions. By iteratively repeating this process, the system is able to derive answers to complex multi-hop questions. Notably, if the maximum iteration limit is reached without successfully generating an answer, the parametric knowledge of the LLM is used to provide a final response.

When LLMs determine that the knowledge retrieved through KG search tools is insufficient or implausible (such as when the KG lacks relevant information, i.e., the tags ¡neighbor_information¿ and ¡/neighbor_information¿ return “No information in KG, please use web tool.”), the model encapsulates the corresponding head entity and relation, for which no information is available, within the special search tokens ¡web_search¿ and ¡/web_search¿. Subsequently, a search engine is invoked, and the documents retrieved from the Bing website are enclosed within ¡web_information¿ and ¡/web_information¿. This mechanism not only enables the model to acquire the supplementary knowledge required to answer the query but also facilitates the identification of missing triples, which can later be incorporated into the KG to mitigate its incompleteness. In our experiments, we fixed the top- $k$ value (e.g., 3) to control the number of retrieved documents.

#### 3.3.2. Reward Design

To further optimize the above retrieval-planning process, we propose the GRPO (Shao et al., 2024) based RL method with the novel multi-reward design. Prior studies have predominantly employed outcome-based rewards to guide LLMs in developing reasoning abilities and leveraging search engines throughout the reinforcement learning process. However, such supervision alone does not sufficiently capture diverse retrieval-oriented reward mechanisms and is inadequate for effectively guiding retrieval in the presence of incomplete KGs. To address this limitation, we incorporate two key components into our reward design: (i) an outcome-based reward, which directly evaluates the quality of the model’s generated answers; and (ii) a retrieval-specific reward, which encourages the model to discern when and how to extract relevant information from knowledge graphs or web documents.

Table 2. Results of Graph-RFT across various datasets (represent the maximum hop), compared with the state-of-the-art (SOTA) in Supervised Learning (SL) and In-Context Learning (ICL) methods. The highest scores for ICL methods are highlighted in bold, while the second-best results are underlined. The Prior FT (Fine-tuned) SOTA includes the best-known results achieved through supervised learning. CKG denotes using complete knowledge graph and IKG denotes using incomplete KG (IKG-40%).

| Method | Class | LLM | Multi-Hop KGQA | Single-Hop KGQA | | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| CWQ (4-hop) | WebQSP (2-hop) | GrailQA (4-hop) | Simple Questions (1-hop) | | | | | | | |

| Without external knowledge | | | | | | | | | | |

| IO prompt (Sun et al., 2023) | - | GPT-3.5-Turbo | 37.6 | 63.3 | 29.4 | 20.0 | | | | |

| CoT (Sun et al., 2023) | - | GPT-3.5-Turbo | 38.8 | 62.2 | 28.1 | 20.3 | | | | |

| SC (Sun et al., 2023) | - | GPT-3.5-Turbo | 45.4 | 61.1 | 29.6 | 18.9 | | | | |

| CKG | IKG | CKG | IKG | CKG | IKG | CKG | IKG | | | |

| With external knowledge | | | | | | | | | | |

| RoG (Luo et al., 2023b) | SL | Qwen2.5-7B-base | 63.7 | 53.6 | 84.2 | 75.3 | - | - | - | - |

| ChatKBQA (Luo et al., 2023a) | SL | Qwen2.5-7B-base | 72.4 | 37.8 | 75.5 | 47.1 | - | - | - | - |

| KB-BINDER (Li et al., 2023a) | ICL | Codex | - | - | 50.7 | 38.4 | - | - | - | - |

| StructGPT (Jiang et al., 2023) | ICL | GPT-3.5-Turbo | - | - | 76.4 | 60.1 | - | - | - | - |

| ToG/ToG-R (Sun et al., 2023) | ICL | GPT-3.5-Turbo | 47.2 | 37.9 | 76.9 | 63.4 | - | - | - | - |

| ToG/ToG-R (Sun et al., 2023) | ICL | GPT-4 | 71.0 | 56.1 | 80.3 | 71.8 | - | - | - | - |

| PoG (Tan et al., 2025b) | ICL | GPT-4 | 76.8 | 62.6 | 96.7 | 76.4 | 79.2 | 64.1 | 80.2 | 59.5 |

| GoG (Xu et al., 2024) | ICL | GPT-3.5-Turbo | 55.7 | 44.3 | 78.7 | 66.6 | 71.7 | 62.4 | 54.3 | 43.9 |

| GoG (Xu et al., 2024) | ICL | GPT-4 | 75.2 | 60.4 | 84.4 | 80.3 | 76.8 | 69.4 | 68.9 | 56.4 |

| Graph-RFT-instruct | RL | Qwen2.5-7B-instruct | 78.4 | 64.8 | 87.3 | 82.7 | 82.1 | 71.8 | 73.8 | 58.7 |

| Graph-RFT-base | RL | Qwen2.5-7B-base | 80.7 | 67.2 | 90.6 | 86.3 | 84.6 | 73.3 | 76.9 | 62.4 |

Outcome-based Reward. Result-based reward are divided into format reward and answer reward. For format reward, we first define the correct format to ensure that the LLM adopts the predefined iterative workflow of ”Think-Decompose-Retrieval-Search”. The definitions of the model’s thinking process, tool invocation, and final answer output format are shown in Figure 7 in Appendix A.4. For answer rewards $r_ans∈[0,1]$ , we compare the predicted answer in ¡answer¿¡/answer¿ with the ground-truth answer, and use the F1-score between the two sets as the reward to measure its accuracy. The complete accuracy reward $R_acc$ is defined as:

$$

R_acc=\begin{cases}\max(0.1,r_ans),&if format is correct,\\

0,&if format is incorrect.\end{cases} \tag{2}

$$

$$

r_ans=F1(p_ans,a)=\frac{2|p_ans∩\{a\}|}{|p_ans|+|a|} \tag{3}

$$

where $p_ans$ is the predicted answer, and $a$ is the ground truth answer from the $(q,a)$ pair.

Retrieval-specific Reward. Inspired by AutoRefine (Shi et al., 2025), building on the result-based reward described above, we further introduce two retrieval rewards to encourage LLMs to learn how to extract relevant information from both the KG and web documents. Specifically, we introduce a graph retrieval reward $R_graph$ and a document search reward $R_web$ .

$R_graph$ is measured based on the retrieval results contained in the ¡neighbor_information¿¡/neighbor_information¿ block. Specifically, we collect all graph retrieval results from the entire trajectory and concatenate them into a single text sequence:

$$

R_graph=I\bigl(\{a\}∩ o_graph=a\bigr) \tag{4}

$$

$$

o_graph=\bigcup≤ft\{c_t\mid(s_t,c_t)∈ o∧ s_t=<neighbor\_information>\right\} \tag{5}

$$

where $I(·)$ is the indicator function, $o_graph$ is the concatenation of all the KG retrieval information steps.

$R_web$ is defined in a similar way to $R_graph$ . We collect all document retrieval results encapsulated in the ¡web_information¿¡/web_information¿ blocks throughout the entire trajectory and concatenate them into a single text sequence.

$$

R_web=I\bigl(\{a\}∩ o_web=a\bigr) \tag{6}

$$

$$

o_web=\bigcup≤ft\{c_t\mid(s_t,c_t)∈ o∧ s_t=<web\_information>\right\} \tag{7}

$$

where $I(·)$ is the indicator function, $o_graph$ is the concatenation of all the web search information steps.

However, such multi-turn reinforcement learning is unstable. To prevent the model from bypassing graph retrieval and directly turning to document search in cases where the correct result could have been obtained through graph retrieval, we introduce a corresponding penalty for this behavior to help LLMs know when to use different retrieval tools. The overall reward $R_over$ can be formally written as:

$$

R_over=\begin{cases}R_acc,&if R_acc>0,\\

0.1,&if R_acc=0 \& R_graph>0 OR R_acc=0 \& R_web>0,\\

-0.1,&if R_web>0 when CKG OR R_web=0 when IKG\\

0,&if R_acc=R_web=R_graph=0.\end{cases} \tag{8}

$$

Training Objective. By integrating the overall reward with the GRPO training objective (Shao et al., 2024), our proposed learning objective enables dynamic interaction with both the knowledge graph and the search engine during optimization (Jin et al., 2025), thereby facilitating effective LLM search training. The objective is formalized as:

$$

\begin{split}\max_π_{θ}E_x∼D, y∼π_θ(·|x;\mathcal{G,R)}≤ft[r_φ(x,y)\right]-\\

βD_KL≤ft[π_θ(y|x;G,R)\big\|π_ref(y|x;G,R)\right]\end{split} \tag{9}

$$

where $π_θ$ denotes the trainable policy model, $π_ref$ is a fixed reference model, $r_φ$ represents the overall reward function, and $D_KL$ denotes the $KL$ divergence. Here, $x$ is sampled from the dataset $D$ , and $y$ denotes the output sequence interleaving reasoning steps with KG and search engine retrievals. Since the retrieved triples and documents are not generated by the policy model, we mask the retrieval results during loss calculation to prevent the training policy from being biased (Hao et al., 2025).

## 4. Experiments

To evaluate the effectiveness of Graph-RFT, we aim to explore the following four research questions (RQs): (1) RQ1: How does Graph-RFT perform against SOTA baselines on complex reasoning datasets? (2) RQ2: What are the contributions of each Graph-RFT module to overall performance? (3) RQ3: Can Graph-RFT effectively bridge information gaps via retrieval under varying KG incompleteness? (4) RQ4: What are the error types of Graph-RFT?

$\blacktriangleright$ Datasets. We evaluate Graph-RFT on four KGQA datasets, including three multi-hop datasets: CWQ (Talmor and Berant, 2018), WebQSP (Yih et al., 2016), GrailQA (Gu et al., 2021) and one single-hop dataset: SimpleQuestions (Petrochuk and Zettlemoyer, ). We consider two scenarios: Complete Knowledge Graph (CKG) and Incomplete Knowledge Graph (IKG). For CKG, we directly use the datasets provided by GoG (Xu et al., 2024). For IKG, since GoG only provides CWQ and WebQSP, we construct IKG versions of GrailQA and SimpleQuestions by sampling 3,000 multi-hop questions from GrailQA and 3,000 questions from SimpleQuestions. We further generate four IKGs with varying completeness levels IKG-20%, IKG-40%, IKG-60%for example, IKG-40% removes 40% of the critical triples for each question and all relations between the corresponding entity pairs. Freebase (Bollacker et al., 2008) serves as the background KG for all datasets. The impact of using alternative knowledge graphs is discussed in Appendix A.5. $\blacktriangleright$ Evaluation Metrics. Following prior work (Li et al., 2023b; Baek et al., 2023; Jiang et al., 2023; Sun et al., 2023), we adopt exact match accuracy (Hits@1) as the evaluation metric for all datasets. Detailed descriptions of the baselines and implementation settings are provided in Appendix A.2 and Appendix A.3.

### 4.1. Main Results (RQ1)

Table 2 presents our main results on four KGQA datasets. We can observe that Graph-RFT consistently achieves SOTA performance across all settings in IKG, even when built upon smaller backbones such as Qwen2.5-7B-base, outperforming GPT-4-based methods.

$\blacktriangleright$ CKG Setting. Under the CKG setting, where all relevant triples are available, Graph-RFT achieves the strongest overall performance across both multi-hop and single-hop benchmarks. The results reveal that our two-stage RFT which combines CoT-based reasoning activation and plan–retrieval RL—provides consistent advantages over both prompt-only ICL methods and supervised learning baselines. ICL approaches such as PoG and GoG rely purely on prompt engineering and lack any fine-tuning or reinforcement optimization. Although they perform competitively on simpler datasets such as WebQSP and SimpleQuestions, they struggle to generalize across long reasoning chains. In contrast, Graph-RFT-base (Qwen-2.5-7B) achieves substantial gains on complex benchmarks like CWQ (80.7 vs 76.8/75.2) and GrailQA (84.6 vs 79.2/76.8), where multi-step logical dependencies are required. These improvements highlight how explicit CoT supervision enables structured planning, and reinforcement optimization refines the timing and sequencing of retrieval actions. Relative to RoG and ChatKBQA, which are purely supervised fine-tuned models, Graph-RFT demonstrates marked improvements (e.g., CWQ 80.7 vs 72.4/63.7, WebQSP 90.6 vs 84.2/75.5). This suggests that reinforcement learning contributes beyond imitation: by rewarding accurate retrieval scheduling and penalizing inefficient queries, the model learns to maintain global reasoning consistency and to balance exploration between relation-search and neighbor-search tools.

Table 3. Ablation study for the Graph-RFT-base in rollout framework.

| Variants | - | ✓ | - | 49.4 | 69.7 | 58.9 | 53.8 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| - | ✓ | ✓ | 55.3 | 74.2 | 63.5 | 61.7 | |

| ✓ | ✓ | - | 62.6 | 83.4 | 69.1 | 57.3 | |

| Graph-RFT-base | ✓ | ✓ | ✓ | 67.2 | 86.3 | 73.3 | 62.4 |

Table 4. Ablation analysis on Graph-RFT-base SFT and multi-reward design.

| Graph-RFT-base w/o SFT Graph-RFT-base w/o $R_web$ Graph-RFT-base w/o $R_graph$ | 46.4 66.3 65.8 | 74.2 83.5 82.7 | 56.4 72.1 71.6 | 54.7 60.8 61.6 |

| --- | --- | --- | --- | --- |

| Graph-RFT-base | 67.2 | 86.3 | 73.3 | 62.4 |

$\blacktriangleright$ IKG Setting. When the knowledge graph is incomplete, the benefits of Graph-RFT become even more pronounced. Prompt-based ICL approaches such as PoG and GoG (GPT-4) achieve competitive accuracy under complete KGs but degrade significantly once KG coverage is reduced. For example, GoG’s performance drops 7-15 points across CWQ (75.2→60.4) and GrailQA (76.8→69.4), while PoG falls from 76.8→62.6 on CWQ. These methods rely on static prompt patterns and local reasoning, lacking mechanisms to assess KG sufficiency or to seek supplementary evidence. In contrast, Graph-RFT-base (Qwen2.5-7B) remains stable across both settings, outperforms among ICL methods. This resilience arises from our two-stage RFT: CoT fine-tuning teaches explicit question decomposition, and reinforcement optimization learns when and how to invoke external retrievals. SL methods such as RoG and ChatKBQA also decline sharply under IKG (e.g., CWQ: 63.7→53.6; ChatKBQA: 72.4→37.8), as their fixed fine-tuning lacks interactivity with retrieval tools. In contrast, Graph-RFT maintains high accuracy (e.g., CWQ 67.2, WebQSP 86.3), confirming that reinforcement-based retrieval scheduling effectively compensates for missing triples through controlled web augmentation. Moreover, Graph-RFT with Qwen2.5-7b-base outperforms methods without external knowledge (e.g., IO, CoT, SC prompting), demonstrating that KGs play a crucial role in reasoning tasks.

### 4.2. Ablation Studies (RQ2)

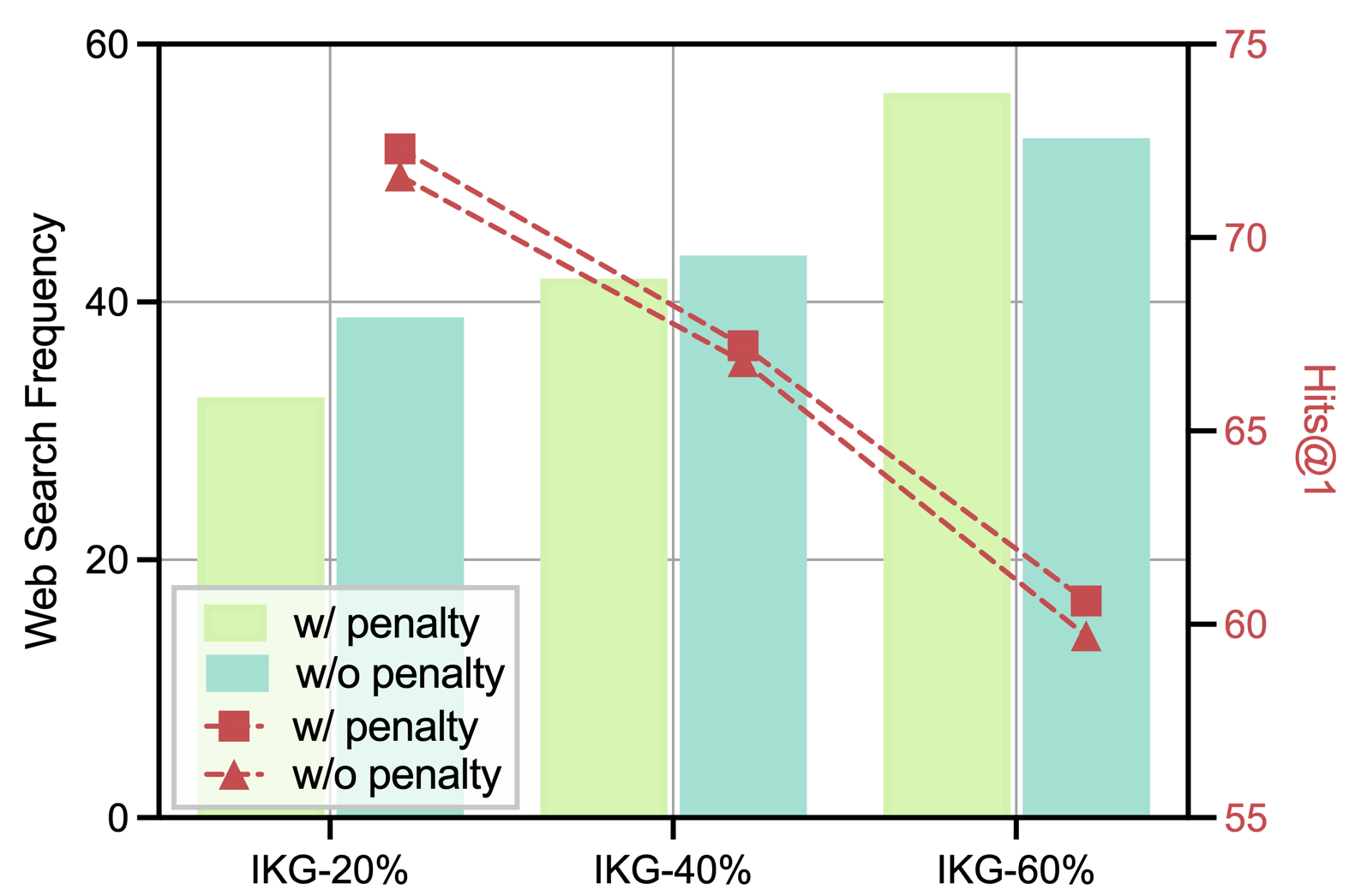

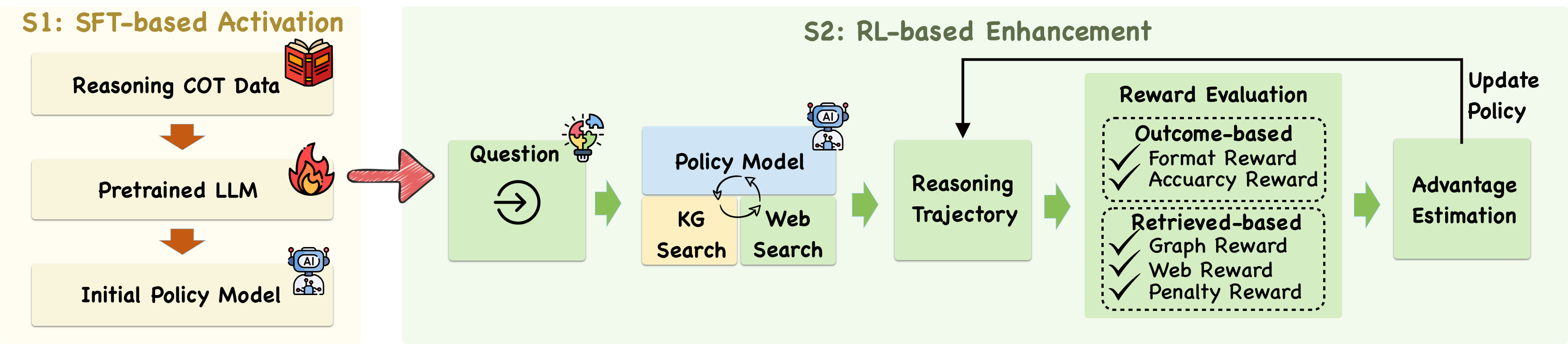

We perform ablation studies to analyze the contributions of Graph-RFT’s key components. Specifically, we examine the effects of the rollout framework, SFT and the multi-reward mechanism in the RL stage. $\blacktriangleright$ Exploration on Rollout Framework. We analyze the contributions of the Planning Steps (PS), KG Retrieval (KR), and Web Retrieval (WR) modules within the rollout framework to Graph-RFT’s performance under the IKG-40% setting. Figure 3 presents model variants obtained by ablating these components, revealing that removing any single module substantially reduces performance. Comparing PS+KR, KR+WR, and KR alone, we observe that PS+KR achieves superior performance on multi-hop datasets, indicating that global planning—including question decomposition with logical operators and tool invocation—provides greater benefit than merely supplementing missing knowledge, thereby validating the effectiveness of the PS module. In contrast, on the single-hop dataset, KR+WR outperforms PS+KR, suggesting that leveraging external unstructured knowledge becomes more critical than global planning for simpler queries, which demonstrates Graph-RFT’s adaptability across tasks of varying complexity. Furthermore, the comparison between KR+WR and KR alone confirms that integrating structured knowledge from the KG with unstructured knowledge from web documents produces substantial improvements. Collectively, these results indicate that each module contributes uniquely, and their synergistic combination significantly enhances the model’s capability for complex reasoning tasks.

|

<details>

<summary>cwq_search.png Details</summary>

### Visual Description

\n

## Dual-Axis Bar and Line Chart: Web Search Frequency vs. Hits@1 Across IKG Percentages

### Overview

This image is a dual-axis combination chart displaying two metrics—**Web Search Frequency** (bar chart, left axis) and **Hits@1** (line chart, right axis)—across three categories on the x-axis: **IKG-20%**, **IKG-40%**, and **IKG-60%**. The chart compares conditions "with penalty" and "without penalty" for both metrics.

### Components/Axes

* **X-Axis (Categories):** Three discrete categories: `IKG-20%`, `IKG-40%`, `IKG-60%`.

* **Primary Y-Axis (Left):** Label: `Web Search Frequency`. Scale: 0 to 60, with major ticks at 0, 20, 40, 60.

* **Secondary Y-Axis (Right):** Label: `Hits@1`. Scale: 55 to 75, with major ticks at 55, 60, 65, 70, 75.

* **Legend (Bottom-Left):** Contains four entries:

1. Light green bar: `w/ penalty`

2. Teal bar: `w/o penalty`

3. Red square marker with dashed line: `w/ penalty`

4. Red triangle marker with dashed line: `w/o penalty`

* **Data Series:**

* **Bars (Web Search Frequency):** Two bars per x-axis category.

* **Lines (Hits@1):** Two dashed red lines with distinct markers, connecting points across the three categories.

### Detailed Analysis

**Web Search Frequency (Bars - Left Axis):**

* **IKG-20%:**

* `w/ penalty` (Light Green): ~32

* `w/o penalty` (Teal): ~39

* **IKG-40%:**

* `w/ penalty` (Light Green): ~42

* `w/o penalty` (Teal): ~44

* **IKG-60%:**

* `w/ penalty` (Light Green): ~57

* `w/o penalty` (Teal): ~53

**Hits@1 (Lines - Right Axis):**

* **Trend Verification:** Both lines show a clear downward trend from left (IKG-20%) to right (IKG-60%).

* **IKG-20%:**

* `w/ penalty` (Red Square): ~72

* `w/o penalty` (Red Triangle): ~71

* **IKG-40%:**

* `w/ penalty` (Red Square): ~67

* `w/o penalty` (Red Triangle): ~66

* **IKG-60%:**

* `w/ penalty` (Red Square): ~61

* `w/o penalty` (Red Triangle): ~60

### Key Observations

1. **Inverse Relationship:** There is a clear inverse relationship between the two metrics. As the IKG percentage increases, **Web Search Frequency increases** for both conditions, while **Hits@1 decreases** for both conditions.

2. **Penalty Effect on Frequency:** The "with penalty" condition results in lower Web Search Frequency at IKG-20% but higher frequency at IKG-60% compared to "without penalty." The crossover occurs between IKG-40% and IKG-60%.

3. **Penalty Effect on Hits@1:** The "with penalty" condition consistently yields a slightly higher Hits@1 score (by approximately 1 point) across all three IKG categories compared to "without penalty."

4. **Magnitude of Change:** The increase in Web Search Frequency from IKG-20% to IKG-60% is substantial (~25 points for "w/ penalty"). The decrease in Hits@1 over the same range is also significant (~11 points for both conditions).

### Interpretation

The data suggests a fundamental trade-off governed by the IKG parameter. Increasing the IKG percentage (which may represent a knowledge graph integration level or a similar parameter) appears to drive more frequent web searches but at the cost of reduced precision in the top result (lower Hits@1).

The "penalty" mechanism introduces a nuanced effect. It initially suppresses search frequency at lower IKG levels but amplifies it at higher levels. Crucially, it provides a consistent, albeit small, boost to result precision (Hits@1) across all settings. This implies the penalty might be a regularization term that discourages excessive or low-quality searches, thereby improving the quality of the top retrieved item even as the quantity of searches changes.

The most notable anomaly is the crossover in Web Search Frequency behavior. This indicates that the system's response to the penalty is not linear and depends heavily on the underlying IKG configuration. For practical application, if the goal is to maximize precision (Hits@1), a lower IKG percentage is better. If the goal is to maximize search frequency (perhaps for coverage or recall), a higher IKG percentage is better, and applying the penalty becomes beneficial only at these higher levels.

</details>

|

<details>

<summary>webqsp_search.png Details</summary>

### Visual Description

## Dual-Axis Combination Chart: Web Search Frequency vs. Hits@1 Across IKG Percentages

### Overview

This image is a dual-axis combination chart displaying two distinct metrics—**Web Search Frequency** (represented by bars) and **Hits@1** (represented by dashed lines with markers)—across three categories on the x-axis: **IKG-20%**, **IKG-40%**, and **IKG-60%**. The chart compares performance "with penalty" and "without penalty" for both metrics.

### Components/Axes

* **X-Axis (Bottom):** Categorical axis with three labels: `IKG-20%`, `IKG-40%`, `IKG-60%`.

* **Primary Y-Axis (Left):** Labeled `Web Search Frequency`. Scale ranges from 10 to 50, with major gridlines at intervals of 10.

* **Secondary Y-Axis (Right):** Labeled `Hits@1` (text is red). Scale ranges from 75 to 90, with major ticks at 75, 80, 85, 90.

* **Legend (Bottom-Left):** Contains four entries:

1. Light green solid bar: `w/ penalty`

2. Teal solid bar: `w/o penalty`

3. Red dashed line with square marker: `w/ penalty`

4. Red dashed line with triangle marker: `w/o penalty`

* **Data Series:**

* **Bars (Web Search Frequency):** Two bars per x-axis category.

* **Lines (Hits@1):** Two dashed red lines connecting markers across the three categories.

### Detailed Analysis

**1. Web Search Frequency (Bars - Left Axis):**

* **Trend:** Both "w/ penalty" and "w/o penalty" series show a clear **upward trend** as the IKG percentage increases.

* **IKG-20%:**

* `w/ penalty` (Light Green): ~28

* `w/o penalty` (Teal): ~31.5

* **IKG-40%:**

* `w/ penalty` (Light Green): ~38.5

* `w/o penalty` (Teal): ~36.5

* **IKG-60%:**

* `w/ penalty` (Light Green): ~43

* `w/o penalty` (Teal): ~42.5

**2. Hits@1 (Lines - Right Axis):**

* **Trend:** Both "w/ penalty" and "w/o penalty" series show a clear **downward trend** as the IKG percentage increases.

* **IKG-20%:**

* `w/ penalty` (Square Marker): ~88.5

* `w/o penalty` (Triangle Marker): ~87.5

* **IKG-40%:**

* `w/ penalty` (Square Marker): ~86

* `w/o penalty` (Triangle Marker): ~85

* **IKG-60%:**

* `w/ penalty` (Square Marker): ~77

* `w/o penalty` (Triangle Marker): ~76.5

### Key Observations

1. **Inverse Relationship:** There is a strong inverse relationship between the two metrics. As **Web Search Frequency increases** with higher IKG percentages, the **Hits@1 score decreases**.

2. **Penalty Effect:** The "with penalty" condition consistently results in a slightly **higher Hits@1 score** than "without penalty" at each IKG level (the square marker line is above the triangle marker line). For Web Search Frequency, the relationship is less consistent; "w/ penalty" is lower at IKG-20%, higher at IKG-40%, and roughly equal at IKG-60%.

3. **Magnitude of Change:** The decline in Hits@1 is particularly steep between IKG-40% and IKG-60%, dropping approximately 9 points for the "w/ penalty" series.

### Interpretation

The chart suggests a fundamental trade-off in the system being measured. Increasing the IKG parameter (which could represent a knowledge graph integration level or a similar variable) leads to more frequent web searches but at the cost of reduced precision in the top result (Hits@1).

The "penalty" mechanism appears to be a tuning parameter that slightly improves precision (Hits@1) across all tested levels without dramatically altering the search frequency trend. The most significant finding is the sharp performance degradation in Hits@1 at the highest IKG level (60%), indicating a potential threshold where the system's ability to return the correct top result is severely compromised, even as it searches more frequently. This could imply that beyond a certain point, integrating more knowledge (or whatever IKG represents) introduces noise or complexity that hinders precise retrieval.

</details>

|

| --- | --- |

| (a) CWQ. | (b) WebQSP. |

Figure 3. Ratio of web search operation in different KG settings under rewards with penalty or not.

|

<details>

<summary>cwq_compare.png Details</summary>

### Visual Description

## Grouped Bar Chart: Performance Comparison of GoG and Graph-RFT Across IKG Percentages

### Overview

The image displays a grouped bar chart comparing the performance of two methods, labeled "GoG" and "Graph-RFT," across three different conditions or datasets. The performance metric is "Hits@1," plotted on the y-axis. The chart visually demonstrates that Graph-RFT consistently achieves a higher Hits@1 score than GoG in all three categories, though both methods show a declining trend as the category percentage increases.

### Components/Axes

* **Chart Type:** Grouped vertical bar chart.

* **Y-Axis:**

* **Label:** "Hits@1"

* **Scale:** Linear scale from 0 to 80, with major tick marks at intervals of 20 (0, 20, 40, 60, 80).

* **X-Axis:**

* **Categories:** Three distinct categories are labeled:

1. `IKG-20%`

2. `IKG-40%`

3. `IKG-60%`

* **Legend:**

* **Position:** Top-right corner of the chart area, enclosed in a box.

* **Entries:**

1. **GoG:** Represented by a light green (pale lime) color.

2. **Graph-RFT:** Represented by a teal (blue-green) color.

* **Grid:** A light gray grid is present, with horizontal lines aligned to the y-axis ticks.

### Detailed Analysis

The chart presents paired bars for each x-axis category. The left bar in each pair is GoG (light green), and the right bar is Graph-RFT (teal).

**1. Category: IKG-20%**

* **GoG (Light Green):** The bar height is approximately **45**. (Visual estimate: slightly above the 40 line).

* **Graph-RFT (Teal):** The bar height is approximately **72**. (Visual estimate: well above the 60 line, closer to 72 than 70 or 75).

* **Trend:** Graph-RFT significantly outperforms GoG in this category.

**2. Category: IKG-40%**

* **GoG (Light Green):** The bar height is approximately **44**. (Visual estimate: appears very slightly lower than the GoG bar for IKG-20%).

* **Graph-RFT (Teal):** The bar height is approximately **67**. (Visual estimate: above the 60 line, but lower than its value for IKG-20%).

* **Trend:** Both methods show a slight decrease compared to IKG-20%. The performance gap remains large.

**3. Category: IKG-60%**

* **GoG (Light Green):** The bar height is approximately **36**. (Visual estimate: clearly below the 40 line).

* **Graph-RFT (Teal):** The bar height is approximately **60**. (Visual estimate: aligns almost exactly with the 60 grid line).

* **Trend:** Both methods show their lowest performance in this category. The decline from IKG-40% to IKG-60% is more pronounced for Graph-RFT than for GoG.

### Key Observations

1. **Consistent Superiority:** Graph-RFT achieves a higher Hits@1 score than GoG in all three measured conditions (IKG-20%, IKG-40%, IKG-60%).

2. **Performance Degradation:** Both methods exhibit a downward trend in performance as the IKG percentage increases from 20% to 60%.

3. **Magnitude of Gap:** The absolute performance gap between Graph-RFT and GoG is largest at IKG-20% (~27 points) and narrows slightly at IKG-60% (~24 points), primarily because Graph-RFT's score drops more steeply.

4. **Relative Stability of GoG:** The GoG method's performance declines more gradually (from ~45 to ~36) compared to Graph-RFT's steeper decline (from ~72 to ~60).

### Interpretation

The data suggests that the "Graph-RFT" method is substantially more effective than the "GoG" method for the task measured by the "Hits@1" metric across the tested scenarios. The "IKG" variable (likely representing some form of "Incomplete Knowledge Graph" or similar concept with a percentage of missing or corrupted data) has a negative impact on both methods' performance. The consistent decline indicates that as the IKG condition becomes more severe (higher percentage), the task becomes more difficult for both models.

The fact that Graph-RFT maintains a significant lead even as performance degrades implies it is more robust to the challenges introduced by the IKG conditions. However, its steeper absolute decline might suggest its performance is more sensitive to the *degree* of IKG compared to GoG, which shows a flatter, more stable, but lower-performing response curve. This chart would be critical in a technical document to argue for the adoption of Graph-RFT over GoG, while also noting the shared vulnerability to increasing IKG percentages.

</details>

|

<details>

<summary>webqsp_compare.png Details</summary>

### Visual Description

## Grouped Bar Chart: Performance Comparison of GoG and Graph-RFT Across IKG Levels

### Overview

This image is a grouped bar chart comparing the performance of two methods, labeled "GoG" and "Graph-RFT," across three different conditions or datasets. The performance metric is "Hits@1," plotted on the y-axis. The chart visually demonstrates that Graph-RFT consistently outperforms GoG across all tested conditions.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** "Hits@1" (likely a performance metric, such as accuracy where the correct answer is ranked first).

* **Scale:** Linear scale from 0 to 100, with major grid lines at intervals of 20 (0, 20, 40, 60, 80, 100).

* **X-Axis:**

* **Categories:** Three distinct categories, labeled:

1. `IKG-20%`

2. `IKG-40%`

3. `IKG-60%`

* These labels likely represent different experimental settings, possibly varying levels of a parameter called "IKG."

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Entries:**

1. **GoG:** Represented by a light green (pale yellow-green) bar.

2. **Graph-RFT:** Represented by a teal (blue-green) bar.

* **Data Series:** Two series of bars, one for each method, grouped together for each x-axis category.

### Detailed Analysis

The chart presents the following approximate "Hits@1" values for each method and category. Values are estimated based on the bar heights relative to the y-axis grid lines.

**Trend Verification:**

* **GoG (Light Green Bars):** The visual trend shows a slight, consistent decrease in performance as the IKG percentage increases from 20% to 60%.

* **Graph-RFT (Teal Bars):** The visual trend also shows a decrease in performance as IKG percentage increases, but the decline appears more pronounced between IKG-40% and IKG-60%.

**Data Points (Approximate Values):**

| Category | GoG (Hits@1) | Graph-RFT (Hits@1) | Performance Gap (Graph-RFT - GoG) |

| :--------- | :----------- | :------------------ | :-------------------------------- |

| **IKG-20%** | ~70 | ~90 | ~+20 |

| **IKG-40%** | ~66 | ~86 | ~+20 |

| **IKG-60%** | ~62 | ~78 | ~+16 |

### Key Observations

1. **Consistent Superiority:** Graph-RFT achieves a higher "Hits@1" score than GoG in all three categories (IKG-20%, IKG-40%, IKG-60%).

2. **Performance Degradation:** Both methods show a downward trend in performance as the IKG percentage increases. The drop is more significant for Graph-RFT between the 40% and 60% conditions.

3. **Narrowing Gap:** While Graph-RFT maintains a clear lead, the absolute performance gap between the two methods narrows slightly at the highest IKG level (IKG-60%).

4. **High Baseline Performance:** Both methods start with relatively high performance (above 60) at the IKG-20% condition.

### Interpretation

The data suggests that the **Graph-RFT method is more robust and effective** than the GoG method for the task measured by "Hits@1," regardless of the IKG setting tested. The consistent ~20-point lead at lower IKG levels indicates a significant architectural or methodological advantage.

The downward trend for both methods implies that the task becomes more challenging as the "IKG" parameter increases (from 20% to 60%). This could mean IKG represents noise, missing data, or task difficulty. Graph-RFT's steeper decline at the highest level (IKG-60%) might indicate it is more sensitive to extreme conditions, though it still outperforms GoG.

**In essence:** Graph-RFT is the superior model under these test conditions, but its advantage diminishes slightly as the problem difficulty (IKG%) increases. The chart effectively communicates a comparative performance analysis, highlighting both the relative strength of one method and the general impact of a changing experimental variable.

</details>

|

| --- | --- |

| (a) CWQ. | (b) WebQSP. |

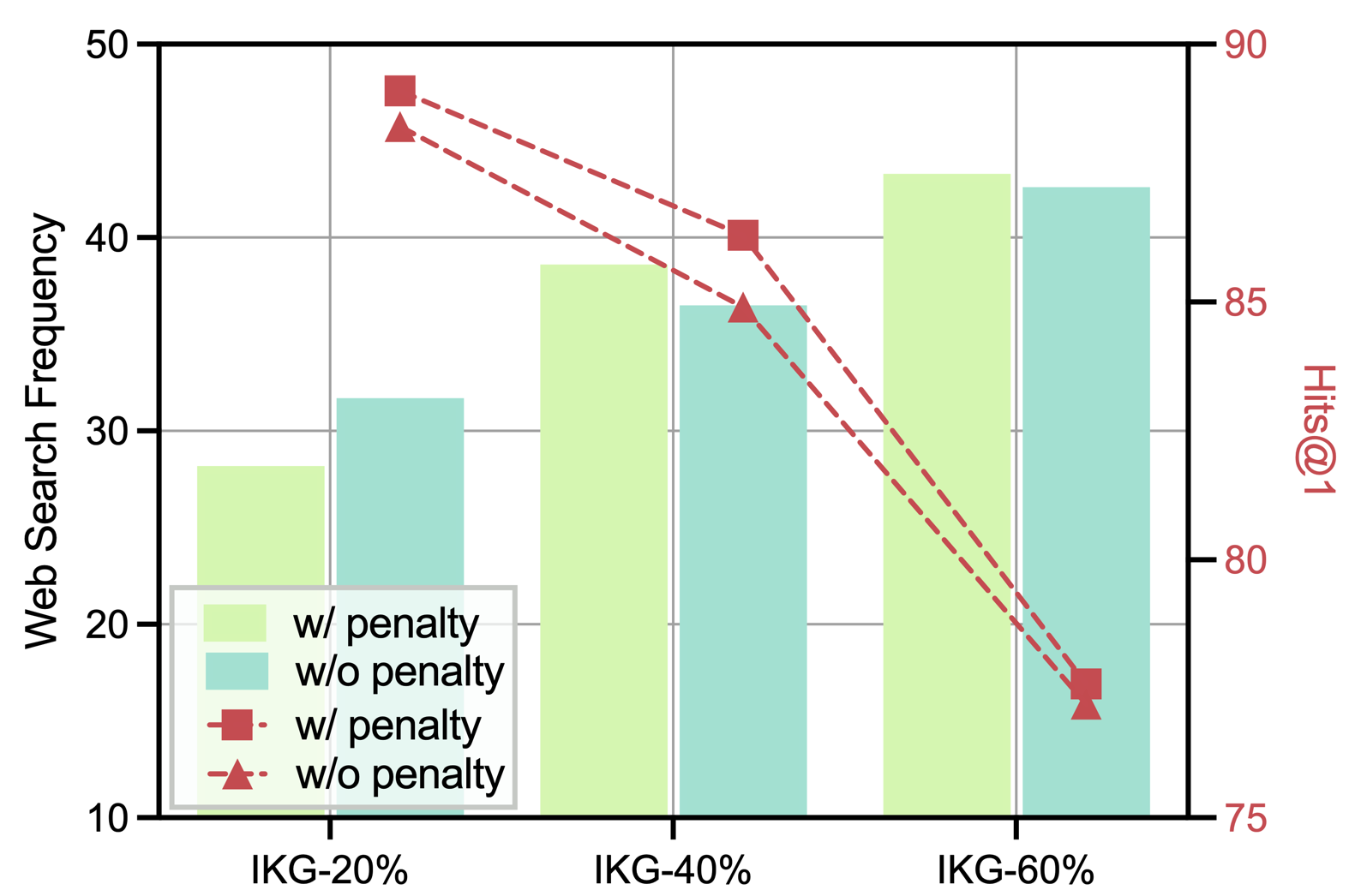

Figure 4. Performance of GoG and our model under different KG settings.

$\blacktriangleright$ Exploration on SFT and Multi-reward Design. We further examine the effect of the SFT stage and the multi-reward mechanism on Graph-RFT’s performance under the IKG-40% setting. Comparing Graph-RFT-base w/o SFT with Graph-RFT-base shows a marked performance drop across all datasets, underscoring the crucial role of SFT in strengthening model capability. Moreover, removing either the web retrieval reward or the graph retrieval reward consistently degrades performance, demonstrating that both rewards effectively guide the LLM to retrieve more semantically relevant triples and external documents. As shown in Figure 3, when the model’s web search frequency approaches the optimal level (e.g., 20% in the IKG-20% setting), performance on CWQ and WebQSP improves. This indicates that Graph-RFT can adaptively regulate web search frequency based on the sufficiency of KG information, reducing the risk of noisy or irrelevant content and thereby enhancing overall effectiveness.

### 4.3. Different KG Incompleteness (RQ3)

The key capabilities of our model lies in performing global planning on complex problems to leverage the knowledge within the KG and identifying knowledge gaps beyond the KG through web search. We examine the performance of Graph-RFT under varying degrees of KG incompleteness and record its external search frequency, as shown in Figure 3. To evaluate the robustness of different methods under KG incompleteness, we compare GoG and Graph-RFT across KGs with different completeness levels, as illustrated in Figure 4. $\blacktriangleright$ Impact of Rewards with or without Penalty. From Figure 3, Graph-RFT increases the frequency of external searches as KG completeness decreases. This behavior reflects the model’s ability to proactively compensate for missing knowledge required for reasoning through web retrieval. Moreover, the model’s search strategy varies notably with question complexity: compared to WebQSP (maximum 2-hop), Graph-RFT conducts significantly more external searches when tackling the more complex multi-hop questions in CWQ (maximum 4-hop). This demonstrates that the model can dynamically adjust its web search frequency according to question complexity, effectively aligning knowledge acquisition with the reasoning demands of the task. $\blacktriangleright$ Impact of Different KG Incompleteness. From Figure 4, we observe that Graph-RFT outperforms GoG across all degrees of incompleteness. This improvement arises because GoG is primarily confined to local decision-making, lacking the global planning needed for effective question understanding and tool invocation in complex problem decomposition. In contrast, when KG triples are missing, GoG relies solely on the LLM’s internal knowledge to generate answers, whereas our model retrieves relevant external documents through web search, thereby supplementing incomplete KG information and improving answer accuracy. Notably, the improvement on CWQ is much more pronounced than on WebQSP, validating Graph-RFT’s strong planning ability and logical decomposition mechanism for tackling complex reasoning tasks.

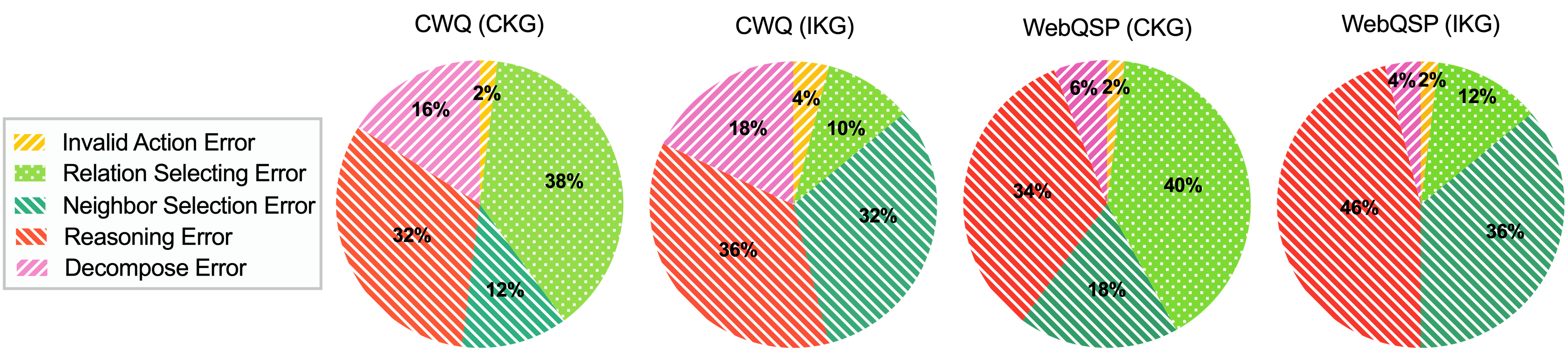

### 4.4. Error Type Analysis (RQ4)

To analyze Graph-RFT’s limitations, we randomly selected 50 failure cases from CWQ and WebQSP under both CKG and IKG settings. These cases are categorized into five types: (1) invalid actions, (2) relation selection errors, (3) neighbor selection errors, (4) reasoning errors, and (5) decomposition errors, with their distribution shown in Figure 6. We can obtain the following findings: (i): reasoning errors—where the LLM follows a correct reasoning process but produces incorrect answers—represent the largest proportion in both datasets and are more prevalent in IKG than in CKG, likely due to answer aliasing and discrepancies between retrieved document answers and reference answers. (ii): neighbor selection errors manifest in two scenarios: on the one hand, when relation selection is correct, neighbor errors are relatively rare in CKG, indicating that the model primarily struggles with relation selection; on the other hand, when the correct relation is not identified, as in IKG, the model often fails to determine whether entities are sufficient for problem-solving, resulting in incorrect neighbor selection. (iii): decomposition errors, arising from flawed planning, occur more frequently on complex problems such as CWQ, whereas invalid action errors (e.g., invoking undefined tools) are effectively managed by the model. The case study is in Appendix A.6.

## 5. Conclusion

We introduced Graph-RFT, a two-stage reinforcement fine-tuning framework for complex reasoning over incomplete knowledge graphs. Graph-RFT unifies structured planning and adaptive retrieval within a single learning paradigm, enabling LLMs to reason coherently and retrieve information dynamically across KGs and web sources. In the first stage, a CoT fine-tuning process with a customized plan–retrieval dataset activates the model’s planning and reasoning capabilities while mitigating the GRPO cold-start problem. In the second stage, a plan–retrieval guided reinforcement learning procedure with a multi-reward design optimizes coverage-aware retrieval scheduling and multi-step logical consistency. Extensive experiments across multiple KGQA benchmarks demonstrate that Graph-RFT consistently outperforms strong baselines, achieving superior accuracy even with smaller LLM backbones. In the future, we will explore enhancing relation filtering performance from KGs and the capability of decomposing problems when dealing with complex reasoning tasks.

Acknowledgements. We thank all anonymous reviewers and area chairs for their comments. This work is supported by the National Natural Science Foundation of China (U23A20316), the CCF-Tencent Rhino-Bird Open Research Fund (CCF-Tencent RAGR20250115), and the computing power resources from Wuhan Dongxihu District Intelligent Computing Center.

## References

- J. Achiam, S. Adler, S. Agarwal, L. Ahmad, I. Akkaya, F. L. Aleman, D. Almeida, J. Altenschmidt, S. Altman, S. Anadkat, et al. (2023) Gpt-4 technical report. arXiv preprint arXiv:2303.08774. Cited by: §2.

- F. Atif, O. El Khatib, and D. Difallah (2023) Beamqa: multi-hop knowledge graph question answering with sequence-to-sequence prediction and beam search. In Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval, pp. 781–790. Cited by: §2.

- J. Baek, A. F. Aji, and A. Saffari (2023) Knowledge-augmented language model prompting for zero-shot knowledge graph question answering. arXiv preprint arXiv:2306.04136. Cited by: §4.

- K. Bollacker, C. Evans, P. Paritosh, T. Sturge, and J. Taylor (2008) Freebase: a collaboratively created graph database for structuring human knowledge. In Proceedings of the 2008 ACM SIGMOD international conference on Management of data, pp. 1247–1250. Cited by: §4.

- P. Clark, I. Cowhey, O. Etzioni, T. Khot, A. Sabharwal, C. Schoenick, and O. Tafjord (2018) Think you have solved question answering? try arc, the ai2 reasoning challenge. arXiv preprint arXiv:1803.05457. Cited by: §1.

- R. Descartes (1901) Discourse on method. Aladdin Book Company. Cited by: §1.

- T. Feng and L. He (2025) Rgr-kbqa: generating logical forms for question answering using knowledge-graph-enhanced large language model. In Proceedings of the 31st International Conference on Computational Linguistics, pp. 3057–3070. Cited by: Table 1, §2.

- Y. Gu, S. Kase, M. Vanni, B. Sadler, P. Liang, X. Yan, and Y. Su (2021) Beyond iid: three levels of generalization for question answering on knowledge bases. In Proceedings of the web conference 2021, pp. 3477–3488. Cited by: §4.

- D. Guo, D. Yang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P. Wang, X. Bi, et al. (2025) Deepseek-r1: incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948. Cited by: §1, §1.

- C. Hao, W. Feng, Y. Zhang, and H. Wang (2025) DynaSearcher: dynamic knowledge graph augmented search agent via multi-reward reinforcement learning. arXiv preprint arXiv:2507.17365. Cited by: §2, §3.3.2.

- D. Hendrycks, C. Burns, S. Basart, A. Zou, M. Mazeika, D. Song, and J. Steinhardt (2020) Measuring massive multitask language understanding. arXiv preprint arXiv:2009.03300. Cited by: §1.

- S. Hu, L. Zou, J. X. Yu, H. Wang, and D. Zhao (2017) Answering natural language questions by subgraph matching over knowledge graphs. IEEE Transactions on Knowledge and Data Engineering 30 (5), pp. 824–837. Cited by: §2.

- J. Jiang, K. Zhou, Z. Dong, K. Ye, W. X. Zhao, and J. Wen (2023) Structgpt: a general framework for large language model to reason over structured data. arXiv preprint arXiv:2305.09645. Cited by: §A.2, §1, Table 2, §4.

- J. Jiang, K. Zhou, W. X. Zhao, Y. Song, C. Zhu, H. Zhu, and J. Wen (2024) Kg-agent: an efficient autonomous agent framework for complex reasoning over knowledge graph. arXiv preprint arXiv:2402.11163. Cited by: Table 1, §2.

- J. Jiang, K. Zhou, W. X. Zhao, and J. Wen (2022) Unikgqa: unified retrieval and reasoning for solving multi-hop question answering over knowledge graph. arXiv preprint arXiv:2212.00959. Cited by: §2.

- B. Jin, H. Zeng, Z. Yue, J. Yoon, S. Arik, D. Wang, H. Zamani, and J. Han (2025) Search-r1: training llms to reason and leverage search engines with reinforcement learning. arXiv preprint arXiv:2503.09516. Cited by: §3.3.2.

- H. Khorashadizadeh, F. Z. Amara, M. Ezzabady, F. Ieng, S. Tiwari, N. Mihindukulasooriya, J. Groppe, S. Sahri, F. Benamara, and S. Groppe (2024) Research trends for the interplay between large language models and knowledge graphs. arXiv preprint arXiv:2406.08223. Cited by: §1.

- Y. Lan and J. Jiang (2020) Query graph generation for answering multi-hop complex questions from knowledge bases. Cited by: §2.

- T. Li, X. Ma, A. Zhuang, Y. Gu, Y. Su, and W. Chen (2023a) Few-shot in-context learning for knowledge base question answering. arXiv preprint arXiv:2305.01750. Cited by: §A.2, §1, Table 2.

- X. Li, R. Zhao, Y. K. Chia, B. Ding, L. Bing, S. Joty, and S. Poria (2023b) Chain of knowledge: a framework for grounding large language models with structured knowledge bases. arXiv preprint arXiv:2305.13269 3. Cited by: §4.

- B. Liu, J. Zhang, F. Lin, C. Yang, M. Peng, and W. Yin (2025a) Symagent: a neural-symbolic self-learning agent framework for complex reasoning over knowledge graphs. In Proceedings of the ACM on Web Conference 2025, pp. 98–108. Cited by: §1, §2.

- Z. Liu, Z. Sun, Y. Zang, X. Dong, Y. Cao, H. Duan, D. Lin, and J. Wang (2025b) Visual-rft: visual reinforcement fine-tuning. arXiv preprint arXiv:2503.01785. Cited by: §1.

- H. Luo, G. Chen, Q. Lin, Y. Guo, F. Xu, Z. Kuang, M. Song, X. Wu, Y. Zhu, L. A. Tuan, et al. (2025a) Graph-r1: towards agentic graphrag framework via end-to-end reinforcement learning. arXiv preprint arXiv:2507.21892. Cited by: §2.

- H. Luo, Z. Tang, S. Peng, Y. Guo, W. Zhang, C. Ma, G. Dong, M. Song, W. Lin, Y. Zhu, et al. (2023a) Chatkbqa: a generate-then-retrieve framework for knowledge base question answering with fine-tuned large language models. arXiv preprint arXiv:2310.08975. Cited by: §A.2, §1, §2, Table 2.

- L. Luo, J. Ju, B. Xiong, Y. Li, G. Haffari, and S. Pan (2025b) Chatrule: mining logical rules with large language models for knowledge graph reasoning. In Pacific-Asia Conference on Knowledge Discovery and Data Mining, pp. 314–325. Cited by: §2.

- L. Luo, Y. Li, G. Haffari, and S. Pan (2023b) Reasoning on graphs: faithful and interpretable large language model reasoning. arXiv preprint arXiv:2310.01061. Cited by: §A.2, Table 2.

- J. Ma, Z. Gao, Q. Chai, W. Sun, P. Wang, H. Pei, J. Tao, L. Song, J. Liu, C. Zhang, et al. (2025) Debate on graph: a flexible and reliable reasoning framework for large language models. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 39, pp. 24768–24776. Cited by: Table 1, §1, §2.

- [28] M. Petrochuk and L. Zettlemoyer Simplequestions nearly solved: a new upperbound and baseline approach. arxiv 2018. arXiv preprint arXiv:1804.08798. Cited by: §4.

- C. Raffel, N. Shazeer, A. Roberts, K. Lee, S. Narang, M. Matena, Y. Zhou, W. Li, and P. J. Liu (2020) Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of machine learning research 21 (140), pp. 1–67. Cited by: §2.

- T. Schick, J. Dwivedi-Yu, R. Dessì, R. Raileanu, M. Lomeli, E. Hambro, L. Zettlemoyer, N. Cancedda, and T. Scialom (2023) Toolformer: language models can teach themselves to use tools. Advances in Neural Information Processing Systems 36, pp. 68539–68551. Cited by: §2.

- Z. Shao, P. Wang, Q. Zhu, R. Xu, J. Song, X. Bi, H. Zhang, M. Zhang, Y. Li, Y. Wu, et al. (2024) Deepseekmath: pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300. Cited by: §3.3.2, §3.3.2.

- Y. Shi, S. Li, C. Wu, Z. Liu, J. Fang, H. Cai, A. Zhang, and X. Wang (2025) Search and refine during think: autonomous retrieval-augmented reasoning of llms. arXiv preprint arXiv:2505.11277. Cited by: §1, §3.3.2.

- J. Sun, C. Xu, L. Tang, S. Wang, C. Lin, Y. Gong, L. M. Ni, H. Shum, and J. Guo (2023) Think-on-graph: deep and responsible reasoning of large language model on knowledge graph. arXiv preprint arXiv:2307.07697. Cited by: §A.2, §1, §2, Table 2, Table 2, Table 2, Table 2, Table 2, §4.