# Understanding AI Trustworthiness: A Scoping Review of AIES & FAccT Articles

**Authors**: Siddharth Mehrotra, Jin Huang, Xuelong Fu, Roel Dobbe, Clara I. Sánchez, Maarten de Rijke

> University of Amsterdam & Delft University of TechnologyThe NetherlandsTBS

> University of CambridgeUnited KingdomXXX

> Delft University of TechnologyThe NetherlandsXX

> University of AmsterdamThe NetherlandsXXX

\keepXColumns \JAIRAE

Min-Yen Kan \JAIRTrack Survey Articles

(2025)

Abstract.

Background: Trustworthy AI serves as a foundational pillar for two major AI ethics conferences: AIES and FAccT. However, current research often adopts techno-centric approaches, focusing primarily on technical attributes such as reliability, robustness, and fairness, while overlooking the sociotechnical dimensions critical to understanding AI trustworthiness in real-world contexts.

Objectives: This scoping review aims to examine how the AIES and FAccT communities conceptualize, measure, and validate AI trustworthiness, identifying major gaps and opportunities for advancing a holistic understanding of trustworthy AI systems.

Methods: We conduct a scoping review of AIES and FAccT conference proceedings to date, systematically analyzing how trustworthiness is defined, operationalized, and applied across different research domains. Our analysis focuses on conceptualization approaches, measurement methods, verification and validation techniques, application areas, and underlying values.

Results: While significant progress has been made in defining technical attributes such as transparency, accountability, and robustness, our findings reveal critical gaps. Current research often predominantly emphasizes technical precision at the expense of social and ethical considerations. The sociotechnical nature of AI systems remains less explored and trustworthiness emerges as a contested concept shaped by those with the power to define it.

Conclusions: An interdisciplinary approach combining technical rigor with social, cultural, and institutional considerations is essential for advancing trustworthy AI. We propose actionable measures for the AI ethics community to adopt holistic frameworks that genuinely address the complex interplay between AI systems and society, ultimately promoting responsible technological development that benefits all stakeholders. copyright: cc doi: 10.1613/jair.1.xxxxx publicationmonth: 10 journalyear: 2025

1. Introduction

The rapid advancement and widespread adoption of artificial intelligence (AI) have ushered in a new era of technological innovation, bringing both immense potential and significant challenges. As AI increasingly permeates aspects of our lives, from healthcare to criminal justice, the need for trustworthy AI has become paramount. Trustworthy AI, as a concept, encompasses a multifaceted approach to AI systems that prioritizes safety, transparency, and ethical considerations for all stakeholders (10.1145/3555803, 85). It extends beyond technical proficiency, embracing principles like reliability, fairness, explainability, and accountability. This has given rise to dedicated academic venues like the AAAI/ACM Conference on AI, Ethics, and Society (AIES) and ACM Conference on Fairness, Accountability, and Transparency (FAccT), fostering interdisciplinary discourse on AI ethics. It is now clear that a purely technology-centric view of trustworthiness is not enough. Trustworthy AI requires an interdisciplinary perspective that views AI systems as sociotechnical systems.

The sociotechnical nature of AI systems demands a holistic approach to trustworthiness, one that considers not only the technical aspects but also the complex interplay between AI and the broader social, cultural, and institutional contexts in which it operates. (betram, 95) lays out five elements of trustworthiness as competent, reliable, transparent, benevolent, and having ethical integrity – and calls out to study these elements in a broader sociotechnical setting. This expanded perspective is particularly crucial for the AI ethics community which aims to bridge the gap between computer science and other disciplines in addressing AI’s ethical challenges.

In a study by (laufer, 82), when asked about what values 36 self-selecting FAccT affiliates believe FAccT scholarship should address in the near future, participants reflected on the need for broader conceptions for trustworthiness. Central to this discourse is the recognition that the concept of trustworthiness is fundamental to understanding and predicting trust levels in AI systems. It becomes imperative to critically examine how the AIES & FAccT communities conceptualize and communicate trustworthiness, and to what ends these efforts are directed. This examination raises important questions about the commitments that trustworthy AI research in these venues signifies, or should signify, in the broader context of AI ethics and societal impact.

The study of the trustworthiness of AI systems has been a topic of interest for many years, even before the existence of the AIES & FAccT conferences. Scholars from various disciplines have identified values that determine the attribution of trustworthiness, revealing both similarities and differences across fields. For example, in interpersonal trust, competence, predictability, benevolence, and integrity have been highlighted as crucial values (lahusen2024trust, 79). For public institutions, the list extends to competence and reliability, procedures like transparency and accountability, and results including effectiveness and general welfare (polemi2024challenges, 115). In the context of AI systems, properties such as reliability, robustness, safety, interpretability, explainability, fairness, transparency, and accountability have been identified as trust-relevant (kaur2021requirements, 70, 84).

Trustworthiness has been used to refer to two sides of a coin (e.g., lee2004trust, 84, 86, 98, 146, 122). On the one hand, trustworthiness has been referred to as an objective attribute of the trustee (e.g., zerilli2022transparency, 152, 52, 71, 65, 47). On the other hand, trustworthiness has been referred to as a trustor’s subjective perception of a trustee’s attributes (e.g., mayer1995integrative, 98, 122, 84). Overall, trustworthiness is multifaceted, comprising several elements regardless of whether it is viewed as an inherent quality of the trusted party or as a subjective assessment made by those extending trust (baer2018people, 9, 31, 65, 84). Therefore, by concentrating on trustworthiness, we can assess the qualities and behaviors of AI systems that contribute to their reliability, safety, and ethical alignment.

Despite the extensive research on trustworthy AI (toney, 141), there remains a critical gap in understanding the sociotechnical nature of these systems. Few studies have adequately addressed the complex interplay between technical capabilities and social contexts in which AI operates. This paper aims to address these gaps by conducting a comprehensive scoping review of articles published in AIES & FAccT conferences to date. Through our analysis, we seek to answer several key questions:

1. How is trustworthiness conceptualized in the context of AI systems within AIES & FAccT proceedings?

1. What methodologies are employed to measure, verify, and validate trustworthiness?

1. Which application areas are most prominently represented?

1. What underlying values and ethical considerations drive this body of work?

We explicitly focus on values because an account of value embodiment in AI aids in assessing whether designed AI systems indeed embody a range of moral values (mehrotraaies, 101), e.g., those articulated by the EU High-Level Expert Group (EU_AI_Ethics_Guidelines, 42). To this end, we examine three key dimensions: intended, embodied and realized values following the framework of (van2020embedding, 114) to do a thematic analysis on our corpus. Our motivation to use this framework is that it helps us overcome the limitations of focusing solely on intentions (which may not manifest in the system) or outcomes (which may be influenced by external factors) by emphasizing embodied values—those intentionally and successfully embedded in the system.

Overall, our core contributions are:

1. A systematic analysis of how trustworthiness is conceptualized, measured, and validated within the AIES & FAccT community.

1. Identification of major gaps in current research, particularly regarding the sociotechnical aspects of AI systems.

1. A critical examination of the values and ethical considerations underpinning trustworthy AI research.

1. A discussion of the intellectual and broader impact of AIES & FAccT conferences in studying AI trustworthiness.

2. Related Work

Trust is a much-discussed topic in algorithmic decision-making, especially in the area of AI (mehrotrareview, 99). In the development of trust, the process by which a human assesses the trustworthiness of a system, leading to their perception of trustworthiness, is crucial. Only with an accurate trustworthiness assessment can people base their trust on adequate expectations about a system’s capabilities and limitations and make informed decisions. Trustworthiness of AI systems has been studied from multiple disciplines such as computer science (liu2023trustworthy, 89), psychology (schlicker2022calibrated, 124), public administration (lahusen2024trust, 79), and medicine (zhang2023ethics, 154). Below, we provide a background on studying trustworthiness in four subject areas, namely: computer science, law, social sciences, and humanities. These areas are common in the AIES & FAccT conferences. AIES includes experts from various disciplines such as ethics, philosophy, economics, sociology, psychology, law, history, and politics (AIES2018, 50), while FAccT brings together scholars from computer science, law, social sciences, and humanities (Facct2018, 48). These areas serve as the foundation for our interdisciplinary approach to understanding the multifaceted nature of AI trustworthiness.

2.1. Computer Science

Computer science has predominantly focused on technical perspectives for trustworthy AI applications, emphasizing three characteristics, (i) robustness, fortifying AI models against malicious attacks such as adversarial attacks; (ii) generalization, ensuring maintaining performance on unseen out-of-distribution (OOD) data; and (iii) interpretability, improving understanding of AI model predictions (wang2023trustworthy, 148, 104). (mucsanyi2023trustworthy, 104) list common pitfalls in evaluating trustworthy machine learning models, e.g., inconsistent coding for evaluation metrics despite being the same mathematically, confounding multiple factors in method comparisons, training and test samples overlap, and lack of validation set. Building on the foundational principles of trustworthy machine learning, recent research has begun to investigate the characteristics that apply to large language models and mechanisms for evaluating their trustworthiness. (liu2023trustworthy, 89) survey key dimensions for assessing LLM trustworthiness. These include reliability, safety, fairness, resistance to misuse, explainability and reasoning, adherence to social norms, and robustness. (liu2023trustworthy, 89) highlight that a key principle for evaluating an LLM ’s trustworthiness is the generation of proper test data across the aforementioned dimensions. Finally, (toney, 141) review similarities and differences between governments’ and researchers’ definitions and frameworks on trustworthy AI. The authors find inconsistencies between policy and research term frequencies, highlighting the different focuses of each group on trustworthy AI, distinct from our work focusing on AI trustworthiness rather than the use of the terminology within the AIES & FAccT community.

Studies in computer science are often tech-centred, focusing primarily on technical methodologies but overlooking socio-cultural, ethical, and legal dimensions of trustworthiness. But achieving trustworthiness demands interdisciplinary collaboration. Thus, we consider AI systems as socio-technical and explore how the AIES & FAccT community is deepening our insight into trustworthiness of AI.

2.2. Social Sciences

Social science provides a broad perspective on AI trustworthiness focusing on its societal, institutional, economic, and political implications and dynamics. E.g., (dacon2023you, 28) investigates the impact of AI trustworthiness on various aspects of society, like the environment, human society, and societal values. These implications are abstracted into three social principles: (i) harm prevention, which focuses on ensuring safety, security, reliability, and privacy; (ii) explicability, which emphasizes explainability and transparency of the system; and (iii) fairness, which consists of accountability and the well-being of society and environment. (thiebes2021trustworthy, 138) evaluate AI trustworthiness frameworks and synthesize the recurring themes in five principles: beneficence, non-maleficence, autonomy, justice, and explicability. They also conceptualize the DaRe4TAI framework, which structures and directs data-driven research in trustworthy AI while addressing tensions between the five principles. Similar framework-oriented approaches are pursued by other social science scholars, as they form a crucial connection with policymaking (polemi2024challenges, 115, 78). We adopt a similar lens in this review as (polemi2024challenges, 115, 78) to discuss the results of our corpus analysis with a socio-technical focus by thinking about the entire ecosystem in which our AI systems operate.

2.3. Law

The question of AI trustworthiness is crucial in the legal domain, where even minor inaccuracies can have significant consequences for individuals navigating the complexities of legal processes. Studies have demonstrated the capabilities of LLMs in understanding and responding to natural language queries. However, the tendency of LLMs to generate inaccurate and incomplete information raises serious concerns about their trustworthiness in providing legal guidance (kattnig2024assessing, 69). E.g., (tan2023chatgpt, 134) investigate ChatGPT’s ability to provide legal information using several simulated cases and compared with the performance to that of and find that often leads laypeople to over-trust it. More generally looking at use of AI systems in law, (steenhuis2024ai, 130) raises important questions of exploring trustworthiness of such systems for methods of service delivery, replacement of routine legal tasks and essential legal assistance to those who might otherwise go without. Potential answers to these explorations can be derived from (hagan2020legal, 55) ’s work who provides legal design testing metrics to explore trustworthiness of AI systems plus methodology and “design deliverables” based on these metrics with California state courts’ Self Help Centers (hagan2018human, 54). Overall, AI systems hold significant promise for improving accessibility and efficiency in the legal domain but face challenges related to inaccuracies, hallucinations, and over-reliance, raising concerns about trustworthiness.

2.4. Humanities

The study of AI trustworthiness within the humanities emphasizes ethical, philosophical, and cultural aspects, addressing critical issues such as moral agency, responsibility, and the societal impact of AI decision-making (noller2024extended, 107, 117, 25). (balmer2023sociological, 10) critically examines AI’s role in society through creative methodologies, while others explore how AI reshapes creativity, authorship, and cultural interpretation (lim2018ai, 87). Historical and philosophical analyses (coeckelbergh2022political, 26, e.g.,) provide valuable context for understanding contemporary AI technologies, alongside inquiries into AI consciousness and religious perspectives that highlight cultural and ethical considerations (IsDeweys, 46, 2, 59). These diverse approaches underline the importance of contextualizing AI systems within broader humanistic and historical frameworks to assess their trustworthiness effectively. The integration of ethical, cultural, and philosophical dimensions in evaluating AI trustworthiness offers a more comprehensive understanding of its implications, enabling more informed and responsible development of AI systems.

3. Methodology and Corpus Overview

3.1. Methodology

We employ a Scoping Literature Review (SLR) following the guidelines by (arksey2005scoping, 8) to identify articles studying trustworthiness of AI systems. SLRs guide the gathering and identification of papers in a topic area for scrutiny (kastner2012most, 68), which enables us to perform our thematic investigation of AIES & FAccT scholarship. We use qualitative manual coding and computational corpus analysis, including topic modeling, to extract themes and patterns from the data.

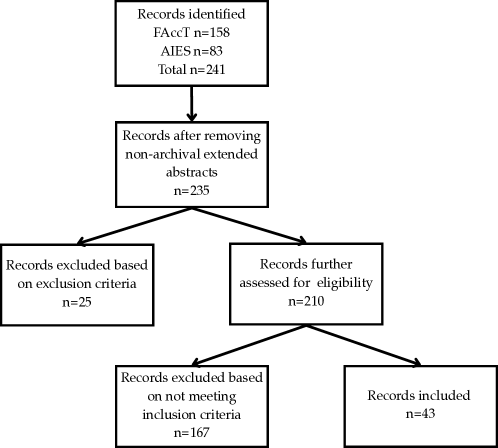

Data Collection. The data consists of peer-reviewed articles published by the AIES & FAccT conferences between 2018–2025, downloaded from the ACM website on September 05, 2025 Note that the proceedings of AIES 2025 are not available when we have submitted this manuscript, hence AIES 2025 articles are excluded from this review.. In total, 241 articles (83 AIES, 158 FAccT) are obtained that include the keyword “trustworthiness” in the full-text excluding references; 6 non-archival extended abstracts are excluded, leaving us 235 articles for screening.

Screening and Selection Criteria. We note several challenges in reviewing the AIES & FAccT papers. The concept of trustworthiness is often embedded throughout papers instead of being the core theme. For example, auditing algorithmic systems or exploring potential biases in AI systems eventually help in understanding their trustworthiness, but this is often a point of discussion rather than the core contribution. Therefore, it is difficult to conclude that these articles engage with the core concept. Hence, we aim for a balanced approach studying trustworthiness, by including two types of article in our analysis: (i) articles that directly address trustworthiness as a central theme, like (liao2022designing, 86, 45, 65, 44), and (ii) articles where trustworthiness is not the main focus, but the authors explain how their findings contribute to a broader understanding of system trustworthiness and, ultimately, user trust.

Therefore, keeping our balanced approach, following prior literature reviews on trust (mehrotrareview, 99, 145, 12) and best research practices to study a particular topic (ajmani, 1, 116), we define our inclusion criteria as:

1. One of the contributions of the article is linked to understanding trustworthiness of an algorithmic system.

1. The article engages with trustworthiness as a component of their measure, directly influenced by the results, or as a key discussion theme.

Our exclusion criteria are:

1. The article discusses the need for AI trustworthiness without directly defining, measuring, or modeling it.

1. The article is classified as a survey, scoping review, or literature review.

The final corpus consists of 43 papers after applying our inclusion and exclusion criteria. An overview of our review process following the PRISMA protocol (page2021prisma, 110) is shown in Figure 1.

<details>

<summary>x1.png Details</summary>

### Visual Description

\n

## Diagram: Process Flowchart

### Overview

The image depicts a flowchart illustrating a hierarchical process with five stages. The flowchart is presented in a top-down manner, with each stage represented by a rectangular box and connected by arrows indicating the flow of the process. The text within each box appears to be in English, but is somewhat obscured and difficult to read with high precision.

### Components/Axes

The diagram consists of:

* **Boxes:** Five rectangular boxes representing process stages.

* **Arrows:** Lines with arrowheads indicating the direction of the process flow.

* **Text:** Labels within each box describing the process stage.

### Detailed Analysis or Content Details

Due to the image quality, the text is difficult to decipher with absolute certainty. However, I will attempt to transcribe the text within each box to the best of my ability, noting the uncertainty.

1. **Top Box:** "Development of the initial model and data collection"

2. **Second Box:** "Development of the model and data collection"

3. **Third Box (Left Branch):** "Model evaluation and refinement"

4. **Third Box (Right Branch):** "Model application and validation"

5. **Bottom Boxes (Branching from Right Branch):**

* **Left Bottom Box:** "Model implementation and deployment"

* **Right Bottom Box:** "Documentation and reporting"

The arrows indicate a sequential flow:

* From "Development of the initial model and data collection" to "Development of the model and data collection".

* From "Development of the model and data collection" to both "Model evaluation and refinement" and "Model application and validation".

* From "Model application and validation" to both "Model implementation and deployment" and "Documentation and reporting".

### Key Observations

The diagram illustrates a typical iterative process in model development. The branching at the third stage suggests that the process can either focus on refining the model or applying and validating it. The final stage involves both implementation and documentation, indicating a complete process lifecycle.

### Interpretation

This flowchart likely represents a simplified overview of a model development lifecycle, potentially in a scientific or engineering context. The initial stages focus on building the model and gathering data. The subsequent branching suggests a decision point: either refine the model based on evaluation or proceed with application and validation. The final stages emphasize practical implementation and thorough documentation, crucial for reproducibility and knowledge sharing. The diagram highlights the iterative nature of model development, where evaluation and refinement can lead to improvements and better performance. The diagram does not provide any quantitative data or specific details about the model itself, but it effectively communicates the overall process flow.

</details>

Figure 1. Flowchart of the articles reviewing process following the PRISMA protocol (page2021prisma, 110).

Free-form Questions. Following the Goal-Question-Metric (GQM) approach (caldiera1994goal, 11), we analyze the articles along the GQM dimensions that are phrased as questions: (i) what is the goal of the paper, (ii) what are different research questions related to this goal, and (iii) what are metrics to measure some aspects/factors of this goal? This framework provides us with a way to categorize the understanding of trustworthiness in the AIES & FAccT community in the form of generic free-form questions closely tied to the research questions from Section 1.

We employ a thematic analysis to analyze the 43 articles and address our research questions. First, we develop a coding scheme to extract relevant information from each paper. For defining trustworthiness (RQ1), we identify key terms, phrases, and concepts used across papers to formulate common definitional themes. We further link the identified themes with the International Organization for Standardization (ISO) standard 5723 on trustworthiness (ISO_Trustworthiness_Vocabulary_2022, 63), allowing us to track frequency and depth of coverage. The ISO standard is chosen as a reference framework as it represents an internationally recognized benchmark for trustworthiness in technical systems (toney, 141). Furthermore, to understand the drivers of studying trustworthiness, we use both inductive and deductive coding approaches, first allowing themes to emerge naturally from the text then mapping these against common clusters.

For trustworthiness measures (RQ2), we develop a hierarchical coding structure to categorize assessment methods and validation & verification techniques. For application scenarios (RQ3), we employ open coding to identify emerging categories of use cases, followed by axial coding to establish relationships between different application contexts. Finally, to better understand the role of values in AI trustworthiness (RQ4), we analyze intended, embodied, and realized values. We followed (van2020embedding, 114) ’s work to study the role of values. According to his work, intended values refer to the ethical principles and societal benefits that AI systems aim to achieve, embodied values are those integrated into their design and implementation, and realized values are the actual outcomes observed in practice. We use a two-stage coding process where we first identify explicitly stated intended values related to trustworthiness and then compare these against realized outcomes and embodied values. This involves creating pairs of intended-realized values and analyzing any gaps or alignments between them.

Our coding process is iterative, with initial codes refined over multiple analysis rounds. To ensure reliability, three researchers independently code a subset of papers (25%) and calculate an average Cohen’s Kappa coefficient ( $\kappa=0.62$ ). Through iterative discussions, they update their understanding, recode previous papers to include emergent concepts, include new papers in small batche of five and achieve improved reliability. The team collaborated for about two months to discuss findings and potential discrepancies in coding of the articles. Through multiple discussions, discrepancies were reevaluated and corrected, resulting in the final coefficient of $\kappa=0.91$ .

3.2. Corpus Overview

First, we perform a metadata analysis on the final corpus of 43 articles, focusing on understanding the trustor’s background, the object of trustworthiness, variables, and study type. Our meta analysis reveals that there are six interlinked trustors: users of a specific AI system (20), The number in brackets denote the count of articles corresponding to a specific dimension. citizens (12), developers (4), designers (3), practitioners (2), and public administrators (2). As to the object of trustworthiness, the corpus revolves around studying trustworthiness of AI linked with data sources (19) and institutions developing the AI system (12). The role of trustworthiness as a study variable is almost equally divided among the papers as independent variable (21) and dependent variable (22). Finally, trustworthiness is studied normatively in only 1/3rd of the studies while the remaining 2/3rd studies it in empirical study design.

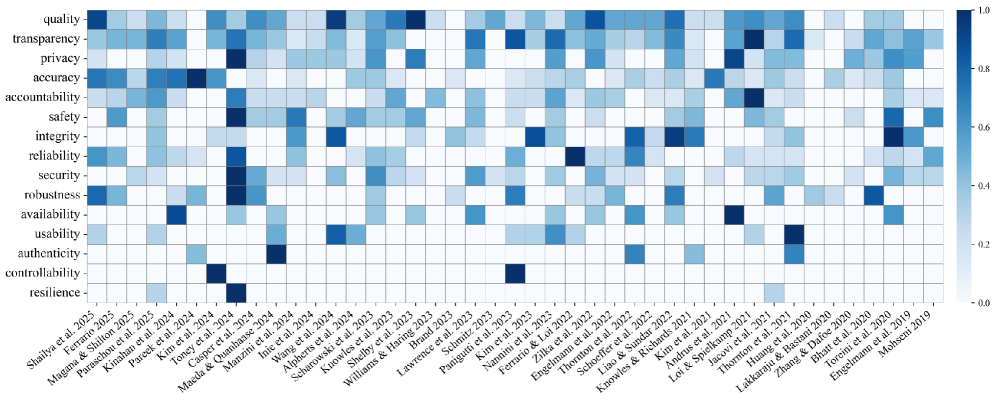

Second, Figure 2 shows a heatmap of the log-normalized, min-max scaled word occurrences of trustworthiness dimensions by (ISO_Trustworthiness_Vocabulary_2022, 63), in the full texts of the included corpus. Dimensions like quality, transparency, and accuracy occur frequently throughout the full texts, while authenticity, controllability, and resilience are mentioned relatively less. Some articles, like (paraschou2025ties, 112) and (toney, 141), refer to a wide array of dimensions.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Heatmap: Ethical Considerations in AI Research (2019-2025)

### Overview

This image presents a heatmap visualizing the consideration of various ethical factors across a range of AI research papers published between 2019 and 2025. The heatmap uses a color gradient to represent the degree to which each ethical consideration is addressed in the respective papers, with darker shades of red indicating higher consideration and lighter shades of blue indicating lower consideration.

### Components/Axes

* **Y-axis (Vertical):** Lists 16 ethical considerations: quality, transparency, privacy, accuracy, accountability, reliability, safety, integrity, security, robustness, availability, usability, authenticity, controllability, and resilience.

* **X-axis (Horizontal):** Lists AI research papers, identified by author(s) and year of publication, ranging from "Shalia et al. 2025" to "Mohsen 2019".

* **Color Scale (Legend):** Located on the right side of the heatmap, ranging from 0.0 (light blue) to 1.0 (dark red). The scale indicates the level of ethical consideration, with higher values representing greater consideration.

* **Grid Lines:** Horizontal and vertical lines delineate the cells of the heatmap, corresponding to the intersection of ethical considerations and research papers.

### Detailed Analysis

The heatmap displays a matrix of color-coded cells, each representing the ethical consideration score for a specific research paper. The values are approximate, based on visual estimation of the color gradient.

Here's a breakdown of trends and approximate values, reading across the X-axis (papers) for each ethical consideration (Y-axis):

* **Quality:** Generally low consideration across all papers, ranging from approximately 0.05 to 0.3. A slight increase is observed towards the middle of the timeline (2022-2023).

* **Transparency:** Starts low (around 0.1-0.2 in 2019-2021), peaks around 0.6-0.7 for papers in 2023-2024, and then declines slightly.

* **Privacy:** Similar trend to transparency, with a peak around 0.6-0.7 in 2023-2024.

* **Accuracy:** Generally moderate consideration, fluctuating between 0.3 and 0.6.

* **Accountability:** Low to moderate consideration, with a slight increase towards 2023-2024 (0.4-0.6).

* **Reliability:** Similar to accountability, with values ranging from 0.2 to 0.5.

* **Safety:** Moderate consideration, generally between 0.4 and 0.7, with a peak around 2023.

* **Integrity:** Low to moderate consideration, similar to reliability.

* **Security:** Low consideration across most papers, generally below 0.3.

* **Robustness:** Low consideration, similar to security.

* **Availability:** Very low consideration, consistently below 0.2.

* **Usability:** Low consideration, generally below 0.3.

* **Authenticity:** Low consideration, similar to usability.

* **Controllability:** Low consideration, consistently below 0.3.

* **Resilience:** Very low consideration, consistently below 0.2.

**Specific Data Points (Approximate):**

* **Mohsen et al. 2019:** All ethical considerations are very low (0.0-0.2).

* **Engelmann et al. 2020:** Slightly higher consideration for transparency (around 0.3) and accuracy (around 0.3).

* **Tseng et al. 2021:** Moderate consideration for safety (around 0.5).

* **Kim et al. 2023:** High consideration for transparency, privacy, and safety (around 0.7-0.8).

* **Paeguit et al. 2023:** High consideration for transparency and privacy (around 0.7).

* **Shalia et al. 2025:** Moderate consideration for transparency and accuracy (around 0.5-0.6).

### Key Observations

* **Peak in 2023-2024:** There's a noticeable peak in the consideration of transparency, privacy, and safety around 2023-2024. This suggests a growing awareness of these ethical issues during that period.

* **Low Consideration for Availability, Usability, Authenticity, Controllability, and Resilience:** These ethical considerations consistently receive the lowest scores, indicating they are often overlooked in AI research.

* **Variability:** There is significant variability in ethical consideration across different research papers, even within the same year.

* **Quality is consistently low:** Quality is consistently the lowest considered ethical factor.

### Interpretation

The heatmap suggests that while ethical considerations are becoming increasingly important in AI research, the focus is primarily on transparency, privacy, and safety. Other crucial ethical aspects, such as availability, usability, and resilience, are often neglected. The peak in consideration around 2023-2024 could be attributed to increased public awareness of AI ethics, the release of ethical guidelines by organizations, or a growing emphasis on responsible AI development within the research community.

The data highlights a potential imbalance in ethical focus. While addressing transparency, privacy, and safety is essential, a holistic approach to AI ethics requires considering a broader range of factors to ensure the responsible and beneficial deployment of AI technologies. The consistent low scores for availability, usability, and resilience suggest a need for more research and discussion on these often-overlooked ethical dimensions.

The heatmap provides a valuable snapshot of the ethical landscape in AI research, revealing both progress and areas for improvement. It can inform researchers, policymakers, and stakeholders about the current state of ethical consideration and guide future efforts towards more responsible AI development.

</details>

Figure 2. Heatmap of the normalized frequencies of trustworthiness dimensions in each paper of the final corpus ( $N=43$ ).

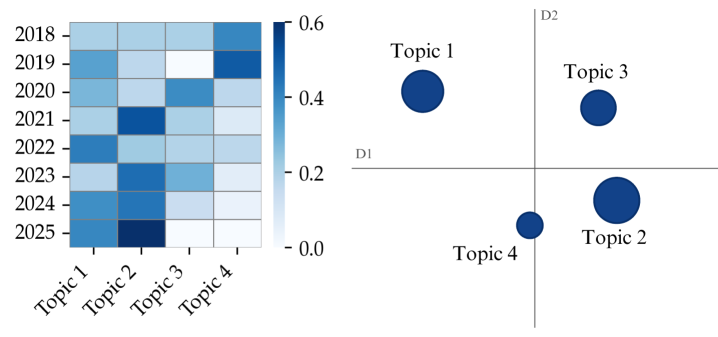

Third, we broaden the analysis and discover themes across the initial keyword-based selection of 235 articles, without extended abstracts, through topic modeling. We apply BERTopic (grootendorst2022bertopic, 53), which leverages c-TF-IDF and the capabilities of the transformer-based language model BERT (devlin2018bert, 29), to analyze topics that are both coherent and contextually meaningful. The generated topics are analyzed using two visualizations as shown in Figure 3. On the one hand, we present the normalized topic distributions over time using a heatmap to capture trends and shifts across publication years. We observe a consistent prevalence and recent increase of topics relating to “explanations” and “accountability”, while there is a relative decline in topics referring to ethics and norms. On the other hand, we visualize the representations and relative distance of the topics in a 2D plot. Notably, topics surrounding “fairness,” “transparency,” and “ethics” lie in closer proximity to each other, while they are more distanced from the topic around “explanations.” Also, we find that although fairness and bias are key ethical concerns, the considerable distance between fairness-related topics and ethical themes suggests that papers tend to emphasize one while giving limited attention to the other.

Topic 1 (72): explanations, human, decision, language, study

Topic 2 (85): transparency, accountability, public, human, technologies

Topic 3 (49): fairness, bias, algorithmic, fair, discrimination

Topic 4 (29): ethics, ethical, moral, responsible, norms

<details>

<summary>x3.png Details</summary>

### Visual Description

## Heatmap & Scatter Plot: Topic Dynamics Over Time

### Overview

The image presents two visualizations: a heatmap on the left showing the intensity of four topics (Topic 1, Topic 2, Topic 3, Topic 4) over the years 2018-2025, and a scatter plot on the right representing the topics in a two-dimensional space defined by dimensions D1 and D2. The heatmap uses a blue color gradient to indicate intensity, with darker blues representing higher values. The scatter plot uses circle size to represent relative importance or magnitude.

### Components/Axes

**Heatmap:**

* **X-axis:** Topics - Topic 1, Topic 2, Topic 3, Topic 4

* **Y-axis:** Years - 2018, 2019, 2020, 2021, 2022, 2023, 2024, 2025

* **Color Scale:** Ranges from 0.0 (lightest blue) to 0.6 (darkest blue).

* **Data Values:** Represent the intensity or prevalence of each topic in each year.

**Scatter Plot:**

* **X-axis:** Dimension D1

* **Y-axis:** Dimension D2

* **Points:** Represent Topics 1, 2, 3, and 4. The size of the circle appears to be proportional to some value.

* **Labels:** Topic 1, Topic 2, Topic 3, Topic 4 are labeled next to their respective points.

### Detailed Analysis or Content Details

**Heatmap Data (Approximate Values):**

| Year | Topic 1 | Topic 2 | Topic 3 | Topic 4 |

|---|---|---|---|---|

| 2018 | 0.5 | 0.3 | 0.2 | 0.4 |

| 2019 | 0.5 | 0.3 | 0.3 | 0.4 |

| 2020 | 0.4 | 0.4 | 0.3 | 0.3 |

| 2021 | 0.3 | 0.5 | 0.4 | 0.2 |

| 2022 | 0.2 | 0.4 | 0.5 | 0.2 |

| 2023 | 0.2 | 0.3 | 0.6 | 0.2 |

| 2024 | 0.2 | 0.2 | 0.5 | 0.3 |

| 2025 | 0.2 | 0.1 | 0.4 | 0.3 |

**Trends in Heatmap:**

* **Topic 1:** Declines steadily from 0.5 in 2018 to 0.2 in 2025.

* **Topic 2:** Initially stable, then declines from 0.4 in 2021 to 0.1 in 2025.

* **Topic 3:** Increases from 0.2 in 2018 to a peak of 0.6 in 2023, then decreases to 0.4 in 2025.

* **Topic 4:** Remains relatively low and stable around 0.2-0.4 throughout the period.

**Scatter Plot Data (Approximate):**

* **Topic 1:** Located at approximately (0.2, 0.5). Medium circle size.

* **Topic 2:** Located at approximately (0.1, -0.3). Largest circle size.

* **Topic 3:** Located at approximately (0.4, 0.4). Medium circle size.

* **Topic 4:** Located at approximately (0.0, -0.1). Smallest circle size.

### Key Observations

* Topic 1 and Topic 2 show a clear decreasing trend in prevalence over time.

* Topic 3 experiences a rise in prevalence, peaking in 2023, then declining.

* Topic 4 remains consistently low in prevalence.

* In the scatter plot, Topic 2 has the largest circle, suggesting it is the most significant or prominent topic based on the dimensions D1 and D2. Topic 4 has the smallest circle.

* Topic 1 and Topic 3 are positioned in the positive quadrant of the scatter plot, while Topic 2 and Topic 4 are in the negative quadrant.

### Interpretation

The visualizations suggest a dynamic shift in topic prevalence over the years 2018-2025. Topics 1 and 2 are losing relevance, while Topic 3 gains prominence before experiencing a decline. Topic 4 remains a minor topic throughout the period.

The scatter plot provides a different perspective, showing the relationships between the topics based on dimensions D1 and D2. These dimensions could represent various characteristics, such as sentiment, complexity, or source. The positioning of the topics suggests that Topic 2 and Topic 4 are distinct from Topic 1 and Topic 3. The circle sizes indicate that Topic 2 is the most significant topic based on these dimensions.

The combination of the heatmap and scatter plot provides a comprehensive view of the topic dynamics. The heatmap shows *how* the topics change over time, while the scatter plot shows *where* they stand in relation to each other. The peak in Topic 3 around 2023 could represent a significant event or trend that drove its increased prevalence. The decline of Topic 1 and Topic 2 could be due to changing interests or the emergence of new topics. The dimensions D1 and D2 likely represent underlying factors that influence the relationships between the topics.

</details>

Figure 3. Visualizations of topics generated using BERTopic applied to the initial keyword-based selection of articles ( $N=235$ ). First, the heatmap (left) shows normalized topic distributions, grouped by year. Values refer to the proportion of documents associated with the topic. Second, the intertopic distance plot (right) shows the relative positions of topics in a 2D space. The representations of topics are reduced to two dimensions using the uniform manifold approximation and projection dimensionality reduction technique, enabling the generation of a 2D plot to visualize the topics.

4. Understanding of AI Trustworthiness in the AIES & FAccT Community

4.1. Definition or Conceptualization

To understand how to understand an AI system’s trustworthiness, it is crucial to examine how we define or conceptualize it. Definition refers to how trustworthiness is formally described and articulated in the selected papers and conceptualization encompasses the broader understanding of trustworthiness, e.g., considering its role in systems.

First, the AIES & FAccT community presents a rich and multifaceted understanding of AI trustworthiness, as evidenced across numerous studies. Through our coding and thematic analysis, we identify seven key conceptualization themes, summarized in Table 1.

Analysis of the conceptual landscape reveals several critical insights about how AI trustworthiness is understood in contemporary scholarship. First, there exists a fundamental tension between transparency-focused approaches (T1) and technical robustness perspectives (T6), suggesting competing philosophies about whether trustworthiness derives primarily from system interpretability or from demonstrated reliability regardless of internal opacity. Second, the prominence of anthropomorphization themes (T3) alongside ethical considerations (T4) indicates growing recognition that trust in AI cannot be divorced from human psychological tendencies to perceive social relationships with technology, a finding that challenges purely technical or procedural definitions. Third, the emphasis on broader societal implications (T5) and regulatory ecosystems signals an important shift from treating trustworthiness as an individual system property to understanding it as embedded within institutional contexts where harms, accountability, and public opinion are distributed unevenly across populations. Finally, the reliance on established frameworks like Mayer’s model and NIST/ISO definitions (T7) alongside emerging concepts of perceived parasocial relationships suggests the field is simultaneously borrowing from organizational trust theory while grappling with AI-specific phenomena that existing models may not adequately capture. These insights collectively reveal that AI trustworthiness is conceptualized not as a unified construct but as a contested terrain where technical, psychological, ethical, and societal dimensions intersect and sometimes conflict.

Table 1. Understanding of AI Trustworthiness: Key themes in definitions and conceptualizations.

| T1: Emphasis on transparency as a foundational aspect of trustworthiness | Trustworthiness cues (liao2022designing, 86), Good explanation (rayyan-64504518, 105), Open communication (brandj, 16), Transparency, Provenance & connections between them (rayyan-64504562, 139), Computational reliabilism & anti-monitoring (Ferrario, 45), Expressing Uncertainty (Sunnie2024a, 73), Transparency with human oversight (rayyan-64504523, 111), Explanation Trustworthiness (rayyan-64504630, 103, 128), Transparency of algorithmic tools deployed in the criminal justice system (rayyan-64504664, 155) |

| --- | --- |

| T2: The importance of benchmarks, rigorous auditing and compliance mechanisms | White- and outside-the box audits (Stephen2024, 21), Certification Labels (nicolas, 121), Performance benchmarks and evaluation measures (rayyan-64504620, 83), Mapping regulatory guidelines to philosophical account of accountability (rayyan-64504624, 90) |

| T3: Perceived Anthropomorphization | (de)Anthropomorphized description of AI system (Nanna2024, 62), Perceived Parasocial Relationships (rayyan-64504506, 92), Facial AI inferences (Severin2022, 40) |

| T4: Ethical considerations, including fairness and respect for user intent | Inclusive & intersectional algorithms (kime, 72), Data leakage & reproducibility (Sean2024, 75), Model interpretability (rayyan-64504617, 81), Understanding of harms and how (and by whom) this has been informed (Bran2023, 76), Interaction between risks and the dispositions of the trustee (rayyan-64504660, 151), Informational fairness (10.1145/3531146.3533218, 126), Achieving fairness (rayyan-64504644, 125), Fairness by design (rayyan-64504611, 61), Fair data procurement (mckane, 4) |

| T5: Broader societal implications: Regulatory Ecosystem and Accountability caused by harms | Social trustworthiness score (Severin2019, 39), Trustworthiness criteria for policy-making (rayyan-64504432, 3), Public trust in AI & institutional trust (Bran2021, 77), AI Assistant design & Organizational practices and third-party governance (rayyan-64504509, 97), sociotechnical harms (rayyan-64504649, 129), Public opinion (rayyan-64504662, 153), Safe, reliable, and acceptable to users and public (magana2025frameworks, 93) |

| T6: Justified reliance on AI’s reliability and technical robustness | Reasonable trust in model’s output (Umang2020, 13), Warranted Trust (jacovi, 65), AI system must maintain to function correctly within its context of application (ferrario2025trustworthiness, 44) |

| T7: Mayer’s (mayer1995integrative, 98) and Lee & See’s model (lee2004trust, 84) & NIST/ISO definition | Ability, Benevolence & Integrity (Sunnie2023, 74, 86, 73, 149, 140, 113, 112), ABI+ (ABI, Predictability) framework (toreini, 142), NIST & ISO Definition (toney, 141) |

We also study the trustworthiness dimensions defined by the ISO trustworthiness standard (ISO_Trustworthiness_Vocabulary_2022, 63) to ensure that our analysis is based on internationally recognized standards. ‘Transparency’ is the most frequently mentioned ISO dimension, appearing in 21 out of 43 papers, followed by ‘accountability’ and ‘explainability’ in 12 papers. The less frequently mentioned ISO dimensions are ‘controllability’ (5), ‘resilience’ (4), ‘robustness’ (4), ‘security’ (4), ‘safety’ (3), ‘reliability’ (3), ‘usability’ (3), and ‘provenance’ (2).

While many studies implicitly link trustworthiness to accuracy, reliability, transparency, and fairness, increasing attention is being given to more nuanced conceptualizations, such as stakeholder-centric approaches and systemic perspectives. Frequent references to ISO dimensions, particularly transparency, accountability, and explainability, underscore their importance in building trustworthy AI systems. However, a more comprehensive and standardized approach to defining and measuring AI trustworthiness is needed for the responsible development and deployment of AI systems.

4.2. Drivers

(anjomshoae2019explainable, 7) have underlined the importance of considering the intended purpose when studying the trustworthiness of AI systems. To this end, this scoping review seeks to understand why the reviewed studies focus on understanding trustworthiness. Most of these works stated their motivation or intended purpose for studying trustworthiness. Table 1 lists the drivers of the 43 papers included in the review, with some papers having more than one driver.

Table 1. The drivers of AI trustworthiness of the primary studies covered by the review.

| D1: Conformity with regulations & Guidelines and ethical considerations | (Stephen2024, 21, 40, 75, 105, 111, 126, 121, 141, 16, 61, 83, 90, 125, 129, 139, 5, 77, 103, 61, 155, 141, 4, 128, 44) |

| --- | --- |

| D2: Promoting honesty | (Severin2019, 39, 151, 3) |

| D3: Trust | (Umang2020, 13, 45, 62, 65, 73, 74, 86, 92, 97, 139, 126, 113, 140, 149, 81, 103, 76, 65, 151, 3, 77, 142, 153, 93, 112) |

| D4: Economic growth & Cultural values | (Severin2019, 39, 16) |

| D5: Reproducibility | (Sean2024, 75) |

| D6: Accuracy | (Aida2023, 94, 72, 3) |

Increasing users’ trust in the system, ethical considerations, and conformity with regulations such as those of (EU_AI_Ethics_Guidelines, 42, 127, 136, 137, 66, 144, 43, 147), and accuracy are among the listed motivations for the explanations. The table reveals that trust and ethical considerations are the most prominent drivers of studying AI trustworthiness. Naturally, trust and trustworthiness go hand in hand to increase users’ confidence in the system by understanding how its reasoning mechanism works (anjomshoae2019explainable, 7). In applications requiring human-AI interaction, honesty and accuracy are among the main drivers for trustworthiness to ensure that AI’s decision-making is reliable and fair (10.1145/3531146.3533218, 126, 113). For public AI systems, trustworthiness drivers are often linked with conformity with regulations and guidelines, and economic growth and cultural values (Severin2019, 39, 16, 77, 90, 153). Finally, (Sean2024, 75) identify reproducibility of their results as a driver for studying trustworthiness for their system. Overall, these studies provide a holistic overview of the key drivers for studying AI trustworthiness.

4.3. Measurement and Verification & Validation

(jacobs2021measurement, 64) have introduce measurement theory for AI/ML systems, where validation and verification are key to ensure we are measuring what we are trying to measure. In the context of AI trustworthiness, measurement refers to quantifying specific attributes or characteristics of the AI system, typically aligned with ISO trustworthiness dimensions. Following ISO 9001 (dnv2015iso, 32), verification involves confirming through examination and provision of objective evidence that the specified trustworthiness dimensions are fulfilled. Validation is similar to verification, but must be confirmed under real-world usage conditions.

Measurement. To analyze the measurement techniques for AI trustworthiness, we follow (schlicker2025we, 122) ’s distinction of actual and perceived trustworthiness. (schlicker2025we, 122) define a system’s actual trustworthiness as a latent construct that indicates the true value of a system’s trustworthiness (in alignment with Realistic Accuracy Model (funder1995accuracy, 49)), e.g., benevolence, integrity, and ability (mehrotrareview, 99, 84, 98). Similarly, perceived trustworthiness reflects the result of a trustor’s assessment of the trustee’s actual trustworthiness.

Table 1. The measurement of AI trustworthiness of the primary studies covered by the review.

| Likert scale | McKnight et al.[(HARRISONMCKNIGHT2002297, 58)] (Sunnie2024a, 73), Jian et al. [(Jian01032000, 67)] (rayyan-64504525, 113), Perceived trustworthiness (Own scale) (10.1145/3531146.3533218, 126) |

| --- | --- |

| Reliance behavior | Change in people’s trust judgment (liao2022designing, 86), Agreement between a participant’s answer and that of the AI system (Sunnie2024a, 73, 74, 93) (rayyan-64504525, 113, 149, 83) |

| Actual cues (e.g., precision, recall, and f1-scores) | Positive predictive value (kime, 72), LDA topic modelling (Severin2019, 39), Stochastic model with surrogates (rayyan-64504518, 105), (Aida2023, 94, 142, 75, 21, 111), Metrics for plausibility and faithfulness scores (shailya2025lext, 128) |

| Assessment of impact and alignment with values and concerns | Ethical charters & internal review bodies (rayyan-64504509, 97), sociotechnical harms taxonomy, Moral groundings for public transparency (rayyan-64504624, 90) (rayyan-64504649, 129) |

| Levels of monitoring activities | Monitoring-avoiding relation between trustor & trustee (Ferrario, 45) (toreini, 142), Trustworthiness level across system accuracy ranges (ferrario2025trustworthiness, 44) |

| Communication style and anthropomorphic features | Roleplaying & reciprocal engagement (rayyan-64504506, 92) |

| Qualitative assessment | Interviews & Surveys (nicolas, 121, 13, 139, 62, 81, 149, 3, 153, 40, 4), User satisfaction (rayyan-64504664, 155) |

| Model Cards and AI Assessment Catalog | Information about data leakage & selective inference for EEG (Sean2024, 75), AI Assessment Catalog (rayyan-64504644, 125), NIST AI Risk Management Framework & other national frameworks (toney, 141) |

| Mediator & Trustee role | Social, Institutional and Temporal Embeddedness, Situational Normality, Structural Assurance, Motivation and ABI (lauren, 140) |

Analysis of measurement strategies reveals several important insights about the operationalization of AI trustworthiness. First, the field exhibits a problematic conflation between measuring trust (a psychological state) and measuring trustworthiness (system properties that warrant trust). Reliance behavior and Likert scales capture user perceptions while technical metrics assess objective capabilities, yet the relationship between these remains theoretically underdeveloped. Second, the diversity of approaches, from LDA topic modeling to ethical charters to role-playing exercises, suggests that trustworthiness is inherently multi-faceted and resists reduction to single metrics, yet this proliferation also indicates a lack of consensus on what actually constitutes valid measurement. Third, the emergence of documentation artifacts like model cards (Sean2024, 75) and AI assessment catalogs (rayyan-64504644, 125) represents an important shift toward longitudinal, context-aware evaluation rather than snapshot assessments, implicitly acknowledging that trustworthiness is not static but emerges through ongoing scrutiny. Finally, the inclusion of mediator and trustee role factors (social embeddedness, situational normality, structural assurance) indicates growing sophistication in recognizing that measurement must account for the relational and contextual dimensions of trust rather than treating it as residing solely in the AI system. Together, these developments suggest the field is moving toward more holistic measurement paradigms, though integration across approaches remains elusive.

Verification and/or Validation. More than half (34 out of 43) of the papers did not (explicitly) provide or adopt verification or validation approaches. Among the remaining 9 papers, surveys are the most widely used verification methodology, appearing in 5 papers (Severin2022, 40, 62, 126, 113, 153). E.g., (rayyan-64504525, 113) conduct a survey-based between-subject experiments involving 300 participants to investigate trust development, erosion, and recovery during AI-assisted decision-making. Additionally, (rayyan-64504662, 153) pre-register their experiments to ensure methodological rigor and transparency. (rayyan-64504617, 81) and (rayyan-64504432, 3) verify trustworthiness measurement through user studies with domain experts. Finally, only one paper employs a mixed method consisting of qualitative interviews and a subsequent survey to collect quantitative data (nicolas, 121).

The gap in current research, i.e., the insufficient attention to validation under real-world constraints and the lack of alignment between verification practice and trustworthiness dimensions, can be attributed to two primary factors: the lack of real-world data or representative scenarios (rayyan-64504434, 5) and the absence of multi-disciplinary collaboration (brundage2020toward, 17). The 9 papers that do so represent important but limited work in controlled settings, not diverse real-world environments. Our thoughts resonate with (rayyan-64504434, 5) and (brandj, 16) who have demonstrated challenges in obtaining representative datasets and how siloed approaches can miss critical sociotechnical dimensions for verification or validation.

4.4. Application Scenarios

AI techniques are applied across domains, including high-stakes ones, making trustworthiness vital to their success. The AIES & FAccT community demonstrates a comprehensive and diverse exploration of trustworthiness across various application scenarios; see Table 2. High-stake domains, including healthcare and medical applications, financial and economic systems, human resources, governance, law, and justice, are the most prominent application scenarios of studying trustworthiness of AI systems (22 out of 43). 12 out of 43 papers do not centre on a specific application scenario but rather generally discuss AI applications and their impact on society. Additionally, (rayyan-64504518, 105) and (rayyan-64504523, 111) examine AI policies with a focus on explainability of AI systems. (rayyan-64504518, 105) perform a thematic and gap analysis of policies and standards on explainability in EU, US, and UK and provide a set of recommendations on how to address explainability in regulations for AI systems. (rayyan-64504523, 111) analyze the EU AI Act (european2021proposal, 41) and argue that it neither mandates explainable AI nor bans the use of black-box AI systems; instead, it seeks to achieve its stated policy objectives with a focus on transparency and human oversight.

Table 2. The application scenarios of AI trustworthiness of the primary studies covered by the review.

| Healthcare and medical applications | (liao2022designing, 86, 121, 142, 73, 75, 80, 5, 4) |

| --- | --- |

| Financial and economic systems | (Umang2020, 13, 16, 5, 126, 121, 4) |

| Human resource | (Severin2022, 40, 121, 103, 5, 4) |

| Governance, law, and justice | (rayyan-64504620, 83, 90, 103, 153, 61, 81, 39, 155, 151, 125, 77) |

| Content moderation and information systems | (Umang2020, 13, 91) |

| Personal assistance and interaction | (Lydia2024, 96, 97, 121) |

| Environmental science | (rayyan-64504432, 3, 74, 139) |

| Domain agnostic | (Stephen2024, 21, 62, 65, 76, 77, 113, 141, 72, 129, 92, 140, 142, 103, 40) |

| AI policies and standards | (rayyan-64504518, 105, 111) |

| Facial recognition | (kime, 72) |

| Software engineering and programming | (wangr, 149) |

4.5. Intended, Embedded, and Realized Values

Our analysis reveals a significant gap between the intended, embodied, and realized values of AI systems. While many are developed with good intentions, e.g., promoting transparency, fairness, and accountability, these values are not always reflected in the final product or its impact on society.

Intended Values. The most frequently cited intended values were transparency (26), fairness (19), and accountability (12) 39 articles had more than one intended value for studying AI trustworthiness.. Other important values included privacy (8), security (5), and human oversight (5). E.g., (Umang2020, 13) emphasize the importance of transparency and trustworthiness as the intended values of explainable AI. Similarly, (Stephen2024, 21) highlight the role of AI audits in identifying risks and improving transparency, and (Severin2019, 39) examine the intended value of the social credit system to promote honesty and trust in Chinese society. In abidance with the AI act, (rayyan-64504523, 111) examine the role of explainable AI and state the intended value to ensure AI is trustworthy by focusing on transparency and human oversight. This includes enabling users to interpret outputs and manage risks associated with AI systems.

Embodied Values. The embodied values in studying AI trustworthiness are often shaped by the techniques used, the stakeholders involved, and the context of deployment. (rayyan-64504518, 105) examine the embodied value of explainable AI, which is determined by how well it can fulfill its intended purpose without compromising other desiderata, such as accuracy and privacy. (nicolas, 121) examine the embodied value of certification labels, which is determined by how well they can fulfill their intended purpose by reflecting the AI system’s compliance with trustworthiness criteria. (rayyan-64504432, 3) examine the embodied value of AI models, determined by how well they can generate explanations that help understand the relations between visual elements in street view imagery and socio-economic variables such as housing pricing.

Realized Values. The realized values of AI systems can differ significantly from their intended values due to various factors, including design flaws, unintended consequences, and societal biases. Some examples of how realized values can deviate from intended values: (Ferrario, 45) find that the realized value of explainability in AI systems may differ from its intended value, particularly when explainability does not lead to a reduction in monitoring or when it fosters an unjustified belief in the AI’s trustworthiness and (Sunnie2023, 74) find that the realized value of AI systems may differ from their intended value due to factors such as malicious manipulation of trustworthiness cues or ill-designed cues that mislead users.

The gap between intended, embodied, and realized values in AI systems raises important questions about the effectiveness of current approaches to embedding values in AI design for studying AI trustworthiness. It highlights the need for more robust methods to evaluate AI’s societal impact.

5. Discussion: Challenges, Open Questions, and Future Directions

This review has examined the multifaceted nature of AI trustworthiness as articulated within the AIES & FAccT community. This section examines the challenges, open questions, and future directions that arise from our analysis.

5.1. Why Do We Need to Rethink AI Trustworthiness?

Several critical gaps and limitations emerge from our analysis in Section 4.1. Despite this rich conceptual terrain, the current literature inadequately address: who defines trustworthiness criteria, whose harms count as significant, and how user intent is interpreted remain largely unexamined despite ethical considerations being prominent. Additionally, while there is convergence around components like accuracy and fairness linking to ISO dimensions, the literature largely neglects the power dynamics and structural inequalities that fundamentally shape trust relationships in AI systems (dobbe2021hard, 33).

Additionally, while benchmarks and auditing mechanisms (T2) are emphasized, there is insufficient attention to how compliance-focused approaches might incentivize “ethics washing” where organizations meet procedural requirements without substantively addressing underlying trust deficits. The conceptualizations also struggle with context-dependency: trustworthiness defined through computational reliability may be necessary but insufficient in domains like criminal justice (as noted in T1) where procedural justice and legitimacy matter as much as accuracy, yet the frameworks provide limited guidance on how to weight these competing demands situationally.

Current conceptualizations, despite their evolution from purely technical metrics to sociotechnical constructs, fail to fully account for how trust can erode or strengthen over time, particularly in response to system failures or successful interventions (dzhelyova2012temporal, 37). An emphasis on system-level characteristics and user perceptions, while important, has come at the expense of examining broader institutional and systemic factors that influence trustworthiness, such as regulatory frameworks, corporate governance structures, and market incentives. Without addressing these gaps, current approaches to building trustworthy AI risk perpetuating existing biases and power imbalances while failing to establish sustainable trust relationships across user communities (osasona2024reviewing, 109).

Based on our analysis of the corpus, we propose the following measures:

1. Future research must implement longitudinal studies that track trust dynamics over time, and design systems with mechanisms to rebuild trust after failures rather than focusing solely on initial trust establishment.

1. At the heart of AI trustworthiness lies a fundamental distinction between perceived and actual trustworthiness (schlicker2022calibrated, 124). Future research should clearly distinguish them to foster a more unified understanding of AI trustworthiness.

1. Develop comprehensive trustworthiness frameworks that explicitly incorporate institutional accountability measures, regulatory compliance mechanisms, and transparent corporate governance structures.

5.2. Is AI Trustworthiness Just a Checklist?

A critical analysis of the drivers of AI trustworthiness revealed oversights in how the field currently conceptualizes motivations for studying this crucial aspect. While the reviewed literature identifies several key drivers (see Section 4.2), the understanding appears superficial and fails to address several fundamental concerns.

First, the heavy emphasis on regulatory compliance and ethical guidelines, while important, suggests a potentially reactive rather than proactive approach to trustworthiness (tyler2001trust, 143). Many studies treat regulations as a checklist (see representative articles for D3 in Table 1) rather than engaging with the deeper philosophical and societal implications of AI trustworthiness. This compliance-driven approach risks creating a false sense of security while potentially missing emerging challenges not yet addressed by current regulations, such as the evolving nature of biases in dynamic systems, the challenge of ensuring explainability in complex models (saeed2023explainable, 118), attributing accountability in autonomous decision-making (busuioc2021accountable, 18), and impacts on human agency and societal power structures (santoni, 119). In real-world industry settings, compliance requirements often act as crucial entry points and motivators for responsible AI work (diaz2023connecting, 30). When organizational resources and attention are limited, framing trustworthiness as a compliance issue can secure support and budget allocations (caldwell2003organizational, 19).

Second, the classification of drivers reveals a bias toward accuracy and promotion of trust in AI, with a limited focus on end-user needs and societal impacts. While “trust” is frequently cited as a driver, there is an inadequate exploration of building an appropriate level of trust in AI, resulting in avoidance of over- and under-trust (10.1145/3610578, 102, 99).

Third, we found vagueness in debates about the safety behavior of AI systems and identifying & diagnosing safety risks in complex social contexts. Safety has been designated as a key component of AI trustworthiness both in the EU AI Act (european2021proposal, 41) and ISO (ISO_Trustworthiness_Vocabulary_2022, 63) & NIST (NIST_AI_Risk_Management_Framework, 106) guidelines. However, in our analysis, system safety does not appear as a prominent measure for trustworthiness except for the mathematical formalism (toney, 141). This raises two key questions: (i) Is safety marred by an underlying vagueness where it is hard to establish whether a system is safe or not (dobbe2021hard, 33)? And (ii) does it even make sense to study trustworthiness of an AI system without exploring its safety especially from a sociotechnical lens?

Finally, drivers related to long-term sustainability, environmental impact, and social justice are limited in our analyzed papers. While accuracy is listed as a driver, there is limited attention to the complex relationship between technical accuracy and real-world effectiveness. This limited understanding of drivers profoundly impacts how trustworthiness is studied and implemented in AI systems. Without a more nuanced understanding of why trustworthiness matters, the field risks producing solutions that address surface concerns while failing to engage with deeper systemic challenges. Hence, we propose the following measures:

1. The ideal approach could involve using compliance frameworks as practical starting points while simultaneously cultivating organizational cultures that recognize the broader societal implications of AI systems beyond regulatory requirements.

1. Develop frameworks and metrics that address the appropriateness of trust, ensuring users understand both the capabilities and limitations of AI systems they interact with.

1. Future research must expand beyond these conventional drivers of trustworthiness to include societal concerns, power dynamics, and long-term implications for human-AI interaction.

5.3. Measurement Problem in AI Trustworthiness

The critical analysis of measurement, verification, and validation approaches in AI trustworthiness research in Section 4.3 reveals gaps and methodological shortcomings to establish robust frameworks for evaluating trustworthiness of AI systems and build appropriate level of trust. More precisely, this necessitates: (i) conducting evaluations of both actual and perceived trustworthiness, i.e., establishing valid and reliable measurements, (ii) identifying methods to make valid comparisons between these two dimensions, and also (iii) to achieve alignment between them.

First, current research presents a significant gap concerning evaluation of trustworthiness. Prior studies have described the general challenge of aligning actual and perceived trustworthiness (mehrotrareview, 99, 86). They have also identified relevant components that constitute actual and perceived trustworthiness (schlicker_trustworthy_2025, 123). Additionally, previous work has emphasized the necessity for a communication process that conveys information about an AI system and its actual trustworthiness to shape and align trustworthiness perceptions (liao2022designing, 86). However, we still lack understanding of how the evaluation of actual trustworthiness and the successful alignment of this actual trustworthiness with perceived trustworthiness would manifest in practice, based on concrete measures and quantitative values for trustworthiness characteristics both for the AI system and user perceptions.

Second, when trustworthiness is measured using only questionnaires or reliance behaviour, there is still uncertainty about the trustee’s actual trustworthiness (schlicker2025we, 122). Robust methodologies that incorporate objective measures of trustworthiness alongside subjective assessments are essential for developing a comprehensive understanding of trustworthiness in human-AI interaction. However, it is still complex to consider that actual and perceived trustworthiness combined together provides a clear overview of the AI system’s overall trustworthiness, perhaps the $2+2=4$ analogy is not a fit here. We believe the issue is there is no single correct way of doing this, i.e., making individual characteristics commensurable. There is no ground truth to questions like what is the objective trustworthiness value for a given performance or explainability score of an AI system (Aida2023, 94).

The third problem differs from the first two in a fundamental way. Rather than dealing with how to combine or compare trustworthiness measures and individual traits, it addresses a more basic question: which specific characteristics should be evaluated when assessing trustworthiness in the first place? The challenge here isn’t about creating compatible measurement scales. Instead, it involves establishing comparable sets of characteristics between two sides—the AI system itself and the users who perceive it. Critical questions arise from this challenge: Which performance metrics should be considered? What aspects of transparency matter? How should explainability be evaluated? What privacy measures and safety standards should factor into the assessment of AI system trustworthiness? These questions are all fundamentally connected to this obstacle.

The verification and validation issues are even more concerning, with nearly half of the papers failing to address these crucial aspects. For example, what is the source of cues that inform users about an AI system’s trustworthiness and how can we verify or validate it? One perspective holds that these cues should originate directly from the systems and their creators. Some researchers advocate for defining good cues that technology developers should implement (liao2022designing, 86). These same scholars acknowledge that such indicators must be accurate and honest to be effective. When examining practical applications, they assume this honesty requirement is already met by creators and focus their analysis on additional necessary conditions. This assumption proves problematic in real-world scenarios. System providers cannot simply be expected to present entirely accurate cues about their products’ limitations. Commercial entities operate with sales objectives as their primary driver. When users place excessive confidence in a product beyond what’s justified, achieving proper confidence calibration would require the provider to communicate information that reduces trust. Yet commercial logic creates a strong disincentive for broadcasting unfavorable information; doing so would directly harm revenue and competitive positioning. It seems unlikely, therefore, that providers would intentionally engineer their systems to broadcast cues indicating mediocre or poor trustworthiness to potential users.

We propose the following measures for effective measurement, validation and verification:

1. Our analysis suggests a pressing need for AI system designers and developers to must explain their rationale for choosing particular trustworthiness attributes and specific metrics to measure those attributes. They also need to justify their methodology for prioritizing and combining these elements to generate a composite trustworthiness score. In doing so, they should be required to acknowledge their inherent perspectives and the criteria they apply when judging an AI system’s trustworthiness.

1. Greater focus on trustworthiness assessment mechanisms within the trust development process would likely enhance future research efforts to clarify how perceived trustworthiness transforms into actual trust and subsequent trusting behaviors.

1. Those who provide the cues to the AI system users need to elaborate on and give reasons why certain characteristics of the AI system and trustworthiness cues warrant trust. Also, focus on understanding how cues are relevant for the users, how they detect and utlize it forms a key element for verification and validation of the trustworthiness assessment.

5.4. Values Gap in AI Trustworthiness

Our critical examination of the values in AI trustworthiness research reveals significant oversights and problematic assumptions that limit our understanding of how values materialize in practice. The analysis of intended values demonstrates a concerning preoccupation with surface-level technical attributes such as transparency and fairness, while giving insufficient attention to deeper systemic values such as social justice, environmental sustainability, and cultural preservation. This narrow focus reflects a persistent bias toward engineering solutions rather than addressing fundamental societal challenges (olteanu2019social, 108). The heavy emphasis on transparency risks becoming a performative gesture rather than a meaningful commitment to openness and accountability, thereby risking to entrench problematic capture of the institutions that are meant to support public values (10.1145/3531146.3533241, 51, 150).

The discussion of embodied values highlights weaknesses in current research. The literature often oversimplifies how values are embedded in technical systems, reducing complex social and ethical considerations to fit technical implementations. This simplification risks a false sense of progress while neglecting the deeper challenges of value implementation (stern2002eva, 131). Particularly concerning is the treatment of realized values, where the analysis lacks a systematic framework for understanding value divergence. The literature notes discrepancies between intended and realized values but inadequately theorizes these gaps, attributing them to superficial issues like “design flaws” or “unintended consequences” without addressing structural barriers that hinder the realization of intended values.

The conclusion that a “more holistic approach” is required appears insufficient given the complexity of the challenges at hand. We need rigorous, dynamic frameworks reflecting the contested and evolving nature of values in AI systems. Such frameworks must move beyond static, universal notions of values to address how they are negotiated, implemented, and evaluated in real-world contexts, confronting structures of power. E.g., these power structures manifest in multiple dimensions that trustworthiness research must address: (i) corporate concentrations of AI development capacity that privilege certain stakeholders’ definitions of “trustworthiness,” (ii) institutional hierarchies within organizations that determine whose values and concerns shape AI systems, (iii) socio-economic disparities that affect who benefits from or bears risks of AI deployment, and (iv) geopolitical imbalances that enable dominant nations to set global AI governance norms; and professional authority structures that privilege technical expertise over lived experience.

We recommend the following measures to reduce the values gap in AI trustworthiness:

1. Implementing participatory design methodologies that meaningfully involve marginalized communities in defining trustworthiness criteria as informed by (harrington2019deconstructing, 57).

1. Developing evaluation frameworks that assess differential impacts across diverse populations rather than assuming universal benefits (carey2017glossary, 20).

1. Establishing transparency mechanisms that reveal how power influences AI system development decisions and provide mechanisms to contest them as showcased by (ehsan2021expanding, 38, 100).

1. Creating accountability structures that redistribute decision-making authority beyond technical experts (busuioc2021accountable, 18) and recognizing that trustworthiness is a contested concept shaped by those with the power to define it (hardin2002trust, 56).

5.5. Operationalizing Recommendations: Use Cases and Transformations

In the above four subsections, we propose general recommendations and measures for future directions from a sociotechnical perspective. Concretely, these recommendations can be deployed across multiple AI application domains. To make them more actionable for researchers working from technical perspectives, such as machine learning practitioners, we provide several illustrative examples across diverse applications: AI-powered clinical decision support systems and conversational agents for caregiver mental well-being support (AI in healthcare), AI-powered hiring/recruitment systems (AI in recruitment), criminal risk assessment algorithms (AI in criminal justice), and AI-powered adaptive learning platforms and automated assessment systems (AI in education). Table 2 demonstrates how current AI systems are defined, built, and evaluated in these use cases, and illustrates the transformative changes that our proposed recommendations and measures would bring to each domain.

Table 2. Comparison of current AI system practices and practices informed by our proposed recommendations: Illustrative use cases shows how our recommendations address limitations in current practices.