## Statistical physics of deep learning: Optimal learning of a multi-layer perceptron near interpolation

Jean Barbier, 1 Francesco Camilli, 2 Minh-Toan Nguyen, 1 Mauro Pastore, 1 and Rudy Skerk 3, ∗

1 The Abdus Salam International Centre for Theoretical Physics

Strada Costiera 11, 34151 Trieste, Italy

2 Alma Mater Studiorum - Universit` a di Bologna, Dipartimento di Matematica

Piazza di Porta S. Donato 5, 40126 Bologna, Italy

3 International School for Advanced Studies

Via Bonomea 265, 34136 Trieste, Italy

For four decades statistical physics has been providing a framework to analyse neural networks. A long-standing question remained on its capacity to tackle deep learning models capturing rich feature learning effects, thus going beyond the narrow networks or kernel methods analysed until now. We positively answer through the study of the supervised learning of a multi-layer perceptron. Importantly, ( i ) its width scales as the input dimension, making it more prone to feature learning than ultra wide networks, and more expressive than narrow ones or ones with fixed embedding layers; and ( ii ) we focus on the challenging interpolation regime where the number of trainable parameters and data are comparable, which forces the model to adapt to the task. We consider the matched teacher-student setting. Therefore, we provide the fundamental limits of learning random deep neural network targets and identify the sufficient statistics describing what is learnt by an optimally trained network as the data budget increases. A rich phenomenology emerges with various learning transitions. With enough data, optimal performance is attained through the model's 'specialisation' towards the target, but it can be hard to reach for training algorithms which get attracted by sub-optimal solutions predicted by the theory. Specialisation occurs inhomogeneously across layers, propagating from shallow towards deep ones, but also across neurons in each layer. Furthermore, deeper targets are harder to learn. Despite its simplicity, the Bayes-optimal setting provides insights on how the depth, non-linearity and finite (proportional) width influence neural networks in the feature learning regime that are potentially relevant in much more general settings.

## I. INTRODUCTION

Neural networks (NNs) are the powerhouse of modern machine learning, with applications in all fields of science and technology. Their use is now widespread in society much beyond the scientific realm. Understanding their expressive power and generalisation capabilities is therefore not only a stimulating intellectual activity, producing surprising results that seem to defy established common sense in statistics and optimisation [1], but is also of major practical and economic importance.

One issue is that even the models dating back to the inception of deep learning [2] are not theoretically well understood when operating in the 'feature learning regime' (a task-dependent term that will be clear later). The simplest deep learning model is the multilayer fully connected feed-forward neural network, also called multi-layer perceptron (MLP). It corresponds to a function F θ ( x ) = v ⊺ σ ( W ( L ) σ ( W ( L -1) · · · σ ( W (1) x ) · · · ) going from R d to R , parametrised by L + 1 matrices θ = ( v ∈ R k L × 1 , ( W ( l ) ∈ R k l × k l -1 ) l ≤ L ), with k l denoting the width of the l -th hidden layer (with k 0 = d ), and an activation function σ ( · ) applied entrywise to vectors.

∗ All authors contributed equally and names are ordered alphabetically. R. Skerk is the student author and has carried the numerical experiments with M.-T. Nguyen in addition to theoretical work. Corresponding author: rskerk@sissa.it

Until now, the quantitative theories for such NNs predicting which relevant features they can extract, how much data n they need to do so and how well they generalise beyond their training data, relied on over-simplified architectural and/or data-abundance assumptions. This prevented to precisely capture the combined role of the depth and non-linearity of NNs when they are trained from sufficiently many data for them to fully express their representation power. This paper offers answers to these questions in a richer scenario than what current statistical approaches could tackle.

## A. A pit in the neural networks landscape

Given the difficulty of the theoretical analysis of NNs, a zoology of tractable simplifications reported here have emerged, each coming with pros and cons.

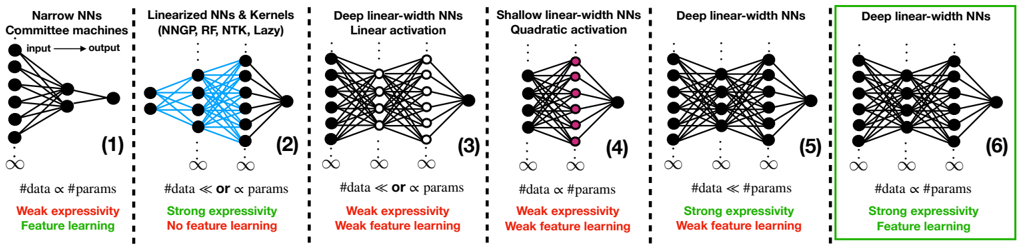

(1) Narrow networks. Triggered by pioneering works employing spin glass techniques to study NNs [3-5], the interest of the statistical physics community for the equilibrium properties of the narrow commitee machines ( L = 1 with k 1 = Θ(1) while n = Θ( d ) → ∞ , (1) in FIG. 1) rose quickly in the nineties [6-20]. This line of classical works is at the inception of the discovery of learning phase transitions (found concurrently also in single-layer architectures with constrained weights or peculiar activations [21-26]). The main issue with narrow NNs is their restricted expressivity. Nevertheless,

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

p

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

→∞

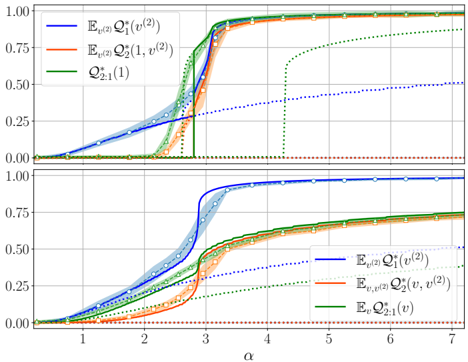

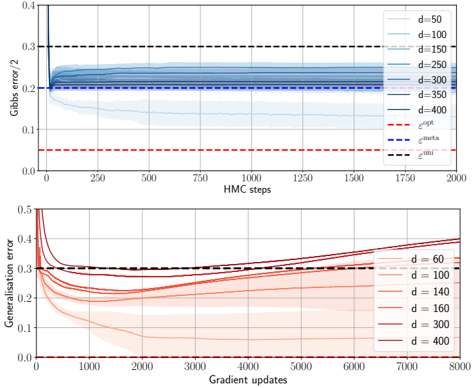

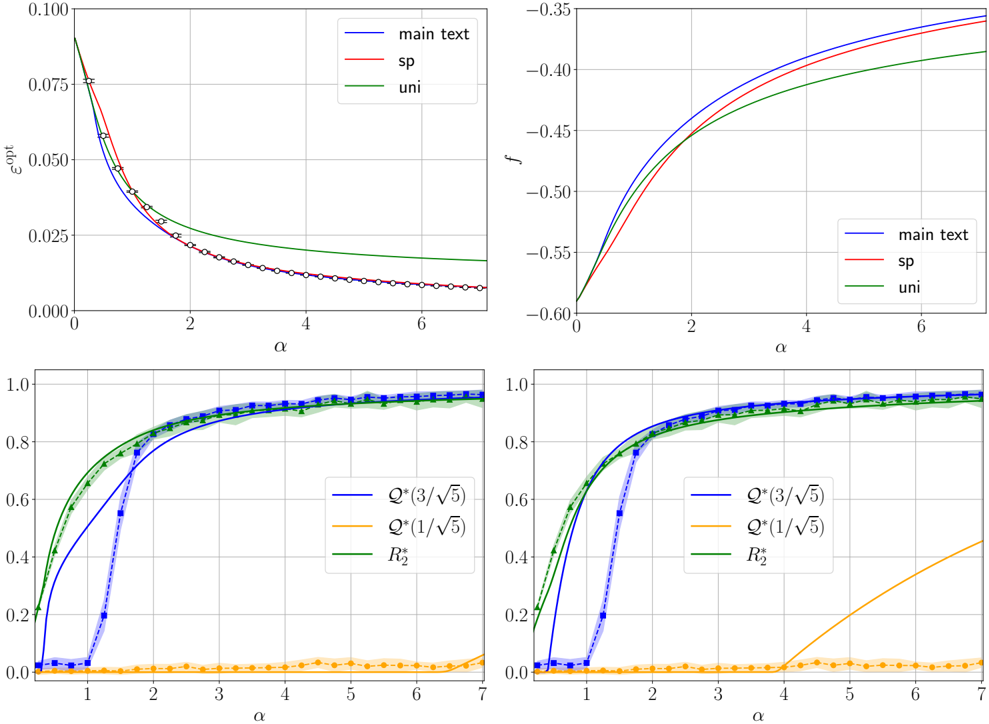

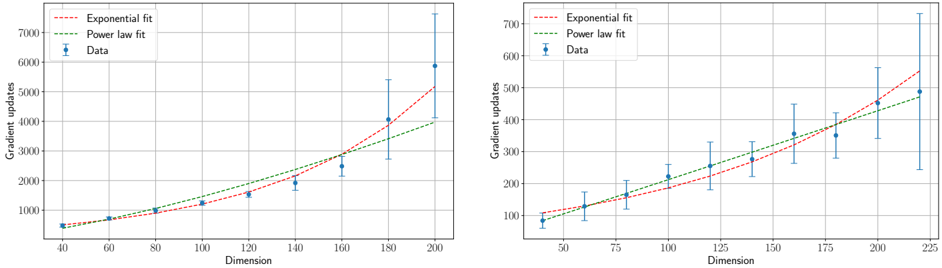

FIG. 1. Classification of models of fully connected feed-forward neural networks analysed in the theoretical literature (see the main text for references). Class ( 1 ) models are very narrow, i.e., with a width independent of the large input dimension d . This includes the perceptron and committee machines studied in the statistical mechanics literature (the latter are linked to the so-called multi-index models). They are analysed near-interpolation, where the number of data and model's parameters are proportional. In this regime, feature learning emerges through phase transitions but these models suffer from their limited expressivity. ( 2 ) encompasses all 'kernel-like models' whose inner weights are frozen to random values (which is represented by the blue colour) either by construction as in the random feature model, or as a consequence of their overwhelming width/overparametrisation (as in neural networks Gaussian processes, or in gradient-based dynamics in the lazy regime, where the weights effectively remain at initialisation and the networks behave as neural tangent kernels). These models are expressive but do not learn task-relevant features due to their effectively frozen embedding layers. However, with readout weights (black last layer) to be O (1 /d ) rather than the standard scaling O (1 / √ d ), feature learning emerges despite the width being infinite. Another tractable simplification are ( 3 ) deep linear networks, where the weights are learnable but activation functions linear (white circled nodes), thus reducing expressivity to that of a linear model, which allows for limited feature learning. A recent simplification is the linear-width shallow network with quadratic activation (pink nodes), ( 4 ). Even if trained near interpolation, it can only learn a quadratic approximation of the target, which limits its expressivity. Moreover, we will see that in the teacher-student setting we consider, it cannot recover the target weights, thus preventing strong feature-learning in this sense. Models ( 5 ) are the same as studied here, but set in the strongly overparametrised 'proportional regime': with a sample size only scaling as the width. Yet, weak forms of feature learning can emerge. The present paper considers fully trainable proportional-width non-linear NNs trained near interpolation , ( 6 ). This is the most challenging regime, where the model expressivity can fully manifest via strong task-adaptation (i.e., recovery of the target).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Neural Network Architectures: A Comparative Diagram

### Overview

The image presents a comparative diagram of six different neural network architectures, highlighting their structural differences, data-parameter relationships, expressivity, and feature learning capabilities. Each architecture is visually represented with nodes and connections, accompanied by textual descriptions.

### Components/Axes

The diagram is divided into six distinct sections, each representing a different neural network architecture. The sections are numbered (1) through (6).

* **Titles:** Each section has a title describing the network architecture:

* (1) Narrow NNs Committee machines

* (2) Linearized NNs & Kernels (NNGP, RF, NTK, Lazy)

* (3) Deep linear-width NNs Linear activation

* (4) Shallow linear-width NNs Quadratic activation

* (5) Deep linear-width NNs

* (6) Deep linear-width NNs

* **Network Diagrams:** Each section contains a schematic diagram of the neural network, showing nodes (neurons) and connections (synapses).

* **Data-Parameter Relationship:** Each section includes a statement about the relationship between the amount of data and the number of parameters.

* **Expressivity:** Each section indicates whether the network has "Weak expressivity" or "Strong expressivity."

* **Feature Learning:** Each section indicates whether the network exhibits "Feature learning" or "No feature learning" or "Weak feature learning."

* **Infinity Symbol:** An infinity symbol is present below each network diagram.

### Detailed Analysis

**Section 1: Narrow NNs Committee machines**

* **Diagram:** A narrow network with an input layer of approximately 5 nodes, a hidden layer of approximately 5 nodes, and a single output node. Connections are dense between layers. An arrow indicates the flow from "input" to "output".

* **Data-Parameter Relationship:** "#data α #params" (Data is proportional to parameters)

* **Expressivity:** Weak expressivity

* **Feature Learning:** Feature learning

**Section 2: Linearized NNs & Kernels (NNGP, RF, NTK, Lazy)**

* **Diagram:** A network with an input layer of approximately 5 nodes, a hidden layer of approximately 5 nodes, and a single output node. The connections between the input and hidden layers are colored in blue.

* **Data-Parameter Relationship:** "#data << or α params" (Data is much less than or proportional to parameters)

* **Expressivity:** Strong expressivity

* **Feature Learning:** No feature learning

**Section 3: Deep linear-width NNs Linear activation**

* **Diagram:** A deep network with an input layer of approximately 5 nodes, multiple hidden layers (approximately 3), and a single output node. The hidden layers have approximately 5 nodes each. Connections are dense between layers. The nodes in the hidden layers are white.

* **Data-Parameter Relationship:** "#data << or α params" (Data is much less than or proportional to parameters)

* **Expressivity:** Weak expressivity

* **Feature Learning:** Weak feature learning

**Section 4: Shallow linear-width NNs Quadratic activation**

* **Diagram:** A shallow network with an input layer of approximately 5 nodes, a hidden layer of approximately 5 nodes, and a single output node. The nodes in the hidden layer are colored in pink. Connections are dense between layers.

* **Data-Parameter Relationship:** "#data α #params" (Data is proportional to parameters)

* **Expressivity:** Weak expressivity

* **Feature Learning:** Weak feature learning

**Section 5: Deep linear-width NNs**

* **Diagram:** A deep network with an input layer of approximately 5 nodes, multiple hidden layers (approximately 3), and a single output node. The hidden layers have approximately 5 nodes each. Connections are dense between layers.

* **Data-Parameter Relationship:** "#data << #params" (Data is much less than parameters)

* **Expressivity:** Strong expressivity

* **Feature Learning:** Weak feature learning

**Section 6: Deep linear-width NNs**

* **Diagram:** A deep network with an input layer of approximately 5 nodes, multiple hidden layers (approximately 3), and a single output node. The hidden layers have approximately 5 nodes each. Connections are dense between layers. This section is enclosed in a green box.

* **Data-Parameter Relationship:** "#data α #params" (Data is proportional to parameters)

* **Expressivity:** Strong expressivity

* **Feature Learning:** Feature learning

### Key Observations

* The diagram contrasts different neural network architectures based on depth, width, activation functions, and data-parameter relationships.

* Expressivity and feature learning capabilities vary across the architectures.

* The relationship between data and parameters seems to influence expressivity and feature learning.

### Interpretation

The diagram illustrates the trade-offs between different neural network architectures. Narrow networks and deep linear-width networks (Section 6) with a proportional data-parameter relationship exhibit feature learning, while linearized networks prioritize strong expressivity but sacrifice feature learning. The choice of architecture depends on the specific task and the available data. The diagram suggests that the relationship between data size and the number of parameters, along with the network's depth and activation functions, plays a crucial role in determining its expressivity and feature learning capabilities.

</details>

their analysis yielded important insights on NNs learning mechanisms, some of which are also occurring in more expressive models. One of particular importance is the so-called specialisation transition [27, 28], where hidden neurons start learning different features. However, as we will see, in more expressive models a richer phenomenology emerges. This field has since then remained very much alive, with the goal of treating more complex architectures as in the present paper, see [29, 30] for reviews. Narrow NNs are multi-index functions , i.e., functions projecting their argument on a low-dimensional subspace, see [31] for a review. Their study allows to understand which properties of non-linearities makes learning hard for gradient-based or message-passing algorithms [32-36].

(2) Kernel limit: ultra wide and linearised NNs, and the mean-field regime. On the other hand, in the ultra wide limit ( L fixed, k l ≫ n ) fully connected Bayesian NNs behave as kernel machines (the so-called neural network Gaussian processes, NNGPs) [37-41], and hence suffer from these models' limitations. Indeed, kernel machines infer the decision rule by first embedding the data in a fixed a priori feature space, the renowned kernel trick , then operating linear regression/classification over the features. In this respect, they do not learn taskrelevant features and therefore need larger and larger feature spaces and training sets to fit their higher order statistics [42-47]. The same conclusions hold for

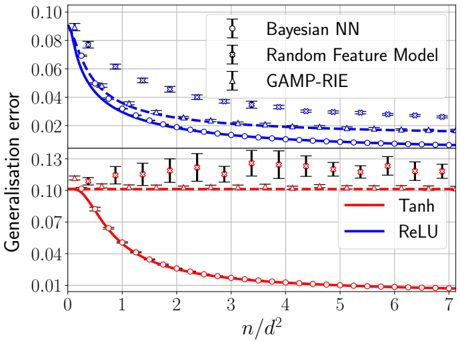

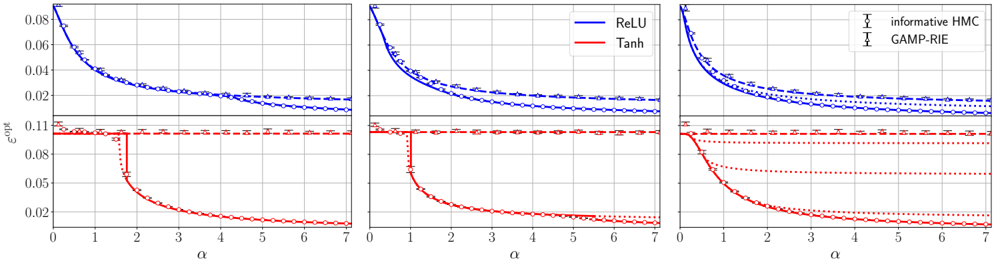

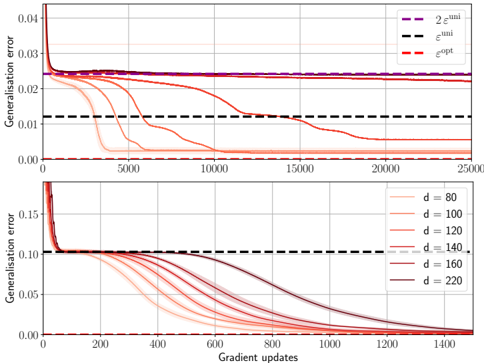

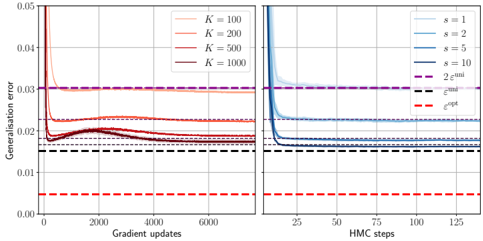

NNs trained with gradient-based methods but linearised around initialisation [48], i.e., with frozen weights represented in blue in FIG. 1. These include the random feature model (RF) with fixed random inner layer [49] (which is a finite size approximation of kernel machines), or the closely related neural tangent kernel (NTK) [50] and lazy regimes [51], see models (2) in FIG. 1. Such models are thus 'effectively linearised' because only the readouts are learnt. FIG. 2 illustrates the importance of feature learning: despite having a larger number of parameters, the best RF model or kernel are outperformed by an optimally trained NN, see also [52, 53].

One way to probe minimal feature learning effects is through perturbative expansions around the ultra wide limit, where k ≫ n but O (1 /k ) corrections are kept [5460]. This connects to expansions around free fields in quantum field theory, where diagrammatic rules are used to manage complex combinatorial sums, see [61, 62] for introductions. Another way to force feature-learning in infinitely wide models is the mean-field scaling obtained by taking the readout weights v vanishingly small ( O (1 /k ) rather than the standard O (1 / √ k ) scaling we consider). Originally proposed as a mean to escape the lazy regime of gradient-based dynamics [51] via a specific weights initialisation [63-69], it was later extended to the Bayesian framework [70-73]. NNs in this scaling converge to kernel machines with data-dependent kernels (rather than fixed a priori, as in the lazy regime). We also

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="3m7ZLQ

z0djGS

XTU/yc wf

>A

C2H

VFN

E

+

R

B

P

K9g

Ik

r

u

J

q

O

M

n

v

p

Y

W

o

D

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

<latexi sh

1\_b

64="oBZS7

VY

JFA0NQ/

ny

g

k

>

C

cj

zGr

q

Iv

H

WM

PT

E

m

f

wR+

K

U

X

O

L

d

pD

u

mention [74, 75] which alternatively rescale the Bayesian likelihood to induce feature learning at infinite width.

(3) Deep linear networks. Another way to linearise networks, thus making them tractable, is by allowing fully trainable weights while placing linear activations in the inner layers. Linear networks are a major theoretical playground from the dynamical perspective [76] but also at the equilibrium (Bayesian) level [70, 77-80]. In the same vein, theoreticians considered linear diagonal networks of the form F diag w , v ( x ) = v ⊺ diag( w ) x [81, 82], which exhibit an implicit bias in gradient descent learning [8386]. A main issue with linear networks, however, is their intrinsically small expressivity, so only weak notions of feature learning can manifest.

(4) Shallow quadratic networks. Various works have recently exploited the fact that a shallow NN with quadratic activation σ ( x ) = x 2 simplifies drastically [8797]. However, we will see that this prevents strong feature learning to emerge. The closest settings to ours are [9496]. There, the analysis based on results for the GLM [98] and matrix denoising [99-102] follows thanks to a specific mapping to a linear matrix sensing problem , where the goal is to infer a Wishart-like matrix given its projection along random rank-one matrices.

(5) Proportional data regime. This overparametrised regime considers a sample size much smaller than the number of model parameters ( L fixed, d large, k l , n = Θ( d )). Recent works show how a limited amount of feature learning makes the network equivalent to optimally regularised kernels [71, 77, 103-105]. MLPs thus reduce to linear networks (GLMs) in the sense conjectured in [106] and proven in [107, 108] and thus suffer from their limitations. This could be a consequence of the fully connected architecture, as, e.g., convolutional networks can learn more informative features in this regime [70, 109111]. In a similar data regime, Yoshino and co-authors have developed a replica theory for overparametrised deep NNs with a non-standard architecture [112-114].

(6) A timely challenge: Deep non-linear networks of linear width trained near interpolation. Despite the wealth of methods developed to study the aforementioned models, none is able to tackle NNs enjoying all the following realistic properties:

- ( P 1 ) a width proportional to the input dimension;

- ( P 2 ) with broad classes of non-linear activations;

- ( P 3 ) with possibly multiple hidden layers;

( P 4 ) learning in the interpolation regime .

The property ( P 1 ) combined with ( P 2 ) , ( P 3 ) allows to capture finite-width effects in NNs that are highly expressive, while still allowing to take the large system limit needed to obtain sharp theoretical predictions. Even if it is not entirely clear whether a finite width improves the performance of Bayesian MLPs compared to their kernel limit [115, 116], it is certainly one of the most natural ways to allow for the emergence of representation learning, which really is the crux of deep learning [2].

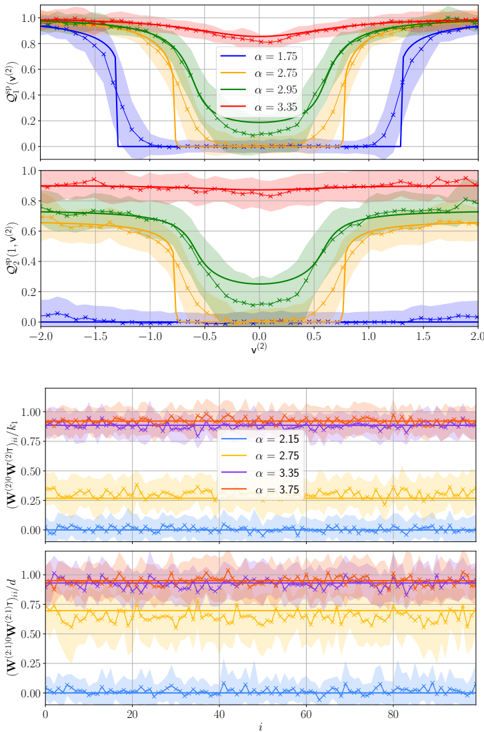

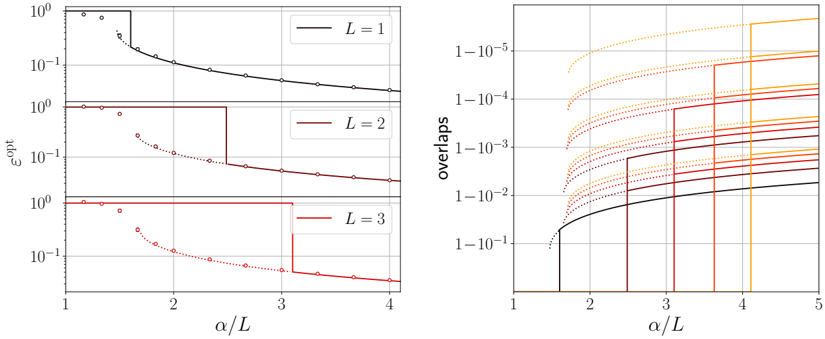

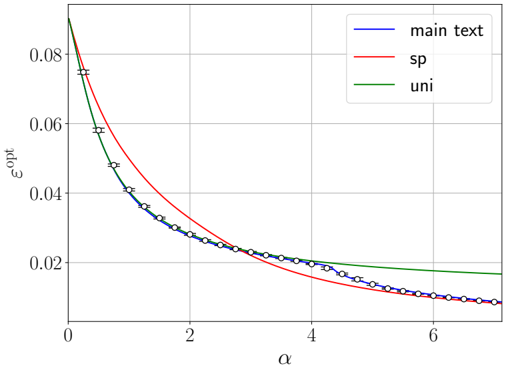

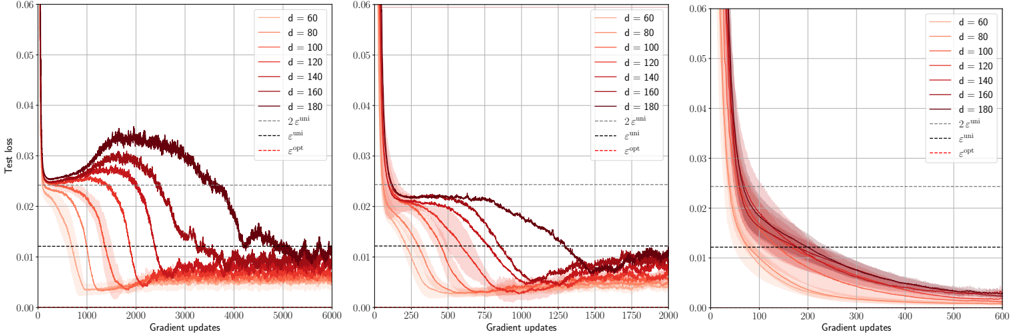

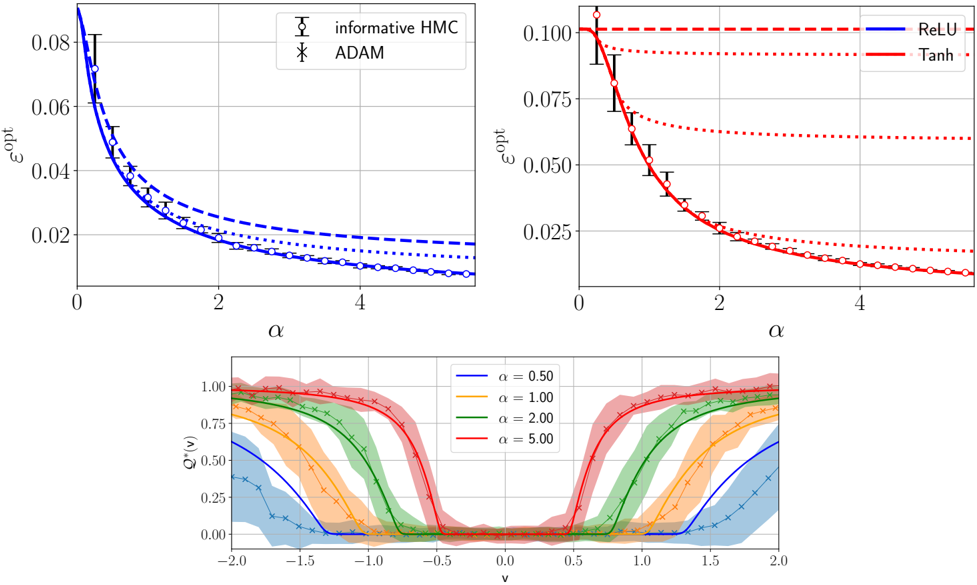

FIG. 2. Bayes-optimal mean-square generalisation error achievable by a two-layer NN F θ ( x ) = v ⊺ σ ( Wx ) as a function of the amount of training data n over the squared input dimension d 2 when d, n and the NN width k all diverge with n/d 2 and k/d → 0 . 5 fixed (solid curves), with activation σ ( x ) = ReLU( x ) and tanh(2 x ) (same setting as right panel of FIG. 5). These theoretical curves follow from the results in Sec. II. The task is regression with standard Gaussian inputs ( x µ ) µ ≤ n and noisy responses ( y µ ) µ ≤ n generated by a target two-layer NN F θ 0 ( x ) with Gaussian random weights and same activation. In the experiments, d = 150 and k = 75. Empty circles are obtained by training the Bayesian NN using Hamiltonian Monte Carlo initialised close to the target (yielding the best achievable error), and then computing its generalisation error on 10 5 test data (error bars are the standard deviation over 10 instances of the training set and target). Crosses show the generalisation error empirically achievable by the random feature model F RF a ∗ ( x ) = a ∗ ⊺ σ ( W RF x ) trained by exact empirical risk minimisation a ∗ = argmin( ∑ µ ≤ n ( y µ -F RF a ( x µ )) 2 + t ∥ a ∥ 2 ) with optimised a ∈ R r and L 2 -regularisation strength t picked by crossvalidation. The fixed Gaussian features matrix W RF ∈ R r × d has width r = 3 kd roughly three times larger than the total number k ( d +1) of parameters of the NN. Triangles are the error of GAMP-RIE [94] extended to generic activations (see App. B4), which reaches the performance of an optimally regularised kernel.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Chart: Generalization Error vs. n/d^2

### Overview

The image is a line chart comparing the generalization error of different models as a function of the ratio n/d^2. The chart displays two distinct sets of models: Bayesian NN, Random Feature Model, and GAMP-RIE in the upper portion, and Tanh and ReLU in the lower portion. The x-axis represents n/d^2, and the y-axis represents the generalization error. Error bars are included for some data series.

### Components/Axes

* **X-axis:** n/d^2, ranging from 0 to 7. Axis markers are present at integer values.

* **Y-axis:** Generalisation error, ranging from 0.01 to 0.10 in the lower portion and from 0.02 to 0.10 in the upper portion, with an additional marker at 0.13.

* **Legend (Top-Right):**

* Bayesian NN (Black, circles): Data points are marked with circles.

* Random Feature Model (Black, crosses): Data points are marked with crosses.

* GAMP-RIE (Black, triangles): Data points are marked with triangles.

* **Legend (Bottom-Right):**

* Tanh (Red, circles): Data points are marked with circles.

* ReLU (Blue, solid line): No data points are explicitly marked on the line.

### Detailed Analysis

**Upper Portion:**

* **Bayesian NN (Blue, circles):** The blue line with circle markers starts at approximately 0.085 at n/d^2 = 0 and decreases to approximately 0.015 at n/d^2 = 7. The trend is downward, indicating decreasing generalization error with increasing n/d^2.

* n/d^2 = 0: ~0.085

* n/d^2 = 1: ~0.045

* n/d^2 = 2: ~0.03

* n/d^2 = 3: ~0.02

* n/d^2 = 7: ~0.015

* **Random Feature Model (Blue, crosses):** The blue dashed line with cross markers starts at approximately 0.09 at n/d^2 = 0 and decreases to approximately 0.03 at n/d^2 = 7. The trend is downward, indicating decreasing generalization error with increasing n/d^2.

* n/d^2 = 0: ~0.09

* n/d^2 = 1: ~0.06

* n/d^2 = 2: ~0.045

* n/d^2 = 3: ~0.035

* n/d^2 = 7: ~0.03

* **GAMP-RIE (Blue, triangles):** The blue dashed line with triangle markers starts at approximately 0.09 at n/d^2 = 0 and decreases to approximately 0.03 at n/d^2 = 7. The trend is downward, indicating decreasing generalization error with increasing n/d^2.

* n/d^2 = 0: ~0.09

* n/d^2 = 1: ~0.05

* n/d^2 = 2: ~0.035

* n/d^2 = 3: ~0.03

* n/d^2 = 7: ~0.025

**Lower Portion:**

* **Tanh (Red, circles):** The red line with circle markers starts at approximately 0.10 at n/d^2 = 0 and decreases to approximately 0.01 at n/d^2 = 7. The trend is downward, indicating decreasing generalization error with increasing n/d^2.

* n/d^2 = 0: ~0.10

* n/d^2 = 1: ~0.04

* n/d^2 = 2: ~0.025

* n/d^2 = 3: ~0.015

* n/d^2 = 7: ~0.01

* **ReLU (Red, triangles):** The red dashed line with triangle markers remains relatively constant at approximately 0.11 across all values of n/d^2. The trend is flat, indicating no significant change in generalization error with increasing n/d^2. Error bars are present.

* n/d^2 = 0: ~0.11

* n/d^2 = 1: ~0.11

* n/d^2 = 2: ~0.115

* n/d^2 = 3: ~0.11

* n/d^2 = 7: ~0.11

### Key Observations

* The generalization error for Bayesian NN, Random Feature Model, GAMP-RIE, and Tanh decreases as n/d^2 increases.

* The generalization error for ReLU remains relatively constant as n/d^2 increases.

* The Tanh model exhibits the lowest generalization error at higher values of n/d^2.

* The ReLU model exhibits a higher, relatively constant generalization error.

### Interpretation

The chart suggests that, for the given models, increasing the ratio of 'n' to 'd^2' generally leads to a reduction in generalization error, implying better model performance with more data relative to the model's complexity. However, this trend is not universal, as demonstrated by the ReLU model, which shows no significant improvement with increasing n/d^2. The Tanh model appears to be the most effective in reducing generalization error as n/d^2 increases, outperforming the other models at higher values of n/d^2. The error bars on the ReLU model indicate some variability in its performance, but the overall trend remains consistent. The Bayesian NN, Random Feature Model, and GAMP-RIE models exhibit similar trends, with decreasing generalization error as n/d^2 increases, but their performance is generally worse than Tanh at higher values of n/d^2.

</details>

The interpolation regime ( P 4 ) means L fixed, d large with k l = Θ( d ) (from ( P 1 )) and n = Θ( d 2 ), i.e., a sample size comparable to the number of trainable parameters. This regime is difficult to analyse for expressive models but also very interesting, because it forces them to adapt to the data in order to perform well. Hence, taskdependent feature learning emerges, thus escaping the reduction to linear models discussed above. Analysing MLPs in the interpolation regime has been an open problem for decades, and is widely recognised as one of the major theoretical challenges in the physics of learning [94, 106]. That statistical mechanics is up to the task is an encouraging signal for physicists working on deep learning [117, 118].

This setting is relevant and timely also from a practical perspective. Indeed, the latest NN architectures

such as generative diffusion and large language models (LLMs) do operate near interpolation: the computeoptimal training scales parameters and tokens in equal proportion [119, 120], with typical sizes ranging in 10 10 -10 12 . These models of utmost interest are highly expressive and also exhibit signs of feature learning [121, 122]. From that perspective, it places them in a similar regime as considered in the present paper. LLMs are far more intricate than MLPs. Yet, they have things in common: in addition to the fact that one of the basic building block of LLMs is actually the MLP (together with the attention head), both correspond to deep non-linear architectures. We thus consider essential to tackle the interpolation regime of MLPs, with the hope that some insights brought forward by our theoretically tractable idealised setting remain qualitatively relevant for the NN architectures deployed in applications.

## B. Main contributions and setting

We address questions pertaining to the foundations of learning theory for NN models possessing all four properties ( P 1 )-( P 4 ). The first one is information-theoretic :

Q1 : Assuming the training data is generated by a target MLP, how much is needed to achieve a certain generalisation performance using an MLP with same architecture and Bayes-optimally trained in a supervised manner?

The answer, provided analytically by Result 2 in Sec. II, yields the Bayes-optimal limits of learning an MLP target function, thus bounding the performance of any model trained on the same data. The setting where the datagenerating process is itself an MLP may look artificial at first, but given the high expressivity and universal approximation property of neural networks, studying their learning provides insights applicable to very general classes of functions. For this reason, and the analytical tractability of the teacher-student scenario explained below, this question has always been a starting point in the statistical physics literature on NNs [5].

Secondly, using statistical physics we will answer another important question concerning interpretability :

Q2 : Given a certain data budget, which target features can the MLP learn?

This is key in order to understand the evolution of the best learning strategy for an MLP as a function of the amount of available data. Consequently, we will precisely explain what a perfectly trained MLP does to beat the random feature model or an optimally regularised kernel (see FIG. 2). In few words, the reason is that given enough data, strong feature learning emerges within the NN, in the sense of recovery of the target weights. This happens through a specialisation phase transition ; in the deep case there will be one transition per layer. This mechanism is not possible with the random feature model, which explains the gap in performance. These insights will follow from the detailed analysis of the suf- ficient statistics (i.e., the order parameters, OPs) of the model as the data increases, obtained from the large deviation perspective provided in Results 1, 3 and 4. The OPs carry more information than merely computing the achievable generalisation performance (see Q1 ).

Finally, based on experiments, we provide in Sec. III algorithmic insights for MLPs with L ≤ 2 layers:

Q3 : Given a reasonable compute and data budget, can practical training algorithms reach optimal performance or are they blocked by statistical-computational gaps?

The short answer is that it depends on the target, in particular on whether its readout weights are discrete.

Answering these questions will provide a phase diagram depicting the optimal performance, the features that are learnt to attain it, and the limitations faced by algorithms, as a function of the data budget, see Sec. III. Before presenting the setting needed to do so, let us emphasise once more that the theoretical component of this paper will be only concerned with static aspects, i.e., generalisation capabilities of trained networks (after a manageable or unconstrained compute time). We do not provide any theoretical claims on how learning occurs during training.

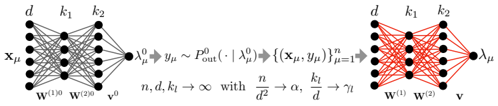

Teacher-student set-up. We consider the supervised learning of an MLP with L hidden layers when the datagenerating model, i.e., the target function (or 'teacher'), is also an MLP of the same form with unknown weights. These are the readouts v 0 ∈ R k and inner weights W ( l )0 ∈ R k l × k l -1 for ℓ ≤ L (with k 0 = d ), drawn entrywise i.i.d. from P 0 v and P 0 W , respectively (the latter being the same law for all ℓ ≤ L ). We assume P 0 W to be centred while P 0 v has mean ¯ v , and both priors have unit second moment. We denote the set of unknown parameters of the target as θ 0 = ( v 0 , ( W ( l )0 ) l ≤ L ).

For a given input vector x µ ∈ R d , for µ ≤ n , the response/label y µ is drawn from a kernel P 0 out :

$$\begin{array} { r l } { y _ { \mu } \sim P _ { o u t } ^ { 0 } ( \, \cdot \, | \, \lambda _ { \mu } ^ { 0 } ) \quad w i t h \quad \lambda _ { \mu } ^ { 0 } \colon = \mathcal { F } _ { \theta ^ { 0 } } ^ { ( L ) } ( x _ { \mu } ) , \quad ( 1 ) } \end{array}$$

where the MLP target function is defined as

$$\begin{array} { r l } & { f a n \, - } \\ & { J \colon \quad \mathcal { F } _ { \theta ^ { 0 } } ^ { ( L ) } ( x ) \colon = \frac { v ^ { 0 \tau } } { \sqrt { k ^ { L } } } \sigma \left ( \frac { W ^ { ( L ) 0 } } { \sqrt { k _ { L - 1 } } } \sigma \left ( \frac { W ^ { ( L - 1 ) 0 } } { \sqrt { k _ { L - 2 } } } \cdots \sigma \left ( \frac { W ^ { ( 1 ) 0 } } { \sqrt { k _ { 0 } } } x \right ) \cdots \right ) . } \end{array}$$

We will analyse the case of an arbitrary number a layers L (that remains d -independent). However, we will give a special attention to the shallow, one hidden layer MLP (we drop layer indices in this case)

$$\begin{array} { r l r } { \mathcal { F } _ { \boldsymbol \theta ^ { 0 } } ^ { ( 1 ) } ( x ) = \frac { 1 } { \sqrt { k } } v ^ { 0 T } \sigma \left ( \frac { 1 } { \sqrt { d } } W ^ { 0 } x \right ) } & { ( 2 ) } \end{array}$$

as well as the MLP with two hidden layers:

$$\begin{array} { r l } { i s } & \mathcal { F } _ { \theta ^ { 0 } } ^ { ( 2 ) } ( x ) = \frac { 1 } { \sqrt { k _ { 2 } } } v ^ { 0 } \tau \sigma \left ( \frac { 1 } { \sqrt { k _ { 1 } } } W ^ { ( 2 ) 0 } \sigma ( \frac { 1 } { \sqrt { d } } W ^ { ( 1 ) 0 } x \right ) . \quad ( 3 ) } \end{array}$$

The kernel can be stochastic or model a deterministic rule if P 0 out ( y | λ ) = δ ( y -f 0 ( λ )) for some function f 0 . Our main example P 0 out ( y | λ ) = exp( -1 2∆ ( y -λ ) 2 ) / √ 2 π ∆ is the linear readout with Gaussian label noise.

<latexi sh

1\_b

64="wg3

pMYuBTvd

Nnf

/

SD5WV

>A

C

X

cj

HL

F

G

oJ

k

q

U

E

+

rZ

K

P

Q

O

m

y

I

z

R

<latexi sh

1\_b

64="cy

OPY3/

H7

JoN+Xmfk

9IGwM

>A

B

V

S8

E

W

K

j0

r

F

v

p

Td

D

Q

g

z

u

n

L

R

q

Z

C

U

<latexi sh

1\_b

64="X

+

8m9TBqInOL

K

H

cG

Ng

>A

VD

S

F

W

r

w

U

E

u

P

0M

kz

f

o

v

d

y

Z

p

C

J

Y

R

Q

j

/

<latexi sh

1\_b

64="

3IrG

XWN2jP/

A

VMcdf

k

Lw

>

B

n

S8

EJ

U

q

F

7Q

o

y

pZ

C

z

Y

u

+O

g

H

D

T

R

v

m

K

<latexi sh

1\_b

64="jUVzSgk

Po

p

7CXJ

I

>A

B+n

c

DL

N

F

r

fq

d

u

ZwT

W

y

R

m

Q3

G

MK

v

E

H

O

Y

/

<latexi sh

1\_b

64="

GXyOmjzY

q

k

u

MR0Fp

BU

>A

n

c

V

NS8

EJ3

W

K

rHo

+w

v

Td

Cf

I

D

PQ

g

/

Z

L

<latexi sh

1\_b

64="H

cN+AI

f

ZYo5kR

M

jr

Q

>

Cz3

V

LT

J

FD

U

d

m

E

S

g

G

O

P

BW/8

X

p2

y9

q

w

u

v

n

K

<latexi sh

1\_b

64="3UN2jorn

T

Y

vC

m

B05IA

>

H

c

VDLSgM

F

X7W+q

d

y

J

k

KE

/Q

R

Z

zu

G

O

P

w

f

p

<latexi sh

1\_b

64="

UCN

d

E

ncjypX

+

2k

>A

Q3

VDL

F

W

Zu

R

mB

T

f

PO

w

S

I

J

o

q

0G

Hv

/

z

r

M

Y

K

g

<latexi sh

1\_b

64="OuD

0k3wJ

g

8S

nU

2Rr9I

>A

CH

c

V

N

M

G

zW/

q

FL

K

Z

p

X

E

T

+

Q

v

f

o

m

y

B

d

Y

j

P

<latexi sh

1\_b

64="m

K

grGu/7JMXTYjC

RI+Z0

>A

c

VHLS

QwFD

W

vUp qo

B

O

k

f

y

5N

n

z

d

P

E

<latexi sh

1\_b

64="cy

OPY3/

H7

JoN+Xmfk

9IGwM

>A

B

V

S8

E

W

K

j0

r

F

v

p

Td

D

Q

g

z

u

n

L

R

q

Z

C

U

<latexi sh

1\_b

64="zqym+d

L

Y

rJ

QT8

I

>A

B

X

c

VD

S

N

F

W

H

p

Rj

K9g

P

Z

GE/

k

U

O2

7u

v

f

C

n

o

M

w

<latexi sh

1\_b

64="

3IrG

XWN2jP/

A

VMcdf

k

Lw

>

B

n

S8

EJ

U

q

F

7Q

o

y

pZ

C

z

Y

u

+O

g

H

D

T

R

v

m

K

<latexi sh

1\_b

64="kKB

vo

G

Rf

TjmQJNECy

U

>A

+X

c

VDLS

F

2pr

Zdu

0W3

Y

/

q

I

n

M

w8

H

O

z

P7

g

<latexi sh

1\_b

64="

GXyOmjzY

q

k

u

MR0Fp

BU

>A

n

c

V

NS8

EJ3

W

K

rHo

+w

v

Td

Cf

I

D

PQ

g

/

Z

L

<latexi sh

1\_b

64="5XrM

F2Y

z+kD

O

/vCR

KNB

g

>A

cjVHLT

J

3U

I

d

m

Z

E

G

f

Sq

o

Pn

p

W

y

w

u

Q

<latexi sh

1\_b

64="gR

8+G

B

qQv

SH3IYc

9y2JE

>A

n

VDL

N

F

U

k

w

K

o

d

Cf

m

O

z

X

M

P

Z

j

p

/

r

u

W

T

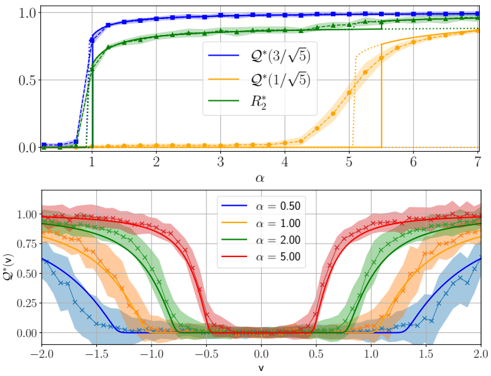

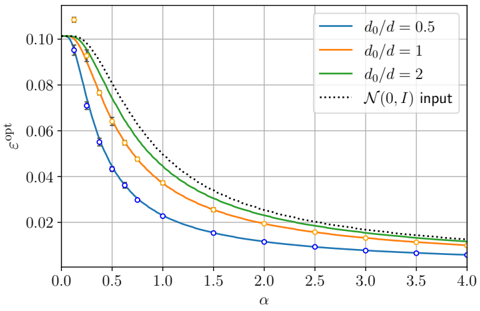

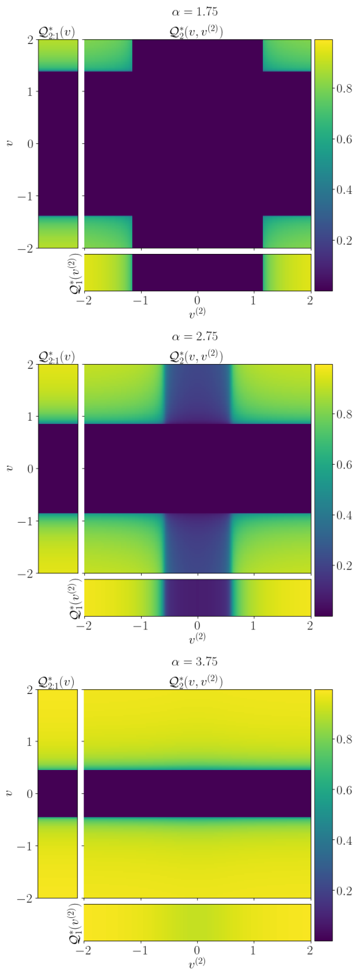

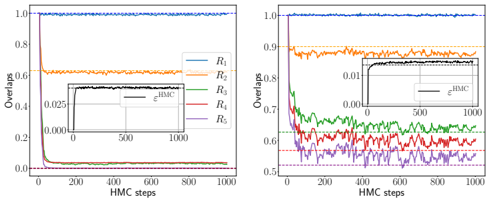

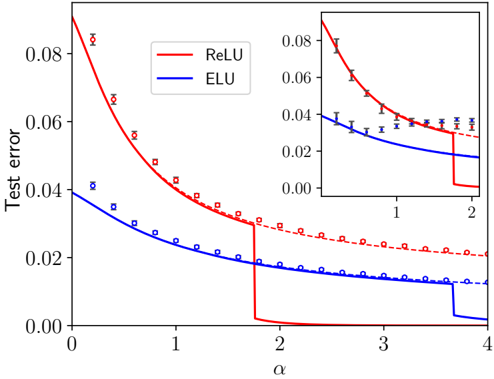

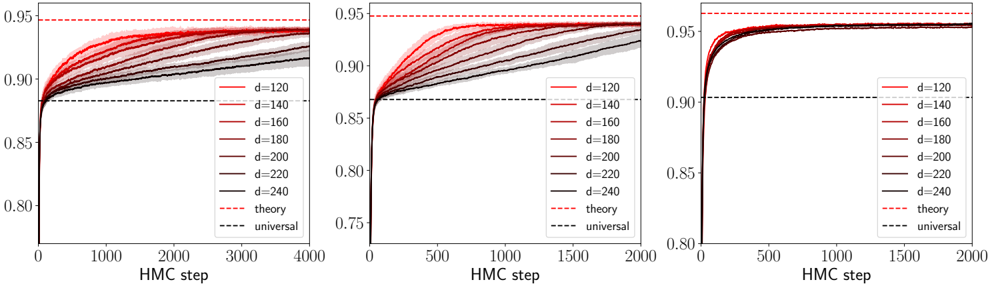

FIG. 3. The teacher-student scenario for the case of two hidden layers. The teacher NN is used to produce the responses given the inputs. A student NN with matched architecture (but who is not aware of the parameters of the teacher) is then trained in a Bayesian manner given the training data. We also display the scaling limit considered given by (4).

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Neural Network Diagram: Forward and Backward Propagation

### Overview

The image depicts a neural network diagram illustrating forward and backward propagation. The diagram is split into two sections, showing the network's state before and after training. The left side represents the initial state with gray connections, while the right side represents the trained state with red connections.

### Components/Axes

* **Nodes:** The diagram features nodes arranged in layers. The number of nodes in each layer varies.

* **Connections:** Lines connecting the nodes represent the weights between neurons. The color of the lines changes from gray (initial state) to red (trained state).

* **Labels:**

* Input Layer: x<sub>μ</sub>

* Hidden Layers: d, k<sub>1</sub>, k<sub>2</sub>

* Output Layer: λ<sub>μ</sub><sup>0</sup> (left), λ<sub>μ</sub> (right)

* Weights: w<sup>(1)0</sup>, w<sup>(2)0</sup> (left), w<sup>(1)</sup>, w<sup>(2)</sup> (right), v<sup>0</sup> (left), v (right)

* **Equations:**

* Left side: λ<sub>μ</sub><sup>0</sup> → y<sub>μ</sub> ~ P<sub>out</sub>(· | λ<sub>μ</sub><sup>0</sup>)

* Center: {(x<sub>μ</sub>, y<sub>μ</sub>)}<sub>μ=1</sub><sup>n</sup>

* Right side: n, d, k<sub>l</sub> → ∞ with n/d<sup>2</sup> → α, k<sub>l</sub>/d → γ<sub>l</sub>

### Detailed Analysis

**Left Side (Initial State):**

* The input layer is labeled x<sub>μ</sub> and has approximately 5 nodes.

* The first hidden layer is labeled 'd' and also has approximately 5 nodes.

* The second hidden layer is labeled 'k<sub>1</sub>' and has approximately 4 nodes.

* The third hidden layer is labeled 'k<sub>2</sub>' and has approximately 5 nodes.

* The output layer is labeled λ<sub>μ</sub><sup>0</sup> and has a single node.

* The connections between the layers are gray, indicating the initial, untrained state of the network.

* The weights associated with the connections are labeled w<sup>(1)0</sup>, w<sup>(2)0</sup>, and v<sup>0</sup>.

**Right Side (Trained State):**

* The input layer is labeled 'd' and has approximately 5 nodes.

* The first hidden layer is labeled 'k<sub>1</sub>' and has approximately 4 nodes.

* The second hidden layer is labeled 'k<sub>2</sub>' and has approximately 5 nodes.

* The output layer is labeled λ<sub>μ</sub> and has a single node.

* The connections between the layers are red, indicating the trained state of the network.

* The weights associated with the connections are labeled w<sup>(1)</sup>, w<sup>(2)</sup>, and v.

**Equations and Relationships:**

* The equation λ<sub>μ</sub><sup>0</sup> → y<sub>μ</sub> ~ P<sub>out</sub>(· | λ<sub>μ</sub><sup>0</sup>) describes the forward pass, where λ<sub>μ</sub><sup>0</sup> is the initial output, y<sub>μ</sub> is the target output, and P<sub>out</sub> is the output distribution.

* The term {(x<sub>μ</sub>, y<sub>μ</sub>)}<sub>μ=1</sub><sup>n</sup> represents the training dataset consisting of input-output pairs.

* The equation n, d, k<sub>l</sub> → ∞ with n/d<sup>2</sup> → α, k<sub>l</sub>/d → γ<sub>l</sub> describes the asymptotic behavior of the network as the number of training samples (n), input dimension (d), and hidden layer sizes (k<sub>l</sub>) approach infinity. α and γ<sub>l</sub> are constants.

### Key Observations

* The color change from gray to red signifies the transition from an untrained to a trained network.

* The diagram illustrates the flow of information from the input layer to the output layer during both forward and backward propagation.

* The equations provide a mathematical description of the network's behavior and training process.

### Interpretation

The diagram illustrates the fundamental concept of training a neural network. The left side represents the initial, random state of the network, where the connections (weights) are untrained. The right side represents the trained network, where the connections have been adjusted to map the input data to the desired output. The equations describe the mathematical relationships between the input, output, and network parameters. The asymptotic behavior equation suggests that the network's performance improves as the size of the network and the amount of training data increase. The diagram effectively visualizes the transformation of a neural network from a random mapping to a learned mapping through the process of training.

</details>

We will first consider i.i.d. standard Gaussian vectors as inputs x µ . In that case the whole data structure is dictated by the input-output relation only, allowing us to focus solely on the influence of the target function on the learning. In Sec. III we generalise the results to include structured data: Gaussian with a covariance and real data (MNIST). The input/output pairs D = { ( x µ , y µ ) } µ ≤ n form the training set for a student network with matching architecture.

The Bayesian student learns via the posterior distribution of the weights matrices θ = ( v , ( W ( l ) ) l ≤ L ) (of same respective sizes as the teacher's) given the training data:

$$d P ( \pm b { \theta } | \, \mathcal { D } ) \colon = \mathcal { Z } ( \mathcal { D } ) ^ { - 1 } d P _ { \theta } ( \pm b { \theta } ) \prod _ { \mu \leq n } P _ { o u t } \left ( y _ { \mu } | \, \lambda _ { \mu } ( \pm b { \theta } ) \right )$$

where dP θ ( θ ) := dP v ( v ) ∏ l ≤ L dP W ( W ( l ) ) (with the notation dP ( M ) := ∏ i,j dP ( M ij )), with post-activations

$$\lambda _ { \mu } ( \boldsymbol \theta ) \colon = \mathcal { F } _ { \boldsymbol \theta } ^ { ( L ) } ( x _ { \mu } ) , \quad \mu \leq n .$$

The posterior normalisation Z ( D ) = Z ( L ) ( D ) for the model with L hidden layers is the partition function, and P W , P v are the priors assumed by the student. We focus on the Bayes-optimal setting P W = P 0 W , P v = P 0 v and P out = P 0 out , but the approach can be extended to account for a mismatch.

As stated above, we study the linear-width regime with quadratically many samples , which places the model in the interpolation regime, i.e., a large size limit

$$\begin{array} { r l } { d , k _ { l } , n \rightarrow + \infty \, w i t h \, \frac { k _ { l } } { d } \rightarrow \gamma _ { l } \, f o r \, l \leq L , \, \frac { n } { d ^ { 2 } } \rightarrow \alpha . ( 4 ) } & { t h e p r i b u m a l y } \end{array}$$

Given the cost of training deep Bayesian MLPs and specific difficulties discussed below associated with an increasing number of layers, we distinguish the cases of one, two and more than two hidden layers for what concerns the hypotheses we impose on the activation σ .

( H 1 ) For shallow NNs with L = 1 hidden layer our results are valid for an arbitrary activation function as long as it admits an expansion in Hermite polynomials with coefficients ( µ ℓ ) ℓ ≥ 0 , see App. A 2:

$$\sigma ( x ) = \sum _ { \ell \geq 0 } \frac { \mu _ { \ell } } { \ell ! } \, H e _ { \ell } ( x ) . \quad \quad ( 5 ) \quad t h a n$$

We also assume it has vanishing 0th Hermite coefficient µ 0 = 0, i.e., that it is centred E z ∼N (0 , 1) σ ( z ) = 0; in

App. B1g we relax this assumption. We will mainly consider tanh, ReLU and Hermite polynomial activations.

Through Hermite expansion, the MLP function can be decomposed as

$$\begin{array} { r l } { \mathcal { F } _ { \theta } ^ { ( 1 ) } ( x ) = \frac { \mu _ { 1 } } { \sqrt { d } } \frac { v ^ { \intercal } W } { \sqrt { k } } x + \frac { \mu _ { 2 } } { 2 d } T r ( \frac { W ^ { \intercal } d i a g ( v ) W } { \sqrt { k } } ( x ^ { \otimes 2 } - I _ { d } ) ) + \cdots } \end{array}$$

where · · · contains terms made of tensors of all orders constructed from θ , contracted with input rank-one tensors ( x ⊗ ℓ ) ℓ . In each such term, at least one tensor is of order ℓ ≥ 3. Therefore an equivalent interpretation of the learning problem of an MLP target is that of a 'tensor sensing problem' where the tensors entering the observed responses, v 0 ⊺ W 0 ∈ R d , W 0 ⊺ diag( v 0 ) W 0 ∈ R d × d , . . . are all constructed from the same fundamental parameters θ 0 (see, e.g., [123]). The first term in the above expansion is called the 'linear term/component'. The one of the target is perfectly learnable in the quadratic data regime we consider. The second term is the 'quadratic term/component'. Both will play a special role because, as we will see, the terms · · · effectively behave as (Gaussian) noise when n = Θ( d 2 ), unless θ 0 is partially recovered. Learning through recovery of θ 0 is called specialisation . In contrast, the linear and quadratic terms are learnable without specialisation. This is reassuring given that we will argue that for many targets, it takes a time growing as exp( c d ) for the network to specialise, for some positive σ -dependent constant c < 1. This separation in algorithmic learnability of first and second components versus all the others is at the root of the emergence of different learning strategies employed by the network, and the crux of the generalisation of a learning algorithm in App. B 4 coined GAMP-RIE (generalised approximate message-passing with rotational invariant estimator) [94]. ( H 2 ) For L = 2 we require µ 0 = µ 2 = 0, which is e.g. the case for odd activations. Our main example is tanh. In the tensor inference problem appearing when expanding all activations, µ 2 = 0 means that no quadratic term is present. However, a 'product term' W (2) W (1) appears (in addition to v ⊺ W (2) W (1) ). We will see in Sec. III that skipping the quadratic term implies that learning terms beyond the linear ones will be possible only through specialisation. However, the presence of the product term will have interesting consequences on the learning curves. Importantly, W (2) W (1) is a matrix learnable partly independently of its factors, and consequently requires its own OP in the analysis.

( H 3 ) For L ≥ 3 we require µ 0 = µ 1 = µ 2 = 0. This does not include standard activations and we consider the hyperbolic tangent after setting µ 1 = 0 in its Hermite decomposition. µ 2 = 0 again entails that learning beyond-linear terms requires the network to specialise, and µ 1 = 0 prevents the multiplication of OPs by avoiding the presence of many product terms.

Related to this last comment, we wish to emphasise that these hypotheses are not due to restrictions of the techniques we develop. The issue is purely practical: relaxing them while increasing the number of layers yields a combinatorial explosion (in L ) of the number of OPs

to track in the theory as well as cumbersome formulas. We have therefore decided to leave for future work the analysis of the most general case, and focus here on these special ones which already yield an extremely rich picture while remaining interpretable.

## C. Replica method and HCIZ combined

A key component of our approach is the way we blend tools from spin glasses (the replica method [124]) and matrix models, in particular, the so-called Harish ChandraItzykson-Zuber (HCIZ) 'spherical' integral [125-127]. Here, we review the growing corpus of works utilising it jointly with the replica method. Let us first define this matrix integral:

$$\begin{array} { r l } & { \mathcal { Z } _ { H C I Z } ^ { ( \beta ) } ( A , B ) \colon = \int d \mu ^ { ( \beta ) } ( 0 ) \exp \left ( \frac { \beta N } { 2 } T r [ O A O ^ { \dagger } B ] \right ) \quad ( 6 ) } \\ & { t h e n j . } \end{array}$$

where β = 2 if A , B are N × N Hermitian matrices, β = 1 if real symmetric. Respectively the integral is over the unitary U ( N ) or orthogonal group O ( N ), w.r.t. the corresponding uniform Haar measure µ ( β ) . For β = 2 it admits a closed form for any N [125] and a known large N limit for β = 1 , 2 [126-129]. It is crucial to analyse matrix models in physics and random geometry [130-132]. In spite of having an 'explicit' limit, it can be tackled only in few cases [100, 133]. However, if one matrix, say A , has small rank compared to N ≫ 1, the corresponding low-rank spherical integral is simple [134, 135].

Spherical integrals were used in the replica method for spin glasses with correlated disorder in the seminal paper [136]. It triggered a long series of works in spin glasses [137-141], in analysing simple NNs [142, 143] or in inference and message-passing algorithms [144-160]. In these papers the degrees of freedom are few vectors (e.g., the replicas of the system forming a low-rank matrix A ) interacting with a quenched rotationally invariant matrix B of rank Θ( N ). Rotational invariance, a crucial property for employing spherical integrals, means distributional invariance under orthogonal transformations, i.e., P ( B ) = P ( OBO ⊺ ) ∀ O ∈ O ( N ) if B is symmetric. Consequently, only the low-rank spherical integral intervenes when integrating B 's eigenvectors.

An active research line tries to include models where the degrees of freedom themselves are linear-rank matrices in addition to the quenched disorder. This presents a whole new challenge. Seminal papers in the context of matrix denoising are [161, 162] which provided a spectral denoising algorithm (on which the GAMP-RIE [94] relies), also analysed in [101, 102]. Extensions to nonsymmetric matrix denoising exist [163, 164]. An early attempt at combining linear-rank spherical integration (where both A , B in (6) have rank Θ( N )) with the replica method is [165], which tried to improve on the replica approach for matrix denoising in [166, 167] that was missing important correlations among variables. It was followed by two concurrent papers yielding intractable [99] or perturbative [100] results for non-Gaussian signals.

Remark 1. No method in the aforementioned papers is satisfactory beyond the realm of denoising problems involving strictly rotational invariant signal matrices (Gaussian, Wishart,...). E.g., the HCIZ/replica combination in the latest works [94, 168] requires it, because after using the replica trick to integrate the quenched disorder, the HCIZ is used directly to integrate the annealed matrix degrees of freedom (representing the replicas of the signal matrix), which is possible by rotational invariance.

Recently, matrix denoising without rotational invariance was analysed in [169] by assuming that the model behaves as a pure matrix model (due to an 'effective rotational invariance') in a first phase, and then as a 'standard' planted mean-field spin glass in a second. The phases were thus treated separately via different formalisms -HCIZ in one phase, a cavity method under mean-field decoupling assumptions in the other- and then joined using a criterion to locate the transition. This approach yielded a good match with numerics. However, we now understand that this treatment can be improved, because the 'matrix nature' of the model and associated correlations discarded by mean-field methods do play a role also in the second phase. Thus, a major conceptual (and technical) issue remained: whether there exists a theory based on a unified formalism able to describe the whole phase diagram of inference/learning problems involving linear-rank matrices which lack rotational invariance. Ideally, it should be able to handle the correlations induced by the matrix nature of the problem while still capturing the phase transitions and symmetry breaking effects connected to its mean-field component. The present paper provides this theory in the context of NNs, through a replica/HCIZ combination of a different nature than previous works, see Sec. IV.

̸

Related to this last point, we emphasise that previous works on extensive-widths shallow NNs ( k = Θ( d c ) for 0 < c ≤ 1) considered either purely quadratic activation [91, 92, 94-97] or, on the contrary, with µ ≤ 2 = 0 [170]. Both settings enjoy intrinsic simplifications. On one hand, the quadratic NN reduces to a matrix sensing problem [94, 96]. It is therefore a 'pure matrix model' with rotational invariance when considering Gaussian weights: the target (and model) only depends on them via W 0 ⊺ W 0 . Therefore, by rotational invariance (from the left and right) of the Gaussian matrix W 0 , it cannot be recovered, so no specialisation transitions can occur. The advantage is that a large toolbox from random matrix theory is then available: the HCIZ integral to study static aspects [94-96], or Oja's flow and matrix Riccati differential equations for the dynamics [92, 97, 171]. On the other hand, [170] considers µ ℓ = 0 for ℓ ≥ 3 only. In this case, the model is 'purely mean-field': strong decoupling phenomena take place which allow a treatment in term of an effective one-body equivalent system as in mean-field spin systems.

In contrast, the techniques we develop in the present paper can deal with truly hybrid models where the two types of characteristics manifest and are taken into ac-

̸

count using a single formalism: the correlations among the entries of the matrix degrees of freedom entering the problem, and specialisation phase transitions induced by mean-field terms. The emerging phase diagram will consequently be extremely rich. In particular, we are able to treat the shallow MLP with generic activation function (Result 1), or the two-layer MLP with µ 1 = 1 (Result II B). The case of L ≥ 3 requires hypothesis ( H 3 ) on σ which, in turn, makes the model 'purely mean-field'.

## D. Organisation of the paper

· Section II first discusses the main hypothesis underlying the theory and the meaning of functions entering it. We then present the theoretical results: replica symmetric formulas for the free entropy and OPs for shallow NNs (Result 1), with two hidden layers (Result 3), or arbitrary L ( Result 4). These provide an answer to Q2 . The Bayes generalisation error is deduced automatically from Result 2 in all cases, thus answering Q1 .

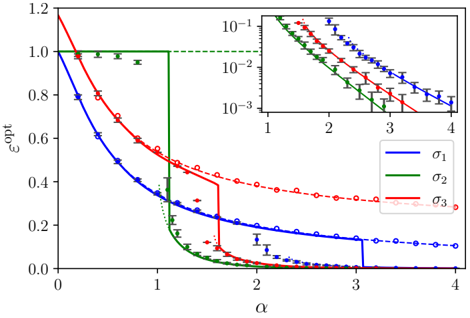

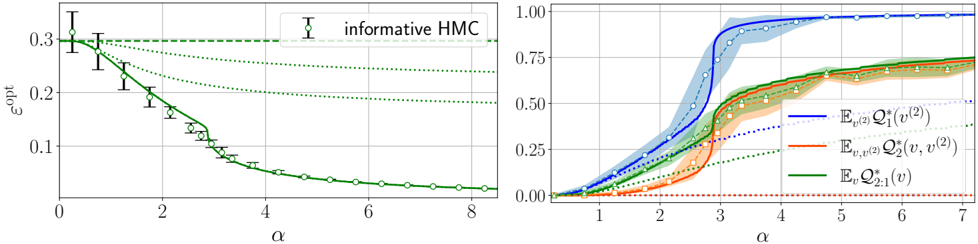

· Section III is the core experimental part. It validates the theory through the numerical exploration of the rich learning phase diagram. Our main message concerning Q2 is as follows. As α increases two phases appear:

( i ) Universal phase. Before a critical sample rate α sp , the NN makes predictions by exploiting specific nonlinear combinations of the teacher's features without disentangling them; effectively, the student learns the best 'quadratic network approximation' of the target. In this phase, performance is (asymptotically) independent of the detailed law of the target hidden weights (hence the term 'universal'). Yet, the (effectively quadratic) NN outperforms kernel ridge regression (and thus the random feature model too, FIG. 2), see [94, 96].

( ii ) Specialisation phase. Increasing the data beyond α sp triggers specialisation transitions: individual hidden units start aligning with target units. Which features specialise first is governed by the readout strengths of the target: stronger features (larger readout amplitudes) emerge earlier. For heterogeneous readouts, this yields a sequence of specialisation events; for homogeneous readouts, a collective transition occurs. If L ≥ 2, possible heterogeneity both in the rows and columns of individual weight matrices induces non-trivial specialisation profiles in each layer. In turn, different layers can experience different phases and do not necessarily specialise concurrently. We will also show that learning propagates from inner to outer hidden layers, because deeper layers require more data to be recovered through specialisation. Consequently, deeper target functions appear harder to learn than shallow ones.

In summary, despite the model's 'matrix nature' at the source of the universal phase, additional mean-fieldlike terms in the free entropy (in information theory parlance, Gaussian scalar channels) imply the existence of specialisation events. These terms depend explicitly on the weights prior and interact with the matrix degrees of freedom, and ultimately break the numerous effective symmetries holding before the transition.

The theoretical phase diagram will be extensively tested against various training algorithms: two Monte Carlo-based Bayesian samplers, a first-order optimisation procedure (ADAM), and a mixed spectral/approximate message-passing algorithm generalising the GAMP-RIE of [94] to accommodate general activation functions σ when L = 1. The performance of these algorithms belonging to different classes, even when sub-optimal, can be exactly (or, for ADAM, at least accurately) predicted by non-equilibrium solutions of the theoretical equations.

Focusing on L ≤ 2 for what pertains algorithmic hardness of learning, Q3 , we will show empirically that specialisation is potentially hard to reach for some target functions, in particular when the readouts are discrete. The tested algorithms fail to find it and instead get trapped by sub-optimal non-specialised solutions, probably due to statistical-computational gaps.

We will also generalise the theory to structured data, i.e., Gaussian with a covariance. It will capture the model's performance when trained from non-Gaussian inputs too. Tests with real (MNIST images) and synthetic data generated by one layer of a NN will confirm it.

· Section IV contains the main steps of our replica theory, with an emphasis on its novel ingredients. Along the derivation, the mixed matrix model/mean-field planted spin glass nature of the problem will become apparent.

· Finally, Section V summarises our contributions and discusses the numerous perspectives this work opens.

The appendices are found after the references.

· Appendix A gathers some important pre-requisites: App. A1 summarises all notations used in the paper (we advise the reader to give it a look before reading the main results); the definition of the Hermite polynomials and Mehler's formula are found in App. A 2; the Nishimori identities in Bayes-optimal inference in App. A 3; the link between free entropy and mutual information in App. A4; and a simplification of the expression for the optimal mean-square generalisation error in App. A 5.

· Appendix B groups all sub-appendices related to the shallow MLP: App. B1 details all the steps of the replica calculation; App. B 2 proposes alternative routes to take care of the entropy of the order parameters associated with the matrix degrees of freedom in the model; App. B3 analyses the large sampling rate limit of the theoretical free entropy; App. B 4 provides the generalisation of the GAMP-RIE algorithm needed to deal with general σ ; App. B5 is an empirical analysis of the hardness of learning shallow targets; App. B 6 is a partial proof for a special case of activation function; finally, App. B 7 provides additional experimental validations of the fact that the readout weights of the model being learnable or fixed has no effect on its optimal performance.

· Appendix C concerns only the deep MLP: App. C 1 is the replica calculation; App. C 2 shows the consistency of the formulas provided for structured inputs with L = 1 in the main, and the ones for a special case of non-Gaussian

data obtainable from the theory for two hidden layers when freezing the first one (which induces a structure for the inputs of the second, learnable layer).

· Appendix D provides all information needed to reproduce the simulations with the provided codes [172].

## II. MAIN RESULTS: THEORY OF THE MLP

We aim at evaluating the expected optimal generalisation error in the teacher-student setting of FIG. 3. Let ( x test , y test ∼ P out ( · | λ 0 test )) be a test sample independent of D drawn using the teacher, where λ 0 test is defined as in (1) with x µ replaced by x test (and similarly for λ test ( θ )). Given a prediction function f , the Bayes estimator for the test response is ˆ y f ( x test , D ) := ⟨ f ( λ test ( θ )) ⟩ , where ⟨ · ⟩ := E [ · | D ]. Then, for a performance measure C : R × R ↦→ R ≥ 0 the Bayes generalisation error is

$$\begin{array} { r l } & { \varepsilon ^ { \mathcal { C } , f } \colon = \mathbb { E } _ { \theta ^ { 0 } , \mathcal { D } , x _ { t e s t } , y _ { t e s t } } \mathcal { C } \left ( y _ { t e s t } , \left \langle f ( \lambda _ { t e s t } ( \theta ) ) \right \rangle \right ) . \quad ( 7 ) \quad a v e r a l T o p } \end{array}$$

The case of square loss C ( y, ˆ y ) = ( y -ˆ y ) 2 with the choice f ( λ ) = ∫ dy y P out ( y | λ ) =: E [ y | λ ] yields the Bayesoptimal mean-square generalisation error:

<!-- formula-not-decoded -->

In order to access ε C , f , ε opt and other relevant observables, one can tackle the computation of the average logpartition function, or free entropy in statistical physics:

$$\begin{array} { r l } { f _ { n } \colon = \frac { 1 } { n } \mathbb { E } _ { \theta ^ { 0 } , \mathcal { D } } \ln \mathcal { Z } ( \mathcal { D } ) . } & { ( 9 ) \quad ( s e c o r m a l i t y ) } \end{array}$$

The mutual information I ( θ 0 ; D ) between the target and data is related to the free entropy f n , see App. A 4.

Before presenting the results we will first detail the main hypothesis for their derivation and explain the physical meaning of the quantities entering them. This will ease their interpretation. We postpone the core of the theoretical derivations to Sec. IV.

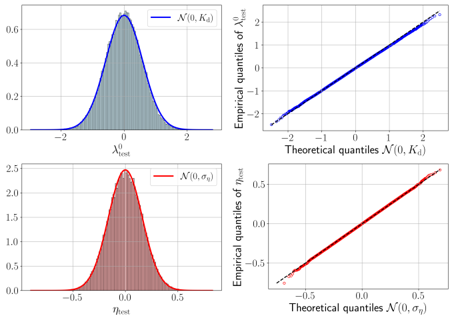

Main hypothesis. Let s be any positive integer independent of d and define a Gaussian vector ( λ a ) s a =0 := ( λ 0 , λ 1 , · · · , λ s ) ⊺ ∼ N ( 0 , K ∗ ) with covariance (for a, b = 0 , . . . , s )

$$( K ^ { * } ) _ { a b } \colon = \mathbb { E } \lambda ^ { a } \lambda ^ { b } = K ^ { * } + ( K _ { d } - K ^ { * } ) \delta _ { a b } . \quad ( 1 0 ) \quad N o t i o n$$

Let ( θ a ) s a =1 be i.i.d. from the posterior dP ( · | D ) and θ 0 are the random target weights. Our main assumption is that there exists a non-random K ∗ s.t., under the randomness of a common test input x test / ∈ D and ( θ a ) s a =0 , the post-activations ( λ test ( θ a )) s a =0 (called 'replicas'), converge in law towards ( λ a ) s a =0 in the limit (4):

$$\begin{array} { r l } { H y p o t h e s i s \colon \exists K ^ { * } | ( \lambda _ { t e s t } ( \theta ^ { a } ) ) _ { a = 0 } ^ { s } \xrightarrow { L a w } ( \lambda ^ { a } ) _ { a = 0 } ^ { s } . ( 1 1 ) } & { t e r i o r i n } \\ { p r e d i c u l y } \end{array}$$

The goal of the replica method will be to derive K ∗ in terms of fundamental low-dimensional OPs capturing the

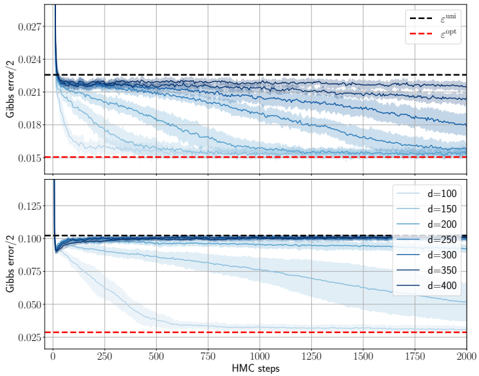

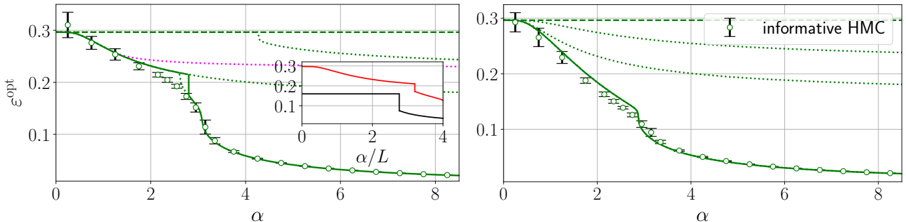

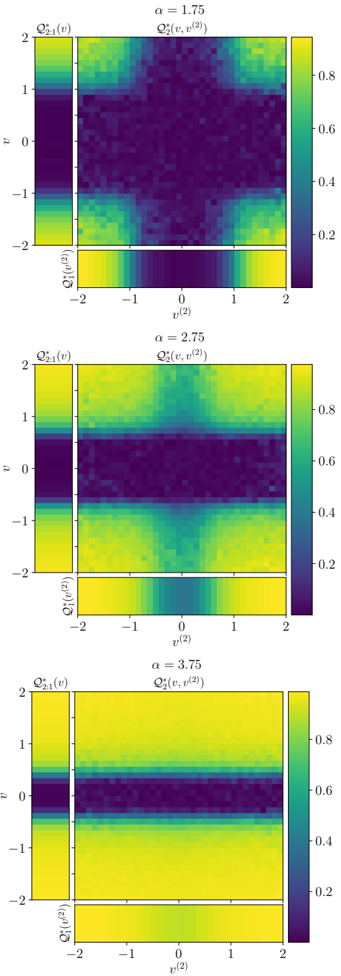

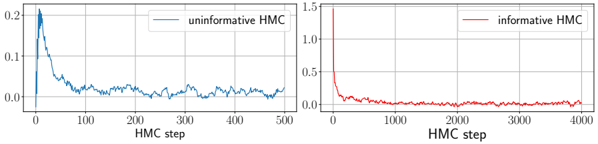

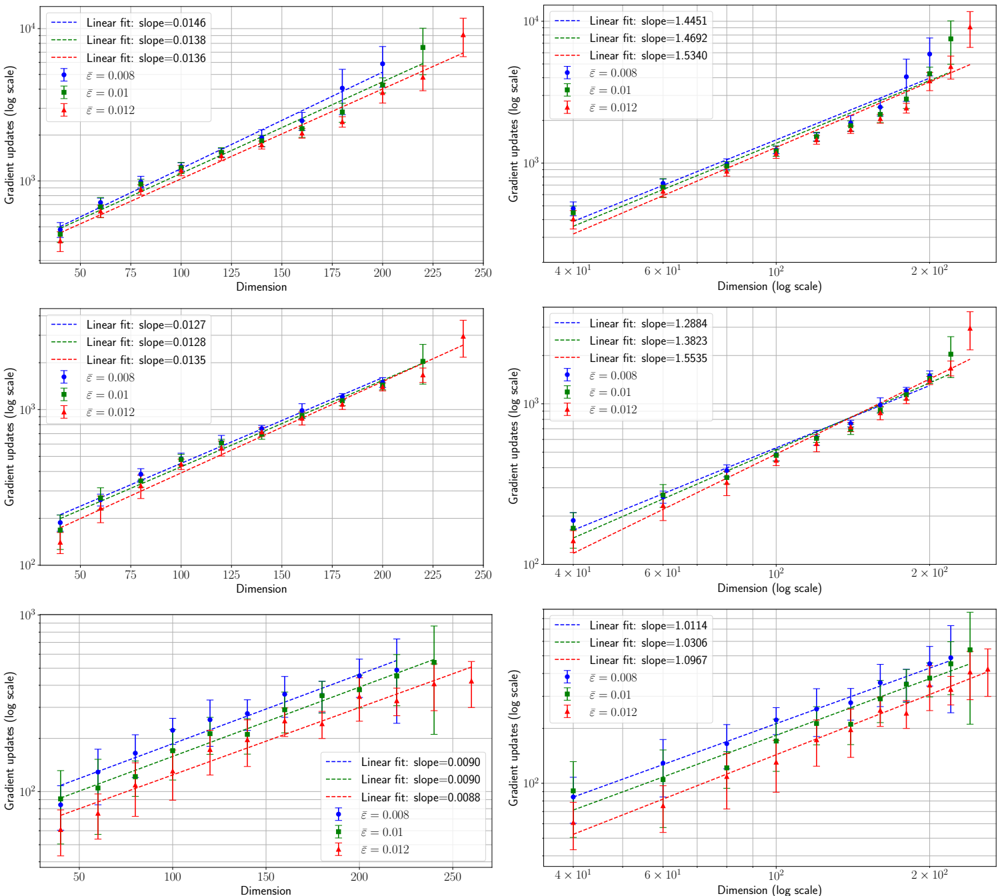

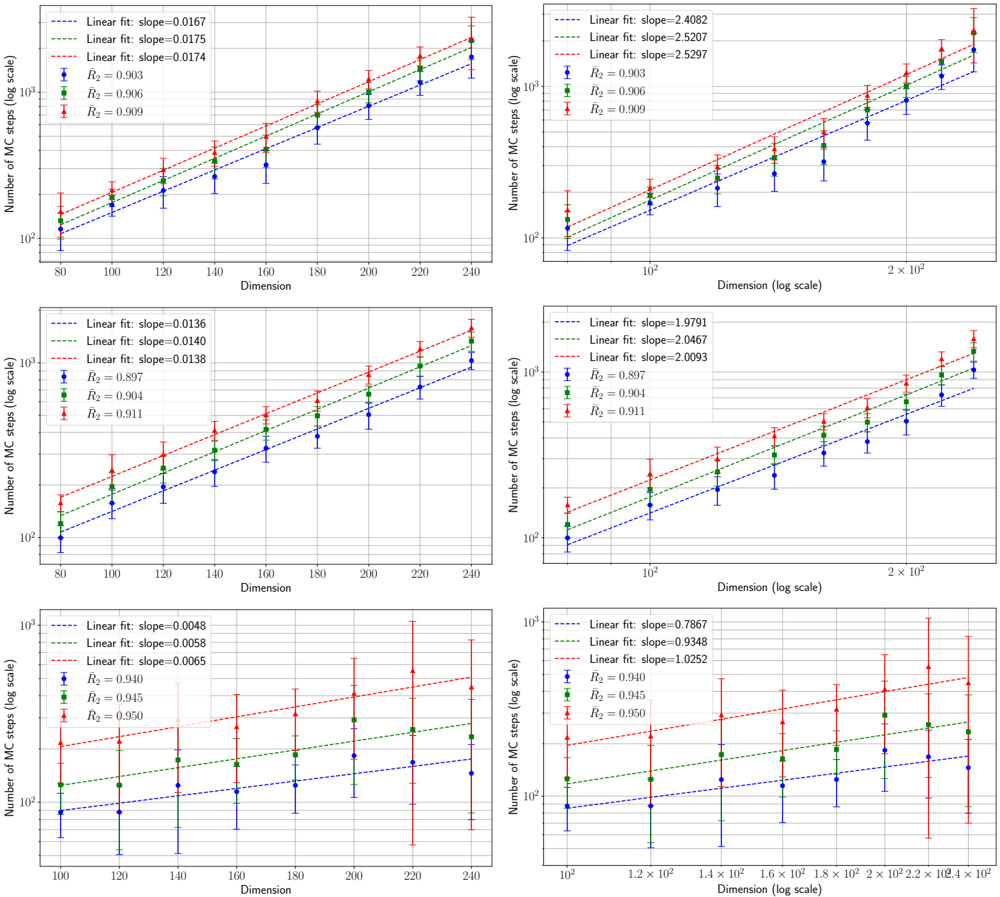

FIG. 4. Experimental evidence for the Gaussian hypothesis. In all experiments, d = 300 , γ = 0 . 5 , α = 3 . 0 , ∆ = 0 . 1 , σ ( x ) = ReLU( x ) -1 / √ 2 π , both readout and inner weights have standard Gaussian prior. Empirical evaluations are based on a test set of size 5 × 10 4 . The results have been averaged over 10 instances of the training set and teacher. Top left : Histogram of the teacher post-activations λ 0 test evaluated on x test compared with the theoretically predicted Gaussian density N (0 , K d ) (see (13) for the definition of K d ). Top right : Quantile-quantile plot comparing the theoretical quantiles of N (0 , K d ) with the empirical ones of λ 0 test . Bottom left : Histogram of the student's projection along the orthogonal direction to the teacher: η test = λ test -[ E x test λ 0 test λ test / E x test ( λ 0 test ) 2 ] λ 0 test ≈ λ test -( K ∗ /K d ) λ 0 test , where λ test ( v , W ) is the student post-activation with both v and W sampled from the posterior via Hamiltonian Monte Carlo, evaluated on the same test set, and compared with the theoretical density N (0 , σ η ), where σ η = K d -K ∗ 2 /K d (see (16) for K ∗ ). Bottom right : Quantile-quantile plot comparing the theoretical quantiles of N (0 , σ η ) with the empirical quantiles of η test .

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Chart Type: Distribution and Quantile-Quantile (Q-Q) Plots

### Overview