# Scaling Latent Reasoning via Looped Language Models

**Authors**: Rui-Jie Zhu, Zixuan Wang, Kai Hua, Tianyu Zhang, Ziniu Li, Haoran Que, Boyi Wei, Zixin Wen, Fan Yin, He Xing, Lu Li, Jiajun Shi, Kaijing Ma, Shanda Li, Taylor Kergan, Andrew Smith, Xingwei Qu, Mude Hui, Bohong Wu, Qiyang Min, Hongzhi Huang, Xun Zhou, Wei Ye, Jiaheng Liu, Jian Yang, Yunfeng Shi, Chenghua Lin, Enduo Zhao, Tianle Cai, Ge Zhang, Wenhao Huang, Yoshua Bengio, Jason Eshraghian

> ridger@ucsc.eduzhangge.eli@bytedance.comhuang.wenhao@bytedance.comjsn@ucsc.edu

1]ByteDance Seed 2]UC Santa Cruz 3]Princeton University 4]Mila - Quebec AI Institute 5]University of Montreal 6]Peking University 7]Carnegie Mellon University 8]University of Pennsylvania 9]Conscium 10]University of Manchester 11]M-A-P \contribution [*]Core Contributors \contribution [†]Corresponding authors

(November 17, 2025)

Abstract

Modern LLMs are trained to “think” primarily via explicit text generation, such as chain-of-thought (CoT), which defers reasoning to post-training and under-leverages pre-training data. We present and open-source Ouro, named after the recursive Ouroboros, a family of pre-trained Looped Language Models (LoopLM) that instead build reasoning into the pre-training phase through (i) iterative computation in latent space, (ii) an entropy-regularized objective for learned depth allocation, and (iii) scaling to 7.7T tokens. Ouro 1.4B and 2.6B models enjoy superior performance that match the results of up to 12B SOTA LLMs across a wide range of benchmarks. Through controlled experiments, we show this advantage stems not from increased knowledge capacity, but from superior knowledge manipulation capabilities. We also show that LoopLM yields reasoning traces more aligned with final outputs than explicit CoT. We hope our results show the potential of LoopLM as a novel scaling direction in the reasoning era.

\correspondence

, , , \checkdata [Project Page & Base / Reasoning Models] http://ouro-llm.github.io

<details>

<summary>x1.png Details</summary>

### Visual Description

## Radar Chart: Model Performance Comparison Across Benchmarks

### Overview

The image contains two radar charts comparing the performance of various language models across multiple benchmarks. The charts are labeled "ARC-C" and "BBH" at the top, with categories arranged radially (ARC-C, BBH, MMLU-Pro, MMLU, Hellaswag, Winogrande, GSM8K, MATH500, HumanEval+). Two charts are presented: one with models up to 4B parameters and another with models up to 12B parameters. The legend distinguishes models by color (e.g., red for "Ours," green for Gemma3 4B, blue for Qwen3 4B).

### Components/Axes

- **Radial Axes**:

- ARC-C, BBH, MMLU-Pro, MMLU, Hellaswag, Winogrande, GSM8K, MATH500, HumanEval+

- **Legend**:

- Red: Ours

- Green: Gemma3 1B / Qwen3 1.7B

- Blue: Gemma3 4B / Qwen3 4B

- Dashed Blue: Gemma3 12B

- Dashed Green: Qwen3 8B

- **Chart Titles**:

- Left chart: "Ouro 2.6B"

- Right chart: "Ouro 2.6B" (same title, but higher performance values)

### Detailed Analysis

#### Left Chart (Ouro 2.6B)

- **ARC-C**: 73.1 (Ours), 66.4 (Gemma3 4B), 65.6 (Qwen3 4B)

- **BBH**: 71.0 (Ours), 60.5 (Gemma3 4B), 55.7 (Qwen3 4B)

- **MMLU-Pro**: 68.9 (Ours), 55.7 (Gemma3 4B), 54.2 (Qwen3 4B)

- **MMLU**: 68.0 (Ours), 58.5 (Gemma3 4B), 56.0 (Qwen3 4B)

- **Hellaswag**: 69.1 (Ours), 59.9 (Gemma3 4B), 58.6 (Qwen3 4B)

- **Winogrande**: 66.8 (Ours), 56.9 (Gemma3 4B), 55.9 (Qwen3 4B)

- **GSM8K**: 64.7 (Ours), 50.5 (Gemma3 4B), 47.0 (Qwen3 4B)

- **MATH500**: 60.9 (Ours), 41.0 (Gemma3 4B), 39.3 (Qwen3 4B)

- **HumanEval+**: 59.9 (Ours), 33.7 (Gemma3 4B), 32.7 (Qwen3 4B)

#### Right Chart (Ouro 2.6B)

- **ARC-C**: 75.7 (Ours), 66.4 (Gemma3 4B), 65.6 (Qwen3 4B)

- **BBH**: 80.5 (Ours), 60.5 (Gemma3 4B), 55.7 (Qwen3 4B)

- **MMLU-Pro**: 66.4 (Ours), 55.7 (Gemma3 4B), 54.2 (Qwen3 4B)

- **MMLU**: 68.0 (Ours), 58.5 (Gemma3 4B), 56.0 (Qwen3 4B)

- **Hellaswag**: 65.6 (Ours), 59.9 (Gemma3 4B), 58.6 (Qwen3 4B)

- **Winogrande**: 65.0 (Ours), 56.9 (Gemma3 4B), 55.9 (Qwen3 4B)

- **GSM8K**: 67.5 (Ours), 50.5 (Gemma3 4B), 47.0 (Qwen3 4B)

- **MATH500**: 90.8 (Ours), 41.0 (Gemma3 4B), 39.3 (Qwen3 4B)

- **HumanEval+**: 64.8 (Ours), 33.7 (Gemma3 4B), 32.7 (Qwen3 4B)

### Key Observations

1. **Performance Trends**:

- "Ours" consistently outperforms other models across all benchmarks in both charts.

- In the right chart, "Ours" achieves near-perfect scores (90.8 on MATH500, 80.5 on BBH).

- Gemma3 4B and Qwen3 4B show similar performance, with slight variations (e.g., Qwen3 4B scores higher in MMLU-Pro: 54.2 vs. 55.7 for Gemma3 4B).

- Dashed lines (Gemma3 12B, Qwen3 8B) indicate lower performance than their smaller counterparts in most categories.

2. **Outliers**:

- Qwen3 1.7B (green) underperforms in MMLU-Pro (48.9) and MATH500 (30.5) compared to other models.

- Gemma3 12B (dashed blue) scores lower than Gemma3 4B in most categories, suggesting diminishing returns with larger model sizes.

### Interpretation

The data demonstrates that the "Ours" model (likely Ouro 2.6B) significantly outperforms existing models (Gemma3 and Qwen3 variants) across diverse benchmarks. This suggests superior architecture or training efficiency. The right chart reveals that larger model sizes (e.g., Gemma3 12B, Qwen3 8B) do not always correlate with better performance, highlighting potential inefficiencies in scaling. The consistent dominance of "Ours" in tasks like MATH500 (90.8) and BBH (80.5) indicates strong reasoning and problem-solving capabilities. The left chart’s lower values (e.g., 60.9 on MATH500) suggest the right chart represents a more optimized or advanced version of the same model.

</details>

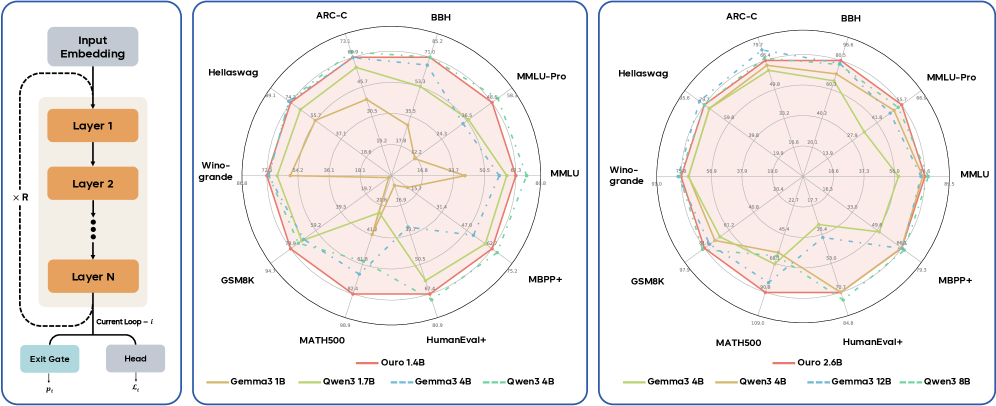

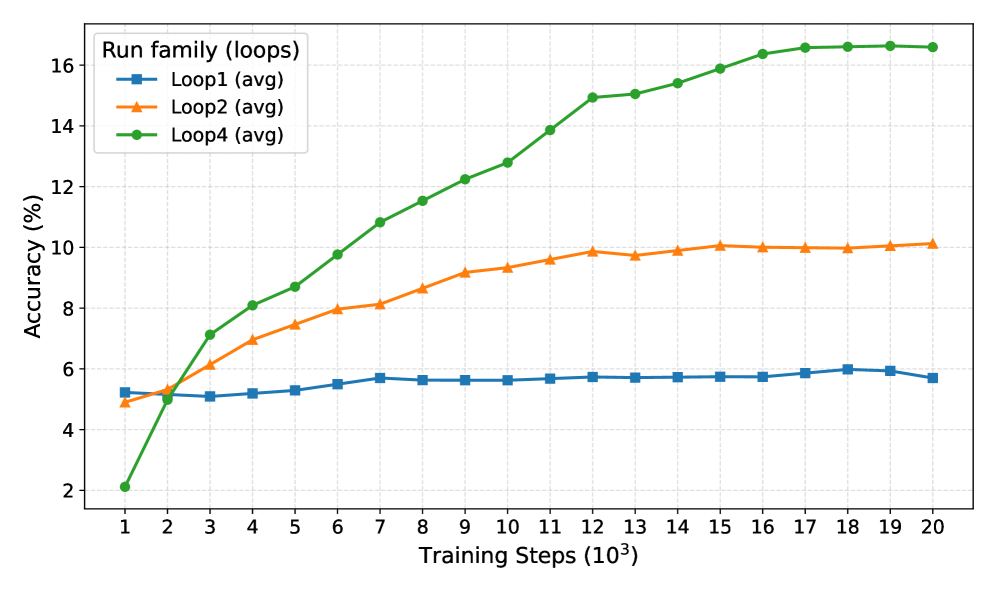

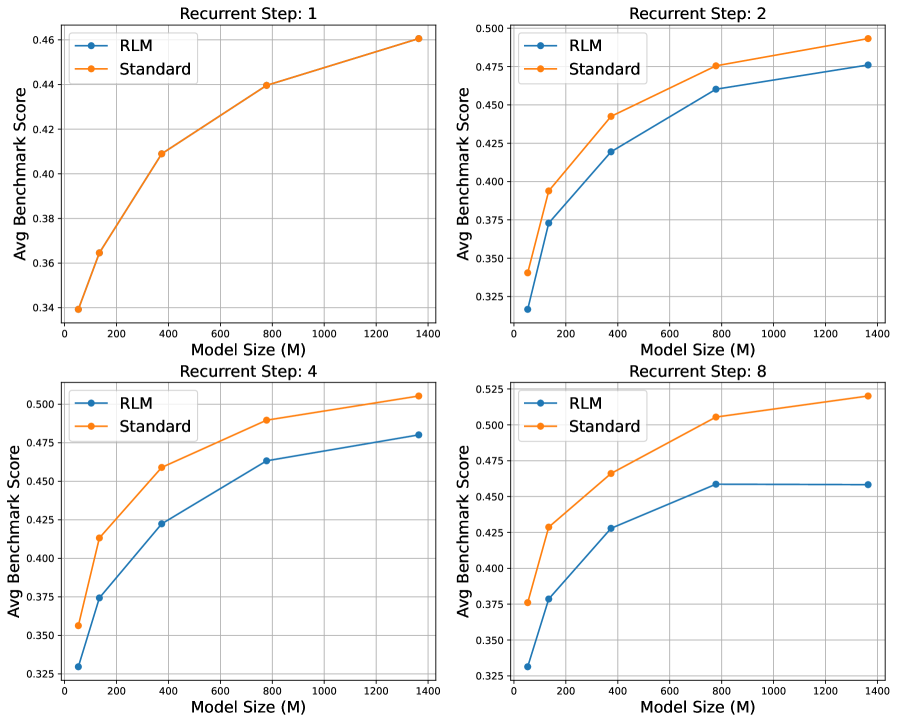

Figure 1: Ouro Looped Language Model performance. (Left) The parameter-shared looped architecture. (Middle & Right) Radar plots comparing the Ouro 1.4B and 2.6B models, both with 4 recurrent steps (red), against individual transformer baselines. Our models demonstrate strong performance comparable to or exceeding much larger baselines.

1 Introduction

The advancement of Large Language Models (LLMs) has historically relied on scaling up model size as the primary driver, accompanied by increases in data and compute [1, 2, 3, 4]. However, deploying models with hundreds of billions of parameters requires extensive infrastructure, increasing latency and cost while limiting accessibility. These factors make parameter efficiency critical: achieving better model capability within a fixed parameter budget. Such models not only mitigate overfitting on finite datasets with fewer trainable parameters, but also enable more practical deployment with lighter infrastructure. To achieve such parameter efficiency, two main avenues have been explored. The first expands the training corpus regardless of model size [5], though data scarcity increasingly limits this path. The second leverages inference-time compute through Chain-of-Thought (CoT) reasoning [6], allowing models to spend more compute on complex problems via extended token generation.

We explore a third pathway based on architectural innovation: achieving dynamic computation within a fixed parameter budget. This is accomplished by recursively applying shared parameters, where a group of weight-tied layers are iteratively reused during the forward pass. We call this the Looped Language Model (LoopLM). The design yields several advantages. First, LoopLM enables adaptive computation via a learned early-exit mechanism: simple inputs can terminate after fewer recurrent steps, while complex ones allocate more iterations. This decouples the compute depth from parameter count. Second, unlike inference-time methods such as CoT, LoopLM scales by deepening its internal computational graph rather than extending the output sequence, avoiding context-length bloat. Finally, LoopLM can improve capacity per parameter and outperform standard transformers of larger sizes when trained on the same data.

An extensive range of prior studies have explored LoopLM at modest scales [7, 8, 9, 10, 11, 12, 13, 14], from the seminal Universal Transformer [15] to recursive Transformers [16] and latent reasoning approaches [17, 18, 19]. Yet whether Looped Language Models translate into frontier-level gains at practically meaningful scales is unproven. To this end, we ask:

Does LoopLM exhibit more favorable scaling behavior (in capabilities, efficiency and safety), compared to non-recursive transformer models?

We show the answer is yes. We characterize LoopLM’s scaling trajectory and saturation behavior, demonstrating that LoopLM offers a more efficient path to higher performance. These claims are evaluated under multi-trillion-token training regimes typical of SoTA foundation models, extending well beyond prior work. Beyond the empirical gains, we analyze the mechanisms behind these improvements by asking the following questions:

- Does the recursive reuse of weights yield the capability gains typically obtained by increasing depth with unshared weights?

- Are LoopLM’s gains monotonic in the number of loops? What are the factors that influence this?

Our Contribution

We address the above questions with a multi-faceted study. We scale LoopLM pre-training to 7.7T tokens and thoroughly investigate its scaling behavior across multiple axes. To enable adaptive computation, we introduce training objectives that enable computationally efficient recurrence while preserving peak performance. We also run controlled ablations to isolate the sources of LoopLM’s gains. Specifically, our contributions are:

- Exceptional parameter efficiency at scale. By pre-training on 7.7T tokens, we demonstrate that 1.4B and 2.6B parameter LoopLMs match 4B and 8B standard transformers on most benchmarks, yielding 2-3 $×$ parameter-efficiency gains that are critical for deployment in resource constrained environments (Figure 1 and Figure 2).

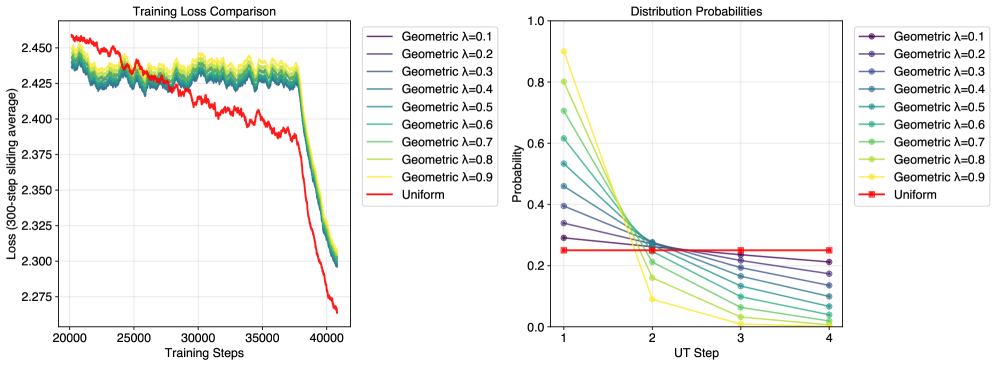

- Entropy-regularized adaptive computation. Adaptive exits tend to collapse to shallow depths or overuse long loops. We avoid this with entropy-reguarization under a uniform prior over exit steps for unbiased depth exploration, followed by a focused training stage that tunes the compute-performance trade-off and allocates steps based on input difficulty.

- Mechanistic understanding of recurrence. Using controlled experiments inspired by the physics-of-LMs framework, we find recurrence does not increase raw knowledge storage (approximately 2 bits per parameter for looped and non-looped models) but dramatically enhances knowledge manipulation capabilities on tasks requiring fact composition and multi-hop reasoning.

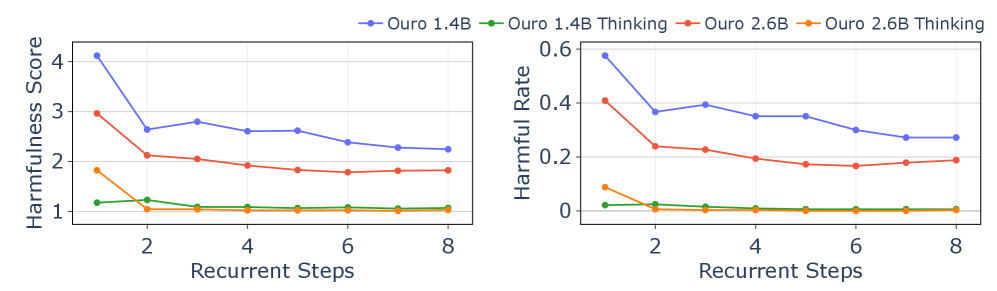

- Improved safety and faithfulness. LoopLM reduces harmfulness on HEx-PHI [20], with safety improving as recurrent steps increase (including extrapolated steps). Compared to CoT, our iterative latent updates produce reasoning traces that are better aligned with final outputs, indicating greater causal faithfulness rather than post-hoc rationalization.

Our study establishes loop depth as a third scaling axis beyond model size and data, and we publicly release the Ouro model family (1.4B and 2.6B parameters) to demonstrate the benefits of LoopLM at scale.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: Model Performance Across Datasets

### Overview

The image contains seven grouped bar charts comparing the performance of seven AI models (Ouro-1.4B, Ouro-2.6B, Owen3-1.7B, Owen3-3.4B, Owen3-3.8B, Deepseek-1.5B, Deepseek-7B) across seven datasets (AIME24, AIME25, Olympiadbench, BeyondAIMe, HLE, SuperGPQA, GPQA). Scores range from 0 to 100, with numerical values displayed on top of each bar.

### Components/Axes

- **X-axis**: Datasets (AIME24, AIME25, Olympiadbench, BeyondAIMe, HLE, SuperGPQA, GPQA)

- **Y-axis**: Score (0–100)

- **Legend**: Located in the bottom-right corner, mapping colors to models:

- Ouro-1.4B: Blue

- Ouro-2.6B: Purple

- Owen3-1.7B: Orange

- Owen3-3.4B: Green

- Owen3-3.8B: Red

- Deepseek-1.5B: Pink

- Deepseek-7B: Brown

- **Bar Groups**: Each dataset chart groups bars by model, with consistent color coding across all charts.

### Detailed Analysis

#### AIME24

- Ouro-2.6B (purple): 64.0 (highest)

- Owen3-3.8B (red): 61.3

- Deepseek-7B (brown): 22.0 (lowest)

#### AIME25

- Owen3-3.8B (red): 66.7 (highest)

- Ouro-2.6B (purple): 63.3

- Deepseek-7B (brown): 23.0

#### Olympiadbench

- Ouro-2.6B (purple): 76.44 (highest)

- Owen3-3.8B (red): 73.18

- Deepseek-7B (brown): 56.44

#### BeyondAIMe

- Owen3-3.8B (red): 39.0 (highest)

- Ouro-2.6B (purple): 34.0

- Deepseek-1.5B (pink): 9.0 (lowest)

#### HLE

- Owen3-3.4B (green): 5.21 (highest)

- Deepseek-1.5B (pink): 2.22 (lowest)

#### SuperGPQA

- Owen3-3.8B (red): 51.89 (highest)

- Ouro-2.6B (purple): 47.37

- Deepseek-7B (brown): 26.50

#### GPQA

- Owen3-3.8B (red): 59.10 (highest)

- Ouro-2.6B (purple): 54.54

- Deepseek-7B (brown): 51.01

### Key Observations

1. **Owen3-3.8B (red)** consistently performs well across most datasets, particularly in AIME25 (66.7) and GPQA (59.10).

2. **Ouro-2.6B (purple)** excels in Olympiadbench (76.44) but underperforms in BeyondAIMe (34.0).

3. **Deepseek-7B (brown)** shows mixed results, with strong performance in GPQA (51.01) but poor results in BeyondAIMe (30.0).

4. **Deepseek-1.5B (pink)** has the lowest score in BeyondAIMe (9.0), suggesting significant dataset-specific limitations.

5. **Owen3-3.4B (green)** dominates HLE (5.21) but underperforms in other datasets.

### Interpretation

The data reveals that model performance is highly dataset-dependent. Owen3-3.8B demonstrates robustness across diverse tasks, while Ouro-2.6B excels in Olympiadbench, possibly due to specialized training. Deepseek models show variability, with the 7B version performing better in GPQA but struggling in BeyondAIMe. The stark drop in Deepseek-1.5B's score in BeyondAIMe (9.0) suggests potential architectural or training mismatches for that dataset. These trends highlight the importance of dataset-specific evaluation in AI model development and the need for models to generalize across tasks.

</details>

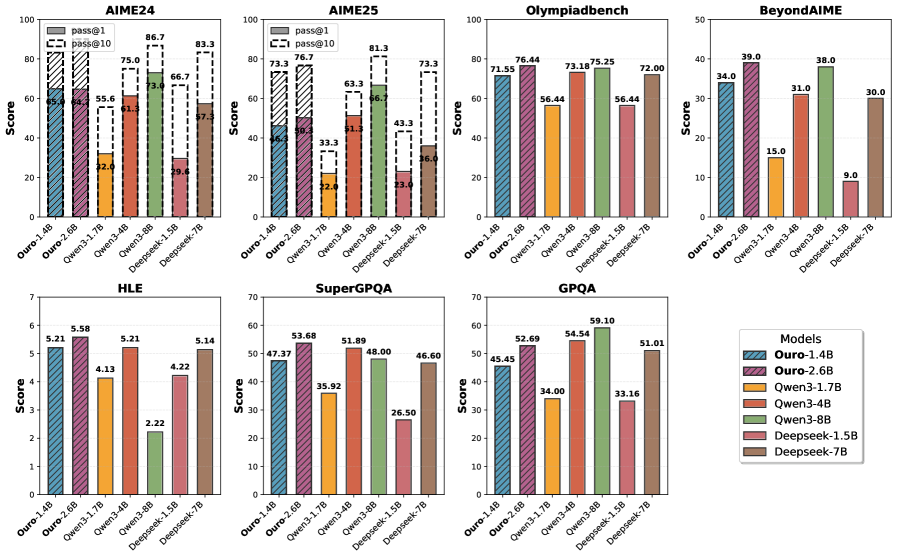

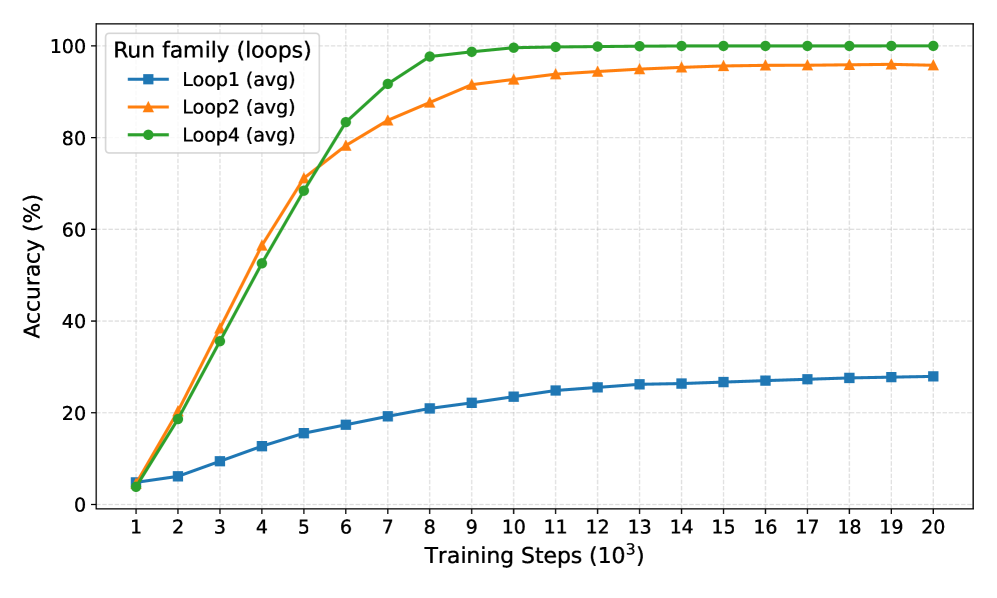

Figure 2: Performance on advanced reasoning benchmarks. Ouro-Thinking models compared with strong baselines such as Qwen3 and DeepSeek-Distill. Ouro-1.4B-Thinking R4 is competitive with 4B models, and Ouro-2.6B-Thinking R4 matches or exceeds 8B models across multiple math and science datasets.

2 Related Works

The core ideas of this architecture have resurfaced in recent literature, with recurrent-depth structures used to improve the efficiency and reasoning capabilities of modern LLMs. For example, Geiping et al. [17] adopts a “recurrent depth” to scale test-time computation in latent space. Similarly, Saunshi et al. [7] demonstrates that “looped transformers” can match the performance of much deeper non-looped models on reasoning tasks, formally connecting looping to the generation of latent thoughts. The approach is refined by converting standard models into “Relaxed Recursive Transformers” with a common base block while injecting unique LoRA adapters across recursive steps [16]. Similar concepts have emerged under different terms, such as “pondering” in continuous space [18] and “inner thinking” for adaptive computation [21]. More advanced variants, such as Mixture-of-Recursions [22] combine recursive parameter efficiency with adaptive, token-level routing.

Across all these works, from the original Universal Transformer to its modern descendants, this emerging line of architectures can be understood in two complementary ways. From one perspective, it behaves like a deep Transformer where the weights of all layers are tied. From another, iteration functions as latent reasoning, where the hidden states form a latent chain of thought that progressively refines the representation to solve a task. Taken together, these results suggest that models can improve their ability to reason by reusing computation internally without having to increase parameter count, shifting scale to substance.

Perspective 1: Parameter Sharing for Model Efficiency.

This view treats LoopLM as parameter sharing: one or more Transformer blocks, or even submodules (e.g., attention, FFN), are reused across the depth of the model, reducing parameters without changing the computation. The most prominent example in the modern transformer era is ALBERT [23], which combines parameter re-use with embedding factorization to drastically reduce the total parameter count. Prior to the widespread adoption of LLMs, parameter sharing was explored extensively in machine translation [24]; Takase et al. [25] systematically studied sharing strategies to balance compression and accuracy. Interest in parameter reuse dropped as models grew larger, but it has resurged to shrink the memory footprint of LLMs. For example, Megrez2 [26] reuses experts across layers in a standard Mixture-of-Experts (MoE) model, and shows a viable path forward for edge LLM deployment with limited memory.

Perspective 2: Latent Reasoning and Iterative Refinement.

Here, the LoopLM’s iteration is viewed as latent reasoning where each step is a non-verbal “thought” that refines the model’s internal representation. Empirically, increasing the number of recurrent steps improves performance on complex reaasoning tasks [17, 7]. Some models make this process explicit by feeding hidden states back into the input. Coconut inserts a “continuous thought” token, which is derived from the previous steps’s last-layer hidden state, so the model can “ponder” in a continuous latent space [27]. CoTFormer interleaves activations back into the input before reapplying this augmented sequence to the shared layers [28]. These explicit feedback loops contrast with implicit LoopLM variants, where the entire thought process is contained within the evolution of hidden states from previous recurrent step to current. Thus, both Perspective 1 (model compression) and Perspective 2 (latent reasoning) leverage shared-parameter iteration to improve parameter efficiency, and are being explored for enhance reasoning and efficient sequence-length expansion (e.g., PHD-Transformer [29]).

3 Learning Adaptive Latent Reasoning with LoopLM

<details>

<summary>x3.png Details</summary>

### Visual Description

## Diagram: Neural Network Training and Inference Architecture

### Overview

The diagram illustrates a neural network architecture with explicit training and inference phases. It emphasizes dynamic computation through exit gates and confidence thresholds, enabling early termination of processing during inference. The architecture includes multiple layers, task-specific heads, and a confidence-driven early exit mechanism.

### Components/Axes

1. **Training Phase (Left Side)**

- **Input Embedding**: Initial data representation

- **Layers (1 to N)**: Stacked processing units

- **Exit Gates**: Decision points at each time step (t=1, t=2, ..., t=T_max)

- **Heads**: Task-specific output modules (L(1), L(2), ..., L(T_max))

- **Loss Function**:

```

L = Σ_{t=1}^{T_max} [p_φ(t|x) · L(t)] - β · H(p_φ(·|x))

```

- First term: Expected task loss

- Second term: Entropy regularization

2. **Inference Phase (Right Side)**

- **Input Embedding**: Same as training

- **Layers (1 to N)**: Identical to training architecture

- **Exit Gates**: Confidence-driven termination points

- **Heads**: Task-specific outputs with cumulative confidence thresholds

- **Confidence Thresholds**:

- CDF₁ = p₁ (t=1)

- CDF₂ = p₁ + p₂ (t=2)

- CDFₙ = p₁ + ... + pₙ (t=n)

### Detailed Analysis

1. **Training Flow**

- Input embedding → Sequential processing through N layers

- At each time step t:

- Exit gate computes probability p_t

- Head L(t) generates task-specific output

- Loss accumulates weighted task losses with entropy regularization

2. **Inference Flow**

- Input embedding → Sequential processing through N layers

- At each time step t:

- Exit gate computes cumulative confidence CDF_t

- If CDF_t < threshold: Continue processing

- If CDF_t ≥ threshold: Early exit with head output

3. **Key Equations**

- Loss function balances task performance (first term) and model uncertainty (second term)

- Confidence thresholds enable adaptive computation during inference

### Key Observations

1. **Dynamic Computation**

- Training phase shows fixed computation path (all layers processed)

- Inference phase introduces early exit capability based on confidence

2. **Confidence Thresholding**

- Cumulative distribution functions (CDFs) determine exit points

- Thresholds increase with processing depth (CDF₁ < CDF₂ < ... < CDFₙ)

3. **Architectural Symmetry**

- Training and inference share identical layer structure

- Exit gates and heads are consistent across both phases

4. **Temporal Progression**

- Time steps (t=1 to t=T_max) represent sequential processing

- Dashed lines in inference indicate optional early termination

### Interpretation

This architecture demonstrates a **confidence-aware neural network** that optimizes computation efficiency without sacrificing accuracy. The training phase learns to balance task performance with model uncertainty through entropy regularization. During inference, the model dynamically terminates processing when confidence thresholds are met, reducing computational cost for easier samples while maintaining accuracy for complex cases.

The early exit mechanism introduces a **computation-accuracy tradeoff** controlled by threshold values. Lower thresholds enable more aggressive early exits (faster but potentially less accurate), while higher thresholds preserve more computation (more accurate but slower). This design is particularly valuable for resource-constrained environments or real-time applications where processing speed is critical.

The entropy regularization term in the loss function prevents overconfidence by encouraging the model to maintain uncertainty awareness, which directly informs the confidence thresholds used during inference. This creates a closed-loop system where training objectives directly enable efficient inference behavior.

</details>

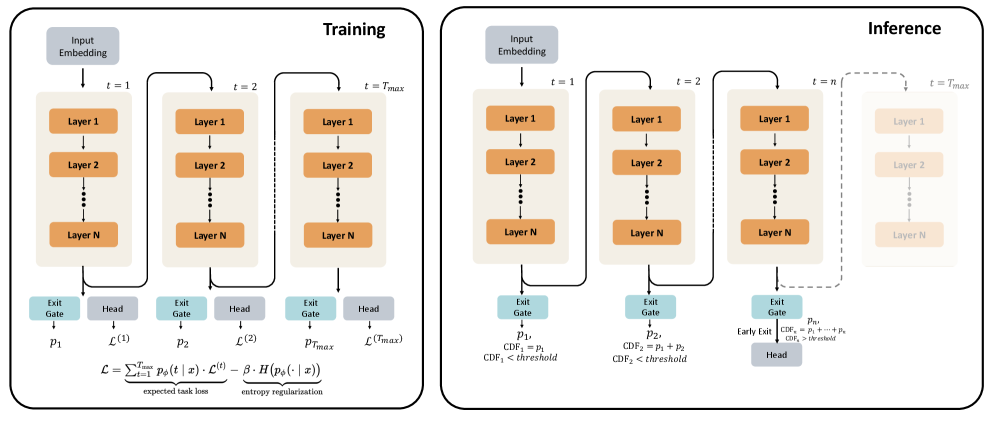

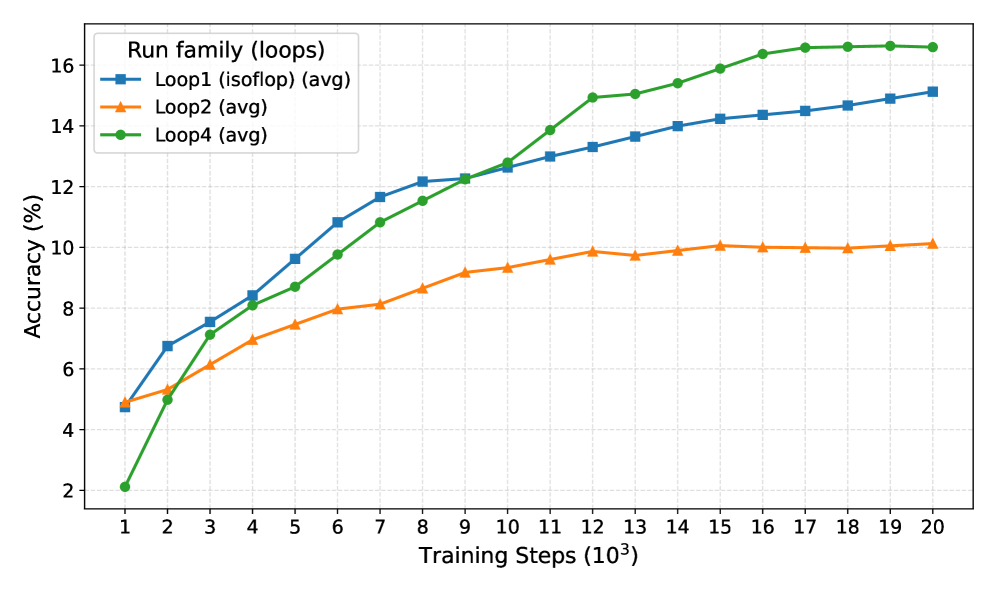

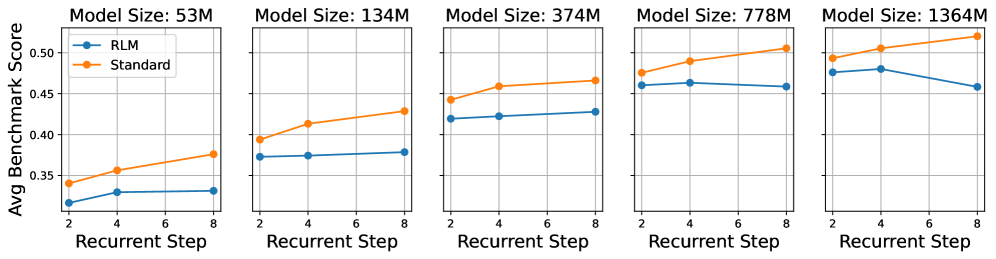

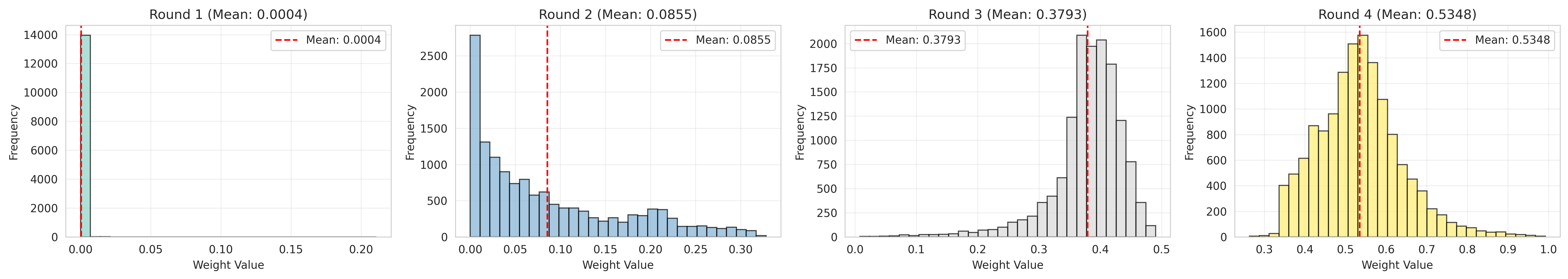

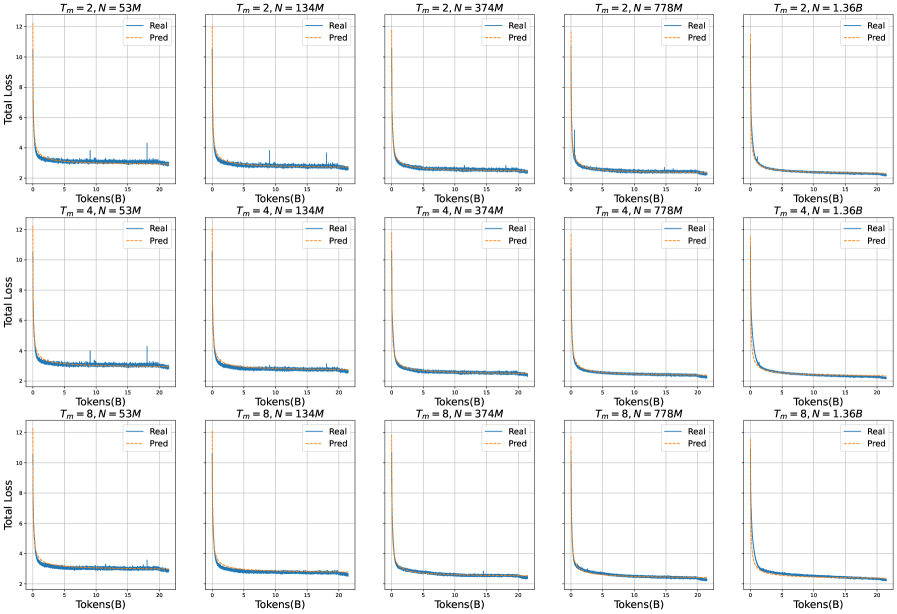

Figure 3: Overview of Looped Language Model (LoopLM) architecture. Left (Training): During training, the model applies a stack of $N$ layers repeatedly for $T_{max}$ recurrent steps. At each recurrent step $\ell$ , an exit gate predicts the probability $p_{\ell}$ of exiting, and a language modeling head $\mathcal{L}_{\ell}$ computes the lanugage modeling loss. Right (Inference): At inference time, the model can exit early based on the accumulated exit probability.

In this section, we formally define the LoopLM architecture on causal Transformers and present our training scheme for adaptive latent reasoning. Figure ˜ 3 illustrates the architecture in training and inference. Our goal is to let the model choose the number of recurrent steps per token and per example, spending less compute on easy inputs and more on hard inputs, without sacrificing accuracy when many steps are available.

3.1 LoopLM Architecture

Let $\mathrm{emb}(·):\mathbb{R}^{|V|}→\mathbb{R}^{d}$ be the token embedding; $\mathcal{T}_{\theta}(·):\mathbb{R}^{M× d}→\mathbb{R}^{M× d}$ a causal transformer layer parameterized by $\theta$ with hidden size $d$ and input length $M$ , and $\mathrm{lmhead}(·):\mathbb{R}^{d}→\mathbb{R}^{|V|}$ be the unembedding layer with vocabulary size $V$ . A non-looped LM stacks $L$ layers, where $\circ$ denotes function composition:

$$

F(\cdot):=\mathrm{lmhead}\circ\mathcal{M}^{L}\circ\mathrm{emb(\cdot)},\quad\qquad\mathcal{M}^{L}(\cdot):=\mathcal{T}_{\theta_{L}}\circ\cdots\circ\mathcal{T}_{\theta_{1}}(\cdot)

$$

Let $t∈\{1,...,T_{\max}\}$ be the number of loop steps (the number of recurrent steps or recurrent depth). The looped model $F^{(t)}$ reuses the same depth- $L$ layer stack $t$ times:

$$

F^{(t)}(\cdot)=\mathrm{lmhead}\circ\underbrace{\mathcal{M}^{L}\circ\mathcal{M}^{L}\circ\cdots\circ\mathcal{M}^{L}}_{t\text{ iterations}}\circ\ \mathrm{emb}(\cdot). \tag{1}

$$

Thus, $t=1$ yields the non-looped model $F^{(1)}\equiv F$ . As shown in Figure 3 (Left), at each recurrent step $t$ , the model produces a language modeling head output. We define the standard cross-entropy loss at single step $t$ as the loss, $\mathcal{L}^{(t)}$ :

$$

\mathcal{L}^{(t)}=\mathbb{E}_{x_{1:M}}\Bigg[\sum_{\ell=1}^{M-1}-\log\,p^{(t)}_{\theta}\!\big(x_{\ell+1}\mid x_{1:\ell}\big)\Bigg], \tag{2}

$$

where $p^{(t)}_{\theta}(·\mid x_{1:\ell})=\mathrm{softmax}\!\big(\mathrm{lmhead}(h^{(t)}_{\ell})\big)$ , $x_{1:\ell}$ denotes the length $-\ell$ prefix of the input (tokens $1$ through $ell$ ), and $h^{(t)}_{\ell}$ is the hidden state after $t$ loops at position $\ell$ . Note that this is the individual loss for a single recurrent step. The total training objective, which combines all steps, is defined in the following sections.

Prior literature [11, 7] has shown that scaling up $t$ is beneficial for reasoning tasks. However, this increases computation, and not all tokens require many steps [30, 31]. Thus, it is crucial to spend the computation budget on the right tokens. This is achieved by the gating mechanism described in the next section.

3.2 Adaptive Computation via Gating Mechanism

To enable adaptive computation, we add an exit gate that runs in parallel with the LM head at each step $t≤ T_{\max}$ (Figure ˜ 3). At each loop $t$ , the gate outputs an instantaneous (per-step) exit probability

$$

\lambda_{t}(x)=\sigma\left(\mathrm{Linear}_{\phi}\left(h^{(t)}\right)\right)\in(0,1)

$$

where $h^{(t)}$ is the final-layer hidden state at step $t$ and $\phi$ are the gate parameters. We define

$$

S_{t}(x)=\prod_{j=1}^{t}\bigl(1-\lambda_{j}(x)\bigr),\qquad S_{0}(x)\equiv 1,

$$

as the survival, or the probability of not exiting in the first $t$ steps. The unnormalized probability of exiting first at step $t$ is then

$$

\tilde{p}_{t}(x)=\lambda_{t}(x)\,S_{t-1}(x),\qquad t=1,\dots,T_{\max}-1.

$$

To obtain a valid discrete distribution over exit steps, we assign the remaining mass to the final step:

$$

p_{\phi}(t\mid x)=\begin{cases}\tilde{p}_{t}(x),&t=1,\dots,T_{\max}-1,\\[3.0pt]

S_{T_{\max}-1}(x),&t=T_{\max},\end{cases}\qquad\text{so that}\quad\sum_{t=1}^{T_{\max}}p_{\phi}(t\mid x)=1. \tag{3}

$$

Inference with Early Exit.

As illustrated in Figure 3 (Right), we infer an exit step from the learned exit distribution $\{p_{\phi}(t\mid x)\}_{t=1}^{T_{\max}}$ , enabling efficient inference. The cumulative exit probability up to step $n$ is:

$$

\mathrm{CDF}(n\mid x)=\sum_{t=1}^{n}p_{\phi}(t\mid x)=1-\prod_{j=1}^{n}\bigl(1-\lambda_{j}(x)\bigr),\quad n<T_{\max},\qquad\mathrm{CDF}(T_{\max}\mid x)=1.

$$

Given a threshold $q∈[0,1]$ , we terminate at the first step where the cumulative probability crosses $q$ :

$$

t_{\mathrm{exit}}(x)=\min\{\,m\in\{1,\dots,T_{\max}\}\,\;:\;\mathrm{CDF}(m\mid x)\geq q\,\}.

$$

The threshold $q$ controls the compute–accuracy tradeoff: smaller $q$ favors earlier exits (less compute), while larger $q$ allows deeper computation. In practice, $q$ may be chosen globally, calibrated per task, or scheduled with a floor/ceiling on steps. This deterministic, quantile-based policy avoids sampling while remaining consistent with the learned distribution.

The gating parameters $\phi$ (and thus $p_{\phi}$ via $\{\lambda_{t}\}$ ) are learned in two stages:

- Stage I: During pre-training, the gates are learned jointly with the LM by optimizing an entropy-regularized objective (Section ˜ 3.3).

- Stage II: We freeze the LM and fine-tune $\phi$ to sharpen $p_{\phi}$ (i.e., adjust depth allocation) without changing token-level predictions.

The complete training objective is described in the next section.

3.3 Stage I: Learning an Entropy-Regularized Objective

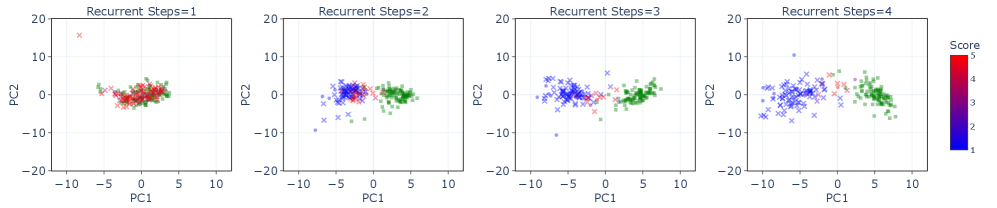

Under naive gradient descent on the next-token prediction loss, deeper loops typically reduce the single-step loss $\mathcal{L}^{(t)}$ from Equation ˜ 2 up to some depth; beyond that, gains diminish and the gradients shift probability mass toward later steps. As $p_{\phi}$ concentrates on late steps, those steps receive more training signal and their losses drop further, which in turn pulls even more mass to the end. This self-reinforcement collapses $p_{\phi}$ onto $t=T_{\rm max}$ . An entropy term penalizes collapse to the deepest step, maintaining enough spread in $p_{\phi}$ to reflect input difficulty.

Given the single-step loss $\mathcal{L}^{(t)}$ and the exit-step distribution $p_{\phi}(t\mid x)$ from Equation ˜ 3, our training objective combines next-token prediction with entropy regularization:

$$

\mathcal{L}=\underbrace{\sum_{t=1}^{T_{\max}}p_{\phi}(t\mid x)\,\mathcal{L}^{(t)}}_{\text{expected task loss}}-\underbrace{\beta\,H\!\left(p_{\phi}(\cdot\mid x)\right)}_{\text{entropy regularization}},\qquad H\!\left(p_{\phi}(\cdot\mid x)\right)=-\sum_{t=1}^{T_{\max}}p_{\phi}(t\mid x)\log p_{\phi}(t\mid x). \tag{4}

$$

Intuitively, the expected task loss weights each $\mathcal{L}^{(t)}$ by the probability of exiting at step $t$ . The coefficient $\beta$ controls the exploration–exploitation trade-off: larger $\beta$ encourages higher-entropy (more exploratory) $p_{\phi}$ , while smaller $\beta$ lets $p_{\phi}(t\mid x)$ place most of its mass on a specific step when the model is confident about the optimal depth.

Alternative perspective: variational inference with a uniform prior.

The objective in Equation ˜ 4 can be viewed as an Evidence Lower Bound (ELBO) loss where the exit step $z∈\{1,...,T_{\max}\}$ is a latent variable whose variational posterior is the learned exit distribution $p_{\phi}(z{=}t\mid x)$ and whose prior is $\pi(t)$ . The negative ELBO is:

$$

\mathcal{L}_{\text{ELBO}}=\sum_{t=1}^{T_{\max}}p_{\phi}(t\mid x)\,\mathcal{L}^{(t)}\;+\;\beta\,\mathrm{KL}\!\big(p_{\phi}(\cdot\mid x)\,\|\,\pi(\cdot)\big).

$$

With a uniform prior $\pi_{t}=1/T_{\max}$ , the KL becomes

$$

\mathrm{KL}\!\big(p_{\phi}(\cdot\mid x)\,\|\,\pi\big)=-H\!\left(p_{\phi}(\cdot\mid x)\right)+\log T_{\max},

$$

so minimizing the ELBO is equivalent (up to the constant $\log T_{\max}$ ) to the objective in Equation ˜ 4. This identifies the entropy term as a KL regularizer and clarifies that the expected loss marginalizes over exit steps, while also linking to adaptive-computation methods such as PonderNet [32], which also optimize an ELBO for dynamic halting.

Why a uniform prior?

Different priors encode different depth preferences. A geometric prior, as in Ref. [32], or Poisson-lognormal priors softly favor earlier halting [17], while a uniform prior is depth-unbiased. We adopt the uniform prior to decouple exit decisions driven by input difficulty from any global compute preference; the entropy term then prevents collapse to always using $T_{\rm max}$ . Empirical comparisons with geometric priors are provided in Appendix Appendix ˜ A.

3.4 Stage II: Focused Adaptive Gate Training

In this stage, we freeze the LM parameters and train only the exit gate to make termination decisions based on realized performance gains. We use a greedy signal that balances marginal improvement from an extra loop against additional compute.

To ensure the gate does not alter LM representations, we compute a detached per-step loss $\mathcal{L}_{i,\mathrm{stop}}^{(t)}$ at each token $i$ and define the loss improvement from step $t\!-\!1$ to $t$ as

$$

I^{(t)}_{i}=\max\!\big(0,\ \mathcal{L}_{i,\mathrm{stop}}^{(t-1)}-\mathcal{L}_{i,\mathrm{stop}}^{(t)}) \tag{5}

$$

where larger $I_{i}^{(t)}$ indicates ongoing improvement; a smaller value indicates that gains have stalled and LoopLM should opt for an early exit. We implement this by computing the ideal continuation probability, a training label that indicates whether to continue (near 1) or exit (near 0):

$$

w^{(t)}_{i}=\sigma(k\cdot(I^{(t)}_{i}-\gamma))

$$

with slope $k=50.0$ and threshold $\gamma=0.005$ , so that $w^{(t)}_{i}\!≈\!1$ recommends continuing and $w^{(t)}_{i}\!≈\!0$ recommends exiting the loop. The adaptive exit loss at step $t$ takes the binary cross-entropy between the gate’s predicted continuation probability $1-\lambda^{(t)}_{i}$ and the ideal label $w^{(t)}_{i}$ , averaged over the sequence length $M$ :

$$

\mathcal{L}^{(t)}_{\text{adaptive}}=-\frac{1}{M}\sum_{i=1}^{M}\!\Big[w^{(t)}_{i}\,\log\bigl(\underbrace{1-\lambda^{(t)}_{i}}_{\begin{subarray}{c}\text{predicted}\\

\text{continuation}\end{subarray}}\bigr)+\bigl(1-w^{(t)}_{i}\bigr)\,\log\bigl(\underbrace{\lambda^{(t)}_{i}}_{\begin{subarray}{c}\text{predicted}\\

\text{exit}\end{subarray}}\bigr)\Big]. \tag{6}

$$

The total adaptive loss averages across recurrent steps:

$$

\mathcal{L}_{\text{adaptive}}=\frac{1}{T_{\max}}\sum_{t=2}^{T_{\max}}\mathcal{L}_{\text{adaptive}}^{(t)}

$$

Significance of our adaptive loss.

The adaptive loss in Equation ˜ 6 trains the gate at step $t$ to match its predictions to the ideal behavior derived from actual performance improvements:

- Predicted probabilities: The gate generates $\lambda^{(t)}_{i}$ (exit probability) and $1-\lambda^{(t)}_{i}$ (continuation probability)

- Target labels: The ideal behavior is encoded as $w^{(t)}_{i}$ (target continuation probability) and $1-w^{(t)}_{i}$ (target exit probability)

This formulation penalizes two failure modes simultaneously:

- Underthinking: the gate exits when it should continue (large label $w^{(t)}_{i}$ , but large predicted exit $\lambda^{(t)}_{i}$ )

- Overthinking: the gate continues when it should exit (small label $w^{(t)}_{i}$ , but small predicted exit $1-\lambda^{(t)}_{i}$ )

Optimizing Equation ˜ 6 trains the gate to choose a greedy exit step that trades additional compute for measured improvement. For empirical evaluations, see Section ˜ 5.4.1.

4 Training Looped Language Models

<details>

<summary>x4.png Details</summary>

### Visual Description

## Flowchart: Ouro Model Training Pipeline

### Overview

The image depicts a bifurcated training pipeline for Ouro models, showing two parallel processes (gold and blue paths) that converge at different stages. The diagram illustrates token quantity transformations and model development phases, with explicit token counts at each stage.

### Components/Axes

- **Paths**:

- Gold path (top): "Upcycle 2.6B" → "Stable Training 3T Tokens" → "CT Annealing 1.4T Tokens" → "LongCT 20B Tokens" → "Mid-Training 300B Tokens" → "Ouro-2.6B" → "Reasoning SFT" → "Ouro-2.6B Thinking"

- Blue path (bottom): "Keep 1.4B" → "Stable Training 3T Tokens" → "CT Annealing 1.4T Tokens" → "LongCT 20B Tokens" → "Mid-Training 300B Tokens" → "Ouro-1.4B" → "Reasoning SFT" → "Ouro-1.4B Thinking"

- **Arrows**: Labeled with token quantities (e.g., "Upcycle 2.6B", "Keep 1.4B")

- **Stages**:

- Warmup → Stable Training → CT Annealing → LongCT → Mid-Training → Ouro → Reasoning SFT → Ouro Thinking

- **Color Coding**:

- Gold (#FFD700) for the 2.6B path

- Blue (#0000FF) for the 1.4B path

### Detailed Analysis

1. **Initial Branching**:

- "Warmup" splits into two paths:

- Gold: "Upcycle 2.6B" (2.6B tokens)

- Blue: "Keep 1.4B" (1.4B tokens)

- Both paths converge at "Stable Training 3T Tokens"

2. **Token Reduction**:

- Gold path reduces from 2.6B → 1.4T (1,400B) → 20B → 300B tokens

- Blue path maintains 1.4B → 1.4T → 20B → 300B tokens

3. **Final Stages**:

- Both paths undergo "Reasoning SFT" before producing:

- Ouro-2.6B Thinking (gold)

- Ouro-1.4B Thinking (blue)

### Key Observations

- **Token Scaling**: The gold path starts with 2.6B tokens but undergoes significant reduction (2.6B → 1.4T → 20B → 300B), while the blue path maintains 1.4B consistency until final stages.

- **Convergence Points**: Both paths share identical stages after initial branching ("Stable Training" onward).

- **Model Output**: Final models differ only in naming (Ouro-2.6B vs. Ouro-1.4B), suggesting token quantity impacts final model size.

### Interpretation

This flowchart represents a token optimization pipeline for large language models. The gold path demonstrates aggressive token reduction (2.6B → 1.4T) through "Upcycle" and "CT Annealing" stages, while the blue path preserves initial token quantity. Both paths converge at "Stable Training" and share identical processing through "LongCT" and "Mid-Training" stages, suggesting these phases standardize token quality regardless of initial quantity. The final "Reasoning SFT" stage appears critical for developing the Ouro models' specialized thinking capabilities, with the token quantity difference (2.6B vs. 1.4B) potentially affecting model performance or specialization depth.

</details>

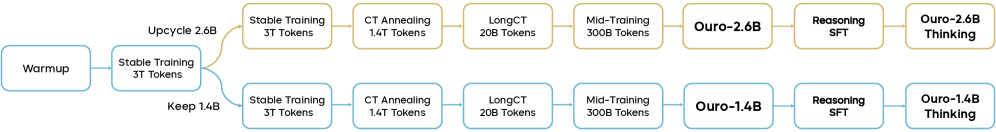

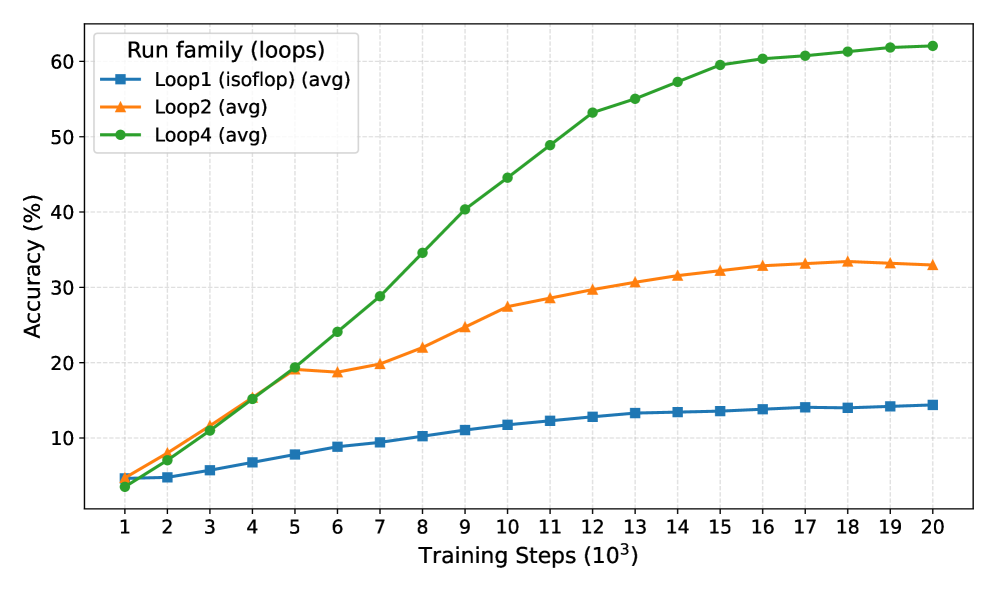

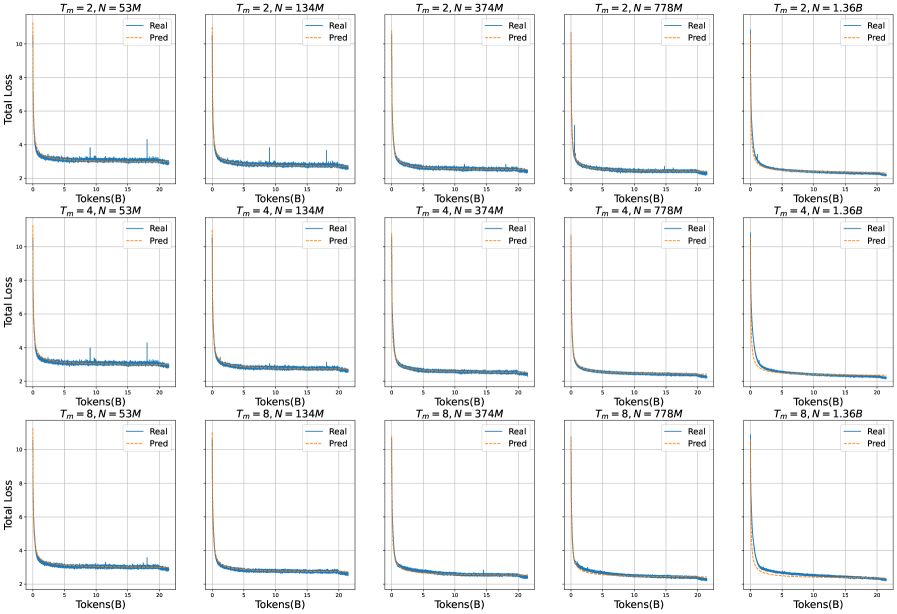

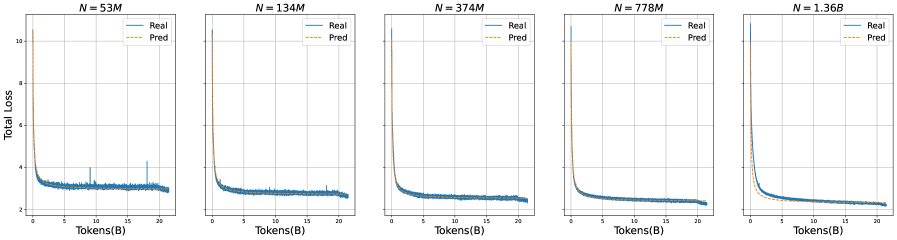

Figure 4: End-to-end Ouro training pipeline: shared warmup → Stable Training → forks into a 1.4B retained path and a 2.6B upcycled path → four shared stages → Reasoning SFT to produce Ouro-Thinking.

Our end-to-end training pipeline for the Ouro model family is shown in Figure 4. In total 7.7T tokens are used to train the base models Ouro-1.4B and Ouro-2.6B. A final Reasoning SFT (Supervised Fine-Tuning) yields the Ouro-1.4B-Thinking and Ouro-2.6B-Thinking variants. This section details the architecture, data composition, and specific configurations used in each of these training stages. A high-level overview of the training recipe of the first four stages is given in Table ˜ 1.

Table 1: Training recipe for Ouro 1.4B and 2.6B.

| Hyperparameters | Stage 1a Pre-train I | Stage 1b Pre-train II | Stage 2 CT Annealing | Stage 3 LongCT | Stage 4 Mid-training |

| --- | --- | --- | --- | --- | --- |

| Learning rate (Final) | $3.0× 10^{-4}$ | $3.0× 10^{-4}$ | $3.0× 10^{-5}$ | $3.0× 10^{-5}$ | $1.0× 10^{-5}$ |

| LR scheduler | Constant | Constant | Cosine Decay | Constant | Cosine Decay |

| Weight decay | 0.1 | | | | |

| Gradient norm clip | 1.0 | | | | |

| Optimizer | AdamW ( $\beta_{1}=0.9$ , $\beta_{2}=0.95$ ) | | | | |

| Batch size (tokens) | 4M $→$ 8M | 8M | | | |

| Sequence length | 4K | 4K | 16K | 64K | 32K |

| Training tokens | 3T | 3T | 1.4T | 20B | 300B |

| Recurrent steps | 8 | 4 | | | |

| $\beta$ for KL divergence | 0.1 | 0.05 | | | |

| RoPE base | 10K | 10K | 40K | 1M | 1M |

| Data Focus | | | | | |

| Web data | High | High | Medium | Low | Low |

| Math & Code | Low | Low | High | Low | High |

| Long-context | None | None | Low | High | Medium |

| SFT-quality | None | None | Low | Low | High |

4.1 Transformer Architecture

The Ouro models use a standard decoder-only Transformer [33], prioritizing a clean implementation of the looped computation mechanism without extraneous modifications. The core architecture consists of a stack of Transformer blocks applied recurrently. Each block uses Multi-Head Attention (MHA) with Rotary Position Embeddings (RoPE) [34]. The feed-forward network (FFN) in each block utilizes a SwiGLU activation [35]. To enhance training stability, which is especially critical for deep recurrent computation, we employ a sandwich normalization structure, placing an RMSNorm layer before both the attention and FFN sub-layers [17]. Both models use a 49,152-token vocabulary from the SmolLM2 model [36]. This tokenizer is optimized for code and Latin-alphabet language. Architectural details are summarized in Table ˜ 2.

Table 2: Ouro model architecture configurations. Both models share the same vocabulary and core component types, differing in parameter count and layer depth.

| Ouro 1.4B | 1.4B | 24 | 2048 | MHA | SwiGLU | RoPE | 49,152 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Ouro 2.6B | 2.6B | 48 | 2048 | MHA | SwiGLU | RoPE | 49,152 |

4.2 Data

Data sets the capability bounds of foundation models. Our corpus spans web text, mathematics, code, and long-context documents across multiple stages, building core language understanding while strengthening reasoning, coding, and long-context skills. Beyond standard web crawls, we include targeted datasets for mathematical reasoning and code generation to improve complex problem solving. Table ˜ 3 summarizes composition and scale at each training stage.

Table 3: Statistics of the training corpus. Since data are randomly sampled during pre-training, the dataset size does not directly correspond to the total number of seen tokens.

| Nemotron-CC (Web Data) | Stage 1 | 6386 | 4404 |

| --- | --- | --- | --- |

| MAP-CC (Web Data) | Stage 1 | 800 | 780 |

| Ultra-FineWeb-zh (Web Data) | Stage 1 | 120 | 120 |

| OpenCoder-pretrain | Stage 1 | 450 | 450 |

| MegaMath-web | Stage 1 | 247 | 246 |

| MegaMath-high-quailty | Stage 2 | 64 | 64 |

| Nemotron-CC-Math-v1 | Stage 2 | 210 | 210 |

| Nemotron-Code | Stage 2 | 53 | 53 |

| Nemotron-SFT-Code | Stage 2 | 48 | 48 |

| Nemotron-SFT-General | Stage 2 | 87 | 87 |

| OpenCoder-Annealing | Stage 2 | 7 | 7 |

| ProLong-64K | Stage 3 | 20 | 20 |

| Mid-training SFT Mix | Stage 4 | 182 | 90 |

Table 4: Data composition for Stage 1 (Stable Training I & II). Total dataset size: 6T tokens.

| Data Source Proportion (%) | Nemotron-CC 73.4 | MAP-CC 13.0 | Ultra-FineWeb-zh 2.0 | OpenCoder-pretrain 7.5 | MegaMath-web 4.1 |

| --- | --- | --- | --- | --- | --- |

To ensure reproducibility, our training corpus is composed entirely of open-source datasets, with data statistics summarized in Table 4. We partition the data into four stages, each with construction strategies aligned to the Warmup-Stable-Decay (WSD) learning rate scheduler [37] commonly used in modern pre-training.

Stage 1: Pre-training

This stage supports the warmup and stable phases of training. The corpus is primarily composed of Web CommonCrawl (CC) data. Because we sought to train the model on >2T tokens, many popular open corpora are too small (e.g., Fineweb-Edu at 1.3T tokens [38], DCLM at 2.6T tokens [39]). We therefore use Nemotron-CC [40] (6.3T tokens) as the main dataset for the stable-phase. To provide the model with basic Chinese proficiency, we include Ultra-FineWeb-zh [41] and MAP-CC [42]. However, without Chinese vocabulary in the tokenizer, characters would be fragmented into multiple byte-level sub-tokens, so we removed Chinese from Stage 2 onwards. To enhance coding and mathematical abilities, we incorporate OpenCoder [43] and MegaMath [44]. See Table ˜ 4 for dataset proportions in further detail.

Stage 2: Continual Training (CT) Annealing

The CT annealing stage incorporates higher-quality data to enhance the model under the annealing learning rate. Token sequence length is extended to 16K tokens, exceeding the length of most samples to minimize truncation. We construct the corpus from the high-quality subset of Nemotron-CC and augment with HQ MegaMath, Nemotron-CC-Math-v1 [45, 46], OpenCoder-Annealing [43], Nemotron-pre-training-Code-v1 [46], and Nemotron-pre-training-SFT-v1 [46]. Data composition is provided in Table ˜ 5.

Table 5: Data composition for Stage 2 (CT Annealing). Total dataset size: 1.4T tokens.

| Nemotron-CC-high-quailty | 66.5 |

| --- | --- |

| Nemotron-CC-Math-v1 | 15.0 |

| MegaMath-high-quailty | 4.6 |

| OpenCoder-LLM/opc-annealing-corpus | 0.5 |

| Nemotron-pre-training-Code-v1/Synthetic-Code | 3.8 |

| Nemotron-pre-training-SFT-v1/Nemotron-SFT-Code | 3.4 |

| Nemotron-pre-training-SFT-v1/Nemotron-SFT-General | 6.2 |

Stage 3: Long Context Training (LongCT)

The LongCT stage extends the long-context capabilities of the model. We adopt the 64K-length subset of ProLong [47], consisting of 20B tokens, to train the model on longer sequences and improve its ability to handle long contexts.

Stage 4: Mid-training

This stage uses a diverse set of extremely high-quality data, consisting of both $\langle$ Question, Answer $\rangle$ and $\langle$ Question, CoT, Answer $\rangle$ samples, to further develop advanced abilities. We integrate 20+ open-source SFT datasets to boost data breadth, with thorough decontamination to avoid overlap with mainstream evaluation benchmarks. All samples are converted to ChatML to reduce alignment tax in the subsequent post-training stage. After processing, we obtain 182B tokens, from which we randomly sample 90B tokens. To stabilize the training distribution, we replay 30B tokens from Stage 1 and 180B from Stage 2, yielding an effective volume of 300B tokens. Consequently, this stage consolidates and extends capabilities acquired during pre-training under diverse supervised signals.

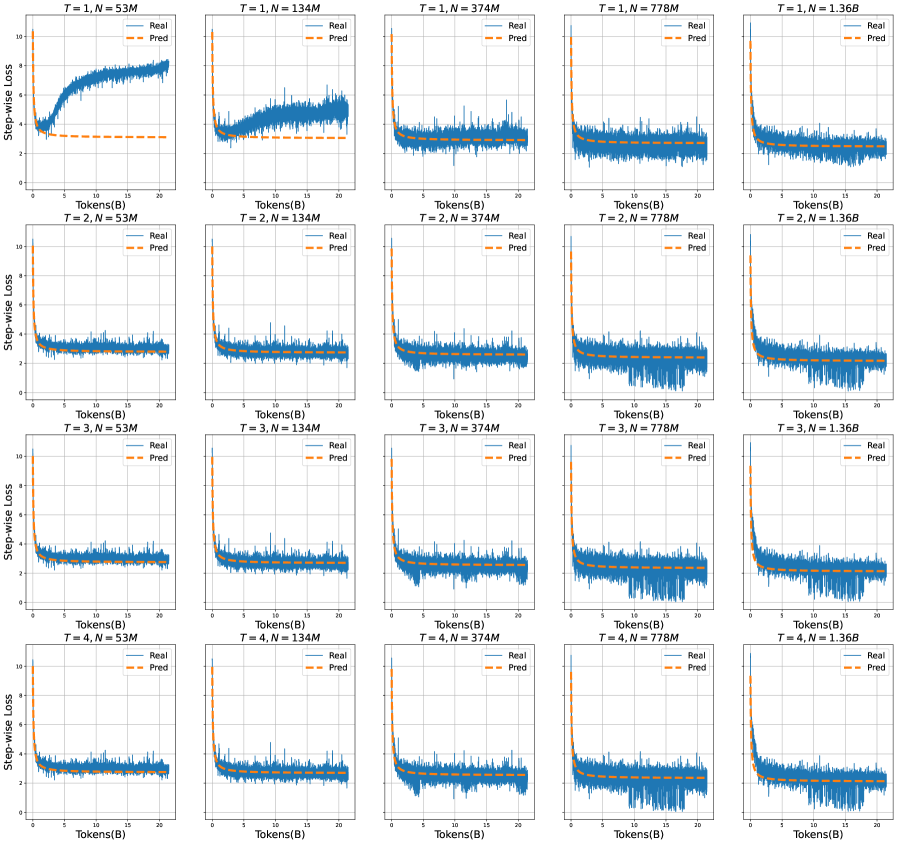

4.3 Training Stability and Adaptive Configuration

We use the flame [48] framework for pre-training, built on torchtitan [49]. During training, we prioritized stability over aggressive scaling, making several key adjustments based on empirical observations of training dynamics. These decisions were critical for achieving stable convergence with recurrent architectures, which exhibit different optimization characteristics compared to standard transformers.

Recurrent Step Reduction for Stability.

Our initial experiments with 8 recurrent steps in Stage 1a (Stable Training I) led to loss spikes and gradient oscillations. We hypothesize this stems from compounded gradient flow through multiple recurrent iterations, which can amplify small perturbations. Consequently, we reduced the recurrent steps from 8 to 4 in Stage 1b (Stable Training II in Figure ˜ 4), which balanced computational depth with training stability.

Batch Size Scaling.

To further enhance stability, we progressively increased the batch size from 4M to 8M tokens. Larger batch sizes provide more stable gradient estimates, which is particularly important for recurrent architectures where gradient flow through multiple iterations can introduce additional variance.

KL Divergence Coefficient Reduction.

We strategically reduced $\beta$ in Equation ˜ 4 from 0.1 in Stage 1a to 0.05 in later stages. This reduction serves dual purposes: (1) it decreases the conflicting gradients between task loss and the KL penalty, leading to more stable optimization, and (2) it reduces the “pull” from the uniform prior, allowing the model greater freedom to explore beneficial depth patterns without being artificially constrained. This adjustment allowed the model to learn useful depth patterns without undue constraint.

Optimization Configuration.

Throughout all stages, we use AdamW optimizer with weight decay set to 0.1, $\beta_{1}=0.9$ , $\beta_{2}=0.95$ , and gradient clipping at 1.0. These conservative settings were chosen specifically to maintain stability with recurrent architectures.

Learning Rate Considerations.

We empirically found that recurrent architectures require smaller learning rates than parameter-matched Transformers. Given compute constraints, we did not run exhaustive LR sweeps, but instead, adopted conservative rates that prioritized stable convergence over potentially faster but riskier schedules.

Sequence Length Progression.

The sequence length is progressively increased across stages: 4K tokens for both pre-training phases, 16K for CT annealing, 64K for long-context training, and 32K for mid-training. This progression stabilizes optimization while expanding context capacity with training throughput.

4.3.1 Stage-wise Training Details

- Stage 1a: Pre-training Phase I (Exploration). We initialize training with 8 recurrent steps. The learning rate follows a Warmup-Stable schedule with a peak of $3× 10^{-4}$ . The sequence length is 4K tokens with an initial batch size of 4M tokens, gradually increased to 8M for stability. During this phase, we observed training instabilities that prompted subsequent architectural adjustments.

- Stage 1b: Pre-training Phase II with Stability-Driven Upcycling. After identifying stability issues in Stage 1a, we reduced the recurrent steps from 8 to 4. To maintain computational efficiency while improving stability, we split our approach into two variants:

- 1.4B Ouro: Uses the original 24 pre-trained layers with 4 recurrent steps

- 2.6B Ouro: Upcycles 24 layers to 48 via layer duplication with 4 recurrent steps

The recurrent nature of our architecture makes this upcycling process particularly smooth, as the shared weights across iterations naturally facilitates layer duplication without the typical instabilities seen in standard transformer upcycling.

- Stage 2: CT Annealing. The learning rate is annealed to $3× 10^{-5}$ while exposing the model to high-quality training data. The recurrent steps remain at 4, having proven optimal for the stability-performance trade-off. The data composition is carefully balanced as shown in Table 5.

- Stage 3: LongCT. The batch size is held at 8M tokens. The reduced KL coefficient ( $\beta=0.05$ ) continues to provide stable training dynamics even with the 64K-length sequences.

- Stage 4: Mid-training. The learning rate is further reduced to $1× 10^{-5}$ with a cosine scheduler to help the model better absorb on this diverse, high-quality dataset.

4.4 Supervised Fine-Tuning

Data Composition.

We perform SFT on a diverse corpus of approximately 8.3M examples drawn from high-quality public datasets. As shown in Table 6, our training mixture emphasizes mathematical reasoning (3.5M examples) and code generation (3.2M examples), while also incorporating scientific reasoning (808K examples) and conversational abilities (767K examples).

For mathematical reasoning, we combine OpenThoughts3 [50] and AceReason-1.1-SFT [51] to provide comprehensive coverage of problem-solving strategies. Our code training data aggregates multiple sources including AceReason-1.1-SFT, OpenCodeReasoning [52], Llama-Nemotron-Post-Training-Dataset [53], and OpenThoughts3, ensuring broad exposure to diverse programming paradigms and reasoning patterns. Scientific reasoning capabilities are developed through OpenThoughts3 and Llama-Nemotron-Post-Training-Dataset, while conversational proficiency is enhanced using the OO1-Chat-747K https://huggingface.co/datasets/m-a-p/OO1-Chat-747K and DeepWriting-20K [54] datasets.

Training Configuration.

We train for 2 epochs with a maximum sequence length of 32K tokens using the LlamaFactory codebase [55]. We employ the Adam optimizer with a learning rate of $2× 10^{-5}$ and $\beta=(0.9,0.95)$ , applying a cosine decay schedule for stable convergence. Training was interrupted due to infrastructure issues; we resumed from the last saved checkpoint with a learning rate close to the original cosine decay schedule.

Table 6: Supervised fine-tuning data composition. The training corpus comprises 8.3M examples across four key capability domains.

| Math | OpenThoughts3, AceReason-1.1-SFT | 3.5M |

| --- | --- | --- |

| Code | AceReason-1.1-SFT, OpenCodeReasoning, Llama-Nemotron-Post-Training-Dataset, OpenThoughts3 | 3.2M |

| Science | OpenThoughts3, Llama-Nemotron-Post-Training-Dataset | 808K |

| Chat | OO1-Chat-747K, DeepWriting-20K | 767K |

4.5 Reinforcement Learning Attempts

Following the SFT stage, we conducted exploratory RLVR (Reinforcement Learning with Verifiable Rewards) alignment experiments using DAPO [56] and GRPO [57] on the DAPO-17K dataset. These attempts did not yield significant performance gains over the final SFT checkpoint. The primary issue stemmed from the model’s dynamic early-exit mechanism. vLLM/SGLang provide fast rollouts via a fixed execution path, which breaks under LoopLM’s variable-depth computation.

We tried two approaches, neither successful:

1. Off-policy rollouts: We generate full four-step rollouts in vLLM, yielding four logit candidates per token. We then selected the first token to exceed the termination threshold to simulate an early exit. For updates, we used the cumulative loss up to that step, discarding later tokens and losses. This off-policy mismatch, i.e., where tokens are produced with the final depth, and losses computed at an earlier depth, did not improve performance.

1. Fixed 4-Round RL: To avoid off-policy issues, we performed rollouts and updates at a fixed four recurrent steps. Training progressed normally but performance did not surpass the SFT checkpoint. A like cause is scale: after having already undergone extensive SFT, these smaller models may have limited headroom for RL gains. Interestingly, the model still used fewer rounds at inference when beneficial despite being trained at four rounds. The mechanism behind this generalization remains unclear.

We will further explore RL alignment for this architecture as we continue to develop infrastructure that can fully support LoopLM’s dynamic computation.

5 Experiments

5.1 Base Model Evaluation

We conduct comprehensive evaluations of the Ouro base models trained on 7.7T tokens using the LoopLM architecture. The evaluation focuses on their performance across general knowledge, reasoning, mathematics, science, coding, and multilingual capabilities. All benchmarks are evaluated using lm-eval-harness [58] and evalplus [59] frameworks with settings detailed in Appendix. C.1.

For the base model baselines, we compare our Ouro models with leading open-source base models, including Qwen2.5 [2], Qwen3 [3], Gemma3 [4], Llama3.1 [5], and Llama3.2 [5] series base models. All models are evaluated using the same evaluation pipeline to ensure fair comparison.

Table 7: Comparison of 1.4B LoopLM model with 1-4B parameter baselines. The best score is bolded, and the second-best is underlined. LoopLM’s column is highlighted in gray.

| Architecture | Gemma3 1B Dense | Llama3.2 1.2B Dense | Qwen2.5 1.5B Dense | Qwen3 1.7B Dense | Qwen2.5 3B Dense | Llama3.2 3B Dense | Qwen3 4B Dense | Gemma3 4B Dense | Ouro 1.4B R4 LoopLM |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| # Params | 1.0B | 1.0B | 1.5B | 1.7B | 3.0B | 3.0B | 4.0B | 4.0B | 1.4B |

| # Tokens | 2T | 9T | 18T | 36T | 18T | 9T | 36T | 4T | 7.7T |

| General Tasks | | | | | | | | | |

| MMLU | 39.85 | 45.46 | 60.99 | 62.46 | 65.62 | 59.69 | 73.19 | 58.37 | 67.35 |

| MMLU-Pro | 11.31 | 11.80 | 29.11 | 37.27 | 37.87 | 33.34 | 51.40 | 34.61 | 48.62 |

| BBH | 30.26 | 30.72 | 43.66 | 53.51 | 55.37 | 39.45 | 70.95 | 66.32 | 71.02 |

| ARC-C | 39.25 | 41.98 | 54.44 | 55.72 | 55.46 | 52.47 | 63.65 | 60.92 | 60.92 |

| HellaSwag | 56.12 | 59.35 | 67.73 | 67.09 | 74.54 | 73.09 | 75.66 | 75.58 | 74.29 |

| Winogrande | 58.72 | 62.75 | 66.77 | 66.30 | 70.17 | 69.14 | 71.19 | 71.07 | 72.30 |

| Math & Coding Tasks | | | | | | | | | |

| GSM8K | 2.05 | 7.05 | 60.73 | 70.28 | 74.60 | 67.20 | 72.86 | 68.69 | 78.92 |

| MATH500 | 41.00 | 7.40 | 17.60 | 25.80 | 42.60 | 40.80 | 59.60 | 68.60 | 82.40 |

| HumanEval | 6.70 | 19.50 | 52.40 | 66.50 | 68.90 | 29.90 | 77.40 | 34.80 | 74.40 |

| HumanEval+ | 5.50 | 17.40 | 46.30 | 59.80 | 62.20 | 26.20 | 70.70 | 29.30 | 67.40 |

| MBPP | 12.40 | 35.70 | 60.30 | 68.00 | 63.00 | 50.30 | 78.80 | 60.60 | 73.00 |

| MBPP+ | 10.10 | 29.10 | 50.00 | 58.50 | 54.20 | 39.70 | 65.90 | 51.10 | 62.70 |

Table 8: Comparison of 2.6B LoopLM model with 3-12B parameter baselines. The best score is bolded, and the second-best is underlined. LoopLM’s column is highlighted in gray.

| Architecture | Qwen2.5 3B Dense | Llama3.2 3B Dense | Qwen3 4B Dense | Gemma3 4B Dense | Qwen2.5 7B Dense | Llama3.1 8B Dense | Qwen3 8B Dense | Gemma3 12B Dense | Ouro 2.6B R4 LoopLM |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| # Total Params | 3.0B | 3.0B | 4.0B | 4.0B | 7.0B | 8.0B | 8.0B | 12.0B | 2.6B |

| # Trained Tokens | 18T | 9T | 36T | 4T | 18T | 15T | 36T | 12T | 7.7T |

| General Tasks | | | | | | | | | |

| MMLU | 65.62 | 59.69 | 73.19 | 58.37 | 74.20 | 73.02 | 76.63 | 72.14 | 74.60 |

| MMLU-Pro | 37.87 | 33.34 | 51.40 | 34.61 | 43.55 | 43.24 | 53.72 | 49.21 | 55.73 |

| BBH | 55.37 | 39.45 | 71.14 | 66.32 | 53.72 | 71.56 | 77.65 | 78.41 | 80.46 |

| ARC-C | 55.46 | 52.47 | 63.65 | 60.75 | 63.65 | 60.75 | 66.10 | 72.44 | 66.40 |

| HellaSwag | 74.54 | 73.09 | 75.66 | 75.58 | 79.98 | 81.97 | 79.60 | 83.68 | 79.69 |

| Winogrande | 70.17 | 69.14 | 71.19 | 71.27 | 76.48 | 77.11 | 76.80 | 77.74 | 75.85 |

| Math & Coding Tasks | | | | | | | | | |

| GSM8K | 74.60 | 67.20 | 72.86 | 68.69 | 81.50 | 78.17 | 83.09 | 77.18 | 81.58 |

| MATH500 | 42.60 | 40.80 | 59.60 | 68.60 | 61.20 | 52.90 | 62.30 | 83.20 | 90.85 |

| HumanEval | 68.90 | 29.90 | 77.70 | 34.80 | 79.30 | 38.40 | 84.80 | 46.30 | 78.70 |

| HumanEval+ | 62.20 | 26.20 | 70.70 | 29.30 | 70.60 | 31.10 | 75.30 | 37.20 | 70.70 |

| MBPP | 63.00 | 50.30 | 78.80 | 60.60 | 73.80 | 62.40 | 79.00 | 73.50 | 80.40 |

| MBPP+ | 54.20 | 39.70 | 65.90 | 51.10 | 63.50 | 51.60 | 67.90 | 66.10 | 66.60 |

Summary of Evaluation Results

Based on the overall evaluation results, we highlight key conclusions about our base models:

1. Our 1.4B parameter Ouro model (with 4 recurrent steps) achieves performance comparable to the 4B Qwen3-Base across most benchmarks. Notably, it matches or exceeds the 4B model on challenging reasoning tasks such as BBH (71.02 vs 70.95), GSM8K (78.92 vs 72.86) and MATH500 (82.40 vs 59.60)

1. The 2.6B parameter Ouro model outperforms dense models up to 8B parameters on reasoning-intensive benchmarks. It achieves 55.73 on MMLU-Pro, 80.46 on BBH and 90.85 on MATH500, surpassing the 8B Qwen3-Base (53.72, 77.65 and 62.30 respectively).

1. The recurrent architecture shows particular strength on tasks requiring multi-step reasoning and knowledge manipulation, with the most pronounced gains observed on MMLU-Pro, BBH, GSM8K and MATH500 benchmarks, validating our hypothesis that iterative computation enhances reasoning capabilities.

5.2 Reasoning Model Evaluation

We evaluate the reasoning capabilities of our Ouro reasoning models (Ouro-Thinking) with 4 recurrent steps on challenging mathematical and scientific benchmarks that require multi-step problem solving and deep reasoning. The evaluation includes AIME 2024/2025 (American Invitational Mathematics Examination), OlympiadBench, GPQA, SuperGPQA, BeyondAIME, and HLE, representing some of the most challenging reasoning tasks in the field.

Table 9: Performance comparison across different benchmarks. For AIME24 and AIME25, we report pass@1/pass@10 metrics. The best score is bolded, and the second-best is underlined.

| Model | AIME24 | AIME25 | Olympiad | Beyond | HLE | Super | GPQA | | |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| pass@1 | pass@10 | pass@1 | pass@10 | bench | AIME | | GPQA | | |

| Ouro-1.4B-Thinking-R4 | 65.0 | 83.3 | 46.3 | 73.3 | 71.6 | 34.0 | 5.21 | 47.4 | 45.5 |

| Ouro-2.6B-Thinking-R4 | 64.7 | 90.0 | 50.3 | 76.7 | 76.4 | 39.0 | 5.58 | 53.7 | 52.7 |

| Qwen3-1.7B | 32.0 | 55.6 | 22.0 | 33.3 | 56.4 | 15.0 | 4.13 | 35.9 | 34.0 |

| Qwen3-4B | 61.3 | 75.0 | 51.3 | 63.3 | 73.2 | 31.0 | 5.21 | 51.9 | 54.5 |

| Qwen3-8B | 73.0 | 86.7 | 66.7 | 81.3 | 75.3 | 38.0 | 2.22 | 48.0 | 59.1 |

| Deepseek-Distill-Qwen-1.5B | 29.6 | 66.7 | 23.0 | 43.33 | 56.44 | 9.0 | 4.2 | 26.5 | 33.2 |

| Deepseek-Distill-Qwen-7B | 57.3 | 83.3 | 36.0 | 73.3 | 72.0 | 30.0 | 5.14 | 46.6 | 51.0 |

Benchmarks.

- AIME 2024/2025 [60]. 30 questions per year from AIME I and II; integer answers 0–999.

- OlympiadBench [61]. Olympiad-level bilingual scientific problems; supports images for multimodal inputs.

- GPQA [62]. 448 graduate-level multiple-choice questions in biology, physics, and chemistry; search-resistant design.

- SuperGPQA [63]. GPQA scaled to about 285 graduate disciplines; curated to remain challenging.

- BeyondAIME [64]. Hard integer-answer math beyond AIME; emphasizes contamination resistance.

- HLE [65]. Multi-disciplinary closed-ended benchmark; expert-written with public splits and a private test set.

Models compared.

We report results for Ouro-1.4B-Thinking and Ouro-2.6B-Thinking, which are LoopLM-based looped language models with iterative depth. As baselines we include Qwen3-1.7B, Qwen3-4B, Qwen3-8B, DeepSeek-Distill-Qwen-1.5B, and DeepSeek-Distill-Qwen-7B. We use size-matched baselines whenever available, otherwise we compare to the next larger widely used model.

Evaluation protocol.

All systems are evaluated with a single in-house harness and identical prompting. We adopt an LLM-as-judge protocol across benchmarks with a fixed rubric and tie-breaking policy. Unless otherwise noted, decoding uses temperature = 1.0 and top_p = 0.7 for every model.

Evaluation results.

Table 9 summarizes outcomes. Iterative reasoning in the LoopLM architecture provides consistent gains on these tasks. The 1.4B Ouro model with 4 recurrent steps reaches 71.55 on OlympiadBench (vs. 73.18 for Qwen3-4B) and 34.0 on BeyondAIME (vs. 31.0 for Qwen3-4B). The 2.6B with 4 recurrent steps variant scores 76.44 on OlympiadBench (vs. 75.25 for Qwen3-8B) and 39.0 on BeyondAIME (vs. 38.0 for Qwen3-8B).

5.3 Performance by Recurrent Depth and Extrapolation

Table 10: Performance of the Ouro 1.4B base model across different recurrent steps (C-QA is CommonsenseQA [66]). Steps 5-8 represent extrapolation, as the model was trained with a maximum of 4 steps. Performance peaks at the trained depth ( $T=4$ ) and then degrades.

| UT Step 1 | ARC-C (25-shot) 37.63 | ARC-E (8-shot) 63.85 | C-QA (10-shot) 44.64 | HellaSwag (10-shot) 55.24 | MMLU (5-shot avg) 41.21 | Winogrande (5-shot) 56.99 |

| --- | --- | --- | --- | --- | --- | --- |

| 2 | 54.86 | 80.30 | 67.98 | 71.15 | 60.43 | 66.69 |

| 3 | 59.47 | 83.33 | 74.37 | 74.07 | 66.71 | 71.35 |

| 4 | 60.92 | 83.96 | 75.43 | 74.29 | 67.45 | 72.30 |

| Extrapolation (Trained on T=4) | | | | | | |

| 5 | 58.96 | 82.91 | 75.35 | 73.72 | 66.64 | 70.32 |

| 6 | 59.73 | 82.58 | 74.94 | 72.77 | 65.77 | 71.03 |

| 7 | 58.96 | 81.99 | 74.28 | 72.35 | 65.28 | 70.09 |

| 8 | 58.19 | 82.07 | 73.55 | 71.60 | 64.49 | 69.30 |

Table 11: Performance of the Ouro 2.6B base model across different recurrent steps (C-QA is CommonsenseQA [66]). Steps 5-8 represent extrapolation, as the model was trained with a maximum of 4 steps. Performance is strongest around the trained depth ( $T=4$ ) and shows varied degradation patterns during extrapolation.

| UT Step 1 | ARC-C (25-shot) 47.95 | ARC-E (8-shot) 72.39 | C-QA (10-shot) 57.58 | HellaSwag (10-shot) 68.94 | MMLU (5-shot avg) 51.55 | Winogrande (5-shot) 61.48 |

| --- | --- | --- | --- | --- | --- | --- |

| 2 | 62.37 | 85.23 | 76.90 | 77.61 | 67.63 | 70.48 |

| 3 | 65.36 | 87.33 | 79.77 | 79.12 | 73.57 | 74.35 |

| 4 | 66.38 | 86.95 | 81.65 | 79.56 | 74.60 | 75.53 |

| Extrapolation (Trained on T=4) | | | | | | |

| 5 | 65.36 | 86.83 | 81.24 | 79.57 | 74.43 | 75.93 |

| 6 | 65.02 | 86.74 | 81.08 | 79.63 | 73.79 | 75.37 |

| 7 | 65.44 | 86.57 | 80.75 | 79.59 | 72.92 | 75.77 |

| 8 | 64.76 | 86.49 | 81.08 | 79.50 | 72.24 | 74.59 |

We analyze the Ouro model’s performance as a function of its recurrent computational depth. Our models were trained with a maximum of 4 recurrent steps ( $T=4$ ). We investigate this behavior for both our base models and our SFT Ouro-Thinking models.

Base Model Performance.

Tables 10 and 11 present the performance of the Ouro 1.4B and 2.6B base models, respectively, evaluated at depths from $T=1$ to $T=8$ .

For both base models, performance on standard benchmarks (e.g., MMLU, ARC-C) generally improves up to the trained depth of $T=4$ . Steps $T=5$ through $T=8$ represent extrapolation beyond the training configuration. As shown in both tables, benchmark performance sees a moderate degradation when extrapolating, with a noticeable drop compared to the peak at $T=4$ .

However, this degradation in task-specific performance contrasts sharply with the model’s safety alignment. As detailed in Section ˜ 7.1, the model’s safety improves as the number of recurrent steps increases, even into the extrapolated regime ( $T>4$ ). This suggests that while the model’s fine-grained knowledge for benchmarks may falter beyond its training depth, the iterative refinement process continues to enhance its safety alignment.

Reasoning Model (SFT) Performance.

Table 12: Performance of Ouro-1.4B-Thinking model by recurrent step. The model was trained at $T=4$ . Performance peaks around $T=4$ or $T=5$ . All scores are percentages (0-100).

| OlympiadBench SuperGPQA AIME 2024 | 2.22 2.03 0.00 | 59.70 33.07 37.33 | 70.67 44.50 62.33 | 71.55 47.37 65.00 | 72.30 48.73 60.67 | 69.48 46.15 50.67 | 69.04 45.29 42.33 | 66.81 42.88 38.67 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| AIME 2025 | 0.33 | 25.00 | 43.33 | 46.30 | 47.00 | 43.00 | 41.00 | 38.00 |

Table 13: Performance of Ouro-2.6B-Thinking model by recurrent step. The model was trained at $T=4$ . Performance peaks at $T=3$ or $T=4$ . All scores are percentages (0-100).

| OlympiadBench SuperGPQA AIME 2024 | 18.96 15.66 3.00 | 68.59 48.58 52.00 | 75.56 56.70 70.33 | 76.44 53.68 64.70 | 71.85 56.45 57.00 | 69.19 55.44 56.33 | 57.63 53.32 49.67 | 39.26 46.84 39.00 |

| --- | --- | --- | --- | --- | --- | --- | --- | --- |

| AIME 2025 | 2.00 | 40.67 | 50.67 | 50.30 | 49.33 | 46.00 | 38.00 | 24.33 |

We conduct a similar analysis on our SFT models, Ouro-Thinking, to see how recurrent depth affects specialized reasoning tasks. Results for the 1.4B and 2.6B models are presented in Table 12 and Table 13, respectively.

We conduct a similar analysis on our SFT models, Ouro-Thinking, to see how recurrent depth affects specialized reasoning tasks. Results for the 1.4B and 2.6B models are presented in Table 12 and Table 13, respectively.

For both SFT models, performance at $T=1$ is very low, confirming that iterative refinement is essential for these complex tasks. Performance generally peaks at or near the trained depth, but shows slightly different patterns. The 1.4B model (Table 12) peaks around $T=4$ or $T=5$ . The 2.6B model (Table 13) tends to peak slightly earlier, at $T=3$ or $T=4$ . Interestingly, neither model peaks strictly at $T=4$ across all tasks, unlike the base model evaluations which are often logit-based. This may suggest that the longer decoding required for these reasoning tasks allows for a more active exploration of capabilities at different recurrent depths. For both models, performance degrades as they extrapolate to deeper, unseen recurrent steps ( $T=6-8$ ), reinforcing that performance is optimized for the depth seen during training.

5.4 Early Exit and Adaptive Computation Efficiency

A defining advantage of the LoopLM architecture lies in its capacity for adaptive computation allocation. Unlike standard transformers with fixed computational budgets, our model can dynamically adjust the number of recurrent steps based on input complexity. This section investigates various strategies for implementing adaptive early exit, comparing their effectiveness in balancing computational efficiency with task performance.

5.4.1 Early Exit Strategies

We explore three distinct approaches to determining when the model should terminate its iterative computation and produce the final output.

Baseline: Static Exit.

The simplest strategy forces the model to exit at a predetermined recurrent step, regardless of the input characteristics. While this approach provides predictable computational costs, it fails to leverage the model’s potential for adaptive resource allocation. We evaluate static exit at steps 1 through 4 to establish performance bounds and understand the relationship between computational depth and accuracy.

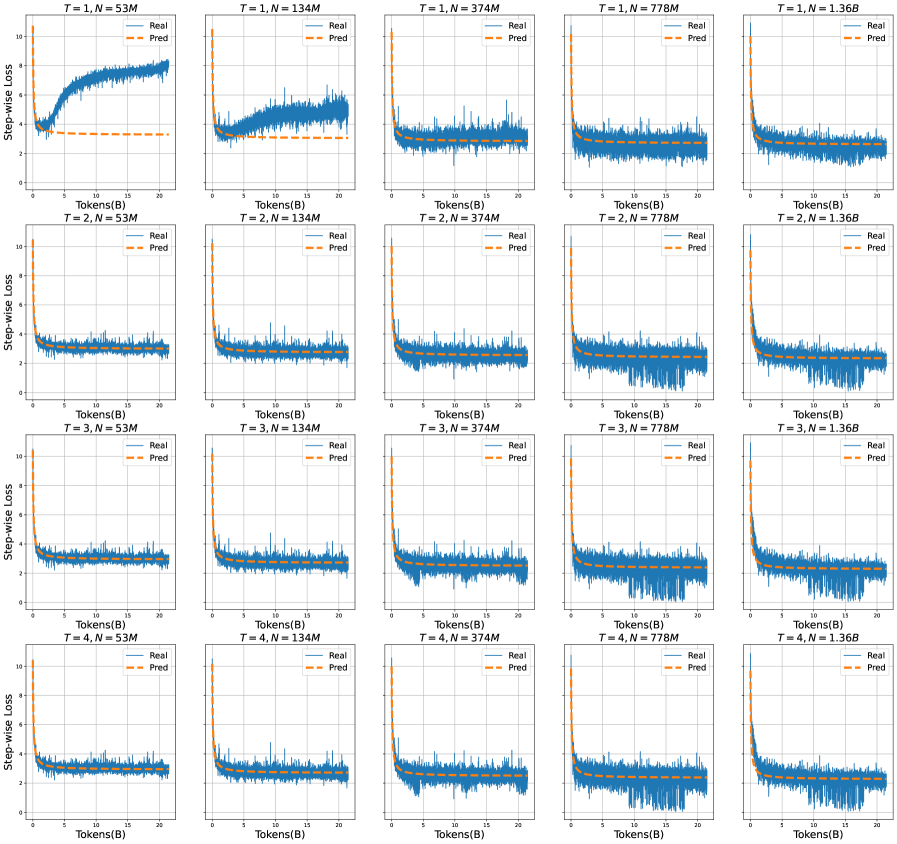

Hidden State Difference Threshold.

This heuristic-based approach monitors the magnitude of representational changes between consecutive recurrent steps. At each step $t$ , we compute $\Delta h_{t}=\|h_{t}-h_{t-1}\|_{2}$ and trigger early exit when $\Delta h_{t}<\epsilon$ for some threshold $\epsilon$ .

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Graph: Accuracy vs. Average Exit Round

### Overview

The image is a line graph comparing the accuracy of four different methods across varying average exit rounds (1.0 to 4.0). The y-axis represents accuracy (0.40 to 0.65), while the x-axis represents average exit rounds. Four data series are plotted with distinct line styles and colors, as indicated in the legend located in the bottom-right corner.

### Components/Axes

- **X-axis (Horizontal)**: Labeled "Average Exit Round," with markers at 1.0, 2.0, 3.0, and 4.0.

- **Y-axis (Vertical)**: Labeled "Accuracy," with increments of 0.05 from 0.40 to 0.65.

- **Legend**: Positioned in the bottom-right corner, mapping:

- Blue solid line with circles: "Using Ponder Gate (Untrained)"

- Orange solid line with diamonds: "Ponder Gate (Trained)"

- Green solid line with squares: "Using Hidden State Diff"

- Red dashed line with triangles: "Fixed Exit Depth"

### Detailed Analysis

1. **Using Ponder Gate (Untrained)** (Blue):

- Starts at (1.0, 0.55) and increases steadily.

- Data points: (2.0, 0.60), (3.0, 0.65), (4.0, 0.68).

- Trend: Consistent upward slope.

2. **Ponder Gate (Trained)** (Orange):

- Starts at (1.0, 0.40) and rises sharply.

- Data points: (2.0, 0.60), (3.0, 0.65), (4.0, 0.68).

- Trend: Steeper ascent than other lines, surpassing all others by x=4.0.

3. **Using Hidden State Diff** (Green):

- Starts at (1.0, 0.50) and increases gradually.

- Data points: (2.0, 0.60), (3.0, 0.65), (4.0, 0.67).

- Trend: Slightly slower growth compared to blue and orange lines.

4. **Fixed Exit Depth** (Red dashed):

- Starts at (1.0, 0.40) and rises steadily.

- Data points: (2.0, 0.55), (3.0, 0.60), (4.0, 0.65).

- Trend: Linear increase but remains below all other methods.

### Key Observations

- The **Ponder Gate (Trained)** method (orange) demonstrates the highest accuracy by x=4.0, outperforming all other methods.

- The **Using Ponder Gate (Untrained)** (blue) and **Using Hidden State Diff** (green) lines converge closely, with blue slightly ahead at x=4.0.

- **Fixed Exit Depth** (red dashed) lags behind all other methods throughout the range.

- All methods show improvement as average exit rounds increase, but the rate of improvement varies significantly.

### Interpretation

The data suggests that **training the Ponder Gate method** (orange line) yields the most significant performance gains over time, particularly in later exit rounds. The **Using Ponder Gate (Untrained)** and **Using Hidden State Diff** methods exhibit similar trajectories, indicating that hidden state differences may not provide a substantial advantage over untrained Ponder Gate approaches. In contrast, **Fixed Exit Depth** (red dashed) underperforms consistently, implying that dynamic exit strategies (e.g., Ponder Gate) are more effective than static depth-based approaches. The sharp rise of the trained Ponder Gate method highlights the importance of training in optimizing accuracy for this task.

</details>

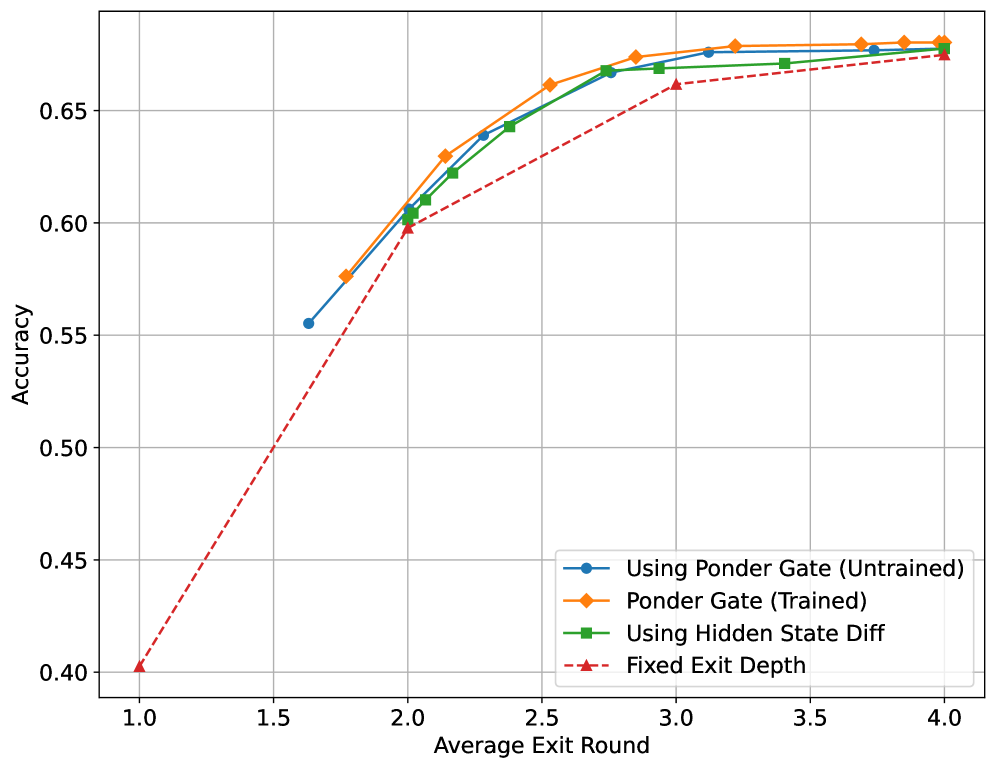

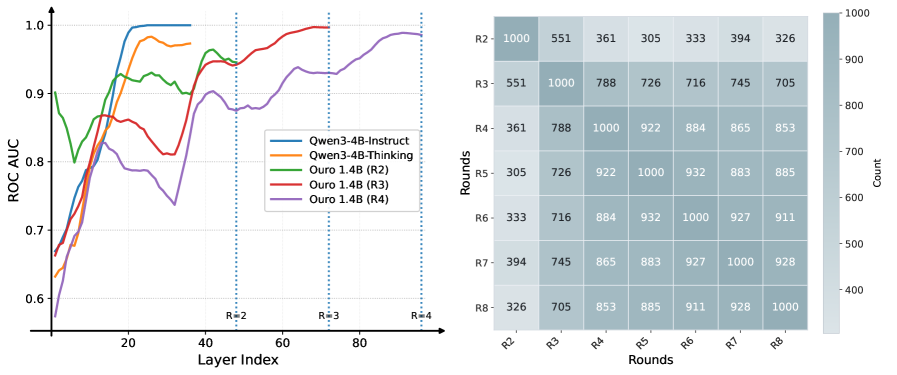

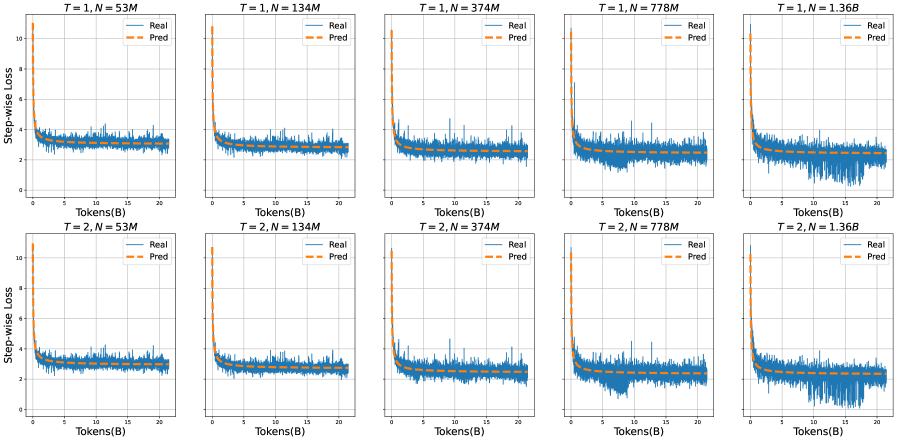

Figure 5: Comparison of early exit strategies on MMLU. We evaluate four approaches across different average exit rounds: static baseline (red triangle), hidden state difference threshold (green squares), Ponder gate from standard pre-training (blue circles), and Ponder gate with specialized adaptive exit training from Section 3.4 (orange diamonds).

Learned Gating with Q-Exit Criterion.

Our primary approach employs the learned exit gate described in Section 4, which produces step-wise halting probabilities $\lambda_{t}$ based on the model’s current hidden states. During inference, we apply the Q-exit criterion: at each step $t$ , we compute the cumulative distribution function $\text{CDF}(t)=\sum_{i=1}^{t}p(i|x)$ and exit when $\text{CDF}(t)$ exceeds the threshold $q∈[0,1]$ . The threshold $q$ serves as a deployment-time hyperparameter that controls the compute-accuracy trade-off without requiring model retraining.

We evaluate this strategy under two training configurations. The untrained configuration uses the gate as trained during our standard pre-training pipeline with the entropy-regularized objective (uniform prior KL loss). This represents the gate’s behavior when jointly optimized with language modeling throughout Stages 1-4. The trained configuration additionally applies the specialized adaptive exit loss described in Section 3.4, which explicitly teaches the gate to base stopping decisions on observed task loss improvements.

Experimental Results.

Figure 5 presents the accuracy-efficiency trade-off curves for all strategies on the MMLU benchmark. By varying the exit threshold (or static exit step for baseline), we obtain multiple operating points for each method, enabling direct comparison at equivalent computational budgets measured by average exit round.

Several key findings emerge from this analysis:

1. The Ponder gate with specialized adaptive exit training achieves the best accuracy at every computational budget, demonstrating that the loss improvement-based training signal described in Section 3.4 provides clear benefits over standard entropy regularization. At an average exit round of 2.5, the specialized training reaches 66% accuracy while the standard gate achieves approximately 64%;

1. Even without specialized training, the Ponder gate from standard pre-training substantially outperforms the static baseline, validating that the entropy-regularized objective with uniform prior successfully enables adaptive computation. The gate learns to differentiate input difficulty through the general training dynamics, though it lacks the explicit supervision to correlate stopping decisions with actual performance improvements. This demonstrates that our base training approach already captures useful signals for resource allocation;

1. The hidden state difference threshold strategy performs surprisingly competitively, closely tracking both gate configurations. At moderate computational budgets (2-3 average rounds), it achieves accuracy within 1%-2% of the specialized trained gate, suggesting that representation stability provides a reasonable proxy for computational convergence. However, the consistently superior performance of the specialized trained gate across all operating points confirms that explicit supervision via the adaptive exit loss captures information beyond what can be inferred from representational dynamics alone.

1. Comparing the untrained and trained gate configurations reveals the value proposition of the specialized training procedure. The gap between these curves, approximately 2%-3% accuracy at most operating points, represents the benefit of teaching the gate to explicitly monitor task loss improvements $I^{(n)}_{t}$ rather than relying solely on entropy regularization to discover stopping policies. This empirical result validates our design choice to introduce the adaptive exit loss as a specialized training objective.

1. The baseline’s monotonic improvement from 1 to 4 rounds confirms the “deeper is better” property while revealing diminishing returns. The dramatic jump from 1.0 to 2 rounds (40% to 60% accuracy) contrasts with the marginal gain from 3 to 4 rounds (67.35% accuracy). This pattern explains why adaptive methods prove effective: most examples achieve near-maximal performance at intermediate depths, with only a minority requiring full computational depth.

5.4.2 KV Cache Sharing for Inference Efficiency

The recurrent nature of our architecture introduces a challenge: naively, each recurrent step requires maintaining its own KV cache, leading to 4 $×$ memory overhead for our 4-step model. We investigate strategies to reduce this overhead through KV cache reuse.

Prefilling Phase

During the prefilling phase (processing the input prompt), we find that all four recurrent steps require their own KV caches, as each step transforms the representations in ways that cannot be approximated by earlier steps. Attempting to reuse KV caches during prefilling leads to performance degradation (>10 points on GSM8K).

Decoding Phase

However, during the decoding phase (auto-regressive generation), we discover that KV cache reuse becomes viable. We explore two strategies:

1. Last-step reuse: Only maintain KV cache from the final (4th) recurrent step

1. First-step reuse: Only maintain KV cache from the first (1st) recurrent step.

1. Averaged reuse: Maintain an averaged KV cache across all four steps

Table 14: KV cache sharing strategies during decoding. Both last-step and averaged strategies achieve minimal performance loss while reducing memory by 4 $×$ .

| Full (4 $×$ cache) First-step only Last-step only | 78.92 18.73 78.85 | 82.40 8.43 80.40 | 1.00 $×$ 4.00 $×$ 4.00 $×$ |

| --- | --- | --- | --- |

| Averaged | 78.73 | 78.52 | 4.00 $×$ |