<details>

<summary>Image 1 Details</summary>

### Visual Description

Icon/Small Image (247x32)

</details>

## AN EXPRESSIVE, EFFICIENT ATTENTION ARCHITECTURE

TECHNICAL REPORT OF KIMI LINEAR

## Kimi Team

/github https://github.com/MoonshotAI/Kimi-Linear

## ABSTRACT

We introduce Kimi Linear, a hybrid linear attention architecture that, for the first time, outperforms full attention under fair comparisons across various scenarios-including short-context, long-context, and reinforcement learning (RL) scaling regimes. At its core lies Kimi Delta Attention (KDA), an expressive linear attention module that extends Gated DeltaNet [111] with a finer-grained gating mechanism, enabling more effective use of limited finite-state RNN memory. Our bespoke chunkwise algorithm achieves high hardware efficiency through a specialized variant of the Diagonal-Plus-LowRank (DPLR) transition matrices, which substantially reduces computation compared to the general DPLR formulation while remaining more consistent with the classical delta rule.

We pretrain a Kimi Linear model with 3B activated parameters and 48B total parameters, based on a layerwise hybrid of KDA and Multi-Head Latent Attention (MLA). Our experiments show that with an identical training recipe, Kimi Linear outperforms full MLA with a sizeable margin across all evaluated tasks, while reducing KV cache usage by up to 75% and achieving up to 6 × decoding throughput for a 1M context. These results demonstrate that Kimi Linear can be a drop-in replacement for full attention architectures with superior performance and efficiency, including tasks with longer input and output lengths.

To support further research, we open-source the KDA kernel and vLLM implementations 1 , and release the pre-trained and instruction-tuned model checkpoints. 2

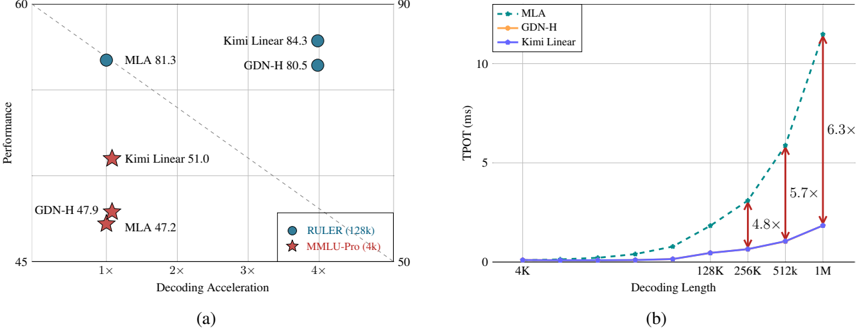

Figure 1: (a) Performance vs. acceleration. With strict fair comparisons with 1.4T training tokens, on MMLU-Pro (4k context length, red stars), Kimi Linear leads performance (51.0) at similar speed. On RULER (128k context length, blue circles), it is Pareto-optimal, achieving top performance (84.3) and 3 . 98 × acceleration. (b) Time per output token (TPOT) vs. decoding length. Kimi Linear (blue line) maintains a low TPOT, matching GDN-H and outperforming MLA at long sequences. This enables larger batches, yielding a 6 . 3 × faster TPOT (1.84ms vs. 11.48ms) than MLA at 1M tokens.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Scatter Plot and Line Graph: Model Performance vs. Decoding Efficiency

### Overview

The image contains two charts:

- **Chart (a)**: A scatter plot comparing model performance (y-axis) against decoding acceleration (x-axis).

- **Chart (b)**: A line graph showing time-to-process (TPOT) in milliseconds (y-axis) as decoding length increases (x-axis).

---

### Components/Axes

#### Chart (a):

- **X-axis**: "Decoding Acceleration" (multiples: 1×, 2×, 3×, 4×).

- **Y-axis**: "Performance" (range: 45–60).

- **Legend**:

- **Blue circles**: RULER (128k)

- **Red stars**: MMLU-Pro (4k)

- **Dashed line**: A reference trend line from (1×, 50) to (4×, 55).

#### Chart (b):

- **X-axis**: "Decoding Length" (4K, 128K, 256K, 512K, 1M).

- **Y-axis**: "TPOT (ms)" (range: 0–10).

- **Legend**:

- **Blue dashed line**: MLA

- **Orange line**: GDN-H

- **Purple line**: Kimi Linear

---

### Detailed Analysis

#### Chart (a):

- **Data Points**:

- **MLA**: 81.3 (1×), 47.2 (4×).

- **Kimi Linear**: 84.3 (1×), 51.0 (4×).

- **GDN-H**: 80.5 (1×), 47.9 (4×).

- **Trends**:

- Performance decreases as decoding acceleration increases (e.g., MLA drops from 81.3 to 47.2).

- The dashed line suggests a linear trade-off between acceleration and performance.

#### Chart (b):

- **Trends**:

- All models show increasing TPOT with decoding length.

- **MLA** has the steepest slope (6.3× slower at 1M vs. 4K).

- **Kimi Linear** has the slowest growth (5.7× at 1M).

- **Annotations**:

- Multipliers (e.g., 6.3×) indicate performance degradation relative to a baseline.

---

### Key Observations

1. **Chart (a)**:

- Models with higher initial performance (e.g., Kimi Linear at 84.3) degrade more sharply with acceleration.

- The dashed line implies a theoretical maximum performance for a given acceleration.

2. **Chart (b)**:

- MLA’s TPOT grows exponentially, suggesting poor scalability.

- Kimi Linear maintains relatively stable efficiency.

---

### Interpretation

- **Chart (a)** highlights a trade-off between model performance and computational efficiency. Higher-performing models (e.g., Kimi Linear) may require more resources to maintain accuracy.

- **Chart (b)** demonstrates that MLA’s performance degrades significantly with longer decoding lengths, while Kimi Linear scales more gracefully.

- **Inconsistency Note**: The legend in Chart (a) labels "RULER (128k)" and "MMLU-Pro (4k)" but does not directly correspond to the model names (MLA, Kimi Linear, GDN-H). This may indicate a mislabeling or contextual mismatch in the visualization.

---

**Final Output**: The charts emphasize the balance between model accuracy and computational cost, with Kimi Linear emerging as a more efficient choice for longer decoding tasks.

</details>

1 /github https://github.com/fla-org/flash-linear-attention/tree/main/fla/ops/kda

2 https://huggingface.co/moonshotai/Kimi-Linear-48B-A3B-Instruct

## 1 Introduction

As large language models (LLMs) evolve into increasingly capable agents [50], the computational demands of inference-particularly in long-horizon and reinforcement learning (RL) settings-are becoming a central bottleneck. This shift toward RL test-time scaling [95, 33, 80, 74, 53], where models must process extended trajectories, tool-use interactions, and complex decision spaces at inference time, exposes fundamental inefficiencies in standard attention mechanisms. In particular, the quadratic time complexity and the linearly growing key-value (KV) cache of softmax attention introduce substantial computational and memory overheads, hindering throughput, context-length scaling, and real-time interactivity.

Linear attention [48] offers a principled approach to reducing computational complexity but has historically underperformed softmax attention in language modeling-even for short sequences-due to limited expressivity. Recent advances have significantly narrowed this gap, primarily through two innovations: gating or decay mechanisms [92, 16, 114] and the delta rule [84, 112, 111, 71]. Together, these developments have pushed linear attention closer to softmaxlevel quality on moderate-length sequences. Nevertheless, purely linear structure remain fundamentally constrained by the finite-state capacity, making long-sequence modeling and in-context retrieval theoretically challenging [104, 4, 45].

Hybrid architectures that combine softmax and linear attention-using a few global-attention layers alongside predominantly faster linear layers-have thus emerged as a practical compromise between quality and efficiency [57, 100, 66, 12, 32, 81]. However, previous hybrid models often operated at limited scale or lacked comprehensive evaluation across diverse benchmarks. The core challenge remains: to develop an attention architecture that matches or surpasses full attention in quality while achieving substantial efficiency gains in both speed and memory-an essential step toward enabling the next generation of agentic, decoding-heavy LLMs.

In this work, we present Kimi Linear , a hybrid linear attention architecture designed to meet the efficiency demands of agentic intelligence and test-time scaling without compromising quality. At its core lies Kimi Delta Attention (KDA) , a hardware-efficient linear attention module that extends Gated DeltaNet [111] with a finer-grained gating mechanism. While GDN, similar to Mamba2 [16], employs a coarse head-wise forget gate, KDA introduces a channel-wise variant in which each feature dimension maintains an independent forgetting rate, akin to Gated Linear Attention (GLA) [114]. This fine-grained design enables more precise regulation of the finite-state RNN memory, unlocking the potential of RNN-style models within hybrid architectures.

Crucially, KDA parameterizes its transition dynamics with a specialized variant of the Diagonal-Plus-Low-Rank (DPLR) matrices [30, 71], enabling a bespoke chunkwise-parallel algorithm that substantially reduces computation relative to general DPLR formulations while remaining consistent with the classical delta rule.

Kimi Linear interleaves KDA with periodic full attention layers in a uniform 3:1 ratio. This hybrid structure reduces memory and KV-cache usage by up to 75% during long-sequence generation while preserving global information flow via the full attention layers. Through matched-scale pretraining and evaluation, we show that Kimi Linear consistently matches or outperforms strong full-attention baselines across short-context, long-context, and RL-style post-training tasks-while achieving up to 6 × higher decoding throughput at 1M context length.

To facilitate further research, we release open-source KDA kernels with vLLM integration, as well as pre-trained and instruction-tuned checkpoints. These components are drop-in compatible with existing full-attention pipelines, requiring no modification to caching or scheduling interfaces, thereby facilitating research on hybrid architectures.

## Contributions

- Kimi Delta Attention (KDA): a linear attention mechanism that refines the gated delta rule with improved recurrent memory management and hardware efficiency.

- The Kimi Linear architecture: a hybrid design adopting a 3:1 KDA-to-global attention ratio, reducing memory footprint while surpassing full-attention quality.

- Fair empirical validation at scale: through 1.4T token training runs, Kimi Linear outperforms full attention and other baselines in short/long context and RL-style evaluations, with full release of kernels, vLLM integration, and checkpoints.

## 2 Preliminary

In this section, we introduce the technical background related to our proposed Kimi Delta Attention.

## 2.1 Notation

In this paper, we define □ t ∈ R d k or R d v , s . t ., □ ∈ { q, k, v, o, u, w } denotes a t -th corresponding column vector, and S t ∈ R d k × d v represents the matrix-form memory state. M and M -denote lower-triangular masks with and without diagonal elements, respectively; for convenience, we also write them as Tril and StrictTril .

Chunk-wise Formulation Suppose the sequence is split into L/C chunks where each chunk is of length C . We define □ [ t ] ∈ R C × d for □ ∈ { Q , K , V , O , U , W } are matrices that stack the vectors within the t -th chunk, and □ r [ t ] = □ tC + r is the r -th element of the chunk. Note that t ∈ [0 , L/C ) , r ∈ [1 , C ] . State matrices are also re-indexed such that S i [ t ] = S tC + i . Additionally, S [ t ] := S 0 [ t ] = S C [ t -1] , i.e., the initial state of a chunk is the last state of the previous chunk.

Decay Formulation We define the cumulative decay γ i → j [ t ] := ∏ j k = i α k [ t ] , and abbreviate γ 1 → r [ t ] as γ r [ t ] . Additionally, A [ t ] := A i/j [ t ] ∈ R C × C is the matrix with elements γ i [ t ] /γ j [ t ] . Diag ( α t ) denotes the fine-grained decay, Diag ( γ i → j [ t ] ) := ∏ j k = i Diag ( α k [ t ] ) , and Γ i → j [ t ] ∈ R C × d k is the matrix stack from γ i [ t ] to γ j [ t ] .

## 2.2 Linear Attention and the Gated Delta Rule

Linear Attention as Online Learning. Linear attention [48] maintains a matrix-valued recurrent state that accumulates key-value associations:

$$S _ { t } = S _ { t - 1 } + k _ { t } v _ { t } ^ { \top } , \quad o _ { t } = S _ { t } ^ { \top } q _ { t } .$$

From the fast-weight perspective [84, 85], S t serves as an associative memory storing transient mappings from keys to values. This update can be viewed as performing gradient descent on the unbounded correlation objective

$$\mathcal { L } _ { t } ( S ) = - \langle S ^ { \top } k _ { t } , v _ { t } \rangle ,$$

which continually reinforces recent key-value pairs without any forgetting. However, such an objective provides no criterion for which memories to erase, and the accumulated state grows unbounded, leading to interference over long contexts.

DeltaNet: Online Gradient Descent on Reconstruction Loss. DeltaNet [84] reinterprets this recurrence as online gradient descent on a reconstruction objective:

$$\begin{array} { r } { \mathcal { L } _ { t } ( S ) = \frac { 1 } { 2 } \| S ^ { \top } k _ { t } - v _ { t } \| ^ { 2 } . } \end{array}$$

Taking a gradient step with learning rate β t gives

$$\begin{array} { r } { S _ { t } = S _ { t - 1 } - \beta _ { t } \nabla _ { s } \mathcal { L } _ { t } ( S _ { t - 1 } ) = ( I - \beta _ { t } k _ { t } k _ { t } ^ { \top } ) S _ { t - 1 } + \beta _ { t } k _ { t } v _ { t } ^ { \top } . } \end{array}$$

This rule-the classical delta rule -treats S as a learnable associative memory that continually corrects itself toward the mapping k t ↦→ v t . The rank-1 update structure, equivalent to a generalized Householder transformation, supports hardware-efficient chunkwise parallelization [11, 112].

Gated DeltaNet as Weight Decay. Although DeltaNet stabilizes learning, it still retains outdated associations indefinitely. Gated DeltaNet (GDN) [111] introduces a scalar forget gate α t ∈ [0 , 1] , yielding

$$S _ { t } = \alpha _ { t } ( I - \beta _ { t } k _ { t } k _ { t } ^ { \top } ) S _ { t - 1 } + \beta _ { t } k _ { t } v _ { t } ^ { \top } .$$

Here, α t acts as a form of weight decay on the fast weights [8], implementing a forgetting mechanism analogous to data-dependent L 2 regularization. This simple yet effective modification provides a principled way to control memory lifespan and mitigate interference, improving both stability and long-context generalization while preserving DeltaNet's parallelizable structure.

From this perspective, we observe that GDN can be interpreted as a form of multiplicative positional encoding where the transition matrix is data-dependent and learnable, relaxing the orthogonality constraint of RoPE [115]. 3

3 When the state transformation matrix preserves its orthogonality, absolute positional encodings can also be applied independently to q and k to be converted into relative positional encodings during the attention computation [87].

## 3 Kimi Delta Attention: Improving Delta Rule with Fine-grained Gating

We propose Kimi Delta Attention (KDA), a new gated linear attention variant that refines GDN's scalar decay by introducing a fine-grained diagonalized gate Diag( α t ) that enables fine-grained control over memory decay and positional awareness (as discussed in §6.1). We begin by introducing the chunkwise parallelization of KDA, showing how a series of rank-1 matrix transformations can be compressed into a dense representation while maintaining stability under diagonal gating. We then highlight the efficiency gains of KDA over the standard DPLR ( Diagonal-Plus-LowRank ) formulation [30, 71].

$$S _ { t } = \left ( I - \beta _ { t } k _ { t } k _ { t } ^ { \top } \right ) D i a g \left ( \alpha _ { t } \right ) S _ { t - 1 } + \beta _ { t } k _ { t } v _ { t } ^ { \top } \in \mathbb { R } ^ { d _ { k } \times d _ { v } } ; \quad o _ { t } = S _ { t } ^ { \top } q _ { t } \in \mathbb { R } ^ { d _ { v } }$$

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Matrix Equation Diagram: Step-by-Step Matrix Operations

### Overview

The image depicts a mathematical equation involving matrix operations, structured as a sequence of steps. It visually represents the transformation of matrices through subtraction, multiplication, and addition, with color-coded matrices (blue, orange, red) to distinguish components. The final result is a composite matrix derived from these operations.

### Components/Axes

- **Matrices**:

- **A** (blue): Initial matrix on the far left.

- **B** (orange): First multiplicand in the subtraction step.

- **C** (orange): Second multiplicand in the subtraction step.

- **D** (red): Added matrix in the final step.

- **Result**: Composite matrix (blue/orange) on the far right.

- **Operations**:

- Subtraction (`-`) between `B × C`.

- Addition (`+`) of `D` to the result of `A - (B × C)`.

</details>

## 3.1 Hardware-Efficient Chunkwise Algorithm

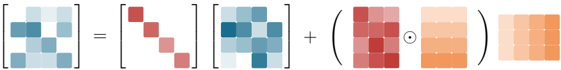

By partially expanding the recurrence for Eq. 1 into a chunk-wise formulation, we have:

$$\begin{array} { r l } & { s _ { [ t ] } ^ { r } = \underbrace { \left ( \prod _ { i = 1 } ^ { r } \left ( I - \beta _ { [ t ] } ^ { i } k _ { [ t ] } ^ { i } k _ { [ t ] } ^ { i \top } \right ) D i a g ( \alpha _ { [ t ] } ^ { i } ) \right ) } _ { \colon = P _ { [ t ] } ^ { r } } \cdot s _ { [ t ] } ^ { 0 } + \underbrace { \sum _ { i = 1 } ^ { r } \left ( \prod _ { j = i + 1 } ^ { r } \left ( I - \beta _ { [ t ] } ^ { j } k _ { [ t ] } ^ { j } k _ { [ t ] } ^ { j \top } \right ) D i a g ( \alpha _ { [ t ] } ^ { j } ) \right ) \cdot \beta _ { [ t ] } ^ { i } k _ { [ t ] } ^ { i } v _ { [ t ] } ^ { i \top } } _ { \colon = H _ { [ t ] } ^ { r } } } & { ( 2 ) } \end{array}$$

WYRepresentation shi is typically employed to pack a series rank-1 updates into a single compact representation [11]. We follow the formulation of P in Comba [40] to reduce the need for an additional matrix inversion in subsequent computations.

$$P _ { [ t ] } ^ { r } = D i a g ( \gamma _ { [ t ] } ^ { r } ) - \sum _ { i = 1 } ^ { r } D i a g ( \gamma _ { [ t ] } ^ { i \rightarrow r } ) k _ { [ t ] } ^ { i } w _ { [ t ] } ^ { i \top } & & H _ { [ t ] } ^ { r } = \sum _ { i = 1 } ^ { t } D i a g \left ( \gamma _ { [ t ] } ^ { i \rightarrow r } \right ) k _ { [ t ] } ^ { i } u _ { [ t ] } ^ { i \top } & & ( 3 )$$

where the auxiliary vector w t ∈ R d k and u t ∈ R d v are computed via the following recurrence relation:

$$w _ { [ t ] } ^ { r } = \beta _ { [ t ] } ^ { r } \left ( D i a g ( \gamma _ { [ t ] } ^ { r } ) k _ { [ t ] } ^ { r } - \sum _ { i = 1 } ^ { r - 1 } w _ { [ t ] } ^ { i } \left ( k _ { [ t ] } ^ { i \top } D i a g \left ( \gamma _ { [ t ] } ^ { i \to r } \right ) k _ { [ t ] } ^ { r } \right ) \right )$$

$$\pm b { u } _ { [ t ] } ^ { r } = \beta _ { [ t ] } ^ { r } \left ( v _ { [ t ] } ^ { r } - \sum _ { i = 1 } ^ { r - 1 } u _ { [ t ] } ^ { i } \left ( k _ { [ t ] } ^ { i \top } D i a g \left ( \gamma _ { [ t ] } ^ { i \rightarrow r } \right ) k _ { [ t ] } ^ { r } \right ) \right )$$

UT transform. We apply the UT transform [46] to reduce non-matmul FLOPs, which is crucial to enable better hardware utilization during training.

$$\begin{array} { r } { M _ { [ t ] } = \left ( I + S t r i c t T r i l \left ( D i a g \left ( \beta _ { [ t ] } \right ) \left ( \Gamma _ { [ t ] } ^ { 1 \rightarrow C } \odot K _ { [ t ] } \right ) \left ( \frac { K _ { [ t ] } } { \Gamma _ { [ t ] } ^ { 1 \rightarrow C } } \right ) \right ) \right ) ^ { - 1 } D i a g \left ( \beta _ { [ t ] } \right ) } \end{array} \quad ( 6 )$$

$$\begin{array} { r l } { W _ { [ t ] } = M _ { [ t ] } \left ( \Gamma ^ { 1 \rightarrow C } _ { [ t ] } \odot K _ { [ t ] } \right ) , } & { U _ { [ t ] } = M _ { [ t ] } V _ { [ t ] } } & { ( 7 ) } \end{array}$$

The inverse of a lower triangular matrix can be efficiently computed through an iterative row-wise approach by forward substitution in Gaussian elimination [28].

Equivalently, in matrix form, we can update the state in chunk-wise:

$$\begin{array} { r } { S _ { [ t + 1 ] } = D i a g ( \gamma _ { [ t ] } ^ { C } ) S _ { [ t ] } + \left ( \Gamma _ { [ t ] } ^ { i \rightarrow C } \odot K _ { [ t ] } \right ) ^ { T } \left ( U _ { [ t ] } - W _ { [ t ] } S _ { [ t ] } \right ) \in \mathbb { R } ^ { d _ { k } \times d _ { v } } } \end{array} \quad ( 8 )$$

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Mathematical Equation: Matrix and Tensor Operations

### Overview

The image depicts a mathematical equation involving matrix and tensor operations. The equation is structured as **A = B + (C ⊙ D)**, where:

- **A**, **B**, and **C** are 2D matrices.

- **D** is a 3D tensor.

- The operation **⊙** denotes element-wise (Hadamard) multiplication between matrices **C** and **D**.

### Components/Axes

1. **Matrices**:

- **A** (leftmost): A 3x3 matrix with varying shades of blue.

- **B** (middle-left): A 3x3 matrix with red shades, containing a diagonal pattern.

- **C** (middle-right): A 3x3 matrix with blue shades, similar to **A** but with a different arrangement.

2. **Tensor**:

- **D** (rightmost): A 3x3x3 tensor represented as a 3x3 grid of 3x3 blocks, shaded in orange. The central block is highlighted with a black dot, indicating the focus of the element-wise operation.

3. **Symbols**:

- **⊙**: Element-wise multiplication operator.

- **+**: Addition operator.

### Detailed Analysis

- **Matrix A**:

- Contains a mix of light and dark blue squares, suggesting a non-uniform distribution of values.

- Positioned as the result of the equation.

- **Matrix B**:

- Red squares form a diagonal pattern (top-left to bottom-right), implying a structured or sparse matrix.

- **Matrix C**:

- Blue squares mirror the structure of **A** but with a distinct arrangement, possibly representing a transformed or filtered version of **A**.

- **Tensor D**:

- Orange shading indicates a 3D structure. The central block (highlighted with a dot) is the focal point for the element-wise operation with **C**.

- **Operations**:

- **C ⊙ D**: The element-wise multiplication between **C** (2D) and the central slice of **D** (3D tensor), resulting in a 2D matrix.

- **B + (C ⊙ D)**: The final result is the sum of **B** and the output of the element-wise multiplication, stored in **A**.

### Key Observations

1. **Color Coding**:

- Blue for **A** and **C** suggests they may share a common role (e.g., input/output or feature maps).

- Red for **B** distinguishes it as an additive component (e.g., bias or offset).

- Orange for **D** emphasizes its role as a higher-dimensional parameter (e.g., weights in a neural network).

2. **Spatial Relationships**:

- **B** is isolated from **C** and **D**, indicating it is added independently.

- **C** and **D** are grouped, highlighting their interdependence via the element-wise operation.

3. **Tensor Structure**:

- The 3D tensor **D** is visualized as a 3x3 grid of 3x3 blocks, with the central block emphasized. This could represent a kernel or filter in convolutional operations.

### Interpretation

The equation **A = B + (C ⊙ D)** likely models a computational process where:

- **A** is the output matrix (e.g., a feature map or result).

- **B** acts as a bias or offset term, added directly to the result.

- **C** and **D** represent interacting components (e.g., input features and learned weights), combined via element-wise multiplication before being added to **B**.

This structure is common in neural network operations, such as:

- **Activation functions**: Combining weighted inputs (C ⊙ D) with a bias (B).

- **Channel-wise operations**: Modulating features (C) with learned parameters (D) before aggregation.

The use of color and spatial arrangement reinforces the hierarchical relationship between matrices and tensors, emphasizing the flow of data through the equation.

</details>

During the output stage, we adopt an inter-block recurrent and intra-block parallel strategy to maximize matrix multiplication throughput, thereby fully utilizing the computational potential of Tensor Cores.

$$O _ { [ t ] } = \underbrace { \left ( \Gamma ^ { 1 \to C } _ { [ t ] } \odot Q _ { [ t ] } \right ) S _ { [ t ] } } _ { i n t e r c h u k } + \underbrace { T r i l \left ( \left ( \Gamma ^ { 1 \to C } _ { [ t ] } \odot Q _ { [ t ] } \right ) \left ( \frac { K _ { [ t ] } } { \Gamma ^ { 1 \to C } _ { [ t ] } } \right ) ^ { \top } \right ) } _ { i n t e r c h u k } \underbrace { \left ( U _ { [ t ] } - W _ { [ t ] } S _ { [ t ] } \right ) \in \mathbb { R } ^ { C \times d _ { v } } } _ { " p s e u n d o r" - value term }$$

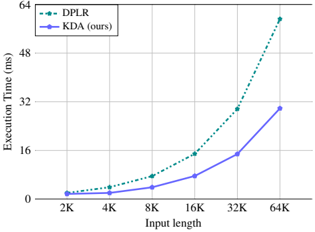

## 3.2 Efficiency Analysis

In terms of representational capacity, KDA aligns with the generalized DPLR formulation, i.e., S t = ( D -a t b ⊤ t ) S t -1 + k t v ⊤ t , both exhibiting fine-grained decay behavior. However, such fine-grained decay introduces numerical precision issues during division operations (e.g., the intra-chunk computation in Eq. 9). To address this, prior work such as GLA [114] performs computations in the logarithmic domain and introduces secondary chunking in full precision. This approach, however, prevents full utilization of half-precision matrix multiplications and significantly reduces operator speed. By binding both variables a and b to k , KDA effectively alleviates this bottleneck-reducing the number of second-level chunk matrix computations from four to two, and further eliminating three additional matrix multiplications. As a result, the operator efficiency of KDA improves by roughly 100% compared to the DPLR formulation. A detailed analysis is provided in §6.2.

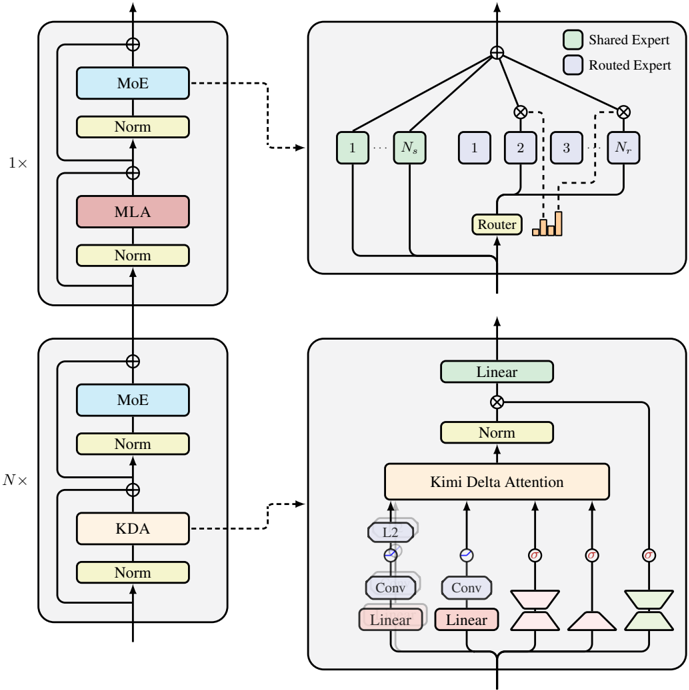

## 4 The Kimi Linear Model Architecture

The main backbone of our model architecture follows Moonlight [62]. In addition to fine-grained gating, we also leverage several components to further improve the expressiveness of Kimi Linear. The overall Kimi Linear architecture is shown in Figure 3.

Neural Parameterization Let x t ∈ R d be the t -th token input representation, the input to KDA for each head h is computed as follows

$$q _ { t } ^ { h } , k _ { t } ^ { h } & = L 2 N o r m ( S w i s h ( S h o r t C o n v ( W _ { q / k } ^ { h } x _ { t } ) ) ) \in \mathbb { R } ^ { d _ { k } } \\ v _ { t } ^ { h } & = S w i s h ( S h o r t C o n v ( W _ { v } ^ { h } x _ { t } ) ) \in \mathbb { R } ^ { d _ { v } } \\ \alpha _ { t } ^ { h } & = f ( W _ { \alpha } ^ { \uparrow } W _ { \alpha } ^ { \downarrow } x _ { t } ) \in [ 0 , 1 ] ^ { d _ { k } } \\ \beta _ { t } ^ { h } & = S i g m o i d ( W _ { \beta } ^ { h } x _ { t } ) \in [ 0 , 1 ]$$

where d k , d v represent the key and value head dimensions, which are set to 128 for all experiments. For q , k , v , we apply a ShortConv followed by a Swish activation, following [111]. The q and k representations are further normalized using L2Norm to ensure eigenvalues stability, as suggested by [112]. The per-channel decay α h t is parameterized via a low-rank projection ( W ↓ α and W ↑ α with rank equal to the head dimension) and a decay function f ( · ) similar to those

Figure 2: Execution time of kernels for varying input lengths, with a uniform batch size of 1 and 16 heads.

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Graph: Execution Time vs. Input Length

### Overview

The image is a line graph comparing the execution time (in milliseconds) of two algorithms, **DPLR** (dashed green line) and **KDA (ours)** (solid blue line), across varying input lengths (2K to 64K). The y-axis represents execution time, and the x-axis represents input length in increments of 2K. The legend is positioned in the top-left corner.

---

### Components/Axes

- **X-axis (Input length)**: Labeled "Input length" with markers at 2K, 4K, 8K, 16K, 32K, and 64K.

- **Y-axis (Execution Time)**: Labeled "Execution Time (ms)" with a range from 0 to 64 ms.

- **Legend**: Located in the top-left corner, with:

- **DPLR**: Dashed green line.

- **KDA (ours)**: Solid blue line.

---

### Detailed Analysis

#### Data Points and Trends

1. **DPLR (dashed green)**:

- **2K**: ~0 ms.

- **4K**: ~2 ms.

- **8K**: ~5 ms.

- **16K**: ~10 ms.

- **32K**: ~20 ms.

- **64K**: ~55 ms.

- **Trend**: Gradual increase until 32K, followed by a steep rise after 32K.

2. **KDA (solid blue)**:

- **2K**: ~0 ms.

- **4K**: ~1 ms.

- **8K**: ~3 ms.

- **16K**: ~6 ms.

- **32K**: ~12 ms.

- **64K**: ~18 ms.

- **Trend**: Steady, linear growth with minimal acceleration.

#### Spatial Grounding

- The legend is anchored in the **top-left** corner, clearly associating colors with labels.

- Data points align with their respective lines: green for DPLR, blue for KDA.

- Gridlines are evenly spaced, aiding in visual alignment of values.

---

### Key Observations

1. **DPLR** exhibits a **non-linear scaling** pattern, with execution time remaining low until 32K input length, then spiking sharply at 64K.

2. **KDA** demonstrates **linear scaling**, maintaining a consistent growth rate across all input lengths.

3. At **64K input length**, DPLR's execution time (~55 ms) is **3x higher** than KDA's (~18 ms).

---

### Interpretation

The graph highlights a critical performance divergence between the two algorithms:

- **DPLR** may be optimized for smaller input sizes but becomes inefficient at larger scales, suggesting potential algorithmic bottlenecks (e.g., memory constraints or suboptimal computational complexity).

- **KDA** scales predictably and efficiently, indicating a more robust design for handling large datasets. This could position KDA as the preferred choice for applications requiring high input lengths with predictable performance.

The stark contrast at 64K input length underscores the importance of algorithmic efficiency in real-world scenarios where data size is a variable factor.

</details>

Outputs

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Diagram: Neural Network Architecture with Mixture of Experts and Kimi Delta Attention

### Overview

The diagram illustrates a neural network architecture with two primary configurations: a standard Mixture of Experts (MoE) setup and an enhanced version incorporating Kimi Delta Attention. The architecture includes components like normalization layers, attention mechanisms, and routing logic, with a focus on expert selection and dynamic attention weighting.

### Components/Axes

- **Top Section (1x Configuration)**:

- **MoE (Mixture of Experts)**: A blue block labeled "MoE" with a "Norm" (Normalization) layer below it.

- **MLA (Multi-Layer Attention)**: A red block labeled "MLA" with a "Norm" layer below it.

- **Router**: A central block labeled "Router" with connections to multiple "Experts" (labeled 1 to N). The router distributes input to experts based on routing logic.

- **Shared Expert**: Green-colored blocks labeled "Shared Expert" (e.g., "1", "2", "3", "N") connected to the router.

- **Routed Expert**: Orange-colored blocks labeled "Routed Expert" (e.g., "1", "2", "3", "N") connected to the router.

- **Bottom Section (Nx Configuration)**:

- **MoE (Mixture of Experts)**: A blue block labeled "MoE" with a "Norm" layer below it.

- **KDA (Kernel Density Attention)**: A pink block labeled "KDA" with a "Norm" layer below it.

- **Linear Layer**: A green block labeled "Linear" with a "Norm" layer below it.

- **Kimi Delta Attention**: A complex block with:

- **Conv Layers**: Two "Conv" (Convolutional) layers.

- **Linear Layers**: Two "Linear" layers.

- **Attention Mechanisms**: "L2" (L2 normalization), "Conv", "Linear", and "Kimi Delta Attention" components.

- **Flow**: Input flows through MoE → KDA → Linear → Kimi Delta Attention, with connections to the router and experts.

### Detailed Analysis

- **Top Section (1x)**:

- The standard MoE setup uses a router to dynamically select experts (Shared/ Routed) based on input. The "Norm" layers ensure stable training by normalizing activations.

- The "MLA" block suggests a multi-layer attention mechanism, possibly for refining feature representations before routing.

- **Bottom Section (Nx)**:

- The enhanced configuration introduces **KDA** (Kernel Density Attention), which may optimize expert selection by analyzing input density.

- The **Kimi Delta Attention** block combines convolutional and linear layers to compute attention weights, potentially improving model adaptability.

- The "Linear" and "Norm" layers in this section likely refine the output before final processing.

### Key Observations

- **Routing Logic**: The router in the top section directs input to experts, while the bottom section’s Kimi Delta Attention may dynamically adjust routing based on input characteristics.

- **Attention Mechanisms**: Both sections use attention (MLA, Kimi Delta Attention) to focus on relevant features, but the bottom section integrates convolutional operations for spatial or temporal context.

- **Normalization**: "Norm" layers are consistently used to stabilize training across all components.

- **Color Coding**: The legend distinguishes "Shared Expert" (green) and "Routed Expert" (orange), aiding in visualizing expert selection.

### Interpretation

This diagram represents a hybrid neural network architecture combining **Mixture of Experts (MoE)** for scalability and **Kimi Delta Attention** for dynamic feature weighting. The top section emphasizes expert selection via a router, while the bottom section introduces KDA and Kimi Delta Attention to enhance adaptability. The use of convolutional layers in the Kimi Delta Attention suggests a focus on spatial or temporal relationships, potentially improving performance on complex tasks. The architecture likely balances efficiency (via MoE) and precision (via attention mechanisms), making it suitable for large-scale models requiring both scalability and contextual awareness.

</details>

Inputs

Figure 3: Illustration of our Kimi Linear model architecture, which consists of a stack of blocks containing a token mixing layer followed by a MoE channel-mixing layer. Specifically, we interleave N KDA layers with one MLA layer for token mixing, where N is set to 3 in our implementation.

used in GDN and Mamba [111, 16]. Before the output projection through W o ∈ R d × d , we use a head-wise RMSNorm [122] and a data-dependent gating mechanism [79] parameterized as:

$$o _ { t } = W _ { o } \left ( S i g m o i d \left ( W _ { g } ^ { \dagger } W _ { g } ^ { \downarrow } x _ { t } \right ) \odot R M S N o r m \left ( K D A \left ( q _ { t } , k _ { t } , v _ { t } , \alpha _ { t } , \beta _ { t } \right ) \right ) \right )$$

Here, the output gate adopts a low-rank parameterization similar to the forget gate, to ensure a fair parameter comparison, while maintaining performance comparable to full-rank gating and alleviating the Attention Sink [79]. The choice of nonlinear activation function is further discussed in §5.2.

Hybrid model architecture Long-context retrieval remains the primary bottleneck for pure linear attention, we therefore hybridize KDA with a small number of full global-attention (Full MLA) layers [19]. For Kimi Linear, we chose a layerwise approach (alternating entire layers) over a headwise one (mixing heads within layers) for its superior infrastructure simplicity and training stability. Empirically, a uniform 3:1 ratio, i.e., repeating 3 KDA layers to 1 full MLA layer, provided the best quality-throughput trade-off. We discuss other hybridization strategies in § 7.2.

No Position Encoding (NoPE) for MLA Layers. In Kimi Linear, we apply NoPE to all full attention (MLA) layers. This design delegates the entire responsibility for encoding positional information and recency bias (see § 6.1) to the KDA layers. KDA is thus established as the primary position-aware operator, fulfilling a role analogous to, or arguably stronger than, auxiliary components like short convolutions [3] or SWA [76]. Our findings align with prior results [110, 7, 19], who similarly demonstrated that complementing global NoPE attention with a dedicated position-aware mechanism yields competitive long-context performance.

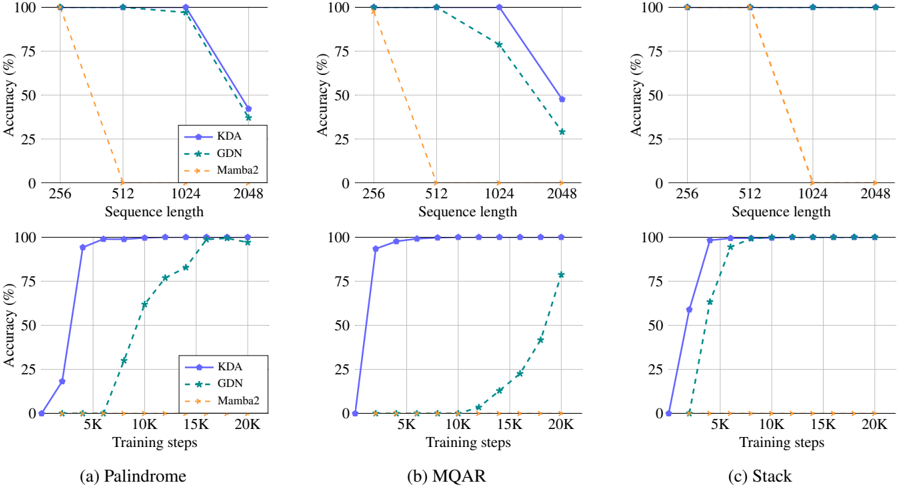

Figure 4: Results on synthetic tasks: palindrome, multi query associative recall, and the state tracking.

<details>

<summary>Image 7 Details</summary>

### Visual Description

```markdown

## Line Charts: Model Performance Across Sequence Lengths and Training Steps

### Overview

The image contains three sets of dual-axis line charts comparing the accuracy of three models (KDA, GDN, Mamba2) across different sequence lengths and training steps. Each chart corresponds to a specific task: Palindrome, MQR, and Stack. The charts reveal how model performance evolves with increasing computational complexity (sequence length) and training duration.

### Components/Axes

- **X-Axes**:

- **Sequence Length**: 256, 512, 1024, 2048 (logarithmic scale)

- **Training Steps**: 5K, 10K, 15K, 20K (linear scale)

- **Y-Axes**: Accuracy (%) from 0% to 100%

- **Legends**:

- **KDA**: Solid blue line

- **GDN**: Dashed green line

- **Mamba2**: Dotted orange line

- **Subplots**:

- Top row: Accuracy vs. Sequence Length

- Bottom row: Accuracy vs. Training Steps

### Detailed Analysis

#### (a) Palindrome

- **Sequence Length**:

- KDA: Starts at 100% (256), drops to 95% (512), 90% (1024), 80% (2048)

- GDN: Starts at 100% (256), drops to 98% (512), 95% (1024), 85% (2048)

- Mamba2: Starts at 100% (256), drops to 90% (512), 80% (1024), 70% (2048)

- **Training Steps**:

- KDA: Rises from 20% (5K) to 100% (20K)

- GDN: Rises from 10% (5K) to 95% (20K)

- Mamba2: Rises from 5% (5K) to 90% (20K)

#### (b) MQR

- **Sequence Length**:

- KDA: Starts at 100% (256), drops to 98% (512), 95% (1024), 85% (2048)

- GDN: Starts at 100% (256), drops to 97% (512), 93% (1024), 80% (2048)

- Mamba2: Starts at 100% (256), drops to 95% (512), 85% (1024), 75% (2048)

- **Training Steps**:

- KDA: Rises from 30% (5K) to 100% (20K)

- GDN: Rises from 15% (5K) to 98% (20K)

- Mamba2: Rises from 8% (5K) to 95% (20K)

#### (c) Stack

- **Sequence Length**:

- KDA: Starts at 100% (256), drops to 99% (512), 97% (1024), 95% (2048)

- GDN: Starts at 100% (256), drops to 98% (512), 96% (1024), 94% (2048)

- Mamba2: Starts at 100% (256), drops to 97% (512), 93% (1024), 90% (2048)

- **Training Steps**:

- KDA: Rises from 40% (5K) to 100% (20K)

- GDN: Rises from 20% (5K) to 99% (20K)

- Mamba2: Rises from 12% (5K) to 98% (20K)

### Key Observations

1. **Sequence Length Impact**:

- All models show accuracy degradation as sequence length increases, with Mamba2 experiencing the steepest decline.

- KDA maintains the highest accuracy across all sequence lengths compared to GDN and Mamba2.

2. **Training Step Impact**:

- All models improve significantly with more training steps, achieving near-100% accuracy by 20K steps.

- KDA demonstrates the fastest convergence, reaching 100% accuracy earlier than GDN and Mamba2.

3. **Model-Specific Trends**:

- Mamba2 underperforms in both sequence length and training step subplots, suggesting architectural limitations for these tasks.

- GDN shows moderate performance, outperforming Mamba2 but lagging behind KDA.

### Interpretation

The data demonstrates that:

- **Sequence Length Sensitivity**: Longer sequences reduce model accuracy, likely due to increased computational complexity and attention mechanism strain.

- **Training Efficiency**: All models benefit from extended training, but KDA's architecture enables faster convergence.

- **Architectural Tradeoffs**: Mamba2's

</details>

We note that NoPE offers practical advantages, particularly for MLA. First, NoPE enables their conversion to the highly-efficient pure Multi-Query Attention (MQA) during inference. Second, it simplifies long-context training, as it obviates the need for RoPE parameter adjustments, such as frequency base tuning or methods like YaRN [72].

## 5 Experiments

## 5.1 Synthetic tests

We start by evaluating KDA against other competing linear attention methods on three synthetic tasks, serving as benchmark tests for long-context performance. Across all experiments, we adopt a consistent model configuration of 2 layers with 2 attention heads, each having a head dimension of 128. For each task, we train the model for at most 20,000 steps with a grid search over learning rates in { 5 × 10 -5 , 1 × 10 -4 , 5 × 10 -4 , 1 × 10 -3 } . We then present the best-performing training accuracy curves. Specifically, we compare two scenarios: (1) the performance of different tasks as training length increases from 256 to 2,048 tokens, measuring the peak accuracy; and (2) the convergence speed of KDA, GDN, and Mamba2 with a fixed context length of 1,024 tokens.

Palindrome Palindrome requires the model to reproduce a given sequence of random tokens in reverse order. As illustrated in Table 5.1, given an input like 'O G R S U N E', the model must generate its exact reversal. Such copying tasks are known to be difficult for linear attention models [45], as they struggle to precisely retrieve the entire history from a compressed, fixed-size memory state.

<!-- formula-not-decoded -->

Multi Query Associative Recall (MQAR) MQAR assesses the model's ability to retrieve values associated with multiple queries that appear at various positions within the context. For instance, as shown in Table 5.1, the model is asked to recall 0 for the query B and 5 for G. This task is known to be highly correlated with language modeling performance [5].

$$\begin{array} { r c l } \text {Input} & A & 1 & C & 3 & B & 0 & M & 8 & G & 5 & E & 4 & < S P > \\ \text {Output} & \phi & \phi & \phi & \phi & \phi & \phi & \phi & \phi & \phi & \phi & \phi & \phi & \phi & 0 & 5 \end{array}$$

Table 1: Ablation study on the hybrid ratio of KDA to MLA attention and other key components. We list the training and validation perplexities (lower is better) for comparison. The best-performing model, used in our final experiments, is highlighted in gray.

| | Training PPL ( ↓ ) | Validation PPL ( ↓ ) |

|-----------------------|----------------------|------------------------|

| Hybrid ratio | 9.23 | 5.65 |

| 0:1 | 9.45 | 5.77 |

| | 9.29 | 5.66 |

| 7:1 | 9.23 | 5.7 |

| 15:1 | 9.34 | 5.82 |

| w/o output gate 9.25 | w/o output gate 9.25 | 5.67 |

| w/ swish output gate | 9.43 | 5.81 |

| w/o convolution layer | 9.29 | 5.7 |

Stack We assess the state tracking capabilities [27] of each candidate by simulating the standard LIFO (Last In First Out) stack operations. Our setup involves 64 independent stacks, each identified by a unique ID. The model processes a sequence of two operations: 1) PUSH: an action like ' <push> 1 G' adds the element G to stack 1; 2) POP: an action like ' <pop> 0 E' requires the model to predict the element E most recently pushed onto stack 0. The objective is to accurately track the states of all stacks and predict the correct element upon each pop request.

Figure 4 shows the final results. Across all tasks, KDA consistently achieves the highest accuracy as the sequence length increases from 256 to 2,048 tokens. In particular, on the Palindrome and recall-intensive MQAR tasks, KDA converges significantly faster than GDN. This confirms the benefits of our fine-grained decay, which enables the model to selectively forget irrelevant information while preserving crucial memories more precisely. We also observe that Mamba2 [16], a typical linear attention that uses only multiplicative decay and lacks a delta rule, fails on all tasks in our model settings.

## 5.2 Ablation on Key Components of Kimi Linear

We conducted a series of ablation studies by directly comparing different models to the first-scale scaling law model, i.e., 16 heads, 16 layers. All models were trained with the same FLOPs budget and hyperparameters for a fair comparison. We report the training and validation perplexities (PPLs) in Table 1. The validation PPL is calculated on a highquality dataset whose distribution differs significantly from the pre-training corpus, emphasizing generalization under distribution shift, and thus the differences in training and validation perplexities.

Output gate We compare our default Sigmoid output gate against two variants: one with no gating and another with swish gating. The results show that removing the gate degrades performance. Moreover, the swish gate adopted by [111] performs substantially worse than Sigmoid . Our observation is consistent with [79], who also conclude that Sigmoid gating offers superior performance. So we adopt Sigmoid across all of our experiments, including GDN-H.

Convolution Layer Lightweight depthwise convolutions with a small kernel size (e.g., 4) can be effective at capturing local token dependencies [3] and are widely adopted by many recent architectures [16, 5, 112]. We validate its efficacy in Table 1, demonstrating that convolutional layers continue to play a non-negligible role in hybrid models.

Hybrid ratio We performed an ablation study to determine the optimal hybrid ratio of KDA linear attention layers to MLAfull attention layers. Among the configurations tested, the 3:1 ratio (3 KDA layers for every 1 MLA layer) yielded the best results, achieving the lowest training and validation losses. We observed clear trade-offs with other ratios: a higher ratio (e.g., 7:1) produced a comparable training loss but led to significantly worse validation performance, while a lower ratio (e.g., 1:1) maintained a similar validation loss but at the cost of increased inference overhead. Furthermore, the pure full-attention baseline (0:1) performed poorly. Thus, the 3:1 configuration offers the most effective balance between model performance and computational efficiency.

NoPE vs. RoPE As shown in Table 5, the Kimi Linear consistently excels on long-context evaluations, whereas Kimi Linear (RoPE) attains similar scores on short-context tasks. We posit that this divergence arises from how positional bias is distributed across depth. In Kimi Linear (RoPE), the global attention layer carries a strong, explicit relative positional signal, while the linear attention (e.g., GDN) contributes a weaker, implicit positional inductive bias. This mismatch yields an overemphasis on short-range order in the global layer, which benefits short contexts but makes the model less flexible when adapting mid-training to extended contexts. By contrast, Kimi Linear induces a more

Table 2: Model configurations and hyperparameters for scaling law experiments.

| # Act. Params. † | Head | Layer | Hidden | Tokens | lr | batch size ‡ |

|--------------------|--------|---------|----------|----------|------------------|----------------|

| 653M | 16 | 16 | 1216 | 038.8B | 2 . 006 × 10 - 3 | 336 |

| 878M | 18 | 18 | 1376 | 059.8B | 1 . 790 × 10 - 3 | 432 |

| 1.1B | 20 | 20 | 1536 | 085.2B | 1 . 617 × 10 - 3 | 512 |

| 1.4B | 22 | 22 | 1632 | 102.5B | 1 . 486 × 10 - 3 | 576 |

| 1.7B | 24 | 24 | 1776 | 128.0B | 1 . 371 × 10 - 3 | 640 |

Figure 5: The fitted scaling law curves for MLA and Kimi Linear.

<details>

<summary>Image 8 Details</summary>

### Visual Description

## Scatter Plot: Model Performance vs. Computational Resources

### Overview

The image is a scatter plot comparing the loss of two models (MLA and Kimi Linear) against computational resources measured in PFLOP/s-days. The plot includes two trend lines (dashed) and annotated data points with stars. A key annotation ("1.16×") highlights a specific data point.

---

### Components/Axes

- **Y-Axis (Loss)**:

- Label: "Loss"

- Scale: Linear, ranging from 2.0 to 2.2 in increments of 0.1.

- Ticks: 2.0, 2.1, 2.2.

- **X-Axis (PFLOP/s-days)**:

- Label: "PFLOP/s-days"

- Scale: Logarithmic, ranging from 10¹ to 10².

- Ticks: 10¹, 10².

- **Legend**:

- Position: Top-right corner.

- Entries:

- **MLA**: Dashed blue line with equation `2.3092 × 10⁻⁰·⁰⁵³⁶`.

- **Kimi Linear**: Dashed red line with equation `2.2879 × 10⁻⁰·⁰⁵²⁷`.

- **Data Points**:

- Symbol: Stars (★).

- Colors: Blue (MLA) and red (Kimi Linear) stars, with some overlapping the trend lines.

---

### Detailed Analysis

- **Trend Lines**:

- **MLA (Blue Dashed Line)**:

- Slope: Slightly decreasing as PFLOP/s-days increase.

- Equation: `Loss = 2.3092 × 10⁻⁰·⁰⁵³⁶`.

- **Kimi Linear (Red Dashed Line)**:

- Slope: Slightly decreasing, parallel to MLA but with a marginally lower loss.

- Equation: `Loss = 2.2879 × 10⁻⁰·⁰⁵²⁷`.

- **Data Points**:

- Stars are distributed along both trend lines, with some points above/below the lines.

- A star at ~10¹ PFLOP/s-days is annotated with "1.16×", pointing to a loss value near 2.1.

---

### Key Observations

1. **Loss vs. Computational Resources**:

- Both models show a **negative correlation** between loss and PFLOP/s-days, indicating improved performance with increased computational resources.

- Kimi Linear consistently achieves **lower loss** than MLA for equivalent PFLOP/s-days.

2. **Annotation "1.16×"**:

- Highlights a data point where the loss is 1.16 times a reference value (context unclear without additional data).

3. **Slope Differences**:

- MLA’s slope (`-0.0536`) is slightly steeper than Kimi Linear’s (`-0.0527`), suggesting MLA’s loss decreases more rapidly with increased resources.

---

### Interpretation

- **Model Efficiency**: Kimi Linear outperforms MLA in terms of loss reduction per unit of computational resource, making it more efficient for the same workload.

- **Trade-offs**: The slight difference in slopes implies MLA may require marginally more resources to achieve similar loss reductions compared to Kimi Linear.

- **Annotation Significance**: The "1.16×" annotation likely emphasizes a critical threshold or benchmark, though its exact meaning depends on the reference value (e.g., baseline loss or target metric).

---

### Spatial Grounding & Verification

- **Legend**: Top-right, clearly associating colors with models.

- **Data Points**: Blue stars align with MLA’s trend line; red stars with Kimi Linear’s. The "1.16×" annotation is spatially linked to a red star near 10¹ PFLOP/s-days.

- **Trend Verification**: Both lines slope downward, confirming the inverse relationship between loss and computational resources.

---

### Content Details

- **Equations**:

- MLA: `2.3092 × 10⁻⁰·⁰⁵³⁶` (≈ 2.3092 × 0.945 ≈ 2.18).

- Kimi Linear: `2.2879 × 10⁻⁰·⁰⁵²⁷` (≈ 2.2879 × 0.946 ≈ 2.16).

- **Loss Values**: All data points fall between 2.0 and 2.2, with Kimi Linear’s values consistently lower.

---

### Final Notes

The plot demonstrates a clear trade-off between computational efficiency and model performance. Kimi Linear’s lower loss and slightly less steep slope suggest it is optimized for resource efficiency, while MLA’s steeper slope indicates faster loss reduction at higher resource levels. The "1.16×" annotation warrants further context to determine its relevance to the analysis.

</details>

balanced positional bias across layers, which improves robustness and extrapolation at long ranges, leading to stronger long-context performance. Regarding long context performance, as shown in Table 5, Kimi Linear achieves the best average score across different long context benchmarks, which verifies the benefits we claim in the last section.

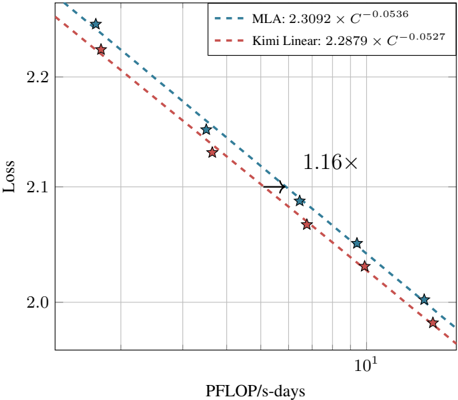

## 5.3 Scaling Law of Kimi Linear

We conducted scaling law experiments on a series of MoE models following the Moonlight [62] architecture. In all experiments, we activated 8 out of 64 experts and utilized the Muon optimizer [62]. Details and hyperparameters are listed in Table 2.

For MLA, following the Chinchilla scaling law methodology [37], we trained five language models of different sizes, carefully tuning their hyperparameters through grid search to ensure optimal performance for each model. For KDA, we maintained the best hybrid ratio of 3:1 as ablated in Table 1. Except for this, we adhered strictly to the MLA training configuration without any modifications. As shown in Figure 5, Kimi Linear achieves ∼ 1 . 16 × computational efficiency compared to the MLA baselines with compute optimal training. We expect that careful hyperparameter tuning will yield superior scaling curves for KDA.

## 5.4 Experimental Setup

Kimi Linear and baselines settings We evaluate our Kimi Linear model against a full-attention MLA baseline and a hybrid Gated DeltaNet (GDN-H) baseline, all of which share the same architecture, parameter count, and training setup for fair comparisons. The model configuration is largely aligned with Moonlight [62], with the key distinction that MoE sparsity is increased to 32. Each model activates 8 out of 256 experts, including one shared expert, resulting in 48 billion total parameters and 3 billion active parameters per forward pass. The first layer is implemented as a dense layer without MoE, ensuring stable training. To evaluate the effectiveness of NoPE in Kimi Linear, we also introduce a hybrid KDA baseline using RoPE with the same model configuration, referred to as Kimi Linear (RoPE).

Evaluation Benchmarks Our evaluation encompasses three primary categories of benchmarks, each designed to assess distinct capabilities of the model:

- Language Understanding and Reasoning : Hellaswag [121], ARC-Challenge [14], Winogrande [83], MMLU [36], TriviaQA [47], MMLU-Redux [26], MMLU-Pro [103], GPQA-Diamond [82], BBH [94], and [105].

- Code Generation : LiveCodeBench v6 4 [44], EvalPlus [60].

- Math & Reasoning : AIME 2025, MATH 500, HMMT 2025, PolyMath-en.

- Long-context : MRCR 5 , RULER [38], Frames [52], HELMET-ICL [118], RepoQA [61], Long Code Arena [13] and LongBench v2 [6].

- Chinese Language Understanding and Reasoning : C-Eval [43], and CMMLU [55].

Evaluation Configurations All models are evaluated using temperature 1.0. For benchmarks with high variance, we report the score of Avg@ k . For base model, We employ perplexity-based evaluation for MMLU, MMLU-Redux, GPQA-Diamond, and C-Eval. Otherwise, generation-based evaluation is adopted. To mitigate the high variance inherent to GPQA-Diamond, we report the mean score across eight independent runs. All evaluations are conducted using our internal framework derived from LM-Harness-Evaluation [10], ensuring consistent settings across all models.

## 5.4.1 Pre-training recipe

Pre-training recipe All models are pretrained using a 4,096-token context window, the MuonClip optimizer, and the WSD learning rate schedule, processing a shared total of 1.4 trillion tokens sampled from the K2 pretraining corpus [50]. The learning rate is set to 1 . 1 × 10 -3 , and the global batch size is fixed at 32 million tokens. They also adopt the same annealing schedule and long-context activation phase established in Kimi K2 [50].

Our final released Kimi Linear checkpoint is pretrained using the same procedure, but with an expanded total of 5.7 trillion tokens to match the pretraining tokens of Moonlight. In addition, the final checkpoint supports a context length of up to 1 million tokens. We compare the performance of Kimi Linear@5.7T and Moonlight in Appendix D

## 5.4.2 Post-training recipe

SFT recipe The SFT dataset extends the Kimi K2 [50] SFT data by incorporating additional reasoning tasks, creating a large-scale instruction-tuning dataset that spans diverse domains with a heavy emphasis on math and coding. We employ a multi-stage SFT approach, initially training the model on a broad range of diverse SFT data for general instruction-following, followed by scheduled targeted training on reasoning-intensive data to enhance the model's reasoning capabilities.

RL recipe For the RL training prompt set, we primarily integrate three data sources: mathematics, code, and STEM. The main purpose of this enhancement is to boost the model's reasoning ability. Before conducting RL, we pre-selected data that matches a moderate difficulty level for the starting checkpoint.

A known risk of RL training is the potential degeneration of general capabilities. To mitigate this, we incorporate the PTX loss [70] during RL, following the practice of K2 [50]. This involves concurrent SFT on a high-quality, distributionally diverse dataset in the RL progress. Our PTX dataset spans both reasoning and general-purpose tasks. All data mentioned above are subsets derived from the training recipe of the K2 model [50].

For the RL algorithm, we use the same algorithm as in K1.5 [95], while introducing several advanced tricks. We noticed that the precision mismatch between training and inference engines may lead to unstable RL learning. Therefore we introduce truncated importance sampling, a method that effectively mitigates the policy mismatch between rollout and training [116]. We also dynamically adjust the KL penalty and the mini batch size ( i.e. , the number of updates per iteration) to make the RL training stable and avoid collapse of entropy [15].

## 5.5 Main results

## 5.5.1 Kimi Linear@1.4T results

Pretrain results We compared our Kimi Linear model against two baselines (MLA and hybrid GDN-H) using a 1.4T pretraining corpus in Table 3. The evaluation focused on three areas: general knowledge, reasoning (math and code), and Chinese tasks. Kimi Linear consistently outperformed both baselines across almost all categories.

4 Questions from 2024.8 to 2025.5

5 https://huggingface.co/datasets/openai/mrcr

- General Knowledge: Kimi Linear scores highest on all of the key benchmarks like BBH, MMLU and HellaSwag.

- Reasoning: It leads in math (GSM8K) and most code tasks (CRUXEval). However, it scores slightly lower on EvalPlus compared to GDN-H.

- Chinese Tasks: Kimi Linear achieves the top scores on CEval and CMMLU.

In summary, Kimi Linear demonstrated the strongest performance, positioning it as a strong alternative to full-attention architectures at short context pretraining.

Table 3: Performance comparison of Kimi Linear with the full-attention MLA baseline and the hybrid GDN baseline, all after the same pretraining recipe. Kimi Linear consistently outperforms both MLA and GDN-H on short-context pretrain evaluations. Best per-column results are bolded .

| | Type Base | MLA | GDN-H | Kimi Linear |

|---------|----------------|-------|---------|---------------|

| | Trained Tokens | 1.4T | 1.4T | 1.4T |

| | HellaSwag | 81.7 | 82.2 | 82.9 |

| | ARC-challenge | 64.6 | 66.5 | 67.3 |

| | Winogrande | 78.1 | 77.9 | 78.6 |

| General | BBH | 71.6 | 70.6 | 72.9 |

| General | MMLU | 71.6 | 72.2 | 73.8 |

| General | MMLU-Pro | 47.2 | 47.9 | 51.0 |

| General | TriviaQA | 68.9 | 70.1 | 71.7 |

| | GSM8K | 83.7 | 81.7 | 83.9 |

| | MATH | 54.7 | 54.1 | 54.7 |

| | EvalPlus | 59.5 | 63.1 | 60.2 |

| | CRUXEval-I-cot | 51.6 | 56.0 | 56.6 |

| | CRUXEval-O-cot | 61.5 | 58.1 | 62.0 |

| Chinese | CEval | 79.3 | 79.1 | 79.5 |

| Chinese | CMMLU | 79.5 | 80.7 | 80.8 |

Table 4: Performance comparison of Kimi Linear with the full-attention MLA baseline and the hybrid GDN baseline, all using the same SFT recipe after pretraining. Kimi Linear consistently outperforms both MLA and GDN-H on short-context instruction-tuned benchmarks. Best per-column results are bolded .

| Type Instruct | MLA | GDN-H | Kimi Linear |

|---------------------------|-------|---------|---------------|

| Trained Tokens | 1.4T | 1.4T | 1.4T |

| BBH | 68.2 | 68.5 | 69.4 |

| MMLU | 75.7 | 75.6 | 77.0 |

| MMLU-Pro | 65.7 | 64.8 | 67.4 |

| MMLU-Redux | 79.2 | 78.7 | 80.3 |

| GPQA-Diamond (Avg@8) | 57.1 | 58.6 | 62.1 |

| LiveBench (Pass@1) | 45.7 | 46.4 | 45.2 |

| AIME 2025 (Avg@64) | 20.6 | 21.1 | 21.3 |

| MATH500 (Acc.) | 80.8 | 83.0 | 81.2 |

| HMMT2025 (Avg@32) | 11.3 | 11.3 | 12.5 |

| PolyMath-en (Avg@4) | 41.3 | 41.5 | 43.6 |

| LiveCodeBench v6 (Pass@1) | 25.1 | 25.4 | 26.0 |

| EvalPlus | 62.6 | 62.5 | 61.0 |

SFT results Kimi Linear demonstrates strong performance across both general and math & code tasks after undergoing the same supervised fine-tuning (SFT) recipe, consistently outperforming MLA and GDN-H. In general tasks, Kimi Linear leads across the board, achieving the top scores on various MMLU benchmarks, BBH, and GPQA-Diamond. In math & code tasks, it surpasses both baselines on difficult benchmarks like AIME 2025, HMMT 2025, PolyMath-en, and LiveCodeBench. Despite some minor exceptions like MATH500 and EvalPlus, Kimi Linear shows robust superiority across the tasks, confirming its clear superiority to the other models tested (GDN-H and MLA).

Table 5: Comparisons of Kimi Linear with MLA, GDN-H, and Kimi Linear (RoPE) across long-context benchmarks. The last column reports the overall average ( ↑ ). All models is trained on 1.4T tokens. Best per-column results are bolded .

| | RULER | MRCR | HELMET-ICL | LongBench V2 | Frames | RepoQA | Long Code Arena | Long Code Arena | Avg. |

|--------------------|---------|--------|--------------|----------------|----------|----------|-------------------|-------------------|--------|

| | | | | | | | Lib | Commit | |

| MLA | 81.3 | 22.6 | 88.0 | 36.1 | 60.5 | 63.0 | 32.8 | 33.2 | 52.2 |

| GDN-H | 80.5 | 23.9 | 85.5 | 32.6 | 58.7 | 63.0 | 34.7 | 30.5 | 51.2 |

| Kimi Linear (RoPE) | 78.8 | 22.0 | 88.0 | 35.4 | 59.9 | 66.5 | 31.3 | 32.5 | 51.8 |

| Kimi Linear | 84.3 | 29.6 | 90.0 | 35.0 | 58.8 | 68.5 | 37.1 | 32.7 | 54.5 |

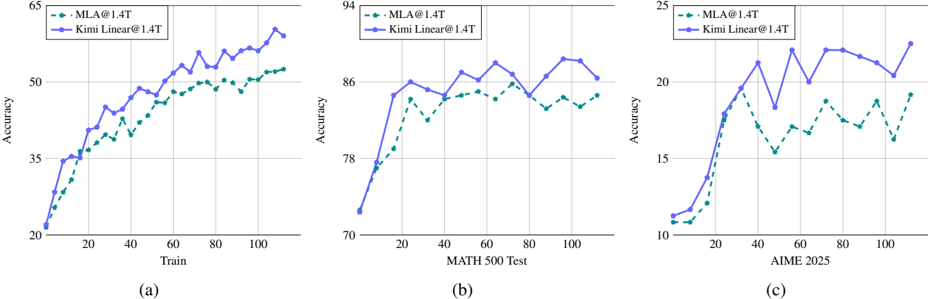

Figure 6: The training and test accuracy curves for Kimi Linear@1.4T and MLA@1.4T during Math RL training. Kimi Linear consistently outperforms the full attention baseline by a sizable margin during the whole RL process.

<details>

<summary>Image 9 Details</summary>

### Visual Description

## Line Graphs: Model Accuracy Comparison Across Datasets

### Overview

The image contains three line graphs comparing the accuracy of two models, **MLA@1.4T** (green dashed line) and **Kimi Linear@1.4T** (blue solid line), across different datasets. Each graph tracks accuracy progression during training or evaluation phases.

---

### Components/Axes

1. **Graph (a)**

- **X-axis**: "Train" (intervals: 0, 20, 40, 60, 80, 100)

- **Y-axis**: "Accuracy" (range: 20–65)

- **Legend**: Top-left corner, labels:

- Green dashed line: MLA@1.4T

- Blue solid line: Kimi Linear@1.4T

2. **Graph (b)**

- **X-axis**: "MATH 500 Test" (intervals: 0, 20, 40, 60, 80, 100)

- **Y-axis**: "Accuracy" (range: 70–94)

- **Legend**: Top-left corner, same labels as Graph (a).

3. **Graph (c)**

- **X-axis**: "AIME 2025" (intervals: 0, 20, 40, 60, 80, 100)

- **Y-axis**: "Accuracy" (range: 10–25)

- **Legend**: Top-left corner, same labels as Graph (a).

---

### Detailed Analysis

#### Graph (a): Training Accuracy

- **MLA@1.4T**: Starts at ~20% accuracy, steadily increases to ~50% by 100 steps.

- **Kimi Linear@1.4T**: Begins at ~25%, surpasses MLA@1.4T after ~60 steps, reaching ~55% by 100 steps.

- **Trend**: Both models improve, but Kimi Linear@1.4T outperforms MLA@1.4T in later stages.

#### Graph (b): MATH 500 Test Accuracy

- **MLA@1.4T**: Starts at ~75%, fluctuates between ~78%–86%, peaking at ~86%.

- **Kimi Linear@1.4T**: Begins at ~78%, rises to ~88%, then dips slightly to ~86%.

- **Trend**: Kimi Linear@1.4T maintains higher accuracy, with minor volatility.

#### Graph (c): AIME 2025 Accuracy

- **MLA@1.4T**: Starts at ~10%, rises to ~18%, then dips to ~16% before recovering to ~19%.

- **Kimi Linear@1.4T**: Begins at ~12%, surges to ~24%, then declines to ~21% before rising to ~24%.

- **Trend**: Kimi Linear@1.4T shows sharper initial gains but higher volatility.

---

### Key Observations

1. **Dataset-Specific Performance**:

- Kimi Linear@1.4T excels in AIME 2025 (highest final accuracy: ~24%).

- MLA@1.4T performs more consistently in MATH 500 Test.

2. **Training Dynamics**:

- Kimi Linear@1.4T overtakes MLA@1.4T during training (Graph a) but shows instability in AIME 2025.

3. **Volatility**:

- MLA@1.4T exhibits smoother trends in MATH 500 Test, while Kimi Linear@1.4T has sharper fluctuations.

---

### Interpretation

The data suggests **task-dependent model efficacy**:

- **Kimi Linear@1.4T** may be optimized for complex reasoning tasks (AIME 2025) but requires stabilization.

- **MLA@1.4T** demonstrates robustness in standardized tests (MATH 500) but lags in advanced benchmarks.

- Training dynamics indicate Kimi Linear@1.4T’s potential for rapid improvement but highlights trade-offs between speed and stability.

No textual content in non-English languages is present. All values are approximate, with uncertainty due to visual estimation from the graph.

</details>

Long Context Performance Evaluation We evaluate the long-context performance of Kimi Linear against three baseline models-MLA, GDN-H, and Kimi Linear (RoPE)-across several benchmarks at 128k context length (see Table 5). The results highlight Kimi Linear's clear superiority in these long-context tasks. It consistently outperformed MLA and GDN-H, achieving the highest scores on RULER (84.3) and RepoQA (68.5) by a significant margin. This pattern of outperformance held across most other tasks, except for LongBench V2 and Frames. Overall, Kimi Linear achieved the highest average score (54.5), further reinforcing its effectiveness as a leading attention architecture in long-context scenarios.

RL results To compare the RL convergence properties of Kimi Linear and MLA, we conduct RLVR using the in-house mathematics training set from [50], and evaluate on mathematics test sets (e.g., AIME 2025, MATH500), while keeping the algorithm and all hyperparameters identical to ensure a fair comparison of performance.

As shown in Figure 6, Kimi Linear demonstrates better efficiency compared to MLA. On the training set, even though both models start at similar points, the growth rate of training accuracy for Kimi Linear is significantly higher than that of MLA, and the gap gradually widens. On the test set, similar phenomena are observed. For example, on MATH500 and AIME2025, Kimi Linear achieves faster and better improvement compared to MLA. Overall, in reasoning-intensive long-form generation under RL, we empirically observe that Kimi Linear performs significantly better than MLA.

Summary of overall findings During the pretraining and SFT stages, a clear performance hierarchy was established: Kimi Linear outperformed GDN-H, which in turn outperformed MLA. However, this hierarchy shifted in long-context evaluations. While Kimi Linear maintained its top position, GDN-H's performance declined, placing it behind MLA. Furthermore, in the RL stage, Kimi Linear also demonstrated superior performance over MLA. Overall, Kimi Linear consistently ranked as the top performer across all stages, establishing itself as a superior alternative to full attention architectures.

## 5.6 Efficiency Comparison

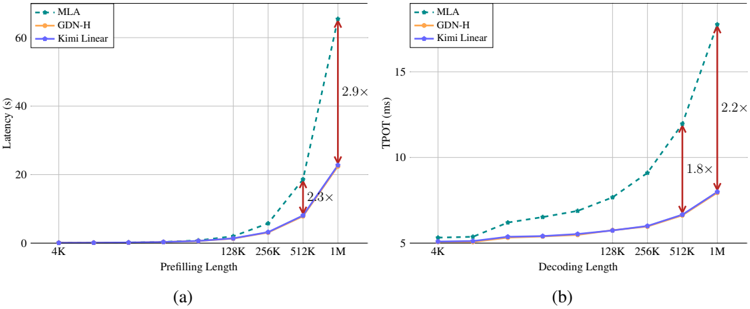

Prefilling & Decoding speed We compare the training and decoding times for full attention MLA [19], GDN-H, and Kimi Linear in Figure 7a and Figure 7b. Note that all models are based on the Kimi Linear 48B setting, with the same number of layers and attention heads. We observe that: 1) Despite incorporating a more fine-grained decay mechanism, Kimi Linear introduces negligible latency overhead compared to GDN-H during prefilling. As shown in Figure 7a, their

Figure 7: (a) The prefilling time of MLA (full attention), hybrid GDN-H and our Kimi Linear. (b) The time per output token (TPOT) for MLA, GDN-H and Kimi Linear during decoding. (We use batch size = 1 here for tests.)

<details>

<summary>Image 10 Details</summary>

### Visual Description

## Line Charts: Latency and TPOT Performance Across Sequence Lengths

### Overview

The image contains two line charts comparing the performance of three methods (MLA, GDN-H, Kimi Linear) across two metrics: **Latency (s)** and **TPOT (ms)**. Chart (a) focuses on **Prefilling Length**, while chart (b) examines **Decoding Length**. Both charts highlight performance degradation at longer sequence lengths using multiplier annotations (e.g., "2.9×").

---

### Components/Axes

#### Chart (a): Latency vs. Prefilling Length

- **X-axis**: Prefilling Length (4K, 128K, 256K, 512K, 1M)

- **Y-axis**: Latency (s), ranging from 0 to 60

- **Legend**: Top-left corner, with color-coded labels:

- MLA: Green dashed line

- GDN-H: Orange solid line

- Kimi Linear: Blue solid line

#### Chart (b): TPOT vs. Decoding Length

- **X-axis**: Decoding Length (4K, 128K, 256K, 512K, 1M)

- **Y-axis**: TPOT (ms), ranging from 5 to 15

- **Legend**: Top-left corner, matching chart (a) color scheme.

---

### Detailed Analysis

#### Chart (a): Latency Trends

1. **MLA (Green Dashed Line)**:

- Starts near 0 at 4K.

- Gradual increase up to 512K (~10s).

- Sharp spike at 1M (~60s), annotated with a **2.9×** multiplier compared to Kimi Linear.

- Intermediate spike at 512K (~20s), annotated with a **2.3×** multiplier.

2. **GDN-H (Orange Solid Line)**:

- Remains flat at 0 across all lengths.

3. **Kimi Linear (Blue Solid Line)**:

- Flat at 0 for all lengths except 1M (~20s), where it aligns with MLA's 512K latency.

#### Chart (b): TPOT Trends

1. **MLA (Green Dashed Line)**:

- Starts at ~5 ms at 4K.

- Gradual increase to ~15 ms at 1M, annotated with a **2.2×** multiplier.

- Intermediate jump at 512K (~12 ms), annotated with a **1.8×** multiplier.

2. **GDN-H (Orange Solid Line)**:

- Flat at ~5 ms across all lengths.

3. **Kimi Linear (Blue Solid Line)**:

- Flat at ~5 ms for 4K–256K.

- Slight increase to ~7 ms at 512K and ~10 ms at 1M.

---

### Key Observations

1. **MLA's Scalability Issues**:

- Latency and TPOT increase exponentially with longer sequences (e.g., 2.9× and 2.2× multipliers at 1M).

- Dominates performance degradation compared to other methods.

2. **GDN-H's Consistency**:

- Unchanged latency and TPOT across all lengths, suggesting fixed computational cost.

3. **Kimi Linear's Stability**:

- Minimal performance variation, except for a modest TPOT increase at 1M.

4. **Multiplier Annotations**:

- Highlight MLA's inefficiency at scale, particularly for 1M sequences.

---

### Interpretation

- **MLA's Limitations**: The sharp performance drops at 1M suggest MLA struggles with long sequences, likely due to quadratic or higher complexity in its architecture.

- **GDN-H's Efficiency**: Flat performance indicates a design optimized for constant-time operations, making it suitable for variable-length tasks.

- **Kimi Linear's Trade-off**: While stable, its slight TPOT increase at 1M hints at potential limitations in extreme-scale scenarios.

- **Practical Implications**: For applications requiring long sequences (e.g., genomics, large-scale NLP), GDN-H may be preferable to MLA despite similar baseline performance.

---

### Spatial Grounding & Validation

- **Legend Placement**: Top-left in both charts, ensuring clear association with line colors.

- **Data Point Validation**:

- MLA's 1M latency (60s) matches the 2.9× multiplier relative to Kimi Linear (20s).

- TPOT annotations align with relative line positions (e.g., 2.2× at 1M).

---

### Content Details

- **Chart (a) Data Points**:

- MLA: 4K (0s), 128K (~2s), 256K (~5s), 512K (~20s), 1M (~60s).

- Kimi Linear: 1M (~20s).

- **Chart (b) Data Points**:

- MLA: 4K (5ms), 128K (~7ms), 256K (~9ms), 512K (~12ms), 1M (~15ms).

- Kimi Linear: 1M (~10ms).

---

### Final Notes

The charts emphasize trade-offs between computational efficiency and sequence length handling. MLA's performance degradation at scale raises questions about its suitability for real-time or resource-constrained applications, while GDN-H and Kimi Linear offer more predictable behavior.

</details>

performance curves are virtually indistinguishable, confirming that our method maintains high efficiency. The hybrid Kimi Linear model demonstrates a clear efficiency advantage over the MLA baseline as sequence length increases. While its performance is comparable to MLA at shorter lengths (4k-16k), it becomes significantly faster from 128k onwards. This efficiency gap widens dramatically at scale, with Kimi Linear outperforming MLA by a factor of 2 . 3 for 512k sequences and 2 . 9 for 1M sequences. As shown in Figure 1b, Kimi Linear fully demonstrates its advantages during the decoding phase. For decoding at 1M context length, Kimi Linear is 6 × faster than full attention.

## 6 Discussions

## 6.1 Kimi Delta Attention as learnable position embeddings

The standard attention in transformers is by design agnostic to the sequence order of its inputs [99], thus necessitating explicit positional encodings [75, 86]. Among various methods, RoPE [88] has emerged as the de facto standard in modern LLMs due to its effectiveness [98, 1, 19]. The mechanism of multiplicative positional encodings like RoPE can be analyzed through a generalized attention formulation:

$$s _ { t , i } = q _ { t } ^ { \top } \left ( \prod _ { j = i + 1 } ^ { t } R _ { j } \right ) k _ { i } & & ( 1 1 )$$

where the position relationship between the t -th query q t and the i -th key k i is reflected by the cumulative matrix products. RoPE defines the transformation matrix R j as a block diagonal matrix composed of d k / 2 2D rotation matrices R k j = ( cos( jθ k ) -sin( jθ k ) sin( jθ k ) cos( jθ k ) ) with per-2-dimensional angular frequency θ k . Due to the properties of rotation matrices, i.e., R t -i = R ⊤ t R i , absolute positional information R t and R i can be applied separately to q t and k i , which are then transformed into relative positional information t -i encoded as ∏ t j = i +1 R j = ( cos(( t -i ) θ k ) -sin(( t -i ) θ k ) sin(( t -i ) θ k ) cos(( t -i ) θ k ) ) .

Consequently, we show that linear attentions with the gated delta rule can be expressed in a comparable formulation in Eq. 12. Similar forms for other attention variants are summarized in Table 6.

$$o _ { t } = \sum _ { i = 1 } ^ { t } \left ( q _ { t } ^ { \top } \left ( \prod _ { j = i + 1 } ^ { t } A _ { j } \left ( I - \beta _ { j } k _ { j } k _ { j } ^ { \top } \right ) \right ) k _ { j } \right ) v _ { j }$$

From this perspective, GDN can be interpreted as a form of multiplicative positional encoding whose transition matrix is data-dependent, thereby relaxing the orthogonality constraint imposed by RoPE and can be potentially more powerful [115]. 6 This provides a potential solution to the known extrapolation issues of RoPE, whose fixed frequencies can cause overfitting to context lengths seen during training [108, 72]. Some recent works adopt workarounds like partial RoPE

6 When preserving orthogonality, absolute positional encodings can be applied independently to q and k , which are then automatically transformed into relative positional encodings during the attention computation [87].

Table 6: An overview of attention mechanisms in their mathematically equivalent recurrent ( o t ) and parallel ( O ) forms. We omitted the normalization term and β t to achieve a more concise representation. The function ϕ refers to the infinite-dimensional feature space corresponding to the exponential kernel, i.e., ϕ ( q ) ⊤ ϕ ( k ) = exp( q ⊤ k ) .

| | Recurrent form | Parallel form |

|-------------------|-----------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------------|

| SA [99] | t ∑ j =1 exp ( q ⊤ t k j ) v j | ( exp ( QK ⊤ ) ⊙ M ) V |

| SA + RoPE [88] | t ∑ j =1 exp ( q ⊤ t ( t ∏ s = j +1 R s ) k j ) v j | ( exp ( R ( Q ) R ( K ) ⊤ ) ⊙ M ) V |

| LA [101] | t ∑ j =1 ( q ⊤ t k j ) v j | ( QK ⊤ ⊙ M ) V |

| Mamba2 [16] | t ∑ j =1 ( q ⊤ t ( t ∏ s = j +1 α s ) k j ) v j | ( QK ⊤ ⊙A⊙ M ) V |

| GLA [114] | t ∑ j =1 ( q ⊤ t ( t ∏ s = j +1 Diag ( α s ) ) k j ) v j | ( ( Q ⊙ Γ ) ( K Γ ) ⊤ ⊙ M ) V |

| DeltaNet [84] | t ∑ j =1 ( q ⊤ t ( t ∏ s = j +1 ( I - k s k ⊤ s ) ) k j ) v j ( ) | ( QK ⊤ ⊙ M ) ( I + KK ⊤ ⊙ M - ) - 1 V |

| FoX [58] | t ∑ j =1 exp ( q ⊤ t k j ) t ∏ s = j +1 α s v j | ( exp ( QK ⊤ ) ⊙A⊙ M ) V |

| DeltaFormer [125] | t ∑ j =1 ( ϕ ( q t ) ⊤ ( t ∏ s = j +1 ( I - ϕ ( k s ) ϕ ( w s ) ⊤ ) ) ϕ ( k j ) ) v j | ( exp ( QK ⊤ ) ⊙ M ) ( I +exp ( WK ⊤ ) ⊙ M - ) - 1 V |

| PaTH-FoX [115] | t ∑ j =1 exp ( q ⊤ t ( t ∏ s = j +1 ( I - w s w ⊤ s ) ) k j )( t ∏ s = j +1 α s ) v j ) | ( exp ( ( QK ⊤ ⊙ M ) ( I + WW ⊤ ⊙ M - ) - 1 ) ⊙A⊙ M ) V |

| GDN [111] | t ∑ j =1 ( q ⊤ t ( t ∏ s = j +1 α s ( I - k s k ⊤ s ) ) k j v j | ( QK ⊤ ⊙A⊙ M ) ( I + KK ⊤ ⊙A⊙ M - ) - 1 V |

| Comba [40] | t ∑ j =1 ( q ⊤ t ( t ∏ s = j +1 ( α s - k s k ⊤ s ) ) k j ) v j | ( QK ⊤ ⊙A⊙ M ) ( I + KK ⊤ ⊙A i - 1 /j ⊙ M - ) - 1 V |

| RWKV7 [71] | t ∑ j =1 ( q ⊤ t ( t ∏ s = j +1 ( Diag ( α s ) - ( b s ⊙ ˆ k s ) ˆ k ⊤ s ) ) k j ) v j | ( ( Q ⊙ Γ ) ( K Γ ) ⊤ ⊙ M ) ( I + ( ˆ K ⊙ 0 → t - 1 Γ ) ( ˜ K ⊙ B Γ ) ⊤ ⊙ M - 1 ) - 1 V |

| KDA (ours) | t ∑ j =1 ( q ⊤ t ( t ∏ s = j +1 Diag ( α s ) ( I - k s k ⊤ s ) ) k j ) v j | ( ( Q ⊙ Γ ) ( K Γ ) ⊤ ⊙ M )( I +( K ⊙ Γ ) ( K Γ ) ⊤ ⊙ M - 1 ) - 1 V |

[7] or even forgo explicit positional encodings entirely (NoPE) [49, 76, 19]. Given that GDN serves as an analogue role to RoPE, we choose NoPE for global full attention layers (MLA) in our model, allowing positional information to be captured dynamically by our proposed KDA model.