# The Ouroboros of Benchmarking: Reasoning Evaluation in an Era of Saturation

**Authors**:

- İbrahim Ethem Deveci (Department of Cognitive Science)

- Ankara, Turkey

- Duygu Ataman (Department of Cognitive Science)

- Ankara, Turkey

## Abstract

The rapid rise of Large Language Models (LLMs) and Large Reasoning Models (LRMs) has been accompanied by an equally rapid increase of benchmarks used to assess them. However, due to both improved model competence resulting from scaling and novel training advances as well as likely many of these datasets being included in pre or post training data, results become saturated, driving a continuous need for new and more challenging replacements. In this paper, we discuss whether surpassing a benchmark truly demonstrates reasoning ability or are we simply tracking numbers divorced from the capabilities we claim to measure? We present an investigation focused on three model families, OpenAI, Anthropic, and Google, and how their reasoning capabilities across different benchmarks evolve over the years. We also analyze performance trends over the years across different reasoning tasks and discuss the current situation of benchmarking and remaining challenges. By offering a comprehensive overview of benchmarks and reasoning tasks, our work aims to serve as a first reference to ground future research in reasoning evaluation and model development.

## 1 Introduction

Benchmarks have long played a central role in evaluating and comparing machine learning models [1]. As models scale up in size and capability, particularly Large Language Models (LLMs) and the specialized Large Reasoning Models (LRMs), many benchmarks quickly saturate, often reaching or surpassing human-level performance. Whether this saturation is driven primarily by improved model capability or dataset contamination is generally unknown. Nevertheless, this quick saturation forces the development of new and more challenging benchmarks that could be used to further compare new model families. In this paper, we investigate several key research questions: How effective are current benchmarks at measuring model capabilities, and does surpassing a benchmark reliably indicate genuine reasoning?

To examine these questions, we select three model families, OpenAI, Anthropic, and Google, and compile performance data from official sources [2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22]. We gather a comprehensive list of 52 benchmarks used in evaluating these models and classify them according to the types of reasoning they aim to evaluate. Analyzing performance trends over the years, we highlight where models improve, where they struggle, and what these trends reveal about the current state of benchmarking. Finally, we discuss the implications of the saturation cycle and emphasize the need for improved evaluation practices that more accurately capture model capabilities.

Our contributions are threefold: (1) we provide a curated list of reasoning benchmarks, classified by the types of reasoning they aim to assess (2) we analyze performance trends over the years to assess benchmarking effectiveness; (3) we examine current landscape of existing benchmarks, identifying which benchmarks have reached high performance thresholds and which seem to remain unsolved.

By situating our analysis within the broader evaluation landscape, our work collects evidence to emphasize the need for reasoning tasks that are more representative of the nature of reasoning process and target evaluation beyond downstream accuracy.

## 2 Benchmark Landscape and Categorization

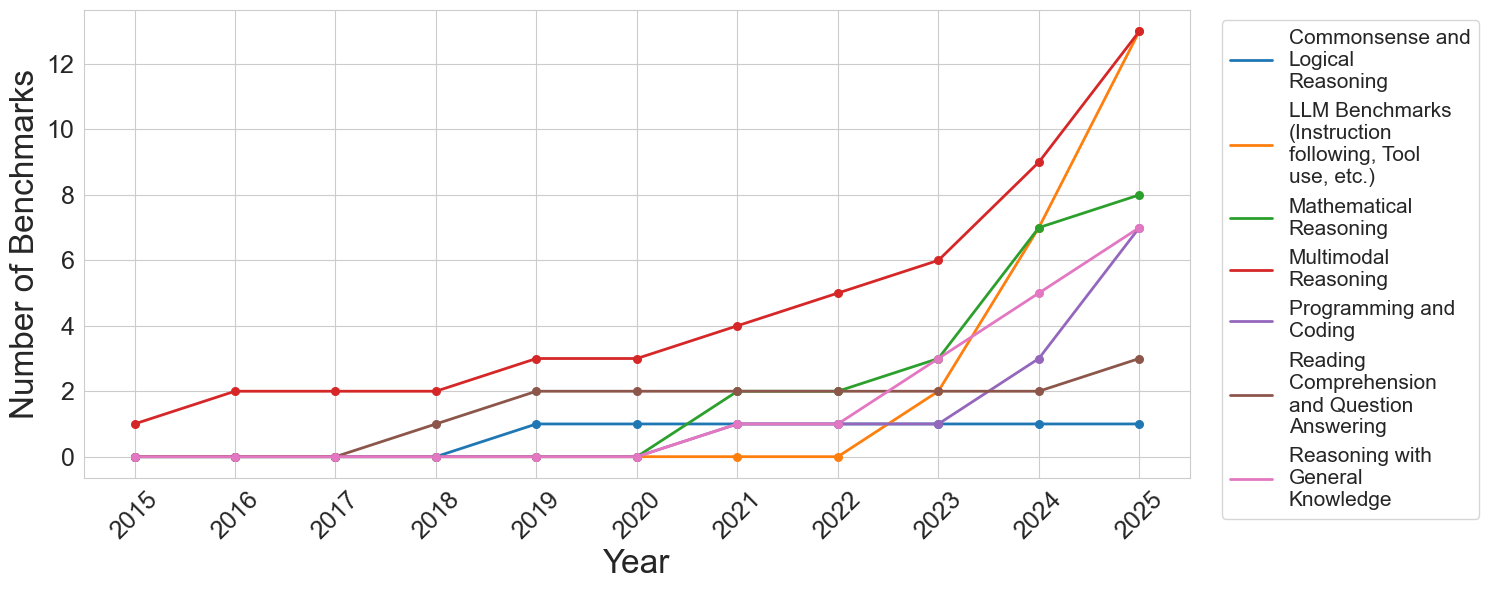

In order to provide a general analysis of how the creation and adoption of reasoning benchmarks have evolved over time, we examine three model families and compile the set of benchmarks employed to evaluate them. Our aim is to provide a comprehensive overview of current benchmarking practices and to trace how the creation and adoption of benchmarks have evolved over time. The complete list of benchmarks, their assigned reasoning types, and short summaries can be found in Appendix A. To facilitate analysis, we categorize benchmarks into seven reasoning types: commonsense and logical reasoning, mathematical reasoning, multimodal reasoning, programming and coding, reading comprehension and question answering, reasoning with general knowledge, and LLM-specific capabilities such as safety, tool use, and instruction following. Figure 1 illustrates a marked increase in benchmark adoption for multimodal reasoning, mathematical reasoning, programming, reasoning with general knowledge, and LLM-specific benchmarks after 2023. In contrast, no new benchmarks in reading comprehension or commonsense reasoning were adopted by these model families during this period. While the literature contains several other benchmarks in these areas [23, 24, 25, 26, 27, 28, 29], our analysis shows they have not been utilized by any of the prominent model families. This likely reflects the evolving understanding of what constitutes reasoning in computational models, in accordance with their current capabilities and what the community deems important to evaluate. Since most models now have direct commercial applications, their performance in more applicable domains, such as coding and tool-use benchmarks, may also motivate the evaluation in certain categories of reasoning tasks.

<details>

<summary>figures/benchmarks_by_year.png Details</summary>

### Visual Description

## Line Chart: Number of Benchmarks by Category (2015-2025)

### Overview

This is a line chart tracking the annual count of distinct AI benchmarks across seven different capability categories from 2015 to 2025. The chart demonstrates a significant and accelerating increase in the total number of benchmarks, particularly from 2022 onward, with the most dramatic growth occurring in the final two years (2024-2025).

### Components/Axes

* **Chart Type:** Multi-line chart.

* **X-Axis (Horizontal):** Labeled "Year". It displays discrete years from 2015 to 2025.

* **Y-Axis (Vertical):** Labeled "Number of Benchmarks". It has a linear scale from 0 to 12, with major gridlines at intervals of 2.

* **Legend:** Positioned to the right of the chart area. It lists seven categories, each associated with a unique colored line:

1. **Commonsense and Logical Reasoning** (Blue line)

2. **LLM Benchmarks (Instruction following, Tool use, etc.)** (Orange line)

3. **Mathematical Reasoning** (Green line)

4. **Multimodal Reasoning** (Red line)

5. **Programming and Coding** (Purple line)

6. **Reading Comprehension and Question Answering** (Brown line)

7. **Reasoning with General Knowledge** (Pink line)

### Detailed Analysis

The following data points are extracted by tracing each colored line against the year markers and the y-axis scale. Values are approximate based on visual alignment with the gridlines.

**1. Commonsense and Logical Reasoning (Blue Line)**

* **Trend:** Flat, then a single step increase.

* **Data Points:** 2015-2018: 0. 2019-2025: 1.

**2. LLM Benchmarks (Instruction following, Tool use, etc.) (Orange Line)**

* **Trend:** Zero for most of the timeline, then explosive growth.

* **Data Points:** 2015-2022: 0. 2023: 2. 2024: 7. 2025: 13.

**3. Mathematical Reasoning (Green Line)**

* **Trend:** Late emergence followed by strong, steady growth.

* **Data Points:** 2015-2020: 0. 2021: 2. 2022: 2. 2023: 3. 2024: 7. 2025: 8.

**4. Multimodal Reasoning (Red Line)**

* **Trend:** Consistent, steady growth throughout the entire period, becoming the category with the highest count by 2025.

* **Data Points:** 2015: 1. 2016: 2. 2017: 2. 2018: 2. 2019: 3. 2020: 3. 2021: 4. 2022: 5. 2023: 6. 2024: 9. 2025: 13.

**5. Programming and Coding (Purple Line)**

* **Trend:** Late emergence with a sharp, recent acceleration.

* **Data Points:** 2015-2021: 0. 2022: 1. 2023: 1. 2024: 3. 2025: 7.

**6. Reading Comprehension and Question Answering (Brown Line)**

* **Trend:** Early emergence, plateau, then a final increase.

* **Data Points:** 2015-2017: 0. 2018: 1. 2019: 2. 2020: 2. 2021: 2. 2022: 2. 2023: 2. 2024: 2. 2025: 3.

**7. Reasoning with General Knowledge (Pink Line)**

* **Trend:** Late emergence with moderate, steady growth.

* **Data Points:** 2015-2020: 0. 2021: 1. 2022: 1. 2023: 3. 2024: 5. 2025: 7.

### Key Observations

1. **Explosive Recent Growth:** The total number of benchmarks across all categories has increased dramatically since 2022. The years 2024 and 2025 show the steepest slopes for most lines.

2. **Category Dominance Shift:** "Multimodal Reasoning" (red) was the leading category for most of the timeline. However, by 2025, "LLM Benchmarks" (orange) has caught up, with both reaching approximately 13 benchmarks.

3. **Emergence of New Categories:** Several categories, notably "LLM Benchmarks," "Programming and Coding," and "Mathematical Reasoning," had zero benchmarks before 2021/2022, indicating these are newer, rapidly developing evaluation areas.

4. **Plateauing Categories:** "Commonsense and Logical Reasoning" (blue) and "Reading Comprehension..." (brown) show much slower growth, suggesting these may be more mature or stable evaluation domains.

5. **2023 as a Pivot Point:** The year 2023 marks an inflection point where the growth rate for nearly all categories (except the two plateauing ones) visibly increases.

### Interpretation

This chart visualizes the rapid evolution and diversification of the AI evaluation landscape. The data suggests a field in a phase of explosive expansion and specialization.

* **The Rise of Capability-Specific Benchmarks:** The late emergence and sharp rise of benchmarks for "LLM Benchmarks" (instruction following, tool use), "Programming," and "Mathematical Reasoning" directly correlate with the release and public adoption of powerful large language models (LLMs) around 2022-2023. The community rapidly developed new tests to measure these newly salient capabilities.

* **Multimodality as a Constant Frontier:** The steady, uninterrupted growth of "Multimodal Reasoning" benchmarks indicates that evaluating AI's ability to integrate different types of information (text, image, etc.) has been a consistent research priority for over a decade, now accelerating.

* **Benchmark Inflation:** The steep upward curves, especially in 2024-2025, may indicate "benchmark inflation"—a proliferation of tests as the field races to keep pace with model capabilities. This raises questions about the consolidation and standardization of evaluation methods.

* **Mature vs. Emerging Domains:** The contrast between the flat lines (blue, brown) and the steeply rising ones (orange, red, green) highlights a shift in research focus from foundational language understanding towards more complex, agentic, and multimodal tasks.

In summary, the chart depicts an AI research field that has moved from establishing basic evaluation metrics to rapidly creating a complex, multi-faceted, and ever-expanding suite of tests to measure increasingly sophisticated and specialized model behaviors.

</details>

Figure 1: Number of benchmarks in different reasoning types over time.

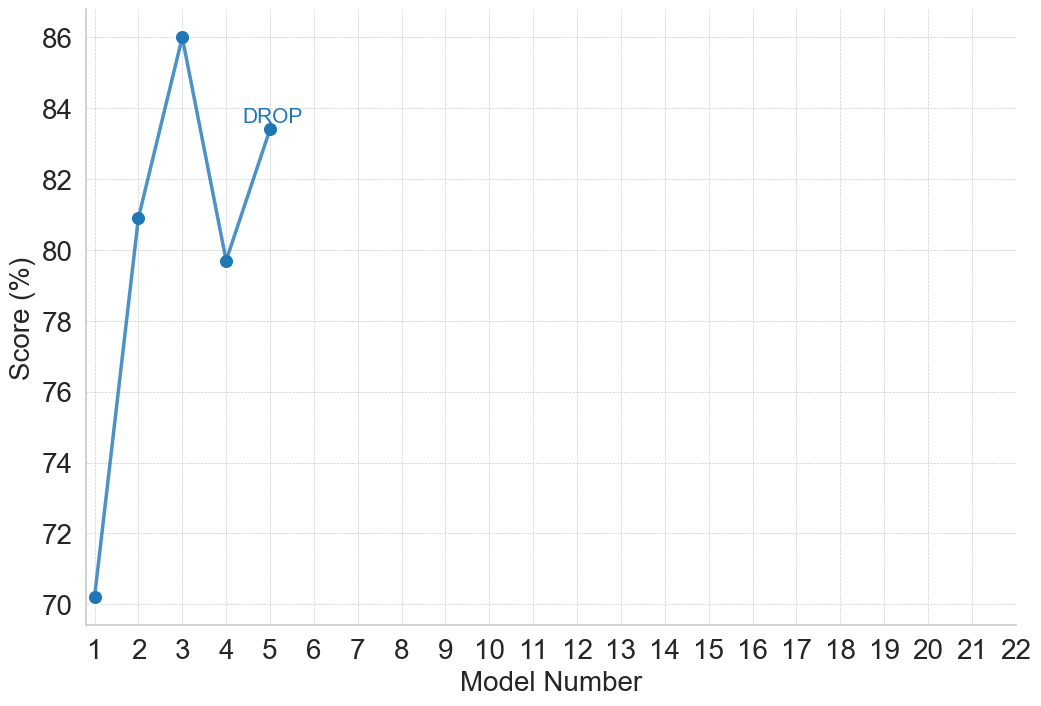

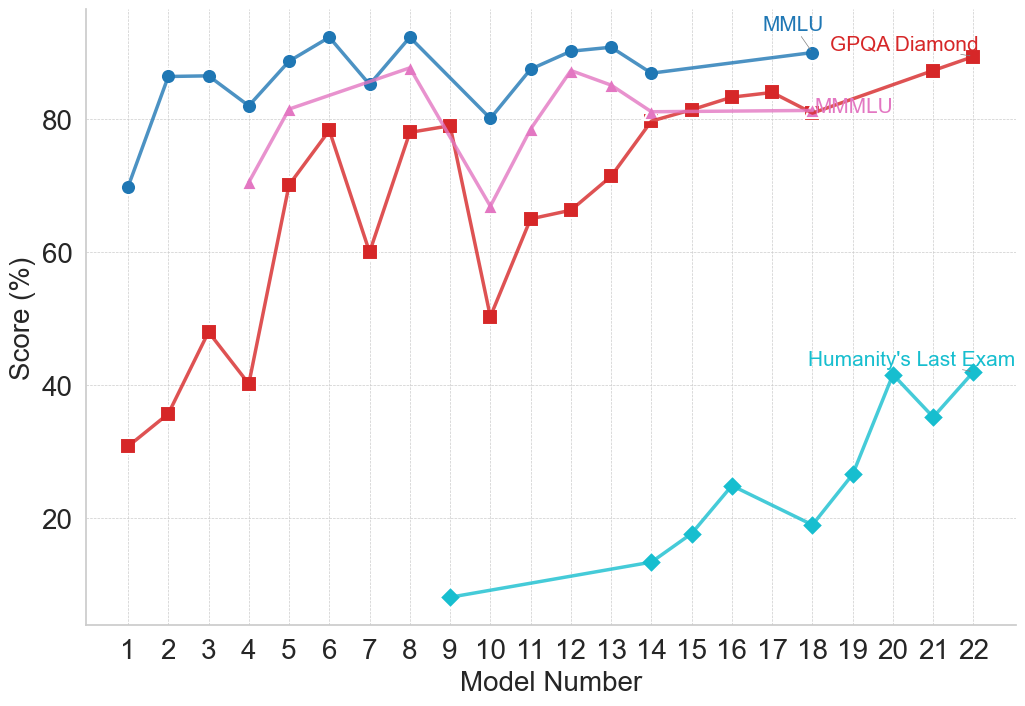

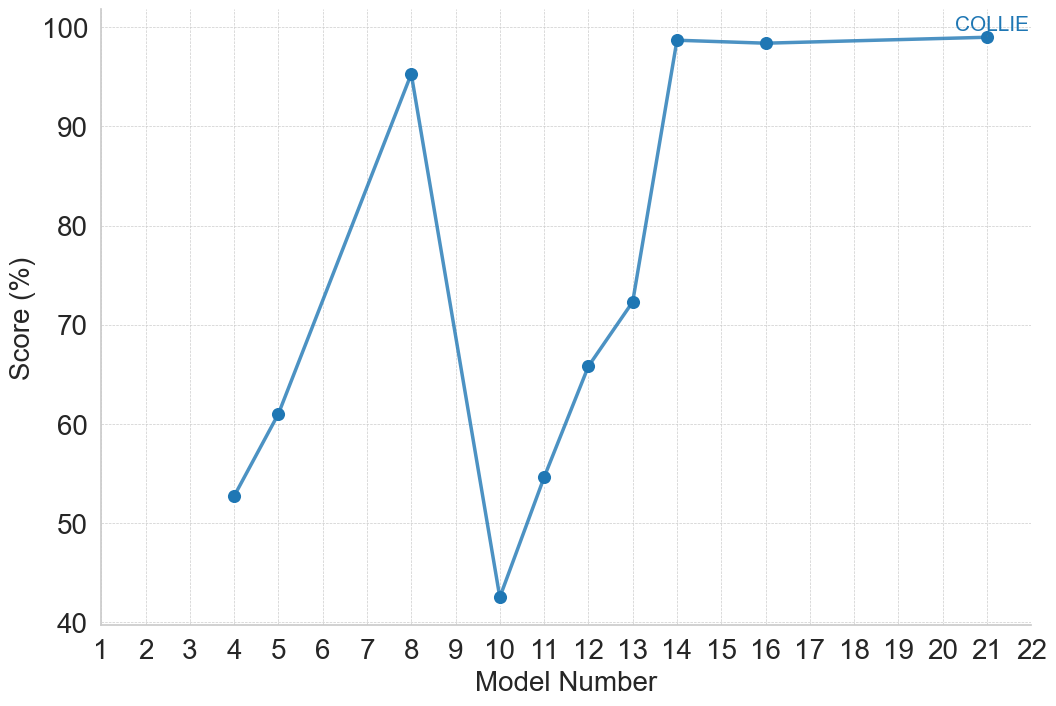

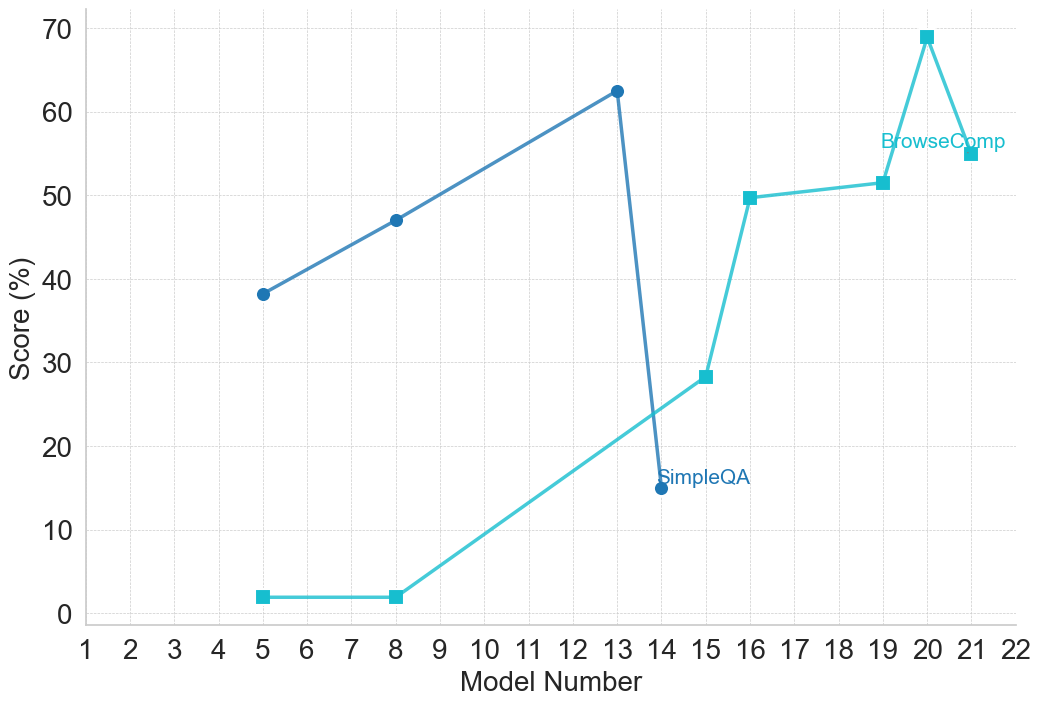

## 3 Performance Trends Across Models

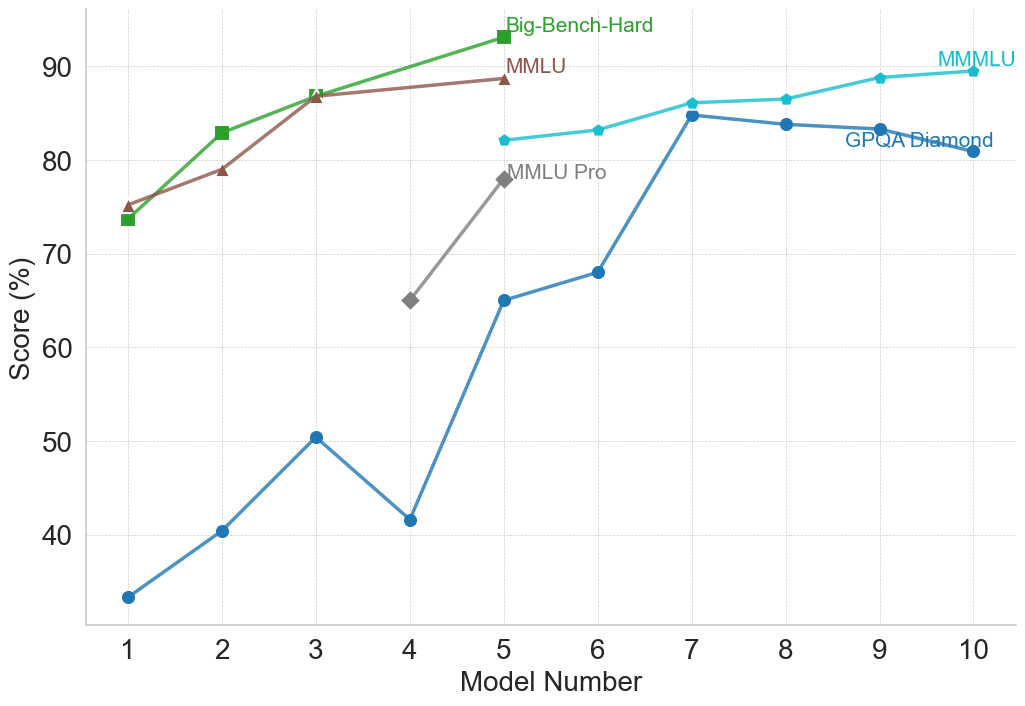

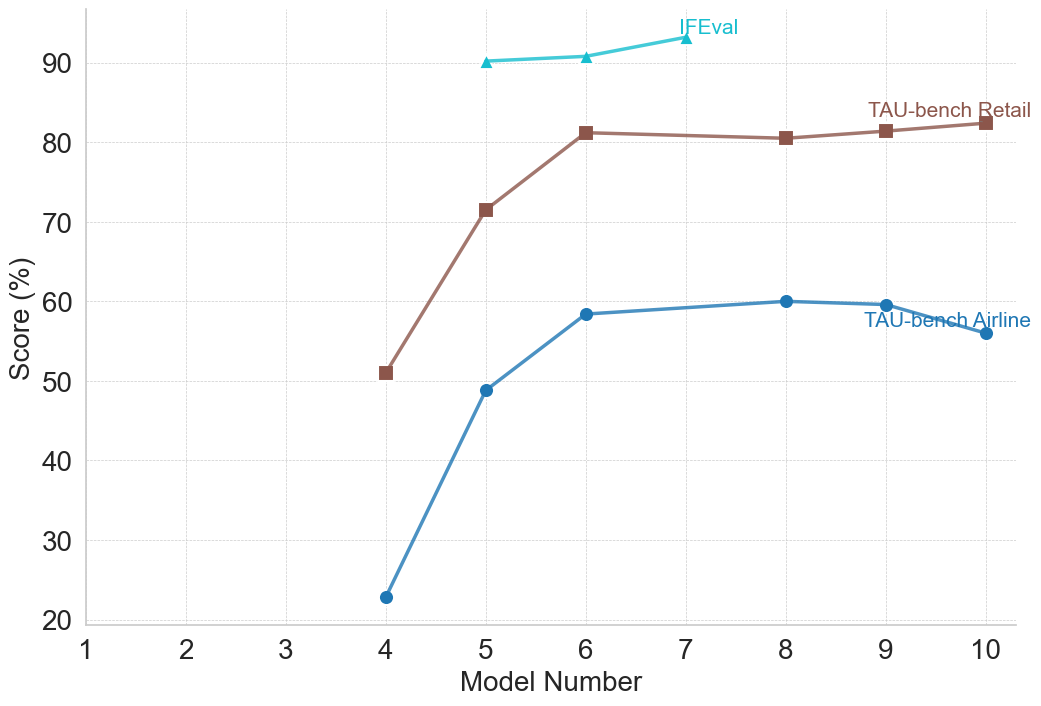

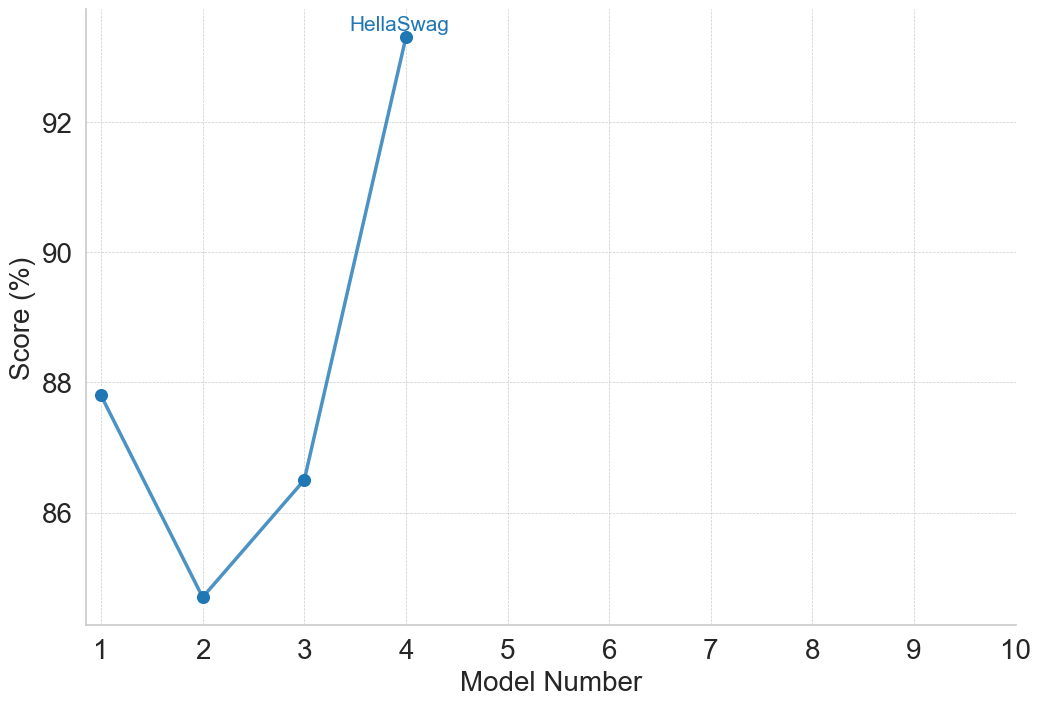

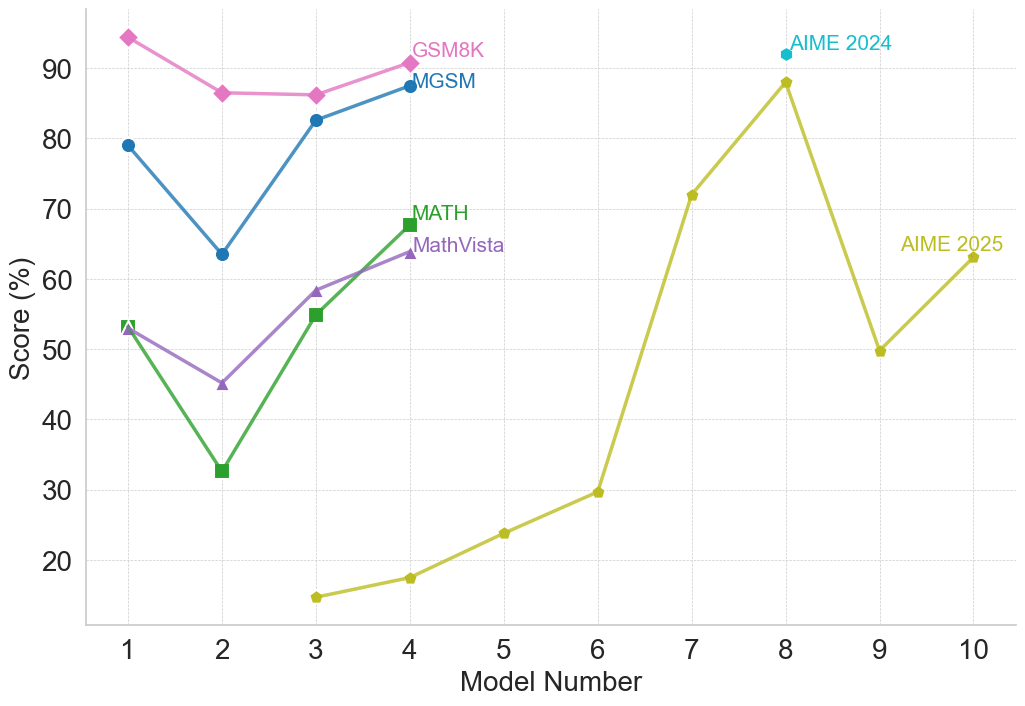

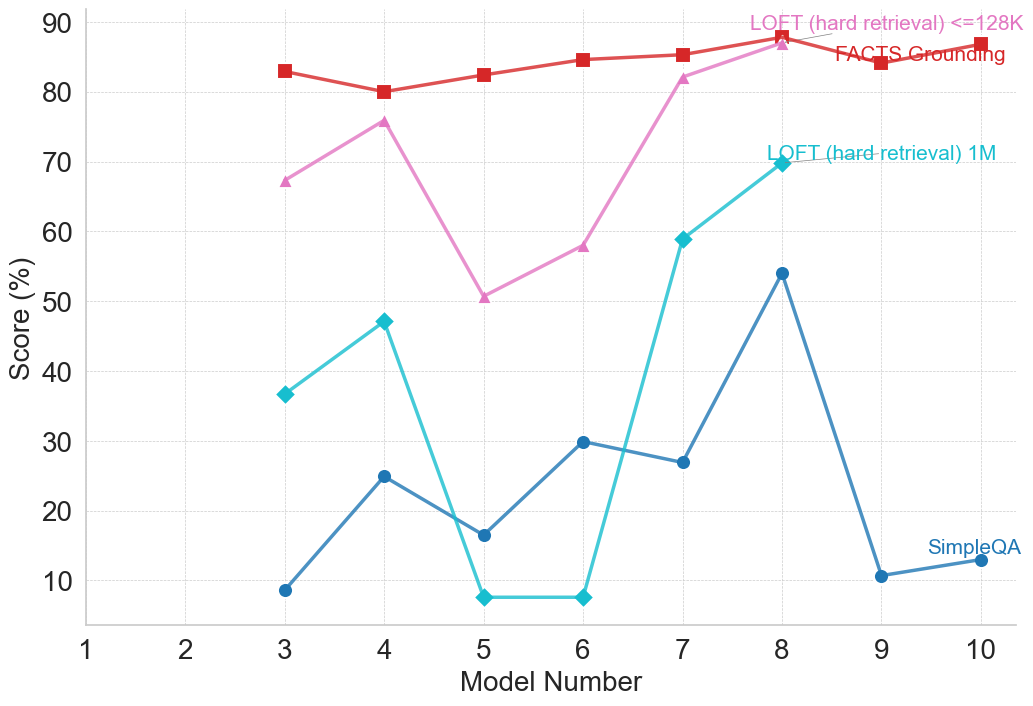

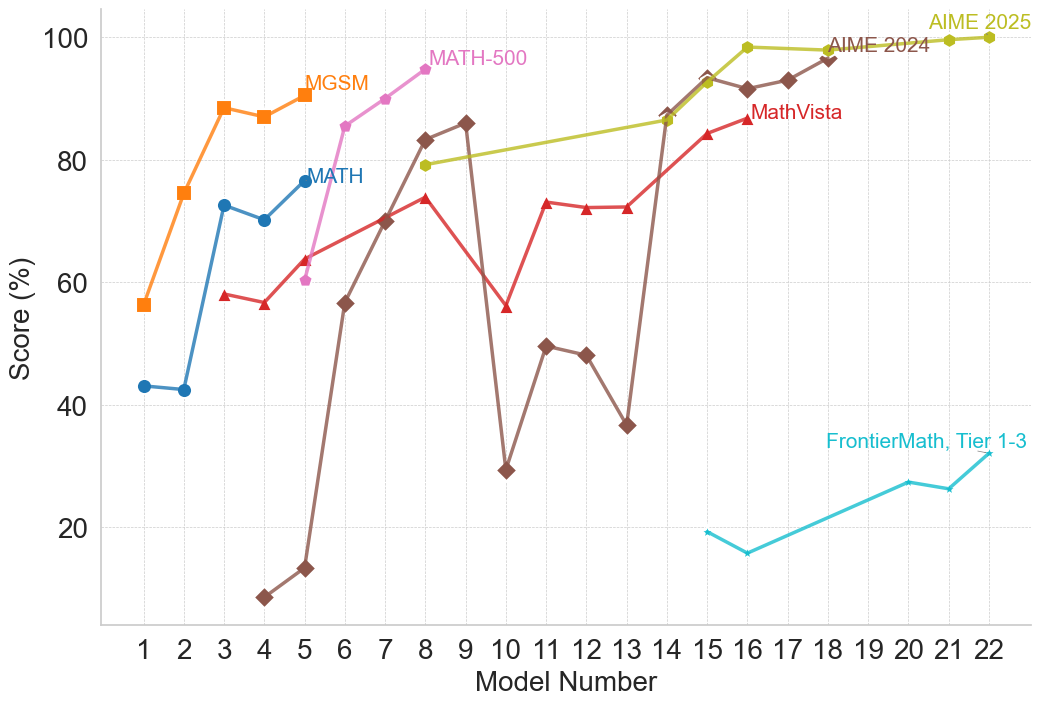

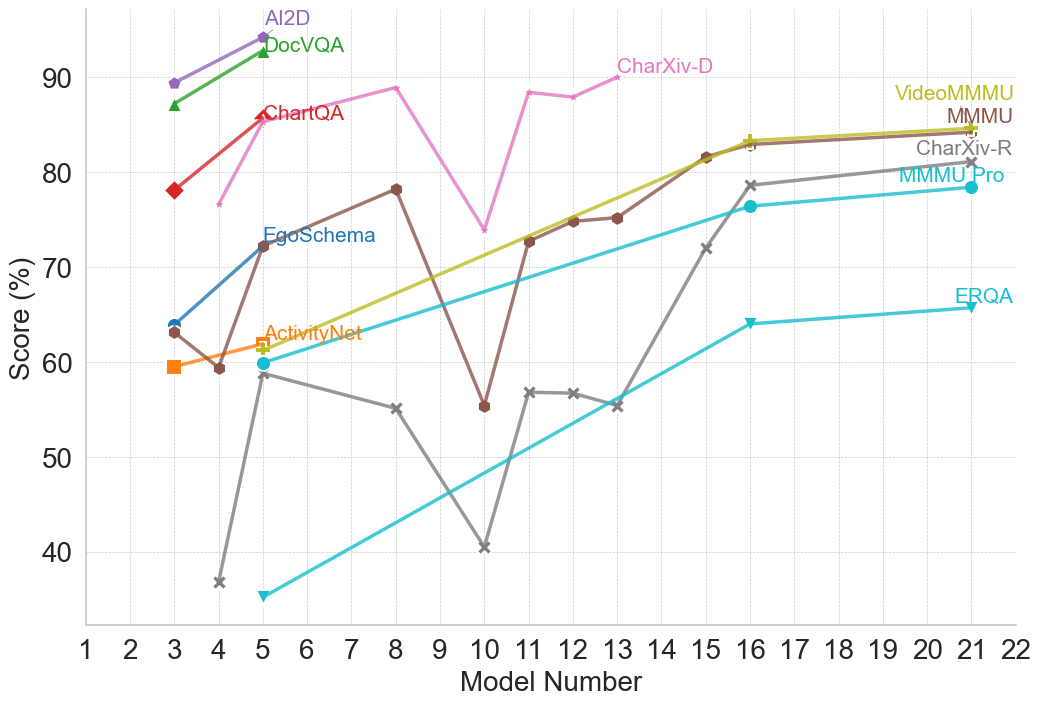

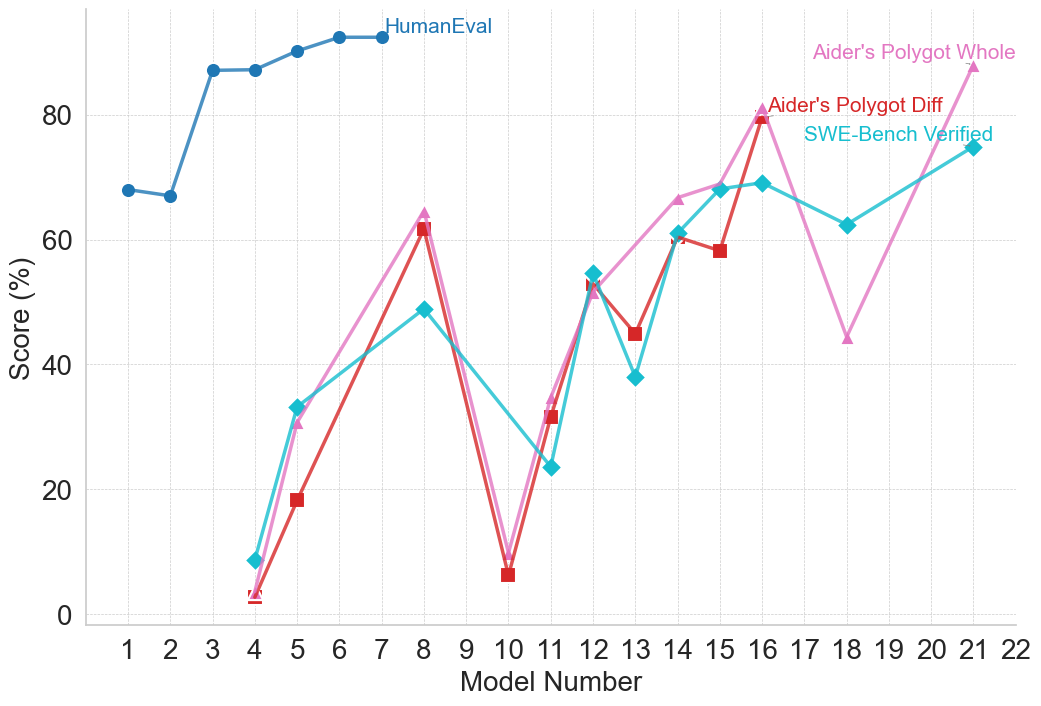

Across all three model families there is a consistent effort to develop newer models or architectural improvements to achieve higher benchmark performance. However, comparing performance across families is challenging, as each family often employs different benchmarks, and even within a single family, benchmarks used can vary between model iterations. This variation appears to stem from two main factors: first, certain benchmarks reach saturation due to high performance; second, benchmark updates or more challenging subsets are introduced, such as the transition from MATH to MATH-500 [30].

We observe a recurring pattern: once a model family achieves a high performance on a particular benchmark, subsequent models tend to use that benchmark less frequently or may discontinue its use entirely. This reflects both practical and conceptual considerations: benchmarks that no longer discriminate between models provide limited evaluative value, and benchmark selection increasingly reflects the evolving understanding of which reasoning tasks remain challenging for current architectures.

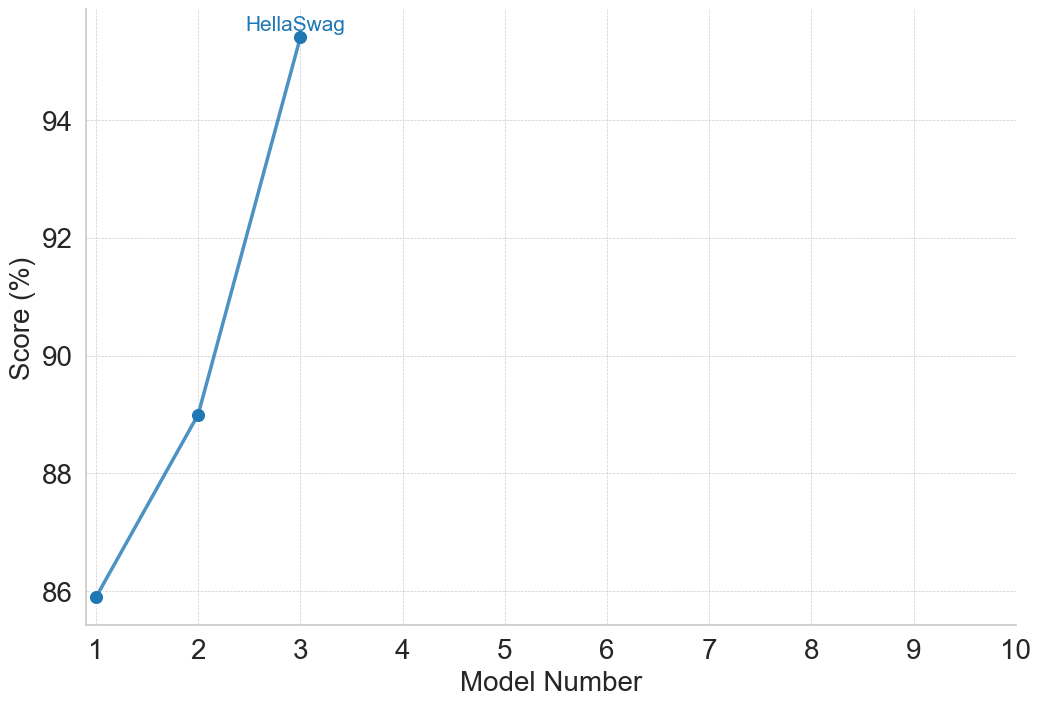

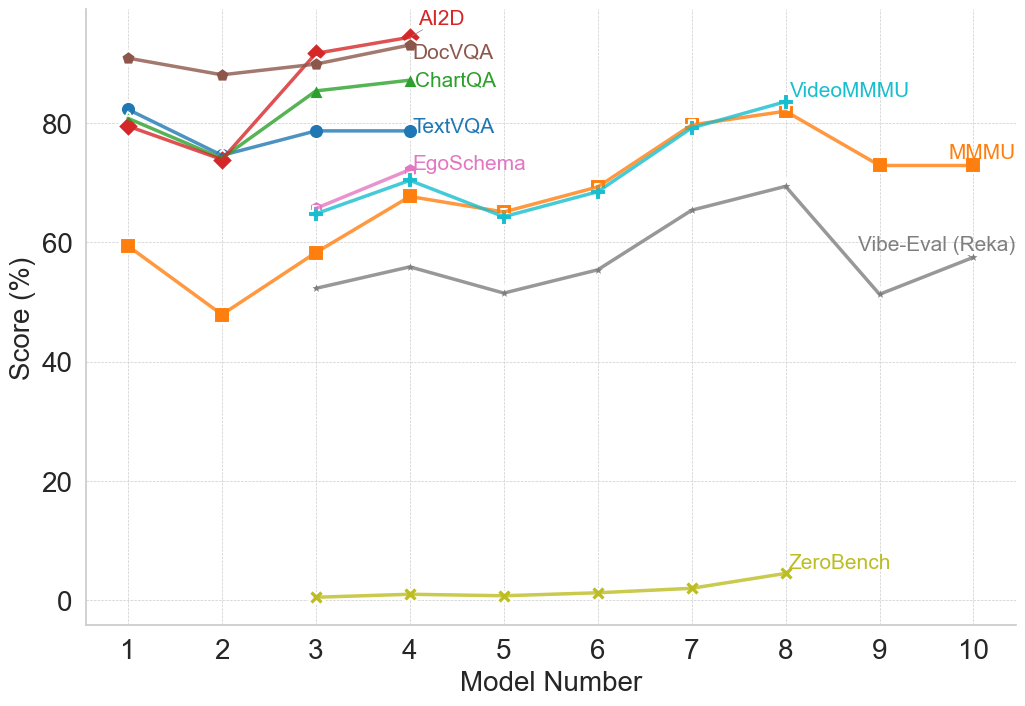

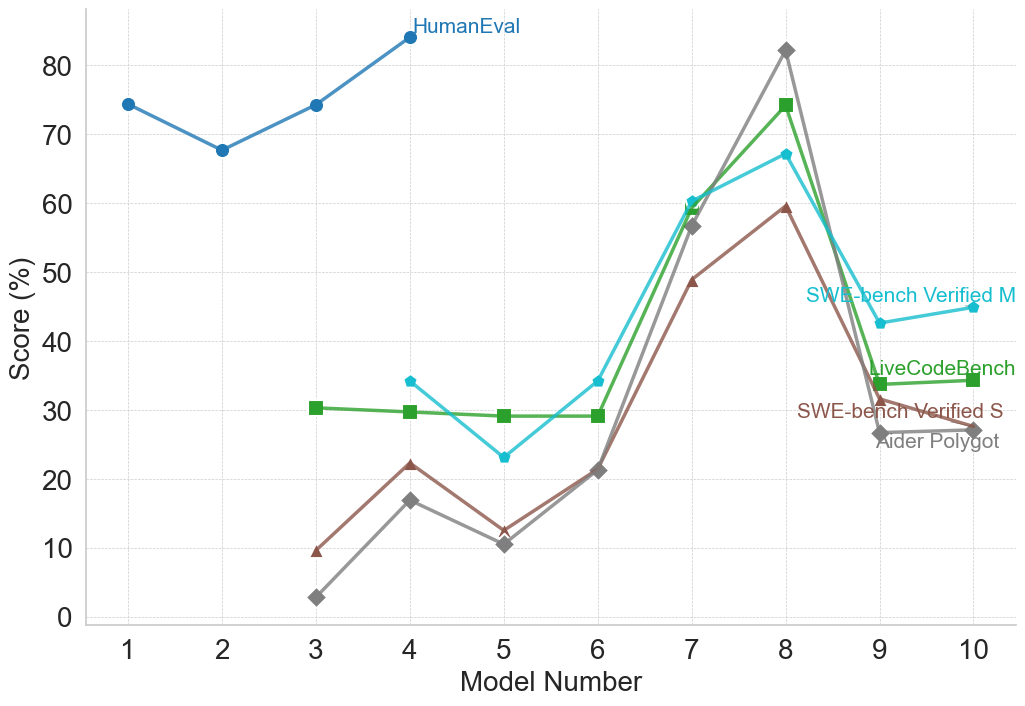

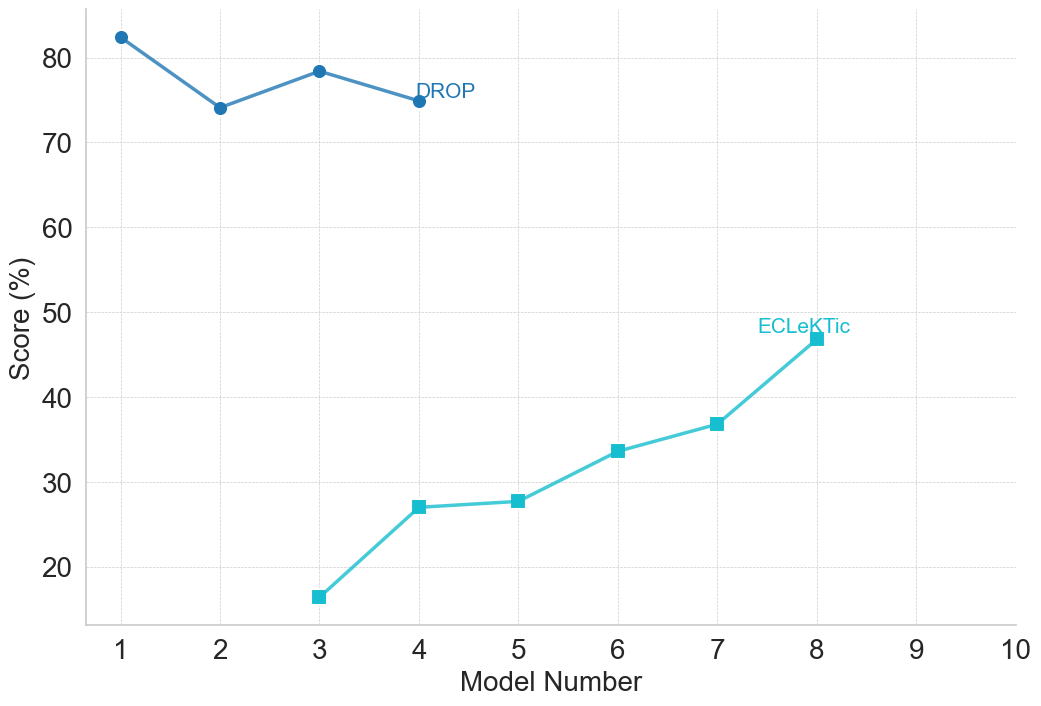

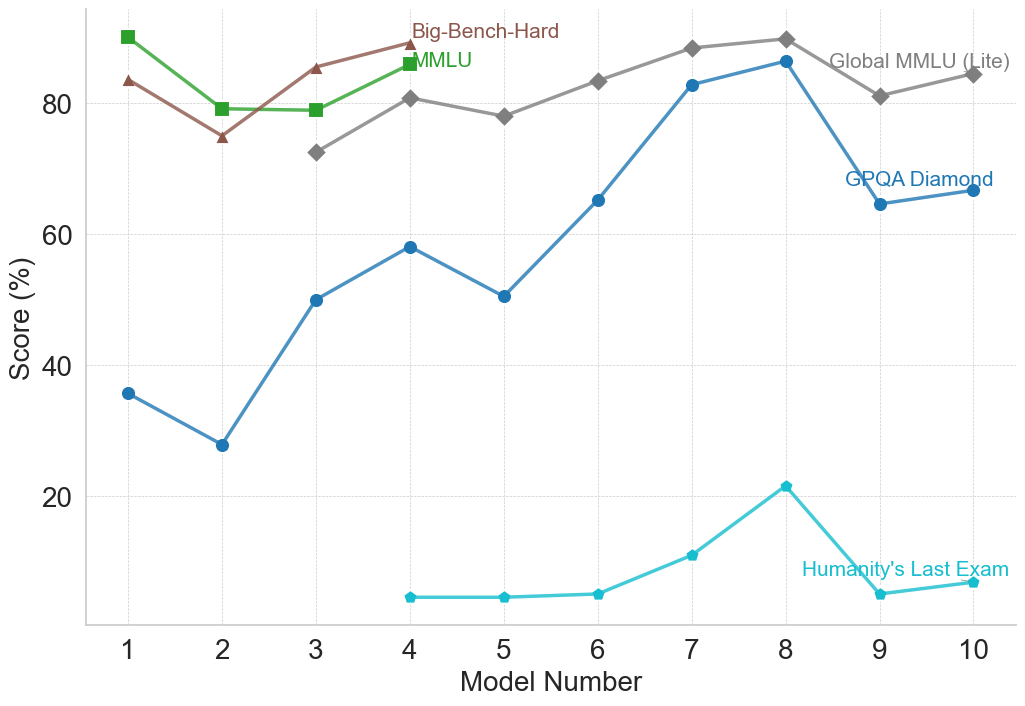

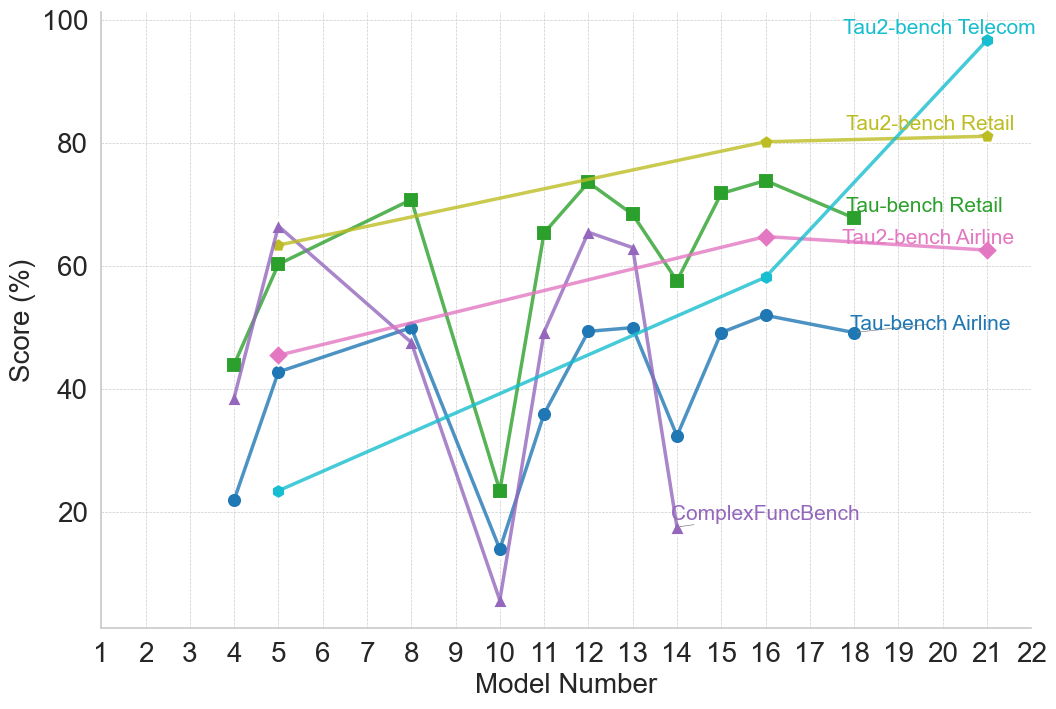

Interestingly, performance trends reveal consistent directional correlations across benchmarks within the same reasoning type. For example, when a model demonstrates improved performance on a benchmark, it generally shows corresponding improvements on other benchmarks of the same type, while lower performance on one benchmark tends to coincide with lower performance on others. Nevertheless, the extent of performance differs across benchmarks, potentially due to variations in problem complexity and the scaling limitations evident in smaller models, as seen within the OpenAI family. This pattern suggests that benchmarks within a reasoning type often capture overlapping aspects of reasoning, so that advances in a models’ capabilities tend to propagate across related tasks. At the same time, variations in the magnitude of performance gains provide insight into the relative difficulty of different benchmarks within the same reasoning type. Detailed plots illustrating performance changes within model families for different reasoning types are provided in Appendix B.

Finally, we note that newer models generally achieve higher performance on previously low-scoring benchmarks. However, the limited overlap of common benchmarks across model families complicates cross-family comparisons. This raises a critical question: if benchmarks are intended to evaluate and compare model capabilities, why are they not consistently adopted or reported across families? If benchmarks are intended to provide a shared measure of capability, their fragmented and selective use undermines that goal and exemplifies the need for more standardized, representative, and domain-informed evaluation frameworks.

## 4 Performance of Models within Benchmarks

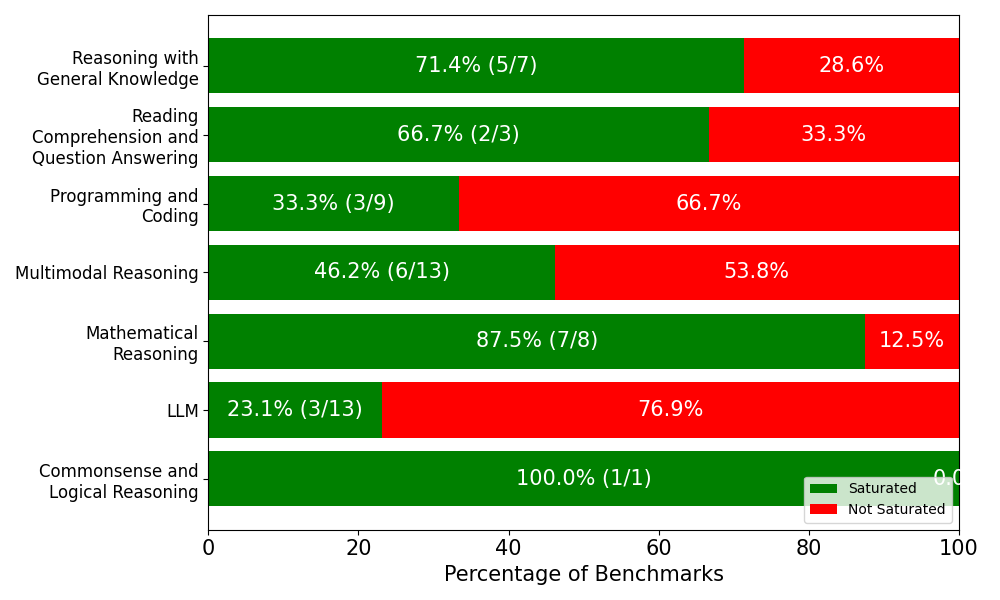

We collect all reported model performances across benchmarks and analyze saturation by defining it as whether a model has achieved at least 80% accuracy on the given benchmark. Out of the full set of benchmarks, we find that 27 benchmarks surpass this threshold in at least one model family, while 25 benchmarks never reach it. The majority of “solved” benchmarks belong to commonsense and logical reasoning, mathematical reasoning, reasoning with general knowledge, and reading comprehension and question answering. By contrast, benchmarks targeting LLM-specific capabilities and programming and coding remain comparatively difficult, with few instances of performance above 80%.

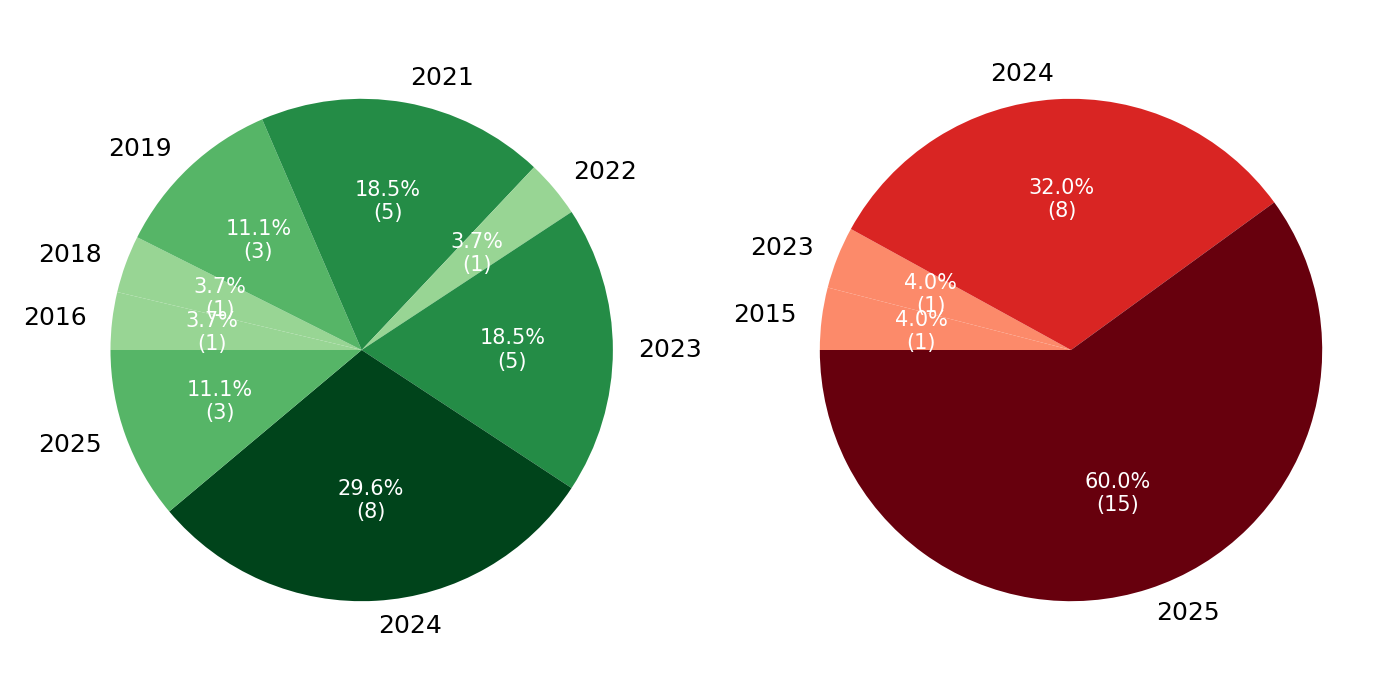

We then examine the release years of benchmarks that never surpass the 80% threshold. The distribution is striking: 60% of unsolved benchmarks were introduced in 2025, 32% in 2024, and only two pre-2023 benchmarks remain unsolved, which are ActivityNet [31] and EgoSchema [32], both multimodal reasoning benchmarks. This distribution suggests a clear trend. Nearly all benchmarks released prior to 2025 have already been surpassed by at least one model family, indicating rapid saturation. By contrast, the benchmarks still below the threshold overwhelmingly correspond to the most recently introduced evaluation tasks.

<details>

<summary>figures/stacked_bar_saturation.png Details</summary>

### Visual Description

## Horizontal Stacked Bar Chart: Benchmark Saturation by Category

### Overview

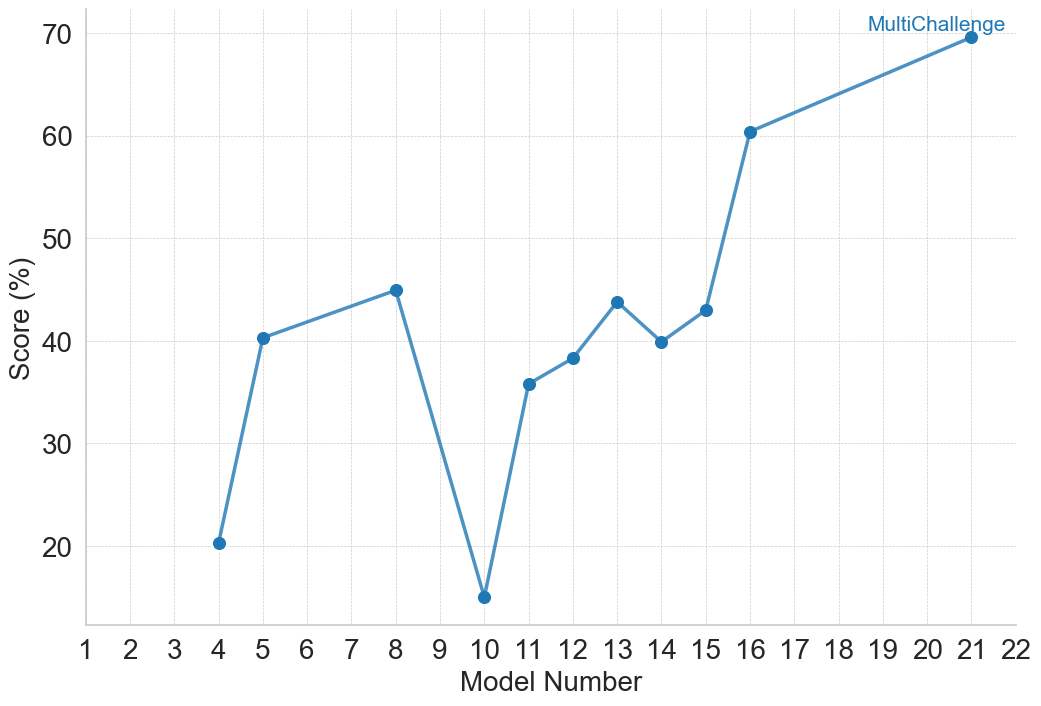

This image is a horizontal stacked bar chart that visualizes the percentage of benchmarks that are "Saturated" versus "Not Saturated" across seven distinct capability categories for an AI model or system. The chart uses a two-color scheme (green for Saturated, red for Not Saturated) to show the proportional split within each category. The overall purpose is to illustrate performance or evaluation results, highlighting areas of strength and weakness.

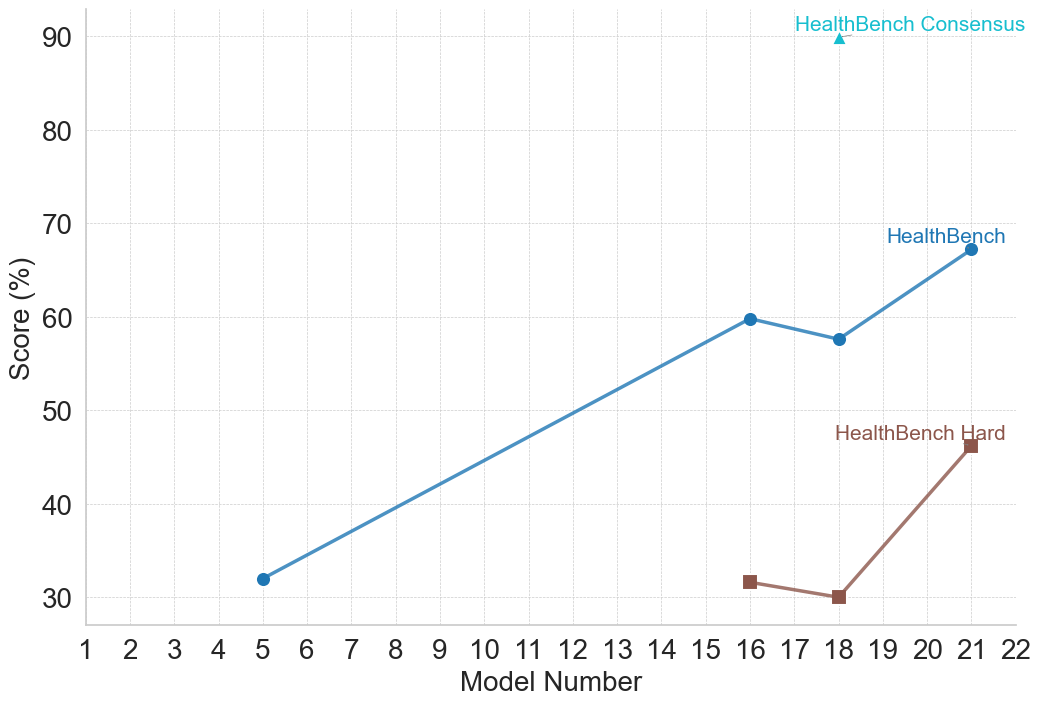

### Components/Axes

* **Chart Type:** Horizontal Stacked Bar Chart.

* **Y-Axis (Vertical):** Lists seven capability categories. From top to bottom:

1. Reasoning with General Knowledge

2. Reading Comprehension and Question Answering

3. Programming and Coding

4. Multimodal Reasoning

5. Mathematical Reasoning

6. LLM

7. Commonsense and Logical Reasoning

* **X-Axis (Horizontal):** Labeled "Percentage of Benchmarks". The scale runs from 0 to 100, with major tick marks at 0, 20, 40, 60, 80, and 100.

* **Legend:** Located in the bottom-right corner of the chart area. It defines the two data series:

* **Green Square:** "Saturated"

* **Red Square:** "Not Saturated"

* **Data Labels:** Each bar segment contains a percentage value. The green "Saturated" segments also include a fraction in parentheses (e.g., "5/7"), indicating the number of saturated benchmarks out of the total benchmarks in that category.

### Detailed Analysis

The chart presents the following data for each category, listed from top to bottom:

1. **Reasoning with General Knowledge**

* **Saturated (Green):** 71.4% (5/7). The green bar extends from 0% to approximately 71.4% on the x-axis.

* **Not Saturated (Red):** 28.6%. The red bar occupies the remainder, from ~71.4% to 100%.

2. **Reading Comprehension and Question Answering**

* **Saturated (Green):** 66.7% (2/3). The green bar extends from 0% to approximately 66.7%.

* **Not Saturated (Red):** 33.3%. The red bar occupies the remainder.

3. **Programming and Coding**

* **Saturated (Green):** 33.3% (3/9). The green bar extends from 0% to approximately 33.3%.

* **Not Saturated (Red):** 66.7%. The red bar is the dominant segment, occupying the majority of the bar.

4. **Multimodal Reasoning**

* **Saturated (Green):** 46.2% (6/13). The green bar extends from 0% to approximately 46.2%.

* **Not Saturated (Red):** 53.8%. The red bar is slightly larger than the green segment.

5. **Mathematical Reasoning**

* **Saturated (Green):** 87.5% (7/8). The green bar is very long, extending from 0% to 87.5%.

* **Not Saturated (Red):** 12.5%. The red segment is a small portion at the end of the bar.

6. **LLM**

* **Saturated (Green):** 23.1% (3/13). The green bar is short, extending from 0% to approximately 23.1%.

* **Not Saturated (Red):** 76.9%. The red bar is the dominant segment, occupying most of the bar.

7. **Commonsense and Logical Reasoning**

* **Saturated (Green):** 100.0% (1/1). The entire bar is green, extending from 0% to 100%.

* **Not Saturated (Red):** 0.0%. No red segment is visible.

### Key Observations

* **Highest Saturation:** "Commonsense and Logical Reasoning" shows 100% saturation, though it is based on only one benchmark (1/1).

* **Lowest Saturation:** "LLM" has the lowest saturation rate at 23.1%.

* **Strong Performance:** "Mathematical Reasoning" (87.5%) and "Reasoning with General Knowledge" (71.4%) also show high saturation rates.

* **Areas for Improvement:** "Programming and Coding" (33.3%) and "LLM" (23.1%) have the lowest saturation rates, indicating these are the most challenging categories where most benchmarks are not yet saturated.

* **Benchmark Count Variation:** The total number of benchmarks per category varies significantly, from 1 ("Commonsense and Logical Reasoning") to 13 ("Multimodal Reasoning" and "LLM"). This affects the statistical weight of each percentage.

### Interpretation

This chart provides a diagnostic snapshot of an AI system's capabilities relative to established benchmarks. "Saturated" likely means the system has reached a performance ceiling or solved the benchmark tasks.

The data suggests the system excels in structured, logical domains like **Commonsense/Logical Reasoning** and **Mathematical Reasoning**, where it has nearly or completely mastered the available tests. It also performs well in **General Knowledge Reasoning**.

Conversely, the system shows significant room for growth in **Programming/Coding** and general **LLM** benchmarks, where over two-thirds of the tasks remain unsaturated. The **Multimodal Reasoning** category sits in the middle, with a near-even split.

The stark contrast between categories highlights the uneven nature of AI capability development. The system's strength in formal logic and math does not directly translate to proficiency in code generation or broad language modeling tasks as measured by these specific benchmarks. The very low benchmark count for "Commonsense and Logical Reasoning" (1/1) is a critical caveat; its 100% score, while positive, is less statistically robust than the high scores in categories with more benchmarks (e.g., Mathematical Reasoning with 8/8). This chart would be essential for guiding future research and development priorities.

</details>

(a) Distribution of benchmarks that models surpassed 80% threshold and those not yet surpassed, grouped by reasoning type.

<details>

<summary>figures/pie_saturation_by_year.png Details</summary>

### Visual Description

\n

## Pie Charts: Yearly Distribution Comparison

### Overview

The image displays two pie charts side-by-side on a white background. The left chart uses a green color palette, and the right chart uses a red color palette. Each chart visualizes the distribution of a dataset across different years, showing both percentage and absolute count (in parentheses) for each year segment.

### Components/Axes

* **Chart Type:** Two pie charts.

* **Left Chart (Green Palette):** Represents data distributed across the years 2016, 2018, 2019, 2021, 2022, 2023, 2024, and 2025.

* **Right Chart (Red Palette):** Represents data distributed across the years 2015, 2023, 2024, and 2025.

* **Labels:** Each segment is labeled with the year, placed outside the pie chart adjacent to its segment.

* **Data Labels:** Inside each segment, the percentage of the total and the absolute count (in parentheses) are displayed in white text.

* **Legend:** There is no separate legend; the year labels serve as the key for each segment.

### Detailed Analysis

#### Left Chart (Green Palette)

This chart has 8 segments. The largest segment is at the bottom.

* **2024:** Darkest green segment, positioned at the bottom (6 o'clock). **29.6% (8)**.

* **2021:** Dark green segment, positioned at the top (12 o'clock). **18.5% (5)**.

* **2023:** Medium-dark green segment, positioned at the right (3 o'clock). **18.5% (5)**.

* **2019:** Medium green segment, positioned at the top-left (10-11 o'clock). **11.1% (3)**.

* **2025:** Medium green segment, positioned at the left (9 o'clock). **11.1% (3)**.

* **2022:** Light green segment, positioned at the top-right (1-2 o'clock). **3.7% (1)**.

* **2018:** Light green segment, positioned at the left (9 o'clock, adjacent to 2025). **3.7% (1)**.

* **2016:** Lightest green segment, positioned at the left (8-9 o'clock). **3.7% (1)**.

**Total Count (Left Chart):** 8 + 5 + 5 + 3 + 3 + 1 + 1 + 1 = **27**.

#### Right Chart (Red Palette)

This chart has 4 segments. The largest segment dominates the bottom half.

* **2025:** Darkest red (maroon) segment, positioned at the bottom (spanning from ~4 o'clock to ~8 o'clock). **60.0% (15)**.

* **2024:** Bright red segment, positioned at the top (spanning from ~10 o'clock to ~2 o'clock). **32.0% (8)**.

* **2023:** Light red (salmon) segment, positioned at the left (9 o'clock). **4.0% (1)**.

* **2015:** Lightest red (peach) segment, positioned at the left (8-9 o'clock, adjacent to 2023). **4.0% (1)**.

**Total Count (Right Chart):** 15 + 8 + 1 + 1 = **25**.

### Key Observations

1. **Concentration of Data:** The right chart shows a heavy concentration in the most recent years, with 2024 and 2025 accounting for 92% of the data (23 out of 25 total). The left chart is more distributed, though 2024 is still the largest single segment.

2. **Year Overlap:** The years 2023, 2024, and 2025 appear in both charts, but with vastly different proportions. For example, 2025 represents 11.1% of the left chart but 60.0% of the right chart.

3. **Color Coding:** The charts use monochromatic color scales (greens and reds) where darker shades correspond to larger segments within each chart.

4. **Total Counts:** The datasets are of similar size (27 vs. 25 items).

### Interpretation

The two pie charts likely represent the composition of two different datasets or categories, broken down by the year of origin or occurrence.

* The **left (green) chart** suggests a dataset with a longer historical tail, containing items from as far back as 2016, but with a clear peak in 2024. The distribution is relatively balanced among the top four years (2021, 2023, 2024, 2025).

* The **right (red) chart** suggests a dataset that is overwhelmingly recent. The year 2025 alone constitutes the majority (60%), and together with 2024, they dominate the set. This could indicate a metric that has surged in the last two years, such as recent sales, new user sign-ups, or current project initiations.

The stark contrast in distribution between the two charts is the primary insight. Without additional context on what the green and red categories represent (e.g., "Product A vs. Product B," "Successes vs. Failures," "Internal vs. External Projects"), the specific meaning is ambiguous. However, the data clearly shows that the "red" category is characterized by extreme recency, while the "green" category has a more established, multi-year history.

</details>

(b) Release years of benchmarks relative to the 80% threshold: left pie shows surpassed benchmarks, right pie shows unsolved benchmarks.

Figure 2: Benchmark saturation dynamics.

This temporal pattern highlights the central dynamic of the saturation cycle: older benchmarks are rapidly mastered and lose discriminative power, while newly introduced benchmarks become the standards for demonstrating progress. Nearly all unsolved benchmarks are recent, highlighting both the accelerating pace of benchmark creation and the difficulty of maintaining evaluations that remain challenging over time. Yet this difficulty seems only temporary. It is highly plausible that within one or two years many of these currently unsolved benchmarks will also be surpassed, at which point model families will shift to alternative or newly designed evaluations to preserve differentiation. Crucially, this pattern reflects the fact that performance gains are often specific to individual benchmarks rather than to the broader reasoning type they are intended to assess. As the analyses indicate, while models often perform consistently and even strongly on benchmarks within a domain, the introduction of a more challenging, novel benchmark frequently leads to a drop in performance. This pattern may arise from the increased difficulty of the new benchmark, or from contamination that inflated performance on earlier benchmarks without truly reflecting generalizable reasoning ability. This situation raises the question of whether what appears as “reasoning ability” is often tied more to benchmark design and prior exposure than to robust mastery of the reasoning type itself. This saturation cycle casts doubt on the long-term evaluation value of benchmarks.

## 5 Discussion: Limitations of Current Benchmarking

Our analysis of three model families demonstrates that benchmark performance has generally increased over time, with newer models achieving higher scores across most reasoning types and benchmarks. However, given that many benchmarks have already been surpassed with high accuracy, we would like to highlight a question originally posed in [25] regarding commonsense reasoning, reframed here for reasoning in general: Have neural language models successfully acquired reasoning, or are we overestimating the true capabilities of machine reasoning? Several studies in the literature show that these models still perform poorly when required to generalize to longer contexts or handle tasks requiring inductive and compositional reasoning [33, 34, 35, 36, 37, 38]. This discrepancy suggests a limitation of current benchmarking practices: improvements in benchmark scores do not necessarily reflect generalizable reasoning ability.

We believe this discrepancy can be reduced by developing more sophisticated, task-specific evaluation metrics that capture intermediate reasoning steps or different modes of error. Additionally, formalizing reasoning for different task types can support these efforts, enabling more structured analyses and clearer assessment of models’ reasoning abilities. Such a formalization enables structured representations of diverse reasoning types and their interrelationships [39, 40, 41], and facilitates the design of layered, targeted evaluation procedures that assess specific reasoning capabilities rather than merely reporting overall accuracy. Furthermore, formal reasoning frameworks can support the development of algorithms that deliver structured feedback to models, guiding the refinement of their reasoning abilities. By integrating formalized reasoning with task-specific evaluations, benchmarking can be conducted in a more targeted and informative manner.

## 6 Limitations

The analysis in our study focuses on 52 benchmarks used by the three model families. Other model families and reasoning-focused models are not fully explored because including them, along with more than two hundred benchmarks identified from other model families and several studies evaluating different types of reasoning in large models, would create a combinatorial explosion of comparisons. This restriction was necessary to maintain the scope of our work on a qualitative evaluation of benchmark design and adoption rather than an exhaustive quantitative analysis of all models and benchmarks. A comprehensive comparison across a wider range of models and benchmarks is left for future work.

## 7 Conclusion

In this work, we analyze 52 benchmarks across three model families, covering multiple reasoning types. Our study reveals the rapid saturation of older benchmarks, selective adoption of new ones, and temporal dynamics that govern the utility of benchmarks in evaluating model performance. While model performance generally improves over time and correlations within reasoning types indicate overlapping evaluation properties, the introduction of more challenging benchmarks generally resets performance, suggesting that apparent reasoning ability is influenced more by extrinsic factors than by mastering the reasoning itself, as supported by other studies. This saturation cycle highlights the limitations of current practices: benchmarks provide only a partial view of model reasoning. Meaningful progress requires formalized reasoning tasks, layered evaluation procedures, and task-specific metrics that go beyond accuracy scores.

## References

- [1] Thomas Liao, Rohan Taori, Deborah Raji, and Ludwig Schmidt. Are we learning yet? a meta review of evaluation failures across machine learning. In J. Vanschoren and S. Yeung, editors, Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks, volume 1, 2021.

- [2] Anthropic. Introducing the next generation of claude, March 2024. Accessed: 2025-08-28.

- [3] Anthropic. Claude 3.5 sonnet, June 2024. Accessed: 2025-08-28.

- [4] Anthropic. Introducing claude 4, May 2025. Accessed: 2025-08-28.

- [5] Anthropic. Introducing claude 3.5 haiku, October 2024. Accessed: 2025-08-28.

- [6] Anthropic. Claude 3.7 sonnet and claude code, February 2025. Accessed: 2025-08-28.

- [7] Anthropic. Claude opus 4.1, August 2025. Accessed: 2025-08-28.

- [8] Google DeepMind. Gemini 2.5 flash-lite, June 2025. Accessed: 2025-08-28.

- [9] Gheorghe Comanici, Eric Bieber, Mike Schaekermann, et al. Gemini 2.5: Pushing the frontier with advanced reasoning, multimodality, long context, and next generation agentic capabilities, 2025.

- [10] Google DeepMind. Gemini 2.5: Our most intelligent ai model, March 2025. Accessed: 2025-08-28.

- [11] Gemini Team, Petko Georgiev, Ving Ian Lei, et al. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context, 2024.

- [12] Gemini Team, Rohan Anil, Sebastian Borgeaud, et al. Gemini: A family of highly capable multimodal models, 2025.

- [13] OpenAI. Openai o1-mini: Advancing cost-efficient reasoning, September 2024. Accessed: 2025-08-28.

- [14] OpenAI. Introducing gpt-4.1 in the api, April 2025. Accessed: 2025-08-28.

- [15] OpenAI. Introducing gpt-4.5, February 2025. Accessed: 2025-08-28.

- [16] OpenAI. gpt-oss-120b & gpt-oss-20b model card, August 2025. Accessed: 2025-08-28.

- [17] OpenAI. Introducing gpt-5, August 2025. Accessed: 2025-08-28.

- [18] OpenAI. Model release notes. Accessed: 2025-08-28.

- [19] OpenAI. Introducing openai o3 and o4-mini, April 2025. Accessed: 2025-08-28.

- [20] OpenAI. Gpt-4o mini: Advancing cost-efficient intelligence, July 2024. Accessed: 2025-08-28.

- [21] OpenAI. Hello gpt-4o, May 2024. Accessed: 2025-08-28.

- [22] OpenAI. Learning to reason with llms, September 2024. Accessed: 2025-08-28.

- [23] Yonatan Bisk, Rowan Zellers, Ronan Le Bras, Jiasen Gao, and Yejin Choi. Piqa: Reasoning about physical commonsense in natural language. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 34, pages 7432–7439, 2020.

- [24] Bill Yuchen Lin, Wangchunshu Zhou, Ming Shen, Pei Zhou, Chandra Bhagavatula, Yejin Choi, and Xiang Ren. CommonGen: A constrained text generation challenge for generative commonsense reasoning. In Trevor Cohn, Yulan He, and Yang Liu, editors, Findings of the Association for Computational Linguistics: EMNLP 2020, pages 1823–1840, Online, November 2020. Association for Computational Linguistics.

- [25] Keisuke Sakaguchi, Ronan Le Bras, Chandra Bhagavatula, and Yejin Choi. Winogrande: an adversarial winograd schema challenge at scale. Commun. ACM, 64(9):99–106, August 2021.

- [26] Alon Talmor, Ori Yoran, Ronan Le Bras, Chandra Bhagavatula, Yoav Goldberg, Yejin Choi, and Jonathan Berant. Commonsenseqa 2.0: Exposing the limits of ai through gamification, 2022.

- [27] Andong Wang, Bo Wu, Sunli Chen, Zhenfang Chen, Haotian Guan, Wei-Ning Lee, Li Erran Li, and Chuang Gan. Sok-bench: A situated video reasoning benchmark with aligned open-world knowledge, 2024.

- [28] Jian Liu, Leyang Cui, Hanmeng Liu, Dandan Huang, Yile Wang, and Yue Zhang. Logiqa: a challenge dataset for machine reading comprehension with logical reasoning. In Proceedings of the Twenty-Ninth International Joint Conference on Artificial Intelligence, IJCAI’20, 2021.

- [29] Weihao Yu, Zihang Jiang, Yanfei Dong, and Jiashi Feng. Reclor: A reading comprehension dataset requiring logical reasoning. In International Conference on Learning Representations, 2020.

- [30] Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric Tang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. In J. Vanschoren and S. Yeung, editors, Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks, volume 1, 2021.

- [31] Fabian Caba Heilbron, Victor Escorcia, Bernard Ghanem, and Juan Carlos Niebles. Activitynet: A large-scale video benchmark for human activity understanding. In 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 961–970, 2015.

- [32] Karttikeya Mangalam, Raiymbek Akshulakov, and Jitendra Malik. Egoschema: A diagnostic benchmark for very long-form video language understanding, 2023.

- [33] Nouha Dziri, Ximing Lu, Melanie Sclar, Xiang Lorraine Li, Liwei Jiang, Bill Yuchen Lin, Peter West, Chandra Bhagavatula, Ronan Le Bras, Jena D. Hwang, Soumya Sanyal, Sean Welleck, Xiang Ren, Allyson Ettinger, Zaid Harchaoui, and Yejin Choi. Faith and fate: limits of transformers on compositionality. In Proceedings of the 37th International Conference on Neural Information Processing Systems, NIPS ’23, Red Hook, NY, USA, 2023. Curran Associates Inc.

- [34] Iman Mirzadeh, Keivan Alizadeh, Hooman Shahrokhi, Oncel Tuzel, Samy Bengio, and Mehrdad Farajtabar. Gsm-symbolic: Understanding the limitations of mathematical reasoning in large language models, 2025.

- [35] Parshin Shojaee, Iman Mirzadeh, Keivan Alizadeh, Maxwell Horton, Samy Bengio, and Mehrdad Farajtabar. The illusion of thinking: Understanding the strengths and limitations of reasoning models via the lens of problem complexity, 2025.

- [36] Jackson Petty, Michael Y. Hu, Wentao Wang, Shauli Ravfogel, William Merrill, and Tal Linzen. Relic: Evaluating compositional instruction following via language recognition, 2025.

- [37] S. Bedi, Y. Jiang, P. Chung, S. Koyejo, and N. Shah. Fidelity of medical reasoning in large language models. JAMA Network Open, 8(8):e2526021, 2025.

- [38] Karthik Valmeekam, Kaya Stechly, Atharva Gundawar, and Subbarao Kambhampati. A systematic evaluation of the planning and scheduling abilities of the reasoning model o1. Transactions on Machine Learning Research, 2025.

- [39] P. N. Johnson-Laird. Mental models: towards a cognitive science of language, inference, and consciousness. Harvard University Press, USA, 1986.

- [40] Patrick Blackburn and Johannes Bos. Representation and Inference for Natural Language: A First Course in Computational Semantics. Center for the Study of Language and Information, Stanford, Calif., 2005.

- [41] Brenden M. Lake, Tomer D. Ullman, Joshua B. Tenenbaum, and Samuel J. Gershman. Building machines that learn and think like people. Behavioral and Brain Sciences, 40:e253, 2017.

- [42] Rowan Zellers, Ari Holtzman, Yonatan Bisk, Ali Farhadi, and Yejin Choi. HellaSwag: Can a machine really finish your sentence? In Anna Korhonen, David Traum, and Lluís Màrquez, editors, Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, pages 4791–4800, Florence, Italy, July 2019. Association for Computational Linguistics.

- [43] Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding, 2021.

- [44] Mirac Suzgun, Nathan Scales, Nathanael Schärli, Sebastian Gehrmann, Yi Tay, Hyung Won Chung, Aakanksha Chowdhery, Quoc Le, Ed Chi, Denny Zhou, and Jason Wei. Challenging BIG-bench tasks and whether chain-of-thought can solve them. In Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki, editors, Findings of the Association for Computational Linguistics: ACL 2023, pages 13003–13051, Toronto, Canada, July 2023. Association for Computational Linguistics.

- [45] Dan Hendrycks, Collin Burns, Steven Basart, Andy Zou, Mantas Mazeika, Dawn Song, and Jacob Steinhardt. Measuring massive multitask language understanding, 2021.

- [46] Long Phan, Alice Gatti, Ziwen Han, et al. Humanity’s last exam, 2025.

- [47] Shivalika Singh, Angelika Romanou, Clémentine Fourrier, David Ifeoluwa Adelani, Jian Gang Ngui, Daniel Vila-Suero, Peerat Limkonchotiwat, Kelly Marchisio, Wei Qi Leong, Yosephine Susanto, Raymond Ng, Shayne Longpre, Sebastian Ruder, Wei-Yin Ko, Antoine Bosselut, Alice Oh, Andre Martins, Leshem Choshen, Daphne Ippolito, Enzo Ferrante, Marzieh Fadaee, Beyza Ermis, and Sara Hooker. Global MMLU: Understanding and addressing cultural and linguistic biases in multilingual evaluation. In Wanxiang Che, Joyce Nabende, Ekaterina Shutova, and Mohammad Taher Pilehvar, editors, Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), pages 18761–18799, Vienna, Austria, July 2025. Association for Computational Linguistics.

- [48] David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty, Richard Yuanzhe Pang, Julien Dirani, Julian Michael, and Samuel R. Bowman. Gpqa: A graduate-level google-proof q&a benchmark, 2023.

- [49] Yubo Wang, Xueguang Ma, Ge Zhang, Yuansheng Ni, Abhranil Chandra, Shiguang Guo, Weiming Ren, Aaran Arulraj, Xuan He, Ziyan Jiang, Tianle Li, Max Ku, Kai Wang, Alex Zhuang, Rongqi Fan, Xiang Yue, and Wenhu Chen. Mmlu-pro: A more robust and challenging multi-task language understanding benchmark, 2024.

- [50] Peter Clark, Isaac Cowhey, Oren Etzioni, Tushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind Tafjord. Think you have solved question answering? try arc, the ai2 reasoning challenge, 2018.

- [51] Omer Goldman, Uri Shaham, Dan Malkin, Sivan Eiger, Avinatan Hassidim, Yossi Matias, Joshua Maynez, Adi Mayrav Gilady, Jason Riesa, Shruti Rijhwani, Laura Rimell, Idan Szpektor, Reut Tsarfaty, and Matan Eyal. Eclektic: a novel challenge set for evaluation of cross-lingual knowledge transfer, 2025.

- [52] Dheeru Dua, Yizhong Wang, Pradeep Dasigi, Gabriel Stanovsky, Sameer Singh, and Matt Gardner. DROP: A reading comprehension benchmark requiring discrete reasoning over paragraphs. In Jill Burstein, Christy Doran, and Thamar Solorio, editors, Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 2368–2378, Minneapolis, Minnesota, June 2019. Association for Computational Linguistics.

- [53] Karl Cobbe, Vineet Kosaraju, Mohammad Bavarian, Mark Chen, Heewoo Jun, Lukasz Kaiser, Matthias Plappert, Jerry Tworek, Jacob Hilton, Reiichiro Nakano, Christopher Hesse, and John Schulman. Training verifiers to solve math word problems, 2021.

- [54] Freda Shi, Mirac Suzgun, Markus Freitag, Xuezhi Wang, Suraj Srivats, Soroush Vosoughi, Hyung Won Chung, Yi Tay, Sebastian Ruder, Denny Zhou, Dipanjan Das, and Jason Wei. Language models are multilingual chain-of-thought reasoners, 2022.

- [55] Pan Lu, Hritik Bansal, Tony Xia, Jiacheng Liu, Chunyuan Li, Hannaneh Hajishirzi, Hao Cheng, Kai-Wei Chang, Michel Galley, and Jianfeng Gao. Mathvista: Evaluating mathematical reasoning of foundation models in visual contexts, 2024.

- [56] Elliot Glazer, Ege Erdil, Tamay Besiroglu, Diego Chicharro, Evan Chen, Alex Gunning, Caroline Falkman Olsson, Jean-Stanislas Denain, Anson Ho, Emily de Oliveira Santos, Olli Järviniemi, Matthew Barnett, Robert Sandler, Matej Vrzala, Jaime Sevilla, Qiuyu Ren, Elizabeth Pratt, Lionel Levine, Grant Barkley, Natalie Stewart, Bogdan Grechuk, Tetiana Grechuk, Shreepranav Varma Enugandla, and Mark Wildon. Frontiermath: A benchmark for evaluating advanced mathematical reasoning in ai, 2024.

- [57] Xiang Yue, Yuansheng Ni, Kai Zhang, Tianyu Zheng, Ruoqi Liu, Ge Zhang, Samuel Stevens, Dongfu Jiang, Weiming Ren, Yuxuan Sun, Cong Wei, Botao Yu, Ruibin Yuan, Renliang Sun, Ming Yin, Boyuan Zheng, Zhenzhu Yang, Yibo Liu, Wenhao Huang, Huan Sun, Yu Su, and Wenhu Chen. Mmmu: A massive multi-discipline multimodal understanding and reasoning benchmark for expert agi, 2024.

- [58] Aniruddha Kembhavi, Mike Salvato, Eric Kolve, Minjoon Seo, Hannaneh Hajishirzi, and Ali Farhadi. A diagram is worth a dozen images, 2016.

- [59] Ahmed Masry, Do Xuan Long, Jia Qing Tan, Shafiq Joty, and Enamul Hoque. ChartQA: A benchmark for question answering about charts with visual and logical reasoning. In Smaranda Muresan, Preslav Nakov, and Aline Villavicencio, editors, Findings of the Association for Computational Linguistics: ACL 2022, pages 2263–2279, Dublin, Ireland, May 2022. Association for Computational Linguistics.

- [60] Minesh Mathew, Dimosthenis Karatzas, and C. V. Jawahar. Docvqa: A dataset for vqa on document images, 2021.

- [61] Amanpreet Singh, Vivek Natarajan, Meet Shah, Yu Jiang, Xinlei Chen, Dhruv Batra, Devi Parikh, and Marcus Rohrbach. Towards vqa models that can read, 2019.

- [62] Kairui Hu, Penghao Wu, Fanyi Pu, Wang Xiao, Yuanhan Zhang, Xiang Yue, Bo Li, and Ziwei Liu. Video-mmmu: Evaluating knowledge acquisition from multi-discipline professional videos, 2025.

- [63] Piotr Padlewski, Max Bain, Matthew Henderson, Zhongkai Zhu, Nishant Relan, Hai Pham, Donovan Ong, Kaloyan Aleksiev, Aitor Ormazabal, Samuel Phua, Ethan Yeo, Eugenie Lamprecht, Qi Liu, Yuqi Wang, Eric Chen, Deyu Fu, Lei Li, Che Zheng, Cyprien de Masson d’Autume, Dani Yogatama, Mikel Artetxe, and Yi Tay. Vibe-eval: A hard evaluation suite for measuring progress of multimodal language models, 2024.

- [64] Jonathan Roberts, Mohammad Reza Taesiri, Ansh Sharma, Akash Gupta, Samuel Roberts, Ioana Croitoru, Simion-Vlad Bogolin, Jialu Tang, Florian Langer, Vyas Raina, Vatsal Raina, Hanyi Xiong, Vishaal Udandarao, Jingyi Lu, Shiyang Chen, Sam Purkis, Tianshuo Yan, Wenye Lin, Gyungin Shin, Qiaochu Yang, Anh Totti Nguyen, David I. Atkinson, Aaditya Baranwal, Alexandru Coca, Mikah Dang, Sebastian Dziadzio, Jakob D. Kunz, Kaiqu Liang, Alexander Lo, Brian Pulfer, Steven Walton, Charig Yang, Kai Han, and Samuel Albanie. Zerobench: An impossible visual benchmark for contemporary large multimodal models, 2025.

- [65] Zirui Wang, Mengzhou Xia, Luxi He, Howard Chen, Yitao Liu, Richard Zhu, Kaiqu Liang, Xindi Wu, Haotian Liu, Sadhika Malladi, Alexis Chevalier, Sanjeev Arora, and Danqi Chen. Charxiv: Charting gaps in realistic chart understanding in multimodal llms. In A. Globerson, L. Mackey, D. Belgrave, A. Fan, U. Paquet, J. Tomczak, and C. Zhang, editors, Advances in Neural Information Processing Systems, volume 37, pages 113569–113697. Curran Associates, Inc., 2024.

- [66] Xiang Yue, Tianyu Zheng, Yuansheng Ni, Yubo Wang, Kai Zhang, Shengbang Tong, Yuxuan Sun, Botao Yu, Ge Zhang, Huan Sun, Yu Su, Wenhu Chen, and Graham Neubig. Mmmu-pro: A more robust multi-discipline multimodal understanding benchmark, 2025.

- [67] Google DeepMind. Gemini robotics: Bringing ai into the physical world, 2025. Accessed: 2025-08-29.

- [68] Carlos E. Jimenez, John Yang, Alexander Wettig, Shunyu Yao, Kexin Pei, Ofir Press, and Karthik Narasimhan. Swe-bench: Can language models resolve real-world github issues?, 2024.

- [69] Stanford University and Laude Institute. Terminal-bench: A benchmark for ai agents in terminal environments, 2025. Accessed: 2025-08-29.

- [70] Mark Chen, Jerry Tworek, Heewoo Jun, Qiming Yuan, Henrique Ponde de Oliveira Pinto, Jared Kaplan, Harri Edwards, Yuri Burda, Nicholas Joseph, Greg Brockman, Alex Ray, Raul Puri, Gretchen Krueger, Michael Petrov, Heidy Khlaaf, Girish Sastry, Pamela Mishkin, Brooke Chan, Scott Gray, Nick Ryder, Mikhail Pavlov, Alethea Power, Lukasz Kaiser, Mohammad Bavarian, Clemens Winter, Philippe Tillet, Felipe Petroski Such, Dave Cummings, Matthias Plappert, Fotios Chantzis, Elizabeth Barnes, Ariel Herbert-Voss, William Hebgen Guss, Alex Nichol, Alex Paino, Nikolas Tezak, Jie Tang, Igor Babuschkin, Suchir Balaji, Shantanu Jain, William Saunders, Christopher Hesse, Andrew N. Carr, Jan Leike, Josh Achiam, Vedant Misra, Evan Morikawa, Alec Radford, Matthew Knight, Miles Brundage, Mira Murati, Katie Mayer, Peter Welinder, Bob McGrew, Dario Amodei, Sam McCandlish, Ilya Sutskever, and Wojciech Zaremba. Evaluating large language models trained on code, 2021.

- [71] Naman Jain, King Han, Alex Gu, Wen-Ding Li, Fanjia Yan, Tianjun Zhang, Sida Wang, Armando Solar-Lezama, Koushik Sen, and Ion Stoica. Livecodebench: Holistic and contamination free evaluation of large language models for code, 2024.

- [72] Aider. o1 tops aider’s new polyglot leaderboard, 2024. Accessed: 2025-08-29.

- [73] Samuel Miserendino, Michele Wang, Tejal Patwardhan, and Johannes Heidecke. Swe-lancer: Can frontier llms earn $1 million from real-world freelance software engineering?, 2025.

- [74] Shunyu Yao, Noah Shinn, Pedram Razavi, and Karthik Narasimhan. $τ$ -bench: A benchmark for tool-agent-user interaction in real-world domains, 2024.

- [75] Victor Barres, Honghua Dong, Soham Ray, Xujie Si, and Karthik Narasimhan. $τ^2$ -bench: Evaluating conversational agents in a dual-control environment, 2025.

- [76] Shunyu Yao, Howard Chen, Austin W. Hanjie, Runzhe Yang, and Karthik Narasimhan. Collie: Systematic construction of constrained text generation tasks, 2023.

- [77] Jason Wei, Nguyen Karina, Hyung Won Chung, Yunxin Joy Jiao, Spencer Papay, Amelia Glaese, John Schulman, and William Fedus. Measuring short-form factuality in large language models, 2024.

- [78] Alon Jacovi, Andrew Wang, Chris Alberti, Connie Tao, Jon Lipovetz, Kate Olszewska, Lukas Haas, Michelle Liu, Nate Keating, Adam Bloniarz, Carl Saroufim, Corey Fry, Dror Marcus, Doron Kukliansky, Gaurav Singh Tomar, James Swirhun, Jinwei Xing, Lily Wang, Madhu Gurumurthy, Michael Aaron, Moran Ambar, Rachana Fellinger, Rui Wang, Zizhao Zhang, Sasha Goldshtein, and Dipanjan Das. The facts grounding leaderboard: Benchmarking llms’ ability to ground responses to long-form input, 2025.

- [79] Jason Wei, Zhiqing Sun, Spencer Papay, Scott McKinney, Jeffrey Han, Isa Fulford, Hyung Won Chung, Alex Tachard Passos, William Fedus, and Amelia Glaese. Browsecomp: A simple yet challenging benchmark for browsing agents, 2025.

- [80] Lucen Zhong, Zhengxiao Du, Xiaohan Zhang, Haiyi Hu, and Jie Tang. Complexfuncbench: Exploring multi-step and constrained function calling under long-context scenario, 2025.

- [81] Jeffrey Zhou, Tianjian Lu, Swaroop Mishra, Siddhartha Brahma, Sujoy Basu, Yi Luan, Denny Zhou, and Le Hou. Instruction-following evaluation for large language models, 2023.

- [82] Yun He, Di Jin, Chaoqi Wang, Chloe Bi, Karishma Mandyam, Hejia Zhang, Chen Zhu, Ning Li, Tengyu Xu, Hongjiang Lv, Shruti Bhosale, Chenguang Zhu, Karthik Abinav Sankararaman, Eryk Helenowski, Melanie Kambadur, Aditya Tayade, Hao Ma, Han Fang, and Sinong Wang. Multi-if: Benchmarking llms on multi-turn and multilingual instructions following, 2024.

- [83] Jinhyuk Lee, Anthony Chen, Zhuyun Dai, Dheeru Dua, Devendra Singh Sachan, Michael Boratko, Yi Luan, Sébastien M. R. Arnold, Vincent Perot, Siddharth Dalmia, Hexiang Hu, Xudong Lin, Panupong Pasupat, Aida Amini, Jeremy R. Cole, Sebastian Riedel, Iftekhar Naim, Ming-Wei Chang, and Kelvin Guu. Can long-context language models subsume retrieval, rag, sql, and more?, 2024.

- [84] Kaustubh Deshpande, Ved Sirdeshmukh, Johannes Baptist Mols, Lifeng Jin, Ed-Yeremai Hernandez-Cardona, Dean Lee, Jeremy Kritz, Willow E. Primack, Summer Yue, and Chen Xing. MultiChallenge: A realistic multi-turn conversation evaluation benchmark challenging to frontier LLMs. In Wanxiang Che, Joyce Nabende, Ekaterina Shutova, and Mohammad Taher Pilehvar, editors, Findings of the Association for Computational Linguistics: ACL 2025, pages 18632–18702, Vienna, Austria, July 2025. Association for Computational Linguistics.

- [85] Rahul K. Arora, Jason Wei, Rebecca Soskin Hicks, Preston Bowman, Joaquin Quiñonero-Candela, Foivos Tsimpourlas, Michael Sharman, Meghan Shah, Andrea Vallone, Alex Beutel, Johannes Heidecke, and Karan Singhal. Healthbench: Evaluating large language models towards improved human health, 2025.

## Appendix A Reasoning Benchmarks

Table 1: Taxonomy of benchmarks used in this study.

| HellaSwag [42] | Commonsense and Logical Reasoning | 2019 | Multiple-choice task: choose the most plausible sentence continuation. |

| --- | --- | --- | --- |

| MMLU [43] | Reasoning with General Knowledge | 2021 | Multiple-choice task: answer questions across 57 domains to test knowledge and problem-solving. |

| Big-Bench-Hard [44] | Reasoning with General Knowledge | 2023 | Open-generation task: solve difficult BIG-Bench problems testing multi-step reasoning and problem-solving. |

| MMMLU [45] | Reasoning with General Knowledge | 2024 | Multiple-choice task: answer 57 domain questions translated into 14 languages to test multilingual knowledge and problem-solving. |

| Humanity’s Last Exam [46] | Reasoning with General Knowledge | 2025 | Multi-modal task: answer closed-ended questions across many subjects to test verifiable knowledge. |

| Global MMLU (Lite) [47] | Reasoning with General Knowledge | 2025 | Multiple-choice task: answer 42-language questions with culturally sensitive labeling to test equitable multilingual knowledge. |

| GPQA Diamond [48] | Reasoning with General Knowledge | 2023 | Multiple-choice task: answer 448 expert-level science questions in biology, physics, and chemistry that are Google-proof and highly challenging. |

| MMLU Pro [49] | Reasoning with General Knowledge | 2024 | Multiple-choice task: extended from MMLU, answer more challenging reasoning questions with 10 options across diverse domains. |

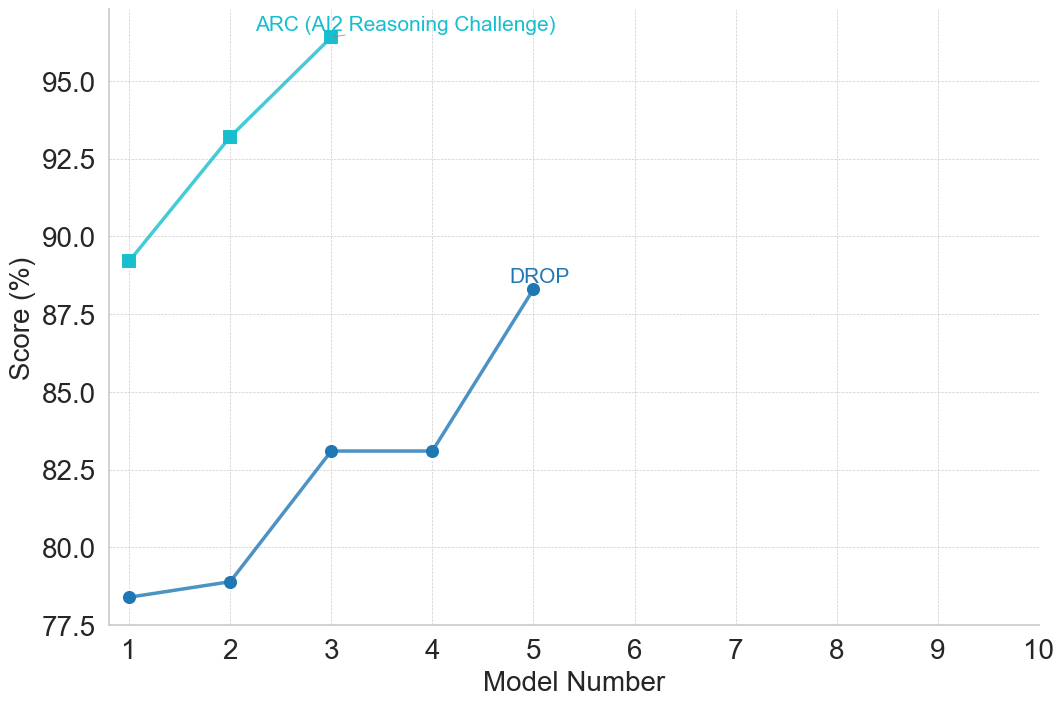

| ARC (AI2 Reasoning Challenge) [50] | Reading Comprehension and Question Answering | 2018 | Multiple-choice task: answer grade-school science questions requiring advanced knowledge and reasoning beyond simple retrieval. |

| ECLeKTic [51] | Reading Comprehension and Question Answering | 2025 | Closed-book QA task: answer 12-language questions to test cross-lingual knowledge transfer. |

| DROP [52] | Reading Comprehension and Question Answering | 2019 | Open-ended QA task: answer 96k English questions requiring discrete reasoning over paragraph content. |

| GSM8K [53] | Mathematical Reasoning | 2021 | Open-ended QA task: solve grade-school problems requiring multi-step mathematical reasoning. |

| MATH [30] | Mathematical Reasoning | 2021 | Open-ended QA: solve 12,500 challenging competition problems with step-by-step solutions to test advanced mathematical reasoning. |

| MATH 500 [30] | Mathematical Reasoning | 2024 | Open-ended QA: Challenging subset of MATH benchmark. |

| MGSM [54] | Mathematical Reasoning | 2023 | Open-ended QA: solve 250 GSM8K problems translated into 10 languages. |

| MathVista [55] | Mathematical Reasoning | 2024 | Open-ended multimodal QA: solve 6,141 math problems requiring visual and compositional reasoning. |

| AIME 2024 | Mathematical Reasoning | 2024 | Open-ended QA: solve challenging competition-level mathematics problems. |

| AIME 2025 | Mathematical Reasoning | 2025 | Open-ended QA: solve challenging competition-level mathematics problems. |

| FrontierMath [56] | Mathematical Reasoning | 2024 | Open-ended QA: tests advanced mathematical reasoning across diverse and expert-level domains, requiring multi-step problem solving and deep mathematical knowledge. |

| MMMU [57] | Multimodal Reasoning | 2024 | Question answering task: multimodal multiple-choice and open-ended questions across 30 subjects requiring advanced reasoning and domain-specific knowledge. |

| AI2D [58] | Multimodal Reasoning | 2016 | Open-ended QA: multimodal questions with 5,000 diagrams and 15,000 Q&A pairs requiring diagram structure understanding and reasoning. |

| ChartQA [59] | Multimodal Reasoning | 2022 | Open-ended QA: multimodal questions with 32.7K chart-based problems requiring visual and logical reasoning. |

| EgoSchema [32] | Multimodal Reasoning | 2023 | Multiple-choice QA: multimodal questions with 5,000 long-form video clips requiring understanding of human activity and temporal reasoning. |

| DocVQA [60] | Multimodal Reasoning | 2021 | Open-ended QA: multimodal questions with 50,000 document images requiring reading and interpreting document layout and structure. |

| TextVQA [61] | Multimodal Reasoning | 2019 | Open-ended QA: multimodal questions with 45,336 images requiring reading and reasoning about embedded text. |

| VideoMMMU [62] | Multimodal Reasoning | 2025 | Open-ended QA: multimodal questions with 300 expert-level videos and 900 Q&A pairs assessing knowledge acquisition through perception, comprehension, and adaptation. |

| Vibe-Eval [63] | Multimodal Reasoning | 2024 | Open-ended QA: multimodal questions, testing visual understanding and multimodal chat capabilities. |

| ZeroBench [64] | Multimodal Reasoning | 2025 | Open-ended QA: multimodal questions with 434 visual reasoning problems designed to be impossible for current LMMs. |

| CharXiv [65] | Multimodal Reasoning | 2024 | Open-ended QA: multimodal questions with 2,323 charts requiring descriptive analysis and complex reasoning. |

| MMMU Pro [66] | Multimodal Reasoning | 2025 | QA task: multimodal multiple-choice and open-ended questions, extended from MMMU, testing integrated visual and textual reasoning. |

| ActivityNet [31] | Multimodal Reasoning | 2015 | Multiple-choice and open-ended QA: evaluates recognition and understanding of complex human activities in untrimmed videos, testing visual perception and temporal reasoning. |

| ERQA [67] | Multimodal Reasoning | 2025 | Multiple-choice QA: evaluates embodied reasoning and spatial understanding in real-world scenarios, requiring models to integrate text and visual inputs to select the correct answer. |

| SWE-bench Verified [68] | Programming and Coding | 2024 | Open-ended QA: answer 2,294 software engineering problems requiring multi-file code edits and complex reasoning. |

| Terminal-bench [69] | Programming and Coding | 2025 | Open-ended QA: answer complex tasks in terminal environments using text-based commands and reasoning. |

| HumanEval [70] | Programming and Coding | 2021 | Open-ended QA: answer Python programming problems from docstrings requiring functional code synthesis. |

| LiveCode Bench [71] | Programming and Coding | 2025 | Open-ended QA: answer 600+ coding problems from contests, testing generation, self-repair, execution, and test prediction. |

| Aider Polygot [72] | Programming and Coding | 2024 | Open-ended QA: answer 225 difficult coding problems in C++, Go, Java, JavaScript, Python, and Rust. |

| SWE-Lancer [73] | Programming and Coding | 2025 | Open-ended QA: answer 1,400 freelance software engineering tasks, including implementation and managerial decisions, with real-world evaluation. |

| SWE-Lancer Diamond [73] | Programming and Coding | 2025 | Open-ended QA: answer tasks from the public SWE-Lancer Diamond split, including implementation and managerial software engineering problems. |

| TAU-bench [74] | Tool Use – LLM | 2024 | Open-ended QA: tests reasoning, consistency, and rule-following in dynamic, tool-assisted human-agent interactions. |

| TAU2-bench [75] | Tool Use – LLM | 2025 | Open-ended QA: tests multi-turn reasoning, coordination, and communication in dual-control environments where both agent and user act with tools. |

| COLLIE [76] | Constrained Text Generation – LLM | 2023 | Open-ended QA: answer 2,080 prompts requiring constrained text generation with compositional, grammar-based, and reasoning challenges. |

| SimpleQA [77] | Factuality – LLM | 2024 | Factual QA benchmark designed to test factual accuracy and knowledge calibration. |

| FACTS Grounding [78] | Factuality – LLM | 2024 | Open-ended QA: answer questions requiring LLMs to generate factually accurate and well-grounded responses from provided source material. |

| BrowseComp [79] | Factuality – LLM | 2025 | Open-ended QA: answer 1,266 questions by persistently navigating the internet to find hard-to-locate information. |

| ComplexFunc Bench [80] | Tool Use – LLM | 2025 | Open-ended QA: answer complex function-calling tasks in five real-world scenarios requiring multi-step reasoning, parameter management, and long-context handling. |

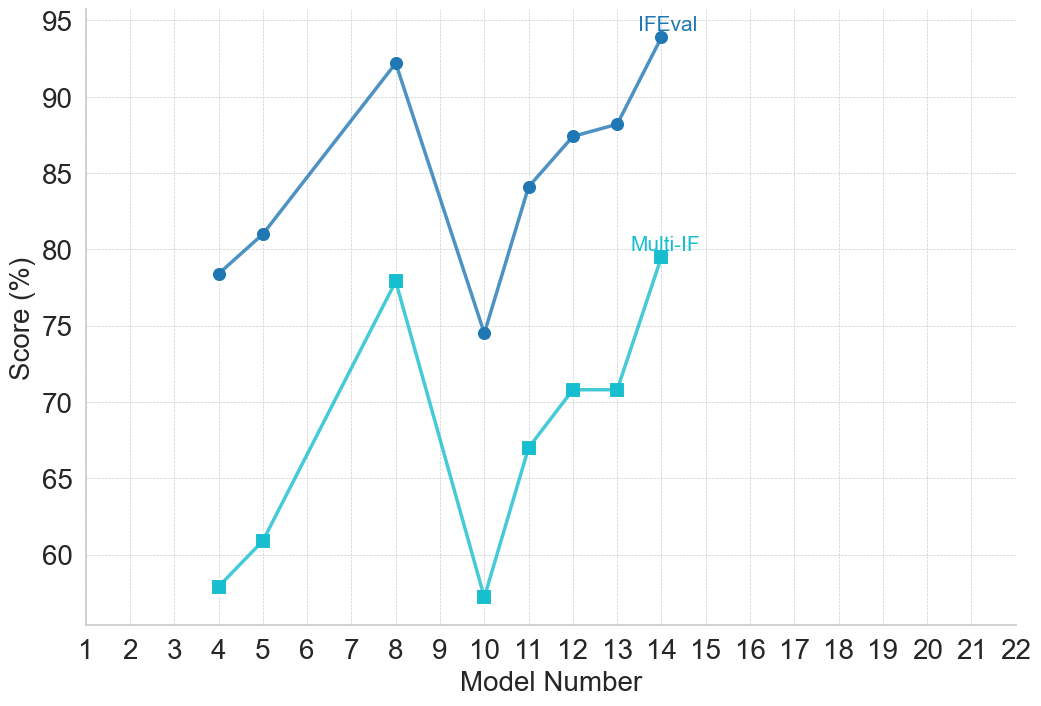

| IFEval [81] | Instruction Following – LLM | 2023 | Open-ended QA: answer 500 prompts requiring LLMs to follow verifiable natural language instructions. |

| Multi-IF [82] | Instruction Following – LLM | 2024 | Open-ended QA: answer 4,501 multilingual multi-turn prompts requiring accurate instruction-following across languages and conversation turns. |

| LOFT [83] | Long-Context – LLM | 2024 | Open-ended QA: answer real-world tasks requiring reasoning and in-context retrieval over millions of tokens. |

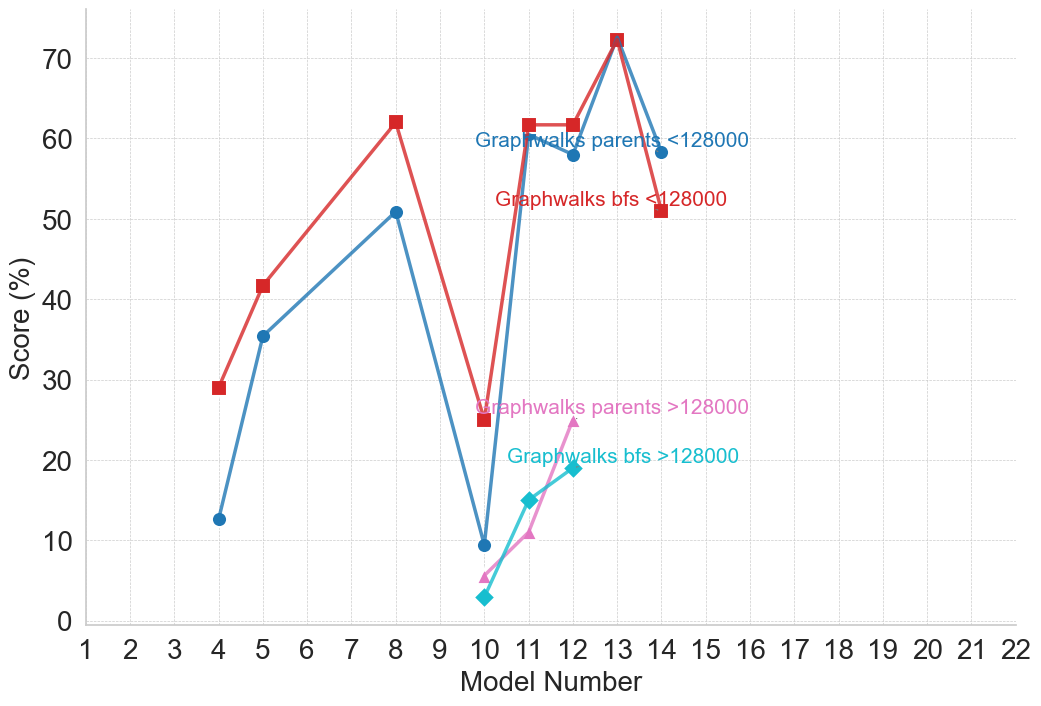

| Graphwalks [14] | Long-Context – LLM | 2025 | Open-ended QA: perform multi-hop reasoning across a graph of millions of tokens to answer questions requiring breadth-first traversal. |

| Multi Challenge [84] | Multi-turn Conversation – LLM | 2025 | Open-ended QA: answer multi-turn conversation prompts requiring instruction-following, context management, and in-context reasoning. |

| HealthBench [85] | Safety – LLM | 2025 | Open-ended QA: evaluates LLMs on multi-turn healthcare conversations, requiring factual reasoning, safety awareness, and context-sensitive decision-making across diverse medical contexts. |

## Appendix B Performance of Models

<details>

<summary>figures/claude_2_plots/claude_performance_Commonsense_and_Logical_Reasoning.png Details</summary>

### Visual Description

## Line Chart: Model Performance on HellaSwag Benchmark

### Overview

The image displays a line chart plotting the performance scores of three sequential models on the HellaSwag benchmark. The chart shows a clear, steep upward trend in scores across the first three model iterations. The data series is labeled "HellaSwag," indicating the specific evaluation dataset used.

### Components/Axes

* **Chart Type:** Line chart with data points marked by filled blue circles.

* **X-Axis (Horizontal):**

* **Label:** "Model Number"

* **Scale:** Linear, integer values from 1 to 10.

* **Markers:** Major ticks and labels at every integer from 1 to 10.

* **Y-Axis (Vertical):**

* **Label:** "Score (%)"

* **Scale:** Linear, percentage values.

* **Range:** Displayed from 86% to 94%, with major ticks and labels at 86, 88, 90, 92, and 94.

* **Data Series:**

* **Label:** "HellaSwag" (text label positioned near the top data point).

* **Color:** Medium blue (approximately #4A90D9).

* **Style:** Solid line connecting three data points.

* **Grid:** Light gray, dashed horizontal and vertical grid lines are present.

### Detailed Analysis

The chart contains data for only the first three model numbers. The line and data points are positioned as follows:

1. **Model Number 1:**

* **Position:** Bottom-left of the plotted data.

* **Score:** 86% (the point sits exactly on the 86% grid line).

* **Trend Start:** This is the baseline score.

2. **Model Number 2:**

* **Position:** Center of the plotted data.

* **Score:** 89% (the point is positioned exactly halfway between the 88% and 90% grid lines).

* **Trend:** The line slopes upward from Model 1 to Model 2, indicating a +3 percentage point improvement.

3. **Model Number 3:**

* **Position:** Top-right of the plotted data.

* **Score:** 95% (the point is positioned above the 94% grid line. Based on the axis scaling, the value is estimated to be 95%).

* **Trend:** The line slopes upward steeply from Model 2 to Model 3, indicating a +6 percentage point improvement. The label "HellaSwag" is placed just above and to the right of this data point.

**Spatial Grounding:** The "HellaSwag" label is located in the top-center area of the chart, directly associated with the highest data point (Model 3, 95%). The data series uses a single, consistent blue color for both the line and the points.

### Key Observations

* **Steep Positive Trend:** The performance improves dramatically with each model iteration. The rate of improvement accelerates, with the gain from Model 2 to 3 (+6%) being double the gain from Model 1 to 2 (+3%).

* **Limited Data Range:** Data is only provided for Model Numbers 1, 2, and 3. The x-axis extends to Model Number 10, but no data is plotted for models 4 through 10, leaving their performance unknown.

* **High Final Score:** The score for Model 3 (95%) is very high, suggesting near-ceiling performance on this particular benchmark.

* **Chart Simplicity:** The chart is minimal, containing only one data series without a separate legend box; the series is identified by a direct label.

### Interpretation

This chart demonstrates a strong, positive correlation between model iteration number and performance on the HellaSwag benchmark, which tests commonsense reasoning. The data suggests that successive versions of the model (1 → 2 → 3) have made significant and accelerating progress on this specific task.

The most notable insight is the non-linear improvement. The jump from 89% to 95% between the second and third models is particularly substantial, indicating a potential breakthrough or the compounding effect of architectural or training data improvements. The absence of data beyond Model 3 creates an open question: does this trend of rapid improvement continue, plateau, or reverse for later models? The empty axis space from 4 to 10 visually emphasizes this unknown. The high final score of 95% implies that further gains on this benchmark may become increasingly difficult, potentially approaching the limit of what the benchmark can measure.

</details>

(a) Commonsense and Logical Reasoning

<details>

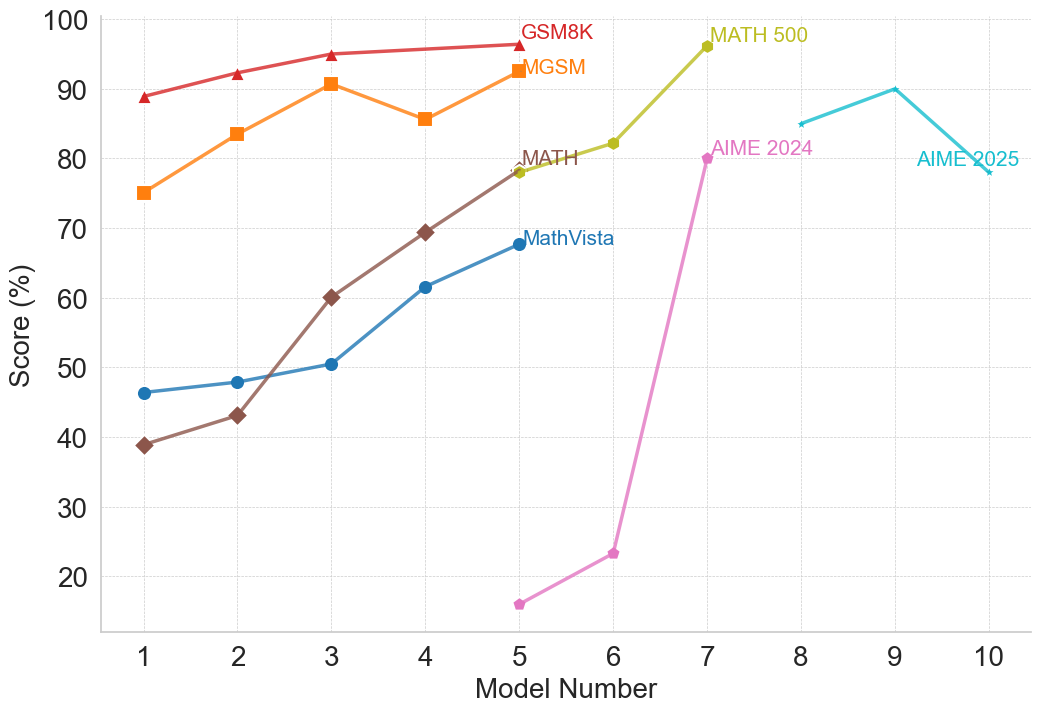

<summary>figures/claude_2_plots/claude_performance_Mathematical_Reasoning.png Details</summary>

### Visual Description

## Multi-Line Chart: Model Performance Across Mathematical Benchmarks

### Overview

The image is a multi-line chart plotting the performance scores (in percentage) of ten different models (labeled 1 through 10) across seven distinct mathematical reasoning benchmarks. Each benchmark is represented by a uniquely colored line with specific markers. The chart illustrates how model performance varies and generally improves across the sequence of models for most tasks.

### Components/Axes

* **X-Axis:** Labeled "Model Number". It is a categorical axis with discrete integer markers from 1 to 10.

* **Y-Axis:** Labeled "Score (%)". It is a linear scale ranging from 0 to 100, with major gridlines at intervals of 10%.

* **Legend/Series Labels:** The legend is embedded directly within the chart area, with labels placed near the end of their respective lines. The series are:

1. **GSM8K:** Red line with upward-pointing triangle markers.

2. **MGSM:** Orange line with square markers.

3. **MATH:** Brown line with diamond markers.

4. **MathVista:** Blue line with circle markers.

5. **MATH 500:** Yellow-green line with circle markers.

6. **AIME 2024:** Pink line with circle markers.

7. **AIME 2025:** Cyan line with circle markers.

### Detailed Analysis

**Data Series Trends and Approximate Values:**

1. **GSM8K (Red, Triangles):**

* **Trend:** Consistently high and slowly increasing.

* **Data Points:** Model 1: ~89%, Model 2: ~92%, Model 3: ~95%, Model 4: ~96%, Model 5: ~97%.

2. **MGSM (Orange, Squares):**

* **Trend:** Generally upward with a notable dip at Model 4.

* **Data Points:** Model 1: ~75%, Model 2: ~84%, Model 3: ~91%, Model 4: ~86%, Model 5: ~93%.

3. **MATH (Brown, Diamonds):**

* **Trend:** Strong, steady upward slope.

* **Data Points:** Model 1: ~39%, Model 2: ~43%, Model 3: ~60%, Model 4: ~69%, Model 5: ~78%.

4. **MathVista (Blue, Circles):**

* **Trend:** Steady, moderate upward slope.

* **Data Points:** Model 1: ~46%, Model 2: ~48%, Model 3: ~50%, Model 4: ~61%, Model 5: ~68%.

5. **MATH 500 (Yellow-Green, Circles):**

* **Trend:** Sharp increase between Model 6 and Model 7.

* **Data Points:** Model 6: ~82%, Model 7: ~96%. (Data only present for these two models).

6. **AIME 2024 (Pink, Circles):**

* **Trend:** Very sharp, dramatic increase from a low base.

* **Data Points:** Model 5: ~16%, Model 6: ~23%, Model 7: ~80%.

7. **AIME 2025 (Cyan, Circles):**

* **Trend:** Increases to a peak at Model 9, then declines.

* **Data Points:** Model 8: ~85%, Model 9: ~90%, Model 10: ~78%.

### Key Observations

* **Performance Hierarchy:** For the models where data is available (Models 1-5), GSM8K and MGSM consistently yield the highest scores, while MATH and MathVista start lower but show significant improvement.

* **Dramatic Improvements:** The most striking improvements are seen in the AIME 2024 series (from ~23% at Model 6 to ~80% at Model 7) and the MATH 500 series (from ~82% to ~96% between Models 6 and 7).

* **Non-Linear Progression:** Performance does not always improve monotonically. MGSM shows a dip at Model 4, and AIME 2025 peaks at Model 9 before falling at Model 10.

* **Benchmark Introduction Points:** Different benchmarks appear to be evaluated on different subsets of models. GSM8K, MGSM, MATH, and MathVista are plotted for Models 1-5. MATH 500 and AIME 2024 are plotted for Models 5-7. AIME 2025 is plotted for Models 8-10.

### Interpretation

This chart visualizes the progression of capability across a series of AI models on standardized mathematical reasoning tasks. The data suggests several key insights:

1. **General Upward Trajectory:** The overarching trend is one of improvement, indicating that successive models (as numbered) generally become better at solving mathematical problems. This is most clearly seen in the steady climbs of the MATH and MathVista benchmarks.

2. **Task-Dependent Performance:** Models excel at different tasks to varying degrees. Foundational arithmetic (GSM8K) appears to be a strength early on, while more complex competition-style problems (AIME) show explosive growth later, suggesting a phase shift in capability for those specific tasks.

3. **Potential Evaluation Shifts:** The disjointed plotting of benchmarks (e.g., AIME starting at Model 5 or 8) may indicate when these evaluation suites were introduced or became relevant to the model development cycle. The sharp jumps in AIME 2024 and MATH 500 could correspond to a significant architectural or training breakthrough that specifically benefited those types of problems.

4. **The AIME 2025 Anomaly:** The decline in AIME 2025 score from Model 9 to Model 10 is a notable outlier. This could indicate a limitation, a trade-off in model specialization, or simply noise in the evaluation. It raises the question of whether performance on future-dated benchmarks (like AIME 2025) follows the same improvement pattern as historical ones.

In essence, the chart documents a narrative of advancing AI mathematical reasoning, highlighting both consistent progress and moments of dramatic, task-specific breakthrough, while also hinting at the complexities and potential plateaus in scaling model capabilities.

</details>

(b) Mathematical Reasoning

<details>

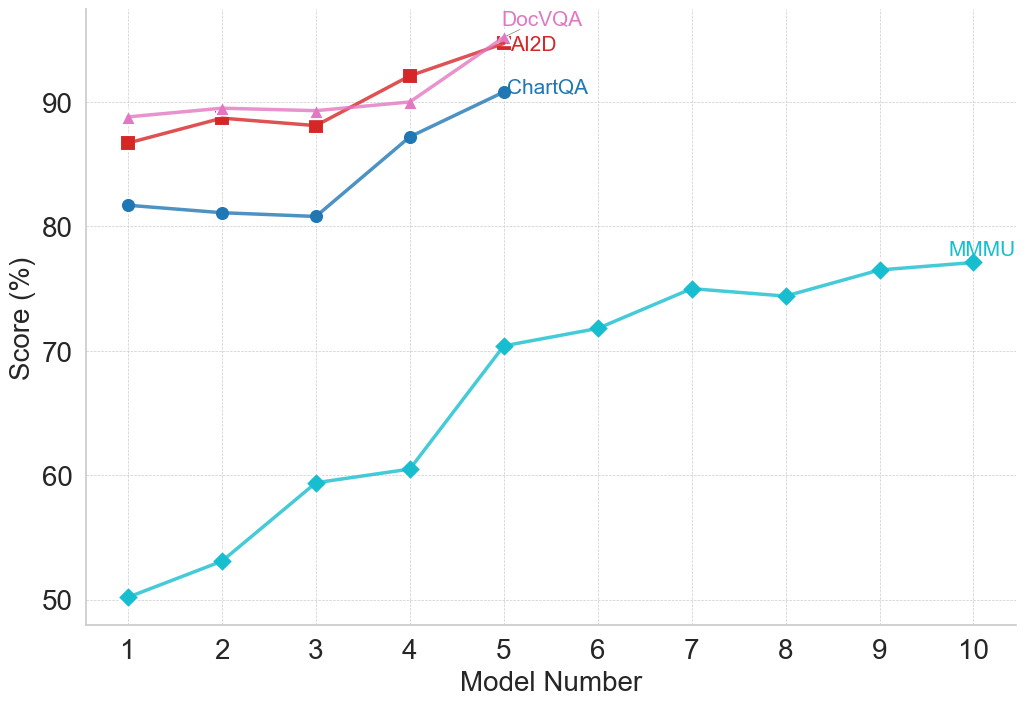

<summary>figures/claude_2_plots/claude_performance_Multimodal_Reasoning.png Details</summary>

### Visual Description

## Line Chart: Model Performance Across Four Benchmarks

### Overview

The image is a line chart comparing the performance scores (in percentage) of ten different models (labeled 1 through 10) across four distinct benchmarks: DocVQA, AI2D, ChartQA, and MMMU. The chart illustrates how scores change as the model number increases.

### Components/Axes

* **Chart Type:** Multi-series line chart with markers.

* **X-Axis:** Labeled "Model Number". It has discrete integer markers from 1 to 10.

* **Y-Axis:** Labeled "Score (%)". It has major gridlines and labels at 50, 60, 70, 80, and 90.

* **Legend:** Positioned in the top-right quadrant of the chart area. It contains four entries:

* **DocVQA:** Pink line with upward-pointing triangle markers.

* **AI2D:** Red line with square markers.

* **ChartQA:** Blue line with circle markers.

* **MMMU:** Cyan (light blue) line with diamond markers.

* **Grid:** A light gray, dashed grid is present for both horizontal and vertical axes.

### Detailed Analysis

**Data Series and Approximate Values:**

1. **MMMU (Cyan line, diamond markers):**

* **Trend:** Shows a strong, consistent upward trend across all ten models, with a slight plateau between models 7 and 8.

* **Data Points (Approximate):**

* Model 1: 50%

* Model 2: 53%

* Model 3: 59%

* Model 4: 60%

* Model 5: 70%

* Model 6: 72%

* Model 7: 75%

* Model 8: 74%

* Model 9: 76%

* Model 10: 77%

2. **ChartQA (Blue line, circle markers):**

* **Trend:** Starts with a slight dip, then increases sharply. Data is only plotted for models 1 through 5.

* **Data Points (Approximate):**

* Model 1: 82%

* Model 2: 81%

* Model 3: 81%

* Model 4: 87%

* Model 5: 91%

3. **AI2D (Red line, square markers):**

* **Trend:** Generally upward with a minor dip at model 3. Data is only plotted for models 1 through 5.

* **Data Points (Approximate):**

* Model 1: 87%

* Model 2: 89%

* Model 3: 88%

* Model 4: 92%

* Model 5: 95%

4. **DocVQA (Pink line, triangle markers):**

* **Trend:** Shows a steady, slight upward trend. Data is only plotted for models 1 through 5.

* **Data Points (Approximate):**

* Model 1: 89%

* Model 2: 90%

* Model 3: 90%

* Model 4: 90%

* Model 5: 95%

### Key Observations

1. **Performance Hierarchy:** For the first five models, DocVQA and AI2D consistently achieve the highest scores, followed by ChartQA, with MMMU scoring significantly lower.

2. **Convergence at Model 5:** At Model 5, the scores for DocVQA and AI2D converge at approximately 95%, the highest point on the chart. ChartQA also peaks here at ~91%.

3. **MMMU's Unique Trajectory:** The MMMU benchmark is the only one plotted for all ten models. It shows the most dramatic relative improvement, starting at 50% and ending at 77%, a 27-percentage-point gain.

4. **Data Completeness:** The ChartQA, AI2D, and DocVQA series are incomplete, providing data only for models 1-5. This prevents comparison with MMMU for models 6-10.

5. **Plateau in MMMU:** The MMMU score shows a near-plateau between Model 7 (75%) and Model 8 (74%), before resuming a slight upward trend.

### Interpretation

This chart likely visualizes the progression of capability across a series of increasingly advanced or larger AI models (represented by "Model Number") on specific multimodal understanding tasks.

* **Benchmark Difficulty:** The consistently lower scores for MMMU suggest it is a more challenging benchmark for these models compared to DocVQA, AI2D, and ChartQA, which may test more specialized or constrained skills.

* **Model Improvement:** The general upward trend for all benchmarks indicates that successive models (higher model numbers) demonstrate improved performance. The steep rise in MMMU scores suggests particular advancements in the capabilities it measures.

* **Specialization vs. Generalization:** The high, converging scores of DocVQA and AI2D at Model 5 might indicate that models have reached a performance ceiling on these specific tasks, or that the models are highly optimized for them. The continued, steady rise of MMMU suggests ongoing progress in a broader or more complex domain of understanding.

* **Missing Data:** The absence of data for ChartQA, AI2D, and DocVQA beyond Model 5 is a significant limitation. It is unclear if these benchmarks were not evaluated, if the models failed, or if the data was simply not included in this visualization. This prevents a full comparison of model evolution across all tasks for the complete set of ten models.

</details>

(c) Multimodal Reasoning

<details>

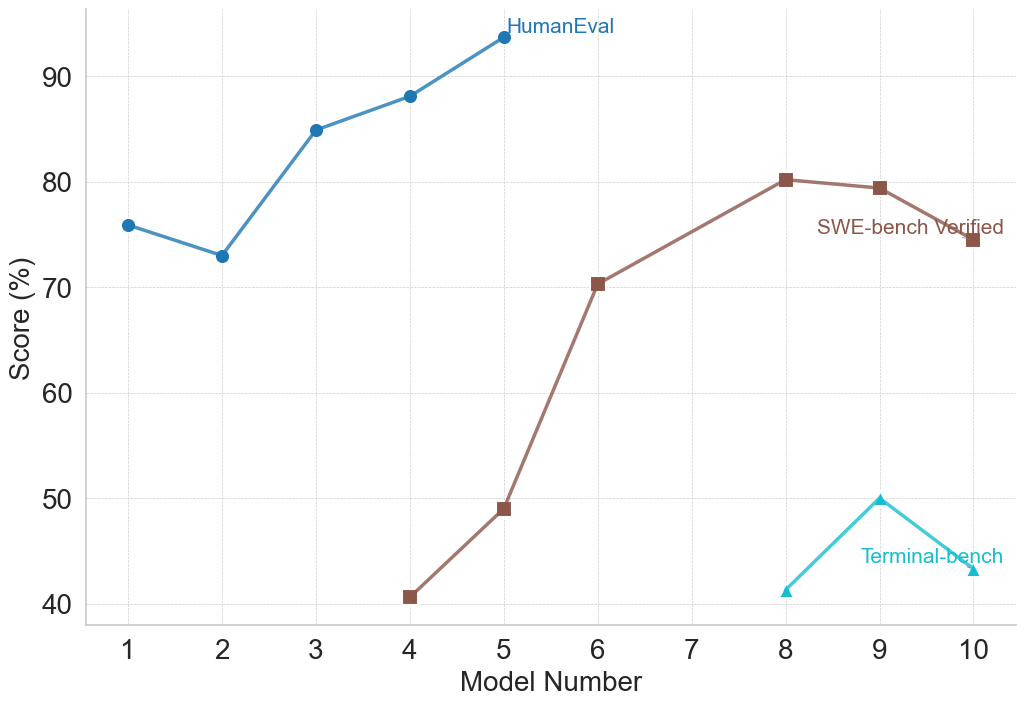

<summary>figures/claude_2_plots/claude_performance_Programming_and_Coding.png Details</summary>

### Visual Description

## Line Chart: Model Performance Across Three Benchmarks

### Overview

This image is a line chart comparing the performance scores (in percentage) of ten different models (labeled 1 through 10) on three distinct evaluation benchmarks: HumanEval, SWE-bench Verified, and Terminal-bench. The chart visualizes how model capabilities vary across these different testing domains.

### Components/Axes

* **X-Axis:** Labeled "Model Number". It has discrete integer markers from 1 to 10.

* **Y-Axis:** Labeled "Score (%)". It has a linear scale with major grid lines at intervals of 10%, ranging from 40% to 90%.

* **Legend:** Located in the top-right quadrant of the chart area. It defines three data series:

* **HumanEval:** Blue line with circular markers.

* **SWE-bench Verified:** Brown line with square markers.

* **Terminal-bench:** Cyan (light blue) line with triangular markers.

### Detailed Analysis

**1. HumanEval (Blue Line, Circle Markers)**

* **Trend:** Shows an overall upward trend with a notable dip at Model 2. Performance is consistently the highest among the three benchmarks for the models where data is present.

* **Data Points (Approximate):**

* Model 1: ~76%

* Model 2: ~73% (Dip)

* Model 3: ~85%

* Model 4: ~88%

* Model 5: ~94% (Peak)

* *No data points are plotted for Models 6 through 10.*

**2. SWE-bench Verified (Brown Line, Square Markers)**

* **Trend:** Shows a strong, generally upward trend from Model 4 to Model 8, followed by a slight decline. Data is only present for Models 4, 5, 6, 8, 9, and 10.

* **Data Points (Approximate):**

* Model 4: ~41%

* Model 5: ~49%

* Model 6: ~70%

* Model 7: *No data point.*

* Model 8: ~80% (Peak)

* Model 9: ~79%

* Model 10: ~75%

**3. Terminal-bench (Cyan Line, Triangle Markers)**

* **Trend:** Shows a sharp increase from Model 8 to Model 9, followed by a decrease to Model 10. Data is only present for the last three models.

* **Data Points (Approximate):**

* Models 1-7: *No data points.*

* Model 8: ~41%

* Model 9: ~50% (Peak)

* Model 10: ~43%

### Key Observations

1. **Benchmark Specificity:** Models are not evaluated on all benchmarks. HumanEval data is only for Models 1-5, SWE-bench for Models 4-10 (except 7), and Terminal-bench only for Models 8-10. This suggests the benchmarks may test different skills or were applied to different model generations.

2. **Performance Hierarchy:** For the models where direct comparison is possible (Models 4 and 5), HumanEval scores are significantly higher than SWE-bench Verified scores. For Models 8-10, SWE-bench scores are substantially higher than Terminal-bench scores.

3. **Peak Performance:** Each benchmark's peak score is achieved by a different model: HumanEval peaks at Model 5 (~94%), SWE-bench at Model 8 (~80%), and Terminal-bench at Model 9 (~50%).