# Where Do LLMs Still Struggle? An In-Depth Analysis of Code Generation Benchmarks

**Authors**: Amir Molzam Sharifloo1, Maedeh Heydari1, Parsa Kazerooni1, Daniel Maninger12, Mira Mezini

## Abstract

Large Language Models (LLMs) have achieved remarkable success in code generation, and the race to improve their performance has become a central focus of AI research. Benchmarks and leaderboards are increasingly popular, offering quantitative rankings of LLMs. However, they provide limited insight into the tasks that LLMs consistently fail to solve—information that is crucial for understanding current limitations and guiding the development of more capable models. To address this gap, we examined code generation tasks across four popular benchmarks, identifying those that major LLMs are most likely to fail. To understand the causes of these failures, we investigated whether the static complexity of solution code contributes to them, followed by a systematic inspection of 114 tasks that LLMs consistently struggled with. Our analysis revealed four recurring patterns of weaknesses in LLMs, as well as common complications within benchmark tasks that most often lead to failure.

## I Introduction

Large Language Models (LLMs) are rapidly transforming software development by automating code generation tasks. Trained on massive corpora of open-source code, these systems are now widely adopted in practice—recent surveys indicate that many developers regularly use AI-assisted coding tools [1]. As the adoption of code-generating LLMs accelerates, rigorous benchmarking becomes increasingly critical. Effective benchmarks provide a standardized framework to evaluate model capabilities, track progress over time, and compare systems on common grounds.

A variety of benchmarks have been proposed to evaluate LLM code generation [2, 3, 4, 5, 6]. Early efforts, such as Most Basic Python Problems (MBPP) [3] and HumanEval [2], focus on functional correctness for small Python programs, probing models’ understanding of algorithms, language, and basic libraries. Extensions like MBPP+ and HumanEval+ [7] increase test coverage to reveal edge cases, while HumanEval Pro and MBPP Pro [8] introduce compositional tasks requiring multiple function calls, testing reasoning across generation steps. Other benchmarks emphasize scale and realism: APPS [9] offers thousands of competitive programming problems with multiple test cases, and LiveCodeBench [4] continuously updates problems from platforms like LeetCode and AtCoder. BigCodeBench [5] evaluates use of external libraries across domains, while repository-level benchmarks such as SWE-bench [6] assess LLM performance on multi-file software development tasks.

Despite the widespread adoption of these benchmarks, there has been little systematic analysis of their tasks and the cases where LLMs failed to generate correct code. Identifying failure-inducing tasks and understanding the underlying causes of the failures is essential for guiding both benchmark design and model development. The prior work [10] analyzed the errors that LLMs made when generating code for the HumanEval benchmark and reported both syntactic and semantic mistakes. However, its scope was limited to HumanEval and did not consider more diverse or challenging benchmarks. In contrast, this paper addresses this gap by conducting an empirical study of popular function-level code generation benchmarks, aiming to answer the following research questions:

- RQ1: Which tasks do LLMs consistently fail to solve?

- RQ2: To what extent can these failures be explained by the complexity of the solution code?

- RQ3: Which additional factors, beyond code complexity, influence LLM failures?

To investigate these questions, we conducted a task-level study of four widely used benchmarks (MBPP, HumanEval, BigCodeBench, and LiveCodeBench) and evaluated six representative advanced LLMs selected to reflect diverse and popular code generation scenarios (Claude Sonnet-4, DeepSeek-V3, Qwen3-Coder, GPT-4o, Llama-3.3-70B, and Mistral-3.2-24B). We additionally implemented a tool to measure task complexity from the corresponding solution code, which we used to examine its correlation with model failures. Interestingly, complexity alone did not fully account for these failures, suggesting that additional factors play a significant role. Analyzing the 114 consistently failed tasks across benchmarks, we identified four recurring failure patterns: wrong problem mapping, flawed or incomplete algorithm design, edge case mishandling, and formatting mistakes. This analysis reveals how factors beyond solution complexity contribute to LLM failures. We further observed that some benchmark tasks are extremely ambiguous, so that certain failures do not necessarily reflect actual model weaknesses. Our contributions are as follows:

- (1) Detailed task-level experimental results for four widely used benchmarks evaluated on six major LLMs.

- (2) A method for measuring solution code complexity and analyzing its correlation with LLM failure rates.

- (3) Fine-grained failure inspection of 114 tasks, revealing patterns of weaknesses in LLMs and challenges arising from benchmark design.

The experimental data and analysis scripts are publicly released on GitHub to support reproducibility and future research. https://github.com/breath24/FailureBench.

## II Experimental Setup

Benchmarks: We conducted a survey of existing code generation benchmarks and selected those that are both widely adopted in the research community and equipped with executable test cases, enabling reliable evaluation of generated code. Our final selection comprises HumanEval (5,845 citations as of August 2025), MBPP (2,142), LiveCodeBench (524), and BigCodeBench (240), representing a diverse yet widely used set of benchmarks for code generation. In this paper, we focused on benchmarks that specialize in function generation, where a natural language problem is given (with or without contextual code) and the model is required to generate a function that implements the expected functionality.

We used the latest version of each benchmark available to minimize data contamination, thereby increasing the likelihood of observing failures. The APPS benchmark was excluded, as it is widely used for training and would risk contamination. Due to the large number of tasks in MBPP, we relied on a subset of 378 tasks, used in recent extensions such as MBPP+. For LiveCodeBench, we used LCB-V6, which consists of 175 programming problems released between January and April 2025. For BigCodeBench, we focused on the BCB-Hard subset (148 tasks) using instruct prompts, which contains the most difficult tasks to challenge LLMs. In total, we experimented with 865 tasks across four benchamrks.

Models: As for the LLMs, we chose six representative advanced LLMs—namely Claude Sonnet-4, DeepSeek-V3, Qwen3-Coder, GPT-4o, Llama-3.3-70B and Mistral-3.2-24B—from different projects that have been widely used. Unfortunately, we excluded Gemini and Grok due to our limited budget and their costly inference fees.

Evaluation Procedure: To identify which tasks lead to failure, we ran the benchmarks on the selected LLMs and collected the generated code. Each task was evaluated using a single generated solution per model (PASS@1): a task was classified as a success if the solution passed all test cases, and as a failure otherwise. For failed tasks, additional details were recorded for further analysis. Table I reports the number of failures for each model across the benchmarks.

TABLE I: The number of task failures across benchmarks.

| Model | HumanEval | MBPP | LCB | BCB-Hard |

| --- | --- | --- | --- | --- |

| Claude Sonnet-4 | 2 | 11 | 54 | 109 |

| Qwen3-Coder | 7 | 9 | 73 | 102 |

| DeepSeek-V3 | 14 | 9 | 76 | 107 |

| GPT-4o | 25 | 14 | 85 | 107 |

| Mistral-3.2-24B | 28 | 23 | 110 | 114 |

| Llama-3.3-70B | 32 | 32 | 100 | 101 |

Results: To provide a categorization of task difficulty based on actual LLM performance, we further classified tasks according to the number of models that failed to solve them, resulting in seven categories ranging from 0 to 6, where 0 represents tasks solved by all models, and 6 represents tasks for which all models failed to generate correct code. Table II shows the number of failures in each category.

TABLE II: Distribution of model failures across benchmarks. For each benchmark, columns 0–6 indicate the number of models that failed a task: 0 = solved by all models, 1 = failed by 1 model, …, 6 = failed by all models. This provides a difficulty categorization of tasks.

- HumanEval: Out of 164 tasks, 113 were solved correctly by every model. There was only one task for which none of the models managed to generate the correct code. Claude Sonnet-4 performed exceptionally good with only two failures, while Llama-3.3-70B records high in failing with tasks that other models were able to solve.

- MBPP: Throughout the benchmark, 318 tasks were solved by every model. Two tasks were not solved by any of the models. Qwen3-Coder and DeepSeek-V3 emerged as the best models, while Llama-3.3-70B failed the highest number of tasks.

- LiveCodeBench: Among all tasks, 35 could not be solved correctly by any model, while 43 were solved correctly by every model. Claude Sonnet-4 demonstrated the strongest performance with only 54 failures, while Mistral-3.2-24B produced most failures.

- BCB-Hard: In this benchmark, only 14 tasks were correctly solved by all models, while 76 tasks were consistently failed. All models produced many failures with a failure rate range from 68% to 77%.

RQ1: Which tasks do LLMs consistently fail at?

Our experiments identified 114 tasks across four benchmarks that all models consistently failed. BCB-Hard had the most failures, followed by LiveCodeBench.

## III Code Complexity Analysis

Solution code complexity provides a quantitative lens on task difficulty, independent of natural language prompts. To explore whether this complexity correlates to LLM performance, we analyzed ground-truth solutions across benchmarks. We first describe the complexity metrics we use, then report descriptive statistics, followed by correlation and regression analyses to examine their relationship with model failures.

### III-A Complexity Measurement

We designed and implemented an algorithm to quantitatively measure the complexity of solution code. We used a set of different code complexity dimensions, each of which captures a distinct facet of what makes a code snippet more cognitively or technically challenging. The dimensions are defined as follows:

- Cyclomatic Complexity (CC): Measures the intricacy of control flow constructs, including conditionals (if), loops (for, while), and branching. A higher CC value indicates more involved execution paths and decision points.

- Data Structures: Assesses the extent and variety of data structure usage, such as lists, dictionaries, sets, and user-defined containers.

- Function Calls: Captures the frequency of function invocation.

- Code Length: Serves as a surface-level proxy for overall complexity, as longer code may correspond to richer logic.

- Nesting Depth: Reflects the maximum depth of syntactic nesting, such as loops within conditionals or recursive calls embedded in other structures.

- Recursions: Specifically tracks the presence and frequency of recursive functions.

We use static analysis of abstract syntax trees to compute each of these metrics, averaging values across all tasks in a benchmark. Table III presents the results of these measurements. As the measurement shows, recursions are extremely rare across benchmarks and that the use of data structures is relatively limited. LiveCodeBench demonstrates greater or nearly equal values for all metrics comparing to other benchmarks, highlighting that its solutions require highest code complexity.

TABLE III: Code complexity metrics-average value- across benchmarks.

| Metric | HumanEval | MBPP | LCB | BCB-Hard |

| --- | --- | --- | --- | --- |

| CC | 3.34 | 2.58 | 9 | 4.66 |

| Data Structures | 0.26 | 0.10 | 0.62 | 0.69 |

| Function Calls | 3.10 | 2.07 | 12.97 | 11.89 |

| Length | 6.30 | 6.68 | 29.54 | 15.91 |

| Nesting Depth | 8.37 | 8.70 | 10.48 | 9.42 |

| Recursions | 0.09 | 0.09 | 0.25 | 0.03 |

### III-B Correlation Analysis

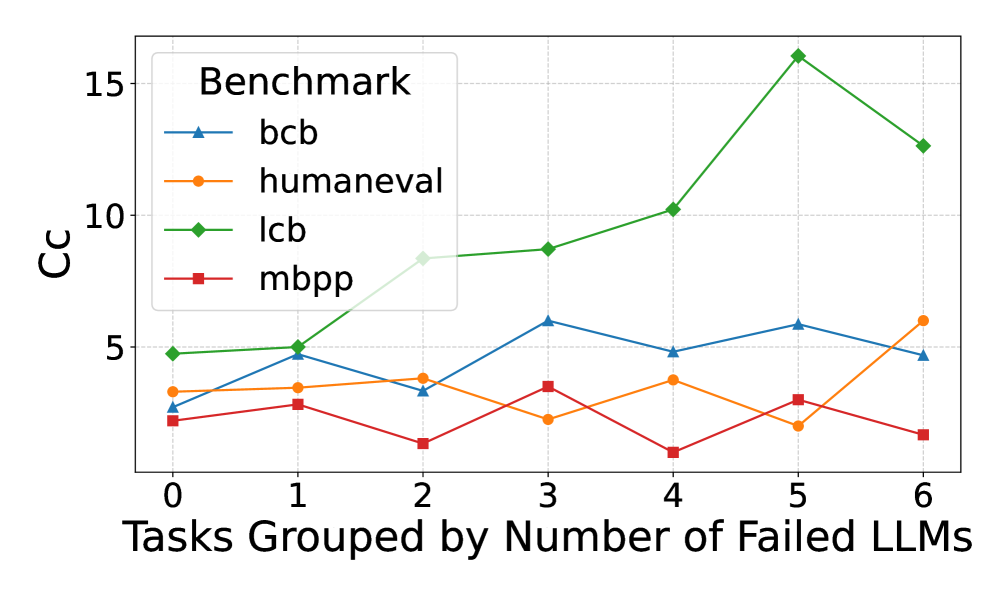

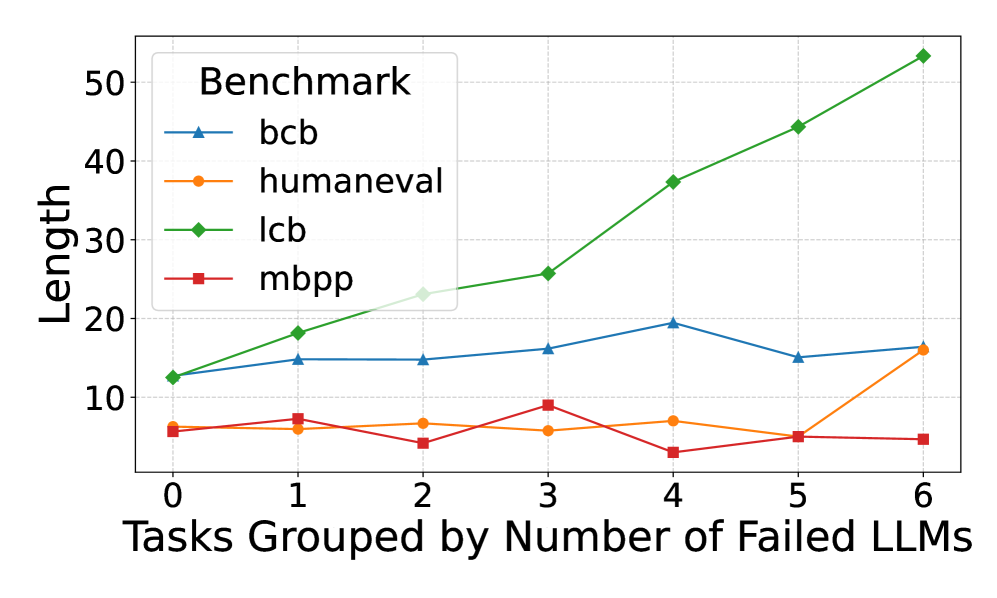

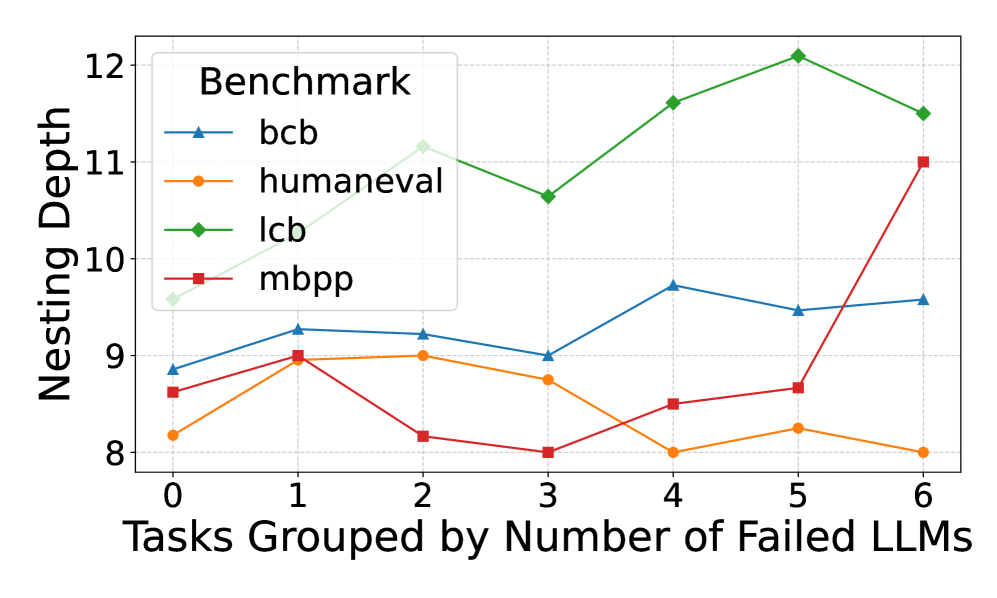

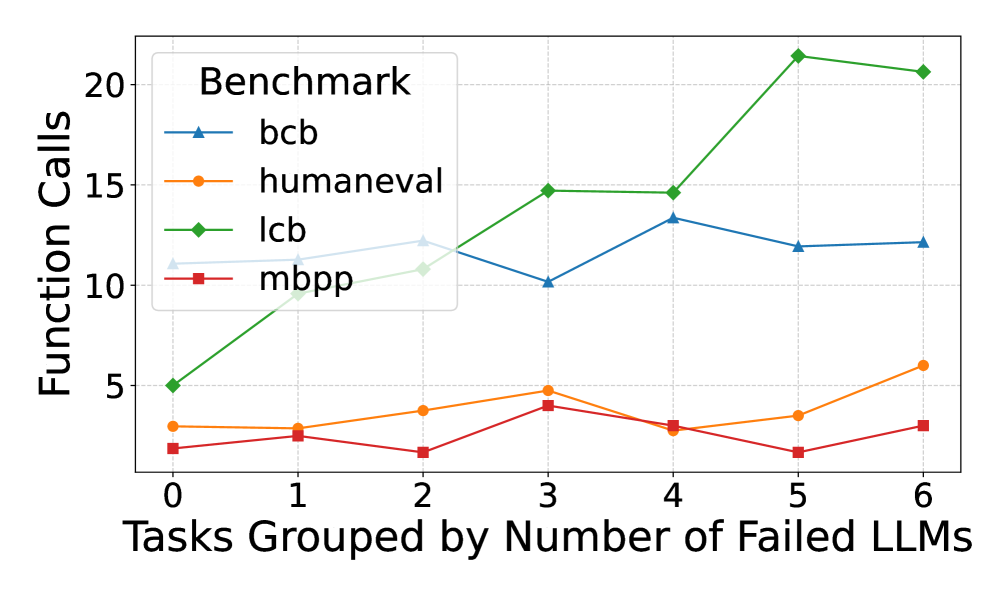

Figure 1 illustrates how average metric values vary across tasks grouped by the number of LLMs that failed to solve them. LiveCodeBench shows a clear positive correlation between code complexity metrics and failure rate, whereas no such trend is evident in the other benchmarks. To quantify these relationships, we applied the Spearman and linear regression methods to measure the correlation between benchmark failure rates and individual metrics. Spearman’s results suggested that LiveCodeBench failures were positively correlated with code complexity metrics. In contrast, correlations remain weak and statistically non-significant for HumanEval, MBPP, and BCB-Hard. Applying regression, the explanatory power of all metrics is negligible in HumanEval and MBPP, with consistently low $R^{2}$ values and non-significant p-values, indicating that these metrics do not meaningfully account for failure rates. BCB-Hard exhibits slightly stronger signals: metrics such as length and nesting depth achieve moderate $R^{2}$ values ( $\approx 0.14$ – $0.15$ ) with borderline p-values ( $\approx 0.07$ – $0.09$ ), suggesting weak but potentially meaningful associations. However, none reach conventional thresholds for statistical significance. By contrast, LiveCodeBench displays the strongest relationships. Several metrics—including cyclomatic complexity, function calls, and length—show relatively high $R^{2}$ values (up to $0.32$ ) alongside highly significant p-values ( $<10^{-6}$ ), indicating robust linear associations with failure rate.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Performance Comparison Line Chart: Benchmark Cc vs. Task Difficulty

### Overview

The image is a line chart comparing the performance (measured in "Cc") of four different benchmarks across tasks grouped by the number of Large Language Models (LLMs) that failed them. The chart illustrates how the performance metric varies as task difficulty (in terms of LLM failures) increases.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:** Labeled "Tasks Grouped by Number of Failed LLMs". It has discrete integer markers from 0 to 6.

* **Y-Axis:** Labeled "Cc". It has numerical markers at 0, 5, 10, and 15.

* **Legend:** Located in the top-left corner of the plot area, titled "Benchmark". It contains four entries:

* `bcb`: Blue line with upward-pointing triangle markers.

* `humaneval`: Orange line with circle markers.

* `lcb`: Green line with diamond markers.

* `mbpp`: Red line with square markers.

* **Grid:** A light gray grid is present in the background.

### Detailed Analysis

**1. `bcb` (Blue line, triangle markers):**

* **Trend:** Fluctuates without a strong overall upward or downward trend. It shows a general pattern of rising and falling between adjacent points.

* **Data Points (Approximate):**

* x=0: Cc ≈ 2.5

* x=1: Cc ≈ 4.8

* x=2: Cc ≈ 3.2

* x=3: Cc ≈ 5.9

* x=4: Cc ≈ 4.8

* x=5: Cc ≈ 5.7

* x=6: Cc ≈ 4.7

**2. `humaneval` (Orange line, circle markers):**

* **Trend:** Relatively flat with minor fluctuations for x=0 to x=4, followed by a noticeable dip at x=5 and a sharp increase at x=6.

* **Data Points (Approximate):**

* x=0: Cc ≈ 3.3

* x=1: Cc ≈ 3.4

* x=2: Cc ≈ 3.9

* x=3: Cc ≈ 2.3

* x=4: Cc ≈ 3.8

* x=5: Cc ≈ 1.9

* x=6: Cc ≈ 6.0

**3. `lcb` (Green line, diamond markers):**

* **Trend:** Shows a clear and strong upward trend, especially from x=2 onwards. It peaks at x=5 before declining at x=6. This is the highest-performing series for most of the chart.

* **Data Points (Approximate):**

* x=0: Cc ≈ 4.8

* x=1: Cc ≈ 5.0

* x=2: Cc ≈ 8.3

* x=3: Cc ≈ 8.7

* x=4: Cc ≈ 10.2

* x=5: Cc ≈ 16.0 (Peak)

* x=6: Cc ≈ 12.6

**4. `mbpp` (Red line, square markers):**

* **Trend:** Generally the lowest-performing series. It fluctuates at a low level, with a notable dip at x=4.

* **Data Points (Approximate):**

* x=0: Cc ≈ 2.0

* x=1: Cc ≈ 2.7

* x=2: Cc ≈ 1.0

* x=3: Cc ≈ 3.5

* x=4: Cc ≈ 0.5 (Lowest point on the entire chart)

* x=5: Cc ≈ 2.9

* x=6: Cc ≈ 1.3

### Key Observations

1. **Dominant Series:** The `lcb` benchmark (green) demonstrates significantly higher Cc values than the others, particularly for tasks where 2 or more LLMs failed (x ≥ 2). Its peak at x=5 (Cc ≈ 16) is the highest value recorded.

2. **Lowest Series:** The `mbpp` benchmark (red) consistently shows the lowest Cc values, with its lowest point occurring at x=4.

3. **Divergence at High Difficulty:** At the highest task difficulty shown (x=6), the performance of the benchmarks diverges sharply: `lcb` remains high, `humaneval` spikes upward, while `bcb` and `mbpp` are low.

4. **Anomaly:** The `humaneval` series shows an unexpected sharp increase at x=6 after a dip at x=5, breaking its previously stable trend.

5. **Crossing Points:** The `bcb` and `humaneval` lines cross multiple times (e.g., near x=1, x=3, x=6), indicating similar but alternating performance levels.

### Interpretation

The chart suggests that the "Cc" metric behaves very differently across these four benchmarks as a function of task difficulty (measured by LLM failures).

* **Benchmark Difficulty:** The `lcb` benchmark appears to be the most "sensitive" or responsive to task difficulty in a positive way, as its Cc metric increases substantially with more LLM failures. This could imply that `lcb` is designed to measure a capability that becomes more pronounced or measurable on harder tasks. Conversely, `mbpp` shows consistently low Cc, suggesting it may measure a different, more stable capability or that its tasks are uniformly easier for the models being evaluated.

* **Task Grouping Insight:** The x-axis groups tasks by how many LLMs failed them. The general upward trend for `lcb` and the spike for `humaneval` at x=6 indicate that for the very hardest tasks (those failed by 6 LLMs), certain benchmarks can yield higher Cc scores. This might reflect that these benchmarks are better at differentiating model performance on extremely challenging problems.

* **Relationship Between Benchmarks:** The fluctuating and crossing lines of `bcb` and `humaneval` suggest their performance metrics are less predictable relative to each other and to task difficulty. They may be measuring overlapping but distinct aspects of model performance.

* **Overall Implication:** The choice of benchmark dramatically affects the reported performance metric (Cc) and its relationship to task difficulty. A model's performance profile would look completely different depending on whether it is evaluated on `lcb` versus `mbpp`. This highlights the importance of using multiple, diverse benchmarks for comprehensive evaluation.

</details>

((a)) Cyclomatic Complexity

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Benchmark Length vs. Number of Failed LLMs

### Overview

This is a line chart comparing the performance or characteristic labeled "Length" across four different benchmarks as a function of task difficulty. Task difficulty is quantified by grouping tasks based on the number of Large Language Models (LLMs) that failed to solve them. The chart suggests an analysis of how benchmark metrics correlate with inherent task difficulty.

### Components/Axes

* **Chart Title:** None visible at the top of the chart area. The primary label is the legend title.

* **Legend:** Located in the top-left corner of the chart area. It is titled "**Benchmark**" and contains four entries:

* `bcb`: Blue line with upward-pointing triangle markers.

* `humaneval`: Orange line with circle markers.

* `lcb`: Green line with diamond markers.

* `mbpp`: Red line with square markers.

* **X-Axis:**

* **Label:** "Tasks Grouped by Number of Failed LLMs"

* **Scale:** Linear, integer values from 0 to 6.

* **Ticks:** 0, 1, 2, 3, 4, 5, 6.

* **Y-Axis:**

* **Label:** "Length"

* **Scale:** Linear, ranging from approximately 0 to 55.

* **Ticks:** 10, 20, 30, 40, 50.

* **Grid:** A light gray grid is present, aligned with the major ticks on both axes.

### Detailed Analysis

The chart plots four data series. Below is a point-by-point extraction for each series, with values approximated from the grid.

**1. Series: `lcb` (Green line, diamond markers)**

* **Trend Verification:** This line shows a strong, consistent upward trend. It starts as the second-lowest value and ends as the highest by a significant margin.

* **Data Points:**

* x=0: y ≈ 12

* x=1: y ≈ 18

* x=2: y ≈ 23

* x=3: y ≈ 26

* x=4: y ≈ 37

* x=5: y ≈ 44

* x=6: y ≈ 53

**2. Series: `bcb` (Blue line, triangle markers)**

* **Trend Verification:** This line shows a moderate, generally stable trend with a slight peak in the middle. It remains in the middle range throughout.

* **Data Points:**

* x=0: y ≈ 12 (appears to start at the same point as `lcb`)

* x=1: y ≈ 15

* x=2: y ≈ 15

* x=3: y ≈ 16

* x=4: y ≈ 19

* x=5: y ≈ 15

* x=6: y ≈ 16

**3. Series: `humaneval` (Orange line, circle markers)**

* **Trend Verification:** This line is relatively flat and low for most of the range, with a sharp increase at the final data point.

* **Data Points:**

* x=0: y ≈ 6

* x=1: y ≈ 6

* x=2: y ≈ 7

* x=3: y ≈ 6

* x=4: y ≈ 7

* x=5: y ≈ 5

* x=6: y ≈ 16

**4. Series: `mbpp` (Red line, square markers)**

* **Trend Verification:** This line is low and volatile, showing no clear upward or downward trend. It has a notable dip at x=4.

* **Data Points:**

* x=0: y ≈ 6 (appears to start at the same point as `humaneval`)

* x=1: y ≈ 7

* x=2: y ≈ 4

* x=3: y ≈ 9

* x=4: y ≈ 3

* x=5: y ≈ 5

* x=6: y ≈ 4

### Key Observations

1. **Divergent Behavior of `lcb`:** The `lcb` benchmark exhibits a near-linear, strong positive correlation between the "Length" metric and the number of failed LLMs. This is the most prominent trend in the chart.

2. **Stability of `bcb`:** The `bcb` benchmark shows relative stability, with "Length" values fluctuating within a narrow band (15-19) regardless of task difficulty group.

3. **Low-Value Benchmarks:** Both `humaneval` and `mbpp` maintain low "Length" values (mostly below 10) for tasks where 0-5 LLMs failed. `humaneval` shows a significant jump at the most difficult task group (6 failed LLMs).

4. **Anomaly in `mbpp`:** The `mbpp` series has a distinct local minimum at x=4 (y≈3), which is the lowest recorded value on the entire chart.

5. **Convergence at Start:** At x=0 (tasks no LLMs failed), the `lcb` and `bcb` series start at approximately the same point (y≈12), as do the `humaneval` and `mbpp` series (y≈6).

### Interpretation

The chart likely analyzes the relationship between a benchmark's output characteristic (e.g., solution length, code length, response length) and the inherent difficulty of programming tasks. The "Length" metric is not standardized, so its absolute meaning is unclear, but the relative trends are informative.

* **What the data suggests:** The `lcb` benchmark's "Length" metric is highly sensitive to task difficulty. As tasks become harder (failing more LLMs), the `lcb` output grows substantially longer. This could imply that `lcb` measures or produces more verbose solutions for complex problems, or that its evaluation is tied to length.

* **Contrast with other benchmarks:** In stark contrast, `bcb`, `humaneval`, and `mbpp` do not show this strong scaling. Their "Length" metrics are largely independent of the number of failing LLMs, except for the final `humaneval` point. This suggests these benchmarks either measure a different property or their output length is not a primary factor in their scoring.

* **Relationship between elements:** The grouping variable ("Number of Failed LLMs") serves as a proxy for task difficulty. The chart reveals that different benchmarks respond to this difficulty gradient in fundamentally different ways. The `lcb` line's steep slope indicates a strong coupling between its metric and difficulty, while the flatness of the others indicates decoupling.

* **Notable implication:** If "Length" correlates with resource usage (e.g., token cost, generation time), then using the `lcb` benchmark for evaluation would become disproportionately more expensive for harder tasks compared to the others. The anomaly in `mbpp` at x=4 warrants investigation—it may represent a cluster of tasks that are difficult for LLMs but yield unusually concise solutions under the `mbpp` framework.

</details>

((b)) Length

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Nesting Depth vs. Number of Failed LLMs

### Overview

This is a line chart comparing the "Nesting Depth" of code across four different programming benchmarks (bcb, humaneval, lcb, mbpp) as a function of the number of Large Language Models (LLMs) that failed to solve a given task. The chart suggests an analysis of code complexity in relation to task difficulty for AI models.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **X-Axis:**

* **Label:** "Tasks Grouped by Number of Failed LLMs"

* **Scale:** Linear, integer values from 0 to 6.

* **Interpretation:** Represents groups of tasks. The value indicates how many LLMs failed on those tasks (e.g., "0" means tasks all LLMs solved, "6" means tasks all LLMs failed).

* **Y-Axis:**

* **Label:** "Nesting Depth"

* **Scale:** Linear, ranging from 8 to 12.

* **Interpretation:** A measure of code complexity, likely the maximum depth of nested control structures (like loops, conditionals) in the solution code.

* **Legend:**

* **Title:** "Benchmark"

* **Position:** Top-left corner of the plot area.

* **Entries:**

1. **bcb:** Blue line with upward-pointing triangle markers.

2. **humaneval:** Orange line with circle markers.

3. **lcb:** Green line with diamond markers.

4. **mbpp:** Red line with square markers.

### Detailed Analysis

**Data Series Trends and Approximate Values:**

1. **bcb (Blue, Triangle):**

* **Trend:** Shows a moderate, fluctuating upward trend. It starts in the middle of the pack, peaks at x=4, and ends as the second highest.

* **Data Points (x, y ≈):**

* (0, 8.8)

* (1, 9.3)

* (2, 9.2)

* (3, 9.0)

* (4, 9.7)

* (5, 9.5)

* (6, 9.6)

2. **humaneval (Orange, Circle):**

* **Trend:** Shows a slight overall downward trend. It starts low, peaks at x=1 and x=2, then generally declines, ending as the lowest series.

* **Data Points (x, y ≈):**

* (0, 8.2)

* (1, 9.0)

* (2, 9.0)

* (3, 8.8)

* (4, 8.0)

* (5, 8.2)

* (6, 8.0)

3. **lcb (Green, Diamond):**

* **Trend:** Shows a strong, consistent upward trend. It starts as the highest series and maintains the highest nesting depth throughout, peaking at x=5.

* **Data Points (x, y ≈):**

* (0, 9.6)

* (1, 10.3)

* (2, 11.2)

* (3, 10.7)

* (4, 11.6)

* (5, 12.1)

* (6, 11.5)

4. **mbpp (Red, Square):**

* **Trend:** Shows a volatile trend with a dramatic, sharp increase at the end. It starts low, dips at x=2 and x=3, then rises sharply from x=4 to x=6, ending as the second highest.

* **Data Points (x, y ≈):**

* (0, 8.6)

* (1, 9.0)

* (2, 8.2)

* (3, 8.0)

* (4, 8.5)

* (5, 8.7)

* (6, 11.0)

### Key Observations

1. **Benchmark Hierarchy:** The `lcb` benchmark consistently exhibits the highest nesting depth across all task difficulty groups, suggesting its solutions are structurally more complex.

2. **Difficulty Correlation:** For the `lcb` and, to a lesser extent, `bcb` benchmarks, there is a positive correlation between the number of failed LLMs (task difficulty) and the nesting depth of the solutions. This implies that tasks harder for AI models may require more complex code structures.

3. **Anomaly - mbpp Spike:** The `mbpp` series shows a significant outlier behavior. Its nesting depth is relatively low and stable for tasks where 0-5 LLMs failed, but it spikes dramatically (from ~8.7 to 11.0) for the hardest task group (6 failed LLMs). This suggests a subset of very difficult `mbpp` tasks that require a sudden jump in structural complexity.

4. **Convergence at Low Difficulty:** For tasks solved by all LLMs (x=0), the nesting depths are relatively clustered between 8.2 and 9.6. The spread widens significantly as task difficulty increases.

### Interpretation

This chart provides a technical lens into the relationship between AI model performance and code structure. The data suggests that **task difficulty for LLMs is not always aligned with human-perceived code complexity**, but a correlation exists for certain benchmarks.

* The `lcb` benchmark's high and rising nesting depth indicates it may contain problems that are inherently complex to structure, which also makes them challenging for LLMs.

* The `mbpp` spike is particularly insightful. It could indicate a "complexity cliff" – a point where solving the problem requires a fundamentally different, more nested algorithmic approach that most LLMs fail to generate. This highlights a potential limitation in LLM reasoning for specific types of hard problems.

* The relative stability of `humaneval` suggests its difficulty for LLMs may be driven more by other factors (e.g., semantic understanding, edge cases) rather than deep structural nesting.

In summary, the chart moves beyond simple pass/fail metrics to show how the *nature* of the code solution (its nesting depth) varies with task difficulty across different standardized benchmarks. This is valuable for understanding the strengths and weaknesses of LLM code generation.

</details>

((c)) Nesting Depth

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Function Calls vs. Tasks Grouped by Number of Failed LLMs

### Overview

This is a line chart comparing the number of function calls required by four different benchmarks (`bcb`, `humaneval`, `lcb`, `mbpp`) as tasks are grouped by the number of Large Language Models (LLMs) that failed on them. The chart plots the relationship between task difficulty (in terms of LLM failure count) and the computational effort (function calls) for each benchmark.

### Components/Axes

* **X-Axis:** Labeled "Tasks Grouped by Number of Failed LLMs". It has discrete integer markers from 0 to 6.

* **Y-Axis:** Labeled "Function Calls". It has a linear scale with major gridlines and labels at 0, 5, 10, 15, and 20.

* **Legend:** Located in the top-left corner of the chart area, titled "Benchmark". It contains four entries:

* `bcb`: Blue line with upward-pointing triangle markers.

* `humaneval`: Orange line with circle markers.

* `lcb`: Green line with diamond markers.

* `mbpp`: Red line with square markers.

### Detailed Analysis

Data points are approximate values read from the chart's gridlines.

**1. `bcb` (Blue line, triangle markers):**

* **Trend:** Relatively stable, hovering between 10 and 13 function calls, with a notable dip at x=3.

* **Data Points:**

* x=0: ~11

* x=1: ~12

* x=2: ~12

* x=3: ~10

* x=4: ~13

* x=5: ~12

* x=6: ~12

**2. `humaneval` (Orange line, circle markers):**

* **Trend:** Shows a slight, gradual upward trend from left to right, with a dip at x=4.

* **Data Points:**

* x=0: ~3

* x=1: ~3

* x=2: ~4

* x=3: ~5

* x=4: ~3

* x=5: ~4

* x=6: ~6

**3. `lcb` (Green line, diamond markers):**

* **Trend:** Exhibits a strong, consistent upward trend. The slope increases significantly after x=4, reaching the highest values on the chart.

* **Data Points:**

* x=0: ~5

* x=1: ~10

* x=2: ~11

* x=3: ~15

* x=4: ~15

* x=5: ~21

* x=6: ~21

**4. `mbpp` (Red line, square markers):**

* **Trend:** Remains relatively flat and low, fluctuating between 2 and 4 function calls with no strong directional trend.

* **Data Points:**

* x=0: ~2

* x=1: ~3

* x=2: ~2

* x=3: ~4

* x=4: ~3

* x=5: ~2

* x=6: ~3

### Key Observations

1. **Divergent Scaling:** The benchmarks show dramatically different scaling behavior. `lcb` scales poorly (requires many more function calls) as task difficulty increases, while `mbpp` scales very well (requires few additional calls).

2. **Performance Gap:** At the highest difficulty level (x=5,6), the gap between the most resource-intensive (`lcb` at ~21 calls) and the least (`mbpp` at ~3 calls) is enormous—approximately a 7x difference.

3. **Stability vs. Volatility:** `bcb` and `mbpp` show relatively stable call counts across difficulty levels. `humaneval` shows moderate growth, and `lcb` shows high volatility and growth.

4. **Anomaly at x=3:** There is a notable dip for `bcb` at x=3, while `lcb` and `mbpp` show a local peak at the same point. This suggests tasks where 3 LLMs failed might have a unique characteristic affecting these benchmarks differently.

### Interpretation

This chart likely evaluates the efficiency or computational cost of different code generation or evaluation benchmarks (`bcb`, `humaneval`, `lcb`, `mbpp`) in relation to task difficulty. The "Number of Failed LLMs" serves as a proxy for task hardness.

The data suggests that the `lcb` benchmark is particularly sensitive to task difficulty, requiring exponentially more function calls as tasks become harder (as measured by more LLM failures). This could indicate that `lcb` involves more complex validation, deeper search, or more iterative testing. In contrast, `mbpp` appears to be a very lightweight benchmark whose computational cost is largely independent of task difficulty.

The `bcb` benchmark occupies a middle ground, being moderately affected by difficulty but showing a curious resilience or different behavior at the x=3 difficulty level. The `humaneval` benchmark shows a predictable, moderate increase in cost with difficulty.

For a practitioner, this implies that choosing a benchmark involves a trade-off: `lcb` may provide a more rigorous or thorough evaluation for hard tasks but at a high computational cost, while `mbpp` offers a fast, consistent evaluation regardless of difficulty. The choice depends on whether the goal is deep analysis or rapid, scalable assessment.

</details>

((d)) Function Calls

Figure 1: Comparison of benchmark code metrics for tasks, grouped by the number of LLMs that failed them. LiveCodeBench shows a positive correlation between code complexity and failure rate, while other benchmarks do not.

RQ2: To what extent are these failures explained by solution complexity?

Failures show only a weak association with solution code complexity across HumanEval, MBPP, and BCB-Hard. These results suggest that code complexity alone cannot systematically explain LLM failures across benchmarks, and that semantic task properties and benchmark-specific factors likely play a more significant role.

## IV Failure Inspection

We conducted a detailed task-by-task analysis of consistently failed tasks, examining the code generated by each model together with the corresponding failed test cases. This close inspection allowed us to uncover recurring patterns and better understand systematic challenges faced by LLMs across benchmarks.

LLM Failure Patterns: Across the consistently failed tasks, we identified four main failure modes:

- Wrong problem mapping occurs when models interpret a task as belonging to the wrong problem class. For example, in HumanEval/132, the task is to determine whether a string of square brackets contains a valid subsequence with at least one nested pair. All models incorrectly mapped this to the standard ”balanced brackets” class of problems and applied a stack-based early-return strategy: pushing opening brackets, popping on closing brackets, and returning True as soon as a nested configuration was locally detected. This approach failed because it identified only the first instance of nesting rather than ensuring that sufficient nesting occurred, as required by the canonical solution. This illustrates a common bias in LLMs toward familiar problem types, which can lead them to overlook details specified in the prompt.

- Flawed or incomplete algorithm design arises when LLMs take the correct approach but include a flawed or incomplete set of steps. For example, task BCB-Hard/945 requires generating a time series of sales data starting from a specified date, using regression to forecast future sales. LLMs correctly implemented data processing and regression, but did not incorporate mechanisms to handle non-monotonic trends.

- Edge case mishandling refers to failures where the code generated by an LLM cannot correctly handle uncommon or boundary scenarios. For example, BCB-Hard/964 requires converting files with multiple extensions from a source directory into CSV files in a target directory, but all models failed the nested subdirectory test case because their code only iterated over top-level files rather than recursively traversing subfolders.

- Formatting mistakes arise when the underlying algorithm is correct, but solutions are rejected because of strict input/output requirements. For instance, LiveCodeBench/3736 required results to be returned as string literals (e.g., "23"), whereas the models produced unquoted digits.

Table IV reports the number of patterns observed in each benchmark. In some tasks, more than one pattern appeared to contribute to the failures, as we analyzed the outputs from all LLMs, each producing different solution code.

TABLE IV: Number of failure patterns across benchmarks.

| Failure Pattern | HumanEval | MBPP | LCB | BCB-Hard |

| --- | --- | --- | --- | --- |

| Wrong Problem Mapping | 1 | 0 | 20 | 24 |

| Flawed/Incomplete Algorithm | 0 | 1 | 31 | 35 |

| Formatting Mistakes | 0 | 0 | 10 | 32 |

| Edge Case Mishandling | 0 | 1 | 1 | 27 |

Ambiguous Prompt & Restricted Test: Many failures in BCB-Hard arise from task ambiguity, where prompts are underspecified and tests are overspecified, forcing models to make reasonable assumptions that nonetheless lead to failure. For instance, models sometimes assumed specific column names in the output CSV, which the test cases did not expect. A task is passed only when a model’s assumptions happen to match the hidden expectations or when the generated code is flexible enough to satisfy alternative assumptions imposed by the tests.

Additionally, we investigated why Llama-3.3-70B outperformed other models on BCB-Hard, particularly Claude Sonnet-4, which demonstrated stronger performance across the remaining benchmarks. We did this by examining tasks that Llama-3.3-70B solved correctly while others failed. A closer examination of these tasks revealed that Llama’s success often stemmed from a simple or literal interpretation of the prompts, whereas stronger models tended to rely on conventional coding practices or added assumptions that were reasonable in general but not aligned with the benchmark’s strict test cases. For instance, in BCB-Hard/147, Claude Sonnet-4 skipped network and broadcast IP addresses following standard Python networking conventions, while Llama-3.3-70B iterated over all addresses literally as requested in the prompt. This may illustrate a broader phenomenon: more capable models can sometimes over-optimize for general correctness or practicality, inadvertently violating strict benchmark test cases, whereas simpler models may succeed by adhering more literally to the task instructions.

RQ3: Which additional task characteristics, beyond solution complexity, influence LLM failures?

We identified four recurring failure patterns— wrong problem mapping, flawed or incomplete algorithm design, edge case mishandling, and formatting mistakes —that highlight current LLM weaknesses. Underspecified prompts, as well as overspecified tests in benchmark tasks, are additional factors contributing to further failures.

## V Limitations and Future Directions

In this section, we briefly discuss several limitations of our study and outline future directions:

1. Our experiments are currently limited to four benchmarks. We plan to extend them with further function-level as well as repository-level benchmarks, such as SWE-Bench [6], which requires solving coding issues across multiple files.

1. We will broaden our study to cover a wide range of models, from small to large scale, to examine what types of failures arise with different architectures and sizes.

1. Our complexity measurement focuses on solution code, but it could be extended to include prompt complexity.

1. Recursion and the use of data structures are underrepresented across existing benchmarks. Future work could involve creating specialized benchmarks to address this gap.

1. Building on failure pattern insights (e.g., edge case handling and problem mapping), we plan to design targeted training strategies to improve LLM performance.

1. We also plan to design benchmarks based on common failure patterns to more effectively discriminate between model capabilities.

## Acknowledgment

This work was funded by the Hessian Ministry of Higher Education, Research, Science and the Arts within the cluster project The Third Wave of Artificial Intelligence (3AI), by the National Research Center for Applied Cybersecurity ATHENE within the project Foundational Models for Secure Software Development, and by the LOEWE initiative (Hesse, Germany) [LOEWE/4a//519/05/00.002(0013)/95].

## References

- [1] J. Ji, J. Jun, M. Wu, and R. Gelles, “Cybersecurity risks of ai-generated code,” Center for Security and Emerging Technology, Tech. Rep., November 2024. [Online]. Available: https://cset.georgetown.edu/wp-content/uploads/CSET-Cybersecurity-Risks-of-AI-Generated-Code.pdf

- [2] M. Chen, J. Tworek, H. Jun, Q. Yuan, H. P. de Oliveira Pinto, J. Kaplan, H. Edwards, Y. Burda, N. Joseph, G. Brockman, A. Ray, R. Puri, G. Krueger, M. Petrov, H. Khlaaf, G. Sastry, P. Mishkin, B. Chan, S. Gray, N. Ryder, M. Pavlov, A. Power, L. Kaiser, M. Bavarian, C. Winter, P. Tillet, F. P. Such, D. Cummings, M. Plappert, F. Chantzis, E. Barnes, A. Herbert-Voss, W. H. Guss, A. Nichol, A. Paino, N. Tezak, J. Tang, I. Babuschkin, S. Balaji, S. Jain, W. Saunders, C. Hesse, A. N. Carr, J. Leike, J. Achiam, V. Misra, E. Morikawa, A. Radford, M. Knight, M. Brundage, M. Murati, K. Mayer, P. Welinder, B. McGrew, D. Amodei, S. McCandlish, I. Sutskever, and W. Zaremba, “Evaluating large language models trained on code,” 2021. [Online]. Available: https://arxiv.org/abs/2107.03374

- [3] J. Austin, A. Odena, M. Nye, M. Bosma, H. Michalewski, D. Dohan, E. Jiang, C. Cai, M. Terry, Q. Le, and C. Sutton, “Program synthesis with large language models,” 2021. [Online]. Available: https://arxiv.org/abs/2108.07732

- [4] S. Jain, S. B. Kong, E. Zelikman, A. Chen, T. Chen, A. Svyatkovskiy, and C. Sutton, “Livecodebench: Holistic and contamination-free evaluation of llms for code,” 2024. [Online]. Available: https://arxiv.org/abs/2404.00699

- [5] T. Y. Zhuo, M. C. Vu, J. Chim, H. Hu, W. Yu, R. Widyasari, I. N. B. Yusuf, H. Zhan, J. He, I. Paul, S. Brunner, C. Gong, T. Hoang, A. R. Zebaze, X. Hong, W.-D. Li, J. Kaddour, M. Xu, Z. Zhang, P. Yadav, N. Jain, A. Gu, Z. Cheng, J. Liu, Q. Liu, Z. Wang, B. Hui, N. Muennighoff, D. Lo, D. Fried, X. Du, H. de Vries, and L. V. Werra, “Bigcodebench: Benchmarking code generation with diverse function calls and complex instructions,” 2025. [Online]. Available: https://arxiv.org/abs/2406.15877

- [6] C. E. Jimenez, J. Yang, A. Wettig, S. Yao, K. Pei, O. Press, and K. Narasimhan, “Swe-bench: Can language models resolve real-world github issues?” 2024. [Online]. Available: https://arxiv.org/abs/2310.06770

- [7] J. Liu, C. S. Xia, Y. Wang, and L. Zhang, “Is your code generated by chatgpt really correct? rigorous evaluation of large language models for code generation,” in Proceedings of the 37th International Conference on Neural Information Processing Systems, ser. NIPS ’23. Red Hook, NY, USA: Curran Associates Inc., 2023.

- [8] Z. Yu, Y. Zhao, A. Cohan, and X.-P. Zhang, “Humaneval pro and mbpp pro: Evaluating large language models on self-invoking code generation,” 2024. [Online]. Available: https://arxiv.org/abs/2412.21199

- [9] D. Hendrycks, S. Basart, S. Kadavath, M. Mazeika, A. Arora, E. Guo, C. Burns, S. Puranik, H. He, D. Song, and J. Steinhardt, “Measuring coding challenge competence with apps,” 2021. [Online]. Available: https://arxiv.org/abs/2105.09938

- [10] Z. Wang, Z. Zhou, D. Song, Y. Huang, S. Chen, L. Ma, and T. Zhang, “Towards understanding the characteristics of code generation errors made by large language models,” in 2025 IEEE/ACM 47th International Conference on Software Engineering (ICSE). Los Alamitos, CA, USA: IEEE Computer Society, May 2025, pp. 2587–2599. [Online]. Available: https://doi.ieeecomputersociety.org/10.1109/ICSE55347.2025.00180