# The Causal Round Trip: Generating Authentic Counterfactuals by Eliminating Information Loss

**Authors**:

- Rui Wu wurui22@mail.ustc.edu.cn (School of Management, University of Science and Technology of China)

- Lizheng Wang lzwang@ustc.edu.cn (School of Management, University of Science and Technology of China)

- Yongjun Li lionli@ustc.edu.cn (School of Management, University of Science and Technology of China)

> Corresponding author.

## Abstract

Judea Pearl’s vision of Structural Causal Models (SCMs) as engines for counterfactual reasoning hinges on faithful abduction: the precise inference of latent exogenous noise. For decades, operationalizing this step for complex, non-linear mechanisms has remained a significant computational challenge. The advent of diffusion models, powerful universal function approximators, offers a promising solution. However, we argue that their standard design, optimized for perceptual generation over logical inference, introduces a fundamental flaw for this classical problem: an inherent information loss we term the Structural Reconstruction Error (SRE). To address this challenge, we formalize the principle of Causal Information Conservation (CIC) as the necessary condition for faithful abduction. We then introduce BELM-MDCM, the first diffusion-based framework engineered to be causally sound by eliminating SRE by construction through an analytically invertible mechanism. To operationalize this framework, a Targeted Modeling strategy provides structural regularization, while a Hybrid Training Objective instills a strong causal inductive bias. Rigorous experiments demonstrate that our Zero-SRE framework not only achieves state-of-the-art accuracy but, more importantly, enables the high-fidelity, individual-level counterfactuals required for deep causal inquiries. Our work provides a foundational blueprint that reconciles the power of modern generative models with the rigor of classical causal theory, establishing a new and more rigorous standard for this emerging field.

Keywords: Causal Inference, Diffusion Models, Causal Information Conservation, Structural Causal Models, Counterfactual Generation, BELM, Structural Reconstruction Error

## 1 Introduction

The fundamental challenge of causal inference, as articulated by rubin1974estimating, is our inability to simultaneously observe an individual’s potential outcomes. Generating authentic counterfactuals is thus the field’s grand challenge. Structural Causal Models (SCMs), introduced by pearl2009causality, provide the formal language for this pursuit. An SCM posits that an outcome $V_i$ is generated by a function of its parents $Pa_i$ and a unique exogenous noise variable $U_i$ . This noise, $U_i$ , represents the primordial causal information —the collection of unobserved factors unique to an individual. This concept aligns directly with the long-standing focus in econometrics on unobserved individual heterogeneity, a central challenge in structural modeling for decades (heckman2001micro). Pearl’s framework for causal reasoning, the Abduction-Action-Prediction cycle, hinges on the fidelity of the first step: abduction. To answer any ”what if” question, one must first perfectly infer this primordial information $U_i$ from an observed outcome $v_i$ . For decades, while this theoretical blueprint was clear, its practical realization for complex, non-linear mechanisms remained a major computational hurdle, often addressed in econometrics through strong parametric assumptions or linear approximations (angrist2008mostly).

The advent of deep generative models, particularly diffusion models (ho2020denoising), offers a powerful new hope for bridging this gap. As near-universal function approximators, they possess the expressive power to learn the complex, non-linear functions that have long challenged classical methods (chao2023interventional; sanchez2022dcms). However, this promise is shadowed by a critical, yet overlooked, ”impedance mismatch.” These models were engineered for perceptual tasks like image synthesis, where visual plausibility is paramount, not for the logical rigor demanded by causal abduction. We argue that their standard design, which relies on approximate inversion schemes like DDIM (song2021denoising), is fundamentally at odds with the strict requirements of this classical causal problem.

In this work, we diagnose and resolve this conflict. We begin by giving the classic requirement for faithful abduction a modern name: Causal Information Conservation (CIC) In this work, ’Causal Information Conservation’ is defined operationally as the lossless, deterministic recovery of the exogenous noise variable $U$ . Its novelty lies in its application as a design principle and diagnostic tool for the diffusion model paradigm in causality, rather than as a formal information-theoretic quantity. Connecting this operational principle to formal measures, such as mutual information, is a compelling avenue for future research.. Our core contribution is the identification that standard diffusion models systematically violate this principle due to an inherent algorithmic flaw. We formalize this flaw as the Structural Reconstruction Error (SRE) —a quantifiable information loss that imposes a hard theoretical ceiling on the fidelity of any counterfactual generated by such methods. The SRE is not an estimation error to be solved with more data, but a structural defect in the tool itself.

To solve the long-standing challenge of operationalizing faithful abduction, we introduce BELM-MDCM. It is not merely a new model, but the first diffusion-based framework re-engineered from first principles to be causally sound. Architected around an analytically invertible sampler (liu2024belm), it is the first Zero-SRE causal framework by construction. This design choice reconciles the expressive power of modern diffusion models with the logical rigor of Pearl’s causal theory, ensuring the abduction step is lossless. Our primary contributions are therefore:

1. Diagnosing a Fundamental Barrier in a Classic Problem. We are the first to identify that standard diffusion models, when applied to the classic problem of SCM abduction, suffer from a structural flaw we term the Structural Reconstruction Error (SRE), which violates the foundational principle of Causal Information Conservation.

1. Proposing the First Causally-Sound Diffusion Framework. We introduce BELM-MDCM, the first framework to eliminate SRE by design. By leveraging an analytically invertible mechanism, it ensures that the power of diffusion models can be applied to causality without compromising the integrity of the abduction process.

1. Developing a Principled Methodology to Operationalize the Framework. To make our Zero-SRE framework practical and robust, we introduce two synergistic innovations: a Targeted Modeling strategy to manage complexity and a Hybrid Training Objective to provide a strong causal inductive bias, both supported by our theoretical analysis.

Through a comprehensive experimental evaluation, we demonstrate that BELM-MDCM not only sets a new state-of-the-art in estimation accuracy but, more critically, unlocks the generation of authentic individual-level counterfactuals for deep causal inquiries. By providing a foundational blueprint that resolves a core tension between modern machine learning and classical causal theory, our work establishes a new, more rigorous standard for this research direction.

### 1.1 The Inversion Challenge in Diffusion-Based Causality

Diffusion models (ho2020denoising) are powerful generative models that learn to reverse a fixed, gradual noising process. They train a neural network, $ε_θ(x_t,t)$ , to predict the noise component of a corrupted sample $x_t$ by optimizing a simple mean-squared error objective:

$$

\begin{split}L_simple(θ)=E_t,x_0,\boldsymbol{ε}\bigg[\Big\|\boldsymbol{ε}-ε_θ\big(√{\bar{α}_t}x_0+√{1-\bar{α}_t}\boldsymbol{ε},t\big)\Big\|^2\bigg]\end{split} \tag{1}

$$

where $\bar{α}_t$ defines the noise schedule and $\boldsymbol{ε}∼N(0,I)$ . This trained network is then used to iteratively denoise a variable from pure noise back to a clean sample. A standard deterministic method for this generative process is the Denoising Diffusion Implicit Model (DDIM) (song2021denoising):

$$

\begin{split}x_t-1=√{\bar{α}_t-1}≤ft(\frac{x_t-√{1-\bar{α}_t}ε_θ(x_t,t)}{√{\bar{α}_t}}\right)+√{1-\bar{α}_t-1}·ε_θ(x_t,t)\end{split} \tag{2}

$$

However, causal abduction requires the inverse operation: encoding an observed data point $x_0$ into its latent noise code $x_T$ . Standard frameworks (chao2023interventional) use the DDIM inversion, which only approximates this path:

$$

\begin{split}x_t+1=√{\bar{α}_t+1}≤ft(\frac{x_t-√{1-\bar{α}_t}ε_θ(x_t,t)}{√{\bar{α}_t}}\right)+√{1-\bar{α}_t+1}·ε_θ(x_t,t)\end{split} \tag{3}

$$

This inversion is approximate because it relies on the noise prediction $ε_θ(x_t,t)$ remaining constant across the step, which introduces discretization errors that accumulate (liu2022pseudo). This structural flaw, which we term the Structural Reconstruction Error (SRE), systematically corrupts the inferred exogenous noise $U_i$ . The initial error in the abduction step then propagates through the entire Abduction-Action-Prediction cycle, compromising the fidelity of the final counterfactual.

### 1.2 Our Solution: A Zero-SRE Causal Framework

To eliminate SRE by construction, we build our framework upon an analytically invertible sampler: the B idirectional E xplicit L inear M ulti-step (BELM) sampler (liu2024belm). BELM overcomes the ”memoryless” limitation of single-step samplers like DDIM by using a history of noise predictions, a principle grounded in classical theory for solving ODEs (hairer2006solving).

Specifically, we employ a second-order BELM. During decoding, it computes a more stable effective noise, $\boldsymbol{ε}_eff$ , using predictions from the current and previous timesteps:

$$

\boldsymbol{ε}_eff=\frac{3}{2}ε_θ(x_t,t)-\frac{1}{2}ε_θ(x_t+1,t+1) \tag{4}

$$

This improved estimate is then used in a DDIM-like update. The key innovation is that the corresponding encoding process is constructed to be the exact algebraic inverse of this decoding process, guaranteeing that the round-trip is lossless, i.e., $H(T(x_0))=x_0$ . While the original work on BELM focused on general generative tasks, we are the first to identify, leverage, and theoretically justify its analytical invertibility as the key to satisfying the principle of Causal Information Conservation for rigorous counterfactual generation. Our choice of a second-order BELM represents a deliberate trade-off, providing substantial accuracy gains over single-step methods while maintaining practical efficiency (liu2024belm), making it ideal for our causal framework.

### 1.3 Methodological Gaps in Applying Invertible SCMs

However, achieving high-fidelity causal inference requires more than a simple substitution of one sampler for another. The principle of analytical invertibility, while theoretically sound, exposes new challenges in practical SCM implementation that our framework is designed to address.

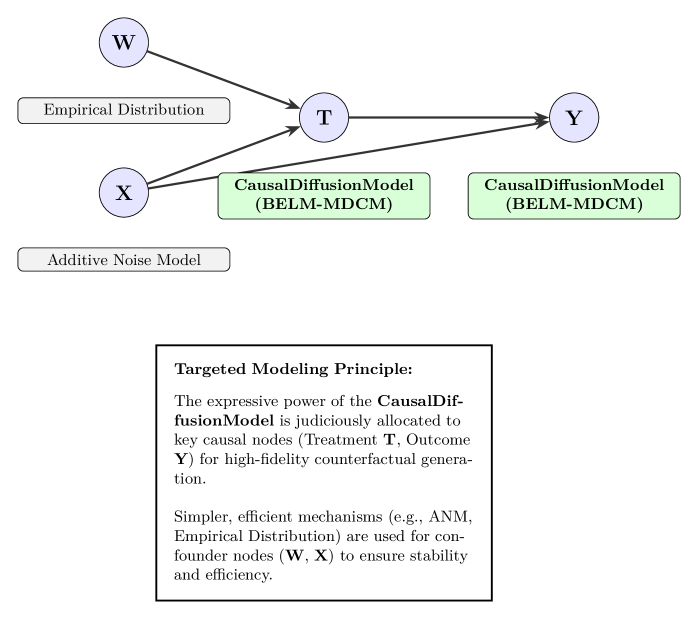

The Challenge of Model Specification: Targeted Modeling.

A key decision in SCM construction is assigning a causal mechanism to each node. Naively applying a complex, computationally expensive BELM-based diffusion model to every node in the causal graph is suboptimal. This motivates our Targeted Modeling strategy, where model complexity is treated as a resource to be allocated judiciously across the graph.

The Challenge of Downstream Tasks: Hybrid Training.

The second challenge arises from a fundamental mismatch in objectives. A diffusion model is trained on a generative objective, $L_diffusion(θ)$ , while a downstream predictive task is optimized using a discriminative loss, $L_task(φ)$ . These two objectives are not aligned. This ”objective mismatch” motivates our Hybrid Training strategy, which seeks to unify these two goals.

## 2 Theoretical Analysis: An Operator-Theoretic Framework

To formalize our thesis that Causal Information Conservation is paramount and its violation via Structural Reconstruction Error is a fundamental barrier, we develop a rigorous operator-theoretic framework. This perspective is essential for analyzing the fidelity of the causal mapping process itself, moving beyond simple prediction errors. We present the first formal analysis that decomposes the counterfactual error in diffusion-based causal models to explicitly isolate the SRE, proving how our Zero-SRE design eliminates this critical structural limitation.

Our analysis first establishes the conditions for perfect counterfactual generation (§ 2.1-§ 2.3) and proves that standard methods produce a non-zero SRE, which our sampler eliminates by construction (Proposition 5 - 6; § 2.4). The centerpiece is a novel error decomposition theorem that isolates the SRE, motivating our Zero-SRE design (§ 2.5-§ 2.7). We conclude with learnability guarantees and a discussion of implications for advanced causal tasks like transportability (§ 2.8-§ 2.10).

### 2.1 Problem Formulation and Causal Operators

Let $(Ω,F,P)$ be a probability space. We consider endogenous variables $V$ as elements of the Hilbert space of square-integrable random variables, $X:=L^2(Ω,ℝ^d)$ . Unless otherwise specified, all vector norms $\|·\|$ in the subsequent analysis refer to the standard Euclidean ( $L_2$ ) norm.

**Definition 1 (Functional SCM Operator)**

*A Structural Causal Model is defined by a set of unknown, true functional operators $\{F_i\}_i=1^d$ , where each $F_i:X^pa_i×U_i→X_i$ is a map such that $V_i:=F_i(Pa_i,U_i)$ , with $Pa_i$ being the set of parent random variables and $U_i$ an exogenous noise variable. We establish the convention that the corresponding lowercase bold letter, $pa_i$ , denotes a specific vector of observed values for these parents.*

Our goal is to learn a model parameterized by $θ$ that approximates this SCM. Our model consists of a pair of conditional operators for each variable $V_i$ :

1. A decoder (generative) operator $H_θ:U×X^p→X$ , which aims to approximate $F$ .

1. An encoder (inference) operator $T_θ:X×X^p→U$ , which aims to perform abduction by inferring the latent noise.

These operators are realized by solving the probability flow ODE (Appendix A). The decoder $H_θ$ solves the ODE from $t=T$ to $t=0$ , while the encoder $T_θ$ solves it from $t=0$ to $t=T$ . Our BELM sampler is a high-fidelity numerical solver designed such that these forward and backward operations are exact algebraic inverses.

### 2.2 Identifiability and Exact Counterfactual Generation

We adapt principles from identifiable generative modeling (chao2023interventional) to formalize the conditions for exact counterfactuals. This requires assuming the SCM is invertible with respect to its noise term, a condition discussed in Section 2.11.

**Theorem 2 (Identifiability via Statistical Independence)**

*Given an SCM operator $X:=F(Pa,U)$ where $U⊥⊥Pa$ and $F$ is invertible w.r.t. $U$ . If a learned encoder $T_θ$ (with sufficient capacity) yields a latent representation $Z=T_θ(X,Pa)$ that is statistically independent of the parents $Pa$ , then $Z$ is an isomorphic representation of the exogenous noise $U$ .*

### 2.3 Geometric Inductive Bias for Identifiability

The score-matching objective’s geometric inductive biases strengthen our identifiability argument. We leverage the principle of implicit regularization, where optimizers favor ”simpler” functions (hochreiter1997flat; neyshabur2018pac). We adopt the principle of simplicity bias, a cornerstone of modern deep learning theory that, while empirically supported, remains an active and not yet universally proven area of research. Our conclusions are conditioned on its validity, as discussed further in Section 2.11. This suggests the model learns the most parsimonious geometric transformation required to explain the data.

Considering the local geometry of the data density $p(x)$ provides powerful intuition. In a local region $R$ , if the data is isotropic (spherically symmetric), the simplest score function is a radial vector field, yielding a conformal map. If the structure is simply anisotropic (e.g., ellipsoidal), the model is biased towards learning a local affine map. This refines the notion of a purely conformal bias and leads to the following proposition.

**Proposition 3 (Implicit Bias towards Simple Geometric Maps)**

*Assume (A1) the true data density $p(x)$ is smooth ( $C^2$ ) and (A2) the optimization process has a simplicity bias (e.g., favoring low-complexity solutions, see Appendix H).

1. If there exists a local region $R$ where $p(x)$ is isotropic, the optimal learned score function is a radial vector field, and the flow map it generates is a conformal map on $R$ .

1. If we relax the condition to a local region $R$ where $p(x)$ has an ellipsoidal structure, the optimal learned score function is normal to the ellipsoidal iso-contours, and the flow map it generates is a local affine transformation on $R$ .*

The formal argument is detailed in Appendix H. This proposition is significant: it suggests that the model defaults to learning the most parsimonious, well-behaved, and locally invertible map that can explain the data’s geometry. This bias is crucial for the abduction step, as it prevents the pathological distortions that would corrupt the inferred causal noise $U$ .

**Theorem 4 (Operator Isomorphism Guarantees Exact Counterfactuals)**

*Let the conditions of Theorem 2 hold. If the learned operator pair $(T_θ,H_θ)$ constitutes a conditional isomorphism (i.e., $H_θ(T_θ(·,pa),pa)=I$ , the identity operator), then the model’s prediction under an intervention $do(Pa:=\boldsymbol{α})$ is exact.*

Proof A full proof, covering cases for different dimensions of the exogenous noise variable, is provided in Appendix B.

### 2.4 Analysis of Inversion Fidelity

We now formally analyze the inversion error. We prove that standard approximate schemes produce a non-zero SRE (Proposition 5), whereas our chosen sampler eliminates it by construction (Proposition 6).

**Proposition 5 (Structural Error of Approximate Inversion)**

*Let $T_DDIM$ be the operator for one step of DDIM inversion from $x_t$ to $x_t+1$ , and $H_DDIM$ be the generative step operator from $x_t+1$ to $x_t$ . The single-step reconstruction error is non-zero and of second order in the time step $Δ t$ :

$$

(H_DDIM∘T_DDIM)(x_t)-x_t=O((Δ t)^2)

$$

This error accumulates over the full trajectory, leading to a non-zero Structural Reconstruction Error.*

Proof See Appendix C for a rigorous proof.

**Proposition 6 (Analytical Invertibility of the Sampler)**

*Let $T_BELM$ and $H_BELM$ be the operators corresponding to the full-trajectory BELM sampler for inference and generation, respectively. For a fixed noise prediction network $ε_θ$ , the operators are exact algebraic inverses:

$$

H_BELM∘T_BELM=I

$$*

Proof The proof follows from the algebraic construction of the BELM update rules, as detailed in Appendix C.

### 2.5 Error Decomposition for Counterfactual Estimation

This brings us to our central theoretical result: an error decomposition theorem that rigorously partitions the total counterfactual error. This decomposition isolates the SRE and mathematically demonstrates why its elimination is critical.

**Definition 7 (Counterfactual Error Components)**

*We formally define the two primary sources of error in counterfactual estimation for the invertible case:

1. The Structural Reconstruction Error ( $E_SR$ ) measures the information loss from the model’s abduction-action cycle on a given sample $X$ :

$$

E_SR(X):=\|(H_θ∘T_θ-I)X\|^2

$$

1. The Latent Space Invariance Error ( $E_LSI$ ) measures the failure of the learned latent space to remain invariant under interventions on parent variables:

$$

E_LSI:=\|T_θ(X,Pa)-T_θ(X_\boldsymbol{α}^true,\boldsymbol{α})\|^2

$$*

**Theorem 8 (Counterfactual Error Bound)**

*Let a model be defined by $(T_θ,H_θ)$ and the true SCM by $F$ . Assume the decoder $H_θ$ is $L_H$ -Lipschitz. The expected squared error of the model’s counterfactual prediction $\hat{X}_\boldsymbol{α}$ is bounded by the expectation of the two error components:

$$

E≤ft[\|\hat{X}_\boldsymbol{α}-X_\boldsymbol{α}^true\|^2\right]≤ 2E≤ft[E_SR(X_\boldsymbol{α}^true)\right]+2L_H^2E≤ft[E_LSI\right]

$$*

Proof The proof is in Appendix D.

**Remark 9 (Elimination of Structural Error)**

*By Proposition 6, the Structural Reconstruction Error for BELM-MDCM is identically zero. This is the central theoretical advantage of our framework. It disentangles the error sources, allowing us to isolate the entire modeling challenge to learning a high-quality score function ( $ε_θ$ ) without the confounding factor of an imperfect inversion algorithm. Any remaining error is now purely a function of statistical estimation, not a structural bias of the model itself.*

**Proposition 10 (Bound on Latent Space Invariance Error)**

*We assume the learned score network, $\boldsymbol{ε}_θ$ , is Lipschitz continuous, ensuring the existence and uniqueness of the probability flow ODE solution via the Picard-Lindelöf theorem. Under standard integrability conditions (Fubini’s theorem), the Latent Space Invariance Error is bounded by the expected score-matching loss:

$$

E≤ft[E_LSI\right]≤ C^\prime·E≤ft[\|\boldsymbol{ε}_θ-\boldsymbol{ε}^*\|^2\right]

$$

for some constant $C^\prime$ , where $\boldsymbol{ε}^*$ is the true score function.*

Proof The proof is in Appendix D. This proposition formally establishes that by eliminating structural error, the causal fidelity of BELM-MDCM is directly and provably controlled by its ability to accurately learn the data’s score function.

### 2.6 Decomposing Error: A Motivation for Empirical Validation

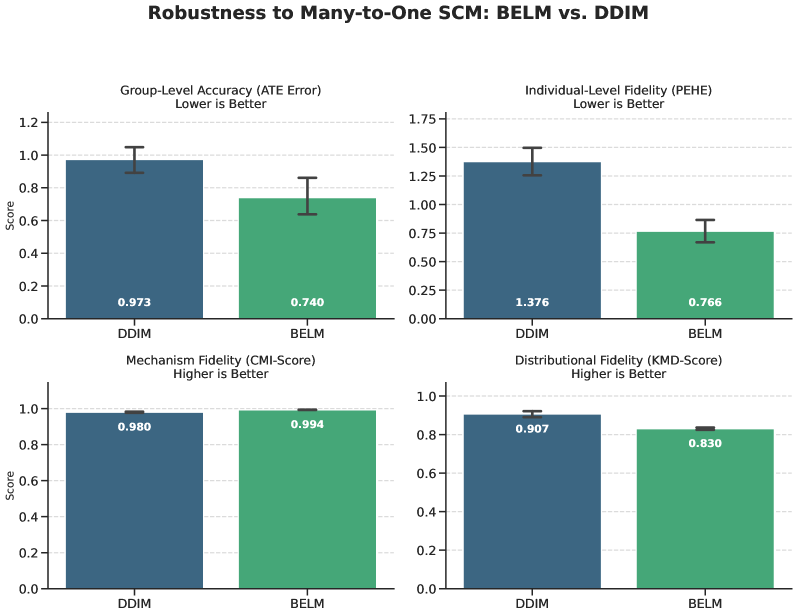

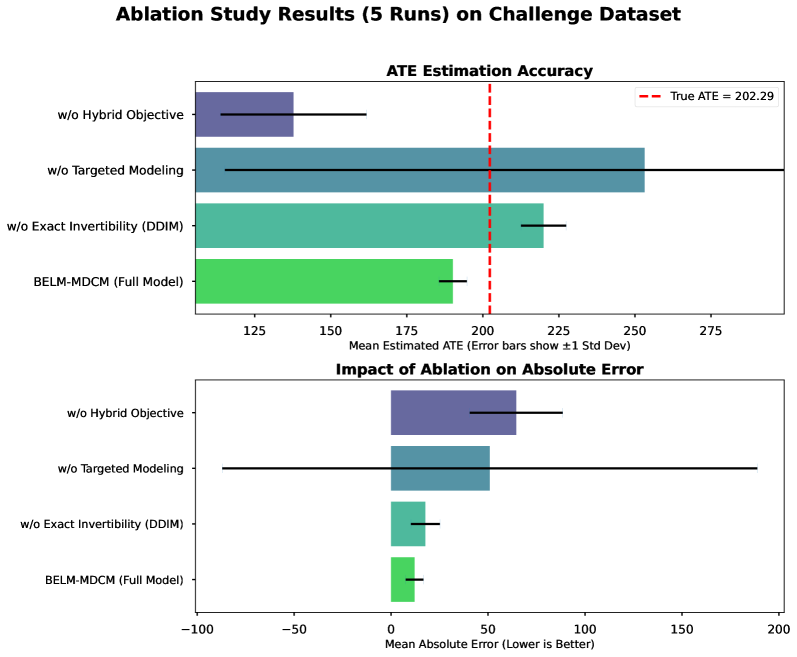

The error decomposition in Theorem 8 provides a clear strategy for empirical validation by isolating two distinct error sources: the Structural Reconstruction Error ( $E_SR$ ) and the Latent Space Invariance Error. While developing a single score combining these is future work, these components directly motivate our empirical investigations. Our ablation study (Section 5.4.2) is designed to measure the impact of a non-zero $E_SR$ , while our stress-test (Section 5.4.1) probes robustness when latent space invariance is challenged by a non-invertible SCM.

### 2.7 Theoretical Roles of Targeted Modeling and Hybrid Training

With algorithmic error eliminated by our Zero-SRE design, the challenge becomes minimizing the modeling error ( $E_LSI$ ). Our two methodological innovations, Targeted Modeling and Hybrid Training, are principled strategies for this purpose.

Targeted Modeling as Formal Complexity Control.

Our Targeted Modeling strategy acts as a form of structural regularization. The finite sample bound in Theorem 15 is governed by the Rademacher complexity $\mathfrak{R}_n(F_Θ)$ of the entire SCM’s hypothesis space. By assigning low-complexity models to a subset of nodes, we directly constrain the overall complexity.

**Remark 11 (Effect on Generalization Bound)**

*Our Targeted Modeling strategy is formally justified as a complexity control mechanism. The Rademacher complexity of a composite SCM is bounded by the sum of the complexities of its individual mechanisms (mohri2018foundations). By strategically substituting a high-complexity diffusion model $F_diff$ with a lower-complexity alternative $F_simple$ for non-critical nodes, Targeted Modeling directly minimizes this upper bound. This leads to a tighter generalization bound and improves the statistical efficiency of the overall SCM.*

Hybrid Training as a Weighted Score-Matching Objective.

The Hybrid Training Objective, $L_total=L_diffusion+λ· L_task$ , imparts a crucial inductive bias for learning a causally salient score function. The task-specific loss acts as a conductor’s baton, forcing the model to prioritize learning an accurate score function in regions of the data manifold most critical to the causal question. We formalize this by proposing that the auxiliary loss implicitly implements a weighted score-matching objective.

**Proposition 12 (Hybrid Objective as a Weighted Score-Matching Regularizer)**

*The auxiliary task loss $L_task$ provides a lower bound for the model’s error, weighted by a function reflecting the causal salience of the data manifold. Minimizing the hybrid objective $L_total$ is thereby equivalent to solving a weighted score-matching problem that prioritizes accuracy in causally salient regions, leading to a smaller effective Latent Space Invariance Error. (A rigorous proof is provided in Appendix E.)*

This proposition formally grounds our hybrid training strategy, revealing that the task-specific loss intelligently forces the diffusion model to prioritize accuracy in regions of the data manifold most critical to the causal question. This reinforces the CIC principle by avoiding information loss where it matters most, effectively implementing the simplicity bias principle from Section 2.3.

We can deepen this insight by analyzing its information-theoretic implications.

**Proposition 13 (Disentanglement via Hybrid Objective)**

*Information-theoretically, the hybrid objective provides a strong inductive bias towards learning a disentangled latent representation. It encourages a ”division of labor” where the task-specific component explains variance from the parents $Pa$ , while the diffusion component’s latent code $Z=T_θ(V,Pa)$ models the residual information. This implicitly pushes $Z$ towards being independent of $Pa$ , a crucial step towards satisfying the identifiability conditions.*

Proof A detailed information-theoretic argument is provided in Appendix E.

### 2.8 BELM-MDCM as a Unifying Framework

The principle of Causal Information Conservation also unifies our framework with classical models. Simpler models like Additive Noise Models (ANMs) can be seen as special cases where this principle is met trivially, positioning our work as a generalization of established causal principles. For instance, in a classic ANM (hoyer2009nonlinear), $V_i=f_i(Pa_i)+U_i$ , the noise is recovered by a direct, lossless inversion: $U_i=V_i-f_i(Pa_i)$ . Our framework generalizes this principle to arbitrarily complex, non-additive mechanisms, offering a flexible, non-parametric extension to classical structural equation models (wooldridge2010econometric). The importance of noise distributions, particularly non-Gaussianity, for identifiability in linear models is also a well-established principle (shimizu2006linear).

### 2.9 Learnability and Statistical Guarantees

We now provide finite-sample learnability guarantees for our SCM framework.

**Proposition 14 (Asymptotic Consistency)**

*Under standard regularity conditions, as the number of data samples $n→∞$ and model capacity $N→∞$ , the learned operators $(\hat{T}_n,\hat{H}_n)$ are consistent estimators of the ideal operators $(T^*,H^*)$ : $\hat{T}_n\xrightarrow{p}T^*$ and $\hat{H}_n\xrightarrow{p}H^*$ .*

**Theorem 15 (Finite Sample Bound for Causal Diffusion SCMs)**

*Let an SCM consist of $d$ endogenous nodes, with a causal graph having a maximum in-degree of $d_in^max$ . Assume each causal mechanism is implemented by a score network $ε_θ$ that is an $L$ -layer MLP with ReLU activations, and the spectral norm of each weight matrix is bounded by $B$ . Let the input space be appropriately normalized. Let the loss function be bounded by $M$ . Then, for the parameters $\hat{θ}_n$ learned from $n$ samples, the excess risk is bounded with probability at least $1-δ$ :

$$

R(\hat{θ}_n)-R(θ^*)≤ C·\frac{d· L· B^L·√{d_in^max+d_embed+1}}{√{n}}+M√{\frac{\log(1/δ)}{2n}}

$$

where $C$ is a constant independent of the network architecture and sample size, and $d_embed$ is the dimension of the time embedding.*

Proof The proof, which combines the sub-additivity of Rademacher complexity over the SCM with standard bounds for deep neural networks (bartlett2017spectrally; neyshabur2018pac), is detailed in Appendix G.

**Remark 16 (Interpretation of the Bound)**

*This refined bound quantitatively links the generalization error to:

1. Causal Complexity ( $d·√{d_in^max}$ ): The error scales with the number of causal mechanisms ( $d$ ) and the graph’s complexity ( $d_in^max$ ), formalizing the intuition that more complex causal systems are harder to learn.

1. Network Complexity ( $L· B^L$ ): The error scales with the depth and spectral norm of the score networks. This provides direct theoretical grounding for our Targeted Modeling strategy, as using simpler models tightens this generalization bound.*

### 2.10 Implications for Causal Transportability

Causal Information Conservation also provides a foundation for transportability —applying knowledge from a source domain $S$ to a target domain $T$ (pearl2014transportability). Transportability requires separating invariant causal knowledge from domain-specific mechanisms. By losslessly recovering the exogenous noise $U$ (the invariant ”causal essence”), our framework achieves this separation by design; the decoders $H_θ$ represent the domain-specific mechanisms. This insight is formalized in the following theorem.

**Theorem 17 (Condition for Lossless Causal Transport)**

*Let a source domain $S$ and a target domain $T$ be described by SCMs $M^S$ and $M^T$ , respectively. Assume the following conditions hold:

1. Shared Structure: Both domains share the same causal graph $G$ and the same exogenous noise distributions $\{p_i(U_i)\}$ . The domains differ only in a subset of causal mechanisms $K_changed$ .

1. Noise Independence: The exogenous noise variables $\{U_i\}_i=1^d$ are mutually independent.

1. Information Conservation: A model $(T_θ,H_θ)$ trained on data from $S$ satisfies the Causal Information Conservation principle, achieving zero Structural Reconstruction Error.

Then, causal knowledge can be losslessly transported from $S$ to $T$ by re-learning only the operators $\{T_θ_{k},H_θ_{k}\}$ corresponding to the changed mechanisms $k∈K_changed$ , while directly reusing all operators for invariant mechanisms.*

Proof The proof is provided in Appendix F.

### 2.11 Discussion of Assumptions

Our framework rests on several key assumptions, which we now critically examine.

Our geometric inductive bias argument (Proposition 3) rests on the principle of simplicity bias. While this principle is a cornerstone of modern deep learning theory with substantial empirical backing, it remains an active area of research and is not a universally proven theorem. Our conclusions are therefore conditioned on the validity of this powerful but conjectural assumption.

The cornerstone of our identifiability theory (Theorem 2) is the SCM’s invertibility with respect to its noise term $U$ . This is a strong assumption; when violated (e.g., by a many-to-one function), the abduction task becomes ill-posed.

To address this foundational challenge, we provide an exhaustive theoretical treatment in Appendix C. There, we formalize the irreducible ”representational error” and derive a tighter, more general error bound (Theorem 21). More importantly, we propose a concrete mitigation strategy: a novel prior-matching regularizer (Definition 23), theoretically shown to reduce the error by encouraging the learned encoder to approximate the ideal Maximum a Posteriori (MAP) solution (Proposition 24). This highlights a primary contribution: even in the challenging non-invertible case, BELM-MDCM’s zero-SRE design eliminates the algorithmic error, thereby isolating the more fundamental representational challenge. Our stress-test in Section 5.4.1 empirically confirms this advantage, while validating our regularizer provides a clear direction for future work.

Our identifiability proof is dimension-dependent, leveraging Liouville’s theorem for $d≥ 3$ and requiring stronger assumptions like asymptotic linearity for the special case of $d=2$ . Other assumptions, such as Lipschitz continuity of the score network, are mild regularity conditions standard in deep generative model analysis and can be encouraged through architectural choices like spectral normalization.

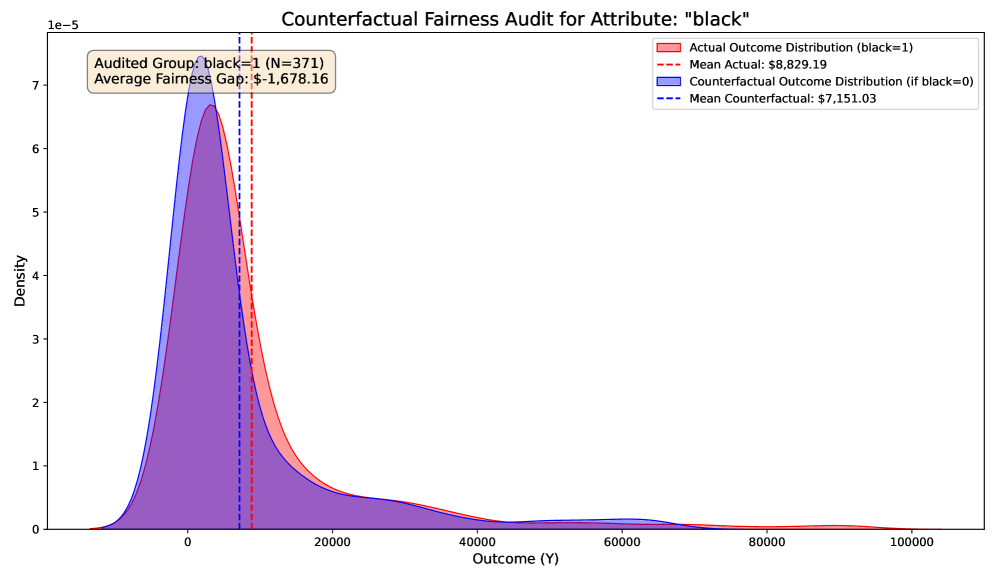

## 3 Architectural Design and Training

The BELM-MDCM architecture embodies our core principles through a non-monolithic, theoretically-motivated design. Its central philosophy is Targeted Modeling: judiciously allocating the expressive power of our Zero-SRE CausalDiffusionModel to nodes of causal interest (e.g., Treatment T, Outcome Y), while using simpler, efficient mechanisms for confounders, as illustrated in Figure 1. This strategy provides practical complexity control, tightening the generalization bound as established in Theorem 15.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Causal Modeling Framework with Targeted Model Allocation

### Overview

The image displays a technical diagram illustrating a causal inference modeling framework. It depicts a causal graph with four nodes (W, X, T, Y) and specifies the modeling techniques applied to the relationships between them. A separate text box explains the "Targeted Modeling Principle" that guides this allocation of models.

### Components/Axes

**Nodes (Causal Variables):**

* **W**: Located at the top-left of the diagram.

* **X**: Located below node W on the left side.

* **T**: Located in the center of the diagram.

* **Y**: Located on the right side of the diagram.

**Directed Edges (Causal Relationships) & Associated Models:**

1. **W → T**: An arrow from W to T. The associated model annotation, placed to the left of the arrow, is a white box labeled: **"Empirical Distribution"**.

2. **X → T**: An arrow from X to T. The associated model annotation, placed along the arrow, is a green box labeled: **"CausalDiffusionModel (BELM-MDCM)"**.

3. **T → Y**: An arrow from T to Y. The associated model annotation, placed along the arrow, is a green box labeled: **"CausalDiffusionModel (BELM-MDCM)"**.

4. **X → Y**: A direct arrow from X to Y. No specific model annotation is attached to this edge.

**Additional Annotation:**

* A white box labeled **"Additive Noise Model"** is positioned below node X. Its placement suggests it may be a general model type or associated with the confounder nodes, but it is not directly linked to a specific arrow in this diagram.

**Text Box (Principle Explanation):**

Located at the bottom of the image, a bordered text box contains the following text:

> **Targeted Modeling Principle:**

>

> The expressive power of the **CausalDiffusionModel** is judiciously allocated to key causal nodes (Treatment **T**, Outcome **Y**) for high-fidelity counterfactual generation.

>

> Simpler, efficient mechanisms (e.g., ANM, Empirical Distribution) are used for confounder nodes (**W**, **X**) to ensure stability and efficiency.

### Detailed Analysis

The diagram presents a structured approach to causal modeling:

* **Key Causal Pathway (T → Y):** The relationship from Treatment (T) to Outcome (Y) is modeled using the most complex and expressive model, the **CausalDiffusionModel (BELM-MDCM)**.

* **Treatment Assignment Mechanism (W → T, X → T):** The influences on the Treatment node are modeled differently. The influence from confounder **W** is modeled with a simple **Empirical Distribution**. The influence from confounder **X** is modeled with the complex **CausalDiffusionModel (BELM-MDCM)**.

* **Direct Confounder Effect (X → Y):** A direct causal path from confounder X to Outcome Y is shown, but no specific model is assigned to it in this diagram.

* **Model Types:** Two primary model types are named:

1. **CausalDiffusionModel (BELM-MDCM):** A complex, expressive model.

2. **Simpler Mechanisms:** Explicitly mentioned are **ANM** (Additive Noise Model) and **Empirical Distribution**.

### Key Observations

1. **Asymmetric Model Allocation:** The framework does not apply the most powerful model uniformly. It strategically allocates the **CausalDiffusionModel** to the edges **X → T** and **T → Y**, which are deemed critical for high-fidelity generation of counterfactuals involving the Treatment and Outcome.

2. **Confounder Handling:** Confounder nodes (W, X) are generally associated with simpler models (Empirical Distribution, ANM) to prioritize stability and computational efficiency, with the notable exception of the path from X to T.

3. **Visual Coding:** Green boxes are used exclusively for the **CausalDiffusionModel**, creating a clear visual distinction from the white boxes used for simpler models and node labels.

4. **Principle Justification:** The text box provides the explicit rationale for the design, linking model complexity to the importance of the causal relationship for the end goal of counterfactual generation.

### Interpretation

This diagram represents a **pragmatic and resource-aware strategy for causal inference**. The core insight is that not all parts of a causal graph require the same level of modeling sophistication. By applying a high-fidelity, likely computationally intensive model (**CausalDiffusionModel**) only to the most critical pathways (those directly defining the treatment effect and its assignment from key confounders), the framework aims to achieve accurate counterfactual predictions. Meanwhile, using simpler, well-understood models for other relationships (like the empirical distribution of W) reduces overall complexity, improves stability, and conserves computational resources. This "targeted" approach reflects a common engineering trade-off between accuracy and efficiency in complex system modeling. The diagram serves as a blueprint for implementing such a hybrid causal model.

</details>

Figure 1: Illustration of the Targeted Modeling Principle. The expressive CausalDiffusionModel is judiciously allocated to key causal nodes (Treatment T, Outcome Y) for high-fidelity counterfactual generation. Simpler, efficient mechanisms (e.g., ANM, Empirical Distribution) are used for confounder nodes (W, X) to ensure stability and efficiency.

<details>

<summary>x2.png Details</summary>

### Visual Description

## System Architecture Diagram: Causal Identification Pipeline

### Overview

The image displays a technical flowchart illustrating a multi-stage data processing and machine learning pipeline designed for causal identification. The diagram flows from left to right, starting with raw input data, moving through pre-processing, embedding, training, and post-processing stages, and culminating in a causal identification result. The architecture is modular, with distinct colored blocks representing different functional units.

### Components/Flow

The diagram is organized into five horizontal phases, labeled at the bottom: **Pre-Processing**, **Embedding**, **Train**, **Post-Processing**, and **Results**.

**1. Input Data (Far Left):**

* **`x_num`**: A numerical input vector. The example values shown are `50`, `257`, and `-3.0`.

* **`x_cat1`**: A categorical input with example value `M`.

* **`x_cat2`**: A categorical input with example value `woman`.

* **`x_cat3`**: A categorical input represented by a blue triangle icon.

**2. Pre-Processing Stage:**

* **`StandardScaler`** (Yellow block): Receives the `x_num` input. A "Select" arrow points from the input line to this block, indicating a selection or routing mechanism.

* **`OneHotEncoder`** (Peach block): Receives all three categorical inputs (`x_cat1`, `x_cat2`, `x_cat3`).

**3. Embedding Stage:**

* **`Connection`** (Grey block): Performs a concatenation operation, denoted by the symbol `⊕`. It combines the processed numerical data (`x_num`) and the processed categorical data (`x_cat`) into a single representation.

* **`Timestep embedding`** (Green block): An independent module that feeds into the next stage.

**4. Train Stage:**

* **`BELM-MDCM module`** (Light blue, tall vertical block): This is the central processing unit. It receives two inputs:

1. The concatenated data from the `Connection` block.

2. The output from the `Timestep embedding` block.

* **`Noisy Target Variable`** (Light cyan block): This input also feeds directly into the `BELM-MDCM module`.

**5. Post-Processing & Results Stage:**

* **`Inverse Transformation`** (White block): Processes the output from the `BELM-MDCM module`.

* **`Causal Identification`** (Pink, tall vertical block): The final output stage of the pipeline, receiving the transformed data.

**Flow Direction:** Arrows clearly indicate a unidirectional data flow from the inputs on the left, through the sequential processing blocks, to the final output on the right. The `Timestep embedding` and `Noisy Target Variable` provide auxiliary inputs to the central training module.

### Detailed Analysis

* **Data Transformation Path:** Numerical data (`x_num`) is scaled via `StandardScaler`. Categorical data (`x_cat`) is one-hot encoded. These parallel streams are then fused (`Connection`).

* **Core Model:** The `BELM-MDCM module` is the primary computational engine, integrating the fused data features, temporal information (`Timestep embedding`), and the target variable (`Noisy Target Variable`).

* **Output Processing:** The model's output undergoes an `Inverse Transformation` before being used for the final task of `Causal Identification`.

* **Visual Coding:** Colors are used to group related functions: yellow/peach for pre-processing, green for embedding, blue for the core model, and pink for the final result.

### Key Observations

1. **Hybrid Data Handling:** The pipeline explicitly separates and then recombines numerical and categorical data streams, a common practice in tabular data modeling.

2. **Temporal Component:** The inclusion of a `Timestep embedding` suggests the model is designed to handle sequential or time-series data.

3. **Noise in Target:** The `Noisy Target Variable` input implies the model is robust to or specifically designed for learning from imperfect or noisy labels.

4. **Modular Design:** Each major step (scaling, encoding, embedding, core modeling, transformation) is encapsulated in its own block, suggesting a flexible and interpretable architecture.

### Interpretation

This diagram represents a sophisticated machine learning pipeline for **causal inference** from structured, likely temporal, data. The process begins by standardizing and encoding raw features, then projects them into a learned embedding space alongside temporal information. The core `BELM-MDCM module` (the acronym is not defined in the image) presumably performs the main causal discovery or estimation task, potentially using a method robust to target noise. The final `Inverse Transformation` likely maps the model's internal representations back into an interpretable causal effect or graph.

The pipeline's structure suggests an application where understanding the cause-and-effect relationships between variables (e.g., in economics, healthcare, or social science) is critical, and the data contains both static features and a time dimension. The explicit handling of a noisy target indicates a practical consideration for real-world data where ground truth may be uncertain.

</details>

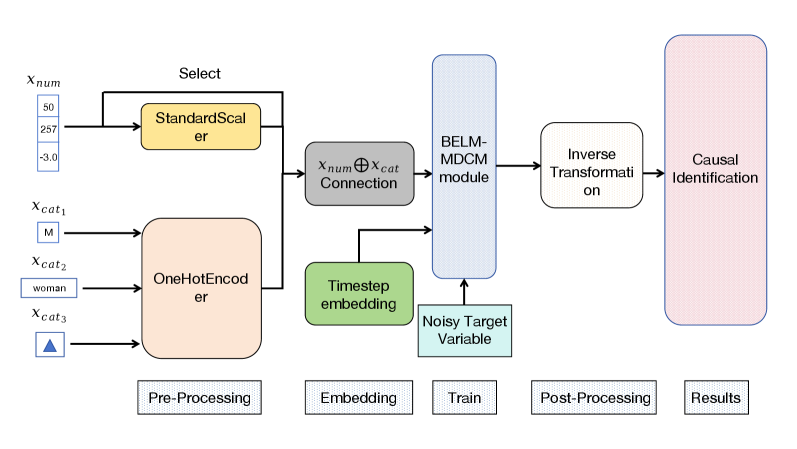

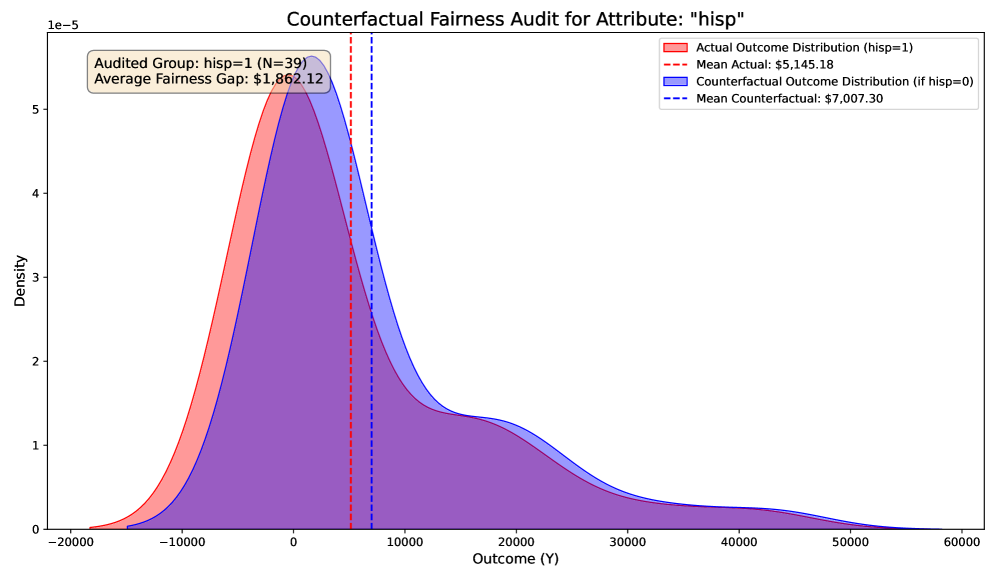

Figure 2: The detailed internal architecture of the CausalDiffusionModel. This diagram illustrates the end-to-end workflow of the causal mechanism designed for key nodes like Treatment T and Outcome Y, detailing the pre-processing, embedding, training, and post-processing stages.

The internal architecture of the CausalDiffusionModel itself, depicted in Figure 2, is engineered to learn the complex, non-linear mapping $v_i:=f_i(pa_i,u_i)$ with high fidelity.

### 3.1 Mechanism for Exogenous Nodes

Exogenous nodes (without parents in the causal graph $G$ ) are modeled non-parametrically via the Empirical Distribution of the observed data. This approach avoids distributional assumptions and provides a robust foundation for the Structural Causal Model (SCM).

### 3.2 Mechanism for Endogenous Nodes: The CausalDiffusionModel

For endogenous nodes $V_i$ , particularly those central to the causal query (treatment, outcome, key mediators), we employ our bespoke CausalDiffusionModel to learn the functional mapping $v_i:=f_i(pa_i,u_i)$ .

#### 3.2.1 Conditioning via Parent Node Transformation

The denoising process is conditioned on the parent nodes $pa_i$ , which are transformed into a fixed-dimensional conditioning vector $c∈ℝ^d_c$ . A ColumnTransformer handles heterogeneous data types: continuous parents are standardized (StandardScaler) to unify scales, while categorical parents are one-hot encoded (OneHotEncoder) to prevent artificial ordinality. The resulting vectors are concatenated into $c$ , which remains constant for a given sample’s diffusion trajectory.

#### 3.2.2 The Denoising Process

The core of the CausalDiffusionModel is a denoising network $ε_θ(v_t,t,c)$ , implemented as a Residual MLP (he2016deep). It takes as input the noisy variable $v_t$ , a sinusoidal Time Embedding of timestep $t$ , and the conditioning vector $c$ . Before the diffusion process, the target variable $V_i$ is also preprocessed (standardized for continuous values or label-encoded for categorical ones). The denoising process is driven by the BELM sampler, ensuring a mathematically exact and stable inversion path as established in Section 2.

#### 3.2.3 Hybrid Training Objective

We introduce a Hybrid Training Objective to reconcile generative fidelity with predictive accuracy. As established in our theoretical analysis (Proposition 12), this is more than a standard multi-task learning scheme; it acts as a powerful inductive bias, creating a weighted score-matching objective that prioritizes accuracy in causally salient regions of the data manifold. The total loss is a linearly weighted combination:

$$

L_total=L_diffusion+λ· L_task \tag{5}

$$

where $L_diffusion$ is the noise prediction error (Equation 1). The auxiliary loss $L_task$ is a Mean Squared Error for continuous nodes ( $L_regression$ ) and a Cross-Entropy loss for discrete nodes ( $L_classification$ ).

#### 3.2.4 Decoding and Counterfactual Generation

For generation, the BELM sampler produces an output in the normalized space. This is then mapped back to the original data domain using the inverse transformations of the pre-fitted preprocessors (StandardScaler for continuous, LabelEncoder for categorical). For categorical outputs, the continuous value is rounded and clipped to the valid class range before the inverse mapping, ensuring that generated (counterfactual) data is interpretable and resides in the correct space.

## 4 New Evaluation Metrics for Generative Causal Models

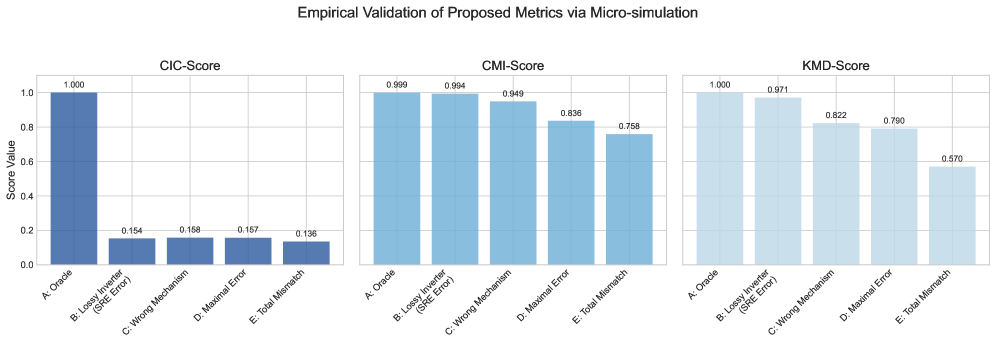

The principle of Causal Information Conservation demands new evaluation dimensions that traditional metrics like ATE and PEHE cannot capture. An accurate ATE score, for instance, could arise from a model with high SRE where individual errors fortuitously cancel out at the population level. To move beyond mere outcome accuracy and directly assess a model’s adherence to our foundational principle, we propose a new, theoretically-grounded evaluation framework.

### 4.1 Causal Information Conservation Score (CIC-Score)

The Causal Information Conservation Score (CIC-Score) is a direct empirical diagnostic for the Structural Reconstruction Error. It quantifies a framework’s adherence to the CIC principle by disentangling algorithmic information loss (from an imperfect inversion process) from modeling error (from the statistical challenge of learning the true causal mechanism). We define the score, bounded in $[0,1]$ , using an exponential formulation:

$$

CIC-Score=\exp≤ft(-≤ft(δ_U+δ_SRE\right)\right)

$$

The error components are designed to isolate distinct failure modes:

- $δ_U$ , the Relative Noise Recovery Error, quantifies the modeling error. It measures how well the trained network approximates the true score function, reflected in the fidelity of the recovered noise $\hat{U}$ versus the ground-truth $U_true$ :

$$

δ_U=\frac{E[\|\hat{U}_scaled-U_true, scaled\|^2]}{E[\|U_true, scaled\|^2]}

$$

This term captures all inaccuracies from finite data and imperfect optimization.

- $δ_SRE$ , the Normalized Structural Error, exclusively quantifies the algorithmic error inherent to the inversion process itself. Its definition is model-dependent to allow for fair comparisons:

- For frameworks with analytical invertibility (e.g., our BELM-MDCM, ANMs), the algorithm introduces no information loss, so we set $δ_SRE≡ 0$ by construction. Any observed reconstruction error is a symptom of modeling error, already captured by $δ_U$ .

- For frameworks relying on approximate inversion (e.g., DDIM), $δ_SRE$ is empirically measured to quantify this inherent algorithmic flaw:

$$

δ_SRE=\frac{E[\|(H_θ∘T_θ-I)X\|^2]}{E[\|X\|^2]}

$$

This principled decomposition allows the CIC-Score to fairly assess different frameworks by isolating structural design advantages from the universal challenge of model training.

### 4.2 Causal Mechanism Fidelity Score (CMF-Score)

A generative causal model’s core promise is to learn true causal mechanisms, not just outcomes. Naïve metrics like pairwise correlations fail to capture the non-linear, multi-variable, and directional nature of causality. We therefore propose the Causal Mechanism Fidelity (CMF) score, a hierarchical framework with two levels of increasing rigor.

#### 4.2.1 Level 1 (Pragmatic): The Conditional Mutual Information Score (CMI-Score)

The Conditional Mutual Information (CMI), $I(V_i;V_j|Pa_j∖\{V_i\})$ , is a non-parametric, non-linear measure of the direct influence a parent $V_i$ has on its child $V_j$ after accounting for all other parents. The CMI-Score evaluates whether this influence is preserved. For a single mechanism $V_j$ , it is the average consistency across all parent-child edges:

$$

CMI-Score(V_j)=\frac{1}{|Pa_j|}∑_V_{i∈Pa_j}≤ft(1-\frac{≤ft|I_obs(V_i;V_j|·)-I_cf(V_i;V^\prime_j|·)\right|}{I_obs(V_i;V_j|·)+ε}\right)

$$

where $I_obs$ and $I_cf$ are the CMI values from observational and counterfactual data, respectively. The final CMI-Score is the average over all SCM mechanisms.

#### 4.2.2 Level 2 (Gold Standard): The Kernelized Mechanism Discrepancy (KMD) Score

To rigorously compare entire conditional distributions, we use the Maximum Mean Discrepancy (MMD) (gretton2012kernel), a kernel-based statistical test for distributional equality. The KMD-Score applies this test to the conditional distributions $p(V_j|Pa_j)$ that define each causal mechanism, measuring the discrepancy between the learned and observed conditionals. The final score is mapped to a similarity measure in $[0,1]$ :

$$

KMD-Score=\exp(-γ·E_pa_j∼ p(\mathbf{Pa_j)}[MMD(p(V_j|pa_j),p_θ(V_j|pa_j))])

$$

where $γ$ is a scaling parameter. A score of 1 indicates that the learned conditional mechanism is statistically indistinguishable from the observed one.

Complementary and Validated Evaluation Metrics.

Our proposed metrics complement, rather than replace, traditional ones like ATE and PEHE. They evaluate distinct facets of performance: while ATE/PEHE measure outcome accuracy, the CMF-Score assesses mechanism fidelity. This distinction is critical, as a model can achieve a high ATE via fortuitous error cancellation despite failing to learn the true data-generating process. To ensure our metrics are practically reliable, we conducted a controlled micro-simulation study, detailed in Appendix J. The results provide strong empirical evidence for their validity and complementary roles: the CIC-Score acts as a high-sensitivity SRE detector; the CMI-Score robustly tracks the fidelity of causal associations; and the KMD-Score serves as a final arbiter of distributional similarity. This validation confirms that our evaluation framework offers a more complete, nuanced, and reliable assessment of generative causal models.

## 5 Experiments

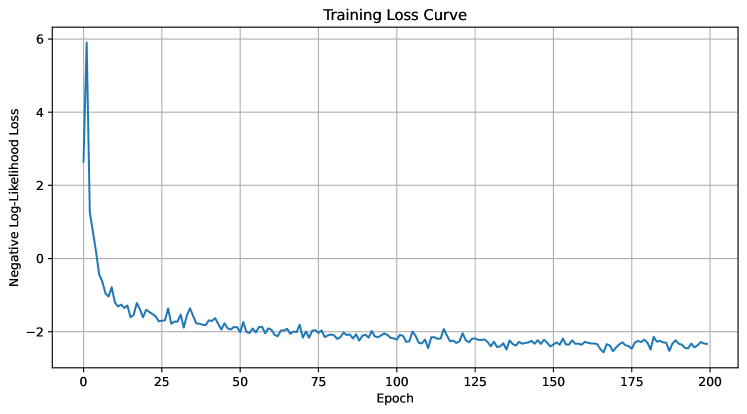

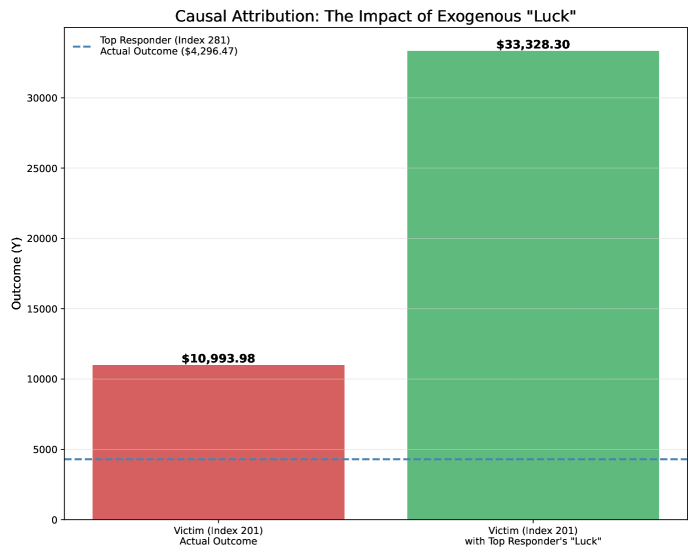

Our empirical evaluation is designed as a comprehensive test of our central thesis: that eliminating SRE is a necessary condition for generating authentic counterfactuals and unlocks analytical capabilities beyond the reach of conventional methods. We structured the study as a four-act narrative to rigorously test our claims. Act I establishes our model’s state-of-the-art predictive fidelity on standard benchmarks. Act II provides a deep diagnostic analysis, using our proposed metrics as empirical evidence for the destructive effect of SRE. Act III showcases the unique capabilities unlocked by an information-conserving framework. Finally, Act IV validates the framework’s robustness through a series of stress tests and a full ablation study.

Evaluation Protocol.

For a rigorous evaluation, we employ two complementary protocols. This distinction is crucial, as it separates the assessment of our methodology’s peak performance from the diagnostic analysis of its components.

1. Ensemble Evaluation for SOTA Performance: To benchmark against state-of-the-art methods (specifically, ITE estimation in Section 5.1.3), we adopt the standard Deep Ensemble methodology. We train N=5 independent models and report the final metric (e.g., PEHE) on the ensembled prediction.

1. Individual Model Evaluation for Diagnostic Analysis: In all other experiments where the goal is a fair, apples-to-apples architectural comparison or stability assessment, we report the mean and standard deviation of metrics from individual model instances across N=5 runs. This isolates the effect of design choices from the gains of ensembling.

We estimate the Average Treatment Effect (ATE) throughout our experiments using a standard counterfactual imputation procedure, the pseudo-code for which is detailed in Algorithm 1 in Appendix K.

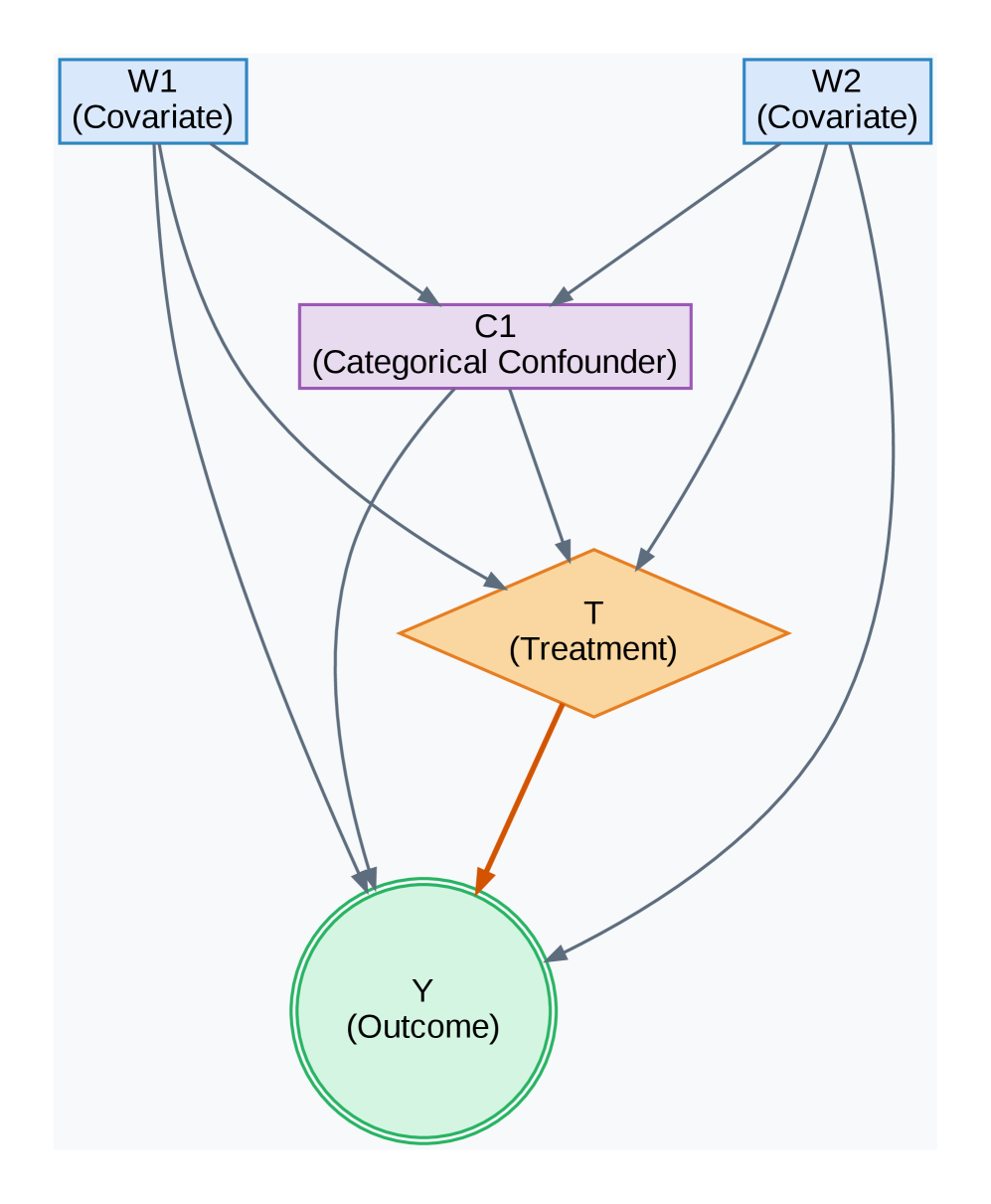

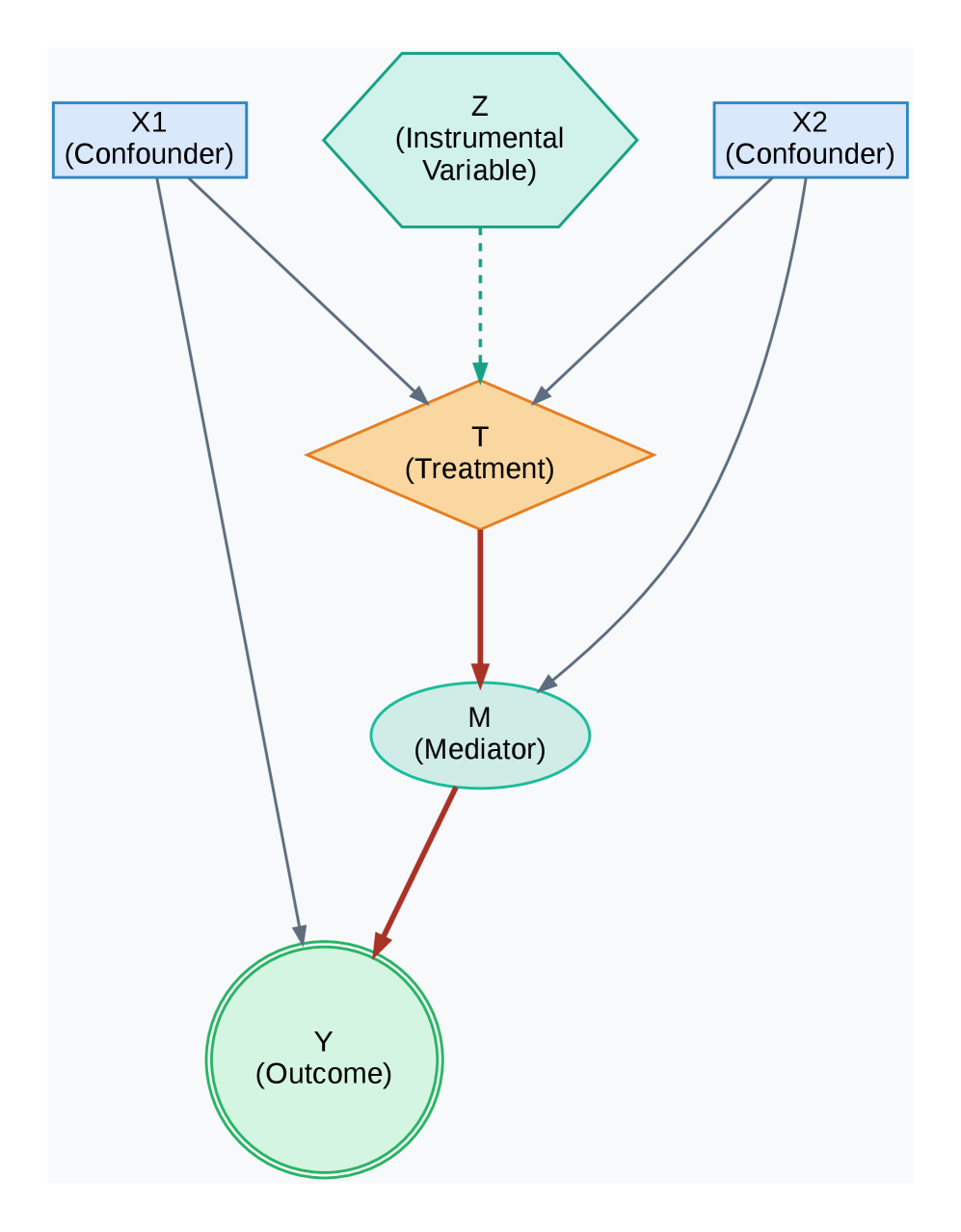

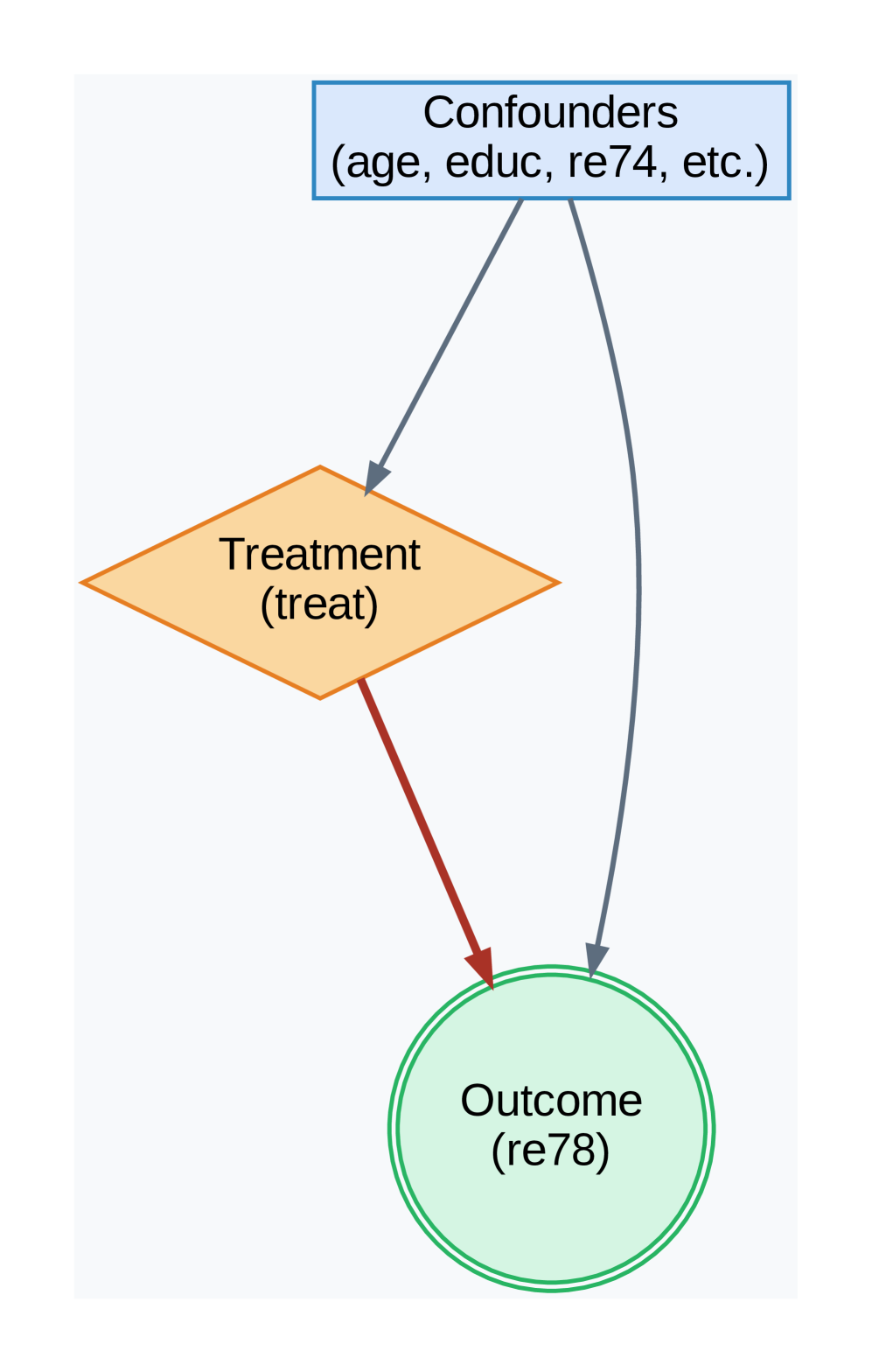

Baseline Estimators.

The Directed Acyclic Graphs (DAGs) for our experiments are shown in Figure 3. We benchmark BELM-MDCM against a suite of baselines from the DoWhy library (sharma2022dowhy), spanning classical statistical methods to state-of-the-art machine learning estimators to ensure a comprehensive comparison.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Directed Acyclic Graph (DAG): Causal Model with Covariates and Confounder

### Overview

The image displays a directed acyclic graph (DAG), a type of causal diagram used in statistics and epidemiology to represent hypothesized causal relationships between variables. The diagram illustrates a model where two covariates (W1, W2) influence a categorical confounder (C1) and the treatment (T), which in turn affects the outcome (Y). The confounder also directly influences both the treatment and the outcome.

### Components/Axes

The diagram consists of five nodes connected by directed arrows (edges). There are no numerical axes, as this is a conceptual model.

**Nodes (Variables):**

1. **W1 (Covariate)**: Located in the top-left corner. Represented by a light blue rectangle with a blue border.

2. **W2 (Covariate)**: Located in the top-right corner. Represented by a light blue rectangle with a blue border.

3. **C1 (Categorical Confounder)**: Located in the center, below W1 and W2. Represented by a light purple rectangle with a purple border.

4. **T (Treatment)**: Located in the center, below C1. Represented by an orange diamond shape.

5. **Y (Outcome)**: Located at the bottom center. Represented by a light green circle with a double green border.

**Edges (Directed Relationships):**

The arrows indicate the direction of hypothesized causal influence. All arrows are grey except for one.

* From **W1** to **C1** (grey arrow).

* From **W1** to **T** (grey arrow).

* From **W1** to **Y** (grey arrow).

* From **W2** to **C1** (grey arrow).

* From **W2** to **T** (grey arrow).

* From **W2** to **Y** (grey arrow).

* From **C1** to **T** (grey arrow).

* From **C1** to **Y** (grey arrow).

* From **T** to **Y** (a thicker, **orange** arrow, visually emphasizing the primary causal path of interest).

### Detailed Analysis

The diagram explicitly maps the following causal pathways:

1. **Direct Effects of Covariates**: Both covariates (W1, W2) have direct causal paths to all other variables in the model: the confounder (C1), the treatment (T), and the outcome (Y).

2. **Role of the Confounder**: The categorical confounder (C1) is influenced by the covariates and, in turn, exerts a direct causal influence on both the treatment (T) and the outcome (Y). This creates a "backdoor path" from T to Y via C1, which must be controlled for to estimate the true effect of T on Y.

3. **Primary Causal Path**: The central relationship of interest is the direct effect of the Treatment (T) on the Outcome (Y), indicated by the distinct, thicker orange arrow.

4. **Common Causes**: W1 and W2 act as common causes (or sources of variation) for both the confounder and the treatment, and they also directly affect the outcome.

### Key Observations

* **Visual Emphasis**: The arrow from **T to Y** is the only one colored orange and is thicker than the others. This design choice highlights it as the primary causal relationship the model is designed to investigate.

* **Node Coding**: Each variable type is encoded with a distinct shape and color: rectangles for covariates/confounder, a diamond for treatment, and a circle for outcome. This follows common conventions in causal diagrams.

* **Complex Confounding Structure**: The model depicts a scenario where simple adjustment for C1 may not be sufficient to block all confounding, as the covariates W1 and W2 also create additional paths between T and Y.

### Interpretation

This DAG represents a causal inference model for estimating the effect of a treatment (T) on an outcome (Y) in the presence of a categorical confounder (C1) and two covariates (W1, W2).

The diagram suggests that to isolate the true causal effect of T on Y (the orange path), an analyst must account for all common causes of T and Y. In this model, those are:

1. The direct confounder **C1**.

2. The covariates **W1** and **W2**, which affect both T and Y directly and also indirectly through C1.

The model implies that a valid analysis (e.g., using regression adjustment, stratification, or propensity score methods) would need to condition on W1, W2, and C1 to block the "backdoor" non-causal associations between T and Y. The diagram serves as a visual blueprint for specifying a statistical model or identifying the necessary variables for a causal analysis. It underscores that the relationship between T and Y is not isolated but is part of a network of influences.

</details>

(a) PSM Failure Scenario

<details>

<summary>x4.png Details</summary>

### Visual Description

## Causal Diagram: Instrumental Variable with Mediation

### Overview

The image displays a directed acyclic graph (DAG) illustrating a causal model. It depicts the relationships between several variables: two confounders (X1, X2), an instrumental variable (Z), a treatment (T), a mediator (M), and an outcome (Y). The diagram uses distinct shapes and colors to denote different variable types and employs arrows (solid and dashed) to represent hypothesized causal pathways.

### Components/Axes

The diagram is composed of six labeled nodes connected by directional arrows. There are no traditional axes, as this is a conceptual model, not a data chart.

**Nodes (Variables):**

1. **X1 (Confounder):** Located in the top-left corner. Represented by a light blue rectangle.

2. **X2 (Confounder):** Located in the top-right corner. Represented by a light blue rectangle.

3. **Z (Instrumental Variable):** Located in the top-center. Represented by a teal hexagon.

4. **T (Treatment):** Located in the center. Represented by an orange diamond.

5. **M (Mediator):** Located directly below T. Represented by a teal oval.

6. **Y (Outcome):** Located at the bottom-center. Represented by a light green circle with a double outline.

**Arrows (Causal Pathways):**

* **Solid Grey Arrows:**

* From X1 to T.

* From X1 to Y.

* From X2 to T.

* From X2 to Y.

* From X2 to M.

* **Dashed Teal Arrow:**

* From Z to T.

* **Solid Red Arrows:**

* From T to M.

* From M to Y.

### Detailed Analysis

The diagram explicitly defines the role of each variable and maps the proposed causal flows.

* **Confounders (X1, X2):** These variables are shown to influence both the Treatment (T) and the Outcome (Y) directly, which is the classic definition of a confounder. X2 also has a direct path to the Mediator (M).

* **Instrumental Variable (Z):** This variable is connected *only* to the Treatment (T) via a dashed arrow. The dashed line may indicate a specific assumption, such as a weaker or conditional relationship, or simply differentiate it visually. Crucially, Z has no direct path to the Outcome (Y) or the Mediator (M), satisfying the core exclusion restriction assumption for an instrument.

* **Treatment (T):** This is the central intervention or exposure variable. It is influenced by the confounders (X1, X2) and the instrumental variable (Z). It then influences the Outcome (Y) through two pathways: a direct path (not shown) and an indirect path via the Mediator (M).

* **Mediator (M):** This variable sits on the causal pathway between Treatment (T) and Outcome (Y). The red arrows from T to M and M to Y highlight this specific mediated effect.

* **Outcome (Y):** The final variable of interest. It is influenced directly by the confounders (X1, X2) and the mediator (M). The double circle around Y emphasizes its status as the primary endpoint.

### Key Observations

1. **Variable Typing by Shape:** The diagram uses a consistent visual language: rectangles for confounders, a hexagon for the instrument, a diamond for the treatment, an oval for the mediator, and a circle for the outcome.

2. **Pathway Highlighting:** The causal path from Treatment (T) to Outcome (Y) via the Mediator (M) is emphasized with red arrows, drawing attention to the mediation analysis component of the model.

3. **Instrumental Variable Isolation:** The instrumental variable Z is visually and structurally isolated, connecting only to T. This is a critical feature for its valid use in causal inference.

4. **Complex Confounding:** The model accounts for confounding from two separate sources (X1 and X2), with X2 having a more complex role as it also affects the mediator.

### Interpretation

This diagram represents a sophisticated causal inference framework that combines **instrumental variable (IV) analysis** with **mediation analysis**.

* **Purpose:** The model is designed to estimate the causal effect of a Treatment (T) on an Outcome (Y) in the presence of unmeasured confounding, using Z as an instrument. Furthermore, it seeks to decompose this total effect into a direct effect and an indirect effect that flows through the Mediator (M).

* **Relationships:** The structure suggests that while Z can be used to isolate variation in T that is not confounded by X1 or X2, the effect of T on Y is not entirely direct. A portion of T's influence is transmitted through changing M, which in turn affects Y.

* **Notable Implications:**

* The direct arrow from X2 to M indicates that the mediator itself is confounded. This is an important consideration for mediation analysis, as it violates the "no mediator-outcome confounder" assumption unless X2 is measured and controlled.

* The absence of a direct arrow from Z to Y or M is the key assumption that makes Z a valid instrument. If this assumption is violated (e.g., Z affects Y through a path other than T), the IV estimates would be biased.

* This model would be used in fields like epidemiology, economics, or social sciences to answer questions such as: "What is the effect of a job training program (T) on future earnings (Y), and how much of that effect works through increasing skills (M), while accounting for pre-existing ability (X1, X2) using an instrument like random assignment to the program (Z)?"

</details>

(b) Ablation Study Scenario

<details>

<summary>x5.png Details</summary>

### Visual Description

## Causal Diagram: Confounding Structure in Treatment-Outcome Analysis

### Overview

The image is a directed acyclic graph (DAG) illustrating a classic confounding structure in causal inference. It depicts the relationships between a set of confounding variables, a treatment variable, and an outcome variable. The diagram is designed to show how external factors can influence both the treatment assignment and the observed outcome, thereby complicating the estimation of the true causal effect of the treatment.

### Components/Axes

The diagram consists of three primary nodes connected by directional arrows (edges).

1. **Top Node (Confounders):**

* **Shape & Color:** A blue rectangle with a solid border.

* **Label Text:** "Confounders (age, educ, re74, etc.)"

* **Position:** Centered at the top of the diagram.

* **Function:** Represents a set of pre-treatment variables that are potential common causes of both the treatment and the outcome. The listed examples are "age," "educ" (likely education), and "re74" (likely real earnings in 1974).

2. **Middle-Left Node (Treatment):**

* **Shape & Color:** An orange diamond (rhombus) with a solid border.

* **Label Text:** "Treatment (treat)"

* **Position:** Located below and to the left of the Confounders node.

* **Function:** Represents the intervention or exposure variable of interest, labeled "treat."

3. **Bottom-Right Node (Outcome):**

* **Shape & Color:** A light green circle with a double-line border.

* **Label Text:** "Outcome (re78)"

* **Position:** Located below and to the right of the Treatment node, and directly below the Confounders node.

* **Function:** Represents the measured result variable, labeled "re78" (likely real earnings in 1978).

**Arrows (Edges):**

* **From Confounders to Treatment:** A solid gray arrow points from the bottom of the Confounders rectangle to the top corner of the Treatment diamond. This indicates that the confounding variables influence the assignment of the treatment.

* **From Confounders to Outcome:** A solid gray arrow points from the bottom of the Confounders rectangle to the top of the Outcome circle. This indicates that the confounding variables also directly influence the outcome.

* **From Treatment to Outcome:** A solid **red** arrow points from the bottom corner of the Treatment diamond to the left side of the Outcome circle. This represents the direct causal path of interest—the effect of the treatment on the outcome.

### Detailed Analysis

The diagram explicitly maps the flow of influence:

1. **Confounders → Treatment:** The gray arrow establishes that variables like age, education, and prior earnings affect who receives the treatment. This is a source of selection bias.

2. **Confounders → Outcome:** The second gray arrow shows these same variables also affect the outcome (e.g., earnings in 1978) independently of the treatment.

3. **Treatment → Outcome:** The red arrow highlights the primary causal relationship under study. However, its effect is "confounded" by the two gray paths originating from the Confounders node.

The use of distinct shapes (rectangle, diamond, circle) and colors (blue, orange, green) visually separates the three types of variables. The red color of the Treatment→Outcome arrow emphasizes it as the focal relationship.

### Key Observations

* **Classic Backdoor Path:** The diagram visually defines a "backdoor path" from Treatment to Outcome via the Confounders: Treatment ← Confounders → Outcome. This open path creates a non-causal association between treatment and outcome.

* **Visual Emphasis:** The red arrow draws the viewer's eye to the direct causal effect, while the gray arrows represent the confounding bias that must be controlled for in analysis.

* **Variable Naming:** The labels "re74" and "re78" strongly suggest this diagram is based on a well-known econometric dataset (likely the Lalonde dataset) studying the effect of a job training program ("treat") on subsequent earnings ("re78"), with prior earnings ("re74") and demographics as confounders.

### Interpretation

This diagram is a foundational tool in causal inference, particularly for observational studies. It argues that a naive comparison of outcomes between treated and untreated groups would be biased because the groups differ systematically in their confounding characteristics (age, education, prior earnings).

The diagram dictates the analytical strategy: to estimate the true causal effect of "treat" on "re78," one must "block" the backdoor path. This is achieved by statistically **controlling for** (adjusting for) the variables in the "Confounders" set. Methods like regression adjustment, matching, or stratification aim to create a comparison where the confounders are balanced across treatment groups, effectively severing the gray arrows and isolating the red causal path.

In essence, the image is not just a flowchart but a **causal model** that encodes assumptions about the data-generating process. It visually justifies the need for specific statistical controls and warns against interpreting raw associations as causal effects.

</details>

(c) Lalonde Confounding Structure

Figure 3: Directed Acyclic Graphs (DAGs) for key experiments. (a) A structure designed to challenge propensity score methods. (b) A mediation structure used for the ablation study. (c) The standard confounding structure assumed for both Lalonde-based experiments.

### 5.1 Act I: Establishing State-of-the-Art Predictive Fidelity

We first establish that our principled design achieves superior predictive fidelity on standard causal inference benchmarks.

#### 5.1.1 Robustness in Non-Linear Confounding Scenarios

We tested our model in a challenging synthetic scenario (Figure 3(a)) designed with highly non-linear confounding to cause propensity-based methods to fail. Table 1 shows the results. While Causal Forest is exceptionally accurate on this specific DGP, our BELM-MDCM framework secures its position as the second most accurate method, delivering a highly stable and competitive ATE estimate. Crucially, it significantly outperforms the entire suite of propensity-based methods and powerful estimators like DML in accuracy. The high standard deviation of DML highlights its unreliability in this context, validating our model as a robust estimator where traditional approaches are compromised.

Table 1: ATE Estimation on the PSM Failure Scenario (True ATE = 5000). We report the mean ATE and standard deviation across multiple runs.

| Causal Forest | 4895.77 $±$ 69.26 | 104.23 |

| --- | --- | --- |

| Propensity Score Stratification | 5309.38 $±$ 185.36 | 309.38 |

| Linear Regression | 5348.82 $±$ 23.23 | 348.82 |

| Propensity Score Matching | 5353.93 $±$ 191.36 | 353.93 |

| Inverse Propensity Weighting | 5385.68 $±$ 52.03 | 385.68 |

| Double Machine Learning | 4285.63 $±$ 550.97 | 714.37 |

#### 5.1.2 Accuracy and Robustness on Real-World Observational Data

We next evaluated our framework on the canonical Lalonde dataset (lalonde1986evaluating), a challenging real-world benchmark with a known RCT ground truth. Table 2 demonstrates the comprehensive superiority of our BELM-MDCM framework. It achieved a mean ATE estimate of 1567.36 $±$ 201.62, the lowest error among all methods that correctly identified the treatment effect’s positive direction. More critically, the results highlight a stark contrast in reliability. Classical methods failed entirely, while the powerful Causal Forest baseline suffered from extreme instability (Std Dev of 785.59). In contrast, BELM-MDCM exhibited remarkable robustness, with a standard deviation approximately four times lower. This outstanding performance on a canonical benchmark validates that our framework delivers accurate estimates with the consistency essential for trustworthy causal inference.

Table 2: ATE Estimation Stability on the Lalonde Dataset (RCT Benchmark ATE $≈ 1794$ ). Results for all models are reported as Mean $±$ Standard Deviation across 5 independent runs.

| Causal Forest | 1085.30 $±$ 785.59 | 708.70 |

| --- | --- | --- |

| Linear Regression | 46.33 $±$ 76.80 | 1747.67 |

| Propensity Score Matching | -3.96 $±$ 118.37 | 1797.96 |

| Propensity Score Stratification | -35.54 $±$ 81.44 | 1829.54 |

| Propensity Score Weighting | -122.55 $±$ 50.51 | 1916.55 |

| Double Machine Learning | nan $±$ nan | nan |

#### 5.1.3 High-Fidelity ITE Estimation and Stability Analysis

Objective. We evaluate performance at the individual level using a semi-synthetic version of the Lalonde dataset. This experiment leverages real-world covariates and assumes the causal structure depicted in Figure 3(c). To rigorously assess both accuracy and reliability, we follow our Individual Model Evaluation protocol, reporting the mean and standard deviation of performance across 5 independent runs for each method.

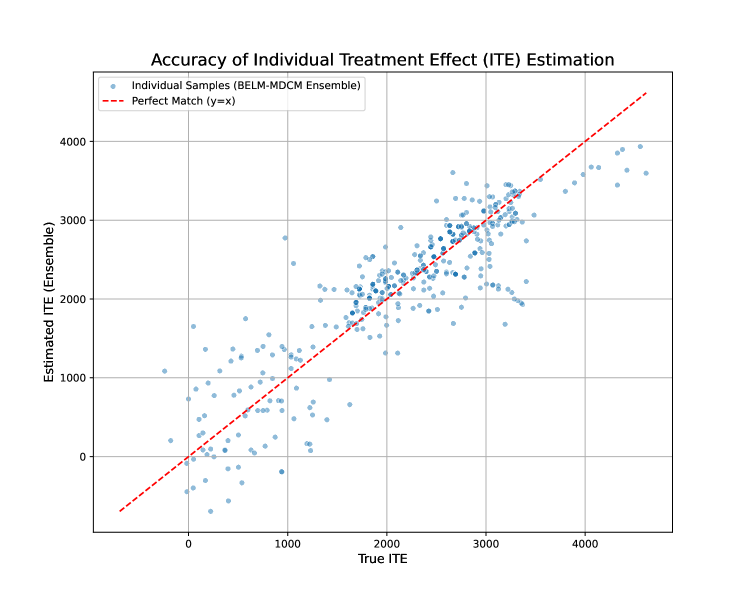

Results. The PEHE results, presented in Table 3, confirm the exceptional fidelity and robustness of our framework. BELM-MDCM achieves the lowest average PEHE score of 537.84 and demonstrates remarkable stability with the lowest standard deviation of just 60.11. This performance is closely followed by Causal Forest. However, the results also highlight the instability of other meta-learners; X-Learner, in particular, exhibits extremely high variance, with a standard deviation more than three times larger than its competitors, rendering its single-run estimates unreliable. This highlights the dual advantage of our framework: superior accuracy combined with consistent, trustworthy performance. Figure 4 provides visual confirmation, showing the tight clustering of our model’s ensembled ITE estimates around the ground truth.

<details>

<summary>x6.png Details</summary>

### Visual Description

\n

## Scatter Plot: Accuracy of Individual Treatment Effect (ITE) Estimation

### Overview

The image is a scatter plot evaluating the performance of an ensemble model (BELM-MDCM Ensemble) in estimating Individual Treatment Effects (ITE). It compares the model's estimated ITE values against the known true ITE values for a set of individual samples. The plot includes a reference line representing perfect prediction.

### Components/Axes

* **Chart Title:** "Accuracy of Individual Treatment Effect (ITE) Estimation" (centered at the top).

* **X-Axis:**

* **Label:** "True ITE"

* **Scale:** Linear, ranging from approximately -500 to 4500.

* **Major Tick Marks:** 0, 1000, 2000, 3000, 4000.

* **Y-Axis:**

* **Label:** "Estimated ITE (Ensemble)"

* **Scale:** Linear, ranging from approximately -500 to 4500.

* **Major Tick Marks:** 0, 1000, 2000, 3000, 4000.

* **Legend:** Located in the top-left corner of the plot area.

* **Item 1:** A blue dot labeled "Individual Samples (BELM-MDCM Ensemble)".

* **Item 2:** A red dashed line labeled "Perfect Match (y=x)".

* **Grid:** A light gray grid is present, aligned with the major tick marks on both axes.

### Detailed Analysis

* **Data Series (Individual Samples):** The plot contains several hundred light blue, semi-transparent circular data points. Each point represents a single sample, plotting its True ITE (x-coordinate) against its Estimated ITE from the ensemble model (y-coordinate).

* **Reference Line (Perfect Match):** A red dashed diagonal line runs from the bottom-left to the top-right of the plot. This line represents the ideal scenario where the estimated value perfectly equals the true value (y = x).

* **Data Distribution & Trend:**

* **Visual Trend:** The cloud of blue data points shows a strong, positive linear trend. The points are generally clustered along the red "Perfect Match" line.

* **Spread:** The points are not perfectly on the line, indicating estimation error. The spread (vertical distance from the line) appears relatively consistent across the range of True ITE values, though there may be slightly more dispersion at the higher end (True ITE > 3000).

* **Range:** The data spans a wide range of ITE values. True ITE values extend from just below 0 to approximately 4500. Estimated ITE values show a similar range.

* **Density:** The highest density of points appears in the central region, roughly between True ITE values of 1500 and 3000.

### Key Observations

1. **Strong Positive Correlation:** There is a clear and strong positive correlation between the True ITE and the Estimated ITE. This indicates the ensemble model's estimates are generally well-calibrated and move in the correct direction relative to the true values.

2. **Model Bias:** The data points are distributed fairly evenly on both sides of the perfect match line across the entire range. There is no obvious systematic over-estimation (points consistently above the line) or under-estimation (points consistently below the line).

3. **Estimation Variance:** While the correlation is strong, there is visible variance. For any given True ITE value, the corresponding estimates show a range of values. For example, at a True ITE of ~2000, estimates range from approximately 1500 to 2500.

4. **Outliers:** A few points deviate more significantly from the trend line. For instance, there are points near True ITE ≈ 500 with Estimated ITE near 1500, and points near True ITE ≈ 3000 with Estimated ITE near 2000. These represent samples where the model's estimation was less accurate.

### Interpretation