# Self-Correcting Large Language Models: Generation vs. Multiple Choice

> Work was done prior to joining Amazon.

## Abstract

Large language models have recently demonstrated remarkable abilities to self-correct their responses through iterative refinement, often referred to as self-consistency or self-reflection. However, the dynamics of this self-correction mechanism may differ substantially depending on whether the model is tasked with open-ended text generation or with selecting the most appropriate response from multiple predefined options. In this paper, we conduct a systematic investigation of these two paradigms by comparing performance trends and error-correction behaviors across various natural language understanding and reasoning tasks, covering language models of different scales and families. Our experimental results reveal distinct patterns of improvement and failure modes:

While open-ended generation often benefits from the flexibility of re-interpretation and compositional refinement, multiple-choice selection can leverage clearer solution boundaries but may be limited by the provided options. This contrast also reflects the dual demands faced by emerging agentic LLM applications: effective agents must not only generate and refine open-ended plans or explanations, but also make reliable discrete choices when operating within constrained action spaces. Our findings, therefore, highlight that the design of self-correction mechanisms should take into account the interaction between task structure and output space, with implications for both knowledge-intensive reasoning and decision-oriented applications of LLMs. Codes and experiments are available at https://github.com/rahmanidashti/llm-self-correction

Self-Correcting Large Language Models: Generation vs. Multiple Choice

Hossein A. Rahmani $\dagger$ , Satyapriya Krishna $\ddagger$ thanks: Work was done prior to joining Amazon., Xi Wang $\nabla$ , Mohammadmehdi Naghiaei $\diamondsuit$ , Emine Yilmaz $\dagger$ $\dagger$ University College London, $\ddagger$ Amazon AGI, $\nabla$ University of Sheffield, $\diamondsuit$ University of Southern California {hossein.rahmani.22, emine.yilmaz}@ucl.ac.uk, skrishna@g.harvard.edu xi.wang@sheffield.ac.uk, naghiaei@usc.edu

## 1 Introduction

Recent advances in Large Language Models (LLMs) have illustrated that iterative self-correction, where a model re-examines and revises its output under a self-reflection framework, can lead to significant performance gains across a variety of tasks (Madaan et al., 2023; Cook et al., 2024; Shinn et al., 2023; Gou et al., 2024, inter alia). This emergent ability is often attributed to the models’ capacity to integrate chain-of-thought reasoning Kamoi et al. (2024); Chang et al. (2024); Wei et al. (2022), prompting them to refine their own outputs as addressed by a human proofreader or mentor. Regarding performance validation, existing studies on self-correction have generally focused on free-form text generation (Huang et al., 2023; Madaan et al., 2023; Zelikman et al., 2022; Ma et al., 2025; Kumar et al., 2025; Krishna et al., 2024, inter alia), such as dialogue response, code optimization, and acronym generation. These tasks align with the strategy of language model optimization in addressing next token prediction.

However, as LLM applications expand, evaluation restricted to free-form generation offers an incomplete picture. For instance, NVIDIA advocates the deployment of smaller language models in agentic systems for tasks such as API calls and orchestration with external tools, motivated by sustainability and efficiency considerations Belcak et al. (2025). This highlights the need to examine self-correction beyond open-ended generation. In this study, we categorize natural language modeling tasks into two broad paradigms: free-form text generation and multi-choice prediction. The former treats modeling as unconstrained sequence generation over the full vocabulary, while the latter frames it as classification over a fixed set of candidate answers. These paradigms are complementary: multi-choice tasks test precise discrimination under constraints, whereas free-form tasks assess expressive generation, and together they capture the main modes of LLM use in applications such as question answering, reasoning, and open-ended dialogue.

In this paper, we investigate how self-correction unfolds when comparing open-ended generation against multiple-choice question scenarios. We hypothesize that while open-ended generation may benefit from enhanced flexibility and creativity, it also faces a larger search space and the risk of compounding errors. By contrast, multiple-choice models operate in a constrained space, which can reduce semantic drift yet limit creative corrections. Our study explores how these respective factors interact with iterative refinement, shedding light on whether self-correction aligns more naturally with either unconstrained or constrained output space.

To address these questions, we conduct comprehensive experiments on two distinct datasets that differ in nature, one focusing on knowledge-intensive question answering and the other on reasoning-oriented problems. We perform iterative inference, giving the model multiple opportunities to reevaluate and revise. By comparing error rates, consistency across iterations, and eventual convergence in each paradigm, we expose nuanced trade-offs in how LLMs adapt to different output constraints under a self-correction regime. Our results provide practical insights for the design and deployment of LLM-based systems, highlighting opportunities to harness better or tailor self-correction behaviors for diverse application settings. Furthermore, we discuss how our findings inform the broader research agenda of aligning emergent capabilities in large-scale models with varied real-world task requirements.

## 2 Related Works

Iterative Reasoning and Self-correction in LLMs.

Large language models first showed an emergent ability to reason step-by-step when prompted with chain-of-thought (CoT) examples (Wei et al., 2022). Shortly after, Wang et al. (2023) demonstrated that sampling several independent reasoning traces and selecting the majority answer—dubbed self-consistency (SC)—boosts accuracy on arithmetic and commonsense tasks. Follow-up studies made the correction loop explicit by asking the model to critique its own draft before rewriting it, leading to sizeable gains in factual QA and code generation (Madaan et al., 2023). Variants that call external tools such as Python or knowledge bases during the critique stage further reduce hallucinations in open-ended generation (Chen et al., 2023; Yao et al., 2023; Gou et al., 2024). These works collectively suggest that LLMs can act as both solver and reviewer, but they focus almost exclusively on free-form text outputs.

Verification–based Refinement.

Instead of trusting the model’s final token distribution, several papers add lightweight verifiers. Cobbe et al. (2021) attach unit tests to code synthesis; Dixit et al. (2023) use factuality checkers for summarization; Pryzant (2023) adopt entailment models for reading comprehension. The common pattern is a two-step pipeline where the LLM proposes an answer, then a cheaper or more precise module scores it. Our work keeps the entire loop inside the language model, isolating the effect of output format itself (generation vs. multiple-choice) from external verification.

Answer Selection and Multiple-Choice Prompting.

Tasks with a closed candidate set (e.g., MMLU (Hendrycks et al., 2021), ARC (Clark and et al., 2018)) are typically solved by mapping each option to an independent prompt and picking the highest-logit answer (Brown and et al., 2020). Several groups have tried to retrofit iterative reasoning onto this template. Zhu and et al. (2024) prepend a self-explanation, rescore the options with the explanation as additional context, and report modest but consistent gains. Li and et al. (2024) show that calibrating logits with contrastive rationales helps low-parameter models, while Pan and et al. (2023) explore ensembling diverse rationales. Yet a systematic comparison between correction dynamics in open versus closed output spaces is missing; our study provides that head-to-head analysis.

Bridging the paradigms.

Contemporary benchmarks increasingly mix free-form and categorical sub-tasks—e.g., TruthfulQA has both short-answer and multiple-choice splits (Lin et al., 2022). Deployment settings such as tutoring agents or search assistants likewise alternate between generating explanations and selecting the best passages. Understanding whether self-correction behaves differently under these two regimes is therefore more than a methodological curiosity as it affects prompt engineering, compute budgeting, and safety guard-rail design. By re-implementing the main correction strategies from the literature under a unified experimental budget, we show that the shape of the output space itself controls how much an LLM can benefit from extra reflection rounds.

## 3 Open-ended Generation vs. Multiple-Choice Answer Selection

Large language models are increasingly expected to handle a wide spectrum of downstream tasks, ranging from unconstrained natural language generation, such as open-domain question answering, to highly structured classification problems, like sentiment analysis. Two of the most commonly encountered settings are (i) open-ended generation, where the model must produce a free-form text response, and (ii) multiple-choice answer selection, where it must select a single correct option from a predefined set of choices. While these two paradigms are often operationalized using the same model architecture and weights, they impose fundamentally different constraints on the output space and influence how self-correction unfolds over successive inference steps. This section formalizes these two paradigms, describes how self-correction mechanisms are instantiated within each, and presents qualitative differences that help explain the empirical patterns observed in Section 5.

Open-Ended Generation.

In the open-ended generation setting, the model is required to produce an output sequence $y^{(0)}=(y^{(0)}_{1},\ldots,y^{(0)}_{T})\in\mathcal{V}^{*}$ , where $\mathcal{V}$ denotes the vocabulary and $T$ is the (variable) sequence length. The generation is conditioned on an input $x$ , which may correspond to a question, prompt, or instruction, such that the model defines a conditional distribution:

$p(y^{(0)}\mid x)=\prod_{t=1}^{T}p(y^{(0)}_{t}\mid y^{(0)}_{<t},x)$

This formulation captures the standard auto-regressive decoding process for open-ended text generation. The generated sequence may consist of a sentence, paragraph, or longer passage, and there are no explicit structural constraints beyond syntactic plausibility and task relevance.

Self-correction in this paradigm typically proceeds by prompting the model to critique its initial output — either via explicit instructions (“identify any flaws”) or implicit prompting strategies (“think step by step”) — followed by a new generation $y^{(1)}$ . This iterative process can be repeated multiple times, resulting in a sequence $\{y^{(k)}\}_{k=0}^{K}$ , where each revised answer aims to improve upon the previous one. A final answer can be selected using majority voting, log-probability re-ranking, or verifier-based scoring. Because generation is unconstrained, each iteration can introduce new content, restructure previous arguments, or expand omitted details. While this offers flexibility and the potential for substantial improvements, it also opens the door to risks such as semantic drift Ji et al. (2023b, a), where the answer becomes misaligned with the original question over time, or hallucinations, where fictitious facts are introduced in an attempt to improve fluency or apparent coherence. These failure modes tend to accumulate if the model “over-corrects” by deviating from the initial context Spataru (2024).

Multiple-Choice Answer Selection.

By contrast, the multi-choice setting restricts the output space to a finite set of candidate answers $A=\{a_{1},a_{2},\ldots,a_{M}\}$ . For each question $x$ , the model computes a logit vector $\ell(x)\in\mathbb{R}^{M}$ , from which a softmax distribution is derived, and selects the most probable answer. Self-correction in this paradigm does not involve rewriting text but rather involves revisiting the initial logits after incorporating additional information. One common strategy is to generate a rationale $r^{(t)}$ for why a particular answer is correct, then concatenate this rationale to the original prompt and recompute the logits to obtain $\ell^{(t+1)}(x,r^{(t)})$ Huang et al. (2023); Liu et al. (2024). Over successive iterations, this allows the model to refine its beliefs based on its own reasoning. However, since the answer set is fixed, the model cannot explore novel hypotheses or restructure the space of answers; instead, it can only shift probability mass among existing options. This bounded nature of the output space makes multiple-choice settings more stable and less prone to semantic drift, but also potentially less effective at recovering from early errors — especially if the correct answer has low initial probability and the generated rationales fail to meaningfully influence the logits.

Qualitative Differences.

The two paradigms, i.e., open-ended generation and multiple-choice selection, exhibit distinct self-correction dynamics due to their differing output constraints. In open-ended generation, performance gains are typically front-loaded, with the most significant improvements occurring in the first few iterations as the model repairs inconsistencies or fills in missing details Cook et al. (2024); Huang et al. (2023); Gou et al. (2024). However, this flexibility also increases the risk of semantic drift in later rounds Spataru (2024): if the model’s revisions start to go off-topic or introduce inaccuracies, the session can degrade without external intervention. In contrast, multiple-choice tasks show steadier, more incremental improvements, benefiting from the stability of a fixed answer set. They may suffer, however, from logit inertia when the correct option is initially underweighted. The model can be difficult to move to a low-probability answer unless a very compelling rationale shifts the balance. Generation tends to be more compute-intensive due to longer outputs per iteration, while multiple-choice achieves better accuracy-to-token efficiency by focusing on short discriminative outputs. Additionally, model scale interacts differently across formats. Larger models can better mitigate drift in generation through coherent reasoning chains, while smaller models perform more reliably in multiple-choice settings due to the structured nature of the output space and the guidance provided by explicit options.

Understanding these qualitative and quantitative differences between the two paradigms is crucial for designing robust systems that use LLMs in iterative inference settings. Depending on the task requirements, whether correctness, stability, creativity, or inference budget is the primary constraint, one or the other format may be more appropriate, and self-correction strategies should be tailored accordingly.

## 4 Experimental Setup

Problem Statement.

In this study, we aim to evaluate the dynamics of iterative self-correction under constrained generation and multiple-choice selection across representative tasks. Let $x\in\mathcal{X}$ denote an input instance (e.g., a question) with ground-truth answer $y^{\star}$ . An LLM parameterised by $\theta$ produces an initial response $y^{(0)}$ whose format depends on the task paradigm. For open-ended generation, the model outputs a sequence $y^{(0)}\in V^{\ast}$ with $p_{\theta}\!\big(y^{(0)}\mid x\big)\;=\;\prod_{t=1}^{T}p_{\theta}\!\big(y^{(0)}t\mid y^{(0)}{<t},x\big)$ . In contrast, for multiple-choice selection, the model selects $y^{(0)}\in A=\{a_{1},\dots,a_{M}\}$ from logits $\ell(x)\in\mathbb{R}^{M}$ , i.e., $y^{(0)}\;=\;\arg\max_{a_{i}\in A}\ell_{i}(x),\qquad$ $\sigma_{i}^{(0)}(x)\;=\;\frac{e^{\ell_{i}(x)}}{\sum_{j=1}^{M}e^{\ell_{j}(x)}}$ . By applying iterative self-correct, given history $\mathcal{H}^{(k-1)}=(x,y^{(0)},\dots,y^{(k-1)})$ , the model produces a revision $y^{(k)}\sim p_{\theta}\!\big(\cdot\mid\mathcal{H}^{(k-1)}\big),\qquad k=1,\dots,K$ .

We study the sequence $\mathcal{Y}(x)=\{y^{(k)}\}_{k=0}^{K}$ and aim to maximize task accuracy of the terminal output $y^{(K)}$ over $x\sim\mathcal{D}$ . We seek to observe how performance evolves with successive self-correction iterations and how error correction or degradation manifests in each paradigm. To that end, we set up experiments on two distinct question-answering benchmarks and examine multiple LLMs under various prompting strategies.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Iteration for Two Methods

### Overview

The image is a line chart comparing the accuracy performance of two methods, "Generation" and "Multiple-choice," over a series of iterations. The chart includes shaded regions around each line, likely representing confidence intervals or standard deviation.

### Components/Axes

* **Chart Type:** Line chart with shaded error bands.

* **X-Axis:**

* **Label:** "Iteration"

* **Scale:** Linear, from 0 to 5.

* **Markers:** 0, 1, 2, 3, 4, 5.

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear, from 0.0 to 1.0 (representing 0% to 100%).

* **Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend:**

* **Position:** Top-left corner of the plot area.

* **Items:**

1. **Blue line with circle markers:** "Generation"

2. **Orange line with diamond markers:** "Multiple-choice"

### Detailed Analysis

**Data Series 1: Generation (Blue Line)**

* **Trend:** The line shows a steady, gradual upward slope from left to right.

* **Approximate Data Points:**

* Iteration 0: ~0.25 (25%)

* Iteration 1: ~0.28

* Iteration 2: ~0.30

* Iteration 3: ~0.32

* Iteration 4: ~0.34

* Iteration 5: ~0.35 (35%)

* **Shaded Region (Blue):** A relatively narrow band surrounds the line, indicating lower variance or a tighter confidence interval. The band spans approximately ±0.05 to ±0.08 in accuracy around the central line.

**Data Series 2: Multiple-choice (Orange Line)**

* **Trend:** The line also shows a steady upward slope, positioned consistently above the blue line.

* **Approximate Data Points:**

* Iteration 0: ~0.45 (45%)

* Iteration 1: ~0.48

* Iteration 2: ~0.50

* Iteration 3: ~0.52

* Iteration 4: ~0.54

* Iteration 5: ~0.55 (55%)

* **Shaded Region (Orange):** A wider band surrounds this line, indicating greater variance or a broader confidence interval. The band spans approximately ±0.10 to ±0.15 in accuracy around the central line.

### Key Observations

1. **Performance Gap:** The "Multiple-choice" method demonstrates consistently higher accuracy than the "Generation" method at every measured iteration. The gap is approximately 20 percentage points at iteration 0 and narrows slightly to about 20 percentage points at iteration 5.

2. **Positive Trend:** Both methods show improvement in accuracy as the number of iterations increases.

3. **Variance Difference:** The "Multiple-choice" method exhibits significantly higher variance (wider shaded area) in its performance compared to the "Generation" method, which has a more consistent output (narrower shaded area).

4. **Convergence:** The slopes of the two lines appear roughly parallel, suggesting the rate of improvement per iteration is similar for both methods.

### Interpretation

The data suggests that for the task being measured, employing a "Multiple-choice" approach yields a substantial and consistent accuracy advantage over a "Generation" approach across the observed training or evaluation iterations. Both methods benefit from more iterations, indicating a learning or refinement process.

The key trade-off highlighted is between **performance and consistency**. While "Multiple-choice" achieves higher average accuracy, its results are more variable, as shown by the wider confidence band. This could imply that its success is more sensitive to specific conditions or data samples. In contrast, "Generation" is less accurate on average but produces more reliable and predictable outcomes.

From a technical standpoint, this chart would be critical for deciding which method to deploy. If the priority is maximizing peak accuracy and some variability is acceptable, "Multiple-choice" is superior. If stable, predictable performance is paramount, "Generation" might be preferred despite its lower average score. The parallel improvement trends suggest that further iterations could continue to benefit both methods, but the fundamental performance gap between them is likely to persist.

</details>

(a) Baseline

<details>

<summary>x2.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Iteration for Two Methods

### Overview

The image is a line chart comparing the performance of two methods, "Generation" and "Multiple-choice," over a series of iterations. The chart plots accuracy percentage against iteration number, showing the progression and variability of each method's performance.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence intervals.

* **Y-Axis:** Labeled "Accuracy (%)". Scale ranges from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Labeled "Iteration". Scale ranges from 0 to 5, with major tick marks at 0, 1, 2, 3, 4, and 5.

* **Legend:** Located in the top-left corner of the plot area.

* Blue line with circular markers: "Generation"

* Orange line with circular markers: "Multiple-choice"

* **Data Series:** Two lines, each with a shaded band (likely representing a confidence interval or standard deviation).

* **Generation (Blue):** Starts lower, increases gradually.

* **Multiple-choice (Orange):** Starts higher, increases more steeply.

### Detailed Analysis

**Trend Verification:**

* **Generation (Blue Line):** Shows a steady, moderate upward slope from iteration 0 to 5.

* **Multiple-choice (Orange Line):** Shows a steeper upward slope, particularly between iterations 0 and 2, then continues to rise.

**Approximate Data Points (Visual Estimation):**

* **Iteration 0:**

* Generation: ~0.22 (22%)

* Multiple-choice: ~0.38 (38%)

* **Iteration 1:**

* Generation: ~0.28 (28%)

* Multiple-choice: ~0.48 (48%)

* **Iteration 2:**

* Generation: ~0.32 (32%)

* Multiple-choice: ~0.52 (52%)

* **Iteration 3:**

* Generation: ~0.35 (35%)

* Multiple-choice: ~0.55 (55%)

* **Iteration 4:**

* Generation: ~0.36 (36%)

* Multiple-choice: ~0.58 (58%)

* **Iteration 5:**

* Generation: ~0.37 (37%)

* Multiple-choice: ~0.60 (60%)

**Shaded Regions (Confidence Intervals):**

* The shaded blue area around the "Generation" line spans approximately ±0.15 (15%) in accuracy at its widest point (around iteration 2-3).

* The shaded orange area around the "Multiple-choice" line spans approximately ±0.10 (10%) in accuracy at its widest point (around iteration 1-2).

* The bands for both methods narrow slightly as iterations increase, suggesting reduced variance in later stages.

### Key Observations

1. **Performance Gap:** The "Multiple-choice" method consistently outperforms the "Generation" method at every measured iteration. The initial gap at iteration 0 is approximately 16 percentage points.

2. **Growth Rate:** "Multiple-choice" shows a faster rate of improvement, especially in the early iterations (0 to 2). Its accuracy nearly doubles from ~38% to ~52% in the first two iterations.

3. **Convergence:** Both methods show continued improvement through iteration 5, with no clear plateau. The performance gap between them remains relatively stable after iteration 2.

4. **Variability:** The "Generation" method exhibits higher variability (wider confidence band) compared to "Multiple-choice," particularly in the middle iterations.

### Interpretation

The data suggests that for the task being measured, the "Multiple-choice" approach is fundamentally more effective than the "Generation" approach, starting from a higher baseline accuracy and improving more rapidly. The steeper initial slope for "Multiple-choice" indicates it learns or adapts more efficiently in the early stages.

The persistent gap implies a core advantage in the "Multiple-choice" methodology that is not overcome by additional iterations within the observed range. The narrowing confidence intervals for both methods suggest that performance becomes more consistent and predictable as the process iterates.

From a Peircean perspective, the chart acts as an indexical sign of a learning or optimization process. The upward trends are iconic of improvement. The key symbolic takeaway is the superiority of selection-based ("Multiple-choice") over generative methods for this specific metric and timeframe. The investigation would next question *why* this gap exists—is it due to the nature of the task, the quality of the choices provided, or an inherent limitation in the generative model's precision?

</details>

(b) CoT

<details>

<summary>x3.png Details</summary>

### Visual Description

## Line Chart: Accuracy vs. Iteration for Two Methods

### Overview

The image is a line chart comparing the accuracy performance of two methods, "Generation" and "Multiple-choice," over a series of iterations. The chart displays the mean accuracy for each method at each iteration, accompanied by shaded regions representing the uncertainty or variance (likely confidence intervals).

### Components/Axes

* **Chart Type:** Line chart with shaded confidence bands.

* **X-Axis (Horizontal):**

* **Label:** "Iteration"

* **Scale:** Linear, from 0 to 5.

* **Markers:** Major ticks at integers 0, 1, 2, 3, 4, 5.

* **Y-Axis (Vertical):**

* **Label:** "Accuracy (%)"

* **Scale:** Linear, from 0.0 to 1.0 (representing 0% to 100%).

* **Markers:** Major ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Legend:**

* **Position:** Top-center of the chart area.

* **Items:**

1. **Blue line with circular markers:** Labeled "Generation".

2. **Orange line with circular markers:** Labeled "Multiple-choice".

* **Data Series & Visual Encoding:**

* **Generation (Blue):** A solid blue line connecting blue circular data points. A semi-transparent blue shaded area surrounds the line.

* **Multiple-choice (Orange):** A solid orange line connecting orange circular data points. A semi-transparent orange shaded area surrounds the line.

### Detailed Analysis

**Trend Verification:**

* **Generation (Blue Line):** The line exhibits a clear, steady upward trend from iteration 0 to 5.

* **Multiple-choice (Orange Line):** The line also exhibits a clear, steady upward trend from iteration 0 to 5. It is positioned consistently above the blue line.

**Data Point Extraction (Approximate Values):**

* **Iteration 0:**

* Generation: ~0.25 (25%)

* Multiple-choice: ~0.45 (45%)

* **Iteration 1:**

* Generation: ~0.28 (28%)

* Multiple-choice: ~0.48 (48%)

* **Iteration 2:**

* Generation: ~0.30 (30%)

* Multiple-choice: ~0.50 (50%)

* **Iteration 3:**

* Generation: ~0.32 (32%)

* Multiple-choice: ~0.52 (52%)

* **Iteration 4:**

* Generation: ~0.34 (34%)

* Multiple-choice: ~0.54 (54%)

* **Iteration 5:**

* Generation: ~0.35 (35%)

* Multiple-choice: ~0.55 (55%)

**Uncertainty Bands (Shaded Areas):**

* The shaded regions for both methods are widest at iteration 0 and appear to narrow slightly as iterations increase, suggesting decreasing variance over time.

* The orange band (Multiple-choice) is consistently positioned above the blue band (Generation). The bands do not overlap after iteration 0, indicating a statistically significant performance difference.

### Key Observations

1. **Consistent Performance Gap:** The "Multiple-choice" method maintains a lead of approximately 20 percentage points in accuracy over the "Generation" method at every measured iteration.

2. **Parallel Improvement:** Both methods improve at a very similar, nearly linear rate. The slope of both lines is approximately +0.02 (2%) accuracy per iteration.

3. **No Crossover:** The performance lines do not intersect; the hierarchy established at iteration 0 is maintained throughout.

4. **Diminishing Uncertainty:** The narrowing of the confidence bands suggests that the performance of both methods becomes more consistent (less variable) with more iterations.

### Interpretation

The data demonstrates that for the task measured, the "Multiple-choice" approach is fundamentally more effective than the "Generation" approach, yielding significantly higher accuracy from the outset. The parallel upward trends indicate that both methods benefit from additional iterations (e.g., more training steps, more data, or more refinement cycles) at a comparable rate. This suggests the core advantage of "Multiple-choice" is not in its learning *rate*, but in its *baseline* capability or efficiency for this specific task.

The lack of overlap in the confidence bands after the first iteration strongly implies that the observed performance difference is reliable and not due to random chance. The narrowing variance could indicate that the models are converging toward a stable performance level. From a practical standpoint, if resources (iterations) are limited, "Multiple-choice" provides a better accuracy return at every point. If the goal is to maximize final accuracy, both methods would need to be run for many more iterations to see if the gap closes, widens, or if one method plateaus before the other.

</details>

(c) SC

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Accuracy Comparison Over Iterations

### Overview

The image displays a line chart comparing the accuracy performance of two methods—"Generation" and "Multiple-choice"—across a series of iterations. The chart includes shaded regions representing confidence intervals or variance around each trend line.

### Components/Axes

- **Chart Type**: Line chart with shaded confidence bands.

- **X-Axis**:

- **Label**: "Iteration"

- **Scale**: Linear, from 0 to 5.

- **Tick Marks**: 0, 1, 2, 3, 4, 5.

- **Y-Axis**:

- **Label**: "Accuracy (%)"

- **Scale**: Linear, from 0.0 to 1.0 (representing 0% to 100%).

- **Tick Marks**: 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

- **Legend**:

- **Placement**: Bottom-right corner of the plot area.

- **Series 1**: "Generation" – represented by a blue line with circular markers.

- **Series 2**: "Multiple-choice" – represented by an orange line with circular markers.

- **Data Series**:

- Each series consists of a solid line connecting data points at each iteration (0 through 5).

- Each line is accompanied by a semi-transparent shaded band of the same color, indicating a range (likely confidence interval or standard deviation).

### Detailed Analysis

**Trend Verification & Data Points (Approximate Values):**

1. **Generation (Blue Line)**:

- **Visual Trend**: The line shows a steady, monotonic upward slope from iteration 0 to 5. The rate of increase appears to slow slightly after iteration 3.

- **Data Points**:

- Iteration 0: ~0.75

- Iteration 1: ~0.78

- Iteration 2: ~0.81

- Iteration 3: ~0.83

- Iteration 4: ~0.84

- Iteration 5: ~0.85

- **Confidence Band**: The blue shaded region is widest at iteration 0 (spanning roughly 0.65 to 0.85) and narrows progressively, becoming tightest at iteration 5 (spanning roughly 0.82 to 0.88).

2. **Multiple-choice (Orange Line)**:

- **Visual Trend**: The line also shows a steady upward slope from iteration 0 to 5. Its slope is less steep than the Generation line throughout.

- **Data Points**:

- Iteration 0: ~0.55

- Iteration 1: ~0.60

- Iteration 2: ~0.65

- Iteration 3: ~0.68

- Iteration 4: ~0.69

- Iteration 5: ~0.70

- **Confidence Band**: The orange shaded region is also widest at iteration 0 (spanning roughly 0.45 to 0.65) and narrows over iterations, but remains wider than the Generation band at iteration 5 (spanning roughly 0.65 to 0.75).

### Key Observations

1. **Performance Gap**: The "Generation" method consistently achieves higher accuracy than the "Multiple-choice" method at every iteration point.

2. **Convergence Rate**: Both methods improve over iterations, but "Generation" improves at a faster rate, widening the performance gap from ~20 percentage points at iteration 0 to ~15 percentage points at iteration 5.

3. **Uncertainty Reduction**: The narrowing of the confidence bands for both methods indicates that the variance or uncertainty in the accuracy measurement decreases as iterations progress.

4. **Non-Overlap**: After iteration 0, the confidence bands of the two methods do not appear to overlap, suggesting the performance difference is statistically significant.

### Interpretation

The chart demonstrates a clear and sustained advantage for the "Generation" method over the "Multiple-choice" method in terms of accuracy for the given task. The upward trend for both indicates that performance improves with more iterations (e.g., more training, more attempts, or more data).

The "Generation" method not only starts at a higher baseline but also learns or improves more efficiently, as evidenced by its steeper slope. The narrowing confidence intervals suggest that both methods become more consistent and reliable in their performance as the process continues, but the "Generation" method achieves higher consistency (a tighter band) at the final iteration.

This data suggests that for the underlying task, a generative approach is fundamentally more effective than a multiple-choice selection approach. The persistent gap implies the advantage is not due to initial conditions but is a property of the method itself. The lack of overlap in confidence intervals after the first iteration strongly supports the conclusion that the observed performance difference is real and not due to random chance.

</details>

(d) Baseline

<details>

<summary>x5.png Details</summary>

### Visual Description

## Line Chart: Accuracy Comparison Over Iterations

### Overview

The image displays a line chart comparing the accuracy performance of two methods—"Generation" and "Multiple-choice"—across a series of iterations. The chart includes shaded regions around each line, likely representing confidence intervals or standard deviation.

### Components/Axes

* **Chart Type:** Line chart with shaded error bands.

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear, ranging from 0.0 to 1.0 (representing 0% to 100%).

* **Major Ticks:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **X-Axis:**

* **Label:** "Iteration"

* **Scale:** Linear, discrete integer values.

* **Major Ticks:** 0, 1, 2, 3, 4, 5.

* **Legend:**

* **Position:** Bottom-right corner of the chart area.

* **Entries:**

1. **Blue line with circle marker:** "Generation"

2. **Orange line with circle marker:** "Multiple-choice"

### Detailed Analysis

**Data Series 1: Generation (Blue Line)**

* **Trend:** The line shows a clear upward trend, with the steepest increase occurring between iterations 0 and 1. The rate of improvement slows after iteration 2, approaching a plateau.

* **Approximate Data Points:**

* Iteration 0: ~0.75 (75%)

* Iteration 1: ~0.82 (82%)

* Iteration 2: ~0.85 (85%)

* Iteration 3: ~0.86 (86%)

* Iteration 4: ~0.87 (87%)

* Iteration 5: ~0.87 (87%)

* **Shaded Region (Blue):** Represents the uncertainty or variance for the "Generation" method. The band is widest at iteration 0 (spanning roughly 0.65 to 0.85) and narrows significantly as iterations increase, indicating more consistent performance.

**Data Series 2: Multiple-choice (Orange Line)**

* **Trend:** This line also shows a consistent upward trend, but starts from a lower baseline and maintains a lower accuracy than the "Generation" method at every iteration. Its growth appears more linear and gradual.

* **Approximate Data Points:**

* Iteration 0: ~0.55 (55%)

* Iteration 1: ~0.62 (62%)

* Iteration 2: ~0.65 (65%)

* Iteration 3: ~0.67 (67%)

* Iteration 4: ~0.68 (68%)

* Iteration 5: ~0.69 (69%)

* **Shaded Region (Orange):** Represents the uncertainty for the "Multiple-choice" method. This band is also widest at the start (spanning roughly 0.45 to 0.65 at iteration 0) and narrows over time, though it remains slightly wider than the blue band at later iterations.

### Key Observations

1. **Performance Gap:** The "Generation" method consistently outperforms the "Multiple-choice" method by a significant margin (approximately 15-20 percentage points) across all iterations.

2. **Convergence:** Both methods show diminishing returns, with accuracy gains slowing after iteration 2 or 3. The "Generation" method appears to converge to a higher final accuracy (~87%) compared to "Multiple-choice" (~69%).

3. **Uncertainty Reduction:** For both methods, the variance in performance (indicated by the shaded bands) decreases substantially with more iterations, suggesting the methods become more stable and predictable.

4. **Initial Advantage:** The "Generation" method starts with a much higher accuracy at iteration 0, indicating a stronger initial performance or better "zero-shot" capability.

### Interpretation

The chart demonstrates a clear superiority of the "Generation" approach over the "Multiple-choice" approach for the task being measured. The data suggests that the "Generation" method is not only more accurate from the outset but also learns or improves more efficiently in the early iterations.

The narrowing confidence intervals imply that with more iterations (or more data/training), both methods become more reliable, but the "Generation" method achieves high reliability faster. The persistent gap between the lines indicates a fundamental difference in the efficacy of the two methods for this specific task, rather than a temporary or easily closed advantage.

From a technical perspective, this could imply that generative models (if that's what "Generation" represents) are better suited for this problem domain than discriminative or selection-based ("Multiple-choice") models. The chart provides strong evidence to favor the "Generation" method if the goal is maximizing accuracy and achieving stable performance with fewer iterations.

</details>

(e) CoT

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Chart: Model Accuracy Over Iterations

### Overview

The image displays a line chart comparing the accuracy performance of two different methods, labeled "Generation" and "Multiple-choice," across a series of training or evaluation iterations. The chart includes shaded regions representing confidence intervals or standard deviation around each mean accuracy line.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence bands.

* **X-Axis:**

* **Label:** "Iteration"

* **Scale:** Linear, from 0 to 5, with major tick marks at each integer (0, 1, 2, 3, 4, 5).

* **Y-Axis:**

* **Label:** "Accuracy (%)"

* **Scale:** Linear, from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Legend:**

* **Position:** Bottom-center of the chart area.

* **Series 1:** "Generation" - Represented by a blue line with circular markers.

* **Series 2:** "Multiple-choice" - Represented by an orange line with circular markers.

* **Data Series & Confidence Intervals:**

* Each line is accompanied by a semi-transparent shaded band of the corresponding color (blue for Generation, orange for Multiple-choice), indicating the range of uncertainty or variance around the mean accuracy at each iteration.

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

1. **Generation (Blue Line):**

* **Trend:** The line shows a sharp initial increase from iteration 0 to 1, followed by a more gradual rise that plateaus from iteration 2 onward.

* **Data Points (Mean Accuracy):**

* Iteration 0: ~0.75

* Iteration 1: ~0.80

* Iteration 2: ~0.82

* Iteration 3: ~0.82

* Iteration 4: ~0.82

* Iteration 5: ~0.82

* **Confidence Interval:** The blue shaded band is widest at iteration 0 (spanning roughly 0.65 to 0.85) and narrows slightly as iterations progress, indicating decreasing variance.

2. **Multiple-choice (Orange Line):**

* **Trend:** The line shows a steady, moderate increase from iteration 0 to 2, after which it plateaus.

* **Data Points (Mean Accuracy):**

* Iteration 0: ~0.60

* Iteration 1: ~0.65

* Iteration 2: ~0.68

* Iteration 3: ~0.68

* Iteration 4: ~0.68

* Iteration 5: ~0.68

* **Confidence Interval:** The orange shaded band is also widest at the start (spanning roughly 0.45 to 0.75 at iteration 0) and narrows over time, though it remains wider than the Generation band at corresponding iterations.

### Key Observations

* **Performance Gap:** The "Generation" method consistently achieves higher accuracy than the "Multiple-choice" method at every measured iteration. The gap is approximately 0.15 (15 percentage points) at iteration 0 and narrows slightly to about 0.14 by the plateau.

* **Convergence:** Both methods appear to converge to a stable accuracy level by iteration 2 or 3, with minimal improvement thereafter.

* **Variance:** Both methods show higher variance (wider confidence intervals) in early iterations, which decreases as training progresses. The "Multiple-choice" method exhibits greater variance than "Generation" at all points.

* **Initial Learning Rate:** "Generation" shows a steeper initial learning curve (larger gain from iter 0 to 1) compared to the more gradual initial ascent of "Multiple-choice."

### Interpretation

The data suggests that the "Generation" approach is both more effective (higher final accuracy) and more efficient (reaches near-peak performance faster) than the "Multiple-choice" approach for the given task. The plateau indicates that further iterations beyond 2 or 3 yield diminishing returns for both methods under the current conditions.

The consistently wider confidence interval for "Multiple-choice" implies its performance is less stable or more sensitive to initial conditions or data variations compared to "Generation." The narrowing of both intervals over time is a typical sign of model stabilization during training.

From a Peircean perspective, the chart is an *icon* representing the relationship between training time (iteration) and performance (accuracy). It is also an *index* pointing to the underlying cause: the "Generation" method's architecture or training procedure is fundamentally better suited to this task than the "Multiple-choice" method. The *interpretant* for a viewer is the conclusion that "Generation" is the superior method, warranting its selection for deployment or further development. The notable outlier is the high initial variance, which might prompt an investigation into the stability of the early training phases.

</details>

(f) SC

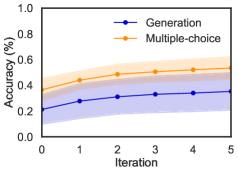

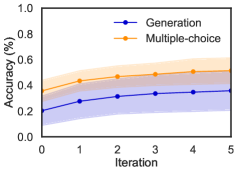

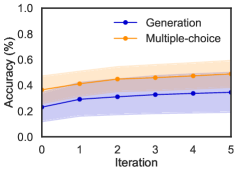

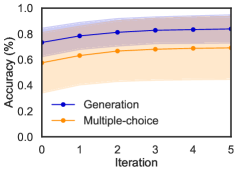

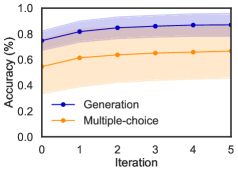

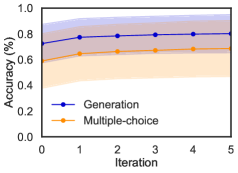

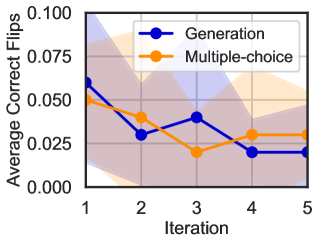

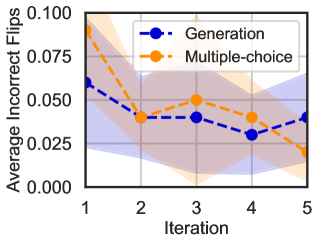

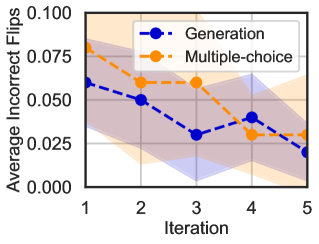

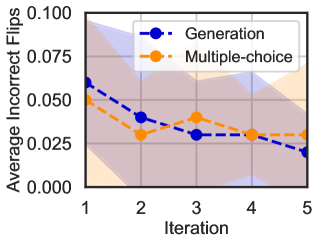

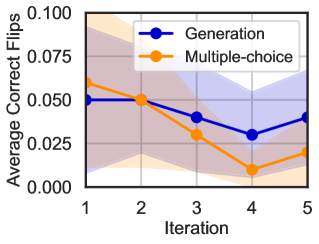

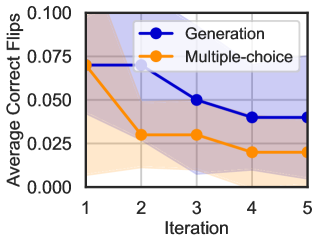

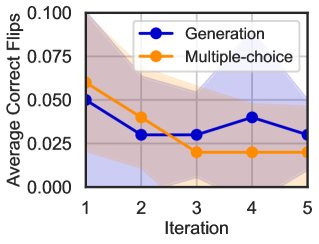

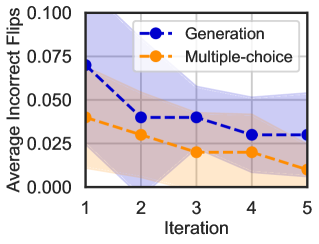

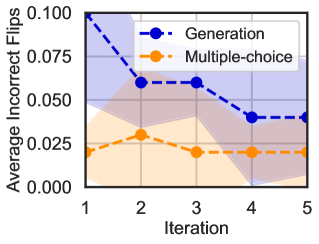

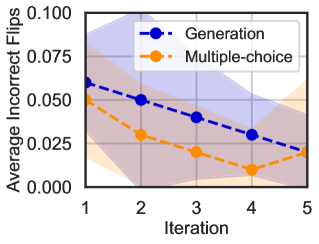

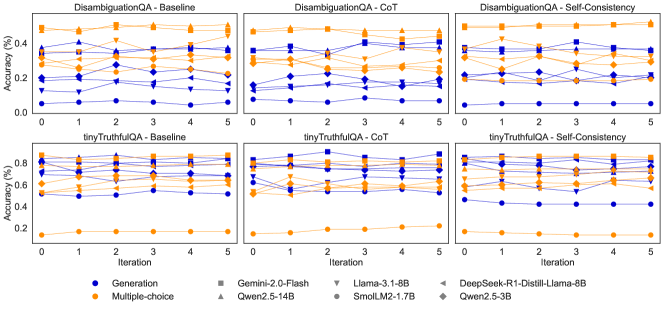

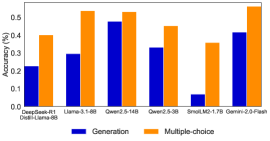

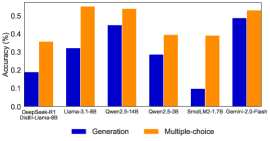

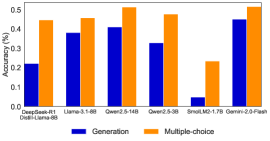

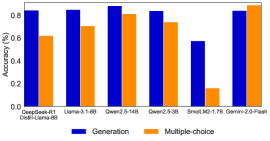

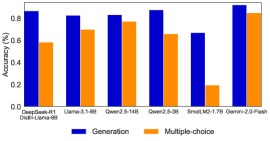

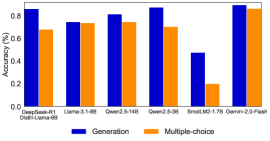

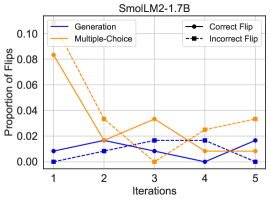

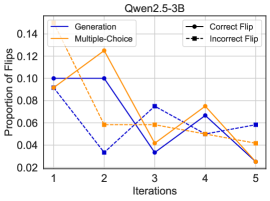

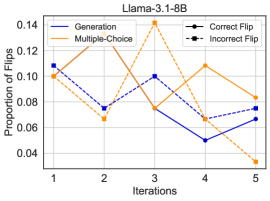

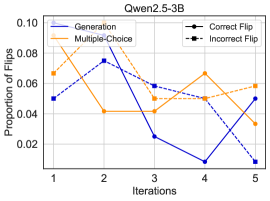

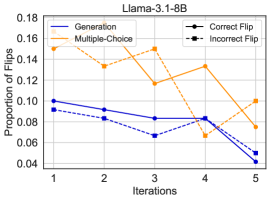

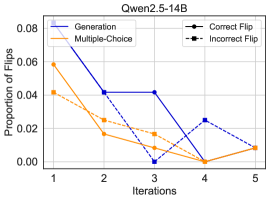

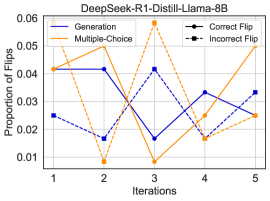

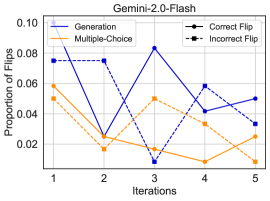

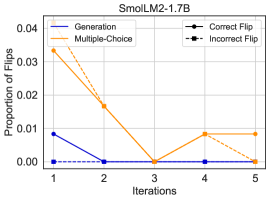

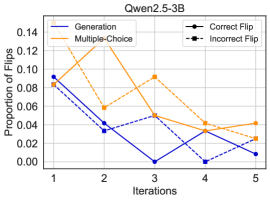

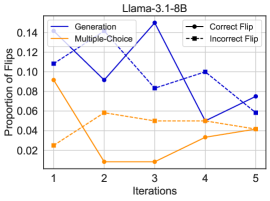

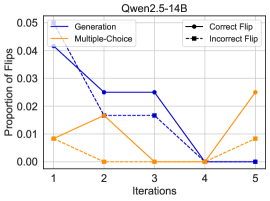

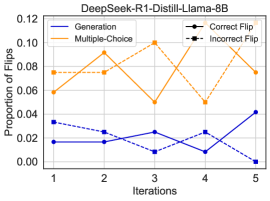

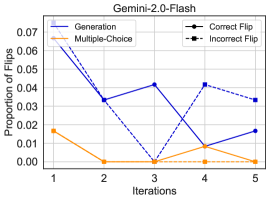

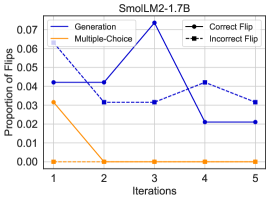

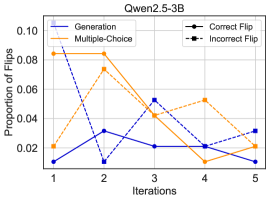

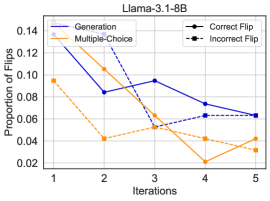

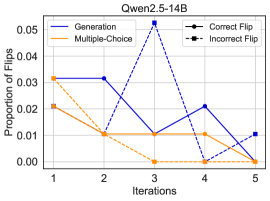

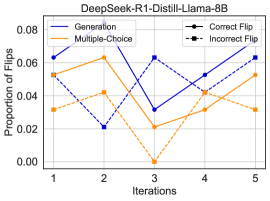

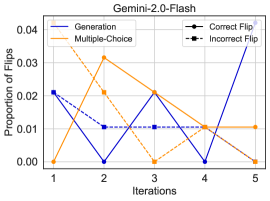

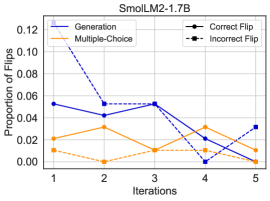

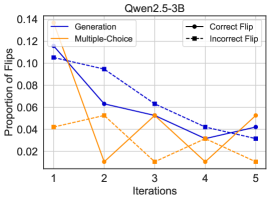

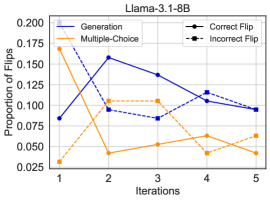

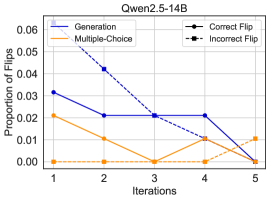

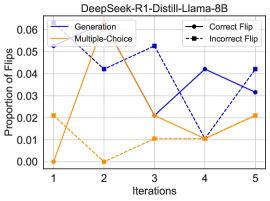

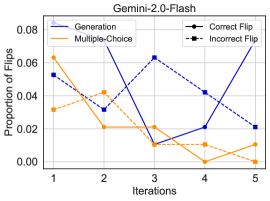

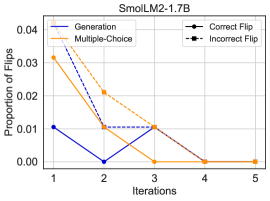

Figure 1: Average cumulative accuracy on generation and multiple-choice. (Top) Accuracy on the DisambiguationQA dataset shows that models perform better on the multiple-choice task when we iteratively self-correct the model response to the questions, while (bottom) shows the accuracy on the tinyTruthfulQA dataset, indicating that models perform better in generation tasks.

Research Questions.

Our study is guided by the following three research questions:

- RQ1: How do self-correction dynamics differ between open-ended and multiple-choice tasks?

- RQ2: How do model scale and prompting strategy influence self-correction across the two paradigms?

- RQ3: How does iterative self-correction affect correctness, stability, and semantic drift, and what mechanisms explain these effects?

Datasets.

We evaluate on two benchmarks, DisambiguationQA and tinyTruthfulQA, that each provide parallel formulations for both multiple-choice questions and open-ended generation. This allows us to study self-correction dynamics under consistent task content but different output constraints.

- DisambiguationQA Kazemi et al. (2025) is typically phrased in multiple-choice form, where each question presents a pronoun or reference with referential ambiguity and provides four candidate referents. However, the same questions can also be cast into an open-ended format by asking models to generate the referent rather than choose among options. Thus, DisambiguationQA instantiates a scenario where the answer space is tightly constrained but also amenable to open-ended generation in a parallel setup.

- tinyTruthfulQA Polo et al. (2024) is a challenging subset of the TruthfulQA benchmark Lin et al. (2022) focused on short-form factual queries that tend to provoke false or misleading answers from LLMs. While TruthfulQA is usually evaluated via free-form generation, where models must produce a truthful answer, a multiple-choice variant has also been developed, offering for each question a small set of candidate answers drawn from the same reference answer pool. Therefore, tinyTruthfulQA inherits this dual-format nature, where the same questions support both open-ended and multiple-choice instantiations. This dataset exemplifies scenarios requiring knowledge retrieval and precision in generation.

By evaluating both tasks, we cover one case where the ground-truth answer is within a closed set of options and one case where the answer must be generated. We therefore can compare how iterative self-correction dynamics differ when the model’s output is tightly constrained versus freely generative.

Models.

We evaluate the dynamics of iterative self‐correction under unconstrained generation and multiple‐choice selection using six pre‐trained language models ranging from small to large parameters. We evaluate SmolLM2-1.7B Allal et al. (2025), Qwen2.5-3B Qwen et al. (2025), Llama-3.1-8B Grattafiori et al. (2024), Qwen2.5-14B Qwen et al. (2025), DeepSeek-R1-Distill-Llama-8B Guo et al. (2025), and Gemini-2.0-Flash Comanici et al. (2025). These models represent diverse families and scales (from distilled smaller models to state-of-the-art large models). For each model and dataset, we compare three aforementioned prompting strategies: a direct Baseline prompt, zero‐shot chain‐of‐thought (CoT) prompting Kojima et al. (2022), and our iterative SC procedure that reviews and refines the model’s own previous response for up to five rounds. We use HuggingFace to run the models except Gemini-2.0-Flash, which is accessed through the API.

Prompts.

In our experiments, we use simplified prompts to minimize the impact of prompt design on performance across tasks, keeping the focus on the self-correction mechanism Huang et al. (2023). Specifically, we apply a basic prompt for the Baseline method and adopt zero-shot Chain-of-Thought (CoT) prompting Kojima et al. (2022) for both the CoT and Self-Consistency (SC) approaches. The initial prompts are used for the first attempt (iteration 0) under each strategy. They differ only in whether the model is encouraged to produce an explicit chain of reasoning before the final answer. For iterations beyond the first, we prepend instructions to review the prior attempts. In both cases, the model is reminded of its earlier answers (which are included in the conversation context) and encouraged to refine them. The CoT variant additionally maintains the directive to use a step-by-step reasoning process during revision. Our full prompts can be found in Appendix A.2.

Final Answer Extraction.

For all of our problems, we added the ‘ The final answer is: ’ suffix to the text of the prompt to encourage the model to produce the final answer in a format that we can easily extract. More details in Appendix A.1.

## 5 Results

<details>

<summary>x7.png Details</summary>

### Visual Description

## Line Chart: Average Correct Flips per Iteration

### Overview

The image is a line chart comparing the performance of two methods, "Generation" and "Multiple-choice," over five iterations. The performance metric is the "Average Correct Flips." Both methods show a decreasing trend, with shaded regions indicating confidence intervals or variability around the mean values.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence bands.

* **Y-Axis:**

* **Label:** "Average Correct Flips"

* **Scale:** Linear, ranging from 0.000 to 0.100.

* **Ticks:** 0.000, 0.025, 0.050, 0.075, 0.100.

* **X-Axis:**

* **Label:** "Iteration"

* **Scale:** Linear, discrete integer values.

* **Ticks:** 1, 2, 3, 4, 5.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Series 1:** "Generation" - Represented by a blue line with circular markers.

* **Series 2:** "Multiple-choice" - Represented by an orange line with circular markers.

* **Visual Elements:**

* **Confidence Bands:** Each line has a corresponding shaded area of the same color but with lower opacity, representing the range of data (e.g., standard deviation, confidence interval).

* **Grid:** A light gray grid is present in the background.

### Detailed Analysis

**Trend Verification & Data Points (Approximate):**

1. **Generation (Blue Line):**

* **Trend:** The line shows a steep downward slope from iteration 1 to 2, followed by a more gradual decline through iterations 3, 4, and 5.

* **Approximate Values:**

* Iteration 1: ~0.070

* Iteration 2: ~0.050

* Iteration 3: ~0.040

* Iteration 4: ~0.030

* Iteration 5: ~0.028

* **Confidence Band:** The blue shaded area is widest at iteration 1 (spanning roughly 0.040 to 0.100) and narrows considerably by iteration 5.

2. **Multiple-choice (Orange Line):**

* **Trend:** The line starts higher than the blue line, drops sharply between iterations 1 and 2, then plateaus with a very slight downward trend from iteration 2 to 5.

* **Approximate Values:**

* Iteration 1: ~0.080

* Iteration 2: ~0.065

* Iteration 3: ~0.040

* Iteration 4: ~0.040

* Iteration 5: ~0.030

* **Confidence Band:** The orange shaded area is also widest at iteration 1 (spanning roughly 0.055 to 0.100) and narrows over time, though it remains wider than the blue band at iterations 4 and 5.

**Component Isolation:**

* **Header:** Contains the Y-axis label and the top portion of the plot area.

* **Main Chart:** The central plotting area containing the two lines, their confidence bands, and the grid.

* **Footer:** Contains the X-axis label and tick marks.

### Key Observations

1. **Initial Performance:** The "Multiple-choice" method starts with a higher average correct flips value (~0.080) than the "Generation" method (~0.070) at iteration 1.

2. **Rate of Decline:** The "Generation" method experiences a more consistent and steeper decline across all iterations. The "Multiple-choice" method's decline is most pronounced in the first two iterations, after which it stabilizes.

3. **Convergence:** By iteration 3, the mean values of both methods converge at approximately 0.040. From iteration 4 onward, the "Generation" method's mean falls slightly below that of "Multiple-choice."

4. **Variability:** The confidence intervals for both methods are substantial, particularly in early iterations. The bands overlap significantly throughout the chart, especially from iteration 3 onwards, indicating that the difference in mean performance between the two methods may not be statistically significant at those points.

5. **Final State:** At iteration 5, both methods show low average correct flips (between ~0.028 and ~0.030), with overlapping confidence intervals.

### Interpretation

The chart demonstrates that both the "Generation" and "Multiple-choice" methods see a reduction in the "Average Correct Flips" metric as the number of iterations increases. This suggests that the task or problem being measured becomes more difficult, or the methods' effectiveness diminishes, with repeated iterations.

The "Multiple-choice" approach appears to have a higher initial performance but stabilizes after a sharp early drop. The "Generation" approach degrades more steadily. The critical observation is the **overlap of the confidence bands**. This visual overlap suggests that while the point estimates (the lines) differ, the underlying data has enough variability that we cannot confidently state one method is superior to the other at any given iteration based solely on this chart. The trend is clear—performance decreases for both—but the precise comparison between methods is uncertain. The data implies that the choice between these methods may depend more on factors other than this specific performance metric over multiple iterations, such as computational cost, speed, or performance on other unmeasured dimensions.

</details>

(a) Baseline

<details>

<summary>x8.png Details</summary>

### Visual Description

## Line Chart: Average Correct Flips Over Iterations

### Overview

The image is a line chart comparing the performance of two methods, "Generation" and "Multiple-choice," across five iterations. The performance metric is "Average Correct Flips." Both methods show a general downward trend in performance over time, with overlapping confidence intervals (shaded regions) suggesting variability in the results.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence intervals.

* **Y-Axis:**

* **Label:** "Average Correct Flips"

* **Scale:** Linear, ranging from 0.000 to 0.100.

* **Ticks:** 0.000, 0.025, 0.050, 0.075, 0.100.

* **X-Axis:**

* **Label:** "Iteration"

* **Scale:** Discrete, with integer values.

* **Ticks:** 1, 2, 3, 4, 5.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Series 1:** "Generation" - Represented by a blue line with circular markers.

* **Series 2:** "Multiple-choice" - Represented by an orange line with circular markers.

* **Confidence Intervals:** Shaded regions around each line, indicating the range of uncertainty or variance for each data point. The "Generation" interval is shaded in a light blue/purple, and the "Multiple-choice" interval is shaded in a light orange/tan.

### Detailed Analysis

**Data Series: Generation (Blue Line)**

* **Trend:** The line shows a general downward trend with a plateau between iterations 2 and 3.

* **Data Points (Approximate):**

* Iteration 1: ~0.070

* Iteration 2: ~0.050

* Iteration 3: ~0.050

* Iteration 4: ~0.030

* Iteration 5: ~0.030

* **Confidence Interval:** The shaded blue region is widest at iteration 1 (spanning roughly 0.045 to 0.095) and narrows considerably by iteration 5 (spanning roughly 0.015 to 0.045).

**Data Series: Multiple-choice (Orange Line)**

* **Trend:** The line shows a consistent downward trend across all iterations.

* **Data Points (Approximate):**

* Iteration 1: ~0.080

* Iteration 2: ~0.060

* Iteration 3: ~0.040

* Iteration 4: ~0.040

* Iteration 5: ~0.030

* **Confidence Interval:** The shaded orange region is also widest at iteration 1 (spanning roughly 0.055 to 0.105) and narrows by iteration 5 (spanning roughly 0.015 to 0.045).

### Key Observations

1. **Initial Performance Gap:** At iteration 1, the "Multiple-choice" method starts with a higher average correct flips value (~0.080) compared to the "Generation" method (~0.070).

2. **Converging Performance:** The performance of both methods converges by the final iteration (5), with both data points at approximately 0.030.

3. **Overlapping Confidence Intervals:** The shaded confidence intervals for both methods overlap significantly at every iteration. This visual overlap suggests that the difference in performance between the two methods may not be statistically significant at any given point.

4. **Plateau in Generation:** The "Generation" method's performance does not decrease between iterations 2 and 3, unlike the "Multiple-choice" method which continues to decline.

5. **Narrowing Variance:** The confidence intervals for both methods narrow as iterations increase, indicating that the results become more consistent or less variable over time.

### Interpretation

The chart demonstrates that both the "Generation" and "Multiple-choice" methods experience a decline in the "Average Correct Flips" metric as the iterative process progresses. This could indicate that the task becomes more difficult with each iteration, or that the methods are being applied to increasingly challenging cases.

The initial advantage of the "Multiple-choice" method diminishes over time, leading to equivalent final performance. The significant overlap in confidence intervals is a critical finding; it implies that any observed difference in the average values between the two methods at a specific iteration could be due to random variation rather than a true difference in effectiveness. Therefore, based solely on this visual data, one cannot confidently conclude that one method is superior to the other at any stage.

The narrowing confidence intervals suggest that the process or the methods themselves become more stable and predictable with repeated iterations. The plateau in the "Generation" method's performance is an interesting anomaly that might warrant further investigation into what occurred between iterations 2 and 3 to halt its decline temporarily.

</details>

(b) CoT

<details>

<summary>x9.png Details</summary>

### Visual Description

## Line Chart: Average Correct Flips by Iteration

### Overview

This is a line chart comparing the performance of two methods, "Generation" and "Multiple-choice," across five iterations. The performance metric is "Average Correct Flips." The chart includes shaded regions around each line, indicating variability or confidence intervals.

### Components/Axes

* **Chart Type:** Line chart with shaded confidence bands.

* **X-Axis (Horizontal):**

* **Label:** "Iteration"

* **Scale:** Discrete, linear scale from 1 to 5.

* **Markers:** 1, 2, 3, 4, 5.

* **Y-Axis (Vertical):**

* **Label:** "Average Correct Flips"

* **Scale:** Linear scale from 0.000 to 0.100.

* **Markers:** 0.000, 0.025, 0.050, 0.075, 0.100.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Series 1:** "Generation" - Represented by a blue line with circular markers.

* **Series 2:** "Multiple-choice" - Represented by an orange line with circular markers.

* **Data Series & Shading:**

* Each line has a corresponding semi-transparent shaded area of the same color (light blue for Generation, light orange for Multiple-choice), representing the range of uncertainty or variance around the mean value.

### Detailed Analysis

**Trend Verification & Data Points (Approximate Values):**

* **Generation (Blue Line):**

* **Trend:** Starts highest, shows an overall downward trend with a small peak at iteration 3.

* **Data Points:**

* Iteration 1: ~0.060

* Iteration 2: ~0.030

* Iteration 3: ~0.040

* Iteration 4: ~0.020

* Iteration 5: ~0.020

* **Uncertainty Band (Approximate Range):** Widest at iteration 1 (spanning ~0.040 to ~0.080), narrows significantly by iteration 5.

* **Multiple-choice (Orange Line):**

* **Trend:** Starts lower than Generation, declines to a minimum at iteration 3, then shows a slight recovery.

* **Data Points:**

* Iteration 1: ~0.050

* Iteration 2: ~0.040

* Iteration 3: ~0.020

* Iteration 4: ~0.030

* Iteration 5: ~0.030

* **Uncertainty Band (Approximate Range):** Relatively consistent width across iterations, spanning roughly ±0.015 from the mean line.

**Spatial Relationships:**

* The two lines cross between iterations 1 and 2. Generation is higher at iteration 1, but Multiple-choice is higher at iteration 2.

* The lines cross again between iterations 3 and 4. Generation is higher at iteration 3, but Multiple-choice is higher at iterations 4 and 5.

* The shaded uncertainty bands overlap significantly between iterations 2 and 4, suggesting the performance difference between the two methods may not be statistically significant in that range.

### Key Observations

1. **Initial Advantage:** The "Generation" method starts with a higher average correct flips score (~0.060) compared to "Multiple-choice" (~0.050).

2. **Convergence and Crossover:** The performance of both methods converges and crosses over multiple times. "Multiple-choice" ends with a slightly higher score (~0.030) than "Generation" (~0.020) at iteration 5.

3. **Minimum Point:** Both methods reach their lowest performance at different iterations: "Generation" at iterations 4 & 5, and "Multiple-choice" at iteration 3.

4. **Variability:** The "Generation" method exhibits much higher variability (wider shaded band) in the early iterations, which decreases over time. The "Multiple-choice" method shows more consistent variability.

### Interpretation

The chart suggests a comparative study of two iterative processes or algorithms. The "Generation" method may be more powerful or effective initially but is less stable (higher variance) and its performance degrades more sharply over successive iterations. The "Multiple-choice" method starts slightly less effective but demonstrates more resilience, recovering after a dip and maintaining a more stable performance level in later iterations.

The overlapping confidence intervals are crucial; they indicate that for iterations 2 through 4, the observed differences in average correct flips between the two methods might be due to random chance rather than a true superiority of one method. The final data point (iteration 5) shows a clearer separation, with "Multiple-choice" outperforming "Generation," but the certainty of this conclusion depends on the width of the confidence bands at that point.

This pattern could imply a trade-off: "Generation" might be a high-risk, high-reward approach that excels early but is prone to degradation, while "Multiple-choice" is a more robust, consistent strategy that improves or stabilizes with more iterations. The "correct flips" metric itself suggests a task involving correction or improvement, where the two methods employ fundamentally different strategies.

</details>

(c) SC

<details>

<summary>x10.png Details</summary>

### Visual Description

## Line Chart: Average Incorrect Flips vs. Iteration

### Overview

The image is a line chart comparing the performance of two methods, "Generation" and "Multiple-choice," over five iterations. The performance metric is the "Average Incorrect Flips," where a lower value indicates better performance. Each data series is represented by a line with circular markers and a shaded region indicating the confidence interval or variance around the mean.

### Components/Axes

* **X-Axis (Horizontal):**

* **Label:** "Iteration"

* **Scale:** Linear, with discrete integer markers at 1, 2, 3, 4, and 5.

* **Y-Axis (Vertical):**

* **Label:** "Average Incorrect Flips"

* **Scale:** Linear, ranging from 0.000 to 0.100, with major tick marks at 0.000, 0.025, 0.050, 0.075, and 0.100.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Series 1:** "Generation" - Represented by a blue line with blue circular markers and a light blue shaded confidence interval.

* **Series 2:** "Multiple-choice" - Represented by an orange line with orange circular markers and a light orange shaded confidence interval.

### Detailed Analysis

**Data Series: Generation (Blue Line)**

* **Trend:** The line shows a general downward trend from iteration 1 to 4, with a slight upward tick at iteration 5. The confidence interval is widest at the first iteration and narrows considerably by the fifth.

* **Approximate Data Points:**

* Iteration 1: ~0.060

* Iteration 2: ~0.040

* Iteration 3: ~0.040

* Iteration 4: ~0.030

* Iteration 5: ~0.040

**Data Series: Multiple-choice (Orange Line)**

* **Trend:** The line shows a steep initial decline from iteration 1 to 2, followed by a moderate increase at iteration 3, and then a steady decline through iterations 4 and 5. The confidence interval is very wide at iteration 1 and narrows significantly by iteration 5.

* **Approximate Data Points:**

* Iteration 1: ~0.090

* Iteration 2: ~0.040

* Iteration 3: ~0.050

* Iteration 4: ~0.040

* Iteration 5: ~0.020

### Key Observations

1. **Initial Performance Gap:** At iteration 1, the "Multiple-choice" method has a substantially higher average incorrect flips (~0.090) compared to the "Generation" method (~0.060).

2. **Convergence at Iteration 2:** Both methods converge to a similar performance level of approximately 0.040 at iteration 2.

3. **Final Performance Divergence:** By iteration 5, the "Multiple-choice" method achieves the lowest observed value (~0.020), outperforming the "Generation" method (~0.040).

4. **Confidence Interval Behavior:** For both series, the shaded confidence intervals are widest at the first iteration and become progressively narrower, suggesting that the variance in performance decreases as the number of iterations increases.

5. **Crossover Point:** The two lines cross between iterations 1 and 2, and again between iterations 4 and 5, indicating a shift in which method is superior at different stages of the process.

### Interpretation

The chart demonstrates the learning or optimization curves for two different approaches. The "Multiple-choice" method starts with poorer performance but exhibits a more dramatic improvement, ultimately achieving the best result by the final iteration. The "Generation" method shows more consistent, moderate improvement but plateaus at a higher error rate.

The narrowing confidence intervals suggest that both methods become more reliable and consistent in their outputs as they are applied iteratively. The crossover points are critical; they indicate that the optimal method depends on the stage of the process. If only a few iterations are possible, "Generation" may be preferable initially. However, for a process allowing for five or more iterations, "Multiple-choice" appears to be the more effective strategy for minimizing incorrect flips. The data suggests an initial phase of rapid learning for "Multiple-choice," followed by a refinement phase where it surpasses the more steadily improving "Generation" method.

</details>

(d) Baseline

<details>

<summary>x11.png Details</summary>

### Visual Description

## Line Chart: Average Incorrect Flips Over Iterations

### Overview

The image is a line chart comparing the performance of two methods, "Generation" and "Multiple-choice," across five iterations. The performance metric is the "Average Incorrect Flips," where a lower value indicates better performance. The chart includes shaded regions around each line, likely representing confidence intervals or variability.

### Components/Axes

* **Chart Type:** Line chart with two data series and shaded error bands.

* **X-Axis:**

* **Label:** "Iteration"

* **Scale:** Discrete, linear scale from 1 to 5.

* **Markers:** Ticks at integers 1, 2, 3, 4, 5.

* **Y-Axis:**

* **Label:** "Average Incorrect Flips"

* **Scale:** Linear scale from 0.000 to 0.100.

* **Markers:** Ticks at 0.000, 0.025, 0.050, 0.075, 0.100.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Series 1:** "Generation" - Represented by a blue dashed line with circular markers.

* **Series 2:** "Multiple-choice" - Represented by an orange dashed line with circular markers.

* **Data Series & Shading:**

* The "Generation" series has a blue shaded area around its line.

* The "Multiple-choice" series has an orange shaded area around its line.

* The shaded areas overlap significantly, particularly in later iterations.

### Detailed Analysis

**Trend Verification:**

* **Generation (Blue Line):** The line shows an overall downward trend from iteration 1 to 5, with a notable dip at iteration 3 and a slight rise at iteration 4 before falling again.

* **Multiple-choice (Orange Line):** The line shows a general downward trend, with a plateau between iterations 2 and 3, followed by a steeper decline.

**Data Point Extraction (Approximate Values):**

| Iteration | Generation (Avg. Incorrect Flips) | Multiple-choice (Avg. Incorrect Flips) |

| :--- | :--- | :--- |

| 1 | ~0.060 | ~0.080 |

| 2 | ~0.050 | ~0.060 |

| 3 | ~0.030 | ~0.060 |

| 4 | ~0.040 | ~0.030 |

| 5 | ~0.020 | ~0.030 |

**Shaded Region Analysis:**

* The shaded regions (likely confidence intervals) are widest at iteration 1 for both series, suggesting higher initial variability.

* The bands narrow considerably by iteration 5, indicating more consistent results as iterations progress.

* The blue and orange shaded areas overlap substantially from iteration 2 onward, suggesting the performance difference between the two methods may not be statistically significant at many points.

### Key Observations

1. **Initial Performance Gap:** At iteration 1, the "Multiple-choice" method has a higher average error (~0.080) compared to the "Generation" method (~0.060).

2. **Convergence:** By iteration 5, the performance of both methods converges to a similar low error rate (between ~0.020 and ~0.030).

3. **Non-Monotonic Improvement:** The "Generation" method does not improve linearly; its error rate increases slightly from iteration 3 to 4 before decreasing again.

4. **Plateau in Multiple-choice:** The "Multiple-choice" method shows no improvement between iterations 2 and 3, maintaining an error rate of ~0.060.

5. **Reducing Variability:** The narrowing of the shaded bands for both series indicates that the results become more precise and less variable with more iterations.

### Interpretation

The chart demonstrates that both the "Generation" and "Multiple-choice" methods are effective at reducing the "Average Incorrect Flips" over successive iterations, suggesting a learning or optimization process.

* **Relative Efficacy:** The "Generation" method starts with a performance advantage. However, the "Multiple-choice" method shows a steeper rate of improvement between iterations 3 and 5, ultimately catching up.

* **Convergence and Reliability:** The convergence of the lines and the narrowing of the confidence bands by iteration 5 suggest that given enough iterations, both methods achieve a similar, reliable, and low-error outcome. The initial higher variability diminishes, indicating the process stabilizes.

* **Practical Implication:** If the goal is to minimize errors quickly (in few iterations), the "Generation" method appears superior initially. If the process can run for more iterations (5 or more), the choice between methods may become less critical based on this final error metric alone. The overlapping confidence intervals caution against declaring one method definitively better than the other at most individual iteration points without further statistical analysis. The data suggests the underlying process for both methods becomes more consistent and accurate over time.

</details>

(e) CoT

<details>

<summary>x12.png Details</summary>

### Visual Description

## Line Chart: Average Incorrect Flips Over Iterations

### Overview

The image is a line chart comparing the performance of two methods, "Generation" and "Multiple-choice," across five iterations. The performance metric is the "Average Incorrect Flips," where a lower value indicates better performance. Each data series is represented by a dashed line with circular markers and includes a shaded region indicating the confidence interval or variability around the mean.

### Components/Axes

* **Chart Type:** Line chart with confidence intervals.

* **X-Axis (Horizontal):**

* **Label:** "Iteration"

* **Scale:** Discrete, linear scale from 1 to 5.

* **Markers:** 1, 2, 3, 4, 5.

* **Y-Axis (Vertical):**

* **Label:** "Average Incorrect Flips"

* **Scale:** Linear scale from 0.000 to 0.100.

* **Markers:** 0.000, 0.025, 0.050, 0.075, 0.100.

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Series 1:** "Generation" - Represented by a blue dashed line with blue circular markers (●).

* **Series 2:** "Multiple-choice" - Represented by an orange dashed line with orange circular markers (●).

* **Visual Elements:**

* **Shaded Regions:** A semi-transparent blue shaded area surrounds the "Generation" line, and a semi-transparent orange shaded area surrounds the "Multiple-choice" line. These represent the range of uncertainty (e.g., standard deviation or confidence interval) for each series.

### Detailed Analysis

**Data Series: Generation (Blue)**

* **Trend:** Shows a consistent downward trend across all five iterations.

* **Data Points (Approximate):**

* Iteration 1: ~0.060

* Iteration 2: ~0.040

* Iteration 3: ~0.030

* Iteration 4: ~0.030

* Iteration 5: ~0.020

* **Confidence Interval:** The blue shaded region is widest at Iteration 1 (spanning roughly 0.035 to 0.085) and narrows progressively, becoming tightest at Iteration 5.

**Data Series: Multiple-choice (Orange)**

* **Trend:** Shows an initial decrease, a slight increase at Iteration 3, and then stabilizes.

* **Data Points (Approximate):**

* Iteration 1: ~0.050

* Iteration 2: ~0.030

* Iteration 3: ~0.040

* Iteration 4: ~0.030

* Iteration 5: ~0.030

* **Confidence Interval:** The orange shaded region is also widest at the start (spanning roughly 0.000 to 0.075 at Iteration 1) and narrows over time, though it remains slightly wider than the blue region at Iteration 5.

### Key Observations

1. **Initial Performance:** At Iteration 1, the "Multiple-choice" method starts with a lower average incorrect flips value (~0.050) compared to the "Generation" method (~0.060).

2. **Convergence:** By Iteration 2, both methods have similar performance (~0.030 for Multiple-choice, ~0.040 for Generation). Their confidence intervals overlap significantly from Iteration 2 onward.

3. **Final Performance:** At Iteration 5, the "Generation" method achieves the lowest observed value (~0.020), while the "Multiple-choice" method plateaus at ~0.030.

4. **Variability:** Both methods show high initial variability (wide shaded areas), which decreases with more iterations, suggesting the results become more consistent over time.

5. **Crossover:** The "Generation" line crosses below the "Multiple-choice" line between Iteration 2 and Iteration 3 and remains below it for the rest of the charted iterations.

### Interpretation

The chart demonstrates that both the "Generation" and "Multiple-choice" methods are effective at reducing the "Average Incorrect Flips" over successive iterations. The "Generation" method shows a more consistent and ultimately greater improvement, starting from a worse position but ending with the best performance. The "Multiple-choice" method improves quickly but then hits a plateau.

The significant overlap in the confidence intervals, especially from Iteration 2 to 4, suggests that the performance difference between the two methods may not be statistically significant during those stages. The narrowing of the shaded regions indicates that the evaluation of both methods becomes more precise or stable with more iterations.

**Underlying Narrative:** This data likely comes from an iterative machine learning or optimization process (e.g., training a model, refining a prompt). The "Incorrect Flips" could refer to errors in classification, generation, or decision-making. The trend suggests that iterative refinement is beneficial, and the "Generation" approach, while potentially noisier initially, may have a higher ceiling for improvement in this specific task. The plateau of the "Multiple-choice" method could indicate it reaches its optimal performance faster but has less room for further refinement.

</details>

(f) SC

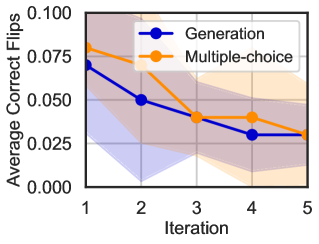

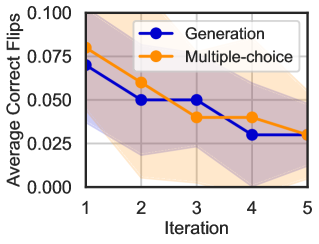

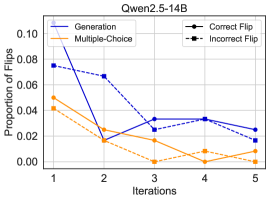

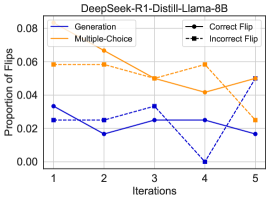

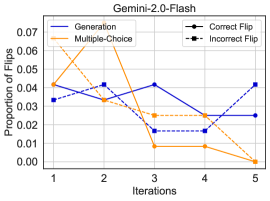

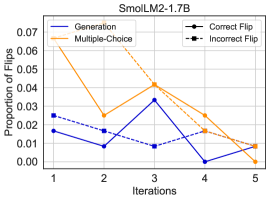

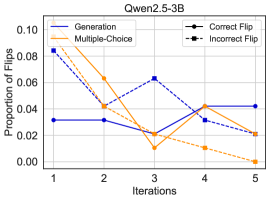

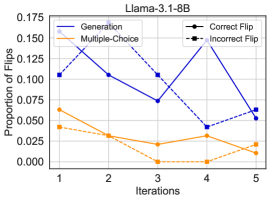

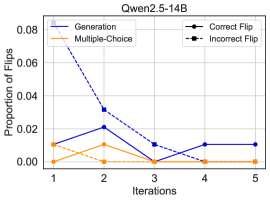

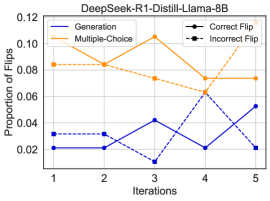

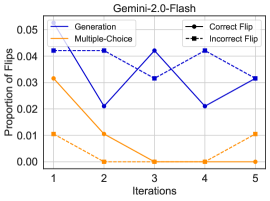

Figure 2: Average Correct and Incorrect Flips on DisambiguationQA

We now analyze the results in relation to our three research questions.

Improvement Patterns Across Iterations (RQ1).