# RPM-MCTS: Knowledge-Retrieval as Process Reward Model with Monte Carlo Tree Search for Code Generation

**Authors**: Yuanyuan Lin\equalcontrib, Xiangyu Ouyang\equalcontrib, Teng Zhang, Kaixin Sui

> Corresponding Author

Abstract

Tree search-based methods have made significant progress in enhancing the code generation capabilities of large language models. However, due to the difficulty in effectively evaluating intermediate algorithmic steps and the inability to locate and timely correct erroneous steps, these methods often generate incorrect code and incur increased computational costs. To tackle these problems, we propose RPM-MCTS, an effective method that utilizes Knowledge- R etrieval as P rocess Reward M odel based on M onte C arlo T ree S earch to evaluate intermediate algorithmic steps. By utilizing knowledge base retrieval, RPM-MCTS avoids the complex training of process reward models. During the expansion phase, similarity filtering is employed to remove redundant nodes, ensuring diversity in reasoning paths. Furthermore, our method utilizes sandbox execution feedback to locate erroneous algorithmic steps during generation, enabling timely and targeted corrections. Extensive experiments on four public code generation benchmarks demonstrate that RPM-MCTS outperforms current state-of-the-art methods while achieving an approximately 15% reduction in token consumption. Furthermore, full fine-tuning of the base model using the data constructed by RPM-MCTS significantly enhances its code capabilities.

Introduction

Code generation aims at understanding problem descriptions in natural language and generating the corresponding code snippets. In recent years, large language models (LLMs) have demonstrated remarkable performance in code generation tasks (zhang2024unifying). For code generation, the early methods involve dividing code planning and synthesis into two phases using chain-of-thought or tree structures (wei2022chain; jiang2024self; zelikman2023parsel). wang2024planning have demonstrated that providing LLMs with a correct solution can significantly enhance model performance, even when these solutions consist of incomplete plans, i.e., for solutions, correctness is preferred over completeness, and the key to enhancing the code generation capability of LLMs lies in generating correct plans.

Programming languages possess their own inherent logical structures and tightly interconnected knowledge, which makes it essential not to overlook long-range dependencies within the code. Previous work has shown that rotary position embedding does not always lead to attention weights decaying with relative distance (barbero2024round). Concurrently, through exploring the attention distribution between tokens, we have experimentally demonstrated that selecting algorithmic steps as the basic units is a superior choice. Therefore, our objective focuses on how to accurately generate intermediate algorithmic steps.

However, a limitation of previous methods lies in the lack of an evaluation and correction mechanism for intermediate algorithmic steps, which fails to guarantee the correctness of these steps (lu2025lsr; li2025structured). One way to tackle this issue is to use a value function or reward model to verify reasoning traces for correctness, which then serves as a learning signal for self-training (lightman2023let; wang2023math). However, training a reliable reward model to verify every step in a reasoning trace generally depends on dense human-generated annotations per reasoning step (lightman2023let), which does not scale well.

Unlike other reasoning tasks, code generation benefits from the homogeneity of algorithmic workflows across different problem categories. This allows us to leverage historical experience from a knowledge base containing numerous correct algorithmic steps to evaluate the process reward of expansion steps. Additionally, code generation typically benefits from detailed feedback provided by compilers. Consequently, in this paper, we propose RPM-MCTS, which optimizes the Monte Carlo Tree Search (MCTS) algorithm using external information feedback. Our method utilizes the knowledge base for intermediate algorithmic step-level evaluation and employs sandbox feedback for result-level assessment of complete code. Specifically, the root node of the Monte Carlo tree represents the coding problem, while all other nodes represent individual algorithmic steps. During each iteration, multiple distinct potential next steps are generated based on the current reasoning path. Node selection is guided by historical experience from the knowledge base, enabling faster discovery of high-value search paths. In the simulation phase, complete code is generated and evaluated using sandbox and model feedback to update node values. Notably, during simulation, we localize erroneous steps within the full algorithmic workflow and incorporate newly generated correct steps into the tree, thereby reducing token consumption. After multiple iterations, the highest-scoring path from root to leaf is selected, ultimately yielding a complete solution alongside its corresponding code. The contributions are summarized as follows:

- We propose RPM-MCTS, which leverages knowledge base retrieval scores to evaluate intermediate algorithmic steps, steering LLMs to explore high-value reasoning paths more effectively.

- We leverage sandbox feedback during the simulation phase to evaluate code generated from reasoning steps, localize errors, and truncate simulations, thereby reducing computational costs.

- We conduct extensive experiments and show that RPM-MCTS is superior to state-of-the-art methods. Moreover, we verify that base models fine-tuned with data generated by RPM-MCTS enjoy greater code capabilities.

Related Work

Monte Carlo Tree Search.

As the extension of Chain-of-Thought (CoT) (wei2022chain), Tree-of-Thought (ToT) (yao2023tree) enhances the reasoning and planning capabilities of LLMs by exploring different thought paths within a tree structure. Subsequently, Monte Carlo Tree Search has served as a search algorithm to more effectively guide LLMs in exploring intermediate sub-steps (zhao2023large; hao2023reasoning; zhou2023language; ding2023everything). ReST-MCTS* (zhang2024rest) combines process reward guidance with Monte Carlo Tree Search to collect high-quality reasoning trajectories and step-by-step values for training strategy and reward models. SRA-MCTS (xu2024sra) further extends this to the field of code generation, using Monte Carlo Tree Search to generate intermediate reasoning steps and conducting iterative self-evaluation to synthesize training data for supervised fine-tuning. However, relying solely on model self-evaluation introduces biases and hallucinations, and small-scale LLMs exhibit limited instruction-following capabilities. RethinkMCTS (li2025rethinkmcts) is another prior work that also uses execution feedback but employs a patching strategy. If this patch fails, the search may proceed on an incorrect path, making it less suitable for generating high-quality SFT data.

Process Evaluation.

In heuristic search, a robust reasoning process needs to have self-evaluation capabilities, and the evaluation results are further used to guide the search. Early work mainly focused on outcome-level evaluation (cobbe2021training), that is, evaluating the complete solution after the reasoning is completed. Outcome-level evaluation is simple to implement but often requires more detailed assessment. Step-level evaluation (lightman2023let; wang2023math; gao2024llm) emphasizes the assessment of individual reasoning steps. In tree search algorithms, process evaluation is widely used to guide search trajectories. Logic-RL (xie2025logic) optimizes path selection by implementing state scoring in beam search. Furthermore, step-level evaluation has proven its effectiveness in both error correction and the summarization of reasoning steps. zheng2024makes developed a method capable of accurately locating inaccuracies in specific reasoning steps, thereby providing more precise and actionable feedback for comprehensive evaluation.

Method

In this section, we elaborate on the proposed modified MCTS that incorporates the knowledge base as a process reward model. The methodology comprises three key components: knowledge base construction, RPM-MCTS, and code generation. First, knowledge base retrieval scores circumvent random selection during node expansion. Then, in the expansion phase, nodes are filtered based on similarity metrics to eliminate redundant candidates. Finally, during the simulation phase, the algorithm performs error reflection and retains nodes with verified correct reasoning. These collective strategies enable faster exploration of higher-quality algorithmic steps.

Knowledge Base Construction

In this section, we introduce the construction of a retrievable global knowledge base designed to mitigate hallucination during the planning process. Due to the homogeneity of algorithms within the same category, where fundamental principles and methods are relatively similar, we utilize a knowledge base containing numerous correct algorithms across diverse categories. This serves as the evaluation model for intermediate algorithmic steps in RPM-MCTS, eliminating the need to train a separate process reward model.

We use the training set data from APPS (hendrycks2021measuring) and CodeContests (li2022competition), which contain coding problems paired with their correctly implemented solutions. We utilize the Claude Sonnet 3.7 to generate the correct algorithmic steps corresponding to the correct code and decompose them step by step. We sequentially concatenate the problems by rolling them out according to the algorithmic steps. Specifically, for problem $p_{i}$ with $n_{i}$ algorithmic steps and $a_{i}^{(j)}$ corresponding to the $j$ -th step, we have

$$

\displaystyle\mathcal{K}_{i}=\{\mathrm{concat}(p_{i},a_{i}^{(1)},\ldots,a_{i}^{(j)}),~j=1,2,\ldots,n_{i}\}, \tag{1}

$$

and $\mathcal{K}=\uplus_{i=1}^{n}\mathcal{K}_{i}$ is the knowledge base with cardinality $n$ .

To enhance retrieval efficiency and improve retrieval precision by distinguishing between problems with similar descriptions but different algorithmic solutions, we organize the knowledge base into 14 distinct algorithm categories and store them as vector database by using the BGE (xiao2024c) embedding model.

RPM-MCTS

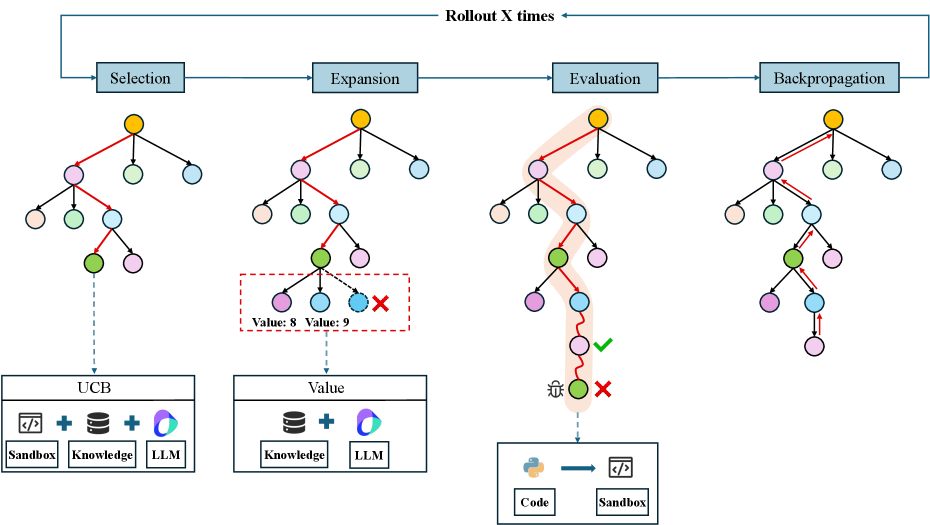

We propose an enhanced MCTS method, named RPM-MCTS. In this method, the root node represents the problem, while all other nodes represent an algorithmic step. Specifically, the method comprises four distinct phases: Selection, Expansion, Evaluation and Reflection, and Backpropagation, as shown in Figure 6. These phases are performed on a search tree composed of tree nodes and are iterated multiple times, with each iteration generating a concrete algorithmic step.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Monte Carlo Tree Search (MCTS)

### Overview

The image illustrates the Monte Carlo Tree Search (MCTS) algorithm, showing the four main stages: Selection, Expansion, Evaluation, and Backpropagation. The diagram depicts a tree structure that is explored and updated during the search process. The process is repeated "Rollout X times".

### Components/Axes

* **Title:** None explicitly given, but the diagram depicts the Monte Carlo Tree Search (MCTS) algorithm.

* **Stages (from left to right):**

* Selection

* Expansion

* Evaluation

* Backpropagation

* **Nodes:** Represented as colored circles. Colors include:

* Yellow (root node)

* Pink

* Green

* Light Blue

* **Edges:** Represented as black lines, with red arrows indicating the path taken during the search.

* **Rollout:** The entire process is repeated "Rollout X times", as indicated by a blue arrow looping from Backpropagation back to Selection.

* **UCB (Selection Stage):**

* Sandbox icon

* Knowledge icon (database)

* LLM icon (purple/blue swirl)

* **Value (Expansion Stage):**

* Knowledge icon (database)

* LLM icon (purple/blue swirl)

* **Evaluation Stage:**

* Python icon

* Arrow pointing to Sandbox icon

* **Value Indicators (Expansion Stage):**

* "Value: 8"

* "Value: 9"

* **Evaluation Indicators (Evaluation Stage):**

* Bug icon

* Green checkmark

* Red X

### Detailed Analysis

**1. Selection:**

* Starts at the yellow root node.

* A red arrow indicates the path taken down the tree.

* The path goes from the yellow node to a pink node, then to a green node.

* A dashed blue arrow points from the green node to a box labeled "UCB".

* The "UCB" box contains icons for "Sandbox", "Knowledge", and "LLM", connected by plus signs.

**2. Expansion:**

* Starts at the yellow root node.

* A red arrow indicates the path taken down the tree.

* The path goes from the yellow node to a pink node, then to a green node.

* From the green node, there are two possible expansions:

* A pink node with "Value: 8"

* A light blue node with "Value: 9" and a red "X" indicating a failed expansion.

* A dashed blue arrow points from the green node to a box labeled "Value".

* The "Value" box contains icons for "Knowledge" and "LLM", connected by a plus sign.

**3. Evaluation:**

* Starts at the yellow root node.

* A red arrow indicates the path taken down the tree. The path is highlighted with a light red background.

* The path goes from the yellow node to a pink node, then to a green node, then to a pink node.

* The path continues down to a light blue node, where a bug icon is present.

* The path ends at a green node with a green checkmark and a pink node with a red "X".

* A dashed blue arrow points from the pink node with the red "X" to a box containing a Python icon, an arrow, and a "Sandbox" icon. The box is labeled "Code" and "Sandbox" respectively.

**4. Backpropagation:**

* Starts at the yellow root node.

* Red arrows indicate the path taken back up the tree.

* The path goes from a pink node to a light blue node, then to a green node, then to a pink node, and finally to the yellow node.

### Key Observations

* The diagram illustrates the iterative nature of MCTS, with the "Rollout X times" loop.

* Each stage of MCTS is clearly represented with its corresponding actions and data.

* The diagram highlights the use of UCB for node selection and value estimation for node expansion.

* The evaluation stage shows the interaction between code execution (Python) and a sandbox environment.

* The backpropagation stage shows how the results of the evaluation are propagated back up the tree.

### Interpretation

The diagram provides a visual representation of the MCTS algorithm, which is commonly used in decision-making problems, particularly in game playing and reinforcement learning. The diagram shows how the algorithm explores the search space by iteratively selecting, expanding, evaluating, and backpropagating information through a tree structure. The use of UCB in the selection stage helps to balance exploration and exploitation, while the value estimation in the expansion stage provides a way to prioritize promising nodes. The evaluation stage simulates the outcome of actions, and the backpropagation stage updates the values of the nodes in the tree based on the simulation results. The "Rollout X times" loop indicates that the algorithm repeats these steps multiple times to refine its search and improve its decision-making. The diagram also highlights the use of a sandbox environment for code execution during the evaluation stage, which is important for security and isolation.

</details>

Figure 1: Overview of RPM-MCTS. (a) Selection: Select a leaf node according to Eqn. (2). (b) Expansion: After selecting a node, expand multiple child nodes, and use knowledge base retrieval scores and LLM evaluation to select nodes for simulation. The node color represents similarity magnitude. (c) Evaluation: Generate complete reasoning steps for the selected node, generate code strictly in accordance with these reasoning steps, and use a sandbox for information feedback. (d) Backpropagation: Propagate the reward scores backward. The yellow root node represents the problem, and the remaining nodes represent each reasoning step.

Selection.

In the selection phase, a leaf node is selected from the current tree for further expansion according to the selection score, which is defined as a weighted combination of the Upper Confidence Bound (UCB) (silver2017mastering) and the knowledge base retrieval score:

$$

\displaystyle\mathrm{SelectionScore}(s,a)=\mathrm{UCB}(s,a)+\alpha K(s,a), \tag{2}

$$

where $(s,a)$ denotes a state-action pair with $s$ containing the description of the problem and previously generated algorithmic steps and $a$ representing the new step at the current node. The parameter $\alpha$ is for balancing the two terms.

UCB is a classical multi-armed bandit algorithm and well performed in addressing the exploration-exploitation trade-off. UCB selects actions by computing an upper confidence estimate of each action’s potential reward:

$$

\displaystyle\mathrm{UCB}(s,a)=Q(s,a)+\beta\sqrt{\frac{\log N(s)}{1+N(s,a)}}, \tag{3}

$$

where $Q(s,a)$ represents the empirical mean cumulative reward after taking action $a$ from state $s$ , $N(s)$ is the number of times state $s$ has been explored in the current context, and $N(s,a)$ is the number of times action $a$ has been taken in state $s$ . The parameter $\beta$ is for trading off the exploitation (the former term) and exploration (the latter term).

The knowledge base retrieval score $K(s,a)$ is obtained by retrieving the concatenated $(s,a)$ pair from the knowledge base. Specifically, let $f$ denote the embedding model that maps $(s,a)$ to a vector with the same dimension as the knowledge base. Given the preceding reasoning path, the knowledge base retrieval score for the current node is calculated as follows:

$$

\displaystyle K(s,a)=\max\left(0,\max_{k\in\mathcal{K}}\frac{f((s,a))\cdot k}{\|f((s,a))\|\cdot\|k\|}\right). \tag{4}

$$

The knowledge base similarity score $K(s,a)$ enables acquisition of step-wise assessments prior to the evaluation phase. In other words, when newly generated nodes remain unexplored, we prioritize leveraging historically validated solutions through knowledge base retrieval scores to identify higher-value nodes.

Starting from the root node, we recursively select the child node with the maximum $\mathrm{SelectionScore}$ value at each branching point. Selection ties are resolved stochastically. Each iteration advances to the highest-scoring child node until reaching a leaf node.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Composite Image: Python Factorial Calculation

### Overview

The image presents a multi-faceted view of a Python program designed to calculate the factorial of 5. It includes a heatmap-like visualization of code relationships, the Python code itself with annotations, and a series of visualizations that appear to represent program execution or state.

### Components/Axes

**Image (a): Heatmap-like Visualization**

* **Axes:** The x and y axes are labeled with Python code snippets. The labels are:

* `n = 5`

* `p = 1`

* `for`

* `in`

* `range`

* `(1`

* `n`

* `+`

* `1):`

* `p =`

* `p *`

* `i`

* `print`

* `(p)`

* `\n`

* **Color Scale:** A vertical color bar on the right ranges from approximately 0.0 (dark purple) to 0.8 (yellow). The scale is marked with values 0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, and 0.8.

* **Red Rectangles:** Three red rectangles highlight specific columns in the heatmap, corresponding to the code snippets `n = 5`, `p = p * i`, and `print(p)`.

**Image (b): Python Code with Annotations**

* **Code:** The Python code is presented as follows:

1. `n = 5`

2. `p = 1`

3. `for i in range(1, n + 1):`

4. `p = p * i`

5. `print(p)`

* **Annotations:** The code lines are annotated with circled numbers: ①, ②, ③, and ④. These correspond to the steps outlined above the code:

* ① Input the value of n

* ② Iterate from 1 to n

* ③ Calculate the factorial

* ④ Output the result

* **Background Colors:** Each line of code has a distinct background color:

* Line 1 (`n = 5`): Light pink

* Line 2 (`p = 1`): Light green

* Line 3 (`for i in range(1, n + 1):`): Light blue

* Line 4 (`p = p * i`): Light green

* Line 5 (`print(p)`): Light orange

**Image (c): Visualizations**

* **Arrangement:** Image (c) consists of two rows of visualizations. The top row appears to be pink and the bottom row appears to be gray. Each row contains a series of similar visualizations.

### Detailed Analysis or ### Content Details

**Image (a): Heatmap-like Visualization**

* The heatmap shows the relationships between different parts of the code. The intensity of the color at each intersection indicates the strength of the relationship.

* The highlighted columns show the relationships of `n = 5`, `p = p * i`, and `print(p)` with all other code snippets.

* The brightest (yellow) cells indicate the strongest relationships. For example, the intersection of `n = 5` with itself is bright, as is the intersection of `p = p * i` with itself.

**Image (b): Python Code with Annotations**

* The code calculates the factorial of 5.

* `n` is initialized to 5.

* `p` is initialized to 1.

* The `for` loop iterates from 1 to `n + 1` (i.e., 1 to 6).

* In each iteration, `p` is multiplied by `i`.

* Finally, `p` (the factorial of 5) is printed.

**Image (c): Visualizations**

* The visualizations in image (c) are difficult to interpret without additional context. They may represent the state of variables or the flow of execution during the program's execution.

* The top row (pink) and bottom row (gray) may represent different aspects of the program's state.

### Key Observations

* The heatmap highlights the relationships between different parts of the code.

* The annotations in image (b) clearly explain the steps involved in calculating the factorial.

* The visualizations in image (c) are abstract and require further context for interpretation.

### Interpretation

The image provides a comprehensive view of a simple Python program. The heatmap offers a visual representation of code relationships, while the annotated code clearly explains the algorithm. The visualizations in image (c) likely represent the program's execution, but their specific meaning is unclear without additional information. The combination of these elements provides a multi-faceted understanding of the program's functionality.

</details>

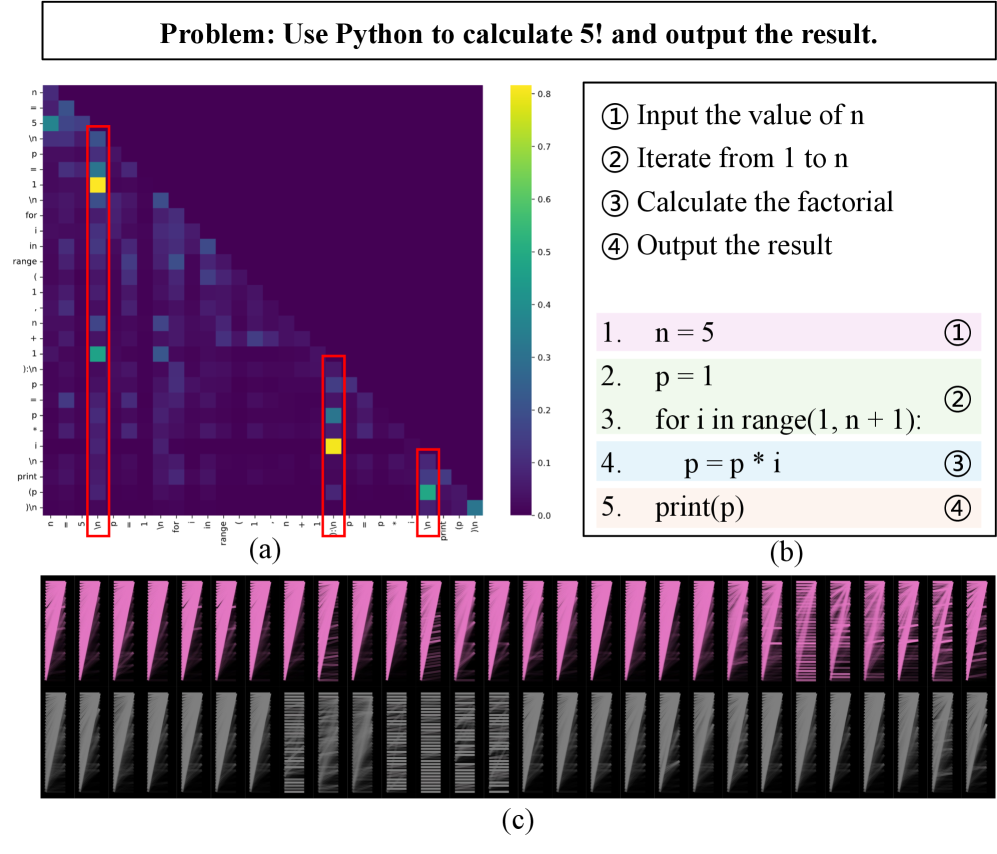

Figure 2: (a) Token-level attention heatmap for code corresponding to the programming problem. (b) Algorithmic steps and corresponding code for the programming problem. (c) Attention sink phenomenon.

Expansion.

Upon selecting a leaf node during the selection phase, the expansion phase aims to generate remaining child nodes, thereby expanding the search scope of the entire tree. Since the attention weights between tokens do not always decay with relative distance (barbero2024round), we conduct an in-depth study on the attention mechanism between tokens in LLMs during code generation to reveal the influencing factors among tokens. As shown in Figure 7, it can be observed that certain tokens have a profound impact on subsequent code generation. It can thus be inferred that these key tokens can summarize and interpret the information of previously generated tokens, and have higher reference value for subsequent token generation. Meanwhile, relevant studies (barbero2025llms; xiao2023efficient) have shown that modern LLMs exhibit the phenomenon of “attention sink”. Specifically, numerous attention heads allocate a disproportionate share of weights, such as exceeding 30% or even 80%, to the beginning-of-sequence token ⟨bos⟩, despite its primary function as a sequence delimiter with minimal semantic content. Therefore, to facilitate our examination of inter-token dependencies in code generation tasks, we selectively visualize token attention mechanisms at designated layers. Figure 7 (c) shows that attention not only sinks to ⟨bos⟩ but also peaks at algorithmic step boundaries, justifying that algorithmic step blocks are more effective basic processing units in code generation tasks. Therefore, we select algorithmic steps as the basic units for expansion.

To ensure diversity in generated steps during the expansion phase, we implement a sampling decoding strategy that sequentially generates each child node. Specifically, to prevent repetitive generation by the LLM, we iteratively provide all previously generated steps as context when producing each new step. The input for the LLM is

$$

\displaystyle\mathrm{concat}(s,a_{1},\ldots,a_{i},g),~i=1,2,\ldots,b \tag{5}

$$

where $g$ represents the reflection in the simulation phase, and $b$ denotes the maximum number of branches each node can expand.

After expanding $b$ nodes, we employ cosine similarity for filtering to reduce computational costs by avoiding simulations on redundant nodes. Specifically, we map the reasoning steps of the $b$ nodes to vectors using the embedding model $\mathcal{E}$ and calculate the cosine similarities between these $b$ nodes. When the similarity exceeds a predetermined threshold, the node is identified as redundant and filtered out. This method effectively reduces the search space and enhances algorithmic efficiency while maintaining diversity.

Evaluation and Reflection.

During the evaluation phase, simulation and evaluation are performed for the selected leaf nodes. We provide the LLM with the algorithmic steps $s$ already generated for the node and its ancestor nodes, enabling the LLM to strictly follow the generated steps and continue simulating to complete all remaining steps. We search for the thoughts and evaluate with the code generated following the thoughts.

The generated code undergoes sandbox evaluation using public test cases. However, since public test cases only cover a subset of possible scenarios, the code may fail on unseen cases, such as boundary conditions or performance issues. We therefore employ the LLM to analyze the complete algorithmic steps based on sandbox feedback.

We assess the steps generated during the expansion phase through two components, which are the pass rate on public test cases and LLM evaluation. The final evaluation score is obtained by weighted summation of these two scores. The formula is as follows:

$$

\displaystyle Q(s,a)=\gamma\cdot r_{\text{exec}}+(1-\gamma)\cdot r_{\text{LLM}} \tag{6}

$$

where $r_{\text{exec}}$ denotes the pass rate on public test cases, $r_{\text{LLM}}$ represents the score from LLM evaluation based on the sandbox feedback results and complete steps provided to the LLM, and $\gamma$ indicates the weight controlling these two parts of the scores.

For code that fails to public test cases, we isolate erroneous algorithmic steps by decomposing the code into blocks and sequentially debugging each block via LLM analysis with public test inputs (zhong2024debug). We retain all correct steps generated during the simulation phase, truncated before the first erroneous step. These validated steps are then incorporated into the MCTS tree as expanded nodes.

The entire RPM-MCTS process is terminated when the solution passes all public test cases and achieves a high LLM evaluation score. Otherwise, node updates and reflection are performed, and the RPM-MCTS process proceeds until the maximum iteration count is reached.

Backpropagation.

The objective of backpropagation is to update the reward values of nodes upon completion of state value evaluation. We propagate reward values backward from leaf nodes to the root node, updating the state estimates of all nodes along the path. For newly generated nodes during the expansion phase, they collectively update their parent node. As the number of simulations increases, these value estimates become increasingly accurate. This process repeats until the preset maximum simulation count is reached, ultimately resulting in a search tree that records the state value and visit count for each node.

Generate Code

Termination of the RPM-MCTS process occurs under two conditions: 1) If all public test cases are passed and LLM analysis confirms robustness to unseen edge cases before reaching maximum iterations, the code generated during the simulation phase is retained. 2) When maximum iterations are reached without meeting termination criteria, the leaf node with the highest state value is selected, its ancestral path is traced, and the LLM is instructed to generate code by rigorously adhering to the algorithmic steps assembled from this path.

Experiments

Experimental Settings

Datasets.

For the construction of the knowledge base, we use the train set splits of APPS (hendrycks2021measuring) and CodeContests (li2022competition) as data sources. After validation and filtering, we obtained 11,038 samples with a total of 82,923 steps. For benchmarking, we used the test set splits of APPS and CodeContests, as well as HumanEval+ (liu2023your) and MBPP+ (liu2023your). The APPS dataset contains three difficulty levels: introductory, interview, and competition. We selected 150 validated samples from each difficulty level. The CodeContests dataset consists of competitive programming problems collected from contest websites such as Codeforces. Additionally, HumanEval (chen2021evaluating) and MBPP (austin2021program) are widely recognized benchmarks in the code generation domain, while HumanEval+ and MBPP+ introduce a larger number of test cases to enable more accurate evaluations. We utilized Claude Sonnet 3.7 to convert all datasets into a unified format, which primarily includes the problem statement, public test cases, private test cases, and standard solution. To facilitate sandbox execution, we transformed datasets with standard input-output problems into function definitions with docstrings. For datasets without public test cases, we selected the first two private test cases as public test cases.

Baselines.

We selected the following methods as baselines for comparison. Base LLM refers to directly prompting the LLM to output solution code using the problem statement and public test cases as input. LDB (zhong2024debug) leverages the LLM to track intermediate variables during code execution to iteratively improve the code. ToT (yao2023tree) performs a search of thought steps using DFS or BFS before generating the final code. SRA-MCTS (xu2024sra) combines LLM with MCTS to explore intermediate reasoning steps. The complete steps obtained by SRA-MCTS are used as input to prompt the LLM to directly infer and output the solution code for evaluation.

Implementation Details.

We use two large-parameter backbone models, Qwen3-235B-A22B (yang2025qwen3) and Claude Sonnet 3.7, alongside a smaller-parameter model, Qwen3-8B. In the code generation domain, pass@k (chen2024survey) is a widely used metric, and we adopted pass@1 as the evaluation metric. The rollout, i.e., maximum number of iterations, was set to 5 for all methods. The branching factor $b$ for tree-based methods was set to 3. The exploration constant $\beta$ for UCB was set to 0.5. In RPM-MCTS, the weight of the knowledge base retrieval score $\alpha$ was set to 0.5, and the similarity filtering threshold was set to 0.85.

Main Results

| Method Qwen3-8B Base LLM | APPS-Intro. 56.7 | APPS-Interv. 35.3 | APPS-Comp. 29.3 | CodeContests 10.7 | HumanEval+ 75.6 | MBPP+ 72.2 | Average 52.1 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| LDB | 64.0 (+7.3) | 42.0 (+6.7) | 28.0 (-1.3) | 11.3 (+0.7) | 78.1 (+2.4) | 70.1 (-2.1) | 53.5 (+1.4) |

| ToT | 69.3 (+12.7) | 54.0 (+18.7) | 41.3 (+12.0) | 17.3 (+6.7) | 82.3 (+6.7) | 70.4 (-1.9) | 59.0 (+6.9) |

| SRA-MCTS | 67.3 (+10.7) | 42.7 (+7.3) | 29.3 (+0.0) | 16.0 (+5.3) | 73.8 (-1.8) | 65.9 (-6.4) | 52.8 (+0.7) |

| Ours w/o KB | 76.7 (+20.0) | 56.7 (+21.3) | 40.7 (+11.3) | 22.3 (+11.6) | 82.3 (+6.7) | 78.3 (+6.1) | 63.5 (+11.4) |

| Ours | 77.3 (+20.7) | 60.0 (+24.7) | 43.6 (+14.3) | 23.0 (+12.3) | 83.5 (+7.9) | 76.2 (+4.0) | 64.0 (+11.9) |

| Qwen3-235B-A22B | | | | | | | |

| Base LLM | 78.0 | 54.7 | 42.7 | 24.0 | 86.0 | 78.8 | 64.6 |

| LDB | 78.7 (+0.7) | 61.3 (+6.7) | 47.3 (+4.7) | 25.3 (+1.3) | 86.0 (+0.0) | 78.8 (+0.0) | 66.4 (+1.8) |

| ToT | 84.7 (+6.7) | 62.7 (+8.0) | 57.3 (+14.7) | 27.3 (+3.3) | 85.4 (-0.6) | 75.4 (-3.4) | 67.7 (+3.1) |

| SRA-MCTS | 76.0 (-2.0) | 52.7 (-2.0) | 44.0 (+1.3) | 24.7 (+0.7) | 85.4 (-0.6) | 70.9 (-7.9) | 61.7 (-3.0) |

| Ours w/o KB | 88.0 (+10.0) | 72.0 (+17.3) | 52.0 (+9.3) | 34.7 (+10.7) | 86.6 (+0.6) | 79.9 (+1.1) | 71.3 (+6.7) |

| Ours | 86.7 (+8.7) | 67.3 (+12.7) | 59.3 (+16.7) | 36.7 (+12.7) | 87.8 (+1.8) | 81.2 (+2.4) | 72.3 (+7.7) |

| Claude Sonnet 3.7 | | | | | | | |

| Base LLM | 78.7 | 56.0 | 59.3 | 31.3 | 82.9 | 77.8 | 67.3 |

| LDB | 82.0 (+3.3) | 64.7 (+8.7) | 73.3 (+14.0) | 33.3 (+2.0) | 88.4 (+5.5) | 77.0 (-0.8) | 71.5 (+4.2) |

| ToT | 84.0 (+5.3) | 68.0 (+12.0) | 66.0 (+6.7) | 39.3 (+8.0) | 86.0 (+3.1) | 74.6 (-3.2) | 70.8 (+3.6) |

| SRA-MCTS | 83.3 (+4.7) | 63.3 (+7.3) | 62.0 (+2.7) | 36.0 (+4.7) | 81.1 (-1.8) | 74.3 (-3.4) | 68.4 (+1.1) |

| Ours w/o KB | 92.0 (+13.3) | 73.3 (+17.3) | 78.0 (+18.7) | 42.7 (+11.3) | 86.6 (+3.7) | 79.1 (+1.3) | 76.2 (+8.9) |

| Ours | 92.0 (+13.3) | 74.0 (+18.0) | 81.3 (+22.0) | 46.0 (+14.7) | 89.0 (+6.1) | 81.0 (+3.2) | 78.1 (+10.9) |

Table 1: Performance comparison of all methods across different backbone models on code generation benchmarks. Values in parentheses indicate the improvement over the base LLM.

Our method achieves the most significant improvements across different backbone models and datasets. As shown in Table 3, Qwen3-8B achieves an average improvement of 11.90%, Qwen3-235B-A22B achieves an average improvement of 7.71%, and Claude Sonnet 3.7 achieves an average improvement of 10.86%. Since the base Qwen3-8B performs worse than the other two larger base LLMs across all datasets, especially on simpler datasets, the Qwen3-8B shows the most significant improvement when using RPM-MCTS. On the two more challenging datasets, APPS-competition and CodeContests, Qwen3-8B achieves an average improvement of 13.3%, Qwen3-235B-A22B achieves an average improvement of 14.67%, and Claude Sonnet 3.7 achieves an average improvement of 18.34%. This is because Qwen3-8B has weaker evaluation scoring capabilities, while larger LLMs have relatively stronger evaluation capabilities, resulting in greater gains. This demonstrates that the more difficult the task, the more accurate evaluation of intermediate algorithm steps is required.

LDB achieves greater improvements on simpler datasets compared to more challenging ones. We found that this is because, for more difficult problems, through multiple rounds of execution feedback, LLMs often only modify code conditions to pass public test cases rather than thinking about modifying the actual logic of the code. SRA-MCTS shows performance improvements on more challenging datasets but declines on simpler ones. The reason is that for simple problems, LLM evaluation scores are always perfect or near-perfect, prematurely ending the search for steps, resulting in incomplete or lower-quality reasoning steps.

Comparing the results across three different difficulty levels in the APPS dataset, it can be observed that for the two larger LLMs, as the difficulty increases, our method brings more significant performance improvements. The higher the difficulty of the problem, the more guidance the LLM needs to avoid getting lost in complex reasoning chains. This demonstrates the effectiveness of our method in evaluating intermediate steps, helping LLMs enhance their evaluation capabilities and further unlocking the vast potential code knowledge and reasoning abilities inherent in LLMs.

For fair comparison, even without using knowledge base retrieval scores as rewards, our method outperforms other baselines. Experimental results show that overall, especially on the two most challenging datasets, incorporating the knowledge base further stabilizes and improves performance. The reason is that LLM evaluation of intermediate steps in complex problems is unreliable, and random exploration struggles to find the correct solution path. Therefore, leveraging the knowledge base to use the reasoning patterns of historically similar problems as guidance helps direct the search. This demonstrates the effectiveness of using knowledge base retrieval scores as rewards for intermediate process evaluation.

On a few simpler datasets, performance slightly improves when knowledge base retrieval scores are not used. We analyze that this is because, in simple tasks, LLMs can already accurately evaluate the quality of generated paths. In this case, introducing knowledge base rewards, while aiming to provide additional prior information, may retrieve historical cases that are textually similar but logically different in their solutions, introducing noise into MCTS node selection. In contrast, for complex tasks, LLM evaluation capabilities for intermediate steps are limited, the search space is vast, and solutions are sparse. The structured priors provided by the knowledge base effectively guide the search direction, significantly improving success rates. This phenomenon indicates that the effectiveness of knowledge base rewards depends on the balance between task difficulty and LLM evaluation confidence.

Ablation Study

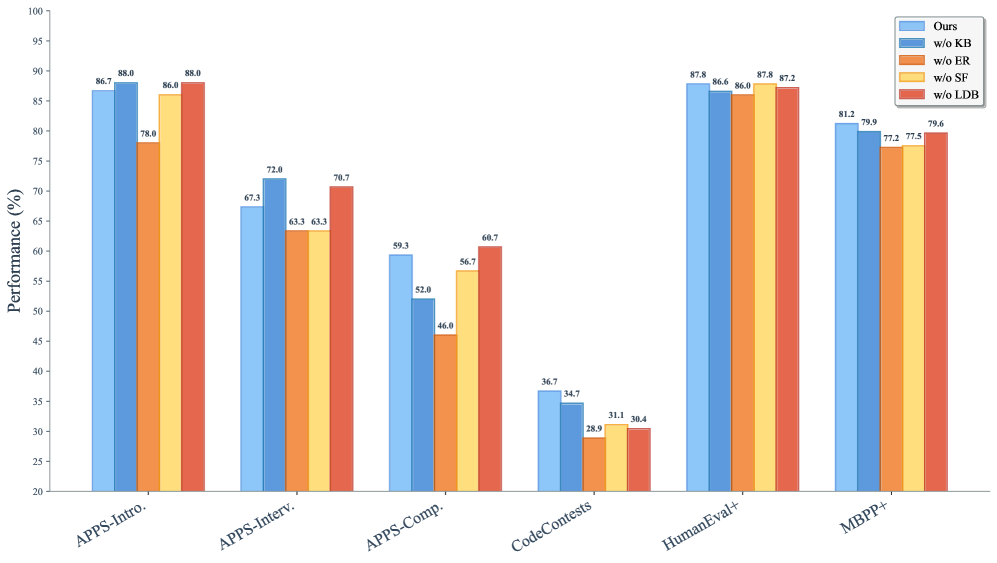

We conduct ablation experiments using Qwen3-235B-A22B as the backbone model to evaluate performance, and the results are shown in Figure 8.

w/o KB indicates that only LLM evaluation is used in selection, without knowledge base retrieval. Compared to the complete method, the overall performance slightly decreased, with an average drop of 1.05%. The decline was most significant on the two more challenging datasets, with an average drop of 4.67%. This indicates that large models still face challenges with complex problems. By introducing a knowledge base to compare the generated reasoning steps with the correct reasoning steps of similar problems in the knowledge base, the self-assessment capability for complex problems can be improved.

w/o ER means that the execution rewards of public test cases in the sandbox are not used during the simulation phase. This resulted in the largest overall performance drop, highlighting that the core of RPM-MCTS reflection lies in the detailed feedback provided by the code execution environment. In fact, previous research (huang2023large) has already pointed out that without external feedback, LLMs lack the ability to self-correct their reasoning processes.

<details>

<summary>x3.png Details</summary>

### Visual Description

## Bar Chart: Performance Comparison

### Overview

The image is a bar chart comparing the performance of a model ("Ours") against four variants where specific components (KB, ER, SF, LDB) have been removed. The chart displays performance percentages across five different tasks: APPS-Intro, APPS-Interv, APPS-Comp, CodeContests, HumanEval+, and MBPP+.

### Components/Axes

* **Y-axis:** "Performance (%)", ranging from 20% to 100% in increments of 5%.

* **X-axis:** Categorical axis representing the different tasks: APPS-Intro, APPS-Interv, APPS-Comp, CodeContests, HumanEval+, and MBPP+.

* **Legend:** Located at the top-right of the chart.

* Blue: "Ours"

* Darker Blue: "w/o KB"

* Orange: "w/o ER"

* Yellow: "w/o SF"

* Red: "w/o LDB"

### Detailed Analysis

Here's a breakdown of the performance for each task and model variant:

* **APPS-Intro:**

* Ours (Blue): 86.7%

* w/o KB (Darker Blue): 88.0%

* w/o ER (Orange): 78.0%

* w/o SF (Yellow): 86.0%

* w/o LDB (Red): 88.0%

* **APPS-Interv:**

* Ours (Blue): 67.3%

* w/o KB (Darker Blue): 72.0%

* w/o ER (Orange): 63.3%

* w/o SF (Yellow): 63.3%

* w/o LDB (Red): 70.7%

* **APPS-Comp:**

* Ours (Blue): 59.3%

* w/o KB (Darker Blue): 52.0%

* w/o ER (Orange): 46.0%

* w/o SF (Yellow): 56.7%

* w/o LDB (Red): 60.7%

* **CodeContests:**

* Ours (Blue): 36.7%

* w/o KB (Darker Blue): 34.7%

* w/o ER (Orange): 28.9%

* w/o SF (Yellow): 31.1%

* w/o LDB (Red): 30.4%

* **HumanEval+:**

* Ours (Blue): 87.8%

* w/o KB (Darker Blue): 86.6%

* w/o ER (Orange): 86.0%

* w/o SF (Yellow): 87.8%

* w/o LDB (Red): 87.2%

* **MBPP+:**

* Ours (Blue): 81.2%

* w/o KB (Darker Blue): 79.9%

* w/o ER (Orange): 77.2%

* w/o SF (Yellow): 77.5%

* w/o LDB (Red): 79.6%

### Key Observations

* The "Ours" model generally performs competitively across all tasks.

* Removing KB ("w/o KB") sometimes improves performance (APPS-Intro), but can also decrease it (APPS-Comp).

* Removing ER ("w/o ER") consistently results in lower performance compared to "Ours".

* Removing SF ("w/o SF") results in performance close to "Ours".

* Removing LDB ("w/o LDB") results in performance close to "Ours".

* The performance varies significantly across different tasks, with CodeContests showing the lowest scores.

### Interpretation

The chart indicates the relative importance of the different components (KB, ER, SF, LDB) for the model's performance on various programming tasks. Removing ER consistently hurts performance, suggesting it is a crucial component. Removing KB has a mixed impact, indicating it might be more specialized for certain tasks. SF and LDB appear to have less impact on overall performance. The wide range of performance across tasks highlights the model's varying effectiveness depending on the specific challenge. The "Ours" model serves as a benchmark, and the other bars show the impact of ablating different components.

</details>

Figure 3: Ablation study results on different benchmarks.

w/o SF refers to the removal of similarity filtering, i.e., not discarding similar child nodes during the expansion phase. The results show that filtering out repeated intermediate algorithmic steps based on similarity allows resources to be better allocated to exploring new steps, thereby improving performance while reducing computational costs.

w/o LDB denotes not using LDB to locate erroneous steps in our method. The average performance drop was minimal, indicating that removing LDB has little impact on our method. With execution feedback, LLMs are already capable of accurately locating errors. However, in a few cases, LDB still helps in pinpointing erroneous steps.

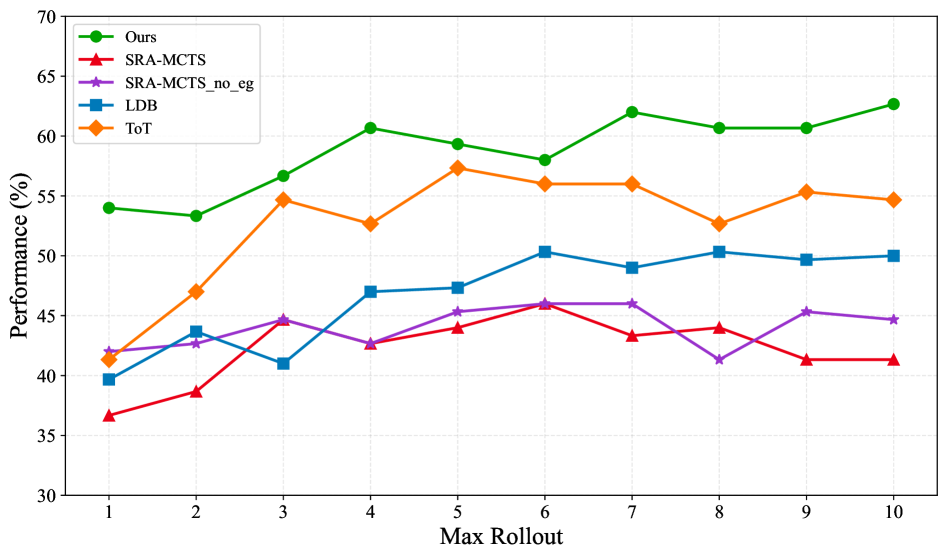

Performance vs. Rollout

We explore the results of different values of the hyperparameter rollout on Qwen3-235B-A22B, as shown in Figure 9. Since SRA-MCTS is prone to premature termination due to self-overestimation by the model, we set its end gate value to exceed the maximum possible score, allowing it to reach the maximum number of iterations whenever possible. we denote this variant as SRA-MCTS_no_eg. The results show that in the early stages, all methods exhibit significant performance improvements as the rollout increases, after which the performance gradually stabilizes. Notably, RPM-MCTS exhibits better performance even with a rollout of 1. This is because it enjoys two advantages in its first rollout: proactive guidance via its Knowledge Base during the selection phase, and wrong step truncation with rethink-based regeneration during the simulation phase. This allows it to perform at least one round of verification and reflection and generate complete code. Moreover, for simpler problems, RPM-MCTS can often arrive at the correct answer with only a single simulation, whereas traditional tree search methods tend to require multiple unnecessary expansions even for straightforward tasks.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Chart: Performance vs. Max Rollout

### Overview

The image is a line chart comparing the performance (in percentage) of five different methods ("Ours", "SRA-MCTS", "SRA-MCTS_no_eg", "LDB", and "ToT") against the "Max Rollout" parameter, which ranges from 1 to 10. The chart shows how the performance of each method changes as the "Max Rollout" increases.

### Components/Axes

* **X-axis:** "Max Rollout", with integer values from 1 to 10.

* **Y-axis:** "Performance (%)", with values ranging from 30 to 70, in increments of 5.

* **Legend:** Located in the top-left corner, it identifies each line by color and label:

* Green: "Ours"

* Red: "SRA-MCTS"

* Purple: "SRA-MCTS_no_eg"

* Blue: "LDB"

* Orange: "ToT"

### Detailed Analysis

* **"Ours" (Green):** The line generally slopes upward, indicating increasing performance with higher "Max Rollout".

* Rollout 1: ~54%

* Rollout 3: ~57%

* Rollout 7: ~62%

* Rollout 10: ~63%

* **"SRA-MCTS" (Red):** The line fluctuates, showing an initial increase followed by a decrease and then stabilization.

* Rollout 1: ~37%

* Rollout 3: ~43%

* Rollout 7: ~43%

* Rollout 10: ~41%

* **"SRA-MCTS_no_eg" (Purple):** The line remains relatively flat, indicating little change in performance with increasing "Max Rollout".

* Rollout 1: ~42%

* Rollout 5: ~45%

* Rollout 10: ~45%

* **"LDB" (Blue):** The line generally slopes upward, showing increasing performance with higher "Max Rollout", but plateaus after Rollout 6.

* Rollout 1: ~40%

* Rollout 6: ~50%

* Rollout 10: ~50%

* **"ToT" (Orange):** The line increases sharply initially, then decreases slightly after Rollout 5.

* Rollout 1: ~42%

* Rollout 5: ~58%

* Rollout 10: ~55%

### Key Observations

* "Ours" consistently outperforms the other methods across all "Max Rollout" values.

* "SRA-MCTS" has the lowest performance among the methods.

* "SRA-MCTS_no_eg" shows the least variation in performance.

* "LDB" and "ToT" show initial improvements, but their performance plateaus or decreases slightly at higher "Max Rollout" values.

### Interpretation

The chart suggests that increasing the "Max Rollout" parameter generally improves the performance of the methods, but the extent of improvement varies. The "Ours" method demonstrates the highest and most consistent performance gains with increasing "Max Rollout". The "SRA-MCTS" method consistently underperforms compared to the others. The "SRA-MCTS_no_eg" method's stable performance suggests it may be less sensitive to changes in the "Max Rollout" parameter. The plateauing of "LDB" and "ToT" indicates that there may be a point of diminishing returns for these methods with increasing "Max Rollout".

</details>

Figure 4: Performance comparison across different maximum rollout values.

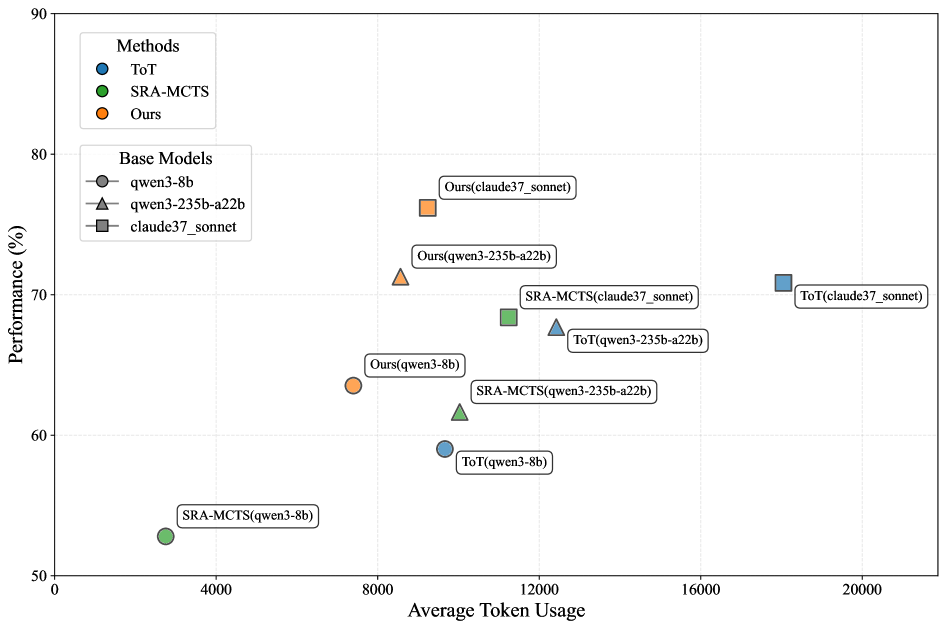

Token Efficiency Analysis

Figure 10 shows the average token usage of different methods across all benchmark datasets. Our method reduces token consumption by approximately 15% compared to the previous MCTS method on both Qwen3-235B-A22B and Claude Sonnet 3.7. This improvement is attributed to: 1) The knowledge base retrieval scoring prioritizes more correct nodes, avoiding exploration of invalid branches. 2) Similarity filtering eliminates duplicate intermediate reasoning steps, enabling dynamic pruning of the Monte Carlo tree and reducing redundant path generation. 3) The simulation phase leverages sandbox feedback to pinpoint erroneous steps, while retaining the verified correct ones. Overall, RPM-MCTS achieves enhanced search efficiency and generation quality through knowledge base guidance and execution feedback.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Scatter Plot: Performance vs. Average Token Usage

### Overview

The image is a scatter plot comparing the performance (in percentage) of different methods (ToT, SRA-MCTS, and Ours) against their average token usage. The data points are further categorized by the base models used (qwen3-8b, qwen3-235b-a22b, and claude37_sonnet).

### Components/Axes

* **Title:** There is no explicit title.

* **X-axis:** Average Token Usage, ranging from 0 to 20000, with gridlines at intervals of 4000.

* **Y-axis:** Performance (%), ranging from 50 to 90, with gridlines at intervals of 10.

* **Legend (Top-Left):**

* **Methods:**

* Blue circle: ToT

* Green circle: SRA-MCTS

* Orange circle: Ours

* **Base Models:**

* Gray circle: qwen3-8b

* Gray triangle: qwen3-235b-a22b

* Gray square: claude37\_sonnet

### Detailed Analysis

The data points are scattered across the plot, each representing a specific method and base model combination. The position of each point indicates its performance and average token usage.

* **ToT (Blue):**

* ToT(qwen3-8b): Performance ~60%, Token Usage ~10000

* ToT(qwen3-235b-a22b): Performance ~68%, Token Usage ~13000

* ToT(claude37\_sonnet): Performance ~70%, Token Usage ~19000

* Trend: As the base model changes from qwen3-8b to qwen3-235b-a22b to claude37_sonnet, both performance and token usage increase.

* **SRA-MCTS (Green):**

* SRA-MCTS(qwen3-8b): Performance ~53%, Token Usage ~3000

* SRA-MCTS(qwen3-235b-a22b): Performance ~63%, Token Usage ~11000

* SRA-MCTS(claude37\_sonnet): Performance ~69%, Token Usage ~12000

* Trend: As the base model changes from qwen3-8b to qwen3-235b-a22b to claude37_sonnet, both performance and token usage increase.

* **Ours (Orange):**

* Ours(qwen3-8b): Performance ~64%, Token Usage ~7500

* Ours(qwen3-235b-a22b): Performance ~71%, Token Usage ~9000

* Ours(claude37\_sonnet): Performance ~75%, Token Usage ~9000

* Trend: As the base model changes from qwen3-8b to qwen3-235b-a22b to claude37_sonnet, performance increases, but token usage remains relatively stable.

### Key Observations

* The "Ours" method generally achieves higher performance with lower token usage compared to "ToT" and "SRA-MCTS" when using the same base model.

* Using the "claude37\_sonnet" base model generally results in higher performance but also higher token usage across all methods.

* SRA-MCTS with qwen3-8b has the lowest token usage and performance.

### Interpretation

The scatter plot visualizes the trade-off between performance and token usage for different methods and base models. The "Ours" method appears to be more efficient, achieving better performance with fewer tokens. The choice of base model significantly impacts both performance and token usage, with "claude37\_sonnet" generally leading to higher performance at the cost of increased token consumption. The data suggests that optimizing the method and base model selection is crucial for balancing performance and computational cost.

</details>

Figure 5: Comparison of token consumption and performance across different methods and models.

Reasoning Steps for Data Distillation

Our method is training-free, yet the algorithmic synthesized reasoning steps it produces can also be used for supervised fine-tuning. Based on Doubao-1.5-pro-32K model, we utilize RPM-MCTS for data distillation and construct a dataset of 2.4k code generation samples with reasoning steps. This distilled data is then combined with a foundational dataset of 170k samples to perform full fine-tuning on Doubao-1.5-pro-32K. Benchmark results in Table 4 demonstrate that the training data generated using RPM-MCTS significantly enhances the code capabilities of the base model.

| SWE-Bench (jimenez2024swebench) MBPP+ (liu2023your) LiveCodeBench (jain2024livecodebench) | 37.6 75.4 46.2 | 38.5 76.7 50.5 | +0.9 +1.3 +4.3 |

| --- | --- | --- | --- |

| Aider (aider2024polyglot) | 17.3 | 22.2 | +4.9 |

| McEval (mceval) | 57.5 | 61.2 | +3.7 |

Table 2: Supervised fine-tuning results on Doubao-1.5-pro-32K model using RPM-MCTS synthesized data.

Conclusion

In this paper, we propose RPM-MCTS, which leverages a knowledge base and external sandbox feedback to directly obtain accurate reward values without requiring additional training of a process reward model. During the search process, errors are identified and promptly corrected. Experimental results demonstrate that RPM-MCTS outperforms current state-of-the-art methods under more constrained search budgets. Additionally, we construct training data using RPM-MCTS and perform full fine-tuning on base model, which significantly enhances code capabilities of the base model.

A limitation of RPM-MCTS is that code solvable in a single line may be divided into multiple lines due to the step-by-step approach, without impacting correctness. In the future, during the evaluation phase of MCTS, we can dynamically adjust the weights of external rewards from the knowledge base and sandbox, based on the uncertainty of LLMs.

Acknowledgments

This work was supported by the National Science and Technology Major Project (2022ZD0114803), the Natural Science Foundation of Wuhan (2023010201020229), the Fundamental Research Funds for the Central Universities (NO.NJ2023032), and the Major Program (JD) of Hubei Province (2023BAA024).

Appendix A Topic Categories

Table 3 presents the classification of algorithms in our knowledge base, which is divided into 14 categories. These categories were derived from the most common algorithmic tags on popular programming websites. The final knowledge base comprises a total of 82,923 items.

| Data Structures | 750 |

| --- | --- |

| Algorithm Strategies | 1218 |

| String Processing | 1676 |

| Sorting and Searching | 542 |

| Graph Theory | 977 |

| Bit Manipulation | 403 |

| Mathematics and Number Theory | 1658 |

| Computational Geometry | 611 |

| Optimization Problems | 1310 |

| Two-Pointer Techniques | 213 |

| Dynamic Programming | 836 |

| Recursion and Backtracking | 226 |

| Hashing Techniques | 316 |

| Other | 302 |

Table 3: Knowledge base data categories and statistics.

Appendix B Dataset Details

As detailed in Table 4, our knowledge base was built from the CodeContests-Train and APPS-Train datasets, which together provide 11,038 training samples. The model’s performance was then benchmarked against a test set consisting of six standard benchmarks: the APPS test splits (by difficulty), the CodeContests test split, HumanEval+, and MBPP+.

| | Dataset | Samples |

| --- | --- | --- |

| Knowledge Base | CodeContests-Train | 7368 |

| APPS-Train | 3670 | |

| Test Set | APPS-Test-Introductory | 150 |

| APPS-Test-Interview | 150 | |

| APPS-Test-Competition | 150 | |

| CodeContests-Test | 150 | |

| HumanEval+ | 164 | |

| MBPP+ | 378 | |

Table 4: Dataset statistics.

Appendix C Prompts

Figure 6 - 9 demonstrate selected key prompts used in our method. The prompt in Figure 6 generates the next step. The prompt in Figure 7 completes full steps. The prompt in Figure 8 analyzes execution errors to locate error steps. The prompt in Figure 9 translates the full steps into code.

Appendix D Algorithm Details

Algorithm 1 provides the detailed pseudocode for our proposed RPM-MCTS. The algorithm follows the canonical MCTS structure, iteratively performing four phases consisting of Selection, Expansion, Evaluation, and Backpropagation. A key component is the EVALUATE_NODE function. Instead of a traditional random rollout, this function generates a complete code solution from the current path, executes it in a sandboxed environment, and assesses its correctness. If the execution fails, the algorithm activates a reflection mechanism that identifies the erroneous step, prunes the incorrect sub-path, and updates its understanding to guide future searches.

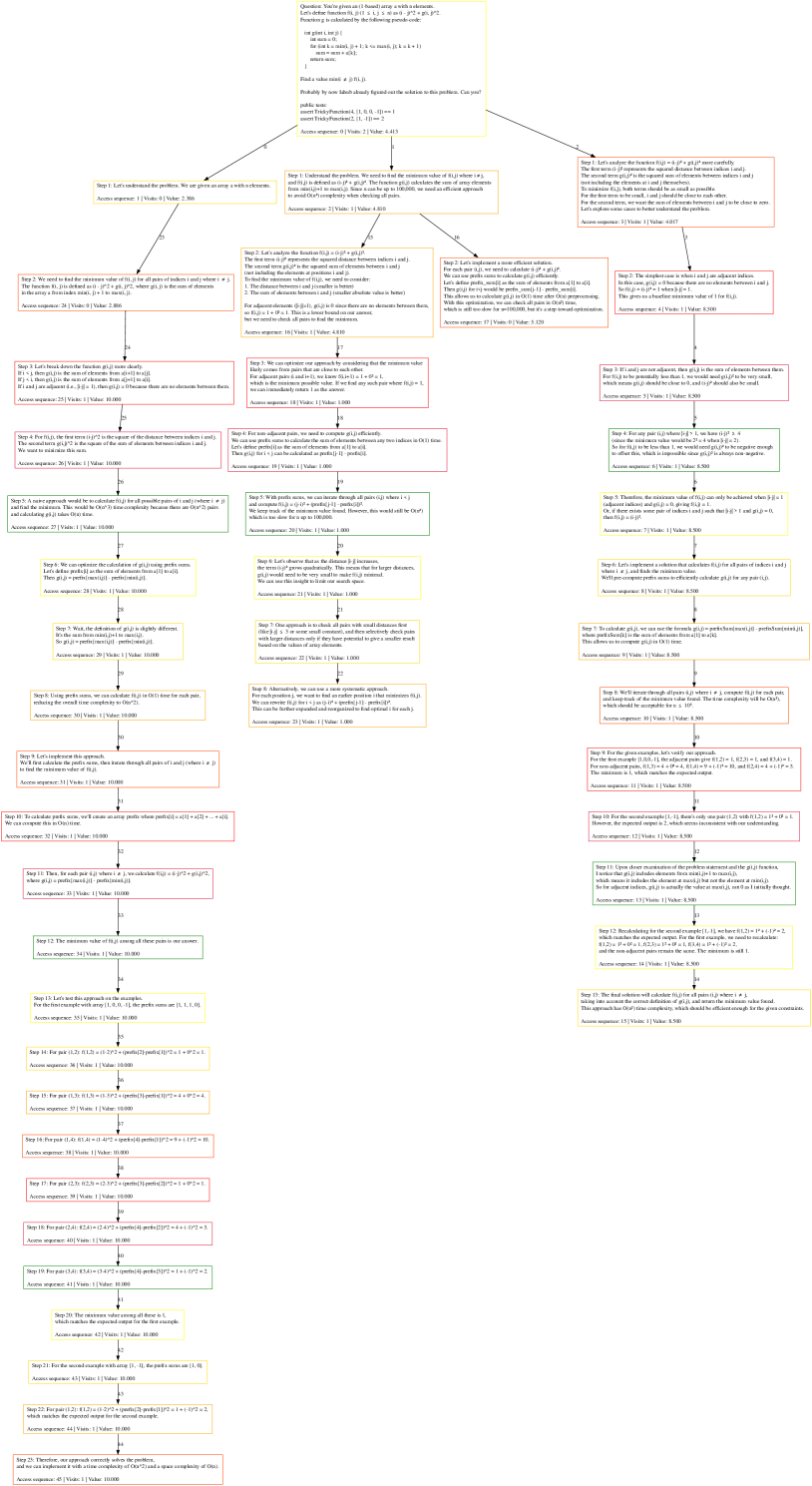

Appendix E Case Study

We present a case study of our method in Figure 10. This figure provides a visualization of the final state of the Monte Carlo Tree after the search process concludes. In the tree, the root node represents the problem statement, while subsequent nodes correspond to individual reasoning steps. Furthermore, each node is annotated with its access sequence, visit count, and value. After four search iterations, our method successfully identifies the correct reasoning path to the solution, which corresponds to the leftmost path in the figure.

Prompt for Generating Next Step

Your task is to provide the correct next step based on the previous incorrect code used to solve the problem and a reflection, for a given programming problem and its existing solution steps (which are incomplete). Let’s think step by step. But you only generate one step at a time. We aim to decompose complex problems into a series of simpler subproblems and sequentially generate the corresponding steps to solve each subproblem. All the substeps should be combined in a way that avoids contradictions, forming a coherent solution to the original complex problem. Input format (n steps): •

Problem: {problem} •

Existing steps: {existing_steps} •

Analysis: {reflection} •

History: {history} The historical content is the solution proposed earlier. To ensure the diversity of solutions, please do not generate ideas identical to those in the historical content. Guidelines: •

The steps you generate will be passed to a code generation model, so they should be structured in a way that is easy for the model to understand. •

Keep each step concise and focused, avoiding the inclusion of too much information at once. Ensure clear organization and logical progression in your reasoning. •

Important: You can use very little code as detailed explanations in your answers, but you cannot just write code. •

If your answer includes code, it will cause unforeseen losses! •

Your answer should be based on the given analysis. Only if the analysis is wrong can you answer it in your own way. •

If no existing steps are provided, you should output the first step based on the given analysis. •

If there are existing steps, output the next step (Step n+1) that logically follows the provided analysis and the previous steps. Output format: •

Next step: … Your response should only generate solutions to the problem, without any extra words.

Figure 6: Prompt for Generating Next Step.

Prompt for Generating Full Steps

Your task is to take a programming problem and incomplete solution steps (not a full answer), then continue from the provided steps to complete all remaining steps and generate the complete final solution. Let’s think step by step. We aim to decompose complex problems into a series of simpler subproblems and sequentially generate the corresponding steps to solve each subproblem. All the substeps should be combined in a way that avoids contradictions, forming a coherent solution to the original complex problem. Note: Do not modify the existing solution steps. Input format (n steps): •

Problem: {problem} •

Existing steps: {existing_steps} Guidelines: •

If n is equal to 0, you need to start from scratch and analyze the solution idea briefly, and then output the complete answer. •

Otherwise, you need to output the complete answer that you think is correct following the train of thought of the existing steps. •

Each step generated should be concise and focused, addressing only a small part of the solution. Avoid making the steps too complex or combining multiple ideas into one. •

The complete solution should consist of at least three steps, so don’t skip any essential steps. •

Your output should be clear and systematic, with each step described one at a time to ensure logical progression. •

Note: You are only allowed to describe the reasoning steps in natural language. Do not output any code. Output format: •

Step 1: … •

Step 2: … •

… •

Step n: … •

Step n + 1: … •

… Among them, Step 1 to Step n are consistent with the existing steps. Continue to generate based on the existing steps to obtain a complete answer. The following is the input. Please output according to the specified output format, do not output unnecessary information, and do not repeat the question. Note: Your output should start from Step 1 and include all the steps, not just the next step.

Figure 7: Prompt for Generating Full Steps.

Prompt for Code Debugging and Analysis

The following is a Python code problem, which includes the thoughts and code for solving the problem, as well as the return results of debugging for a failed test case. Input: •

Python code problem: {problem} •

Thoughts: {solution} •

Code: {code} •

Test case debug information: {exec_result} The debugging process is to first split the code into block-level code according to the AST. If the block-level code is correct after debugging analysis, the ”correct” field is True, otherwise it is False. The ”explanation” field is the analysis of the block-level code debugging. Guidelines: Your task is to determine which specific step is written incorrectly based on the debug return results and conduct an analysis and summary. The correctly generated code and corresponding thought processes will be retained, while the incorrect code and corresponding thought processes will be discarded. You need to analyze and summarize the points to note so that subsequent thought processes can be generated based on the correct thought processes to correct the previous errors. Output format: Your output consists of two parts: •

1. Which specific step went wrong. Wrap it with the <step_n>x</step_n> XML tag, where x represents the specific number of the first erroneous step. If there are multiple erroneous steps in the thought process, only output the number of the first erroneous step. Do not output any extra content. •

2. Analyze and summarize the points to note. The final output should look like this: <step_n>x</step_n>..., where … represents the generated analysis.

Figure 8: Prompt for analyzing debugging results and identifying errors.

Prompt for Code Implementation

You will play the role of a code implementer, writing a complete code based on the given problem and the step-by-step analysis of the problem. Your code must strictly follow the analysis steps provided and should not include your own opinions. Rules: •

Importing function libraries(like: import math) and output function code only, without main function so that I can call your generated functions directly. •

The output code should be wrapped with code blocks (like “‘python). Example: “‘python\ndef add(a, b):\n return a + b\n“‘. Input: •

question: {question} •

analysis: {analysis}

Figure 9: Prompt for generating code based on steps.

Algorithm 1 The RPM-MCTS Algorithm for Code Generation

Input: Problem description $P$ , total iterations $I$ , branching factor $B$ , success threshold $\theta_{succ}$ Output: The best generated code solution $C_{best}$

1: $v_{root}←\text{CREATE\_NODE}(P)$

2: for $i← 1$ to $I$ do

3: // 1. Selection

4: $v_{l}← v_{root}$

5: while $v_{l}$ is fully expanded do

6: $v_{l}←\text{SELECT\_BEST\_CHILD\_UCB}(v_{l})$

7: end while

8:

9: // 2. Expansion

10: if $v_{l}$ is not a terminal node then

11: $S_{gen}←\emptyset,H_{hist}←\emptyset$

12: for $j← 1$ to $B$ do

13: $s_{new}←\text{GENERATE\_NEXT\_STEP}(P,\text{path}(v_{l}),H_{hist})$ {Generate diverse steps}

14: Add $s_{new}$ to $S_{gen}$ and $H_{hist}$

15: end for

16: $S_{unique}←\text{FILTER\_SIMILAR\_STEPS}(S_{gen})$ {Filter semantic duplicates}

17: for each step $s$ in $S_{unique}$ do

18: $v_{c}←\text{ADD\_CHILD}(v_{l},s)$

19: $v_{c}.value←\text{GET\_INITIAL\_VALUE}(\text{path}(v_{c}))$ {Score from knowledge base & LLM}

20: end for

21: Mark $v_{l}$ as fully expanded

22: end if

23:

24: // 3. Evaluation (Simulation)

25: $v_{r}←\text{SELECT\_BEST\_CHILD\_UCB}(v_{l})$

26: if $v_{r}$ is not NULL then

27: $is\_solved,Q_{final}←\text{EVALUATE\_NODE}(v_{r},P,\theta_{succ})$

28: if $is\_solved$ then

29: return $\text{GET\_SOLUTION}(v_{r})$ {Optimal solution found, terminate early}

30: end if

31: else

32: $Q_{final}← v_{l}.value$ {Use parent value if no children to evaluate}

33: end if

34:

35: // 4. Backpropagation

36: $\text{BACKPROPAGATE}(v_{r},Q_{final})$

37: end for

38: return $\text{GET\_BEST\_SOLUTION}(v_{root})$ {Return best solution after all iterations}

39:

40: Function EVALUATE_NODE( $v,P,\theta_{succ}$ )

41: $\pi_{s}←\text{path}(v)$

42: $S_{full},C←\text{GENERATE\_FULL\_SOLUTION}(\pi_{s})$

43: $r_{exec},res_{sb}←\text{EXECUTE\_CODE}(C)$ {Evaluate in sandbox}

44: $r_{llm},f←\text{EVALUATE\_WITH\_LLM}(S_{full},C,res_{sb})$ { $f$ is reflection}

45: $Q_{comb}←\gamma· r_{exec}+(1-\gamma)· r_{llm}$ {Weighted combined value}

46: if $r_{exec}$ is SUCCESS and $r_{llm}≥\theta_{succ}$ then

47: $\text{ADD\_SOLUTION\_TO\_TREE}(v,S_{full})$

48: return true, $Q_{comb}$

49: end if

50: if $r_{exec}$ is FAILURE then

51: $idx_{err}←\text{LOCATE\_ERROR\_STEP}(S_{full},res_{sb},f)$

52: $S_{pruned}←\text{PRUNE\_STEPS}(S_{full},idx_{err})$

53: $\text{ADD\_SOLUTION\_TO\_TREE}(v,S_{pruned})$ {Add correct partial path}

54: $\text{UPDATE\_REFLECTION\_IN\_TREE}(v,f)$

55: end if

56: return false, $Q_{comb}$ {Return failure if not solved}

<details>

<summary>x6.png Details</summary>

### Visual Description

## Algorithm Flowchart: Tricky Function Optimization

### Overview

The image presents a flowchart outlining the steps to optimize a "tricky function" involving array elements. The flowchart details different approaches, optimizations, and considerations for calculating the function efficiently. The nodes are color-coded to represent different stages or types of operations.

### Components/Axes

* **Nodes:** Represent individual steps or decisions in the algorithm. Each node contains a description of the step and information about access sequence and value.

* **Edges:** Arrows connecting the nodes, indicating the flow of the algorithm.

* **Color Coding:**

* **Yellow:** Initial problem statement and high-level understanding.

* **Orange:** Analysis and optimization strategies.

* **Green:** Implementation and calculation steps.

* **Red:** Examples and verification steps.

* **Access Sequence:** A counter indicating the order in which the steps are accessed.

* **Value:** A numerical value associated with each step, likely representing a cost or metric.

### Detailed Analysis or ### Content Details

**Node 0 (Yellow):**

* Text: "Question: You're given an [0-based] array a with n elements. Let's define function f(i, j) = (i - j)^2 + g(i, j)^2. The function g(i, j) is the sum of a[i+1] + a[i+2] + ... + a[j-1] elements by their following pseudo code: int g(int i, int j) { int sum = 0; for (int k = i+1; k < j; k++) { sum += a[k]; } return sum; } Find a value min( f(i, j) ). Probably now lately already figured out the solution to this problem. Can you? public static void note: insertTrickyFunction1(1, 0, 0) // row 1 insertTrickyFunction2(1, 1, 0) // row 2 Access sequence: 0 | Visits: 2 | Value: 4.413"

* Position: Top-center of the image.

**Node 1 (Yellow):**

* Text: "Step 1: Let's understand the problem. We are given an array a with n elements. Access sequence: 1 | Visits: 1 | Value: 2.386"

* Position: Below and to the left of Node 0.

**Node 2 (Yellow):**

* Text: "Step 1: Let's analyze the function f(i, j) = (i - j)^2 + g(i, j)^2 more carefully. The first term (i - j)^2 is the square of the distance between indices i and j. The second term g(i, j) is the squared sum of elements between indices i and j (not including the elements at i and j themselves). Therefore, for the first term to be small, i and j should be close to each other. For the second term to be small, the elements between i and j should be close to zero. Let's explore some cases to better understand the problem. Access sequence: 2 | Visits: 1 | Value: 4.017"

* Position: Below and to the right of Node 0.

**Node 3 (Orange):**

* Text: "Step 2: We need to find the minimum value of f(i, j) for all pairs of indices i and j where i < j. The function f(i, j) is defined as (i - j)^2 + g(i, j)^2. The function g(i, j) calculates the sum of array elements between indices i and j. To avoid O(n^2) complexity when checking all pairs, we need an efficient approach. Access sequence: 2 | Visits: 1 | Value: 4.810"

* Position: Below Node 1.

**Node 4 (Orange):**

* Text: "Step 2: The simplest case is when i and j are adjacent indices. In this case, there are no elements between i and j. This gives us a baseline minimum value of 1 for f(i, j). Access sequence: 4 | Visits: 1 | Value: 8.500"

* Position: Below Node 2.

**Node 5 (Orange):**

* Text: "Step 2: Let's analyze the function g(i, j) = a[i+1] + a[i+2] + ... + a[j-1]. The second term g(i, j)^2 is the squared sum of elements between i and j (not including the elements at positions i and j). Therefore, when choosing which pairs (i, j) to consider: 1. The distance between i and j (smaller is better). 2. The sum of elements between i and j (smaller absolute value is better). Step 3: If g(i, j) = 0 since there are no elements between them, then f(i, j) = (i - j)^2 + 0. Therefore, we need to check all pairs to find the minimum. Access sequence: 16 | Visits: 1 | Value: 4.810"

* Position: Below Node 3.

**Node 6 (Orange):**

* Text: "Step 2: Let's implement a more efficient solution. Define prefix sums: prefix[i] = a[0] + a[1] + ... + a[i]. We can use prefix sums to calculate g(i, j) efficiently. Let's define prefix[i] = a[0] + a[1] + ... + a[i]. This allows us to calculate g(i, j) in O(1) time after O(n) preprocessing, which is still too slow since n = 100,000, but it's a step toward optimization. Access sequence: 17 | Visits: 1 | Value: 5.125"

* Position: Below Node 4.

**Node 7 (Orange):**

* Text: "Step 3: If i and j are not adjacent, then g(i, j) is the sum of elements between them. For f(i, j) to be potentially less than 1, we would need g(i, j) to be very small, which means g(i, j) should be close to 0, and i and j should also be small. Access sequence: 6 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 4.

**Node 8 (Orange):**

* Text: "Step 4: For pairs (i, j) where |i - j| > 1, we have (i - j)^2 > 1. So the sum must be close to 1, and g(i, j)^2 should be negative enough to offset this, which is impossible since g(i, j)^2 is always non-negative. Access sequence: 7 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 7.

**Node 9 (Orange):**

* Text: "Step 5: Therefore, the minimum value of f(i, j) can only be achieved when j = i + 1 (adjacent indices) and g(i, j) = 0, giving f(i, j) = 1. Or, if there exist some pairs of indices i and j such that (i - j)^2 + 1 and g(i, j) = 0, then f(i, j) = 1. Access sequence: 7 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 8.

**Node 10 (Orange):**

* Text: "Step 6: Let's implement a solution that calculates f(i, j) for all pairs of indices i and j where j = i + 1, and finds the minimum value. We'll pre-compute prefix sums to efficiently calculate g(i, j) for any pair (i, j). Access sequence: 8 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 9.

**Node 11 (Orange):**

* Text: "Step 7: To calculate g(i, j), we can use the formula g(i, j) = prefix[max(i, j) - 1] - prefix[min(i, j)]. This allows us to compute g(i, j) in O(1) time. Access sequence: 9 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 10.

**Node 12 (Orange):**

* Text: "Step 8: We'll iterate through all pairs (i, j) where j = i + 1, and compute f(i, j) for each pair, which should be acceptable for n = 10^5. The time complexity will be O(n^2). Access sequence: 10 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 11.

**Node 13 (Red):**

* Text: "Step 9: For the given examples, let's verify our approach. For the first example [1, 0, 0, -1], the adjacent pairs give (1, 2) = 1, (2, 3) = 1, and (3, 4) = 1. The minimum is 1, which matches the expected output. Access sequence: 11 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 12.

**Node 14 (Red):**

* Text: "Step 10: For the second example [1, 1, -1, 1], there's only one pair (1, 2) with (1, 2) = 1 + 3^2 = 0. However, the expected output is 2, which seems inconsistent with our understanding. Access sequence: 12 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 13.

**Node 15 (Red):**

* Text: "Step 11: Upon closer examination of the problem statement and the g(i, j) function, I notice that g(i, j) includes elements between i and j, and the non-adjacent pairs remain the same. The minimum is still 1. Access sequence: 13 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 14.

**Node 16 (Red):**

* Text: "Step 12: Recalculating for the second example [1, 1, -1, 1], we have f(1, 2) = (1 - 2)^2 + 1^2 = 2, which matches the expected output. For the first example, no non-adjacent pairs remain the same. The minimum is still 1. Access sequence: 14 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 15.

**Node 17 (Red):**

* Text: "Step 13: The final solution will calculate f(i, j) for all pairs (i, j) where j = i + 1, and finds the minimum value. This approach has O(n^2) time complexity, which should be efficient enough for the given constraints. Access sequence: 15 | Visits: 1 | Value: 8.500"

* Position: Below and to the right of Node 16.

**Node 18 (Orange):**

* Text: "Step 3: We can optimize our approach by considering that the minimum value is likely to be found for adjacent pairs (i and i+1). For adjacent pairs (i and i+1), we know f(i, i+1) = 1 + g(i, i+1)^2. Therefore, we can immediately return 1 as the answer. Access sequence: 17 | Visits: 1 | Value: 1.000"

* Position: Below Node 5.

**Node 19 (Orange):**

* Text: "Step 4: For non-adjacent pairs, we need to compute g(i, j) efficiently. We can use prefix sums to calculate the sum of elements between any two indices in O(1) time. Let's define prefix[i] as the sum of elements from a[1] to a[i]. Then g(i, j) for i < j can be calculated as prefix[j - 1] - prefix[i]. Access sequence: 19 | Visits: 1 | Value: 1.000"

* Position: Below Node 18.

**Node 20 (Orange):**

* Text: "Step 5: With prefix sums, we can iterate through all pairs (i, j) where i < j and compute g(i, j) in O(1) time. We keep track of the minimum value found. However, this would still be O(n^2). Access sequence: 20 | Visits: 1 | Value: 1.000"

* Position: Below Node 19.

**Node 21 (Orange):**

* Text: "Step 6: Let's observe that as the distance |i - j| increases, the term (i - j)^2 grows quadratically. This means that for larger distances, g(i, j) would have to be very small to avoid removal. We can use this insight to limit our search space. Access sequence: 21 | Visits: 1 | Value: 1.000"

* Position: Below Node 20.

**Node 22 (Orange):**

* Text: "Step 7: One approach is to check all pairs with small distances first. For larger distances, we only check pairs with relatively small g(i, j) values. This may potentially give us a smaller search space based on the values of array elements. Access sequence: 22 | Visits: 1 | Value: 1.000"

* Position: Below Node 21.

**Node 23 (Orange):**

* Text: "Step 8: Alternatively, we can use a more systematic approach. For each position i, we want to find the position j that minimizes f(i, j). We can rewrite f(i, j) as (i - j)^2 + (prefix[j - 1] - prefix[i])^2. This can be further expanded and manipulated to find optimal j for each i. Access sequence: 23 | Visits: 1 | Value: 1.000"

* Position: Below Node 22.

**Node 24 (Orange):**

* Text: "Step 4: Let's break down the function g(i, j) more clearly. Let's define prefix[i] as the sum of elements from a[1] to a[i]. If i and j are adjacent (j = i + 1), then g(i, j) = 0 because there are no elements between them. If i and j are adjacent (j = i + 1), then g(i, j) = 0 because there are no elements between them. Access sequence: 25 | Visits: 1 | Value: 10.000"

* Position: Below Node 5.

**Node 25 (Orange):**

* Text: "Step 4: For f(i, j), the first term (i - j)^2 is the square of the distance between indices i and j. The second term g(i, j)^2 is the square of the sum of elements between indices i and j. We want to minimize this sum. Access sequence: 26 | Visits: 1 | Value: 10.000"

* Position: Below Node 24.

**Node 26 (Orange):**

* Text: "Step 5: A naive approach would be to calculate f(i, j) for all possible pairs of i and j (where i < j) and find the minimum value. This has O(n^2) time complexity because there are O(n^2) pairs and calculating g(i, j) takes O(n) time. Access sequence: 27 | Visits: 1 | Value: 10.000"

* Position: Below Node 25.

**Node 27 (Green):**

* Text: "Step 6: We can optimize the calculation of g(i, j) using prefix sums. Let's define prefix[i] as the sum of elements from a[1] to a[i]. Then g(i, j) = prefix[max(i, j) - 1] - prefix[min(i, j)]. Access sequence: 28 | Visits: 1 | Value: 10.000"

* Position: Below Node 26.

**Node 28 (Green):**

* Text: "Step 7: Wait, the definition of g(i, j) is slightly different. It's the sum from a[i+1] to a[j-1], not a[i] to a[j]. So g(i, j) = prefix[j - 1] - prefix[i]. Access sequence: 29 | Visits: 1 | Value: 10.000"