# Language-conditioned world model improves policy generalization by reading environmental descriptions

**Authors**:

- Anh (Joe) Nguyen (Oregon State University)

- &Stefan Lee (Oregon State University)

LAW 2025: Bridging Language, Agent, and World Models

## Abstract

To interact effectively with humans in the real world, it is important for agents to understand language that describes the dynamics of the environment—that is, how the environment behaves —rather than just task instructions specifying what to do. For example, a cargo-handling robot might receive a statement like "the floor is slippery so pushing any object on the floor will make it slide faster than usual". Understanding this dynamics-descriptive language is important for human-agent interaction and agent behavior. Recent work [20, 40, 6] address this problem using a model-based approach: language is incorporated into a world model, which is then used to learn a behavior policy. However, these existing methods either do not demonstrate policy generalization to unseen language or rely on limiting assumptions. For instance, assuming that the latency induced by inference-time planning is tolerable for the target task or that expert demonstrations are available. Expanding on this line of research, we focus on improving policy generalization from a language-conditioned world model while dropping these assumptions. We propose a model-based reinforcement learning approach, where a language-conditioned world model is trained through interaction with the environment, and a policy is learned from this model—without planning or expert demonstrations. Our method proposes L anguage-aware E ncoder for D reamer W orld M odel (LED-WM) built on top of DreamerV3 [13]. LED-WM features an observation encoder that uses an attention mechanism to explicitly ground language descriptions to entities in the observation. We show that policies trained with LED-WM generalize more effectively to unseen games described by novel dynamics and language compared to other baselines in several settings in two environments: MESSENGER and MESSENGER-WM. To highlight how the policy can leverage the trained world model before real-world deployment, we demonstrate the policy can be improved through fine-tuning on synthetic test trajectories generated by the world model.

## 1 Introduction

We envision a future where humans can seamlessly command AI agents through natural language to automate repetitive tasks in the real world. Traditionally, language has been used to specify task instructions, such as telling a navigation robot to "go to the door" [2, 17, 1]. However, language can also offer valuable information about environments. Such environmental description not only makes human interaction more natural, but also provides important contextual information about how the environment changes over time. It informs the agent about how the environment behaves —its dynamics, the current state of the world, and how various entities interact with each other and with the agent—not just what to do.

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Game Scenario Grid with Legend

### Overview

The image displays a diagram composed of two main sections: a grid-based map on the left and an explanatory legend box on the right. The grid contains several pixel-art style icons representing different entities, with dashed lines indicating movement paths. The legend provides definitions for three key elements within the scenario.

### Components/Axes

**Grid Section (Left):**

* **Structure:** A 10x10 square grid with light gray lines on a white background.

* **Icons & Elements:**

1. **Researcher Icon:** A pixel-art figure of a person with brown hair, wearing a white lab coat, located in the top row, 7th column from the left.

2. **Plane Icons:** Three identical white airplane icons. One is in the 4th row, 5th column. Two are in the 5th row, columns 4 and 5.

3. **Ferry Icon:** A blue and white boat/ferry icon located in the 3rd row, 3rd column.

4. **Submarine Icon:** A gray submarine icon located in the 8th row, 3rd column.

5. **Dashed Lines with Arrows:**

* A vertical dashed line with an upward-pointing arrowhead runs from the submarine (row 8, col 3) up to the researcher (row 1, col 7). The line travels straight up column 3 to row 5, then turns right to column 7, then continues up to the researcher.

* A separate vertical dashed line with an upward-pointing arrowhead extends from the plane in row 4, column 5, going straight up off the top of the grid.

**Legend Box (Right):**

* **Structure:** A rectangular box with a black border, positioned to the right of the grid.

* **Content (Transcribed Text):**

* The plane going away from you carries out a message

* The researcher who doesn't move is final goal

* The ferry chasing you is an enemy

### Detailed Analysis

**Spatial Grounding & Element Relationships:**

* The **Researcher** (top-center) is the static endpoint of the primary dashed path.

* The **Submarine** (bottom-left) is the starting point of the primary dashed path, which leads to the Researcher. This suggests the submarine is the player-controlled unit.

* The **Plane** in the center (row 4, col 5) has its own path leading away from the grid, consistent with the legend's description of it "going away."

* The **Ferry** is positioned above and to the left of the submarine's starting point, in a location that could be interpreted as "chasing" or intercepting the path to the goal.

* The two additional **Plane** icons in row 5 are static and do not have associated paths.

### Key Observations

1. **Path Logic:** The primary dashed path is not a straight line. It moves vertically from the submarine, then makes a 90-degree right turn, then another 90-degree turn upward to reach the researcher. This could indicate an obstacle or a specific route requirement.

2. **Icon States:** The legend defines dynamic roles ("going away," "chasing") for the plane and ferry, but the diagram shows them as static icons. Their movement is implied by the text and the path lines, not by animation.

3. **Unexplained Elements:** The two additional plane icons in row 5 are not referenced in the legend. Their purpose is unclear from the provided information.

### Interpretation

This diagram appears to be a **schematic for a simple game or puzzle scenario**. It visually defines the core rules and objectives using a grid map and a legend.

* **Objective:** The player (likely controlling the submarine) must navigate to the stationary researcher (the "final goal").

* **Conflict:** An enemy ferry is present, presumably acting as an obstacle or threat to be avoided.

* **Narrative Element:** A plane is delivering a message, adding a layer of story or a secondary objective.

* **Game Mechanics Implied:** The grid suggests turn-based or tile-based movement. The dashed lines illustrate the intended or possible paths for key entities. The legend translates the visual symbols into game roles (goal, enemy, messenger).

The diagram efficiently communicates a game state or level design by separating the visual layout (grid) from the semantic rules (legend). The spatial arrangement creates immediate tension: the player's path to the goal is not direct and passes near the enemy ferry.

</details>

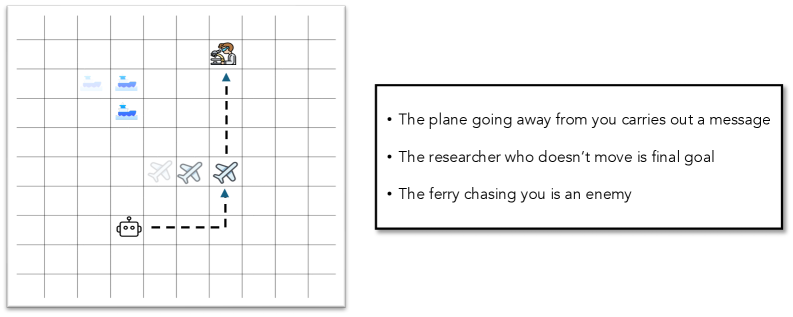

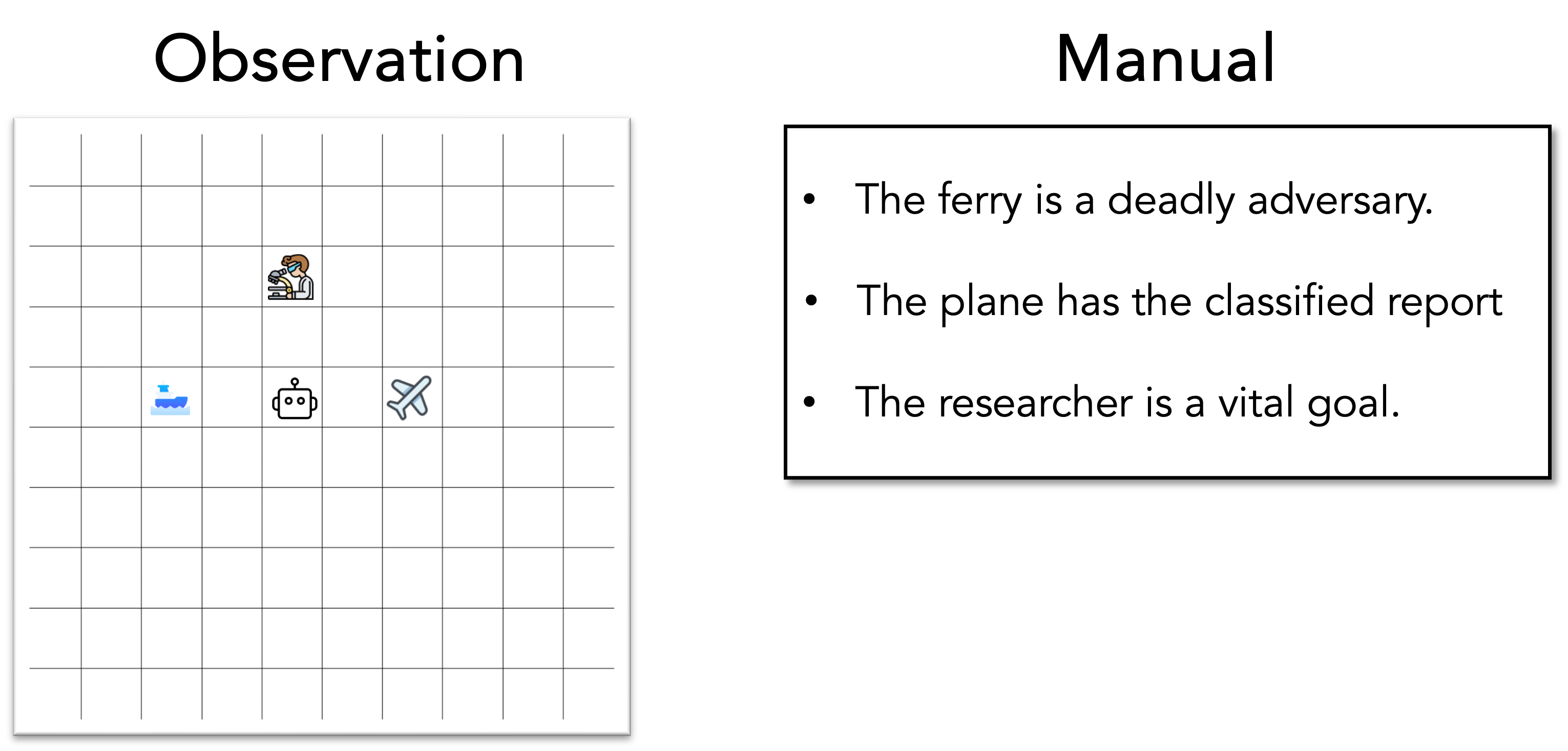

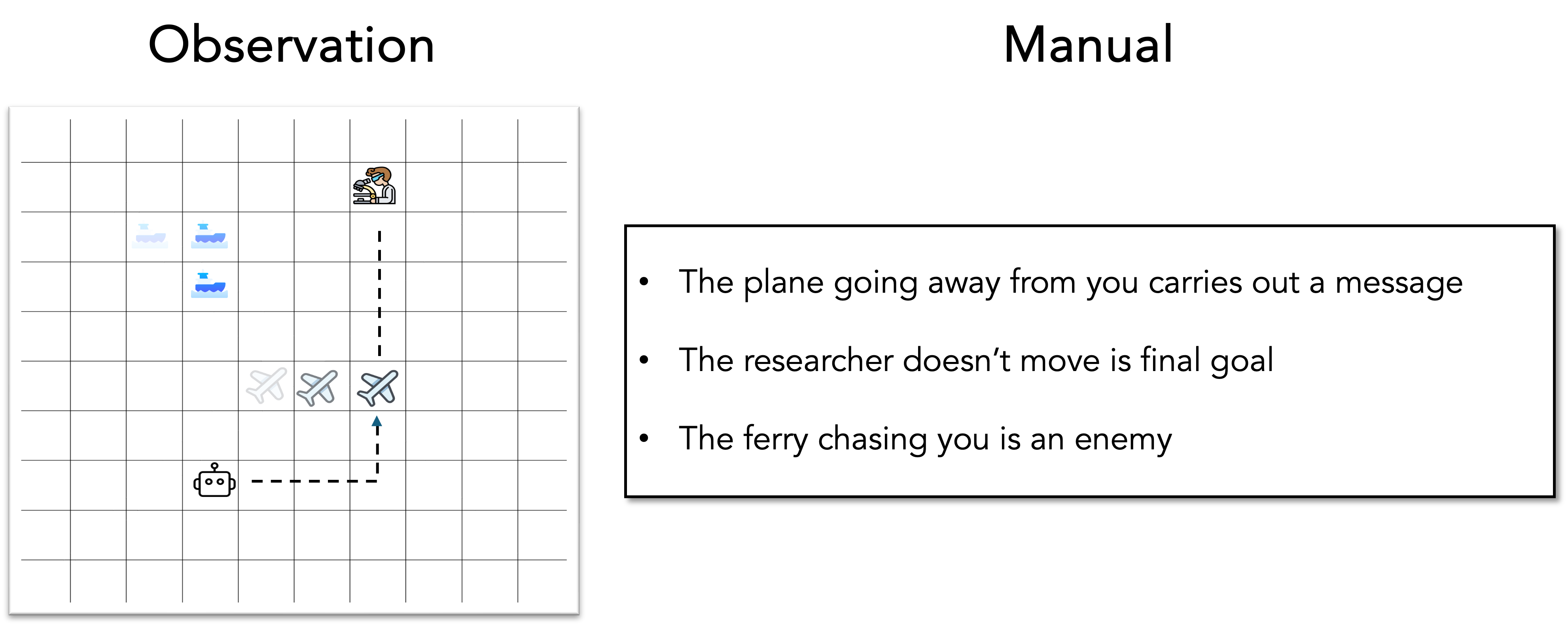

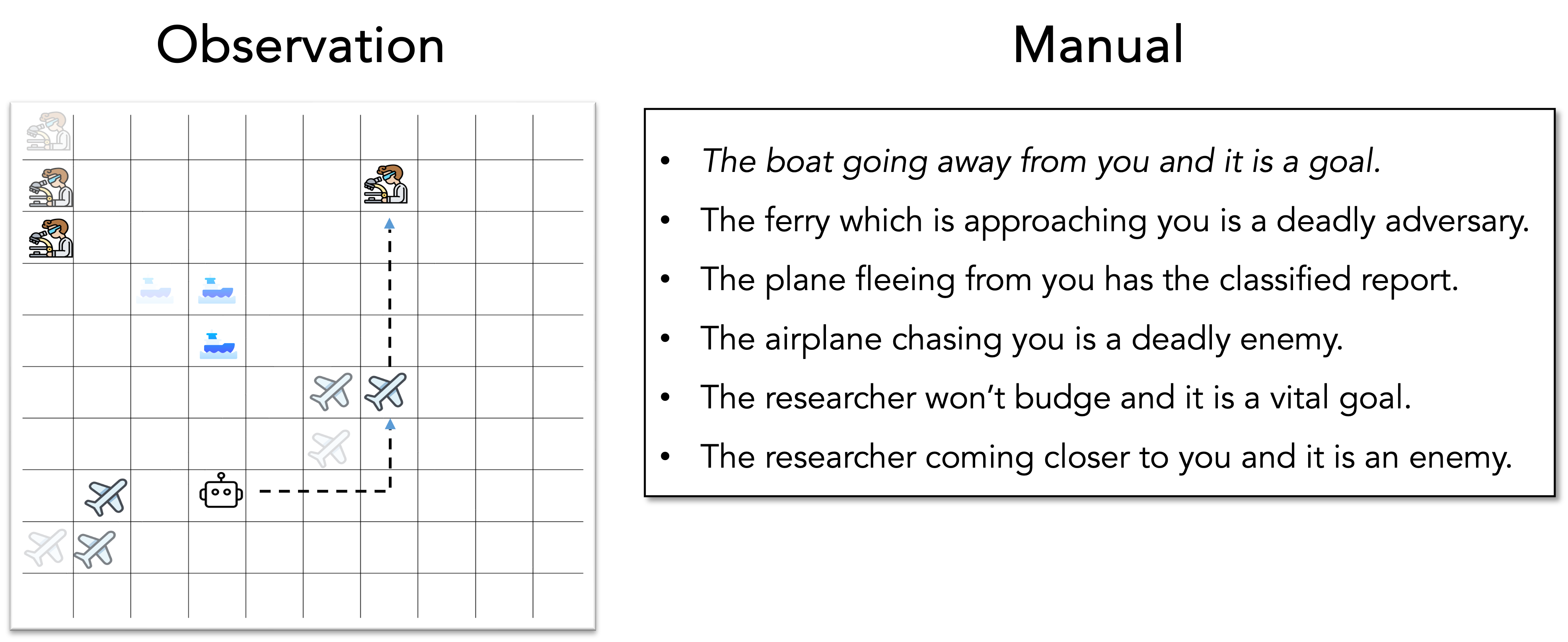

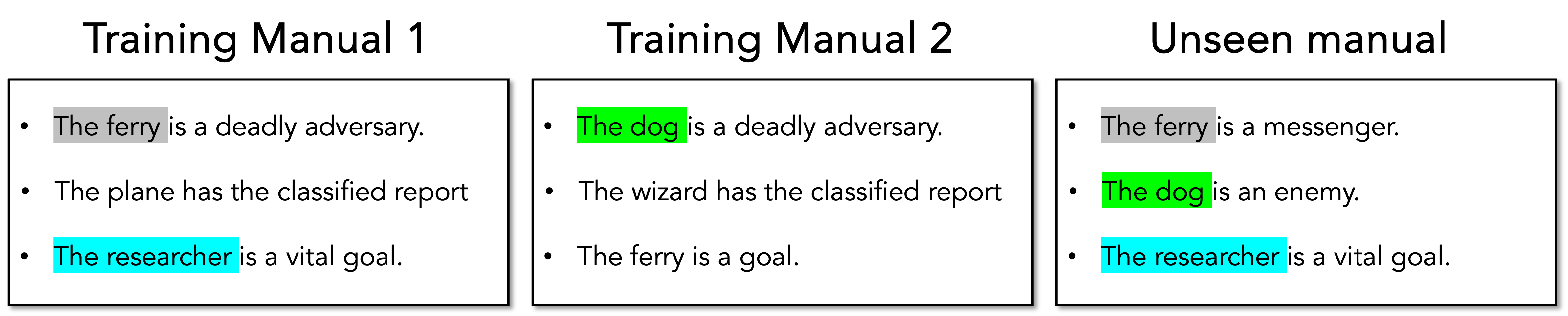

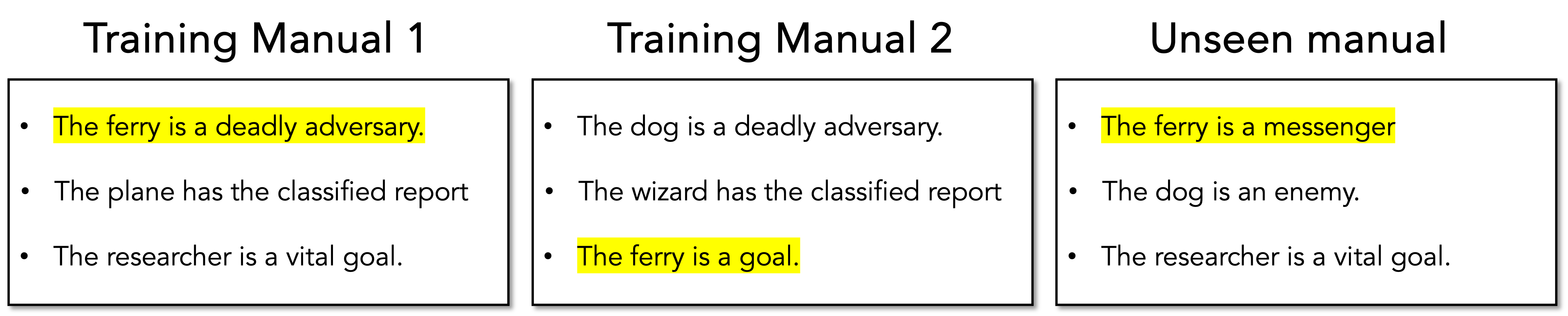

Figure 1: An example of dynamics-descriptive language in a game play. The observation includes a 10 $\times$ 10 grid-world with three entities represented by their associated symbols: (ferry -

<details>

<summary>figures/ferry.png Details</summary>

### Visual Description

## Illustration: Stylized Ship Icon

### Overview

The image is a flat, stylized digital illustration of a ship on water. It is not a chart, diagram, or document containing textual data. The image is purely graphical and symbolic, using simplified shapes and a limited color palette to represent a vessel at sea. There is no embedded text, numerical data, labels, or legends present.

### Components/Axes

As this is an illustration and not a data visualization, there are no axes, scales, or legends. The visual components are:

1. **Ship Structure:**

* **Hull:** A large, solid blue shape forming the main body of the ship. It has a curved bow (front) on the right and a flat stern (back) on the left.

* **Superstructure:** A white, rectangular block sitting atop the hull, representing the bridge or accommodation area.

* **Funnel/Stack:** A bright blue, tapered cylindrical shape positioned on top of the superstructure.

* **Mast/Antenna:** A thin, white vertical line extending upwards from the top of the funnel.

2. **Water:** A light blue, wavy band at the bottom of the image, representing the sea surface. The waves are depicted as a series of connected, smooth curves.

3. **Background:** A solid, light beige or off-white color fills the space behind the ship and above the water.

### Detailed Analysis

* **Color Palette:** The illustration uses a monochromatic blue scheme for the subject, with white accents, against a neutral background.

* Hull: Approximate color value is a medium-dark blue (e.g., #4A6FE3).

* Funnel: A brighter, cyan-like blue (e.g., #00B4FF).

* Superstructure & Mast: White.

* Water: A very light, pastel blue (e.g., #D6F0FF).

* Background: Light beige (e.g., #F5F2EB).

* **Style:** The design is minimalist and iconic, using geometric shapes with soft, rounded corners. There is no shading, texture, or perspective detail, giving it a clean, modern, app-icon-like appearance.

* **Composition:** The ship is centered horizontally and occupies the middle vertical third of the image. The water line sits in the lower third.

### Key Observations

* The image contains **zero textual information**. There are no labels, titles, annotations, or numbers.

* It is a **symbolic representation**, not a technical diagram. It conveys the concept of "ship" or "maritime" rather than specific technical details.

* The design is **non-literal**. For example, the ship lacks specific features like portholes, railings, or a defined deck, and the water is a stylized pattern.

### Interpretation

This image functions as a **visual icon or symbol**. Its purpose is to be immediately recognizable as a ship, likely for use in a user interface, logo, or informational graphic where a simple maritime metaphor is needed. The choice of a calm, blue color palette and smooth shapes suggests themes of transport, travel, logistics, or the sea in a friendly, approachable, and non-technical context. The absence of data or text means its informational content is purely connotative, relying on the viewer's cultural understanding of the ship symbol.

</details>

), (plane -

<details>

<summary>figures/plane.png Details</summary>

### Visual Description

## Icon: Airplane Symbol

### Overview

The image displays a stylized, flat-design icon of a commercial airplane viewed from a top-down perspective. The icon is presented against a plain, light grey background. It contains no textual information, data, charts, or diagrams. It is a purely graphical symbol.

### Components/Axes

* **Primary Subject:** A single airplane icon.

* **Background:** A solid, uniform light grey field (approximate hex: #f0f0f0).

* **Legend/Labels:** None present.

* **Axes/Scale:** Not applicable.

### Detailed Analysis

The icon is constructed with the following visual elements:

* **Outline:** A thick, dark grey (approximate hex: #3a3a3a) border defines the entire shape of the airplane.

* **Fill:** The interior of the airplane is filled with a very light, cool blue (approximate hex: #e6f3ff).

* **Detail:** Two slightly darker blue (approximate hex: #a8c8e8) rectangular stripes are placed on the wings, suggesting engine nacelles or wing markings. One stripe is on the upper-left wing, and the other is on the lower-right wing.

* **Orientation:** The airplane is oriented diagonally, with its nose pointing towards the top-right corner of the image frame and its tail towards the bottom-left.

* **Spatial Grounding:** The icon is centered within the square image frame. The wings extend towards the top-left and bottom-right corners. The fuselage runs diagonally from the bottom-left to the top-right.

### Key Observations

1. **Simplicity:** The design is minimalist, using only three colors (dark grey outline, light blue fill, medium blue detail) and basic geometric shapes to represent the aircraft.

2. **Style:** It follows a common "flat design" or "outline icon" aesthetic, suitable for user interfaces, signage, or informational graphics.

3. **Clarity:** The symbol is immediately recognizable as an airplane due to its distinct silhouette: a central fuselage, two swept-back wings, and a tail assembly.

4. **Lack of Data:** The image contains no quantitative information, trends, labels, or text to extract. It is a symbolic representation, not a data visualization.

### Interpretation

This image serves as a **symbolic identifier**, not a source of factual data. Its meaning is derived from universal visual conventions.

* **Purpose:** The icon is designed to represent concepts related to air travel, aviation, airports, flight, or transportation in a quick, universally understandable manner. It would typically be used in contexts like navigation menus, maps, informational brochures, or signage.

* **Design Choices:** The diagonal orientation conveys a sense of motion or dynamism. The thick outline ensures visibility at small sizes, and the limited color palette aids in clear recognition and potential branding consistency.

* **Underlying Information:** The image itself provides no underlying data trends or investigative findings. Its "information" is purely semiotic: it is a signifier for the concept of an airplane. Any additional meaning (e.g., "departures," "airline logo," "travel section") would be entirely dependent on the context in which this icon is placed.

</details>

), (researcher -

<details>

<summary>figures/scientist.png Details</summary>

### Visual Description

## Icon/Illustration: Scientist with Microscope

### Overview

This is a flat-design, stylized icon or illustration depicting a scientist (or lab technician) looking into a microscope. The image contains no textual information, data, charts, or diagrams. It is a symbolic representation of scientific research, laboratory work, or analysis.

### Components/Axes

* **Primary Subject:** A human figure, shown from the chest up, in profile facing left.

* **Key Object:** A compound microscope positioned in front of the figure.

* **Background:** A solid, light gray background (`#f0f0f0` approximate).

* **Style:** Bold, black outlines define all shapes. Colors are flat with no gradients or shading.

### Detailed Analysis

**Figure Details:**

* **Hair:** Brown, styled with a prominent curl or wave on top.

* **Face:** No facial features are depicted (eyes, nose, mouth are absent).

* **Eyewear:** Large, bright blue safety goggles or glasses.

* **Clothing:** A white lab coat with a black outline. The collar and front seam are indicated.

* **Pose:** The figure is leaning forward, with their head positioned to look into the microscope's eyepiece. Their right arm is bent, with the hand resting on the microscope's arm or focus knob.

**Microscope Details:**

* **Body/Arm:** A curved, yellow arm connects the base to the head.

* **Head/Optical Tube:** Gray, containing the eyepiece (ocular lens) and objective lenses.

* **Stage:** A flat, gray platform where a sample would be placed.

* **Base:** A gray, rectangular base supporting the structure.

* **Knobs:** A prominent, circular yellow knob (likely the coarse focus) is visible on the side of the arm. A smaller black circle may represent a fine focus knob.

* **Nosepiece:** A gray, rotating turret holding the objective lenses is implied below the head.

**Spatial Grounding:**

* The **microscope** occupies the left and central portion of the frame.

* The **scientist** is positioned on the right side, overlapping the microscope.

* The **yellow focus knob** is located at the junction of the arm and the base, slightly below the center of the image.

* The **blue goggles** are the most saturated color element, positioned in the upper-right quadrant of the figure's head.

### Key Observations

1. **Absence of Text:** The image contains zero textual elements—no labels, titles, legends, or annotations.

2. **Symbolic, Not Literal:** The illustration is an archetype. It uses universal symbols (lab coat, microscope, goggles) to convey the concept of "scientist" or "research" rather than depicting a specific person or equipment model.

3. **Simplified Form:** Complex details of the microscope (like specific lenses, adjustment screws, or a light source) and the human figure (facial features, fingers) are omitted for clarity and iconographic impact.

4. **Color Palette:** The palette is limited and functional: white (coat), brown (hair), blue (goggles), yellow (microscope arm/knob), gray (microscope body/background), and black (outlines).

### Interpretation

This image functions as a **visual metaphor**. Its purpose is to quickly and universally communicate ideas related to:

* **Scientific Research & Discovery:** The act of close examination and analysis.

* **Laboratory Work:** A standard setting for biological, chemical, or medical investigation.

* **Precision & Scrutiny:** The microscope symbolizes looking deeper into a subject, studying details invisible to the naked eye.

* **Expertise & Analysis:** The figure represents a trained professional engaged in technical work.

The lack of specific data or text means its information is purely **connotative**. It doesn't present facts but evokes a field of knowledge and a set of activities. In a technical document, this icon would likely serve as a section header, a button label, or an illustrative element to denote a related topic (e.g., "Lab Results," "Microscopic Analysis," "Research Methods"). Its effectiveness lies in its immediate recognizability and its clean, unambiguous design.

</details>

) and one agent (depicted by

<details>

<summary>figures/bot.png Details</summary>

### Visual Description

## Icon/Symbol: Robot Head Line Drawing

### Overview

The image is a simple, monochromatic line drawing of a stylized robot head, presented as a black icon on a light gray background. It contains no textual information, data, charts, or diagrams. The design is minimalist and symbolic, intended to represent a robot or artificial intelligence concept.

### Components

The icon is composed of the following geometric elements:

1. **Head Outline:** A square with rounded corners, drawn with a thick black line.

2. **Eyes:** Two identical, solid black circles placed symmetrically within the head outline, positioned in the upper half.

3. **Antenna:** A vertical line extending from the top center of the head, terminating in a small, hollow circle (a ring).

4. **Ears/Side Panels:** Two identical, vertical rectangular shapes with rounded outer edges, attached to the left and right sides of the head outline. They are drawn with the same line thickness as the head.

### Detailed Analysis / Content Details

* **Textual Content:** None. The image contains no words, labels, numbers, or characters in any language.

* **Data Content:** None. This is not a chart, graph, or data visualization.

* **Color:** The image uses a two-tone palette: black (#000000) for all lines and shapes, and a uniform light gray (approximately #E5E5E5) for the background.

* **Line Style:** All lines are of consistent, medium-heavy weight with no variation. Corners on the head and ears are rounded.

### Key Observations

* The design is highly symmetrical along the vertical axis.

* The icon uses universal, simple geometric shapes (square, circle, rectangle) for immediate recognizability.

* The lack of a mouth or other facial features gives it a neutral, non-expressive appearance.

* The antenna is a classic visual shorthand for "robot" or "wireless communication."

### Interpretation

This image serves as a **symbol or icon**, not a carrier of factual data or complex information. Its purpose is purely representational.

* **What it represents:** The combination of a boxy head, circular eyes, and an antenna is a widely understood visual metaphor for a robot, AI, or automated system. The simplicity suggests it could be used as an app icon, a logo element, or in user interface design to denote a bot, AI assistant, or automated process.

* **Design Intent:** The clean, bold lines and lack of detail ensure the icon remains legible at very small sizes (e.g., a favicon or mobile app icon). The rounded corners soften the mechanical feel, making it appear more friendly or approachable.

* **Notable Absence:** The lack of any text or unique identifying marks means this icon is generic. It does not represent a specific brand, product, or dataset. Its meaning is derived entirely from cultural conventions around how robots are depicted.

</details>

). The observation also has a manual on the right, which describes the dynamics of the game. The agent can navigate the grid using five actions: left, right, up, down, and stay. The agent can only interact with entities when it is in the same grid cell as the entity. The agent’s task is to identify roles of all entities from the manual, go to the messenger, then go to the goal, while avoiding the enemy. Shaded icons indicate one possible scenario of entity movement over time. By observing entity movement patterns and grounding language to entities based on their behaviors, the agent can infer the roles assigned to each entity: (ferry-enemy), (plane-messenger), and (researcher-goal). The agent can then execute an appropriate plan to complete the task. The dashed line in the grid shows such a possible plan.

We illustrate dynamics-descriptive language by using a simple 2D grid-based game in Figure ˜ 1, instantiated by MESSENGER S2 [14]. This is the setting of our testbed environments and will be detailed in Section ˜ 3.1 Each game instance consists of several entities, an agent positioned in a grid-world observation, and a language manual. Each entity has a role among messenger, goal, and enemy. The agent acts as a courier, tasked with picking up a message from the messenger and delivering it to the goal while avoiding the enemy. The manual provides descriptions of the entity attributes, helping the agent understand the environment’s dynamics: what the roles of entities are and how the environment changes as the agent interacts with them. To succeed, the agent must interpret the language manual, identify the entities, and infer their respective roles based on observed behaviors.

Language is valuable because it allows for the description of novel games by recombining known concepts. For instance, consider Figure ˜ 1 as the training reference game and the following example manual: The ship going away from you is the goal you need to go to. The stationary plane is an enemy. The scientist won’t move and has an important message. The example manual describes an unseen dynamics game with known concepts derived from the reference game. To succeed in this environment—where the dynamics have changed but the rules remains the same—the agent must adopt a different behavior than in the reference game. This manual also produces novel surface-level language through synonyms (e.g. "researcher" vs "scientist") and paraphrases ("won’t move" vs "stationary"),

We want to study language grounding and how it affects agent generalizability. Therefore, we abstract away our observation to a discrete grid-world, thus simplifying perception complexity, similar to existing work [14, 26, 20, 40, 6]. Our goal is to develop an agent capable of understanding dynamics-descriptive language by grounding it to discrete entities. More importantly, we aim for the agent to generalize to unseen games described by unseen dynamics and/or novel language, allowing it to adapt agent behavior to new environmental changes.

In the current literature, there are two main approaches to building such an agent: model-free and model-based approach. Model-free methods [14, 26] directly map language to a policy. Language grounding is thus based entirely on policy learning signals, without modeling the environment dynamics. This might be challenging for agent to learn complex mapping from dynamics-descriptive language to action. Meanwhile, model-based methods like EMMA-LWM [40], Reader [6], and Dynalang [20] build a world model [11] simulating trajectories, which are then used to train a policy. Dynamics-descriptive language is incorporated into the world model, enabling it to use language to predict environmental changes.

However, these existing works have some limitations. EMMA-LWM requires expert demonstrations—a constraint that may not be always feasible for real-life tasks. Reader assumes inference-time latency is tolerable for the target tasks. This is because Reader uses a Monte Carlo Tree Search (MCTS) to look ahead and generate a full plan. This approach may not be practical for applications that require quick policy responses. Last, we show that the policy learned from Dynalang fails to generalize over unseen games in Section ˜ 5.1. To address these limitations, we adopt a model-based reinforcement learning (MBRL) approach that builds a language-grounded world model from interaction with the environment, and then use this world model to train a policy. In contrast to previous methods, our approach does not require expert demonstrations, avoids expensive inference-time planning, and can generalize to unseen games.

We propose L anguage-aware E ncoder for D reamer W orld M odel (LED-WM), building on a MBRL framework: DreamerV3 [13]. LED-WM introduces a new encoder for DreamerV3 that explicitly grounds entities to their language descriptions, using a simple yet effective attention mechanism. In this paper, we make the following contributions:

- We show that a language-conditioned MBRL without an explicit language grounding to entities, instantiated by Dynalang [20], fails to generalize over unseen games (see Section ˜ 5.1).

- By using an attention mechanism in LED-WM to do language grounding, we show that a policy trained from LED-WM can generalize over unseen games better than model-free and model-based baselines in several settings MESSENGER and MESSENGER-WM (see Section ˜ 4).

- We demonstrate that given a trained LED-WM, we can improve a trained policy by fine-tuning it in synthetic test trajectories generated by the world model (see Section ˜ 5.2).

## 2 Background

Problem formulation.

We define our problem as a language-conditioned Markov Decision Process, represented by a tuple with common notations: $(\mathcal{S},\mathcal{A},r,T,\gamma,H)$ . $\mathcal{S}$ represents the state space where each state has a 10 $\times$ 10 grid-world observation containing entity symbols and an agent (e.g.

<details>

<summary>figures/ferry.png Details</summary>

### Visual Description

## Illustration: Stylized Ship Icon

### Overview

The image is a flat, stylized digital illustration of a ship on water. It is not a chart, diagram, or document containing textual data. The image is purely graphical and symbolic, using simplified shapes and a limited color palette to represent a vessel at sea. There is no embedded text, numerical data, labels, or legends present.

### Components/Axes

As this is an illustration and not a data visualization, there are no axes, scales, or legends. The visual components are:

1. **Ship Structure:**

* **Hull:** A large, solid blue shape forming the main body of the ship. It has a curved bow (front) on the right and a flat stern (back) on the left.

* **Superstructure:** A white, rectangular block sitting atop the hull, representing the bridge or accommodation area.

* **Funnel/Stack:** A bright blue, tapered cylindrical shape positioned on top of the superstructure.

* **Mast/Antenna:** A thin, white vertical line extending upwards from the top of the funnel.

2. **Water:** A light blue, wavy band at the bottom of the image, representing the sea surface. The waves are depicted as a series of connected, smooth curves.

3. **Background:** A solid, light beige or off-white color fills the space behind the ship and above the water.

### Detailed Analysis

* **Color Palette:** The illustration uses a monochromatic blue scheme for the subject, with white accents, against a neutral background.

* Hull: Approximate color value is a medium-dark blue (e.g., #4A6FE3).

* Funnel: A brighter, cyan-like blue (e.g., #00B4FF).

* Superstructure & Mast: White.

* Water: A very light, pastel blue (e.g., #D6F0FF).

* Background: Light beige (e.g., #F5F2EB).

* **Style:** The design is minimalist and iconic, using geometric shapes with soft, rounded corners. There is no shading, texture, or perspective detail, giving it a clean, modern, app-icon-like appearance.

* **Composition:** The ship is centered horizontally and occupies the middle vertical third of the image. The water line sits in the lower third.

### Key Observations

* The image contains **zero textual information**. There are no labels, titles, annotations, or numbers.

* It is a **symbolic representation**, not a technical diagram. It conveys the concept of "ship" or "maritime" rather than specific technical details.

* The design is **non-literal**. For example, the ship lacks specific features like portholes, railings, or a defined deck, and the water is a stylized pattern.

### Interpretation

This image functions as a **visual icon or symbol**. Its purpose is to be immediately recognizable as a ship, likely for use in a user interface, logo, or informational graphic where a simple maritime metaphor is needed. The choice of a calm, blue color palette and smooth shapes suggests themes of transport, travel, logistics, or the sea in a friendly, approachable, and non-technical context. The absence of data or text means its informational content is purely connotative, relying on the viewer's cultural understanding of the ship symbol.

</details>

,

<details>

<summary>figures/plane.png Details</summary>

### Visual Description

## Icon: Airplane Symbol

### Overview

The image displays a stylized, flat-design icon of a commercial airplane viewed from a top-down perspective. The icon is presented against a plain, light grey background. It contains no textual information, data, charts, or diagrams. It is a purely graphical symbol.

### Components/Axes

* **Primary Subject:** A single airplane icon.

* **Background:** A solid, uniform light grey field (approximate hex: #f0f0f0).

* **Legend/Labels:** None present.

* **Axes/Scale:** Not applicable.

### Detailed Analysis

The icon is constructed with the following visual elements:

* **Outline:** A thick, dark grey (approximate hex: #3a3a3a) border defines the entire shape of the airplane.

* **Fill:** The interior of the airplane is filled with a very light, cool blue (approximate hex: #e6f3ff).

* **Detail:** Two slightly darker blue (approximate hex: #a8c8e8) rectangular stripes are placed on the wings, suggesting engine nacelles or wing markings. One stripe is on the upper-left wing, and the other is on the lower-right wing.

* **Orientation:** The airplane is oriented diagonally, with its nose pointing towards the top-right corner of the image frame and its tail towards the bottom-left.

* **Spatial Grounding:** The icon is centered within the square image frame. The wings extend towards the top-left and bottom-right corners. The fuselage runs diagonally from the bottom-left to the top-right.

### Key Observations

1. **Simplicity:** The design is minimalist, using only three colors (dark grey outline, light blue fill, medium blue detail) and basic geometric shapes to represent the aircraft.

2. **Style:** It follows a common "flat design" or "outline icon" aesthetic, suitable for user interfaces, signage, or informational graphics.

3. **Clarity:** The symbol is immediately recognizable as an airplane due to its distinct silhouette: a central fuselage, two swept-back wings, and a tail assembly.

4. **Lack of Data:** The image contains no quantitative information, trends, labels, or text to extract. It is a symbolic representation, not a data visualization.

### Interpretation

This image serves as a **symbolic identifier**, not a source of factual data. Its meaning is derived from universal visual conventions.

* **Purpose:** The icon is designed to represent concepts related to air travel, aviation, airports, flight, or transportation in a quick, universally understandable manner. It would typically be used in contexts like navigation menus, maps, informational brochures, or signage.

* **Design Choices:** The diagonal orientation conveys a sense of motion or dynamism. The thick outline ensures visibility at small sizes, and the limited color palette aids in clear recognition and potential branding consistency.

* **Underlying Information:** The image itself provides no underlying data trends or investigative findings. Its "information" is purely semiotic: it is a signifier for the concept of an airplane. Any additional meaning (e.g., "departures," "airline logo," "travel section") would be entirely dependent on the context in which this icon is placed.

</details>

,

<details>

<summary>figures/scientist.png Details</summary>

### Visual Description

## Icon/Illustration: Scientist with Microscope

### Overview

This is a flat-design, stylized icon or illustration depicting a scientist (or lab technician) looking into a microscope. The image contains no textual information, data, charts, or diagrams. It is a symbolic representation of scientific research, laboratory work, or analysis.

### Components/Axes

* **Primary Subject:** A human figure, shown from the chest up, in profile facing left.

* **Key Object:** A compound microscope positioned in front of the figure.

* **Background:** A solid, light gray background (`#f0f0f0` approximate).

* **Style:** Bold, black outlines define all shapes. Colors are flat with no gradients or shading.

### Detailed Analysis

**Figure Details:**

* **Hair:** Brown, styled with a prominent curl or wave on top.

* **Face:** No facial features are depicted (eyes, nose, mouth are absent).

* **Eyewear:** Large, bright blue safety goggles or glasses.

* **Clothing:** A white lab coat with a black outline. The collar and front seam are indicated.

* **Pose:** The figure is leaning forward, with their head positioned to look into the microscope's eyepiece. Their right arm is bent, with the hand resting on the microscope's arm or focus knob.

**Microscope Details:**

* **Body/Arm:** A curved, yellow arm connects the base to the head.

* **Head/Optical Tube:** Gray, containing the eyepiece (ocular lens) and objective lenses.

* **Stage:** A flat, gray platform where a sample would be placed.

* **Base:** A gray, rectangular base supporting the structure.

* **Knobs:** A prominent, circular yellow knob (likely the coarse focus) is visible on the side of the arm. A smaller black circle may represent a fine focus knob.

* **Nosepiece:** A gray, rotating turret holding the objective lenses is implied below the head.

**Spatial Grounding:**

* The **microscope** occupies the left and central portion of the frame.

* The **scientist** is positioned on the right side, overlapping the microscope.

* The **yellow focus knob** is located at the junction of the arm and the base, slightly below the center of the image.

* The **blue goggles** are the most saturated color element, positioned in the upper-right quadrant of the figure's head.

### Key Observations

1. **Absence of Text:** The image contains zero textual elements—no labels, titles, legends, or annotations.

2. **Symbolic, Not Literal:** The illustration is an archetype. It uses universal symbols (lab coat, microscope, goggles) to convey the concept of "scientist" or "research" rather than depicting a specific person or equipment model.

3. **Simplified Form:** Complex details of the microscope (like specific lenses, adjustment screws, or a light source) and the human figure (facial features, fingers) are omitted for clarity and iconographic impact.

4. **Color Palette:** The palette is limited and functional: white (coat), brown (hair), blue (goggles), yellow (microscope arm/knob), gray (microscope body/background), and black (outlines).

### Interpretation

This image functions as a **visual metaphor**. Its purpose is to quickly and universally communicate ideas related to:

* **Scientific Research & Discovery:** The act of close examination and analysis.

* **Laboratory Work:** A standard setting for biological, chemical, or medical investigation.

* **Precision & Scrutiny:** The microscope symbolizes looking deeper into a subject, studying details invisible to the naked eye.

* **Expertise & Analysis:** The figure represents a trained professional engaged in technical work.

The lack of specific data or text means its information is purely **connotative**. It doesn't present facts but evokes a field of knowledge and a set of activities. In a technical document, this icon would likely serve as a section header, a button label, or an illustrative element to denote a related topic (e.g., "Lab Results," "Microscopic Analysis," "Research Methods"). Its effectiveness lies in its immediate recognizability and its clean, unambiguous design.

</details>

,

<details>

<summary>figures/bot.png Details</summary>

### Visual Description

## Icon/Symbol: Robot Head Line Drawing

### Overview

The image is a simple, monochromatic line drawing of a stylized robot head, presented as a black icon on a light gray background. It contains no textual information, data, charts, or diagrams. The design is minimalist and symbolic, intended to represent a robot or artificial intelligence concept.

### Components

The icon is composed of the following geometric elements:

1. **Head Outline:** A square with rounded corners, drawn with a thick black line.

2. **Eyes:** Two identical, solid black circles placed symmetrically within the head outline, positioned in the upper half.

3. **Antenna:** A vertical line extending from the top center of the head, terminating in a small, hollow circle (a ring).

4. **Ears/Side Panels:** Two identical, vertical rectangular shapes with rounded outer edges, attached to the left and right sides of the head outline. They are drawn with the same line thickness as the head.

### Detailed Analysis / Content Details

* **Textual Content:** None. The image contains no words, labels, numbers, or characters in any language.

* **Data Content:** None. This is not a chart, graph, or data visualization.

* **Color:** The image uses a two-tone palette: black (#000000) for all lines and shapes, and a uniform light gray (approximately #E5E5E5) for the background.

* **Line Style:** All lines are of consistent, medium-heavy weight with no variation. Corners on the head and ears are rounded.

### Key Observations

* The design is highly symmetrical along the vertical axis.

* The icon uses universal, simple geometric shapes (square, circle, rectangle) for immediate recognizability.

* The lack of a mouth or other facial features gives it a neutral, non-expressive appearance.

* The antenna is a classic visual shorthand for "robot" or "wireless communication."

### Interpretation

This image serves as a **symbol or icon**, not a carrier of factual data or complex information. Its purpose is purely representational.

* **What it represents:** The combination of a boxy head, circular eyes, and an antenna is a widely understood visual metaphor for a robot, AI, or automated system. The simplicity suggests it could be used as an app icon, a logo element, or in user interface design to denote a bot, AI assistant, or automated process.

* **Design Intent:** The clean, bold lines and lack of detail ensure the icon remains legible at very small sizes (e.g., a favicon or mobile app icon). The rounded corners soften the mechanical feel, making it appear more friendly or approachable.

* **Notable Absence:** The lack of any text or unique identifying marks means this icon is generic. It does not represent a specific brand, product, or dataset. Its meaning is derived entirely from cultural conventions around how robots are depicted.

</details>

in Figure ˜ 1). Each state also has a language manual $L$ , describing environment dynamics: transition function $T(s^{\prime}|s,a)$ and reward function $r(s,a)$ . $L$ consists of $N$ sentences associated with $N$ entities, where each sentence describes the dynamics of each entity. An example of a state is shown in Figure ˜ 1. Action space $\mathcal{A}=\{\texttt{up, down, right, left, stay}\}$ is discrete. The agent must take a sequence of actions $a_{t}\in\mathcal{A}$ over a horizon $H$ , where time step $t\in[1..H]$ , resulting in a state-action trajectory $(s_{1},a_{1},\ldots,s_{H},a_{H})$ . Our goal is to find a policy $\pi:\mathcal{S}\times L\rightarrow\mathcal{A}$ that maximizes the expected sum of discounted rewards: $\mathbb{E}_{\pi,L}\left[\sum_{t=1}^{H}\gamma^{t-1}r(s_{t},a_{t})\right].$

World model DreamerV3.

We base our world model on DreamerV3 [13], which uses Recurrent State-Space Model (RSSM) [12] to build a recurrent world model. DreamerV3 receives a sequence of observations and predicts latent representations of future observations given actions. Specifically, at a time step $t$ , DreamerV3 receives an observation $x_{t}$ , an action $a_{t}$ , and history information $h_{t}$ . These inputs are compressed into a latent representation $z_{t}$ and fed to RSSM with the action $a_{t}$ to predict the next latent representation $z_{t+1}$ . The world model has the following components:

$$

\displaystyle\raisebox{25.00003pt}{

$\text{RSSM}~~\begin{cases}\hphantom{A}\\[-6.0pt]

\hphantom{A}\\[-6.0pt]

\hphantom{A}\end{cases}$}\begin{aligned} &\text{Sequence model:}&\quad h_{t}&=f_{\phi}(h_{t-1},z_{t-1},a_{t-1})\\

&\text{Encoder:}&\quad z_{t}&\sim q_{\phi}(z_{t}\mid h_{t},x_{t})\\

&\text{Dynamics predictor:}&\quad\hat{z}_{t}&\sim p_{\phi}(\hat{z}_{t}\mid h_{t})\\

&\text{Reward predictor:}&\quad\hat{r}_{t}&\sim p_{\phi}(\hat{r}_{t}\mid h_{t},z_{t})\\

&\text{Continue predictor:}&\quad\hat{c}_{t}&\sim p_{\phi}(\hat{c}_{t}\mid h_{t},z_{t})\\

&\text{Decoder:}&\quad\hat{x}_{t}&\sim p_{\phi}(\hat{x}_{t}\mid h_{t},z_{t})\\

\end{aligned} \tag{1}

$$

In this work, we propose to change the encoder of DreamerV3 to better leverage language grounding to learn a more robust world model.

## 3 Environment setup

We adopt MESSENGER [14] and MESSENGER-WM [40] as our test bed environments. Both environments have the same setup as the example game in Figure ˜ 1. To succeed in the game, the agent must understand the language manuals $L$ and use reward and transitional signals to ground the roles, entity names and movement types to the entity symbols in the observation.

### 3.1 MESSENGER

Overview.

As shown in Figure ˜ 1, MESSENGER [14] is a 10 $\times$ 10 grid-world environment. We refer the readers to Figure ˜ 1 for game rules and setup, and Section ˜ C.1.1 for environment dynamics and action. Each game includes a language manual and an observation containing entities and a single agent. For more details about language grounding to entities, we refer the readers to Section ˜ C.1.2.

Evaluation settings.

MESSENGER offers four stages (stage S1, S2, S2-dev, S3) with different levels of generalization for test games. Each stage has its own training set, test set, and development set, all of which are described in detail in Section ˜ C.1.

### 3.2 MESSENGER-WM

Overview.

While providing multiple stages to evaluate policy generalization over out-of-distribution dynamics, MESSENGER does not include a setting for compositional generalization dynamics. To bridge this gap, MESSENGER-WM [40], derived from MESSENGER S2, enables evaluation at compositional generalization for world model and policy. Together, these two environments offer a comprehensive framework for assessing generalization, under varying levels of unseen games.

Evaluation settings.

MESSENGER-WM has three different evaluation settings with different levels of generalization: NewCombo, NewAttr, and NewAll. All settings share the same training set. More details about the evaluation settings are provided in Section ˜ C.3.2 and the original paper [40].

### 3.3 Evaluating generalization to unseen games

Table 1: Summary of generalization capabilities over unseen games in MESSENGER and MESSENGER-WM across stages. Examples with visualizations are provided in Section ˜ C.2.

| | MESSENGER | MESSENGER-WM | | | | | |

| --- | --- | --- | --- | --- | --- | --- | --- |

| S1 | S2 | S2-dev | S3 | New | New | New | |

| Combo | Attr | All | | | | | |

| Novel combinations of known entities | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | ✓ |

| Novel language (synonyms and paraphrase) | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

| Novel entity-role assignments | ✓ | ✓ | ✓ | ✓ | ✗ | ✓ | ✓ |

| Novel entity-movement-role assignments | ✗ | ✓ | ✓ | ✓ | ✗ | ✓ | ✓ |

| Novel game dynamics of known movement behaviors | ✗ | ✓ | ✗ | ✗ | ✗ | ✓ | ✓ |

| Novel game dynamics from one training dynamic | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ |

Together, MESSENGER and MESSENGER-WM offer different levels of generalization in test games. We summarize these in Table ˜ 1 and below:

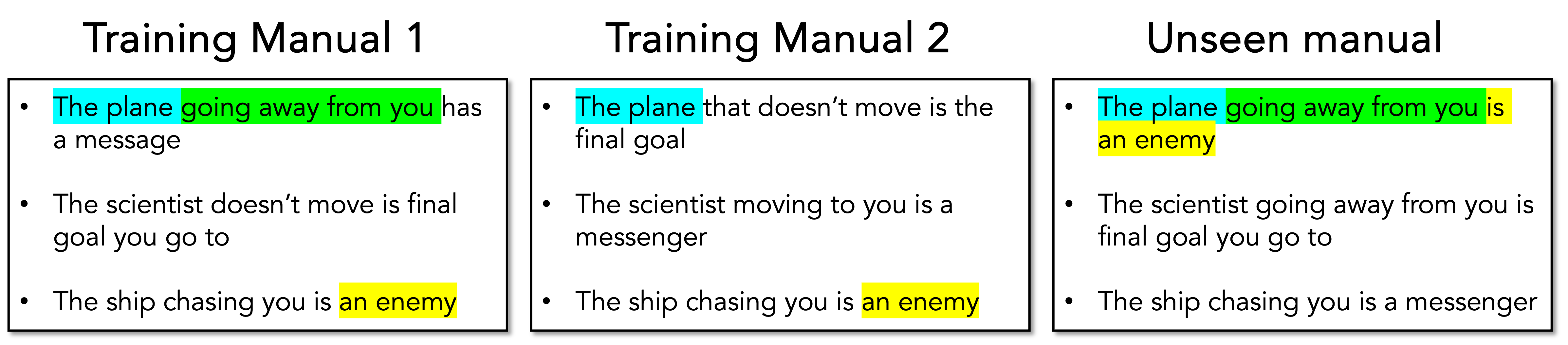

- Novel language: the test manual uses synonyms and paraphrases to create novel language through surface structure.

- Novel combinations of known entities: the test game involve entities that appear in training set but never appear together in one training game.

- Novel entity-role assignments: at least one entity in the test game has a different role from its roles in training games.

- Novel entity-role-movement assignment: at least one entity in the test game has a novel combination of entity-role-movement assignment.

- Novel combinations of known movement behaviors (novel game dynamics): the test game has a novel movement combinations of entities, e.g. (chaser-chaser-chaser) for three entities.

- Novel game dynamics from one training dynamic: Game dynamic is defined by the combination of entity movements. In the training set, there is only one such combination (chasing-fleeing-stationary) across all training games. The test game meanwhile has a novel dynamic, e.g. (chaser-chaser-chaser). This is also the difference between MESSENGER-WM and MESSENGER, which can be found more detailed in Section ˜ C.3.1

See Section ˜ C.2 for visualizations of these settings.

## 4 Method: L anguage-aware E ncoder for D reamer W orld M odel (LED-WM)

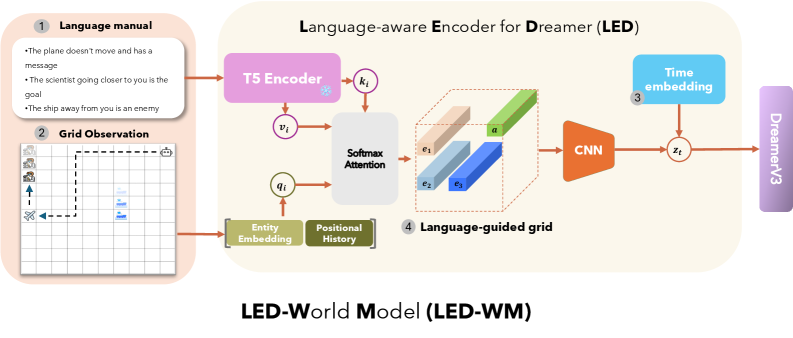

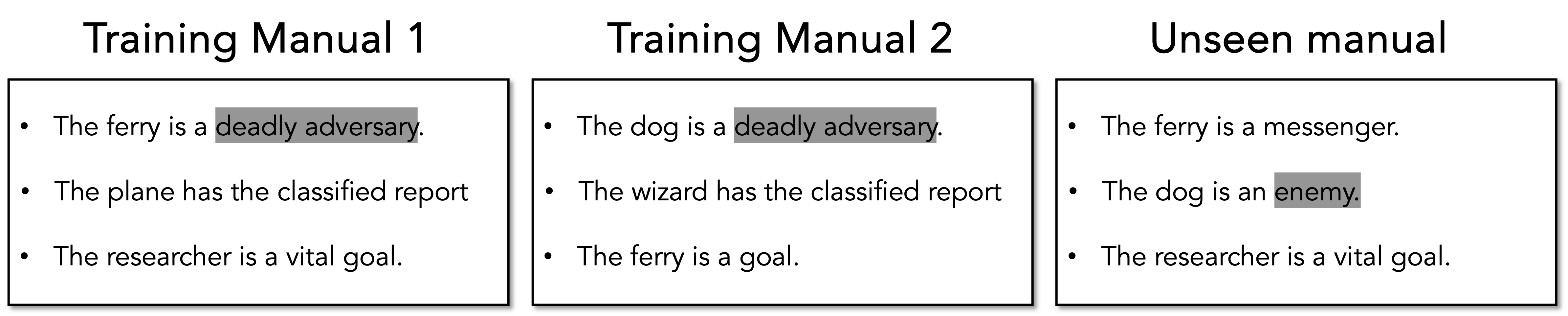

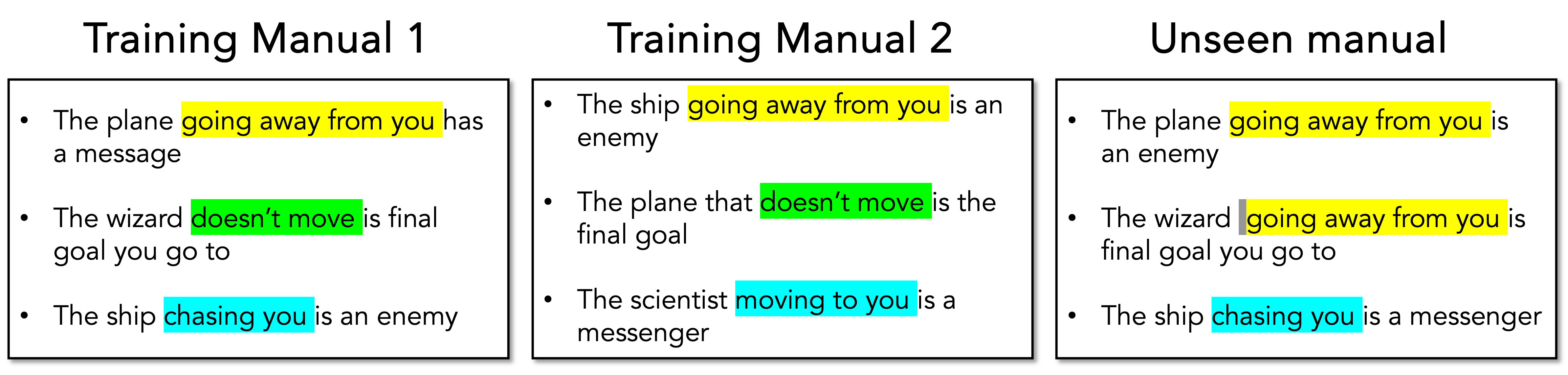

To generalize policy across unseen games, we aim to develop a world model capable of doing language grounding to entities in a game. Inspired by EMMA [14], we propose L anguage-aware E ncoder for D reamer (LED), which uses cross-modal attention to align game entities with sentences. The resulting vectors are then placed back into their original entity locations, producing a language-aware grid observation. This grid is passed through a CNN encoder to extract observation features, which are used by the other components of DreamerV3. We call this overall model LED- W orld M odel (LED-WM). We provide an overview of the encoder LED and the world model LED-WM in Figure ˜ 2 and describe each component in the following sections.

<details>

<summary>x2.png Details</summary>

### Visual Description

\n

## Diagram: Language-aware Encoder for Dreamer (LED) / LED-World Model (LED-WM)

### Overview

This image is a technical system architecture diagram illustrating the **LED-World Model (LED-WM)**. It details a pipeline that processes two primary inputs—a natural language manual and a grid-based visual observation—to produce a latent representation (`z_i`) that is fed into the "DreamerV3" agent. The system's purpose is to create a language-aware world model, enabling an AI agent to understand and act upon instructions within a grid-world environment.

### Components/Axes

The diagram is organized into a flow from left to right, with inputs on the left and the final output on the right. It is segmented into four numbered, key components:

1. **Language manual (Top-Left):** A text box containing three example instruction sentences.

2. **Grid Observation (Bottom-Left):** A visual representation of a 2D grid-world state.

3. **Time embedding (Top-Right):** A module providing temporal information.

4. **Language-guided grid (Center):** The core processed representation combining language and visual data.

**Key Processing Blocks & Labels:**

* **T5 Encoder:** Processes the language manual. It outputs a key vector `k_i`.

* **Entity Embedding & Positional History:** Processes the Grid Observation. It outputs a value vector `v_i` and a query vector `q_i`.

* **Softmax Attention:** A mechanism that takes `q_i`, `k_i`, and `v_i` as inputs.

* **CNN (Convolutional Neural Network):** Processes the output of the attention mechanism.

* **DreamerV3:** The final destination for the processed latent vector `z_i`.

**Data Flow & Variables:**

* `k_i`, `v_i`, `q_i`: Key, Value, and Query vectors for the attention mechanism.

* `e_1`, `e_2`, `e_3`: Represent entity embeddings within the 3D "Language-guided grid."

* `a`: Represents an attribute or feature dimension within the grid.

* `z_i`: The final latent state vector output by the CNN, combined with the Time embedding.

### Detailed Analysis

**1. Language Manual (Component 1):**

* **Content:** Contains three bulleted sentences:

* "The plane doesn't move and has a message"

* "The scientist going closer to you is the goal"

* "The ship away from you is an enemy"

* **Function:** Serves as the natural language instruction or context for the agent's task.

**2. Grid Observation (Component 2):**

* **Visual:** A 10x10 grid (approximate) with a light gray background and darker grid lines.

* **Elements:**

* **Icons:** A blue airplane (top-left), a blue scientist figure (center), and a blue ship (bottom-right).

* **Annotations:** A dashed black arrow points from the scientist towards the left. A dashed black rectangle outlines a 3x3 area in the top-left quadrant.

* **Function:** Represents the current visual state of the environment observed by the agent.

**3. Core Processing Pipeline:**

* The **T5 Encoder** processes the language manual, generating a key `k_i`.

* The **Grid Observation** is processed by **Entity Embedding** and **Positional History** modules, generating a value `v_i` and a query `q_i`.

* These three vectors (`q_i`, `k_i`, `v_i`) are fed into a **Softmax Attention** block. This suggests the model is performing cross-modal attention, aligning linguistic concepts (from the manual) with visual entities (from the grid).

* The output of the attention block is visualized as a **3D "Language-guided grid" (Component 4)**. This is a conceptual tensor with dimensions labeled `e_1`, `e_2`, `e_3` (likely representing different entities or features) and an attribute dimension `a`.

* This 3D grid is processed by a **CNN**, which flattens and transforms it into a latent vector `z_i`.

* A **Time embedding (Component 3)** is added to `z_i` (indicated by the `+` symbol), incorporating temporal context.

* The final vector `z_i` (now time-aware) is the output, directed to **DreamerV3**.

### Key Observations

* **Multimodal Fusion:** The architecture explicitly fuses linguistic and visual information early in the pipeline via an attention mechanism, rather than processing them separately.

* **Structured Representation:** The "Language-guided grid" is a key intermediate representation, suggesting the model constructs a structured, entity-centric view of the world informed by language.

* **Temporal Awareness:** The explicit addition of a Time embedding indicates the model is designed for sequential decision-making tasks where timing is crucial.

* **Spatial Grounding:** The dashed arrow and rectangle in the Grid Observation imply the system may track relationships (e.g., "going closer") and regions of interest defined by the language.

### Interpretation

The LED-WM diagram presents a method for grounding natural language instructions in a visual, interactive environment. The system does not merely caption the image or parse the text in isolation; it actively uses the language to *guide* its perception of the grid world.

* **How it works:** The language manual defines goals ("the scientist... is the goal") and threats ("the ship... is an enemy"). The attention mechanism likely weights different parts of the visual grid (e.g., the scientist icon, the ship icon) based on their relevance to these linguistic concepts. The resulting "Language-guided grid" is a rich, context-aware representation where visual features are annotated with semantic meaning derived from the instructions.

* **Why it matters:** This approach is critical for creating AI agents that can follow open-ended, natural language commands in games, simulations, or robotics. By building a world model (`z_i`) that is inherently language-aware, the downstream planner (DreamerV3) can make decisions that are directly aligned with high-level human instructions.

* **Notable Design Choice:** The use of a T5 Encoder (a powerful text-to-text model) suggests the language understanding component is substantial, capable of interpreting complex instructions beyond simple keywords. The transformation of a 2D grid observation into a 3D entity-attribute tensor for CNN processing indicates a sophisticated approach to spatial reasoning.

</details>

Figure 2: Overview of our proposed world model LED-WM. The world model input consists of:

<details>

<summary>x7.png Details</summary>

### Visual Description

Icon/Small Image (23x22)

</details>

a language manual $L$ ,

<details>

<summary>x8.png Details</summary>

### Visual Description

Icon/Small Image (22x22)

</details>

a grid-world observation representing entity and agent symbols, and

<details>

<summary>x9.png Details</summary>

### Visual Description

Icon/Small Image (22x22)

</details>

the current time step $t$ . Entity, agent symbols, and time step are encoded using learned embeddings, while $L$ is encoded via a frozen T5 encoder. To represent each entity, we employ a multi-layer perceptron (MLP) that processes the entity embedding and its temporal information, capturing its movement pattern relative to the agent, to produce a query vector. We apply an attention network between the query vectors and the sentence embeddings to align each entity with its corresponding sentence. The resulting vectors are then put into their respective entity positions. This produces

<details>

<summary>x10.png Details</summary>

### Visual Description

Icon/Small Image (22x22)

</details>

a language-grounded grid $G_{l}$ , which is then processed by a CNN. The extracted feature vector is flattened and concatenated with the time embedding to form final observation representation $x_{t}$ .

### 4.1 Observational inputs

The input to the world model consists of a natural language manual $L$ , a grid observation $o_{t}$ of size $10\times 10$ , containing symbolic entities, and the current time step $t$ . The manual

<details>

<summary>x11.png Details</summary>

### Visual Description

Icon/Small Image (24x23)

</details>

comprises $N$ sentences, each describing the dynamic of one of the $N$ entities in the observation. Following Lin et al. [20], $N$ sentences in $L$ are encoded using a T5 encoder [30], resulting in $N$ frozen sentence embeddings, denoted by $s_{1},s_{2},...,s_{N}$ . In the grid

<details>

<summary>x12.png Details</summary>

### Visual Description

Icon/Small Image (23x23)

</details>

, the $N$ entities and the agent, represented by entity symbols, are encoded using a learned entity embedding vectors initialized with random weights. This ensures the agent does not have prior knowledge about entity identities, requiring it to infer entities based on the language. This results in $N$ symbol embeddings $sb_{1},sb_{2},...,sb_{N}\in\mathbb{R}^{d_{sb}}$ and a single agent embedding $a\in\mathbb{R}^{d_{sb}}$ . The current time step $t$

<details>

<summary>x13.png Details</summary>

### Visual Description

Icon/Small Image (23x23)

</details>

is encoded as $time_{t}$ using a learned time embedding, also initialized with random weights.

To build position history of each entity $i$ , we capture temporal dynamics by constructing an array $D_{i}$ temporally, with length corresponding to the maximum possible steps in the environment and initial values of $-1$ . At time step $t$ , let the 2D coordinate of the entity $i$ be $p^{t}_{i}$ and that of the agent be $p^{t}_{a}$ . To determine the relative direction of the entity’s movement with respect to the agent, we compute the dot product:

$$

D_{i}^{t}=\frac{p^{t}_{i}-p^{t}_{a}}{\|p^{t}_{i}-p^{t}_{a}\|}\cdot\frac{p^{t}_{i}-p^{t-1}_{i}}{||p^{t}_{i}-p^{t-1}_{i}||},\forall i\in[1..N],\forall t, \tag{2}

$$

where the first term is a normalized vector from the agent to the entity $i$ , and the second term is a normalized velocity vector of the entity $i$ . This dot product quantifies the alignment between the entity’s direction of motion and its position relative to the agent at each time step $t$ .

### 4.2 LED: Building a language-aware encoder

We construct a language-grounded grid representation that aligns the language manual $L$ , which consists of $N$ sentence embeddings, with the observation $o_{t}$ , which includes $N$ entity embeddings and one agent embedding. To align the sentence embeddings with the entity embeddings, we use an attention network. The values are obtained through a linear transformation of the sentence embeddings $s_{i}$ . Meanwhile, the queries are obtained through a multi-layer perception (MLP) applied to the entity embeddings $sb_{i}$ and temporal array $D_{e}$ . Likewise, the keys are obtained through an MLP applied to the sentence embeddings $s_{i}$ :

$$

\displaystyle q_{i} \displaystyle=\text{MLP}([sb_{i},D_{e}]), \displaystyle k_{i} \displaystyle=\text{MLP}(s_{i}), \displaystyle v_{i} \displaystyle=W_{v}s_{i}, \displaystyle q_{i} \displaystyle\in\mathbb{R}^{d}, \displaystyle k_{i} \displaystyle\in\mathbb{R}^{d}, \displaystyle v_{i} \displaystyle\in\mathbb{R}^{d_{\text{val}}}, \tag{3}

$$

where $d$ and $d_{val}$ denote the dimensions of the query/key and value vectors, respectively. We then apply scaled dot-product attention [35]. Given $K\in R^{N\times d}$ as the key matrix where the row $i$ of $K$ is $k_{i}^{T}$ , attention scores $\gamma_{i}\in R^{N}$ and resulting vector $e_{i}\in R^{d_{a}}$ for each entity $i$ are calculated as:

$$

\displaystyle\gamma_{i}=softmax\left(\frac{q_{i}\cdot K}{\sqrt{d}}\right),\quad e_{i}=\sum_{j=1}^{N}\gamma_{ij}v_{j}, \tag{5}

$$

This attention aligns entity symbols in the observation with sentences in the manual based on attribute language descriptions such as movement (e.g., chaser, moving away, stationary) and entity name (e.g., dog, wizard). The resulting $e_{i}$ from the attention is able to represent an associated role for entity $i$ such as enemy, messenger, goal, which is vital information for world model and policy learning.

To retain the spatial information of entities, we place the resulting vectors $e_{i}$ back into the original positions of their corresponding entities in the grid observation. This produces

<details>

<summary>x14.png Details</summary>

### Visual Description

Icon/Small Image (23x23)

</details>

a language-aware grid observation $G_{l}$ of size $h\times w\times d_{val}$ . We then use a CNN encoder to extract a feature map, which is subsequently flattened and concatenated with the time embedding $\text{time}_{t}$ . The combined representation is processed through an MLP to obtain the final feature representation $x_{t}$ for the observation $o_{t}$ at time step $t$ :

$$

\displaystyle x_{t} \displaystyle=\text{MLP}(\text{Flatten}([\text{CNN}(G_{l})),\text{time}_{t}]) \tag{6}

$$

Denoting $\phi$ as the parameters of LED-WM, we can find stochastic variable $z_{t}$ as the function of $x_{t}$ :

$$

\displaystyle z_{t}\sim q_{\phi}(z_{t}|h_{t},x_{t}), \tag{7}

$$

which now replaces the encoder in DreamerV3, as shown in Equation ˜ 1.

### 4.3 LED-WM: Combining LED with Dreamerv3

We replace DreamerV3’s encoder with LED, resulting in our world model LED-WM. We adopt world model and policy learning from DreamerV3. However, we make the following changes to the original architecture to improve policy generalization and sample efficiency: we omit the reconstruction decoder (Decoder in Equation ˜ 1) and adopt multi-step prediction for reward and continue prediction [15, 27]. For more details, we refer the readers to Appendix ˜ D for world model loss and Appendix ˜ E for training procedure.

## 5 Experiments

We want to answer the following questions: 1) Can a policy trained on our world model LED-WM generalize to unseen games? (see Section ˜ 5.1), and 2) Can the world model LED-WM generalize to unseen games? (see Section ˜ 5.2) To answer these, we use two environments: MESSENGER and MESSENGER-WM, which are detailed in Section ˜ 3. We detail the training settings in Appendix ˜ A.

### 5.1 Policy generalization trained from LED-WM

#### 5.1.1 Policy baselines

As baselines, we adopt the following model-free (EMMA,CRL) and model-based (Dynalang, EMMA-LWM) methods:

- EMMA [14] uses attention between entities and sentences to generate language-conditioned observation to the policy. The policy is trained via curriculum learning where the agent is initialized with parameters learned from previous easier game settings. We report EMMA with curriculum learning from the original paper and EMMA without curriculum learning from [40].

- CRL [26] develops a specialized constraint for MESSENGER to overcome spurious correlations between entity identities and their roles in the training data. It has the state-of-the-art win rate performance in test environments of MESSENGER.

- Dynalang [20] use soft actor-critic for policy learning. Because the paper does not report policy generalization performance in MESSENGER, we first reproduce Dynalang using published code and train to convergence according to published hyperparameters and training steps. We then report its policy performance on test environment of MESSENGER in Table ˜ 2.

- EMMA-LWM [40] built a language-conditioned world model. A policy is trained with simulated trajectories from this world model through online imitation learning and filtered behavior cloning. Both methods require expert demonstrations. Online imitation learning is where the expert supervises the optimal action to take in simulated states (from the world model). Meanwhile, in filtered behavior cloning, the expert uses only states from its own expert plan. The agent then only chooses plans that achieve the highest returns according to the world models to imitate.

#### 5.1.2 Evaluation metrics

- Win Rates for MESSENGER: To make our comparison consistent with reported results from EMMA [14] and CRL [26], we adopt win rate as the metric in MESSENGER. Win rate is calculated as the average number of games won by the agent over 1000 episodes.

- Average Sum of Scores for MESSENGER-WM: Likewise to be consistent with EMMA-LWM [40] studying MESSENGER-WM, we adopt average sum of scores as the metric. For each game configuration, we run the policy for 60 trials We find that 60 trials are enough to find a stable average sum of scores to evaluate a policy given a particular game configuration. and compute the average sum of scores. This process is repeated for 1000 games, and we report the average sum across all games.

Table 2: Policy generalization in MESSENGER in terms of win rate. Note that other methods (Dynalang, CRL and LED-WM) do not use curriculum training. Results of Dynalang and LED-WM (∗) are rounded to second decimal place, while results for CRL and EMMA are taken from their original papers. Results are recorded across five training seeds.

| Dynalang ∗ CRL EMMA (w/o curriculum) | 0.03 ± 0.02 88 ± 2.5 85 ± 1.4 | 0.04 ± 0.05 76 ± 5 45 ± 12 | – – – | 0.03 ± 0.05 32 ± 1.9 10 ± 0.8 |

| --- | --- | --- | --- | --- |

| EMMA(w/ curriculum) | 88 ± 2.3 | 95 ± 0.4 | – | 22 ± 3.8 |

| LED-WM (Ours) ∗ | 100 ± 0 | 51.6 ± 2.7 | 96.6 ± 1.0 | 34.97 ± 1.73 |

#### 5.1.3 Results

We report the win rate performance of our method and other baselines for MESSENGER in Table ˜ 2 and the average sum of scores for MESSENGER-WM in Table ˜ 3.

In MESSENGER-WM, LED-WM outperforms EMMA-LWM in all settings without using any expert demonstrations. In MESSENGER, Dynalang fails to generalize to unseen games. We hypothesize that this is because Dynalang lacks an explicit mechanism to ground language to each entity. Meanwhile, LED-WM is better than other baselines in S1 and comparable to CRL in S3.

However, LED-WM underperforms CRL in S2, where the agent is trained on only one movement combination chasing-fleeing-stationary but is evaluated over different unseen movement combinations (unseen dynamics - see Table ˜ 1). In contrast, LED-WM performs well on S2-dev, where its setting is similar to S2, but its test dynamics are the same as the training games. We hypothesize that this occurs because CRL incorporates an explicit mechanism to mitigate the data bias in S2 that there is only one movement combination in the training data and spurious correlations between entity identities and their roles. For instance, the assumption "a dog is always a goal". Therefore, this mechanism might enhance generalization in test scenarios where the dog is either a friend or an enemy. Incorporating such a mechanism in LED-WM might be a promising direction for future work.

Table 3: Policy generalization in MESSENGER-WM in terms of average sum of scores. EMMA-LWM results are taken from its original paper [40]. Results are recorded across five training seeds.

| EMMA-LWM Online IL Filtered BC (near-optimal) | 1.01 $\pm$ 0.12 1.18 $\pm$ 0.10 | 0.96 $\pm$ 0.17 0.75 $\pm$ 0.20 | 0.62 $\pm$ 0.21 0.44 $\pm$ 0.18 |

| --- | --- | --- | --- |

| Filtered BC (suboptimal) | 0.98 $\pm$ 0.13 | 0.29 $\pm$ 0.25 | 0.13 $\pm$ 0.19 |

| LED-WM (Ours) | 1.31 $\pm$ 0.05 | 1.15 $\pm$ 0.08 | 1.16 $\pm$ 0.02 |

### 5.2 World model generalization

To evaluate the generalization of a world model, one pragmatic metric is to measure how its generated rollouts on unseen dynamics benefit policy learning. If the world model can generalize to unseen dynamics in test games, which effectively simulates these dynamics, a policy finetuned on these rollouts should improve in new games.

Finetuning procedure.

Given a trained LED-WM, a trained policy from LED-WM, and a test game, LED-WM takes the initial observation and the manual as input to generate 60 synthetic trajectories. These trajectories are then used to determine whether the policy should be finetuned on this game. We estimate the value of the trained policy from the world model and finetune the policy if the estimated value is smaller than a pre-defined threshold. For each gradient update on the policy finetune, we generate 60 synthetic trajectories. We repeat this process in 2000 optimization steps. We illustrate the finetuning procedure in Appendix ˜ F with a Python-like format.

Table 4: World model generalization over S2-dev and S3 (MESSENGER) through finetune procedure. Finetune results are recorded in average sum of scores across five seeds.

| LED-WM (Ours) After finetune | 1.500 $\pm$ 0 - | - - | 1.4478 $\pm$ 0.01 1.4513 $\pm$ 0.01 | –0.11 $\pm$ 0.05 -0.01 $\pm$ 0.12 |

| --- | --- | --- | --- | --- |

Evaluation metrics and results.

We adopt the average sum of scores due to its robustness to the stochasticity of the environment. We show policy finetune results in Table ˜ 4 for MESSENGER. In MESSENGER, we show that the finetuning procedure improves the trained policy in S2-dev, In S2-dev, we use Wilcoxon signed-rank [37] and hierarchical bootstrap sampling [8] corresponding to two levels of hierarchies in our experiments (episodes and run trials): at a 95% confidence level, hierarchical sampling indicates an improvement between 0.014 and 0.019. and in S3, demonstrating that the world model is generalizable to test trajectories. However, the absolute policy improvement is still limited in our experiments.

## 6 Related work

Due to space limit, we provide a detailed related work in Appendix ˜ B. In this section, we briefly review related work on language-conditioned dynamics using model-based approach. Recent efforts focus on integrating dynamics-descriptive language into world models, resulting in language-conditioned world models. Dynalang [20] shows that such world model improves policy’s sample efficiency compared to model-free approaches. However, it does not demonstrate policy generalization in unseen games. Reader [6] shows that a MCTS planner can generalize to unseen games using a language-conditioned world model. Despite this, its environment (RTFM [43]) does not require language grounding to entities. Zhang et al. [40] introduce MESSENGER-WM, a compositional benchmark based on MESSENGER, and EMMA-LWM—a policy can generalize over unseen games from a language-based world model and expert demonstrations. Though sharing this same goal of policy generalization with our work, these studies rely on limiting assumptions. Planning with an MCTS tree in Reader, involves incurring computational cost to generate plans in inference time. This approach may not be practical for applications that require quick policy responses. On the other hand, EMMA-LWM requires expert demonstrations to use imitation learning and behavior cloning. This assumption may not always be feasible for every application. In contrast, our work lifts these assumptions and demonstrates policy generalization over unseen games in two environments that require language grounding: MESSENGER and MESSENGER-WM.

## 7 Conclusion

We develop an agent that can understand dynamics-descriptive language in interactive tasks. We adopt a model-based reinforcement learning (MBRL) approach, where a language-conditioned world model is trained through interactions with the environment, and a policy is learned from this world model. Unlike existing works, we do not require expert demonstrations or expensive planning during inference. Our method proposes Language-aware Encoder for Dreamer World Model (LED-WM). LED-WM adopts an attention mechanism to explicitly align language description to entities in the observation. We show that policies trained with LED-WM can generalize better to unseen games than existing baselines. We can also further improve the trained policy through fine-tuning on synthetic test trajectories generated by the world model.

## 8 Acknowledgment

We thank everyone from VIRL lab (Oregon State University), especially Skand and Akhil for their valuable feedback and discussions. The first author was personally supported by Amanda Putiza, Nguyen Thi Ngoc Anh, Ngo Thi Bich Lan, Tran Thanh Nhu, Bui Thuy Tien, and Nguyen Hoang Kieu Anh. This work is supported by NSF CAREER Award 2339676. We also thank the anonymous reviewers for their valuable feedback and suggestions.

## References

- Anderson et al. [2017] Peter Anderson, Qi Wu, Damien Teney, Jake Bruce, Mark Johnson, Niko Sünderhauf, Ian Reid, Stephen Gould, and Anton van den Hengel. Vision-and-language navigation: Interpreting visually-grounded navigation instructions in real environments. arXiv [cs.CV], November 2017.

- Bisk et al. [2016] Yonatan Bisk, Deniz Yuret, and Daniel Marcu. Natural language communication with robots. In Kevin Knight, Ani Nenkova, and Owen Rambow, editors, Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 751–761. Association for Computational Linguistics, June 2016.

- Cao et al. [2023] Tianshi Cao, Jingkang Wang, Yining Zhang, and Sivabalan Manivasagam. Zero-shot compositional policy learning via language grounding. arXiv, April 2023.

- Cheng et al. [2023] Ching-An Cheng, Andrey Kolobov, Dipendra Misra, Allen Nie, and Adith Swaminathan. LLF-bench: Benchmark for interactive learning from language feedback. arXiv, December 2023.

- Chevalier-Boisvert et al. [2019] Maxime Chevalier-Boisvert, Dzmitry Bahdanau, Salem Lahlou, Lucas Willems, Chitwan Saharia, Thien Huu Nguyen, and Yoshua Bengio. BabyAI: A platform to study the sample efficiency of grounded language learning. arXiv, December 2019.

- Dainese et al. [2023] Nicola Dainese, Pekka Marttinen, and Alexander Ilin. Reader: Model-based language-instructed reinforcement learning. In Houda Bouamor, Juan Pino, and Kalika Bali, editors, Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, page 16583–16599, Singapore, December 2023. Association for Computational Linguistics.

- Dainese et al. [2024] Nicola Dainese, Matteo Merler, Minttu Alakuijala, and Pekka Marttinen. Generating code world models with large language models guided by monte carlo tree search. arXiv, October 2024.

- Davison and Hinkley [2013] A C Davison and D V Hinkley. Cambridge series in statistical and probabilistic mathematics: Bootstrap methods and their application series number 1. Cambridge University Press, Cambridge, England, June 2013.

- Du et al. [2023] Yilun Du, Mengjiao Yang, Bo Dai, Hanjun Dai, Ofir Nachum, Joshua B Tenenbaum, Dale Schuurmans, and Pieter Abbeel. Learning universal policies via text-guided video generation. arXiv, November 2023.

- Goyal et al. [2019] Prasoon Goyal, Scott Niekum, and Raymond J Mooney. Using natural language for reward shaping in reinforcement learning. arXiv, May 2019.

- Ha and Schmidhuber [2018] David Ha and Jürgen Schmidhuber. World models. March 2018.

- Hafner et al. [2018] Danijar Hafner, Timothy Lillicrap, Ian Fischer, Ruben Villegas, David Ha, Honglak Lee, and James Davidson. Learning latent dynamics for planning from pixels. arXiv [cs.LG], November 2018.

- Hafner et al. [2024] Danijar Hafner, Jurgis Pasukonis, Jimmy Ba, and Timothy Lillicrap. Mastering diverse domains through world models - new 2024. arXiv, April 2024.

- Hanjie et al. [2021] Austin W Hanjie, Victor Zhong, and Karthik Narasimhan. Grounding language to entities and dynamics for generalization in reinforcement learning. arXiv, June 2021.

- Hansen et al. [2023] Nicklas Hansen, Hao Su, and Xiaolong Wang. TD-MPC2: Scalable, robust world models for continuous control. arXiv, October 2023.

- Kaiser et al. [2020] Lukasz Kaiser, Mohammad Babaeizadeh, Piotr Milos, Blazej Osinski, Roy H Campbell, Konrad Czechowski, Dumitru Erhan, Chelsea Finn, Piotr Kozakowski, Sergey Levine, Afroz Mohiuddin, Ryan Sepassi, George Tucker, and Henryk Michalewski. Model-based reinforcement learning for atari. arXiv, February 2020.

- Krantz and Lee [2022] Jacob Krantz and Stefan Lee. Sim-2-sim transfer for vision-and-language navigation in continuous environments. arXiv, April 2022.

- Krantz et al. [2022] Jacob Krantz, Shurjo Banerjee, Wang Zhu, Jason Corso, Peter Anderson, Stefan Lee, and Jesse Thomason. Iterative vision-and-language navigation. arXiv [cs.CV], October 2022.

- Krantz et al. [2023] Jacob Krantz, Shurjo Banerjee, Wang Zhu, Jason Corso, Peter Anderson, Stefan Lee, and Jesse Thomason. Iterative vision-and-language navigation. In 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 14921–14930, Vancouver, BC, Canada, June 2023. IEEE.

- Lin et al. [2023] Jessy Lin, Yuqing Du, Olivia Watkins, Danijar Hafner, Pieter Abbeel, Dan Klein, and Anca Dragan. Learning to model the world with language. arXiv [cs.CL], July 2023.

- Liu et al. [2023] Bingbin Liu, Jordan T Ash, Surbhi Goel, Akshay Krishnamurthy, and Cyril Zhang. Transformers learn shortcuts to automata. arXiv, May 2023.

- McCallum et al. [2023] Sabrina McCallum, Max Taylor-Davies, Stefano V Albrecht, and Alessandro Suglia. Is feedback all you need? leveraging natural language feedback in goal-conditioned reinforcement learning. arXiv, December 2023.

- Mehta et al. [2024] Nikhil Mehta, Milagro Teruel, Patricio Figueroa Sanz, Xin Deng, Ahmed Hassan Awadallah, and Julia Kiseleva. Improving grounded language understanding in a collaborative environment by interacting with agents through help feedback. arXiv, February 2024.

- Nguyen et al. [2022] Khanh Nguyen, Yonatan Bisk, and Hal Daumé, III. A framework for learning to request rich and contextually useful information from humans. arXiv, June 2022.

- Parakh et al. [2023] Meenal Parakh, Alisha Fong, Anthony Simeonov, Tao Chen, Abhishek Gupta, and Pulkit Agrawal. Lifelong robot learning with human assisted language planners. arXiv, October 2023.