# Adversarial Signed Graph Learning with Differential Privacy

**Authors**: Haobin Ke, Sen Zhang, Qingqing Ye, Xun Ran, Haibo Hu

> The Hong Kong Polytechnic UniversityHung HomHong Konghaobin.ke@connect.polyu.hk

> The Hong Kong Polytechnic UniversityHung HomHong Kongsenzhang@polyu.edu.hk

> The Hong Kong Polytechnic UniversityHung HomHong Kongqqing.ye@polyu.edu.hk

> The Hong Kong Polytechnic UniversityHung HomHong Kongqi-xun.ran@connect.polyu.hk

> The Hong Kong Polytechnic UniversityResearch Centre for Privacy and Security Technologies in Future Smart Systems, PolyUHung HomHong Konghaibo.hu@polyu.edu.hk

\setcctype

by

(2026)

Abstract.

Signed graphs with positive and negative edges can model complex relationships in social networks. Leveraging on balance theory that deduces edge signs from multi-hop node pairs, signed graph learning can generate node embeddings that preserve both structural and sign information. However, training on sensitive signed graphs raises significant privacy concerns, as model parameters may leak private link information. Existing protection methods with differential privacy (DP) typically rely on edge or gradient perturbation for unsigned graph protection. Yet, they are not well-suited for signed graphs, mainly because edge perturbation tends to cascading errors in edge sign inference under balance theory, while gradient perturbation increases sensitivity due to node interdependence and gradient polarity change caused by sign flips, resulting in larger noise injection. In this paper, motivated by the robustness of adversarial learning to noisy interactions, we present ASGL, a privacy-preserving adversarial signed graph learning method that preserves high utility while achieving node-level DP. We first decompose signed graphs into positive and negative subgraphs based on edge signs, and then design a gradient-perturbed adversarial module to approximate the true signed connectivity distribution. In particular, the gradient perturbation helps mitigate cascading errors, while the subgraph separation facilitates sensitivity reduction. Further, we devise a constrained breadth-first search tree strategy that fuses with balance theory to identify the edge signs between generated node pairs. This strategy also enables gradient decoupling, thereby effectively lowering gradient sensitivity. Extensive experiments on real-world datasets show that ASGL achieves favorable privacy-utility trade-offs across multiple downstream tasks. Our code and data are available in https://github.com/KHBDL/ASGL-KDD26.

Differential privacy, Adversarial signed graph learning, Constrained breadth first search-trees, Balanced theory. journalyear: 2026 copyright: cc conference: Proceedings of the 32nd ACM SIGKDD Conference on Knowledge Discovery and Data Mining V.1; August 09–13, 2026; Jeju Island, Republic of Korea booktitle: Proceedings of the 32nd ACM SIGKDD Conference on Knowledge Discovery and Data Mining V.1 (KDD ’26), August 09–13, 2026, Jeju Island, Republic of Korea doi: 10.1145/3770854.3780282 isbn: 979-8-4007-2258-5/2026/08 ccs: Security and privacy Data anonymization and sanitization

1. Introduction

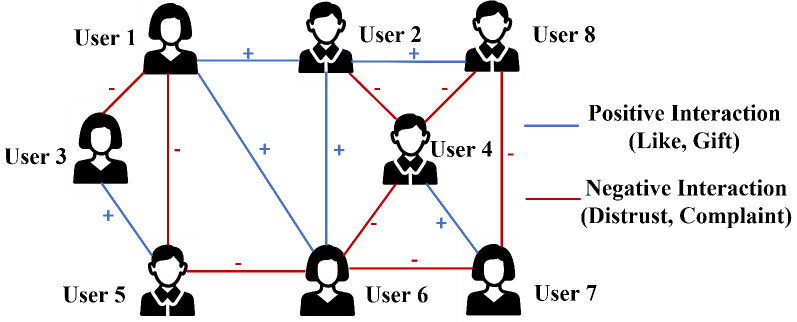

The signed graph is a common and widely adopted graph structure that can represent both positive and negative relationships using signed edges (19; 20; 21). For example, in online social networks shown in Fig. 1, while user interactions reflect positive relationships (e.g., like, trust, friendship), negative relationships (e.g., dislike, distrust, complaint) also exist. Signed graphs provide more expressive power than unsigned graphs to capture such complex user interactions.

Recently, some studies (22; 23; 24) have explored signed graph learning methods, aiming to obtain low-dimensional vector representations of nodes that preserve key signed graph properties: neighbor proximity and structural balance. These embeddings are subsequently applied to downstream tasks such as edge sign prediction, node clustering, and node classification. Among existing signed graph learning methods, balance theory (27) has proven effective in identifying the edge signs between the source node and multi-hop neighbor nodes. It is leveraged in graph neural network (GNN)-based models to guide message passing across signed edges, ensuring that information aggregation is aligned with the node proximity (36; 38; 39). Moreover, to enhance the robustness and generalization capability of deep learning models, the adversarial graph embedding model (03; 14) learns the underlying connectivity distribution of signed graphs by generating high-quality node embeddings that preserve signed node proximity.

Despite their ability to effectively capture signed relationships between nodes, graph learning models remain vulnerable to link stealing attacks (25; 42; 43), which aim to infer the existence of links between arbitrary node pairs in the training graph. For instance, in online social graphs, such attacks may reveal whether two users share a friendly or adversarial relationship, compromising user privacy and damaging personal or professional reputations.

<details>

<summary>x1.png Details</summary>

### Visual Description

# Technical Document Extraction: Social Interaction Network Diagram

## 1. Document Overview

This image is a social network graph (node-edge diagram) illustrating the relationships between eight distinct users. The diagram uses color-coded and symbol-labeled edges to represent the nature of interactions between individuals.

## 2. Legend and Key Components

The legend is located in the middle-right section of the image.

| Visual Element | Label | Description/Examples |

| :--- | :--- | :--- |

| **Blue Line with '+'** | **Positive Interaction** | Like, Gift |

| **Red Line with '-'** | **Negative Interaction** | Distrust, Complaint |

### Node Identification

There are 8 nodes, represented by human silhouettes and labeled "User 1" through "User 8".

* **Female Silhouettes:** User 1, User 3, User 6, User 7.

* **Male Silhouettes:** User 2, User 4, User 5, User 8.

---

## 3. Network Topology and Interaction Data

The following table reconstructs the connections (edges) between users based on the spatial layout and color/symbol coding.

| Source Node | Target Node | Interaction Type | Symbol | Color |

| :--- | :--- | :--- | :--- | :--- |

| User 1 | User 2 | Positive | + | Blue |

| User 1 | User 3 | Negative | - | Red |

| User 1 | User 5 | Negative | - | Red |

| User 1 | User 6 | Positive | + | Blue |

| User 2 | User 4 | Negative | - | Red |

| User 2 | User 6 | Positive | + | Blue |

| User 2 | User 8 | Positive | + | Blue |

| User 3 | User 5 | Positive | + | Blue |

| User 4 | User 6 | Negative | - | Red |

| User 4 | User 7 | Positive | + | Blue |

| User 4 | User 8 | Negative | - | Red |

| User 5 | User 6 | Negative | - | Red |

| User 6 | User 7 | Negative | - | Red |

| User 7 | User 8 | Negative | - | Red |

---

## 4. Component Isolation and Spatial Analysis

### Region 1: Upper Tier (Users 1, 2, 8)

* **User 1 to User 2:** A horizontal blue line indicates a positive interaction.

* **User 2 to User 8:** A horizontal blue line indicates a positive interaction.

* *Trend:* The top row of the network is characterized primarily by positive horizontal connections.

### Region 2: Central/Diagonal Interactions

* **User 1 to User 6:** A long diagonal blue line (+) indicates a positive relationship spanning the height of the graph.

* **User 2 to User 4 & User 4 to User 8:** These form a "V" shape of red lines (-), indicating negative interactions centered on User 4 from the upper tier.

* **User 4 to User 6 & User 4 to User 7:** User 4 has a negative interaction with User 6 and a positive interaction with User 7.

### Region 3: Lower Tier (Users 3, 5, 6, 7)

* **User 3 to User 5:** A diagonal blue line (+) indicates a positive interaction.

* **User 5 to User 6:** A horizontal red line (-) indicates a negative interaction.

* **User 6 to User 7:** A horizontal red line (-) indicates a negative interaction.

* *Trend:* The bottom-right of the graph (Users 5, 6, 7) is dominated by negative horizontal interactions.

### Region 4: Vertical Interactions

* **User 1 to User 5:** A vertical red line (-) indicates a negative interaction.

* **User 2 to User 6:** A vertical blue line (+) indicates a positive interaction.

* **User 8 to User 7:** A vertical red line (-) indicates a negative interaction.

---

## 5. Summary of Findings

* **Total Nodes:** 8

* **Total Edges:** 14

* **Positive Edges (Blue):** 7

* **Negative Edges (Red):** 7

* **Most Connected Nodes:** User 1, User 2, User 4, and User 6 (4 connections each).

* **Least Connected Node:** User 3 (2 connections).

</details>

Figure 1. A signed social graph with blue edges for positive links and red edges for negative links.

Differential privacy (DP) (06) is a rigorous privacy framework that guarantees statistically indistinguishable outputs regardless of any individual data presence. Such guarantee is achieved through sufficient perturbation while maintaining provable privacy bounds and computational feasibility. Existing privacy-preserving graph learning methods with DP can be categorized into two types based on the perturbation mechanism: one applies edge perturbation (53) to protect the link information by modifying the graph structure, and the other adopts gradient perturbation (54; 52) to obscure the relationships between nodes during model training. However, these methods are not well-suited for signed graph learning due to the following two challenges:

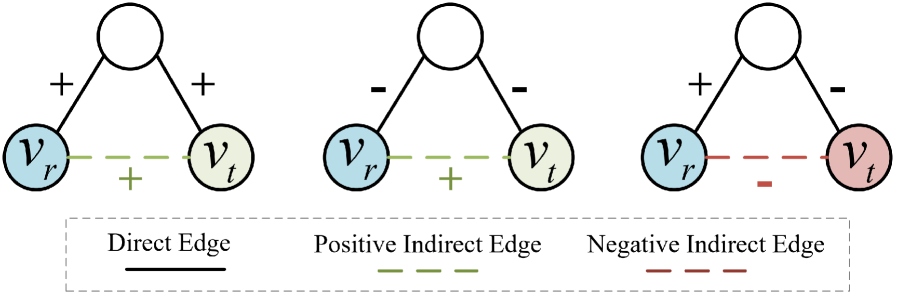

- Cascading error: As illustrated in Fig. 2, balance theory facilitates the inference of the edge sign between two unconnected nodes by computing the product of edge signs along a path. However, existing methods that use edge perturbation to protect link information may alter the sign of any edge along the path, thereby leading to incorrect inference of edge signs under balance theory. Such a local error can further propagate along the path, resulting in cascading errors in edge sign inference.

- High sensitivity: While gradient perturbation methods without directly perturbing edges may mitigate cascading errors, they are still ill-suited for signed graph learning because the node interdependence in signed graphs leads to high gradient sensitivity. The presence or absence of a node affects gradient updates of itself and its neighbors. Furthermore, edge change may induce sign flips that reverse gradient polarity within the loss function (see Eq. (10) for details), resulting in higher sensitivity compared to unsigned graphs. This increased sensitivity requires larger noise for privacy protection, thereby reducing the data utility.

To address these challenges, we turn to an adversarial learning-based approach for private signed graph learning. The core motivation is that this adversarial method generates node embeddings by approximating the true connectivity distribution, making it naturally robust to noisy interactions during optimization. As a result, we propose ASGL, a differentially private adversarial signed graph learning method that achieves high utility while maintaining node-level differential privacy. Within ASGL, the signed graph is first decomposed into positive and negative subgraphs based on edge signs. These subgraphs are then processed through an adversarial learning module within shared model parameters, enabling both positive and negative node pairs to be mapped into a unified embedding space while effectively preserving signed proximity. Based on this, we develop the adversarial learning module with differentially private stochastic gradient descent (DPSGD), which generates private node embeddings that closely approximate the true signed connectivity distribution. In particular, the gradient perturbation helps mitigate cascading errors, while the subgraph separation avoids gradient polarity reversals induced by edge sign flips within the loss function, thereby reducing the sensitivity to changes in edge signs. Considering that node interdependence further increases gradient sensitivity, we design a constrained breadth-first search (BFS) tree strategy within adversarial learning. This strategy integrates balance theory to identify the edge signs between generated node pairs, while also constraining the receptive fields of nodes to enable gradient decoupling, thereby effectively lowering gradient sensitivity and reducing noise injection. Our main contributions are listed as follows:

- We present a privacy-preserving adversarial learning method for signed graphs, called ASGL. To our best knowledge, it is the first work that can ensure the node-level differential privacy of signed graph learning while preserving high data utility.

- To mitigate cascading errors, we develop the adversarial learning module with DPSGD, which generates private node embeddings that closely approximate the true signed connectivity distribution. This approach avoids direct perturbation of the edge structure, which helps mitigate cascading errors and prevents gradient polarity reversals in the loss function.

- To further reduce the sensitivity caused by complex node relationships, we design a constrained breadth-first search tree strategy that integrates balance theory to identify edge signs between generated node pairs. This strategy also constrains the receptive fields of nodes, enabling gradient decoupling and effectively lowering gradient sensitivity.

- Extensive experiments demonstrate that our method achieves favorable privacy-accuracy trade-offs and significantly outperforms state-of-the-art methods in edge sign prediction and node clustering tasks. Additionally, we conduct link stealing attacks, demonstrating that ASGL exhibits stronger resistance to such attacks across all datasets.

The remainder of our work is organized as follows. Section 2 describes the preliminaries of our solution. The problem statement is introduced in Section 3. Our proposed solution and its privacy analysis are presented in Section 4. The experimental results are reported in Section 5. We discuss related works in Section 6, followed by conclusion in Section 7.

2. Preliminaries

In this section, we provide an overview of signed graphs, differential privacy, and DPSGD. Additionally, the vanilla adversarial graph learning is introduced in App. A, and the frequently used notations are summarized in Table 5 (See App. B).

2.1. Signed Graph with Balance Theory

A signed graph is denoted as $\mathcal{G}=(V,E^{+},E^{-})$ , where $V$ is the set of nodes, and $E^{+}/E^{-}$ represent positive and negative edge sets, respectively. An edge $e_{ij}=(v_{i},v_{j})∈ E^{+}/E^{-}$ represents the positive/negative link between node pair $(v_{i},v_{j})∈ V$ , respectively. Notably, $E^{+}\cap E^{-}=\emptyset$ ensures that any node pair cannot maintain both positive and negative relationships simultaneously. The objective of signed graph embedding is to learn a mapping function $f:V→\mathbb{R}^{k}$ that projects each node $v∈ V$ into a low $k$ -dimensional vector while preserving both the structural properties of the original signed graph. In other words, node pairs connected by positive edges should be embedded closely, while those connected by negative edges should be placed farther apart in the embedding space.

<details>

<summary>x2.png Details</summary>

### Visual Description

# Technical Document Extraction: Graph Edge Relationship Diagram

## 1. Document Overview

This image is a technical diagram illustrating the relationship between direct edges and indirect edges in a graph structure, likely representing social balance theory or signed network analysis. It consists of three distinct graph scenarios and a legend.

---

## 2. Component Isolation

### A. Legend (Footer Region)

Located at the bottom of the image within a dashed rectangular border.

* **Direct Edge:** Represented by a solid black line.

* **Positive Indirect Edge:** Represented by a dashed green line.

* **Negative Indirect Edge:** Represented by a dashed red line.

### B. Graph Scenarios (Main Chart Region)

The image contains three triangular graph structures. Each graph features a top unlabeled white node connected to two bottom nodes ($v_r$ and $v_t$) via direct edges. An indirect edge connects $v_r$ and $v_t$.

#### Scenario 1: Positive Indirect Relationship (Left)

* **Nodes:**

* Top: Unlabeled (White)

* Bottom Left: $v_r$ (Light Blue)

* Bottom Right: $v_t$ (Light Green)

* **Direct Edges (Solid Black):**

* Top to $v_r$: Labeled with a plus sign (**+**)

* Top to $v_t$: Labeled with a plus sign (**+**)

* **Indirect Edge (Dashed Green):**

* Between $v_r$ and $v_t$: Labeled with a plus sign (**+**)

* **Logic:** Two positive direct connections result in a **Positive Indirect Edge**.

#### Scenario 2: Positive Indirect Relationship (Center)

* **Nodes:**

* Top: Unlabeled (White)

* Bottom Left: $v_r$ (Light Blue)

* Bottom Right: $v_t$ (Light Green)

* **Direct Edges (Solid Black):**

* Top to $v_r$: Labeled with a minus sign (**-**)

* Top to $v_t$: Labeled with a minus sign (**-**)

* **Indirect Edge (Dashed Green):**

* Between $v_r$ and $v_t$: Labeled with a plus sign (**+**)

* **Logic:** Two negative direct connections result in a **Positive Indirect Edge** (The "enemy of my enemy is my friend" principle).

#### Scenario 3: Negative Indirect Relationship (Right)

* **Nodes:**

* Top: Unlabeled (White)

* Bottom Left: $v_r$ (Light Blue)

* Bottom Right: $v_t$ (Light Red/Pink)

* **Direct Edges (Solid Black):**

* Top to $v_r$: Labeled with a plus sign (**+**)

* Top to $v_t$: Labeled with a minus sign (**-**)

* **Indirect Edge (Dashed Red):**

* Between $v_r$ and $v_t$: Labeled with a minus sign (**-**)

* **Logic:** One positive and one negative direct connection result in a **Negative Indirect Edge**.

---

## 3. Data Summary Table

| Scenario | Edge (Top to $v_r$) | Edge (Top to $v_t$) | Resulting Indirect Edge ($v_r$ to $v_t$) | Indirect Edge Type |

| :--- | :--- | :--- | :--- | :--- |

| 1 | Positive (+) | Positive (+) | Positive (+) | Dashed Green |

| 2 | Negative (-) | Negative (-) | Positive (+) | Dashed Green |

| 3 | Positive (+) | Negative (-) | Negative (-) | Dashed Red |

---

## 4. Technical Observations

* **Node Color Coding:** The node $v_r$ is consistently light blue. The node $v_t$ changes color based on the relationship: light green for positive indirect relationships and light red for negative indirect relationships.

* **Mathematical Pattern:** The indirect edge sign follows the rules of multiplication for signed integers:

* $(+) \times (+) = (+)$

* $(-) \times (-) = (+)$

* $(+) \times (-) = (-)$

</details>

Figure 2. The signs of multi-hop connection based on balanced theory.

Balance theory (27) is a well-established standard to describe the signed relationships of unconnected node pairs. It is commonly summarized by four intuitive rules: “A friend of my friend is my friend,” “A friend of my enemy is my enemy,” “An enemy of my friend is my enemy,” and “An enemy of my enemy is my friend.” Based on these rules, the balance theory can deduce signs of the multi-hop connection. As shown in Fig. 2, given a path $P_{rt}:v_{r}→ v_{t}$ from rooted node $v_{r}$ to target node $v_{t}$ , the sign of the indirect relationships between $v_{r}$ and $v_{t}$ can be inferred by iteratively applying balance theory. Specifically, the sign of the multi-hop connection corresponds to the product of the signs of the edges along the path.

2.2. Differential Privacy

Differential Privacy (DP) (04) provides a rigorous mathematical framework for quantifying the privacy guarantees of algorithms operating on sensitive data. Informally, it bounds how much the output distribution of a mechanism can change in response to small changes in its input. When applying DP to signed graph data, the definition of adjacent databases typically considers two signed graphs, $\mathcal{G}$ and $\mathcal{G^{\prime}}$ , which are regarded as adjacent graphs if they differ by at most one edge or one node with its associated edges.

**Definition 0 (Edge (Node)-level DP(05))**

*Given $\epsilon>0$ and $\delta>0$ , a graph analysis mechanism $\mathcal{M}$ satisfies edge- or node-level $(\epsilon,\delta)$ -DP, if for any two adjacent graph datasets $\mathcal{G}$ and $\mathcal{G^{\prime}}$ that only differ by an edge or a node with its associated edges, and for any possible algorithm output $S⊂eq Range(\mathcal{M})$ , it holds that

$$

\displaystyle\text{Pr}[\mathcal{M}(\mathcal{G})\in S]\leq e^{\epsilon}\text{Pr}[\mathcal{M}(\mathcal{G^{\prime}})\in S]+\delta. \tag{1}

$$

Here, $\epsilon$ is the privacy budget (i.e., privacy cost), where smaller values indicate stronger privacy protection but greater utility reduction. The parameter $\delta$ denotes the probability that the privacy guarantee may not hold, and is typically set to be negligible. In other words, $\delta$ allows for a negligible probability of privacy leakage, while ensuring the privacy guarantee holds with high probability.*

**Remark 1**

*Note that satisfying node-level DP is much more challenging than satisfying edge-level DP, as removing a single node may, in the worst case, remove $|V|-1$ edges, where $|V|$ denotes the total number of nodes. Consequently, node-level DP requires injecting substantially more noise.*

Two fundamental properties of DP are useful for the privacy analysis of complex algorithms: (1) Post-Processing Property (06): If a mechanism $\mathcal{M}(\mathcal{G})$ satisfies $(\epsilon,\delta)$ -DP, then for any function $f$ that indirectly queries the private dataset $\mathcal{G}$ , the composition $f(\mathcal{M}(\mathcal{G}))$ also satisfies $(\epsilon,\delta)$ -DP; (2) Composition Property (06): If $\mathcal{M}(\mathcal{G})$ and $f(\mathcal{G})$ satisfy $(\epsilon_{1},\delta_{1})$ -DP and $(\epsilon_{2},\delta_{2})$ -DP, respectively, then the combined mechanism $\mathcal{F}(\mathcal{G})=(\mathcal{M}(\mathcal{G}),f(\mathcal{G}))$ which outputs both results, satisfies $(\epsilon_{1}+\epsilon_{2},\delta_{1}+\delta_{2})$ -DP.

2.3. DPSGD

A common approach to differentially private training combines noisy stochastic gradient descent with the Moments Accountant (MA) (02). This approach, known as DPSGD, has been widely adopted for releasing private low-dimensional representations, as MA effectively mitigates excessive privacy loss during iterative optimization. Formally, for each sample $x_{i}$ in a batch of size $B$ , we compute its gradient $∇\mathcal{L}_{i}(\theta)$ , denoted as $∇(x_{i})$ for simplicity. Gradient sensitivity refers to the maximum change in the output of the gradient function resulting from a change in a single sample. To control the sensitivity of ${∇(x_{i})}$ , the $\ell_{2}$ norm of each gradient is clipped by a threshold $C$ . These clipped gradients are then aggregated and perturbed with Gaussian noise $\mathcal{N}(0,\sigma^{2}C^{2}\mathbf{I})$ to satisfy the DP guarantee. Finally, the average noisy gradient ${\tilde{∇}_{B}}$ is used to update the model parameters $\theta$ . This process is given by:

$$

\displaystyle{\tilde{\nabla}_{B}}\leftarrow\frac{1}{B}\Big(\sum_{i=1}^{B}\text{Clip}_{C}(\nabla(x_{i}))+\mathcal{N}\left(0,\sigma^{2}C^{2}\mathbf{I}\right)\Big). \tag{2}

$$

Here, $\text{Clip}_{C}(∇(x_{i}))=∇(x_{i})/\max(1,\frac{||∇(x_{i})||_{2}}{C})$ .

3. Problem Definition and Existing Solutions

3.1. Problem Definition

Instead of publishing a sanitized version of original node embeddings, we aim to release a privacy-preserving ASGL model trained on raw signed graph data with node-level DP guarantees, enabling data analysts to generate task-specific node embeddings.

Threat Model. We consider a black-box attack (42), where the attacker can query the trained model and observe its outputs with no access to its internal architecture or parameters. The attacker attempts to infer the presence of specific nodes or edges in the training graph solely from model outputs. This setting reflects a more practical attack surface compared to the white-box scenario (11).

Privacy Model. Signed graph data encodes both positive and negative relationships between nodes, which differs from tabular or image data. Therefore, it is necessary to adapt the standard definition of node-level DP (See Definition 1) to ensure black-box adversaries cannot determine whether a specific node and its associated signed edges are present in the training data. To this end, we define the differentially private adversarial signed graph learning model as follows.

**Definition 0 (Adversarial signed graph learning model under node-level DP)**

*The vanilla process of graph adversarial learning is illustrated in App. A, let $\theta_{D}$ denote the discriminator parameters, and its $r$ -th row element corresponds to the $k$ -dimensional vector $\mathbf{d}_{v_{r}}$ of node $v_{r}$ , that is $\mathbf{d}_{v_{r}}∈\theta_{D}$ . The discriminator module $L_{D}$ satisfies node-level ( $\epsilon,\delta$ )-DP if two adjacent signed graphs $\mathcal{G}$ and $\mathcal{G}^{\prime}$ only differ in one node with its associated signed edges, and for all possible $\theta_{s}⊂eq Range(L_{D})$ , we have

$$

\displaystyle\text{Pr}[L_{D}(\mathcal{G})\in\theta_{s}]\leq e^{\epsilon}\text{Pr}[L_{D}(\mathcal{G^{\prime}})\in\theta_{s}]+\delta, \tag{3}

$$

where $\theta_{s}$ denotes the set comprising all possible values of $\theta_{D}$ .*

In particular, the generator $G$ is trained based on the feedback from the differentially private discriminator $D$ . According to the post-processing property of DP (08; 12), the generator module $L_{G}$ also satisfies node-level $(\epsilon,\delta)$ -DP. Leveraging the robustness to post-processing property, the privacy guarantee is preserved in the generated signed node embeddings and their downstream usage.

<details>

<summary>x3.png Details</summary>

### Visual Description

# Technical Document Extraction: Graph Neural Network Architecture Diagram

This document provides a detailed technical extraction of the provided image, which illustrates a Generative Adversarial Network (GAN) framework for graph embedding, specifically handling positive and negative edges with Differential Privacy (DPSGD).

## 1. Legend and Symbol Definitions

Located at the top-left of the image:

* **Tan circle with center dot**: Rooted node ($v_r$)

* **Blue line**: Positive edge

* **Red line**: Negative edge

Located at the far right (Discriminator outputs):

* **Solid Blue circle**: Real positive edge

* **Patterned Blue circle**: Fake positive edge

* **Solid Red circle**: Real negative edge

* **Patterned Red circle**: Fake negative edge

---

## 2. Component Segmentation and Flow

The diagram is divided into three primary horizontal stages, labeled at the bottom as (i), (ii), and (iii).

### Stage (i): Graph Decomposition

* **Input**: "The original graph $\mathcal{G}$" containing a central rooted node $v_r$ connected by both blue (positive) and red (negative) edges.

* **Process**: The original graph is split into two distinct subgraphs:

1. **The positive graph $\mathcal{G}^+$**: Contains only nodes and blue edges.

2. **The negative graph $\mathcal{G}^-$**: Contains only nodes and red edges.

### Stage (ii): Generative Process and Path Sampling

This stage describes the dual generator architecture ($G^+$ and $G^-$).

* **Embedding Space ($\theta_G$)**: A central block that provides parameters to and receives updates from the generators.

* **Positive Path Generation ($G^+$)**:

* **Input**: Data from the positive graph $\mathcal{G}^+$.

* **Function**: Calculates $P_{T_{v_r}}^+(v_i | v_r)$ (Positive relevance probability).

* **Output (iv)**: "Path from Constrained BFS-tree".

* Constraints: Max path length $L=4$, Max path amount $N=2$.

* Visual: Shows a sequence of nodes starting from a rooted node.

* **Result**: Produces "Fake positive edges" (represented by two light blue circles connected by a dashed line).

* **Direct Link**: "Real positive edges" are pulled directly from the positive graph $\mathcal{G}^+$ for comparison.

* **Negative Path Generation ($G^-$)**:

* **Input**: Data from the negative graph $\mathcal{G}^-$.

* **Function**: Calculates $P_{T_{v_r}}^-(v_i | v_r)$ (Negative relevance probability).

* **Output (iv)**: "Path from Constrained BFS-tree".

* Constraints: Max path length $L=4$, Max path amount $N=2$.

* **Result**: Produces "Fake negative edges" (represented by two light red circles connected by a dashed line).

* **Direct Link**: "Real negative edges" are pulled directly from the negative graph $\mathcal{G}^-$ for comparison.

* **Central Output**: A red arrow points from the Embedding Space to a box labeled **"Downstream tasks"**.

### Stage (iii): Discriminator and Differentially Private Training

This stage describes the evaluation and optimization process using Differentially Private Stochastic Gradient Descent (DPSGD).

* **Discriminators ($D^+$ and $D^-$)**:

* $D^+$ receives both Real and Fake positive edges.

* $D^-$ receives both Real and Fake negative edges.

* **Optimization Flow**:

1. **Gradient Calculation**: Gradients ($\nabla_{v_1}, \nabla_{v_2}, \dots, \nabla_{v_n}$) are computed.

2. **Gradient Clipping**: Represented by a scissor icon on the gradient arrows.

3. **DPSGD Process**:

* Formula: $\frac{1}{n} \sum + \text{Noise Addition}$

* The noise addition is visualized as a Gaussian distribution curve.

* Formula snippet: $\mathcal{N}(0, (\frac{2\sigma C}{n})^2 I)$

4. **Embedding Space ($\theta_D$)**: The processed gradients update a separate discriminator embedding space.

5. **Feedback Loop**: Red dashed arrows labeled **"Guidance: Post-processing"** flow from the Discriminators back to the Generators' Embedding Space ($\theta_G$).

---

## 3. Mathematical Notations and Labels

* **Root Node**: $v_r$

* **Probability Functions**: $P_{T_{v_r}}^+(v_i | v_r)$ and $P_{T_{v_r}}^-(v_i | v_r)$

* **Embedding Parameters**: $\theta_G$ (Generator) and $\theta_D$ (Discriminator)

* **Training Mechanism**: DPSGD (Differential Private Stochastic Gradient Descent)

* **BFS Constraints**: $L=4$ (Length), $N=2$ (Amount)

## 4. Summary of Logic and Trends

The system employs a **Symmetric Dual-GAN architecture**. The "Positive" and "Negative" branches mirror each other exactly in structure but process different edge types. The trend of the data flow is from left to right (Decomposition $\rightarrow$ Generation $\rightarrow$ Discrimination), with a critical feedback loop (Guidance) returning to the center to refine the embeddings. The inclusion of Gradient Clipping and Noise Addition indicates a focus on privacy-preserving graph representation learning.

</details>

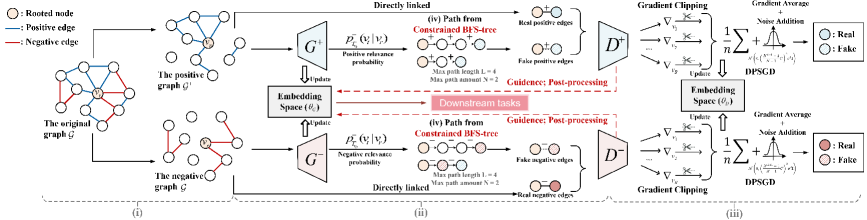

Figure 3. Overview of the ASGL framework: (i) The process decomposes a signed graph into positive and negative subgraphs, (ii) then maps node pairs into a unified embedding space while preserving signed proximity. To ensure privacy, (iii) adversarial learning module with DPSGD generates private node embeddings that approximate true connectivity without cascading errors. (iv) A constrained BFS-tree strategy manages node receptive field, reduces gradient noise, and improves model utility.

3.2. Existing Solutions

To our best knowledge, existing differentially private graph learning methods follow two main tracks: gradient perturbation and edge perturbation. In the first category, Yang et al. (54) introduce a privacy-preserving generative model that incorporates generative adversarial networks (GAN) or variational autoencoders (VAE) with DPSGD to protect edge privacy, while Xiang et al. (52) design a node sampling mechanism that adds Laplace noise to per-subgraph gradients, achieving node-level DP. For the edge perturbation-based methods, Lin et al. (53) use randomized response to perturb the adjacency matrix for edge-level privacy, and EDGERAND (42) perturbs the graph structure while preserving sparsity by clipping the adjacency matrix according to a privacy-calibrated graph density.

Limitation. The aforementioned solutions are not directly applicable to signed graphs. This is primarily because edge perturbation can lead to cascading errors when inferring edge signs under balance theory. Moreover, gradient perturbation often suffers from high sensitivity caused by complex node dependencies and gradient polarity reversal from edge sign flips, leading to excessive noise and degraded model utility.

4. Our Proposal: ASGL

To tackle the above limitations, we present ASGL, a DP-based adversarial signed graph learning model that integrates a constrained BFS-tree strategy to achieve favorable utility-privacy tradeoffs.

4.1. Overview

The ASGL framework, illustrated in Fig. 3, comprises three steps:

- Private Adversarial Signed Graph Learning. The signed graph $\mathcal{G}$ is first split into positive and negative subgraphs, $\mathcal{G}^{+}$ and $\mathcal{G}^{-}$ , based on edge signs. Subsequently, two discriminators, $D^{+}$ and $D^{-}$ , sharing parameters $\theta_{D}$ , are trained to distinguish real from fake positive and negative edges. Guided by $D^{+}$ and $D^{-}$ , two generators $G^{+}$ and $G^{-}$ with shared parameters $\theta_{G}$ generate node embeddings that approximate the true connectivity distribution. To ensure node-level DP, we apply gradient perturbation during discriminator training instead of directly perturbing edges. This strategy mitigates cascading errors and prevents gradient polarity reversals caused by edge sign flips, thereby reducing gradient sensitivity. By the post-processing property, the generators also preserve node-level DP.

- Optimization via Constrained BFS-tree. To further reduce gradient sensitivity and the required noise scale, ASGL employs a constrained BFS-tree strategy. By empirically limiting the number and length of paths, each node’s receptive field is restricted, which reduces node dependency and enables gradient decoupling. This significantly lowers gradient sensitivity and enhances model utility under differential privacy constraints.

- Privacy Accounting and Complexity Analysis. The complete training process for ASGL is outlined in Algorithm 2 (see App. F.3). Based on this, we present a comprehensive privacy accounting and computational complexity analysis for ASGL.

4.2. Private Adversarial Signed Graph Learning

Motivated by (03; 14), a signed graph $\mathcal{G}$ is first divided into a positive subgraph $\mathcal{G}^{+}$ and a negative subgraph $\mathcal{G}^{-}$ according to edge signs. Let $\mathcal{N}(v_{r})$ be the set of neighbor nodes directly connected to node $v_{r}$ . We denote the true positive and negative connectivity distributions of $v_{r}$ over its neighborhood $\mathcal{N}(v_{r})$ as the conditional probabilities $p_{\text{true}}^{+}(·|v_{r})$ and $p_{\text{true}}^{-}(·|v_{r})$ , which capture the preference of $v_{r}$ to connect with other nodes in $V$ . The adversarial learning for the signed graph $\mathcal{G}$ is conducted by two adversarial learning modules:

Generators $G^{+}$ and $G^{-}$ : Through optimizing the shared parameters $\theta_{G}$ , generators $G^{+}$ and $G^{-}$ aim to approximate the underlying true connectivity distribution and generate the most likely but unconnected nodes $v_{t}∉\mathcal{N}(v_{r})$ that are relevant to a given node $v_{r}$ . To this end, we estimate the relevance probabilities of these fake The term “Fake” indicates that although a node $v$ selected by the generator is relevant to $v_{r}$ , there is no actual edge between them. node pairs. Specifically, for the implementation of $G^{+}$ , given the fake positive node pairs $(v_{r},v_{t})^{+}$ , we use the graph softmax function (03) to calculate the fake positive connectivity probability:

$$

p^{+}_{\text{fake}}(v_{t}|v_{r})=G^{+}\left(v_{t}|v_{r};\theta_{G}\right)=\sigma(\mathbf{g}_{v_{t}}^{\top}\mathbf{g}_{v_{r}})=\frac{1}{1+\exp({-\mathbf{g}_{v_{t}}^{\top}\mathbf{g}_{v_{r}})}}, \tag{4}

$$

where $\mathbf{g}_{v_{t}},\mathbf{g}_{v_{r}}∈\mathbb{R}^{k}$ are the $k$ -dimensional vectors of nodes $v_{t}$ and $v_{r}$ , respectively, and $\theta_{G}$ is the union of all $\mathbf{g}_{v}$ ’s. The output $G^{+}(v_{t}|v_{r};\theta_{G})$ increases with the decrease of the distance between $v_{r}$ and $v_{t}$ in the embedding space of the generator $G^{+}$ . Similarly, for the generator $G^{-}$ , given the fake negative node pairs $(v_{r},v_{t})^{-}$ , we estimate their fake negative connectivity probability:

$$

p^{-}_{\text{fake}}(v_{t}|v_{r})=G^{-}(v_{t}|v_{r};\theta_{G})=1-\sigma(\mathbf{g}_{v_{t}}^{\top}\!\mathbf{g}_{v_{r}})=\frac{\exp{(-\mathbf{g}_{v_{t}}^{\top}\!\mathbf{g}_{v_{r}}})}{1+\exp{(-\mathbf{g}_{v_{t}}^{\top}\!\mathbf{g}_{v_{r}}})}. \tag{5}

$$

Here, Eq. (5) ensures that node pairs with higher negative connectivity probabilities are mapped farther apart in the embedding space of $G^{-}$ . Since generators $G^{+}$ and $G^{-}$ share the parameters $\theta_{G}$ , they jointly learn the proximity and separation of positive and negative node pairs in a unified embedding space, respectively.

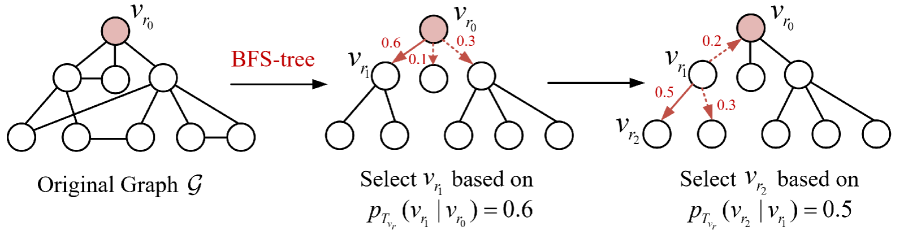

Notably, the aforementioned fake node pairs $(v_{r},v_{t})^{+}$ and $(v_{r},v_{t})^{-}$ are sampled by a breadth-first search (BFS)-tree strategy (27). Compared to depth-first search (DFS) (56), BFS ensures more uniform exploration of neighboring nodes and can be integrated with random walk techniques (29) to optimize computational efficiency. Specifically, we perform BFS on the positive subgraph $\mathcal{G}^{+}$ to construct a BFS-tree $T^{+}_{v_{r}}$ rooted from node $v_{r}$ . Then, we calculate the positive relevance probability of node $v_{r}$ with its neighbors $v_{k}∈\mathcal{N}({v_{r}})$ :

$$

p^{+}_{T^{+}_{v_{r}}}(v_{k}|v_{r})=\frac{\exp\left(\mathbf{g}_{v_{k}}^{\top}\mathbf{g}_{v_{r}}\right)}{\sum_{v_{k}\in\mathcal{N}({v_{r}})}\exp\left(\mathbf{g}_{v_{k}}^{\top}\mathbf{g}_{v_{r}}\right)}, \tag{6}

$$

which is actually a softmax function over $\mathcal{N}({v_{r}})$ . To further sample node pairs unconnected in $T^{+}_{v_{r}}$ as fake positive edges, we perform a random walk at $T^{+}_{v_{r}}$ : Starting from the root node $v_{r}$ , a path $P_{rt}:v_{r}→ v_{t}$ is built by iteratively selecting the next node based on the transition probabilities defined in Eq. (6). The resulting unconnected node pair $(v_{r},v_{t})^{+}$ is treated as a fake positive edge, and App. E provides an example of this process. Given the node pair $(v_{r},v_{t})^{+}$ , the generator $G^{+}$ estimates $p^{+}_{\text{fake}}(v_{t}|v_{r})$ according to Eq. (4).

Similarly, we also establish a BFS-tree $T^{-}_{v_{r}}$ rooted at node $v_{r}$ in the negative subgraph $\mathcal{G}^{-}$ . To obtain the negative node pair $(v_{r},v_{t})^{-}$ , we perform a random walk on $T^{-}_{v_{r}}$ according to the following transition probability (i.e., negative relevance probability):

$$

p^{-}_{T^{-}_{v_{r}}}(v_{k}|v_{r})=\frac{1-\exp\left(\mathbf{g}_{v_{k}}^{\top}\mathbf{g}_{v_{r}}\right)}{\sum_{v_{k}\in\mathcal{N}({v_{r}})}\left(1-\exp\left(\mathbf{g}_{v_{k}}^{\top}\mathbf{g}_{v_{r}}\right)\right)}. \tag{7}

$$

In particular, the edge sign of the negative node pair $(v_{r},v_{t})^{-}$ depends on the length of the path $P_{rt}:v_{r}→ v_{t}$ . According to the balance theory introduced in Section 2.1, the edge signs of multi-hop node pairs correspond to the product of the edge signs along the path. Accordingly, the rules for generating fake negative edges within $P_{rt}$ are defined as follows: (1) If the path length of $P_{rt}$ is odd, a node pair $(v_{r},v_{t})^{-}$ for the rooted node $v_{r}$ and the last node $v_{t}$ is selected as a fake negative pair; (2) If the path length of $P_{rt}$ is even, a node pair $(v_{r},v_{t})^{-}$ for the rooted node $v_{r}$ and the second last node $v_{t}$ is selected as a fake negative pair. The resulting node pair $(v_{r},v_{t})^{-}$ is then used to compute $p^{-}_{\text{fake}}(v_{t}|v_{r})$ according to Eq. (5).

Discriminators $D^{+}$ and $D^{-}$ : This module tries to distinguish between real node pairs and fake node pairs synthesized by the generators $G^{+}$ and $G^{-}$ . Accordingly, the discriminators $D^{+}$ and $D^{-}$ estimate the likelihood that positive and negative edges exists between $v_{r}$ and $v∈ V$ , respectively, denoted as:

$$

D^{+}(v_{r},v|\theta_{D})=\sigma(\mathbf{d}_{v}^{\top}\mathbf{d}_{v_{r}})=\frac{1}{1+\exp({-\mathbf{d}_{v}^{\top}\mathbf{d}_{v_{r}})}},\\ \tag{8}

$$

$$

D^{-}(v,v_{r}|\theta_{D})=1-\sigma(\mathbf{d}_{v}^{\top}\mathbf{d}_{v_{r}})=\frac{\exp({-\mathbf{d}_{v}^{\top}\mathbf{d}_{v_{r}})}}{1+\exp({-\mathbf{d}_{v}^{\top}\mathbf{d}_{v_{r}})}}, \tag{9}

$$

where $\mathbf{d}_{v},\mathbf{d}_{v_{r}}∈\mathbb{R}^{k}$ are vectors corresponding to the $v$ -th and $v_{r}$ -th rows of shared parameters $\theta_{D}$ , respectively. $\sigma(·)$ represents the sigmoid function of the inner product of these two vectors.

In summary, given real positive and real negative edges sampled from $p_{\text{true}}^{+}(·|v_{r})$ and $p_{\text{true}}^{-}(·|v_{r})$ , along with fake positive and fake negative edges generated from generators $G^{+}/G^{-}$ , the adversarial learning pairs $(D^{+},G^{+})$ and $(D^{-},G^{-})$ , operating on the positive subgraph $\mathcal{G}^{+}$ and the negative subgraph $\mathcal{G}^{-}$ , respectively, engage in a four-player mini-max game with the joint loss function:

$$

\displaystyle\min_{\theta_{G}} \displaystyle\max_{\theta_{D}}L\left(G^{+},G^{-},D^{+},D^{-}\right) \displaystyle= \displaystyle\sum_{v_{r}\in V^{+}}\left(\left(\mathbb{E}_{v\sim p_{\text{true }}^{+}\left(\cdot\mid v_{r}\right)}\right)\left[\log D^{+}\left(v,v_{r}\mid\theta_{D}\right)\right]\right. \displaystyle\left.\qquad+\left(\mathbb{E}_{v\sim G^{+}\left(\cdot\mid v_{r};\theta_{G}\right)}\right)\left[\log\left(1-D^{+}\left(v,v_{r}\mid\theta_{D}\right)\right)\right]\right) \displaystyle+ \displaystyle\sum_{v_{r}\in V^{-}}\left(\left(\mathbb{E}_{v\sim p_{\text{true }}^{-}\left(\cdot\mid v_{r}\right)}\right)\left[\log D^{-}\left(v,v_{r}\mid\theta_{D}\right)\right]\right. \displaystyle\left.\qquad+\left(\mathbb{E}_{v\sim G^{-}\left(\cdot\mid v_{r};\theta_{G}\right)}\right)\left[\log\left(1-D^{-}\left(v,v_{r}\mid\theta_{D}\right)\right)\right]\right). \tag{10}

$$

Based on Eq. (10), the parameters $\theta_{D}$ and $\theta_{G}$ are updated alternately by maximizing and minimizing the joint loss function. Competition between $G$ and $D$ results in mutual improvement until the fake node pairs generated by $G$ are indistinguishable from the real ones, thus approximating the true connectivity distribution. Lastly, the learned node embeddings $\mathbf{g}_{v}∈\theta_{G}$ are used in downstream tasks.

How to Achieve DP? Given real and fake positive/negative edges of the node $v_{i}$ , the corresponding node embedding $\mathbf{d}_{v_{i}}∈\theta_{D}$ is updated by ascending gradients of the joint loss function in Eq. (10):

$$

\frac{\partial L_{D}}{\partial\mathbf{d}_{v_{i}}}=\left\{\begin{array}[]{l}\partial\log{D^{+}(v_{i},v_{j}|\theta_{D})}/{\partial\mathbf{d}_{v_{i}}}=[1-\sigma(\mathbf{d}_{v_{j}}^{\top}\mathbf{d}_{v_{i}})]\mathbf{d}_{v_{j}},\\

\text{if }\left(v_{i},v_{j}\right)\text{ is a real positive edge from $\mathcal{G}^{+}$};\\

\partial\log{(1-D^{+}(v_{i},v_{j}|\theta_{D}))}/{\partial\mathbf{d}_{v_{i}}}=-\sigma(\mathbf{d}_{v_{j}}^{\top}\mathbf{d}_{v_{i}})\mathbf{d}_{v_{j}},\\

\text{if }\left(v_{i},v_{j}\right)\text{ is a fake positive edge from ${G}^{+}$};\\

\partial\log{D^{-}(v_{i},v_{j}|\theta_{D})}/{\partial\mathbf{d}_{v_{i}}}=-\sigma(\mathbf{d}_{v_{j}}^{\top}\mathbf{d}_{v_{i}})\mathbf{d}_{v_{j}},\\

\text{if }\left(v_{i},v_{j}\right)\text{ is a real negative edge from $\mathcal{G}^{-}$};\\

\partial\log{(1-D^{-}(v_{i},v_{j}|\theta_{D}))}/{\partial\mathbf{d}_{v_{i}}}=[1-\sigma(\mathbf{d}_{v_{j}}^{\top}\mathbf{d}_{v_{i}})]\mathbf{d}_{v_{j}},\\

\text{if }\left(v_{i},v_{j}\right)\text{ is a fake negative edge from ${G}^{-}$}.\end{array}\right. \tag{11}

$$

According to Definition 1, to achieve node-level differential privacy in adversarial signed graph learning, it is necessary to add the Gaussian noise to the sum of clipped gradients over a batch of nodes. The resulting noisy gradient $\tilde{∇}{L_{D}}$ is formulated as:

$$

{\tilde{\nabla}{L_{D}}}=\frac{1}{B}\Big(\sum_{v_{i}\in V_{B}}\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v_{i}}})+\mathcal{N}\left(0,B^{2}C^{2}\sigma^{2}\mathbf{I}\right)\Big), \tag{12}

$$

where $V_{B}$ denotes the batch set of nodes, with batch size $B=|V_{B}|$ . $C$ is the clipping threshold to control gradient sensitivity. The fact that the gradient sensitivity reaches $BC$ is explained in Section 4.3.

**Remark 2**

*To achieve node-level DP, we perturb discriminator gradients instead of signed edges, avoiding cascading errors and gradient polarity reversals from edge sign flips (see Eq. (10)), which reduces gradient sensitivity. Furthermore, generators also preserve DP under discriminator guidance via the post-processing property of DP.*

4.3. Optimization via Constrained BFS-Tree

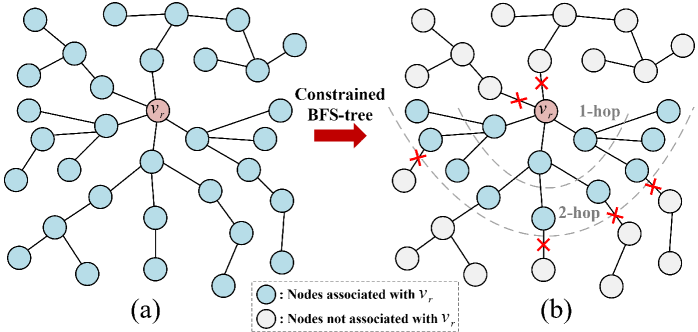

According to Eq. (11), in graph adversarial learning, the interdependence among samples implies that modifying a single node $v_{i}$ may affect the gradients of multiple other nodes $v_{j}$ within the same batch. This interdependence also exists among the fake node pairs generated along the BFS-tree paths. Consequently, in the worst-case illustrated in Fig. 4 (a), all node samples within a batch may become interrelated due to the BFS-tree, resulting in the gradient sensitivity of discriminators $D$ as high as $BC$ . Such high sensitivity necessitates injecting substantial noise to satisfy node-level DP, hindering effective optimization and reducing model utility.

<details>

<summary>x4.png Details</summary>

### Visual Description

# Technical Document Extraction: Constrained BFS-tree Diagram

This image illustrates the process of generating a **Constrained BFS-tree** (Breadth-First Search tree) from a general graph structure. The diagram is divided into two main stages, labeled (a) and (b), connected by a process indicator.

## 1. Component Isolation

### Region 1: Initial Graph (a)

* **Location:** Left side of the image.

* **Description:** A graph consisting of a central root node and multiple branching paths.

* **Root Node:** Labeled $v_r$, colored in a light reddish-brown/pink.

* **Peripheral Nodes:** All other nodes are colored light blue.

* **Structure:** Every node in this panel is colored blue, indicating they are all currently associated with the root $v_r$.

### Region 2: Transition Process

* **Location:** Center, between (a) and (b).

* **Text Label:** "Constrained BFS-tree"

* **Visual Element:** A thick red arrow pointing from left to right, indicating a transformation or filtering process.

### Region 3: Constrained Graph (b)

* **Location:** Right side of the image.

* **Description:** The same graph structure as (a), but with specific constraints applied to node association.

* **Root Node:** Labeled $v_r$, colored light reddish-brown/pink.

* **Node States:** Nodes are now differentiated into two colors: light blue and light grey.

* **Spatial Markers:**

* **1-hop:** A dashed grey arc indicating the first level of neighbors from the root.

* **2-hop:** A dashed grey arc indicating the second level of neighbors from the root.

* **Constraint Markers:** Red "X" marks are placed on specific edges. These marks indicate where the "association" with $v_r$ is severed or blocked.

### Region 4: Legend

* **Location:** Bottom center.

* **Symbol 1:** Light blue circle: "Nodes associated with $V_r$"

* **Symbol 2:** Light grey circle: "Nodes not associated with $V_r$"

---

## 2. Data and Flow Analysis

### Process Flow

The diagram demonstrates how a global graph (a) is pruned into a constrained tree (b). The constraints appear to be based on both distance (hops) and specific edge-level restrictions (the red X marks).

### Trend and Logic Verification

1. **Distance Constraint:** In panel (b), nodes within the "1-hop" and "2-hop" radius are generally blue (associated), while nodes beyond the 2-hop radius transition to grey (not associated).

2. **Edge Constraint:** Even within the hop boundaries, if an edge is marked with a **red X**, the downstream nodes (and the node immediately following the X) turn grey.

* *Example:* In the top-left quadrant of (b), a red X appears on the edge directly connected to $v_r$. Consequently, that entire branch is grey.

* *Example:* In the bottom-right quadrant, a red X appears after the 2-hop boundary, turning the subsequent nodes grey.

### Node Association Summary

| Node Color | Status | Condition in (b) |

| :--- | :--- | :--- |

| **Light Blue** | Associated with $v_r$ | Within hop limits AND no red X on the path from $v_r$. |

| **Light Grey** | Not associated with $v_r$ | Outside hop limits OR path is blocked by a red X. |

---

## 3. Textual Transcription

* **Labels:**

* `(a)`: Identifier for the initial state.

* `(b)`: Identifier for the constrained state.

* $v_r$: The root node identifier (appears in both graphs).

* `Constrained BFS-tree`: The name of the process/result.

* `1-hop`: Distance marker for the first neighbor level.

* `2-hop`: Distance marker for the second neighbor level.

* **Legend Text:**

* `Nodes associated with V_r` (Note: The legend uses capital $V_r$ while the nodes use lowercase $v_r$).

* `Nodes not associated with V_r`.

</details>

Figure 4. The receptive field of node $v_{r}$ within a batch is illustrated in two cases: (a) An unconstrained BFS tree, and the receptive field size of $v_{r}$ is $B=|V_{B}|=34$ ; (b) A constrained BFS tree with path length $L=2$ , path amount $N=3$ of each node, and the receptive field size of $v_{r}$ is $\sum_{l=0}^{L}N^{l}=13$ .

To address the aforementioned challenge, we introduce the constrained BFS-tree strategy: As illustrated in Algorithm 1 (see App. F.2), when performing a random walk on the BFS-tree $T^{+}_{v_{r}}$ or $T^{-}_{v_{r}}$ rooted at $v_{r}∈ V_{tr}$ to generate multiple unique paths, we also limit both the number of sampled paths and their lengths by $N$ and $L$ . Following this, the training set of subgraphs $S_{tr}$ composed of constrained paths is obtained. The rationale behind these settings is discussed below.

**Theorem 1**

*By constraining both the number and length of paths generated via random walks on the BFS-trees to $N$ and $L$ , respectively, the gradient sensitivity $\Delta_{{g}}$ of the discriminator can be reduced from $BC$ to $\frac{N^{L+1}-1}{N-1}C$ . Empirical results in Section 5 demonstrate that our ASGL achieves satisfactory performance even with a relatively small receptive field. Specifically, when setting $N=3$ and $L=4$ , that is, $\frac{N^{L+1}-1}{N-1}=121<B=256$ , the ASGL method still performs good model utility. Thus, the noisy gradient $\tilde{∇}{L_{D}}$ of discriminator within a mini-batch $\mathcal{B}_{t}$ is denoted as:

$$

\displaystyle{\tilde{\nabla}{L_{D}}}=\frac{1}{|\mathcal{B}_{t}|}\Big(\sum_{v\in\mathcal{B}_{t}}\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v}})+\mathcal{N}\left(0,\Delta_{{g}}^{2}\sigma^{2}\mathbf{I}\right)\Big), \tag{13}

$$

where the gradient sensitivity $\Delta_{{g}}=\frac{N^{L+1}-1}{N-1}C$ .*

Proof of Theorem 1. Let the sum of clipped gradients of batch subgraphs be $g_{t}(\mathcal{G})=\sum_{v∈\mathcal{B}_{t}}\text{Clip}_{C}(\frac{∂ L_{D}}{∂\mathbf{d}_{v}})$ , where $\mathcal{B}_{t}$ represents any choice of batch subgraphs from $S_{tr}$ . Consider a node-level adjacent graph $\mathcal{G}^{\prime}$ formed by removing a node $v^{*}$ with its associated edges from $\mathcal{G}$ , we obtain their training sets of subgraphs $S_{tr}$ and $S_{tr}^{\prime}$ via the SAMPLE-SUBGRAPHS method in Algorithm 1, denoted as:

$$

\displaystyle S_{tr} \displaystyle=\text{SAMPLE-SUBGRAPHS}(\mathcal{G},V_{tr},N,L), \displaystyle S_{tr}^{\prime} \displaystyle=\text{SAMPLE-SUBGRAPHS}(\mathcal{G}^{\prime},V_{tr},N,L). \tag{14}

$$

The only subgraphs that differ between $S_{tr}$ and $S_{tr}^{\prime}$ are those that involve the node $v^{*}$ . Let $S(v^{*})$ denote the set of such subgraphs, i.e., $S(v^{*})=S_{tr}\setminus S_{tr}^{\prime}$ . According to Lemma 1 in App. G, the number of such subgraphs $S(v^{*})$ is at most $R_{N,L}$ . Thus, in any mini-batch training, the only gradient terms $\frac{∂ L_{D}}{∂\mathbf{d}_{v}}$ affected by the removal of node $v^{*}$ are those associated with the subgraphs in $(S(v^{*})\cap\mathcal{B}_{t})$ :

$$

\displaystyle g_{t}(\mathcal{G})-g_{t}(\mathcal{G}^{\prime}) \displaystyle=\sum_{v\in\mathcal{B}_{t}}\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v}})-\sum_{v^{\prime}\in\mathcal{B}_{t}^{\prime}}\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v^{\prime}}}) \displaystyle=\sum_{v,v^{\prime}\in(S(v^{*})\cap\mathcal{B}_{t})}[\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v}})-\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v^{\prime}}})], \tag{15}

$$

where $\mathcal{B}_{t}^{\prime}=\mathcal{B}_{t}\setminus(S(v^{*})\cap\mathcal{B}_{t})$ . Since each gradient term is clipped to have an $\ell_{2}$ -norm of at most $C$ , it holds that:

$$

||\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v}})-\text{Clip}_{C}(\frac{\partial L_{D}}{\partial\mathbf{d}_{v^{\prime}}})||_{F}\leq C. \tag{16}

$$

In the worst case, all subgraphs in $S(v^{*})$ appear in $\mathcal{B}_{t}$ , so we bound the $\ell_{2}$ -norm of the following quantity based on Lemma 2 in App. G:

$$

||g_{t}(\mathcal{G})-g_{t}(\mathcal{G}^{\prime})||_{F}\leq C\cdot R_{N,L}=C\cdot\frac{N^{L+1}-1}{N-1}. \tag{17}

$$

The same reasoning applies when $\mathcal{G}^{\prime}$ is obtained by adding a new node $v^{*}$ to $\mathcal{G}$ . Since $\mathcal{G}$ and $\mathcal{G}^{\prime}$ are arbitrary node-level adjacent graphs, the proof is complete.

4.4. Privacy and Complexity Analysis

The complete training process for ASGL is outlined in Algorithm 2 (see App. F.3). In this section, we present a comprehensive privacy analysis and computational complexity analysis for ASGL.

Privacy Accounting. In this section, we adopt the functional perspective of Rényi Differential Privacy (RDP; see App. C) to analyze privacy budgets of ASGL, as summarized below:

**Theorem 2**

*Given the number of training set $N_{tr}$ , number of epochs $n^{epoch}$ , number of discriminators’ iterations $n^{iter}$ , batch size $B_{d}$ , maximum path length $L$ , and maximum path number $N$ , over $T=n^{epoch}n^{iter}$ iterations, Algorithm 2 satisfies node-level $(\alpha,2T\gamma)$ -RDP, where $\gamma=\frac{1}{\alpha-1}\ln\left(\sum_{i=0}^{R_{N,L}}\beta_{i}\left(\exp{\frac{\alpha(\alpha-1)i^{2}}{2\sigma^{2}R_{N,L}^{2}}}\right)\right)$ , $R_{N,L}=\frac{N^{L+1}-1}{N-1}$ and $\beta_{i}=\binom{R_{N,L}}{i}\binom{N_{tr}-R_{N,L}}{B_{d}-i}/{\binom{N_{tr}}{B_{d}}}$ . Please refer to App. I for the proof.*

Complexity Analysis. To analyze the time complexity of training ASGL (App. F.3), we break down the major computations. The outer loop runs for $n^{\text{epoch}}$ epochs, and in each epoch, the discriminators $D^{+}$ and $D^{-}$ are trained for $n^{\text{iter}}$ iterations. Each iteration samples a batch of $B_{d}$ real and fake edges to update $\theta_{D}$ , with DP cost updates incurring complexity $\mathcal{O}(B_{d}k\xi)$ , where $\xi$ is the sampling probability and $k$ is the embedding dimension (08; 17). Thus, each epoch of $D^{+}$ or $D^{-}$ costs $\mathcal{O}(n^{\text{iter}}B_{d}k(1+\xi))$ . For the generators $G^{+}$ and $G^{-}$ , each iteration samples $B_{g}$ fake edges to update $\theta_{G}$ , resulting in per-epoch complexity $\mathcal{O}(n^{\text{iter}}B_{g}k)$ . In total, ASGL’s overall time complexity over $n^{\text{epoch}}$ epochs is: $\mathcal{O}\left(2n^{\text{epoch}}n^{\text{iter}}(B_{d}+B_{g})(1+\xi)k\right)$ . This complexity is linear in the number of iterations and batch size, demonstrating the scalability of ASGL for large-scale graphs.

5. Experiments

In this section, some experiments are designed to answer the following questions: (1) How do key parameters affect the performance of ASGL (See Section 5.2)? (2) How much does the privacy budget affect the performance of ASGL and other private signed graph learning models in edge sign prediction (See Section 5.3)? (3) How much does the privacy budget affect the performance of ASGL and other baselines in node clustering (See Section 5.4)? (4) How resilient is ASGL to defense link stealing attacks (See Section 5.5)?

Table 1. Overview of the datasets

| Bitcoin-Alpha | 3,783 | 14,081 | 12,769 (90.7 $\%$ ) | 1,312 (9.3 $\%$ ) |

| --- | --- | --- | --- | --- |

| Bitcoin-OTC | 5,881 | 21,434 | 18,281 (85.3 $\%$ ) | 3,153 (14.7 $\%$ ) |

| WikiRfA | 11,258 | 185,627 | 144,451 (77.8 $\%$ ) | 41,176 (22.2 $\%$ ) |

| Slashdot | 13,182 | 36,338 | 30,914 (85.1 $\%$ ) | 5,424 (14.9 $\%$ ) |

| Epinions | 131,828 | 841,372 | 717,690 (85.3 $\%$ ) | 123,682 (14.7 $\%$ ) |

5.1. Experimental Settings

Datasets. To comprehensive evaluate our ASGL method, we conduct extensive experiments on five real-world datasets, namely Bitcoin-Alpha Collected in https://snap.stanford.edu/data., Bitcoin-OTC footnotemark: , WikiRfA footnotemark: , Slashdot Collected in https://www.aminer.cn. and Epinions footnotemark: . These datasets are regarded as undirected signed graphs, with their detailed statistics summarized in Table 1 and App. J.1.

Competitive Methods. To the best of our knowledge, this work is the first to address the problem of differentially private signed graph learning while aiming to preserve model utility. Due to the absence of prior studies in this area, we construct baselines by integrating four state-of-the-art signed graph learning methods—SGCN (36), SiGAT (38), LSNE (37), and SDGNN (39) —with the DPSGD mechanism. Since these models primarily leverage structural information, we further include the private graph learning method GAP (40), using Truncated SVD-generated spectral features (36) as input to ensure a fair comparison involving node features.

Evaluation Metrics. For edge sign prediction tasks, we follow the evaluation procedures in (14; 38; 39). Specifically, we first generate embedding vectors for all nodes in the training set using each comparative method. Then, we train a logistic regression classifier using the concatenated embeddings of node pairs as input features. Finally, we use the trained classifier to predict edge signs in the test set for each method. Considering the class imbalance between positive and negative edges (see Table 1), we adopt the area under curve (AUC) as the evaluation metric to ensure a fair comparison.

For node clustering, to fairly evaluate the clustering effect of node embeddings, we compute the average cosine distance for both positive and negative node pairs: $\text{CD}^{+}=\sum_{(v_{i},v_{j})∈ E^{+}}Cos(\mathbf{Z}_{i},\mathbf{Z}_{j})/|E^{+}|$ and $\text{CD}^{-}=\sum_{(v_{n},v_{m})∈ E^{-}}Cos(\mathbf{Z}_{n},\mathbf{Z}_{m})/|E^{-}|$ , where $\mathbf{Z}_{i}$ is the node embedding generated by each comparative method, and $Cos(·)$ represents the cosine distance between node embeddings. Then we propose the symmetric separation index (SSI) to measure the clustering degree between the embeddings of positive and negative node pairs in the test set, denoted as $\text{SSI}=1/(|\text{CD}^{+}-1|+|\text{CD}^{-}+1|)$ . A higher SSI indicates better structural proximity, with positive node pairs more tightly clustered and negative pairs more clearly separated in the unified embedding space.

Parameter Settings. For both edge sign prediction and node clustering tasks, we set the dimensionality of all node embeddings, $\mathbf{d}_{v}$ and $\mathbf{g}_{v}$ , to 128, following standard practice in prior work (41; 14). ASGL adopts DPSGD-based optimization, where the total number of training epochs is determined by the moments accountant (MA) (04), which offers tighter privacy tracking across multiple iterations. We set the iteration number $n^{iter}$ to 10 for Bitcoin-Alpha and Bitcoin-OTC, 15 for WikiRfA and Slashdot, and 20 for Epinions. Since all comparative methods are trained using DPSGD, their number of training epochs depends on the privacy budget. As discussed in Section 5.2, the maximum path number $N$ and path length $L$ are varied to analyze their impact on ASGL’s utility. For privacy parameters, we follow (02; 51; 08) by fixing $\delta=10^{-5}$ and $C=1$ , and vary the privacy budget $\epsilon∈\{1,2,...,6\}$ to evaluate utility under different privacy levels. To ensure fair comparison, we modify the official GitHub implementations of all baselines and adopt the best hyperparameter settings reported in their original papers. To minimize random errors, each experiment is repeated five times.

5.2. Impact of Key Parameters

In this section, we perform experiments on two datasets by varying the maximum number $N$ and the maximum length $L$ of paths in the BFS-trees, providing a rationale for parameter selection.

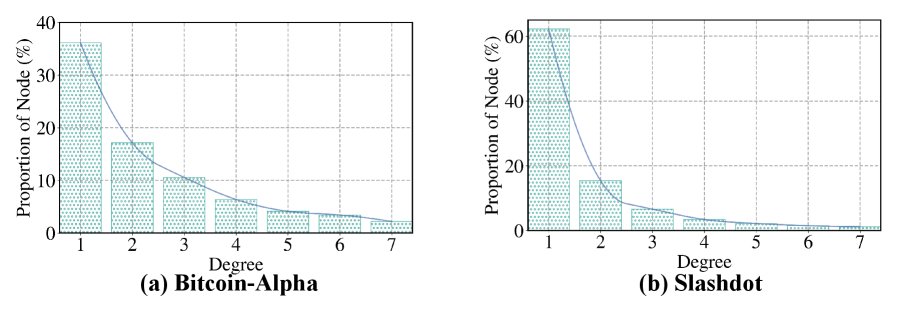

5.2.1. The effect of the parameter $N$

As discussed in Section 4.3, the greater the number of neighbors a rooted node has, the more paths can be obtained through random walks. Therefore, the maximum number of paths $N$ also depends on the node degrees. As shown in Fig. 8 (see App. J.2), for the Bitcoin-Alpha and Slashdot datasets, most nodes in signed graphs have degrees below 3. In addition, we investigate the impact of $N$ by varying its value within $\{2,3,4,5,6\}$ . As shown by the average AUC results in Table 2, the proposed ASGL method achieves optimal edge prediction performance at $N=3$ for Bitcoin-Alpha and $N=4$ for Slashdot. Considering both gradient sensitivity and computational efficiency, we adopt $N=3$ for subsequent experiments.

5.2.2. The effect of the parameter $L$

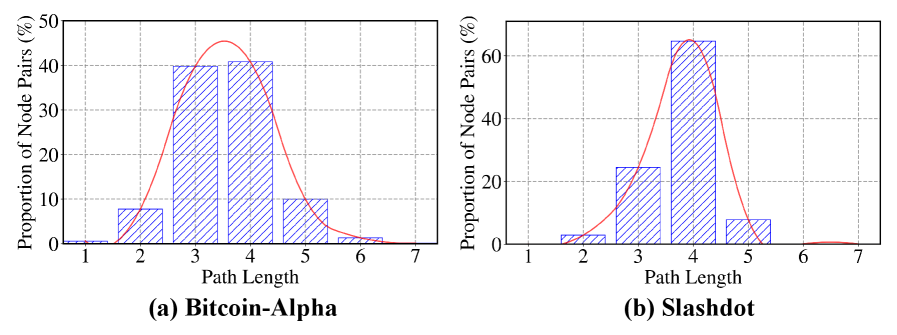

In this experiment, we evaluate the impact of the path length $L$ on the utility of ASGL by varying its value. As shown in Table 3, ASGL achieves the best performance on both datasets when $L=4$ . This result is closely aligned with the structural characteristics of the signed graphs: As summarized in Fig. 9 (see App. J.2), most node pairs in these datasets exhibit maximum path lengths of 3 or 4. Therefore, in subsequent experiments, we set $L=4$ , as it adequately covers the receptive field of most nodes.

Table 2. Summary of average AUC with different maximum path counts $N$ under $\epsilon=3$ and $L=3$ . (BOLD: Best)

| Bitcoin-Alpha Slashdot | 0.8025 0.7723 | 0.8562 0.8823 | 0.8557 0.8888 | 0.8498 0.8871 | 0.8553 0.8881 |

| --- | --- | --- | --- | --- | --- |

Table 3. Summary of average AUC with different path lengths $L$ under $\epsilon=3$ and $N=3$ . (BOLD: Best)

| Bitcoin-Alpha Slashdot | 0.7409 0.7629 | 0.8443 0.8290 | 0.8587 0.8833 | 0.8545 0.8809 | 0.8516 0.8807 |

| --- | --- | --- | --- | --- | --- |

<details>

<summary>x5.png Details</summary>

### Visual Description

# Technical Data Extraction: Performance Comparison of ASGL vs. Baselines

This document provides a comprehensive extraction of data from a series of five line charts comparing the performance of various graph neural network models under different privacy constraints.

## 1. Document Metadata & Global Components

* **Image Type:** Multi-panel line graph (5 subplots).

* **Primary Language:** English.

* **Global Legend (Top Center):**

* **SDGNN:** Grey line with open circles ($\circ$).

* **SiGAT:** Pink line with open circles ($\circ$).

* **SGCN:** Blue line with open circles ($\circ$).

* **GAP:** Green line with open squares ($\square$).

* **LSNE:** Gold/Yellow line with open diamonds ($\diamond$).

* **ASGL (Proposed):** Red line with open triangles ($\triangle$).

* **Common X-Axis:** Privacy budget ($\epsilon$), ranging from 1 to 6.

* **Common Y-Axis:** AUC (Area Under the Curve), ranging generally from 0.50 to 0.90.

---

## 2. Component Isolation & Data Extraction

### (a) Bitcoin_Alpha

* **Trend Analysis:** All models show an upward trend as the privacy budget increases. **ASGL (Proposed)** maintains the highest AUC throughout, starting at ~0.75 and plateauing near 0.86. **LSNE** shows the most significant growth, starting as the lowest performer and surpassing GAP and SGCN by $\epsilon=6$.

* **Approximate Data Points (AUC):**

| Model | $\epsilon=1$ | $\epsilon=2$ | $\epsilon=3$ | $\epsilon=4$ | $\epsilon=5$ | $\epsilon=6$ |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: |

| **ASGL** | 0.75 | 0.81 | 0.83 | 0.86 | 0.86 | 0.86 |

| **SiGAT** | 0.71 | 0.73 | 0.73 | 0.75 | 0.79 | 0.82 |

| **SDGNN** | 0.68 | 0.69 | 0.71 | 0.73 | 0.80 | 0.85 |

| **GAP** | 0.57 | 0.60 | 0.64 | 0.71 | 0.73 | 0.73 |

| **SGCN** | 0.52 | 0.55 | 0.64 | 0.69 | 0.73 | 0.77 |

| **LSNE** | 0.51 | 0.54 | 0.60 | 0.65 | 0.76 | 0.81 |

### (b) Bitcoin_OCT

* **Trend Analysis:** **ASGL** dominates, reaching a peak of ~0.88. **SDGNN** and **SiGAT** follow closely. **SGCN** and **GAP** show a sharp increase between $\epsilon=2$ and $\epsilon=4$.

* **Approximate Data Points (AUC):**

| Model | $\epsilon=1$ | $\epsilon=2$ | $\epsilon=3$ | $\epsilon=4$ | $\epsilon=5$ | $\epsilon=6$ |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: |

| **ASGL** | 0.80 | 0.85 | 0.85 | 0.85 | 0.87 | 0.88 |

| **SDGNN** | 0.77 | 0.79 | 0.79 | 0.82 | 0.83 | 0.86 |

| **SiGAT** | 0.70 | 0.73 | 0.79 | 0.84 | 0.86 | 0.87 |

| **SGCN** | 0.56 | 0.58 | 0.67 | 0.75 | 0.76 | 0.78 |

| **GAP** | 0.58 | 0.58 | 0.65 | 0.68 | 0.70 | 0.74 |

| **LSNE** | 0.51 | 0.54 | 0.65 | 0.81 | 0.87 | 0.88 |

### (c) WikiRfA

* **Trend Analysis:** **ASGL** shows a steep improvement from $\epsilon=1$ to $\epsilon=3$, then plateaus. **LSNE** shows a steady linear-like climb, eventually matching **SDGNN** at $\epsilon=6$. **GAP** remains the lowest performer with very slow growth.

* **Approximate Data Points (AUC):**

| Model | $\epsilon=1$ | $\epsilon=2$ | $\epsilon=3$ | $\epsilon=4$ | $\epsilon=5$ | $\epsilon=6$ |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: |

| **ASGL** | 0.67 | 0.77 | 0.80 | 0.80 | 0.80 | 0.81 |

| **SDGNN** | 0.66 | 0.71 | 0.72 | 0.72 | 0.76 | 0.79 |

| **SiGAT** | 0.63 | 0.65 | 0.71 | 0.73 | 0.74 | 0.80 |

| **SGCN** | 0.51 | 0.65 | 0.65 | 0.70 | 0.71 | 0.71 |

| **LSNE** | 0.51 | 0.53 | 0.61 | 0.66 | 0.74 | 0.79 |

| **GAP** | 0.54 | 0.55 | 0.56 | 0.57 | 0.58 | 0.60 |

### (d) Slashdot

* **Trend Analysis:** **ASGL** and **SDGNN** are the top performers, converging at ~0.89. **LSNE** exhibits a "S-curve" trend, starting very low and rising sharply between $\epsilon=2$ and $\epsilon=4$.

* **Approximate Data Points (AUC):**

| Model | $\epsilon=1$ | $\epsilon=2$ | $\epsilon=3$ | $\epsilon=4$ | $\epsilon=5$ | $\epsilon=6$ |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: |

| **ASGL** | 0.79 | 0.86 | 0.88 | 0.89 | 0.89 | 0.89 |

| **SDGNN** | 0.76 | 0.84 | 0.87 | 0.88 | 0.89 | 0.89 |

| **SiGAT** | 0.71 | 0.79 | 0.84 | 0.84 | 0.85 | 0.85 |

| **LSNE** | 0.57 | 0.62 | 0.76 | 0.78 | 0.78 | 0.78 |

| **SGCN** | 0.57 | 0.62 | 0.67 | 0.72 | 0.78 | 0.81 |

| **GAP** | 0.61 | 0.64 | 0.69 | 0.71 | 0.74 | 0.75 |

### (e) Epinions

* **Trend Analysis:** **ASGL** leads significantly in the low-privacy budget range ($\epsilon=2, 3$). By $\epsilon=6$, **ASGL**, **SDGNN**, **SiGAT**, and **LSNE** all converge around 0.84-0.87. **GAP** remains significantly lower than the others.

* **Approximate Data Points (AUC):**

| Model | $\epsilon=1$ | $\epsilon=2$ | $\epsilon=3$ | $\epsilon=4$ | $\epsilon=5$ | $\epsilon=6$ |

| :--- | :---: | :---: | :---: | :---: | :---: | :---: |

| **ASGL** | 0.68 | 0.82 | 0.85 | 0.87 | 0.87 | 0.87 |

| **SDGNN** | 0.68 | 0.72 | 0.72 | 0.85 | 0.85 | 0.87 |

| **SiGAT** | 0.68 | 0.71 | 0.71 | 0.75 | 0.79 | 0.84 |

| **LSNE** | 0.51 | 0.61 | 0.76 | 0.85 | 0.86 | 0.86 |

| **SGCN** | 0.62 | 0.65 | 0.70 | 0.75 | 0.81 | 0.84 |

| **GAP** | 0.59 | 0.61 | 0.63 | 0.63 | 0.65 | 0.67 |

---

## 3. Summary of Findings

Across all five datasets (Bitcoin_Alpha, Bitcoin_OCT, WikiRfA, Slashdot, and Epinions), the **ASGL (Proposed)** model consistently outperforms or matches the baseline models. It is particularly robust at lower privacy budgets ($\epsilon < 3$), where other models like LSNE and SGCN show significantly lower performance. As the privacy budget increases (allowing for less noise), all models generally improve, with most converging toward a high AUC between 0.80 and 0.90.

</details>

Figure 5. AUC vs. Privacy cost ( $\epsilon$ ) of private signed graph learning methods in edge sign prediction.

<details>

<summary>x6.png Details</summary>

### Visual Description

# Technical Data Extraction: Performance Comparison of Signed Graph Embedding Models

This document provides a comprehensive extraction of data from a series of five bar charts comparing the performance of various signed graph embedding models across different datasets.

## 1. Global Legend and Metadata

The charts share a common legend located at the top of the image. Each model is represented by a specific color and hatching pattern.

| Model Name | Color/Pattern Description |

| :--- | :--- |

| **SGCN** | Light Blue with diagonal stripes (bottom-left to top-right) |

| **SDGNN** | Light Green with vertical stripes |

| **SiGAT** | Pink with horizontal stripes |

| **LSNE** | Yellow/Gold with dotted pattern |

| **GAP** | Purple with horizontal dashed lines |

| **ASGL** | Red/Brown with cross-hatch (diamond) pattern |

**Common Y-Axis:** SSI (Signed Structural Index)

**Common X-Axis Categories:** $e=1$, $e=2$, $e=4$ (representing different experimental parameters, likely embedding dimensions or edge densities).

---

## 2. Component Analysis by Dataset

### (a) Bitcoin_Alpha

* **Trend:** All models show a general upward trend as $e$ increases. The **ASGL** model (red cross-hatch) consistently outperforms all other models, with its lead widening significantly at $e=4$.

* **Data Points (Approximate SSI):**

* **$e=1$:** Models cluster between 0.43 and 0.51. ASGL is highest (~0.51).

* **$e=2$:** Models cluster between 0.47 and 0.54. ASGL is highest (~0.54).

* **$e=4$:** ASGL shows a sharp increase to ~0.67. GAP is second at ~0.62. Others are near 0.50.

### (b) Bitcoin_OTC

* **Trend:** Similar to Bitcoin_Alpha, there is a positive correlation between $e$ and SSI. **ASGL** maintains a dominant lead across all categories.

* **Data Points (Approximate SSI):**

* **$e=1$:** Range 0.45 to 0.52. ASGL is highest (~0.52).

* **$e=2$:** Range 0.47 to 0.68. ASGL shows a significant jump to ~0.68.

* **$e=4$:** ASGL reaches its peak at ~0.77. GAP follows at ~0.70.

### (c) WikiRfA

* **Trend:** The performance gap between ASGL and other models is more pronounced here. While other models remain relatively flat or show modest gains, **ASGL** scales aggressively.

* **Data Points (Approximate SSI):**

* **$e=1$:** Most models are below 0.50; ASGL is at ~0.51.

* **$e=2$:** ASGL rises to ~0.56.

* **$e=4$:** ASGL reaches ~0.60. GAP and LSNE show moderate improvements to ~0.56 and ~0.55 respectively.

### (d) Slashdot

* **Trend:** This dataset shows the most dramatic performance increase for the **ASGL** model at $e=4$.

* **Data Points (Approximate SSI):**

* **$e=1$:** Models are tightly grouped between 0.47 and 0.51.

* **$e=2$:** ASGL moves to ~0.55.

* **$e=4$:** ASGL reaches ~0.60. Interestingly, SDGNN and LSNE show a significant jump at this stage to ~0.57, outperforming GAP.

### (e) Epinions

* **Trend:** Higher baseline SSI values compared to other datasets. **ASGL** and **GAP** are the top performers, ending nearly equal at $e=4$.

* **Data Points (Approximate SSI):**

* **$e=1$:** Range 0.48 to 0.61. GAP and ASGL are tied for highest (~0.61).

* **$e=2$:** ASGL takes a clear lead at ~0.67.

* **$e=4$:** ASGL and GAP are nearly tied at the highest point of ~0.68. LSNE follows at ~0.63.

---

## 3. Summary of Findings

1. **Top Performer:** The **ASGL** model (red cross-hatch) is the superior model across all five datasets and all tested parameters ($e=1, 2, 4$).

2. **Scalability:** The performance of ASGL improves significantly as the parameter $e$ increases, often showing a steeper growth curve than baseline models like SGCN or SiGAT.

3. **Secondary Models:** **GAP** (purple) is consistently the second-best performer, particularly on the Bitcoin and Epinions datasets.

4. **Baseline Stability:** Models like **SGCN** and **SDGNN** show the least variance, maintaining relatively stable but lower SSI scores across different values of $e$.

</details>

Figure 6. Symmetric separation index (SSI) vs. Privacy cost ( $\epsilon$ ) of private signed graph learning methods in node clustering.

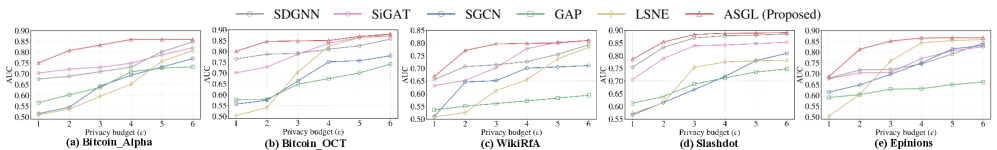

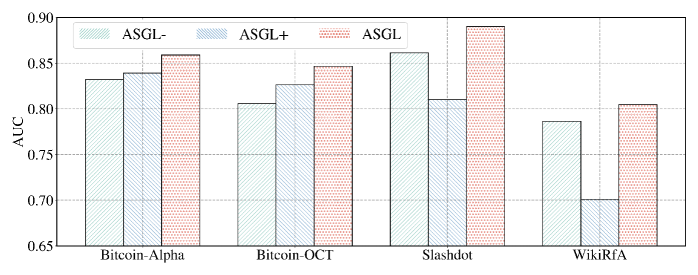

5.3. Impact of Privacy Budget on Edge Sign Prediction

To evaluate the effectiveness of different private graph learning methods on edge sign prediction, we compare their AUC scores under privacy budgets $\epsilon$ ranging from 1 to 6, as shown in Fig. 5 and Table 6 (see App. J.3). The proposed ASGL consistently outperforms all baselines across all privacy levels and datasets, owing to its ability to generate node embeddings that preserve connectivity distributions while satisfying DP guarantees. Although SDGNN achieves sub-optimal performance, it exhibits a noticeable gap from ASGL under limited privacy budgets ( $\epsilon<4$ ). SiGAT, SGCN, and LSNE employ the moments accountant (MA) to mitigate excessive privacy budget consumption, yet still suffer from poor convergence and degraded utility under limited privacy budgets. GAP adopts aggregation perturbation to ensure node-level DP, but its performance is limited due to noisy neighborhood information, hindering its ability to capture structural information for edge prediction tasks.

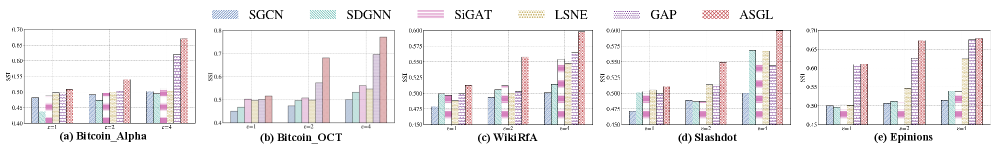

5.4. Impact of Privacy Budget on Node Cluster

To further examine the capability of ASGL in preserving signed node proximity, we conduct a fair comparison across multiple private graph learning methods using the SSI metric. As shown in Fig. 6 and Table 7 (see App. J.4), ASGL consistently outperforms all baselines across different datasets and privacy budgets, demonstrating that ASGL is capable of generating node embeddings that effectively preserve signed node proximity. Notably, GAP achieves the second-best clustering performance on most datasets (excluding Slashdot), benefiting from its ability to leverage node features for clustering nodes. Nevertheless, to guarantee node-level DP, GAP needs to repeatedly query sensitive graph information in every training iteration, resulting in significantly higher privacy costs.

5.5. Resilience Against Link Stealing Attack

To assess the effectiveness of ASGL in preserving the privacy of edge information, we perform link stealing attacks (LSA) across all datasets and compare the resilience of all methods to such attacks in edge sign prediction tasks. The LSA setup is detailed in App. J.5. Attack performance is measured by the AUC score, averaged over five independent runs. Table 4 summarizes the effectiveness of LSA on various trained target models and datasets. It can be observed that as the privacy budget $\epsilon$ increases, the average AUC of LSA consistently improves, indicating the reduced privacy protection of target models and an increased success rate of the attack. Overall, the average AUC of the attack is close to 0.50 in most cases, indicating the unsuccessful edge inference and the robustness of DP against such an attack. When $\epsilon=3$ , ASGL demonstrates stronger resistance to LSA across most datasets, with AUC values consistently below 0.57. This suggests that ASGL offers defense performance comparable to other differentially private graph learning methods.

Table 4. The average AUC of LSA on different comparisons and datasets. (BOLD: Best resilience against LSA)

| 1 | Bitcoin-Alpha | 0.5072 | 0.7091 | 0.5079 | 0.5145 | 0.5404 | 0.5053 |

| --- | --- | --- | --- | --- | --- | --- | --- |

| Bitcoin-OTC | 0.5081 | 0.7118 | 0.5119 | 0.5409 | 0.5660 | 0.5466 | |

| Slashdot | 0.5538 | 0.8232 | 0.5551 | 0.5609 | 0.5460 | 0.5325 | |

| WikiRfA | 0.5148 | 0.5424 | 0.5427 | 0.5293 | 0.5470 | 0.5302 | |

| Epinions | 0.7877 | 0.6329 | 0.5114 | 0.5129 | 0.5188 | 0.5092 | |

| 3 | Bitcoin-Alpha | 0.5547 | 0.7514 | 0.5533 | 0.5542 | 0.5598 | 0.5430 |

| Bitcoin-OTC | 0.5655 | 0.7273 | 0.5684 | 0.5734 | 0.5765 | 0.5612 | |

| Slashdot | 0.5742 | 0.8394 | 0.6267 | 0.5730 | 0.6464 | 0.5634 | |

| WikiRfA | 0.5276 | 0.5466 | 0.5542 | 0.5696 | 0.5772 | 0.5624 | |

| Epinions | 0.7981 | 0.6456 | 0.5588 | 0.5629 | 0.5665 | 0.5542 | |

6. Related Work