# Modal Logical Neural Networks

**Authors**: Antonin Sulc

## MODAL LOGICAL NEURAL NETWORKS

Antonin Sulc Lawrence Berkeley National Lab Berkeley, CA, USA

## ABSTRACT

We propose Modal Logical Neural Networks (MLNNs), a neurosymbolic framework that integrates deep learning with the formal semantics of modal logic, enabling reasoning about necessity and possibility. Drawing on Kripke semantics, we introduce specialized neurons for the modal operators □ and ♢ that operate over a set of possible worlds, enabling the framework to act as a differentiable 'logical guardrail.' The architecture is highly flexible: the accessibility relation between worlds can either be fixed by the user to enforce known rules or, as an inductive feature, be parameterized by a neural network. This allows the model to optionally learn the relational structure of a logical system from data while simultaneously performing deductive reasoning within that structure.

This versatile construction is designed for flexibility. The entire framework is differentiable from end to end, with learning driven by minimizing a logical contradiction loss. This not only makes the system resilient to inconsistent knowledge but also enables it to learn nonlinear relationships that can help define the logic of a problem space. We illustrate MLNNs on four case studies: grammatical guardrailing, multi-agent epistemic trust, detecting constructive deception in natural language negotiation, and combinatorial constraint satisfaction in Sudoku. These experiments demonstrate how enforcing or learning accessibility can increase logical consistency and interpretability without changing the underlying task architecture.

## 1 INTRODUCTION

Modern neural networks, particularly large language models, have achieved remarkable success in learning complex statistical patterns from vast datasets. However, their reliance on purely datadriven learning presents a critical challenge in high-stakes environments. These models can produce outputs that are statistically plausible yet logically incoherent, factually incorrect, or in violation of fundamental domain constraints. This inherent unpredictability poses a significant barrier to their deployment in safety-critical applications such as autonomous systems, medical diagnostics, or legal reasoning, where adherence to explicit rules and principles is not just desirable, but essential. The core of this problem is a methodological gap: a lack of a native mechanism within these architectures to enforce declarative, symbolic knowledge and guarantee that outputs conform to a set of verifiable logical rules.

This paper addresses this gap by turning to modal logic, a powerful extension of classical logic designed for reasoning about concepts like necessity and possibility. While standard logic deals with propositions that are simply true or false in a single, fixed reality, modal logic introduces a framework of 'possible worlds' to reason about qualified truths. For instance, in the context of an autonomous vehicle, a temporal logic rule might state □ ( ¬ moving ∧ red light ) , meaning 'it is necessarily true at all future moments that the vehicle is not moving while the traffic light is red.' This is a far stronger and more useful constraint than a simple statistical correlation. Similarly, epistemic logic, another form of modal logic, reasons about knowledge, where a statement like K a ϕ means 'agent a knows that ϕ is true.' These logics provide the formal language needed to specify the complex, nuanced rules that govern real-world systems, but integrating them into differentiable, learnable models remains an open challenge.

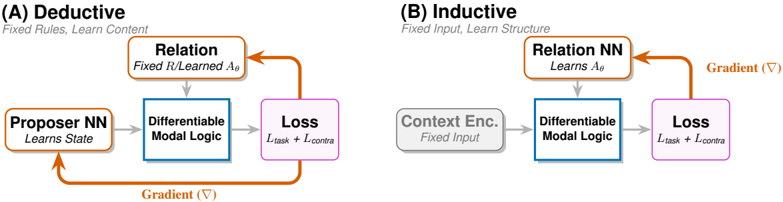

Figure 1: MLNN learning modes executing the Upward-Downward inference algorithm. (A) Deductive: Enforces fixed axioms by updating state representations (Proposer NN). (B) Inductive: Discovers relational structure ( A θ ) by updating the Relation NN. Gray arrows denote the forward differentiable inference (performing Upward aggregation and Downward constraint propagation); Orange arrows denote the gradient flow minimizing logical contradiction. Key: The central 'Differentiable Modal Logic' block evaluates □ and ♢ operators over truth bounds [ L, U ] across the world set W . The accessibility relation (top) determines which worlds participate in modal aggregation. In mode (A), the relation is fixed (denoted R ), while in mode (B), it is learned ( A θ ).

<details>

<summary>Image 1 Details</summary>

### Visual Description

## Diagram: Deductive vs. Inductive Neural Network Architectures

### Overview

The image compares two neural network architectures: **Deductive** (A) and **Inductive** (B). Both involve a **Differentiable Modal Logic** component and a **Loss** function, but differ in their structural design principles and learning objectives.

### Components/Axes

#### (A) Deductive

- **Title**: "Deductive"

- **Subtitle**: "Fixed Rules, Learn Content"

- **Components**:

1. **Proposer NN**: "Learns State" (orange box with bidirectional arrow).

2. **Relation**: "Fixed R/Learned Aθ" (orange box with unidirectional arrow to Differentiable Modal Logic).

3. **Differentiable Modal Logic**: Central blue box with gradient (∇) annotation.

4. **Loss**: "L_task + L_contra" (purple box).

- **Flow**:

- Proposer NN → Relation → Differentiable Modal Logic → Loss.

- Gradient (∇) flows from Loss back to Proposer NN.

#### (B) Inductive

- **Title**: "Inductive"

- **Subtitle**: "Fixed Input, Learn Structure"

- **Components**:

1. **Context Enc.**: "Fixed Input" (gray box with unidirectional arrow).

2. **Relation NN**: "Learns Aθ" (orange box with unidirectional arrow to Differentiable Modal Logic).

3. **Differentiable Modal Logic**: Central blue box (shared with Deductive).

4. **Loss**: "L_task + L_contra" (purple box).

- **Flow**:

- Context Enc. → Relation NN → Differentiable Modal Logic → Loss.

- Gradient (∇) flows from Loss back to Relation NN.

### Detailed Analysis

- **Deductive Architecture**:

- **Proposer NN** learns the system state, suggesting dynamic adaptation.

- **Relation** combines fixed rules (R) with learned parameters (Aθ), indicating hybrid reasoning.

- Gradient (∇) propagates through the entire loop, enabling end-to-end training.

- **Inductive Architecture**:

- **Context Enc.** processes fixed input data, emphasizing structured input handling.

- **Relation NN** learns parameters (Aθ) directly, focusing on structural adaptation.

- Gradient (∇) is localized to the Relation NN and Loss, limiting backpropagation scope.

### Key Observations

1. **Shared Elements**:

- Both architectures use **Differentiable Modal Logic** as a core component.

- Loss function combines task-specific (L_task) and contrastive (L_contra) objectives.

2. **Divergent Designs**:

- **Deductive** prioritizes learning content (state) while retaining fixed rules.

- **Inductive** focuses on learning structure (Aθ) from fixed inputs.

3. **Gradient Flow**:

- Deductive uses a closed-loop gradient (∇) for holistic optimization.

- Inductive restricts gradients to the Relation NN and Loss, simplifying training.

### Interpretation

The diagram illustrates a trade-off between **rule-based reasoning** (Deductive) and **data-driven adaptation** (Inductive). The Deductive approach leverages fixed rules for stability while learning state-specific content, whereas the Inductive method optimizes structural parameters (Aθ) to generalize from fixed inputs. The shared use of Differentiable Modal Logic suggests a unified framework for integrating symbolic reasoning with neural learning. The gradient annotations highlight differences in optimization strategies, with Deductive favoring global updates and Inductive focusing on localized parameter tuning.

</details>

We introduce Modal Logical Neural Networks (MLNNs) 1 , a neurosymbolic framework that bridges this divide. Building upon the weighted real-valued logic of Logical Neural Networks (LNNs) Riegel et al. (2020), we propose a distinct architecture centered on Kripke semantics that reasons over possible worlds. This moves beyond purely statistical learning to a hybrid approach, allowing users to impose explicit modal logic constraints on a model's behavior. A defining feature of this architecture is its flexibility: the accessibility relation between worlds can be parameterized by a neural network. This design enables MLNNs to simultaneously perform deductive reasoning based on user-defined axioms and inductively learn the relational structure that best explains the data. Consequently, this work shows modeling multi-agent systems: by interpreting the learnable accessibility relation as a dynamic graph of trust or information flow, the framework can formalize how agent behavior and communication evolve in response to one another.

This approach enables the creation of differentiable 'guardrails' for powerful statistical models. By framing learning as the dual objective of fitting data and minimizing logical contradiction, MLNNs can steer models toward outputs that are both statistically likely and logically sound. In this paradigm, we explicitly accept a trade-off: we may sacrifice a margin of raw statistical accuracy to achieve higher logical coherence and explainability. We demonstrate this capability through a series of experiments: reducing specific grammatical errors made by a sequence tagger, training a classifier to logically abstain on ambiguous inputs, solving highly non-convex Sudoku puzzles as a multi-world constraint problem, and learning relational knowledge structures in multi-agent systems, and identifying deceptive strategies in human negotiation benchmarks.

Beyond these demonstrated applications, the MLNN framework has potential relevance for a broader range of domains where relational structure and logical constraints are paramount. In bioinformatics, the framework could model gene regulatory networks with temporal and epistemic uncertainties about gene expression states. In climate science, MLNNs could reason about alternative future scenarios (possible worlds) under different policy interventions, enabling formal verification of climate model predictions. For autonomous systems, the framework offers a path toward verifying temporal-epistemic properties of multi-robot teams, where each robot's knowledge and beliefs must be coordinated. In financial networks, MLNNs could learn trust and influence relationships among actors from transaction data, providing interpretable representations of systemic risk.

MLNNs offer a methodological step toward building AI systems that are not only powerful pattern recognizers but also more predictable and verifiable reasoners. In the following sections, we formalize the MLNN architecture (Section 3), analyze its theoretical properties (Section 4), and demonstrate its capabilities across distinct case studies (Section 5).

1 Code available at https://github.com/sulcantonin/MLNN public.git

## 2 RELATED WORK

## 2.1 CLASSICAL (SINGLE-WORLD) NEUROSYMBOLIC LOGIC

Our work utilizes the foundational principles of the Logical Neural Network (LNN) framework Riegel et al. (2020). LNNs establish a one-to-one correspondence between neurons and the components of logical formulae, creating a highly disentangled and interpretable representation. They employ a weighted real-valued logic, where neurons compute truth values within the continuous interval [0 , 1] and use truth bounds [ L, U ] to represent uncertainty and enable an open-world assumption. Inference is performed via a provably convergent Upward-Downward algorithm, and learning is driven by a novel loss function that minimizes logical contradiction ( L > U ).

Other prominent neurosymbolic systems designed for First-Order Logic (FOL) or probabilistic logic also operate on a single-world assumption. Frameworks like Logic Tensor Networks (LTNs) ground FOL formulae in tensors Serafini and d'Avila Garcez (2016), Markov Logic Networks (MLNs) merge FOL with probabilistic graphical models by weighting clauses as features Richardson and Domingos (2006), and ProbLog integrates probabilistic reasoning with logic programming De Raedt et al. (2007). While powerful, these systems share a common characteristic: their logic is defined over a single, fixed reality.

Sophisticated reasoning often demands evaluating statements across dynamic contexts, such as temporal futures or epistemic beliefs, rather than within a static snapshot. By incorporating Kripke semantics Fagin et al. (1995), our framework evaluates propositions over connected possible worlds. This architecture captures structural relationships inexpressible in single-world models, enabling the richer analysis of complex domains like demonstrated in experiment section.

## 2.2 NEURAL MODELS FOR MODAL LOGIC

The concept of representing modal logic in neural networks was pioneered by Connectionist Modal Logic (CML) Garcez et al. (2007). CML represents Kripke models by using an ensemble of neural networks, with one network dedicated to each possible world. In this framework, the modal operators ( □ , ♢ ) are implemented by structurally propagating information between these networks based on a fixed accessibility relation, which is typically derived a priori from a logic program. Consequently, the learning task in CML is restricted to the internal propositions of each world within this fixed topology.

Modal Logical Neural Networks (MLNNs) advance this paradigm in two fundamental ways. First, rather than relying on binary truth values, they incorporate a weighted, real-valued logic capable of modeling uncertainty and actively minimizing logical inconsistencies. Second, and most significantly, MLNNs treat the accessibility relation, the structural rules defining how different possible worlds connect, as a flexible, learnable component. Unlike CML, which relies on a pre-determined logical structure, MLNNs employ fully differentiable modal operators. This allows the entire reasoning pipeline to be trained end-to-end, enabling the system to be embedded within larger neural architectures and to inductively discover complex logical structures, such as epistemic trust or temporal dependencies, directly from data.

Key distinction from CML: The differentiability of MLNN operators enables joint optimization of logical structure and task performance. In CML, the accessibility relation R is fixed before training, meaning the network can only learn propositional content within a predetermined logical structure. In contrast, MLNNs can simultaneously learn what is true (propositional content) and how worlds relate (accessibility structure), guided by a unified objective that balances task loss and logical consistency. This joint optimization is impossible in CML's architecture.

## 2.3 MODAL LOGIC AS A CONSTRAINT OR TOOL FOR NEURAL NETS

Other recent work has used modal logics, particularly temporal and epistemic logics, around neural networks rather than as the network architecture itself. For example, LTLfNet trains a recursive neural network to decide the satisfiability of Linear Temporal Logic on finite traces (LTLf) Luo et al. (2022). Here, the logic is the input to be classified, not a persistent multi-world state for reasoning.

In epistemic reinforcement learning, modal logic has been used as an external model-checking layer or 'shield' to verify an agent's beliefs (doxastic states) and guide its actions Engesser et al. (2025). In such systems, the agent itself is often a standard neural network, and the accessibility relation (defining an agent's beliefs) is symbolically defined, not learned via gradient descent as A θ is in our framework. These approaches demonstrate the utility of modal logic for specification and verification, but do not provide a unified, differentiable Kripke-style architecture.

## 2.4 MODAL LOGIC AS AN ANALYSIS TOOL FOR GNNS

While theoretical studies have established an expressive equivalence between GNNs and certain fragments of modal logic Nunn and Schwarzentruber (2023), the two frameworks differ fundamentally in their operational direction. Their approach leverage this duality for post-hoc analysis: they train standard GNNs on data and subsequently attempt to extract or explain logical behaviors from the learned weights. In contrast, MLNNs natively embed modal semantics into the neural architecture itself. Rather than relying on a statistical model to approximate logical rules as an emergent property of the data, MLNNs enforce these rules as differentiable constraints.

Advantage over GNN+verifier pipelines: Recent work has used modal logic as a specification language for GNN verification (e.g., Nunn and Schwarzentruber (2023)). Such approaches train a GNN first, then apply an external modal logic verifier to check properties post-hoc. MLNNs offer an integrated alternative: modal constraints are enforced during training via the contradiction loss, not verified afterward. This has several implications: (1) the model is guided toward satisfying constraints during optimization rather than potentially failing verification later; (2) the learned accessibility relation A θ provides an interpretable structure that emerges from the learning process; and (3) the framework avoids the computational overhead of running a separate verification pipeline.

Similarly,Barcel´ o et al. (2020) identified the limitations of the equivalence between GNNs and graded modal logic. They demonstrated that this logical fragment is inherently restricted to local neighborhoods, preventing the representation of global properties without explicit readout mechanisms. Our framework addresses this theoretical constraint by parameterizing the accessibility relation ( A θ ), enabling the model to learn the global connectivity needed for broader logical expressivity.

## 2.5 POSITIONING OF MLNNS

To clarify the specific advances of MLNNs relative to prior work, we provide a systematic comparison in Table 1. This comparison highlights the unique combination of capabilities that MLNNs offer.

Table 1: Comparison of MLNNs with related neurosymbolic and modal logic systems. MLNNs uniquely combine all listed capabilities.

| Capability | CML | LNN | GNN+Verifier | Shielding | MLNN(Ours) |

|--------------------------------------|-----------|-------|----------------|-------------|--------------|

| Real-valued truth bounds [ L,U ] | ✗ | ✓ | ✗ | ✗ | ✓ |

| Explicit □ / ♢ operators | ✓ | ✗ | ✗ ∗ | ✓ | ✓ |

| Multiple possible worlds | ✓ | ✗ | ✗ | ✓ | ✓ |

| Learnable accessibility A θ | ✗ | N/A | ✗ | ✗ | ✓ |

| End-to-end differentiable | Partial † | ✓ | ✗ | ✗ | ✓ |

| Contradiction-driven learning | ✗ | ✓ | ✗ | ✗ | ✓ |

| Joint structure+content learning | ✗ | N/A | ✗ | ✗ | ✓ |

| Axiomatic regularization (T, S4, S5) | ✗ | N/A | N/A | Fixed | ✓ |

| Scalable (metric learning) | ✗ | ✓ | ✓ | ✗ | ✓ |

| Combinatorial Opt. (e.g., Sudoku) ‡ | ✗ | ✗ | ✗ | ✗ | ✓ |

MLNNs synthesize and extend ideas from multiple research threads. Our framework makes two primary contributions to this landscape. First, it brings full Kripke semantics to neurosymbolic

learning by introducing sound, differentiable □ and ♢ neurons that operate over a set of possible worlds, inheriting the LNN's [ L, U ] bounds and contradiction-driven learning. Second, unlike CML or shielding-based approaches, MLNNs uniquely parameterize the accessibility relation itself as A θ , allowing the logical structure to be learned from data. This A θ can also be optionally regularized to approximate the relational properties of standard modal systems, such as T (reflexivity) or S4 (transitivity), as discussed in Section 4.3.

Relationship to LNNs: The extension from LNNs to MLNNs is nontrivial. The introduction of multiple worlds and accessibility relations changes the inference algorithm in several key ways: (1) the Upward pass must now aggregate truth values across worlds via the modal operators, weighted by A θ ; (2) the Downward pass must propagate constraints not just within a formula tree but across the accessibility structure; and (3) the contradiction loss now accumulates across all world-formula pairs, allowing contradictions in one world to influence learning in accessible worlds. We detail these changes in Section 3 and prove their soundness in Section 4.

This makes MLNN a general-purpose, differentiable modal reasoning layer designed to enforce logical coherence. This capability complements purely statistical approaches. For instance, while Conformal Prediction (CP) provides statistical guarantees for abstention Angelopoulos and Bates (2021), MLNNs enable axiomatic detection of the unknown (as shown in Section 5.2) based on user-defined axioms, providing a form of logical interpretability absent in statistical methods.

To the best of our knowledge, there is currently no neurosymbolic framework that simultaneously (i) implements Kripke-style modal semantics with explicit □ / ♢ operators, (ii) maintains LNN-style lower/upper truth bounds for open-world reasoning, and (iii) treats the accessibility relation itself as a differentiable, learnable component trained jointly with the task. Existing systems either handle only propositional or first-order constraints, rely on symbolic (non-differentiable) modal reasoning, or assume a fixed accessibility relation defined a priori. As a consequence, there is no directly comparable 'drop-in' baseline for MLNNs; in our experiments we therefore focus on controlled comparisons to simplified variants of our own model and to standard non-symbolic baselines that address overlapping subtasks.

## 3 METHOD: MODAL LOGICAL NEURAL NETWORKS

## 3.1 PRELIMINARIES AND NOTATION

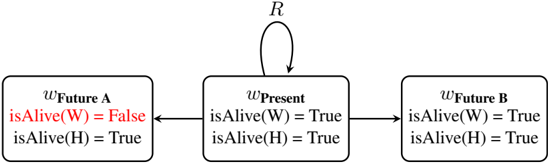

The power of modal logic stems from Kripke semantics, which extends classical logic by evaluating propositions across multiple 'possible worlds'. Formally, a Kripke model is a tuple M = ⟨ W,R,V ⟩ , where W is a set of possible worlds, R is a binary accessibility relation on W (i.e., R ⊆ W × W ), and V is a valuation function that assigns a truth value to each atomic proposition p ∈ P in each world w ∈ W . An MLNN instantiates a differentiable, learnable version of this model. A 'world' is a flexible concept representing an agent's belief, a moment in time, or a specific context.

We formalize our framework using the following notation. Let W be a finite set of possible worlds and T be a finite set of discrete time steps, which together define a set of spacetime states S = W × T . Atomic propositions and logical formulae are denoted by p and ϕ , respectively, with their truth values represented by continuous lower and upper bounds [ L, U ] ⊆ [0 , 1] . The structural relationships between worlds are defined by a crisp binary accessibility relation R ⊆ W × W (used when the relation is fixed and given) or a learnable, neurally parameterized accessibility matrix A θ (used when the relation is learned), which may be applied as a masked matrix ˜ A . We use R exclusively for fixed relations and A θ for learnable relations throughout this paper. We utilize the standard modal operators □ (necessity) and ♢ (possibility), which instantiate as K a ϕ ('Agent a knows ϕ ') in epistemic logic or Gϕ ('Globally') and Fϕ ('Finally') in temporal logic. The soft logic aggregations are controlled by a temperature parameter τ , and the model is trained by minimizing a total loss composed of a task loss L task and a logical contradiction loss L contra, balanced by a weighting hyperparameter β .

## 3.2 DIFFERENTIABLE KRIPKE SEMANTICS

In an MLNN, the components of a Kripke model are realized as differentiable tensors. For each proposition p , the MLNN stores a tensor of truth bounds of shape ( | W | , 2) , where each row

[ L p,w , U p,w ] represents the truth bounds of p in world w . The accessibility relation, which defines which worlds can 'see' each other, dictates the function of modal operators. A key feature of the MLNN is the option to make this relation a learnable component, parameterized by a neural network, A θ . This allows the model to learn the underlying relational physics of the problem domain-such as which agents trust each other or how time flows-directly from data, guided by the goal of achieving logical consistency.

## 3.2.1 MODAL OPERATORS: THE NECESSITY AND POSSIBILITY NEURONS

The modal operators are specialized neurons that aggregate information across worlds. While classical logic uses hard minimums and maximums over a fixed set of neighbors, we employ differentiable relaxations over the weighted accessibility matrix ˜ A to allow gradients to propagate through the structural decision boundaries. To ensure the soundness of our bounds (as shown in Theorem 1), we define a set of differentiable, monotonic operators. Let x = { x i } be a set of truth values from all worlds. We define:

- softmin τ ( x ) = -τ log ∑ i exp( -x i /τ ) , which is a sound lower bound on min( x ) .

- softmax τ ( x ) = τ log ∑ i exp( x i /τ ) , which is a sound upper bound on max( x ) .

- conv-pool τ ( x, z ) = ∑ i w i x i , where w i = softmax( z i /τ ) . This is a convex pooling (a weighted average). When z = x , this is a lower bound on max( x ) . When z = -x , this is an upper bound on min( x ) .

We use these to define the modal neuron bounds (default τ = 0 . 1 unless stated).

The □ (Necessity) Neuron In differentiable Kripke semantics, □ ϕ represents a weighted universal quantification: ϕ must be true in all worlds to the degree that they are accessible. We implement this using the differentiable implication (1 -˜ A w,w ′ + truth ) . Intuitively, this acts as a 'weakest link' detector: if a world is highly accessible ( ˜ A ≈ 1 ) but ϕ is false, the score collapses.

The ♢ (Possibility) Neuron Dually, ♢ ϕ represents a weighted existential quantification: ϕ must be true in at least one highly accessible world. We implement this using a differentiable conjunction. Functionally, this acts as an 'evidence scout': the neuron activates if it finds any world that is both accessible and where ϕ is true.

$$L _ { \phi , w } = conv-pool ( A _ { w , w } + L _ { \phi , w } - 1 , . . ) ( Sound, ( A _ { w , w } + U _ { \phi , w } - 1 ) ( Weighted, A _ { w , w } + U _ { \phi , w } - 1 )$$

This formulation ensures that the bounds respect the fundamental modal duality ♢ ϕ ≡ ¬ □ ¬ ϕ via the identity softmax( x ) = 1 -softmin(1 -x ) .

## 3.3 FLEXIBLE AND LEARNABLE ACCESSIBILITY RELATIONS

A key capability of MLNNs is the ability to treat the accessibility relation as a learnable parameter, rather than a fixed part of the model structure. In classical modal logic, R is a fixed, given structure. In an MLNN, this fixed R can be replaced by a learnable, weighted relation A θ . The flexibility to use either a fixed or learnable structure is crucial; we use fixed logical rules in our grammatical guardrailing and axiomatic detection of the unknown, while the learnable relation A θ is showcased in our multi-agent epistemic trust analysis.

We parameterize this relation with a neural network, A θ : W × W → [0 , 1] . For smaller domains, this can be instantiated as a direct matrix of learnable logits passed through a sigmoid function. For scalable applications, we can employ a metric learning parameterization, where a neural encoder

maps each world w to a latent embedding h w ∈ R d . The accessibility score is then defined by a kernel function, such as A θ ( w i , w j ) = σ ( h ⊤ w i h w j ) , effectively determining logical access via geometric proximity. This factorized form reduces the parameter space from quadratic to linear with respect to | W | .

Initialization of A θ The initialization of A θ can significantly affect convergence speed and final performance. We recommend the following strategies based on domain knowledge:

- Default (no prior): Initialize logits with small random values (e.g., N (0 , 0 . 01) ), yielding A θ ≈ 0 . 5 uniformly.

- Reflexivity prior: When reflexivity is expected (e.g., epistemic logic), initialize diagonal entries with positive bias (e.g., logits = 2 . 0 ) so A θ ( w,w ) ≈ 0 . 88 .

- Distrust prior: For adversarial domains (e.g., Diplomacy), initialize with negative bias (e.g., logits = -2 . 0 ) so A θ ≈ 0 . 12 , encoding a prior of skepticism.

- Identity prior: Initialize as identity matrix when agents should initially only 'see' themselves.

For differentiability, we use these weights directly in a soft aggregation. The necessity neuron, for example, becomes a weighted soft minimum, see Equation 1. The truth value L ϕ,w j from a target world w j is incorporated into the minimum at w i using a differentiable implication 1 -( ˜ A ) ij + L ϕ,w j . If ( ˜ A ) ij ≈ 1 (full access), the term (1 -( ˜ A ) ij ) is near 0, and L ϕ,w j fully participates in the minimum. If ( ˜ A ) ij ≈ 0 (no access), the term is near 1, effectively removing L ϕ,w j from consideration.

This formulation is a weighted generalization of a logical conjunction, adapted from the LNN framework's operator for universal quantification. Its use preserves the monotonicity required for the Upward-Downward algorithm, as it is a composition of monotonic functions (soft-min and a linear term in L ), which we rely on in Section 4.2. In line with the base LNN framework, we assume that all neuron activations are clipped to the interval [0 , 1] to maintain valid truth values.

The parameters θ of this accessibility network are learnable, updated via gradient descent on the system's overall contradiction loss. This is the core inductive capability of the MLNN. It allows the model to discover the logical structure that best resolves contradictions in the data. For example, it can learn whether the relation should be reflexive (by learning high values on the diagonal) or symmetric, all driven by the objective of finding a maximally consistent logical theory.

## 3.4 MODAL INFERENCE AND LEARNING

We extend the Upward-Downward algorithm from Riegel et al. (2020) to handle modal operators. During the upward pass, the truth of a modal formula is determined by aggregating its subformula's truth from accessible worlds, as shown in the equations above.

Downward Pass During the downward pass, a parent modal formula's truth constrains its subformula in accessible worlds. This propagation is also monotonic. For the necessity operator, if the lower bound L □ ϕ,w is high, it implies a strong belief that ϕ must be true in all accessible worlds. This propagates a new, tighter lower bound to all children:

$$L ( t + 1 ) _ { \phi , w } ^ { - } + \max ( L ( t ) _ { \phi , w } ^ { - } , L ( t ) _ { \phi } ^ { - } )$$

Dually, a low upper bound U ♢ ϕ,w implies ϕ must be false in all accessible worlds:

$$, w ) \nu w ^ { s . t . } ( A ) _ { w , w ^ { s . t . } } > 0$$

Cross-World Contradiction Propagation Akey difference from single-world LNNs is how contradictions propagate across the Kripke structure. In a standard LNN, a contradiction ( L > U ) affects only the formula tree containing that proposition. In an MLNN, contradictions can propagate across worlds via the accessibility relation:

1. Direct contradiction: If L ϕ,w > U ϕ,w in world w , this contributes to L contra .

2. Modal propagation: If □ ϕ has a high lower bound at w , but some accessible world w ′ has ϕ with a low upper bound, the modal operator creates a 'cross-world' tension that manifests as a gradient signal.

3. Accessibility learning: When A θ is learnable, the gradient from this cross-world tension flows back to modify A θ , potentially 'severing' logical access to resolve the contradiction.

This mechanism is what enables inductive learning of relational structure: the model can learn to adjust which worlds are accessible to minimize logical contradictions.

The entire system is trained end-to-end via joint optimization. The total loss combines a standard task-specific loss (e.g., cross-entropy) with the logical contradiction loss. This loss is theoretically grounded in the principle of reductio ad absurdum. A state where the lower bound L exceeds the upper bound U is a logical impossibility. Theoretically, the minimization of L contra can be viewed as a differentiable search for a satisfying interpretation of the logical theory. Under the assumption that a fully consistent Kripke model exists that is representable by the network, gradient descent is guided towards a parameterization θ and a set of truth bounds that satisfy all axioms simultaneously. The total loss is formulated as:

$$\int _ { 0 } ^ { 1 } \int _ { 0 } ^ { 1 } L_{task} + B L_{contra} d A = 1$$

where β is a hyperparameter that balances statistical learning and logical consistency. Gradients from both loss terms flow back through the entire network, including the parameters θ of the accessibility relation A θ , allowing the logical structure itself to be learned.

Guidance on Setting β The hyperparameter β controls the trade-off between task performance and logical consistency. We provide the following guidelines:

- Compute relative scales: Before training, estimate the typical magnitudes of L task and L contra on a validation batch. Set β so that β · L contra is comparable to L task .

- Constraint-critical tasks: When logical consistency is paramount (e.g., safety constraints), use β ∈ [0 . 5 , 1 . 0] or higher.

- Accuracy-critical tasks: When task performance dominates, use β ∈ [0 . 1 , 0 . 3] .

- Adaptive scheduling: Consider increasing β over training epochs, allowing the model to first learn task-relevant features before enforcing strict logical consistency.

## 3.5 NEURAL PARAMETERIZATION FOR TEMPORAL EPISTEMIC LOGIC

Aparticularly powerful instantiation of the MLNN framework is for temporal epistemic logic, which combines reasoning about knowledge (epistemic) and time (temporal). This is essential for modeling scenarios like multiagent planning or verifying the behavior of autonomous systems over time.

Worlds as Spacetime Points Instead of a simple set of worlds W , we consider a set of world-time points S = W × T , where W is a set of agents and T = { t 0 , . . . , t m } is a set of discrete time steps. A 'world' in this context is a tuple ( w,t ) , representing the state of agent w at time t . Propositional truth bounds are now tensors of shape ( | W | × | T | , 2) . While we typically use discrete time steps for simplicity, it is worth noting that continuous representations of time are also possible within this framework, for instance by parameterizing A θ as a function of a continuous variable ∆ t (e.g., via Neural ODEs), though we leave this exploration for future work.

Multiple Accessibility Relations We introduce distinct, learnable neural models for the different accessibility relations. First, Epistemic Accessibility ( A a θ ) defines, for each agent a , a separate neural network that computes a matrix defining which states the agent considers possible. The operator K a ϕ ('agent a knows ϕ ') is then implemented as a □ neuron operating with the A a θ relation. Second, Temporal Accessibility ( A T θ ) is a network that computes a matrix governing the flow of time. Common temporal operators like Gϕ (' ϕ is always true in the future') and Fϕ (' ϕ is eventually true in the future') are implemented as □ and ♢ neurons, respectively, using the A T θ relation.

Composite Modal Operators This structure allows for rich, composite operators. For example, the statement 'Agent a will always know that ϕ is true' ( GK a ϕ ) is implemented as a nested aggregation. First, the epistemic neuron for K a ϕ is computed at each future time step. Then, the temporal neuron for G (Globally/Always) aggregates these intermediate results. This compositional approach allows the MLNN to learn and reason about complex specifications involving how agents' knowledge evolves over time.

Having defined the architecture and learning mechanism of an MLNN, we now turn to a formal analysis of its soundness, convergence, and expressivity.

## 4 THEORETICAL ANALYSIS

## 4.1 SOUNDNESS OF MLNN BOUNDS

We first establish the formal setting and then prove that the bounds computed by an MLNN are sound with respect to a probabilistic semantics over Kripke models. This extends Theorem 2 from the LNN paper Riegel et al. (2020).

Assumptions Throughout this section, we assume: (1) finite world set W ; (2) bounded continuous operators with monotone updates; (3) fixed temperature τ > 0 ; and (4) bounded accessibility weights A θ ( w,w ′ ) ∈ [0 , 1] .

Definitions Let a set of atomic propositions be given over a set of worlds W .

Definition 1 (Classical Kripke Interpretation) . A classical Kripke interpretation g assigns a crisp truth value { T, F } to each proposition p in each world w ∈ W . Let G be the set of all such interpretations.

Definition 2 (Probabilistic Kripke Model) . Aprobabilistic Kripke model is a probability distribution p ( · ) over G . For any modal formula σ and world w , we define S σ,w = { g ∈ G | g ( σ, w ) = T } as the set of interpretations where σ is true at w .

Definition 3 (Consistent Probabilistic Model) . Given an MLNN initialized with a theory Γ 0 = { ( σ, L 0 ( σ ) , U 0 ( σ )) } , a probabilistic Kripke model p is consistent with Γ 0 if for any formula σ and world w : L 0 ( σ, w ) ≤ p ( S σ,w ) ≤ U 0 ( σ, w ) . Let P Γ 0 denote the set of all such consistent models.

We first establish a key lemma regarding the monotonicity of modal operators.

Lemma 1 (Monotonicity of Modal Operators) . The modal operators □ and ♢ , as defined in Section 3, are monotonic with respect to their input bounds when composed with the accessibility weights ˜ A . Specifically:

1. L □ ϕ,w is monotonically non-decreasing in each L ϕ,w ′ and non-increasing in each ˜ A w,w ′ .

2. U □ ϕ,w is monotonically non-decreasing in each U ϕ,w ′ .

3. L ♢ ϕ,w is monotonically non-decreasing in each L ϕ,w ′ and each ˜ A w,w ′ .

4. U ♢ ϕ,w is monotonically non-decreasing in each U ϕ,w ′ .

Proof. We verify each case:

(1) For L □ ϕ,w = softmin τ ((1 -˜ A w,w ′ ) + L ϕ,w ′ ) : The softmin function is monotonically nondecreasing in each of its arguments (since ∂ softmin /∂x i ≥ 0 ). The term (1 -˜ A w,w ′ ) + L ϕ,w ′ is linear and non-decreasing in L ϕ,w ′ and non-increasing in ˜ A w,w ′ . By composition, L □ ϕ,w is nondecreasing in L ϕ,w ′ and non-increasing in ˜ A w,w ′ .

(2) For U □ ϕ,w = conv-pool τ ((1 -˜ A w,w ′ ) + U ϕ,w ′ , -) : The convex pooling is a weighted average with non-negative weights summing to 1. It is therefore non-decreasing in each input. Since the input (1 -˜ A w,w ′ ) + U ϕ,w ′ is linear and non-decreasing in U ϕ,w ′ , the composition is non-decreasing.

(3) For L ♢ ϕ,w = conv-pool τ ( ˜ A w,w ′ + L ϕ,w ′ -1 , +) : By similar reasoning, conv-pool is nondecreasing in each input, and the input ˜ A w,w ′ + L ϕ,w ′ -1 is non-decreasing in both ˜ A w,w ′ and L ϕ,w ′ .

(4) For U ♢ ϕ,w = softmax τ ( ˜ A w,w ′ + U ϕ,w ′ -1) : Softmax is non-decreasing in each argument, and the input is non-decreasing in U ϕ,w ′ .

Theorem 1 (Soundness of MLNN Bounds) . Let an MLNN be initialized with a theory Γ 0 = { ( σ, L 0 ( σ ) , U 0 ( σ )) } . If P Γ 0 is non-empty, then for any formula ϕ and world w , the bounds [ L ϕ,w , U ϕ,w ] computed by the MLNN after convergence satisfy:

$$\rho ( S _ { \phi , w } ) a L _ { \phi , w } \leq i n f _ { p E P r _ { 0 } }$$

Proof. The proof extends the logic of Lemma 1 and Theorem 2 from the LNN supplementary material by showing that each update step preserves the set of consistent probabilistic models P Γ . This property has been established for classical connectives; we must check that it holds for the modal operators as defined in Section 3.1. For any p ∈ P Γ , we know by induction that L ϕ,w ′ ≤ p ( S ϕ,w ′ ) ≤ U ϕ,w ′ .

For L □ ϕ,w = softmin( L ) : By classical modal semantics, p ( S □ ϕ,w ) = min w ′ : R ( w,w ′ ) p ( S ϕ,w ′ ) for crisp accessibility. For weighted accessibility, this generalizes to a weighted minimum. The sound bound satisfies inf p ( S □ ϕ,w ) ≥ min w ′ p ( S ϕ,w ′ ) ≥ min w ′ L ϕ,w ′ . Since softmin( L ) ≤ min( L ) , we have L □ ϕ,w ≤ min w ′ L ϕ,w ′ ≤ inf p ( S □ ϕ,w ) . This bound is sound .

For U □ ϕ,w = conv-pool( U, -U ) : The sound bound requires sup p ( S □ ϕ,w ) ≤ min w ′ p ( S ϕ,w ′ ) ≤ min w ′ U ϕ,w ′ . A convex pooling conv-pool( U ) = ∑ w i U i (with ∑ w i = 1 , w i ≥ 0 ) is always greater than or equal to min( U ) . Thus, U □ ϕ,w ≥ min w ′ U ϕ,w ′ ≥ sup p ( S □ ϕ,w ) . This bound is sound .

For L ♢ ϕ,w = conv-pool( L, L ) : By modal semantics, p ( S ♢ ϕ,w ) = max w ′ : R ( w,w ′ ) p ( S ϕ,w ′ ) . The sound bound requires inf p ( S ♢ ϕ,w ) ≥ max w ′ p ( S ϕ,w ′ ) ≥ max w ′ L ϕ,w ′ . A convex pooling conv-pool( L ) = ∑ w i L i is always less than or equal to max( L ) . Thus, L ♢ ϕ,w ≤ max w ′ L ϕ,w ′ ≤ inf p ( S ♢ ϕ,w ) . This bound is sound .

For U ♢ ϕ,w = softmax( U ) : The sound bound requires sup p ( S ♢ ϕ,w ) ≤ max w ′ p ( S ϕ,w ′ ) ≤ max w ′ U ϕ,w ′ . Since softmax( U ) ≥ max( U ) , we have U ♢ ϕ,w ≥ max w ′ U ϕ,w ′ ≥ sup p ( S ♢ ϕ,w ) . This bound is sound .

Behavior as τ → 0 : In the limit τ → 0 , softmin τ → min and softmax τ → max . The bounds become tight, recovering classical (crisp) modal semantics. For any fixed τ > 0 , the bounds remain sound but may be looser than the crisp case.

A complete proof with all intermediate steps is provided in Appendix A.1.

## 4.2 CONVERGENCE OF MLNN INFERENCE

Theorem 2 (Convergence of MLNN Inference) . For a finite set of propositions and a finite set of worlds, the MLNN Upward-Downward inference algorithm converges to a fixed point.

Proof. The proof relies on the monotonic nature of the bound update operations, established in Lemma 1. The network consists of a finite set of neurons k , each storing truth bounds [ L k , U k ] . The Upward-Downward algorithm Riegel et al. (2020) is an iterative application of bound update functions f k .

Each update function f k (for ∧ , ∨ , → , □ , ♢ ) is a composition of monotonic functions. The base LNN operators are known to be monotonic Riegel et al. (2020). By Lemma 1, the new modal operators, softmin , softmax , and conv-pool , composed with the accessibility weights ˜ A , are also monotonic with respect to their inputs. Therefore, the entire update function for any bound is monotonic.

During inference, each lower bound L k forms a non-decreasing sequence, L ( t +1) k ≥ L ( t ) k , which is bounded above by 1. Simultaneously, each upper bound U k forms a non-increasing sequence,

U ( t +1) k ≤ U ( t ) k , which is bounded below by 0. By the monotone convergence theorem, each of these bounded monotonic sequences must converge to a limit. Since the number of bounds in the network is finite, the joint state of all bounds must converge to a global fixed point.

## 4.3 EXPRESSIVITY AND GUARANTEES OF THE LEARNABLE RELATION

The parametrized accessibility relation A θ is a particularly powerful feature for interoperability, allowing us to maintain the classification strength of neural networks while adhering to symbolic structures. This capability is theoretically grounded in the Universal Approximation Theorem Cybenko (1989); Hornik (1991), which posits that a neural network with sufficient capacity (as used for A θ ) can approximate the characteristic function of any arbitrary accessibility relation R . This flexibility allows the MLNN to inductively discover the appropriate modal logic system (e.g., T , S4 , S5 ) that best explains the data by minimizing contradiction.

This learning can be guided. We can enforce specific modal axioms by adding regularization terms to the contradiction loss that penalize violations of the corresponding relational properties. For example, the axiom T , □ ϕ → ϕ , requires the relation to be reflexive ( wRw ). This can be encouraged by a regularization loss:

$$L _ { r } = \sum _ { i = 1 } ^ { | W | } ( 1 - A _ { 0 } ( w _ { 1 } , w _ { 2 } ) ^ { 2 }$$

Minimizing L T forces each diagonal term toward 1, directly encouraging reflexivity.

Similarly, axiom 4 , □ ϕ → □□ ϕ , requires transitivity. This can be encouraged by:

$$L _ { 4 } = \sum _ { i , j } ^ { L _ { 4 } } max ( 0 , A _ { 2 } ) _ { i } - A _ { 6 } ( w _ { i } )$$

which enforces a soft version of transitivity where a direct path A θ ( w i , w j ) must be at least as strong as any two-step path ( A 2 θ ) ij .

An optional symmetry regularizer for axiom B can also be applied:

$$\sum _ { i < j } ( A _ { 0 } ( w _ { i } , w _ { j } ) - A _ { 0 } ( w _ { j } ,$$

By combining these regularizers with weights ( λ T , λ 4 , λ S ) , A θ can be trained to learn a relation that is a soft approximation of an S4 (reflexive, transitive) or S5 (equivalence) relation.

Trade-offs in Axiomatic Regularization It is important to be precise about the guarantees provided by these regularizers. As λ T → ∞ , the learned A θ approaches a relation that minimizes L T , but this is balanced against the task loss and contradiction loss. The regularizers provide soft guidance, not hard constraints.

Empirical Analysis of Axiomatic Regularization We analyzed the trade-off between task performance and axiomatic compliance by applying three distinct modal regularizers-Reflexivity ( T ), Transitivity ( 4 ), and Symmetry ( B )-to the synthetic ring task. We swept the regularization weight λ ∈ [0 , 10] for each axiom.

As summarized in Table 7, we observe a consistent 'tug-of-war' across all three logical constraints. At λ = 0 , the model prioritizes the ground-truth ring structure (minimizing MSE) while violating the axioms (high ϵ ). As λ increases to 10.0, the model successfully minimizes the axiomatic errors ( ϵ R , ϵ 4 , ϵ B ) but incurs a penalty in structure MSE. Notably, Symmetry ( B ) is the easiest to satisfy ( ϵ B → 0 . 00 ), while Reflexivity ( T ) faces the strongest resistance from the data topology ( ϵ R plateaus at 0.461).

In practice, we observe that:

1. For moderate λ values, the model finds a balance that approximately satisfies axioms while fitting the data.

2. Very high λ values can harm task performance if the 'correct' relation for the task does not strictly satisfy the axiom.

3. The learned theory is constrained by the expressive power of the axioms and the information available in the data.

The guarantees are therefore about logical consistency with the specified axioms (soundness relative to the soft constraints), not about semantic 'correctness' or completeness in an absolute sense.

## 4.4 COMPLEXITY ANALYSIS

Proposition 1 (Computational Complexity) . The computational cost for a single modal neuron ( □ or ♢ ) in an MLNN scales as O ( | W | ) for a fixed sparse accessibility relation, or O ( | W | 2 ) if a dense learnable relation A θ is explicitly materialized. The complexity for one full inference pass over a network with N formulae is therefore bounded by O ( N · | W | 2 ) in the naive dense case.

While this quadratic scaling appears restrictive, the MLNN framework fundamentally bypasses this bottleneck through metric learning parameterizations. Rather than enumerating pairwise links in a static N × N matrix, the accessibility relation can be defined intensionally via a kernel function or geometric distance over latent state embeddings ϕ : W → R d (where d ≪| W | ). This shift moves the problem from relational enumeration to representation learning, reducing the parameter space to O ( | W | · d ) .

We empirically validate this linear scaling behavior in Section 5.6, demonstrating that the metric parameterization enables training on graphs with N = 20 , 000 nodes on a single GPU, whereas the dense formulation fails due to memory constraints at N = 10 , 000 .

In this geometric regime, retrieving accessible worlds transforms from a row-scan to a nearestneighbor search, allowing the use of approximate search algorithms (e.g., Locality Sensitive Hashing) to reduce runtime complexity to O ( | W | log | W | ) or even linear time. Although the scale of the multi-agent scenarios in this study did not necessitate this optimization, allowing us to compute the full exact relation, this theoretical property ensures that MLNNs remain viable for tasks with massive state spaces, provided the accessibility relation exhibits underlying geometric structure.

## 5 EXPERIMENTS

We conducted a series of experiments to validate the deductive reasoning, inductive learning, and combined capabilities of MLNNs across diverse problem domains. As no canonical benchmarks exist for evaluating differentiable modal reasoning with a learnable accessibility relation, we construct a set of reference tasks by adapting existing datasets and logical puzzles so that the underlying queries are genuinely modal (involving necessity, possibility, or epistemic structure) rather than purely propositional. Each task is designed to isolate a particular capability: enforcing fixed symbolic constraints (Sections 5.1, 5.2 and 5.7) and learning relational structure from data (Sections 5.3, 5.4, and 5.5). Section 5.7 specifically evaluates the framework's ability to navigate non-convex optimization landscapes with rigid, predefined rules. Our goal is not to establish state-of-the-art performance on these datasets, but to provide clear, controlled studies of when and how modal structure matters. Accordingly, we report comparisons against (i) ablated variants of our architecture (e.g., without modality or without a learnable accessibility relation) and (ii) standard neural baselines with identical propositional backbones (e.g., a BiLSTM), so that any observed differences can be attributed to the modal components.

Statistical Reporting Unless otherwise noted, all quantitative results report mean ± standard deviation over 5 independent runs with different random seeds. We specify hyperparameters in Appendix A. For key comparisons, we report 95% confidence intervals and perform paired t-tests where appropriate.

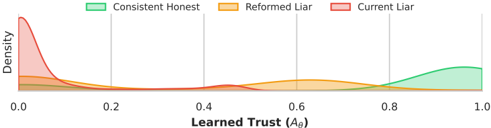

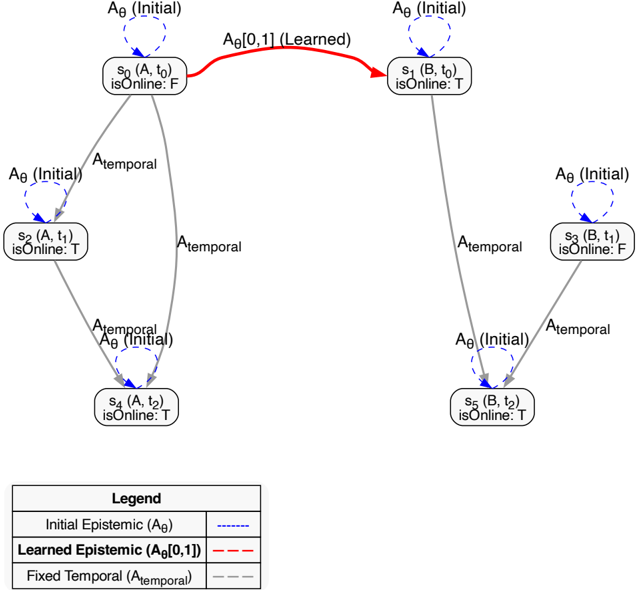

## 5.1 CASE STUDY: ENFORCING SYMBOLIC CONSTRAINTS OVER STATISTICAL PRIORS

This experiment investigates the MLNN's capacity to act as a mechanism for enforcing user-defined policies over a statistical model. While deep learning models like LSTMs maximize likelihood based

on data distribution, safety-critical applications often require adherence to explicit rules regardless of that distribution. We utilize Part-of-Speech (POS) tagging as a proxy task to demonstrate this control, defining a set of rigid logical constraints (e.g., tagging a determiner followed by a verb: 'the / DET go / VERB') to test the framework's ability to override statistical patterns.

Motivation and Real-World Relevance This case study is intentionally stylized: starting from a standard POS-tagging setup, we impose a set of deliberately rigid grammatical constraints to create a setting where modal policies explicitly conflict with natural data statistics. While the specific axioms are simplified, the underlying capability-enforcing user-defined constraints that override learned statistical patterns-has direct applications in safety-critical domains. For instance, in medical NLP, one might enforce constraints like 'a drug dosage must always be followed by a unit' or in legal document processing, 'a contract clause must not contradict the preamble.' The POS task serves as a controlled proxy for these scenarios.

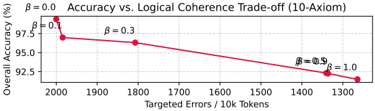

Methodology We compared a baseline BiLSTM tagger against a structurally-aware MLNN tagger. The MLNN used the same BiLSTM architecture as its 'proposer' network (the 'Real' world) but was augmented with two additional, specialized latent worlds: a 'Pessimistic' world ( w 1 ), designed to penalize violations of necessity ( □ ) axioms, and an 'Exploratory' world ( w 2 ) to add noise. Both models were trained on the same supervised data, but the MLNN's loss function was a weighted sum of the standard supervised loss and the logical contradiction loss, L total = L task + βL contra. We swept β ∈ { 0 , 0 . 1 , 0 . 3 , 0 . 5 , 0 . 9 , 1 . 0 } to trace the trade-off between statistical accuracy and logical consistency.

The MLNN guardrail functions by creating a multi-world Kripke structure where the accessibility relations allow modal axioms to inspect alternative possibilities. For example, the axiom □ ¬ ( DET i ∧ VERB i +1 ) is evaluated by checking the truth bounds across all accessible worlds (including w 1 , where penalties are active). The resulting contradiction loss, L contra, penalizes the model's 'Real' world propositions if they lead to a system-wide logical inconsistency, effectively steering the BiLSTM proposer away from outputting tags that violate the policy, even if those tags are statistically probable.

We tested the scalability of this approach with sets of 3, 6, and 10 axioms. It is important to note that these axioms are deliberately rigid simplifications of grammar (detailed in Appendix A.3).

Results and Evaluation The results, summarized in Table 2, demonstrate the MLNN's capability to enforce constraints. The baseline BiLSTM ( β = 0 ) achieved high token-level accuracy (99.38% ± 0.02%) by closely fitting the natural language data, but consequently committed thousands of violations against our rigid axiom set (2000.07 ± 45.3 per 10k tokens).

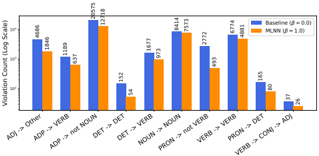

As β increased, the MLNN prioritized the logical policy over the data distribution. In the 10-axiom experiment, increasing β to 1.0 reduced the number of policy violations by 36.8% . Crucially, this compliance came at the cost of overall accuracy (dropping to 91.49% ± 0.31%), which serves as a quantitative measure of the 'Cost of Alignment'. This trade-off, plotted in Figure 3, confirms that the logic component is strong enough to suppress learned statistical patterns (such as intervening adjectives flagged by simplified axioms) to satisfy the user's constraints. This effect was even stronger in the 6-axiom experiment, yielding a 49.6% reduction in violations. The per-axiom breakdown is shown in Figure 2.

Table 2: Analysis of MLNN as a constraint enforcement mechanism. We report metrics for the 10-axiom setting, sweeping the contradiction loss weight β . Results show mean ± std over 5 runs. The drop in Overall Accuracy reflects the model diverging from the data distribution to satisfy the strict logical policy.

| Model (10 Axioms) | Overall Acc. (%) | Policy Violations / 10k | Violation Reduction | ECE (%) |

|----------------------------------|--------------------|---------------------------|-----------------------|-------------|

| Baseline (Non-modal, β = 0 . 0 ) | 99.38 ± 0.02 | 2000.07 ± 45.3 | - | 0.49 ± 0.03 |

| MLNN ( β = 0 . 1 ) | 96.98 ± 0.15 | 1984.24 ± 52.1 | 0.7% | 1.69 ± 0.08 |

| MLNN ( β = 0 . 3 ) | 96.31 ± 0.22 | 1807.10 ± 48.7 | 9.3% | 1.94 ± 0.11 |

| MLNN ( β = 1 . 0 ) | 91.49 ± 0.31 | 1264.26 ± 61.2 | 36.8% | 4.59 ± 0.24 |

Figure 2: Per-axiom violation counts (log scale) for the 10-axiom experiment, comparing the baseline BiLSTM with the MLNN guardrail ( β = 1 . 0 ). The MLNN significantly reduces violations for all targeted axioms.

<details>

<summary>Image 2 Details</summary>

### Visual Description

## Bar Chart: Violation Counts by Grammatical Structure (Baseline vs MLNN)

### Overview

The chart compares violation counts (on a logarithmic scale) between two models: Baseline (β=0.0) and MLNN (β=1.0) across 10 grammatical structure categories. The y-axis uses a log scale (10–1000), and the x-axis lists grammatical relationships (e.g., "Adj -> Other", "ADP -> VERB").

### Components/Axes

- **X-axis**: Grammatical structure categories (10 total):

1. Adj -> Other

2. ADP -> VERB

3. ADP -> not NOUN

4. DET -> DET

5. DET -> VERB

6. NOUN -> NOUN

7. PRON -> not VERB

8. VERB -> VERB

9. PRON -> DET

10. VERB -> CONJ -> ADJ

- **Y-axis**: Violation Count (Log Scale, 10–1000)

- **Legend**:

- Blue bars: Baseline (β=0.0)

- Orange bars: MLNN (β=1.0)

- **Legend Position**: Top-right corner

### Detailed Analysis

1. **Adj -> Other**:

- Baseline: 4,686

- MLNN: 1,846

2. **ADP -> VERB**:

- Baseline: 1,109

- MLNN: 637

3. **ADP -> not NOUN**:

- Baseline: 30,573 (highest)

- MLNN: 12,318

4. **DET -> DET**:

- Baseline: 152

- MLNN: 54

5. **DET -> VERB**:

- Baseline: 1,677

- MLNN: 973

6. **NOUN -> NOUN**:

- Baseline: 8,414

- MLNN: 7,573

7. **PRON -> not VERB**:

- Baseline: 2,772

- MLNN: 493

8. **VERB -> VERB**:

- Baseline: 6,774

- MLNN: 4,881

9. **PRON -> DET**:

- Baseline: 165

- MLNN: 80

10. **VERB -> CONJ -> ADJ**:

- Baseline: 37

- MLNN: 26

### Key Observations

- **Baseline Dominance**: Baseline violations consistently exceed MLNN across all categories, with the largest gap in "ADP -> not NOUN" (30,573 vs 12,318).

- **MLNN Reduction**: MLNN reduces violations by 50–90% in most categories (e.g., "Adj -> Other" drops from 4,686 to 1,846).

- **Lowest Violations**: "VERB -> CONJ -> ADJ" has the smallest counts (37 vs 26), suggesting both models perform well here.

- **Outlier**: "ADP -> not NOUN" has the highest violation count for Baseline, indicating potential grammatical ambiguity in this structure.

### Interpretation

The data demonstrates that MLNN significantly reduces grammatical violations compared to Baseline, particularly in complex structures like "ADP -> not NOUN" and "PRON -> not VERB". The logarithmic scale emphasizes the disparity in violation magnitudes, with MLNN showing stronger performance in high-violation categories. The minimal violations in "VERB -> CONJ -> ADJ" suggest this structure is inherently less ambiguous. The consistent trend across categories implies MLNN’s β=1.0 parameter effectively constrains ungrammatical constructions.

</details>

<details>

<summary>Image 3 Details</summary>

### Visual Description

## Line Graph: Accuracy vs. Logical Coherence Trade-off (10-Axiom)

### Overview

The image is a line graph illustrating the trade-off between overall accuracy and logical coherence as a function of targeted errors per 10,000 tokens. The graph shows a single red line connecting five data points, each labeled with a β (beta) value. The x-axis represents "Targeted Errors / 10k Tokens" (ranging from 1300 to 2000), and the y-axis represents "Overall Accuracy (%)" (ranging from 92.5% to 97.5%). The legend on the right maps β values (0.0, 0.1, 0.3, 0.5, 1.0) to specific data points.

### Components/Axes

- **X-axis**: "Targeted Errors / 10k Tokens" (values: 1300, 1400, 1500, 1600, 1700, 1800, 1900, 2000).

- **Y-axis**: "Overall Accuracy (%)" (values: 92.5%, 93.0%, 93.5%, 94.0%, 94.5%, 95.0%, 95.5%, 96.0%, 96.5%, 97.0%, 97.5%).

- **Legend**: Located on the right, with β values (0.0, 0.1, 0.3, 0.5, 1.0) mapped to distinct data points.

- **Line**: A single red line connects all data points, showing a downward trend.

### Detailed Analysis

- **Data Points**:

- β = 0.0: (2000, 97.5%)

- β = 0.1: (1900, ~97.0%)

- β = 0.3: (1800, ~96.5%)

- β = 0.5: (1700, ~96.0%)

- β = 1.0: (1300, 92.5%)

- **Trend**: The red line slopes downward linearly from left to right, indicating a consistent decrease in accuracy as targeted errors decrease (β increases).

### Key Observations

1. **Linear Relationship**: The trade-off between accuracy and logical coherence is linear, with no curvature in the line.

2. **Accuracy Decline**: Accuracy drops by ~5% as targeted errors decrease from 2000 to 1300 tokens.

3. **β Parameter**: Higher β values correspond to lower targeted errors and lower accuracy, suggesting β controls the balance between accuracy and coherence.

### Interpretation

The graph demonstrates that reducing targeted errors (via higher β values) improves logical coherence but reduces overall accuracy. This trade-off implies that optimizing for coherence (e.g., in AI models) may come at the cost of accuracy. The linear trend suggests a predictable, proportional relationship between β and the trade-off effect. The absence of other lines in the graph (despite the legend listing multiple β values) may indicate a simplified visualization or focus on a single β trajectory.

</details>

Figure 3: Trade-off between Data Fidelity (Accuracy) and Policy Adherence (Logic) in the 10axiom task. As the logical contradiction weight ( β ) increases, the model sacrifices raw accuracy to strictly enforce the user-defined constraints.

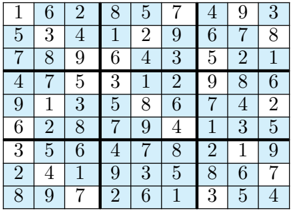

## 5.2 REASONING FOR LOGICAL INDETERMINACY

This experiment tests a key capability of MLNNs: robustly detecting ambiguous or out-of-scope inputs by executing user-defined logical rules, rather than failing unpredictably. Standard classifiers operate under a 'closed-world assumption,' forcing a choice between the classes they were trained on. We demonstrate how an MLNN can be designed to explicitly handle 'open-world' ambiguity by reasoning about its own logical definitions.

Motivation and Task Design Justification This experiment is designed as a controlled probe of logical indeterminacy rather than a realistic dialect-identification benchmark. We tasked a model with classifying English sentences as American (AmE) or British (BrE). The challenge arises with 'Neutral' sentences-those containing either no dialectal indicators (e.g., 'The cat sat on the mat') or a contradictory mix of both (e.g., 'My favorite lorry has a new color'). A standard classifier trained only on clear AmE/BrE examples will fail catastrophically, as it has no concept of 'Neutral' and will assign one of the two labels based on spurious statistical noise.

While this specific task is synthetic, the underlying capability has practical applications. In safetycritical classification (e.g., medical diagnosis), a system should abstain when inputs fall outside its training distribution rather than making confident but unreliable predictions. Unlike statistical uncertainty quantification methods like Conformal Prediction, which provide coverage guarantees based on calibration data, MLNNs enable semantic abstention based on user-defined logical rules. This means the abstention criterion is interpretable and can be specified a priori (e.g., 'abstain if symptoms are contradictory') rather than learned post-hoc from held-out data.

Methodology We compared three models: (1) A baseline BiLSTM classifier. (2) The same BiLSTM classifier augmented with Conformal Prediction (CP), providing a strong statistical abstention baseline. We used a non-conformity score of 1 -max ( softmax ) and set the error rate α = 0 . 05 . (3) An MLNN-based reasoner. All models that required training were trained only on sentences clearly labeled as AmE or BrE (1,771 sentences).

To isolate and test the framework's core deductive capabilities, the MLNN in this experiment uses a fixed, user-defined Kripke model. This model is simulated by applying different certainty thresholds to the output of a pre-trained 'expert' network, effectively creating multiple 'worlds' of belief.

The reasoning system consists of two key stages. First, the Valuation Function is realized by a pre-trained BiLSTM. For any given sentence, this network analyzes the text and outputs continuous truth bounds in [0 , 1] for two atomic propositions: 'HasAmE' and 'HasBrE'. For example, for the sentence 'My favorite truck...', it might output a high value for 'HasAmE' and a low one for 'HasBrE'. Second, this output feeds into a Kripke Model & Axioms stage. The MLNN reasoner is not trained; instead, it applies a fixed deductive logic. It simulates a three-world Kripke model (Real, Skeptical, Credulous) by applying different certainty thresholds to the predictor's scores. A proposition is considered necessarily true ( □ P ) if its score exceeds 0.9, and possibly true ( ♢ P ) if its score exceeds 0.1. These derived modal truth values are then used to evaluate the final classification axioms, such as: □ ( HasAmE ) ∧ ¬ ♢ ( HasBrE ) → IsAmE, and the abstention rule ( ♢ ( HasAmE ) ∧

<details>

<summary>Image 4 Details</summary>

### Visual Description

## Line Chart: Risk-Coverage Curve (Dialect Task)

### Overview

The chart compares two approaches for risk-coverage performance in a dialect task: "BiLSTM + Conformal Prediction (CP)" and "Modal MLNN Reasoner (Fixed Point)". The y-axis represents "Selective Risk (1 - Accuracy on non-abstained)" (0.00–1.00), while the x-axis represents "Risk-Coverage" (0.0–1.0). The blue line (BiLSTM + CP) shows a steep initial increase followed by a plateau, while the red data point (Modal MLNN) remains at the origin.

### Components/Axes

- **Y-Axis**: "Selective Risk (1 - Accuracy on non-abstained)" (0.00–1.00, increments of 0.25)

- **X-Axis**: "Risk-Coverage" (0.0–1.0, increments of 0.2)

- **Legend**:

- Blue line: "BiLSTM + Conformal Prediction (CP)"

- Red circle: "Modal MLNN Reasoner (Fixed Point)"

- **Placement**: Legend is positioned in the bottom-right corner.

### Detailed Analysis

- **BiLSTM + CP (Blue Line)**:

- Starts at ~0.75 on the y-axis at x=0.0.

- Rises sharply to 1.00 by x=0.2.

- Remains flat at 1.00 for x=0.2–1.0.

- **Modal MLNN Reasoner (Red Circle)**:

- Single data point at (x=0.0, y=0.00).

### Key Observations

1. The BiLSTM + CP approach achieves near-perfect risk coverage (y=1.00) after x=0.2.

2. The Modal MLNN Reasoner shows no risk coverage (y=0.00) at x=0.0.

3. The blue line’s steep ascent suggests rapid improvement in performance with increasing risk-coverage.

### Interpretation

The chart demonstrates that the BiLSTM + Conformal Prediction method outperforms the Modal MLNN Reasoner in balancing risk and coverage for dialect tasks. The abrupt plateau at y=1.00 for BiLSTM + CP implies it achieves full accuracy on non-abstained data once risk-coverage exceeds 20% (x=0.2). In contrast, the Modal MLNN Reasoner fails to cover any risk at the measured point, highlighting a critical limitation. This suggests BiLSTM + CP is more robust for dialect tasks requiring high accuracy under constrained risk.

</details>

Coverage (1 - Abstention Rate)

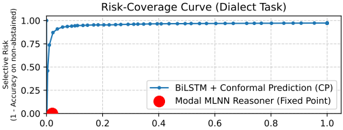

Figure 4: Risk-Coverage curve comparing CP with the rule-based MLNN Reasoner on dialect task. The CP model's performance (blue line) shows a trade-off of coverage and risk as threshold varies. The MLNN operates at a single, fixed point (red circle) achieving near-perfect selective accuracy (low risk).

<details>

<summary>Image 5 Details</summary>

### Visual Description

## Line Chart: MLNN Training: L_contradiction and Aθ vs. Epoch

### Overview

The chart visualizes the training dynamics of a Multi-Layer Neural Network (MLNN) over 30 epochs, comparing two metrics: **Contradiction Loss (L_contradiction)** and **Accessibility Weight (Aθ)**. Two accessibility weight scenarios are plotted: one where Aθ transitions from A to B (Aθ[0,1]) and another where it remains within A (Aθ[0,0]).

### Components/Axes

- **X-axis**: "Epoch" (0 to 30, linear scale).

- **Left Y-axis**: "Contradiction Loss (L_contradiction)" (0.00 to 0.50, linear scale).

- **Right Y-axis**: "Accessibility Weight (Aθ)" (0.0 to 1.0, linear scale).

- **Legend**: Located at the top-left of the chart, with three entries:

- Green line: "L_contradiction"

- Blue line: "Aθ[0, 1] (A,t0 → B,t0)"

- Red dashed line: "Aθ[0, 0] (A,t0 → A,t0)"

### Detailed Analysis

1. **L_contradiction (Green Line)**:

- Starts at **0.5** (epoch 0) and decreases exponentially to **0.0** by epoch 30.

- Slope: Steep decline initially, flattening as epochs increase.

2. **Aθ[0,1] (Blue Line)**:

- Starts at **0.0** (epoch 0) and rises sharply to **1.0** by epoch 20.

- Plateaus at **1.0** from epoch 20 to 30.

- Slope: Linear ascent until epoch 20, then horizontal.

3. **Aθ[0,0] (Red Dashed Line)**:

- Remains constant at **1.0** across all epochs.

- No slope; horizontal line.

### Key Observations

- **L_contradiction** decreases monotonically, indicating improved model performance over time.

- **Aθ[0,1]** (A→B transition) increases accessibility weight rapidly, while **Aθ[0,0]** (A→A) remains static.

- The blue and red lines intersect at epoch 15, where Aθ[0,1] surpasses Aθ[0,0].

### Interpretation

The chart demonstrates that:

1. **Contradiction Loss Reduction**: The model’s ability to resolve contradictions improves significantly with training, suggesting effective learning.

2. **Accessibility Dynamics**:

- Transitioning from A to B (Aθ[0,1]) enhances accessibility weight, implying better generalization or adaptability.

- Staying within A (Aθ[0,0]) does not improve accessibility, highlighting the importance of cross-domain transitions for robustness.

3. **Intersection at Epoch 15**: The crossover point suggests that A→B transitions become more critical than A→A stability after 15 epochs.

This analysis aligns with MLNN training principles, where reducing loss and optimizing accessibility weights are critical for model efficacy.

</details>

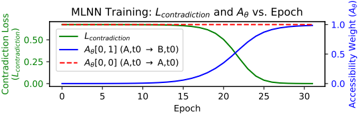

Figure 5: MLNN training dynamics for the epistemic learning task. As the Contradiction Loss (green) decreases, the model is forced to learn a new epistemic link. The targeted accessibility weight A θ [0 , 1] (blue) increases from 0 to ≈ 1.0 to satisfy the axiom. A control weight, A θ [0 , 0] (red, dashed), remains unchanged.

♢ ( HasBrE )) ∨ ( ¬ ♢ ( HasAmE ) ∧ ¬ ♢ ( HasBrE )) → IsNeutral. The class with the highest final truth value is chosen.

Results and Evaluation The results, shown in Table 3, demonstrate a qualitative difference in behavior. The baseline BiLSTM, as predicted, failed completely on the Neutral class, achieving 0% recall and forcing guesses based on statistical noise, resulting in an overall accuracy of just 2.6%. The Conformal Prediction baseline, a robust statistical method for abstention, performed better but was still unable to reliably identify all Neutral sentences. While its abstentions were precise (98% precision), it achieved only 34% recall on the unseen Neutral class.

The MLNN reasoner, in contrast, achieved the designed detection of unknown inputs. It correctly executed its logical axioms, classifying all 9,207 unseen Neutral sentences correctly for a recall of 100%. This highlights a key strength of the framework: the MLNN allows a user to define the logic of abstention semantically (e.g., 'abstain if A and B are true'), rather than relying on a post-hoc statistical uncertainty threshold. This provides a framework for building systems that know what they do not know, based on explicit rules. The risk-coverage curve in Figure 4 further illustrates this: while CP provides a smooth trade-off between statistical accuracy and coverage, the MLNN's rule-based approach operates at a single, logically-defined point of near-zero risk (high accuracy on non-abstained items) by correctly identifying all logically indeterminate data as per its rules.

Table 3: Performance on the 3-class dialect task (AmE, BrE, Neutral). All models were trained only on AmE/BrE data. P/R/F1 refer to Precision, Recall, and F1-Score for each class. Results show mean over 5 runs (std < 0.01 for all metrics).

| Model | AmE (P/R/F1) | BrE (P/R/F1) | Neutral (P/R/F1) | Overall Acc. |

|----------------------------|----------------|----------------|--------------------|----------------|

| Baseline BiLSTM | .03/.97/.05 | .03/.34/.06 | .00/.00/.00 | 2.6% |

| BiLSTM + CP ( α = 0 . 05 ) | .03/.78/.06 | .00/.00/.00 | .98/.34/.51 | 35.1% |

| MLNN Reasoner | 1.00/.72/.84 | 1.00/.58/.73 | .99/1.00/1.00 | 99.1% |

## 5.3 LEARNING EPISTEMIC RELATIONS AND EVALUATING COMPOSITE OPERATORS

Motivation This controlled toy model isolates the inductive aspect of our framework: learning an epistemic accessibility relation and evaluating nested modal operators in a minimal setting where the ground truth is known. A core claim of the MLNN framework is its ability to inductively learn the structure of modal logic, particularly the accessibility relation A θ , by minimizing logical contradictions. Furthermore, the framework proposes a method for evaluating complex, nested modal formulae involving different logical systems (e.g., temporal and epistemic, see Section 3.5). This experiment provides a focused demonstration of these two capabilities in a controlled setting.

Methodology We constructed a simple spacetime Kripke model with two agents (A, B) over three discrete time steps ( t 0 , t 1 , t 2 ) , resulting in 6 'spacetime' states. A single proposition, 'isOnline', was defined with varying truth values across these states. The model was given a fixed temporal relation

R temporal (encoding the flow of time) and a learnable epistemic relation A θ , which was initialized to be 'siloed' (agents only see themselves). The training objective was to resolve a single, targeted logical contradiction: we asserted the axiom that 'Agent A at t 0 must consider it possible that the system is online' ( ♢ epistemic ( isOnline ) at s 0 ). This was a contradiction because s 0 was initially isolated and 'isOnline' was False in its own state. The model could only resolve this by learning a new epistemic link. Full details of the model, states, and axiom are in Appendix A.6.

Results and Evaluation The training successfully resolved the contradiction, validating the inductive learning claim. As shown in Figure 5, the contradiction loss converged to near-zero (0.003 ± 0.001 over 5 runs). This was driven by the targeted modification of the A θ matrix: the model learned the specific accessibility link from s 0 (Agent A, t 0 ) to s 1 (Agent B, t 0 ), where 'isOnline' was True. This specific weight A θ [0 , 1] increased from 0.0 to 0.99 ± 0.01, while unrelated weights remained unchanged (mean change < 0.02), demonstrating the localized nature of the gradient updates. Posttraining evaluation confirmed the model's resulting logical coherence. It correctly satisfied the training axiom, deduced the related 'knows' formula ( K ( isOnline ) ) as False, and successfully evaluated both standard temporal operators and complex, nested K ( G ( isOnline )) formulae. A generalization check further confirmed that the training did not corrupt the epistemic isolation of unrelated states.

Discussion This experiment, while simple, validates two key aspects of the MLNN framework. First, it demonstrates that the learnable accessibility relation A θ can indeed be modified via gradient descent on a contradiction loss to discover relational structures required by logical axioms. The model effectively learned an inter-agent dependency (A's knowledge depending on B's state) without direct supervision, purely from a logical constraint. Second, it confirms the mechanism for evaluating composite modal operators by nested computation, allowing different logical systems (temporal, epistemic) defined by distinct accessibility matrices to interact correctly within the unified spacetime Kripke model.

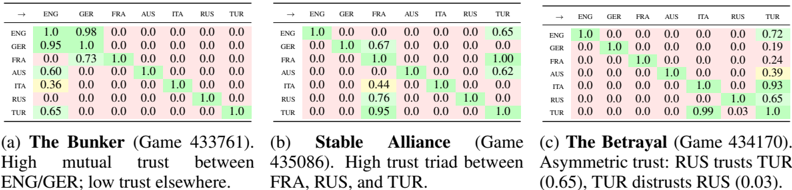

## 5.4 CASE STUDY IN REAL DIPLOMACY GAMES: LEARNING EPISTEMIC TRUST

This case study uses real Diplomacy game logs as a qualitatively richer source of epistemic structure. The task is designed to probe whether MLNNs can recover interpretable patterns of 'who trusts whom', not to compete with specialized Diplomacy agents.

To validate our framework's ability to model complex epistemic states from real-world data, we apply the MLNN to game logs from the 'in-the-wild' domain of Diplomacy Bakhtin et al. (2022). This domain provides a rich testbed for multi-agent systems defined by unstructured natural language negotiation, hidden information, and strategic deception. Our objective is to demonstrate that an MLNN can inductively recover interpretable social structures (alliances, distrust, and deception) purely by minimizing logical contradictions between agents' communicated intent and their subsequent actions. It demonstrates how MLNNs transform communication from natural text into a logical mechanism.

## Comparison with Graph Inference Techniques

Methodological Pipeline: Self-Supervised Logical Consistency Wemodel the game as a Kripke structure where the accessibility relation A θ (representing trust) is latent and must be learned. The learning process is driven by a self-supervised objective that detects discrepancies between word and deed (Figure 10).

The pipeline begins by embedding the dialogue history between agents using a pre-trained transformer. This embedding is passed through a neural head to estimate a scalar truth value P intent ∈ [0 , 1] , representing the probability of cooperative intent. Based on the pragmatic structure of the game, we treat the existence of private negotiation as a prima facie assertion of cooperation ( P ≈ 1 . 0 ). Simultaneously, we extract the ground-truth physical actions Q action from the game logs, flagging moves as either hostile ( Q = 0 . 0 ) or cooperative ( Q = 1 . 0 ).

The core of the framework is the enforcement of the consistency axiom □ ( Intent → Action ) . This modal formula asserts that if an agent signals cooperation, it is necessarily true that their actions will align with that signal relative to the trust level. The MLNN computes the logical contradiction loss for this operator. If an agent professes cooperation ( P ≈ 1 . 0 ) but performs a hostile action

Figure 6: Learned Epistemic Accessibility ( A θ ) matrices for three distinct game scenarios. Rows represent the 'Trustor' and columns the 'Trustee'. Green cells indicate high learned trust, red cells indicate low trust. The model inductively recovers different social topologies-isolation, stable cooperation, and asymmetric deception-without supervision.

<details>

<summary>Image 6 Details</summary>

### Visual Description

## Heatmap Analysis: Trust Dynamics Across Language Groups

### Overview

Three comparative heatmaps visualize trust relationships between language groups (ENG, GER, FRA, AUS, ITA, RUS, TUR) across three distinct scenarios: "The Bunker" (Game 433761), "Stable Alliance" (Game 435086), and "The Betrayal" (Game 434170). Values represent trust scores (0.0-1.0), with green highlighting higher trust and yellow indicating moderate trust.

### Components/Axes