# Sparse Attention Post-Training for Mechanistic Interpretability

**Authors**: Florent Draye, Anson Lei, Hsiao-Ru Pan, Ingmar Posner, Bernhard Schölkopf

## Abstract

We introduce a simple post-training method that makes transformer attention sparse without sacrificing performance. Applying a flexible sparsity regularisation under a constrained-loss objective, we show on models up to 7B parameters that it is possible to retain the original pretraining loss while reducing attention connectivity to $\approx 0.4\$ of its edges. Unlike sparse-attention methods designed for computational efficiency, our approach leverages sparsity as a structural prior: it preserves capability while exposing a more organized and interpretable connectivity pattern. We find that this local sparsity cascades into global circuit simplification: task-specific circuits involve far fewer components (attention heads and MLPs) with up to 100× fewer edges connecting them. Additionally, using cross-layer transcoders, we show that sparse attention substantially simplifies attention attribution, enabling a unified view of feature-based and circuit-based perspectives. These results demonstrate that transformer attention can be made orders of magnitude sparser, suggesting that much of its computation is redundant and that sparsity may serve as a guiding principle for more structured and interpretable models.

Machine Learning, ICML

## 1 Introduction

Scaling has driven major advances in artificial intelligence, with ever-larger models trained on internet-scale datasets achieving remarkable capabilities across domains. Large language models (LLMs) now underpin applications from text generation to question answering, yet their increasing complexity renders their internal mechanisms largely opaque (Bommasani, 2021). Methods of mechanistic interpretability have been developed to address this gap by reverse-engineering neural networks to uncover how internal components implement specific computations and behaviors. Recent advances in this area have successfully identified interpretable circuits, features, and algorithms within LLMs (Nanda et al., 2023; Olsson et al., 2022), showing that large complex models can, in part, be understood mechanistically, opening avenues for improving transparency, reliability, and alignment (Bereska and Gavves, 2024).

<details>

<summary>x1.png Details</summary>

### Visual Description

## Diagram: Neural Network Sparsity Regularization Comparison

### Overview

The image is a technical diagram comparing the internal connectivity of two neural network models—a "Base Model" and a "Sparse Model"—before and after a process labeled "Sparsity-Regularised Finetuning." It visually demonstrates how the finetuning process drastically reduces the number of active connections (weights) within the network while maintaining the same input-output function for a simple arithmetic task.

### Components/Axes

The diagram is divided into two primary rectangular panels, stacked vertically, with a large curved arrow connecting them on the left.

1. **Top Panel: "Base Model"**

* **Title:** "Base Model" (top-left corner of the panel).

* **Input Sequence (Bottom):** `3 6 + 2 8 = ? ? ? ? ?`

* **Output Sequence (Top):** `3 6 + 2 8 = 0 0 0 6 4`

* **Network Structure:** A grid of nodes representing neurons, organized into four horizontal rows labeled from bottom to top: "Layer 0", "Layer 1", "Layer 2", "Layer 3".

* **Connections:** A dense web of light blue lines connects nodes between adjacent layers. Several connections are highlighted in a darker, more prominent blue, indicating stronger or more significant weights. The connections are dense and distributed across all layers.

2. **Bottom Panel: "Sparse Model"**

* **Title:** "Sparse Model" (top-left corner of the panel).

* **Input Sequence (Bottom):** `3 6 + 2 8 = ? ? ? ? ?`

* **Output Sequence (Top):** `3 6 + 2 8 = 0 0 0 6 4`

* **Network Structure:** Identical grid layout and layer labels ("Layer 0" to "Layer 3") as the Base Model.

* **Connections:** The vast majority of connections are absent. Only a small cluster of dark blue lines remains, almost exclusively connecting nodes from the input sequence directly to nodes in "Layer 0". A single, isolated connection is visible between Layer 1 and Layer 2 on the far right.

3. **Connecting Element:**

* A large, black, curved arrow points from the "Base Model" panel down to the "Sparse Model" panel.

* **Label:** The text "Sparsity-Regularised Finetuning" is written vertically along the left side of this arrow.

### Detailed Analysis

* **Task:** Both models are performing the same arithmetic task: calculating `36 + 28`. The correct answer, `00064` (likely representing a 5-digit output format), is shown as the target output for both.

* **Connectivity Trend - Base Model:** The Base Model exhibits a "dense" or fully-connected-like architecture. Information flows through a complex, distributed network of connections across all four layers. The darker blue lines suggest certain pathways are more active or important for this specific computation.

* **Connectivity Trend - Sparse Model:** The Sparse Model exhibits extreme sparsity. The finetuning process has pruned nearly all connections. The remaining active pathways are highly localized:

* **Primary Cluster:** A fan of dark blue connections originates from the nodes representing the digits `3`, `6`, `+`, `2`, `8` in the input line and converges onto a subset of nodes in **Layer 0**. This suggests the model has learned to perform the core computation using direct, early-layer feature extraction.

* **Isolated Connection:** One dark blue line connects a node in Layer 1 to a node in Layer 2 on the far right side of the grid. This may represent a minimal residual pathway for propagating the result.

* **Output Consistency:** Crucially, the output `0 0 0 6 4` is identical in both models, indicating that the sparse model retains the functional capability of the dense base model for this task.

### Key Observations

1. **Dramatic Pruning:** The "Sparsity-Regularised Finetuning" process removes the vast majority of neural connections, transforming a dense network into a highly sparse one.

2. **Functional Preservation:** Despite the massive reduction in parameters (connections), the sparse model produces the exact same correct output for the given input.

3. **Localization of Computation:** The remaining computation in the sparse model is heavily concentrated in the first layer (Layer 0), with direct connections from the input. This implies the essential logic for solving `36 + 28` can be encoded with very few, direct transformations.

4. **Architectural Insight:** The diagram suggests that for this specific, simple algorithmic task, a dense network is over-parameterized. The true computational "circuit" required is small and can be isolated via sparsity-inducing techniques.

### Interpretation

This diagram is a powerful visual argument for the efficacy of **sparsity regularization** in neural network training. It demonstrates that:

* **Efficiency through Sparsity:** Large, dense models contain significant redundancy. Techniques like sparsity regularization can identify and preserve only the essential sub-network (a "winning ticket") needed for a specific task, leading to models that are potentially much smaller and faster.

* **Mechanistic Understanding:** The process acts as a form of "circuit discovery." By pruning away unused connections, it reveals the minimal computational graph the network uses to solve the problem. Here, the core arithmetic appears to be handled by direct input-to-first-layer mappings.

* **Generalization vs. Specialization:** While shown for a simple task, the principle raises questions about how such sparse sub-networks might generalize to more complex problems. The diagram highlights a trade-off: the sparse model is highly efficient for this task but may lack the distributed representation that could be beneficial for broader reasoning.

In essence, the image moves beyond showing *that* sparsity works to illustrating *how* it works—by surgically removing non-essential pathways and leaving behind a lean, functional core.

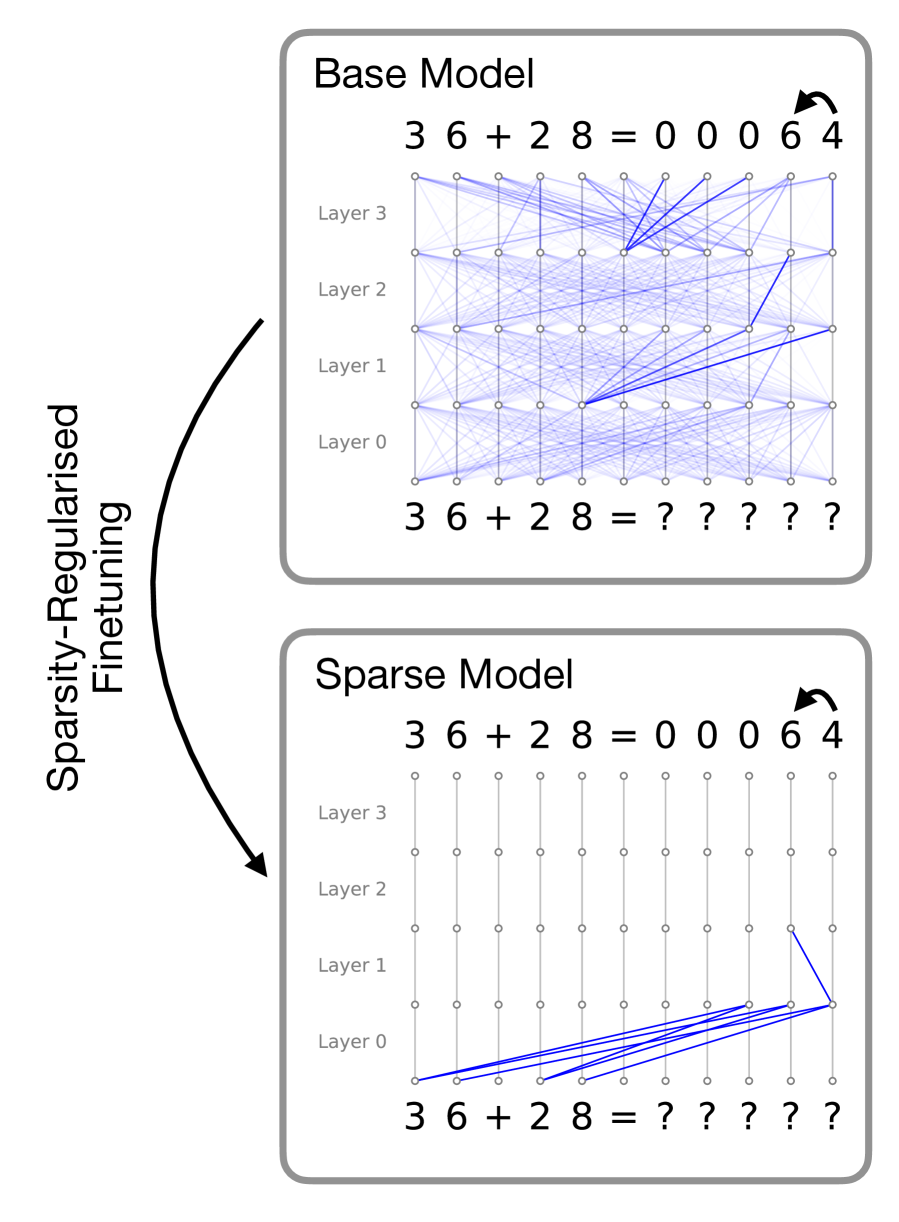

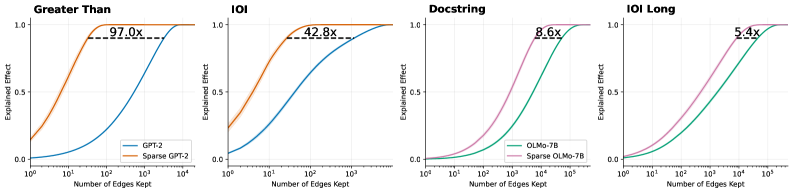

</details>

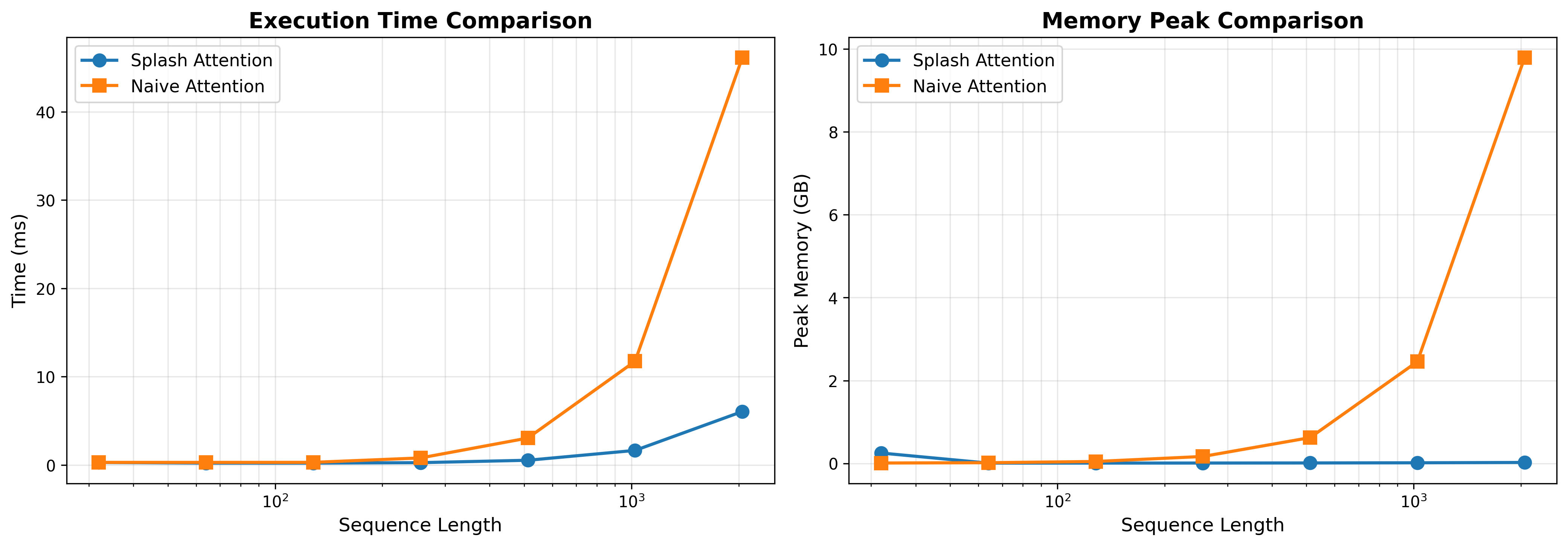

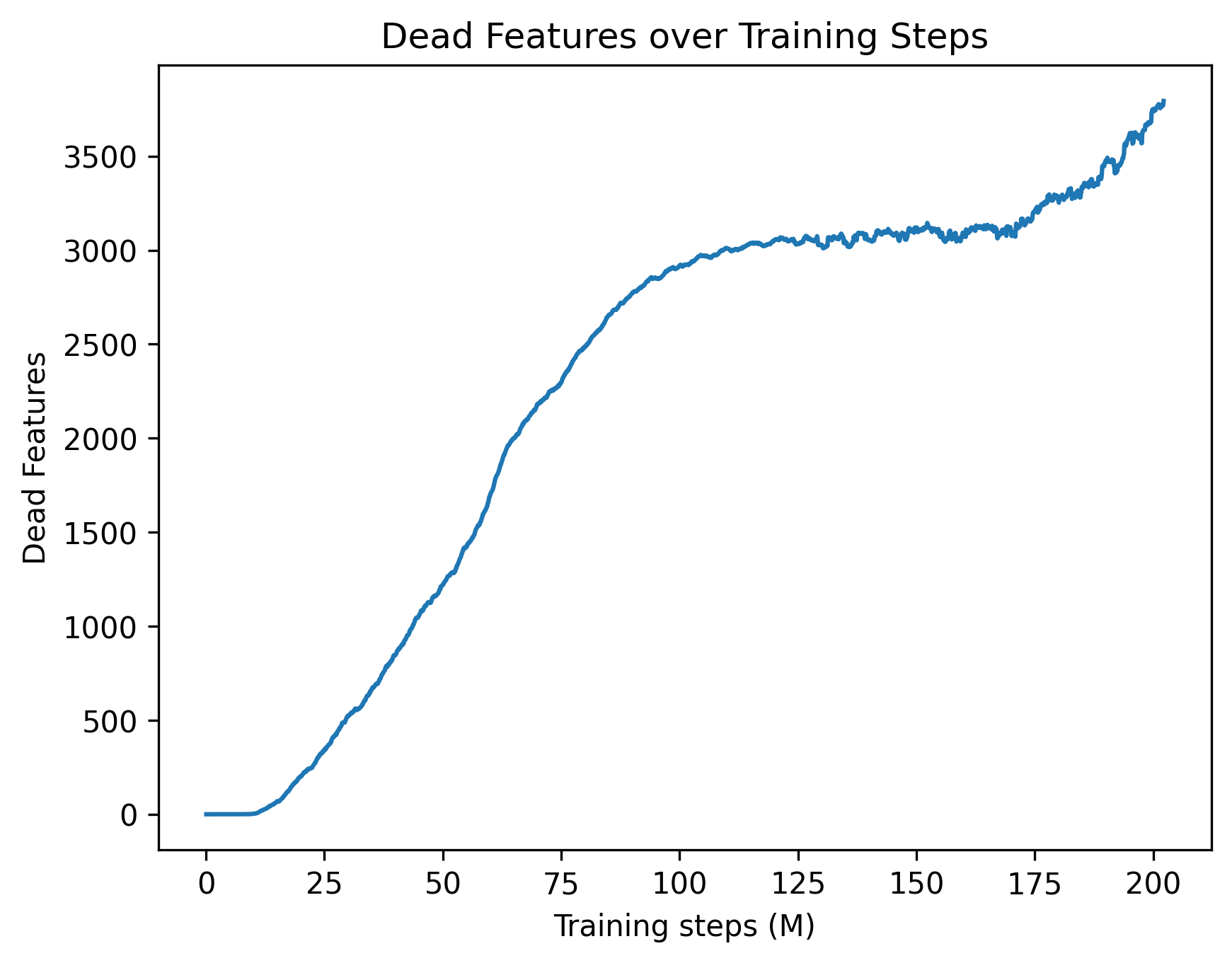

Figure 1: Visualised attention patterns for a 4-layer toy model trained on a simple 2-digit addition task. The main idea of this work is to induce sparse attention between tokens via a post-training procedure that optimizes for attention sparsity while maintaining model performance. In this example, while both models are able to correctly predict the sum, the sparse model solves the problem with a naturally interpretable circuit. Details of this toy setup and more examples are provided in Appendix A

However, interpretability is bottlenecked by the model itself: even with sophisticated reverse-engineering techniques that can faithfully reveal internal algorithms, the underlying computations implemented by large models can still remain highly complex and uninterpretable. Circuits for seemingly simple tasks may span hundreds of interacting attention heads and MLPs with densely intertwined contributions across layers (Conmy et al., 2023), and features can influence each other along combinatorially many attention-mediated paths, complicating attention attribution (Kamath et al., 2025). To exemplify this, Figure 1 (top) illustrates the attention patterns of a small, single-head transformer trained on a simple two-digit addition task. Here, the model has learned to solve the task in a highly diffused manner, where information about each token is dispersed across all token locations, rendering the interpretation of the underlying algorithm extremely difficult even in this simple case.

The crux of the problem is that models are not incentivised to employ simple algorithms during training. In this work, we advocate for directly embedding interpretability constraints into model design in a way that induces simple circuits while preserving performance. We focus our analysis on attention mechanisms and investigate sparsity regularisation on attention patterns, originally proposed in (Lei et al., 2025), as an inductive bias. To demonstrate how sparse attention patterns can give rise to interpretable circuits, we return to the two-digit addition example: Figure 1 (bottom) shows the attention patterns induced by penalising attention edges during training. Here, the sparsity inductive bias forces the model to solve the problem with much smaller, intrinsically interpretable computation circuits.

In this work, we investigate using this sparsity regularisation scheme as a post-training strategy for pre-trained LLMs. We propose a practical method for fine-tuning existing models without re-running pretraining, offering a flexible way to induce sparse attention patterns and enhance interpretability. We show, on models of up to 7B parameters, that our proposed procedure preserves the performance of the base models on pretraining data while reducing the effective attention map to less than $0.5\$ of its edges. To evaluate our central hypothesis that sparse attention facilitates interpretability, we consider two complementary settings. First, we study circuit discovery, where the objective is to identify the minimal set of components responsible for task performance (Conmy et al., 2023). We find that sparsified models yield substantially simpler computational graphs: the resulting circuits explain model behaviour using up to four times fewer attention heads and up to two orders of magnitude fewer edges. Second, using cross-layer transcoders (Ameisen et al., 2025), we analyse attribution graphs, which capture feature-level interactions across layers. In this setting, sparse attention mitigates the attention attribution problem by making it possible to identify which attention heads give rise to a given edge, owing to the reduced number of components mediating each connection. We argue that this clarity enables a tighter integration of feature-based and circuit-based perspectives, allowing feature interactions to be understood through explicit, tractable circuits. Taken together, these results position attention sparsity as an effective and practical inductive tool for surfacing the minimal functional backbone underlying model behaviour.

## 2 Related Work

### 2.1 Sparse Attention

As self-attention is a key component of the ubiquitous Transformer architecture, a large number of variants of attention mechanisms have been explored in the literature. Related to our approach are sparse attention methods, which are primarily designed to alleviate the quadratic scaling of vanilla self-attention. These methods typically rely on masks based on fixed local and strided patterns (Child et al., 2019) or sliding-window and global attention patterns (Beltagy et al., 2020; Zaheer et al., 2020) to constrain the receptive field of each token. While these approaches are successful in reducing the computational complexity of self-attention, they require hand-defined heuristics that do not reflect the internal computations learned by the model.

Beyond these fixed-pattern sparse attention methods, Top- $k$ attention, which enforces sparsity by dynamically selecting the $k$ most relevant keys per query based on their attention scores, has also been explored (Gupta et al., 2021; DeepSeek-AI, 2025). While Top- $k$ attention enables learnable sparse attention, the necessity to specify $k$ limits its scope for interpretability for two reasons. First, selecting the optimal $k$ is difficult, and setting $k$ too low can degrade model performance. Second, and more fundamentally, Top-k attention does not allow the model to choose different $k$ for different attention heads based on the context. We argue that this flexibility is crucial for maintaining model performance.

More recently, gated attention mechanisms (Qiu et al., 2025) provide a scalable and performant framework for inducing sparse attention. In particular, Lei et al. (2025) introduce a sparsity regularisation scheme for world modelling that reveals sparse token dependencies. We adopt this method and examine its role as an inductive bias for interpretability.

### 2.2 Circuit Discovery

Mechanistic interpretability seeks to uncover how internal components of LLMs implement specific computations. Ablation studies assess performance drops from removing components (Nanda et al., 2023), activation patching measures the effect of substituting activations (Zhang and Nanda, 2023), and attribution patching scales this approach via local linearisation (Syed et al., 2024). Together, these approaches allow researchers to isolate sub-circuits, minimal sets of attention heads and MLPs that are causally responsible for a given behavior or task (Conmy et al., 2023). Attention itself plays a dual role: it both routes information and exposes interpretable relational structure, making it a key substrate for mechanistic study. Our work builds on this foundation by leveraging sparsity to simplify these circuits, amplifying the interpretability of attention-mediated computation while preserving model performance.

### 2.3 Attribution Graph

Mechanistic interpretability has gradually shifted from an emphasis on explicit circuit discovery towards the analysis of internal representations and features. Recent work on attribution graphs and circuit tracing seeks to reunify these perspectives by approximating MLP outputs as sparse linear combinations of features and computing causal effects along linear paths between them (Dunefsky et al., 2024; Ameisen et al., 2025; Lindsey et al., 2025b). This framework enables the construction of feature-level circuits spanning the computation from input embeddings to final token predictions. Within attribution graphs, edges correspond to direct linear causal relationships between features. However, these relationships are mediated by attention heads that transmit information across token positions. Identifying which attention heads give rise to a particular edge, and understanding why they do so, is essential, as this mechanism forms a fundamental component of the computational graph (Kamath et al., 2025). A key limitation of current attribution-based approaches is that individual causal edges are modulated by dozens of attention components. We show that this leads to feature-to-feature influences that are overly complex, rendering explanations in terms of other features in the graph both computationally expensive and conceptually challenging.

## 3 Method

Our main hypothesis is that post-training existing LLMs to encourage sparse attention patterns leads to the emergence of more interpretable circuits. In order to instantiate this idea, we require a post-training pipeline that satisfies three main desiderata:

1. To induce sparse message passing between tokens, we need an attention mechanism that can ‘zero-out’ attention edges, which in turn enables effective $L_{0}$ -regularisation on the attention weights. This is in contrast to the standard softmax attention mechanism, where naive regularisation would result in small but non-zero attention weights that still allow information flow between tokens.

1. The model architecture needs to be compatible with the original LLM such that the pre-trained LLM weights can be directly loaded at initialisation.

1. The post-training procedure needs to ensure that the post-trained models do not lose prediction performance compared to their fully-connected counterparts.

To this end, we leverage the Sparse Transformer architecture in the SPARTAN framework proposed in (Lei et al., 2025), which uses sparsity-regularised hard attention instead of the standard softmax attention. In the following subsections, we describe the Sparse Transformer architecture and the optimisation setup, highlighting how this approach satisfies the above desiderata.

### 3.1 Sparse Attention Layer

Given a set of token embeddings, the Sparse Transformer layer computes the key, query, and value embeddings, $\{k_{i},q_{i},v_{i}\}$ , via linear projections, analogous to the standard Transformer. Based on the embeddings, we sample a binary gating matrix from a learnable distribution parameterised by the keys and queries,

$$

A_{ij}\sim\mathrm{Bern}(\sigma(q_{i}^{T}k_{j})), \tag{1}

$$

where $\mathrm{Bern}(\cdot)$ is the Bernoulli distribution and $\sigma(\cdot)$ is the logistic sigmoid function. This sampling step can be made differentiable via the Gumbel Softmax trick (Jang et al., 2017). This binary matrix acts as a mask that controls the information flow across tokens. Next, the message passing step is carried out in the same way as standard softmax attention, with the exception that we mask out the value embeddings using the sampled binary mask,

$$

\mathrm{SparseAttn}(Q,K,V)=\bigg[A\odot\mathrm{softmax}(\frac{QK^{T}}{\sqrt{d_{k}}})\bigg]V, \tag{2}

$$

where $d_{k}$ is the dimension of the key embeddings and $\odot$ denotes element-wise multiplication. During training, we regularise the expected number of edges between tokens based on the distribution over the gating matrix. Concretely, the expected number of edges for each layer can be calculated as

$$

\mathbb{E}\big[|A|\big]=\sum_{i,j}\sigma(q^{T}_{i}k_{j}). \tag{3}

$$

Note that during the forward pass, each entry of $A$ is a hard binary sample that zeros out attention edges, which serves as an effective $L_{0}$ regularisation. Moreover, since the functional form of the sparse attention layer after the hard sampling step is the same as standard softmax attention, pre-trained model weights can be directly used without alterations. Technically, the sampled $A$ affects the computation. This can be mitigated by adding a positive bias term inside the sigmoid function to ensure all gates are open at initialisation. Experimentally, we found this to be unnecessary as the models quickly recover their original performance within a small number of gradient steps.

### 3.2 Constrained Optimisation

In order to ensure that the models do not lose prediction performance during the post-training procedure, as per desideratum 3, we follow the approach proposed in (Lei et al., 2025), which employs the GECO algorithm (Rezende and Viola, 2018). Originally developed in the context of regularising VAEs, the GECO algorithm places a constraint on the performance of the model and uses a Lagrangian multiplier to automatically find the right strength of regularisation during training. Concretely, we formulate the learning process as the following optimisation problem,

$$

\min_{\theta}\sum_{l}\mathbb{E}\big[|A_{l}|\big]\qquad s.t.\quad CE\leq\tau, \tag{4}

$$

where $A_{l}$ denotes the gating matrix at layer $l$ , $CE$ is the standard next token prediction cross-entropy loss, and $\tau$ is the required target loss, and $\theta$ is the model parameters. In practice, we set this target as the loss of the pre-trained baseline models. We solve this optimisation problem via Lagrangian relaxation, yielding the following max-min objective,

$$

\max_{\lambda>0}\min_{\theta}\bigg[\sum_{l}\mathbb{E}\big[|A_{l}|\big]+\lambda(CE-\tau)\bigg]. \tag{5}

$$

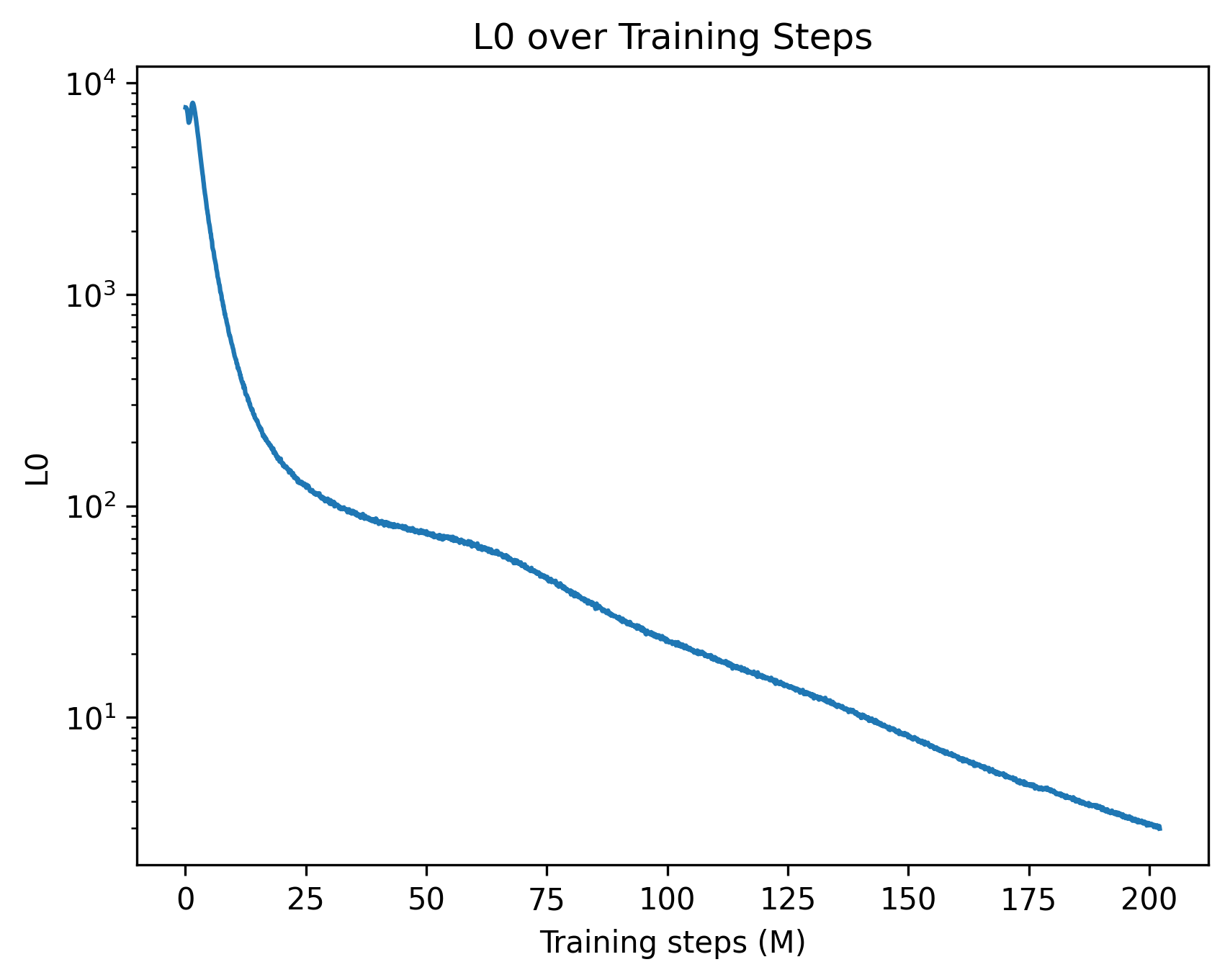

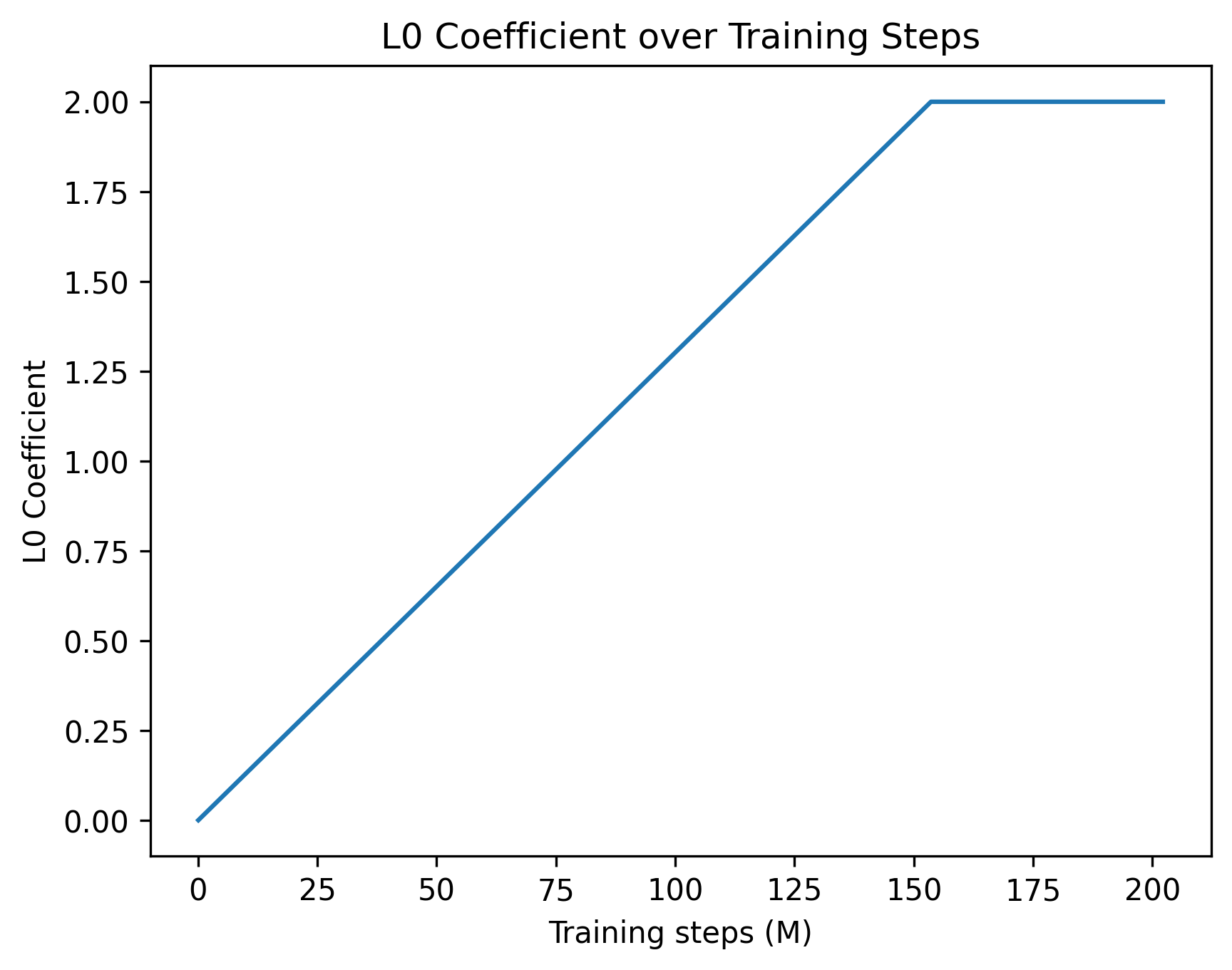

This can be solved by taking gradient steps on $\theta$ and $\lambda$ alternately. During training, updating $\lambda$ automatically balances the strength of the sparsity regularisation: when $CE$ is lower than the threshold, $\lambda$ decreases, and hence more weight is given to the sparsity regularisation term. This effectively acts as an adaptive schedule which continues to increase the strength of the regularisation until the model performance degrades. Here, the value of $\tau$ is selected as a hyperparameter to ensure that the sparse model’s performance remains within a certain tolerance of the original base model. In practice, the choice of $\tau$ controls a trade off between sparsity and performance: picking a tight $\tau$ can lead to a slower training process, whereas a higher tolerance can substantially speed up training at the cost of potentially harming model performance. In Appendix C, we provide further discussion on this optimisation process and its training dynamics.

### 3.3 Practical Considerations

One of the main strengths of our proposed method is that, architecturally, the only difference between a sparse Transformer and a normal one lies in how the dot-product attention is computed. As such, most practical training techniques for optimising Transformers can be readily adapted to our setting. In our experiments, we find the following techniques helpful for improving computational efficiency and training stability.

#### LoRA finetuning (Hu et al., 2022) .

Low rank finetuning techniques can significantly reduce the computational requirements for training large models. In our experiments, we verify on a 7B parameter model that LoRA finetuning is sufficiently expressive for inducing sparse attention patterns.

#### FlashAttention (Dao, 2023)

FlashAttention has become a standard method for reducing the memory footprint of dot-product attention mechanisms. In Appendix B, we discuss how the sampled sparse attention can be implemented in an analogous manner.

#### Distillation (Gu et al., 2024) .

Empirically, we find that adding an auxiliary distillation loss based on the KL divergence between the base model and the sparse model improves training stability and ensures that the behaviour of the model remains unchanged during post-training.

<details>

<summary>x2.png Details</summary>

### Visual Description

## Bar Chart: Benchmark Comparison

### Overview

The image is a grouped bar chart titled "Benchmark Comparison." It compares the accuracy of two models, "OLMo-7B" and "Sparse OLMo-7B," across four different benchmark datasets. The chart uses a vertical bar format with a clear legend and labeled axes.

### Components/Axes

* **Title:** "Benchmark Comparison" (centered at the top).

* **Y-Axis:** Labeled "Accuracy." The scale runs from 0.0 to 1.0, with major tick marks at intervals of 0.2 (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

* **X-Axis:** Lists four benchmark categories. The labels are rotated approximately 45 degrees for readability. From left to right: "TruthfulQA", "PIQA", "OpenBookQA", "ARC-Easy".

* **Legend:** Located in the top-right corner of the plot area. It defines the two data series:

* A teal/green square labeled "OLMo-7B".

* A mauve/pink square labeled "Sparse OLMo-7B".

### Detailed Analysis

The chart presents accuracy scores for two models on four tasks. For each benchmark, the "OLMo-7B" bar (teal) is positioned to the left of the "Sparse OLMo-7B" bar (mauve).

**1. TruthfulQA**

* **Trend:** Both models show the lowest performance on this benchmark compared to the others.

* **Data Points:**

* OLMo-7B: Accuracy is approximately **0.24**.

* Sparse OLMo-7B: Accuracy is approximately **0.24**. The bars appear nearly identical in height.

**2. PIQA**

* **Trend:** This benchmark yields the highest accuracy scores for both models.

* **Data Points:**

* OLMo-7B: Accuracy is approximately **0.80**.

* Sparse OLMo-7B: Accuracy is approximately **0.79**. The sparse model's bar is marginally shorter.

**3. OpenBookQA**

* **Trend:** Performance is moderate, lower than PIQA and ARC-Easy but higher than TruthfulQA.

* **Data Points:**

* OLMo-7B: Accuracy is approximately **0.37**.

* Sparse OLMo-7B: Accuracy is approximately **0.35**. A small but visible gap exists, with the sparse model scoring lower.

**4. ARC-Easy**

* **Trend:** The second-highest performing benchmark for both models.

* **Data Points:**

* OLMo-7B: Accuracy is approximately **0.59**.

* Sparse OLMo-7B: Accuracy is approximately **0.57**. Again, the sparse model shows a slight decrease.

### Key Observations

* **Consistent Performance Gap:** Across all four benchmarks, the "Sparse OLMo-7B" model consistently achieves a slightly lower accuracy score than the standard "OLMo-7B" model. The difference is small but visually apparent in three of the four categories (PIQA, OpenBookQA, ARC-Easy).

* **Benchmark Difficulty Hierarchy:** The relative difficulty of the benchmarks is consistent for both models. From easiest to hardest (highest to lowest accuracy): PIQA > ARC-Easy > OpenBookQA > TruthfulQA.

* **No Outliers:** The data follows a clear pattern without any anomalous spikes or drops that break the trend.

### Interpretation

This chart demonstrates the impact of model sparsification on performance across diverse reasoning and knowledge tasks. The key takeaway is that **sparsifying the OLMo-7B model results in a minor but consistent reduction in accuracy** across all tested benchmarks.

The data suggests a trade-off: the "Sparse OLMo-7B" likely offers advantages in computational efficiency (memory, speed) at the cost of a small performance penalty. The fact that the performance drop is uniform and small indicates that the sparsification technique preserves the model's core capabilities effectively. The benchmark hierarchy (PIQA being easiest, TruthfulQA hardest) reveals the relative challenges these tasks pose to this class of language models, independent of their size or sparsity.

</details>

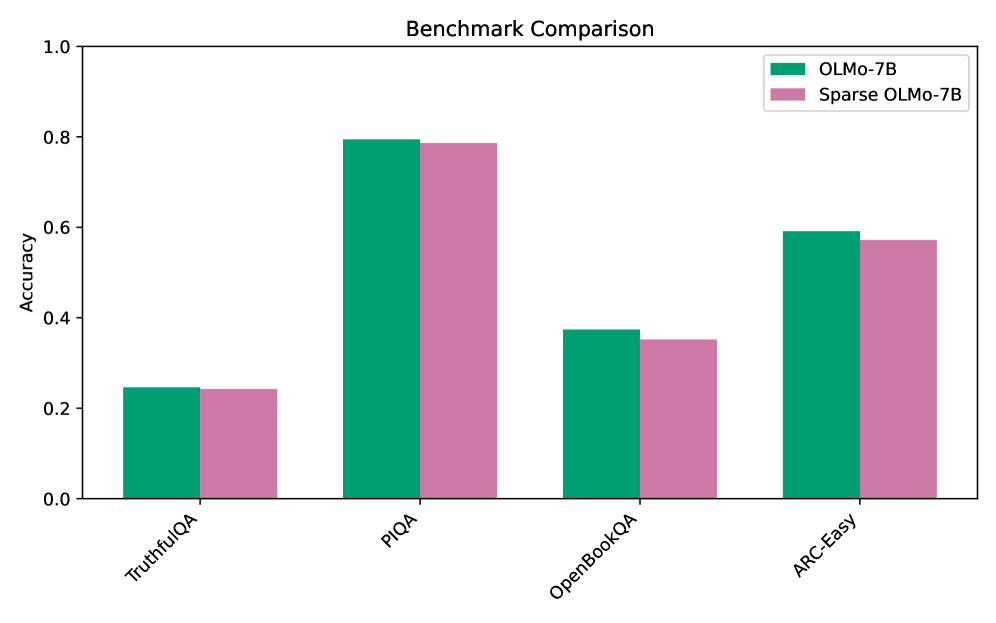

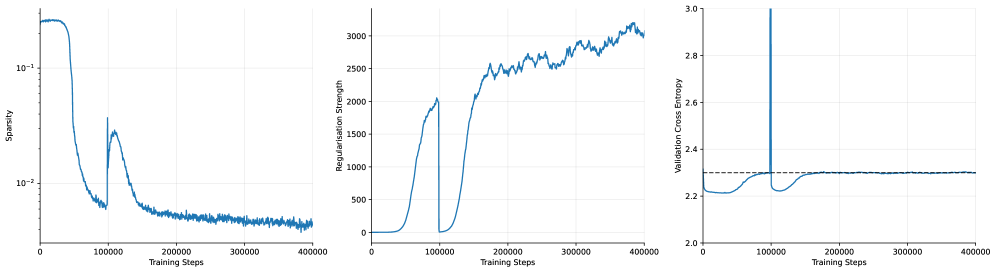

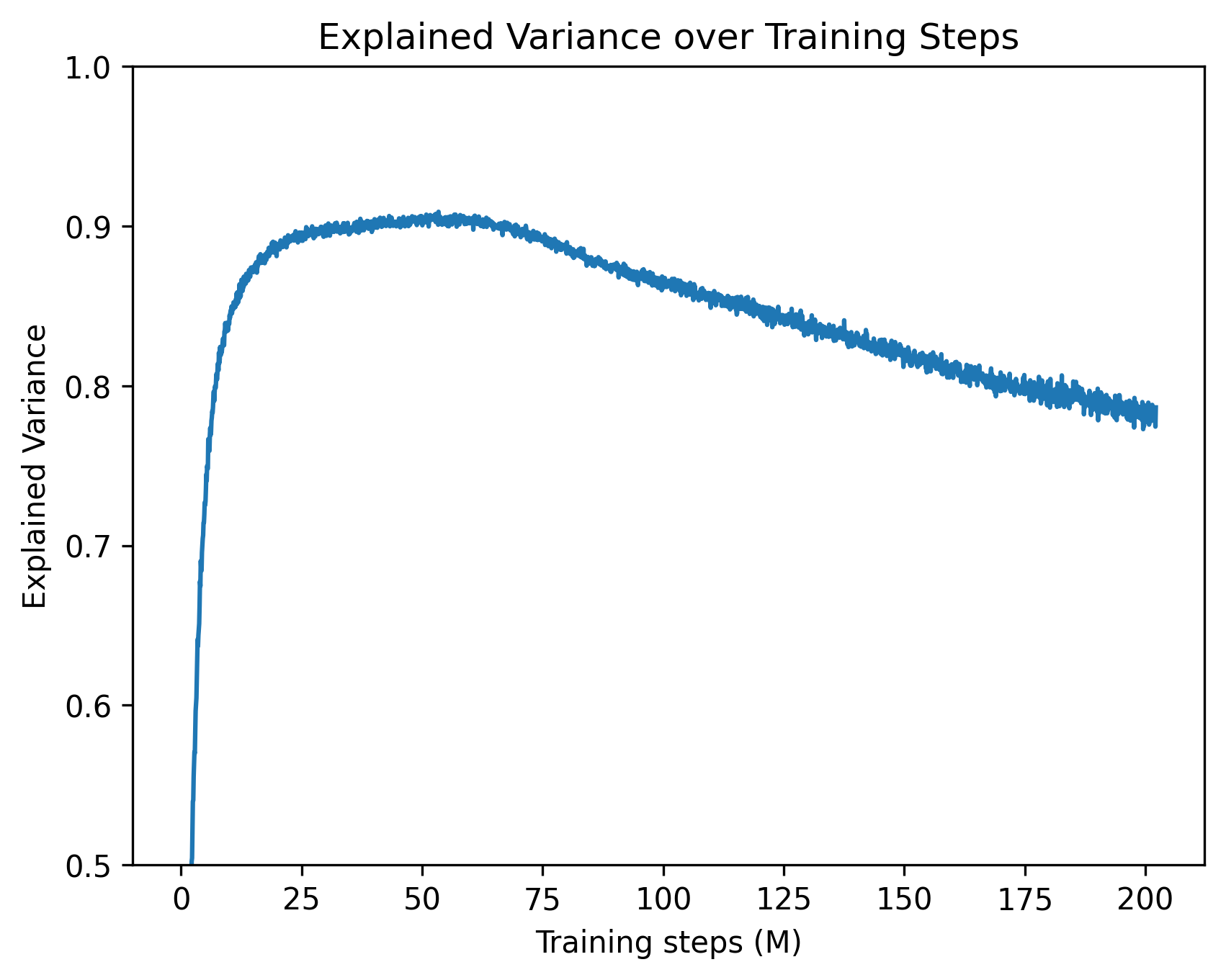

Figure 2: Comparison of model performance between the base OLMo model and the sparsified model evaluated on the various benchmarks. Across all tasks, the performance of the sparse model remains comparable with the base model despite using substantially fewer attention edges.

## 4 Experiments

To evaluate the effectiveness of our post-training pipeline, we finetune pre-trained LLMs and compare their prediction performance and interpretability before and after applying sparsity regularisation. We perform full finetuning on a GPT-2 base model (Radford et al., 2019) (124M parameters) on the OpenWebText dataset (Gokaslan and Cohen, 2019). To investigate the generality and scalability of our method, we perform LoRA finetuning on the larger OLMo-7B model (Groeneveld et al., 2024) on the Dolma dataset (Soldaini et al., 2024), which is the dataset on which the base model was trained. The GPT-2 model and the OLMo model are trained on sequences of length 64 and 512, respectively. In the following subsections, we first present a quantitative evaluation of model performance and sparsity after sparse post-training. We then conduct two interpretability studies, using activation patching and attribution graphs, to demonstrate that our method enables the discovery of substantially smaller circuits.

### 4.1 Model Performance and Sparsity

We begin by evaluating both performance retention and the degree of sparsity achieved by post-training. We set cross-entropy targets of 3.50 for GPT-2 (base model: 3.48) and 2.29 for OLMo (base model: 2.24). After training, the mean cross-entropy loss for both models remains within $\pm 0.01$ of the target, indicating that the dual optimisation scheme effectively enforces a tight performance constraint. To quantify the sparsity achieved by the models, we evaluate them on the validation split of their respective datasets and compute the mean number of non-zero attention edges per attention head. We find that the sparsified GPT-2 model activates, on average, only 0.22% of its attention edges, while the sparsified OLMo model activates 0.44%, indicating substantial sparsification in both cases. Table 1 provides a summary of the results. To further verify that this drastic reduction in message passing between tokens does not substantially alter model behaviour, we evaluate the sparsified OLMo model on a subset of the benchmarks used to assess the original model. As shown in Figure 2, the sparse model largely retains the performance of the base model across a diverse set of tasks. In sum, our results demonstrate that sparse post-training is effective in consolidating information flow into a small number of edges while maintaining a commensurate level of performance.

| GPT-2 | 3.48 | 3.50 | 3.501 | 0.22% |

| --- | --- | --- | --- | --- |

| OLMo | 2.24 | 2.29 | 2.287 | 0.44% |

Table 1: Performance and sparsity of post-trained models. Final cross-entropy losses closely match the specified targets, while attention sparsity is substantially increased.

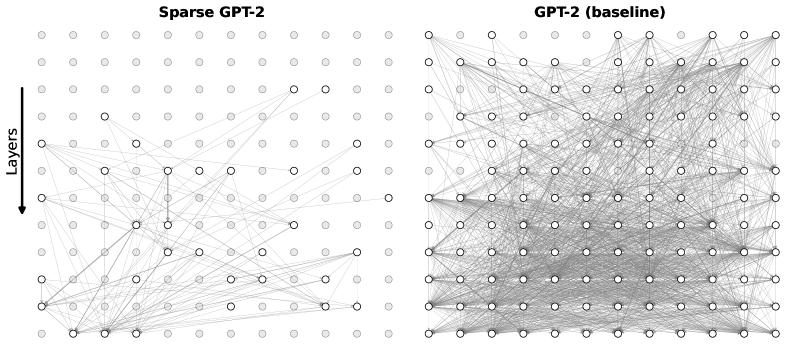

### 4.2 Circuit Discovery with Activation Patching

<details>

<summary>x3.png Details</summary>

### Visual Description

## Attention Pattern Visualization: GPT-2 vs. Sparse GPT-2

### Overview

The image displays a comparative visualization of attention patterns from two transformer models: a standard "GPT2" model and a "Sparse GPT2" model. The visualization consists of two distinct panels, each containing a grid of small, square subplots. Each subplot represents the attention pattern of a specific attention head within a specific layer of the model. The patterns are depicted as heatmaps where dark blue dots indicate high attention weights between token positions, and white space indicates low or zero attention.

### Components/Axes

* **Panel Titles:** The top panel is labeled **"GPT2"**. The bottom panel is labeled **"Sparse GPT2"**.

* **Subplot Labels:** Each subplot has a unique identifier in its top-left corner, following the format `L[Layer Number]H[Head Number]`. For example, `L0H0` denotes Layer 0, Head 0.

* **Subplot Content:** Each subplot is a square matrix. The x-axis and y-axis of these matrices represent token positions in a sequence (e.g., from the first token to the last). The color intensity at coordinate (i, j) represents the attention weight from token `i` to token `j`.

* **Color Scale:** A monochromatic blue scale is used. Dark blue signifies high attention weight, while white signifies low or zero attention weight. No explicit color bar legend is provided.

### Detailed Analysis

#### **GPT2 Panel (Top)**

This panel contains a large grid of 59 attention head visualizations, arranged in 7 rows. The number of subplots per row varies (Row 1: 10, Row 2: 10, Row 3: 10, Row 4: 10, Row 5: 10, Row 6: 9, Row 7: 7).

**Complete List of Head Labels (Row-wise, Left to Right):**

* **Row 1:** L0H0, L0H2, L5H5, L0H7, L0H1, L0H10, L0H3, L8H5, L1H3

* **Row 2:** L7H6, L3H10, L4H8, L2H11, L5H8, L6H7, L11H0, L6H10, L7H3

* **Row 3:** L3H0, L5H9, L7H1, L2H10, L7H2, L8H10, L7H10, L0H5, L0H9

* **Row 4:** L6H6, L7H11, L2H9, L1H4, L6H11, L3H8, L5H3, L7H7, L1H10

* **Row 5:** L9H5, L6H9, L4H9, L1H2, L11H10, L4H3, L6H1, L5H6, L11H4

* **Row 6:** L3H11, L6H8, L4H7, L0H6, L3H1, L5H7, L10H2, L1H0, L5H1

* **Row 7:** L3H6, L6H3, L11H11, L5H2, L3H2, L2H3, L0H11

**Visual Trend & Pattern Distribution:**

The attention patterns in the standard GPT-2 model are highly diverse:

1. **Diagonal Patterns:** Many heads (e.g., L5H5, L0H1, L0H3, L7H2, L5H6) show a strong, clean diagonal line. This indicates a "local" or "previous-token" attention pattern, where each token attends primarily to itself or the immediately preceding token.

2. **Vertical/Horizontal Lines:** Some heads (e.g., L0H7, L6H7, L3H1) show vertical or horizontal lines. A vertical line means a specific token is attended to by all other tokens (a "global" or "summary" token). A horizontal line means a specific token attends to all other tokens.

3. **Scattered/Diffuse Patterns:** A significant number of heads (e.g., L0H0, L7H6, L3H10, L9H5) exhibit scattered, diffuse attention across the matrix, suggesting more complex, non-local relationships.

4. **Blocky/Clustered Patterns:** Some heads (e.g., L4H8, L2H11, L1H4) show attention concentrated in blocks or clusters, indicating attention within phrases or syntactic units.

#### **Sparse GPT2 Panel (Bottom)**

This panel contains a single row of 9 attention head visualizations.

**Complete List of Head Labels (Left to Right):**

L0H5, L5H1, L4H11, L6H8, L5H5, L1H0, L6H9, L3H4, L5H6

**Visual Trend & Pattern Distribution:**

The attention patterns in the Sparse GPT-2 model are strikingly uniform and distinct from the standard model:

1. **Dominant Diagonal:** **Every single head** (L0H5, L5H1, L4H11, L6H8, L5H5, L1H0, L6H9, L3H4, L5H6) displays a very clean, sharp diagonal line. This is the defining characteristic of this panel.

2. **Sparsity:** The off-diagonal areas are almost entirely white, indicating near-zero attention weights. This visual sparsity is the direct result of the "Sparse" modification, which likely prunes or masks attention connections to enforce this diagonal, local pattern.

3. **Consistency:** There is almost no variation in pattern type across the sampled heads. The sparsity constraint appears to have homogenized the attention behavior towards a strict local focus.

### Key Observations

1. **Pattern Diversity vs. Uniformity:** The most striking contrast is between the high diversity of attention patterns in standard GPT-2 and the extreme uniformity in Sparse GPT-2.

2. **Sparsity Enforcement:** The Sparse GPT-2 visualization provides clear visual evidence of a sparsity mechanism at work, successfully limiting attention to a local, diagonal band.

3. **Layer/Head Specificity:** In the standard GPT-2 panel, patterns are not strictly organized by layer. For example, Layer 0 contains both diagonal (L0H1, L0H3) and diffuse (L0H0) heads. This suggests functional specialization occurs at the head level, not uniformly across a layer.

4. **Potential Redundancy:** The Sparse GPT-2 panel shows multiple heads (e.g., L5H5 and L5H6) with nearly identical diagonal patterns, suggesting potential redundancy in the sparse model's attention heads.

### Interpretation

This visualization is a powerful diagnostic tool for understanding the internal mechanics of transformer models.

* **What the data demonstrates:** It provides direct visual proof of how a "sparse" attention modification alters model behavior. The standard GPT-2 model utilizes a rich repertoire of attention strategies (local, global, clustered, diffuse) to build its representations. In contrast, the Sparse GPT-2 model is constrained to a much simpler, local-only strategy.

* **Relationship between elements:** The two panels serve as a controlled comparison. By showing heads from similar layers (e.g., L5H5 appears in both), the image isolates the effect of the sparsity constraint. The diversity in the top panel is the "baseline" behavior, while the uniformity in the bottom panel is the "constrained" outcome.

* **Implications and Anomalies:**

* **Efficiency vs. Capability:** The sparse model's patterns suggest a potential gain in computational efficiency (fewer non-zero operations) but raise questions about its ability to model long-range dependencies, which are crucial for tasks like coreference resolution or document-level understanding.

* **Interpretability:** The sparse patterns are far easier to interpret (each token focuses only on its immediate context), which could be beneficial for model transparency and debugging.

* **Investigative Question:** A key follow-up question would be: Does this enforced locality hurt the model's performance on downstream tasks that require non-local reasoning? The visualization alone cannot answer this, but it frames the critical hypothesis to test. The homogeneity in the sparse model might also indicate an over-regularization, where useful, diverse attention patterns have been inadvertently suppressed.

</details>

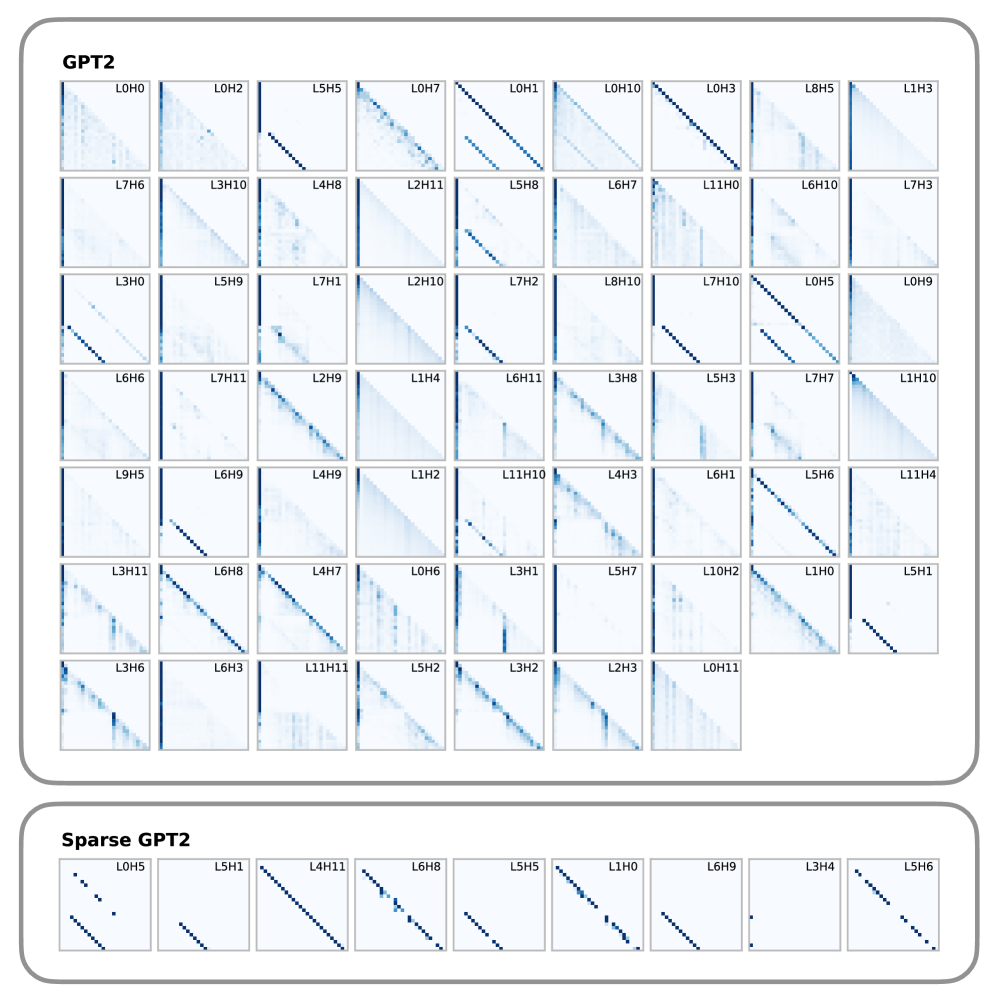

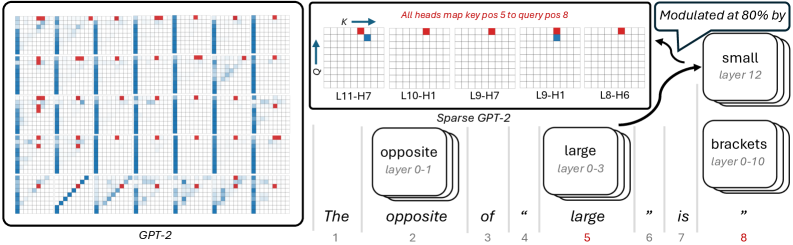

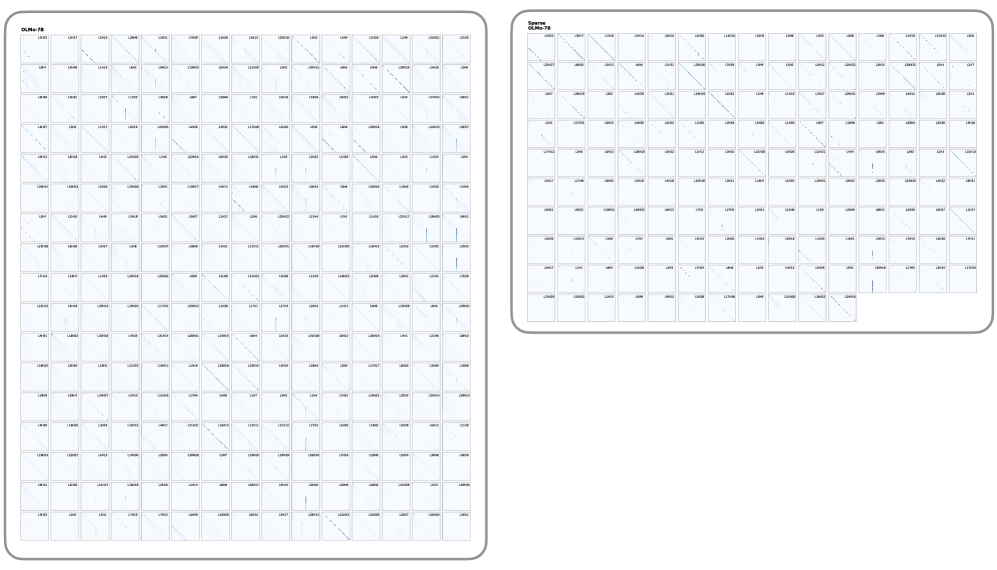

Figure 3: Attention patterns of the heads required to explain 90% of model behaviour on a copy task. The sparse model requires substantially fewer attention heads. Moreover, the selected heads exhibit the characteristic ‘induction head’ pattern: each token attends to a previous token at a fixed relative offset, effectively copying information forward through the sequence, a pattern well known to implement the copy mechanism in transformer models. Equivalent plots for OLMo can be found in Appendix D.

<details>

<summary>x4.png Details</summary>

### Visual Description

## Line Charts: Comparative Efficiency of Dense vs. Sparse Transformer Models

### Overview

The image displays a set of four line charts arranged horizontally, comparing the performance of dense and sparse versions of two language models (GPT-2 and OLMo-7B) across four different evaluation tasks. The charts plot the "Explained Effect" (y-axis) against the "Number of Heads Kept" (x-axis), demonstrating how model performance scales with the number of attention heads retained after pruning. Each chart includes an annotation indicating a speedup factor.

### Components/Axes

* **Chart Titles (Top, Left to Right):** "Greater Than", "IOI", "Docstring", "IOI Long".

* **Y-Axis (All Charts):** Label: "Explained Effect". Scale: 0.0 to 1.0, with major ticks at 0.0, 0.5, and 1.0.

* **X-Axis (All Charts):** Label: "Number of Heads Kept".

* Charts 1 & 2 ("Greater Than", "IOI"): Scale 0 to 100, with major ticks at 0, 50, 100.

* Charts 3 & 4 ("Docstring", "IOI Long"): Scale 0 to 1000, with major ticks at 0, 250, 500, 750, 1000.

* **Legends (Bottom-Right of each chart):**

* Charts 1 & 2: Blue line = "GPT-2", Orange line = "Sparse GPT-2".

* Charts 3 & 4: Green line = "OLMo-7B", Pink line = "Sparse OLMo-7B".

* **Annotations (Within each chart):** A horizontal dashed black line connects a point on the sparse model's curve to a point on the dense model's curve at the same y-value (Explained Effect ≈ 0.95). A text label above this line indicates the speedup factor: "4.5x", "2.2x", "2.2x", and "1.4x" respectively.

### Detailed Analysis

**Chart 1: Greater Than**

* **Trend:** Both lines show a sigmoidal increase. The orange "Sparse GPT-2" line rises much more steeply from the origin than the blue "GPT-2" line.

* **Data Points (Approximate):** The Sparse GPT-2 curve reaches an Explained Effect of ~0.95 with approximately 22 heads kept. The standard GPT-2 curve reaches the same effect with approximately 100 heads kept.

* **Annotation:** The dashed line and label "4.5x" indicate that Sparse GPT-2 achieves the target performance with roughly 4.5 times fewer heads than standard GPT-2.

**Chart 2: IOI**

* **Trend:** Similar sigmoidal pattern. The orange "Sparse GPT-2" line again has a steeper initial ascent.

* **Data Points (Approximate):** Sparse GPT-2 reaches Explained Effect ~0.95 with ~45 heads. Standard GPT-2 reaches it with ~100 heads.

* **Annotation:** The "2.2x" label indicates a 2.2x efficiency gain for the sparse model on this task.

**Chart 3: Docstring**

* **Trend:** Both lines increase, but with more noise/fluctuation than the GPT-2 charts. The pink "Sparse OLMo-7B" line rises more sharply initially.

* **Data Points (Approximate):** Sparse OLMo-7B reaches Explained Effect ~0.95 with ~250 heads. Standard OLMo-7B reaches it with ~550 heads.

* **Annotation:** The "2.2x" label indicates a 2.2x efficiency gain.

**Chart 4: IOI Long**

* **Trend:** Both lines show a sharp, almost step-like increase. The pink "Sparse OLMo-7B" line's ascent is significantly earlier and steeper.

* **Data Points (Approximate):** Sparse OLMo-7B reaches Explained Effect ~0.95 with ~400 heads. Standard OLMo-7B reaches it with ~560 heads.

* **Annotation:** The "1.4x" label indicates a 1.4x efficiency gain, the smallest among the four tasks.

### Key Observations

1. **Consistent Superiority of Sparse Models:** In all four tasks, the sparse model variant (orange/pink) achieves a high "Explained Effect" with fewer attention heads than its dense counterpart (blue/green).

2. **Task-Dependent Speedup:** The efficiency gain (speedup factor) varies significantly by task, ranging from 1.4x to 4.5x. The "Greater Than" task shows the most dramatic benefit from sparsification.

3. **Performance Ceiling:** All models eventually approach an Explained Effect of 1.0, indicating that with enough heads, both dense and sparse models can fully explain the effect. The key difference is the *rate* of convergence.

4. **Model Architecture Difference:** The OLMo-7B charts (3 & 4) operate on a scale of hundreds of heads (x-axis up to 1000), while the GPT-2 charts (1 & 2) operate on a scale of tens of heads (x-axis up to 100), reflecting the different sizes/architectures of the base models.

### Interpretation

This set of charts provides strong empirical evidence for the **efficiency of sparse attention mechanisms** in transformer language models. The "Explained Effect" likely measures how well a subset of attention heads can replicate the full model's behavior on specific linguistic tasks (e.g., "Greater Than" for numerical comparison, "IOI" for indirect object identification).

The core finding is that **pruning (sparsifying) the model retains the most critical computational pathways**. The sparse models reach high performance with a fraction of the active parameters (heads), suggesting that dense models contain significant redundancy. The varying speedup factors (1.4x to 4.5x) imply that the "importance" or "dispersal" of critical information across heads is task-dependent. Some tasks ("Greater Than") may rely on a very small, core set of heads that are preserved in the sparse model, while others ("IOI Long") may have their critical functions more distributed, leading to a smaller efficiency gain upon pruning.

From a practical standpoint, this data supports the use of sparse models for **reducing computational cost and memory footprint** during inference without a proportional loss in performance on targeted tasks. The charts serve as a validation of the sparsification technique used, showing it successfully identifies and preserves the most salient model components.

</details>

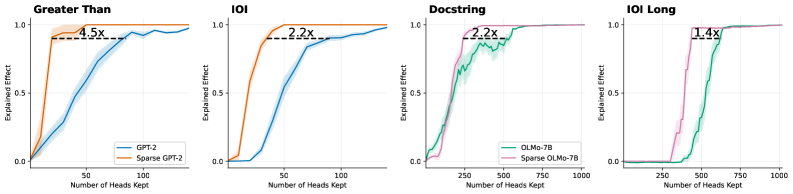

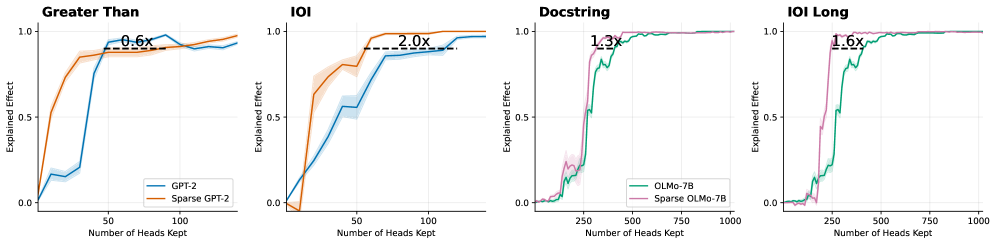

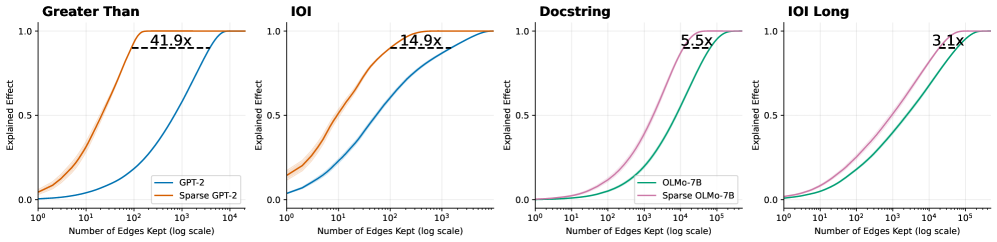

Figure 4: Logit attribution keeping only the top- $k$ attention heads. Dotted line annotates the number of attention heads needed to explain 90% of the logit difference. Sparse models yields 1.4 $\times$ to 4.5 $\times$ smaller circuits. Shaded areas show standard error across 20 prompts.

<details>

<summary>x5.png Details</summary>

### Visual Description

## Comparative Analysis of Sparse vs. Dense Model Efficiency

### Overview

The image displays a 1x4 grid of line charts comparing the performance of standard ("dense") neural network models against their "sparse" counterparts. Each chart plots the "Explained Effect" (y-axis) against the "Number of Edges Kept" (x-axis, logarithmic scale). The central finding is that sparse models achieve the same level of explained effect with significantly fewer parameters (edges), with the efficiency gain (multiplier) varying by task.

### Components/Axes

* **Chart Type:** 4 separate line charts arranged horizontally.

* **X-Axis (All Charts):** "Number of Edges Kept". Scale is logarithmic (base 10).

* Charts 1 & 2 ("Greater Than", "IOI"): Range from 10⁰ (1) to 10⁴ (10,000).

* Charts 3 & 4 ("Docstring", "IOI Long"): Range from 10¹ (10) to 10⁵ (100,000).

* **Y-Axis (All Charts):** "Explained Effect". Linear scale from 0.0 to 1.0.

* **Legends:** Located in the bottom-right corner of each subplot.

* Chart 1: Blue line = "GPT-2", Orange line = "Sparse GPT-2"

* Chart 2: Blue line = "GPT-2", Orange line = "Sparse GPT-2"

* Chart 3: Green line = "OLMo-7B", Pink line = "Sparse OLMo-7B"

* Chart 4: Green line = "OLMo-7B", Pink line = "Sparse OLMo-7B"

* **Annotations:** Each chart contains a dashed horizontal line near the top (y ≈ 0.95-0.98) with a multiplier value (e.g., "97.0x") indicating the relative efficiency of the sparse model.

### Detailed Analysis

**Chart 1: "Greater Than"**

* **Trend Verification:** The orange "Sparse GPT-2" curve rises steeply from the left, reaching near-maximum explained effect (~0.95) at approximately 10² edges. The blue "GPT-2" curve rises much more gradually, reaching the same effect level at nearly 10⁴ edges.

* **Key Data Point/Annotation:** "97.0x". This indicates the sparse model requires roughly 97 times fewer edges to achieve the same high explained effect as the dense model on this task.

* **Spatial Grounding:** The dashed line and "97.0x" label are positioned in the upper center of the plot area.

**Chart 2: "IOI"**

* **Trend Verification:** Similar pattern to Chart 1. The orange "Sparse GPT-2" curve is significantly to the left of the blue "GPT-2" curve, indicating superior parameter efficiency.

* **Key Data Point/Annotation:** "42.8x". The efficiency gain is substantial but less extreme than for the "Greater Than" task.

* **Spatial Grounding:** Annotation is centered in the upper plot area.

**Chart 3: "Docstring"**

* **Trend Verification:** The pink "Sparse OLMo-7B" curve is to the left of the green "OLMo-7B" curve, but the gap between them is narrower than in the first two charts. Both curves have a sigmoidal shape.

* **Key Data Point/Annotation:** "8.6x". The sparse model is still more efficient, but the advantage is an order of magnitude smaller than for the GPT-2 models on the previous tasks.

* **Spatial Grounding:** Annotation is centered in the upper plot area.

**Chart 4: "IOI Long"**

* **Trend Verification:** The pink "Sparse OLMo-7B" curve is again to the left of the green "OLMo-7B" curve. The curves are closer together than in Chart 3.

* **Key Data Point/Annotation:** "5.4x". This represents the smallest efficiency multiplier among the four charts.

* **Spatial Grounding:** Annotation is centered in the upper plot area.

### Key Observations

1. **Universal Efficiency Gain:** In all four tasks, the sparse model variant achieves any given level of "Explained Effect" with fewer edges than its dense counterpart.

2. **Diminishing Multiplier:** The efficiency multiplier decreases dramatically across the charts: 97.0x → 42.8x → 8.6x → 5.4x.

3. **Task/Model Dependency:** The magnitude of the sparsification benefit is highly dependent on both the model architecture (GPT-2 vs. OLMo-7B) and the specific task ("Greater Than" vs. "IOI" vs. "Docstring" vs. "IOI Long").

4. **Curve Shape:** All curves are sigmoidal (S-shaped), indicating a phase transition where explanatory power rapidly increases after a certain threshold of edges is retained.

### Interpretation

This data provides strong empirical evidence for the **pruning hypothesis** in neural networks: that a significant portion of a model's parameters (edges) are redundant for specific tasks. The "Explained Effect" likely measures how well a subnetwork (defined by the kept edges) can replicate the full model's behavior or performance.

The key insight is that **the degree of redundancy is not constant**. The massive 97x multiplier for "Greater Than" suggests this is a relatively simple, localized computation that can be encoded in a very small sub-circuit of GPT-2. In contrast, the "IOI Long" task (likely a more complex, long-range dependency problem) shows less redundancy (5.4x), implying its solution is more distributed across the network's parameters.

The transition from GPT-2 to the larger OLMo-7B model, and from simpler to more complex tasks, shows a clear trend: **as task complexity and/or model scale increases, the relative efficiency gain from sparsification decreases, though it remains positive.** This has profound implications for model compression and efficient AI, suggesting that while pruning is universally beneficial, its most dramatic savings are found in simpler cognitive tasks or within smaller models. The consistent sigmoidal curves further suggest there exists a critical minimal subnetwork size required to solve a task, below which performance collapses.

</details>

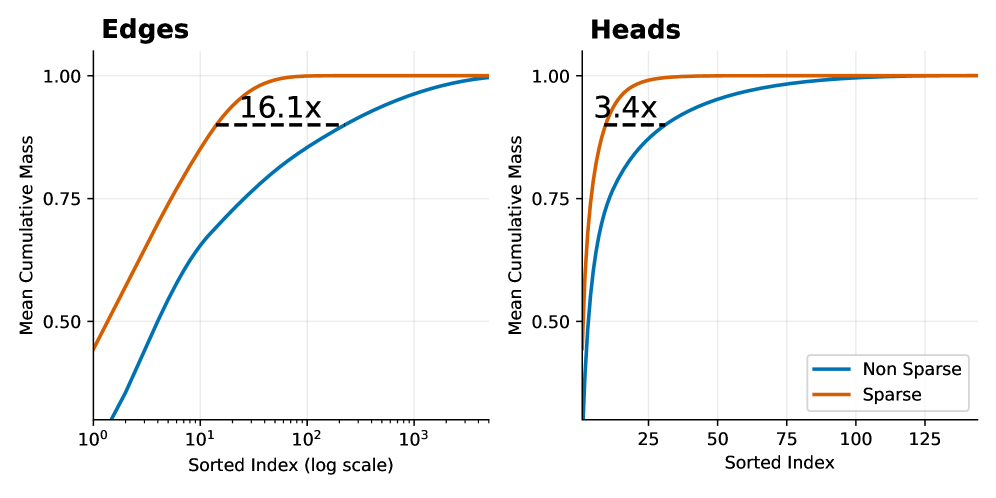

Figure 5: Logit attribution per sentence keeping only the top- $k$ attention edges. Sparse models yields 5.4 $\times$ to 97 $\times$ smaller circuits. Shaded area shows standard error across 20 prompts.

We begin by outlining the experimental procedure used for circuit discovery. Activation patching (Nanda et al., 2023) is a widely used technique for identifying task-specific circuits in transformer models. In a typical setup, the model is evaluated on pairs of prompts: a clean prompt, for which the model predicts a correct target token, and a corrupted prompt that shares the overall structure of the clean prompt but is modified to induce an incorrect prediction. Here, the goal is to find the set of model components that is responsible for the model’s preference for the correct answer over the wrong one, as measured by the logit difference between the corresponding tokens. In activation patching, individual model components, such as attention heads and individual edges, can be ’switched-off’ by patching activation at the specific positions. Circuit discovery amounts to finding a set of components whose replacement causes the model’s prediction to shift from the correct to the corrupted answer.

Since searching over every possible subset of model components is infeasible due to the exponential number of potential subsets, we adopt a common heuristic to rank each model component. Specifically, for each individual component, we compute an importance score by replacing the activations of the component with the corrupted activations and measuring its effect on the logit difference. In our experiments, we use this ranking to select the top- $k$ components and intervene on the model by freezing all remaining components, with the goal of identifying the minimal set that accounts for at least 90% of the model’s preference for the correct prediction. Note that these importance scores can be computed at two levels: (i) a single-sentence level, using a single pair of correct and corrupted inputs, and (ii) a global level, obtained by averaging scores across many task variants. In our experiments, we report the results using single-sentence scores. In Appendix D, we also provide results using the global scores, which are largely consistent with our main results. There are also two standard approaches for freezing component activations: setting the activation to zero or replacing it with a mean activation value (Conmy et al., 2023). We evaluate both variants for each model and report results for the patching strategy that yields the smallest circuits.

We first focus on the copy task with the following prompt: "AJEFCKLMOPQRSTVWZS, AJEFCKLMOPQRSTVWZ", where the model has to copy the letter S to the next token position. This task is well studied and is widely believed to be implemented by emergent induction heads (Elhage et al., 2021), which propagate token information forward in the sequence. Figure 3 illustrates the attention patterns of the set of attention heads that explains this prompt for the sparse and base GPT-2 models. See Appendix D for analogous results for the OLMo models. The sparse model admits a substantially smaller set of attention heads (9 heads) than its fully connected counterpart (61 heads). Moreover, the identified heads in the sparse model exhibit cleaner induction head patterns, with each token attending to a single prior position at a fixed relative offset. These results illustrate how sparsification facilitates interpretability under simple ranking-based methods and support our hypothesis that sparse post-training yields models that are more amenable to mechanistic interpretability techniques.

To further verify our hypothesis, we repeat the experiment on classical circuit discovery tasks. For GPT-2, we evaluate variants of the Indirect Object Identification (IOI) task, in which the model copies a person’s name from the start of a sentence, and the Greater Than task, in which the model predicts a number that is larger than a previously mentioned number. To further assess the scalability of our approach, we investigate more challenging and longer horizon tasks for OLMo, including a longer context IOI task and a Docstring task where the model needs to predict an argument name in a Docstring based on an implemented function. Details of each task can be found in Appendix E. Figure 4 and 5 show the fraction of model behaviour explained as a function of the number of retained model components (attention heads and attention edges, respectively). Across all tasks and models, the sparse models consistently produce significantly smaller circuits, as measured by the number of model components needed to explain 90% of model prediction. This further corroborates our claim that sparse models lead to simpler and more interpretable internal circuits.

### 4.3 Attribution-graph

Next, we present a more fine-grained, feature-level investigation of whether sparsity in attention leads to interpretable circuits in practice using cross-layer transcoders (CLTs). Since training CLTs on OLMo-7B is computationally prohibitive The largest open-source CLT is on Gemma-2B at the time of writing., we focus our analysis on the GPT-2 models. For the rest of the section, we perform analysis on CLTs trained on the sparse and base GPT-2 models, trained with an expansion factor of $32$ and achieve above $80\$ replacement score measured with Circuit Tracer (Hanna et al., 2025). See Appendix F and G for details on training and visualisation.

We study the problem of attention attribution, which seeks to understand how edges between features are mediated. The key challenge here is that any given edge can be affected by a large number of model components, making mediation circuits difficult to analyse both computationally and conceptually: computationally, exhaustive enumeration is costly; conceptually, the resulting circuits are often large and uninterpretable. In this experiment, we demonstrate that sparse attention patterns induced via post-training substantially alleviate these challenges, as the vast majority of attention components have zero effect on the computation.

As in (Ameisen et al., 2025), we define the total attribution score between feature $n$ at layer $\ell$ and position $k$ , and feature $n^{\prime}$ at layer $\ell^{\prime}$ and position $k^{\prime}$ as

$$

a_{\ell,k,n}^{\ell^{\prime},k^{\prime},n^{\prime}}=f_{k,n}^{\ell}\;J_{\ell,k}^{\ell^{\prime},k^{\prime}}\;g_{k^{\prime},n^{\prime}}^{\ell^{\prime}}. \tag{6}

$$

Here, $f_{k,n}^{\ell}$ denotes the decoder vector corresponding to feature $n$ at layer $\ell$ and position $k$ , and $g_{k^{\prime},n^{\prime}}^{\ell^{\prime}}$ is the corresponding encoder vector for feature $n^{\prime}$ at layer $\ell^{\prime}$ and position $k^{\prime}$ . The term $J_{\ell,k}^{\ell^{\prime},k^{\prime}}$ is the Jacobian from the MLP output at $(\ell,k)$ to the MLP input at $(\ell^{\prime},k^{\prime})$ . This Jacobian is computed during a forward pass in which all nonlinearities are frozen using stop-gradient operations. Under this linearisation, the attribution score represents the sum over all linear paths from the source feature to the target feature.

To analyse how this total effect between two features is mediated by each model component, we define the component-specific attribution by subtracting the contribution of all paths that do not pass through the component:

$$

a_{\ell,k,n}^{\ell^{\prime},k^{\prime},n^{\prime}}(h)=f_{k,n}^{\ell}\;J_{\ell,k}^{\ell^{\prime},k^{\prime}}\;g_{k^{\prime},n^{\prime}}^{\ell^{\prime}}-f_{k,n}^{\ell}\;\bigl[J_{\ell,k}^{\ell^{\prime},k^{\prime}}\bigr]_{h}\;g_{k^{\prime},n^{\prime}}^{\ell^{\prime}}.

$$

Here, $\bigl[J_{\ell,k}^{\ell^{\prime},k^{\prime}}\bigr]_{h}$ denotes a modified Jacobian computed under the same linearization as above, but with the specific attention component $h$ additionaly frozen via stop-gradient. As such, these component-specific scores quantifies how much each model component impacts a particular edge between features.

Empicially, we evaluate the method on ten pruned attribution graphs, computed on the IOI, greater-than, completion, and category tasks. Similar to our previous circuit discovery experiment, we compute attribution scores on the level of attention heads as well as individual key–query pairs. In practice, attention sparsity yields substantial computational savings: because inactive key–query pairs are known a priori to have exactly zero attribution score, attribution need only be computed for a small subset of components. This reduces the computation time per attribution graph from several hours to several minutes.

<details>

<summary>x6.png Details</summary>

### Visual Description

## Line Charts: Edges and Heads Cumulative Mass Comparison

### Overview

The image displays two side-by-side line charts comparing the "Mean Cumulative Mass" of "Sparse" versus "Non Sparse" methods across two different domains: "Edges" (left chart) and "Heads" (right chart). Both charts plot cumulative mass against a sorted index, demonstrating that the Sparse method achieves higher cumulative mass with fewer elements.

### Components/Axes

**Common Elements:**

* **Y-Axis (Both Charts):** Labeled "Mean Cumulative Mass". The scale ranges from 0.50 to 1.00, with major tick marks at 0.50, 0.75, and 1.00.

* **Legend (Located in bottom-right of the "Heads" chart):**

* **Blue Line:** "Non Sparse"

* **Orange Line:** "Sparse"

**Left Chart: "Edges"**

* **Title:** "Edges" (top-left).

* **X-Axis:** Labeled "Sorted Index (log scale)". It is a logarithmic scale with major tick marks at 10⁰ (1), 10¹ (10), 10² (100), and 10³ (1000).

* **Annotation:** A dashed black horizontal line connects the two curves near the top. The text "16.1x" is placed above this line, indicating a multiplicative factor.

**Right Chart: "Heads"**

* **Title:** "Heads" (top-left).

* **X-Axis:** Labeled "Sorted Index". It is a linear scale with major tick marks at 25, 50, 75, 100, and 125.

* **Annotation:** A dashed black horizontal line connects the two curves near the top. The text "3.4x" is placed above this line, indicating a multiplicative factor.

### Detailed Analysis

**Trend Verification & Data Points:**

* **General Trend (Both Charts):** The "Sparse" (orange) line rises more steeply and plateaus at a cumulative mass of 1.00 much earlier (at a lower sorted index) than the "Non Sparse" (blue) line. This indicates the Sparse method concentrates mass in fewer top-ranked elements.

* **"Edges" Chart (Log Scale):**

* The Sparse line starts at approximately 0.45 at index 1 (10⁰) and reaches near 1.00 by index ~100 (10²).

* The Non Sparse line starts near 0 at index 1 and rises more gradually, reaching near 1.00 by index ~1000 (10³).

* The "16.1x" annotation suggests that to achieve a specific high cumulative mass (visually estimated at ~0.95), the Non Sparse method requires approximately 16.1 times more sorted elements than the Sparse method.

* **"Heads" Chart (Linear Scale):**

* The Sparse line starts at approximately 0.50 at index 0 and reaches near 1.00 by index ~25.

* The Non Sparse line starts near 0 at index 0 and rises more gradually, reaching near 1.00 by index ~125.

* The "3.4x" annotation suggests that to achieve a specific high cumulative mass (visually estimated at ~0.95), the Non Sparse method requires approximately 3.4 times more sorted elements than the Sparse method.

### Key Observations

1. **Consistent Superiority of Sparse:** In both domains (Edges and Heads), the Sparse method demonstrates superior efficiency, accumulating mass significantly faster.

2. **Magnitude of Difference:** The efficiency gain is more dramatic for "Edges" (16.1x) than for "Heads" (3.4x), as indicated by the annotations.

3. **Scale Context:** The "Edges" chart uses a logarithmic x-axis, compressing a wide range of indices (1 to 1000+), while the "Heads" chart uses a linear axis over a narrower range (0 to ~140). This difference in scale is crucial for interpreting the absolute index values.

4. **Convergence:** Both methods in both charts eventually converge to a cumulative mass of 1.00, meaning all mass is accounted for when considering all elements.

### Interpretation

These charts likely visualize the concept of **sparsity** in a technical context, such as neural network pruning, graph theory, or attention mechanisms. The "Mean Cumulative Mass" represents the proportion of a total quantity (e.g., weight magnitude, signal strength, importance score) captured by the top-ranked elements.

* **What the Data Suggests:** The Sparse method is highly effective at identifying and concentrating the most significant elements. A small subset of "Sparse" edges or heads contains the vast majority of the "mass" or importance. The Non Sparse distribution is more diffuse, requiring many more elements to capture the same amount of information.

* **Relationship Between Elements:** The "Edges" and "Heads" likely refer to different components of a system (e.g., edges in a graph neural network, attention heads in a transformer). The analysis shows sparsity is beneficial in both, but the effect is more pronounced for edges.

* **Notable Anomalies/Outliers:** There are no apparent outliers in the smooth curves. The key anomaly is the stark difference in the rate of accumulation between the two methods, which is the central point of the visualization.

* **Practical Implication:** The multipliers (16.1x, 3.4x) quantify potential efficiency gains. For example, if one can achieve 95% of the performance using only the top 10% of elements identified by the Sparse method, this translates to significant computational savings or model compression. The charts provide empirical evidence for the effectiveness of a sparsity-inducing technique.

</details>

Figure 6: Mean cumulative distribution of the component scores that mediate an attribution graph edge. The components are on the left key-query pairs within a head, and on the right full attention heads.

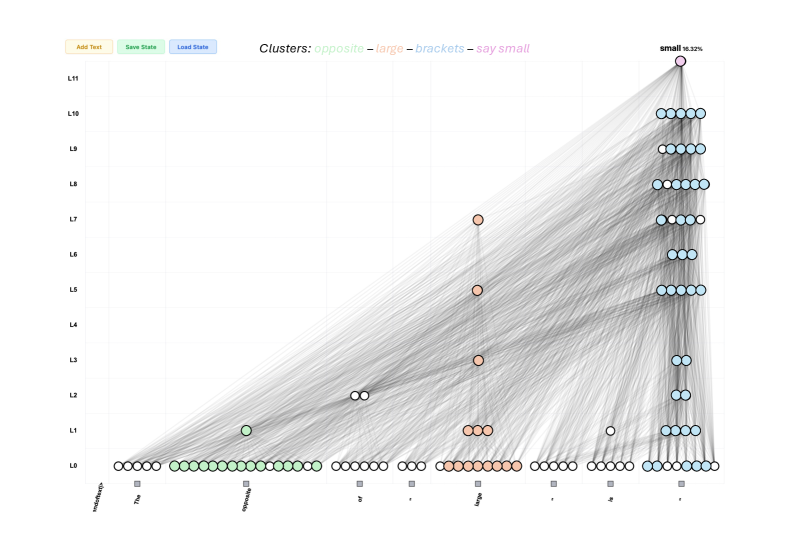

In terms of circuit size, Figure 6 shows the mean cumulative distribution of component attribution scores for each edge in the attribution graph. We find that, to reach a cumulative attribution threshold of $90\$ , the sparse model on average requires $16.1\times$ fewer key–query pairs and $3.4\times$ fewer attention heads when compared to the dense GPT-2 model, supporting our hypothesis that sparse attention patterns leads to simpler mediation circuits.

<details>

<summary>x7.png Details</summary>

### Visual Description

## Diagram: Attention Pattern Comparison (GPT-2 vs. Sparse GPT-2)

### Overview

The image is a technical diagram comparing the attention head patterns of a standard GPT-2 model (left panel) and a "Sparse GPT-2" model (right panel). It visualizes how different attention heads in various layers map key positions to query positions for a specific input sentence. The diagram includes a main heatmap-style grid, a detailed breakout of specific heads, and a flow diagram linking words to model layers.

### Components/Axes

**Left Panel (GPT-2):**

* **Label:** "GPT-2" (bottom center).

* **Grid:** A 12x12 grid representing attention heads (likely layers x heads or a similar mapping). The grid contains colored squares.

* **Color Legend (Implied):** Blue and red squares. The right panel clarifies that red indicates a specific mapping.

**Right Panel (Sparse GPT-2):**

* **Label:** "Sparse GPT-2" (bottom center).

* **Top Annotation:** "All heads map key pos 5 to query pos 8" (red text, top center).

* **Axes:** A small coordinate system in the top-left corner with:

* Vertical axis labeled "Q" (Query) with an upward arrow.

* Horizontal axis labeled "K" (Key) with a rightward arrow.

* **Grids:** Five smaller 12x12 grids arranged horizontally, each labeled with a specific attention head:

* L11-H7 (Layer 11, Head 7)

* L10-H1 (Layer 10, Head 1)

* L9-H7 (Layer 9, Head 7)

* L9-H1 (Layer 9, Head 1)

* L8-H6 (Layer 8, Head 6)

* **Legend/Key:** A red square and a blue square in the top-left grid (L11-H7). The annotation confirms red squares represent the mapping from key position 5 to query position 8.

* **Flow Diagram Components:**

* **Input Sentence:** "The opposite of \" large \" is \" \"" with positional indices 1 through 8 below each token. The word "large" (position 5) is highlighted in red.

* **Word Boxes:** Three rounded rectangles representing words and their associated layer ranges:

* "opposite" / "layer 0-1"

* "large" / "layer 0-3"

* "brackets" / "layer 0-10"

* **Modulation Box:** A box labeled "small" / "layer 12" with an arrow pointing to the "large" box, annotated with "Modulated at 80% by".

* **Connecting Lines:** Lines connect the words in the sentence to their respective boxes and show the flow of information/modulation.

### Detailed Analysis

**1. GPT-2 Attention Grid (Left Panel):**

* **Visual Trend:** The grid shows a pattern of vertical blue lines and scattered red squares. The blue lines suggest consistent attention patterns across certain positions for many heads. The red squares, representing the specific mapping from key pos 5 to query pos 8, are distributed across various heads without a single dominant pattern.

**2. Sparse GPT-2 Attention Grids (Right Panel - Top):**

* **Trend Verification:** For the five highlighted heads (L11-H7, L10-H1, L9-H7, L9-H1, L8-H6), the red squares (mapping K5->Q8) are consistently present. Their placement varies slightly but is generally in the upper-right quadrant of each head's grid.

* **Data Points (Approximate Grid Coordinates for Red Square):**

* L11-H7: (Q≈9, K≈5)

* L10-H1: (Q≈9, K≈5)

* L9-H7: (Q≈9, K≈5)

* L9-H1: (Q≈9, K≈5)

* L8-H6: (Q≈9, K≈5)

* **Observation:** The red squares are highly aligned across these specific heads in the Sparse model, indicating a focused, shared attention mechanism on the target position pair (5->8).

**3. Flow Diagram (Right Panel - Bottom):**

* **Component Isolation:**

* **Header/Context:** The sentence "The opposite of \" large \" is \" \"" defines the input. Token 5 ("large") is the focal key position.

* **Main Processing Flow:** The word "opposite" is processed in early layers (0-1). The word "large" is processed across layers 0-3. The word "brackets" (likely referring to the quotation marks) is processed across a wide range of layers (0-10).

* **Modulation:** A separate "small" component in layer 12 modulates the processing of "large" at an 80% rate. This suggests a late-layer, high-influence adjustment.

### Key Observations

1. **Focused vs. Distributed Attention:** The standard GPT-2 shows a distributed pattern for the K5->Q8 mapping (red squares scattered). The Sparse GPT-2 shows a highly concentrated pattern for the same mapping across the five highlighted heads.

2. **Layer Specialization:** The flow diagram indicates different words are active in different layer ranges. "Brackets" have the widest layer engagement (0-10), while "opposite" is limited to the earliest layers (0-1).

3. **Late-Stage Modulation:** A significant modulation (80%) occurs at the final layer (12) specifically targeting the processing of the word "large".

4. **Positional Consistency:** In the Sparse GPT-2 heads shown, the red square (K5->Q8) appears at a consistent relative position within each head's grid (around Q=9, K=5).

### Interpretation

This diagram illustrates a key difference in how sparse attention models can function compared to standard transformers. The data suggests that **Sparse GPT-2 achieves efficiency by forcing multiple attention heads across different layers to specialize in the same critical token-to-token mapping** (here, connecting the key at position 5 ["large"] to the query at position 8). This creates a robust, redundant pathway for that specific linguistic relationship.

In contrast, the standard GPT-2's attention for the same mapping is more diffuse, spread across many heads without strong consensus. The flow diagram provides a mechanistic hypothesis: early layers handle basic word relationships ("opposite"), mid-layers process core semantic content ("large"), and a late, powerful modulation (80% from "small" layer 12) fine-tunes the output based on that core content. The wide layer engagement for "brackets" suggests punctuation or structural tokens require sustained processing throughout the network.

The **outlier** is the "brackets" component, which has an unusually broad layer range (0-10), indicating that syntactic or structural elements may be processed in a fundamentally different, more persistent manner than content words. The **notable trend** is the engineered sparsity leading to head specialization, which is the core concept the diagram aims to demonstrate.

</details>

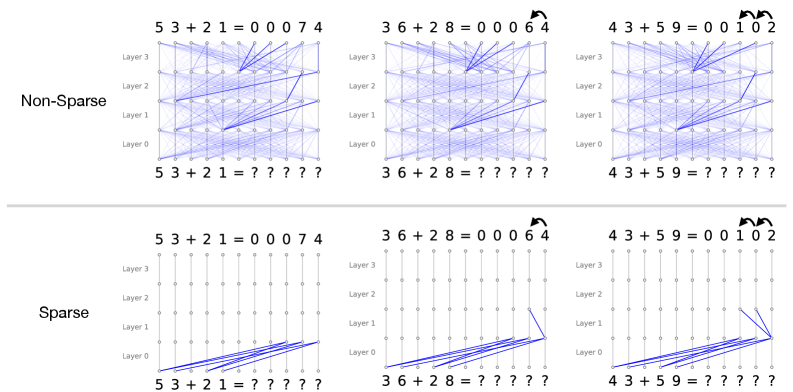

Figure 7: Sketch of the attribution graph for the sentence “The opposite of ‘large’ is”. The cluster of features associated with large at token position 5 maps directly to the final next-token prediction logit small. We show the attention patterns of all key–query pairs required to account for $80\$ of the cumulative attribution score. In the sparse-attention setting, this corresponds to five attention heads, compared to more than forty heads in the dense-attention case. In the sparse model, these heads read from token position 5 and write directly to the last token residual stream at token position 8. These heads thus compute in parallel and provide a clear picture of the internal computation.

Next, we present a qualitative case-study to showcase the benefits of sparse attention patterns. For a given key–query pair, we compute the causal effect from all other features in the attribution graph to both the key and the query vectors. Figure 7 illustrates this analysis for the prompt “The opposite of ‘large’ is”. The resulting attribution graph decomposes into four coherent clusters of features: features related to opposite, features related to large, features activating on bracketed tokens, and the final next-token logit corresponding to small (see Appendix H for example of features and visualization).

Here, the features in the large cluster are directly connected to the small logit. The key question is then to understand how this connection from the large to the small logit comes about. To this end, we analyse their mediation structure. We find that $80\$ of the cumulative attribution score of the edges connecting the large cluster to the small logit is mediated by the same five late layer attention key–query pairs. These attention components map features from token position $5$ directly into the final-layer residual stream at position $8$ , and thus operate in parallel.

For these five key–query pairs, we then compute the causal influence of all other features in the graph on their key and query vectors. The query vectors are primarily modulated by features associated with bracketed tokens in the last token position, while the key vectors are driven by strongly active features in both the opposite and large clusters, as shown in Figure 8.These results are in agreement with the recent work on attention attribution and the ”opposite of” attribution graph (Kamath et al., 2025). In stark contrast, Figure 7 (left) shows that a similar (and more computationally expensive) analysis on the dense model produces a much more complicated circuit. This case study illustrates the potential of sparse attention in the context of attribution graphs, as it enables a unified view of features and circuits. By jointly analyzing feature activations, attention components, and their mediating roles, we obtain a more faithful picture of the computational graph underlying the model’s input–output behavior.

## 5 Conclusion

Achieving interpretability requires innovations in both interpretation techniques and model design. We investigate how large models can be trained to be intrinsically interpretable. We present a flexible post-training procedure that sparsifies transformer attention while preserving the original pretraining loss. By minimally adapting the architecture, we apply a sparsity penalty under a constrained-loss objective, allowing pre-trained model to reorganise its connectivity into a much more selective and structured pattern.

$\rightarrow$ Query

⬇

1. large (pos 5)

2. large (pos 5)

3. quantities (pos 5)

4. comparison (pos 3)

5. opposite (pos 3)

$\rightarrow$ Key

⬇

1. bracket (pos 8)

2. bracket (pos 8)

3. bracket (pos 8)

4. bracket (pos 8)

5. bracket (pos 8)

Figure 8: Minimal description of the top5 features activating the query and the key vectors for the attention head L8-H6 from Figure 7.

Mechanistically, this induced sparsity gives rise to substantially simpler circuits: task-relevant computation concentrates into a small number of attention heads and edges. Across a range of tasks and analyses, we show that sparsity improves interpretability at the circuit level by reducing the number of components involved in specific behaviours. In circuit discovery experiments, most of the model’s behaviour can be explained by circuits that are orders of magnitude smaller than in dense models; in attribution graph analyses, the reduced number of mediating components renders attention attribution tractable. Together, these results position sparse post-training of attention as a practical and effective tool for enhancing the mechanistic interpretability of pre-trained models.

#### Limitations and Future Work.

One limitation of the present investigation is that, while we deliberately focus on sparsity as a post-training intervention, it remains an open question whether injecting a sparsity bias directly during training would yield qualitatively different or simpler circuit structures. Also, a comprehensive exploration of the performance trade-offs for larger models and for tasks that require very dense or long-range attention patterns would be beneficial, even if beyond the computational means currently at our disposal. Moreover, our study is primarily restricted to sparsifying attention patterns, the underlying principle of leveraging sparsity to promote interpretability naturally extends to other components of the transformer architecture. As such, combining the proposed method with complementary approaches for training intrinsically interpretable models, such as Sparse Mixture-of-Experts (Yang et al., 2025), sparsifying model weights (Gao et al., 2024), or limiting superposition offers a promising direction for future work. Another exciting avenue for future work is to apply the sparsity regularisation framework developed here within alternative post-training paradigms, such as reinforcement learning (Ouyang et al., 2022; Zhou et al., 2024) or supervised fine-tuning (Pareja et al., 2025).

## Impact Statement

This paper presents work whose goal is to advance the field of Machine Learning. There are many potential societal consequences of our work, none which we feel must be specifically highlighted here.

## Acknowledgment

F. D. acknowledges support through a fellowship from the Hector Fellow Academy. A. L. is supported by an EPSRC Programme Grant (EP/V000748/1). I. P. holds concurrent appointments as a Professor of Applied AI at the University of Oxford and as an Amazon Scholar. This paper describes work performed at the University of Oxford and is not associated with Amazon.

## References

- E. Ameisen, J. Lindsey, A. Pearce, W. Gurnee, N. L. Turner, B. Chen, C. Citro, D. Abrahams, S. Carter, B. Hosmer, J. Marcus, M. Sklar, A. Templeton, T. Bricken, C. McDougall, H. Cunningham, T. Henighan, A. Jermyn, A. Jones, A. Persic, Z. Qi, T. Ben Thompson, S. Zimmerman, K. Rivoire, T. Conerly, C. Olah, and J. Batson (2025) Circuit tracing: revealing computational graphs in language models. Transformer Circuits Thread. External Links: Link Cited by: Appendix F, Appendix G, Appendix G, §1, §2.3, §4.3.

- I. Beltagy, M. E. Peters, and A. Cohan (2020) Longformer: the long-document transformer. arXiv preprint arXiv:2004.05150. Cited by: §2.1.

- L. Bereska and E. Gavves (2024) Mechanistic interpretability for ai safety–a review. arXiv preprint arXiv:2404.14082. Cited by: §1.

- R. e. al. Bommasani (2021) On the opportunities and risks of foundation models. ArXiv. External Links: Link Cited by: §1.

- R. Child, S. Gray, A. Radford, and I. Sutskever (2019) Generating long sequences with sparse transformers. arXiv preprint arXiv:1904.10509. Cited by: §2.1.